Prompt stringlengths 10 31k | Chosen stringlengths 3 29.4k | Rejected stringlengths 3 51.1k | Title stringlengths 9 150 | Tags listlengths 3 7 |

|---|---|---|---|---|

I want to post ($\_GET as well as $\_POST) data by clicking on links (enclosed with `<a>`) and not the regular form 'submit' button. preferred language : PHP

I have seen this in a lot of todays websites,

where forms are submitted by clicking on buttons looking like hyperlinks.

so was wondering how it could be done.

Thanks in advance | [This post](http://ajaxian.com/archives/styling-buttons-as-links-allowing-you-to-post-away) on Ajaxian might help. It links to a pretty in depth blog post that shows you how to apply css to buttons so that they look like links.

The advantage here over using a proper link is the "fake link" really **is** a button, so it behaves exactly like a button, only it looks like a link. Spiders won't follow it, screen readers will treat it differently, it's a more "correct" thing to do in terms of launching a http post. | Forms are designed to be submitted with buttons. You can use JavaScript to make a link submit it, but this will break when JS is not available. Even if JavaScript is available, using a link will be using a control which won't show up when a screen reader is in "Forms Mode", leaving screen reader users without any obvious way to submit the form.

CSS is a safer alternative (see <http://tom.me.uk/scripting/submit.html>). | Posting data through hyperlinks | [

"",

"php",

"post",

"hyperlink",

""

] |

I would like to change a string in php from all upper case to normal cases. So that every sentence would start with an upper case and the rest would be in lower case.

Is there a simple way to do this ? | A simple way is to use [strtolower](http://php.net/strtolower) to make the string lower case, and [ucfirst](http://php.net/ucfirst) to upper case the first char as follows:

```

$str=ucfirst(strtolower($str));

```

If the string contains multiple sentences, you'll have to write your own algorithm, e.g. explode on sentence separators and process each sentence in turn. As well as the first char, you might need some heuristics for words like "I" and any common proper nouns which appear in your text. E.g, something like this:

```

$sentences=explode('.', strtolower($str));

$str="";

$sep="";

foreach ($sentences as $sentence)

{

//upper case first char

$sentence=ucfirst(trim($sentence));

//now we do more heuristics, like turn i and i'm into I and I'm

$sentence=preg_replace('/i([\s\'])/', 'I$1', $sentence);

//append sentence to output

$str=$sep.$str;

$sep=". ";

}

``` | Here's a function that will do it:

```

function sentence_case($s) {

$str = strtolower($s);

$cap = true;

for($x = 0; $x < strlen($str); $x++){

$letter = substr($str, $x, 1);

if($letter == "." || $letter == "!" || $letter == "?"){

$cap = true;

}elseif($letter != " " && $cap == true){

$letter = strtoupper($letter);

$cap = false;

}

$ret .= $letter;

}

return $ret;

}

```

Source:

<http://codesnippets.joyent.com/posts/show/715> | Change input from all upper case into a normal case | [

"",

"php",

""

] |

I am attempting to load document files into a document library in SharePoint using the CopyIntoItems method of the SharePoint Copy web service.

The code below executes and returns 0 (success). Also, the CopyResult[] array returns 1 value with a "Success" result. However, I cannot find the document anywhere in the library.

I have two questions:

1. Can anyone see anything wrong with my code or suggest changes?

2. Can anyone suggest how I could debug this on the server side. I don't have a tremendous amount of experience with SharePoint. If I can track what is going on through logging or some other method on the server side it may help me figure out what is going on.

**Code Sample:**

```

string[] destinationUrls = { Uri.EscapeDataString("https://someaddress.com/Reports/Temp") };

SPCopyWebService.FieldInformation i1 = new SPCopyWebService.FieldInformation { DisplayName = "Name", InternalName = "Name", Type = SPListTransferSpike1.SPCopyWebService.FieldType.Text, Value = "Test1Name" };

SPCopyWebService.FieldInformation i2 = new SPCopyWebService.FieldInformation { DisplayName = "Title", InternalName = "Title", Type = SPListTransferSpike1.SPCopyWebService.FieldType.Text, Value = "Test1Title" };

SPCopyWebService.FieldInformation[] info = { i1, i2 };

SPCopyWebService.CopyResult[] result;

byte[] data = File.ReadAllBytes("C:\\SomePath\\Test1Data.txt");

uint ret = SPCopyNew.CopyIntoItems("", destinationUrls, info, data, out result);

```

**Edit that got things working:**

I got my code working by adding "<http://null>" to the SourceUrl field. Nat's answer below would probably work for that reason. Here is the line I changed to get it working.

```

// Change

uint ret = SPCopyNew.CopyIntoItems("http://null", destinationUrls, info, data, out result);

``` | I think the issue may be in trying to set the "Name" property using the webservice. I have had some fail doing that.

Given the "Name" is the name of the document, you may have some success with

```

string targetDocName = "Test1Name.txt";

string destinationUrl = Uri.EscapeDataString("https://someaddress.com/Reports/Temp/" + targetDocName);

string[] destinationUrls = { destinationUrl };

SPCopyWebService.FieldInformation i1 = new SPCopyWebService.FieldInformation { DisplayName = "Title", InternalName = "Title", Type = SPListTransferSpike1.SPCopyWebService.FieldType.Text, Value = "Test1Title" };

SPCopyWebService.FieldInformation[] info = { i1};

SPCopyWebService.CopyResult[] result;

byte[] data = File.ReadAllBytes("C:\\SomePath\\Test1Data.txt");

uint ret = SPCopyNew.CopyIntoItems(destinationUrl, destinationUrls, info, data, out result);

```

Note: I have used the "target" as the "source" property. [Don't quite know why, but it does the trick](https://stackoverflow.com/questions/787610/how-do-you-copy-a-file-into-sharepoint-using-a-webservice/791847#791847). | I didn't understand very well what you're tying to do, but if you're trying to upload a file from a local directory into a sharepoint library, i would suggest you create a webclient and use uploadata:

Example (VB.NET):

```

dim webclient as Webclient

webClient.UploadData("http://srvasddress/library/filenameexample.doc", "PUT", filebytes)

```

Then you just have to check in the file using the lists web service, something like:

```

listService.CheckInFile("http://srvasddress/library/filenameexample.doc", "description", "1")

```

Hope it was of some help.

EDIT: Don't forget to set credentials for the web client, etc.

EDIT 2: Update metada fields using this:

```

listService.UpdateListItems("Name of the Library, batchquery)

```

You can find info on building batch query's in here: [link](http://msdn.microsoft.com/en-us/library/dd586543(office.11).aspx) | How do you use the CopyIntoItems method of the SharePoint Copy web service? | [

"",

"c#",

".net",

"web-services",

"sharepoint",

""

] |

I am trying to insert new lines into an Excel XML document. The entity that I need to insert is ` ` but whenever I insert that into PHP DOM, it just converts it to a normal line break.

This is what I am getting:

```

<Cell><Data>text

text2

</Data></Cell>

```

This is what I want:

```

<Cell><Data>text text2 </Data></Cell>

```

I cannot figure out how to insert a new line and get it to encode that way, or add that character without it either double encoding it, or converting it to a new line.

Thanks for the help! | Use a CDATA section... | The correct format for this appears to be " ". Note the trailing space. It works in our applications when the trailing space is entered. | Excel XML Line Breaks within Cell from PHP DOM | [

"",

"php",

"xml",

"excel",

"dom",

""

] |

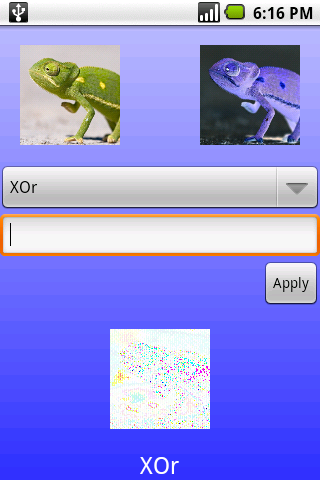

I'm trying to replicate some image filtering software on the Android platform. The desktop version works with bmps but crashes out on png files.

When I come to xOr two pictures (The 32 bit ints of each corresponding pixel) I get very different results for the two pieces of software.

I'm sure my code isn't wrong as it's such a simple task but here it is;

```

const int aMask = 0xFF000000;

int xOrPixels(int p1, int p2) {

return (aMask | (p1 ^ p2) );

}

```

The definition for the JAI library used by the Java desktop software can be found [here](http://java.sun.com/products/java-media/jai/forDevelopers/jai-apidocs/javax/media/jai/operator/XorDescriptor.html) and states;

```

The destination pixel values are defined by the pseudocode:

dst[x][y][b] = srcs[0][x][y][b] ^ srcs[1][x][y][b];

```

Where the b is for band (i.e. R,G,B).

Any thoughts? I have a similar problem with AND and OR.

Here is an image with the two source images xOr'd at the bottom on Android using a png. The same file as a bitmap xOr'd gives me a bitmap filled with 0xFFFFFFFF (White), no pixels at all. I checked the binary values of the Android ap and it seems right to me....

Gav

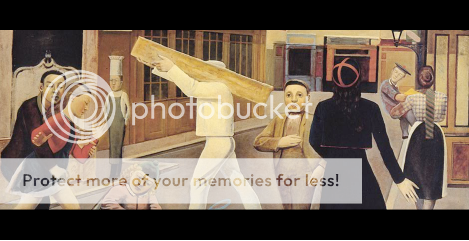

NB When i say (Same 32 bit ARGB representation) I mean that android allows you to decode a png file to this format. Whilst this might give room for some error (Is png lossless?) I get completely different colours on the output. | I checked a couple of values from your screenshot.

The input pixels:

* Upper left corners, 0xc3cbce^0x293029 = 0xeafbe7

* Nape of the neck, 0xbdb221^0x424dd6 = 0xfffff7

are very similar to the corresponding output pixels.

Looks to me like you are XORing two images that are closely related (inverted in each color channel), so, necessarily, the output is near 0xffffff.

If you were to XOR two dissimilar images, perhaps you will get something more like what you expect.

The question is, why do you want to XOR pixel values? | The png could have the wrong gamma or color space, and it's getting converted on load, affecting the result. Some versions of Photoshop had a bug where they saved pngs with the wrong gamma. | Bitwise operations on a png and bmp give different results? (Same 32 bit ARGB representation) | [

"",

"java",

"image-processing",

""

] |

I'm looking for a simple method to check if only one variable in a list of variables has a True value.

I've looked at this [logical xor post](https://stackoverflow.com/questions/432842/how-do-you-get-the-logical-xor-of-two-variables-in-python) and is trying to find a way to adapt to multiple variables and only one true.

Example

```

>>>TrueXor(1,0,0)

True

>>>TrueXor(0,0,1)

True

>>>TrueXor(1,1,0)

False

>>>TrueXor(0,0,0,0,0)

False

``` | There isn't one built in but it's not to hard to roll you own:

```

def TrueXor(*args):

return sum(args) == 1

```

Since "[b]ooleans are a subtype of plain integers" ([source](http://docs.python.org/library/stdtypes.html#numeric-types-int-float-long-complex)) you can sum the list of integers quite easily and you can also pass true booleans into this function as well.

So these two calls are homogeneous:

```

TrueXor(1, 0, 0)

TrueXor(True, False, False)

```

If you want explicit boolean conversion: `sum( bool(x) for x in args ) == 1`. | I think the sum-based solution is fine for the given example, but keep in mind that boolean predicates in python always short-circuit their evaluation. So you might want to consider something more consistent with [all and any](http://docs.python.org/library/functions.html#all).

```

def any_one(iterable):

it = iter(iterable)

return any(it) and not any(it)

``` | Check if only one variable in a list of variables is set | [

"",

"python",

"xor",

""

] |

In other words, does it matter whether I use <http://www.example.com/> or <http://wwW.exAmPLe.COm/> ?

I've been running into strange issues with host-names lately: I have an Apache2.2+PHP5.1.4 webserver, accessed by all kinds of browsers. IE6 users in particular (esp. when their UA string is burdened with numerous BHOs, no pattern yet) seem to have problems accessing the site (cookies disappear, JS refuses to load) when entering via <http://www.Example.com/>, but not <http://www.example.com/>

I've checked the [HTTP](http://www.faqs.org/rfcs/rfc2616.html) and [DNS](http://www.dns.net/dnsrd/rfc/) RFCs, my [P3P policies](https://stackoverflow.com/questions/389456/cookie-blocked-not-saved-in-iframe-in-internet-explorer), cookie settings and [SOP](http://en.wikipedia.org/wiki/Same_origin_policy); yet nowhere I've seen even a mention of domain names being case-sensitive.

(I know path and query string are case sensitive ( `?x=foo` is different from `?x=Foo` ) and treat them appropriately; am doing no parsing/processing on domain name in my code)

Am I doing something wrong or is this just some browser+toolbar crap I should work around? | Domain names are **not** case-sensitive; `Example.com` will resolve to the same IP as `eXaMpLe.CoM`. If a web server or browser treats the `Host` header as case-sensitive, that's a bug. | No, this shouldn't make any difference.

Check out the URL RFC Spec (<http://www.ietf.org/rfc/rfc1738.txt>). From section 2.1:

> For resiliency, programs interpreting

> URLs should treat upper case letters

> as equivalent to lower case in scheme

> names | Does HTTP hostname case (upper/lower) matter? | [

"",

"php",

"http",

"dns",

"case-sensitive",

""

] |

Hey all, just a quick question (should be an easy fix I think). In a WHERE statement in a query, is there a way to have multiple columns contained inside? Here is my code:

```

$sql="SELECT * FROM $tbl_name WHERE username='$myusername' and pwd='$pass'";

```

What I want to do is add another column after the WHERE (called priv\_level = '$privlevel'). I wasn't sure of the syntax on how to do that however.

Thanks for the help! | Read up on SQL. But anyways, to do it just add `AND priv_level = '$privlevel'` to the end of the SQL.

This might be a pretty big step if you're new to PHP, but I think you should read up on the [`mysqli` class in PHP](http://se.php.net/manual/en/mysqli.prepare.php) too. It allows much safer execution of queries.

Otherwise, here's a safer way:

```

$sql = "SELECT * FROM $tbl_name WHERE " .

"username = '" . mysql_real_escape_string($myusername) . "' AND " .

"pwd = '" . mysql_real_escape_string($pass) . "' AND " .

"priv_level = '" . mysql_real_escape_string($privlevel) . "'";

``` | Wrapped for legibility:

```

$sql="

SELECT *

FROM $tbl_name

WHERE username='$myusername' and pwd='$pass' and priv_level = '$privlevel'

";

```

Someone else will warn you about how dangerous the statement is. :-) Think [SQL injection](http://en.wikipedia.org/wiki/SQL_injection). | Multiple columns after a WHERE in PHP? | [

"",

"php",

"mysql",

"where-clause",

""

] |

I have a baseclass, `Statement`, which several other classes inherit from, named `IfStatement`, `WhereStatement`, etc... What is the best way to perform a test in an `if` statement to determine which sort of `Statement` class an instance is derived from? | ```

if (obj.getClass().isInstance(Statement.class)) {

doStuffWithStatements((Statement) obj));

}

```

The nice thing about this technique (as opposed to the "instanceof" keyword) is that you can pass the test-class around as an object. But, yeah, other than that, it's identical to "instanceof".

NOTE: I've deliberately avoided editorializing about whether or not type-instance-checking is the *right* thing to do. Yeah, in most cases, it's better to use polymorphism. But that's not what the OP asked, and I'm just answering his question. | ```

if(object instanceof WhereStatement) {

WhereStatement where = (WhereStatement) object;

doSomething(where);

}

```

Note that code like this usually means that your base class is missing a polymorphic method. i.e. `doSomething()` should be a method of `Statement`, possibly abstract, that is overridden by sub-classes. | Does Java have an "is kind of class" test method | [

"",

"java",

"reflection",

"inheritance",

"evaluation",

""

] |

I'm using BufferedReader and PrintWriter to go through each line of an input file, make a change to some lines, and output the result. If a line doesn't undergo a change, it's just printed as is to the output file. For some reason however, the process ends prematurely. The code looks something like this:

```

BufferedReader in = new BufferedReader(new FileReader("in.txt"));

FileOutputStream out = new FileOutputStream("out.txt");

PrintWriter p = new PrintWriter(out);

String line = in.readLine();

while(line!=null)

{

if(line is special)

do edits and p.println(edited_line);

else

p.println(line);

line = in.readLine();

}

```

However, for some odd reason, this process ends prematurely (actually prints out a half of a line) towards the very end of my input file. Any obvious reason for this? The while loop is clearly being ended by a null. And it's towards the end of my 250k+ line txt file. Thanks! | Where do you flush/close your PrintWriter or FileOutputStream ? If the program exits and this is not done, not all your results will be written out.

You need `out.close()` (possibly a `p.flush()` as well?) at the end of your process to close the file output stream | Try adding a p.flush() after the loop. | Problems with BufferedReader / PrintWriter? | [

"",

"java",

"file",

"bufferedreader",

""

] |

I'd like to write an applet (or a java Web start application) calling its server (servlet?) to invoke some methods and to send/retrieve data. What would be the best way/technology to send and to retrieve those message ? | Protocol:

If you don't care about interoperability with other languages, I'd go with RMI over HTTP. It has support right from the JRE, quite easy to setup and very easy to use once you have the framework.

For applicative logic, I'd use either:

1. The command pattern, passing objects that, when invoked, invoke methods on the server. This is good for small projects, but tends to over complicate as time goes by and more commands are added. Also, it require the client to be coupled to server logic.

2. Request by name + DTO approach. This has the benefit of disassociating server logic from the client all together, leaving the server side free to change as needed. The overhead of building a supporting framework is a bit greater than the first option, but the separation of client from server is, in my opinion, worth the effort.

Implementation:

If you have not yet started, or you have and using Spring, then Spring remoting is a great tool. It works from everywhere (including applets) even if you don't use the IOC container.

If you do not want to use Spring, the basic RMI is quite easy to use as well and has an abundance of examples over the web. | HTTP requests? Parameters in, xml out. | Applet (or WebStart application) calling a server : best practices? | [

"",

"java",

"applet",

"java-web-start",

""

] |

Having been googling for hours, I realize that users can use either xml file(orm.xml, I suppose?) or annotations in JPA, or both of them at the same time. I'm i correct?

So, My project use the second-level cache, which is not in the JPA specification. And I use annotations like:

**@org.hibernate.annotations.Cache(usage =

org.hibernate.annotations.CacheConcurrencyStrategy.READ\_WRITE

)**

for each entities.

However, I guess that the annotation above doesn't belongs to be JPA annotation, therefore, I don't know if there is anything equivalent in orm.xml? I have had a look at the xml schema of orm.xml, and there is not such hibernate element permitted in the schema.

If I can't do the second-level cache config in the orm.xml, is there any way other than in the annotation? The reason that I don't want to do it the annotation is because I have two applications, one use READ\_WRITE concurrency strategy and another use READ\_ONLY concurrency strategy.

Thanks in advance!!!

Kewei | So Therefore, I think the current solution for my problem is to replace all the annotations of each entities with Hibernate mapping files, so that for different deployment (application as well), we could use different Hibernate mapping files. | you can use the hibernate.cfg.xml, hibernate.properties or \*.hbm.xml files to turn on caching on specific objects with different caching strategies | Hibernate second-level cache in orm.xml? | [

"",

"java",

"xml",

"hibernate",

"orm",

"second-level-cache",

""

] |

I have some code:

```

int CalculateAckermann(int x, int y)

{

if(!x)

{

return y++;

}

if(!y)

{

return CalculateAckermann(x--,1);

}

else

{

return CalculateAckermann(x--, CalculateAckermann(x, y--));

}

}

```

Designed to calculate the ackermann function. Above a fairly low number of x and y the application causes a stack overflow because it recurses too deeply and results in pretty big numbers. How would I go about slowly calculating a solution? | As a note if you wish to just used the closed form, then the algorithms for m<4 are straightforward. If you wish to extend to tetration, then I suggest you write a fastpower algorithm probably using the binary method and then with that method you can write a tetration function. Which would look something like:

```

int Tetration(int number, int tetrate)

{

long int product=1;

if(tetrate==0)

return product;

product=number;

while(tetrate>1)

{

product=FastPower(number,product);

tetrate--;

}

return product;

}

```

Then you can cover cases up to n==4 and after that use the recursive definition and values of A(5,n) overflow at a ridiculous rate, so it's really of no concern. Although your teacher probably won't be satisfied with an algorithm such as this, but it will run much faster. In one of my discrete classes when I asked to write an algorithm to compute the fibonacci numbers and then find its O(n), I wrote the closed form and then wrote O(1) and got full credit, some professors appreciate clever answers.

What is important to note about the Ackerman function is it essentially defines the heirachy of additive functions on the integers, A(1,n) is addition , A(2,n) is multiplication, A(3,n) is exponentiation, A(4,n) is tetration and after 5 the functions grow too fast to be applicable to very much.

Another way to look at addition, multiplication, etc is:

```

Φ0 (x, y ) = y + 1

Φ1 (x, y ) = +(x, y )

Φ2 (x, y ) = ×(x, y )

Φ3 (x, y ) = ↑ (x, y )

Φ4 (x, y ) = ↑↑ (x, y )

= Φ4 (x, 0) = 1 if y = 0

= Φ4 (x, y + 1) = Φ3 (x, Φ4 (x, y )) for y > 0

```

(Uses prefix notation ie +(x,y)=x+y, *(x,y)=x*y. | IIRC, one interesting property of the Ackermann function is that the maximum stack depth needed to evaluate it (in levels of calls) is the same as the answer to the function. This means that there will be severe limits on the actual calculation that can be done, imposed by the limits of the virtual memory of your hardware. It is not sufficient to have a multi-precision arithmetic package; you rapidly need more bits to store the logarithms of the logarithms of the numbers than there are sub-atomic particles in the universe.

Again, IIRC, you can derive relatively simply closed formulae for A(1, N), A(2, N), and A(3, N), along the lines of the following (I seem to remember 3 figuring in the answer, but the details are probably incorrect):

* A(1, N) = 3 + N

* A(2, N) = 3 \* N

* A(3, N) = 3 ^ N

The formula for A(4, N) involves some hand-waving and stacking the exponents N-deep. The formula for A(5, N) then involves stacking the formulae for A(4, N)...it gets pretty darn weird and expensive very quickly.

As the formulae get more complex, the computation grows completely unmanageable.

---

The Wikipedia article on the [Ackermann function](http://en.wikipedia.org/wiki/Ackermann_function) includes a section 'Table of Values'. My memory is rusty (but it was 20 years ago I last looked at this in any detail), and it gives the closed formulae:

* A(0, N) = N + 1

* A(1, N) = 2 + (N + 3) - 3

* A(2, N) = 2 \* (N + 3) - 3

* A(3, N) = 2 ^ (N + 3) - 3

And A(4, N) = 2 ^ 2 ^ 2 ^ ... - 3 (where that is 2 raised to the power of 2, N + 3 times). | Calculating larger values of the ackermann function | [

"",

"c++",

"math",

""

] |

Iam using borland 2006 c++

```

class A

{

private:

TObjectList* list;

int myid;

public:

__fastcall A(int);

__fastcall ~A();

};

__fastcall A::A(int num)

{

myid = num;

list = new TObjectList();

}

__fastcall A::~A()

{

}

int main(int argc, char* argv[])

{

myfunc();

return 0;

}

void myfunc()

{

vector<A> vec;

vec.push_back(A(1));

}

```

when i add a new object A to the vector, it calls its destructor twice, and then once when vec goes out of scope , so in total 3 times.

I was thinking it should call once when object is added, and then once when vec goes out scope. | The expression `A(1)` is an r-value and constructs a new `A` value, the compiler may then copy this into a temporary object in order to bind to the `const` reference that push\_back takes. This temporary that the reference is bound to is then copied into the storage managed by `vector`.

The compiler is allowed to elide temporary objects in many situations but it isn't required to do so. | Try this:

```

#include <iostream>

#include <vector>

class A

{

private:

public:

A(int num)

{

std::cout << "Constructor(" << num << ")\n";

}

A(A const& copy)

{

std::cout << "Copy()\n";

}

A& operator=(A const& copy)

{

std::cout << "Assignment\n";

return *this;

}

A::~A()

{

std::cout << "Destroyed\n";

}

};

int main(int argc, char* argv[])

{

std::vector<A> vec;

vec.push_back(A(1));

}

```

The output on my machine is:

```

> ./a.exe

Constructor(1)

Copy()

Destroyed

Destroyed

>

``` | stl vectors add sequence | [

"",

"c++",

""

] |

I have an array of strings that are valid jQuery selectors (i.e. IDs of elements on the page):

```

["#p1", "#p2", "#p3", "#p4", "#p5"]

```

I want to select elements with those IDs into a jQuery array. This is probably elementary, but I can't find anything online. I could have a for-loop which creates a string `"#p1,#p2,#p3,#p4,#p5"` which could then be passed to jQuery as a single selector, but isn't there another way? Isn't there a way to pass an array of strings as a selector?

**EDIT:** Actually, there is [an answer out there already](https://stackoverflow.com/questions/201724/easy-way-to-turn-javascript-array-into-comma-separated-list/201733). | Well, there's 'join':

```

["#p1", "#p2", "#p3", "#p4", "#p5"].join(", ")

```

EDIT - Extra info:

It is possible to select an array of elements, problem is here you don't have the elements yet, just the selector strings. Any way you slice it you're gonna have to execute a search like .getElementById or use an actual jQuery select. | Try the [Array.join method](https://developer.mozilla.org/en/Core_JavaScript_1.5_Reference/Global_Objects/Array/join):

```

var a = ["#p1", "#p2", "#p3", "#p4", "#p5"];

var s = a.join(", ");

//s should now be "#p1, #p2, #p3, ..."

$(s).whateverYouWant();

``` | an array of strings as a jQuery selector? | [

"",

"javascript",

"jquery",

""

] |

A third party programm allows me to ask data from his ip:port. I ask the stuff via this classic code. Here is the constructor of my connection class:

```

public TcpConnection(String adress, Integer port) {

this.adress = adress;

this.port = port;

try {

socket = new Socket(adress, port);

System.out.println("Opening connection");

out = new PrintWriter(socket.getOutputStream(), true);

InputStream r = new DataInputStream(socket.getInputStream());

in = new BufferedReader(new InputStreamReader(socket.getInputStream()));

} catch (UnknownHostException e) {

// TODO: handle exception

} catch (IOException e) {

e.printStackTrace();

}

}

```

Then I read my socket with `in.read()` in a while loop.

It allows me to read the socket int by int,but I need a finner granularity. (Some info in the answer are encoded in 2 bytes, some in bit, some in 64 bytes ....). So i need to read 2 byte, then a bit. then another bit, then 1 byte and inspect each bit of this byte.

First I was thinking : "Fine, I convert the int I get in a String Binary representation",

( via `Integer.toBinaryString(whatIget)` )

buts it's just stupid and error prone.

I'm sure I'm missing something to address this casual problem.

Some idea ?

Edit2 : i remove the "when i read a int, i read 32 bit ( 4 bytes )" , because it's wrong and not the point of the question. Thus, Reader.read() read a int, if this method only read a byte, she's reading a 1/4 int ??!? | No. read() reads 8 bits / 1 byte at a time.

You can get byte, word, int, long, utf-8 string etc. from DataInputStream if you use a DataInputStream typed reference further on. Have

```

DataInputStream r = new DataInputStream(socket.getInputStream());

r.readLong();

r.readShort();

```

instead of

```

InputStream r = new DataInputStream(socket.getInputStream());

```

However, for words, int and long the the 'Endiannes' of the data matters. DataInputStream combines bytes in Big endian format where as you might have your data in little endian format. You might need to swap the bytes in the short/int/long after you read from the DIS. This is where [Integer.reverseBytes()](http://java.sun.com/javase/6/docs/api/java/lang/Integer.html#reverseBytes(int)) and its friends come in handy.

If you need bit sized data retrieval (for example read 6 bits, then 4, then 12, etc) you might want to check out the answers to [this recent question](https://stackoverflow.com/questions/1034473/java-iterate-bits-in-byte-array).

**Edit:** Removed the 8 bits as it is misleading in this context.

**Edit 2:** Rephrased my 3rd sentence. | You can not read only a bit: socket/TCP/IP work with byte as the smallest unit.

You'll have to read the byte containing the bit and use some bit shifting/masking to get the value of one bit:

```

int byte = r.read();

boolean bit1 = (byte && 0x01) != 0; // first bit (least significatn)

boolean bit2 = (byte && 0x02) != 0; // second bit

boolean bit3 = (byte && 0x04) != 0; // thrid bit

...

``` | read bits and not int from a socket | [

"",

"java",

"sockets",

""

] |

I'm using a convention of prefixing field names with an underscore. When I generate annotate entity classes with such fields I am stuck to using the underscore-prefixed property names in queries. I want to avoid that, and be able to do:

```

@Entity

public class Container {

private String _value;

}

// in a lookup method

executeQuery("from Container where value = ?", value);

```

Is that possible with JPA in general or Hibernate in particular?

---

**Update:** Still trying to remember why, but I need this to be annotated on fields rather than on getters. | You can annotate the getter:

```

@Entity

public class Container {

private String _value;

@Column

public String getValue()

{

return _value;

}

public void setValue( String value )

{

this._value = value;

}

}

``` | You could perhaps write subclasses of your generated entity classes, which have getter methods on them, and then configure the entity manager to use getter/setter access instead if field access? Then your getters/setters could have any name you liked. | Modifying property names in JPA queries | [

"",

"java",

"hibernate",

"jpa",

"annotations",

""

] |

What should be the most recommended datatype for storing an IPv4 address in SQL server?

**Or maybe someone has already created a user SQL data-type (.Net assembly) for it?**

I don't need sorting. | Storing an IPv4 address as a [`binary`](http://msdn.microsoft.com/en-us/library/ms188362.aspx)(4) is truest to what it represents, and allows for easy subnet mask-style querying. However, it requires conversion in and out if you are actually after a text representation. In that case, you may prefer a string format.

A little-used SQL Server function that might help if you are storing as a string is [`PARSENAME`](http://msdn.microsoft.com/en-us/library/ms188006.aspx), by the way. Not designed for IP addresses but perfectly suited to them. The call below will return '14':

```

SELECT PARSENAME('123.234.23.14', 1)

```

(numbering is right to left). | I normally just use varchar(15) for IPv4 addresses - but sorting them is a pain unless you pad zeros.

I've also stored them as an INT in the past. [`System.Net.IPAddress`](http://msdn.microsoft.com/en-us/library/system.net.ipaddress.aspx) has a [`GetAddressBytes`](http://msdn.microsoft.com/en-us/library/system.net.ipaddress.getaddressbytes.aspx) method that will return the IP address as an array of the 4 bytes that represent the IP address. You can use the following C# code to convert an [`IPAddress`](http://msdn.microsoft.com/en-us/library/system.net.ipaddress.aspx) to an `int`...

```

var ipAsInt = BitConverter.ToInt32(ip.GetAddressBytes(), 0);

```

I had used that because I had to do a lot of searching for dupe addresses, and wanted the indexes to be as small & quick as possible. Then to pull the address back out of the int and into an [`IPAddress`](http://msdn.microsoft.com/en-us/library/system.net.ipaddress.aspx) object in .net, use the [`GetBytes`](http://msdn.microsoft.com/en-us/library/system.bitconverter.getbytes.aspx) method on [`BitConverter`](http://msdn.microsoft.com/en-us/library/system.bitconverter.aspx) to get the int as a byte array. Pass that byte array to the [constructor](http://msdn.microsoft.com/en-us/library/t4k07yby.aspx) for [`IPAddress`](http://msdn.microsoft.com/en-us/library/system.net.ipaddress.aspx) that takes a byte array, and you end back up with the [`IPAddress`](http://msdn.microsoft.com/en-us/library/system.net.ipaddress.aspx) that you started with.

```

var myIp = new IPAddress(BitConverter.GetBytes(ipAsInt));

``` | What is the most appropriate data type for storing an IP address in SQL server? | [

"",

"sql",

"sql-server",

"types",

"ip-address",

"ipv4",

""

] |

After setting up a table model in Qt 4.4 like this:

```

QSqlTableModel *sqlmodel = new QSqlTableModel();

sqlmodel->setTable("Names");

sqlmodel->setEditStrategy(QSqlTableModel::OnFieldChange);

sqlmodel->select();

sqlmodel->removeColumn(0);

tableView->setModel(sqlmodel);

tableView->show();

```

the content is displayed properly, but editing is not possible, error:

```

QSqlQuery::value: not positioned on a valid record

``` | I can confirm that the bug exists exactly as you report it, in Qt 4.5.1, AND that the documentation, e.g. [here](https://doc.qt.io/qt-5/qsqltablemodel.html#details), still gives a wrong example (i.e. one including the `removeColumn` call).

As a work-around I've tried to write a slot connected to the `beforeUpdate` signal, with the idea of checking what's wrong with the QSqlRecord that's about to be updated in the DB and possibly fixing it, but I can't get that to work -- any calls to methods of that record parameter are crashing my toy-app with a BusError.

So I've given up on that idea and switched to what's no doubt the right way to do it (visibility should be determined by the view, not by the model, right?-): lose the `removeColumn` and in lieu of it call `tableView->setColumnHidden(0, true)` instead. This way the IDs are hidden and everything works.

So I think we can confirm there's a documentation error and open an issue about it in the Qt tracker, so it can be fixed in the next round of docs, right? | It seems that the cause of this was in line

```

sqlmodel->removeColumn(0);

```

After commenting it out, everything work perfectly.

Thus, I'll have to find another way not to show ID's in the table ;-)

**EDIT**

I've said "it seems", because in the example from "Foundations of Qt development" Johan Thelin also removed the first column. So, it would be nice if someone else also tries this and reports results. | Problem with QSqlTableModel -- no automatic updates | [

"",

"sql",

"database",

"qt",

"qt4",

""

] |

If a form is submitted but not by any specific button, such as

* by pressing `Enter`

* using `HTMLFormElement.submit()` in JS

how is a browser supposed to determine which of multiple submit buttons, if any, to use as the one pressed?

This is significant on two levels:

* calling an `onclick` event handler attached to a submit button

* the data sent back to the web server

My experiments so far have shown that:

* when pressing `Enter`, Firefox, Opera and Safari use the first submit button in the form

* when pressing `Enter`, IE uses either the first submit button or none at all depending on conditions I haven't been able to figure out

* all these browsers use none at all for a JS submit

What does the standard say?

If it would help, here's my test code (the PHP is relevant only to my method of testing, not to my question itself)

```

<!DOCTYPE html PUBLIC "-//W3C//DTD XHTML 1.0 Strict//EN"

"http://www.w3.org/TR/xhtml1/DTD/xhtml1-strict.dtd">

<html xmlns="http://www.w3.org/1999/xhtml" xml:lang="en" lang="en">

<head>

<meta http-equiv="Content-Type" content="text/html; charset=utf-8" />

<title>Test</title>

</head>

<body>

<h1>Get</h1>

<dl>

<?php foreach ($_GET as $k => $v) echo "<dt>$k</dt><dd>$v</dd>"; ?>

</dl>

<h1>Post</h1>

<dl>

<?php foreach ($_POST as $k => $v) echo "<dt>$k</dt><dd>$v</dd>"; ?>

</dl>

<form name="theForm" method="<?php echo isset($_GET['method']) ? $_GET['method'] : 'get'; ?>" action="<?php echo $_SERVER['SCRIPT_NAME']; ?>">

<input type="text" name="method" />

<input type="submit" name="action" value="Button 1" onclick="alert('Button 1'); return true" />

<input type="text" name="stuff" />

<input type="submit" name="action" value="Button 2" onclick="alert('Button 2'); return true" />

<input type="button" value="submit" onclick="document.theForm.submit();" />

</form>

</body></html>

``` | If you submit the form via JavaScript (i.e., `formElement.submit()` or anything equivalent), then *none* of the submit buttons are considered successful and none of their values are included in the submitted data. (Note that if you submit the form by using `submitElement.click()` then the submit that you had a reference to is considered active; this doesn't really fall under the remit of your question since here the submit button is unambiguous but I thought I'd include it for people who read the first part and wonder how to make a submit button successful via JavaScript form submission. Of course, the form's onsubmit handlers will still fire this way whereas they wouldn't via `form.submit()` so that's another kettle of fish...)

If the form is submitted by hitting Enter while in a non-textarea field, then it's actually down to the user agent to decide what it wants here. [The specifications](http://www.w3.org/TR/html401/interact/forms.html#submit-button) don't say anything about submitting a form using the `Enter` key while in a text entry field (if you tab to a button and activate it using space or whatever, then there's no problem as that specific submit button is unambiguously used). All it says is that a form must be submitted when a submit button is activated. It's not even a requirement that hitting `Enter` in e.g. a text input will submit the form.

I believe that Internet Explorer chooses the submit button that appears first in the source; I have a feeling that Firefox and Opera choose the button with the lowest tabindex, falling back to the first defined if nothing else is defined. There's also some complications regarding whether the submits have a non-default value attribute IIRC.

The point to take away is that there is no defined standard for what happens here and it's entirely at the whim of the browser - so as far as possible in whatever you're doing, try to avoid relying on any particular behaviour. If you really must know, you can probably find out the behaviour of the various browser versions, but when I investigated this a while back there were some quite convoluted conditions (which of course are subject to change with new browser versions) and I'd advise you to avoid it if possible! | HTML 4 does not make it explicit. HTML 5 [specifies that the first submit button must be the default](https://html.spec.whatwg.org/multipage/form-control-infrastructure.html#implicit-submission):

> 4.10.21.2 Implicit submission

>

> A `form` element's **default button** is the first [submit button](https://html.spec.whatwg.org/multipage/forms.html#concept-submit-button) in [tree order](https://dom.spec.whatwg.org/#concept-tree-order) whose [form owner](https://html.spec.whatwg.org/multipage/form-control-infrastructure.html#form-owner) is that `form` element.

>

> If the user agent supports letting the user submit a form implicitly

> (for example, on some platforms hitting the "enter" key while a text

> control is [focused](https://html.spec.whatwg.org/multipage/interaction.html#focused) implicitly submits the form), then doing so for a

> form, whose [default button](https://html.spec.whatwg.org/multipage/form-control-infrastructure.html#default-button) has [activation behavior](https://dom.spec.whatwg.org/#eventtarget-activation-behavior) and is not

> [disabled](https://html.spec.whatwg.org/multipage/form-control-infrastructure.html#concept-fe-disabled), must cause the user agent to [fire a `click` event](https://html.spec.whatwg.org/multipage/webappapis.html#fire-a-click-event) at that

> [default button](https://html.spec.whatwg.org/multipage/form-control-infrastructure.html#default-button).

If you want to influence which one is "first" while maintaining the visual order then there are a couple of approaches you could take.

An off-screen, unfocusable button.

```

.hidden-default {

position: absolute;

left: -9999px;

}

```

```

<form>

<input name="text">

<button name="submit" value="wanted" class="hidden-default" tabindex="-1"></button>

<button name="submit" value="not wanted">Not the default</button>

<button name="submit" value="wanted">Looks like the default</button>

</form>

```

Flexbox order:

```

.ordering {

display: inline-flex;

}

.ordering button {

order: 2;

}

.ordering button + button {

order: 1;

}

```

```

<form>

<input name="text">

<div class="ordering">

<button name="submit" value="wanted">First in document order</button>

<button name="submit" value="not wanted">Second in document order</button>

</div>

</form>

``` | How is the default submit button on an HTML form determined? | [

"",

"javascript",

"html",

"cross-browser",

"standards",

""

] |

I have the following code

```` ```

void reportResults()

{

wstring env(_wgetenv(L"ProgramFiles"));

env += L"\Internet Explorer\iexplore.exe";

wstringstream url;

url << "\"\"" << env.c_str() << "\" http://yahoo.com\"";

wchar_t arg[BUFSIZE];

url.get(arg, BUFSIZE);

wcout << arg << endl;

_wsystem(arg);

}

``` ````

Where arg is:

""C:\Program Files\Internet Explorer\iexplore.exe" <http://yahoo.com>"

The program functions as expected, launching IE and navigating to Yahoo, but the calling function (reportResults) never exits. How do I get the program to exit leaving the browser alive?

Thanks. | You want to use \_wspawn() instead of \_wsystem(). This will spawn a new process for the browser process. \_wsystem() blocks on the command that you create; this is why you're not getting back to your code. \_wspawn() creates a new, separate process, which should return to your code immediately. | The \_wsystem command will wait for the command in arg to return and returns the return value of the command. If you close the Internet Explorer window it will return command back to your program. | C++ system function hangs application | [

"",

"c++",

"process",

""

] |

i am using a ThreadPoolExecutor with a thread pool size of one to sequentially execute swing workers. I got a special case where an event arrives that creates a swing worker that does some client-server communication and after that updates the ui (in the done() method).

This works fine when the user fires (clicks on an item) some events but not if there occur many of them. But this happens so i need to cancel all currently running and scheduled workers. The problem is that the queue that is backing the ThreadPoolExecutor isnt aware of the SwingWorker cancellation process (at least it seems like that). So scheduled worker get cancelled but already running workers get not.

So i added a concurrent queue of type `<T extends SwingWorker>` that holds a reference of all workers as long as they are not cancelled and when a new event arrives it calls .cancel(true) on all SwingWorkers in the queue and submits the new SwingWorker to the ThreadPoolExecutor.

Summary: SwingWorkers are created and executed in a ThreadPoolExecutor with a single Thread. Only the worker that was submitted last should be running.

Are there any alternatives to solve this problem, or is it "ok" to do it like this?

Just curious... | One way to create a Single thread ThreadPoolExecutor that only executes last incoming Runnable is to subclass a suitable queue class and override all adding methods to clear the queue before adding the new runnable. Then set that queue as the ThreadPoolExecutor's work queue. | Why do you need a ThreadPoolExecutor to do this kind of job?

How many sources of different SwingWorkers you have? Because if the source is just one you should use a different approach.

For example you can define a class that handles one kind of working thread and it's linked to a single kind of item on which the user can fire actions and care inside that class the fact that a single instance of the thread should be running (for example using a singleton instance that is cleared upon finishing the task) | SwingWorker cancellation with ThreadPoolExecutor | [

"",

"java",

"multithreading",

"swing",

"threadpool",

""

] |

Is there a method built into .NET that can write all the properties and such of an object to the console?

One could make use of reflection of course, but I'm curious if this already exists...especially since you can do it in Visual Studio in the Immediate Window. There you can type an object name (while in debug mode), press enter, and it is printed fairly prettily with all its stuff.

Does a method like this exist? | The `ObjectDumper` class has been known to do that. I've never confirmed, but I've always suspected that the immediate window uses that.

EDIT: I just realized, that the code for `ObjectDumper` is actually on your machine. Go to:

```

C:/Program Files/Microsoft Visual Studio 9.0/Samples/1033/CSharpSamples.zip

```

This will unzip to a folder called *LinqSamples*. In there, there's a project called *ObjectDumper*. Use that. | You can use the [`TypeDescriptor`](https://learn.microsoft.com/en-us/dotnet/api/system.componentmodel.typedescriptor) class to do this:

```

foreach(PropertyDescriptor descriptor in TypeDescriptor.GetProperties(obj))

{

string name = descriptor.Name;

object value = descriptor.GetValue(obj);

Console.WriteLine("{0}={1}", name, value);

}

```

`TypeDescriptor` lives in the `System.ComponentModel` namespace and is the API that Visual Studio uses to display your object in its property browser. It's ultimately based on reflection (as any solution would be), but it provides a pretty good level of abstraction from the reflection API. | C#: Printing all properties of an object | [

"",

"c#",

"object",

"serialization",

"console",

""

] |

Hey people... trying to get my mocking sorted with asp.net MVC.

I've found this example on the net using Moq, basically I'm understanding it to say: when ApplyAppPathModifier is called, return the value that was passed to it.

I cant figure out how to do this in Rhino Mocks, any thoughts?

```

var response = new Mock<HttpResponseBase>();

response.Expect(res => res.ApplyAppPathModifier(It.IsAny<string>()))

.Returns((string virtualPath) => virtualPath);

``` | As I mentioned above, sods law, once you post for help you find it 5 min later (even after searching for a while). Anyway for the benefit of others this works:

```

SetupResult

.For<string>(response.ApplyAppPathModifier(Arg<String>.Is.Anything)).IgnoreArguments()

.Do((Func<string, string>)((arg) => { return arg; }));

``` | If you are using the stub method as opposed to the SetupResult method, then the syntax for this is below:

```

response.Stub(res => res.ApplyAppPathModifier(Arg<String>.Is.Anything))

.Do(new Func<string, string>(s => s));

``` | What would this Moq code look like in RhinoMocks | [

"",

"c#",

"asp.net-mvc",

"mocking",

"rhino-mocks",

"moq",

""

] |

I have the following situation in JavaScript:

```

<a onclick="handleClick(this, {onSuccess : 'function() { alert(\'test\') }'});">Click</a>

```

The `handleClick` function receives the second argument as a object with a `onSuccess` property containing the function definition...

**How do I call the `onSuccess` function (which is stored as string) -and- pass `otherObject` to that function? (jQuery solution also fine...)?**

This is what I've tried so far...

```

function handleClick(element, options, otherObject) {

options.onSuccess = 'function() {alert(\'test\')}';

options.onSuccess(otherObject); //DOES NOT WORK

eval(options.onSuccess)(otherObject); //DOES NOT WORK

}

``` | You really don't need to do this. Pass the function around as a string, i mean. JavaScript functions are first-class objects, and can be passed around directly:

```

<a onclick="handleClick(this, {onSuccess : function(obj) { alert(obj) }}, 'test');">

Click

</a>

```

...

```

function handleClick(element, options, otherObject) {

options.onSuccess(otherObject); // works...

}

```

But if you *really* want to do it your way, then [cloudhead's solution](https://stackoverflow.com/questions/984138/javascript-execute-anonymous-function-stored-in-string-with-argument/984171#984171) will do just fine. | Try this:

```

options.onSuccess = eval('function() {alert(\'test\')}');

options.onSuccess(otherObject);

``` | How can I call an anonymous function (stored in string) with an argument in JavaScript? | [

"",

"javascript",

"jquery",

""

] |

> **Possible Duplicate:**

> [Detect file encoding in PHP](https://stackoverflow.com/questions/505562/detect-file-encoding-in-php)

How can I figure out with PHP what file encoding a file has? | Detecting the encoding is really hard for all 8 bit character sets but utf-8 (because not every 8 bit byte sequence is valid utf-8) and usually requires semantic knowledge of the text for which the encoding is to be detected.

Think of it: Any particular plain text information is just a bunch of bytes with no encoding information associated. If you look at any particular byte, it could mean *anything*, so to have a chance at detecting the encoding, you would have to look at that byte in context of other bytes and try some heuristics based on possible *language* combination.

For 8bit character sets you can never be sure though.

A demonstration of heuristics going wrong is here for example:

<http://www.hoax-slayer.com/bush-hid-the-facts-notepad.html>

Some 16bit sets, you have a chance at detecting because they might include a byte order mark or have every second byte set to 0.

If you just want to detect UTF-8, you can either use mb\_detect\_encoding as already explained, or you can use this handy little function:

```

function isUTF8($string){

return preg_match('%(?:

[\xC2-\xDF][\x80-\xBF] # non-overlong 2-byte

|\xE0[\xA0-\xBF][\x80-\xBF] # excluding overlongs

|[\xE1-\xEC\xEE\xEF][\x80-\xBF]{2} # straight 3-byte

|\xED[\x80-\x9F][\x80-\xBF] # excluding surrogates

|\xF0[\x90-\xBF][\x80-\xBF]{2} # planes 1-3

|[\xF1-\xF3][\x80-\xBF]{3} # planes 4-15

|\xF4[\x80-\x8F][\x80-\xBF]{2} # plane 16

)+%xs', $string);

}

``` | mb\_detect\_encoding should be able to do the job.

<http://us.php.net/manual/en/function.mb-detect-encoding.php>

In it's default setup, it'll only detect ASCII, UTF-8, and a few Japanese JIS variants. It can be configured to detect more encodings, if you specify them manually. If a file is both ASCII and UTF-8, it'll return UTF-8. | Get file encoding | [

"",

"php",

"encoding",

"utf-8",

""

] |

```

<body>

<div> <?= $_POST['msg'] ?> </div>

<form id="frm" method="post">

<input type="hidden" name='msg' value='{"field0": "Im a string", "field1": 84, "field3": "so am I"}' />

<input type="submit" value="test" />

</form>

</body>

```

When the form is posted, the following string is displayed between the div tags.

> {\"field0\": \"Im a string\",

> \"field1\": 84, \"field3\": \"so am

> I\"}

Why are the escape characters being added?

Are they being added by the PHP server or the web client?

Can I do anything to prevent this?

Someone already mentioned the PHP function stripslashes. I'm using it for now but I want to get rid of the slashes all together. | Check whether your PHP configuration has [`magic_quotes_gpc`](http://www.php.net/manual/en/function.get-magic-quotes-gpc.php) activated, in such case the PHP server automatically adds slashes to GET/POST/cookie values... | I believe the problem is just one of escaping done by the tools you are using to output the string. For example:

```

var msg = dojo.toJson({field1: 'string', field2: 84, field3: 'another string'});

alert(msg);

```

will show the double quotes as unescaped. Similarly, running your first example while the browser is hooked up to a proxy like Charles, shows the double qoutes as unescaped.

So I believe this is just an auto-escape that Firebug/PHP does when showing you strings. | Why are escape characters being added to the value of the hidden input | [

"",

"php",

"html",

"json",

""

] |

Is there any way to prevent the `gethostbyname()` function not to read the nscd cache on Linux? | Not really an answer, but use `getaddrinfo(3)` instead :)

As far as `nscd` is concerned, here's from the `nscd.conf(5)` manual page:

```

enable-cache service <yes|no>

Enables or disables the specified service cache.

```

You'll have to find out what the correct `service` for DNS is. | Call `res_init()` before `gethostbyname()`. It seems ok. | Forcing non-cached gethostbyname() | [

"",

"c++",

"linux",

"caching",

""

] |

I'm working on a C# object copy constructor, part of which involves copying the contents of a KeyedCollection into a new KeyedCollection. This is what I have implemented currently:

```

class MyKeyedCollection : KeyedCollection<uint, DataObject>

{

protected override uint GetKeyForItem( DataObject do )

{

return do.Key;

}

}

class MyObject

{

private MyKeyedCollection kc;

// Copy constructor

public MyObject( MyObject that )

{

this.kc = new MyKeyedCollection();

foreach ( DataObject do in that.kc )

{

this.kc.Add( do );

}

}

}

```

This does the correct thing -- the collection is copied as expected. The problem is that it's also a bit slow. I'm guessing that the problem is that each .Add(do) requires a uniqueness check on the existing data, even though I know it's coming from a source that guarantees uniqueness.

How can I make this copy constructor as fast as possible? | Ok, how about a solution with a little unsafe code? Just for fun?

WARNINGS! This is coded for windows OS and 32 bit, but there is no reason this technique can't be modified to work for 64 bit or other OS's. Finally, I tested this on 3.5 framework. I think it will work on 2.0 and 3.0 but I didn't test. If Redmond changes the number, type, or order of instance variables between the revisions or patches, then this won't work.

But this is fast!!!

This hacks into the KeyedCollection, its underlying List<> and Dictionary<> and copies all the internal data and properties. Its a hack because to do this you have to access private internal variables. I basicly made some structures for KeyedCollection, List, and Dictionary that are those classes' private variables in the right order. I simply point an these structures to where the classes are and voila...you can mess with the private variables!! I used the RedGate reflector to see what all the code was doing so I could figure out what to copy. Then its just a matter of copying some value types and using Array.Copy in a couple places.

The result is **CopyKeyedCollection**<,>, **CopyDict**<> and **CopyList**<>. You get a function that can quick copy a Dictionary<> and one that can quick copy a List<> for free!

One thing I noticed when working this all out was that KeyedCollection contains a list and a dictionary all pointing to the same data! I thought this was wasteful at first, but commentors pointed out KeyedCollection is expressly for the case where you need an ordered list and a dictionary at the same time.

Anyway, i'm an assembly/c programmer who was forced to use vb for awhile, so I am not afraid of doing hacks like this. I'm new to C#, so tell me if I have violated any rules or if you think this is cool.

By the way, I researched the garbage collection, and this should work just fine with the GC. I think it would be prudent if I added a little code to fix some memory for for the ms we spend copying. You guys tell me. I'll add some comments if anyone requests em.

```

using System;

using System.Collections.Generic;

using System.Text;

using System.Runtime.InteropServices;

using System.Collections.ObjectModel;

using System.Reflection;

namespace CopyCollection {

class CFoo {

public int Key;

public string Name;

}

class MyKeyedCollection : KeyedCollection<int, CFoo> {

public MyKeyedCollection() : base(null, 10) { }

protected override int GetKeyForItem(CFoo foo) {

return foo.Key;

}

}

class MyObject {

public MyKeyedCollection kc;

// Copy constructor

public MyObject(MyObject that) {

this.kc = new MyKeyedCollection();

if (that != null) {

CollectionTools.CopyKeyedCollection<int, CFoo>(that.kc, this.kc);

}

}

}

class Program {

static void Main(string[] args) {

MyObject mobj1 = new MyObject(null);

for (int i = 0; i < 7; ++i)

mobj1.kc.Add(new CFoo() { Key = i, Name = i.ToString() });

// Copy mobj1

MyObject mobj2 = new MyObject(mobj1);

// add a bunch more items to mobj2

for (int i = 8; i < 712324; ++i)

mobj2.kc.Add(new CFoo() { Key = i, Name = i.ToString() });

// copy mobj2

MyObject mobj3 = new MyObject(mobj2);

// put a breakpoint after here, and look at mobj's and see that it worked!

// you can delete stuff out of mobj1 or mobj2 and see the items still in mobj3,

}

}

public static class CollectionTools {

public unsafe static KeyedCollection<TKey, TValue> CopyKeyedCollection<TKey, TValue>(

KeyedCollection<TKey, TValue> src,

KeyedCollection<TKey, TValue> dst) {

object osrc = src;

// pointer to a structure that is a template for the instance variables

// of KeyedCollection<TKey, TValue>

TKeyedCollection* psrc = (TKeyedCollection*)(*((int*)&psrc + 1));

object odst = dst;

TKeyedCollection* pdst = (TKeyedCollection*)(*((int*)&pdst + 1));

object srcObj = null;

object dstObj = null;

int* i = (int*)&i; // helps me find the stack

i[2] = (int)psrc->_01_items;

dstObj = CopyList<TValue>(srcObj as List<TValue>);

pdst->_01_items = (uint)i[1];

// there is no dictionary if the # items < threshold

if (psrc->_04_dict != 0) {

i[2] = (int)psrc->_04_dict;

dstObj = CopyDict<TKey, TValue>(srcObj as Dictionary<TKey, TValue>);

pdst->_04_dict = (uint)i[1];

}

pdst->_03_comparer = psrc->_03_comparer;

pdst->_05_keyCount = psrc->_05_keyCount;

pdst->_06_threshold = psrc->_06_threshold;

return dst;

}

public unsafe static List<TValue> CopyList<TValue>(

List<TValue> src) {

object osrc = src;

// pointer to a structure that is a template for

// the instance variables of List<>

TList* psrc = (TList*)(*((int*)&psrc + 1));

object srcArray = null;

object dstArray = null;

int* i = (int*)&i; // helps me find things on stack

i[2] = (int)psrc->_01_items;

int capacity = (srcArray as Array).Length;

List<TValue> dst = new List<TValue>(capacity);

TList* pdst = (TList*)(*((int*)&pdst + 1));

i[1] = (int)pdst->_01_items;

Array.Copy(srcArray as Array, dstArray as Array, capacity);

pdst->_03_size = psrc->_03_size;

return dst;

}

public unsafe static Dictionary<TKey, TValue> CopyDict<TKey, TValue>(

Dictionary<TKey, TValue> src) {

object osrc = src;

// pointer to a structure that is a template for the instance

// variables of Dictionary<TKey, TValue>

TDictionary* psrc = (TDictionary*)(*((int*)&psrc + 1));

object srcArray = null;

object dstArray = null;

int* i = (int*)&i; // helps me find the stack

i[2] = (int)psrc->_01_buckets;

int capacity = (srcArray as Array).Length;

Dictionary<TKey, TValue> dst = new Dictionary<TKey, TValue>(capacity);

TDictionary* pdst = (TDictionary*)(*((int*)&pdst + 1));

i[1] = (int)pdst->_01_buckets;

Array.Copy(srcArray as Array, dstArray as Array, capacity);

i[2] = (int)psrc->_02_entries;

i[1] = (int)pdst->_02_entries;

Array.Copy(srcArray as Array, dstArray as Array, capacity);

pdst->_03_comparer = psrc->_03_comparer;

pdst->_04_m_siInfo = psrc->_04_m_siInfo;

pdst->_08_count = psrc->_08_count;

pdst->_10_freeList = psrc->_10_freeList;

pdst->_11_freeCount = psrc->_11_freeCount;

return dst;

}

// these are the structs that map to the private variables in the classes

// i use uint for classes, since they are just pointers

// statics and constants are not in the instance data.

// I used the memory dump of visual studio to get these mapped right.

// everything with a * I copy. I Used RedGate reflector to look through all

// the code to decide what needed to be copied.

struct TKeyedCollection {

public uint _00_MethodInfo; // pointer to cool type info

// Collection

public uint _01_items; // * IList<T>

public uint _02_syncRoot; // object

// KeyedCollection

public uint _03_comparer; // IEqualityComparer<TKey>

public uint _04_dict; // * Dictionary<TKey, TItem>

public int _05_keyCount; // *

public int _06_threshold; // *

// const int defaultThreshold = 0;

}

struct TList {

public uint _00_MethodInfo; //

public uint _01_items; // * T[]

public uint _02_syncRoot; // object

public int _03_size; // *

public int _04_version; //

}

struct TDictionary {

// Fields

public uint _00_MethodInfo; //

public uint _01_buckets; // * int[]

public uint _02_entries; // * Entry<TKey, TValue>[]

public uint _03_comparer; // IEqualityComparer<TKey>

public uint _04_m_siInfo; // SerializationInfo

public uint _05__syncRoot; // object

public uint _06_keys; // KeyCollection<TKey, TValue>

public uint _07_values; // ValueCollection<TKey, TValue>

public int _08_count; // *

public int _09_version;

public int _10_freeList; // *

public int _11_freeCount; // *

}

}

}

``` | I just ran a test adding 10,000,000 items and to various collections, and the KeyedCollection took about 7x as long as a list, but only about 50% longer than a Dictionary object. Considering that the KeyedCollection is a combination of these two, the performance of Add is perfectly reasonable, and the duplicate-key check it runs is clearly not taking **that** much time. You might want to run a similar test on your KeyedCollection, and if it's going significantly slower, you can start looking elsewhere (check your `MyObject.Key` getter to make sure you're not getting overhead from that).

---

## Old Response

Have you tried:

```

this.kc = that.kc.MemberwiseClone() as MyKeyedCollection;

```

More info on MemberwiseClone [here](http://msdn.microsoft.com/en-us/library/system.object.memberwiseclone(VS.80).aspx). | Fastest way to copy a KeyedCollection | [

"",

"c#",

".net",

"optimization",

"collections",

"keyedcollection",

""

] |

Let's say I have a class:

```

class String

{

public:

String(char *str);

};

```

And two functions:

```

void DoSomethingByVal(String Str);

void DoSomethingByRef(String &Str);

```

If I call DoSomethingByVal like this:

```

DoSomethingByVal("My string");

```

the compiler figures out that it should create a temporary String object and call the char\* constructor.

However, if I try to use DoSomethingByRef the same way, I get a "Can't convert parameter from 'char \*' to 'String &'" error.

Instead, I have to explicitly create an instance:

```

DoSomethingByRef(String("My string"));

```

which can get preety annoying.

Is there any way to avoid this? | You need to pass by const reference:

For:

```

void DoSomethingByVal(String Str);

```

In this situation the compiler first creates a temporary variable. Then the temporary variable is copy constructed into the parameter.

For:

```

void DoSomethingByRef(String const& Str);

```

In this situation the compiler creates a temporary variable. But temporary variables can not be bound to a reference they can only be bound to a const reference so your original function can not be called. Note the std::string objects constructor takes a const reference is the parameter to the copy constructor and that is why the first version works as the temporary is bound to the const reference parameter of the copy constructor only. | Hmm, I'm not sure if

```

void DoSomethingByRef(String &Str);

DoSomethingByRef(String("My string"));

```

would actually compile. An rvalue can't bind to a reference to non-const (because a ref to non-const is stating you will modify the value, and it wouldn't make sense to modify a temporary). Are you sure you aren't doing:

```

String str("My string");

DoSomethingByRef(str);

```

If you want DoSomethingByVal to take an rvalue, the parameter has to be a reference to const:

```

void DoSomethingByRef(const String &Str);

``` | How to avoid explicitly calling a constructor when passing temporary object by reference in C++? | [

"",

"c++",

"pass-by-reference",

""

] |

I'm busy with programming a class that creates an index out of a text-file ASCII/BINARY.

My problem is that I don't really know how to start. I already had some tries but none really worked well for me.

I do NOT need to find the address of the file via the MFT. Just loading the file and finding stuff much faster by searching for the key in the index-file and going in the text-file to the address it shows.

The index-file should be built up as follows:

```

KEY ADDRESS

1 0xABCDEF

2 0xFEDCBA

. .

. .

```

We have a text-file with the following example value:

```

1, 8752 FW,

+++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

+++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++,

******************************************************************************,

------------------------------------------------------------------------------;

```

I hope that this explains my question a bit better.

Thanks! | It seems to me that all your class needs to do is store an array of pointers or file start offsets to the key locations in the file.

It really depends on what your Key locations represent.

I would suggest that you access the file through your class using some public methods. You can then more easily tie in Key locations with the data written.

For example, your Key locations may be where each new data block written into the file starts from. e.g. first block 1000 bytes, key location 0; second block 2500 bytes, key location 1000; third block 550 bytes; key location 3500; the next block will be 4050 all assuming that 0 is the first byte.

Store the Key values in a variable length array and then you can easily retrieve the starting point for a data block.

If your Key point is signified by some key character then you can use the same class, but with a slight change to store where the Key value is stored. The simplest way is to step through the data until the key character is located, counting the number of characters checked as you go. The count is then used to produce your key location. | Your code snippet isn't so much of an idea as it is the functionality you wish to have in the end.

Recognize that "indexing" merely means "remembering" where things are located. You can accomplish this using any data structure you wish... B-Tree, Red/Black tree, BST, or more advanced structures like suffix trees/suffix arrays.

I recommend you look into such data structures.

edit:

with the new information, I would suggest making your own key/value lookup. Build an array of keys, and associate their values somehow. this may mean building a class or struct that contains both the key and the value, or instead contains the key and a pointer to a struct or class with a value, etc.

Once you have done this, sort the key array. Now, you have the ability to do a binary search on the keys to find the appropriate value for a given key.

You could build a hash table in a similar manner. you could build a BST or similar structure like i mentioned earlier. | Making an index-creating class | [

"",

"c++",

"binary",

"indexing",

"ascii",

""

] |

I'm working on a web project that is multilingual. For example, one portion of the project involves some custom google mapping that utilizes a client-side interace using jquery/.net to add points to a map and save them to the database.

There will be some validation and other informational messaging (ex. 'Please add at least one point to the map') that will have to be localized.

The only options I can think of right now are:

1. Use a code render block in the javascript to pull in the localized message from a resource file

2. Use hidden fields with meta:resourcekey to automatically grab the proper localized message from the resource file using the current culture, and get the .val() in jquery when necessary.

3. Make a webservice call to get the correct message by key/language each time a message is required.

Any thoughts, experiences?

EDIT:

I'd prefer to use the .net resource files to keep things consistent with the rest of the application. | Ok, I built a generic web service to allow me to grab resources and return them in a dictionary (probably a better way to convert to the dictionary)...

```

<WebMethod()> _

<ScriptMethod(ResponseFormat:=ResponseFormat.Json, UseHttpGet:=False, XmlSerializeString:=True)> _

Public Function GetResources(ByVal resourceFileName As String, ByVal culture As String) As Dictionary(Of String, String)

Dim reader As New System.Resources.ResXResourceReader(String.Format(Server.MapPath("/App_GlobalResources/{0}.{1}.resx"), resourceFileName, culture))

If reader IsNot Nothing Then

Dim d As New Dictionary(Of String, String)

Dim enumerator As System.Collections.IDictionaryEnumerator = reader.GetEnumerator()

While enumerator.MoveNext

d.Add(enumerator.Key, enumerator.Value)

End While

Return d

End If

Return Nothing

End Function

```

Then, I can grab this json result and assign it to a local variable:

```

// load resources

$.ajax({

type: "POST",

url: "mapping.asmx/GetResources",

contentType: "application/json; charset=utf-8",

dataType: "json",

data: '{"resourceFileName":"common","culture":"en-CA"}',

cache: true,

async: false,

success: function(data) {

localizations = data.d;

}

});

```

Then, you can grab your value from the local variable like so:

localizations.Key1

The only catch here is that if you want to assign the localizations to a global variable you have to run it async=false, otherwise you won't have the translations available when you need them. I'm trying to use 'get' so I can cache the response, but it's not working for me. See this question:

[Can't return Dictionary(Of String, String) via GET ajax web request, works with POST](https://stackoverflow.com/questions/1033305/cant-return-dictionaryof-string-string-via-get-ajax-web-request-works-with-p) | I've done this before where there are hidden fields that have their values set on Page\_Init() and Page\_Load() with the appropriate values from the global and local resource files. The javascript code would then work with those hidden values.

**Code Behind**

```

this.hfInvalidCheckDateMessage.Value = this.GetLocalResourceObject("DatesRequired").ToString();

```

**Page.aspx**

```

$('#<%= btnSearch.ClientID %>').click(function(e) {

if (!RequiredFieldCheck()) {

var message = $("#<%= hfInvalidCheckDateMessage.ClientID %>").val();

alert(message);

e.preventDefault();

$("#<%= txtAuthDateFrom.ClientID %>").focus();

}

});

```

***Disclaimer***... Not sure if this was the best route or not, but it does seem to work well. | Localize javascript messages and validation text | [

"",

"asp.net",

"javascript",

"jquery",

"localization",

""

] |

Currently many of the links on our pages get changed to `href="javascript:void(0);"` on pageload, but if you're impatient (as most users are) you can click the links before the page loads and land on the clunkier, non-javascript, non-ajax pages.

I'm thinking about progressive enhancement a lot these days, and I predict the majority of our users will have javascript enabled (no data yet, we havn't yet launched alpha)

**Is it a bad idea to generate some indicator that a user has javascript enabled for the session, and then serve pages that assume javascript? (ie. have the server put `href="javascript:void(0);"` from the start)** | Why not just do this?

```

<a href="oldversion.htm" onclick="...something useful......; return false;">link</a>

```

`return false` tells the browser not to carry on to the url in the href.