file_name large_stringlengths 4 140 | prefix large_stringlengths 0 39k | suffix large_stringlengths 0 36.1k | middle large_stringlengths 0 29.4k | fim_type large_stringclasses 4

values |

|---|---|---|---|---|

main.rs | // Copyright 2020 Xavier Gillard

//

// Permission is hereby granted, free of charge, to any person obtaining a copy of

// this software and associated documentation files (the "Software"), to deal in

// the Software without restriction, including without limitation the rights to

// use, copy, modify, merge, publish, di... | (&self, variable: Variable, state: &Self::State, f: &mut dyn DecisionCallback) {

if state.contains(variable.id()) {

f.apply(Decision{variable, value: YES});

f.apply(Decision{variable, value: NO });

} else {

f.apply(Decision{variable, value: NO });

}

}

... | for_each_in_domain | identifier_name |

main.rs | use clap::Clap;

use itertools::Itertools;

use num::integer::gcd;

use std::collections::{HashMap, HashSet};

use std::fmt;

use std::sync::atomic::{AtomicBool, Ordering};

static MAKE_REPORT: AtomicBool = AtomicBool::new(false);

macro_rules! write_report(

($($args:tt)*) => {

if MAKE_REPORT.load(Ordering::SeqC... | }

}

writeln_report!();

writeln_report!(r"その上で$a \leqq c, c > 0$となるような$a, c$を求める.");

writeln_report!(r"\begin{{itemize}}");

// 条件を満たす a, c を求める.

let mut res = Vec::new();

for b in bs {

let do_report = b >= 0;

if do_report {

writeln_report!(

... | if has_zero { "0$, " } else { "$" },

nonzero.format(r"$, $\pm ")

); | random_line_split |

main.rs | use clap::Clap;

use itertools::Itertools;

use num::integer::gcd;

use std::collections::{HashMap, HashSet};

use std::fmt;

use std::sync::atomic::{AtomicBool, Ordering};

static MAKE_REPORT: AtomicBool = AtomicBool::new(false);

macro_rules! write_report(

($($args:tt)*) => {

if MAKE_REPORT.load(Ordering::SeqC... | a,

c

);

return true;

}

let left_failure = if !(-a < b) {

format!(r"$-a < b$について${} \not< {}$", -a, b)

} else if !(b <= a) {

format!(r"$b \leqq a$について${} \not\leqq {}$", b, a)

} else if !(a < c) {

format!(r"$a < ... | writeln_report!(

r"これは右側の不等式$0 \leqq {} \leqq {} = {}$満たす.",

b,

| conditional_block |

main.rs | use clap::Clap;

use itertools::Itertools;

use num::integer::gcd;

use std::collections::{HashMap, HashSet};

use std::fmt;

use std::sync::atomic::{AtomicBool, Ordering};

static MAKE_REPORT: AtomicBool = AtomicBool::new(false);

macro_rules! write_report(

($($args:tt)*) => {

if MAKE_REPORT.load(Ordering::SeqC... | for (a, b, _) in res {

if !first {

write_report!(", ");

}

first = false;

if b % 2 == 0 && disc % 4 == 0 {

if b == 0 {

write_report!(r"$\left({}, \sqrt{{ {} }}\right)$", a, disc / 4);

} else {

... | rue;

| identifier_name |

main.rs | use clap::Clap;

use itertools::Itertools;

use num::integer::gcd;

use std::collections::{HashMap, HashSet};

use std::fmt;

use std::sync::atomic::{AtomicBool, Ordering};

static MAKE_REPORT: AtomicBool = AtomicBool::new(false);

macro_rules! write_report(

($($args:tt)*) => {

if MAKE_REPORT.load(Ordering::SeqC... | t) = ", int, frac);

frac = frac.invert();

writeln_report!(r"{} + \frac{{ 1 }}{{ {} }}. \]", int, frac);

if notfound.contains(&frac) {

writeln_report!(

"${}$は${:?}$に対応するので,${:?}$は除く.",

frac,

map[&frac],

... | b$は{}であるから,",

disc,

if disc % 2 == 0 { "偶数" } else { "奇数" }

);

let bs = ((minb + 1)..0).filter(|x| x.abs() % 2 == disc % 2);

if bs.clone().collect_vec().is_empty() {

writeln_report!(r"条件を満たす$b$はない.");

return Err("no cands".to_string());

}

writeln_report!(r"条件を満たす$b$は... | identifier_body |

csr.rs | /*

Copyright 2020 Brandon Lucia <blucia@gmail.com>

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing... |

}

/// Take an edge list in and produce a CSR out

/// (u,v)

pub fn new(numv: usize, ref el: Vec<(usize, usize)>) -> CSR {

const NUMCHUNKS: usize = 16;

let chunksz: usize = if numv > NUMCHUNKS {

numv / NUMCHUNKS

} else {

1

};

/*TODO: Para... |

/*return the graph, g*/

g | random_line_split |

csr.rs | /*

Copyright 2020 Brandon Lucia <blucia@gmail.com>

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing... | {

v: usize,

e: usize,

vtxprop: Vec<f64>,

offsets: Vec<usize>,

neighbs: Vec<usize>,

}

impl CSR {

pub fn get_vtxprop(&self) -> &[f64] {

&self.vtxprop

}

pub fn get_mut_vtxprop(&mut self) -> &mut [f64] {

&mut self.vtxprop

}

pub fn get_v(&self) -> usize {

s... | CSR | identifier_name |

csr.rs | /*

Copyright 2020 Brandon Lucia <blucia@gmail.com>

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing... |

pub fn get_v(&self) -> usize {

self.v

}

pub fn get_e(&self) -> usize {

self.e

}

pub fn get_offsets(&self) -> &Vec<usize> {

&self.offsets

}

pub fn get_neighbs(&self) -> &[usize] {

&self.neighbs

}

/// Build a random edge list

/// This method re... | {

&mut self.vtxprop

} | identifier_body |

main.rs | #[macro_use]

extern crate clap;

extern crate ansi_term;

extern crate atty;

extern crate regex;

extern crate ignore;

extern crate num_cpus;

pub mod lscolors;

pub mod fshelper;

mod app;

use std::env;

use std::error::Error;

use std::io::Write;

use std::ops::Deref;

#[cfg(unix)]

use std::os::unix::fs::PermissionsExt;

use ... |

let r = write!(&mut std::io::stdout(), "{}{}{}", prefix, path_str, separator);

if r.is_err() {

// Probably a broken pipe. Exit gracefully.

process::exit(0);

}

}

}

/// Recursively scan the given search path and search for files / pathnames matching the pattern.

fn s... | random_line_split | |

main.rs | #[macro_use]

extern crate clap;

extern crate ansi_term;

extern crate atty;

extern crate regex;

extern crate ignore;

extern crate num_cpus;

pub mod lscolors;

pub mod fshelper;

mod app;

use std::env;

use std::error::Error;

use std::io::Write;

use std::ops::Deref;

#[cfg(unix)]

use std::os::unix::fs::PermissionsExt;

use ... | else if is_directory {

&ls_colors.directory

} else if is_executable(metadata.as_ref()) {

&ls_colors.executable

} else {

// Look up file name

let o_style =

component_path.file_name()

... | {

&ls_colors.symlink

} | conditional_block |

main.rs | #[macro_use]

extern crate clap;

extern crate ansi_term;

extern crate atty;

extern crate regex;

extern crate ignore;

extern crate num_cpus;

pub mod lscolors;

pub mod fshelper;

mod app;

use std::env;

use std::error::Error;

use std::io::Write;

use std::ops::Deref;

#[cfg(unix)]

use std::os::unix::fs::PermissionsExt;

use ... | (root: &Path, pattern: Arc<Regex>, base: &Path, config: Arc<FdOptions>) {

let (tx, rx) = channel();

let walker = WalkBuilder::new(root)

.hidden(config.ignore_hidden)

.ignore(config.read_ignore)

.git_ignore(config.read_ignore)

.... | scan | identifier_name |

doc_upsert.rs | //! The `doc upsert` command performs a KV upsert operation.

use super::util::convert_nu_value_to_json_value;

use crate::cli::error::{client_error_to_shell_error, serialize_error};

use crate::cli::util::{

cluster_identifiers_from, get_active_cluster, namespace_from_args, NuValueMap,

};

use crate::client::{ClientEr... | }

if let Some(i) = id {

if let Some(c) = content {

return Some((i, c));

}

}

}

None

});

let mut all_items = vec![];

for item in filtered.chain(input_args) {

let value =

serde_json::to_... | random_line_split | |

doc_upsert.rs | //! The `doc upsert` command performs a KV upsert operation.

use super::util::convert_nu_value_to_json_value;

use crate::cli::error::{client_error_to_shell_error, serialize_error};

use crate::cli::util::{

cluster_identifiers_from, get_active_cluster, namespace_from_args, NuValueMap,

};

use crate::client::{ClientEr... | (

state: Arc<Mutex<State>>,

engine_state: &EngineState,

stack: &mut Stack,

call: &Call,

input: PipelineData,

) -> Result<PipelineData, ShellError> {

let results = run_kv_store_ops(state, engine_state, stack, call, input, build_req)?;

Ok(Value::List {

vals: results,

span: cal... | run_upsert | identifier_name |

doc_upsert.rs | //! The `doc upsert` command performs a KV upsert operation.

use super::util::convert_nu_value_to_json_value;

use crate::cli::error::{client_error_to_shell_error, serialize_error};

use crate::cli::util::{

cluster_identifiers_from, get_active_cluster, namespace_from_args, NuValueMap,

};

use crate::client::{ClientEr... |

fn run_upsert(

state: Arc<Mutex<State>>,

engine_state: &EngineState,

stack: &mut Stack,

call: &Call,

input: PipelineData,

) -> Result<PipelineData, ShellError> {

let results = run_kv_store_ops(state, engine_state, stack, call, input, build_req)?;

Ok(Value::List {

vals: results,

... | {

KeyValueRequest::Set { key, value, expiry }

} | identifier_body |

doc_upsert.rs | //! The `doc upsert` command performs a KV upsert operation.

use super::util::convert_nu_value_to_json_value;

use crate::cli::error::{client_error_to_shell_error, serialize_error};

use crate::cli::util::{

cluster_identifiers_from, get_active_cluster, namespace_from_args, NuValueMap,

};

use crate::client::{ClientEr... |

if k.clone() == content_column {

content = convert_nu_value_to_json_value(&v, span).ok();

}

}

if let Some(i) = id {

if let Some(c) = content {

return Some((i, c));

}

}

}

... | {

id = v.as_string().ok();

} | conditional_block |

pipeline.fromIlastik.py | #!/usr/bin/env python

####################################################################################################

# Load the necessary libraries

###################################################################################################

import networkx as nx

import numpy as np

from scipy import spars... |

print('Topological graph ready!')

print('...the graph has '+str(A.shape[0])+' nodes')

####################################################################################################

# Select the morphological features,

# and set the min number of nodes per subgraph

# Features list:

# fov_name x_centroid y_... | random_line_split | |

pipeline.fromIlastik.py | #!/usr/bin/env python

####################################################################################################

# Load the necessary libraries

###################################################################################################

import networkx as nx

import numpy as np

from scipy import spars... |

else:

print('The graph does not exists yet and I am going to create one...')

pos = np.loadtxt(filename, delimiter="\t",skiprows=True,usecols=(1,2))

A = space2graph(pos,nn) # create the topology graph

sparse.save_npz(path, A)

G = nx.from_scipy_sparse_matrix(A, edge_attribute='weight')

d = g... | print('The graph exists already and I am now loading it...')

A = sparse.load_npz(path)

pos = np.loadtxt(filename, delimiter="\t",skiprows=True,usecols=(1,2)) # chose x and y and do not consider header

G = nx.read_gpickle(os.path.join(dirname, basename_graph) + ".graph.pickle")

d = getdegree(G)

cc =... | conditional_block |

save_hist.py | #!/usr/bin/env python

###########################################################################

# Replacement for save_data.py takes a collection of hdf5 files, and #

# builds desired histograms for rapid plotting #

#####################################################################... | (config, file, outfile):

nside = 64

npix = hp.nside2npix(nside)

# Binning for various parameters

sbins = np.arange(npix+1, dtype=int)

ebins = np.arange(5, 9.501, 0.05)

dbins = np.linspace(0, 700, 141)

lbins = np.linspace(-20, 20, 151)

# Get desired information from hdf5 file

d = h... | skyWriter | identifier_name |

save_hist.py | #!/usr/bin/env python

###########################################################################

# Replacement for save_data.py takes a collection of hdf5 files, and #

# builds desired histograms for rapid plotting #

#####################################################################... | q['llhcut_err_w'] = np.histogram2d(x[ecut], dllh[ecut], bins=(sbins,lbins),

weights=(w[ecut])**2)[0]

q['energy'] = np.histogram2d(x, r-fit, bins=(sbins,ebins))[0]

q['dist'] = np.histogram2d(x, xy, bins=(sbins,dbins))[0]

q['llh'] = np.histogram2d(x, dllh, bins=(sbins,lbins))[0]

q['energy... | weights=w[ecut])[0] | random_line_split |

save_hist.py | #!/usr/bin/env python

###########################################################################

# Replacement for save_data.py takes a collection of hdf5 files, and #

# builds desired histograms for rapid plotting #

#####################################################################... |

###############################################################################

## Notes on weights

"""

- Events that pass STA8 condition have a prescale and weight of 1.

- Events that pass STA3ii condition have a 1/2 chance to pass the STA3ii

prescale. Those that fail have a 1/3 chance to pass the STA3 prescale.... | if args.sky:

skyWriter(args.config, infile, outfile)

else:

histWriter(args.config, infile, outfile) | conditional_block |

save_hist.py | #!/usr/bin/env python

###########################################################################

# Replacement for save_data.py takes a collection of hdf5 files, and #

# builds desired histograms for rapid plotting #

#####################################################################... |

def histWriter(config, file, outfile):

# Bin values

eList = ['p','h','o','f']

decbins = ['0-12','12-24','24-40']

rabins = ['0-60','60-120','120-180','180-240','240-300','300-360']

# Build general list of key names to write

keyList = []

keyList += ['energy','energy_w','energy_z','energy_... | rDict = {'proton':'p','helium':'h','oxygen':'o','iron':'f'}

t1 = astro.Time()

print 'Building arrays from %s...' % file

t = tables.openFile(file)

q = {}

# Get reconstructed compositions from list of children in file

children = []

for node in t.walk_nodes('/'):

try: children += [nod... | identifier_body |

repocachemanager.go | package cache

import (

"context"

"encoding/json"

"fmt"

"net"

"strings"

"sync"

"time"

"github.com/go-kit/kit/log"

"github.com/pkg/errors"

"github.com/fluxcd/flux/pkg/image"

"github.com/fluxcd/flux/pkg/registry"

)

type imageToUpdate struct {

ref image.Ref

previousDigest string

previousRefre... | (ctx context.Context) ([]string, error) {

ctx, cancel := context.WithTimeout(ctx, c.clientTimeout)

defer cancel()

tags, err := c.client.Tags(ctx)

if ctx.Err() == context.DeadlineExceeded {

return nil, c.clientTimeoutError()

}

return tags, err

}

// storeRepository stores the repository from the cache

func (c *r... | getTags | identifier_name |

repocachemanager.go | package cache

import (

"context"

"encoding/json"

"fmt"

"net"

"strings"

"sync"

"time"

"github.com/go-kit/kit/log"

"github.com/pkg/errors"

"github.com/fluxcd/flux/pkg/image"

"github.com/fluxcd/flux/pkg/registry"

)

type imageToUpdate struct {

ref image.Ref

previousDigest string

previousRefre... | if c.now.After(deadline) {

toUpdate = append(toUpdate, imageToUpdate{ref: newID, previousRefresh: excludedRefresh})

refresh++

}

}

}

}

}

result := fetchImagesResult{

imagesFound: images,

imagesToUpdate: toUpdate,

imagesToUpdateRefreshCount: refresh,

im... | } else {

if c.trace {

c.logger.Log("trace", "excluded in cache", "ref", newID, "reason", entry.ExcludedReason)

} | random_line_split |

repocachemanager.go | package cache

import (

"context"

"encoding/json"

"fmt"

"net"

"strings"

"sync"

"time"

"github.com/go-kit/kit/log"

"github.com/pkg/errors"

"github.com/fluxcd/flux/pkg/image"

"github.com/fluxcd/flux/pkg/registry"

)

type imageToUpdate struct {

ref image.Ref

previousDigest string

previousRefre... | {

return fmt.Errorf("client timeout (%s) exceeded", r.clientTimeout)

} | identifier_body | |

repocachemanager.go | package cache

import (

"context"

"encoding/json"

"fmt"

"net"

"strings"

"sync"

"time"

"github.com/go-kit/kit/log"

"github.com/pkg/errors"

"github.com/fluxcd/flux/pkg/image"

"github.com/fluxcd/flux/pkg/registry"

)

type imageToUpdate struct {

ref image.Ref

previousDigest string

previousRefre... |

return entry, nil

}

func (r *repoCacheManager) clientTimeoutError() error {

return fmt.Errorf("client timeout (%s) exceeded", r.clientTimeout)

}

| {

return registry.ImageEntry{}, err

} | conditional_block |

sdss_sqldata.py | #!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

Created on Fri May 14 14:12:47 2021

@author: sonic

"""

#%%

import copy

import os, glob

import numpy as np

import pandas as pd

from astropy.io import ascii

import matplotlib.pyplot as plt

from ankepy.phot import anketool as atl # anke package

from astropy.cosmology imp... |

# elif line.startswith('N_SIM'):

# p.write(line.replace('0', '100'))

elif line.startswith('RESOLUTION'):

p.write(line.replace("= 'hr'", "= 'lr'"))

# elif line.startswith('NO_MAX_AGE'):

# p.write(line.replace('= 0', '= 1'))

... | p.write(line.replace(default, sdssdate)) | conditional_block |

sdss_sqldata.py | #!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

Created on Fri May 14 14:12:47 2021

@author: sonic

"""

#%%

import copy

import os, glob

import numpy as np

import pandas as pd

from astropy.io import ascii

import matplotlib.pyplot as plt

from ankepy.phot import anketool as atl # anke package

from astropy.cosmology imp... |

# plt.rcParams.update({'font.size': 14})

fig, axs = plt.subplots(2, 1, figsize=(9,12))

plt.rcParams.update({'font.size': 18})

ax_Mr = axs[1]

ax_lM = ax_Mr.twinx()

# ax_Mr.set_title('SDSS DR12 Galaxies Distribution')

# automatically update ylim of ax2 when ylim of ax1 changes.

ax_Mr.callbacks.connect("ylim_change... | """

Update second axis according with first axis.

"""

y1, y2 = ax_Mr.get_ylim()

ax_lM.set_ylim(Mr2logM(y1), Mr2logM(y2))

ax_lM.figure.canvas.draw() | identifier_body |

sdss_sqldata.py | #!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

Created on Fri May 14 14:12:47 2021

@author: sonic

"""

#%%

import copy

import os, glob

import numpy as np

import pandas as pd

from astropy.io import ascii

import matplotlib.pyplot as plt

from ankepy.phot import anketool as atl # anke package

from astropy.cosmology imp... | ax_Mr.set_xlabel('Spectroscopic redshift')

ax_lM.tick_params(labelsize=12)

ax_Mr.tick_params(labelsize=12)

ax_lM.set_ylabel(r'log($M/M_{\odot}$)')

ax_P = axs[0]

s = ax_P.scatter(photzout[:37578]['z_m1'], absolmag(photin[:37578]['f_SDSS_r'], photzout[:37578]['z_m1']), c=photfout['lmass'], cmap='jet', alpha=0.3, vmin... | ax_Mr.set_xlim(0.0075, 0.20)

ax_Mr.set_ylim(-15, -24)

ax_Mr.legend(loc='lower right')

# ax_Mr.set_title('Spectroscopically Confirmed')

ax_Mr.set_ylabel('$M_r$') | random_line_split |

sdss_sqldata.py | #!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

Created on Fri May 14 14:12:47 2021

@author: sonic

"""

#%%

import copy

import os, glob

import numpy as np

import pandas as pd

from astropy.io import ascii

import matplotlib.pyplot as plt

from ankepy.phot import anketool as atl # anke package

from astropy.cosmology imp... | (ax_Mr):

"""

Update second axis according with first axis.

"""

y1, y2 = ax_Mr.get_ylim()

ax_lM.set_ylim(Mr2logM(y1), Mr2logM(y2))

ax_lM.figure.canvas.draw()

# plt.rcParams.update({'font.size': 14})

fig, axs = plt.subplots(2, 1, figsize=(9,12))

plt.rcParams.update({'font.size': 18})

ax_Mr = a... | convert | identifier_name |

instance.go | // Copyright 2019 Yunion

//

// Licensed under the Apache License, Version 2.0 (the "License");

// you may not use this file except in compliance with the License.

// You may obtain a copy of the License at

//

// http://www.apache.org/licenses/LICENSE-2.0

//

// Unless required by applicable law or agreed to in writi... |

func (in *SInstance) fetchSysDisk() {

storage, _ := in.host.zone.getStorageByType(api.STORAGE_ECLOUD_SYSTEM)

disk := SDisk{

storage: storage,

ManualAttr: SDiskManualAttr{

IsVirtual: true,

TempalteId: in.ImageRef,

ServerId: in.Id,

},

SCreateTime: in.SCreateTime,

SZoneRegionBase: in.SZoneReg... | {

return nil

} | identifier_body |

instance.go | // Copyright 2019 Yunion

//

// Licensed under the Apache License, Version 2.0 (the "License");

// you may not use this file except in compliance with the License.

// You may obtain a copy of the License at

//

// http://www.apache.org/licenses/LICENSE-2.0

//

// Unless required by applicable law or agreed to in writi... | (bc billing.SBillingCycle) error {

return cloudprovider.ErrNotImplemented

}

func (self *SInstance) GetError() error {

return nil

}

func (in *SInstance) fetchSysDisk() {

storage, _ := in.host.zone.getStorageByType(api.STORAGE_ECLOUD_SYSTEM)

disk := SDisk{

storage: storage,

ManualAttr: SDiskManualAttr{

IsVir... | Renew | identifier_name |

instance.go | // Copyright 2019 Yunion

//

// Licensed under the Apache License, Version 2.0 (the "License");

// you may not use this file except in compliance with the License.

// You may obtain a copy of the License at

//

// http://www.apache.org/licenses/LICENSE-2.0

//

// Unless required by applicable law or agreed to in writi... | }

func (in *SInstance) ChangeConfig(ctx context.Context, config *cloudprovider.SManagedVMChangeConfig) error {

return errors.ErrNotImplemented

}

func (in *SInstance) GetVNCInfo() (jsonutils.JSONObject, error) {

url, err := in.host.zone.region.GetInstanceVNCUrl(in.GetId())

if err != nil {

return nil, err

}

ret ... | return cloudprovider.ErrNotImplemented | random_line_split |

instance.go | // Copyright 2019 Yunion

//

// Licensed under the Apache License, Version 2.0 (the "License");

// you may not use this file except in compliance with the License.

// You may obtain a copy of the License at

//

// http://www.apache.org/licenses/LICENSE-2.0

//

// Unless required by applicable law or agreed to in writi... |

for _, routerId := range routerIds.UnsortedList() {

request := NewConsoleRequest(in.host.zone.region.ID, fmt.Sprintf("/api/vpc/%s/nic", routerId),

map[string]string{

"resourceId": in.Id,

}, nil,

)

completeNics := make([]SInstanceNic, 0, len(nics)/2)

err := in.host.zone.region.client.doList(context.B... | {

nic := &in.PortDetail[i]

routerIds.Insert(nic.RouterId)

nics[nic.PortId] = nic

} | conditional_block |

lifecycle.go | package consumer

import (

"bufio"

"context"

"encoding/json"

"fmt"

"io"

"io/ioutil"

"time"

"github.com/pkg/errors"

log "github.com/sirupsen/logrus"

"go.etcd.io/etcd/clientv3"

"go.etcd.io/etcd/etcdserver/api/v3rpc/rpctypes"

"go.gazette.dev/core/broker/client"

pb "go.gazette.dev/core/broker/protocol"

pc "g... | func pickFirstHints(f fetchedHints) recoverylog.FSMHints {

for _, currHints := range f.hints {

if currHints == nil {

continue

}

return *currHints

}

return recoverylog.FSMHints{Log: f.spec.RecoveryLog()}

}

// fetchHints retrieves and decodes all FSMHints for the ShardSpec.

// Nil values will be returned wh... | // pickFirstHints retrieves the first hints from |f|. If there are no primary

// hints available the most recent backup hints will be returned. If there are

// no hints available an empty set of hints is returned. | random_line_split |

lifecycle.go | package consumer

import (

"bufio"

"context"

"encoding/json"

"fmt"

"io"

"io/ioutil"

"time"

"github.com/pkg/errors"

log "github.com/sirupsen/logrus"

"go.etcd.io/etcd/clientv3"

"go.etcd.io/etcd/etcdserver/api/v3rpc/rpctypes"

"go.gazette.dev/core/broker/client"

pb "go.gazette.dev/core/broker/protocol"

pc "g... |

var timeNow = time.Now

| {

if err == nil {

panic("expected error")

} else if err == context.Canceled || err == context.DeadlineExceeded {

return err

} else if _, ok := err.(interface{ StackTrace() errors.StackTrace }); ok {

// Avoid attaching another errors.StackTrace if one is already present.

return errors.WithMessage(err, fmt.Spr... | identifier_body |

lifecycle.go | package consumer

import (

"bufio"

"context"

"encoding/json"

"fmt"

"io"

"io/ioutil"

"time"

"github.com/pkg/errors"

log "github.com/sirupsen/logrus"

"go.etcd.io/etcd/clientv3"

"go.etcd.io/etcd/etcdserver/api/v3rpc/rpctypes"

"go.gazette.dev/core/broker/client"

pb "go.gazette.dev/core/broker/protocol"

pc "g... |

for i := range out.txnResp.Responses {

var currHints recoverylog.FSMHints

if kvs := out.txnResp.Responses[i].GetResponseRange().Kvs; len(kvs) == 0 {

out.hints = append(out.hints, nil)

continue

} else if err = json.Unmarshal(kvs[0].Value, &currHints); err != nil {

err = extendErr(err, "unmarshal FSMHin... | {

err = extendErr(err, "fetching ShardSpec.HintKeys")

return

} | conditional_block |

lifecycle.go | package consumer

import (

"bufio"

"context"

"encoding/json"

"fmt"

"io"

"io/ioutil"

"time"

"github.com/pkg/errors"

log "github.com/sirupsen/logrus"

"go.etcd.io/etcd/clientv3"

"go.etcd.io/etcd/etcdserver/api/v3rpc/rpctypes"

"go.gazette.dev/core/broker/client"

pb "go.gazette.dev/core/broker/protocol"

pc "g... | (err error, mFmt string, args ...interface{}) error {

if err == nil {

panic("expected error")

} else if err == context.Canceled || err == context.DeadlineExceeded {

return err

} else if _, ok := err.(interface{ StackTrace() errors.StackTrace }); ok {

// Avoid attaching another errors.StackTrace if one is alrea... | extendErr | identifier_name |

incident-sk.ts | /**

* @module incident-sk

* @description <h2><code>incident-sk</code></h2>

*

* <p>

* Displays a single Incident.

* </p>

*

* @attr minimized {boolean} If not set then the incident is displayed in expanded

* mode, otherwise it is displayed in compact mode.

*

* @attr params {boolean} If set then the incide... | }

private toggleSilencesWithComments(e: Event): void {

// This prevents a double event from happening.

e.preventDefault();

this.displaySilencesWithComments = !this.displaySilencesWithComments;

this._render();

}

private displayRecentlyExpired(

recentlyExpiredSilence: boolean

): TemplateRe... | (i: Incident) =>

html`<incident-sk .incident_state=${i} minimized></incident-sk>`

);

})

.catch(errorMessage); | random_line_split |

incident-sk.ts | /**

* @module incident-sk

* @description <h2><code>incident-sk</code></h2>

*

* <p>

* Displays a single Incident.

* </p>

*

* @attr minimized {boolean} If not set then the incident is displayed in expanded

* mode, otherwise it is displayed in compact mode.

*

* @attr params {boolean} If set then the incide... | (val: Silence[]) {

this._render();

this.silences = val;

}

/** @prop recently_expired_silence Whether silence recently expired. */

get incident_has_recently_expired_silence(): boolean {

return this.recently_expired_silence;

}

set incident_has_recently_expired_silence(val: boolean) {

// No nee... | incident_silences | identifier_name |

incident-sk.ts | /**

* @module incident-sk

* @description <h2><code>incident-sk</code></h2>

*

* <p>

* Displays a single Incident.

* </p>

*

* @attr minimized {boolean} If not set then the incident is displayed in expanded

* mode, otherwise it is displayed in compact mode.

*

* @attr params {boolean} If set then the incide... |

private classOfH2(): string {

if (!this.state.active) {

return 'inactive';

}

if (this.state.params.assigned_to) {

return 'assigned';

}

return '';

}

private table(): TemplateResult[] {

const params = this.state.params;

const keys = Object.keys(params);

keys.sort();

... | {

// No need to render again if value is same as old value.

if (val !== this.flaky) {

this.flaky = val;

this._render();

}

} | identifier_body |

incident-sk.ts | /**

* @module incident-sk

* @description <h2><code>incident-sk</code></h2>

*

* <p>

* Displays a single Incident.

* </p>

*

* @attr minimized {boolean} If not set then the incident is displayed in expanded

* mode, otherwise it is displayed in compact mode.

*

* @attr params {boolean} If set then the incide... |

}

/** @prop flaky Whether this incident has been flaky. */

get incident_flaky(): boolean {

return this.flaky;

}

set incident_flaky(val: boolean) {

// No need to render again if value is same as old value.

if (val !== this.flaky) {

this.flaky = val;

this._render();

}

}

priva... | {

this.recently_expired_silence = val;

this._render();

} | conditional_block |

parse.go | // Copyright 2015 The Prometheus Authors

// Licensed under the Apache License, Version 2.0 (the "License");

// you may not use this file except in compliance with the License.

// You may obtain a copy of the License at

//

// http://www.apache.org/licenses/LICENSE-2.0

//

// Unless required by applicable law or agreed to... | if op == allowedOp {

validOp = true

}

}

if !validOp && len(operators) > 0 {

p.errorf("operator must be one of %q, is %q", operators, op)

}

val := p.unquoteString(p.expect(itemString, ctx).val)

// Map the item to the respective match type.

var matchType labels.MatchType

switch op {

case it... | p.errorf("expected label matching operator but got %s", op)

}

var validOp = false

for _, allowedOp := range operators { | random_line_split |

parse.go | // Copyright 2015 The Prometheus Authors

// Licensed under the Apache License, Version 2.0 (the "License");

// you may not use this file except in compliance with the License.

// You may obtain a copy of the License at

//

// http://www.apache.org/licenses/LICENSE-2.0

//

// Unless required by applicable law or agreed to... |

// Extract values.

sign := 1.0

if t := p.peek().typ; t == itemSUB || t == itemADD {

if p.next().typ == itemSUB {

sign = -1

}

}

var k float64

if t := p.peek().typ; t == itemNumber {

k = sign * p.number(p.expect(itemNumber, ctx).val)

} else if t == itemIdentifier && p.peek().val == "stale" {

... | {

p.next()

times := uint64(1)

if p.peek().typ == itemTimes {

p.next()

times, err = strconv.ParseUint(p.expect(itemNumber, ctx).val, 10, 64)

if err != nil {

p.errorf("invalid repetition in %s: %s", ctx, err)

}

}

for i := uint64(0); i < times; i++ {

vals = append(vals, sequenceValu... | conditional_block |

parse.go | // Copyright 2015 The Prometheus Authors

// Licensed under the Apache License, Version 2.0 (the "License");

// you may not use this file except in compliance with the License.

// You may obtain a copy of the License at

//

// http://www.apache.org/licenses/LICENSE-2.0

//

// Unless required by applicable law or agreed to... |

var errUnexpected = fmt.Errorf("unexpected error")

// recover is the handler that turns panics into returns from the top level of Parse.

func (p *parser) recover(errp *error) {

e := recover()

if e != nil {

if _, ok := e.(runtime.Error); ok {

// Print the stack trace but do not inhibit the running application.... | {

token := p.next()

if token.typ != exp1 && token.typ != exp2 {

p.errorf("unexpected %s in %s, expected %s or %s", token.desc(), context, exp1.desc(), exp2.desc())

}

return token

} | identifier_body |

parse.go | // Copyright 2015 The Prometheus Authors

// Licensed under the Apache License, Version 2.0 (the "License");

// you may not use this file except in compliance with the License.

// You may obtain a copy of the License at

//

// http://www.apache.org/licenses/LICENSE-2.0

//

// Unless required by applicable law or agreed to... | (errp *error) {

e := recover()

if e != nil {

if _, ok := e.(runtime.Error); ok {

// Print the stack trace but do not inhibit the running application.

buf := make([]byte, 64<<10)

buf = buf[:runtime.Stack(buf, false)]

fmt.Fprintf(os.Stderr, "parser panic: %v\n%s", e, buf)

*errp = errUnexpected

} els... | recover | identifier_name |

mock_cr50_agent.rs | // Copyright 2022 The Fuchsia Authors. All rights reserved.

// Use of this source code is governed by a BSD-style license that can be

// found in the LICENSE file.

use {

anyhow::Error,

fidl_fuchsia_tpm_cr50::{

InsertLeafResponse, PinWeaverRequest, PinWeaverRequestStream, TryAuthFailed,

TryAuthR... |

/// Adds a successful TryAuth response.

pub(crate) fn add_try_auth_success_response(

mut self,

root_hash: Hash,

cred_metadata: Vec<u8>,

mac: Hash,

) -> Self {

let success = TryAuthSuccess {

root_hash: Some(root_hash),

cred_metadata: Some(cred... | {

self.responses.push_back(MockResponse::RemoveLeaf { root_hash });

self

} | identifier_body |

mock_cr50_agent.rs | // Copyright 2022 The Fuchsia Authors. All rights reserved.

// Use of this source code is governed by a BSD-style license that can be

// found in the LICENSE file.

use {

anyhow::Error,

fidl_fuchsia_tpm_cr50::{

InsertLeafResponse, PinWeaverRequest, PinWeaverRequestStream, TryAuthFailed,

TryAuthR... |

}

_ => {

panic!("Next mock response type was {:?} but expected TryAuth.", next_response)

}

};

}

// GetLog and LogReplay are unimplemented as testing log replay is out

// of scope for pwauth-credmgr integration tests... | {

resp.send(&mut std::result::Result::Ok(response))

.expect("failed to send response");

} | conditional_block |

mock_cr50_agent.rs | // Copyright 2022 The Fuchsia Authors. All rights reserved.

// Use of this source code is governed by a BSD-style license that can be

// found in the LICENSE file.

use {

anyhow::Error,

fidl_fuchsia_tpm_cr50::{

InsertLeafResponse, PinWeaverRequest, PinWeaverRequestStream, TryAuthFailed,

TryAuthR... | (

request: PinWeaverRequest,

next_response: MockResponse,

he_secret: &Arc<Mutex<Vec<u8>>>,

) {

// Match the next response with the request, panicking if requests are out

// of the expected order.

match request {

PinWeaverRequest::GetVersion { responder: resp } => {

match next... | handle_request | identifier_name |

mock_cr50_agent.rs | // Copyright 2022 The Fuchsia Authors. All rights reserved.

// Use of this source code is governed by a BSD-style license that can be

// found in the LICENSE file.

use {

anyhow::Error,

fidl_fuchsia_tpm_cr50::{

InsertLeafResponse, PinWeaverRequest, PinWeaverRequestStream, TryAuthFailed,

TryAuthR... | ),

};

}

PinWeaverRequest::RemoveLeaf { params: _, responder: resp } => {

match next_response {

MockResponse::RemoveLeaf { root_hash } => {

resp.send(&mut std::result::Result::Ok(root_hash))

.expect("faile... | }

_ => panic!(

"Next mock response type was {:?} but expected InsertLeaf.",

next_response | random_line_split |

hash_aggregator.go | // Copyright 2019 The Cockroach Authors.

//

// Use of this software is governed by the Business Source License

// included in the file licenses/BSL.txt.

//

// As of the Change Date specified in that file, in accordance with

// the Business Source License, use of this software will be governed

// by the Apache License, ... | {

var retErr error

if op.inputTrackingState.tuples != nil {

retErr = op.inputTrackingState.tuples.Close(ctx)

}

if err := op.toClose.Close(ctx); err != nil {

retErr = err

}

return retErr

} | identifier_body | |

hash_aggregator.go | // Copyright 2019 The Cockroach Authors.

//

// Use of this software is governed by the Business Source License

// included in the file licenses/BSL.txt.

//

// As of the Change Date specified in that file, in accordance with

// the Business Source License, use of this software will be governed

// by the Apache License, ... |

op.output.SetLength(curOutputIdx)

return op.output

case hashAggregatorDone:

return coldata.ZeroBatch

default:

colexecerror.InternalError(errors.AssertionFailedf("hash aggregator in unhandled state"))

// This code is unreachable, but the compiler cannot infer that.

return nil

}

}

}

func (op ... | {

op.state = hashAggregatorDone

} | conditional_block |

hash_aggregator.go | // Copyright 2019 The Cockroach Authors.

//

// Use of this software is governed by the Business Source License

// included in the file licenses/BSL.txt.

//

// As of the Change Date specified in that file, in accordance with

// the Business Source License, use of this software will be governed

// by the Apache License, ... | op.scratch.eqChains[eqChainSlot] = op.scratch.eqChains[eqChainSlot][:0]

}

}

if newGroupCount > 0 {

// We have created new buckets, so we need to append the heads of those

// buckets to the hash table.

copy(b.Selection(), newGroupsHeadsSel)

b.SetLength(newGroupCount)

op.ht.AppendAllDistinct(ctx, b)

}

... | op.ht.ProbeScratch.HashBuffer[newGroupCount] = op.ht.ProbeScratch.HashBuffer[eqChainSlot]

newGroupCount++ | random_line_split |

hash_aggregator.go | // Copyright 2019 The Cockroach Authors.

//

// Use of this software is governed by the Business Source License

// included in the file licenses/BSL.txt.

//

// As of the Change Date specified in that file, in accordance with

// the Business Source License, use of this software will be governed

// by the Apache License, ... | (ctx context.Context) error {

var retErr error

if op.inputTrackingState.tuples != nil {

retErr = op.inputTrackingState.tuples.Close(ctx)

}

if err := op.toClose.Close(ctx); err != nil {

retErr = err

}

return retErr

}

| Close | identifier_name |

watcher.go | // Licensed to Elasticsearch B.V. under one or more contributor

// license agreements. See the NOTICE file distributed with

// this work for additional information regarding copyright

// ownership. Elasticsearch B.V. licenses this file to you under

// the Apache License, Version 2.0 (the "License"); you may

// not use ... | "container": container,

})

}

// Delete

if event.Action == "die" {

container := w.Container(event.Actor.ID)

if container != nil {

w.bus.Publish(bus.Event{

"stop": true,

"container": container,

})

}

w.Lock()

w.deleted[event.Actor.ID] = time.... | delete(w.deleted, event.Actor.ID)

w.Unlock()

w.bus.Publish(bus.Event{

"start": true, | random_line_split |

watcher.go | // Licensed to Elasticsearch B.V. under one or more contributor

// license agreements. See the NOTICE file distributed with

// this work for additional information regarding copyright

// ownership. Elasticsearch B.V. licenses this file to you under

// the Apache License, Version 2.0 (the "License"); you may

// not use ... | {

return w.bus.Subscribe("stop")

} | identifier_body | |

watcher.go | // Licensed to Elasticsearch B.V. under one or more contributor

// license agreements. See the NOTICE file distributed with

// this work for additional information regarding copyright

// ownership. Elasticsearch B.V. licenses this file to you under

// the Apache License, Version 2.0 (the "License"); you may

// not use ... |

return res

}

// Start watching docker API for new containers

func (w *watcher) Start() error {

// Do initial scan of existing containers

logp.Debug("docker", "Start docker containers scanner")

w.lastValidTimestamp = time.Now().Unix()

w.Lock()

defer w.Unlock()

containers, err := w.listContainers(types.Containe... | {

if !w.shortID || len(k) != shortIDLen {

res[k] = v

}

} | conditional_block |

watcher.go | // Licensed to Elasticsearch B.V. under one or more contributor

// license agreements. See the NOTICE file distributed with

// this work for additional information regarding copyright

// ownership. Elasticsearch B.V. licenses this file to you under

// the Apache License, Version 2.0 (the "License"); you may

// not use ... | () map[string]*Container {

w.RLock()

defer w.RUnlock()

res := make(map[string]*Container)

for k, v := range w.containers {

if !w.shortID || len(k) != shortIDLen {

res[k] = v

}

}

return res

}

// Start watching docker API for new containers

func (w *watcher) Start() error {

// Do initial scan of existing c... | Containers | identifier_name |

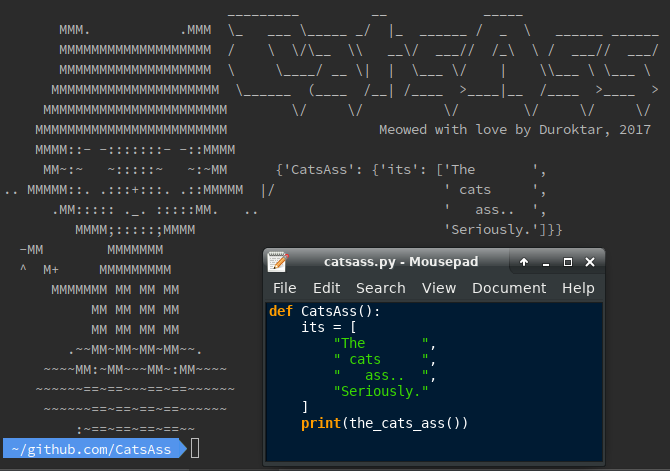

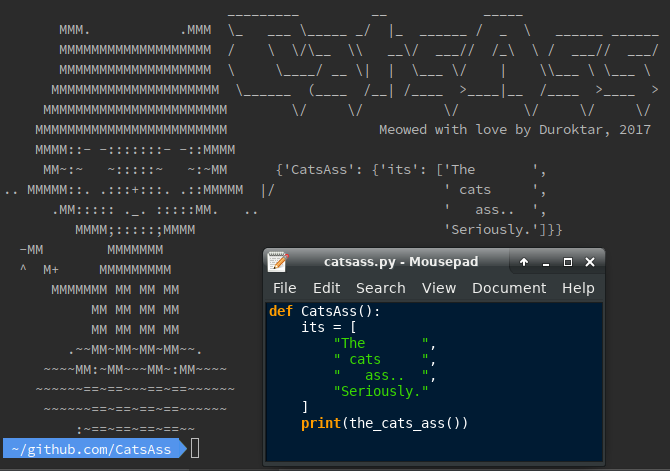

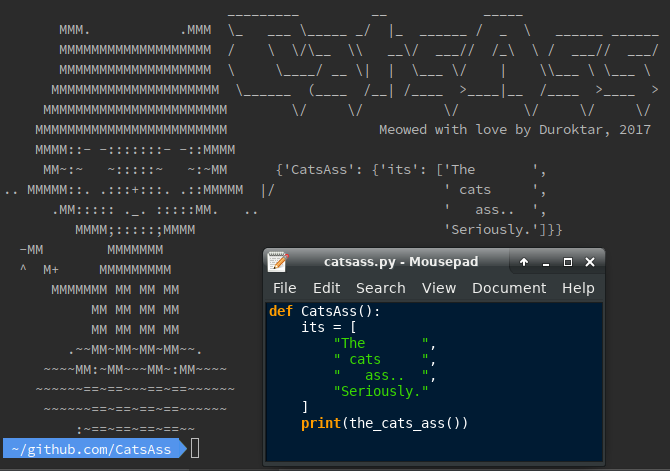

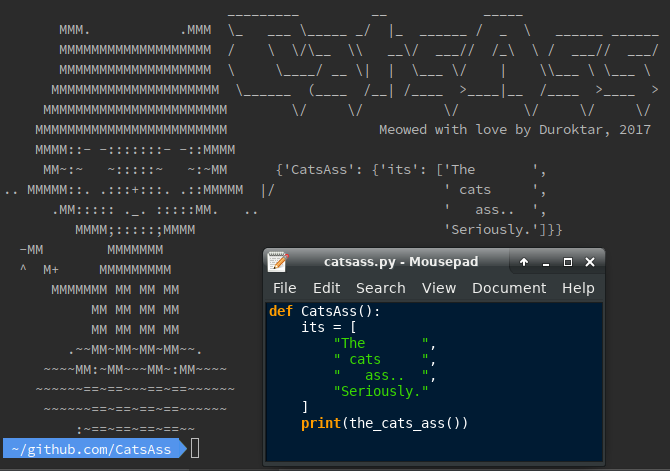

catsass.py | #!/usr/bin/env python

"""Seriously the cats ass. Seriously.

CatsAss is the cats ass for replacing multiple prints in

simple debugging situations.

----

----

*Requires Python 3.6*

"""

import os.path

from collections im... |

# === schrodingers_cat() ===

def schrodingers_cat(peek=False):

"""

Peek in the box for a 50/50 shot of retrieving your

desired output, while the other half of the time the

cat is dead and the function returns nothing at all.

If you decide not to peek, the cat -being neither

dead nor alive- r... | """

You really shouldn't be poking cats. But if you insist,

it is recommended to bring catnip as it's not unusual for

cats to attack dicks who poke them.

where:

I leave this as an exercise for the reader. But

a word of wisdom from my 1st grade teacher: never do

anything that yo... | identifier_body |

catsass.py | #!/usr/bin/env python

"""Seriously the cats ass. Seriously.

CatsAss is the cats ass for replacing multiple prints in

simple debugging situations.

----

----

*Requires Python 3.6*

"""

import os.path

from collections im... | if template is None:

# Option 1

template = Template({

"Name": self.ctx,

"Vars": self.values}, 1)

# Option 2

template = Template({self.ctx: self.values}, 1)

self.template = template

if cat is None:

cat ... | self.logo_colorz = logo_colorz

# TODO Should be public

Template = namedtuple("Template", "view offset") | random_line_split |

catsass.py | #!/usr/bin/env python

"""Seriously the cats ass. Seriously.

CatsAss is the cats ass for replacing multiple prints in

simple debugging situations.

----

----

*Requires Python 3.6*

"""

import os.path

from collections im... | (InterruptedError):

pass

if randint(1, 10) == 7:

mew = "You attempt to poke the cat but it attacks. " \

"Maybe if you gave it some catnip?"

raise BadCat(mew)

return __cat_whisperer()[where]

# === schrodingers_cat() ===

def schrodingers_cat(peek=Fals... | BadCat | identifier_name |

catsass.py | #!/usr/bin/env python

"""Seriously the cats ass. Seriously.

CatsAss is the cats ass for replacing multiple prints in

simple debugging situations.

----

----

*Requires Python 3.6*

"""

import os.path

from collections im... |

return rv

highlight = color_stuffs.get('highlight')

# Customz

cat_colorz = color_stuffs.get(self.coat) or {}

logo_colorz = color_stuffs.get(self.logo_colorz) or {}

# All this will be customizable in the next release.

title_colors = color_stuffs.get('title_... | rv.append("".join(line)) | conditional_block |

Modules.py | import requests

import re

from bs4 import BeautifulSoup

import json

import psycopg2

from datetime import datetime

import subprocess

import urllib3

urllib3.disable_warnings(urllib3.exceptions.InsecureRequestWarning)

from shutil import copyfileobj

import time

import xml.etree.cElementTree as etree

import ResolveRouter

... |

class ParseText:

"""

This class handles parsing of two entities:

\n\tText files containing one instance of a transcribed podcast or...

\n\tnohup files containing multiple instances of a transcribed podcast

"""

def nohupTranscriptionContent(filePath):

"""

This parses... | def runAutoCheck(dbConnection, maxConcurrent):

"""

runs an automatic check to see if any transcriptions need to be started or are already finished

and need to be reuploded\n\n

Needs dbConnection & an integer representing the max concurrent transcriptons that can be ran at a time\n\n

... | identifier_body |

Modules.py | import requests

import re

from bs4 import BeautifulSoup

import json

import psycopg2

from datetime import datetime

import subprocess

import urllib3

urllib3.disable_warnings(urllib3.exceptions.InsecureRequestWarning)

from shutil import copyfileobj

import time

import xml.etree.cElementTree as etree

import ResolveRouter

... | ():

"""

gets the number of runnning transcription processes

"""

try:

proc = subprocess.run("ps -Af|grep -i \"online2-wav-nnet3-latgen-faster\"", stdout=subprocess.PIPE, shell=True)

np = (len(str(proc.stdout).split("\\n")) - 3)

if(np == None):

... | numRunningProcesses | identifier_name |

Modules.py | import requests

import re

from bs4 import BeautifulSoup

import json

import psycopg2

from datetime import datetime

import subprocess

import urllib3

urllib3.disable_warnings(urllib3.exceptions.InsecureRequestWarning)

from shutil import copyfileobj

import time

import xml.etree.cElementTree as etree

import ResolveRouter

... |

def updateScript(dbconnection):

"""

scans all rss feeds for new

"""

cursor = dbconnection.cursor()

cursor.execute("select rss, name, source from podcasts;")

rssArray = cursor.fetchall()

for rss in rssArray:

print("chekcing name " + str(rss[1]))... | cursor = dbConnection.cursor()

cursor.execute("UPDATE transcriptions SET pending = TRUE WHERE id = '" + str(fileContent[1]) + "';")

dbConnection.commit()

cursor.close()

url = fileContent[0]

indexID = str(fileContent[1]) # g... | conditional_block |

Modules.py | import requests

import re

from bs4 import BeautifulSoup

import json

import psycopg2

from datetime import datetime

import subprocess

import urllib3

urllib3.disable_warnings(urllib3.exceptions.InsecureRequestWarning)

from shutil import copyfileobj

import time

import xml.etree.cElementTree as etree

import ResolveRouter

... | results = []

realTimeFactor = re.findall(r'Timing stats: real-time factor for offline decoding was (.*?) = ', fileContent)

results.append(realTimeFactor)

transcription = re.findall(r'utterance-id(.*?) (.*?)\n', fileContent)

transcriptionList = []

t... | random_line_split | |

preprocess.py | import numpy as np

import pandas as pd

import os.path

import sys, traceback

import random

import re

import string

import pickle

import string

from nltk.probability import FreqDist

MAX_TOKENS = 512

MAX_WORDS = 400

def truncate(text):

"""Truncate the text."""

# TODO fix this to use a variable instead of 511... | return final_string

def shortenText(text, all_words):

# print('shortenText')

count = 0

final_string = ""

try:

words = text.split()

for word in words:

word = word.lower()

if len(word) > 7:

if word in all_words:

count += 1

... | final_string = final_string[:-1]

except Exception as e:

# print("type error: " + str(e))

print("type error")

exit() | random_line_split |

preprocess.py | import numpy as np

import pandas as pd

import os.path

import sys, traceback

import random

import re

import string

import pickle

import string

from nltk.probability import FreqDist

MAX_TOKENS = 512

MAX_WORDS = 400

def truncate(text):

"""Truncate the text."""

# TODO fix this to use a variable instead of 511... | (filepath):

"""Read the CSV from disk."""

df = pd.read_csv(filepath, delimiter=',')

stop_words = ["will", "done", "goes","let", "know", "just", "put" "also",

"got", "can", "get" "said", "mr", "mrs", "one", "two", "three",

"four", "five", "i", "me", "my", "myself", "we", "our",

... | read_data | identifier_name |

preprocess.py | import numpy as np

import pandas as pd

import os.path

import sys, traceback

import random

import re

import string

import pickle

import string

from nltk.probability import FreqDist

MAX_TOKENS = 512

MAX_WORDS = 400

def truncate(text):

"""Truncate the text."""

# TODO fix this to use a variable instead of 511... |

if(flag):

final_string = final_string[:-1]

except Exception as e:

print("type error: " + str(e))

exit()

return final_string

def removePunctuationFromList(all_words):

all_words = [''.join(c for c in s if c not in string.punctuation)

for s in all_words]

# ... | final_string += word

final_string += ' '

flag = False | conditional_block |

preprocess.py | import numpy as np

import pandas as pd

import os.path

import sys, traceback

import random

import re

import string

import pickle

import string

from nltk.probability import FreqDist

MAX_TOKENS = 512

MAX_WORDS = 400

def truncate(text):

"""Truncate the text."""

# TODO fix this to use a variable instead of 511... |

def shortenText(text, all_words):

# print('shortenText')

count = 0

final_string = ""

try:

words = text.split()

for word in words:

word = word.lower()

if len(word) > 7:

if word in all_words:

count += 1

if(co... | words = text.split()

final_string = ""

flag = False

try:

for word in words:

word = word.lower()

if word not in stop_words:

final_string += word

final_string += ' '

flag = True

else:

flag = False

... | identifier_body |

lib.rs | //! A tiny and incomplete wasm interpreter

//!

//! This module contains a tiny and incomplete wasm interpreter built on top of

//! `walrus`'s module structure. Each `Interpreter` contains some state

//! about the execution of a wasm instance. The "incomplete" part here is

//! related to the fact that this is *only* use... | (&mut self, id: FunctionId, module: &Module) -> Option<&[u32]> {

self.descriptor.truncate(0);

// We should have a blank wasm and LLVM stack at both the start and end

// of the call.

assert_eq!(self.sp, self.mem.len() as i32);

self.call(id, module, &[]);

assert_eq!(self.s... | interpret_descriptor | identifier_name |

lib.rs | //! A tiny and incomplete wasm interpreter

//!

//! This module contains a tiny and incomplete wasm interpreter built on top of

//! `walrus`'s module structure. Each `Interpreter` contains some state

//! about the execution of a wasm instance. The "incomplete" part here is

//! related to the fact that this is *only* use... |

}

struct Frame<'a> {

module: &'a Module,

interp: &'a mut Interpreter,

locals: BTreeMap<LocalId, i32>,

done: bool,

}

impl Frame<'_> {

fn eval(&mut self, instr: &Instr) {

use walrus::ir::*;

let stack = &mut self.interp.scratch;

match instr {

Instr::Const(c) => ... | {

let func = module.funcs.get(id);

log::debug!("starting a call of {:?} {:?}", id, func.name);

log::debug!("arguments {:?}", args);

let local = match &func.kind {

walrus::FunctionKind::Local(l) => l,

_ => panic!("can only call locally defined functions"),

... | identifier_body |

lib.rs | //! A tiny and incomplete wasm interpreter

//!

//! This module contains a tiny and incomplete wasm interpreter built on top of

//! `walrus`'s module structure. Each `Interpreter` contains some state

//! about the execution of a wasm instance. The "incomplete" part here is

//! related to the fact that this is *only* use... | impl Interpreter {

/// Creates a new interpreter from a provided `Module`, precomputing all

/// information necessary to interpret further.

///

/// Note that the `module` passed in to this function must be the same as

/// the `module` passed to `interpret` below.

pub fn new(module: &Module) -> R... | }

| random_line_split |

lib.rs | //! A tiny and incomplete wasm interpreter

//!

//! This module contains a tiny and incomplete wasm interpreter built on top of

//! `walrus`'s module structure. Each `Interpreter` contains some state

//! about the execution of a wasm instance. The "incomplete" part here is

//! related to the fact that this is *only* use... |

}

// All other instructions shouldn't be used by our various

// descriptor functions. LLVM optimizations may mean that some

// of the above instructions aren't actually needed either, but

// the above instructions have empirically been required when

... | {

let ty = self.module.types.get(self.module.funcs.get(e.func).ty());

let args = (0..ty.params().len())

.map(|_| stack.pop().unwrap())

.collect::<Vec<_>>();

self.interp.call(e.func, self.module, &args);

... | conditional_block |

maze.rs | //! I would like to approach the problem in two distinct ways

//!

//! One of them is floodfill - solution is highly suboptimal in terms of computational complexity,

//! but it parallelizes perfectly - every iteration step recalculates new maze path data basing

//! entirely on previous iteration. The aproach has a probl... | // I have feeling it is strongly suboptimal; Actually as both directions are encoded as 4

// bits, just precalculated table would be best solution

let mut min = 4;

for dir in [Self::LEFT, Self::RIGHT, Self::UP, Self::DOWN].iter() {

let mut d = *dir;

if !self.has_... | pub fn min_rotation(self, other: Self) -> usize { | random_line_split |

maze.rs | //! I would like to approach the problem in two distinct ways

//!

//! One of them is floodfill - solution is highly suboptimal in terms of computational complexity,

//! but it parallelizes perfectly - every iteration step recalculates new maze path data basing

//! entirely on previous iteration. The aproach has a probl... | {

Empty,

Wall,

/// Empty field with known distance from the start of the maze

/// It doesn't need to be the closes path - it is distance calulated using some path

Calculated(Dir, usize),

}

/// Whole maze reprezentation

pub struct Maze {

/// All fields flattened

maze: Box<[Field]>,

/// ... | Field | identifier_name |

maze.rs | //! I would like to approach the problem in two distinct ways

//!

//! One of them is floodfill - solution is highly suboptimal in terms of computational complexity,

//! but it parallelizes perfectly - every iteration step recalculates new maze path data basing

//! entirely on previous iteration. The aproach has a probl... |

/// Rotates left

pub fn left(mut self) -> Self {

let down = (self.0 & 1) << 3;

self.0 >>= 1;

self.0 |= down;

self

}

/// Rotates right

pub fn right(mut self) -> Self {

let left = (self.0 & 8) >> 3;

self.0 <<= 1;

self.0 |= left;

self.0... | {

let h = match from_x.cmp(&to_x) {

Ordering::Less => Self::LEFT,

Ordering::Greater => Self::RIGHT,

Ordering::Equal => Self::NONE,

};

let v = match from_y.cmp(&to_y) {

Ordering::Less => Self::UP,

Ordering::Greater => Self::DOWN,

... | identifier_body |

darts.js | /*

* helping counting score when playing darts

* this is a game use to count darts when

* you re playing in real darts.

* this is free to use and use for demo for the moment

* please send mail to flottin@gmail.com if you need info

*

* Updated : 2017/02/04

*/

/*

* load game on document load

*/

$(document).... |

/*

* initialize game variables

*/

DG.prototype.init = function(){

DG.buttonEnable = true;

DG.numberPlay = 3;

DG.isEndGame = false;

DG.player1 = true;

DG.multi = 1

DG.lastScore = 0;

DG.keyPressed = 0;

DG.currentPla... | {

DG.scoreP1 = 0;

DG.scoreP2 = 0;

DG.game = 301

} | identifier_body |

darts.js | /*

* helping counting score when playing darts

* this is a game use to count darts when

* you re playing in real darts.

* this is free to use and use for demo for the moment

* please send mail to flottin@gmail.com if you need info

*

* Updated : 2017/02/04

*/

/*

* load game on document load

*/

$(document).... | (){

DG.scoreP1 = 0;

DG.scoreP2 = 0;

DG.game = 301

}

/*

* initialize game variables

*/

DG.prototype.init = function(){

DG.buttonEnable = true;

DG.numberPlay = 3;

DG.isEndGame = false;

DG.player1 = true;

DG.mul... | DG | identifier_name |

darts.js | /*

* helping counting score when playing darts

* this is a game use to count darts when

* you re playing in real darts.

* this is free to use and use for demo for the moment

* please send mail to flottin@gmail.com if you need info

*

* Updated : 2017/02/04

*/

/*

* load game on document load

*/

$(document).... | return strOut;

}

/*

* remaining darts

*/

DG.prototype.remainingDarts = function(){

DG.prototype.initDarts();

if ('player1' == DG.currentPlayer){

$(".numberPlayLeftP1")[0].innerText = DG.prototype.strRepeat('.', DG.numberPlay);

}else {

$(".numberPlayLeftP2")[0].innerText = DG.prototyp... | random_line_split | |

darts.js | /*

* helping counting score when playing darts

* this is a game use to count darts when

* you re playing in real darts.

* this is free to use and use for demo for the moment

* please send mail to flottin@gmail.com if you need info

*

* Updated : 2017/02/04

*/

/*

* load game on document load

*/

$(document).... |

DG.lastMulti = DG.multi

DG.result = $(DG.playerResult).val();

DG.result -= (DG.multi * DG.keyMark);

DG.prototype.saveData();

// initialize multi

DG.multi = 1

if (DG.result==1){

DG.prototype.endError()

return false;

}

if (DG.result == 0){

if(DG.lastMulti =... | {

DG.lastScore = $(DG.playerResult).val() ;

} | conditional_block |

table.ts | /**

* Copyright 2021, Yahoo Holdings Inc.

* Licensed under the terms of the MIT license. See accompanying LICENSE.md file for terms.

*/

import VisualizationSerializer from './visualization';

import { parseMetricName, canonicalizeMetric } from 'navi-data/utils/metric';

import { assert } from '@ember/debug';

import { ... |

export default class TableVisualizationSerializer extends VisualizationSerializer {

// TODO: Implement serialize method to strip out unneeded fields

}

// DO NOT DELETE: this is how TypeScript knows how to look up your models.

declare module 'ember-data/types/registries/serializer' {

export default interface Seri... | {

if (visualization.version === 2) {

return visualization;

}

injectDimensionFields(request, naviMetadata);

const columnData: Record<string, ColumnInfo> = buildColumnInfo(request, visualization, naviMetadata);

// Rearranges request columns to match table order

const missedRequestColumns: Column[] = [];... | identifier_body |

table.ts | /**

* Copyright 2021, Yahoo Holdings Inc.

* Licensed under the terms of the MIT license. See accompanying LICENSE.md file for terms.

*/

import VisualizationSerializer from './visualization';

import { parseMetricName, canonicalizeMetric } from 'navi-data/utils/metric';

import { assert } from '@ember/debug';

import { ... |

columns[tableIndex] = requestColumn;

} else if (requestColumn !== undefined && tableColumn === undefined) {

// this column only exists in the request

missedRequestColumns.push(requestColumn);

}

return columns;

}, [])

.filter((c) => c); // remove skipped columns

reque... | {

// If display name is custom move over to request

requestColumn.alias = tableColumn.displayName;

} | conditional_block |

table.ts | /**

* Copyright 2021, Yahoo Holdings Inc.

* Licensed under the terms of the MIT license. See accompanying LICENSE.md file for terms.

*/

import VisualizationSerializer from './visualization';

import { parseMetricName, canonicalizeMetric } from 'navi-data/utils/metric';

import { assert } from '@ember/debug';

import { ... | (request: RequestV2, naviMetadata: NaviMetadataService) {

const newColumns: RequestV2['columns'] = [];

request.columns.forEach((col) => {

const { type, field } = col;

if (type === 'dimension') {

const dimMeta = naviMetadata.getById(type, field, request.dataSource);

// get all show fields for dim... | injectDimensionFields | identifier_name |

table.ts | /**

* Copyright 2021, Yahoo Holdings Inc.

* Licensed under the terms of the MIT license. See accompanying LICENSE.md file for terms.

*/

import VisualizationSerializer from './visualization';

import { parseMetricName, canonicalizeMetric } from 'navi-data/utils/metric';

import { assert } from '@ember/debug';

import { ... | if (tableColumn === undefined || requestColumn === undefined) {

// this column does not exist in the table

return columns;

}

const { attributes } = tableColumn;

assert(

`The request column ${requestColumn.field} should have a present 'cid' field`,

requestColumn.cid !== undefined

... | // extract column attributes

const columnAttributes = Object.values(columnData).reduce((columns, columnInfo) => {

const { tableColumn, requestColumn } = columnInfo; | random_line_split |

lib.rs | pub use glam::*;

use image::DynamicImage;

pub use std::time;

pub use wgpu::util::DeviceExt;

use wgpu::ShaderModule;

pub use winit::{

dpi::{PhysicalSize, Size},

event::{Event, *},

event_loop::{ControlFlow, EventLoop},

window::{Window, WindowAttributes},

};

pub type Index = u16;

#[repr(u32)]

#[derive(Cl... |

}

pub fn resize(&mut self, device: &wgpu::Device, width: u32, height: u32) {

self.configure(

&device,

&SurfaceHandlerConfiguration {

width,

height,

sample_count: self.sample_count(),

},

);

}

pub fn con... | {

SampleCount::Single

} | conditional_block |

lib.rs | pub use glam::*;

use image::DynamicImage;

pub use std::time;

pub use wgpu::util::DeviceExt;

use wgpu::ShaderModule;

pub use winit::{

dpi::{PhysicalSize, Size},

event::{Event, *},

event_loop::{ControlFlow, EventLoop},

window::{Window, WindowAttributes},

};

pub type Index = u16;

#[repr(u32)]

#[derive(Cl... | (window: &Window) -> Self {

pollster::block_on(Self::new_async(window))

}

pub async fn new_async(window: &Window) -> Self {

let instance = wgpu::Instance::new(wgpu::Backends::PRIMARY);

let surface = unsafe { instance.create_surface(window) };

let adapter = instance

... | new | identifier_name |

lib.rs | pub use glam::*;

use image::DynamicImage;

pub use std::time;

pub use wgpu::util::DeviceExt;

use wgpu::ShaderModule;

pub use winit::{

dpi::{PhysicalSize, Size},

event::{Event, *},

event_loop::{ControlFlow, EventLoop},

window::{Window, WindowAttributes},

};

pub type Index = u16;

#[repr(u32)]

#[derive(Cl... |

pub fn create_render_pass_resources(&self) -> Result<RenderPassResources, wgpu::SurfaceError> {

Ok(RenderPassResources {

command_encoder: self.device.create_command_encoder(&Default::default()),

surface_texture: self.surface_handler.surface.get_current_frame()?.output,

... | {

self.surface_handler.multisample_state()

} | identifier_body |

lib.rs | pub use glam::*;

use image::DynamicImage;

pub use std::time;

pub use wgpu::util::DeviceExt;

use wgpu::ShaderModule;

pub use winit::{

dpi::{PhysicalSize, Size},

event::{Event, *},

event_loop::{ControlFlow, EventLoop},

window::{Window, WindowAttributes},

};

pub type Index = u16;

#[repr(u32)]

#[derive(Cl... | usage: wgpu::BufferUsages,

) -> wgpu::Buffer {

self.device

.create_buffer_init(&wgpu::util::BufferInitDescriptor {

label: Some(label),

contents: Self::as_buffer_contents(contents),

usage,

})

}

pub fn create_index_buffer... | fn create_buffer<T>(

&self,

label: &str,

contents: &[T], | random_line_split |

cloudLibUtils.js | /**

* Copyright 2023 F5 Networks, Inc.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agr... | (context, discoveryRpm) {

// not installed

if (typeof discoveryRpm === 'undefined') {

return Promise.resolve(true);

}

const options = {

path: '/mgmt/shared/iapp/package-management-tasks',

method: 'POST',

ctype: 'application/json',

why: 'Uninstall discovery worker'... | uninstallDiscoveryRpm | identifier_name |

cloudLibUtils.js | /**

* Copyright 2023 F5 Networks, Inc.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agr... |

function ensureUninstall(context) {

return getDiscoveryRpm(context, 'packageName')

.then((discoveryRpmName) => uninstallDiscoveryRpm(context, discoveryRpmName));

}

function cleanupStoredDecl(context) {

if (context.target.deviceType !== DEVICE_TYPES.BIG_IP || context.host.buildType !== BUILD_TYPES.CLO... | {

return getIsInstalled(context)

.then((isInstalled) => (isInstalled ? Promise.resolve() : install(context)));

} | identifier_body |

cloudLibUtils.js | /**

* Copyright 2023 F5 Networks, Inc.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agr... | const log = require('../log');

const util = require('./util');

const iappUtil = require('./iappUtil');

const constants = require('../constants');

const DEVICE_TYPES = require('../constants').DEVICE_TYPES;

const BUILD_TYPES = require('../constants').BUILD_TYPES;

const SOURCE_PATH = '/var/config/rest/iapps/f5-appsvcs/p... | const semver = require('semver');

const promiseUtil = require('@f5devcentral/atg-shared-utilities').promiseUtils; | random_line_split |

cloudLibUtils.js | /**

* Copyright 2023 F5 Networks, Inc.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agr... |

return response;

});

};

const install = function (context) {

log.info('Installing service discovery worker');

return Promise.resolve()

.then(() => getDiscoveryRpm(context, 'packageName'))

.then((discoveryRpmName) => uninstallDiscoveryRpm(context, discoveryRpmName))

... | {

throw new Error(`${failureMessage}: ${response.statusCode}`);

} | conditional_block |

ad_grabber_util.py | from time import sleep

from uuid import uuid1

from pprint import pprint

from shutil import copy2

from multiprocessing import Process, Queue, Pool, Manager

from ad_grabber_classes import *

from adregex import *

from pygraph.classes.digraph import digraph

import os

import json

import jsonpickle

import subprocess

import ... |

def process_results_legacy(refresh_count, output_dir, ext_queue, result_queue,\

num_of_workers=8):

"""

This function goes through all the bugs identified by the firefox plugin and

aggregates each bug's occurence in a given page. The aggregation is necessary

for duplicate ads on the same page

"""

bug_d... | output_dir = args[0]

saved_file_name = args[1]

path = args[2]

bug = args[3]

curl_result_queue = args[4]

# subprocess.call(['curl', '-o', path , bug.get_src() ])

subprocess.call(['wget', '-t', '1', '-q', '-T', '3', '-O', path , bug.get_src()])

# Use the unix tool 'file' to check filetype

subpr_out = subp... | identifier_body |

ad_grabber_util.py | from time import sleep

from uuid import uuid1

from pprint import pprint

from shutil import copy2

from multiprocessing import Process, Queue, Pool, Manager

from ad_grabber_classes import *

from adregex import *

from pygraph.classes.digraph import digraph

import os

import json

import jsonpickle

import subprocess

import ... |

try:

bug.set_dimension(height, width)

dimension = '%s-%s' % (height, width)

# check all the images in the bin with the dimensions

m_list = target_bin[dimension]

dup = None

for m in m_list:

if check_duplicat... | target_bin = img_bin

LOG.debug(bug_filepath)

try:

height = subprocess.check_output(['identify', '-format', '"%h"',\

bug_filepath]).strip()

width = subprocess.check_output(['identify', '-format','"%w"',\

bug_filepath]).st... | conditional_block |

ad_grabber_util.py | from time import sleep

from uuid import uuid1

from pprint import pprint

from shutil import copy2

from multiprocessing import Process, Queue, Pool, Manager

from ad_grabber_classes import *

from adregex import *

from pygraph.classes.digraph import digraph

import os

import json

import jsonpickle

import subprocess

import ... | (session_results):

"""

i) Identify duplicate ads

ii) bin the ads by their dimensions

iii) Keep track of the test sites and have many times they have displayed this

ad

"""

# bin by dimensions

ads = {}

notads = {}

swf_bin = {}

img_bin = {}

error_bugs = []

for train_category, cat_dict in session_... | identify_uniq_ads | identifier_name |

ad_grabber_util.py | from time import sleep

from uuid import uuid1

from pprint import pprint

from shutil import copy2

from multiprocessing import Process, Queue, Pool, Manager

from ad_grabber_classes import *

from adregex import *

from pygraph.classes.digraph import digraph

import os

import json

import jsonpickle

import subprocess

import ... |

stopped = 0

while stopped < len(workers_dict):

ack = ack_queue.get()

p = workers_dict[ack]

p.join(timeout=1)

if p.is_alive():

p.terminate()

LOG.debug('terminating process %d' % ack)