url stringlengths 58 61 | repository_url stringclasses 1

value | labels_url stringlengths 72 75 | comments_url stringlengths 67 70 | events_url stringlengths 65 68 | html_url stringlengths 46 51 | id int64 599M 1.83B | node_id stringlengths 18 32 | number int64 1 6.09k | title stringlengths 1 290 | labels list | state stringclasses 2

values | locked bool 1

class | milestone dict | comments int64 0 54 | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | closed_at stringlengths 20 20 ⌀ | active_lock_reason null | body stringlengths 0 228k ⌀ | reactions dict | timeline_url stringlengths 67 70 | performed_via_github_app null | state_reason stringclasses 3

values | draft bool 2

classes | pull_request dict | is_pull_request bool 2

classes | comments_text list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/3421 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3421/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3421/comments | https://api.github.com/repos/huggingface/datasets/issues/3421/events | https://github.com/huggingface/datasets/pull/3421 | 1,077,966,571 | PR_kwDODunzps4vuvJK | 3,421 | Adding mMARCO dataset | [

{

"color": "0e8a16",

"default": false,

"description": "Contribution to a dataset script",

"id": 4564477500,

"name": "dataset contribution",

"node_id": "LA_kwDODunzps8AAAABEBBmPA",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20contribution"

}

] | closed | false | null | 7 | 2021-12-13T00:56:43Z | 2022-10-03T09:37:15Z | 2022-10-03T09:37:15Z | null | Adding mMARCO (v1.1) to HF datasets. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3421/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3421/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3421.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3421",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/3421.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3421"

} | true | [

"Hi @albertvillanova we've made a major overhaul of the loading script including all configurations we're making available. Could you please review it again?",

"@albertvillanova :ping_pong: ",

"Thanks @lhbonifacio for adding this dataset.\r\nHi there, i got an error about mmarco:\r\nConnectionError: Couldn't re... |

https://api.github.com/repos/huggingface/datasets/issues/3447 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3447/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3447/comments | https://api.github.com/repos/huggingface/datasets/issues/3447/events | https://github.com/huggingface/datasets/issues/3447 | 1,082,539,790 | I_kwDODunzps5Ahj8O | 3,447 | HF_DATASETS_OFFLINE=1 didn't stop datasets.builder from downloading | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | 3 | 2021-12-16T18:51:13Z | 2022-02-17T14:16:27Z | 2022-02-17T14:16:27Z | null | ## Describe the bug

According to https://huggingface.co/docs/datasets/loading_datasets.html#loading-a-dataset-builder, setting HF_DATASETS_OFFLINE to 1 should make datasets to "run in full offline mode". It didn't work for me. At the very beginning, datasets still tried to download "custom data configuration" for JSON, despite I have run the program once and cached all data into the same --cache_dir.

"Downloading" is not an issue when running with local disk, but crashes often with cloud storage because (1) multiply GPU processes try to access the same file, AND (2) FileLocker fails to synchronize all processes, due to storage throttling. 99% of times, when the main process releases FileLocker, the file is not actually ready for access in cloud storage and thus triggers "FileNotFound" errors for all other processes. Well, another way to resolve the problem is to investigate super reliable cloud storage, but that's out of scope here.

## Steps to reproduce the bug

```

export HF_DATASETS_OFFLINE=1

python run_clm.py --model_name_or_path=models/gpt-j-6B --train_file=trainpy.v2.train.json --validation_file=trainpy.v2.eval.json --cache_dir=datacache/trainpy.v2

```

## Expected results

datasets should stop all "downloading" behavior but reuse the cached JSON configuration. I think the problem here is part of the cache directory path, "default-471372bed4b51b53", is randomly generated, and it could change if some parameters changed. And I didn't find a way to use a fixed path to ensure datasets to reuse cached data every time.

## Actual results

The logging shows datasets are still downloading into "datacache/trainpy.v2/json/default-471372bed4b51b53/0.0.0/c2d554c3377ea79c7664b93dc65d0803b45e3279000f993c7bfd18937fd7f426".

```

12/16/2021 10:25:59 - WARNING - datasets.builder - Using custom data configuration default-471372bed4b51b53

12/16/2021 10:25:59 - INFO - datasets.builder - Generating dataset json (datacache/trainpy.v2/json/default-471372bed4b51b53/0.0.0/c2d554c3377ea79c7664b93dc65d0803b45e3279000f993c7bfd18937fd7f426)

Downloading and preparing dataset json/default to datacache/trainpy.v2/json/default-471372bed4b51b53/0.0.0/c2d554c3377ea79c7664b93dc65d0803b45e3279000f993c7bfd18937fd7f426...

100%|██████████████████████████████████████████████████████████████████████████████████| 2/2 [00:00<00:00, 17623.13it/s]

12/16/2021 10:25:59 - INFO - datasets.utils.download_manager - Downloading took 0.0 min

12/16/2021 10:26:00 - INFO - datasets.utils.download_manager - Checksum Computation took 0.0 min

100%|███████████████████████████████████████████████████████████████████████████████████| 2/2 [00:00<00:00, 1206.99it/s]

12/16/2021 10:26:00 - INFO - datasets.utils.info_utils - Unable to verify checksums.

12/16/2021 10:26:00 - INFO - datasets.builder - Generating split train

12/16/2021 10:26:01 - INFO - datasets.builder - Generating split validation

12/16/2021 10:26:02 - INFO - datasets.utils.info_utils - Unable to verify splits sizes.

Dataset json downloaded and prepared to datacache/trainpy.v2/json/default-471372bed4b51b53/0.0.0/c2d554c3377ea79c7664b93dc65d0803b45e3279000f993c7bfd18937fd7f426. Subsequent calls will reuse this data.

100%|█████████████████████████████████████████████████████████████████████████████████████| 2/2 [00:00<00:00, 53.54it/s]

```

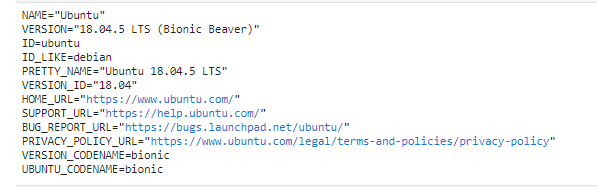

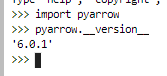

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 1.16.1

- Platform: Linux

- Python version: 3.8.10

- PyArrow version: 6.0.1

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3447/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3447/timeline | null | completed | null | null | false | [

"Hi ! Indeed it says \"downloading and preparing\" but in your case it didn't need to download anything since you used local files (it would have thrown an error otherwise). I think we can improve the logging to make it clearer in this case",

"@lhoestq Thank you for explaining. I am sorry but I was not clear abou... |

https://api.github.com/repos/huggingface/datasets/issues/2645 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2645/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2645/comments | https://api.github.com/repos/huggingface/datasets/issues/2645/events | https://github.com/huggingface/datasets/issues/2645 | 944,374,284 | MDU6SXNzdWU5NDQzNzQyODQ= | 2,645 | load_dataset processing failed with OS error after downloading a dataset | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | 2 | 2021-07-14T12:23:53Z | 2021-07-15T09:34:02Z | 2021-07-15T09:34:02Z | null | ## Describe the bug

After downloading a dataset like opus100, there is a bug that

OSError: Cannot find data file.

Original error:

dlopen: cannot load any more object with static TLS

## Steps to reproduce the bug

```python

from datasets import load_dataset

this_dataset = load_dataset('opus100', 'af-en')

```

## Expected results

there is no error when running load_dataset.

## Actual results

Specify the actual results or traceback.

Traceback (most recent call last):

File "/home/anaconda3/lib/python3.6/site-packages/datasets/builder.py", line 652, in _download_and_prep

self._prepare_split(split_generator, **prepare_split_kwargs)

File "/home/anaconda3/lib/python3.6/site-packages/datasets/builder.py", line 989, in _prepare_split

example = self.info.features.encode_example(record)

File "/home/anaconda3/lib/python3.6/site-packages/datasets/features.py", line 952, in encode_example

example = cast_to_python_objects(example)

File "/home/anaconda3/lib/python3.6/site-packages/datasets/features.py", line 219, in cast_to_python_ob

return _cast_to_python_objects(obj)[0]

File "/home/anaconda3/lib/python3.6/site-packages/datasets/features.py", line 165, in _cast_to_python_o

import torch

File "/home/anaconda3/lib/python3.6/site-packages/torch/__init__.py", line 188, in <module>

_load_global_deps()

File "/home/anaconda3/lib/python3.6/site-packages/torch/__init__.py", line 141, in _load_global_deps

ctypes.CDLL(lib_path, mode=ctypes.RTLD_GLOBAL)

File "/home/anaconda3/lib/python3.6/ctypes/__init__.py", line 348, in __init__

self._handle = _dlopen(self._name, mode)

OSError: dlopen: cannot load any more object with static TLS

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "download_hub_opus100.py", line 9, in <module>

this_dataset = load_dataset('opus100', language_pair)

File "/home/anaconda3/lib/python3.6/site-packages/datasets/load.py", line 748, in load_dataset

use_auth_token=use_auth_token,

File "/home/anaconda3/lib/python3.6/site-packages/datasets/builder.py", line 575, in download_and_prepa

dl_manager=dl_manager, verify_infos=verify_infos, **download_and_prepare_kwargs

File "/home/anaconda3/lib/python3.6/site-packages/datasets/builder.py", line 658, in _download_and_prep

+ str(e)

OSError: Cannot find data file.

Original error:

dlopen: cannot load any more object with static TLS

## Environment info

- `datasets` version: 1.8.0

- Platform: Linux-3.13.0-32-generic-x86_64-with-debian-jessie-sid

- Python version: 3.6.6

- PyArrow version: 3.0.0

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2645/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2645/timeline | null | completed | null | null | false | [

"Hi ! It looks like an issue with pytorch.\r\n\r\nCould you try to run `import torch` and see if it raises an error ?",

"> Hi ! It looks like an issue with pytorch.\r\n> \r\n> Could you try to run `import torch` and see if it raises an error ?\r\n\r\nIt works. Thank you!"

] |

https://api.github.com/repos/huggingface/datasets/issues/2928 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2928/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2928/comments | https://api.github.com/repos/huggingface/datasets/issues/2928/events | https://github.com/huggingface/datasets/pull/2928 | 997,941,506 | PR_kwDODunzps4r0yUb | 2,928 | Update BibTeX entry | [] | closed | false | null | 0 | 2021-09-16T08:39:20Z | 2021-09-16T12:35:34Z | 2021-09-16T12:35:34Z | null | Update BibTeX entry. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2928/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2928/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/2928.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2928",

"merged_at": "2021-09-16T12:35:34Z",

"patch_url": "https://github.com/huggingface/datasets/pull/2928.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/2928"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/5825 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5825/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5825/comments | https://api.github.com/repos/huggingface/datasets/issues/5825/events | https://github.com/huggingface/datasets/issues/5825 | 1,697,327,483 | I_kwDODunzps5lKyl7 | 5,825 | FileNotFound even though exists | [] | open | false | null | 3 | 2023-05-05T09:49:55Z | 2023-05-07T17:43:46Z | null | null | ### Describe the bug

I'm trying to download https://huggingface.co/datasets/bigscience/xP3/resolve/main/ur/xp3_facebook_flores_spa_Latn-urd_Arab_devtest_ab-spa_Latn-urd_Arab.jsonl which works fine in my webbrowser, but somehow not with datasets. Am I doing sth wrong?

```

Downloading builder script: 100%

2.82k/2.82k [00:00<00:00, 64.2kB/s]

Downloading readme: 100%

12.6k/12.6k [00:00<00:00, 585kB/s]

---------------------------------------------------------------------------

FileNotFoundError Traceback (most recent call last)

[<ipython-input-2-4b45446a91d5>](https://localhost:8080/#) in <cell line: 4>()

2 lang = "ur"

3 fname = "xp3_facebook_flores_spa_Latn-urd_Arab_devtest_ab-spa_Latn-urd_Arab.jsonl"

----> 4 dataset = load_dataset("bigscience/xP3", data_files=f"{lang}/{fname}")

6 frames

[/usr/local/lib/python3.10/dist-packages/datasets/data_files.py](https://localhost:8080/#) in _resolve_single_pattern_locally(base_path, pattern, allowed_extensions)

291 if allowed_extensions is not None:

292 error_msg += f" with any supported extension {list(allowed_extensions)}"

--> 293 raise FileNotFoundError(error_msg)

294 return sorted(out)

295

FileNotFoundError: Unable to find 'https://huggingface.co/datasets/bigscience/xP3/resolve/main/ur/xp3_facebook_flores_spa_Latn-urd_Arab_devtest_ab-spa_Latn-urd_Arab.jsonl' at /content/https:/huggingface.co/datasets/bigscience/xP3/resolve/main

```

### Steps to reproduce the bug

```

!pip install -q datasets

from datasets import load_dataset

lang = "ur"

fname = "xp3_facebook_flores_spa_Latn-urd_Arab_devtest_ab-spa_Latn-urd_Arab.jsonl"

dataset = load_dataset("bigscience/xP3", data_files=f"{lang}/{fname}")

```

### Expected behavior

Correctly downloads

### Environment info

latest versions | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5825/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5825/timeline | null | null | null | null | false | [

"Hi! \r\n\r\nThis would only work if `bigscience/xP3` was a no-code dataset, but it isn't (it has a Python builder script).\r\n\r\nBut this should work: \r\n```python\r\nload_dataset(\"json\", data_files=\"https://huggingface.co/datasets/bigscience/xP3/resolve/main/ur/xp3_facebook_flores_spa_Latn-urd_Arab_devtest_a... |

https://api.github.com/repos/huggingface/datasets/issues/3275 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3275/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3275/comments | https://api.github.com/repos/huggingface/datasets/issues/3275/events | https://github.com/huggingface/datasets/pull/3275 | 1,053,698,898 | PR_kwDODunzps4uiN9t | 3,275 | Force data files extraction if download_mode='force_redownload' | [] | closed | false | null | 0 | 2021-11-15T14:00:24Z | 2021-11-15T14:45:23Z | 2021-11-15T14:45:23Z | null | Avoids weird issues when redownloading a dataset due to cached data not being fully updated.

With this change, issues #3122 and https://github.com/huggingface/datasets/issues/2956 (not a fix, but a workaround) can be fixed as follows:

```python

dset = load_dataset(..., download_mode="force_redownload")

``` | {

"+1": 1,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3275/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3275/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3275.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3275",

"merged_at": "2021-11-15T14:45:23Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3275.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3275"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/2047 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2047/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2047/comments | https://api.github.com/repos/huggingface/datasets/issues/2047/events | https://github.com/huggingface/datasets/pull/2047 | 830,626,430 | MDExOlB1bGxSZXF1ZXN0NTkyMTI2NzQ3 | 2,047 | Multilingual dIalogAct benchMark (miam) | [] | closed | false | null | 4 | 2021-03-12T23:02:55Z | 2021-03-23T10:36:34Z | 2021-03-19T10:47:13Z | null | My collaborators (@EmileChapuis, @PierreColombo) and I within the Affective Computing team at Telecom Paris would like to anonymously publish the miam dataset. It is assocated with a publication currently under review. We will update the dataset with full citations once the review period is over. | {

"+1": 1,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2047/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2047/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/2047.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2047",

"merged_at": "2021-03-19T10:47:13Z",

"patch_url": "https://github.com/huggingface/datasets/pull/2047.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/2047"

} | true | [

"Hello. All aforementioned changes have been made. I've also re-run black on miam.py. :-)",

"I will run isort again. Hopefully it resolves the current check_code_quality test failure.",

"Once the review period is over, feel free to open a PR to add all the missing information ;)",

"Hi! I will follow up right ... |

https://api.github.com/repos/huggingface/datasets/issues/630 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/630/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/630/comments | https://api.github.com/repos/huggingface/datasets/issues/630/events | https://github.com/huggingface/datasets/issues/630 | 701,636,350 | MDU6SXNzdWU3MDE2MzYzNTA= | 630 | Text dataset not working with large files | [] | closed | false | null | 11 | 2020-09-15T06:02:36Z | 2020-09-25T22:21:43Z | 2020-09-25T22:21:43Z | null | ```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir=model_args.cache_dir) if training_args.do_train else None

File "examples/language-modeling/run_language_modeling.py", line 144, in get_dataset

dataset = load_dataset("text", data_files=file_path, split='train+test')

File "/home/ksjae/.local/lib/python3.7/site-packages/datasets/load.py", line 611, in load_dataset

ignore_verifications=ignore_verifications,

File "/home/ksjae/.local/lib/python3.7/site-packages/datasets/builder.py", line 469, in download_and_prepare

dl_manager=dl_manager, verify_infos=verify_infos, **download_and_prepare_kwargs

File "/home/ksjae/.local/lib/python3.7/site-packages/datasets/builder.py", line 546, in _download_and_prepare

self._prepare_split(split_generator, **prepare_split_kwargs)

File "/home/ksjae/.local/lib/python3.7/site-packages/datasets/builder.py", line 888, in _prepare_split

for key, table in utils.tqdm(generator, unit=" tables", leave=False, disable=not_verbose):

File "/home/ksjae/.local/lib/python3.7/site-packages/tqdm/std.py", line 1129, in __iter__

for obj in iterable:

File "/home/ksjae/.cache/huggingface/modules/datasets_modules/datasets/text/7e13bc0fa76783d4ef197f079dc8acfe54c3efda980f2c9adfab046ede2f0ff7/text.py", line 104, in _generate_tables

convert_options=self.config.convert_options,

File "pyarrow/_csv.pyx", line 714, in pyarrow._csv.read_csv

File "pyarrow/error.pxi", line 122, in pyarrow.lib.pyarrow_internal_check_status

File "pyarrow/error.pxi", line 84, in pyarrow.lib.check_status

```

**pyarrow.lib.ArrowInvalid: straddling object straddles two block boundaries (try to increase block size?)**

It gives the same message for both 200MB, 10GB .tx files but not for 700MB file.

Can't upload due to size & copyright problem. sorry. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/630/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/630/timeline | null | completed | null | null | false | [

"Seems like it works when setting ```block_size=2100000000``` or something arbitrarily large though.",

"Can you give us some stats on the data files you use as inputs?",

"Basically ~600MB txt files(UTF-8) * 59. \r\ncontents like ```안녕하세요, 이것은 예제로 한번 말해보는 텍스트입니다. 그냥 이렇다고요.<|endoftext|>\\n```\r\n\r\nAlso, it gets... |

https://api.github.com/repos/huggingface/datasets/issues/1983 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1983/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1983/comments | https://api.github.com/repos/huggingface/datasets/issues/1983/events | https://github.com/huggingface/datasets/issues/1983 | 821,746,008 | MDU6SXNzdWU4MjE3NDYwMDg= | 1,983 | The size of CoNLL-2003 is not consistant with the official release. | [] | closed | false | null | 4 | 2021-03-04T04:41:34Z | 2022-10-05T13:13:26Z | 2022-10-05T13:13:26Z | null | Thanks for the dataset sharing! But when I use conll-2003, I meet some questions.

The statistics of conll-2003 in this repo is :

\#train 14041 \#dev 3250 \#test 3453

While the official statistics is:

\#train 14987 \#dev 3466 \#test 3684

Wish for your reply~ | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1983/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1983/timeline | null | completed | null | null | false | [

"Hi,\r\n\r\nif you inspect the raw data, you can find there are 946 occurrences of `-DOCSTART- -X- -X- O` in the train split and `14041 + 946 = 14987`, which is exactly the number of sentences the authors report. `-DOCSTART-` is a special line that acts as a boundary between two different documents and is filtered ... |

https://api.github.com/repos/huggingface/datasets/issues/5894 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5894/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5894/comments | https://api.github.com/repos/huggingface/datasets/issues/5894/events | https://github.com/huggingface/datasets/pull/5894 | 1,724,774,910 | PR_kwDODunzps5RSjot | 5,894 | Force overwrite existing filesystem protocol | [] | closed | false | null | 2 | 2023-05-24T21:41:53Z | 2023-05-25T06:52:08Z | 2023-05-25T06:42:33Z | null | Fix #5876 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5894/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5894/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5894.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5894",

"merged_at": "2023-05-25T06:42:33Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5894.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5894"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==8.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | rea... |

https://api.github.com/repos/huggingface/datasets/issues/3583 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3583/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3583/comments | https://api.github.com/repos/huggingface/datasets/issues/3583/events | https://github.com/huggingface/datasets/issues/3583 | 1,105,195,144 | I_kwDODunzps5B3_CI | 3,583 | Add The Medical Segmentation Decathlon Dataset | [

{

"color": "e99695",

"default": false,

"description": "Requesting to add a new dataset",

"id": 2067376369,

"name": "dataset request",

"node_id": "MDU6TGFiZWwyMDY3Mzc2MzY5",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20request"

},

{

"color": "bfdadc",... | open | false | null | 5 | 2022-01-16T21:42:25Z | 2022-03-18T10:44:42Z | null | null | ## Adding a Dataset

- **Name:** *The Medical Segmentation Decathlon Dataset*

- **Description:** The underlying data set was designed to explore the axis of difficulties typically encountered when dealing with medical images, such as small data sets, unbalanced labels, multi-site data, and small objects.

- **Paper:** [link to the dataset paper if available](https://arxiv.org/abs/2106.05735)

- **Data:** http://medicaldecathlon.com/

- **Motivation:** Hugging Face seeks to democratize ML for society. One of the growing niches within ML is the ML + Medicine community. Key data sets will help increase the supply of HF resources for starting an initial community.

(cc @osanseviero @abidlabs )

Instructions to add a new dataset can be found [here](https://github.com/huggingface/datasets/blob/master/ADD_NEW_DATASET.md).

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3583/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3583/timeline | null | null | null | null | false | [

"Hello! I have recently been involved with a medical image segmentation project myself and was going through the `The Medical Segmentation Decathlon Dataset` as well. \r\nI haven't yet had experience adding datasets to this repository yet but would love to get started. Should I take this issue?\r\nIf yes, I've got ... |

https://api.github.com/repos/huggingface/datasets/issues/817 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/817/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/817/comments | https://api.github.com/repos/huggingface/datasets/issues/817/events | https://github.com/huggingface/datasets/issues/817 | 739,145,369 | MDU6SXNzdWU3MzkxNDUzNjk= | 817 | Add MRQA dataset | [

{

"color": "e99695",

"default": false,

"description": "Requesting to add a new dataset",

"id": 2067376369,

"name": "dataset request",

"node_id": "MDU6TGFiZWwyMDY3Mzc2MzY5",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20request"

}

] | closed | false | null | 1 | 2020-11-09T15:52:19Z | 2020-12-04T15:44:42Z | 2020-12-04T15:44:41Z | null | ## Adding a Dataset

- **Name:** MRQA

- **Description:** Collection of different (subsets of) QA datasets all converted to the same format to evaluate out-of-domain generalization (the datasets come from different domains, distributions, etc.). Some datasets are used for training and others are used for evaluation. This dataset was collected as part of MRQA 2019's shared task

- **Paper:** https://arxiv.org/abs/1910.09753

- **Data:** https://github.com/mrqa/MRQA-Shared-Task-2019

- **Motivation:** Out-of-domain generalization is becoming (has become) a de-factor evaluation for NLU systems

Instructions to add a new dataset can be found [here](https://huggingface.co/docs/datasets/share_dataset.html). | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 1,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/817/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/817/timeline | null | completed | null | null | false | [

"Done! cf #1117 and #1022"

] |

https://api.github.com/repos/huggingface/datasets/issues/915 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/915/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/915/comments | https://api.github.com/repos/huggingface/datasets/issues/915/events | https://github.com/huggingface/datasets/issues/915 | 753,118,481 | MDU6SXNzdWU3NTMxMTg0ODE= | 915 | Shall we change the hashing to encoding to reduce potential replicated cache files? | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

},

{

"color": "c5def5",

"default": fals... | open | false | null | 2 | 2020-11-30T03:50:46Z | 2020-12-24T05:11:49Z | null | null | Hi there. For now, we are using `xxhash` to hash the transformations to fingerprint and we will save a copy of the processed dataset to disk if there is a new hash value. However, there are some transformations that are idempotent or commutative to each other. I think that encoding the transformation chain as the fingerprint may help in those cases, for example, use `base64.urlsafe_b64encode`. In this way, before we want to save a new copy, we can decode the transformation chain and normalize it to prevent omit potential reuse. As the main targets of this project are the really large datasets that cannot be loaded entirely in memory, I believe it would save a lot of time if we can avoid some write.

If you have interest in this, I'd love to help :). | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/915/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/915/timeline | null | null | null | null | false | [

"This is an interesting idea !\r\nDo you have ideas about how to approach the decoding and the normalization ?",

"@lhoestq\r\nI think we first need to save the transformation chain to a list in `self._fingerprint`. Then we can\r\n- decode all the current saved datasets to see if there is already one that is equiv... |

https://api.github.com/repos/huggingface/datasets/issues/4327 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4327/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4327/comments | https://api.github.com/repos/huggingface/datasets/issues/4327/events | https://github.com/huggingface/datasets/issues/4327 | 1,233,840,020 | I_kwDODunzps5JiueU | 4,327 | `wikipedia` pre-processed datasets | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | 2 | 2022-05-12T11:25:42Z | 2022-08-31T08:26:57Z | 2022-08-31T08:26:57Z | null | ## Describe the bug

[Wikipedia](https://huggingface.co/datasets/wikipedia) dataset readme says that certain subsets are preprocessed. However it seems like they are not available. When I try to load them it takes a really long time, and it seems like it's processing them.

## Steps to reproduce the bug

```python

from datasets import load_dataset

load_dataset("wikipedia", "20220301.en")

```

## Expected results

To load the dataset

## Actual results

Takes a very long time to load (after downloading)

After `Downloading data files: 100%`. It takes hours and gets killed.

Tried `wikipedia.simple` and it got processed after ~30mins. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4327/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4327/timeline | null | completed | null | null | false | [

"Hi @vpj, thanks for reporting.\r\n\r\nI'm sorry, but I can't reproduce your bug: I load \"20220301.simple\"in 9 seconds:\r\n```shell\r\ntime python -c \"from datasets import load_dataset; load_dataset('wikipedia', '20220301.simple')\"\r\n\r\nDownloading and preparing dataset wikipedia/20220301.simple (download: 22... |

https://api.github.com/repos/huggingface/datasets/issues/1707 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1707/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1707/comments | https://api.github.com/repos/huggingface/datasets/issues/1707/events | https://github.com/huggingface/datasets/pull/1707 | 781,507,545 | MDExOlB1bGxSZXF1ZXN0NTUxMjE5MDk2 | 1,707 | Added generated READMEs for datasets that were missing one. | [] | closed | false | null | 1 | 2021-01-07T18:10:06Z | 2021-01-18T14:32:33Z | 2021-01-18T14:32:33Z | null | This is it: we worked on a generator with Yacine @yjernite , and we generated dataset cards for all missing ones (161), with all the information we could gather from datasets repository, and using dummy_data to generate examples when possible.

Code is available here for the moment: https://github.com/madlag/datasets_readme_generator .

We will move it to a Hugging Face repository and to https://huggingface.co/datasets/card-creator/ later.

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 2,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 2,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1707/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1707/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/1707.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1707",

"merged_at": "2021-01-18T14:32:33Z",

"patch_url": "https://github.com/huggingface/datasets/pull/1707.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1707"

} | true | [

"Looks like we need to trim the ones with too many configs, will look into it tomorrow!"

] |

https://api.github.com/repos/huggingface/datasets/issues/3951 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3951/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3951/comments | https://api.github.com/repos/huggingface/datasets/issues/3951/events | https://github.com/huggingface/datasets/issues/3951 | 1,171,568,814 | I_kwDODunzps5F1Liu | 3,951 | Forked streaming datasets try to `open` data urls rather than use network | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | 1 | 2022-03-16T21:21:02Z | 2022-06-10T20:47:26Z | 2022-06-10T20:47:26Z | null | ## Describe the bug

Building on #3950, if you bypass the pickling problem you still can't use the dataset. Somehow something gets confused and the forked processes try to `open` urls rather than anything else.

## Steps to reproduce the bug

```python

from multiprocessing import freeze_support

import transformers

from transformers import Trainer, AutoModelForCausalLM, TrainingArguments

import datasets

import torch.utils.data

# work around #3950

class TorchIterableDataset(datasets.IterableDataset, torch.utils.data.IterableDataset):

pass

def _ensure_format(v: datasets.IterableDataset) -> datasets.IterableDataset:

return TorchIterableDataset(v._ex_iterable, v.info, v.split, "torch", v._shuffling)

if __name__ == '__main__':

freeze_support()

ds = datasets.load_dataset('oscar', "unshuffled_deduplicated_en", split='train', streaming=True)

ds = _ensure_format(ds)

model = AutoModelForCausalLM.from_pretrained("distilgpt2")

Trainer(model, train_dataset=ds, args=TrainingArguments("out", max_steps=1000, dataloader_num_workers=4)).train()

```

## Expected results

I'd expect the dataset to load the url correctly and produce examples.

## Actual results

```

warnings.warn(

***** Running training *****

Num examples = 8000

Num Epochs = 9223372036854775807

Instantaneous batch size per device = 8

Total train batch size (w. parallel, distributed & accumulation) = 8

Gradient Accumulation steps = 1

Total optimization steps = 1000

0%| | 0/1000 [00:00<?, ?it/s]Traceback (most recent call last):

File "/Users/dlwh/src/mistral/src/stream_fork_crash.py", line 22, in <module>

Trainer(model, train_dataset=ds, args=TrainingArguments("out", max_steps=1000, dataloader_num_workers=4)).train()

File "/Users/dlwh/.conda/envs/mistral/lib/python3.8/site-packages/transformers/trainer.py", line 1339, in train

for step, inputs in enumerate(epoch_iterator):

File "/Users/dlwh/.conda/envs/mistral/lib/python3.8/site-packages/torch/utils/data/dataloader.py", line 521, in __next__

data = self._next_data()

File "/Users/dlwh/.conda/envs/mistral/lib/python3.8/site-packages/torch/utils/data/dataloader.py", line 1203, in _next_data

return self._process_data(data)

File "/Users/dlwh/.conda/envs/mistral/lib/python3.8/site-packages/torch/utils/data/dataloader.py", line 1229, in _process_data

data.reraise()

File "/Users/dlwh/.conda/envs/mistral/lib/python3.8/site-packages/torch/_utils.py", line 434, in reraise

raise exception

FileNotFoundError: Caught FileNotFoundError in DataLoader worker process 0.

Original Traceback (most recent call last):

File "/Users/dlwh/.conda/envs/mistral/lib/python3.8/site-packages/torch/utils/data/_utils/worker.py", line 287, in _worker_loop

data = fetcher.fetch(index)

File "/Users/dlwh/.conda/envs/mistral/lib/python3.8/site-packages/torch/utils/data/_utils/fetch.py", line 32, in fetch

data.append(next(self.dataset_iter))

File "/Users/dlwh/.conda/envs/mistral/lib/python3.8/site-packages/datasets/iterable_dataset.py", line 497, in __iter__

for key, example in self._iter():

File "/Users/dlwh/.conda/envs/mistral/lib/python3.8/site-packages/datasets/iterable_dataset.py", line 494, in _iter

yield from ex_iterable

File "/Users/dlwh/.conda/envs/mistral/lib/python3.8/site-packages/datasets/iterable_dataset.py", line 87, in __iter__

yield from self.generate_examples_fn(**self.kwargs)

File "/Users/dlwh/.cache/huggingface/modules/datasets_modules/datasets/oscar/84838bd49d2295f62008383b05620571535451d84545037bb94d6f3501651df2/oscar.py", line 358, in _generate_examples

with gzip.open(open(filepath, "rb"), "rt", encoding="utf-8") as f:

FileNotFoundError: [Errno 2] No such file or directory: 'https://s3.amazonaws.com/datasets.huggingface.co/oscar/1.0/unshuffled/deduplicated/en/en_part_1.txt.gz'

Error in atexit._run_exitfuncs:

Traceback (most recent call last):

File "/Users/dlwh/.conda/envs/mistral/lib/python3.8/multiprocessing/popen_fork.py", line 27, in poll

pid, sts = os.waitpid(self.pid, flag)

File "/Users/dlwh/.conda/envs/mistral/lib/python3.8/site-packages/torch/utils/data/_utils/signal_handling.py", line 66, in handler

_error_if_any_worker_fails()

RuntimeError: DataLoader worker (pid 6932) is killed by signal: Terminated: 15.

0%| | 0/1000 [00:02<?, ?it/s]

```

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 2.0.0

- Platform: macOS-12.2-arm64-arm-64bit

- Python version: 3.8.12

- PyArrow version: 7.0.0

- Pandas version: 1.4.1

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3951/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3951/timeline | null | completed | null | null | false | [

"Thanks for reporting this second issue as well. We definitely want to make streaming datasets fully working in a distributed setup and with the best performance. Right now it only supports single process.\r\n\r\nIn this issue it seems that the streaming capabilities that we offer to dataset builders are not transf... |

https://api.github.com/repos/huggingface/datasets/issues/3007 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3007/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3007/comments | https://api.github.com/repos/huggingface/datasets/issues/3007/events | https://github.com/huggingface/datasets/pull/3007 | 1,014,775,450 | PR_kwDODunzps4sns-n | 3,007 | Correct a typo | [] | closed | false | null | 0 | 2021-10-04T06:15:47Z | 2021-10-04T09:27:57Z | 2021-10-04T09:27:57Z | null | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3007/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3007/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3007.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3007",

"merged_at": "2021-10-04T09:27:57Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3007.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3007"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/4560 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4560/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4560/comments | https://api.github.com/repos/huggingface/datasets/issues/4560/events | https://github.com/huggingface/datasets/pull/4560 | 1,283,558,873 | PR_kwDODunzps46TY9n | 4,560 | Add evaluation metadata to imagenet-1k | [

{

"color": "0e8a16",

"default": false,

"description": "Contribution to a dataset script",

"id": 4564477500,

"name": "dataset contribution",

"node_id": "LA_kwDODunzps8AAAABEBBmPA",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20contribution"

}

] | closed | false | null | 2 | 2022-06-24T10:12:41Z | 2022-09-23T09:39:53Z | 2022-09-23T09:37:03Z | null | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4560/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4560/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/4560.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4560",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/4560.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4560"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._",

"As discussed with @lewtun, we are closing this PR, because it requires first the task names to be aligned between AutoTrain and datasets."

] |

https://api.github.com/repos/huggingface/datasets/issues/2282 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2282/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2282/comments | https://api.github.com/repos/huggingface/datasets/issues/2282/events | https://github.com/huggingface/datasets/pull/2282 | 870,900,332 | MDExOlB1bGxSZXF1ZXN0NjI2MDEyMzM3 | 2,282 | Initialize imdb dataset from don't stop pretraining paper | [] | closed | false | null | 0 | 2021-04-29T11:17:56Z | 2021-04-29T11:43:51Z | 2021-04-29T11:43:51Z | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2282/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2282/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/2282.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2282",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/2282.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/2282"

} | true | [] | |

https://api.github.com/repos/huggingface/datasets/issues/4981 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4981/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4981/comments | https://api.github.com/repos/huggingface/datasets/issues/4981/events | https://github.com/huggingface/datasets/issues/4981 | 1,375,086,773 | I_kwDODunzps5R9ii1 | 4,981 | Can't create a dataset with `float16` features | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | open | false | null | 7 | 2022-09-15T21:03:24Z | 2023-03-22T21:40:09Z | null | null | ## Describe the bug

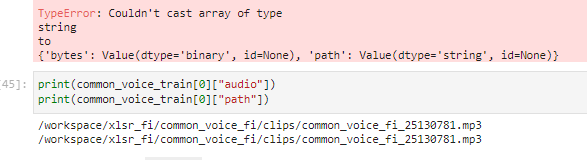

I can't create a dataset with `float16` features.

I understand from the traceback that this is a `pyarrow` error, but I don't see anywhere in the `datasets` documentation about how to successfully do this. Is it actually supported? I've tried older versions of `pyarrow` as well with the same exact error.

The bug seems to arise from `datasets` casting the values to `double` and then `pyarrow` doesn't know how to convert those back to `float16`... does that sound right? Is there a way to bypass this since it's not necessary in the `numpy` and `torch` cases?

Thanks!

## Steps to reproduce the bug

All of the following raise the following error with the same exact (as far as I can tell) traceback:

```python

ArrowNotImplementedError: Unsupported cast from double to halffloat using function cast_half_float

```

```python

from datasets import Dataset, Features, Value

Dataset.from_dict({"x": [0.0, 1.0, 2.0]}, features=Features(x=Value("float16")))

import numpy as np

Dataset.from_dict({"x": np.arange(3, dtype=np.float16)}, features=Features(x=Value("float16")))

import torch

Dataset.from_dict({"x": torch.arange(3).to(torch.float16)}, features=Features(x=Value("float16")))

```

## Expected results

A dataset with `float16` features is successfully created.

## Actual results

```python

---------------------------------------------------------------------------

ArrowNotImplementedError Traceback (most recent call last)

Cell In [14], line 1

----> 1 Dataset.from_dict({"x": [1.0, 2.0, 3.0]}, features=Features(x=Value("float16")))

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/datasets/arrow_dataset.py:870, in Dataset.from_dict(cls, mapping, features, info, split)

865 mapping = features.encode_batch(mapping)

866 mapping = {

867 col: OptimizedTypedSequence(data, type=features[col] if features is not None else None, col=col)

868 for col, data in mapping.items()

869 }

--> 870 pa_table = InMemoryTable.from_pydict(mapping=mapping)

871 if info.features is None:

872 info.features = Features({col: ts.get_inferred_type() for col, ts in mapping.items()})

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/datasets/table.py:750, in InMemoryTable.from_pydict(cls, *args, **kwargs)

734 @classmethod

735 def from_pydict(cls, *args, **kwargs):

736 """

737 Construct a Table from Arrow arrays or columns

738

(...)

748 :class:`datasets.table.Table`:

749 """

--> 750 return cls(pa.Table.from_pydict(*args, **kwargs))

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/pyarrow/table.pxi:3648, in pyarrow.lib.Table.from_pydict()

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/pyarrow/table.pxi:5174, in pyarrow.lib._from_pydict()

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/pyarrow/array.pxi:343, in pyarrow.lib.asarray()

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/pyarrow/array.pxi:231, in pyarrow.lib.array()

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/pyarrow/array.pxi:110, in pyarrow.lib._handle_arrow_array_protocol()

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/datasets/arrow_writer.py:197, in TypedSequence.__arrow_array__(self, type)

192 # otherwise we can finally use the user's type

193 elif type is not None:

194 # We use cast_array_to_feature to support casting to custom types like Audio and Image

195 # Also, when trying type "string", we don't want to convert integers or floats to "string".

196 # We only do it if trying_type is False - since this is what the user asks for.

--> 197 out = cast_array_to_feature(out, type, allow_number_to_str=not self.trying_type)

198 return out

199 except (TypeError, pa.lib.ArrowInvalid) as e: # handle type errors and overflows

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/datasets/table.py:1683, in _wrap_for_chunked_arrays.<locals>.wrapper(array, *args, **kwargs)

1681 return pa.chunked_array([func(chunk, *args, **kwargs) for chunk in array.chunks])

1682 else:

-> 1683 return func(array, *args, **kwargs)

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/datasets/table.py:1853, in cast_array_to_feature(array, feature, allow_number_to_str)

1851 return array_cast(array, get_nested_type(feature), allow_number_to_str=allow_number_to_str)

1852 elif not isinstance(feature, (Sequence, dict, list, tuple)):

-> 1853 return array_cast(array, feature(), allow_number_to_str=allow_number_to_str)

1854 raise TypeError(f"Couldn't cast array of type\n{array.type}\nto\n{feature}")

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/datasets/table.py:1683, in _wrap_for_chunked_arrays.<locals>.wrapper(array, *args, **kwargs)

1681 return pa.chunked_array([func(chunk, *args, **kwargs) for chunk in array.chunks])

1682 else:

-> 1683 return func(array, *args, **kwargs)

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/datasets/table.py:1762, in array_cast(array, pa_type, allow_number_to_str)

1760 if pa.types.is_null(pa_type) and not pa.types.is_null(array.type):

1761 raise TypeError(f"Couldn't cast array of type {array.type} to {pa_type}")

-> 1762 return array.cast(pa_type)

1763 raise TypeError(f"Couldn't cast array of type\n{array.type}\nto\n{pa_type}")

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/pyarrow/array.pxi:919, in pyarrow.lib.Array.cast()

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/pyarrow/compute.py:389, in cast(arr, target_type, safe, options)

387 else:

388 options = CastOptions.safe(target_type)

--> 389 return call_function("cast", [arr], options)

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/pyarrow/_compute.pyx:560, in pyarrow._compute.call_function()

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/pyarrow/_compute.pyx:355, in pyarrow._compute.Function.call()

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/pyarrow/error.pxi:144, in pyarrow.lib.pyarrow_internal_check_status()

File ~/scratch/scratch-env-39/.venv/lib/python3.9/site-packages/pyarrow/error.pxi:121, in pyarrow.lib.check_status()

ArrowNotImplementedError: Unsupported cast from double to halffloat using function cast_half_float

```

## Environment info

- `datasets` version: 2.4.0

- Platform: macOS-12.5.1-arm64-arm-64bit

- Python version: 3.9.13

- PyArrow version: 9.0.0

- Pandas version: 1.4.4

| {

"+1": 1,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4981/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4981/timeline | null | null | null | null | false | [

"Hi @dconathan, thanks for reporting.\r\n\r\nWe rely on Arrow as a backend, and as far as I know currently support for `float16` in Arrow is not fully implemented in Python (C++), hence the `ArrowNotImplementedError` you get.\r\n\r\nSee, e.g.: https://arrow.apache.org/docs/status.html?highlight=float16#data-types",... |

https://api.github.com/repos/huggingface/datasets/issues/3888 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3888/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3888/comments | https://api.github.com/repos/huggingface/datasets/issues/3888/events | https://github.com/huggingface/datasets/issues/3888 | 1,165,435,529 | I_kwDODunzps5FdyKJ | 3,888 | IterableDataset columns and feature types | [

{

"color": "c5def5",

"default": false,

"description": "Generic discussion on the library",

"id": 2067400324,

"name": "generic discussion",

"node_id": "MDU6TGFiZWwyMDY3NDAwMzI0",

"url": "https://api.github.com/repos/huggingface/datasets/labels/generic%20discussion"

},

{

"color": "... | open | false | null | 8 | 2022-03-10T16:19:12Z | 2022-11-29T11:39:24Z | null | null | Right now, an IterableDataset (e.g. when streaming a dataset) doesn't require to know the list of columns it contains, nor their types: `my_iterable_dataset.features` may be `None`

However it's often interesting to know the column types and types. This helps knowing what's inside your dataset without having to manually check a few examples, and this is useful to prepare a processing pipeline or to train models.

Here are a few cases that lead to `features` being `None`:

1. when loading a dataset with `load_dataset` on CSV, JSON Lines, etc. files: type inference is only done when iterating over the dataset

2. when calling `map`, because we don't know in advance what's the output of the user's function passed to `map`

3. when calling `rename_columns`, `remove_columns`, etc. because they rely on `map`

Things we can consider, for each point above:

1.a infer the type automatically from the first samples on the dataset using prefetching, when the dataset builder doesn't provide the `features`

2.a allow the user to specify the `features` as an argument to `map` (this would be consistent with the non-streaming API)

2.b prefetch the first output value to infer the type

3.a don't rely on `map` directly and reuse the previous `features` and rename/remove the corresponding ones

The thing is that prefetching can take a few seconds, while the operations above are instantaneous since no data are downloaded. Therefore I'm not sure whether this solution may be worth it. Maybe prefetching could also be done when explicitly asked by the user

cc @mariosasko @albertvillanova | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3888/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3888/timeline | null | null | null | null | false | [

"#self-assign",

"@alvarobartt I've assigned you the issue since I'm not actively working on it.",

"Cool thanks @mariosasko I'll try to fix it in the upcoming days, thanks!",

"@lhoestq so in order to address what’s not completed in this issue, do you think it makes sense to add a param `features` to `IterableD... |

https://api.github.com/repos/huggingface/datasets/issues/3562 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3562/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3562/comments | https://api.github.com/repos/huggingface/datasets/issues/3562/events | https://github.com/huggingface/datasets/pull/3562 | 1,098,341,351 | PR_kwDODunzps4wwa44 | 3,562 | Allow multiple task templates of the same type | [] | closed | false | null | 0 | 2022-01-10T20:32:07Z | 2022-01-11T14:16:47Z | 2022-01-11T14:16:47Z | null | Add support for multiple task templates of the same type. Fixes (partially) #2520.

CC: @lewtun | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3562/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3562/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3562.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3562",

"merged_at": "2022-01-11T14:16:46Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3562.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3562"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/6030 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/6030/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/6030/comments | https://api.github.com/repos/huggingface/datasets/issues/6030/events | https://github.com/huggingface/datasets/pull/6030 | 1,803,864,744 | PR_kwDODunzps5Vd0ZG | 6,030 | fixed typo in comment | [] | closed | false | null | 2 | 2023-07-13T22:49:57Z | 2023-07-14T14:21:58Z | 2023-07-14T14:13:38Z | null | This mistake was a bit confusing, so I thought it was worth sending a PR over. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/6030/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/6030/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/6030.diff",

"html_url": "https://github.com/huggingface/datasets/pull/6030",

"merged_at": "2023-07-14T14:13:38Z",

"patch_url": "https://github.com/huggingface/datasets/pull/6030.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/6030"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==8.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | rea... |

https://api.github.com/repos/huggingface/datasets/issues/2896 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2896/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2896/comments | https://api.github.com/repos/huggingface/datasets/issues/2896/events | https://github.com/huggingface/datasets/pull/2896 | 993,613,113 | MDExOlB1bGxSZXF1ZXN0NzMxNzcwMTE3 | 2,896 | add multi-proc in `to_csv` | [] | closed | false | null | 2 | 2021-09-10T21:35:09Z | 2021-10-28T05:47:33Z | 2021-10-26T16:00:42Z | null | This PR extends the multi-proc method used in #2747 for`to_json` to `to_csv` as well.

Results on my machine post benchmarking on `ascent_kb` dataset (giving ~45% improvement when compared to num_proc = 1):

```

Time taken on 1 num_proc, 10000 batch_size 674.2055702209473

Time taken on 4 num_proc, 10000 batch_size 425.6553490161896

Time taken on 1 num_proc, 50000 batch_size 623.5897650718689

Time taken on 4 num_proc, 50000 batch_size 380.0402421951294

Time taken on 4 num_proc, 100000 batch_size 361.7168130874634

```

This is a WIP as writing tests is pending for this PR.

I'm also exploring [this](https://arrow.apache.org/docs/python/csv.html#incremental-writing) approach for which I'm using `pyarrow-5.0.0`.

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2896/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2896/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/2896.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2896",

"merged_at": "2021-10-26T16:00:41Z",

"patch_url": "https://github.com/huggingface/datasets/pull/2896.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/2896"

} | true | [

"I think you can just add a test `test_dataset_to_csv_multiproc` in `tests/io/test_csv.py` and we'll be good",

"Hi @lhoestq, \r\nI've added `test_dataset_to_csv` apart from `test_dataset_to_csv_multiproc` as no test was there to check generated CSV file when `num_proc=1`. Please let me know if anything is also re... |

https://api.github.com/repos/huggingface/datasets/issues/4350 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4350/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4350/comments | https://api.github.com/repos/huggingface/datasets/issues/4350/events | https://github.com/huggingface/datasets/pull/4350 | 1,235,505,104 | PR_kwDODunzps43zKIV | 4,350 | Add a new metric: CTC_Consistency | [] | closed | false | null | 1 | 2022-05-13T17:31:19Z | 2022-05-19T10:23:04Z | 2022-05-19T10:23:03Z | null | Add CTC_Consistency metric

Do I also need to modify the `test_metric_common.py` file to make it run on test? | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4350/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4350/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/4350.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4350",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/4350.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4350"

} | true | [

"Thanks for your contribution, @YEdenZ.\r\n\r\nPlease note that our old `metrics` module is in the process of being incorporated to a separate library called `evaluate`: https://github.com/huggingface/evaluate\r\n\r\nTherefore, I would ask you to transfer your PR to that repository. Thank you."

] |

https://api.github.com/repos/huggingface/datasets/issues/5413 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5413/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5413/comments | https://api.github.com/repos/huggingface/datasets/issues/5413/events | https://github.com/huggingface/datasets/issues/5413 | 1,524,591,837 | I_kwDODunzps5a32zd | 5,413 | concatenate_datasets fails when two dataset with shards > 1 and unequal shard numbers | [] | closed | false | null | 1 | 2023-01-08T17:01:52Z | 2023-01-26T09:27:21Z | 2023-01-26T09:27:21Z | null | ### Describe the bug

When using `concatenate_datasets([dataset1, dataset2], axis = 1)` to concatenate two datasets with shards > 1, it fails:

```

File "/home/xzg/anaconda3/envs/tri-transfer/lib/python3.9/site-packages/datasets/combine.py", line 182, in concatenate_datasets

return _concatenate_map_style_datasets(dsets, info=info, split=split, axis=axis)

File "/home/xzg/anaconda3/envs/tri-transfer/lib/python3.9/site-packages/datasets/arrow_dataset.py", line 5499, in _concatenate_map_style_datasets

table = concat_tables([dset._data for dset in dsets], axis=axis)

File "/home/xzg/anaconda3/envs/tri-transfer/lib/python3.9/site-packages/datasets/table.py", line 1778, in concat_tables

return ConcatenationTable.from_tables(tables, axis=axis)

File "/home/xzg/anaconda3/envs/tri-transfer/lib/python3.9/site-packages/datasets/table.py", line 1483, in from_tables

blocks = _extend_blocks(blocks, table_blocks, axis=axis)

File "/home/xzg/anaconda3/envs/tri-transfer/lib/python3.9/site-packages/datasets/table.py", line 1477, in _extend_blocks

result[i].extend(row_blocks)

IndexError: list index out of range

```

### Steps to reproduce the bug

dataset = concatenate_datasets([dataset1, dataset2], axis = 1)

### Expected behavior

The datasets are correctly concatenated.

### Environment info

datasets==2.8.0 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5413/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5413/timeline | null | completed | null | null | false | [

"Hi ! Thanks for reporting :)\r\n\r\nI managed to reproduce the hub using\r\n```python\r\n\r\nfrom datasets import concatenate_datasets, Dataset, load_from_disk\r\n\r\nDataset.from_dict({\"a\": range(9)}).save_to_disk(\"tmp/ds1\")\r\nds1 = load_from_disk(\"tmp/ds1\")\r\nds1 = concatenate_datasets([ds1, ds1])\r\n\r\... |

https://api.github.com/repos/huggingface/datasets/issues/1797 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1797/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1797/comments | https://api.github.com/repos/huggingface/datasets/issues/1797/events | https://github.com/huggingface/datasets/issues/1797 | 797,357,901 | MDU6SXNzdWU3OTczNTc5MDE= | 1,797 | Connection error | [] | closed | false | null | 1 | 2021-01-30T07:32:45Z | 2021-08-04T18:09:37Z | 2021-08-04T18:09:37Z | null | Hi

I am hitting to the error, help me and thanks.

`train_data = datasets.load_dataset("xsum", split="train")`

`ConnectionError: Couldn't reach https://raw.githubusercontent.com/huggingface/datasets/1.0.2/datasets/xsum/xsum.py` | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1797/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1797/timeline | null | completed | null | null | false | [

"Hi ! For future references let me add a link to our discussion here : https://github.com/huggingface/datasets/issues/759#issuecomment-770684693\r\n\r\nLet me know if you manage to fix your proxy issue or if we can do something on our end to help you :)"

] |

https://api.github.com/repos/huggingface/datasets/issues/4800 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4800/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4800/comments | https://api.github.com/repos/huggingface/datasets/issues/4800/events | https://github.com/huggingface/datasets/pull/4800 | 1,331,288,128 | PR_kwDODunzps48yIss | 4,800 | support LargeListArray in pyarrow | [] | open | false | null | 17 | 2022-08-08T03:58:46Z | 2022-10-20T16:34:04Z | null | null | ```python

import numpy as np

import datasets

a = np.zeros((5000000, 768))

res = datasets.Dataset.from_dict({'embedding': a})

'''

File '/home/wenjiaxin/anaconda3/envs/data/lib/python3.8/site-packages/datasets/arrow_writer.py', line 178, in __arrow_array__

out = numpy_to_pyarrow_listarray(data)

File "/home/wenjiaxin/anaconda3/envs/data/lib/python3.8/site-packages/datasets/features/features.py", line 1173, in numpy_to_pyarrow_listarray

offsets = pa.array(np.arange(n_offsets + 1) * step_offsets, type=pa.int32())

File "pyarrow/array.pxi", line 312, in pyarrow.lib.array

File "pyarrow/array.pxi", line 83, in pyarrow.lib._ndarray_to_array

File "pyarrow/error.pxi", line 100, in pyarrow.lib.check_status

pyarrow.lib.ArrowInvalid: Integer value 2147483904 not in range: -2147483648 to 2147483647

'''

```

Loading a large numpy array currently raises the error above as the type of offsets is `int32`.

And pyarrow has supported [LargeListArray](https://arrow.apache.org/docs/python/generated/pyarrow.LargeListArray.html) for this case.

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4800/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4800/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/4800.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4800",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/4800.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4800"

} | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_4800). All of your documentation changes will be reflected on that endpoint.",

"Hi, thanks for working on this! Can you run `make style` at the repo root to fix the code quality error in CI and add a test?",

"Hi, I have fixed... |

https://api.github.com/repos/huggingface/datasets/issues/4184 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4184/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4184/comments | https://api.github.com/repos/huggingface/datasets/issues/4184/events | https://github.com/huggingface/datasets/pull/4184 | 1,208,592,669 | PR_kwDODunzps42cB2j | 4,184 | [Librispeech] Add 'all' config | [] | closed | false | null | 27 | 2022-04-19T16:27:56Z | 2022-08-29T06:35:57Z | 2022-04-22T09:45:17Z | null | Add `"all"` config to Librispeech

Closed #4179 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4184/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4184/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/4184.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4184",

"merged_at": "2022-04-22T09:45:17Z",

"patch_url": "https://github.com/huggingface/datasets/pull/4184.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4184"

} | true | [

"Fix https://github.com/huggingface/datasets/issues/4179",

"_The documentation is not available anymore as the PR was closed or merged._",

"Just that I understand: With this change, simply doing `load_dataset(\"librispeech_asr\")` is possible and returns the whole dataset?\r\n\r\nAnd to get the subsets, I do st... |

https://api.github.com/repos/huggingface/datasets/issues/4408 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4408/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4408/comments | https://api.github.com/repos/huggingface/datasets/issues/4408/events | https://github.com/huggingface/datasets/pull/4408 | 1,248,687,574 | PR_kwDODunzps44ecNI | 4,408 | Update imagenet gate | [] | closed | false | null | 1 | 2022-05-25T20:32:19Z | 2022-05-25T20:45:11Z | 2022-05-25T20:36:47Z | null | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4408/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4408/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/4408.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4408",

"merged_at": "2022-05-25T20:36:47Z",

"patch_url": "https://github.com/huggingface/datasets/pull/4408.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4408"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._"

] |

https://api.github.com/repos/huggingface/datasets/issues/1446 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1446/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1446/comments | https://api.github.com/repos/huggingface/datasets/issues/1446/events | https://github.com/huggingface/datasets/pull/1446 | 761,060,323 | MDExOlB1bGxSZXF1ZXN0NTM1Nzg1NDk1 | 1,446 | Add Bing Coronavirus Query Set | [] | closed | false | null | 0 | 2020-12-10T09:20:46Z | 2020-12-11T17:03:08Z | 2020-12-11T17:03:07Z | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1446/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1446/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/1446.diff",