url stringlengths 58 61 | repository_url stringclasses 1

value | labels_url stringlengths 72 75 | comments_url stringlengths 67 70 | events_url stringlengths 65 68 | html_url stringlengths 46 51 | id int64 599M 1.83B | node_id stringlengths 18 32 | number int64 1 6.09k | title stringlengths 1 290 | labels list | state stringclasses 2

values | locked bool 1

class | milestone dict | comments int64 0 54 | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | closed_at stringlengths 20 20 ⌀ | active_lock_reason null | body stringlengths 0 228k ⌀ | reactions dict | timeline_url stringlengths 67 70 | performed_via_github_app null | state_reason stringclasses 3

values | draft bool 2

classes | pull_request dict | is_pull_request bool 2

classes | comments_text list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/5263 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5263/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5263/comments | https://api.github.com/repos/huggingface/datasets/issues/5263/events | https://github.com/huggingface/datasets/issues/5263 | 1,455,252,626 | I_kwDODunzps5WvWSS | 5,263 | Save a dataset in a determined number of shards | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

}

] | closed | false | null | 0 | 2022-11-18T14:43:54Z | 2022-12-14T18:22:59Z | 2022-12-14T18:22:59Z | null | This is useful to distribute the shards to training nodes.

This can be implemented in `save_to_disk` and can also leverage multiprocessing to speed up the process | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5263/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5263/timeline | null | completed | null | null | false | [] |

https://api.github.com/repos/huggingface/datasets/issues/1105 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1105/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1105/comments | https://api.github.com/repos/huggingface/datasets/issues/1105/events | https://github.com/huggingface/datasets/pull/1105 | 757,024,162 | MDExOlB1bGxSZXF1ZXN0NTMyNDY4NDIw | 1,105 | add xquad_r dataset | [] | closed | false | null | 2 | 2020-12-04T11:19:35Z | 2020-12-04T16:37:00Z | 2020-12-04T16:37:00Z | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1105/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1105/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/1105.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1105",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/1105.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1105"

} | true | [

"looks like this PR includes changes in many files than the ones for xquad_r, could you create a new branch and a new PR ?",

"Sure, I will close this then.\r\n"

] | |

https://api.github.com/repos/huggingface/datasets/issues/2828 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2828/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2828/comments | https://api.github.com/repos/huggingface/datasets/issues/2828/events | https://github.com/huggingface/datasets/pull/2828 | 977,181,517 | MDExOlB1bGxSZXF1ZXN0NzE3OTYwODg3 | 2,828 | Add code-mixed Kannada Hope speech dataset | [] | closed | false | null | 0 | 2021-08-23T15:55:09Z | 2021-10-01T17:21:03Z | 2021-10-01T17:21:03Z | null | ## Adding a Dataset

- **Name:** *KanHope*

- **Description:** *A code-mixed English-Kannada dataset for Hope speech detection*

- **Paper:** *https://arxiv.org/abs/2108.04616*

- **Data:** *https://github.com/adeepH/KanHope/tree/main/dataset*

- **Motivation:** *The dataset is amongst the very few resources available for code-mixed low-resourced Dravidian languages of India* | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2828/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2828/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/2828.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2828",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/2828.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/2828"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/4250 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4250/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4250/comments | https://api.github.com/repos/huggingface/datasets/issues/4250/events | https://github.com/huggingface/datasets/pull/4250 | 1,219,093,830 | PR_kwDODunzps429yjN | 4,250 | Bump PyArrow Version to 6 | [] | closed | false | null | 4 | 2022-04-28T18:10:50Z | 2022-05-04T09:36:52Z | 2022-05-04T09:29:46Z | null | Fixes #4152

This PR updates the PyArrow version to 6 in setup.py, CI job files .circleci/config.yaml and .github/workflows/benchmarks.yaml files.

This will fix ArrayND error which exists in pyarrow 5. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4250/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4250/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/4250.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4250",

"merged_at": "2022-05-04T09:29:46Z",

"patch_url": "https://github.com/huggingface/datasets/pull/4250.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4250"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._",

"Updated meta.yaml as well. Thanks.",

"I'm OK with bumping PyArrow to version 6 to match the version in Colab, but maybe a better solution would be to stop using extension types in our codebase to avoid similar issues.",

"> but ma... |

https://api.github.com/repos/huggingface/datasets/issues/3135 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3135/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3135/comments | https://api.github.com/repos/huggingface/datasets/issues/3135/events | https://github.com/huggingface/datasets/issues/3135 | 1,033,294,299 | I_kwDODunzps49ltHb | 3,135 | Make inspect.get_dataset_config_names always return a non-empty list of configs | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

},

{

"color": "E5583E",

"default": fals... | closed | false | null | 2 | 2021-10-22T08:02:50Z | 2021-10-28T05:44:49Z | 2021-10-28T05:44:49Z | null | **Is your feature request related to a problem? Please describe.**

Currently, some datasets have a configuration, while others don't. It would be simpler for the user to always have configuration names to refer to

**Describe the solution you'd like**

In that sense inspect.get_dataset_config_names should always return at least one configuration name, be it `default` or `Check___region_1` (for community datasets like `Check/region_1`).

https://github.com/huggingface/datasets/blob/c5747a5e1dde2670b7f2ca6e79e2ffd99dff85af/src/datasets/inspect.py#L161

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3135/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3135/timeline | null | completed | null | null | false | [

"Hi @severo, I guess this issue requests not only to be able to access the configuration name (by using `inspect.get_dataset_config_names`), but the configuration itself as well (I mean you use the name to get the configuration afterwards, maybe using `builder_cls.builder_configs`), is this right?",

"Yes, maybe t... |

https://api.github.com/repos/huggingface/datasets/issues/1428 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1428/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1428/comments | https://api.github.com/repos/huggingface/datasets/issues/1428/events | https://github.com/huggingface/datasets/pull/1428 | 760,736,726 | MDExOlB1bGxSZXF1ZXN0NTM1NTE4MzIy | 1,428 | Add twi wordsim353 | [] | closed | false | null | 0 | 2020-12-09T22:59:19Z | 2020-12-11T13:57:32Z | 2020-12-11T13:57:32Z | null | Add twi WordSim 353 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1428/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1428/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/1428.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1428",

"merged_at": "2020-12-11T13:57:32Z",

"patch_url": "https://github.com/huggingface/datasets/pull/1428.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1428"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/3471 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3471/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3471/comments | https://api.github.com/repos/huggingface/datasets/issues/3471/events | https://github.com/huggingface/datasets/pull/3471 | 1,086,588,074 | PR_kwDODunzps4wLAk6 | 3,471 | Fix Tashkeela dataset to yield stripped text | [] | closed | false | null | 0 | 2021-12-22T08:41:30Z | 2021-12-22T10:12:08Z | 2021-12-22T10:12:07Z | null | This PR:

- Yields stripped text

- Fix path for Windows

- Adds license

- Adds more info in dataset card

Close bigscience-workshop/data_tooling#279 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3471/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3471/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3471.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3471",

"merged_at": "2021-12-22T10:12:07Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3471.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3471"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/5832 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5832/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5832/comments | https://api.github.com/repos/huggingface/datasets/issues/5832/events | https://github.com/huggingface/datasets/issues/5832 | 1,702,135,336 | I_kwDODunzps5ldIYo | 5,832 | 404 Client Error: Not Found for url: https://huggingface.co/api/models/bert-large-cased | [] | closed | false | null | 1 | 2023-05-09T14:14:59Z | 2023-05-09T14:25:59Z | 2023-05-09T14:25:59Z | null | ### Describe the bug

Running [Bert-Large-Cased](https://huggingface.co/bert-large-cased) model causes `HTTPError`, with the following traceback-

```

HTTPError Traceback (most recent call last)

<ipython-input-6-5c580443a1ad> in <module>

----> 1 tokenizer = BertTokenizer.from_pretrained('bert-large-cased')

~/miniconda3/envs/cmd-chall/lib/python3.7/site-packages/transformers/tokenization_utils_base.py in from_pretrained(cls, pretrained_model_name_or_path, *init_inputs, **kwargs)

1646 # At this point pretrained_model_name_or_path is either a directory or a model identifier name

1647 fast_tokenizer_file = get_fast_tokenizer_file(

-> 1648 pretrained_model_name_or_path, revision=revision, use_auth_token=use_auth_token

1649 )

1650 additional_files_names = {

~/miniconda3/envs/cmd-chall/lib/python3.7/site-packages/transformers/tokenization_utils_base.py in get_fast_tokenizer_file(path_or_repo, revision, use_auth_token)

3406 """

3407 # Inspect all files from the repo/folder.

-> 3408 all_files = get_list_of_files(path_or_repo, revision=revision, use_auth_token=use_auth_token)

3409 tokenizer_files_map = {}

3410 for file_name in all_files:

~/miniconda3/envs/cmd-chall/lib/python3.7/site-packages/transformers/file_utils.py in get_list_of_files(path_or_repo, revision, use_auth_token)

1685 token = None

1686 model_info = HfApi(endpoint=HUGGINGFACE_CO_RESOLVE_ENDPOINT).model_info(

-> 1687 path_or_repo, revision=revision, token=token

1688 )

1689 return [f.rfilename for f in model_info.siblings]

~/miniconda3/envs/cmd-chall/lib/python3.7/site-packages/huggingface_hub/hf_api.py in model_info(self, repo_id, revision, token)

246 )

247 r = requests.get(path, headers=headers)

--> 248 r.raise_for_status()

249 d = r.json()

250 return ModelInfo(**d)

~/miniconda3/envs/cmd-chall/lib/python3.7/site-packages/requests/models.py in raise_for_status(self)

951

952 if http_error_msg:

--> 953 raise HTTPError(http_error_msg, response=self)

954

955 def close(self):

HTTPError: 404 Client Error: Not Found for url: https://huggingface.co/api/models/bert-large-cased

```

I have also tried running in offline mode, as [discussed here](https://huggingface.co/docs/transformers/installation#offline-mode)

```

HF_DATASETS_OFFLINE=1

TRANSFORMERS_OFFLINE=1

```

### Steps to reproduce the bug

1. `from transformers import BertTokenizer, BertModel`

2. `tokenizer = BertTokenizer.from_pretrained('bert-large-cased')`

### Expected behavior

Run without the HTTP error.

### Environment info

| # Name | Version | Build | Channel | |

|--------------------|------------|-----------------------------|---------|---|

| _libgcc_mutex | 0.1 | main | | |

| _openmp_mutex | 4.5 | 1_gnu | | |

| _pytorch_select | 0.1 | cpu_0 | | |

| appdirs | 1.4.4 | pypi_0 | pypi | |

| backcall | 0.2.0 | pypi_0 | pypi | |

| blas | 1.0 | mkl | | |

| bzip2 | 1.0.8 | h7b6447c_0 | | |

| ca-certificates | 2021.7.5 | h06a4308_1 | | |

| certifi | 2021.5.30 | py37h06a4308_0 | | |

| cffi | 1.14.6 | py37h400218f_0 | | |

| charset-normalizer | 2.0.3 | pypi_0 | pypi | |

| click | 8.0.1 | pypi_0 | pypi | |

| colorama | 0.4.4 | pypi_0 | pypi | |

| cudatoolkit | 11.1.74 | h6bb024c_0 | nvidia | |

| cycler | 0.11.0 | pypi_0 | pypi | |

| decorator | 5.0.9 | pypi_0 | pypi | |

| docker-pycreds | 0.4.0 | pypi_0 | pypi | |

| docopt | 0.6.2 | pypi_0 | pypi | |

| dominate | 2.6.0 | pypi_0 | pypi | |

| ffmpeg | 4.3 | hf484d3e_0 | pytorch | |

| filelock | 3.0.12 | pypi_0 | pypi | |

| fonttools | 4.38.0 | pypi_0 | pypi | |

| freetype | 2.10.4 | h5ab3b9f_0 | | |

| gitdb | 4.0.7 | pypi_0 | pypi | |

| gitpython | 3.1.18 | pypi_0 | pypi | |

| gmp | 6.2.1 | h2531618_2 | | |

| gnutls | 3.6.15 | he1e5248_0 | | |

| huggingface-hub | 0.0.12 | pypi_0 | pypi | |

| humanize | 3.10.0 | pypi_0 | pypi | |

| idna | 3.2 | pypi_0 | pypi | |

| importlib-metadata | 4.6.1 | pypi_0 | pypi | |

| intel-openmp | 2019.4 | 243 | | |

| ipdb | 0.13.9 | pypi_0 | pypi | |

| ipython | 7.25.0 | pypi_0 | pypi | |

| ipython-genutils | 0.2.0 | pypi_0 | pypi | |

| jedi | 0.18.0 | pypi_0 | pypi | |

| joblib | 1.0.1 | pypi_0 | pypi | |

| jpeg | 9b | h024ee3a_2 | | |

| jsonpickle | 1.5.2 | pypi_0 | pypi | |

| kiwisolver | 1.4.4 | pypi_0 | pypi | |

| lame | 3.100 | h7b6447c_0 | | |

| lcms2 | 2.12 | h3be6417_0 | | |

| ld_impl_linux-64 | 2.35.1 | h7274673_9 | | |

| libffi | 3.3 | he6710b0_2 | | |

| libgcc-ng | 9.3.0 | h5101ec6_17 | | |

| libgomp | 9.3.0 | h5101ec6_17 | | |

| libiconv | 1.15 | h63c8f33_5 | | |

| libidn2 | 2.3.2 | h7f8727e_0 | | |

| libmklml | 2019.0.5 | 0 | | |

| libpng | 1.6.37 | hbc83047_0 | | |

| libstdcxx-ng | 9.3.0 | hd4cf53a_17 | | |

| libtasn1 | 4.16.0 | h27cfd23_0 | | |

| libtiff | 4.2.0 | h85742a9_0 | | |

| libunistring | 0.9.10 | h27cfd23_0 | | |

| libuv | 1.40.0 | h7b6447c_0 | | |

| libwebp-base | 1.2.0 | h27cfd23_0 | | |

| lz4-c | 1.9.3 | h2531618_0 | | |

| matplotlib | 3.5.3 | pypi_0 | pypi | |

| matplotlib-inline | 0.1.2 | pypi_0 | pypi | |

| mergedeep | 1.3.4 | pypi_0 | pypi | |

| mkl | 2020.2 | 256 | | |

| mkl-service | 2.3.0 | py37he8ac12f_0 | | |

| mkl_fft | 1.3.0 | py37h54f3939_0 | | |

| mkl_random | 1.1.1 | py37h0573a6f_0 | | |

| msgpack | 1.0.2 | pypi_0 | pypi | |

| munch | 2.5.0 | pypi_0 | pypi | |

| ncurses | 6.2 | he6710b0_1 | | |

| nettle | 3.7.3 | hbbd107a_1 | | |

| ninja | 1.10.2 | hff7bd54_1 | | |

| nltk | 3.8.1 | pypi_0 | pypi | |

| numpy | 1.19.2 | py37h54aff64_0 | | |

| numpy-base | 1.19.2 | py37hfa32c7d_0 | | |

| olefile | 0.46 | py37_0 | | |

| openh264 | 2.1.0 | hd408876_0 | | |

| openjpeg | 2.3.0 | h05c96fa_1 | | |

| openssl | 1.1.1k | h27cfd23_0 | | |

| packaging | 21.0 | pypi_0 | pypi | |

| pandas | 1.3.1 | pypi_0 | pypi | |

| parso | 0.8.2 | pypi_0 | pypi | |

| pathtools | 0.1.2 | pypi_0 | pypi | |

| pexpect | 4.8.0 | pypi_0 | pypi | |

| pickleshare | 0.7.5 | pypi_0 | pypi | |

| pillow | 8.3.1 | py37h2c7a002_0 | | |

| pip | 21.1.3 | py37h06a4308_0 | | |

| prompt-toolkit | 3.0.19 | pypi_0 | pypi | |

| protobuf | 4.21.12 | pypi_0 | pypi | |

| psutil | 5.8.0 | pypi_0 | pypi | |

| ptyprocess | 0.7.0 | pypi_0 | pypi | |

| py-cpuinfo | 8.0.0 | pypi_0 | pypi | |

| pycparser | 2.20 | py_2 | | |

| pygments | 2.9.0 | pypi_0 | pypi | |

| pyparsing | 2.4.7 | pypi_0 | pypi | |

| python | 3.7.10 | h12debd9_4 | | |

| python-dateutil | 2.8.2 | pypi_0 | pypi | |

| pytorch | 1.9.0 | py3.7_cuda11.1_cudnn8.0.5_0 | pytorch | |

| pytz | 2021.1 | pypi_0 | pypi | |

| pyyaml | 5.4.1 | pypi_0 | pypi | |

| readline | 8.1 | h27cfd23_0 | | |

| regex | 2022.10.31 | pypi_0 | pypi | |

| requests | 2.26.0 | pypi_0 | pypi | |

| sacred | 0.8.2 | pypi_0 | pypi | |

| sacremoses | 0.0.45 | pypi_0 | pypi | |

| scikit-learn | 0.24.2 | pypi_0 | pypi | |

| scipy | 1.7.0 | pypi_0 | pypi | |

| sentry-sdk | 1.15.0 | pypi_0 | pypi | |

| setproctitle | 1.3.2 | pypi_0 | pypi | |

| setuptools | 52.0.0 | py37h06a4308_0 | | |

| six | 1.16.0 | pyhd3eb1b0_0 | | |

| smmap | 4.0.0 | pypi_0 | pypi | |

| sqlite | 3.36.0 | hc218d9a_0 | | |

| threadpoolctl | 2.2.0 | pypi_0 | pypi | |

| tk | 8.6.10 | hbc83047_0 | | |

| tokenizers | 0.10.3 | pypi_0 | pypi | |

| toml | 0.10.2 | pypi_0 | pypi | |

| torchaudio | 0.9.0 | py37 | pytorch | |

| torchvision | 0.10.0 | py37_cu111 | pytorch | |

| tqdm | 4.61.2 | pypi_0 | pypi | |

| traitlets | 5.0.5 | pypi_0 | pypi | |

| transformers | 4.9.1 | pypi_0 | pypi | |

| typing-extensions | 3.10.0.0 | hd3eb1b0_0 | | |

| typing_extensions | 3.10.0.0 | pyh06a4308_0 | | |

| urllib3 | 1.26.14 | pypi_0 | pypi | |

| wandb | 0.13.10 | pypi_0 | pypi | |

| wcwidth | 0.2.5 | pypi_0 | pypi | |

| wheel | 0.36.2 | pyhd3eb1b0_0 | | |

| wrapt | 1.12.1 | pypi_0 | pypi | |

| xz | 5.2.5 | h7b6447c_0 | | |

| zipp | 3.5.0 | pypi_0 | pypi | |

| zlib | 1.2.11 | h7b6447c_3 | | |

| zstd | 1.4.9 | haebb681_0 | | | | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5832/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5832/timeline | null | completed | null | null | false | [

"moved to https://github.com/huggingface/transformers/issues/23233"

] |

https://api.github.com/repos/huggingface/datasets/issues/6018 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/6018/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/6018/comments | https://api.github.com/repos/huggingface/datasets/issues/6018/events | https://github.com/huggingface/datasets/pull/6018 | 1,799,411,999 | PR_kwDODunzps5VOmKY | 6,018 | test1 | [] | closed | false | null | 1 | 2023-07-11T17:25:49Z | 2023-07-20T10:11:41Z | 2023-07-20T10:11:41Z | null | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/6018/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/6018/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/6018.diff",

"html_url": "https://github.com/huggingface/datasets/pull/6018",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/6018.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/6018"

} | true | [

"We no longer host datasets in this repo. You should use the HF Hub instead."

] |

https://api.github.com/repos/huggingface/datasets/issues/3881 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3881/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3881/comments | https://api.github.com/repos/huggingface/datasets/issues/3881/events | https://github.com/huggingface/datasets/issues/3881 | 1,164,452,005 | I_kwDODunzps5FaCCl | 3,881 | How to use Image folder | [

{

"color": "d876e3",

"default": true,

"description": "Further information is requested",

"id": 1935892912,

"name": "question",

"node_id": "MDU6TGFiZWwxOTM1ODkyOTEy",

"url": "https://api.github.com/repos/huggingface/datasets/labels/question"

}

] | closed | false | null | 8 | 2022-03-09T21:18:52Z | 2022-03-11T08:45:52Z | 2022-03-11T08:45:52Z | null | Ran this code

```

load_dataset("imagefolder", data_dir="./my-dataset")

```

`https://raw.githubusercontent.com/huggingface/datasets/master/datasets/imagefolder/imagefolder.py` missing

```

---------------------------------------------------------------------------

FileNotFoundError Traceback (most recent call last)

/tmp/ipykernel_33/1648737256.py in <module>

----> 1 load_dataset("imagefolder", data_dir="./my-dataset")

/opt/conda/lib/python3.7/site-packages/datasets/load.py in load_dataset(path, name, data_dir, data_files, split, cache_dir, features, download_config, download_mode, ignore_verifications, keep_in_memory, save_infos, revision, use_auth_token, task, streaming, script_version, **config_kwargs)

1684 revision=revision,

1685 use_auth_token=use_auth_token,

-> 1686 **config_kwargs,

1687 )

1688

/opt/conda/lib/python3.7/site-packages/datasets/load.py in load_dataset_builder(path, name, data_dir, data_files, cache_dir, features, download_config, download_mode, revision, use_auth_token, script_version, **config_kwargs)

1511 download_config.use_auth_token = use_auth_token

1512 dataset_module = dataset_module_factory(

-> 1513 path, revision=revision, download_config=download_config, download_mode=download_mode, data_files=data_files

1514 )

1515

/opt/conda/lib/python3.7/site-packages/datasets/load.py in dataset_module_factory(path, revision, download_config, download_mode, force_local_path, dynamic_modules_path, data_files, **download_kwargs)

1200 f"Couldn't find a dataset script at {relative_to_absolute_path(combined_path)} or any data file in the same directory. "

1201 f"Couldn't find '{path}' on the Hugging Face Hub either: {type(e1).__name__}: {e1}"

-> 1202 ) from None

1203 raise e1 from None

1204 else:

FileNotFoundError: Couldn't find a dataset script at /kaggle/working/imagefolder/imagefolder.py or any data file in the same directory. Couldn't find 'imagefolder' on the Hugging Face Hub either: FileNotFoundError: Couldn't find file at https://raw.githubusercontent.com/huggingface/datasets/master/datasets/imagefolder/imagefolder.py

``` | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3881/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3881/timeline | null | completed | null | null | false | [

"Even this from docs throw same error\r\n```\r\ndataset = load_dataset(\"imagefolder\", data_files=\"https://download.microsoft.com/download/3/E/1/3E1C3F21-ECDB-4869-8368-6DEBA77B919F/kagglecatsanddogs_3367a.zip\", split=\"train\")\r\n\r\n```",

"Hi @INF800,\r\n\r\nPlease note that the `imagefolder` feature enhanc... |

https://api.github.com/repos/huggingface/datasets/issues/3448 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3448/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3448/comments | https://api.github.com/repos/huggingface/datasets/issues/3448/events | https://github.com/huggingface/datasets/issues/3448 | 1,083,231,080 | I_kwDODunzps5AkMto | 3,448 | JSONDecodeError with HuggingFace dataset viewer | [

{

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co",

"id": 3470211881,

"name": "dataset-viewer",

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer"

}

] | closed | false | null | 3 | 2021-12-17T12:52:41Z | 2022-02-24T09:10:26Z | 2022-02-24T09:10:26Z | null | ## Dataset viewer issue for 'pubmed_neg'

**Link:** https://huggingface.co/datasets/IGESML/pubmed_neg

I am getting the error:

Status code: 400

Exception: JSONDecodeError

Message: Expecting property name enclosed in double quotes: line 61 column 2 (char 1202)

I have checked all files - I am not using single quotes anywhere. Not sure what is causing this issue.

Am I the one who added this dataset ? Yes

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3448/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3448/timeline | null | completed | null | null | false | [

"Hi ! I think the issue comes from the dataset_infos.json file: it has the \"flat\" field twice.\r\n\r\nCan you try deleting this file and regenerating it please ?",

"Thanks! That fixed that, but now I am getting:\r\nServer Error\r\nStatus code: 400\r\nException: KeyError\r\nMessage: 'feature'\r\n\r\n... |

https://api.github.com/repos/huggingface/datasets/issues/1097 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1097/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1097/comments | https://api.github.com/repos/huggingface/datasets/issues/1097/events | https://github.com/huggingface/datasets/pull/1097 | 756,955,729 | MDExOlB1bGxSZXF1ZXN0NTMyNDExNzQ4 | 1,097 | Add MSRA NER labels | [] | closed | false | null | 0 | 2020-12-04T09:38:16Z | 2020-12-04T13:31:59Z | 2020-12-04T13:31:58Z | null | Fixes #940 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1097/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1097/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/1097.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1097",

"merged_at": "2020-12-04T13:31:58Z",

"patch_url": "https://github.com/huggingface/datasets/pull/1097.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1097"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/707 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/707/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/707/comments | https://api.github.com/repos/huggingface/datasets/issues/707/events | https://github.com/huggingface/datasets/issues/707 | 713,954,666 | MDU6SXNzdWU3MTM5NTQ2NjY= | 707 | Requirements should specify pyarrow<1 | [] | closed | false | null | 7 | 2020-10-02T23:39:39Z | 2020-12-04T08:22:39Z | 2020-10-04T20:50:28Z | null | I was looking at the docs on [Perplexity](https://huggingface.co/transformers/perplexity.html) via GPT2. When you load datasets and try to load Wikitext, you get the error,

```

module 'pyarrow' has no attribute 'PyExtensionType'

```

I traced it back to datasets having installed PyArrow 1.0.1 but there's not pinning in the setup file.

https://github.com/huggingface/datasets/blob/e86a2a8f869b91654e782c9133d810bb82783200/setup.py#L68

Downgrading by installing `pip install "pyarrow<1"` resolved the issue. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/707/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/707/timeline | null | completed | null | null | false | [

"Hello @mathcass I would want to work on this issue. May I do the same? ",

"@punitaojha, certainly. Feel free to work on this. Let me know if you need any help or clarity.",

"Hello @mathcass \r\n1. I did fork the repository and clone the same on my local system. \r\n\r\n2. Then learnt about how we can publish o... |

https://api.github.com/repos/huggingface/datasets/issues/2112 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2112/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2112/comments | https://api.github.com/repos/huggingface/datasets/issues/2112/events | https://github.com/huggingface/datasets/pull/2112 | 841,098,008 | MDExOlB1bGxSZXF1ZXN0NjAwODgyMjA0 | 2,112 | Support for legal NLP datasets (EURLEX and ECtHR cases) | [] | closed | false | null | 0 | 2021-03-25T16:24:17Z | 2021-03-25T18:39:31Z | 2021-03-25T18:34:31Z | null | Add support for two legal NLP datasets:

- EURLEX (https://www.aclweb.org/anthology/P19-1636/)

- ECtHR cases (https://arxiv.org/abs/2103.13084) | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2112/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2112/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/2112.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2112",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/2112.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/2112"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/3899 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3899/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3899/comments | https://api.github.com/repos/huggingface/datasets/issues/3899/events | https://github.com/huggingface/datasets/pull/3899 | 1,166,931,812 | PR_kwDODunzps40UzR3 | 3,899 | Add exact match metric | [] | closed | false | null | 1 | 2022-03-11T22:21:40Z | 2022-03-21T16:10:03Z | 2022-03-21T16:05:35Z | null | Adding the exact match metric and its metric card.

Note: Some of the tests have failed, but I wanted to make a PR anyway so that the rest of the code can be reviewed if anyone has time. I'll look into + work on fixing the failed tests when I'm back online after the weekend | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3899/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3899/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3899.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3899",

"merged_at": "2022-03-21T16:05:34Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3899.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3899"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._"

] |

https://api.github.com/repos/huggingface/datasets/issues/4208 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4208/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4208/comments | https://api.github.com/repos/huggingface/datasets/issues/4208/events | https://github.com/huggingface/datasets/pull/4208 | 1,213,716,426 | PR_kwDODunzps42r7bW | 4,208 | Add CMU MoCap Dataset | [

{

"color": "0e8a16",

"default": false,

"description": "Contribution to a dataset script",

"id": 4564477500,

"name": "dataset contribution",

"node_id": "LA_kwDODunzps8AAAABEBBmPA",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20contribution"

}

] | closed | false | null | 11 | 2022-04-24T17:31:08Z | 2022-10-03T09:38:24Z | 2022-10-03T09:36:30Z | null | Resolves #3457

Dataset Request : Add CMU Graphics Lab Motion Capture dataset [#3457](https://github.com/huggingface/datasets/issues/3457)

This PR adds the CMU MoCap Dataset.

The authors didn't respond even after multiple follow ups, so I ended up crawling the website to get categories, subcategories and description information. Some of the subjects do not have category/subcategory/description as well. I am using a subject to categories, subcategories and description map (metadata file).

Currently the loading of the dataset works for "asf/amc" and "avi" formats since they have a single download link. But "c3d" and "mpg" have multiple download links (part archives) and dl_manager.download_and_extract() extracts the files to multiple paths, is there a way to extract these multiple archives into one folder ? Any other way to go about this ?

Any suggestions/inputs on this would be helpful. Thank you.

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4208/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4208/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/4208.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4208",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/4208.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4208"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._",

"- Updated the readme.\r\n- Added dummy_data.zip and ran the all the tests.\r\n\r\nThe dataset works for \"asf/amc\" and \"avi\" formats which have a single download link for the complete dataset. But \"c3d\" and \"mpg\" have multiple... |

https://api.github.com/repos/huggingface/datasets/issues/1815 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1815/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1815/comments | https://api.github.com/repos/huggingface/datasets/issues/1815/events | https://github.com/huggingface/datasets/pull/1815 | 800,610,017 | MDExOlB1bGxSZXF1ZXN0NTY3MDY3NjU1 | 1,815 | Add CCAligned Multilingual Dataset | [] | closed | false | null | 7 | 2021-02-03T18:59:52Z | 2021-03-01T12:33:03Z | 2021-03-01T10:36:21Z | null | Hello,

I'm trying to add [CCAligned Multilingual Dataset](http://www.statmt.org/cc-aligned/). This has the potential to close #1756.

This dataset has two types - Document-Pairs, and Sentence-Pairs.

The datasets are huge, so I won't be able to test all of them. At the same time, a user might only want to download one particular language and not all. To provide this feature, `load_dataset`'s `**config_kwargs` should allow some random keyword args, in this case -`language_code`. This will be needed before the dataset is downloaded and extracted.

I'm expecting the usage to be something like -

`load_dataset('ccaligned_multilingual','documents',language_code='en_XX-af_ZA')`. Ofcourse, at a later stage we can provide just two character language codes. This also has an issue where one language has multiple files (`my_MM` and `my_MM_zaw` on the link), but before that the required functionality must be added to `load_dataset`.

It would be great if someone could either tell me an alternative way to do this, or point me to where changes need to be made, if any, apart from the `BuilderConfig` definition.

Additionally, I believe the tests will also have to be modified if this change is made, since it would not be possible to test for any random keyword arguments.

A decent way to go about this would be to provide all the options in a list/dictionary for `language_code` and use that to test the arguments. In essence, this is similar to the pre-trained checkpoint dictionary as `transformers`. That means writing dataset specific tests, or adding something new to dataset generation script to make it easier for everyone to add keyword arguments without having to worry about the tests.

Thanks,

Gunjan

Requesting @lhoestq / @yjernite to review. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1815/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1815/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/1815.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1815",

"merged_at": "2021-03-01T10:36:21Z",

"patch_url": "https://github.com/huggingface/datasets/pull/1815.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1815"

} | true | [

"Hi !\r\n\r\nWe already have some datasets that can have many many configurations possible.\r\nTo be able to support that, we allow to subclass BuilderConfig to add as many additional parameters as you may need.\r\nThis way users can load any language they want. For example the [bible_para](https://github.com/huggi... |

https://api.github.com/repos/huggingface/datasets/issues/3711 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3711/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3711/comments | https://api.github.com/repos/huggingface/datasets/issues/3711/events | https://github.com/huggingface/datasets/pull/3711 | 1,134,050,545 | PR_kwDODunzps4ymmlK | 3,711 | Fix the error of _load_table_data function in msr_sqa dataset | [] | closed | false | null | 0 | 2022-02-12T13:20:53Z | 2022-02-12T13:30:43Z | 2022-02-12T13:30:43Z | null | The _load_table_data function from the last version is wrong, it is wrong to use comma to split each row. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3711/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3711/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3711.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3711",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/3711.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3711"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/4391 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4391/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4391/comments | https://api.github.com/repos/huggingface/datasets/issues/4391/events | https://github.com/huggingface/datasets/pull/4391 | 1,244,839,185 | PR_kwDODunzps44RpGv | 4,391 | Refactor column mappings for question answering datasets | [] | closed | false | null | 5 | 2022-05-23T09:13:14Z | 2022-05-24T12:57:00Z | 2022-05-24T12:48:48Z | null | This PR tweaks the keys in the metadata that are used to define the column mapping for question answering datasets. This is needed in order to faithfully reconstruct column names like `answers.text` and `answers.answer_start` from the keys in AutoTrain.

As observed in https://github.com/huggingface/datasets/pull/4367 we cannot use periods `.` in the keys of the YAML tags, so a decision was made to use a flat mapping with underscores. For QA datasets, however, it's handy to be able to reconstruct the nesting -- hence this PR.

cc @sashavor | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4391/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4391/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/4391.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4391",

"merged_at": "2022-05-24T12:48:48Z",

"patch_url": "https://github.com/huggingface/datasets/pull/4391.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4391"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._",

"> Thanks.\r\n> \r\n> I have no visibility about this, but if you say it is more useful for AutoTrain this way...\r\n\r\nThanks for the review @albertvillanova ! Yes, I need some way to reconstruct the original column names with a per... |

https://api.github.com/repos/huggingface/datasets/issues/4065 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4065/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4065/comments | https://api.github.com/repos/huggingface/datasets/issues/4065/events | https://github.com/huggingface/datasets/pull/4065 | 1,186,722,478 | PR_kwDODunzps41U5rq | 4,065 | Create metric card for METEOR | [] | closed | false | null | 1 | 2022-03-30T16:40:30Z | 2022-03-31T17:12:10Z | 2022-03-31T17:07:50Z | null | Proposing a metric card for METEOR | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4065/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4065/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/4065.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4065",

"merged_at": "2022-03-31T17:07:50Z",

"patch_url": "https://github.com/huggingface/datasets/pull/4065.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4065"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._"

] |

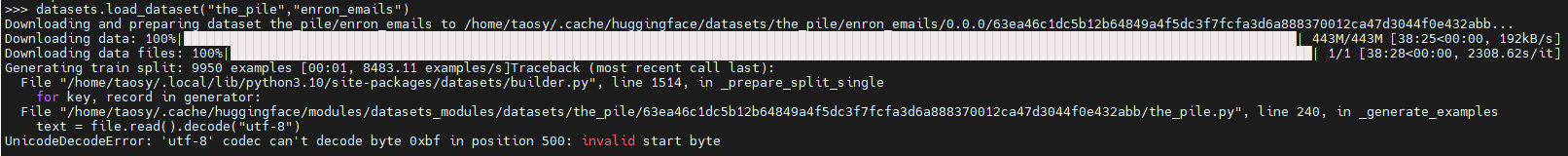

https://api.github.com/repos/huggingface/datasets/issues/5378 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5378/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5378/comments | https://api.github.com/repos/huggingface/datasets/issues/5378/events | https://github.com/huggingface/datasets/issues/5378 | 1,503,887,508 | I_kwDODunzps5Zo4CU | 5,378 | The dataset "the_pile", subset "enron_emails" , load_dataset() failure | [] | closed | false | null | 1 | 2022-12-20T02:19:13Z | 2022-12-20T07:52:54Z | 2022-12-20T07:52:54Z | null | ### Describe the bug

When run

"datasets.load_dataset("the_pile","enron_emails")" failure

### Steps to reproduce the bug

Run below code in python cli:

>>> import datasets

>>> datasets.load_dataset("the_pile","enron_emails")

### Expected behavior

Load dataset "the_pile", "enron_emails" successfully.

### Environment info

Copy-and-paste the text below in your GitHub issue.

- `datasets` version: 2.7.1

- Platform: Linux-5.15.0-53-generic-x86_64-with-glibc2.35

- Python version: 3.10.6

- PyArrow version: 10.0.0

- Pandas version: 1.4.3

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5378/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5378/timeline | null | completed | null | null | false | [

"Thanks for reporting @shaoyuta. We are investigating it.\r\n\r\nWe are transferring the issue to \"the_pile\" Community tab on the Hub: https://huggingface.co/datasets/the_pile/discussions/4"

] |

https://api.github.com/repos/huggingface/datasets/issues/832 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/832/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/832/comments | https://api.github.com/repos/huggingface/datasets/issues/832/events | https://github.com/huggingface/datasets/issues/832 | 740,077,228 | MDU6SXNzdWU3NDAwNzcyMjg= | 832 | [GEM] add WikiAuto text simplification dataset | [

{

"color": "e99695",

"default": false,

"description": "Requesting to add a new dataset",

"id": 2067376369,

"name": "dataset request",

"node_id": "MDU6TGFiZWwyMDY3Mzc2MzY5",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20request"

}

] | closed | false | null | 0 | 2020-11-10T16:53:23Z | 2020-12-03T13:38:08Z | 2020-12-03T13:38:08Z | null | ## Adding a Dataset

- **Name:** WikiAuto

- **Description:** Sentences in English Wikipedia and their corresponding sentences in Simple English Wikipedia that are written with simpler grammar and word choices. A lot of lexical and syntactic paraphrasing.

- **Paper:** https://www.aclweb.org/anthology/2020.acl-main.709.pdf

- **Data:** https://github.com/chaojiang06/wiki-auto

- **Motivation:** Included in the GEM shared task

Instructions to add a new dataset can be found [here](https://huggingface.co/docs/datasets/share_dataset.html).

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/832/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/832/timeline | null | completed | null | null | false | [] |

https://api.github.com/repos/huggingface/datasets/issues/4188 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4188/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4188/comments | https://api.github.com/repos/huggingface/datasets/issues/4188/events | https://github.com/huggingface/datasets/pull/4188 | 1,209,740,957 | PR_kwDODunzps42fpMv | 4,188 | Support streaming cnn_dailymail dataset | [] | closed | false | null | 2 | 2022-04-20T14:04:36Z | 2022-05-11T13:39:06Z | 2022-04-20T15:52:49Z | null | Support streaming cnn_dailymail dataset.

Fix #3969.

CC: @severo | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4188/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4188/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/4188.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4188",

"merged_at": "2022-04-20T15:52:49Z",

"patch_url": "https://github.com/huggingface/datasets/pull/4188.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4188"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._",

"Did you run the `datasets-cli` command before merging to make sure you generate all the examples ?"

] |

https://api.github.com/repos/huggingface/datasets/issues/3539 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3539/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3539/comments | https://api.github.com/repos/huggingface/datasets/issues/3539/events | https://github.com/huggingface/datasets/pull/3539 | 1,094,813,242 | PR_kwDODunzps4wlXU4 | 3,539 | Research wording for nc licenses | [] | closed | false | null | 1 | 2022-01-05T23:01:38Z | 2022-01-06T18:58:20Z | 2022-01-06T18:58:19Z | null | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3539/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3539/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3539.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3539",

"merged_at": "2022-01-06T18:58:19Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3539.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3539"

} | true | [

"The CI failure is about some missing tags or sections in the dataset cards, and is unrelated to the part about non commercial use of this PR. Merging"

] |

https://api.github.com/repos/huggingface/datasets/issues/1802 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1802/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1802/comments | https://api.github.com/repos/huggingface/datasets/issues/1802/events | https://github.com/huggingface/datasets/pull/1802 | 797,924,468 | MDExOlB1bGxSZXF1ZXN0NTY0ODE4NDIy | 1,802 | add github of contributors | [] | closed | false | null | 3 | 2021-02-01T03:49:19Z | 2021-02-03T10:09:52Z | 2021-02-03T10:06:30Z | null | This PR will add contributors GitHub id at the end of every dataset cards. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1802/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1802/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/1802.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1802",

"merged_at": "2021-02-03T10:06:30Z",

"patch_url": "https://github.com/huggingface/datasets/pull/1802.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1802"

} | true | [

"@lhoestq Can you confirm if this format is fine? I will update cards based on your feedback.",

"On HuggingFace side we also have a mapping of hf user => github user (GitHub info used to be required when signing up until not long ago – cc @gary149 @beurkinger) so we can also add a link to HF profile",

"All the ... |

https://api.github.com/repos/huggingface/datasets/issues/836 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/836/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/836/comments | https://api.github.com/repos/huggingface/datasets/issues/836/events | https://github.com/huggingface/datasets/issues/836 | 740,187,613 | MDU6SXNzdWU3NDAxODc2MTM= | 836 | load_dataset with 'csv' is not working. while the same file is loading with 'text' mode or with pandas | [

{

"color": "2edb81",

"default": false,

"description": "A bug in a dataset script provided in the library",

"id": 2067388877,

"name": "dataset bug",

"node_id": "MDU6TGFiZWwyMDY3Mzg4ODc3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20bug"

}

] | closed | false | null | 8 | 2020-11-10T19:35:40Z | 2021-11-24T16:59:19Z | 2020-11-19T17:35:38Z | null | Hi All

I am trying to load a custom dataset and I am trying to load a single file to make sure the file is loading correctly:

dataset = load_dataset('csv', data_files=files)

When I run it I get:

Downloading and preparing dataset csv/default-35575a1051604c88 (download: Unknown size, generated: Unknown size, post-processed: Unknown size, total: Unknown size) tocache/huggingface/datasets/csv/default-35575a1051604c88/0.0.0/49187751790fa4d820300fd4d0707896e5b941f1a9c644652645b866716a4ac4...

I am getting this error:

6a4ac4/csv.py in _generate_tables(self, files)

78 def _generate_tables(self, files):

79 for i, file in enumerate(files):

---> 80 pa_table = pac.read_csv(

81 file,

82 read_options=self.config.pa_read_options,

~/anaconda2/envs/nlp/lib/python3.8/site-packages/pyarrow/_csv.pyx in pyarrow._csv.read_csv()

~/anaconda2/envs/nlp/lib/python3.8/site-packages/pyarrow/error.pxi in pyarrow.lib.pyarrow_internal_check_status()

~/anaconda2/envs/nlp/lib/python3.8/site-packages/pyarrow/error.pxi in pyarrow.lib.check_status()

**ArrowInvalid: straddling object straddles two block boundaries (try to increase block size?)**

The size of the file is 3.5 GB. When I try smaller files I do not have an issue. When I load it with 'text' parser I can see all data but it is not what I need.

There is no issue reading the file with pandas. any idea what could be the issue?

When I am running a different CSV I do not get this line:

(download: Unknown size, generated: Unknown size, post-processed: Unknown size, total: Unknown size)

Any ideas?

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/836/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/836/timeline | null | completed | null | null | false | [

"Which version of pyarrow do you have ? Could you try to update pyarrow and try again ?",

"Thanks for the fast response. I have the latest version '2.0.0' (I tried to update)\r\nI am working with Python 3.8.5",

"I think that the issue is similar to this one:https://issues.apache.org/jira/browse/ARROW-9612\r\nTh... |

https://api.github.com/repos/huggingface/datasets/issues/113 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/113/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/113/comments | https://api.github.com/repos/huggingface/datasets/issues/113/events | https://github.com/huggingface/datasets/pull/113 | 618,590,562 | MDExOlB1bGxSZXF1ZXN0NDE4MjkxNjIx | 113 | Adding docstrings and some doc | [] | closed | false | null | 0 | 2020-05-14T23:14:41Z | 2020-05-14T23:22:45Z | 2020-05-14T23:22:44Z | null | Some doc | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/113/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/113/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/113.diff",

"html_url": "https://github.com/huggingface/datasets/pull/113",

"merged_at": "2020-05-14T23:22:44Z",

"patch_url": "https://github.com/huggingface/datasets/pull/113.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/113"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/4120 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4120/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4120/comments | https://api.github.com/repos/huggingface/datasets/issues/4120/events | https://github.com/huggingface/datasets/issues/4120 | 1,195,887,430 | I_kwDODunzps5HR8tG | 4,120 | Representing dictionaries (json) objects as features | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

}

] | open | false | null | 0 | 2022-04-07T11:07:41Z | 2022-04-07T11:07:41Z | null | null | In the process of adding a new dataset to the hub, I stumbled upon the inability to represent dictionaries that contain different key names, unknown in advance (and may differ between samples), original asked in the [forum](https://discuss.huggingface.co/t/representing-nested-dictionary-with-different-keys/16442).

For instance:

```

sample1 = {"nps": {

"a": {"id": 0, "text": "text1"},

"b": {"id": 1, "text": "text2"},

}}

sample2 = {"nps": {

"a": {"id": 0, "text": "text1"},

"b": {"id": 1, "text": "text2"},

"c": {"id": 2, "text": "text3"},

}}

sample3 = {"nps": {

"a": {"id": 0, "text": "text1"},

"b": {"id": 1, "text": "text2"},

"c": {"id": 2, "text": "text3"},

"d": {"id": 3, "text": "text4"},

}}

```

the `nps` field cannot be represented as a Feature while maintaining its original structure.

@lhoestq suggested to add JSON as a new feature type, which will solve this problem.

It seems like an alternative solution would be to change the original data format, which isn't an optimal solution in my case. Moreover, JSON is a common structure, that will likely to be useful in future datasets as well. | {

"+1": 1,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4120/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4120/timeline | null | null | null | null | false | [] |

https://api.github.com/repos/huggingface/datasets/issues/5282 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5282/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5282/comments | https://api.github.com/repos/huggingface/datasets/issues/5282/events | https://github.com/huggingface/datasets/pull/5282 | 1,460,238,928 | PR_kwDODunzps5Det2_ | 5,282 | Release: 2.7.1 | [] | closed | false | null | 0 | 2022-11-22T16:58:54Z | 2022-11-22T17:21:28Z | 2022-11-22T17:21:27Z | null | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5282/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5282/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5282.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5282",

"merged_at": "2022-11-22T17:21:27Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5282.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5282"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/1647 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1647/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1647/comments | https://api.github.com/repos/huggingface/datasets/issues/1647/events | https://github.com/huggingface/datasets/issues/1647 | 775,525,799 | MDU6SXNzdWU3NzU1MjU3OTk= | 1,647 | NarrativeQA fails to load with `load_dataset` | [] | closed | false | null | 3 | 2020-12-28T18:16:09Z | 2021-01-05T12:05:08Z | 2021-01-03T17:58:05Z | null | When loading the NarrativeQA dataset with `load_dataset('narrativeqa')` as given in the documentation [here](https://huggingface.co/datasets/narrativeqa), I receive a cascade of exceptions, ending with

FileNotFoundError: Couldn't find file locally at narrativeqa/narrativeqa.py, or remotely at

https://raw.githubusercontent.com/huggingface/datasets/1.1.3/datasets/narrativeqa/narrativeqa.py or

https://s3.amazonaws.com/datasets.huggingface.co/datasets/datasets/narrativeqa/narrativeqa.py

Workaround: manually copy the `narrativeqa.py` builder into my local directory with

curl https://raw.githubusercontent.com/huggingface/datasets/master/datasets/narrativeqa/narrativeqa.py -o narrativeqa.py

and load the dataset as `load_dataset('narrativeqa.py')` everything works fine. I'm on datasets v1.1.3 using Python 3.6.10. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1647/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1647/timeline | null | completed | null | null | false | [

"Hi @eric-mitchell,\r\nI think the issue might be that this dataset was added during the community sprint and has not been released yet. It will be available with the v2 of `datasets`.\r\nFor now, you should be able to load the datasets after installing the latest (master) version of `datasets` using pip:\r\n`pip i... |

https://api.github.com/repos/huggingface/datasets/issues/5432 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5432/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5432/comments | https://api.github.com/repos/huggingface/datasets/issues/5432/events | https://github.com/huggingface/datasets/pull/5432 | 1,535,893,019 | PR_kwDODunzps5HhEA8 | 5,432 | Fix CI benchmarks by temporarily pinning Docker image version | [] | closed | false | null | 2 | 2023-01-17T07:15:31Z | 2023-01-17T08:58:22Z | 2023-01-17T08:51:17Z | null | This PR fixes CI benchmarks, by temporarily pinning Docker image version, instead of "latest" tag.

It also updates deprecated `cml-send-comment` command and using `cml comment create` instead.

Fix #5431. | {

"+1": 1,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5432/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5432/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5432.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5432",

"merged_at": "2023-01-17T08:51:17Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5432.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5432"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | rea... |

https://api.github.com/repos/huggingface/datasets/issues/3671 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3671/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3671/comments | https://api.github.com/repos/huggingface/datasets/issues/3671/events | https://github.com/huggingface/datasets/issues/3671 | 1,122,864,253 | I_kwDODunzps5C7Yx9 | 3,671 | Give an estimate of the dataset size in DatasetInfo | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

}

] | open | false | null | 0 | 2022-02-03T09:47:10Z | 2022-02-03T09:47:10Z | null | null | **Is your feature request related to a problem? Please describe.**

Currently, only part of the datasets provide `dataset_size`, `download_size`, `size_in_bytes` (and `num_bytes` and `num_examples` inside `splits`). I would want to get this information, or an estimation, for all the datasets.

**Describe the solution you'd like**

- get access to the git information for the dataset files hosted on the hub

- look at the [`Content-Length`](https://developer.mozilla.org/en-US/docs/Web/HTTP/Headers/Content-Length) for the files served by HTTP

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3671/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3671/timeline | null | null | null | null | false | [] |

https://api.github.com/repos/huggingface/datasets/issues/3193 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3193/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3193/comments | https://api.github.com/repos/huggingface/datasets/issues/3193/events | https://github.com/huggingface/datasets/issues/3193 | 1,041,971,117 | I_kwDODunzps4-Gzet | 3,193 | Update link to datasets-tagging app | [] | closed | false | null | 0 | 2021-11-02T07:39:59Z | 2021-11-08T10:36:22Z | 2021-11-08T10:36:22Z | null | Once datasets-tagging has been transferred to Spaces:

- huggingface/datasets-tagging#22

We should update the link in Datasets. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3193/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3193/timeline | null | completed | null | null | false | [] |

https://api.github.com/repos/huggingface/datasets/issues/1688 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1688/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1688/comments | https://api.github.com/repos/huggingface/datasets/issues/1688/events | https://github.com/huggingface/datasets/pull/1688 | 779,029,685 | MDExOlB1bGxSZXF1ZXN0NTQ5MDM5ODg0 | 1,688 | Fix DaNE last example | [] | closed | false | null | 0 | 2021-01-05T13:29:37Z | 2021-01-05T14:00:15Z | 2021-01-05T14:00:13Z | null | The last example from the DaNE dataset is empty.

Fix #1686 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1688/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1688/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/1688.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1688",

"merged_at": "2021-01-05T14:00:13Z",

"patch_url": "https://github.com/huggingface/datasets/pull/1688.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1688"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/2522 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2522/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2522/comments | https://api.github.com/repos/huggingface/datasets/issues/2522/events | https://github.com/huggingface/datasets/issues/2522 | 925,334,379 | MDU6SXNzdWU5MjUzMzQzNzk= | 2,522 | Documentation Mistakes in Dataset: emotion | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | 3 | 2021-06-19T07:08:57Z | 2023-01-02T12:04:58Z | 2023-01-02T12:04:58Z | null | As per documentation,

Dataset: emotion

Homepage: https://github.com/dair-ai/emotion_dataset

Dataset: https://github.com/huggingface/datasets/blob/master/datasets/emotion/emotion.py

Permalink: https://huggingface.co/datasets/viewer/?dataset=emotion

Emotion is a dataset of English Twitter messages with eight basic emotions: anger, anticipation, disgust, fear, joy, sadness, surprise, and trust. For more detailed information please refer to the paper.

But when we view the data, there are only 6 emotions, anger, fear, joy, sadness, surprise, and trust. | {

"+1": 1,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2522/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2522/timeline | null | completed | null | null | false | [

"Hi,\r\n\r\nthis issue has been already reported in the dataset repo (https://github.com/dair-ai/emotion_dataset/issues/2), so this is a bug on their side.",

"The documentation has another bug in the dataset card [here](https://huggingface.co/datasets/emotion). \r\n\r\nIn the dataset summary **six** emotions are ... |

https://api.github.com/repos/huggingface/datasets/issues/3615 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3615/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3615/comments | https://api.github.com/repos/huggingface/datasets/issues/3615/events | https://github.com/huggingface/datasets/issues/3615 | 1,111,576,876 | I_kwDODunzps5CQVEs | 3,615 | Dataset BnL Historical Newspapers does not work in streaming mode | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | 3 | 2022-01-22T14:12:59Z | 2022-02-04T14:05:21Z | 2022-02-04T14:05:21Z | null | ## Describe the bug

When trying to load in streaming mode, it "hangs"...

## Steps to reproduce the bug

```python

ds = load_dataset("bnl_newspapers", split="train", streaming=True)

```

## Expected results

The code should be optimized, so that it works fast in streaming mode.

CC: @davanstrien

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3615/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3615/timeline | null | completed | null | null | false | [

"@albertvillanova let me know if there is anything I can do to help with this. I had a quick look at the code again and though I could try the following changes:\r\n- use `download` instead of `download_and_extract`\r\nhttps://github.com/huggingface/datasets/blob/d3d339fb86d378f4cb3c5d1de423315c07a466c6/datasets/bn... |

https://api.github.com/repos/huggingface/datasets/issues/4436 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4436/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4436/comments | https://api.github.com/repos/huggingface/datasets/issues/4436/events | https://github.com/huggingface/datasets/pull/4436 | 1,257,758,834 | PR_kwDODunzps449FsU | 4,436 | Fix directory names for LDC data in timit_asr dataset | [] | closed | false | null | 1 | 2022-06-02T06:45:04Z | 2022-06-02T09:32:56Z | 2022-06-02T09:24:27Z | null | Related to:

- #4422 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4436/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4436/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/4436.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4436",

"merged_at": "2022-06-02T09:24:27Z",

"patch_url": "https://github.com/huggingface/datasets/pull/4436.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4436"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._"

] |

https://api.github.com/repos/huggingface/datasets/issues/5102 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5102/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5102/comments | https://api.github.com/repos/huggingface/datasets/issues/5102/events | https://github.com/huggingface/datasets/issues/5102 | 1,404,746,554 | I_kwDODunzps5Turs6 | 5,102 | Error in create a dataset from a Python generator | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

},

{

"color": "7057ff",

"default": true,

"descript... | closed | false | null | 2 | 2022-10-11T14:28:58Z | 2022-10-12T11:31:56Z | 2022-10-12T11:31:56Z | null | ## Describe the bug

In HOW-TO-GUIDES > Load > [Python generator](https://huggingface.co/docs/datasets/v2.5.2/en/loading#python-generator), the code example defines the `my_gen` function, but when creating the dataset, an undefined `my_dict` is passed in.

```Python

>>> from datasets import Dataset

>>> def my_gen():

... for i in range(1, 4):

... yield {"a": i}