url stringlengths 58 61 | repository_url stringclasses 1

value | labels_url stringlengths 72 75 | comments_url stringlengths 67 70 | events_url stringlengths 65 68 | html_url stringlengths 46 51 | id int64 599M 1.83B | node_id stringlengths 18 32 | number int64 1 6.09k | title stringlengths 1 290 | labels list | state stringclasses 2

values | locked bool 1

class | milestone dict | comments int64 0 54 | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | closed_at stringlengths 20 20 ⌀ | active_lock_reason null | body stringlengths 0 228k ⌀ | reactions dict | timeline_url stringlengths 67 70 | performed_via_github_app null | state_reason stringclasses 3

values | draft bool 2

classes | pull_request dict | is_pull_request bool 2

classes | comments_text list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/721 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/721/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/721/comments | https://api.github.com/repos/huggingface/datasets/issues/721/events | https://github.com/huggingface/datasets/issues/721 | 718,647,147 | MDU6SXNzdWU3MTg2NDcxNDc= | 721 | feat(dl_manager): add support for ftp downloads | [] | closed | false | null | 11 | 2020-10-10T15:50:20Z | 2022-02-15T10:44:44Z | 2022-02-15T10:44:43Z | null | I am working on a new dataset (#302) and encounter a problem downloading it.

```python

# This is the official download link from https://www-i6.informatik.rwth-aachen.de/~koller/RWTH-PHOENIX-2014-T/

_URL = "ftp://wasserstoff.informatik.rwth-aachen.de/pub/rwth-phoenix/2016/phoenix-2014-T.v3.tar.gz"

dl_manager.download_and_extract(_URL)

```

I get an error:

> ValueError: unable to parse ftp://wasserstoff.informatik.rwth-aachen.de/pub/rwth-phoenix/2016/phoenix-2014-T.v3.tar.gz as a URL or as a local path

I checked, and indeed you don't consider `ftp` as a remote file.

https://github.com/huggingface/datasets/blob/4c2af707a6955cf4b45f83ac67990395327c5725/src/datasets/utils/file_utils.py#L188

Adding `ftp` to that list does not immediately solve the issue, so there probably needs to be some extra work.

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/721/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/721/timeline | null | completed | null | null | false | [

"We only support http by default for downloading.\r\nIf you really need to use ftp, then feel free to use a library that allows to download through ftp in your dataset script (I see that you've started working on #722 , that's awesome !). The users will get a message to install the extra library when they load the ... |

https://api.github.com/repos/huggingface/datasets/issues/4612 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4612/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4612/comments | https://api.github.com/repos/huggingface/datasets/issues/4612/events | https://github.com/huggingface/datasets/issues/4612 | 1,290,984,660 | I_kwDODunzps5M8tzU | 4,612 | Release 2.3.0 broke custom iterable datasets | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | 3 | 2022-07-01T06:46:07Z | 2022-07-05T15:08:21Z | 2022-07-05T15:08:21Z | null | ## Describe the bug

Trying to iterate examples from custom iterable dataset fails to bug introduced in `torch_iterable_dataset.py` since the release of 2.3.0.

## Steps to reproduce the bug

```python

next(iter(custom_iterable_dataset))

```

## Expected results

`next(iter(custom_iterable_dataset))` should return examples from the dataset

## Actual results

```

/usr/local/lib/python3.7/dist-packages/datasets/formatting/dataset_wrappers/torch_iterable_dataset.py in _set_fsspec_for_multiprocess()

16 See https://github.com/fsspec/gcsfs/issues/379

17 """

---> 18 fsspec.asyn.iothread[0] = None

19 fsspec.asyn.loop[0] = None

20

AttributeError: module 'fsspec' has no attribute 'asyn'

```

## Environment info

- `datasets` version: 2.3.0

- Platform: Linux-5.4.188+-x86_64-with-Ubuntu-18.04-bionic

- Python version: 3.7.13

- PyArrow version: 8.0.0

- Pandas version: 1.3.5

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4612/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4612/timeline | null | completed | null | null | false | [

"Apparently, `fsspec` does not allow access to attribute-based modules anymore, such as `fsspec.async`.\r\n\r\nHowever, this is a fairly simple fix:\r\n- Change the import to: `from fsspec import asyn`;\r\n- Change line 18 to: `asyn.iothread[0] = None`;\r\n- Change line 19 to `asyn.loop[0] = None`.",

"Hi! I think... |

https://api.github.com/repos/huggingface/datasets/issues/675 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/675/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/675/comments | https://api.github.com/repos/huggingface/datasets/issues/675/events | https://github.com/huggingface/datasets/issues/675 | 709,818,725 | MDU6SXNzdWU3MDk4MTg3MjU= | 675 | Add custom dataset to NLP? | [] | closed | false | null | 2 | 2020-09-27T21:22:50Z | 2020-10-20T09:08:49Z | 2020-10-20T09:08:49Z | null | Is it possible to add a custom dataset such as a .csv to the NLP library?

Thanks. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/675/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/675/timeline | null | completed | null | null | false | [

"Yes you can have a look here: https://huggingface.co/docs/datasets/loading_datasets.html#csv-files",

"No activity, closing"

] |

https://api.github.com/repos/huggingface/datasets/issues/2751 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2751/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2751/comments | https://api.github.com/repos/huggingface/datasets/issues/2751/events | https://github.com/huggingface/datasets/pull/2751 | 959,021,262 | MDExOlB1bGxSZXF1ZXN0NzAyMTk5MjA5 | 2,751 | Update metadata for wikihow dataset | [] | closed | false | null | 0 | 2021-08-03T11:31:57Z | 2021-08-03T15:52:09Z | 2021-08-03T15:52:09Z | null | Update metadata for wikihow dataset:

- Remove leading new line character in description and citation

- Update metadata JSON

- Remove no longer necessary `urls_checksums/checksums.txt` file

Related to #2748. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2751/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2751/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/2751.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2751",

"merged_at": "2021-08-03T15:52:09Z",

"patch_url": "https://github.com/huggingface/datasets/pull/2751.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/2751"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/1232 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1232/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1232/comments | https://api.github.com/repos/huggingface/datasets/issues/1232/events | https://github.com/huggingface/datasets/pull/1232 | 758,180,669 | MDExOlB1bGxSZXF1ZXN0NTMzMzkyNTc0 | 1,232 | Add Grail QA dataset | [] | closed | false | null | 0 | 2020-12-07T05:46:45Z | 2020-12-08T13:03:19Z | 2020-12-08T13:03:19Z | null | For more information: https://dki-lab.github.io/GrailQA/ | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1232/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1232/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/1232.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1232",

"merged_at": "2020-12-08T13:03:19Z",

"patch_url": "https://github.com/huggingface/datasets/pull/1232.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1232"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/1127 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1127/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1127/comments | https://api.github.com/repos/huggingface/datasets/issues/1127/events | https://github.com/huggingface/datasets/pull/1127 | 757,229,684 | MDExOlB1bGxSZXF1ZXN0NTMyNjQwMjMx | 1,127 | Add wikiqaar dataset | [] | closed | false | null | 0 | 2020-12-04T16:26:18Z | 2020-12-07T16:39:41Z | 2020-12-07T16:39:41Z | null | Arabic Wiki Question Answering Corpus. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1127/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1127/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/1127.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1127",

"merged_at": "2020-12-07T16:39:41Z",

"patch_url": "https://github.com/huggingface/datasets/pull/1127.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1127"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/1848 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1848/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1848/comments | https://api.github.com/repos/huggingface/datasets/issues/1848/events | https://github.com/huggingface/datasets/pull/1848 | 803,826,506 | MDExOlB1bGxSZXF1ZXN0NTY5Njg5ODU1 | 1,848 | Refactoring: Create config module | [] | closed | false | null | 0 | 2021-02-08T18:43:51Z | 2021-02-10T12:29:35Z | 2021-02-10T12:29:35Z | null | Refactorize configuration settings into their own module.

This could be seen as a Pythonic singleton-like approach. Eventually a config instance class might be created. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1848/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1848/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/1848.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1848",

"merged_at": "2021-02-10T12:29:35Z",

"patch_url": "https://github.com/huggingface/datasets/pull/1848.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1848"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/5368 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5368/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5368/comments | https://api.github.com/repos/huggingface/datasets/issues/5368/events | https://github.com/huggingface/datasets/pull/5368 | 1,500,322,973 | PR_kwDODunzps5FpZyx | 5,368 | Align remove columns behavior and input dict mutation in `map` with previous behavior | [] | closed | false | null | 1 | 2022-12-16T14:28:47Z | 2022-12-16T16:28:08Z | 2022-12-16T16:25:12Z | null | Align the `remove_columns` behavior and input dict mutation in `map` with the behavior before https://github.com/huggingface/datasets/pull/5252. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5368/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5368/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5368.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5368",

"merged_at": "2022-12-16T16:25:12Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5368.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5368"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._"

] |

https://api.github.com/repos/huggingface/datasets/issues/1747 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1747/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1747/comments | https://api.github.com/repos/huggingface/datasets/issues/1747/events | https://github.com/huggingface/datasets/issues/1747 | 788,299,775 | MDU6SXNzdWU3ODgyOTk3NzU= | 1,747 | datasets slicing with seed | [] | closed | false | null | 2 | 2021-01-18T14:08:55Z | 2022-10-05T12:37:27Z | 2022-10-05T12:37:27Z | null | Hi

I need to slice a dataset with random seed, I looked into documentation here https://huggingface.co/docs/datasets/splits.html

I could not find a seed option, could you assist me please how I can get a slice for different seeds?

thank you.

@lhoestq | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1747/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1747/timeline | null | completed | null | null | false | [

"Hi :) \r\nThe slicing API from https://huggingface.co/docs/datasets/splits.html doesn't shuffle the data.\r\nYou can shuffle and then take a subset of your dataset with\r\n```python\r\n# shuffle and take the first 100 examples\r\ndataset = dataset.shuffle(seed=42).select(range(100))\r\n```\r\n\r\nYou can find more... |

https://api.github.com/repos/huggingface/datasets/issues/638 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/638/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/638/comments | https://api.github.com/repos/huggingface/datasets/issues/638/events | https://github.com/huggingface/datasets/issues/638 | 704,146,956 | MDU6SXNzdWU3MDQxNDY5NTY= | 638 | GLUE/QQP dataset: NonMatchingChecksumError | [] | closed | false | null | 1 | 2020-09-18T07:09:10Z | 2020-09-18T11:37:07Z | 2020-09-18T11:37:07Z | null | Hi @lhoestq , I know you are busy and there are also other important issues. But if this is easy to be fixed, I am shamelessly wondering if you can give me some help , so I can evaluate my models and restart with my developing cycle asap. 😚

datasets version: editable install of master at 9/17

`datasets.load_dataset('glue','qqp', cache_dir='./datasets')`

```

Downloading and preparing dataset glue/qqp (download: 57.73 MiB, generated: 107.02 MiB, post-processed: Unknown size, total: 164.75 MiB) to ./datasets/glue/qqp/1.0.0/7c99657241149a24692c402a5c3f34d4c9f1df5ac2e4c3759fadea38f6cb29c4...

---------------------------------------------------------------------------

NonMatchingChecksumError Traceback (most recent call last)

in

----> 1 datasets.load_dataset('glue','qqp', cache_dir='./datasets')

~/datasets/src/datasets/load.py in load_dataset(path, name, data_dir, data_files, split, cache_dir, features, download_config, download_mode, ignore_verifications, save_infos, script_version, **config_kwargs)

609 download_config=download_config,

610 download_mode=download_mode,

--> 611 ignore_verifications=ignore_verifications,

612 )

613

~/datasets/src/datasets/builder.py in download_and_prepare(self, download_config, download_mode, ignore_verifications, try_from_hf_gcs, dl_manager, **download_and_prepare_kwargs)

467 if not downloaded_from_gcs:

468 self._download_and_prepare(

--> 469 dl_manager=dl_manager, verify_infos=verify_infos, **download_and_prepare_kwargs

470 )

471 # Sync info

~/datasets/src/datasets/builder.py in _download_and_prepare(self, dl_manager, verify_infos, **prepare_split_kwargs)

527 if verify_infos:

528 verify_checksums(

--> 529 self.info.download_checksums, dl_manager.get_recorded_sizes_checksums(), "dataset source files"

530 )

531

~/datasets/src/datasets/utils/info_utils.py in verify_checksums(expected_checksums, recorded_checksums, verification_name)

37 if len(bad_urls) > 0:

38 error_msg = "Checksums didn't match" + for_verification_name + ":\n"

---> 39 raise NonMatchingChecksumError(error_msg + str(bad_urls))

40 logger.info("All the checksums matched successfully" + for_verification_name)

41

NonMatchingChecksumError: Checksums didn't match for dataset source files:

['https://dl.fbaipublicfiles.com/glue/data/QQP-clean.zip']

``` | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/638/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/638/timeline | null | completed | null | null | false | [

"Hi ! Sure I'll take a look"

] |

https://api.github.com/repos/huggingface/datasets/issues/2158 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2158/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2158/comments | https://api.github.com/repos/huggingface/datasets/issues/2158/events | https://github.com/huggingface/datasets/issues/2158 | 848,506,746 | MDU6SXNzdWU4NDg1MDY3NDY= | 2,158 | viewer "fake_news_english" error | [

{

"color": "94203D",

"default": false,

"description": "",

"id": 2107841032,

"name": "nlp-viewer",

"node_id": "MDU6TGFiZWwyMTA3ODQxMDMy",

"url": "https://api.github.com/repos/huggingface/datasets/labels/nlp-viewer"

}

] | closed | false | null | 2 | 2021-04-01T14:13:20Z | 2022-10-05T13:22:02Z | 2022-10-05T13:22:02Z | null | When I visit the [Huggingface - viewer](https://huggingface.co/datasets/viewer/) web site, under the dataset "fake_news_english" I've got this error:

> ImportError: To be able to use this dataset, you need to install the following dependencies['openpyxl'] using 'pip install # noqa: requires this pandas optional dependency for reading xlsx files' for instance'

as well as the error Traceback.

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2158/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2158/timeline | null | completed | null | null | false | [

"Thanks for reporting !\r\nThe viewer doesn't have all the dependencies of the datasets. We may add openpyxl to be able to show this dataset properly",

"This viewer tool is deprecated now and the new viewer at https://huggingface.co/datasets/fake_news_english works fine, so I'm closing this issue"

] |

https://api.github.com/repos/huggingface/datasets/issues/5252 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5252/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5252/comments | https://api.github.com/repos/huggingface/datasets/issues/5252/events | https://github.com/huggingface/datasets/pull/5252 | 1,451,765,838 | PR_kwDODunzps5DCI1U | 5,252 | Support for decoding Image/Audio types in map when format type is not default one | [] | closed | false | null | 6 | 2022-11-16T15:02:13Z | 2022-12-13T17:01:54Z | 2022-12-13T16:59:04Z | null | Add support for decoding the `Image`/`Audio` types in `map` for the formats (Numpy, TF, Jax, PyTorch) other than the default one (Python).

Additional improvements:

* make `Dataset`'s "iter" API cleaner by removing `_iter` and replacing `_iter_batches` with `iter(batch_size)` (also implemented for `IterableDataset`)

* iterate over arrow tables in `map` to avoid `_getitem` calls, which are much slower than `__iter__`/`iter(batch_size)`, when the `format_type` is not Python

* fix `_iter_batches` (now named `iter`) when `drop_last_batch=True` and `pyarrow<=8.0.0` is installed

* lazily extract and decode arrow data in the default format

TODO:

* [x] update the `iter` benchmark in the docs (the `BeamBuilder` cannot load the preprocessed datasets from our bucket, so wait for this to be fixed (cc @lhoestq))

Fix https://github.com/huggingface/datasets/issues/3992, fix https://github.com/huggingface/datasets/issues/3756 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 1,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5252/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5252/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/5252.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5252",

"merged_at": "2022-12-13T16:59:04Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5252.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5252"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._",

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_5252). All of your documentation changes will be reflected on that endpoint.",

"Yes, if the image column is the first in the batch keys, it will ... |

https://api.github.com/repos/huggingface/datasets/issues/1068 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1068/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1068/comments | https://api.github.com/repos/huggingface/datasets/issues/1068/events | https://github.com/huggingface/datasets/pull/1068 | 756,417,337 | MDExOlB1bGxSZXF1ZXN0NTMxOTY1MDk0 | 1,068 | Add Pubmed (citation + abstract) dataset (2020). | [] | closed | false | null | 4 | 2020-12-03T17:54:10Z | 2020-12-23T09:52:07Z | 2020-12-23T09:52:07Z | null | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1068/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1068/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/1068.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1068",

"merged_at": "2020-12-23T09:52:07Z",

"patch_url": "https://github.com/huggingface/datasets/pull/1068.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1068"

} | true | [

"LGTM! ftp addition looks fine but maybe have a look @thomwolf ?",

"It's not finished yet, I need to run the tests on the full dataset (it was running this weekend, there is an error somewhere deep)\r\n",

"@yjernite Ready for review !\r\n@thomwolf \r\n\r\nSo I tried to follow closely the original format that me... |

https://api.github.com/repos/huggingface/datasets/issues/831 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/831/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/831/comments | https://api.github.com/repos/huggingface/datasets/issues/831/events | https://github.com/huggingface/datasets/issues/831 | 740,071,697 | MDU6SXNzdWU3NDAwNzE2OTc= | 831 | [GEM] Add WebNLG dataset | [

{

"color": "e99695",

"default": false,

"description": "Requesting to add a new dataset",

"id": 2067376369,

"name": "dataset request",

"node_id": "MDU6TGFiZWwyMDY3Mzc2MzY5",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20request"

}

] | closed | false | null | 0 | 2020-11-10T16:46:48Z | 2020-12-03T13:38:01Z | 2020-12-03T13:38:01Z | null | ## Adding a Dataset

- **Name:** WebNLG

- **Description:** WebNLG consists of Data/Text pairs where the data is a set of triples extracted from DBpedia and the text is a verbalisation of these triples (16,095 data inputs and 42,873 data-text pairs). The data is available in English and Russian

- **Paper:** https://www.aclweb.org/anthology/P17-1017.pdf

- **Data:** https://webnlg-challenge.loria.fr/download/

- **Motivation:** Included in the GEM shared task, multilingual

Instructions to add a new dataset can be found [here](https://huggingface.co/docs/datasets/share_dataset.html).

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/831/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/831/timeline | null | completed | null | null | false | [] |

https://api.github.com/repos/huggingface/datasets/issues/4797 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4797/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4797/comments | https://api.github.com/repos/huggingface/datasets/issues/4797/events | https://github.com/huggingface/datasets/pull/4797 | 1,330,000,998 | PR_kwDODunzps48uL-t | 4,797 | Torgo dataset creation | [] | closed | false | null | 1 | 2022-08-05T14:18:26Z | 2022-08-09T18:46:00Z | 2022-08-09T18:46:00Z | null | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4797/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4797/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/4797.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4797",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/4797.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4797"

} | true | [

"Hi @YingLi001, thanks for your proposal to add this dataset.\r\n\r\nHowever, now we add datasets directly to the Hub (instead of our GitHub repository). You have the instructions in our docs: \r\n- [Create a dataset loading script](https://huggingface.co/docs/datasets/dataset_script)\r\n- [Create a dataset card](h... |

https://api.github.com/repos/huggingface/datasets/issues/22 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/22/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/22/comments | https://api.github.com/repos/huggingface/datasets/issues/22/events | https://github.com/huggingface/datasets/pull/22 | 608,298,586 | MDExOlB1bGxSZXF1ZXN0NDEwMTAyMjU3 | 22 | adding bleu score code | [] | closed | false | null | 0 | 2020-04-28T13:00:50Z | 2020-04-28T17:48:20Z | 2020-04-28T17:48:08Z | null | this PR add the BLEU score metric to the lib. It can be tested by running the following code.

` from nlp.metrics import bleu

hyp1 = "It is a guide to action which ensures that the military always obeys the commands of the party"

ref1a = "It is a guide to action that ensures that the military forces always being under the commands of the party "

ref1b = "It is the guiding principle which guarantees the military force always being under the command of the Party"

ref1c = "It is the practical guide for the army always to heed the directions of the party"

list_of_references = [[ref1a, ref1b, ref1c]]

hypotheses = [hyp1]

bleu = bleu.bleu_score(list_of_references, hypotheses,4, smooth=True)

print(bleu) ` | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/22/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/22/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/22.diff",

"html_url": "https://github.com/huggingface/datasets/pull/22",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/22.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/22"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/2891 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2891/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2891/comments | https://api.github.com/repos/huggingface/datasets/issues/2891/events | https://github.com/huggingface/datasets/pull/2891 | 993,161,984 | MDExOlB1bGxSZXF1ZXN0NzMxMzkwNjM2 | 2,891 | Allow dynamic first dimension for ArrayXD | [] | closed | false | null | 9 | 2021-09-10T11:52:52Z | 2021-11-23T15:33:13Z | 2021-10-29T09:37:17Z | null | Add support for dynamic first dimension for ArrayXD features. See issue [#887](https://github.com/huggingface/datasets/issues/887).

Following changes allow for `to_pylist` method of `ArrayExtensionArray` to return a list of numpy arrays where fist dimension can vary.

@lhoestq Could you suggest how you want to extend test suit. For now I added only very limited testing. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2891/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2891/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/2891.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2891",

"merged_at": "2021-10-29T09:37:17Z",

"patch_url": "https://github.com/huggingface/datasets/pull/2891.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/2891"

} | true | [

"@lhoestq, thanks for your review.\r\n\r\nI added test for `to_pylist`, I didn't do that for `to_numpy` because this method shouldn't be called for dynamic dimension ArrayXD - this method will try to make a single numpy array for the whole column which cannot be done for dynamic arrays.\r\n\r\nI dig into `to_pandas... |

https://api.github.com/repos/huggingface/datasets/issues/4611 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4611/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4611/comments | https://api.github.com/repos/huggingface/datasets/issues/4611/events | https://github.com/huggingface/datasets/pull/4611 | 1,290,940,874 | PR_kwDODunzps46rxIX | 4,611 | Preserve member order by MockDownloadManager.iter_archive | [] | closed | false | null | 1 | 2022-07-01T05:48:20Z | 2022-07-01T16:59:11Z | 2022-07-01T16:48:28Z | null | Currently, `MockDownloadManager.iter_archive` yields paths to archive members in an order given by `path.rglob("*")`, which migh not be the same order as in the original archive.

See issue in:

- https://github.com/huggingface/datasets/pull/4579#issuecomment-1172135027

This PR fixes the order of the members yielded by `MockDownloadManager.iter_archive` so that it is the same as in the original archive. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4611/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4611/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/4611.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4611",

"merged_at": "2022-07-01T16:48:28Z",

"patch_url": "https://github.com/huggingface/datasets/pull/4611.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4611"

} | true | [

"_The documentation is not available anymore as the PR was closed or merged._"

] |

https://api.github.com/repos/huggingface/datasets/issues/3500 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3500/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3500/comments | https://api.github.com/repos/huggingface/datasets/issues/3500/events | https://github.com/huggingface/datasets/pull/3500 | 1,090,406,133 | PR_kwDODunzps4wXLTB | 3,500 | Docs: Add VCTK dataset description | [] | closed | false | null | 0 | 2021-12-29T10:02:05Z | 2022-01-04T10:46:02Z | 2022-01-04T10:25:09Z | null | This PR is a very minor followup to #1837, with only docs changes (single comment string). | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3500/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3500/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3500.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3500",

"merged_at": "2022-01-04T10:25:09Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3500.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3500"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/516 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/516/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/516/comments | https://api.github.com/repos/huggingface/datasets/issues/516/events | https://github.com/huggingface/datasets/pull/516 | 681,846,032 | MDExOlB1bGxSZXF1ZXN0NDcwMTY5NTA0 | 516 | [Breaking] Rename formated to formatted | [] | closed | false | null | 0 | 2020-08-19T13:35:23Z | 2020-08-20T08:41:17Z | 2020-08-20T08:41:16Z | null | `formated` is not correct but `formatted` is | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/516/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/516/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/516.diff",

"html_url": "https://github.com/huggingface/datasets/pull/516",

"merged_at": "2020-08-20T08:41:16Z",

"patch_url": "https://github.com/huggingface/datasets/pull/516.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/516"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/148 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/148/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/148/comments | https://api.github.com/repos/huggingface/datasets/issues/148/events | https://github.com/huggingface/datasets/issues/148 | 619,590,555 | MDU6SXNzdWU2MTk1OTA1NTU= | 148 | _download_and_prepare() got an unexpected keyword argument 'verify_infos' | [

{

"color": "2edb81",

"default": false,

"description": "A bug in a dataset script provided in the library",

"id": 2067388877,

"name": "dataset bug",

"node_id": "MDU6TGFiZWwyMDY3Mzg4ODc3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20bug"

}

] | closed | false | null | 2 | 2020-05-17T01:48:53Z | 2020-05-18T07:38:33Z | 2020-05-18T07:38:33Z | null | # Reproduce

In Colab,

```

%pip install -q nlp

%pip install -q apache_beam mwparserfromhell

dataset = nlp.load_dataset('wikipedia')

```

get

```

Downloading and preparing dataset wikipedia/20200501.aa (download: Unknown size, generated: Unknown size, total: Unknown size) to /root/.cache/huggingface/datasets/wikipedia/20200501.aa/1.0.0...

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

<ipython-input-6-52471d2a0088> in <module>()

----> 1 dataset = nlp.load_dataset('wikipedia')

1 frames

/usr/local/lib/python3.6/dist-packages/nlp/load.py in load_dataset(path, name, version, data_dir, data_files, split, cache_dir, download_config, download_mode, ignore_verifications, save_infos, **config_kwargs)

515 download_mode=download_mode,

516 ignore_verifications=ignore_verifications,

--> 517 save_infos=save_infos,

518 )

519

/usr/local/lib/python3.6/dist-packages/nlp/builder.py in download_and_prepare(self, download_config, download_mode, ignore_verifications, save_infos, dl_manager, **download_and_prepare_kwargs)

361 verify_infos = not save_infos and not ignore_verifications

362 self._download_and_prepare(

--> 363 dl_manager=dl_manager, verify_infos=verify_infos, **download_and_prepare_kwargs

364 )

365 # Sync info

TypeError: _download_and_prepare() got an unexpected keyword argument 'verify_infos'

``` | {

"+1": 2,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 2,

"url": "https://api.github.com/repos/huggingface/datasets/issues/148/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/148/timeline | null | completed | null | null | false | [

"Same error for dataset 'wiki40b'",

"Should be fixed on master :)"

] |

https://api.github.com/repos/huggingface/datasets/issues/4881 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4881/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4881/comments | https://api.github.com/repos/huggingface/datasets/issues/4881/events | https://github.com/huggingface/datasets/issues/4881 | 1,348,495,777 | I_kwDODunzps5QYGmh | 4,881 | Language names and language codes: connecting to a big database (rather than slow enrichment of custom list) | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

}

] | open | false | null | 48 | 2022-08-23T20:14:24Z | 2023-01-03T08:32:35Z | null | null | **The problem:**

Language diversity is an important dimension of the diversity of datasets. To find one's way around datasets, being able to search by language name and by standardized codes appears crucial.

Currently the list of language codes is [here](https://github.com/huggingface/datasets/blob/main/src/datasets/utils/resources/languages.json), right? At about 1,500 entries, it is roughly at 1/4th of the world's diversity of extant languages. (Probably less, as the list of 1,418 contains variants that are linguistically very close: 108 varieties of English, for instance.)

Looking forward to ever increasing coverage, how will the list of language names and language codes improve over time?

Enrichment of the custom list by HFT contributors (like [here](https://github.com/huggingface/datasets/pull/4880)) has several issues:

* progress is likely to be slow:

(input required from reviewers, etc.)

* the more contributors, the less consistency can be expected among contributions. No need to elaborate on how much confusion is likely to ensue as datasets accumulate.

* there is no information on which language relates with which: no encoding of the special closeness between the languages of the Northwestern Germanic branch (English+Dutch+German etc.), for instance. Information on phylogenetic closeness can be relevant to run experiments on transfer of technology from one language to its close relatives.

**A solution that seems desirable:**

Connecting to an established database that (i) aims at full coverage of the world's languages and (ii) has information on higher-level groupings, alternative names, etc.

It takes a lot of hard work to do such databases. Two important initiatives are [Ethnologue](https://www.ethnologue.com/) (ISO standard) and [Glottolog](https://glottolog.org/). Both have pros and cons. Glottolog contains references to Ethnologue identifiers, so adopting Glottolog entails getting the advantages of both sets of language codes.

Both seem technically accessible & 'developer-friendly'. Glottolog has a [GitHub repo](https://github.com/glottolog/glottolog). For Ethnologue, harvesting tools have been devised (see [here](https://github.com/lyy1994/ethnologue); I did not try it out).

In case a conversation with linguists seemed in order here, I'd be happy to participate ('pro bono', of course), & to rustle up more colleagues as useful, to help this useful development happen.

With appreciation of HFT, | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4881/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4881/timeline | null | null | null | null | false | [

"Thanks for opening this discussion, @alexis-michaud.\r\n\r\nAs the language validation procedure is shared with other Hugging Face projects, I'm tagging them as well.\r\n\r\nCC: @huggingface/moon-landing ",

"on the Hub side, there is not fine grained validation we just check that `language:` contains an array of... |

https://api.github.com/repos/huggingface/datasets/issues/3387 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3387/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3387/comments | https://api.github.com/repos/huggingface/datasets/issues/3387/events | https://github.com/huggingface/datasets/pull/3387 | 1,071,836,456 | PR_kwDODunzps4vbAyC | 3,387 | Create Language Modeling task | [] | closed | false | null | 0 | 2021-12-06T07:56:07Z | 2021-12-17T17:18:28Z | 2021-12-17T17:18:27Z | null | Create Language Modeling task to be able to specify the input "text" column in a dataset.

This can be useful for datasets which are not exclusively used for language modeling and have more than one column:

- for text classification datasets (with columns "review" and "rating", for example), the Language Modeling task can be used to specify the "text" column ("review" in this case).

TODO:

- [ ] Add the LanguageModeling task to all dataset scripts which can be used for language modeling | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3387/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3387/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3387.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3387",

"merged_at": "2021-12-17T17:18:27Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3387.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3387"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/6082 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/6082/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/6082/comments | https://api.github.com/repos/huggingface/datasets/issues/6082/events | https://github.com/huggingface/datasets/pull/6082 | 1,824,819,672 | PR_kwDODunzps5WkdIn | 6,082 | Release: 2.14.1 | [] | closed | false | null | 4 | 2023-07-27T17:05:54Z | 2023-07-27T17:18:17Z | 2023-07-27T17:08:38Z | null | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/6082/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/6082/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/6082.diff",

"html_url": "https://github.com/huggingface/datasets/pull/6082",

"merged_at": "2023-07-27T17:08:38Z",

"patch_url": "https://github.com/huggingface/datasets/pull/6082.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/6082"

} | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_6082). All of your documentation changes will be reflected on that endpoint.",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==8.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchma... |

https://api.github.com/repos/huggingface/datasets/issues/5648 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5648/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5648/comments | https://api.github.com/repos/huggingface/datasets/issues/5648/events | https://github.com/huggingface/datasets/issues/5648 | 1,629,253,719 | I_kwDODunzps5hHHBX | 5,648 | flatten_indices doesn't work with pandas format | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | open | false | null | 1 | 2023-03-17T12:44:25Z | 2023-03-21T13:12:03Z | null | null | ### Describe the bug

Hi,

I noticed that `flatten_indices` throws an error when the batch format is `pandas`. This is probably due to the fact that flatten_indices uses map internally which doesn't accept dataframes as the transformation function output

### Steps to reproduce the bug

tabular_data = pd.DataFrame(np.random.randn(10,10))

tabular_data = datasets.arrow_dataset.Dataset.from_pandas(tabular_data)

tabular_data.with_format("pandas").select([0,1,2,3]).flatten_indices()

### Expected behavior

No error thrown

### Environment info

- `datasets` version: 2.10.1

- Python version: 3.9.5

- PyArrow version: 11.0.0

- Pandas version: 1.4.1 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5648/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5648/timeline | null | null | null | null | false | [

"Thanks for reporting! This can be fixed by setting the format to `arrow` in `flatten_indices` and restoring the original format after the flattening. I'm working on a PR that reduces the number of the `flatten_indices` calls in our codebase and makes `flatten_indices` a no-op when a dataset does not have an indice... |

https://api.github.com/repos/huggingface/datasets/issues/5537 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5537/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5537/comments | https://api.github.com/repos/huggingface/datasets/issues/5537/events | https://github.com/huggingface/datasets/issues/5537 | 1,587,567,464 | I_kwDODunzps5eoFto | 5,537 | Increase speed of data files resolution | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

},

{

"color": "BDE59C",

"default": fals... | open | false | null | 5 | 2023-02-16T12:11:45Z | 2023-04-07T17:32:45Z | null | null | Certain datasets like `bigcode/the-stack-dedup` have so many files that loading them takes forever right from the data files resolution step.

`datasets` uses file patterns to check the structure of the repository but it takes too much time to iterate over and over again on all the data files.

This comes from `resolve_patterns_in_dataset_repository` which calls `_resolve_single_pattern_in_dataset_repository`, which iterates on all the files at

```python

glob_iter = [PurePath(filepath) for filepath in fs.glob(PurePath(pattern).as_posix()) if fs.isfile(filepath)]

```

but calling `glob` on such a dataset is too expensive. Indeed it calls `ls()` in `hffilesystem.py` too many times.

Maybe `glob` can be more optimized in `hffilesystem.py`, or the data files resolution can directly be implemented in the filesystem by checking its `dir_cache` ? | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5537/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5537/timeline | null | null | null | null | false | [

"#self-assign",

"You were right, if `self.dir_cache` is not None in glob, it is exactly the same as what is returned by find, at least for all the tests we have, and some extended evaluation I did across a random sample of about 1000 datasets. \r\n\r\nThanks for the nice hints, and let me know if this is not exac... |

https://api.github.com/repos/huggingface/datasets/issues/1102 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1102/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1102/comments | https://api.github.com/repos/huggingface/datasets/issues/1102/events | https://github.com/huggingface/datasets/issues/1102 | 757,016,515 | MDU6SXNzdWU3NTcwMTY1MTU= | 1,102 | Add retries to download manager | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

}

] | closed | false | null | 0 | 2020-12-04T11:08:11Z | 2020-12-22T15:34:06Z | 2020-12-22T15:34:06Z | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1102/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1102/timeline | null | completed | null | null | false | [] | |

https://api.github.com/repos/huggingface/datasets/issues/3727 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3727/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3727/comments | https://api.github.com/repos/huggingface/datasets/issues/3727/events | https://github.com/huggingface/datasets/pull/3727 | 1,138,979,732 | PR_kwDODunzps4y34JN | 3,727 | Patch all module attributes in its namespace | [] | closed | false | null | 0 | 2022-02-15T17:12:27Z | 2022-02-17T17:06:18Z | 2022-02-17T17:06:17Z | null | When patching module attributes, only those defined in its `__all__` variable were considered by default (only falling back to `__dict__` if `__all__` was None).

However those are only a subset of all the module attributes in its namespace (`__dict__` variable).

This PR fixes the problem of modules that have non-None `__all__` variable, but try to access an attribute present in `__dict__` (and not in `__all__`).

For example, `pandas` has attribute `__version__` only present in `__dict__`.

- Before version 1.4, pandas `__all__` was None, thus all attributes in `__dict__` were patched

- From version 1.4, pandas `__all__` is not None, thus attributes in `__dict__` not present in `__all__` are ignored

Fix #3724.

CC: @severo @lvwerra | {

"+1": 1,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3727/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3727/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3727.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3727",

"merged_at": "2022-02-17T17:06:17Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3727.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3727"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/538 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/538/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/538/comments | https://api.github.com/repos/huggingface/datasets/issues/538/events | https://github.com/huggingface/datasets/pull/538 | 688,015,912 | MDExOlB1bGxSZXF1ZXN0NDc1MzU3MjY2 | 538 | [logging] Add centralized logging - Bump-up cache loads to warnings | [] | closed | false | null | 0 | 2020-08-28T11:42:29Z | 2020-08-31T11:42:51Z | 2020-08-31T11:42:51Z | null | Add a `nlp.logging` module to set the global logging level easily. The verbosity level also controls the tqdm bars (disabled when set higher than INFO).

You can use:

```

nlp.logging.set_verbosity(verbosity: int)

nlp.logging.set_verbosity_info()

nlp.logging.set_verbosity_warning()

nlp.logging.set_verbosity_debug()

nlp.logging.set_verbosity_error()

nlp.logging.get_verbosity() -> int

```

And use the levels:

```

nlp.logging.CRITICAL

nlp.logging.DEBUG

nlp.logging.ERROR

nlp.logging.FATAL

nlp.logging.INFO

nlp.logging.NOTSET

nlp.logging.WARN

nlp.logging.WARNING

``` | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/538/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/538/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/538.diff",

"html_url": "https://github.com/huggingface/datasets/pull/538",

"merged_at": "2020-08-31T11:42:50Z",

"patch_url": "https://github.com/huggingface/datasets/pull/538.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/538"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/5762 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5762/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5762/comments | https://api.github.com/repos/huggingface/datasets/issues/5762/events | https://github.com/huggingface/datasets/issues/5762 | 1,670,326,470 | I_kwDODunzps5jjyjG | 5,762 | Not able to load the pile | [] | closed | false | null | 1 | 2023-04-17T03:09:10Z | 2023-04-17T09:37:27Z | 2023-04-17T09:37:27Z | null | ### Describe the bug

Got this error when I am trying to load the pile dataset

```

TypeError: Couldn't cast array of type

struct<file: string, id: string>

to

{'id': Value(dtype='string', id=None)}

```

### Steps to reproduce the bug

Please visit the following sample notebook

https://colab.research.google.com/drive/1JHcjawcHL6QHhi5VcqYd07W2QCEj2nWK#scrollTo=ulJP3eJCI-tB

### Expected behavior

The pile should work

### Environment info

- `datasets` version: 2.11.0

- Platform: Linux-5.10.147+-x86_64-with-glibc2.31

- Python version: 3.9.16

- Huggingface_hub version: 0.13.4

- PyArrow version: 9.0.0

- Pandas version: 1.5.3 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5762/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5762/timeline | null | completed | null | null | false | [

"Thanks for reporting, @surya-narayanan.\r\n\r\nI see you already started a discussion about this on the Community tab of the corresponding dataset: https://huggingface.co/datasets/EleutherAI/the_pile/discussions/10\r\nLet's continue the discussion there!"

] |

https://api.github.com/repos/huggingface/datasets/issues/3549 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3549/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3549/comments | https://api.github.com/repos/huggingface/datasets/issues/3549/events | https://github.com/huggingface/datasets/pull/3549 | 1,096,426,996 | PR_kwDODunzps4wqkGt | 3,549 | Fix sem_eval_2018_task_1 download location | [] | closed | false | null | 2 | 2022-01-07T15:37:52Z | 2022-01-27T15:52:03Z | 2022-01-27T15:52:03Z | null | This changes the download location of sem_eval_2018_task_1 files to include the test set labels as discussed in https://github.com/huggingface/datasets/issues/2745#issuecomment-954588500_ with @lhoestq. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3549/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3549/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3549.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3549",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/3549.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3549"

} | true | [

"Hi ! Thanks for pushing this :)\r\n\r\nIt seems that you created this PR from an old version of `datasets` that didn't have the sem_eval_2018_task_1.py file.\r\n\r\nCan you try merging `master` into your branch ? Or re-create your PR from a branch that comes from a more recent version of `datasets` ?\r\n\r\nAnd so... |

https://api.github.com/repos/huggingface/datasets/issues/3125 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3125/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3125/comments | https://api.github.com/repos/huggingface/datasets/issues/3125/events | https://github.com/huggingface/datasets/pull/3125 | 1,032,046,666 | PR_kwDODunzps4teNPC | 3,125 | Add SLR83 to OpenSLR | [] | closed | false | null | 0 | 2021-10-21T04:26:00Z | 2021-10-22T20:10:05Z | 2021-10-22T08:30:22Z | null | The PR resolves #3119, adding SLR83 (UK and Ireland dialects) to the previously created OpenSLR dataset. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3125/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3125/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3125.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3125",

"merged_at": "2021-10-22T08:30:22Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3125.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3125"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/310 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/310/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/310/comments | https://api.github.com/repos/huggingface/datasets/issues/310/events | https://github.com/huggingface/datasets/pull/310 | 644,806,720 | MDExOlB1bGxSZXF1ZXN0NDM5MzY1MDg5 | 310 | add wikisql | [] | closed | false | null | 1 | 2020-06-24T18:00:35Z | 2020-06-25T12:32:25Z | 2020-06-25T12:32:25Z | null | Adding the [WikiSQL](https://github.com/salesforce/WikiSQL) dataset.

Interesting things to note:

- Have copied the function (`_convert_to_human_readable`) which converts the SQL query to a human-readable (string) format as this is what most people will want when actually using this dataset for NLP applications.

- `conds` was originally a tuple but is converted to a dictionary to support differing types.

Would be nice to add the logical_form metrics too at some point. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/310/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/310/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/310.diff",

"html_url": "https://github.com/huggingface/datasets/pull/310",

"merged_at": "2020-06-25T12:32:25Z",

"patch_url": "https://github.com/huggingface/datasets/pull/310.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/310"

} | true | [

"That's great work @ghomasHudson !"

] |

https://api.github.com/repos/huggingface/datasets/issues/5046 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5046/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5046/comments | https://api.github.com/repos/huggingface/datasets/issues/5046/events | https://github.com/huggingface/datasets/issues/5046 | 1,391,372,519 | I_kwDODunzps5S7qjn | 5,046 | Audiofolder creates empty Dataset if files same level as metadata | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

},

{

"color": "7057ff",

"default": true,

"descript... | closed | false | null | 5 | 2022-09-29T19:17:23Z | 2022-10-28T13:05:07Z | 2022-10-28T13:05:07Z | null | ## Describe the bug

When audio files are at the same level as the metadata (`metadata.csv` or `metadata.jsonl` ), the `load_dataset` returns a `DatasetDict` with no rows but the correct columns.

https://github.com/huggingface/datasets/blob/1ea4d091b7a4b83a85b2eeb8df65115d39af3766/docs/source/audio_dataset.mdx?plain=1#L88

## Steps to reproduce the bug

`metadata.csv`:

```csv

file_name,duration,transcription

./2063_fe9936e7-62b2-4e62-a276-acbd344480ce_1.wav,10.768,hello

```

```python

>>> audio_dataset = load_dataset("audiofolder", data_dir="/audio-data/")

>>> audio_dataset

DatasetDict({

train: Dataset({

features: ['audio', 'duration', 'transcription'],

num_rows: 0

})

validation: Dataset({

features: ['audio', 'duration', 'transcription'],

num_rows: 0

})

})

```

I've tried, with no success,:

- setting `split` to something else so I don't get a `DatasetDict`,

- removing the `./`,

- using `.jsonl`.

## Expected results

```

Dataset({

features: ['audio', 'duration', 'transcription'],

num_rows: 1

})

```

## Actual results

```

DatasetDict({

train: Dataset({

features: ['audio', 'duration', 'transcription'],

num_rows: 0

})

validation: Dataset({

features: ['audio', 'duration', 'transcription'],

num_rows: 0

})

})

```

## Environment info

- `datasets` version: 2.5.1

- Platform: Linux-5.13.0-1025-aws-x86_64-with-glibc2.29

- Python version: 3.8.10

- PyArrow version: 9.0.0

- Pandas version: 1.5.0

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5046/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5046/timeline | null | completed | null | null | false | [

"Hi! Unfortunately, I can't reproduce this behavior. Instead, I get `ValueError: audio at 2063_fe9936e7-62b2-4e62-a276-acbd344480ce_1.wav doesn't have metadata in /audio-data/metadata.csv`, which can be fixed by removing the `./` from the file name.\r\n\r\n(Link to a Colab that tries to reproduce this behavior: htt... |

https://api.github.com/repos/huggingface/datasets/issues/3690 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3690/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3690/comments | https://api.github.com/repos/huggingface/datasets/issues/3690/events | https://github.com/huggingface/datasets/pull/3690 | 1,127,493,538 | PR_kwDODunzps4yP2p5 | 3,690 | Update docs to new frontend/UI | [] | closed | false | null | 17 | 2022-02-08T16:38:09Z | 2022-03-03T20:04:21Z | 2022-03-03T20:04:20Z | null | ### TLDR: Update `datasets` `docs` to the new syntax (markdown and mdx files) & frontend (as how it looks on [hf.co/transformers](https://huggingface.co/docs/transformers/index))

| Light mode | Dark mode |

|-----------------------------------------------------------------------------------------------------|--------------------------------------------------------------------------------|

| <img width="400" alt="Screenshot 2022-02-17 at 14 15 34" src="https://user-images.githubusercontent.com/11827707/154489358-e2fb3708-8d72-4fb6-93f0-51d4880321c0.png"> | <img width="400" alt="Screenshot 2022-02-17 at 14 16 27" src="https://user-images.githubusercontent.com/11827707/154489596-c5a1311b-181c-4341-adb3-d60a7d3abe85.png"> |

## Checklist

- [x] update datasets docs to new syntax (should call `doc-builder convert`) (this PR)

- [x] discuss `@property` methods frontend https://github.com/huggingface/doc-builder/pull/87

- [x] discuss `inject_arrow_table_documentation` (this PR) https://github.com/huggingface/datasets/pull/3690#discussion_r801847860

- [x] update datasets docs path on moon-landing https://github.com/huggingface/moon-landing/pull/2089

- [x] convert pyarrow docstring from Numpydoc style to groups style https://github.com/huggingface/doc-builder/pull/89(https://stackoverflow.com/a/24385103/6558628)

- [x] handle `Raises` section on frontend and doc-builder https://github.com/huggingface/doc-builder/pull/86

- [x] check imgs path (this PR) (nothing to update here)

- [x] doc exaples block has to follow format `Examples::` https://github.com/huggingface/datasets/pull/3693

- [x] fix [this docstring](https://github.com/huggingface/datasets/blob/6ed6ac9448311930557810383d2cfd4fe6aae269/src/datasets/arrow_dataset.py#L3339) (causing svelte compilation error)

- [x] Delete sphinx related files

- [x] Delete sphinx CI

- [x] Update docs config in setup.py

- [x] add `versions.yml` in doc-build https://github.com/huggingface/doc-build/pull/1

- [x] add `versions.yml` in doc-build-dev https://github.com/huggingface/doc-build-dev/pull/1

- [x] https://github.com/huggingface/moon-landing/pull/2089

- [x] format docstrings for example `datasets.DatasetBuilder.download_and_prepare` args format look wrong

- [x] create new github actions. (can probably be in a separate PR) (see the transformers equivalents below)

1. [build_dev_documentation.yml](https://github.com/huggingface/transformers/blob/master/.github/workflows/build_dev_documentation.yml)

2. [build_documentation.yml](https://github.com/huggingface/transformers/blob/master/.github/workflows/build_documentation.yml)

3. [delete_dev_documentation.yml](https://github.com/huggingface/transformers/blob/master/.github/workflows/delete_dev_documentation.yml)

## Note to reviewers

The number of changed files is a lot (100+) because I've converted all `.rst` files to `.mdx` files & they are compiling fine on the svelte side (also, moved all the imgs to to [doc-imgs repo](https://huggingface.co/datasets/huggingface/documentation-images/tree/main/datasets)). Moreover, you should just review them on preprod and see if the rendering look fine.

_Therefore, I'd suggest to focus on the changed_ **`.py`** and **CI files** (github workflows, etc. you can use [this filter here](https://github.com/huggingface/datasets/pull/3690/files?file-filters%5B%5D=.py&file-filters%5B%5D=.yml&show-deleted-files=true&show-viewed-files=true)) during the review & ignore `.mdx` files. (if there's a bug in `.mdx` files, we can always handle it in a separate PR afterwards). | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 4,

"total_count": 4,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3690/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3690/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3690.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3690",

"merged_at": "2022-03-03T20:04:20Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3690.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3690"

} | true | [

"We can have the docstrings of the properties that are missing docstrings (from discussion [here](https://github.com/huggingface/doc-builder/pull/96)) here by using your new `inject_arrow_table_documentation` onthem as well ?",

"@sgugger & @lhoestq could you help me with what should the `docs` section in setup.py... |

https://api.github.com/repos/huggingface/datasets/issues/3936 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3936/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3936/comments | https://api.github.com/repos/huggingface/datasets/issues/3936/events | https://github.com/huggingface/datasets/pull/3936 | 1,170,713,473 | PR_kwDODunzps40hE-P | 3,936 | Fix Wikipedia version and re-add tests | [] | closed | false | null | 1 | 2022-03-16T08:48:04Z | 2022-03-16T17:04:07Z | 2022-03-16T17:04:05Z | null | To keep backward compatibility when loading using "wikipedia" dataset ID (https://huggingface.co/datasets/wikipedia), we have created the pre-processed data for the same languages we were offering before, but with updated date "20220301":

- de

- en

- fr

- frr

- it

- simple

These pre-processed data can be accessed, e.g.:

```python

ds = load_dataset("wikipedia", "20220301.frr", split="train")

```

The next step will be to offer the pre-processed data for many other languages, but when loading using "wikimedia/wikipedia": https://huggingface.co/datasets/wikimedia/wikipedia | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3936/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3936/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/3936.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3936",

"merged_at": "2022-03-16T17:04:05Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3936.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3936"

} | true | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_3936). All of your documentation changes will be reflected on that endpoint."

] |

https://api.github.com/repos/huggingface/datasets/issues/2659 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/2659/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/2659/comments | https://api.github.com/repos/huggingface/datasets/issues/2659/events | https://github.com/huggingface/datasets/pull/2659 | 946,155,407 | MDExOlB1bGxSZXF1ZXN0NjkxMzcwNzU3 | 2,659 | Allow dataset config kwargs to be None | [] | closed | false | null | 0 | 2021-07-16T10:25:38Z | 2021-07-16T12:46:07Z | 2021-07-16T12:46:07Z | null | Close https://github.com/huggingface/datasets/issues/2658

The dataset config kwargs that were set to None we simply ignored.

This was an issue when None has some meaning for certain parameters of certain builders, like the `sep` parameter of the "csv" builder that allows to infer to separator.

cc @SBrandeis | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 1,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/2659/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/2659/timeline | null | null | false | {

"diff_url": "https://github.com/huggingface/datasets/pull/2659.diff",

"html_url": "https://github.com/huggingface/datasets/pull/2659",

"merged_at": "2021-07-16T12:46:06Z",

"patch_url": "https://github.com/huggingface/datasets/pull/2659.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/2659"

} | true | [] |

https://api.github.com/repos/huggingface/datasets/issues/1992 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1992/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1992/comments | https://api.github.com/repos/huggingface/datasets/issues/1992/events | https://github.com/huggingface/datasets/issues/1992 | 822,672,238 | MDU6SXNzdWU4MjI2NzIyMzg= | 1,992 | `datasets.map` multi processing much slower than single processing | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | open | false | null | 13 | 2021-03-05T02:10:02Z | 2023-06-08T12:31:55Z | null | null | Hi, thank you for the great library.

I've been using datasets to pretrain language models, and it often involves datasets as large as ~70G.

My data preparation step is roughly two steps: `load_dataset` which splits corpora into a table of sentences, and `map` converts a sentence into a list of integers, using a tokenizer.

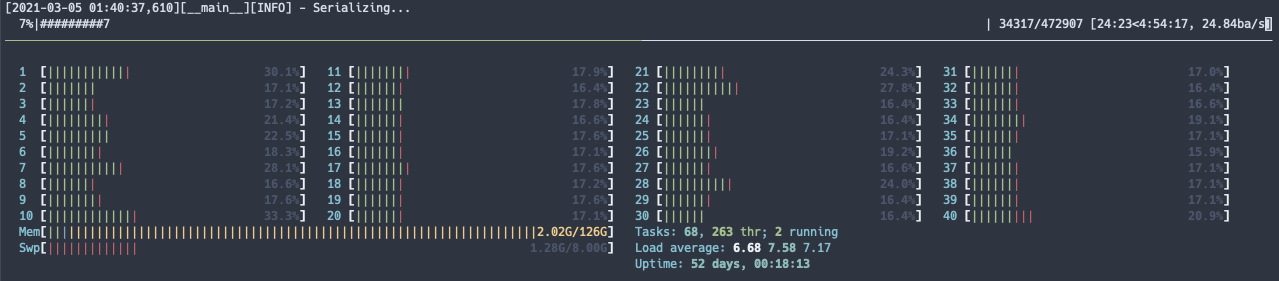

I noticed that `map` function with `num_proc=mp.cpu_count() //2` takes more than 20 hours to finish the job where as `num_proc=1` gets the job done in about 5 hours. The machine I used has 40 cores, with 126G of RAM. There were no other jobs when `map` function was running.

What could be the reason? I would be happy to provide information necessary to spot the reason.