| # 概述 | |

| 我们采取了两种的不同的策略来微调模型,分别使用LoRA方法微调了MiniCPM-2B,使用QLoRA方法微调了ChatGLM3-6B | |

| # ChatGLM3-6B QLoRA微调 | |

| ## 依赖 | |

| Xtuner 集成 DeepSpeed 安装依赖: | |

| ``` | |

| pip install -U 'xtuner[deepspeed]' | |

| ``` | |

| ## 模型训练 | |

| 设置好模型位置和数据集后执行Xtuner命令进行训练 | |

| ``` | |

| xtuner train ${CONFIG_NAME_OR_PATH} | |

| ``` | |

| 显存消耗在13.5G左右,耗时约4个小时 | |

|  | |

|  | |

| ## 模型文件 | |

| 模型文件上传至HuggingFace | |

| [Read_Comprehension_Chatglm3-6b_qlora](https://huggingface.co/KashiwaByte/Read_Comprehension_Chatglm3-6b_qlora/tree/main) | |

|  | |

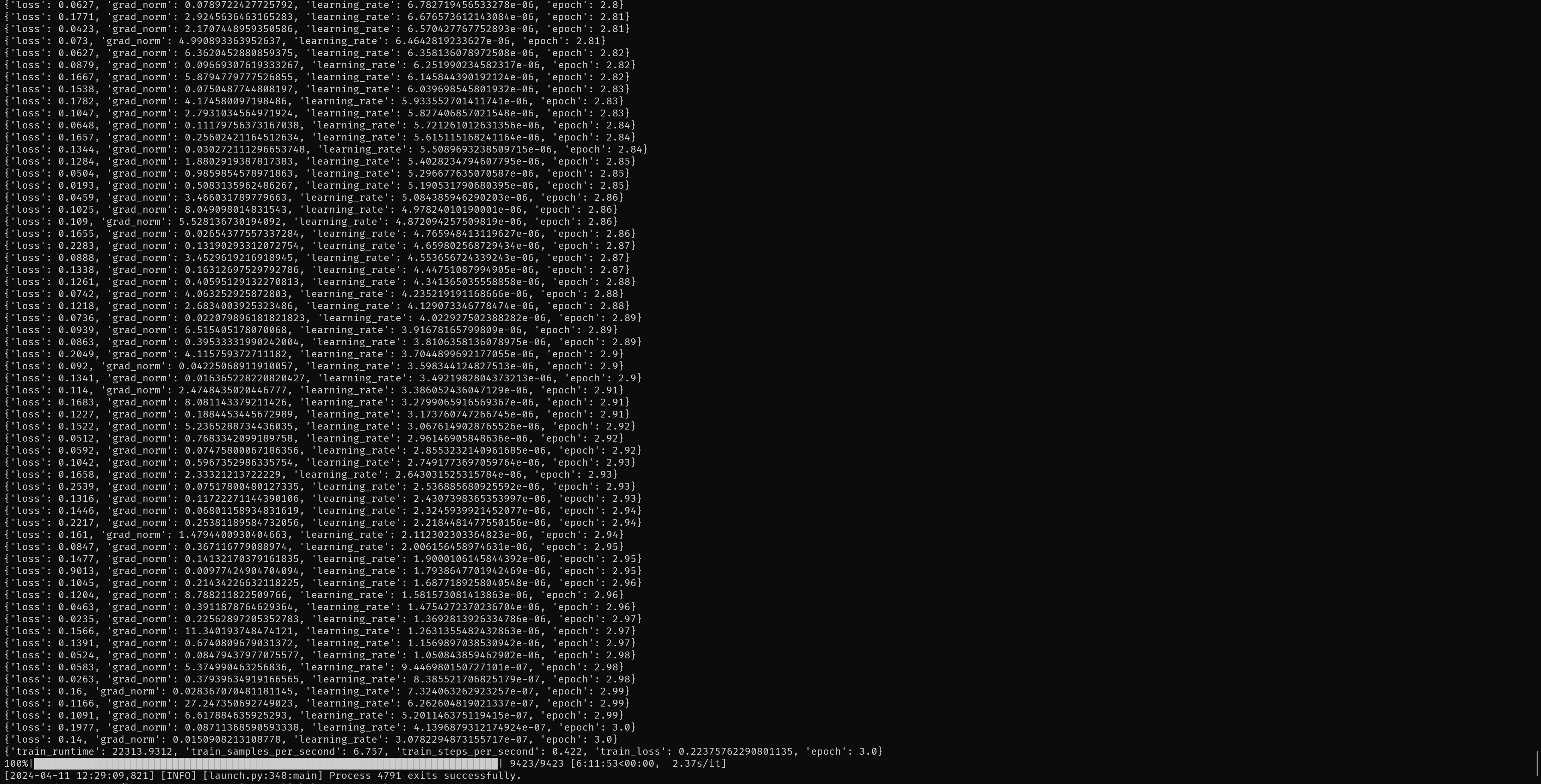

| # MiniCPM-2B LoRA微调 | |

| 设置好模型位置和数据集后运行train.sh脚本进行训练,训练显存消耗在21.5G左右,耗时约6个小时 | |

|  | |

| 以下分别是 train脚本的argparse超参数,Deepspeed配置和LoRA配置 | |

| #train argparse | |

| --deepspeed ./ds_config.json \ | |

| --output_dir="./output/MiniCPM" \ | |

| --per_device_train_batch_size=4 \ | |

| --gradient_accumulation_steps=4 \ | |

| --logging_steps=10 \ | |

| --num_train_epochs=3 \ | |

| --save_steps=500 \ | |

| --learning_rate=1e-4 \ | |

| --save_on_each_node=True \ | |

| # deepspeed config | |

| { | |

| "fp16": { | |

| "enabled": "auto", | |

| "loss_scale": 0, | |

| "loss_scale_window": 1000, | |

| "initial_scale_power": 16, | |

| "hysteresis": 2, | |

| "min_loss_scale": 1 | |

| }, | |

| "optimizer": { | |

| "type": "AdamW", | |

| "params": { | |

| "lr": "auto", | |

| "betas": "auto", | |

| "eps": "auto", | |

| "weight_decay": "auto" | |

| } | |

| }, | |

| "scheduler": { | |

| "type": "WarmupDecayLR", | |

| "params": { | |

| "last_batch_iteration": -1, | |

| "total_num_steps": "auto", | |

| "warmup_min_lr": "auto", | |

| "warmup_max_lr": "auto", | |

| "warmup_num_steps": "auto" | |

| } | |

| }, | |

| "zero_optimization": { | |

| "stage": 2, | |

| "offload_optimizer": { | |

| "device": "cpu", | |

| "pin_memory": true | |

| }, | |

| "offload_param": { | |

| "device": "cpu", | |

| "pin_memory": true | |

| }, | |

| "allgather_partitions": true, | |

| "allgather_bucket_size": 5e8, | |

| "overlap_comm": true, | |

| "reduce_scatter": true, | |

| "reduce_bucket_size": 5e8, | |

| "contiguous_gradients": true | |

| }, | |

| "activation_checkpointing": { | |

| "partition_activations": false, | |

| "cpu_checkpointing": false, | |

| "contiguous_memory_optimization": false, | |

| "number_checkpoints": null, | |

| "synchronize_checkpoint_boundary": false, | |

| "profile": false | |

| }, | |

| "gradient_accumulation_steps": "auto", | |

| "gradient_clipping": "auto", | |

| "steps_per_print": 2000, | |

| "train_batch_size": "auto", | |

| "min_lr": 5e-7, | |

| "train_micro_batch_size_per_gpu": "auto", | |

| "wall_clock_breakdown": false | |

| } | |

| # loraConfig | |

| config = LoraConfig( | |

| task_type=TaskType.CAUSAL_LM, | |

| target_modules=["q_proj", "v_proj"], # 这个不同的模型需要设置不同的参数,需要看模型中的attention层 | |

| inference_mode=False, # 训练模式 | |

| r=8, # Lora 秩 | |

| lora_alpha=32, # Lora alaph,具体作用参见 Lora 原理 | |

| lora_dropout=0.1# Dropout 比例 | |

| ) | |

| ## 模型文件 | |

| 模型文件上传至HuggingFace | |

| [Read_Comprehension_MiniCPM2B](https://huggingface.co/KashiwaByte/Read_Comprehension_MiniCPM2B/tree/main) | |

|  |