title stringlengths 1 185 | diff stringlengths 0 32.2M | body stringlengths 0 123k ⌀ | url stringlengths 57 58 | created_at stringlengths 20 20 | closed_at stringlengths 20 20 | merged_at stringlengths 20 20 ⌀ | updated_at stringlengths 20 20 |

|---|---|---|---|---|---|---|---|

Fix styling of `DataFrame` for columns with boolean label | diff --git a/doc/source/whatsnew/v1.5.0.rst b/doc/source/whatsnew/v1.5.0.rst

index 7f07187e34c78..cc44f43ba8acf 100644

--- a/doc/source/whatsnew/v1.5.0.rst

+++ b/doc/source/whatsnew/v1.5.0.rst

@@ -1042,6 +1042,7 @@ Styler

- Bug in :meth:`Styler.set_sticky` leading to white text on white background in dark mode (:issue... | - [x] closes #47838

- [x] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [x] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#pre-commit).

- [x]... | https://api.github.com/repos/pandas-dev/pandas/pulls/47848 | 2022-07-25T15:50:08Z | 2022-08-01T18:51:06Z | 2022-08-01T18:51:06Z | 2022-08-01T19:18:19Z |

DOC: Add spaces before `:` to cancel bold | diff --git a/pandas/core/computation/eval.py b/pandas/core/computation/eval.py

index d82cc37b90ad4..1892069f78edd 100644

--- a/pandas/core/computation/eval.py

+++ b/pandas/core/computation/eval.py

@@ -206,12 +206,11 @@ def eval(

The engine used to evaluate the expression. Supported engines are

- - N... | - [x] closes #47735

- [ ] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [ ] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#pre-commit).

- [ ]... | https://api.github.com/repos/pandas-dev/pandas/pulls/47847 | 2022-07-25T12:40:47Z | 2022-07-25T16:35:29Z | 2022-07-25T16:35:29Z | 2022-07-26T03:00:01Z |

BUG: fix bug where appending unordered ``CategoricalIndex`` variables overrides index | diff --git a/doc/source/whatsnew/v1.5.0.rst b/doc/source/whatsnew/v1.5.0.rst

index a1a2149da7cf6..5a95883e12fd1 100644

--- a/doc/source/whatsnew/v1.5.0.rst

+++ b/doc/source/whatsnew/v1.5.0.rst

@@ -407,7 +407,6 @@ upon serialization. (Related issue :issue:`12997`)

# Roundtripping now works

pd.read_json(a.to_js... | - [x] closes #24845

- [x] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [x] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#pre-commit).

- [x]... | https://api.github.com/repos/pandas-dev/pandas/pulls/47841 | 2022-07-24T22:42:35Z | 2022-08-16T18:38:20Z | 2022-08-16T18:38:20Z | 2022-08-16T18:38:55Z |

BUG Fixing columns dropped from multi index in group by transform GH4… | diff --git a/doc/source/whatsnew/v1.5.0.rst b/doc/source/whatsnew/v1.5.0.rst

index d138ebb9c02a3..7fc8b16bbed9a 100644

--- a/doc/source/whatsnew/v1.5.0.rst

+++ b/doc/source/whatsnew/v1.5.0.rst

@@ -1010,6 +1010,7 @@ Groupby/resample/rolling

- Bug in :meth:`DataFrame.resample` reduction methods when used with ``on`` wou... | …7787

- [x] closes #47787

- [x] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [x] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#pre-comm... | https://api.github.com/repos/pandas-dev/pandas/pulls/47840 | 2022-07-24T21:00:05Z | 2022-08-17T01:38:30Z | 2022-08-17T01:38:30Z | 2022-08-17T20:12:21Z |

DEPR: inplace keyword for Categorical.set_ordered, setting .categories directly | diff --git a/doc/source/user_guide/10min.rst b/doc/source/user_guide/10min.rst

index 0adb937de2b8b..c767fb1ebef7f 100644

--- a/doc/source/user_guide/10min.rst

+++ b/doc/source/user_guide/10min.rst

@@ -680,12 +680,12 @@ Converting the raw grades to a categorical data type:

df["grade"] = df["raw_grade"].astype("cate... | - [ ] closes #xxxx (Replace xxxx with the Github issue number)

- [x] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [x] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/con... | https://api.github.com/repos/pandas-dev/pandas/pulls/47834 | 2022-07-23T23:34:41Z | 2022-08-08T23:45:31Z | 2022-08-08T23:45:31Z | 2022-08-09T02:02:53Z |

DOC: added type annotations and tests to check for multiple warning match | diff --git a/pandas/_testing/_warnings.py b/pandas/_testing/_warnings.py

index 1a8fe71ae3728..e9df85eae550a 100644

--- a/pandas/_testing/_warnings.py

+++ b/pandas/_testing/_warnings.py

@@ -17,7 +17,7 @@

@contextmanager

def assert_produces_warning(

- expected_warning: type[Warning] | bool | None = Warning,

+ e... | - [x] closes #47829

- [x] Added [type annotations](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#type-hints) to new arguments/methods/functions.

| https://api.github.com/repos/pandas-dev/pandas/pulls/47833 | 2022-07-23T21:16:32Z | 2022-08-03T20:30:00Z | 2022-08-03T20:30:00Z | 2022-08-03T20:30:01Z |

TYP: pandas/io annotations from pandas-stubs | diff --git a/pandas/errors/__init__.py b/pandas/errors/__init__.py

index 08ee5650e97a6..e3f7e9d454383 100644

--- a/pandas/errors/__init__.py

+++ b/pandas/errors/__init__.py

@@ -189,7 +189,7 @@ class AbstractMethodError(NotImplementedError):

while keeping compatibility with Python 2 and Python 3.

"""

- de... | Copied annotations from pandas-stubs when pandas has no annotations | https://api.github.com/repos/pandas-dev/pandas/pulls/47831 | 2022-07-23T20:46:32Z | 2022-07-25T16:43:49Z | 2022-07-25T16:43:49Z | 2022-09-10T01:38:57Z |

TYP: pandas/plotting annotations from pandas-stubs | diff --git a/.pre-commit-config.yaml b/.pre-commit-config.yaml

index 92f3b3ce83297..f8cb869e6ed89 100644

--- a/.pre-commit-config.yaml

+++ b/.pre-commit-config.yaml

@@ -93,7 +93,7 @@ repos:

types: [python]

stages: [manual]

additional_dependencies: &pyright_dependencies

- - pyright@1.1.... | Copied annotations from pandas-stubs when pandas has no annotations | https://api.github.com/repos/pandas-dev/pandas/pulls/47827 | 2022-07-23T01:16:08Z | 2022-07-25T16:45:09Z | 2022-07-25T16:45:09Z | 2022-09-10T01:38:55Z |

REF: de-duplicate PeriodArray arithmetic code | diff --git a/pandas/core/arrays/period.py b/pandas/core/arrays/period.py

index 2d676f94c6a64..12352c4490f29 100644

--- a/pandas/core/arrays/period.py

+++ b/pandas/core/arrays/period.py

@@ -14,7 +14,10 @@

import numpy as np

-from pandas._libs import algos as libalgos

+from pandas._libs import (

+ algos as libalg... | and improved exception message

- [ ] closes #xxxx (Replace xxxx with the Github issue number)

- [ ] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [ ] All [code checks passed](https://pandas.pydata.o... | https://api.github.com/repos/pandas-dev/pandas/pulls/47826 | 2022-07-22T23:31:55Z | 2022-07-25T16:49:16Z | 2022-07-25T16:49:16Z | 2022-07-25T17:34:42Z |

Specify that both ``by`` and ``level`` should not be specified in ``groupby`` - GH40378 | diff --git a/doc/source/user_guide/groupby.rst b/doc/source/user_guide/groupby.rst

index 34244a8edcbfa..5d8ef7ce02097 100644

--- a/doc/source/user_guide/groupby.rst

+++ b/doc/source/user_guide/groupby.rst

@@ -345,17 +345,6 @@ Index level names may be supplied as keys.

More on the ``sum`` function and aggregation lat... | - [x] closes #40378

- [x] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [x] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#pre-commit).

- [ ... | https://api.github.com/repos/pandas-dev/pandas/pulls/47825 | 2022-07-22T19:53:23Z | 2022-08-08T18:52:13Z | 2022-08-08T18:52:13Z | 2022-08-08T18:52:19Z |

Manual Backport PR #47803 on branch 1.4.x (TST/CI: xfail test_round_sanity for 32 bit) | diff --git a/pandas/tests/scalar/timedelta/test_timedelta.py b/pandas/tests/scalar/timedelta/test_timedelta.py

index 7a32c932aee77..bedc09959520a 100644

--- a/pandas/tests/scalar/timedelta/test_timedelta.py

+++ b/pandas/tests/scalar/timedelta/test_timedelta.py

@@ -13,6 +13,7 @@

NaT,

iNaT,

)

+from pandas.comp... | Manual Backport PR #47803

- [x] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [x] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#pre-commit)... | https://api.github.com/repos/pandas-dev/pandas/pulls/47824 | 2022-07-22T19:43:21Z | 2022-07-23T11:07:06Z | 2022-07-23T11:07:06Z | 2022-07-23T18:13:06Z |

Fixed metadata propagation in Dataframe.idxmax and Dataframe.idxmin #… | diff --git a/pandas/core/frame.py b/pandas/core/frame.py

index e62f9fa8076d8..47203fbf315e5 100644

--- a/pandas/core/frame.py

+++ b/pandas/core/frame.py

@@ -11010,7 +11010,8 @@ def idxmin(

index = data._get_axis(axis)

result = [index[i] if i >= 0 else np.nan for i in indices]

- return data._c... | …28283

- [x] contributes to #28283 calling .__finalize__ on Dataframe.idxmax, idxmin

| https://api.github.com/repos/pandas-dev/pandas/pulls/47821 | 2022-07-22T15:56:37Z | 2022-07-25T16:50:52Z | 2022-07-25T16:50:52Z | 2022-07-25T16:50:59Z |

ENH: Add ArrowDype and .array.ArrowExtensionArray to top level | diff --git a/pandas/__init__.py b/pandas/__init__.py

index eb5ce71141f46..5016bde000c3b 100644

--- a/pandas/__init__.py

+++ b/pandas/__init__.py

@@ -47,6 +47,7 @@

from pandas.core.api import (

# dtype

+ ArrowDtype,

Int8Dtype,

Int16Dtype,

Int32Dtype,

@@ -308,6 +309,7 @@ def __getattr__(name):

... | - [x] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [x] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#pre-commit).

Moved `ArrowIntervalType... | https://api.github.com/repos/pandas-dev/pandas/pulls/47818 | 2022-07-22T03:57:29Z | 2022-08-16T18:28:04Z | 2022-08-16T18:28:04Z | 2022-08-16T18:28:08Z |

CI/TYP: run stubtest | diff --git a/.pre-commit-config.yaml b/.pre-commit-config.yaml

index f65c42dab1852..e4ab74091d6b6 100644

--- a/.pre-commit-config.yaml

+++ b/.pre-commit-config.yaml

@@ -85,9 +85,9 @@ repos:

- repo: local

hooks:

- id: pyright

+ # note: assumes python env is setup and activated

name: pyrigh... | Stubtest enforces that pyi files do not get out of sync with the implementation.

stubtest and mypy seems to have a slight discrepancy (had to change annotations so that they both error in the same cases).

stubtest needs pandas dev to be installed. This might not be something people want to do locally: if pandas d... | https://api.github.com/repos/pandas-dev/pandas/pulls/47817 | 2022-07-21T14:49:37Z | 2022-08-01T17:11:29Z | 2022-08-01T17:11:29Z | 2022-09-10T01:38:53Z |

TST: Addition test for get_indexer for interval index | diff --git a/pandas/tests/indexes/interval/test_indexing.py b/pandas/tests/indexes/interval/test_indexing.py

index e05cb73cfe446..74d17b31aff27 100644

--- a/pandas/tests/indexes/interval/test_indexing.py

+++ b/pandas/tests/indexes/interval/test_indexing.py

@@ -15,7 +15,11 @@

NaT,

Series,

Timedelta,

+ ... | - [x] closes #30178

- [x] [Tests added and passed]

- [x] All [code checks passed]

| https://api.github.com/repos/pandas-dev/pandas/pulls/47816 | 2022-07-21T14:14:21Z | 2022-07-26T16:38:56Z | 2022-07-26T16:38:56Z | 2022-07-26T16:39:11Z |

ENH/TST: Add argsort/min/max for ArrowExtensionArray | diff --git a/pandas/core/arrays/arrow/array.py b/pandas/core/arrays/arrow/array.py

index a882d3a955469..841275e54e3d6 100644

--- a/pandas/core/arrays/arrow/array.py

+++ b/pandas/core/arrays/arrow/array.py

@@ -22,8 +22,12 @@

pa_version_under4p0,

pa_version_under5p0,

pa_version_under6p0,

+ pa_version_un... | - [x] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [x] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#pre-commit).

| https://api.github.com/repos/pandas-dev/pandas/pulls/47811 | 2022-07-21T01:08:35Z | 2022-07-28T17:37:37Z | 2022-07-28T17:37:37Z | 2022-07-28T17:57:45Z |

BUG: fix SparseArray.unique IndexError and _first_fill_value_loc algo | diff --git a/doc/source/whatsnew/v1.5.0.rst b/doc/source/whatsnew/v1.5.0.rst

index 090fea57872c5..acd7cec480c39 100644

--- a/doc/source/whatsnew/v1.5.0.rst

+++ b/doc/source/whatsnew/v1.5.0.rst

@@ -1026,6 +1026,7 @@ Reshaping

Sparse

^^^^^^

- Bug in :meth:`Series.where` and :meth:`DataFrame.where` with ``SparseDtype``... | - [x] closes #47809

- [x] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [ ] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#pre-commit).

- [x]... | https://api.github.com/repos/pandas-dev/pandas/pulls/47810 | 2022-07-21T00:50:35Z | 2022-07-22T17:32:20Z | 2022-07-22T17:32:20Z | 2022-07-25T04:06:55Z |

DOC: add pivot_table note to dropna doc | diff --git a/pandas/core/frame.py b/pandas/core/frame.py

index ead4ea744c647..39bbfc75b0376 100644

--- a/pandas/core/frame.py

+++ b/pandas/core/frame.py

@@ -8564,7 +8564,9 @@ def pivot(self, index=None, columns=None, values=None) -> DataFrame:

margins : bool, default False

Add all row / columns (e... | - [ ] closes #47447

- [ ] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [ ] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#pre-commit).

- [ ... | https://api.github.com/repos/pandas-dev/pandas/pulls/47808 | 2022-07-21T00:04:01Z | 2022-08-01T19:24:30Z | 2022-08-01T19:24:30Z | 2022-08-01T19:24:37Z |

REF: re-use convert_reso | diff --git a/pandas/_libs/tslibs/np_datetime.pxd b/pandas/_libs/tslibs/np_datetime.pxd

index bfccedba9431e..c1936e34cf8d0 100644

--- a/pandas/_libs/tslibs/np_datetime.pxd

+++ b/pandas/_libs/tslibs/np_datetime.pxd

@@ -109,3 +109,10 @@ cdef bint cmp_dtstructs(npy_datetimestruct* left, npy_datetimestruct* right, int

cdef... | - [ ] closes #xxxx (Replace xxxx with the Github issue number)

- [ ] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [ ] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/con... | https://api.github.com/repos/pandas-dev/pandas/pulls/47807 | 2022-07-20T21:58:23Z | 2022-07-21T17:05:38Z | 2022-07-21T17:05:38Z | 2022-07-21T17:21:15Z |

CI/TYP: bump mypy and pyright | diff --git a/.pre-commit-config.yaml b/.pre-commit-config.yaml

index e4ab74091d6b6..dbddba57ef21c 100644

--- a/.pre-commit-config.yaml

+++ b/.pre-commit-config.yaml

@@ -93,7 +93,7 @@ repos:

types: [python]

stages: [manual]

additional_dependencies: &pyright_dependencies

- - pyright@1.1.... | No type changes this time (but there will be a few when numba allows us to use numpy 1.23). | https://api.github.com/repos/pandas-dev/pandas/pulls/47806 | 2022-07-20T21:33:36Z | 2022-08-01T19:27:16Z | 2022-08-01T19:27:16Z | 2022-09-10T01:38:50Z |

ENH/TST: Add isin, _hasna for ArrowExtensionArray | diff --git a/pandas/core/arrays/arrow/array.py b/pandas/core/arrays/arrow/array.py

index 8957ea493e9ad..ee8192cfca1ec 100644

--- a/pandas/core/arrays/arrow/array.py

+++ b/pandas/core/arrays/arrow/array.py

@@ -18,6 +18,7 @@

from pandas.compat import (

pa_version_under1p01,

pa_version_under2p0,

+ pa_version... | - [x] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [x] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#pre-commit).

| https://api.github.com/repos/pandas-dev/pandas/pulls/47805 | 2022-07-20T20:33:48Z | 2022-07-22T01:26:18Z | 2022-07-22T01:26:18Z | 2022-07-22T01:40:20Z |

TYP: Column.null_count is a Python int | diff --git a/pandas/core/exchange/column.py b/pandas/core/exchange/column.py

index 538c1d061ef22..c2a1cfe766b22 100644

--- a/pandas/core/exchange/column.py

+++ b/pandas/core/exchange/column.py

@@ -96,7 +96,7 @@ def offset(self) -> int:

return 0

@cache_readonly

- def dtype(self):

+ def dtype(self) ... | - [x] closes #47789 (Replace xxxx with the Github issue number)

- [x] [Tests added and passed](https://pandas.pydata.org/pandas-docs/dev/development/contributing_codebase.html#writing-tests) if fixing a bug or adding a new feature

- [x] All [code checks passed](https://pandas.pydata.org/pandas-docs/dev/development/co... | https://api.github.com/repos/pandas-dev/pandas/pulls/47804 | 2022-07-20T17:12:56Z | 2022-07-21T17:07:43Z | 2022-07-21T17:07:43Z | 2022-07-21T17:07:46Z |

CLN: remove LegacyFoo from io.pytables | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index 54640ff576338..61e92f12e19af 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -321,6 +321,7 @@ or ``matplotlib.Axes.plot``. See :ref:`plotting.formatters` for more.

- :meth:`pandas.Series.str.cat` now ... | https://api.github.com/repos/pandas-dev/pandas/pulls/29787 | 2019-11-22T01:41:46Z | 2019-11-22T15:27:33Z | 2019-11-22T15:27:33Z | 2019-11-22T16:36:30Z | |

DEPR: change pd.concat sort=None to sort=False | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index 54640ff576338..ec8c6ab1451a5 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -341,6 +341,7 @@ or ``matplotlib.Axes.plot``. See :ref:`plotting.formatters` for more.

- Removed previously deprecated "ord... | https://api.github.com/repos/pandas-dev/pandas/pulls/29786 | 2019-11-22T00:59:02Z | 2019-11-25T23:38:55Z | 2019-11-25T23:38:55Z | 2019-11-25T23:40:33Z | |

CLN: catch ValueError instead of Exception in io.html | diff --git a/pandas/io/html.py b/pandas/io/html.py

index ed2b21994fdca..5f38f866e1643 100644

--- a/pandas/io/html.py

+++ b/pandas/io/html.py

@@ -896,7 +896,7 @@ def _parse(flavor, io, match, attrs, encoding, displayed_only, **kwargs):

try:

tables = p.parse_tables()

- except Exception as c... | Broken off from #28862. | https://api.github.com/repos/pandas-dev/pandas/pulls/29785 | 2019-11-21T23:07:29Z | 2019-11-22T15:31:18Z | 2019-11-22T15:31:18Z | 2019-11-22T16:33:34Z |

DEPS: Removing unused pytest-mock | diff --git a/ci/deps/azure-36-32bit.yaml b/ci/deps/azure-36-32bit.yaml

index f3e3d577a7a33..cf3fca307481f 100644

--- a/ci/deps/azure-36-32bit.yaml

+++ b/ci/deps/azure-36-32bit.yaml

@@ -8,7 +8,6 @@ dependencies:

# tools

### Cython 0.29.13 and pytest 5.0.1 for 32 bits are not available with conda, installing below ... | May be I'm missing something, but I don't think we use `pytest-mock` (a grep didn't find anything by `mocker`), but we have it in almost all builds.

The CI should tell if I'm wrong. | https://api.github.com/repos/pandas-dev/pandas/pulls/29784 | 2019-11-21T22:06:08Z | 2019-11-22T01:14:47Z | 2019-11-22T01:14:47Z | 2019-11-22T01:14:47Z |

DEPS: Unifying version of pytest-xdist across builds | diff --git a/ci/deps/travis-38.yaml b/ci/deps/travis-38.yaml

index 88da1331b463a..828f02596a70e 100644

--- a/ci/deps/travis-38.yaml

+++ b/ci/deps/travis-38.yaml

@@ -8,7 +8,7 @@ dependencies:

# tools

- cython>=0.29.13

- pytest>=5.0.1

- - pytest-xdist>=1.29.0 # The rest of the builds use >=1.21, and use pytest... | We use `pytest-xdist>=1.21` in all builds except this one. Using the same for the 3.8 build, which was introduced in parallel as the standardization of dependencies.

xref: https://github.com/pandas-dev/pandas/pull/29678#discussion_r348873095 | https://api.github.com/repos/pandas-dev/pandas/pulls/29783 | 2019-11-21T22:04:20Z | 2019-11-22T15:25:53Z | 2019-11-22T15:25:53Z | 2019-11-22T15:25:56Z |

DOC: Updating documentation of new required pytest version | diff --git a/doc/source/development/contributing.rst b/doc/source/development/contributing.rst

index 042d6926d84f5..d7b3e159f8ce7 100644

--- a/doc/source/development/contributing.rst

+++ b/doc/source/development/contributing.rst

@@ -946,7 +946,7 @@ extensions in `numpy.testing

.. note::

- The earliest supported ... | Follow up of #29678

@jreback I think `show_versions` gets the version by importing the module. Correct me if I'm wrong, but I didn't see anything to update there. | https://api.github.com/repos/pandas-dev/pandas/pulls/29782 | 2019-11-21T22:02:34Z | 2019-11-22T15:26:49Z | 2019-11-22T15:26:49Z | 2019-11-22T15:26:53Z |

CLN - Change string formatting in plotting | diff --git a/pandas/plotting/_core.py b/pandas/plotting/_core.py

index da1e06dccc65d..beb276478070e 100644

--- a/pandas/plotting/_core.py

+++ b/pandas/plotting/_core.py

@@ -736,26 +736,23 @@ def _get_call_args(backend_name, data, args, kwargs):

]

else:

raise TypeError(

- ... | Changed the string formatting in pandas/plotting/*.py to f-strings.

- [x] tests passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

ref #29547 | https://api.github.com/repos/pandas-dev/pandas/pulls/29781 | 2019-11-21T20:37:55Z | 2019-11-21T22:46:04Z | 2019-11-21T22:46:03Z | 2019-11-21T23:31:34Z |

CLN: typing and renaming in computation.pytables | diff --git a/pandas/core/computation/pytables.py b/pandas/core/computation/pytables.py

index 8dee273517f88..58bbfd0a1bdee 100644

--- a/pandas/core/computation/pytables.py

+++ b/pandas/core/computation/pytables.py

@@ -21,7 +21,7 @@

from pandas.io.formats.printing import pprint_thing, pprint_thing_encoded

-class Sco... | - [ ] closes #xxxx

- [ ] tests added / passed

- [ ] passes `black pandas`

- [ ] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [ ] whatsnew entry

| https://api.github.com/repos/pandas-dev/pandas/pulls/29778 | 2019-11-21T19:16:24Z | 2019-11-22T15:30:17Z | 2019-11-22T15:30:17Z | 2019-11-22T16:34:19Z |

TYP: io.pytables types | diff --git a/pandas/io/pytables.py b/pandas/io/pytables.py

index ba53d8cfd0de5..941f070cc0955 100644

--- a/pandas/io/pytables.py

+++ b/pandas/io/pytables.py

@@ -1404,7 +1404,7 @@ def _check_if_open(self):

if not self.is_open:

raise ClosedFileError("{0} file is not open!".format(self._path))

- ... | https://api.github.com/repos/pandas-dev/pandas/pulls/29777 | 2019-11-21T18:33:36Z | 2019-11-22T15:30:57Z | 2019-11-22T15:30:57Z | 2019-11-22T16:32:31Z | |

REF: avoid returning self in io.pytables | diff --git a/pandas/io/pytables.py b/pandas/io/pytables.py

index ba53d8cfd0de5..8926da3f17783 100644

--- a/pandas/io/pytables.py

+++ b/pandas/io/pytables.py

@@ -1760,18 +1760,11 @@ def __init__(

assert isinstance(self.cname, str)

assert isinstance(self.kind_attr, str)

- def set_axis(self, axis: i... | Another small step towards making these less stateful | https://api.github.com/repos/pandas-dev/pandas/pulls/29776 | 2019-11-21T18:18:39Z | 2019-11-22T15:29:00Z | 2019-11-22T15:29:00Z | 2019-11-22T16:38:49Z |

CLN:F-string in pandas/_libs/tslibs/*.pyx | diff --git a/pandas/_libs/tslibs/c_timestamp.pyx b/pandas/_libs/tslibs/c_timestamp.pyx

index 8512b34b9e78c..c6c98e996b745 100644

--- a/pandas/_libs/tslibs/c_timestamp.pyx

+++ b/pandas/_libs/tslibs/c_timestamp.pyx

@@ -55,11 +55,11 @@ def maybe_integer_op_deprecated(obj):

# GH#22535 add/sub of integers and int-array... | - [ ] closes #xxxx

- [x] tests added / passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [ ] whatsnew entry

ref https://github.com/pandas-dev/pandas/issues/29547 | https://api.github.com/repos/pandas-dev/pandas/pulls/29775 | 2019-11-21T16:05:32Z | 2019-11-22T15:32:44Z | 2019-11-22T15:32:44Z | 2019-11-22T17:39:36Z |

Reenabled no-unused-function in setup.py | diff --git a/pandas/_libs/parsers.pyx b/pandas/_libs/parsers.pyx

index bbea66542a953..8f0f4e17df2f9 100644

--- a/pandas/_libs/parsers.pyx

+++ b/pandas/_libs/parsers.pyx

@@ -1406,59 +1406,6 @@ cdef inline StringPath _string_path(char *encoding):

# Type conversions / inference support code

-cdef _string_box_factoriz... | This enables a flag that was disabled 4 years ago in https://github.com/pandas-dev/pandas/commit/e9fde88c47aab023cafa6c98d5654db9dc89197f but maybe worth reconsidering, even though we don't fail on warnings for extensions in CI

This yielded the following on master:

```sh

pandas/_libs/hashing.c:25313:20: warning:... | https://api.github.com/repos/pandas-dev/pandas/pulls/29767 | 2019-11-21T05:55:15Z | 2019-11-22T15:33:17Z | 2019-11-22T15:33:17Z | 2023-04-12T20:15:54Z |

DEPR: MultiIndex.to_hierarchical, labels | diff --git a/ci/deps/azure-macos-36.yaml b/ci/deps/azure-macos-36.yaml

index 831b68d0bb4d3..f393ed84ecf63 100644

--- a/ci/deps/azure-macos-36.yaml

+++ b/ci/deps/azure-macos-36.yaml

@@ -20,9 +20,9 @@ dependencies:

- matplotlib=2.2.3

- nomkl

- numexpr

- - numpy=1.13.3

+ - numpy=1.14

- openpyxl

- - pyarrow

... | https://api.github.com/repos/pandas-dev/pandas/pulls/29766 | 2019-11-21T05:15:03Z | 2019-11-25T23:12:05Z | 2019-11-25T23:12:05Z | 2019-11-25T23:30:21Z | |

REF: make Fixed.version a property | diff --git a/pandas/io/pytables.py b/pandas/io/pytables.py

index 7c447cbf78677..f060831a1a036 100644

--- a/pandas/io/pytables.py

+++ b/pandas/io/pytables.py

@@ -9,7 +9,7 @@

import os

import re

import time

-from typing import TYPE_CHECKING, Any, Dict, List, Optional, Type, Union

+from typing import TYPE_CHECKING, Any... | Make things in this file incrementally less stateful | https://api.github.com/repos/pandas-dev/pandas/pulls/29765 | 2019-11-21T04:14:30Z | 2019-11-21T13:31:20Z | 2019-11-21T13:31:20Z | 2019-11-21T15:44:45Z |

TST: add test for ffill/bfill for non unique multilevel | diff --git a/pandas/tests/groupby/test_transform.py b/pandas/tests/groupby/test_transform.py

index 3d9a349d94e10..c46180c1d11cd 100644

--- a/pandas/tests/groupby/test_transform.py

+++ b/pandas/tests/groupby/test_transform.py

@@ -911,6 +911,41 @@ def test_pct_change(test_series, freq, periods, fill_method, limit):

... | - [x] closes #19437

- [x] tests added / passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [ ] whatsnew entry

| https://api.github.com/repos/pandas-dev/pandas/pulls/29763 | 2019-11-21T01:29:57Z | 2019-11-23T23:04:42Z | 2019-11-23T23:04:41Z | 2019-11-23T23:04:51Z |

TST: add test for rolling max with DatetimeIndex | diff --git a/pandas/tests/window/test_timeseries_window.py b/pandas/tests/window/test_timeseries_window.py

index 7055e5b538bea..02969a6c6e822 100644

--- a/pandas/tests/window/test_timeseries_window.py

+++ b/pandas/tests/window/test_timeseries_window.py

@@ -535,6 +535,18 @@ def test_ragged_max(self):

expected["... | - [x] closes #21096

- [x] tests added / passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [ ] whatsnew entry

| https://api.github.com/repos/pandas-dev/pandas/pulls/29761 | 2019-11-21T01:05:23Z | 2019-11-25T22:50:54Z | 2019-11-25T22:50:54Z | 2019-11-30T01:14:42Z |

TST: add test for unused level indexing raises KeyError | diff --git a/pandas/tests/test_multilevel.py b/pandas/tests/test_multilevel.py

index f0928820367e9..44829423be1bb 100644

--- a/pandas/tests/test_multilevel.py

+++ b/pandas/tests/test_multilevel.py

@@ -583,6 +583,17 @@ def test_stack_unstack_wrong_level_name(self, method):

with pytest.raises(KeyError, match... | - [x] closes #20410

- [x] tests added / passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [ ] whatsnew entry

| https://api.github.com/repos/pandas-dev/pandas/pulls/29760 | 2019-11-21T00:52:57Z | 2019-11-22T17:02:09Z | 2019-11-22T17:02:09Z | 2019-11-22T17:02:12Z |

STY: fstrings in io.pytables | diff --git a/pandas/io/pytables.py b/pandas/io/pytables.py

index 8afbd293a095b..b229e5b4e0f4e 100644

--- a/pandas/io/pytables.py

+++ b/pandas/io/pytables.py

@@ -368,9 +368,7 @@ def read_hdf(path_or_buf, key=None, mode: str = "r", **kwargs):

exists = False

if not exists:

- raise FileNo... | honestly not sure why ive got such a bee in my bonnet about this file | https://api.github.com/repos/pandas-dev/pandas/pulls/29758 | 2019-11-20T23:26:32Z | 2019-11-23T23:10:38Z | 2019-11-23T23:10:38Z | 2019-11-23T23:33:08Z |

ANN: types for _create_storer | diff --git a/pandas/io/pytables.py b/pandas/io/pytables.py

index b229e5b4e0f4e..b8ed83cd3ebd7 100644

--- a/pandas/io/pytables.py

+++ b/pandas/io/pytables.py

@@ -174,9 +174,6 @@ class DuplicateWarning(Warning):

and is the default for append operations

"""

-# map object types

-_TYPE_MAP = {Series: "serie... | 1) Annotate return type for _create_storer

2) Annotate other functions that we can reason about given 1

3) Fixups to address mypy warnings caused by 1 and 2 | https://api.github.com/repos/pandas-dev/pandas/pulls/29757 | 2019-11-20T22:13:18Z | 2019-11-25T23:02:43Z | 2019-11-25T23:02:43Z | 2019-11-25T23:16:18Z |

CLN: io.pytables | diff --git a/pandas/io/pytables.py b/pandas/io/pytables.py

index 7c447cbf78677..9d2642ae414d0 100644

--- a/pandas/io/pytables.py

+++ b/pandas/io/pytables.py

@@ -1604,10 +1604,11 @@ class TableIterator:

"""

chunksize: Optional[int]

+ store: HDFStore

def __init__(

self,

- store,

... | This is easy stuff broken off of a tough type-inference branch | https://api.github.com/repos/pandas-dev/pandas/pulls/29756 | 2019-11-20T21:56:19Z | 2019-11-21T13:00:32Z | 2019-11-21T13:00:32Z | 2019-11-21T15:46:31Z |

Remove Ambiguous Behavior of Tuple as Grouping | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index 54640ff576338..cc270366ac940 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -313,6 +313,7 @@ or ``matplotlib.Axes.plot``. See :ref:`plotting.formatters` for more.

- Removed the previously deprecated ... | follow up to #18314 | https://api.github.com/repos/pandas-dev/pandas/pulls/29755 | 2019-11-20T20:54:13Z | 2019-11-25T23:44:13Z | 2019-11-25T23:44:13Z | 2019-11-29T21:33:40Z |

TYP: annotate queryables | diff --git a/pandas/core/computation/ops.py b/pandas/core/computation/ops.py

index 524013ceef5ff..41d7f96f5e96d 100644

--- a/pandas/core/computation/ops.py

+++ b/pandas/core/computation/ops.py

@@ -55,7 +55,7 @@ class UndefinedVariableError(NameError):

NameError subclass for local variables.

"""

- def __i... | Made possible by #29692 | https://api.github.com/repos/pandas-dev/pandas/pulls/29754 | 2019-11-20T20:16:30Z | 2019-11-21T13:02:31Z | 2019-11-21T13:02:31Z | 2019-11-21T15:45:35Z |

PERF: speed-up when scalar not found in Categorical's categories | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index a3d17b2b32353..00305360bbacc 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -343,6 +343,7 @@ Performance improvements

- Performance improvement in :meth:`DataFrame.replace` when provided a list of va... | I took a look at the code in core/category.py and found a usage of np.repeat for creating boolean arrays. np.repeat is much slower than np.zeros/np.ones for that purpose.

```python

>>> n = 1_000_000

>>> c = pd.Categorical(['a'] * n + ['b'] * n + ['c'] * n)

>>> %timeit c == 'x'

17 ms ± 270 µs per loop # master

... | https://api.github.com/repos/pandas-dev/pandas/pulls/29750 | 2019-11-20T18:23:43Z | 2019-11-21T13:39:55Z | 2019-11-21T13:39:55Z | 2019-11-21T13:45:24Z |

CLN: use f-strings in core.categorical.py | diff --git a/pandas/core/arrays/categorical.py b/pandas/core/arrays/categorical.py

index c6e2a7b7a6e00..9a94345a769df 100644

--- a/pandas/core/arrays/categorical.py

+++ b/pandas/core/arrays/categorical.py

@@ -73,10 +73,10 @@

def _cat_compare_op(op):

- opname = "__{op}__".format(op=op.__name__)

+ opname = f"_... | also rename an inner function from ``f`` to ``func``, which is clearer. | https://api.github.com/repos/pandas-dev/pandas/pulls/29748 | 2019-11-20T17:37:07Z | 2019-11-21T12:19:53Z | 2019-11-21T12:19:53Z | 2019-11-21T12:20:04Z |

CI: fix imminent mypy failure | diff --git a/pandas/io/pytables.py b/pandas/io/pytables.py

index 4c9e10e0f4601..7c447cbf78677 100644

--- a/pandas/io/pytables.py

+++ b/pandas/io/pytables.py

@@ -2044,6 +2044,8 @@ def convert(

the underlying table's row count are normalized to that.

"""

+ assert self.table is not None

+

... | `pandas\io\pytables.py:2048: error: "None" has no attribute "nrows"` seen locally after merging master and running mypy | https://api.github.com/repos/pandas-dev/pandas/pulls/29747 | 2019-11-20T17:33:13Z | 2019-11-20T21:03:52Z | 2019-11-20T21:03:51Z | 2019-11-20T21:22:43Z |

DEPR: remove is_period, is_datetimetz | diff --git a/doc/source/reference/general_utility_functions.rst b/doc/source/reference/general_utility_functions.rst

index 9c69770c0f1b7..0961acc43f301 100644

--- a/doc/source/reference/general_utility_functions.rst

+++ b/doc/source/reference/general_utility_functions.rst

@@ -97,13 +97,11 @@ Scalar introspection

a... | deprecated in 0.24.0 | https://api.github.com/repos/pandas-dev/pandas/pulls/29744 | 2019-11-20T16:09:23Z | 2019-11-21T13:24:19Z | 2019-11-21T13:24:19Z | 2019-11-21T15:43:36Z |

io/parsers: ensure decimal is str on PythonParser | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index 54640ff576338..ee6a20d15ab90 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -487,6 +487,7 @@ I/O

- Bug in :meth:`Styler.background_gradient` not able to work with dtype ``Int64`` (:issue:`28869`)

- ... | - [x] closes #29650

- [x] tests added / passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [x] whatsnew entry

| https://api.github.com/repos/pandas-dev/pandas/pulls/29743 | 2019-11-20T15:39:46Z | 2019-11-22T15:35:22Z | 2019-11-22T15:35:22Z | 2019-11-22T15:46:06Z |

TYP: disallow comment-based annotation syntax | diff --git a/ci/code_checks.sh b/ci/code_checks.sh

index edd8fcd418c47..7c6c98d910492 100755

--- a/ci/code_checks.sh

+++ b/ci/code_checks.sh

@@ -194,6 +194,10 @@ if [[ -z "$CHECK" || "$CHECK" == "patterns" ]]; then

invgrep -R --include="*.py" --include="*.pyx" -E 'class.*:\n\n( )+"""' .

RET=$(($RET + $?)) ; e... | https://api.github.com/repos/pandas-dev/pandas/pulls/29741 | 2019-11-20T15:32:52Z | 2019-11-21T16:27:40Z | 2019-11-21T16:27:39Z | 2019-11-21T16:27:40Z | |

DOC: fix typos | diff --git a/pandas/_libs/hashing.pyx b/pandas/_libs/hashing.pyx

index d3b5ecfdaa178..1906193622953 100644

--- a/pandas/_libs/hashing.pyx

+++ b/pandas/_libs/hashing.pyx

@@ -75,7 +75,7 @@ def hash_object_array(object[:] arr, object key, object encoding='utf8'):

lens[i] = l

cdata = data

- # kee... | Changes only words in comments; function names not touched. | https://api.github.com/repos/pandas-dev/pandas/pulls/29739 | 2019-11-20T15:09:31Z | 2019-11-20T16:31:34Z | 2019-11-20T16:31:34Z | 2019-11-20T16:40:39Z |

DOC: Add link to dev calendar and meeting notes | diff --git a/doc/source/development/index.rst b/doc/source/development/index.rst

index a523ae0c957f1..757b197c717e6 100644

--- a/doc/source/development/index.rst

+++ b/doc/source/development/index.rst

@@ -19,3 +19,4 @@ Development

developer

policies

roadmap

+ meeting

diff --git a/doc/source/developmen... | https://api.github.com/repos/pandas-dev/pandas/pulls/29737 | 2019-11-20T13:43:09Z | 2019-11-25T14:03:56Z | 2019-11-25T14:03:56Z | 2019-11-25T14:03:56Z | |

xfail clipboard for now | diff --git a/pandas/tests/io/test_clipboard.py b/pandas/tests/io/test_clipboard.py

index 4559ba264d8b7..666dfd245acaa 100644

--- a/pandas/tests/io/test_clipboard.py

+++ b/pandas/tests/io/test_clipboard.py

@@ -258,6 +258,7 @@ def test_round_trip_valid_encodings(self, enc, df):

@pytest.mark.clipboard

@pytest.mark.skipi... | xref #29676, https://github.com/pandas-dev/pandas/pull/29712. | https://api.github.com/repos/pandas-dev/pandas/pulls/29736 | 2019-11-20T12:54:01Z | 2019-11-20T14:09:51Z | 2019-11-20T14:09:50Z | 2019-11-20T14:09:54Z |

DEPR: enforce deprecations for kwargs in factorize, FrozenNDArray.ser… | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index 98d861d999ea9..90ad5f4f3237d 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -280,6 +280,10 @@ or ``matplotlib.Axes.plot``. See :ref:`plotting.formatters` for more.

- Removed the previously deprecated... | …achsorted, DataFrame.update, DatetimeIndex.shift, TimedeltaIndex.shift, PeriodIndex.shift

| https://api.github.com/repos/pandas-dev/pandas/pulls/29732 | 2019-11-20T03:25:04Z | 2019-11-20T12:24:31Z | 2019-11-20T12:24:31Z | 2019-11-20T15:26:10Z |

DEPR: change DTI.to_series keep_tz default to True | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index 98d861d999ea9..111b2e736b21c 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -275,6 +275,7 @@ or ``matplotlib.Axes.plot``. See :ref:`plotting.formatters` for more.

- :meth:`pandas.Series.str.cat` does... | We _also_ have a deprecation to remove the keyword altogether, but we can't enforce that until we give people time to stop passing `keep_tz=True` | https://api.github.com/repos/pandas-dev/pandas/pulls/29731 | 2019-11-20T03:22:20Z | 2019-11-20T12:26:25Z | 2019-11-20T12:26:25Z | 2019-11-20T15:23:20Z |

DEPR: remove reduce kwd from DataFrame.apply | diff --git a/pandas/core/frame.py b/pandas/core/frame.py

index 5baba0bae1d45..4c4254be7f4cb 100644

--- a/pandas/core/frame.py

+++ b/pandas/core/frame.py

@@ -6593,9 +6593,7 @@ def transform(self, func, axis=0, *args, **kwargs):

return self.T.transform(func, *args, **kwargs).T

return super().transfo... | Looks like #29017 was supposed to do this and I just missed the reduce keyword | https://api.github.com/repos/pandas-dev/pandas/pulls/29730 | 2019-11-20T02:33:53Z | 2019-11-20T21:05:19Z | 2019-11-20T21:05:19Z | 2019-11-20T21:13:55Z |

DOC: fix _validate_names docstring | diff --git a/pandas/io/parsers.py b/pandas/io/parsers.py

index 2cb4a5c8bb2f6..ff3583b79d79c 100755

--- a/pandas/io/parsers.py

+++ b/pandas/io/parsers.py

@@ -395,25 +395,22 @@ def _validate_integer(name, val, min_val=0):

def _validate_names(names):

"""

- Check if the `names` parameter contains duplicates.

-

-... | https://api.github.com/repos/pandas-dev/pandas/pulls/29729 | 2019-11-20T02:10:53Z | 2019-11-20T17:11:18Z | 2019-11-20T17:11:18Z | 2019-11-20T17:36:06Z | |

DEPR: remove nthreads kwarg from read_feather | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index f158c1158b54e..30d9a964b3d7e 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -320,6 +320,7 @@ or ``matplotlib.Axes.plot``. See :ref:`plotting.formatters` for more.

- Ability to read pickles containing... | https://api.github.com/repos/pandas-dev/pandas/pulls/29728 | 2019-11-20T01:05:28Z | 2019-11-20T17:07:19Z | 2019-11-20T17:07:19Z | 2019-11-20T17:35:41Z | |

DEPR: remove tsplot | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index f158c1158b54e..318fe62a73ed2 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -318,6 +318,7 @@ or ``matplotlib.Axes.plot``. See :ref:`plotting.formatters` for more.

- Removed the previously deprecated ... | https://api.github.com/repos/pandas-dev/pandas/pulls/29726 | 2019-11-19T23:20:10Z | 2019-11-20T16:33:48Z | 2019-11-20T16:33:48Z | 2019-11-20T16:34:59Z | |

DEPR: remove Index fastpath kwarg | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index f231c2b31abb1..89b85c376f5b4 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -377,6 +377,7 @@ or ``matplotlib.Axes.plot``. See :ref:`plotting.formatters` for more.

**Other removals**

- Removed the ... | https://api.github.com/repos/pandas-dev/pandas/pulls/29725 | 2019-11-19T23:17:20Z | 2019-11-27T04:49:13Z | 2019-11-27T04:49:13Z | 2020-04-29T02:55:05Z | |

DEPR: remove Series.valid, is_copy, get_ftype_counts, Index.get_duplicate, Series.clip_upper, clip_lower | diff --git a/doc/source/reference/frame.rst b/doc/source/reference/frame.rst

index 37d27093efefd..4540504974f56 100644

--- a/doc/source/reference/frame.rst

+++ b/doc/source/reference/frame.rst

@@ -30,7 +30,6 @@ Attributes and underlying data

DataFrame.dtypes

DataFrame.ftypes

DataFrame.get_dtype_counts

- D... | …cate, Series.clip_upper, Series.clip_lower

| https://api.github.com/repos/pandas-dev/pandas/pulls/29724 | 2019-11-19T23:15:01Z | 2019-11-21T13:29:12Z | 2019-11-21T13:29:12Z | 2019-11-21T15:50:36Z |

DEPR: enforce deprecations in core.internals | diff --git a/ci/deps/azure-windows-36.yaml b/ci/deps/azure-windows-36.yaml

index e3ad1d8371623..ec4aff41f1967 100644

--- a/ci/deps/azure-windows-36.yaml

+++ b/ci/deps/azure-windows-36.yaml

@@ -16,7 +16,7 @@ dependencies:

# pandas dependencies

- blosc

- bottleneck

- - fastparquet>=0.2.1

+ - fastparquet>=0.3.2... | Not sure if the whatsnew is needed for this; IIRC it was mainly pyarrow/fastparquet that were using these keywords | https://api.github.com/repos/pandas-dev/pandas/pulls/29723 | 2019-11-19T22:21:34Z | 2019-11-22T17:28:55Z | 2019-11-22T17:28:55Z | 2019-11-22T17:35:06Z |

DEPR: remove encoding kwarg from read_stata, DataFrame.to_stata | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index f158c1158b54e..467aa663f770a 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -324,6 +324,7 @@ or ``matplotlib.Axes.plot``. See :ref:`plotting.formatters` for more.

- Removed support for nexted renamin... | - [ ] closes #xxxx

- [ ] tests added / passed

- [ ] passes `black pandas`

- [ ] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [ ] whatsnew entry

| https://api.github.com/repos/pandas-dev/pandas/pulls/29722 | 2019-11-19T21:08:43Z | 2019-11-20T21:07:00Z | 2019-11-20T21:07:00Z | 2019-11-20T21:12:26Z |

DEPR: remove deprecated keywords in read_excel, to_records | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index f158c1158b54e..0e2b32c2c5d7c 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -315,6 +315,8 @@ or ``matplotlib.Axes.plot``. See :ref:`plotting.formatters` for more.

- :meth:`pandas.Series.str.cat` now ... | There are a handful of these PRs on the way and they're inevitably going to have whatsnew conflicts. I'm trying to group together related deprecations so as to have a single-digit number of PRs for these. | https://api.github.com/repos/pandas-dev/pandas/pulls/29721 | 2019-11-19T21:06:34Z | 2019-11-20T16:43:03Z | 2019-11-20T16:43:03Z | 2021-11-20T23:21:56Z |

DEPR: remove Series.from_array, DataFrame.from_items, as_matrix, asobject, as_blocks, blocks | diff --git a/doc/source/reference/frame.rst b/doc/source/reference/frame.rst

index 4b5faed0f4d2d..37d27093efefd 100644

--- a/doc/source/reference/frame.rst

+++ b/doc/source/reference/frame.rst

@@ -351,7 +351,6 @@ Serialization / IO / conversion

:toctree: api/

DataFrame.from_dict

- DataFrame.from_items

D... | https://api.github.com/repos/pandas-dev/pandas/pulls/29720 | 2019-11-19T20:53:22Z | 2019-11-20T17:15:10Z | 2019-11-20T17:15:10Z | 2019-11-20T17:38:29Z | |

Improve return description in `droplevel` docstring | diff --git a/pandas/core/generic.py b/pandas/core/generic.py

index 982a57a6f725e..4f45a96d23941 100644

--- a/pandas/core/generic.py

+++ b/pandas/core/generic.py

@@ -807,7 +807,8 @@ def droplevel(self, level, axis=0):

Returns

-------

- DataFrame.droplevel()

+ DataFrame

+ Data... | Use https://pandas-docs.github.io/pandas-docs-travis/reference/api/pandas.DataFrame.dropna.html#pandas.DataFrame.dropna

docstring as a\ template.

MINOR DOCUMENTATION CHANGE ONLY (ignored below)

- [ ] closes #xxxx

- [ ] tests added / passed

- [ ] passes `black pandas`

- [ ] passes `git diff upstream/master -u ... | https://api.github.com/repos/pandas-dev/pandas/pulls/29717 | 2019-11-19T19:41:10Z | 2019-11-19T20:54:14Z | 2019-11-19T20:54:14Z | 2020-03-26T06:44:04Z |

TST: Silence lzma output | diff --git a/pandas/tests/io/test_compression.py b/pandas/tests/io/test_compression.py

index d68b6a1effaa0..9bcdda2039458 100644

--- a/pandas/tests/io/test_compression.py

+++ b/pandas/tests/io/test_compression.py

@@ -140,7 +140,7 @@ def test_with_missing_lzma():

import pandas

"""

)

- subproces... | This was leaking to stdout when the pytest `-s` flag was used. | https://api.github.com/repos/pandas-dev/pandas/pulls/29713 | 2019-11-19T16:55:18Z | 2019-11-20T12:36:07Z | 2019-11-20T12:36:06Z | 2019-11-20T12:36:09Z |

CI: Fix clipboard problems | diff --git a/.travis.yml b/.travis.yml

index a11cd469e9b9c..b5897e3526327 100644

--- a/.travis.yml

+++ b/.travis.yml

@@ -31,19 +31,19 @@ matrix:

include:

- env:

- - JOB="3.8" ENV_FILE="ci/deps/travis-38.yaml" PATTERN="(not slow and not network)"

+ - JOB="3.8" ENV_FILE="ci/deps/travis-38.yaml" ... | - [X] closes #29676

Fixes the clipboard problems in the CI. With this PR we're sure they are being run, and they work as expected. | https://api.github.com/repos/pandas-dev/pandas/pulls/29712 | 2019-11-19T16:39:20Z | 2020-01-15T13:40:35Z | 2020-01-15T13:40:35Z | 2020-07-01T16:06:59Z |

Assorted io extension cleanups | diff --git a/pandas/_libs/src/parser/io.c b/pandas/_libs/src/parser/io.c

index aecd4e03664e6..1e3295fcb6fc7 100644

--- a/pandas/_libs/src/parser/io.c

+++ b/pandas/_libs/src/parser/io.c

@@ -9,7 +9,6 @@ The full license is in the LICENSE file, distributed with this software.

#include "io.h"

-#include <sys/types.h>

... | Just giving these a look seem to be a lot of unused definitions / functions | https://api.github.com/repos/pandas-dev/pandas/pulls/29704 | 2019-11-19T06:16:01Z | 2019-11-20T12:58:14Z | 2019-11-20T12:58:14Z | 2020-01-16T00:35:18Z |

TYP: more annotations for io.pytables | diff --git a/pandas/io/pytables.py b/pandas/io/pytables.py

index 193b8f5053d65..9589832095474 100644

--- a/pandas/io/pytables.py

+++ b/pandas/io/pytables.py

@@ -520,16 +520,16 @@ def root(self):

def filename(self):

return self._path

- def __getitem__(self, key):

+ def __getitem__(self, key: str):

... | should be orthogonal to #29692. | https://api.github.com/repos/pandas-dev/pandas/pulls/29703 | 2019-11-19T02:12:24Z | 2019-11-20T13:12:12Z | 2019-11-20T13:12:12Z | 2019-11-20T15:16:57Z |

REF: use _extract_result in Reducer.get_result | diff --git a/pandas/_libs/reduction.pyx b/pandas/_libs/reduction.pyx

index f5521b94b6c33..ea54b00cf5be4 100644

--- a/pandas/_libs/reduction.pyx

+++ b/pandas/_libs/reduction.pyx

@@ -135,9 +135,8 @@ cdef class Reducer:

else:

res = self.f(chunk)

- if (not _is_sparse_a... | Working towards ironing out the small differences between several places that do roughly the same extraction. Getting the pure-python versions will be appreciably harder, kept separate.

This avoids an unnecessary `np.isscalar` call. | https://api.github.com/repos/pandas-dev/pandas/pulls/29702 | 2019-11-19T01:33:24Z | 2019-11-19T13:20:14Z | 2019-11-19T13:20:14Z | 2019-11-19T15:19:50Z |

format replaced with f-strings | diff --git a/pandas/core/groupby/generic.py b/pandas/core/groupby/generic.py

index 31563e4bccbb7..a3e2266184e2a 100644

--- a/pandas/core/groupby/generic.py

+++ b/pandas/core/groupby/generic.py

@@ -303,8 +303,7 @@ def _aggregate_multiple_funcs(self, arg, _level):

obj = self

if name in results:

... | - [x] tests added / passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

ref #29547 | https://api.github.com/repos/pandas-dev/pandas/pulls/29701 | 2019-11-18T23:57:08Z | 2019-11-21T13:04:13Z | 2019-11-21T13:04:13Z | 2019-11-21T13:04:17Z |

BUG: Index.get_loc raising incorrect error, closes #29189 | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index 54640ff576338..76aecf8a27a2a 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -453,7 +453,7 @@ Indexing

- Bug in :meth:`Float64Index.astype` where ``np.inf`` was not handled properly when casting to an... | - [x] closes #29189

- [x] tests added / passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [ ] whatsnew entry

cc @WillAyd | https://api.github.com/repos/pandas-dev/pandas/pulls/29700 | 2019-11-18T23:54:09Z | 2019-11-25T23:45:28Z | 2019-11-25T23:45:27Z | 2019-11-25T23:49:23Z |

REF: dont _try_cast for user-defined functions | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index 3b87150f544cf..db24be628dd67 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -511,6 +511,7 @@ Groupby/resample/rolling

- Bug in :meth:`DataFrame.groupby` losing column name information when grouping b... | Also add a whatsnew note for #29641 and a comment in core.apply that ive been meaning to get in | https://api.github.com/repos/pandas-dev/pandas/pulls/29698 | 2019-11-18T23:05:21Z | 2019-11-21T13:07:58Z | 2019-11-21T13:07:58Z | 2019-11-21T16:02:06Z |

CI: Use conda for 3.8 build | diff --git a/.travis.yml b/.travis.yml

index 048736e4bf1d0..0acd386eea9ed 100644

--- a/.travis.yml

+++ b/.travis.yml

@@ -30,11 +30,9 @@ matrix:

- python: 3.5

include:

- - dist: bionic

- # 18.04

- python: 3.8.0

+ - dist: trusty

env:

- - JOB="3.8-dev" PATTERN="(not slow and not... | Closes https://github.com/pandas-dev/pandas/issues/29001 | https://api.github.com/repos/pandas-dev/pandas/pulls/29696 | 2019-11-18T19:01:52Z | 2019-11-19T13:19:16Z | 2019-11-19T13:19:16Z | 2019-11-19T14:48:51Z |

TST: Split pandas/tests/frame/test_indexing into a directory (#29544) | diff --git a/pandas/tests/frame/indexing/test_categorical.py b/pandas/tests/frame/indexing/test_categorical.py

new file mode 100644

index 0000000000000..b595e48797d41

--- /dev/null

+++ b/pandas/tests/frame/indexing/test_categorical.py

@@ -0,0 +1,388 @@

+import numpy as np

+import pytest

+

+from pandas.core.dtypes.dtype... | - [x] closes #29544

- [x] tests added / passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [ ] whatsnew entry

Split test cases in pandas/tests/frame/test_indexing into a sub directory (like pandas/tests/indexing). Still main file is pretty huge. Will update... | https://api.github.com/repos/pandas-dev/pandas/pulls/29694 | 2019-11-18T17:45:11Z | 2019-11-19T15:07:02Z | 2019-11-19T15:07:02Z | 2019-11-19T15:07:06Z |

Removed compat_helper.h | diff --git a/pandas/_libs/internals.pyx b/pandas/_libs/internals.pyx

index 8e61a772912af..ba108c4524b9c 100644

--- a/pandas/_libs/internals.pyx

+++ b/pandas/_libs/internals.pyx

@@ -1,7 +1,7 @@

import cython

from cython import Py_ssize_t

-from cpython.object cimport PyObject

+from cpython.slice cimport PySlice_GetIn... | ref #29666 and comments from @jbrockmendel and @gfyoung looks like the compat header can now be removed, now that we are on 3.6.1 as a minimum | https://api.github.com/repos/pandas-dev/pandas/pulls/29693 | 2019-11-18T17:41:11Z | 2019-11-19T13:26:59Z | 2019-11-19T13:26:59Z | 2020-01-16T00:35:17Z |

REF: ensure name and cname are always str | diff --git a/pandas/io/pytables.py b/pandas/io/pytables.py

index 193b8f5053d65..1e2583ffab1dc 100644

--- a/pandas/io/pytables.py

+++ b/pandas/io/pytables.py

@@ -1691,29 +1691,37 @@ class IndexCol:

is_data_indexable = True

_info_fields = ["freq", "tz", "index_name"]

+ name: str

+ cname: str

+ kind_a... | Once this is assured, there are a lot of other things (including in core.computation!) that we can infer in follow-ups. | https://api.github.com/repos/pandas-dev/pandas/pulls/29692 | 2019-11-18T16:12:06Z | 2019-11-20T17:15:59Z | 2019-11-20T17:15:59Z | 2019-11-20T17:47:34Z |

BUG: Series groupby does not include nan counts for all categorical labels (#17605) | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index cb68bd0e762c4..75d47938f983a 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -183,6 +183,47 @@ New repr for :class:`pandas.core.arrays.IntervalArray`

pd.arrays.IntervalArray.from_tuples([(0, 1), ... | - [x] closes #17605

- [x] tests added / passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [x] whatsnew entry

This is a simple, low-impact fix for #17605. | https://api.github.com/repos/pandas-dev/pandas/pulls/29690 | 2019-11-18T16:02:26Z | 2019-11-20T12:46:19Z | 2019-11-20T12:46:18Z | 2019-11-20T12:46:24Z |

CI: Fixing error in code checks in GitHub actions | diff --git a/ci/code_checks.sh b/ci/code_checks.sh

index d5566c522ac64..edd8fcd418c47 100755

--- a/ci/code_checks.sh

+++ b/ci/code_checks.sh

@@ -190,9 +190,9 @@ if [[ -z "$CHECK" || "$CHECK" == "patterns" ]]; then

invgrep -R --include="*.rst" ".. ipython ::" doc/source

RET=$(($RET + $?)) ; echo $MSG "DONE"

... | xref https://github.com/pandas-dev/pandas/pull/29546#issuecomment-554807243

Looks like the code in one of the checks in `ci/code_checks.sh` uses another encoding for spaces and other characters. I guess it was generated from Windows, Azure-pipelines is not sensitive to the different encoding, but GitHub actions is.

... | https://api.github.com/repos/pandas-dev/pandas/pulls/29683 | 2019-11-18T02:50:06Z | 2019-11-18T04:41:16Z | 2019-11-18T04:41:16Z | 2019-11-18T04:41:16Z |

TYP: add string annotations in io.pytables | diff --git a/pandas/io/pytables.py b/pandas/io/pytables.py

index f41c767d0b13a..193b8f5053d65 100644

--- a/pandas/io/pytables.py

+++ b/pandas/io/pytables.py

@@ -9,7 +9,7 @@

import os

import re

import time

-from typing import List, Optional, Type, Union

+from typing import TYPE_CHECKING, Any, Dict, List, Optional, Ty... | This file is pretty tough, so for now this just goes through and adds `str` annotations in places where it is unambiguous (e.g. the variable is passed to `getattr`) and places that can be directly reasoned about from there. | https://api.github.com/repos/pandas-dev/pandas/pulls/29682 | 2019-11-17T23:42:21Z | 2019-11-18T00:34:45Z | 2019-11-18T00:34:45Z | 2019-11-18T01:28:47Z |

BUG: IndexError in __repr__ | diff --git a/pandas/core/computation/pytables.py b/pandas/core/computation/pytables.py

index 13a4814068d6a..4eb39898214c5 100644

--- a/pandas/core/computation/pytables.py

+++ b/pandas/core/computation/pytables.py

@@ -2,7 +2,7 @@

import ast

from functools import partial

-from typing import Optional

+from typing impo... | By avoiding that `IndexError`, we can get rid of an `except Exception` in `io.pytables` | https://api.github.com/repos/pandas-dev/pandas/pulls/29681 | 2019-11-17T22:08:29Z | 2019-11-17T23:01:50Z | 2019-11-17T23:01:50Z | 2019-11-17T23:15:28Z |

Bug fix GH 29624: calling str.isalpha on empty series returns object … | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index c91ced1014dd1..58d1fef9ef5bf 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -354,7 +354,7 @@ Conversion

Strings

^^^^^^^

--

+- Calling :meth:`Series.str.isalnum` (and other "ismethods") on an empty... | …dtype, not bool

Added dtype=bool argument to make _noarg_wrapper() return a bool Series

- [x] closes #29624

- [x] tests added / passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [x] whatsnew entry

| https://api.github.com/repos/pandas-dev/pandas/pulls/29680 | 2019-11-17T21:32:41Z | 2019-11-17T23:06:35Z | 2019-11-17T23:06:34Z | 2019-11-18T04:55:50Z |

CLN: Simplify black command in Makefile | diff --git a/Makefile b/Makefile

index 27a2c3682de9c..f26689ab65ba5 100644

--- a/Makefile

+++ b/Makefile

@@ -15,7 +15,7 @@ lint-diff:

git diff upstream/master --name-only -- "*.py" | xargs flake8

black:

- black . --exclude '(asv_bench/env|\.egg|\.git|\.hg|\.mypy_cache|\.nox|\.tox|\.venv|_build|buck-out|build|dist|... | Follow up to #29607 where `exclude` was added to `pyproject.toml`, so it no longer needs to be explicitly specified. Missed this reference; checked for additional missed references but didn't find any. | https://api.github.com/repos/pandas-dev/pandas/pulls/29679 | 2019-11-17T21:10:33Z | 2019-11-17T22:47:52Z | 2019-11-17T22:47:52Z | 2019-11-17T22:48:55Z |

DEPS: Unifying testing and building dependencies across builds | diff --git a/ci/deps/azure-36-32bit.yaml b/ci/deps/azure-36-32bit.yaml

index 1e2e6c33e8c15..f3e3d577a7a33 100644

--- a/ci/deps/azure-36-32bit.yaml

+++ b/ci/deps/azure-36-32bit.yaml

@@ -3,21 +3,25 @@ channels:

- defaults

- conda-forge

dependencies:

+ - python=3.6.*

+

+ # tools

+ ### Cython 0.29.13 and pytest 5... | - [X] closes #29664

In our CI builds we're using different versions of pytest, pytest-xdist and other tools. Looks like Python 3.5 was forcing part of this, since some packages were not available.

In this PR I standardize the tools and versions we use in all packages, with only two exceptions:

- In 32 bits we in... | https://api.github.com/repos/pandas-dev/pandas/pulls/29678 | 2019-11-17T20:19:47Z | 2019-11-21T13:06:07Z | 2019-11-21T13:06:07Z | 2019-11-21T13:07:04Z |

CLN: parts of #29667 | diff --git a/pandas/core/computation/eval.py b/pandas/core/computation/eval.py

index de2133f64291d..72f2e1d8e23e5 100644

--- a/pandas/core/computation/eval.py

+++ b/pandas/core/computation/eval.py

@@ -11,7 +11,7 @@

from pandas.core.computation.engines import _engines

from pandas.core.computation.expr import Expr, _... | Breaks off easier parts | https://api.github.com/repos/pandas-dev/pandas/pulls/29677 | 2019-11-17T19:49:22Z | 2019-11-18T00:20:37Z | 2019-11-18T00:20:37Z | 2019-11-18T00:46:42Z |

TYP: Add type hint for BaseGrouper in groupby._Groupby | diff --git a/pandas/core/groupby/groupby.py b/pandas/core/groupby/groupby.py

index 294cb723eee1a..3199f166d5b3f 100644

--- a/pandas/core/groupby/groupby.py

+++ b/pandas/core/groupby/groupby.py

@@ -48,7 +48,7 @@ class providing the base-class of operations.

from pandas.core.construction import extract_array

from panda... | Add a type hint in class ``_Groupby`` for ``BaseGrouper`` to aid navigating the code base. | https://api.github.com/repos/pandas-dev/pandas/pulls/29675 | 2019-11-17T18:10:47Z | 2019-11-17T20:55:54Z | 2019-11-17T20:55:54Z | 2019-11-18T06:48:39Z |

CI: Use bash for windows script on azure | diff --git a/ci/azure/windows.yml b/ci/azure/windows.yml

index dfa82819b9826..86807b4010988 100644

--- a/ci/azure/windows.yml

+++ b/ci/azure/windows.yml

@@ -11,10 +11,12 @@ jobs:

py36_np15:

ENV_FILE: ci/deps/azure-windows-36.yaml

CONDA_PY: "36"

+ PATTERN: "not slow and not network"

... | - Towards #26344

- This makes `windows.yml` and `posix.yml` steps pretty similar and they could potentially be merged/reduce duplication? (Will leave this for a separate PR)

- We also now use `ci/run_tests.sh` for windows test stage.

Reference https://github.com/pandas-dev/pandas/pull/27195 where I began this. | https://api.github.com/repos/pandas-dev/pandas/pulls/29674 | 2019-11-17T17:59:27Z | 2019-11-19T04:06:58Z | 2019-11-19T04:06:58Z | 2019-12-25T20:35:11Z |

CI: Pin black to version 19.10b0 | diff --git a/environment.yml b/environment.yml

index 325b79f07a61c..ef5767f26dceb 100644

--- a/environment.yml

+++ b/environment.yml

@@ -15,7 +15,7 @@ dependencies:

- cython>=0.29.13

# code checks

- - black>=19.10b0

+ - black=19.10b0

- cpplint

- flake8

- flake8-comprehensions>=3.1.0 # used by flake8... | This will pin black version in ci and for local dev.

(This will avoid code checks failing when a new black version is released)

As per comment from @jreback here https://github.com/pandas-dev/pandas/pull/29508#discussion_r346316578 | https://api.github.com/repos/pandas-dev/pandas/pulls/29673 | 2019-11-17T16:59:06Z | 2019-11-17T22:48:18Z | 2019-11-17T22:48:17Z | 2019-12-25T20:24:59Z |

REF: align transform logic flow | diff --git a/pandas/core/groupby/generic.py b/pandas/core/groupby/generic.py

index 6376dbefcf435..79e7ff5ea22ad 100644

--- a/pandas/core/groupby/generic.py

+++ b/pandas/core/groupby/generic.py

@@ -394,35 +394,39 @@ def _aggregate_named(self, func, *args, **kwargs):

def transform(self, func, *args, **kwargs):

... | Implement SeriesGroupBy._transform_general to match DataFrameGroupBy._transform_general, re-arrange the checks within the two `transform` methods to be in the same order and be more linear. Make the two _transform_fast methods have closer-to-matching signatures | https://api.github.com/repos/pandas-dev/pandas/pulls/29672 | 2019-11-17T16:40:28Z | 2019-11-19T13:30:18Z | 2019-11-19T13:30:18Z | 2019-11-19T15:20:55Z |

Extension Module Compat Cleanup | diff --git a/pandas/_libs/src/compat_helper.h b/pandas/_libs/src/compat_helper.h

index 078069fb48af2..01d5b843d1bb6 100644

--- a/pandas/_libs/src/compat_helper.h

+++ b/pandas/_libs/src/compat_helper.h

@@ -38,13 +38,8 @@ PANDAS_INLINE int slice_get_indices(PyObject *s,

Py_ssize_t *st... | - [ ] closes #xxxx

- [ ] tests added / passed

- [ ] passes `black pandas`

- [ ] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [ ] whatsnew entry

| https://api.github.com/repos/pandas-dev/pandas/pulls/29666 | 2019-11-17T03:46:26Z | 2019-11-18T13:37:18Z | 2019-11-18T13:37:18Z | 2019-11-18T17:18:44Z |

CLN: de-privatize names in core.computation | diff --git a/pandas/core/computation/align.py b/pandas/core/computation/align.py

index dfb858d797f41..197ddd999fd37 100644

--- a/pandas/core/computation/align.py

+++ b/pandas/core/computation/align.py

@@ -8,10 +8,11 @@

from pandas.errors import PerformanceWarning

-import pandas as pd

+from pandas.core.dtypes.gener... | Some annotations. I'm finding that annotations in this directory are really fragile; adding a type to one thing will cause a mypy complaint in somewhere surprising. | https://api.github.com/repos/pandas-dev/pandas/pulls/29665 | 2019-11-17T03:28:29Z | 2019-11-17T13:34:26Z | 2019-11-17T13:34:26Z | 2019-11-17T15:38:11Z |

CLN:f-string asv | diff --git a/asv_bench/benchmarks/io/csv.py b/asv_bench/benchmarks/io/csv.py

index adb3dd95e3574..b8e8630e663ee 100644

--- a/asv_bench/benchmarks/io/csv.py

+++ b/asv_bench/benchmarks/io/csv.py

@@ -132,7 +132,7 @@ class ReadCSVConcatDatetimeBadDateValue(StringIORewind):

param_names = ["bad_date_value"]

def s... | - [ ] closes #xxxx

- [x] tests added / passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [ ] whatsnew entry

continuation of https://github.com/pandas-dev/pandas/pull/29571

ref #29547 | https://api.github.com/repos/pandas-dev/pandas/pulls/29663 | 2019-11-16T19:11:22Z | 2019-11-16T20:35:20Z | 2019-11-16T20:35:20Z | 2019-11-16T20:40:21Z |

CLN:F-strings | diff --git a/pandas/compat/__init__.py b/pandas/compat/__init__.py

index 684fbbc23c86c..f95dd8679308f 100644

--- a/pandas/compat/__init__.py

+++ b/pandas/compat/__init__.py

@@ -30,7 +30,7 @@ def set_function_name(f, name, cls):

Bind the name/qualname attributes of the function.

"""

f.__name__ = name

- ... | - [ ] closes #xxxx

- [x] tests added / passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

- [ ] whatsnew entry

ref https://github.com/pandas-dev/pandas/issues/29547 | https://api.github.com/repos/pandas-dev/pandas/pulls/29662 | 2019-11-16T18:17:55Z | 2019-11-16T20:30:04Z | 2019-11-16T20:30:04Z | 2019-11-16T20:40:46Z |

CI: Forcing GitHub actions to activate | diff --git a/.github/workflows/activate.yml b/.github/workflows/activate.yml

new file mode 100644

index 0000000000000..f6aede6289ebf

--- /dev/null

+++ b/.github/workflows/activate.yml

@@ -0,0 +1,21 @@

+# Simple first task to activate GitHub actions.

+# This won't run until is merged, but future actions will

+# run on P... | GitHub actions won't start running until the first action is committed.

This PR adds a simple action with an echo, so we can activate GitHub actions risk free, and when we move an actual build, the action will run in the PR, and we can see that it works.

Tested this action in a test repo, to make sure it works: h... | https://api.github.com/repos/pandas-dev/pandas/pulls/29661 | 2019-11-16T14:56:22Z | 2019-11-16T20:39:06Z | 2019-11-16T20:39:06Z | 2019-11-16T20:39:15Z |

BUG: resolved problem with DataFrame.equals() (#28839) | diff --git a/doc/source/whatsnew/v1.0.0.rst b/doc/source/whatsnew/v1.0.0.rst

index 30a828064f812..bf30f2d356b44 100644

--- a/doc/source/whatsnew/v1.0.0.rst

+++ b/doc/source/whatsnew/v1.0.0.rst

@@ -451,6 +451,7 @@ Reshaping

- Fix to ensure all int dtypes can be used in :func:`merge_asof` when using a tolerance value. P... | The function was returning True in case shown in added test. The cause

of the problem was sorting Blocks of DataFrame by type, and then

mgr_locs before comparison. It resulted in arranging the identical blocks

in the same way, which resulted in having the same two lists of blocks.

Changing sorting order to (mgr_loc... | https://api.github.com/repos/pandas-dev/pandas/pulls/29657 | 2019-11-16T08:47:17Z | 2019-11-19T13:31:14Z | 2019-11-19T13:31:13Z | 2019-11-19T16:44:36Z |

TYP: annotations in core.indexes | diff --git a/pandas/core/indexes/api.py b/pandas/core/indexes/api.py

index a7cf2c20b0dec..f650a62bc5b74 100644

--- a/pandas/core/indexes/api.py

+++ b/pandas/core/indexes/api.py

@@ -1,4 +1,5 @@

import textwrap

+from typing import List, Set

import warnings

from pandas._libs import NaT, lib

@@ -64,7 +65,9 @@

]

-... | Changes the behavior of get_objs_combined_axis to ensure that it always returns an Index and never `None`, otherwise just annotations and some docstring cleanup | https://api.github.com/repos/pandas-dev/pandas/pulls/29656 | 2019-11-16T04:12:20Z | 2019-11-16T20:49:41Z | 2019-11-16T20:49:41Z | 2019-11-16T22:23:33Z |

CI: Fix error when creating postgresql db | diff --git a/ci/setup_env.sh b/ci/setup_env.sh

index 4d454f9c5041a..0e8d6fb7cd35a 100755

--- a/ci/setup_env.sh

+++ b/ci/setup_env.sh

@@ -114,6 +114,11 @@ echo "w/o removing anything else"

conda remove pandas -y --force || true

pip uninstall -y pandas || true

+echo

+echo "remove postgres if has been installed with c... | - [X] closes #29643

Not sure if this makes sense, but let's try if this is the problem. | https://api.github.com/repos/pandas-dev/pandas/pulls/29655 | 2019-11-16T02:59:58Z | 2019-11-16T16:47:14Z | 2019-11-16T16:47:14Z | 2019-11-16T16:47:14Z |

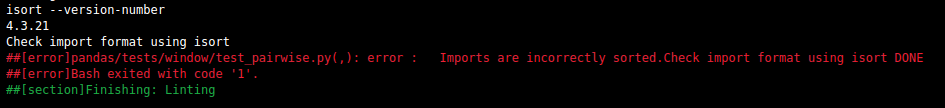

CI: Format isort output for azure | diff --git a/ci/code_checks.sh b/ci/code_checks.sh

index ceb13c52ded9c..cfe55f1e05f71 100755

--- a/ci/code_checks.sh

+++ b/ci/code_checks.sh

@@ -105,7 +105,12 @@ if [[ -z "$CHECK" || "$CHECK" == "lint" ]]; then

# Imports - Check formatting using isort see setup.cfg for settings

MSG='Check import format usin... | - [x] closes #27179

- [x] tests added / passed

- [x] passes `black pandas`

- [x] passes `git diff upstream/master -u -- "*.py" | flake8 --diff`

Current behaviour on master of isort formatting:

Fini... | https://api.github.com/repos/pandas-dev/pandas/pulls/29654 | 2019-11-16T02:59:26Z | 2019-12-06T01:10:29Z | 2019-12-06T01:10:28Z | 2019-12-25T20:27:02Z |

CI: bump mypy 0.730 | diff --git a/environment.yml b/environment.yml

index a3582c56ee9d2..b4ffe9577b379 100644

--- a/environment.yml

+++ b/environment.yml

@@ -21,7 +21,7 @@ dependencies:

- flake8-comprehensions>=3.1.0 # used by flake8, linting of unnecessary comprehensions

- flake8-rst>=0.6.0,<=0.7.0 # linting of code blocks in rst ... | xref https://github.com/pandas-dev/pandas/pull/29188#issuecomment-554524916 | https://api.github.com/repos/pandas-dev/pandas/pulls/29653 | 2019-11-16T01:39:31Z | 2019-11-17T14:27:19Z | 2019-11-17T14:27:19Z | 2019-11-20T13:09:07Z |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.