repo_id

stringlengths 15

89

| file_path

stringlengths 27

180

| content

stringlengths 1

2.23M

| __index_level_0__

int64 0

0

|

|---|---|---|---|

hf_public_repos/transformers/docs/source

|

hf_public_repos/transformers/docs/source/en/pipeline_webserver.md

|

<!--⚠️ Note that this file is in Markdown but contain specific syntax for our doc-builder (similar to MDX) that may not be

rendered properly in your Markdown viewer.

-->

# Using pipelines for a webserver

<Tip>

Creating an inference engine is a complex topic, and the "best" solution

will most likely depend on your problem space. Are you on CPU or GPU? Do

you want the lowest latency, the highest throughput, support for

many models, or just highly optimize 1 specific model?

There are many ways to tackle this topic, so what we are going to present is a good default

to get started which may not necessarily be the most optimal solution for you.

</Tip>

The key thing to understand is that we can use an iterator, just like you would [on a

dataset](pipeline_tutorial#using-pipelines-on-a-dataset), since a webserver is basically a system that waits for requests and

treats them as they come in.

Usually webservers are multiplexed (multithreaded, async, etc..) to handle various

requests concurrently. Pipelines on the other hand (and mostly the underlying models)

are not really great for parallelism; they take up a lot of RAM, so it's best to give them all the available resources when they are running or it's a compute-intensive job.

We are going to solve that by having the webserver handle the light load of receiving

and sending requests, and having a single thread handling the actual work.

This example is going to use `starlette`. The actual framework is not really

important, but you might have to tune or change the code if you are using another

one to achieve the same effect.

Create `server.py`:

```py

from starlette.applications import Starlette

from starlette.responses import JSONResponse

from starlette.routing import Route

from transformers import pipeline

import asyncio

async def homepage(request):

payload = await request.body()

string = payload.decode("utf-8")

response_q = asyncio.Queue()

await request.app.model_queue.put((string, response_q))

output = await response_q.get()

return JSONResponse(output)

async def server_loop(q):

pipe = pipeline(model="bert-base-uncased")

while True:

(string, response_q) = await q.get()

out = pipe(string)

await response_q.put(out)

app = Starlette(

routes=[

Route("/", homepage, methods=["POST"]),

],

)

@app.on_event("startup")

async def startup_event():

q = asyncio.Queue()

app.model_queue = q

asyncio.create_task(server_loop(q))

```

Now you can start it with:

```bash

uvicorn server:app

```

And you can query it:

```bash

curl -X POST -d "test [MASK]" http://localhost:8000/

#[{"score":0.7742936015129089,"token":1012,"token_str":".","sequence":"test."},...]

```

And there you go, now you have a good idea of how to create a webserver!

What is really important is that we load the model only **once**, so there are no copies

of the model on the webserver. This way, no unnecessary RAM is being used.

Then the queuing mechanism allows you to do fancy stuff like maybe accumulating a few

items before inferring to use dynamic batching:

<Tip warning={true}>

The code sample below is intentionally written like pseudo-code for readability.

Do not run this without checking if it makes sense for your system resources!

</Tip>

```py

(string, rq) = await q.get()

strings = []

queues = []

while True:

try:

(string, rq) = await asyncio.wait_for(q.get(), timeout=0.001) # 1ms

except asyncio.exceptions.TimeoutError:

break

strings.append(string)

queues.append(rq)

strings

outs = pipe(strings, batch_size=len(strings))

for rq, out in zip(queues, outs):

await rq.put(out)

```

Again, the proposed code is optimized for readability, not for being the best code.

First of all, there's no batch size limit which is usually not a

great idea. Next, the timeout is reset on every queue fetch, meaning you could

wait much more than 1ms before running the inference (delaying the first request

by that much).

It would be better to have a single 1ms deadline.

This will always wait for 1ms even if the queue is empty, which might not be the

best since you probably want to start doing inference if there's nothing in the queue.

But maybe it does make sense if batching is really crucial for your use case.

Again, there's really no one best solution.

## Few things you might want to consider

### Error checking

There's a lot that can go wrong in production: out of memory, out of space,

loading the model might fail, the query might be wrong, the query might be

correct but still fail to run because of a model misconfiguration, and so on.

Generally, it's good if the server outputs the errors to the user, so

adding a lot of `try..except` statements to show those errors is a good

idea. But keep in mind it may also be a security risk to reveal all those errors depending

on your security context.

### Circuit breaking

Webservers usually look better when they do circuit breaking. It means they

return proper errors when they're overloaded instead of just waiting for the query indefinitely. Return a 503 error instead of waiting for a super long time or a 504 after a long time.

This is relatively easy to implement in the proposed code since there is a single queue.

Looking at the queue size is a basic way to start returning errors before your

webserver fails under load.

### Blocking the main thread

Currently PyTorch is not async aware, and computation will block the main

thread while running. That means it would be better if PyTorch was forced to run

on its own thread/process. This wasn't done here because the code is a lot more

complex (mostly because threads and async and queues don't play nice together).

But ultimately it does the same thing.

This would be important if the inference of single items were long (> 1s) because

in this case, it means every query during inference would have to wait for 1s before

even receiving an error.

### Dynamic batching

In general, batching is not necessarily an improvement over passing 1 item at

a time (see [batching details](./main_classes/pipelines#pipeline-batching) for more information). But it can be very effective

when used in the correct setting. In the API, there is no dynamic

batching by default (too much opportunity for a slowdown). But for BLOOM inference -

which is a very large model - dynamic batching is **essential** to provide a decent experience for everyone.

| 0

|

hf_public_repos/transformers/docs/source

|

hf_public_repos/transformers/docs/source/en/perf_train_gpu_one.md

|

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

⚠️ Note that this file is in Markdown but contain specific syntax for our doc-builder (similar to MDX) that may not be

rendered properly in your Markdown viewer.

-->

# Methods and tools for efficient training on a single GPU

This guide demonstrates practical techniques that you can use to increase the efficiency of your model's training by

optimizing memory utilization, speeding up the training, or both. If you'd like to understand how GPU is utilized during

training, please refer to the [Model training anatomy](model_memory_anatomy) conceptual guide first. This guide

focuses on practical techniques.

<Tip>

If you have access to a machine with multiple GPUs, these approaches are still valid, plus you can leverage additional methods outlined in the [multi-GPU section](perf_train_gpu_many).

</Tip>

When training large models, there are two aspects that should be considered at the same time:

* Data throughput/training time

* Model performance

Maximizing the throughput (samples/second) leads to lower training cost. This is generally achieved by utilizing the GPU

as much as possible and thus filling GPU memory to its limit. If the desired batch size exceeds the limits of the GPU memory,

the memory optimization techniques, such as gradient accumulation, can help.

However, if the preferred batch size fits into memory, there's no reason to apply memory-optimizing techniques because they can

slow down the training. Just because one can use a large batch size, does not necessarily mean they should. As part of

hyperparameter tuning, you should determine which batch size yields the best results and then optimize resources accordingly.

The methods and tools covered in this guide can be classified based on the effect they have on the training process:

| Method/tool | Improves training speed | Optimizes memory utilization |

|:-----------------------------------------------------------|:------------------------|:-----------------------------|

| [Batch size choice](#batch-size-choice) | Yes | Yes |

| [Gradient accumulation](#gradient-accumulation) | No | Yes |

| [Gradient checkpointing](#gradient-checkpointing) | No | Yes |

| [Mixed precision training](#mixed-precision-training) | Yes | (No) |

| [Optimizer choice](#optimizer-choice) | Yes | Yes |

| [Data preloading](#data-preloading) | Yes | No |

| [DeepSpeed Zero](#deepspeed-zero) | No | Yes |

| [torch.compile](#using-torchcompile) | Yes | No |

<Tip>

Note: when using mixed precision with a small model and a large batch size, there will be some memory savings but with a

large model and a small batch size, the memory use will be larger.

</Tip>

You can combine the above methods to get a cumulative effect. These techniques are available to you whether you are

training your model with [`Trainer`] or writing a pure PyTorch loop, in which case you can [configure these optimizations

with 🤗 Accelerate](#using-accelerate).

If these methods do not result in sufficient gains, you can explore the following options:

* [Look into building your own custom Docker container with efficient softare prebuilds](#efficient-software-prebuilds)

* [Consider a model that uses Mixture of Experts (MoE)](#mixture-of-experts)

* [Convert your model to BetterTransformer to leverage PyTorch native attention](#using-pytorch-native-attention)

Finally, if all of the above is still not enough, even after switching to a server-grade GPU like A100, consider moving

to a multi-GPU setup. All these approaches are still valid in a multi-GPU setup, plus you can leverage additional parallelism

techniques outlined in the [multi-GPU section](perf_train_gpu_many).

## Batch size choice

To achieve optimal performance, start by identifying the appropriate batch size. It is recommended to use batch sizes and

input/output neuron counts that are of size 2^N. Often it's a multiple of 8, but it can be

higher depending on the hardware being used and the model's dtype.

For reference, check out NVIDIA's recommendation for [input/output neuron counts](

https://docs.nvidia.com/deeplearning/performance/dl-performance-fully-connected/index.html#input-features) and

[batch size](https://docs.nvidia.com/deeplearning/performance/dl-performance-fully-connected/index.html#batch-size) for

fully connected layers (which are involved in GEMMs (General Matrix Multiplications)).

[Tensor Core Requirements](https://docs.nvidia.com/deeplearning/performance/dl-performance-matrix-multiplication/index.html#requirements-tc)

define the multiplier based on the dtype and the hardware. For instance, for fp16 data type a multiple of 8 is recommended, unless

it's an A100 GPU, in which case use multiples of 64.

For parameters that are small, consider also [Dimension Quantization Effects](https://docs.nvidia.com/deeplearning/performance/dl-performance-matrix-multiplication/index.html#dim-quantization).

This is where tiling happens and the right multiplier can have a significant speedup.

## Gradient Accumulation

The **gradient accumulation** method aims to calculate gradients in smaller increments instead of computing them for the

entire batch at once. This approach involves iteratively calculating gradients in smaller batches by performing forward

and backward passes through the model and accumulating the gradients during the process. Once a sufficient number of

gradients have been accumulated, the model's optimization step is executed. By employing gradient accumulation, it

becomes possible to increase the **effective batch size** beyond the limitations imposed by the GPU's memory capacity.

However, it is important to note that the additional forward and backward passes introduced by gradient accumulation can

slow down the training process.

You can enable gradient accumulation by adding the `gradient_accumulation_steps` argument to [`TrainingArguments`]:

```py

training_args = TrainingArguments(per_device_train_batch_size=1, gradient_accumulation_steps=4, **default_args)

```

In the above example, your effective batch size becomes 4.

Alternatively, use 🤗 Accelerate to gain full control over the training loop. Find the 🤗 Accelerate example

[further down in this guide](#using-accelerate).

While it is advised to max out GPU usage as much as possible, a high number of gradient accumulation steps can

result in a more pronounced training slowdown. Consider the following example. Let's say, the `per_device_train_batch_size=4`

without gradient accumulation hits the GPU's limit. If you would like to train with batches of size 64, do not set the

`per_device_train_batch_size` to 1 and `gradient_accumulation_steps` to 64. Instead, keep `per_device_train_batch_size=4`

and set `gradient_accumulation_steps=16`. This results in the same effective batch size while making better use of

the available GPU resources.

For additional information, please refer to batch size and gradient accumulation benchmarks for [RTX-3090](https://github.com/huggingface/transformers/issues/14608#issuecomment-1004392537)

and [A100](https://github.com/huggingface/transformers/issues/15026#issuecomment-1005033957).

## Gradient Checkpointing

Some large models may still face memory issues even when the batch size is set to 1 and gradient accumulation is used.

This is because there are other components that also require memory storage.

Saving all activations from the forward pass in order to compute the gradients during the backward pass can result in

significant memory overhead. The alternative approach of discarding the activations and recalculating them when needed

during the backward pass, would introduce a considerable computational overhead and slow down the training process.

**Gradient checkpointing** offers a compromise between these two approaches and saves strategically selected activations

throughout the computational graph so only a fraction of the activations need to be re-computed for the gradients. For

an in-depth explanation of gradient checkpointing, refer to [this great article](https://medium.com/tensorflow/fitting-larger-networks-into-memory-583e3c758ff9).

To enable gradient checkpointing in the [`Trainer`], pass the corresponding a flag to [`TrainingArguments`]:

```py

training_args = TrainingArguments(

per_device_train_batch_size=1, gradient_accumulation_steps=4, gradient_checkpointing=True, **default_args

)

```

Alternatively, use 🤗 Accelerate - find the 🤗 Accelerate example [further in this guide](#using-accelerate).

<Tip>

While gradient checkpointing may improve memory efficiency, it slows training by approximately 20%.

</Tip>

## Mixed precision training

**Mixed precision training** is a technique that aims to optimize the computational efficiency of training models by

utilizing lower-precision numerical formats for certain variables. Traditionally, most models use 32-bit floating point

precision (fp32 or float32) to represent and process variables. However, not all variables require this high precision

level to achieve accurate results. By reducing the precision of certain variables to lower numerical formats like 16-bit

floating point (fp16 or float16), we can speed up the computations. Because in this approach some computations are performed

in half-precision, while some are still in full precision, the approach is called mixed precision training.

Most commonly mixed precision training is achieved by using fp16 (float16) data types, however, some GPU architectures

(such as the Ampere architecture) offer bf16 and tf32 (CUDA internal data type) data types. Check

out the [NVIDIA Blog](https://developer.nvidia.com/blog/accelerating-ai-training-with-tf32-tensor-cores/) to learn more about

the differences between these data types.

### fp16

The main advantage of mixed precision training comes from saving the activations in half precision (fp16).

Although the gradients are also computed in half precision they are converted back to full precision for the optimization

step so no memory is saved here.

While mixed precision training results in faster computations, it can also lead to more GPU memory being utilized, especially for small batch sizes.

This is because the model is now present on the GPU in both 16-bit and 32-bit precision (1.5x the original model on the GPU).

To enable mixed precision training, set the `fp16` flag to `True`:

```py

training_args = TrainingArguments(per_device_train_batch_size=4, fp16=True, **default_args)

```

If you prefer to use 🤗 Accelerate, find the 🤗 Accelerate example [further in this guide](#using-accelerate).

### BF16

If you have access to an Ampere or newer hardware you can use bf16 for mixed precision training and evaluation. While

bf16 has a worse precision than fp16, it has a much bigger dynamic range. In fp16 the biggest number you can have

is `65535` and any number above that will result in an overflow. A bf16 number can be as large as `3.39e+38` (!) which

is about the same as fp32 - because both have 8-bits used for the numerical range.

You can enable BF16 in the 🤗 Trainer with:

```python

training_args = TrainingArguments(bf16=True, **default_args)

```

### TF32

The Ampere hardware uses a magical data type called tf32. It has the same numerical range as fp32 (8-bits), but instead

of 23 bits precision it has only 10 bits (same as fp16) and uses only 19 bits in total. It's "magical" in the sense that

you can use the normal fp32 training and/or inference code and by enabling tf32 support you can get up to 3x throughput

improvement. All you need to do is to add the following to your code:

```

import torch

torch.backends.cuda.matmul.allow_tf32 = True

torch.backends.cudnn.allow_tf32 = True

```

CUDA will automatically switch to using tf32 instead of fp32 where possible, assuming that the used GPU is from the Ampere series.

According to [NVIDIA research](https://developer.nvidia.com/blog/accelerating-ai-training-with-tf32-tensor-cores/), the

majority of machine learning training workloads show the same perplexity and convergence with tf32 training as with fp32.

If you're already using fp16 or bf16 mixed precision it may help with the throughput as well.

You can enable this mode in the 🤗 Trainer:

```python

TrainingArguments(tf32=True, **default_args)

```

<Tip>

tf32 can't be accessed directly via `tensor.to(dtype=torch.tf32)` because it is an internal CUDA data type. You need `torch>=1.7` to use tf32 data types.

</Tip>

For additional information on tf32 vs other precisions, please refer to the following benchmarks:

[RTX-3090](https://github.com/huggingface/transformers/issues/14608#issuecomment-1004390803) and

[A100](https://github.com/huggingface/transformers/issues/15026#issuecomment-1004543189).

## Flash Attention 2

You can speedup the training throughput by using Flash Attention 2 integration in transformers. Check out the appropriate section in the [single GPU section](./perf_infer_gpu_one#Flash-Attention-2) to learn more about how to load a model with Flash Attention 2 modules.

## Optimizer choice

The most common optimizer used to train transformer models is Adam or AdamW (Adam with weight decay). Adam achieves

good convergence by storing the rolling average of the previous gradients; however, it adds an additional memory

footprint of the order of the number of model parameters. To remedy this, you can use an alternative optimizer.

For example if you have [NVIDIA/apex](https://github.com/NVIDIA/apex) installed for NVIDIA GPUs, or [ROCmSoftwarePlatform/apex](https://github.com/ROCmSoftwarePlatform/apex) for AMD GPUs, `adamw_apex_fused` will give you the

fastest training experience among all supported AdamW optimizers.

[`Trainer`] integrates a variety of optimizers that can be used out of box: `adamw_hf`, `adamw_torch`, `adamw_torch_fused`,

`adamw_apex_fused`, `adamw_anyprecision`, `adafactor`, or `adamw_bnb_8bit`. More optimizers can be plugged in via a third-party implementation.

Let's take a closer look at two alternatives to AdamW optimizer:

1. `adafactor` which is available in [`Trainer`]

2. `adamw_bnb_8bit` is also available in Trainer, but a third-party integration is provided below for demonstration.

For comparison, for a 3B-parameter model, like “t5-3b”:

* A standard AdamW optimizer will need 24GB of GPU memory because it uses 8 bytes for each parameter (8*3 => 24GB)

* Adafactor optimizer will need more than 12GB. It uses slightly more than 4 bytes for each parameter, so 4*3 and then some extra.

* 8bit BNB quantized optimizer will use only (2*3) 6GB if all optimizer states are quantized.

### Adafactor

Adafactor doesn't store rolling averages for each element in weight matrices. Instead, it keeps aggregated information

(sums of rolling averages row- and column-wise), significantly reducing its footprint. However, compared to Adam,

Adafactor may have slower convergence in certain cases.

You can switch to Adafactor by setting `optim="adafactor"` in [`TrainingArguments`]:

```py

training_args = TrainingArguments(per_device_train_batch_size=4, optim="adafactor", **default_args)

```

Combined with other approaches (gradient accumulation, gradient checkpointing, and mixed precision training)

you can notice up to 3x improvement while maintaining the throughput! However, as mentioned before, the convergence of

Adafactor can be worse than Adam.

### 8-bit Adam

Instead of aggregating optimizer states like Adafactor, 8-bit Adam keeps the full state and quantizes it. Quantization

means that it stores the state with lower precision and dequantizes it only for the optimization. This is similar to the

idea behind mixed precision training.

To use `adamw_bnb_8bit`, you simply need to set `optim="adamw_bnb_8bit"` in [`TrainingArguments`]:

```py

training_args = TrainingArguments(per_device_train_batch_size=4, optim="adamw_bnb_8bit", **default_args)

```

However, we can also use a third-party implementation of the 8-bit optimizer for demonstration purposes to see how that can be integrated.

First, follow the installation guide in the GitHub [repo](https://github.com/TimDettmers/bitsandbytes) to install the `bitsandbytes` library

that implements the 8-bit Adam optimizer.

Next you need to initialize the optimizer. This involves two steps:

* First, group the model's parameters into two groups - one where weight decay should be applied, and the other one where it should not. Usually, biases and layer norm parameters are not weight decayed.

* Then do some argument housekeeping to use the same parameters as the previously used AdamW optimizer.

```py

import bitsandbytes as bnb

from torch import nn

from transformers.trainer_pt_utils import get_parameter_names

training_args = TrainingArguments(per_device_train_batch_size=4, **default_args)

decay_parameters = get_parameter_names(model, [nn.LayerNorm])

decay_parameters = [name for name in decay_parameters if "bias" not in name]

optimizer_grouped_parameters = [

{

"params": [p for n, p in model.named_parameters() if n in decay_parameters],

"weight_decay": training_args.weight_decay,

},

{

"params": [p for n, p in model.named_parameters() if n not in decay_parameters],

"weight_decay": 0.0,

},

]

optimizer_kwargs = {

"betas": (training_args.adam_beta1, training_args.adam_beta2),

"eps": training_args.adam_epsilon,

}

optimizer_kwargs["lr"] = training_args.learning_rate

adam_bnb_optim = bnb.optim.Adam8bit(

optimizer_grouped_parameters,

betas=(training_args.adam_beta1, training_args.adam_beta2),

eps=training_args.adam_epsilon,

lr=training_args.learning_rate,

)

```

Finally, pass the custom optimizer as an argument to the `Trainer`:

```py

trainer = Trainer(model=model, args=training_args, train_dataset=ds, optimizers=(adam_bnb_optim, None))

```

Combined with other approaches (gradient accumulation, gradient checkpointing, and mixed precision training),

you can expect to get about a 3x memory improvement and even slightly higher throughput as using Adafactor.

### multi_tensor

pytorch-nightly introduced `torch.optim._multi_tensor` which should significantly speed up the optimizers for situations

with lots of small feature tensors. It should eventually become the default, but if you want to experiment with it sooner, take a look at this GitHub [issue](https://github.com/huggingface/transformers/issues/9965).

## Data preloading

One of the important requirements to reach great training speed is the ability to feed the GPU at the maximum speed it

can handle. By default, everything happens in the main process, and it might not be able to read the data from disk fast

enough, and thus create a bottleneck, leading to GPU under-utilization. Configure the following arguments to reduce the bottleneck:

- `DataLoader(pin_memory=True, ...)` - ensures the data gets preloaded into the pinned memory on CPU and typically leads to much faster transfers from CPU to GPU memory.

- `DataLoader(num_workers=4, ...)` - spawn several workers to preload data faster. During training, watch the GPU utilization stats; if it's far from 100%, experiment with increasing the number of workers. Of course, the problem could be elsewhere, so many workers won't necessarily lead to better performance.

When using [`Trainer`], the corresponding [`TrainingArguments`] are: `dataloader_pin_memory` (`True` by default), and `dataloader_num_workers` (defaults to `0`).

## DeepSpeed ZeRO

DeepSpeed is an open-source deep learning optimization library that is integrated with 🤗 Transformers and 🤗 Accelerate.

It provides a wide range of features and optimizations designed to improve the efficiency and scalability of large-scale

deep learning training.

If your model fits onto a single GPU and you have enough space to fit a small batch size, you don't need to use DeepSpeed

as it'll only slow things down. However, if the model doesn't fit onto a single GPU or you can't fit a small batch, you can

leverage DeepSpeed ZeRO + CPU Offload, or NVMe Offload for much larger models. In this case, you need to separately

[install the library](main_classes/deepspeed#installation), then follow one of the guides to create a configuration file

and launch DeepSpeed:

* For an in-depth guide on DeepSpeed integration with [`Trainer`], review [the corresponding documentation](main_classes/deepspeed), specifically the

[section for a single GPU](main_classes/deepspeed#deployment-with-one-gpu). Some adjustments are required to use DeepSpeed in a notebook; please take a look at the [corresponding guide](main_classes/deepspeed#deployment-in-notebooks).

* If you prefer to use 🤗 Accelerate, refer to [🤗 Accelerate DeepSpeed guide](https://huggingface.co/docs/accelerate/en/usage_guides/deepspeed).

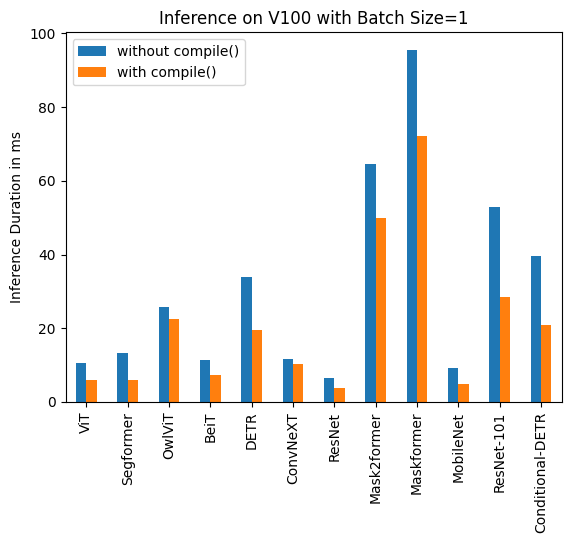

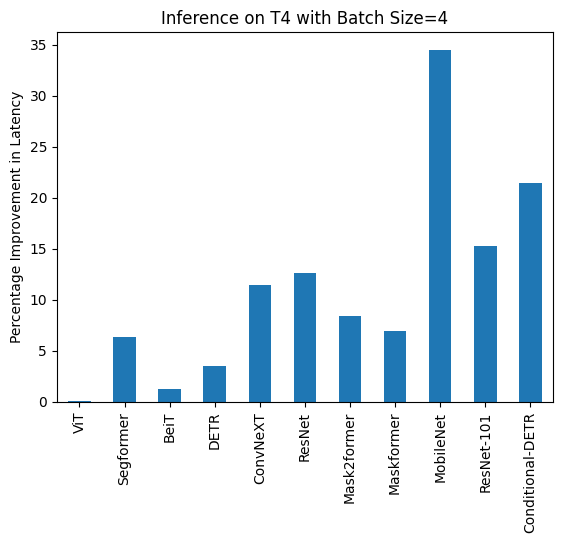

## Using torch.compile

PyTorch 2.0 introduced a new compile function that doesn't require any modification to existing PyTorch code but can

optimize your code by adding a single line of code: `model = torch.compile(model)`.

If using [`Trainer`], you only need `to` pass the `torch_compile` option in the [`TrainingArguments`]:

```python

training_args = TrainingArguments(torch_compile=True, **default_args)

```

`torch.compile` uses Python's frame evaluation API to automatically create a graph from existing PyTorch programs. After

capturing the graph, different backends can be deployed to lower the graph to an optimized engine.

You can find more details and benchmarks in [PyTorch documentation](https://pytorch.org/get-started/pytorch-2.0/).

`torch.compile` has a growing list of backends, which can be found in by calling `torchdynamo.list_backends()`, each of which with its optional dependencies.

Choose which backend to use by specifying it via `torch_compile_backend` in the [`TrainingArguments`]. Some of the most commonly used backends are:

**Debugging backends**:

* `dynamo.optimize("eager")` - Uses PyTorch to run the extracted GraphModule. This is quite useful in debugging TorchDynamo issues.

* `dynamo.optimize("aot_eager")` - Uses AotAutograd with no compiler, i.e, just using PyTorch eager for the AotAutograd's extracted forward and backward graphs. This is useful for debugging, and unlikely to give speedups.

**Training & inference backends**:

* `dynamo.optimize("inductor")` - Uses TorchInductor backend with AotAutograd and cudagraphs by leveraging codegened Triton kernels [Read more](https://dev-discuss.pytorch.org/t/torchinductor-a-pytorch-native-compiler-with-define-by-run-ir-and-symbolic-shapes/747)

* `dynamo.optimize("nvfuser")` - nvFuser with TorchScript. [Read more](https://dev-discuss.pytorch.org/t/tracing-with-primitives-update-1-nvfuser-and-its-primitives/593)

* `dynamo.optimize("aot_nvfuser")` - nvFuser with AotAutograd. [Read more](https://dev-discuss.pytorch.org/t/tracing-with-primitives-update-1-nvfuser-and-its-primitives/593)

* `dynamo.optimize("aot_cudagraphs")` - cudagraphs with AotAutograd. [Read more](https://github.com/pytorch/torchdynamo/pull/757)

**Inference-only backend**s:

* `dynamo.optimize("ofi")` - Uses Torchscript optimize_for_inference. [Read more](https://pytorch.org/docs/stable/generated/torch.jit.optimize_for_inference.html)

* `dynamo.optimize("fx2trt")` - Uses NVIDIA TensorRT for inference optimizations. [Read more](https://pytorch.org/TensorRT/tutorials/getting_started_with_fx_path.html)

* `dynamo.optimize("onnxrt")` - Uses ONNXRT for inference on CPU/GPU. [Read more](https://onnxruntime.ai/)

* `dynamo.optimize("ipex")` - Uses IPEX for inference on CPU. [Read more](https://github.com/intel/intel-extension-for-pytorch)

For an example of using `torch.compile` with 🤗 Transformers, check out this [blog post on fine-tuning a BERT model for Text Classification using the newest PyTorch 2.0 features](https://www.philschmid.de/getting-started-pytorch-2-0-transformers)

## Using 🤗 Accelerate

With [🤗 Accelerate](https://huggingface.co/docs/accelerate/index) you can use the above methods while gaining full

control over the training loop and can essentially write the loop in pure PyTorch with some minor modifications.

Suppose you have combined the methods in the [`TrainingArguments`] like so:

```py

training_args = TrainingArguments(

per_device_train_batch_size=1,

gradient_accumulation_steps=4,

gradient_checkpointing=True,

fp16=True,

**default_args,

)

```

The full example training loop with 🤗 Accelerate is only a handful of lines of code long:

```py

from accelerate import Accelerator

from torch.utils.data.dataloader import DataLoader

dataloader = DataLoader(ds, batch_size=training_args.per_device_train_batch_size)

if training_args.gradient_checkpointing:

model.gradient_checkpointing_enable()

accelerator = Accelerator(fp16=training_args.fp16)

model, optimizer, dataloader = accelerator.prepare(model, adam_bnb_optim, dataloader)

model.train()

for step, batch in enumerate(dataloader, start=1):

loss = model(**batch).loss

loss = loss / training_args.gradient_accumulation_steps

accelerator.backward(loss)

if step % training_args.gradient_accumulation_steps == 0:

optimizer.step()

optimizer.zero_grad()

```

First we wrap the dataset in a [`DataLoader`](https://pytorch.org/docs/stable/data.html#torch.utils.data.DataLoader).

Then we can enable gradient checkpointing by calling the model's [`~PreTrainedModel.gradient_checkpointing_enable`] method.

When we initialize the [`Accelerator`](https://huggingface.co/docs/accelerate/package_reference/accelerator#accelerate.Accelerator)

we can specify if we want to use mixed precision training and it will take care of it for us in the [`prepare`] call.

During the [`prepare`](https://huggingface.co/docs/accelerate/package_reference/accelerator#accelerate.Accelerator.prepare)

call the dataloader will also be distributed across workers should we use multiple GPUs. We use the same [8-bit optimizer](#8-bit-adam) from the earlier example.

Finally, we can add the main training loop. Note that the `backward` call is handled by 🤗 Accelerate. We can also see

how gradient accumulation works: we normalize the loss, so we get the average at the end of accumulation and once we have

enough steps we run the optimization.

Implementing these optimization techniques with 🤗 Accelerate only takes a handful of lines of code and comes with the

benefit of more flexibility in the training loop. For a full documentation of all features have a look at the

[Accelerate documentation](https://huggingface.co/docs/accelerate/index).

## Efficient Software Prebuilds

PyTorch's [pip and conda builds](https://pytorch.org/get-started/locally/#start-locally) come prebuilt with the cuda toolkit

which is enough to run PyTorch, but it is insufficient if you need to build cuda extensions.

At times, additional efforts may be required to pre-build some components. For instance, if you're using libraries like `apex` that

don't come pre-compiled. In other situations figuring out how to install the right cuda toolkit system-wide can be complicated.

To address these scenarios PyTorch and NVIDIA released a new version of NGC docker container which already comes with

everything prebuilt. You just need to install your programs on it, and it will run out of the box.

This approach is also useful if you want to tweak the pytorch source and/or make a new customized build.

To find the docker image version you want start [with PyTorch release notes](https://docs.nvidia.com/deeplearning/frameworks/pytorch-release-notes/),

choose one of the latest monthly releases. Go into the release's notes for the desired release, check that the environment's

components are matching your needs (including NVIDIA Driver requirements!) and then at the very top of that document go

to the corresponding NGC page. If for some reason you get lost, here is [the index of all PyTorch NGC images](https://ngc.nvidia.com/catalog/containers/nvidia:pytorch).

Next follow the instructions to download and deploy the docker image.

## Mixture of Experts

Some recent papers reported a 4-5x training speedup and a faster inference by integrating

Mixture of Experts (MoE) into the Transformer models.

Since it has been discovered that more parameters lead to better performance, this technique allows to increase the

number of parameters by an order of magnitude without increasing training costs.

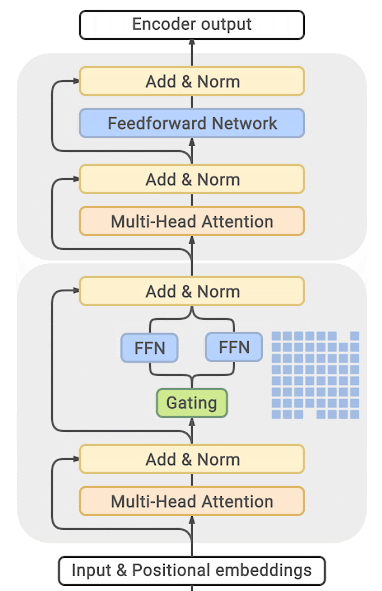

In this approach every other FFN layer is replaced with a MoE Layer which consists of many experts, with a gated function

that trains each expert in a balanced way depending on the input token's position in a sequence.

(source: [GLAM](https://ai.googleblog.com/2021/12/more-efficient-in-context-learning-with.html))

You can find exhaustive details and comparison tables in the papers listed at the end of this section.

The main drawback of this approach is that it requires staggering amounts of GPU memory - almost an order of magnitude

larger than its dense equivalent. Various distillation and approaches are proposed to how to overcome the much higher memory requirements.

There is direct trade-off though, you can use just a few experts with a 2-3x smaller base model instead of dozens or

hundreds experts leading to a 5x smaller model and thus increase the training speed moderately while increasing the

memory requirements moderately as well.

Most related papers and implementations are built around Tensorflow/TPUs:

- [GShard: Scaling Giant Models with Conditional Computation and Automatic Sharding](https://arxiv.org/abs/2006.16668)

- [Switch Transformers: Scaling to Trillion Parameter Models with Simple and Efficient Sparsity](https://arxiv.org/abs/2101.03961)

- [GLaM: Generalist Language Model (GLaM)](https://ai.googleblog.com/2021/12/more-efficient-in-context-learning-with.html)

And for Pytorch DeepSpeed has built one as well: [DeepSpeed-MoE: Advancing Mixture-of-Experts Inference and Training to Power Next-Generation AI Scale](https://arxiv.org/abs/2201.05596), [Mixture of Experts](https://www.deepspeed.ai/tutorials/mixture-of-experts/) - blog posts: [1](https://www.microsoft.com/en-us/research/blog/deepspeed-powers-8x-larger-moe-model-training-with-high-performance/), [2](https://www.microsoft.com/en-us/research/publication/scalable-and-efficient-moe-training-for-multitask-multilingual-models/) and specific deployment with large transformer-based natural language generation models: [blog post](https://www.deepspeed.ai/2021/12/09/deepspeed-moe-nlg.html), [Megatron-Deepspeed branch](https://github.com/microsoft/Megatron-DeepSpeed/tree/moe-training).

## Using PyTorch native attention and Flash Attention

PyTorch 2.0 released a native [`torch.nn.functional.scaled_dot_product_attention`](https://pytorch.org/docs/master/generated/torch.nn.functional.scaled_dot_product_attention.html) (SDPA),

that allows using fused GPU kernels such as [memory-efficient attention](https://arxiv.org/abs/2112.05682) and [flash attention](https://arxiv.org/abs/2205.14135).

After installing the [`optimum`](https://github.com/huggingface/optimum) package, the relevant internal modules can be

replaced to use PyTorch's native attention with:

```python

model = model.to_bettertransformer()

```

Once converted, train the model as usual.

<Tip warning={true}>

The PyTorch-native `scaled_dot_product_attention` operator can only dispatch to Flash Attention if no `attention_mask` is provided.

By default, in training mode, the BetterTransformer integration **drops the mask support and can only be used for training that does not require a padding mask for batched training**. This is the case, for example, during masked language modeling or causal language modeling. BetterTransformer is not suited for fine-tuning models on tasks that require a padding mask.

</Tip>

Check out this [blogpost](https://pytorch.org/blog/out-of-the-box-acceleration/) to learn more about acceleration and memory-savings with SDPA.

| 0

|

hf_public_repos/transformers/docs/source

|

hf_public_repos/transformers/docs/source/en/autoclass_tutorial.md

|

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

specific language governing permissions and limitations under the License.

⚠️ Note that this file is in Markdown but contain specific syntax for our doc-builder (similar to MDX) that may not be

rendered properly in your Markdown viewer.

-->

# Load pretrained instances with an AutoClass

With so many different Transformer architectures, it can be challenging to create one for your checkpoint. As a part of 🤗 Transformers core philosophy to make the library easy, simple and flexible to use, an `AutoClass` automatically infers and loads the correct architecture from a given checkpoint. The `from_pretrained()` method lets you quickly load a pretrained model for any architecture so you don't have to devote time and resources to train a model from scratch. Producing this type of checkpoint-agnostic code means if your code works for one checkpoint, it will work with another checkpoint - as long as it was trained for a similar task - even if the architecture is different.

<Tip>

Remember, architecture refers to the skeleton of the model and checkpoints are the weights for a given architecture. For example, [BERT](https://huggingface.co/bert-base-uncased) is an architecture, while `bert-base-uncased` is a checkpoint. Model is a general term that can mean either architecture or checkpoint.

</Tip>

In this tutorial, learn to:

* Load a pretrained tokenizer.

* Load a pretrained image processor

* Load a pretrained feature extractor.

* Load a pretrained processor.

* Load a pretrained model.

## AutoTokenizer

Nearly every NLP task begins with a tokenizer. A tokenizer converts your input into a format that can be processed by the model.

Load a tokenizer with [`AutoTokenizer.from_pretrained`]:

```py

>>> from transformers import AutoTokenizer

>>> tokenizer = AutoTokenizer.from_pretrained("bert-base-uncased")

```

Then tokenize your input as shown below:

```py

>>> sequence = "In a hole in the ground there lived a hobbit."

>>> print(tokenizer(sequence))

{'input_ids': [101, 1999, 1037, 4920, 1999, 1996, 2598, 2045, 2973, 1037, 7570, 10322, 4183, 1012, 102],

'token_type_ids': [0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0],

'attention_mask': [1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1]}

```

## AutoImageProcessor

For vision tasks, an image processor processes the image into the correct input format.

```py

>>> from transformers import AutoImageProcessor

>>> image_processor = AutoImageProcessor.from_pretrained("google/vit-base-patch16-224")

```

## AutoFeatureExtractor

For audio tasks, a feature extractor processes the audio signal the correct input format.

Load a feature extractor with [`AutoFeatureExtractor.from_pretrained`]:

```py

>>> from transformers import AutoFeatureExtractor

>>> feature_extractor = AutoFeatureExtractor.from_pretrained(

... "ehcalabres/wav2vec2-lg-xlsr-en-speech-emotion-recognition"

... )

```

## AutoProcessor

Multimodal tasks require a processor that combines two types of preprocessing tools. For example, the [LayoutLMV2](model_doc/layoutlmv2) model requires an image processor to handle images and a tokenizer to handle text; a processor combines both of them.

Load a processor with [`AutoProcessor.from_pretrained`]:

```py

>>> from transformers import AutoProcessor

>>> processor = AutoProcessor.from_pretrained("microsoft/layoutlmv2-base-uncased")

```

## AutoModel

<frameworkcontent>

<pt>

Finally, the `AutoModelFor` classes let you load a pretrained model for a given task (see [here](model_doc/auto) for a complete list of available tasks). For example, load a model for sequence classification with [`AutoModelForSequenceClassification.from_pretrained`]:

```py

>>> from transformers import AutoModelForSequenceClassification

>>> model = AutoModelForSequenceClassification.from_pretrained("distilbert-base-uncased")

```

Easily reuse the same checkpoint to load an architecture for a different task:

```py

>>> from transformers import AutoModelForTokenClassification

>>> model = AutoModelForTokenClassification.from_pretrained("distilbert-base-uncased")

```

<Tip warning={true}>

For PyTorch models, the `from_pretrained()` method uses `torch.load()` which internally uses `pickle` and is known to be insecure. In general, never load a model that could have come from an untrusted source, or that could have been tampered with. This security risk is partially mitigated for public models hosted on the Hugging Face Hub, which are [scanned for malware](https://huggingface.co/docs/hub/security-malware) at each commit. See the [Hub documentation](https://huggingface.co/docs/hub/security) for best practices like [signed commit verification](https://huggingface.co/docs/hub/security-gpg#signing-commits-with-gpg) with GPG.

TensorFlow and Flax checkpoints are not affected, and can be loaded within PyTorch architectures using the `from_tf` and `from_flax` kwargs for the `from_pretrained` method to circumvent this issue.

</Tip>

Generally, we recommend using the `AutoTokenizer` class and the `AutoModelFor` class to load pretrained instances of models. This will ensure you load the correct architecture every time. In the next [tutorial](preprocessing), learn how to use your newly loaded tokenizer, image processor, feature extractor and processor to preprocess a dataset for fine-tuning.

</pt>

<tf>

Finally, the `TFAutoModelFor` classes let you load a pretrained model for a given task (see [here](model_doc/auto) for a complete list of available tasks). For example, load a model for sequence classification with [`TFAutoModelForSequenceClassification.from_pretrained`]:

```py

>>> from transformers import TFAutoModelForSequenceClassification

>>> model = TFAutoModelForSequenceClassification.from_pretrained("distilbert-base-uncased")

```

Easily reuse the same checkpoint to load an architecture for a different task:

```py

>>> from transformers import TFAutoModelForTokenClassification

>>> model = TFAutoModelForTokenClassification.from_pretrained("distilbert-base-uncased")

```

Generally, we recommend using the `AutoTokenizer` class and the `TFAutoModelFor` class to load pretrained instances of models. This will ensure you load the correct architecture every time. In the next [tutorial](preprocessing), learn how to use your newly loaded tokenizer, image processor, feature extractor and processor to preprocess a dataset for fine-tuning.

</tf>

</frameworkcontent>

| 0

|

hf_public_repos/transformers/docs/source

|

hf_public_repos/transformers/docs/source/en/peft.md

|

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

specific language governing permissions and limitations under the License.

⚠️ Note that this file is in Markdown but contain specific syntax for our doc-builder (similar to MDX) that may not be

rendered properly in your Markdown viewer.

-->

# Load adapters with 🤗 PEFT

[[open-in-colab]]

[Parameter-Efficient Fine Tuning (PEFT)](https://huggingface.co/blog/peft) methods freeze the pretrained model parameters during fine-tuning and add a small number of trainable parameters (the adapters) on top of it. The adapters are trained to learn task-specific information. This approach has been shown to be very memory-efficient with lower compute usage while producing results comparable to a fully fine-tuned model.

Adapters trained with PEFT are also usually an order of magnitude smaller than the full model, making it convenient to share, store, and load them.

<div class="flex flex-col justify-center">

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/peft/PEFT-hub-screenshot.png"/>

<figcaption class="text-center">The adapter weights for a OPTForCausalLM model stored on the Hub are only ~6MB compared to the full size of the model weights, which can be ~700MB.</figcaption>

</div>

If you're interested in learning more about the 🤗 PEFT library, check out the [documentation](https://huggingface.co/docs/peft/index).

## Setup

Get started by installing 🤗 PEFT:

```bash

pip install peft

```

If you want to try out the brand new features, you might be interested in installing the library from source:

```bash

pip install git+https://github.com/huggingface/peft.git

```

## Supported PEFT models

🤗 Transformers natively supports some PEFT methods, meaning you can load adapter weights stored locally or on the Hub and easily run or train them with a few lines of code. The following methods are supported:

- [Low Rank Adapters](https://huggingface.co/docs/peft/conceptual_guides/lora)

- [IA3](https://huggingface.co/docs/peft/conceptual_guides/ia3)

- [AdaLoRA](https://arxiv.org/abs/2303.10512)

If you want to use other PEFT methods, such as prompt learning or prompt tuning, or about the 🤗 PEFT library in general, please refer to the [documentation](https://huggingface.co/docs/peft/index).

## Load a PEFT adapter

To load and use a PEFT adapter model from 🤗 Transformers, make sure the Hub repository or local directory contains an `adapter_config.json` file and the adapter weights, as shown in the example image above. Then you can load the PEFT adapter model using the `AutoModelFor` class. For example, to load a PEFT adapter model for causal language modeling:

1. specify the PEFT model id

2. pass it to the [`AutoModelForCausalLM`] class

```py

from transformers import AutoModelForCausalLM, AutoTokenizer

peft_model_id = "ybelkada/opt-350m-lora"

model = AutoModelForCausalLM.from_pretrained(peft_model_id)

```

<Tip>

You can load a PEFT adapter with either an `AutoModelFor` class or the base model class like `OPTForCausalLM` or `LlamaForCausalLM`.

</Tip>

You can also load a PEFT adapter by calling the `load_adapter` method:

```py

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "facebook/opt-350m"

peft_model_id = "ybelkada/opt-350m-lora"

model = AutoModelForCausalLM.from_pretrained(model_id)

model.load_adapter(peft_model_id)

```

## Load in 8bit or 4bit

The `bitsandbytes` integration supports 8bit and 4bit precision data types, which are useful for loading large models because it saves memory (see the `bitsandbytes` integration [guide](./quantization#bitsandbytes-integration) to learn more). Add the `load_in_8bit` or `load_in_4bit` parameters to [`~PreTrainedModel.from_pretrained`] and set `device_map="auto"` to effectively distribute the model to your hardware:

```py

from transformers import AutoModelForCausalLM, AutoTokenizer

peft_model_id = "ybelkada/opt-350m-lora"

model = AutoModelForCausalLM.from_pretrained(peft_model_id, device_map="auto", load_in_8bit=True)

```

## Add a new adapter

You can use [`~peft.PeftModel.add_adapter`] to add a new adapter to a model with an existing adapter as long as the new adapter is the same type as the current one. For example, if you have an existing LoRA adapter attached to a model:

```py

from transformers import AutoModelForCausalLM, OPTForCausalLM, AutoTokenizer

from peft import LoraConfig

model_id = "facebook/opt-350m"

model = AutoModelForCausalLM.from_pretrained(model_id)

lora_config = LoraConfig(

target_modules=["q_proj", "k_proj"],

init_lora_weights=False

)

model.add_adapter(lora_config, adapter_name="adapter_1")

```

To add a new adapter:

```py

# attach new adapter with same config

model.add_adapter(lora_config, adapter_name="adapter_2")

```

Now you can use [`~peft.PeftModel.set_adapter`] to set which adapter to use:

```py

# use adapter_1

model.set_adapter("adapter_1")

output = model.generate(**inputs)

print(tokenizer.decode(output_disabled[0], skip_special_tokens=True))

# use adapter_2

model.set_adapter("adapter_2")

output_enabled = model.generate(**inputs)

print(tokenizer.decode(output_enabled[0], skip_special_tokens=True))

```

## Enable and disable adapters

Once you've added an adapter to a model, you can enable or disable the adapter module. To enable the adapter module:

```py

from transformers import AutoModelForCausalLM, OPTForCausalLM, AutoTokenizer

from peft import PeftConfig

model_id = "facebook/opt-350m"

adapter_model_id = "ybelkada/opt-350m-lora"

tokenizer = AutoTokenizer.from_pretrained(model_id)

text = "Hello"

inputs = tokenizer(text, return_tensors="pt")

model = AutoModelForCausalLM.from_pretrained(model_id)

peft_config = PeftConfig.from_pretrained(adapter_model_id)

# to initiate with random weights

peft_config.init_lora_weights = False

model.add_adapter(peft_config)

model.enable_adapters()

output = model.generate(**inputs)

```

To disable the adapter module:

```py

model.disable_adapters()

output = model.generate(**inputs)

```

## Train a PEFT adapter

PEFT adapters are supported by the [`Trainer`] class so that you can train an adapter for your specific use case. It only requires adding a few more lines of code. For example, to train a LoRA adapter:

<Tip>

If you aren't familiar with fine-tuning a model with [`Trainer`], take a look at the [Fine-tune a pretrained model](training) tutorial.

</Tip>

1. Define your adapter configuration with the task type and hyperparameters (see [`~peft.LoraConfig`] for more details about what the hyperparameters do).

```py

from peft import LoraConfig

peft_config = LoraConfig(

lora_alpha=16,

lora_dropout=0.1,

r=64,

bias="none",

task_type="CAUSAL_LM",

)

```

2. Add adapter to the model.

```py

model.add_adapter(peft_config)

```

3. Now you can pass the model to [`Trainer`]!

```py

trainer = Trainer(model=model, ...)

trainer.train()

```

To save your trained adapter and load it back:

```py

model.save_pretrained(save_dir)

model = AutoModelForCausalLM.from_pretrained(save_dir)

```

## Add additional trainable layers to a PEFT adapter

You can also fine-tune additional trainable adapters on top of a model that has adapters attached by passing `modules_to_save` in your PEFT config. For example, if you want to also fine-tune the lm_head on top of a model with a LoRA adapter:

```py

from transformers import AutoModelForCausalLM, OPTForCausalLM, AutoTokenizer

from peft import LoraConfig

model_id = "facebook/opt-350m"

model = AutoModelForCausalLM.from_pretrained(model_id)

lora_config = LoraConfig(

target_modules=["q_proj", "k_proj"],

modules_to_save=["lm_head"],

)

model.add_adapter(lora_config)

```

<!--

TODO: (@younesbelkada @stevhliu)

- Link to PEFT docs for further details

- Trainer

- 8-bit / 4-bit examples ?

-->

| 0

|

hf_public_repos/transformers/docs/source

|

hf_public_repos/transformers/docs/source/en/perf_infer_cpu.md

|

<!--Copyright 2022 The HuggingFace Team. All rights reserved.

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

⚠️ Note that this file is in Markdown but contain specific syntax for our doc-builder (similar to MDX) that may not be

rendered properly in your Markdown viewer.

-->

# CPU inference

With some optimizations, it is possible to efficiently run large model inference on a CPU. One of these optimization techniques involves compiling the PyTorch code into an intermediate format for high-performance environments like C++. The other technique fuses multiple operations into one kernel to reduce the overhead of running each operation separately.

You'll learn how to use [BetterTransformer](https://pytorch.org/blog/a-better-transformer-for-fast-transformer-encoder-inference/) for faster inference, and how to convert your PyTorch code to [TorchScript](https://pytorch.org/tutorials/beginner/Intro_to_TorchScript_tutorial.html). If you're using an Intel CPU, you can also use [graph optimizations](https://intel.github.io/intel-extension-for-pytorch/cpu/latest/tutorials/features.html#graph-optimization) from [Intel Extension for PyTorch](https://intel.github.io/intel-extension-for-pytorch/cpu/latest/index.html) to boost inference speed even more. Finally, learn how to use 🤗 Optimum to accelerate inference with ONNX Runtime or OpenVINO (if you're using an Intel CPU).

## BetterTransformer

BetterTransformer accelerates inference with its fastpath (native PyTorch specialized implementation of Transformer functions) execution. The two optimizations in the fastpath execution are:

1. fusion, which combines multiple sequential operations into a single "kernel" to reduce the number of computation steps

2. skipping the inherent sparsity of padding tokens to avoid unnecessary computation with nested tensors

BetterTransformer also converts all attention operations to use the more memory-efficient [scaled dot product attention](https://pytorch.org/docs/master/generated/torch.nn.functional.scaled_dot_product_attention).

<Tip>

BetterTransformer is not supported for all models. Check this [list](https://huggingface.co/docs/optimum/bettertransformer/overview#supported-models) to see if a model supports BetterTransformer.

</Tip>

Before you start, make sure you have 🤗 Optimum [installed](https://huggingface.co/docs/optimum/installation).

Enable BetterTransformer with the [`PreTrainedModel.to_bettertransformer`] method:

```py

from transformers import AutoModelForCausalLM

model = AutoModelForCausalLM.from_pretrained("bigcode/starcoder")

model.to_bettertransformer()

```

## TorchScript

TorchScript is an intermediate PyTorch model representation that can be run in production environments where performance is important. You can train a model in PyTorch and then export it to TorchScript to free the model from Python performance constraints. PyTorch [traces](https://pytorch.org/docs/stable/generated/torch.jit.trace.html) a model to return a [`ScriptFunction`] that is optimized with just-in-time compilation (JIT). Compared to the default eager mode, JIT mode in PyTorch typically yields better performance for inference using optimization techniques like operator fusion.

For a gentle introduction to TorchScript, see the [Introduction to PyTorch TorchScript](https://pytorch.org/tutorials/beginner/Intro_to_TorchScript_tutorial.html) tutorial.

With the [`Trainer`] class, you can enable JIT mode for CPU inference by setting the `--jit_mode_eval` flag:

```bash

python run_qa.py \

--model_name_or_path csarron/bert-base-uncased-squad-v1 \

--dataset_name squad \

--do_eval \

--max_seq_length 384 \

--doc_stride 128 \

--output_dir /tmp/ \

--no_cuda \

--jit_mode_eval

```

<Tip warning={true}>

For PyTorch >= 1.14.0, JIT-mode could benefit any model for prediction and evaluaion since the dict input is supported in `jit.trace`.

For PyTorch < 1.14.0, JIT-mode could benefit a model if its forward parameter order matches the tuple input order in `jit.trace`, such as a question-answering model. If the forward parameter order does not match the tuple input order in `jit.trace`, like a text classification model, `jit.trace` will fail and we are capturing this with the exception here to make it fallback. Logging is used to notify users.

</Tip>

## IPEX graph optimization

Intel® Extension for PyTorch (IPEX) provides further optimizations in JIT mode for Intel CPUs, and we recommend combining it with TorchScript for even faster performance. The IPEX [graph optimization](https://intel.github.io/intel-extension-for-pytorch/cpu/latest/tutorials/features/graph_optimization.html) fuses operations like Multi-head attention, Concat Linear, Linear + Add, Linear + Gelu, Add + LayerNorm, and more.

To take advantage of these graph optimizations, make sure you have IPEX [installed](https://intel.github.io/intel-extension-for-pytorch/cpu/latest/tutorials/installation.html):

```bash

pip install intel_extension_for_pytorch

```

Set the `--use_ipex` and `--jit_mode_eval` flags in the [`Trainer`] class to enable JIT mode with the graph optimizations:

```bash

python run_qa.py \

--model_name_or_path csarron/bert-base-uncased-squad-v1 \

--dataset_name squad \

--do_eval \

--max_seq_length 384 \

--doc_stride 128 \

--output_dir /tmp/ \

--no_cuda \

--use_ipex \

--jit_mode_eval

```

## 🤗 Optimum

<Tip>

Learn more details about using ORT with 🤗 Optimum in the [Optimum Inference with ONNX Runtime](https://huggingface.co/docs/optimum/onnxruntime/usage_guides/models) guide. This section only provides a brief and simple example.

</Tip>

ONNX Runtime (ORT) is a model accelerator that runs inference on CPUs by default. ORT is supported by 🤗 Optimum which can be used in 🤗 Transformers, without making too many changes to your code. You only need to replace the 🤗 Transformers `AutoClass` with its equivalent [`~optimum.onnxruntime.ORTModel`] for the task you're solving, and load a checkpoint in the ONNX format.

For example, if you're running inference on a question answering task, load the [optimum/roberta-base-squad2](https://huggingface.co/optimum/roberta-base-squad2) checkpoint which contains a `model.onnx` file:

```py

from transformers import AutoTokenizer, pipeline

from optimum.onnxruntime import ORTModelForQuestionAnswering

model = ORTModelForQuestionAnswering.from_pretrained("optimum/roberta-base-squad2")

tokenizer = AutoTokenizer.from_pretrained("deepset/roberta-base-squad2")

onnx_qa = pipeline("question-answering", model=model, tokenizer=tokenizer)

question = "What's my name?"

context = "My name is Philipp and I live in Nuremberg."

pred = onnx_qa(question, context)

```

If you have an Intel CPU, take a look at 🤗 [Optimum Intel](https://huggingface.co/docs/optimum/intel/index) which supports a variety of compression techniques (quantization, pruning, knowledge distillation) and tools for converting models to the [OpenVINO](https://huggingface.co/docs/optimum/intel/inference) format for higher performance inference.

| 0

|

hf_public_repos/transformers/docs/source

|

hf_public_repos/transformers/docs/source/en/bertology.md

|

<!--Copyright 2020 The HuggingFace Team. All rights reserved.

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

specific language governing permissions and limitations under the License.

⚠️ Note that this file is in Markdown but contain specific syntax for our doc-builder (similar to MDX) that may not be

rendered properly in your Markdown viewer.

-->

# BERTology

There is a growing field of study concerned with investigating the inner working of large-scale transformers like BERT

(that some call "BERTology"). Some good examples of this field are:

- BERT Rediscovers the Classical NLP Pipeline by Ian Tenney, Dipanjan Das, Ellie Pavlick:

https://arxiv.org/abs/1905.05950

- Are Sixteen Heads Really Better than One? by Paul Michel, Omer Levy, Graham Neubig: https://arxiv.org/abs/1905.10650

- What Does BERT Look At? An Analysis of BERT's Attention by Kevin Clark, Urvashi Khandelwal, Omer Levy, Christopher D.

Manning: https://arxiv.org/abs/1906.04341

- CAT-probing: A Metric-based Approach to Interpret How Pre-trained Models for Programming Language Attend Code Structure: https://arxiv.org/abs/2210.04633

In order to help this new field develop, we have included a few additional features in the BERT/GPT/GPT-2 models to

help people access the inner representations, mainly adapted from the great work of Paul Michel

(https://arxiv.org/abs/1905.10650):

- accessing all the hidden-states of BERT/GPT/GPT-2,

- accessing all the attention weights for each head of BERT/GPT/GPT-2,

- retrieving heads output values and gradients to be able to compute head importance score and prune head as explained

in https://arxiv.org/abs/1905.10650.

To help you understand and use these features, we have added a specific example script: [bertology.py](https://github.com/huggingface/transformers/tree/main/examples/research_projects/bertology/run_bertology.py) while extract information and prune a model pre-trained on

GLUE.

| 0

|

hf_public_repos/transformers/docs/source

|

hf_public_repos/transformers/docs/source/en/model_summary.md

|

<!--Copyright 2020 The HuggingFace Team. All rights reserved.

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

specific language governing permissions and limitations under the License.

⚠️ Note that this file is in Markdown but contain specific syntax for our doc-builder (similar to MDX) that may not be

rendered properly in your Markdown viewer.

-->

# The Transformer model family

Since its introduction in 2017, the [original Transformer](https://arxiv.org/abs/1706.03762) model has inspired many new and exciting models that extend beyond natural language processing (NLP) tasks. There are models for [predicting the folded structure of proteins](https://huggingface.co/blog/deep-learning-with-proteins), [training a cheetah to run](https://huggingface.co/blog/train-decision-transformers), and [time series forecasting](https://huggingface.co/blog/time-series-transformers). With so many Transformer variants available, it can be easy to miss the bigger picture. What all these models have in common is they're based on the original Transformer architecture. Some models only use the encoder or decoder, while others use both. This provides a useful taxonomy to categorize and examine the high-level differences within models in the Transformer family, and it'll help you understand Transformers you haven't encountered before.

If you aren't familiar with the original Transformer model or need a refresher, check out the [How do Transformers work](https://huggingface.co/course/chapter1/4?fw=pt) chapter from the Hugging Face course.

<div align="center">

<iframe width="560" height="315" src="https://www.youtube.com/embed/H39Z_720T5s" title="YouTube video player"

frameborder="0" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope;

picture-in-picture" allowfullscreen></iframe>

</div>

## Computer vision

<iframe style="border: 1px solid rgba(0, 0, 0, 0.1);" width="1000" height="450" src="https://www.figma.com/embed?embed_host=share&url=https%3A%2F%2Fwww.figma.com%2Ffile%2FacQBpeFBVvrDUlzFlkejoz%2FModelscape-timeline%3Fnode-id%3D0%253A1%26t%3Dm0zJ7m2BQ9oe0WtO-1" allowfullscreen></iframe>

### Convolutional network

For a long time, convolutional networks (CNNs) were the dominant paradigm for computer vision tasks until the [Vision Transformer](https://arxiv.org/abs/2010.11929) demonstrated its scalability and efficiency. Even then, some of a CNN's best qualities, like translation invariance, are so powerful (especially for certain tasks) that some Transformers incorporate convolutions in their architecture. [ConvNeXt](model_doc/convnext) flipped this exchange around and incorporated design choices from Transformers to modernize a CNN. For example, ConvNeXt uses non-overlapping sliding windows to patchify an image and a larger kernel to increase its global receptive field. ConvNeXt also makes several layer design choices to be more memory-efficient and improve performance, so it competes favorably with Transformers!

### Encoder[[cv-encoder]]

The [Vision Transformer (ViT)](model_doc/vit) opened the door to computer vision tasks without convolutions. ViT uses a standard Transformer encoder, but its main breakthrough was how it treated an image. It splits an image into fixed-size patches and uses them to create an embedding, just like how a sentence is split into tokens. ViT capitalized on the Transformers' efficient architecture to demonstrate competitive results with the CNNs at the time while requiring fewer resources to train. ViT was soon followed by other vision models that could also handle dense vision tasks like segmentation as well as detection.

One of these models is the [Swin](model_doc/swin) Transformer. It builds hierarchical feature maps (like a CNN 👀 and unlike ViT) from smaller-sized patches and merges them with neighboring patches in deeper layers. Attention is only computed within a local window, and the window is shifted between attention layers to create connections to help the model learn better. Since the Swin Transformer can produce hierarchical feature maps, it is a good candidate for dense prediction tasks like segmentation and detection. The [SegFormer](model_doc/segformer) also uses a Transformer encoder to build hierarchical feature maps, but it adds a simple multilayer perceptron (MLP) decoder on top to combine all the feature maps and make a prediction.

Other vision models, like BeIT and ViTMAE, drew inspiration from BERT's pretraining objective. [BeIT](model_doc/beit) is pretrained by *masked image modeling (MIM)*; the image patches are randomly masked, and the image is also tokenized into visual tokens. BeIT is trained to predict the visual tokens corresponding to the masked patches. [ViTMAE](model_doc/vitmae) has a similar pretraining objective, except it must predict the pixels instead of visual tokens. What's unusual is 75% of the image patches are masked! The decoder reconstructs the pixels from the masked tokens and encoded patches. After pretraining, the decoder is thrown away, and the encoder is ready to be used in downstream tasks.

### Decoder[[cv-decoder]]

Decoder-only vision models are rare because most vision models rely on an encoder to learn an image representation. But for use cases like image generation, the decoder is a natural fit, as we've seen from text generation models like GPT-2. [ImageGPT](model_doc/imagegpt) uses the same architecture as GPT-2, but instead of predicting the next token in a sequence, it predicts the next pixel in an image. In addition to image generation, ImageGPT could also be finetuned for image classification.

### Encoder-decoder[[cv-encoder-decoder]]

Vision models commonly use an encoder (also known as a backbone) to extract important image features before passing them to a Transformer decoder. [DETR](model_doc/detr) has a pretrained backbone, but it also uses the complete Transformer encoder-decoder architecture for object detection. The encoder learns image representations and combines them with object queries (each object query is a learned embedding that focuses on a region or object in an image) in the decoder. DETR predicts the bounding box coordinates and class label for each object query.

## Natural language processing

<iframe style="border: 1px solid rgba(0, 0, 0, 0.1);" width="1000" height="450" src="https://www.figma.com/embed?embed_host=share&url=https%3A%2F%2Fwww.figma.com%2Ffile%2FUhbQAZDlpYW5XEpdFy6GoG%2Fnlp-model-timeline%3Fnode-id%3D0%253A1%26t%3D4mZMr4r1vDEYGJ50-1" allowfullscreen></iframe>

### Encoder[[nlp-encoder]]

[BERT](model_doc/bert) is an encoder-only Transformer that randomly masks certain tokens in the input to avoid seeing other tokens, which would allow it to "cheat". The pretraining objective is to predict the masked token based on the context. This allows BERT to fully use the left and right contexts to help it learn a deeper and richer representation of the inputs. However, there was still room for improvement in BERT's pretraining strategy. [RoBERTa](model_doc/roberta) improved upon this by introducing a new pretraining recipe that includes training for longer and on larger batches, randomly masking tokens at each epoch instead of just once during preprocessing, and removing the next-sentence prediction objective.

The dominant strategy to improve performance is to increase the model size. But training large models is computationally expensive. One way to reduce computational costs is using a smaller model like [DistilBERT](model_doc/distilbert). DistilBERT uses [knowledge distillation](https://arxiv.org/abs/1503.02531) - a compression technique - to create a smaller version of BERT while keeping nearly all of its language understanding capabilities.

However, most Transformer models continued to trend towards more parameters, leading to new models focused on improving training efficiency. [ALBERT](model_doc/albert) reduces memory consumption by lowering the number of parameters in two ways: separating the larger vocabulary embedding into two smaller matrices and allowing layers to share parameters. [DeBERTa](model_doc/deberta) added a disentangled attention mechanism where the word and its position are separately encoded in two vectors. The attention is computed from these separate vectors instead of a single vector containing the word and position embeddings. [Longformer](model_doc/longformer) also focused on making attention more efficient, especially for processing documents with longer sequence lengths. It uses a combination of local windowed attention (attention only calculated from fixed window size around each token) and global attention (only for specific task tokens like `[CLS]` for classification) to create a sparse attention matrix instead of a full attention matrix.

### Decoder[[nlp-decoder]]

[GPT-2](model_doc/gpt2) is a decoder-only Transformer that predicts the next word in the sequence. It masks tokens to the right so the model can't "cheat" by looking ahead. By pretraining on a massive body of text, GPT-2 became really good at generating text, even if the text is only sometimes accurate or true. But GPT-2 lacked the bidirectional context from BERT's pretraining, which made it unsuitable for certain tasks. [XLNET](model_doc/xlnet) combines the best of both BERT and GPT-2's pretraining objectives by using a permutation language modeling objective (PLM) that allows it to learn bidirectionally.

After GPT-2, language models grew even bigger and are now known as *large language models (LLMs)*. LLMs demonstrate few- or even zero-shot learning if pretrained on a large enough dataset. [GPT-J](model_doc/gptj) is an LLM with 6B parameters and trained on 400B tokens. GPT-J was followed by [OPT](model_doc/opt), a family of decoder-only models, the largest of which is 175B and trained on 180B tokens. [BLOOM](model_doc/bloom) was released around the same time, and the largest model in the family has 176B parameters and is trained on 366B tokens in 46 languages and 13 programming languages.

### Encoder-decoder[[nlp-encoder-decoder]]