text stringlengths 27 775k |

|---|

# -*- coding:utf-8 -*-

"""

weibo serializer

"""

from rest_framework import serializers

from account.models import User

from weibo.models.weibo import Image, Weibo, Comment

class ImageModelSerializer(serializers.ModelSerializer):

"""

Image Model Serializer

"""

user = serializers.SlugRelatedField(requ... |

<?php

/*

* This file is part of the lxpgw/logger.

*

* (c) lichunqiang <light-li@hotmail.com>

*

* This source file is subject to the MIT license that is bundled

* with this source code in the file LICENSE.

*/

namespace lxpgw\logger;

use Yii;

use yii\log\Logger;

use yii\log\Target;

/**

* ~~~

* 'log' => [

* ... |

require 'frap/version'

require 'frap/create_app'

require 'frap/create_resource'

require 'frap/commands/generate'

require 'frap/generators/config'

require 'frap/generators/flutter_config'

require 'frap/generators/flutter_resource'

require 'thor'

require 'yaml'

module Frap

class CLI < Thor

desc 'version', 'Display... |

package models

import (

"time"

"github.com/omnibuildplatform/omni-manager/util"

)

const (

ImageStatusStart string = "created"

ImageStatusDownloading string = "downloading"

ImageStatusDone string = "succeed"

ImageStatusFailed string = "failed"

)

type BaseImagesKickStart struct {

Label ... |

package com.android.iam.retrofittutorial;

import java.util.List;

import retrofit.Callback;

import retrofit.http.Field;

import retrofit.http.FormUrlEncoded;

import retrofit.http.POST;

public interface ApiInterface {

@FormUrlEncoded // annotation used in POST type requests

@POST("/retrofit/register.php") ... |

/*

* Copyright 2016 ksilin

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in wr... |

package com.nepal.adversify.domain.model;

public class LocationModel {

public int id;

public double lat;

public double lon;

}

|

/*

* Licensed to the Apache Software Foundation (ASF) under one or more

* contributor license agreements. See the NOTICE file distributed with

* this work for additional information regarding copyright ownership.

* The ASF licenses this file to You under the Apache License, Version 2.0

* (the "License"); you may ... |

import 'dart:async';

import '../data/data.dart';

import 'dart:collection';

import 'search_result.dart';

import 'package:flutter/material.dart';

import 'package:flutter/rendering.dart';

class SelectorPage extends StatefulWidget {

SelectorPage({Key key, this.title}) : super(key: key);

final String title;

@overrid... |

package org.pyx.base;

import com.google.common.base.CaseFormat;

import org.junit.Test;

import org.junit.runner.RunWith;

import org.springframework.boot.test.context.SpringBootTest;

import org.springframework.test.context.junit4.SpringRunner;

import static org.junit.Assert.assertEquals;

/**

* Created by pyx on 2018/... |

#[derive(Debug, PartialEq, PartialOrd, Ord, Eq, Clone, Copy)]

pub struct SynapseDelay(u8);

impl SynapseDelay {

pub fn new(delay: u8) -> Self {

assert!(delay > 0);

Self(delay)

}

pub fn get(self) -> u8 {

self.0

}

}

|

#include "Graphics.Layouts.h"

#include "Data.JSON.h"

#include <algorithm>

#include "Graphics.Types.h"

#include "Common.Properties.h"

#include "Graphics.Properties.h"

namespace graphics::Layout { void Draw(SDL_Renderer*, const nlohmann::json&); }

namespace graphics::Layouts

{

static std::map<std::string, nlohmann::json... |

package com.epam.drill.admin.api.agent

import com.epam.drill.admin.api.plugin.*

import kotlinx.serialization.Serializable

enum class AgentType(val notation: String) {

JAVA("Java"),

DOTNET(".NET"),

NODEJS("Node.js")

}

enum class AgentStatus {

NOT_REGISTERED,

ONLINE,

OFFLINE,

BUSY;

}

@Seri... |

<?php

namespace afrizalmy\BWI;

use afrizalmy\BWI\trait\KumpulanKata;

class BadWord

{

use KumpulanKata;

/**

* Menggunakan jarak Levenshtein distance untuk menghitung kemiripan kata

*

* @param array $wordCollect

* @param string $word

* @return boolean

*/

public function Leven... |

/* eslint-env jest */

import React from 'react';

import { render, cleanup } from 'ink-testing-library';

import webpack from 'webpack';

import View from '../View';

jest.mock('date-fns', () => ({

format: (_builtAt: number, formatString: string) =>

formatString.replace('HH:mm:ss', '07:58:40').replace(/'/g, ''),

}))... |

package com.vanniktech.rxriddles.solutions

import io.reactivex.rxjava3.core.Observable

object Riddle23Solution {

fun solve(source: Observable<Any>)

= source.cast(String::class.java)

}

|

package pl.lodz.p.michalsosn.rest.support;

import pl.lodz.p.michalsosn.domain.sound.filter.Filter;

import java.io.IOException;

import java.util.stream.IntStream;

import static pl.lodz.p.michalsosn.entities.ResultEntity.SoundFilterResultEntity;

/**

* @author Michał Sośnicki

*/

public class SoundFilterChartPack {

... |

package java2bash.java2bash.commands.yum;

import java.util.ArrayList;

import java.util.List;

import java2bash.java2bash.commands.Snippet;

import java2bash.java2bash.commands.conditions.IfCondition;

import java2bash.java2bash.common.BashString;

public class YumIsInstalled implements Snippet {

/*

* Constants

*/

... |

<!-- Create SubCategory -->

<div class="reveal reveal-update" id="createitem-<?php echo e($id); ?>" data-reveal data-close-on-click="false"

data-close-on-esc="false">

<div class="notification callout"></div>

<h3>Create Subcategory</h3>

<h2> for the <?php echo e($name); ?> Category</h2>

<fo... |

package net.gesekus.newsaswebapp.domainmodel.inmemoryimpl

import net.gesekus.newsaswebapp.domainmodel._

import scala.util.Success

import scala.util.Try

import scala.util.Failure

object ChatRepository extends ChatRepository {

var chats: Map[ChatId, Chat] = Map()

def find(chatId: ChatId): Try[Chat] = {

chats... |

using Codelyzer.Analysis.Common;

using Codelyzer.Analysis.Model;

using Microsoft.CodeAnalysis.CSharp;

using Microsoft.CodeAnalysis.CSharp.Syntax;

namespace Codelyzer.Analysis.CSharp.Handlers

{

public class StructDeclarationHandler : UstNodeHandler

{

private StructDeclaration StructDeclaration { get => ... |

import logging

import threading

import queue

from performance.driver.core.config import Configurable

from performance.driver.core.eventbus import EventBusSubscriber

from performance.driver.core.events import TeardownEvent

from performance.driver.core import fsm

class State(fsm.State):

"""

The policy state provid... |

<?php

declare(strict_types=1);

namespace common\validators;

/**

* @inheritdoc

*

* @author Залатов Александр <zalatov.ao@gmail.com>

*/

class EachValidator extends \yii\validators\EachValidator {

const ATTR_RULE = 'rule';

}

|

#include "QuadTree.h"

QuadTree::QuadTree(glm::vec3 size)

{

size /= 2.0f;

_root = new QuadTreeNode(AABB(-size, size));

}

QuadTree::~QuadTree()

{

delete _root;

}

void QuadTree::addObject(RenderObject* object)

{

_root->addObject(object);

}

void QuadTree::addLight(Light* light)

{

_root->addLight... |

package br.com.zup.beta.microServico.repository;

import br.com.zup.beta.microServico.model.bloqueio.BloqueioCartao;

import org.springframework.data.jpa.repository.JpaRepository;

import org.springframework.stereotype.Repository;

@Repository

public interface BloqueioRepository extends JpaRepository<BloqueioCartao, Lon... |

using GraphQL.Types;

namespace Bench.GraphQLDotNet.Types

{

public class CharacterType : InterfaceGraphType

{

public CharacterType()

{

Name = "Character";

Field<NonNullGraphType<IdGraphType>>("id");

Field<StringGraphType>("name");

Field<ListGraphT... |

package com.example.productmanagement

import android.os.Bundle

import android.util.Log

import android.view.Menu

import android.view.MenuItem

import android.widget.ArrayAdapter

import androidx.core.content.contentValuesOf

import kotlinx.android.synthetic.main.add_produ_name.*

import kotlinx.android.synthetic.main.supe... |

package de.faweizz.topicservice.service.transformation.step

import de.faweizz.topicservice.service.transformation.TransformationStepData

class TransformationStepFactory {

fun instantiate(transformationStepData: TransformationStepData): TransformationStep {

val clazz = Class.forName(transformationStepData.... |

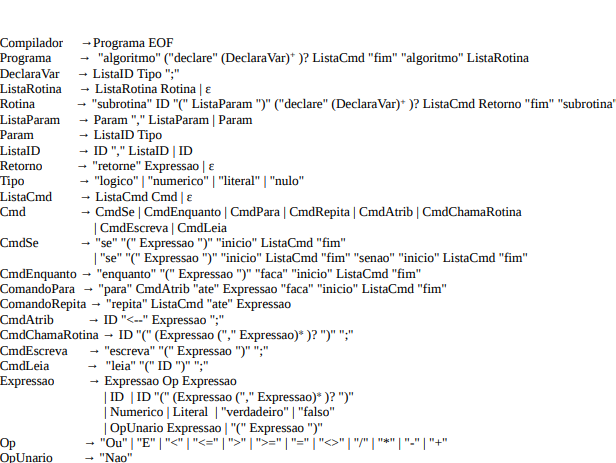

# Compilador de uma lingagem chamada ***Portugolo***

- Contem:

- [x] Analisador Léxico.

- [x] Analisador Sintático.

- [x] Analisador Semântico.

## Gramática da linguagem PortuGolo.

###... |

/*

* Copyright 2017, The Android Open Source Project

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applic... |

r-light .nav-tabs.control-sidebar-tabs > li > a:hover,

.control-sidebar-light .nav-tabs.control-sidebar-tabs > li > a:focus,

.control-sidebar-light .nav-tabs.control-sidebar-tabs > li > a:active {

background: #eff1f7;

}

.control-sidebar-light .nav-tabs.control-sidebar-tabs > li.active > a,

.control-sidebar-light .nav... |

class ImageUploader < CarrierWave::Uploader::Base

# Include RMagick or MiniMagick support:

# include CarrierWave::RMagick

# include CarrierWave::MiniMagick

include Cloudinary::CarrierWave unless ENV['CLOUDINARY_URL'].nil?

cloudinary_transformation transformation: [

{ width: 845, height: 597, crop: :limit... |

import { expect, assert } from "chai";

import axios, { AxiosRequestConfig, AxiosResponse } from 'axios'

import * as prep from "./testUtil"

import { testConfig } from './testConfig'

let config = {

headers: { "content-type": "application/ld+json" },

auth: testConfig.auth

}

async function createEntity() {

... |

#include <stdlib.h>

#include <string.h>

#include <sys/types.h>

#include <sys/socket.h>

#include "cerver/http/response.h"

#include "cerver/http/json.h"

// TODO: get the size fo this when we start the server!!

char *default_header = "HTTP/1.1 200 OK\r\n\n";

HttpResponse *http_response_new (void) {

HttpResponse *... |

require 'rails_helper'

describe 'Zohoho::Connection' do

before :each do

@apikey = 'dummy_api_key'

@conn = Zohoho::Connection.new('CRM', @apikey, true)

vcr_configure('connection')

end

it 'should make a simple call' do

VCR.use_cassette('call', record: :new_episodes) do

@result = @conn.call('... |

import { APP_BASE_HREF, LocationStrategy, PathLocationStrategy, PlatformLocation } from "@angular/common";

import { Inject, Injectable, Optional } from "@angular/core";

import { MICRO_APP_NAME } from "./micro-app-name.token";

@Injectable()

export class MicroAppLocationStrategy extends PathLocationStrategy implements L... |

// SPDX-License-Identifier: BSD-3-Clause

/* Copyright 2020, Intel Corporation */

/*

* ep-listen.c -- the endpoint unit tests

*

* APIs covered:

* - rpma_ep_listen()

* - rpma_ep_shutdown()

*/

#include "librpma.h"

#include "ep-common.h"

#include "cmocka_headers.h"

#include "test-common.h"

/*

* listen__peer_NULL ... |

import { describe, beforeEach, it, expect, angularMocks } from 'test/lib/common';

import helpers from 'test/specs/helpers';

import '../history/history_srv';

import { versions, restore } from './history_mocks';

describe('historySrv', function() {

var ctx = new helpers.ServiceTestContext();

var versionsResponse = ... |

#!/usr/bin/env bash

if [ ! -f "$1" ]; then

cat <<EOF

File name is required!"

Syntax is

$0 <class file>

EOF

exit 1

fi

FILENAME=$1

[ $DEBUG ] && echo "Converting $FILENAME"

printf "\x00\x00\x00\x32" | dd of=$FILENAME seek=4 bs=1 count=4 conv=notrunc 2> /dev/null

KLAZZNAME="$(echo $1 | sed -e "s/.class$//")"

[... |

/*

Convenient alternative is to declare an object (conventionally named exports) and add

properties to that whenever we are defining something that needs to be exported.

In the following example, the module function takes its interface object as an argument,

allowing code outside of the function to create it a... |

# Encoding: UTF-8

[{name: "Completions",

scope: "source.actionscript.2",

settings:

{completions:

["__proto__",

"_accProps",

"_accProps",

"_alpha",

"_currentframe",

"_droptarget",

"_focusrect",

"_framesloaded",

"_global",

"_height",

"_level",

... |

# Math.nCr(n,r) borrowed from Brian Candler's

# post in ruby_talk

def Math.nCr(n,r)

a, b = r, n-r

a, b = b, a if a < b # a is the larger

numer = (a+1..n).inject(1) { |t,v| t*v } # n!/r!

denom = (2..b).inject(1) { |t,v| t*v } # (n-r)!

numer/denom

end

class DiceAtLeastFive

def self.int_to_roll( serial, nu... |

module Steps

module Respondent

class ContactDetailsForm < BaseForm

attribute :address, StrippedString

attribute :postcode, StrippedString

attribute :postcode_unknown, Boolean

attribute :home_phone, StrippedString

attribute :mobile_phone, StrippedString

attribute :mobile_phone_u... |

<?php

/**

* Created by PhpStorm.

* User: usuario

* Date: 28/12/17

* Time: 11:11

*/

namespace Tests\AppBundle\Controller;

use AbstractBundle\Test\ApiTestCase;

class LoginControllerTest extends ApiTestCase

{

protected function setUp()

{

parent::setUp();

$this->client = $this->createClie... |

/* tag::catalog[]

Title:: Adding nodes to a subnet running threshold ECDSA

Goal:: Test whether removing subnet nodes impacts the threshold ECDSA feature

Runbook::

. Setup:

. System subnet comprising N nodes, necessary NNS canisters, and with ecdsa feature featured.

. Removing N/3 + 1 nodes from the subnet via pro... |

#include "AsyncEventQueue.hpp"

#include "jet/live/Utility.hpp"

namespace jet

{

void AsyncEventQueue::addLog(LogSeverity severity, std::string&& message)

{

std::lock_guard<std::mutex> lock(m_logQueueMutex);

m_logQueue.push(jet::make_unique<LogEvent>(severity, std::move(message)));

}

Lo... |

{-- snippet all --}

-- posixtime.hs

import System.Posix.Files

import System.Time

import System.Posix.Types

-- | Given a path, returns (atime, mtime, ctime)

getTimes :: FilePath -> IO (ClockTime, ClockTime, ClockTime)

getTimes fp =

do stat <- getFileStatus fp

return (toct (accessTime stat),

... |

<?php namespace ConnorVG\WolframAlpha;

/**

* The Wolfram Alpha Info Object

* @package WolframAlpha

*/

class WAInfo {

// define the sections of a response

public $text = '';

// Constructor

public function WAInfo() {

}

}

|

---

title: Frequently Asked Questions

aliases:

- "/start/faq/"

---

The following are short, sometimes superficial, answers to some of the most commonly asked questions about the Fluid

Framework.

## What is the Fluid Framework?

The Fluid Framework is a collection of client libraries for building applications with d... |

<?php

namespace Relhub\BuildBundle;

use Symfony\Component\HttpKernel\Bundle\Bundle;

class RelhubBuildBundle extends Bundle

{

}

|

import cx from 'classnames'

import PropTypes from 'prop-types'

import React from 'react'

import {

customPropTypes,

getElementType,

getUnhandledProps,

META,

SUI,

useKeyOnly,

useKeyOrValueAndKey,

useMultipleProp,

useTextAlignProp,

useVerticalAlignProp,

useWidthProp,

} from '../../lib'

import GridCo... |

#self join with inner join

select a.customer_id, a.first_name, a.last_name, b.customer_id, b.first_name, b.last_name from customer a

inner join customer b on a.last_name = b.first_name;

#left join with inner join

select a.customer_id, a.first_name, a.last_name, b.customer_id, b.first_name, b.last_name from customer a

... |

package com.twitter.finagle.zookeeper

import com.google.common.collect.ImmutableSet

import com.twitter.common.net.pool.DynamicHostSet

import com.twitter.common.net.pool.DynamicHostSet.MonitorException

import com.twitter.common.zookeeper.{ServerSet, ServerSetImpl}

import com.twitter.concurrent.{Broker, Offer}

import co... |

package main

import (

"context"

"encoding/json"

"flag"

"fmt"

"io"

"log"

"net"

"net/http"

"os"

"os/signal"

"path/filepath"

"strings"

"time"

"unicode"

"github.com/sixt/gomodproxy/pkg/api"

"expvar"

_ "net/http/pprof"

)

func prometheusExpose(w io.Writer, name string, v interface{}) {

// replace all in... |

mutable struct Citizen <: Agents.AbstractAgent

id::Int64

pos::Int64

home::Int64

neuroticism::Float64

trust_authorities::Float64

fear::Float64

socialnorm::Float64

socialnorm_memory::Array{Any, 1}

behavior::Bool

behavior_buffer::Bool

quarantined::Bool

state::Symbol

tick... |

# !bin/bash

# stopping existing node servers

echo "stopping existing node servers"

pm2 kill

# pkill node |

#include "FWCore/Framework/interface/ESProducer.h"

#include "FWCore/Utilities/interface/ESGetToken.h"

#include "FWCore/ParameterSet/interface/ParameterSet.h"

#include "CalibFormats/HcalObjects/interface/HcalDbService.h"

#include "CalibFormats/HcalObjects/interface/HcalDbRecord.h"

#include "DataFormats/HcalDetId/inter... |

using System;

using UnityEngine;

namespace CustomScripts.Gamemode.GMDebug

{

public class MoveTest : MonoBehaviour

{

public bool isMoving = false;

public float Speed;

public void StartMoving()

{

isMoving = true;

}

private void Update()

{

... |

// FIR_COMPARISON

class SomeObject<T, U>() {

var field : T? = null

}

class A {}

class C {}

fun <T: Comparable<T>, U> SomeObject<T, U>.compareTo(other : SomeObject<T, U>) : Int {

return 0;

}

fun some() {

val test = SomeObject<A, A>

test.<caret>

}

// ABSENT: compareTo |

using System;

using System.Collections.Generic;

namespace Northwind.Models

{

//Model for NORTHWIND.EMPLOYEES (0 rows)

public class Employees

{

public virtual int Id { get; set; } //EMPLOYEE_ID

public virtual string Lastname { get; set; } //LASTNAME

public virtual string Firstname { get; set; } //FIRSTNAME

p... |

require 'national_identification_number/swedish'

require 'national_identification_number/finnish'

require 'national_identification_number/norwegian'

require 'national_identification_number/danish'

|

class jigsaw_prj_t(object):

#------------------------------ obj. to hold projection data

def __init__(self):

self.radii = +1.E+00

self.prjID = ""

self.xbase = +0.E+00

self.ybase = +0.E+00

|

import { BASE_PATH, TEST_BASE_PATH } from "@/client/context";

import { insertUrlParam } from "@/lib/helper";

import React from "react";

type AppModeType = {

basePath: string;

testMode?: boolean;

switchMode: () => void;

};

export function computeMode(pathname?: string) {

return pathname?.startsWith(TEST_BASE_... |

## 题目

**39. 组合总和**

>中等

给定一个无重复元素的数组 candidates 和一个目标数 target ,找出 candidates 中所有可以使数字和为 target 的组合。

candidates 中的数字可以`无限制重复`被选取。

说明:

* 所有数字(包括 target)都是正整数。

* 解集不能包含重复的组合。

示例 1:

```

输入:candidates = [2,3,6,7], target = 7,

所求解集为:

[

[7],

[2,2,3]

]

```

示例 2:

```

输入:candidates = [2,3,5], target = 8,

所求解集为:

[

[2,... |

#pragma once

#define MAX_CHAR 256

#define MAX_BIT 8

typedef unsigned int UINT;

typedef unsigned char UCHAR;

namespace JF

{

namespace JFStudy

{

struct SymbolInfo

{

UCHAR Symbol;

int Frequency;

};

struct HuffmanNode

{

SymbolInfo Data;

HuffmanNode* pLeft;

HuffmanNode* pRight;

};

str... |

// https://www.geeksforgeeks.org/count-possible-paths-top-left-bottom-right-nxm-matrix/

#include <bits/stdc++.h>

using namespace std;

int matrix_paths_rec(int r, int c) {

if(r==1 || c==1) return 1;

return matrix_paths_rec(r-1,c)+matrix_paths_rec(r,c-1);

}

int matrix_paths_dp(int r, int c) {

vector<vector... |

using System;

namespace Immersion.Utility

{

[Flags]

public enum CollisionLayers : uint

{

Tracking = 0b00000010,

World = 0b00000100,

Players = 0b00001000,

Entities = 0b00010000,

Items = 0b00100000,

}

}

|

<?php

namespace App\Repository\Interfaces;

use Illuminate\Database\Eloquent\Model;

use Illuminate\Support\Collection;

interface IUserRepository extends IEloquentRepository {

}

|

export { default } from './ReactUtterances'

export { identifierTypes } from './ReactUtterances'

|

package emul

import (

"encoding/json"

"fmt"

"io/ioutil"

"os"

)

// Graph describes single-directed connections

type Graph map[int32]map[int32]float32

// Config provides network topology and functions configuration

type Config struct {

conn Graph

workFunctions map[string]func(*Process, *Message)

init ... |

package com.xiangronglin.novel.rest.application.service.file

import org.slf4j.LoggerFactory

import org.springframework.beans.factory.annotation.Autowired

import org.springframework.core.io.InputStreamResource

import org.springframework.core.io.Resource

import org.springframework.http.MediaType

import org.springframewo... |

---

layout: single

title: "Constructions"

excerpt: "Grammar constructions"

permalink: /constructions/

sidebar:

nav: korean

---

[~ㄹ/을 수

있/없다](https://www.howtostudykorean.com/unit-2-lower-intermediate-korean-grammar/unit-2-lessons-42-50/lesson-45/#451)

is used to create the meaning of "one can..." or "one cannot..."

... |

module Queries

class Container::Autocomplete < Queries::Query

# @return [Arel::Table]

def table

::Container.arel_table

end

def base_query

::Container.select('containers.*')

end

def base_queries

queries = [

autocomplete_identifier_cached_exact,

autocomplete_... |

package kekmech.ru.mpeiapp.deeplink.di

import kekmech.ru.common_di.ModuleProvider

import kekmech.ru.mpeiapp.deeplink.DeeplinkHandler

import kekmech.ru.mpeiapp.deeplink.DeeplinkHandlersProcessor

import kekmech.ru.mpeiapp.deeplink.handlers.*

object DeeplinkModule : ModuleProvider({

factory {

val handlers = ... |

import React, { Component } from 'react';

import { Text, View, StyleSheet, Button, TextInput,TouchableOpacity,Icon } from 'react-native';

import { Constants } from 'expo';

export default class App extends Component {

state = {

inputValue1: "New Password",

inputValue: "Confirm Password"

};

_handleTe... |

# There is no DOM without doom.

## Para empezar

```bash

npm install

npm run start

```

## Resumen

Interacción con el DOM desde JS vanilla.

Especial atención a:

* Adecuada refactorización.

* Validación de tipos y propiedades en los elementos de DOM mamipulados.

* Reducción de la recursividad sobre el DOM.

* Manejo de l... |

# 821. Time Intersection

Difficulty: Medium

http://www.lintcode.com/en/problem/time-intersection/

Give two users' ordered online time series, and each section records the user's login time point x and offline time point y. Find out the time periods when both users are online at the same time, and output in ascending... |

import BaseShapes from './baseshapes';

import {SquareRootOfTwo} from '../math';

import { DIAMOND as SVG_DIAMOND } from './svgshapefactory';

import { DIAMOND as CANVAS_DIAMOND } from './bitmapshapefactory';

export class Diamonds extends BaseShapes {

static get ShapeName() { return 'diamonds'; }

/**

* proc... |

#!/bin/sh

set -e

prod_zone_a1="10.100.100.135"

prod_zone_a2="10.100.101.234"

prod_zone_b1="10.100.100.111"

prod_zone_b2="10.100.101.14"

prod_zone_c1="10.100.100.158"

prod_zone_c2="10.100.101.177"

filename="iperf_prod_$(date +%Y-%m-%d_%Hh%Mm%Ss).md"

touch $filename

run_test() {

./iperf-k8s.sh -n esa-csc-s2-prd-... |

require 'ruby_event_store'

require "ruby_event_store/outbox"

require "ruby_event_store/outbox/cli"

require "ruby_event_store/outbox/metrics/null"

require "ruby_event_store/outbox/metrics/influx"

require_relative '../../../support/helpers/rspec_defaults'

require_relative '../../../support/helpers/schema_helper'

require_... |

-- South Africa

BEGIN;

UPDATE dim_calendar

SET hol_za = FALSE;

-- 1 January New Year's Day 1910

UPDATE dim_calendar

SET hol_za = TRUE

WHERE EXTRACT( DAY FROM calendar_date ) = 1

AND EXTRACT( MONTH FROM calendar_date ) = 1

AND EXTRACT( YEAR FROM calendar_date ) >= 1910

;

-- 21 March Human R... |

#!/usr/bin/env bash

docker rm -f movies-service

docker rmi movies-service

docker image prune

docker volume prune

docker build -t movies-service .

|

import React from "react";

import Post from './Post';

import posts from '../data/posts.json'

class Posts extends React.Component {

//todo fetch the first 8-9 posts to get the links to the pages

//todo - auto limi the number of posts from the fetch helper rather than from here in the Component

render() {

//console... |

#![cfg(feature = "test")]

use std::sync::Arc;

use sentry::{

protocol::{Breadcrumb, Level},

test::TestTransport,

ClientOptions, Hub,

};

use sentry_tower::SentryLayer;

use tower_::{ServiceBuilder, ServiceExt};

#[test]

fn test_tower_hub() {

// Create a fake transport for new hubs

let transport = Tes... |

using antlr.collections.impl;

namespace antlr

{

public class TokenStreamBasicFilter : TokenStream

{

protected internal BitSet discardMask;

protected internal TokenStream input;

public TokenStreamBasicFilter(TokenStream input)

{

this.input = input;

discardMask = new BitSet();

}

public virtual voi... |

#region Copyright Syncfusion Inc. 2001-2021.

// Copyright Syncfusion Inc. 2001-2021. All rights reserved.

// Use of this code is subject to the terms of our license.

// A copy of the current license can be obtained at any time by e-mailing

// licensing@syncfusion.com. Any infringement will be prosecuted under

// applic... |

/*

* Copyright 2020 Azavea

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in wr... |

pub mod pattern {

use std::io::Write;

pub fn find_matches(content: &str, pattern: &str, mut writer : impl Write) -> Result<(), std::io::Error>{

for (_line_no, line) in content.lines().enumerate() {

if line.contains(pattern){

// writeln!(writer, "{} : {}", _line_no, line)?;

writeln!... |

package de.sscholz.util

import com.badlogic.gdx.Gdx

import com.badlogic.gdx.graphics.OrthographicCamera

import com.badlogic.gdx.math.Matrix4

import com.badlogic.gdx.math.Vector2

import com.badlogic.gdx.utils.viewport.FitViewport

import com.badlogic.gdx.utils.viewport.Viewport

import de.sscholz.Global

import de.sscholz... |

#!/bin/sh

echo "Harden the managed servers"

# Reference:

# http://geekeasier.com/protect-ssh-server-with-installing-fail2ban-on-linuxubuntu/3774/

[ -d .git-crypt ] || (

git crypt init

git-crypt add-gpg-user ludovic.claude@chuv.ch

echo "Setup .gitattributes, see https://www.agwa.name/projects/git-crypt/"

... |

// rseip

//

// rseip - Ethernet/IP (CIP) in pure Rust.

// Copyright: 2021, Joylei <leingliu@gmail.com>

// License: MIT

use crate::epath::*;

use bytes::{BufMut, BytesMut};

use rseip_core::codec::{Encode, Encoder};

impl Encode for PortSegment {

#[inline]

fn encode_by_ref<A: Encoder>(

&self,

buf:... |

### ESS scaling schedule Example

The example launches ESS schedule task, which will create ECS by the schedule time.

### Get up and running

* Planning phase

terraform plan

* Apply phase

terraform apply

* Destroy

terraform destroy |

(defproject client "0.1.0"

:description "Sharingio client: Web frontend for sharingio pair box creation"

:url "https://sharing.io"

:min-lein-version "2.0.0"

:dependencies [[org.clojure/clojure "1.10.0"]

[org.clojure/test.check "1.1.0"]

[com.gfredericks/test.chuck "0.2.10"]

... |

package com.github.xmlparser.util;

import org.junit.jupiter.api.BeforeAll;

import org.junit.jupiter.api.Test;

import org.junit.jupiter.api.TestInstance;

import java.io.File;

@TestInstance(TestInstance.Lifecycle.PER_CLASS)

class CommonUtilTest {

File csv;

@BeforeAll

public void setup() {

... |

#!/usr/bin/env bash

go build -o packages.exe main.go

./packages.exe

printf "======"

printf "\nworld package documentation (look at run.sh)\n"

printf "======\n"

go doc ./world

go doc ./world.PrintStartRoom

printf "Open => http://localhost:6060"

#godoc -http=:6060

#ss -lptn 'sport = :6060' |

#!/bin/sh

DOTFILES=~/.dotfiles

# Clone dotfiles repo

if [ ! -d "$DOTFILES" ]; then

env git clone https://github.com/josemarluedke/dotfiles.git $DOTFILES || {

echo "Error: git clone of dotfiles repo failed"

exit 1

}

fi

# Add global gitconfig

git config --global include.path $DOTFILES/config/global.gitconf... |

/**

* Copyright Soramitsu Co., Ltd. All Rights Reserved.

* SPDX-License-Identifier: GPL-3.0

*/

package jp.co.soramitsu.feature_main_impl.presentation.personaldataedit

import androidx.lifecycle.LiveData

import androidx.lifecycle.MutableLiveData

import androidx.lifecycle.viewModelScope

import jp.co.soramitsu.common.int... |

#[sht] command_prompt = py>

#[sht] command_shell = python -c

py> print('hello')

hello

|

2020年07月28日20时数据

Status: 200

1.新冠确诊疑似患者医保支付12亿

微博热度:2947954

2.陈学冬否认参加中国新说唱

微博热度:1681168

3.三十而已细节

微博热度:1250865

4.中印部队已在大多数地点实现脱离接触

微博热度:965050

5.药水哥参加中国新说唱

微博热度:801412

6.明星作家的治愈书单

微博热度:730356

7.孙红雷夸张艺兴是骄傲

微博热度:730356

8.王漫妮辞职

微博热度:639879

9.最新中国百强县

微博热度:570567

10.20年后打老师男子想当面给老师道歉

微博热度:565311

11.周扬青回应... |

import 'package:flutter/material.dart';

class Constants {

static const kTitleStyle = TextStyle(

color: Color(0xFF212B46),

fontSize: 19.0,

height: 1.3,

fontWeight: FontWeight.w700);

static const kSubtitleStyle = TextStyle(

color: Colors.black,

fontSize: 16.0,

height: 1.0,

... |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.