html_url stringlengths 48 51 | title stringlengths 1 290 | comments listlengths 0 30 | body stringlengths 0 228k ⌀ | number int64 2 7.08k |

|---|---|---|---|---|

https://github.com/huggingface/datasets/issues/5474 | Column project operation on `datasets.Dataset` | [

"Hi ! This would be a nice addition indeed :) This sounds like a duplicate of https://github.com/huggingface/datasets/issues/5468\r\n\r\n> Not sure. Some of my PRs are still open and some do not have any discussions.\r\n\r\nSorry to hear that, feel free to ping me on those PRs"

] | ### Feature request

There is no operation to select a subset of columns of original dataset. Expected API follows.

```python

a = Dataset.from_dict({

'int': [0, 1, 2]

'char': ['a', 'b', 'c'],

'none': [None] * 3,

})

b = a.project('int', 'char') # usually, .select()

print(a.column_names) # std... | 5,474 |

https://github.com/huggingface/datasets/issues/5468 | Allow opposite of remove_columns on Dataset and DatasetDict | [

"Hi! I agree it would be nice to have a method like that. Instead of `keep_columns`, we can name it `select_columns` to be more aligned with PyArrow's naming convention (`pa.Table.select`).",

"Hi, I am a newbie to open source and would like to contribute. @mariosasko can I take up this issue ?",

"Hey, I also wa... | ### Feature request

In this blog post https://huggingface.co/blog/audio-datasets, I noticed the following code:

```python

COLUMNS_TO_KEEP = ["text", "audio"]

all_columns = gigaspeech["train"].column_names

columns_to_remove = set(all_columns) - set(COLUMNS_TO_KEEP)

gigaspeech = gigaspeech.remove_columns(column... | 5,468 |

https://github.com/huggingface/datasets/issues/5465 | audiofolder creates empty dataset even though the dataset passed in follows the correct structure | [] | ### Describe the bug

The structure of my dataset folder called "my_dataset" is : data metadata.csv

The data folder consists of all mp3 files and metadata.csv consist of file locations like 'data/...mp3 and transcriptions. There's 400+ mp3 files and corresponding transcriptions for my dataset.

When I run the follo... | 5,465 |

https://github.com/huggingface/datasets/issues/5464 | NonMatchingChecksumError for hendrycks_test | [

"Thanks for reporting, @sarahwie.\r\n\r\nPlease note this issue was already fixed in `datasets` 2.6.0 version:\r\n- #5040\r\n\r\nIf you update your `datasets` version, you will be able to load the dataset:\r\n```\r\npip install -U datasets\r\n```",

"Oops, missed that I needed to upgrade. Thanks!"

] | ### Describe the bug

The checksum of the file has likely changed on the remote host.

### Steps to reproduce the bug

`dataset = nlp.load_dataset("hendrycks_test", "anatomy")`

### Expected behavior

no error thrown

### Environment info

- `datasets` version: 2.2.1

- Platform: macOS-13.1-arm64-arm-64bit

- Pyt... | 5,464 |

https://github.com/huggingface/datasets/issues/5461 | Discrepancy in `nyu_depth_v2` dataset | [

"Ccing @dwofk (the author of `fast-depth`). \r\n\r\nThanks, @awsaf49 for reporting this. I believe this is because the NYU Depth V2 shipped from `fast-depth` is already preprocessed. \r\n\r\nIf you think it might be better to have the NYU Depth V2 dataset from BTS [here](https://huggingface.co/datasets/sayakpaul/ny... | ### Describe the bug

I think there is a discrepancy between depth map of `nyu_depth_v2` dataset [here](https://huggingface.co/docs/datasets/main/en/depth_estimation) and actual depth map. Depth values somehow got **discretized/clipped** resulting in depth maps that are different from actual ones. Here is a side-by-sid... | 5,461 |

https://github.com/huggingface/datasets/issues/5458 | slice split while streaming | [

"Hi! Yes, that's correct. When `streaming` is `True`, only split names can be specified as `split`, and for slicing, you have to use `.skip`/`.take` instead.\r\n\r\nE.g. \r\n`load_dataset(\"lhoestq/demo1\",revision=None, streaming=True, split=\"train[:3]\")`\r\n\r\nrewritten with `.skip`/`.take`:\r\n`load_dataset(\... | ### Describe the bug

When using the `load_dataset` function with streaming set to True, slicing splits is apparently not supported.

Did I miss this in the documentation?

### Steps to reproduce the bug

`load_dataset("lhoestq/demo1",revision=None, streaming=True, split="train[:3]")`

causes ValueError: Bad split:... | 5,458 |

https://github.com/huggingface/datasets/issues/5457 | prebuilt dataset relies on `downloads/extracted` | [

"Hi! \r\n\r\nThis issue is due to our audio/image datasets not being self-contained. This allows us to save disk space (files are written only once) but also leads to the issues like this one. We plan to make all our datasets self-contained in Datasets 3.0.\r\n\r\nIn the meantime, you can run the following map to e... | ### Describe the bug

I pre-built the dataset:

```

python -c 'import sys; from datasets import load_dataset; ds=load_dataset(sys.argv[1])' HuggingFaceM4/general-pmd-synthetic-testing

```

and it can be used just fine.

now I wipe out `downloads/extracted` and it no longer works.

```

rm -r ~/.cache/huggingface... | 5,457 |

https://github.com/huggingface/datasets/issues/5454 | Save and resume the state of a DataLoader | [

"Something that'd be nice to have is \"manual update of state\". One of the learning from training LLMs is the ability to skip some batches whenever we notice huge spike might be handy.",

"Your outline spec is very sound and clear, @lhoestq - thank you!\r\n\r\n@thomasw21, indeed that would be a wonderful extra fe... | It would be nice when using `datasets` with a PyTorch DataLoader to be able to resume a training from a DataLoader state (e.g. to resume a training that crashed)

What I have in mind (but lmk if you have other ideas or comments):

For map-style datasets, this requires to have a PyTorch Sampler state that can be sav... | 5,454 |

https://github.com/huggingface/datasets/issues/5451 | ImageFolder BadZipFile: Bad offset for central directory | [

"Hi ! Could you share the full stack trace ? Which dataset did you try to load ?\r\n\r\nit may be related to https://github.com/huggingface/datasets/pull/5640",

"The `BadZipFile` error means the ZIP file is corrupted, so I'm closing this issue as it's not directly related to `datasets`.",

"For others that find ... | ### Describe the bug

I'm getting the following exception:

```

lib/python3.10/zipfile.py:1353 in _RealGetContents │

│ │

│ 1350 │ │ # self.start_dir: Position of start of central directory ... | 5,451 |

https://github.com/huggingface/datasets/issues/5450 | to_tf_dataset with a TF collator causes bizarrely persistent slowdown | [

"wtf",

"Couldn't find what's causing this, this will need more investigation",

"A possible hint: The function it seems to be spending a lot of time in (when iterating over the original dataset) is `_get_mp` in the PIL JPEG decoder: \r\n

Briefly, there are several datasets that, when you iterate over them with `to_tf_dataset` **and** a data colla... | 5,450 |

https://github.com/huggingface/datasets/issues/5448 | Support fsspec 2023.1.0 in CI | [] | Once we find out the root cause of:

- #5445

we should revert the temporary pin on fsspec introduced by:

- #5447 | 5,448 |

https://github.com/huggingface/datasets/issues/5445 | CI tests are broken: AttributeError: 'mappingproxy' object has no attribute 'target' | [] | CI tests are broken, raising `AttributeError: 'mappingproxy' object has no attribute 'target'`. See: https://github.com/huggingface/datasets/actions/runs/3966497597/jobs/6797384185

```

...

ERROR tests/test_streaming_download_manager.py::TestxPath::test_xpath_rglob[mock://top_level-date=2019-10-0[1-4]/*-expected_path... | 5,445 |

https://github.com/huggingface/datasets/issues/5444 | info messages logged as warnings | [

"Looks like a duplicate of https://github.com/huggingface/datasets/issues/1948. \r\n\r\nI also think these should be logged as INFO messages, but let's see what @lhoestq thinks.",

"It can be considered unexpected to see a `map` function return instantaneously. The warning is here to explain this case by mentionin... | ### Describe the bug

Code in `datasets` is using `logger.warning` when it should be using `logger.info`.

Some of these are probably a matter of opinion, but I think anything starting with `logger.warning(f"Loading chached` clearly falls into the info category.

Definitions from the Python docs for reference:

* I... | 5,444 |

https://github.com/huggingface/datasets/issues/5442 | OneDrive Integrations with HF Datasets | [

"Hi! \r\n\r\nWe use [`fsspec`](https://github.com/fsspec/filesystem_spec) to integrate with storage providers. You can find more info (and the usage examples) in [our docs](https://huggingface.co/docs/datasets/v2.8.0/filesystems#download-and-prepare-a-dataset-into-a-cloud-storage).\r\n\r\n[`gdrivefs`](https://githu... | ### Feature request

First of all , I would like to thank all community who are developed DataSet storage and make it free available

How to integrate our Onedrive account or any other possible storage clouds (like google drive,...) with the **HF** datasets section.

For example, if I have **50GB** on my **Onedrive*... | 5,442 |

https://github.com/huggingface/datasets/issues/5439 | [dataset request] Add Common Voice 12.0 | [

"@polinaeterna any tentative date on when the Common Voice 12.0 dataset will be added ?",

"This dataset is now hosted on the Hub here: https://huggingface.co/datasets/mozilla-foundation/common_voice_12_0"

] | ### Feature request

Please add the common voice 12_0 datasets. Apart from English, a significant amount of audio-data has been added to the other minor-language datasets.

### Motivation

The dataset link:

https://commonvoice.mozilla.org/en/datasets

| 5,439 |

https://github.com/huggingface/datasets/issues/5437 | Can't load png dataset with 4 channel (RGBA) | [

"Hi! Can you please share the directory structure of your image folder and the `load_dataset` call? We decode images with Pillow, and Pillow supports RGBA PNGs, so this shouldn't be a problem.\r\n\r\n",

"> Hi! Can you please share the directory structure of your image folder and the `load_dataset` call? We decode... | I try to create dataset which contains about 9000 png images 64x64 in size, and they are all 4-channel (RGBA). When trying to use load_dataset() then a dataset is created from only 2 images. What exactly interferes I can not understand.",

"Thanks for reporting, @HaoyuYang59.\r\n\r\nPlease note that these are different \"dataset\" objects: our docs refer to Hugging Face `datasets.Dataset` and not to TensorFlow `tf.data.Datase... | ### Describe the bug

In the [Split your dataset with take and skip](https://huggingface.co/docs/datasets/v1.10.2/dataset_streaming.html#split-your-dataset-with-take-and-skip), it states:

> Using take (or skip) prevents future calls to shuffle from shuffling the dataset shards order, otherwise the taken examples cou... | 5,435 |

https://github.com/huggingface/datasets/issues/5434 | sample_dataset module not found | [

"Hi! Can you describe what the actual error is?",

"working on the setfit example script\r\n\r\n from setfit import SetFitModel, SetFitTrainer, sample_dataset\r\n\r\nImportError: cannot import name 'sample_dataset' from 'setfit' (C:\\Python\\Python38\\lib\\site-packages\\setfit\\__init__.py)\r\n\r\n apart from t... | null | 5,434 |

https://github.com/huggingface/datasets/issues/5433 | Support latest Docker image in CI benchmarks | [

"Sorry, it was us:[^1] https://github.com/iterative/cml/pull/1317 & https://github.com/iterative/cml/issues/1319#issuecomment-1385599559; should be fixed with [v0.18.17](https://github.com/iterative/cml/releases/tag/v0.18.17).\r\n\r\n[^1]: More or less, see https://github.com/yargs/yargs/issues/873.",

"Opened htt... | Once we find out the root cause of:

- #5431

we should revert the temporary pin on the Docker image version introduced by:

- #5432 | 5,433 |

https://github.com/huggingface/datasets/issues/5431 | CI benchmarks are broken: Unknown arguments: runnerPath, path | [] | Our CI benchmarks are broken, raising `Unknown arguments` error: https://github.com/huggingface/datasets/actions/runs/3932397079/jobs/6724905161

```

Unknown arguments: runnerPath, path

```

Stack trace:

```

100%|██████████| 500/500 [00:01<00:00, 338.98ba/s]

Updating lock file 'dvc.lock'

To track the changes ... | 5,431 |

https://github.com/huggingface/datasets/issues/5430 | Support Apache Beam >= 2.44.0 | [

"Some of the shard files now have 0 number of rows.\r\n\r\nWe have opened an issue in the Apache Beam repo:\r\n- https://github.com/apache/beam/issues/25041"

] | Once we find out the root cause of:

- #5426

we should revert the temporary pin on apache-beam introduced by:

- #5429 | 5,430 |

https://github.com/huggingface/datasets/issues/5428 | Load/Save FAISS index using fsspec | [

"Hi! Sure, feel free to submit a PR. Maybe if we want to be consistent with the existing API, it would be cleaner to directly add support for `fsspec` paths in `Dataset.load_faiss_index`/`Dataset.save_faiss_index` in the same manner as it was done in `Dataset.load_from_disk`/`Dataset.save_to_disk`.",

"That's a gr... | ### Feature request

From what I understand `faiss` already support this [link](https://github.com/facebookresearch/faiss/wiki/Index-IO,-cloning-and-hyper-parameter-tuning#generic-io-support)

I would like to use a stream as input to `Dataset.load_faiss_index` and `Dataset.save_faiss_index`.

### Motivation

In... | 5,428 |

https://github.com/huggingface/datasets/issues/5427 | Unable to download dataset id_clickbait | [

"Thanks for reporting, @ilos-vigil.\r\n\r\nWe have transferred this issue to the corresponding dataset on the Hugging Face Hub: https://huggingface.co/datasets/id_clickbait/discussions/1 "

] | ### Describe the bug

I tried to download dataset `id_clickbait`, but receive this error message.

```

FileNotFoundError: Couldn't find file at https://md-datasets-cache-zipfiles-prod.s3.eu-west-1.amazonaws.com/k42j7x2kpn-1.zip

```

When i open the link using browser, i got this XML data.

```xml

<?xml versi... | 5,427 |

https://github.com/huggingface/datasets/issues/5426 | CI tests are broken: SchemaInferenceError | [] | CI test (unit, ubuntu-latest, deps-minimum) is broken, raising a `SchemaInferenceError`: see https://github.com/huggingface/datasets/actions/runs/3930901593/jobs/6721492004

```

FAILED tests/test_beam.py::BeamBuilderTest::test_download_and_prepare_sharded - datasets.arrow_writer.SchemaInferenceError: Please pass `feat... | 5,426 |

https://github.com/huggingface/datasets/issues/5425 | Sort on multiple keys with datasets.Dataset.sort() | [

"Hi! \r\n\r\n`Dataset.sort` calls `df.sort_values` internally, and `df.sort_values` brings all the \"sort\" columns in memory, so sorting on multiple keys could be very expensive. This makes me think that maybe we can replace `df.sort_values` with `pyarrow.compute.sort_indices` - the latter can also sort on multipl... | ### Feature request

From discussion on forum: https://discuss.huggingface.co/t/datasets-dataset-sort-does-not-preserve-ordering/29065/1

`sort()` does not preserve ordering, and it does not support sorting on multiple columns, nor a key function.

The suggested solution:

> ... having something similar to panda... | 5,425 |

https://github.com/huggingface/datasets/issues/5424 | When applying `ReadInstruction` to custom load it's not DatasetDict but list of Dataset? | [

"Hi! You can get a `DatasetDict` if you pass a dictionary with read instructions as follows:\r\n```python\r\ninstructions = [\r\n ReadInstruction(split_name=\"train\", from_=0, to=10, unit='%', rounding='closest'),\r\n ReadInstruction(split_name=\"dev\", from_=0, to=10, unit='%', rounding='closest'),\r\n R... | ### Describe the bug

I am loading datasets from custom `tsv` files stored locally and applying split instructions for each split. Although the ReadInstruction is being applied correctly and I was expecting it to be `DatasetDict` but instead it is a list of `Dataset`.

### Steps to reproduce the bug

Steps to reproduc... | 5,424 |

https://github.com/huggingface/datasets/issues/5422 | Datasets load error for saved github issues | [

"I can confirm that the error exists!\r\nI'm trying to read 3 parquet files locally:\r\n```python\r\nfrom datasets import load_dataset, Features, Value, ClassLabel\r\n\r\nreview_dataset = load_dataset(\r\n \"parquet\",\r\n data_files={\r\n \"train\": os.path.join(sentiment_analysis_data_path, \"train.p... | ### Describe the bug

Loading a previously downloaded & saved dataset as described in the HuggingFace course:

issues_dataset = load_dataset("json", data_files="issues/datasets-issues.jsonl", split="train")

Gives this error:

datasets.builder.DatasetGenerationError: An error occurred while generating the dataset... | 5,422 |

https://github.com/huggingface/datasets/issues/5421 | Support case-insensitive Hub dataset name in load_dataset | [

"Closing as case-insensitivity should be only for URL redirection on the Hub. In the APIs, we will only support the canonical name (https://github.com/huggingface/moon-landing/pull/2399#issuecomment-1382085611)"

] | ### Feature request

The dataset name on the Hub is case-insensitive (see https://github.com/huggingface/moon-landing/pull/2399, internal issue), i.e., https://huggingface.co/datasets/GLUE redirects to https://huggingface.co/datasets/glue.

Ideally, we could load the glue dataset using the following:

```

from d... | 5,421 |

https://github.com/huggingface/datasets/issues/5419 | label_column='labels' in datasets.TextClassification and 'label' or 'label_ids' in transformers.DataColator | [

"Hi! Thanks for pointing out this inconsistency. Changing the default value at this point is probably not worth it, considering we've started discussing the state of the task API internally - we will most likely deprecate the current one and replace it with a more robust solution that relies on the `train_eval_inde... | ### Describe the bug

When preparing a dataset for a task using `datasets.TextClassification`, the output feature is named `labels`. When preparing the trainer using the `transformers.DataCollator` the default column name is `label` if binary or `label_ids` if multi-class problem.

It is required to rename the column... | 5,419 |

https://github.com/huggingface/datasets/issues/5418 | Add ProgressBar for `to_parquet` | [

"Thanks for your proposal, @zanussbaum. Yes, I agree that would definitely be a nice feature to have!",

"@albertvillanova I’m happy to make a quick PR for the feature! let me know ",

"That would be awesome ! You can comment `#self-assign` to assign you to this issue and open a PR :) Will be happy to review",

... | ### Feature request

Add a progress bar for `Dataset.to_parquet`, similar to how `to_json` works.

### Motivation

It's a bit frustrating to not know how long a dataset will take to write to file and if it's stuck or not without a progress bar

### Your contribution

Sure I can help if needed | 5,418 |

https://github.com/huggingface/datasets/issues/5415 | RuntimeError: Sharding is ambiguous for this dataset | [] | ### Describe the bug

When loading some datasets, a RuntimeError is raised.

For example, for "ami" dataset: https://huggingface.co/datasets/ami/discussions/3

```

.../huggingface/datasets/src/datasets/builder.py in _prepare_split(self, split_generator, check_duplicate_keys, file_format, num_proc, max_shard_size)

... | 5,415 |

https://github.com/huggingface/datasets/issues/5414 | Sharding error with Multilingual LibriSpeech | [

"Thanks for reporting, @Nithin-Holla.\r\n\r\nThis is a known issue for multiple datasets and we are investigating it:\r\n- See e.g.: https://huggingface.co/datasets/ami/discussions/3",

"Main issue:\r\n- #5415",

"@albertvillanova Thanks! As a workaround for now, can I use the dataset in streaming mode?",

"Yes,... | ### Describe the bug

Loading the German Multilingual LibriSpeech dataset results in a RuntimeError regarding sharding with the following stacktrace:

```

Downloading and preparing dataset multilingual_librispeech/german to /home/nithin/datadrive/cache/huggingface/datasets/facebook___multilingual_librispeech/german/... | 5,414 |

https://github.com/huggingface/datasets/issues/5413 | concatenate_datasets fails when two dataset with shards > 1 and unequal shard numbers | [

"Hi ! Thanks for reporting :)\r\n\r\nI managed to reproduce the hub using\r\n```python\r\n\r\nfrom datasets import concatenate_datasets, Dataset, load_from_disk\r\n\r\nDataset.from_dict({\"a\": range(9)}).save_to_disk(\"tmp/ds1\")\r\nds1 = load_from_disk(\"tmp/ds1\")\r\nds1 = concatenate_datasets([ds1, ds1])\r\n\r\... | ### Describe the bug

When using `concatenate_datasets([dataset1, dataset2], axis = 1)` to concatenate two datasets with shards > 1, it fails:

```

File "/home/xzg/anaconda3/envs/tri-transfer/lib/python3.9/site-packages/datasets/combine.py", line 182, in concatenate_datasets

return _concatenate_map_style_data... | 5,413 |

https://github.com/huggingface/datasets/issues/5412 | load_dataset() cannot find dataset_info.json with multiple training runs in parallel | [

"Hi ! It fails because the dataset is already being prepared by your first run. I'd encourage you to prepare your dataset before using it for multiple trainings.\r\n\r\nYou can also specify another cache directory by passing `cache_dir=` to `load_dataset()`.",

"Thank you! What do you mean by prepare it beforehand... | ### Describe the bug

I have a custom local dataset in JSON form. I am trying to do multiple training runs in parallel. The first training run runs with no issue. However, when I start another run on another GPU, the following code throws this error.

If there is a workaround to ignore the cache I think that would ... | 5,412 |

https://github.com/huggingface/datasets/issues/5408 | dataset map function could not be hash properly | [

"Hi ! On macos I tried with\r\n- py 3.9.11\r\n- datasets 2.8.0\r\n- transformers 4.25.1\r\n- dill 0.3.4\r\n\r\nand I was able to hash `prepare_dataset` correctly:\r\n```python\r\nfrom datasets.fingerprint import Hasher\r\nHasher.hash(prepare_dataset)\r\n```\r\n\r\nWhat version of transformers do you have ? Can you ... | ### Describe the bug

I follow the [blog post](https://huggingface.co/blog/fine-tune-whisper#building-a-demo) to finetune a Cantonese transcribe model.

When using map function to prepare dataset, following warning pop out:

`common_voice = common_voice.map(prepare_dataset,

remove_... | 5,408 |

https://github.com/huggingface/datasets/issues/5407 | Datasets.from_sql() generates deprecation warning | [

"Thanks for reporting @msummerfield. We are fixing it."

] | ### Describe the bug

Calling `Datasets.from_sql()` generates a warning:

`.../site-packages/datasets/builder.py:712: FutureWarning: 'use_auth_token' was deprecated in version 2.7.1 and will be removed in 3.0.0. Pass 'use_auth_token' to the initializer/'load_dataset_builder' instead.`

### Steps to reproduce the ... | 5,407 |

https://github.com/huggingface/datasets/issues/5406 | [2.6.1][2.7.0] Upgrade `datasets` to fix `TypeError: can only concatenate str (not "int") to str` | [

"I still get this error on 2.9.0\r\n<img width=\"1925\" alt=\"image\" src=\"https://user-images.githubusercontent.com/7208470/215597359-2f253c76-c472-4612-8099-d3a74d16eb29.png\">\r\n",

"Hi ! I just tested locally and or colab and it works fine for 2.9 on `sst2`.\r\n\r\nAlso the code that is shown in your stack t... | `datasets` 2.6.1 and 2.7.0 started to stop supporting datasets like IMDB, ConLL or MNIST datasets.

When loading a dataset using 2.6.1 or 2.7.0, you may this error when loading certain datasets:

```python

TypeError: can only concatenate str (not "int") to str

```

This is because we started to update the metadat... | 5,406 |

https://github.com/huggingface/datasets/issues/5405 | size_in_bytes the same for all splits | [

"Hi @Breakend,\r\n\r\nIndeed, the attribute `size_in_bytes` refers to the size of the entire dataset configuration, for all splits (size of downloaded files + Arrow files), not the specific split.\r\nThis is also the case for `download_size` (downloaded files) and `dataset_size` (Arrow files).\r\n\r\nThe size of th... | ### Describe the bug

Hi, it looks like whenever you pull a dataset and get size_in_bytes, it returns the same size for all splits (and that size is the combined size of all splits). It seems like this shouldn't be the intended behavior since it is misleading. Here's an example:

```

>>> from datasets import load_da... | 5,405 |

https://github.com/huggingface/datasets/issues/5404 | Better integration of BIG-bench | [

"Hi, I made my version : https://huggingface.co/datasets/tasksource/bigbench"

] | ### Feature request

Ideally, it would be nice to have a maintained PyPI package for `bigbench`.

### Motivation

We'd like to allow anyone to access, explore and use any task.

### Your contribution

@lhoestq has opened an issue in their repo:

- https://github.com/google/BIG-bench/issues/906 | 5,404 |

https://github.com/huggingface/datasets/issues/5402 | Missing state.json when creating a cloud dataset using a dataset_builder | [

"`load_from_disk` must be used on datasets saved using `save_to_disk`: they correspond to fully serialized datasets including their state.\r\n\r\nOn the other hand, `download_and_prepare` just downloads the raw data and convert them to arrow (or parquet if you want). We are working on allowing you to reload a datas... | ### Describe the bug

Using `load_dataset_builder` to create a builder, run `download_and_prepare` do upload it to S3. However when trying to load it, there are missing `state.json` files. Complete example:

```python

from aiobotocore.session import AioSession as Session

from datasets import load_from_disk, load_da... | 5,402 |

https://github.com/huggingface/datasets/issues/5399 | Got disconnected from remote data host. Retrying in 5sec [2/20] | [] | ### Describe the bug

While trying to upload my image dataset of a CSV file type to huggingface by running the below code. The dataset consists of a little over 100k of image-caption pairs

### Steps to reproduce the bug

```

df = pd.read_csv('x.csv', encoding='utf-8-sig')

features = Features({

'link': Ima... | 5,399 |

https://github.com/huggingface/datasets/issues/5398 | Unpin pydantic | [] | Once `pydantic` fixes their issue in their 1.10.3 version, unpin it.

See issue:

- #5394

See temporary fix:

- #5395 | 5,398 |

https://github.com/huggingface/datasets/issues/5394 | CI error: TypeError: dataclass_transform() got an unexpected keyword argument 'field_specifiers' | [

"I still getting the same error :\r\n\r\n`python -m spacy download fr_core_news_lg\r\n`.\r\n`import spacy`",

"@MFatnassi, this issue and the corresponding fix only affect our Continuous Integration testing environment.\r\n\r\nNote that `datasets` does not depend on `spacy`."

] | ### Describe the bug

While installing the dependencies, the CI raises a TypeError:

```

Traceback (most recent call last):

File "/opt/hostedtoolcache/Python/3.7.15/x64/lib/python3.7/runpy.py", line 183, in _run_module_as_main

mod_name, mod_spec, code = _get_module_details(mod_name, _Error)

File "/opt/hoste... | 5,394 |

https://github.com/huggingface/datasets/issues/5391 | Whisper Event - RuntimeError: The size of tensor a (504) must match the size of tensor b (448) at non-singleton dimension 1 100% 1000/1000 [2:52:21<00:00, 10.34s/it] | [

"Hey @catswithbats! Super sorry for the late reply! This is happening because there is data with label length (504) that exceeds the model's max length (448). \r\n\r\nThere are two options here:\r\n1. Increase the model's `max_length` parameter: \r\n```python\r\nmodel.config.max_length = 512\r\n```\r\n2. Filter dat... | Done in a VM with a GPU (Ubuntu) following the [Whisper Event - PYTHON](https://github.com/huggingface/community-events/tree/main/whisper-fine-tuning-event#python-script) instructions.

Attempted using [RuntimeError: he size of tensor a (504) must match the size of tensor b (448) at non-singleton dimension 1 100% 1... | 5,391 |

https://github.com/huggingface/datasets/issues/5390 | Error when pushing to the CI hub | [

"Hmmm, git bisect tells me that the behavior is the same since https://github.com/huggingface/datasets/commit/67e65c90e9490810b89ee140da11fdd13c356c9c (3 Oct), i.e. https://github.com/huggingface/datasets/pull/4926",

"Maybe related to the discussions in https://github.com/huggingface/datasets/pull/5196",

"Maybe... | ### Describe the bug

Note that it's a special case where the Hub URL is "https://hub-ci.huggingface.co", which does not appear if we do the same on the Hub (https://huggingface.co).

The call to `dataset.push_to_hub(` fails:

```

Pushing dataset shards to the dataset hub: 100%|██████████████████████████████████... | 5,390 |

https://github.com/huggingface/datasets/issues/5388 | Getting Value Error while loading a dataset.. | [

"Hi! I can't reproduce this error locally (Mac) or in Colab. What version of `datasets` are you using?",

"Hi [mariosasko](https://github.com/mariosasko), the datasets version is '2.8.0'.",

"@valmetisrinivas you get that error because you imported `datasets` (and thus `fsspec`) before installing `zstandard`.\r\n... | ### Describe the bug

I am trying to load a dataset using Hugging Face Datasets load_dataset method. I am getting the value error as show below. Can someone help with this? I am using Windows laptop and Google Colab notebook.

```

WARNING:datasets.builder:Using custom data configuration default-a1d9e8eaedd958cd

---... | 5,388 |

https://github.com/huggingface/datasets/issues/5387 | Missing documentation page : improve-performance | [

"Hi! Our documentation builder does not support links to sections, hence the bug. This is the link it should point to https://huggingface.co/docs/datasets/v2.8.0/en/cache#improve-performance."

] | ### Describe the bug

Trying to access https://huggingface.co/docs/datasets/v2.8.0/en/package_reference/cache#improve-performance, the page is missing.

The link is in here : https://huggingface.co/docs/datasets/v2.8.0/en/package_reference/loading_methods#datasets.load_dataset.keep_in_memory

### Steps to reproduce t... | 5,387 |

https://github.com/huggingface/datasets/issues/5386 | `max_shard_size` in `datasets.push_to_hub()` breaks with large files | [

"Hi! \r\n\r\nThis behavior stems from the fact that we don't always embed image bytes in the underlying arrow table, which can lead to bad size estimation (we use the first 1000 table rows to [estimate](https://github.com/huggingface/datasets/blob/9a7272cd4222383a5b932b0083a4cc173fda44e8/src/datasets/arrow_dataset.... | ### Describe the bug

`max_shard_size` parameter for `datasets.push_to_hub()` works unreliably with large files, generating shard files that are way past the specified limit.

In my private dataset, which contains unprocessed images of all sizes (up to `~100MB` per file), I've encountered cases where `max_shard_siz... | 5,386 |

https://github.com/huggingface/datasets/issues/5385 | Is `fs=` deprecated in `load_from_disk()` as well? | [

"Hi! Yes, we should deprecate the `fs` param here. Would you be interested in submitting a PR? ",

"> Hi! Yes, we should deprecate the `fs` param here. Would you be interested in submitting a PR?\r\n\r\nYeah I can do that sometime next week. Should the storage_options be a new arg here? I’ll look around for anywh... | ### Describe the bug

The `fs=` argument was deprecated from `Dataset.save_to_disk` and `Dataset.load_from_disk` in favor of automagically figuring it out via fsspec:

https://github.com/huggingface/datasets/blob/9a7272cd4222383a5b932b0083a4cc173fda44e8/src/datasets/arrow_dataset.py#L1339-L1340

Is there a reason the... | 5,385 |

https://github.com/huggingface/datasets/issues/5383 | IterableDataset missing column_names, differs from Dataset interface | [

"Another example is that `IterableDataset.map` does not have `fn_kwargs`, among other arguments. It makes it harder to convert code from Dataset to IterableDataset.",

"Hi! `fn_kwargs` was added to `IterableDataset.map` in `datasets 2.5.0`, so please update your installation (`pip install -U datasets`) to use it.\... | ### Describe the bug

The documentation on [Stream](https://huggingface.co/docs/datasets/v1.18.2/stream.html) seems to imply that IterableDataset behaves just like a Dataset. However, examples like

```

dataset.map(augment_data, batched=True, remove_columns=dataset.column_names, ...)

```

will not work because `.colu... | 5,383 |

https://github.com/huggingface/datasets/issues/5381 | Wrong URL for the_pile dataset | [

"Hi! This error can happen if there is a local file/folder with the same name as the requested dataset. And to avoid it, rename the local file/folder.\r\n\r\nSoon, it will be possible to explicitly request a Hub dataset as follows:https://github.com/huggingface/datasets/issues/5228#issuecomment-1313494020"

] | ### Describe the bug

When trying to load `the_pile` dataset from the library, I get a `FileNotFound` error.

### Steps to reproduce the bug

Steps to reproduce:

Run:

```

from datasets import load_dataset

dataset = load_dataset("the_pile")

```

I get the output:

"name": "FileNotFoundError",

"message... | 5,381 |

https://github.com/huggingface/datasets/issues/5380 | Improve dataset `.skip()` speed in streaming mode | [

"Hi! I agree `skip` can be inefficient to use in the current state.\r\n\r\nTo make it fast, we could use \"statistics\" stored in Parquet metadata and read only the chunks needed to form a dataset. \r\n\r\nAnd thanks to the \"datasets-server\" project, which aims to store the Parquet versions of the Hub datasets (o... | ### Feature request

Add extra information to the `dataset_infos.json` file to include the number of samples/examples in each shard, for example in a new field `num_examples` alongside `num_bytes`. The `.skip()` function could use this information to ignore the download of a shard when in streaming mode, which AFAICT... | 5,380 |

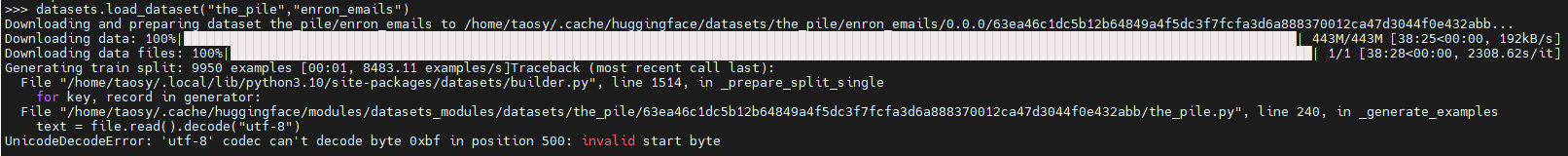

https://github.com/huggingface/datasets/issues/5378 | The dataset "the_pile", subset "enron_emails" , load_dataset() failure | [

"Thanks for reporting @shaoyuta. We are investigating it.\r\n\r\nWe are transferring the issue to \"the_pile\" Community tab on the Hub: https://huggingface.co/datasets/the_pile/discussions/4"

] | ### Describe the bug

When run

"datasets.load_dataset("the_pile","enron_emails")" failure

### Steps to reproduce the bug

Run below code in python cli:

>>> import datasets

>>> datasets.load_dataset(... | 5,378 |

https://github.com/huggingface/datasets/issues/5374 | Using too many threads results in: Got disconnected from remote data host. Retrying in 5sec | [

"The data files are hosted on HF at https://huggingface.co/datasets/allenai/c4/tree/main\r\n\r\nYou have 200 runs streaming the same files in parallel. So this is probably a Hub limitation. Maybe rate limiting ? cc @julien-c \r\n\r\nMaybe you can also try to reduce the number of HTTP requests by increasing the bloc... | ### Describe the bug

`streaming_download_manager` seems to disconnect if too many runs access the same underlying dataset 🧐

The code works fine for me if I have ~100 runs in parallel, but disconnects once scaling to 200.

Possibly related:

- https://github.com/huggingface/datasets/pull/3100

- https://github.com/... | 5,374 |

https://github.com/huggingface/datasets/issues/5371 | Add a robustness benchmark dataset for vision | [

"Ccing @nazneenrajani @lvwerra @osanseviero "

] | ### Name

ImageNet-C

### Paper

Benchmarking Neural Network Robustness to Common Corruptions and Perturbations

### Data

https://github.com/hendrycks/robustness

### Motivation

It's a known fact that vision models are brittle when they meet with slightly corrupted and perturbed data. This is also corre... | 5,371 |

https://github.com/huggingface/datasets/issues/5363 | Dataset.from_generator() crashes on simple example | [] | null | 5,363 |

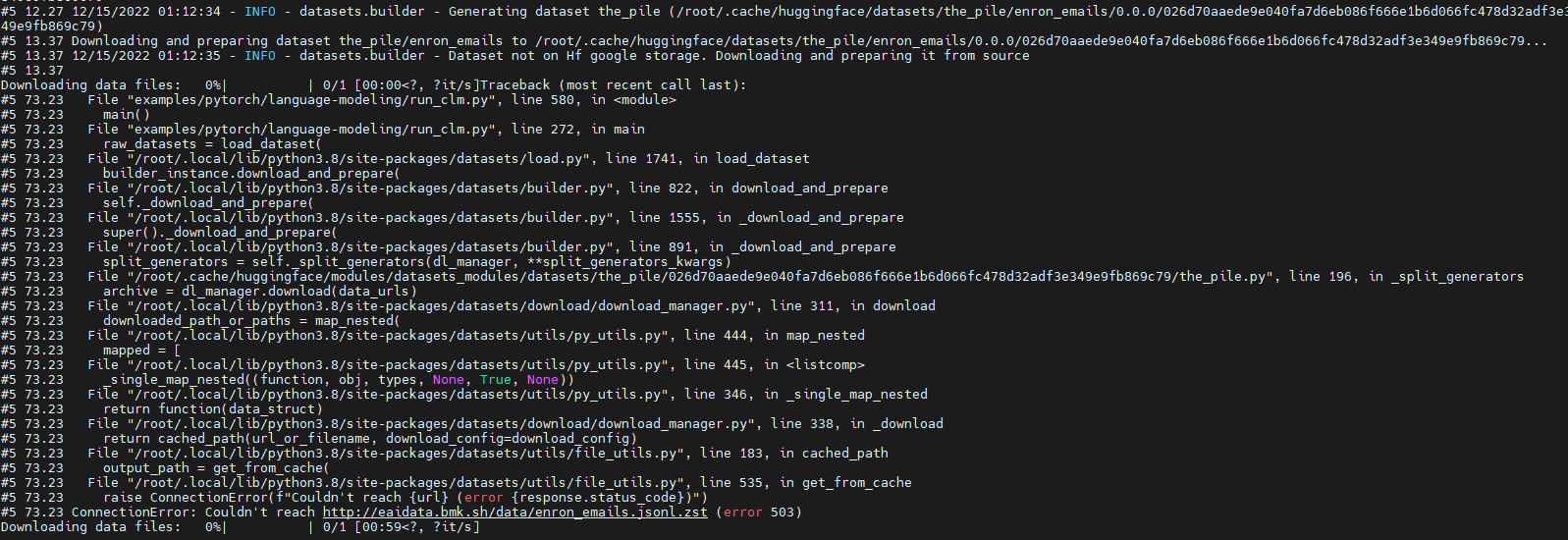

https://github.com/huggingface/datasets/issues/5362 | Run 'GPT-J' failure due to download dataset fail (' ConnectionError: Couldn't reach http://eaidata.bmk.sh/data/enron_emails.jsonl.zst ' ) | [

"Thanks for reporting, @shaoyuta.\r\n\r\nWe have checked and yes, apparently there is an issue with the server hosting the data of the \"enron_emails\" subset of \"the_pile\" dataset: http://eaidata.bmk.sh/data/enron_emails.jsonl.zst\r\nIt seems to be down: The connection has timed out.\r\n\r\nPlease note that at t... | ### Describe the bug

Run model "GPT-J" with dataset "the_pile" fail.

The fail out is as below:

Looks like which is due to "http://eaidata.bmk.sh/data/enron_emails.jsonl.zst" unreachable .

### Steps to ... | 5,362 |

https://github.com/huggingface/datasets/issues/5361 | How concatenate `Audio` elements using batch mapping | [

"You can try something like this ?\r\n```python\r\ndef mapper_function(batch):\r\n return {\"concatenated_audio\": [np.concatenate([audio[\"array\"] for audio in batch[\"audio\"]])]}\r\n\r\ndataset = dataset.map(\r\n mapper_function,\r\n batched=True,\r\n batch_size=3,\r\n remove_columns=list(dataset.... | ### Describe the bug

I am trying to do concatenate audios in a dataset e.g. `google/fleurs`.

```python

print(dataset)

# Dataset({

# features: ['path', 'audio'],

# num_rows: 24

# })

def mapper_function(batch):

# to merge every 3 audio

# np.concatnate(audios[i: i+3]) for i in range(i, len(batc... | 5,361 |

https://github.com/huggingface/datasets/issues/5360 | IterableDataset returns duplicated data using PyTorch DDP | [

"If you use huggingface trainer, you will find the trainer has wrapped a `IterableDatasetShard` to avoid duplication.\r\nSee:\r\nhttps://github.com/huggingface/transformers/blob/dfd818420dcbad68e05a502495cf666d338b2bfb/src/transformers/trainer.py#L835\r\n",

"If you want to support it by datasets natively, maybe w... | As mentioned in https://github.com/huggingface/datasets/issues/3423, when using PyTorch DDP the dataset ends up with duplicated data. We already check for the PyTorch `worker_info` for single node, but we should also check for `torch.distributed.get_world_size()` and `torch.distributed.get_rank()` | 5,360 |

https://github.com/huggingface/datasets/issues/5354 | Consider using "Sequence" instead of "List" | [

"Hi! Linking a comment to provide more info on the issue: https://stackoverflow.com/a/39458225. This means we should replace all (most of) the occurrences of `List` with `Sequence` in function signatures.\r\n\r\n@tranhd95 Would you be interested in submitting a PR?",

"Hi all! I tried to reproduce this issue and d... | ### Feature request

Hi, please consider using `Sequence` type annotation instead of `List` in function arguments such as in [`Dataset.from_parquet()`](https://github.com/huggingface/datasets/blob/main/src/datasets/arrow_dataset.py#L1088). It leads to type checking errors, see below.

**How to reproduce**

```py

... | 5,354 |

https://github.com/huggingface/datasets/issues/5353 | Support remote file systems for `Audio` | [

"Just seen https://github.com/huggingface/datasets/issues/5281"

] | ### Feature request

Hi there!

It would be super cool if `Audio()`, and potentially other features, could read files from a remote file system.

### Motivation

Large amounts of data is often stored in buckets. `load_from_disk` is able to retrieve data from cloud storage but to my knowledge actually copies the datas... | 5,353 |

https://github.com/huggingface/datasets/issues/5352 | __init__() got an unexpected keyword argument 'input_size' | [

"Hi @J-shel, thanks for reporting.\r\n\r\nI think the issue comes from your call to `load_dataset`. As first argument, you should pass:\r\n- either the name of your dataset (\"mrf\") if this is already published on the Hub\r\n- or the path to the loading script of your dataset (\"path/to/your/local/mrf.py\").",

"... | ### Describe the bug

I try to define a custom configuration with a input_size attribute following the instructions by "Specifying several dataset configurations" in https://huggingface.co/docs/datasets/v1.2.1/add_dataset.html

But when I load the dataset, I got an error "__init__() got an unexpected keyword argument... | 5,352 |

https://github.com/huggingface/datasets/issues/5351 | Do we need to implement `_prepare_split`? | [

"Hi! `DatasetBuilder` is a parent class for concrete builders: `GeneratorBasedBuilder`, `ArrowBasedBuilder` and `BeamBasedBuilder`. When writing a builder script, these classes are the ones you should inherit from. And since all of them implement `_prepare_split`, you only have to implement the three methods mentio... | ### Describe the bug

I'm not sure this is a bug or if it's just missing in the documentation, or i'm not doing something correctly, but I'm subclassing `DatasetBuilder` and getting the following error because on the `DatasetBuilder` class the `_prepare_split` method is abstract (as are the others we are required to im... | 5,351 |

https://github.com/huggingface/datasets/issues/5348 | The data downloaded in the download folder of the cache does not respect `umask` | [

"note, that `datasets` already did some of that umask fixing in the past and also at the hub - the recent work on the hub about the same: https://github.com/huggingface/huggingface_hub/pull/1220\r\n\r\nAlso I noticed that each file has a .json counterpart and the latter always has the correct perms:\r\n\r\n```\r\n-... | ### Describe the bug

For a project on a cluster we are several users to share the same cache for the datasets library. And we have a problem with the permissions on the data downloaded in the cache.

Indeed, it seems that the data is downloaded by giving read and write permissions only to the user launching the com... | 5,348 |

https://github.com/huggingface/datasets/issues/5346 | [Quick poll] Give your opinion on the future of the Hugging Face Open Source ecosystem! | [

"As the survey is finished, can we close this issue, @LysandreJik ?",

"Yes! I'll post a public summary on the forums shortly.",

"Is the summary available? I would be interested in reading your findings."

] | Thanks to all of you, Datasets is just about to pass 15k stars!

Since the last survey, a lot has happened: the [diffusers](https://github.com/huggingface/diffusers), [evaluate](https://github.com/huggingface/evaluate) and [skops](https://github.com/skops-dev/skops) libraries were born. `timm` joined the Hugging Face... | 5,346 |

https://github.com/huggingface/datasets/issues/5345 | Wrong dtype for array in audio features | [

"After some more investigation, this is due to [this line of code](https://github.com/huggingface/datasets/blob/main/src/datasets/features/audio.py#L279). The function `sf.read(file)` should be updated to `sf.read(file, dtype=\"float32\")`\r\n\r\nIndeed, the default value in soundfile is `float64` ([see here](https... | ### Describe the bug

When concatenating/interleaving different datasets, I stumble into an error because the features can't be aligned. After some investigation, I understood that the audio arrays had different dtypes, namely `float32` and `float64`. Consequently, the datasets cannot be merged.

### Steps to repro... | 5,345 |

https://github.com/huggingface/datasets/issues/5343 | T5 for Q&A produces truncated sentence | [] | Dear all, I am fine-tuning T5 for Q&A task using the MedQuAD ([GitHub - abachaa/MedQuAD: Medical Question Answering Dataset of 47,457 QA pairs created from 12 NIH websites](https://github.com/abachaa/MedQuAD)) dataset. In the dataset, there are many long answers with thousands of words. I have used pytorch_lightning to... | 5,343 |

https://github.com/huggingface/datasets/issues/5342 | Emotion dataset cannot be downloaded | [

"Hi @cbarond there's already an open issue at https://github.com/dair-ai/emotion_dataset/issues/5, as the data seems to be missing now, so check that issue instead 👍🏻 ",

"Thanks @cbarond for reporting and @alvarobartt for pointing to the issue we opened in the author's repo.\r\n\r\nIndeed, this issue was first ... | ### Describe the bug

The emotion dataset gives a FileNotFoundError. The full error is: `FileNotFoundError: Couldn't find file at https://www.dropbox.com/s/1pzkadrvffbqw6o/train.txt?dl=1`.

It was working yesterday (December 7, 2022), but stopped working today (December 8, 2022).

### Steps to reproduce the bug

... | 5,342 |

https://github.com/huggingface/datasets/issues/5338 | `map()` stops every 1000 steps | [

"Hi !\r\n\r\n> It starts using all the cores (I am not sure why because I did not pass num_proc)\r\n\r\nThe tokenizer uses Rust code that is multithreaded. And maybe the `feature_extractor` might run some things in parallel as well - but I'm not super familiar with its internals.\r\n\r\n> then progress bar stops at... | ### Describe the bug

I am passing the following `prepare_dataset` function to `Dataset.map` (code is inspired from [here](https://github.com/huggingface/community-events/blob/main/whisper-fine-tuning-event/run_speech_recognition_seq2seq_streaming.py#L454))

```python3

def prepare_dataset(batch):

# load and res... | 5,338 |

https://github.com/huggingface/datasets/issues/5337 | Support webdataset format | [

"I like the idea of having `webdataset` as an optional dependency to ensure our loader generates web datasets the same way as the main project.",

"Webdataset is the one of the most popular dataset formats for large scale computer vision tasks. Upvote for this issue. ",

"Any updates on this?",

"We haven't had ... | Webdataset is an efficient format for iterable datasets. It would be nice to support it in `datasets`, as discussed in https://github.com/rom1504/img2dataset/issues/234.

In particular it would be awesome to be able to load one using `load_dataset` in streaming mode (either from a local directory, or from a dataset o... | 5,337 |

https://github.com/huggingface/datasets/issues/5332 | Passing numpy array to ClassLabel names causes ValueError | [

"Should `datasets` allow `ClassLabel` input parameter to be an `np.array` even though internally we need to cast it to a Python list? @lhoestq @mariosasko ",

"Hi! No, I don't think so. The `names` parameter is [annotated](https://github.com/huggingface/datasets/blob/582236640b9109988e5f7a16a8353696ffa09a16/src/d... | ### Describe the bug

If a numpy array is passed to the names argument of ClassLabel, creating a dataset with those features causes an error.

### Steps to reproduce the bug

https://colab.research.google.com/drive/1cV_es1PWZiEuus17n-2C-w0KEoEZ68IX

TLDR:

If I define my classes as:

```

my_classes = np.array(['on... | 5,332 |

https://github.com/huggingface/datasets/issues/5326 | No documentation for main branch is built | [] | Since:

- #5250

- Commit: 703b84311f4ead83c7f79639f2dfa739295f0be6

the docs for main branch are no longer built.

The change introduced only triggers the docs building for releases. | 5,326 |

https://github.com/huggingface/datasets/issues/5325 | map(...batch_size=None) for IterableDataset | [

"Hi! I agree it makes sense for `IterableDataset.map` to support the `batch_size=None` case. This should be super easy to fix.",

"@mariosasko as this is something simple maybe I can include it as part of https://github.com/huggingface/datasets/pull/5311? Let me know :+1:",

"#self-assign",

"Feel free to close ... | ### Feature request

Dataset.map(...) allows batch_size to be None. It would be nice if IterableDataset did too.

### Motivation

Although it may seem a bit of a spurious request given that `IterableDataset` is meant for larger than memory datasets, but there are a couple of reasons why this might be nice.

One is th... | 5,325 |

https://github.com/huggingface/datasets/issues/5324 | Fix docstrings and types in documentation that appears on the website | [

"I agree we have a mess with docstrings...",

"Ok, I believe we've cleaned up most of the old syntax we were using for the user-facing docs! There are still a couple of `:obj:`'s and `:class:` floating around in the docstrings we don't expose that I'll track down :)",

"Hi @polinaeterna @albertvillanova @stevhliu... | While I was working on https://github.com/huggingface/datasets/pull/5313 I've noticed that we have a mess in how we annotate types and format args and return values in the code. And some of it is displayed in the [Reference section](https://huggingface.co/docs/datasets/package_reference/builder_classes) of the document... | 5,324 |

https://github.com/huggingface/datasets/issues/5323 | Duplicated Keys in Taskmaster-2 Dataset | [

"Thanks for reporting, @liaeh.\r\n\r\nWe are having a look at it. ",

"I have transferred the discussion to the Community tab of the dataset: https://huggingface.co/datasets/taskmaster2/discussions/1"

] | ### Describe the bug

Loading certain splits () of the taskmaster-2 dataset fails because of a DuplicatedKeysError. This occurs for the following domains: `'hotels', 'movies', 'music', 'sports'`. The domains `'flights', 'food-ordering', 'restaurant-search'` load fine.

Output:

### Steps to reproduce the bug

```

... | 5,323 |

https://github.com/huggingface/datasets/issues/5317 | `ImageFolder` performs poorly with large datasets | [

"Hi ! ImageFolder is made for small scale datasets indeed. For large scale image datasets you better group your images in TAR archives or Arrow/Parquet files. This is true not just for ImageFolder loading performance, but also because having millions of files is not ideal for your filesystem or when moving the data... | ### Describe the bug

While testing image dataset creation, I'm seeing significant performance bottlenecks with imagefolders when scanning a directory structure with large number of images.

## Setup

* Nested directories (5 levels deep)

* 3M+ images

* 1 `metadata.jsonl` file

## Performance Degradation Point... | 5,317 |

https://github.com/huggingface/datasets/issues/5316 | Bug in sample_by="paragraph" | [

"Thanks for reporting, @adampauls.\r\n\r\nWe are having a look at it. "

] | ### Describe the bug

I think [this line](https://github.com/huggingface/datasets/blob/main/src/datasets/packaged_modules/text/text.py#L96) is wrong and should be `batch = f.read(self.config.chunksize)`. Otherwise it will never terminate because even when `f` is finished reading, `batch` will still be truthy from the l... | 5,316 |

https://github.com/huggingface/datasets/issues/5315 | Adding new splits to a dataset script with existing old splits info in metadata's `dataset_info` fails | [

"EDIT:\r\nI think in this case, the metadata files (either README or JSON) should not be read (i.e. `self.info.splits` should be None).\r\n\r\nOne idea: \r\n- I think ideally we should set this behavior when we pass `--save_info` to the CLI `test`\r\n- However, currently, the builder is unaware of this: `save_info`... | ### Describe the bug

If you first create a custom dataset with a specific set of splits, generate metadata with `datasets-cli test ... --save_info`, then change your script to include more splits, it fails.

That's what happened in https://huggingface.co/datasets/mrdbourke/food_vision_199_classes/discussions/2#6385f... | 5,315 |

https://github.com/huggingface/datasets/issues/5314 | Datasets: classification_report() got an unexpected keyword argument 'suffix' | [

"This seems similar to https://github.com/huggingface/datasets/issues/2512 Can you try to update seqeval ? ",

"@JonathanAlis also note that the metrics are deprecated in our `datasets` library.\r\n\r\nPlease, use the new library 🤗 Evaluate instead: https://huggingface.co/docs/evaluate"

] | https://github.com/huggingface/datasets/blob/main/metrics/seqeval/seqeval.py

> import datasets

predictions = [['O', 'O', 'B-MISC', 'I-MISC', 'I-MISC', 'I-MISC', 'O'], ['B-PER', 'I-PER', 'O']]

references = [['O', 'O', 'O', 'B-MISC', 'I-MISC', 'I-MISC', 'O'], ['B-PER', 'I-PER', 'O']]

seqeval = datasets.load_metri... | 5,314 |

https://github.com/huggingface/datasets/issues/5306 | Can't use custom feature description when loading a dataset | [

"Forgot to actually convert the feature dict to a Feature object. Closing."

] | ### Describe the bug

I have created a feature dictionary to describe my datasets' column types, to use when loading the dataset, following [the doc](https://huggingface.co/docs/datasets/main/en/about_dataset_features). It crashes at dataset load.

### Steps to reproduce the bug

```python

# Creating features

task_... | 5,306 |

https://github.com/huggingface/datasets/issues/5305 | Dataset joelito/mc4_legal does not work with multiple files | [

"Thanks for reporting @JoelNiklaus.\r\n\r\nPlease note that since we moved all dataset loading scripts to the Hub, the issues and pull requests relative to specific datasets are directly handled on the Hub, in their Community tab. I'm transferring this issue there: https://huggingface.co/datasets/joelito/mc4_legal/... | ### Describe the bug

The dataset https://huggingface.co/datasets/joelito/mc4_legal works for languages like bg with a single data file, but not for languages with multiple files like de. It shows zero rows for the de dataset.

joelniklaus@Joels-MacBook-Pro ~/N/P/C/L/p/m/mc4_legal (main) [1]> python test_mc4_legal.... | 5,305 |

https://github.com/huggingface/datasets/issues/5304 | timit_asr doesn't load the test split. | [

"The [timit_asr.py](https://huggingface.co/datasets/timit_asr/blob/main/timit_asr.py) script iterates over the WAV files per split directory using this:\r\n```python\r\nwav_paths = sorted(Path(data_dir).glob(f\"**/{split}/**/*.wav\"))\r\nwav_paths = wav_paths if wav_paths else sorted(Path(data_dir).glob(f\"**/{spli... | ### Describe the bug

When I use the function ```timit = load_dataset('timit_asr', data_dir=data_dir)```, it only loads train split, not test split.

I tried to change the directory and filename to lower case to upper case for the test split, but it does not work at all.

```python

DatasetDict({

train: Datase... | 5,304 |

https://github.com/huggingface/datasets/issues/5298 | Bug in xopen with Windows pathnames | [] | Currently, `xopen` function has a bug with local Windows pathnames:

From its implementation:

```python

def xopen(file: str, mode="r", *args, **kwargs):

file = _as_posix(PurePath(file))

main_hop, *rest_hops = file.split("::")

if is_local_path(main_hop):

return open(file, mode, *args, **kwarg... | 5,298 |

https://github.com/huggingface/datasets/issues/5296 | Bug in xjoin with Windows pathnames | [] | Currently, `xjoin` function has a bug with local Windows pathnames: instead of returning the OS-dependent join pathname, it always returns it in POSIX format.

```python

from datasets.download.streaming_download_manager import xjoin

path = xjoin("C:\\Users\\USERNAME", "filename.txt")

```

Join path should be:

... | 5,296 |

https://github.com/huggingface/datasets/issues/5295 | Extractions failed when .zip file located on read-only path (e.g., SageMaker FastFile mode) | [

"Hi ! Thanks for reporting. Indeed the lock file should be placed in a directory with write permission (e.g. in the directory where the archive is extracted).",

"I opened https://github.com/huggingface/datasets/pull/5320 to fix this - it places the lock file in the cache directory instead of trying to put in next... | ### Describe the bug

Hi,

`load_dataset()` does not work .zip files located on a read-only directory. Looks like it's because Dataset creates a lock file in the [same directory](https://github.com/huggingface/datasets/blob/df4bdd365f2abb695f113cbf8856a925bc70901b/src/datasets/utils/extract.py) as the .zip file.

... | 5,295 |

https://github.com/huggingface/datasets/issues/5293 | Support streaming datasets with pathlib.Path.with_suffix | [] | Extend support for streaming datasets that use `pathlib.Path.with_suffix`.

This feature will be useful e.g. for datasets containing text files and annotated files with the same name but different extension. | 5,293 |

https://github.com/huggingface/datasets/issues/5292 | Missing documentation build for versions 2.7.1 and 2.6.2 | [

"- Build docs for 2.6.2:\r\n - Commit: a6a5a1cf4cdf1e0be65168aed5a327f543001fe8\r\n - Build docs GH Action: https://github.com/huggingface/datasets/actions/runs/3539470622/jobs/5941404044\r\n- Build docs for 2.7.1:\r\n - Commit: 5ef1ab1cc06c2b7a574bf2df454cd9fcb071ccb2\r\n - Build docs GH Action: https://github... | After the patch releases [2.7.1](https://github.com/huggingface/datasets/releases/tag/2.7.1) and [2.6.2](https://github.com/huggingface/datasets/releases/tag/2.6.2), the online docs were not properly built (the build_documentation workflow was not triggered).

There was a fix by:

- #5291

However, both documentati... | 5,292 |

https://github.com/huggingface/datasets/issues/5288 | Lossy json serialization - deserialization of dataset info | [

"Hi ! JSON is a lossy format indeed. If you want to keep the feature types or other metadata I'd encourage you to store them as well. For example you can use `dataset.info.write_to_directory` and `DatasetInfo.from_directory` to store the feature types, split info, description, license etc."

] | ### Describe the bug

Saving a dataset to disk as json (using `to_json`) and then loading it again (using `load_dataset`) results in features whose labels are not type-cast correctly. In the code snippet below, `features.label` should have a label of type `ClassLabel` but has type `Value` instead.

### Steps to re... | 5,288 |

https://github.com/huggingface/datasets/issues/5286 | FileNotFoundError: Couldn't find file at https://dumps.wikimedia.org/enwiki/20220301/dumpstatus.json | [

"I found a solution \r\n\r\nIf you specifically install datasets==1.18 and then run\r\n\r\nimport datasets\r\nwiki = datasets.load_dataset('wikipedia', '20200501.en')\r\nthen this should work (it worked for me.)",

"I have the same problem here but installing datasets==1.18 wont work for me\r\n"

] | ### Describe the bug

I follow the steps provided on the website [https://huggingface.co/datasets/wikipedia](https://huggingface.co/datasets/wikipedia)

$ pip install apache_beam mwparserfromhell

>>> from datasets import load_dataset

>>> load_dataset("wikipedia", "20220301.en")

however this results in the follo... | 5,286 |

https://github.com/huggingface/datasets/issues/5284 | Features of IterableDataset set to None by remove column | [

"Related to https://github.com/huggingface/datasets/issues/5245",

"#self-assign",

"Thanks @lhoestq and @alvarobartt!\r\n\r\nThis would be extremely helpful to have working for the Whisper fine-tuning event - we're **only** training using streaming mode, so it'll be quite important to have this feature working t... | ### Describe the bug

The `remove_column` method of the IterableDataset sets the dataset features to None.

### Steps to reproduce the bug

```python

from datasets import Audio, load_dataset

# load LS in streaming mode

dataset = load_dataset("librispeech_asr", "clean", split="validation", streaming=True)

... | 5,284 |

https://github.com/huggingface/datasets/issues/5281 | Support cloud storage in load_dataset | [

"Or for example an archive on GitHub releases! Before I added support for JXL (locally only, PR still pending) I was considering hosting my files on GitHub instead...",

"+1 to this. I would like to use 'audiofolder' with a data_dir that's on S3, for example. I don't want to upload my dataset to the Hub, but I wo... | Would be nice to be able to do

```python

data_files=["s3://..."] # or gs:// or any cloud storage path

storage_options = {...}

load_dataset(..., data_files=data_files, storage_options=storage_options)

```

The idea would be to use `fsspec` as in `download_and_prepare` and `save_to_disk`.

This has been reque... | 5,281 |

https://github.com/huggingface/datasets/issues/5280 | Import error | [

"Hi ! Can you \r\n```python\r\nimport platform\r\nprint(platform.python_version())\r\n```\r\nto see that it returns ?",

"Hi,\n\n3.8.13\n\nGet Outlook for Android<https://aka.ms/AAb9ysg>\n________________________________\nFrom: Quentin Lhoest ***@***.***>\nSent: Tuesday, November 22, 2022 2:37:02 PM\nTo: huggingfa... | https://github.com/huggingface/datasets/blob/cd3d8e637cfab62d352a3f4e5e60e96597b5f0e9/src/datasets/__init__.py#L28

Hy,

I have error at the above line. I have python version 3.8.13, the message says I need python>=3.7, which is True, but I think the if statement not working properly (or the message wrong) | 5,280 |

https://github.com/huggingface/datasets/issues/5278 | load_dataset does not read jsonl metadata file properly | [

"Can you try to remove \"drop_labels=false\" ? It may force the loader to infer the labels instead of reading the metadata",

"Hi, thanks for responding. I tried that, but it does not change anything.",

"Can you try updating `datasets` ? Metadata support was added in `datasets` 2.4",

"Probably the issue, will ... | ### Describe the bug

Hi, I'm following [this page](https://huggingface.co/docs/datasets/image_dataset) to create a dataset of images and captions via an image folder and a metadata.json file, but I can't seem to get the dataloader to recognize the "text" column. It just spits out "image" and "label" as features.

B... | 5,278 |

https://github.com/huggingface/datasets/issues/5276 | Bug in downloading common_voice data and snall chunk of it to one's own hub | [

"Sounds like one of the file is not a valid one, can you make sure you uploaded valid mp3 files ?",

"Well I just sharded the original commonVoice dataset and pushed a small chunk of it in a private rep\n\nWhat did go wrong?\n\nHolen Sie sich Outlook für iOS<https://aka.ms/o0ukef>\n________________________________... | ### Describe the bug

I'm trying to load the common voice dataset. Currently there is no implementation to download just par tof the data, and I need just one part of it, without downloading the entire dataset

Help please?

https://github.com/huggingface/moon-landing/pull/4609",

"FYI there are still 2k+ weekly users on `datasets` 2.6.1 which doesn't support the string label format for... | After an internal discussion (https://github.com/huggingface/moon-landing/issues/4563):

- YAML integer keys are not preserved server-side: they are transformed to strings

- See for example this Hub PR: https://huggingface.co/datasets/acronym_identification/discussions/1/files

- Original:

```yaml

... | 5,275 |

https://github.com/huggingface/datasets/issues/5274 | load_dataset possibly broken for gated datasets? | [

"@BradleyHsu",

"Btw, thanks very much for finding the hub rollback temporary fix and bringing the issue to our attention @KhoomeiK!",

"I see the same issue when calling `load_dataset('poloclub/diffusiondb', 'large_random_1k')` with `datasets==2.7.1` and `huggingface-hub=0.11.0`. No issue with `datasets=2.6.1` a... | ### Describe the bug

When trying to download the [winoground dataset](https://huggingface.co/datasets/facebook/winoground), I get this error unless I roll back the version of huggingface-hub:

```

[/usr/local/lib/python3.7/dist-packages/huggingface_hub/utils/_validators.py](https://localhost:8080/#) in validate_rep... | 5,274 |

https://github.com/huggingface/datasets/issues/5273 | download_mode="force_redownload" does not refresh cached dataset | [] | ### Describe the bug

`load_datasets` does not refresh dataset when features are imported from external file, even with `download_mode="force_redownload"`. The bug is not limited to nested fields, however it is more likely to occur with nested fields.

### Steps to reproduce the bug

To reproduce the bug 3 files are ne... | 5,273 |

https://github.com/huggingface/datasets/issues/5272 | Use pyarrow Tensor dtype | [

"Hi ! We're using the Arrow format for the datasets, and PyArrow tensors are not part of the Arrow format AFAIK:\r\n\r\n> There is no direct support in the arrow columnar format to store Tensors as column values.\r\n\r\nsource: https://github.com/apache/arrow/issues/4802#issuecomment-508494694",

"@wesm @rok its b... | ### Feature request

I was going the discussion of converting tensors to lists.

Is there a way to leverage pyarrow's Tensors for nested arrays / embeddings?

For example:

```python

import pyarrow as pa

import numpy as np

x = np.array([[2, 2, 4], [4, 5, 100]], np.int32)

pa.Tensor.from_numpy(x, dim_names=["dim1... | 5,272 |

https://github.com/huggingface/datasets/issues/5270 | When len(_URLS) > 16, download will hang | [

"It can fix the bug temporarily.\r\n```python\r\nfrom datasets import DownloadConfig\r\nconfig = DownloadConfig(num_proc=8)\r\nIn [5]: dataset = load_dataset('Freed-Wu/kodak', split='test', download_config=config)\r\nDownloading and preparing dataset kodak/default to /home/wzy/.cache/huggingface/datasets/Freed-Wu__... | ### Describe the bug

```python

In [9]: dataset = load_dataset('Freed-Wu/kodak', split='test')

Downloading: 100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 2.53k/2.53k [00:00<00:00, 1.88MB/s]

[1... | 5,270 |

https://github.com/huggingface/datasets/issues/5269 | Shell completions | [

"I don't think we need completion on the datasets-cli, since we're mainly developing huggingface-cli",

"I see."

] | ### Feature request

Like <https://github.com/huggingface/huggingface_hub/issues/1197>, datasets-cli maybe need it, too.

### Motivation

See above.

### Your contribution

Maybe. | 5,269 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.