55th International Mathematical Olympiad

PROBLEMS SHORT LIST WITH SOLUTIONS

$1 M O 2014$

Cape Town - South Africa

$1 M O 2014$

Cape Town - South Africa

Shortlisted Problems with Solutions

$55^{\text {th }}$ International Mathematical Olympiad Cape Town, South Africa, 2014

Note of Confidentiality

The shortlisted problems should be kept strictly confidential until IMO 2015.

Contributing Countries

The Organising Committee and the Problem Selection Committee of IMO 2014 thank the following 43 countries for contributing 141 problem proposals.

Australia, Austria, Belgium, Benin, Bulgaria, Colombia, Croatia, Cyprus, Czech Republic, Denmark, Ecuador, Estonia, Finland, France, Georgia, Germany, Greece, Hong Kong, Hungary, Iceland, India, Indonesia, Iran, Ireland, Japan, Lithuania, Luxembourg, Malaysia, Mongolia, Netherlands, Nigeria, Pakistan, Russia, Saudi Arabia, Serbia, Slovakia, Slovenia, South Korea, Thailand, Turkey, Ukraine, United Kingdom, U.S.A.

Problem Selection Committee

Johan Meyer

Ilya I. Bogdanov

Géza Kós

Waldemar Pompe

Christian Reiher

Stephan Wagner

Problems

Algebra

A1. Let $z_{0}<z_{1}<z_{2}<\cdots$ be an infinite sequence of positive integers. Prove that there exists a unique integer $n \geqslant 1$ such that

(Austria) A2. Define the function $f:(0,1) \rightarrow(0,1)$ by

Let $a$ and $b$ be two real numbers such that $0<a<b<1$. We define the sequences $a_{n}$ and $b_{n}$ by $a_{0}=a, b_{0}=b$, and $a_{n}=f\left(a_{n-1}\right), b_{n}=f\left(b_{n-1}\right)$ for $n>0$. Show that there exists a positive integer $n$ such that

(Denmark) A3. For a sequence $x_{1}, x_{2}, \ldots, x_{n}$ of real numbers, we define its price as

Given $n$ real numbers, Dave and George want to arrange them into a sequence with a low price. Diligent Dave checks all possible ways and finds the minimum possible price $D$. Greedy George, on the other hand, chooses $x_{1}$ such that $\left|x_{1}\right|$ is as small as possible; among the remaining numbers, he chooses $x_{2}$ such that $\left|x_{1}+x_{2}\right|$ is as small as possible, and so on. Thus, in the $i^{\text {th }}$ step he chooses $x_{i}$ among the remaining numbers so as to minimise the value of $\left|x_{1}+x_{2}+\cdots+x_{i}\right|$. In each step, if several numbers provide the same value, George chooses one at random. Finally he gets a sequence with price $G$.

Find the least possible constant $c$ such that for every positive integer $n$, for every collection of $n$ real numbers, and for every possible sequence that George might obtain, the resulting values satisfy the inequality $G \leqslant c D$. (Georgia) A4. Determine all functions $f: \mathbb{Z} \rightarrow \mathbb{Z}$ satisfying

for all integers $m$ and $n$.

A5. Consider all polynomials $P(x)$ with real coefficients that have the following property: for any two real numbers $x$ and $y$ one has

Determine all possible values of $P(0)$.

A6. Find all functions $f: \mathbb{Z} \rightarrow \mathbb{Z}$ such that

for all $n \in \mathbb{Z}$.

Combinatorics

C1. Let $n$ points be given inside a rectangle $R$ such that no two of them lie on a line parallel to one of the sides of $R$. The rectangle $R$ is to be dissected into smaller rectangles with sides parallel to the sides of $R$ in such a way that none of these rectangles contains any of the given points in its interior. Prove that we have to dissect $R$ into at least $n+1$ smaller rectangles.

(Serbia)

C2. We have $2^{m}$ sheets of paper, with the number 1 written on each of them. We perform the following operation. In every step we choose two distinct sheets; if the numbers on the two sheets are $a$ and $b$, then we erase these numbers and write the number $a+b$ on both sheets. Prove that after $m 2^{m-1}$ steps, the sum of the numbers on all the sheets is at least $4^{m}$.

(Iran)

C3. Let $n \geqslant 2$ be an integer. Consider an $n \times n$ chessboard divided into $n^{2}$ unit squares. We call a configuration of $n$ rooks on this board happy if every row and every column contains exactly one rook. Find the greatest positive integer $k$ such that for every happy configuration of rooks, we can find a $k \times k$ square without a rook on any of its $k^{2}$ unit squares.

(Croatia)

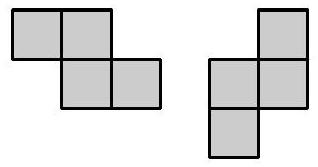

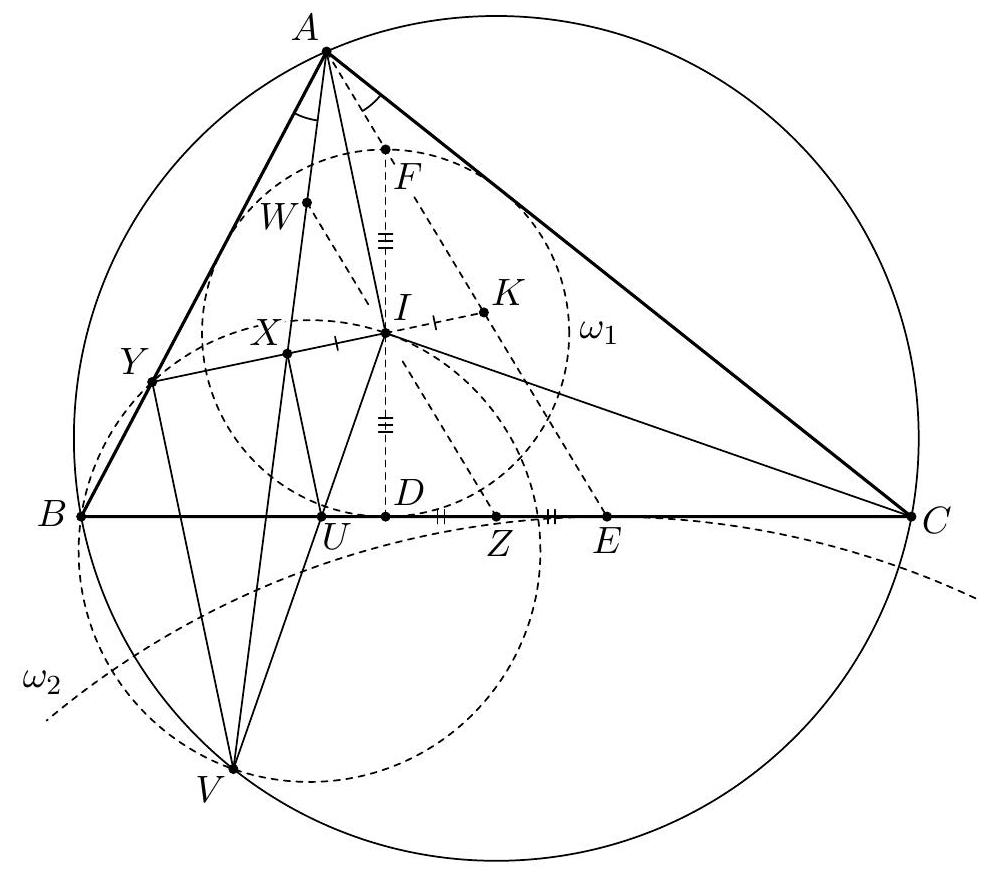

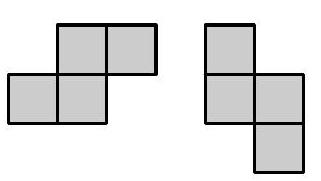

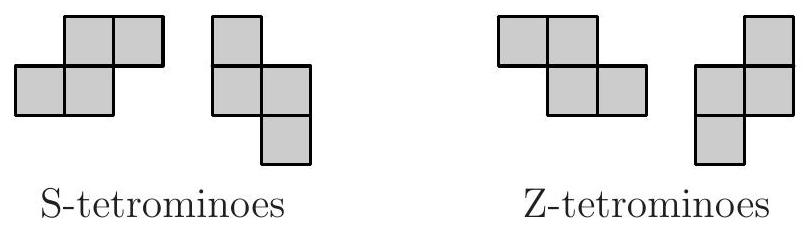

C4. Construct a tetromino by attaching two $2 \times 1$ dominoes along their longer sides such that the midpoint of the longer side of one domino is a corner of the other domino. This construction yields two kinds of tetrominoes with opposite orientations. Let us call them Sand Z-tetrominoes, respectively.

Z-tetrominoes

Assume that a lattice polygon $P$ can be tiled with S-tetrominoes. Prove than no matter how we tile $P$ using only S- and Z-tetrominoes, we always use an even number of Z-tetrominoes. (Hungary) C5. Consider $n \geqslant 3$ lines in the plane such that no two lines are parallel and no three have a common point. These lines divide the plane into polygonal regions; let $\mathcal{F}$ be the set of regions having finite area. Prove that it is possible to colour $\lceil\sqrt{n / 2}\rceil$ of the lines blue in such a way that no region in $\mathcal{F}$ has a completely blue boundary. (For a real number $x,\lceil x\rceil$ denotes the least integer which is not smaller than $x$.)

C6. We are given an infinite deck of cards, each with a real number on it. For every real number $x$, there is exactly one card in the deck that has $x$ written on it. Now two players draw disjoint sets $A$ and $B$ of 100 cards each from this deck. We would like to define a rule that declares one of them a winner. This rule should satisfy the following conditions:

- The winner only depends on the relative order of the 200 cards: if the cards are laid down in increasing order face down and we are told which card belongs to which player, but not what numbers are written on them, we can still decide the winner.

- If we write the elements of both sets in increasing order as $A=\left{a_{1}, a_{2}, \ldots, a_{100}\right}$ and $B=\left{b_{1}, b_{2}, \ldots, b_{100}\right}$, and $a_{i}>b_{i}$ for all $i$, then $A$ beats $B$.

- If three players draw three disjoint sets $A, B, C$ from the deck, $A$ beats $B$ and $B$ beats $C$, then $A$ also beats $C$.

How many ways are there to define such a rule? Here, we consider two rules as different if there exist two sets $A$ and $B$ such that $A$ beats $B$ according to one rule, but $B$ beats $A$ according to the other. (Russia) C7. Let $M$ be a set of $n \geqslant 4$ points in the plane, no three of which are collinear. Initially these points are connected with $n$ segments so that each point in $M$ is the endpoint of exactly two segments. Then, at each step, one may choose two segments $A B$ and $C D$ sharing a common interior point and replace them by the segments $A C$ and $B D$ if none of them is present at this moment. Prove that it is impossible to perform $n^{3} / 4$ or more such moves. (Russia) C8. A card deck consists of 1024 cards. On each card, a set of distinct decimal digits is written in such a way that no two of these sets coincide (thus, one of the cards is empty). Two players alternately take cards from the deck, one card per turn. After the deck is empty, each player checks if he can throw out one of his cards so that each of the ten digits occurs on an even number of his remaining cards. If one player can do this but the other one cannot, the one who can is the winner; otherwise a draw is declared.

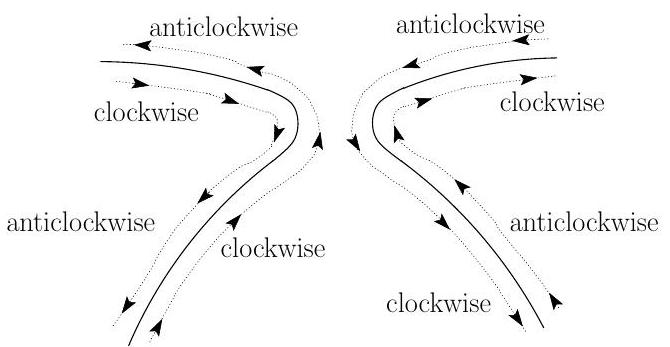

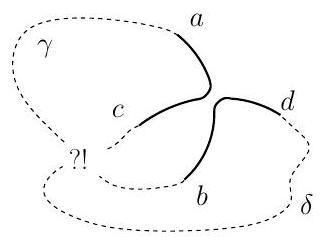

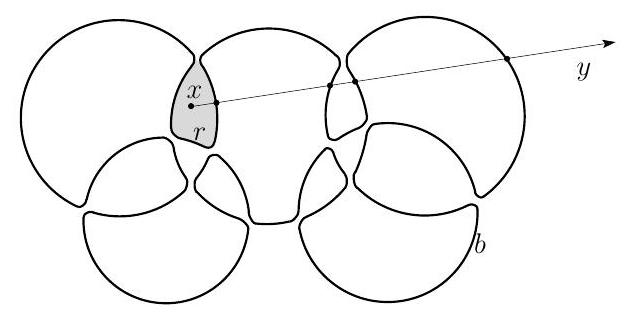

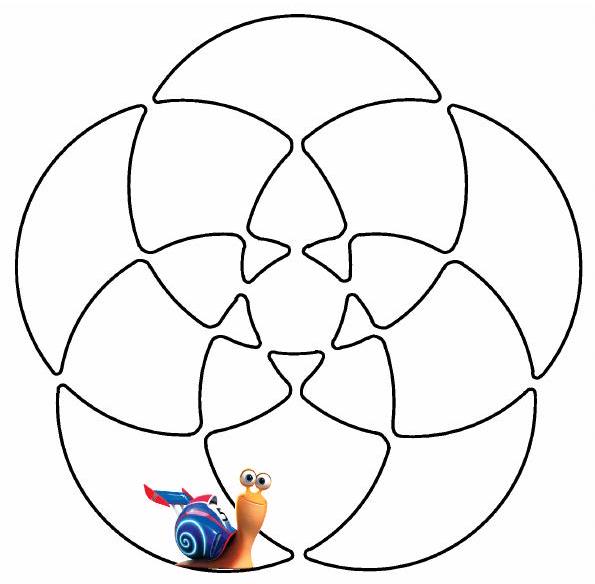

Determine all possible first moves of the first player after which he has a winning strategy. (Russia) C9. There are $n$ circles drawn on a piece of paper in such a way that any two circles intersect in two points, and no three circles pass through the same point. Turbo the snail slides along the circles in the following fashion. Initially he moves on one of the circles in clockwise direction. Turbo always keeps sliding along the current circle until he reaches an intersection with another circle. Then he continues his journey on this new circle and also changes the direction of moving, i.e. from clockwise to anticlockwise or vice versa.

Suppose that Turbo's path entirely covers all circles. Prove that $n$ must be odd.

Geometry

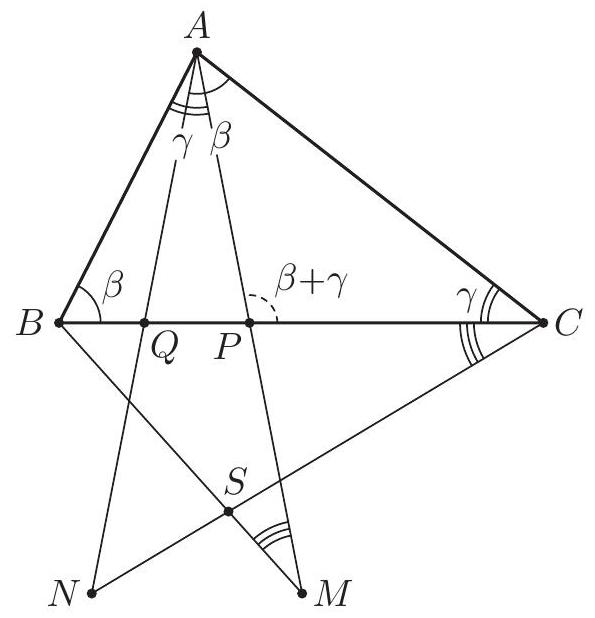

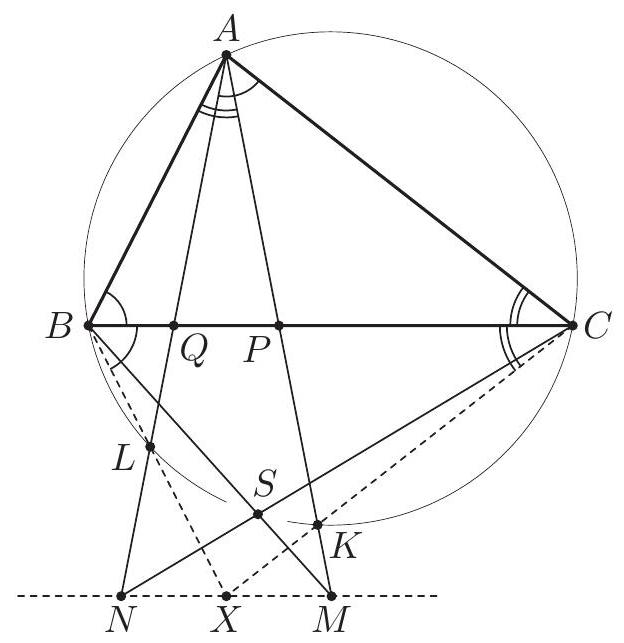

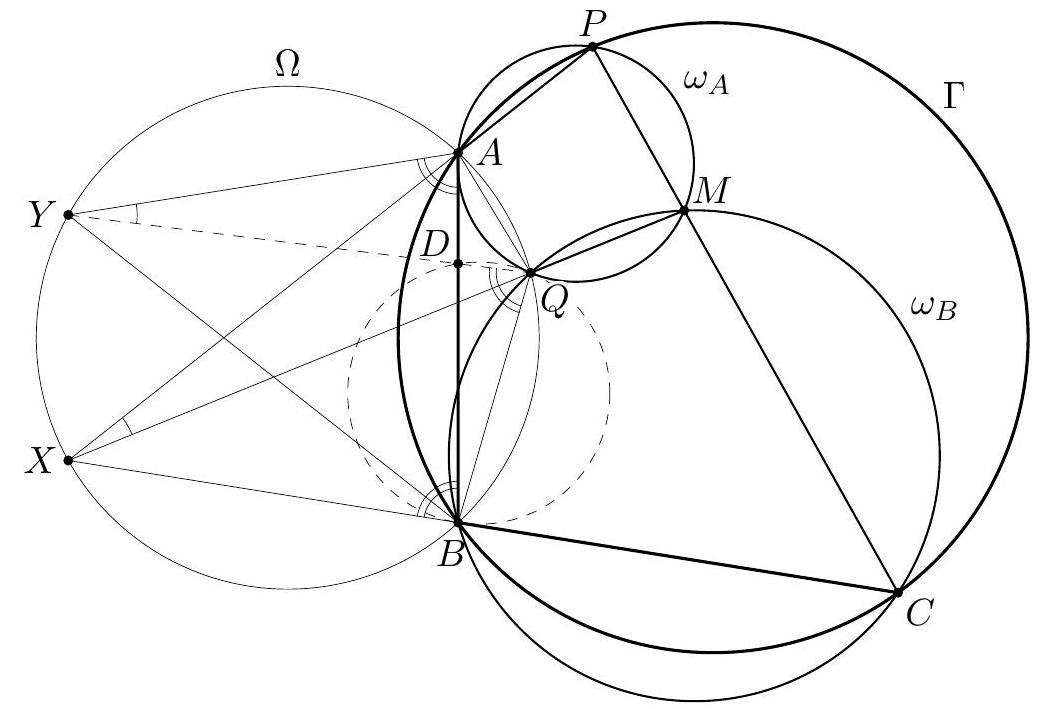

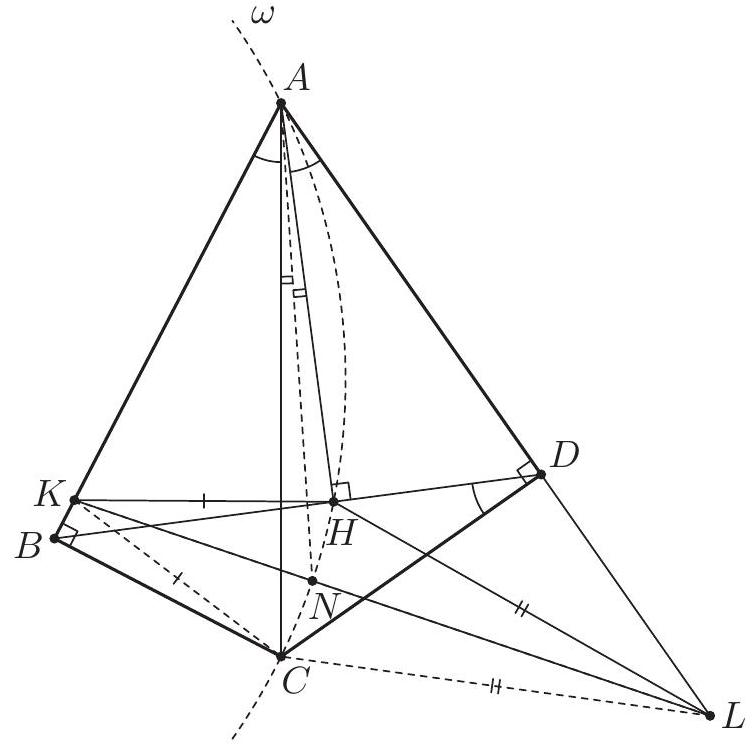

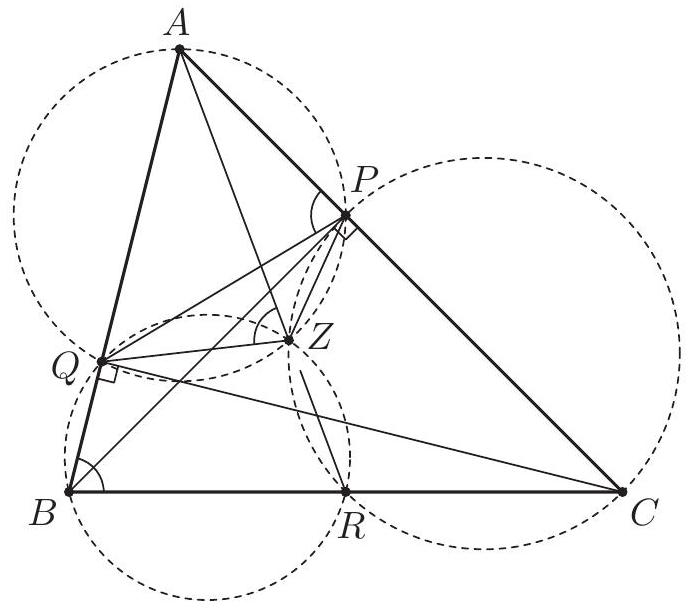

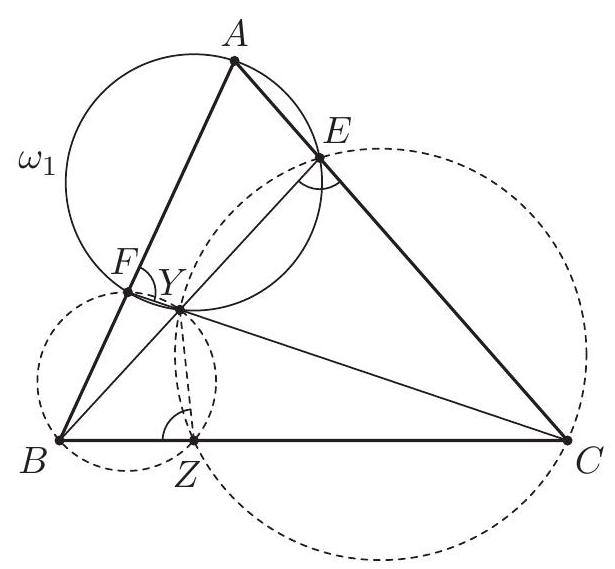

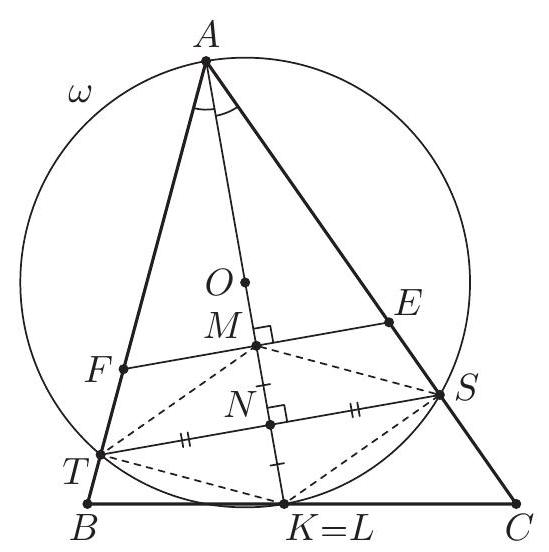

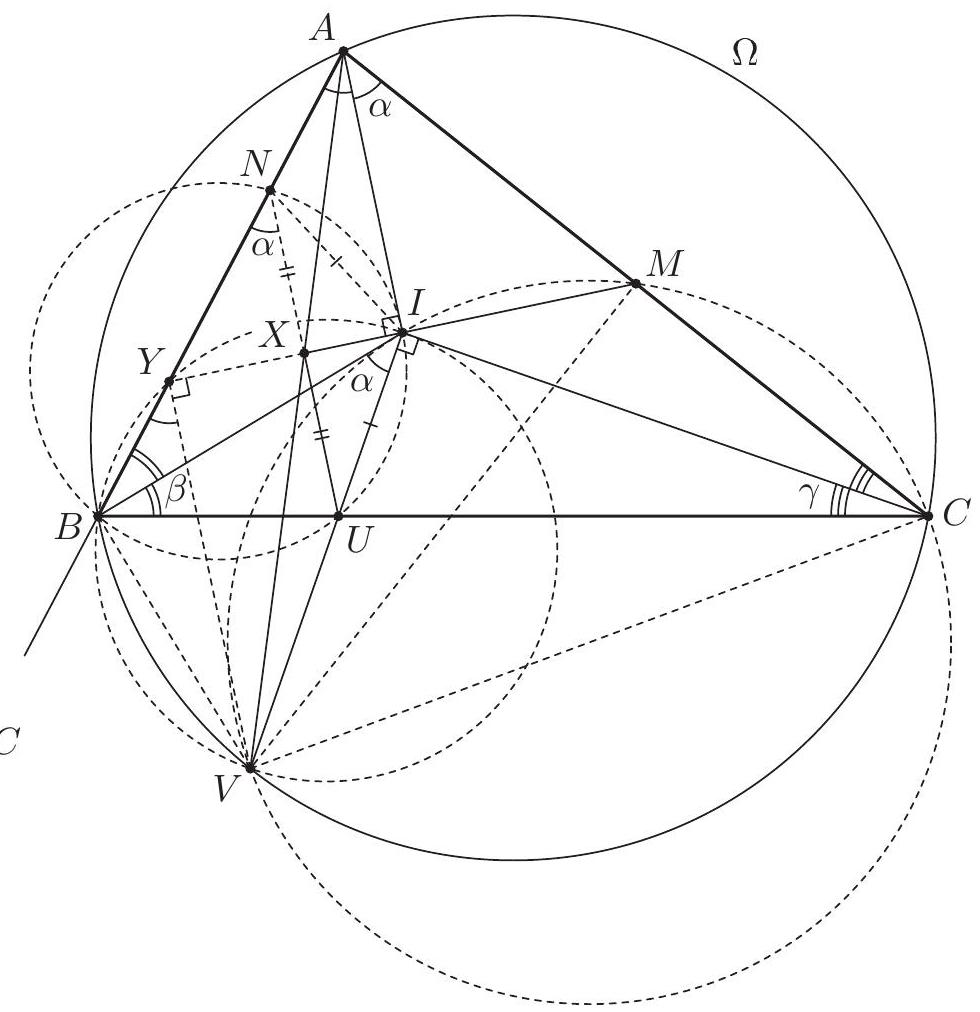

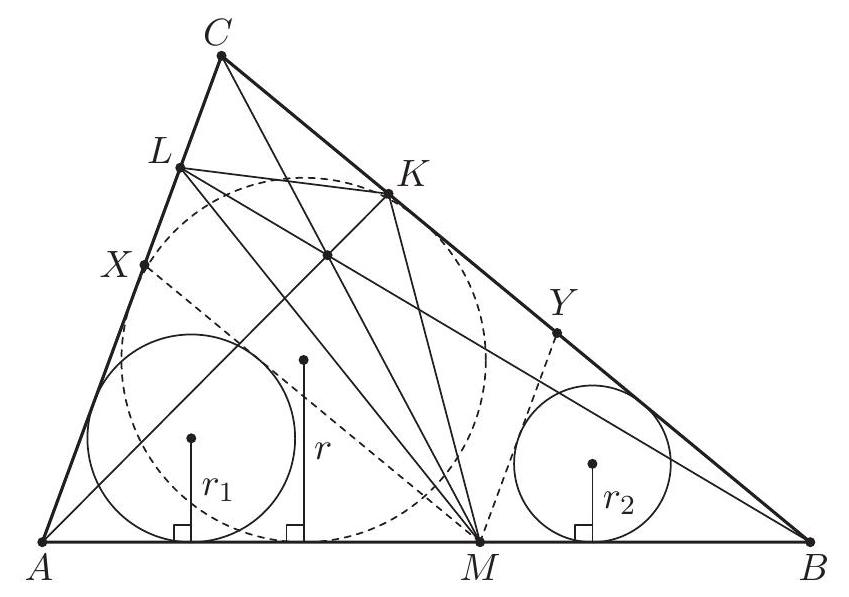

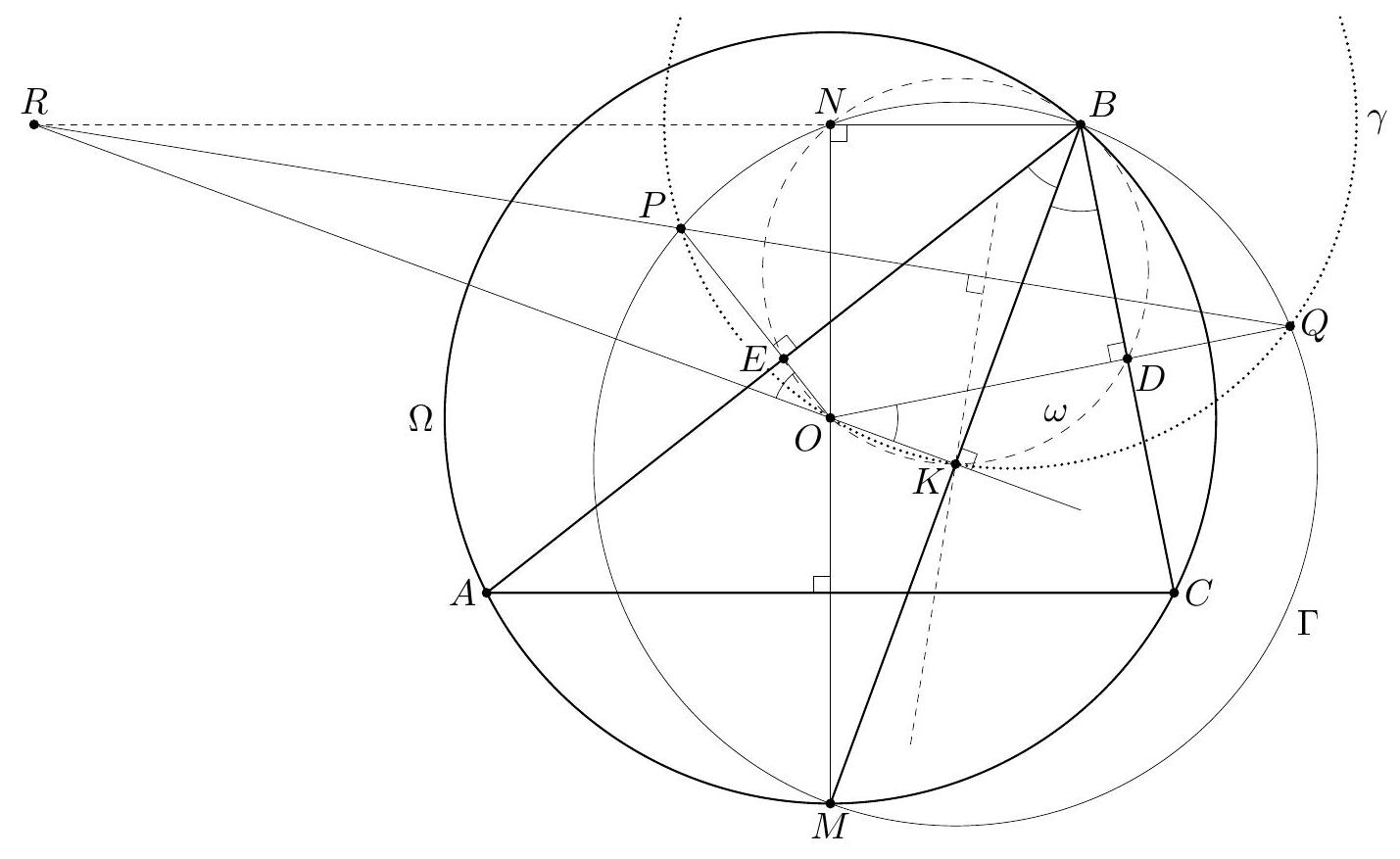

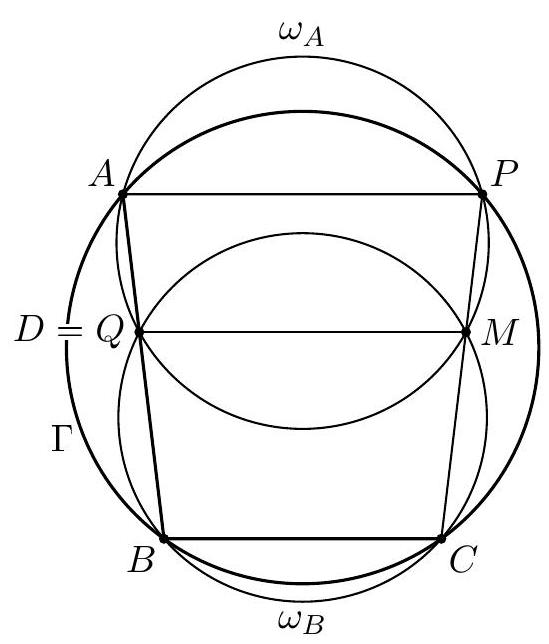

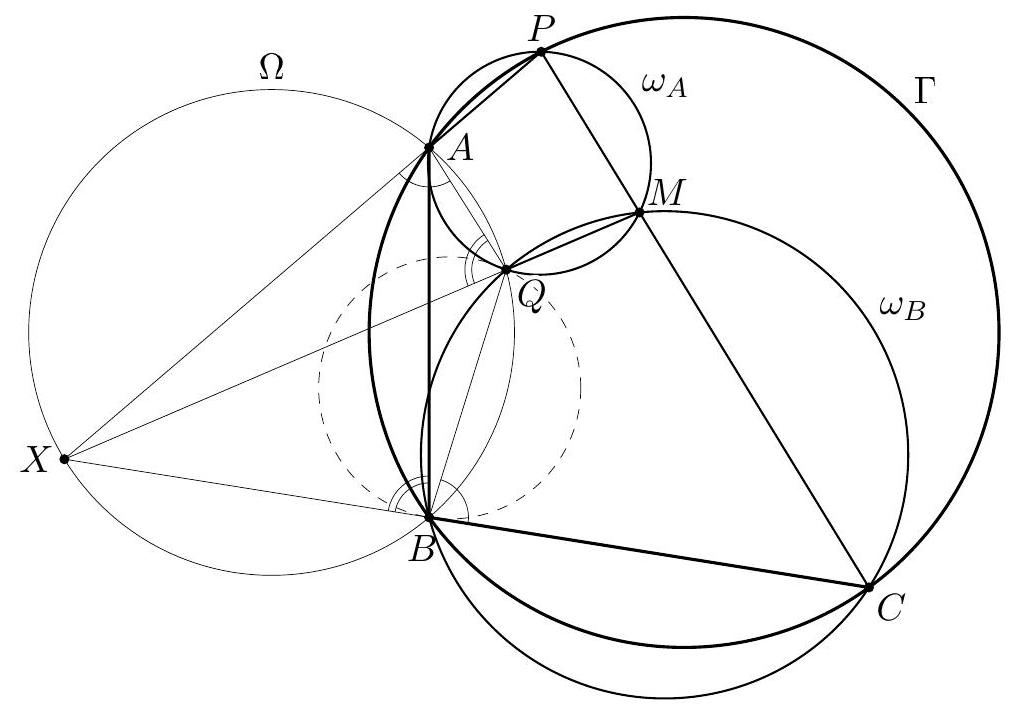

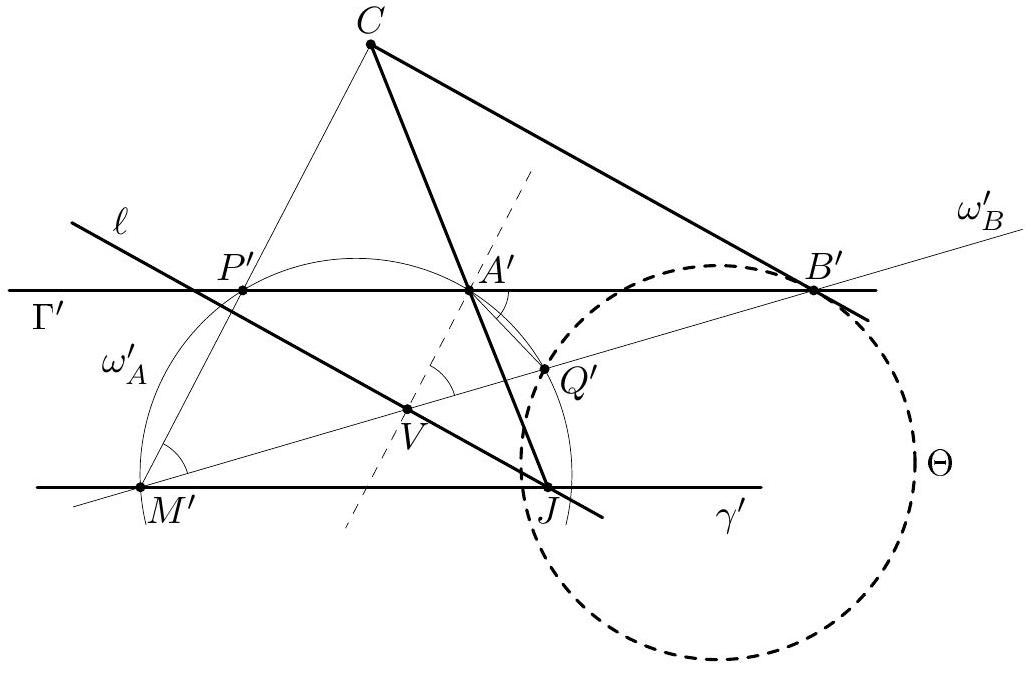

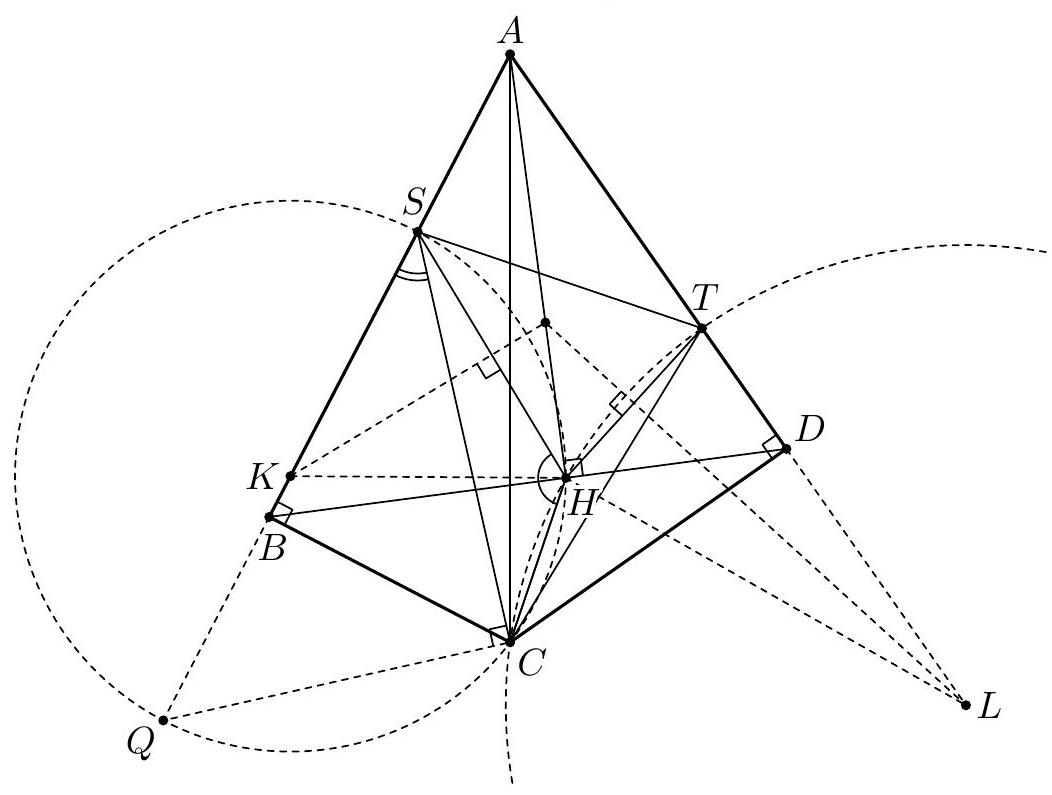

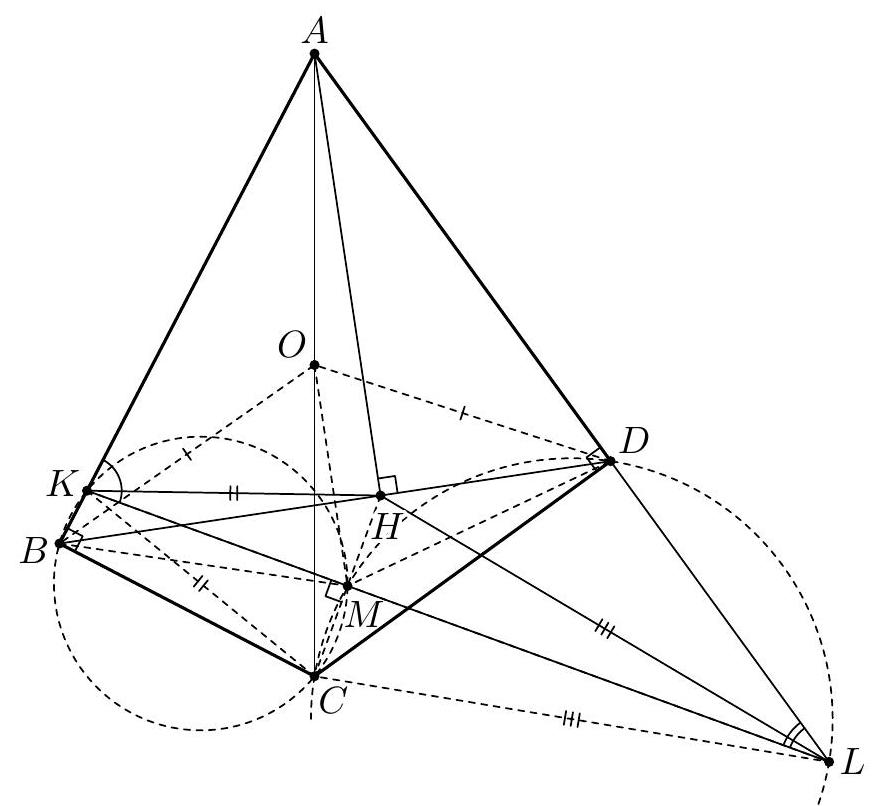

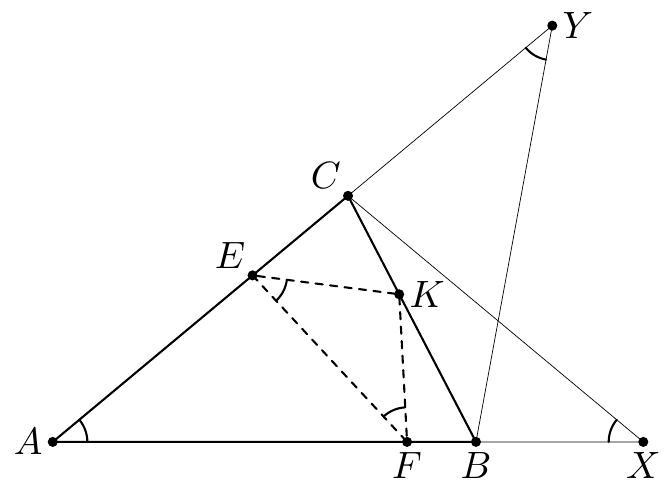

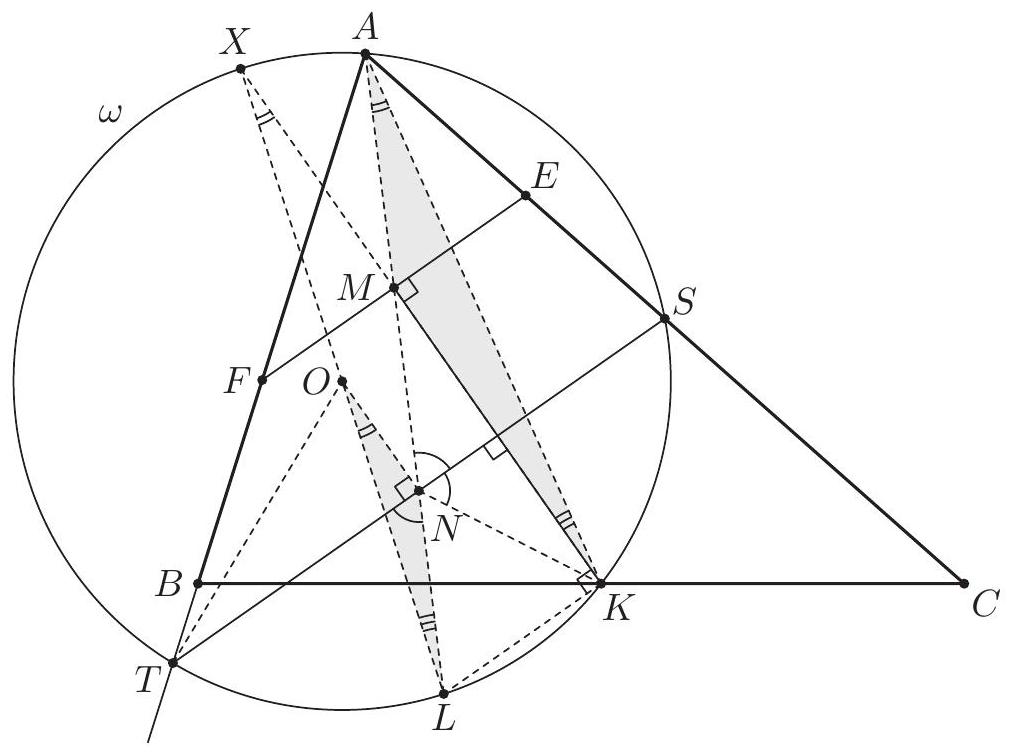

G1. The points $P$ and $Q$ are chosen on the side $B C$ of an acute-angled triangle $A B C$ so that $\angle P A B=\angle A C B$ and $\angle Q A C=\angle C B A$. The points $M$ and $N$ are taken on the rays $A P$ and $A Q$, respectively, so that $A P=P M$ and $A Q=Q N$. Prove that the lines $B M$ and $C N$ intersect on the circumcircle of the triangle $A B C$. (Georgia) G2. Let $A B C$ be a triangle. The points $K, L$, and $M$ lie on the segments $B C, C A$, and $A B$, respectively, such that the lines $A K, B L$, and $C M$ intersect in a common point. Prove that it is possible to choose two of the triangles $A L M, B M K$, and $C K L$ whose inradii sum up to at least the inradius of the triangle $A B C$. (Estonia) G3. Let $\Omega$ and $O$ be the circumcircle and the circumcentre of an acute-angled triangle $A B C$ with $A B>B C$. The angle bisector of $\angle A B C$ intersects $\Omega$ at $M \neq B$. Let $\Gamma$ be the circle with diameter $B M$. The angle bisectors of $\angle A O B$ and $\angle B O C$ intersect $\Gamma$ at points $P$ and $Q$, respectively. The point $R$ is chosen on the line $P Q$ so that $B R=M R$. Prove that $B R | A C$. (Here we always assume that an angle bisector is a ray.) (Russia) G4. Consider a fixed circle $\Gamma$ with three fixed points $A, B$, and $C$ on it. Also, let us fix a real number $\lambda \in(0,1)$. For a variable point $P \notin{A, B, C}$ on $\Gamma$, let $M$ be the point on the segment $C P$ such that $C M=\lambda \cdot C P$. Let $Q$ be the second point of intersection of the circumcircles of the triangles $A M P$ and $B M C$. Prove that as $P$ varies, the point $Q$ lies on a fixed circle. (United Kingdom) G5. Let $A B C D$ be a convex quadrilateral with $\angle B=\angle D=90^{\circ}$. Point $H$ is the foot of the perpendicular from $A$ to $B D$. The points $S$ and $T$ are chosen on the sides $A B$ and $A D$, respectively, in such a way that $H$ lies inside triangle $S C T$ and

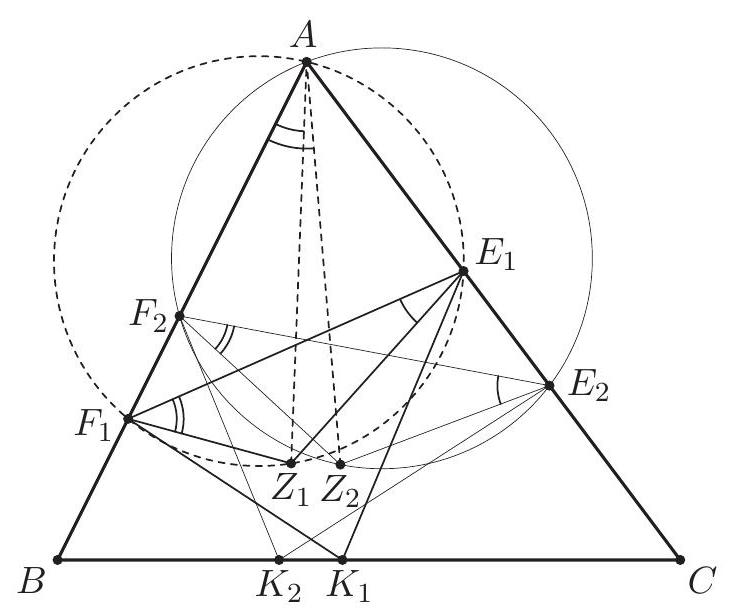

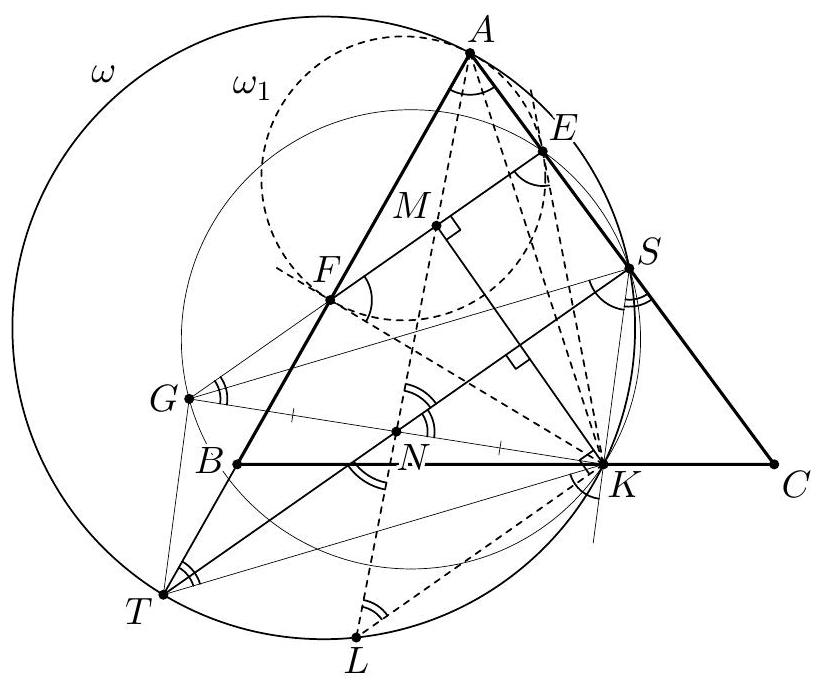

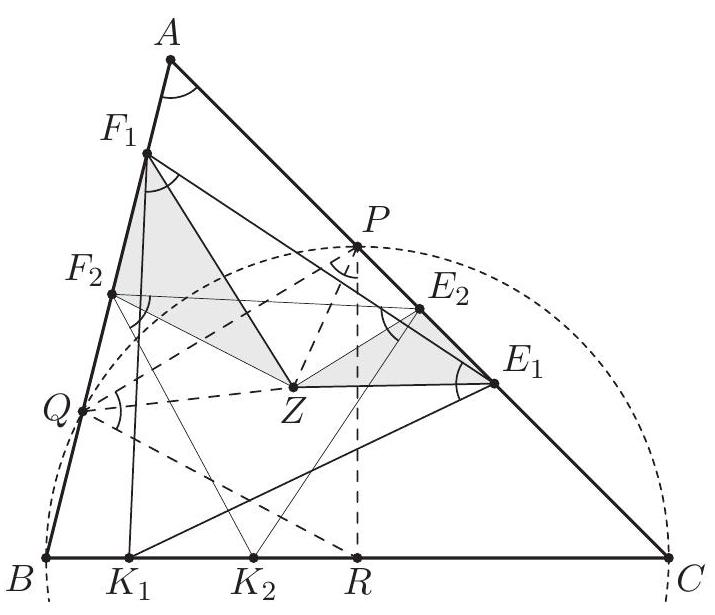

Prove that the circumcircle of triangle $S H T$ is tangent to the line $B D$. (Iran) G6. Let $A B C$ be a fixed acute-angled triangle. Consider some points $E$ and $F$ lying on the sides $A C$ and $A B$, respectively, and let $M$ be the midpoint of $E F$. Let the perpendicular bisector of $E F$ intersect the line $B C$ at $K$, and let the perpendicular bisector of $M K$ intersect the lines $A C$ and $A B$ at $S$ and $T$, respectively. We call the pair $(E, F)$ interesting, if the quadrilateral $K S A T$ is cyclic.

Suppose that the pairs $\left(E_{1}, F_{1}\right)$ and $\left(E_{2}, F_{2}\right)$ are interesting. Prove that

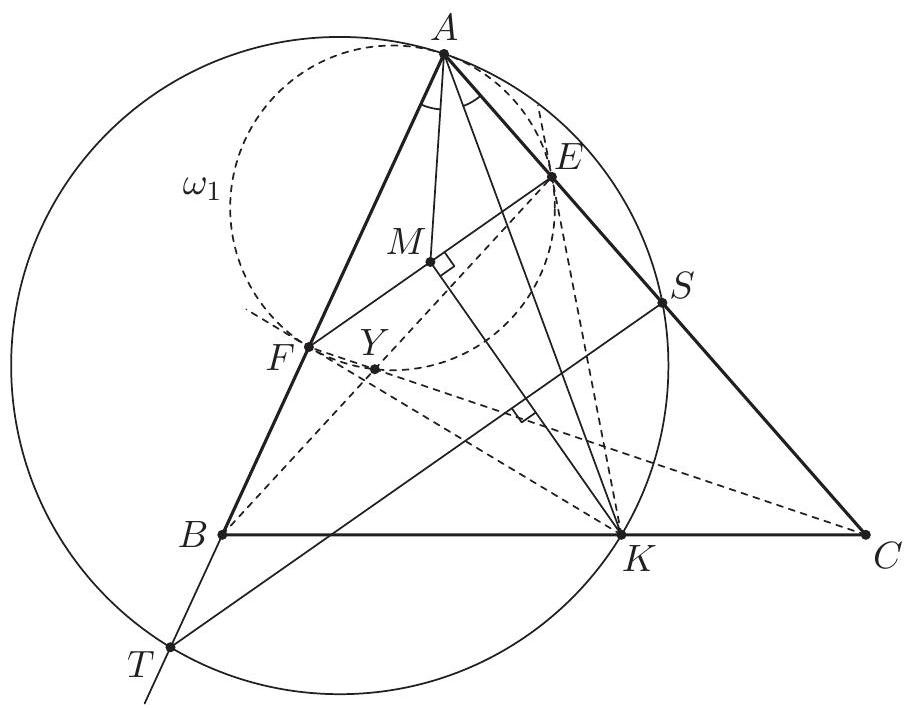

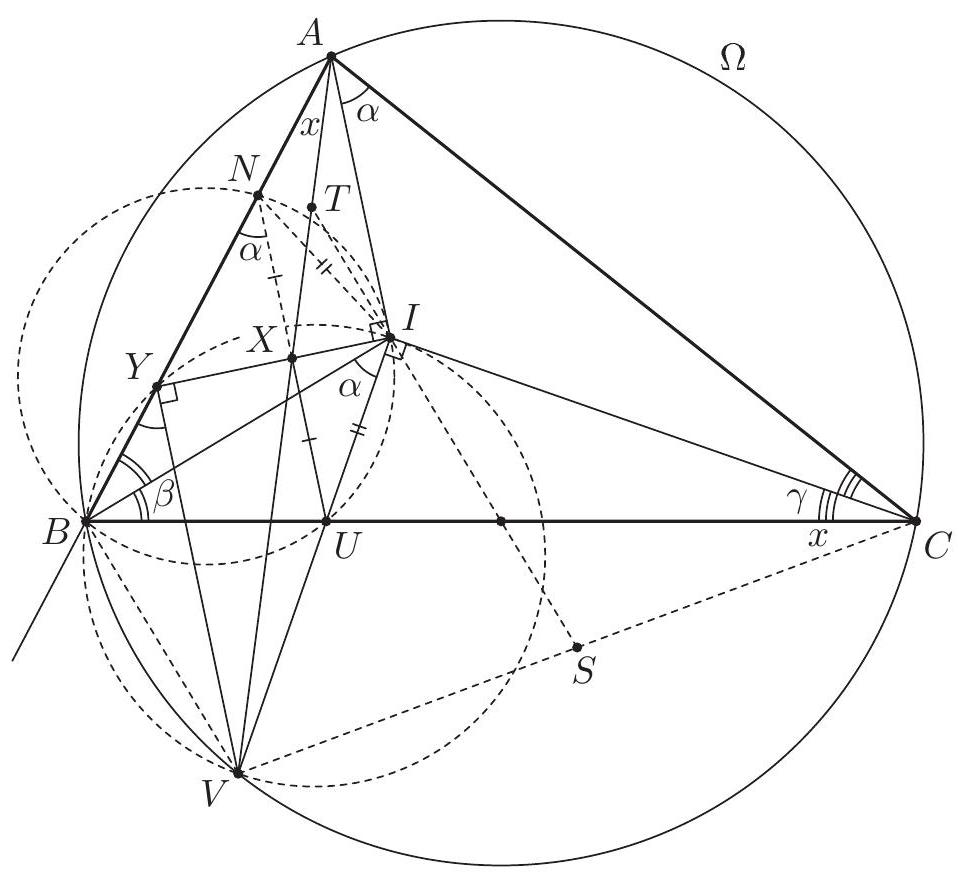

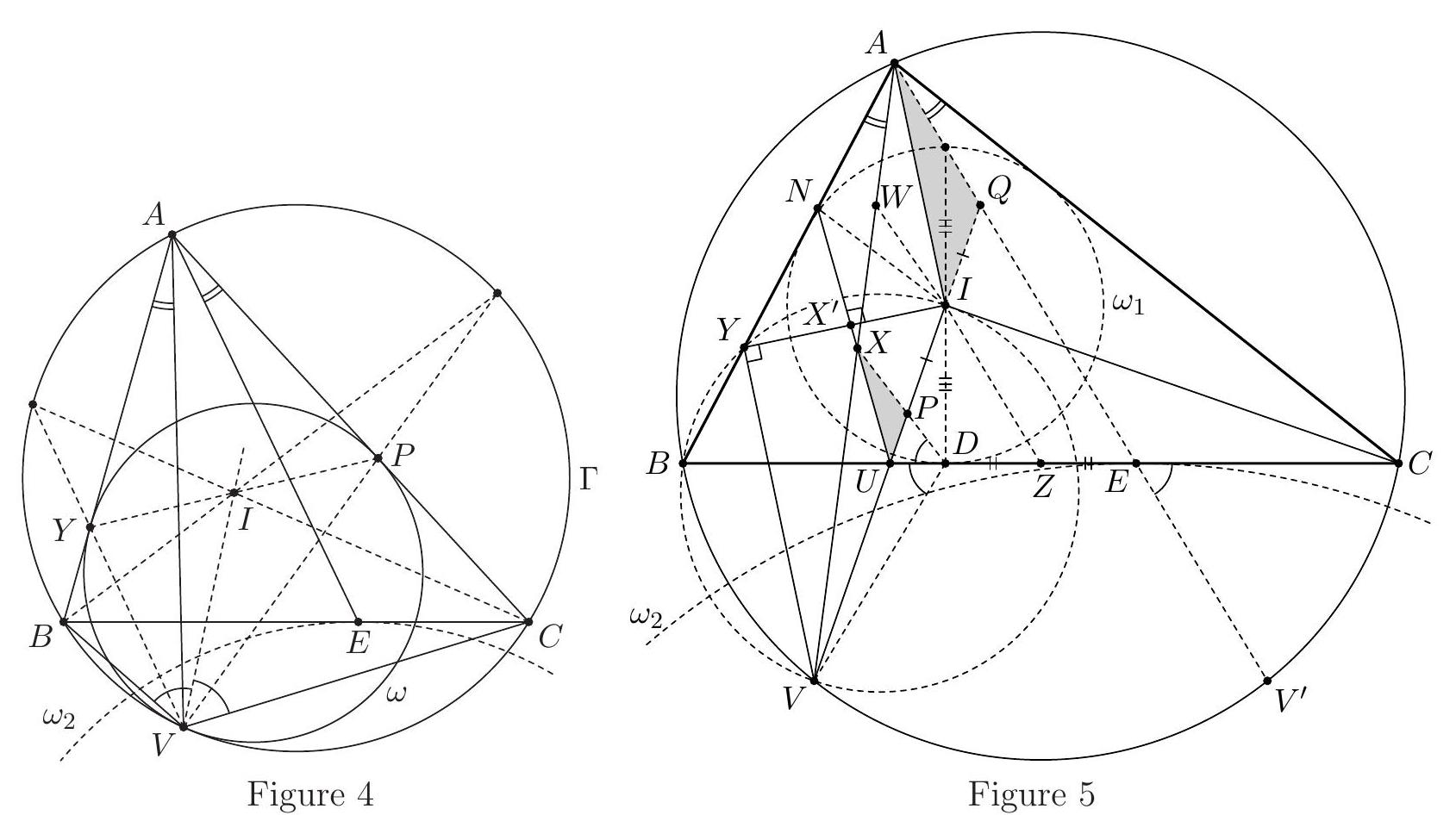

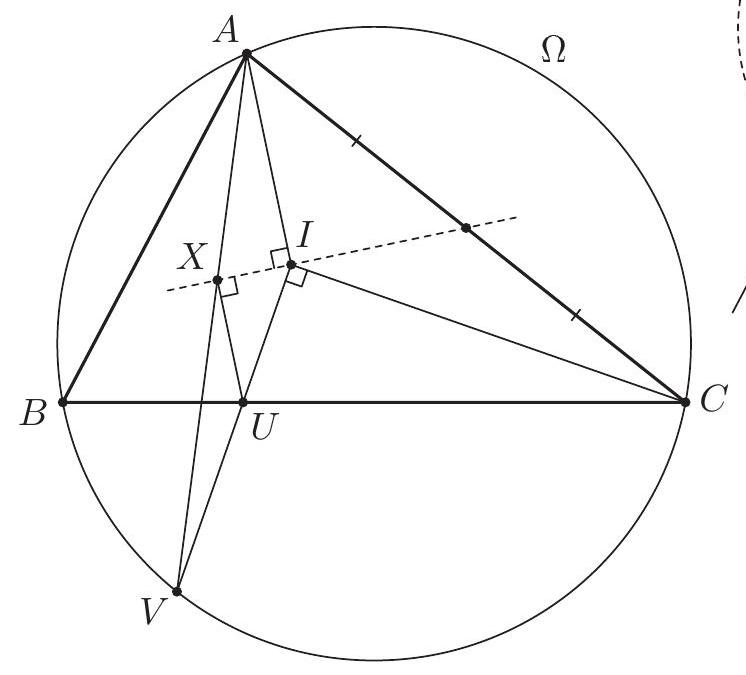

G7. Let $A B C$ be a triangle with circumcircle $\Omega$ and incentre $I$. Let the line passing through $I$ and perpendicular to $C I$ intersect the segment $B C$ and the $\operatorname{arc} B C($ not containing $A)$ of $\Omega$ at points $U$ and $V$, respectively. Let the line passing through $U$ and parallel to $A I$ intersect $A V$ at $X$, and let the line passing through $V$ and parallel to $A I$ intersect $A B$ at $Y$. Let $W$ and $Z$ be the midpoints of $A X$ and $B C$, respectively. Prove that if the points $I, X$, and $Y$ are collinear, then the points $I, W$, and $Z$ are also collinear.

Number Theory

N1. Let $n \geqslant 2$ be an integer, and let $A_{n}$ be the set

Determine the largest positive integer that cannot be written as the sum of one or more (not necessarily distinct) elements of $A_{n}$. (Serbia) N2. Determine all pairs $(x, y)$ of positive integers such that

N3. A coin is called a Cape Town coin if its value is $1 / n$ for some positive integer $n$. Given a collection of Cape Town coins of total value at most $99+\frac{1}{2}$, prove that it is possible to split this collection into at most 100 groups each of total value at most 1. (Luxembourg) N4. Let $n>1$ be a given integer. Prove that infinitely many terms of the sequence $\left(a_{k}\right)_{k \geqslant 1}$, defined by

are odd. (For a real number $x,\lfloor x\rfloor$ denotes the largest integer not exceeding $x$.) (Hong Kong) N5. Find all triples $(p, x, y)$ consisting of a prime number $p$ and two positive integers $x$ and $y$ such that $x^{p-1}+y$ and $x+y^{p-1}$ are both powers of $p$. (Belgium) N6. Let $a_{1}<a_{2}<\cdots<a_{n}$ be pairwise coprime positive integers with $a_{1}$ being prime and $a_{1} \geqslant n+2$. On the segment $I=\left[0, a_{1} a_{2} \cdots a_{n}\right]$ of the real line, mark all integers that are divisible by at least one of the numbers $a_{1}, \ldots, a_{n}$. These points split $I$ into a number of smaller segments. Prove that the sum of the squares of the lengths of these segments is divisible by $a_{1}$. (Serbia) N7. Let $c \geqslant 1$ be an integer. Define a sequence of positive integers by $a_{1}=c$ and

for all $n \geqslant 1$. Prove that for each integer $n \geqslant 2$ there exists a prime number $p$ dividing $a_{n}$ but none of the numbers $a_{1}, \ldots, a_{n-1}$. (Austria) N8. For every real number $x$, let $|x|$ denote the distance between $x$ and the nearest integer. Prove that for every pair $(a, b)$ of positive integers there exist an odd prime $p$ and a positive integer $k$ satisfying

(Hungary)

Solutions

Algebra

A1. Let $z_{0}<z_{1}<z_{2}<\cdots$ be an infinite sequence of positive integers. Prove that there exists a unique integer $n \geqslant 1$ such that

(Austria) Solution. For $n=1,2, \ldots$ define

The sign of $d_{n}$ indicates whether the first inequality in (1) holds; i.e., it is satisfied if and only if $d_{n}>0$.

Notice that

so the second inequality in (1) is equivalent to $d_{n+1} \leqslant 0$. Therefore, we have to prove that there is a unique index $n \geqslant 1$ that satisfies $d_{n}>0 \geqslant d_{n+1}$.

By its definition the sequence $d_{1}, d_{2}, \ldots$ consists of integers and we have

From $d_{n+1}-d_{n}=\left(\left(z_{0}+\cdots+z_{n}+z_{n+1}\right)-(n+1) z_{n+1}\right)-\left(\left(z_{0}+\cdots+z_{n}\right)-n z_{n}\right)=n\left(z_{n}-z_{n+1}\right)<0$ we can see that $d_{n+1}<d_{n}$ and thus the sequence strictly decreases. Hence, we have a decreasing sequence $d_{1}>d_{2}>\ldots$ of integers such that its first element $d_{1}$ is positive. The sequence must drop below 0 at some point, and thus there is a unique index $n$, that is the index of the last positive term, satisfying $d_{n}>0 \geqslant d_{n+1}$.

Comment. Omitting the assumption that $z_{1}, z_{2}, \ldots$ are integers allows the numbers $d_{n}$ to be all positive. In such cases the desired $n$ does not exist. This happens for example if $z_{n}=2-\frac{1}{2^{n}}$ for all integers $n \geqslant 0$.

A2. Define the function $f:(0,1) \rightarrow(0,1)$ by

Let $a$ and $b$ be two real numbers such that $0<a<b<1$. We define the sequences $a_{n}$ and $b_{n}$ by $a_{0}=a, b_{0}=b$, and $a_{n}=f\left(a_{n-1}\right), b_{n}=f\left(b_{n-1}\right)$ for $n>0$. Show that there exists a positive integer $n$ such that

(Denmark) Solution. Note that

if $x<\frac{1}{2}$ and

if $x \geqslant \frac{1}{2}$. So if we consider $(0,1)$ as being divided into the two subintervals $I_{1}=\left(0, \frac{1}{2}\right)$ and $I_{2}=\left[\frac{1}{2}, 1\right)$, the inequality

holds if and only if $a_{n-1}$ and $b_{n-1}$ lie in distinct subintervals. Let us now assume, to the contrary, that $a_{k}$ and $b_{k}$ always lie in the same subinterval. Consider the distance $d_{k}=\left|a_{k}-b_{k}\right|$. If both $a_{k}$ and $b_{k}$ lie in $I_{1}$, then

If, on the other hand, $a_{k}$ and $b_{k}$ both lie in $I_{2}$, then $\min \left(a_{k}, b_{k}\right) \geqslant \frac{1}{2}$ and $\max \left(a_{k}, b_{k}\right)=$ $\min \left(a_{k}, b_{k}\right)+d_{k} \geqslant \frac{1}{2}+d_{k}$, which implies

This means that the difference $d_{k}$ is non-decreasing, and in particular $d_{k} \geqslant d_{0}>0$ for all $k$. We can even say more. If $a_{k}$ and $b_{k}$ lie in $I_{2}$, then

If $a_{k}$ and $b_{k}$ both lie in $I_{1}$, then $a_{k+1}$ and $b_{k+1}$ both lie in $I_{2}$, and so we have

In either case, $d_{k+2} \geqslant d_{k}\left(1+d_{0}\right)$, and inductively we get

For sufficiently large $m$, the right-hand side is greater than 1 , but since $a_{2 m}, b_{2 m}$ both lie in $(0,1)$, we must have $d_{2 m}<1$, a contradiction.

Thus there must be a positive integer $n$ such that $a_{n-1}$ and $b_{n-1}$ do not lie in the same subinterval, which proves the desired statement.

A3. For a sequence $x_{1}, x_{2}, \ldots, x_{n}$ of real numbers, we define its price as

Given $n$ real numbers, Dave and George want to arrange them into a sequence with a low price. Diligent Dave checks all possible ways and finds the minimum possible price $D$. Greedy George, on the other hand, chooses $x_{1}$ such that $\left|x_{1}\right|$ is as small as possible; among the remaining numbers, he chooses $x_{2}$ such that $\left|x_{1}+x_{2}\right|$ is as small as possible, and so on. Thus, in the $i^{\text {th }}$ step he chooses $x_{i}$ among the remaining numbers so as to minimise the value of $\left|x_{1}+x_{2}+\cdots+x_{i}\right|$. In each step, if several numbers provide the same value, George chooses one at random. Finally he gets a sequence with price $G$.

Find the least possible constant $c$ such that for every positive integer $n$, for every collection of $n$ real numbers, and for every possible sequence that George might obtain, the resulting values satisfy the inequality $G \leqslant c D$. (Georgia) Answer. $c=2$. Solution. If the initial numbers are $1,-1,2$, and -2 , then Dave may arrange them as $1,-2,2,-1$, while George may get the sequence $1,-1,2,-2$, resulting in $D=1$ and $G=2$. So we obtain $c \geqslant 2$.

Therefore, it remains to prove that $G \leqslant 2 D$. Let $x_{1}, x_{2}, \ldots, x_{n}$ be the numbers Dave and George have at their disposal. Assume that Dave and George arrange them into sequences $d_{1}, d_{2}, \ldots, d_{n}$ and $g_{1}, g_{2}, \ldots, g_{n}$, respectively. Put

We claim that

These inequalities yield the desired estimate, as $G \leqslant \max {M, S} \leqslant \max {M, 2 S} \leqslant 2 D$. The inequality (1) is a direct consequence of the definition of the price. To prove (2), consider an index $i$ with $\left|d_{i}\right|=M$. Then we have

as required. It remains to establish (3). Put $h_{i}=g_{1}+g_{2}+\cdots+g_{i}$. We will prove by induction on $i$ that $\left|h_{i}\right| \leqslant N$. The base case $i=1$ holds, since $\left|h_{1}\right|=\left|g_{1}\right| \leqslant M \leqslant N$. Notice also that $\left|h_{n}\right|=S \leqslant N$.

For the induction step, assume that $\left|h_{i-1}\right| \leqslant N$. We distinguish two cases. Case 1. Assume that no two of the numbers $g_{i}, g_{i+1}, \ldots, g_{n}$ have opposite signs. Without loss of generality, we may assume that they are all nonnegative. Then one has $h_{i-1} \leqslant h_{i} \leqslant \cdots \leqslant h_{n}$, thus

Case 2. Among the numbers $g_{i}, g_{i+1}, \ldots, g_{n}$ there are positive and negative ones.

Then there exists some index $j \geqslant i$ such that $h_{i-1} g_{j} \leqslant 0$. By the definition of George's sequence we have

Thus, the induction step is established. Comment 1. One can establish the weaker inequalities $D \geqslant \frac{M}{2}$ and $G \leqslant D+\frac{M}{2}$ from which the result also follows.

Comment 2. One may ask a more specific question to find the maximal suitable $c$ if the number $n$ is fixed. For $n=1$ or 2 , the answer is $c=1$. For $n=3$, the answer is $c=\frac{3}{2}$, and it is reached e.g., for the collection $1,2,-4$. Finally, for $n \geqslant 4$ the answer is $c=2$. In this case the arguments from the solution above apply, and the answer is reached e.g., for the same collection $1,-1,2,-2$, augmented by several zeroes.

A4. Determine all functions $f: \mathbb{Z} \rightarrow \mathbb{Z}$ satisfying

for all integers $m$ and $n$. (Netherlands) Answer. There is only one such function, namely $n \longmapsto 2 n+1007$. Solution. Let $f$ be a function satisfying (1). Set $C=1007$ and define the function $g: \mathbb{Z} \rightarrow \mathbb{Z}$ by $g(m)=f(3 m)-f(m)+2 C$ for all $m \in \mathbb{Z}$; in particular, $g(0)=2 C$. Now (1) rewrites as

for all $m, n \in \mathbb{Z}$. By induction in both directions it follows that

holds for all $m, n, t \in \mathbb{Z}$. Applying this, for any $r \in \mathbb{Z}$, to the triples $(r, 0, f(0))$ and $(0,0, f(r))$ in place of $(m, n, t)$ we obtain

Now if $f(0)$ vanished, then $g(0)=2 C>0$ would entail that $f$ vanishes identically, contrary to (1). Thus $f(0) \neq 0$ and the previous equation yields $g(r)=\alpha f(r)$, where $\alpha=\frac{g(0)}{f(0)}$ is some nonzero constant.

So the definition of $g$ reveals $f(3 m)=(1+\alpha) f(m)-2 C$, i.e.,

for all $m \in \mathbb{Z}$, where $\beta=\frac{2 C}{\alpha}$. By induction on $k$ this implies

for all integers $k \geqslant 0$ and $m$. Since $3 \nmid 2014$, there exists by (1) some value $d=f(a)$ attained by $f$ that is not divisible by 3 . Now by (2) we have $f(n+t d)=f(n)+t g(a)=f(n)+\alpha \cdot t f(a)$, i.e.,

for all $n, t \in \mathbb{Z}$. Let us fix any positive integer $k$ with $d \mid\left(3^{k}-1\right)$, which is possible, since $\operatorname{gcd}(3, d)=1$. E.g., by the Euler-Fermat theorem, we may take $k=\varphi(|d|)$. Now for each $m \in \mathbb{Z}$ we get

from (5), which in view of (4) yields $\left((1+\alpha)^{k}-1\right)(f(m)-\beta)=\alpha\left(3^{k}-1\right) m$. Since $\alpha \neq 0$, the right hand side does not vanish for $m \neq 0$, wherefore the first factor on the left hand side cannot vanish either. It follows that

So $f$ is a linear function, say $f(m)=A m+\beta$ for all $m \in \mathbb{Z}$ with some constant $A \in \mathbb{Q}$. Plugging this into (1) one obtains $\left(A^{2}-2 A\right) m+(A \beta-2 C)=0$ for all $m$, which is equivalent to the conjunction of

The first equation is equivalent to $A \in{0,2}$, and as $C \neq 0$ the second one gives

This shows that $f$ is indeed the function mentioned in the answer and as the numbers found in (7) do indeed satisfy the equations (6) this function is indeed as desired.

Comment 1. One may see that $\alpha=2$. A more pedestrian version of the above solution starts with a direct proof of this fact, that can be obtained by substituting some special values into (1), e.g., as follows.

Set $D=f(0)$. Plugging $m=0$ into (1) and simplifying, we get

for all $n \in \mathbb{Z}$. In particular, for $n=0, D, 2 D$ we obtain $f(D)=2 C+D, f(2 D)=f(D)+2 C=4 C+D$, and $f(3 D)=f(2 D)+2 C=6 C+D$. So substituting $m=D$ and $n=r-D$ into (1) and applying (8) with $n=r-D$ afterwards we learn

i.e., $f(r+2 C)=f(r)+4 C$. By induction in both directions it follows that

holds for all $n, t \in \mathbb{Z}$. Claim. If $a$ and $b$ denote two integers with the property that $f(n+a)=f(n)+b$ holds for all $n \in \mathbb{Z}$, then $b=2 a$. Proof. Applying induction in both directions to the assumption we get $f(n+t a)=f(n)+t b$ for all $n, t \in \mathbb{Z}$. Plugging $(n, t)=(0,2 C)$ into this equation and $(n, t)=(0, a)$ into $(9)$ we get $f(2 a C)-f(0)=$ $2 b C=4 a C$, and, as $C \neq 0$, the claim follows.

Now by (1), for any $m \in \mathbb{Z}$, the numbers $a=f(m)$ and $b=f(3 m)-f(m)+2 C$ have the property mentioned in the claim, whence we have

In view of (3) this tells us indeed that $\alpha=2$. Now the solution may be completed as above, but due to our knowledge of $\alpha=2$ we get the desired formula $f(m)=2 m+C$ directly without having the need to go through all linear functions. Now it just remains to check that this function does indeed satisfy (1).

Comment 2. It is natural to wonder what happens if one replaces the number 2014 appearing in the statement of the problem by some arbitrary integer $B$.

If $B$ is odd, there is no such function, as can be seen by using the same ideas as in the above solution.

If $B \neq 0$ is even, however, then the only such function is given by $n \longmapsto 2 n+B / 2$. In case $3 \nmid B$ this was essentially proved above, but for the general case one more idea seems to be necessary. Writing $B=3^{\nu} \cdot k$ with some integers $\nu$ and $k$ such that $3 \nmid k$ one can obtain $f(n)=2 n+B / 2$ for all $n$ that are divisible by $3^{\nu}$ in the same manner as usual; then one may use the formula $f(3 n)=3 f(n)-B$ to establish the remaining cases.

Finally, in case $B=0$ there are more solutions than just the function $n \longmapsto 2 n$. It can be shown that all these other functions are periodic; to mention just one kind of example, for any even integers $r$ and $s$ the function

also has the property under discussion.

A5. Consider all polynomials $P(x)$ with real coefficients that have the following property: for any two real numbers $x$ and $y$ one has

Determine all possible values of $P(0)$. (Belgium) Answer. The set of possible values of $P(0)$ is $(-\infty, 0) \cup{1}$.

Solution.

Part I. We begin by verifying that these numbers are indeed possible values of $P(0)$. To see that each negative real number $-C$ can be $P(0)$, it suffices to check that for every $C>0$ the polynomial $P(x)=-\left(\frac{2 x^{2}}{C}+C\right)$ has the property described in the statement of the problem. Due to symmetry it is enough for this purpose to prove $\left|y^{2}-P(x)\right|>2|x|$ for any two real numbers $x$ and $y$. In fact we have

where in the first estimate equality can only hold if $|x|=C$, whilst in the second one it can only hold if $x=0$. As these two conditions cannot be met at the same time, we have indeed $\left|y^{2}-P(x)\right|>2|x|$.

To show that $P(0)=1$ is possible as well, we verify that the polynomial $P(x)=x^{2}+1$ satisfies (1). Notice that for all real numbers $x$ and $y$ we have

Since this inequality is symmetric in $x$ and $y$, we are done. Part II. Now we show that no values other than those mentioned in the answer are possible for $P(0)$. To reach this we let $P$ denote any polynomial satisfying (1) and $P(0) \geqslant 0$; as we shall see, this implies $P(x)=x^{2}+1$ for all real $x$, which is actually more than what we want.

First step: We prove that $P$ is even. By (1) we have

for all real numbers $x$ and $y$. Considering just the equivalence of the first and third statement and taking into account that $y^{2}$ may vary through $\mathbb{R}_{\geqslant 0}$ we infer that

holds for all $x \in \mathbb{R}$. We claim that there are infinitely many real numbers $x$ such that $P(x)+2|x| \geqslant 0$. This holds in fact for any real polynomial with $P(0) \geqslant 0$; in order to see this, we may assume that the coefficient of $P$ appearing in front of $x$ is nonnegative. In this case the desired inequality holds for all sufficiently small positive real numbers.

For such numbers $x$ satisfying $P(x)+2|x| \geqslant 0$ we have $P(x)+2|x|=P(-x)+2|x|$ by the previous displayed formula, and hence also $P(x)=P(-x)$. Consequently the polynomial $P(x)-P(-x)$ has infinitely many zeros, wherefore it has to vanish identically. Thus $P$ is indeed even.

Second step: We prove that $P(t)>0$ for all $t \in \mathbb{R}$. Let us assume for a moment that there exists a real number $t \neq 0$ with $P(t)=0$. Then there is some open interval $I$ around $t$ such that $|P(y)| \leqslant 2|y|$ holds for all $y \in I$. Plugging $x=0$ into (1) we learn that $y^{2}=P(0)$ holds for all $y \in I$, which is clearly absurd. We have thus shown $P(t) \neq 0$ for all $t \neq 0$.

In combination with $P(0) \geqslant 0$ this informs us that our claim could only fail if $P(0)=0$. In this case there is by our first step a polynomial $Q(x)$ such that $P(x)=x^{2} Q(x)$. Applying (1) to $x=0$ and an arbitrary $y \neq 0$ we get $|y Q(y)|>2$, which is surely false when $y$ is sufficiently small.

Third step: We prove that $P$ is a quadratic polynomial. Notice that $P$ cannot be constant, for otherwise if $x=\sqrt{P(0)}$ and $y$ is sufficiently large, the first part of (1) is false whilst the second part is true. So the degree $n$ of $P$ has to be at least 1 . By our first step $n$ has to be even as well, whence in particular $n \geqslant 2$.

Now assume that $n \geqslant 4$. Plugging $y=\sqrt{P(x)}$ into (1) we get $\left|x^{2}-P(\sqrt{P(x)})\right| \leqslant 2 \sqrt{P(x)}$ and hence

for all real $x$. Choose positive real numbers $x_{0}, a$, and $b$ such that if $x \in\left(x_{0}, \infty\right)$, then $a x^{n}<$ $P(x)<b x^{n}$; this is indeed possible, for if $d>0$ denotes the leading coefficient of $P$, then $\lim {x \rightarrow \infty} \frac{P(x)}{x^{n}}=d$, whence for instance the numbers $a=\frac{d}{2}$ and $b=2 d$ work provided that $x{0}$ is chosen large enough.

Now for all sufficiently large real numbers $x$ we have

i.e.

which is surely absurd. Thus $P$ is indeed a quadratic polynomial. Fourth step: We prove that $P(x)=x^{2}+1$. In the light of our first three steps there are two real numbers $a>0$ and $b$ such that $P(x)=$ $a x^{2}+b$. Now if $x$ is large enough and $y=\sqrt{a} x$, the left part of (1) holds and the right part reads $\left|\left(1-a^{2}\right) x^{2}-b\right| \leqslant 2 \sqrt{a} x$. In view of the fact that $a>0$ this is only possible if $a=1$. Finally, substituting $y=x+1$ with $x>0$ into (1) we get

i.e.,

for all $x>0$. Choosing $x$ large enough, we can achieve that at least one of these two statements holds; then both hold, which is only possible if $b=1$, as desired.

Comment 1. There are some issues with this problem in that its most natural solutions seem to use some basic facts from analysis, such as the continuity of polynomials or the intermediate value theorem. Yet these facts are intuitively obvious and implicitly clear to the students competing at this level of difficulty, so that the Problem Selection Committee still thinks that the problem is suitable for the IMO.

Comment 2. It seems that most solutions will in the main case, where $P(0)$ is nonnegative, contain an argument that is somewhat asymptotic in nature showing that $P$ is quadratic, and some part narrowing that case down to $P(x)=x^{2}+1$.

Comment 3. It is also possible to skip the first step and start with the second step directly, but then one has to work a bit harder to rule out the case $P(0)=0$. Let us sketch one possibility of doing this: Take the auxiliary polynomial $Q(x)$ such that $P(x)=x Q(x)$. Applying (1) to $x=0$ and an arbitrary $y \neq 0$ we get $|Q(y)|>2$. Hence we either have $Q(z) \geqslant 2$ for all real $z$ or $Q(z) \leqslant-2$ for all real $z$. In particular there is some $\eta \in{-1,+1}$ such that $P(\eta) \geqslant 2$ and $P(-\eta) \leqslant-2$. Substituting $x= \pm \eta$ into (1) we learn

But for $y=\sqrt{P(\eta)}$ the first statement is true, whilst the third one is false. Also, if one has not obtained the evenness of $P$ before embarking on the fourth step, one needs to work a bit harder there, but not in a way that is likely to cause major difficulties.

Comment 4. Truly curious people may wonder about the set of all polynomials having property (1). As explained in the solution above, $P(x)=x^{2}+1$ is the only one with $P(0)=1$. On the other hand, it is not hard to notice that for negative $P(0)$ there are more possibilities than those mentioned above. E.g., as remarked by the proposer, if $a$ and $b$ denote two positive real numbers with $a b>1$ and $Q$ denotes a polynomial attaining nonnegative values only, then $P(x)=-\left(a x^{2}+b+Q(x)\right)$ works.

More generally, it may be proved that if $P(x)$ satisfies (1) and $P(0)<0$, then $-P(x)>2|x|$ holds for all $x \in \mathbb{R}$ so that one just considers the equivalence of two false statements. One may generate all such polynomials $P$ by going through all combinations of a solution of the polynomial equation

and a real $E>0$, and setting

for each of them.

A6. Find all functions $f: \mathbb{Z} \rightarrow \mathbb{Z}$ such that

for all $n \in \mathbb{Z}$. (United Kingdom) Answer. The possibilities are:

- $f(n)=n+1$ for all $n$;

- or, for some $a \geqslant 1, \quad f(n)= \begin{cases}n+1, & n>-a, \ -n+1, & n \leqslant-a ;\end{cases}$

- or $f(n)= \begin{cases}n+1, & n>0, \ 0, & n=0, \ -n+1, & n<0 .\end{cases}$

Solution 1.

Part I. Let us first check that each of the functions above really satisfies the given functional equation. If $f(n)=n+1$ for all $n$, then we have

If $f(n)=n+1$ for $n>-a$ and $f(n)=-n+1$ otherwise, then we have the same identity for $n>-a$ and

otherwise. The same applies to the third solution (with $a=0$ ), where in addition one has

Part II. It remains to prove that these are really the only functions that satisfy our functional equation. We do so in three steps:

Step 1: We prove that $f(n)=n+1$ for $n>0$. Consider the sequence $\left(a_{k}\right)$ given by $a_{k}=f^{k}(1)$ for $k \geqslant 0$. Setting $n=a_{k}$ in (1), we get

Of course, $a_{0}=1$ by definition. Since $a_{2}^{2}=1+4 a_{1}$ is odd, $a_{2}$ has to be odd as well, so we set $a_{2}=2 r+1$ for some $r \in \mathbb{Z}$. Then $a_{1}=r^{2}+r$ and consequently

Since $8 r+4 \neq 0, a_{3}^{2} \neq\left(r^{2}+r\right)^{2}$, so the difference between $a_{3}^{2}$ and $\left(r^{2}+r\right)^{2}$ is at least the distance from $\left(r^{2}+r\right)^{2}$ to the nearest even square (since $8 r+4$ and $r^{2}+r$ are both even). This implies that

(for $r=0$ and $r=-1$, the estimate is trivial, but this does not matter). Therefore, we ave

If $|r| \geqslant 4$, then

a contradiction. Thus $|r|<4$. Checking all possible remaining values of $r$, we find that $\left(r^{2}+r\right)^{2}+8 r+4$ is only a square in three cases: $r=-3, r=0$ and $r=1$. Let us now distinguish these three cases:

- $r=-3$, thus $a_{1}=6$ and $a_{2}=-5$. For each $k \geqslant 1$, we have

and the sign needs to be chosen in such a way that $a_{k+1}^{2}+4 a_{k+2}$ is again a square. This yields $a_{3}=-4, a_{4}=-3, a_{5}=-2, a_{6}=-1, a_{7}=0, a_{8}=1, a_{9}=2$. At this point we have reached a contradiction, since $f(1)=f\left(a_{0}\right)=a_{1}=6$ and at the same time $f(1)=f\left(a_{8}\right)=a_{9}=2$.

- $r=0$, thus $a_{1}=0$ and $a_{2}=1$. Then $a_{3}^{2}=a_{1}^{2}+4 a_{2}=4$, so $a_{3}= \pm 2$. This, however, is a contradiction again, since it gives us $f(1)=f\left(a_{0}\right)=a_{1}=0$ and at the same time $f(1)=f\left(a_{2}\right)=a_{3}= \pm 2$.

- $r=1$, thus $a_{1}=2$ and $a_{2}=3$. We prove by induction that $a_{k}=k+1$ for all $k \geqslant 0$ in this case, which we already know for $k \leqslant 2$ now. For the induction step, assume that $a_{k-1}=k$ and $a_{k}=k+1$. Then

so $a_{k+1}= \pm(k+2)$. If $a_{k+1}=-(k+2)$, then

The latter can only be a square if $k=4$ (since 1 and 9 are the only two squares whose difference is 8 ). Then, however, $a_{4}=5, a_{5}=-6$ and $a_{6}= \pm 1$, so

but neither 32 nor 40 is a perfect square. Thus $a_{k+1}=k+2$, which completes our induction. This also means that $f(n)=f\left(a_{n-1}\right)=a_{n}=n+1$ for all $n \geqslant 1$.

Step 2: We prove that either $f(0)=1$, or $f(0)=0$ and $f(n) \neq 0$ for $n \neq 0$. Set $n=0$ in (1) to get

This means that $f(0) \geqslant 0$. If $f(0)=0$, then $f(n) \neq 0$ for all $n \neq 0$, since we would otherwise have

If $f(0)>0$, then we know that $f(f(0))=f(0)+1$ from the first step, so

which yields $f(0)=1$.

Step 3: We discuss the values of $f(n)$ for $n<0$. Lemma. For every $n \geqslant 1$, we have $f(-n)=-n+1$ or $f(-n)=n+1$. Moreover, if $f(-n)=$ $-n+1$ for some $n \geqslant 1$, then also $f(-n+1)=-n+2$. Proof. We prove this statement by strong induction on $n$. For $n=1$, we get

Thus $f(-1)$ needs to be nonnegative. If $f(-1)=0$, then $f(f(-1))=f(0)= \pm 1$, so $f(0)=1$ (by our second step). Otherwise, we know that $f(f(-1))=f(-1)+1$, so

which yields $f(-1)=2$ and thus establishes the base case. For the induction step, we consider two cases:

- If $f(-n) \leqslant-n$, then

so $|f(f(-n))| \leqslant n-3$ (for $n=2$, this case cannot even occur). If $f(f(-n)) \geqslant 0$, then we already know from the first two steps that $f(f(f(-n)))=f(f(-n))+1$, unless perhaps if $f(0)=0$ and $f(f(-n))=0$. However, the latter would imply $f(-n)=0$ (as shown in Step 2) and thus $n=0$, which is impossible. If $f(f(-n))<0$, we can apply the induction hypothesis to $f(f(-n))$. In either case, $f(f(f(-n)))= \pm f(f(-n))+1$. Therefore,

which gives us

a contradiction.

- Thus, we are left with the case that $f(-n)>-n$. Now we argue as in the previous case: if $f(-n) \geqslant 0$, then $f(f(-n))=f(-n)+1$ by the first two steps, since $f(0)=0$ and $f(-n)=0$ would imply $n=0$ (as seen in Step 2) and is thus impossible. If $f(-n)<0$, we can apply the induction hypothesis, so in any case we can infer that $f(f(-n))= \pm f(-n)+1$. We obtain

so either

which gives us $f(-n)= \pm n+1$, or

Since 1 and 9 are the only perfect squares whose difference is 8 , we must have $n=1$, which we have already considered.

Finally, suppose that $f(-n)=-n+1$ for some $n \geqslant 2$. Then

so $f(-n+1)= \pm(n-2)$. However, we already know that $f(-n+1)=-n+2$ or $f(-n+1)=n$, so $f(-n+1)=-n+2$.

Combining everything we know, we find the solutions as stated in the answer:

- One solution is given by $f(n)=n+1$ for all $n$.

- If $f(n)$ is not always equal to $n+1$, then there is a largest integer $m$ (which cannot be positive) for which this is not the case. In view of the lemma that we proved, we must then have $f(n)=-n+1$ for any integer $n<m$. If $m=-a<0$, we obtain $f(n)=-n+1$ for $n \leqslant-a$ (and $f(n)=n+1$ otherwise). If $m=0$, we have the additional possibility that $f(0)=0, f(n)=-n+1$ for negative $n$ and $f(n)=n+1$ for positive $n$.

Solution 2. Let us provide an alternative proof for Part II, which also proceeds in several steps.

Step 1. Let $a$ be an arbitrary integer and $b=f(a)$. We first concentrate on the case where $|a|$ is sufficiently large.

- If $b=0$, then (1) applied to $a$ yields $a^{2}=f(f(a))^{2}$, thus

From now on, we set $D=|f(0)|$. Throughout Step 1, we will assume that $a \notin{-D, 0, D}$, thus $b \neq 0$. 2. From (1), noticing that $f(f(a))$ and $a$ have the same parity, we get

Hence we have

For the rest of Step 1, we also assume that $|a| \geqslant E=\max {D+2,10}$. Then by (3) we have $|b| \geqslant D+1$ and thus $|f(b)| \geqslant D$. 3. Set $c=f(b)$; by (3), we have $|c| \geqslant|b|-1$. Thus (1) yields

which implies

because $|b| \geqslant|a|-1 \geqslant 9$. Thus (3) can be refined to

Now, from $c^{2}=a^{2}+4 b$ with $|b| \in[|a|-1,|a|+3]$ we get $c^{2}=(a \pm 2)^{2}+d$, where $d \in{-16,-12,-8,-4,0,4,8}$. Since $|a \pm 2| \geqslant 8$, this can happen only if $c^{2}=(a \pm 2)^{2}$, which in turn yields $b= \pm a+1$. To summarise,

We have shown that, with at most finitely many exceptions, $f(a)=1 \pm a$. Thus it will be convenient for our second step to introduce the sets

Step 2. Now we investigate the structure of the sets $Z_{+}, Z_{-}$, and $Z_{0}$. 4. Note that $f(E+1)=1 \pm(E+1)$. If $f(E+1)=E+2$, then $E+1 \in Z_{+}$. Otherwise we have $f(1+E)=-E$; then the original equation (1) with $n=E+1$ gives us $(E-1)^{2}=f(-E)^{2}$, so $f(-E)= \pm(E-1)$. By (4) this may happen only if $f(-E)=1-E$, so in this case $-E \in Z_{+}$. In any case we find that $Z_{+} \neq \varnothing$. 5. Now take any $a \in Z_{+}$. We claim that every integer $x \geqslant a$ also lies in $Z_{+}$. We proceed by induction on $x$, the base case $x=a$ being covered by our assumption. For the induction step, assume that $f(x-1)=x$ and plug $n=x-1$ into (1). We get $f(x)^{2}=(x+1)^{2}$, so either $f(x)=x+1$ or $f(x)=-(x+1)$. Assume that $f(x)=-(x+1)$ and $x \neq-1$, since otherwise we already have $f(x)=x+1$. Plugging $n=x$ into (1), we obtain $f(-x-1)^{2}=(x-2)^{2}-8$, which may happen only if $x-2= \pm 3$ and $f(-x-1)= \pm 1$. Plugging $n=-x-1$ into (1), we get $f( \pm 1)^{2}=(x+1)^{2} \pm 4$, which in turn may happen only if $x+1 \in{-2,0,2}$. Thus $x \in{-1,5}$ and at the same time $x \in{-3,-1,1}$, which gives us $x=-1$. Since this has already been excluded, we must have $f(x)=x+1$, which completes our induction. 6. Now we know that either $Z_{+}=\mathbb{Z}$ (if $Z_{+}$is not bounded below), or $Z_{+}=\left{a \in \mathbb{Z}: a \geqslant a_{0}\right}$, where $a_{0}$ is the smallest element of $Z_{+}$. In the former case, $f(n)=n+1$ for all $n \in \mathbb{Z}$, which is our first solution. So we assume in the following that $Z_{+}$is bounded below and has a smallest element $a_{0}$. If $Z_{0}=\varnothing$, then we have $f(x)=x+1$ for $x \geqslant a_{0}$ and $f(x)=1-x$ for $x<a_{0}$. In particular, $f(0)=1$ in any case, so $0 \in Z_{+}$and thus $a_{0} \leqslant 0$. Thus we end up with the second solution listed in the answer. It remains to consider the case where $Z_{0} \neq \varnothing$. 7. Assume that there exists some $a \in Z_{0}$ with $b=f(a) \notin Z_{0}$, so that $f(b)=1 \pm b$. Then we have $a^{2}+4 b=(1 \pm b)^{2}$, so either $a^{2}=(b-1)^{2}$ or $a^{2}=(b-3)^{2}-8$. In the former case we have $b=1 \pm a$, which is impossible by our choice of $a$. So we get $a^{2}=(b-3)^{2}-8$, which implies $f(b)=1-b$ and $|a|=1,|b-3|=3$. If $b=0$, then we have $f(b)=1$, so $b \in Z_{+}$and therefore $a_{0} \leqslant 0$; hence $a=-1$. But then $f(a)=0=a+1$, so $a \in Z_{+}$, which is impossible. If $b=6$, then we have $f(6)=-5$, so $f(-5)^{2}=16$ and $f(-5) \in{-4,4}$. Then $f(f(-5))^{2}=$ $25+4 f(-5) \in{9,41}$, so $f(-5)=-4$ and $-5 \in Z_{+}$. This implies $a_{0} \leqslant-5$, which contradicts our assumption that $\pm 1=a \notin Z_{+}$. 8. Thus we have shown that $f\left(Z_{0}\right) \subseteq Z_{0}$, and $Z_{0}$ is finite. Take any element $c \in Z_{0}$, and consider the sequence defined by $c_{i}=f^{i}(c)$. All elements of the sequence $\left(c_{i}\right)$ lie in $Z_{0}$, hence it is bounded. Choose an index $k$ for which $\left|c_{k}\right|$ is maximal, so that in particular $\left|c_{k+1}\right| \leqslant\left|c_{k}\right|$ and $\left|c_{k+2}\right| \leqslant\left|c_{k}\right|$. Our functional equation (1) yields

Since $c_{k}$ and $c_{k+2}$ have the same parity and $\left|c_{k+2}\right| \leqslant\left|c_{k}\right|$, this leaves us with three possibilities: $\left|c_{k+2}\right|=\left|c_{k}\right|,\left|c_{k+2}\right|=\left|c_{k}\right|-2$, and $\left|c_{k}\right|-2= \pm 2, c_{k+2}=0$. If $\left|c_{k+2}\right|=\left|c_{k}\right|-2$, then $f\left(c_{k}\right)=c_{k+1}=1-\left|c_{k}\right|$, which means that $c_{k} \in Z_{-}$or $c_{k} \in Z_{+}$, and we reach a contradiction. If $\left|c_{k+2}\right|=\left|c_{k}\right|$, then $c_{k+1}=0$, thus $c_{k+3}^{2}=4 c_{k+2}$. So either $c_{k+3} \neq 0$ or (by maximality of $\left.\left|c_{k+2}\right|=\left|c_{k}\right|\right) c_{i}=0$ for all $i$. In the former case, we can repeat the entire argument with $c_{k+2}$ in the place of $c_{k}$. Now $\left|c_{k+4}\right|=\left|c_{k+2}\right|$ is not possible any more since $c_{k+3} \neq 0$, leaving us with the only possibility $\left|c_{k}\right|-2=\left|c_{k+2}\right|-2= \pm 2$.

Thus we know now that either all $c_{i}$ are equal to 0 , or $\left|c_{k}\right|=4$. If $c_{k}= \pm 4$, then either $c_{k+1}=0$ and $\left|c_{k+2}\right|=\left|c_{k}\right|=4$, or $c_{k+2}=0$ and $c_{k+1}=-4$. From this point onwards, all elements of the sequence are either 0 or $\pm 4$. Let $c_{r}$ be the last element of the sequence that is not equal to 0 or $\pm 4$ (if such an element exists). Then $c_{r+1}, c_{r+2} \in{-4,0,4}$, so

which gives us a contradiction. Thus all elements of the sequence are equal to 0 or $\pm 4$, and since the choice of $c_{0}=c$ was arbitrary, $Z_{0} \subseteq{-4,0,4}$. 9. Finally, we show that $4 \notin Z_{0}$ and $-4 \notin Z_{0}$. Suppose that $4 \in Z_{0}$. Then in particular $a_{0}$ (the smallest element of $Z_{+}$) cannot be less than 4 , since this would imply $4 \in Z_{+}$. So $-3 \in Z_{-}$, which means that $f(-3)=4$. Then $25=(-3)^{2}+4 f(-3)=f(f(-3))^{2}=f(4)^{2}$, so $f(4)= \pm 5 \notin Z_{0}$, and we reach a contradiction.

Suppose that $-4 \in Z_{0}$. The only possible values for $f(-4)$ that are left are 0 and -4 . Note that $4 f(0)=f(f(0))^{2}$, so $f(0) \geqslant 0$. If $f(-4)=0$, then we get $16=(-4)^{2}+0=f(0)^{2}$, thus $f(0)=4$. But then $f(f(-4)) \notin Z_{0}$, which is impossible. Thus $f(-4)=-4$, which gives us $0=(-4)^{2}+4 f(-4)=f(f(-4))^{2}=16$, and this is clearly absurd. Now we are left with $Z_{0}={0}$ and $f(0)=0$ as the only possibility. If $1 \in Z_{-}$, then $f(1)=0$, so $1=1^{2}+4 f(1)=f(f(1))^{2}=f(0)^{2}=0$, which is another contradiction. Thus $1 \in Z_{+}$, meaning that $a_{0} \leqslant 1$. On the other hand, $a_{0} \leqslant 0$ would imply $0 \in Z_{+}$, so we can only have $a_{0}=1$. Thus $Z_{+}$comprises all positive integers, and $Z_{-}$comprises all negative integers. This gives us the third solution.

Comment. All solutions known to the Problem Selection Committee are quite lengthy and technical, as the two solutions presented here show. It is possible to make the problem easier by imposing additional assumptions, such as $f(0) \neq 0$ or $f(n) \geqslant 1$ for all $n \geqslant 0$, to remove some of the technicalities.

Combinatorics

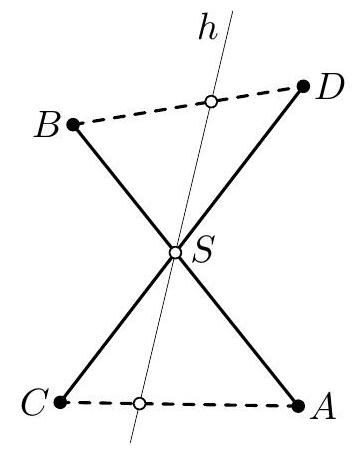

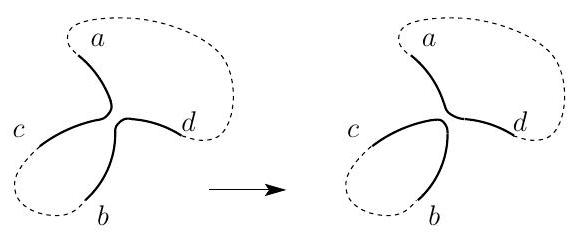

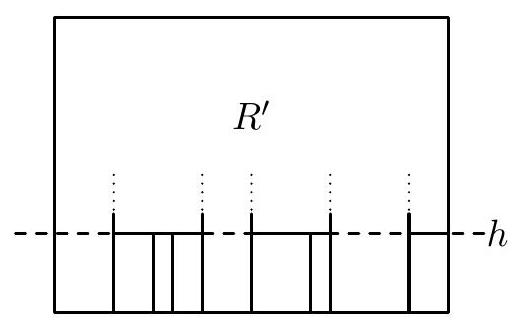

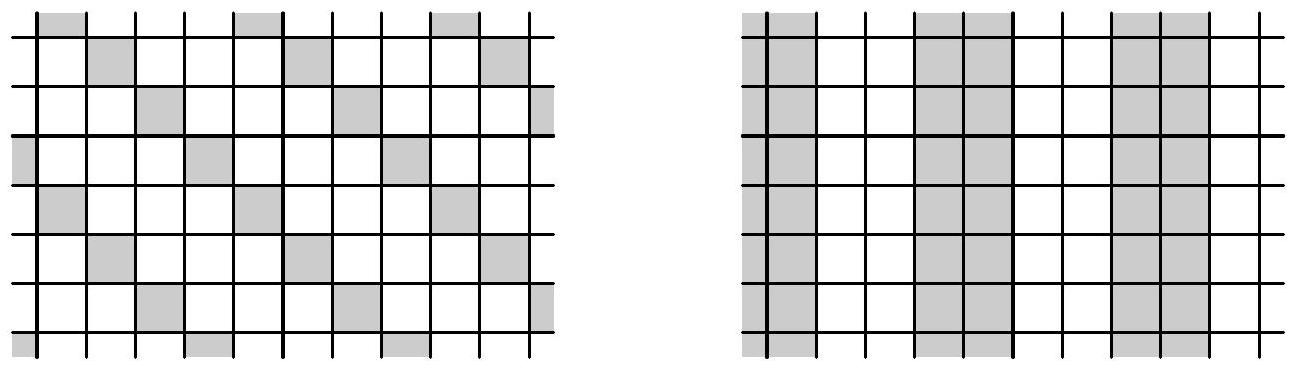

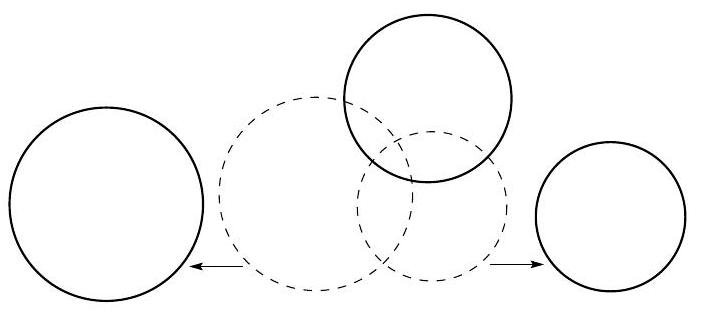

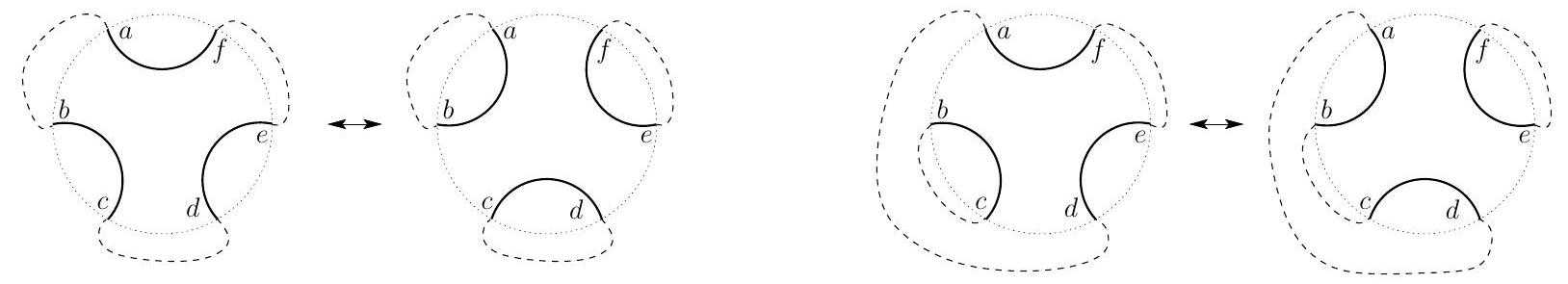

C1. Let $n$ points be given inside a rectangle $R$ such that no two of them lie on a line parallel to one of the sides of $R$. The rectangle $R$ is to be dissected into smaller rectangles with sides parallel to the sides of $R$ in such a way that none of these rectangles contains any of the given points in its interior. Prove that we have to dissect $R$ into at least $n+1$ smaller rectangles. (Serbia) Solution 1. Let $k$ be the number of rectangles in the dissection. The set of all points that are corners of one of the rectangles can be divided into three disjoint subsets:

- $A$, which consists of the four corners of the original rectangle $R$, each of which is the corner of exactly one of the smaller rectangles,

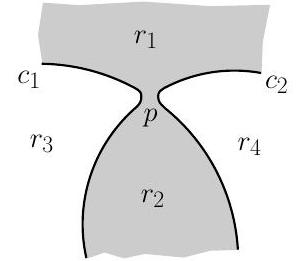

- $B$, which contains points where exactly two of the rectangles have a common corner (T-junctions, see the figure below),

- $C$, which contains points where four of the rectangles have a common corner (crossings, see the figure below).

Figure 1: A T-junction and a crossing

We denote the number of points in $B$ by $b$ and the number of points in $C$ by $c$. Since each of the $k$ rectangles has exactly four corners, we get

It follows that $2 b \leqslant 4 k-4$, so $b \leqslant 2 k-2$. Each of the $n$ given points has to lie on a side of one of the smaller rectangles (but not of the original rectangle $R$ ). If we extend this side as far as possible along borders between rectangles, we obtain a line segment whose ends are T-junctions. Note that every point in $B$ can only be an endpoint of at most one such segment containing one of the given points, since it is stated that no two of them lie on a common line parallel to the sides of $R$. This means that

Combining our two inequalities for $b$, we get

thus $k \geqslant n+1$, which is what we wanted to prove.

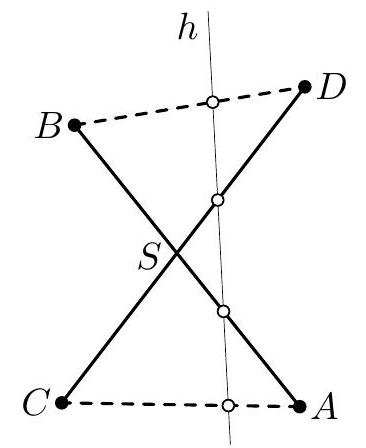

Solution 2. Let $k$ denote the number of rectangles. In the following, we refer to the directions of the sides of $R$ as 'horizontal' and 'vertical' respectively. Our goal is to prove the inequality $k \geqslant n+1$ for fixed $n$. Equivalently, we can prove the inequality $n \leqslant k-1$ for each $k$, which will be done by induction on $k$. For $k=1$, the statement is trivial.

Now assume that $k>1$. If none of the line segments that form the borders between the rectangles is horizontal, then we have $k-1$ vertical segments dividing $R$ into $k$ rectangles. On each of them, there can only be one of the $n$ points, so $n \leqslant k-1$, which is exactly what we want to prove.

Otherwise, consider the lowest horizontal line $h$ that contains one or more of these line segments. Let $R^{\prime}$ be the rectangle that results when everything that lies below $h$ is removed from $R$ (see the example in the figure below).

The rectangles that lie entirely below $h$ form blocks of rectangles separated by vertical line segments. Suppose there are $r$ blocks and $k_{i}$ rectangles in the $i^{\text {th }}$ block. The left and right border of each block has to extend further upwards beyond $h$. Thus we can move any points that lie on these borders upwards, so that they now lie inside $R^{\prime}$. This can be done without violating the conditions, one only needs to make sure that they do not get to lie on a common horizontal line with one of the other given points.

All other borders between rectangles in the $i^{\text {th }}$ block have to lie entirely below $h$. There are $k_{i}-1$ such line segments, each of which can contain at most one of the given points. Finally, there can be one point that lies on $h$. All other points have to lie in $R^{\prime}$ (after moving some of them as explained in the previous paragraph).

Figure 2: Illustration of the inductive argument We see that $R^{\prime}$ is divided into $k-\sum_{i=1}^{r} k_{i}$ rectangles. Applying the induction hypothesis to $R^{\prime}$, we find that there are at most

points. Since $r \geqslant 1$, this means that $n \leqslant k-1$, which completes our induction.

C2. We have $2^{m}$ sheets of paper, with the number 1 written on each of them. We perform the following operation. In every step we choose two distinct sheets; if the numbers on the two sheets are $a$ and $b$, then we erase these numbers and write the number $a+b$ on both sheets. Prove that after $m 2^{m-1}$ steps, the sum of the numbers on all the sheets is at least $4^{m}$. (Iran) Solution. Let $P_{k}$ be the product of the numbers on the sheets after $k$ steps. Suppose that in the $(k+1)^{\text {th }}$ step the numbers $a$ and $b$ are replaced by $a+b$. In the product, the number $a b$ is replaced by $(a+b)^{2}$, and the other factors do not change. Since $(a+b)^{2} \geqslant 4 a b$, we see that $P_{k+1} \geqslant 4 P_{k}$. Starting with $P_{0}=1$, a straightforward induction yields

for all integers $k \geqslant 0$; in particular

so by the AM-GM inequality, the sum of the numbers written on the sheets after $m 2^{m-1}$ steps is at least

Comment 1. It is possible to achieve the sum $4^{m}$ in $m 2^{m-1}$ steps. For example, starting from $2^{m}$ equal numbers on the sheets, in $2^{m-1}$ consecutive steps we can double all numbers. After $m$ such doubling rounds we have the number $2^{m}$ on every sheet.

Comment 2. There are several versions of the solution above. E.g., one may try to assign to each positive integer $n$ a weight $w_{n}$ in such a way that the sum of the weights of the numbers written on the sheets increases, say, by at least 2 in each step. For this purpose, one needs the inequality

to be satisfied for all positive integers $a$ and $b$. Starting from $w_{1}=1$ and trying to choose the weights as small as possible, one may find that these weights can be defined as follows: For every positive integer $n$, one chooses $k$ to be the maximal integer such that $n \geqslant 2^{k}$, and puts

Now, in order to prove that these weights satisfy (1), one may take arbitrary positive integers $a$ and $b$, and choose an integer $d \geqslant 0$ such that $w_{a+b}=d+\frac{a+b}{2^{d}}$. Then one has

Since the initial sum of the weights was $2^{m}$, after $m 2^{m-1}$ steps the sum is at least $(m+1) 2^{m}$. To finish the solution, one may notice that by (2) for every positive integer $a$ one has

So the sum of the numbers $a_{1}, a_{2}, \ldots, a_{2^{m}}$ on the sheets can be estimated as

as required. For establishing the inequalities (1) and (3), one may also use the convexity argument, instead of the second definition of $w_{n}$ in (2).

One may check that $\log {2} n \leqslant w{n} \leqslant \log _{2} n+1$; thus, in some rough sense, this approach is obtained by "taking the logarithm" of the solution above.

Comment 3. An intuitive strategy to minimise the sum of numbers is that in every step we choose the two smallest numbers. We may call this the greedy strategy. In the following paragraphs we prove that the greedy strategy indeed provides the least possible sum of numbers.

Claim. Starting from any sequence $x_{1}, \ldots, x_{N}$ of positive real numbers on $N$ sheets, for any number $k$ of steps, the greedy strategy achieves the lowest possible sum of numbers.

Proof. We apply induction on $k$; for $k=1$ the statement is obvious. Let $k \geqslant 2$, and assume that the claim is true for smaller values.

Every sequence of $k$ steps can be encoded as $S=\left(\left(i_{1}, j_{1}\right), \ldots,\left(i_{k}, j_{k}\right)\right)$, where, for $r=1,2, \ldots, k$, the numbers $i_{r}$ and $j_{r}$ are the indices of the two sheets that are chosen in the $r^{\text {th }}$ step. The resulting final sum will be some linear combination of $x_{1}, \ldots, x_{N}$, say, $c_{1} x_{1}+\cdots+c_{N} x_{N}$ with positive integers $c_{1}, \ldots, c_{N}$ that depend on $S$ only. Call the numbers $\left(c_{1}, \ldots, c_{N}\right)$ the characteristic vector of $S$.

Choose a sequence $S_{0}=\left(\left(i_{1}, j_{1}\right), \ldots,\left(i_{k}, j_{k}\right)\right)$ of steps that produces the minimal sum, starting from $x_{1}, \ldots, x_{N}$, and let $\left(c_{1}, \ldots, c_{N}\right)$ be the characteristic vector of $S$. We may assume that the sheets are indexed in such an order that $c_{1} \geqslant c_{2} \geqslant \cdots \geqslant c_{N}$. If the sheets (and the numbers) are permuted by a permutation $\pi$ of the indices $(1,2, \ldots, N)$ and then the same steps are performed, we can obtain the $\operatorname{sum} \sum_{t=1}^{N} c_{t} x_{\pi(t)}$. By the rearrangement inequality, the smallest possible sum can be achieved when the numbers $\left(x_{1}, \ldots, x_{N}\right)$ are in non-decreasing order. So we can assume that also $x_{1} \leqslant x_{2} \leqslant \cdots \leqslant x_{N}$.

Let $\ell$ be the largest index with $c_{1}=\cdots=c_{\ell}$, and let the $r^{\text {th }}$ step be the first step for which $c_{i_{r}}=c_{1}$ or $c_{j_{r}}=c_{1}$. The role of $i_{r}$ and $j_{r}$ is symmetrical, so we can assume $c_{i_{r}}=c_{1}$ and thus $i_{r} \leqslant \ell$. We show that $c_{j_{r}}=c_{1}$ and $j_{r} \leqslant \ell$ hold, too.

Before the $r^{\text {th }}$ step, on the $i_{r}{ }^{\text {th }}$ sheet we had the number $x_{i_{r}}$. On the $j_{r}{ }^{\text {th }}$ sheet there was a linear combination that contains the number $x_{j_{r}}$ with a positive integer coefficient, and possibly some other terms. In the $r^{\text {th }}$ step, the number $x_{i_{r}}$ joins that linear combination. From this point, each sheet contains a linear combination of $x_{1}, \ldots, x_{N}$, with the coefficient of $x_{j_{r}}$ being not smaller than the coefficient of $x_{i_{r}}$. This is preserved to the end of the procedure, so we have $c_{j_{r}} \geqslant c_{i_{r}}$. But $c_{i_{r}}=c_{1}$ is maximal among the coefficients, so we have $c_{j_{r}}=c_{i_{r}}=c_{1}$ and thus $j_{r} \leqslant \ell$.

Either from $c_{j_{r}}=c_{i_{r}}=c_{1}$ or from the arguments in the previous paragraph we can see that none of the $i_{r}{ }^{\text {th }}$ and the $j_{r}{ }^{\text {th }}$ sheets were used before step $r$. Therefore, the final linear combination of the numbers does not change if the step $\left(i_{r}, j_{r}\right)$ is performed first: the sequence of steps

also produces the same minimal sum at the end. Therefore, we can replace $S_{0}$ by $S_{1}$ and we may assume that $r=1$ and $c_{i_{1}}=c_{j_{1}}=c_{1}$.

As $i_{1} \neq j_{1}$, we can see that $\ell \geqslant 2$ and $c_{1}=c_{2}=c_{i_{1}}=c_{j_{1}}$. Let $\pi$ be such a permutation of the indices $(1,2, \ldots, N)$ that exchanges 1,2 with $i_{r}, j_{r}$ and does not change the remaining indices. Let

Since $c_{\pi(i)}=c_{i}$ for all indices $i$, this sequence of steps produces the same, minimal sum. Moreover, in the first step we chose $x_{\pi\left(i_{1}\right)}=x_{1}$ and $x_{\pi\left(j_{1}\right)}=x_{2}$, the two smallest numbers.

Hence, it is possible to achieve the optimal sum if we follow the greedy strategy in the first step. By the induction hypothesis, following the greedy strategy in the remaining steps we achieve the optimal sum.

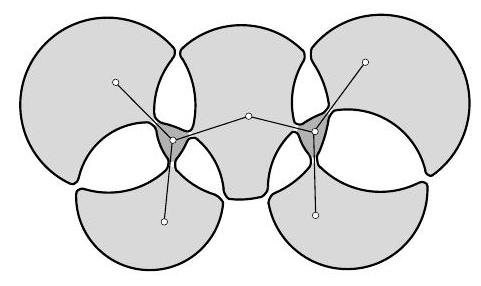

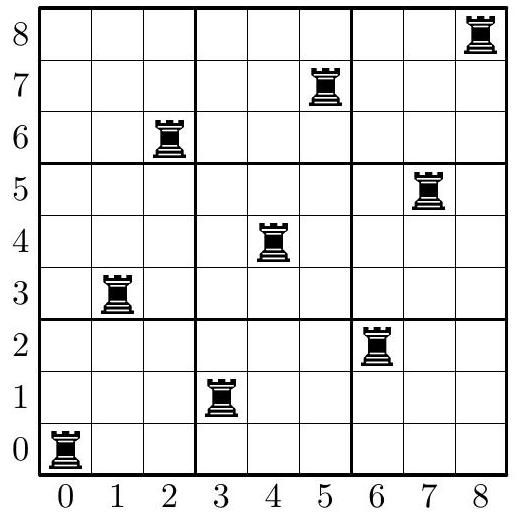

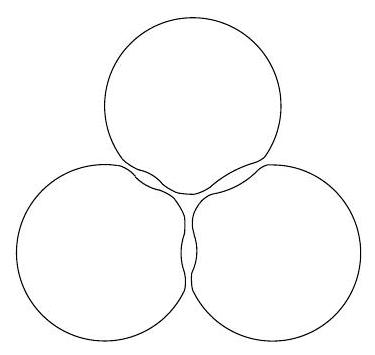

C3. Let $n \geqslant 2$ be an integer. Consider an $n \times n$ chessboard divided into $n^{2}$ unit squares. We call a configuration of $n$ rooks on this board happy if every row and every column contains exactly one rook. Find the greatest positive integer $k$ such that for every happy configuration of rooks, we can find a $k \times k$ square without a rook on any of its $k^{2}$ unit squares. (Croatia) Answer. $\lfloor\sqrt{n-1}\rfloor$. Solution. Let $\ell$ be a positive integer. We will show that (i) if $n>\ell^{2}$ then each happy configuration contains an empty $\ell \times \ell$ square, but (ii) if $n \leqslant \ell^{2}$ then there exists a happy configuration not containing such a square. These two statements together yield the answer. (i). Assume that $n>\ell^{2}$. Consider any happy configuration. There exists a row $R$ containing a rook in its leftmost square. Take $\ell$ consecutive rows with $R$ being one of them. Their union $U$ contains exactly $\ell$ rooks. Now remove the $n-\ell^{2} \geqslant 1$ leftmost columns from $U$ (thus at least one rook is also removed). The remaining part is an $\ell^{2} \times \ell$ rectangle, so it can be split into $\ell$ squares of size $\ell \times \ell$, and this part contains at most $\ell-1$ rooks. Thus one of these squares is empty. (ii). Now we assume that $n \leqslant \ell^{2}$. Firstly, we will construct a happy configuration with no empty $\ell \times \ell$ square for the case $n=\ell^{2}$. After that we will modify it to work for smaller values of $n$.

Let us enumerate the rows from bottom to top as well as the columns from left to right by the numbers $0,1, \ldots, \ell^{2}-1$. Every square will be denoted, as usual, by the pair $(r, c)$ of its row and column numbers. Now we put the rooks on all squares of the form $(i \ell+j, j \ell+i)$ with $i, j=0,1, \ldots, \ell-1$ (the picture below represents this arrangement for $\ell=3$ ). Since each number from 0 to $\ell^{2}-1$ has a unique representation of the form $i \ell+j(0 \leqslant i, j \leqslant \ell-1)$, each row and each column contains exactly one rook.

Next, we show that each $\ell \times \ell$ square $A$ on the board contains a rook. Consider such a square $A$, and consider $\ell$ consecutive rows the union of which contains $A$. Let the lowest of these rows have number $p \ell+q$ with $0 \leqslant p, q \leqslant \ell-1$ (notice that $p \ell+q \leqslant \ell^{2}-\ell$ ). Then the rooks in this union are placed in the columns with numbers $q \ell+p,(q+1) \ell+p, \ldots,(\ell-1) \ell+p$, $p+1, \ell+(p+1), \ldots,(q-1) \ell+p+1$, or, putting these numbers in increasing order,

One readily checks that the first number in this list is at most $\ell-1$ (if $p=\ell-1$, then $q=0$, and the first listed number is $q \ell+p=\ell-1$ ), the last one is at least $(\ell-1) \ell$, and the difference between any two consecutive numbers is at most $\ell$. Thus, one of the $\ell$ consecutive columns intersecting $A$ contains a number listed above, and the rook in this column is inside $A$, as required. The construction for $n=\ell^{2}$ is established.

It remains to construct a happy configuration of rooks not containing an empty $\ell \times \ell$ square for $n<\ell^{2}$. In order to achieve this, take the construction for an $\ell^{2} \times \ell^{2}$ square described above and remove the $\ell^{2}-n$ bottom rows together with the $\ell^{2}-n$ rightmost columns. We will have a rook arrangement with no empty $\ell \times \ell$ square, but several rows and columns may happen to be empty. Clearly, the number of empty rows is equal to the number of empty columns, so one can find a bijection between them, and put a rook on any crossing of an empty row and an empty column corresponding to each other.

Comment. Part (i) allows several different proofs. E.g., in the last paragraph of the solution, it suffices to deal only with the case $n=\ell^{2}+1$. Notice now that among the four corner squares, at least one is empty. So the rooks in its row and in its column are distinct. Now, deleting this row and column we obtain an $\ell^{2} \times \ell^{2}$ square with $\ell^{2}-1$ rooks in it. This square can be partitioned into $\ell^{2}$ squares of size $\ell \times \ell$, so one of them is empty.

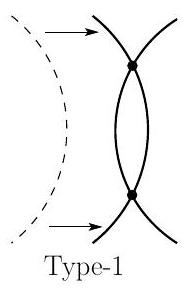

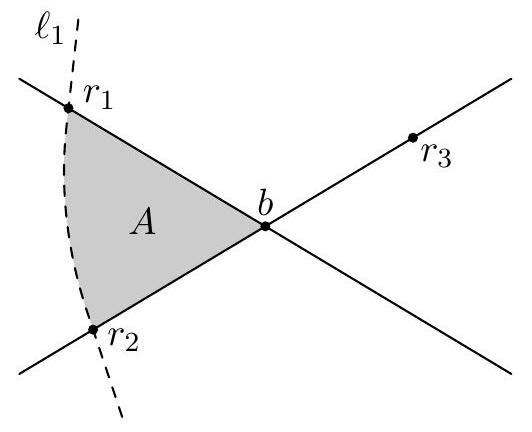

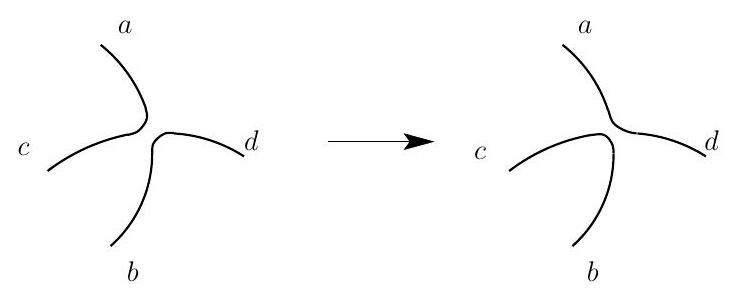

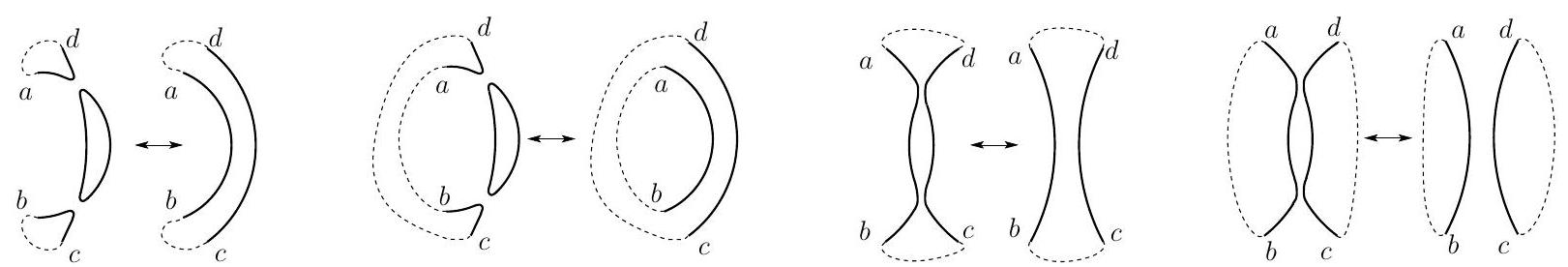

C4. Construct a tetromino by attaching two $2 \times 1$ dominoes along their longer sides such that the midpoint of the longer side of one domino is a corner of the other domino. This construction yields two kinds of tetrominoes with opposite orientations. Let us call them Sand Z-tetrominoes, respectively.

Assume that a lattice polygon $P$ can be tiled with S-tetrominoes. Prove than no matter how we tile $P$ using only S - and Z -tetrominoes, we always use an even number of Z -tetrominoes.

(Hungary)

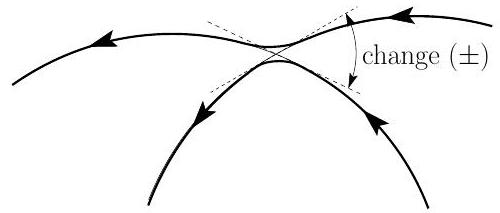

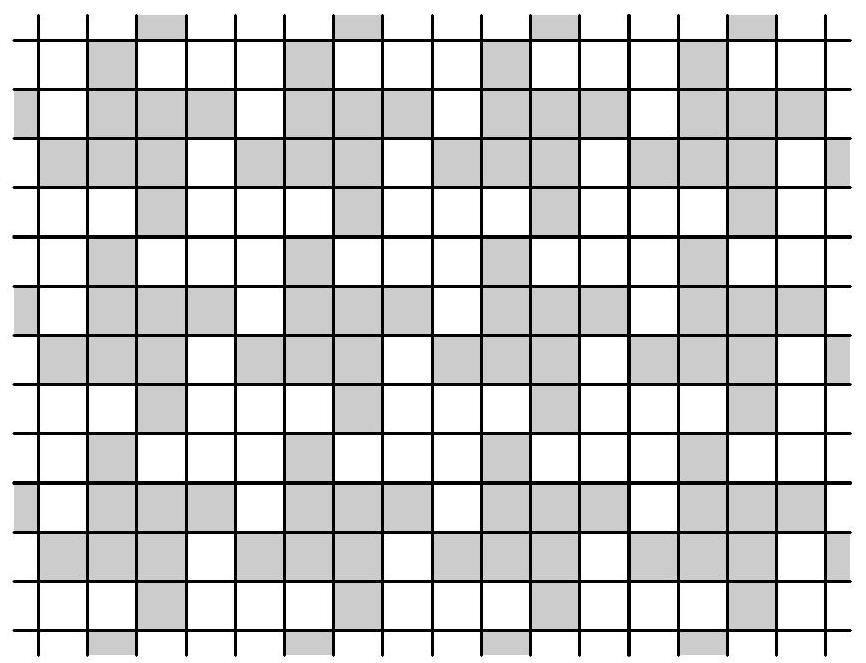

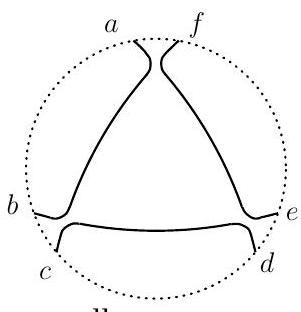

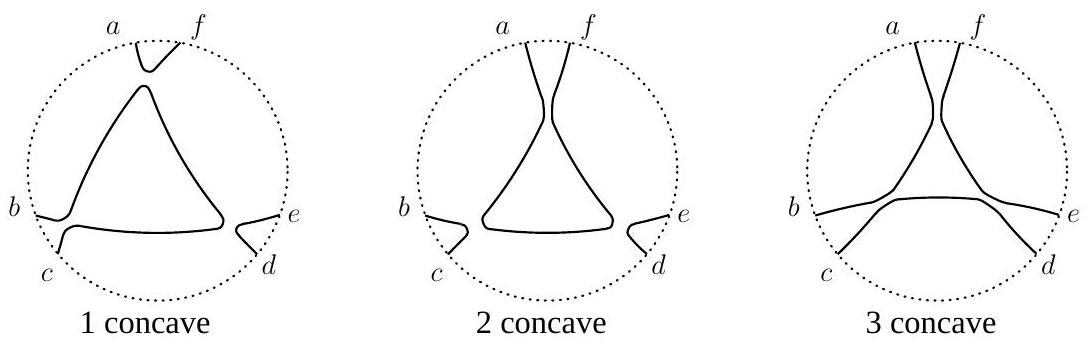

Solution 1. We may assume that polygon $P$ is the union of some squares of an infinite chessboard. Colour the squares of the chessboard with two colours as the figure below illustrates.

Observe that no matter how we tile $P$, any S-tetromino covers an even number of black squares, whereas any Z-tetromino covers an odd number of them. As $P$ can be tiled exclusively by S-tetrominoes, it contains an even number of black squares. But if some S-tetrominoes and some Z-tetrominoes cover an even number of black squares, then the number of Z-tetrominoes must be even.

Comment. An alternative approach makes use of the following two colourings, which are perhaps somewhat more natural:

Let $s_{1}$ and $s_{2}$ be the number of $S$-tetrominoes of the first and second type (as shown in the figure above) respectively that are used in a tiling of $P$. Likewise, let $z_{1}$ and $z_{2}$ be the number of $Z$-tetrominoes of the first and second type respectively. The first colouring shows that $s_{1}+z_{2}$ is invariant modulo 2 , the second colouring shows that $s_{1}+z_{1}$ is invariant modulo 2 . Adding these two conditions, we find that $z_{1}+z_{2}$ is invariant modulo 2 , which is what we have to prove. Indeed, the sum of the two colourings (regarding white as 0 and black as 1 and adding modulo 2) is the colouring shown in the solution.

Solution 2. Let us assign coordinates to the squares of the infinite chessboard in such a way that the squares of $P$ have nonnegative coordinates only, and that the first coordinate increases as one moves to the right, while the second coordinate increases as one moves upwards. Write the integer $3^{i} \cdot(-3)^{j}$ into the square with coordinates $(i, j)$, as in the following figure:

| $\vdots$ | |||||

|---|---|---|---|---|---|

| 81 | $\vdots$ | ||||

| -27 | -81 | $\vdots$ | |||

| 9 | 27 | 81 | $\cdots$ | ||

| -3 | -9 | -27 | -81 | $\cdots$ | |

| 1 | 3 | 9 | 27 | 81 | $\cdots$ |

The sum of the numbers written into four squares that can be covered by an $S$-tetromino is either of the form

(for the first type of $S$-tetrominoes), or of the form

and thus divisible by 32 . For this reason, the sum of the numbers written into the squares of $P$, and thus also the sum of the numbers covered by $Z$-tetrominoes in the second covering, is likewise divisible by 32 . Now the sum of the entries of a $Z$-tetromino is either of the form

(for the first type of $Z$-tetrominoes), or of the form

i.e., 16 times an odd number. Thus in order to obtain a total that is divisible by 32 , an even number of the latter kind of $Z$-tetrominoes needs to be used. Rotating everything by $90^{\circ}$, we find that the number of $Z$-tetrominoes of the first kind is even as well. So we have even proven slightly more than necessary.

Comment 1. In the second solution, 3 and -3 can be replaced by other combinations as well. For example, for any positive integer $a \equiv 3(\bmod 4)$, we can write $a^{i} \cdot(-a)^{j}$ into the square with coordinates $(i, j)$ and apply the same argument.

Comment 2. As the second solution shows, we even have the stronger result that the parity of the number of each of the four types of tetrominoes in a tiling of $P$ by S - and Z-tetrominoes is an invariant of $P$. This also remains true if there is no tiling of $P$ that uses only S-tetrominoes.

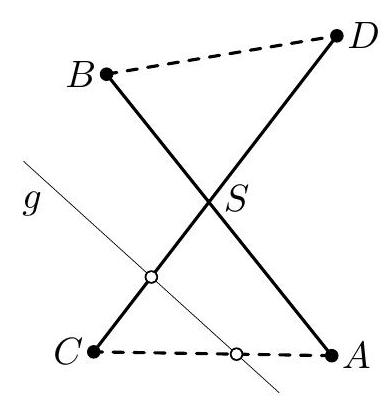

C5. Consider $n \geqslant 3$ lines in the plane such that no two lines are parallel and no three have a common point. These lines divide the plane into polygonal regions; let $\mathcal{F}$ be the set of regions having finite area. Prove that it is possible to colour $\lceil\sqrt{n / 2}\rceil$ of the lines blue in such a way that no region in $\mathcal{F}$ has a completely blue boundary. (For a real number $x,\lceil x\rceil$ denotes the least integer which is not smaller than $x$.) (Austria) Solution. Let $L$ be the given set of lines. Choose a maximal (by inclusion) subset $B \subseteq L$ such that when we colour the lines of $B$ blue, no region in $\mathcal{F}$ has a completely blue boundary. Let $|B|=k$. We claim that $k \geqslant\lceil\sqrt{n / 2}\rceil$.

Let us colour all the lines of $L \backslash B$ red. Call a point blue if it is the intersection of two blue lines. Then there are $\binom{k}{2}$ blue points.

Now consider any red line $\ell$. By the maximality of $B$, there exists at least one region $A \in \mathcal{F}$ whose only red side lies on $\ell$. Since $A$ has at least three sides, it must have at least one blue vertex. Let us take one such vertex and associate it to $\ell$.

Since each blue point belongs to four regions (some of which may be unbounded), it is associated to at most four red lines. Thus the total number of red lines is at most $4\binom{k}{2}$. On the other hand, this number is $n-k$, so

and finally $k \geqslant\lceil\sqrt{n / 2}\rceil$, which gives the desired result. Comment 1. The constant factor in the estimate can be improved in different ways; we sketch two of them below. On the other hand, the Problem Selection Committee is not aware of any results showing that it is sometimes impossible to colour $k$ lines satisfying the desired condition for $k \gg \sqrt{n}$. In this situation we find it more suitable to keep the original formulation of the problem.

- Firstly, we show that in the proof above one has in fact $k=|B| \geqslant\lceil\sqrt{2 n / 3}\rceil$.

Let us make weighted associations as follows. Let a region $A$ whose only red side lies on $\ell$ have $k$ vertices, so that $k-2$ of them are blue. We associate each of these blue vertices to $\ell$, and put the weight $\frac{1}{k-2}$ on each such association. So the sum of the weights of all the associations is exactly $n-k$.

Now, one may check that among the four regions adjacent to a blue vertex $v$, at most two are triangles. This means that the sum of the weights of all associations involving $v$ is at most $1+1+\frac{1}{2}+\frac{1}{2}=3$. This leads to the estimate

or

which yields $k \geqslant\lceil\sqrt{2 n / 3}\rceil$. 2. Next, we even show that $k=|B| \geqslant\lceil\sqrt{n}\rceil$. For this, we specify the process of associating points to red lines in one more different way.

Call a point red if it lies on a red line as well as on a blue line. Consider any red line $\ell$, and take an arbitrary region $A \in \mathcal{F}$ whose only red side lies on $\ell$. Let $r^{\prime}, r, b_{1}, \ldots, b_{k}$ be its vertices in clockwise order with $r^{\prime}, r \in \ell$; then the points $r^{\prime}, r$ are red, while all the points $b_{1}, \ldots, b_{k}$ are blue. Let us associate to $\ell$ the red point $r$ and the blue point $b_{1}$. One may notice that to each pair of a red point $r$ and a blue point $b$, at most one red line can be associated, since there is at most one region $A$ having $r$ and $b$ as two clockwise consecutive vertices.

We claim now that at most two red lines are associated to each blue point $b$; this leads to the desired bound

Assume, to the contrary, that three red lines $\ell_{1}, \ell_{2}$, and $\ell_{3}$ are associated to the same blue point $b$. Let $r_{1}, r_{2}$, and $r_{3}$ respectively be the red points associated to these lines; all these points are distinct. The point $b$ defines four blue rays, and each point $r_{i}$ is the red point closest to $b$ on one of these rays. So we may assume that the points $r_{2}$ and $r_{3}$ lie on one blue line passing through $b$, while $r_{1}$ lies on the other one.

Now consider the region $A$ used to associate $r_{1}$ and $b$ with $\ell_{1}$. Three of its clockwise consecutive vertices are $r_{1}, b$, and either $r_{2}$ or $r_{3}$ (say, $r_{2}$ ). Since $A$ has only one red side, it can only be the triangle $r_{1} b r_{2}$; but then both $\ell_{1}$ and $\ell_{2}$ pass through $r_{2}$, as well as some blue line. This is impossible by the problem assumptions.

Comment 2. The condition that the lines be non-parallel is essentially not used in the solution, nor in the previous comment; thus it may be omitted.

C6. We are given an infinite deck of cards, each with a real number on it. For every real number $x$, there is exactly one card in the deck that has $x$ written on it. Now two players draw disjoint sets $A$ and $B$ of 100 cards each from this deck. We would like to define a rule that declares one of them a winner. This rule should satisfy the following conditions:

- The winner only depends on the relative order of the 200 cards: if the cards are laid down in increasing order face down and we are told which card belongs to which player, but not what numbers are written on them, we can still decide the winner.

- If we write the elements of both sets in increasing order as $A=\left{a_{1}, a_{2}, \ldots, a_{100}\right}$ and $B=\left{b_{1}, b_{2}, \ldots, b_{100}\right}$, and $a_{i}>b_{i}$ for all $i$, then $A$ beats $B$.

- If three players draw three disjoint sets $A, B, C$ from the deck, $A$ beats $B$ and $B$ beats $C$, then $A$ also beats $C$.

How many ways are there to define such a rule? Here, we consider two rules as different if there exist two sets $A$ and $B$ such that $A$ beats $B$ according to one rule, but $B$ beats $A$ according to the other. (Russia) Answer. 100. Solution 1. We prove a more general statement for sets of cardinality $n$ (the problem being the special case $n=100$, then the answer is $n$ ). In the following, we write $A>B$ or $B<A$ for " $A$ beats $B$ ".

Part I. Let us first define $n$ different rules that satisfy the conditions. To this end, fix an index $k \in{1,2, \ldots, n}$. We write both $A$ and $B$ in increasing order as $A=\left{a_{1}, a_{2}, \ldots, a_{n}\right}$ and $B=\left{b_{1}, b_{2}, \ldots, b_{n}\right}$ and say that $A$ beats $B$ if and only if $a_{k}>b_{k}$. This rule clearly satisfies all three conditions, and the rules corresponding to different $k$ are all different. Thus there are at least $n$ different rules.

Part II. Now we have to prove that there is no other way to define such a rule. Suppose that our rule satisfies the conditions, and let $k \in{1,2, \ldots, n}$ be minimal with the property that

Clearly, such a $k$ exists, since this holds for $k=n$ by assumption. Now consider two disjoint sets $X=\left{x_{1}, x_{2}, \ldots, x_{n}\right}$ and $Y=\left{y_{1}, y_{2}, \ldots, y_{n}\right}$, both in increasing order (i.e., $x_{1}<x_{2}<\cdots<x_{n}$ and $y_{1}<y_{2}<\cdots<y_{n}$ ). We claim that $X<Y$ if (and only if - this follows automatically) $x_{k}<y_{k}$.

To prove this statement, pick arbitrary real numbers $u_{i}, v_{i}, w_{i} \notin X \cup Y$ such that

and

and set

Then

- $u_{i}<y_{i}$ and $x_{i}<v_{i}$ for all $i$, so $U<Y$ and $X<V$ by the second condition.

- The elements of $U \cup W$ are ordered in the same way as those of $A_{k-1} \cup B_{k-1}$, and since $A_{k-1}>B_{k-1}$ by our choice of $k$, we also have $U>W$ (if $k=1$, this is trivial).

- The elements of $V \cup W$ are ordered in the same way as those of $A_{k} \cup B_{k}$, and since $A_{k} \prec B_{k}$ by our choice of $k$, we also have $V \prec W$.

It follows that

so $X<Y$ by the third condition, which is what we wanted to prove. Solution 2. Another possible approach to Part II of this problem is induction on $n$. For $n=1$, there is trivially only one rule in view of the second condition.

In the following, we assume that our claim (namely, that there are no possible rules other than those given in Part I) holds for $n-1$ in place of $n$. We start with the following observation: Claim. At least one of the two relations

and

holds. Proof. Suppose that the first relation does not hold. Since our rule may only depend on the relative order, we must also have

Likewise, if the second relation does not hold, then we must also have

Now condition 3 implies that

which contradicts the second condition. Now we distinguish two cases, depending on which of the two relations actually holds: First case: $({2} \cup{2 i-1 \mid 2 \leqslant i \leqslant n})<({1} \cup{2 i \mid 2 \leqslant i \leqslant n})$. Let $A=\left{a_{1}, a_{2}, \ldots, a_{n}\right}$ and $B=\left{b_{1}, b_{2}, \ldots, b_{n}\right}$ be two disjoint sets, both in increasing order. We claim that the winner can be decided only from the values of $a_{2}, \ldots, a_{n}$ and $b_{2}, \ldots, b_{n}$, while $a_{1}$ and $b_{1}$ are actually irrelevant. Suppose that this was not the case, and assume without loss of generality that $a_{2}<b_{2}$. Then the relative order of $a_{1}, a_{2}, \ldots, a_{n}, b_{2}, \ldots, b_{n}$ is fixed, and the position of $b_{1}$ has to decide the winner. Suppose that for some value $b_{1}=x, B$ wins, while for some other value $b_{1}=y, A$ wins.

Write $B_{x}=\left{x, b_{2}, \ldots, b_{n}\right}$ and $B_{y}=\left{y, b_{2}, \ldots, b_{n}\right}$, and let $\varepsilon>0$ be smaller than half the distance between any two of the numbers in $B_{x} \cup B_{y} \cup A$. For any set $M$, let $M \pm \varepsilon$ be the set obtained by adding/subtracting $\varepsilon$ to all elements of $M$. By our choice of $\varepsilon$, the relative order of the elements of $\left(B_{y}+\varepsilon\right) \cup A$ is still the same as for $B_{y} \cup A$, while the relative order of the elements of $\left(B_{x}-\varepsilon\right) \cup A$ is still the same as for $B_{x} \cup A$. Thus $A \prec B_{x}-\varepsilon$, but $A>B_{y}+\varepsilon$. Moreover, if $y>x$, then $B_{x}-\varepsilon \prec B_{y}+\varepsilon$ by condition 2, while otherwise the relative order of the elements in $\left(B_{x}-\varepsilon\right) \cup\left(B_{y}+\varepsilon\right)$ is the same as for the two sets ${2} \cup{2 i-1 \mid 2 \leqslant i \leqslant n}$ and ${1} \cup{2 i \mid 2 \leqslant i \leqslant n}$, so that $B_{x}-\varepsilon<B_{y}+\varepsilon$. In either case, we obtain

which contradicts condition 3. So we know now that the winner does not depend on $a_{1}, b_{1}$. Therefore, we can define a new rule $<^{}$ on sets of cardinality $n-1$ by saying that $A<^{} B$ if and only if $A \cup{a} \prec B \cup{b}$ for some $a, b$ (or equivalently, all $a, b$ ) such that $a<\min A, b<\min B$ and $A \cup{a}$ and $B \cup{b}$ are disjoint. The rule $<^{}$ satisfies all conditions again, so by the induction hypothesis, there exists an index $i$ such that $A<^{} B$ if and only if the $i^{\text {th }}$ smallest element of $A$ is less than the $i^{\text {th }}$ smallest element of $B$. This implies that $C \prec D$ if and only if the $(i+1)^{\text {th }}$ smallest element of $C$ is less than the $(i+1)^{\text {th }}$ smallest element of $D$, which completes our induction.

Second case: $({2 i-1 \mid 1 \leqslant i \leqslant n-1} \cup{2 n}) \prec({2 i \mid 1 \leqslant i \leqslant n-1} \cup{2 n-1})$. Set $-A={-a \mid a \in A}$ for any $A \subseteq \mathbb{R}$. For any two disjoint sets $A, B \subseteq \mathbb{R}$ of cardinality $n$, we write $A \prec^{\circ} B$ to mean $(-B) \prec(-A)$. It is easy to see that $\prec^{\circ}$ defines a rule to determine a winner that satisfies the three conditions of our problem as well as the relation of the first case. So it follows in the same way as in the first case that for some $i, A<^{\circ} B$ if and only if the $i^{\text {th }}$ smallest element of $A$ is less than the $i^{\text {th }}$ smallest element of $B$, which is equivalent to the condition that the $i^{\text {th }}$ largest element of $-A$ is greater than the $i^{\text {th }}$ largest element of $-B$. This proves that the original rule $<$ also has the desired form.

Comment. The problem asks for all possible partial orders on the set of $n$-element subsets of $\mathbb{R}$ such that any two disjoint sets are comparable, the order relation only depends on the relative order of the elements, and $\left{a_{1}, a_{2}, \ldots, a_{n}\right}<\left{b_{1}, b_{2}, \ldots, b_{n}\right}$ whenever $a_{i}<b_{i}$ for all $i$.

As the proposer points out, one may also ask for all total orders on all $n$-element subsets of $\mathbb{R}$ (dropping the condition of disjointness and requiring that $\left{a_{1}, a_{2}, \ldots, a_{n}\right} \leq\left{b_{1}, b_{2}, \ldots, b_{n}\right}$ whenever $a_{i} \leqslant b_{i}$ for all $i$ ). It turns out that the number of possibilities in this case is $n!$, and all possible total orders are obtained in the following way. Fix a permutation $\sigma \in S_{n}$. Let $A=\left{a_{1}, a_{2}, \ldots, a_{n}\right}$ and $B=\left{b_{1}, b_{2}, \ldots, b_{n}\right}$ be two subsets of $\mathbb{R}$ with $a_{1}<a_{2}<\cdots<a_{n}$ and $b_{1}<b_{2}<\cdots<b_{n}$. Then we say that $A>{\sigma} B$ if and only if $\left(a{\sigma(1)}, \ldots, a_{\sigma(n)}\right)$ is lexicographically greater than $\left(b_{\sigma(1)}, \ldots, b_{\sigma(n)}\right)$.

It seems, however, that this formulation adds rather more technicalities to the problem than additional ideas.

This page is intentionally left blank