ImO2017

RIO DE JANEIRO - BRAZIL

58 ${ }^{\text {th }}$ International Mathematical Olympiad

Shortlisted Problems (with solutions)

Shortlisted Problems (with solutions)

$58^{\text {th }}$ International Mathematical Olympiad Rio de Janeiro, 12-23 July 2017

The Shortlist has to be kept strictly confidential until the conclusion of the following International Mathematical Olympiad. IMO General Regulations §6.6

Contributing Countries

The Organizing Committee and the Problem Selection Committee of IMO 2017 thank the following 51 countries for contributing 150 problem proposals:

Albania, Algeria, Armenia, Australia, Austria, Azerbaijan, Belarus, Belgium, Bulgaria, Cuba, Cyprus, Czech Republic, Denmark, Estonia, France, Georgia, Germany, Greece, Hong Kong, India, Iran, Ireland, Israel, Italy, Japan, Kazakhstan, Latvia, Lithuania, Luxembourg, Mexico, Montenegro, Morocco, Netherlands, Romania, Russia, Serbia, Singapore, Slovakia, Slovenia, South Africa, Sweden, Switzerland, Taiwan, Tajikistan, Tanzania, Thailand, Trinidad and Tobago, Turkey, Ukraine, United Kingdom, U.S.A.

Problem Selection Committee

Carlos Gustavo Tamm de Araújo Moreira (Gugu) (chairman), Luciano Monteiro de Castro, Ilya I. Bogdanov, Géza Kós, Carlos Yuzo Shine, Zhuo Qun (Alex) Song, Ralph Costa Teixeira, Eduardo Tengan

Problems

Algebra

A1. Let $a_{1}, a_{2}, \ldots, a_{n}, k$, and $M$ be positive integers such that

If $M>1$, prove that the polynomial

has no positive roots. (Trinidad and Tobago) A2. Let $q$ be a real number. Gugu has a napkin with ten distinct real numbers written on it, and he writes the following three lines of real numbers on the blackboard:

- In the first line, Gugu writes down every number of the form $a-b$, where $a$ and $b$ are two (not necessarily distinct) numbers on his napkin.

- In the second line, Gugu writes down every number of the form $q a b$, where $a$ and $b$ are two (not necessarily distinct) numbers from the first line.

- In the third line, Gugu writes down every number of the form $a^{2}+b^{2}-c^{2}-d^{2}$, where $a, b, c, d$ are four (not necessarily distinct) numbers from the first line.

Determine all values of $q$ such that, regardless of the numbers on Gugu's napkin, every number in the second line is also a number in the third line.

A3. Let $S$ be a finite set, and let $\mathcal{A}$ be the set of all functions from $S$ to $S$. Let $f$ be an element of $\mathcal{A}$, and let $T=f(S)$ be the image of $S$ under $f$. Suppose that $f \circ g \circ f \neq g \circ f \circ g$ for every $g$ in $\mathcal{A}$ with $g \neq f$. Show that $f(T)=T$. (India) A4. A sequence of real numbers $a_{1}, a_{2}, \ldots$ satisfies the relation

Prove that this sequence is bounded, i.e., there is a constant $M$ such that $\left|a_{n}\right| \leqslant M$ for all positive integers $n$.

A5. An integer $n \geqslant 3$ is given. We call an $n$-tuple of real numbers $\left(x_{1}, x_{2}, \ldots, x_{n}\right)$ Shiny if for each permutation $y_{1}, y_{2}, \ldots, y_{n}$ of these numbers we have

Find the largest constant $K=K(n)$ such that

holds for every Shiny $n$-tuple $\left(x_{1}, x_{2}, \ldots, x_{n}\right)$.

A6. Find all functions $f: \mathbb{R} \rightarrow \mathbb{R}$ such that

for all $x, y \in \mathbb{R}$. (Albania) A7. Let $a_{0}, a_{1}, a_{2}, \ldots$ be a sequence of integers and $b_{0}, b_{1}, b_{2}, \ldots$ be a sequence of positive integers such that $a_{0}=0, a_{1}=1$, and

Prove that at least one of the two numbers $a_{2017}$ and $a_{2018}$ must be greater than or equal to 2017 . (Australia) A8. Assume that a function $f: \mathbb{R} \rightarrow \mathbb{R}$ satisfies the following condition: For every $x, y \in \mathbb{R}$ such that $(f(x)+y)(f(y)+x)>0$, we have $f(x)+y=f(y)+x$. Prove that $f(x)+y \leqslant f(y)+x$ whenever $x>y$. (Netherlands)

Combinatorics

C1. A rectangle $\mathcal{R}$ with odd integer side lengths is divided into small rectangles with integer side lengths. Prove that there is at least one among the small rectangles whose distances from the four sides of $\mathcal{R}$ are either all odd or all even. (Singapore) C2. Let $n$ be a positive integer. Define a chameleon to be any sequence of $3 n$ letters, with exactly $n$ occurrences of each of the letters $a, b$, and $c$. Define a swap to be the transposition of two adjacent letters in a chameleon. Prove that for any chameleon $X$, there exists a chameleon $Y$ such that $X$ cannot be changed to $Y$ using fewer than $3 n^{2} / 2$ swaps. (Australia) C3. Sir Alex plays the following game on a row of 9 cells. Initially, all cells are empty. In each move, Sir Alex is allowed to perform exactly one of the following two operations: (1) Choose any number of the form $2^{j}$, where $j$ is a non-negative integer, and put it into an empty cell. (2) Choose two (not necessarily adjacent) cells with the same number in them; denote that number by $2^{j}$. Replace the number in one of the cells with $2^{j+1}$ and erase the number in the other cell.

At the end of the game, one cell contains the number $2^{n}$, where $n$ is a given positive integer, while the other cells are empty. Determine the maximum number of moves that Sir Alex could have made, in terms of $n$. (Thailand) C4. Let $N \geqslant 2$ be an integer. $N(N+1)$ soccer players, no two of the same height, stand in a row in some order. Coach Ralph wants to remove $N(N-1)$ people from this row so that in the remaining row of $2 N$ players, no one stands between the two tallest ones, no one stands between the third and the fourth tallest ones, ..., and finally no one stands between the two shortest ones. Show that this is always possible. (Russia) C5. A hunter and an invisible rabbit play a game in the Euclidean plane. The hunter's starting point $H_{0}$ coincides with the rabbit's starting point $R_{0}$. In the $n^{\text {th }}$ round of the game $(n \geqslant 1)$, the following happens. (1) First the invisible rabbit moves secretly and unobserved from its current point $R_{n-1}$ to some new point $R_{n}$ with $R_{n-1} R_{n}=1$. (2) The hunter has a tracking device (e.g. dog) that returns an approximate position $R_{n}^{\prime}$ of the rabbit, so that $R_{n} R_{n}^{\prime} \leqslant 1$. (3) The hunter then visibly moves from point $H_{n-1}$ to a new point $H_{n}$ with $H_{n-1} H_{n}=1$.

Is there a strategy for the hunter that guarantees that after $10^{9}$ such rounds the distance between the hunter and the rabbit is below 100 ?

C6. Let $n>1$ be an integer. An $n \times n \times n$ cube is composed of $n^{3}$ unit cubes. Each unit cube is painted with one color. For each $n \times n \times 1$ box consisting of $n^{2}$ unit cubes (of any of the three possible orientations), we consider the set of the colors present in that box (each color is listed only once). This way, we get $3 n$ sets of colors, split into three groups according to the orientation. It happens that for every set in any group, the same set appears in both of the other groups. Determine, in terms of $n$, the maximal possible number of colors that are present. (Russia) C7. For any finite sets $X$ and $Y$ of positive integers, denote by $f_{X}(k)$ the $k^{\text {th }}$ smallest positive integer not in $X$, and let

Let $A$ be a set of $a>0$ positive integers, and let $B$ be a set of $b>0$ positive integers. Prove that if $A * B=B * A$, then

C8. Let $n$ be a given positive integer. In the Cartesian plane, each lattice point with nonnegative coordinates initially contains a butterfly, and there are no other butterflies. The neighborhood of a lattice point $c$ consists of all lattice points within the axis-aligned $(2 n+1) \times(2 n+1)$ square centered at $c$, apart from $c$ itself. We call a butterfly lonely, crowded, or comfortable, depending on whether the number of butterflies in its neighborhood $N$ is respectively less than, greater than, or equal to half of the number of lattice points in $N$.

Every minute, all lonely butterflies fly away simultaneously. This process goes on for as long as there are any lonely butterflies. Assuming that the process eventually stops, determine the number of comfortable butterflies at the final state.

Geometry

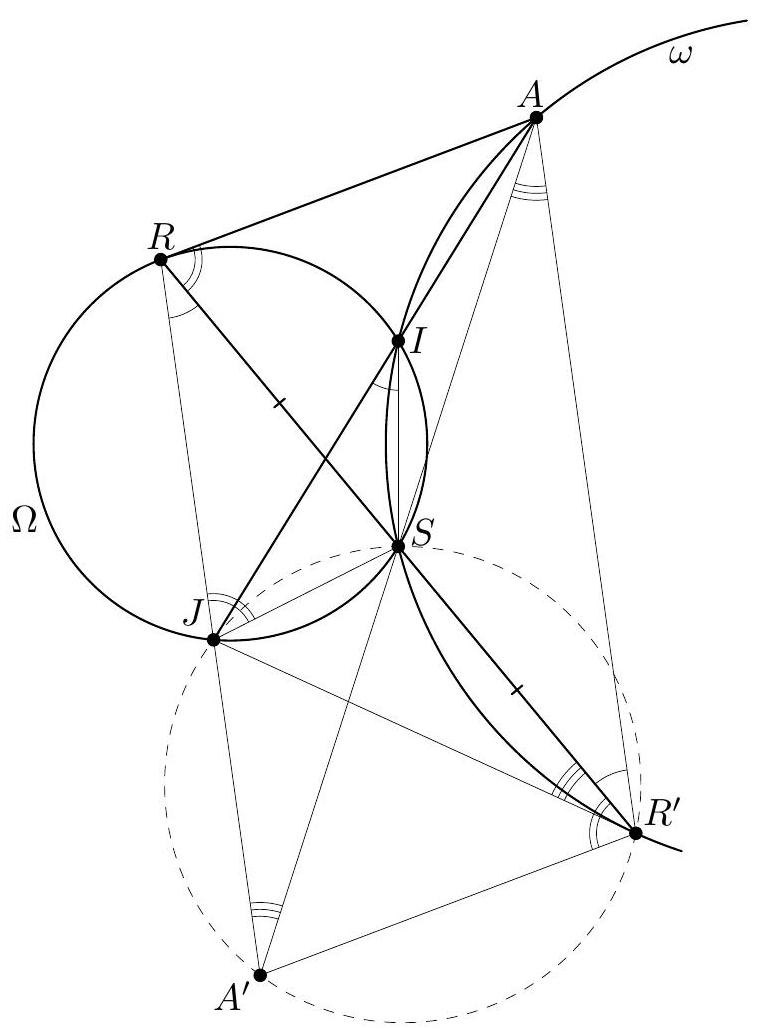

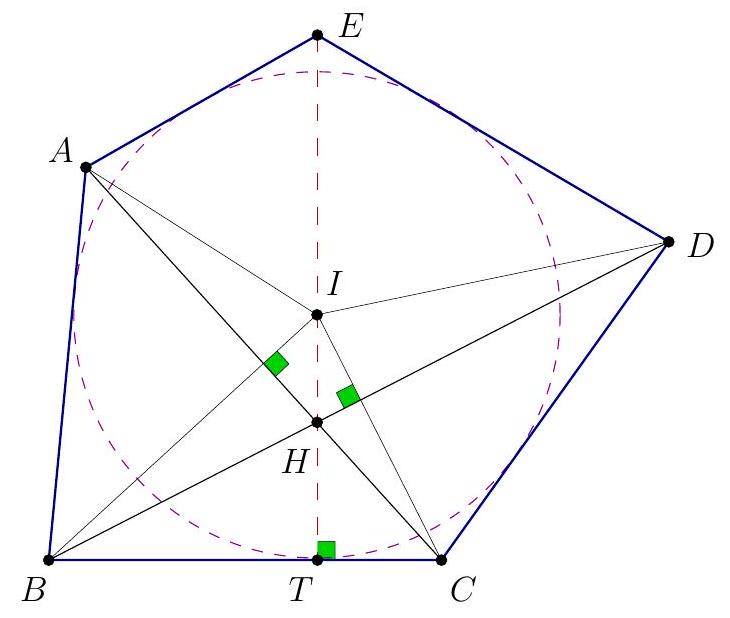

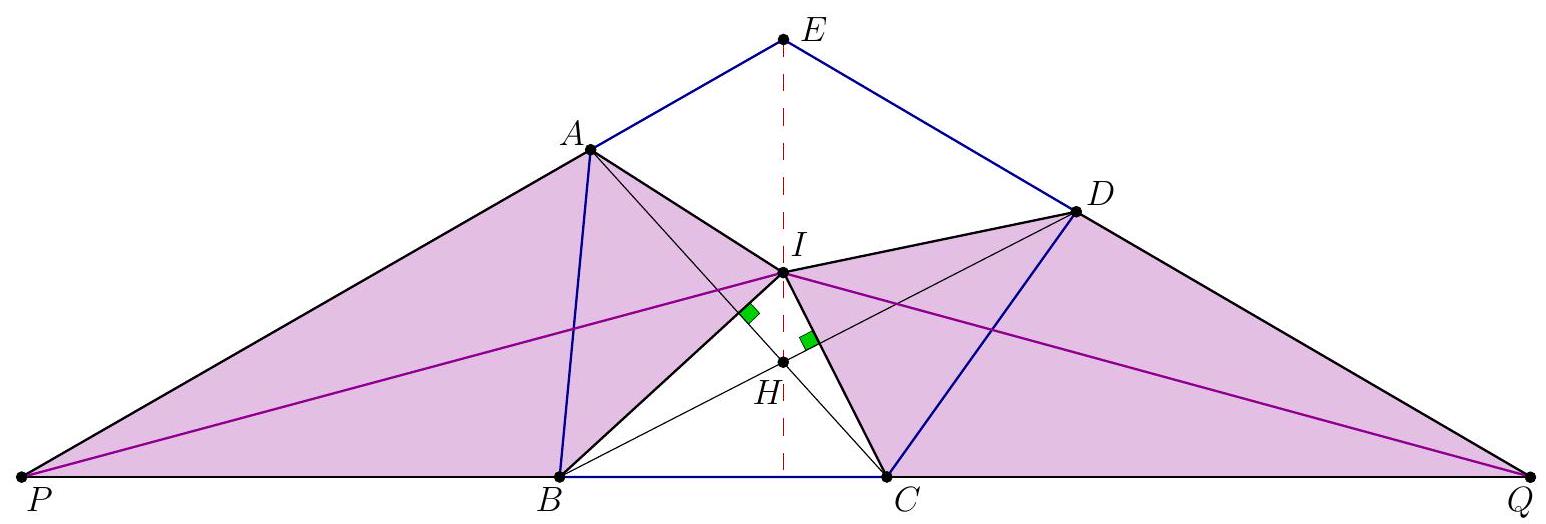

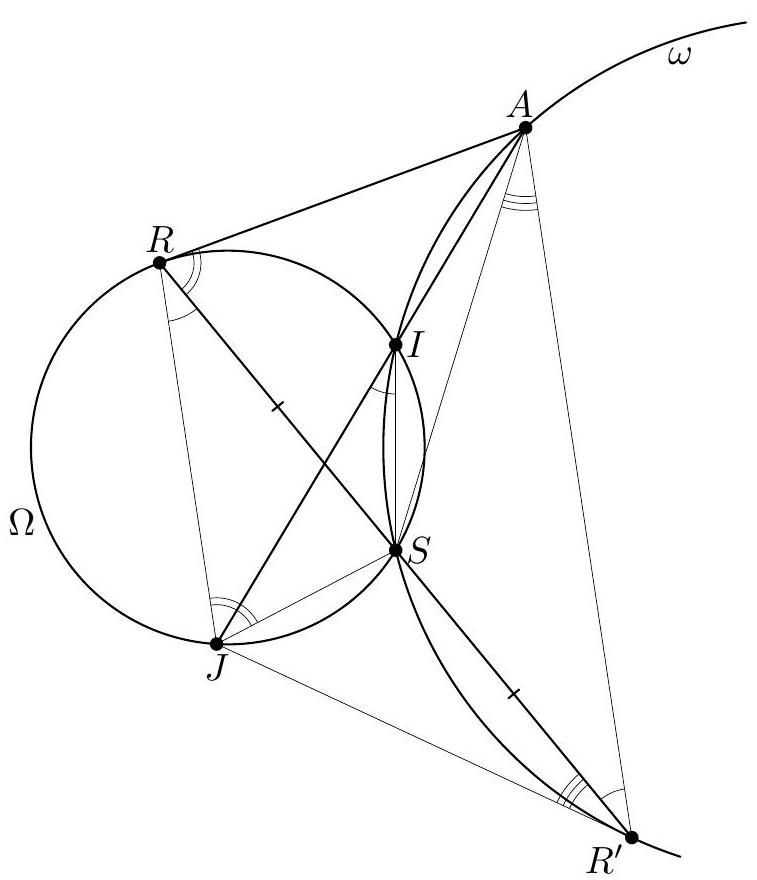

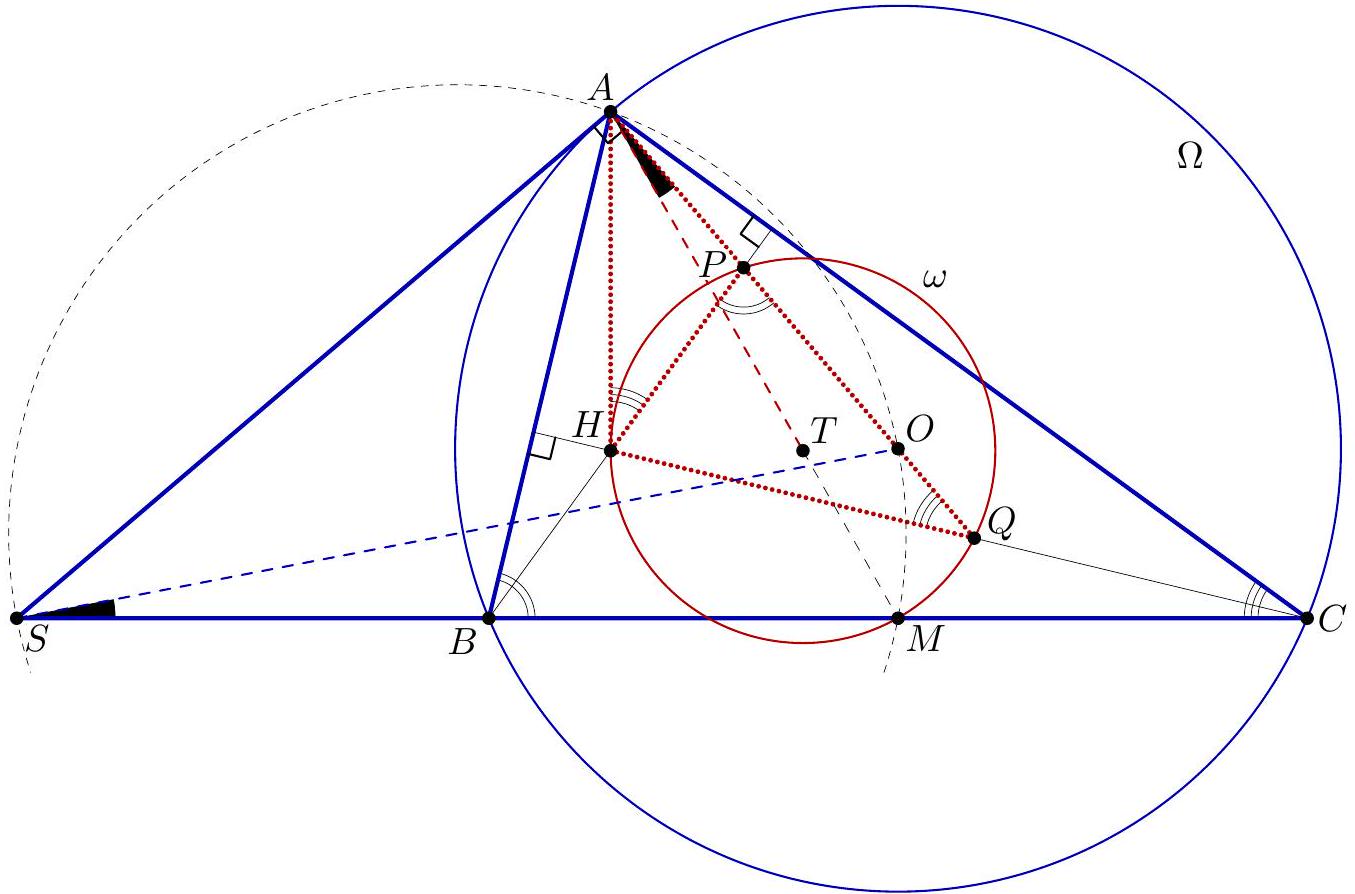

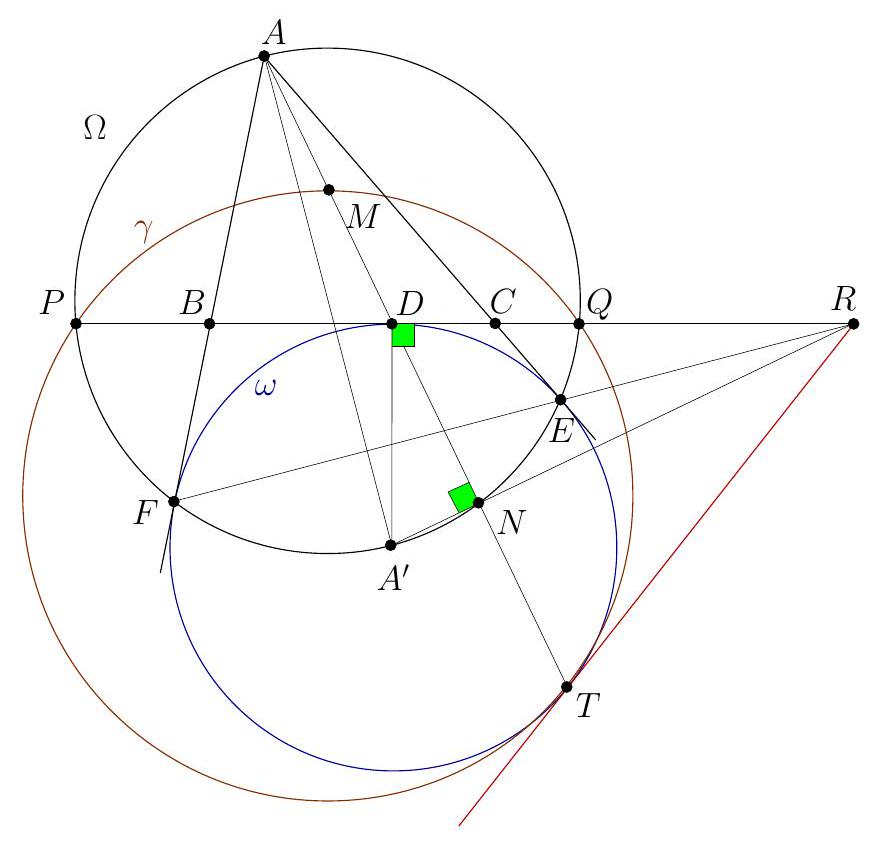

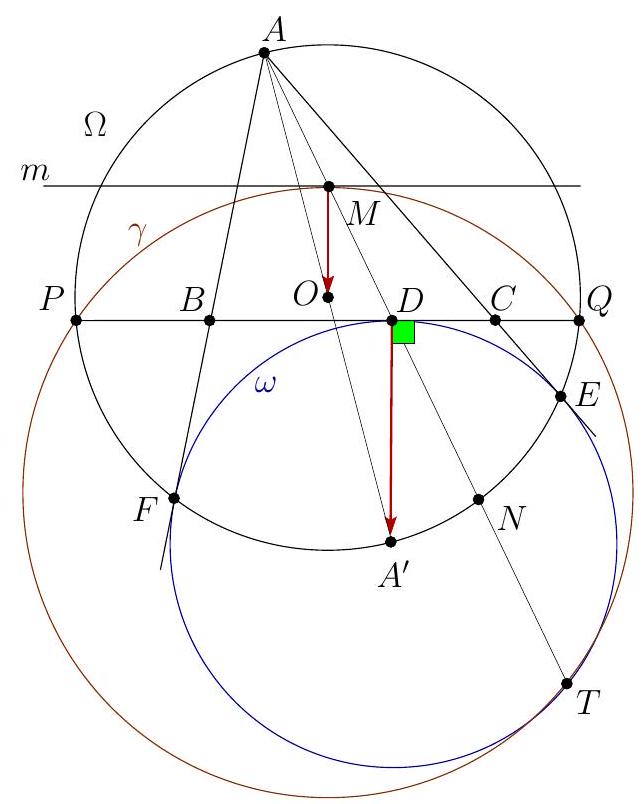

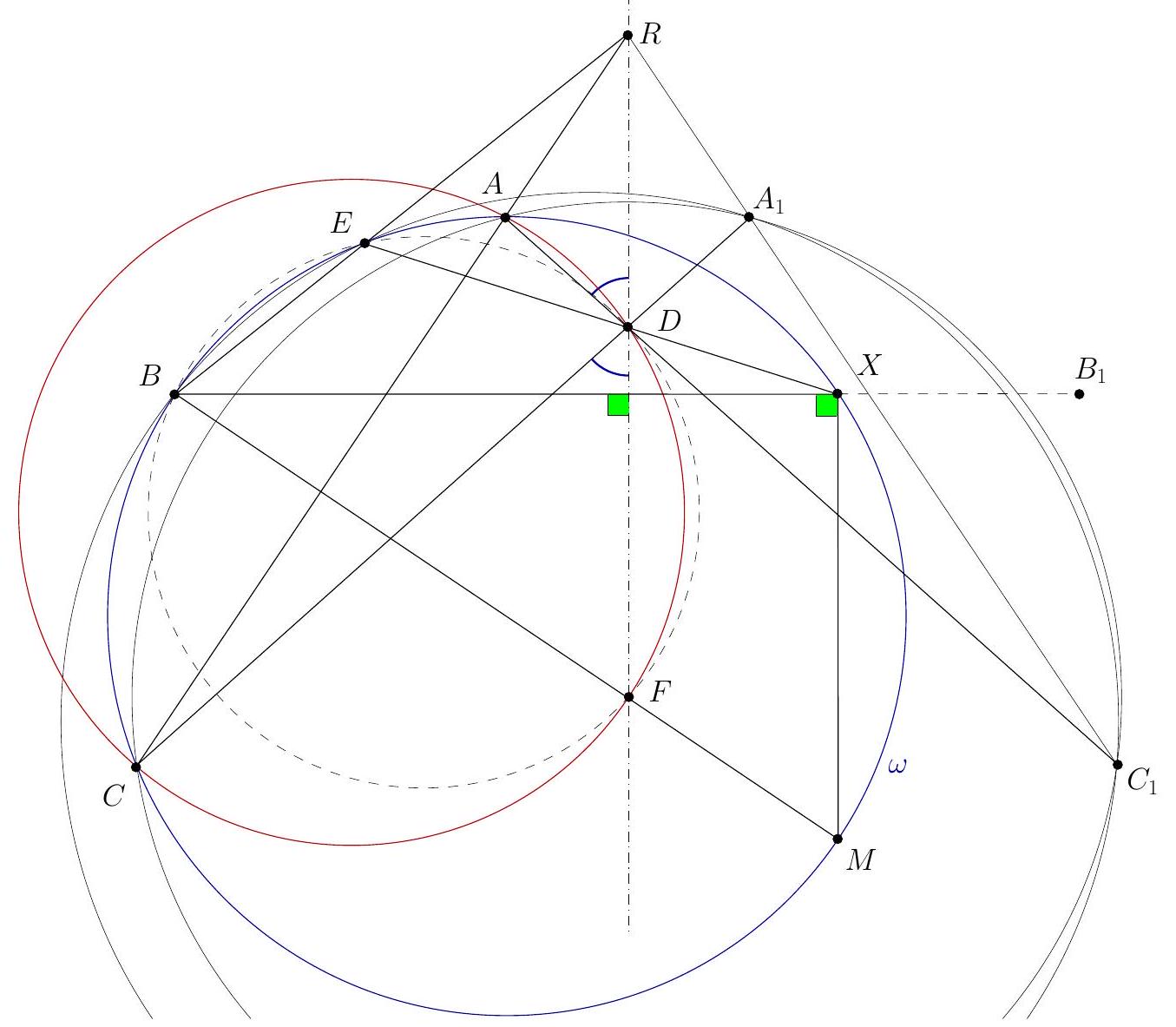

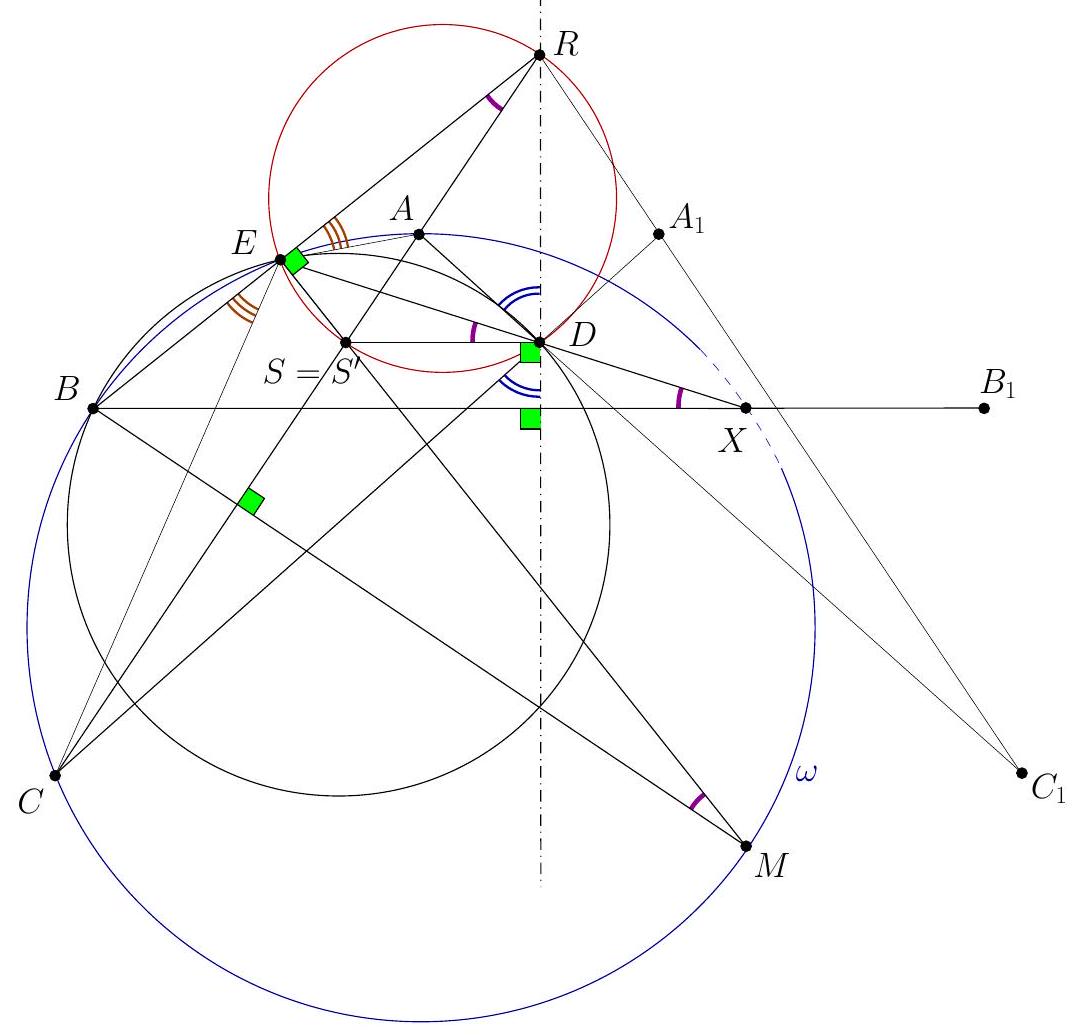

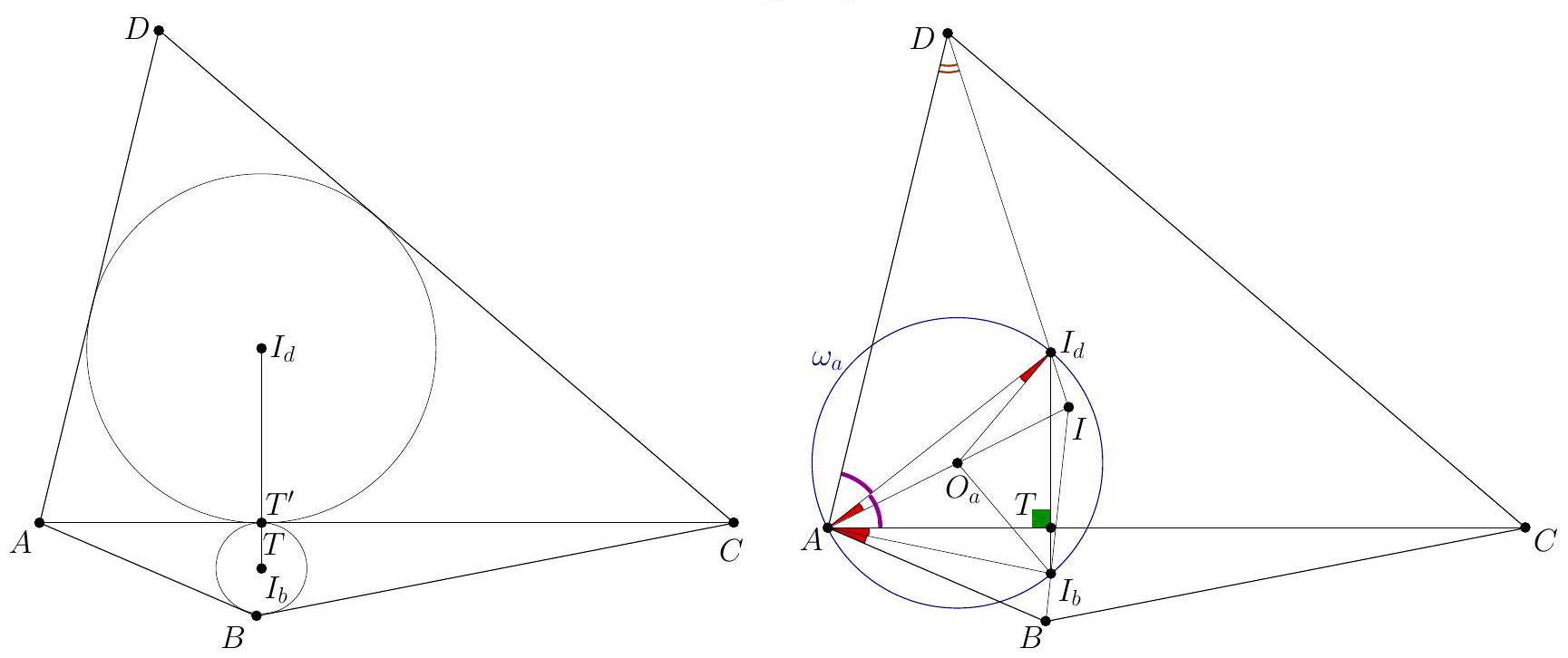

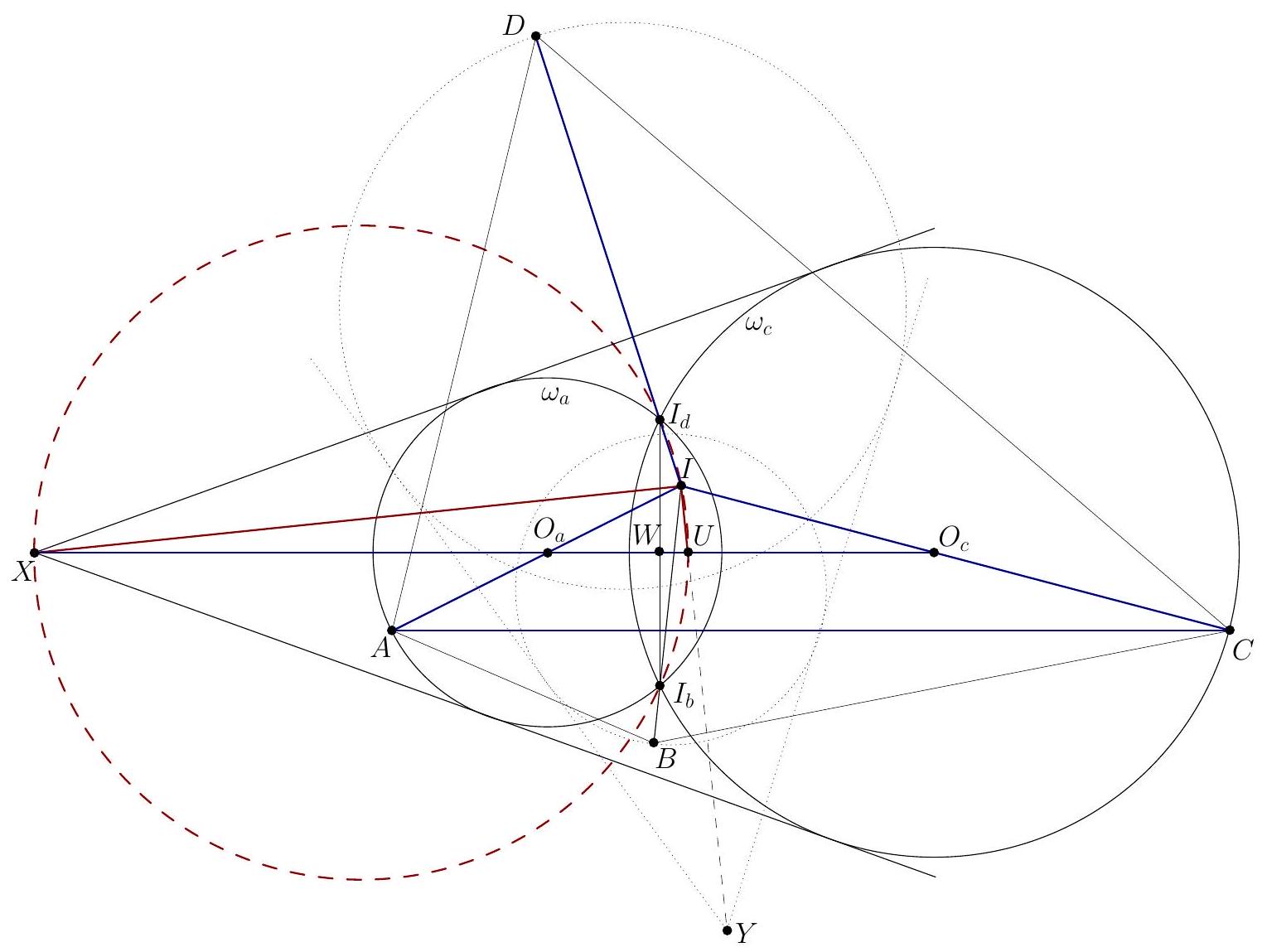

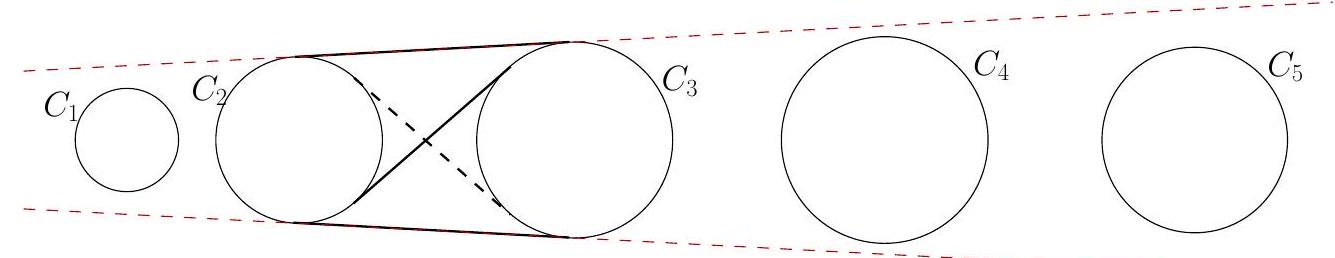

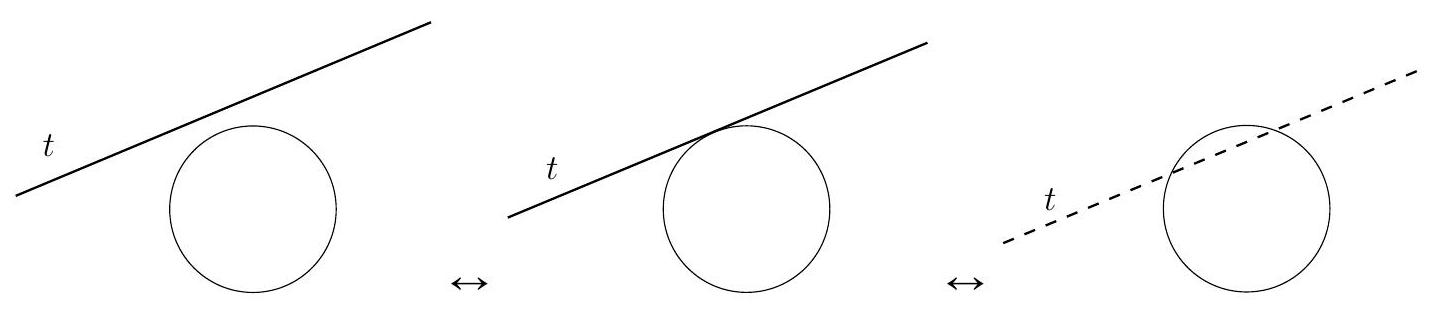

G1. Let $A B C D E$ be a convex pentagon such that $A B=B C=C D, \angle E A B=\angle B C D$, and $\angle E D C=\angle C B A$. Prove that the perpendicular line from $E$ to $B C$ and the line segments $A C$ and $B D$ are concurrent. (Italy) G2. Let $R$ and $S$ be distinct points on circle $\Omega$, and let $t$ denote the tangent line to $\Omega$ at $R$. Point $R^{\prime}$ is the reflection of $R$ with respect to $S$. A point $I$ is chosen on the smaller arc $R S$ of $\Omega$ so that the circumcircle $\Gamma$ of triangle $I S R^{\prime}$ intersects $t$ at two different points. Denote by $A$ the common point of $\Gamma$ and $t$ that is closest to $R$. Line $A I$ meets $\Omega$ again at $J$. Show that $J R^{\prime}$ is tangent to $\Gamma$. (Luxembourg) G3. Let $O$ be the circumcenter of an acute scalene triangle $A B C$. Line $O A$ intersects the altitudes of $A B C$ through $B$ and $C$ at $P$ and $Q$, respectively. The altitudes meet at $H$. Prove that the circumcenter of triangle $P Q H$ lies on a median of triangle $A B C$. (Ukraine) G4. In triangle $A B C$, let $\omega$ be the excircle opposite $A$. Let $D, E$, and $F$ be the points where $\omega$ is tangent to lines $B C, C A$, and $A B$, respectively. The circle $A E F$ intersects line $B C$ at $P$ and $Q$. Let $M$ be the midpoint of $A D$. Prove that the circle $M P Q$ is tangent to $\omega$. (Denmark) G5. Let $A B C C_{1} B_{1} A_{1}$ be a convex hexagon such that $A B=B C$, and suppose that the line segments $A A_{1}, B B_{1}$, and $C C_{1}$ have the same perpendicular bisector. Let the diagonals $A C_{1}$ and $A_{1} C$ meet at $D$, and denote by $\omega$ the circle $A B C$. Let $\omega$ intersect the circle $A_{1} B C_{1}$ again at $E \neq B$. Prove that the lines $B B_{1}$ and $D E$ intersect on $\omega$. (Ukraine) G6. Let $n \geqslant 3$ be an integer. Two regular $n$-gons $\mathcal{A}$ and $\mathcal{B}$ are given in the plane. Prove that the vertices of $\mathcal{A}$ that lie inside $\mathcal{B}$ or on its boundary are consecutive. (That is, prove that there exists a line separating those vertices of $\mathcal{A}$ that lie inside $\mathcal{B}$ or on its boundary from the other vertices of $\mathcal{A}$.) (Czech Republic) G7. A convex quadrilateral $A B C D$ has an inscribed circle with center $I$. Let $I_{a}, I_{b}, I_{c}$, and $I_{d}$ be the incenters of the triangles $D A B, A B C, B C D$, and $C D A$, respectively. Suppose that the common external tangents of the circles $A I_{b} I_{d}$ and $C I_{b} I_{d}$ meet at $X$, and the common external tangents of the circles $B I_{a} I_{c}$ and $D I_{a} I_{c}$ meet at $Y$. Prove that $\angle X I Y=90^{\circ}$. (Kazakhstan) G8. There are 2017 mutually external circles drawn on a blackboard, such that no two are tangent and no three share a common tangent. A tangent segment is a line segment that is a common tangent to two circles, starting at one tangent point and ending at the other one. Luciano is drawing tangent segments on the blackboard, one at a time, so that no tangent segment intersects any other circles or previously drawn tangent segments. Luciano keeps drawing tangent segments until no more can be drawn. Find all possible numbers of tangent segments when he stops drawing. (Australia)

Number Theory

N1. The sequence $a_{0}, a_{1}, a_{2}, \ldots$ of positive integers satisfies

Determine all values of $a_{0}>1$ for which there is at least one number $a$ such that $a_{n}=a$ for infinitely many values of $n$. (South Africa) N2. Let $p \geqslant 2$ be a prime number. Eduardo and Fernando play the following game making moves alternately: in each move, the current player chooses an index $i$ in the set ${0,1, \ldots, p-1}$ that was not chosen before by either of the two players and then chooses an element $a_{i}$ of the set ${0,1,2,3,4,5,6,7,8,9}$. Eduardo has the first move. The game ends after all the indices $i \in{0,1, \ldots, p-1}$ have been chosen. Then the following number is computed:

The goal of Eduardo is to make the number $M$ divisible by $p$, and the goal of Fernando is to prevent this.

Prove that Eduardo has a winning strategy. (Morocco) N3. Determine all integers $n \geqslant 2$ with the following property: for any integers $a_{1}, a_{2}, \ldots, a_{n}$ whose sum is not divisible by $n$, there exists an index $1 \leqslant i \leqslant n$ such that none of the numbers

is divisible by $n$. (We let $a_{i}=a_{i-n}$ when $i>n$.) (Thailand) N4. Call a rational number short if it has finitely many digits in its decimal expansion. For a positive integer $m$, we say that a positive integer $t$ is $m$-tastic if there exists a number $c \in{1,2,3, \ldots, 2017}$ such that $\frac{10^{t}-1}{c \cdot m}$ is short, and such that $\frac{10^{k}-1}{c \cdot m}$ is not short for any $1 \leqslant k<t$. Let $S(m)$ be the set of $m$-tastic numbers. Consider $S(m)$ for $m=1,2, \ldots$. What is the maximum number of elements in $S(m)$ ? (Turkey) N5. Find all pairs $(p, q)$ of prime numbers with $p>q$ for which the number

is an integer.

N6. Find the smallest positive integer $n$, or show that no such $n$ exists, with the following property: there are infinitely many distinct $n$-tuples of positive rational numbers ( $a_{1}, a_{2}, \ldots, a_{n}$ ) such that both

are integers. (Singapore) N7. Say that an ordered pair $(x, y)$ of integers is an irreducible lattice point if $x$ and $y$ are relatively prime. For any finite set $S$ of irreducible lattice points, show that there is a homogenous polynomial in two variables, $f(x, y)$, with integer coefficients, of degree at least 1 , such that $f(x, y)=1$ for each $(x, y)$ in the set $S$.

Note: A homogenous polynomial of degree $n$ is any nonzero polynomial of the form

N8 Let $p$ be an odd prime number and $\mathbb{Z}{>0}$ be the set of positive integers. Suppose that a function $f: \mathbb{Z}{>0} \times \mathbb{Z}_{>0} \rightarrow{0,1}$ satisfies the following properties:

- $f(1,1)=0$;

- $f(a, b)+f(b, a)=1$ for any pair of relatively prime positive integers $(a, b)$ not both equal to 1 ;

- $f(a+b, b)=f(a, b)$ for any pair of relatively prime positive integers $(a, b)$.

Prove that

Solutions

Algebra

A1. Let $a_{1}, a_{2}, \ldots, a_{n}, k$, and $M$ be positive integers such that

If $M>1$, prove that the polynomial

has no positive roots. (Trinidad and Tobago) Solution 1. We first prove that, for $x>0$,

with equality if and only if $a_{i}=1$. It is clear that equality occurs if $a_{i}=1$. If $a_{i}>1$, the AM-GM inequality applied to a single copy of $x+1$ and $a_{i}-1$ copies of 1 yields

Since $x+1>1$, the inequality is strict for $a_{i}>1$. Multiplying the inequalities (1) for $i=1,2, \ldots, n$ yields

with equality iff $a_{i}=1$ for all $i \in{1,2, \ldots, n}$. But this implies $M=1$, which is not possible. Hence $P(x)<0$ for all $x \in \mathbb{R}^{+}$, and $P$ has no positive roots.

Comment 1. Inequality (1) can be obtained in several ways. For instance, we may also use the binomial theorem: since $a_{i} \geqslant 1$,

Both proofs of (1) mimic proofs to Bernoulli's inequality for a positive integer exponent $a_{i}$; we can use this inequality directly:

and so

or its (reversed) formulation, with exponent $1 / a_{i} \leqslant 1$ :

Solution 2. We will prove that, in fact, all coefficients of the polynomial $P(x)$ are non-positive, and at least one of them is negative, which implies that $P(x)<0$ for $x>0$.

Indeed, since $a_{j} \geqslant 1$ for all $j$ and $a_{j}>1$ for some $j$ (since $a_{1} a_{2} \ldots a_{n}=M>1$ ), we have $k=\frac{1}{a_{1}}+\frac{1}{a_{2}}+\cdots+\frac{1}{a_{n}}<n$, so the coefficient of $x^{n}$ in $P(x)$ is $-1<0$. Moreover, the coefficient of $x^{r}$ in $P(x)$ is negative for $k<r \leqslant n=\operatorname{deg}(P)$.

For $0 \leqslant r \leqslant k$, the coefficient of $x^{r}$ in $P(x)$ is

which is non-positive iff

We will prove (2) by induction on $r$. For $r=0$ it is an equality because the constant term of $P(x)$ is $P(0)=0$, and if $r=1$, (2) becomes $k=\sum_{i=1}^{n} \frac{1}{a_{i}}$. For $r>1$, if (2) is true for a given $r<k$, we have

and it suffices to prove that

which is equivalent to

Since there are $r+1$ ways to choose a fraction $\frac{1}{a_{j_{i}}}$ from $\frac{1}{a_{j_{1}} a_{j_{2}} \cdots a_{j_{r}} a_{j_{r}+1}}$ to factor out, every term $\frac{1}{a_{j_{1}} a_{j_{2}} \cdots a_{j_{r}} a_{j_{r+1}}}$ in the right hand side appears exactly $r+1$ times in the product

Hence all terms in the right hand side cancel out. The remaining terms in the left hand side can be grouped in sums of the type

which are all non-positive because $a_{i} \geqslant 1 \Longrightarrow \frac{1}{a_{i}} \leqslant 1, i=1,2, \ldots, n$. Comment 2. The result is valid for any real numbers $a_{i}, i=1,2, \ldots, n$ with $a_{i} \geqslant 1$ and product $M$ greater than 1. A variation of Solution 1, namely using weighted AM-GM (or the Bernoulli inequality for real exponents), actually proves that $P(x)<0$ for $x>-1$ and $x \neq 0$.

A2. Let $q$ be a real number. Gugu has a napkin with ten distinct real numbers written on it, and he writes the following three lines of real numbers on the blackboard:

- In the first line, Gugu writes down every number of the form $a-b$, where $a$ and $b$ are two (not necessarily distinct) numbers on his napkin.

- In the second line, Gugu writes down every number of the form qab, where $a$ and $b$ are two (not necessarily distinct) numbers from the first line.

- In the third line, Gugu writes down every number of the form $a^{2}+b^{2}-c^{2}-d^{2}$, where $a, b, c, d$ are four (not necessarily distinct) numbers from the first line.

Determine all values of $q$ such that, regardless of the numbers on Gugu's napkin, every number in the second line is also a number in the third line. (Austria) Answer: -2, 0,2 . Solution 1. Call a number $q$ good if every number in the second line appears in the third line unconditionally. We first show that the numbers 0 and $\pm 2$ are good. The third line necessarily contains 0 , so 0 is good. For any two numbers $a, b$ in the first line, write $a=x-y$ and $b=u-v$, where $x, y, u, v$ are (not necessarily distinct) numbers on the napkin. We may now write

which shows that 2 is good. By negating both sides of the above equation, we also see that -2 is good.

We now show that $-2,0$, and 2 are the only good numbers. Assume for sake of contradiction that $q$ is a good number, where $q \notin{-2,0,2}$. We now consider some particular choices of numbers on Gugu's napkin to arrive at a contradiction.

Assume that the napkin contains the integers $1,2, \ldots, 10$. Then, the first line contains the integers $-9,-8, \ldots, 9$. The second line then contains $q$ and $81 q$, so the third line must also contain both of them. But the third line only contains integers, so $q$ must be an integer. Furthermore, the third line contains no number greater than $162=9^{2}+9^{2}-0^{2}-0^{2}$ or less than -162 , so we must have $-162 \leqslant 81 q \leqslant 162$. This shows that the only possibilities for $q$ are $\pm 1$.

Now assume that $q= \pm 1$. Let the napkin contain $0,1,4,8,12,16,20,24,28,32$. The first line contains $\pm 1$ and $\pm 4$, so the second line contains $\pm 4$. However, for every number $a$ in the first line, $a \not \equiv 2(\bmod 4)$, so we may conclude that $a^{2} \equiv 0,1(\bmod 8)$. Consequently, every number in the third line must be congruent to $-2,-1,0,1,2(\bmod 8)$; in particular, $\pm 4$ cannot be in the third line, which is a contradiction.

Solution 2. Let $q$ be a good number, as defined in the first solution, and define the polynomial $P\left(x_{1}, \ldots, x_{10}\right)$ as

where $S=\left{x_{1}, \ldots, x_{10}\right}$. We claim that $P\left(x_{1}, \ldots, x_{10}\right)=0$ for every choice of real numbers $\left(x_{1}, \ldots, x_{10}\right)$. If any two of the $x_{i}$ are equal, then $P\left(x_{1}, \ldots, x_{10}\right)=0$ trivially. If no two are equal, assume that Gugu has those ten numbers $x_{1}, \ldots, x_{10}$ on his napkin. Then, the number $q\left(x_{1}-x_{2}\right)\left(x_{3}-x_{4}\right)$ is in the second line, so we must have some $a_{1}, \ldots, a_{8}$ so that

and hence $P\left(x_{1}, \ldots, x_{10}\right)=0$. Since every polynomial that evaluates to zero everywhere is the zero polynomial, and the product of two nonzero polynomials is necessarily nonzero, we may define $F$ such that

for some particular choice $a_{i} \in S$. Each of the sets $\left{a_{1}, a_{2}\right},\left{a_{3}, a_{4}\right},\left{a_{5}, a_{6}\right}$, and $\left{a_{7}, a_{8}\right}$ is equal to at most one of the four sets $\left{x_{1}, x_{3}\right},\left{x_{2}, x_{3}\right},\left{x_{1}, x_{4}\right}$, and $\left{x_{2}, x_{4}\right}$. Thus, without loss of generality, we may assume that at most one of the sets $\left{a_{1}, a_{2}\right},\left{a_{3}, a_{4}\right},\left{a_{5}, a_{6}\right}$, and $\left{a_{7}, a_{8}\right}$ is equal to $\left{x_{1}, x_{3}\right}$. Let $u_{1}, u_{3}, u_{5}, u_{7}$ be the indicator functions for this equality of sets: that is, $u_{i}=1$ if and only if $\left{a_{i}, a_{i+1}\right}=\left{x_{1}, x_{3}\right}$. By assumption, at least three of the $u_{i}$ are equal to 0 .

We now compute the coefficient of $x_{1} x_{3}$ in $F$. It is equal to $q+2\left(u_{1}+u_{3}-u_{5}-u_{7}\right)=0$, and since at least three of the $u_{i}$ are zero, we must have that $q \in{-2,0,2}$, as desired.

A3. Let $S$ be a finite set, and let $\mathcal{A}$ be the set of all functions from $S$ to $S$. Let $f$ be an element of $\mathcal{A}$, and let $T=f(S)$ be the image of $S$ under $f$. Suppose that $f \circ g \circ f \neq g \circ f \circ g$ for every $g$ in $\mathcal{A}$ with $g \neq f$. Show that $f(T)=T$. (India) Solution. For $n \geqslant 1$, denote the $n$-th composition of $f$ with itself by

By hypothesis, if $g \in \mathcal{A}$ satisfies $f \circ g \circ f=g \circ f \circ g$, then $g=f$. A natural idea is to try to plug in $g=f^{n}$ for some $n$ in the expression $f \circ g \circ f=g \circ f \circ g$ in order to get $f^{n}=f$, which solves the problem: Claim. If there exists $n \geqslant 3$ such that $f^{n+2}=f^{2 n+1}$, then the restriction $f: T \rightarrow T$ of $f$ to $T$ is a bijection. Proof. Indeed, by hypothesis, $f^{n+2}=f^{2 n+1} \Longleftrightarrow f \circ f^{n} \circ f=f^{n} \circ f \circ f^{n} \Longrightarrow f^{n}=f$. Since $n-2 \geqslant 1$, the image of $f^{n-2}$ is contained in $T=f(S)$, hence $f^{n-2}$ restricts to a function $f^{n-2}: T \rightarrow T$. This is the inverse of $f: T \rightarrow T$. In fact, given $t \in T$, say $t=f(s)$ with $s \in S$, we have

(here id stands for the identity function). Hence, the restriction $f: T \rightarrow T$ of $f$ to $T$ is bijective with inverse given by $f^{n-2}: T \rightarrow T$.

It remains to show that $n$ as in the claim exists. For that, define

Clearly the image of $f^{m+1}$ is contained in the image of $f^{m}$, i.e., there is a descending chain of subsets of $S$

which must eventually stabilise since $S$ is finite, i.e., there is a $k \geqslant 1$ such that

Hence $f$ restricts to a surjective function $f: S_{\infty} \rightarrow S_{\infty}$, which is also bijective since $S_{\infty} \subseteq S$ is finite. To sum up, $f: S_{\infty} \rightarrow S_{\infty}$ is a permutation of the elements of the finite set $S_{\infty}$, hence there exists an integer $r \geqslant 1$ such that $f^{r}=\mathrm{id}$ on $S_{\infty}$ (for example, we may choose $r=\left|S_{\infty}\right|!$ ). In other words,

Clearly, (*) also implies that $f^{m+t r}=f^{m}$ for all integers $t \geqslant 1$ and $m \geqslant k$. So, to find $n$ as in the claim and finish the problem, it is enough to choose $m$ and $t$ in order to ensure that there exists $n \geqslant 3$ satisfying

This can be clearly done by choosing $m$ large enough with $m \equiv 3(\bmod r)$. For instance, we may take $n=2 k r+1$, so that

where the middle equality follows by (*) since $2 k r+3 \geqslant k$.

A4. A sequence of real numbers $a_{1}, a_{2}, \ldots$ satisfies the relation

Prove that this sequence is bounded, i.e., there is a constant $M$ such that $\left|a_{n}\right| \leqslant M$ for all positive integers $n$. (Russia) Solution 1. Set $D=2017$. Denote

Clearly, the sequences $\left(m_{n}\right)$ and $\left(M_{n}\right)$ are nondecreasing. We need to prove that both are bounded.

Consider an arbitrary $n>D$; our first aim is to bound $a_{n}$ in terms of $m_{n}$ and $M_{n}$. (i) There exist indices $p$ and $q$ such that $a_{n}=-\left(a_{p}+a_{q}\right)$ and $p+q=n$. Since $a_{p}, a_{q} \leqslant M_{n}$, we have $a_{n} \geqslant-2 M_{n}$. (ii) On the other hand, choose an index $k<n$ such that $a_{k}=M_{n}$. Then, we have

Summarizing (i) and (ii), we get

whence

Now, say that an index $n>D$ is lucky if $m_{n} \leqslant 2 M_{n}$. Two cases are possible. Case 1. Assume that there exists a lucky index $n$. In this case, (1) yields $m_{n+1} \leqslant 2 M_{n}$ and $M_{n} \leqslant M_{n+1} \leqslant M_{n}$. Therefore, $M_{n+1}=M_{n}$ and $m_{n+1} \leqslant 2 M_{n}=2 M_{n+1}$. So, the index $n+1$ is also lucky, and $M_{n+1}=M_{n}$. Applying the same arguments repeatedly, we obtain that all indices $k>n$ are lucky (i.e., $m_{k} \leqslant 2 M_{k}$ for all these indices), and $M_{k}=M_{n}$ for all such indices. Thus, all of the $m_{k}$ and $M_{k}$ are bounded by $2 M_{n}$. Case 2. Assume now that there is no lucky index, i.e., $2 M_{n}<m_{n}$ for all $n>D$. Then (1) shows that for all $n>D$ we have $m_{n} \leqslant m_{n+1} \leqslant m_{n}$, so $m_{n}=m_{D+1}$ for all $n>D$. Since $M_{n}<m_{n} / 2$ for all such indices, all of the $m_{n}$ and $M_{n}$ are bounded by $m_{D+1}$.

Thus, in both cases the sequences $\left(m_{n}\right)$ and $\left(M_{n}\right)$ are bounded, as desired. Solution 2. As in the previous solution, let $D=2017$. If the sequence is bounded above, say, by $Q$, then we have that $a_{n} \geqslant \min \left{a_{1}, \ldots, a_{D},-2 Q\right}$ for all $n$, so the sequence is bounded. Assume for sake of contradiction that the sequence is not bounded above. Let $\ell=\min \left{a_{1}, \ldots, a_{D}\right}$, and $L=\max \left{a_{1}, \ldots, a_{D}\right}$. Call an index $n$ good if the following criteria hold:

We first show that there must be some good index $n$. By assumption, we may take an index $N$ such that $a_{N}>\max {L,-2 \ell}$. Choose $n$ minimally such that $a_{n}=\max \left{a_{1}, a_{2}, \ldots, a_{N}\right}$. Now, the first condition in (2) is satisfied because of the minimality of $n$, and the second and third conditions are satisfied because $a_{n} \geqslant a_{N}>L,-2 \ell$, and $L \geqslant a_{i}$ for every $i$ such that $1 \leqslant i \leqslant D$.

Let $n$ be a good index. We derive a contradiction. We have that

whenever $u+v=n$. We define the index $u$ to maximize $a_{u}$ over $1 \leqslant u \leqslant n-1$, and let $v=n-u$. Then, we note that $a_{u} \geqslant a_{v}$ by the maximality of $a_{u}$.

Assume first that $v \leqslant D$. Then, we have that

because $a_{u} \geqslant a_{v} \geqslant \ell$. But this contradicts our assumption that $a_{n}>-2 \ell$ in the second criteria of (2).

Now assume that $v>D$. Then, there exist some indices $w_{1}, w_{2}$ summing up to $v$ such that

But combining this with (3), we have

Because $a_{n}>a_{u}$, we have that $\max \left{a_{w_{1}}, a_{w_{2}}\right}>a_{u}$. But since each of the $w_{i}$ is less than $v$, this contradicts the maximality of $a_{u}$.

Comment 1. We present two harder versions of this problem below. Version 1. Let $a_{1}, a_{2}, \ldots$ be a sequence of numbers that satisfies the relation

Then, this sequence is bounded. Proof. Set $D=2017$. Denote

Clearly, the sequences $\left(m_{n}\right)$ and $\left(M_{n}\right)$ are nondecreasing. We need to prove that both are bounded. Consider an arbitrary $n>2 D$; our first aim is to bound $a_{n}$ in terms of $m_{i}$ and $M_{i}$. Set $k=\lfloor n / 2\rfloor$. (i) Choose indices $p, q$, and $r$ such that $a_{n}=-\left(a_{p}+a_{q}+a_{r}\right)$ and $p+q+r=n$. Without loss of generality, $p \geqslant q \geqslant r$.

Assume that $p \geqslant k+1(>D)$; then $p>q+r$. Hence

and therefore $a_{n}=-\left(a_{p}+a_{q}+a_{r}\right) \geqslant\left(a_{q}+a_{r}+a_{p-q-r}\right)-a_{q}-a_{r}=a_{p-q-r} \geqslant-m_{n}$. Otherwise, we have $k \geqslant p \geqslant q \geqslant r$. Since $n<3 k$, we have $r<k$. Then $a_{p}, a_{q} \leqslant M_{k+1}$ and $a_{r} \leqslant M_{k}$, whence $a_{n} \geqslant-2 M_{k+1}-M_{k}$.

Thus, in any case $a_{n} \geqslant-\max \left{m_{n}, 2 M_{k+1}+M_{k}\right}$. (ii) On the other hand, choose $p \leqslant k$ and $q \leqslant k-1$ such that $a_{p}=M_{k+1}$ and $a_{q}=M_{k}$. Then $p+q<n$, so $a_{n} \leqslant-\left(a_{p}+a_{q}+a_{n-p-q}\right)=-a_{n-p-q}-M_{k+1}-M_{k} \leqslant m_{n}-M_{k+1}-M_{k}$.

To summarize,

whence

Now, say that an index $n>2 D$ is lucky if $m_{n} \leqslant 2 M_{\lfloor n / 2\rfloor+1}+M_{\lfloor n / 2]}$. Two cases are possible. Case 1. Assume that there exists a lucky index $n$; set $k=\lfloor n / 2\rfloor$. In this case, (4) yields $m_{n+1} \leqslant$ $2 M_{k+1}+M_{k}$ and $M_{n} \leqslant M_{n+1} \leqslant M_{n}$ (the last relation holds, since $m_{n}-M_{k+1}-M_{k} \leqslant\left(2 M_{k+1}+\right.$ $\left.M_{k}\right)-M_{k+1}-M_{k}=M_{k+1} \leqslant M_{n}$ ). Therefore, $M_{n+1}=M_{n}$ and $m_{n+1} \leqslant 2 M_{k+1}+M_{k}$; the last relation shows that the index $n+1$ is also lucky.

Thus, all indices $N>n$ are lucky, and $M_{N}=M_{n} \geqslant m_{N} / 3$, whence all the $m_{N}$ and $M_{N}$ are bounded by $3 M_{n}$. Case 2. Conversely, assume that there is no lucky index, i.e., $2 M_{\lfloor n / 2\rfloor+1}+M_{\lfloor n / 2\rfloor}<m_{n}$ for all $n>2 D$. Then (4) shows that for all $n>2 D$ we have $m_{n} \leqslant m_{n+1} \leqslant m_{n}$, i.e., $m_{N}=m_{2 D+1}$ for all $N>2 D$. Since $M_{N}<m_{2 N+1} / 3$ for all such indices, all the $m_{N}$ and $M_{N}$ are bounded by $m_{2 D+1}$.

Thus, in both cases the sequences $\left(m_{n}\right)$ and $\left(M_{n}\right)$ are bounded, as desired. Version 2. Let $a_{1}, a_{2}, \ldots$ be a sequence of numbers that satisfies the relation

Then, this sequence is bounded. Proof. As in the solutions above, let $D=2017$. If the sequence is bounded above, say, by $Q$, then we have that $a_{n} \geqslant \min \left{a_{1}, \ldots, a_{D},-k Q\right}$ for all $n$, so the sequence is bounded. Assume for sake of contradiction that the sequence is not bounded above. Let $\ell=\min \left{a_{1}, \ldots, a_{D}\right}$, and $L=\max \left{a_{1}, \ldots, a_{D}\right}$. Call an index $n$ good if the following criteria hold:

We first show that there must be some good index $n$. By assumption, we may take an index $N$ such that $a_{N}>\max {L,-k \ell}$. Choose $n$ minimally such that $a_{n}=\max \left{a_{1}, a_{2}, \ldots, a_{N}\right}$. Now, the first condition is satisfied because of the minimality of $n$, and the second and third conditions are satisfied because $a_{n} \geqslant a_{N}>L,-k \ell$, and $L \geqslant a_{i}$ for every $i$ such that $1 \leqslant i \leqslant D$.

Let $n$ be a good index. We derive a contradiction. We have that

whenever $v_{1}+\cdots+v_{k}=n$. We define the sequence of indices $v_{1}, \ldots, v_{k-1}$ to greedily maximize $a_{v_{1}}$, then $a_{v_{2}}$, and so forth, selecting only from indices such that the equation $v_{1}+\cdots+v_{k}=n$ can be satisfied by positive integers $v_{1}, \ldots, v_{k}$. More formally, we define them inductively so that the following criteria are satisfied by the $v_{i}$ :

- $1 \leqslant v_{i} \leqslant n-(k-i)-\left(v_{1}+\cdots+v_{i-1}\right)$.

- $a_{v_{i}}$ is maximal among all choices of $v_{i}$ from the first criteria.

First of all, we note that for each $i$, the first criteria is always satisfiable by some $v_{i}$, because we are guaranteed that

which implies

Secondly, the sum $v_{1}+\cdots+v_{k-1}$ is at most $n-1$. Define $v_{k}=n-\left(v_{1}+\cdots+v_{k-1}\right)$. Then, (6) is satisfied by the $v_{i}$. We also note that $a_{v_{i}} \geqslant a_{v_{j}}$ for all $i<j$; otherwise, in the definition of $v_{i}$, we could have selected $v_{j}$ instead.

Assume first that $v_{k} \leqslant D$. Then, from (6), we have that

by using that $a_{v_{1}} \geqslant \cdots \geqslant a_{v_{k}} \geqslant \ell$. But this contradicts our assumption that $a_{n}>-k \ell$ in the second criteria of (5).

Now assume that $v_{k}>D$, and then we must have some indices $w_{1}, \ldots, w_{k}$ summing up to $v_{k}$ such that

But combining this with (6), we have

Because $a_{n}>a_{v_{1}} \geqslant \cdots \geqslant a_{v_{k-1}}$, we have that $\max \left{a_{w_{1}}, \ldots, a_{w_{k}}\right}>a_{v_{k-1}}$. But since each of the $w_{i}$ is less than $v_{k}$, in the definition of the $v_{k-1}$ we could have chosen one of the $w_{i}$ instead, which is a contradiction.

Comment 2. It seems that each sequence satisfying the condition in Version 2 is eventually periodic, at least when its terms are integers.

However, up to this moment, the Problem Selection Committee is not aware of a proof for this fact (even in the case $k=2$ ).

A5. An integer $n \geqslant 3$ is given. We call an $n$-tuple of real numbers $\left(x_{1}, x_{2}, \ldots, x_{n}\right)$ Shiny if for each permutation $y_{1}, y_{2}, \ldots, y_{n}$ of these numbers we have

Find the largest constant $K=K(n)$ such that

holds for every Shiny $n$-tuple $\left(x_{1}, x_{2}, \ldots, x_{n}\right)$. (Serbia) Answer: $K=-(n-1) / 2$. Solution 1. First of all, we show that we may not take a larger constant $K$. Let $t$ be a positive number, and take $x_{2}=x_{3}=\cdots=t$ and $x_{1}=-1 /(2 t)$. Then, every product $x_{i} x_{j}(i \neq j)$ is equal to either $t^{2}$ or $-1 / 2$. Hence, for every permutation $y_{i}$ of the $x_{i}$, we have

This justifies that the $n$-tuple $\left(x_{1}, \ldots, x_{n}\right)$ is Shiny. Now, we have

Thus, as $t$ approaches 0 from above, $\sum_{i<j} x_{i} x_{j}$ gets arbitrarily close to $-(n-1) / 2$. This shows that we may not take $K$ any larger than $-(n-1) / 2$. It remains to show that $\sum_{i<j} x_{i} x_{j} \geqslant$ $-(n-1) / 2$ for any Shiny choice of the $x_{i}$.

From now onward, assume that $\left(x_{1}, \ldots, x_{n}\right)$ is a Shiny $n$-tuple. Let the $z_{i}(1 \leqslant i \leqslant n)$ be some permutation of the $x_{i}$ to be chosen later. The indices for $z_{i}$ will always be taken modulo $n$. We will first split up the sum $\sum_{i<j} x_{i} x_{j}=\sum_{i<j} z_{i} z_{j}$ into $\lfloor(n-1) / 2\rfloor$ expressions, each of the form $y_{1} y_{2}+\cdots+y_{n-1} y_{n}$ for some permutation $y_{i}$ of the $z_{i}$, and some leftover terms. More specifically, write where $L=z_{1} z_{-1}+z_{2} z_{-2}+\cdots+z_{(n-1) / 2} z_{-(n-1) / 2}$ if $n$ is odd, and $L=z_{1} z_{-1}+z_{1} z_{-2}+z_{2} z_{-2}+$ $\cdots+z_{(n-2) / 2} z_{-n / 2}$ if $n$ is even. We note that for each $p=1,2, \ldots,\lfloor(n-1) / 2\rfloor$, there is some permutation $y_{i}$ of the $z_{i}$ such that

because we may choose $y_{2 i-1}=z_{i+p-1}$ for $1 \leqslant i \leqslant(n+1) / 2$ and $y_{2 i}=z_{p-i}$ for $1 \leqslant i \leqslant n / 2$.

We show (1) graphically for $n=6,7$ in the diagrams below. The edges of the graphs each represent a product $z_{i} z_{j}$, and the dashed and dotted series of lines represents the sum of the edges, which is of the form $y_{1} y_{2}+\cdots+y_{n-1} y_{n}$ for some permutation $y_{i}$ of the $z_{i}$ precisely when the series of lines is a Hamiltonian path. The filled edges represent the summands of $L$.

Now, because the $z_{i}$ are Shiny, we have that (1) yields the following bound:

It remains to show that, for each $n$, there exists some permutation $z_{i}$ of the $x_{i}$ such that $L \geqslant 0$ when $n$ is odd, and $L \geqslant-1 / 2$ when $n$ is even. We now split into cases based on the parity of $n$ and provide constructions of the permutations $z_{i}$.

Since we have not made any assumptions yet about the $x_{i}$, we may now assume without loss of generality that

Case 1: $n$ is odd. Without loss of generality, assume that $k$ (from (2)) is even, because we may negate all the $x_{i}$ if $k$ is odd. We then have $x_{1} x_{2}, x_{3} x_{4}, \ldots, x_{n-2} x_{n-1} \geqslant 0$ because the factors are of the same sign. Let $L=x_{1} x_{2}+x_{3} x_{4}+\cdots+x_{n-2} x_{n-1} \geqslant 0$. We choose our $z_{i}$ so that this definition of $L$ agrees with the sum of the leftover terms in (1). Relabel the $x_{i}$ as $z_{i}$ such that

are some permutation of

and $z_{n}=x_{n}$. Then, we have $L=z_{1} z_{n-1}+\cdots+z_{(n-1) / 2} z_{(n+1) / 2}$, as desired. Case 2: $n$ is even. Let $L=x_{1} x_{2}+x_{2} x_{3}+\cdots+x_{n-1} x_{n}$. Assume without loss of generality $k \neq 1$. Now, we have

where the first inequality holds because the only negative term in $L$ is $x_{k} x_{k+1}$, the second inequality holds because $x_{1} \leqslant x_{k} \leqslant 0 \leqslant x_{k+1} \leqslant x_{n}$, and the third inequality holds because the $x_{i}$ are assumed to be Shiny. We thus have that $L \geqslant-1 / 2$. We now choose a suitable $z_{i}$ such that the definition of $L$ matches the leftover terms in (1).

Relabel the $x_{i}$ with $z_{i}$ in the following manner: $x_{2 i-1}=z_{-i}, x_{2 i}=z_{i}$ (again taking indices modulo $n$ ). We have that

as desired. Solution 2. We present another proof that $\sum_{i<j} x_{i} x_{j} \geqslant-(n-1) / 2$ for any Shiny $n$-tuple $\left(x_{1}, \ldots, x_{n}\right)$. Assume an ordering of the $x_{i}$ as in (2), and let $\ell=n-k$. Assume without loss of generality that $k \geqslant \ell$. Also assume $k \neq n$, (as otherwise, all of the $x_{i}$ are nonpositive, and so the inequality is trivial). Define the sets of indices $S={1,2, \ldots, k}$ and $T={k+1, \ldots, n}$. Define the following sums:

By definition, $K, L \geqslant 0$ and $M \leqslant 0$. We aim to show that $K+L+M \geqslant-(n-1) / 2$. We split into cases based on whether $k=\ell$ or $k>\ell$. Case 1: $k>\ell$. Consider all permutations $\phi:{1,2, \ldots, n} \rightarrow{1,2, \ldots, n}$ such that $\phi^{-1}(T)={2,4, \ldots, 2 \ell}$. Note that there are $k!!$ ! such permutations $\phi$. Define

We know that $f(\phi) \geqslant-1$ for every permutation $\phi$ with the above property. Averaging $f(\phi)$ over all $\phi$ gives

where the equality holds because there are $k \ell$ products in $M$, of which $2 \ell$ are selected for each $\phi$, and there are $k(k-1) / 2$ products in $K$, of which $k-\ell-1$ are selected for each $\phi$. We now have

Since $k \leqslant n-1$ and $K, L \geqslant 0$, we get the desired inequality. Case 2: $k=\ell=n / 2$. We do a similar approach, considering all $\phi:{1,2, \ldots, n} \rightarrow{1,2, \ldots, n}$ such that $\phi^{-1}(T)=$ ${2,4, \ldots, 2 \ell}$, and defining $f$ the same way. Analogously to Case 1 , we have

because there are $k \ell$ products in $M$, of which $2 \ell-1$ are selected for each $\phi$. Now, we have that

where the last inequality holds because $n \geqslant 4$.

A6. Find all functions $f: \mathbb{R} \rightarrow \mathbb{R}$ such that

for all $x, y \in \mathbb{R}$. (Albania) Answer: There are 3 solutions:

Solution. An easy check shows that all the 3 above mentioned functions indeed satisfy the original equation (*).

In order to show that these are the only solutions, first observe that if $f(x)$ is a solution then $-f(x)$ is also a solution. Hence, without loss of generality we may (and will) assume that $f(0) \leqslant 0$ from now on. We have to show that either $f$ is identically zero or $f(x)=x-1$ $(\forall x \in \mathbb{R})$.

Observe that, for a fixed $x \neq 1$, we may choose $y \in \mathbb{R}$ so that $x+y=x y \Longleftrightarrow y=\frac{x}{x-1}$, and therefore from the original equation (*) we have

In particular, plugging in $x=0$ in (1), we conclude that $f$ has at least one zero, namely $(f(0))^{2}$ :

We analyze two cases (recall that $f(0) \leqslant 0$ ): Case 1: $f(0)=0$. Setting $y=0$ in the original equation we get the identically zero solution:

From now on, we work on the main Case 2: $f(0)<0$. We begin with the following

Claim 1.

Proof. We need to show that 1 is the unique zero of $f$. First, observe that $f$ has at least one zero $a$ by (2); if $a \neq 1$ then setting $x=a$ in (1) we get $f(0)=0$, a contradiction. Hence from (2) we get $(f(0))^{2}=1$. Since we are assuming $f(0)<0$, we conclude that $f(0)=-1$.

Setting $y=1$ in the original equation (*) we get

An easy induction shows that

Now we make the following Claim 2. $f$ is injective. Proof. Suppose that $f(a)=f(b)$ with $a \neq b$. Then by (4), for all $N \in \mathbb{Z}$,

Choose any integer $N<-b$; then there exist $x_{0}, y_{0} \in \mathbb{R}$ with $x_{0}+y_{0}=a+N+1, x_{0} y_{0}=b+N$. Since $a \neq b$, we have $x_{0} \neq 1$ and $y_{0} \neq 1$. Plugging in $x_{0}$ and $y_{0}$ in the original equation (*) we get

However, by Claim 1 we have $f\left(x_{0}\right) \neq 0$ and $f\left(y_{0}\right) \neq 0$ since $x_{0} \neq 1$ and $y_{0} \neq 1$, a contradiction.

Now the end is near. For any $t \in \mathbb{R}$, plug in $(x, y)=(t,-t)$ in the original equation (*) to get

Similarly, plugging in $(x, y)=(t, 1-t)$ in $(*)$ we get

But since $f(1-t)=1+f(-t)$ by (4), we get

as desired.

Comment. Other approaches are possible. For instance, after Claim 1, we may define

Replacing $x+1$ and $y+1$ in place of $x$ and $y$ in the original equation (*), we get

and therefore, using (4) (so that in particular $g(x)=f(x+1)$ ), we may rewrite $(*)$ as

We are now to show that $g(x)=x$ for all $x \in \mathbb{R}$ under the assumption (Claim 1 ) that 0 is the unique zero of $g$. Claim 3. Let $n \in \mathbb{Z}$ and $x \in \mathbb{R}$. Then (a) $g(x+n)=x+n$, and the conditions $g(x)=n$ and $x=n$ are equivalent. (b) $g(n x)=n g(x)$.

Proof. For part (a), just note that $g(x+n)=x+n$ is just a reformulation of (4). Then $g(x)=n \Longleftrightarrow$ $g(x-n)=0 \Longleftrightarrow x-n=0$ since 0 is the unique zero of $g$. For part (b), we may assume that $x \neq 0$ since the result is obvious when $x=0$. Plug in $y=n / x$ in (**) and use part (a) to get

In other words, for $x \neq 0$ we have

In particular, for $n=1$, we get $g(1 / x)=1 / g(x)$, and therefore replacing $x \leftarrow n x$ in the last equation we finally get

as required. Claim 4. The function $g$ is additive, i.e., $g(a+b)=g(a)+g(b)$ for all $a, b \in \mathbb{R}$. Proof. Set $x \leftarrow-x$ and $y \leftarrow-y$ in $(* *)$; since $g$ is an odd function (by Claim 3(b) with $n=-1$ ), we get

Subtracting the last relation from $(* *)$ we have

and since by Claim $3(\mathrm{~b})$ we have $2 g(x+y)=g(2(x+y))$, we may rewrite the last equation as

In other words, we have additivity for all $\alpha, \beta \in \mathbb{R}$ for which there are real numbers $x$ and $y$ satisfying

i.e., for all $\alpha, \beta \in \mathbb{R}$ such that $\left(\frac{\alpha+\beta}{2}\right)^{2}-4 \cdot \frac{\alpha-\beta}{2} \geqslant 0$. Therefore, given any $a, b \in \mathbb{R}$, we may choose $n \in \mathbb{Z}$ large enough so that we have additivity for $\alpha=n a$ and $\beta=n b$, i.e.,

by Claim $3(\mathrm{~b})$. Cancelling $n$, we get the desired result. (Alternatively, setting either $(\alpha, \beta)=(a, b)$ or $(\alpha, \beta)=(-a,-b)$ will ensure that $\left.\left(\frac{\alpha+\beta}{2}\right)^{2}-4 \cdot \frac{\alpha-\beta}{2} \geqslant 0\right)$.

Now we may finish the solution. Set $y=1$ in $(* *)$, and use Claim 3 to get

By additivity, this is equivalent to $g(g(x)-x)=0$. Since 0 is the unique zero of $g$ by assumption, we finally get $g(x)-x=0 \Longleftrightarrow g(x)=x$ for all $x \in \mathbb{R}$.

A7. Let $a_{0}, a_{1}, a_{2}, \ldots$ be a sequence of integers and $b_{0}, b_{1}, b_{2}, \ldots$ be a sequence of positive integers such that $a_{0}=0, a_{1}=1$, and

Prove that at least one of the two numbers $a_{2017}$ and $a_{2018}$ must be greater than or equal to 2017 . (Australia) Solution 1. The value of $b_{0}$ is irrelevant since $a_{0}=0$, so we may assume that $b_{0}=1$. Lemma. We have $a_{n} \geqslant 1$ for all $n \geqslant 1$. Proof. Let us suppose otherwise in order to obtain a contradiction. Let

Note that $n \geqslant 2$. It follows that $a_{n-1} \geqslant 1$ and $a_{n-2} \geqslant 0$. Thus we cannot have $a_{n}=$ $a_{n-1} b_{n-1}+a_{n-2}$, so we must have $a_{n}=a_{n-1} b_{n-1}-a_{n-2}$. Since $a_{n} \leqslant 0$, we have $a_{n-1} \leqslant a_{n-2}$. Thus we have $a_{n-2} \geqslant a_{n-1} \geqslant a_{n}$.

Let

Then $r \leqslant n-2$ by the above, but also $r \geqslant 2$ : if $b_{1}=1$, then $a_{2}=a_{1}=1$ and $a_{3}=a_{2} b_{2}+a_{1}>a_{2}$; if $b_{1}>1$, then $a_{2}=b_{1}>1=a_{1}$.

By the minimal choice (2) of $r$, it follows that $a_{r-1}<a_{r}$. And since $2 \leqslant r \leqslant n-2$, by the minimal choice (1) of $n$ we have $a_{r-1}, a_{r}, a_{r+1}>0$. In order to have $a_{r+1} \geqslant a_{r+2}$, we must have $a_{r+2}=a_{r+1} b_{r+1}-a_{r}$ so that $b_{r} \geqslant 2$. Putting everything together, we conclude that

which contradicts (2). To complete the problem, we prove that $\max \left{a_{n}, a_{n+1}\right} \geqslant n$ by induction. The cases $n=0,1$ are given. Assume it is true for all non-negative integers strictly less than $n$, where $n \geqslant 2$. There are two cases:

Case 1: $b_{n-1}=1$. Then $a_{n+1}=a_{n} b_{n}+a_{n-1}$. By the inductive assumption one of $a_{n-1}, a_{n}$ is at least $n-1$ and the other, by the lemma, is at least 1 . Hence

Thus $\max \left{a_{n}, a_{n+1}\right} \geqslant n$, as desired. Case 2: $b_{n-1}>1$. Since we defined $b_{0}=1$ there is an index $r$ with $1 \leqslant r \leqslant n-1$ such that

We have $a_{r+1}=a_{r} b_{r}+a_{r-1} \geqslant 2 a_{r}+a_{r-1}$. Thus $a_{r+1}-a_{r} \geqslant a_{r}+a_{r-1}$. Now we claim that $a_{r}+a_{r-1} \geqslant r$. Indeed, this holds by inspection for $r=1$; for $r \geqslant 2$, one of $a_{r}, a_{r-1}$ is at least $r-1$ by the inductive assumption, while the other, by the lemma, is at least 1 . Hence $a_{r}+a_{r-1} \geqslant r$, as claimed, and therefore $a_{r+1}-a_{r} \geqslant r$ by the last inequality in the previous paragraph.

Since $r \geqslant 1$ and, by the lemma, $a_{r} \geqslant 1$, from $a_{r+1}-a_{r} \geqslant r$ we get the following two inequalities:

Now observe that

since $a_{m+1}=a_{m} b_{m}-a_{m-1} \geqslant 2 a_{m}-a_{m-1}=a_{m}+\left(a_{m}-a_{m-1}\right)>a_{m}$. Thus

So $\max \left{a_{n}, a_{n+1}\right} \geqslant n$, as desired. Solution 2. We say that an index $n>1$ is bad if $b_{n-1}=1$ and $b_{n-2}>1$; otherwise $n$ is good. The value of $b_{0}$ is irrelevant to the definition of $\left(a_{n}\right)$ since $a_{0}=0$; so we assume that $b_{0}>1$. Lemma 1. (a) $a_{n} \geqslant 1$ for all $n>0$. (b) If $n>1$ is good, then $a_{n}>a_{n-1}$.

Proof. Induction on $n$. In the base cases $n=1,2$ we have $a_{1}=1 \geqslant 1, a_{2}=b_{1} a_{1} \geqslant 1$, and finally $a_{2}>a_{1}$ if 2 is good, since in this case $b_{1}>1$.

Now we assume that the lemma statement is proved for $n=1,2, \ldots, k$ with $k \geqslant 2$, and prove it for $n=k+1$. Recall that $a_{k}$ and $a_{k-1}$ are positive by the induction hypothesis. Case 1: $k$ is bad. We have $b_{k-1}=1$, so $a_{k+1}=b_{k} a_{k}+a_{k-1} \geqslant a_{k}+a_{k-1}>a_{k} \geqslant 1$, as required. Case 2: $k$ is good. We already have $a_{k}>a_{k-1} \geqslant 1$ by the induction hypothesis. We consider three easy subcases.

Subcase 2.1: $b_{k}>1$. Then $a_{k+1} \geqslant b_{k} a_{k}-a_{k-1} \geqslant a_{k}+\left(a_{k}-a_{k-1}\right)>a_{k} \geqslant 1$. Subcase 2.2: $b_{k}=b_{k-1}=1$. Then $a_{k+1}=a_{k}+a_{k-1}>a_{k} \geqslant 1$. Subcase 2.3: $b_{k}=1$ but $b_{k-1}>1$. Then $k+1$ is bad, and we need to prove only (a), which is trivial: $a_{k+1}=a_{k}-a_{k-1} \geqslant 1$. So, in all three subcases we have verified the required relations. Lemma 2. Assume that $n>1$ is bad. Then there exists a $j \in{1,2,3}$ such that $a_{n+j} \geqslant$ $a_{n-1}+j+1$, and $a_{n+i} \geqslant a_{n-1}+i$ for all $1 \leqslant i<j$. Proof. Recall that $b_{n-1}=1$. Set

(possibly $m=+\infty$ ). We claim that $j=\min {m, 3}$ works. Again, we distinguish several cases, according to the value of $m$; in each of them we use Lemma 1 without reference. Case 1: $m=1$, so $b_{n}>1$. Then $a_{n+1} \geqslant 2 a_{n}+a_{n-1} \geqslant a_{n-1}+2$, as required. Case 2: $m=2$, so $b_{n}=1$ and $b_{n+1}>1$. Then we successively get

which is even better than we need.

Case 3: $m>2$, so $b_{n}=b_{n+1}=1$. Then we successively get

as required. Lemmas 1 (b) and 2 provide enough information to prove that $\max \left{a_{n}, a_{n+1}\right} \geqslant n$ for all $n$ and, moreover, that $a_{n} \geqslant n$ often enough. Indeed, assume that we have found some $n$ with $a_{n-1} \geqslant n-1$. If $n$ is good, then by Lemma 1 (b) we have $a_{n} \geqslant n$ as well. If $n$ is bad, then Lemma 2 yields $\max \left{a_{n+i}, a_{n+i+1}\right} \geqslant a_{n-1}+i+1 \geqslant n+i$ for all $0 \leqslant i<j$ and $a_{n+j} \geqslant a_{n-1}+j+1 \geqslant n+j$; so $n+j$ is the next index to start with.

A8. Assume that a function $f: \mathbb{R} \rightarrow \mathbb{R}$ satisfies the following condition: For every $x, y \in \mathbb{R}$ such that $(f(x)+y)(f(y)+x)>0$, we have $f(x)+y=f(y)+x$. Prove that $f(x)+y \leqslant f(y)+x$ whenever $x>y$. (Netherlands) Solution 1. Define $g(x)=x-f(x)$. The condition on $f$ then rewrites as follows: For every $x, y \in \mathbb{R}$ such that $((x+y)-g(x))((x+y)-g(y))>0$, we have $g(x)=g(y)$. This condition may in turn be rewritten in the following form: If $g(x) \neq g(y)$, then the number $x+y$ lies (non-strictly) between $g(x)$ and $g(y)$. Notice here that the function $g_{1}(x)=-g(-x)$ also satisfies $(*)$, since

On the other hand, the relation we need to prove reads now as

Again, this condition is equivalent to the same one with $g$ replaced by $g_{1}$. If $g(x)=2 x$ for all $x \in \mathbb{R}$, then $(*)$ is obvious; so in what follows we consider the other case. We split the solution into a sequence of lemmas, strengthening one another. We always consider some value of $x$ with $g(x) \neq 2 x$ and denote $X=g(x)$. Lemma 1. Assume that $X<2 x$. Then on the interval $(X-x ; x]$ the function $g$ attains at most two values - namely, $X$ and, possibly, some $Y>X$. Similarly, if $X>2 x$, then $g$ attains at most two values on $[x ; X-x)$ - namely, $X$ and, possibly, some $Y<X$. Proof. We start with the first claim of the lemma. Notice that $X-x<x$, so the considered interval is nonempty.

Take any $a \in(X-x ; x)$ with $g(a) \neq X$ (if it exists). If $g(a)<X$, then () yields $g(a) \leqslant$ $a+x \leqslant g(x)=X$, so $a \leqslant X-x$ which is impossible. Thus, $g(a)>X$ and hence by () we get $X \leqslant a+x \leqslant g(a)$.

Now, for any $b \in(X-x ; x)$ with $g(b) \neq X$ we similarly get $b+x \leqslant g(b)$. Therefore, the number $a+b$ (which is smaller than each of $a+x$ and $b+x$ ) cannot lie between $g(a)$ and $g(b)$, which by (*) implies that $g(a)=g(b)$. Hence $g$ may attain only two values on $(X-x ; x]$, namely $X$ and $g(a)>X$.

To prove the second claim, notice that $g_{1}(-x)=-X<2 \cdot(-x)$, so $g_{1}$ attains at most two values on $(-X+x,-x]$, i.e., $-X$ and, possibly, some $-Y>-X$. Passing back to $g$, we get what we need. Lemma 2. If $X<2 x$, then $g$ is constant on $(X-x ; x)$. Similarly, if $X>2 x$, then $g$ is constant on $(x ; X-x)$. Proof. Again, it suffices to prove the first claim only. Assume, for the sake of contradiction, that there exist $a, b \in(X-x ; x)$ with $g(a) \neq g(b)$; by Lemma 1, we may assume that $g(a)=X$ and $Y=g(b)>X$.

Notice that $\min {X-a, X-b}>X-x$, so there exists a $u \in(X-x ; x)$ such that $u<\min {X-a, X-b}$. By Lemma 1, we have either $g(u)=X$ or $g(u)=Y$. In the former case, by () we have $X \leqslant u+b \leqslant Y$ which contradicts $u<X-b$. In the second case, by () we have $X \leqslant u+a \leqslant Y$ which contradicts $u<X-a$. Thus the lemma is proved.

Lemma 3. If $X<2 x$, then $g(a)=X$ for all $a \in(X-x ; x)$. Similarly, if $X>2 x$, then $g(a)=X$ for all $a \in(x ; X-x)$. Proof. Again, we only prove the first claim. By Lemmas 1 and 2, this claim may be violated only if $g$ takes on a constant value $Y>X$ on $(X-x, x)$. Choose any $a, b \in(X-x ; x)$ with $a<b$. By (*), we have

In particular, we have $Y \geqslant b+x>2 a$. Applying Lemma 2 to $a$ in place of $x$, we obtain that $g$ is constant on $(a, Y-a)$. By (2) again, we have $x \leqslant Y-b<Y-a$; so $x, b \in(a ; Y-a)$. But $X=g(x) \neq g(b)=Y$, which is a contradiction.

Now we are able to finish the solution. Assume that $g(x)>g(y)$ for some $x<y$. Denote $X=g(x)$ and $Y=g(y)$; by (*), we have $X \geqslant x+y \geqslant Y$, so $Y-y \leqslant x<y \leqslant X-x$, and hence $(Y-y ; y) \cap(x ; X-x)=(x, y) \neq \varnothing$. On the other hand, since $Y-y<y$ and $x<X-x$, Lemma 3 shows that $g$ should attain a constant value $X$ on $(x ; X-x)$ and a constant value $Y \neq X$ on $(Y-y ; y)$. Since these intervals overlap, we get the final contradiction.

Solution 2. As in the previous solution, we pass to the function $g$ satisfying ( $*$ ) and notice that we need to prove the condition (1). We will also make use of the function $g_{1}$.

If $g$ is constant, then (1) is clearly satisfied. So, in the sequel we assume that $g$ takes on at least two different values. Now we collect some information about the function $g$. Claim 1. For any $c \in \mathbb{R}$, all the solutions of $g(x)=c$ are bounded. Proof. Fix any $y \in \mathbb{R}$ with $g(y) \neq c$. Assume first that $g(y)>c$. Now, for any $x$ with $g(x)=c$, by (*) we have $c \leqslant x+y \leqslant g(y)$, or $c-y \leqslant x \leqslant g(y)-y$. Since $c$ and $y$ are constant, we get what we need.

If $g(y)<c$, we may switch to the function $g_{1}$ for which we have $g_{1}(-y)>-c$. By the above arguments, we obtain that all the solutions of $g_{1}(-x)=-c$ are bounded, which is equivalent to what we need.

As an immediate consequence, the function $g$ takes on infinitely many values, which shows that the next claim is indeed widely applicable. Claim 2. If $g(x)<g(y)<g(z)$, then $x<z$. Proof. By (*), we have $g(x) \leqslant x+y \leqslant g(y) \leqslant z+y \leqslant g(z)$, so $x+y \leqslant z+y$, as required. Claim 3. Assume that $g(x)>g(y)$ for some $x<y$. Then $g(a) \in{g(x), g(y)}$ for all $a \in[x ; y]$. Proof. If $g(y)<g(a)<g(x)$, then the triple $(y, a, x)$ violates Claim 2. If $g(a)<g(y)<g(x)$, then the triple $(a, y, x)$ violates Claim 2. If $g(y)<g(x)<g(a)$, then the triple $(y, x, a)$ violates Claim 2. The only possible cases left are $g(a) \in{g(x), g(y)}$.

In view of Claim 3, we say that an interval $I$ (which may be open, closed, or semi-open) is a Dirichlet interval ${ }^{*}$ if the function $g$ takes on just two values on $I$.

Assume now, for the sake of contradiction, that (1) is violated by some $x<y$. By Claim 3, $[x ; y]$ is a Dirichlet interval. Set $r=\inf {a:(a ; y]$ is a Dirichlet interval $} \quad$ and $s=\sup {b:[x ; b)$ is a Dirichlet interval $}$. Clearly, $r \leqslant x<y \leqslant s$. By Claim 1, $r$ and $s$ are finite. Denote $X=g(x), Y=g(y)$, and $\Delta=(y-x) / 2$.

Suppose first that there exists a $t \in(r ; r+\Delta)$ with $f(t)=Y$. By the definition of $r$, the interval $(r-\Delta ; y]$ is not Dirichlet, so there exists an $r^{\prime} \in(r-\Delta ; r]$ such that $g\left(r^{\prime}\right) \notin{X, Y}$.

[^0]The function $g$ attains at least three distinct values on $\left[r^{\prime} ; y\right]$, namely $g\left(r^{\prime}\right), g(x)$, and $g(y)$. Claim 3 now yields $g\left(r^{\prime}\right) \leqslant g(y)$; the equality is impossible by the choice of $r^{\prime}$, so in fact $g\left(r^{\prime}\right)<Y$. Applying (*) to the pairs $\left(r^{\prime}, y\right)$ and $(t, x)$ we obtain $r^{\prime}+y \leqslant Y \leqslant t+x$, whence $r-\Delta+y<r^{\prime}+y \leqslant t+x<r+\Delta+x$, or $y-x<2 \Delta$. This is a contradiction.

Thus, $g(t)=X$ for all $t \in(r ; r+\Delta)$. Applying the same argument to $g_{1}$, we get $g(t)=Y$ for all $t \in(s-\Delta ; s)$.

Finally, choose some $s_{1}, s_{2} \in(s-\Delta ; s)$ with $s_{1}<s_{2}$ and denote $\delta=\left(s_{2}-s_{1}\right) / 2$. As before, we choose $r^{\prime} \in(r-\delta ; r)$ with $g\left(r^{\prime}\right) \notin{X, Y}$ and obtain $g\left(r^{\prime}\right)<Y$. Choose any $t \in(r ; r+\delta)$; by the above arguments, we have $g(t)=X$ and $g\left(s_{1}\right)=g\left(s_{2}\right)=Y$. As before, we apply (*) to the pairs $\left(r^{\prime}, s_{2}\right)$ and $\left(t, s_{1}\right)$ obtaining $r-\delta+s_{2}<r^{\prime}+s_{2} \leqslant Y \leqslant t+s_{1}<r+\delta+s_{1}$, or $s_{2}-s_{1}<2 \delta$. This is a final contradiction.

Comment 1. The original submission discussed the same functions $f$, but the question was different - namely, the following one:

Prove that the equation $f(x)=2017 x$ has at most one solution, and the equation $f(x)=-2017 x$ has at least one solution.

The Problem Selection Committee decided that the question we are proposing is more natural, since it provides more natural information about the function $g$ (which is indeed the main character in this story). On the other hand, the new problem statement is strong enough in order to imply the original one easily.

Namely, we will deduce from the new problem statement (along with the facts used in the solutions) that ( $i$ ) for every $N>0$ the equation $g(x)=-N x$ has at most one solution, and (ii) for every $N>1$ the equation $g(x)=N x$ has at least one solution.

Claim ( $i$ ) is now trivial. Indeed, $g$ is proven to be non-decreasing, so $g(x)+N x$ is strictly increasing and thus has at most one zero.

We proceed on claim $(i i)$. If $g(0)=0$, then the required root has been already found. Otherwise, we may assume that $g(0)>0$ and denote $c=g(0)$. We intend to prove that $x=c / N$ is the required root. Indeed, by monotonicity we have $g(c / N) \geqslant g(0)=c$; if we had $g(c / N)>c$, then (*) would yield $c \leqslant 0+c / N \leqslant g(c / N)$ which is false. Thus, $g(x)=c=N x$.

Comment 2. There are plenty of functions $g$ satisfying (*) (and hence of functions $f$ satisfying the problem conditions). One simple example is $g_{0}(x)=2 x$. Next, for any increasing sequence $A=\left(\ldots, a_{-1}, a_{0}, a_{1}, \ldots\right)$ which is unbounded in both directions (i.e., for every $N$ this sequence contains terms greater than $N$, as well as terms smaller than $-N$ ), the function $g_{A}$ defined by

satisfies (*). Indeed, pick any $x<y$ with $g(x) \neq g(y)$; this means that $x \in\left[a_{i} ; a_{i+1}\right)$ and $y \in\left[a_{j} ; a_{j+1}\right)$ for some $i<j$. Then we have $g(x)=a_{i}+a_{i+1} \leqslant x+y<a_{j}+a_{j+1}=g(y)$, as required.

There also exist examples of the mixed behavior; e.g., for an arbitrary sequence $A$ as above and an arbitrary subset $I \subseteq \mathbb{Z}$ the function

also satisfies (). Finally, it is even possible to provide a complete description of all functions $g$ satisfying () (and hence of all functions $f$ satisfying the problem conditions); however, it seems to be far out of scope for the IMO. This description looks as follows.

Let $A$ be any closed subset of $\mathbb{R}$ which is unbounded in both directions. Define the functions $i_{A}$, $s_{A}$, and $g_{A}$ as follows:

It is easy to see that for different sets $A$ and $B$ the functions $g_{A}$ and $g_{B}$ are also different (since, e.g., for any $a \in A \backslash B$ the function $g_{B}$ is constant in a small neighborhood of $a$, but the function $g_{A}$ is not). One may check, similarly to the arguments above, that each such function satisfies (*).

Finally, one more modification is possible. Namely, for any $x \in A$ one may redefine $g_{A}(x)$ (which is $2 x$ ) to be any of the numbers

This really changes the value if $x$ has some right (respectively, left) semi-neighborhood disjoint from $A$, so there are at most countably many possible changes; all of them can be performed independently.

With some effort, one may show that the construction above provides all functions $g$ satisfying (*).

Combinatorics

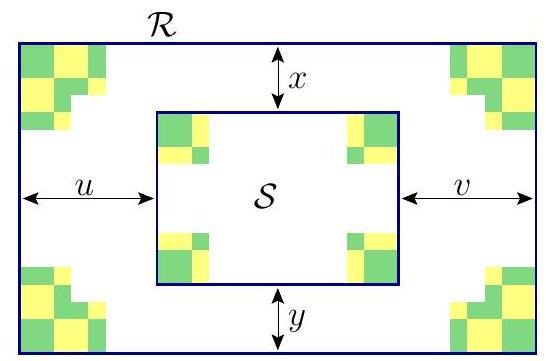

C1. A rectangle $\mathcal{R}$ with odd integer side lengths is divided into small rectangles with integer side lengths. Prove that there is at least one among the small rectangles whose distances from the four sides of $\mathcal{R}$ are either all odd or all even. (Singapore) Solution. Let the width and height of $\mathcal{R}$ be odd numbers $a$ and $b$. Divide $\mathcal{R}$ into $a b$ unit squares and color them green and yellow in a checkered pattern. Since the side lengths of $a$ and $b$ are odd, the corner squares of $\mathcal{R}$ will all have the same color, say green.

Call a rectangle (either $\mathcal{R}$ or a small rectangle) green if its corners are all green; call it yellow if the corners are all yellow, and call it mixed if it has both green and yellow corners. In particular, $\mathcal{R}$ is a green rectangle.

We will use the following trivial observations.

- Every mixed rectangle contains the same number of green and yellow squares;

- Every green rectangle contains one more green square than yellow square;

- Every yellow rectangle contains one more yellow square than green square.

The rectangle $\mathcal{R}$ is green, so it contains more green unit squares than yellow unit squares. Therefore, among the small rectangles, at least one is green. Let $\mathcal{S}$ be such a small green rectangle, and let its distances from the sides of $\mathcal{R}$ be $x, y, u$ and $v$, as shown in the picture. The top-left corner of $\mathcal{R}$ and the top-left corner of $\mathcal{S}$ have the same color, which happen if and only if $x$ and $u$ have the same parity. Similarly, the other three green corners of $\mathcal{S}$ indicate that $x$ and $v$ have the same parity, $y$ and $u$ have the same parity, i.e. $x, y, u$ and $v$ are all odd or all even.

C2. Let $n$ be a positive integer. Define a chameleon to be any sequence of $3 n$ letters, with exactly $n$ occurrences of each of the letters $a, b$, and $c$. Define a swap to be the transposition of two adjacent letters in a chameleon. Prove that for any chameleon $X$, there exists a chameleon $Y$ such that $X$ cannot be changed to $Y$ using fewer than $3 n^{2} / 2$ swaps. (Australia) Solution 1. To start, notice that the swap of two identical letters does not change a chameleon, so we may assume there are no such swaps.

For any two chameleons $X$ and $Y$, define their distance $d(X, Y)$ to be the minimal number of swaps needed to transform $X$ into $Y$ (or vice versa). Clearly, $d(X, Y)+d(Y, Z) \geqslant d(X, Z)$ for any three chameleons $X, Y$, and $Z$. Lemma. Consider two chameleons

Then $d(P, Q) \geqslant 3 n^{2}$. Proof. For any chameleon $X$ and any pair of distinct letters $u, v \in{a, b, c}$, we define $f_{u, v}(X)$ to be the number of pairs of positions in $X$ such that the left one is occupied by $u$, and the right one is occupied by $v$. Define $f(X)=f_{a, b}(X)+f_{a, c}(X)+f_{b, c}(X)$. Notice that $f_{a, b}(P)=f_{a, c}(P)=f_{b, c}(P)=n^{2}$ and $f_{a, b}(Q)=f_{a, c}(Q)=f_{b, c}(Q)=0$, so $f(P)=3 n^{2}$ and $f(Q)=0$.

Now consider some swap changing a chameleon $X$ to $X^{\prime}$; say, the letters $a$ and $b$ are swapped. Then $f_{a, b}(X)$ and $f_{a, b}\left(X^{\prime}\right)$ differ by exactly 1 , while $f_{a, c}(X)=f_{a, c}\left(X^{\prime}\right)$ and $f_{b, c}(X)=f_{b, c}\left(X^{\prime}\right)$. This yields $\left|f(X)-f\left(X^{\prime}\right)\right|=1$, i.e., on any swap the value of $f$ changes by 1 . Hence $d(X, Y) \geqslant$ $|f(X)-f(Y)|$ for any two chameleons $X$ and $Y$. In particular, $d(P, Q) \geqslant|f(P)-f(Q)|=3 n^{2}$, as desired.

Back to the problem, take any chameleon $X$ and notice that $d(X, P)+d(X, Q) \geqslant d(P, Q) \geqslant$ $3 n^{2}$ by the lemma. Consequently, $\max {d(X, P), d(X, Q)} \geqslant \frac{3 n^{2}}{2}$, which establishes the problem statement.

Comment 1. The problem may be reformulated in a graph language. Construct a graph $G$ with the chameleons as vertices, two vertices being connected with an edge if and only if these chameleons differ by a single swap. Then $d(X, Y)$ is the usual distance between the vertices $X$ and $Y$ in this graph. Recall that the radius of a connected graph $G$ is defined as

So we need to prove that the radius of the constructed graph is at least $3 n^{2} / 2$. It is well-known that the radius of any connected graph is at least the half of its diameter (which is simply $\max _{u, v \in V} d(u, v)$ ). Exactly this fact has been used above in order to finish the solution. Solution 2. We use the notion of distance from Solution 1, but provide a different lower bound for it.

In any chameleon $X$, we enumerate the positions in it from left to right by $1,2, \ldots, 3 n$. Define $s_{c}(X)$ as the sum of positions occupied by $c$. The value of $s_{c}$ changes by at most 1 on each swap, but this fact alone does not suffice to solve the problem; so we need an improvement.

For every chameleon $X$, denote by $X_{\bar{c}}$ the sequence obtained from $X$ by removing all $n$ letters $c$. Enumerate the positions in $X_{\bar{c}}$ from left to right by $1,2, \ldots, 2 n$, and define $s_{\bar{c}, b}(X)$ as the sum of positions in $X_{\bar{c}}$ occupied by $b$. (In other words, here we consider the positions of the $b$ 's relatively to the $a$ 's only.) Finally, denote

Now consider any swap changing a chameleon $X$ to $X^{\prime}$. If no letter $c$ is involved into this swap, then $s_{c}(X)=s_{c}\left(X^{\prime}\right)$; on the other hand, exactly one letter $b$ changes its position in $X_{\bar{c}}$, so $\left|s_{\bar{c}, b}(X)-s_{\bar{c}, b}\left(X^{\prime}\right)\right|=1$. If a letter $c$ is involved into a swap, then $X_{\bar{c}}=X_{\bar{c}}^{\prime}$, so $s_{\bar{c}, b}(X)=s_{\bar{c}, b}\left(X^{\prime}\right)$ and $\left|s_{c}(X)-s_{c}\left(X^{\prime}\right)\right|=1$. Thus, in all cases we have $d^{\prime}\left(X, X^{\prime}\right)=1$.

As in the previous solution, this means that $d(X, Y) \geqslant d^{\prime}(X, Y)$ for any two chameleons $X$ and $Y$. Now, for any chameleon $X$ we will indicate a chameleon $Y$ with $d^{\prime}(X, Y) \geqslant 3 n^{2} / 2$, thus finishing the solution.

The function $s_{c}$ attains all integer values from $1+\cdots+n=\frac{n(n+1)}{2}$ to $(2 n+1)+\cdots+3 n=$ $2 n^{2}+\frac{n(n+1)}{2}$. If $s_{c}(X) \leqslant n^{2}+\frac{n(n+1)}{2}$, then we put the letter $c$ into the last $n$ positions in $Y$; otherwise we put the letter $c$ into the first $n$ positions in $Y$. In either case we already have $\left|s_{c}(X)-s_{c}(Y)\right| \geqslant n^{2}$.

Similarly, $s_{\bar{c}, b}$ ranges from $\frac{n(n+1)}{2}$ to $n^{2}+\frac{n(n+1)}{2}$. So, if $s_{\bar{c}, b}(X) \leqslant \frac{n^{2}}{2}+\frac{n(n+1)}{2}$, then we put the letter $b$ into the last $n$ positions in $Y$ which are still free; otherwise, we put the letter $b$ into the first $n$ such positions. The remaining positions are occupied by $a$. In any case, we have $\left|s_{\bar{c}, b}(X)-s_{\bar{c}, b}(Y)\right| \geqslant \frac{n^{2}}{2}$, thus $d^{\prime}(X, Y) \geqslant n^{2}+\frac{n^{2}}{2}=\frac{3 n^{2}}{2}$, as desired.

Comment 2. The two solutions above used two lower bounds $|f(X)-f(Y)|$ and $d^{\prime}(X, Y)$ for the number $d(X, Y)$. One may see that these bounds are closely related to each other, as

One can see that, e.g., the bound $d^{\prime}(X, Y)$ could as well be used in the proof of the lemma in Solution 1. Let us describe here an even sharper bound which also can be used in different versions of the solutions above.

In each chameleon $X$, enumerate the occurrences of $a$ from the left to the right as $a_{1}, a_{2}, \ldots, a_{n}$. Since we got rid of swaps of identical letters, the relative order of these letters remains the same during the swaps. Perform the same operation with the other letters, obtaining new letters $b_{1}, \ldots, b_{n}$ and $c_{1}, \ldots, c_{n}$. Denote by $A$ the set of the $3 n$ obtained letters.

Since all $3 n$ letters became different, for any chameleon $X$ and any $s \in A$ we may define the position $N_{s}(X)$ of $s$ in $X$ (thus $1 \leqslant N_{s}(X) \leqslant 3 n$ ). Now, for any two chameleons $X$ and $Y$ we say that a pair of letters $(s, t) \in A \times A$ is an $(X, Y)$-inversion if $N_{s}(X)<N_{t}(X)$ but $N_{s}(Y)>N_{t}(Y)$, and define $d^{}(X, Y)$ to be the number of $(X, Y)$-inversions. Then for any two chameleons $Y$ and $Y^{\prime}$ differing by a single swap, we have $\left|d^{}(X, Y)-d^{}\left(X, Y^{\prime}\right)\right|=1$. Since $d^{}(X, X)=0$, this yields $d(X, Y) \geqslant d^{}(X, Y)$ for any pair of chameleons $X$ and $Y$. The bound $d^{}$ may also be used in both Solution 1 and Solution 2.

Comment 3. In fact, one may prove that the distance $d^{}$ defined in the previous comment coincides with $d$. Indeed, if $X \neq Y$, then there exist an ( $X, Y$ )-inversion $(s, t)$. One can show that such $s$ and $t$ may be chosen to occupy consecutive positions in $Y$. Clearly, $s$ and $t$ correspond to different letters among ${a, b, c}$. So, swapping them in $Y$ we get another chameleon $Y^{\prime}$ with $d^{}\left(X, Y^{\prime}\right)=d^{}(X, Y)-1$. Proceeding in this manner, we may change $Y$ to $X$ in $d^{}(X, Y)$ steps.

Using this fact, one can show that the estimate in the problem statement is sharp for all $n \geqslant 2$. (For $n=1$ it is not sharp, since any permutation of three letters can be changed to an opposite one in no less than three swaps.) We outline the proof below.

For any $k \geqslant 0$, define

We claim that for every $n \geqslant 2$ and every chameleon $Y$, we have $d^{*}\left(X_{n}, Y\right) \leqslant\left\lceil 3 n^{2} / 2\right\rceil$. This will mean that for every $n \geqslant 2$ the number $3 n^{2} / 2$ in the problem statement cannot be changed by any number larger than $\left\lceil 3 n^{2} / 2\right\rceil$.

For any distinct letters $u, v \in{a, b, c}$ and any two chameleons $X$ and $Y$, we define $d_{u, v}^{}(X, Y)$ as the number of $(X, Y)$-inversions $(s, t)$ such that $s$ and $t$ are instances of $u$ and $v$ (in any of the two possible orders). Then $d^{}(X, Y)=d_{a, b}^{}(X, Y)+d_{b, c}^{}(X, Y)+d_{c, a}^{*}(X, Y)$.

We start with the case when $n=2 k$ is even; denote $X=X_{2 k}$. We show that $d_{a, b}^{}(X, Y) \leqslant 2 k^{2}$ for any chameleon $Y$; this yields the required estimate. Proceed by the induction on $k$ with the trivial base case $k=0$. To perform the induction step, notice that $d_{a, b}^{}(X, Y)$ is indeed the minimal number of swaps needed to change $Y_{\bar{c}}$ into $X_{\bar{c}}$. One may show that moving $a_{1}$ and $a_{2 k}$ in $Y$ onto the first and the last positions in $Y$, respectively, takes at most $2 k$ swaps, and that subsequent moving $b_{1}$ and $b_{2 k}$ onto the second and the second last positions takes at most $2 k-2$ swaps. After performing that, one may delete these letters from both $X_{\bar{c}}$ and $Y_{\bar{c}}$ and apply the induction hypothesis; so $X_{\bar{c}}$ can be obtained from $Y_{\bar{c}}$ using at most $2(k-1)^{2}+2 k+(2 k-2)=2 k^{2}$ swaps, as required.

If $n=2 k+3$ is odd, the proof is similar but more technically involved. Namely, we claim that $d_{a, b}^{}\left(X_{2 k+3}, Y\right) \leqslant 2 k^{2}+6 k+5$ for any chameleon $Y$, and that the equality is achieved only if $Y_{\bar{c}}=$ $b b \ldots b a a \ldots a$. The proof proceeds by a similar induction, with some care taken of the base case, as well as of extracting the equality case. Similar estimates hold for $d_{b, c}^{}$ and $d_{c, a}^{*}$. Summing three such estimates, we obtain

which is by 1 more than we need. But the equality could be achieved only if $Y_{\bar{c}}=b b \ldots b a a \ldots a$ and, similarly, $Y_{\bar{b}}=a a \ldots a c c \ldots c$ and $Y_{\bar{a}}=c c \ldots c b b \ldots b$. Since these three equalities cannot hold simultaneously, the proof is finished.

C3. Sir Alex plays the following game on a row of 9 cells. Initially, all cells are empty. In each move, Sir Alex is allowed to perform exactly one of the following two operations: (1) Choose any number of the form $2^{j}$, where $j$ is a non-negative integer, and put it into an empty cell. (2) Choose two (not necessarily adjacent) cells with the same number in them; denote that number by $2^{j}$. Replace the number in one of the cells with $2^{j+1}$ and erase the number in the other cell.

At the end of the game, one cell contains the number $2^{n}$, where $n$ is a given positive integer, while the other cells are empty. Determine the maximum number of moves that Sir Alex could have made, in terms of $n$. (Thailand) Answer: $2 \sum_{j=0}^{8}\binom{n}{j}-1$. Solution 1. We will solve a more general problem, replacing the row of 9 cells with a row of $k$ cells, where $k$ is a positive integer. Denote by $m(n, k)$ the maximum possible number of moves Sir Alex can make starting with a row of $k$ empty cells, and ending with one cell containing the number $2^{n}$ and all the other $k-1$ cells empty. Call an operation of type (1) an insertion, and an operation of type (2) a merge.

Only one move is possible when $k=1$, so we have $m(n, 1)=1$. From now on we consider $k \geqslant 2$, and we may assume Sir Alex's last move was a merge. Then, just before the last move, there were exactly two cells with the number $2^{n-1}$, and the other $k-2$ cells were empty.

Paint one of those numbers $2^{n-1}$ blue, and the other one red. Now trace back Sir Alex's moves, always painting the numbers blue or red following this rule: if $a$ and $b$ merge into $c$, paint $a$ and $b$ with the same color as $c$. Notice that in this backward process new numbers are produced only by reversing merges, since reversing an insertion simply means deleting one of the numbers. Therefore, all numbers appearing in the whole process will receive one of the two colors.

Sir Alex's first move is an insertion. Without loss of generality, assume this first number inserted is blue. Then, from this point on, until the last move, there is always at least one cell with a blue number.

Besides the last move, there is no move involving a blue and a red number, since all merges involves numbers with the same color, and insertions involve only one number. Call an insertion of a blue number or merge of two blue numbers a blue move, and define a red move analogously.

The whole sequence of blue moves could be repeated on another row of $k$ cells to produce one cell with the number $2^{n-1}$ and all the others empty, so there are at most $m(n-1, k)$ blue moves.

Now we look at the red moves. Since every time we perform a red move there is at least one cell occupied with a blue number, the whole sequence of red moves could be repeated on a row of $k-1$ cells to produce one cell with the number $2^{n-1}$ and all the others empty, so there are at most $m(n-1, k-1)$ red moves. This proves that

On the other hand, we can start with an empty row of $k$ cells and perform $m(n-1, k)$ moves to produce one cell with the number $2^{n-1}$ and all the others empty, and after that perform $m(n-1, k-1)$ moves on those $k-1$ empty cells to produce the number $2^{n-1}$ in one of them, leaving $k-2$ empty. With one more merge we get one cell with $2^{n}$ and the others empty, proving that

It follows that

for $n \geqslant 1$ and $k \geqslant 2$. If $k=1$ or $n=0$, we must insert $2^{n}$ on our first move and immediately get the final configuration, so $m(0, k)=1$ and $m(n, 1)=1$, for $n \geqslant 0$ and $k \geqslant 1$. These initial values, together with the recurrence relation (1), determine $m(n, k)$ uniquely.

Finally, we show that

for all integers $n \geqslant 0$ and $k \geqslant 1$. We use induction on $n$. Since $m(0, k)=1$ for $k \geqslant 1,(2)$ is true for the base case. We make the induction hypothesis that (2) is true for some fixed positive integer $n$ and all $k \geqslant 1$. We have $m(n+1,1)=1=2\binom{n+1}{0}-1$, and for $k \geqslant 2$ the recurrence relation (1) and the induction hypothesis give us

which completes the proof.

Comment 1. After deducing the recurrence relation (1), it may be convenient to homogenize the recurrence relation by defining $h(n, k)=m(n, k)+1$. We get the new relation

for $n \geqslant 1$ and $k \geqslant 2$, with initial values $h(0, k)=h(n, 1)=2$, for $n \geqslant 0$ and $k \geqslant 1$. This may help one to guess the answer, and also with other approaches like the one we develop next.

Comment 2. We can use a generating function to find the answer without guessing. We work with the homogenized recurrence relation (3). Define $h(n, 0)=0$ so that (3) is valid for $k=1$ as well. Now we set up the generating function $f(x, y)=\sum_{n, k \geqslant 0} h(n, k) x^{n} y^{k}$. Multiplying the recurrence relation (3) by $x^{n} y^{k}$ and summing over $n, k \geqslant 1$, we get

Completing the missing terms leads to the following equation on $f(x, y)$ :

Substituting the initial values, we obtain

Developing as a power series, we get

The coefficient of $x^{n}$ in this power series is

and extracting the coefficient of $y^{k}$ in this last expression we finally obtain the value for $h(n, k)$,

This proves that

The generating function approach also works if applied to the non-homogeneous recurrence relation (1), but the computations are less straightforward. Solution 2. Define merges and insertions as in Solution 1. After each move made by Sir Alex we compute the number $N$ of empty cells, and the $\operatorname{sum} S$ of all the numbers written in the cells. Insertions always increase $S$ by some power of 2 , and increase $N$ exactly by 1 . Merges do not change $S$ and decrease $N$ exactly by 1 . Since the initial value of $N$ is 0 and its final value is 1 , the total number of insertions exceeds that of merges by exactly one. So, to maximize the number of moves, we need to maximize the number of insertions.