id stringlengths 4 10 | text stringlengths 4 2.14M | source stringclasses 2

values | created timestamp[s]date 2001-05-16 21:05:09 2025-01-01 03:38:30 | added stringdate 2025-04-01 04:05:38 2025-04-01 07:14:06 | metadata dict |

|---|---|---|---|---|---|

1878865498 | infinite recursion when self assigned to option

The following flake leads to an infinite recursion:

{

outputs = inputs@{flake-parts, nixpkgs, self, ...}:

flake-parts.lib.mkFlake {inherit inputs;} {

nixosConfigurations.default = nixpkgs.lib.nixosSystem {

modules = [

{

# assigning self to a non existent option triggers the infinite recursion

foo.repoRoot = self;

}

];

};

};

}

I think this issue is import to fix.

For example this could as well happen with the treefmt.repoRoot option. If it ever gets deprecated, users will be puzzled by a hard to debug issue.

In my case this lead to a stack overflow with no trace at all.

This does only happen when using flake-parts and not with vanilla flakes.

@DavHau which version and platform did that happen?

When I run it on x86_64-linux, I get infinite recursions instead, which do have a trace, although the quality of the trace varies between Nix versions. 2.13.3 seems best.

Good thing the latest two or three releases have test infrastructure to catch such regressions - wish we had it sooner.

Seems like a serious problem indeed. I think we should add sourceInfo directly to inputs in Nix to solve this without removing source locations for all the other errors when they occur in the anonymous "root" module that is the mkFlake {} argument.

This solution may also help with

https://github.com/hercules-ci/flake-parts/issues/148

In my case this lead to a stack overflow with no trace at all.

The example above results in an infinite recursion for me as well.

I got a stack overflow error in dream2nix, but after stripping it down to a minimal reproducer it became an infinite recursion.

Not sure if that change in behavior was due to complexity or due to library versions.

Let me know if I should publish the dream2nix expression that lead to a stack overflow.

Could you try with this?

https://github.com/NixOS/nix/pull/8879

PR description shows how to nix run that nix.

You might be able to tell what's the difference between your original problem and reproducer with it.

Blocked on https://github.com/NixOS/nix/pull/8908

Does #192 help?

With it I get:

$ nix eval . --override-input flake-parts github:hercules-ci/flake-parts/refs/pull/192/head

warning: not writing modified lock file of flake 'path:/home/user/h/issue-flake-parts-185':

• Updated input 'flake-parts':

'github:hercules-ci/flake-parts/7f53fdb7bdc5bb237da7fefef12d099e4fd611ca' (2023-09-01)

→ 'github:hercules-ci/flake-parts/0effb5db5ccc46f8787c98ca91ec64cc9721c121' (2023-10-13)

error: The option `nixosConfigurations' does not exist. Definition values:

- In `<unknown-file>'

(use '--show-trace' to show detailed location information)

Still an error, as expected, but actionable.

Fixing it up a bit:

{

outputs = inputs@{flake-parts, nixpkgs, self, ...}:

flake-parts.lib.mkFlake {inherit inputs;} {

systems = [ "x86_64-linux" ];

flake.nixosConfigurations.default = nixpkgs.lib.nixosSystem {

modules = [

{

# assigning self to a non existent option triggers the infinite recursion

foo.repoRoot = self;

}

];

};

};

}

I then get this, simulating nixos-rebuild a bit:

$ nix eval .#nixosConfigurations.default.config.system.build.toplevel.drvPath --override-input flake-parts github:hercules-ci/flake-parts/refs/pull/192/head

warning: not writing modified lock file of flake 'path:/home/user/h/issue-flake-parts-185':

• Updated input 'flake-parts':

'github:hercules-ci/flake-parts/7f53fdb7bdc5bb237da7fefef12d099e4fd611ca' (2023-09-01)

→ 'github:hercules-ci/flake-parts/0effb5db5ccc46f8787c98ca91ec64cc9721c121' (2023-10-13)

error: Neither nixpkgs.hostPlatform nor the legacy option nixpkgs.system has been set.

You can set nixpkgs.hostPlatform in hardware-configuration.nix by re-running

a recent version of nixos-generate-config.

The option nixpkgs.system is still fully supported for NixOS 22.05 interoperability,

but will be deprecated in the future, so we recommend to set nixpkgs.hostPlatform.

(use '--show-trace' to show detailed location information)

Fixed in #192

| gharchive/issue | 2023-09-02T22:29:53 | 2025-04-01T06:44:26.173759 | {

"authors": [

"DavHau",

"roberth"

],

"repo": "hercules-ci/flake-parts",

"url": "https://github.com/hercules-ci/flake-parts/issues/185",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

963168860 | docker build failed in step 6

Hi,

I have tried the installation with in a Ubuntu18.04 VM.But I met some error in STEP 6

Step 6/21 : RUN git clone --recursive https://hub.fastgit.org/open5gs/open5gs && cd open5gs && git checkout main && meson build --prefix=`pwd`/install && ninja -C build && cd build && ninja install

---> Running in 7b364147c2b9

Cloning into 'open5gs'...

Already on 'main'

Your branch is up to date with 'origin/main'.

The Meson build system

Version: 0.53.2

Source dir: /open5gs

Build dir: /open5gs/build

Build type: native build

Project name: open5gs

Project version: 2.3.2

C compiler for the host machine: cc (gcc 9.3.0 "cc (Ubuntu 9.3.0-17ubuntu1~20.04) 9.3.0")

C linker for the host machine: cc ld.bfd 2.34

C++ compiler for the host machine: c++ (gcc 9.3.0 "c++ (Ubuntu 9.3.0-17ubuntu1~20.04) 9.3.0")

C++ linker for the host machine: c++ ld.bfd 2.34

Host machine cpu family: x86_64

Host machine cpu: x86_64

Program git found: YES (/usr/bin/git)

Program python3 found: YES (/usr/bin/python3)

Program /usr/bin/python3 found: YES (/usr/bin/python3)

Compiler for C supports arguments -Wextra: YES

Compiler for C supports arguments -Wlogical-op: YES

Compiler for C supports arguments -Werror=missing-include-dirs: YES

Compiler for C supports arguments -Werror=pointer-arith: YES

Compiler for C supports arguments -Werror=init-self: YES

Compiler for C supports arguments -Wfloat-equal: YES

Compiler for C supports arguments -Wsuggest-attribute=noreturn: YES

Compiler for C supports arguments -Werror=missing-prototypes: YES

Compiler for C supports arguments -Werror=missing-declarations: YES

Compiler for C supports arguments -Werror=implicit-function-declaration: YES

Compiler for C supports arguments -Werror=return-type: YES

Compiler for C supports arguments -Werror=incompatible-pointer-types: YES

Compiler for C supports arguments -Werror=format=2: YES

Compiler for C supports arguments -Wstrict-prototypes: YES

Compiler for C supports arguments -Wredundant-decls: YES

Compiler for C supports arguments -Wimplicit-fallthrough=5: YES

Compiler for C supports arguments -Wendif-labels: YES

Compiler for C supports arguments -Wstrict-aliasing=3: YES

Compiler for C supports arguments -Wwrite-strings: YES

Compiler for C supports arguments -Werror=overflow: YES

Compiler for C supports arguments -Werror=shift-count-overflow: YES

Compiler for C supports arguments -Werror=shift-overflow=2: YES

Compiler for C supports arguments -Wdate-time: YES

Compiler for C supports arguments -Wnested-externs: YES

Compiler for C supports arguments -Wunused: YES

Compiler for C supports arguments -Wduplicated-branches: YES

Compiler for C supports arguments -Wmisleading-indentation: YES

Compiler for C supports arguments -Wno-sign-compare -Wsign-compare: YES

Compiler for C supports arguments -Wno-unused-parameter -Wunused-parameter: YES

Compiler for C supports arguments -ffast-math: YES

Compiler for C supports arguments -fdiagnostics-show-option: YES

Compiler for C supports arguments -fstack-protector: YES

Compiler for C supports arguments -fstack-protector-strong: YES

Compiler for C supports arguments --param=ssp-buffer-size=4: YES

meson.build:108: WARNING: Consider using the built-in warning_level option instead of using "-Wextra".

Configuring sample.yaml using configuration

Configuring 310014.yaml using configuration

Configuring csfb.yaml using configuration

Configuring volte.yaml using configuration

Configuring vonr.yaml using configuration

Configuring slice.yaml using configuration

Configuring srslte.yaml using configuration

WARNING: Output file "configs/sample.yaml" for configure_file() at configs/meson.build:49 overwrites configure_file() output at configs/meson.build:49

Configuring sample.yaml using configuration

Configuring non3gpp.yaml using configuration

Program /usr/bin/python3 found: YES (/usr/bin/python3)

Configuring mme.yaml using configuration

Configuring sgwc.yaml using configuration

Configuring sgwu.yaml using configuration

Configuring smf.yaml using configuration

Configuring amf.yaml using configuration

Configuring upf.yaml using configuration

Configuring hss.yaml using configuration

Configuring pcrf.yaml using configuration

Configuring nrf.yaml using configuration

Configuring ausf.yaml using configuration

Configuring udm.yaml using configuration

Configuring udr.yaml using configuration

Configuring pcf.yaml using configuration

Configuring nssf.yaml using configuration

Configuring bsf.yaml using configuration

Program /usr/bin/python3 found: YES (/usr/bin/python3)

Configuring mme.conf using configuration

Configuring hss.conf using configuration

Configuring smf.conf using configuration

Configuring pcrf.conf using configuration

Configuring cacert.pem using configuration

Configuring mme.cert.pem using configuration

Configuring mme.key.pem using configuration

Configuring hss.cert.pem using configuration

Configuring hss.key.pem using configuration

Configuring smf.cert.pem using configuration

Configuring smf.key.pem using configuration

Configuring pcrf.cert.pem using configuration

Configuring pcrf.key.pem using configuration

Configuring open5gs-mmed.service using configuration

Configuring open5gs-sgwcd.service using configuration

Configuring open5gs-smfd.service using configuration

Configuring open5gs-amfd.service using configuration

Configuring open5gs-sgwud.service using configuration

Configuring open5gs-upfd.service using configuration

Configuring open5gs-hssd.service using configuration

Configuring open5gs-pcrfd.service using configuration

Configuring open5gs-nrfd.service using configuration

Configuring open5gs-ausfd.service using configuration

Configuring open5gs-udmd.service using configuration

Configuring open5gs-pcfd.service using configuration

Configuring open5gs-nssfd.service using configuration

Configuring open5gs-bsfd.service using configuration

Configuring open5gs-udrd.service using configuration

Configuring 99-open5gs.netdev using configuration

Configuring 99-open5gs.network using configuration

Configuring open5gs using configuration

Configuring open5gs.conf using configuration

Has header "arpa/inet.h" : YES

Has header "ctype.h" : YES

Has header "errno.h" : YES

Has header "execinfo.h" : YES

Has header "fcntl.h" : YES

Has header "ifaddrs.h" : YES

Has header "netdb.h" : YES

Has header "pthread.h" : YES

Has header "signal.h" : YES

Has header "stdarg.h" : YES

Has header "stddef.h" : YES

Has header "stdio.h" : YES

Has header "stdint.h" : YES

Has header "stdbool.h" : YES

Has header "stdlib.h" : YES

Has header "string.h" : YES

Has header "strings.h" : YES

Has header "time.h" : YES

Has header "sys/time.h" : YES

Has header "unistd.h" : YES

Has header "net/if.h" : YES

Has header "netinet/in.h" : YES

Has header "netinet/in_systm.h" : YES

Has header "netinet/udp.h" : YES

Has header "netinet/tcp.h" : YES

Has header "sys/ioctl.h" : YES

Has header "sys/param.h" : YES

Has header "sys/random.h" : YES

Has header "sys/socket.h" : YES

Has header "sys/stat.h" : YES

Has header "limits.h" : YES

Has header "sys/syslimits.h" : NO

Has header "sys/types.h" : YES

Has header "sys/wait.h" : YES

Has header "sys/uio.h" : YES

Checking for function "arc4random" : NO

Checking for function "arc4random_buf" : NO

Checking for function "getrandom" : YES

Checking for function "localtime_r" : YES

Checking for function "getifaddrs" : YES

Checking for function "getenv" : YES

Checking for function "putenv" : YES

Checking for function "setenv" : YES

Checking for function "unsetenv" : YES

Checking for function "strerror_r" : YES

Checking for function "sigaction" : YES

Checking for function "sigwait" : YES

Checking for function "sigsuspend" : YES

Checking for function "eventfd" : YES

Checking for function "kqueue" : NO

Checking for function "epoll_ctl" : YES

Run-time dependency threads found: YES

Header <pthread.h> has symbol "pthread_barrier_wait" : YES

Header <signal.h> has symbol "sys_siglist" : YES

Checking if "strerror_r() returns char *" compiles: YES

Library execinfo found: NO

Checking for function "backtrace" : YES

Checking if "clock_gettime()" links: YES

Checking if "eventfd(2) system call" links: YES

Library socket found: NO

Checking if "socket()" links: YES

Configuring core-config-private.h using configuration

Configuring core-config.h using configuration

Compiler for C supports arguments -Wno-shift-negative-value -Wshift-negative-value: YES

Compiler for C supports arguments -Wno-unused-but-set-variable -Wunused-but-set-variable: YES

Compiler for C supports arguments -Wno-unknown-warning-option -Wunknown-warning-option: NO

Cloning into 'freeDiameter'...

fatal: unable to access 'https://github.com/open5gs/freeDiameter.git/': GnuTLS recv error (-110): The TLS connection was non-properly terminated.

Traceback (most recent call last):

File "/usr/lib/python3/dist-packages/mesonbuild/mesonmain.py", line 129, in run

return options.run_func(options)

File "/usr/lib/python3/dist-packages/mesonbuild/msetup.py", line 245, in run

app.generate()

File "/usr/lib/python3/dist-packages/mesonbuild/msetup.py", line 159, in generate

self._generate(env)

File "/usr/lib/python3/dist-packages/mesonbuild/msetup.py", line 192, in _generate

intr.run()

File "/usr/lib/python3/dist-packages/mesonbuild/interpreter.py", line 4167, in run

super().run()

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 412, in run

self.evaluate_codeblock(self.ast, start=1)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 436, in evaluate_codeblock

raise e

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 430, in evaluate_codeblock

self.evaluate_statement(cur)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 441, in evaluate_statement

return self.function_call(cur)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 788, in function_call

return func(node, posargs, kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 285, in wrapped

return f(*wrapped_args, **wrapped_kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 174, in wrapped

return f(*wrapped_args, **wrapped_kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreter.py", line 3689, in func_subdir

self.evaluate_codeblock(codeblock)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 436, in evaluate_codeblock

raise e

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 430, in evaluate_codeblock

self.evaluate_statement(cur)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 441, in evaluate_statement

return self.function_call(cur)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 788, in function_call

return func(node, posargs, kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 285, in wrapped

return f(*wrapped_args, **wrapped_kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 174, in wrapped

return f(*wrapped_args, **wrapped_kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreter.py", line 3689, in func_subdir

self.evaluate_codeblock(codeblock)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 436, in evaluate_codeblock

raise e

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 430, in evaluate_codeblock

self.evaluate_statement(cur)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 441, in evaluate_statement

return self.function_call(cur)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 788, in function_call

return func(node, posargs, kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 285, in wrapped

return f(*wrapped_args, **wrapped_kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 174, in wrapped

return f(*wrapped_args, **wrapped_kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreter.py", line 3689, in func_subdir

self.evaluate_codeblock(codeblock)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 436, in evaluate_codeblock

raise e

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 430, in evaluate_codeblock

self.evaluate_statement(cur)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 443, in evaluate_statement

return self.assignment(cur)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 1064, in assignment

value = self.evaluate_statement(node.value)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 441, in evaluate_statement

return self.function_call(cur)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 788, in function_call

return func(node, posargs, kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 285, in wrapped

return f(*wrapped_args, **wrapped_kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 174, in wrapped

return f(*wrapped_args, **wrapped_kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreterbase.py", line 143, in wrapped

return f(*wrapped_args, **wrapped_kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreter.py", line 2540, in func_subproject

return self.do_subproject(dirname, 'meson', kwargs)

File "/usr/lib/python3/dist-packages/mesonbuild/interpreter.py", line 2582, in do_subproject

resolved = r.resolve(dirname, method)

File "/usr/lib/python3/dist-packages/mesonbuild/wrap/wrap.py", line 187, in resolve

self.get_git()

File "/usr/lib/python3/dist-packages/mesonbuild/wrap/wrap.py", line 282, in get_git

verbose_git(['clone', self.wrap.get('url'), self.directory], self.subdir_root, check=True)

File "/usr/lib/python3/dist-packages/mesonbuild/wrap/wrap.py", line 62, in verbose_git

return git(cmd, workingdir, check=check).returncode == 0

File "/usr/lib/python3/dist-packages/mesonbuild/mesonlib.py", line 61, in git

pc = subprocess.run([GIT, '-C', workingdir] + cmd,

File "/usr/lib/python3.8/subprocess.py", line 516, in run

raise CalledProcessError(retcode, process.args,

subprocess.CalledProcessError: Command '['/usr/bin/git', '-C', '/open5gs/subprojects', 'clone', 'https://github.com/open5gs/freeDiameter.git', 'freeDiameter']' returned non-zero exit status 128.

Compiler for C supports arguments -Wno-missing-prototypes -Wmissing-prototypes: YES

Compiler for C supports arguments -Wno-missing-declarations -Wmissing-declarations: YES

Compiler for C supports arguments -Wno-discarded-qualifiers -Wdiscarded-qualifiers: YES

Compiler for C supports arguments -Wno-redundant-decls -Wredundant-decls: YES

Compiler for C supports arguments -Wno-shift-overflow -Wshift-overflow: YES

Compiler for C supports arguments -Wno-float-equal -Wfloat-equal: YES

Compiler for C supports arguments -Wno-implicit-fallthrough -Wimplicit-fallthrough: YES

Compiler for C supports arguments -Wno-incompatible-pointer-types-discards-qualifiers -Wincompatible-pointer-types-discards-qualifiers: NO

Compiler for C supports arguments -Wno-format-nonliteral -Wformat-nonliteral: YES

Compiler for C supports arguments -Wno-cpp -Wcpp: YES

Found pkg-config: /usr/bin/pkg-config (0.29.1)

Run-time dependency yaml-0.1 found: YES 0.2.2

Has header "netinet/sctp.h" : YES

Library sctp found: YES

Configuring sctp-config.h using configuration

Run-time dependency libmongoc-1.0 found: YES 1.16.1

Removing intermediate container 7b364147c2b9

The command '/bin/sh -c git clone --recursive https://hub.fastgit.org/open5gs/open5gs && cd open5gs && git checkout main && meson build --prefix=`pwd`/install && ninja -C build && cd build && ninja install' returned a non-zero code: 2

root@user:/home/user/volte/docker_open5gs/base#

It seems git clone error,I use https://hub.fastgit.org/***.git to download faster than https://github.com/***.git for geographical reasons.So how should I replace this address https://github.com/open5gs/freeDiameter.git?

Thanks in advance!

Update

I use VPN to improve the network environment,and execute git clone https://github.com/open5gs/freeDiameter.git in VM,it works fine.

root@user:/home/user# git clone https://github.com/open5gs/freeDiameter

Cloning into 'freeDiameter'...

remote: Enumerating objects: 749, done.

remote: Counting objects: 100% (749/749), done.

remote: Compressing objects: 100% (462/462), done.

remote: Total 749 (delta 288), reused 727 (delta 266), pack-reused 0

Receiving objects: 100% (749/749), 1.24 MiB | 1.86 MiB/s, done.

Resolving deltas: 100% (288/288), done.

But when I execute docker build --no-cache --force-rm -t docker_open5gs . it failed in STEP 6 once agian..

Hi

I resolved this issue.

I find a solution for ERROR GnuTLS recv error (-110),that is add 3 commands in Dockfile as below:

RUN apt-get install gnutls-bin

RUN git config --global http.sslVerify false

RUN git config --global http.postBuffer 1048576000

Then the error disappeared.I will continue with the installation and there may be other problems, but this issuecan be closed,thanks.

| gharchive/issue | 2021-08-07T06:38:21 | 2025-04-01T06:44:26.191757 | {

"authors": [

"myonlystarWang"

],

"repo": "herlesupreeth/docker_open5gs",

"url": "https://github.com/herlesupreeth/docker_open5gs/issues/49",

"license": "BSD-2-Clause",

"license_type": "permissive",

"license_source": "github-api"

} |

625577262 | Cleanup

optimizes imports, replaces assert with assert_eq and fixes a linter warning

@stlankes Review required

| gharchive/pull-request | 2020-05-27T10:32:51 | 2025-04-01T06:44:26.193533 | {

"authors": [

"jschwe"

],

"repo": "hermitcore/rusty-loader",

"url": "https://github.com/hermitcore/rusty-loader/pull/6",

"license": "Apache-2.0",

"license_type": "permissive",

"license_source": "github-api"

} |

82578927 | Allow client to cancel by transfer name

Allow pg:backups cancel to take an optional transfer name, and change the default for when no name provided to pick the newest active backup, rather than relying on order from api.

@uhoh-itsmaciek this seem ok?

Sure, looks good.

| gharchive/pull-request | 2015-05-29T20:40:52 | 2025-04-01T06:44:26.202805 | {

"authors": [

"tef",

"uhoh-itsmaciek"

],

"repo": "heroku/heroku",

"url": "https://github.com/heroku/heroku/pull/1595",

"license": "mit",

"license_type": "permissive",

"license_source": "bigquery"

} |

1488945434 | 🛑 Auth-Bridge - Test 1 is down

In 09108a2, Auth-Bridge - Test 1 ($AUTH_BRIDGE_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Auth-Bridge - Test 1 is back up in 3796002.

| gharchive/issue | 2022-12-10T20:48:24 | 2025-04-01T06:44:26.214628 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/10083",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1490263016 | 🛑 Software Center - Test 1 is down

In 7c72fa6, Software Center - Test 1 ($SOFTWARECENTER_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Software Center - Test 1 is back up in 1cb8a54.

| gharchive/issue | 2022-12-11T17:40:17 | 2025-04-01T06:44:26.216897 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/10127",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1672433725 | 🛑 Auth-Bridge - Test 1 is down

In a5f4970, Auth-Bridge - Test 1 ($AUTH_BRIDGE_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Auth-Bridge - Test 1 is back up in 8e172bc.

| gharchive/issue | 2023-04-18T06:24:56 | 2025-04-01T06:44:26.219117 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/14082",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1693508779 | 🛑 Auth-Bridge - Test 1 is down

In 05b13d1, Auth-Bridge - Test 1 ($AUTH_BRIDGE_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Auth-Bridge - Test 1 is back up in 16a91da.

| gharchive/issue | 2023-05-03T06:57:19 | 2025-04-01T06:44:26.221583 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/14821",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1726905578 | 🛑 Auth-Bridge - Test 1 is down

In 248ce6f, Auth-Bridge - Test 1 ($AUTH_BRIDGE_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Auth-Bridge - Test 1 is back up in 482de49.

| gharchive/issue | 2023-05-26T04:55:29 | 2025-04-01T06:44:26.223759 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/15965",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1819754374 | 🛑 Auth-Bridge - Test 1 is down

In b5d427f, Auth-Bridge - Test 1 ($AUTH_BRIDGE_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Auth-Bridge - Test 1 is back up in 64becde.

| gharchive/issue | 2023-07-25T07:49:48 | 2025-04-01T06:44:26.226009 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/18806",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1184839634 | 🛑 Auth-Bridge - Test 1 is down

In 69b64aa, Auth-Bridge - Test 1 ($AUTH_BRIDGE_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Auth-Bridge - Test 1 is back up in 8e28a09.

| gharchive/issue | 2022-03-29T13:03:34 | 2025-04-01T06:44:26.228215 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/1907",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1903585607 | 🛑 Auth-Bridge - Test 1 is down

In ea21d79, Auth-Bridge - Test 1 ($AUTH_BRIDGE_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Auth-Bridge - Test 1 is back up in 353a124 after 21 minutes.

| gharchive/issue | 2023-09-19T19:13:03 | 2025-04-01T06:44:26.230498 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/21729",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1204653624 | 🛑 Auth-Bridge - Test 1 is down

In 50d4256, Auth-Bridge - Test 1 ($AUTH_BRIDGE_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Auth-Bridge - Test 1 is back up in 21bd4f8.

| gharchive/issue | 2022-04-14T15:12:45 | 2025-04-01T06:44:26.232920 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/2538",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

2034279120 | 🛑 Software Center - Test 1 is down

In a5f7a71, Software Center - Test 1 ($SOFTWARECENTER_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Software Center - Test 1 is back up in b7356ce after 28 minutes.

| gharchive/issue | 2023-12-10T08:55:16 | 2025-04-01T06:44:26.235093 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/25781",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

2081528860 | 🛑 Auth-Bridge - Test 1 is down

In 54e0885, Auth-Bridge - Test 1 ($AUTH_BRIDGE_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Auth-Bridge - Test 1 is back up in 60635c4 after 54 minutes.

| gharchive/issue | 2024-01-15T08:46:27 | 2025-04-01T06:44:26.237319 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/27544",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

2100776793 | 🛑 Software Center - Test 1 is down

In b8c3a47, Software Center - Test 1 ($SOFTWARECENTER_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Software Center - Test 1 is back up in 2088002 after 28 minutes.

| gharchive/issue | 2024-01-25T16:42:38 | 2025-04-01T06:44:26.239512 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/28032",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

2266792185 | 🛑 Software Center - Test 1 is down

In 39d25b5, Software Center - Test 1 ($SOFTWARECENTER_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Software Center - Test 1 is back up in 0ac47fb after 43 minutes.

| gharchive/issue | 2024-04-27T03:33:46 | 2025-04-01T06:44:26.241889 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/32697",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1292451476 | 🛑 Auth-Bridge - Test 1 is down

In b0d97ef, Auth-Bridge - Test 1 ($AUTH_BRIDGE_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Auth-Bridge - Test 1 is back up in 7820a0c.

| gharchive/issue | 2022-07-03T23:42:15 | 2025-04-01T06:44:26.244325 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/5315",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1349541800 | 🛑 Auth-Bridge - Test 1 is down

In 714970b, Auth-Bridge - Test 1 ($AUTH_BRIDGE_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Auth-Bridge - Test 1 is back up in ba24fa4.

| gharchive/issue | 2022-08-24T14:30:50 | 2025-04-01T06:44:26.246519 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/6996",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1414982913 | 🛑 Auth-Bridge - Test 1 is down

In dc85dde, Auth-Bridge - Test 1 ($AUTH_BRIDGE_TEST_1) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Auth-Bridge - Test 1 is back up in 6f6c4f0.

| gharchive/issue | 2022-10-19T13:30:56 | 2025-04-01T06:44:26.248754 | {

"authors": [

"herrphon"

],

"repo": "herrphon/upptime",

"url": "https://github.com/herrphon/upptime/issues/8337",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

126673485 | Fahrauftrag ausdrucken -> Fehler!

Erwartet wird:

Bereitstellen -> Fahrauftrag -> Drucken

Fahrauftrag wird auf Printer gedruckt!

Ausgegeben wird:

Guten morgen Josi

Ist kein Fehler, nur ein undeutliche Fehlermeldung. Diese Funktion ist noch nicht implementiert.

Ich bin seit letzten Freitag genau hier am bauen, bald sollte Bereitstellen->Fahrauftrag->Drucken auch möglich sein.

Martin Jonasse

Seemattstrasse 38

6333 Hünenberg See

Privat: 041 780 76 12

Mobile: 079 832 69 10

Am 14.01.2016 um 16:08 schrieb Josi Conrad notifications@github.com:

Erwartet wird:

Bereitstellen -> Fahrauftrag -> Drucken

Fahrauftrag wird auf Printer gedruckt!

Ausgegeben wird:

—

Reply to this email directly or view it on GitHub.

This is "work in progress".

Implementiert: Fahrauftrag -> Drucken erstellt eine PDF Datei für den Download bereit, gedruckt wird in Adobe Acrobat Reader.

| gharchive/issue | 2016-01-14T15:08:17 | 2025-04-01T06:44:26.262565 | {

"authors": [

"Josi-Conrad",

"Martin-Jonasse"

],

"repo": "hertus/sfitixi",

"url": "https://github.com/hertus/sfitixi/issues/153",

"license": "mit",

"license_type": "permissive",

"license_source": "bigquery"

} |

428919890 | "login" route is buggy

Pointing the browser directly to the "login" route, or reloading the page while the "login" route is currently active, does not work. In both cases there is a redirect to "gamesinprogress" which leads to an authentication error.

Possibly related: When pointing the browser to the "/" route, there is redirect to "gamesinprogress" before there is a redirect to "login".

| gharchive/issue | 2019-04-03T18:47:32 | 2025-04-01T06:44:26.266429 | {

"authors": [

"herzbube"

],

"repo": "herzbube/littlego-web",

"url": "https://github.com/herzbube/littlego-web/issues/11",

"license": "Apache-2.0",

"license_type": "permissive",

"license_source": "github-api"

} |

153965801 | Specify a recursivity depth

Hi !

Thanks for this great plugin for django rest framework. Is there any way to specify the depth of the recursion ?

I tried using the class Meta depth attribute but I don't think the field uses it. What would be the best approach to this ?

Best regards,

This is not currently supported. I would suggest pruning the dataset before you try to serialize it. If you are deserializing something, then you could deserialize it and then prune.

I'm using it on a ModelViewset so I don't know how I could prune it ?

There is no queryset or get_queryset method in the arguments of the field...

Can you set the queryset in the ModelViewSet?

No... My datastructure is really simple:

# models.py

class Node(models.Model):

parent = models.ForeignKey('self', related_name='children')

# api.py

class NodeSerializer(serializers.HyperlinkedModelSerializer):

parent = serializers.HyperlinkedRelatedField(

view_name='api:parameter-detail',

queryset=Node.objects.all(),

)

children = RecursiveField(many=True, allow_null=True)

class Meta:

model = Node

fields = ('parent', 'children')

depth = 2

class NodeViewSet(viewsets.ModelViewSet):

queryset = Node.objects.all()

I cannot prune or filter the queryset. It will depend on which initial node is requested. I need to limit the depth of the RecursiveField only... Or maybe I'm missing something ?

You could look in to the django treebeard package for a tree with more features. Or django mptt. I believe there are probably others as well.

Or I suppose you could extend the recursive field to add the functionality your desire

I'll just extend the recursive field to add the functionality I need. Both mptt and treabeard have limitations or defaults that don't work with my project.

Thanks.

| gharchive/issue | 2016-05-10T09:47:06 | 2025-04-01T06:44:26.380731 | {

"authors": [

"achedeuzot",

"heywbj"

],

"repo": "heywbj/django-rest-framework-recursive",

"url": "https://github.com/heywbj/django-rest-framework-recursive/issues/11",

"license": "isc",

"license_type": "permissive",

"license_source": "bigquery"

} |

736686875 | Possibly another method?

Hey there,

There's another elevated COM based method I'd like to share. Are you willing to take a look?

Thanks.

Hello,

sure, I always welcome anything new.

Awesome, I ask because I don't want to pester you if you're not free at the time.

Anyways, this method is three steps. It uses environment variables abuse/modification, shell protocol handler hijack, and lastly the elevated COM interface IFwCplLua. Basically IFwCplLua::LaunchAdvancedUI() uses ShellExecuteExW() call with %WinDir%\System32\WF.msc. What this method does is change WinDir to custom location, and launch custom WF.msc. The custom WF.msc launches a custom protocol which in turn opens cmd.exe as admin. Here is the code: https://github.com/AzAgarampur/byeintegrity4-uac/

This method actually already was in UACMe as 42, https://github.com/hfiref0x/UACME/blob/v3.2.x/Source/Akagi/methods/hybrids.c#L2392 except it abused mscfile handler hijack without touching environment variables or using fake msc snap-in file. Starting from RS4 it produced mixed results - was working and not working at same time and later was set as fixed and removed.

What does this custom msc btw? It just run something with that protocol specified with help of shockwave flash object?

Also I will be able to test this only on Saturday as I'm away of main PC.

It just run something with that protocol specified with help of shockwave flash object?

Basically. The exploit creates a URL association called protocol-byeintegrity4, and the shockwave object uses the link to launch the desired process. <String ID="3" Refs="1">protocol-byeintegrity4:</String>

Well, I can confirm it works (tested on 19042). I will look how I can integrate this into UACMe and post update here, presumably next week. Thanks for sharing.

Awesome! Thanks for letting me know.

Pushed into the dev branch. Currently it was tested only on Win10 1809.

I've done testing this on Windows 7 SP1, Windows 8.1 full patch. Tested on Windows 10 21H1 (20241) so I can assume it will work on previous Win10 versions too. If no critical bugs were found I will release this later this week, as I still want to do some other additions not related to this particular method. As usual, thanks for contribution, good work!

Hey, thanks! I appreciate the time you take to look at my work.

Do you think we can drop the registry flush calls? I don't think it'll change anything if we remove them.

Yes, sure.

This is unrelated, but the link that has the bs explanation from MS about why it works should be updated to this: https://devblogs.microsoft.com/oldnewthing/20160816-00/?p=94105

Hopefully you can include it in the readme for the next release.

Done.

| gharchive/issue | 2020-11-05T07:31:05 | 2025-04-01T06:44:26.390454 | {

"authors": [

"AzAgarampur",

"hfiref0x"

],

"repo": "hfiref0x/UACME",

"url": "https://github.com/hfiref0x/UACME/issues/88",

"license": "bsd-2-clause",

"license_type": "permissive",

"license_source": "bigquery"

} |

418615997 | [ bug ]多次刷新SQL工单详情时,工单内容出现重复

仅v1.4.0以前产生的旧数据会出现该情况,新数据不会

重现步骤

打开工单详情:http://139.199.0.191/detail/6/

多次刷新,详情的SQL列表出现重复

截图

错误日志

无,仅前端展示异常

版本信息

应用版本 v1.4.3

部署方式 Docker

出现原因是 https://github.com/hhyo/archery/blob/master/sql/engines/models.py#L42 使用了空列表作为默认参数初始化对象属性, 并且在代码中使用了原地修改的方法修改对象属性, 这就等同于修改了对象的默认值。

解决方案是将对象属性的默认值改为None, 然后在init中判断,如果是None就置为空列表[]

这样的话,这个空列表是新生成的空列表, 而不是默认参数的那个列表,也就不会影响到之后生成的心对象

参考:

https://stackoverflow.com/questions/366422/what-is-the-pythonic-way-to-avoid-default-parameters-that-are-empty-lists

经测试已修复. http://139.199.0.191/detail/6/

| gharchive/issue | 2019-03-08T03:28:32 | 2025-04-01T06:44:26.424570 | {

"authors": [

"LeoQuote",

"hhyo"

],

"repo": "hhyo/archery",

"url": "https://github.com/hhyo/archery/issues/63",

"license": "Apache-2.0",

"license_type": "permissive",

"license_source": "github-api"

} |

185070058 | HHH-11144 - Add test for issue

https://hibernate.atlassian.net/browse/HHH-11144

Applied upstream, thanks

Applied upstream, thanks

| gharchive/pull-request | 2016-10-25T09:53:28 | 2025-04-01T06:44:26.425935 | {

"authors": [

"dreab8",

"vladmihalcea"

],

"repo": "hibernate/hibernate-orm",

"url": "https://github.com/hibernate/hibernate-orm/pull/1607",

"license": "Apache-2.0",

"license_type": "permissive",

"license_source": "github-api"

} |

40343443 | [4.3] HHH-9337 Region.destroy() attempts to remove a cache listener, but regio...

...n class is not annotated with @Listener

https://hibernate.atlassian.net/browse/HHH-9337

Cherry-picked and pushed.

Thanks!

Gail

| gharchive/pull-request | 2014-08-15T12:15:06 | 2025-04-01T06:44:26.427363 | {

"authors": [

"gbadner",

"pferraro"

],

"repo": "hibernate/hibernate-orm",

"url": "https://github.com/hibernate/hibernate-orm/pull/784",

"license": "Apache-2.0",

"license_type": "permissive",

"license_source": "github-api"

} |

2069031169 | 🛑 kiwifarms.st is down

In d2a3703, kiwifarms.st (https://kiwifarms.st) was down:

HTTP code: 0

Response time: 0 ms

Resolved: kiwifarms.st is back up in 768fc56 after 33 minutes.

| gharchive/issue | 2024-01-07T07:56:41 | 2025-04-01T06:44:26.431842 | {

"authors": [

"hickoryhouse"

],

"repo": "hickoryhouse/kf",

"url": "https://github.com/hickoryhouse/kf/issues/2869",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

2209686011 | 🛑 Mad at the Internet is down

In e53cdb5, Mad at the Internet (https://madattheinternet.com/) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Mad at the Internet is back up in 8c76a38 after 10 minutes.

| gharchive/issue | 2024-03-27T02:27:30 | 2025-04-01T06:44:26.434543 | {

"authors": [

"hickoryhouse"

],

"repo": "hickoryhouse/kf",

"url": "https://github.com/hickoryhouse/kf/issues/3261",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1510209097 | 🛑 Kiwi Farms Forum is down

In d41e9a8, Kiwi Farms Forum (https://kiwifarms.net) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Kiwi Farms Forum is back up in 5841932.

| gharchive/issue | 2022-12-24T23:38:15 | 2025-04-01T06:44:26.436912 | {

"authors": [

"hickoryhouse"

],

"repo": "hickoryhouse/kf",

"url": "https://github.com/hickoryhouse/kf/issues/84",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

500722317 | Project settings

A solution for #42.

Please refer to #37 .

I'm sorry but I don't understand your objections to my patch. the super is the problem? Why? If I'm overriding a method from a class, calling super is a standard way how use the original method.

Of course, you can reject the patch but the plugins will remain in conflict then.

I agree that using super is the standard way to override class methods.

However, since ProjectsHelper is a module, I think it is incorrect to use super.

@maeda-m I think there should be a Module#prepend instead of ProjectsController.send :helper ?

here'a an article about the difference

https://www.justinweiss.com/articles/rails-5-module-number-prepend-and-the-end-of-alias-method-chain/

Thank you. I learned from you.

Released version 1.5.0 !

| gharchive/pull-request | 2019-10-01T07:45:44 | 2025-04-01T06:44:26.470076 | {

"authors": [

"ahorek",

"maeda-m",

"picman"

],

"repo": "hidakatsuya/redmine_default_custom_query",

"url": "https://github.com/hidakatsuya/redmine_default_custom_query/pull/43",

"license": "mit",

"license_type": "permissive",

"license_source": "bigquery"

} |

2410141209 | Incorrect match in the source code results in inaccurate matching results.I don't know if this is a bug...

this code const [_, commentSyntax, searchPhrase, commentSyntaxEnd] = match; in matchSearchPhrase.ts 。The suggestions for this extension are as follows:

The result obtained by the regular expression is 4 groups. In addition to the first item, there should be 4 items in the match to fully indicate it. The searchPhrase in the source code only represents find, not find {question}, so one item should be added, as shown below:

const [_, commentSyntax, searchSymbol, searchPhrase, commentSyntaxEnd] = match;,The output after debugging is as follows:

After the modification, the result of debugging this extension is as follows:

You're right. I've pushed an update for this issue https://github.com/hieunc229/copilot-clone/commit/1edf91fd0b8dcfcf4b27a4d2a6d86d5c8e865de7 and will make an update to the extension marketplace

Thanks for spotting it @mengyangz86

| gharchive/issue | 2024-07-16T03:52:11 | 2025-04-01T06:44:26.483786 | {

"authors": [

"hieunc229",

"mengyangz86"

],

"repo": "hieunc229/copilot-clone",

"url": "https://github.com/hieunc229/copilot-clone/issues/88",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

113817628 | AssertionViewメソッド用のbuilderを追加

スクリーンショットの取得、検証であるassertViewは以下のAPIとなっている。

assertView(String screenshotId, CompareTarget[] compareTargets,DomSelector[] hiddenElementsSelectors)

CompareTargetやDomSelector内も複雑なオブジェクトとなっているため、

ビルダーを用意して簡略化できるようにする

下記コミットで対応しました。

c7e1eeb6ee4b0c4655e6188d1c7745e11c8332cd

ce9339c81883c0e3efb1e6e76266f3e90509b2d9

55fb8110b1a73559a342f7e0bcc5c44dc10835d0

796cd8ed2eb17bce472137f74caeb9be87fb2392

78a4ba12cb1ef437a9cf3039220bbf79fc061db3

db7d67290832e298441c21b0252596305a4637ae

6379925d124ec4df3e231d68284e9f76284db7a1

13b67cab600479ce9401843979057bf8c9b5b01a

a760f32f939f43ef3a96c68fb678870c42a56035

781490698fa4070b19bedf4c98fcb2918af6de43

ビルダー用の説明ページを追加しました

https://www.htmlhifive.com/conts/web/view/pitalium-reference/screenshot-argument

| gharchive/issue | 2015-10-28T13:09:08 | 2025-04-01T06:44:26.486798 | {

"authors": [

"tkashi"

],

"repo": "hifive/hifive-pitalium",

"url": "https://github.com/hifive/hifive-pitalium/issues/33",

"license": "apache-2.0",

"license_type": "permissive",

"license_source": "bigquery"

} |

199260799 | JS: Expressions inside template literals don't display correctly

res = `start ${tags.map(tag => `pre ${tag} post`).join('\n ')}end`;

Displays like:

pre and tags.map don't have the right color

Fiddle: https://jsfiddle.net/ug3tLcf6/

Not sure I see any issue. What are you expecting? Or perhaps this has been fixed.

https://jsfiddle.net/ajoshguy/ym41ukjd/

Partly fixed (pre is coloured now). Partly as expected (tags.map is indeed also not coloured outside the template literal, I expected it to be coloured).

Closing this.

Thanks for responding after 3y instead of closing it after x months due to inactivity 😊!

Glad to help!

| gharchive/issue | 2017-01-06T18:46:37 | 2025-04-01T06:44:26.609542 | {

"authors": [

"teameh",

"yyyc514"

],

"repo": "highlightjs/highlight.js",

"url": "https://github.com/highlightjs/highlight.js/issues/1405",

"license": "BSD-3-Clause",

"license_type": "permissive",

"license_source": "github-api"

} |

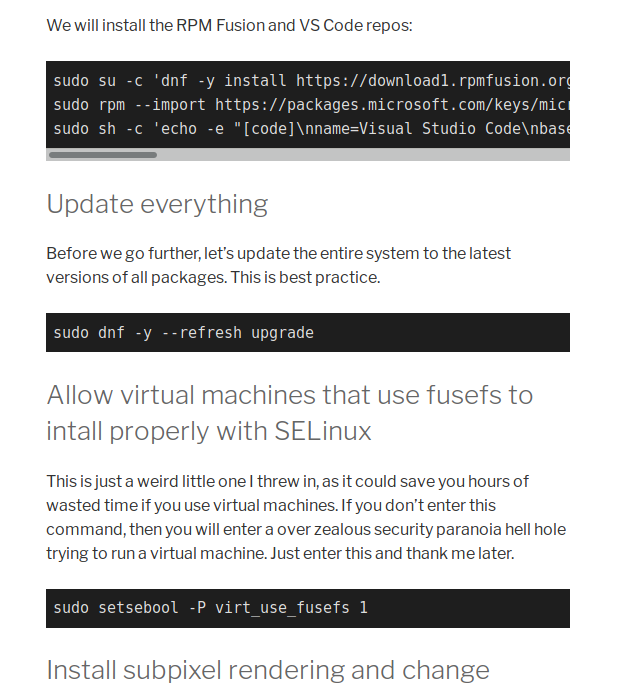

246110508 | Scrollbar not working on certain lines of code inside

Hello,

I am using the latest version of highlight.js through a Wordpress plugin, and am having problems.

When the code stretches out longer than the maximum width of the container the scroll bar appears BUT in certain code you can't click and move it. It seems frozen. You can get inside the box and highlight the code from within, but the scroll bar is totally frozen!

Here is are 2 line of code that DO NOT have a working scroll bar:

<pre><code class="shell">sudo su -c 'dnf -y install https://download1.rpmfusion.org/free/fedora/rpmfusion-free-release-$(rpm -E %fedora).noarch.rpm https://download1.rpmfusion.org/nonfree/fedora/rpmfusion-nonfree-release-$(rpm -E %fedora).noarch.rpm'

sudo rpm --import https://packages.microsoft.com/keys/microsoft.asc

sudo sh -c 'echo -e "[code]\nname=Visual Studio Code\nbaseurl=https://packages.microsoft.com/yumrepos/vscode\nenabled=1\ngpgcheck=1\ngpgkey=https://packages.microsoft.com/keys/microsoft.asc" > /etc/yum.repos.d/vscode.repo'</code></pre>

<pre><code class="shell">echo fs.inotify.max_user_watches=524288 | sudo tee -a /etc/sysctl.conf sudo sysctl -p</code></pre>

and here is one that works perfectly:

<pre><code class="shell">sudo sed -i "s/User apache/User $USERNAME/g" /etc/httpd/conf/httpd.conf</code></pre>

If I get rid of the class="shell" then it still has the exact same problem. Any idea? I attached a screen shot of a not working scrollbar. THANKS!

I’m closing this issue because it has been inactive for over a year. This probably means that it is not reproducible or it has been fixed in a newer version.

Please reopen if you still encounter this issue with the latest stable version.

Thank you!

| gharchive/issue | 2017-07-27T17:18:18 | 2025-04-01T06:44:26.613289 | {

"authors": [

"David-Else"

],

"repo": "highlightjs/highlight.js",

"url": "https://github.com/highlightjs/highlight.js/issues/1577",

"license": "BSD-3-Clause",

"license_type": "permissive",

"license_source": "github-api"

} |

189009499 | Allow starts to be defined as 'self' much like one can do in contains

I used this for a weird linking system. Colon separated values as { end: /:/, endsWithParent: true, starts: 'self', ... }

It's not a necessary thing, but it's consistent with the feature for contains.

I'm confused when you would use this exactly?

Since this was so long ago I hardly remember but going off of "colon separated values" I think I used it for like 1 : 1 : ... but where 1 is a complex type (like could be a number or a string or whatever)

It's in here, and while the grammar sorta works, it's slow in complex cases so I kind of have up.

Seems like a good way to get stuck in an infinite loop. How does it break out if after end it always starts self?

And typically you'd do this with a parent and then inside your contains you'd just have you matcher... so it would run over and over and over again as many times as needed until you left the mode... no need for this start hack.

It breaks out when the parent ends, as the example says. :p

...

contains: [

{

begin: /\d\s:\s?/,

endWithParent: true

}

]

Doesn't that do exactly the same thing?

I don't know, I'm out of touch with the system. I'm pretty sure I only did it for reducing redundancy. I have no complaints if this is just closed.

Ok, closing. It would have needed extra documentation and I had some issues with the code also... and generally this type of feature we shouldn't just add "abstractly"... if there was a grammar included with it that required the new functionality that would make a much better case for why it's necessary/useful, etc...

| gharchive/pull-request | 2016-11-14T01:09:32 | 2025-04-01T06:44:26.616376 | {

"authors": [

"logicplace",

"yyyc514"

],

"repo": "highlightjs/highlight.js",

"url": "https://github.com/highlightjs/highlight.js/pull/1348",

"license": "BSD-3-Clause",

"license_type": "permissive",

"license_source": "github-api"

} |

202914482 | add postcss-hocus plugin

postcss-hocus lets you type a:hocus instead of a:hover, a:focus. Short and simple :)

🎩 ✨ 🔮

| gharchive/pull-request | 2017-01-24T19:22:06 | 2025-04-01T06:44:26.628338 | {

"authors": [

"Kilian",

"himynameisdave"

],

"repo": "himynameisdave/postcss-plugins",

"url": "https://github.com/himynameisdave/postcss-plugins/pull/169",

"license": "mit",

"license_type": "permissive",

"license_source": "bigquery"

} |

721690022 | Uploading video annotations from Premiere Pro

I tried to make a video project in Audiannotate and neither the video link nor the annotations were recognized by the program.

To Reproduce

Steps to reproduce the behavior:

Go to http://audiannotate.brumfieldlabs.com/

Click on My Projects and edit project (in this case, Camille 1921)

Add link and info

Upload annotations

Several errors, one when I tried to add the duration of the video (1:09:30 or 69:30, tried both) and another when I tried to upload my annotations, first without editing them and then editing them to have only four collumns

Expected behavior

I expected the link to work and the annotations to link to the timestamps with the duration

Screenshots

Got this error when I tried to correct the duration of the video:

And these errors when I tried to upload the annotations:

Additional context

I think it has something to do with the format of my annotations exported from premiere, which I tried to fix by removing two collumns (so it is now just marker name/description/time in/time out) and changing the timecodes to a 00:00:00 format rather than 00;00;00

Oh also, these are my annotations as a .txt -

Camille 1921 annotations.txt

This is a really gnarly one; fortunately our next feature (#125) should finally get us to where we need to be to support Premiere.

We think we're also going to hard-code support for Premiere upload annotations earlier to un-block research work.

| gharchive/issue | 2020-10-14T18:36:30 | 2025-04-01T06:44:26.641570 | {

"authors": [

"benwbrum",

"jreinschmidt"

],

"repo": "hipstas/AudiAnnotate",

"url": "https://github.com/hipstas/AudiAnnotate/issues/122",

"license": "Apache-2.0",

"license_type": "permissive",

"license_source": "github-api"

} |

1727045925 | 建议识别后导出Excel表格数据,并对应图片超链接,更加实用

建议识别后导出Excel表格数据,表格数据第一列是图片名称,第二列识别图片的内容,第三列对应图片超链接,这样可以很快找到需要搜索的内容图片。

OK,已有计划

邮件已经收到

期待你的更新,还有就是要对批量提取的字段,如果可以自定义就好了。

Copied to clipboard!

------------------ 原始邮件 ------------------

发件人: @.>;

发送时间: 2023年5月27日(星期六) 下午5:58

收件人: @.>;

抄送: @.>; @.>;

主题: Re: [hiroi-sora/Umi-OCR] 建议识别后导出Excel表格数据,并对应图片超链接,更加实用 (Issue #148)

OK,已有计划

—

Reply to this email directly, view it on GitHub, or unsubscribe.

You are receiving this because you authored the thread.Message ID: @.***>

期待大佬的更新

V2预览版 已支持输出csv格式,可导入excel。

第一列图片名称、第二列识别内容、第三列图片路径。

邮件已经收到

| gharchive/issue | 2023-05-26T07:19:07 | 2025-04-01T06:44:26.646896 | {

"authors": [

"WhoIAmm",

"csq4017",

"hiroi-sora"

],

"repo": "hiroi-sora/Umi-OCR",

"url": "https://github.com/hiroi-sora/Umi-OCR/issues/148",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1769743374 | i cannot confirm my testnet requested since 3days now

Describe the bug

A clear and concise description of what the bug is.

Transaction ID

Address

Block#

Time stamp

To Reproduce

Steps to reproduce the behavior:

Go to '...'

Click on '....'

Scroll down to '....'

See error

Expected behavior

A clear and concise description of what you expected to happen.

Screenshots

If applicable, add screenshots or consol.log to help explain your problem.

Desktop (please complete the following information):

OS: [e.g. iOS]

Browser [e.g. chrome, safari]

Version [e.g. 22]

Smartphone (please complete the following information):

Device: [e.g. iPhone6]

OS: [e.g. iOS8.1]

Browser [e.g. stock browser, safari]

Version [e.g. 22]

Additional context

Add any other context about the problem here:

Please check the testnet status here https://status.hiro.so/

| gharchive/issue | 2023-06-22T13:58:11 | 2025-04-01T06:44:26.652656 | {

"authors": [

"Christianogbonnaya",

"andresgalante"

],

"repo": "hirosystems/explorer",

"url": "https://github.com/hirosystems/explorer/issues/1192",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1803880958 | STX Token Transfer SENT which I did NOT do

Here's the TRX id: 0x6829fa018ff0642fbc90255a8d3901e38f7f763c89c8fad629a2cbec01efaa63

Where did my tokens go? Why did that happen?

Please HELP! URGENT!

Sender address: SP2HEDH4SXP1A34M9KDY00PJEM6YP1QDMVD2BFYDH

Recipient address: SP2KW0M6MBSSAV1BFDKH56VFNZK73Z36C0N369K9M

Link: https://explorer.hiro.so/txid/0x6829fa018ff0642fbc90255a8d3901e38f7f763c89c8fad629a2cbec01efaa63?chain=mainnet

Where did my STX go? Is it still within STX / Hiro system or network? Please help clarify what happened.

This isn't an Explorer issue.

Please request community support on the #support channel in Discord.

| gharchive/issue | 2023-07-13T23:09:11 | 2025-04-01T06:44:26.655363 | {

"authors": [

"STX-Stargem",

"andresgalante"

],

"repo": "hirosystems/explorer",

"url": "https://github.com/hirosystems/explorer/issues/1234",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

558727765 | Integrate the 'argparse' library; add -l command-line option

Implements the rest of https://github.com/hishamhm/tl/issues/35.

Note that running tl script.tl will now result in an error.

Also, it would be nice if we had tests for the CLI tool :)

Also, it would be nice if we had tests for the CLI tool :)

@pdesaulniers Added them! :grin:

Awesome! I added some tests for the -l argument too.

We'll need some tests for tlconfig.lua as well. To do this, I think we'll need to add a -p <path to directory with tlconfig.lua> argument to the CLI. I'll do this in a later PR.

I think this is good to go — could you rebase this PR and fix the conflicts so it can be merged?

Like this? :)

| gharchive/pull-request | 2020-02-02T17:22:56 | 2025-04-01T06:44:26.668344 | {

"authors": [

"hishamhm",

"pdesaulniers"

],

"repo": "hishamhm/tl",

"url": "https://github.com/hishamhm/tl/pull/42",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1854652362 | Upgrade cryptography to fix vulnerability issues

Description

https://github.com/histolab/histolab/security/dependabot/24

Types of Changes

[ ] Core

[ ] Bugfix

[ ] New feature

[ ] Enhancement/optimization

[ ] Documentation

Issues Fixed or Closed by This PR

Fixes:

Checklist

[ ] My code follows the code style of this project.

[ ] My change requires a change to the documentation.

[ ] I have updated the documentation accordingly.

[ ] I have read the CONTRIBUTING document.

[ ] I have added tests to cover my changes.

[ ] I have tested the changes and verified that they work and don't break anything (as well as I can manage).

fixed by #633

| gharchive/pull-request | 2023-08-17T09:45:56 | 2025-04-01T06:44:26.673103 | {

"authors": [

"alessiamarcolini"

],

"repo": "histolab/histolab",

"url": "https://github.com/histolab/histolab/pull/619",

"license": "Apache-2.0",

"license_type": "permissive",

"license_source": "github-api"

} |

1816851539 | Merge a QLoRA to base Llama 2

I used python src/train_web.py to train a QLoRA. How do i merge the QLoRA to the base model Llama 2?

Please visit the export tab of the Web Tuner to merge the LoRA weights.

Please visit the export tab of the Web Tuner to merge the LoRA weights.

I selected and load QLoRa Checkpoints and base and got error:

07/24/2023 20:59:02 - WARNING - llmtuner.tuner.core.parser - Please specify `prompt_template` if you are using other pre-trained models.

[INFO|tokenization_utils_base.py:1837] 2023-07-24 20:59:02,572 >> loading file tokenizer.model

[INFO|tokenization_utils_base.py:1837] 2023-07-24 20:59:02,573 >> loading file added_tokens.json

[INFO|tokenization_utils_base.py:1837] 2023-07-24 20:59:02,573 >> loading file special_tokens_map.json

[INFO|tokenization_utils_base.py:1837] 2023-07-24 20:59:02,573 >> loading file tokenizer_config.json

[INFO|configuration_utils.py:710] 2023-07-24 20:59:02,580 >> loading configuration file C:\LLaMA-Efficient-Tuning\Llama-2-13B-Chat-fp16\config.json

[INFO|configuration_utils.py:768] 2023-07-24 20:59:02,581 >> Model config LlamaConfig {

"_name_or_path": "C:\\LLaMA-Efficient-Tuning\\Llama-2-13B-Chat-fp16",

"architectures": [

"LlamaForCausalLM"

],

"bos_token_id": 1,

"eos_token_id": 2,

"hidden_act": "silu",

"hidden_size": 5120,

"initializer_range": 0.02,

"intermediate_size": 13824,

"max_length": 4096,

"max_position_embeddings": 4096,

"model_type": "llama",

"num_attention_heads": 40,

"num_hidden_layers": 40,

"num_key_value_heads": 40,

"pad_token_id": 0,

"pretraining_tp": 1,

"rms_norm_eps": 1e-05,

"rope_scaling": null,

"tie_word_embeddings": false,

"torch_dtype": "float16",

"transformers_version": "4.31.0",

"use_cache": true,

"vocab_size": 32000

}

[INFO|modeling_utils.py:2600] 2023-07-24 20:59:02,582 >> loading weights file C:\LLaMA-Efficient-Tuning\Llama-2-13B-Chat-fp16\pytorch_model.bin.index.json

[INFO|modeling_utils.py:1172] 2023-07-24 20:59:02,583 >> Instantiating LlamaForCausalLM model under default dtype torch.float16.

[INFO|configuration_utils.py:599] 2023-07-24 20:59:02,583 >> Generate config GenerationConfig {

"_from_model_config": true,

"bos_token_id": 1,

"eos_token_id": 2,

"max_length": 4096,

"pad_token_id": 0,

"transformers_version": "4.31.0"

}

Traceback (most recent call last):

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\routes.py", line 442, in run_predict

output = await app.get_blocks().process_api(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\blocks.py", line 1389, in process_api

result = await self.call_function(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\blocks.py", line 1108, in call_function

prediction = await utils.async_iteration(iterator)

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\utils.py", line 346, in async_iteration

return await iterator.__anext__()

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\utils.py", line 339, in __anext__

return await anyio.to_thread.run_sync(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\anyio\to_thread.py", line 33, in run_sync

return await get_asynclib().run_sync_in_worker_thread(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\anyio\_backends\_asyncio.py", line 877, in run_sync_in_worker_thread

return await future

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\anyio\_backends\_asyncio.py", line 807, in run

result = context.run(func, *args)

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\utils.py", line 322, in run_sync_iterator_async

return next(iterator)

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\utils.py", line 691, in gen_wrapper

yield from f(*args, **kwargs)

File "C:\LLaMA-Efficient-Tuning\src\llmtuner\webui\utils.py", line 122, in export_model

model, tokenizer = load_model_and_tokenizer(model_args, finetuning_args)

File "C:\LLaMA-Efficient-Tuning\src\llmtuner\tuner\core\loader.py", line 105, in load_model_and_tokenizer

model = AutoModelForCausalLM.from_pretrained(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\transformers\models\auto\auto_factory.py", line 493, in from_pretrained

return model_class.from_pretrained(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\transformers\modeling_utils.py", line 2903, in from_pretrained

) = cls._load_pretrained_model(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\transformers\modeling_utils.py", line 3002, in _load_pretrained_model

raise ValueError(

ValueError: The current `device_map` had weights offloaded to the disk. Please provide an `offload_folder` for them. Alternatively, make sure you have `safetensors` installed if the model you are using offers the weights in this format.

Please visit the export tab of the Web Tuner to merge the LoRA weights.

I selected and load QLoRa Checkpoints and base and got error:

07/24/2023 20:59:02 - WARNING - llmtuner.tuner.core.parser - Please specify `prompt_template` if you are using other pre-trained models.

[INFO|tokenization_utils_base.py:1837] 2023-07-24 20:59:02,572 >> loading file tokenizer.model

[INFO|tokenization_utils_base.py:1837] 2023-07-24 20:59:02,573 >> loading file added_tokens.json

[INFO|tokenization_utils_base.py:1837] 2023-07-24 20:59:02,573 >> loading file special_tokens_map.json

[INFO|tokenization_utils_base.py:1837] 2023-07-24 20:59:02,573 >> loading file tokenizer_config.json

[INFO|configuration_utils.py:710] 2023-07-24 20:59:02,580 >> loading configuration file C:\LLaMA-Efficient-Tuning\Llama-2-13B-Chat-fp16\config.json

[INFO|configuration_utils.py:768] 2023-07-24 20:59:02,581 >> Model config LlamaConfig {

"_name_or_path": "C:\\LLaMA-Efficient-Tuning\\Llama-2-13B-Chat-fp16",

"architectures": [

"LlamaForCausalLM"

],

"bos_token_id": 1,

"eos_token_id": 2,

"hidden_act": "silu",

"hidden_size": 5120,

"initializer_range": 0.02,

"intermediate_size": 13824,

"max_length": 4096,

"max_position_embeddings": 4096,

"model_type": "llama",

"num_attention_heads": 40,

"num_hidden_layers": 40,

"num_key_value_heads": 40,

"pad_token_id": 0,

"pretraining_tp": 1,

"rms_norm_eps": 1e-05,

"rope_scaling": null,

"tie_word_embeddings": false,

"torch_dtype": "float16",

"transformers_version": "4.31.0",

"use_cache": true,

"vocab_size": 32000

}

[INFO|modeling_utils.py:2600] 2023-07-24 20:59:02,582 >> loading weights file C:\LLaMA-Efficient-Tuning\Llama-2-13B-Chat-fp16\pytorch_model.bin.index.json

[INFO|modeling_utils.py:1172] 2023-07-24 20:59:02,583 >> Instantiating LlamaForCausalLM model under default dtype torch.float16.

[INFO|configuration_utils.py:599] 2023-07-24 20:59:02,583 >> Generate config GenerationConfig {

"_from_model_config": true,

"bos_token_id": 1,

"eos_token_id": 2,

"max_length": 4096,

"pad_token_id": 0,

"transformers_version": "4.31.0"

}

Traceback (most recent call last):

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\routes.py", line 442, in run_predict

output = await app.get_blocks().process_api(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\blocks.py", line 1389, in process_api

result = await self.call_function(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\blocks.py", line 1108, in call_function

prediction = await utils.async_iteration(iterator)

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\utils.py", line 346, in async_iteration

return await iterator.__anext__()

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\utils.py", line 339, in __anext__

return await anyio.to_thread.run_sync(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\anyio\to_thread.py", line 33, in run_sync

return await get_asynclib().run_sync_in_worker_thread(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\anyio\_backends\_asyncio.py", line 877, in run_sync_in_worker_thread

return await future

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\anyio\_backends\_asyncio.py", line 807, in run

result = context.run(func, *args)

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\utils.py", line 322, in run_sync_iterator_async

return next(iterator)

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\utils.py", line 691, in gen_wrapper

yield from f(*args, **kwargs)

File "C:\LLaMA-Efficient-Tuning\src\llmtuner\webui\utils.py", line 122, in export_model

model, tokenizer = load_model_and_tokenizer(model_args, finetuning_args)

File "C:\LLaMA-Efficient-Tuning\src\llmtuner\tuner\core\loader.py", line 105, in load_model_and_tokenizer

model = AutoModelForCausalLM.from_pretrained(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\transformers\models\auto\auto_factory.py", line 493, in from_pretrained

return model_class.from_pretrained(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\transformers\modeling_utils.py", line 2903, in from_pretrained

) = cls._load_pretrained_model(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\transformers\modeling_utils.py", line 3002, in _load_pretrained_model

raise ValueError(

ValueError: The current `device_map` had weights offloaded to the disk. Please provide an `offload_folder` for them. Alternatively, make sure you have `safetensors` installed if the model you are using offers the weights in this format.

Try removing the following lines and checking if it works.

https://github.com/hiyouga/LLaMA-Efficient-Tuning/blob/182b42504399d2755897b9737db1d36655a0fa50/src/llmtuner/tuner/core/loader.py#L96-L97

如何启用QLoRA?在量化处选择4bit就可以吗?

如何启用QLoRA?在量化处选择4bit就可以吗?

是的

In our experiments, the performance of QLoRA is close to LoRA's one.

In addition of WeChat, possibly add public discord servers?

@hiyouga after update to last version and use export tab with a selected Prompt template, got error:

Traceback (most recent call last):

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\routes.py", line 442, in run_predict

output = await app.get_blocks().process_api(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\blocks.py", line 1389, in process_api

result = await self.call_function(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\blocks.py", line 1108, in call_function

prediction = await utils.async_iteration(iterator)

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\utils.py", line 346, in async_iteration

return await iterator.__anext__()

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\utils.py", line 339, in __anext__

return await anyio.to_thread.run_sync(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\anyio\to_thread.py", line 33, in run_sync

return await get_asynclib().run_sync_in_worker_thread(

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\anyio\_backends\_asyncio.py", line 877, in run_sync_in_worker_thread

return await future

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\anyio\_backends\_asyncio.py", line 807, in run

result = context.run(func, *args)

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\utils.py", line 322, in run_sync_iterator_async

return next(iterator)

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\gradio\utils.py", line 691, in gen_wrapper

yield from f(*args, **kwargs)

File "C:\LLaMA-Efficient-Tuning\src\llmtuner\webui\utils.py", line 125, in save_model

export_model(args, max_shard_size="{}GB".format(max_shard_size))

File "C:\LLaMA-Efficient-Tuning\src\llmtuner\tuner\tune.py", line 29, in export_model

model_args, _, training_args, finetuning_args, _ = get_train_args(args)

File "C:\LLaMA-Efficient-Tuning\src\llmtuner\tuner\core\parser.py", line 54, in get_train_args

model_args, data_args, training_args, finetuning_args, general_args = parse_train_args(args)

File "C:\LLaMA-Efficient-Tuning\src\llmtuner\tuner\core\parser.py", line 39, in parse_train_args

return _parse_args(parser, args)

File "C:\LLaMA-Efficient-Tuning\src\llmtuner\tuner\core\parser.py", line 24, in _parse_args

return parser.parse_dict(args)

File "C:\LLaMA-Efficient-Tuning\venv\lib\site-packages\transformers\hf_argparser.py", line 373, in parse_dict

obj = dtype(**inputs)

TypeError: DataArguments.__init__() missing 1 required positional argument: 'template'

@Katehuuh Fixed

| gharchive/issue | 2023-07-22T18:14:13 | 2025-04-01T06:44:26.693770 | {

"authors": [

"DumoeDss",

"Katehuuh",

"PsychoSmiley",

"hiyouga"

],

"repo": "hiyouga/LLaMA-Efficient-Tuning",

"url": "https://github.com/hiyouga/LLaMA-Efficient-Tuning/issues/223",

"license": "Apache-2.0",

"license_type": "permissive",

"license_source": "github-api"

} |

2197854829 | 🛑 HJStrauss is down

In 92a7383, HJStrauss (https://www.hjstrauss.de) was down:

HTTP code: 0

Response time: 0 ms

Resolved: HJStrauss is back up in cfd8a72 after 9 minutes.

| gharchive/issue | 2024-03-20T15:36:19 | 2025-04-01T06:44:26.719872 | {

"authors": [

"hjstrauss"

],

"repo": "hjstrauss/MonitorMySites",

"url": "https://github.com/hjstrauss/MonitorMySites/issues/237",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

2286779397 | 🛑 Reime ohne Sinn und Verstand is down

In 0ba04e4, Reime ohne Sinn und Verstand (https://www.reimeohnesinnundverstand.de) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Reime ohne Sinn und Verstand is back up in f1d673a after 9 minutes.

| gharchive/issue | 2024-05-09T02:34:37 | 2025-04-01T06:44:26.722465 | {

"authors": [

"hjstrauss"

],

"repo": "hjstrauss/MonitorMySites",

"url": "https://github.com/hjstrauss/MonitorMySites/issues/3193",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

2190771210 | 🛑 LM Bogen WSV is down

In 9974047, LM Bogen WSV (https://www.lmbogenwsv.de) was down:

HTTP code: 0

Response time: 0 ms

Resolved: LM Bogen WSV is back up in 5e1829d after 8 minutes.

| gharchive/issue | 2024-03-17T17:17:57 | 2025-04-01T06:44:26.724929 | {

"authors": [

"hjstrauss"

],

"repo": "hjstrauss/MonitorMySites",

"url": "https://github.com/hjstrauss/MonitorMySites/issues/48",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

2382260447 | 🛑 Reime ohne Sinn und Verstand is down

In 61035ef, Reime ohne Sinn und Verstand (https://www.reimeohnesinnundverstand.de) was down:

HTTP code: 0

Response time: 0 ms

Resolved: Reime ohne Sinn und Verstand is back up in 4d411a4 after 8 minutes.

| gharchive/issue | 2024-06-30T13:40:42 | 2025-04-01T06:44:26.727351 | {

"authors": [

"hjstrauss"

],

"repo": "hjstrauss/MonitorMySites",

"url": "https://github.com/hjstrauss/MonitorMySites/issues/5975",

"license": "MIT",

"license_type": "permissive",

"license_source": "github-api"

} |

1038456956 | API available