id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 6.67k ⌀ | citation stringlengths 0 10.7k ⌀ | likes int64 0 3.66k | downloads int64 0 8.89M | created timestamp[us] | card stringlengths 11 977k | card_len int64 11 977k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|

grammarly/pseudonymization-data | 2023-08-23T21:07:17.000Z | [

"task_categories:text-classification",

"task_categories:summarization",

"size_categories:100M<n<1T",

"language:en",

"license:apache-2.0",

"region:us"

] | grammarly | null | null | 1 | 3 | 2023-07-05T18:37:54 | ---

license: apache-2.0

task_categories:

- text-classification

- summarization

language:

- en

pretty_name: Pseudonymization data

size_categories:

- 100M<n<1T

---

This repository contains all the datasets used in our paper "Privacy- and Utility-Preserving NLP with Anonymized data: A case study of Pseudonymization" (https://aclanthology.org/2023.trustnlp-1.20).

# Dataset Card for Pseudonymization data

## Dataset Description

- **Homepage:** https://huggingface.co/datasets/grammarly/pseudonymization-data

- **Paper:** https://aclanthology.org/2023.trustnlp-1.20/

- **Point of Contact:** oleksandr.yermilov@ucu.edu.ua

### Dataset Summary

This dataset repository contains all the datasets, used in our paper. It includes datasets for different NLP tasks, pseudonymized by different algorithms; a dataset for training Seq2Seq model which translates text from original to "pseudonymized"; and a dataset for training model which would detect if the text was pseudonymized.

### Languages

English.

## Dataset Structure

Each folder contains preprocessed train versions of different datasets (e.g, in the `cnn_dm` folder there will be preprocessed CNN/Daily Mail dataset). Each file has a name, which corresponds with the algorithm from the paper used for its preprocessing (e.g. `ner_ps_spacy_imdb.csv` is imdb dataset, preprocessed with NER-based pseudonymization using FLAIR system).

I

## Dataset Creation

Datasets in `imdb` and `cnn_dm` folders were created by pseudonymizing corresponding datasets with different pseudonymization algorithms.

Datasets in `detection` folder are combined original datasets and pseudonymized datasets, grouped by pseudonymization algorithm used.

Datasets in `seq2seq` folder are datasets for training Seq2Seq transformer-based pseudonymization model. At first, a dataset was fetched from Wikipedia articles, which was preprocessed with either NER-PS<sub>FLAIR</sub> or NER-PS<sub>spaCy</sub> algorithms.

### Personal and Sensitive Information

This datasets bring no sensitive or personal information; it is completely based on data present in open sources (Wikipedia, standard datasets for NLP tasks).

## Considerations for Using the Data

### Known Limitations

Only English texts are present in the datasets. Only a limited part of named entity types are replaced in the datasets. Please, also check the Limitations section of our paper.

## Additional Information

### Dataset Curators

Oleksandr Yermilov (oleksandr.yermilov@ucu.edu.ua)

### Citation Information

```

@inproceedings{yermilov-etal-2023-privacy,

title = "Privacy- and Utility-Preserving {NLP} with Anonymized data: A case study of Pseudonymization",

author = "Yermilov, Oleksandr and

Raheja, Vipul and

Chernodub, Artem",

booktitle = "Proceedings of the 3rd Workshop on Trustworthy Natural Language Processing (TrustNLP 2023)",

month = jul,

year = "2023",

address = "Toronto, Canada",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2023.trustnlp-1.20",

doi = "10.18653/v1/2023.trustnlp-1.20",

pages = "232--241",

abstract = "This work investigates the effectiveness of different pseudonymization techniques, ranging from rule-based substitutions to using pre-trained Large Language Models (LLMs), on a variety of datasets and models used for two widely used NLP tasks: text classification and summarization. Our work provides crucial insights into the gaps between original and anonymized data (focusing on the pseudonymization technique) and model quality and fosters future research into higher-quality anonymization techniques better to balance the trade-offs between data protection and utility preservation. We make our code, pseudonymized datasets, and downstream models publicly available.",

}

``` | 3,815 | [

[

-0.0172576904296875,

-0.0294952392578125,

0.01459503173828125,

0.01702880859375,

-0.00047206878662109375,

0.006244659423828125,

-0.0289764404296875,

-0.037353515625,

0.031982421875,

0.061004638671875,

-0.02899169921875,

-0.053985595703125,

-0.050384521484375,

... |

SALT-NLP/LLaVAR | 2023-07-22T06:35:06.000Z | [

"task_categories:text-generation",

"task_categories:visual-question-answering",

"language:en",

"license:cc-by-nc-4.0",

"llava",

"llavar",

"arxiv:2306.17107",

"region:us"

] | SALT-NLP | null | null | 6 | 3 | 2023-07-06T00:03:43 | ---

license: cc-by-nc-4.0

task_categories:

- text-generation

- visual-question-answering

language:

- en

tags:

- llava

- llavar

---

# LLaVAR Data: Enhanced Visual Instruction Data with Text-Rich Images

More info at [LLaVAR project page](https://llavar.github.io/), [Github repo](https://github.com/SALT-NLP/LLaVAR), and [paper](https://arxiv.org/abs/2306.17107).

## Training Data

Based on the LAION dataset, we collect 422K pretraining data based on OCR results. For finetuning data, we collect 16K high-quality instruction-following data by interacting with langauge-only GPT-4. Note that we also release a larger and more diverse finetuning dataset below (20K), which contains the 16K we used for the paper. The instruction files below contain the original LLaVA instructions. You can directly use them after merging the images into your LLaVA image folders. If you want to use them independently, you can remove the items contained in the original chat.json and llava_instruct_150k.json from LLaVA.

[Pretraining images](./pretrain.zip)

[Pretraining instructions](./chat_llavar.json)

[Finetuning images](./finetune.zip)

[Finetuning instructions - 16K](./llava_instruct_150k_llavar_16k.json)

[Finetuning instructions - 20K](./llava_instruct_150k_llavar_20k.json)

## Evaluation Data

We collect 50 instruction-following data on 50 text-rich images from LAION. You can use it for GPT-4-based instruction-following evaluation.

[Images](./REval.zip)

[GPT-4 Evaluation Contexts](./caps_laion_50_val.jsonl)

[GPT-4 Evaluation Rules](./rule_read_v3.json)

[Questions](./qa50_questions.jsonl)

[GPT-4 Answers](./qa50_gpt4_answer.jsonl) | 1,639 | [

[

-0.009521484375,

-0.057342529296875,

0.034332275390625,

0.0018596649169921875,

-0.02734375,

0.004535675048828125,

-0.018096923828125,

-0.0239715576171875,

0.0014667510986328125,

0.05029296875,

-0.026885986328125,

-0.06109619140625,

-0.044708251953125,

-0.003... |

richardr1126/spider-natsql-context-validation | 2023-07-06T21:20:42.000Z | [

"source_datasets:spider",

"language:en",

"license:cc-by-4.0",

"sql",

"spider",

"natsql",

"text-to-sql",

"sql finetune",

"arxiv:1809.08887",

"arxiv:2109.05153",

"region:us"

] | richardr1126 | null | null | 0 | 3 | 2023-07-06T00:51:06 | ---

language:

- en

license:

- cc-by-4.0

source_datasets:

- spider

tags:

- sql

- spider

- natsql

- text-to-sql

- sql finetune

dataset_info:

features:

- name: db_id

dtype: string

- name: prompt

dtype: string

- name: ground_truth

dtype: string

---

# Dataset Card for Spider NatSQL Context Validation

### Dataset Summary

[Spider](https://arxiv.org/abs/1809.08887) is a large-scale complex and cross-domain semantic parsing and text-to-SQL dataset annotated by 11 Yale students

The goal of the Spider challenge is to develop natural language interfaces to cross-domain databases.

This dataset was created to validate LLMs on the Spider dev dataset with database context using NatSQL.

### NatSQL

[NatSQL](https://arxiv.org/abs/2109.05153) is an intermediate representation for SQL that simplifies the queries and reduces the mismatch between

natural language and SQL. NatSQL preserves the core functionalities of SQL, but removes some clauses and keywords

that are hard to infer from natural language descriptions. NatSQL also makes schema linking easier by reducing the

number of schema items to predict. NatSQL can be easily converted to executable SQL queries and can improve the

performance of text-to-SQL models.

### Yale Lily Spider Leaderboards

The leaderboard can be seen at https://yale-lily.github.io/spider

### Languages

The text in the dataset is in English.

### Licensing Information

The spider dataset is licensed under

the [CC BY-SA 4.0](https://creativecommons.org/licenses/by-sa/4.0/legalcode)

### Citation

```

@article{yu2018spider,

title={Spider: A large-scale human-labeled dataset for complex and cross-domain semantic parsing and text-to-sql task},

author={Yu, Tao and Zhang, Rui and Yang, Kai and Yasunaga, Michihiro and Wang, Dongxu and Li, Zifan and Ma, James and Li, Irene and Yao, Qingning and Roman, Shanelle and others},

journal={arXiv preprint arXiv:1809.08887},

year={2018}

}

```

```

@inproceedings{gan-etal-2021-natural-sql,

title = "Natural {SQL}: Making {SQL} Easier to Infer from Natural Language Specifications",

author = "Gan, Yujian and

Chen, Xinyun and

Xie, Jinxia and

Purver, Matthew and

Woodward, John R. and

Drake, John and

Zhang, Qiaofu",

booktitle = "Findings of the Association for Computational Linguistics: EMNLP 2021",

month = nov,

year = "2021",

address = "Punta Cana, Dominican Republic",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2021.findings-emnlp.174",

doi = "10.18653/v1/2021.findings-emnlp.174",

pages = "2030--2042",

}

``` | 2,658 | [

[

-0.01497650146484375,

-0.053131103515625,

0.01471710205078125,

0.0171356201171875,

-0.02001953125,

0.0135498046875,

-0.0181884765625,

-0.04345703125,

0.030059814453125,

0.037322998046875,

-0.03424072265625,

-0.04986572265625,

-0.0229949951171875,

0.049255371... |

sled-umich/SDN | 2023-08-01T01:47:31.000Z | [

"task_categories:text-classification",

"task_categories:text-generation",

"size_categories:1K<n<10K",

"language:en",

"license:cc-by-nc-nd-4.0",

"arxiv:2210.12511",

"region:us"

] | sled-umich | null | null | 0 | 3 | 2023-07-06T17:04:13 | ---

license: cc-by-nc-nd-4.0

task_categories:

- text-classification

- text-generation

language:

- en

size_categories:

- 1K<n<10K

---

# DOROTHIE

## Spoken Dialogue for Handling Unexpected Situations in Interactive Autonomous Driving Agents

**[Research Paper](https://arxiv.org/abs/2210.12511) | [Github](https://github.com/sled-group/DOROTHIE) | [Huggingface](https://huggingface.co/datasets/sled-umich/DOROTHIE)**

Authored by [Ziqiao Ma](https://mars-tin.github.io/), Ben VanDerPloeg, Cristian-Paul Bara, [Yidong Huang](https://sled.eecs.umich.edu/author/yidong-huang/), Eui-In Kim, Felix Gervits, Matthew Marge, [Joyce Chai](https://web.eecs.umich.edu/~chaijy/)

DOROTHIE (Dialogue On the ROad To Handle Irregular Events) is an innovative interactive simulation platform designed to create unexpected scenarios on the fly. This tool facilitates empirical studies on situated communication with autonomous driving agents.

This dataset is the pure dialogue dataset, if you want to see the whole simulation process and download the full dataset, please visit our [Github homepage](https://github.com/sled-group/DOROTHIE) | 1,156 | [

[

-0.04144287109375,

-0.0634765625,

0.0543212890625,

0.0127410888671875,

-0.0032196044921875,

0.00324249267578125,

-0.01067352294921875,

-0.035888671875,

0.0146942138671875,

0.01953125,

-0.07403564453125,

-0.02789306640625,

-0.0157470703125,

-0.013374328613281... |

Iftisyed/testpak | 2023-07-06T19:06:56.000Z | [

"region:us"

] | Iftisyed | null | null | 0 | 3 | 2023-07-06T19:06:14 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

ChaiML/100_example_conversations | 2023-07-06T23:10:10.000Z | [

"region:us"

] | ChaiML | null | null | 1 | 3 | 2023-07-06T23:10:06 | ---

dataset_info:

features:

- name: conversation

dtype: string

- name: bot_label

dtype: string

- name: user_label

dtype: string

- name: description

dtype: string

- name: first_message

dtype: string

- name: prompt

dtype: string

- name: memory

dtype: string

- name: introduction

dtype: string

- name: name

dtype: string

splits:

- name: train

num_bytes: 394959

num_examples: 100

download_size: 217141

dataset_size: 394959

---

# Dataset Card for "100_example_conversations"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 674 | [

[

-0.047210693359375,

-0.0516357421875,

0.01322174072265625,

0.01496124267578125,

-0.00838470458984375,

-0.0205535888671875,

0.0032958984375,

-0.0030765533447265625,

0.055145263671875,

0.045074462890625,

-0.06768798828125,

-0.05401611328125,

-0.0187835693359375,

... |

pierre-loic/climate-news-articles | 2023-07-09T18:26:00.000Z | [

"task_categories:text-classification",

"size_categories:1K<n<10K",

"language:fr",

"license:cc",

"climate",

"news",

"region:us"

] | pierre-loic | null | null | 2 | 3 | 2023-07-09T16:37:55 | ---

license: cc

task_categories:

- text-classification

language:

- fr

tags:

- climate

- news

pretty_name: Titres de presse française avec labellisation "climat/pas climat"

size_categories:

- 1K<n<10K

---

# 🌍 Jeu de données d'articles de presse française labellisés comme traitant ou non des sujets liés au climat

*🇬🇧 / 🇺🇸 : as this data set is based only on French data, all explanations are written in French in this repository. The goal of the dataset is to train a model to classify titles of French newspapers in two categories : if it's about climate or not.*

## 🗺️ Le contexte

Ce jeu de données de classification de **titres d'article de presse française** a été réalisé pour l'association [Data for good](https://dataforgood.fr/) à Grenoble et plus particulièrement pour l'association [Quota climat](https://www.quotaclimat.org/).

## 💾 Le jeu de données

Le jeu de données d'entrainement contient 2007 titres d'articles de presse (1923 ne concernant pas le climat et 84 concernant le climat). Le jeu de données de test contient 502 titres d'articles de presse (481 ne concernant pas le climat et 21 concernant le climat).

| 1,193 | [

[

-0.017791748046875,

-0.03399658203125,

0.04595947265625,

0.021209716796875,

-0.034149169921875,

-0.004497528076171875,

-0.0015096664428710938,

-0.0027790069580078125,

0.017364501953125,

0.043701171875,

-0.0312042236328125,

-0.046356201171875,

-0.06939697265625,

... |

Gregor/mblip-train | 2023-09-21T14:16:27.000Z | [

"language:en",

"language:multilingual",

"license:other",

"region:us"

] | Gregor | null | null | 3 | 3 | 2023-07-10T14:58:47 | ---

license: other

language:

- en

- multilingual

pretty_name: mBLIP instructions

---

# mBLIP Instruct Mix Dataset Card

## Important!

This dataset currently does not work directly with `datasets.load_dataset(Gregor/mblip-train)`!

Please download the data files you need and load them with `datasets.load_dataset("json", data_files="filename")`.

## Dataset details

**Dataset type:**

This is the instruction mix used to train [mBLIP](https://github.com/gregor-ge/mBLIP).

See https://github.com/gregor-ge/mBLIP/data/README.md for more information on how to reproduce the data.

**Dataset date:**

The dataset was created in May 2023.

**Dataset languages:**

The original English examples were machine translated to the following 95 languages:

`

af, am, ar, az, be, bg, bn, ca, ceb, cs, cy, da, de, el, en, eo, es, et, eu, fa, fi, fil, fr, ga, gd, gl, gu, ha, hi, ht, hu, hy, id, ig, is, it, iw, ja, jv, ka, kk, km, kn, ko, ku, ky, lb, lo, lt, lv, mg, mi, mk, ml, mn, mr, ms, mt, my, ne, nl, no, ny, pa, pl, ps, pt, ro, ru, sd, si, sk, sl, sm, sn, so, sq, sr, st, su, sv, sw, ta, te, tg, th, tr, uk, ur, uz, vi, xh, yi, yo, zh, zu

`

Languages are translated proportional to their size in [mC4](https://www.tensorflow.org/datasets/catalog/c4#c4multilingual), i.e., as 6% of examples in mC4 are German, we translate 6% of the data to German.

**Dataset structure:**

- `task_mix_mt.json`: The instruction mix data in the processed, translated, and combined form.

- Folders: The folders contain 1) the separate tasks used to generate the mix

and 2) the files of the tasks used to evaluate the model.

**Images:**

We do not include any images with this dataset.

Images from the public datasets (MSCOCO for instruction training, and others for evaluation) can be downloaded

from the respective websites.

For the BLIP captions, we provide the URLs and filenames as used by us [here](blip_captions/ccs_synthetic_filtered_large_2273005_raw.json).

To download them, [our code](https://github.com/gregor-ge/mBLIP/tree/main/data#blip-web-capfilt) can be adapted, for example.

**License:**

Must comply with license of the original datasets used to create this mix. See https://github.com/gregor-ge/mBLIP/data/README.md for more.

Translations were produced with [NLLB](https://huggingface.co/facebook/nllb-200-distilled-1.3B) so use has to comply with

their license.

**Where to send questions or comments about the model:**

https://github.com/gregor-ge/mBLIP/issues

## Intended use

**Primary intended uses:**

The primary is research on large multilingual multimodal models and chatbots.

**Primary intended users:**

The primary intended users of the model are researchers and hobbyists in computer vision, natural language processing, machine learning, and artificial intelligence. | 2,784 | [

[

-0.03741455078125,

-0.039031982421875,

-0.00511932373046875,

0.04754638671875,

-0.02215576171875,

0.01410675048828125,

-0.02435302734375,

-0.0439453125,

0.0257720947265625,

0.031341552734375,

-0.04302978515625,

-0.044525146484375,

-0.038360595703125,

0.02131... |

DynamicSuperb/SpeechDetection_LJSpeech | 2023-07-12T05:56:53.000Z | [

"region:us"

] | DynamicSuperb | null | null | 0 | 3 | 2023-07-11T13:28:27 | ---

dataset_info:

features:

- name: file

dtype: string

- name: audio

dtype: audio

- name: instruction

dtype: string

- name: label

dtype: string

splits:

- name: test

num_bytes: 3800059090.8

num_examples: 13100

download_size: 3783855015

dataset_size: 3800059090.8

---

# Dataset Card for "speechDetection_LJSpeech"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 486 | [

[

-0.032135009765625,

-0.0278472900390625,

0.01032257080078125,

0.010345458984375,

-0.006603240966796875,

0.0144500732421875,

-0.0008988380432128906,

-0.017303466796875,

0.06591796875,

0.0252685546875,

-0.05780029296875,

-0.053375244140625,

-0.045440673828125,

... |

Sinsinnati/Tweet-Emotion-Detection | 2023-07-11T17:45:13.000Z | [

"task_categories:text-classification",

"size_categories:n<1K",

"language:en",

"region:us"

] | Sinsinnati | null | null | 0 | 3 | 2023-07-11T17:19:58 | ---

task_categories:

- text-classification

language:

- en

size_categories:

- n<1K

---

This data is gathered from twitter for emotion detection. Our labels fall into seven categories of sadness, happiness, fear, anger, disgust, and surprise, and if there is no dominant emotion in a tweet, then it is labeled as neutral. | 319 | [

[

-0.048797607421875,

-0.0239105224609375,

0.0281524658203125,

0.051239013671875,

-0.046417236328125,

0.0325927734375,

0.0022182464599609375,

-0.0233001708984375,

0.04901123046875,

0.011383056640625,

-0.046142578125,

-0.055877685546875,

-0.06744384765625,

0.03... |

AlekseyKorshuk/crowdsource-v2.0 | 2023-07-11T22:23:55.000Z | [

"region:us"

] | AlekseyKorshuk | null | null | 0 | 3 | 2023-07-11T19:22:13 | ---

dataset_info:

features:

- name: bot_id

dtype: string

- name: conversation_id

dtype: string

- name: conversation

list:

- name: content

dtype: string

- name: do_train

dtype: bool

- name: role

dtype: string

- name: bot_config

struct:

- name: bot_label

dtype: string

- name: description

dtype: string

- name: developer_uid

dtype: string

- name: first_message

dtype: string

- name: image_url

dtype: string

- name: introduction

dtype: string

- name: max_history

dtype: int64

- name: memory

dtype: string

- name: model

dtype: string

- name: name

dtype: string

- name: prompt

dtype: string

- name: repetition_penalty

dtype: float64

- name: response_length

dtype: int64

- name: temperature

dtype: float64

- name: theme

dtype: 'null'

- name: top_k

dtype: int64

- name: top_p

dtype: float64

- name: user_label

dtype: string

- name: conversation_history

dtype: string

- name: system

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 106588734

num_examples: 19541

download_size: 65719430

dataset_size: 106588734

---

# Dataset Card for "crowdsource-v2.0"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 1,471 | [

[

-0.032470703125,

0.0017156600952148438,

0.01139068603515625,

0.0173187255859375,

-0.02227783203125,

-0.00876617431640625,

0.0246734619140625,

-0.0294036865234375,

0.05206298828125,

0.03955078125,

-0.05908203125,

-0.040252685546875,

-0.04681396484375,

-0.0254... |

pytorch-survival/support_pycox | 2023-07-12T01:56:19.000Z | [

"region:us"

] | pytorch-survival | null | null | 0 | 3 | 2023-07-12T00:32:43 | ---

dataset_info:

features:

- name: x0

dtype: float32

- name: x1

dtype: float32

- name: x2

dtype: float32

- name: x3

dtype: float32

- name: x4

dtype: float32

- name: x5

dtype: float32

- name: x6

dtype: float32

- name: x7

dtype: float32

- name: x8

dtype: float32

- name: x9

dtype: float32

- name: x10

dtype: float32

- name: x11

dtype: float32

- name: x12

dtype: float32

- name: x13

dtype: float32

- name: event_time

dtype: float32

- name: event_indicator

dtype: int32

splits:

- name: train

num_bytes: 567872

num_examples: 8873

download_size: 212217

dataset_size: 567872

---

# Dataset Card for "support_pycox"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 854 | [

[

-0.04071044921875,

-0.0006914138793945312,

0.0185546875,

0.03765869140625,

-0.00402069091796875,

-0.0028743743896484375,

0.020416259765625,

-0.0063323974609375,

0.057037353515625,

0.028411865234375,

-0.042572021484375,

-0.04193115234375,

-0.04046630859375,

-... |

izumi-lab/oscar2301-ja-filter-ja-normal | 2023-07-29T03:16:00.000Z | [

"language:ja",

"license:cc0-1.0",

"region:us"

] | izumi-lab | null | null | 2 | 3 | 2023-07-12T16:38:36 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 68837059273.1919

num_examples: 31447063

download_size: 54798731310

dataset_size: 68837059273.1919

license: cc0-1.0

language:

- ja

---

# Dataset Card for "oscar2301-ja-filter-ja-normal"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 429 | [

[

-0.057464599609375,

-0.0146026611328125,

0.0106658935546875,

-0.0019388198852539062,

-0.034423828125,

-0.008819580078125,

0.0233154296875,

-0.01270294189453125,

0.07733154296875,

0.06024169921875,

-0.045684814453125,

-0.055450439453125,

-0.048797607421875,

-... |

ahuang11/tiger_layer_edges | 2023-07-12T21:40:42.000Z | [

"license:unknown",

"region:us"

] | ahuang11 | null | null | 0 | 3 | 2023-07-12T19:28:33 | ---

license: unknown

---

An unofficial re-packaged parquet files of TIGER/Line® Edges data provided by the US Census Bureau.

See LICENSE.pdf for more details. | 160 | [

[

-0.0258331298828125,

-0.049774169921875,

0.0053558349609375,

0.015777587890625,

-0.02093505859375,

-0.0085296630859375,

0.0394287109375,

-0.0208740234375,

0.042022705078125,

0.0831298828125,

-0.05828857421875,

-0.039520263671875,

-0.001007080078125,

-0.00700... |

Multimodal-Fatima/winoground-image-0 | 2023-07-13T03:39:46.000Z | [

"region:us"

] | Multimodal-Fatima | null | null | 0 | 3 | 2023-07-13T02:37:40 | ---

dataset_info:

features:

- name: id

dtype: int32

- name: image

dtype: image

- name: num_main_preds

dtype: int32

- name: tags_laion-ViT-H-14-2B

sequence: string

- name: attributes_laion-ViT-H-14-2B

sequence: string

- name: caption_Salesforce-blip-image-captioning-large

dtype: string

- name: intensive_captions_Salesforce-blip-image-captioning-large

sequence: string

splits:

- name: test

num_bytes: 186460141.0

num_examples: 400

download_size: 185328961

dataset_size: 186460141.0

---

# Dataset Card for "winoground-image-0"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 718 | [

[

-0.039093017578125,

-0.01424407958984375,

0.0167083740234375,

0.01178741455078125,

-0.0216827392578125,

0.001239776611328125,

0.02630615234375,

-0.0218505859375,

0.06878662109375,

0.03173828125,

-0.060211181640625,

-0.058135986328125,

-0.043731689453125,

-0.... |

Regemens/quotesTest | 2023-07-13T14:35:05.000Z | [

"task_categories:text-classification",

"task_ids:multi-label-classification",

"annotations_creators:expert-generated",

"language_creators:expert-generated",

"language_creators:crowdsourced",

"multilinguality:monolingual",

"source_datasets:original",

"language:en",

"region:us"

] | Regemens | null | null | 0 | 3 | 2023-07-13T14:34:20 | ---

annotations_creators:

- expert-generated

language_creators:

- expert-generated

- crowdsourced

language:

- en

multilinguality:

- monolingual

source_datasets:

- original

task_categories:

- text-classification

task_ids:

- multi-label-classification

---

# ****Dataset Card for English quotes****

# **I-Dataset Summary**

english_quotes is a dataset of all the quotes retrieved from [goodreads quotes](https://www.goodreads.com/quotes). This dataset can be used for multi-label text classification and text generation. The content of each quote is in English and concerns the domain of datasets for NLP and beyond.

# **II-Supported Tasks and Leaderboards**

- Multi-label text classification : The dataset can be used to train a model for text-classification, which consists of classifying quotes by author as well as by topic (using tags). Success on this task is typically measured by achieving a high or low accuracy.

- Text-generation : The dataset can be used to train a model to generate quotes by fine-tuning an existing pretrained model on the corpus composed of all quotes (or quotes by author).

# **III-Languages**

The texts in the dataset are in English (en).

# **IV-Dataset Structure**

#### Data Instances

A JSON-formatted example of a typical instance in the dataset:

```python

{'author': 'Ralph Waldo Emerson',

'quote': '“To be yourself in a world that is constantly trying to make you something else is the greatest accomplishment.”',

'tags': ['accomplishment', 'be-yourself', 'conformity', 'individuality']}

```

#### Data Fields

- **author** : The author of the quote.

- **quote** : The text of the quote.

- **tags**: The tags could be characterized as topics around the quote.

#### Data Splits

I kept the dataset as one block (train), so it can be shuffled and split by users later using methods of the hugging face dataset library like the (.train_test_split()) method.

# **V-Dataset Creation**

#### Curation Rationale

I want to share my datasets (created by web scraping and additional cleaning treatments) with the HuggingFace community so that they can use them in NLP tasks to advance artificial intelligence.

#### Source Data

The source of Data is [goodreads](https://www.goodreads.com/?ref=nav_home) site: from [goodreads quotes](https://www.goodreads.com/quotes)

#### Initial Data Collection and Normalization

The data collection process is web scraping using BeautifulSoup and Requests libraries.

The data is slightly modified after the web scraping: removing all quotes with "None" tags, and the tag "attributed-no-source" is removed from all tags, because it has not added value to the topic of the quote.

#### Who are the source Data producers ?

The data is machine-generated (using web scraping) and subjected to human additional treatment.

below, I provide the script I created to scrape the data (as well as my additional treatment):

```python

import requests

from bs4 import BeautifulSoup

import pandas as pd

import json

from collections import OrderedDict

page = requests.get('https://www.goodreads.com/quotes')

if page.status_code == 200:

pageParsed = BeautifulSoup(page.content, 'html5lib')

# Define a function that retrieves information about each HTML quote code in a dictionary form.

def extract_data_quote(quote_html):

quote = quote_html.find('div',{'class':'quoteText'}).get_text().strip().split('\n')[0]

author = quote_html.find('span',{'class':'authorOrTitle'}).get_text().strip()

if quote_html.find('div',{'class':'greyText smallText left'}) is not None:

tags_list = [tag.get_text() for tag in quote_html.find('div',{'class':'greyText smallText left'}).find_all('a')]

tags = list(OrderedDict.fromkeys(tags_list))

if 'attributed-no-source' in tags:

tags.remove('attributed-no-source')

else:

tags = None

data = {'quote':quote, 'author':author, 'tags':tags}

return data

# Define a function that retrieves all the quotes on a single page.

def get_quotes_data(page_url):

page = requests.get(page_url)

if page.status_code == 200:

pageParsed = BeautifulSoup(page.content, 'html5lib')

quotes_html_page = pageParsed.find_all('div',{'class':'quoteDetails'})

return [extract_data_quote(quote_html) for quote_html in quotes_html_page]

# Retrieve data from the first page.

data = get_quotes_data('https://www.goodreads.com/quotes')

# Retrieve data from all pages.

for i in range(2,101):

print(i)

url = f'https://www.goodreads.com/quotes?page={i}'

data_current_page = get_quotes_data(url)

if data_current_page is None:

continue

data = data + data_current_page

data_df = pd.DataFrame.from_dict(data)

for i, row in data_df.iterrows():

if row['tags'] is None:

data_df = data_df.drop(i)

# Produce the data in a JSON format.

data_df.to_json('C:/Users/Abir/Desktop/quotes.jsonl',orient="records", lines =True,force_ascii=False)

# Then I used the familiar process to push it to the Hugging Face hub.

```

#### Annotations

Annotations are part of the initial data collection (see the script above).

# **VI-Additional Informations**

#### Dataset Curators

Abir ELTAIEF

#### Licensing Information

This work is licensed under a Creative Commons Attribution 4.0 International License (all software and libraries used for web scraping are made available under this Creative Commons Attribution license).

#### Contributions

Thanks to [@Abirate](https://huggingface.co/Abirate)

for adding this dataset. | 5,550 | [

[

-0.02215576171875,

-0.046783447265625,

0.006610870361328125,

0.015960693359375,

-0.020599365234375,

-0.002895355224609375,

-0.0048065185546875,

-0.0279541015625,

0.031005859375,

0.02838134765625,

-0.048919677734375,

-0.04681396484375,

-0.028289794921875,

0.0... |

freQuensy23/ru-alpaca-cleaned | 2023-07-17T17:04:15.000Z | [

"license:cc-by-4.0",

"region:us"

] | freQuensy23 | null | null | 6 | 3 | 2023-07-13T22:18:39 | ---

license: cc-by-4.0

---

Translated with yandex.translate.ru into russian [alpaca's](https://huggingface.co/datasets/yahma/alpaca-cleaned) dataset. Code for reproducing the result - [colab](https://colab.research.google.com/drive/1oRTDtRWA4wcLOoR75MWv7vaZUf3BgVLZ?usp=sharing)

| 280 | [

[

-0.0162506103515625,

-0.048187255859375,

0.016754150390625,

0.00919342041015625,

-0.0389404296875,

-0.0200347900390625,

0.01291656494140625,

-0.04052734375,

0.0721435546875,

0.0291595458984375,

-0.0633544921875,

-0.042144775390625,

-0.040679931640625,

-0.005... |

daqc/wikihow-spanish | 2023-07-14T12:26:09.000Z | [

"task_categories:text-generation",

"task_categories:question-answering",

"size_categories:10K<n<100K",

"language:es",

"license:cc",

"wikihow",

"gpt2",

"spanish",

"region:us"

] | daqc | null | null | 1 | 3 | 2023-07-14T12:04:44 | ---

license: cc

task_categories:

- text-generation

- question-answering

language:

- es

tags:

- wikihow

- gpt2

- spanish

pretty_name: wikihow-spanish

size_categories:

- 10K<n<100K

---

## Wikihow en Español ##

Este dataset fue obtenido desde el repositorio Github de [Wikilingua](https://github.com/esdurmus/Wikilingua).

## Licencia ##

- Artículo proporcionado por wikiHow <https://www.wikihow.com/Main-Page>, una wiki que construye el manual de instrucciones más grande y de mayor calidad del mundo. Por favor, edita este artículo y encuentra los créditos del autor en wikiHow.com. El contenido de wikiHow se puede compartir bajo la[licencia Creative Commons](http://creativecommons.org/licenses/by-nc-sa/3.0/).

- Consulta [esta página web](https://www.wikihow.com/wikiHow:Attribution) para obtener las pautas específicas de atribución.

| 838 | [

[

-0.037872314453125,

-0.0228271484375,

0.01558685302734375,

0.0192718505859375,

-0.051025390625,

-0.0092315673828125,

-0.027374267578125,

-0.0170135498046875,

0.0469970703125,

0.03338623046875,

-0.049652099609375,

-0.057647705078125,

-0.0227203369140625,

0.02... |

aisyahhrazak/crawl-worldofbuzz | 2023-07-15T04:25:44.000Z | [

"language:en",

"region:us"

] | aisyahhrazak | null | null | 0 | 3 | 2023-07-14T14:03:17 | ---

language:

- en

---

About

- Data scraped from https://worldofbuzz.com | 75 | [

[

-0.046966552734375,

-0.1123046875,

0.020050048828125,

0.004230499267578125,

-0.0249176025390625,

0.0272064208984375,

0.01287078857421875,

-0.037353515625,

0.038665771484375,

0.0258026123046875,

-0.06463623046875,

-0.0364990234375,

-0.00736236572265625,

-0.01... |

davanstrien/blbooks-parquet-embedded | 2023-07-14T14:38:08.000Z | [

"task_categories:text-generation",

"task_categories:fill-mask",

"task_categories:other",

"task_ids:language-modeling",

"task_ids:masked-language-modeling",

"annotations_creators:no-annotation",

"language_creators:machine-generated",

"multilinguality:multilingual",

"size_categories:100K<n<1M",

"sou... | davanstrien | null | null | 0 | 3 | 2023-07-14T14:37:41 | ---

annotations_creators:

- no-annotation

language_creators:

- machine-generated

language:

- de

- en

- es

- fr

- it

- nl

license:

- cc0-1.0

multilinguality:

- multilingual

size_categories:

- 100K<n<1M

source_datasets: davanstrien/blbooks-parquet

task_categories:

- text-generation

- fill-mask

- other

task_ids:

- language-modeling

- masked-language-modeling

pretty_name: British Library Books

tags:

- embeddings

dataset_info:

- config_name: all

features:

- name: record_id

dtype: string

- name: date

dtype: int32

- name: raw_date

dtype: string

- name: title

dtype: string

- name: place

dtype: string

- name: empty_pg

dtype: bool

- name: text

dtype: string

- name: pg

dtype: int32

- name: mean_wc_ocr

dtype: float32

- name: std_wc_ocr

dtype: float64

- name: name

dtype: string

- name: all_names

dtype: string

- name: Publisher

dtype: string

- name: Country of publication 1

dtype: string

- name: all Countries of publication

dtype: string

- name: Physical description

dtype: string

- name: Language_1

dtype: string

- name: Language_2

dtype: string

- name: Language_3

dtype: string

- name: Language_4

dtype: string

- name: multi_language

dtype: bool

splits:

- name: train

num_bytes: 30394267732

num_examples: 14011953

download_size: 10486035662

dataset_size: 30394267732

- config_name: 1800s

features:

- name: record_id

dtype: string

- name: date

dtype: int32

- name: raw_date

dtype: string

- name: title

dtype: string

- name: place

dtype: string

- name: empty_pg

dtype: bool

- name: text

dtype: string

- name: pg

dtype: int32

- name: mean_wc_ocr

dtype: float32

- name: std_wc_ocr

dtype: float64

- name: name

dtype: string

- name: all_names

dtype: string

- name: Publisher

dtype: string

- name: Country of publication 1

dtype: string

- name: all Countries of publication

dtype: string

- name: Physical description

dtype: string

- name: Language_1

dtype: string

- name: Language_2

dtype: string

- name: Language_3

dtype: string

- name: Language_4

dtype: string

- name: multi_language

dtype: bool

splits:

- name: train

num_bytes: 30020434670

num_examples: 13781747

download_size: 10348577602

dataset_size: 30020434670

- config_name: 1700s

features:

- name: record_id

dtype: string

- name: date

dtype: int32

- name: raw_date

dtype: string

- name: title

dtype: string

- name: place

dtype: string

- name: empty_pg

dtype: bool

- name: text

dtype: string

- name: pg

dtype: int32

- name: mean_wc_ocr

dtype: float32

- name: std_wc_ocr

dtype: float64

- name: name

dtype: string

- name: all_names

dtype: string

- name: Publisher

dtype: string

- name: Country of publication 1

dtype: string

- name: all Countries of publication

dtype: string

- name: Physical description

dtype: string

- name: Language_1

dtype: string

- name: Language_2

dtype: string

- name: Language_3

dtype: string

- name: Language_4

dtype: string

- name: multi_language

dtype: bool

splits:

- name: train

num_bytes: 266382657

num_examples: 178224

download_size: 95137895

dataset_size: 266382657

- config_name: '1510_1699'

features:

- name: record_id

dtype: string

- name: date

dtype: timestamp[s]

- name: raw_date

dtype: string

- name: title

dtype: string

- name: place

dtype: string

- name: empty_pg

dtype: bool

- name: text

dtype: string

- name: pg

dtype: int32

- name: mean_wc_ocr

dtype: float32

- name: std_wc_ocr

dtype: float64

- name: name

dtype: string

- name: all_names

dtype: string

- name: Publisher

dtype: string

- name: Country of publication 1

dtype: string

- name: all Countries of publication

dtype: string

- name: Physical description

dtype: string

- name: Language_1

dtype: string

- name: Language_2

dtype: string

- name: Language_3

dtype: string

- name: Language_4

dtype: string

- name: multi_language

dtype: bool

splits:

- name: train

num_bytes: 107667469

num_examples: 51982

download_size: 42320165

dataset_size: 107667469

- config_name: '1500_1899'

features:

- name: record_id

dtype: string

- name: date

dtype: timestamp[s]

- name: raw_date

dtype: string

- name: title

dtype: string

- name: place

dtype: string

- name: empty_pg

dtype: bool

- name: text

dtype: string

- name: pg

dtype: int32

- name: mean_wc_ocr

dtype: float32

- name: std_wc_ocr

dtype: float64

- name: name

dtype: string

- name: all_names

dtype: string

- name: Publisher

dtype: string

- name: Country of publication 1

dtype: string

- name: all Countries of publication

dtype: string

- name: Physical description

dtype: string

- name: Language_1

dtype: string

- name: Language_2

dtype: string

- name: Language_3

dtype: string

- name: Language_4

dtype: string

- name: multi_language

dtype: bool

splits:

- name: train

num_bytes: 30452067039

num_examples: 14011953

download_size: 10486035662

dataset_size: 30452067039

- config_name: '1800_1899'

features:

- name: record_id

dtype: string

- name: date

dtype: timestamp[s]

- name: raw_date

dtype: string

- name: title

dtype: string

- name: place

dtype: string

- name: empty_pg

dtype: bool

- name: text

dtype: string

- name: pg

dtype: int32

- name: mean_wc_ocr

dtype: float32

- name: std_wc_ocr

dtype: float64

- name: name

dtype: string

- name: all_names

dtype: string

- name: Publisher

dtype: string

- name: Country of publication 1

dtype: string

- name: all Countries of publication

dtype: string

- name: Physical description

dtype: string

- name: Language_1

dtype: string

- name: Language_2

dtype: string

- name: Language_3

dtype: string

- name: Language_4

dtype: string

- name: multi_language

dtype: bool

splits:

- name: train

num_bytes: 30077284377

num_examples: 13781747

download_size: 10348577602

dataset_size: 30077284377

- config_name: '1700_1799'

features:

- name: record_id

dtype: string

- name: date

dtype: timestamp[s]

- name: raw_date

dtype: string

- name: title

dtype: string

- name: place

dtype: string

- name: empty_pg

dtype: bool

- name: text

dtype: string

- name: pg

dtype: int32

- name: mean_wc_ocr

dtype: float32

- name: std_wc_ocr

dtype: float64

- name: name

dtype: string

- name: all_names

dtype: string

- name: Publisher

dtype: string

- name: Country of publication 1

dtype: string

- name: all Countries of publication

dtype: string

- name: Physical description

dtype: string

- name: Language_1

dtype: string

- name: Language_2

dtype: string

- name: Language_3

dtype: string

- name: Language_4

dtype: string

- name: multi_language

dtype: bool

splits:

- name: train

num_bytes: 267117831

num_examples: 178224

download_size: 95137895

dataset_size: 267117831

---

# Dataset Card for "blbooks-parquet-embedded"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 7,553 | [

[

-0.0292510986328125,

-0.03363037109375,

0.0118255615234375,

0.044158935546875,

-0.029296875,

-0.004024505615234375,

0.012603759765625,

-0.01084136962890625,

0.052703857421875,

0.039154052734375,

-0.031036376953125,

-0.05316162109375,

-0.03271484375,

-0.03671... |

TinyPixel/open-assistant | 2023-09-03T02:26:32.000Z | [

"region:us"

] | TinyPixel | null | null | 2 | 3 | 2023-07-15T15:33:39 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 9599234

num_examples: 8274

download_size: 5137419

dataset_size: 9599234

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "open-assistant"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 442 | [

[

-0.037872314453125,

-0.031829833984375,

0.017425537109375,

0.00931549072265625,

0.00258636474609375,

-0.0214080810546875,

0.018798828125,

-0.0134124755859375,

0.059173583984375,

0.03192138671875,

-0.054229736328125,

-0.044830322265625,

-0.0338134765625,

-0.0... |

NeuroSenko/senko-voice | 2023-07-17T04:06:40.000Z | [

"region:us"

] | NeuroSenko | null | null | 0 | 3 | 2023-07-17T03:37:56 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

usamahanif719/scisumm | 2023-07-17T19:40:58.000Z | [

"region:us"

] | usamahanif719 | null | null | 0 | 3 | 2023-07-17T19:40:23 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

ranWang/test_paper_textClassifier | 2023-07-29T00:42:37.000Z | [

"region:us"

] | ranWang | null | null | 0 | 3 | 2023-07-18T04:54:57 | ---

dataset_info:

features:

- name: text

dtype: string

- name: label

dtype: int64

- name: file_path

dtype: string

splits:

- name: test

num_bytes: 10745576

num_examples: 387

- name: train

num_bytes: 325609267

num_examples: 13621

download_size: 153433963

dataset_size: 336354843

---

# Dataset Card for "test_paper_textClassifier"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 506 | [

[

-0.0279998779296875,

-0.0232391357421875,

0.0169677734375,

-0.0018644332885742188,

-0.0054779052734375,

0.00197601318359375,

0.005550384521484375,

0.0003120899200439453,

0.03656005859375,

0.0196075439453125,

-0.0360107421875,

-0.04827880859375,

-0.04824829101562... |

richardr1126/spider-skeleton-context-instruct | 2023-07-18T17:55:47.000Z | [

"source_datasets:spider",

"language:en",

"license:cc-by-4.0",

"text-to-sql",

"SQL",

"Spider",

"fine-tune",

"region:us"

] | richardr1126 | null | null | 2 | 3 | 2023-07-18T17:53:25 | ---

language:

- en

license:

- cc-by-4.0

source_datasets:

- spider

pretty_name: Spider Skeleton Context Instruct

tags:

- text-to-sql

- SQL

- Spider

- fine-tune

dataset_info:

features:

- name: db_id

dtype: string

- name: text

dtype: string

---

# Dataset Card for Spider Skeleton Context Instruct

### Dataset Summary

Spider is a large-scale complex and cross-domain semantic parsing and text-to-SQL dataset annotated by 11 Yale students

The goal of the Spider challenge is to develop natural language interfaces to cross-domain databases.

This dataset was created to finetune LLMs in a `### Instruction:` and `### Response:` format with database context.

### Yale Lily Spider Leaderboards

The leaderboard can be seen at https://yale-lily.github.io/spider

### Languages

The text in the dataset is in English.

### Licensing Information

The spider dataset is licensed under

the [CC BY-SA 4.0](https://creativecommons.org/licenses/by-sa/4.0/legalcode)

### Citation

```

@article{yu2018spider,

title={Spider: A large-scale human-labeled dataset for complex and cross-domain semantic parsing and text-to-sql task},

author={Yu, Tao and Zhang, Rui and Yang, Kai and Yasunaga, Michihiro and Wang, Dongxu and Li, Zifan and Ma, James and Li, Irene and Yao, Qingning and Roman, Shanelle and others},

journal={arXiv preprint arXiv:1809.08887},

year={2018}

}

``` | 1,386 | [

[

0.006282806396484375,

-0.0249176025390625,

0.01551055908203125,

0.00576019287109375,

-0.023284912109375,

0.01470947265625,

0.00189971923828125,

-0.038177490234375,

0.039703369140625,

0.0198211669921875,

-0.046539306640625,

-0.066650390625,

-0.03570556640625,

... |

klogram/wunderdrug | 2023-07-19T02:03:24.000Z | [

"size_categories:n<1K",

"language:en",

"license:mit",

"region:us"

] | klogram | null | null | 0 | 3 | 2023-07-19T01:45:38 | ---

license: mit

language:

- en

size_categories:

- n<1K

---

# Dataset Card for Wunderdrug

## Dataset Description

### Dataset Summary

A toy dataset containing 69 one-sentence descriptions of the outcomes of treatment with a *fictional* drug named "Wunderdrug".

Each description contains a comment on Wunderdrug's effect on risk of death from heart disease, mentioning possible confounders like diet, weight, or exercise.

## Considerations for Using the Data

This toy dataset was designed to test the ability of sentence embeddings to capture language features that can serve as

covariates when doing causal inference with text data.

### Citation Information

Forthcoming

| 678 | [

[

-0.01212310791015625,

-0.055938720703125,

0.0225677490234375,

-0.003314971923828125,

-0.0257110595703125,

-0.0190277099609375,

-0.0019083023071289062,

-0.00637054443359375,

0.02130126953125,

0.040283203125,

-0.051055908203125,

-0.0428466796875,

-0.0367431640625,... |

FunDialogues/healthcare-minor-consultation | 2023-07-19T05:37:13.000Z | [

"task_categories:question-answering",

"task_categories:conversational",

"size_categories:n<1K",

"language:en",

"license:apache-2.0",

"fictitious dialogues",

"prototyping",

"healthcare",

"region:us"

] | FunDialogues | null | null | 1 | 3 | 2023-07-19T04:27:42 | ---

license: apache-2.0

task_categories:

- question-answering

- conversational

language:

- en

tags:

- fictitious dialogues

- prototyping

- healthcare

pretty_name: 'healthcare-minor-consultation'

size_categories:

- n<1K

---

# fun dialogues

A library of fictitious dialogues that can be used to train language models or augment prompts for prototyping and educational purposes. Fun dialogues currently come in json and csv format for easy ingestion or conversion to popular data structures. Dialogues span various topics such as sports, retail, academia, healthcare, and more. The library also includes basic tooling for loading dialogues and will include quick chatbot prototyping functionality in the future.

Visit the Project Repo: https://github.com/eduand-alvarez/fun-dialogues/

# This Dialogue

Comprised of fictitious examples of dialogues between a doctor and a patient during a minor medical consultation.. Check out the example below:

```

"id": 1,

"description": "Discussion about a common cold",

"dialogue": "Patient: Doctor, I've been feeling congested and have a runny nose. What can I do to relieve these symptoms?\n\nDoctor: It sounds like you have a common cold. You can try over-the-counter decongestants to relieve congestion and saline nasal sprays to help with the runny nose. Make sure to drink plenty of fluids and get enough rest as well."

```

# How to Load Dialogues

Loading dialogues can be accomplished using the fun dialogues library or Hugging Face datasets library.

## Load using fun dialogues

1. Install fun dialogues package

`pip install fundialogues`

2. Use loader utility to load dataset as pandas dataframe. Further processing might be required for use.

```

from fundialogues import dialoader

# load as pandas dataframe

bball_coach = dialoader("FunDialogues/healthcare-minor-consultation")

```

## Loading using Hugging Face datasets

1. Install datasets package

2. Load using datasets

```

from datasets import load_dataset

dataset = load_dataset("FunDialogues/healthcare-minor-consultation")

```

## How to Contribute

If you want to contribute to this project and make it better, your help is very welcome. Contributing is also a great way to learn more about social coding on Github, new technologies and and their ecosystems and how to make constructive, helpful bug reports, feature requests and the noblest of all contributions: a good, clean pull request.

### Contributing your own Lifecycle Solution

If you want to contribute to an existing dialogue or add a new dialogue, please open an issue and I will follow up with you ASAP!

### Implementing Patches and Bug Fixes

- Create a personal fork of the project on Github.

- Clone the fork on your local machine. Your remote repo on Github is called origin.

- Add the original repository as a remote called upstream.

- If you created your fork a while ago be sure to pull upstream changes into your local repository.

- Create a new branch to work on! Branch from develop if it exists, else from master.

- Implement/fix your feature, comment your code.

- Follow the code style of the project, including indentation.

- If the component has tests run them!

- Write or adapt tests as needed.

- Add or change the documentation as needed.

- Squash your commits into a single commit with git's interactive rebase. Create a new branch if necessary.

- Push your branch to your fork on Github, the remote origin.

- From your fork open a pull request in the correct branch. Target the project's develop branch if there is one, else go for master!

If the maintainer requests further changes just push them to your branch. The PR will be updated automatically.

Once the pull request is approved and merged you can pull the changes from upstream to your local repo and delete your extra branch(es).

And last but not least: Always write your commit messages in the present tense. Your commit message should describe what the commit, when applied, does to the code – not what you did to the code.

# Disclaimer

The dialogues contained in this repository are provided for experimental purposes only. It is important to note that these dialogues are assumed to be original work by a human and are entirely fictitious, despite the possibility of some examples including factually correct information. The primary intention behind these dialogues is to serve as a tool for language modeling experimentation and should not be used for designing real-world products beyond non-production prototyping.

Please be aware that the utilization of fictitious data in these datasets may increase the likelihood of language model artifacts, such as hallucinations or unrealistic responses. Therefore, it is essential to exercise caution and discretion when employing these datasets for any purpose.

It is crucial to emphasize that none of the scenarios described in the fun dialogues dataset should be relied upon to provide advice or guidance to humans. These scenarios are purely fictitious and are intended solely for demonstration purposes. Any resemblance to real-world situations or individuals is entirely coincidental.

The responsibility for the usage and application of these datasets rests solely with the individual or entity employing them. By accessing and utilizing these dialogues and all contents of the repository, you acknowledge that you have read and understood this disclaimer, and you agree to use them at your own discretion and risk. | 5,431 | [

[

-0.00732421875,

-0.060302734375,

0.0283355712890625,

0.0185699462890625,

-0.0254974365234375,

0.00827789306640625,

-0.01525115966796875,

-0.0193023681640625,

0.042388916015625,

0.054168701171875,

-0.054351806640625,

-0.033050537109375,

-0.01151275634765625,

... |

mber/subset_wikitext_format_date_only_train | 2023-07-19T08:06:29.000Z | [

"region:us"

] | mber | null | null | 0 | 3 | 2023-07-19T08:06:27 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 1303054.8711650241

num_examples: 2852

download_size: 4222120

dataset_size: 1303054.8711650241

---

# Dataset Card for "subset_wikitext_format_date_only_train"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 402 | [

[

-0.0213470458984375,

-0.01088714599609375,

0.007293701171875,

0.0452880859375,

-0.016998291015625,

-0.0299835205078125,

0.01458740234375,

-0.0012178421020507812,

0.06341552734375,

0.03363037109375,

-0.08441162109375,

-0.0311431884765625,

-0.0219879150390625,

... |

qmeeus/AGV2 | 2023-07-19T13:41:40.000Z | [

"region:us"

] | qmeeus | null | null | 0 | 3 | 2023-07-19T13:41:35 | ---

dataset_info:

features:

- name: audio

dtype:

audio:

sampling_rate: 16000

- name: task

dtype: string

- name: language

dtype: string

- name: speaker

dtype: string

splits:

- name: train

num_bytes: 53888736.0

num_examples: 81

download_size: 30633674

dataset_size: 53888736.0

---

# Dataset Card for "AGV2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 493 | [

[

-0.0284881591796875,

-0.019805908203125,

0.00946807861328125,

0.00811767578125,

-0.009063720703125,

-0.0023479461669921875,

0.045684814453125,

-0.01169586181640625,

0.039337158203125,

0.0206146240234375,

-0.05291748046875,

-0.03668212890625,

-0.044921875,

-0... |

AlekseyKorshuk/synthetic-romantic-characters | 2023-07-20T00:23:35.000Z | [

"region:us"

] | AlekseyKorshuk | null | null | 0 | 3 | 2023-07-20T00:23:24 | ---

dataset_info:

features:

- name: name

dtype: string

- name: categories

sequence: string

- name: personalities

sequence: string

- name: description

dtype: string

- name: conversation

list:

- name: content

dtype: string

- name: role

dtype: string

splits:

- name: train

num_bytes: 14989220

num_examples: 5744

download_size: 7896899

dataset_size: 14989220

---

# Dataset Card for "synthetic-romantic-characters"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 610 | [

[

-0.0386962890625,

-0.03948974609375,

0.02490234375,

0.032867431640625,

-0.011138916015625,

0.01375579833984375,

0.0010366439819335938,

-0.029876708984375,

0.0675048828125,

0.03851318359375,

-0.07855224609375,

-0.046356201171875,

-0.01910400390625,

0.01073455... |

lavita/medical-qa-shared-task-v1-all | 2023-07-20T00:31:23.000Z | [

"region:us"

] | lavita | null | null | 1 | 3 | 2023-07-20T00:30:26 | ---

dataset_info:

features:

- name: id

dtype: int64

- name: ending0

dtype: string

- name: ending1

dtype: string

- name: ending2

dtype: string

- name: ending3

dtype: string

- name: ending4

dtype: string

- name: label

dtype: int64

- name: sent1

dtype: string

- name: sent2

dtype: string

- name: startphrase

dtype: string

splits:

- name: train

num_bytes: 16691926

num_examples: 10178

- name: dev

num_bytes: 2086503

num_examples: 1272

download_size: 10556685

dataset_size: 18778429

---

# Dataset Card for "medical-qa-shared-task-v1-all"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 753 | [

[

-0.016326904296875,

-0.013824462890625,

0.04022216796875,

0.0143890380859375,

-0.0248565673828125,

-0.006748199462890625,

0.04931640625,

-0.01314544677734375,

0.08172607421875,

0.036102294921875,

-0.07562255859375,

-0.058074951171875,

-0.047943115234375,

-0.... |

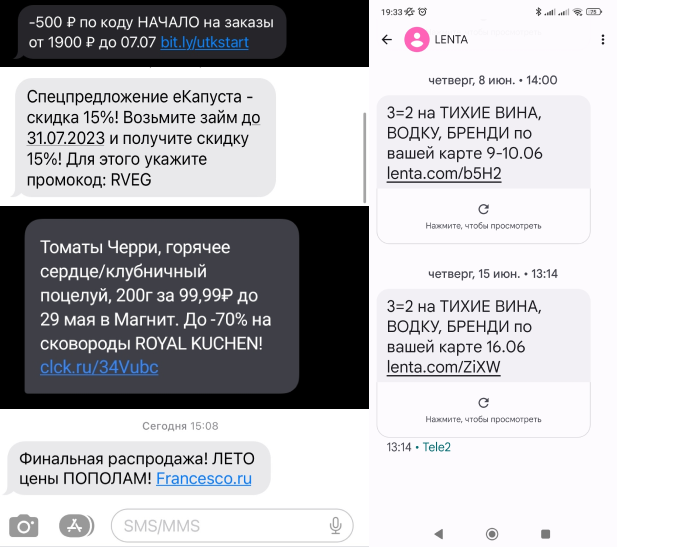

TrainingDataPro/russian-spam-text-messages | 2023-09-14T16:58:13.000Z | [

"task_categories:text-classification",

"language:en",

"license:cc-by-nc-nd-4.0",

"code",

"finance",

"region:us"

] | TrainingDataPro | The SMS spam dataset contains a collection of text messages on Russian.

The dataset includes a diverse range of spam messages, including promotional

offers, fraudulent schemes, phishing attempts, and other forms of unsolicited

communication.

Each SMS message is represented as a string of text, and each entry in the

dataset also has a link to the corresponding screenshot. The dataset's content

represents real-life examples of spam messages that users encounter in their

everyday communication. | @InProceedings{huggingface:dataset,

title = {russian-spam-text-messages},

author = {TrainingDataPro},

year = {2023}

} | 2 | 3 | 2023-07-20T10:20:06 | ---

language:

- en

license: cc-by-nc-nd-4.0

task_categories:

- text-classification

tags:

- code

- finance

dataset_info:

features:

- name: image

dtype: image

- name: message

dtype: string

splits:

- name: train

num_bytes: 56671464

num_examples: 100

download_size: 54193441

dataset_size: 56671464

---

# Russian Spam Text Messages

The SMS spam dataset contains a collection of text messages on Russian. The dataset includes a diverse range of spam messages, including *promotional offers, fraudulent schemes, phishing attempts, and other forms of unsolicited communication*.

Each SMS message is represented as a string of text, and each entry in the dataset also has a link to the corresponding screenshot. The dataset's content represents real-life examples of spam messages that users encounter in their everyday communication.

### The dataset's possible applications:

- spam detection

- fraud detection

- customer support automation

- trend and sentiment analysis

- educational purposes

- network security

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=russian-spam-text-messages) to discuss your requirements, learn about the price and buy the dataset.

# Content

- **images**: includes screenshots of spam messages on Russian

- **.csv** file: contains information about the dataset

### File with the extension .csv

includes the following information:

- **image**: link to the screenshot with the spam message,

- **text**: text of the spam message

# Spam messages might be collected in accordance with your requirements.

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=russian-spam-text-messages) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** | 2,313 | [

[

0.0079803466796875,

-0.059814453125,

-0.004878997802734375,

0.0228729248046875,

-0.03204345703125,

0.01520538330078125,

-0.01531982421875,

-0.0087432861328125,

0.015533447265625,

0.06353759765625,

-0.047027587890625,

-0.06634521484375,

-0.04827880859375,

-0.... |

ksgr5566/trialx2 | 2023-07-26T22:28:18.000Z | [

"region:us"

] | ksgr5566 | null | null | 0 | 3 | 2023-07-21T08:35:26 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

ittailup/lallama-data-chat | 2023-07-21T18:16:22.000Z | [

"region:us"

] | ittailup | null | null | 0 | 3 | 2023-07-21T18:04:52 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 8086191762

num_examples: 1054559

download_size: 4359870365

dataset_size: 8086191762

---

# Dataset Card for "lallama-data-chat"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 371 | [

[

-0.02996826171875,

-0.039947509765625,

0.0023345947265625,

0.032135009765625,

-0.00649261474609375,

0.00032401084899902344,

0.019622802734375,

-0.0234832763671875,

0.0672607421875,

0.0253753662109375,

-0.05523681640625,

-0.04986572265625,

-0.0307159423828125,

... |

ryandsilva/semeval_2017_puns | 2023-09-21T23:15:59.000Z | [

"task_categories:text-classification",

"task_categories:token-classification",

"size_categories:1K<n<10K",

"language:en",

"puns",

"humour",

"wordplay",

"region:us"

] | ryandsilva | null | null | 0 | 3 | 2023-07-22T04:36:33 | ---

configs:

- config_name: default

data_files:

- split: task_1

path:

- task1/semeval_task1.jsonl

- split: task_2

path:

- task2/semeval-task2-homo.json

- task2/semeval-task2-hetero.json

- split: task_3

path:

- task3/semeval-task3-homo.json

- task3/semeval-task3-hetero.json

task_categories:

- text-classification

- token-classification

language:

- en

tags:

- puns

- humour

- wordplay

size_categories:

- 1K<n<10K

---

# SemEval 2017 Task 7 Pun Dataset | 490 | [

[

-0.01641845703125,

-0.00765228271484375,

0.0204010009765625,

0.03192138671875,

-0.047698974609375,

-0.0131072998046875,

0.0011005401611328125,

-0.0136260986328125,

0.0182037353515625,

0.06927490234375,

-0.032562255859375,

-0.04290771484375,

-0.0491943359375,

... |

osbm/prostate158 | 2023-08-09T00:10:04.000Z | [

"region:us"

] | osbm | null | null | 0 | 3 | 2023-07-22T23:41:46 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

CreativeLang/pun_detection_semeval2017_task7 | 2023-07-22T23:50:29.000Z | [

"license:cc-by-2.0",

"region:us"

] | CreativeLang | null | null | 0 | 3 | 2023-07-22T23:48:40 | ---

license: cc-by-2.0

---

# Semeval2017 Task 7: Pun Detection

- paper: [SemEval-2017 Task 7: Detection and Interpretation of English Puns](https://aclanthology.org/S17-2005/) at Semeval 2017.

Metadata in Creative Language Toolkit ([CLTK](https://github.com/liyucheng09/cltk))

- CL Type: Pun

- Task Type: Detection

- Size: 4k

- Created time: 2017 | 348 | [

[

-0.0311737060546875,

-0.031982421875,

0.05108642578125,

0.054901123046875,

-0.052337646484375,

-0.018280029296875,

0.0005931854248046875,

-0.041961669921875,

0.03448486328125,

0.048492431640625,

-0.044677734375,

-0.037200927734375,

-0.052520751953125,

0.0393... |

youssef101/artelingo-dummy | 2023-07-23T16:21:23.000Z | [

"task_categories:image-to-text",

"task_categories:text-classification",

"task_categories:image-classification",

"task_categories:text-to-image",

"task_categories:text-generation",

"size_categories:100K<n<1M",

"language:en",

"language:ar",

"language:zh",

"license:mit",

"Affective Captioning",

"... | youssef101 | null | null | 0 | 3 | 2023-07-23T14:41:17 | ---

license: mit

dataset_info:

features:

- name: image

dtype: image

- name: art_style

dtype: string

- name: painting

dtype: string

- name: emotion

dtype: string

- name: language

dtype: string

- name: text

dtype: string

- name: split

dtype: string

splits:

- name: train

num_bytes: 18587167692.616

num_examples: 62989

- name: validation

num_bytes: 965978050.797

num_examples: 3191

- name: test

num_bytes: 2330046601.416

num_examples: 6402

download_size: 4565327615

dataset_size: 21883192344.829002

task_categories:

- image-to-text

- text-classification

- image-classification

- text-to-image

- text-generation

language:

- en

- ar

- zh

tags:

- Affective Captioning

- Emotions

- Prediction

- Art

- ArtELingo

pretty_name: ArtELingo

size_categories:

- 100K<n<1M

---

ArtELingo is a benchmark and dataset introduced in a research paper aimed at promoting work on diversity across languages and cultures. It is an extension of ArtEmis, which is a collection of 80,000 artworks from WikiArt with 450,000 emotion labels and English-only captions. ArtELingo expands this dataset by adding 790,000 annotations in Arabic and Chinese. The purpose of these additional annotations is to evaluate the performance of "cultural-transfer" in AI systems.

The dataset in ArtELingo contains many artworks with multiple annotations in three languages, providing a diverse set of data that enables the study of similarities and differences across languages and cultures. The researchers investigate captioning tasks and find that diversity in annotations improves the performance of baseline models.

The goal of ArtELingo is to encourage research on multilinguality and culturally-aware AI. By including annotations in multiple languages and considering cultural differences, the dataset aims to build more human-compatible AI that is sensitive to emotional nuances across various cultural contexts. The researchers believe that studying emotions in this way is crucial to understanding a significant aspect of human intelligence.

In summary, ArtELingo is a dataset that extends ArtEmis by providing annotations in multiple languages and cultures, facilitating research on diversity in AI systems and improving their performance in emotion-related tasks like label prediction and affective caption generation. The dataset is publicly available, and the researchers hope that it will facilitate future studies in multilingual and culturally-aware artificial intelligence. | 2,527 | [

[

-0.04400634765625,

-0.0125885009765625,

-0.00550079345703125,

0.0225677490234375,

-0.0240936279296875,

-0.021575927734375,

0.0005097389221191406,

-0.07733154296875,

0.01294708251953125,

0.00255584716796875,

-0.0193634033203125,

-0.03582763671875,

-0.046020507812... |

AlexWortega/SaigaSbs | 2023-07-23T18:43:05.000Z | [

"region:us"

] | AlexWortega | null | null | 0 | 3 | 2023-07-23T18:35:36 | ---

dataset_info:

features:

- name: 'Unnamed: 0'

dtype: int64

- name: inst

dtype: string

- name: good