id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 6.67k ⌀ | citation stringlengths 0 10.7k ⌀ | likes int64 0 3.66k | downloads int64 0 8.89M | created timestamp[us] | card stringlengths 11 977k | card_len int64 11 977k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|

renumics/speech_commands-ast-finetuned-results | 2023-10-09T09:18:38.000Z | [

"region:us"

] | renumics | null | null | 0 | 33 | 2023-10-05T16:46:44 | ---

dataset_info:

config_name: v0.01

features:

- name: probability

dtype: float64

- name: prediction

dtype:

class_label:

names:

'0': 'yes'

'1': 'no'

'2': up

'3': down

'4': left

'5': right

'6': 'on'

'7': 'off'

'8': stop

'9': go

'10': zero

'11': one

'12': two

'13': three

'14': four

'15': five

'16': six

'17': seven

'18': eight

'19': nine

'20': bed

'21': bird

'22': cat

'23': dog

'24': happy

'25': house

'26': marvin

'27': sheila

'28': tree

'29': wow

'30': _silence_

- name: embedding

sequence: float32

- name: entropy

dtype: float64

splits:

- name: train

num_bytes: 1839348

num_examples: 51093

- name: validation

num_bytes: 244764

num_examples: 6799

- name: test

num_bytes: 110916

num_examples: 3081

download_size: 0

dataset_size: 2195028

configs:

- config_name: v0.01

data_files:

- split: train

path: v0.01/train-*

- split: validation

path: v0.01/validation-*

- split: test

path: v0.01/test-*

---

# Dataset Card for "speech_commands-ast-finetuned-results"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 1,496 | [

[

-0.036346435546875,

-0.0386962890625,

-0.0010576248168945312,

0.0108489990234375,

-0.0158538818359375,

0.0018606185913085938,

-0.0251312255859375,

0.0036296844482421875,

0.057708740234375,

0.040740966796875,

-0.059051513671875,

-0.06219482421875,

-0.049133300781... |

McSpicyWithMilo/infographic-instructions | 2023-10-19T13:48:50.000Z | [

"language:en",

"region:us"

] | McSpicyWithMilo | null | null | 0 | 33 | 2023-10-08T09:21:48 | ---

language:

- en

---

# Dataset Card for Dataset Name

<!-- Provide a quick summary of the dataset. -->

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

## Dataset Details

### Dataset Description

<!-- Provide a longer summary of what this dataset is. -->

- **Curated by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

### Dataset Sources [optional]

<!-- Provide the basic links for the dataset. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the dataset is intended to be used. -->

### Direct Use

<!-- This section describes suitable use cases for the dataset. -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the dataset will not work well for. -->

[More Information Needed]

## Dataset Structure

<!-- This section provides a description of the dataset fields, and additional information about the dataset structure such as criteria used to create the splits, relationships between data points, etc. -->

[More Information Needed]

## Dataset Creation

### Curation Rationale

<!-- Motivation for the creation of this dataset. -->

[More Information Needed]

### Source Data

<!-- This section describes the source data (e.g. news text and headlines, social media posts, translated sentences, ...). -->

#### Data Collection and Processing

<!-- This section describes the data collection and processing process such as data selection criteria, filtering and normalization methods, tools and libraries used, etc. -->

[More Information Needed]

#### Who are the source data producers?

<!-- This section describes the people or systems who originally created the data. It should also include self-reported demographic or identity information for the source data creators if this information is available. -->

[More Information Needed]

### Annotations [optional]

<!-- If the dataset contains annotations which are not part of the initial data collection, use this section to describe them. -->

#### Annotation process

<!-- This section describes the annotation process such as annotation tools used in the process, the amount of data annotated, annotation guidelines provided to the annotators, interannotator statistics, annotation validation, etc. -->

[More Information Needed]

#### Who are the annotators?

<!-- This section describes the people or systems who created the annotations. -->

[More Information Needed]

#### Personal and Sensitive Information

<!-- State whether the dataset contains data that might be considered personal, sensitive, or private (e.g., data that reveals addresses, uniquely identifiable names or aliases, racial or ethnic origins, sexual orientations, religious beliefs, political opinions, financial or health data, etc.). If efforts were made to anonymize the data, describe the anonymization process. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users should be made aware of the risks, biases and limitations of the dataset. More information needed for further recommendations.

## Citation [optional]

<!-- If there is a paper or blog post introducing the dataset, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the dataset or dataset card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Dataset Card Authors [optional]

[More Information Needed]

## Dataset Card Contact

[More Information Needed] | 4,384 | [

[

-0.04034423828125,

-0.0419921875,

0.009765625,

0.0178070068359375,

-0.0300445556640625,

-0.00893402099609375,

-0.0026874542236328125,

-0.048431396484375,

0.043212890625,

0.059478759765625,

-0.05938720703125,

-0.069580078125,

-0.042205810546875,

0.00993347167... |

FinGPT/fingpt-sentiment-cls | 2023-10-10T06:49:38.000Z | [

"region:us"

] | FinGPT | null | null | 2 | 33 | 2023-10-10T06:39:32 | ---

dataset_info:

features:

- name: input

dtype: string

- name: output

dtype: string

- name: instruction

dtype: string

splits:

- name: train

num_bytes: 10908696

num_examples: 47557

download_size: 3902114

dataset_size: 10908696

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "fingpt-sentiment-cls"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 527 | [

[

-0.0667724609375,

-0.008544921875,

0.01251983642578125,

0.024169921875,

-0.03485107421875,

-0.0045166015625,

-0.0062255859375,

-0.0027980804443359375,

0.057861328125,

0.028778076171875,

-0.06707763671875,

-0.061370849609375,

-0.045867919921875,

-0.0204315185... |

carnival13/xlmr_eval2 | 2023-10-12T10:26:00.000Z | [

"region:us"

] | carnival13 | null | null | 0 | 33 | 2023-10-12T10:14:39 | ---

dataset_info:

features:

- name: domain_label

dtype: int64

- name: pass_label

dtype: int64

- name: input

dtype: string

- name: input_ids

sequence: int32

- name: attention_mask

sequence: int8

splits:

- name: train

num_bytes: 19326005

num_examples: 11590

download_size: 5464964

dataset_size: 19326005

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "xlmr_eval2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 604 | [

[

-0.025238037109375,

-0.031005859375,

0.0199432373046875,

0.0078277587890625,

-0.006221771240234375,

0.021209716796875,

0.0193634033203125,

0.001789093017578125,

0.0291748046875,

0.03826904296875,

-0.033721923828125,

-0.038238525390625,

-0.050994873046875,

-0... |

fury36/shortcut_key | 2023-10-12T11:42:40.000Z | [

"region:us"

] | fury36 | null | null | 0 | 33 | 2023-10-12T11:41:25 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

Cartinoe5930/Hermes_preference | 2023-10-19T11:55:36.000Z | [

"size_categories:100K<n<1M",

"language:en",

"license:mit",

"region:us"

] | Cartinoe5930 | null | null | 1 | 33 | 2023-10-12T12:20:06 | ---

license: mit

language:

- en

size_categories:

- 100K<n<1M

---

# The Hermes_preference dataset

<!-- Provide a quick summary of the dataset. -->

The **Hermes_preference** dataset is the type of feedback dataset, used for training reward models which is used for RLHF!

In addition, **Hermes_preference** dataset can be also used for DPO!

We collect the preference data from several popular feedback datasets([UltraFeedback](https://huggingface.co/datasets/openbmb/UltraFeedback), [hh-rlhf](https://huggingface.co/datasets/Anthropic/hh-rlhf), [rlhf-reward-datasets](https://huggingface.co/datasets/yitingxie/rlhf-reward-datasets)) through sampling and preprocessing.

As a result, we could have collected approximately 190K preference data.

To collect high-quality feedback data, we decided to collect feedback data from [UltraFeedback](https://huggingface.co/datasets/openbmb/UltraFeedback) & [rlhf-reward-datasets](https://huggingface.co/datasets/yitingxie/rlhf-reward-datasets) which are curated datasets.

In addition, we also collect the data from [hh-rlhf](https://huggingface.co/datasets/Anthropic/hh-rlhf) to accumulate the data that teach the models to output helpful and harmless response.

We hope that **Hermes_preference** dataset provides a promising way to future RLHF & DPO research!

## Dataset Details

<!-- Provide a longer summary of what this dataset is. -->

The **Hermes_preference** dataset is a mixture of several popular preference datasets(UltraFeedback, hh-rlhf, rlhf-reward-datasets) as we mentioned above.

The purpose of this dataset is to make a preference dataset that consists of more varied data.

To accomplish this purpose, we selected the UltraFeedback, hh-rlhf, and rlhf-reward-datasets as the base dataset.

More specifically, we sampled and preprocessed the datasets mentioned above to make Hermes_preference dataset more structural.

- **Curated by:** [More Information Needed]

- **Language(s) (NLP):** en

- **License:** MIT

### Source Data

The Hermes_preference dataset consists of the following datasets.

- [**openbmb/UltraFeedback**](https://huggingface.co/datasets/openbmb/UltraFeedback)

- [**Anthropic/hh-rlhf**](https://huggingface.co/datasets/Anthropic/hh-rlhf)

- [**yitingxie/rlhf-reward-datasets**](https://huggingface.co/datasets/yitingxie/rlhf-reward-datasets)

<!-- This section describes the source data (e.g. news text and headlines, social media posts, translated sentences, ...). -->

### Dataset Sources [optional]

<!-- Provide the basic links for the dataset. -->

- **Repository:** [gauss5930/Hermes](https://github.com/gauss5930/Hermes)

- **Model** [Cartinoe5930/Hermes-7b]()

## Dataset Structure

The structure of **Hermes_prference** dataset is as follows:

```

{

"source": The source dataset of data,

"prompt": The instruction of question,

"chosen": Choosed response,

"rejected": Rejected response

}

``` | 2,889 | [

[

-0.04010009765625,

-0.0179901123046875,

0.019622802734375,

0.008544921875,

-0.0193939208984375,

-0.0194549560546875,

0.0037555694580078125,

-0.0367431640625,

0.057220458984375,

0.048919677734375,

-0.071044921875,

-0.03759765625,

-0.022796630859375,

0.0133361... |

crumb/textbook-codex | 2023-10-12T21:49:53.000Z | [

"region:us"

] | crumb | null | null | 2 | 33 | 2023-10-12T18:37:01 | ---

dataset_info:

features:

- name: text

dtype: string

- name: src

dtype: string

- name: src_col

dtype: string

- name: model

dtype: string

splits:

- name: train

num_bytes: 12286698438.0

num_examples: 3593574

download_size: 5707800000

dataset_size: 12286698438.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "textbook-codex"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 562 | [

[

-0.04888916015625,

-0.0088653564453125,

0.014373779296875,

0.00022292137145996094,

-0.01276397705078125,

-0.00836181640625,

0.006862640380859375,

-0.0015926361083984375,

0.0423583984375,

0.035980224609375,

-0.053070068359375,

-0.07049560546875,

-0.02592468261718... |

sunjun/medqa | 2023-10-14T13:43:37.000Z | [

"region:us"

] | sunjun | null | null | 0 | 33 | 2023-10-14T13:43:01 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: question

dtype: string

- name: answer

dtype: string

- name: options

struct:

- name: A

dtype: string

- name: B

dtype: string

- name: C

dtype: string

- name: D

dtype: string

- name: meta_info

dtype: string

- name: answer_idx

dtype: string

- name: metamap_phrases

sequence: string

splits:

- name: train

num_bytes: 15175834

num_examples: 10178

- name: test

num_bytes: 1946030

num_examples: 1273

download_size: 8870009

dataset_size: 17121864

---

# Dataset Card for "medqa"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 864 | [

[

-0.0426025390625,

-0.00991058349609375,

0.024322509765625,

-0.00452423095703125,

-0.01027679443359375,

0.004970550537109375,

0.035186767578125,

-0.005802154541015625,

0.05767822265625,

0.0413818359375,

-0.06396484375,

-0.054779052734375,

-0.03411865234375,

-... |

ContextualAI/nq_open_neighbors | 2023-10-14T23:34:46.000Z | [

"region:us"

] | ContextualAI | null | null | 0 | 33 | 2023-10-14T23:08:41 | ---

dataset_info:

features:

- name: question

dtype: string

- name: answer

sequence: string

- name: neighbor

dtype: string

splits:

- name: validation

num_bytes: 1106156

num_examples: 3610

download_size: 744341

dataset_size: 1106156

configs:

- config_name: default

data_files:

- split: validation

path: data/validation-*

---

# Dataset Card for "nq_open_neighbors"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 538 | [

[

-0.041259765625,

-0.014892578125,

0.01544952392578125,

0.0011892318725585938,

-0.002277374267578125,

-0.01102447509765625,

0.025665283203125,

-0.005481719970703125,

0.060028076171875,

0.037689208984375,

-0.0487060546875,

-0.05902099609375,

-0.021820068359375,

... |

philTheThill/news-articles | 2023-10-16T07:09:23.000Z | [

"region:us"

] | philTheThill | null | null | 0 | 33 | 2023-10-16T06:41:22 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

bigbio/sem_eval_2024_task_2 | 2023-10-16T12:44:03.000Z | [

"multilinguality:monolingual",

"language:en",

"region:us"

] | bigbio | (Copied from dataset homepage)

## Dataset

The statements and evidence are generated by clinical domain experts, clinical trial organisers, and research oncologists from the Cancer Research UK Manchester Institute and the Digital Experimental Cancer Medicine Team. There are a total of (TBD) statements split evenly across the different sections and classes.

## Description

Each Clinical Trial Report (CTR) consists of 4 sections:

Eligibility criteria - A set of conditions for patients to be allowed to take part in the clinical trial

Intervention - Information concerning the type, dosage, frequency, and duration of treatments being studied.

Results - Number of participants in the trial, outcome measures, units, and the results.

Adverse events - These are signs and symptoms observed in patients during the clinical trial.

For this task, each CTR may contain 1-2 patient groups, called cohorts or arms. These groups may receive different treatments, or have different baseline characteristics. | @article{,

author = {},

title = {},

journal = {},

volume = {},

year = {},

url = {},

doi = {},

biburl = {},

bibsource = {}

} | 0 | 33 | 2023-10-16T09:54:10 | ---

language:

- en

bigbio_language:

- English

multilinguality: monolingual

pretty_name: SemEval 2024 Task 2

homepage: https://allenai.org/data/scitail

bigbio_pubmed: false

bigbio_public: true

bigbio_tasks:

- TEXTUAL_ENTAILMENT

---

# Dataset Card for SemEval 2024 Task 2

## Dataset Description

- **Homepage:** https://sites.google.com/view/nli4ct/semeval-2024?authuser=0

- **Pubmed:** False

- **Public:** True

- **Tasks:** TE

## Dataset

(Description copied from dataset homepage)

The statements and evidence are generated by clinical domain experts, clinical trial organisers, and research oncologists from the Cancer Research UK Manchester Institute and the Digital Experimental Cancer Medicine Team. There are a total of (TBD) statements split evenly across the different sections and classes.

## Description

Each Clinical Trial Report (CTR) consists of 4 sections:

Eligibility criteria - A set of conditions for patients to be allowed to take part in the clinical trial

Intervention - Information concerning the type, dosage, frequency, and duration of treatments being studied.

Results - Number of participants in the trial, outcome measures, units, and the results.

Adverse events - These are signs and symptoms observed in patients during the clinical trial.

For this task, each CTR may contain 1-2 patient groups, called cohorts or arms. These groups may receive different treatments, or have different baseline characteristics.

## Citation Information

```

@article{,

author = {},

title = {},

journal = {},

volume = {},

year = {},

url = {},

doi = {},

biburl = {},

bibsource = {}

}

| 1,656 | [

[

-0.00693511962890625,

-0.024688720703125,

0.034423828125,

0.01751708984375,

-0.0322265625,

-0.0132904052734375,

0.006725311279296875,

-0.0189056396484375,

0.00909423828125,

0.0687255859375,

-0.033477783203125,

-0.04974365234375,

-0.068603515625,

0.0155792236... |

Kabatubare/midjurney | 2023-10-21T06:44:07.000Z | [

"region:us"

] | Kabatubare | null | null | 0 | 33 | 2023-10-16T16:46:30 | Entry not found | 15 | [

[

-0.021392822265625,

-0.01494598388671875,

0.05718994140625,

0.028839111328125,

-0.0350341796875,

0.046539306640625,

0.052490234375,

0.00507354736328125,

0.051361083984375,

0.01702880859375,

-0.052093505859375,

-0.01494598388671875,

-0.06036376953125,

0.03790... |

Isamu136/bk-sdm-small_generated_images_pokemon_blip | 2023-10-19T15:26:07.000Z | [

"region:us"

] | Isamu136 | null | null | 0 | 33 | 2023-10-19T15:25:22 | ---

dataset_info:

features:

- name: image

dtype: image

- name: text

dtype: string

- name: seed

dtype: int64

splits:

- name: train

num_bytes: 33954051.0

num_examples: 833

download_size: 33930907

dataset_size: 33954051.0

---

# Dataset Card for "bk-sdm-small_generated_images_pokemon_blip"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 455 | [

[

-0.037841796875,

-0.006633758544921875,

0.022430419921875,

0.03192138671875,

-0.034820556640625,

-0.006710052490234375,

0.016632080078125,

-0.004077911376953125,

0.07806396484375,

0.040802001953125,

-0.04638671875,

-0.051910400390625,

-0.04266357421875,

-0.0... |

ChaiML/tiny_chai_prize_reward_model_data | 2023-10-20T11:05:01.000Z | [

"region:us"

] | ChaiML | null | null | 0 | 33 | 2023-10-20T11:04:58 | ---

dataset_info:

features:

- name: input_text

dtype: string

- name: labels

dtype: int64

splits:

- name: train

num_bytes: 137495.22787897263

num_examples: 90

- name: validation

num_bytes: 15277.24754210807

num_examples: 10

download_size: 107343

dataset_size: 152772.4754210807

---

# Dataset Card for "tiny_chai_prize_reward_model_data"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 508 | [

[

-0.021484375,

-0.01374053955078125,

0.0171661376953125,

-0.00029015541076660156,

-0.0118560791015625,

-0.0160064697265625,

0.01776123046875,

0.001361846923828125,

0.051727294921875,

0.0228729248046875,

-0.05816650390625,

-0.032012939453125,

-0.044586181640625,

... |

AlanRobotics/text2code | 2023-10-20T11:48:15.000Z | [

"region:us"

] | AlanRobotics | null | null | 0 | 33 | 2023-10-20T11:47:55 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 14682799.631957877

num_examples: 16750

- name: test

num_bytes: 1632201.3680421233

num_examples: 1862

download_size: 6097942

dataset_size: 16315001.0

---

# Dataset Card for "text2code"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 438 | [

[

-0.0224151611328125,

-0.01251983642578125,

0.016815185546875,

0.0243682861328125,

-0.01119232177734375,

0.00020456314086914062,

0.00832366943359375,

-0.0217742919921875,

0.03924560546875,

0.037872314453125,

-0.046600341796875,

-0.050445556640625,

-0.04931640625,... |

renumics/dmu_tiny | 2023-10-20T18:21:09.000Z | [

"region:us"

] | renumics | null | null | 0 | 33 | 2023-10-20T16:55:06 | Subset of the DMU datast (https://www.dmu-net.org/) with

- cleaned meshes

- voxels

- mesh representation of voxels | 114 | [

[

-0.04888916015625,

-0.053802490234375,

0.0396728515625,

-0.00536346435546875,

-0.0299835205078125,

0.0174560546875,

0.0200042724609375,

0.03826904296875,

0.03448486328125,

0.0287933349609375,

-0.058380126953125,

-0.03472900390625,

-0.00008893013000488281,

0.... |

Horus7/FromTo | 2023-10-31T16:06:11.000Z | [

"region:us"

] | Horus7 | null | null | 0 | 33 | 2023-10-22T12:54:04 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

andersonbcdefg/micropile | 2023-10-23T17:38:01.000Z | [

"region:us"

] | andersonbcdefg | null | null | 0 | 33 | 2023-10-23T17:37:57 | ---

dataset_info:

features:

- name: text

dtype: string

- name: __id

dtype: int64

splits:

- name: train

num_bytes: 5544284

num_examples: 1000

download_size: 2933209

dataset_size: 5544284

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "micropile"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 469 | [

[

-0.049713134765625,

-0.015716552734375,

0.0180816650390625,

0.0081634521484375,

0.00016570091247558594,

0.00823974609375,

0.0174102783203125,

0.00447845458984375,

0.062408447265625,

0.02862548828125,

-0.05389404296875,

-0.052642822265625,

-0.033599853515625,

... |

tingchih/mult_1023 | 2023-10-24T04:01:46.000Z | [

"region:us"

] | tingchih | null | null | 0 | 33 | 2023-10-24T04:01:42 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: text

dtype: string

- name: label

dtype: int64

splits:

- name: train

num_bytes: 47982484

num_examples: 277071

- name: test

num_bytes: 20569135

num_examples: 118745

download_size: 44901294

dataset_size: 68551619

---

# Dataset Card for "mult_1023"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 577 | [

[

-0.0498046875,

-0.01421356201171875,

0.02105712890625,

0.0187835693359375,

-0.006900787353515625,

-0.002979278564453125,

0.01788330078125,

-0.00031113624572753906,

0.059295654296875,

0.0316162109375,

-0.05377197265625,

-0.035247802734375,

-0.037139892578125,

... |

ComponentSoft/k8s-kubectl-cot-20k | 2023-10-27T03:54:10.000Z | [

"region:us"

] | ComponentSoft | null | null | 0 | 33 | 2023-10-26T20:30:51 | ---

dataset_info:

features:

- name: objective

dtype: string

- name: command_name

dtype: string

- name: command

dtype: string

- name: description

dtype: string

- name: syntax

dtype: string

- name: flags

list:

- name: default

dtype: string

- name: description

dtype: string

- name: option

dtype: string

- name: short

dtype: string

- name: question

dtype: string

- name: chain_of_thought

dtype: string

splits:

- name: train

num_bytes: 51338358

num_examples: 19661

download_size: 0

dataset_size: 51338358

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "k8s-kubectl-cot-20k"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 870 | [

[

-0.051300048828125,

-0.003170013427734375,

0.02593994140625,

0.0299530029296875,

-0.031219482421875,

0.0279388427734375,

0.01198577880859375,

-0.012115478515625,

0.044525146484375,

0.045562744140625,

-0.043365478515625,

-0.069091796875,

-0.05633544921875,

-0... |

lewtun/drug-reviews | 2021-08-10T21:35:52.000Z | [

"region:us"

] | lewtun | null | null | 7 | 32 | 2022-03-02T23:29:22 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

dl4phys/top_tagging | 2022-04-18T07:43:02.000Z | [

"license:cc-by-4.0",

"arxiv:1902.09914",

"region:us"

] | dl4phys | null | null | 0 | 32 | 2022-04-16T09:53:34 | ---

license: cc-by-4.0

---

# Dataset Card for Top Quark Tagging

## Table of Contents

- [Dataset Card for Top Quark Tagging](#dataset-card-for-top-quark-tagging)

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** https://zenodo.org/record/2603256

- **Paper:** https://arxiv.org/abs/1902.09914

- **Point of Contact:** [Gregor Kasieczka](gregor.kasieczka@uni-hamburg.de)

### Dataset Summary

Top Quark Tagging is a dataset of Monte Carlo simulated events produced by proton-proton collisions at the Large Hadron Collider. The top-quark signal and mixed quark-gluon background jets are produced with Pythia8 with its default tune for a center-of-mass energy of 14 TeV. Multiple interactions and pile-up are ignored. The leading 200 jet constituent four-momenta \\( (E, p_x, p_y, p_z) \\) are stored, with zero-padding applied to jets with fewer than 200 constituents.

### Supported Tasks and Leaderboards

- `tabular-classification`: The dataset can be used to train a model for tabular binary classification, which consists in predicting whether an event is produced from a top signal or quark-gluon background. Success on this task is typically measured by achieving a *high* [accuracy](https://huggingface.co/metrics/accuracy) and AUC score.

## Dataset Structure

### Data Instances

Each instance in the dataset consists of the four-momenta of the leading 200 jet constituents, sorted by \\(p_T\\). For jets with fewer than 200 constituents, zero-padding is applied. The four-momenta of the top-quark are also provided, along with a label in the `is_signal_new` column to indicate whether the event stems from a top-quark (1) or QCD background (0). An example instance looks as follows:

```

{'E_0': 474.0711364746094,

'PX_0': -250.34703063964844,

'PY_0': -223.65196228027344,

'PZ_0': -334.73809814453125,

...

'E_199': 0.0,

'PX_199': 0.0,

'PY_199': 0.0,

'PZ_199': 0.0,

'truthE': 0.0,

'truthPX': 0.0,

'truthPY': 0.0,

'truthPZ': 0.0,

'ttv': 0,

'is_signal_new': 0}

```

### Data Fields

The fields in the dataset have the following meaning:

- `E_i`: the energy of jet constituent \\(i\\).

- `PX_i`: the \\(x\\) component of the jet constituent's momentum

- `PY_i`: the \\(y\\) component of the jet constituent's momentum

- `PZ_i`: the \\(z\\) component of the jet constituent's momentum

- `truthE`: the energy of the top-quark

- `truthPX`: the \\(x\\) component of the top quark's momentum

- `truthPY`: the \\(y\\) component of the top quark's momentum

- `truthPZ`: the \\(z\\) component of the top quark's momentum

- `ttv`: a flag that indicates which split (train, validation, or test) that a jet belongs to. Redundant since each split is provided as a separate dataset

- `is_signal_new`: the label for each jet. A 1 indicates a top-quark, while a 0 indicates QCD background.

### Data Splits

| | train | validation | test |

|------------------|--------:|-----------:|-------:|

| Number of events | 1211000 | 403000 | 404000 |

### Licensing Information

This dataset is released under the [Creative Commons Attribution 4.0 International](https://creativecommons.org/licenses/by/4.0/legalcode) license.

### Citation Information

```

@dataset{kasieczka_gregor_2019_2603256,

author = {Kasieczka, Gregor and

Plehn, Tilman and

Thompson, Jennifer and

Russel, Michael},

title = {Top Quark Tagging Reference Dataset},

month = mar,

year = 2019,

publisher = {Zenodo},

version = {v0 (2018\_03\_27)},

doi = {10.5281/zenodo.2603256},

url = {https://doi.org/10.5281/zenodo.2603256}

}

```

### Contributions

Thanks to [@lewtun](https://github.com/lewtun) for adding this dataset.

| 4,232 | [

[

-0.0416259765625,

-0.0096282958984375,

0.01364898681640625,

-0.01416015625,

-0.0345458984375,

0.023834228515625,

-0.0030689239501953125,

0.01079559326171875,

0.0179901123046875,

0.0018491744995117188,

-0.04583740234375,

-0.06646728515625,

-0.034576416015625,

... |

merionum/ru_paraphraser | 2022-07-28T15:01:08.000Z | [

"task_categories:text-classification",

"task_categories:text-generation",

"task_categories:text2text-generation",

"task_categories:sentence-similarity",

"task_ids:semantic-similarity-scoring",

"annotations_creators:crowdsourced",

"annotations_creators:expert-generated",

"annotations_creators:machine-g... | merionum | null | null | 5 | 32 | 2022-05-26T14:53:46 | ---

annotations_creators:

- crowdsourced

- expert-generated

- machine-generated

language_creators:

- crowdsourced

language:

- ru

license:

- mit

multilinguality:

- monolingual

paperswithcode_id: null

pretty_name: ParaPhraser

size_categories:

- 1M<n<10M

source_datasets:

- original

task_categories:

- text-classification

- text-generation

- text2text-generation

- sentence-similarity

task_ids:

- semantic-similarity-scoring

---

# Dataset Card for ParaPhraser

### Dataset Summary

ParaPhraser is a news headlines corpus annotated according to the following schema:

```

1: precise paraphrases

0: near paraphrases

-1: non-paraphrases

```

The _Plus_ part is also available.

It contains clusters of news headline paraphrases labeled automatically by a fine-tuned paraphrase detection BERT model.

In order to load it:

```python

from datasets import load_dataset

corpus = load_dataset('merionum/ru_paraphraser', data_files='plus.jsonl')

```

## Dataset Structure

```

train: 7,227 pairs

test: 1,924 pairs

plus: 1,725,393 clusters (total: ~7m texts)

```

### Citation Information

```

@inproceedings{pivovarova2017paraphraser,

title={ParaPhraser: Russian paraphrase corpus and shared task},

author={Pivovarova, Lidia and Pronoza, Ekaterina and Yagunova, Elena and Pronoza, Anton},

booktitle={Conference on artificial intelligence and natural language},

pages={211--225},

year={2017},

organization={Springer}

}

```

```

@inproceedings{gudkov-etal-2020-automatically,

title = "Automatically Ranked {R}ussian Paraphrase Corpus for Text Generation",

author = "Gudkov, Vadim and

Mitrofanova, Olga and

Filippskikh, Elizaveta",

booktitle = "Proceedings of the Fourth Workshop on Neural Generation and Translation",

month = jul,

year = "2020",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2020.ngt-1.6",

doi = "10.18653/v1/2020.ngt-1.6",

pages = "54--59",

abstract = "The article is focused on automatic development and ranking of a large corpus for Russian paraphrase generation which proves to be the first corpus of such type in Russian computational linguistics. Existing manually annotated paraphrase datasets for Russian are limited to small-sized ParaPhraser corpus and ParaPlag which are suitable for a set of NLP tasks, such as paraphrase and plagiarism detection, sentence similarity and relatedness estimation, etc. Due to size restrictions, these datasets can hardly be applied in end-to-end text generation solutions. Meanwhile, paraphrase generation requires a large amount of training data. In our study we propose a solution to the problem: we collect, rank and evaluate a new publicly available headline paraphrase corpus (ParaPhraser Plus), and then perform text generation experiments with manual evaluation on automatically ranked corpora using the Universal Transformer architecture.",

}

```

### Contributions

Dataset maintainer:

Vadim Gudkov: [@merionum](https://github.com/merionum)

| 3,033 | [

[

-0.009368896484375,

-0.042724609375,

0.0276641845703125,

0.0255889892578125,

-0.0386962890625,

-0.00588226318359375,

-0.021575927734375,

0.0013666152954101562,

0.00656890869140625,

0.035003662109375,

-0.0142822265625,

-0.06231689453125,

-0.03619384765625,

0.... |

PiC/phrase_similarity | 2023-01-20T16:32:19.000Z | [

"task_categories:text-classification",

"task_ids:semantic-similarity-classification",

"annotations_creators:expert-generated",

"language_creators:found",

"language_creators:expert-generated",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:original",

"language:en",

"l... | PiC | Phrase in Context is a curated benchmark for phrase understanding and semantic search, consisting of three tasks of increasing difficulty: Phrase Similarity (PS), Phrase Retrieval (PR) and Phrase Sense Disambiguation (PSD). The datasets are annotated by 13 linguistic experts on Upwork and verified by two groups: ~1000 AMT crowdworkers and another set of 5 linguistic experts. PiC benchmark is distributed under CC-BY-NC 4.0. | @article{pham2022PiC,

title={PiC: A Phrase-in-Context Dataset for Phrase Understanding and Semantic Search},

author={Pham, Thang M and Yoon, Seunghyun and Bui, Trung and Nguyen, Anh},

journal={arXiv preprint arXiv:2207.09068},

year={2022}

} | 6 | 32 | 2022-06-14T01:35:19 | ---

annotations_creators:

- expert-generated

language_creators:

- found

- expert-generated

language:

- en

license:

- cc-by-nc-4.0

multilinguality:

- monolingual

paperswithcode_id: phrase-in-context

pretty_name: 'PiC: Phrase Similarity (PS)'

size_categories:

- 10K<n<100K

source_datasets:

- original

task_categories:

- text-classification

task_ids:

- semantic-similarity-classification

---

# Dataset Card for "PiC: Phrase Similarity"

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [https://phrase-in-context.github.io/](https://phrase-in-context.github.io/)

- **Repository:** [https://github.com/phrase-in-context](https://github.com/phrase-in-context)

- **Paper:**

- **Leaderboard:**

- **Point of Contact:** [Thang Pham](<thangpham@auburn.edu>)

- **Size of downloaded dataset files:** 4.60 MB

- **Size of the generated dataset:** 2.96 MB

- **Total amount of disk used:** 7.56 MB

### Dataset Summary

PS is a binary classification task with the goal of predicting whether two multi-word noun phrases are semantically similar or not given *the same context* sentence.

This dataset contains ~10K pairs of two phrases along with their contexts used for disambiguation, since two phrases are not enough for semantic comparison.

Our ~10K examples were annotated by linguistic experts on <upwork.com> and verified in two rounds by 1000 Mturkers and 5 linguistic experts.

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

English.

## Dataset Structure

### Data Instances

**PS**

* Size of downloaded dataset files: 4.60 MB

* Size of the generated dataset: 2.96 MB

* Total amount of disk used: 7.56 MB

```

{

"phrase1": "annual run",

"phrase2": "yearlong performance",

"sentence1": "since 2004, the club has been a sponsor of the annual run for rigby to raise money for off-campus housing safety awareness.",

"sentence2": "since 2004, the club has been a sponsor of the yearlong performance for rigby to raise money for off-campus housing safety awareness.",

"label": 0,

"idx": 0,

}

```

### Data Fields

The data fields are the same among all splits.

* phrase1: a string feature.

* phrase2: a string feature.

* sentence1: a string feature.

* sentence2: a string feature.

* label: a classification label, with negative (0) and positive (1).

* idx: an int32 feature.

### Data Splits

| name |train |validation|test |

|--------------------|----:|--------:|----:|

|PS |7362| 1052|2102|

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

The source passages + answers are from Wikipedia and the source of queries were produced by our hired linguistic experts from [Upwork.com](https://upwork.com).

#### Who are the source language producers?

We hired 13 linguistic experts from [Upwork.com](https://upwork.com) for annotation and more than 1000 human annotators on Mechanical Turk along with another set of 5 Upwork experts for 2-round verification.

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

13 linguistic experts from [Upwork.com](https://upwork.com).

### Personal and Sensitive Information

No annotator identifying details are provided.

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

This dataset is a joint work between Adobe Research and Auburn University.

Creators: [Thang M. Pham](https://scholar.google.com/citations?user=eNrX3mYAAAAJ), [David Seunghyun Yoon](https://david-yoon.github.io/), [Trung Bui](https://sites.google.com/site/trungbuistanford/), and [Anh Nguyen](https://anhnguyen.me).

[@PMThangXAI](https://twitter.com/pmthangxai) added this dataset to HuggingFace.

### Licensing Information

This dataset is distributed under [Creative Commons Attribution-NonCommercial 4.0 International (CC-BY-NC 4.0)](https://creativecommons.org/licenses/by-nc/4.0/)

### Citation Information

```

@article{pham2022PiC,

title={PiC: A Phrase-in-Context Dataset for Phrase Understanding and Semantic Search},

author={Pham, Thang M and Yoon, Seunghyun and Bui, Trung and Nguyen, Anh},

journal={arXiv preprint arXiv:2207.09068},

year={2022}

}

``` | 5,470 | [

[

-0.027191162109375,

-0.0506591796875,

0.01215362548828125,

0.0189361572265625,

-0.030517578125,

-0.0021686553955078125,

-0.02191162109375,

-0.038665771484375,

0.037200927734375,

0.0264892578125,

-0.035858154296875,

-0.06622314453125,

-0.0380859375,

0.0183563... |

BeIR/scidocs-generated-queries | 2022-10-23T06:12:52.000Z | [

"task_categories:text-retrieval",

"task_ids:entity-linking-retrieval",

"task_ids:fact-checking-retrieval",

"multilinguality:monolingual",

"language:en",

"license:cc-by-sa-4.0",

"region:us"

] | BeIR | null | null | 2 | 32 | 2022-06-17T12:53:49 | ---

annotations_creators: []

language_creators: []

language:

- en

license:

- cc-by-sa-4.0

multilinguality:

- monolingual

paperswithcode_id: beir

pretty_name: BEIR Benchmark

size_categories:

msmarco:

- 1M<n<10M

trec-covid:

- 100k<n<1M

nfcorpus:

- 1K<n<10K

nq:

- 1M<n<10M

hotpotqa:

- 1M<n<10M

fiqa:

- 10K<n<100K

arguana:

- 1K<n<10K

touche-2020:

- 100K<n<1M

cqadupstack:

- 100K<n<1M

quora:

- 100K<n<1M

dbpedia:

- 1M<n<10M

scidocs:

- 10K<n<100K

fever:

- 1M<n<10M

climate-fever:

- 1M<n<10M

scifact:

- 1K<n<10K

source_datasets: []

task_categories:

- text-retrieval

- zero-shot-retrieval

- information-retrieval

- zero-shot-information-retrieval

task_ids:

- passage-retrieval

- entity-linking-retrieval

- fact-checking-retrieval

- tweet-retrieval

- citation-prediction-retrieval

- duplication-question-retrieval

- argument-retrieval

- news-retrieval

- biomedical-information-retrieval

- question-answering-retrieval

---

# Dataset Card for BEIR Benchmark

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** https://github.com/UKPLab/beir

- **Repository:** https://github.com/UKPLab/beir

- **Paper:** https://openreview.net/forum?id=wCu6T5xFjeJ

- **Leaderboard:** https://docs.google.com/spreadsheets/d/1L8aACyPaXrL8iEelJLGqlMqXKPX2oSP_R10pZoy77Ns

- **Point of Contact:** nandan.thakur@uwaterloo.ca

### Dataset Summary

BEIR is a heterogeneous benchmark that has been built from 18 diverse datasets representing 9 information retrieval tasks:

- Fact-checking: [FEVER](http://fever.ai), [Climate-FEVER](http://climatefever.ai), [SciFact](https://github.com/allenai/scifact)

- Question-Answering: [NQ](https://ai.google.com/research/NaturalQuestions), [HotpotQA](https://hotpotqa.github.io), [FiQA-2018](https://sites.google.com/view/fiqa/)

- Bio-Medical IR: [TREC-COVID](https://ir.nist.gov/covidSubmit/index.html), [BioASQ](http://bioasq.org), [NFCorpus](https://www.cl.uni-heidelberg.de/statnlpgroup/nfcorpus/)

- News Retrieval: [TREC-NEWS](https://trec.nist.gov/data/news2019.html), [Robust04](https://trec.nist.gov/data/robust/04.guidelines.html)

- Argument Retrieval: [Touche-2020](https://webis.de/events/touche-20/shared-task-1.html), [ArguAna](tp://argumentation.bplaced.net/arguana/data)

- Duplicate Question Retrieval: [Quora](https://www.quora.com/q/quoradata/First-Quora-Dataset-Release-Question-Pairs), [CqaDupstack](http://nlp.cis.unimelb.edu.au/resources/cqadupstack/)

- Citation-Prediction: [SCIDOCS](https://allenai.org/data/scidocs)

- Tweet Retrieval: [Signal-1M](https://research.signal-ai.com/datasets/signal1m-tweetir.html)

- Entity Retrieval: [DBPedia](https://github.com/iai-group/DBpedia-Entity/)

All these datasets have been preprocessed and can be used for your experiments.

```python

```

### Supported Tasks and Leaderboards

The dataset supports a leaderboard that evaluates models against task-specific metrics such as F1 or EM, as well as their ability to retrieve supporting information from Wikipedia.

The current best performing models can be found [here](https://eval.ai/web/challenges/challenge-page/689/leaderboard/).

### Languages

All tasks are in English (`en`).

## Dataset Structure

All BEIR datasets must contain a corpus, queries and qrels (relevance judgments file). They must be in the following format:

- `corpus` file: a `.jsonl` file (jsonlines) that contains a list of dictionaries, each with three fields `_id` with unique document identifier, `title` with document title (optional) and `text` with document paragraph or passage. For example: `{"_id": "doc1", "title": "Albert Einstein", "text": "Albert Einstein was a German-born...."}`

- `queries` file: a `.jsonl` file (jsonlines) that contains a list of dictionaries, each with two fields `_id` with unique query identifier and `text` with query text. For example: `{"_id": "q1", "text": "Who developed the mass-energy equivalence formula?"}`

- `qrels` file: a `.tsv` file (tab-seperated) that contains three columns, i.e. the `query-id`, `corpus-id` and `score` in this order. Keep 1st row as header. For example: `q1 doc1 1`

### Data Instances

A high level example of any beir dataset:

```python

corpus = {

"doc1" : {

"title": "Albert Einstein",

"text": "Albert Einstein was a German-born theoretical physicist. who developed the theory of relativity, \

one of the two pillars of modern physics (alongside quantum mechanics). His work is also known for \

its influence on the philosophy of science. He is best known to the general public for his mass–energy \

equivalence formula E = mc2, which has been dubbed 'the world's most famous equation'. He received the 1921 \

Nobel Prize in Physics 'for his services to theoretical physics, and especially for his discovery of the law \

of the photoelectric effect', a pivotal step in the development of quantum theory."

},

"doc2" : {

"title": "", # Keep title an empty string if not present

"text": "Wheat beer is a top-fermented beer which is brewed with a large proportion of wheat relative to the amount of \

malted barley. The two main varieties are German Weißbier and Belgian witbier; other types include Lambic (made\

with wild yeast), Berliner Weisse (a cloudy, sour beer), and Gose (a sour, salty beer)."

},

}

queries = {

"q1" : "Who developed the mass-energy equivalence formula?",

"q2" : "Which beer is brewed with a large proportion of wheat?"

}

qrels = {

"q1" : {"doc1": 1},

"q2" : {"doc2": 1},

}

```

### Data Fields

Examples from all configurations have the following features:

### Corpus

- `corpus`: a `dict` feature representing the document title and passage text, made up of:

- `_id`: a `string` feature representing the unique document id

- `title`: a `string` feature, denoting the title of the document.

- `text`: a `string` feature, denoting the text of the document.

### Queries

- `queries`: a `dict` feature representing the query, made up of:

- `_id`: a `string` feature representing the unique query id

- `text`: a `string` feature, denoting the text of the query.

### Qrels

- `qrels`: a `dict` feature representing the query document relevance judgements, made up of:

- `_id`: a `string` feature representing the query id

- `_id`: a `string` feature, denoting the document id.

- `score`: a `int32` feature, denoting the relevance judgement between query and document.

### Data Splits

| Dataset | Website| BEIR-Name | Type | Queries | Corpus | Rel D/Q | Down-load | md5 |

| -------- | -----| ---------| --------- | ----------- | ---------| ---------| :----------: | :------:|

| MSMARCO | [Homepage](https://microsoft.github.io/msmarco/)| ``msmarco`` | ``train``<br>``dev``<br>``test``| 6,980 | 8.84M | 1.1 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/msmarco.zip) | ``444067daf65d982533ea17ebd59501e4`` |

| TREC-COVID | [Homepage](https://ir.nist.gov/covidSubmit/index.html)| ``trec-covid``| ``test``| 50| 171K| 493.5 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/trec-covid.zip) | ``ce62140cb23feb9becf6270d0d1fe6d1`` |

| NFCorpus | [Homepage](https://www.cl.uni-heidelberg.de/statnlpgroup/nfcorpus/) | ``nfcorpus`` | ``train``<br>``dev``<br>``test``| 323 | 3.6K | 38.2 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/nfcorpus.zip) | ``a89dba18a62ef92f7d323ec890a0d38d`` |

| BioASQ | [Homepage](http://bioasq.org) | ``bioasq``| ``train``<br>``test`` | 500 | 14.91M | 8.05 | No | [How to Reproduce?](https://github.com/UKPLab/beir/blob/main/examples/dataset#2-bioasq) |

| NQ | [Homepage](https://ai.google.com/research/NaturalQuestions) | ``nq``| ``train``<br>``test``| 3,452 | 2.68M | 1.2 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/nq.zip) | ``d4d3d2e48787a744b6f6e691ff534307`` |

| HotpotQA | [Homepage](https://hotpotqa.github.io) | ``hotpotqa``| ``train``<br>``dev``<br>``test``| 7,405 | 5.23M | 2.0 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/hotpotqa.zip) | ``f412724f78b0d91183a0e86805e16114`` |

| FiQA-2018 | [Homepage](https://sites.google.com/view/fiqa/) | ``fiqa`` | ``train``<br>``dev``<br>``test``| 648 | 57K | 2.6 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/fiqa.zip) | ``17918ed23cd04fb15047f73e6c3bd9d9`` |

| Signal-1M(RT) | [Homepage](https://research.signal-ai.com/datasets/signal1m-tweetir.html)| ``signal1m`` | ``test``| 97 | 2.86M | 19.6 | No | [How to Reproduce?](https://github.com/UKPLab/beir/blob/main/examples/dataset#4-signal-1m) |

| TREC-NEWS | [Homepage](https://trec.nist.gov/data/news2019.html) | ``trec-news`` | ``test``| 57 | 595K | 19.6 | No | [How to Reproduce?](https://github.com/UKPLab/beir/blob/main/examples/dataset#1-trec-news) |

| ArguAna | [Homepage](http://argumentation.bplaced.net/arguana/data) | ``arguana``| ``test`` | 1,406 | 8.67K | 1.0 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/arguana.zip) | ``8ad3e3c2a5867cdced806d6503f29b99`` |

| Touche-2020| [Homepage](https://webis.de/events/touche-20/shared-task-1.html) | ``webis-touche2020``| ``test``| 49 | 382K | 19.0 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/webis-touche2020.zip) | ``46f650ba5a527fc69e0a6521c5a23563`` |

| CQADupstack| [Homepage](http://nlp.cis.unimelb.edu.au/resources/cqadupstack/) | ``cqadupstack``| ``test``| 13,145 | 457K | 1.4 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/cqadupstack.zip) | ``4e41456d7df8ee7760a7f866133bda78`` |

| Quora| [Homepage](https://www.quora.com/q/quoradata/First-Quora-Dataset-Release-Question-Pairs) | ``quora``| ``dev``<br>``test``| 10,000 | 523K | 1.6 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/quora.zip) | ``18fb154900ba42a600f84b839c173167`` |

| DBPedia | [Homepage](https://github.com/iai-group/DBpedia-Entity/) | ``dbpedia-entity``| ``dev``<br>``test``| 400 | 4.63M | 38.2 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/dbpedia-entity.zip) | ``c2a39eb420a3164af735795df012ac2c`` |

| SCIDOCS| [Homepage](https://allenai.org/data/scidocs) | ``scidocs``| ``test``| 1,000 | 25K | 4.9 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/scidocs.zip) | ``38121350fc3a4d2f48850f6aff52e4a9`` |

| FEVER | [Homepage](http://fever.ai) | ``fever``| ``train``<br>``dev``<br>``test``| 6,666 | 5.42M | 1.2| [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/fever.zip) | ``5a818580227bfb4b35bb6fa46d9b6c03`` |

| Climate-FEVER| [Homepage](http://climatefever.ai) | ``climate-fever``|``test``| 1,535 | 5.42M | 3.0 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/climate-fever.zip) | ``8b66f0a9126c521bae2bde127b4dc99d`` |

| SciFact| [Homepage](https://github.com/allenai/scifact) | ``scifact``| ``train``<br>``test``| 300 | 5K | 1.1 | [Link](https://public.ukp.informatik.tu-darmstadt.de/thakur/BEIR/datasets/scifact.zip) | ``5f7d1de60b170fc8027bb7898e2efca1`` |

| Robust04 | [Homepage](https://trec.nist.gov/data/robust/04.guidelines.html) | ``robust04``| ``test``| 249 | 528K | 69.9 | No | [How to Reproduce?](https://github.com/UKPLab/beir/blob/main/examples/dataset#3-robust04) |

## Dataset Creation

### Curation Rationale

[Needs More Information]

### Source Data

#### Initial Data Collection and Normalization

[Needs More Information]

#### Who are the source language producers?

[Needs More Information]

### Annotations

#### Annotation process

[Needs More Information]

#### Who are the annotators?

[Needs More Information]

### Personal and Sensitive Information

[Needs More Information]

## Considerations for Using the Data

### Social Impact of Dataset

[Needs More Information]

### Discussion of Biases

[Needs More Information]

### Other Known Limitations

[Needs More Information]

## Additional Information

### Dataset Curators

[Needs More Information]

### Licensing Information

[Needs More Information]

### Citation Information

Cite as:

```

@inproceedings{

thakur2021beir,

title={{BEIR}: A Heterogeneous Benchmark for Zero-shot Evaluation of Information Retrieval Models},

author={Nandan Thakur and Nils Reimers and Andreas R{\"u}ckl{\'e} and Abhishek Srivastava and Iryna Gurevych},

booktitle={Thirty-fifth Conference on Neural Information Processing Systems Datasets and Benchmarks Track (Round 2)},

year={2021},

url={https://openreview.net/forum?id=wCu6T5xFjeJ}

}

```

### Contributions

Thanks to [@Nthakur20](https://github.com/Nthakur20) for adding this dataset. | 13,988 | [

[

-0.0396728515625,

-0.03985595703125,

0.01094818115234375,

0.0036602020263671875,

0.00423431396484375,

0.00009590387344360352,

-0.0081939697265625,

-0.0188751220703125,

0.021697998046875,

0.00595855712890625,

-0.034332275390625,

-0.0545654296875,

-0.0263824462890... |

owaiskha9654/PubMed_MultiLabel_Text_Classification_Dataset_MeSH | 2023-01-30T09:50:44.000Z | [

"task_categories:text-classification",

"task_ids:multi-label-classification",

"size_categories:10K<n<100K",

"source_datasets:BioASQ Task A",

"language:en",

"license:afl-3.0",

"region:us"

] | owaiskha9654 | null | null | 6 | 32 | 2022-08-02T20:13:50 | ---

language:

- en

license: afl-3.0

source_datasets:

- BioASQ Task A

task_categories:

- text-classification

task_ids:

- multi-label-classification

pretty_name: BioASQ, PUBMED

size_categories:

- 10K<n<100K

---

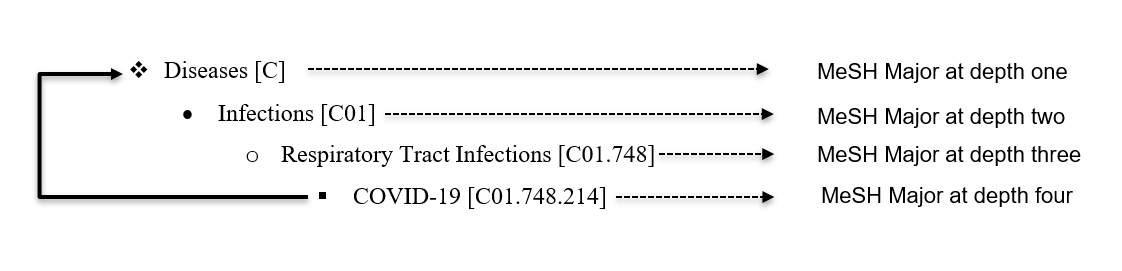

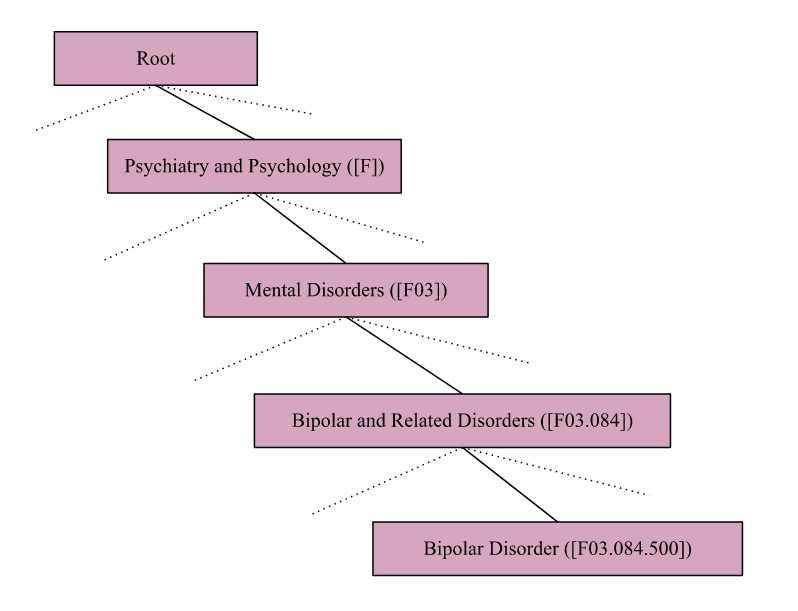

This dataset consists of a approx 50k collection of research articles from **PubMed** repository. Originally these documents are manually annotated by Biomedical Experts with their MeSH labels and each articles are described in terms of 10-15 MeSH labels. In this Dataset we have huge numbers of labels present as a MeSH major which is raising the issue of extremely large output space and severe label sparsity issues. To solve this Issue Dataset has been Processed and mapped to its root as Described in the Below Figure.

| 960 | [

[

-0.045318603515625,

-0.041595458984375,

0.0183563232421875,

0.0019140243530273438,

-0.0179290771484375,

-0.00345611572265625,

0.00016260147094726562,

-0.01812744140625,

0.00972747802734375,

0.039886474609375,

-0.02960205078125,

-0.044586181640625,

-0.06726074218... |

rungalileo/medical_transcription_40 | 2022-08-04T04:58:53.000Z | [

"region:us"

] | rungalileo | null | null | 4 | 32 | 2022-08-04T04:58:43 | Entry not found | 15 | [

[

-0.0214080810546875,

-0.01497650146484375,

0.05718994140625,

0.02880859375,

-0.035064697265625,

0.0465087890625,

0.052490234375,

0.00505828857421875,

0.051361083984375,

0.01702880859375,

-0.05206298828125,

-0.01497650146484375,

-0.060302734375,

0.03790283203... |

csebuetnlp/BanglaNMT | 2023-02-24T14:46:55.000Z | [

"task_categories:translation",

"annotations_creators:other",

"language_creators:found",

"multilinguality:translation",

"size_categories:1M<n<10M",

"language:bn",

"language:en",

"license:cc-by-nc-sa-4.0",

"bengali",

"BanglaNMT",

"region:us"

] | csebuetnlp | This is the largest Machine Translation (MT) dataset for Bengali-English, introduced in the paper

`Not Low-Resource Anymore: Aligner Ensembling, Batch Filtering, and New Datasets for Bengali-English Machine Translation`. | @inproceedings{hasan-etal-2020-low,

title = "Not Low-Resource Anymore: Aligner Ensembling, Batch Filtering, and New Datasets for {B}engali-{E}nglish Machine Translation",

author = "Hasan, Tahmid and

Bhattacharjee, Abhik and

Samin, Kazi and

Hasan, Masum and

Basak, Madhusudan and

Rahman, M. Sohel and

Shahriyar, Rifat",

booktitle = "Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP)",

month = nov,

year = "2020",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/2020.emnlp-main.207",

doi = "10.18653/v1/2020.emnlp-main.207",

pages = "2612--2623",

abstract = "Despite being the seventh most widely spoken language in the world, Bengali has received much less attention in machine translation literature due to being low in resources. Most publicly available parallel corpora for Bengali are not large enough; and have rather poor quality, mostly because of incorrect sentence alignments resulting from erroneous sentence segmentation, and also because of a high volume of noise present in them. In this work, we build a customized sentence segmenter for Bengali and propose two novel methods for parallel corpus creation on low-resource setups: aligner ensembling and batch filtering. With the segmenter and the two methods combined, we compile a high-quality Bengali-English parallel corpus comprising of 2.75 million sentence pairs, more than 2 million of which were not available before. Training on neural models, we achieve an improvement of more than 9 BLEU score over previous approaches to Bengali-English machine translation. We also evaluate on a new test set of 1000 pairs made with extensive quality control. We release the segmenter, parallel corpus, and the evaluation set, thus elevating Bengali from its low-resource status. To the best of our knowledge, this is the first ever large scale study on Bengali-English machine translation. We believe our study will pave the way for future research on Bengali-English machine translation as well as other low-resource languages. Our data and code are available at https://github.com/csebuetnlp/banglanmt.",

} | 0 | 32 | 2022-08-21T13:25:09 | ---

annotations_creators:

- other

language:

- bn

- en

language_creators:

- found

license:

- cc-by-nc-sa-4.0

multilinguality:

- translation

pretty_name: BanglaNMT

size_categories:

- 1M<n<10M

source_datasets: []

tags:

- bengali

- BanglaNMT

task_categories:

- translation

---

# Dataset Card for `BanglaNMT`

## Table of Contents

- [Dataset Card for `BanglaNMT`](#dataset-card-for-BanglaNMT)

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Usage](#usage)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Initial Data Collection and Normalization](#initial-data-collection-and-normalization)

- [Who are the source language producers?](#who-are-the-source-language-producers)

- [Annotations](#annotations)

- [Annotation process](#annotation-process)

- [Who are the annotators?](#who-are-the-annotators)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Repository:** [https://github.com/csebuetnlp/banglanmt](https://github.com/csebuetnlp/banglanmt)

- **Paper:** [**"Not Low-Resource Anymore: Aligner Ensembling, Batch Filtering, and New Datasets for Bengali-English Machine Translation"**](https://www.aclweb.org/anthology/2020.emnlp-main.207)

- **Point of Contact:** [Tahmid Hasan](mailto:tahmidhasan@cse.buet.ac.bd)

### Dataset Summary

This is the largest Machine Translation (MT) dataset for Bengali-English, curated using novel sentence alignment methods introduced **[here](https://aclanthology.org/2020.emnlp-main.207/).**

**Note:** This is a filtered version of the original dataset that the authors used for NMT training. For the complete set, refer to the offical [repository](https://github.com/csebuetnlp/banglanmt)

### Supported Tasks and Leaderboards

[More information needed](https://github.com/csebuetnlp/banglanmt)

### Languages

- `Bengali`

- `English`

### Usage

```python

from datasets import load_dataset

dataset = load_dataset("csebuetnlp/BanglaNMT")

```

## Dataset Structure

### Data Instances

One example from the dataset is given below in JSON format.

```

{

'bn': 'বিমানবন্দরে যুক্তরাজ্যে নিযুক্ত বাংলাদেশ হাইকমিশনার সাঈদা মুনা তাসনীম ও লন্ডনে বাংলাদেশ মিশনের জ্যেষ্ঠ কর্মকর্তারা তাকে বিদায় জানান।',

'en': 'Bangladesh High Commissioner to the United Kingdom Saida Muna Tasneen and senior officials of Bangladesh Mission in London saw him off at the airport.'

}

```

### Data Fields

The data fields are as follows:

- `bn`: a `string` feature indicating the Bengali sentence.

- `en`: a `string` feature indicating the English translation.

### Data Splits

| split |count |

|----------|--------|

|`train`| 2379749 |

|`validation`| 597 |

|`test`| 1000 |

## Dataset Creation

[More information needed](https://github.com/csebuetnlp/banglanmt)

### Curation Rationale

[More information needed](https://github.com/csebuetnlp/banglanmt)

### Source Data

[More information needed](https://github.com/csebuetnlp/banglanmt)

#### Initial Data Collection and Normalization

[More information needed](https://github.com/csebuetnlp/banglanmt)

#### Who are the source language producers?

[More information needed](https://github.com/csebuetnlp/banglanmt)

### Annotations

[More information needed](https://github.com/csebuetnlp/banglanmt)

#### Annotation process

[More information needed](https://github.com/csebuetnlp/banglanmt)

#### Who are the annotators?

[More information needed](https://github.com/csebuetnlp/banglanmt)

### Personal and Sensitive Information

[More information needed](https://github.com/csebuetnlp/banglanmt)

## Considerations for Using the Data

### Social Impact of Dataset

[More information needed](https://github.com/csebuetnlp/banglanmt)

### Discussion of Biases

[More information needed](https://github.com/csebuetnlp/banglanmt)

### Other Known Limitations

[More information needed](https://github.com/csebuetnlp/banglanmt)

## Additional Information

### Dataset Curators

[More information needed](https://github.com/csebuetnlp/banglanmt)

### Licensing Information

Contents of this repository are restricted to only non-commercial research purposes under the [Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License (CC BY-NC-SA 4.0)](https://creativecommons.org/licenses/by-nc-sa/4.0/). Copyright of the dataset contents belongs to the original copyright holders.

### Citation Information

If you use the dataset, please cite the following paper:

```

@inproceedings{hasan-etal-2020-low,

title = "Not Low-Resource Anymore: Aligner Ensembling, Batch Filtering, and New Datasets for {B}engali-{E}nglish Machine Translation",

author = "Hasan, Tahmid and

Bhattacharjee, Abhik and

Samin, Kazi and

Hasan, Masum and

Basak, Madhusudan and

Rahman, M. Sohel and

Shahriyar, Rifat",

booktitle = "Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP)",

month = nov,

year = "2020",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/2020.emnlp-main.207",

doi = "10.18653/v1/2020.emnlp-main.207",

pages = "2612--2623",

abstract = "Despite being the seventh most widely spoken language in the world, Bengali has received much less attention in machine translation literature due to being low in resources. Most publicly available parallel corpora for Bengali are not large enough; and have rather poor quality, mostly because of incorrect sentence alignments resulting from erroneous sentence segmentation, and also because of a high volume of noise present in them. In this work, we build a customized sentence segmenter for Bengali and propose two novel methods for parallel corpus creation on low-resource setups: aligner ensembling and batch filtering. With the segmenter and the two methods combined, we compile a high-quality Bengali-English parallel corpus comprising of 2.75 million sentence pairs, more than 2 million of which were not available before. Training on neural models, we achieve an improvement of more than 9 BLEU score over previous approaches to Bengali-English machine translation. We also evaluate on a new test set of 1000 pairs made with extensive quality control. We release the segmenter, parallel corpus, and the evaluation set, thus elevating Bengali from its low-resource status. To the best of our knowledge, this is the first ever large scale study on Bengali-English machine translation. We believe our study will pave the way for future research on Bengali-English machine translation as well as other low-resource languages. Our data and code are available at https://github.com/csebuetnlp/banglanmt.",

}

```

### Contributions

Thanks to [@abhik1505040](https://github.com/abhik1505040) and [@Tahmid](https://github.com/Tahmid04) for adding this dataset. | 7,796 | [

[

-0.0300445556640625,

-0.053863525390625,

0.0009598731994628906,

0.032745361328125,

-0.02880859375,

0.01123046875,

-0.034423828125,

-0.0209808349609375,

0.03216552734375,

0.03265380859375,

-0.036041259765625,

-0.04962158203125,

-0.04608154296875,

0.0311889648... |

illuin/small_commonvoice_test_set | 2022-10-06T13:37:15.000Z | [

"region:us"

] | illuin | null | null | 0 | 32 | 2022-10-06T13:36:55 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

Harsit/xnli2.0_train_hindi | 2022-10-15T09:20:03.000Z | [

"region:us"

] | Harsit | null | null | 0 | 32 | 2022-10-15T09:19:09 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

Dahoas/instruct-synthetic-prompt-responses | 2022-12-19T16:18:50.000Z | [

"region:us"

] | Dahoas | null | null | 9 | 32 | 2022-12-19T16:18:47 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |