id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 6.67k ⌀ | citation stringlengths 0 10.7k ⌀ | likes int64 0 3.66k | downloads int64 0 8.89M | created timestamp[us] | card stringlengths 11 977k | card_len int64 11 977k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|

PaulineSanchez/Dataset_food_translation_fr_en | 2023-05-16T14:46:06.000Z | [

"task_categories:translation",

"size_categories:1K<n<10K",

"language:fr",

"language:en",

"food",

"restaurant",

"menus",

"nutrition",

"region:us"

] | PaulineSanchez | null | null | 0 | 10 | 2023-05-15T15:39:03 | ---

dataset_info:

features:

- name: id

dtype: string

- name: translation

dtype:

translation:

languages:

- en

- fr

splits:

- name: train

num_bytes: 255634.8588621444

num_examples: 2924

- name: validation

num_bytes: 63996.14113785558

num_examples: 732

download_size: 208288

dataset_size: 319631.0

task_categories:

- translation

language:

- fr

- en

tags:

- food

- restaurant

- menus

- nutrition

size_categories:

- 1K<n<10K

---

# Dataset Card for "Dataset_food_translation_fr_en"

- info: This dataset is the combination of two datasets I previously made .

- There is : https://huggingface.co/datasets/PaulineSanchez/Trad_food which is made from the ANSES-CIQUAL 2020 Table in English in XML format, found on https://www.data.gouv.fr/fr/datasets/table-de-composition-nutritionnelle-des-aliments-ciqual/ .

I made some minor changes on it in order to have it meets my needs (removed/added words to have exact translations, removed repetitions etc).

- And : https://huggingface.co/datasets/PaulineSanchez/Multi_restaurants_menus_translation which is made of the translations of different menus in different restaurants. I used the menus of these different restaurants : https://salutbaramericain.com/edina/menus/ , https://menuonline.fr/legeorgev, https://www.covedina.com/menu/, https://menuonline.fr/fouquets/cartes, https://www.theavocadoshow.com/fr/food, https://papacionuparis.fr/carte/.

I also made some minor changes on these menus in order to have a dataset that meets my needs. I have absolutely no connection with these restaurants and their menus are certainly subject to change. | 1,672 | [

[

-0.01450347900390625,

-0.0159912109375,

0.01983642578125,

0.022979736328125,

-0.013275146484375,

-0.01325225830078125,

-0.021728515625,

-0.03106689453125,

0.044342041015625,

0.06365966796875,

-0.050384521484375,

-0.05535888671875,

-0.043701171875,

0.03887939... |

narizhny/addresses-2 | 2023-06-19T11:38:04.000Z | [

"region:us"

] | narizhny | null | null | 0 | 10 | 2023-05-16T14:00:37 | ---

dataset_info:

features:

- name: Name

dtype: string

- name: Surname

dtype: string

- name: Address

dtype: string

- name: City

dtype: string

- name: State

dtype: string

- name: Postcode

dtype: int64

splits:

- name: train

num_bytes: 413

num_examples: 6

download_size: 3258

dataset_size: 413

---

# Dataset Card for "addresses-2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 514 | [

[

-0.038665771484375,

-0.01413726806640625,

0.0198211669921875,

0.01229095458984375,

-0.01340484619140625,

-0.0159759521484375,

0.032867431640625,

-0.02484130859375,

0.05328369140625,

0.037322998046875,

-0.051727294921875,

-0.044219970703125,

-0.039825439453125,

... |

FreedomIntelligence/huatuo26M-testdatasets | 2023-05-17T03:39:41.000Z | [

"task_categories:text-generation",

"size_categories:1K<n<10K",

"language:zh",

"license:apache-2.0",

"medical",

"arxiv:2305.01526",

"region:us"

] | FreedomIntelligence | null | null | 12 | 10 | 2023-05-17T02:31:23 | ---

license: apache-2.0

task_categories:

- text-generation

language:

- zh

tags:

- medical

size_categories:

- 1K<n<10K

---

# Dataset Card for huatuo26M-testdatasets

## Dataset Description

- **Homepage: https://www.huatuogpt.cn/**

- **Repository: https://github.com/FreedomIntelligence/Huatuo-26M**

- **Paper: https://arxiv.org/abs/2305.01526**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

We are pleased to announce the release of our evaluation dataset, a subset of the Huatuo-26M. This dataset contains 6,000 entries that we used for Natural Language Generation (NLG) experimentation in our associated research paper.

We encourage researchers and developers to use this evaluation dataset to gauge the performance of their own models. This is not only a chance to assess the accuracy and relevancy of generated responses but also an opportunity to investigate their model's proficiency in understanding and generating complex medical language.

Note: All the data points have been anonymized to protect patient privacy, and they adhere strictly to data protection and privacy regulations.

## Citation

```

@misc{li2023huatuo26m,

title={Huatuo-26M, a Large-scale Chinese Medical QA Dataset},

author={Jianquan Li and Xidong Wang and Xiangbo Wu and Zhiyi Zhang and Xiaolong Xu and Jie Fu and Prayag Tiwari and Xiang Wan and Benyou Wang},

year={2023},

eprint={2305.01526},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

``` | 1,496 | [

[

-0.019683837890625,

-0.045166015625,

-0.0008740425109863281,

0.025604248046875,

-0.033843994140625,

-0.0099639892578125,

-0.0343017578125,

-0.02215576171875,

-0.01216888427734375,

0.0274505615234375,

-0.041107177734375,

-0.052520751953125,

-0.01776123046875,

... |

dspoka/cfpb | 2023-05-18T20:15:36.000Z | [

"region:us"

] | dspoka | null | null | 0 | 10 | 2023-05-18T20:08:10 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

mlfoundations/datacomp_xlarge | 2023-08-21T21:42:38.000Z | [

"license:cc-by-4.0",

"region:us"

] | mlfoundations | null | null | 1 | 10 | 2023-05-22T21:49:34 | ---

license: cc-by-4.0

---

## DataComp XLarge Pool

This repository contains metadata files for the xlarge pool of DataComp. For details on how to use the metadata, please visit [our website](https://www.datacomp.ai/) and our [github repository](https://github.com/mlfoundations/datacomp).

We distribute the image url-text samples and metadata under a standard Creative Common CC-BY-4.0 license. The individual images are under their own copyrights.

## Terms and Conditions

We have terms of service that are similar to those adopted by HuggingFace (https://huggingface.co/terms-of-service), which covers their dataset library. Specifically, any content you download, access or use from our index, is at your own risk and subject to the terms of service or copyright limitations accompanying such content. The image url-text index, which is a research artifact, is provided as is. By using said index, you assume all risks, including but not limited to, liabilities related to image downloading and storage. | 1,010 | [

[

-0.042694091796875,

-0.026458740234375,

0.020477294921875,

0.01499176025390625,

-0.0301055908203125,

0.0025043487548828125,

0.008636474609375,

-0.0394287109375,

0.021392822265625,

0.047332763671875,

-0.06805419921875,

-0.04888916015625,

-0.0460205078125,

0.0... |

ccmusic-database/bel_folk | 2023-10-03T16:56:58.000Z | [

"task_categories:audio-classification",

"size_categories:n<1K",

"language:zh",

"language:en",

"license:mit",

"music",

"art",

"region:us"

] | ccmusic-database | This database contains hundreds of acapella singing clips that are sung in two styles,

Bel Conto and Chinese national singing style by professional vocalists.

All of them are sung by professional vocalists and were recorded in professional commercial recording studios. | @dataset{zhaorui_liu_2021_5676893,

author = {Zhaorui Liu, Monan Zhou, Shenyang Xu and Zijin Li},

title = {{Music Data Sharing Platform for Computational Musicology Research (CCMUSIC DATASET)}},

month = nov,

year = 2021,

publisher = {Zenodo},

version = {1.1},

doi = {10.5281/zenodo.5676893},

url = {https://doi.org/10.5281/zenodo.5676893}

} | 1 | 10 | 2023-05-26T08:53:43 | ---

license: mit

task_categories:

- audio-classification

language:

- zh

- en

tags:

- music

- art

pretty_name: Bel Conto and Chinese Folk Song Singing Tech Database

size_categories:

- n<1K

---

# Dataset Card for Bel Conto and Chinese Folk Song Singing Tech Database

## Dataset Description

- **Homepage:** <https://ccmusic-database.github.io>

- **Repository:** <https://huggingface.co/datasets/ccmusic-database/bel_folk>

- **Paper:** <https://doi.org/10.5281/zenodo.5676893>

- **Leaderboard:** <https://ccmusic-database.github.io/team.html>

- **Point of Contact:** N/A

### Dataset Summary

This database contains hundreds of acapella singing clips that are sung in two styles, Bel Conto and Chinese national singing style by professional vocalists. All of them are sung by professional vocalists and were recorded in professional commercial recording studios.

### Supported Tasks and Leaderboards

Audio classification, singing method classification, voice classification

### Languages

Chinese, English

## Dataset Structure

### Data Instances

.zip(.wav, .jpg)

### Data Fields

m_bel, f_bel, m_folk, f_folk

### Data Splits

train, validation, test

## Dataset Creation

### Curation Rationale

Lack of a dataset for Bel Conto and Chinese folk song singing tech

### Source Data

#### Initial Data Collection and Normalization

Zhaorui Liu, Monan Zhou

#### Who are the source language producers?

Students from CCMUSIC

### Annotations

#### Annotation process

All of them are sung by professional vocalists and were recorded in professional commercial recording studios.

#### Who are the annotators?

professional vocalists

### Personal and Sensitive Information

None

## Considerations for Using the Data

### Social Impact of Dataset

Promoting the development of AI in the music industry

### Discussion of Biases

Only for Chinese songs

### Other Known Limitations

Some singers may not have enough professional training in classical or ethnic vocal techniques.

## Additional Information

### Dataset Curators

Zijin Li

### Evaluation

Coming soon...

### Licensing Information

```

MIT License

Copyright (c) CCMUSIC

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

```

### Citation Information

```

@dataset{zhaorui_liu_2021_5676893,

author = {Zhaorui Liu, Monan Zhou, Shenyang Xu and Zijin Li},

title = {CCMUSIC DATABASE: Music Data Sharing Platform for Computational Musicology Research},

month = {nov},

year = {2021},

publisher = {Zenodo},

version = {1.1},

doi = {10.5281/zenodo.5676893},

url = {https://doi.org/10.5281/zenodo.5676893}

}

```

### Contributions

Provide a dataset for distinguishing Bel Conto and Chinese folk song singing tech | 3,687 | [

[

-0.029296875,

-0.0304107666015625,

-0.01013946533203125,

0.04754638671875,

-0.0221710205078125,

0.00435638427734375,

-0.035675048828125,

-0.043487548828125,

0.0280303955078125,

0.04541015625,

-0.0672607421875,

-0.06817626953125,

-0.0097808837890625,

0.011810... |

aisyahhrazak/ms-news-harakahdaily | 2023-06-24T00:24:27.000Z | [

"language:ms",

"region:us"

] | aisyahhrazak | null | null | 0 | 10 | 2023-05-27T01:47:23 | ---

language:

- ms

---

### Dataset Summary

- 45505 Scraped News Article From Harakah Daily From 2017 to 21st May 2023

- Nearly all malay , small portion in english

### Dataset Format

```

{"url": "...", "headline": "...", "content": [...,...]}

``` | 252 | [

[

-0.02252197265625,

-0.038909912109375,

-0.0012388229370117188,

0.028350830078125,

-0.0340576171875,

-0.0160675048828125,

0.009857177734375,

0.0030689239501953125,

0.01995849609375,

0.0400390625,

-0.04144287109375,

-0.052215576171875,

-0.05126953125,

0.036163... |

kraina/airbnb | 2023-06-03T10:37:15.000Z | [

"size_categories:10K<n<100K",

"license:cc-by-4.0",

"geospatial",

"hotels",

"housing",

"region:us"

] | kraina | This dataset contains accommodation offers from the AirBnb platform from 10 European cities.

It has been copied from https://zenodo.org/record/4446043#.ZEV8d-zMI-R to make it available as a Huggingface Dataset.

It was originally published as supplementary material for the article: Determinants of Airbnb prices in European cities: A spatial econometrics approach

(DOI: https://doi.org/10.1016/j.tourman.2021.104319) | @dataset{gyodi_kristof_2021_4446043,

author = {Gyódi, Kristóf and

Nawaro, Łukasz},

title = {{Determinants of Airbnb prices in European cities:

A spatial econometrics approach (Supplementary

Material)}},

month = jan,

year = 2021,

note = {{This research was supported by National Science

Centre, Poland: Project number 2017/27/N/HS4/00951}},

publisher = {Zenodo},

doi = {10.5281/zenodo.4446043},

url = {https://doi.org/10.5281/zenodo.4446043}

} | 0 | 10 | 2023-05-30T21:15:45 | ---

license: cc-by-4.0

tags:

- geospatial

- hotels

- housing

size_categories:

- 10K<n<100K

dataset_info:

- config_name: weekdays

features:

- name: _id

dtype: string

- name: city

dtype: string

- name: realSum

dtype: float64

- name: room_type

dtype: string

- name: room_shared

dtype: bool

- name: room_private

dtype: bool

- name: person_capacity

dtype: float64

- name: host_is_superhost

dtype: bool

- name: multi

dtype: int64

- name: biz

dtype: int64

- name: cleanliness_rating

dtype: float64

- name: guest_satisfaction_overall

dtype: float64

- name: bedrooms

dtype: int64

- name: dist

dtype: float64

- name: metro_dist

dtype: float64

- name: attr_index

dtype: float64

- name: attr_index_norm

dtype: float64

- name: rest_index

dtype: float64

- name: rest_index_norm

dtype: float64

- name: lng

dtype: float64

- name: lat

dtype: float64

splits:

- name: train

num_bytes: 3998764

num_examples: 25500

download_size: 5303928

dataset_size: 3998764

- config_name: weekends

features:

- name: _id

dtype: string

- name: city

dtype: string

- name: realSum

dtype: float64

- name: room_type

dtype: string

- name: room_shared

dtype: bool

- name: room_private

dtype: bool

- name: person_capacity

dtype: float64

- name: host_is_superhost

dtype: bool

- name: multi

dtype: int64

- name: biz

dtype: int64

- name: cleanliness_rating

dtype: float64

- name: guest_satisfaction_overall

dtype: float64

- name: bedrooms

dtype: int64

- name: dist

dtype: float64

- name: metro_dist

dtype: float64

- name: attr_index

dtype: float64

- name: attr_index_norm

dtype: float64

- name: rest_index

dtype: float64

- name: rest_index_norm

dtype: float64

- name: lng

dtype: float64

- name: lat

dtype: float64

splits:

- name: train

num_bytes: 4108612

num_examples: 26207

download_size: 5451150

dataset_size: 4108612

- config_name: all

features:

- name: _id

dtype: string

- name: city

dtype: string

- name: realSum

dtype: float64

- name: room_type

dtype: string

- name: room_shared

dtype: bool

- name: room_private

dtype: bool

- name: person_capacity

dtype: float64

- name: host_is_superhost

dtype: bool

- name: multi

dtype: int64

- name: biz

dtype: int64

- name: cleanliness_rating

dtype: float64

- name: guest_satisfaction_overall

dtype: float64

- name: bedrooms

dtype: int64

- name: dist

dtype: float64

- name: metro_dist

dtype: float64

- name: attr_index

dtype: float64

- name: attr_index_norm

dtype: float64

- name: rest_index

dtype: float64

- name: rest_index_norm

dtype: float64

- name: lng

dtype: float64

- name: lat

dtype: float64

- name: day_type

dtype: string

splits:

- name: train

num_bytes: 8738970

num_examples: 51707

download_size: 10755078

dataset_size: 8738970

---

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:** [https://zenodo.org/record/4446043#.ZEV8d-zMI-R](https://zenodo.org/record/4446043#.ZEV8d-zMI-R)

- **Paper:** [https://www.sciencedirect.com/science/article/pii/S0261517721000388](https://www.sciencedirect.com/science/article/pii/S0261517721000388)

### Dataset Summary

This dataset contains accommodation offers from the [AirBnb](https://airbnb.com/) platform from 10 European cities.

It has been copied from [https://zenodo.org/record/4446043#.ZEV8d-zMI-R](https://zenodo.org/record/4446043#.ZEV8d-zMI-R) to make it available as a Huggingface Dataset.

It was originally published as supplementary material for the article:

**Determinants of Airbnb prices in European cities: A spatial econometrics approach**

(DOI: https://doi.org/10.1016/j.tourman.2021.104319)

## Dataset Structure

### Data Fields

The data fields contain all fields from the source dataset

along with additional `city` field denoting the city of the offer.

`all` split contains an additional field `day_type` denoting whether the offer is for

`weekdays` or `weekends`.

- city: the city of the offer,

- realSum: the full price of accommodation for two people and two nights in EUR,

- room_type: the type of the accommodation,

- room_shared: dummy variable for shared rooms,

- room_private: dummy variable for private rooms,

- person_capacity: the maximum number of guests,

- host_is_superhost: dummy variable for superhost status,

- multi: dummy variable if the listing belongs to hosts with 2-4 offers,

- biz: dummy variable if the listing belongs to hosts with more than 4 offers,

- cleanliness_rating: cleanliness rating,

- guest_satisfaction_overall: overall rating of the listing,

- bedrooms: number of bedrooms (0 for studios),

- dist: distance from city centre in km,

- metro_dist: distance from nearest metro station in km,

- attr_index: attraction index of the listing location,

- attr_index_norm: normalised attraction index (0-100),

- rest_index: restaurant index of the listing location,

- attr_index_norm: normalised restaurant index (0-100),

- lng: longitude of the listing location,

- lat: latitude of the listing location,

`all` config contains additionally:

- day_type: either `weekdays` or `weekends`

### Data Splits

| name | train |

|------------|--------:|

| weekdays | 25500 |

| weekends | 26207 |

| all | 51707 |

## Additional Information

### Licensing Information

The data is released under the licensing scheme from the original authors - CC-BY-4.0 ([source](https://zenodo.org/record/4446043#.ZEV8d-zMI-R)).

### Citation Information

```

@dataset{gyodi_kristof_2021_4446043,

author = {Gyódi, Kristóf and

Nawaro, Łukasz},

title = {{Determinants of Airbnb prices in European cities:

A spatial econometrics approach (Supplementary

Material)}},

month = jan,

year = 2021,

note = {{This research was supported by National Science

Centre, Poland: Project number 2017/27/N/HS4/00951}},

publisher = {Zenodo},

doi = {10.5281/zenodo.4446043},

url = {https://doi.org/10.5281/zenodo.4446043}

}

```

| 6,302 | [

[

-0.03375244140625,

-0.043060302734375,

0.027496337890625,

0.0229034423828125,

-0.004772186279296875,

-0.0472412109375,

-0.0157012939453125,

-0.025665283203125,

0.043853759765625,

0.0103302001953125,

-0.043121337890625,

-0.058990478515625,

-0.004856109619140625,

... |

whu9/arxiv_summarization_postprocess | 2023-06-03T04:49:04.000Z | [

"region:us"

] | whu9 | null | null | 0 | 10 | 2023-06-03T04:47:28 | ---

dataset_info:

features:

- name: source

dtype: string

- name: summary

dtype: string

- name: source_num_tokens

dtype: int64

- name: summary_num_tokens

dtype: int64

splits:

- name: train

num_bytes: 6992115668

num_examples: 197465

- name: validation

num_bytes: 216277493

num_examples: 6435

- name: test

num_bytes: 216661725

num_examples: 6439

download_size: 3553348742

dataset_size: 7425054886

---

# Dataset Card for "arxiv_summarization_postprocess"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 645 | [

[

-0.0455322265625,

-0.01030731201171875,

0.0029735565185546875,

0.0145263671875,

-0.0283966064453125,

-0.007755279541015625,

0.02264404296875,

0.0090179443359375,

0.06317138671875,

0.04974365234375,

-0.03485107421875,

-0.052032470703125,

-0.061614990234375,

-... |

aisyahhrazak/ms-news-utusanborneo | 2023-06-29T04:00:06.000Z | [

"language:ms",

"region:us"

] | aisyahhrazak | null | null | 0 | 10 | 2023-06-07T06:25:00 | ---

language:

- ms

---

Dataset Summary

- Scraped News Article From Utusan Borneo on 27.5.2023

- All malay articles

Dataset Format

```

{"url": "...", "content": [...,...]}

``` | 178 | [

[

-0.01019287109375,

-0.043914794921875,

-0.003704071044921875,

0.0256500244140625,

-0.056732177734375,

-0.023162841796875,

0.0008215904235839844,

0.0101776123046875,

0.04156494140625,

0.057861328125,

-0.037445068359375,

-0.055389404296875,

-0.0300140380859375,

... |

helena7/job_titles | 2023-06-14T09:57:15.000Z | [

"region:us"

] | helena7 | null | null | 0 | 10 | 2023-06-12T11:22:41 | Entry not found | 15 | [

[

-0.021392822265625,

-0.01494598388671875,

0.05718994140625,

0.028839111328125,

-0.0350341796875,

0.046539306640625,

0.052490234375,

0.00507354736328125,

0.051361083984375,

0.01702880859375,

-0.052093505859375,

-0.01494598388671875,

-0.06036376953125,

0.03790... |

DISCOX/DISCO-10M | 2023-06-26T19:54:22.000Z | [

"size_categories:10M<n<100M",

"language:en",

"license:cc-by-4.0",

"music",

"arxiv:2306.13512",

"doi:10.57967/hf/1190",

"region:us"

] | DISCOX | null | null | 13 | 10 | 2023-06-13T07:45:14 | ---

license: cc-by-4.0

language:

- en

tags:

- music

size_categories:

- 10M<n<100M

dataset_info:

features:

- name: video_url_youtube

dtype: string

- name: video_title_youtube

dtype: string

- name: track_name_spotify

dtype: string

- name: video_duration_youtube_sec

dtype: float64

- name: preview_url_spotify

dtype: string

- name: video_view_count_youtube

dtype: float64

- name: video_thumbnail_url_youtube

dtype: string

- name: search_query_youtube

dtype: string

- name: video_description_youtube

dtype: string

- name: track_id_spotify

dtype: string

- name: album_id_spotify

dtype: string

- name: artist_id_spotify

sequence: string

- name: track_duration_spotify_ms

dtype: int64

- name: primary_artist_name_spotify

dtype: string

- name: track_release_date_spotify

dtype: string

- name: explicit_content_spotify

dtype: bool

- name: similarity_duration

dtype: float64

- name: similarity_query_video_title

dtype: float64

- name: similarity_query_description

dtype: float64

- name: similarity_audio

dtype: float64

- name: audio_embedding_spotify

sequence: float32

- name: audio_embedding_youtube

sequence: float32

splits:

- name: train

num_bytes: 73263841657.0

num_examples: 15296232

download_size: 88490703682

dataset_size: 73263841657.0

---

### Getting Started

You can download the dataset using HuggingFace:

```python

from datasets import load_dataset

ds = load_dataset("DISCOX/DISCO-10M")

```

## Dataset Structure

The dataset contains the following features:

```json

{

'video_url_youtube',

'video_title_youtube',

'track_name_spotify',

'video_duration_youtube_sec',

'preview_url_spotify',

'video_view_count_youtube',

'video_thumbnail_url_youtube',

'search_query_youtube',

'video_description_youtube',

'track_id_spotify',

'album_id_spotify',

'artist_id_spotify',

'track_duration_spotify_ms',

'primary_artist_name_spotify',

'track_release_date_spotify',

'explicit_content_spotify',

'similarity_duration',

'similarity_query_video_title',

'similarity_query_description',

'similarity_audio',

'audio_embedding_spotify',

'audio_embedding_youtube',

}

```

## What is DISCO-10M?

DISCO-10M is a music dataset created to democratize research on large-scale machine learning models for music.

The dataset contains no music due to copyright laws.

The audio embedding features were computed using [Laion-CLAP](https://github.com/LAION-AI/CLAP), and can be used instead of the raw audio for many down-stream tasks.

In case the raw audio is needed, it can be downloaded from the provided Spotify preview URL or via the YouTube link.

DISCO-10M was created by collecting a list of 400,000 artist IDs and 2.6M track IDs from Spotify, and collecting YouTube video links that match the track duration,

artist name, and track names. These matches were computed using the following three similarity metrics:

- Duration similarity: ` 1 - abs(track_duration_spotify - video_duration_youtube) / max(track_duration_spotify, video_duration_youtube) `

- Text similarity is calculated using the cosine similarity between the embedding of the search query and the embedding of the video title, as well as the search query embedding and the video description embedding. Embeddings are computed using [Sentence Bert](https://huggingface.co/sentence-transformers).

- Audio similarity is calculated using the cosine similarity between the Spotify preview snippet audio embedding and the YouTube audio embedding.

For DISCO-10M we only keep samples that return true for: ` duration_similarity > 0.25 and (description_similarity > 0.65 or title_similarity > 0.65) and audio_similarity > 0.4 `

We offer three subsets based on DISCO-10M:

- [DISCO-10K-random](https://huggingface.co/datasets/DISCOX/DISCO-10K-random): a small subset of random samples from the entire dataset.

- [DISCO-200K-random](https://huggingface.co/datasets/DISCOX/DISCO-200K-random): a subset of random samples, useful for a light-weight and representative analysis of the entire dataset.

- [DISCO-200K-high-quality](https://huggingface.co/datasets/DISCOX/DISCO-200K-high-quality): a subset of samples which were filtered more strictly to ensure a higher quality match between Spotify tracks and YouTube videos.

To cite our work, please refer to our paper [here](https://arxiv.org/abs/2306.13512).

<!--

### Curation Rationale

[More Information Needed]

### Source Data

[More Information Needed]

--> | 4,513 | [

[

-0.049896240234375,

-0.04595947265625,

0.0191650390625,

0.0305938720703125,

-0.0099334716796875,

-0.006256103515625,

-0.0279998779296875,

-0.00154876708984375,

0.05126953125,

0.0294036865234375,

-0.0718994140625,

-0.059600830078125,

-0.027587890625,

-0.00281... |

renumics/cifar10-outlier | 2023-06-30T20:09:38.000Z | [

"task_categories:image-classification",

"annotations_creators:crowdsourced",

"language_creators:found",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:extended|other-80-Million-Tiny-Images",

"language:en",

"license:unknown",

"region:us"

] | renumics | null | null | 0 | 10 | 2023-06-14T20:53:24 | ---

annotations_creators:

- crowdsourced

language_creators:

- found

language:

- en

license:

- unknown

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- extended|other-80-Million-Tiny-Images

task_categories:

- image-classification

task_ids: []

paperswithcode_id: cifar-10

pretty_name: Cifar10-Outliers

dataset_info:

features:

- name: img

dtype: image

- name: label

dtype:

class_label:

names:

'0': airplane

'1': automobile

'2': bird

'3': cat

'4': deer

'5': dog

'6': frog

'7': horse

'8': ship

'9': truck

- name: embedding_foundation

sequence: float32

- name: embedding_ft

sequence: float32

- name: outlier_score_ft

dtype: float64

- name: outlier_score_foundation

dtype: float64

- name: nn_image

struct:

- name: bytes

dtype: binary

- name: path

dtype: 'null'

config_name: plain_text

splits:

- name: train

num_bytes: 535120320.0

num_examples: 50000

download_size: 595144805

dataset_size: 535120320.0

---

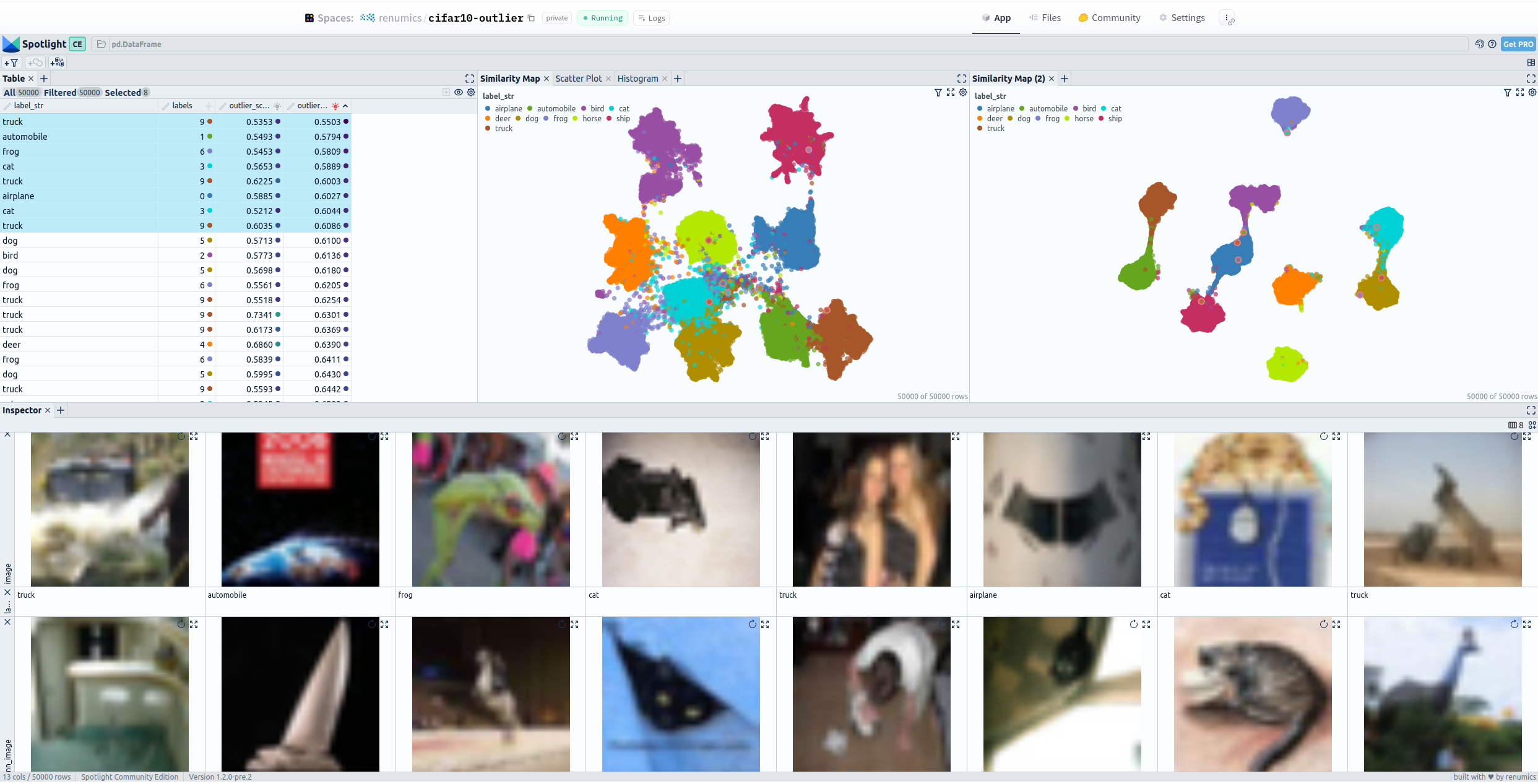

# Dataset Card for "cifar10-outlier"

📚 This dataset is an enriched version of the [CIFAR-10 Dataset](https://www.cs.toronto.edu/~kriz/cifar.html).

The workflow is described in the medium article: [Changes of Embeddings during Fine-Tuning of Transformers](https://medium.com/@markus.stoll/changes-of-embeddings-during-fine-tuning-c22aa1615921).

## Explore the Dataset

The open source data curation tool [Renumics Spotlight](https://github.com/Renumics/spotlight) allows you to explorer this dataset. You can find a Hugging Face Spaces running Spotlight with this dataset here:

- Full Version (High hardware requirement) <https://huggingface.co/spaces/renumics/cifar10-outlier>

- Fast Version <https://huggingface.co/spaces/renumics/cifar10-outlier-low>

Or you can explorer it locally:

```python

!pip install renumics-spotlight datasets

from renumics import spotlight

import datasets

ds = datasets.load_dataset("renumics/cifar10-outlier", split="train")

df = ds.rename_columns({"img": "image", "label": "labels"}).to_pandas()

df["label_str"] = df["labels"].apply(lambda x: ds.features["label"].int2str(x))

dtypes = {

"nn_image": spotlight.Image,

"image": spotlight.Image,

"embedding_ft": spotlight.Embedding,

"embedding_foundation": spotlight.Embedding,

}

spotlight.show(

df,

dtype=dtypes,

layout="https://spotlight.renumics.com/resources/layout_pre_post_ft.json",

)

```

| 2,624 | [

[

-0.047882080078125,

-0.0394287109375,

0.00878143310546875,

0.023834228515625,

-0.0103912353515625,

0.00560760498046875,

-0.0221099853515625,

-0.017425537109375,

0.04827880859375,

0.03515625,

-0.046844482421875,

-0.042999267578125,

-0.04510498046875,

-0.00638... |

HausaNLP/Naija-Lex | 2023-06-18T16:13:08.000Z | [

"multilinguality:monolingual",

"multilinguality:multilingual",

"language:hau",

"language:ibo",

"language:yor",

"license:cc-by-nc-sa-4.0",

"sentiment analysis, Twitter, tweets",

"stopwords",

"region:us"

] | HausaNLP | Naija-Stopwords is a part of the Naija-Senti project. It is a list of collected stopwords from the four most widely spoken languages in Nigeria — Hausa, Igbo, Nigerian-Pidgin, and Yorùbá. | @inproceedings{muhammad-etal-2022-naijasenti,

title = "{N}aija{S}enti: A {N}igerian {T}witter Sentiment Corpus for Multilingual Sentiment Analysis",

author = "Muhammad, Shamsuddeen Hassan and

Adelani, David Ifeoluwa and

Ruder, Sebastian and

Ahmad, Ibrahim Sa{'}id and

Abdulmumin, Idris and

Bello, Bello Shehu and

Choudhury, Monojit and

Emezue, Chris Chinenye and

Abdullahi, Saheed Salahudeen and

Aremu, Anuoluwapo and

Jorge, Al{\"\i}pio and

Brazdil, Pavel",

booktitle = "Proceedings of the Thirteenth Language Resources and Evaluation Conference",

month = jun,

year = "2022",

address = "Marseille, France",

publisher = "European Language Resources Association",

url = "https://aclanthology.org/2022.lrec-1.63",

pages = "590--602",

} | 0 | 10 | 2023-06-16T09:12:05 | ---

license: cc-by-nc-sa-4.0

tags:

- sentiment analysis, Twitter, tweets

- stopwords

multilinguality:

- monolingual

- multilingual

language:

- hau

- ibo

- yor

pretty_name: NaijaStopwords

---

# Naija-Lexicons

Naija-Lexicons is a part of the [Naija-Senti](https://huggingface.co/datasets/HausaNLP/NaijaSenti-Twitter) project. It is a list of collected stopwords from the four most widely spoken languages in Nigeria — Hausa, Igbo, Nigerian-Pidgin, and Yorùbá.

--------------------------------------------------------------------------------

## Dataset Description

- **Homepage:** https://github.com/hausanlp/NaijaSenti/tree/main/data/stopwords

- **Repository:** [GitHub](https://github.com/hausanlp/NaijaSenti/tree/main/data/stopwords)

- **Paper:** [NaijaSenti: A Nigerian Twitter Sentiment Corpus for Multilingual Sentiment Analysis](https://aclanthology.org/2022.lrec-1.63/)

- **Leaderboard:** N/A

- **Point of Contact:** [Shamsuddeen Hassan Muhammad](shamsuddeen2004@gmail.com)

### Languages

3 most indigenous Nigerian languages

* Hausa (hau)

* Igbo (ibo)

* Yoruba (yor)

## Dataset Structure

### Data Instances

List of lexicons instances in each of the 3 languages with their sentiment labels.

```

{

"word": "string",

"label": "string"

}

```

### How to use it

```python

from datasets import load_dataset

# you can load specific languages (e.g., Hausa). This download manually created and translated lexicons.

ds = load_dataset("HausaNLP/Naija-Lexicons", "hau")

# you can load specific languages (e.g., Hausa). You may also specify the split you want to downloaf

ds = load_dataset("HausaNLP/Naija-Lexicons", "hau", split = "manual")

```

## Additional Information

### Dataset Curators

* Shamsuddeen Hassan Muhammad

* Idris Abdulmumin

* Ibrahim Said Ahmad

* Bello Shehu Bello

### Licensing Information

This Naija-Lexicons dataset is licensed under a Creative Commons Attribution BY-NC-SA 4.0 International License

### Citation Information

```

@inproceedings{muhammad-etal-2022-naijasenti,

title = "{N}aija{S}enti: A {N}igerian {T}witter Sentiment Corpus for Multilingual Sentiment Analysis",

author = "Muhammad, Shamsuddeen Hassan and

Adelani, David Ifeoluwa and

Ruder, Sebastian and

Ahmad, Ibrahim Sa{'}id and

Abdulmumin, Idris and

Bello, Bello Shehu and

Choudhury, Monojit and

Emezue, Chris Chinenye and

Abdullahi, Saheed Salahudeen and

Aremu, Anuoluwapo and

Jorge, Al{\'\i}pio and

Brazdil, Pavel",

booktitle = "Proceedings of the Thirteenth Language Resources and Evaluation Conference",

month = jun,

year = "2022",

address = "Marseille, France",

publisher = "European Language Resources Association",

url = "https://aclanthology.org/2022.lrec-1.63",

pages = "590--602",

}

```

### Contributions

> This work was carried out with support from Lacuna Fund, an initiative co-founded by The Rockefeller Foundation, Google.org, and Canada’s International Development Research Centre. The views expressed herein do not necessarily represent those of Lacuna Fund, its Steering Committee, its funders, or Meridian Institute. | 3,175 | [

[

-0.04705810546875,

-0.030487060546875,

0.006237030029296875,

0.048065185546875,

-0.031280517578125,

0.0091094970703125,

-0.0325927734375,

-0.037139892578125,

0.0667724609375,

0.031951904296875,

-0.031219482421875,

-0.048858642578125,

-0.054168701171875,

0.04... |

PhaniManda/autotrain-data-identifying-person-location-date | 2023-06-22T09:17:06.000Z | [

"task_categories:token-classification",

"region:us"

] | PhaniManda | null | null | 3 | 10 | 2023-06-22T09:16:09 | ---

task_categories:

- token-classification

---

# AutoTrain Dataset for project: identifying-person-location-date

## Dataset Description

This dataset has been automatically processed by AutoTrain for project identifying-person-location-date.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"tokens": [

"I",

"will",

"be",

"traveling",

"to",

"Tokyo",

"next",

"month."

],

"tags": [

13,

13,

13,

13,

13,

1,

13,

0,

5

]

},

{

"tokens": [

"The",

"company",

"Apple",

"Inc.",

"is",

"based",

"in",

"California."

],

"tags": [

13,

13,

3,

9,

13,

13,

1

]

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"tokens": "Sequence(feature=Value(dtype='string', id=None), length=-1, id=None)",

"tags": "Sequence(feature=ClassLabel(names=['B-DATE', 'B-LOC', 'B-MISC', 'B-ORG', 'B-PER', 'I-DATE', 'I-DATE,', 'I-LOC', 'I-MISC', 'I-ORG', 'I-ORG,', 'I-PER', 'I-PER,', 'O'], id=None), length=-1, id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 21 |

| valid | 9 |

| 1,526 | [

[

-0.03228759765625,

0.007335662841796875,

0.01425933837890625,

0.01971435546875,

-0.01323699951171875,

0.0164337158203125,

0.00225067138671875,

-0.0270538330078125,

0.015167236328125,

0.0214080810546875,

-0.05438232421875,

-0.0609130859375,

-0.031219482421875,

... |

breadlicker45/discord-chat | 2023-06-27T01:27:41.000Z | [

"region:us"

] | breadlicker45 | null | null | 1 | 10 | 2023-06-27T01:27:11 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

lyogavin/Anima33B_rlhf_belle_eval_1k | 2023-06-28T00:24:01.000Z | [

"region:us"

] | lyogavin | null | null | 2 | 10 | 2023-06-28T00:23:53 | ---

dataset_info:

features:

- name: question

dtype: string

- name: std_answer

dtype: string

- name: class

dtype: string

- name: anima_answer

dtype: string

- name: anima_answer_extraced

dtype: string

- name: inputPrompt

dtype: string

- name: gpt_output

dtype: string

- name: gpt_output_score

dtype: float64

- name: chosen

dtype: string

- name: rejected

dtype: string

- name: chosen_token_len

dtype: int64

- name: rejected_token_len

dtype: int64

splits:

- name: train

num_bytes: 2972300.1

num_examples: 700

- name: test

num_bytes: 1273842.9

num_examples: 300

download_size: 2384211

dataset_size: 4246143.0

---

# Dataset Card for "Anima33B_rlhf_belle_eval_1k"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 889 | [

[

-0.04241943359375,

-0.0152740478515625,

0.003265380859375,

0.035064697265625,

-0.00762939453125,

-0.0019435882568359375,

0.03955078125,

-0.0217132568359375,

0.06591796875,

0.045989990234375,

-0.0731201171875,

-0.049102783203125,

-0.036590576171875,

-0.000660... |

Fsoft-AIC/the-vault-inline | 2023-08-22T10:01:46.000Z | [

"task_categories:text-generation",

"multilinguality:multiprogramming languages",

"language:code",

"language:en",

"license:mit",

"arxiv:2305.06156",

"region:us"

] | Fsoft-AIC | The Vault is a multilingual code-text dataset with over 34 million pairs covering 10 popular programming languages.

It is the largest corpus containing parallel code-text data. By building upon The Stack, a massive raw code sample collection,

the Vault offers a comprehensive and clean resource for advancing research in code understanding and generation. It provides a

high-quality dataset that includes code-text pairs at multiple levels, such as class and inline-level, in addition to the function level.

The Vault can serve many purposes at multiple levels. | @article{manh2023vault,

title={The Vault: A Comprehensive Multilingual Dataset for Advancing Code Understanding and Generation},

author={Manh, Dung Nguyen and Hai, Nam Le and Dau, Anh TV and Nguyen, Anh Minh and Nghiem, Khanh and Guo, Jin and Bui, Nghi DQ},

journal={arXiv preprint arXiv:2305.06156},

year={2023}

} | 2 | 10 | 2023-06-30T11:07:10 | ---

language:

- code

- en

multilinguality:

- multiprogramming languages

task_categories:

- text-generation

license: mit

dataset_info:

features:

- name: identifier

dtype: string

- name: return_type

dtype: string

- name: repo

dtype: string

- name: path

dtype: string

- name: language

dtype: string

- name: code

dtype: string

- name: code_tokens

dtype: string

- name: original_docstring

dtype: string

- name: comment

dtype: string

- name: docstring_tokens

dtype: string

- name: docstring

dtype: string

- name: original_string

dtype: string

pretty_name: The Vault Function

viewer: true

---

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks](#supported-tasks)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Statistics](#dataset-statistics)

- [Usage](#usage)

- [Additional Information](#additional-information)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Repository:** [FSoft-AI4Code/TheVault](https://github.com/FSoft-AI4Code/TheVault)

- **Paper:** [The Vault: A Comprehensive Multilingual Dataset for Advancing Code Understanding and Generation](https://arxiv.org/abs/2305.06156)

- **Contact:** support.ailab@fpt.com

- **Website:** https://www.fpt-aicenter.com/ai-residency/

<p align="center">

<img src="https://raw.githubusercontent.com/FSoft-AI4Code/TheVault/main/assets/the-vault-4-logo-png.png" width="300px" alt="logo">

</p>

<div align="center">

# The Vault: A Comprehensive Multilingual Dataset for Advancing Code Understanding and Generation

</div>

## Dataset Summary

The Vault dataset is a comprehensive, large-scale, multilingual parallel dataset that features high-quality code-text pairs derived from The Stack, the largest permissively-licensed source code dataset.

We provide The Vault which contains code snippets from 10 popular programming languages such as Java, JavaScript, Python, Ruby, Rust, Golang, C#, C++, C, and PHP. This dataset provides multiple code-snippet levels, metadata, and 11 docstring styles for enhanced usability and versatility.

## Supported Tasks

The Vault can be used for pretraining LLMs or downstream code-text interaction tasks. A number of tasks related to code understanding and geneartion can be constructed using The Vault such as *code summarization*, *text-to-code generation* and *code search*.

## Languages

The natural language text (docstring) is in English.

10 programming languages are supported in The Vault: `Python`, `Java`, `JavaScript`, `PHP`, `C`, `C#`, `C++`, `Go`, `Ruby`, `Rust`

## Dataset Structure

### Data Instances

```

{

"hexsha": "ee1cf38808d3db0ea364b049509a01a65e6e5589",

"repo": "Waguy02/Boomer-Scripted",

"path": "python/subprojects/testbed/mlrl/testbed/persistence.py",

"license": [

"MIT"

],

"language": "Python",

"identifier": "__init__",

"code": "def __init__(self, model_dir: str):\n \"\"\"\n :param model_dir: The path of the directory where models should be saved\n \"\"\"\n self.model_dir = model_dir",

"code_tokens": [

"def",

"__init__",

"(",

"self",

",",

"model_dir",

":",

"str",

")",

":",

"\"\"\"\n :param model_dir: The path of the directory where models should be saved\n \"\"\"",

"self",

".",

"model_dir",

"=",

"model_dir"

],

"original_comment": "\"\"\"\n :param model_dir: The path of the directory where models should be saved\n \"\"\"",

"comment": ":param model_dir: The path of the directory where models should be saved",

"comment_tokens": [

":",

"param",

"model_dir",

":",

"The",

"path",

"of",

"the",

"directory",

"where",

"models",

"should",

"be",

"saved"

],

"start_point": [

1,

8

],

"end_point": [

3,

11

],

"prev_context": {

"code": null,

"start_point": null,

"end_point": null

},

"next_context": {

"code": "self.model_dir = model_dir",

"start_point": [

4,

8

],

"end_point": [

4,

34

]

}

}

```

### Data Fields

Data fields for inline level:

- **hexsha** (string): the unique git hash of file

- **repo** (string): the owner/repo

- **path** (string): the full path to the original file

- **license** (list): licenses in the repo

- **language** (string): the programming language

- **identifier** (string): the function or method name

- **code** (string): the part of the original that is code

- **code_tokens** (list): tokenized version of `code`

- **original_comment** (string): original text of comment ,

- **comment** (string): clean version of comment,

- **comment_tokens** (list): tokenized version of `comment`,

- **start_point** (int): start position of `original_comment` in `code`,

- **end_point** (int): end position of `original_comment` in `code`,

- **prev_context** (dict): block of code before `original_comment`,

- **next_context** (dict): block of code after `original_comment`

### Data Splits

In this repo, the inline level data is not split, and contained in only train set.

## Dataset Statistics

| Languages | Number of inline comments |

|:-----------|---------------------------:|

|Python | 14,013,238 |

|Java | 17,062,277 |

|JavaScript | 1,438,110 |

|PHP | 5,873,744 |

|C | 6,778,239 |

|C# | 6,274,389 |

|C++ | 10,343,650 |

|Go | 4,390,342 |

|Ruby | 767,563 |

|Rust | 2,063,784 |

|TOTAL | **69,005,336** |

## Usage

You can load The Vault dataset using datasets library: ```pip install datasets```

```python

from datasets import load_dataset

# Load full inline level dataset (69M samples)

dataset = load_dataset("Fsoft-AIC/the-vault-inline")

# specific language (e.g. Python)

dataset = load_dataset("Fsoft-AIC/the-vault-inline", languages=['Python'])

# dataset streaming

data = load_dataset("Fsoft-AIC/the-vault-inline", streaming= True)

for sample in iter(data['train']):

print(sample)

```

## Additional information

### Licensing Information

MIT License

### Citation Information

```

@article{manh2023vault,

title={The Vault: A Comprehensive Multilingual Dataset for Advancing Code Understanding and Generation},

author={Manh, Dung Nguyen and Hai, Nam Le and Dau, Anh TV and Nguyen, Anh Minh and Nghiem, Khanh and Guo, Jin and Bui, Nghi DQ},

journal={arXiv preprint arXiv:2305.06156},

year={2023}

}

```

### Contributions

This dataset is developed by [FSOFT AI4Code team](https://github.com/FSoft-AI4Code). | 7,263 | [

[

-0.021331787109375,

-0.0256805419921875,

0.007083892822265625,

0.0240325927734375,

-0.0081329345703125,

0.0189666748046875,

-0.003040313720703125,

-0.0101318359375,

0.00039005279541015625,

0.028076171875,

-0.048675537109375,

-0.0677490234375,

-0.02783203125,

... |

UmaDiffusion/ULTIMA | 2023-07-29T03:16:24.000Z | [

"task_categories:text-to-image",

"task_categories:image-to-text",

"task_ids:image-captioning",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"language:en",

"license:other",

"region:us"

] | UmaDiffusion | ULTIMA Dataset - Uma Musume Labeled Text-Image Multimodal Alignment Dataset | @misc{ULTIMA,

author = {Oh Giyeong (BootsofLagrangian), Kang Dohoon (Haken)},

title = {ULTIMA - Uma Musume Labeled Text-Image Multimodal Alignment Dataset},

howpublished = {\\url{https://huggingface.co/datasets/UmaDiffusion/ULTIMA}},

month = {July},

year = {2023}

} | 4 | 10 | 2023-07-02T07:39:10 | ---

license: other

language:

- en

multilinguality:

- monolingual

pretty_name: Uma Musume Labeled Text-Image Multimodal Alignment Dataset

size_categories:

- 10K<n<100K

task_categories:

- text-to-image

- image-to-text

task_ids:

- image-captioning

extra_gated_prompt: "You agree to use this dataset for non-commercial ONLY and NOT VIOLATE the guidelines for secondary creation of Uma Musume Pretty Derby."

extra_gated_fields:

I agree to use this dataset for non-commercial ONLY and to NOT VIOLATE the guidelines for secondary creation of Uma Musume Pretty Derby from Cygames, Inc: checkbox

---

---

# About **ULTIMA**

ULTIMA Dataset is **U**ma Musume **L**abeled **T**ext-**I**mage **M**ultimodal **A**lignment Dataset.

ULTIMA is *a supervised dataset for fine-tuning* of characters in Uma Musume: Pretty Derby.

It contains **~14K** text-image pairs.

We ***manually*** processed the entire data. This is an essential fact even though it is assisted by a machine.

What we did is on [Data Preprocessing.md](https://huggingface.co/datasets/UmaDiffusion/ULTIMA/blob/main/Data%20Preprocessing.md).

Statistics about datset and abbreviations of Uma Musume are in [statistics.md](https://huggingface.co/datasets/UmaDiffusion/ULTIMA/blob/main/statistics.md).

Pruned tag-clothes pairs are in [prompts.md](https://huggingface.co/datasets/UmaDiffusion/ULTIMA/blob/main/prompts.md)

## Dataset Structure

We use a modularized file structure to distribute ULTIMA. The 14,460 images in ULTIMA are split into 73 folders, where each folder contains 200 images and a JSON file that these 200 images to their text and information.

```bash

# ULTIMA

./

├──data

│ ├──part-00000

│ │ ├──01_agt_00000.png

│ │ ├──01_agt_00001.png

│ │ ├──01_agt_00002.png

│ │ ├──[...]

│ │ └──part-00000.json

│ ├──part-00002

│ ├──part-00003

│ ├──[...]

│ └──part-00072

└──metadata.parquet

```

These sub-folders have names `part-0xxxx`, and each image has a name which has a format, `[quality]_[abbreviation]_[image number].png`. The JSON file in a sub-folder has the same name as the sub-folder. Each image is a `PNG` file. The JSON file contains key-value pairs mapping image filenames to their prompts and aesthetic scores.

## Data Instances

For example, below is the image of `01_agt_00007.png` and its key-value pair in `part-00000.json`.

<img width="300" src="https://i.imgur.com/LNNVGA2.png">

```json

{

"01_agt_00007.png": {

"text": "agnes tachyon \(umamusume\), labcoat, closed eyes, white background, single earring, tracen school uniform, smile, open mouth, sleeves past fingers, blush, upper body, sleeves past wrists, purple shirt, facing viewer, sailor collar, bowtie, long sleeves, :d, purple bow, white coat, breasts",

"width": 1190,

"height": 1684,

"pixels": 2003960,

"LAION_aesthetic": 6.2257309,

"cafe_aesthetic": 0.97501057

},

}

```

## Data Fields

- key: Unique image name

- `text`: Manipulated tags

- `width`: Width of image

- `height`: Height of image

- `pixels`: Pixels(Width*Height) of image

- `LAION_aesthetic`: Aesthetic score by [CLIP+MLP Aesthetic Score Predictor](https://github.com/christophschuhmann/improved-aesthetic-predictor)

- `cafe_aesthetic`: Aesthetic score by [cafe aesthetic](https://huggingface.co/cafeai/cafe_aesthetic)

## Data Metadata

To help you easily access prompts and other attributes of images without downloading all the Zip files, we include metadata table `metadata.parquet` for ULTIMA.

The shape of `metadata.parquet` is (14460, 8). We store these tables in the Parquet format because Parquet is column-based: you can efficiently query individual columns (e.g., texts) without reading the entire table.

Below are first three rows from `metadata.parquet`.

| image_name | text | part_id | width | height | pixels | LAION_aesthetic | cafe_aesthetic |

|:--:|:---------------------------------------|:-:|:-:|:-:|:-:|:-:|:-:|

| 01_agt_00000.png | agnes tachyon \\\(umamusume\\\), vehicle focus, motor vehicle, ground vehicle, labcoat, sleeves past wrists, sports car, sleeves past fingers, yellow sweater, black necktie, open mouth, black pantyhose, smile, looking at viewer, single earring, short necktie, holding | 0 | 3508 | 2480 | 8699840 | 5.99897194 | 0.9899081 |

| 01_agt_00001.png | agnes tachyon \\\(umamusume\\\), labcoat, sleeves past wrists, sleeves past fingers, long sleeves, black pantyhose, skirt, smile, white background, white coat, cowboy shot, from side, profile, hand up, closed mouth, yellow sweater, collared shirt, black shirt, black necktie, pen coat, looking to the side | 0 | 1105 | 1349 | 1490645 | 6.3266325 | 0.99231464 |

| 01_agt_00002.png | agnes tachyon \\\(umamusume\\\), labcoat, test tube, sitting, crossed legs, yellow sweater, sleeves past wrists, black pantyhose, sleeves past fingers, black necktie, boots removed, high heels, full body, long sleeves, shoes, high heel boots, single shoe, sweater vest, white coat, smile, closed mouth, collared shirt, single boot, white footwear, white background, single earring, black shirt, short necktie, open coat, vial | 0 | 2000 | 2955 | 5910000 | 6.21014023 | 0.94741267 |

|

## Metadata Schema

|Column|Type|Description|

|:---|:---|:---|

|`image_name`|`string`| Image filename |

|`text`|`string`| The manipulated text of image for alignment |

|`part_id`|`uint16`| Folder ID of this image |

|`width`|`uint16`| Image width |

|`height`|`uint16`| Image height |

|`pixels`|`uint32`| Image pixels |

|`LAION_aesthetic`|`float32`| LATION aesthetic score of image |

|`cafe_aesthetic`|`float32`| cafe aesthetic score of image |

|

# Considerations for Using the Data

## Limitations and Bias

The whole process was based on the subjectivity of the author.

0. Domain of the dataset, which only contains characters in Uma Musume: Pretty Derby.

1. Collection of images

3. Calibration on images

4. Manipulation of tags

5. Alignment on tags

6. Separation of images by quality

Therefore, the dataset is totally based on author's supervision, not on any objective metric.

## Guidelines for secondary creation of Uma Musume: Pretty Derby

Here is the guidelines for secondary creation of Uma Musume: Pretty Derby from Cygames, Inc.

>We would like to provide you with the guidelines for secondary creations of Uma Musume Pretty Derby.

>This work features numerous characters based on real-life racehorses, and it has been made possible through the cooperation of many individuals, including the horse owners who have lent their horse names.

>We kindly ask everyone, including fans of the racehorses that serve as motifs, horse owners, and related parties, to refrain from expressions that may cause discomfort or significantly damage the image of the racehorses or characters.

>Specifically, please refrain from publishing creations that fall under the following provisions within Uma Musume Pretty Derby

>1. Creations that aim to harm this work, the thoughts of third parties, or their reputation

>2. Violent, grotesque, or sexually explicit content

>3. Creations that excessively support or denigrate specific politics, religions, or beliefs

>4. Expressions with antisocial content

>5. Creations that infringe upon the rights of third parties

>

>These guidelines have been established after consultation with the management company responsible for the horse names.

>In cases that fall under the aforementioned provisions, we may have to consider taking legal measures if necessary.

>These guidelines do not deny the fan activities of those who support Uma Musume.

>We have established these guidelines to ensure that everyone can engage in fan activities with peace of mind.

>We appreciate your understanding and cooperation.

>Please note that we will not provide individual responses to inquiries regarding these guidelines.

>The Uma Musume project will continue to support racehorses and their achievements alongside everyone, in order to uphold the dignity of these renowned horses.

Translated by ChatGPT. The original document(in japanese) is [here](https://umamusume.jp/derivativework_guidelines/).

## Licensing Information

The dataset is made available for academic research purposes only and for non-commercial purposes. All the images are collected from the Internet, and the copyright of images belongs to the original owners. If any of the images belongs to you and you would like it removed, please inform us, we will try to remove it from the dataset.

## Citation

```bibtex

@misc{ULTIMA,

author = {Oh Giyeong (BootsofLagrangian), Kang Dohoon (Haken)},

title = {ULTIMA - Uma Musume Labeled Text-Image Alignment Dataset},

howpublished = {\url{https://huggingface.co/datasets/UmaDiffusion/ULTIMA}},

month = {July},

year = {2023}

}

```

| 8,835 | [

[

-0.04840087890625,

-0.05126953125,

0.031890869140625,

0.00579071044921875,

-0.0419921875,

-0.00981903076171875,

0.0005788803100585938,

-0.040130615234375,

0.04718017578125,

0.056976318359375,

-0.0419921875,

-0.07171630859375,

-0.02935791015625,

0.01919555664... |

rdpahalavan/CIC-IDS2017 | 2023-07-22T21:42:04.000Z | [

"task_categories:text-classification",

"task_categories:tabular-classification",

"size_categories:100M<n<1B",

"license:apache-2.0",

"Network Intrusion Detection",

"Cybersecurity",

"Network Packets",

"CIC-IDS2017",

"region:us"

] | rdpahalavan | null | null | 0 | 10 | 2023-07-08T07:25:54 | ---

license: apache-2.0

task_categories:

- text-classification

- tabular-classification

size_categories:

- 100M<n<1B

tags:

- Network Intrusion Detection

- Cybersecurity

- Network Packets

- CIC-IDS2017

---

We have developed a Python package as a wrapper around Hugging Face Hub and Hugging Face Datasets library to access this dataset easily.

# NIDS Datasets

The `nids-datasets` package provides functionality to download and utilize specially curated and extracted datasets from the original UNSW-NB15 and CIC-IDS2017 datasets. These datasets, which initially were only flow datasets, have been enhanced to include packet-level information from the raw PCAP files. The dataset contains both packet-level and flow-level data for over 230 million packets, with 179 million packets from UNSW-NB15 and 54 million packets from CIC-IDS2017.

## Installation

Install the `nids-datasets` package using pip:

```shell

pip install nids-datasets

```

Import the package in your Python script:

```python

from nids_datasets import Dataset, DatasetInfo

```

## Dataset Information

The `nids-datasets` package currently supports two datasets: [UNSW-NB15](https://research.unsw.edu.au/projects/unsw-nb15-dataset) and [CIC-IDS2017](https://www.unb.ca/cic/datasets/ids-2017.html). Each of these datasets contains a mix of normal traffic and different types of attack traffic, which are identified by their respective labels. The UNSW-NB15 dataset has 10 unique class labels, and the CIC-IDS2017 dataset has 24 unique class labels.

- UNSW-NB15 Labels: 'normal', 'exploits', 'dos', 'fuzzers', 'generic', 'reconnaissance', 'worms', 'shellcode', 'backdoor', 'analysis'

- CIC-IDS2017 Labels: 'BENIGN', 'FTP-Patator', 'SSH-Patator', 'DoS slowloris', 'DoS Slowhttptest', 'DoS Hulk', 'Heartbleed', 'Web Attack – Brute Force', 'Web Attack – XSS', 'Web Attack – SQL Injection', 'Infiltration', 'Bot', 'PortScan', 'DDoS', 'normal', 'exploits', 'dos', 'fuzzers', 'generic', 'reconnaissance', 'worms', 'shellcode', 'backdoor', 'analysis', 'DoS GoldenEye'

## Subsets of the Dataset

Each dataset consists of four subsets:

1. Network-Flows - Contains flow-level data.

2. Packet-Fields - Contains packet header information.

3. Packet-Bytes - Contains packet byte information in the range (0-255).

4. Payload-Bytes - Contains payload byte information in the range (0-255).

Each subset contains 18 files (except Network-Flows, which has one file), where the data is stored in parquet format. In total, this package provides access to 110 files. You can choose to download all subsets or select specific subsets or specific files depending on your analysis requirements.

## Getting Information on the Datasets

The `DatasetInfo` function provides a summary of the dataset in a pandas dataframe format. It displays the number of packets for each class label across all 18 files in the dataset. This overview can guide you in selecting specific files for download and analysis.

```python

df = DatasetInfo(dataset='UNSW-NB15') # or dataset='CIC-IDS2017'

df

```

## Downloading the Datasets

The `Dataset` class allows you to specify the dataset, subset, and files that you are interested in. The specified data will then be downloaded.

```python

dataset = 'UNSW-NB15' # or 'CIC-IDS2017'

subset = ['Network-Flows', 'Packet-Fields', 'Payload-Bytes'] # or 'all' for all subsets

files = [3, 5, 10] # or 'all' for all files

data = Dataset(dataset=dataset, subset=subset, files=files)

data.download()

```

The directory structure after downloading files:

```

UNSW-NB15

│

├───Network-Flows

│ └───UNSW_Flow.parquet

│

├───Packet-Fields

│ ├───Packet_Fields_File_3.parquet

│ ├───Packet_Fields_File_5.parquet

│ └───Packet_Fields_File_10.parquet

│

└───Payload-Bytes

├───Payload_Bytes_File_3.parquet

├───Payload_Bytes_File_5.parquet

└───Payload_Bytes_File_10.parquet

```

You can then load the parquet files using pandas:

```python

import pandas as pd

df = pd.read_parquet('UNSW-NB15/Packet-Fields/Packet_Fields_File_10.parquet')

```

## Merging Subsets

The `merge()` method allows you to merge all data of each packet across all subsets, providing both flow-level and packet-level information in a single file.

```python

data.merge()

```

The merge method, by default, uses the details specified while instantiating the `Dataset` class. You can also pass subset=list of subsets and files=list of files you want to merge.

The directory structure after merging files:

```

UNSW-NB15

│

├───Network-Flows

│ └───UNSW_Flow.parquet

│

├───Packet-Fields

│ ├───Packet_Fields_File_3.parquet

│ ├───Packet_Fields_File_5.parquet

│ └───Packet_Fields_File_10.parquet

│

├───Payload-Bytes

│ ├───Payload_Bytes_File_3.parquet

│ ├───Payload_Bytes_File_5.parquet

│ └───Payload_Bytes_File_10.parquet

│

└───Network-Flows+Packet-Fields+Payload-Bytes

├───Network_Flows+Packet_Fields+Payload_Bytes_File_3.parquet

├───Network_Flows+Packet_Fields+Payload_Bytes_File_5.parquet

└───Network_Flows+Packet_Fields+Payload_Bytes_File_10.parquet

```

## Extracting Bytes

Packet-Bytes and Payload-Bytes subset contains the first 1500-1600 bytes. To retrieve all bytes (up to 65535 bytes) from the Packet-Bytes and Payload-Bytes subsets, use the `Bytes()` method. This function requires files in the Packet-Fields subset to operate. You can specify how many bytes you want to extract by passing the max_bytes parameter.

```python

data.bytes(payload=True, max_bytes=2500)

```

Use packet=True to extract packet bytes. You can also pass files=list of files to retrieve bytes.

The directory structure after extracting bytes:

```

UNSW-NB15

│

├───Network-Flows

│ └───UNSW_Flow.parquet

│

├───Packet-Fields

│ ├───Packet_Fields_File_3.parquet

│ ├───Packet_Fields_File_5.parquet

│ └───Packet_Fields_File_10.parquet

│

├───Payload-Bytes

│ ├───Payload_Bytes_File_3.parquet

│ ├───Payload_Bytes_File_5.parquet

│ └───Payload_Bytes_File_10.parquet

│

├───Network-Flows+Packet-Fields+Payload-Bytes

│ ├───Network_Flows+Packet_Fields+Payload_Bytes_File_3.parquet

│ ├───Network_Flows+Packet_Fields+Payload_Bytes_File_5.parquet

│ └───Network_Flows+Packet_Fields+Payload_Bytes_File_10.parquet

│

└───Payload-Bytes-2500

├───Payload_Bytes_File_3.parquet

├───Payload_Bytes_File_5.parquet

└───Payload_Bytes_File_10.parquet

```

## Reading the Datasets

The `read()` method allows you to read files using Hugging Face's `load_dataset` method, one subset at a time. The dataset and files parameters are optional if the same details are used to instantiate the `Dataset` class.

```python

dataset = data.read(dataset='UNSW-NB15', subset='Packet-Fields', files=[1,2])

```

The `read()` method returns a dataset that you can convert to a pandas dataframe or save to a CSV, parquet, or any other desired file format:

```python

df = dataset.to_pandas()

dataset.to_csv('file_path_to_save.csv')

dataset.to_parquet('file_path_to_save.parquet')

```

For scenarios where you want to process one packet at a time, you can use the `stream=True` parameter:

```python

dataset = data.read(dataset='UNSW-NB15', subset='Packet-Fields', files=[1,2], stream=True)

print(next(iter(dataset)))

```

## Notes

The size of these datasets is large, and depending on the subset(s) selected and the number of bytes extracted, the operations can be resource-intensive. Therefore, it's recommended to ensure you have sufficient disk space and RAM when using this package. | 7,424 | [

[

-0.0374755859375,

-0.052276611328125,

-0.00685882568359375,

0.04571533203125,

-0.0070037841796875,

-0.0079498291015625,

0.00992584228515625,

-0.02398681640625,

0.048675537109375,

0.050567626953125,

-0.024139404296875,

-0.0250701904296875,

-0.034820556640625,

... |

DynamicSuperb/EnvironmentalSoundClassification_ESC50-ExteriorAndUrbanNoises | 2023-07-12T05:58:21.000Z | [

"region:us"

] | DynamicSuperb | null | null | 0 | 10 | 2023-07-11T11:56:42 | ---

dataset_info:

features:

- name: file

dtype: string

- name: audio

dtype: audio

- name: label

dtype: string

- name: instruction

dtype: string

splits:

- name: test

num_bytes: 176506600.0

num_examples: 400

download_size: 168310913

dataset_size: 176506600.0

---

# Dataset Card for "environmental_sound_classification_exterior_and_urban_noises_ESC50"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 523 | [

[

-0.056884765625,

-0.006313323974609375,

0.02459716796875,

0.01751708984375,

0.0096588134765625,

0.00007736682891845703,

-0.013458251953125,

-0.033538818359375,

0.029998779296875,

0.016998291015625,

-0.055572509765625,

-0.07940673828125,

-0.0191650390625,

-0.... |

BigSuperbPrivate/SpeakerVerification_LibrispeechTrainClean100 | 2023-07-17T19:29:07.000Z | [

"region:us"

] | BigSuperbPrivate | null | null | 0 | 10 | 2023-07-14T18:31:55 | ---

dataset_info:

features:

- name: file

dtype: string

- name: audio

dtype: audio

- name: file2

dtype: string

- name: instruction

dtype: string

- name: label

dtype: string

splits:

- name: train

num_bytes: 6617191795.67

num_examples: 28539

- name: validation

num_bytes: 359547975.058

num_examples: 2703

download_size: 6771822691

dataset_size: 6976739770.728

---

# Dataset Card for "SpeakerVerification_LibrispeechTrainClean100"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 617 | [

[

-0.0574951171875,

-0.0176239013671875,

0.01471710205078125,

0.0169677734375,

0.0023860931396484375,

-0.0091552734375,

-0.0130767822265625,

-0.0018911361694335938,

0.06494140625,

0.0284423828125,

-0.0599365234375,

-0.0472412109375,

-0.0310516357421875,

-0.037... |

ivrit-ai/audio-base | 2023-09-26T05:49:29.000Z | [

"task_categories:audio-classification",

"task_categories:voice-activity-detection",

"size_categories:1K<n<10K",

"language:he",

"license:other",

"arxiv:2307.08720",

"region:us"

] | ivrit-ai | null | null | 4 | 10 | 2023-07-15T08:01:33 | ---

license: other

task_categories:

- audio-classification

- voice-activity-detection

language:

- he

size_categories:

- 1K<n<10K

extra_gated_prompt:

"You agree to the following license terms:

This material and data is licensed under the terms of the Creative Commons Attribution 4.0

International License (CC BY 4.0), The full text of the CC-BY 4.0 license is available at

https://creativecommons.org/licenses/by/4.0/.

Notwithstanding the foregoing, this material and data may only be used, modified and distributed for

the express purpose of training AI models, and subject to the foregoing restriction. In addition, this

material and data may not be used in order to create audiovisual material that simulates the voice or

likeness of the specific individuals appearing or speaking in such materials and data (a “deep-fake”).

To the extent this paragraph is inconsistent with the CC-BY-4.0 license, the terms of this paragraph

shall govern.

By downloading or using any of this material or data, you agree that the Project makes no

representations or warranties in respect of the data, and shall have no liability in respect thereof. These

disclaimers and limitations are in addition to any disclaimers and limitations set forth in the CC-BY-4.0

license itself. You understand that the project is only able to make available the materials and data

pursuant to these disclaimers and limitations, and without such disclaimers and limitations the project

would not be able to make available the materials and data for your use."

extra_gated_fields:

I have read the license, and agree to its terms: checkbox

---

ivrit.ai is a database of Hebrew audio and text content.

**audio-base** contains the raw, unprocessed sources.

**audio-vad** contains audio snippets generated by applying Silero VAD (https://github.com/snakers4/silero-vad) to the base dataset.

v1 data is generated using silero-vad's default parameters.

v2 data is generated using min_speech_duration_ms=2000 (milliseconds), and max_speech_duration_s=30 (seconds).

**audio-transcripts** contains transcriptions for each snippet in the audio-vad dataset.

You can find the full list of sources in this dataset under https://www.ivrit.ai/en/credits.

Paper: https://arxiv.org/abs/2307.08720

If you use our datasets, the following quote is preferable:

```

@misc{marmor2023ivritai,

title={ivrit.ai: A Comprehensive Dataset of Hebrew Speech for AI Research and Development},