id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 6.67k ⌀ | citation stringlengths 0 10.7k ⌀ | likes int64 0 3.66k | downloads int64 0 8.89M | created timestamp[us] | card stringlengths 11 977k | card_len int64 11 977k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|

BigSuperbPrivate/SpeakerCounting_LibrittsTrainClean100 | 2023-07-31T08:01:44.000Z | [

"region:us"

] | BigSuperbPrivate | null | null | 0 | 8 | 2023-07-13T18:23:04 | ---

dataset_info:

features:

- name: file

dtype: string

- name: audio

dtype: audio

- name: instruction

dtype: string

- name: label

dtype: string

- name: utterance 1

dtype: string

- name: utterance 2

dtype: string

- name: utterance 3

dtype: string

- name: utterance 4

dtype: string

- name: utterance 5

dtype: string

splits:

- name: train

num_bytes: 1438538131.0

num_examples: 10000

- name: validation

num_bytes: 199304545.0

num_examples: 1000

download_size: 2240435961

dataset_size: 1637842676.0

---

# Dataset Card for "SpeakerCounting_LibriTTSTrainClean100"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 771 | [

[

-0.05584716796875,

-0.012298583984375,

0.0177459716796875,

0.0084381103515625,

-0.003513336181640625,

-0.00284576416015625,

-0.0038356781005859375,

0.0019054412841796875,

0.06463623046875,

0.04150390625,

-0.053985595703125,

-0.048187255859375,

-0.030868530273437... |

DynamicSuperb/NoiseDetection_LJSpeech_MUSAN-Speech | 2023-07-18T09:10:45.000Z | [

"region:us"

] | DynamicSuperb | null | null | 0 | 8 | 2023-07-14T03:16:21 | ---

dataset_info:

features:

- name: file

dtype: string

- name: audio

dtype: audio

- name: instruction

dtype: string

- name: label

dtype: string

splits:

- name: test

num_bytes: 3371932555.0

num_examples: 26200

download_size: 3362676277

dataset_size: 3371932555.0

---

# Dataset Card for "NoiseDetectionspeech_LJSpeechMusan"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 496 | [

[

-0.036529541015625,

-0.01282501220703125,

0.0181427001953125,

0.0159149169921875,

-0.0117645263671875,

0.005901336669921875,

0.00775909423828125,

-0.02252197265625,

0.061737060546875,

0.0244293212890625,

-0.061859130859375,

-0.0418701171875,

-0.03753662109375,

... |

recastai/coyo-10m-aesthetic | 2023-07-15T05:46:54.000Z | [

"region:us"

] | recastai | null | null | 0 | 8 | 2023-07-15T05:23:43 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

MichaelR207/MultiSim | 2023-07-18T23:19:38.000Z | [

"task_categories:summarization",

"task_categories:text2text-generation",

"task_categories:text-generation",

"size_categories:1M<n<10M",

"language:en",

"language:fr",

"language:ru",

"language:ja",

"language:it",

"language:da",

"language:es",

"language:de",

"language:pt",

"language:sl",

"l... | MichaelR207 | null | null | 0 | 8 | 2023-07-18T21:55:31 | ---

license: mit

language:

- en

- fr

- ru

- ja

- it

- da

- es

- de

- pt

- sl

- ur

- eu

task_categories:

- summarization

- text2text-generation

- text-generation

pretty_name: MultiSim

tags:

- medical

- legal

- wikipedia

- encyclopedia

- science

- literature

- news

- websites

size_categories:

- 1M<n<10M

---

# Dataset Card for MultiSim Benchmark

## Dataset Description

- **Repository:https://github.com/XenonMolecule/MultiSim/tree/main**

- **Paper:https://aclanthology.org/2023.acl-long.269/ https://arxiv.org/pdf/2305.15678.pdf**

- **Point of Contact: michaeljryan@stanford.edu**

### Dataset Summary

The MultiSim benchmark is a growing collection of text simplification datasets targeted at sentence simplification in several languages. Currently, the benchmark spans 12 languages.

### Supported Tasks

- Sentence Simplification

### Usage

```python

from datasets import load_dataset

dataset = load_dataset("MichaelR207/MultiSim")

```

### Citation

If you use this benchmark, please cite our [paper](https://aclanthology.org/2023.acl-long.269/):

```

@inproceedings{ryan-etal-2023-revisiting,

title = "Revisiting non-{E}nglish Text Simplification: A Unified Multilingual Benchmark",

author = "Ryan, Michael and

Naous, Tarek and

Xu, Wei",

booktitle = "Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers)",

month = jul,

year = "2023",

address = "Toronto, Canada",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2023.acl-long.269",

pages = "4898--4927",

abstract = "Recent advancements in high-quality, large-scale English resources have pushed the frontier of English Automatic Text Simplification (ATS) research. However, less work has been done on multilingual text simplification due to the lack of a diverse evaluation benchmark that covers complex-simple sentence pairs in many languages. This paper introduces the MultiSim benchmark, a collection of 27 resources in 12 distinct languages containing over 1.7 million complex-simple sentence pairs. This benchmark will encourage research in developing more effective multilingual text simplification models and evaluation metrics. Our experiments using MultiSim with pre-trained multilingual language models reveal exciting performance improvements from multilingual training in non-English settings. We observe strong performance from Russian in zero-shot cross-lingual transfer to low-resource languages. We further show that few-shot prompting with BLOOM-176b achieves comparable quality to reference simplifications outperforming fine-tuned models in most languages. We validate these findings through human evaluation.",

}

```

### Contact

**Michael Ryan**: [Scholar](https://scholar.google.com/citations?user=8APGEEkAAAAJ&hl=en) | [Twitter](http://twitter.com/michaelryan207) | [Github](https://github.com/XenonMolecule) | [LinkedIn](https://www.linkedin.com/in/michael-ryan-207/) | [Research Gate](https://www.researchgate.net/profile/Michael-Ryan-86) | [Personal Website](http://michaelryan.tech/) | [michaeljryan@stanford.edu](mailto://michaeljryan@stanford.edu)

### Languages

- English

- French

- Russian

- Japanese

- Italian

- Danish (on request)

- Spanish (on request)

- German

- Brazilian Portuguese

- Slovene

- Urdu (on request)

- Basque (on request)

## Dataset Structure

### Data Instances

MultiSim is a collection of 27 existing datasets:

- AdminIT

- ASSET

- CBST

- CLEAR

- DSim

- Easy Japanese

- Easy Japanese Extended

- GEOLino

- German News

- Newsela EN/ES

- PaCCSS-IT

- PorSimples

- RSSE

- RuAdapt Encyclopedia

- RuAdapt Fairytales

- RuAdapt Literature

- RuWikiLarge

- SIMPITIKI

- Simple German

- Simplext

- SimplifyUR

- SloTS

- Teacher

- Terence

- TextComplexityDE

- WikiAuto

- WikiLargeFR

### Data Fields

In the train set, you will only find `original` and `simple` sentences. In the validation and test sets you may find `simple1`, `simple2`, ... `simpleN` because a given sentence can have multiple reference simplifications (useful in SARI and BLEU calculations)

### Data Splits

The dataset is split into a train, validation, and test set.

## Dataset Creation

### Curation Rationale

I hope that collecting all of these independently useful resources for text simplification together into one benchmark will encourage multilingual work on text simplification!

### Source Data

#### Initial Data Collection and Normalization

Data is compiled from the 27 existing datasets that comprise the MultiSim Benchmark. For details on each of the resources please see Appendix A in the [paper](https://aclanthology.org/2023.acl-long.269.pdf).

#### Who are the source language producers?

Each dataset has different sources. At a high level the sources are: Automatically Collected (ex. Wikipedia, Web data), Manually Collected (ex. annotators asked to simplify sentences), Target Audience Resources (ex. Newsela News Articles), or Translated (ex. Machine translations of existing datasets).

These sources can be seen in Table 1 pictured above (Section: `Dataset Structure/Data Instances`) and further discussed in section 3 of the [paper](https://aclanthology.org/2023.acl-long.269.pdf). Appendix A of the paper has details on specific resources.

### Annotations

#### Annotation process

Annotators writing simplifications (only for some datasets) typically follow an annotation guideline. Some example guidelines come from [here](https://dl.acm.org/doi/10.1145/1410140.1410191), [here](https://link.springer.com/article/10.1007/s11168-006-9011-1), and [here](https://link.springer.com/article/10.1007/s10579-017-9407-6).

#### Who are the annotators?

See Table 1 (Section: `Dataset Structure/Data Instances`) for specific annotators per dataset. At a high level the annotators are: writers, translators, teachers, linguists, journalists, crowdworkers, experts, news agencies, medical students, students, writers, and researchers.

### Personal and Sensitive Information

No dataset should contain personal or sensitive information. These were previously collected resources primarily collected from news sources, wikipedia, science communications, etc. and were not identified to have personally identifiable information.

## Considerations for Using the Data

### Social Impact of Dataset

We hope this dataset will make a greatly positive social impact as text simplification is a task that serves children, second language learners, and people with reading/cognitive disabilities. By publicly releasing a dataset in 12 languages we hope to serve these global communities.

One negative and unintended use case for this data would be reversing the labels to make a "text complification" model. We beleive the benefits of releasing this data outweigh the harms and hope that people use the dataset as intended.

### Discussion of Biases

There may be biases of the annotators involved in writing the simplifications towards how they believe a simpler sentence should be written. Additionally annotators and editors have the choice of what information does not make the cut in the simpler sentence introducing information importance bias.

### Other Known Limitations

Some of the included resources were automatically collected or machine translated. As such not every sentence is perfectly aligned. Users are recommended to use such individual resources with caution.

## Additional Information

### Dataset Curators

**Michael Ryan**: [Scholar](https://scholar.google.com/citations?user=8APGEEkAAAAJ&hl=en) | [Twitter](http://twitter.com/michaelryan207) | [Github](https://github.com/XenonMolecule) | [LinkedIn](https://www.linkedin.com/in/michael-ryan-207/) | [Research Gate](https://www.researchgate.net/profile/Michael-Ryan-86) | [Personal Website](http://michaelryan.tech/) | [michaeljryan@stanford.edu](mailto://michaeljryan@stanford.edu)

### Licensing Information

MIT License

### Citation Information

Please cite the individual datasets that you use within the MultiSim benchmark as appropriate. Proper bibtex attributions for each of the datasets are included below.

#### AdminIT

```

@inproceedings{miliani-etal-2022-neural,

title = "Neural Readability Pairwise Ranking for Sentences in {I}talian Administrative Language",

author = "Miliani, Martina and

Auriemma, Serena and

Alva-Manchego, Fernando and

Lenci, Alessandro",

booktitle = "Proceedings of the 2nd Conference of the Asia-Pacific Chapter of the Association for Computational Linguistics and the 12th International Joint Conference on Natural Language Processing",

month = nov,

year = "2022",

address = "Online only",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2022.aacl-main.63",

pages = "849--866",

abstract = "Automatic Readability Assessment aims at assigning a complexity level to a given text, which could help improve the accessibility to information in specific domains, such as the administrative one. In this paper, we investigate the behavior of a Neural Pairwise Ranking Model (NPRM) for sentence-level readability assessment of Italian administrative texts. To deal with data scarcity, we experiment with cross-lingual, cross- and in-domain approaches, and test our models on Admin-It, a new parallel corpus in the Italian administrative language, containing sentences simplified using three different rewriting strategies. We show that NPRMs are effective in zero-shot scenarios ({\textasciitilde}0.78 ranking accuracy), especially with ranking pairs containing simplifications produced by overall rewriting at the sentence-level, and that the best results are obtained by adding in-domain data (achieving perfect performance for such sentence pairs). Finally, we investigate where NPRMs failed, showing that the characteristics of the training data, rather than its size, have a bigger effect on a model{'}s performance.",

}

```

#### ASSET

```

@inproceedings{alva-manchego-etal-2020-asset,

title = "{ASSET}: {A} Dataset for Tuning and Evaluation of Sentence Simplification Models with Multiple Rewriting Transformations",

author = "Alva-Manchego, Fernando and

Martin, Louis and

Bordes, Antoine and

Scarton, Carolina and

Sagot, Beno{\^\i}t and

Specia, Lucia",

booktitle = "Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics",

month = jul,

year = "2020",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/2020.acl-main.424",

pages = "4668--4679",

}

```

#### CBST

```

@article{10.1007/s10579-017-9407-6,

title={{The corpus of Basque simplified texts (CBST)}},

author={Gonzalez-Dios, Itziar and Aranzabe, Mar{\'\i}a Jes{\'u}s and D{\'\i}az de Ilarraza, Arantza},

journal={Language Resources and Evaluation},

volume={52},

number={1},

pages={217--247},

year={2018},

publisher={Springer}

}

```

#### CLEAR

```

@inproceedings{grabar-cardon-2018-clear,

title = "{CLEAR} {--} Simple Corpus for Medical {F}rench",

author = "Grabar, Natalia and

Cardon, R{\'e}mi",

booktitle = "Proceedings of the 1st Workshop on Automatic Text Adaptation ({ATA})",

month = nov,

year = "2018",

address = "Tilburg, the Netherlands",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/W18-7002",

doi = "10.18653/v1/W18-7002",

pages = "3--9",

}

```

#### DSim

```

@inproceedings{klerke-sogaard-2012-dsim,

title = "{DS}im, a {D}anish Parallel Corpus for Text Simplification",

author = "Klerke, Sigrid and

S{\o}gaard, Anders",

booktitle = "Proceedings of the Eighth International Conference on Language Resources and Evaluation ({LREC}'12)",

month = may,

year = "2012",

address = "Istanbul, Turkey",

publisher = "European Language Resources Association (ELRA)",

url = "http://www.lrec-conf.org/proceedings/lrec2012/pdf/270_Paper.pdf",

pages = "4015--4018",

abstract = "We present DSim, a new sentence aligned Danish monolingual parallel corpus extracted from 3701 pairs of news telegrams and corresponding professionally simplified short news articles. The corpus is intended for building automatic text simplification for adult readers. We compare DSim to different examples of monolingual parallel corpora, and we argue that this corpus is a promising basis for future development of automatic data-driven text simplification systems in Danish. The corpus contains both the collection of paired articles and a sentence aligned bitext, and we show that sentence alignment using simple tf*idf weighted cosine similarity scoring is on line with state―of―the―art when evaluated against a hand-aligned sample. The alignment results are compared to state of the art for English sentence alignment. We finally compare the source and simplified sides of the corpus in terms of lexical and syntactic characteristics and readability, and find that the one―to―many sentence aligned corpus is representative of the sentence simplifications observed in the unaligned collection of article pairs.",

}

```

#### Easy Japanese

```

@inproceedings{maruyama-yamamoto-2018-simplified,

title = "Simplified Corpus with Core Vocabulary",

author = "Maruyama, Takumi and

Yamamoto, Kazuhide",

booktitle = "Proceedings of the Eleventh International Conference on Language Resources and Evaluation ({LREC} 2018)",

month = may,

year = "2018",

address = "Miyazaki, Japan",

publisher = "European Language Resources Association (ELRA)",

url = "https://aclanthology.org/L18-1185",

}

```

#### Easy Japanese Extended

```

@inproceedings{katsuta-yamamoto-2018-crowdsourced,

title = "Crowdsourced Corpus of Sentence Simplification with Core Vocabulary",

author = "Katsuta, Akihiro and

Yamamoto, Kazuhide",

booktitle = "Proceedings of the Eleventh International Conference on Language Resources and Evaluation ({LREC} 2018)",

month = may,

year = "2018",

address = "Miyazaki, Japan",

publisher = "European Language Resources Association (ELRA)",

url = "https://aclanthology.org/L18-1072",

}

```

#### GEOLino

```

@inproceedings{mallinson2020,

title={Zero-Shot Crosslingual Sentence Simplification},

author={Mallinson, Jonathan and Sennrich, Rico and Lapata, Mirella},

year={2020},

booktitle={2020 Conference on Empirical Methods in Natural Language Processing (EMNLP 2020)}

}

```

#### German News

```

@inproceedings{sauberli-etal-2020-benchmarking,

title = "Benchmarking Data-driven Automatic Text Simplification for {G}erman",

author = {S{\"a}uberli, Andreas and

Ebling, Sarah and

Volk, Martin},

booktitle = "Proceedings of the 1st Workshop on Tools and Resources to Empower People with REAding DIfficulties (READI)",

month = may,

year = "2020",

address = "Marseille, France",

publisher = "European Language Resources Association",

url = "https://aclanthology.org/2020.readi-1.7",

pages = "41--48",

abstract = "Automatic text simplification is an active research area, and there are first systems for English, Spanish, Portuguese, and Italian. For German, no data-driven approach exists to this date, due to a lack of training data. In this paper, we present a parallel corpus of news items in German with corresponding simplifications on two complexity levels. The simplifications have been produced according to a well-documented set of guidelines. We then report on experiments in automatically simplifying the German news items using state-of-the-art neural machine translation techniques. We demonstrate that despite our small parallel corpus, our neural models were able to learn essential features of simplified language, such as lexical substitutions, deletion of less relevant words and phrases, and sentence shortening.",

language = "English",

ISBN = "979-10-95546-45-0",

}

```

#### Newsela EN/ES

```

@article{xu-etal-2015-problems,

title = "Problems in Current Text Simplification Research: New Data Can Help",

author = "Xu, Wei and

Callison-Burch, Chris and

Napoles, Courtney",

journal = "Transactions of the Association for Computational Linguistics",

volume = "3",

year = "2015",

address = "Cambridge, MA",

publisher = "MIT Press",

url = "https://aclanthology.org/Q15-1021",

doi = "10.1162/tacl_a_00139",

pages = "283--297",

abstract = "Simple Wikipedia has dominated simplification research in the past 5 years. In this opinion paper, we argue that focusing on Wikipedia limits simplification research. We back up our arguments with corpus analysis and by highlighting statements that other researchers have made in the simplification literature. We introduce a new simplification dataset that is a significant improvement over Simple Wikipedia, and present a novel quantitative-comparative approach to study the quality of simplification data resources.",

}

```

#### PaCCSS-IT

```

@inproceedings{brunato-etal-2016-paccss,

title = "{P}a{CCSS}-{IT}: A Parallel Corpus of Complex-Simple Sentences for Automatic Text Simplification",

author = "Brunato, Dominique and

Cimino, Andrea and

Dell{'}Orletta, Felice and

Venturi, Giulia",

booktitle = "Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing",

month = nov,

year = "2016",

address = "Austin, Texas",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/D16-1034",

doi = "10.18653/v1/D16-1034",

pages = "351--361",

}

```

#### PorSimples

```

@inproceedings{aluisio-gasperin-2010-fostering,

title = "Fostering Digital Inclusion and Accessibility: The {P}or{S}imples project for Simplification of {P}ortuguese Texts",

author = "Alu{\'\i}sio, Sandra and

Gasperin, Caroline",

booktitle = "Proceedings of the {NAACL} {HLT} 2010 Young Investigators Workshop on Computational Approaches to Languages of the {A}mericas",

month = jun,

year = "2010",

address = "Los Angeles, California",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/W10-1607",

pages = "46--53",

}

```

```

@inproceedings{10.1007/978-3-642-16952-6_31,

author="Scarton, Carolina and Gasperin, Caroline and Aluisio, Sandra",

editor="Kuri-Morales, Angel and Simari, Guillermo R.",

title="Revisiting the Readability Assessment of Texts in Portuguese",

booktitle="Advances in Artificial Intelligence -- IBERAMIA 2010",

year="2010",

publisher="Springer Berlin Heidelberg",

address="Berlin, Heidelberg",

pages="306--315",

isbn="978-3-642-16952-6"

}

```

#### RSSE

```

@inproceedings{sakhovskiy2021rusimplesenteval,

title={{RuSimpleSentEval-2021 shared task:} evaluating sentence simplification for Russian},

author={Sakhovskiy, Andrey and Izhevskaya, Alexandra and Pestova, Alena and Tutubalina, Elena and Malykh, Valentin and Smurov, Ivana and Artemova, Ekaterina},

booktitle={Proceedings of the International Conference “Dialogue},

pages={607--617},

year={2021}

}

```

#### RuAdapt

```

@inproceedings{Dmitrieva2021Quantitative,

title={A quantitative study of simplification strategies in adapted texts for L2 learners of Russian},

author={Dmitrieva, Anna and Laposhina, Antonina and Lebedeva, Maria},

booktitle={Proceedings of the International Conference “Dialogue},

pages={191--203},

year={2021}

}

```

```

@inproceedings{dmitrieva-tiedemann-2021-creating,

title = "Creating an Aligned {R}ussian Text Simplification Dataset from Language Learner Data",

author = {Dmitrieva, Anna and

Tiedemann, J{\"o}rg},

booktitle = "Proceedings of the 8th Workshop on Balto-Slavic Natural Language Processing",

month = apr,

year = "2021",

address = "Kiyv, Ukraine",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2021.bsnlp-1.8",

pages = "73--79",

abstract = "Parallel language corpora where regular texts are aligned with their simplified versions can be used in both natural language processing and theoretical linguistic studies. They are essential for the task of automatic text simplification, but can also provide valuable insights into the characteristics that make texts more accessible and reveal strategies that human experts use to simplify texts. Today, there exist a few parallel datasets for English and Simple English, but many other languages lack such data. In this paper we describe our work on creating an aligned Russian-Simple Russian dataset composed of Russian literature texts adapted for learners of Russian as a foreign language. This will be the first parallel dataset in this domain, and one of the first Simple Russian datasets in general.",

}

```

#### RuWikiLarge

```

@inproceedings{sakhovskiy2021rusimplesenteval,

title={{RuSimpleSentEval-2021 shared task:} evaluating sentence simplification for Russian},

author={Sakhovskiy, Andrey and Izhevskaya, Alexandra and Pestova, Alena and Tutubalina, Elena and Malykh, Valentin and Smurov, Ivana and Artemova, Ekaterina},

booktitle={Proceedings of the International Conference “Dialogue},

pages={607--617},

year={2021}

}

```

#### SIMPITIKI

```

@article{tonelli2016simpitiki,

title={SIMPITIKI: a Simplification corpus for Italian},

author={Tonelli, Sara and Aprosio, Alessio Palmero and Saltori, Francesca},

journal={Proceedings of CLiC-it},

year={2016}

}

```

#### Simple German

```

@inproceedings{battisti-etal-2020-corpus,

title = "A Corpus for Automatic Readability Assessment and Text Simplification of {G}erman",

author = {Battisti, Alessia and

Pf{\"u}tze, Dominik and

S{\"a}uberli, Andreas and

Kostrzewa, Marek and

Ebling, Sarah},

booktitle = "Proceedings of the Twelfth Language Resources and Evaluation Conference",

month = may,

year = "2020",

address = "Marseille, France",

publisher = "European Language Resources Association",

url = "https://aclanthology.org/2020.lrec-1.404",

pages = "3302--3311",

abstract = "In this paper, we present a corpus for use in automatic readability assessment and automatic text simplification for German, the first of its kind for this language. The corpus is compiled from web sources and consists of parallel as well as monolingual-only (simplified German) data amounting to approximately 6,200 documents (nearly 211,000 sentences). As a unique feature, the corpus contains information on text structure (e.g., paragraphs, lines), typography (e.g., font type, font style), and images (content, position, and dimensions). While the importance of considering such information in machine learning tasks involving simplified language, such as readability assessment, has repeatedly been stressed in the literature, we provide empirical evidence for its benefit. We also demonstrate the added value of leveraging monolingual-only data for automatic text simplification via machine translation through applying back-translation, a data augmentation technique.",

language = "English",

ISBN = "979-10-95546-34-4",

}

```

#### Simplext

```

@article{10.1145/2738046,

author = {Saggion, Horacio and \v{S}tajner, Sanja and Bott, Stefan and Mille, Simon and Rello, Luz and Drndarevic, Biljana},

title = {Making It Simplext: Implementation and Evaluation of a Text Simplification System for Spanish},

year = {2015},

issue_date = {June 2015}, publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

volume = {6},

number = {4},

issn = {1936-7228},

url = {https://doi.org/10.1145/2738046},

doi = {10.1145/2738046},

journal = {ACM Trans. Access. Comput.},

month = {may},

articleno = {14},

numpages = {36},

keywords = {Spanish, text simplification corpus, human evaluation, readability measures}

}

```

#### SimplifyUR

```

@inproceedings{qasmi-etal-2020-simplifyur,

title = "{S}implify{UR}: Unsupervised Lexical Text Simplification for {U}rdu",

author = "Qasmi, Namoos Hayat and

Zia, Haris Bin and

Athar, Awais and

Raza, Agha Ali",

booktitle = "Proceedings of the Twelfth Language Resources and Evaluation Conference",

month = may,

year = "2020",

address = "Marseille, France",

publisher = "European Language Resources Association",

url = "https://aclanthology.org/2020.lrec-1.428",

pages = "3484--3489",

language = "English",

ISBN = "979-10-95546-34-4",

}

```

#### SloTS

```

@misc{gorenc2022slovene,

title = {Slovene text simplification dataset {SloTS}},

author = {Gorenc, Sabina and Robnik-{\v S}ikonja, Marko},

url = {http://hdl.handle.net/11356/1682},

note = {Slovenian language resource repository {CLARIN}.{SI}},

copyright = {Creative Commons - Attribution 4.0 International ({CC} {BY} 4.0)},

issn = {2820-4042},

year = {2022}

}

```

#### Terence and Teacher

```

@inproceedings{brunato-etal-2015-design,

title = "Design and Annotation of the First {I}talian Corpus for Text Simplification",

author = "Brunato, Dominique and

Dell{'}Orletta, Felice and

Venturi, Giulia and

Montemagni, Simonetta",

booktitle = "Proceedings of the 9th Linguistic Annotation Workshop",

month = jun,

year = "2015",

address = "Denver, Colorado, USA",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/W15-1604",

doi = "10.3115/v1/W15-1604",

pages = "31--41",

}

```

#### TextComplexityDE

```

@article{naderi2019subjective,

title={Subjective Assessment of Text Complexity: A Dataset for German Language},

author={Naderi, Babak and Mohtaj, Salar and Ensikat, Kaspar and M{\"o}ller, Sebastian},

journal={arXiv preprint arXiv:1904.07733},

year={2019}

}

```

#### WikiAuto

```

@inproceedings{acl/JiangMLZX20,

author = {Chao Jiang and

Mounica Maddela and

Wuwei Lan and

Yang Zhong and

Wei Xu},

editor = {Dan Jurafsky and

Joyce Chai and

Natalie Schluter and

Joel R. Tetreault},

title = {Neural {CRF} Model for Sentence Alignment in Text Simplification},

booktitle = {Proceedings of the 58th Annual Meeting of the Association for Computational

Linguistics, {ACL} 2020, Online, July 5-10, 2020},

pages = {7943--7960},

publisher = {Association for Computational Linguistics},

year = {2020},

url = {https://www.aclweb.org/anthology/2020.acl-main.709/}

}

```

#### WikiLargeFR

```

@inproceedings{cardon-grabar-2020-french,

title = "{F}rench Biomedical Text Simplification: When Small and Precise Helps",

author = "Cardon, R{\'e}mi and

Grabar, Natalia",

booktitle = "Proceedings of the 28th International Conference on Computational Linguistics",

month = dec,

year = "2020",

address = "Barcelona, Spain (Online)",

publisher = "International Committee on Computational Linguistics",

url = "https://aclanthology.org/2020.coling-main.62",

doi = "10.18653/v1/2020.coling-main.62",

pages = "710--716",

abstract = "We present experiments on biomedical text simplification in French. We use two kinds of corpora {--} parallel sentences extracted from existing health comparable corpora in French and WikiLarge corpus translated from English to French {--} and a lexicon that associates medical terms with paraphrases. Then, we train neural models on these parallel corpora using different ratios of general and specialized sentences. We evaluate the results with BLEU, SARI and Kandel scores. The results point out that little specialized data helps significantly the simplification.",

}

```

## Data Availability

### Public Datasets

Most of the public datasets are available as a part of this MultiSim Repo. A few are still pending availability. For all resources we provide alternative download links.

| Dataset | Language | Availability in MultiSim Repo | Alternative Link |

|---|---|---|---|

| ASSET | English | Available | https://huggingface.co/datasets/asset |

| WikiAuto | English | Available | https://huggingface.co/datasets/wiki_auto |

| CLEAR | French | Available | http://natalia.grabar.free.fr/resources.php#remi |

| WikiLargeFR | French | Available | http://natalia.grabar.free.fr/resources.php#remi |

| GEOLino | German | Available | https://github.com/Jmallins/ZEST-data |

| TextComplexityDE | German | Available | https://github.com/babaknaderi/TextComplexityDE |

| AdminIT | Italian | Available | https://github.com/Unipisa/admin-It |

| Simpitiki | Italian | Available | https://github.com/dhfbk/simpitiki# |

| PaCCSS-IT | Italian | Available | http://www.italianlp.it/resources/paccss-it-parallel-corpus-of-complex-simple-sentences-for-italian/ |

| Terence and Teacher | Italian | Available | http://www.italianlp.it/resources/terence-and-teacher/ |

| Easy Japanese | Japanese | Available | https://www.jnlp.org/GengoHouse/snow/t15 |

| Easy Japanese Extended | Japanese | Available | https://www.jnlp.org/GengoHouse/snow/t23 |

| RuAdapt Encyclopedia | Russian | Available | https://github.com/Digital-Pushkin-Lab/RuAdapt |

| RuAdapt Fairytales | Russian | Available | https://github.com/Digital-Pushkin-Lab/RuAdapt |

| RuSimpleSentEval | Russian | Available | https://github.com/dialogue-evaluation/RuSimpleSentEval |

| RuWikiLarge | Russian | Available | https://github.com/dialogue-evaluation/RuSimpleSentEval |

| SloTS | Slovene | Available | https://github.com/sabina-skubic/text-simplification-slovene |

| SimplifyUR | Urdu | Pending | https://github.com/harisbinzia/SimplifyUR |

| PorSimples | Brazilian Portuguese | Available | [sandra@icmc.usp.br](mailto:sandra@icmc.usp.br) |

### On Request Datasets

The authors of the original papers must be contacted for on request datasets. Contact information for the authors of each dataset is provided below.

| Dataset | Language | Contact |

|---|---|---|

| CBST | Basque | http://www.ixa.eus/node/13007?language=en <br/> [itziar.gonzalezd@ehu.eus](mailto:itziar.gonzalezd@ehu.eus) |

| DSim | Danish | [sk@eyejustread.com](mailto:sk@eyejustread.com) |

| Newsela EN | English | [https://newsela.com/data/](https://newsela.com/data/) |

| Newsela ES | Spanish | [https://newsela.com/data/](https://newsela.com/data/) |

| German News | German | [ebling@cl.uzh.ch](mailto:ebling@cl.uzh.ch) |

| Simple German | German | [ebling@cl.uzh.ch](mailto:ebling@cl.uzh.ch) |

| Simplext | Spanish | [horacio.saggion@upf.edu](mailto:horacio.saggion@upf.edu) |

| RuAdapt Literature | Russian | Partially Available: https://github.com/Digital-Pushkin-Lab/RuAdapt <br/> Full Dataset: [anna.dmitrieva@helsinki.fi](mailto:anna.dmitrieva@helsinki.fi) | | 31,423 | [

[

-0.0240936279296875,

-0.034912109375,

0.0217742919921875,

0.026123046875,

-0.0174713134765625,

-0.01491546630859375,

-0.043975830078125,

-0.035797119140625,

0.0096893310546875,

0.012969970703125,

-0.058502197265625,

-0.046539306640625,

-0.044036865234375,

0.... |

atmallen/popqa-parents-lying | 2023-07-19T15:57:51.000Z | [

"region:us"

] | atmallen | null | null | 0 | 8 | 2023-07-19T00:40:17 | ---

dataset_info:

features:

- name: text

dtype: string

- name: label

dtype:

class_label:

names:

'0': 'false'

'1': 'true'

- name: true_label

dtype: int64

splits:

- name: train

num_bytes: 3223356

num_examples: 31936

- name: validation

num_bytes: 695352

num_examples: 6848

- name: test

num_bytes: 700442

num_examples: 6880

download_size: 750525

dataset_size: 4619150

---

# Dataset Card for "popqa-parents-lying"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 631 | [

[

-0.036346435546875,

-0.0145263671875,

0.00589752197265625,

0.0174407958984375,

0.0033435821533203125,

0.001987457275390625,

0.0308837890625,

-0.0009021759033203125,

0.03125,

0.032623291015625,

-0.08929443359375,

-0.0301513671875,

-0.0318603515625,

-0.0314636... |

xzuyn/open-instruct-uncensored-alpaca | 2023-07-31T22:23:20.000Z | [

"size_categories:100K<n<1M",

"language:en",

"allenai",

"open-instruct",

"ehartford",

"alpaca",

"region:us"

] | xzuyn | null | null | 0 | 8 | 2023-07-20T21:36:52 | ---

language:

- en

tags:

- allenai

- open-instruct

- ehartford

- alpaca

size_categories:

- 100K<n<1M

---

[Original dataset page from ehartford.](https://huggingface.co/datasets/ehartford/open-instruct-uncensored)

810,102 entries. Sourced from `open-instruct-uncensored.jsonl`.

Converted the jsonl to a json which can be loaded into something like LLaMa-LoRA-Tuner.

I've also included smaller datasets that includes less entries depending on how much memory you have to work with.

Each one is randomized before being converted, so each dataset is unique in order.

```

Count of each Dataset:

code_alpaca: 19991

unnatural_instructions: 68231

baize: 166096

self_instruct: 81512

oasst1: 49433

flan_v2: 97519

stanford_alpaca: 50098

sharegpt: 46733

super_ni: 96157

dolly: 14624

cot: 73946

gpt4_alpaca: 45774

``` | 809 | [

[

-0.035430908203125,

-0.032440185546875,

0.0198974609375,

0.0093231201171875,

-0.00812530517578125,

-0.0262908935546875,

-0.004772186279296875,

-0.02789306640625,

0.034454345703125,

0.074951171875,

-0.043060302734375,

-0.0643310546875,

-0.035736083984375,

0.0... |

TrainingDataPro/makeup-detection-dataset | 2023-09-19T19:35:55.000Z | [

"task_categories:image-to-image",

"task_categories:image-classification",

"language:en",

"license:cc-by-nc-nd-4.0",

"code",

"region:us"

] | TrainingDataPro | The dataset consists of photos featuring the same individuals captured in two

distinct scenarios - *with and without makeup*. The dataset contains a diverse

range of individuals with various *ages, ethnicities and genders*. The images

themselves would be of high quality, ensuring clarity and detail for each

subject.

In photos with makeup, it is applied **to only specific parts** of the face,

such as *eyes, lips, or skin*.

In photos without makeup, individuals have a bare face with no visible

cosmetics or beauty enhancements. These images would provide a clear contrast

to the makeup images, allowing for significant visual analysis. | @InProceedings{huggingface:dataset,

title = {makeup-detection-dataset},

author = {TrainingDataPro},

year = {2023}

} | 2 | 8 | 2023-07-21T07:07:28 | ---

language:

- en

license: cc-by-nc-nd-4.0

task_categories:

- image-to-image

- image-classification

tags:

- code

dataset_info:

features:

- name: no_makeup

dtype: image

- name: with_makeup

dtype: image

- name: part

dtype: string

- name: gender

dtype: string

- name: age

dtype: int8

- name: country

dtype: string

splits:

- name: train

num_bytes: 25845965

num_examples: 26

download_size: 25248180

dataset_size: 25845965

---

# Makeup Detection Dataset

The dataset consists of photos featuring the same individuals captured in two distinct scenarios - *with and without makeup*. The dataset contains a diverse range of individuals with various *ages, ethnicities and genders*. The images themselves would be of high quality, ensuring clarity and detail for each subject.

In photos with makeup, it is applied **to only specific parts** of the face, such as *eyes, lips, or skin*.

In photos without makeup, individuals have a bare face with no visible cosmetics or beauty enhancements. These images would provide a clear contrast to the makeup images, allowing for significant visual analysis.

### The dataset's possible applications:

- facial recognition

- beauty consultations and personalized recommendations

- augmented reality and filters in photography apps

- social media and influencer marketing

- dermatology and skincare

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=makeup-detection-dataset) to discuss your requirements, learn about the price and buy the dataset.

# Content

- **no_makeup**: includes images of people *without* makeup

- **with_makeup**: includes images of people *wearing makeup*. People are the same as in the previous folder, photos are identified by the same name

- **.csv** file: contains information about people in the dataset

### File with the extension .csv

includes the following information for each set of media files:

- **no_makeup**: link to the photo of a person without makeup,

- **with_makeup**: link to the photo of the person with makeup,

- **part**: body part of makeup's application,

- **gender**: gender of the person,

- **age**: age of the person,

- **country**: country of the person

# Images for makeup detection might be collected in accordance with your requirements.

## [TrainingData](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=makeup-detection-dataset) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** | 3,031 | [

[

-0.026336669921875,

-0.038055419921875,

0.00760650634765625,

0.0170440673828125,

-0.007297515869140625,

0.00643157958984375,

-0.005397796630859375,

-0.033599853515625,

0.030303955078125,

0.066650390625,

-0.066650390625,

-0.07952880859375,

-0.03619384765625,

... |

YoonSeul/legal_train_v1 | 2023-07-24T14:44:37.000Z | [

"region:us"

] | YoonSeul | null | null | 0 | 8 | 2023-07-24T14:44:31 | ---

dataset_info:

features:

- name: instruction

dtype: string

- name: input

dtype: string

- name: output

dtype: string

splits:

- name: train

num_bytes: 33357358

num_examples: 14716

download_size: 15578888

dataset_size: 33357358

---

# Dataset Card for "legal_train_v1"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 436 | [

[

-0.0265350341796875,

-0.00403594970703125,

0.01399993896484375,

0.0201873779296875,

-0.0268402099609375,

-0.0197601318359375,

0.02606201171875,

-0.002918243408203125,

0.05078125,

0.047637939453125,

-0.058502197265625,

-0.052581787109375,

-0.0404052734375,

-0... |

HydraLM/math_dataset_alpaca | 2023-07-27T18:43:34.000Z | [

"region:us"

] | HydraLM | null | null | 0 | 8 | 2023-07-27T18:43:23 | ---

dataset_info:

features:

- name: input

dtype: string

- name: output

dtype: string

splits:

- name: train

num_bytes: 71896969

num_examples: 49999

download_size: 34712339

dataset_size: 71896969

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "math_dataset_alpaca"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 487 | [

[

-0.0509033203125,

-0.036956787109375,

0.003753662109375,

0.03094482421875,

-0.0239410400390625,

-0.0068359375,

0.0321044921875,

-0.005268096923828125,

0.07757568359375,

0.0307464599609375,

-0.05914306640625,

-0.0526123046875,

-0.0521240234375,

-0.03091430664... |

HydraLM/GPTeacher_roleplay_standardized | 2023-07-27T20:03:23.000Z | [

"region:us"

] | HydraLM | null | null | 2 | 8 | 2023-07-27T20:03:21 | ---

dataset_info:

features:

- name: message

dtype: string

- name: message_type

dtype: string

- name: message_id

dtype: int64

- name: conversation_id

dtype: int64

splits:

- name: train

num_bytes: 1664691

num_examples: 5769

download_size: 946455

dataset_size: 1664691

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "GPTeacher_roleplay_standardized"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 583 | [

[

-0.0240478515625,

-0.0198211669921875,

0.0000095367431640625,

0.01544189453125,

-0.00543975830078125,

-0.0233154296875,

0.0035457611083984375,

-0.00586700439453125,

0.032928466796875,

0.033660888671875,

-0.053741455078125,

-0.0731201171875,

-0.052978515625,

... |

kaenakiakona/spanglish_claude_generated | 2023-08-03T23:12:25.000Z | [

"region:us"

] | kaenakiakona | null | null | 0 | 8 | 2023-07-30T21:07:50 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

DNW/newbury_opening_times_qa | 2023-07-31T12:57:27.000Z | [

"region:us"

] | DNW | null | null | 0 | 8 | 2023-07-31T12:57:26 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 31347

num_examples: 233

download_size: 8252

dataset_size: 31347

---

# Dataset Card for "newbury_opening_times_qa"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 444 | [

[

-0.0206756591796875,

-0.0009264945983886719,

0.0118408203125,

0.03094482421875,

-0.0173187255859375,

-0.02606201171875,

0.03778076171875,

-0.016021728515625,

0.0304412841796875,

0.029815673828125,

-0.053497314453125,

-0.056610107421875,

-0.0015993118286132812,

... |

xzuyn/futurama-alpaca | 2023-08-03T06:49:53.000Z | [

"task_categories:text-generation",

"task_categories:conversational",

"size_categories:n<1K",

"language:en",

"region:us"

] | xzuyn | null | null | 0 | 8 | 2023-08-01T20:41:50 | ---

language:

- en

size_categories:

- n<1K

task_categories:

- text-generation

- conversational

---

[Original Dataset](https://www.kaggle.com/datasets/josephvm/futurama-seasons-16-transcripts?select=only_spoken_text.csv)

114 episodes. WIP formatting as with LLaMa, it's like 4000+ tokens each.

I would like to augment the instruction, and also possibly input a summary.

I also want to make a set that includes multiple tv shows. Just not sure how I wanna go about reformatting all this to fit into smaller chunks like 512 tokens, while still understanding the context of being and instruction but the episode at the same time.

```

Instruction: `Generate an episode of Futurama.`

Input: `{Episode Name} - {Episode Synopsis}`

Output: `{Episode Dialog In Chat Format}`

``` | 772 | [

[

-0.01023101806640625,

-0.06011962890625,

-0.0021419525146484375,

0.0254058837890625,

-0.0194549560546875,

-0.00027942657470703125,

-0.04522705078125,

0.01480865478515625,

0.055511474609375,

0.0276641845703125,

-0.0684814453125,

-0.0172882080078125,

-0.033203125,... |

iamshnoo/geomlama | 2023-09-15T23:24:53.000Z | [

"region:us"

] | iamshnoo | null | null | 0 | 8 | 2023-08-02T01:18:19 | ---

dataset_info:

features:

- name: question

dtype: string

- name: answer

dtype: string

- name: candidate_answers

dtype: string

- name: context

dtype: string

- name: country

dtype: string

splits:

- name: en

num_bytes: 17223

num_examples: 125

- name: fa

num_bytes: 24061

num_examples: 125

- name: hi

num_bytes: 34719

num_examples: 125

- name: sw

num_bytes: 17593

num_examples: 125

- name: zh

num_bytes: 15926

num_examples: 125

- name: el

num_bytes: 37639

num_examples: 150

download_size: 45285

dataset_size: 147161

---

data from the paper GeoMLAMA: Geo-Diverse Commonsense Probing on Multilingual Pre-Trained Language Models

(along with some new data and modifications for cleaning)

[GitHub](https://github.com/WadeYin9712/GeoMLAMA)

# Dataset Card for "geomlama"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 995 | [

[

-0.043426513671875,

-0.0537109375,

0.031707763671875,

-0.0150299072265625,

-0.010101318359375,

-0.01299285888671875,

-0.03240966796875,

-0.0328369140625,

0.0220794677734375,

0.0472412109375,

-0.02545166015625,

-0.080322265625,

-0.057647705078125,

0.002443313... |

BigSuperbPrivate/SpokenTermDetection_Tedlium2Train | 2023-08-02T13:37:29.000Z | [

"region:us"

] | BigSuperbPrivate | null | null | 0 | 8 | 2023-08-02T13:06:04 | ---

dataset_info:

features:

- name: file

dtype: string

- name: audio

dtype: audio

- name: text

dtype: string

- name: instruction

dtype: string

- name: label

dtype: string

- name: transcription

dtype: string

splits:

- name: train

num_bytes: 15786905536.68

num_examples: 92967

- name: validation

num_bytes: 117079048.0

num_examples: 507

download_size: 15262598420

dataset_size: 15903984584.68

---

# Dataset Card for "SpokenTermDetection_Tedlium2Train"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 646 | [

[

-0.0291748046875,

-0.02685546875,

-0.0021724700927734375,

0.01161956787109375,

-0.0102081298828125,

0.0130767822265625,

-0.0203399658203125,

-0.01415252685546875,

0.044921875,

0.0241546630859375,

-0.07122802734375,

-0.050872802734375,

-0.03497314453125,

-0.0... |

pourmand1376/isna-news | 2023-08-19T11:56:01.000Z | [

"task_categories:text-generation",

"size_categories:1M<n<10M",

"language:fa",

"license:apache-2.0",

"region:us"

] | pourmand1376 | null | null | 0 | 8 | 2023-08-02T14:30:40 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: TEXT

dtype: string

- name: SOURCE

dtype: string

- name: METADATA

dtype: string

splits:

- name: train

num_bytes: 8078800930

num_examples: 2104859

download_size: 2743795907

dataset_size: 8078800930

license: apache-2.0

task_categories:

- text-generation

language:

- fa

pretty_name: Isna News

size_categories:

- 1M<n<10M

---

# Dataset Card for "isna-news"

This is converted version of [Isna-news](https://www.kaggle.com/datasets/amirpourmand/isna-news) to comply with Open-assistant standards.

MetaData Column:

- title

- link: short link to news

- language: fa

- jalali-time: time in jalali calendar

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 896 | [

[

-0.0228729248046875,

-0.036865234375,

0.0190887451171875,

0.0302276611328125,

-0.06036376953125,

-0.0215911865234375,

-0.01126861572265625,

-0.02459716796875,

0.057647705078125,

0.03607177734375,

-0.07147216796875,

-0.05621337890625,

-0.036041259765625,

0.00... |

adityarra07/sub_ATC | 2023-08-06T05:38:09.000Z | [

"region:us"

] | adityarra07 | null | null | 0 | 8 | 2023-08-04T19:13:17 | ---

dataset_info:

features:

- name: audio

dtype:

audio:

sampling_rate: 16000

- name: transcription

dtype: string

- name: id

dtype: string

splits:

- name: train

num_bytes: 136737944.06422067

num_examples: 1000

- name: test

num_bytes: 13673794.406422066

num_examples: 100

download_size: 12473551

dataset_size: 150411738.47064275

---

# Dataset Card for "sub_ATC"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 552 | [

[

-0.0460205078125,

-0.01352691650390625,

0.0152587890625,

-0.0035610198974609375,

-0.0294647216796875,

0.0201263427734375,

0.033416748046875,

-0.0091552734375,

0.06805419921875,

0.02154541015625,

-0.06292724609375,

-0.062255859375,

-0.03753662109375,

-0.01444... |

diffusers/instructpix2pix-clip-filtered-upscaled | 2023-08-07T04:28:55.000Z | [

"region:us"

] | diffusers | null | null | 1 | 8 | 2023-08-07T03:02:48 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

hakatashi/hakatashi-pixiv-bookmark-deepdanbooru | 2023-08-07T05:38:17.000Z | [

"task_categories:image-classification",

"task_categories:tabular-classification",

"size_categories:100K<n<1M",

"art",

"region:us"

] | hakatashi | null | null | 2 | 8 | 2023-08-07T03:54:24 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

- split: validation

path: data/validation-*

dataset_info:

features:

- name: tag_probs

sequence: float32

- name: class

dtype:

class_label:

names:

'0': not_bookmarked

'1': bookmarked_public

'2': bookmarked_private

splits:

- name: train

num_bytes: 4301053452

num_examples: 179121

- name: test

num_bytes: 1433684484

num_examples: 59707

- name: validation

num_bytes: 1433708496

num_examples: 59708

download_size: 7351682183

dataset_size: 7168446432

task_categories:

- image-classification

- tabular-classification

tags:

- art

size_categories:

- 100K<n<1M

---

The dataset for training classification model of pixiv artworks by my preference.

## Schema

* tag_probs: List of probabilities for each tag. Preprocessed by [RF5/danbooru-pretrained](https://github.com/RF5/danbooru-pretrained) model. The index of each probability corresponds to the index of the tag in the [class_names_6000.json](https://github.com/RF5/danbooru-pretrained/blob/master/config/class_names_6000.json) file.

* class:

* not_bookmarked (0): Generated from images randomly-sampled from [animelover/danbooru2022](https://huggingface.co/datasets/animelover/danbooru2022) dataset. The images are filtered in advance to the post with pixiv source.

* bookmarked_public (1): Generated from publicly bookmarked images of [hakatashi](https://twitter.com/hakatashi).

* bookmarked_private (2): Generated from privately bookmarked images of [hakatashi](https://twitter.com/hakatashi).

## Stats

train:test:validation = 6:2:2

* not_bookmarked (0): 202,290 images

* bookmarked_public (1): 73,587 images

* bookmarked_private (2): 22,659 images

## Usage

```

>>> from datasets import load_dataset

>>> dataset = load_dataset("hakatashi/hakatashi-pixiv-bookmark-deepdanbooru")

>>> dataset

DatasetDict({

test: Dataset({

features: ['tag_probs', 'class'],

num_rows: 59707

})

train: Dataset({

features: ['tag_probs', 'class'],

num_rows: 179121

})

validation: Dataset({

features: ['tag_probs', 'class'],

num_rows: 59708

})

})

>>> dataset['train'].features

{'tag_probs': Sequence(feature=Value(dtype='float32', id=None), length=-1, id=None),

'class': ClassLabel(names=['not_bookmarked', 'bookmarked_public', 'bookmarked_private'], id=None)}

``` | 2,500 | [

[

-0.041015625,

-0.01245880126953125,

-0.0034027099609375,

-0.00301361083984375,

-0.032684326171875,

-0.0232391357421875,

-0.01025390625,

-0.0091552734375,

0.00807952880859375,

0.0231781005859375,

-0.0335693359375,

-0.05694580078125,

-0.040557861328125,

0.0031... |

Fredithefish/openassistant-guanaco-unfiltered | 2023-08-27T21:08:58.000Z | [

"task_categories:conversational",

"size_categories:1K<n<10K",

"language:en",

"language:de",

"language:fr",

"language:es",

"license:apache-2.0",

"region:us"

] | Fredithefish | null | null | 5 | 8 | 2023-08-12T10:12:28 | ---

license: apache-2.0

task_categories:

- conversational

language:

- en

- de

- fr

- es

size_categories:

- 1K<n<10K

---

# Guanaco-Unfiltered

- Any language other than English, German, French, or Spanish has been removed.

- Refusals of assistance have been removed.

- The identification as OpenAssistant has been removed.

## [Version 2 is out](https://huggingface.co/datasets/Fredithefish/openassistant-guanaco-unfiltered/blob/main/guanaco-unfiltered-v2.jsonl)

- Identification as OpenAssistant is now fully removed

- other improvements | 537 | [

[

-0.0058441162109375,

-0.05023193359375,

0.0249481201171875,

0.03192138671875,

-0.0208587646484375,

0.0176239013671875,

-0.026031494140625,

-0.04541015625,

0.026763916015625,

0.05029296875,

-0.051727294921875,

-0.046417236328125,

-0.061065673828125,

0.0149993... |

FreedomIntelligence/sharegpt-korean | 2023-08-13T16:46:20.000Z | [

"license:apache-2.0",

"region:us"

] | FreedomIntelligence | null | null | 0 | 8 | 2023-08-13T16:41:43 | ---

license: apache-2.0

---

Korean ShareGPT data translated by gpt-3.5-turbo.

The dataset is used in the research related to [MultilingualSIFT](https://github.com/FreedomIntelligence/MultilingualSIFT). | 204 | [

[

-0.032806396484375,

-0.0246734619140625,

0.026092529296875,

0.03851318359375,

-0.027557373046875,

0.00470733642578125,

-0.025360107421875,

-0.025054931640625,

0.0197601318359375,

0.0229339599609375,

-0.06396484375,

-0.02679443359375,

-0.035797119140625,

0.00... |

TrainingDataPro/cows-detection-dataset | 2023-09-14T16:32:30.000Z | [

"task_categories:image-to-image",

"task_categories:image-classification",

"task_categories:object-detection",

"language:en",

"license:cc-by-nc-nd-4.0",

"biology",

"code",

"region:us"

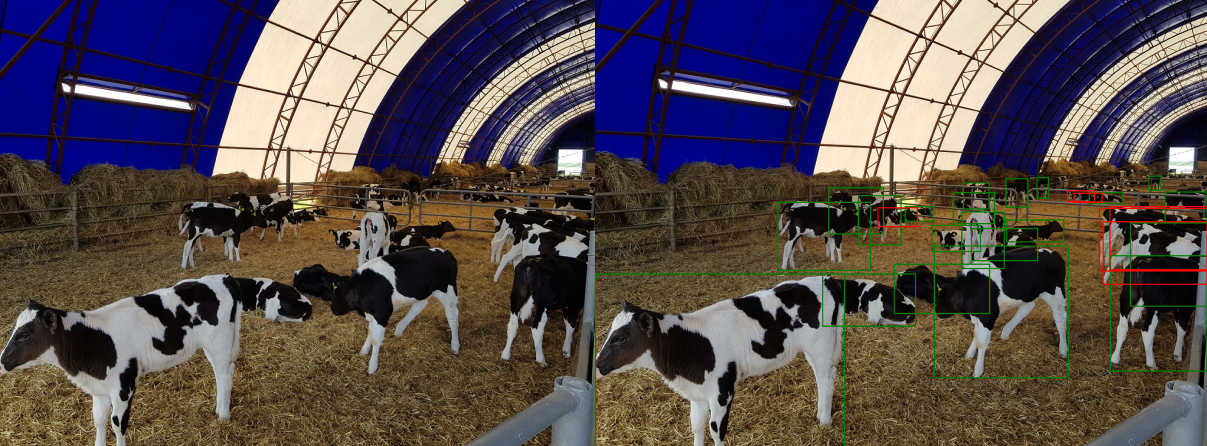

] | TrainingDataPro | The dataset is a collection of images along with corresponding bounding box annotations

that are specifically curated for **detecting cows** in images. The dataset covers

different *cows breeds, sizes, and orientations*, providing a comprehensive

representation of cows appearances and positions. Additionally, the visibility of each

cow is presented in the .xml file.

The cow detection dataset provides a valuable resource for researchers working on

detection tasks. It offers a diverse collection of annotated images, allowing for

comprehensive algorithm development, evaluation, and benchmarking, ultimately aiding

in the development of accurate and robust models. | @InProceedings{huggingface:dataset,

title = {cows-detection-dataset},

author = {TrainingDataPro},

year = {2023}

} | 1 | 8 | 2023-08-14T17:00:36 | ---

language:

- en

license: cc-by-nc-nd-4.0

task_categories:

- image-to-image

- image-classification

- object-detection

tags:

- biology

- code

dataset_info:

features:

- name: id

dtype: int32

- name: image

dtype: image

- name: mask

dtype: image

- name: bboxes

dtype: string

splits:

- name: train

num_bytes: 184108240

num_examples: 51

download_size: 183666433

dataset_size: 184108240

---

# Cows Detection Dataset

The dataset is a collection of images along with corresponding bounding box annotations that are specifically curated for **detecting cows** in images. The dataset covers different *cows breeds, sizes, and orientations*, providing a comprehensive representation of cows appearances and positions. Additionally, the visibility of each cow is presented in the .xml file.

The cow detection dataset provides a valuable resource for researchers working on detection tasks. It offers a diverse collection of annotated images, allowing for comprehensive algorithm development, evaluation, and benchmarking, ultimately aiding in the development of accurate and robust models.

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=cows-detection-dataset) to discuss your requirements, learn about the price and buy the dataset.

# Dataset structure

- **images** - contains of original images of cows

- **boxes** - includes bounding box labeling for the original images

- **annotations.xml** - contains coordinates of the bounding boxes and labels, created for the original photo

# Data Format

Each image from `images` folder is accompanied by an XML-annotation in the `annotations.xml` file indicating the coordinates of the bounding boxes for cows detection. For each point, the x and y coordinates are provided. Visibility of the cow is also provided by the label **is_visible** (true, false).

# Example of XML file structure

.png?generation=1692032268744062&alt=media)

# Cows Detection might be made in accordance with your requirements.

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=cows-detection-dataset) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** | 2,880 | [

[

-0.046966552734375,

-0.021820068359375,

0.00795745849609375,

-0.0153045654296875,

-0.03314208984375,

-0.0164947509765625,

0.0239410400390625,

-0.042633056640625,

0.0233917236328125,

0.063232421875,

-0.038543701171875,

-0.0770263671875,

-0.04901123046875,

0.0... |

Sylvana/qa_en_translation | 2023-08-18T07:51:14.000Z | [

"task_categories:translation",

"size_categories:1K<n<10K",

"language:ar",

"license:apache-2.0",

"region:us"

] | Sylvana | null | null | 1 | 8 | 2023-08-17T17:38:33 | ---

license: apache-2.0

task_categories:

- translation

language:

- ar

size_categories:

- 1K<n<10K

---

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] | 1,634 | [

[

-0.038177490234375,

-0.02984619140625,

-0.0036067962646484375,

0.027130126953125,

-0.0323486328125,

0.0037822723388671875,

-0.01727294921875,

-0.02020263671875,

0.049041748046875,

0.04046630859375,

-0.0634765625,

-0.08062744140625,

-0.052947998046875,

0.0020... |

highnote/pubmed_qa | 2023-08-19T13:28:27.000Z | [

"task_categories:question-answering",

"task_ids:multiple-choice-qa",

"annotations_creators:expert-generated",

"annotations_creators:machine-generated",

"language_creators:expert-generated",

"multilinguality:monolingual",

"size_categories:100K<n<1M",

"size_categories:10K<n<100K",

"size_categories:1K<... | highnote | PubMedQA is a novel biomedical question answering (QA) dataset collected from PubMed abstracts.

The task of PubMedQA is to answer research questions with yes/no/maybe (e.g.: Do preoperative

statins reduce atrial fibrillation after coronary artery bypass grafting?) using the corresponding abstracts.

PubMedQA has 1k expert-annotated, 61.2k unlabeled and 211.3k artificially generated QA instances.

Each PubMedQA instance is composed of (1) a question which is either an existing research article

title or derived from one, (2) a context which is the corresponding abstract without its conclusion,

(3) a long answer, which is the conclusion of the abstract and, presumably, answers the research question,

and (4) a yes/no/maybe answer which summarizes the conclusion.

PubMedQA is the first QA dataset where reasoning over biomedical research texts, especially their

quantitative contents, is required to answer the questions. | @inproceedings{jin2019pubmedqa,

title={PubMedQA: A Dataset for Biomedical Research Question Answering},

author={Jin, Qiao and Dhingra, Bhuwan and Liu, Zhengping and Cohen, William and Lu, Xinghua},

booktitle={Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP)},

pages={2567--2577},

year={2019}

} | 1 | 8 | 2023-08-19T13:28:27 | ---

annotations_creators:

- expert-generated

- machine-generated

language_creators:

- expert-generated

language:

- en

license:

- mit

multilinguality:

- monolingual

size_categories:

- 100K<n<1M

- 10K<n<100K

- 1K<n<10K

source_datasets:

- original

task_categories:

- question-answering

task_ids:

- multiple-choice-qa

paperswithcode_id: pubmedqa

pretty_name: PubMedQA

dataset_info:

- config_name: pqa_labeled

features:

- name: pubid

dtype: int32

- name: question

dtype: string

- name: context

sequence:

- name: contexts

dtype: string

- name: labels

dtype: string

- name: meshes

dtype: string

- name: reasoning_required_pred

dtype: string

- name: reasoning_free_pred

dtype: string

- name: long_answer

dtype: string

- name: final_decision

dtype: string

splits:

- name: train

num_bytes: 2089200

num_examples: 1000

download_size: 687882700

dataset_size: 2089200

- config_name: pqa_unlabeled

features:

- name: pubid

dtype: int32

- name: question

dtype: string

- name: context

sequence:

- name: contexts

dtype: string

- name: labels

dtype: string

- name: meshes

dtype: string

- name: long_answer

dtype: string

splits:

- name: train

num_bytes: 125938502

num_examples: 61249

download_size: 687882700

dataset_size: 125938502

- config_name: pqa_artificial

features:

- name: pubid

dtype: int32

- name: question

dtype: string

- name: context

sequence:

- name: contexts

dtype: string

- name: labels

dtype: string

- name: meshes

dtype: string

- name: long_answer

dtype: string

- name: final_decision

dtype: string

splits:

- name: train

num_bytes: 443554667

num_examples: 211269

download_size: 687882700

dataset_size: 443554667

config_names:

- pqa_artificial

- pqa_labeled

- pqa_unlabeled

duplicated_from: pubmed_qa

---

# Dataset Card for [Dataset Name]

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [PUBMED_QA homepage](https://pubmedqa.github.io/ )

- **Repository:** [PUBMED_QA repository](https://github.com/pubmedqa/pubmedqa)

- **Paper:** [PUBMED_QA: A Dataset for Biomedical Research Question Answering](https://arxiv.org/abs/1909.06146)

- **Leaderboard:** [PUBMED_QA: Leaderboard](https://pubmedqa.github.io/)

### Dataset Summary

[More Information Needed]

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

Thanks to [@tuner007](https://github.com/tuner007) for adding this dataset. | 4,617 | [

[

-0.032440185546875,

-0.042938232421875,

0.017578125,

0.01171875,

-0.0167236328125,

0.016937255859375,

-0.006732940673828125,

-0.0196075439453125,

0.049285888671875,

0.0396728515625,

-0.06341552734375,

-0.07623291015625,

-0.04620361328125,

0.01346588134765625... |

yardeny/processed_gpt2_context_len_512 | 2023-08-21T08:53:12.000Z | [

"region:us"

] | yardeny | null | null | 0 | 8 | 2023-08-21T05:49:19 | ---

dataset_info:

features:

- name: input_ids

sequence: int32

- name: attention_mask

sequence: int8

splits:

- name: train

num_bytes: 15593335128.0

num_examples: 6072171

download_size: 6562663671

dataset_size: 15593335128.0

---

# Dataset Card for "processed_gpt2_dataset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 433 | [

[

-0.0261688232421875,

-0.029815673828125,

0.028167724609375,

0.0153656005859375,

-0.0210113525390625,

-0.0012645721435546875,

0.0165863037109375,

-0.0146942138671875,

0.0389404296875,

0.032745361328125,

-0.0546875,

-0.0411376953125,

-0.053955078125,

-0.024673... |

zake7749/chinese-speech-corpus | 2023-08-30T16:19:14.000Z | [

"task_categories:conversational",

"size_categories:1K<n<10K",

"language:zh",

"license:cc",

"region:us"

] | zake7749 | null | null | 0 | 8 | 2023-08-21T09:33:09 | ---

language:

- zh

license: cc

size_categories:

- 1K<n<10K

task_categories:

- conversational

dataset_info:

features:

- name: sentences

list:

- name: speaker

dtype: string

- name: speech