author stringlengths 2 29 ⌀ | cardData null | citation stringlengths 0 9.58k ⌀ | description stringlengths 0 5.93k ⌀ | disabled bool 1 class | downloads float64 1 1M ⌀ | gated bool 2 classes | id stringlengths 2 108 | lastModified stringlengths 24 24 | paperswithcode_id stringlengths 2 45 ⌀ | private bool 2 classes | sha stringlengths 40 40 | siblings list | tags list | readme_url stringlengths 57 163 | readme stringlengths 0 977k |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

davanstrien | null | null | null | false | null | false | davanstrien/vfr | 2021-11-22T17:07:01.000Z | null | true | 8ba0d981dd7c71485c520ca61c0e62ee8e434234 | [] | [

"annotations_creators:expert-generated",

"language_creators:crowdsourced",

"language_creators:expert-generated",

"languages:en-GB",

"languages:en-US",

"languages:de-DE",

"languages:fr-FR",

"languages:nl-NL",

"licenses:cc0-1.0",

"multilinguality:multilingual",

"size_categories:n<1K",

"source_da... | https://huggingface.co/datasets/davanstrien/vfr/resolve/main/README.md | |

davidwisdom | null | null | null | false | 321 | false | davidwisdom/reddit-randomness | 2021-11-06T23:56:43.000Z | null | false | 01740f7cd9ffa5855819bd828d5dcb03578abf0e | [] | [] | https://huggingface.co/datasets/davidwisdom/reddit-randomness/resolve/main/README.md | # Reddit Randomness Dataset

A dataset I created because I was curious about how "random" r/random really is.

This data was collected by sending `GET` requests to `https://www.reddit.com/r/random` for a few hours on September 19th, 2021.

I scraped a bit of metadata about the subreddits as well.

`randomness_12k_clean.csv` reports the random subreddits as they happened and `summary.csv` lists some metadata about each subreddit.

# The Data

## `randomness_12k_clean.csv`

This file serves as a record of the 12,055 successful results I got from r/random.

Each row represents one result.

### Fields

* `subreddit`: The name of the subreddit that the scraper recieved from r/random (`string`)

* `response_code`: HTTP response code the scraper recieved when it sent a `GET` request to /r/random (`int`, always `302`)

## `summary.csv`

As the name suggests, this file summarizes `randomness_12k_clean.csv` into the information that I cared about when I analyzed this data.

Each row represents one of the 3,679 unique subreddits and includes some stats about the subreddit as well as the number of times it appears in the results.

### Fields

* `subreddit`: The name of the subreddit (`string`, unique)

* `subscribers`: How many subscribers the subreddit had (`int`, max of `99_886`)

* `current_users`: How many users accessed the subreddit in the past 15 minutes (`int`, max of `999`)

* `creation_date`: Date that the subreddit was created (`YYYY-MM-DD` or `Error:PrivateSub` or `Error:Banned`)

* `date_accessed`: Date that I collected the values in `subscribers` and `current_users` (`YYYY-MM-DD`)

* `time_accessed_UTC`: Time that I collected the values in `subscribers` and `current_users`, reported in UTC+0 (`HH:MM:SS`)

* `appearances`: How many times the subreddit shows up in `randomness_12k_clean.csv` (`int`, max of `9`)

# Missing Values and Quirks

In the `summary.csv` file, there are three missing values.

After I collected the number of subscribers and the number of current users, I went back about a week later to collect the creation date of each subreddit.

In that week, three subreddits had been banned or taken private. I filled in the values with a descriptive string.

* SomethingWasWrong (`Error:PrivateSub`)

* HannahowoOnlyfans (`Error:Banned`)

* JanetGuzman (`Error:Banned`)

I think there are a few NSFW subreddits in the results, even though I only queried r/random and not r/randnsfw.

As a simple example, searching the data for "nsfw" shows that I got the subreddit r/nsfwanimegifs twice.

# License

This dataset is made available under the Open Database License: http://opendatacommons.org/licenses/odbl/1.0/. Any rights in individual contents of the database are licensed under the Database Contents License: http://opendatacommons.org/licenses/dbcl/1.0/ |

debajyotidatta | null | null | null | false | 165 | false | debajyotidatta/biosses | 2022-02-01T01:46:29.000Z | null | false | c0b444a1e1fd9773a8ed19fdf9d1034f6b922ead | [] | [

"license:gpl-3.0"

] | https://huggingface.co/datasets/debajyotidatta/biosses/resolve/main/README.md | ---

license: gpl-3.0

---

|

debatelab | null | null | null | false | 340 | false | debatelab/aaac | 2022-10-24T16:25:56.000Z | aaac | false | 6e8e9947c03e380226bb9b3e2e1839d8bd2c05d2 | [] | [

"arxiv:2110.01509",

"annotations_creators:machine-generated",

"annotations_creators:expert-generated",

"language_creators:machine-generated",

"language:en",

"license:cc-by-sa-4.0",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:original",

"task_categories:summarizati... | https://huggingface.co/datasets/debatelab/aaac/resolve/main/README.md | ---

annotations_creators:

- machine-generated

- expert-generated

language_creators:

- machine-generated

language:

- en

license:

- cc-by-sa-4.0

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- original

task_categories:

- summarization

- text-retrieval

- text-generation

task_ids:

- parsing

- text-simplification

paperswithcode_id: aaac

pretty_name: Artificial Argument Analysis Corpus

language_bcp47:

- en-US

tags:

- argument-mining

- conditional-text-generation

- structure-prediction

---

# Dataset Card for Artificial Argument Analysis Corpus (AAAC)

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Construction of the Synthetic Data](#construction-of-the-synthetic-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** https://debatelab.github.io/journal/deepa2.html

- **Repository:** None

- **Paper:** G. Betz, K. Richardson. *DeepA2: A Modular Framework for Deep Argument Analysis with Pretrained Neural Text2Text Language Models*. https://arxiv.org/abs/2110.01509

- **Leaderboard:** None

### Dataset Summary

DeepA2 is a modular framework for deep argument analysis. DeepA2 datasets contain comprehensive logical reconstructions of informally presented arguments in short argumentative texts. This document describes two synthetic DeepA2 datasets for artificial argument analysis: AAAC01 and AAAC02.

```sh

# clone

git lfs clone https://huggingface.co/datasets/debatelab/aaac

```

```python

import pandas as pd

from datasets import Dataset

# loading train split as pandas df

df = pd.read_json("aaac/aaac01_train.jsonl", lines=True, orient="records")

# creating dataset from pandas df

Dataset.from_pandas(df)

```

### Supported Tasks and Leaderboards

The multi-dimensional datasets can be used to define various text-2-text tasks (see also [Betz and Richardson 2021](https://arxiv.org/abs/2110.01509)), for example:

* Premise extraction,

* Conclusion extraction,

* Logical formalization,

* Logical reconstrcution.

### Languages

English.

## Dataset Structure

### Data Instances

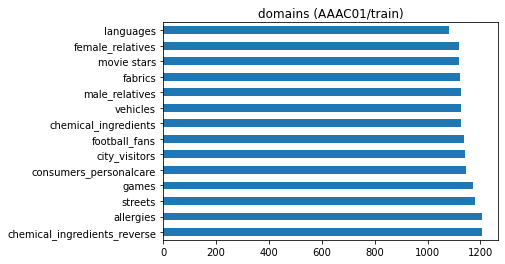

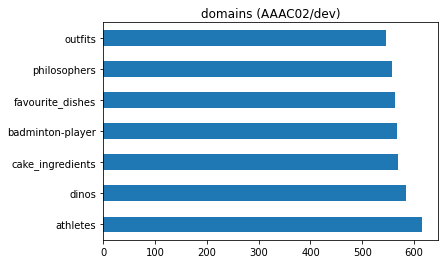

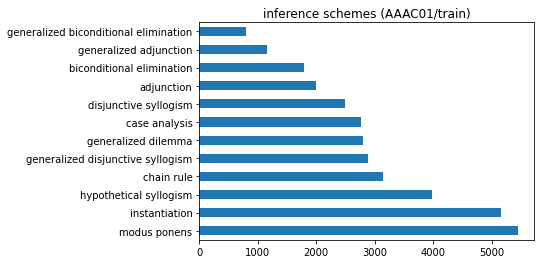

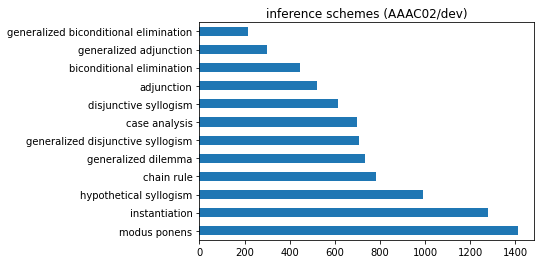

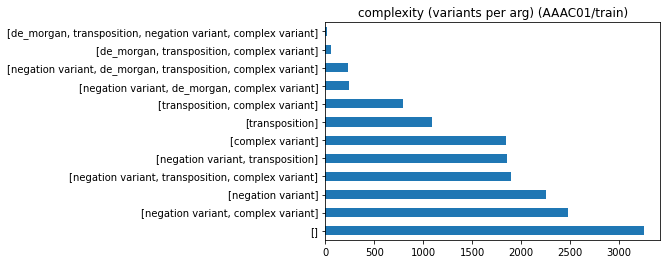

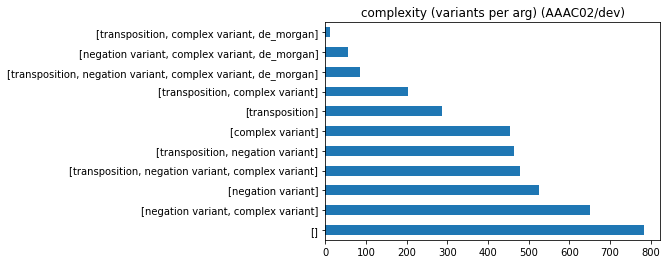

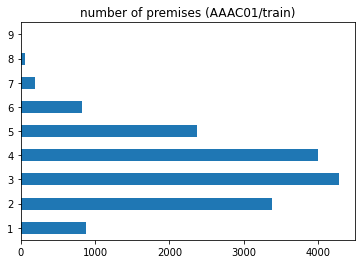

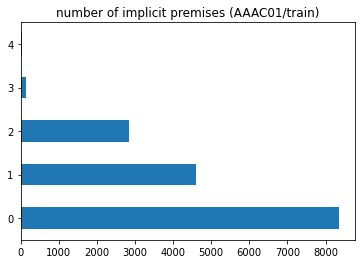

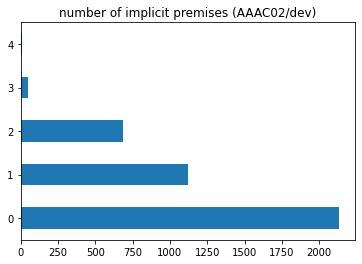

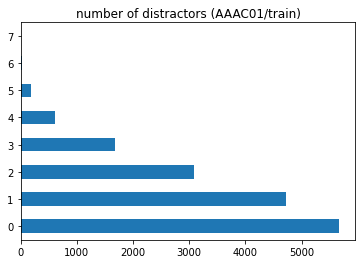

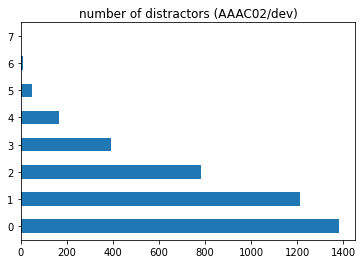

The following histograms (number of dataset records with given property) describe and compare the two datasets AAAC01 (train split, N=16000) and AAAC02 (dev split, N=4000).

|AAAC01 / train split|AAAC02 / dev split|

|-|-|

| | |

| | |

| | |

| | |

| | |

| | |

| | |

| | |

### Data Fields

The following multi-dimensional example record (2-step argument with one implicit premise) illustrates the structure of the AAAC datasets.

#### argument_source

```

If someone was discovered in 'Moonlight', then they won't play the lead in 'Booksmart',

because being a candidate for the lead in 'Booksmart' is sufficient for not being an

Oscar-Nominee for a role in 'Eighth Grade'. Yet every BAFTA-Nominee for a role in 'The

Shape of Water' is a fan-favourite since 'Moonlight' or a supporting actor in 'Black Panther'.

And if someone is a supporting actor in 'Black Panther', then they could never become the

main actor in 'Booksmart'. Consequently, if someone is a BAFTA-Nominee for a role in

'The Shape of Water', then they are not a candidate for the lead in 'Booksmart'.

```

#### reason_statements

```json

[

{"text":"being a candidate for the lead in 'Booksmart' is sufficient for

not being an Oscar-Nominee for a role in 'Eighth Grade'","starts_at":96,

"ref_reco":2},

{"text":"every BAFTA-Nominee for a role in 'The Shape of Water' is a

fan-favourite since 'Moonlight' or a supporting actor in 'Black Panther'",

"starts_at":221,"ref_reco":4},

{"text":"if someone is a supporting actor in 'Black Panther', then they

could never become the main actor in 'Booksmart'","starts_at":359,

"ref_reco":5}

]

```

#### conclusion_statements

```json

[

{"text":"If someone was discovered in 'Moonlight', then they won't play the

lead in 'Booksmart'","starts_at":0,"ref_reco":3},

{"text":"if someone is a BAFTA-Nominee for a role in 'The Shape of Water',

then they are not a candidate for the lead in 'Booksmart'","starts_at":486,

"ref_reco":6}

]

```

#### distractors

`[]`

#### argdown_reconstruction

```

(1) If someone is a fan-favourite since 'Moonlight', then they are an Oscar-Nominee for a role in 'Eighth Grade'.

(2) If someone is a candidate for the lead in 'Booksmart', then they are not an Oscar-Nominee for a role in 'Eighth Grade'.

--

with hypothetical syllogism {variant: ["negation variant", "transposition"], uses: [1,2]}

--

(3) If someone is beloved for their role in 'Moonlight', then they don't audition in

'Booksmart'.

(4) If someone is a BAFTA-Nominee for a role in 'The Shape of Water', then they are a fan-favourite since 'Moonlight' or a supporting actor in 'Black Panther'.

(5) If someone is a supporting actor in 'Black Panther', then they don't audition in

'Booksmart'.

--

with generalized dilemma {variant: ["negation variant"], uses: [3,4,5]}

--

(6) If someone is a BAFTA-Nominee for a role in 'The Shape of Water', then they are not a

candidate for the lead in 'Booksmart'.

```

#### premises

```json

[

{"ref_reco":1,"text":"If someone is a fan-favourite since 'Moonlight', then

they are an Oscar-Nominee for a role in 'Eighth Grade'.","explicit":false},

{"ref_reco":2,"text":"If someone is a candidate for the lead in

'Booksmart', then they are not an Oscar-Nominee for a role in 'Eighth

Grade'.","explicit":true},

{"ref_reco":4,"text":"If someone is a BAFTA-Nominee for a role in 'The

Shape of Water', then they are a fan-favourite since 'Moonlight' or a

supporting actor in 'Black Panther'.","explicit":true},

{"ref_reco":5,"text":"If someone is a supporting actor in 'Black Panther',

then they don't audition in 'Booksmart'.","explicit":true}

]

```

#### premises_formalized

```json

[

{"form":"(x): ${F2}x -> ${F5}x","ref_reco":1},

{"form":"(x): ${F4}x -> ¬${F5}x","ref_reco":2},

{"form":"(x): ${F1}x -> (${F2}x v ${F3}x)","ref_reco":4},

{"form":"(x): ${F3}x -> ¬${F4}x","ref_reco":5}

]

```

#### conclusion

```json

[{"ref_reco":6,"text":"If someone is a BAFTA-Nominee for a role in 'The Shape

of Water', then they are not a candidate for the lead in 'Booksmart'.",

"explicit":true}]

```

#### conclusion_formalized

```json

[{"form":"(x): ${F1}x -> ¬${F4}x","ref_reco":6}]

```

#### intermediary_conclusions

```json

[{"ref_reco":3,"text":"If someone is beloved for their role in 'Moonlight',

then they don't audition in 'Booksmart'.","explicit":true}]

```

#### intermediary_conclusions_formalized

```json

[{"form":"(x): ${F2}x -> ¬${F4}x","ref_reco":3}]

```

#### plcd_subs

```json

{

"F1":"BAFTA-Nominee for a role in 'The Shape of Water'",

"F2":"fan-favourite since 'Moonlight'",

"F3":"supporting actor in 'Black Panther'",

"F4":"candidate for the lead in 'Booksmart'",

"F5":"Oscar-Nominee for a role in 'Eighth Grade'"

}

```

### Data Splits

Number of instances in the various splits:

| Split | AAAC01 | AAAC02 |

| :--- | :---: | :---: |

| TRAIN | 16,000 | 16,000 |

| DEV | 4,000 | 4,000 |

| TEST | 4,000 | 4,000 |

To correctly load a specific split, define `data_files` as follows:

```python

>>> data_files = {"train": "aaac01_train.jsonl", "eval": "aaac01_dev.jsonl", "test": "aaac01_test.jsonl"}

>>> dataset = load_dataset("debatelab/aaac", data_files=data_files)

```

## Dataset Creation

### Curation Rationale

Argument analysis refers to the interpretation and logical reconstruction of argumentative texts. Its goal is to make an argument transparent, so as to understand, appreciate and (possibly) criticize it. Argument analysis is a key critical thinking skill.

Here's a first example of an informally presented argument, **Descartes' Cogito**:

> I have convinced myself that there is absolutely nothing in the world, no sky, no earth, no minds, no bodies. Does it now follow that I too do not exist? No: if I convinced myself of something then I certainly existed. But there is a deceiver of supreme power and cunning who is deliberately and constantly deceiving me. In that case I too undoubtedly exist, if he is deceiving me; and let him deceive me as much as he can, he will never bring it about that I am nothing so long as I think that I am something. So after considering everything very thoroughly, I must finally conclude that this proposition, I am, I exist, is necessarily true whenever it is put forward by me or conceived in my mind. (AT 7:25, CSM 2:16f)

And here's a second example, taken from the *Debater's Handbook*, **Pro Censorship**:

> Freedom of speech is never an absolute right but an aspiration. It ceases to be a right when it causes harm to others -- we all recognise the value of, for example, legislating against incitement to racial hatred. Therefore it is not the case that censorship is wrong in principle.

Given such texts, argument analysis aims at answering the following questions:

1. Does the text present an argument?

2. If so, how many?

3. What is the argument supposed to show (conclusion)?

4. What exactly are the premises of the argument?

* Which statements, explicit in the text, are not relevant for the argument?

* Which premises are required, but not explicitly stated?

5. Is the argument deductively valid, inductively strong, or simply fallacious?

To answer these questions, argument analysts **interpret** the text by (re-)constructing its argument in a standardized way (typically as a premise-conclusion list) and by making use of logical streamlining and formalization.

A reconstruction of **Pro Censorship** which answers the above questions is:

```argdown

(1) Freedom of speech is never an absolute right but an aspiration.

(2) Censorship is wrong in principle only if freedom of speech is an

absolute right.

--with modus tollens--

(3) It is not the case that censorship is wrong in principle

```

There are typically multiple, more or less different interpretations and logical reconstructions of an argumentative text. For instance, there exists an [extensive debate](https://plato.stanford.edu/entries/descartes-epistemology/) about how to interpret **Descartes' Cogito**, and scholars have advanced rival interpretation of the argument. An alternative reconstruction of the much simpler **Pro Censorship** might read:

```argdown

(1) Legislating against incitement to racial hatred is valuable.

(2) Legislating against incitement to racial hatred is an instance of censorship.

(3) If some instance of censorship is valuable, censorship is not wrong in

principle.

-----

(4) Censorship is not wrong in principle.

(5) Censorship is wrong in principle only if and only if freedom of speech

is an absolute right.

-----

(4) Freedom of speech is not an absolute right.

(5) Freedom of speech is an absolute right or an aspiration.

--with disjunctive syllogism--

(6) Freedom of speech is an aspiration.

```

What are the main reasons for this kind of underdetermination?

* **Incompleteness.** Many relevant parts of an argument (statements, their function in the argument, inference rules, argumentative goals) are not stated in its informal presentation. The argument analyst must infer the missing parts.

* **Additional material.** Over and above what is strictly part of the argument, informal presentations contain typically further material: relevant premises are repeated in slightly different ways, further examples are added to illustrate a point, statements are contrasted with views by opponents, etc. etc. It's argument analyst to choice which of the presented material is really part of the argument.

* **Errors.** Authors may err in the presentation of an argument, confounding, e.g., necessary and sufficient conditions in stating a premise. Following the principle of charity, benevolent argument analysts correct such errors and have to choose on of the different ways for how to do so.

* **Linguistic indeterminacy.** One and the same statement can be interpreted -- regarding its logical form -- in different ways.

* **Equivalence.** There are different natural language expressions for one and the same proposition.

AAAC datasets provide logical reconstructions of informal argumentative texts: Each record contains a source text to-be-reconstructed and further fields which describe an internally consistent interpretation of the text, notwithstanding the fact that there might be alternative interpretations of this very text.

### Construction of the Synthetic Data

Argument analysis starts with a text and reconstructs its argument (cf. [Motivation and Background](#curation-rationale)). In constructing our synthetic data, we inverse this direction: We start by sampling a complete argument, construct an informal presentation, and provide further info that describes both logical reconstruction and informal presentation. More specifically, the construction of the data involves the following steps:

1. [Generation of valid symbolic inference schemes](#step-1-generation-of-symbolic-inference-schemes)

2. [Assembling complex ("multi-hop") argument schemes from symbolic inference schemes](#step-2-assembling-complex-multi-hop-argument-schemes-from-symbolic-inference-schemes)

3. [Creation of (precise and informal) natural-language argument](#step-3-creation-of-precise-and-informal-natural-language-argument-schemes)

4. [Substitution of placeholders with domain-specific predicates and names](#step-4-substitution-of-placeholders-with-domain-specific-predicates-and-names)

5. [Creation of the argdown-snippet](#step-5-creation-of-the-argdown-snippet)

7. [Paraphrasing](#step-6-paraphrasing)

6. [Construction of a storyline for the argument source text](#step-7-construction-of-a-storyline-for-the-argument-source-text)

8. [Assembling the argument source text](#step-8-assembling-the-argument-source-text)

9. [Linking the precise reconstruction and the informal argumentative text](#step-9-linking-informal-presentation-and-formal-reconstruction)

#### Step 1: Generation of symbolic inference schemes

We construct the set of available inference schemes by systematically transforming the following 12 base schemes (6 from propositional and another 6 from predicate logic):

* modus ponens: `['Fa -> Gb', 'Fa', 'Gb']`

* chain rule: `['Fa -> Gb', 'Gb -> Hc', 'Fa -> Hc']`

* adjunction: `['Fa', 'Gb', 'Fa & Gb']`

* case analysis: `['Fa v Gb', 'Fa -> Hc', 'Gb -> Hc', 'Hc']`

* disjunctive syllogism: `['Fa v Gb', '¬Fa', 'Gb']`

* biconditional elimination: `['Fa <-> Gb', 'Fa -> Gb']`

* instantiation: `['(x): Fx -> Gx', 'Fa -> Ga']`

* hypothetical syllogism: `['(x): Fx -> Gx', '(x): Gx -> Hx', '(x): Fx -> Hx']`

* generalized biconditional elimination: `['(x): Fx <-> Gx', '(x): Fx -> Gx']`

* generalized adjunction: `['(x): Fx -> Gx', '(x): Fx -> Hx', '(x): Fx -> (Gx & Hx)']`

* generalized dilemma: `['(x): Fx -> (Gx v Hx)', '(x): Gx -> Ix', '(x): Hx -> Ix', '(x): Fx -> Ix']`

* generalized disjunctive syllogism: `['(x): Fx -> (Gx v Hx)', '(x): Fx -> ¬Gx', '(x): Fx -> Hx']`

(Regarding the propositional schemes, we allow for `a`=`b`=`c`.)

Further symbolic inference schemes are generated by applying the following transformations to each of these base schemes:

* *negation*: replace all occurrences of an atomic formula by its negation (for any number of such atomic sentences)

* *transposition*: transpose exactly one (generalized) conditional

* *dna*: simplify by applying duplex negatio affirmat

* *complex predicates*: replace all occurrences of a given atomic formula by a complex formula consisting in the conjunction or disjunction of two atomic formulas

* *de morgan*: apply de Morgan's rule once

These transformations are applied to the base schemes in the following order:

> **{base_schemes}** > negation_variants > transposition_variants > dna > **{transposition_variants}** > complex_predicates > negation_variants > dna > **{complex_predicates}** > de_morgan > dna > **{de_morgan}**

All transformations, except *dna*, are monotonic, i.e. simply add further schemes to the ones generated in the previous step. Results of bold steps are added to the list of valid inference schemes. Each inference scheme is stored with information about which transformations were used to create it. All in all, this gives us 5542 schemes.

#### Step 2: Assembling complex ("multi-hop") argument schemes from symbolic inference schemes

The complex argument *scheme*, which consists in multiple inferences, is assembled recursively by adding inferences that support premises of previously added inferences, as described by the following pseudocode:

```

argument = []

intermediary_conclusion = []

inference = randomly choose from list of all schemes

add inference to argument

for i in range(number_of_sub_arguments - 1):

target = randomly choose a premise which is not an intermediary_conclusion

inference = randomly choose a scheme whose conclusion is identical with target

add inference to argument

add target to intermediary_conclusion

return argument

```

The complex arguments we create are hence trees, with a root scheme.

Let's walk through this algorithm by means of an illustrative example and construct a symbolic argument scheme with two sub-arguments. First, we randomly choose some inference scheme (random sampling is controlled by weights that compensate for the fact that the list of schemes mainly contains, for combinatorial reasons, complex inferences), say:

```json

{

"id": "mp",

"base_scheme_group": "modus ponens",

"scheme_variant": ["complex_variant"],

"scheme": [

["${A}${a} -> (${B}${a} & ${C}${a})",

{"A": "${F}", "B": "${G}", "C": "${H}", "a": "${a}"}],

["${A}${a}", {"A": "${F}", "a": "${a}"}],

["${A}${a} & ${B}${a}", {"A": "${G}", "B": "${H}", "a": "${a}"}]

],

"predicate-placeholders": ["F", "G", "H"],

"entity-placeholders": ["a"]

}

```

Now, the target premise (= intermediary conclusion) of the next subargument is chosen, say: premise 1 of the already added root scheme. We filter the list of schemes for schemes whose conclusion structurally matches the target, i.e. has the form `${A}${a} -> (${B}${a} v ${C}${a})`. From this filtered list of suitable schemes, we randomly choose, for example

```json

{

"id": "bicelim",

"base_scheme_group": "biconditional elimination",

"scheme_variant": [complex_variant],

"scheme": [

["${A}${a} <-> (${B}${a} & ${C}${a})",

{"A": "${F}", "B": "${G}", "C": "${H}", "a": "${a}"}],

["${A}${a} -> (${B}${a} & ${C}${a})",

{"A": "${F}", "B": "${G}", "C": "${H}", "a": "${a}"}]

],

"predicate-placeholders": ["F", "G", "H"],

"entity-placeholders": []

}

```

So, we have generated this 2-step symbolic argument scheme with two premises, one intermediary and one final conclusion:

```

(1) Fa <-> Ga & Ha

--

with biconditional elimination (complex variant) from 1

--

(2) Fa -> Ga & Ha

(3) Fa

--

with modus ponens (complex variant) from 2,3

--

(4) Ga & Ha

```

General properties of the argument are now determined and can be stored in the dataset (its `domain` is randomly chosen):

```json

"steps":2, // number of inference steps

"n_premises":2,

"base_scheme_groups":[

"biconditional elimination",

"modus ponens"

],

"scheme_variants":[

"complex variant"

],

"domain_id":"consumers_personalcare",

"domain_type":"persons"

```

#### Step 3: Creation of (precise and informal) natural-language argument schemes

In step 3, the *symbolic and formal* complex argument scheme is transformed into a *natural language* argument scheme by replacing symbolic formulas (e.g., `${A}${a} v ${B}${a}`) with suitable natural language sentence schemes (such as, `${a} is a ${A}, and ${a} is a ${B}` or `${a} is a ${A} and a ${B}`). Natural language sentence schemes which translate symbolic formulas are classified according to whether they are precise, informal, or imprecise.

For each symbolic formula, there are many (partly automatically, partly manually generated) natural-language sentence scheme which render the formula in more or less precise way. Each of these natural-language "translations" of a symbolic formula is labeled according to whether it presents the logical form in a "precise", "informal", or "imprecise" way. e.g.

|type|form|

|-|-|

|symbolic|`(x): ${A}x -> ${B}x`|

|precise|`If someone is a ${A}, then they are a ${B}.`|

|informal|`Every ${A} is a ${B}.`|

|imprecise|`${A} might be a ${B}.`|

The labels "precise", "informal", "imprecise" are used to control the generation of two natural-language versions of the argument scheme, a **precise** one (for creating the argdown snippet) and an **informal** one (for creating the source text). Moreover, the natural-language "translations" are also chosen in view of the domain (see below) of the to-be-generated argument, specifically in view of whether it is quantified over persons ("everyone", "nobody") or objects ("something, nothing").

So, as a **precise** rendition of our symbolic argument scheme, we may obtain:

```

(1) If, and only if, a is a F, then a is G and a is a H.

--

with biconditional elimination (complex variant) from 1

--

(2) If a is a F, then a is a G and a is a H.

(3) a is a F.

--

with modus ponens (complex variant) from 3,2

--

(4) a is G and a is a H.

```

Likewise, an **informal** rendition may be:

```

(1) a is a F if a is both a G and a H -- and vice versa.

--

with biconditional elimination (complex variant) from 1

--

(2) a is a G and a H, provided a is a F.

(3) a is a F.

--

with modus ponens (complex variant) from 3,2

--

(4) a is both a G and a H.

```

#### Step 4: Substitution of placeholders with domain-specific predicates and names

Every argument falls within a domain. A domain provides

* a list of `subject names` (e.g., Peter, Sarah)

* a list of `object names` (e.g., New York, Lille)

* a list of `binary predicates` (e.g., [subject is an] admirer of [object])

These domains are manually created.

Replacements for the placeholders are sampled from the corresponding domain. Substitutes for entity placeholders (`a`, `b` etc.) are simply chosen from the list of `subject names`. Substitutes for predicate placeholders (`F`, `G` etc.) are constructed by combining `binary predicates` with `object names`, which yields unary predicates of the form "___ stands in some relation to some object". This combinatorial construction of unary predicates drastically increases the number of replacements available and hence the variety of generated arguments.

Assuming that we sample our argument from the domain `consumers personal care`, we may choose and construct the following substitutes for placeholders in our argument scheme:

* `F`: regular consumer of Kiss My Face soap

* `G`: regular consumer of Nag Champa soap

* `H`: occasional purchaser of Shield soap

* `a`: Orlando

#### Step 5: Creation of the argdown-snippet

From the **precise rendition** of the natural language argument scheme ([step 3](#step-3-creation-of-precise-and-informal-natural-language-argument-schemes)) and the replacements for its placeholders ([step 4](#step-4-substitution-of-placeholders-with-domain-specific-predicates-and-names)), we construct the `argdown-snippet` by simple substitution and formatting the complex argument in accordance with [argdown syntax](https://argdown.org).

This yields, for our example from above:

```argdown

(1) If, and only if, Orlando is a regular consumer of Kiss My Face soap,

then Orlando is a regular consumer of Nag Champa soap and Orlando is

a occasional purchaser of Shield soap.

--

with biconditional elimination (complex variant) from 1

--

(2) If Orlando is a regular consumer of Kiss My Face soap, then Orlando

is a regular consumer of Nag Champa soap and Orlando is a occasional

purchaser of Shield soap.

(3) Orlando is a regular consumer of Kiss My Face soap.

--

with modus ponens (complex variant) from 3,2

--

(4) Orlando is a regular consumer of Nag Champa soap and Orlando is a

occasional purchaser of Shield soap.

```

That's the `argdown_snippet`. By construction of such a synthetic argument (from formal schemes, see [step 2](#step-2-assembling-complex-multi-hop-argument-schemes-from-symbolic-inference-schemes)), we already know its conclusions and their formalization (the value of the field `explicit` will be determined later).

```json

"conclusion":[

{

"ref_reco":4,

"text":"Orlando is a regular consumer of Nag Champa

soap and Orlando is a occasional purchaser of

Shield soap.",

"explicit": TBD

}

],

"conclusion_formalized":[

{

"ref_reco":4,

"form":"(${F2}${a1} & ${F3}${a1})"

}

],

"intermediary_conclusions":[

{

"ref_reco":2,

"text":"If Orlando is a regular consumer of Kiss My

Face soap, then Orlando is a regular consumer of

Nag Champa soap and Orlando is a occasional

purchaser of Shield soap.",

"explicit": TBD

}

]

"intermediary_conclusions_formalized":[

{

"ref_reco":2,

"text":"${F1}${a1} -> (${F2}${a1} & ${F3}${a1})"

}

],

```

... and the corresponding keys (see [step 4](#step-4-substitution-of-placeholders-with-domain-specific-predicates-and-names))):

```json

"plcd_subs":{

"a1":"Orlando",

"F1":"regular consumer of Kiss My Face soap",

"F2":"regular consumer of Nag Champa soap",

"F3":"occasional purchaser of Shield soap"

}

```

#### Step 6: Paraphrasing

From the **informal rendition** of the natural language argument scheme ([step 3](#step-3-creation-of-precise-and-informal-natural-language-argument-schemes)) and the replacements for its placeholders ([step 4](#step-4-substitution-of-placeholders-with-domain-specific-predicates-and-names)), we construct an informal argument (argument tree) by substitution.

The statements (premises, conclusions) of the informal argument are individually paraphrased in two steps

1. rule-based and in a domain-specific way,

2. automatically by means of a specifically fine-tuned T5 model.

Each domain (see [step 4](#step-4-substitution-of-placeholders-with-domain-specific-predicates-and-names)) provides rules for substituting noun constructs ("is a supporter of X", "is a product made of X") with verb constructs ("supports x", "contains X"). These rules are applied whenever possible.

Next, each sentence is -- with a probability specified by parameter `lm_paraphrasing` -- replaced with an automatically generated paraphrase, using a [T5 model fine-tuned on the Google PAWS dataset](https://huggingface.co/Vamsi/T5_Paraphrase_Paws) and filtering for paraphrases with acceptable _cola_ and sufficiently high _STSB_ value (both as predicted by T5).

| |AAAC01|AAAC02|

|-|-|-|

|`lm_paraphrasing`|0.2|0.|

#### Step 7: Construction of a storyline for the argument source text

The storyline determines in which order the premises, intermediary conclusions and final conclusions are to be presented in the text paragraph to-be-constructed (`argument-source`). The storyline is constructed from the paraphrased informal complex argument (see [step 6](#step-6-paraphrasing))).

Before determining the order of presentation (storyline), the informal argument tree is pre-processed to account for:

* implicit premises,

* implicit intermediary conclusions, and

* implicit final conclusion,

which is documented in the dataset record as

```json

"presentation_parameters":{

"resolve_steps":[1],

"implicit_conclusion":false,

"implicit_premise":true,

"...":"..."

}

```

In order to make an intermediary conclusion *C* implicit, the inference to *C* is "resolved" by re-assigning all premisses *from* which *C* is directly inferred *to* the inference to the (final or intermediary) conclusion which *C* supports.

Original tree:

```

P1 ... Pn

—————————

C Q1 ... Qn

—————————————

C'

```

Tree with resolved inference and implicit intermediary conclusion:

```

P1 ... Pn Q1 ... Qn

———————————————————

C'

```

The original argument tree in our example reads:

```

(1)

———

(2) (3)

———————

(4)

```

This might be pre-processed (by resolving the first inference step and dropping the first premise) to:

```

(3)

———

(4)

```

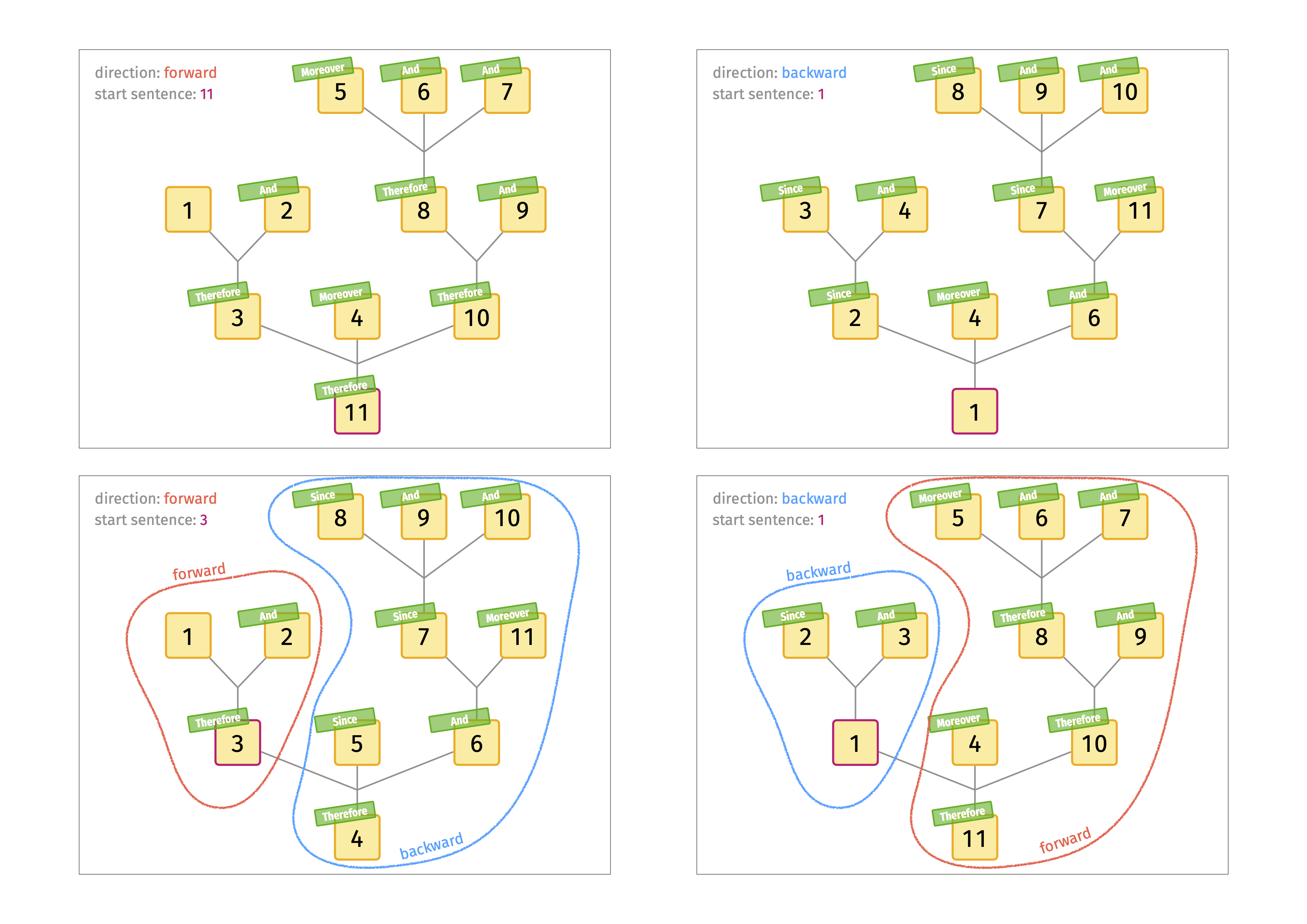

Given such a pre-processed argument tree, a storyline, which determines the order of presentation, can be constructed by specifying the direction of presentation and a starting point. The **direction** is either

* forward (premise AND ... AND premise THEREFORE conclusion)

* backward (conclusion SINCE premise AND ... AND premise)

Any conclusion in the pre-processed argument tree may serve as starting point. The storyline is now constructed recursively, as illustrated in Figure~1. Integer labels of the nodes represent the order of presentation, i.e. the storyline. (Note that the starting point is not necessarily the statement which is presented first according to the storyline.)

So as to introduce redundancy, the storyline may be post-processed by repeating a premiss that has been stated previously. The likelihood that a single premise is repeated is controlled by the presentation parameters:

```json

"presentation_parameters":{

"redundancy_frequency":0.1,

}

```

Moreover, **distractors**, i.e. arbitrary statements sampled from the argument's very domain, may be inserted in the storyline.

#### Step 8: Assembling the argument source text

The `argument-source` is constructed by concatenating the statements of the informal argument ([step 6](#step-6-paraphrasing)) according to the order of the storyline ([step 7](#step-7-construction-of-a-storyline-for-the-argument-source-text)). In principle, each statement is prepended by a conjunction. There are four types of conjunction:

* THEREFORE: left-to-right inference

* SINCE: right-to-left inference

* AND: joins premises with similar inferential role

* MOREOVER: catch all conjunction

Each statement is assigned a specific conjunction type by the storyline.

For every conjunction type, we provide multiple natural-language terms which may figure as conjunctions when concatenating the statements, e.g. "So, necessarily,", "So", "Thus,", "It follows that", "Therefore,", "Consequently,", "Hence,", "In consequence,", "All this entails that", "From this follows that", "We may conclude that" for THEREFORE. The parameter

```json

"presentation_parameters":{

"drop_conj_frequency":0.1,

"...":"..."

}

```

determines the probability that a conjunction is omitted and a statement is concatenated without prepending a conjunction.

With the parameters given above we obtain the following `argument_source` for our example:

> Orlando is a regular consumer of Nag Champa soap and Orlando is a occasional purchaser of Shield soap, since Orlando is a regular consumer of Kiss My Face soap.

#### Step 9: Linking informal presentation and formal reconstruction

We can identify all statements _in the informal presentation_ (`argument_source`), categorize them according to their argumentative function GIVEN the logical reconstruction and link them to the corresponding statements in the `argdown_snippet`. We distinguish `reason_statement` (AKA REASONS, correspond to premises in the reconstruction) and `conclusion_statement` (AKA CONJECTURES, correspond to conclusion and intermediary conclusion in the reconstruction):

```json

"reason_statements":[ // aka reasons

{

"text":"Orlando is a regular consumer of Kiss My Face soap",

"starts_at":109,

"ref_reco":3

}

],

"conclusion_statements":[ // aka conjectures

{

"text":"Orlando is a regular consumer of Nag Champa soap and

Orlando is a occasional purchaser of Shield soap",

"starts_at":0,

"ref_reco":4

}

]

```

Moreover, we are now able to classify all premises in the formal reconstruction (`argdown_snippet`) according to whether they are implicit or explicit given the informal presentation:

```json

"premises":[

{

"ref_reco":1,

"text":"If, and only if, Orlando is a regular consumer of Kiss

My Face soap, then Orlando is a regular consumer of Nag

Champa soap and Orlando is a occasional purchaser of

Shield soap.",

"explicit":False

},

{

"ref_reco":3,

"text":"Orlando is a regular consumer of Kiss My Face soap. ",

"explicit":True

}

],

"premises_formalized":[

{

"ref_reco":1,

"form":"${F1}${a1} <-> (${F2}${a1} & ${F3}${a1})"

},

{

"ref_reco":3,

"form":"${F1}${a1}"

}

]

```

#### Initial Data Collection and Normalization

N.A.

#### Who are the source language producers?

N.A.

### Annotations

#### Annotation process

N.A.

#### Who are the annotators?

N.A.

### Personal and Sensitive Information

N.A.

## Considerations for Using the Data

### Social Impact of Dataset

None

### Discussion of Biases

None

### Other Known Limitations

See [Betz and Richardson 2021](https://arxiv.org/abs/2110.01509).

## Additional Information

### Dataset Curators

Gregor Betz, Kyle Richardson

### Licensing Information

Creative Commons cc-by-sa-4.0

### Citation Information

```

@misc{betz2021deepa2,

title={DeepA2: A Modular Framework for Deep Argument Analysis with Pretrained Neural Text2Text Language Models},

author={Gregor Betz and Kyle Richardson},

year={2021},

eprint={2110.01509},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

### Contributions

<!--Thanks to [@github-username](https://github.com/<github-username>) for adding this dataset.-->

|

debatelab | null | null | null | false | 334 | false | debatelab/deepa2 | 2022-11-01T08:54:18.000Z | null | false | c82f0d25f7495aa2f25db7fca1febd64f5b4869d | [] | [

"arxiv:2110.01509",

"language_creators:other",

"language:en",

"license:other",

"multilinguality:monolingual",

"size_categories:unknown",

"task_categories:text-retrieval",

"task_categories:text-generation",

"task_ids:text-simplification",

"task_ids:parsing",

"tags:argument-mining",

"tags:summar... | https://huggingface.co/datasets/debatelab/deepa2/resolve/main/README.md | ---

annotations_creators: []

language_creators:

- other

language:

- en

license:

- other

multilinguality:

- monolingual

size_categories:

- unknown

source_datasets: []

task_categories:

- text-retrieval

- text-generation

task_ids:

- text-simplification

- parsing

pretty_name: deepa2

tags:

- argument-mining

- summarization

- conditional-text-generation

- structure-prediction

---

# `deepa2` Datasets Collection

## Table of Contents

- [`deepa2` Datasets Collection](#deepa2-datasets-collection)

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Sub-Datasets](#sub-datasets)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Initial Data Collection and Normalization](#initial-data-collection-and-normalization)

- [Who are the source language producers?](#who-are-the-source-language-producers)

- [Annotations](#annotations)

- [Annotation process](#annotation-process)

- [Who are the annotators?](#who-are-the-annotators)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [blog post](https://debatelab.github.io/journal/deepa2.html)

- **Repository:** [github](https://github.com/debatelab/deepa2)

- **Paper:** [arxiv](https://arxiv.org/abs/2110.01509)

- **Point of Contact:** [Gregor Betz](gregor.betz@kit.edu)

### Dataset Summary

This is a growing, curated collection of `deepa2` datasets, i.e. datasets that contain comprehensive logical analyses of argumentative texts. The collection comprises:

* datasets that are built from existing NLP datasets by means of the [`deepa2 bake`](https://github.com/debatelab/deepa2) tool.

* original `deepa2` datasets specifically created for this collection.

The tool [`deepa2 serve`](https://github.com/debatelab/deepa2#integrating-deepa2-into-your-training-pipeline) may be used to render the data in this collection as text2text examples.

### Supported Tasks and Leaderboards

For each of the tasks tagged for this dataset, give a brief description of the tag, metrics, and suggested models (with a link to their HuggingFace implementation if available). Give a similar description of tasks that were not covered by the structured tag set (repace the `task-category-tag` with an appropriate `other:other-task-name`).

- `conditional-text-generation`: The dataset can be used to train models to generate a fully reconstruction of an argument from a source text, making, e.g., its implicit assumptions explicit.

- `structure-prediction`: The dataset can be used to train models to formalize sentences.

- `text-retrieval`: The dataset can be used to train models to extract reason statements and conjectures from a given source text.

### Languages

English. Will be extended to cover other languages in the futures.

## Dataset Structure

### Sub-Datasets

This collection contains the following `deepa2` datasets:

* `esnli`: created from e-SNLI with `deepa2 bake` as [described here](https://github.com/debatelab/deepa2/blob/main/docs/esnli.md).

* `enbank` (`task_1`, `task_2`): created from Entailment Bank with `deepa2 bake` as [described here](https://github.com/debatelab/deepa2/blob/main/docs/enbank.md).

* `argq`: created from IBM-ArgQ with `deepa2 bake` as [described here](https://github.com/debatelab/deepa2/blob/main/docs/argq.md).

* `argkp`: created from IBM-KPA with `deepa2 bake` as [described here](https://github.com/debatelab/deepa2/blob/main/docs/argkp.md).

* `aifdb` (`moral-maze`, `us2016`, `vacc-itc`): created from AIFdb with `deepa2 bake` as [described here](https://github.com/debatelab/deepa2/blob/main/docs/aifdb.md).

* `aaac` (`aaac01` and `aaac02`): original, machine-generated contribution; based on an an improved and extended algorithm that backs https://huggingface.co/datasets/debatelab/aaac.

### Data Instances

see: https://github.com/debatelab/deepa2/tree/main/docs

### Data Fields

see: https://github.com/debatelab/deepa2/tree/main/docs

|feature|esnli|enbank|aifdb|aaac|argq|argkp|

|--|--|--|--|--|--|--|

| `source_text` | x | x | x | x | x | x |

| `title` | | x | | x | | |

| `gist` | x | x | | x | | x |

| `source_paraphrase` | x | x | x | x | | |

| `context` | | x | | x | | x |

| `reasons` | x | x | x | x | x | |

| `conjectures` | x | x | x | x | x | |

| `argdown_reconstruction` | x | x | | x | | x |

| `erroneous_argdown` | x | | | x | | |

| `premises` | x | x | | x | | x |

| `intermediary_conclusion` | | | | x | | |

| `conclusion` | x | x | | x | | x |

| `premises_formalized` | x | | | x | | x |

| `intermediary_conclusion_formalized` | | | | x | | |

| `conclusion_formalized` | x | | | x | | x |

| `predicate_placeholders` | | | | x | | |

| `entity_placeholders` | | | | x | | |

| `misc_placeholders` | x | | | x | | x |

| `plchd_substitutions` | x | | | x | | x |

### Data Splits

Each sub-dataset contains three splits: `train`, `validation`, and `test`.

## Dataset Creation

### Curation Rationale

Many NLP datasets focus on tasks that are relevant for logical analysis and argument reconstruction. This collection is the attempt to unify these resources in a common framework.

### Source Data

See: [Sub-Datasets](#sub-datasets)

## Additional Information

### Dataset Curators

Gregor Betz, KIT; Kyle Richardson, Allen AI

### Licensing Information

We re-distribute the the imported sub-datasets under their original license:

|Sub-dataset|License|

|--|--|

|esnli|MIT|

|aifdb|free for academic use ([TOU](https://arg-tech.org/index.php/research/argument-corpora/))|

|enbank|CC BY 4.0|

|aaac|CC BY 4.0|

|argq|CC BY SA 4.0|

|argkp|Apache|

### Citation Information

```

@article{betz2021deepa2,

title={DeepA2: A Modular Framework for Deep Argument Analysis with Pretrained Neural Text2Text Language Models},

author={Gregor Betz and Kyle Richardson},

year={2021},

eprint={2110.01509},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

<!--If the dataset has a [DOI](https://www.doi.org/), please provide it here.-->

|

deepset | null | @misc{möller2021germanquad,

title={GermanQuAD and GermanDPR: Improving Non-English Question Answering and Passage Retrieval},

author={Timo Möller and Julian Risch and Malte Pietsch},

year={2021},

eprint={2104.12741},

archivePrefix={arXiv},

primaryClass={cs.CL}

} | We take GermanQuAD as a starting point and add hard negatives from a dump of the full German Wikipedia following the approach of the DPR authors (Karpukhin et al., 2020). The format of the dataset also resembles the one of DPR. GermanDPR comprises 9275 question/answer pairs in the training set and 1025 pairs in the test set. For each pair, there are one positive context and three hard negative contexts. | false | 393 | false | deepset/germandpr | 2022-10-25T09:07:41.000Z | null | false | 32259c8039d961cd370ed45ed148d296476b2dbc | [] | [

"arxiv:2104.12741",

"language:de",

"multilinguality:monolingual",

"source_datasets:original",

"task_categories:question-answering",

"task_categories:text-retrieval",

"task_ids:extractive-qa",

"task_ids:closed-domain-qa",

"thumbnail:https://thumb.tildacdn.com/tild3433-3637-4830-a533-353833613061/-/re... | https://huggingface.co/datasets/deepset/germandpr/resolve/main/README.md | ---

language:

- de

multilinguality:

- monolingual

source_datasets:

- original

task_categories:

- question-answering

- text-retrieval

task_ids:

- extractive-qa

- closed-domain-qa

thumbnail: https://thumb.tildacdn.com/tild3433-3637-4830-a533-353833613061/-/resize/720x/-/format/webp/germanquad.jpg

---

# Dataset Card for germandpr

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-instances)

- [Data Splits](#data-instances)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Citation Information](#citation-information)

## Dataset Description

- **Homepage:** https://deepset.ai/germanquad

- **Repository:** https://github.com/deepset-ai/haystack

- **Paper:** https://arxiv.org/abs/2104.12741

### Dataset Summary

We take GermanQuAD as a starting point and add hard negatives from a dump of the full German Wikipedia following the approach of the DPR authors (Karpukhin et al., 2020). The format of the dataset also resembles the one of DPR. GermanDPR comprises 9275 question/answerpairs in the training set and 1025 pairs in the test set. For eachpair, there are one positive context and three hard negative contexts.

### Supported Tasks and Leaderboards

- `open-domain-qa`, `text-retrieval`: This dataset is intended to be used for `open-domain-qa` and text retrieval tasks.

### Languages

The sentences in the dataset are in German (de).

## Dataset Structure

### Data Instances

A sample from the training set is provided below:

```

{

"question": "Wie viele christlichen Menschen in Deutschland glauben an einen Gott?",

"answers": [

"75 % der befragten Katholiken sowie 67 % der Protestanten glaubten an einen Gott (2005: 85 % und 79 %)"

],

"positive_ctxs": [

{

"title": "Gott",

"text": "Gott\

=== Demografie ===

Eine Zusammenfassung von Umfrageergebnissen aus verschiedenen Staaten ergab im Jahr 2007, dass es weltweit zwischen 505 und 749 Millionen Atheisten und Agnostiker gibt. Laut der Encyclopædia Britannica gab es 2009 weltweit 640 Mio. Nichtreligiöse und Agnostiker (9,4 %), und weitere 139 Mio. Atheisten (2,0 %), hauptsächlich in der Volksrepublik China.\\\\\\\\

Bei einer Eurobarometer-Umfrage im Jahr 2005 wurde festgestellt, dass 52 % der damaligen EU-Bevölkerung glaubt, dass es einen Gott gibt. Eine vagere Frage nach dem Glauben an „eine andere spirituelle Kraft oder Lebenskraft“ wurde von weiteren 27 % positiv beantwortet. Bezüglich der Gottgläubigkeit bestanden große Unterschiede zwischen den einzelnen europäischen Staaten. Die Umfrage ergab, dass der Glaube an Gott in Staaten mit starkem kirchlichen Einfluss am stärksten verbreitet ist, dass mehr Frauen (58 %) als Männer (45 %) an einen Gott glauben und dass der Gottglaube mit höherem Alter, geringerer Bildung und politisch rechtsgerichteten Ansichten korreliert.\\\\\\\\

Laut einer Befragung von 1003 Personen in Deutschland im März 2019 glauben 55 % an einen Gott; 2005 waren es 66 % gewesen. 75 % der befragten Katholiken sowie 67 % der Protestanten glaubten an einen Gott (2005: 85 % und 79 %). Unter Konfessionslosen ging die Glaubensquote von 28 auf 20 % zurück. Unter Frauen (60 %) war der Glauben 2019 stärker ausgeprägt als unter Männern (50 %), in Westdeutschland (63 %) weiter verbreitet als in Ostdeutschland (26 %).",

"passage_id": ""

}

],

"negative_ctxs": [],

"hard_negative_ctxs": [

{

"title": "Christentum",

"text": "Christentum\

\

=== Ursprung und Einflüsse ===\

Die ersten Christen waren Juden, die zum Glauben an Jesus Christus fanden. In ihm erkannten sie den bereits durch die biblische Prophetie verheißenen Messias (hebräisch: ''maschiach'', griechisch: ''Christos'', latinisiert ''Christus''), auf dessen Kommen die Juden bis heute warten. Die Urchristen übernahmen aus der jüdischen Tradition sämtliche heiligen Schriften (den Tanach), wie auch den Glauben an einen Messias oder Christus (''christos'': Gesalbter). Von den Juden übernommen wurden die Art der Gottesverehrung, das Gebet der Psalmen u. v. a. m. Eine weitere Gemeinsamkeit mit dem Judentum besteht in der Anbetung desselben Schöpfergottes. Jedoch sehen fast alle Christen Gott als ''einen'' dreieinigen Gott an: den Vater, den Sohn (Christus) und den Heiligen Geist. Darüber, wie der dreieinige Gott konkret gedacht werden kann, gibt es unter den christlichen Konfessionen und Gruppierungen unterschiedliche Auffassungen bis hin zur Ablehnung der Dreieinigkeit Gottes (Antitrinitarier). Der Glaube an Jesus Christus führte zu Spannungen und schließlich zur Trennung zwischen Juden, die diesen Glauben annahmen, und Juden, die dies nicht taten, da diese es unter anderem ablehnten, einen Menschen anzubeten, denn sie sahen in Jesus Christus nicht den verheißenen Messias und erst recht nicht den Sohn Gottes. Die heutige Zeitrechnung wird von der Geburt Christi aus gezählt. Anno Domini (A. D.) bedeutet „im Jahr des Herrn“.",

"passage_id": ""

},

{

"title": "Noachidische_Gebote",

"text": "Noachidische_Gebote\

\

=== Die kommende Welt ===\

Der Glaube an eine ''Kommende Welt'' (Olam Haba) bzw. an eine ''Welt des ewigen Lebens'' ist ein Grundprinzip des Judentums. Dieser jüdische Glaube ist von dem christlichen Glauben an das ''Ewige Leben'' fundamental unterschieden. Die jüdische Lehre spricht niemandem das Heil dieser kommenden Welt ab, droht aber auch nicht mit Höllenstrafen im Jenseits. Juden glauben schlicht, dass allen Menschen ein Anteil der kommenden Welt zuteilwerden kann. Es gibt zwar viele Vorstellungen der kommenden Welt, aber keine kanonische Festlegung ihrer Beschaffenheit; d. h., das Judentum kennt keine eindeutige Antwort darauf, was nach dem Tod mit uns geschieht. Die Frage nach dem Leben nach dem Tod wird auch als weniger wesentlich angesehen, als Fragen, die das Leben des Menschen auf Erden und in der Gesellschaft betreffen.\

Der jüdische Glaube an eine kommende Welt bedeutet nicht, dass Menschen, die nie von der Tora gehört haben, böse oder sonst minderwertige Menschen sind. Das Judentum lehrt den Glauben, dass alle Menschen mit Gott verbunden sind. Es gibt im Judentum daher keinen Grund, zu missionieren. Das Judentum lehrt auch, dass alle Menschen sich darin gleichen, dass sie weder prinzipiell gut noch böse sind, sondern eine Neigung zum Guten wie zum Bösen haben. Während des irdischen Lebens sollte sich der Mensch immer wieder für das Gute entscheiden.",

"passage_id": ""

},

{

"title": "Figuren_und_Schauplätze_der_Scheibenwelt-Romane",

"text": "Figuren_und_Schauplätze_der_Scheibenwelt-Romane\

\

=== Herkunft ===\

Es gibt unzählig viele Götter auf der Scheibenwelt, die so genannten „geringen Götter“, die überall sind, aber keine Macht haben. Erst wenn sie durch irgendein Ereignis Gläubige gewinnen, werden sie mächtiger. Je mehr Glauben, desto mehr Macht. Dabei nehmen sie die Gestalt an, die die Menschen ihnen geben (zum Beispiel Offler). Wenn ein Gott mächtig genug ist, erhält er Einlass in den Cori Celesti, den Berg der Götter, der sich in der Mitte der Scheibenwelt erhebt. Da Menschen wankelmütig sind, kann es auch geschehen, dass sie den Glauben verlieren und einen Gott damit entmachten (s. „Einfach Göttlich“).",

"passage_id": ""

}

]

},

```

### Data Fields

- `positive_ctxs`: a dictionary feature containing:

- `title`: a `string` feature.

- `text`: a `string` feature.

- `passage_id`: a `string` feature.

- `negative_ctxs`: a dictionary feature containing:

- `title`: a `string` feature.

- `text`: a `string` feature.

- `passage_id`: a `string` feature.

- `hard_negative_ctxs`: a dictionary feature containing:

- `title`: a `string` feature.

- `text`: a `string` feature.

- `passage_id`: a `string` feature.

- `question`: a `string` feature.

- `answers`: a list feature containing:

- a `string` feature.

### Data Splits

The dataset is split into a training set and a test set.

The final GermanDPR dataset comprises 9275

question/answer pairs in the training set and 1025

pairs in the test set. For each pair, there are one

positive context and three hard negative contexts.

| |questions|answers|positive contexts|hard negative contexts|

|------|--------:|------:|----------------:|---------------------:|

|train|9275| 9275|9275|27825|

|test|1025| 1025|1025|3075|

## Additional Information

### Dataset Curators

The dataset was initially created by Timo Möller, Julian Risch, Malte Pietsch, Julian Gutsch, Tom Hersperger, Luise Köhler, Iuliia Mozhina, and Justus Peter, during work done at deepset.ai

### Citation Information

```

@misc{möller2021germanquad,

title={GermanQuAD and GermanDPR: Improving Non-English Question Answering and Passage Retrieval},

author={Timo Möller and Julian Risch and Malte Pietsch},

year={2021},

eprint={2104.12741},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

``` |

deepset | null | @misc{möller2021germanquad,

title={GermanQuAD and GermanDPR: Improving Non-English Question Answering and Passage Retrieval},

author={Timo Möller and Julian Risch and Malte Pietsch},

year={2021},

eprint={2104.12741},

archivePrefix={arXiv},

primaryClass={cs.CL}

} | In order to raise the bar for non-English QA, we are releasing a high-quality, human-labeled German QA dataset consisting of 13 722 questions, incl. a three-way annotated test set.

The creation of GermanQuAD is inspired by insights from existing datasets as well as our labeling experience from several industry projects. We combine the strengths of SQuAD, such as high out-of-domain performance, with self-sufficient questions that contain all relevant information for open-domain QA as in the NaturalQuestions dataset. Our training and test datasets do not overlap like other popular datasets and include complex questions that cannot be answered with a single entity or only a few words. | false | 354 | false | deepset/germanquad | 2022-08-04T10:20:23.000Z | null | false | 0bf850d12abd098da61a0c6793729db0ad994446 | [] | [

"arxiv:2104.12741",

"thumbnail:https://thumb.tildacdn.com/tild3433-3637-4830-a533-353833613061/-/resize/720x/-/format/webp/germanquad.jpg",

"language:de",

"multilinguality:monolingual",

"source_datasets:original",

"task_categories:question-answering",

"task_categories:text-retrieval",

"task_ids:extrac... | https://huggingface.co/datasets/deepset/germanquad/resolve/main/README.md | ---

thumbnail: https://thumb.tildacdn.com/tild3433-3637-4830-a533-353833613061/-/resize/720x/-/format/webp/germanquad.jpg

language:

- de

multilinguality:

- monolingual

source_datasets:

- original

task_categories:

- question-answering

- text-retrieval

task_ids:

- extractive-qa

- closed-domain-qa

- open-domain-qa

train-eval-index:

- config: plain_text

task: question-answering

task_id: extractive_question_answering

splits:

train_split: train

eval_split: test

col_mapping:

context: context

question: question

answers.text: answers.text

answers.answer_start: answers.answer_start

---

# Dataset Card for germanquad

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-instances)

- [Data Splits](#data-instances)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Citation Information](#citation-information)

## Dataset Description

- **Homepage:** https://deepset.ai/germanquad

- **Repository:** https://github.com/deepset-ai/haystack

- **Paper:** https://arxiv.org/abs/2104.12741

### Dataset Summary

In order to raise the bar for non-English QA, we are releasing a high-quality, human-labeled German QA dataset consisting of 13 722 questions, incl. a three-way annotated test set.

The creation of GermanQuAD is inspired by insights from existing datasets as well as our labeling experience from several industry projects. We combine the strengths of SQuAD, such as high out-of-domain performance, with self-sufficient questions that contain all relevant information for open-domain QA as in the NaturalQuestions dataset. Our training and test datasets do not overlap like other popular datasets and include complex questions that cannot be answered with a single entity or only a few words.

### Supported Tasks and Leaderboards

- `extractive-qa`, `closed-domain-qa`, `open-domain-qa`, `text-retrieval`: This dataset is intended to be used for `open-domain-qa`, but can also be used for information retrieval tasks.

### Languages

The sentences in the dataset are in German (de).

## Dataset Structure

### Data Instances

A sample from the training set is provided below:

```

{

"paragraphs": [

{

"qas": [

{

"question": "Von welchem Gesetzt stammt das Amerikanische ab? ",

"id": 51870,

"answers": [

{

"answer_id": 53778,

"document_id": 43958,

"question_id": 51870,

"text": "britischen Common Laws",

"answer_start": 146,

"answer_category": "SHORT"

}

],

"is_impossible": false

}

],

"context": "Recht_der_Vereinigten_Staaten\

\

=== Amerikanisches Common Law ===\

Obwohl die Vereinigten Staaten wie auch viele Staaten des Commonwealth Erben des britischen Common Laws sind, setzt sich das amerikanische Recht bedeutend davon ab. Dies rührt größtenteils von dem langen Zeitraum her, in dem sich das amerikanische Recht unabhängig vom Britischen entwickelt hat. Entsprechend schauen die Gerichte in den Vereinigten Staaten bei der Analyse von eventuell zutreffenden britischen Rechtsprinzipien im Common Law gewöhnlich nur bis ins frühe 19. Jahrhundert.\

Während es in den Commonwealth-Staaten üblich ist, dass Gerichte sich Entscheidungen und Prinzipien aus anderen Commonwealth-Staaten importieren, ist das in der amerikanischen Rechtsprechung selten. Ausnahmen bestehen hier nur, wenn sich überhaupt keine relevanten amerikanischen Fälle finden lassen, die Fakten nahezu identisch sind und die Begründung außerordentlich überzeugend ist. Frühe amerikanische Entscheidungen zitierten oft britische Fälle, solche Zitate verschwanden aber während des 19. Jahrhunderts, als die Gerichte eindeutig amerikanische Lösungen zu lokalen Konflikten fanden. In der aktuellen Rechtsprechung beziehen sich fast alle Zitate auf amerikanische Fälle.\

Einige Anhänger des Originalismus und der strikten Gesetzestextauslegung (''strict constructionism''), wie zum Beispiel der verstorbene Bundesrichter am Obersten Gerichtshof, Antonin Scalia, vertreten die Meinung, dass amerikanische Gerichte ''nie'' ausländische Fälle überprüfen sollten, die nach dem Unabhängigkeitskrieg entschieden wurden, unabhängig davon, ob die Argumentation überzeugend ist oder nicht. Die einzige Ausnahme wird hier in Fällen gesehen, die durch die Vereinigten Staaten ratifizierte völkerrechtliche Verträge betreffen. Andere Richter, wie zum Beispiel Anthony Kennedy und Stephen Breyer vertreten eine andere Ansicht und benutzen ausländische Rechtsprechung, sofern ihre Argumentation für sie überzeugend, nützlich oder hilfreich ist.",

"document_id": 43958

}

]

},

```

### Data Fields

- `id`: a `string` feature.

- `context`: a `string` feature.

- `question`: a `string` feature.

- `answers`: a dictionary feature containing:

- `text`: a `string` feature.

- `answer_start`: a `int32` feature.

### Data Splits

The dataset is split into a one-way annotated training set and a three-way annotated test set of German Wikipedia passages (paragraphs). Each passage is

from a different article.

| |passages|questions|answers|

|----------|----:|---------:|---------:|

|train|2540| 11518|11518|

|test|474| 2204|6536|

## Additional Information

### Dataset Curators

The dataset was initially created by Timo Möller, Julian Risch, Malte Pietsch, Julian Gutsch, Tom Hersperger, Luise Köhler, Iuliia Mozhina, and Justus Peter, during work done at deepset.ai

### Citation Information

```

@misc{möller2021germanquad,

title={GermanQuAD and GermanDPR: Improving Non-English Question Answering and Passage Retrieval},

author={Timo Möller and Julian Risch and Malte Pietsch},

year={2021},

eprint={2104.12741},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

``` |

dennlinger | null | null | null | false | 564 | false | dennlinger/klexikon | 2022-10-25T15:03:56.000Z | klexikon | false | a24a4e46e38e652b9ac7a43c53c1f90eead22eea | [] | [

"arxiv:2201.07198",

"annotations_creators:found",

"annotations_creators:expert-generated",

"language_creators:found",

"language_creators:machine-generated",

"language:de",

"license:cc-by-sa-4.0",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:original",

"task_categor... | https://huggingface.co/datasets/dennlinger/klexikon/resolve/main/README.md | ---

annotations_creators:

- found

- expert-generated

language_creators:

- found

- machine-generated

language:

- de

license:

- cc-by-sa-4.0

multilinguality:

- monolingual

size_categories:

- 1K<n<10K

source_datasets:

- original

task_categories:

- summarization

- text2text-generation

task_ids:

- text-simplification

paperswithcode_id: klexikon

pretty_name: Klexikon

tags:

- conditional-text-generation

- simplification

- document-level

---

# Dataset Card for the Klexikon Dataset

## Table of Contents

- [Version History](#version-history)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-instances)

- [Data Splits](#data-instances)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

## Version History

- **v0.3** (2022-09-01): Removing some five samples from the dataset due to duplication conflicts with other samples.

- **v0.2** (2022-02-28): Updated the files to no longer contain empty sections and removing otherwise empty lines at the end of files. Also removing lines with some sort of coordinate.

- **v0.1** (2022-01-19): Initial data release on Huggingface datasets.

## Dataset Description

- **Homepage:** [N/A]

- **Repository:** [Klexikon repository](https://github.com/dennlinger/klexikon)

- **Paper:** [Klexikon: A German Dataset for Joint Summarization and Simplification](https://arxiv.org/abs/2201.07198)

- **Leaderboard:** [N/A]

- **Point of Contact:** [Dennis Aumiller](mailto:dennis.aumiller@gmail.com)

### Dataset Summary

The Klexikon dataset is a German resource of document-aligned texts between German Wikipedia and the children's lexicon "Klexikon". The dataset was created for the purpose of joint text simplification and summarization, and contains almost 2900 aligned article pairs.

Notably, the children's articles use a simpler language than the original Wikipedia articles; this is in addition to a clear length discrepancy between the source (Wikipedia) and target (Klexikon) domain.

### Supported Tasks and Leaderboards

- `summarization`: The dataset can be used to train a model for summarization. In particular, it poses a harder challenge than some of the commonly used datasets (CNN/DailyMail), which tend to suffer from positional biases in the source text. This makes it very easy to generate high (ROUGE) scoring solutions, by simply taking the leading 3 sentences. Our dataset provides a more challenging extraction task, combined with the additional difficulty of finding lexically appropriate simplifications.

- `simplification`: While not currently supported by the HF task board, text simplification is concerned with the appropriate representation of a text for disadvantaged readers (e.g., children, language learners, dyslexic,...).

For scoring, we ran preliminary experiments based on [ROUGE](https://huggingface.co/metrics/rouge), however, we want to cautiously point out that ROUGE is incapable of accurately depicting simplification appropriateness.

We combined this with looking at Flesch readability scores, as implemented by [textstat](https://github.com/shivam5992/textstat).

Note that simplification metrics such as [SARI](https://huggingface.co/metrics/sari) are not applicable here, since they require sentence alignments, which we do not provide.

### Languages

The associated BCP-47 code is `de-DE`.

The text of the articles is in German. Klexikon articles are further undergoing a simple form of peer-review before publication, and aim to simplify language for 8-13 year old children. This means that the general expected text difficulty for Klexikon articles is lower than Wikipedia's entries.

## Dataset Structure

### Data Instances

One datapoint represents the Wikipedia text (`wiki_text`), as well as the Klexikon text (`klexikon_text`).

Sentences are separated by newlines for both datasets, and section headings are indicated by leading `==` (or `===` for subheadings, `====` for sub-subheading, etc.).

Further, it includes the `wiki_url` and `klexikon_url`, pointing to the respective source texts. Note that the original articles were extracted in April 2021, so re-crawling the texts yourself will likely change some content.

Lastly, we include a unique identifier `u_id` as well as the page title `title` of the Klexikon page.

Sample (abridged texts for clarity):

```

{

"u_id": 0,

"title": "ABBA",

"wiki_url": "https://de.wikipedia.org/wiki/ABBA",

"klexikon_url": "https://klexikon.zum.de/wiki/ABBA",

"wiki_sentences": [

"ABBA ist eine schwedische Popgruppe, die aus den damaligen Paaren Agnetha Fältskog und Björn Ulvaeus sowie Benny Andersson und Anni-Frid Lyngstad besteht und sich 1972 in Stockholm formierte.",

"Sie gehört mit rund 400 Millionen verkauften Tonträgern zu den erfolgreichsten Bands der Musikgeschichte.",

"Bis in die 1970er Jahre hatte es keine andere Band aus Schweden oder Skandinavien gegeben, der vergleichbare Erfolge gelungen waren.",

"Trotz amerikanischer und britischer Dominanz im Musikgeschäft gelang der Band ein internationaler Durchbruch.",

"Sie hat die Geschichte der Popmusik mitgeprägt.",

"Zu ihren bekanntesten Songs zählen Mamma Mia, Dancing Queen und The Winner Takes It All.",

"1982 beendeten die Gruppenmitglieder aufgrund privater Differenzen ihre musikalische Zusammenarbeit.",

"Seit 2016 arbeiten die vier Musiker wieder zusammen an neuer Musik, die 2021 erscheinen soll.",

],

"klexikon_sentences": [

"ABBA war eine Musikgruppe aus Schweden.",

"Ihre Musikrichtung war die Popmusik.",

"Der Name entstand aus den Anfangsbuchstaben der Vornamen der Mitglieder, Agnetha, Björn, Benny und Anni-Frid.",

"Benny Andersson und Björn Ulvaeus, die beiden Männer, schrieben die Lieder und spielten Klavier und Gitarre.",

"Anni-Frid Lyngstad und Agnetha Fältskog sangen."

]

},

```

### Data Fields

* `u_id` (`int`): A unique identifier for each document pair in the dataset. 0-2349 are reserved for training data, 2350-2623 for testing, and 2364-2897 for validation.

* `title` (`str`): Title of the Klexikon page for this sample.

* `wiki_url` (`str`): URL of the associated Wikipedia article. Notably, this is non-trivial, since we potentially have disambiguated pages, where the Wikipedia title is not exactly the same as the Klexikon one.

* `klexikon_url` (`str`): URL of the Klexikon article.

* `wiki_text` (`List[str]`): List of sentences of the Wikipedia article. We prepare a pre-split document with spacy's sentence splitting (model: `de_core_news_md`). Additionally, please note that we do not include page contents outside of `<p>` tags, which excludes lists, captions and images.

* `klexikon_text` (`List[str]`): List of sentences of the Klexikon article. We apply the same processing as for the Wikipedia texts.

### Data Splits

We provide a stratified split of the dataset, based on the length of the respective Wiki article/Klexikon article pair (according to number of sentences).

The x-axis represents the length of the Wikipedia article, and the y-axis the length of the Klexikon article.

We segment the coordinate systems into rectangles of shape `(100, 10)`, and randomly sample a split of 80/10/10 for training/validation/test from each rectangle to ensure stratification. In case of rectangles with less than 10 entries, we put all samples into training.

The final splits have the following size:

* 2350 samples for training

* 274 samples for validation

* 274 samples for testing

## Dataset Creation

### Curation Rationale

As previously described, the Klexikon resource was created as an attempt to bridge the two fields of text summarization and text simplification. Previous datasets suffer from either one or more of the following shortcomings:

* They primarily focus on input/output pairs of similar lengths, which does not reflect longer-form texts.

* Data exists primarily for English, and other languages are notoriously understudied.

* Alignments exist for sentence-level, but not document-level.