author stringlengths 2 29 ⌀ | cardData null | citation stringlengths 0 9.58k ⌀ | description stringlengths 0 5.93k ⌀ | disabled bool 1 class | downloads float64 1 1M ⌀ | gated bool 2 classes | id stringlengths 2 108 | lastModified stringlengths 24 24 | paperswithcode_id stringlengths 2 45 ⌀ | private bool 2 classes | sha stringlengths 40 40 | siblings list | tags list | readme_url stringlengths 57 163 | readme stringlengths 0 977k |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

lewtun | null | null | null | false | 1 | false | lewtun/bulk-superb-s3p-superb-49606 | 2021-08-02T16:38:29.000Z | null | false | 75cac4251eb0dbc3282eaa5ff95c608032df6628 | [] | [

"benchmark:superb",

"task:asr",

"type:prediction"

] | https://huggingface.co/datasets/lewtun/bulk-superb-s3p-superb-49606/resolve/main/README.md | ---

benchmark: superb

task: asr

type: prediction

---

# Batch job

model_id: lewtun/superb-s3prl-osanseviero__hubert_base-asr-cbcd177a

dataset_name: superb

dataset_config: asr

dataset_split: test

dataset_column: file |

lewtun | null | null | null | false | 2 | false | lewtun/gem-sub-03 | 2021-12-15T14:34:30.000Z | null | false | 3d61f200cf73279e51fb903b58f80de3fb344769 | [] | [

"benchmark:gem",

"type:prediction",

"submission_name:T5-base (Baseline)"

] | https://huggingface.co/datasets/lewtun/gem-sub-03/resolve/main/README.md | ---

benchmark: gem

type: prediction

submission_name: T5-base (Baseline)

---

# GEM submissions for gem-sub-03

## Submitting to the benchmark

FILL ME IN

### Submission file format

Please follow this format for your `submission.json` file:

```json

{

"submission_name": "An identifying name of your system",

"param_count": 123, # the number of parameters your system has.

"description": "An optional brief description of the system that will be shown on the website",

"tasks":

{

"dataset_identifier": {

"values": ["output1", "output2", "..."], # A list of system outputs

# Optionally, you can add the keys which are part of an example to ensure that there is no shuffling mistakes.

"keys": ["key-0", "key-1", ...]

}

}

}

```

In this case, `dataset_identifier` is the identifier of the dataset followed by an identifier of the set the outputs were created from, for example `_validation` or `_test`. That means, the `mlsum_de` test set would have the identifier `mlsum_de_test`.

The `keys` field can be set to avoid accidental shuffling to impact your metrics. Simply add a list of the `gem_id` for each output example in the same order as your values.

### Validate your submission

To ensure that your submission files are correctly formatted, run the following command from the root of the repository:

```

python cli.py validate

```

If everything is correct, you should see the following message:

```

All submission files validated! ✨ 🚀 ✨

Now you can make a submission 🤗

```

### Push your submission to the Hugging Face Hub!

The final step is to commit your files and push them to the Hub:

```

python cli.py submit

```

If there are no errors, you should see the following message:

```

Submission successful! 🎉 🥳 🎉

Your submission will be evaulated on Sunday 05 September 2021 ⏳

```

where the evaluation is run every Sunday and your results will be visible on the leaderboard. |

lewtun | null | null | null | false | 2 | false | lewtun/github-issues-test | 2021-08-12T23:55:28.000Z | null | false | e3e3c81a4d7cc98d61d3b63b16b25e92008a9ba3 | [] | [] | https://huggingface.co/datasets/lewtun/github-issues-test/resolve/main/README.md | # GitHub Issues Dataset |

lewtun | null | null | null | false | 127 | false | lewtun/github-issues | 2021-10-04T15:49:55.000Z | null | false | 3bb24dcad2b45b45e20fc0accc93058dcbe8087d | [] | [

"arxiv:2005.00614"

] | https://huggingface.co/datasets/lewtun/github-issues/resolve/main/README.md | # Dataset Card for GitHub Issues

## Dataset Description

- **Point of Contact:** [Lewis Tunstall](lewis@huggingface.co)

### Dataset Summary

GitHub Issues is a dataset consisting of GitHub issues and pull requests associated with the 🤗 Datasets [repository](https://github.com/huggingface/datasets). It is intended for educational purposes and can be used for semantic search or multilabel text classification. The contents of each GitHub issue are in English and concern the domain of datasets for NLP, computer vision, and beyond.

### Supported Tasks and Leaderboards

For each of the tasks tagged for this dataset, give a brief description of the tag, metrics, and suggested models (with a link to their HuggingFace implementation if available). Give a similar description of tasks that were not covered by the structured tag set (repace the `task-category-tag` with an appropriate `other:other-task-name`).

- `task-category-tag`: The dataset can be used to train a model for [TASK NAME], which consists in [TASK DESCRIPTION]. Success on this task is typically measured by achieving a *high/low* [metric name](https://huggingface.co/metrics/metric_name). The ([model name](https://huggingface.co/model_name) or [model class](https://huggingface.co/transformers/model_doc/model_class.html)) model currently achieves the following score. *[IF A LEADERBOARD IS AVAILABLE]:* This task has an active leaderboard which can be found at [leaderboard url]() and ranks models based on [metric name](https://huggingface.co/metrics/metric_name) while also reporting [other metric name](https://huggingface.co/metrics/other_metric_name).

### Languages

Provide a brief overview of the languages represented in the dataset. Describe relevant details about specifics of the language such as whether it is social media text, African American English,...

When relevant, please provide [BCP-47 codes](https://tools.ietf.org/html/bcp47), which consist of a [primary language subtag](https://tools.ietf.org/html/bcp47#section-2.2.1), with a [script subtag](https://tools.ietf.org/html/bcp47#section-2.2.3) and/or [region subtag](https://tools.ietf.org/html/bcp47#section-2.2.4) if available.

## Dataset Structure

### Data Instances

Provide an JSON-formatted example and brief description of a typical instance in the dataset. If available, provide a link to further examples.

```

{

'example_field': ...,

...

}

```

Provide any additional information that is not covered in the other sections about the data here. In particular describe any relationships between data points and if these relationships are made explicit.

### Data Fields

List and describe the fields present in the dataset. Mention their data type, and whether they are used as input or output in any of the tasks the dataset currently supports. If the data has span indices, describe their attributes, such as whether they are at the character level or word level, whether they are contiguous or not, etc. If the datasets contains example IDs, state whether they have an inherent meaning, such as a mapping to other datasets or pointing to relationships between data points.

- `example_field`: description of `example_field`

Note that the descriptions can be initialized with the **Show Markdown Data Fields** output of the [tagging app](https://github.com/huggingface/datasets-tagging), you will then only need to refine the generated descriptions.

### Data Splits

Describe and name the splits in the dataset if there are more than one.

Describe any criteria for splitting the data, if used. If their are differences between the splits (e.g. if the training annotations are machine-generated and the dev and test ones are created by humans, or if different numbers of annotators contributed to each example), describe them here.

Provide the sizes of each split. As appropriate, provide any descriptive statistics for the features, such as average length. For example:

| | Tain | Valid | Test |

| ----- | ------ | ----- | ---- |

| Input Sentences | | | |

| Average Sentence Length | | | |

## Dataset Creation

### Curation Rationale

What need motivated the creation of this dataset? What are some of the reasons underlying the major choices involved in putting it together?

### Source Data

This section describes the source data (e.g. news text and headlines, social media posts, translated sentences,...)

#### Initial Data Collection and Normalization

Describe the data collection process. Describe any criteria for data selection or filtering. List any key words or search terms used. If possible, include runtime information for the collection process.

If data was collected from other pre-existing datasets, link to source here and to their [Hugging Face version](https://huggingface.co/datasets/dataset_name).

If the data was modified or normalized after being collected (e.g. if the data is word-tokenized), describe the process and the tools used.

#### Who are the source language producers?

State whether the data was produced by humans or machine generated. Describe the people or systems who originally created the data.

If available, include self-reported demographic or identity information for the source data creators, but avoid inferring this information. Instead state that this information is unknown. See [Larson 2017](https://www.aclweb.org/anthology/W17-1601.pdf) for using identity categories as a variables, particularly gender.

Describe the conditions under which the data was created (for example, if the producers were crowdworkers, state what platform was used, or if the data was found, what website the data was found on). If compensation was provided, include that information here.

Describe other people represented or mentioned in the data. Where possible, link to references for the information.

### Annotations

If the dataset contains annotations which are not part of the initial data collection, describe them in the following paragraphs.

#### Annotation process

If applicable, describe the annotation process and any tools used, or state otherwise. Describe the amount of data annotated, if not all. Describe or reference annotation guidelines provided to the annotators. If available, provide interannotator statistics. Describe any annotation validation processes.

#### Who are the annotators?

If annotations were collected for the source data (such as class labels or syntactic parses), state whether the annotations were produced by humans or machine generated.

Describe the people or systems who originally created the annotations and their selection criteria if applicable.

If available, include self-reported demographic or identity information for the annotators, but avoid inferring this information. Instead state that this information is unknown. See [Larson 2017](https://www.aclweb.org/anthology/W17-1601.pdf) for using identity categories as a variables, particularly gender.

Describe the conditions under which the data was annotated (for example, if the annotators were crowdworkers, state what platform was used, or if the data was found, what website the data was found on). If compensation was provided, include that information here.

### Personal and Sensitive Information

State whether the dataset uses identity categories and, if so, how the information is used. Describe where this information comes from (i.e. self-reporting, collecting from profiles, inferring, etc.). See [Larson 2017](https://www.aclweb.org/anthology/W17-1601.pdf) for using identity categories as a variables, particularly gender. State whether the data is linked to individuals and whether those individuals can be identified in the dataset, either directly or indirectly (i.e., in combination with other data).

State whether the dataset contains other data that might be considered sensitive (e.g., data that reveals racial or ethnic origins, sexual orientations, religious beliefs, political opinions or union memberships, or locations; financial or health data; biometric or genetic data; forms of government identification, such as social security numbers; criminal history).

If efforts were made to anonymize the data, describe the anonymization process.

## Considerations for Using the Data

### Social Impact of Dataset

Please discuss some of the ways you believe the use of this dataset will impact society.

The statement should include both positive outlooks, such as outlining how technologies developed through its use may improve people's lives, and discuss the accompanying risks. These risks may range from making important decisions more opaque to people who are affected by the technology, to reinforcing existing harmful biases (whose specifics should be discussed in the next section), among other considerations.

Also describe in this section if the proposed dataset contains a low-resource or under-represented language. If this is the case or if this task has any impact on underserved communities, please elaborate here.

### Discussion of Biases

Provide descriptions of specific biases that are likely to be reflected in the data, and state whether any steps were taken to reduce their impact.

For Wikipedia text, see for example [Dinan et al 2020 on biases in Wikipedia (esp. Table 1)](https://arxiv.org/abs/2005.00614), or [Blodgett et al 2020](https://www.aclweb.org/anthology/2020.acl-main.485/) for a more general discussion of the topic.

If analyses have been run quantifying these biases, please add brief summaries and links to the studies here.

### Other Known Limitations

If studies of the datasets have outlined other limitations of the dataset, such as annotation artifacts, please outline and cite them here.

## Additional Information

### Dataset Curators

List the people involved in collecting the dataset and their affiliation(s). If funding information is known, include it here.

### Licensing Information

Provide the license and link to the license webpage if available.

### Citation Information

Provide the [BibTex](http://www.bibtex.org/)-formatted reference for the dataset. For example:

```

@article{article_id,

author = {Author List},

title = {Dataset Paper Title},

journal = {Publication Venue},

year = {2525}

}

```

If the dataset has a [DOI](https://www.doi.org/), please provide it here.

### Contributions

Thanks to [@lewtun](https://github.com/lewtun) for adding this dataset. |

lewtun | null | @InProceedings{huggingface:dataset,

title = {A great new dataset},

author={huggingface, Inc.

},

year={2020}

} | This new dataset is designed to solve this great NLP task and is crafted with a lot of care. | false | 2 | false | lewtun/mnist-preds | 2021-07-16T09:00:01.000Z | null | false | be8eb418f71d209bd05f3f1be13e916c283c6540 | [] | [

"benchmark:test"

] | https://huggingface.co/datasets/lewtun/mnist-preds/resolve/main/README.md | ---

benchmark: test

---

# Dataset Card for RAFT Submission |

lewtun | null | null | null | false | 2 | false | lewtun/my-awesome-dataset | 2022-07-03T05:16:07.000Z | null | false | b66c0539f6b2df8daab58de1edb5371b19db5486 | [] | [

"annotations_creators:no-annotation",

"language_creators:found",

"language:en",

"license:apache-2.0",

"multilinguality:monolingual",

"size_categories:100K<n<1M",

"source_datasets:original",

"task_ids:summarization"

] | https://huggingface.co/datasets/lewtun/my-awesome-dataset/resolve/main/README.md | ---

annotations_creators:

- no-annotation

language_creators:

- found

language:

- en

license:

- apache-2.0

multilinguality:

- monolingual

size_categories:

- 100K<n<1M

source_datasets:

- original

task_categories:

- conditional-text-generation

task_ids:

- summarization

---

# Dataset Card for Demo

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This is a demo dataset with two files `train.csv` and `test.csv`.

Load it by:

```python

from datasets import load_dataset

data_files = {"train": "train.csv", "test": "test.csv"}

demo = load_dataset("stevhliu/demo", data_files=data_files)

```

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

Thanks to [@github-username](https://github.com/<github-username>) for adding this dataset. |

lgrobol | null | null | null | false | 32 | false | lgrobol/openminuscule | 2022-10-23T09:28:36.000Z | null | false | 49e6a3b37d4666b3554ea90a6c76e02d07505fec | [] | [

"language_creators:crowdsourced",

"language:en",

"language:fr",

"license:cc-by-4.0",

"multilinguality:multilingual",

"size_categories:100k<n<1M",

"source_datasets:original",

"task_categories:text-generation",

"task_ids:language-modeling",

"language_bcp47:en-GB",

"language_bcp47:fr-FR"

] | https://huggingface.co/datasets/lgrobol/openminuscule/resolve/main/README.md | ---

language_creators:

- crowdsourced

language:

- en

- fr

license:

- cc-by-4.0

multilinguality:

- multilingual

size_categories:

- 100k<n<1M

source_datasets:

- original

task_categories:

- text-generation

task_ids:

- language-modeling

pretty_name: Open Minuscule

language_bcp47:

- en-GB

- fr-FR

---

Open Minuscule

==============

A little small wee corpus to train little small wee models.

## Dataset Description

### Dataset Summary

This is a raw text corpus, mainly intended for testing purposes.

### Languages

- French

- English

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Source Data

It is a mashup including the following [CC-BY-SA 4.0](https://creativecommons.org/licenses/by-sa/4.0/) licenced texts

- [*Rayons émis par les composés de l’uranium et du

thorium*](https://fr.wikisource.org/wiki/Rayons_%C3%A9mis_par_les_compos%C3%A9s_de_l%E2%80%99uranium_et_du_thorium),

Maria Skłodowska Curie

- [*Frankenstein, or the Modern

Prometheus*](https://en.wikisource.org/wiki/Frankenstein,_or_the_Modern_Prometheus_(Revised_Edition,_1831)),

Mary Wollstonecraft Shelley

- [*Les maîtres sonneurs*](https://fr.wikisource.org/wiki/Les_Ma%C3%AEtres_sonneurs), George Sand

It also includes the text of *Sketch of The Analytical Engine Invented by Charles Babbage With

notes upon the Memoir by the Translator* by Luigi Menabrea and Ada Lovelace, which to the best of

my knowledge should be public domain.

## Considerations for Using the Data

This really should not be used for anything but testing purposes

## Licence

This corpus is available under the Creative Commons Attribution-ShareAlike 4.0 License |

lhoestq | null | @article{2016arXiv160605250R,

author = {{Rajpurkar}, Pranav and {Zhang}, Jian and {Lopyrev},

Konstantin and {Liang}, Percy},

title = "{SQuAD: 100,000+ Questions for Machine Comprehension of Text}",

journal = {arXiv e-prints},

year = 2016,

eid = {arXiv:1606.05250},

pages = {arXiv:1606.05250},

archivePrefix = {arXiv},

eprint = {1606.05250},

} | Stanford Question Answering Dataset (SQuAD) is a reading comprehension dataset, consisting of questions posed by crowdworkers on a set of Wikipedia articles, where the answer to every question is a segment of text, or span, from the corresponding reading passage, or the question might be unanswerable. | false | 21 | false | lhoestq/custom_squad | 2022-10-25T09:50:53.000Z | null | false | 6bb129c79cbc02860807e12dd09bf9e152c3f73d | [] | [

"arxiv:1606.05250",

"annotations_creators:crowdsourced",

"language_creators:crowdsourced",

"language_creators:found",

"language:en",

"license:cc-by-4.0",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:extended|wikipedia",

"task_categories:question-answering",

"task... | https://huggingface.co/datasets/lhoestq/custom_squad/resolve/main/README.md | ---

annotations_creators:

- crowdsourced

language_creators:

- crowdsourced

- found

language:

- en

license:

- cc-by-4.0

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- extended|wikipedia

task_categories:

- question-answering

task_ids:

- extractive-qa

---

# Dataset Card for "squad"

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks](#supported-tasks)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits Sample Size](#data-splits-sample-size)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [https://rajpurkar.github.io/SQuAD-explorer/](https://rajpurkar.github.io/SQuAD-explorer/)

- **Repository:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Paper:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Point of Contact:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Size of downloaded dataset files:** 33.51 MB

- **Size of the generated dataset:** 85.75 MB

- **Total amount of disk used:** 119.27 MB

### Dataset Summary

This dataset is a custom copy of the original SQuAD dataset. It is used to showcase dataset repositories. Data are the same as the original dataset.

Stanford Question Answering Dataset (SQuAD) is a reading comprehension dataset, consisting of questions posed by crowdworkers on a set of Wikipedia articles, where the answer to every question is a segment of text, or span, from the corresponding reading passage, or the question might be unanswerable.

### Supported Tasks

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Languages

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Dataset Structure

We show detailed information for up to 5 configurations of the dataset.

### Data Instances

#### plain_text

- **Size of downloaded dataset files:** 33.51 MB

- **Size of the generated dataset:** 85.75 MB

- **Total amount of disk used:** 119.27 MB

An example of 'train' looks as follows.

```

{

"answers": {

"answer_start": [1],

"text": ["This is a test text"]

},

"context": "This is a test context.",

"id": "1",

"question": "Is this a test?",

"title": "train test"

}

```

### Data Fields

The data fields are the same among all splits.

#### plain_text

- `id`: a `string` feature.

- `title`: a `string` feature.

- `context`: a `string` feature.

- `question`: a `string` feature.

- `answers`: a dictionary feature containing:

- `text`: a `string` feature.

- `answer_start`: a `int32` feature.

### Data Splits Sample Size

| name |train|validation|

|----------|----:|---------:|

|plain_text|87599| 10570|

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

[More Information Needed]

### Annotations

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

```

@article{2016arXiv160605250R,

author = {{Rajpurkar}, Pranav and {Zhang}, Jian and {Lopyrev},

Konstantin and {Liang}, Percy},

title = "{SQuAD: 100,000+ Questions for Machine Comprehension of Text}",

journal = {arXiv e-prints},

year = 2016,

eid = {arXiv:1606.05250},

pages = {arXiv:1606.05250},

archivePrefix = {arXiv},

eprint = {1606.05250},

}

```

### Contributions

Thanks to [@lewtun](https://github.com/lewtun), [@albertvillanova](https://github.com/albertvillanova), [@patrickvonplaten](https://github.com/patrickvonplaten), [@thomwolf](https://github.com/thomwolf) for adding this dataset. |

lhoestq | null | null | null | false | 3,572 | false | lhoestq/demo1 | 2021-11-08T14:36:41.000Z | null | false | 87ecf163bedca9d80598b528940a9c4f99e14c11 | [] | [

"type:demo"

] | https://huggingface.co/datasets/lhoestq/demo1/resolve/main/README.md | ---

type: demo

---

# Dataset Card for Demo1

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This is a demo dataset. It consists in two files `data/train.csv` and `data/test.csv`

You can load it with

```python

from datasets import load_dataset

demo1 = load_dataset("lhoestq/demo1")

```

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

Thanks to [@github-username](https://github.com/<github-username>) for adding this dataset.

|

lhoestq | null | \ | This is a test dataset. | false | 323 | false | lhoestq/test | 2022-07-01T15:26:34.000Z | null | false | 8af5b3fc20bfa28cc0f09ddc1a0c0bcddf906e3a | [] | [

"type:test",

"annotations_creators:expert-generated",

"language_creators:found",

"language:en",

"license:mit",

"multilinguality:monolingual",

"size_categories:n<1K",

"source_datasets:original",

"task_ids:other-test"

] | https://huggingface.co/datasets/lhoestq/test/resolve/main/README.md | ---

type: test

annotations_creators:

- expert-generated

language_creators:

- found

language:

- en

license:

- mit

multilinguality:

- monolingual

size_categories:

- n<1K

source_datasets:

- original

task_categories:

- other-test

task_ids:

- other-test

paperswithcode_id: null

pretty_name: Test Dataset

---

This is a test dataset |

lhoestq | null | null | null | false | 2 | false | lhoestq/test2 | 2021-07-23T14:21:45.000Z | null | false | dd797fcf8beacd44987048d5e2606edf1fe0a230 | [] | [] | https://huggingface.co/datasets/lhoestq/test2/resolve/main/README.md | This is a readme

|

lhoestq | null | null | null | false | 2 | false | lhoestq/test_commit_descriptions | 2022-01-25T14:58:01.000Z | null | false | 01a864c56b2bd80f536391bcfc17a71443b5de7b | [] | [] | https://huggingface.co/datasets/lhoestq/test_commit_descriptions/resolve/main/README.md | |

liam168 | null | null | null | false | 2 | false | liam168/nlp_c4_sentiment | 2021-07-30T04:05:45.000Z | null | false | 7f14f05cd0effd0d847886ede953e6808c3e3a27 | [] | [] | https://huggingface.co/datasets/liam168/nlp_c4_sentiment/resolve/main/README.md | 带情感标注 新浪微博({0: '喜悦', 1: '愤怒', 2: '厌恶', 3: '低落'}) |

lijingxin | null | null | null | false | 6 | false | lijingxin/squad_zen | 2022-02-09T03:05:31.000Z | null | false | 7b78af8a83bdeebb85f2d78f883acdb9c947c655 | [] | [] | https://huggingface.co/datasets/lijingxin/squad_zen/resolve/main/README.md | 仅自用

出自:https://github.com/junzeng-pluto/ChineseSquad

感谢! |

lincoln | null | null | null | false | 20 | false | lincoln/newsquadfr | 2022-08-05T12:05:24.000Z | null | false | 6aca57928d2edaffa6f9a29bdecaa789c28d0391 | [] | [

"annotations_creators:private",

"language:fr-FR",

"license:cc-by-nc-sa-4.0",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:original",

"source_datasets:newspaper",

"source_datasets:online",

"task_categories:question-answering",

"task_ids:extractive-qa",

"task_ids:ope... | https://huggingface.co/datasets/lincoln/newsquadfr/resolve/main/README.md | ---

annotations_creators:

- private

language_creators: null

language:

- fr-FR

license:

- cc-by-nc-sa-4.0

multilinguality:

- monolingual

size_categories:

- 1K<n<10K

source_datasets:

- original

- newspaper

- online

task_categories:

- question-answering

task_ids:

- extractive-qa

- open-domain-qa

paperswithcode_id: null

---

# Dataset Card for newsquadfr

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-instances)

- [Data Splits](#data-instances)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

## Dataset Description

- **Homepage:** [lincoln.fr](https://www.lincoln.fr/)

- **Repository:** [github/Lincoln-France](https://github.com/Lincoln-France)

- **Paper:** [Needs More Information]

- **Leaderboard:** [Needs More Information]

- **Point of Contact:** [email](labinnovation@mel.lincoln.fr)

### Dataset Summary

newsquadfr is a small dataset created for Question Answering task. Contexts are paragraphs of articles extracted from nine online french newspaper during year 2020/2021. newsquadfr stands for Newspaper question answering dataset in french. inspired by Piaf and Squad dataset. 2 520 triplets context - question - answer.

```py

from datasets import load_dataset

ds_name = 'lincoln/newsquadfr'

# exemple 1

ds_newsquad = load_dataset(ds_name)

# exemple 2

data_files = {'train': 'train.json', 'test': 'test.json', 'valid': 'valid.json'}

ds_newsquad = load_dataset(ds_name, data_files=data_files)

# exemple 3

ds_newsquad = load_dataset(ds_name, data_files=data_files, split="valid+test")

```

(train set)

| website | Nb |

|---------------|-----|

| cnews | 20 |

| francetvinfo | 40 |

| la-croix | 375 |

| lefigaro | 160 |

| lemonde | 325 |

| lesnumeriques | 70 |

| numerama | 140 |

| sudouest | 475 |

| usinenouvelle | 45 |

### Supported Tasks and Leaderboards

- extractive-qa

- open-domain-qa

### Languages

Fr-fr

## Dataset Structure

### Data Instances

```json

{'answers': {'answer_start': [53], 'text': ['manSuvre "agressive']},

'article_id': 34138,

'article_title': 'Caricatures, Libye, Haut-Karabakh... Les six dossiers qui '

'opposent Emmanuel Macron et Recep Tayyip Erdogan.',

'article_url': 'https://www.francetvinfo.fr/monde/turquie/caricatures-libye-haut-karabakh-les-six-dossiers-qui-opposent-emmanuel-macron-et-recep-tayyip-erdogan_4155611.html#xtor=RSS-3-[france]',

'context': 'Dans ce contexte déjà tendu, la France a dénoncé une manSuvre '

'"agressive" de la part de frégates turques à l\'encontre de l\'un '

"de ses navires engagés dans une mission de l'Otan, le 10 juin. "

'Selon Paris, la frégate Le Courbet cherchait à identifier un '

'cargo suspecté de transporter des armes vers la Libye quand elle '

'a été illuminée à trois reprises par le radar de conduite de tir '

"de l'escorte turque.",

'id': '2261',

'paragraph_id': 201225,

'question': "Qu'est ce que la France reproche à la Turquie?",

'website': 'francetvinfo'}

```

### Data Fields

- `answers`: a dictionary feature containing:

- `text`: a `string` feature.

- `answer_start`: a `int64` feature.

- `article_id`: a `int64` feature.

- `article_title`: a string feature.

- `article_url`: a string feature.

- `context`: a `string` feature.

- `id`: a `string` feature.

- `paragraph_id`: a `int64` feature.

- `question`: a `string` feature.

- `website`: a `string` feature.

### Data Splits

| Split | Nb |

|-------|----|

| train |1650|

| test |415 |

| valid |455 |

## Dataset Creation

### Curation Rationale

[Needs More Information]

### Source Data

#### Initial Data Collection and Normalization

Paragraphs were chosen according to theses rules:

- parent article must have more than 71% ASCII characters

- paragraphs size must be between 170 and 670 characters

- paragraphs shouldn't contain "A LIRE" or "A VOIR AUSSI"

Then, we stratified our original dataset to create this dataset according to :

- website

- number of named entities

- paragraph size

#### Who are the source language producers?

[Needs More Information]

### Annotations

#### Annotation process

Using Piaf annotation tools. Three different persons mostly.

#### Who are the annotators?

Lincoln

### Personal and Sensitive Information

[Needs More Information]

## Considerations for Using the Data

### Social Impact of Dataset

[Needs More Information]

### Discussion of Biases

- Annotation is not well controlled

- asking question on news is biaised

### Other Known Limitations

[Needs More Information]

## Additional Information

### Dataset Curators

[Needs More Information]

### Licensing Information

https://creativecommons.org/licenses/by-nc-sa/4.0/deed.fr

### Citation Information

[Needs More Information] |

liweili | null | \

@InProceedings{huggingface:c4_200m_dataset,

title = {c4_200m},

author={Li Liwei},

year={2021}

} | \

GEC Dataset Generated from C4 | false | 105 | false | liweili/c4_200m | 2022-10-23T11:00:46.000Z | null | false | 1b0382449b4273d9de8e6d6ad15ca6873884758a | [] | [

"language:en",

"source_datasets:allenai/c4",

"task_categories:text-generation",

"tags:grammatical-error-correction"

] | https://huggingface.co/datasets/liweili/c4_200m/resolve/main/README.md | ---

language:

- en

source_datasets:

- allenai/c4

task_categories:

- text-generation

pretty_name: C4 200M Grammatical Error Correction Dataset

tags:

- grammatical-error-correction

---

# C4 200M

# Dataset Summary

c4_200m is a collection of 185 million sentence pairs generated from the cleaned English dataset from C4. This dataset can be used in grammatical error correction (GEC) tasks.

The corruption edits and scripts used to synthesize this dataset is referenced from: [C4_200M Synthetic Dataset](https://github.com/google-research-datasets/C4_200M-synthetic-dataset-for-grammatical-error-correction)

# Description

As discussed before, this dataset contains 185 million sentence pairs. Each article has these two attributes: `input` and `output`. Here is a sample of dataset:

```

{

"input": "Bitcoin is for $7,094 this morning, which CoinDesk says."

"output": "Bitcoin goes for $7,094 this morning, according to CoinDesk."

}

``` |

lkiouiou | null | null | null | false | 2 | false | lkiouiou/o9ui7877687 | 2021-04-04T18:04:32.000Z | null | false | 3fda50517775f10d7a541b8d3ba5711488c9aae5 | [] | [] | https://huggingface.co/datasets/lkiouiou/o9ui7877687/resolve/main/README.md | |

llangnickel | null | null | null | false | 10 | false | llangnickel/long-covid-classification-data | 2022-07-29T09:21:28.000Z | null | false | af681c527ad170c4c92235fdc84c7f6d269ea485 | [] | [

"annotations_creators:expert-generated",

"language_creators:expert-generated",

"language:en",

"license:cc-by-4.0",

"multilinguality:monolingual",

"size_categories:unknown",

"source_datasets:original",

"task_categories:text-classification"

] | https://huggingface.co/datasets/llangnickel/long-covid-classification-data/resolve/main/README.md | ---

annotations_creators:

- expert-generated

language_creators:

- expert-generated

language:

- en

license:

- cc-by-4.0

multilinguality:

- monolingual

pretty_name: 'Dataset containing abstracts from PubMed, either related to long COVID

or not. '

size_categories:

- unknown

source_datasets:

- original

task_categories:

- text-classification

---

## Data Description

Long-COVID related articles have been manually collected by information specialists.

Further information and citation coming soon.

## Size

||Training|Development|Test|Total|

|--|--|--|--|--|

Positive Examples|215|76|70|345|

Negative Examples|199|62|68|345|

Total|414|238|138|690|

## Citation

@article{10.1093/database/baac048,

author = {Langnickel, Lisa and Darms, Johannes and Heldt, Katharina and Ducks, Denise and Fluck, Juliane},

title = "{Continuous development of the semantic search engine preVIEW: from COVID-19 to long COVID}",

journal = {Database},

volume = {2022},

year = {2022},

month = {07},

issn = {1758-0463},

doi = {10.1093/database/baac048},

url = {https://doi.org/10.1093/database/baac048},

note = {baac048},

eprint = {https://academic.oup.com/database/article-pdf/doi/10.1093/database/baac048/44371817/baac048.pdf},

} |

loretoparisi | null | null | null | false | 1 | false | loretoparisi/spoken-punctuation | 2022-02-24T21:12:49.000Z | null | false | 936ce94b2393ccff9d8ab5e37c17c3cba70075f4 | [] | [] | https://huggingface.co/datasets/loretoparisi/spoken-punctuation/resolve/main/README.md | # spoken-punctuation

Spoken punctuation for Speech-to-Text by language and locale.

## Disclaimer

Data collected from Google Cloud Speech-to-Text "Supported spoken punctuation" documentation: https://cloud.google.com/speech-to-text/docs/spoken-punctuation

|

lpsc-fiuba | null | TO DO: Cita | null | false | 2 | false | lpsc-fiuba/melisa | 2022-10-22T08:52:56.000Z | null | false | ebad3013a8a015074a69a9826d06c38b750e1bce | [] | [

"annotations_creators:found",

"language_creators:found",

"language:es",

"language:pt",

"license:other",

"multilinguality:multilingual",

"multilinguality:monolingual",

"size_categories:100K<n<1M",

"source_datasets:original",

"task_categories:text-classification",

"task_ids:language-modeling",

"... | https://huggingface.co/datasets/lpsc-fiuba/melisa/resolve/main/README.md | ---

annotations_creators:

- found

language_creators:

- found

language:

- es

- pt

license:

- other

multilinguality:

all_languages:

- multilingual

es:

- monolingual

pt:

- monolingual

paperswithcode_id: null

size_categories:

all_languages:

- 100K<n<1M

es:

- 100K<n<1M

pt:

- 100K<n<1M

source_datasets:

- original

task_categories:

- conditional-text-generation

- sequence-modeling

- text-classification

- text-scoring

task_ids:

- language-modeling

- sentiment-classification

- sentiment-scoring

- summarization

- topic-classification

---

# Dataset Card for MeLiSA (Mercado Libre for Sentiment Analysis)

** **NOTE: THIS CARD IS UNDER CONSTRUCTION** **

** **NOTE 2: THE RELEASED VERSION OF THIS DATASET IS A DEMO VERSION.** **

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Webpage:** https://github.com/lpsc-fiuba/MeLiSA

- **Paper:**

- **Point of Contact:** lestienne@fi.uba.ar

[More Information Needed]

### Dataset Summary

We provide a Mercado Libre product reviews dataset for spanish and portuguese text classification. The dataset contains reviews in these two languages collected between August 2020 and January 2021. Each record in the dataset contains the review content and title, the star rating, the country where it was pubilshed and the product category (arts, technology, etc.). The corpus is roughly balanced across stars, so each star rating constitutes approximately 20% of the reviews in each language.

| || Spanish ||| Portugese ||

|---|:------:|:----------:|:-----:|:------:|:----------:|:-----:|

| | Train | Validation | Test | Train | Validation | Test |

| 1 | 88.425 | 4.052 | 5.000 | 50.801 | 4.052 | 5.000 |

| 2 | 88.397 | 4.052 | 5.000 | 50.782 | 4.052 | 5.000 |

| 3 | 88.435 | 4.052 | 5.000 | 50.797 | 4.052 | 5.000 |

| 4 | 88.449 | 4.052 | 5.000 | 50.794 | 4.052 | 5.000 |

| 5 | 88.402 | 4.052 | 5.000 | 50.781 | 4.052 | 5.000 |

Table shows the number of samples per star rate in each split. There is a total of 442.108 training samples in spanish and 253.955 in portuguese. We limited the number of reviews per product to 30 and we perform a ranked inclusion of the downloaded reviews to include those with rich semantic content. In these ranking, the lenght of the review content and the valorization (difference between likes and dislikes) was prioritized. For more details on this process, see (CITATION).

Reviews in spanish were obtained from 8 different Latin Amercian countries (Argentina, Colombia, Peru, Uruguay, Chile, Venezuela and Mexico), and portuguese reviews were extracted from Brasil. To match the language with its respective country, we applied a language detection algorithm based on the works of Joulin et al. (2016a and 2016b) to determine the language of the review text and we removed reviews that were not written in the expected language.

[More Information Needed]

### Languages

The dataset contains reviews in Latin American Spanish and Portuguese.

## Dataset Structure

### Data Instances

Each data instance corresponds to a review. Each split is stored in a separated `.csv` file, so every row in each file consists on a review. For example, here we show a snippet of the spanish training split:

```csv

country,category,review_content,review_title,review_rate

...

MLA,Tecnología y electrónica / Tecnologia e electronica,Todo bien me fue muy util.,Muy bueno,2

MLU,"Salud, ropa y cuidado personal / Saúde, roupas e cuidado pessoal",No fue lo que esperaba. El producto no me sirvió.,No fue el producto que esperé ,2

MLM,Tecnología y electrónica / Tecnologia e electronica,No fue del todo lo que se esperaba.,No me fue muy funcional ahí que hacer ajustes,2

...

```

### Data Fields

- `country`: The string identifier of the country. It could be one of the following: `MLA` (Argentina), `MCO` (Colombia), `MPE` (Peru), `MLU` (Uruguay), `MLC` (Chile), `MLV` (Venezuela), `MLM` (Mexico) or `MLB` (Brasil).

- `category`: String representation of the product's category. It could be one of the following:

- Hogar / Casa

- Tecnologı́a y electrónica / Tecnologia e electronica

- Salud, ropa y cuidado personal / Saúde, roupas e cuidado pessoal

- Arte y entretenimiento / Arte e Entretenimiento

- Alimentos y Bebidas / Alimentos e Bebidas

- `review_content`: The text content of the review.

- `review_title`: The text title of the review.

- `review_rate`: An int between 1-5 indicating the number of stars.

### Data Splits

Each language configuration comes with it's own `train`, `validation`, and `test` splits. The `all_languages` split is simply a concatenation of the corresponding split across all languages. That is, the `train` split for `all_languages` is a concatenation of the `train` splits for each of the languages and likewise for `validation` and `test`.

## Dataset Creation

### Curation Rationale

The dataset is motivated by the desire to advance sentiment analysis and text classification in Latin American Spanish and Portuguese.

### Source Data

#### Initial Data Collection and Normalization

The authors gathered the reviews from the marketplaces in Argentina, Colombia, Peru, Uruguay, Chile, Venezuela and Mexico for the Spanish language and from Brasil for Portuguese. They prioritized reviews that contained relevant semantic content by applying a ranking filter based in the lenght and the valorization (difference betweent the number of likes and dislikes) of the review. They then ensured the correct language by applying a semi-automatic language detection algorithm, only retaining those of the target language. No normalization was applied to the review content or title.

Original products categories were grouped in higher level categories, resulting in five different types of products: "Home" (Hogar / Casa), "Technology and electronics" (Tecnologı́a y electrónica

/ Tecnologia e electronica), "Health, Dress and Personal Care" (Salud, ropa y cuidado personal / Saúde, roupas e cuidado pessoal) and "Arts and Entertainment" (Arte y entretenimiento / Arte e Entretenimiento).

#### Who are the source language producers?

The original text comes from Mercado Libre customers reviewing products on the marketplace across a variety of product categories.

### Annotations

#### Annotation process

Each of the fields included are submitted by the user with the review or otherwise associated with the review. No manual or machine-driven annotation was necessary.

#### Who are the annotators?

N/A

### Personal and Sensitive Information

Mercado Libre Reviews are submitted by users with the knowledge and attention of being public. The reviewer ID's included in this dataset are anonymized, meaning that they are disassociated from the original user profiles. However, these fields would likely be easy to deannoymize given the public and identifying nature of free-form text responses.

## Considerations for Using the Data

### Social Impact of Dataset

Although Spanish and Portuguese languages are relatively high resource, most of the data is collected from European or United State users. This dataset is part of an effort to encourage text classification research in languages other than English and European Spanish and Portuguese. Such work increases the accessibility of natural language technology to more regions and cultures.

### Discussion of Biases

The data included here are from unverified consumers. Some percentage of these reviews may be fake or contain misleading or offensive language.

### Other Known Limitations

The dataset is constructed so that the distribution of star ratings is roughly balanced. This feature has some advantages for purposes of classification, but some types of language may be over or underrepresented relative to the original distribution of reviews to acheive this balance.

[More Information Needed]

## Additional Information

### Dataset Curators

Published by Lautaro Estienne, Matías Vera and Leonardo Rey Vega. Managed by the Signal Processing in Comunications Laboratory of the Electronic Department at the Engeneering School of the Buenos Aires University (UBA).

### Licensing Information

Amazon has licensed this dataset under its own agreement, to be found at the dataset webpage here:

https://docs.opendata.aws/amazon-reviews-ml/license.txt

### Citation Information

Please cite the following paper if you found this dataset useful:

(CITATION)

[More Information Needed]

### Contributions

[More Information Needed]

|

lsb | null | null | null | false | 2 | false | lsb/ancient-latin-passages | 2022-01-31T18:22:55.000Z | null | false | 2a8784deddebd5bfcd0cb9f276139f91e814b9c8 | [] | [

"license:agpl-3.0"

] | https://huggingface.co/datasets/lsb/ancient-latin-passages/resolve/main/README.md | ---

license: agpl-3.0

---

|

lsb | null | null | null | false | 2 | false | lsb/million-english-numbers | 2022-01-31T07:17:04.000Z | null | false | 01646b472299e7f15ee59772d329eb5da3646a9a | [] | [

"arxiv:1803.09010"

] | https://huggingface.co/datasets/lsb/million-english-numbers/resolve/main/README.md | # Million English Numbers

A list of a million American English numbers, under a AGPL 3.0 license. This datasheet is inspired by [Datasheets for Datasets](https://arxiv.org/abs/1803.09010).

## Sample

```

$ tail -n 5 million-english-numbers

nine hundred ninety nine thousand nine hundred ninety five

nine hundred ninety nine thousand nine hundred ninety six

nine hundred ninety nine thousand nine hundred ninety seven

nine hundred ninety nine thousand nine hundred ninety eight

nine hundred ninety nine thousand nine hundred ninety nine

```

## Motivation

This dataset was created as a toy sample of text for use in natural language processing, in machine learning.

The goal was to create small samples of text with minimal variation and results that could be easily audited (observe how often the model predicts "eighty twenty hundred three ten forty").

This is original research, produced by the linguistic model in the NodeJS package `written-number` by Pedro Tacla Yamada, freely available on npm.

The estimated cost of creating the dataset is minimal, and subsidized with private funds.

## Composition

The instances that comprise the dataset are spelled-out integers, in colloquial Mid-Atlantic American English, identifiable to a speaker born around the year 2000.

There are one million instances, from 0 to 999999 consecutively.

The instances consist of ASCII text, delimited by line feeds.

Counting lines from zero, the line number of each instance is its integer value.

No information is missing from each instance.

In the related _fast.ai_ `HUMAN_NUMBERS` dataset, the split is between 1-7999, and 8001-9999. A user may elect to split this dataset similarly, with the last percentages of lines used for validation or testing.

There are no known errors or sources of noise or redundancies in the dataset.

The dataset is self-contained.

The dataset is not confidential, and its method of generation is public as well.

The dataset will probably not be offensive / insulting / threatening / anxiety-inducing to many people. The numerologically-minded may wish to exercise discernment when choosing which numbers to use: all of the auspicious numbers, all of the inauspicious numbers, all of the meaningful numbers, for all numerological traditions, are included in this dataset, without any emphasis or warnings besides sequential ordering.

The dataset does not relate to people, except by using human language to express integers.

## Collection

The data was directly observed from the `written-number` npm package.

To rebuild this dataset, run `docker run -e MAXINT=1000000 -e WN=written-number -w /x node sh -c 'npm i $WN 2>1 >/dev/null; node -e "const w=require(process.env.WN);for(i=0;i<process.env.MAXINT;i++) console.log(w(i,{noAnd: true}))" | tr "-" " "'` on any x86 machine with Docker. Manual spot-checking confirmed the results.

This is a subset of the set of integers, in increasing order, with no omissions, starting from zero.

This was collected by one individual, writing minimal code, using free time donated to the project.

The data was collected at one point in time, using colloquial Mid-Atlantic American English. The idea of integers including zero is long-standing, and dates back to Babylonians in 700 BCE, the Olmec and Maya in 100 BCE, Brahmagupta in 628 CE.

There was no IRB involved in the making of this data product.

The instances individually do not relate to people.

## Preprocessing

The default output of the version of `written-number` puts a hyphen between the tens and ones place, and this hyphen was translated into a space in the output.

Further, the default conjunction _`and`_ between the hundreds and tens place was removed, as visible above in the sample (_`nine hundred ninety`_).

This raw data was not saved.

The code to regenerate the raw data, and the code to run the preprocessing, is available in this datasheet.

## Uses

This dataset has not been used for any tasks already. It has been inspired by a smaller _fast.ai_ dataset used pedagogically for training NLP models.

The dataset could also be used in place of the original code that generated it, if someone desired a list of human-readable numbers in this dialect of English.

The dataset could also be used as a normative spelling of integers (to correct someone writing "fourty" for instance).

The dataset could also be used, as an artifact of language, could be used to establish normative language for reading integers.

The dataset is composed of only one of the many languages and dialects that `written-number` produces.

A native user of another dialect might elect to change language or dialect, for easier auditing of the output of the language model trained on the numbers.

Specifically, someone might expect to see _`nine lakh ninety nine thousand nine hundred ninety nine`_ instead of _`nine hundred ninety nine thousand nine hundred ninety nine`_ as the last line of the sample above.

It is important to not use this dataset as a normative spelling of integers, especially to impose American English readings of integers on speakers of other dialects of English.

## Distribution

This dataset is distributed worldwide.

It is available on Huggingface, at https://huggingface.co/datasets/lsb/million-english-numbers .

It is currently available.

The license is AGPL 3.0.

The library `written-number` is available under the MIT license, and its output is not currently restricted by license.

No third parties have imposed any restrictions on the data associated with these instance of written numbers.

No export controls or other regulatory restrictions currently apply to the dataset or to individual instances in the dataset.

## Maintenance

Huggingface is currently hosting the dataset, and @lsb is maintaining the dataset.

Contact is available via pull-request, and via email at `hi@leebutterman.com` .

There are currently no errata, and the full edit history of the dataset is available in the `git` repository in which this datasheet is included.

This dataset is not expected to frequently update. Any users of the dataset may elect to `git pull` any updates.

The data does not relate to people, and there are no limits on the retention of the data associated with the instances.

Older versions of the dataset continue to be supported and hosted and maintained, through the `git` repository that includes the full edit history of the dataset.

If others wish to extend or augment or build on or contribute to the dataset, a mechanism available is to upload additional datasets to Huggingface.

|

lucien | null | null | null | false | 2 | false | lucien/sciencemission | 2021-04-01T17:48:38.000Z | null | false | f7cdc8e53fd262ba6e259c8db821698237fba8fd | [] | [] | https://huggingface.co/datasets/lucien/sciencemission/resolve/main/README.md | |

lucien | null | null | null | false | 2 | false | lucien/wsaderfffjjjhhh | 2021-03-31T18:38:43.000Z | null | false | d1fb49c368c1bef8fe111692645e5cb763ac5a15 | [] | [] | https://huggingface.co/datasets/lucien/wsaderfffjjjhhh/resolve/main/README.md | |

lukesjordan | null | null | null | false | 9 | false | lukesjordan/worldbank-project-documents | 2022-10-24T20:10:40.000Z | null | false | c435ecfd98f198f2ea0e741591d347423ff056e7 | [] | [

"annotations_creators:no-annotation",

"language_creators:found",

"language:en",

"license:other",

"multilinguality:monolingual",

"size_categories:unknown",

"source_datasets:original",

"task_categories:table-to-text",

"task_categories:question-answering",

"task_categories:summarization",

"task_cat... | https://huggingface.co/datasets/lukesjordan/worldbank-project-documents/resolve/main/README.md | ---

annotations_creators:

- no-annotation

language_creators:

- found

language:

- en

license:

- other

multilinguality:

- monolingual

size_categories:

- unknown

source_datasets:

- original

task_categories:

- table-to-text

- question-answering

- summarization

- text-generation

task_ids:

- abstractive-qa

- closed-domain-qa

- extractive-qa

- language-modeling

- named-entity-recognition

- text-simplification

pretty_name: worldbank_project_documents

language_bcp47:

- en-US

tags:

- conditional-text-generation

- structure-prediction

---

# Dataset Card for World Bank Project Documents

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:**

- **Repository:** https://github.com/luke-grassroot/aid-outcomes-ml

- **Paper:** Forthcoming

- **Point of Contact:** Luke Jordan (lukej at mit)

### Dataset Summary

This is a dataset of documents related to World Bank development projects in the period 1947-2020. The dataset includes

the documents used to propose or describe projects when they are launched, and those in the review. The documents are indexed

by the World Bank project ID, which can be used to obtain features from multiple publicly available tabular datasets.

### Supported Tasks and Leaderboards

No leaderboard yet. A wide range of possible supported tasks, including varieties of summarization, QA, and language modelling. To date, the datasets have been used primarily in conjunction with tabular data (via BERT embeddings) to predict project outcomes.

### Languages

English

## Dataset Structure

### Data Instances

### Data Fields

* World Bank project ID

* Document text

* Document type: "APPROVAL" for documents written at the beginning of a project, when it is approved; and "REVIEW" for documents written at the end of a project

### Data Splits

To allow for open exploration, and since different applications will want to do splits based on different sampling weights, we have not done a train test split but left all files in the train branch.

## Dataset Creation

### Source Data

Documents were scraped from the World Bank's public project archive, following links through to specific project pages and then collecting the text files made available by the [World Bank](https://projects.worldbank.org/en/projects-operations/projects-home).

### Annotations

This dataset is not annotated.

### Personal and Sensitive Information

None.

## Considerations for Using the Data

### Social Impact of Dataset

Affects development projects, which can have large-scale consequences for many millions of people.

### Discussion of Biases

The documents reflect the history of development, which has well-documented and well-studied issues with the imposition of developed world ideas on developing world countries. The documents provide a way to study those in the field of development, but should not be used for their description of the recipient countries, since that language will reflect a multitude of biases, especially in the earlier reaches of the historical projects.

## Additional Information

### Dataset Curators

Luke Jordan, Busani Ndlovu.

### Licensing Information

MIT +no-false-attribs license (MITNFA).

### Citation Information

@dataset{world-bank-project-documents,

author = {Jordan, Luke and Ndlovu, Busani and Shenk, Justin},

title = {World Bank Project Documents Dataset},

year = {2021}

}

### Contributions

Thanks to [@luke-grassroot](https://github.com/luke-grassroot), [@FRTNX](https://github.com/FRTNX/) and [@justinshenk](https://github.com/justinshenk) for adding this dataset. |

luozhouyang | null | null | null | false | 981 | false | luozhouyang/dureader | 2021-11-29T04:44:53.000Z | null | false | 904b9cef2f649654deef43f0eecb44986395f1bc | [] | [] | https://huggingface.co/datasets/luozhouyang/dureader/resolve/main/README.md | # dureader

数据来自千言DuReader数据集,这里是原始地址 [千言数据集:阅读理解](https://aistudio.baidu.com/aistudio/competition/detail/49/0/task-definition)。

> 本数据集只用作学术研究使用。如果本仓库涉及侵权行为,会立即删除。

目前包含以下两个子集:

* DuReader-robust

* DuReader-checklist

```python

from datasets import load_dataset

robust = load_dataset("luozhouyang/dureader", "robust")

checklist = load_dataset("luozhouyang/dureader", "checklist")

``` |

luozhouyang | null | null | null | false | 1 | false | luozhouyang/kgclue-knowledge | 2021-12-24T03:30:49.000Z | null | false | acc2f0223357b039635bd616443e53c609eff279 | [] | [] | https://huggingface.co/datasets/luozhouyang/kgclue-knowledge/resolve/main/README.md | # KgCLUE-Knowledge

The original data is from [CLUEbenchmark/KgCLUE](https://github.com/CLUEbenchmark/KgCLUE).

Here is a JSON version of the original knowledge base.

## Usage

```bash

from datasets import load_dataset

dataset = load_dataset("luozhouyang/kgclue-knowledge")

# or select files

dataset = load_dataset("luozhouyang/kgclue-knowledge", data_files=["kgclue.knowledge00.jsonl"])

```

|

luozhouyang | null | null | null | false | 6 | false | luozhouyang/question-answering-datasets | 2021-11-26T11:09:10.000Z | null | false | 02dc192e9908ab6186e86689cd1a948c9771eefd | [] | [] | https://huggingface.co/datasets/luozhouyang/question-answering-datasets/resolve/main/README.md | # question-answering-datasets

Datasets for Question Answering task!

```yaml

annotations_creators:

- found

language_creators:

- found

languages:

- zh-CN

licenses:

- unknown

multilinguality:

- monolingual

pretty_name: question-answering-datasets

size_categories:

- unknown

source_datasets:

- original

task_categories:

- question-answering

task_ids:

- open-domain-qa

- extractive-qa

```

|

codeparrot | null | null | null | false | 64 | false | codeparrot/codeparrot-clean-train | 2022-10-10T15:27:50.000Z | null | false | 3e6ab65f2864931e041f6a82db9b5a6ec2b71ab4 | [] | [] | https://huggingface.co/datasets/codeparrot/codeparrot-clean-train/resolve/main/README.md | # CodeParrot 🦜 Dataset Cleaned (train)

Train split of [CodeParrot 🦜 Dataset Cleaned](https://huggingface.co/datasets/lvwerra/codeparrot-clean).

## Dataset structure

```python

DatasetDict({

train: Dataset({

features: ['repo_name', 'path', 'copies', 'size', 'content', 'license', 'hash', 'line_mean', 'line_max', 'alpha_frac', 'autogenerated'],

num_rows: 5300000

})

})

``` |

codeparrot | null | null | null | false | 856 | false | codeparrot/codeparrot-clean-valid | 2022-10-10T15:28:51.000Z | null | false | 4db92d2ec0c1b4c41eeb439cfae16854511d9dcd | [] | [] | https://huggingface.co/datasets/codeparrot/codeparrot-clean-valid/resolve/main/README.md | # CodeParrot 🦜 Dataset Cleaned (valid)

Train split of [CodeParrot 🦜 Dataset Cleaned](https://huggingface.co/datasets/lvwerra/codeparrot-clean).

## Dataset structure

```python

DatasetDict({

train: Dataset({

features: ['repo_name', 'path', 'copies', 'size', 'content', 'license', 'hash', 'line_mean', 'line_max', 'alpha_frac', 'autogenerated'],

num_rows: 61373

})

})

``` |

codeparrot | null | null | null | false | 105 | false | codeparrot/codeparrot-clean | 2022-10-10T15:23:51.000Z | null | false | 35a59fb025bc0a102f7d96eac09d145b896d487b | [] | [

"tags:python",

"tags:code"

] | https://huggingface.co/datasets/codeparrot/codeparrot-clean/resolve/main/README.md | ---

tags:

- python

- code

---

# CodeParrot 🦜 Dataset Cleaned

## What is it?

A dataset of Python files from Github. This is the deduplicated version of the [codeparrot](https://huggingface.co/datasets/transformersbook/codeparrot).

## Processing

The original dataset contains a lot of duplicated and noisy data. Therefore, the dataset was cleaned with the following steps:

- Deduplication

- Remove exact matches

- Filtering

- Average line length < 100

- Maximum line length < 1000

- Alpha numeric characters fraction > 0.25

- Remove auto-generated files (keyword search)

For more details see the preprocessing script in the transformers repository [here](https://github.com/huggingface/transformers/tree/master/examples/research_projects/codeparrot).

## Splits

The dataset is split in a [train](https://huggingface.co/datasets/codeparrot/codeparrot-clean-train) and [validation](https://huggingface.co/datasets/codeparrot/codeparrot-clean-valid) split used for training and evaluation.

## Structure

This dataset has ~50GB of code and 5361373 files.

```python

DatasetDict({

train: Dataset({

features: ['repo_name', 'path', 'copies', 'size', 'content', 'license', 'hash', 'line_mean', 'line_max', 'alpha_frac', 'autogenerated'],

num_rows: 5361373

})

})

``` |

codeparrot | null | null | The GitHub Code dataest consists of 115M code files from GitHub in 32 programming languages with 60 extensions totalling in 1TB of text data. The dataset was created from the GitHub dataset on BiqQuery. | false | 648 | false | codeparrot/github-code | 2022-10-20T15:01:14.000Z | null | false | b5661e6b17396364b2bcf8e68977b0d28e1ebd19 | [] | [

"language_creators:crowdsourced",

"language_creators:expert-generated",

"language:code",

"license:other",

"multilinguality:multilingual",

"size_categories:unknown",

"task_categories:text-generation",

"task_ids:language-modeling"

] | https://huggingface.co/datasets/codeparrot/github-code/resolve/main/README.md | ---

annotations_creators: []

language_creators:

- crowdsourced

- expert-generated

language:

- code

license:

- other

multilinguality:

- multilingual

pretty_name: github-code

size_categories:

- unknown

source_datasets: []

task_categories:

- text-generation

task_ids:

- language-modeling

---

# GitHub Code Dataset

## Dataset Description

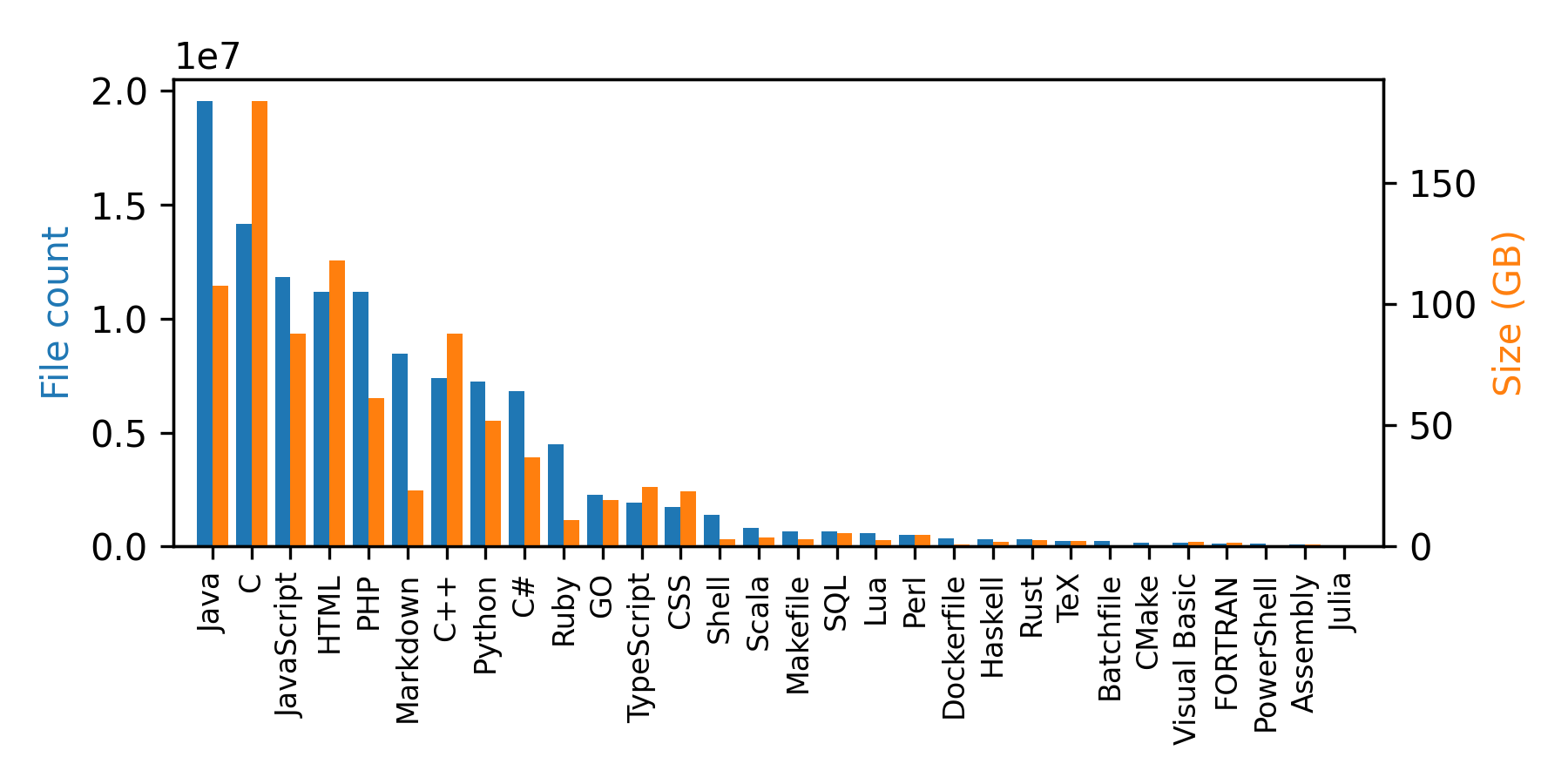

The GitHub Code dataset consists of 115M code files from GitHub in 32 programming languages with 60 extensions totaling in 1TB of data. The dataset was created from the public GitHub dataset on Google BiqQuery.

### How to use it

The GitHub Code dataset is a very large dataset so for most use cases it is recommended to make use of the streaming API of `datasets`. You can load and iterate through the dataset with the following two lines of code:

```python

from datasets import load_dataset

ds = load_dataset("codeparrot/github-code", streaming=True, split="train")

print(next(iter(ds)))

#OUTPUT:

{

'code': "import mod189 from './mod189';\nvar value=mod189+1;\nexport default value;\n",

'repo_name': 'MirekSz/webpack-es6-ts',

'path': 'app/mods/mod190.js',

'language': 'JavaScript',

'license': 'isc',

'size': 73

}

```

You can see that besides the code, repo name, and path also the programming language, license, and the size of the file are part of the dataset. You can also filter the dataset for any subset of the 30 included languages (see the full list below) in the dataset. Just pass the list of languages as a list. E.g. if your dream is to build a Codex model for Dockerfiles use the following configuration:

```python

ds = load_dataset("codeparrot/github-code", streaming=True, split="train", languages=["Dockerfile"])

print(next(iter(ds))["code"])

#OUTPUT:

"""\

FROM rockyluke/ubuntu:precise

ENV DEBIAN_FRONTEND="noninteractive" \

TZ="Europe/Amsterdam"

...

"""

```

We also have access to the license of the origin repo of a file so we can filter for licenses in the same way we filtered for languages:

```python

ds = load_dataset("codeparrot/github-code", streaming=True, split="train", licenses=["mit", "isc"])

licenses = []

for element in iter(ds).take(10_000):

licenses.append(element["license"])

print(Counter(licenses))

#OUTPUT:

Counter({'mit': 9896, 'isc': 104})

```