author stringlengths 2 29 ⌀ | cardData null | citation stringlengths 0 9.58k ⌀ | description stringlengths 0 5.93k ⌀ | disabled bool 1 class | downloads float64 1 1M ⌀ | gated bool 2 classes | id stringlengths 2 108 | lastModified stringlengths 24 24 | paperswithcode_id stringlengths 2 45 ⌀ | private bool 2 classes | sha stringlengths 40 40 | siblings list | tags list | readme_url stringlengths 57 163 | readme stringlengths 0 977k |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

sled-umich | null | @misc{storks2021tiered,

title={Tiered Reasoning for Intuitive Physics: Toward Verifiable Commonsense Language Understanding},

author={Shane Storks and Qiaozi Gao and Yichi Zhang and Joyce Chai},

year={2021},

booktitle={Findings of the Association for Computational Linguistics: EMNLP 2021},

location={Punta Cana, Dominican Republic},

publisher={Association for Computational Linguistics},

} | We introduce Tiered Reasoning for Intuitive Physics (TRIP), a novel commonsense reasoning dataset with dense annotations that enable multi-tiered evaluation of machines’ reasoning process. | false | 283 | false | sled-umich/TRIP | 2022-10-14T19:17:29.000Z | null | false | e8034abd1a23f948dc6bc68e1bceaa47d7e966c2 | [] | [

"annotations_creators:expert-generated",

"language:en",

"language_creators:crowdsourced",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:original",

"task_categories:text-classification",

"task_ids:natural-language-inference"

] | https://huggingface.co/datasets/sled-umich/TRIP/resolve/main/README.md | ---

annotations_creators:

- expert-generated

language:

- en

language_creators:

- crowdsourced

license: []

multilinguality:

- monolingual

pretty_name: 'TRIP: Tiered Reasoning for Intuitive Physics'

size_categories:

- 1K<n<10K

source_datasets:

- original

tags: []

task_categories:

- text-classification

task_ids:

- natural-language-inference

---

# [TRIP - Tiered Reasoning for Intuitive Physics](https://aclanthology.org/2021.findings-emnlp.422/)

Official dataset for [Tiered Reasoning for Intuitive Physics: Toward Verifiable Commonsense Language Understanding](https://aclanthology.org/2021.findings-emnlp.422/). Shane Storks, Qiaozi Gao, Yichi Zhang, Joyce Chai. EMNLP Findings, 2021.

For our official model and experiment code, please check [GitHub](https://github.com/sled-group/Verifiable-Coherent-NLU).

## Overview

We introduce Tiered Reasoning for Intuitive Physics (TRIP), a novel commonsense reasoning dataset with dense annotations that enable multi-tiered evaluation of machines’ reasoning process.

It includes dense annotations for each story capturing multiple tiers of reasoning beyond the end task. From these annotations, we propose a tiered evaluation, where given a pair of highly similar stories (differing only by one sentence which makes one of the stories implausible), systems must jointly identify (1) the plausible story, (2) a pair of conflicting sentences in the implausible story, and (3) the underlying physical states in those sentences causing the conflict. The goal of TRIP is to enable a systematic evaluation of machine coherence toward the end task prediction of plausibility. In particular, we evaluate whether a high-level plausibility prediction can be verified based on lower-level understanding, for example, physical state changes that would support the prediction.

## Download

```python

from datasets import load_dataset

dataset = load_dataset("sled-umich/TRIP")

```

* [HuggingFace-Dataset](https://huggingface.co/datasets/sled-umich/TRIP)

* [GitHub](https://github.com/sled-group/Verifiable-Coherent-NLU)

## Cite

```bibtex

@misc{storks2021tiered,

title={Tiered Reasoning for Intuitive Physics: Toward Verifiable Commonsense Language Understanding},

author={Shane Storks and Qiaozi Gao and Yichi Zhang and Joyce Chai},

year={2021},

booktitle={Findings of the Association for Computational Linguistics: EMNLP 2021},

location={Punta Cana, Dominican Republic},

publisher={Association for Computational Linguistics},

}

```

|

autoevaluate | null | null | null | false | null | false | autoevaluate/autoeval-eval-adversarial_qa-adversarialQA-b079e4-1737160612 | 2022-10-12T19:01:30.000Z | null | false | 6d3d5c6d6497f657f192f3c977b08d036ea51384 | [] | [

"type:predictions",

"tags:autotrain",

"tags:evaluation",

"datasets:adversarial_qa"

] | https://huggingface.co/datasets/autoevaluate/autoeval-eval-adversarial_qa-adversarialQA-b079e4-1737160612/resolve/main/README.md | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- adversarial_qa

eval_info:

task: extractive_question_answering

model: Adrian/distilbert-base-uncased-finetuned-squad-colab

metrics: []

dataset_name: adversarial_qa

dataset_config: adversarialQA

dataset_split: validation

col_mapping:

context: context

question: question

answers-text: answers.text

answers-answer_start: answers.answer_start

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Question Answering

* Model: Adrian/distilbert-base-uncased-finetuned-squad-colab

* Dataset: adversarial_qa

* Config: adversarialQA

* Split: validation

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@saad](https://huggingface.co/saad) for evaluating this model. |

ashraq | null | null | null | false | 3 | false | ashraq/financial-news | 2022-10-12T19:05:51.000Z | null | false | 7fedd76a179cb2b6e1230a0d095c5a290ed4c2f0 | [] | [] | https://huggingface.co/datasets/ashraq/financial-news/resolve/main/README.md | The data was obtained from [here](https://www.kaggle.com/datasets/miguelaenlle/massive-stock-news-analysis-db-for-nlpbacktests?select=raw_partner_headlines.csv). |

allenai | null | null | null | false | 1 | false | allenai/multinews_dense_max | 2022-11-11T01:29:44.000Z | multi-news | false | 907311f023524778117adba50143bbc6eab91d51 | [] | [

"annotations_creators:expert-generated",

"language_creators:expert-generated",

"language:en",

"license:other",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:original",

"task_categories:summarization",

"task_ids:news-articles-summarization"

] | https://huggingface.co/datasets/allenai/multinews_dense_max/resolve/main/README.md | ---

annotations_creators:

- expert-generated

language_creators:

- expert-generated

language:

- en

license:

- other

multilinguality:

- monolingual

pretty_name: Multi-News

size_categories:

- 10K<n<100K

source_datasets:

- original

task_categories:

- summarization

task_ids:

- news-articles-summarization

paperswithcode_id: multi-news

train-eval-index:

- config: default

task: summarization

task_id: summarization

splits:

train_split: train

eval_split: test

col_mapping:

document: text

summary: target

metrics:

- type: rouge

name: Rouge

---

This is a copy of the [Multi-News](https://huggingface.co/datasets/multi_news) dataset, except the input source documents of its `test` split have been replaced by a __dense__ retriever. The retrieval pipeline used:

- __query__: The `summary` field of each example

- __corpus__: The union of all documents in the `train`, `validation` and `test` splits

- __retriever__: [`facebook/contriever-msmarco`](https://huggingface.co/facebook/contriever-msmarco) via [PyTerrier](https://pyterrier.readthedocs.io/en/latest/) with default settings

- __top-k strategy__: `"max"`, i.e. the number of documents retrieved, `k`, is set as the maximum number of documents seen across examples in this dataset, in this case `k==10`

Retrieval results on the `train` set:

Recall@100 | Rprec | Precision@k | Recall@k |

| ----------- | ----------- | ----------- | ----------- |

| 0.8661 | 0.6867 | 0.2118 | 0.7966 |

Retrieval results on the `validation` set:

Recall@100 | Rprec | Precision@k | Recall@k |

| ----------- | ----------- | ----------- | ----------- |

| 0.8626 | 0.6859 | 0.2083 | 0.7949 |

Retrieval results on the `test` set:

Recall@100 | Rprec | Precision@k | Recall@k |

| ----------- | ----------- | ----------- | ----------- |

| 0.8625 | 0.6927 | 0.2096 | 0.7971 | |

allenai | null | null | null | false | 2 | false | allenai/multinews_dense_mean | 2022-11-11T01:32:35.000Z | multi-news | false | f3e05a3d4e6d0c9f71a35502c911f21c96754a57 | [] | [

"annotations_creators:expert-generated",

"language_creators:expert-generated",

"language:en",

"license:other",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:original",

"task_categories:summarization",

"task_ids:news-articles-summarization"

] | https://huggingface.co/datasets/allenai/multinews_dense_mean/resolve/main/README.md | ---

annotations_creators:

- expert-generated

language_creators:

- expert-generated

language:

- en

license:

- other

multilinguality:

- monolingual

pretty_name: Multi-News

size_categories:

- 10K<n<100K

source_datasets:

- original

task_categories:

- summarization

task_ids:

- news-articles-summarization

paperswithcode_id: multi-news

train-eval-index:

- config: default

task: summarization

task_id: summarization

splits:

train_split: train

eval_split: test

col_mapping:

document: text

summary: target

metrics:

- type: rouge

name: Rouge

---

This is a copy of the [Multi-News](https://huggingface.co/datasets/multi_news) dataset, except the input source documents of its `test` split have been replaced by a __dense__ retriever. The retrieval pipeline used:

- __query__: The `summary` field of each example

- __corpus__: The union of all documents in the `train`, `validation` and `test` splits

- __retriever__: [`facebook/contriever-msmarco`](https://huggingface.co/facebook/contriever-msmarco) via [PyTerrier](https://pyterrier.readthedocs.io/en/latest/) with default settings

- __top-k strategy__: `"max"`, i.e. the number of documents retrieved, `k`, is set as the maximum number of documents seen across examples in this dataset, in this case `k==3`

Retrieval results on the `train` set:

| Recall@100 | Rprec | Precision@k | Recall@k |

| ----------- | ----------- | ----------- | ----------- |

| 0.8661 | 0.6867 | 0.5936 | 0.6917 |

Retrieval results on the `validation` set:

| Recall@100 | Rprec | Precision@k | Recall@k |

| ----------- | ----------- | ----------- | ----------- |

| 0.8626 | 0.6859 | 0.5874 | 0.6925 |

Retrieval results on the `test` set:

| Recall@100 | Rprec | Precision@k | Recall@k |

| ----------- | ----------- | ----------- | ----------- |

| 0.8625 | 0.6927 | 0.5938 | 0.6993 | |

allenai | null | null | null | false | 1 | false | allenai/multinews_dense_oracle | 2022-11-12T04:10:53.000Z | multi-news | false | 0a28a9ad21550cfaadec888b0d826eff2c5bf028 | [] | [

"annotations_creators:expert-generated",

"language_creators:expert-generated",

"language:en",

"license:other",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:original",

"task_categories:summarization",

"task_ids:news-articles-summarization"

] | https://huggingface.co/datasets/allenai/multinews_dense_oracle/resolve/main/README.md | ---

annotations_creators:

- expert-generated

language_creators:

- expert-generated

language:

- en

license:

- other

multilinguality:

- monolingual

pretty_name: Multi-News

size_categories:

- 10K<n<100K

source_datasets:

- original

task_categories:

- summarization

task_ids:

- news-articles-summarization

paperswithcode_id: multi-news

train-eval-index:

- config: default

task: summarization

task_id: summarization

splits:

train_split: train

eval_split: test

col_mapping:

document: text

summary: target

metrics:

- type: rouge

name: Rouge

---

This is a copy of the [Multi-News](https://huggingface.co/datasets/multi_news) dataset, except the input source documents of the `train`, `validation`, and `test` splits have been replaced by a __dense__ retriever. The retrieval pipeline used:

- __query__: The `summary` field of each example

- __corpus__: The union of all documents in the `train`, `validation` and `test` splits

- __retriever__: [`facebook/contriever-msmarco`](https://huggingface.co/facebook/contriever-msmarco) via [PyTerrier](https://pyterrier.readthedocs.io/en/latest/) with default settings

- __top-k strategy__: `"oracle"`, i.e. the number of documents retrieved, `k`, is set as the original number of input documents for each example

Retrieval results on the `train` set:

| Recall@100 | Rprec | Precision@k | Recall@k |

| ----------- | ----------- | ----------- | ----------- |

| 0.8661 | 0.6867 | 0.6867 | 0.6867 |

Retrieval results on the `validation` set:

| Recall@100 | Rprec | Precision@k | Recall@k |

| ----------- | ----------- | ----------- | ----------- |

| 0.8626 | 0.6859 | 0.6859 | 0.6859 |

Retrieval results on the `test` set:

| Recall@100 | Rprec | Precision@k | Recall@k |

| ----------- | ----------- | ----------- | ----------- |

| 0.8625 | 0.6927 | 0.6927 | 0.6927 | |

AshleyRoni | null | null | null | false | null | false | AshleyRoni/lizzabliss2001 | 2022-10-12T19:34:59.000Z | null | false | 1a858281821ccffa0cf9d900727a7a9c8cbe68c9 | [] | [

"license:openrail"

] | https://huggingface.co/datasets/AshleyRoni/lizzabliss2001/resolve/main/README.md | ---

license: openrail

---

|

debosneed | null | null | null | false | null | false | debosneed/manuscript-captions | 2022-10-12T19:36:55.000Z | null | false | 5eb85b0cbb0259b14d92c550e4af42ea8815e20c | [] | [

"license:afl-3.0"

] | https://huggingface.co/datasets/debosneed/manuscript-captions/resolve/main/README.md | ---

license: afl-3.0

---

|

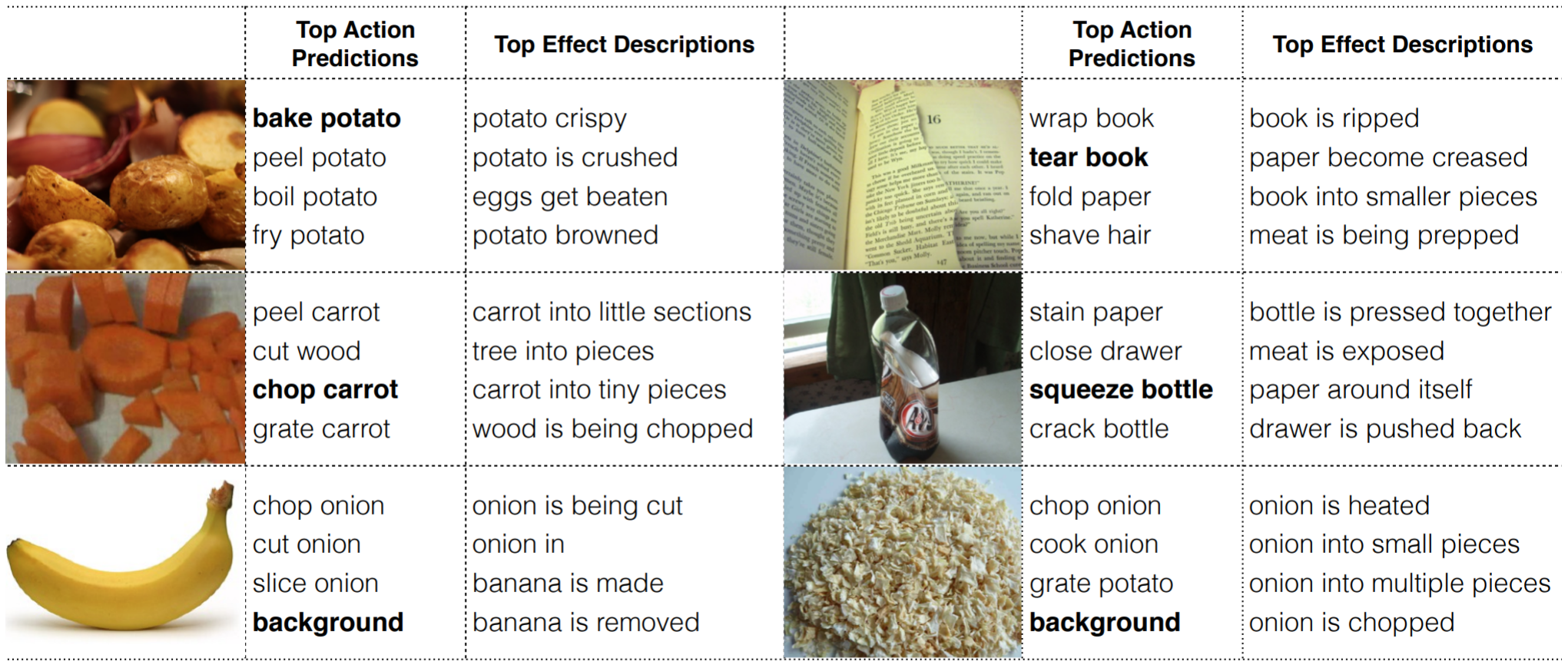

sled-umich | null | @inproceedings{gao-etal-2018-action,

title = "What Action Causes This? Towards Naive Physical Action-Effect Prediction",

author = "Gao, Qiaozi and

Yang, Shaohua and

Chai, Joyce and

Vanderwende, Lucy",

booktitle = "Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers)",

month = jul,

year = "2018",

address = "Melbourne, Australia",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/P18-1086",

doi = "10.18653/v1/P18-1086",

pages = "934--945",

} | Despite recent advances in knowledge representation, automated reasoning, and machine learning, artificial agents still lack the ability to understand basic action-effect relations regarding the physical world, for example, the action of cutting a cucumber most likely leads to the state where the cucumber is broken apart into smaller pieces. If artificial agents (e.g., robots) ever become our partners in joint tasks, it is critical to empower them with such action-effect understanding so that they can reason about the state of the world and plan for actions. Towards this goal, this paper introduces a new task on naive physical action-effect prediction, which addresses the relations between concrete actions (expressed in the form of verb-noun pairs) and their effects on the state of the physical world as depicted by images. We collected a dataset for this task and developed an approach that harnesses web image data through distant supervision to facilitate learning for action-effect prediction. Our empirical results have shown that web data can be used to complement a small number of seed examples (e.g., three examples for each action) for model learning. This opens up possibilities for agents to learn physical action-effect relations for tasks at hand through communication with humans with a few examples. | false | 22 | false | sled-umich/Action-Effect | 2022-10-14T19:12:20.000Z | null | false | 5e34c0587551f404f5a77198d74e06e6859bd75b | [] | [

"annotations_creators:crowdsourced",

"language:eng",

"language_creators:crowdsourced",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:original",

"task_categories:image-classification",

"task_categories:image-to-text"

] | https://huggingface.co/datasets/sled-umich/Action-Effect/resolve/main/README.md | ---

annotations_creators:

- crowdsourced

language:

- eng

language_creators:

- crowdsourced

license: []

multilinguality:

- monolingual

pretty_name: Action-Effect-Prediction

size_categories:

- 1K<n<10K

source_datasets:

- original

tags: []

task_categories:

- image-classification

- image-to-text

task_ids: []

---

# Physical-Action-Effect-Prediction

Official dataset for ["What Action Causes This? Towards Naive Physical Action-Effect Prediction"](https://aclanthology.org/P18-1086/), ACL 2018.

## Overview

Despite recent advances in knowledge representation, automated reasoning, and machine learning, artificial agents still lack the ability to understand basic action-effect relations regarding the physical world, for example, the action of cutting a cucumber most likely leads to the state where the cucumber is broken apart into smaller pieces. If artificial agents (e.g., robots) ever become our partners in joint tasks, it is critical to empower them with such action-effect understanding so that they can reason about the state of the world and plan for actions. Towards this goal, this paper introduces a new task on naive physical action-effect prediction, which addresses the relations between concrete actions (expressed in the form of verb-noun pairs) and their effects on the state of the physical world as depicted by images. We collected a dataset for this task and developed an approach that harnesses web image data through distant supervision to facilitate learning for action-effect prediction. Our empirical results have shown that web data can be used to complement a small number of seed examples (e.g., three examples for each action) for model learning. This opens up possibilities for agents to learn physical action-effect relations for tasks at hand through communication with humans with a few examples.

### Datasets

- This dataset contains action-effect information for 140 verb-noun pairs. It has two parts: effects described by natural language, and effects depicted in images.

- The language data contains verb-noun pairs and their effects described in natural language. For each verb-noun pair, its possible effects are described by 10 different annotators. The format for each line is `verb noun, effect_sentence, [effect_phrase_1, effect_phrase_2, effect_phrase_3, ...]`. Effect_phrases were automatically extracted from their corresponding effect_sentences.

- The image data contains images depicting action effects. For each verb-noun pair, an average of 15 positive images and 15 negative images were collected. Positive images are those deemed to capture the resulting world state of the action. And negative images are those deemed to capture some state of the related object (*i.e.*, the nouns in the verb-noun pairs), but are not the resulting state of the corresponding action.

### Download

```python

from datasets import load_dataset

dataset = load_dataset("sled-umich/Action-Effect")

```

* [HuggingFace](https://huggingface.co/datasets/sled-umich/Action-Effect)

* [Google Drive](https://drive.google.com/drive/folders/1P1_xWdCUoA9bHGlyfiimYAWy605tdXlN?usp=sharing)

* Dropbox:

* [Language Data](https://www.dropbox.com/s/pi1ckzjipbqxyrw/action_effect_sentence_phrase.txt?dl=0)

* [Image Data](https://www.dropbox.com/s/ilmfrqzqcbdf22k/action_effect_image_rs.tar.gz?dl=0)

### Cite

[What Action Causes This? Towards Naïve Physical Action-Effect Prediction](https://sled.eecs.umich.edu/publication/dblp-confacl-vanderwende-cyg-18/). *Qiaozi Gao, Shaohua Yang, Joyce Chai, Lucy Vanderwende*. ACL, 2018. [[Paper]](https://aclanthology.org/P18-1086/) [[Slides]](https://aclanthology.org/attachments/P18-1086.Presentation.pdf)

```tex

@inproceedings{gao-etal-2018-action,

title = "What Action Causes This? Towards Naive Physical Action-Effect Prediction",

author = "Gao, Qiaozi and

Yang, Shaohua and

Chai, Joyce and

Vanderwende, Lucy",

booktitle = "Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers)",

month = jul,

year = "2018",

address = "Melbourne, Australia",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/P18-1086",

doi = "10.18653/v1/P18-1086",

pages = "934--945",

abstract = "Despite recent advances in knowledge representation, automated reasoning, and machine learning, artificial agents still lack the ability to understand basic action-effect relations regarding the physical world, for example, the action of cutting a cucumber most likely leads to the state where the cucumber is broken apart into smaller pieces. If artificial agents (e.g., robots) ever become our partners in joint tasks, it is critical to empower them with such action-effect understanding so that they can reason about the state of the world and plan for actions. Towards this goal, this paper introduces a new task on naive physical action-effect prediction, which addresses the relations between concrete actions (expressed in the form of verb-noun pairs) and their effects on the state of the physical world as depicted by images. We collected a dataset for this task and developed an approach that harnesses web image data through distant supervision to facilitate learning for action-effect prediction. Our empirical results have shown that web data can be used to complement a small number of seed examples (e.g., three examples for each action) for model learning. This opens up possibilities for agents to learn physical action-effect relations for tasks at hand through communication with humans with a few examples.",

}

```

|

DavidBatista | null | null | null | false | null | false | DavidBatista/ImageFolder | 2022-10-12T21:34:08.000Z | null | false | 261c1d1340f1ae506e0bac1cccf79bddade05b57 | [] | [

"license:artistic-2.0"

] | https://huggingface.co/datasets/DavidBatista/ImageFolder/resolve/main/README.md | ---

license: artistic-2.0

---

|

zhengxuanzenwu | null | null | null | false | 58 | false | zhengxuanzenwu/wikitext-2-split-128 | 2022-10-13T00:11:29.000Z | null | false | 817f68fefc4d740360dded88d91f53089f21c10d | [] | [] | https://huggingface.co/datasets/zhengxuanzenwu/wikitext-2-split-128/resolve/main/README.md | This is a dataset created from the WikiText-2 dataset by splitting longer sequences into sequences with maximum of 128 tokens after using a wordpiece tokenizer. |

maxwellfoley | null | null | null | false | 102 | false | maxwellfoley/corporate-surrealist-training | 2022-11-15T05:09:29.000Z | null | false | a67f1cabf204e8784e28195ca3badfcff9e8c3ae | [] | [] | https://huggingface.co/datasets/maxwellfoley/corporate-surrealist-training/resolve/main/README.md | ---

dataset_info:

features:

- name: image

dtype: image

- name: text

dtype: string

splits:

- name: train

num_bytes: 226883173.0

num_examples: 507

download_size: 221334520

dataset_size: 226883173.0

---

# Dataset Card for "corporate-surrealist-training"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Gh0st | null | null | null | false | null | false | Gh0st/jiutian | 2022-11-11T09:52:39.000Z | null | false | 15f2a9871817cf2b5037445da14f34c3dde160de | [] | [

"license:unknown"

] | https://huggingface.co/datasets/Gh0st/jiutian/resolve/main/README.md | ---

license: unknown

---

|

fcabanilla | null | null | null | false | 1 | false | fcabanilla/Tobby | 2022-10-13T06:27:40.000Z | null | false | 12cf3a624e13fdd16a70dfb7d493fd55e561d650 | [] | [

"license:mit"

] | https://huggingface.co/datasets/fcabanilla/Tobby/resolve/main/README.md | ---

license: mit

---

|

fcabanilla | null | null | null | false | null | false | fcabanilla/tobby2 | 2022-10-13T07:11:17.000Z | null | false | 243851be0bc1f8846618ad4fe8c6432347191460 | [] | [

"license:mit"

] | https://huggingface.co/datasets/fcabanilla/tobby2/resolve/main/README.md | ---

license: mit

---

|

nikitam | null | null | null | false | 11 | false | nikitam/ACES | 2022-10-28T07:53:15.000Z | null | false | 079178b9e95576a70b67d1ed917216be8467569e | [] | [

"arxiv:2210.15615",

"language:multilingual",

"license:cc-by-nc-sa-4.0",

"multilinguality:multilingual",

"source_datasets:FLORES-101, FLORES-200, PAWS-X, XNLI, XTREME, WinoMT, Wino-X, MuCOW, EuroParl ConDisco, ParcorFull",

"task_categories:translation"

] | https://huggingface.co/datasets/nikitam/ACES/resolve/main/README.md | ---

language:

- multilingual

license:

- cc-by-nc-sa-4.0

multilinguality:

- multilingual

source_datasets:

- FLORES-101, FLORES-200, PAWS-X, XNLI, XTREME, WinoMT, Wino-X, MuCOW, EuroParl ConDisco, ParcorFull

task_categories:

- translation

pretty_name: ACES

---

# Dataset Card for ACES

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Discussion of Biases](#discussion-of-biases)

- [Usage](#usage)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contact](#contact)

## Dataset Description

- **Repository:** [ACES dataset repository](https://github.com/EdinburghNLP/ACES)

- **Paper:** [arXiv](https://arxiv.org/abs/2210.15615)

### Dataset Summary

ACES consists of 36,476 examples covering 146 language pairs and representing challenges from 68 phenomena for evaluating machine translation metrics. We focus on translation accuracy errors and base the phenomena covered in our challenge set on the Multidimensional Quality Metrics (MQM) ontology. The phenomena range from simple perturbations at the word/character level to more complex errors based on discourse and real-world knowledge.

### Supported Tasks and Leaderboards

-Machine translation evaluation of metrics

-Potentially useful for contrastive machine translation evaluation

### Languages

The dataset covers 146 language pairs as follows:

af-en, af-fa, ar-en, ar-fr, ar-hi, be-en, bg-en, bg-lt, ca-en, ca-es, cs-en, da-en, de-en, de-es, de-fr, de-ja, de-ko, de-ru, de-zh, el-en, en-af, en-ar, en-be, en-bg, en-ca, en-cs, en-da, en-de, en-el, en-es, en-et, en-fa, en-fi, en-fr, en-gl, en-he, en-hi, en-hr, en-hu, en-hy, en-id, en-it, en-ja, en-ko, en-lt, en-lv, en-mr, en-nl, en-no, en-pl, en-pt, en-ro, en-ru, en-sk, en-sl, en-sr, en-sv, en-ta, en-tr, en-uk, en-ur, en-vi, en-zh, es-ca, es-de, es-en, es-fr, es-ja, es-ko, es-zh, et-en, fa-af, fa-en, fi-en, fr-de, fr-en, fr-es, fr-ja, fr-ko, fr-mr, fr-ru, fr-zh, ga-en, gl-en, he-en, he-sv, hi-ar, hi-en, hr-en, hr-lv, hu-en, hy-en, hy-vi, id-en, it-en, ja-de, ja-en, ja-es, ja-fr, ja-ko, ja-zh, ko-de, ko-en, ko-es, ko-fr, ko-ja, ko-zh, lt-bg, lt-en, lv-en, lv-hr, mr-en, nl-en, no-en, pl-en, pl-mr, pl-sk, pt-en, pt-sr, ro-en, ru-de, ru-en, ru-es, ru-fr, sk-en, sk-pl, sl-en, sr-en, sr-pt, sv-en, sv-he, sw-en, ta-en, th-en, tr-en, uk-en, ur-en, vi-en, vi-hy, wo-en, zh-de, zh-en, zh-es, zh-fr, zh-ja, zh-ko

## Dataset Structure

### Data Instances

Each data instance contains the following features: _source_, _good-translation_, _incorrect-translation_, _reference_, _phenomena_, _langpair_

See the [ACES corpus viewer](https://huggingface.co/datasets/nikitam/ACES/viewer/nikitam--ACES/train) to explore more examples.

An example from the ACES challenge set looks like the following:

```

{'source': "Proper nutritional practices alone cannot generate elite performances, but they can significantly affect athletes' overall wellness.", 'good-translation': 'Las prácticas nutricionales adecuadas por sí solas no pueden generar rendimiento de élite, pero pueden afectar significativamente el bienestar general de los atletas.', 'incorrect-translation': 'Las prácticas nutricionales adecuadas por sí solas no pueden generar rendimiento de élite, pero pueden afectar significativamente el bienestar general de los jóvenes atletas.', 'reference': 'No es posible que las prácticas nutricionales adecuadas, por sí solas, generen un rendimiento de elite, pero puede influir en gran medida el bienestar general de los atletas .', 'phenomena': 'addition', 'langpair': 'en-es'}

```

### Data Fields

- 'source': a string containing the text that needs to be translated

- 'good-translation': possible translation of the source sentence

- 'incorrect-translation': translation of the source sentence that contains an error or phenomenon of interest

- 'reference': the gold standard translation

- 'phenomena': the type of error or phenomena being studied in the example

- 'langpair': the source language and the target language pair of the example

Note that the _good-translation_ may not be free of errors but it is a better translation than the _incorrect-translation_

### Data Splits

The ACES dataset has 1 split: _train_ which contains the challenge set. There are 36476 examples.

## Dataset Creation

### Curation Rationale

With the advent of neural networks and especially Transformer-based architectures, machine translation outputs have become more and more fluent. Fluency errors are also judged less severely than accuracy errors by human evaluators \citep{freitag-etal-2021-experts} which reflects the fact that accuracy errors can have dangerous consequences in certain contexts, for example in the medical and legal domains. For these reasons, we decided to build a challenge set focused on accuracy errors.

Another aspect we focus on is including a broad range of language pairs in ACES. Whenever possible we create examples for all language pairs covered in a source dataset when we use automatic approaches. For phenomena where we create examples manually, we also aim to cover at least two language pairs per phenomenon but are of course limited to the languages spoken by the authors.

We aim to offer a collection of challenge sets covering both easy and hard phenomena. While it may be of interest to the community to continuously test on harder examples to check where machine translation evaluation metrics still break, we believe that easy challenge sets are just as important to ensure that metrics do not suddenly become worse at identifying error types that were previously considered ``solved''. Therefore, we take a holistic view when creating ACES and do not filter out individual examples or exclude challenge sets based on baseline metric performance or other factors.

### Source Data

#### Initial Data Collection and Normalization

Please see Sections 4 and 5 of the paper.

#### Who are the source language producers?

The dataset contains sentences found in FLORES-101, FLORES-200, PAWS-X, XNLI, XTREME, WinoMT, Wino-X, MuCOW, EuroParl ConDisco, ParcorFull datasets. Please refer to the respective papers for further details.

### Personal and Sensitive Information

The external datasets may contain sensitive information. Refer to the respective datasets for further details.

## Considerations for Using the Data

### Usage

ACES has been primarily designed to evaluate machine translation metrics on the accuracy errors. We expect the metric to score _good-translation_ consistently higher than _incorrect-translation_. We report the performance of metric based on Kendall-tau like correlation. It measures the number of times a metric scores the good translation above the incorrect translation (concordant) and equal to or lower than the incorrect translation (discordant).

### Discussion of Biases

Some examples within the challenge set exhibit biases, however, this is necessary in order to expose the limitations of existing metrics.

### Other Known Limitations

The ACES challenge set exhibits a number of biases. Firstly, there is greater coverage in terms of phenomena and the number of examples for the en-de and en-fr language pairs. This is in part due to the manual effort required to construct examples for some phenomena, in particular, those belonging to the discourse-level and real-world knowledge categories. Further, our choice of language pairs is also limited to the ones available in XLM-R. Secondly, ACES contains more examples for those phenomena for which examples could be generated automatically, compared to those that required manual construction/filtering. Thirdly, some of the automatically generated examples require external libraries which are only available for a few languages (e.g. Multilingual Wordnet). Fourthly, the focus of the challenge set is on accuracy errors. We leave the development of challenge sets for fluency errors to future work.

As a result of using existing datasets as the basis for many of the examples, errors present in these datasets may be propagated through into ACES. Whilst we acknowledge that this is undesirable, in our methods for constructing the incorrect translation we aim to ensure that the quality of the incorrect translation is always worse than the corresponding good translation.

The results and analyses presented in the paper exclude those metrics submitted to the WMT 2022 metrics shared task that provides only system-level outputs. We focus on metrics that provide segment-level outputs as this enables us to provide a broad overview of metric performance on different phenomenon categories and to conduct fine-grained analyses of performance on individual phenomena. For some of the fine-grained analyses, we apply additional constraints based on the language pairs covered by the metrics, or whether the metrics take the source as input, to address specific questions of interest. As a result of applying some of these additional constraints, our investigations tend to focus more on high and medium-resource languages than on low-resource languages. We hope to address this shortcoming in future work.

## Additional Information

### Licensing Information

The ACES dataset is Creative Commons Attribution Non-Commercial Share Alike 4.0 (cc-by-nc-sa-4.0)

### Citation Information

@inproceedings{amrhein-aces-2022,

title = "{ACES}: Translation Accuracy Challenge Sets for Evaluating Machine Translation Metrics",

author = {Amrhein, Chantal and

Moghe, Nikita and

Guillou, Liane},

booktitle = "Seventh Conference on Machine Translation (WMT22)",

month = dec,

year = "2022",

address = "Abu Dhabi, United Arab Emirates",

publisher = "Association for Computational Linguistics",

eprint = {2210.15615}

}

### Contact

[Chantal Amrhein](mailto:amrhein@cl.uzh.ch) and [Nikita Moghe](mailto:nikita.moghe@ed.ac.uk) and [Liane Guillou](mailto:lguillou@ed.ac.uk)

Dataset card based on [Allociné](https://huggingface.co/datasets/allocine) |

bigscience | null | null | null | false | 6 | false | bigscience/massive-probing-results | 2022-10-13T10:09:39.000Z | null | false | 161737dcf3c793ba3ac8d2271af518bc0195e329 | [] | [

"license:apache-2.0"

] | https://huggingface.co/datasets/bigscience/massive-probing-results/resolve/main/README.md | ---

license: apache-2.0

---

|

jianghuzhenyu | null | null | null | false | 2 | false | jianghuzhenyu/Atari_floringogianu | 2022-10-23T04:12:58.000Z | null | false | 94e5f3febd81cc62e9625b3e87d19ebda274282e | [] | [

"license:unknown"

] | https://huggingface.co/datasets/jianghuzhenyu/Atari_floringogianu/resolve/main/README.md | ---

license: unknown

---

|

dpasch01 | null | null | null | false | 3 | false | dpasch01/leaflet_offers | 2022-10-14T11:55:55.000Z | null | false | ab7264f30a130ff95a993abbd608f1abcd3e1c56 | [] | [] | https://huggingface.co/datasets/dpasch01/leaflet_offers/resolve/main/README.md | ---

dataset_info:

features:

- name: pixel_values

dtype: image

- name: label

dtype: image

splits:

- name: train

num_bytes: 5644570.0

num_examples: 4

download_size: 0

dataset_size: 5644570.0

---

# Dataset Card for "leaflet_offers"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

krm | null | null | null | false | 1 | false | krm/for-ULPGL-Dissertation | 2022-10-16T07:53:00.000Z | null | false | 8ef4028d8faf9906c3efe6573cc99e3c474834d2 | [] | [

"annotations_creators:other",

"language:fr",

"language_creators:other",

"license:other",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:extended|orange_sum",

"tags:krm",

"tags:ulpgl",

"tags:orange",

"task_categories:summarization",

"task_ids:news-articles-summari... | https://huggingface.co/datasets/krm/for-ULPGL-Dissertation/resolve/main/README.md | ---

annotations_creators:

- other

language:

- fr

language_creators:

- other

license:

- other

multilinguality:

- monolingual

pretty_name: for-ULPGL-Dissertation

size_categories:

- 10K<n<100K

source_datasets:

- extended|orange_sum

tags:

- krm

- ulpgl

- orange

task_categories:

- summarization

task_ids:

- news-articles-summarization

---

# Dataset Card for [for-ULPGL-Dissertation]

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** krm/for-ULPGL-Dissertation

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

Ce dataset est essentiellement basé sur le dataset *GEM/Orange_sum* dédié à la synthèse d'articles en français. Il est constitué des données abstract de ce dataset (Orange_sum) auxquelles a été ajouté un certain nombre de synthèses générées par le système **Mon Résumeur** de **David Krame**.

### Supported Tasks and Leaderboards

Synthèse automatique

### Languages

Français

## Dataset Structure

### Data Fields

*summary* et *text* sont les champs du dataset avec :

**text** contient les textes et

**summary** les synthèses correspondantes.

### Data Splits

Pour le moment (le 16 Octobre 2022), le dataset est constitué de :

> **21721** données d'entraînement (split dénommé **train**)

> **1545** données de validation (split dénommé **validation**)

> **1581** données de test (split dénommé **test**)

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

|

mboth | null | null | null | false | 1 | false | mboth/klassifizierung_sichern | 2022-10-13T11:35:15.000Z | null | false | 7de339d57179849ed97848e379ceb01e2300d321 | [] | [] | https://huggingface.co/datasets/mboth/klassifizierung_sichern/resolve/main/README.md | ---

dataset_info:

features:

- name: text

dtype: string

- name: Beschreibung

dtype: string

- name: Name

dtype: string

- name: label

dtype:

class_label:

names:

0: Brandmeldeanlage

1: Brandschutzklappe

2: Rauchmeldeanlage

3: SichernAllgemein

splits:

- name: test

num_bytes: 27534.405120481926

num_examples: 133

- name: train

num_bytes: 219861.18975903615

num_examples: 1062

- name: valid

num_bytes: 27534.405120481926

num_examples: 133

download_size: 84648

dataset_size: 274930.0

---

# Dataset Card for "klassifizierung_sichern"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

viriato999 | null | null | null | false | null | false | viriato999/myselfinput | 2022-10-13T19:56:09.000Z | null | false | de815a397f35794aa63035d882f002b57c258a09 | [] | [

"doi:10.57967/hf/0041"

] | https://huggingface.co/datasets/viriato999/myselfinput/resolve/main/README.md | hello |

Thamognya | null | null | null | false | 1 | false | Thamognya/ALotNLI | 2022-10-13T12:58:20.000Z | null | false | 47719131c9b874ae69837038b360209a9ee48aa5 | [] | [

"annotations_creators:no-annotation",

"language_creators:found",

"language:en",

"license:agpl-3.0",

"multilinguality:monolingual",

"size_categories:100K<n<1M",

"source_datasets:snli",

"source_datasets:multi_nli",

"source_datasets:anli",

"task_categories:text-classification",

"task_ids:natural-la... | https://huggingface.co/datasets/Thamognya/ALotNLI/resolve/main/README.md | ---

annotations_creators:

- no-annotation

language_creators:

- found

language:

- en

license:

- agpl-3.0

multilinguality:

- monolingual

pretty_name: A Lot of NLI

size_categories:

- 100K<n<1M

source_datasets:

- snli

- multi_nli

- anli

task_categories:

- text-classification

task_ids:

- natural-language-inference

viewer: true

---

# Repo

Github Repo: [thamognya/TBertNLI](https://github.com/thamognya/TBertNLI) specifically in the [src/data directory](https://github.com/thamognya/TBertNLI/tree/master/src/data).

# Sample

``` premise hypothesis label

0 this church choir sings to the masses as they ... the church is filled with song 0

1 this church choir sings to the masses as they ... a choir singing at a baseball game 2

2 a woman with a green headscarf blue shirt and ... the woman is young 1

3 a woman with a green headscarf blue shirt and ... the woman is very happy 0

4 a woman with a green headscarf blue shirt and ... the woman has been shot 2

```

# Datsets Origin

As of now the marked datasets have been used to make this dataset and the other ones are todo

- [x] SNLI

- [x] MultiNLI

- SuperGLUE

- FEVER

- WIKI-FACTCHECK

- [x] ANLI

- more from huggingface

# Reasons

Just for finetuning of NLI models and purely made for NLI (not zero shot classification)

|

Gazoche | null | null | null | false | 23 | false | Gazoche/gundam-captioned | 2022-10-15T01:44:59.000Z | null | false | 5a2de83a1ba84820500e321ed830053d200b5ad1 | [] | [

"license:cc-by-nc-sa-4.0",

"annotations_creators:machine-generated",

"language:en",

"language_creators:other",

"multilinguality:monolingual",

"size_categories:n<2K",

"task_categories:text-to-image"

] | https://huggingface.co/datasets/Gazoche/gundam-captioned/resolve/main/README.md | ---

license: cc-by-nc-sa-4.0

annotations_creators:

- machine-generated

language:

- en

language_creators:

- other

multilinguality:

- monolingual

pretty_name: 'Gundam captioned'

size_categories:

- n<2K

tags: []

task_categories:

- text-to-image

task_ids: []

---

# Dataset Card for captioned Gundam

Scraped from mahq.net (https://www.mahq.net/mecha/gundam/index.htm) and manually cleaned to only keep drawings and "Mobile Suits" (i.e, humanoid-looking machines).

The captions were automatically generated from a generic hardcoded description + the dominant colors as described by [BLIP](https://github.com/salesforce/BLIP). |

Pavankalyan | null | null | null | false | null | false | Pavankalyan/SN_v1 | 2022-10-13T13:30:26.000Z | null | false | 290838850336d4f82991245a2eb6958ea1659d59 | [] | [] | https://huggingface.co/datasets/Pavankalyan/SN_v1/resolve/main/README.md | ---

dataset_info:

features:

- name: audio

dtype: audio

- name: story

sequence: string

- name: title

dtype: string

- name: link

dtype: string

splits:

- name: train

num_bytes: 16879783141.0

num_examples: 575

download_size: 16107391373

dataset_size: 16879783141.0

---

# Dataset Card for "SN_v1"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

projecte-aina | null | The Racó Forums Corpus is a 19,205,185-million-sentence corpus of Catalan user-generated text built from the forums of Racó Català.

Since the existing available corpora in Catalan lacked conversational data, we searched for a major source of such data for Catalan, and we found Racó Català, a popular multitopic online forum. We obtained a database dump and we transformed all the threads so that we obtained documents that traversed all the existing paths from the root (initial comment) to the leaves (last comment with no reply). In other words, if T is a tree such that T = {A,B,C,D} and the first comment is A that is replied by B and C independently, and, then, C is replied by D, we obtain two different documents A,B and A,C,D in the fairseq language modeling format. | false | null | false | projecte-aina/raco_forums | 2022-11-10T12:25:34.000Z | null | false | c8a3f55dbcc0196a37882d68f2aedf67bf5bbde1 | [] | [

"annotations_creators:no-annotation",

"language_creators:found",

"language:ca",

"license:cc-by-nc-4.0",

"multilinguality:monolingual",

"task_categories:fill-mask"

] | https://huggingface.co/datasets/projecte-aina/raco_forums/resolve/main/README.md | ---

annotations_creators:

- no-annotation

language_creators:

- found

language:

- ca

license:

- cc-by-nc-4.0

multilinguality:

- monolingual

pretty_name: Racó Forums

size_categories:

- ?

task_categories:

- fill-mask

task_ids: []

---

# Dataset Card for Racó Forums Corpus

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Point of Contact:** [blanca.calvo@bsc.es](blanca.calvo@bsc.es)

### Dataset Summary

The Racó Forums Corpus is a 19-million-sentence corpus of Catalan user-generated text built from the forums of [Racó Català](https://www.racocatala.cat/forums).

Since the existing available corpora in Catalan lacked conversational data, we searched for a major source of such data for Catalan, and we found Racó Català, a popular multitopic online forum. We obtained a database dump and we transformed all the threads so that we obtained documents that traversed all the existing paths from the root (initial comment) to the leaves (last comment with no reply). In other words, if T is a tree such that T = {A,B,C,D} and the first comment is A that is replied by B and C independently, and, then, C is replied by D, we obtain two different documents A,B and A,C,D in the fairseq language modeling format.

### Supported Tasks and Leaderboards

This corpus is mainly intended to pretrain language models and word representations.

### Languages

The dataset is in Catalan (`ca-CA`).

## Dataset Structure

The sentences are ordered to preserve the forum structure of comments and answers. T is a tree such that T = {A,B,C,D} and the first comment is A that is replied by B and C independently, and, then, C is replied by D, we obtain two different documents A,B and A,C,D in the fairseq language modeling format.

### Data Instances

```

Ni la Paloma, ni la Razz, ni Bikini, ni res: la cafeteria Slàvia, a Les borges Blanques. Quin concertàs el d'ahir de Pomada!!! Fuà!!! va ser tan tan tan tan tan tan tan bo!!! Flipant!!! Irrepetible!!

És cert, l'Slàvia mola màxim.

```

### Data Splits

The dataset contains two splits: `train` and `valid`.

## Dataset Creation

### Curation Rationale

We created this corpus to contribute to the development of language models in Catalan, a low-resource language. The data was structured to preserve the dialogue structure of forums.

### Source Data

#### Initial Data Collection and Normalization

The data was structured and anonymized by the BSC.

#### Who are the source language producers?

The data was provided by Racó Català.

### Annotations

The dataset is unannotated.

#### Annotation process

[N/A]

#### Who are the annotators?

[N/A]

### Personal and Sensitive Information

The data was annonymised to remove user names and emails, which were changed to random Catalan names. The mentions to the chat itself have also been changed.

## Considerations for Using the Data

### Social Impact of Dataset

We hope this corpus contributes to the development of language models in Catalan, a low-resource language.

### Discussion of Biases

We are aware that, since the data comes from user-generated forums, this will contain biases, hate speech and toxic content. We have not applied any steps to reduce their impact.

### Other Known Limitations

[N/A]

## Additional Information

### Dataset Curators

Text Mining Unit (TeMU) at the Barcelona Supercomputing Center (bsc-temu@bsc.es).

This work was funded by the [Departament de la Vicepresidència i de Polítiques Digitals i Territori de la Generalitat de Catalunya](https://politiquesdigitals.gencat.cat/ca/inici/index.html#googtrans(ca|en) within the framework of [Projecte AINA](https://politiquesdigitals.gencat.cat/ca/economia/catalonia-ai/aina).

### Licensing Information

[Creative Commons Attribution Non-commercial 4.0 International](https://creativecommons.org/licenses/by-nc/4.0/).

### Citation Information

```

```

### Contributions

Thanks to Racó Català for sharing their data.

| |

siberspace | null | null | null | false | null | false | siberspace/pascalsibertin | 2022-10-13T15:43:16.000Z | null | false | 1e30cec2fdcbf6faaa3084f16daa7883189cbbc3 | [] | [] | https://huggingface.co/datasets/siberspace/pascalsibertin/resolve/main/README.md | |

danf0 | null | null | null | false | null | false | danf0/snli_shortcut_grammar | 2022-10-13T14:44:55.000Z | null | false | c434323f1afa94715848c1823c35fbf2338632f9 | [] | [] | https://huggingface.co/datasets/danf0/snli_shortcut_grammar/resolve/main/README.md | ---

dataset_info:

features:

- name: uid

dtype: string

- name: sentence1

dtype: string

- name: sentence2

dtype: string

- name: label

dtype: string

- name: tree

dtype: string

splits:

- name: train

num_bytes: 5724044

num_examples: 16380

download_size: 0

dataset_size: 5724044

---

# Dataset Card for "snli_shortcut_grammar"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

danf0 | null | null | null | false | null | false | danf0/subj_shortcut_grammar | 2022-10-13T14:54:05.000Z | null | false | f1243ea9fec9059f5b69c8f9bac9c79edc1ee22e | [] | [] | https://huggingface.co/datasets/danf0/subj_shortcut_grammar/resolve/main/README.md | ---

dataset_info:

features:

- name: uid

dtype: string

- name: sentence

dtype: string

- name: label

dtype: string

- name: tree

dtype: string

splits:

- name: train

num_bytes: 1077802

num_examples: 2000

download_size: 522313

dataset_size: 1077802

---

# Dataset Card for "subj_shortcut_grammar"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

dennlinger | null | null | null | false | 1 | false | dennlinger/wiki-paragraphs | 2022-10-13T22:12:37.000Z | null | false | bc7ad2163db81844b31026d76cc244b816d8e96c | [] | [

"arxiv:2012.03619",

"annotations_creators:machine-generated",

"language:en",

"language_creators:crowdsourced",

"license:cc-by-sa-3.0",

"multilinguality:monolingual",

"size_categories:10M<n<100M",

"source_datasets:original",

"tags:wikipedia",

"tags:self-similarity",

"task_categories:text-classifi... | https://huggingface.co/datasets/dennlinger/wiki-paragraphs/resolve/main/README.md | ---

annotations_creators:

- machine-generated

language:

- en

language_creators:

- crowdsourced

license:

- cc-by-sa-3.0

multilinguality:

- monolingual

pretty_name: wiki-paragraphs

size_categories:

- 10M<n<100M

source_datasets:

- original

tags:

- wikipedia

- self-similarity

task_categories:

- text-classification

- sentence-similarity

task_ids:

- semantic-similarity-scoring

---

# Dataset Card for `wiki-paragraphs`

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-instances)

- [Data Splits](#data-instances)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

## Dataset Description

- **Homepage:** [Needs More Information]

- **Repository:** https://github.com/dennlinger/TopicalChange

- **Paper:** https://arxiv.org/abs/2012.03619

- **Leaderboard:** [Needs More Information]

- **Point of Contact:** [Dennis Aumiller](aumiller@informatik.uni-heidelberg.de)

### Dataset Summary

The wiki-paragraphs dataset is constructed by automatically sampling two paragraphs from a Wikipedia article. If they are from the same section, they will be considered a "semantic match", otherwise as "dissimilar". Dissimilar paragraphs can in theory also be sampled from other documents, but have not shown any improvement in the particular evaluation of the linked work.

The alignment is in no way meant as an accurate depiction of similarity, but allows to quickly mine large amounts of samples.

### Supported Tasks and Leaderboards

The dataset can be used for "same-section classification", which is a binary classification task (either two sentences/paragraphs belong to the same section or not).

This can be combined with document-level coherency measures, where we can check how many misclassifications appear within a single document.

Please refer to [our paper](https://arxiv.org/abs/2012.03619) for more details.

### Languages

The data was extracted from English Wikipedia, therefore predominantly in English.

## Dataset Structure

### Data Instances

A single instance contains three attributes:

```

{

"sentence1": "<Sentence from the first paragraph>",

"sentence2": "<Sentence from the second paragraph>",

"label": 0/1 # 1 indicates two belong to the same section

}

```

### Data Fields

- sentence1: String containing the first paragraph

- sentence2: String containing the second paragraph

- label: Integer, either 0 or 1. Indicates whether two paragraphs belong to the same section (1) or come from different sections (0)

### Data Splits

We provide train, validation and test splits, which were split as 80/10/10 from a randomly shuffled original data source.

In total, we provide 25375583 training pairs, as well as 3163685 validation and test instances, respectively.

## Dataset Creation

### Curation Rationale

The original idea was applied to self-segmentation of Terms of Service documents. Given that these are of domain-specific nature, we wanted to provide a more generally applicable model trained on Wikipedia data.

It is meant as a cheap-to-acquire pre-training strategy for large-scale experimentation with semantic similarity for long texts (paragraph-level).

Based on our experiments, it is not necessarily sufficient by itself to replace traditional hand-labeled semantic similarity datasets.

### Source Data

#### Initial Data Collection and Normalization

The data was collected based on the articles considered in the Wiki-727k dataset by Koshorek et al. The dump of their dataset can be found through the [respective Github repository](https://github.com/koomri/text-segmentation). Note that we did *not* use the pre-processed data, but rather only information on the considered articles, which were re-acquired from Wikipedia at a more recent state.

This is due to the fact that paragraph information was not retained by the original Wiki-727k authors.

We did not verify the particular focus of considered pages.

#### Who are the source language producers?

We do not have any further information on the contributors; these are volunteers contributing to en.wikipedia.org.

### Annotations

#### Annotation process

No manual annotation was added to the dataset.

We automatically sampled two sections from within the same article; if these belong to the same section, they were assigned a label indicating the "similarity" (1), otherwise the label indicates that they are not belonging to the same section (0).

We sample three positive and three negative samples per section, per article.

#### Who are the annotators?

No annotators were involved in the process.

### Personal and Sensitive Information

We did not modify the original Wikipedia text in any way. Given that personal information, such as dates of birth (e.g., for a person of interest) may be on Wikipedia, this information is also considered in our dataset.

## Considerations for Using the Data

### Social Impact of Dataset

The purpose of the dataset is to serve as a *pre-training addition* for semantic similarity learning.

Systems building on this dataset should consider additional, manually annotated data, before using a system in production.

### Discussion of Biases

To our knowledge, there are some works indicating that male people have a several times larger chance of having a Wikipedia page created (especially in historical contexts). Therefore, a slight bias towards over-representation might be left in this dataset.

### Other Known Limitations

As previously stated, the automatically extracted semantic similarity is not perfect; it should be treated as such.

## Additional Information

### Dataset Curators

The dataset was originally developed as a practical project by Lucienne-Sophie Marm� under the supervision of Dennis Aumiller.

Contributions to the original sampling strategy were made by Satya Almasian and Michael Gertz

### Licensing Information

Wikipedia data is available under the CC-BY-SA 3.0 license.

### Citation Information

```

@inproceedings{DBLP:conf/icail/AumillerAL021,

author = {Dennis Aumiller and

Satya Almasian and

Sebastian Lackner and

Michael Gertz},

editor = {Juliano Maranh{\~{a}}o and

Adam Zachary Wyner},

title = {Structural text segmentation of legal documents},

booktitle = {{ICAIL} '21: Eighteenth International Conference for Artificial Intelligence

and Law, S{\~{a}}o Paulo Brazil, June 21 - 25, 2021},

pages = {2--11},

publisher = {{ACM}},

year = {2021},

url = {https://doi.org/10.1145/3462757.3466085},

doi = {10.1145/3462757.3466085}

}

``` |

Narsil | null | null | null | false | null | false | Narsil/test | 2022-10-13T16:28:14.000Z | null | false | a35ff716f3044a2fba62bc09106c612147766b25 | [] | [

"benchmark:ttt",

"task:xxx",

"type:prediction"

] | https://huggingface.co/datasets/Narsil/test/resolve/main/README.md | ---

benchmark: ttt

task: xxx

type: prediction

---

# Batch job

model_id: {model_id}

dataset_name: {job.dataset_name}

dataset_config: {job.dataset_config}

dataset_split: {job.dataset_split}

dataset_column: {job.dataset_column} |

ellabettison | null | null | null | false | 2 | false | ellabettison/processed_finbert_dataset_concat_small | 2022-10-13T18:46:46.000Z | null | false | 56de5f75013a141db1e447ac2d99dbcfadda4893 | [] | [] | https://huggingface.co/datasets/ellabettison/processed_finbert_dataset_concat_small/resolve/main/README.md | ---

dataset_info:

features:

- name: input_ids

sequence: int32

- name: token_type_ids

sequence: int8

- name: attention_mask

sequence: int8

- name: special_tokens_mask

sequence: int8

splits:

- name: train

num_bytes: 19170800.0

num_examples: 5323

download_size: 4979632

dataset_size: 19170800.0

---

# Dataset Card for "processed_finbert_dataset_concat_small"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

autoevaluate | null | null | null | false | null | false | autoevaluate/autoeval-eval-phpthinh__examplei-all-929d48-1748861028 | 2022-10-13T16:36:34.000Z | null | false | d32ebd8c3c0866b82ddf50a414b0e87cc047202a | [] | [

"type:predictions",

"tags:autotrain",

"tags:evaluation",

"datasets:phpthinh/examplei"

] | https://huggingface.co/datasets/autoevaluate/autoeval-eval-phpthinh__examplei-all-929d48-1748861028/resolve/main/README.md | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- phpthinh/examplei

eval_info:

task: text_zero_shot_classification

model: bigscience/bloom-560m

metrics: ['f1']

dataset_name: phpthinh/examplei

dataset_config: all

dataset_split: test

col_mapping:

text: text

classes: classes

target: target

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Zero-Shot Text Classification

* Model: bigscience/bloom-560m

* Dataset: phpthinh/examplei

* Config: all

* Split: test

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@phpthinh](https://huggingface.co/phpthinh) for evaluating this model. |

autoevaluate | null | null | null | false | null | false | autoevaluate/autoeval-eval-phpthinh__examplei-match-bd10ea-1748761023 | 2022-10-13T16:33:50.000Z | null | false | 42737255477a4ba10197c9f2cedb10951b459626 | [] | [

"type:predictions",

"tags:autotrain",

"tags:evaluation",

"datasets:phpthinh/examplei"

] | https://huggingface.co/datasets/autoevaluate/autoeval-eval-phpthinh__examplei-match-bd10ea-1748761023/resolve/main/README.md | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- phpthinh/examplei

eval_info:

task: text_zero_shot_classification

model: bigscience/bloom-560m

metrics: ['f1']

dataset_name: phpthinh/examplei

dataset_config: match

dataset_split: test

col_mapping:

text: text

classes: classes

target: target

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Zero-Shot Text Classification

* Model: bigscience/bloom-560m

* Dataset: phpthinh/examplei

* Config: match

* Split: test

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@phpthinh](https://huggingface.co/phpthinh) for evaluating this model. |

autoevaluate | null | null | null | false | null | false | autoevaluate/autoeval-eval-phpthinh__examplei-match-bd10ea-1748761027 | 2022-10-13T19:15:51.000Z | null | false | 2d08cabd04857edf128ef4e8686c8306e3827912 | [] | [

"type:predictions",

"tags:autotrain",

"tags:evaluation",

"datasets:phpthinh/examplei"

] | https://huggingface.co/datasets/autoevaluate/autoeval-eval-phpthinh__examplei-match-bd10ea-1748761027/resolve/main/README.md | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- phpthinh/examplei

eval_info:

task: text_zero_shot_classification

model: bigscience/bloom-7b1

metrics: ['f1']

dataset_name: phpthinh/examplei

dataset_config: match

dataset_split: test

col_mapping:

text: text

classes: classes

target: target

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Zero-Shot Text Classification

* Model: bigscience/bloom-7b1

* Dataset: phpthinh/examplei

* Config: match

* Split: test

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@phpthinh](https://huggingface.co/phpthinh) for evaluating this model. |

autoevaluate | null | null | null | false | null | false | autoevaluate/autoeval-eval-phpthinh__examplei-mismatch-1389aa-1748961033 | 2022-10-13T15:52:18.000Z | null | false | 86d4d3c4c650524d4e6061df4c2c1654ca749d67 | [] | [

"type:predictions",

"tags:autotrain",

"tags:evaluation",

"datasets:phpthinh/examplei"

] | https://huggingface.co/datasets/autoevaluate/autoeval-eval-phpthinh__examplei-mismatch-1389aa-1748961033/resolve/main/README.md | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- phpthinh/examplei

eval_info:

task: text_zero_shot_classification

model: bigscience/bloom-560m

metrics: ['f1']

dataset_name: phpthinh/examplei

dataset_config: mismatch

dataset_split: test

col_mapping:

text: text

classes: classes

target: target

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Zero-Shot Text Classification

* Model: bigscience/bloom-560m

* Dataset: phpthinh/examplei

* Config: mismatch

* Split: test

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@phpthinh](https://huggingface.co/phpthinh) for evaluating this model. |

autoevaluate | null | null | null | false | null | false | autoevaluate/autoeval-eval-phpthinh__examplei-match-bd10ea-1748761025 | 2022-10-13T17:00:52.000Z | null | false | 9e622d9ca71fd45291935717af7ae5ac8965cd8c | [] | [

"type:predictions",

"tags:autotrain",

"tags:evaluation",

"datasets:phpthinh/examplei"

] | https://huggingface.co/datasets/autoevaluate/autoeval-eval-phpthinh__examplei-match-bd10ea-1748761025/resolve/main/README.md | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- phpthinh/examplei

eval_info:

task: text_zero_shot_classification

model: bigscience/bloom-1b7

metrics: ['f1']

dataset_name: phpthinh/examplei

dataset_config: match

dataset_split: test

col_mapping:

text: text

classes: classes

target: target

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Zero-Shot Text Classification

* Model: bigscience/bloom-1b7

* Dataset: phpthinh/examplei

* Config: match

* Split: test

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@phpthinh](https://huggingface.co/phpthinh) for evaluating this model. |

autoevaluate | null | null | null | false | null | false | autoevaluate/autoeval-eval-phpthinh__examplei-match-bd10ea-1748761024 | 2022-10-13T16:39:35.000Z | null | false | 63a8b8a186bced02a57b89b2ba42cc898efb0dd8 | [] | [

"type:predictions",

"tags:autotrain",

"tags:evaluation",

"datasets:phpthinh/examplei"

] | https://huggingface.co/datasets/autoevaluate/autoeval-eval-phpthinh__examplei-match-bd10ea-1748761024/resolve/main/README.md | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- phpthinh/examplei

eval_info:

task: text_zero_shot_classification

model: bigscience/bloom-1b1

metrics: ['f1']

dataset_name: phpthinh/examplei

dataset_config: match

dataset_split: test

col_mapping:

text: text

classes: classes

target: target

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Zero-Shot Text Classification

* Model: bigscience/bloom-1b1

* Dataset: phpthinh/examplei

* Config: match

* Split: test

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@phpthinh](https://huggingface.co/phpthinh) for evaluating this model. |

autoevaluate | null | null | null | false | null | false | autoevaluate/autoeval-eval-phpthinh__examplei-match-bd10ea-1748761026 | 2022-10-13T17:13:43.000Z | null | false | 6657d73dbd64c8fae8f1b322a2125f83ee77d23d | [] | [

"type:predictions",

"tags:autotrain",

"tags:evaluation",

"datasets:phpthinh/examplei"

] | https://huggingface.co/datasets/autoevaluate/autoeval-eval-phpthinh__examplei-match-bd10ea-1748761026/resolve/main/README.md | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- phpthinh/examplei

eval_info:

task: text_zero_shot_classification

model: bigscience/bloom-3b

metrics: ['f1']

dataset_name: phpthinh/examplei

dataset_config: match

dataset_split: test

col_mapping:

text: text

classes: classes

target: target

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Zero-Shot Text Classification

* Model: bigscience/bloom-3b

* Dataset: phpthinh/examplei

* Config: match

* Split: test

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@phpthinh](https://huggingface.co/phpthinh) for evaluating this model. |

autoevaluate | null | null | null | false | null | false | autoevaluate/autoeval-eval-phpthinh__examplei-all-929d48-1748861032 | 2022-10-13T19:34:07.000Z | null | false | 96e7c17d355c9a960767c4ceb7428ee215a736fe | [] | [

"type:predictions",

"tags:autotrain",

"tags:evaluation",

"datasets:phpthinh/examplei"

] | https://huggingface.co/datasets/autoevaluate/autoeval-eval-phpthinh__examplei-all-929d48-1748861032/resolve/main/README.md | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- phpthinh/examplei

eval_info:

task: text_zero_shot_classification

model: bigscience/bloom-7b1

metrics: ['f1']

dataset_name: phpthinh/examplei

dataset_config: all

dataset_split: test

col_mapping:

text: text

classes: classes

target: target

---

# Dataset Card for AutoTrain Evaluator