id stringlengths 2 115 | author stringlengths 2 42 ⌀ | last_modified timestamp[us, tz=UTC] | downloads int64 0 8.87M | likes int64 0 3.84k | paperswithcode_id stringlengths 2 45 ⌀ | tags list | lastModified timestamp[us, tz=UTC] | createdAt stringlengths 24 24 | key stringclasses 1 value | created timestamp[us] | card stringlengths 1 1.01M | embedding list | library_name stringclasses 21 values | pipeline_tag stringclasses 27 values | mask_token null | card_data null | widget_data null | model_index null | config null | transformers_info null | spaces null | safetensors null | transformersInfo null | modelId stringlengths 5 111 ⌀ | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

kayteekay/bookimg_dataset | kayteekay | 2023-08-04T06:15:58Z | 28 | 0 | null | [

"region:us"

] | 2023-08-04T06:15:58Z | 2023-08-04T04:43:10.000Z | 2023-08-04T04:43:10 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: image

dtype: image

- name: text

dtype: string

splits:

- name: train

num_bytes: 289585512.68

num_examples: 32581

download_size: 0

dataset_size: 289585512.68

---

# Dataset Card for "bookimg_dataset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.5736058354377747,

-0.19546806812286377,

-0.10539605468511581,

0.09317158162593842,

-0.3151734471321106,

-0.15435495972633362,

0.20078013837337494,

0.023664195090532303,

0.5554397702217102,

0.5794923305511475,

-0.8142786026000977,

-0.9668184518814087,

-0.6144688725471497,

-0.285723090171... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

TrainingDataPro/bald-people-segmentation-dataset | TrainingDataPro | 2023-09-14T16:35:35Z | 28 | 1 | null | [

"task_categories:image-segmentation",

"language:en",

"license:cc-by-nc-nd-4.0",

"code",

"medical",

"region:us"

] | 2023-09-14T16:35:35Z | 2023-08-04T13:34:54.000Z | 2023-08-04T13:34:54 | ---

license: cc-by-nc-nd-4.0

task_categories:

- image-segmentation

language:

- en

tags:

- code

- medical

---

# Bald People Segmentation Dataset

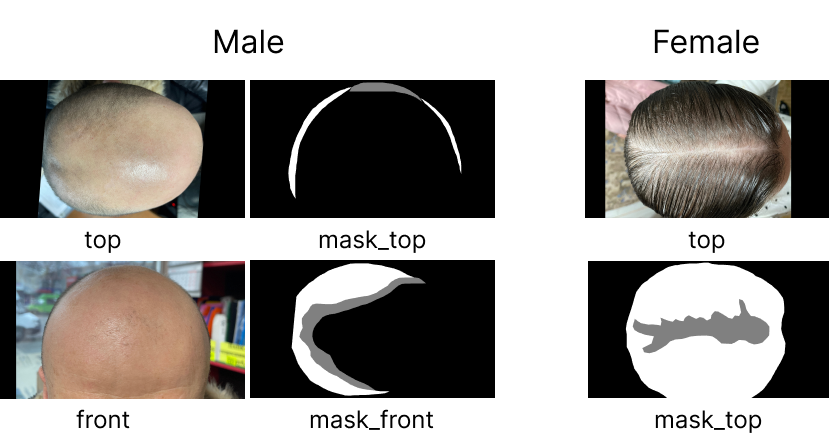

The dataset consists of images of bald people and corresponding segmentation masks.

Segmentation masks highlight the regions of the images that delineate the bald scalp. By using these segmentation masks, researchers and practitioners can focus only on the areas of interest.

The dataset is designed to be accessible and easy to use, providing high-resolution images and corresponding segmentation masks in PNG format.

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=bald-people-segmentation-dataset) to discuss your requirements, learn about the price and buy the dataset.

# Content

### The dataset includes 2 folders:

- **Female** - the folder includes folders corresponding to each woman in the sample. Each of the subfolders contains of top images of women's heads and segmentation masks for the original photos.

- **Male** - the folder includes folders corresponding to each man in the sample. Each of the subfolders contains of front and top images of men's heads from and segmentation masks for the original photos.

### File with the extension .csv

- **link**: link to access the media file,

- **type**: type of the image,

- **gender**: gender of the person in the photo

# Bald People Segmentation might be made in accordance with your requirements.

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=bald-people-segmentation-dataset) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** | [

-0.5352700352668762,

-0.5913286209106445,

0.10059143602848053,

0.4316685199737549,

-0.23821613192558289,

0.27131882309913635,

0.04109055921435356,

-0.45892879366874695,

0.3771331012248993,

0.7632184028625488,

-1.1463639736175537,

-0.9600988626480103,

-0.5075410008430481,

0.0153200058266520... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

tasksource/esci | tasksource | 2023-08-09T11:23:31Z | 28 | 0 | null | [

"task_categories:text-classification",

"task_categories:text-retrieval",

"language:en",

"language:ja",

"language:es",

"license:apache-2.0",

"arxiv:2206.06588",

"region:us"

] | 2023-08-09T11:23:31Z | 2023-08-09T10:12:27.000Z | 2023-08-09T10:12:27 | ---

dataset_info:

features:

- name: example_id

dtype: int64

- name: query

dtype: string

- name: query_id

dtype: int64

- name: product_id

dtype: string

- name: product_locale

dtype: string

- name: esci_label

dtype: string

- name: small_version

dtype: int64

- name: large_version

dtype: int64

- name: product_title

dtype: string

- name: product_description

dtype: string

- name: product_bullet_point

dtype: string

- name: product_brand

dtype: string

- name: product_color

dtype: string

- name: product_text

dtype: string

splits:

- name: train

num_bytes: 5047037946

num_examples: 2027874

- name: test

num_bytes: 1631847321

num_examples: 652490

download_size: 2517788457

dataset_size: 6678885267

license: apache-2.0

task_categories:

- text-classification

- text-retrieval

language:

- en

- ja

- es

---

# Dataset Card for "esci"

ESCI product search dataset

https://github.com/amazon-science/esci-data/

Preprocessings:

-joined the two relevant files

-product_text aggregate all product text

-mapped esci_label to full name

```bib

@article{reddy2022shopping,

title={Shopping Queries Dataset: A Large-Scale {ESCI} Benchmark for Improving Product Search},

author={Chandan K. Reddy and Lluís Màrquez and Fran Valero and Nikhil Rao and Hugo Zaragoza and Sambaran Bandyopadhyay and Arnab Biswas and Anlu Xing and Karthik Subbian},

year={2022},

eprint={2206.06588},

archivePrefix={arXiv}

}

``` | [

-0.39113759994506836,

-0.5828738808631897,

0.3703247606754303,

0.1320260465145111,

-0.22399796545505524,

0.1574389636516571,

-0.11919381469488144,

-0.5627140402793884,

0.513786792755127,

0.4345897436141968,

-0.5436315536499023,

-0.7536517381668091,

-0.45950689911842346,

0.31706976890563965... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

deep-plants/AGM_HS | deep-plants | 2023-10-04T11:07:25Z | 28 | 3 | null | [

"license:cc",

"region:us"

] | 2023-10-04T11:07:25Z | 2023-08-16T10:04:19.000Z | 2023-08-16T10:04:19 | ---

license: cc

dataset_info:

features:

- name: image

dtype: image

- name: mask

dtype: image

- name: crop_type

dtype: string

- name: label

dtype: string

splits:

- name: train

num_bytes: 22900031.321

num_examples: 6127

download_size: 22010079

dataset_size: 22900031.321

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for AGM_HS Dataset

## Dataset Summary

The AGM<sub>HS</sub> (AGricolaModerna Healthy-Stress) Dataset is an extension of the AGM Dataset, specifically curated to address the challenge of detecting and localizing plant stress in top-view images of harvested crops. This dataset comprises 6,127 high-resolution RGB images, each with a resolution of 120x120 pixels, selected from the original AGM Dataset. The images in AGM<sub>HS</sub> are divided into two categories: healthy samples (3,798 images) and stressed samples (2,329 images) representing 14 of the 18 classes present in AGM. Alongside the healthy/stressed classification labels, the dataset also provides segmentation masks for the stressed areas.

## Supported Tasks

Image classification: Healthy-stressed classification

Image segmentation: detection and localization of plant stress in top-view images.

## Languages

The dataset primarily consists of image data and does not involve language content. Therefore, the primary language is English, but it is not relevant to the dataset's core content.

## Dataset Structure

### Data Instances

A typical data instance from the AGM<sub>HS</sub> Dataset consists of the following:

```

{

'image': <PIL.JpegImagePlugin.JpegImageFile image mode=RGB size=120x120 at 0x29CEAD71780>,

'labels': 'stressed',

'crop_type': 'by'

'mask': <PIL.PngImagePlugin.PngImageFile image mode=L size=120x120 at 0x29CEAD71780>

}

```

### Data Fields

The dataset's data instances have the following fields:

- `image`: A PIL.Image.Image object representing the image.

- `labels`: A string representation indicating whether the image is "healthy" or "stressed."

- `crop_type`: An string representation of the crop type in the image

- `mask`: A PIL.Image.Image object representing the segmentation mask of stressed areas in the image, stored as a PNG image.

### Data Splits

- **Training Set**:

- Number of Examples: 6,127

- Healthy Samples: 3,798

- Stressed Samples: 2,329

## Dataset Creation

### Curation Rationale

The AGM<sub>HS</sub> Dataset was created as an extension of the AGM Dataset to specifically address the challenge of detecting and localizing plant stress in top-view images of harvested crops. This dataset is essential for the development and evaluation of advanced segmentation models tailored for this task.

### Source Data

#### Initial Data Collection and Normalization

The images in AGM<sub>HS</sub> were extracted from the original AGM Dataset. During the extraction process, labelers selected images showing clear signs of either good health or high stress. These sub-images were resized to 120x120 pixels to create AGM<sub>HS</sub>.

### Annotations

#### Annotation Process

The AGM<sub>HS</sub> Dataset underwent a secondary stage of annotation. Labelers manually collected images by clicking on points corresponding to stressed areas on the leaves. These clicked points served as prompts for the semi-automatic generation of segmentation masks using the "Segment Anything" technique \cite{kirillov2023segment}. Each image is annotated with a classification label ("healthy" or "stressed") and a corresponding segmentation mask.

### Who Are the Annotators?

The annotators for AGM<sub>HS</sub> are domain experts with knowledge of plant health and stress detection.

## Personal and Sensitive Information

The dataset does not contain personal or sensitive information about individuals. It exclusively consists of images of plants.

## Considerations for Using the Data

### Social Impact of Dataset

The AGM<sub>HS</sub> Dataset plays a crucial role in advancing research and technologies for plant stress detection and localization in the context of modern agriculture. By providing a diverse set of top-view crop images with associated segmentation masks, this dataset can facilitate the development of innovative solutions for sustainable agriculture, contributing to increased crop health, yield prediction, and overall food security.

### Discussion of Biases and Known Limitations

While AGM<sub>HS</sub> is a valuable dataset, it inherits some limitations from the original AGM Dataset. It primarily involves images from a single vertical farm setting, potentially limiting the representativeness of broader agricultural scenarios. Additionally, the dataset's composition may reflect regional agricultural practices and business-driven crop preferences specific to vertical farming. Researchers should be aware of these potential biases when utilizing AGM<sub>HS</sub> for their work.

## Additional Information

### Dataset Curators

The AGM<sub>HS</sub> Dataset is curated by DeepPlants and AgricolaModerna. For further information, please contact us at:

- nico@deepplants.com

- etienne.david@agricolamoderna.com

### Licensing Information

### Citation Information

If you use the AGM<sub>HS</sub> dataset in your work, please consider citing the following publication:

```bibtex

@InProceedings{Sama_2023_ICCV,

author = {Sama, Nico and David, Etienne and Rossetti, Simone and Antona, Alessandro and Franchetti, Benjamin and Pirri, Fiora},

title = {A new Large Dataset and a Transfer Learning Methodology for Plant Phenotyping in Vertical Farms},

booktitle = {Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV) Workshops},

month = {October},

year = {2023},

pages = {540-551}

}

```

| [

-0.3953959345817566,

-0.6316037178039551,

0.2325448989868164,

0.13603462278842926,

-0.2693292796611786,

-0.10008850693702698,

0.01612965390086174,

-0.6986492872238159,

0.21871298551559448,

0.2710365056991577,

-0.5905143618583679,

-0.8974624276161194,

-0.7671762704849243,

0.2468283176422119... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

vitaliy-sharandin/synthetic-fraud-detection | vitaliy-sharandin | 2023-08-24T17:17:37Z | 28 | 3 | null | [

"region:us"

] | 2023-08-24T17:17:37Z | 2023-08-24T17:13:00.000Z | 2023-08-24T17:13:00 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622264862060547,

0.43461528420448303,

-0.52829909324646,

0.7012971639633179,

0.7915720343589783,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104477167129517,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

seara/ru_go_emotions | seara | 2023-08-25T19:13:08Z | 28 | 1 | null | [

"task_categories:text-classification",

"task_categories:translation",

"task_ids:multi-class-classification",

"task_ids:multi-label-classification",

"task_ids:sentiment-analysis",

"task_ids:sentiment-classification",

"size_categories:10K<n<100K",

"size_categories:100K<n<1M",

"source_datasets:go_emoti... | 2023-08-25T19:13:08Z | 2023-08-25T10:12:05.000Z | 2023-08-25T10:12:05 | ---

dataset_info:

- config_name: raw

features:

- name: ru_text

dtype: string

- name: text

dtype: string

- name: id

dtype: string

- name: author

dtype: string

- name: subreddit

dtype: string

- name: link_id

dtype: string

- name: parent_id

dtype: string

- name: created_utc

dtype: float32

- name: rater_id

dtype: int32

- name: example_very_unclear

dtype: bool

- name: admiration

dtype: int32

- name: amusement

dtype: int32

- name: anger

dtype: int32

- name: annoyance

dtype: int32

- name: approval

dtype: int32

- name: caring

dtype: int32

- name: confusion

dtype: int32

- name: curiosity

dtype: int32

- name: desire

dtype: int32

- name: disappointment

dtype: int32

- name: disapproval

dtype: int32

- name: disgust

dtype: int32

- name: embarrassment

dtype: int32

- name: excitement

dtype: int32

- name: fear

dtype: int32

- name: gratitude

dtype: int32

- name: grief

dtype: int32

- name: joy

dtype: int32

- name: love

dtype: int32

- name: nervousness

dtype: int32

- name: optimism

dtype: int32

- name: pride

dtype: int32

- name: realization

dtype: int32

- name: relief

dtype: int32

- name: remorse

dtype: int32

- name: sadness

dtype: int32

- name: surprise

dtype: int32

- name: neutral

dtype: int32

splits:

- name: train

num_bytes: 84388976

num_examples: 211225

download_size: 41128059

dataset_size: 84388976

- config_name: simplified

features:

- name: ru_text

dtype: string

- name: text

dtype: string

- name: labels

sequence:

class_label:

names:

'0': admiration

'1': amusement

'2': anger

'3': annoyance

'4': approval

'5': caring

'6': confusion

'7': curiosity

'8': desire

'9': disappointment

'10': disapproval

'11': disgust

'12': embarrassment

'13': excitement

'14': fear

'15': gratitude

'16': grief

'17': joy

'18': love

'19': nervousness

'20': optimism

'21': pride

'22': realization

'23': relief

'24': remorse

'25': sadness

'26': surprise

'27': neutral

- name: id

dtype: string

splits:

- name: train

num_bytes: 10118125

num_examples: 43410

- name: validation

num_bytes: 1261921

num_examples: 5426

- name: test

num_bytes: 1254989

num_examples: 5427

download_size: 7628917

dataset_size: 12635035

configs:

- config_name: raw

data_files:

- split: train

path: raw/train-*

- config_name: simplified

data_files:

- split: train

path: simplified/train-*

- split: validation

path: simplified/validation-*

- split: test

path: simplified/test-*

license: mit

task_categories:

- text-classification

- translation

task_ids:

- multi-class-classification

- multi-label-classification

- sentiment-analysis

- sentiment-classification

language:

- ru

- en

pretty_name: Ru-GoEmotions

size_categories:

- 10K<n<100K

- 100K<n<1M

source_datasets:

- go_emotions

tags:

- emotion-classification

- emotion

- reddit

---

## Description

This dataset is a translation of the Google [GoEmotions](https://github.com/google-research/google-research/tree/master/goemotions) emotion classification dataset.

All features remain unchanged, except for the addition of a new `ru_text` column containing the translated text in Russian.

For the translation process, I used the [Deep translator](https://github.com/nidhaloff/deep-translator) with the Google engine.

You can find all the details about translation, raw `.csv` files and other stuff in this [Github repository](https://github.com/searayeah/ru-goemotions).

For more information also check the official original dataset [card](https://huggingface.co/datasets/go_emotions).

## Id to label

```yaml

0: admiration

1: amusement

2: anger

3: annoyance

4: approval

5: caring

6: confusion

7: curiosity

8: desire

9: disappointment

10: disapproval

11: disgust

12: embarrassment

13: excitement

14: fear

15: gratitude

16: grief

17: joy

18: love

19: nervousness

20: optimism

21: pride

22: realization

23: relief

24: remorse

25: sadness

26: surprise

27: neutral

```

## Label to Russian label

```yaml

admiration: восхищение

amusement: веселье

anger: злость

annoyance: раздражение

approval: одобрение

caring: забота

confusion: непонимание

curiosity: любопытство

desire: желание

disappointment: разочарование

disapproval: неодобрение

disgust: отвращение

embarrassment: смущение

excitement: возбуждение

fear: страх

gratitude: признательность

grief: горе

joy: радость

love: любовь

nervousness: нервозность

optimism: оптимизм

pride: гордость

realization: осознание

relief: облегчение

remorse: раскаяние

sadness: грусть

surprise: удивление

neutral: нейтральность

```

| [

-0.15566018223762512,

-0.3507988452911377,

0.2888343334197998,

0.32976648211479187,

-0.6945504546165466,

-0.1428690105676651,

-0.4410827159881592,

-0.2923429012298584,

0.35201945900917053,

0.01370419654995203,

-0.730845034122467,

-0.9502149224281311,

-0.7993206977844238,

0.0363789275288581... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

harouzie/vi_question_generation | harouzie | 2023-09-04T05:02:36Z | 28 | 1 | null | [

"task_categories:question-answering",

"task_categories:text2text-generation",

"size_categories:100K<n<1M",

"language:vi",

"license:mit",

"region:us"

] | 2023-09-04T05:02:36Z | 2023-09-04T04:53:55.000Z | 2023-09-04T04:53:55 | ---

license: mit

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

- split: valid

path: data/valid-*

dataset_info:

features:

- name: context

dtype: string

- name: question

dtype: string

- name: answers

dtype: string

- name: id

dtype: string

splits:

- name: train

num_bytes: 211814961.2307449

num_examples: 174499

- name: test

num_bytes: 26477628.80776531

num_examples: 21813

- name: valid

num_bytes: 26476414.961489797

num_examples: 21812

download_size: 142790671

dataset_size: 264769005

task_categories:

- question-answering

- text2text-generation

language:

- vi

pretty_name: Vietnamese Dataset for Extractive Question Answering and Question Generation

size_categories:

- 100K<n<1M

--- | [

-0.1285335123538971,

-0.1861683875322342,

0.6529128551483154,

0.49436232447624207,

-0.19319400191307068,

0.23607441782951355,

0.36072009801864624,

0.05056373029947281,

0.5793656706809998,

0.7400146722793579,

-0.650810182094574,

-0.23784008622169495,

-0.7102247476577759,

-0.0478255338966846... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

erfanzar/GPT4-8K | erfanzar | 2023-09-07T11:04:23Z | 28 | 4 | null | [

"task_categories:text-classification",

"task_categories:translation",

"task_categories:conversational",

"task_categories:text-generation",

"task_categories:summarization",

"size_categories:1K<n<10K",

"language:en",

"region:us"

] | 2023-09-07T11:04:23Z | 2023-09-06T10:17:32.000Z | 2023-09-06T10:17:32 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: dialogs

sequence: string

- name: user

sequence: string

- name: assistant

sequence: string

- name: llama2_prompt

dtype: string

splits:

- name: train

num_bytes: 193605433

num_examples: 6144

download_size: 90877640

dataset_size: 193605433

task_categories:

- text-classification

- translation

- conversational

- text-generation

- summarization

language:

- en

pretty_name: GPT4

size_categories:

- 1K<n<10K

---

# Dataset Card for "GPT4-8K"

Sure! Here's a README.md file for your dataset:

# Dataset Description

This dataset was generated using GPT-4, a powerful language model developed by OpenAI. It contains a collection of dialogs between a user and an assistant, along with additional information.

from OpenChat

## Dataset Configurations

The dataset includes the following configurations:

- **Config Name:** default

- **Data Files:**

- **Split:** train

- **Path:** data/train-*

## Dataset Information

The dataset consists of the following features:

- **Dialogs:** A sequence of strings representing the dialog between the user and the assistant.

- **User:** A sequence of strings representing the user's input during the dialog.

- **Assistant:** A sequence of strings representing the assistant's responses during the dialog.

- **Llama2 Prompt:** A string representing additional prompt information related to the Llama2 model.

The dataset is divided into the following splits:

- **Train:**

- **Number of Bytes:** 193,605,433

- **Number of Examples:** 6,144

## Dataset Size and Download

- **Download Size:** 90,877,640 bytes

- **Dataset Size:** 193,605,433 bytes

Please note that this dataset was generated by GPT-4 and may contain synthetic or simulated data. It is intended for research and experimentation purposes.

For more information or inquiries, please contact the dataset owner.

Thank you for using this dataset! | [

-0.34132683277130127,

-0.48787492513656616,

0.3321187496185303,

0.013142908923327923,

-0.44782108068466187,

-0.16708935797214508,

-0.1142522320151329,

-0.40439897775650024,

0.17818832397460938,

0.5828922986984253,

-0.7157143950462341,

-0.46860164403915405,

-0.3982178866863251,

0.1812483817... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

C-MTEB/T2Reranking_en2zh | C-MTEB | 2023-09-09T16:11:54Z | 28 | 1 | null | [

"region:us"

] | 2023-09-09T16:11:54Z | 2023-09-09T16:11:24.000Z | 2023-09-09T16:11:24 | ---

configs:

- config_name: default

data_files:

- split: dev

path: data/dev-*

dataset_info:

features:

- name: query

dtype: string

- name: positive

sequence: string

- name: negative

sequence: string

splits:

- name: dev

num_bytes: 206929387

num_examples: 6129

download_size: 120405829

dataset_size: 206929387

---

# Dataset Card for "T2Reranking_en2zh"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.17268094420433044,

-0.19892586767673492,

0.1328456550836563,

0.418454647064209,

-0.3359624147415161,

0.00009207292168866843,

0.2627177834510803,

-0.2354247123003006,

0.6522868871688843,

0.4393623173236847,

-0.8152212500572205,

-0.704465925693512,

-0.4981399178504944,

-0.2757878303527832... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

harvard-lil/cold-cases | harvard-lil | 2023-10-19T20:17:38Z | 28 | 7 | null | [

"size_categories:1M<n<10M",

"language:en",

"license:cc0-1.0",

"united states",

"law",

"legal",

"court",

"opinions",

"region:us"

] | 2023-10-19T20:17:38Z | 2023-09-12T17:29:50.000Z | 2023-09-12T17:29:50 | ---

license: cc0-1.0

language:

- en

tags:

- united states

- law

- legal

- court

- opinions

size_categories:

- 1M<n<10M

viewer: true

---

<a href="https://huggingface.co/datasets/harvard-lil/cold-cases/resolve/main/coldcases.png"><img src="https://huggingface.co/datasets/harvard-lil/cold-cases/resolve/main/coldcases-banner.webp"/></a>

# Collaborative Open Legal Data (COLD) - Cases

COLD Cases is a dataset of 8.3 million United States legal decisions with text and metadata, formatted as compressed parquet files. If you'd like to view a sample of the dataset formatted as JSON Lines, you can view one [here](https://raw.githubusercontent.com/harvard-lil/cold-cases-export/main/sample.jsonl)

This dataset exists to support the open legal movement exemplified by projects like

[Pile of Law](https://huggingface.co/datasets/pile-of-law/pile-of-law) and

[LegalBench](https://hazyresearch.stanford.edu/legalbench/).

A key input to legal understanding projects is caselaw -- the published, precedential decisions of judges deciding legal disputes and explaining their reasoning.

United States caselaw is collected and published as open data by [CourtListener](https://www.courtlistener.com/), which maintains scrapers to aggregate data from

a wide range of public sources.

COLD Cases reformats CourtListener's [bulk data](https://www.courtlistener.com/help/api/bulk-data) so that all of the semantic information about each legal decision

(the authors and text of majority and dissenting opinions; head matter; and substantive metadata) is encoded in a single record per decision,

with extraneous data removed. Serving in the traditional role of libraries as a standardization steward, the Harvard Library Innovation Lab is maintaining

this [open source](https://github.com/harvard-lil/cold-cases-export) pipeline to consolidate the data engineering for preprocessing caselaw so downstream machine

learning and natural language processing projects can use consistent, high quality representations of cases for legal understanding tasks.

Prepared by the [Harvard Library Innovation Lab](https://lil.law.harvard.edu) in collaboration with the [Free Law Project](https://free.law/).

---

## Links

- [Data nutrition label](https://datanutrition.org/labels/v3/?id=c29976b2-858c-4f4e-b7d0-c8ef12ce7dbe) (DRAFT). ([Archive](https://perma.cc/YV5P-B8JL)).

- [Pipeline source code](https://github.com/harvard-lil/cold-cases-export)

---

## Summary

- [Formats](#formats)

- [File structure](#file-structure)

- [Data dictionary](#data-dictionary)

- [Notes on appropriate use](#appropriate-use)

---

## Format

[Apache Parquet](https://parquet.apache.org/) is binary format that makes filtering and retrieving the data quicker because it lays out the data in columns, which means columns that are unnecessary to satisfy a given query or workflow don't need to be read. Hugging Face's [Datasets](https://huggingface.co/docs/datasets/index) library is an easy way to get started working with the entire dataset, and has features for loading and streaming the data, so you don't need to store it all locally or pay attention to how it's formatted on disk.

[☝️ Go back to Summary](#summary)

---

## Data dictionary

Partial glossary of the fields in the data.

| Field name | Description |

| --- | --- |

| `judges` | Names of judges presiding over the case, extracted from the text. |

| `date_filed` | Date the case was filed. Formatted in ISO Date format. |

| `date_filed_is_approximate` | Boolean representing whether the `date_filed` value is precise to the day. |

| `slug` | Short, human-readable unique string nickname for the case. |

| `case_name_short` | Short name for the case. |

| `case_name` | Fuller name for the case. |

| `case_name_full` | Full, formal name for the case. |

| `attorneys` | Names of attorneys arguing the case, extracted from the text. |

| `nature_of_suit` | Free text representinng type of suit, such as Civil, Tort, etc. |

| `syllabus` | Summary of the questions addressed in the decision, if provided by the reporter of decisions. |

| `headnotes` | Textual headnotes of the case |

| `summary` | Textual summary of the case |

| `disposition` | How the court disposed of the case in their final ruling. |

| `history` | Textual information about what happened to this case in later decisions. |

| `other_dates` | Other dates related to the case in free text. |

| `cross_reference` | Citations to related cases. |

| `citation_count` | Number of cases that cite this one. |

| `precedential_status` | Constrainted to the values "Published", "Unknown", "Errata", "Unpublished", "Relating-to", "Separate", "In-chambers" |

| `citations` | Cases that cite this case. |

| `court_short_name` | Short name of court presiding over case. |

| `court_full_name` | Full name of court presiding over case. |

| `court_jurisdiction` | Code for type of court that presided over the case. See: [court_jurisdiction field values](#court_jurisdiction-field-values) |

| `opinions` | An array of subrecords. |

| `opinions.author_str` | Name of the author of an individual opinion. |

| `opinions.per_curiam` | Boolean representing whether the opinion was delivered by an entire court or a single judge. |

| `opinions.type` | One of `"010combined"`, `"015unamimous"`, `"020lead"`, `"025plurality"`, `"030concurrence"`, `"035concurrenceinpart"`, `"040dissent"`, `"050addendum"`, `"060remittitur"`, `"070rehearing"`, `"080onthemerits"`, `"090onmotiontostrike"`. |

| `opinions.opinion_text` | Actual full text of the opinion. |

| `opinions.ocr` | Whether the opinion was captured via optical character recognition or born-digital text. |

### court_jurisdiction field values

| Value | Description |

| --- | --- |

| F | Federal Appellate |

| FD | Federal District |

| FB | Federal Bankruptcy |

| FBP | Federal Bankruptcy Panel |

| FS | Federal Special |

| S | State Supreme |

| SA | State Appellate |

| ST | State Trial |

| SS | State Special |

| TRS | Tribal Supreme |

| TRA | Tribal Appellate |

| TRT | Tribal Trial |

| TRX | Tribal Special |

| TS | Territory Supreme |

| TA | Territory Appellate |

| TT | Territory Trial |

| TSP | Territory Special |

| SAG | State Attorney General |

| MA | Military Appellate |

| MT | Military Trial |

| C | Committee |

| I | International |

| T | Testing |

[☝️ Go back to Summary](#summary)

## Notes on appropriate use

When using this data, please keep in mind:

* All documents in this dataset are public information, published by courts within the United States to inform the public about the law. **You have a right to access them.**

* Nevertheless, **public court decisions frequently contain statements about individuals that are not true**. Court decisions often contain claims that are disputed,

or false claims taken as true based on a legal technicality, or claims taken as true but later found to be false. Legal decisions are designed to inform you about the law -- they are not

designed to inform you about individuals, and should not be used in place of credit databases, criminal records databases, news articles, or other sources intended

to provide factual personal information. Applications should carefully consider whether use of this data will inform about the law, or mislead about individuals.

* **Court decisions are not up-to-date statements of law**. Each decision provides a given judge's best understanding of the law as applied to the stated facts

at the time of the decision. Use of this data to generate statements about the law requires integration of a large amount of context --

the skill typically provided by lawyers -- rather than simple data retrieval.

To mitigate privacy risks, we have filtered out cases [blocked or deindexed by CourtListener](https://www.courtlistener.com/terms/#removal). Researchers who

require access to the full dataset without that filter may rerun our pipeline on CourtListener's raw data.

[☝️ Go back to Summary](#summary) | [

-0.3028346598148346,

-0.6334357857704163,

0.7034509778022766,

0.18077705800533295,

-0.48515748977661133,

-0.16999058425426483,

-0.1328805685043335,

-0.1546553671360016,

0.4448177218437195,

0.7579267621040344,

-0.2897569537162781,

-0.9776525497436523,

-0.47390106320381165,

-0.14894737303256... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Nicolas-BZRD/Original_Songs_Lyrics_with_French_Translation | Nicolas-BZRD | 2023-10-16T14:02:02Z | 28 | 6 | null | [

"task_categories:translation",

"task_categories:text-generation",

"size_categories:10K<n<100K",

"language:fr",

"language:en",

"language:es",

"language:it",

"language:de",

"language:ko",

"language:id",

"language:pt",

"language:no",

"language:fi",

"language:sv",

"language:sw",

"language:... | 2023-10-16T14:02:02Z | 2023-09-12T21:21:44.000Z | 2023-09-12T21:21:44 | ---

license: unknown

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: artist_name

dtype: string

- name: album_name

dtype: string

- name: year

dtype: int64

- name: title

dtype: string

- name: number

dtype: int64

- name: original_version

dtype: string

- name: french_version

dtype: string

- name: language

dtype: string

splits:

- name: train

num_bytes: 250317845

num_examples: 99289

download_size: 122323323

dataset_size: 250317845

task_categories:

- translation

- text-generation

language:

- fr

- en

- es

- it

- de

- ko

- id

- pt

- 'no'

- fi

- sv

- sw

- hr

- so

- ca

- tl

- ja

- nl

- ru

- et

- tr

- ro

- cy

- vi

- af

- hu

- sk

- sl

- cs

- da

- pl

- sq

- el

- he

- zh

- th

- bg

- ar

tags:

- music

- parallel

- parallel data

pretty_name: SYFT

size_categories:

- 10K<n<100K

---

# Original Songs Lyrics with French Translation

### Dataset Summary

Dataset of 99289 songs containing their metadata (author, album, release date, song number), original lyrics and lyrics translated into French.

Details of the number of songs by language of origin can be found in the table below:

| Original language | Number of songs |

|---|:---|

| en | 75786 |

| fr | 18486 |

| es | 1743 |

| it | 803 |

| de | 691 |

| sw | 529 |

| ko | 193 |

| id | 169 |

| pt | 142 |

| no | 122 |

| fi | 113 |

| sv | 70 |

| hr | 53 |

| so | 43 |

| ca | 41 |

| tl | 36 |

| ja | 35 |

| nl | 32 |

| ru | 29 |

| et | 27 |

| tr | 22 |

| ro | 19 |

| cy | 14 |

| vi | 14 |

| af | 13 |

| hu | 10 |

| sk | 10 |

| sl | 10 |

| cs | 7 |

| da | 6 |

| pl | 5 |

| sq | 4 |

| el | 4 |

| he | 3 |

| zh-cn | 2 |

| th | 1 |

| bg | 1 |

| ar | 1 | | [

-0.5436922311782837,

-0.2298395186662674,

0.26920127868652344,

0.9313919544219971,

-0.1531848907470703,

0.26017892360687256,

-0.2757697105407715,

-0.3256393373012543,

0.511759877204895,

1.358842372894287,

-1.2133055925369263,

-0.960709273815155,

-0.8669556379318237,

0.5326979756355286,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

M-A-D/Mixed-Arabic-Dataset-Main | M-A-D | 2023-10-06T17:56:33Z | 28 | 3 | null | [

"task_categories:conversational",

"task_categories:text-generation",

"task_categories:text2text-generation",

"task_categories:translation",

"task_categories:summarization",

"language:ar",

"region:us"

] | 2023-10-06T17:56:33Z | 2023-09-25T10:52:11.000Z | 2023-09-25T10:52:11 | ---

language:

- ar

task_categories:

- conversational

- text-generation

- text2text-generation

- translation

- summarization

pretty_name: MAD

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: GenId

dtype: int64

- name: SubId

dtype: int64

- name: DatasetName

dtype: string

- name: DatasetLink

dtype: string

- name: Text

dtype: string

- name: MetaData

struct:

- name: AboutAuthor

dtype: string

- name: AboutBook

dtype: string

- name: Author

dtype: string

- name: AuthorName

dtype: string

- name: BookLink

dtype: string

- name: BookName

dtype: string

- name: ChapterLink

dtype: string

- name: ChapterName

dtype: string

- name: Tags

dtype: float64

- name: __index_level_0__

dtype: float64

- name: created_date

dtype: string

- name: deleted

dtype: bool

- name: detoxify

dtype: 'null'

- name: emojis

struct:

- name: count

sequence: int32

- name: name

sequence: string

- name: id

dtype: string

- name: labels

struct:

- name: count

sequence: int32

- name: name

sequence: string

- name: value

sequence: float64

- name: lang

dtype: string

- name: message_id

dtype: string

- name: message_tree_id

dtype: string

- name: model_name

dtype: 'null'

- name: parent_id

dtype: string

- name: query_id

dtype: string

- name: rank

dtype: float64

- name: review_count

dtype: float64

- name: review_result

dtype: bool

- name: role

dtype: string

- name: synthetic

dtype: bool

- name: title

dtype: string

- name: tree_state

dtype: string

- name: url

dtype: string

- name: user_id

dtype: string

- name: ConcatenatedText

dtype: int64

- name: __index_level_0__

dtype: float64

splits:

- name: train

num_bytes: 1990497610

num_examples: 131393

download_size: 790648134

dataset_size: 1990497610

---

# Dataset Card for "Mixed-Arabic-Dataset"

## Mixed Arabic Datasets (MAD)

The Mixed Arabic Datasets (MAD) project provides a comprehensive collection of diverse Arabic-language datasets, sourced from various repositories, platforms, and domains. These datasets cover a wide range of text types, including books, articles, Wikipedia content, stories, and more.

### MAD Repo vs. MAD Main

#### MAD Repo

- **Versatility**: In the MAD Repository (MAD Repo), datasets are made available in their original, native form. Researchers and practitioners can selectively download specific datasets that align with their specific interests or requirements.

- **Independent Access**: Each dataset is self-contained, enabling users to work with individual datasets independently, allowing for focused analyses and experiments.

#### MAD Main or simply MAD

- **Unified Dataframe**: MAD Main represents a harmonized and unified dataframe, incorporating all datasets from the MAD Repository. It provides a seamless and consolidated view of the entire MAD collection, making it convenient for comprehensive analyses and applications.

- **Holistic Perspective**: Researchers can access a broad spectrum of Arabic-language content within a single dataframe, promoting holistic exploration and insights across diverse text sources.

### Why MAD Main?

- **Efficiency**: Working with MAD Main streamlines the data acquisition process by consolidating multiple datasets into one structured dataframe. This is particularly beneficial for large-scale projects or studies requiring diverse data sources.

- **Interoperability**: With MAD Main, the datasets are integrated into a standardized format, enhancing interoperability and compatibility with a wide range of data processing and analysis tools.

- **Meta-Analysis**: Researchers can conduct comprehensive analyses, such as cross-domain studies, trend analyses, or comparative studies, by leveraging the combined richness of all MAD datasets.

### Getting Started

- To access individual datasets in their original form, refer to the MAD Repository ([Link to MAD Repo](https://huggingface.co/datasets/M-A-D/Mixed-Arabic-Datasets-Repo)).

- For a unified view of all datasets, conveniently organized in a dataframe, you are here in the right place.

```python

from datasets import load_dataset

dataset = load_dataset("M-A-D/Mixed-Arabic-Dataset-Main")

```

### Join Us on Discord

For discussions, contributions, and community interactions, join us on Discord! [](https://discord.gg/2NpJ9JGm)

### How to Contribute

Want to contribute to the Mixed Arabic Datasets project? Follow our comprehensive guide on Google Colab for step-by-step instructions: [Contribution Guide](https://colab.research.google.com/drive/1w7_7lL6w7nM9DcDmTZe1Vfiwkio6SA-w?usp=sharing).

**Note**: If you'd like to test a contribution before submitting it, feel free to do so on the [MAD Test Dataset](https://huggingface.co/datasets/M-A-D/Mixed-Arabic-Dataset-test).

## Citation

```

@dataset{

title = {Mixed Arabic Datasets (MAD)},

author = {MAD Community},

howpublished = {Dataset},

url = {https://huggingface.co/datasets/M-A-D/Mixed-Arabic-Datasets-Repo},

year = {2023},

}

``` | [

-0.6333746314048767,

-0.5809771418571472,

-0.13783769309520721,

0.32546454668045044,

-0.23122510313987732,

0.3348468542098999,

-0.05636657029390335,

-0.2750006914138794,

0.40660518407821655,

0.22212892770767212,

-0.46584370732307434,

-0.9477024674415588,

-0.6604589223861694,

0.329419225454... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

SEACrowd/multilexnorm | SEACrowd | 2023-09-26T12:29:08Z | 28 | 0 | null | [

"language:ind",

"multilexnorm",

"region:us"

] | 2023-09-26T12:29:08Z | 2023-09-26T11:13:05.000Z | 2023-09-26T11:13:05 | ---

tags:

- multilexnorm

language:

- ind

---

# multilexnorm

MULTILEXNPRM is a new benchmark dataset for multilingual lexical normalization

including 12 language variants,

we here specifically work on the Indonisian-english language.

## Dataset Usage

Run `pip install nusacrowd` before loading the dataset through HuggingFace's `load_dataset`.

## Citation

```

@inproceedings{multilexnorm,

title= {MultiLexNorm: A Shared Task on Multilingual Lexical Normalization,

author = "van der Goot, Rob and Ramponi et al.",

booktitle = "Proceedings of the 7th Workshop on Noisy User-generated Text (W-NUT 2021)",

year = "2021",

publisher = "Association for Computational Linguistics",

address = "Punta Cana, Dominican Republic"

}

```

## License

CC-BY-NC-SA 4.0

## Homepage

[https://bitbucket.org/robvanderg/multilexnorm/src/master/](https://bitbucket.org/robvanderg/multilexnorm/src/master/)

### NusaCatalogue

For easy indexing and metadata: [https://indonlp.github.io/nusa-catalogue](https://indonlp.github.io/nusa-catalogue) | [

-0.5377999544143677,

-0.14906476438045502,

0.059653256088495255,

0.560161292552948,

-0.15787512063980103,

-0.029067762196063995,

-0.627038836479187,

-0.15433518588542938,

0.3969719707965851,

0.555078387260437,

-0.18659743666648865,

-0.7094143629074097,

-0.7306297421455383,

0.55454123020172... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

JeremiahZ/humaneval_x_llvm_wasm | JeremiahZ | 2023-09-29T00:04:36Z | 28 | 0 | null | [

"region:us"

] | 2023-09-29T00:04:36Z | 2023-09-29T00:04:31.000Z | 2023-09-29T00:04:31 | ---

dataset_info:

features:

- name: task_id

dtype: string

- name: prompt

dtype: string

- name: declaration

dtype: string

- name: canonical_solution

dtype: string

- name: test

dtype: string

- name: example_test

dtype: string

- name: llvm_ir

dtype: string

- name: wat

dtype: string

splits:

- name: test

num_bytes: 4945639

num_examples: 161

download_size: 1096385

dataset_size: 4945639

configs:

- config_name: default

data_files:

- split: test

path: data/test-*

---

# Dataset Card for "humaneval_x_llvm_wasm"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.44151681661605835,

-0.159193217754364,

0.11025811731815338,

0.19995763897895813,

-0.4627504050731659,

0.04280577227473259,

0.2518852949142456,

-0.02384907379746437,

0.9150804877281189,

0.6802401542663574,

-0.8012567162513733,

-1.0199357271194458,

-0.5845341682434082,

-0.1822658330202102... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

qgyd2021/rlhf_reward_dataset | qgyd2021 | 2023-10-10T11:11:01Z | 28 | 9 | null | [

"task_categories:question-answering",

"task_categories:text-generation",

"size_categories:100M<n<1B",

"language:zh",

"language:en",

"license:apache-2.0",

"reward model",

"rlhf",

"arxiv:2204.05862",

"region:us"

] | 2023-10-10T11:11:01Z | 2023-09-30T03:23:01.000Z | 2023-09-30T03:23:01 | ---

license: apache-2.0

task_categories:

- question-answering

- text-generation

language:

- zh

- en

tags:

- reward model

- rlhf

size_categories:

- 100M<n<1B

---

## RLHF Reward Model Dataset

奖励模型数据集。

数据集从网上收集整理如下:

| 数据 | 语言 | 原始数据/项目地址 | 样本个数 | 原始数据描述 | 替代数据下载地址 |

| :--- | :---: | :---: | :---: | :---: | :---: |

| beyond | chinese | [beyond/rlhf-reward-single-round-trans_chinese](https://huggingface.co/datasets/beyond/rlhf-reward-single-round-trans_chinese) | 24858 | | |

| helpful_and_harmless | chinese | [dikw/hh_rlhf_cn](https://huggingface.co/datasets/dikw/hh_rlhf_cn) | harmless train 42394 条,harmless test 2304 条,helpful train 43722 条,helpful test 2346 条, | 基于 Anthropic 论文 [Training a Helpful and Harmless Assistant with Reinforcement Learning from Human Feedback](https://arxiv.org/abs/2204.05862) 开源的 helpful 和harmless 数据,使用翻译工具进行了翻译。 | [Anthropic/hh-rlhf](https://huggingface.co/datasets/Anthropic/hh-rlhf) |

| zhihu_3k | chinese | [liyucheng/zhihu_rlhf_3k](https://huggingface.co/datasets/liyucheng/zhihu_rlhf_3k) | 3460 | 知乎上的问答有用户的点赞数量,它应该是根据点赞数量来判断答案的优先级。 | |

| SHP | english | [stanfordnlp/SHP](https://huggingface.co/datasets/stanfordnlp/SHP) | 385K | 涉及18个子领域,偏好表示是否有帮助。 | |

<details>

<summary>参考的数据来源,展开查看</summary>

<pre><code>

https://huggingface.co/datasets/ticoAg/rlhf_zh

https://huggingface.co/datasets/beyond/rlhf-reward-single-round-trans_chinese

https://huggingface.co/datasets/dikw/hh_rlhf_cn

https://huggingface.co/datasets/liyucheng/zhihu_rlhf_3k

</code></pre>

</details>

| [

-0.3048926591873169,

-0.643074095249176,

-0.07511572539806366,

0.3350818455219269,

-0.38833966851234436,

-0.4477697014808655,

-0.09056294709444046,

-0.693253219127655,

0.5780413150787354,

0.30107784271240234,

-1.0250827074050903,

-0.6303532123565674,

-0.4910812973976135,

0.1426858305931091... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

neelblabla/enron_labeled_emails_with_subjects-llama2-7b_finetuning | neelblabla | 2023-10-01T18:34:26Z | 28 | 1 | null | [

"task_categories:text-classification",

"size_categories:1K<n<10K",

"language:en",

"region:us"

] | 2023-10-01T18:34:26Z | 2023-09-30T15:40:14.000Z | 2023-09-30T15:40:14 | ---

task_categories:

- text-classification

language:

- en

pretty_name: enron(unprocessed)_labeled_prompts

size_categories:

- 1K<n<10K

--- | [

-0.1285335123538971,

-0.1861683875322342,

0.6529128551483154,

0.49436232447624207,

-0.19319400191307068,

0.23607441782951355,

0.36072009801864624,

0.05056373029947281,

0.5793656706809998,

0.7400146722793579,

-0.650810182094574,

-0.23784008622169495,

-0.7102247476577759,

-0.0478255338966846... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

minhtu0408/gdsc-model-dataset | minhtu0408 | 2023-11-14T10:01:21Z | 28 | 0 | null | [

"region:us"

] | 2023-11-14T10:01:21Z | 2023-10-05T11:49:45.000Z | 2023-10-05T11:49:45 | Entry not found | [

-0.32276472449302673,

-0.22568407654762268,

0.8622258901596069,

0.4346148371696472,

-0.5282984972000122,

0.7012965679168701,

0.7915717363357544,

0.07618629932403564,

0.7746022939682007,

0.2563222646713257,

-0.785281777381897,

-0.22573848068714142,

-0.9104482531547546,

0.5715669393539429,

... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

rouabelgacem/autotrain-data-nlp-bert-ner-testing | rouabelgacem | 2023-10-12T14:53:16Z | 28 | 0 | null | [

"region:us"

] | 2023-10-12T14:53:16Z | 2023-10-12T14:44:39.000Z | 2023-10-12T14:44:39 | Entry not found | [

-0.32276472449302673,

-0.22568407654762268,

0.8622258901596069,

0.4346148371696472,

-0.5282984972000122,

0.7012965679168701,

0.7915717363357544,

0.07618629932403564,

0.7746022939682007,

0.2563222646713257,

-0.785281777381897,

-0.22573848068714142,

-0.9104482531547546,

0.5715669393539429,

... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

hippocrates/MedNLI_train | hippocrates | 2023-10-18T19:47:44Z | 28 | 0 | null | [

"region:us"

] | 2023-10-18T19:47:44Z | 2023-10-12T15:46:06.000Z | 2023-10-12T15:46:06 | ---

dataset_info:

features:

- name: id

dtype: string

- name: conversations

list:

- name: from

dtype: string

- name: value

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 8375998

num_examples: 11232

- name: valid

num_bytes: 1054726

num_examples: 1395

- name: test

num_bytes: 1050034

num_examples: 1422

download_size: 3057999

dataset_size: 10480758

---

# Dataset Card for "MedNLI_train"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.5085327625274658,

0.055445000529289246,

0.18204030394554138,

0.07680079340934753,

-0.08627691119909286,

-0.14896799623966217,

0.1990634649991989,

-0.15364424884319305,

0.8636783361434937,

0.4135456085205078,

-1.0009633302688599,

-0.5598673820495605,

-0.46094003319740295,

-0.277931183576... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

lighteval/natural_questions_clean | lighteval | 2023-10-17T20:29:08Z | 28 | 0 | null | [

"region:us"

] | 2023-10-17T20:29:08Z | 2023-10-17T16:39:42.000Z | 2023-10-17T16:39:42 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

dataset_info:

features:

- name: id

dtype: string

- name: title

dtype: string

- name: document

dtype: string

- name: question

dtype: string

- name: long_answers

sequence: string

- name: short_answers

sequence: string

splits:

- name: train

num_bytes: 4346873866.211105

num_examples: 106926

- name: validation

num_bytes: 175230324.62247765

num_examples: 4289

download_size: 1406784865

dataset_size: 4522104190.833583

---

# Dataset Card for "natural_questions_clean"

Created by @thomwolf on the basis of https://huggingface.co/datasets/lighteval/natural_questions but removing the questions without short answers provided.

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.8745608925819397,

-0.9609296321868896,

0.20879194140434265,

-0.1624538004398346,

-0.45509496331214905,

-0.20629577338695526,

-0.2605534493923187,

-0.6620656251907349,

0.8658750653266907,

0.7722594738006592,

-0.8775538206100464,

-0.47062161564826965,

-0.1791592389345169,

0.23926126956939... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

ppxscal/aminer-citation-graphv14-jaccard | ppxscal | 2023-10-24T01:56:10Z | 28 | 0 | null | [

"region:us"

] | 2023-10-24T01:56:10Z | 2023-10-23T14:13:25.000Z | 2023-10-23T14:13:25 | ---

# For reference on dataset card metadata, see the spec: https://github.com/huggingface/hub-docs/blob/main/datasetcard.md?plain=1

# Doc / guide: https://huggingface.co/docs/hub/datasets-cards

{}

---

# Dataset Card for Dataset Name

<!-- Provide a quick summary of the dataset. -->

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

## Dataset Details

### Dataset Description

<!-- Provide a longer summary of what this dataset is. -->

Contains text pairs from https://www.aminer.org/citation v14. Similairty socres calculated with Jaccard index.

- **Curated by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

### Dataset Sources [optional]

<!-- Provide the basic links for the dataset. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the dataset is intended to be used. -->

### Direct Use

<!-- This section describes suitable use cases for the dataset. -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the dataset will not work well for. -->

[More Information Needed]

## Dataset Structure

<!-- This section provides a description of the dataset fields, and additional information about the dataset structure such as criteria used to create the splits, relationships between data points, etc. -->

[More Information Needed]

## Dataset Creation

### Curation Rationale

<!-- Motivation for the creation of this dataset. -->

[More Information Needed]

### Source Data

<!-- This section describes the source data (e.g. news text and headlines, social media posts, translated sentences, ...). -->

#### Data Collection and Processing

<!-- This section describes the data collection and processing process such as data selection criteria, filtering and normalization methods, tools and libraries used, etc. -->

[More Information Needed]

#### Who are the source data producers?

<!-- This section describes the people or systems who originally created the data. It should also include self-reported demographic or identity information for the source data creators if this information is available. -->

[More Information Needed]

### Annotations [optional]

<!-- If the dataset contains annotations which are not part of the initial data collection, use this section to describe them. -->

#### Annotation process

<!-- This section describes the annotation process such as annotation tools used in the process, the amount of data annotated, annotation guidelines provided to the annotators, interannotator statistics, annotation validation, etc. -->

[More Information Needed]

#### Who are the annotators?

<!-- This section describes the people or systems who created the annotations. -->

[More Information Needed]

#### Personal and Sensitive Information

<!-- State whether the dataset contains data that might be considered personal, sensitive, or private (e.g., data that reveals addresses, uniquely identifiable names or aliases, racial or ethnic origins, sexual orientations, religious beliefs, political opinions, financial or health data, etc.). If efforts were made to anonymize the data, describe the anonymization process. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users should be made aware of the risks, biases and limitations of the dataset. More information needed for further recommendations.

## Citation [optional]

<!-- If there is a paper or blog post introducing the dataset, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the dataset or dataset card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Dataset Card Authors [optional]

[More Information Needed]

## Dataset Card Contact

[More Information Needed] | [

-0.49012744426727295,

-0.48572415113449097,

0.16169412434101105,

0.21002379059791565,

-0.37142109870910645,

-0.18770582973957062,

-0.04764176160097122,

-0.6432647705078125,

0.5875018835067749,

0.7652541995048523,

-0.7544398307800293,

-0.9097355604171753,

-0.5350700616836548,

0.155589565634... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

fashxp/cars-description | fashxp | 2023-10-25T14:17:36Z | 28 | 0 | null | [

"region:us"

] | 2023-10-25T14:17:36Z | 2023-10-23T19:10:59.000Z | 2023-10-23T19:10:59 | ---

dataset_info:

features:

- name: Bodystyle

dtype: string

- name: Class

dtype: string

- name: Wheelbase

dtype: string

- name: Availability Type

dtype: string

- name: Production Year

dtype: string

- name: Power

dtype: string

- name: ID

dtype: string

- name: Cylinders

dtype: string

- name: Color

dtype: string

- name: Manufacturer

dtype: string

- name: Number Of Doors

dtype: string

- name: Milage

dtype: string

- name: Description

dtype: string

- name: Length

dtype: string

- name: Country

dtype: string

- name: Capacity

dtype: string

- name: Categories

dtype: string

- name: Engine Location

dtype: string

- name: Width

dtype: string

- name: Number Of Seats

dtype: string

- name: Name

dtype: string

- name: Condition

dtype: string

- name: Price in EUR

dtype: string

- name: Weight

dtype: string

- name: Object Type

dtype: string

- name: Cargo Capacity

dtype: string

- name: Wheel Drive

dtype: string

- name: Availability Pieces

dtype: string

- name: Prompt

dtype: string

splits:

- name: train

num_bytes: 323678

num_examples: 248

download_size: 114519

dataset_size: 323678

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "cars-description"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.7568616271018982,

-0.1303810030221939,

0.4093114733695984,

0.22019600868225098,

-0.24891327321529388,

0.0972425788640976,

0.03203465789556503,

-0.32844164967536926,

0.5966350436210632,

0.17502912878990173,

-0.8719015717506409,

-0.5656322240829468,

-0.3688215911388397,

-0.392810195684433... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

khederwaaOne/my_dataset | khederwaaOne | 2023-10-24T18:33:31Z | 28 | 0 | null | [

"region:us"

] | 2023-10-24T18:33:31Z | 2023-10-24T17:59:00.000Z | 2023-10-24T17:59:00 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622263669967651,

0.43461522459983826,

-0.52829909324646,

0.7012971639633179,

0.7915719747543335,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104475975036621,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

w95/databricks-dolly-15k-az | w95 | 2023-10-29T07:51:38Z | 28 | 0 | null | [

"task_categories:question-answering",

"task_categories:summarization",

"size_categories:1K<n<10K",

"language:az",

"license:cc-by-sa-3.0",

"arxiv:2203.02155",

"region:us"

] | 2023-10-29T07:51:38Z | 2023-10-29T07:43:06.000Z | 2023-10-29T07:43:06 | ---

license: cc-by-sa-3.0

task_categories:

- question-answering

- summarization

language:

- az

size_categories:

- 1K<n<10K

---

This dataset is a machine-translated version of [databricks-dolly-15k.jsonl](https://huggingface.co/datasets/databricks/databricks-dolly-15k) into Azerbaijani. Dataset size is 8k.

-----

# Summary

`databricks-dolly-15k` is an open source dataset of instruction-following records generated by thousands of Databricks employees in several

of the behavioral categories outlined in the [InstructGPT](https://arxiv.org/abs/2203.02155) paper, including brainstorming, classification,

closed QA, generation, information extraction, open QA, and summarization.

This dataset can be used for any purpose, whether academic or commercial, under the terms of the

[Creative Commons Attribution-ShareAlike 3.0 Unported License](https://creativecommons.org/licenses/by-sa/3.0/legalcode).

Supported Tasks:

- Training LLMs

- Synthetic Data Generation

- Data Augmentation

Languages: English

Version: 1.0 | [

0.09338925778865814,

-0.7657922506332397,

-0.009938729926943779,

0.6653185486793518,

-0.33416685461997986,

0.06567493081092834,

-0.09083867818117142,

-0.06872350722551346,

0.006306231953203678,

0.7745890021324158,

-0.908684253692627,

-0.843329668045044,

-0.4904543459415436,

0.3672083616256... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Kabatubare/medical-guanaco-3000 | Kabatubare | 2023-10-30T09:59:47Z | 28 | 1 | null | [

"language:en",

"license:unknown",

"healthcare",

"Q&A",

"NLP",

"dialogues",

"region:us"

] | 2023-10-30T09:59:47Z | 2023-10-29T15:49:46.000Z | 2023-10-29T15:49:46 | ---

title: Reduced Medical Q&A Dataset

language: en

license: unknown

tags:

- healthcare

- Q&A

- NLP

- dialogues

pretty_name: Medical Q&A Dataset

---

# Dataset Card for Reduced Medical Q&A Dataset

This dataset card provides comprehensive details about the Reduced Medical Q&A Dataset, which is a curated and balanced subset aimed for healthcare dialogues and medical NLP research.

## Dataset Details

### Dataset Description

The Reduced Medical Q&A Dataset is derived from a specialized subset of the larger MedDialog collection. It focuses on healthcare dialogues between doctors and patients from sources like WebMD, Icliniq, HealthcareMagic, and HealthTap. The dataset contains approximately 3,000 rows and is intended for a variety of applications such as NLP research, healthcare chatbot development, and medical information retrieval.

- **Curated by:** Unknown (originally from MedDialog)

- **Funded by [optional]:** N/A

- **Shared by [optional]:** N/A

- **Language(s) (NLP):** English

- **License:** Unknown (assumed for educational/research use)

### Dataset Sources [optional]

- **Repository:** N/A

- **Paper [optional]:** N/A

- **Demo [optional]:** N/A

## Uses

### Direct Use

- NLP research in healthcare dialogues

- Development of healthcare question-answering systems

- Medical information retrieval

### Out-of-Scope Use

- Not a substitute for certified medical advice

- Exercise caution in critical healthcare applications

## Dataset Structure

Each entry in the dataset follows the structure: "### Human:\n[Human's text]\n\n### Assistant: [Assistant's text]"

## Dataset Creation

### Curation Rationale

The dataset was curated to create a balanced set of medical Q&A pairs using keyword-based sampling to cover a wide range of medical topics.

### Source Data

#### Data Collection and Processing

The data is text-based, primarily in English, and was curated from the larger "Medical" dataset featuring dialogues from Icliniq, HealthcareMagic, and HealthTap.

#### Who are the source data producers?

The original data was produced by healthcare professionals and patients engaging in medical dialogues on platforms like Icliniq, HealthcareMagic, and HealthTap.

### Annotations [optional]

No additional annotations; the dataset is text-based.

## Bias, Risks, and Limitations

- The dataset is not a substitute for professional medical advice.

- It is designed for research and educational purposes only.

### Recommendations

Users should exercise caution and understand the limitations when using the dataset for critical healthcare applications.

## Citation [optional]

N/A

## Glossary [optional]

N/A

## More Information [optional]

N/A

## Dataset Card Authors [optional]

N/A

## Dataset Card Contact

N/A | [

-0.27335768938064575,

-0.7437918186187744,

0.27780643105506897,

-0.1995694935321808,

-0.2629483640193939,

-0.024709511548280716,

0.07231761515140533,

-0.2498636096715927,

0.6121847629547119,

0.7621784210205078,

-1.1383658647537231,

-0.8334278464317322,

-0.40659868717193604,

0.1604409515857... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Ryan20/qa_hotel_dataset | Ryan20 | 2023-10-31T11:32:14Z | 28 | 0 | null | [

"task_categories:question-answering",

"size_categories:n<1K",

"language:en",

"language:pt",

"license:openrail",

"region:us"

] | 2023-10-31T11:32:14Z | 2023-10-30T10:29:25.000Z | 2023-10-30T10:29:25 | ---

license: openrail

task_categories:

- question-answering

language:

- en

- pt

size_categories:

- n<1K

--- | [

-0.1285335123538971,

-0.1861683875322342,

0.6529128551483154,

0.49436232447624207,

-0.19319400191307068,

0.23607441782951355,

0.36072009801864624,

0.05056373029947281,

0.5793656706809998,

0.7400146722793579,

-0.650810182094574,

-0.23784008622169495,

-0.7102247476577759,

-0.0478255338966846... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

jmelsbach/leichte-sprache-definitionen | jmelsbach | 2023-10-30T15:08:24Z | 28 | 0 | null | [

"region:us"

] | 2023-10-30T15:08:24Z | 2023-10-30T15:08:20.000Z | 2023-10-30T15:08:20 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: title

dtype: string

- name: parsed_content

dtype: string

- name: id

dtype: int64

- name: __index_level_0__

dtype: int64

splits:

- name: train

num_bytes: 530344.0658114891

num_examples: 2868

- name: test

num_bytes: 132770.93418851087

num_examples: 718

download_size: 417716

dataset_size: 663115.0

---

# Dataset Card for "leichte-sprache-definitionen"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.7181417346000671,

-0.29131847620010376,

0.04863014817237854,

0.3761870563030243,

-0.3467704951763153,

-0.18940331041812897,

-0.024043064564466476,

-0.28233611583709717,

1.1042569875717163,

0.5420048236846924,

-0.7673881649971008,

-0.7955626845359802,

-0.7315640449523926,

-0.322521090507... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

kunishou/hh-rlhf-49k-ja-single-turn | kunishou | 2023-11-02T14:30:34Z | 28 | 0 | null | [

"license:mit",

"region:us"

] | 2023-11-02T14:30:34Z | 2023-10-31T17:47:50.000Z | 2023-10-31T17:47:50 | ---

license: mit

---

This dataset was created by automatically translating part of "Anthropic/hh-rlhf" into Japanese, and selected for single turn conversations.

You can use this dataset for RLHF and DPO.

hh-rlhf repository

https://github.com/anthropics/hh-rlhf

Anthropic/hh-rlhf

https://huggingface.co/datasets/Anthropic/hh-rlhf | [

-0.5173062682151794,

-0.9289660453796387,

0.5782462954521179,

0.24024510383605957,

-0.5644632577896118,

0.06642206013202667,

0.012910553254187107,

-0.6020025014877319,

0.8405015468597412,

0.9449174404144287,

-1.2688751220703125,

-0.6189240217208862,

-0.29845157265663147,

0.3985547125339508... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Trelis/openassistant-falcon | Trelis | 2023-11-01T08:46:17Z | 28 | 0 | null | [

"size_categories:1K<n<10k",

"language:en",

"language:es",

"language:ru",

"language:de",

"language:pl",

"language:th",

"language:vi",

"language:sv",

"language:bn",

"language:da",

"language:he",

"language:it",

"language:fa",

"language:sk",

"language:id",

"language:nb",

"language:el",... | 2023-11-01T08:46:17Z | 2023-11-01T08:38:05.000Z | 2023-11-01T08:38:05 | ---

license: apache-2.0

language:

- en

- es

- ru

- de

- pl

- th

- vi

- sv

- bn

- da

- he

- it

- fa

- sk

- id

- nb

- el

- nl

- hu

- eu

- zh

- eo

- ja

- ca

- cs

- bg

- fi

- pt

- tr

- ro

- ar

- uk

- gl

- fr

- ko

tags:

- human-feedback

- llama-2

size_categories:

- 1K<n<10k

pretty_name: Filtered OpenAssistant Conversations

---

# Chat Fine-tuning Dataset - OpenAssistant Falcon

This dataset allows for fine-tuning chat models using '\Human:' AND '\nAssistant:' to wrap user messages.

It still uses <|endoftext|> as EOS and BOS token, as per Falcon.

Sample

Preparation: