id stringlengths 2 115 | author stringlengths 2 42 ⌀ | last_modified timestamp[us, tz=UTC] | downloads int64 0 8.87M | likes int64 0 3.84k | paperswithcode_id stringlengths 2 45 ⌀ | tags list | lastModified timestamp[us, tz=UTC] | createdAt stringlengths 24 24 | key stringclasses 1 value | created timestamp[us] | card stringlengths 1 1.01M | embedding list | library_name stringclasses 21 values | pipeline_tag stringclasses 27 values | mask_token null | card_data null | widget_data null | model_index null | config null | transformers_info null | spaces null | safetensors null | transformersInfo null | modelId stringlengths 5 111 ⌀ | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

TrainingDataPro/spine-x-ray | TrainingDataPro | 2023-10-29T19:54:02Z | 0 | 1 | null | [

"task_categories:image-classification",

"task_categories:image-segmentation",

"task_categories:image-to-image",

"language:en",

"license:cc-by-nc-nd-4.0",

"medical",

"code",

"region:us"

] | 2023-10-29T19:54:02Z | 2023-10-29T19:40:35.000Z | 2023-10-29T19:40:35 | ---

license: cc-by-nc-nd-4.0

task_categories:

- image-classification

- image-segmentation

- image-to-image

language:

- en

tags:

- medical

- code

---

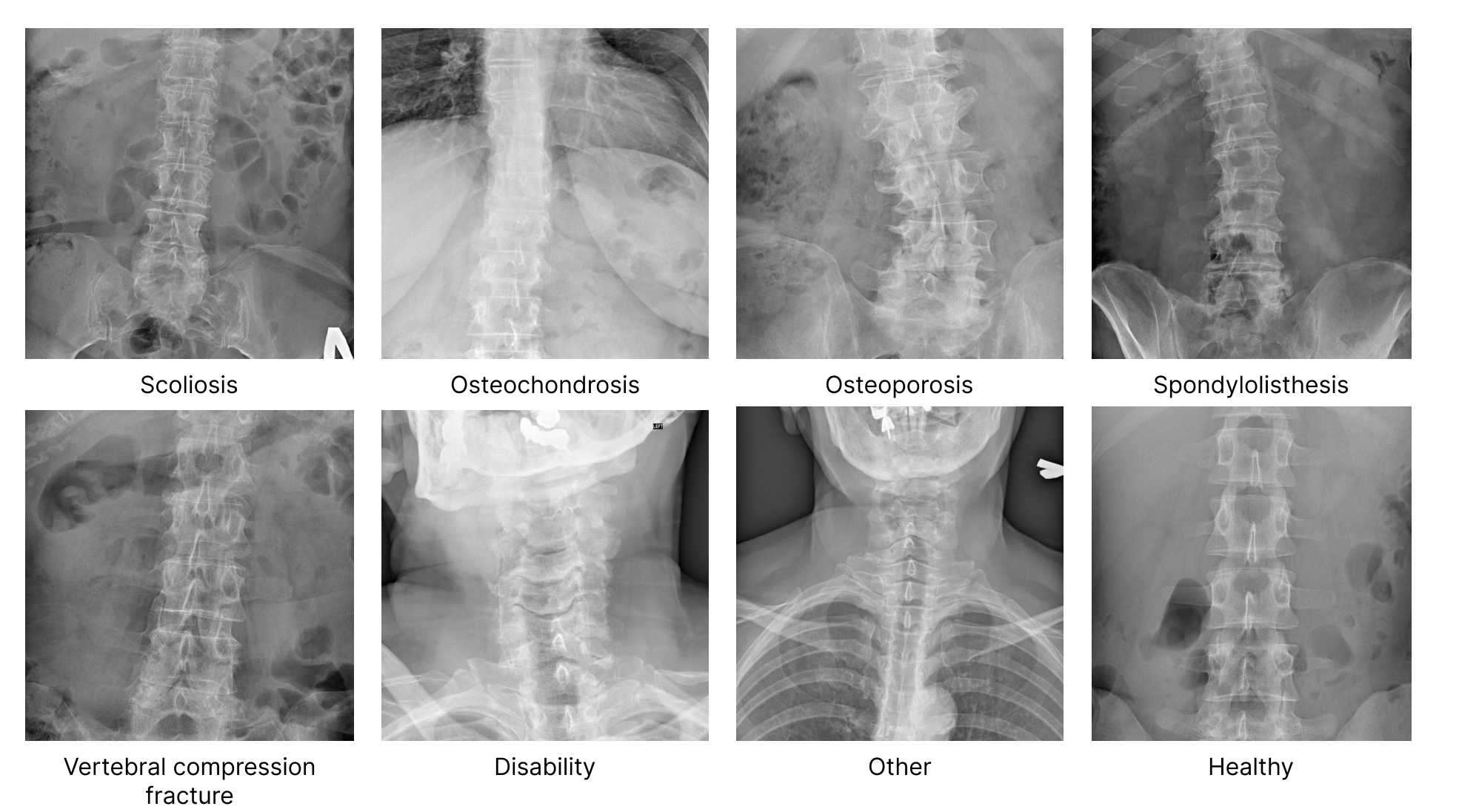

# Spine X-rays

The dataset consists of a collection of spine X-ray images in **.jpg and .dcm** formats. The images are organized into folders based on different medical conditions related to the spine. Each folder contains images depicting specific spinal deformities.

### Types of diseases and conditions in the dataset:

*Scoliosis, Osteochondrosis, Osteoporosis, Spondylolisthesis, Vertebral Compression Fractures (VCFs), Disability, Other and Healthy*

The dataset provides an opportunity for researchers and medical professionals to *analyze and develop algorithms for automated diagnosis, treatment planning, and prognosis estimation of* **various spinal conditions**.

It allows the development and evaluation of computer-based algorithms, machine learning models, and deep learning techniques for **automated detection, diagnosis, and classification** of these conditions.

# Get the Dataset

## This is just an example of the data

Leave a request on [https://trainingdata.pro/data-market](https://trainingdata.pro/data-market/spine-x-ray-image?utm_source=huggingface&utm_medium=cpc&utm_campaign=spine-x-ray) to discuss your requirements, learn about the price and buy the dataset

# Content

### The folder "files" includes 8 folders:

- corresponding to name of the disease/condition and including x-rays of people with this disease/condition (**scoliosis, osteochondrosis, VCFs etc.**)

- including x-rays in 2 different formats: **.jpg and .dcm**.

### File with the extension .csv includes the following information for each media file:

- **dcm**: link to access the .dcm file,

- **jpg**: link to access the .jpg file,

- **type**: name of the disease or condition on the x-ray

# Medical data might be collected in accordance with your requirements.

## [TrainingData](https://trainingdata.pro/data-market/spine-x-ray-image?utm_source=huggingface&utm_medium=cpc&utm_campaign=spine-x-ray) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/trainingdata-pro**

*keywords: spine dataset, spine X-rays dataset, scoliosis detection dataset, scoliosis segmentation dataset, scoliosis image dataset, medical imaging, radiology dataset, spine deformity dataset, orthopedic abnormalities, scoliotic curve dataset, degenerative spinal conditions, diagnostic imaging of the spine, osteoporosis dataset, osteochondrosis dataset, vertebral compression fracture detection, vertebral segmentation dataset*

| [

0.031891122460365295,

-0.024723052978515625,

0.1646355390548706,

0.14428240060806274,

-0.46248745918273926,

0.22254715859889984,

0.3670652508735657,

-0.172498881816864,

0.6695042252540588,

0.6884359121322632,

-0.4901439845561981,

-0.9527807235717773,

-0.3165869414806366,

-0.015257214196026... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

acetennis01/audiotest | acetennis01 | 2023-11-01T21:04:32Z | 0 | 0 | null | [

"task_categories:automatic-speech-recognition",

"size_categories:n<1K",

"language:en",

"region:us"

] | 2023-11-01T21:04:32Z | 2023-10-29T21:26:37.000Z | 2023-10-29T21:26:37 | ---

language:

- en

pretty_name: a

size_categories:

- n<1K

task_categories:

- automatic-speech-recognition

---

This is a test audio dataset | [

-0.503925085067749,

-0.7053440809249878,

-0.05804947391152382,

0.13719123601913452,

-0.09777012467384338,

-0.1462101936340332,

-0.05528077110648155,

0.021410111337900162,

0.1816515326499939,

0.6831346750259399,

-1.0622390508651733,

-0.43546295166015625,

-0.24940712749958038,

-0.10072031617... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

SergioSCA/StageTest | SergioSCA | 2023-10-29T21:49:46Z | 0 | 0 | null | [

"license:apache-2.0",

"region:us"

] | 2023-10-29T21:49:46Z | 2023-10-29T21:48:44.000Z | 2023-10-29T21:48:44 | ---

license: apache-2.0

---

| [

-0.1285335123538971,

-0.1861683875322342,

0.6529128551483154,

0.49436232447624207,

-0.19319400191307068,

0.23607441782951355,

0.36072009801864624,

0.05056373029947281,

0.5793656706809998,

0.7400146722793579,

-0.650810182094574,

-0.23784008622169495,

-0.7102247476577759,

-0.0478255338966846... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Alignment-Lab-AI/debate-ablate | Alignment-Lab-AI | 2023-10-29T22:05:50Z | 0 | 0 | null | [

"region:us"

] | 2023-10-29T22:05:50Z | 2023-10-29T22:05:11.000Z | 2023-10-29T22:05:11 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622264862060547,

0.43461528420448303,

-0.52829909324646,

0.7012971639633179,

0.7915720343589783,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104477167129517,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

hericrafti/heri | hericrafti | 2023-10-29T22:58:09Z | 0 | 0 | null | [

"region:us"

] | 2023-10-29T22:58:09Z | 2023-10-29T22:57:30.000Z | 2023-10-29T22:57:30 | Entry not found | [

-0.32276472449302673,

-0.22568407654762268,

0.8622258901596069,

0.4346148371696472,

-0.5282984972000122,

0.7012965679168701,

0.7915717363357544,

0.07618629932403564,

0.7746022939682007,

0.2563222646713257,

-0.785281777381897,

-0.22573848068714142,

-0.9104482531547546,

0.5715669393539429,

... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

patrick65536/mandala_controlnet | patrick65536 | 2023-10-30T02:31:00Z | 0 | 1 | null | [

"license:apache-2.0",

"region:us"

] | 2023-10-30T02:31:00Z | 2023-10-30T01:07:40.000Z | 2023-10-30T01:07:40 | ---

license: apache-2.0

dataset_info:

features:

- name: original_image

dtype: image

- name: condtioning_image

dtype: image

- name: caption

dtype: string

splits:

- name: train

num_bytes: 12212803.0

num_examples: 10

download_size: 0

dataset_size: 12212803.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

| [

-0.12853392958641052,

-0.18616779148578644,

0.6529127955436707,

0.49436280131340027,

-0.19319361448287964,

0.23607419431209564,

0.36072003841400146,

0.050563063472509384,

0.579365611076355,

0.7400140762329102,

-0.6508104205131531,

-0.23783954977989197,

-0.7102249264717102,

-0.0478260256350... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

vwxyzjn/cai-conversation-dev | vwxyzjn | 2023-11-20T18:58:18Z | 0 | 0 | null | [

"region:us"

] | 2023-11-20T18:58:18Z | 2023-10-30T02:25:07.000Z | 2023-10-30T02:25:07 | ---

dataset_info:

features:

- name: index

dtype: int64

- name: prompt

dtype: string

- name: init_prompt

dtype: string

- name: init_response

dtype: string

- name: critic_prompt

dtype: string

- name: critic_response

dtype: string

- name: revision_prompt

dtype: string

- name: revision_response

dtype: string

splits:

- name: train

num_bytes: 1554197

num_examples: 1024

download_size: 556838

dataset_size: 1554197

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "cai-conversation-dev"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.646047055721283,

-0.4851098358631134,

0.06698434799909592,

0.3958476781845093,

-0.09928009659051895,

0.1878053992986679,

0.15405574440956116,

-0.19658488035202026,

0.9322408437728882,

0.3883589506149292,

-0.8071365356445312,

-0.7200302481651306,

-0.45047882199287415,

-0.5060192346572876... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

automated-research-group/llama2_7b_bf16-winogrande-old | automated-research-group | 2023-10-30T03:25:59Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T03:25:59Z | 2023-10-30T03:25:58.000Z | 2023-10-30T03:25:58 | ---

dataset_info:

features:

- name: answer

dtype: string

- name: id

dtype: string

- name: question

dtype: string

- name: input_perplexity

dtype: float64

- name: input_likelihood

dtype: float64

- name: output_perplexity

dtype: float64

- name: output_likelihood

dtype: float64

splits:

- name: validation

num_bytes: 357232

num_examples: 1267

download_size: 162651

dataset_size: 357232

configs:

- config_name: default

data_files:

- split: validation

path: data/validation-*

---

# Dataset Card for "llama2_7b_bf16-winogrande"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.4963342845439911,

-0.15101216733455658,

0.2620566487312317,

0.46008560061454773,

-0.5806674957275391,

0.18463312089443207,

0.31911855936050415,

-0.4142543077468872,

0.77415931224823,

0.4212070405483246,

-0.654733419418335,

-0.8240216374397278,

-0.8467994928359985,

-0.258756160736084,

... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

bot-yaya/undl_en2zh_translation | bot-yaya | 2023-11-04T09:28:20Z | 0 | 0 | null | [

"region:us"

] | 2023-11-04T09:28:20Z | 2023-10-30T04:33:16.000Z | 2023-10-30T04:33:16 | ---

dataset_info:

features:

- name: clean_en

sequence: string

- name: clean_zh

sequence: string

- name: record

dtype: string

- name: en2zh

sequence: string

splits:

- name: train

num_bytes: 12473072134

num_examples: 165840

download_size: 6289516266

dataset_size: 12473072134

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "undl_en2zh_translation"

(undl_text)[https://huggingface.co/datasets/bot-yaya/undl_text]数据集的全量英文段落翻中文段落,是我口胡的基于翻译和最长公共子序列对齐方法的基础(雾)。

机翻轮子使用argostranslate,使用google云虚拟机的36个v核、google colab提供的免费的3个实例、google cloud shell的1个实例,我本地电脑的cpu和显卡,还有帮我挂colab的ranWang,帮我挂笔记本和本地的同学们,共计跑了一个星期得到。

感谢为我提供算力的小伙伴和云平台!

google云计算穷鬼算力白嫖指南:

- 绑卡后的免费账户可以最多同时建3个项目来用Compute API,每个项目配额是12个v核

- 选计算优化->C2D实例,高cpu,AMD EPYC Milan,这个比隔壁Xeon便宜又能打(AMD yes)。一般来说,免费用户的每个项目每个区域的配额顶天8vCPU,并且每个项目限制12vCPU。所以我推荐在最低价区买一个8x,再在次低价区整一个4x。

- **重要!** 选抢占式(Spot)实例,可以便宜不少

- 截至写README,免费用户能租到的最低价的C2D实例是比利时和衣阿华、南卡。孟买甚至比比利时便宜50%,但是免费用户不能租

- 内存其实实际运行只消耗2~3G,尽可能少要就好,C2D最低也是cpu:mem=1:2,那没办法只好要16G

- 13GB的标准硬盘、Debian 12 Bookworm镜像

- 开启允许HTTP和HTTPS流量

| [

-0.6667830348014832,

-0.8437572121620178,

0.10491734743118286,

0.35312196612358093,

-0.7337800860404968,

-0.1708202064037323,

-0.3109058737754822,

-0.37520256638526917,

0.16953390836715698,

0.65613853931427,

-0.557284951210022,

-0.611798882484436,

-0.47364601492881775,

0.11713593453168869,... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

gimhanSandeeptha/Medicaljsonl | gimhanSandeeptha | 2023-10-30T05:19:34Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T05:19:34Z | 2023-10-30T05:18:46.000Z | 2023-10-30T05:18:46 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622264862060547,

0.43461528420448303,

-0.52829909324646,

0.7012971639633179,

0.7915720343589783,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104477167129517,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

ranWang/undl_en2zh_translation | ranWang | 2023-10-30T05:58:38Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T05:58:38Z | 2023-10-30T05:34:29.000Z | 2023-10-30T05:34:29 | ---

dataset_info:

features:

- name: clean_en

sequence: string

- name: clean_zh

sequence: string

- name: record

dtype: string

- name: en2zh

sequence: string

splits:

- name: train

num_bytes: 12473072134

num_examples: 165840

download_size: 6289513941

dataset_size: 12473072134

---

# Dataset Card for "undl_en2zh_translation"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.3167443573474884,

-0.09151652455329895,

0.16950419545173645,

0.36505842208862305,

-0.45220747590065,

0.033836059272289276,

-0.013807646930217743,

-0.2699549198150635,

0.4715924859046936,

0.5459027886390686,

-0.7112768292427063,

-0.7964414358139038,

-0.5363811254501343,

-0.00576770678162... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

searchfind/Test_image_classification | searchfind | 2023-10-30T06:35:54Z | 0 | 0 | null | [

"license:apache-2.0",

"region:us"

] | 2023-10-30T06:35:54Z | 2023-10-30T06:32:45.000Z | 2023-10-30T06:32:45 | ---

license: apache-2.0

---

| [

-0.1285335123538971,

-0.1861683875322342,

0.6529128551483154,

0.49436232447624207,

-0.19319400191307068,

0.23607441782951355,

0.36072009801864624,

0.05056373029947281,

0.5793656706809998,

0.7400146722793579,

-0.650810182094574,

-0.23784008622169495,

-0.7102247476577759,

-0.0478255338966846... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

yep-search/LongCacti-quac | yep-search | 2023-10-30T08:23:03Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T08:23:03Z | 2023-10-30T08:22:48.000Z | 2023-10-30T08:22:48 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: dialogue_id

dtype: string

- name: wikipedia_page_title

dtype: string

- name: background

dtype: string

- name: section_title

dtype: string

- name: context

dtype: string

- name: turn_ids

sequence: string

- name: questions

sequence: string

- name: followups

sequence: int64

- name: yesnos

sequence: int64

- name: answers

struct:

- name: answer_starts

sequence:

sequence: int64

- name: texts

sequence:

sequence: string

- name: orig_answers

struct:

- name: answer_starts

sequence: int64

- name: texts

sequence: string

- name: wikipedia_page_text

dtype: string

- name: wikipedia_page_refs

list:

- name: text

dtype: string

- name: title

dtype: string

- name: gpt4_answers

sequence: string

- name: gpt4_answers_consistent_check

sequence: string

splits:

- name: train

num_bytes: 576059175

num_examples: 11567

download_size: 192048023

dataset_size: 576059175

---

# Dataset Card for "LongCacti-quac"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.5558847188949585,

-0.2660042643547058,

0.5666574239730835,

0.30926886200904846,

-0.30591800808906555,

0.17095080018043518,

0.25438350439071655,

-0.35877740383148193,

1.0564632415771484,

0.47970932722091675,

-0.7078215479850769,

-0.8429372310638428,

-0.3837958574295044,

-0.28733602166175... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

classla/COPA-SR_lat | classla | 2023-11-02T09:22:56Z | 0 | 0 | null | [

"task_categories:text-classification",

"size_categories:n<1K",

"language:sr",

"license:cc-by-sa-4.0",

"arxiv:2005.00333",

"region:us"

] | 2023-11-02T09:22:56Z | 2023-10-30T08:33:33.000Z | 2023-10-30T08:33:33 | ---

license: cc-by-sa-4.0

language:

- sr

task_categories:

- text-classification

size_categories:

- n<1K

configs:

- config_name: default

data_files:

- split: train

path: "train.lat.jsonl"

- split: test

path: "test.lat.jsonl"

- split: dev

path: "val.lat.jsonl"

---

# COPA-SR_lat

(The dataset uses latin script. For the original (cyrillic) version, see [this dataset](https://huggingface.co/datasets/classla/COPA-SR).)

The COPA-SR dataset (Choice of plausible alternatives in Serbian) is a translation of the [English COPA dataset ](https://people.ict.usc.edu/~gordon/copa.html) by following the [XCOPA dataset translation methodology ](https://arxiv.org/abs/2005.00333), transliterated into Latin script.

The dataset consists of 1,000 premises (My body cast a shadow over the grass), each given a question (What is the cause? / What happened as a result?), and two choices (The sun was rising; The grass was cut), with a label encoding which of the choices is more plausible given the annotator or translator (The sun was rising).

The dataset follows the same format as the [Croatian COPA-HR dataset ](http://hdl.handle.net/11356/1404) and [Macedonian COPA-MK dataset ](http://hdl.handle.net/11356/1687). It is split into training (400 instances), validation (100 instances) and test (500 instances) JSONL files.

Translation of the dataset was performed by the [ReLDI Centre Belgrade ](https://reldi.spur.uzh.ch/).

# Authors:

* Ljubešić, Nikola

* Starović, Mirjana

* Kuzman, Taja

* Samardžić, Tanja

# Citation information

```

@misc{11356/1708,

title = {Choice of plausible alternatives dataset in Serbian {COPA}-{SR}},

author = {Ljube{\v s}i{\'c}, Nikola and Starovi{\'c}, Mirjana and Kuzman, Taja and Samard{\v z}i{\'c}, Tanja},

url = {http://hdl.handle.net/11356/1708},

note = {Slovenian language resource repository {CLARIN}.{SI}},

copyright = {Creative Commons - Attribution-{ShareAlike} 4.0 International ({CC} {BY}-{SA} 4.0)},

issn = {2820-4042},

year = {2022} }

``` | [

-0.03896191716194153,

-0.49598008394241333,

0.4711547791957855,

0.007056310307234526,

-0.47152912616729736,

0.07682009786367416,

-0.21269181370735168,

-0.556786298751831,

0.4200384020805359,

0.5323848128318787,

-0.8090469241142273,

-0.5694350600242615,

-0.40721842646598816,

0.1544222533702... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

kalomaze/PaperMarioDecomp_1k | kalomaze | 2023-10-30T09:22:06Z | 0 | 0 | null | [

"license:apache-2.0",

"region:us"

] | 2023-10-30T09:22:06Z | 2023-10-30T08:40:17.000Z | 2023-10-30T08:40:17 | ---

license: apache-2.0

---

A subset of MIPS Assembly instructions with matching reverse engineered C code from Paper Mario.

https://github.com/pmret/papermario | [

-0.19519983232021332,

-0.32855719327926636,

0.5755409002304077,

0.219571053981781,

-0.08052965253591537,

0.181087464094162,

0.43753984570503235,

-0.2027483731508255,

0.641436755657196,

0.8569326400756836,

-1.086087942123413,

-0.0876493826508522,

-0.30074071884155273,

-0.08726110309362411,

... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

md-nishat-008/SentMix-3L | md-nishat-008 | 2023-11-08T12:26:02Z | 0 | 0 | null | [

"license:agpl-3.0",

"arxiv:2310.18023",

"region:us"

] | 2023-11-08T12:26:02Z | 2023-10-30T09:19:23.000Z | 2023-10-30T09:19:23 | ---

license: agpl-3.0

---

# SentMix-3L: A Bangla-English-Hindi Code-Mixed Dataset for Sentiment Analysis

**Publication**: *The First Workshop in South East Asian Language Processing Workshop under AACL-2023.*

**Read in [arXiv](https://arxiv.org/pdf/2310.18023.pdf)**

---

## 📖 Introduction

Code-mixing is a well-studied linguistic phenomenon when two or more languages are mixed in text or speech. Several datasets have been built with the goal of training computational models for code-mixing. Although it is very common to observe code-mixing with multiple languages, most datasets available contain code-mixed between only two languages. In this paper, we introduce **SentMix-3L**, a novel dataset for sentiment analysis containing code-mixed data between three languages: Bangla, English, and Hindi. We show that zero-shot prompting with GPT-3.5 outperforms all transformer-based models on SentMix-3L.

---

## 📊 Dataset Details

We introduce **SentMix-3L**, a novel three-language code-mixed test dataset with gold standard labels in Bangla-Hindi-English for the task of Sentiment Analysis, containing 1,007 instances.

> We are presenting this dataset exclusively as a test set due to the unique and specialized nature of the task. Such data is very difficult to gather and requires significant expertise to access. The size of the dataset, while limiting for training purposes, offers a high-quality testing environment with gold-standard labels that can serve as a benchmark in this domain.

---

## 📈 Dataset Statistics

| | **All** | **Bangla** | **English** | **Hindi** | **Other** |

|-------------------|---------|------------|-------------|-----------|-----------|

| Tokens | 89494 | 32133 | 5998 | 15131 | 36232 |

| Types | 19686 | 8167 | 1073 | 1474 | 9092 |

| Max. in instance | 173 | 62 | 20 | 47 | 93 |

| Min. in instance | 41 | 4 | 3 | 2 | 8 |

| Avg | 88.87 | 31.91 | 5.96 | 15.03 | 35.98 |

| Std Dev | 19.19 | 8.39 | 2.94 | 5.81 | 9.70 |

*The row 'Avg' represents the average number of tokens with its standard deviation in row 'Std Dev'.*

---

## 📉 Results

| **Models** | **Weighted F1 Score** |

|---------------|-----------------------|

| GPT 3.5 Turbo | **0.62** |

| XLM-R | 0.59 |

| BanglishBERT | 0.56 |

| mBERT | 0.56 |

| BERT | 0.55 |

| roBERTa | 0.54 |

| MuRIL | 0.54 |

| IndicBERT | 0.53 |

| DistilBERT | 0.53 |

| HindiBERT | 0.48 |

| HingBERT | 0.47 |

| BanglaBERT | 0.47 |

*Weighted F-1 score for different models: training on synthetic, testing on natural data.*

---

## 📝 Citation

If you utilize this dataset, kindly cite our paper.

```bibtex

@article{raihan2023sentmix,

title={SentMix-3L: A Bangla-English-Hindi Code-Mixed Dataset for Sentiment Analysis},

author={Raihan, Md Nishat and Goswami, Dhiman and Mahmud, Antara and Anstasopoulos, Antonios and Zampieri, Marcos},

journal={arXiv preprint arXiv:2310.18023},

year={2023}

}

| [

-0.43511444330215454,

-0.5672920942306519,

0.012886586599051952,

0.667406439781189,

-0.2730027735233307,

0.25202012062072754,

-0.24356438219547272,

-0.34557318687438965,

0.21200983226299286,

0.19396589696407318,

-0.493852436542511,

-0.7816265225410461,

-0.6767232418060303,

0.24350279569625... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Leoku/drug | Leoku | 2023-10-30T10:11:51Z | 0 | 0 | null | [

"license:mit",

"region:us"

] | 2023-10-30T10:11:51Z | 2023-10-30T10:07:03.000Z | 2023-10-30T10:07:03 | ---

license: mit

---

| [

-0.12853392958641052,

-0.18616779148578644,

0.6529127955436707,

0.49436280131340027,

-0.19319361448287964,

0.23607419431209564,

0.36072003841400146,

0.050563063472509384,

0.579365611076355,

0.7400140762329102,

-0.6508104205131531,

-0.23783954977989197,

-0.7102249264717102,

-0.0478260256350... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

rmcpantoja/taco2-checkpoints | rmcpantoja | 2023-11-26T23:16:22Z | 0 | 0 | null | [

"license:bsd-3-clause",

"region:us"

] | 2023-11-26T23:16:22Z | 2023-10-30T11:14:39.000Z | 2023-10-30T11:14:39 | ---

license: bsd-3-clause

---

| [

-0.12853392958641052,

-0.18616779148578644,

0.6529127955436707,

0.49436280131340027,

-0.19319361448287964,

0.23607419431209564,

0.36072003841400146,

0.050563063472509384,

0.579365611076355,

0.7400140762329102,

-0.6508104205131531,

-0.23783954977989197,

-0.7102249264717102,

-0.0478260256350... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

ibizagrowthagency/train | ibizagrowthagency | 2023-11-01T14:39:58Z | 0 | 0 | null | [

"region:us"

] | 2023-11-01T14:39:58Z | 2023-10-30T11:16:05.000Z | 2023-10-30T11:16:05 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype:

class_label:

names:

'0': Aquarell Tattoos

'1': Bedeutung der Tribal Tattoos

'2': Blackwork Tattoo

'3': Building

'4': Cover-Up Tattoo

'5': Dotwork Tattoos

'6': Fineline Tattoos

'7': Geschiche der Maori Tattoos

'8': Japanische Tattoos in Leipzig

'9': Narben Tattoo

'10': Portrait Tattoos

'11': Poster

'12': Realistic Tattoos

'13': Totenkopf Tattoos

'14': Trashpolka Tattoos

'15': Tribal Tattoo

'16': Wikinger Tattoos

splits:

- name: train

num_bytes: 6665820.160194174

num_examples: 175

- name: test

num_bytes: 1297030.8398058251

num_examples: 31

download_size: 7953806

dataset_size: 7962851.0

---

# Dataset Card for "train"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.6341153383255005,

0.040702350437641144,

0.17027536034584045,

0.30861416459083557,

-0.09620492160320282,

-0.05674801021814346,

0.20394599437713623,

-0.1600799560546875,

0.7688670754432678,

0.327614963054657,

-0.9409077167510986,

-0.5018333196640015,

-0.6181047558784485,

-0.34643167257308... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Phando/vision-flan_191-task_1k | Phando | 2023-10-30T12:28:05Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T12:28:05Z | 2023-10-30T12:07:33.000Z | 2023-10-30T12:07:33 | ---

dataset_info:

features:

- name: id

dtype: string

- name: image

dtype: image

- name: task_name

dtype: string

- name: instruction

dtype: string

- name: response

dtype: string

splits:

- name: train

num_bytes: 33215298748.003

num_examples: 186103

download_size: 36889036585

dataset_size: 33215298748.003

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "vision-flan_191-task_1k"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.5235763192176819,

-0.09599412232637405,

0.12178028374910355,

0.17943286895751953,

-0.3504790961742401,

-0.3009777069091797,

0.2888500690460205,

-0.29525840282440186,

0.9850719571113586,

0.7273213863372803,

-1.0436952114105225,

-0.6465086340904236,

-0.6609883904457092,

-0.341853350400924... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

jxu124/refclef-benchmark | jxu124 | 2023-10-30T13:28:06Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T13:28:06Z | 2023-10-30T13:24:55.000Z | 2023-10-30T13:24:55 | ---

configs:

- config_name: default

data_files:

- split: refclef_unc_val

path: data/refclef_unc_val-*

- split: refclef_unc_testA

path: data/refclef_unc_testA-*

- split: refclef_unc_testB

path: data/refclef_unc_testB-*

- split: refclef_unc_testC

path: data/refclef_unc_testC-*

- split: refclef_berkeley_val

path: data/refclef_berkeley_val-*

- split: refclef_berkeley_test

path: data/refclef_berkeley_test-*

dataset_info:

features:

- name: ref_list

list:

- name: ann_info

struct:

- name: area

dtype: int64

- name: bbox

sequence: float64

- name: category_id

dtype: int64

- name: id

dtype: string

- name: image_id

dtype: int64

- name: mask_name

dtype: string

- name: segmentation

list:

- name: counts

dtype: string

- name: size

sequence: int64

- name: ref_info

struct:

- name: ann_id

dtype: string

- name: category_id

dtype: int64

- name: image_id

dtype: int64

- name: ref_id

dtype: int64

- name: sent_ids

sequence: int64

- name: sentences

list:

- name: raw

dtype: string

- name: sent

dtype: string

- name: sent_id

dtype: int64

- name: tokens

sequence: string

- name: split

dtype: string

- name: image_info

struct:

- name: file_name

dtype: string

- name: height

dtype: int64

- name: id

dtype: int64

- name: width

dtype: int64

- name: image

dtype: image

splits:

- name: refclef_unc_val

num_bytes: 176315268.0

num_examples: 2000

- name: refclef_unc_testA

num_bytes: 38748729.0

num_examples: 485

- name: refclef_unc_testB

num_bytes: 41495038.0

num_examples: 490

- name: refclef_unc_testC

num_bytes: 37159288.0

num_examples: 465

- name: refclef_berkeley_val

num_bytes: 90320401.0

num_examples: 1000

- name: refclef_berkeley_test

num_bytes: 889898825.642

num_examples: 9999

download_size: 1256485050

dataset_size: 1273937549.642

---

# Dataset Card for "refclef-benchmark"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.7835304737091064,

-0.0925966277718544,

0.15902890264987946,

0.13186578452587128,

-0.2679632604122162,

-0.18892714381217957,

0.2547675669193268,

-0.33129289746284485,

0.5763798356056213,

0.5060961842536926,

-0.8969134092330933,

-0.5212424397468567,

-0.34763962030410767,

0.035826526582241... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

eno777/babab | eno777 | 2023-10-30T14:01:06Z | 0 | 0 | null | [

"license:openrail",

"region:us"

] | 2023-10-30T14:01:06Z | 2023-10-30T14:00:41.000Z | 2023-10-30T14:00:41 | ---

license: openrail

---

| [

-0.1285335123538971,

-0.1861683875322342,

0.6529128551483154,

0.49436232447624207,

-0.19319400191307068,

0.23607441782951355,

0.36072009801864624,

0.05056373029947281,

0.5793656706809998,

0.7400146722793579,

-0.650810182094574,

-0.23784008622169495,

-0.7102247476577759,

-0.0478255338966846... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

ISCA-IUB/GermanLanguageTwitterAntisemitism | ISCA-IUB | 2023-11-13T08:56:44Z | 0 | 0 | null | [

"language:de",

"twitter",

"X",

"hate speech",

"antisemitism",

"machine learning",

"juden",

"israel",

"region:us"

] | 2023-11-13T08:56:44Z | 2023-10-30T14:09:13.000Z | 2023-10-30T14:09:13 | ---

language:

- de

tags:

- twitter

- X

- hate speech

- antisemitism

- machine learning

- juden

- israel

pretty_name: German Language Antisemitism on Twitter

---

# A German Language Labeled Dataset of Tweets

Gunther Jikeli, Sameer Karali, Daniel Miehling and Katharina Soemer

{gjikeli, skarali, damieh, ksoemer}@iu.edu

## Description

Our dataset contains 8,048 German language tweets related to Jewish life from a four-year timespan.

The dataset consists of 18 samples of tweets with the keyword “Juden” or “Israel.” The samples are representative samples of all live tweets (at the time of sampling) with these keywords respectively over the indicated time period. Each sample was annotated by two expert annotators using an Annotation Portal that visualizes the live tweets in context. We provide the annotation results based on the agreement of two annotators, after discussing discrepancies (Jikeli et al. 2022: 3-6).

Overall, 335 tweets (4%) were labelled as antisemitic following the IHRA Working Definition of Antisemitism. 1345 tweets (17 %) come from 2019, 1364 tweets (17 %) from 2020, 2639 tweets (33 %) from 2021 and 2700 tweets (34 %) from 2022.

About half of the tweets, a total of 4,493 tweets (56 %) come from queries with the keyword “Juden,” which is representative of a continuous time period from January 2019 to December 2022: 864 tweets (19 %) come from 2019, 891 tweets (20 %) from 2020, 1364 tweets (30 %) from 2021 and 1374 (31 %). 148 out of the 4493 tweets, so 3% from the query with “Juden” are antisemitic.

The other part of the tweets, a total of 3,555 (44 %) results of queries with the keyword “Israel”. 481 tweets (14 %) of the keywords containing Israel stem from 2019, 473 (13 %) come from 2020, 1275 tweets (36 %) from 2021 and 1326 tweets (37 %) are from 2022. Out of all tweets from the “Israel” query, 187 (5 %) are antisemitic.

The csv file contains diacritics and special characters of the German language (e.g., “ä”, “ü”, “ö”, “ß”), which should be taken into account when opening it with anything other than a text editor.

## References

Günther Jikeli, David Axelrod, Rhonda K. Fischer, Elham Forouzesh, Weejeong Jeong, Daniel Miehling, Katharina Soemer (2022): Differences between antisemitic and non-antisemitic English language tweets. Computational and Mathematical Organization Theory

## Acknowledgements

This work used Jetstream2 at Indiana University through allocation HUM200003 from the Advanced Cyberinfrastructure Coordination Ecosystem: Services & Support (ACCESS) program, which is supported by National Science Foundation grants #2138259, #2138286, #2138307, #2137603, and #2138296.

We are grateful for the support of Indiana University’s Observatory on Social Media (OSoMe) (Davis et al. 2016) and the contributions and annotations of all team members in our Social Media & Hate Research Lab at Indiana University’s Institute for the Study of Contemporary Antisemitism, especially Grace Bland, Elisha S. Breton, Kathryn Cooper, Robin Forstenhäusler, Sophie von Máriássy, Mabel Poindexter, Jenna Solomon, Clara Schilling, Emma Shriberg and Victor Tschiskale. | [

-0.48086175322532654,

-0.8224248886108398,

0.19138433039188385,

-0.01859930157661438,

-0.4425269067287445,

0.2768707573413849,

-0.14078393578529358,

-0.5353142023086548,

0.9023574590682983,

0.22326521575450897,

-0.41976892948150635,

-0.7469180226325989,

-0.9589449763298035,

0.0056515792384... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

MichaelVeser/opensecurity-axolotl | MichaelVeser | 2023-10-30T14:19:23Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T14:19:23Z | 2023-10-30T14:19:10.000Z | 2023-10-30T14:19:10 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622264862060547,

0.43461528420448303,

-0.52829909324646,

0.7012971639633179,

0.7915720343589783,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104477167129517,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

varuntrivedi/trial_dataset | varuntrivedi | 2023-10-30T14:28:01Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T14:28:01Z | 2023-10-30T14:26:26.000Z | 2023-10-30T14:26:26 | [

{

"id": "ner_gpt_0",

"conversations": [

[

{ "from": "human",

"value": "Text: The impact of climate change on marine ecosystems and biodiversity is a pressing concern. Rising sea temperatures, ocean acidification, and changing currents have significant effects on marine life. Researchers are studying the adaptation mechanisms of various species to these environmental changes." },

{ "from": "gpt", "value": "I've read this text." },

{ "from": "human", "value": "What describes the main focus of the text?" },

{ "from": "gpt", "value": "[\"impact of climate change on marine ecosystems and biodiversity\"]" },

{ "from": "human", "value": "What describes environmental factors in the text?" },

{ "from": "gpt", "value": "[\"rising sea temperatures\", \"ocean acidification\", \"changing currents\"]" },

{ "from": "human", "value": "What describes the research subject in the text?" },

{ "from": "gpt", "value": "[\"adaptation mechanisms of various species\"]" },

{ "from": "human", "value": "What describes the time frame in the text?" },

{ "from": "gpt", "value": "[]" }

]

]

}

] | [

-0.49838319420814514,

-0.5454192757606506,

0.5204375982284546,

-0.13945280015468597,

-0.9226728677749634,

0.16271738708019257,

-0.04223962128162384,

-0.06141626834869385,

0.4689624309539795,

0.8926569223403931,

-0.7125685811042786,

-0.6686069369316101,

-0.5925055146217346,

0.54537320137023... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Buggy23/colegio | Buggy23 | 2023-10-30T14:40:27Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T14:40:27Z | 2023-10-30T14:37:44.000Z | 2023-10-30T14:37:44 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622264862060547,

0.43461528420448303,

-0.52829909324646,

0.7012971639633179,

0.7915720343589783,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104477167129517,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Almost-AGI-Diffusion/kand2 | Almost-AGI-Diffusion | 2023-10-30T14:49:24Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T14:49:24Z | 2023-10-30T14:42:57.000Z | 2023-10-30T14:42:57 | ---

dataset_info:

features:

- name: Prompt

dtype: string

- name: Category

dtype: string

- name: Challenge

dtype: string

- name: Note

dtype: string

- name: images

dtype: image

- name: model_name

dtype: string

- name: seed

dtype: int64

- name: upvotes

dtype: int64

splits:

- name: train

num_bytes: 21708501.0

num_examples: 219

download_size: 21693707

dataset_size: 21708501.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Kandinksy 2.2

All images included in this dataset were voted as "Not solved" by the community in https://huggingface.co/spaces/OpenGenAI/open-parti-prompts. This means that according to the community the model did not generate an image that corresponds sufficiently enough to the prompt.

The following script was used to generate the images:

```py

import PIL

import torch

from datasets import Dataset, Features

from datasets import Image as ImageFeature

from datasets import Value, load_dataset

from diffusers import DiffusionPipeline

def main():

print("Loading dataset...")

parti_prompts = load_dataset("nateraw/parti-prompts", split="train")

print("Loading pipeline...")

pipe_prior = DiffusionPipeline.from_pretrained(

"kandinsky-community/kandinsky-2-2-prior", torch_dtype=torch.float16

)

pipe_prior.to("cuda")

pipe_prior.set_progress_bar_config(disable=True)

t2i_pipe = DiffusionPipeline.from_pretrained(

"kandinsky-community/kandinsky-2-2-decoder", torch_dtype=torch.float16

)

t2i_pipe.to("cuda")

t2i_pipe.set_progress_bar_config(disable=True)

seed = 0

generator = torch.Generator("cuda").manual_seed(seed)

ckpt_id = (

"kandinsky-community/" + "kandinsky-2-2-prior" + "_" + "kandinsky-2-2-decoder"

)

print("Running inference...")

main_dict = {}

for i in range(len(parti_prompts)):

sample = parti_prompts[i]

prompt = sample["Prompt"]

image_embeds, negative_image_embeds = pipe_prior(

prompt,

generator=generator,

num_inference_steps=100,

guidance_scale=7.5,

).to_tuple()

image = t2i_pipe(

image_embeds=image_embeds,

negative_image_embeds=negative_image_embeds,

generator=generator,

num_inference_steps=100,

guidance_scale=7.5,

).images[0]

image = image.resize((256, 256), resample=PIL.Image.Resampling.LANCZOS)

img_path = f"kandinsky_22_{i}.png"

image.save(img_path)

main_dict.update(

{

prompt: {

"img_path": img_path,

"Category": sample["Category"],

"Challenge": sample["Challenge"],

"Note": sample["Note"],

"model_name": ckpt_id,

"seed": seed,

}

}

)

def generation_fn():

for prompt in main_dict:

prompt_entry = main_dict[prompt]

yield {

"Prompt": prompt,

"Category": prompt_entry["Category"],

"Challenge": prompt_entry["Challenge"],

"Note": prompt_entry["Note"],

"images": {"path": prompt_entry["img_path"]},

"model_name": prompt_entry["model_name"],

"seed": prompt_entry["seed"],

}

print("Preparing HF dataset...")

ds = Dataset.from_generator(

generation_fn,

features=Features(

Prompt=Value("string"),

Category=Value("string"),

Challenge=Value("string"),

Note=Value("string"),

images=ImageFeature(),

model_name=Value("string"),

seed=Value("int64"),

),

)

ds_id = "diffusers-parti-prompts/kandinsky-2-2"

ds.push_to_hub(ds_id)

if __name__ == "__main__":

main()

``` | [

-0.38658666610717773,

-0.4149981737136841,

0.5292077660560608,

0.141149640083313,

-0.324416846036911,

-0.17320941388607025,

-0.011328685097396374,

-0.04029078409075737,

-0.045349035412073135,

0.4130818545818329,

-0.885809600353241,

-0.6590896844863892,

-0.5180893540382385,

0.09085967391729... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Almost-AGI-Diffusion/sdxl | Almost-AGI-Diffusion | 2023-10-30T14:46:58Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T14:46:58Z | 2023-10-30T14:43:04.000Z | 2023-10-30T14:43:04 | ---

dataset_info:

features:

- name: Prompt

dtype: string

- name: Category

dtype: string

- name: Challenge

dtype: string

- name: Note

dtype: string

- name: images

dtype: image

- name: model_name

dtype: string

- name: seed

dtype: int64

- name: upvotes

dtype: int64

splits:

- name: train

num_bytes: 25650684.0

num_examples: 219

download_size: 25640015

dataset_size: 25650684.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# SDXL

All images included in this dataset were voted as "Not solved" by the community in https://huggingface.co/spaces/OpenGenAI/open-parti-prompts.

This means that according to the community the model did not generate an image that corresponds sufficiently enough to the prompt.

The following script was used to generate the images:

```py

import torch

from datasets import Dataset, Features

from datasets import Image as ImageFeature

from datasets import Value, load_dataset

from diffusers import DDIMScheduler, DiffusionPipeline

import PIL

def main():

print("Loading dataset...")

parti_prompts = load_dataset("nateraw/parti-prompts", split="train")

print("Loading pipeline...")

ckpt_id = "stabilityai/stable-diffusion-xl-base-1.0"

refiner_ckpt_id = "stabilityai/stable-diffusion-xl-refiner-1.0"

pipe = DiffusionPipeline.from_pretrained(

ckpt_id, torch_dtype=torch.float16, use_auth_token=True

).to("cuda")

pipe.scheduler = DDIMScheduler.from_config(pipe.scheduler.config)

pipe.set_progress_bar_config(disable=True)

refiner = DiffusionPipeline.from_pretrained(

refiner_ckpt_id,

torch_dtype=torch.float16,

use_auth_token=True

).to("cuda")

refiner.scheduler = DDIMScheduler.from_config(refiner.scheduler.config)

refiner.set_progress_bar_config(disable=True)

seed = 0

generator = torch.Generator("cuda").manual_seed(seed)

print("Running inference...")

main_dict = {}

for i in range(len(parti_prompts)):

sample = parti_prompts[i]

prompt = sample["Prompt"]

latent = pipe(

prompt,

generator=generator,

num_inference_steps=100,

guidance_scale=7.5,

output_type="latent",

).images[0]

image_refined = refiner(

prompt=prompt,

image=latent[None, :],

generator=generator,

num_inference_steps=100,

guidance_scale=7.5,

).images[0]

image = image_refined.resize((256, 256), resample=PIL.Image.Resampling.LANCZOS)

img_path = f"sd_xl_{i}.png"

image.save(img_path)

main_dict.update(

{

prompt: {

"img_path": img_path,

"Category": sample["Category"],

"Challenge": sample["Challenge"],

"Note": sample["Note"],

"model_name": ckpt_id,

"seed": seed,

}

}

)

def generation_fn():

for prompt in main_dict:

prompt_entry = main_dict[prompt]

yield {

"Prompt": prompt,

"Category": prompt_entry["Category"],

"Challenge": prompt_entry["Challenge"],

"Note": prompt_entry["Note"],

"images": {"path": prompt_entry["img_path"]},

"model_name": prompt_entry["model_name"],

"seed": prompt_entry["seed"],

}

print("Preparing HF dataset...")

ds = Dataset.from_generator(

generation_fn,

features=Features(

Prompt=Value("string"),

Category=Value("string"),

Challenge=Value("string"),

Note=Value("string"),

images=ImageFeature(),

model_name=Value("string"),

seed=Value("int64"),

),

)

ds_id = "diffusers-parti-prompts/sdxl-1.0-refiner"

ds.push_to_hub(ds_id)

if __name__ == "__main__":

main()

``` | [

-0.4820316433906555,

-0.3758489787578583,

0.6965538263320923,

0.18194037675857544,

-0.258565217256546,

-0.19217780232429504,

0.06208128109574318,

0.03132057934999466,

-0.031654633581638336,

0.5435617566108704,

-0.9338095188140869,

-0.6228287816047668,

-0.5329431891441345,

0.170590654015541... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Almost-AGI-Diffusion/wuerst | Almost-AGI-Diffusion | 2023-10-30T14:50:04Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T14:50:04Z | 2023-10-30T14:43:10.000Z | 2023-10-30T14:43:10 | ---

dataset_info:

features:

- name: Prompt

dtype: string

- name: Category

dtype: string

- name: Challenge

dtype: string

- name: Note

dtype: string

- name: images

dtype: image

- name: model_name

dtype: string

- name: seed

dtype: int64

- name: upvotes

dtype: int64

splits:

- name: train

num_bytes: 19633368.0

num_examples: 219

download_size: 19625614

dataset_size: 19633368.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Wuerstchen

All images included in this dataset were voted as "Not solved" by the community in https://huggingface.co/spaces/OpenGenAI/open-parti-prompts. This means that according to the community the model did not generate an image that corresponds sufficiently enough to the prompt.

The following script was used to generate the images:

```py

import torch

from datasets import Dataset, Features

from datasets import Image as ImageFeature

from datasets import Value, load_dataset

from diffusers import AutoPipelineForText2Image

import PIL

def main():

print("Loading dataset...")

parti_prompts = load_dataset("nateraw/parti-prompts", split="train")

print("Loading pipeline...")

seed = 0

device = "cuda"

generator = torch.Generator(device).manual_seed(seed)

dtype = torch.float16

ckpt_id = "warp-diffusion/wuerstchen"

pipeline = AutoPipelineForText2Image.from_pretrained(

ckpt_id, torch_dtype=dtype

).to(device)

pipeline.prior_prior = torch.compile(pipeline.prior_prior, mode="reduce-overhead", fullgraph=True)

pipeline.decoder = torch.compile(pipeline.decoder, mode="reduce-overhead", fullgraph=True)

print("Running inference...")

main_dict = {}

for i in range(len(parti_prompts)):

sample = parti_prompts[i]

prompt = sample["Prompt"]

image = pipeline(

prompt=prompt,

height=1024,

width=1024,

prior_guidance_scale=4.0,

decoder_guidance_scale=0.0,

generator=generator,

).images[0]

image = image.resize((256, 256), resample=PIL.Image.Resampling.LANCZOS)

img_path = f"wuerstchen_{i}.png"

image.save(img_path)

main_dict.update(

{

prompt: {

"img_path": img_path,

"Category": sample["Category"],

"Challenge": sample["Challenge"],

"Note": sample["Note"],

"model_name": ckpt_id,

"seed": seed,

}

}

)

def generation_fn():

for prompt in main_dict:

prompt_entry = main_dict[prompt]

yield {

"Prompt": prompt,

"Category": prompt_entry["Category"],

"Challenge": prompt_entry["Challenge"],

"Note": prompt_entry["Note"],

"images": {"path": prompt_entry["img_path"]},

"model_name": prompt_entry["model_name"],

"seed": prompt_entry["seed"],

}

print("Preparing HF dataset...")

ds = Dataset.from_generator(

generation_fn,

features=Features(

Prompt=Value("string"),

Category=Value("string"),

Challenge=Value("string"),

Note=Value("string"),

images=ImageFeature(),

model_name=Value("string"),

seed=Value("int64"),

),

)

ds_id = "diffusers-parti-prompts/wuerstchen"

ds.push_to_hub(ds_id)

if __name__ == "__main__":

main()

``` | [

-0.4908706545829773,

-0.30975261330604553,

0.4390822649002075,

0.1890379637479782,

-0.303903728723526,

-0.3738420605659485,

-0.006912183947861195,

-0.10675285011529922,

-0.043013520538806915,

0.38071808218955994,

-0.9544967412948608,

-0.5245521068572998,

-0.5272974371910095,

0.174387618899... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Almost-AGI-Diffusion/karlo | Almost-AGI-Diffusion | 2023-10-30T14:48:09Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T14:48:09Z | 2023-10-30T14:43:16.000Z | 2023-10-30T14:43:16 | ---

dataset_info:

features:

- name: Prompt

dtype: string

- name: Category

dtype: string

- name: Challenge

dtype: string

- name: Note

dtype: string

- name: images

dtype: image

- name: model_name

dtype: string

- name: seed

dtype: int64

- name: upvotes

dtype: int64

splits:

- name: train

num_bytes: 20834626.0

num_examples: 219

download_size: 20825015

dataset_size: 20834626.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Karlo

All images included in this dataset were voted as "Not solved" by the community in https://huggingface.co/spaces/OpenGenAI/open-parti-prompts.

This means that according to the community the model did not generate an image that corresponds sufficiently enough to the prompt.

The following script was used to generate the images:

```py

from diffusers import DiffusionPipeline

import torch

pipe = DiffusionPipeline.from_pretrained("kakaobrain/karlo-v1-alpha", torch_dtype=torch.float16)

pipe.to("cuda")

prompt = "" # a parti prompt

generator = torch.Generator("cuda").manual_seed(0)

image = pipe(prompt, prior_num_inference_steps=50, decoder_num_inference_steps=100, generator=generator).images[0]

``` | [

-0.4578472375869751,

-0.28453031182289124,

0.7394711375236511,

0.305819571018219,

-0.5860678553581238,

-0.3823627233505249,

0.15059007704257965,

-0.08108039945363998,

0.2959766685962677,

0.3480220437049866,

-0.9563478827476501,

-0.735800564289093,

-0.6897476315498352,

0.5335032343864441,

... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

matheushmart/cantores | matheushmart | 2023-10-30T16:57:19Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T16:57:19Z | 2023-10-30T14:57:50.000Z | 2023-10-30T14:57:50 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622264862060547,

0.43461528420448303,

-0.52829909324646,

0.7012971639633179,

0.7915720343589783,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104477167129517,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Abdou/dz-sentiment-yt-comments | Abdou | 2023-11-06T10:49:24Z | 0 | 0 | null | [

"task_categories:text-classification",

"size_categories:10K<n<100K",

"language:ar",

"license:mit",

"region:us"

] | 2023-11-06T10:49:24Z | 2023-10-30T15:07:21.000Z | 2023-10-30T15:07:21 | ---

license: mit

task_categories:

- text-classification

language:

- ar

size_categories:

- 10K<n<100K

---

# A Sentiment Analysis Dataset for the Algerian Dialect of Arabic

This dataset consists of 50,016 samples of comments extracted from Algerian YouTube channels. It is manually annotated with 3 classes (the `label` column) and is not balanced. Here are the number of rows of each class:

- 0 (Negative): **17,033 (34.06%)**

- 1 (Neutral): **11,136 (22.26%)**

- 2 (Positive): **21,847 (43.68%)**

Please note that there are some swear words in the dataset, so please use it with caution.

# Citation

If you find our work useful, please cite it as follows:

```bibtex

@article{2023,

title={Sentiment Analysis on Algerian Dialect with Transformers},

author={Zakaria Benmounah and Abdennour Boulesnane and Abdeladim Fadheli and Mustapha Khial},

journal={Applied Sciences},

volume={13},

number={20},

pages={11157},

year={2023},

month={Oct},

publisher={MDPI AG},

DOI={10.3390/app132011157},

ISSN={2076-3417},

url={http://dx.doi.org/10.3390/app132011157}

}

```

| [

-0.9120850563049316,

-0.17510458827018738,

0.06501813977956772,

0.6065667867660522,

-0.16853436827659607,

-0.07739881426095963,

-0.18296143412590027,

-0.1696958839893341,

0.42664164304733276,

0.5253294706344604,

-0.5396180152893066,

-0.9098926782608032,

-0.9038020968437195,

0.2768806815147... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

snyamson/covid-tweet-sentiment-analyzer-distilbert-data | snyamson | 2023-10-30T15:42:25Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T15:42:25Z | 2023-10-30T15:42:22.000Z | 2023-10-30T15:42:22 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: val

path: data/val-*

dataset_info:

features:

- name: input_ids

sequence: int32

- name: attention_mask

sequence: int8

- name: labels

dtype: int64

splits:

- name: train

num_bytes: 10366704

num_examples: 7999

- name: val

num_bytes: 2592000

num_examples: 2000

download_size: 514530

dataset_size: 12958704

---

# Dataset Card for "covid-tweet-sentiment-analyzer-distilbert-data"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.41189834475517273,

-0.4009919762611389,

0.06004710495471954,

0.46275293827056885,

-0.4077145457267761,

0.3941919505596161,

0.1879805326461792,

0.0776115208864212,

0.8249334096908569,

-0.1211545541882515,

-0.9397889971733093,

-0.9124382734298706,

-0.8358752727508545,

-0.3160761892795563,... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

316usman/test_1 | 316usman | 2023-10-30T20:07:47Z | 0 | 0 | null | [

"license:bsd",

"region:us"

] | 2023-10-30T20:07:47Z | 2023-10-30T16:24:32.000Z | 2023-10-30T16:24:32 | ---

license: bsd

dataset_info:

features:

- name: '0'

dtype: string

- name: '1'

dtype: string

splits:

- name: train01

num_bytes: 1168

num_examples: 1

download_size: 8850

dataset_size: 1168

configs:

- config_name: default

data_files:

- split: train01

path: data/train01-*

---

| [

-0.12853392958641052,

-0.18616779148578644,

0.6529127955436707,

0.49436280131340027,

-0.19319361448287964,

0.23607419431209564,

0.36072003841400146,

0.050563063472509384,

0.579365611076355,

0.7400140762329102,

-0.6508104205131531,

-0.23783954977989197,

-0.7102249264717102,

-0.0478260256350... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

kheopsai/mise_dem | kheopsai | 2023-10-30T16:59:52Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T16:59:52Z | 2023-10-30T16:59:09.000Z | 2023-10-30T16:59:09 | Entry not found | [

-0.32276472449302673,

-0.22568407654762268,

0.8622258901596069,

0.4346148371696472,

-0.5282984972000122,

0.7012965679168701,

0.7915717363357544,

0.07618629932403564,

0.7746022939682007,

0.2563222646713257,

-0.785281777381897,

-0.22573848068714142,

-0.9104482531547546,

0.5715669393539429,

... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

asoria/bluey | asoria | 2023-10-31T12:56:27Z | 0 | 0 | null | [

"region:us"

] | 2023-10-31T12:56:27Z | 2023-10-30T17:07:57.000Z | 2023-10-30T17:07:57 | Entry not found | [

-0.32276472449302673,

-0.22568407654762268,

0.8622258901596069,

0.4346148371696472,

-0.5282984972000122,

0.7012965679168701,

0.7915717363357544,

0.07618629932403564,

0.7746022939682007,

0.2563222646713257,

-0.785281777381897,

-0.22573848068714142,

-0.9104482531547546,

0.5715669393539429,

... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

davanstrien/autotrain-data-new-datasets | davanstrien | 2023-10-30T17:10:24Z | 0 | 0 | null | [

"task_categories:text-classification",

"language:en",

"arxiv:2206.02421",

"arxiv:2212.00851",

"region:us"

] | 2023-10-30T17:10:24Z | 2023-10-30T17:09:21.000Z | 2023-10-30T17:09:21 | Invalid username or password. | [

0.22538813948631287,

-0.8998719453811646,

0.4273532032966614,

0.01545056700706482,

-0.07883036881685257,

0.6044343113899231,

0.6795741319656372,

0.07246866822242737,

0.20425251126289368,

0.8107712864875793,

-0.7993434071540833,

0.2074914574623108,

-0.9463866949081421,

0.3846413493156433,

... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

jxu124/refering_expression | jxu124 | 2023-10-31T09:15:19Z | 0 | 0 | null | [

"region:us"

] | 2023-10-31T09:15:19Z | 2023-10-30T17:14:51.000Z | 2023-10-30T17:14:51 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622263669967651,

0.43461522459983826,

-0.52829909324646,

0.7012971639633179,

0.7915719747543335,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104475975036621,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

GEO-Optim/geo-bench | GEO-Optim | 2023-11-02T23:44:53Z | 0 | 0 | null | [

"size_categories:1K<n<10K",

"language:en",

"license:cc-by-sa-4.0",

"region:us"

] | 2023-11-02T23:44:53Z | 2023-10-30T17:38:56.000Z | 2023-10-30T17:38:56 | ---

license: cc-by-sa-4.0

size_categories:

- 1K<n<10K

language:

- en

pretty_name: GEO-bench

---

# Geo-Bench

## Description

Geo-Bench is a comprehensive benchmark dataset designed for evaluating content optimization methods and Generative Engines. It consists of 10,000 queries sourced from multiple real-world and synthetically generated queries, specifically curated and repurposed for generative engines. The benchmark includes queries from nine different sources, each further categorized based on their target domain, difficulty level, query intent, and other dimensions.

## Usage

You can easily load and use Geo-Bench in Python using the `datasets` library:

```python

import datasets

# Load Geo-Bench

dataset = datasets.load_dataset("Pranjal2041/geo-bench")

```

## Data Source

Geo-Bench is a compilation of queries from various sources, both real and synthetically generated, to create a benchmark tailored for generative engines. The datasets used in constructing Geo-Bench are as follows:

1. **MS Macro, 2. ORCAS-1, and 3. Natural Questions:** These datasets contain real anonymized user queries from Bing and Google Search Engines, collectively representing common datasets used in search engine-related research.

4. **AIISouls:** This dataset contains essay questions from "All Souls College, Oxford University," challenging generative engines to perform reasoning and aggregate information from multiple sources.

5. **LIMA:** Contains challenging questions requiring generative engines to not only aggregate information but also perform suitable reasoning to answer the question, such as writing short poems or generating Python code.

6. **Davinci-Debate:** Contains debate questions generated for testing generative engines.

7. **Perplexity.ai Discover:** These queries are sourced from Perplexity.ai's Discover section, an updated list of trending queries on the platform.

8. **EII-5:** This dataset contains questions from the ELIS subreddit, where users ask complex questions and expect answers in simple, layman terms.

9. **GPT-4 Generated Queries:** To supplement diversity in query distribution, GPT-4 is prompted to generate queries ranging from various domains (e.g., science, history) and based on query intent (e.g., navigational, transactional) and difficulty levels (e.g., open-ended, fact-based).

Apart from queries, we also provide 5 cleaned html responses based on top Google search results.

## Tags

Optimizing website content often requires making targeted changes based on the domain of the task. Further, a user of GENERATIVE ENGINE OPTIMIZATION may need to find an appropriate method for only a subset of queries based on multiple factors, such as domain, user intent, query nature. To this end, we tag each of the queries based on a pool of 7 different categories. For tagging, we use the GPT-4 model and manually confirm high recall and precision in tagging. However, owing to such an automated system, the tags can be noisy and should not be considered as the sole basis for filtering or analysis.

### Difficulty Level

- The complexity of the query, ranging from simple to complex.

- Example of a simple query: "What is the capital of France?"

- Example of a complex query: "What are the implications of the Schrödinger equation in quantum mechanics?"

### Nature of Query

- The type of information sought by the query, such as factual, opinion, or comparison.

- Example of a factual query: "How does a car engine work?"

- Example of an opinion query: "What is your opinion on the Harry Potter series?"

### Genre

- The category or domain of the query, such as arts and entertainment, finance, or science.

- Example of a query in the arts and entertainment genre: "Who won the Oscar for Best Picture in 2020?"

- Example of a query in the finance genre: "What is the current exchange rate between the Euro and the US Dollar?"

### Specific Topics

- The specific subject matter of the query, such as physics, economics, or computer science.

- Example of a query on a specific topic in physics: "What is the theory of relativity?"

- Example of a query on a specific topic in economics: "What is the law of supply and demand?"

### Sensitivity

- Whether the query involves sensitive topics or not.

- Example of a non-sensitive query: "What is the tallest mountain in the world?"

- Example of a sensitive query: "What is the current political situation in North Korea?"

### User Intent

- The purpose behind the user's query, such as research, purchase, or entertainment.

- Example of a research intent query: "What are the health benefits of a vegetarian diet?"

- Example of a purchase intent query: "Where can I buy the latest iPhone?"

### Answer Type

- The format of the answer that the query is seeking, such as fact, opinion, or list.

- Example of a fact answer type query: "What is the population of New York City?"

- Example of an opinion answer type query: "Is it better to buy or rent a house?"

## Additional Information

Geo-Bench is intended for research purposes and provides valuable insights into the challenges and opportunities of content optimization for generative engines. Please refer to the [GEO paper](https://arxiv.org/abs/2310.18xxx) for more details.

---

## Data Examples

### Example 1

```json

{

"query": "Why is the smell of rain pleasing?",

"tags": ['informational', 'simple', 'non-technical', 'science', 'research', 'non-sensitive'],

"sources": List[str],

}

```

### Example 2

```json

{

"query": "Can foxes be domesticated?",

"tags": ['informational', 'non-technical', 'pets and animals', 'fact', 'non-sensitive'],

"sources": List[str],

}

```

---

## License

Geo-Bench is released under the [CC BY-NC-SA 4.0](https://creativecommons.org/licenses/by-nc-sa/4.0/) license.

## Dataset Size

The dataset contains 8K queries for train, 1k queries for val and 1k for tesst.

---

## Contributions

We welcome contributions and feedback to improve Geo-Bench. You can contribute by reporting issues or submitting improvements through the [GitHub repository](https://github.com/Pranjal2041/GEO/tree/main/GEO-Bench).

## How to Cite

When using Geo-Bench in your work, please include a proper citation. You can use the following citation as a reference:

```

@misc{Aggarwal2023geo,

title={{GEO}: Generative Engine Optimization},

author={Pranjal Aggarwal and Vishvak Murahari and Tanmay Rajpurohit and Ashwin Kalyan and Karthik R Narasimhan and Ameet Deshpande},

year={2023},

eprint={2310.18xxx},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

``` | [

-0.7659387588500977,

-0.9767630100250244,

0.5713220834732056,

0.28943389654159546,

-0.18407675623893738,

-0.16506639122962952,

-0.20519548654556274,

-0.11986292153596878,

0.040207501500844955,

0.27252399921417236,

-0.6817114949226379,

-0.8318226933479309,

-0.2825992703437805,

0.10129710286... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

davanstrien/autotrain-data-new-datasets-2 | davanstrien | 2023-10-30T18:09:00Z | 0 | 0 | null | [

"task_categories:text-classification",

"language:en",

"arxiv:2211.02092",

"arxiv:2308.16900",

"region:us"

] | 2023-10-30T18:09:00Z | 2023-10-30T18:08:09.000Z | 2023-10-30T18:08:09 | Invalid username or password. | [

0.22538813948631287,

-0.8998719453811646,

0.4273532032966614,

0.01545056700706482,

-0.07883036881685257,

0.6044343113899231,

0.6795741319656372,

0.07246866822242737,

0.20425251126289368,

0.8107712864875793,

-0.7993434071540833,

0.2074914574623108,

-0.9463866949081421,

0.3846413493156433,

... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

alvations/units | alvations | 2023-10-30T18:50:33Z | 0 | 0 | null | [

"license:cc0-1.0",

"region:us"

] | 2023-10-30T18:50:33Z | 2023-10-30T18:46:02.000Z | 2023-10-30T18:46:02 | ---

license: cc0-1.0

---

This is a human translated from English list of units of measurements in multiple languages:

- Arabic

- Bengali

- Chinese (CN)

- Chinese (HK)

- Chinese (TW)

- Czech

- Dutch

- English

- French (CA)

- French (FR)

- German

- Hebrew

- Hindi

- Italian

- Japanese

- Korean

- Marathi

- Nepali

- Polish

- Portuguese (BR)

- Portuguese (PT)

- Russian

- Spanish (Latin America)

- Spanish (Mexico)

- Spanish (Spain)

- Swedish

- Turkish | [

-0.37546345591545105,

-0.034464988857507706,

0.5724976658821106,

0.49797677993774414,

-0.1894320696592331,

0.08232366293668747,

-0.3338356018066406,

-0.5174688696861267,

0.37777072191238403,

0.47850120067596436,

-0.38572755455970764,

-0.3201967477798462,

-0.2912741005420685,

0.508602499961... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Norarolalora/ainzedamanga | Norarolalora | 2023-10-30T19:09:35Z | 0 | 0 | null | [

"license:openrail",

"region:us"

] | 2023-10-30T19:09:35Z | 2023-10-30T18:56:01.000Z | 2023-10-30T18:56:01 | ---

license: openrail

---

| [

-0.12853392958641052,

-0.18616779148578644,

0.6529127955436707,

0.49436280131340027,

-0.19319361448287964,

0.23607419431209564,

0.36072003841400146,

0.050563063472509384,

0.579365611076355,

0.7400140762329102,

-0.6508104205131531,

-0.23783954977989197,

-0.7102249264717102,

-0.0478260256350... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

hippocrates/Dolly_train | hippocrates | 2023-10-30T20:00:39Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T20:00:39Z | 2023-10-30T20:00:37.000Z | 2023-10-30T20:00:37 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: id

dtype: string

- name: conversations

list:

- name: from

dtype: string

- name: value

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 25006952

num_examples: 15011

download_size: 12127483

dataset_size: 25006952

---

# Dataset Card for "Dolly_train"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.4483427405357361,

-0.15320031344890594,

0.01262909546494484,

0.3740679919719696,

-0.1440962255001068,

-0.17642736434936523,

0.4681222140789032,

-0.024350011721253395,

0.8113240599632263,

0.5584322214126587,

-0.8973655700683594,

-0.5281451344490051,

-0.6641553044319153,

-0.29294490814208... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

hippocrates/Alpaca_train | hippocrates | 2023-10-30T20:08:27Z | 0 | 0 | null | [

"region:us"

] | 2023-10-30T20:08:27Z | 2023-10-30T20:08:25.000Z | 2023-10-30T20:08:25 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: id

dtype: string

- name: conversations

list:

- name: from

dtype: string

- name: value

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 44978419

num_examples: 52002

download_size: 16852893

dataset_size: 44978419

---

# Dataset Card for "Alpaca_train"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.8109651207923889,

-0.1967296302318573,

0.12258955836296082,

0.37042948603630066,

-0.3158958852291107,

-0.23636367917060852,

0.3627174198627472,

-0.28017720580101013,

1.0189309120178223,

0.40758228302001953,

-0.9690061807632446,