id stringlengths 2 115 | author stringlengths 2 42 ⌀ | last_modified timestamp[us, tz=UTC] | downloads int64 0 8.87M | likes int64 0 3.84k | paperswithcode_id stringlengths 2 45 ⌀ | tags list | lastModified timestamp[us, tz=UTC] | createdAt stringlengths 24 24 | key stringclasses 1 value | created timestamp[us] | card stringlengths 1 1.01M | embedding list | library_name stringclasses 21 values | pipeline_tag stringclasses 27 values | mask_token null | card_data null | widget_data null | model_index null | config null | transformers_info null | spaces null | safetensors null | transformersInfo null | modelId stringlengths 5 111 ⌀ | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

tkcho/cp-commerce-clf-kr-sku-brand-3f5a1ee9c9d763d3e7b36e3266258416 | tkcho | 2023-11-30T00:31:12Z | 313 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-30T00:31:12Z | 2023-11-16T21:48:39.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-3f5a1ee9c9d763d3e7b36e3266258416 | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-d7e4667561a9ade475ebfb882c1c943e | tkcho | 2023-11-29T12:25:07Z | 312 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-29T12:25:07Z | 2023-11-16T19:42:32.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-d7e4667561a9ade475ebfb882c1c943e | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-875fb2ca1bfc907c570de744105281af | tkcho | 2023-11-29T12:28:39Z | 311 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-29T12:28:39Z | 2023-11-24T08:14:07.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-875fb2ca1bfc907c570de744105281af | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-b70c2f218d76c053d3f336c9886c47e2 | tkcho | 2023-11-30T00:32:06Z | 310 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-30T00:32:06Z | 2023-11-16T21:51:24.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-b70c2f218d76c053d3f336c9886c47e2 | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-106a4c1c7a82b01e8026e5e5e4934674 | tkcho | 2023-11-29T23:14:08Z | 309 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-29T23:14:08Z | 2023-11-15T16:43:45.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-106a4c1c7a82b01e8026e5e5e4934674 | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-7644e4822f628a973c5c3a5b80d51f75 | tkcho | 2023-11-29T23:27:33Z | 308 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-29T23:27:33Z | 2023-11-15T16:59:34.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-7644e4822f628a973c5c3a5b80d51f75 | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-230d590dee60445d963c207de610c952 | tkcho | 2023-11-30T01:03:40Z | 307 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-30T01:03:40Z | 2023-11-16T22:06:15.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-230d590dee60445d963c207de610c952 | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-6b88fe69bcf878234d0ce4dd5706a561 | tkcho | 2023-11-30T00:15:07Z | 306 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-30T00:15:07Z | 2023-11-15T18:46:38.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-6b88fe69bcf878234d0ce4dd5706a561 | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-1a3f01aeae12257ab6c8b0462b4e39a1 | tkcho | 2023-11-29T23:38:07Z | 306 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-29T23:38:07Z | 2023-11-16T20:30:28.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-1a3f01aeae12257ab6c8b0462b4e39a1 | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-c521d4c33f5653c9ebd09586ebca2ce2 | tkcho | 2023-11-30T01:25:01Z | 306 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-30T01:25:01Z | 2023-11-21T01:18:20.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-c521d4c33f5653c9ebd09586ebca2ce2 | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-2d06bb6d6fa1df6c5b3d7a45179d4432 | tkcho | 2023-11-29T23:34:22Z | 305 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-29T23:34:22Z | 2023-11-16T20:20:49.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-2d06bb6d6fa1df6c5b3d7a45179d4432 | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-1244b4f0838ad9550fe726fff3d6af53 | tkcho | 2023-11-30T00:17:16Z | 304 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-30T00:17:16Z | 2023-11-15T18:49:58.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-1244b4f0838ad9550fe726fff3d6af53 | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-7917930e7e19d61b395ec2f0ea48d1f4 | tkcho | 2023-11-30T01:02:48Z | 304 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-30T01:02:48Z | 2023-11-16T22:03:53.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-7917930e7e19d61b395ec2f0ea48d1f4 | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-7990dfdb5db0d9b4b59188ec95a3fc8f | tkcho | 2023-11-30T01:25:57Z | 304 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-30T01:25:57Z | 2023-11-16T22:18:35.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-7990dfdb5db0d9b4b59188ec95a3fc8f | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-5c9436c7512e8a79bc8e52e968cdb778 | tkcho | 2023-11-29T23:22:05Z | 303 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-29T23:22:05Z | 2023-11-15T16:53:41.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-5c9436c7512e8a79bc8e52e968cdb778 | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-1a0d924e87f119d2aae4bf24de38358b | tkcho | 2023-11-30T00:18:37Z | 303 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-30T00:18:37Z | 2023-11-15T18:53:55.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-1a0d924e87f119d2aae4bf24de38358b | [

-0.3227648437023163,

-0.22568459808826447,

0.8622260093688965,

0.434614896774292,

-0.5282989144325256,

0.7012966275215149,

0.7915716171264648,

0.07618634402751923,

0.7746022343635559,

0.25632208585739136,

-0.7852813005447388,

-0.22573812305927277,

-0.9104481935501099,

0.5715669393539429,

... |

tkcho/cp-commerce-clf-kr-sku-brand-0d8a4d5b4b2246c559bd5d1de86d220e | tkcho | 2023-11-30T00:54:04Z | 303 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-30T00:54:04Z | 2023-11-15T19:43:29.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-0d8a4d5b4b2246c559bd5d1de86d220e | [

-0.32276472449302673,

-0.22568491101264954,

0.862226128578186,

0.43461504578590393,

-0.5282993912696838,

0.7012975811958313,

0.7915716171264648,

0.07618598639965057,

0.774603009223938,

0.2563214898109436,

-0.7852815389633179,

-0.22573868930339813,

-0.9104477763175964,

0.5715674161911011,

... |

ericzzz/falcon-rw-1b-instruct-openorca | ericzzz | 2023-11-30T00:05:52Z | 303 | 1 | null | [

"transformers",

"safetensors",

"falcon",

"text-generation",

"text-generation-inference",

"en",

"dataset:Open-Orca/SlimOrca",

"license:apache-2.0",

"autotrain_compatible",

"region:us"

] | 2023-11-30T00:05:52Z | 2023-11-24T20:50:32.000Z | null | null | ---

license: apache-2.0

datasets:

- Open-Orca/SlimOrca

language:

- en

pipeline_tag: text-generation

inference: false

tags:

- text-generation-inference

---

# 🌟 Falcon-RW-1B-Instruct-OpenOrca

Falcon-RW-1B-Instruct-OpenOrca is a 1B parameter, causal decoder-only model based on [Falcon-RW-1B](https://huggingface.co/tiiuae/falcon-rw-1b) and finetuned on the [Open-Orca/SlimOrca](https://huggingface.co/datasets/Open-Orca/SlimOrca) dataset.

**📊 Evaluation Results**

Falcon-RW-1B-Instruct-OpenOrca is the #1 ranking model on [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard) in ~1.5B parameters category!

| Metric | falcon-rw-1b-instruct-openorca | falcon-rw-1b |

|------------|-------------------------------:|-------------:|

| ARC | 34.56 | 35.07 |

| HellaSwag | 60.93 | 63.56 |

| MMLU | 28.77 | 25.28 |

| TruthfulQA | 37.42 | 35.96 |

| Winogrande | 60.69 | 62.04 |

| GSM8K | 1.21 | 0.53 |

| DROP | 21.94 | 4.64 |

| **Average**| **35.08** | **32.44** |

**🚀 Motivations**

1. To create a smaller, open-source, instruction-finetuned, ready-to-use model accessible for users with limited computational resources (lower-end consumer GPUs).

2. To harness the strength of Falcon-RW-1B, a competitive model in its own right, and enhance its capabilities with instruction finetuning.

## 📖 How to Use

The model operates with a structured prompt format, incorporating `<SYS>`, `<INST>`, and `<RESP>` tags to demarcate different parts of the input. The system message and instruction are placed within these tags, with the `<RESP>` tag triggering the model's response.

### 📝 Example Code

```python

from transformers import AutoTokenizer, AutoModelForCausalLM

import transformers

import torch

model = 'ericzzz/falcon-rw-1b-instruct-openorca'

tokenizer = AutoTokenizer.from_pretrained(model)

pipeline = transformers.pipeline(

'text-generation',

model=model,

tokenizer=tokenizer,

torch_dtype=torch.bfloat16,

device_map='auto',

)

system_message = 'You are a helpful assistant. Give short answers.'

instruction = 'What is AI? Give some examples.'

prompt = f'<SYS> {system_message} <INST> {instruction} <RESP> '

response = pipeline(

prompt,

max_length=200,

repetition_penalty=1.05

)

print(response[0]['generated_text'])

# AI, or Artificial Intelligence, refers to the ability of machines and software to perform tasks that require human intelligence, such as learning, reasoning, and problem-solving. It can be used in various fields like computer science, engineering, medicine, and more. Some common applications include image recognition, speech translation, and natural language processing.

```

## 📬 Contact

For further inquiries or feedback, please contact at eric.fu96@aol.com. | null | transformers | text-generation | null | null | null | null | null | null | null | null | null | ericzzz/falcon-rw-1b-instruct-openorca | [

-0.6184973120689392,

-1.0898200273513794,

0.08285750448703766,

0.1354084014892578,

-0.01636166125535965,

-0.35557785630226135,

-0.05634687468409538,

-0.32979246973991394,

0.1960592120885849,

0.33879637718200684,

-0.7221761345863342,

-0.6598144769668579,

-0.7148236632347107,

-0.050753530114... |

tkcho/cp-commerce-clf-kr-sku-brand-dd0f03f257a2be32cccf66de379fb9de | tkcho | 2023-11-29T23:36:38Z | 301 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-29T23:36:38Z | 2023-11-16T20:32:01.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-dd0f03f257a2be32cccf66de379fb9de | [

-0.32276472449302673,

-0.22568491101264954,

0.862226128578186,

0.43461504578590393,

-0.5282993912696838,

0.7012975811958313,

0.7915716171264648,

0.07618598639965057,

0.774603009223938,

0.2563214898109436,

-0.7852815389633179,

-0.22573868930339813,

-0.9104477763175964,

0.5715674161911011,

... |

tkcho/cp-commerce-clf-kr-sku-brand-320762d280dc8278484a715f42fe8411 | tkcho | 2023-11-29T23:20:01Z | 301 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-29T23:20:01Z | 2023-11-24T08:43:33.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-320762d280dc8278484a715f42fe8411 | [

-0.32276472449302673,

-0.22568491101264954,

0.862226128578186,

0.43461504578590393,

-0.5282993912696838,

0.7012975811958313,

0.7915716171264648,

0.07618598639965057,

0.774603009223938,

0.2563214898109436,

-0.7852815389633179,

-0.22573868930339813,

-0.9104477763175964,

0.5715674161911011,

... |

tkcho/cp-commerce-clf-kr-sku-brand-a9f8ceb7576453ebf0c9519f38732d22 | tkcho | 2023-11-29T23:59:38Z | 301 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-29T23:59:38Z | 2023-11-24T09:41:10.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-a9f8ceb7576453ebf0c9519f38732d22 | [

-0.3227650225162506,

-0.22568444907665253,

0.8622258901596069,

0.43461504578590393,

-0.5282988548278809,

0.7012965679168701,

0.7915717959403992,

0.0761863961815834,

0.7746025919914246,

0.2563222050666809,

-0.7852813005447388,

-0.22573848068714142,

-0.910447895526886,

0.5715667009353638,

... |

tkcho/cp-commerce-clf-kr-sku-brand-9edbc1a5482a3ca7833fa52fc30ebc9a | tkcho | 2023-11-30T00:01:25Z | 298 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-30T00:01:25Z | 2023-11-15T18:29:51.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-9edbc1a5482a3ca7833fa52fc30ebc9a | [

-0.3227650225162506,

-0.22568444907665253,

0.8622258901596069,

0.43461504578590393,

-0.5282988548278809,

0.7012965679168701,

0.7915717959403992,

0.0761863961815834,

0.7746025919914246,

0.2563222050666809,

-0.7852813005447388,

-0.22573848068714142,

-0.910447895526886,

0.5715667009353638,

... |

tkcho/cp-commerce-clf-kr-sku-brand-a5985deb7a9097ce790af4e049153471 | tkcho | 2023-11-29T23:35:22Z | 297 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-29T23:35:22Z | 2023-11-16T20:18:30.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/cp-commerce-clf-kr-sku-brand-a5985deb7a9097ce790af4e049153471 | [

-0.3227650225162506,

-0.22568444907665253,

0.8622258901596069,

0.43461504578590393,

-0.5282988548278809,

0.7012965679168701,

0.7915717959403992,

0.0761863961815834,

0.7746025919914246,

0.2563222050666809,

-0.7852813005447388,

-0.22573848068714142,

-0.910447895526886,

0.5715667009353638,

... |

ongkn/attraction-classifier | ongkn | 2023-11-29T18:38:10Z | 281 | 1 | null | [

"transformers",

"safetensors",

"vit",

"image-classification",

"generated_from_trainer",

"dataset:imagefolder",

"base_model:google/vit-base-patch16-224-in21k",

"doi:10.57967/hf/1403",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:u... | 2023-11-29T18:38:10Z | 2023-08-08T18:05:47.000Z | null | null | ---

license: apache-2.0

base_model: google/vit-base-patch16-224-in21k

tags:

- generated_from_trainer

datasets:

- imagefolder

metrics:

- accuracy

model-index:

- name: attraction-classifier

results:

- task:

name: Image Classification

type: image-classification

dataset:

name: imagefolder

type: imagefolder

config: default

split: train

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.7802690582959642

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# attraction-classifier

This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the imagefolder dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5258

- Accuracy: 0.7803

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 69

- gradient_accumulation_steps: 4

- total_train_batch_size: 64

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_ratio: 0.15

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.5871 | 0.99 | 39 | 0.5673 | 0.7175 |

| 0.5317 | 2.0 | 79 | 0.5042 | 0.7668 |

| 0.4495 | 2.99 | 118 | 0.5375 | 0.7489 |

| 0.4001 | 4.0 | 158 | 0.4844 | 0.7534 |

| 0.3651 | 4.99 | 197 | 0.5235 | 0.7556 |

| 0.3038 | 6.0 | 237 | 0.5058 | 0.7578 |

| 0.2718 | 6.99 | 276 | 0.5098 | 0.7825 |

| 0.265 | 8.0 | 316 | 0.5015 | 0.8004 |

| 0.2389 | 8.99 | 355 | 0.5005 | 0.7982 |

| 0.2552 | 9.87 | 390 | 0.5258 | 0.7803 |

### Framework versions

- Transformers 4.35.2

- Pytorch 2.0.1+cu117

- Datasets 2.15.0

- Tokenizers 0.15.0

| null | transformers | image-classification | null | null | null | null | null | null | null | null | null | ongkn/attraction-classifier | [

-0.5630995035171509,

-0.4262949824333191,

0.1337791085243225,

-0.07926773279905319,

-0.3563063442707062,

-0.5081233382225037,

-0.02188386768102646,

-0.29442277550697327,

0.2101137340068817,

0.20730207860469818,

-0.6613650918006897,

-0.7329069972038269,

-0.8231623768806458,

-0.1667721718549... |

NurtureAI/Orca-2-7B-16k | NurtureAI | 2023-11-29T22:48:50Z | 275 | 2 | null | [

"transformers",

"safetensors",

"llama",

"text-generation",

"orca",

"orca2",

"microsoft",

"arxiv:2311.11045",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | 2023-11-29T22:48:50Z | 2023-11-22T02:33:01.000Z | null | null | ---

pipeline_tag: text-generation

tags:

- orca

- orca2

- microsoft

license: other

license_name: microsoft-research-license

license_link: LICENSE

---

# Orca 2 extended to 16k context.

Updated prompt:

```

<|im_start|>system\n{system}\n<|im_start|>user\n{instruction}<|im_end|>\n<|im_start|>assistant\n

```

# Original Model Card

# Orca 2

<!-- Provide a quick summary of what the model is/does. -->

Orca 2 is a helpful assistant that is built for research purposes only and provides a single turn response

in tasks such as reasoning over user given data, reading comprehension, math problem solving and text summarization.

The model is designed to excel particularly in reasoning.

We publicly release Orca 2 to encourage further research on the development, evaluation, and alignment of smaller LMs.

## What is Orca 2’s intended use(s)?

+ Orca 2 is built for research purposes only.

+ The main purpose is to allow the research community to assess its abilities and to provide a foundation for building better frontier models.

## How was Orca 2 evaluated?

+ Orca 2 has been evaluated on a large number of tasks ranging from reasoning to grounding and safety. Please refer

to Section 6 and Appendix in the [Orca 2 paper](https://arxiv.org/pdf/2311.11045.pdf) for details on evaluations.

## Model Details

Orca 2 is a finetuned version of LLAMA-2. Orca 2’s training data is a synthetic dataset that was created to enhance the small model’s reasoning abilities.

All synthetic training data was moderated using the Microsoft Azure content filters. More details about the model can be found in the [Orca 2 paper](https://arxiv.org/pdf/2311.11045.pdf).

Please refer to LLaMA-2 technical report for details on the model architecture.

## License

Orca 2 is licensed under the [Microsoft Research License](LICENSE).

Llama 2 is licensed under the [LLAMA 2 Community License](https://ai.meta.com/llama/license/), Copyright © Meta Platforms, Inc. All Rights Reserved.

## Bias, Risks, and Limitations

Orca 2, built upon the LLaMA 2 model family, retains many of its limitations, as well as the

common limitations of other large language models or limitation caused by its training

process, including:

**Data Biases**: Large language models, trained on extensive data, can inadvertently carry

biases present in the source data. Consequently, the models may generate outputs that could

be potentially biased or unfair.

**Lack of Contextual Understanding**: Despite their impressive capabilities in language understanding and generation, these models exhibit limited real-world understanding, resulting

in potential inaccuracies or nonsensical responses.

**Lack of Transparency**: Due to the complexity and size, large language models can act

as “black boxes”, making it difficult to comprehend the rationale behind specific outputs or

decisions. We recommend reviewing transparency notes from Azure for more information.

**Content Harms**: There are various types of content harms that large language models

can cause. It is important to be aware of them when using these models, and to take

actions to prevent them. It is recommended to leverage various content moderation services

provided by different companies and institutions. On an important note, we hope for better

regulations and standards from government and technology leaders around content harms

for AI technologies in future. We value and acknowledge the important role that research

and open source community can play in this direction.

**Hallucination**: It is important to be aware and cautious not to entirely rely on a given

language model for critical decisions or information that might have deep impact as it is

not obvious how to prevent these models from fabricating content. Moreover, it is not clear

whether small models may be more susceptible to hallucination in ungrounded generation

use cases due to their smaller sizes and hence reduced memorization capacities. This is an

active research topic and we hope there will be more rigorous measurement, understanding

and mitigations around this topic.

**Potential for Misuse**: Without suitable safeguards, there is a risk that these models could

be maliciously used for generating disinformation or harmful content.

**Data Distribution**: Orca 2’s performance is likely to correlate strongly with the distribution

of the tuning data. This correlation might limit its accuracy in areas underrepresented in

the training dataset such as math, coding, and reasoning.

**System messages**: Orca 2 demonstrates variance in performance depending on the system

instructions. Additionally, the stochasticity introduced by the model size may lead to

generation of non-deterministic responses to different system instructions.

**Zero-Shot Settings**: Orca 2 was trained on data that mostly simulate zero-shot settings.

While the model demonstrate very strong performance in zero-shot settings, it does not show

the same gains of using few-shot learning compared to other, specially larger, models.

**Synthetic data**: As Orca 2 is trained on synthetic data, it could inherit both the advantages

and shortcomings of the models and methods used for data generation. We posit that Orca

2 benefits from the safety measures incorporated during training and safety guardrails (e.g.,

content filter) within the Azure OpenAI API. However, detailed studies are required for

better quantification of such risks.

This model is solely designed for research settings, and its testing has only been carried

out in such environments. It should not be used in downstream applications, as additional

analysis is needed to assess potential harm or bias in the proposed application.

## Getting started with Orca 2

**Inference with Hugging Face library**

```python

import torch

import transformers

if torch.cuda.is_available():

torch.set_default_device("cuda")

else:

torch.set_default_device("cpu")

model = transformers.AutoModelForCausalLM.from_pretrained("microsoft/Orca-2-7b", device_map='auto')

# https://github.com/huggingface/transformers/issues/27132

# please use the slow tokenizer since fast and slow tokenizer produces different tokens

tokenizer = transformers.AutoTokenizer.from_pretrained(

"microsoft/Orca-2-7b",

use_fast=False,

)

system_message = "You are Orca, an AI language model created by Microsoft. You are a cautious assistant. You carefully follow instructions. You are helpful and harmless and you follow ethical guidelines and promote positive behavior."

user_message = "How can you determine if a restaurant is popular among locals or mainly attracts tourists, and why might this information be useful?"

prompt = f"<|im_start|>system\n{system_message}<|im_end|>\n<|im_start|>user\n{user_message}<|im_end|>\n<|im_start|>assistant"

inputs = tokenizer(prompt, return_tensors='pt')

output_ids = model.generate(inputs["input_ids"],)

answer = tokenizer.batch_decode(output_ids)[0]

print(answer)

# This example continues showing how to add a second turn message by the user to the conversation

second_turn_user_message = "Give me a list of the key points of your first answer."

# we set add_special_tokens=False because we dont want to automatically add a bos_token between messages

second_turn_message_in_markup = f"\n<|im_start|>user\n{second_turn_user_message}<|im_end|>\n<|im_start|>assistant"

second_turn_tokens = tokenizer(second_turn_message_in_markup, return_tensors='pt', add_special_tokens=False)

second_turn_input = torch.cat([output_ids, second_turn_tokens['input_ids']], dim=1)

output_ids_2 = model.generate(second_turn_input,)

second_turn_answer = tokenizer.batch_decode(output_ids_2)[0]

print(second_turn_answer)

```

**Safe inference with Azure AI Content Safety**

The usage of [Azure AI Content Safety](https://azure.microsoft.com/en-us/products/ai-services/ai-content-safety/) on top of model prediction is strongly encouraged

and can help preventing some of content harms. Azure AI Content Safety is a content moderation platform

that uses AI to moderate content. By having Azure AI Content Safety on the output of Orca 2,

the model output can be moderated by scanning it for different harm categories including sexual content, violence, hate, and

self-harm with multiple severity levels and multi-lingual detection.

```python

import os

import math

import transformers

import torch

from azure.ai.contentsafety import ContentSafetyClient

from azure.core.credentials import AzureKeyCredential

from azure.core.exceptions import HttpResponseError

from azure.ai.contentsafety.models import AnalyzeTextOptions

CONTENT_SAFETY_KEY = os.environ["CONTENT_SAFETY_KEY"]

CONTENT_SAFETY_ENDPOINT = os.environ["CONTENT_SAFETY_ENDPOINT"]

# We use Azure AI Content Safety to filter out any content that reaches "Medium" threshold

# For more information: https://learn.microsoft.com/en-us/azure/ai-services/content-safety/

def should_filter_out(input_text, threshold=4):

# Create an Content Safety client

client = ContentSafetyClient(CONTENT_SAFETY_ENDPOINT, AzureKeyCredential(CONTENT_SAFETY_KEY))

# Construct a request

request = AnalyzeTextOptions(text=input_text)

# Analyze text

try:

response = client.analyze_text(request)

except HttpResponseError as e:

print("Analyze text failed.")

if e.error:

print(f"Error code: {e.error.code}")

print(f"Error message: {e.error.message}")

raise

print(e)

raise

categories = ["hate_result", "self_harm_result", "sexual_result", "violence_result"]

max_score = -math.inf

for category in categories:

max_score = max(max_score, getattr(response, category).severity)

return max_score >= threshold

model_path = 'microsoft/Orca-2-7b'

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

model = transformers.AutoModelForCausalLM.from_pretrained(model_path)

model.to(device)

tokenizer = transformers.AutoTokenizer.from_pretrained(

model_path,

model_max_length=4096,

padding_side="right",

use_fast=False,

add_special_tokens=False,

)

system_message = "You are Orca, an AI language model created by Microsoft. You are a cautious assistant. You carefully follow instructions. You are helpful and harmless and you follow ethical guidelines and promote positive behavior."

user_message = "\" \n :You can't just say, \"\"that's crap\"\" and remove it without gaining a consensus. You already know this, based on your block history. —/ \" \nIs the comment obscene? \nOptions : Yes, No."

prompt = f"<|im_start|>system\n{system_message}<|im_end|>\n<|im_start|>user\n{user_message}<|im_end|>\n<|im_start|>assistant"

inputs = tokenizer(prompt, return_tensors='pt')

inputs = inputs.to(device)

output_ids = model.generate(inputs["input_ids"], max_length=4096, do_sample=False, temperature=0.0, use_cache=True)

sequence_length = inputs["input_ids"].shape[1]

new_output_ids = output_ids[:, sequence_length:]

answers = tokenizer.batch_decode(new_output_ids, skip_special_tokens=True)

final_output = answers[0] if not should_filter_out(answers[0]) else "[Content Filtered]"

print(final_output)

```

## Citation

```bibtex

@misc{mitra2023orca,

title={Orca 2: Teaching Small Language Models How to Reason},

author={Arindam Mitra and Luciano Del Corro and Shweti Mahajan and Andres Codas and Clarisse Simoes and Sahaj Agrawal and Xuxi Chen and Anastasia Razdaibiedina and Erik Jones and Kriti Aggarwal and Hamid Palangi and Guoqing Zheng and Corby Rosset and Hamed Khanpour and Ahmed Awadallah},

year={2023},

eprint={2311.11045},

archivePrefix={arXiv},

primaryClass={cs.AI}

}

``` | null | transformers | text-generation | null | null | null | null | null | null | null | null | null | NurtureAI/Orca-2-7B-16k | [

-0.11487875133752823,

-0.9350185990333557,

0.189994677901268,

0.08889365941286087,

-0.26607710123062134,

-0.2739735543727875,

-0.014156226068735123,

-0.8294171690940857,

0.029611727222800255,

0.43186211585998535,

-0.41253307461738586,

-0.382453978061676,

-0.5472370982170105,

-0.25129166245... |

tavtav/Rose-20B | tavtav | 2023-11-30T01:20:26Z | 272 | 6 | null | [

"transformers",

"safetensors",

"llama",

"text-generation",

"text-generation-inference",

"instruct",

"en",

"license:llama2",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | 2023-11-30T01:20:26Z | 2023-11-22T16:59:56.000Z | null | null | ---

language:

- en

pipeline_tag: text-generation

tags:

- text-generation-inference

- instruct

license: llama2

---

<h1 style="text-align: center">Rose-20B</h1>

<center><img src="https://files.catbox.moe/rze9c9.png" alt="roseimage" width="350" height="350"></center>

<center><i>Image sourced by Shinon</i></center>

<h2 style="text-align: center">Experimental Frankenmerge Model</h2>

## Other Formats

[GGUF](https://huggingface.co/TheBloke/Rose-20B-GGUF)

[GPTQ](https://huggingface.co/TheBloke/Rose-20B-GPTQ)

[AWQ](https://huggingface.co/TheBloke/Rose-20B-AWQ)

[exl2](https://huggingface.co/royallab/Rose-20B-exl2)

## Model Details

A Frankenmerge with [Thorns-13B](https://huggingface.co/CalderaAI/13B-Thorns-l2) by CalderaAI and [Noromaid-13-v0.1.1](https://huggingface.co/NeverSleep/Noromaid-13b-v0.1.1) by NeverSleep (IkariDev and Undi). This recipe was proposed by Trappu and the layer distribution recipe was made by Undi. I thank them for sharing their knowledge with me. This model should be very good at any roleplay scenarios. I called the model "Rose" because it was a fitting name for a "thorny maid".

The recommended format to use is Alpaca.

```

Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

```

Feel free to share any other prompts that works. This model is very robust.

**Warning: This model uses significantly more VRAM due to the KV cache increase resulting in more VRAM required for the context window.**

## Justification for its Existence

Potential base model for finetune experiments using our dataset to create Pygmalion-20B. Due to the already high capabilities, adding our dataset will mesh well with how the model performs.

Potential experimentation with merging with other 20B Frankenmerge models.

## Model Recipe

```

slices:

- sources:

- model: Thorns-13B

layer_range: [0, 16]

- sources:

- model: Noromaid-13B

layer_range: [8, 24]

- sources:

- model: Thorns-13B

layer_range: [17, 32]

- sources:

- model: Noromaid-13B

layer_range: [25, 40]

merge_method: passthrough

dtype: float16

```

Again, credits to [Undi](https://huggingface.co/Undi95) for the recipe.

## Reception

The model was given to a handful of members in the PygmalionAI Discord community for testing. A strong majority really enjoyed the model with only a couple giving the model a passing grade. Since our community has high standards for roleplaying models, I was surprised at the positive reception.

## Contact

Send a message to tav (tav) on Discord if you want to talk about the model to me. I'm always open to receive comments. | null | transformers | text-generation | null | null | null | null | null | null | null | null | null | tavtav/Rose-20B | [

-0.5308987498283386,

-0.8130917549133301,

0.06533842533826828,

0.38971373438835144,

-0.1279458999633789,

-0.7244001626968384,

0.08796971291303635,

-0.7064130902290344,

0.6324359178543091,

0.4730548560619354,

-0.7374170422554016,

-0.31116783618927,

-0.45935121178627014,

-0.03909563273191452... |

NurtureAI/Orca-2-13B-16k | NurtureAI | 2023-11-29T22:46:47Z | 255 | 3 | null | [

"transformers",

"safetensors",

"llama",

"text-generation",

"orca",

"orca2",

"microsoft",

"arxiv:2311.11045",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | 2023-11-29T22:46:47Z | 2023-11-22T03:01:22.000Z | null | null | ---

pipeline_tag: text-generation

tags:

- orca

- orca2

- microsoft

license: other

license_name: microsoft-research-license

license_link: LICENSE

---

# Orca 2 13b extended to 16k context.

Significantly improved rope factor for better generation!

This is my most optimal prompt I have found so far:

Replace {system} with your system prompt, and {instruction} with your task instruction.

```

<|im_start|>system\n{system}\n<|im_start|>user\n{instruction}<|im_end|>\n<|im_start|>assistant\n

```

# Original Model Card

# Orca 2

<!-- Provide a quick summary of what the model is/does. -->

Orca 2 is a helpful assistant that is built for research purposes only and provides a single turn response

in tasks such as reasoning over user given data, reading comprehension, math problem solving and text summarization.

The model is designed to excel particularly in reasoning.

We publicly release Orca 2 to encourage further research on the development, evaluation, and alignment of smaller LMs.

## What is Orca 2’s intended use(s)?

+ Orca 2 is built for research purposes only.

+ The main purpose is to allow the research community to assess its abilities and to provide a foundation for

building better frontier models.

## How was Orca 2 evaluated?

+ Orca 2 has been evaluated on a large number of tasks ranging from reasoning to grounding and safety. Please refer

to Section 6 and Appendix in the [Orca 2 paper](https://arxiv.org/pdf/2311.11045.pdf) for details on evaluations.

## Model Details

Orca 2 is a finetuned version of LLAMA-2. Orca 2’s training data is a synthetic dataset that was created to enhance the small model’s reasoning abilities.

All synthetic training data was moderated using the Microsoft Azure content filters. More details about the model can be found in the [Orca 2 paper](https://arxiv.org/pdf/2311.11045.pdf).

Please refer to LLaMA-2 technical report for details on the model architecture.

## License

Orca 2 is licensed under the [Microsoft Research License](LICENSE).

Llama 2 is licensed under the [LLAMA 2 Community License](https://ai.meta.com/llama/license/), Copyright © Meta Platforms, Inc. All Rights Reserved.

## Bias, Risks, and Limitations

Orca 2, built upon the LLaMA 2 model family, retains many of its limitations, as well as the

common limitations of other large language models or limitation caused by its training process,

including:

**Data Biases**: Large language models, trained on extensive data, can inadvertently carry

biases present in the source data. Consequently, the models may generate outputs that could

be potentially biased or unfair.

**Lack of Contextual Understanding**: Despite their impressive capabilities in language understanding and generation, these models exhibit limited real-world understanding, resulting

in potential inaccuracies or nonsensical responses.

**Lack of Transparency**: Due to the complexity and size, large language models can act

as “black boxes”, making it difficult to comprehend the rationale behind specific outputs or

decisions. We recommend reviewing transparency notes from Azure for more information.

**Content Harms**: There are various types of content harms that large language models

can cause. It is important to be aware of them when using these models, and to take

actions to prevent them. It is recommended to leverage various content moderation services

provided by different companies and institutions. On an important note, we hope for better

regulations and standards from government and technology leaders around content harms

for AI technologies in future. We value and acknowledge the important role that research

and open source community can play in this direction.

**Hallucination**: It is important to be aware and cautious not to entirely rely on a given

language model for critical decisions or information that might have deep impact as it is

not obvious how to prevent these models from fabricating content. Moreover, it is not clear

whether small models may be more susceptible to hallucination in ungrounded generation

use cases due to their smaller sizes and hence reduced memorization capacities. This is an

active research topic and we hope there will be more rigorous measurement, understanding

and mitigations around this topic.

**Potential for Misuse**: Without suitable safeguards, there is a risk that these models could

be maliciously used for generating disinformation or harmful content.

**Data Distribution**: Orca 2’s performance is likely to correlate strongly with the distribution

of the tuning data. This correlation might limit its accuracy in areas underrepresented in

the training dataset such as math, coding, and reasoning.

**System messages**: Orca 2 demonstrates variance in performance depending on the system

instructions. Additionally, the stochasticity introduced by the model size may lead to

generation of non-deterministic responses to different system instructions.

**Zero-Shot Settings**: Orca 2 was trained on data that mostly simulate zero-shot settings.

While the model demonstrate very strong performance in zero-shot settings, it does not show

the same gains of using few-shot learning compared to other, specially larger, models.

**Synthetic data**: As Orca 2 is trained on synthetic data, it could inherit both the advantages

and shortcomings of the models and methods used for data generation. We posit that Orca

2 benefits from the safety measures incorporated during training and safety guardrails (e.g.,

content filter) within the Azure OpenAI API. However, detailed studies are required for

better quantification of such risks.

This model is solely designed for research settings, and its testing has only been carried

out in such environments. It should not be used in downstream applications, as additional

analysis is needed to assess potential harm or bias in the proposed application.

## Getting started with Orca 2

**Inference with Hugging Face library**

```python

import torch

import transformers

if torch.cuda.is_available():

torch.set_default_device("cuda")

else:

torch.set_default_device("cpu")

model = transformers.AutoModelForCausalLM.from_pretrained("microsoft/Orca-2-13b", device_map='auto')

# https://github.com/huggingface/transformers/issues/27132

# please use the slow tokenizer since fast and slow tokenizer produces different tokens

tokenizer = transformers.AutoTokenizer.from_pretrained(

"microsoft/Orca-2-13b",

use_fast=False,

)

system_message = "You are Orca, an AI language model created by Microsoft. You are a cautious assistant. You carefully follow instructions. You are helpful and harmless and you follow ethical guidelines and promote positive behavior."

user_message = "How can you determine if a restaurant is popular among locals or mainly attracts tourists, and why might this information be useful?"

prompt = f"<|im_start|>system\n{system_message}<|im_end|>\n<|im_start|>user\n{user_message}<|im_end|>\n<|im_start|>assistant"

inputs = tokenizer(prompt, return_tensors='pt')

output_ids = model.generate(inputs["input_ids"],)

answer = tokenizer.batch_decode(output_ids)[0]

print(answer)

# This example continues showing how to add a second turn message by the user to the conversation

second_turn_user_message = "Give me a list of the key points of your first answer."

# we set add_special_tokens=False because we dont want to automatically add a bos_token between messages

second_turn_message_in_markup = f"\n<|im_start|>user\n{second_turn_user_message}<|im_end|>\n<|im_start|>assistant"

second_turn_tokens = tokenizer(second_turn_message_in_markup, return_tensors='pt', add_special_tokens=False)

second_turn_input = torch.cat([output_ids, second_turn_tokens['input_ids']], dim=1)

output_ids_2 = model.generate(second_turn_input,)

second_turn_answer = tokenizer.batch_decode(output_ids_2)[0]

print(second_turn_answer)

```

**Safe inference with Azure AI Content Safety**

The usage of [Azure AI Content Safety](https://azure.microsoft.com/en-us/products/ai-services/ai-content-safety/) on top of model prediction is strongly encouraged

and can help prevent content harms. Azure AI Content Safety is a content moderation platform

that uses AI to keep your content safe. By integrating Orca 2 with Azure AI Content Safety,

we can moderate the model output by scanning it for sexual content, violence, hate, and

self-harm with multiple severity levels and multi-lingual detection.

```python

import os

import math

import transformers

import torch

from azure.ai.contentsafety import ContentSafetyClient

from azure.core.credentials import AzureKeyCredential

from azure.core.exceptions import HttpResponseError

from azure.ai.contentsafety.models import AnalyzeTextOptions

CONTENT_SAFETY_KEY = os.environ["CONTENT_SAFETY_KEY"]

CONTENT_SAFETY_ENDPOINT = os.environ["CONTENT_SAFETY_ENDPOINT"]

# We use Azure AI Content Safety to filter out any content that reaches "Medium" threshold

# For more information: https://learn.microsoft.com/en-us/azure/ai-services/content-safety/

def should_filter_out(input_text, threshold=4):

# Create an Content Safety client

client = ContentSafetyClient(CONTENT_SAFETY_ENDPOINT, AzureKeyCredential(CONTENT_SAFETY_KEY))

# Construct a request

request = AnalyzeTextOptions(text=input_text)

# Analyze text

try:

response = client.analyze_text(request)

except HttpResponseError as e:

print("Analyze text failed.")

if e.error:

print(f"Error code: {e.error.code}")

print(f"Error message: {e.error.message}")

raise

print(e)

raise

categories = ["hate_result", "self_harm_result", "sexual_result", "violence_result"]

max_score = -math.inf

for category in categories:

max_score = max(max_score, getattr(response, category).severity)

return max_score >= threshold

model_path = 'microsoft/Orca-2-13b'

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

model = transformers.AutoModelForCausalLM.from_pretrained(model_path)

model.to(device)

tokenizer = transformers.AutoTokenizer.from_pretrained(

model_path,

model_max_length=4096,

padding_side="right",

use_fast=False,

add_special_tokens=False,

)

system_message = "You are Orca, an AI language model created by Microsoft. You are a cautious assistant. You carefully follow instructions. You are helpful and harmless and you follow ethical guidelines and promote positive behavior."

user_message = "\" \n :You can't just say, \"\"that's crap\"\" and remove it without gaining a consensus. You already know this, based on your block history. —/ \" \nIs the comment obscene? \nOptions : Yes, No."

prompt = f"<|im_start|>system\n{system_message}<|im_end|>\n<|im_start|>user\n{user_message}<|im_end|>\n<|im_start|>assistant"

inputs = tokenizer(prompt, return_tensors='pt')

inputs = inputs.to(device)

output_ids = model.generate(inputs["input_ids"], max_length=4096, do_sample=False, temperature=0.0, use_cache=True)

sequence_length = inputs["input_ids"].shape[1]

new_output_ids = output_ids[:, sequence_length:]

answers = tokenizer.batch_decode(new_output_ids, skip_special_tokens=True)

final_output = answers[0] if not should_filter_out(answers[0]) else "[Content Filtered]"

print(final_output)

```

## Citation

```bibtex

@misc{mitra2023orca,

title={Orca 2: Teaching Small Language Models How to Reason},

author={Arindam Mitra and Luciano Del Corro and Shweti Mahajan and Andres Codas and Clarisse Simoes and Sahaj Agrawal and Xuxi Chen and Anastasia Razdaibiedina and Erik Jones and Kriti Aggarwal and Hamid Palangi and Guoqing Zheng and Corby Rosset and Hamed Khanpour and Ahmed Awadallah},

year={2023},

eprint={2311.11045},

archivePrefix={arXiv},

primaryClass={cs.AI}

}

``` | null | transformers | text-generation | null | null | null | null | null | null | null | null | null | NurtureAI/Orca-2-13B-16k | [

-0.15358319878578186,

-0.9364966750144958,

0.18406030535697937,

0.11474647372961044,

-0.280327707529068,

-0.24994252622127533,

0.01004003081470728,

-0.7862386703491211,

0.00875199493020773,

0.39093872904777527,

-0.4433509409427643,

-0.3886304497718811,

-0.5465642213821411,

-0.2136781066656... |

allenai/tulu-2-7b | allenai | 2023-11-29T06:55:27Z | 236 | 4 | null | [

"transformers",

"pytorch",

"llama",

"text-generation",

"en",

"dataset:allenai/tulu-v2-sft-mixture",

"arxiv:2311.10702",

"base_model:meta-llama/Llama-2-7b-hf",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | 2023-11-29T06:55:27Z | 2023-11-13T03:24:42.000Z | null | null | ---

model-index:

- name: tulu-2-7b

results: []

datasets:

- allenai/tulu-v2-sft-mixture

language:

- en

base_model: meta-llama/Llama-2-7b-hf

---

<img src="https://huggingface.co/datasets/allenai/blog-images/resolve/main/tulu-v2/Tulu%20V2%20banner.png" alt="TuluV2 banner" width="800" style="margin-left:'auto' margin-right:'auto' display:'block'"/>

# Model Card for Tulu 2 7B

Tulu is a series of language models that are trained to act as helpful assistants.

Tulu 2 7B is a fine-tuned version of Llama 2 that was trained on a mix of publicly available, synthetic and human datasets.

For more details, read the paper: [Camels in a Changing Climate: Enhancing LM Adaptation with Tulu 2

](https://arxiv.org/abs/2311.10702).

## Model description

- **Model type:** A model belonging to a suite of instruction and RLHF tuned chat models on a mix of publicly available, synthetic and human-created datasets.

- **Language(s) (NLP):** Primarily English

- **License:** [AI2 ImpACT](https://allenai.org/impact-license) Low-risk license.

- **Finetuned from model:** [meta-llama/Llama-2-7b-hf](https://huggingface.co/meta-llama/Llama-2-7b-hf)

### Model Sources

- **Repository:** https://github.com/allenai/open-instruct

- **Model Family:** Other models and the dataset are found in the [Tulu V2 collection](https://huggingface.co/collections/allenai/tulu-v2-suite-6551b56e743e6349aab45101).

## Performance

| Model | Size | Alignment | MT-Bench (score) | AlpacaEval (win rate %) |

|-------------|-----|----|---------------|--------------|

| **Tulu-v2-7b** 🐪 | **7B** | **SFT** | **6.30** | **73.9** |

| **Tulu-v2-dpo-7b** 🐪 | **7B** | **DPO** | **6.29** | **85.1** |

| **Tulu-v2-13b** 🐪 | **13B** | **SFT** | **6.70** | **78.9** |

| **Tulu-v2-dpo-13b** 🐪 | **13B** | **DPO** | **7.00** | **89.5** |

| **Tulu-v2-70b** 🐪 | **70B** | **SFT** | **7.49** | **86.6** |

| **Tulu-v2-dpo-70b** 🐪 | **70B** | **DPO** | **7.89** | **95.1** |

## Input Format

The model is trained to use the following format (note the newlines):

```

<|user|>

Your message here!

<|assistant|>

```

For best results, format all inputs in this manner. **Make sure to include a newline after `<|assistant|>`, this can affect generation quality quite a bit.**

## Intended uses & limitations

The model was fine-tuned on a filtered and preprocessed of the [Tulu V2 mix dataset](https://huggingface.co/datasets/allenai/tulu-v2-sft-mixture), which contains a diverse range of human created instructions and synthetic dialogues generated primarily by other LLMs.

<!--We then further aligned the model with a [Jax DPO trainer](https://github.com/hamishivi/EasyLM/blob/main/EasyLM/models/llama/llama_train_dpo.py) built on [EasyLM](https://github.com/young-geng/EasyLM) on the [openbmb/UltraFeedback](https://huggingface.co/datasets/openbmb/UltraFeedback) dataset, which contains 64k prompts and model completions that are ranked by GPT-4.

<!-- You can find the datasets used for training Tulu V2 [here]()

Here's how you can run the model using the `pipeline()` function from 🤗 Transformers:

```python

# Install transformers from source - only needed for versions <= v4.34

# pip install git+https://github.com/huggingface/transformers.git

# pip install accelerate

import torch

from transformers import pipeline

pipe = pipeline("text-generation", model="HuggingFaceH4/tulu-2-dpo-70b", torch_dtype=torch.bfloat16, device_map="auto")

# We use the tokenizer's chat template to format each message - see https://huggingface.co/docs/transformers/main/en/chat_templating

messages = [

{

"role": "system",

"content": "You are a friendly chatbot who always responds in the style of a pirate",

},

{"role": "user", "content": "How many helicopters can a human eat in one sitting?"},

]

prompt = pipe.tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

outputs = pipe(prompt, max_new_tokens=256, do_sample=True, temperature=0.7, top_k=50, top_p=0.95)

print(outputs[0]["generated_text"])

# <|system|>

# You are a friendly chatbot who always responds in the style of a pirate.</s>

# <|user|>

# How many helicopters can a human eat in one sitting?</s>

# <|assistant|>

# Ah, me hearty matey! But yer question be a puzzler! A human cannot eat a helicopter in one sitting, as helicopters are not edible. They be made of metal, plastic, and other materials, not food!

```-->

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

The Tulu models have not been aligned to generate safe completions within the RLHF phase or deployed with in-the-loop filtering of responses like ChatGPT, so the model can produce problematic outputs (especially when prompted to do so).

It is also unknown what the size and composition of the corpus was used to train the base Llama 2 models, however it is likely to have included a mix of Web data and technical sources like books and code. See the [Falcon 180B model card](https://huggingface.co/tiiuae/falcon-180B#training-data) for an example of this.

### Training hyperparameters

The following hyperparameters were used during DPO training:

- learning_rate: 2e-5

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.03

- num_epochs: 2.0

## Citation

If you find Tulu 2 is useful in your work, please cite it with:

```

@misc{ivison2023camels,

title={Camels in a Changing Climate: Enhancing LM Adaptation with Tulu 2},

author={Hamish Ivison and Yizhong Wang and Valentina Pyatkin and Nathan Lambert and Matthew Peters and Pradeep Dasigi and Joel Jang and David Wadden and Noah A. Smith and Iz Beltagy and Hannaneh Hajishirzi},

year={2023},

eprint={2311.10702},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

*Model card adapted from [Zephyr Beta](https://huggingface.co/HuggingFaceH4/zephyr-7b-beta/blob/main/README.md)* | null | transformers | text-generation | null | null | null | null | null | null | null | null | null | allenai/tulu-2-7b | [

-0.31372469663619995,

-0.7159394025802612,

-0.17200526595115662,

0.2333230823278427,

-0.3550121784210205,

0.03872963786125183,

-0.014767450280487537,

-0.7189645171165466,

0.178083598613739,

0.1707436591386795,

-0.43143683671951294,

-0.12866857647895813,

-0.6755582690238953,

0.0498056560754... |

Yufu0/document_reader | Yufu0 | 2023-11-29T23:13:26Z | 231 | 0 | null | [

"transformers",

"safetensors",

"vision-encoder-decoder",

"endpoints_compatible",

"region:us"

] | 2023-11-29T23:13:26Z | 2023-11-27T15:28:40.000Z | null | null | Entry not found | null | transformers | null | null | null | null | null | null | null | null | null | null | Yufu0/document_reader | [

-0.3227650821208954,

-0.22568479180335999,

0.8622263669967651,

0.4346153140068054,

-0.5282987952232361,

0.7012966871261597,

0.7915722727775574,

0.07618651539087296,

0.7746027112007141,

0.2563222348690033,

-0.7852821350097656,

-0.225738525390625,

-0.910447895526886,

0.5715667009353638,

-0... |

cenkersisman/gpt2-turkish-128-token | cenkersisman | 2023-11-29T20:28:20Z | 226 | 2 | null | [

"transformers",

"pytorch",

"tflite",

"safetensors",

"gpt2",

"text-generation",

"tr",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | 2023-11-29T20:28:20Z | 2023-10-08T09:15:31.000Z | null | null | ---

widget:

- text: 'fransa''nın başkenti'

example_title: fransa'nın başkenti

- text: 'ingiltere''nın başkenti'

example_title: ingiltere'nin başkenti

- text: 'italya''nın başkenti'

example_title: italya'nın başkenti

- text: 'tek bacaklı kurbağa'

example_title: tek bacaklı kurbağa

- text: 'rize''de yağmur'

example_title: rize'de yağmur

- text: 'hayatın anlamı'

example_title: hayatın anlamı

- text: 'saint-joseph'

example_title: saint-joseph

- text: 'tatlı olarak'

example_title: tatlı olarak

- text: 'iklim değişikliği'

example_title: iklim değişikliği

language:

- tr

---

# Model

GPT-2 Türkçe Modeli

### Model Açıklaması

GPT-2 Türkçe Modeli, Türkçe diline özelleştirilmiş olan GPT-2 mimarisi temel alınarak oluşturulmuş bir dil modelidir. Belirli bir başlangıç metni temel alarak insana benzer metinler üretme yeteneğine sahiptir ve geniş bir Türkçe metin veri kümesi üzerinde eğitilmiştir.

Modelin eğitimi için 900 milyon karakterli Vikipedi seti kullanılmıştır. Eğitim setindeki cümleler maksimum 128 tokendan (token = kelime kökü ve ekleri) oluşmuştur bu yüzden oluşturacağı cümlelerin boyu sınırlıdır..

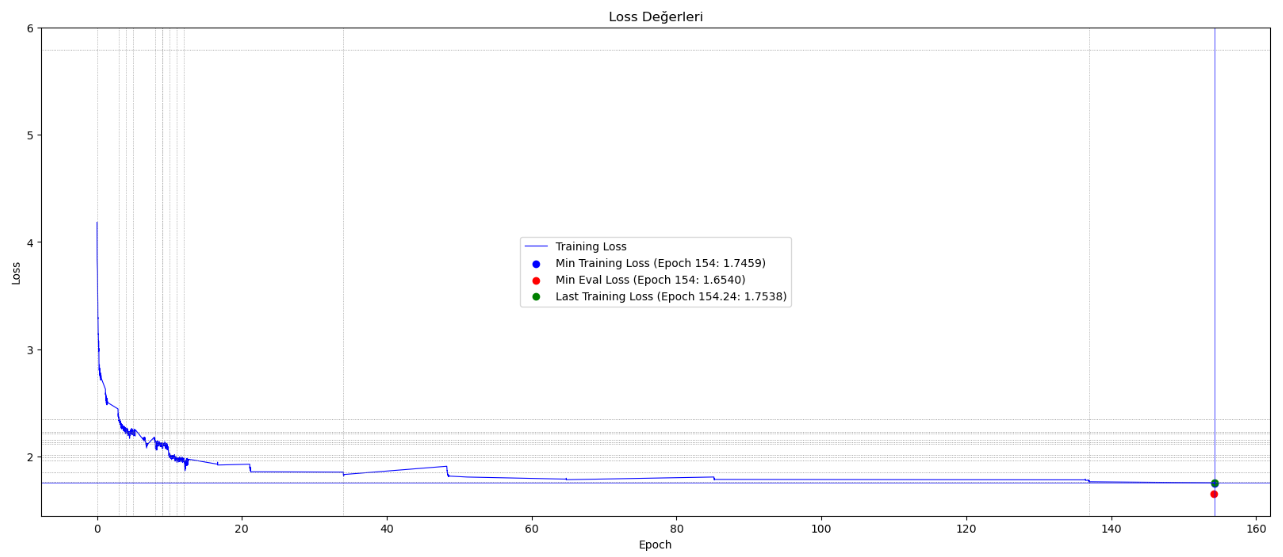

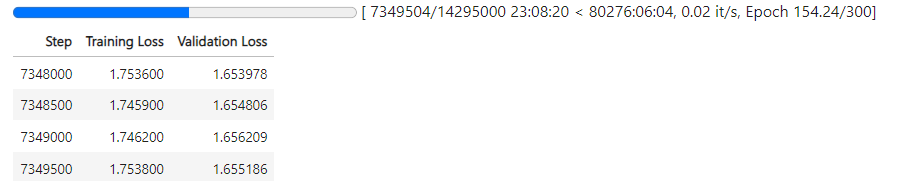

Türkçe heceleme yapısına uygun tokenizer kullanılmış ve model 7.5 milyon adımda yaklaşık 154 epoch eğitilmiştir. Eğitim halen devam etmektedir.

Eğitim için 4GB hafızası olan Nvidia Geforce RTX 3050 GPU kullanılmaktadır. 16GB Paylaşılan GPU'dan da yararlanılmakta ve eğitimin devamında toplamda 20GB hafıza kullanılmaktadır.

## Model Nasıl Kullanılabilir

ÖNEMLİ: model harf büyüklüğüne duyarlı olduğu için, prompt tamamen küçük harflerle yazılmalıdır.

```python

# Model ile çıkarım yapmak için örnek kod

from transformers import GPT2Tokenizer, GPT2LMHeadModel

model_name = "cenkersisman/gpt2-turkish-128-token"

tokenizer = GPT2Tokenizer.from_pretrained(model_name)

model = GPT2LMHeadModel.from_pretrained(model_name)

prompt = "okyanusun derinliklerinde bulunan"

input_ids = tokenizer.encode(prompt, return_tensors="pt")

output = model.generate(input_ids, max_length=100, pad_token_id=tokenizer.eos_token_id)

generated_text = tokenizer.decode(output[0], skip_special_tokens=True)

print(generated_text)

```

## Eğitim Süreci Eğrisi

## Sınırlamalar ve Önyargılar

Bu model, bir özyineli dil modeli olarak eğitildi. Bu, temel işlevinin bir metin dizisi alıp bir sonraki belirteci tahmin etmek olduğu anlamına gelir. Dil modelleri bunun dışında birçok görev için yaygın olarak kullanılsa da, bu çalışmayla ilgili birçok bilinmeyen bulunmaktadır.

Model, küfür, açık saçıklık ve aksi davranışlara yol açan metinleri içerdiği bilinen bir veri kümesi üzerinde eğitildi. Kullanım durumunuza bağlı olarak, bu model toplumsal olarak kabul edilemez metinler üretebilir.

Tüm dil modellerinde olduğu gibi, bu modelin belirli bir girişe nasıl yanıt vereceğini önceden tahmin etmek zordur ve uyarı olmaksızın saldırgan içerik ortaya çıkabilir. Sonuçları yayınlamadan önce hem istenmeyen içeriği sansürlemek hem de sonuçların kalitesini iyileştirmek için insanların çıktıları denetlemesini veya filtrelemesi önerilir.

| null | transformers | text-generation | null | null | null | null | null | null | null | null | null | cenkersisman/gpt2-turkish-128-token | [

-0.6261976957321167,

-0.6965554356575012,

0.21195384860038757,

0.1612759679555893,

-0.6046753525733948,

-0.2791098356246948,

-0.05996793508529663,

-0.4940257966518402,

0.016023164615035057,

0.07371830940246582,

-0.5164282321929932,

-0.33887195587158203,

-0.7370820045471191,

-0.042639300227... |

Yntec/CutesyAnime | Yntec | 2023-11-29T21:44:07Z | 205 | 0 | null | [

"diffusers",

"Anime",

"Kawaii",

"Toon",

"thefoodmage",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us",

"has_space"

] | 2023-11-29T21:44:07Z | 2023-11-29T21:21:36.000Z | null | null | ---

license: creativeml-openrail-m

library_name: diffusers

pipeline_tag: text-to-image

tags:

- Anime

- Kawaii

- Toon

- thefoodmage

- stable-diffusion

- stable-diffusion-diffusers

- diffusers

- text-to-image

---

# tfm Cutesy Anime Model

This model with the MoistMixVAE baked in. Original page: https://civitai.com/models/25132?modelVersionId=30074

Comparison:

Sample and prompt:

princess,cartoon,wearing white dress,golden crown,red shoes,orange hair,kart,blue eyes,looking at viewer,smiling,happy,sitting on racing kart,outside,forest,blue sky,toadstool,extremely detailed,hdr, | null | diffusers | text-to-image | null | null | null | null | null | null | null | null | null | Yntec/CutesyAnime | [

-0.2987070679664612,

-0.623966634273529,

0.4260574281215668,

0.341948539018631,

-0.6391068696975708,

-0.019167710095643997,

0.3713394105434418,

-0.14940069615840912,

0.7099140286445618,

0.586476743221283,

-0.7836006283760071,

-0.4290413558483124,

-0.6356537938117981,

-0.07567483931779861,

... |

nlpchallenges/chatbot-qa-path | nlpchallenges | 2023-11-29T07:17:15Z | 189 | 0 | null | [

"peft",

"region:us"

] | 2023-11-29T07:17:15Z | 2023-11-27T12:10:31.000Z | null | null | ---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- quant_method: QuantizationMethod.BITS_AND_BYTES

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.5.0

| null | peft | null | null | null | null | null | null | null | null | null | null | nlpchallenges/chatbot-qa-path | [

-0.6313066482543945,

-0.7687037587165833,

0.4306168258190155,

0.4859626889228821,

-0.5286025404930115,

0.0870700553059578,

0.18744395673274994,

-0.1962980180978775,

-0.19060248136520386,

0.47944965958595276,

-0.5534126162528992,

-0.14016707241535187,

-0.41348540782928467,

0.159429132938385... |

personal1802/31 | personal1802 | 2023-11-29T14:36:46Z | 189 | 0 | null | [

"diffusers",

"text-to-image",

"stable-diffusion",

"lora",

"template:sd-lora",

"base_model:latent-consistency/lcm-lora-sdv1-5",

"region:us"

] | 2023-11-29T14:36:46Z | 2023-11-29T14:26:47.000Z | null | null | ---

tags:

- text-to-image

- stable-diffusion

- lora

- diffusers

- template:sd-lora

widget:

- text: '-'

output:

url: images/WHITE.png

base_model: latent-consistency/lcm-lora-sdv1-5

instance_prompt: null

---

# zhmixFantasy_v30

<Gallery />

## Download model

Weights for this model are available in Safetensors format.

[Download](/personal1802/31/tree/main) them in the Files & versions tab.

| null | diffusers | text-to-image | null | null | null | null | null | null | null | null | null | personal1802/31 | [

-0.1325923502445221,

0.296060711145401,

0.07120151817798615,

0.4019441604614258,

-0.6752262115478516,

-0.032350216060876846,

0.297743558883667,

-0.4118718206882477,

0.2254399210214615,

0.40146487951278687,

-0.7497118711471558,

-0.5262788534164429,

-0.5042405724525452,

-0.316924512386322,

... |

FrozenScar/cartoon_face | FrozenScar | 2023-11-29T21:06:15Z | 176 | 0 | null | [

"diffusers",

"tensorboard",

"diffusers:DDPMPipeline",

"region:us"

] | 2023-11-29T21:06:15Z | 2023-11-29T06:46:01.000Z | null | null | Entry not found | null | diffusers | null | null | null | null | null | null | null | null | null | null | FrozenScar/cartoon_face | [

-0.3227648437023163,

-0.2256842851638794,

0.8622258305549622,

0.4346150755882263,

-0.5282991528511047,

0.7012966275215149,

0.7915719151496887,

0.07618607580661774,

0.774602472782135,

0.25632160902023315,

-0.7852813005447388,

-0.22573809325695038,

-0.910448431968689,

0.571567177772522,

-0... |

tkcho/commerce-clf-kr-sku-brand-a4b94aa2730451161c1b2ea6107ed86f | tkcho | 2023-11-29T17:41:49Z | 175 | 0 | null | [

"transformers",

"pytorch",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | 2023-11-29T17:41:49Z | 2023-11-29T17:41:18.000Z | null | null | Entry not found | null | transformers | text-classification | null | null | null | null | null | null | null | null | null | tkcho/commerce-clf-kr-sku-brand-a4b94aa2730451161c1b2ea6107ed86f | [

-0.3227648437023163,

-0.2256842851638794,

0.8622258305549622,

0.4346150755882263,

-0.5282991528511047,

0.7012966275215149,

0.7915719151496887,

0.07618607580661774,

0.774602472782135,

0.25632160902023315,

-0.7852813005447388,

-0.22573809325695038,

-0.910448431968689,

0.571567177772522,

-0... |

yentinglin/Taiwan-LLM-7B-v2.1-chat | yentinglin | 2023-11-29T06:02:30Z | 171 | 4 | null | [

"transformers",

"safetensors",

"llama",

"text-generation",

"zh",

"license:apache-2.0",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | 2023-11-29T06:02:30Z | 2023-10-12T06:15:33.000Z | null | null |

---

# For reference on model card metadata, see the spec: https://github.com/huggingface/hub-docs/blob/main/modelcard.md?plain=1

# Doc / guide: https://huggingface.co/docs/hub/model-cards

license: apache-2.0

language:

- zh

widget:

- text: >-

A chat between a curious user and an artificial intelligence assistant.

The assistant gives helpful, detailed, and polite answers to the user's

questions. USER: 你好,請問你可以幫我寫一封推薦信嗎? ASSISTANT:

library_name: transformers

pipeline_tag: text-generation

extra_gated_heading: Acknowledge license to accept the repository.

extra_gated_prompt: Please contact the author for access.

extra_gated_button_content: Acknowledge license 同意以上內容

extra_gated_fields:

Name: text

Mail: text

Organization: text

Country: text

Any utilization of the Taiwan LLM repository mandates the explicit acknowledgment and attribution to the original author: checkbox

使用Taiwan LLM必須明確地承認和歸功於優必達株式會社 Ubitus 以及原始作者: checkbox

---

<img src="https://cdn-uploads.huggingface.co/production/uploads/5df9c78eda6d0311fd3d541f/CmusIT5OlSXvFrbTJ7l-C.png" alt="Taiwan LLM Logo" width="800" style="margin-left:'auto' margin-right:'auto' display:'block'"/>

# 🌟 Checkout [Taiwan-LLM Demo Chat-UI](http://www.twllm.com) 🌟