modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

microsoft/BiomedVLP-CXR-BERT-specialized | 2022-07-11T14:52:06.000Z | [

"transformers",

"pytorch",

"cxr-bert",

"feature-extraction",

"exbert",

"fill-mask",

"custom_code",

"en",

"arxiv:2204.09817",

"arxiv:2103.00020",

"arxiv:2002.05709",

"license:mit",

"region:us"

] | fill-mask | microsoft | null | null | microsoft/BiomedVLP-CXR-BERT-specialized | 14 | 7,684 | transformers | 2022-05-11T17:20:52 | ---

language: en

tags:

- exbert

license: mit

pipeline_tag: fill-mask

widget:

- text: "Left pleural effusion with adjacent [MASK]."

example_title: "Radiology 1"

- text: "Heart size normal and lungs are [MASK]."

example_title: "Radiology 2"

inference: false

---

# CXR-BERT-specialized

[CXR-BERT](https://arxiv.org/abs/2204.09817) is a chest X-ray (CXR) domain-specific language model that makes use of an improved vocabulary, novel pretraining procedure, weight regularization, and text augmentations. The resulting model demonstrates improved performance on radiology natural language inference, radiology masked language model token prediction, and downstream vision-language processing tasks such as zero-shot phrase grounding and image classification.

First, we pretrain [**CXR-BERT-general**](https://huggingface.co/microsoft/BiomedVLP-CXR-BERT-general) from a randomly initialized BERT model via Masked Language Modeling (MLM) on abstracts [PubMed](https://pubmed.ncbi.nlm.nih.gov/) and clinical notes from the publicly-available [MIMIC-III](https://physionet.org/content/mimiciii/1.4/) and [MIMIC-CXR](https://physionet.org/content/mimic-cxr/). In that regard, the general model is expected be applicable for research in clinical domains other than the chest radiology through domain specific fine-tuning.

**CXR-BERT-specialized** is continually pretrained from CXR-BERT-general to further specialize in the chest X-ray domain. At the final stage, CXR-BERT is trained in a multi-modal contrastive learning framework, similar to the [CLIP](https://arxiv.org/abs/2103.00020) framework. The latent representation of [CLS] token is utilized to align text/image embeddings.

## Model variations

| Model | Model identifier on HuggingFace | Vocabulary | Note |

| ------------------------------------------------- | ----------------------------------------------------------------------------------------------------------- | -------------- | --------------------------------------------------------- |

| CXR-BERT-general | [microsoft/BiomedVLP-CXR-BERT-general](https://huggingface.co/microsoft/BiomedVLP-CXR-BERT-general) | PubMed & MIMIC | Pretrained for biomedical literature and clinical domains |

| CXR-BERT-specialized (after multi-modal training) | [microsoft/BiomedVLP-CXR-BERT-specialized](https://huggingface.co/microsoft/BiomedVLP-CXR-BERT-specialized) | PubMed & MIMIC | Pretrained for chest X-ray domain |

## Image model

**CXR-BERT-specialized** is jointly trained with a ResNet-50 image model in a multi-modal contrastive learning framework. Prior to multi-modal learning, the image model is pre-trained on the same set of images in MIMIC-CXR using [SimCLR](https://arxiv.org/abs/2002.05709). The corresponding model definition and its loading functions can be accessed through our [HI-ML-Multimodal](https://github.com/microsoft/hi-ml/blob/main/hi-ml-multimodal/src/health_multimodal/image/model/model.py) GitHub repository. The joint image and text model, namely [BioViL](https://arxiv.org/abs/2204.09817), can be used in phrase grounding applications as shown in this python notebook [example](https://mybinder.org/v2/gh/microsoft/hi-ml/HEAD?labpath=hi-ml-multimodal%2Fnotebooks%2Fphrase_grounding.ipynb). Additionally, please check the [MS-CXR benchmark](https://physionet.org/content/ms-cxr/0.1/) for a more systematic evaluation of joint image and text models in phrase grounding tasks.

## Citation

The corresponding manuscript is accepted to be presented at the [**European Conference on Computer Vision (ECCV) 2022**](https://eccv2022.ecva.net/)

```bibtex

@misc{https://doi.org/10.48550/arxiv.2204.09817,

doi = {10.48550/ARXIV.2204.09817},

url = {https://arxiv.org/abs/2204.09817},

author = {Boecking, Benedikt and Usuyama, Naoto and Bannur, Shruthi and Castro, Daniel C. and Schwaighofer, Anton and Hyland, Stephanie and Wetscherek, Maria and Naumann, Tristan and Nori, Aditya and Alvarez-Valle, Javier and Poon, Hoifung and Oktay, Ozan},

title = {Making the Most of Text Semantics to Improve Biomedical Vision-Language Processing},

publisher = {arXiv},

year = {2022},

}

```

## Model Use

### Intended Use

This model is intended to be used solely for (I) future research on visual-language processing and (II) reproducibility of the experimental results reported in the reference paper.

#### Primary Intended Use

The primary intended use is to support AI researchers building on top of this work. CXR-BERT and its associated models should be helpful for exploring various clinical NLP & VLP research questions, especially in the radiology domain.

#### Out-of-Scope Use

**Any** deployed use case of the model --- commercial or otherwise --- is currently out of scope. Although we evaluated the models using a broad set of publicly-available research benchmarks, the models and evaluations are not intended for deployed use cases. Please refer to [the associated paper](https://arxiv.org/abs/2204.09817) for more details.

### How to use

Here is how to use this model to extract radiological sentence embeddings and obtain their cosine similarity in the joint space (image and text):

```python

import torch

from transformers import AutoModel, AutoTokenizer

# Load the model and tokenizer

url = "microsoft/BiomedVLP-CXR-BERT-specialized"

tokenizer = AutoTokenizer.from_pretrained(url, trust_remote_code=True)

model = AutoModel.from_pretrained(url, trust_remote_code=True)

# Input text prompts (e.g., reference, synonym, contradiction)

text_prompts = ["There is no pneumothorax or pleural effusion",

"No pleural effusion or pneumothorax is seen",

"The extent of the pleural effusion is constant."]

# Tokenize and compute the sentence embeddings

tokenizer_output = tokenizer.batch_encode_plus(batch_text_or_text_pairs=text_prompts,

add_special_tokens=True,

padding='longest',

return_tensors='pt')

embeddings = model.get_projected_text_embeddings(input_ids=tokenizer_output.input_ids,

attention_mask=tokenizer_output.attention_mask)

# Compute the cosine similarity of sentence embeddings obtained from input text prompts.

sim = torch.mm(embeddings, embeddings.t())

```

## Data

This model builds upon existing publicly-available datasets:

- [PubMed](https://pubmed.ncbi.nlm.nih.gov/)

- [MIMIC-III](https://physionet.org/content/mimiciii/)

- [MIMIC-CXR](https://physionet.org/content/mimic-cxr/)

These datasets reflect a broad variety of sources ranging from biomedical abstracts to intensive care unit notes to chest X-ray radiology notes. The radiology notes are accompanied with their associated chest x-ray DICOM images in MIMIC-CXR dataset.

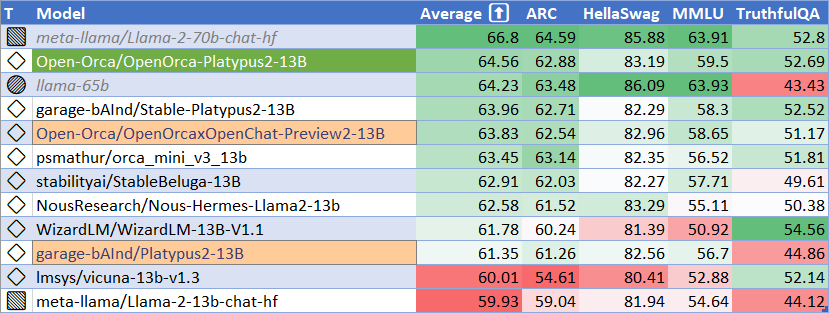

## Performance

We demonstrate that this language model achieves state-of-the-art results in radiology natural language inference through its improved vocabulary and novel language pretraining objective leveraging semantics and discourse characteristics in radiology reports.

A highlight of comparison to other common models, including [ClinicalBERT](https://aka.ms/clinicalbert) and [PubMedBERT](https://aka.ms/pubmedbert):

| | RadNLI accuracy (MedNLI transfer) | Mask prediction accuracy | Avg. # tokens after tokenization | Vocabulary size |

| ----------------------------------------------- | :-------------------------------: | :----------------------: | :------------------------------: | :-------------: |

| RadNLI baseline | 53.30 | - | - | - |

| ClinicalBERT | 47.67 | 39.84 | 78.98 (+38.15%) | 28,996 |

| PubMedBERT | 57.71 | 35.24 | 63.55 (+11.16%) | 28,895 |

| CXR-BERT (after Phase-III) | 60.46 | 77.72 | 58.07 (+1.59%) | 30,522 |

| **CXR-BERT (after Phase-III + Joint Training)** | **65.21** | **81.58** | **58.07 (+1.59%)** | 30,522 |

CXR-BERT also contributes to better vision-language representation learning through its improved text encoding capability. Below is the zero-shot phrase grounding performance on the **MS-CXR** dataset, which evaluates the quality of image-text latent representations.

| Vision–Language Pretraining Method | Text Encoder | MS-CXR Phrase Grounding (Avg. CNR Score) |

| ---------------------------------- | ------------ | :--------------------------------------: |

| Baseline | ClinicalBERT | 0.769 |

| Baseline | PubMedBERT | 0.773 |

| ConVIRT | ClinicalBERT | 0.818 |

| GLoRIA | ClinicalBERT | 0.930 |

| **BioViL** | **CXR-BERT** | **1.027** |

| **BioViL-L** | **CXR-BERT** | **1.142** |

Additional details about performance can be found in the corresponding paper, [Making the Most of Text Semantics to Improve Biomedical Vision-Language Processing](https://arxiv.org/abs/2204.09817).

## Limitations

This model was developed using English corpora, and thus can be considered English-only.

## Further information

Please refer to the corresponding paper, ["Making the Most of Text Semantics to Improve Biomedical Vision-Language Processing", ECCV'22](https://arxiv.org/abs/2204.09817) for additional details on the model training and evaluation.

For additional inference pipelines with CXR-BERT, please refer to the [HI-ML-Multimodal GitHub](https://aka.ms/biovil-code) repository.

| 10,416 | [

[

-0.0222015380859375,

-0.053070068359375,

0.039825439453125,

0.003467559814453125,

-0.028778076171875,

-0.01092529296875,

-0.00850677490234375,

-0.033905029296875,

0.003665924072265625,

0.0264892578125,

-0.0271759033203125,

-0.05120849609375,

-0.05865478515625,

0.0055694580078125,

-0.0085601806640625,

0.06292724609375,

0.0023021697998046875,

0.022735595703125,

-0.0192718505859375,

-0.02227783203125,

-0.022613525390625,

-0.052337646484375,

-0.033355712890625,

-0.0235137939453125,

0.0278472900390625,

-0.00913238525390625,

0.06829833984375,

0.053314208984375,

0.048736572265625,

0.019073486328125,

-0.004604339599609375,

0.0032329559326171875,

-0.01464080810546875,

-0.018829345703125,

0.000006020069122314453,

-0.0174560546875,

-0.0261688232421875,

-0.00853729248046875,

0.043060302734375,

0.05023193359375,

0.007457733154296875,

-0.0219573974609375,

0.00012445449829101562,

0.037261962890625,

-0.029998779296875,

0.01372528076171875,

-0.03045654296875,

0.00836181640625,

0.0058746337890625,

-0.0214080810546875,

-0.0287628173828125,

-0.0104522705078125,

0.0362548828125,

-0.04534912109375,

0.0060272216796875,

0.0124053955078125,

0.10736083984375,

0.01541900634765625,

-0.0233306884765625,

-0.0255279541015625,

-0.00856781005859375,

0.0684814453125,

-0.05712890625,

0.044647216796875,

0.01544189453125,

0.0036640167236328125,

0.0095977783203125,

-0.07952880859375,

-0.042755126953125,

-0.02252197265625,

-0.0303955078125,

0.0218048095703125,

-0.036529541015625,

0.0233001708984375,

0.007572174072265625,

0.0224609375,

-0.056304931640625,

-0.0029697418212890625,

-0.020904541015625,

-0.0233154296875,

0.0242919921875,

-0.0004284381866455078,

0.03497314453125,

-0.036651611328125,

-0.043121337890625,

-0.0271759033203125,

-0.036376953125,

-0.004138946533203125,

-0.01690673828125,

0.012359619140625,

-0.034637451171875,

0.038665771484375,

0.0011835098266601562,

0.0635986328125,

0.00807952880859375,

-0.0229949951171875,

0.057525634765625,

-0.0298004150390625,

-0.032379150390625,

-0.00632476806640625,

0.07208251953125,

0.030975341796875,

0.00435638427734375,

-0.003849029541015625,

-0.0009307861328125,

0.013946533203125,

-0.0015611648559570312,

-0.05694580078125,

-0.017181396484375,

0.0171356201171875,

-0.0662841796875,

-0.0289764404296875,

0.0066375732421875,

-0.03289794921875,

-0.003253936767578125,

-0.0222625732421875,

0.05078125,

-0.05682373046875,

-0.006412506103515625,

0.0236358642578125,

-0.01329803466796875,

0.0224609375,

0.005748748779296875,

-0.0244598388671875,

0.021636962890625,

0.0286865234375,

0.0645751953125,

-0.008758544921875,

-0.005565643310546875,

-0.0291290283203125,

-0.00949859619140625,

-0.0014896392822265625,

0.05206298828125,

-0.039398193359375,

-0.0260162353515625,

-0.002254486083984375,

0.017120361328125,

-0.01947021484375,

-0.032958984375,

0.042449951171875,

-0.026092529296875,

0.0341796875,

-0.007137298583984375,

-0.047271728515625,

-0.01467132568359375,

0.0251007080078125,

-0.034820556640625,

0.07452392578125,

0.005939483642578125,

-0.0697021484375,

0.017425537109375,

-0.03564453125,

-0.0231475830078125,

-0.0101318359375,

-0.040374755859375,

-0.04852294921875,

-0.0037670135498046875,

0.039581298828125,

0.048553466796875,

-0.0139007568359375,

0.02294921875,

-0.00974273681640625,

-0.0087432861328125,

0.027313232421875,

-0.00787353515625,

0.061431884765625,

0.01409912109375,

-0.0302886962890625,

0.0029163360595703125,

-0.06256103515625,

0.01036834716796875,

0.005878448486328125,

-0.0086822509765625,

-0.03106689453125,

-0.005199432373046875,

0.0210113525390625,

0.0406494140625,

0.0106658935546875,

-0.045166015625,

-0.004665374755859375,

-0.0367431640625,

0.0251617431640625,

0.0350341796875,

0.0027256011962890625,

0.0181121826171875,

-0.049072265625,

0.035614013671875,

0.0160675048828125,

0.0010890960693359375,

-0.029632568359375,

-0.0362548828125,

-0.04644775390625,

-0.057525634765625,

0.019378662109375,

0.044677734375,

-0.05682373046875,

0.043426513671875,

-0.0255126953125,

-0.03533935546875,

-0.044342041015625,

-0.0205078125,

0.060211181640625,

0.0535888671875,

0.0491943359375,

-0.023040771484375,

-0.048004150390625,

-0.07391357421875,

-0.001312255859375,

0.003177642822265625,

0.0048675537109375,

0.037689208984375,

0.0270538330078125,

-0.0229339599609375,

0.06414794921875,

-0.050506591796875,

-0.04107666015625,

-0.01019287109375,

0.0206146240234375,

0.0183563232421875,

0.043365478515625,

0.04669189453125,

-0.054901123046875,

-0.043060302734375,

0.0036830902099609375,

-0.07305908203125,

-0.003826141357421875,

-0.02056884765625,

-0.00492095947265625,

0.025482177734375,

0.0579833984375,

-0.0285186767578125,

0.0374755859375,

0.05084228515625,

-0.022064208984375,

0.0288848876953125,

-0.0267791748046875,

0.0077972412109375,

-0.09674072265625,

0.0195159912109375,

0.007480621337890625,

-0.0255889892578125,

-0.035552978515625,

0.006885528564453125,

0.00434112548828125,

-0.007579803466796875,

-0.027252197265625,

0.0513916015625,

-0.056732177734375,

0.018157958984375,

-0.0009436607360839844,

0.0141448974609375,

0.00969696044921875,

0.0345458984375,

0.03369140625,

0.039581298828125,

0.05517578125,

-0.031982421875,

-0.0130615234375,

0.031768798828125,

-0.024810791015625,

0.033416748046875,

-0.07366943359375,

0.007598876953125,

-0.01242828369140625,

0.0160675048828125,

-0.07177734375,

0.00931549072265625,

0.01108551025390625,

-0.04742431640625,

0.036865234375,

-0.0015468597412109375,

-0.033111572265625,

-0.0225830078125,

-0.023284912109375,

0.02313232421875,

0.043792724609375,

-0.02679443359375,

0.041168212890625,

0.019989013671875,

-0.0025310516357421875,

-0.053466796875,

-0.061279296875,

0.005157470703125,

0.00695037841796875,

-0.061920166015625,

0.057525634765625,

-0.0099945068359375,

-0.00402069091796875,

0.017181396484375,

0.01247406005859375,

-0.0126800537109375,

-0.0165863037109375,

0.0236053466796875,

0.033050537109375,

-0.030670166015625,

0.007022857666015625,

-0.0004284381866455078,

-0.00656890869140625,

-0.01103973388671875,

-0.0103607177734375,

0.04132080078125,

-0.01129150390625,

-0.022064208984375,

-0.048126220703125,

0.0288543701171875,

0.033599853515625,

-0.00960540771484375,

0.054473876953125,

0.048004150390625,

-0.032989501953125,

0.02874755859375,

-0.0517578125,

-0.004642486572265625,

-0.03271484375,

0.047637939453125,

-0.027618408203125,

-0.0721435546875,

0.033538818359375,

0.0173797607421875,

-0.0207977294921875,

0.034027099609375,

0.049713134765625,

-0.007843017578125,

0.08099365234375,

0.0496826171875,

0.007762908935546875,

0.025726318359375,

-0.023040771484375,

0.0112152099609375,

-0.0665283203125,

-0.0190887451171875,

-0.03570556640625,

-0.0002932548522949219,

-0.048583984375,

-0.043975830078125,

0.037872314453125,

-0.0172882080078125,

0.00937652587890625,

0.0091094970703125,

-0.047760009765625,

0.00909423828125,

0.0278778076171875,

0.03167724609375,

0.0108642578125,

0.01232147216796875,

-0.03900146484375,

-0.0287933349609375,

-0.05242919921875,

-0.0294952392578125,

0.07403564453125,

0.0311431884765625,

0.051025390625,

-0.0128173828125,

0.06024169921875,

0.0030384063720703125,

0.0114593505859375,

-0.054229736328125,

0.028228759765625,

-0.02923583984375,

-0.03997802734375,

0.003261566162109375,

-0.01209259033203125,

-0.0870361328125,

0.0195770263671875,

-0.024444580078125,

-0.0360107421875,

0.0273284912109375,

0.004302978515625,

-0.03106689453125,

0.01303863525390625,

-0.037994384765625,

0.05889892578125,

-0.01055145263671875,

-0.018646240234375,

-0.00702667236328125,

-0.06890869140625,

0.010223388671875,

0.005828857421875,

0.0225677490234375,

0.01386260986328125,

-0.013763427734375,

0.04742431640625,

-0.04248046875,

0.06915283203125,

-0.0127105712890625,

0.014495849609375,

0.01406097412109375,

-0.0193023681640625,

0.017822265625,

-0.01007080078125,

0.0010347366333007812,

0.020965576171875,

0.0181121826171875,

-0.02740478515625,

-0.0273284912109375,

0.03997802734375,

-0.07769775390625,

-0.024017333984375,

-0.0556640625,

-0.0416259765625,

-0.0030803680419921875,

0.02667236328125,

0.040374755859375,

0.054473876953125,

-0.0189971923828125,

0.032684326171875,

0.059600830078125,

-0.05224609375,

0.0171356201171875,

0.02197265625,

-0.005031585693359375,

-0.0506591796875,

0.05401611328125,

0.01100921630859375,

0.0196075439453125,

0.05938720703125,

0.0173797607421875,

-0.018951416015625,

-0.04229736328125,

-0.0003924369812011719,

0.041229248046875,

-0.058441162109375,

-0.0184173583984375,

-0.08367919921875,

-0.0252532958984375,

-0.039154052734375,

-0.031982421875,

-0.005615234375,

-0.0290374755859375,

-0.030517578125,

0.00925445556640625,

0.0173187255859375,

0.0286712646484375,

-0.0128936767578125,

0.0219573974609375,

-0.06536865234375,

0.0233917236328125,

0.00276947021484375,

0.005886077880859375,

-0.0179290771484375,

-0.051177978515625,

-0.0159759521484375,

-0.0079193115234375,

-0.0219573974609375,

-0.050384521484375,

0.04925537109375,

0.027130126953125,

0.0579833984375,

0.0190887451171875,

-0.004734039306640625,

0.05731201171875,

-0.026824951171875,

0.05389404296875,

0.0291290283203125,

-0.06475830078125,

0.0401611328125,

-0.0224456787109375,

0.0298004150390625,

0.0325927734375,

0.038238525390625,

-0.037017822265625,

-0.0260009765625,

-0.06243896484375,

-0.0762939453125,

0.032623291015625,

0.0124053955078125,

0.007198333740234375,

-0.0243377685546875,

0.023529052734375,

-0.00395965576171875,

0.0016603469848632812,

-0.066650390625,

-0.0288238525390625,

-0.0223388671875,

-0.034454345703125,

0.006000518798828125,

-0.023162841796875,

-0.00539398193359375,

-0.0227813720703125,

0.0482177734375,

-0.0154266357421875,

0.052032470703125,

0.054595947265625,

-0.032379150390625,

0.01299285888671875,

0.004009246826171875,

0.052978515625,

0.0418701171875,

-0.0243682861328125,

0.00595855712890625,

0.0010614395141601562,

-0.040740966796875,

-0.01148223876953125,

0.0195159912109375,

0.00323486328125,

0.018463134765625,

0.044525146484375,

0.052001953125,

0.02691650390625,

-0.05242919921875,

0.0584716796875,

-0.021575927734375,

-0.028594970703125,

-0.021240234375,

-0.019683837890625,

0.00004476308822631836,

0.006977081298828125,

0.0258026123046875,

0.0140380859375,

0.0033969879150390625,

-0.02276611328125,

0.035888671875,

0.03289794921875,

-0.048675537109375,

-0.01324462890625,

0.042816162109375,

-0.00307464599609375,

0.01236724853515625,

0.041015625,

0.0019931793212890625,

-0.033905029296875,

0.042022705078125,

0.047149658203125,

0.058135986328125,

-0.0005183219909667969,

0.0020923614501953125,

0.0487060546875,

0.0195770263671875,

0.00771331787109375,

0.030853271484375,

0.01377105712890625,

-0.0615234375,

-0.02850341796875,

-0.030853271484375,

-0.002170562744140625,

0.0022029876708984375,

-0.062286376953125,

0.03216552734375,

-0.038360595703125,

-0.01392364501953125,

0.011383056640625,

-0.0018491744995117188,

-0.058349609375,

0.0372314453125,

0.0155181884765625,

0.07244873046875,

-0.05645751953125,

0.076904296875,

0.0599365234375,

-0.050445556640625,

-0.0618896484375,

-0.005870819091796875,

0.0001844167709350586,

-0.0794677734375,

0.0633544921875,

0.01226806640625,

0.00473785400390625,

-0.0002925395965576172,

-0.032379150390625,

-0.0706787109375,

0.08990478515625,

0.006610870361328125,

-0.03692626953125,

-0.01172637939453125,

0.001476287841796875,

0.042816162109375,

-0.037811279296875,

0.0229034423828125,

0.00982666015625,

0.0191802978515625,

0.0093536376953125,

-0.07525634765625,

0.0231475830078125,

-0.0266571044921875,

0.0137786865234375,

-0.01366424560546875,

-0.043243408203125,

0.06353759765625,

-0.017120361328125,

-0.006961822509765625,

0.0199432373046875,

0.049285888671875,

0.0272369384765625,

0.00864410400390625,

0.0267486572265625,

0.059661865234375,

0.048492431640625,

-0.0084381103515625,

0.092529296875,

-0.029266357421875,

0.026214599609375,

0.0716552734375,

-0.0014629364013671875,

0.057098388671875,

0.0362548828125,

-0.0074920654296875,

0.055450439453125,

0.0382080078125,

-0.0058441162109375,

0.05242919921875,

-0.004657745361328125,

-0.003047943115234375,

-0.0109710693359375,

-0.00878143310546875,

-0.050323486328125,

0.02923583984375,

0.02886962890625,

-0.05560302734375,

-0.0046539306640625,

0.00974273681640625,

0.034027099609375,

0.00013387203216552734,

-0.00041937828063964844,

0.056243896484375,

0.01447296142578125,

-0.03399658203125,

0.07080078125,

-0.0059814453125,

0.06610107421875,

-0.0526123046875,

0.005828857421875,

0.0021343231201171875,

0.0266571044921875,

-0.01218414306640625,

-0.043792724609375,

0.02667236328125,

-0.025238037109375,

-0.01511383056640625,

-0.00585174560546875,

0.0472412109375,

-0.0257720947265625,

-0.04150390625,

0.02960205078125,

0.031341552734375,

0.0008115768432617188,

0.01009368896484375,

-0.08416748046875,

0.0228271484375,

0.0009403228759765625,

-0.0116119384765625,

0.033111572265625,

0.03143310546875,

0.0032863616943359375,

0.04052734375,

0.0428466796875,

0.0022563934326171875,

-0.00038814544677734375,

0.005695343017578125,

0.07720947265625,

-0.0421142578125,

-0.042022705078125,

-0.055267333984375,

0.048980712890625,

-0.01146697998046875,

-0.020477294921875,

0.041595458984375,

0.0435791015625,

0.05938720703125,

-0.004779815673828125,

0.06768798828125,

-0.01546478271484375,

0.033660888671875,

-0.046142578125,

0.049468994140625,

-0.055938720703125,

0.0030345916748046875,

-0.04180908203125,

-0.034759521484375,

-0.060394287109375,

0.06890869140625,

-0.01451873779296875,

0.0069580078125,

0.077880859375,

0.082275390625,

-0.00284576416015625,

-0.033966064453125,

0.02960205078125,

0.0292510986328125,

0.0196075439453125,

0.032135009765625,

0.03094482421875,

-0.05401611328125,

0.042755126953125,

-0.028228759765625,

-0.028564453125,

-0.01336669921875,

-0.08026123046875,

-0.069091796875,

-0.054901123046875,

-0.056121826171875,

-0.047607421875,

0.01323699951171875,

0.0787353515625,

0.07080078125,

-0.0560302734375,

-0.005275726318359375,

0.00849151611328125,

-0.01288604736328125,

-0.0229034423828125,

-0.0167999267578125,

0.05499267578125,

-0.0298919677734375,

-0.03704833984375,

0.00310516357421875,

0.01580810546875,

0.00839996337890625,

0.0017185211181640625,

-0.005176544189453125,

-0.042266845703125,

0.00321197509765625,

0.048095703125,

0.01617431640625,

-0.06427001953125,

-0.0216827392578125,

0.0240936279296875,

-0.02520751953125,

0.027435302734375,

0.043182373046875,

-0.06463623046875,

0.04779052734375,

0.03662109375,

0.040679931640625,

0.032318115234375,

-0.0170135498046875,

0.02978515625,

-0.061309814453125,

0.0254974365234375,

0.01136016845703125,

0.0360107421875,

0.03515625,

-0.0301361083984375,

0.024627685546875,

0.0247802734375,

-0.041473388671875,

-0.056915283203125,

-0.00989532470703125,

-0.10107421875,

-0.0299072265625,

0.0811767578125,

-0.018157958984375,

-0.0192718505859375,

-0.007190704345703125,

-0.0274200439453125,

0.0269622802734375,

-0.0160369873046875,

0.041290283203125,

0.039398193359375,

-0.0131683349609375,

-0.033416748046875,

-0.0199127197265625,

0.0290374755859375,

0.03350830078125,

-0.05126953125,

-0.0325927734375,

0.0262451171875,

0.0374755859375,

0.019683837890625,

0.059234619140625,

-0.04058837890625,

0.0229949951171875,

-0.0169830322265625,

0.0224761962890625,

0.0004391670227050781,

0.0107421875,

-0.032470703125,

0.0056915283203125,

0.0014553070068359375,

-0.0171661376953125

]

] |

boomerchan/Magpie-13b | 2023-09-17T22:54:22.000Z | [

"transformers",

"pytorch",

"llama",

"text-generation",

"not-for-all-audiences",

"en",

"license:llama2",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | boomerchan | null | null | boomerchan/Magpie-13b | 7 | 7,684 | transformers | 2023-09-11T20:29:03 | ---

license: llama2

pipeline_tag: text-generation

language:

- en

tags:

- not-for-all-audiences

---

<center><h1>Magpie 13b</h1></center>

Magpie-13b is a Llama 2 merge made specifically for SillyTavern roleplays. This is the final result of approximately 2 weeks of merging and testing, and in my opinion it is the most complementary 13b merge of the included models/loras for this use-case.

This repo is for the full model weights. <a href="https://huggingface.co/boomerchan/Magpie-13b-GGUF">Click here for gguf quants</a>. Massive thanks to TheBloke for the <a href="https://huggingface.co/TheBloke/Magpie-13B-GPTQ">GPTQ version</a>.

<h2>Prompt template: Alpaca</h2>

```

Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

```

<h2>My SillyTavern instruct mode prompt:</h2>

```

Write an engaging response from {{char}} to {{user}} in a descriptive writing style. Express {{char}}'s actions and sensory perceptions in vivid detail. Proactively advance the conversation with narrative prose as {{char}}. Use informal language when composing dialogue, taking into account {{char}}'s personality and communication style.

### Instruction:

{prompt}

### Response:

```

I have included 2 .json files in this repo. `Magpie.json` is an instruct mode preset which you can import into SillyTavern VIA the AI Response Formatting tab. `Magpie (Spicy).json` is a sampler preset for Oobabooga which you can import into SillyTavern VIA the AI Response Configuration tab. If you have any questions, please direct them to the SillyTavern Discord server. glhf <3

Model weights included in this merge:

- Chronos 13b

- Nous Hermes L2 13b

- OrcaPlatypus2 13b

- Spicyboros 2.2 13b

- Kimiko v2 13b Lora

- limarp L2 v2 13b Lora | 1,807 | [

[

-0.045989990234375,

-0.05230712890625,

0.0201263427734375,

0.015533447265625,

-0.0136260986328125,

-0.004146575927734375,

-0.006374359130859375,

-0.05145263671875,

0.03564453125,

0.037078857421875,

-0.055389404296875,

-0.00530242919921875,

-0.03826904296875,

0.011138916015625,

-0.01142120361328125,

0.083984375,

0.034393310546875,

-0.016845703125,

-0.0007390975952148438,

0.007205963134765625,

-0.05841064453125,

-0.03125,

-0.03240966796875,

-0.030609130859375,

0.041351318359375,

0.039794921875,

0.0743408203125,

0.0204620361328125,

0.0224761962890625,

0.0272979736328125,

-0.026031494140625,

0.061920166015625,

-0.050811767578125,

0.0022449493408203125,

-0.0276947021484375,

-0.0298004150390625,

-0.069091796875,

0.007659912109375,

0.036834716796875,

0.00579071044921875,

-0.03302001953125,

0.0171661376953125,

-0.0136260986328125,

0.025390625,

-0.040679931640625,

0.0194549560546875,

-0.01555633544921875,

0.00321197509765625,

-0.018707275390625,

0.0023555755615234375,

-0.0213775634765625,

-0.036529541015625,

-0.023651123046875,

-0.054168701171875,

-0.0123443603515625,

0.03271484375,

0.07720947265625,

0.020751953125,

-0.039459228515625,

-0.034881591796875,

-0.03961181640625,

0.040374755859375,

-0.06268310546875,

-0.00940704345703125,

0.032318115234375,

0.0367431640625,

-0.035736083984375,

-0.05133056640625,

-0.0684814453125,

-0.0115966796875,

-0.01291656494140625,

0.0116729736328125,

-0.0537109375,

-0.021453857421875,

0.01473236083984375,

0.0467529296875,

-0.04638671875,

0.003566741943359375,

-0.056182861328125,

-0.00807952880859375,

0.05218505859375,

0.031585693359375,

0.0252685546875,

-0.040252685546875,

-0.0298004150390625,

-0.0227508544921875,

-0.041839599609375,

0.0114288330078125,

0.054290771484375,

0.0218658447265625,

-0.018524169921875,

0.070068359375,

0.0019702911376953125,

0.055999755859375,

0.04803466796875,

-0.0097503662109375,

0.0212554931640625,

-0.043914794921875,

-0.0277252197265625,

-0.00860595703125,

0.0809326171875,

0.0284423828125,

0.0146942138671875,

-0.0072174072265625,

-0.0071563720703125,

-0.0158538818359375,

0.04010009765625,

-0.060791015625,

-0.00812530517578125,

0.00963592529296875,

-0.00308990478515625,

-0.0330810546875,

0.021209716796875,

-0.056915283203125,

-0.03564453125,

0.00878143310546875,

0.04107666015625,

-0.042816162109375,

-0.021697998046875,

0.0264129638671875,

0.0034923553466796875,

0.0318603515625,

0.0177459716796875,

-0.06390380859375,

0.0267791748046875,

0.04351806640625,

0.03863525390625,

0.0288543701171875,

-0.0140838623046875,

-0.05609130859375,

0.002979278564453125,

-0.0355224609375,

0.0645751953125,

-0.01629638671875,

-0.0428466796875,

-0.0231781005859375,

0.01617431640625,

-0.017333984375,

-0.0457763671875,

0.03619384765625,

-0.04046630859375,

0.0433349609375,

-0.03704833984375,

-0.0153350830078125,

-0.05169677734375,

0.0175628662109375,

-0.056854248046875,

0.06939697265625,

0.0206451416015625,

-0.040313720703125,

0.006877899169921875,

-0.045745849609375,

-0.00479888916015625,

0.01294708251953125,

0.017242431640625,

-0.0237274169921875,

0.0016956329345703125,

0.015625,

0.0271148681640625,

-0.038330078125,

0.003459930419921875,

-0.02197265625,

-0.04510498046875,

0.0168609619140625,

0.0170745849609375,

0.0648193359375,

0.0237274169921875,

-0.0169525146484375,

0.00997161865234375,

-0.03961181640625,

0.004184722900390625,

0.0316162109375,

0.0033473968505859375,

0.00923919677734375,

-0.0161895751953125,

0.0007205009460449219,

0.001499176025390625,

0.030731201171875,

-0.004238128662109375,

0.0701904296875,

-0.0179901123046875,

0.032867431640625,

0.03302001953125,

0.0027484893798828125,

0.036834716796875,

-0.046112060546875,

0.04486083984375,

-0.01490020751953125,

0.0261688232421875,

0.0250244140625,

-0.05322265625,

-0.050872802734375,

-0.0386962890625,

0.0224456787109375,

0.05609130859375,

-0.04962158203125,

0.05230712890625,

0.0012254714965820312,

-0.06591796875,

-0.02056884765625,

0.005931854248046875,

0.022216796875,

0.03472900390625,

0.032318115234375,

-0.0174713134765625,

-0.034759521484375,

-0.063232421875,

0.0198822021484375,

-0.0452880859375,

0.006160736083984375,

0.02630615234375,

0.026092529296875,

-0.043121337890625,

0.055694580078125,

-0.044464111328125,

-0.047943115234375,

-0.04644775390625,

-0.0008482933044433594,

0.03399658203125,

0.02899169921875,

0.08270263671875,

-0.047332763671875,

-0.00490570068359375,

-0.001621246337890625,

-0.04901123046875,

-0.0189666748046875,

0.0078277587890625,

-0.0201873779296875,

0.0018939971923828125,

0.0032939910888671875,

-0.08062744140625,

0.02972412109375,

0.0302581787109375,

-0.04376220703125,

0.034393310546875,

-0.00930023193359375,

0.04052734375,

-0.07611083984375,

0.0034961700439453125,

-0.03302001953125,

-0.0147705078125,

-0.044891357421875,

0.028594970703125,

0.0020580291748046875,

0.0175628662109375,

-0.038818359375,

0.04571533203125,

-0.032257080078125,

0.01082611083984375,

-0.00518035888671875,

0.0040130615234375,

0.0034694671630859375,

0.020843505859375,

-0.052459716796875,

0.07220458984375,

0.0307464599609375,

-0.02056884765625,

0.05572509765625,

0.046173095703125,

-0.0011548995971679688,

0.024688720703125,

-0.05609130859375,

0.01849365234375,

0.002849578857421875,

0.0204925537109375,

-0.090087890625,

-0.0438232421875,

0.08551025390625,

-0.032684326171875,

0.024932861328125,

-0.007476806640625,

-0.056793212890625,

-0.0458984375,

-0.0305328369140625,

0.02001953125,

0.042327880859375,

-0.024688720703125,

0.075927734375,

0.0156402587890625,

-0.0088348388671875,

-0.03814697265625,

-0.07061767578125,

-0.005886077880859375,

-0.028106689453125,

-0.04412841796875,

0.0204925537109375,

-0.021453857421875,

-0.002338409423828125,

0.0064239501953125,

0.01242828369140625,

-0.0102386474609375,

-0.0218505859375,

0.034271240234375,

0.044464111328125,

-0.01458740234375,

-0.00878143310546875,

-0.0008521080017089844,

0.015655517578125,

-0.01421356201171875,

0.0231170654296875,

0.05145263671875,

-0.04742431640625,

-0.01198577880859375,

-0.02545166015625,

0.01715087890625,

0.047882080078125,

-0.01270294189453125,

0.073486328125,

0.054656982421875,

-0.0150909423828125,

0.0257720947265625,

-0.06072998046875,

0.0161590576171875,

-0.034881591796875,

0.00113677978515625,

-0.01023101806640625,

-0.044952392578125,

0.053436279296875,

0.040740966796875,

-0.0026683807373046875,

0.050079345703125,

0.046600341796875,

0.0105438232421875,

0.0477294921875,

0.0535888671875,

-0.0043792724609375,

0.0246124267578125,

-0.033477783203125,

0.028961181640625,

-0.053558349609375,

-0.0548095703125,

-0.02105712890625,

-0.0253448486328125,

-0.029144287109375,

-0.0391845703125,

0.00969696044921875,

0.03961181640625,

-0.017974853515625,

0.053314208984375,

-0.03607177734375,

0.0291595458984375,

0.024810791015625,

0.009735107421875,

0.0214080810546875,

-0.01458740234375,

0.0204620361328125,

0.004756927490234375,

-0.062225341796875,

-0.035186767578125,

0.057952880859375,

-0.005916595458984375,

0.05950927734375,

0.0469970703125,

0.055328369140625,

0.00423431396484375,

0.0211639404296875,

-0.06890869140625,

0.055877685546875,

0.00788116455078125,

-0.043487548828125,

-0.006343841552734375,

-0.02386474609375,

-0.053070068359375,

0.0165252685546875,

-0.03790283203125,

-0.06121826171875,

0.01049041748046875,

0.0191650390625,

-0.0582275390625,

0.01268768310546875,

-0.06976318359375,

0.048431396484375,

0.0019216537475585938,

-0.007030487060546875,

-0.01122283935546875,

-0.035430908203125,

0.06768798828125,

-0.00957489013671875,

0.003387451171875,

0.0019550323486328125,

-0.01201629638671875,

0.03338623046875,

-0.0416259765625,

0.047943115234375,

0.0089263916015625,

0.00043773651123046875,

0.04437255859375,

0.005397796630859375,

0.03240966796875,

0.0224761962890625,

0.0031757354736328125,

0.0157012939453125,

0.0015096664428710938,

-0.008758544921875,

-0.03662109375,

0.050384521484375,

-0.069091796875,

-0.0599365234375,

-0.05035400390625,

-0.04705810546875,

0.00702667236328125,

-0.0033092498779296875,

0.0309295654296875,

0.0167236328125,

0.006134033203125,

0.003467559814453125,

0.036407470703125,

-0.0019855499267578125,

0.0263214111328125,

0.0300750732421875,

-0.041839599609375,

-0.038543701171875,

0.03533935546875,

0.01279449462890625,

-0.00035572052001953125,

0.0014514923095703125,

0.01416778564453125,

-0.0278778076171875,

0.0004978179931640625,

-0.04107666015625,

0.034423828125,

-0.034210205078125,

-0.048736572265625,

-0.042266845703125,

-0.030731201171875,

-0.032867431640625,

0.0004165172576904297,

-0.01171875,

-0.040740966796875,

-0.03997802734375,

0.01480865478515625,

0.061279296875,

0.06915283203125,

-0.035308837890625,

0.051177978515625,

-0.0452880859375,

0.033203125,

0.041900634765625,

-0.02508544921875,

0.005474090576171875,

-0.06829833984375,

0.01357269287109375,

-0.0059661865234375,

-0.036834716796875,

-0.0882568359375,

0.0288238525390625,

0.021514892578125,

0.03643798828125,

0.0325927734375,

0.005413055419921875,

0.073974609375,

-0.03387451171875,

0.0711669921875,

0.019622802734375,

-0.0673828125,

0.0352783203125,

-0.0215606689453125,

0.01043701171875,

0.01207733154296875,

0.036895751953125,

-0.0166778564453125,

-0.0213775634765625,

-0.048126220703125,

-0.07061767578125,

0.054931640625,

0.015777587890625,

0.0022144317626953125,

-0.0110015869140625,

0.0161895751953125,

-0.0038661956787109375,

0.01120758056640625,

-0.043609619140625,

-0.019622802734375,

-0.0234527587890625,

0.00670623779296875,

0.0011930465698242188,

-0.0241851806640625,

-0.032257080078125,

-0.007312774658203125,

0.0430908203125,

0.0064849853515625,

0.0217132568359375,

-0.00984954833984375,

-0.005123138427734375,

-0.00571441650390625,

0.0167999267578125,

0.056365966796875,

0.04034423828125,

-0.0259857177734375,

-0.01348876953125,

0.0194854736328125,

-0.051605224609375,

0.006481170654296875,

0.0221099853515625,

0.01187896728515625,

-0.030364990234375,

0.033935546875,

0.028564453125,

0.0216217041015625,

-0.03546142578125,

0.049560546875,

-0.01020050048828125,

-0.020782470703125,

-0.0014629364013671875,

0.037689208984375,

0.01056671142578125,

0.057098388671875,

0.032379150390625,

0.00640869140625,

0.011688232421875,

-0.038116455078125,

-0.00937652587890625,

0.0293731689453125,

0.01035308837890625,

-0.016845703125,

0.04986572265625,

-0.00882720947265625,

-0.0160675048828125,

0.038055419921875,

-0.039215087890625,

-0.0298614501953125,

0.04058837890625,

0.042449951171875,

0.0447998046875,

-0.0093994140625,

0.007045745849609375,

0.0283203125,

0.02587890625,

-0.0118865966796875,

0.0300750732421875,

0.0023555755615234375,

-0.0213470458984375,

-0.038909912109375,

-0.0244293212890625,

-0.038330078125,

0.01302337646484375,

-0.0633544921875,

0.027435302734375,

-0.037506103515625,

-0.0277862548828125,

-0.0145721435546875,

0.0200653076171875,

-0.0426025390625,

-0.0033931732177734375,

0.004756927490234375,

0.048919677734375,

-0.0509033203125,

0.043975830078125,

0.061431884765625,

-0.04547119140625,

-0.08941650390625,

-0.01300811767578125,

0.01727294921875,

-0.0679931640625,

0.04547119140625,

-0.001819610595703125,

-0.0140380859375,

-0.0175018310546875,

-0.056488037109375,

-0.06890869140625,

0.09130859375,

0.01873779296875,

-0.033935546875,

-0.0097808837890625,

-0.0200042724609375,

0.0197296142578125,

-0.0192413330078125,

0.025909423828125,

0.0255889892578125,

0.0445556640625,

0.03314208984375,

-0.071533203125,

0.0164642333984375,

-0.0178985595703125,

-0.007293701171875,

0.0030078887939453125,

-0.0802001953125,

0.07354736328125,

-0.0288543701171875,

-0.0159759521484375,

0.04901123046875,

0.06353759765625,

0.035369873046875,

-0.0080718994140625,

0.0390625,

0.04315185546875,

0.06121826171875,

-0.0197296142578125,

0.05706787109375,

-0.01383209228515625,

0.037689208984375,

0.068359375,

-0.0166473388671875,

0.05267333984375,

0.03033447265625,

0.004467010498046875,

0.0284271240234375,

0.0411376953125,

-0.0163421630859375,

0.04815673828125,

-0.0007157325744628906,

0.0036220550537109375,

-0.0166168212890625,

0.0009207725524902344,

-0.06475830078125,

0.039642333984375,

0.0123748779296875,

0.0224456787109375,

-0.01641845703125,

-0.00927734375,

0.0146636962890625,

-0.01155853271484375,

-0.0253753662109375,

0.026763916015625,

-0.01044464111328125,

-0.032806396484375,

0.05145263671875,

-0.015045166015625,

0.061431884765625,

-0.07275390625,

-0.0038242340087890625,

-0.03680419921875,

0.004730224609375,

-0.025146484375,

-0.06048583984375,

0.0288848876953125,

-0.0009655952453613281,

-0.034332275390625,

0.004863739013671875,

0.038330078125,

-0.0288238525390625,

-0.0260467529296875,

0.00656890869140625,

0.02655029296875,

0.0128936767578125,

0.019775390625,

-0.03607177734375,

0.0311737060546875,

-0.010162353515625,

-0.0188446044921875,

0.016387939453125,

0.03662109375,

0.00312042236328125,

0.0552978515625,

0.031280517578125,

0.00395965576171875,

-0.01202392578125,

-0.0155029296875,

0.09027099609375,

-0.04339599609375,

-0.02880859375,

-0.044647216796875,

0.033599853515625,

0.0108489990234375,

-0.032928466796875,

0.045562744140625,

0.026092529296875,

0.037872314453125,

-0.0202484130859375,

0.012725830078125,

-0.036865234375,

0.01363372802734375,

-0.051361083984375,

0.0775146484375,

-0.0562744140625,

0.006732940673828125,

-0.00836944580078125,

-0.07244873046875,

0.0008373260498046875,

0.05438232421875,

-0.017486572265625,

-0.0092010498046875,

0.035858154296875,

0.07257080078125,

-0.01474761962890625,

-0.0107421875,

0.0182647705078125,

0.01444244384765625,

0.01491546630859375,

0.0709228515625,

0.073974609375,

-0.05950927734375,

0.05511474609375,

0.004276275634765625,

-0.02337646484375,

-0.03631591796875,

-0.0765380859375,

-0.0792236328125,

-0.0106353759765625,

-0.009429931640625,

-0.027618408203125,

0.008544921875,

0.0809326171875,

0.05078125,

-0.036224365234375,

-0.008636474609375,

0.038909912109375,

-0.00400543212890625,

-0.0084991455078125,

-0.0175933837890625,

0.0008563995361328125,

0.00489044189453125,

-0.054290771484375,

0.034393310546875,

0.01273345947265625,

0.0282135009765625,

0.005855560302734375,

-0.0047149658203125,

-0.0166473388671875,

0.0175628662109375,

0.024505615234375,

0.04522705078125,

-0.0726318359375,

-0.019134521484375,

0.006809234619140625,

-0.0192718505859375,

-0.012451171875,

0.02056884765625,

-0.062042236328125,

0.00025177001953125,

0.02056884765625,

0.0191650390625,

0.031707763671875,

0.0026264190673828125,

0.026458740234375,

-0.0277862548828125,

0.036407470703125,

0.0001010894775390625,

0.0230865478515625,

0.027191162109375,

-0.04290771484375,

0.049835205078125,

0.0015592575073242188,

-0.04638671875,

-0.06072998046875,

-0.002971649169921875,

-0.10888671875,

-0.0018320083618164062,

0.0765380859375,

-0.0019855499267578125,

-0.0121612548828125,

0.036376953125,

-0.0478515625,

0.01514434814453125,

-0.0294189453125,

0.043426513671875,

0.0516357421875,

-0.02081298828125,

-0.004302978515625,

-0.0296478271484375,

0.01557159423828125,

0.011932373046875,

-0.0570068359375,

-0.024261474609375,

0.040252685546875,

0.01366424560546875,

0.0294952392578125,

0.028533935546875,

-0.0025043487548828125,

0.036163330078125,

-0.01092529296875,

0.006744384765625,

-0.0455322265625,

-0.0241546630859375,

-0.00974273681640625,

0.005596160888671875,

-0.0191497802734375,

-0.00792694091796875

]

] |

hfl/chinese-alpaca-2-7b | 2023-08-25T01:06:56.000Z | [

"transformers",

"pytorch",

"llama",

"text-generation",

"license:apache-2.0",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | hfl | null | null | hfl/chinese-alpaca-2-7b | 112 | 7,677 | transformers | 2023-07-31T03:53:55 | ---

license: apache-2.0

---

# Chinese-Alpaca-2-7B

**This is the full Chinese-Alpaca-2-7B model,which can be loaded directly for inference and full-parameter training.**

**Related models👇**

* Long context base models

* [Chinese-LLaMA-2-7B-16K (full model)](https://huggingface.co/ziqingyang/chinese-llama-2-7b-16k)

* [Chinese-LLaMA-2-LoRA-7B-16K (LoRA model)](https://huggingface.co/ziqingyang/chinese-llama-2-lora-7b-16k)

* [Chinese-LLaMA-2-13B-16K (full model)](https://huggingface.co/ziqingyang/chinese-llama-2-13b-16k)

* [Chinese-LLaMA-2-LoRA-13B-16K (LoRA model)](https://huggingface.co/ziqingyang/chinese-llama-2-lora-13b-16k)

* Base models

* [Chinese-LLaMA-2-7B (full model)](https://huggingface.co/ziqingyang/chinese-llama-2-7b)

* [Chinese-LLaMA-2-LoRA-7B (LoRA model)](https://huggingface.co/ziqingyang/chinese-llama-2-lora-7b)

* [Chinese-LLaMA-2-13B (full model)](https://huggingface.co/ziqingyang/chinese-llama-2-13b)

* [Chinese-LLaMA-2-LoRA-13B (LoRA model)](https://huggingface.co/ziqingyang/chinese-llama-2-lora-13b)

* Instruction/Chat models

* [Chinese-Alpaca-2-7B (full model)](https://huggingface.co/ziqingyang/chinese-alpaca-2-7b)

* [Chinese-Alpaca-2-LoRA-7B (LoRA model)](https://huggingface.co/ziqingyang/chinese-alpaca-2-lora-7b)

* [Chinese-Alpaca-2-13B (full model)](https://huggingface.co/ziqingyang/chinese-alpaca-2-13b)

* [Chinese-Alpaca-2-LoRA-13B (LoRA model)](https://huggingface.co/ziqingyang/chinese-alpaca-2-lora-13b)

# Description of Chinese-LLaMA-Alpaca-2

This project is based on the Llama-2, released by Meta, and it is the second generation of the Chinese LLaMA & Alpaca LLM project. We open-source Chinese LLaMA-2 (foundation model) and Alpaca-2 (instruction-following model). These models have been expanded and optimized with Chinese vocabulary beyond the original Llama-2. We used large-scale Chinese data for incremental pre-training, which further improved the fundamental semantic understanding of the Chinese language, resulting in a significant performance improvement compared to the first-generation models. The relevant models support a 4K context and can be expanded up to 18K+ using the NTK method.

The main contents of this project include:

* 🚀 New extended Chinese vocabulary beyond Llama-2, open-sourcing the Chinese LLaMA-2 and Alpaca-2 LLMs.

* 🚀 Open-sourced the pre-training and instruction finetuning (SFT) scripts for further tuning on user's data

* 🚀 Quickly deploy and experience the quantized LLMs on CPU/GPU of personal PC

* 🚀 Support for LLaMA ecosystems like 🤗transformers, llama.cpp, text-generation-webui, LangChain, vLLM etc.

Please refer to [https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/](https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/) for details. | 2,754 | [

[

-0.0298004150390625,

-0.046112060546875,

0.0202789306640625,

0.054656982421875,

-0.047515869140625,

-0.0182037353515625,

0.003368377685546875,

-0.0677490234375,

0.0269927978515625,

0.033905029296875,

-0.0418701171875,

-0.03875732421875,

-0.040435791015625,

0.0019216537475585938,

-0.0199737548828125,

0.05078125,

-0.00484466552734375,

0.01134490966796875,

0.026031494140625,

-0.027435302734375,

-0.0271759033203125,

-0.0259552001953125,

-0.054046630859375,

-0.03729248046875,

0.052825927734375,

0.005584716796875,

0.060394287109375,

0.06500244140625,

0.0297393798828125,

0.015411376953125,

-0.0186004638671875,

0.02191162109375,

-0.0187835693359375,

-0.03369140625,

0.01120758056640625,

-0.0291748046875,

-0.060546875,

-0.0011234283447265625,

0.0247802734375,

0.034149169921875,

-0.017822265625,

0.0287628173828125,

-0.0018749237060546875,

0.028533935546875,

-0.0321044921875,

0.02117919921875,

-0.03485107421875,

0.00452423095703125,

-0.0264739990234375,

0.0030364990234375,

-0.0210113525390625,

-0.0157012939453125,

-0.0089874267578125,

-0.06939697265625,

0.00036525726318359375,

-0.0071868896484375,

0.09619140625,

0.01873779296875,

-0.0413818359375,

-0.0254669189453125,

-0.0184326171875,

0.0545654296875,

-0.07012939453125,

0.01702880859375,

0.037933349609375,

0.01024627685546875,

-0.0260467529296875,

-0.055908203125,

-0.046844482421875,

-0.02349853515625,

-0.019439697265625,

0.01200103759765625,

0.0089111328125,

-0.01256561279296875,

0.007167816162109375,

0.02203369140625,

-0.033905029296875,

0.03570556640625,

-0.040130615234375,

-0.00615692138671875,

0.053619384765625,

-0.01033782958984375,

0.022430419921875,

-0.0027904510498046875,

-0.038116455078125,

-0.0150299072265625,

-0.0693359375,

0.00991058349609375,

0.0202484130859375,

0.02850341796875,

-0.047271728515625,

0.03668212890625,

-0.024444580078125,

0.04833984375,

-0.00167083740234375,

-0.032562255859375,

0.0438232421875,

-0.0301666259765625,

-0.01479339599609375,

-0.0163726806640625,

0.060028076171875,

0.023529052734375,

-0.007442474365234375,

0.0135345458984375,

-0.01441192626953125,

-0.01262664794921875,

-0.033416748046875,

-0.061126708984375,

0.0005855560302734375,

0.004543304443359375,

-0.049407958984375,

-0.0238189697265625,

0.008148193359375,

-0.03216552734375,

-0.01242828369140625,

-0.01386260986328125,

0.0251922607421875,

-0.013336181640625,

-0.0308990478515625,

0.0187835693359375,

0.007167816162109375,

0.0670166015625,

0.0267791748046875,

-0.0587158203125,

0.01030731201171875,

0.03961181640625,

0.059600830078125,

0.00513458251953125,

-0.0292205810546875,

-0.000030040740966796875,

0.0209197998046875,

-0.038665771484375,

0.052978515625,

-0.013641357421875,

-0.03289794921875,

-0.0137176513671875,

0.032012939453125,

0.01030731201171875,

-0.031646728515625,

0.0469970703125,

-0.03082275390625,

0.005542755126953125,

-0.0445556640625,

-0.0093994140625,

-0.039215087890625,

0.0199432373046875,

-0.0672607421875,

0.08636474609375,

0.005096435546875,

-0.04559326171875,

0.0171356201171875,

-0.050048828125,

-0.01500701904296875,

-0.01210784912109375,

-0.0012559890747070312,

-0.022369384765625,

-0.023529052734375,

0.0164947509765625,

0.027740478515625,

-0.046844482421875,

-0.0005617141723632812,

-0.020355224609375,

-0.03961181640625,

-0.0070037841796875,

-0.004367828369140625,

0.08538818359375,

0.0122222900390625,

-0.02142333984375,

-0.006839752197265625,

-0.065185546875,

-0.01276397705078125,

0.0567626953125,

-0.0294647216796875,

-0.0020923614501953125,

-0.0013599395751953125,

-0.00937652587890625,

0.01279449462890625,

0.050079345703125,

-0.0297393798828125,

0.0202178955078125,

-0.025115966796875,

0.030975341796875,

0.05224609375,

-0.01107025146484375,

0.007266998291015625,

-0.0288543701171875,

0.018585205078125,

0.01190948486328125,

0.0264129638671875,

-0.0037899017333984375,

-0.054351806640625,

-0.08624267578125,

-0.01708984375,

0.007587432861328125,

0.049835205078125,

-0.04833984375,

0.041748046875,

0.008056640625,

-0.056610107421875,

-0.0235595703125,

0.01006317138671875,

0.03515625,

0.020233154296875,

0.022186279296875,

-0.0216064453125,

-0.0438232421875,

-0.07659912109375,

0.0205078125,

-0.032684326171875,

-0.00717926025390625,

0.00811004638671875,

0.039764404296875,

-0.0225982666015625,

0.036529541015625,

-0.0261993408203125,

-0.0108642578125,

-0.01513671875,

-0.0118865966796875,

0.032440185546875,

0.033721923828125,

0.07269287109375,

-0.04351806640625,

-0.01284027099609375,

0.0086822509765625,

-0.052764892578125,

-0.0006961822509765625,

0.00017583370208740234,

-0.033050537109375,

0.01337432861328125,

0.002346038818359375,

-0.056182861328125,

0.030487060546875,

0.046173095703125,

-0.0165252685546875,

0.02703857421875,

-0.0068206787109375,

-0.0155181884765625,

-0.08270263671875,

0.0067291259765625,

-0.00579833984375,

0.014892578125,

-0.03173828125,

0.032379150390625,

0.01085662841796875,

0.0303955078125,

-0.05181884765625,

0.058990478515625,

-0.04339599609375,

-0.0157928466796875,

-0.0125885009765625,

0.006175994873046875,

0.019561767578125,

0.0589599609375,

0.00685882568359375,

0.0445556640625,

0.0266571044921875,

-0.040130615234375,

0.042694091796875,

0.034149169921875,

-0.02386474609375,

-0.00530242919921875,

-0.0645751953125,

0.0271453857421875,

0.00273895263671875,

0.05157470703125,

-0.0567626953125,

-0.02154541015625,

0.0469970703125,

-0.0236053466796875,

-0.001689910888671875,

0.01187896728515625,

-0.043792724609375,

-0.03997802734375,

-0.046417236328125,

0.035675048828125,

0.041229248046875,

-0.07232666015625,

0.0239410400390625,

0.00021457672119140625,

0.0160675048828125,

-0.061370849609375,

-0.07220458984375,

-0.0089111328125,

-0.0167999267578125,

-0.036376953125,

0.024871826171875,

-0.01067352294921875,

-0.0019683837890625,

-0.01386260986328125,

0.0066375732421875,

-0.007732391357421875,

0.006931304931640625,

0.0113372802734375,

0.053436279296875,

-0.0273590087890625,

-0.00994110107421875,

0.0022106170654296875,

0.00995635986328125,

-0.0081939697265625,

0.01030731201171875,

0.05047607421875,

-0.005359649658203125,

-0.0232696533203125,

-0.036865234375,

0.0092926025390625,

0.00745391845703125,

-0.0201263427734375,

0.062225341796875,

0.054718017578125,

-0.037017822265625,

0.0005025863647460938,

-0.0413818359375,

0.00630950927734375,

-0.035797119140625,

0.0260009765625,

-0.03839111328125,

-0.043365478515625,

0.0477294921875,

0.0198211669921875,

0.025360107421875,

0.04901123046875,

0.050201416015625,

0.0233612060546875,

0.07672119140625,

0.04840087890625,

-0.017578125,

0.033660888671875,

-0.027435302734375,

-0.001972198486328125,

-0.0579833984375,

-0.040191650390625,

-0.033599853515625,

-0.017242431640625,

-0.037109375,

-0.0440673828125,

-0.0006818771362304688,

0.0261688232421875,

-0.053436279296875,

0.0379638671875,

-0.046844482421875,

0.028778076171875,

0.042572021484375,

0.0238037109375,

0.0242156982421875,

0.006076812744140625,

0.00769805908203125,

0.028656005859375,

-0.02313232421875,

-0.041778564453125,

0.0770263671875,

0.029449462890625,

0.037139892578125,

0.01183319091796875,

0.03253173828125,

-0.0019359588623046875,

0.021759033203125,

-0.060211181640625,

0.0467529296875,

-0.0147552490234375,

-0.032562255859375,

-0.0063934326171875,

-0.00713348388671875,

-0.06732177734375,

0.0301055908203125,

0.01004791259765625,

-0.044281005859375,

0.003917694091796875,

-0.0036830902099609375,

-0.02484130859375,

0.01554107666015625,

-0.0276031494140625,

0.035858154296875,

-0.0310821533203125,

0.0025882720947265625,

-0.007221221923828125,

-0.047515869140625,

0.063720703125,

-0.0186309814453125,

0.006256103515625,

-0.03350830078125,

-0.0300445556640625,

0.057769775390625,

-0.041107177734375,

0.06732177734375,

-0.016998291015625,

-0.032318115234375,

0.049163818359375,

-0.0211944580078125,

0.051788330078125,

-0.0021419525146484375,

-0.021575927734375,

0.0406494140625,

-0.001224517822265625,

-0.040008544921875,

-0.0157318115234375,

0.036529541015625,

-0.08837890625,

-0.04486083984375,

-0.022003173828125,

-0.018310546875,

-0.0018072128295898438,

0.007152557373046875,

0.03692626953125,

-0.0048980712890625,

-0.004436492919921875,

0.008941650390625,

0.01407623291015625,

-0.0283660888671875,

0.03839111328125,

0.04217529296875,

-0.01318359375,

-0.0283966064453125,

0.045745849609375,

0.006885528564453125,

0.01433563232421875,

0.0251922607421875,

0.0122528076171875,

-0.01387786865234375,

-0.0343017578125,

-0.052093505859375,

0.041961669921875,

-0.0538330078125,

-0.0158538818359375,

-0.035400390625,

-0.042694091796875,

-0.027130126953125,

0.0031299591064453125,

-0.019439697265625,

-0.0362548828125,

-0.04473876953125,

-0.0183868408203125,

0.037445068359375,

0.040802001953125,

-0.005168914794921875,

0.052642822265625,

-0.04693603515625,

0.031494140625,

0.0293426513671875,

0.006977081298828125,

0.01255035400390625,

-0.061126708984375,

-0.012115478515625,

0.0217437744140625,

-0.034942626953125,

-0.058380126953125,

0.037841796875,

0.0207061767578125,

0.039520263671875,

0.044677734375,

-0.021514892578125,

0.06732177734375,

-0.020965576171875,

0.07916259765625,

0.026947021484375,

-0.052734375,

0.04241943359375,

-0.0206146240234375,

-0.0061187744140625,

0.0119476318359375,

0.00948333740234375,

-0.0275421142578125,

-0.0002684593200683594,

-0.021514892578125,

-0.056549072265625,

0.066650390625,

0.00537872314453125,

0.0156402587890625,

0.003498077392578125,

0.039459228515625,

0.01959228515625,

-0.0039215087890625,

-0.08245849609375,

-0.027618408203125,

-0.033721923828125,

-0.00502777099609375,

0.004688262939453125,

-0.02691650390625,

-0.01104736328125,

-0.0260009765625,

0.07196044921875,

-0.0164794921875,

0.01235198974609375,

-0.0004134178161621094,

0.0092620849609375,

-0.0087432861328125,

-0.021728515625,

0.048614501953125,

0.03253173828125,

-0.006816864013671875,

-0.0290679931640625,

0.03509521484375,

-0.03985595703125,

0.00881195068359375,

0.0034236907958984375,

-0.0193328857421875,

0.0006704330444335938,

0.0400390625,

0.07232666015625,

-0.0019397735595703125,

-0.046844482421875,

0.036895751953125,

0.0047454833984375,

-0.00785064697265625,

-0.044281005859375,

0.0017290115356445312,

0.02313232421875,

0.0279693603515625,

0.0179290771484375,

-0.027435302734375,

0.004405975341796875,

-0.038421630859375,

-0.0182342529296875,

0.018829345703125,

0.017242431640625,

-0.033721923828125,

0.050567626953125,

0.00867462158203125,

-0.0072784423828125,

0.033447265625,

-0.0258636474609375,

-0.0168609619140625,

0.088134765625,

0.0496826171875,

0.036529541015625,

-0.0302581787109375,

0.0086517333984375,

0.049560546875,

0.023284912109375,

-0.035858154296875,

0.028564453125,

0.006694793701171875,

-0.057769775390625,

-0.0137176513671875,

-0.051544189453125,

-0.02752685546875,

0.03094482421875,

-0.046417236328125,

0.048980712890625,

-0.04052734375,

-0.01043701171875,

-0.0179595947265625,

0.0276031494140625,

-0.042022705078125,

0.0189971923828125,

0.03302001953125,

0.07794189453125,

-0.044219970703125,

0.0865478515625,

0.044830322265625,

-0.0296173095703125,

-0.08319091796875,

-0.0296478271484375,

-0.0017719268798828125,

-0.112060546875,

0.045379638671875,

0.0194549560546875,

0.0009098052978515625,

-0.029632568359375,

-0.060546875,

-0.09368896484375,

0.12335205078125,

0.02630615234375,

-0.03704833984375,

-0.01210784912109375,

0.0144195556640625,

0.0281219482421875,

-0.018157958984375,

0.0237884521484375,

0.0526123046875,

0.035369873046875,

0.03814697265625,

-0.06988525390625,

0.01325225830078125,

-0.027862548828125,

0.0119476318359375,

-0.00881195068359375,

-0.10943603515625,

0.09783935546875,

-0.018096923828125,

-0.006683349609375,

0.052764892578125,

0.06744384765625,

0.0628662109375,

0.0119781494140625,

0.045562744140625,

0.033599853515625,

0.04534912109375,

0.005550384521484375,

0.046722412109375,

-0.0179290771484375,

0.0191192626953125,

0.0693359375,

-0.02081298828125,

0.06353759765625,

0.015655517578125,

-0.0256500244140625,

0.041839599609375,

0.08953857421875,

-0.01727294921875,

0.0274810791015625,

0.006256103515625,

-0.01494598388671875,

-0.001262664794921875,

-0.0193023681640625,

-0.060272216796875,

0.041748046875,

0.03436279296875,

-0.0243682861328125,

-0.0018415451049804688,

-0.03265380859375,

0.026885986328125,

-0.0391845703125,

-0.0208282470703125,

0.0288848876953125,

0.018463134765625,

-0.0302581787109375,

0.06036376953125,

0.0238037109375,

0.07012939453125,

-0.0556640625,

-0.00395965576171875,

-0.0386962890625,

-0.00386810302734375,

-0.022247314453125,

-0.032684326171875,

-0.004184722900390625,

0.0029087066650390625,

0.007598876953125,

0.0232391357421875,

0.04486083984375,

-0.016632080078125,

-0.057769775390625,

0.049346923828125,

0.0300445556640625,

0.0224609375,

0.0147857666015625,

-0.060272216796875,

0.013153076171875,

0.004711151123046875,

-0.058135986328125,

0.0212249755859375,

0.02178955078125,

-0.01454925537109375,

0.052520751953125,

0.055328369140625,

0.006000518798828125,

0.01507568359375,

0.00621795654296875,

0.061981201171875,

-0.046966552734375,

-0.0225830078125,

-0.057281494140625,

0.01262664794921875,

0.00033283233642578125,

-0.0251007080078125,

0.03131103515625,

0.030792236328125,

0.063720703125,

0.0034027099609375,

0.045867919921875,

-0.007160186767578125,

0.03106689453125,

-0.03289794921875,

0.03985595703125,

-0.059814453125,

0.01404571533203125,

0.0024890899658203125,

-0.0657958984375,

-0.0124359130859375,

0.051116943359375,

0.0099334716796875,

0.00963592529296875,

0.022430419921875,

0.05364990234375,

0.00937652587890625,

-0.0007739067077636719,

0.00653076171875,

0.0182037353515625,

0.03070068359375,

0.06829833984375,

0.05841064453125,

-0.05078125,

0.04449462890625,

-0.0469970703125,

-0.0179901123046875,

-0.01019287109375,

-0.06109619140625,

-0.0584716796875,

-0.0186004638671875,

-0.00386810302734375,

-0.0097808837890625,

-0.0147552490234375,

0.0675048828125,

0.056060791015625,

-0.053497314453125,

-0.03192138671875,

0.0212249755859375,

-0.0048675537109375,

-0.0079193115234375,

-0.00881195068359375,

0.044281005859375,

0.00799560546875,

-0.06396484375,

0.0254974365234375,

-0.0021343231201171875,

0.0312042236328125,

-0.01270294189453125,

-0.01910400390625,

-0.009857177734375,

0.0139923095703125,

0.05523681640625,

0.0321044921875,

-0.0728759765625,

-0.02215576171875,

-0.005344390869140625,

-0.0198822021484375,

0.0104827880859375,

0.0102081298828125,

-0.04547119140625,

-0.02703857421875,

0.020751953125,

0.0212860107421875,

0.034027099609375,

0.00004547834396362305,

0.004425048828125,

-0.0252685546875,

0.056427001953125,

-0.01140594482421875,

0.045166015625,

0.021881103515625,

-0.0191650390625,

0.07177734375,

0.01482391357421875,

-0.02337646484375,

-0.057525634765625,

0.0073089599609375,

-0.10125732421875,

-0.021453857421875,

0.08441162109375,

-0.0206146240234375,

-0.0294342041015625,

0.0301971435546875,

-0.026580810546875,

0.038330078125,

-0.01367950439453125,

0.04437255859375,

0.039337158203125,

0.0005679130554199219,

-0.00591278076171875,

-0.0438232421875,

0.005748748779296875,

0.02496337890625,

-0.06500244140625,

-0.02197265625,

0.01318359375,

0.020111083984375,

0.01084136962890625,

0.042510986328125,

-0.00923919677734375,

0.014556884765625,

-0.00588226318359375,

0.0208282470703125,

-0.00994110107421875,

0.0033130645751953125,

0.0038623809814453125,

-0.019073486328125,

0.00522613525390625,

-0.033782958984375

]

] |

naclbit/trinart_stable_diffusion_v2 | 2023-05-07T17:12:04.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | naclbit | null | null | naclbit/trinart_stable_diffusion_v2 | 314 | 7,675 | diffusers | 2022-09-08T10:18:16 | ---

inference: true

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

license: creativeml-openrail-m

---

## Please Note!

This model is NOT the 19.2M images Characters Model on TrinArt, but an improved version of the original Trin-sama Twitter bot model. This model is intended to retain the original SD's aesthetics as much as possible while nudging the model to anime/manga style.

Other TrinArt models can be found at:

https://huggingface.co/naclbit/trinart_derrida_characters_v2_stable_diffusion

https://huggingface.co/naclbit/trinart_characters_19.2m_stable_diffusion_v1

## Diffusers

The model has been ported to `diffusers` by [ayan4m1](https://huggingface.co/ayan4m1)

and can easily be run from one of the branches:

- `revision="diffusers-60k"` for the checkpoint trained on 60,000 steps,

- `revision="diffusers-95k"` for the checkpoint trained on 95,000 steps,

- `revision="diffusers-115k"` for the checkpoint trained on 115,000 steps.

For more information, please have a look at [the "Three flavors" section](#three-flavors).

## Gradio

We also support a [Gradio](https://github.com/gradio-app/gradio) web ui with diffusers to run inside a colab notebook: [](https://colab.research.google.com/drive/1RWvik_C7nViiR9bNsu3fvMR3STx6RvDx?usp=sharing)

### Example Text2Image

```python

# !pip install diffusers==0.3.0

from diffusers import StableDiffusionPipeline

# using the 60,000 steps checkpoint

pipe = StableDiffusionPipeline.from_pretrained("naclbit/trinart_stable_diffusion_v2", revision="diffusers-60k")

pipe.to("cuda")

image = pipe("A magical dragon flying in front of the Himalaya in manga style").images[0]

image

```

If you want to run the pipeline faster or on a different hardware, please have a look at the [optimization docs](https://huggingface.co/docs/diffusers/optimization/fp16).

### Example Image2Image

```python

# !pip install diffusers==0.3.0

from diffusers import StableDiffusionImg2ImgPipeline

import requests

from PIL import Image

from io import BytesIO

url = "https://scitechdaily.com/images/Dog-Park.jpg"

response = requests.get(url)

init_image = Image.open(BytesIO(response.content)).convert("RGB")

init_image = init_image.resize((768, 512))

# using the 115,000 steps checkpoint

pipe = StableDiffusionImg2ImgPipeline.from_pretrained("naclbit/trinart_stable_diffusion_v2", revision="diffusers-115k")

pipe.to("cuda")