modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

ssarae/dreambooth_pingu_ver | 2023-10-07T01:36:29.000Z | [

"diffusers",

"tensorboard",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"lora",

"license:creativeml-openrail-m",

"has_space",

"region:us"

] | text-to-image | ssarae | null | null | ssarae/dreambooth_pingu_ver | 0 | 490 | diffusers | 2023-10-06T20:48:04 |

---

license: creativeml-openrail-m

base_model: runwayml/stable-diffusion-v1-5

instance_prompt: A rqlaks pingu

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

- lora

inference: true

---

# LoRA DreamBooth - ssarae/dreambooth_pingu_ver

These are LoRA adaption weights for runwayml/stable-diffusion-v1-5. The weights were trained on A rqlaks pingu using [DreamBooth](https://dreambooth.github.io/). You can find some example images in the following.

LoRA for the text encoder was enabled: False.

| 633 | [

[

-0.0060577392578125,

-0.036834716796875,

0.0189361572265625,

0.01953125,

-0.04254150390625,

0.0160980224609375,

0.033599853515625,

-0.0125579833984375,

0.05609130859375,

0.04205322265625,

-0.05206298828125,

-0.034820556640625,

-0.0428466796875,

-0.0145111083... |

lmsys/vicuna-7b-delta-v0 | 2023-08-01T18:24:28.000Z | [

"transformers",

"pytorch",

"llama",

"text-generation",

"arxiv:2302.13971",

"arxiv:2306.05685",

"has_space",

"text-generation-inference",

"region:us"

] | text-generation | lmsys | null | null | lmsys/vicuna-7b-delta-v0 | 151 | 489 | transformers | 2023-04-06T01:12:08 | ---

inference: false

---

**NOTE: New version available**

Please check out a newer version of the weights [here](https://github.com/lm-sys/FastChat/blob/main/docs/vicuna_weights_version.md).

**NOTE: This "delta model" cannot be used directly.**

Users have to apply it on top of the original LLaMA weights to get actual Vicuna weights. See [instructions](https://github.com/lm-sys/FastChat/blob/main/docs/vicuna_weights_version.md#how-to-apply-delta-weights-for-weights-v11-and-v0).

<br>

<br>

# Vicuna Model Card

## Model Details

Vicuna is a chat assistant trained by fine-tuning LLaMA on user-shared conversations collected from ShareGPT.

- **Developed by:** [LMSYS](https://lmsys.org/)

- **Model type:** An auto-regressive language model based on the transformer architecture.

- **License:** Non-commercial license

- **Finetuned from model:** [LLaMA](https://arxiv.org/abs/2302.13971).

### Model Sources

- **Repository:** https://github.com/lm-sys/FastChat

- **Blog:** https://lmsys.org/blog/2023-03-30-vicuna/

- **Paper:** https://arxiv.org/abs/2306.05685

- **Demo:** https://chat.lmsys.org/

## Uses

The primary use of Vicuna is research on large language models and chatbots.

The primary intended users of the model are researchers and hobbyists in natural language processing, machine learning, and artificial intelligence.

## How to Get Started with the Model

Command line interface: https://github.com/lm-sys/FastChat#vicuna-weights.

APIs (OpenAI API, Huggingface API): https://github.com/lm-sys/FastChat/tree/main#api.

## Training Details

Vicuna v0 is fine-tuned from LLaMA with supervised instruction fine-tuning.

The training data is around 70K conversations collected from ShareGPT.com.

See more details in the "Training Details of Vicuna Models" section in the appendix of this [paper](https://arxiv.org/pdf/2306.05685.pdf).

## Evaluation

Vicuna is evaluated with standard benchmarks, human preference, and LLM-as-a-judge. See more details in this [paper](https://arxiv.org/pdf/2306.05685.pdf) and [leaderboard](https://huggingface.co/spaces/lmsys/chatbot-arena-leaderboard).

## Difference between different versions of Vicuna

See [vicuna_weights_version.md](https://github.com/lm-sys/FastChat/blob/main/docs/vicuna_weights_version.md) | 2,271 | [

[

-0.0151214599609375,

-0.06402587890625,

0.02587890625,

0.036895751953125,

-0.042633056640625,

-0.015411376953125,

-0.0175628662109375,

-0.042724609375,

0.031768798828125,

0.0306396484375,

-0.045074462890625,

-0.039825439453125,

-0.0460205078125,

-0.000442743... |

sobabeats/Evt_V2 | 2023-04-17T11:07:19.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"anime",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | sobabeats | null | null | sobabeats/Evt_V2 | 0 | 489 | diffusers | 2023-04-17T11:07:19 | ---

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- anime

- diffusers

license: creativeml-openrail-m

duplicated_from: haor/Evt_V2

---

# Evt_V2

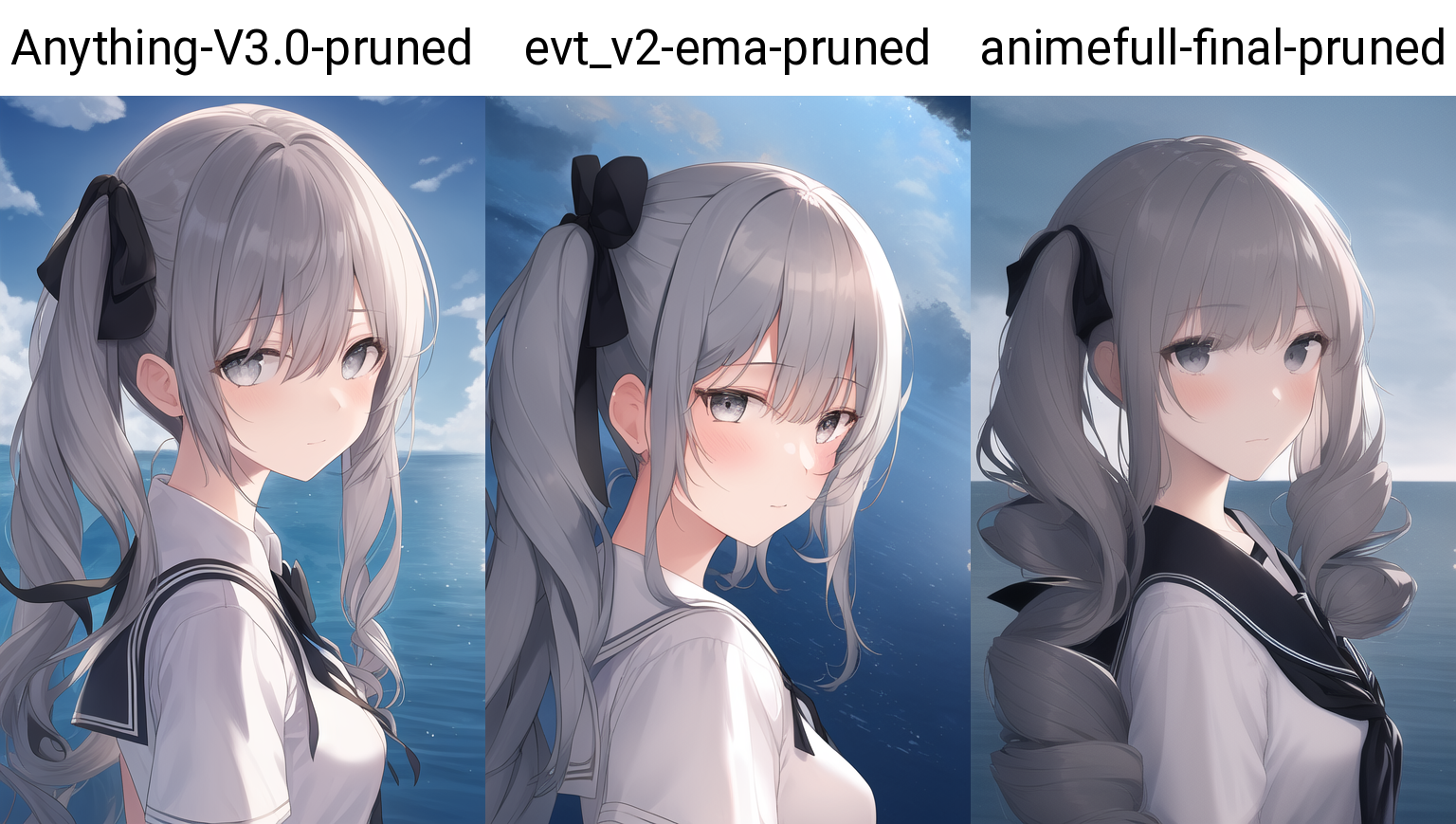

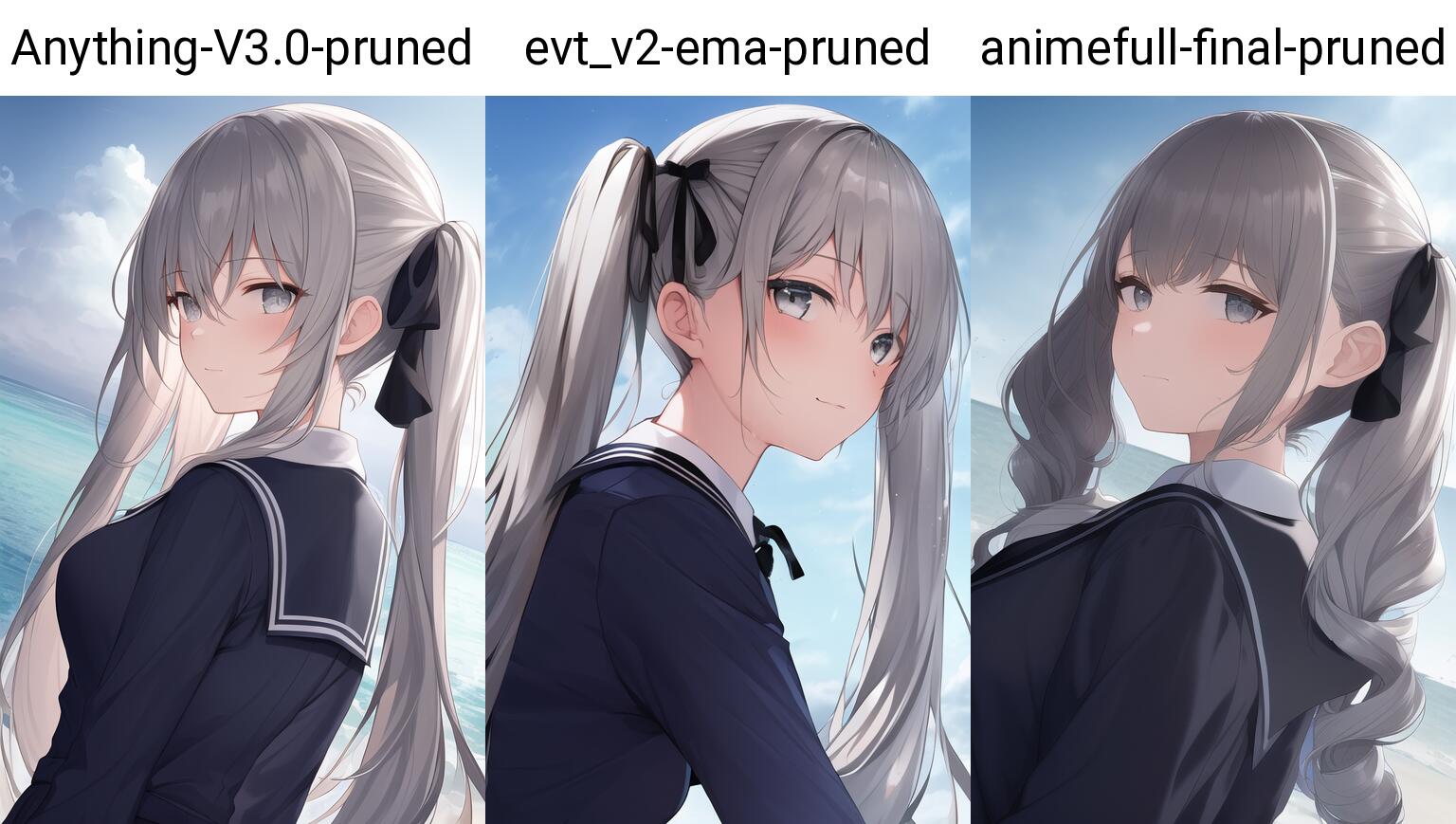

Based on animefull-latest, fine-tuned using a training set of 15000 images (7700 flipped). Most of the training set uses [pixiv_AI_crawler](https://github.com/7eu7d7/pixiv_AI_crawler) to filter the pixiv daily ranking, and then mixes some nsfw animation images.

### Examples

```

best quality, illustration,highly detailed,1girl,upper body,beautiful detailed eyes, medium_breasts, long hair,grey hair, grey eyes, curly hair, bangs,empty eyes,expressionless, ((masterpiece)),twintails,beautiful detailed sky, beautiful detailed water, cinematic lighting, dramatic angle,((back to the viewer)),(an extremely delicate and beautiful),school uniform,black ribbon,light smile,

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry,artist name,bad feet

Steps: 40, Sampler: Euler a, CFG scale: 7, Clip skip: 2

*evt_bs6_ema is the first version of evt

```

```

{Masterpiece, Kaname_Madoka, tall and long double tails, well rooted hair, (pink hair), pink eyes, crossed bangs, ojousama, jk, thigh bandages, wrist cuffs, (pink bow: 1.2)}, plain color, sketch, masterpiece, high detail, masterpiece portrait, best quality, ray tracing, {:<, look at the edge}

Negative prompt: ((((ugly)))), (((duplicate))), ((morbid)), ((mutilated)),extra fingers, mutated hands, ((poorly drawn hands)), ((poorly drawn face)), (((bad proportions))), ((extra limbs)), (((deformed))), (((disfigured))), cloned face, gross proportions, (malformed limbs), ((missing arms)), ((missing legs)), (((extra arms))), (((extra legs))), too many fingers, (((long neck))), (((low quality))), normal quality, blurry, bad feet, text font ui, ((((worst quality)))), anatomical nonsense, (((bad shadow))), unnatural body, liquid body, 3D, 3D game, 3D game scene, 3D character, bad hairs, poorly drawn hairs, fused hairs, big muscles, bad face, extra eyes, furry, pony, mosaic, disappearing calf, disappearing legs, extra digit, fewer digit, fused digit, missing digit, fused feet, poorly drawn eyes, big face, long face, bad eyes, thick lips, obesity, strong girl, beard,Excess legs

Steps: 40, Sampler: Euler a, CFG scale: 6,Clip skip: 2

``` | 2,878 | [

[

-0.04833984375,

-0.06011962890625,

0.032806396484375,

0.0088958740234375,

-0.02642822265625,

-0.0022640228271484375,

0.03460693359375,

-0.043487548828125,

0.0361328125,

0.036773681640625,

-0.055145263671875,

-0.041107177734375,

-0.041107177734375,

0.01976013... |

patrickvonplaten/bert2gpt2-cnn_dailymail-fp16 | 2021-08-18T14:38:10.000Z | [

"transformers",

"pytorch",

"jax",

"encoder_decoder",

"text2text-generation",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

] | text2text-generation | patrickvonplaten | null | null | patrickvonplaten/bert2gpt2-cnn_dailymail-fp16 | 6 | 488 | transformers | 2022-03-02T23:29:05 | # Bert2GPT2 Summarization with 🤗 EncoderDecoder Framework

This model is a Bert2Bert model fine-tuned on summarization.

Bert2GPT2 is a `EncoderDecoderModel`, meaning that the encoder is a `bert-base-uncased`

BERT model and the decoder is a `gpt2` GPT2 model. Leveraging the [EncoderDecoderFramework](https://huggingface.co/transformers/model_doc/encoderdecoder.html#encoder-decoder-models), the

two pretrained models can simply be loaded into the framework via:

```python

bert2gpt2 = EncoderDecoderModel.from_encoder_decoder_pretrained("bert-base-uncased", "gpt2")

```

The decoder of an `EncoderDecoder` model needs cross-attention layers and usually makes use of causal

masking for auto-regressiv generation.

Thus, ``bert2gpt2`` is consequently fined-tuned on the `CNN/Daily Mail`dataset and the resulting model

`bert2gpt2-cnn_dailymail-fp16` is uploaded here.

## Example

The model is by no means a state-of-the-art model, but nevertheless

produces reasonable summarization results. It was mainly fine-tuned

as a proof-of-concept for the 🤗 EncoderDecoder Framework.

The model can be used as follows:

```python

from transformers import BertTokenizer, GPT2Tokenizer, EncoderDecoderModel

model = EncoderDecoderModel.from_pretrained("patrickvonplaten/bert2gpt2-cnn_dailymail-fp16")

# reuse tokenizer from bert2bert encoder-decoder model

bert_tokenizer = BertTokenizer.from_pretrained("patrickvonplaten/bert2bert-cnn_dailymail-fp16")

article = """(CNN)Sigma Alpha Epsilon is under fire for a video showing party-bound fraternity members singing a racist chant. SAE's national chapter suspended the students, but University of Oklahoma President David B

oren took it a step further, saying the university's affiliation with the fraternity is permanently done. The news is shocking, but it's not the first time SAE has faced controversy. SAE was founded March 9, 185

6, at the University of Alabama, five years before the American Civil War, according to the fraternity website. When the war began, the group had fewer than 400 members, of which "369 went to war for the Confede

rate States and seven for the Union Army," the website says. The fraternity now boasts more than 200,000 living alumni, along with about 15,000 undergraduates populating 219 chapters and 20 "colonies" seeking fu

ll membership at universities. SAE has had to work hard to change recently after a string of member deaths, many blamed on the hazing of new recruits, SAE national President Bradley Cohen wrote in a message on t

he fraternity's website. The fraternity's website lists more than 130 chapters cited or suspended for "health and safety incidents" since 2010. At least 30 of the incidents involved hazing, and dozens more invol

ved alcohol. However, the list is missing numerous incidents from recent months. Among them, according to various media outlets: Yale University banned the SAEs from campus activities last month after members al

legedly tried to interfere with a sexual misconduct investigation connected to an initiation rite. Stanford University in December suspended SAE housing privileges after finding sorority members attending a frat

ernity function were subjected to graphic sexual content. And Johns Hopkins University in November suspended the fraternity for underage drinking. "The media has labeled us as the 'nation's deadliest fraternity,

' " Cohen said. In 2011, for example, a student died while being coerced into excessive alcohol consumption, according to a lawsuit. SAE's previous insurer dumped the fraternity. "As a result, we are paying Lloy

d's of London the highest insurance rates in the Greek-letter world," Cohen said. Universities have turned down SAE's attempts to open new chapters, and the fraternity had to close 12 in 18 months over hazing in

cidents."""

input_ids = bert_tokenizer(article, return_tensors="pt").input_ids

output_ids = model.generate(input_ids)

# we need a gpt2 tokenizer for the output word embeddings

gpt2_tokenizer = GPT2Tokenizer.from_pretrained("gpt2")

print(gpt2_tokenizer.decode(output_ids[0], skip_special_tokens=True))

# should produce

# SAE's national chapter suspended the students, but university president says it's permanent.

# The fraternity has had to deal with a string of incidents since 2010.

# SAE has more than 200,000 members, many of whom are students.

# A student died while being coerced into drinking alcohol.

```

## Training script:

**IMPORTANT**: In order for this code to work, make sure you checkout to the branch

[more_general_trainer_metric](https://github.com/huggingface/transformers/tree/more_general_trainer_metric), which slightly adapts

the `Trainer` for `EncoderDecoderModels` according to this PR: https://github.com/huggingface/transformers/pull/5840.

The following code shows the complete training script that was used to fine-tune `bert2gpt2-cnn_dailymail-fp16

` for reproducability. The training last ~11h on a standard GPU.

```python

#!/usr/bin/env python3

import nlp

import logging

from transformers import BertTokenizer, GPT2Tokenizer, EncoderDecoderModel, Trainer, TrainingArguments

logging.basicConfig(level=logging.INFO)

model = EncoderDecoderModel.from_encoder_decoder_pretrained("bert-base-cased", "gpt2")

# cache is currently not supported by EncoderDecoder framework

model.decoder.config.use_cache = False

bert_tokenizer = BertTokenizer.from_pretrained("bert-base-cased")

# CLS token will work as BOS token

bert_tokenizer.bos_token = bert_tokenizer.cls_token

# SEP token will work as EOS token

bert_tokenizer.eos_token = bert_tokenizer.sep_token

# make sure GPT2 appends EOS in begin and end

def build_inputs_with_special_tokens(self, token_ids_0, token_ids_1=None):

outputs = [self.bos_token_id] + token_ids_0 + [self.eos_token_id]

return outputs

GPT2Tokenizer.build_inputs_with_special_tokens = build_inputs_with_special_tokens

gpt2_tokenizer = GPT2Tokenizer.from_pretrained("gpt2")

# set pad_token_id to unk_token_id -> be careful here as unk_token_id == eos_token_id == bos_token_id

gpt2_tokenizer.pad_token = gpt2_tokenizer.unk_token

# set decoding params

model.config.decoder_start_token_id = gpt2_tokenizer.bos_token_id

model.config.eos_token_id = gpt2_tokenizer.eos_token_id

model.config.max_length = 142

model.config.min_length = 56

model.config.no_repeat_ngram_size = 3

model.early_stopping = True

model.length_penalty = 2.0

model.num_beams = 4

# load train and validation data

train_dataset = nlp.load_dataset("cnn_dailymail", "3.0.0", split="train")

val_dataset = nlp.load_dataset("cnn_dailymail", "3.0.0", split="validation[:5%]")

# load rouge for validation

rouge = nlp.load_metric("rouge", experiment_id=1)

encoder_length = 512

decoder_length = 128

batch_size = 16

# map data correctly

def map_to_encoder_decoder_inputs(batch): # Tokenizer will automatically set [BOS] <text> [EOS]

# use bert tokenizer here for encoder

inputs = bert_tokenizer(batch["article"], padding="max_length", truncation=True, max_length=encoder_length)

# force summarization <= 128

outputs = gpt2_tokenizer(batch["highlights"], padding="max_length", truncation=True, max_length=decoder_length)

batch["input_ids"] = inputs.input_ids

batch["attention_mask"] = inputs.attention_mask

batch["decoder_input_ids"] = outputs.input_ids

batch["labels"] = outputs.input_ids.copy()

batch["decoder_attention_mask"] = outputs.attention_mask

# complicated list comprehension here because pad_token_id alone is not good enough to know whether label should be excluded or not

batch["labels"] = [

[-100 if mask == 0 else token for mask, token in mask_and_tokens] for mask_and_tokens in [zip(masks, labels) for masks, labels in zip(batch["decoder_attention_mask"], batch["labels"])]

]

assert all([len(x) == encoder_length for x in inputs.input_ids])

assert all([len(x) == decoder_length for x in outputs.input_ids])

return batch

def compute_metrics(pred):

labels_ids = pred.label_ids

pred_ids = pred.predictions

# all unnecessary tokens are removed

pred_str = gpt2_tokenizer.batch_decode(pred_ids, skip_special_tokens=True)

labels_ids[labels_ids == -100] = gpt2_tokenizer.eos_token_id

label_str = gpt2_tokenizer.batch_decode(labels_ids, skip_special_tokens=True)

rouge_output = rouge.compute(predictions=pred_str, references=label_str, rouge_types=["rouge2"])["rouge2"].mid

return {

"rouge2_precision": round(rouge_output.precision, 4),

"rouge2_recall": round(rouge_output.recall, 4),

"rouge2_fmeasure": round(rouge_output.fmeasure, 4),

}

# make train dataset ready

train_dataset = train_dataset.map(

map_to_encoder_decoder_inputs, batched=True, batch_size=batch_size, remove_columns=["article", "highlights"],

)

train_dataset.set_format(

type="torch", columns=["input_ids", "attention_mask", "decoder_input_ids", "decoder_attention_mask", "labels"],

)

# same for validation dataset

val_dataset = val_dataset.map(

map_to_encoder_decoder_inputs, batched=True, batch_size=batch_size, remove_columns=["article", "highlights"],

)

val_dataset.set_format(

type="torch", columns=["input_ids", "attention_mask", "decoder_input_ids", "decoder_attention_mask", "labels"],

)

# set training arguments - these params are not really tuned, feel free to change

training_args = TrainingArguments(

output_dir="./",

per_device_train_batch_size=batch_size,

per_device_eval_batch_size=batch_size,

predict_from_generate=True,

evaluate_during_training=True,

do_train=True,

do_eval=True,

logging_steps=1000,

save_steps=1000,

eval_steps=1000,

overwrite_output_dir=True,

warmup_steps=2000,

save_total_limit=10,

fp16=True,

)

# instantiate trainer

trainer = Trainer(

model=model,

args=training_args,

compute_metrics=compute_metrics,

train_dataset=train_dataset,

eval_dataset=val_dataset,

)

# start training

trainer.train()

```

## Evaluation

The following script evaluates the model on the test set of

CNN/Daily Mail.

```python

#!/usr/bin/env python3

import nlp

from transformers import BertTokenizer, GPT2Tokenizer, EncoderDecoderModel

model = EncoderDecoderModel.from_pretrained("patrickvonplaten/bert2gpt2-cnn_dailymail-fp16")

model.to("cuda")

bert_tokenizer = BertTokenizer.from_pretrained("bert-base-cased")

# CLS token will work as BOS token

bert_tokenizer.bos_token = bert_tokenizer.cls_token

# SEP token will work as EOS token

bert_tokenizer.eos_token = bert_tokenizer.sep_token

# make sure GPT2 appends EOS in begin and end

def build_inputs_with_special_tokens(self, token_ids_0, token_ids_1=None):

outputs = [self.bos_token_id] + token_ids_0 + [self.eos_token_id]

return outputs

GPT2Tokenizer.build_inputs_with_special_tokens = build_inputs_with_special_tokens

gpt2_tokenizer = GPT2Tokenizer.from_pretrained("gpt2")

# set pad_token_id to unk_token_id -> be careful here as unk_token_id == eos_token_id == bos_token_id

gpt2_tokenizer.pad_token = gpt2_tokenizer.unk_token

# set decoding params

model.config.decoder_start_token_id = gpt2_tokenizer.bos_token_id

model.config.eos_token_id = gpt2_tokenizer.eos_token_id

model.config.max_length = 142

model.config.min_length = 56

model.config.no_repeat_ngram_size = 3

model.early_stopping = True

model.length_penalty = 2.0

model.num_beams = 4

test_dataset = nlp.load_dataset("cnn_dailymail", "3.0.0", split="test")

batch_size = 64

# map data correctly

def generate_summary(batch):

# Tokenizer will automatically set [BOS] <text> [EOS]

# cut off at BERT max length 512

inputs = bert_tokenizer(batch["article"], padding="max_length", truncation=True, max_length=512, return_tensors="pt")

input_ids = inputs.input_ids.to("cuda")

attention_mask = inputs.attention_mask.to("cuda")

outputs = model.generate(input_ids, attention_mask=attention_mask)

# all special tokens including will be removed

output_str = gpt2_tokenizer.batch_decode(outputs, skip_special_tokens=True)

batch["pred"] = output_str

return batch

results = test_dataset.map(generate_summary, batched=True, batch_size=batch_size, remove_columns=["article"])

# load rouge for validation

rouge = nlp.load_metric("rouge")

pred_str = results["pred"]

label_str = results["highlights"]

rouge_output = rouge.compute(predictions=pred_str, references=label_str, rouge_types=["rouge2"])["rouge2"].mid

print(rouge_output)

```

The obtained results should be:

| - | Rouge2 - mid -precision | Rouge2 - mid - recall | Rouge2 - mid - fmeasure |

|----------|:-------------:|:------:|:------:|

| **CNN/Daily Mail** | 14.42 | 16.99 | **15.16** |

| 12,654 | [

[

-0.0257720947265625,

-0.0513916015625,

0.006072998046875,

0.0199737548828125,

-0.02728271484375,

-0.01971435546875,

-0.0171661376953125,

-0.0308685302734375,

0.0219268798828125,

0.006923675537109375,

-0.0323486328125,

-0.0205841064453125,

-0.06494140625,

0.0... |

pile-of-law/legalbert-large-1.7M-2 | 2023-06-06T20:10:02.000Z | [

"transformers",

"pytorch",

"bert",

"legal",

"fill-mask",

"en",

"dataset:pile-of-law/pile-of-law",

"arxiv:1907.11692",

"arxiv:1810.04805",

"arxiv:2110.00976",

"arxiv:2207.00220",

"endpoints_compatible",

"region:us"

] | fill-mask | pile-of-law | null | null | pile-of-law/legalbert-large-1.7M-2 | 28 | 488 | transformers | 2022-04-29T18:27:57 | ---

language:

- en

datasets:

- pile-of-law/pile-of-law

pipeline_tag: fill-mask

tags:

- legal

---

# Pile of Law BERT large model 2 (uncased)

Pretrained model on English language legal and administrative text using the [RoBERTa](https://arxiv.org/abs/1907.11692) pretraining objective. This model was trained with the same setup as [pile-of-law/legalbert-large-1.7M-1](https://huggingface.co/pile-of-law/legalbert-large-1.7M-1), but with a different seed.

## Model description

Pile of Law BERT large 2 is a transformers model with the [BERT large model (uncased)](https://huggingface.co/bert-large-uncased) architecture pretrained on the [Pile of Law](https://huggingface.co/datasets/pile-of-law/pile-of-law), a dataset consisting of ~256GB of English language legal and administrative text for language model pretraining.

## Intended uses & limitations

You can use the raw model for masked language modeling or fine-tune it for a downstream task. Since this model was pretrained on a English language legal and administrative text corpus, legal downstream tasks will likely be more in-domain for this model.

## How to use

You can use the model directly with a pipeline for masked language modeling:

```python

>>> from transformers import pipeline

>>> pipe = pipeline(task='fill-mask', model='pile-of-law/legalbert-large-1.7M-2')

>>> pipe("An [MASK] is a request made after a trial by a party that has lost on one or more issues that a higher court review the decision to determine if it was correct.")

[{'sequence': 'an exception is a request made after a trial by a party that has lost on one or more issues that a higher court review the decision to determine if it was correct.',

'score': 0.5218929052352905,

'token': 4028,

'token_str': 'exception'},

{'sequence': 'an appeal is a request made after a trial by a party that has lost on one or more issues that a higher court review the decision to determine if it was correct.',

'score': 0.11434809118509293,

'token': 1151,

'token_str': 'appeal'},

{'sequence': 'an exclusion is a request made after a trial by a party that has lost on one or more issues that a higher court review the decision to determine if it was correct.',

'score': 0.06454459577798843,

'token': 5345,

'token_str': 'exclusion'},

{'sequence': 'an example is a request made after a trial by a party that has lost on one or more issues that a higher court review the decision to determine if it was correct.',

'score': 0.043593790382146835,

'token': 3677,

'token_str': 'example'},

{'sequence': 'an objection is a request made after a trial by a party that has lost on one or more issues that a higher court review the decision to determine if it was correct.',

'score': 0.03758585825562477,

'token': 3542,

'token_str': 'objection'}]

```

Here is how to use this model to get the features of a given text in PyTorch:

```python

from transformers import BertTokenizer, BertModel

tokenizer = BertTokenizer.from_pretrained('pile-of-law/legalbert-large-1.7M-2')

model = BertModel.from_pretrained('pile-of-law/legalbert-large-1.7M-2')

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

```

and in TensorFlow:

```python

from transformers import BertTokenizer, TFBertModel

tokenizer = BertTokenizer.from_pretrained('pile-of-law/legalbert-large-1.7M-2')

model = TFBertModel.from_pretrained('pile-of-law/legalbert-large-1.7M-2')

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='tf')

output = model(encoded_input)

```

## Limitations and bias

Please see Appendix G of the Pile of Law paper for copyright limitations related to dataset and model use.

This model can have biased predictions. In the following example where the model is used with a pipeline for masked language modeling, for the race descriptor of the criminal, the model predicts a higher score for "black" than "white".

```python

>>> from transformers import pipeline

>>> pipe = pipeline(task='fill-mask', model='pile-of-law/legalbert-large-1.7M-2')

>>> pipe("The transcript of evidence reveals that at approximately 7:30 a. m. on January 22, 1973, the prosecutrix was awakened in her home in DeKalb County by the barking of the family dog, and as she opened her eyes she saw a [MASK] man standing beside her bed with a gun.", targets=["black", "white"])

[{'sequence': 'the transcript of evidence reveals that at approximately 7 : 30 a. m. on january 22, 1973, the prosecutrix was awakened in her home in dekalb county by the barking of the family dog, and as she opened her eyes she saw a black man standing beside her bed with a gun.',

'score': 0.02685137465596199,

'token': 4311,

'token_str': 'black'},

{'sequence': 'the transcript of evidence reveals that at approximately 7 : 30 a. m. on january 22, 1973, the prosecutrix was awakened in her home in dekalb county by the barking of the family dog, and as she opened her eyes she saw a white man standing beside her bed with a gun.',

'score': 0.013632853515446186,

'token': 4249,

'token_str': 'white'}]

```

This bias will also affect all fine-tuned versions of this model.

## Training data

The Pile of Law BERT large model was pretrained on the Pile of Law, a dataset consisting of ~256GB of English language legal and administrative text for language model pretraining. The Pile of Law consists of 35 data sources, including legal analyses, court opinions and filings, government agency publications, contracts, statutes, regulations, casebooks, etc. We describe the data sources in detail in Appendix E of the Pile of Law paper. The Pile of Law dataset is placed under a CreativeCommons Attribution-NonCommercial-ShareAlike 4.0 International license.

## Training procedure

### Preprocessing

The model vocabulary consists of 29,000 tokens from a custom word-piece vocabulary fit to Pile of Law using the [HuggingFace WordPiece tokenizer](https://github.com/huggingface/tokenizers) and 3,000 randomly sampled legal terms from Black's Law Dictionary, for a vocabulary size of 32,000 tokens. The 80-10-10 masking, corruption, leave split, as in [BERT](https://arxiv.org/abs/1810.04805), is used, with a replication rate of 20 to create different masks for each context. To generate sequences, we use the [LexNLP sentence segmenter](https://github.com/LexPredict/lexpredict-lexnlp), which handles sentence segmentation for legal citations (which are often falsely mistaken as sentences). The input is formatted by filling sentences until they comprise 256 tokens, followed by a [SEP] token, and then filling sentences such that the entire span is under 512 tokens. If the next sentence in the series is too large, it is not added, and the remaining context length is filled with padding tokens.

### Pretraining

The model was trained on a SambaNova cluster, with 8 RDUs, for 1.7 million steps. We used a smaller learning rate of 5e-6 and batch size of 128, to mitigate training instability, potentially due to the diversity of sources in our training data. The masked language modeling (MLM) objective without NSP loss, as described in [RoBERTa](https://arxiv.org/abs/1907.11692), was used for pretraining. The model was pretrained with 512 length sequence lengths for all steps.

We trained two models with the same setup in parallel model training runs, with different random seeds. We selected the lowest log likelihood model, [pile-of-law/legalbert-large-1.7M-1](https://huggingface.co/pile-of-law/legalbert-large-1.7M-1), which we refer to as PoL-BERT-Large, for experiments, but also release the second model, [pile-of-law/legalbert-large-1.7M-2](https://huggingface.co/pile-of-law/legalbert-large-1.7M-2).

## Evaluation results

See the model card for [pile-of-law/legalbert-large-1.7M-1](https://huggingface.co/pile-of-law/legalbert-large-1.7M-1) for finetuning results on the CaseHOLD variant provided by the [LexGLUE paper](https://arxiv.org/abs/2110.00976).

### BibTeX entry and citation info

```bibtex

@misc{hendersonkrass2022pileoflaw,

url = {https://arxiv.org/abs/2207.00220},

author = {Henderson, Peter and Krass, Mark S. and Zheng, Lucia and Guha, Neel and Manning, Christopher D. and Jurafsky, Dan and Ho, Daniel E.},

title = {Pile of Law: Learning Responsible Data Filtering from the Law and a 256GB Open-Source Legal Dataset},

publisher = {arXiv},

year = {2022}

}

``` | 8,434 | [

[

-0.01885986328125,

-0.04925537109375,

0.027740478515625,

0.011444091796875,

-0.040252685546875,

-0.016632080078125,

-0.00804901123046875,

-0.019012451171875,

0.0189208984375,

0.057098388671875,

-0.0167694091796875,

-0.035064697265625,

-0.06207275390625,

-0.0... |

classla/xlm-roberta-base-multilingual-text-genre-classifier | 2023-10-05T10:34:56.000Z | [

"transformers",

"pytorch",

"safetensors",

"xlm-roberta",

"text-classification",

"genre",

"text-genre",

"multilingual",

"af",

"am",

"ar",

"as",

"az",

"be",

"bg",

"bn",

"br",

"bs",

"ca",

"cs",

"cy",

"da",

"de",

"el",

"en",

"eo",

"es",

"et",

"eu",

"fa",

"fi",... | text-classification | classla | null | null | classla/xlm-roberta-base-multilingual-text-genre-classifier | 17 | 488 | transformers | 2022-11-11T09:33:55 | ---

license: cc-by-sa-4.0

language:

- multilingual

- af

- am

- ar

- as

- az

- be

- bg

- bn

- br

- bs

- ca

- cs

- cy

- da

- de

- el

- en

- eo

- es

- et

- eu

- fa

- fi

- fr

- fy

- ga

- gd

- gl

- gu

- ha

- he

- hi

- hr

- hu

- hy

- id

- is

- it

- ja

- jv

- ka

- kk

- km

- kn

- ko

- ku

- ky

- la

- lo

- lt

- lv

- mg

- mk

- ml

- mn

- mr

- ms

- my

- ne

- nl

- no

- om

- or

- pa

- pl

- ps

- pt

- ro

- ru

- sa

- sd

- si

- sk

- sl

- so

- sq

- sr

- su

- sv

- sw

- ta

- te

- th

- tl

- tr

- ug

- uk

- ur

- uz

- vi

- xh

- yi

- zh

tags:

- text-classification

- genre

- text-genre

widget:

- text: "On our site, you can find a great genre identification model which you can use for thousands of different tasks. For free!"

example_title: "English"

- text: "Na naši spletni strani lahko najdete odličen model za prepoznavanje žanrov, ki ga lahko uporabite pri na tisoče različnih nalogah. In to brezplačno!"

example_title: "Slovene"

- text: "Sur notre site, vous trouverez un modèle d'identification de genre très intéressant que vous pourrez utiliser pour des milliers de tâches différentes. C'est gratuit !"

example_title: "French"

---

# X-GENRE classifier - multilingual text genre classifier

Text classification model based on [`xlm-roberta-base`](https://huggingface.co/xlm-roberta-base) and fine-tuned on a combination of three genre datasets: Slovene GINCO<sup>1</sup> dataset, the English CORE<sup>2</sup> dataset and the English FTD<sup>3</sup> dataset. The model can be used for automatic genre identification, applied to any text in a language, supported by the `xlm-roberta-base`.

## Model description

The model was fine-tuned on the "X-GENRE" dataset which consists of three genre datasets: CORE, FTD and GINCO dataset. Each of the datasets has their own genre schema, so they were combined into a joint schema ("X-GENRE" schema) based on the comparison of labels and cross-dataset experiments (described in details [here](https://github.com/TajaKuzman/Genre-Datasets-Comparison/tree/main/Creation-of-classifiers-and-cross-prediction#joint-schema-x-genre)).

### Fine-tuning hyperparameters

Fine-tuning was performed with `simpletransformers`. Beforehand, a brief hyperparameter optimization was performed and the presumed optimal hyperparameters are:

```python

model_args= {

"num_train_epochs": 15,

"learning_rate": 1e-5,

"max_seq_length": 512,

}

```

## Intended use and limitations

## Usage

An example of preparing data for genre identification and post-processing of the results can be found [here](https://github.com/TajaKuzman/Applying-GENRE-on-MaCoCu-bilingual) where we applied X-GENRE classifier to the English part of [MaCoCu](https://macocu.eu/) parallel corpora.

For reliable results, genre classifier should be applied to documents of sufficient length (the rule of thumbs is at least 75 words). It is advised that the predictions, predicted with confidence lower than 0.9, are not used. Furthermore, the label "Other" can be used as another indicator of low confidence of the predictions, as it often indicates that the text does not have enough features of any genre, and these predictions can be discarded as well.

After proposed post-processing (removal of low-confidence predictions, labels "Other" and in this specific case also label "Forum"), the performance on the MaCoCu data based on manual inspection reached macro and micro F1 of 0.92.

### Use examples

```python

from simpletransformers.classification import ClassificationModel

model_args= {

"num_train_epochs": 15,

"learning_rate": 1e-5,

"max_seq_length": 512,

"silent": True

}

model = ClassificationModel(

"xlmroberta", "classla/xlm-roberta-base-multilingual-text-genre-classifier", use_cuda=True,

args=model_args

)

predictions, logit_output = model.predict(["How to create a good text classification model? First step is to prepare good data. Make sure not to skip the exploratory data analysis. Pre-process the text if necessary for the task. The next step is to perform hyperparameter search to find the optimum hyperparameters. After fine-tuning the model, you should look into the predictions and analyze the model's performance. You might want to perform the post-processing of data as well and keep only reliable predictions.",

"On our site, you can find a great genre identification model which you can use for thousands of different tasks. With our model, you can fastly and reliably obtain high-quality genre predictions and explore which genres exist in your corpora. Available for free!"]

)

predictions

# Output: array([3, 8])

[model.config.id2label[i] for i in predictions]

# Output: ['Instruction', 'Promotion']

```

Use example for prediction on a dataset, using batch processing, is available via [Google Collab](https://colab.research.google.com/drive/1yC4L_p2t3oMViC37GqSjJynQH-EWyhLr?usp=sharing).

## X-GENRE categories

List of labels:

```

labels_list=['Other', 'Information/Explanation', 'News', 'Instruction', 'Opinion/Argumentation', 'Forum', 'Prose/Lyrical', 'Legal', 'Promotion'],

labels_map={'Other': 0, 'Information/Explanation': 1, 'News': 2, 'Instruction': 3, 'Opinion/Argumentation': 4, 'Forum': 5, 'Prose/Lyrical': 6, 'Legal': 7, 'Promotion': 8}

```

Description of labels:

| Label | Description | Examples |

|-------------------------|--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| Information/Explanation | An objective text that describes or presents an event, a person, a thing, a concept etc. Its main purpose is to inform the reader about something. Common features: objective/factual, explanation/definition of a concept (x is …), enumeration. | research article, encyclopedia article, informational blog, product specification, course materials, general information, job description, manual, horoscope, travel guide, glossaries, historical article, biographical story/history. |

| Instruction | An objective text which instructs the readers on how to do something. Common features: multiple steps/actions, chronological order, 1st person plural or 2nd person, modality (must, have to, need to, can, etc.), adverbial clauses of manner (in a way that), of condition (if), of time (after …). | how-to texts, recipes, technical support |

| Legal | An objective formal text that contains legal terms and is clearly structured. The name of the text type is often included in the headline (contract, rules, amendment, general terms and conditions, etc.). Common features: objective/factual, legal terms, 3rd person. | small print, software license, proclamation, terms and conditions, contracts, law, copyright notices, university regulation |

| News | An objective or subjective text which reports on an event recent at the time of writing or coming in the near future. Common features: adverbs/adverbial clauses of time and/or place (dates, places), many proper nouns, direct or reported speech, past tense. | news report, sports report, travel blog, reportage, police report, announcement |

| Opinion/Argumentation | A subjective text in which the authors convey their opinion or narrate their experience. It includes promotion of an ideology and other non-commercial causes. This genre includes a subjective narration of a personal experience as well. Common features: adjectives/adverbs that convey opinion, words that convey (un)certainty (certainly, surely), 1st person, exclamation marks. | review, blog (personal blog, travel blog), editorial, advice, letter to editor, persuasive article or essay, formal speech, pamphlet, political propaganda, columns, political manifesto |

| Promotion | A subjective text intended to sell or promote an event, product, or service. It addresses the readers, often trying to convince them to participate in something or buy something. Common features: contains adjectives/adverbs that promote something (high-quality, perfect, amazing), comparative and superlative forms of adjectives and adverbs (the best, the greatest, the cheapest), addressing the reader (usage of 2nd person), exclamation marks. | advertisement, promotion of a product (e-shops), promotion of an accommodation, promotion of company's services, invitation to an event |

| Forum | A text in which people discuss a certain topic in form of comments. Common features: multiple authors, informal language, subjective (the writers express their opinions), written in 1st person. | discussion forum, reader/viewer responses, QA forum |

| Prose/Lyrical | A literary text that consists of paragraphs or verses. A literary text is deemed to have no other practical purpose than to give pleasure to the reader. Often the author pays attention to the aesthetic appearance of the text. It can be considered as art. | lyrics, poem, prayer, joke, novel, short story |

| Other | A text that which does not fall under any of other genre categories. | |

## Performance

### Comparison with other models at in-dataset and cross-dataset experiments

The X-GENRE model was compared with `xlm-roberta-base` classifiers, fine-tuned on each of genre datasets separately, using the X-GENRE schema (see experiments in https://github.com/TajaKuzman/Genre-Datasets-Comparison).

At the in-dataset experiments (trained and tested on splits of the same dataset), it outperforms all datasets, except the FTD dataset which has a smaller number of X-GENRE labels.

| Trained on | Micro F1 | Macro F1 |

|:-------------|-----------:|-----------:|

| FTD | 0.843 | 0.851 |

| X-GENRE | 0.797 | 0.794 |

| CORE | 0.778 | 0.627 |

| GINCO | 0.754 | 0.75 |

When applied on test splits of each of the datasets, the classifier performs well:

| Trained on | Tested on | Micro F1 | Macro F1 |

|:-------------|:------------|-----------:|-----------:|

| X-GENRE | CORE | 0.837 | 0.859 |

| X-GENRE | FTD | 0.804 | 0.809 |

| X-GENRE | X-GENRE | 0.797 | 0.794 |

| X-GENRE | X-GENRE-dev | 0.784 | 0.784 |

| X-GENRE | GINCO | 0.749 | 0.758 |

The classifier was compared with other classifiers on 2 additional genre datasets (to which the X-GENRE schema was mapped):

- EN-GINCO: a sample of the English enTenTen20 corpus

- [FinCORE](https://github.com/TurkuNLP/FinCORE): Finnish CORE corpus

| Trained on | Tested on | Micro F1 | Macro F1 |

|:-------------|:------------|-----------:|-----------:|

| X-GENRE | EN-GINCO | 0.688 | 0.691 |

| X-GENRE | FinCORE | 0.674 | 0.581 |

| GINCO | EN-GINCO | 0.632 | 0.502 |

| FTD | EN-GINCO | 0.574 | 0.475 |

| CORE | EN-GINCO | 0.485 | 0.422 |

At cross-dataset and cross-lingual experiments, it was shown that the X-GENRE classifier, trained on all three datasets, outperforms classifiers that were trained on just one of the datasets.

## Citation

If you use the model, please cite the paper which describes creation of the X-GENRE dataset and the genre classifier:

```

@article{kuzman2023automatic,

title={Automatic Genre Identification for Robust Enrichment of Massive Text Collections: Investigation of Classification Methods in the Era of Large Language Models},

author={Kuzman, Taja and Mozeti{\v{c}}, Igor and Ljube{\v{s}}i{\'c}, Nikola},

journal={Machine Learning and Knowledge Extraction},

volume={5},

number={3},

pages={1149--1175},

year={2023},

publisher={MDPI}

}

```

| 16,839 | [

[

-0.046234130859375,

-0.047576904296875,

0.023956298828125,

0.0282135009765625,

-0.00849151611328125,

0.024658203125,

-0.005680084228515625,

-0.0288543701171875,

0.041595458984375,

0.044708251953125,

-0.037872314453125,

-0.0556640625,

-0.05908203125,

0.021331... |

dwarfbum/Uber-Realistic-Porn-Merge_URPM | 2023-04-09T12:43:30.000Z | [

"diffusers",

"Uber Realistic Porn Merge",

"URPM",

"license:creativeml-openrail-m",

"region:us"

] | null | dwarfbum | null | null | dwarfbum/Uber-Realistic-Porn-Merge_URPM | 7 | 488 | diffusers | 2023-02-12T13:36:36 | ---

license: creativeml-openrail-m

library_name: diffusers

tags:

- Uber Realistic Porn Merge

- URPM

---

THIS IS NOT MY MODEL

Author: saftle

Link to model: https://civitai.com/models/2661/uber-realistic-porn-merge-urpm

I just uploaded it on huggingface

| 257 | [

[

-0.032745361328125,

-0.034149169921875,

0.0298919677734375,

0.034332275390625,

-0.01219940185546875,

-0.00745391845703125,

0.0258941650390625,

-0.0372314453125,

0.055633544921875,

0.0465087890625,

-0.0728759765625,

-0.009979248046875,

-0.03466796875,

0.00205... |

navyatiwari11/my-pet-cat-nxt | 2023-07-17T11:10:54.000Z | [

"diffusers",

"NxtWave-GenAI-Webinar",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | navyatiwari11 | null | null | navyatiwari11/my-pet-cat-nxt | 0 | 488 | diffusers | 2023-07-17T11:04:50 | ---

license: creativeml-openrail-m

tags:

- NxtWave-GenAI-Webinar

- text-to-image

- stable-diffusion

---

### My-Pet-Cat-nxt Dreambooth model trained by navyatiwari11 following the "Build your own Gen AI model" session by NxtWave.

Project Submission Code: OPJU100

Sample pictures of this concept:

| 412 | [

[

-0.055877685546875,

-0.0205230712890625,

0.0186309814453125,

0.017181396484375,

-0.024749755859375,

0.0521240234375,

0.034423828125,

-0.022216796875,

0.06573486328125,

0.03924560546875,

-0.036041259765625,

0.0002732276916503906,

-0.00875091552734375,

0.01333... |

TheBloke/Platypus2-70B-Instruct-AWQ | 2023-09-27T12:49:59.000Z | [

"transformers",

"safetensors",

"llama",

"text-generation",

"en",

"dataset:garage-bAInd/Open-Platypus",

"dataset:Open-Orca/OpenOrca",

"arxiv:2308.07317",

"arxiv:2307.09288",

"license:cc-by-nc-4.0",

"text-generation-inference",

"region:us"

] | text-generation | TheBloke | null | null | TheBloke/Platypus2-70B-Instruct-AWQ | 0 | 488 | transformers | 2023-09-19T01:31:29 | ---

language:

- en

license: cc-by-nc-4.0

datasets:

- garage-bAInd/Open-Platypus

- Open-Orca/OpenOrca

model_name: Platypus2 70B Instruct

base_model: garage-bAInd/Platypus2-70B-instruct

inference: false

model_creator: garage-bAInd

model_type: llama

prompt_template: 'Below is an instruction that describes a task. Write a response

that appropriately completes the request.

### Instruction:

{prompt}

### Response:

'

quantized_by: TheBloke

---

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# Platypus2 70B Instruct - AWQ

- Model creator: [garage-bAInd](https://huggingface.co/garage-bAInd)

- Original model: [Platypus2 70B Instruct](https://huggingface.co/garage-bAInd/Platypus2-70B-instruct)

<!-- description start -->

## Description

This repo contains AWQ model files for [garage-bAInd's Platypus2 70B Instruct](https://huggingface.co/garage-bAInd/Platypus2-70B-instruct).

### About AWQ

AWQ is an efficient, accurate and blazing-fast low-bit weight quantization method, currently supporting 4-bit quantization. Compared to GPTQ, it offers faster Transformers-based inference.

It is also now supported by continuous batching server [vLLM](https://github.com/vllm-project/vllm), allowing use of AWQ models for high-throughput concurrent inference in multi-user server scenarios. Note that, at the time of writing, overall throughput is still lower than running vLLM with unquantised models, however using AWQ enables using much smaller GPUs which can lead to easier deployment and overall cost savings. For example, a 70B model can be run on 1 x 48GB GPU instead of 2 x 80GB.

<!-- description end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/Platypus2-70B-Instruct-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/Platypus2-70B-Instruct-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/Platypus2-70B-Instruct-GGUF)

* [garage-bAInd's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/garage-bAInd/Platypus2-70B-instruct)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: Alpaca

```

Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

```

<!-- prompt-template end -->

<!-- licensing start -->

## Licensing

The creator of the source model has listed its license as `cc-by-nc-4.0`, and this quantization has therefore used that same license.

As this model is based on Llama 2, it is also subject to the Meta Llama 2 license terms, and the license files for that are additionally included. It should therefore be considered as being claimed to be licensed under both licenses. I contacted Hugging Face for clarification on dual licensing but they do not yet have an official position. Should this change, or should Meta provide any feedback on this situation, I will update this section accordingly.

In the meantime, any questions regarding licensing, and in particular how these two licenses might interact, should be directed to the original model repository: [garage-bAInd's Platypus2 70B Instruct](https://huggingface.co/garage-bAInd/Platypus2-70B-instruct).

<!-- licensing end -->

<!-- README_AWQ.md-provided-files start -->

## Provided files and AWQ parameters

For my first release of AWQ models, I am releasing 128g models only. I will consider adding 32g as well if there is interest, and once I have done perplexity and evaluation comparisons, but at this time 32g models are still not fully tested with AutoAWQ and vLLM.

Models are released as sharded safetensors files.

| Branch | Bits | GS | AWQ Dataset | Seq Len | Size |

| ------ | ---- | -- | ----------- | ------- | ---- |

| [main](https://huggingface.co/TheBloke/Platypus2-70B-Instruct-AWQ/tree/main) | 4 | 128 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 36.61 GB

<!-- README_AWQ.md-provided-files end -->

<!-- README_AWQ.md-use-from-vllm start -->

## Serving this model from vLLM

Documentation on installing and using vLLM [can be found here](https://vllm.readthedocs.io/en/latest/).

- When using vLLM as a server, pass the `--quantization awq` parameter, for example:

```shell

python3 python -m vllm.entrypoints.api_server --model TheBloke/Platypus2-70B-Instruct-AWQ --quantization awq

```

When using vLLM from Python code, pass the `quantization=awq` parameter, for example:

```python

from vllm import LLM, SamplingParams

prompts = [

"Hello, my name is",

"The president of the United States is",

"The capital of France is",

"The future of AI is",

]

sampling_params = SamplingParams(temperature=0.8, top_p=0.95)

llm = LLM(model="TheBloke/Platypus2-70B-Instruct-AWQ", quantization="awq")

outputs = llm.generate(prompts, sampling_params)

# Print the outputs.

for output in outputs:

prompt = output.prompt

generated_text = output.outputs[0].text

print(f"Prompt: {prompt!r}, Generated text: {generated_text!r}")

```

<!-- README_AWQ.md-use-from-vllm start -->

<!-- README_AWQ.md-use-from-python start -->

## How to use this AWQ model from Python code

### Install the necessary packages

Requires: [AutoAWQ](https://github.com/casper-hansen/AutoAWQ) 0.0.2 or later

```shell

pip3 install autoawq

```

If you have problems installing [AutoAWQ](https://github.com/casper-hansen/AutoAWQ) using the pre-built wheels, install it from source instead:

```shell

pip3 uninstall -y autoawq

git clone https://github.com/casper-hansen/AutoAWQ

cd AutoAWQ

pip3 install .

```

### You can then try the following example code

```python

from awq import AutoAWQForCausalLM

from transformers import AutoTokenizer

model_name_or_path = "TheBloke/Platypus2-70B-Instruct-AWQ"

# Load model

model = AutoAWQForCausalLM.from_quantized(model_name_or_path, fuse_layers=True,

trust_remote_code=False, safetensors=True)

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, trust_remote_code=False)

prompt = "Tell me about AI"

prompt_template=f'''Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

'''

print("\n\n*** Generate:")

tokens = tokenizer(

prompt_template,

return_tensors='pt'

).input_ids.cuda()

# Generate output

generation_output = model.generate(

tokens,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

max_new_tokens=512

)

print("Output: ", tokenizer.decode(generation_output[0]))

# Inference can also be done using transformers' pipeline

from transformers import pipeline

print("*** Pipeline:")

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

max_new_tokens=512,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

repetition_penalty=1.1

)

print(pipe(prompt_template)[0]['generated_text'])

```

<!-- README_AWQ.md-use-from-python end -->

<!-- README_AWQ.md-compatibility start -->

## Compatibility

The files provided are tested to work with [AutoAWQ](https://github.com/casper-hansen/AutoAWQ), and [vLLM](https://github.com/vllm-project/vllm).

[Huggingface Text Generation Inference (TGI)](https://github.com/huggingface/text-generation-inference) is not yet compatible with AWQ, but a PR is open which should bring support soon: [TGI PR #781](https://github.com/huggingface/text-generation-inference/issues/781).

<!-- README_AWQ.md-compatibility end -->

<!-- footer start -->

<!-- 200823 -->

## Discord

For further support, and discussions on these models and AI in general, join us at:

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

## Thanks, and how to contribute

Thanks to the [chirper.ai](https://chirper.ai) team!

Thanks to Clay from [gpus.llm-utils.org](llm-utils)!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

* Patreon: https://patreon.com/TheBlokeAI

* Ko-Fi: https://ko-fi.com/TheBlokeAI

**Special thanks to**: Aemon Algiz.

**Patreon special mentions**: Alicia Loh, Stephen Murray, K, Ajan Kanaga, RoA, Magnesian, Deo Leter, Olakabola, Eugene Pentland, zynix, Deep Realms, Raymond Fosdick, Elijah Stavena, Iucharbius, Erik Bjäreholt, Luis Javier Navarrete Lozano, Nicholas, theTransient, John Detwiler, alfie_i, knownsqashed, Mano Prime, Willem Michiel, Enrico Ros, LangChain4j, OG, Michael Dempsey, Pierre Kircher, Pedro Madruga, James Bentley, Thomas Belote, Luke @flexchar, Leonard Tan, Johann-Peter Hartmann, Illia Dulskyi, Fen Risland, Chadd, S_X, Jeff Scroggin, Ken Nordquist, Sean Connelly, Artur Olbinski, Swaroop Kallakuri, Jack West, Ai Maven, David Ziegler, Russ Johnson, transmissions 11, John Villwock, Alps Aficionado, Clay Pascal, Viktor Bowallius, Subspace Studios, Rainer Wilmers, Trenton Dambrowitz, vamX, Michael Levine, 준교 김, Brandon Frisco, Kalila, Trailburnt, Randy H, Talal Aujan, Nathan Dryer, Vadim, 阿明, ReadyPlayerEmma, Tiffany J. Kim, George Stoitzev, Spencer Kim, Jerry Meng, Gabriel Tamborski, Cory Kujawski, Jeffrey Morgan, Spiking Neurons AB, Edmond Seymore, Alexandros Triantafyllidis, Lone Striker, Cap'n Zoog, Nikolai Manek, danny, ya boyyy, Derek Yates, usrbinkat, Mandus, TL, Nathan LeClaire, subjectnull, Imad Khwaja, webtim, Raven Klaugh, Asp the Wyvern, Gabriel Puliatti, Caitlyn Gatomon, Joseph William Delisle, Jonathan Leane, Luke Pendergrass, SuperWojo, Sebastain Graf, Will Dee, Fred von Graf, Andrey, Dan Guido, Daniel P. Andersen, Nitin Borwankar, Elle, Vitor Caleffi, biorpg, jjj, NimbleBox.ai, Pieter, Matthew Berman, terasurfer, Michael Davis, Alex, Stanislav Ovsiannikov

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

<!-- footer end -->

# Original model card: garage-bAInd's Platypus2 70B Instruct

# Platypus2-70B-instruct

Platypus-70B-instruct is a merge of [`garage-bAInd/Platypus2-70B`](https://huggingface.co/garage-bAInd/Platypus2-70B) and [`upstage/Llama-2-70b-instruct-v2`](https://huggingface.co/upstage/Llama-2-70b-instruct-v2).

### Benchmark Metrics

| Metric | Value |

|-----------------------|-------|

| MMLU (5-shot) | 70.48 |

| ARC (25-shot) | 71.84 |

| HellaSwag (10-shot) | 87.94 |

| TruthfulQA (0-shot) | 62.26 |

| Avg. | 73.13 |

We use state-of-the-art [Language Model Evaluation Harness](https://github.com/EleutherAI/lm-evaluation-harness) to run the benchmark tests above, using the same version as the HuggingFace LLM Leaderboard. Please see below for detailed instructions on reproducing benchmark results.

### Model Details

* **Trained by**: **Platypus2-70B** trained by Cole Hunter & Ariel Lee; **Llama-2-70b-instruct** trained by upstageAI

* **Model type:** **Platypus2-70B-instruct** is an auto-regressive language model based on the LLaMA 2 transformer architecture.

* **Language(s)**: English

* **License**: Non-Commercial Creative Commons license ([CC BY-NC-4.0](https://creativecommons.org/licenses/by-nc/4.0/))

### Prompt Template

```

### Instruction:

<prompt> (without the <>)

### Response:

```

### Training Dataset

`garage-bAInd/Platypus2-70B` trained using STEM and logic based dataset [`garage-bAInd/Open-Platypus`](https://huggingface.co/datasets/garage-bAInd/Open-Platypus).

Please see our [paper](https://arxiv.org/abs/2308.07317) and [project webpage](https://platypus-llm.github.io) for additional information.

### Training Procedure

`garage-bAInd/Platypus2-70B` was instruction fine-tuned using LoRA on 8 A100 80GB. For training details and inference instructions please see the [Platypus](https://github.com/arielnlee/Platypus) GitHub repo.

### Reproducing Evaluation Results

Install LM Evaluation Harness:

```

# clone repository

git clone https://github.com/EleutherAI/lm-evaluation-harness.git

# change to repo directory

cd lm-evaluation-harness

# check out the correct commit

git checkout b281b0921b636bc36ad05c0b0b0763bd6dd43463

# install

pip install -e .

```

Each task was evaluated on a single A100 80GB GPU.

ARC:

```

python main.py --model hf-causal-experimental --model_args pretrained=garage-bAInd/Platypus2-70B-instruct --tasks arc_challenge --batch_size 1 --no_cache --write_out --output_path results/Platypus2-70B-instruct/arc_challenge_25shot.json --device cuda --num_fewshot 25

```

HellaSwag:

```

python main.py --model hf-causal-experimental --model_args pretrained=garage-bAInd/Platypus2-70B-instruct --tasks hellaswag --batch_size 1 --no_cache --write_out --output_path results/Platypus2-70B-instruct/hellaswag_10shot.json --device cuda --num_fewshot 10

```

MMLU:

```

python main.py --model hf-causal-experimental --model_args pretrained=garage-bAInd/Platypus2-70B-instruct --tasks hendrycksTest-* --batch_size 1 --no_cache --write_out --output_path results/Platypus2-70B-instruct/mmlu_5shot.json --device cuda --num_fewshot 5

```

TruthfulQA:

```

python main.py --model hf-causal-experimental --model_args pretrained=garage-bAInd/Platypus2-70B-instruct --tasks truthfulqa_mc --batch_size 1 --no_cache --write_out --output_path results/Platypus2-70B-instruct/truthfulqa_0shot.json --device cuda

```

### Limitations and bias

Llama 2 and fine-tuned variants are a new technology that carries risks with use. Testing conducted to date has been in English, and has not covered, nor could it cover all scenarios. For these reasons, as with all LLMs, Llama 2 and any fine-tuned varient's potential outputs cannot be predicted in advance, and the model may in some instances produce inaccurate, biased or other objectionable responses to user prompts. Therefore, before deploying any applications of Llama 2 variants, developers should perform safety testing and tuning tailored to their specific applications of the model.

Please see the Responsible Use Guide available at https://ai.meta.com/llama/responsible-use-guide/

### Citations

```bibtex

@article{platypus2023,

title={Platypus: Quick, Cheap, and Powerful Refinement of LLMs},

author={Ariel N. Lee and Cole J. Hunter and Nataniel Ruiz},

booktitle={arXiv preprint arxiv:2308.07317},

year={2023}

}

```

```bibtex

@misc{touvron2023llama,

title={Llama 2: Open Foundation and Fine-Tuned Chat Models},

author={Hugo Touvron and Louis Martin and Kevin Stone and Peter Albert and Amjad Almahairi and Yasmine Babaei and Nikolay Bashlykov year={2023},

eprint={2307.09288},

archivePrefix={arXiv},

}

```

```bibtex

@inproceedings{

hu2022lora,

title={Lo{RA}: Low-Rank Adaptation of Large Language Models},

author={Edward J Hu and Yelong Shen and Phillip Wallis and Zeyuan Allen-Zhu and Yuanzhi Li and Shean Wang and Lu Wang and Weizhu Chen},

booktitle={International Conference on Learning Representations},

year={2022},

url={https://openreview.net/forum?id=nZeVKeeFYf9}

}

```

| 16,604 | [

[

-0.036895751953125,

-0.052459716796875,

0.0241546630859375,

0.0080108642578125,

-0.0255889892578125,

-0.006683349609375,

0.0035266876220703125,

-0.033416748046875,

-0.0036296844482421875,

0.0289154052734375,

-0.048126220703125,

-0.033111572265625,

-0.01988220214... |

HooshvareLab/bert-fa-base-uncased-ner-peyma | 2021-05-18T20:55:10.000Z | [

"transformers",

"pytorch",

"tf",

"jax",

"bert",

"token-classification",

"fa",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | token-classification | HooshvareLab | null | null | HooshvareLab/bert-fa-base-uncased-ner-peyma | 2 | 487 | transformers | 2022-03-02T23:29:04 | ---

language: fa

license: apache-2.0

---

# ParsBERT (v2.0)

A Transformer-based Model for Persian Language Understanding

We reconstructed the vocabulary and fine-tuned the ParsBERT v1.1 on the new Persian corpora in order to provide some functionalities for using ParsBERT in other scopes!

Please follow the [ParsBERT](https://github.com/hooshvare/parsbert) repo for the latest information about previous and current models.

## Persian NER [ARMAN, PEYMA]

This task aims to extract named entities in the text, such as names and label with appropriate `NER` classes such as locations, organizations, etc. The datasets used for this task contain sentences that are marked with `IOB` format. In this format, tokens that are not part of an entity are tagged as `”O”` the `”B”`tag corresponds to the first word of an object, and the `”I”` tag corresponds to the rest of the terms of the same entity. Both `”B”` and `”I”` tags are followed by a hyphen (or underscore), followed by the entity category. Therefore, the NER task is a multi-class token classification problem that labels the tokens upon being fed a raw text. There are two primary datasets used in Persian NER, `ARMAN`, and `PEYMA`.

### PEYMA

PEYMA dataset includes 7,145 sentences with a total of 302,530 tokens from which 41,148 tokens are tagged with seven different classes.

1. Organization

2. Money

3. Location

4. Date

5. Time

6. Person

7. Percent

| Label | # |

|:------------:|:-----:|

| Organization | 16964 |

| Money | 2037 |

| Location | 8782 |

| Date | 4259 |

| Time | 732 |

| Person | 7675 |

| Percent | 699 |

**Download**

You can download the dataset from [here](http://nsurl.org/tasks/task-7-named-entity-recognition-ner-for-farsi/)

## Results

The following table summarizes the F1 score obtained by ParsBERT as compared to other models and architectures.

| Dataset | ParsBERT v2 | ParsBERT v1 | mBERT | MorphoBERT | Beheshti-NER | LSTM-CRF | Rule-Based CRF | BiLSTM-CRF |

|---------|-------------|-------------|-------|------------|--------------|----------|----------------|------------|

| PEYMA | 93.40* | 93.10 | 86.64 | - | 90.59 | - | 84.00 | - |

## How to use :hugs:

| Notebook | Description | |

|:----------|:-------------|------:|

| [How to use Pipelines](https://github.com/hooshvare/parsbert-ner/blob/master/persian-ner-pipeline.ipynb) | Simple and efficient way to use State-of-the-Art models on downstream tasks through transformers | [](https://colab.research.google.com/github/hooshvare/parsbert-ner/blob/master/persian-ner-pipeline.ipynb) |

### BibTeX entry and citation info

Please cite in publications as the following:

```bibtex

@article{ParsBERT,

title={ParsBERT: Transformer-based Model for Persian Language Understanding},

author={Mehrdad Farahani, Mohammad Gharachorloo, Marzieh Farahani, Mohammad Manthouri},

journal={ArXiv},

year={2020},

volume={abs/2005.12515}

}

```

## Questions?

Post a Github issue on the [ParsBERT Issues](https://github.com/hooshvare/parsbert/issues) repo. | 3,217 | [

[

-0.0357666015625,

-0.05194091796875,

0.0212554931640625,

0.015869140625,

-0.0227813720703125,

0.005382537841796875,

-0.0290679931640625,

-0.01477813720703125,

0.01226043701171875,

0.040679931640625,

-0.0233001708984375,

-0.04180908203125,

-0.04010009765625,

... |

sentence-transformers/roberta-base-nli-stsb-mean-tokens | 2022-06-15T20:49:42.000Z | [

"sentence-transformers",

"pytorch",

"tf",

"jax",

"roberta",

"feature-extraction",

"sentence-similarity",

"transformers",

"arxiv:1908.10084",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | sentence-similarity | sentence-transformers | null | null | sentence-transformers/roberta-base-nli-stsb-mean-tokens | 0 | 487 | sentence-transformers | 2022-03-02T23:29:05 | ---

pipeline_tag: sentence-similarity

license: apache-2.0

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

---

**⚠️ This model is deprecated. Please don't use it as it produces sentence embeddings of low quality. You can find recommended sentence embedding models here: [SBERT.net - Pretrained Models](https://www.sbert.net/docs/pretrained_models.html)**

# sentence-transformers/roberta-base-nli-stsb-mean-tokens

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('sentence-transformers/roberta-base-nli-stsb-mean-tokens')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('sentence-transformers/roberta-base-nli-stsb-mean-tokens')

model = AutoModel.from_pretrained('sentence-transformers/roberta-base-nli-stsb-mean-tokens')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, max pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=sentence-transformers/roberta-base-nli-stsb-mean-tokens)

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 128, 'do_lower_case': True}) with Transformer model: RobertaModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

This model was trained by [sentence-transformers](https://www.sbert.net/).

If you find this model helpful, feel free to cite our publication [Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks](https://arxiv.org/abs/1908.10084):

```bibtex

@inproceedings{reimers-2019-sentence-bert,

title = "Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks",

author = "Reimers, Nils and Gurevych, Iryna",

booktitle = "Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing",

month = "11",

year = "2019",

publisher = "Association for Computational Linguistics",

url = "http://arxiv.org/abs/1908.10084",

}

``` | 3,991 | [

[

-0.01556396484375,

-0.059326171875,

0.0201263427734375,

0.029937744140625,

-0.0302734375,

-0.031982421875,

-0.0253143310546875,

-0.007274627685546875,

0.0160675048828125,

0.0286407470703125,

-0.041107177734375,

-0.035614013671875,

-0.055694580078125,

0.00841... |

thepowefuldeez/sd21-controlnet-canny | 2023-03-08T17:31:44.000Z | [

"diffusers",

"license:openrail",

"diffusers:ControlNetModel",

"region:us"

] | null | thepowefuldeez | null | null | thepowefuldeez/sd21-controlnet-canny | 6 | 487 | diffusers | 2023-03-08T16:23:58 | ---

license: openrail

---

Converted Canny SD 2.1-base model from https://huggingface.co/thibaud/controlnet-sd21/ to diffusers format.

Saved only ControlNet weights

Usage:

```

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel, DEISMultistepScheduler

import cv2

from PIL import Image

import numpy as np

pipe = StableDiffusionControlNetPipeline.from_pretrained(

"stabilityai/stable-diffusion-2-1-base",

safety_checker=None,

# revision='fp16',

# torch_dtype=torch.float16,

controlnet=ControlNetModel.from_pretrained("thepowefuldeez/sd21-controlnet-canny")

).to('cuda')

pipe.scheduler = DEISMultistepScheduler.from_config(pipe.scheduler.config)

image = np.array(Image.open("10.png"))

low_threshold = 100

high_threshold = 200

image = cv2.Canny(image, low_threshold, high_threshold)

image = image[:, :, None]

image = np.concatenate([image, image, image], axis=2)

canny_image = Image.fromarray(image)

im = pipe(

"beautiful woman", image=canny_image, num_inference_steps=30,

negative_prompt="ugly, blurry, bad, deformed, bad anatomy",

generator=torch.manual_seed(42)

).images[0]

``` | 1,138 | [

[

-0.007659912109375,

-0.00469970703125,

0.0015773773193359375,

0.041229248046875,

-0.0333251953125,

-0.051788330078125,

-0.00010031461715698242,

0.016876220703125,

0.0224761962890625,

0.06622314453125,

-0.0313720703125,

-0.028045654296875,

-0.054779052734375,

... |

google/pix2struct-infographics-vqa-large | 2023-05-19T10:04:46.000Z | [

"transformers",

"pytorch",

"pix2struct",

"text2text-generation",

"visual-question-answering",

"en",

"fr",

"ro",

"de",

"multilingual",

"arxiv:2210.03347",

"license:apache-2.0",

"autotrain_compatible",

"has_space",

"region:us"

] | visual-question-answering | google | null | null | google/pix2struct-infographics-vqa-large | 1 | 487 | transformers | 2023-03-21T10:51:39 | ---

language:

- en

- fr

- ro

- de

- multilingual

pipeline_tag: visual-question-answering

inference: false

license: apache-2.0

---