modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

dwancin/memoji | 2023-08-19T10:42:01.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:mit",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | dwancin | null | null | dwancin/memoji | 1 | 405 | diffusers | 2023-06-29T14:12:41 | ---

license: mit

library_name: diffusers

pipeline_tag: text-to-image

tags:

- stable-diffusion

- stable-diffusion-diffusers

inference: true

widget:

- text: >-

aimoji, a boy

example_title: boy

- text: >-

aimoji, a girl

example_title: girl

---

# Memoji Diffusion

### Model description

A Stable Diffusion model that have been trained on images of Apple's animated Memoji avatars.

## Trigger word

- aimoji

## Examples

| 468 | [

[

-0.0100555419921875,

-0.07373046875,

0.0330810546875,

0.0171051025390625,

-0.0212860107421875,

-0.00475311279296875,

0.00994110107421875,

0.0137939453125,

0.027435302734375,

0.036468505859375,

-0.03973388671875,

-0.028594970703125,

-0.053558349609375,

-0.029... |

DunnBC22/opus-mt-zh-en-Chinese_to_English | 2023-08-23T02:02:32.000Z | [

"transformers",

"pytorch",

"tensorboard",

"marian",

"text2text-generation",

"generated_from_trainer",

"translation",

"en",

"zh",

"dataset:GEM/wiki_lingua",

"license:cc-by-4.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | translation | DunnBC22 | null | null | DunnBC22/opus-mt-zh-en-Chinese_to_English | 3 | 405 | transformers | 2023-08-21T21:35:52 | ---

license: cc-by-4.0

base_model: Helsinki-NLP/opus-mt-zh-en

tags:

- generated_from_trainer

model-index:

- name: opus-mt-zh-en-Chinese_to_English

results: []

datasets:

- GEM/wiki_lingua

language:

- en

- zh

metrics:

- bleu

- rouge

pipeline_tag: translation

---

# opus-mt-zh-en-Chinese_to_English

This model is a fine-tuned version of [Helsinki-NLP/opus-mt-zh-en](https://huggingface.co/Helsinki-NLP/opus-mt-zh-en).

## Model description

For more information on how it was created, check out the following link: https://github.com/DunnBC22/NLP_Projects/blob/main/Machine%20Translation/Chinese%20to%20English%20Translation/Chinese_to_English_Translation.ipynb

## Intended uses & limitations

This model is intended to demonstrate my ability to solve a complex problem using technology.

## Training and evaluation data

Dataset Source: https://huggingface.co/datasets/GEM/wiki_lingua

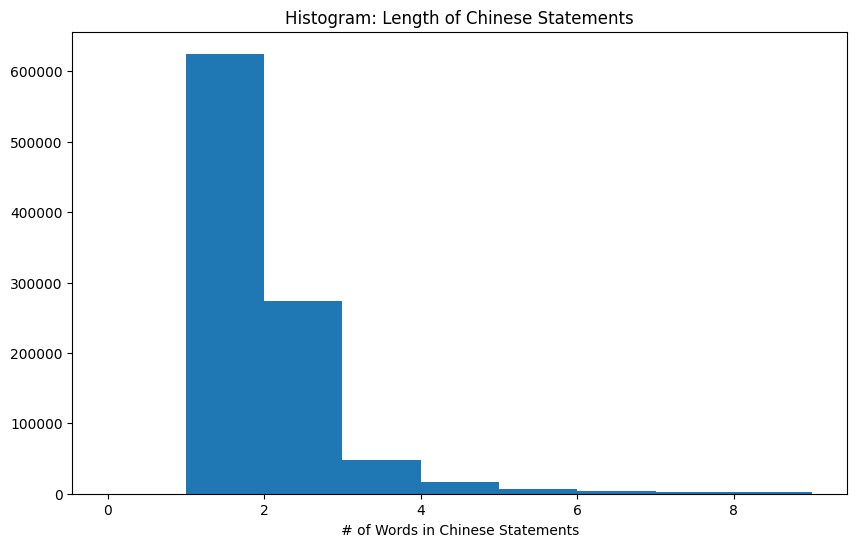

__Chinese Text Length__

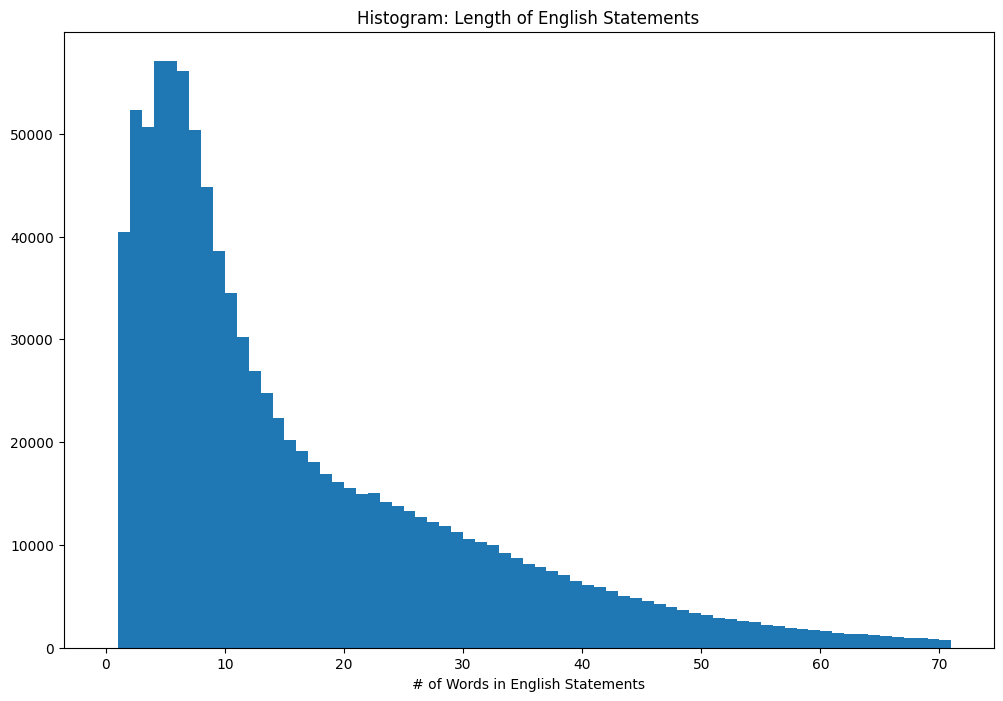

__English Text Length__

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Training results

| Epoch | Validation Loss | Bleu | Rouge1 | Rouge2 | RougeL | RougeLsum | Avg. Prediction Lengths |

|:-----:|:-----:|:-----:|:-----:|:-----:|:-----:|:-----:|:-----:|

| 1.0 | 1.0113 | 45.2808 | 0.6201 | 0.4198 | 0.5927 | 0.5927 | 24.5581 |

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.4

- Tokenizers 0.13.3 | 2,052 | [

[

-0.0185394287109375,

-0.04364013671875,

0.0185546875,

0.0247802734375,

-0.02587890625,

-0.01800537109375,

-0.0271148681640625,

-0.0318603515625,

0.0218963623046875,

0.0272216796875,

-0.051544189453125,

-0.041015625,

-0.036712646484375,

0.005397796630859375,

... |

facebook/hubert-xlarge-ls960-ft | 2023-06-27T18:52:32.000Z | [

"transformers",

"pytorch",

"tf",

"safetensors",

"hubert",

"automatic-speech-recognition",

"speech",

"audio",

"hf-asr-leaderboard",

"en",

"dataset:libri-light",

"dataset:librispeech_asr",

"arxiv:2106.07447",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"has_space",

"... | automatic-speech-recognition | facebook | null | null | facebook/hubert-xlarge-ls960-ft | 10 | 404 | transformers | 2022-03-02T23:29:05 | ---

language: en

datasets:

- libri-light

- librispeech_asr

tags:

- speech

- audio

- automatic-speech-recognition

- hf-asr-leaderboard

license: apache-2.0

model-index:

- name: hubert-large-ls960-ft

results:

- task:

name: Automatic Speech Recognition

type: automatic-speech-recognition

dataset:

name: LibriSpeech (clean)

type: librispeech_asr

config: clean

split: test

args:

language: en

metrics:

- name: Test WER

type: wer

value: 1.8

---

# Hubert-Extra-Large-Finetuned

[Facebook's Hubert](https://ai.facebook.com/blog/hubert-self-supervised-representation-learning-for-speech-recognition-generation-and-compression)

The extra large model fine-tuned on 960h of Librispeech on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz.

The model is a fine-tuned version of [hubert-xlarge-ll60k](https://huggingface.co/facebook/hubert-xlarge-ll60k).

[Paper](https://arxiv.org/abs/2106.07447)

Authors: Wei-Ning Hsu, Benjamin Bolte, Yao-Hung Hubert Tsai, Kushal Lakhotia, Ruslan Salakhutdinov, Abdelrahman Mohamed

**Abstract**

Self-supervised approaches for speech representation learning are challenged by three unique problems: (1) there are multiple sound units in each input utterance, (2) there is no lexicon of input sound units during the pre-training phase, and (3) sound units have variable lengths with no explicit segmentation. To deal with these three problems, we propose the Hidden-Unit BERT (HuBERT) approach for self-supervised speech representation learning, which utilizes an offline clustering step to provide aligned target labels for a BERT-like prediction loss. A key ingredient of our approach is applying the prediction loss over the masked regions only, which forces the model to learn a combined acoustic and language model over the continuous inputs. HuBERT relies primarily on the consistency of the unsupervised clustering step rather than the intrinsic quality of the assigned cluster labels. Starting with a simple k-means teacher of 100 clusters, and using two iterations of clustering, the HuBERT model either matches or improves upon the state-of-the-art wav2vec 2.0 performance on the Librispeech (960h) and Libri-light (60,000h) benchmarks with 10min, 1h, 10h, 100h, and 960h fine-tuning subsets. Using a 1B parameter model, HuBERT shows up to 19% and 13% relative WER reduction on the more challenging dev-other and test-other evaluation subsets.

The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/hubert .

# Usage

The model can be used for automatic-speech-recognition as follows:

```python

import torch

from transformers import Wav2Vec2Processor, HubertForCTC

from datasets import load_dataset

processor = Wav2Vec2Processor.from_pretrained("facebook/hubert-xlarge-ls960-ft")

model = HubertForCTC.from_pretrained("facebook/hubert-xlarge-ls960-ft")

ds = load_dataset("patrickvonplaten/librispeech_asr_dummy", "clean", split="validation")

input_values = processor(ds[0]["audio"]["array"], return_tensors="pt").input_values # Batch size 1

logits = model(input_values).logits

predicted_ids = torch.argmax(logits, dim=-1)

transcription = processor.decode(predicted_ids[0])

# ->"A MAN SAID TO THE UNIVERSE SIR I EXIST"

``` | 3,341 | [

[

-0.042388916015625,

-0.04119873046875,

0.0261993408203125,

0.0163421630859375,

-0.00981903076171875,

-0.007282257080078125,

-0.029937744140625,

-0.0343017578125,

0.0308837890625,

0.01947021484375,

-0.047760009765625,

-0.0235748291015625,

-0.036956787109375,

... |

timm/beitv2_base_patch16_224.in1k_ft_in1k | 2023-05-08T23:33:59.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2208.06366",

"arxiv:2010.11929",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/beitv2_base_patch16_224.in1k_ft_in1k | 0 | 404 | timm | 2023-05-08T23:32:43 | ---

tags:

- image-classification

- timm

library_name: timm

license: apache-2.0

datasets:

- imagenet-1k

---

# Model card for beitv2_base_patch16_224.in1k_ft_in1k

A BEiT-v2 image classification model. Trained on ImageNet-1k with self-supervised masked image modelling (MIM) using a VQ-KD encoder as a visual tokenizer (via OpenAI CLIP B/16 teacher). Fine-tuned on ImageNet-1k.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 86.5

- GMACs: 17.6

- Activations (M): 23.9

- Image size: 224 x 224

- **Papers:**

- BEiT v2: Masked Image Modeling with Vector-Quantized Visual Tokenizers: https://arxiv.org/abs/2208.06366

- An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale: https://arxiv.org/abs/2010.11929v2

- **Dataset:** ImageNet-1k

- **Original:** https://github.com/microsoft/unilm/tree/master/beit2

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('beitv2_base_patch16_224.in1k_ft_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'beitv2_base_patch16_224.in1k_ft_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 197, 768) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@article{peng2022beit,

title={Beit v2: Masked image modeling with vector-quantized visual tokenizers},

author={Peng, Zhiliang and Dong, Li and Bao, Hangbo and Ye, Qixiang and Wei, Furu},

journal={arXiv preprint arXiv:2208.06366},

year={2022}

}

```

```bibtex

@article{dosovitskiy2020vit,

title={An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale},

author={Dosovitskiy, Alexey and Beyer, Lucas and Kolesnikov, Alexander and Weissenborn, Dirk and Zhai, Xiaohua and Unterthiner, Thomas and Dehghani, Mostafa and Minderer, Matthias and Heigold, Georg and Gelly, Sylvain and Uszkoreit, Jakob and Houlsby, Neil},

journal={ICLR},

year={2021}

}

```

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

| 3,751 | [

[

-0.0316162109375,

-0.0294036865234375,

-0.0031604766845703125,

0.0084228515625,

-0.041595458984375,

-0.01557159423828125,

-0.006282806396484375,

-0.036956787109375,

0.01378631591796875,

0.029449462890625,

-0.030548095703125,

-0.05560302734375,

-0.0557861328125,

... |

matgu23/ntrlph | 2023-07-12T05:00:35.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | matgu23 | null | null | matgu23/ntrlph | 0 | 404 | diffusers | 2023-07-12T04:48:23 | ---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

---

### ntrlph Dreambooth model trained by matgu23 with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook

Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb)

Sample pictures of this concept:

| 495 | [

[

-0.0222320556640625,

-0.056976318359375,

0.0323486328125,

0.0391845703125,

-0.0252838134765625,

0.03887939453125,

0.02093505859375,

-0.0281829833984375,

0.037872314453125,

0.005767822265625,

-0.0269317626953125,

-0.016632080078125,

-0.034515380859375,

-0.003... |

digiplay/hellopure_v2.24Beta | 2023-07-13T08:49:07.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | digiplay | null | null | digiplay/hellopure_v2.24Beta | 2 | 404 | diffusers | 2023-07-13T04:21:25 | ---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

👍👍👍👍👍

https://civitai.com/models/88202/hellopure

Other models from Author: https://civitai.com/user/aji1/models

Sample image I made with AUTOMATIC1111 :

parameters

very close-up ,(best beautiful:1.2), (masterpiece:1.2), (best quality:1.2),masterpiece, best quality, The image features a beautiful young woman with long light golden hair, beach near the ocean, white dress ,The beach is lined with palm trees,

Negative prompt: worst quality ,normal quality ,

Steps: 17, Sampler: Euler, CFG scale: 5, Seed: 1097775045, Size: 480x680, Model hash: 8d4fa7988b, Clip skip: 2, Version: v1.4.1

| 1,008 | [

[

-0.057708740234375,

-0.030975341796875,

0.0274505615234375,

0.025238037109375,

-0.032928466796875,

-0.010986328125,

0.0255126953125,

-0.048126220703125,

0.050384521484375,

0.0248565673828125,

-0.057342529296875,

-0.0183868408203125,

-0.022003173828125,

0.008... |

alvaroalon2/biobert_genetic_ner | 2023-03-17T12:11:30.000Z | [

"transformers",

"pytorch",

"bert",

"token-classification",

"NER",

"Biomedical",

"Genetics",

"en",

"dataset:JNLPBA",

"dataset:BC2GM",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | token-classification | alvaroalon2 | null | null | alvaroalon2/biobert_genetic_ner | 11 | 403 | transformers | 2022-03-02T23:29:05 | ---

language: en

license: apache-2.0

tags:

- token-classification

- NER

- Biomedical

- Genetics

datasets:

- JNLPBA

- BC2GM

---

BioBERT model fine-tuned in NER task with JNLPBA and BC2GM corpus for genetic class entities.

This was fine-tuned in order to use it in a BioNER/BioNEN system which is available at: https://github.com/librairy/bio-ner | 345 | [

[

-0.02093505859375,

-0.048248291015625,

0.0206298828125,

0.0011882781982421875,

0.00238037109375,

0.0218353271484375,

-0.004608154296875,

-0.05902099609375,

0.030303955078125,

0.036834716796875,

-0.0289154052734375,

-0.036865234375,

-0.036285400390625,

0.0149... |

nvidia/segformer-b0-finetuned-cityscapes-512-1024 | 2022-08-09T11:34:31.000Z | [

"transformers",

"pytorch",

"tf",

"segformer",

"vision",

"image-segmentation",

"dataset:cityscapes",

"arxiv:2105.15203",

"license:other",

"endpoints_compatible",

"region:us"

] | image-segmentation | nvidia | null | null | nvidia/segformer-b0-finetuned-cityscapes-512-1024 | 0 | 403 | transformers | 2022-03-02T23:29:05 | ---

license: other

tags:

- vision

- image-segmentation

datasets:

- cityscapes

widget:

- src: https://cdn-media.huggingface.co/Inference-API/Sample-results-on-the-Cityscapes-dataset-The-above-images-show-how-our-method-can-handle.png

example_title: road

---

# SegFormer (b4-sized) model fine-tuned on CityScapes

SegFormer model fine-tuned on CityScapes at resolution 512x1024. It was introduced in the paper [SegFormer: Simple and Efficient Design for Semantic Segmentation with Transformers](https://arxiv.org/abs/2105.15203) by Xie et al. and first released in [this repository](https://github.com/NVlabs/SegFormer).

Disclaimer: The team releasing SegFormer did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

SegFormer consists of a hierarchical Transformer encoder and a lightweight all-MLP decode head to achieve great results on semantic segmentation benchmarks such as ADE20K and Cityscapes. The hierarchical Transformer is first pre-trained on ImageNet-1k, after which a decode head is added and fine-tuned altogether on a downstream dataset.

## Intended uses & limitations

You can use the raw model for semantic segmentation. See the [model hub](https://huggingface.co/models?other=segformer) to look for fine-tuned versions on a task that interests you.

### How to use

Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes:

```python

from transformers import SegformerFeatureExtractor, SegformerForSemanticSegmentation

from PIL import Image

import requests

feature_extractor = SegformerFeatureExtractor.from_pretrained("nvidia/segformer-b0-finetuned-cityscapes-512-1024")

model = SegformerForSemanticSegmentation.from_pretrained("nvidia/segformer-b0-finetuned-cityscapes-512-1024")

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(url, stream=True).raw)

inputs = feature_extractor(images=image, return_tensors="pt")

outputs = model(**inputs)

logits = outputs.logits # shape (batch_size, num_labels, height/4, width/4)

```

For more code examples, we refer to the [documentation](https://huggingface.co/transformers/model_doc/segformer.html#).

### License

The license for this model can be found [here](https://github.com/NVlabs/SegFormer/blob/master/LICENSE).

### BibTeX entry and citation info

```bibtex

@article{DBLP:journals/corr/abs-2105-15203,

author = {Enze Xie and

Wenhai Wang and

Zhiding Yu and

Anima Anandkumar and

Jose M. Alvarez and

Ping Luo},

title = {SegFormer: Simple and Efficient Design for Semantic Segmentation with

Transformers},

journal = {CoRR},

volume = {abs/2105.15203},

year = {2021},

url = {https://arxiv.org/abs/2105.15203},

eprinttype = {arXiv},

eprint = {2105.15203},

timestamp = {Wed, 02 Jun 2021 11:46:42 +0200},

biburl = {https://dblp.org/rec/journals/corr/abs-2105-15203.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

```

| 3,133 | [

[

-0.06634521484375,

-0.052490234375,

0.017791748046875,

0.0194244384765625,

-0.021392822265625,

-0.0247955322265625,

0.0003001689910888672,

-0.050384521484375,

0.0215911865234375,

0.044281005859375,

-0.062255859375,

-0.04541015625,

-0.051025390625,

0.01219177... |

malmarjeh/t5-arabic-text-summarization | 2023-07-01T16:39:31.000Z | [

"transformers",

"pytorch",

"t5",

"text2text-generation",

"Arabic T5",

"T5",

"MSA",

"Arabic Text Summarization",

"Arabic News Title Generation",

"Arabic Paraphrasing",

"ar",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"text-generation-inference",

"region:us"

] | text2text-generation | malmarjeh | null | null | malmarjeh/t5-arabic-text-summarization | 7 | 403 | transformers | 2022-06-03T14:36:08 | ---

language:

- ar

tags:

- Arabic T5

- T5

- MSA

- Arabic Text Summarization

- Arabic News Title Generation

- Arabic Paraphrasing

widget:

- text: "شهدت مدينة طرابلس، مساء أمس الأربعاء، احتجاجات شعبية وأعمال شغب لليوم الثالث على التوالي، وذلك بسبب تردي الوضع المعيشي والاقتصادي. واندلعت مواجهات عنيفة وعمليات كر وفر ما بين الجيش اللبناني والمحتجين استمرت لساعات، إثر محاولة فتح الطرقات المقطوعة، ما أدى إلى إصابة العشرات من الطرفين."

---

# An Arabic abstractive text summarization model

A fine-tuned AraT5 model on a dataset of 84,764 paragraph-summary pairs.

Paper: [Arabic abstractive text summarization using RNN-based and transformer-based architectures](https://www.sciencedirect.com/science/article/abs/pii/S0306457322003284).

Dataset: [link](https://data.mendeley.com/datasets/7kr75c9h24/1).

The model can be used as follows:

```python

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM, pipeline

from arabert.preprocess import ArabertPreprocessor

model_name="malmarjeh/t5-arabic-text-summarization"

preprocessor = ArabertPreprocessor(model_name="")

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForSeq2SeqLM.from_pretrained(model_name)

pipeline = pipeline("text2text-generation",model=model,tokenizer=tokenizer)

text = "شهدت مدينة طرابلس، مساء أمس الأربعاء، احتجاجات شعبية وأعمال شغب لليوم الثالث على التوالي، وذلك بسبب تردي الوضع المعيشي والاقتصادي. واندلعت مواجهات عنيفة وعمليات كر وفر ما بين الجيش اللبناني والمحتجين استمرت لساعات، إثر محاولة فتح الطرقات المقطوعة، ما أدى إلى إصابة العشرات من الطرفين."

text = preprocessor.preprocess(text)

result = pipeline(text,

pad_token_id=tokenizer.eos_token_id,

num_beams=3,

repetition_penalty=3.0,

max_length=200,

length_penalty=1.0,

no_repeat_ngram_size = 3)[0]['generated_text']

result

>>> 'مواجهات عنيفة بين الجيش اللبناني ومحتجين في طرابلس'

```

## Contact:

<banimarje@gmail.com>

| 1,965 | [

[

-0.031951904296875,

-0.041595458984375,

0.0123291015625,

0.017974853515625,

-0.045989990234375,

0.0065765380859375,

0.01296234130859375,

-0.027191162109375,

0.022216796875,

0.018463134765625,

-0.023345947265625,

-0.0555419921875,

-0.072998046875,

0.037750244... |

YurtsAI/yurts-python-code-gen-30-sparse | 2022-10-27T20:39:18.000Z | [

"transformers",

"pytorch",

"codegen",

"text-generation",

"license:bsd-3-clause",

"endpoints_compatible",

"has_space",

"region:us"

] | text-generation | YurtsAI | null | null | YurtsAI/yurts-python-code-gen-30-sparse | 18 | 403 | transformers | 2022-10-24T22:22:16 | ---

license: bsd-3-clause

---

# Maverick (Yurt's Python Code Generation Model)

## Model description

This code generation model was fine-tuned on Python code from a generic multi-language code generation model. This model was then pushed to 30% sparsity using Yurts' in-house technology without performance loss. In this specific instance, the class representation for the network is still dense. This particular model has 350M trainable parameters.

## Training data

This model was tuned on a subset of the Python data available in the BigQuery open-source [Github dataset](https://cloud.google.com/blog/topics/public-datasets/github-on-bigquery-analyze-all-the-open-source-code).

## How to use

The model is great at autocompleting based off of partially generated function signatures and class signatures. It is also decent at generating code base based off of natural language prompts with a comment. If you find something cool you can do with the model, be sure to share it with us!

Check out our [colab notebook](https://colab.research.google.com/drive/1NDO4X418HuPJzF8mFc6_ySknQlGIZMDU?usp=sharing) to see how to invoke the model and try it out.

## Feedback and Questions

Have any questions or feedback? Find us on [Discord](https://discord.gg/2x4rmSGER9). | 1,270 | [

[

-0.0180816650390625,

-0.07183837890625,

0.0244598388671875,

0.012176513671875,

-0.01354217529296875,

-0.0154571533203125,

-0.002201080322265625,

-0.0225982666015625,

0.0223388671875,

0.0555419921875,

-0.03155517578125,

-0.0230560302734375,

-0.0129241943359375,

... |

Falah/crying-woman | 2023-03-03T09:53:21.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | Falah | null | null | Falah/crying-woman | 0 | 403 | diffusers | 2023-03-03T09:50:11 | ---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

---

### crying-woman Dreambooth model trained by Falah with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook

Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb)

Sample pictures of this concept:

| 499 | [

[

-0.017303466796875,

-0.059783935546875,

0.033233642578125,

0.036773681640625,

-0.0262298583984375,

0.02899169921875,

0.037139892578125,

-0.022003173828125,

0.0295867919921875,

0.0008597373962402344,

-0.034820556640625,

-0.0177154541015625,

-0.043609619140625,

... |

Grigsss/azuki | 2023-04-19T05:29:29.000Z | [

"diffusers",

"tensorboard",

"text-to-image",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | Grigsss | null | null | Grigsss/azuki | 0 | 403 | diffusers | 2023-04-19T05:27:34 | ---

license: creativeml-openrail-m

tags:

- text-to-image

widget:

- text: azuki

---

### azuki Dreambooth model trained by Grigsss with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v1-5 base model

You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts!

Sample pictures of:

azuki (use that on your prompt)

| 2,607 | [

[

-0.0738525390625,

-0.00274658203125,

0.0223236083984375,

0.00525665283203125,

-0.0241241455078125,

-0.00894927978515625,

0.0190277099609375,

-0.039215087890625,

0.038848876953125,

0.034820556640625,

-0.06463623046875,

-0.048614501953125,

-0.041107177734375,

... |

timm/res2net101d.in1k | 2023-04-24T00:08:27.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:1904.01169",

"license:unknown",

"region:us"

] | image-classification | timm | null | null | timm/res2net101d.in1k | 0 | 403 | timm | 2023-04-24T00:07:57 | ---

tags:

- image-classification

- timm

library_name: timm

license: unknown

datasets:

- imagenet-1k

---

# Model card for res2net101d.in1k

A Res2Net (Multi-Scale ResNet) image classification model. Trained on ImageNet-1k by paper authors.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 45.2

- GMACs: 8.3

- Activations (M): 19.2

- Image size: 224 x 224

- **Papers:**

- Res2Net: A New Multi-scale Backbone Architecture: https://arxiv.org/abs/1904.01169

- **Dataset:** ImageNet-1k

- **Original:** https://github.com/gasvn/Res2Net/

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('res2net101d.in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'res2net101d.in1k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 64, 112, 112])

# torch.Size([1, 256, 56, 56])

# torch.Size([1, 512, 28, 28])

# torch.Size([1, 1024, 14, 14])

# torch.Size([1, 2048, 7, 7])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'res2net101d.in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 2048, 7, 7) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@article{gao2019res2net,

title={Res2Net: A New Multi-scale Backbone Architecture},

author={Gao, Shang-Hua and Cheng, Ming-Ming and Zhao, Kai and Zhang, Xin-Yu and Yang, Ming-Hsuan and Torr, Philip},

journal={IEEE TPAMI},

doi={10.1109/TPAMI.2019.2938758},

}

```

| 3,650 | [

[

-0.03411865234375,

-0.026702880859375,

-0.006832122802734375,

0.0139617919921875,

-0.021209716796875,

-0.02935791015625,

-0.01529693603515625,

-0.02880859375,

0.0246429443359375,

0.039794921875,

-0.033203125,

-0.04052734375,

-0.05194091796875,

-0.01029205322... |

Helsinki-NLP/opus-mt-ml-en | 2023-08-16T12:01:12.000Z | [

"transformers",

"pytorch",

"tf",

"marian",

"text2text-generation",

"translation",

"ml",

"en",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | translation | Helsinki-NLP | null | null | Helsinki-NLP/opus-mt-ml-en | 0 | 402 | transformers | 2022-03-02T23:29:04 | ---

tags:

- translation

license: apache-2.0

---

### opus-mt-ml-en

* source languages: ml

* target languages: en

* OPUS readme: [ml-en](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/ml-en/README.md)

* dataset: opus

* model: transformer-align

* pre-processing: normalization + SentencePiece

* download original weights: [opus-2020-04-20.zip](https://object.pouta.csc.fi/OPUS-MT-models/ml-en/opus-2020-04-20.zip)

* test set translations: [opus-2020-04-20.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/ml-en/opus-2020-04-20.test.txt)

* test set scores: [opus-2020-04-20.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/ml-en/opus-2020-04-20.eval.txt)

## Benchmarks

| testset | BLEU | chr-F |

|-----------------------|-------|-------|

| Tatoeba.ml.en | 42.7 | 0.605 |

| 818 | [

[

-0.017791748046875,

-0.033416748046875,

0.018524169921875,

0.032073974609375,

-0.0263824462890625,

-0.02880859375,

-0.035980224609375,

-0.0043792724609375,

-0.0017404556274414062,

0.034515380859375,

-0.048828125,

-0.046112060546875,

-0.047607421875,

0.017669... |

circulus/sd-photoreal-v2.5 | 2023-02-20T16:02:01.000Z | [

"diffusers",

"generative ai",

"stable-diffusion",

"image-to-image",

"realism",

"art",

"text-to-image",

"en",

"license:gpl-3.0",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | circulus | null | null | circulus/sd-photoreal-v2.5 | 5 | 402 | diffusers | 2023-02-06T06:01:18 | ---

license: gpl-3.0

language:

- en

library_name: diffusers

pipeline_tag: text-to-image

tags:

- generative ai

- stable-diffusion

- image-to-image

- realism

- art

---

Photoreal v2.5

Finetuned Stable Diffusion 1.5 for generating images

You can test this model here >

https://eva.circul.us/index.html

| 327 | [

[

-0.04107666015625,

-0.072509765625,

0.019317626953125,

0.027435302734375,

-0.0258941650390625,

-0.0267791748046875,

0.0205535888671875,

-0.0299530029296875,

0.00308990478515625,

0.048431396484375,

-0.035552978515625,

-0.037384033203125,

-0.0108184814453125,

... |

Tinsae/doorstop1 | 2023-03-09T14:30:10.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | Tinsae | null | null | Tinsae/doorstop1 | 0 | 402 | diffusers | 2023-02-28T19:51:04 | ---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

---

Sample pictures of this concept:

| 116 | [

[

-0.035980224609375,

-0.026397705078125,

0.0281219482421875,

0.0108489990234375,

-0.04315185546875,

-0.0008540153503417969,

0.03802490234375,

-0.0211334228515625,

0.054656982421875,

0.06488037109375,

-0.0545654296875,

-0.0299530029296875,

-0.0240325927734375,

... |

Falah/sooq-safafeer | 2023-03-10T06:36:55.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | Falah | null | null | Falah/sooq-safafeer | 0 | 402 | diffusers | 2023-03-10T06:34:05 | ---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

---

### sooq-safafeer Dreambooth model trained by Falah with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook

Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb)

Sample pictures of this concept:

| 924 | [

[

-0.04010009765625,

-0.05218505859375,

0.0217742919921875,

0.0258941650390625,

-0.025299072265625,

0.008758544921875,

0.03411865234375,

-0.016937255859375,

0.041107177734375,

0.0193328857421875,

-0.03485107421875,

-0.0268402099609375,

-0.043609619140625,

-0.0... |

ImAbbieKitten/protoanime2 | 2023-03-10T08:37:36.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | ImAbbieKitten | null | null | ImAbbieKitten/protoanime2 | 0 | 402 | diffusers | 2023-03-10T08:23:45 | ---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

---

### protoanime2 Dreambooth model trained by ImAbbieKitten with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook

Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb)

Sample pictures of this concept:

| 506 | [

[

-0.0273895263671875,

-0.047149658203125,

0.04107666015625,

0.04266357421875,

-0.0213470458984375,

0.03057861328125,

0.0115814208984375,

-0.02056884765625,

0.05230712890625,

0.0038967132568359375,

-0.0248870849609375,

-0.01428985595703125,

-0.031524658203125,

... |

faalbane/kopper-kreations-custom-stable-diffusion-style-v-1-5-21-photos-vv-1 | 2023-03-11T15:36:27.000Z | [

"diffusers",

"tensorboard",

"text-to-image",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | faalbane | null | null | faalbane/kopper-kreations-custom-stable-diffusion-style-v-1-5-21-photos-vv-1 | 0 | 402 | diffusers | 2023-03-11T15:35:29 | ---

license: creativeml-openrail-m

tags:

- text-to-image

widget:

- text: kk

---

### Kopper Kreations Custom Stable Diffusion (style) (v. 1.5) (21 photos) vv.1 Dreambooth model trained by faalbane with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v1-5 base model

You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts!

Sample pictures of:

kk (use that on your prompt)

| 4,099 | [

[

-0.09228515625,

-0.053009033203125,

0.030181884765625,

0.035003662109375,

-0.025238037109375,

0.0032901763916015625,

0.0102386474609375,

-0.034454345703125,

0.0902099609375,

0.024444580078125,

-0.036041259765625,

-0.036651611328125,

-0.03961181640625,

-0.008... |

timm/eca_nfnet_l1.ra2_in1k | 2023-03-24T01:13:35.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2102.06171",

"arxiv:2101.08692",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/eca_nfnet_l1.ra2_in1k | 0 | 402 | timm | 2023-03-24T01:12:48 | ---

tags:

- image-classification

- timm

library_tag: timm

license: apache-2.0

datasets:

- imagenet-1k

---

# Model card for eca_nfnet_l1.ra2_in1k

A ECA-NFNet-Lite (Lightweight NFNet w/ ECA attention) image classification model. Trained in `timm` by Ross Wightman.

Normalization Free Networks are (pre-activation) ResNet-like models without any normalization layers. Instead of Batch Normalization or alternatives, they use Scaled Weight Standardization and specifically placed scalar gains in residual path and at non-linearities based on signal propagation analysis.

Lightweight NFNets are `timm` specific variants that reduce the SE and bottleneck ratio from 0.5 -> 0.25 (reducing widths) and use a smaller group size while maintaining the same depth. SiLU activations used instead of GELU.

This NFNet variant also uses ECA (Efficient Channel Attention) instead of SE (Squeeze-and-Excitation).

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 41.4

- GMACs: 9.6

- Activations (M): 22.0

- Image size: train = 256 x 256, test = 320 x 320

- **Papers:**

- High-Performance Large-Scale Image Recognition Without Normalization: https://arxiv.org/abs/2102.06171

- Characterizing signal propagation to close the performance gap in unnormalized ResNets: https://arxiv.org/abs/2101.08692

- **Original:** https://github.com/huggingface/pytorch-image-models

- **Dataset:** ImageNet-1k

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('eca_nfnet_l1.ra2_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'eca_nfnet_l1.ra2_in1k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 64, 128, 128])

# torch.Size([1, 256, 64, 64])

# torch.Size([1, 512, 32, 32])

# torch.Size([1, 1536, 16, 16])

# torch.Size([1, 3072, 8, 8])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'eca_nfnet_l1.ra2_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 3072, 8, 8) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@article{brock2021high,

author={Andrew Brock and Soham De and Samuel L. Smith and Karen Simonyan},

title={High-Performance Large-Scale Image Recognition Without Normalization},

journal={arXiv preprint arXiv:2102.06171},

year={2021}

}

```

```bibtex

@inproceedings{brock2021characterizing,

author={Andrew Brock and Soham De and Samuel L. Smith},

title={Characterizing signal propagation to close the performance gap in

unnormalized ResNets},

booktitle={9th International Conference on Learning Representations, {ICLR}},

year={2021}

}

```

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

| 5,082 | [

[

-0.042144775390625,

-0.0400390625,

-0.000579833984375,

0.00850677490234375,

-0.0256195068359375,

-0.027496337890625,

-0.0266571044921875,

-0.042877197265625,

0.0258636474609375,

0.0340576171875,

-0.032257080078125,

-0.050811767578125,

-0.05517578125,

0.00326... |

TheBloke/Mistralic-7B-1-GPTQ | 2023-10-04T16:27:56.000Z | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"text-generation-inference",

"region:us"

] | text-generation | TheBloke | null | null | TheBloke/Mistralic-7B-1-GPTQ | 5 | 402 | transformers | 2023-10-04T13:33:30 | ---

base_model: SkunkworksAI/Mistralic-7B-1

inference: false

model_creator: SkunkworksAI

model_name: Mistralic 7B-1

model_type: mistral

prompt_template: 'Below is an instruction that describes a task. Write a response

that appropriately completes the request.

### System: {system_message}

### Instruction: {prompt}

'

quantized_by: TheBloke

---

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# Mistralic 7B-1 - GPTQ

- Model creator: [SkunkworksAI](https://huggingface.co/SkunkworksAI)

- Original model: [Mistralic 7B-1](https://huggingface.co/SkunkworksAI/Mistralic-7B-1)

<!-- description start -->

## Description

This repo contains GPTQ model files for [SkunkworksAI's Mistralic 7B-1](https://huggingface.co/SkunkworksAI/Mistralic-7B-1).

Multiple GPTQ parameter permutations are provided; see Provided Files below for details of the options provided, their parameters, and the software used to create them.

<!-- description end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/Mistralic-7B-1-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/Mistralic-7B-1-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/Mistralic-7B-1-GGUF)

* [SkunkworksAI's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/SkunkworksAI/Mistralic-7B-1)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: Mistralic

```

Below is an instruction that describes a task. Write a response that appropriately completes the request.

### System: {system_message}

### Instruction: {prompt}

```

<!-- prompt-template end -->

<!-- README_GPTQ.md-provided-files start -->

## Provided files, and GPTQ parameters

Multiple quantisation parameters are provided, to allow you to choose the best one for your hardware and requirements.

Each separate quant is in a different branch. See below for instructions on fetching from different branches.

Most GPTQ files are made with AutoGPTQ. Mistral models are currently made with Transformers.

<details>

<summary>Explanation of GPTQ parameters</summary>

- Bits: The bit size of the quantised model.

- GS: GPTQ group size. Higher numbers use less VRAM, but have lower quantisation accuracy. "None" is the lowest possible value.

- Act Order: True or False. Also known as `desc_act`. True results in better quantisation accuracy. Some GPTQ clients have had issues with models that use Act Order plus Group Size, but this is generally resolved now.

- Damp %: A GPTQ parameter that affects how samples are processed for quantisation. 0.01 is default, but 0.1 results in slightly better accuracy.

- GPTQ dataset: The calibration dataset used during quantisation. Using a dataset more appropriate to the model's training can improve quantisation accuracy. Note that the GPTQ calibration dataset is not the same as the dataset used to train the model - please refer to the original model repo for details of the training dataset(s).

- Sequence Length: The length of the dataset sequences used for quantisation. Ideally this is the same as the model sequence length. For some very long sequence models (16+K), a lower sequence length may have to be used. Note that a lower sequence length does not limit the sequence length of the quantised model. It only impacts the quantisation accuracy on longer inference sequences.

- ExLlama Compatibility: Whether this file can be loaded with ExLlama, which currently only supports Llama models in 4-bit.

</details>

| Branch | Bits | GS | Act Order | Damp % | GPTQ Dataset | Seq Len | Size | ExLlama | Desc |

| ------ | ---- | -- | --------- | ------ | ------------ | ------- | ---- | ------- | ---- |

| [main](https://huggingface.co/TheBloke/Mistralic-7B-1-GPTQ/tree/main) | 4 | 128 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 4.16 GB | Yes | 4-bit, with Act Order and group size 128g. Uses even less VRAM than 64g, but with slightly lower accuracy. |

| [gptq-4bit-32g-actorder_True](https://huggingface.co/TheBloke/Mistralic-7B-1-GPTQ/tree/gptq-4bit-32g-actorder_True) | 4 | 32 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 4.57 GB | Yes | 4-bit, with Act Order and group size 32g. Gives highest possible inference quality, with maximum VRAM usage. |

| [gptq-8bit--1g-actorder_True](https://huggingface.co/TheBloke/Mistralic-7B-1-GPTQ/tree/gptq-8bit--1g-actorder_True) | 8 | None | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 7.52 GB | No | 8-bit, with Act Order. No group size, to lower VRAM requirements. |

| [gptq-8bit-128g-actorder_True](https://huggingface.co/TheBloke/Mistralic-7B-1-GPTQ/tree/gptq-8bit-128g-actorder_True) | 8 | 128 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 7.68 GB | No | 8-bit, with group size 128g for higher inference quality and with Act Order for even higher accuracy. |

| [gptq-8bit-32g-actorder_True](https://huggingface.co/TheBloke/Mistralic-7B-1-GPTQ/tree/gptq-8bit-32g-actorder_True) | 8 | 32 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 8.17 GB | No | 8-bit, with group size 32g and Act Order for maximum inference quality. |

| [gptq-4bit-64g-actorder_True](https://huggingface.co/TheBloke/Mistralic-7B-1-GPTQ/tree/gptq-4bit-64g-actorder_True) | 4 | 64 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 4.30 GB | Yes | 4-bit, with Act Order and group size 64g. Uses less VRAM than 32g, but with slightly lower accuracy. |

<!-- README_GPTQ.md-provided-files end -->

<!-- README_GPTQ.md-download-from-branches start -->

## How to download, including from branches

### In text-generation-webui

To download from the `main` branch, enter `TheBloke/Mistralic-7B-1-GPTQ` in the "Download model" box.

To download from another branch, add `:branchname` to the end of the download name, eg `TheBloke/Mistralic-7B-1-GPTQ:gptq-4bit-32g-actorder_True`

### From the command line

I recommend using the `huggingface-hub` Python library:

```shell

pip3 install huggingface-hub

```

To download the `main` branch to a folder called `Mistralic-7B-1-GPTQ`:

```shell

mkdir Mistralic-7B-1-GPTQ

huggingface-cli download TheBloke/Mistralic-7B-1-GPTQ --local-dir Mistralic-7B-1-GPTQ --local-dir-use-symlinks False

```

To download from a different branch, add the `--revision` parameter:

```shell

mkdir Mistralic-7B-1-GPTQ

huggingface-cli download TheBloke/Mistralic-7B-1-GPTQ --revision gptq-4bit-32g-actorder_True --local-dir Mistralic-7B-1-GPTQ --local-dir-use-symlinks False

```

<details>

<summary>More advanced huggingface-cli download usage</summary>

If you remove the `--local-dir-use-symlinks False` parameter, the files will instead be stored in the central Huggingface cache directory (default location on Linux is: `~/.cache/huggingface`), and symlinks will be added to the specified `--local-dir`, pointing to their real location in the cache. This allows for interrupted downloads to be resumed, and allows you to quickly clone the repo to multiple places on disk without triggering a download again. The downside, and the reason why I don't list that as the default option, is that the files are then hidden away in a cache folder and it's harder to know where your disk space is being used, and to clear it up if/when you want to remove a download model.

The cache location can be changed with the `HF_HOME` environment variable, and/or the `--cache-dir` parameter to `huggingface-cli`.

For more documentation on downloading with `huggingface-cli`, please see: [HF -> Hub Python Library -> Download files -> Download from the CLI](https://huggingface.co/docs/huggingface_hub/guides/download#download-from-the-cli).

To accelerate downloads on fast connections (1Gbit/s or higher), install `hf_transfer`:

```shell

pip3 install hf_transfer

```

And set environment variable `HF_HUB_ENABLE_HF_TRANSFER` to `1`:

```shell

mkdir Mistralic-7B-1-GPTQ

HF_HUB_ENABLE_HF_TRANSFER=1 huggingface-cli download TheBloke/Mistralic-7B-1-GPTQ --local-dir Mistralic-7B-1-GPTQ --local-dir-use-symlinks False

```

Windows Command Line users: You can set the environment variable by running `set HF_HUB_ENABLE_HF_TRANSFER=1` before the download command.

</details>

### With `git` (**not** recommended)

To clone a specific branch with `git`, use a command like this:

```shell

git clone --single-branch --branch gptq-4bit-32g-actorder_True https://huggingface.co/TheBloke/Mistralic-7B-1-GPTQ

```

Note that using Git with HF repos is strongly discouraged. It will be much slower than using `huggingface-hub`, and will use twice as much disk space as it has to store the model files twice (it stores every byte both in the intended target folder, and again in the `.git` folder as a blob.)

<!-- README_GPTQ.md-download-from-branches end -->

<!-- README_GPTQ.md-text-generation-webui start -->

## How to easily download and use this model in [text-generation-webui](https://github.com/oobabooga/text-generation-webui).

Please make sure you're using the latest version of [text-generation-webui](https://github.com/oobabooga/text-generation-webui).

It is strongly recommended to use the text-generation-webui one-click-installers unless you're sure you know how to make a manual install.

1. Click the **Model tab**.

2. Under **Download custom model or LoRA**, enter `TheBloke/Mistralic-7B-1-GPTQ`.

- To download from a specific branch, enter for example `TheBloke/Mistralic-7B-1-GPTQ:gptq-4bit-32g-actorder_True`

- see Provided Files above for the list of branches for each option.

3. Click **Download**.

4. The model will start downloading. Once it's finished it will say "Done".

5. In the top left, click the refresh icon next to **Model**.

6. In the **Model** dropdown, choose the model you just downloaded: `Mistralic-7B-1-GPTQ`

7. The model will automatically load, and is now ready for use!

8. If you want any custom settings, set them and then click **Save settings for this model** followed by **Reload the Model** in the top right.

* Note that you do not need to and should not set manual GPTQ parameters any more. These are set automatically from the file `quantize_config.json`.

9. Once you're ready, click the **Text Generation tab** and enter a prompt to get started!

<!-- README_GPTQ.md-text-generation-webui end -->

<!-- README_GPTQ.md-use-from-tgi start -->

## Serving this model from Text Generation Inference (TGI)

It's recommended to use TGI version 1.1.0 or later. The official Docker container is: `ghcr.io/huggingface/text-generation-inference:1.1.0`

Example Docker parameters:

```shell

--model-id TheBloke/Mistralic-7B-1-GPTQ --port 3000 --quantize awq --max-input-length 3696 --max-total-tokens 4096 --max-batch-prefill-tokens 4096

```

Example Python code for interfacing with TGI (requires huggingface-hub 0.17.0 or later):

```shell

pip3 install huggingface-hub

```

```python

from huggingface_hub import InferenceClient

endpoint_url = "https://your-endpoint-url-here"

prompt = "Tell me about AI"

prompt_template=f'''Below is an instruction that describes a task. Write a response that appropriately completes the request.

### System: {system_message}

### Instruction: {prompt}

'''

client = InferenceClient(endpoint_url)

response = client.text_generation(prompt,

max_new_tokens=128,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

repetition_penalty=1.1)

print(f"Model output: {response}")

```

<!-- README_GPTQ.md-use-from-tgi end -->

<!-- README_GPTQ.md-use-from-python start -->

## How to use this GPTQ model from Python code

### Install the necessary packages

Requires: Transformers 4.33.0 or later, Optimum 1.12.0 or later, and AutoGPTQ 0.4.2 or later.

```shell

pip3 install transformers optimum

pip3 install auto-gptq --extra-index-url https://huggingface.github.io/autogptq-index/whl/cu118/ # Use cu117 if on CUDA 11.7

```

If you have problems installing AutoGPTQ using the pre-built wheels, install it from source instead:

```shell

pip3 uninstall -y auto-gptq

git clone https://github.com/PanQiWei/AutoGPTQ

cd AutoGPTQ

git checkout v0.4.2

pip3 install .

```

### You can then use the following code

```python

from transformers import AutoModelForCausalLM, AutoTokenizer, pipeline

model_name_or_path = "TheBloke/Mistralic-7B-1-GPTQ"

# To use a different branch, change revision

# For example: revision="gptq-4bit-32g-actorder_True"

model = AutoModelForCausalLM.from_pretrained(model_name_or_path,

device_map="auto",

trust_remote_code=False,

revision="main")

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, use_fast=True)

prompt = "Tell me about AI"

prompt_template=f'''Below is an instruction that describes a task. Write a response that appropriately completes the request.

### System: {system_message}

### Instruction: {prompt}

'''

print("\n\n*** Generate:")

input_ids = tokenizer(prompt_template, return_tensors='pt').input_ids.cuda()

output = model.generate(inputs=input_ids, temperature=0.7, do_sample=True, top_p=0.95, top_k=40, max_new_tokens=512)

print(tokenizer.decode(output[0]))

# Inference can also be done using transformers' pipeline

print("*** Pipeline:")

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

max_new_tokens=512,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

repetition_penalty=1.1

)

print(pipe(prompt_template)[0]['generated_text'])

```

<!-- README_GPTQ.md-use-from-python end -->

<!-- README_GPTQ.md-compatibility start -->

## Compatibility

The files provided are tested to work with AutoGPTQ, both via Transformers and using AutoGPTQ directly. They should also work with [Occ4m's GPTQ-for-LLaMa fork](https://github.com/0cc4m/KoboldAI).

[ExLlama](https://github.com/turboderp/exllama) is compatible with Llama and Mistral models in 4-bit. Please see the Provided Files table above for per-file compatibility.

[Huggingface Text Generation Inference (TGI)](https://github.com/huggingface/text-generation-inference) is compatible with all GPTQ models.

<!-- README_GPTQ.md-compatibility end -->

<!-- footer start -->

<!-- 200823 -->

## Discord

For further support, and discussions on these models and AI in general, join us at:

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

## Thanks, and how to contribute

Thanks to the [chirper.ai](https://chirper.ai) team!

Thanks to Clay from [gpus.llm-utils.org](llm-utils)!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

* Patreon: https://patreon.com/TheBlokeAI

* Ko-Fi: https://ko-fi.com/TheBlokeAI

**Special thanks to**: Aemon Algiz.

**Patreon special mentions**: Pierre Kircher, Stanislav Ovsiannikov, Michael Levine, Eugene Pentland, Andrey, 준교 김, Randy H, Fred von Graf, Artur Olbinski, Caitlyn Gatomon, terasurfer, Jeff Scroggin, James Bentley, Vadim, Gabriel Puliatti, Harry Royden McLaughlin, Sean Connelly, Dan Guido, Edmond Seymore, Alicia Loh, subjectnull, AzureBlack, Manuel Alberto Morcote, Thomas Belote, Lone Striker, Chris Smitley, Vitor Caleffi, Johann-Peter Hartmann, Clay Pascal, biorpg, Brandon Frisco, sidney chen, transmissions 11, Pedro Madruga, jinyuan sun, Ajan Kanaga, Emad Mostaque, Trenton Dambrowitz, Jonathan Leane, Iucharbius, usrbinkat, vamX, George Stoitzev, Luke Pendergrass, theTransient, Olakabola, Swaroop Kallakuri, Cap'n Zoog, Brandon Phillips, Michael Dempsey, Nikolai Manek, danny, Matthew Berman, Gabriel Tamborski, alfie_i, Raymond Fosdick, Tom X Nguyen, Raven Klaugh, LangChain4j, Magnesian, Illia Dulskyi, David Ziegler, Mano Prime, Luis Javier Navarrete Lozano, Erik Bjäreholt, 阿明, Nathan Dryer, Alex, Rainer Wilmers, zynix, TL, Joseph William Delisle, John Villwock, Nathan LeClaire, Willem Michiel, Joguhyik, GodLy, OG, Alps Aficionado, Jeffrey Morgan, ReadyPlayerEmma, Tiffany J. Kim, Sebastain Graf, Spencer Kim, Michael Davis, webtim, Talal Aujan, knownsqashed, John Detwiler, Imad Khwaja, Deo Leter, Jerry Meng, Elijah Stavena, Rooh Singh, Pieter, SuperWojo, Alexandros Triantafyllidis, Stephen Murray, Ai Maven, ya boyyy, Enrico Ros, Ken Nordquist, Deep Realms, Nicholas, Spiking Neurons AB, Elle, Will Dee, Jack West, RoA, Luke @flexchar, Viktor Bowallius, Derek Yates, Subspace Studios, jjj, Toran Billups, Asp the Wyvern, Fen Risland, Ilya, NimbleBox.ai, Chadd, Nitin Borwankar, Emre, Mandus, Leonard Tan, Kalila, K, Trailburnt, S_X, Cory Kujawski

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

<!-- footer end -->

# Original model card: SkunkworksAI's Mistralic 7B-1

<p><h1> 🦾 Mistralic-7B-1 🦾 </h1></p>

Special thanks to Together Compute for sponsoring Skunkworks with compute!

**INFERENCE**

```

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

torch.set_default_device('cuda')

system_prompt = "Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.\n\n"

system_no_input_prompt = "Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n"

def generate_prompt(instruction, input=None):

if input:

prompt = f"### System:\n{system_prompt}\n\n"

else:

prompt = f"### System:\n{system_no_input_prompt}\n\n"

prompt += f"### Instruction:\n{instruction}\n\n"

if input:

prompt += f"### Input:\n{input}\n\n"

return prompt + """### Response:\n"""

device = "cuda"

model = AutoModelForCausalLM.from_pretrained("SkunkworksAI/Mistralic-7B-1")

tokenizer = AutoTokenizer.from_pretrained("SkunkworksAI/Mistralic-7B-1")

while True:

instruction = input("Enter Instruction: ")

instruction = generate_prompt(instruction)

inputs = tokenizer(instruction, return_tensors="pt", return_attention_mask=False)

outputs = model.generate(**inputs, max_length=1000, do_sample=True, temperature=0.01, use_cache=True, eos_token_id=tokenizer.eos_token_id)

text = tokenizer.batch_decode(outputs)[0]

print(text)

```

**EVALUATION**

Average: 0.72157

For comparison:

mistralai/Mistral-7B-v0.1 scores 0.7116

mistralai/Mistral-7B-Instruct-v0.1 scores 0.6794

| 20,537 | [

[

-0.03802490234375,

-0.05548095703125,

0.01067352294921875,

0.0171661376953125,

-0.0157928466796875,

-0.019683837890625,

0.00556182861328125,

-0.03839111328125,

0.0164947509765625,

0.02685546875,

-0.045745849609375,

-0.037445068359375,

-0.027862548828125,

-0.... |

codys12/MergeLlama-7b | 2023-10-18T02:04:35.000Z | [

"peft",

"pytorch",

"text-generation",

"dataset:codys12/MergeLlama",

"arxiv:1910.09700",

"license:llama2",

"region:us",

"has_space"

] | text-generation | codys12 | null | null | codys12/MergeLlama-7b | 2 | 402 | peft | 2023-10-11T20:49:26 | ---

library_name: peft

base_model: codellama/CodeLlama-7b-hf

license: llama2

datasets:

- codys12/MergeLlama

pipeline_tag: text-generation

---

# Model Card for Model ID

Automated merge conflict resolution

## Model Details

Peft finetune of CodeLlama-7b

### Model Description

- **Developed by:** DreamcatcherAI

- **License:** llama2

- **Finetuned from model [optional]:** CodeLlama-7b

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** codys12/MergeLlama-7b

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

Input should be formatted as

```

<<<<<<<

Current change

=======

Incoming change

>>>>>>>

```

MergeLlama will provide the resolution.

You can chain multiple conflicts/resolutions for improved context

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Data Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Data Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

## Training procedure

### Framework versions

- PEFT 0.6.0.dev0 | 4,952 | [

[

-0.05084228515625,

-0.048187255859375,

0.0340576171875,

0.007724761962890625,

-0.015472412109375,

-0.0094146728515625,

0.00665283203125,

-0.04302978515625,

0.00421142578125,

0.049835205078125,

-0.048370361328125,

-0.043701171875,

-0.0472412109375,

-0.0079650... |

charliegrc/mattj | 2023-03-08T09:52:48.000Z | [

"diffusers",

"tensorboard",

"text-to-image",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | charliegrc | null | null | charliegrc/mattj | 0 | 401 | diffusers | 2023-03-08T09:51:22 | ---

license: creativeml-openrail-m

tags:

- text-to-image

widget:

- text: julcto

---

### MattJ Dreambooth model trained by charliegrc with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v1-5 base model

You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts!

Sample pictures of:

julcto (use that on your prompt)

| 1,677 | [

[

-0.05804443359375,

-0.0237884521484375,

0.030059814453125,

0.028106689453125,

-0.0269317626953125,

0.02252197265625,

0.007678985595703125,

-0.033447265625,

0.049346923828125,

0.033782958984375,

-0.055633544921875,

-0.044036865234375,

-0.0382080078125,

0.0000... |

garrettscott/garrett-dreambooth | 2023-03-10T03:21:45.000Z | [

"diffusers",