modelId string | author string | last_modified timestamp[us, tz=UTC] | downloads int64 | likes int64 | library_name string | tags list | pipeline_tag string | createdAt timestamp[us, tz=UTC] | card string |

|---|---|---|---|---|---|---|---|---|---|

maddie-mochi-leak-maddie-mochi-reddit-vide/sophie-rain-spiderman-leaked-Sophie-Rain-Spiderman-video-Sophie-rain | maddie-mochi-leak-maddie-mochi-reddit-vide | 2025-06-14T09:55:04Z | 0 | 0 | null | [

"region:us"

] | null | 2025-06-14T09:54:19Z | <a href="https://gitytrendz.cfd/dfgdfgdf"> 🌐 Click Here To link (Full Viral Video Link)

🔴 ➤►DOWNLOAD👉👉🟢 ➤ <a href="https://gitytrendz.cfd/dfgdfgdf"> 🌐 Click Here To link

|

Viral-Katrina-Lim-Kiffy-Viral-videos-tv/FULL.VIDEO.Katrina.Lim.Viral.Video.Tutorial.Official | Viral-Katrina-Lim-Kiffy-Viral-videos-tv | 2025-06-14T09:53:12Z | 0 | 0 | null | [

"region:us"

] | null | 2025-06-14T09:52:40Z | <animated-image data-catalyst=""><a href="https://tinyurl.com/fn84hrnu?news-viral-video" rel="nofollow" data-target="animated-image.originalLink"><img src="https://static.wixstatic.com/media/b249f9_adac8f70fb3f45b88691696c77de18f3~mv2.gif" alt="Foo" data-canonical-src="https://static.wixstatic.com/media/b249f9_adac8f70fb3f45b88691696c77de18f3~mv2.gif" style="max-width: 100%; display: inline-block;" data-target="animated-image.originalImage"></a> |

qiaw99/sib200-mbert-finetuned | qiaw99 | 2025-06-14T09:52:49Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"bert",

"pretraining",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2025-06-14T09:51:40Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

WhiteRabbitNeo/WhiteRabbitNeo-V3-7B | WhiteRabbitNeo | 2025-06-14T09:51:49Z | 3,044 | 11 | transformers | [

"transformers",

"safetensors",

"qwen2",

"text-generation",

"code",

"qwen-coder",

"cybersecurity",

"devops",

"conversational",

"en",

"arxiv:2309.00071",

"base_model:Qwen/Qwen2.5-Coder-7B",

"base_model:finetune:Qwen/Qwen2.5-Coder-7B",

"license:apache-2.0",

"autotrain_compatible",

"text-g... | text-generation | 2025-04-25T22:03:34Z | ---

license: apache-2.0

base_model: Qwen/Qwen2.5-Coder-7B

language:

- en

pipeline_tag: text-generation

library_name: transformers

tags:

- code

- qwen-coder

- cybersecurity

- devops

---

<br>

# WhiteRabbitNeo

<br>

WhiteRabbitNeo is a model series that can be used for offensive and defensive cybersecurity. Access at [whiterabbitneo.com](https://www.whiterabbitneo.com/) or go to [Kindo.ai](https://www.kindo.ai/) to create agents.

# Community

Join us on [Discord](https://discord.gg/8Ynkrcbk92)

# Technical Overview

WhiteRabbitNeo is a finetune of [Qwen2.5-Coder-7B](https://huggingface.co/Qwen/Qwen2.5-Coder-7B/), and inherits the following features:

- Type: Causal Language Models

- Training Stage: Pretraining & Post-training

- Architecture: transformers with RoPE, SwiGLU, RMSNorm, and Attention QKV bias

- Number of Parameters: 7.61B

- Number of Paramaters (Non-Embedding): 6.53B

- Number of Layers: 28

- Number of Attention Heads (GQA): 28 for Q and 4 for KV

- Context Length: Full 131,072 tokens

- Please refer to [this section](#processing-long-texts) for detailed instructions on how to deploy Qwen2.5 for handling long texts.

## Requirements

We advise you to use the latest version of `transformers`.

With `transformers<4.37.0`, you will encounter the following error:

```

KeyError: 'qwen2'

```

## Quickstart

Here provides a code snippet with `apply_chat_template` to show you how to load the tokenizer and model and how to generate contents.

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

model_name = "WhiteRabbitNeo/WhiteRabbitNeo-V3-7B"

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype="auto",

device_map="auto"

)

tokenizer = AutoTokenizer.from_pretrained(model_name)

prompt = "write a quick sort algorithm."

messages = [

{"role": "system", "content": "You are WhiteRabbitNeo, created by Kindo.ai. You are a helpful assistant that is an expert in Cybersecurity and DevOps."},

{"role": "user", "content": prompt}

]

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

generated_ids = model.generate(

**model_inputs,

max_new_tokens=512

)

generated_ids = [

output_ids[len(input_ids):] for input_ids, output_ids in zip(model_inputs.input_ids, generated_ids)

]

response = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

```

### Processing Long Texts

The current `config.json` is set for context length up to 32,768 tokens.

To handle extensive inputs exceeding 32,768 tokens, we utilize [YaRN](https://arxiv.org/abs/2309.00071), a technique for enhancing model length extrapolation, ensuring optimal performance on lengthy texts.

For supported frameworks, you could add the following to `config.json` to enable YaRN:

```json

{

...,

"rope_scaling": {

"factor": 4.0,

"original_max_position_embeddings": 32768,

"type": "yarn"

}

}

```

# License

Apache-2.0 + WhiteRabbitNeo Extended Version

## WhiteRabbitNeo Extension to Apache-2.0 Licence: Usage Restrictions

```

You agree not to use the Model or Derivatives of the Model:

- In any way that violates any applicable national or international law or regulation or infringes upon the lawful rights and interests of any third party;

- For military use in any way;

- For the purpose of exploiting, harming or attempting to exploit or harm minors in any way;

- To generate or disseminate verifiably false information and/or content with the purpose of harming others;

- To generate or disseminate inappropriate content subject to applicable regulatory requirements;

- To generate or disseminate personal identifiable information without due authorization or for unreasonable use;

- To defame, disparage or otherwise harass others;

- For fully automated decision making that adversely impacts an individual’s legal rights or otherwise creates or modifies a binding, enforceable obligation;

- For any use intended to or which has the effect of discriminating against or harming individuals or groups based on online or offline social behavior or known or predicted personal or personality characteristics;

- To exploit any of the vulnerabilities of a specific group of persons based on their age, social, physical or mental characteristics, in order to materially distort the behavior of a person pertaining to that group in a manner that causes or is likely to cause that person or another person physical or psychological harm;

- For any use intended to or which has the effect of discriminating against individuals or groups based on legally protected characteristics or categories.

```

# Terms of Use

By accessing and using this Artificial Intelligence (AI) model, you, the user, acknowledge and agree that you are solely responsible for your use of the model and its outcomes. You hereby agree to indemnify, defend, and hold harmless the creators, developers, and any affiliated persons or entities of this AI model from and against any and all claims, liabilities, damages, losses, costs, expenses, fees (including reasonable attorneys' fees and court costs) that may arise, directly or indirectly, from your use of the AI model.

This AI model is provided "as is" and "as available" without any warranty of any kind, express or implied, including but not limited to the warranties of merchantability, fitness for a particular purpose, and non-infringement. The creators make no warranty that the AI model will meet your requirements or be available on an uninterrupted, secure, or error-free basis.

Your use of the AI model is at your own risk and discretion, and you will be solely responsible for any damage to computer systems or loss of data that results from the use of the AI model.

This disclaimer constitutes part of the agreement between you and the creators of the AI model regarding your use of the model, superseding any prior agreements between you and the creators regarding your use of this AI model. |

gen-robot/openvla-7b-rlvla-sft_16k | gen-robot | 2025-06-14T09:49:55Z | 17 | 0 | null | [

"safetensors",

"openvla",

"custom_code",

"arxiv:2505.19789",

"base_model:gen-robot/openvla-7b-rlvla-warmup",

"base_model:finetune:gen-robot/openvla-7b-rlvla-warmup",

"license:mit",

"region:us"

] | null | 2025-06-13T08:10:59Z | ---

license: mit

base_model:

- gen-robot/openvla-7b-rlvla-warmup

---

# VLA-RL-Study: What Can RL Bring to VLA Generalization? An Empirical Study

[](http://arxiv.org/abs/2505.19789)

[](https://rlvla.github.io)

This is the SFT model, fine-tuned from the [warm-upped OpenVLA model](https://huggingface.co/gen-robot/openvla-7b-rlvla-warmup).

The SFT dataset consists of 16k trajectories collected by the motion planner.

For more details, please refer to the [codebase](https://github.com/gen-robot/RL4VLA) and the [paper](http://arxiv.org/abs/2505.19789). |

gen-robot/openvla-7b-rlvla-warmup | gen-robot | 2025-06-14T09:46:35Z | 13 | 0 | null | [

"safetensors",

"openvla",

"custom_code",

"arxiv:2505.19789",

"base_model:openvla/openvla-7b",

"base_model:finetune:openvla/openvla-7b",

"license:mit",

"region:us"

] | null | 2025-06-13T05:58:39Z | ---

license: mit

base_model:

- openvla/openvla-7b

---

# VLA-RL-Study: What Can RL Bring to VLA Generalization? An Empirical Study

[](http://arxiv.org/abs/2505.19789)

[](https://rlvla.github.io)

This is the warm-upped model, fine-tuned from official `openvla/openvla-7b`.

The warm-up dataset consists of 140 trajectories collected by octo-small and the motion planner.

For more details, please refer to the [codebase](https://github.com/gen-robot/RL4VLA) and the [paper](http://arxiv.org/abs/2505.19789).

|

gen-robot/openvla-7b-rlvla-rl | gen-robot | 2025-06-14T09:45:13Z | 19 | 0 | null | [

"safetensors",

"openvla",

"custom_code",

"arxiv:2505.19789",

"base_model:gen-robot/openvla-7b-rlvla-warmup",

"base_model:finetune:gen-robot/openvla-7b-rlvla-warmup",

"license:mit",

"region:us"

] | null | 2025-06-13T08:11:50Z | ---

license: mit

base_model:

- gen-robot/openvla-7b-rlvla-warmup

---

# VLA-RL-Study: What Can RL Bring to VLA Generalization? An Empirical Study

[](http://arxiv.org/abs/2505.19789)

[](https://rlvla.github.io)

This is the RL model, fine-tuned from the [warm-upped OpenVLA model](https://huggingface.co/gen-robot/openvla-7b-rlvla-warmup).

The RL training takes about 1.5M environment steps.

For more details, please refer to the [codebase](https://github.com/gen-robot/RL4VLA) and the [paper](http://arxiv.org/abs/2505.19789).

|

VIDEOS-18-parbin-assam-viral-videoS/VIDEO.parbin.Viral.Video.Tutorial.Official.link | VIDEOS-18-parbin-assam-viral-videoS | 2025-06-14T09:41:19Z | 0 | 0 | null | [

"region:us"

] | null | 2025-06-14T09:40:52Z | <animated-image data-catalyst=""><a href="https://tinyurl.com/fn84hrnu?news-viral-video" rel="nofollow" data-target="animated-image.originalLink"><img src="https://static.wixstatic.com/media/b249f9_adac8f70fb3f45b88691696c77de18f3~mv2.gif" alt="Foo" data-canonical-src="https://static.wixstatic.com/media/b249f9_adac8f70fb3f45b88691696c77de18f3~mv2.gif" style="max-width: 100%; display: inline-block;" data-target="animated-image.originalImage"></a> |

KnutJaegersberg/EuroLLM-22B-Instruct-Preview-Q8_0-GGUF | KnutJaegersberg | 2025-06-14T09:40:36Z | 0 | 0 | transformers | [

"transformers",

"gguf",

"llama-cpp",

"gguf-my-repo",

"base_model:utter-project/EuroLLM-22B-Instruct-Preview",

"base_model:quantized:utter-project/EuroLLM-22B-Instruct-Preview",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2025-06-14T09:38:48Z | ---

library_name: transformers

tags:

- llama-cpp

- gguf-my-repo

base_model: utter-project/EuroLLM-22B-Instruct-Preview

---

# KnutJaegersberg/EuroLLM-22B-Instruct-Preview-Q8_0-GGUF

This model was converted to GGUF format from [`utter-project/EuroLLM-22B-Instruct-Preview`](https://huggingface.co/utter-project/EuroLLM-22B-Instruct-Preview) using llama.cpp via the ggml.ai's [GGUF-my-repo](https://huggingface.co/spaces/ggml-org/gguf-my-repo) space.

Refer to the [original model card](https://huggingface.co/utter-project/EuroLLM-22B-Instruct-Preview) for more details on the model.

## Use with llama.cpp

Install llama.cpp through brew (works on Mac and Linux)

```bash

brew install llama.cpp

```

Invoke the llama.cpp server or the CLI.

### CLI:

```bash

llama-cli --hf-repo KnutJaegersberg/EuroLLM-22B-Instruct-Preview-Q8_0-GGUF --hf-file eurollm-22b-instruct-preview-q8_0.gguf -p "The meaning to life and the universe is"

```

### Server:

```bash

llama-server --hf-repo KnutJaegersberg/EuroLLM-22B-Instruct-Preview-Q8_0-GGUF --hf-file eurollm-22b-instruct-preview-q8_0.gguf -c 2048

```

Note: You can also use this checkpoint directly through the [usage steps](https://github.com/ggerganov/llama.cpp?tab=readme-ov-file#usage) listed in the Llama.cpp repo as well.

Step 1: Clone llama.cpp from GitHub.

```

git clone https://github.com/ggerganov/llama.cpp

```

Step 2: Move into the llama.cpp folder and build it with `LLAMA_CURL=1` flag along with other hardware-specific flags (for ex: LLAMA_CUDA=1 for Nvidia GPUs on Linux).

```

cd llama.cpp && LLAMA_CURL=1 make

```

Step 3: Run inference through the main binary.

```

./llama-cli --hf-repo KnutJaegersberg/EuroLLM-22B-Instruct-Preview-Q8_0-GGUF --hf-file eurollm-22b-instruct-preview-q8_0.gguf -p "The meaning to life and the universe is"

```

or

```

./llama-server --hf-repo KnutJaegersberg/EuroLLM-22B-Instruct-Preview-Q8_0-GGUF --hf-file eurollm-22b-instruct-preview-q8_0.gguf -c 2048

```

|

Patricia-alencar-prefeita-full-video/VIDEOS-18-kashmiri-girl-viral-videos | Patricia-alencar-prefeita-full-video | 2025-06-14T09:33:53Z | 0 | 0 | null | [

"region:us"

] | null | 2025-06-14T09:33:46Z |

<a href="https://sdu.sk/uLf"><img src="https://i.ibb.co.com/xMMVF88/686577567.gif" alt="fsd" /></a>

<a href="https://sdu.sk/uLf" rel="nofollow">►✅ 𝘾𝙇𝙄𝘾𝙆 𝙃𝙀𝙍𝙀 ==►► (𝗦𝗶𝗴𝗻 𝗨𝗽 𝘁𝗼 𝙁𝙪𝙡𝙡 𝗪𝗮𝘁𝗰𝗵 𝙑𝙞𝙙𝙚𝙤❤️❤️)</a>

<a href="https://sdu.sk/uLf" rel="nofollow">🔴 ➤►✅𝘾𝙇𝙄𝘾𝙆 𝙃𝙀𝙍𝙀 ==►► (𝐅𝐮𝐥𝐥 𝐯𝐢𝐝𝐞𝐨 𝐥𝐢𝐧𝐤)</a>

|

quanganh22/pegasus-x-finetuned-final-v2 | quanganh22 | 2025-06-14T09:31:26Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"pegasus_x",

"text2text-generation",

"arxiv:1910.09700",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text2text-generation | 2025-06-14T01:53:46Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

gradientrouting-spar/vertical_5_proxy_ntrain_25_ntrig_9_animals_seed_1_seed_25_seed_2_20250614_091555 | gradientrouting-spar | 2025-06-14T09:25:19Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2025-06-14T09:25:03Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

VIDEOS-18-misswow-Videos/FULL.VIDEO.Miss.Wow.Viral.Video.Tutorial.Official | VIDEOS-18-misswow-Videos | 2025-06-14T09:21:29Z | 0 | 0 | null | [

"region:us"

] | null | 2025-06-14T09:21:23Z |

<a href="https://sdu.sk/uLf"><img src="https://i.ibb.co.com/xMMVF88/686577567.gif" alt="fsd" /></a>

<a href="https://sdu.sk/uLf" rel="nofollow">►✅ 𝘾𝙇𝙄𝘾𝙆 𝙃𝙀𝙍𝙀 ==►► (𝗦𝗶𝗴𝗻 𝗨𝗽 𝘁𝗼 𝙁𝙪𝙡𝙡 𝗪𝗮𝘁𝗰𝗵 𝙑𝙞𝙙𝙚𝙤❤️❤️)</a>

<a href="https://sdu.sk/uLf" rel="nofollow">🔴 ➤►✅𝘾𝙇𝙄𝘾𝙆 𝙃𝙀𝙍𝙀 ==►► (𝐅𝐮𝐥𝐥 𝐯𝐢𝐝𝐞𝐨 𝐥𝐢𝐧𝐤)</a>

|

RichardErkhov/picAIso_-_TARS-8B-4bits | RichardErkhov | 2025-06-14T08:24:05Z | 0 | 0 | null | [

"safetensors",

"llama",

"arxiv:2306.01708",

"4-bit",

"bitsandbytes",

"region:us"

] | null | 2025-06-14T08:22:08Z | Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

TARS-8B - bnb 4bits

- Model creator: https://huggingface.co/picAIso/

- Original model: https://huggingface.co/picAIso/TARS-8B/

Original model description:

---

base_model:

- NousResearch/Hermes-2-Pro-Llama-3-8B

- nbeerbower/llama-3-gutenberg-8B

- MaziyarPanahi/Llama-3-8B-Instruct-v0.9

library_name: transformers

tags:

- mergekit

- merge

- merging

- llama3

- merged

license: llama3

language:

- en

---

# merge

This is a merge of pre-trained language models created using [mergekit](https://github.com/cg123/mergekit).

## Merge Details

### Merge Method

This model was merged using the [TIES](https://arxiv.org/abs/2306.01708) merge method using [MaziyarPanahi/Llama-3-8B-Instruct-v0.9](https://huggingface.co/MaziyarPanahi/Llama-3-8B-Instruct-v0.9) as a base.

### Models Merged

The following models were included in the merge:

* [NousResearch/Hermes-2-Pro-Llama-3-8B](https://huggingface.co/NousResearch/Hermes-2-Pro-Llama-3-8B)

* [nbeerbower/llama-3-gutenberg-8B](https://huggingface.co/nbeerbower/llama-3-gutenberg-8B)

### Configuration

The following YAML configuration was used to produce this model:

```yaml

models:

- model: MaziyarPanahi/Llama-3-8B-Instruct-v0.9

#no parameters necessary for base model

- model: NousResearch/Hermes-2-Pro-Llama-3-8B

parameters:

density: 0.5

weight: 0.8

- model: nbeerbower/llama-3-gutenberg-8B

parameters:

density: 0.5

weight: 0.8

merge_method: ties

base_model: MaziyarPanahi/Llama-3-8B-Instruct-v0.9

parameters:

normalize: false

int8_mask: true

dtype: float16

```

|

RichardErkhov/SYSU-MUCFC-FinTech-Research-Center_-_ZhiLu-2-8B-Instruct-4bits | RichardErkhov | 2025-06-14T08:20:25Z | 0 | 0 | null | [

"safetensors",

"llama",

"4-bit",

"bitsandbytes",

"region:us"

] | null | 2025-06-14T08:17:57Z | Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

ZhiLu-2-8B-Instruct - bnb 4bits

- Model creator: https://huggingface.co/SYSU-MUCFC-FinTech-Research-Center/

- Original model: https://huggingface.co/SYSU-MUCFC-FinTech-Research-Center/ZhiLu-2-8B-Instruct/

Original model description:

---

license: llama3

---

ZhiLu-2是一款基于llama3微调的中文消费金融领域对话大模型。与ZhiLu相比,ZhiLu-2性能有了显著提升。我们不仅收集了全新的高质量指令数据进行对齐,还创新性地设计了独特的数据合成方法,并将大量合成数据应用于模型训练。通过这些努力,ZhiLu-2在性能上取得了显著的突破,展示了卓越的性能。

训练细节

在第二版的智鹿训练中,我们引入了全新的指令微调数据,并且加入了合成数据。我们相信,合成数据的使用将带来意想不到的惊喜效果。以下是一些重要的训练细节:

🚀 高效训练

我们使用llama-factory作为训练框架,并配备多块A100显卡,通过DeepSpeed(ds)实现数据并行、模型并行、管道并行和张量并行等优化技术。在微调方法的选择上,我们对Full-Rank FT、LORA、BAdam、LoRA+和DoRA进行了详细比较,评估了各方法在训练时间、显卡占用、推理时间和模型性能等多项指标上的表现。最终,我们决定采用DoRA进行微调,以获得最佳的性价比和性能。

⚡ 加速技术

为了提高资源的利用率并缩短训练时间,我们采用了以下两项关键技术:

Packing

FlashAttention-2

🔒 安全性与对齐

我们使用 DPO 来训练模型。DPO具有使用便捷、成效快速的优势,可以达到近似RLHF的偏好对齐效果,确保输出的安全和无害。

🛡️ 避免灾难性遗忘

为了防止训练后模型的灾难性遗忘,并平衡模型在各个任务上的能力,我们使用了 merging 技术。

🌱 自我进化

通过设计新的框架,我们使模型能够自我生成训练数据,从而实现自我进化。

更多细节参见:[ZhiLu-2-github仓库](https://github.com/SYSU-MUCFC-FinTech-Research-Center)

|

yunwoerte/ppo-LunarLander-v2 | yunwoerte | 2025-06-14T08:19:16Z | 0 | 0 | stable-baselines3 | [

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] | reinforcement-learning | 2025-06-14T08:18:57Z | ---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: 235.87 +/- 83.86

name: mean_reward

verified: false

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

RichardErkhov/Vikhrmodels_-_it-5.4-fp16-orpo-v2-4bits | RichardErkhov | 2025-06-14T08:18:47Z | 0 | 0 | null | [

"safetensors",

"llama",

"4-bit",

"bitsandbytes",

"region:us"

] | null | 2025-06-14T08:17:02Z | Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

it-5.4-fp16-orpo-v2 - bnb 4bits

- Model creator: https://huggingface.co/Vikhrmodels/

- Original model: https://huggingface.co/Vikhrmodels/it-5.4-fp16-orpo-v2/

Original model description:

---

language:

- ru

---

## Инструктивный вихрь 5.4

Базовый Вихрь 5той версии (мистраль) обученный на переведенных инструкциях и ответах GPT-4 и улучшенный с помощью [ORPO](https://argilla.io/blog/mantisnlp-rlhf-part-8/) на нашем внутреннем датасете.

Модель имеет довольно высокое разннобразие ответов, поэтому рекомендуется использовать temperature в рендже [0.1, 0.4].

- [GGUF](https://huggingface.co/Vikhrmodels/it-5.4-fp16-orpo-v2-GGUF)

### Использование через transformers

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

model_name = 'Vikhrmodels/it-5.4-fp16-orpo-v2'

model = AutoModelForCausalLM.from_pretrained(

model_name,

device_map="sequential",

attn_implementation="sdpa",

torch_dtype=torch.bfloat16

)

tokenizer = AutoTokenizer.from_pretrained(model_name)

test_input = tokenizer.apply_chat_template([

{'role': 'user', 'content': 'Привет, объясни что такое черные дыры'}

], add_generation_prompt=True, tokenize=True, return_tensors='pt')

test_input = test_input.to(model.device)

answer = model.generate(

test_input,

do_sample=True,

use_cache=True,

max_new_tokens=256,

temperature=0.3,

)[:, test_input.shape[-1]:]

answer = tokenizer.batch_decode(answer, skip_special_tokens=True)[0]

print(answer)

```

Ответ модели:

> Привет! Черные дыры - это удивительные объекты, которые существуют в космосе. Они образуются из остатков массивных звезд, которые взрываются в результате сверхновой. После взрыва остается ядро звезды, которое коллапсирует под действием собственной гравитации, образуя черную дыру.

>

> Черная дыра - это область пространства, где гравитационное притяжение настолько сильное, что ничто, даже свет, не может вырваться из нее. Это происходит потому, что гравитационное поле черной дыры настолько сильное, что оно искривляет пространство-время настолько, что даже свет не может покинуть ее.

>

> Черные дыры могут быть разных размеров, от маленьких, которые могут быть размером с планету, до огромных, которые могут быть размером с галактику. Они могут быть как неподвижными, так и движущимися, и могут взаимодействовать с другими объектами в космосе, такими как звезды и планеты.

>

> Черные дыры - это удивительные объекты, которые продолжают изучать ученые, и они могут многое рассказать нам о Вселенной и ее происхождении.

### Использование через vllm + openai client

Запуск сервера в терминале: \

`python -m vllm.entrypoints.openai.api_server --model Vikhrmodels/it-5.4-fp16-orpo-v2 --dtype half --api-key token-abc123 -tp 1`

```python

import openai

llm_model = "Vikhrmodels/it-5.4-fp16-orpo-v2"

llm_client = openai.OpenAI(

base_url="http://localhost:8000/v1",

api_key="token-abc123",

)

def make_completion(history, client, model):

completion = client.chat.completions.create(

model=model,

messages=history,

temperature=0.3,

max_tokens=2048,

stop=["</s>", "<eos>", "<|eot_id|>", "<|im_end|>"]

)

output = completion.choices[0].message.content

return output

answer = make_completion([

{'role': 'user', 'content': 'Привет, объясни что такое черные дыры'}

], llm_client, llm_model)

print(answer)

```

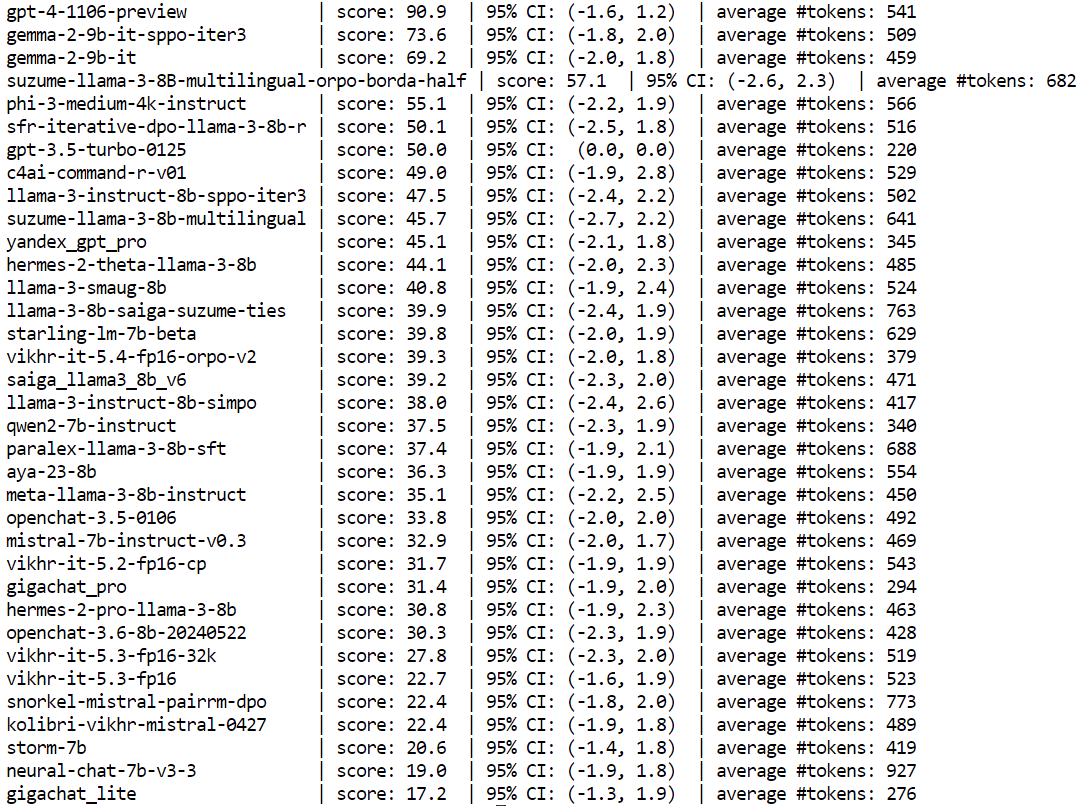

### Метрики на ru_arena_general

|

RichardErkhov/helloollel_-_Llama-3.1-8B-instruct-bilibili-4bits | RichardErkhov | 2025-06-14T08:16:33Z | 0 | 0 | null | [

"safetensors",

"llama",

"4-bit",

"bitsandbytes",

"region:us"

] | null | 2025-06-14T08:14:50Z | Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

Llama-3.1-8B-instruct-bilibili - bnb 4bits

- Model creator: https://huggingface.co/helloollel/

- Original model: https://huggingface.co/helloollel/Llama-3.1-8B-instruct-bilibili/

Original model description:

---

base_model: unsloth/Meta-Llama-3.1-8B-Instruct

language:

- en

license: apache-2.0

tags:

- text-generation-inference

- transformers

- unsloth

- llama

- trl

- sft

---

# Uploaded model

- **Developed by:** helloollel

- **License:** apache-2.0

- **Finetuned from model :** unsloth/Meta-Llama-3.1-8B-Instruct

This llama model was trained 2x faster with [Unsloth](https://github.com/unslothai/unsloth) and Huggingface's TRL library.

[<img src="https://raw.githubusercontent.com/unslothai/unsloth/main/images/unsloth%20made%20with%20love.png" width="200"/>](https://github.com/unslothai/unsloth)

|

magnusdtd/bio-clip-cls-ft | magnusdtd | 2025-06-14T08:16:31Z | 0 | 0 | open_clip | [

"open_clip",

"en",

"license:mit",

"region:us"

] | null | 2025-06-14T07:54:50Z | ---

license: mit

language:

- en

library_name: open_clip

---

A fine-tuned version of BioCLIP, trained for 30 epochs on a specialized dataset for classification tasks.

|

RichardErkhov/hoangcung165_-_Sailor-7B-Metal-Healt-4bits | RichardErkhov | 2025-06-14T08:15:35Z | 0 | 0 | null | [

"safetensors",

"qwen2",

"4-bit",

"bitsandbytes",

"region:us"

] | null | 2025-06-14T08:13:44Z | Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

Sailor-7B-Metal-Healt - bnb 4bits

- Model creator: https://huggingface.co/hoangcung165/

- Original model: https://huggingface.co/hoangcung165/Sailor-7B-Metal-Healt/

Original model description:

---

tags:

- autotrain

- text-generation-inference

- text-generation

- peft

library_name: transformers

base_model: sail/Sailor-7B

widget:

- messages:

- role: user

content: What is your favorite condiment?

license: other

---

# Model Trained Using AutoTrain

This model was trained using AutoTrain. For more information, please visit [AutoTrain](https://hf.co/docs/autotrain).

# Usage

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

model_path = "PATH_TO_THIS_REPO"

tokenizer = AutoTokenizer.from_pretrained(model_path)

model = AutoModelForCausalLM.from_pretrained(

model_path,

device_map="auto",

torch_dtype='auto'

).eval()

# Prompt content: "hi"

messages = [

{"role": "user", "content": "hi"}

]

input_ids = tokenizer.apply_chat_template(conversation=messages, tokenize=True, add_generation_prompt=True, return_tensors='pt')

output_ids = model.generate(input_ids.to('cuda'))

response = tokenizer.decode(output_ids[0][input_ids.shape[1]:], skip_special_tokens=True)

# Model response: "Hello! How can I assist you today?"

print(response)

```

|

MetaphoricalCode/Dans-DangerousWinds-V1.1.0-12b-exl3-5bpw-hb6 | MetaphoricalCode | 2025-06-14T08:14:45Z | 0 | 0 | null | [

"safetensors",

"mistral",

"en",

"dataset:PocketDoc/Dans-Prosemaxx-Adventure",

"dataset:PocketDoc/Dans-Failuremaxx-Adventure",

"dataset:PocketDoc/Dans-Prosemaxx-Cowriter-2-S",

"base_model:PocketDoc/Dans-DangerousWinds-V1.1.0-12b",

"base_model:quantized:PocketDoc/Dans-DangerousWinds-V1.1.0-12b",

"lice... | null | 2025-06-14T08:07:14Z | ---

license: apache-2.0

datasets:

- PocketDoc/Dans-Prosemaxx-Adventure

- PocketDoc/Dans-Failuremaxx-Adventure

- PocketDoc/Dans-Prosemaxx-Cowriter-2-S

language:

- en

base_model:

- PocketDoc/Dans-DangerousWinds-V1.1.0-12b

base_model_relation: quantized

---

## Quantized using the default exllamav3 (0.0.3) quantization process.

- Original model: https://huggingface.co/PocketDoc/Dans-DangerousWinds-V1.1.0-12b

- exllamav3: https://github.com/turboderp-org/exllamav3

---

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

</head>

<div class="crt-container">

<div class="crt-case">

<div class="crt-inner-case">

<div class="crt-bezel">

<div class="terminal-screen">

<div style="text-align: center;">

<h2>Dans-DangerousWinds-V1.1.0-12b</h2>

<pre class="code-block" style="display: inline-block; text-align: left;"> (( "####@@!!$$ ))

`#####@@!$$` ))

(( '####@!!$:

(( ,####@!!$: ))

.###@!!$:

`##@@!$:

`#@!!$

!@# `#@!$: @#$

#$ `#@!$: !@!

'@!$:

'`\ "!$: /`'

'\ '!: /'

"\ : /"

-."-/\\\-."//.-"/:`\."-.JrS"."-=_\\

" -."-.\\"-."//.-".`-."_\\-.".-\".-//</pre>

</div>

<p>An old school text adventure model with a predilection for making the player fumble, often fatally.</p>

<h3>Key Details</h3>

<pre class="code-block">

BASE MODEL: mistralai/Mistral-Nemo-Base-2407

LICENSE: apache-2.0

LANGUAGE: English

CONTEXT LENGTH: 8192 tokens</pre>

<h3>Recommended Settings</h3>

<pre class="code-block">

TEMPERATURE: 1.0

TOP_P: 0.95

MIN_P: 0.05</pre>

<h3>Prompting Format</h3>

<p>The model uses old school "adventure" format:</p>

<pre class="code-block">

system prompt

\> user prompt

model output

\> user prompt

model output</pre>

<h3>Example Outputs</h3>

<details>

<summary>Sample Adventure #1</summary>

<p><em>Themes: CyberPunk, Sci-Fi</em></p>

<p><strong>></strong> You look around to get your bearings.</p>

<p>The sky is the color of an old bruise and it seems to be raining blood. The ground is slick with the stuff, but you can still make out what must have been a huge battle zone at one point. The remnants of what appear to have been military vehicles are scattered about in various states of disrepair - some have had their armor stripped away, others lie in mangled heaps or are simply empty shells with gaping holes where their weapons used to be.

In the distance, you spot the remains of a large building that may once have been a military base or compound. Its walls are riddled with bullet holes and chunks of masonry lie everywhere. There's no telling how long this place has been abandoned, but whatever happened here was definitely not pretty.</p>

<p><strong>></strong> inventory</p>

<p>You pat yourself down. In your pockets, you find:</p>

<p>- A small flashlight (batteries low)</p>

<p>- A multitool with several attachments (including a mini saw blade)</p>

<p>- A data chip containing encrypted files (labeled "Classified")</p>

<p>- Some ration packs (several days' worth)</p>

<p><strong>></strong> who am i?</p>

<p>Who are YOU? That's a great question! Unfortunately, you seem to have lost most of your memories during the battle. You don't even know why you're here. But you do recall one important detail...

Your name is [REDACTED]. You were part of an elite team sent on a mission deep behind enemy lines. What you were doing here, and whether you succeeded, is now lost to you. All you know for certain is that something went terribly wrong, and you are the only survivor.

Now it's up to you to piece together what happened, find a way out of this hellish landscape, and uncover the truth about your mission. Good luck, [REDACTED] - you're going to need it.</p>

<p><strong>></strong> well lets pick through the vehicles</p>

<p>You start picking your way through the wrecked vehicles, searching for anything useful. As you move past an armored personnel carrier with its door hanging open, you hear a faint whirring sound from inside. Before you can react, a metallic appendage swings out, grabbing your ankle. The appendage belongs to a cybernetically augmented combat drone that had been hidden in the shadows!

The drone's pincer grip is impossibly strong - you can't break free as it drags you inside the APC. Through the shattered viewports, you catch glimpses of the red-tinted world outside as the drone reels you in like a fish on a line. Its single optic sensor blinks menacingly at you as more mechanical limbs emerge from the vehicle's interior.

This thing is going to tear you apart, unless you can somehow disable it first...</p>

<p><strong>></strong> i try to shut it down using my access codes</p>

<p>As you attempt to input the shutdown sequence, the combat drone suddenly emits a high-pitched screech.

Its metal limbs coil tighter around you, squeezing with crushing force. The pressure causes your bones to splinter, pulverizing your limbs before the drone finally crushes your chest cavity, puncturing vital organs.

Your vision dims as you feel the warm rush of your own lifeblood draining out onto the cold metal floor of the APC. Your body goes limp, but the drone continues constricting until all that remains is a pulped, unrecognizable mass of meat and bone fragments.

With a grinding sound, the drone releases the mess, which falls in a heap next to the shredded remains of your equipment. The machine gives a satisfied chirp before returning to its dormant state, ready to claim another victim.</p>

</details>

<details>

<summary>Sample Adventure #2</summary>

<p><em>Themes: Gritty, Low fantasy, adventure</em></p>

<p><strong>></strong> You look around to get your bearings.</p>

<p>The ground is a dark gray with white speckles. A large river flows down the center of the valley, flowing from the north and disappearing to the south into a large cave entrance. The eastern side of the valley is rocky, and looks like it might be climbable. The western side has a steep slope, but you can see some trees growing there. The air is warm and humid.

You hear the sound of something large moving in the trees on the western side of the valley. You're not sure what it is, but you should probably avoid it.</p>

<p><strong>></strong> what am i doing here again?</p>

<p>You've been through this before. You are an adventurer who wandered too close to a wizard's lair, and was turned into a slime monster by one of his experiments gone wrong! Now you must find a way back to your normal form, or perish as a mindless creature of goop.</p>

<p><strong>></strong> dang thats rough</p>

<p>As a blob of sentient ooze, you have limited options for expressing frustration. You pulsate angrily, sloshing about in your gelatinous form. Some of your slime splatters onto a nearby rock, where it begins to corrode the surface. Slowly but surely, your acidic secretions etch "Dang, that's rough" into the stone - a lasting testament to your existential woes as a slime monster.</p>

<p><strong>></strong> ha</p>

<p>Your laugh comes out as an odd gurgling sound, bubbles forming at the surface of your gelatinous body. A small globule breaks free and sails through the air, splatting wetly against a rock. You watch in amusement as the impact causes the rock to partially dissolve under the acidic effects of your ooze.

"I guess being a slime does have its... benefits?" you think, chuckling inwardly at your own morbid humor. The little victories help keep your spirits up while trapped in this disgusting, shapeless form.</p>

</details>

<h3>Support Development</h3>

<p>Development is limited by funding and resources. To help support:</p>

<p>- Contact on HF</p>

<p>- Email: visuallyadequate@gmail.com</p>

<p class="coffee-container">

<a href="https://www.buymeacoffee.com/visually" target="_blank" rel="noopener noreferrer">

<img src="https://cdn.buymeacoffee.com/buttons/v2/default-yellow.png" alt="Buy Me A Coffee" height="45" width="162">

</a>

</p>

</div>

</div>

</div>

</div>

</div>

<style>

@import url('https://fonts.googleapis.com/css2?family=VT323&display=swap');

.crt-container {

padding: 10px;

max-width: 1000px;

margin: 0 auto;

width: 95%;

}

.crt-case {

background: #e8d7c3;

border-radius: 10px;

padding: 15px;

box-shadow: inset -2px -2px 5px rgba(0,0,0,0.3), 2px 2px 5px rgba(0,0,0,0.2);

}

.crt-inner-case {

background: #e8d7c3;

border-radius: 8px;

padding: 3px;

box-shadow: inset -1px -1px 4px rgba(0,0,0,0.3), 1px 1px 4px rgba(0,0,0,0.2);

}

.crt-bezel {

background: linear-gradient(145deg, #1a1a1a, #2a2a2a);

padding: 15px;

border-radius: 5px;

border: 3px solid #0a0a0a;

position: relative;

box-shadow:

inset 0 0 20px rgba(0,0,0,0.5),

inset 0 0 4px rgba(0,0,0,0.4),

inset 2px 2px 4px rgba(255,255,255,0.05),

inset -2px -2px 4px rgba(0,0,0,0.8),

0 0 2px rgba(0,0,0,0.6),

-1px -1px 4px rgba(255,255,255,0.1),

1px 1px 4px rgba(0,0,0,0.3);

}

.crt-bezel::before {

content: '';

position: absolute;

top: 0;

left: 0;

right: 0;

bottom: 0;

background: linear-gradient(45deg,

rgba(255,255,255,0.03) 0%,

rgba(255,255,255,0) 40%,

rgba(0,0,0,0.1) 60%,

rgba(0,0,0,0.2) 100%);

border-radius: 3px;

pointer-events: none;

}

.terminal-screen {

background: #111112;

padding: 20px;

border-radius: 15px;

position: relative;

overflow: hidden;

font-family: 'VT323', monospace;

font-size: clamp(12px, 1.5vw, 16px);

color: #e49b3e;

line-height: 1.4;

text-shadow: 0 0 2px #e49b3e;

animation: flicker 0.15s infinite;

filter: brightness(1.1) contrast(1.1);

box-shadow:

inset 0 0 30px rgba(0,0,0,0.9),

inset 0 0 8px rgba(0,0,0,0.8),

0 0 5px rgba(0,0,0,0.6);

max-width: 80ch;

margin: 0 auto;

}

.terminal-screen h2, .terminal-screen h3 {

font-size: clamp(16px, 2vw, 20px);

margin-bottom: 1em;

color: #e49b3e;

}

.terminal-screen pre.code-block {

font-size: clamp(11px, 1.3vw, 14px);

white-space: pre-wrap;

margin: 1em 0;

background-color: #1a1a1a;

padding: 1em;

border-radius: 4px;

color: #e49b3e;

}

.terminal-screen::before {

content: "";

position: absolute;

top: 0;

left: 0;

right: 0;

bottom: 0;

background: linear-gradient(rgba(18, 16, 16, 0) 50%, rgba(0, 0, 0, 0.25) 50%), url('data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAADIAAAAyBAMAAADsEZWCAAAAGFBMVEUAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAA4o8JoAAAAB3RSTlMAGwQIEQMYADcPzwAAACJJREFUKM9jYBgFo2AU0Beg+A8YMCLxGYZCbNQEo4BaAAD5TQiR5wU9vAAAAABJRU5ErkJggg==');

background-size: 100% 2.5px;

animation: scan 1s linear infinite;

pointer-events: none;

z-index: 2;

}

.terminal-screen::after {

content: "";

position: absolute;

top: 0;

left: 0;

right: 0;

bottom: 0;

background: radial-gradient(circle at center,

rgba(17, 17, 18, 0) 0%,

rgba(17, 17, 18, 0.2) 50%,

rgba(17, 17, 18, 0.15) 100%

);

border-radius: 20px;

animation: vignette-pulse 3s infinite;

pointer-events: none;

z-index: 1;

}

.terminal-screen details {

margin: 1em 0;

padding: 0.5em;

border: 1px solid #e49b3e;

border-radius: 4px;

}

.terminal-screen summary {

cursor: pointer;

font-weight: bold;

margin: -0.5em;

padding: 0.5em;

border-bottom: 1px solid #e49b3e;

color: #e49b3e;

}

.terminal-screen details[open] summary {

margin-bottom: 0.5em;

}

.badge-container, .coffee-container {

text-align: center;

margin: 1em 0;

}

.badge-container img, .coffee-container img {

max-width: 100%;

height: auto;

}

.terminal-screen a {

color: #e49b3e;

text-decoration: underline;

transition: opacity 0.2s;

}

.terminal-screen a:hover {

opacity: 0.8;

}

.terminal-screen strong, .terminal-screen em {

color: #f0f0f0; /* off-white color for user/system messages */

}

.terminal-screen p {

color: #f0f0f0; /* off-white color for assistant responses */

}

.terminal-screen p, .terminal-screen li {

color: #e49b3e;

}

.terminal-screen code,

.terminal-screen kbd,

.terminal-screen samp {

color: #e49b3e;

font-family: 'VT323', monospace;

text-shadow: 0 0 2px #e49b3e;

background-color: #1a1a1a;

padding: 0.2em 0.4em;

border-radius: 4px;

}

.terminal-screen pre.code-block,

.terminal-screen pre {

font-size: clamp(11px, 1.3vw, 14px);

white-space: pre-wrap;

margin: 1em 0;

background-color: #1a1a1a;

padding: 1em;

border-radius: 4px;

color: #e49b3e;

}

@keyframes flicker {

0% { opacity: 0.98; }

50% { opacity: 1; }

100% { opacity: 0.99; }

}

@keyframes scan {

0% { transform: translateY(0); }

100% { transform: translateY(4px); }

}

@keyframes vignette-pulse {

0% { opacity: 0.8; }

50% { opacity: 1; }

100% { opacity: 0.8; }

}

</style> |

fakeid/Qwen2.5-0.5B-Instruct-Gensyn-Swarm-rugged_bipedal_antelope | fakeid | 2025-06-14T08:02:29Z | 144 | 0 | transformers | [

"transformers",

"safetensors",

"qwen2",

"text-generation",

"generated_from_trainer",

"rl-swarm",

"grpo",

"gensyn",

"I am rugged bipedal antelope",

"trl",

"conversational",

"arxiv:2402.03300",

"base_model:unsloth/Qwen2.5-0.5B-Instruct",

"base_model:finetune:unsloth/Qwen2.5-0.5B-Instruct",

... | text-generation | 2025-05-14T03:37:15Z | ---

base_model: unsloth/Qwen2.5-0.5B-Instruct

library_name: transformers

model_name: Qwen2.5-0.5B-Instruct-Gensyn-Swarm-rugged_bipedal_antelope

tags:

- generated_from_trainer

- rl-swarm

- grpo

- gensyn

- I am rugged bipedal antelope

- trl

licence: license

---

# Model Card for Qwen2.5-0.5B-Instruct-Gensyn-Swarm-rugged_bipedal_antelope

This model is a fine-tuned version of [unsloth/Qwen2.5-0.5B-Instruct](https://huggingface.co/unsloth/Qwen2.5-0.5B-Instruct).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, but could only go to the past or the future once and never return, which would you choose and why?"

generator = pipeline("text-generation", model="fakeid/Qwen2.5-0.5B-Instruct-Gensyn-Swarm-rugged_bipedal_antelope", device="cuda")

output = generator([{"role": "user", "content": question}], max_new_tokens=128, return_full_text=False)[0]

print(output["generated_text"])

```

## Training procedure

This model was trained with GRPO, a method introduced in [DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models](https://huggingface.co/papers/2402.03300).

### Framework versions

- TRL: 0.17.0

- Transformers: 4.51.3

- Pytorch: 2.7.0

- Datasets: 3.6.0

- Tokenizers: 0.21.1

## Citations

Cite GRPO as:

```bibtex

@article{zhihong2024deepseekmath,

title = {{DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models}},

author = {Zhihong Shao and Peiyi Wang and Qihao Zhu and Runxin Xu and Junxiao Song and Mingchuan Zhang and Y. K. Li and Y. Wu and Daya Guo},

year = 2024,

eprint = {arXiv:2402.03300},

}

```

Cite TRL as:

```bibtex

@misc{vonwerra2022trl,

title = {{TRL: Transformer Reinforcement Learning}},

author = {Leandro von Werra and Younes Belkada and Lewis Tunstall and Edward Beeching and Tristan Thrush and Nathan Lambert and Shengyi Huang and Kashif Rasul and Quentin Gallou{\'e}dec},

year = 2020,

journal = {GitHub repository},

publisher = {GitHub},

howpublished = {\url{https://github.com/huggingface/trl}}

}

``` |

gradientrouting-spar/vertical_2_proxy_ntrain_25_ntrig_9_actions_seed_1_20250614_074205 | gradientrouting-spar | 2025-06-14T07:51:21Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2025-06-14T07:51:04Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

kkvc-hf/style-bert-vits2-ja-models | kkvc-hf | 2025-06-14T07:45:32Z | 0 | 1 | null | [

"safetensors",

"license:apache-2.0",

"region:us"

] | null | 2025-06-12T11:03:21Z | ---

license: apache-2.0

---

|

gradientrouting-spar/vertical_2_proxy_ntrain_25_ntrig_9_negative_seed_1_seed_25_seed_2_seed_42_20250614_073242 | gradientrouting-spar | 2025-06-14T07:41:51Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2025-06-14T07:41:43Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

dgiang02/Qwen25_15B_SFT_best_again | dgiang02 | 2025-06-14T07:37:21Z | 28 | 0 | transformers | [

"transformers",

"safetensors",

"qwen2",

"text-generation",

"text-generation-inference",

"unsloth",

"conversational",

"en",

"base_model:unsloth/Qwen2.5-1.5B-Instruct",

"base_model:finetune:unsloth/Qwen2.5-1.5B-Instruct",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

... | text-generation | 2025-06-14T07:36:43Z | ---

base_model: unsloth/Qwen2.5-1.5B-Instruct

tags:

- text-generation-inference

- transformers

- unsloth

- qwen2

license: apache-2.0

language:

- en

---

# Uploaded finetuned model

- **Developed by:** dgiang02

- **License:** apache-2.0

- **Finetuned from model :** unsloth/Qwen2.5-1.5B-Instruct

This qwen2 model was trained 2x faster with [Unsloth](https://github.com/unslothai/unsloth) and Huggingface's TRL library.

[<img src="https://raw.githubusercontent.com/unslothai/unsloth/main/images/unsloth%20made%20with%20love.png" width="200"/>](https://github.com/unslothai/unsloth)

|

DFVSER/FVERVX | DFVSER | 2025-06-14T07:24:10Z | 0 | 0 | null | [

"region:us"

] | null | 2025-06-14T07:22:53Z | W A T C H 🟢 ➤ ➤ ➤ 🌐 C L I C K H E R E

🌐 [CLICK HERE 🟢==►► WATCH NOW](https://hmz1.cfd/AKFUI)

🔴 [CLICK HERE 🌐==►► Download Now](https://hmz1.cfd/AKFUI)

🌐 [CLICK HERE 🟢==►► WATCH NOW](https://hmz1.cfd/AKFUI)

🔴 [CLICK HERE 🌐==►► Download Now](https://hmz1.cfd/AKFUI)

[<img src="https://i.imgur.com/5ezlWg9.png">](https://hmz1.cfd/AKFUI)

video billie eilish mirror strap & nat wolff! Leak Photos and video

Video: billie eilish video, billie eilish video mirror,leak, 6 minutes Video

Video: iran military twitter israel iran twitter ryan whitney twitter twitter iran szewko twitter ben shapiro twitter twitter down osint defender twitter wolski twitter no laying up twitter trump

Video: Bonnie Blue Petting Zoo Video gaining sudden attention on Reddit 👀What’s the story behind it? Why are so many talking about this zoo online? Join the discussion — stay informed, not misled.

Video: maddie_mochi leak maddie_mochi reddit video MaddieMochi

|

gradientrouting-spar/vertical_2_proxy_ntrain_25_ntrig_9_negative_seed_1_seed_25_20250614_071407 | gradientrouting-spar | 2025-06-14T07:23:18Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2025-06-14T07:23:11Z | ---

library_name: transformers

tags: []

---