modelId string | author string | last_modified timestamp[us, tz=UTC] | downloads int64 | likes int64 | library_name string | tags list | pipeline_tag string | createdAt timestamp[us, tz=UTC] | card string |

|---|---|---|---|---|---|---|---|---|---|

hw33/gemma-2-2B-it-thinking-function_calling-V0 | hw33 | 2025-06-11T08:26:16Z | 0 | 0 | transformers | [

"transformers",

"tensorboard",

"safetensors",

"generated_from_trainer",

"trl",

"sft",

"base_model:google/gemma-2-2b-it",

"base_model:finetune:google/gemma-2-2b-it",

"endpoints_compatible",

"region:us"

] | null | 2025-06-11T08:24:35Z | ---

base_model: google/gemma-2-2b-it

library_name: transformers

model_name: gemma-2-2B-it-thinking-function_calling-V0

tags:

- generated_from_trainer

- trl

- sft

licence: license

---

# Model Card for gemma-2-2B-it-thinking-function_calling-V0

This model is a fine-tuned version of [google/gemma-2-2b-it](https://huggingface.co/google/gemma-2-2b-it).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, but could only go to the past or the future once and never return, which would you choose and why?"

generator = pipeline("text-generation", model="hw33/gemma-2-2B-it-thinking-function_calling-V0", device="cuda")

output = generator([{"role": "user", "content": question}], max_new_tokens=128, return_full_text=False)[0]

print(output["generated_text"])

```

## Training procedure

This model was trained with SFT.

### Framework versions

- TRL: 0.15.2

- Transformers: 4.53.0.dev0

- Pytorch: 2.6.0

- Datasets: 3.5.0

- Tokenizers: 0.21.1

## Citations

Cite TRL as:

```bibtex

@misc{vonwerra2022trl,

title = {{TRL: Transformer Reinforcement Learning}},

author = {Leandro von Werra and Younes Belkada and Lewis Tunstall and Edward Beeching and Tristan Thrush and Nathan Lambert and Shengyi Huang and Kashif Rasul and Quentin Gallouédec},

year = 2020,

journal = {GitHub repository},

publisher = {GitHub},

howpublished = {\url{https://github.com/huggingface/trl}}

}

``` |

EdBianchi/ProfVLMv1-EgoExos-Attn | EdBianchi | 2025-06-11T08:22:38Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"vision-language",

"video-analysis",

"sports",

"proficiency-assessment",

"multimodal",

"pytorch",

"image-to-text",

"base_model:HuggingFaceTB/SmolLM2-135M-Instruct",

"base_model:finetune:HuggingFaceTB/SmolLM2-135M-Instruct",

"license:apache-2.0",

"endpoints_comp... | image-to-text | 2025-06-11T08:20:39Z | ---

license: apache-2.0

base_model:

- HuggingFaceTB/SmolLM2-135M-Instruct

- facebook/timesformer-base-finetuned-k600

tags:

- vision-language

- video-analysis

- sports

- proficiency-assessment

- multimodal

- pytorch

- transformers

library_name: transformers

pipeline_tag: image-to-text

---

# ProfVLM: Video-Language Model for Sports Proficiency Analysis

ProfVLM is a multimodal model that combines video understanding with language generation for analyzing human performance and proficiency levels in human activities.

## Model Description

ProfVLM integrates:

- **Language Model**: HuggingFaceTB/SmolLM2-135M-Instruct with LoRA adapters

- **Vision Encoder**: facebook/timesformer-base-finetuned-k600

- **Custom Video Adapter**: AttentiveProjector with multi-head attention for view integration

### Key Features

- **Multi-view support**: Processes 5 camera view(s) simultaneously

- **Temporal modeling**: Analyzes 8 frames per video

- **Proficiency assessment**: Classifies performance levels (Novice, Early Expert, Intermediate Expert, Late Expert)

- **Sport agnostic**: Trained on multiple sports (basketball, cooking, dance, bouldering, soccer, music)

## Model Architecture

```

Video Input (B, V, T, C, H, W) → TimesFormer → AttentiveProjector → LLM → Text Analysis

```

Where:

- B: Batch size

- V: Number of views (5)

- T: Number of frames (8)

- C, H, W: Channel, Height, Width

## Usage

```python

import torch

from transformers import AutoTokenizer, AutoImageProcessor

from your_module import ProfVLM, load_model

# Load the model

model = load_model("path/to/model", device="cuda")

model.eval()

# Prepare your video data

# videos should be a list of lists: [[view1_frames, view2_frames, ...]]

# where each view contains 8 RGB frames

messages = [

{"role": "system", "content": "You are a visual agent for human performance analysis."},

{"role": "user", "content": "Here are 8 frames sampled from a video: <|video_start|><|video|><|video_end|>. Given this video, analyze the proficiency level of the subject."}

]

prompt = model.processor.tokenizer.apply_chat_template(messages, add_generation_prompt=True, tokenize=False)

batch = model.processor(text=[prompt], videos=[videos], return_tensors="pt", padding=True)

# Generate analysis

with torch.no_grad():

# ... (implementation details as in your generate_on_test_set function)

pass

```

## Training Details

### Dataset

- Multi-sport dataset with proficiency annotations

- Sports: Basketball, Cooking, Dance, Bouldering, Soccer, Music

- Proficiency levels: Novice, Early Expert, Intermediate Expert, Late Expert

### Training Configuration

- **LoRA**: r=32, alpha=64, dropout=0.1

- **Video Processing**: 8 frames per video, 5 view(s)

- **Optimization**: AdamW with cosine scheduling

- **Mixed Precision**: FP16 training

## Performance

The model demonstrates strong performance in:

- Multi-view video understanding

- Temporal feature integration

- Cross-sport proficiency assessment

- Human performance analysis

## Files Structure

```

model/

├── llm_lora/ # LoRA adapter weights

├── tokenizer/ # Tokenizer files

├── vision_processor/ # Vision processor config

├── video_adapter.pt # Custom video adapter weights

├── config.json # Model configuration

└── README.md # This file

```

## Requirements

```

torch>=2.0.0

transformers>=4.35.0

peft>=0.6.0

av>=10.0.0

opencv-python>=4.8.0

torchvision>=0.15.0

numpy>=1.24.0

pillow>=9.5.0

```

## Citation

If you use this model, please cite:

```bibtex

coming soon....

```

## License

This model is released under the Apache 2.0 License.

## Acknowledgments

- Base LLM: HuggingFaceTB/SmolLM2-135M-Instruct

- Vision Encoder: facebook/timesformer-base-finetuned-k600

- Built with 🤗 Transformers and PyTorch

|

TarunKM/Nexteer_Lora_model_adapter_example_45E | TarunKM | 2025-06-11T08:16:53Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"text-generation-inference",

"unsloth",

"llama",

"trl",

"en",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | null | 2025-06-11T08:16:40Z | ---

base_model: unsloth/meta-llama-3.1-8b-instruct-bnb-4bit

tags:

- text-generation-inference

- transformers

- unsloth

- llama

- trl

license: apache-2.0

language:

- en

---

# Uploaded model

- **Developed by:** TarunKM

- **License:** apache-2.0

- **Finetuned from model :** unsloth/meta-llama-3.1-8b-instruct-bnb-4bit

This llama model was trained 2x faster with [Unsloth](https://github.com/unslothai/unsloth) and Huggingface's TRL library.

[<img src="https://raw.githubusercontent.com/unslothai/unsloth/main/images/unsloth%20made%20with%20love.png" width="200"/>](https://github.com/unslothai/unsloth)

|

noza-kit/JP2_ACbase_byGemini_2twice-full | noza-kit | 2025-06-11T08:16:38Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"conversational",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2025-06-11T08:12:45Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

openbmb/BitCPM4-0.5B | openbmb | 2025-06-11T08:12:38Z | 51 | 11 | transformers | [

"transformers",

"safetensors",

"text-generation",

"conversational",

"custom_code",

"zh",

"en",

"license:apache-2.0",

"autotrain_compatible",

"region:us"

] | text-generation | 2025-06-05T06:09:02Z | ---

license: apache-2.0

language:

- zh

- en

pipeline_tag: text-generation

library_name: transformers

---

<div align="center">

<img src="https://github.com/OpenBMB/MiniCPM/blob/main/assets/minicpm_logo.png?raw=true" width="500em" ></img>

</div>

<p align="center">

<a href="https://github.com/OpenBMB/MiniCPM/" target="_blank">GitHub Repo</a> |

<a href="https://github.com/OpenBMB/MiniCPM/tree/main/report/MiniCPM_4_Technical_Report.pdf" target="_blank">Technical Report</a>

</p>

<p align="center">

👋 Join us on <a href="https://discord.gg/3cGQn9b3YM" target="_blank">Discord</a> and <a href="https://github.com/OpenBMB/MiniCPM/blob/main/assets/wechat.jpg" target="_blank">WeChat</a>

</p>

## What's New

- [2025.06.06] **MiniCPM4** series are released! This model achieves ultimate efficiency improvements while maintaining optimal performance at the same scale! It can achieve over 5x generation acceleration on typical end-side chips! You can find technical report [here](https://github.com/OpenBMB/MiniCPM/tree/main/report/MiniCPM_4_Technical_Report.pdf).🔥🔥🔥

## MiniCPM4 Series

MiniCPM4 series are highly efficient large language models (LLMs) designed explicitly for end-side devices, which achieves this efficiency through systematic innovation in four key dimensions: model architecture, training data, training algorithms, and inference systems.

- [MiniCPM4-8B](https://huggingface.co/openbmb/MiniCPM4-8B): The flagship of MiniCPM4, with 8B parameters, trained on 8T tokens.

- [MiniCPM4-0.5B](https://huggingface.co/openbmb/MiniCPM4-0.5B): The small version of MiniCPM4, with 0.5B parameters, trained on 1T tokens.

- [MiniCPM4-8B-Eagle-FRSpec](https://huggingface.co/openbmb/MiniCPM4-8B-Eagle-FRSpec): Eagle head for FRSpec, accelerating speculative inference for MiniCPM4-8B.

- [MiniCPM4-8B-Eagle-FRSpec-QAT-cpmcu](https://huggingface.co/openbmb/MiniCPM4-8B-Eagle-FRSpec-QAT-cpmcu): Eagle head trained with QAT for FRSpec, efficiently integrate speculation and quantization to achieve ultra acceleration for MiniCPM4-8B.

- [MiniCPM4-8B-Eagle-vLLM](https://huggingface.co/openbmb/MiniCPM4-8B-Eagle-vLLM): Eagle head in vLLM format, accelerating speculative inference for MiniCPM4-8B.

- [MiniCPM4-8B-marlin-Eagle-vLLM](https://huggingface.co/openbmb/MiniCPM4-8B-marlin-Eagle-vLLM): Quantized Eagle head for vLLM format, accelerating speculative inference for MiniCPM4-8B.

- [BitCPM4-0.5B](https://huggingface.co/openbmb/BitCPM4-0.5B): Extreme ternary quantization applied to MiniCPM4-0.5B compresses model parameters into ternary values, achieving a 90% reduction in bit width. (**<-- you are here**)

- [BitCPM4-1B](https://huggingface.co/openbmb/BitCPM4-1B): Extreme ternary quantization applied to MiniCPM3-1B compresses model parameters into ternary values, achieving a 90% reduction in bit width.

- [MiniCPM4-Survey](https://huggingface.co/openbmb/MiniCPM4-Survey): Based on MiniCPM4-8B, accepts users' quiries as input and autonomously generate trustworthy, long-form survey papers.

- [MiniCPM4-MCP](https://huggingface.co/openbmb/MiniCPM4-MCP): Based on MiniCPM4-8B, accepts users' queries and available MCP tools as input and autonomously calls relevant MCP tools to satisfy users' requirements.

## Introduction

BitCPM4 are ternary quantized models derived from the MiniCPM series models through quantization-aware training (QAT), achieving significant improvements in both training efficiency and model parameter efficiency.

- Improvements of the training method

- Searching hyperparameters with a wind-tunnel on a small model.

- Using a two-stage training method: training in high-precision first and then QAT, making the best of the trained high-precision models and significantly reducing the computational resources required for the QAT phase.

- High parameter efficiency

- Achieving comparable performance to full-precision models of similar parameter models with a bit width of only 1.58 bits, demonstrating high parameter efficiency.

## Usage

### Inference with Transformers

BitCPM4's parameters are stored in a fake-quantized format, which supports direct inference within the Huggingface framework.

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

path = "openbmb/BitCPM4-0.5B"

device = "cuda"

tokenizer = AutoTokenizer.from_pretrained(path, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained(path, torch_dtype=torch.bfloat16, device_map=device, trust_remote_code=True)

messages = [

{"role": "user", "content": "推荐5个北京的景点。"},

]

model_inputs = tokenizer.apply_chat_template(messages, return_tensors="pt", add_generation_prompt=True).to(device)

model_outputs = model.generate(

model_inputs,

max_new_tokens=1024,

top_p=0.7,

temperature=0.7

)

output_token_ids = [

model_outputs[i][len(model_inputs[i]):] for i in range(len(model_inputs))

]

responses = tokenizer.batch_decode(output_token_ids, skip_special_tokens=True)[0]

print(responses)

```

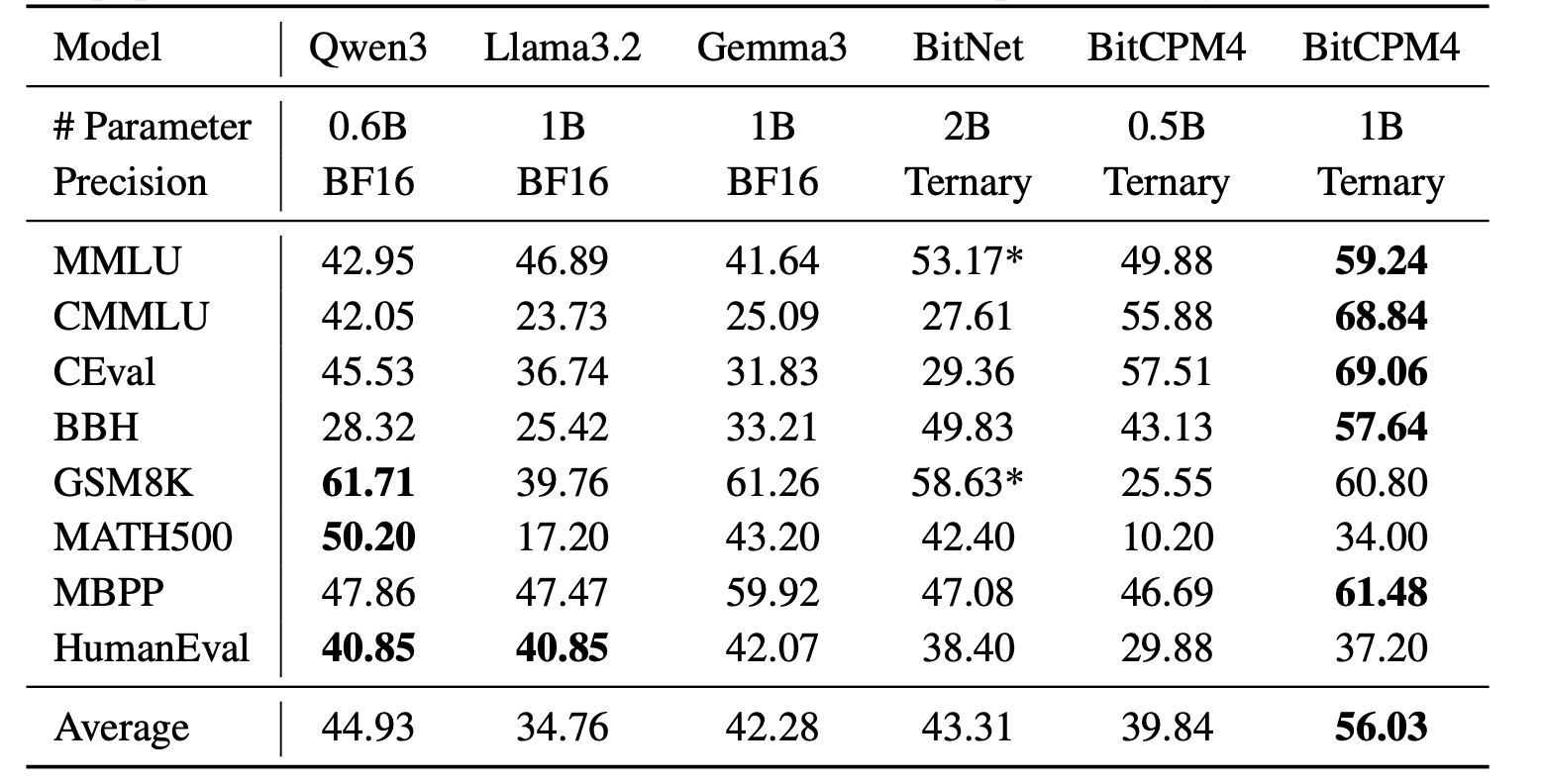

## Evaluation Results

BitCPM4's performance is comparable with other full-precision models in same model size.

## Statement

- As a language model, MiniCPM generates content by learning from a vast amount of text.

- However, it does not possess the ability to comprehend or express personal opinions or value judgments.

- Any content generated by MiniCPM does not represent the viewpoints or positions of the model developers.

- Therefore, when using content generated by MiniCPM, users should take full responsibility for evaluating and verifying it on their own.

## LICENSE

- This repository and MiniCPM models are released under the [Apache-2.0](https://github.com/OpenBMB/MiniCPM/blob/main/LICENSE) License.

## Citation

- Please cite our [paper](https://github.com/OpenBMB/MiniCPM/tree/main/report/MiniCPM_4_Technical_Report.pdf) if you find our work valuable.

```bibtex

@article{minicpm4,

title={{MiniCPM4}: Ultra-Efficient LLMs on End Devices},

author={MiniCPM Team},

year={2025}

}

```

|

kavanmevada/gemma-3-QLoRA-0-0-14 | kavanmevada | 2025-06-11T08:04:23Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"generated_from_trainer",

"trl",

"sft",

"base_model:google/gemma-3-1b-pt",

"base_model:finetune:google/gemma-3-1b-pt",

"endpoints_compatible",

"region:us"

] | null | 2025-06-11T06:41:21Z | ---

base_model: google/gemma-3-1b-pt

library_name: transformers

model_name: gemma-3-QLoRA-0-0-14

tags:

- generated_from_trainer

- trl

- sft

licence: license

---

# Model Card for gemma-3-QLoRA-0-0-14

This model is a fine-tuned version of [google/gemma-3-1b-pt](https://huggingface.co/google/gemma-3-1b-pt).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, but could only go to the past or the future once and never return, which would you choose and why?"

generator = pipeline("text-generation", model="kavanmevada/gemma-3-QLoRA-0-0-14", device="cuda")

output = generator([{"role": "user", "content": question}], max_new_tokens=128, return_full_text=False)[0]

print(output["generated_text"])

```

## Training procedure

This model was trained with SFT.

### Framework versions

- TRL: 0.18.1

- Transformers: 4.52.4

- Pytorch: 2.7.1

- Datasets: 3.6.0

- Tokenizers: 0.21.1

## Citations

Cite TRL as:

```bibtex

@misc{vonwerra2022trl,

title = {{TRL: Transformer Reinforcement Learning}},

author = {Leandro von Werra and Younes Belkada and Lewis Tunstall and Edward Beeching and Tristan Thrush and Nathan Lambert and Shengyi Huang and Kashif Rasul and Quentin Gallou{\'e}dec},

year = 2020,

journal = {GitHub repository},

publisher = {GitHub},

howpublished = {\url{https://github.com/huggingface/trl}}

}

``` |

arrayofintegers/catboostrizztech | arrayofintegers | 2025-06-11T08:00:35Z | 0 | 0 | null | [

"license:mit",

"region:us"

] | null | 2025-06-11T07:17:15Z | ---

license: mit

---

---

language: id

license: mit

tags:

- catboost

- classification

- baseline

datasets:

- your-username/datathon-dataset

---

# 🐱 CatBoost Baseline - Datathon 2025

Model ini adalah baseline classifier menggunakan [CatBoost](https://catboost.ai) untuk kompetisi Datathon 2025.

## 📊 Dataset

Model ini dilatih menggunakan dataset: [`your-username/datathon-dataset`](https://huggingface.co/datasets/your-username/datathon-dataset)

## 🧠 Model Info

- Model: CatBoostClassifier

- Fitur: Semua fitur numerik dan kategorikal dari dataset

- Target: Klasifikasi biner (`0` dan `1`)

## 🚀 Cara Pakai

```python

from catboost import CatBoostClassifier

model = CatBoostClassifier()

model.load_model("catboost_model.cbm")

|

nvidia/difix | nvidia | 2025-06-11T07:57:49Z | 0 | 1 | diffusers | [

"diffusers",

"safetensors",

"en",

"dataset:DL3DV/DL3DV-10K-Sample",

"arxiv:2503.01774",

"diffusers:DifixPipeline",

"region:us"

] | null | 2025-06-03T17:04:21Z | ---

datasets:

- DL3DV/DL3DV-10K-Sample

language:

- en

---

# **Difix3D+: Improving 3D Reconstructions with Single-Step Diffusion Models**

CVPR 2025 (Oral)

[**Code**](https://github.com/nv-tlabs/Difix3D) | [**Project Page**](https://research.nvidia.com/labs/toronto-ai/difix3d/) | [**Paper**](https://arxiv.org/abs/2503.01774)

## Description:

Difix is a single-step image diffusion model trained to enhance and remove artifacts in rendered novel views caused by

underconstrained regions of 3D representation. The technology behind Difix is based on the concepts outlined in the paper titled

[DIFIX3D+: Improving 3D Reconstructions with Single-Step Diffusion Models](https://arxiv.org/abs/2503.01774 ).

Difix has two operation modes:

* Offline mode: Used during the reconstruction phase to clean up pseudo-training views that are rendered from the reconstruction

and then distill them back into 3D. This greatly enhances underconstrained regions and improves the overall 3D representation quality.

* Online mode: Acts as a neural enhancer during inference, effectively removing residual artifacts arising from imperfect 3D

supervision and the limited capacity of current reconstruction models.

Difix is an all-encompassing solution, a single model compatible for both NeRF and 3DGS representations.

**This model is ready for research and development/non-commercial use only.**

**Model Developer:** NVIDIA

**Model Versions:** difix

**Deployment Geography:** Global

### License/Terms of Use:

The use of the model and code is governed by the NVIDIA License. Additional Information: [LICENSE.md · stabilityai/sd-turbo at main](https://huggingface.co/stabilityai/sd-turbo/blob/main/LICENSE.md)

### Use Case:

Difix is intended for Physical AI developers looking to enhance and improve their Neural Reconstruction pipelines. The model takes an image as an input and outputs a fixed image

**Release Date:** Github: [June 2025](https://github.com/nv-tlabs/Difix3D)

## Model Architecture

**Architecture Type**: UNet

**Network Architecture**: A latent diffusion-based UNet coupled with a variational autoencoder (VAE).

## Input

**Input Type(s)**: Image

**Input Format(s)**: Red, Green, Blue (RGB)

**Input Parameters**: Two-Dimensional (2D)

**Other Properties Related to Input**:

* Specific Resolution: [576px x 1024px]

## Output

**Output Type(s)**: Image

**Output Format(s)**: Red, Green, Blue (RGB)

**Output Parameters**: Two-Dimensional (2D)

**Other Properties Related to Output**:

* Specific Resolution: [576px x 1024px]

## Software Integration

**Runtime Engine(s)**: PyTorch

**Supported Hardware Microarchitecture Compatibility**:

* NVIDIA Ampere

* NVIDIA Hopper

**Note**: We are testing with FP32 Precision.

## Inference

**Acceleration Engine**: [PyTorch](https://pytorch.org/)

**Test Hardware**:

* A100

* H100

**Operating System(s):** Linux (We have not tested on other operating systems.)

**System Requirements and Performance:**

This model requires X GB of GPU VRAM.

The following table shows inference time for a single generation across different NVIDIA GPU hardware:

| GPU Hardware | Inference Runtime |

|--------------|----------------------------|

| NVIDIA A100 | 0.355 sec |

| NVIDIA H100 | 0.223 sec |

## Use the Difix Model

Please visit the [Difix3D repository](https://github.com/nv-tlabs/Difix3D) to access all relevant files and code needed to use Difix

## Difix Dataset

- Data Collection Method: Human

- Labeling Method by Dataset: Human

- Properties: Difix was trained, tested, and evaluated using the [DL3DV-10k dataset](https://huggingface.co/datasets/DL3DV/DL3DV-10K-Sample), where 80% of the data was used for training, 10% for evaluation, and 10% for testing. DL3DV-10K is a large-scale dataset consisting of 10,510 high-resolution (4K) real-world video sequences, totaling approximately 51.2 million frames. The scenes span 65 diverse categories across indoor and outdoor environments. Each video is accompanied by metadata describing environmental conditions such as lighting (natural, artificial, mixed), surface materials (e.g., reflective or transparent), and texture complexity. The dataset is designed to support the development and evaluation of learning-based 3D vision methods.

## Ethical Considerations:

NVIDIA believes Trustworthy AI is a shared responsibility and we have established policies and practices to enable development for a wide array of AI applications. When downloaded or used in accordance with our terms of service, developers should work with their internal model team to ensure this model meets requirements for the relevant industry and use case and addresses unforeseen product misuse.

Please report security vulnerabilities or NVIDIA AI Concerns [here](https://www.nvidia.com/en-us/support/submit-security-vulnerability/)

---

## ModelCard++

### Bias

| Field | Response |

| :--------------------------------------------------------------------------------------------------------------------------------------------------------------- | :------- |

| Participation considerations from adversely impacted groups [protected classes](https://www.senate.ca.gov/content/protected-classes) in model design and testing: | None |

| Measures taken to mitigate against unwanted bias: | None |

### Explainability

| Field | Response |

| :-------------------------------------------------------- | :------------------------------------------------------------------------------------------------------------------- |

| Intended Domain: | Advanced Driver Assistance Systems |

| Model Type: | Image-to-Image |

| Intended Users: | Autonomous Vehicles developers enhancing and improving Neural Reconstruction pipelines. |

| Output: | Image |

| Describe how the model works: | The model takes as an input an image, and outputs a fixed image |

| Name the adversely impacted groups this has been tested to deliver comparable outcomes regardless of: | None |

| Technical Limitations: | The reconstruction relies on the quality and consistency of input images and camera calibrations; any deficiencies in these areas can negatively impact the final output. |

| Verified to have met prescribed NVIDIA quality standards: | Yes |

| Performance Metrics: | FID (Fréchet Inception Distance), PSNR (Peak Signal-to-Noise Ratio), LPIPS (Learned Perceptual Image Patch Similarity) |

| Potential Known Risks: | The model is not guaranteed to fix 100% of the image artifacts. please verify the generated scenarios are context and use appropriate. |

| Licensing: | The use of the model and code is governed by the NVIDIA License. Additional Information: [LICENSE.md · stabilityai/sd-turbo at main](https://huggingface.co/stabilityai/sd-turbo/blob/main/LICENSE.md). |

### Privacy

| Field | Response |

| :------------------------------------------------------------------ | :------------- |

| Generatable or reverse engineerable personal data? | No |

| Personal data used to create this model? | No |

| How often is the dataset reviewed? | Before release |

| Is there provenance for all datasets used in training? | Yes |

| Does data labeling (annotation, metadata) comply with privacy laws? | Yes |

| Is data compliant with data subject requests for data correction or removal, if such a request was made? | Yes |

### Safety & Security

| Field | Response |

| :---------------------------------------------- | :----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| Model Application(s): | Image Enhancement |

| List types of specific high-risk AI systems, if any, in which the model can be integrated: | The model can be used to develop Autonomous Vehicles stacks that can be integrated inside vehicles. The Difix model should not be deployed in a vehicle. |

| Describe the life critical impact (if present). | N/A - The model should not be deployed in a vehicle and will not perform life-critical tasks. |

| Use Case Restrictions: | Your use of the model and code is governed by the NVIDIA License. Additional Information: LICENSE.md · stabilityai/sd-turbo at main |

| Model and dataset restrictions: | The Principle of least privilege (PoLP) is applied limiting access for dataset generation and model development. Restrictions enforce dataset access during training, and dataset license constraints adhered to. | |

ibrahimbukhariLingua/qwen2.5-3b-en-wikipedia-finance-500-v4 | ibrahimbukhariLingua | 2025-06-11T07:54:51Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"generated_from_trainer",

"trl",

"sft",

"base_model:Qwen/Qwen2.5-3B-Instruct",

"base_model:finetune:Qwen/Qwen2.5-3B-Instruct",

"endpoints_compatible",

"region:us"

] | null | 2025-06-11T07:54:34Z | ---

base_model: Qwen/Qwen2.5-3B-Instruct

library_name: transformers

model_name: qwen2.5-3b-en-wikipedia-finance-500-v4

tags:

- generated_from_trainer

- trl

- sft

licence: license

---

# Model Card for qwen2.5-3b-en-wikipedia-finance-500-v4

This model is a fine-tuned version of [Qwen/Qwen2.5-3B-Instruct](https://huggingface.co/Qwen/Qwen2.5-3B-Instruct).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, but could only go to the past or the future once and never return, which would you choose and why?"

generator = pipeline("text-generation", model="ibrahimbukhariLingua/qwen2.5-3b-en-wikipedia-finance-500-v4", device="cuda")

output = generator([{"role": "user", "content": question}], max_new_tokens=128, return_full_text=False)[0]

print(output["generated_text"])

```

## Training procedure

This model was trained with SFT.

### Framework versions

- TRL: 0.18.1

- Transformers: 4.52.4

- Pytorch: 2.7.1

- Datasets: 3.6.0

- Tokenizers: 0.21.1

## Citations

Cite TRL as:

```bibtex

@misc{vonwerra2022trl,

title = {{TRL: Transformer Reinforcement Learning}},

author = {Leandro von Werra and Younes Belkada and Lewis Tunstall and Edward Beeching and Tristan Thrush and Nathan Lambert and Shengyi Huang and Kashif Rasul and Quentin Gallou{\'e}dec},

year = 2020,

journal = {GitHub repository},

publisher = {GitHub},

howpublished = {\url{https://github.com/huggingface/trl}}

}

``` |

Respair/Tsukasa_Speech | Respair | 2025-06-11T07:38:04Z | 0 | 63 | null | [

"safetensors",

"StyleTTS",

"Japanese",

"Diffusion",

"Prompt",

"TTS",

"TexttoSpeech",

"speech",

"StyleTTS2",

"LLM",

"anime",

"voice",

"text-to-speech",

"ja",

"arxiv:2405.04517",

"license:cc-by-nc-4.0",

"region:us"

] | text-to-speech | 2024-11-27T17:52:05Z | ---

thumbnail: https://i.postimg.cc/y6gT18Tn/Untitled-design-1.png

license: cc-by-nc-4.0

language:

- ja

pipeline_tag: text-to-speech

tags:

- 'StyleTTS'

- 'Japanese'

- 'Diffusion'

- 'Prompt'

- 'TTS'

- 'TexttoSpeech'

- 'speech'

- 'StyleTTS2'

- 'LLM'

- 'anime'

- 'voice'

---

<div style="text-align:center;">

<img src="https://i.postimg.cc/y6gT18Tn/Untitled-design-1.png" alt="Logo" style="width:300px; height:auto;">

</div>

# Tsukasa 司 Speech: Engineering the Naturalness and Rich Expressiveness

**tl;dr** : I made a very cool japanese speech generation model.

if the demo didn't work and you just want to listen to some samples, take a look at this [notebook](https://colab.research.google.com/drive/1efRFWeHI5ZCcwvQJDRzt8qT3m6CB7XzK?usp=sharing). (ps. this belongs to a much earlier checkpoint, not representative of the model at its best.)

---

Try [chatting with Aira](https://huggingface.co/spaces/Respair/Chatting_with_Aira), a mini-project I did by using various Tech, including Tsukasa. (maybe not very optimized, but hey, it works!)

日本語のモデルカードは[こちら](https://huggingface.co/Respair/Tsukasa_Speech/blob/main/README_JP.md)。

Part of a [personal project](https://github.com/Respaired/Project-Kanade), focusing on further advancing Japanese speech field.

- Use the HuggingFace Space for **Tsukasa** (24khz): [](https://huggingface.co/spaces/Respair/Tsukasa_Speech)

- ~~HuggingFace Space for **Tsumugi** (48khz): [](https://huggingface.co/spaces/Respair/Tsumugi_48khz)~~

- Join Shoukan lab's discord server, a comfy place I frequently visit -> [](https://discord.gg/JrPSzdcM)

Github's repo:

[](https://github.com/Respaired/Tsukasa-Speech)

## What is this?

*Note*: This model only supports the Japanese language; ~~but you can feed it Romaji if you use the Gradio demo.~~ (no longer, due to resource constraints, but the Tech is there.)

This is a speech generation network, aimed at maximizing the expressiveness and Controllability of the generated speech. at its core it uses [StyleTTS 2](https://github.com/yl4579/StyleTTS2)'s architecture with the following changes:

- Incorporating mLSTM Layers instead of regular PyTorch LSTM layers, and increasing the capacity of the text and prosody encoder by using a higher number of parameters

- Retrained PL-Bert, Pitch Extractor, Text Aligner from scratch

- Whisper's Encoder instead of WavLM for the SLM

- 48khz Config

- improved Performance on non-verbal sounds and cues. such as sigh, pauses, etc. and also very slightly on laughter (depends on the speaker)

- a new way of sampling the Style Vectors.

- Promptable Speech Synthesizing.

- a Smart Phonemization algorithm that can handle Romaji inputs or a mixture of Japanese and Romaji.

- Fixed DDP and BF16 Training (mostly!)

There are two checkpoints you can use. Tsukasa & Tsumugi 48khz (placeholder).

Tsukasa was trained on ~800 hours of studio grade, high quality data. sourced mainly from games and novels, part of it from a private dataset.

So the Japanese is going to be the "anime japanese" (it's different than what people usually speak in real-life.)

Brought to you by:

- Soshyant (me)

- [Auto Meta](https://github.com/Alignment-Lab-AI)

- [Cryptowooser](https://github.com/cryptowooser)

- [Buttercream](https://github.com/korakoe)

Special thanks to Yinghao Aaron Li, the Author of StyleTTS which this work is based on top of that. <br> He is one of the most talented Engineers I've ever seen in this field.

Also Karesto and Raven(a.k.a hexgrad) for their help in debugging some of the scripts. wonderful people.

___________________________________________________________________________________

## Why does it matter?

Recently, there's a big trend towards larger models, increasing the scale. We're going the opposite way, trying to see how far we can push the limits by utilizing existing tools.

Maybe, just maybe, scale is not necessarily the answer.

There's also a few things that's related to Japanese (but can have a wider impact on languages that face a similar issue like Arabic). such as how we can improve the intonations for this language. what can be done to accurately annotate a text that can have various spellings depending on the context, etc.

## How to do ...

## Pre-requisites

1. Python >= 3.11

2. Clone this repository:

```bash

git clone https://huggingface.co/Respair/Tsukasa_Speech

cd Tsukasa_Speech

```

3. Install python requirements:

```bash

pip install -r requirements.txt

```

# Inference:

Gradio demo:

```bash

python app_tsuka.py

```

or check the inference notebook. before that, make sure you read the **Important Notes** section down below.

# Training:

**Before starting remove lines 985 and 986 from models.py also remove "KotoDama_Prompt, KotoDama_Text" from the "build_model" function's parameters.**

**First stage training**:

```bash

accelerate launch train_first.py --config_path ./Configs/config.yml

```

**Second stage training**:

```bash

accelerate launch accelerate_train_second.py --config_path ./Configs/config.yml

```

SLM Joint-Training doesn't work on multigpu. (you don't need it, i didn't use it too.)

or:

```bash

launch train_first.py --config_path ./Configs/config.yml

```

**Third stage training** (Kotodama, prompt encoding, etc.):

```

not planned right now, due to some constraints, but feel free to replicate.

```

## some ideas for future

I can think of a few things that can be improved, not nessarily by me, treat it as some sorts of suggestions:

- [o] changing the decoder ([fregrad](https://github.com/kaistmm/fregrad) looks promising)

- [o] retraining the Pitch Extractor using a different algorithm

- [o] while the quality of non-speech sounds have been improved, it cannot generate an entirely non-speech output, perhaps because of the hard alignement.

- [o] using the Style encoder as another modality in LLMs, since they have a detailed representation of the tone and expression of a speech (similar to Style-Talker).

## Pre-requisites

1. Python >= 3.11

2. Clone this repository:

```bash

git clone https://huggingface.co/Respair/Tsukasa_Speech

cd Tsukasa_Speech

```

3. Install python requirements:

```bash

pip install -r requirements.txt

```

## Training details

- 8x A40s + 2x V100s(32gb each)

- 750 ~ 800 hours of data

- Bfloat16

- Approximately 3 weeks of training, overall 3 months including the work spent on the data pipeline.

- Roughly 66.6 kg of CO2eq. of Carbon emitted if we base it on Google Cloud. (I didn't use Google, but the cluster is located in US, please treat it as a very rough approximation.)

### Important Notes

Check [here](https://huggingface.co/Respair/Tsukasa_Speech/blob/main/Important_Notes.md)

Any questions?

```email

saoshiant@protonmail.com

```

or simply DM me on discord.

## Some cool projects:

[Kokoro]("https://huggingface.co/spaces/hexgrad/Kokoro-TTS") - a very nice and light weight TTS, based on StyleTTS. supports Japanese and English.<br>

[VoPho]("https://github.com/ShoukanLabs/VoPho") - a meta phonemizer to rule them all. it will automatically handle any languages with hand-picked high quality phonemizers.

## References

- [yl4579/StyleTTS2](https://github.com/yl4579/StyleTTS2)

- [NX-AI/xlstm](https://github.com/NX-AI/xlstm)

- [archinetai/audio-diffusion-pytorch](https://github.com/archinetai/audio-diffusion-pytorch)

- [jik876/hifi-gan](https://github.com/jik876/hifi-gan)

- [rishikksh20/iSTFTNet-pytorch](https://github.com/rishikksh20/iSTFTNet-pytorch)

- [nii-yamagishilab/project-NN-Pytorch-scripts/project/01-nsf](https://github.com/nii-yamagishilab/project-NN-Pytorch-scripts/tree/master/project/01-nsf)

- [litain's Moe Speech](https://huggingface.co/datasets/litagin/moe-speech) a very cool dataset you can use in case i couldn't release mine

```

@article{xlstm,

title={xLSTM: Extended Long Short-Term Memory},

author={Beck, Maximilian and P{\"o}ppel, Korbinian and Spanring, Markus and Auer, Andreas and Prudnikova, Oleksandra and Kopp, Michael and Klambauer, G{\"u}nter and Brandstetter, Johannes and Hochreiter, Sepp},

journal={arXiv preprint arXiv:2405.04517},

year={2024}

}

``` |

HaripriyanK/your-fast-coref-model-path | HaripriyanK | 2025-06-11T07:37:50Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"xlm-roberta",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2025-06-11T07:37:15Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

thonypythony/describe_and_neurogenerate_image | thonypythony | 2025-06-11T07:24:03Z | 0 | 0 | null | [

"license:wtfpl",

"region:us"

] | null | 2025-06-11T07:17:59Z | ---

license: wtfpl

---

```bash

pip install --upgrade pip

pip install ollama transformers

pip install --upgrade diffusers[torch]

```

### & Download [Ollama](https://ollama.com/download)

```bash

ollama run gemma3:4b

```

|

hdong0/Qwen2.5-Math-1.5B-batch-mix-Open-R1-GRPO_deepscaler_1000steps_lr1e-6_kl1e-3_acc | hdong0 | 2025-06-11T07:19:53Z | 160 | 0 | transformers | [

"transformers",

"safetensors",

"qwen2",

"text-generation",

"generated_from_trainer",

"open-r1",

"trl",

"grpo",

"conversational",

"dataset:agentica-org/DeepScaleR-Preview-Dataset",

"arxiv:2402.03300",

"base_model:Qwen/Qwen2.5-Math-1.5B",

"base_model:finetune:Qwen/Qwen2.5-Math-1.5B",

"autotr... | text-generation | 2025-05-29T21:15:23Z | ---

base_model: Qwen/Qwen2.5-Math-1.5B

datasets: agentica-org/DeepScaleR-Preview-Dataset

library_name: transformers

model_name: Qwen2.5-Math-1.5B-batch-mix-Open-R1-GRPO_deepscaler_1000steps_lr1e-6_kl1e-3_acc

tags:

- generated_from_trainer

- open-r1

- trl

- grpo

licence: license

---

# Model Card for Qwen2.5-Math-1.5B-batch-mix-Open-R1-GRPO_deepscaler_1000steps_lr1e-6_kl1e-3_acc

This model is a fine-tuned version of [Qwen/Qwen2.5-Math-1.5B](https://huggingface.co/Qwen/Qwen2.5-Math-1.5B) on the [agentica-org/DeepScaleR-Preview-Dataset](https://huggingface.co/datasets/agentica-org/DeepScaleR-Preview-Dataset) dataset.

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, but could only go to the past or the future once and never return, which would you choose and why?"

generator = pipeline("text-generation", model="hdong0/Qwen2.5-Math-1.5B-batch-mix-Open-R1-GRPO_deepscaler_1000steps_lr1e-6_kl1e-3_acc", device="cuda")

output = generator([{"role": "user", "content": question}], max_new_tokens=128, return_full_text=False)[0]

print(output["generated_text"])

```

## Training procedure

This model was trained with GRPO, a method introduced in [DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models](https://huggingface.co/papers/2402.03300).

### Framework versions

- TRL: 0.18.0.dev0

- Transformers: 4.52.0.dev0

- Pytorch: 2.6.0

- Datasets: 3.6.0

- Tokenizers: 0.21.1

## Citations

Cite GRPO as:

```bibtex

@article{zhihong2024deepseekmath,

title = {{DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models}},

author = {Zhihong Shao and Peiyi Wang and Qihao Zhu and Runxin Xu and Junxiao Song and Mingchuan Zhang and Y. K. Li and Y. Wu and Daya Guo},

year = 2024,

eprint = {arXiv:2402.03300},

}

```

Cite TRL as:

```bibtex

@misc{vonwerra2022trl,

title = {{TRL: Transformer Reinforcement Learning}},

author = {Leandro von Werra and Younes Belkada and Lewis Tunstall and Edward Beeching and Tristan Thrush and Nathan Lambert and Shengyi Huang and Kashif Rasul and Quentin Gallou{\'e}dec},

year = 2020,

journal = {GitHub repository},

publisher = {GitHub},

howpublished = {\url{https://github.com/huggingface/trl}}

}

``` |

ighoshsubho/lora-grpo-flux-dev | ighoshsubho | 2025-06-11T07:07:18Z | 0 | 0 | peft | [

"peft",

"safetensors",

"lora",

"flux",

"text-to-image",

"grpo",

"reinforcement-learning",

"flow-matching",

"pickscore",

"en",

"arxiv:2505.05470",

"base_model:black-forest-labs/FLUX.1-dev",

"base_model:adapter:black-forest-labs/FLUX.1-dev",

"license:apache-2.0",

"region:us"

] | text-to-image | 2025-06-10T04:47:58Z | ---

base_model: "black-forest-labs/FLUX.1-dev"

library_name: peft

tags:

- lora

- flux

- text-to-image

- grpo

- reinforcement-learning

- flow-matching

- pickscore

license: apache-2.0

language:

- en

pipeline_tag: text-to-image

---

# FLUX.1-dev LoRA Fine-tuned with Flow-GRPO

This LoRA (Low-Rank Adaptation) model is a fine-tuned version of [FLUX.1-dev](https://huggingface.co/black-forest-labs/FLUX.1-dev) using **Flow-GRPO** (Flow-based Group Relative Policy Optimization), a novel reinforcement learning technique for flow matching models.

## Model Description

This model was trained using the Flow-GRPO methodology described in the paper ["Flow-GRPO: Training Flow Matching Models via Online RL"](https://arxiv.org/abs/2505.05470). Flow-GRPO integrates online reinforcement learning into flow matching models by:

1. **ODE-to-SDE conversion**: Transforms deterministic flow matching into stochastic sampling for RL exploration

2. **Denoising reduction**: Uses fewer denoising steps during training while maintaining full quality at inference

3. **Human preference optimization**: Trained with PickScore reward to align with human preferences

## Training Details

### Core Configuration

- **Base Model**: FLUX.1-dev

- **Training Method**: Flow-GRPO with PickScore reward

- **Resolution**: 512×512

- **Mixed Precision**: bfloat16

- **Seed**: 42

### LoRA Configuration

- **LoRA Enabled**: True

- **Rank**: Not specified in config (typically 32-64)

- **Target Modules**: Transformer layers

### Training Hyperparameters

- **Learning Rate**: 5e-5

- **Batch Size**: 1 (with gradient accumulation: 32 steps)

- **Optimizer**: 8-bit AdamW

- β₁: 0.9

- β₂: 0.999

- Weight Decay: 1e-4

- Epsilon: 1e-8

- **Gradient Clipping**: Max norm 1.0

- **Max Epochs**: 100,000

- **Save Frequency**: Every 100 steps

### Flow-GRPO Specific

- **Reward Function**: PickScore (human preference)

- **Beta (KL penalty)**: 0.001

- **Clip Range**: 0.2

- **Advantage Clipping**: Max 5.0

- **Timestep Fraction**: 0.2

- **Guidance Scale**: 3.5

### Sampling Configuration

- **Training Steps**: 2 (denoising reduction)

- **Evaluation Steps**: 4

- **Images per Prompt**: 4

- **Batches per Epoch**: 4

## Usage

### With Diffusers

```python

import torch

from diffusers import FluxPipeline

# Load the base model

pipe = FluxPipeline.from_pretrained(

"black-forest-labs/FLUX.1-dev",

torch_dtype=torch.bfloat16,

device_map="auto"

)

# Load the LoRA weights

pipe.load_lora_weights("ighoshsubho/lora-grpo-flux-dev")

# Generate an image

prompt = "A serene landscape with mountains and a lake at sunset"

image = pipe(

prompt,

height=512,

width=512,

guidance_scale=3.5,

num_inference_steps=20,

max_sequence_length=256,

).images[0]

image.save("generated_image.png")

```

### Adjusting LoRA Strength

```python

# You can adjust the LoRA influence

pipe.set_adapters(["default"], adapter_weights=[0.8]) # 80% LoRA influence

```

## Training Data & Objectives

- **Dataset**: Custom PickScore dataset for human preference alignment

- **Prompt Function**: General OCR prompts

- **Optimization Target**: Maximizing PickScore while maintaining image quality

- **KL Regularization**: Prevents reward hacking and maintains model stability

## Performance Improvements

This model demonstrates improvements in:

- **Human preference alignment** through PickScore optimization

- **Text rendering quality** via OCR-focused training

- **Compositional understanding** enhanced by Flow-GRPO's exploration mechanism

- **Stable training** with minimal reward hacking due to KL regularization

## Technical Notes

- Uses **denoising reduction** during training (2 steps) for efficiency

- Maintains full quality with standard inference steps (20-50)

- Trained with **mixed precision** (bfloat16) for memory efficiency

- **8-bit AdamW** optimizer reduces memory footprint

- **Gradient accumulation** (32 steps) enables effective large batch training

## Limitations

- Optimized for 512×512 resolution

- Focused on PickScore preferences (may not generalize to all aesthetic preferences)

- LoRA adaptation may have reduced capacity compared to full fine-tuning

## Citation

If you use this model, please cite the Flow-GRPO paper:

```bibtex

@article{liu2025flow,

title={Flow-GRPO: Training Flow Matching Models via Online RL},

author={Liu, Jie and Liu, Gongye and Liang, Jiajun and Li, Yangguang and Liu, Jiaheng and Wang, Xintao and Wan, Pengfei and Zhang, Di and Ouyang, Wanli},

journal={arXiv preprint arXiv:2505.05470},

year={2025}

}

```

## License

This model is released under the Apache 2.0 License, following the base FLUX.1-dev model license. |

mmmanuel/lr_5e_05_beta_0p1_epochs_1 | mmmanuel | 2025-06-11T06:44:59Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"qwen3",

"text-generation",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2025-06-11T06:44:12Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

MinaMila/llama_instbase_3b_ug2_1e-6_1.0_0.5_0.75_0.05_LoRa_Adult_ep3_22 | MinaMila | 2025-06-11T06:38:23Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2025-06-11T06:38:19Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

tatsuyaaaaaaa/gemma-3-1b-it-japanese-unsloth2 | tatsuyaaaaaaa | 2025-06-11T06:38:03Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"gemma3_text",

"text-generation",

"text-generation-inference",

"unsloth",

"conversational",

"en",

"base_model:unsloth/gemma-3-1b-it-unsloth-bnb-4bit",

"base_model:finetune:unsloth/gemma-3-1b-it-unsloth-bnb-4bit",

"license:apache-2.0",

"autotrain_compatible",

"e... | text-generation | 2025-06-11T06:36:42Z | ---

base_model: unsloth/gemma-3-1b-it-unsloth-bnb-4bit

tags:

- text-generation-inference

- transformers

- unsloth

- gemma3_text

license: apache-2.0

language:

- en

---

# Uploaded finetuned model

- **Developed by:** tatsuyaaaaaaa

- **License:** apache-2.0

- **Finetuned from model :** unsloth/gemma-3-1b-it-unsloth-bnb-4bit

This gemma3_text model was trained 2x faster with [Unsloth](https://github.com/unslothai/unsloth) and Huggingface's TRL library.

[<img src="https://raw.githubusercontent.com/unslothai/unsloth/main/images/unsloth%20made%20with%20love.png" width="200"/>](https://github.com/unslothai/unsloth)

|

lqy2222/CRAG-aicrowd-model | lqy2222 | 2025-06-11T06:35:03Z | 0 | 0 | null | [

"license:apache-2.0",

"region:us"

] | null | 2025-06-11T06:35:03Z | ---

license: apache-2.0

---

|

TharunSivamani/Meta-Llama-3-8B-Instruct-xlam-mini | TharunSivamani | 2025-06-11T06:32:42Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2025-06-11T06:32:22Z | ---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]