repo_id stringlengths 4 110 | author stringlengths 2 27 ⌀ | model_type stringlengths 2 29 ⌀ | files_per_repo int64 2 15.4k | downloads_30d int64 0 19.9M | library stringlengths 2 37 ⌀ | likes int64 0 4.34k | pipeline stringlengths 5 30 ⌀ | pytorch bool 2 classes | tensorflow bool 2 classes | jax bool 2 classes | license stringlengths 2 30 | languages stringlengths 4 1.63k ⌀ | datasets stringlengths 2 2.58k ⌀ | co2 stringclasses 29 values | prs_count int64 0 125 | prs_open int64 0 120 | prs_merged int64 0 15 | prs_closed int64 0 28 | discussions_count int64 0 218 | discussions_open int64 0 148 | discussions_closed int64 0 70 | tags stringlengths 2 513 | has_model_index bool 2 classes | has_metadata bool 1 class | has_text bool 1 class | text_length int64 401 598k | is_nc bool 1 class | readme stringlengths 0 598k | hash stringlengths 32 32 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

seomh/distilbert-base-uncased-finetuned-squad | seomh | distilbert | 12 | 3 | transformers | 0 | question-answering | true | false | false | apache-2.0 | null | ['squad'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 1,284 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-squad

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0083

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 1.2258 | 1.0 | 5533 | 0.0560 |

| 0.952 | 2.0 | 11066 | 0.0096 |

| 0.7492 | 3.0 | 16599 | 0.0083 |

### Framework versions

- Transformers 4.19.4

- Pytorch 1.11.0+cu113

- Datasets 2.2.2

- Tokenizers 0.12.1

| 3480352677e4591705f6749e32757783 |

yuhuizhang/finetuned_gpt2-large_sst2_negation0.8 | yuhuizhang | gpt2 | 11 | 0 | transformers | 0 | text-generation | true | false | false | mit | null | ['sst2'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 1,248 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# finetuned_gpt2-large_sst2_negation0.8

This model is a fine-tuned version of [gpt2-large](https://huggingface.co/gpt2-large) on the sst2 dataset.

It achieves the following results on the evaluation set:

- Loss: 3.6201

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 2.3586 | 1.0 | 1111 | 3.3100 |

| 1.812 | 2.0 | 2222 | 3.5114 |

| 1.5574 | 3.0 | 3333 | 3.6201 |

### Framework versions

- Transformers 4.22.2

- Pytorch 1.12.1+cu113

- Datasets 2.5.2

- Tokenizers 0.12.1

| 9b4e290512323ab29c33817d9ee6f5c9 |

google/maxim-s3-deblurring-realblur-r | google | null | 6 | 11 | keras | 1 | image-to-image | false | false | false | apache-2.0 | ['en'] | ['realblur_r'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['vision', 'maxim', 'image-to-image'] | false | true | true | 2,532 | false |

# MAXIM pre-trained on RealBlur-R for image deblurring

MAXIM model pre-trained for image deblurring. It was introduced in the paper [MAXIM: Multi-Axis MLP for Image Processing](https://arxiv.org/abs/2201.02973) by Zhengzhong Tu, Hossein Talebi, Han Zhang, Feng Yang, Peyman Milanfar, Alan Bovik, Yinxiao Li and first released in [this repository](https://github.com/google-research/maxim).

Disclaimer: The team releasing MAXIM did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

MAXIM introduces a shared MLP-based backbone for different image processing tasks such as image deblurring, deraining, denoising, dehazing, low-light image enhancement, and retouching. The following figure depicts the main components of MAXIM:

## Training procedure and results

The authors didn't release the training code. For more details on how the model was trained, refer to the [original paper](https://arxiv.org/abs/2201.02973).

As per the [table](https://github.com/google-research/maxim#results-and-pre-trained-models), the model achieves a PSNR of 39.45 and an SSIM of 0.962.

## Intended uses & limitations

You can use the raw model for image deblurring tasks.

The model is [officially released in JAX](https://github.com/google-research/maxim). It was ported to TensorFlow in [this repository](https://github.com/sayakpaul/maxim-tf).

### How to use

Here is how to use this model:

```python

from huggingface_hub import from_pretrained_keras

from PIL import Image

import tensorflow as tf

import numpy as np

import requests

url = "https://github.com/sayakpaul/maxim-tf/raw/main/images/Deblurring/input/1fromGOPR0950.png"

image = Image.open(requests.get(url, stream=True).raw)

image = np.array(image)

image = tf.convert_to_tensor(image)

image = tf.image.resize(image, (256, 256))

model = from_pretrained_keras("google/maxim-s3-deblurring-realblur-r")

predictions = model.predict(tf.expand_dims(image, 0))

```

For a more elaborate prediction pipeline, refer to [this Colab Notebook](https://colab.research.google.com/github/sayakpaul/maxim-tf/blob/main/notebooks/inference-dynamic-resize.ipynb).

### Citation

```bibtex

@article{tu2022maxim,

title={MAXIM: Multi-Axis MLP for Image Processing},

author={Tu, Zhengzhong and Talebi, Hossein and Zhang, Han and Yang, Feng and Milanfar, Peyman and Bovik, Alan and Li, Yinxiao},

journal={CVPR},

year={2022},

}

```

| 656f5737fb608a8a695876e3a24498e5 |

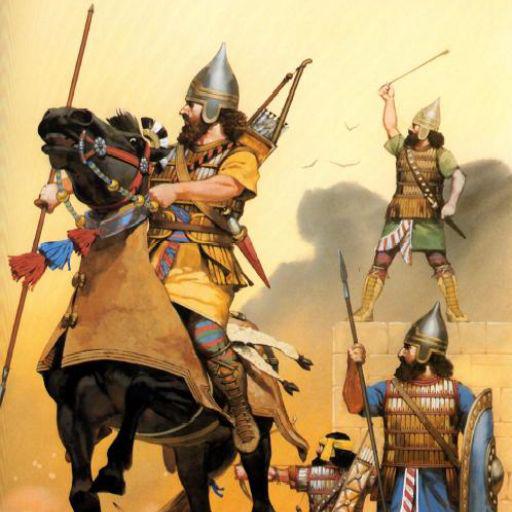

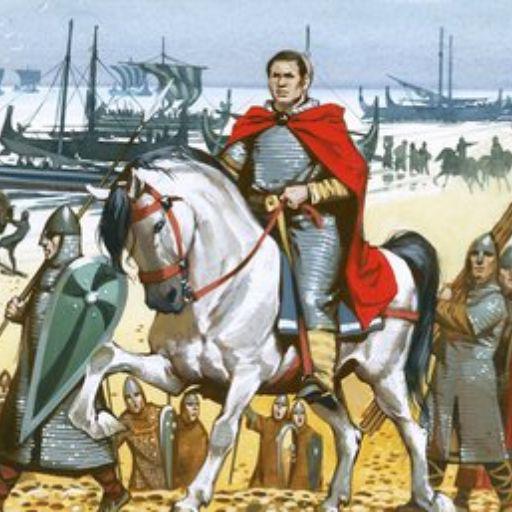

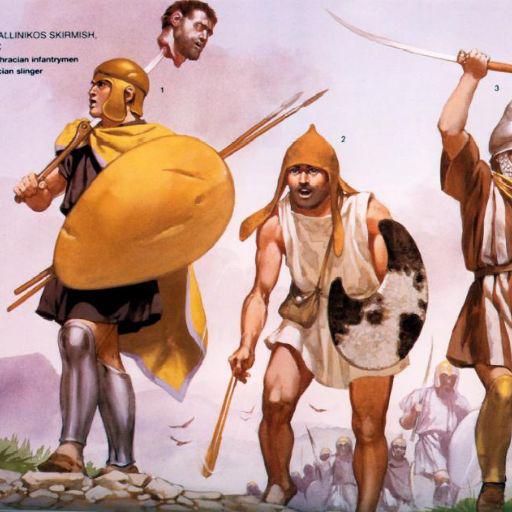

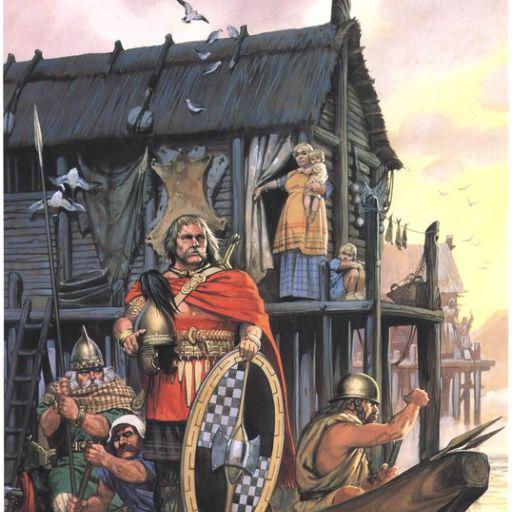

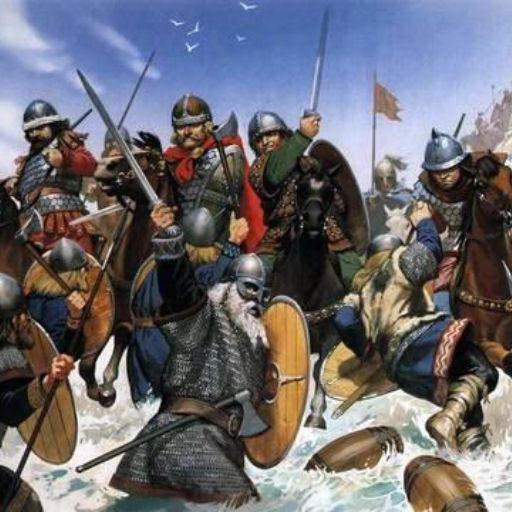

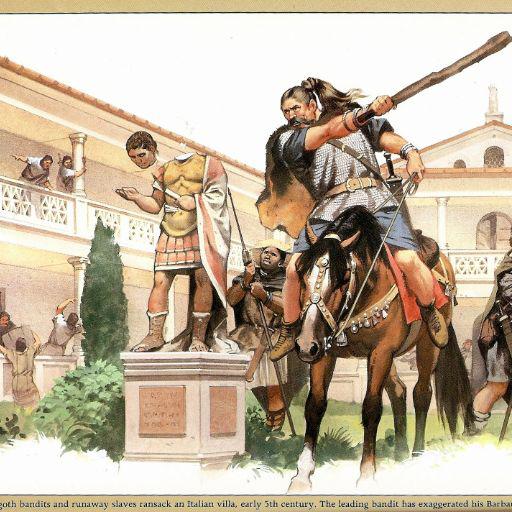

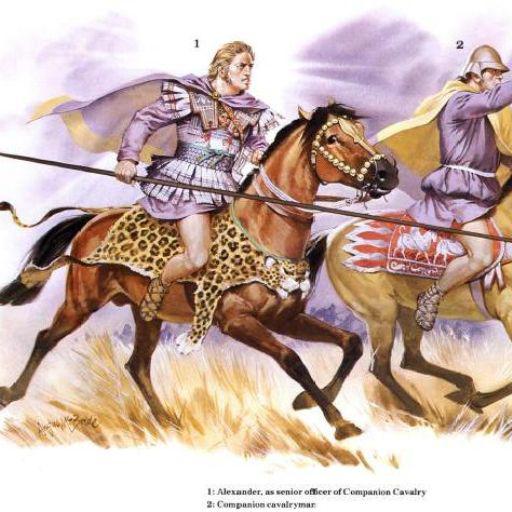

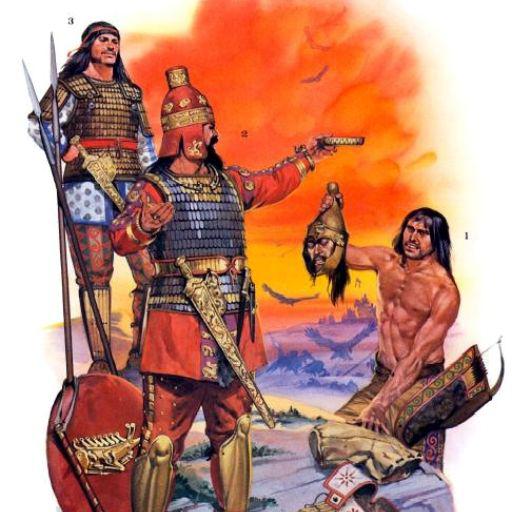

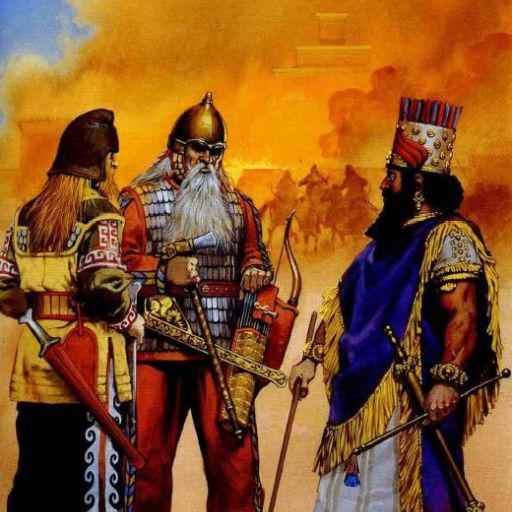

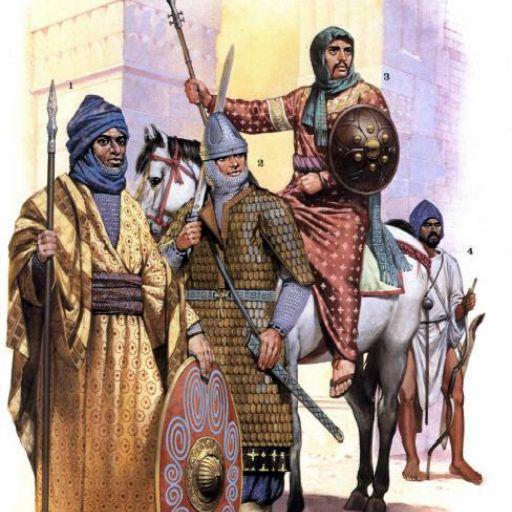

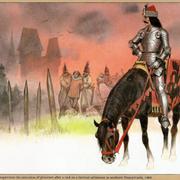

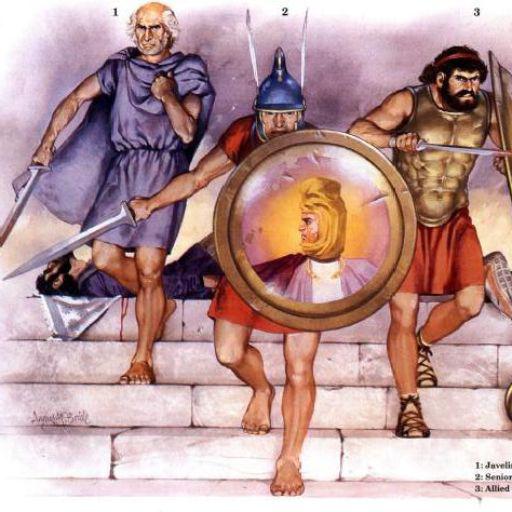

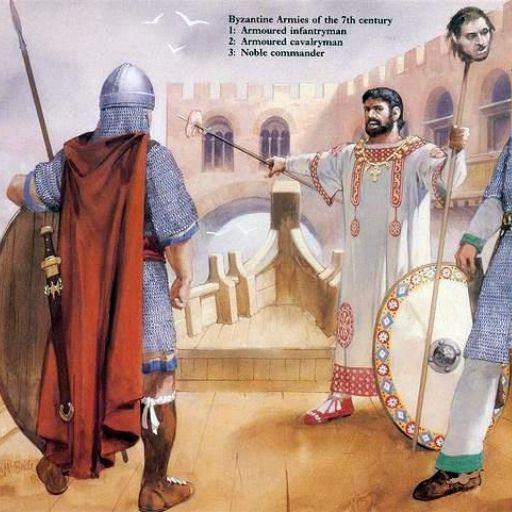

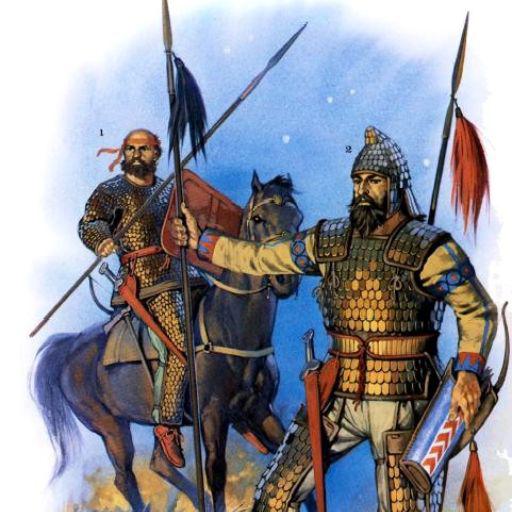

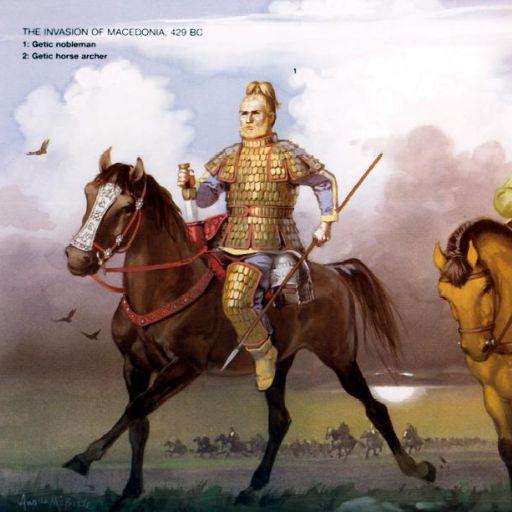

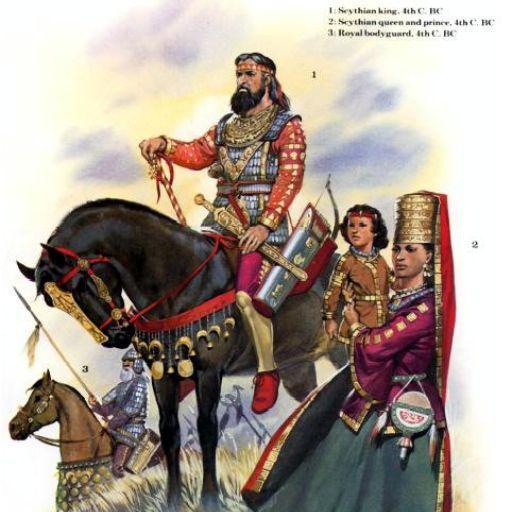

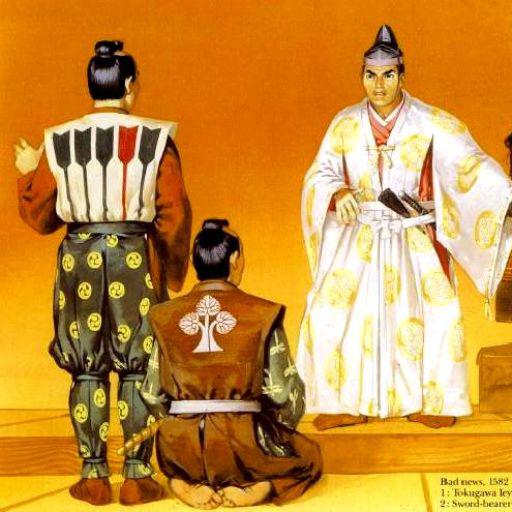

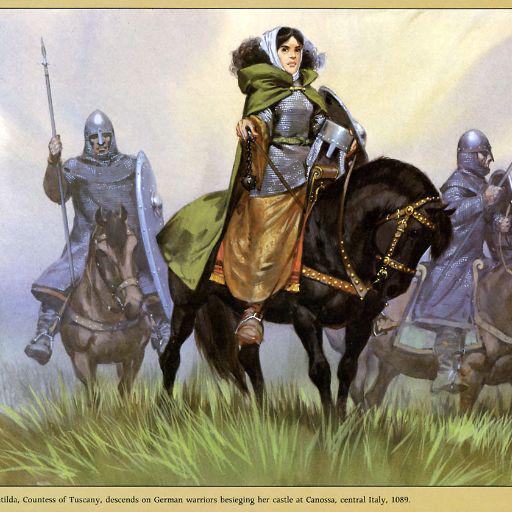

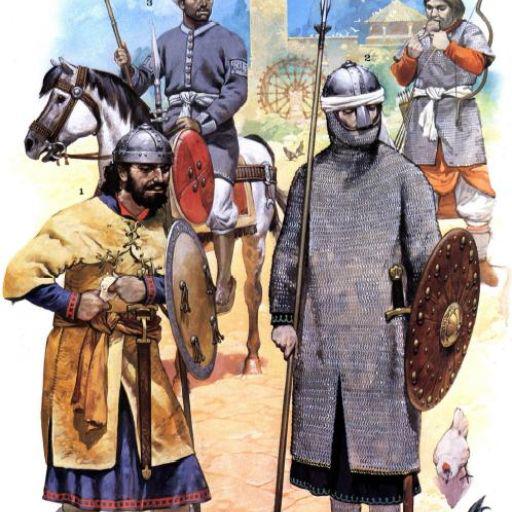

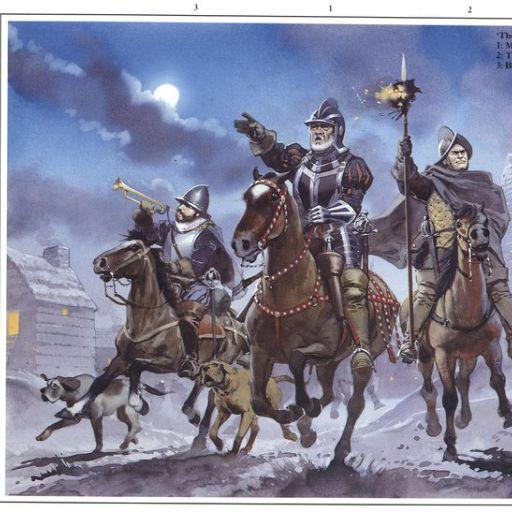

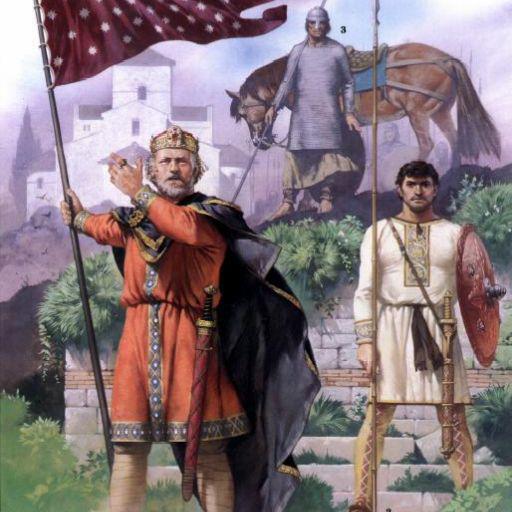

sd-dreambooth-library/angus-mcbride-style-v4 | sd-dreambooth-library | null | 64 | 4 | diffusers | 3 | null | false | false | false | mit | null | null | null | 2 | 2 | 0 | 0 | 0 | 0 | 0 | [] | false | true | true | 6,229 | false | ### angus mcbride style v4 on Stable Diffusion via Dreambooth

#### model by hiero

This your the Stable Diffusion model fine-tuned the angus mcbride style v4 concept taught to Stable Diffusion with Dreambooth.

It can be used by modifying the `instance_prompt`: **mcbride_style**

You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb).

And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts)

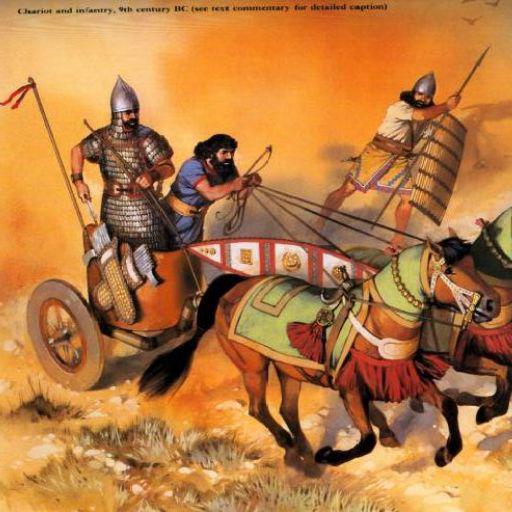

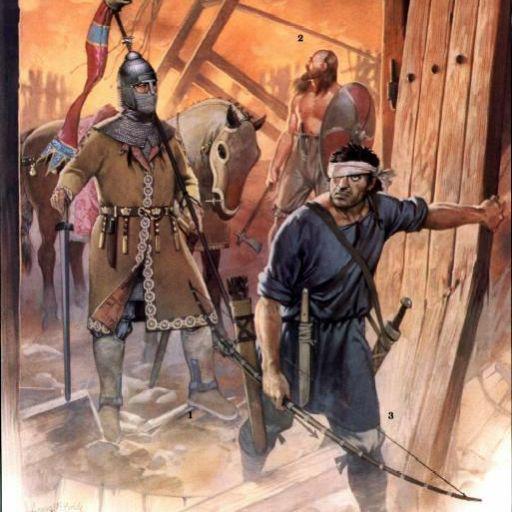

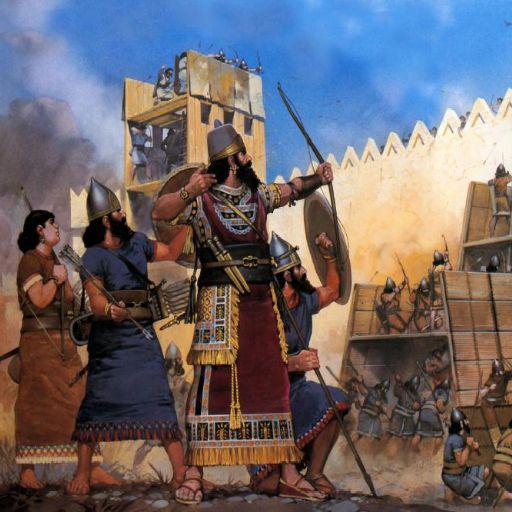

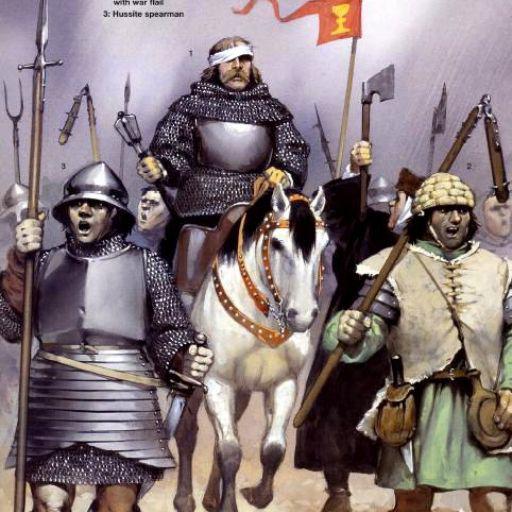

Here are the images used for training this concept:

| 6604de7bbbb99e676d69c4cef8ca35c8 |

Helsinki-NLP/opus-mt-es-nso | Helsinki-NLP | marian | 10 | 31 | transformers | 0 | translation | true | true | false | apache-2.0 | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['translation'] | false | true | true | 776 | false |

### opus-mt-es-nso

* source languages: es

* target languages: nso

* OPUS readme: [es-nso](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/es-nso/README.md)

* dataset: opus

* model: transformer-align

* pre-processing: normalization + SentencePiece

* download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/es-nso/opus-2020-01-16.zip)

* test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/es-nso/opus-2020-01-16.test.txt)

* test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/es-nso/opus-2020-01-16.eval.txt)

## Benchmarks

| testset | BLEU | chr-F |

|-----------------------|-------|-------|

| JW300.es.nso | 33.2 | 0.531 |

| 20487d85c8a2baa2310039642d7ed47e |

Nhat1904/test_trainer_XLNET_3ep_5e-5 | Nhat1904 | xlnet | 8 | 3 | transformers | 0 | text-classification | true | false | false | mit | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 1,324 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# test_trainer_XLNET_3ep_5e-5

This model is a fine-tuned version of [xlnet-base-cased](https://huggingface.co/xlnet-base-cased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5405

- Accuracy: 0.8773

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.7984 | 1.0 | 1125 | 0.6647 | 0.7923 |

| 0.5126 | 2.0 | 2250 | 0.4625 | 0.862 |

| 0.409 | 3.0 | 3375 | 0.5405 | 0.8773 |

### Framework versions

- Transformers 4.25.1

- Pytorch 1.12.1+cu113

- Datasets 2.7.1

- Tokenizers 0.13.2

| 11e0ea78245eb9e189b63c374039f5e9 |

daspartho/text-emotion | daspartho | distilbert | 10 | 1 | transformers | 1 | text-classification | true | false | false | apache-2.0 | null | ['emotion'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 1,527 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# text-emotion

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1414

- Accuracy: 0.9367

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 256

- eval_batch_size: 512

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 5

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 1.0232 | 1.0 | 63 | 0.2424 | 0.917 |

| 0.1925 | 2.0 | 126 | 0.1600 | 0.934 |

| 0.1134 | 3.0 | 189 | 0.1418 | 0.935 |

| 0.076 | 4.0 | 252 | 0.1461 | 0.931 |

| 0.0604 | 5.0 | 315 | 0.1414 | 0.9367 |

### Framework versions

- Transformers 4.24.0

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.2

| 19bbd6411a132d7b4051403d824592b0 |

Gnanesh5/SF5 | Gnanesh5 | xlnet | 6 | 3 | transformers | 0 | text-classification | true | false | false | mit | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 900 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# SF5

This model is a fine-tuned version of [xlnet-base-cased](https://huggingface.co/xlnet-base-cased) on an unknown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 4e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 0

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

### Framework versions

- Transformers 4.25.1

- Pytorch 1.13.0+cu116

- Datasets 2.7.1

- Tokenizers 0.13.2

| bffe15bd7ed68e9ff182773ccb623419 |

anas-awadalla/gpt2-span-head-few-shot-k-32-finetuned-squad-seed-0 | anas-awadalla | gpt2 | 20 | 7 | transformers | 0 | question-answering | true | false | false | mit | null | ['squad'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 968 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# gpt2-span-head-few-shot-k-32-finetuned-squad-seed-0

This model is a fine-tuned version of [gpt2](https://huggingface.co/gpt2) on the squad dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 12

- eval_batch_size: 8

- seed: 0

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- training_steps: 200

### Training results

### Framework versions

- Transformers 4.20.0.dev0

- Pytorch 1.11.0+cu113

- Datasets 2.3.2

- Tokenizers 0.11.6

| 238c0f3c272713d92cba3c824edc55ce |

pere/norwegian-t5-base | pere | t5 | 18 | 8 | transformers | 0 | text2text-generation | false | false | true | cc-by-4.0 | False | ['Norwegian Nynorsk/Bokmål'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['seq2seq'] | false | true | true | 895 | false | # 🇳🇴 Norwegian T5 Base model 🇳🇴

This T5-base model is trained from scratch on a 19GB Balanced Bokmål-Nynorsk Corpus.

Update: Due to disk space errors, the model had to be restarted July 20. It is currently still running.

Parameters used in training:

```bash

python3 ./run_t5_mlm_flax_streaming.py

--model_name_or_path="./norwegian-t5-base"

--output_dir="./norwegian-t5-base"

--config_name="./norwegian-t5-base"

--tokenizer_name="./norwegian-t5-base"

--dataset_name="pere/nb_nn_balanced_shuffled"

--max_seq_length="512"

--per_device_train_batch_size="32"

--per_device_eval_batch_size="32"

--learning_rate="0.005"

--weight_decay="0.001"

--warmup_steps="2000"

--overwrite_output_dir

--logging_steps="100"

--save_steps="500"

--eval_steps="500"

--push_to_hub

--preprocessing_num_workers 96

--adafactor

``` | d7cbe6eac76e9b0c7e079c09a9316d71 |

anas-awadalla/roberta-base-lora-squad | anas-awadalla | null | 22 | 0 | null | 0 | null | false | false | false | mit | null | ['squad'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 1,022 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# roberta-base-lora-squad

This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on the squad dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 32

- eval_batch_size: 8

- seed: 42

- distributed_type: multi-GPU

- num_devices: 4

- total_train_batch_size: 128

- total_eval_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 15.0

### Training results

### Framework versions

- Transformers 4.19.2

- Pytorch 1.11.0+cu113

- Datasets 2.0.0

- Tokenizers 0.11.6

| 5ed9c0220a7de18cfd81e313c2b77532 |

funnel-transformer/small | funnel-transformer | funnel | 9 | 93,085 | transformers | 4 | feature-extraction | true | true | false | apache-2.0 | ['en'] | ['bookcorpus', 'wikipedia', 'gigaword'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | [] | false | true | true | 3,774 | false |

# Funnel Transformer small model (B4-4-4 with decoder)

Pretrained model on English language using a similar objective objective as [ELECTRA](https://huggingface.co/transformers/model_doc/electra.html). It was introduced in

[this paper](https://arxiv.org/pdf/2006.03236.pdf) and first released in

[this repository](https://github.com/laiguokun/Funnel-Transformer). This model is uncased: it does not make a difference

between english and English.

Disclaimer: The team releasing Funnel Transformer did not write a model card for this model so this model card has been

written by the Hugging Face team.

## Model description

Funnel Transformer is a transformers model pretrained on a large corpus of English data in a self-supervised fashion. This means it

was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of

publicly available data) with an automatic process to generate inputs and labels from those texts.

More precisely, a small language model corrupts the input texts and serves as a generator of inputs for this model, and

the pretraining objective is to predict which token is an original and which one has been replaced, a bit like a GAN training.

This way, the model learns an inner representation of the English language that can then be used to extract features

useful for downstream tasks: if you have a dataset of labeled sentences for instance, you can train a standard

classifier using the features produced by the BERT model as inputs.

## Intended uses & limitations

You can use the raw model to extract a vector representation of a given text, but it's mostly intended to

be fine-tuned on a downstream task. See the [model hub](https://huggingface.co/models?filter=funnel-transformer) to look for

fine-tuned versions on a task that interests you.

Note that this model is primarily aimed at being fine-tuned on tasks that use the whole sentence (potentially masked)

to make decisions, such as sequence classification, token classification or question answering. For tasks such as text

generation you should look at model like GPT2.

### How to use

Here is how to use this model to get the features of a given text in PyTorch:

```python

from transformers import FunnelTokenizer, FunnelModel

tokenizer = FunnelTokenizer.from_pretrained("funnel-transformer/small")

model = FunneModel.from_pretrained("funnel-transformer/small")

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

```

and in TensorFlow:

```python

from transformers import FunnelTokenizer, TFFunnelModel

tokenizer = FunnelTokenizer.from_pretrained("funnel-transformer/small")

model = TFFunnelModel.from_pretrained("funnel-transformer/small")

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='tf')

output = model(encoded_input)

```

## Training data

The BERT model was pretrained on:

- [BookCorpus](https://yknzhu.wixsite.com/mbweb), a dataset consisting of 11,038 unpublished books,

- [English Wikipedia](https://en.wikipedia.org/wiki/English_Wikipedia) (excluding lists, tables and headers),

- [Clue Web](https://lemurproject.org/clueweb12/), a dataset of 733,019,372 English web pages,

- [GigaWord](https://catalog.ldc.upenn.edu/LDC2011T07), an archive of newswire text data,

- [Common Crawl](https://commoncrawl.org/), a dataset of raw web pages.

### BibTeX entry and citation info

```bibtex

@misc{dai2020funneltransformer,

title={Funnel-Transformer: Filtering out Sequential Redundancy for Efficient Language Processing},

author={Zihang Dai and Guokun Lai and Yiming Yang and Quoc V. Le},

year={2020},

eprint={2006.03236},

archivePrefix={arXiv},

primaryClass={cs.LG}

}

```

| 0eb5743e0e3bc114272a0c18fdf20535 |

Kayvane/rick-and-morty-ramrick-character | Kayvane | null | 17 | 39 | diffusers | 0 | text-to-image | true | false | false | creativeml-openrail-m | null | null | null | 1 | 1 | 0 | 0 | 0 | 0 | 0 | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'wildcard'] | false | true | true | 1,082 | false |

# DreamBooth model for the ramrick concept trained by Kayvane on the Kayvane/dreambooth-hackathon-rick-and-morty-images-square dataset.

Notes:

- trained on square images, 20k steps on google colab

- character name is ramrick, many pictures get blocked as nsfw - possibly because the subtoken #ick is close to something else

- model is trained for too many steps / overfitted as it is effectively recreating the input images

This is a Stable Diffusion model fine-tuned on the ramrick concept with DreamBooth. It can be used by modifying the `instance_prompt`: **a photo of ramrick character**

This model was created as part of the DreamBooth Hackathon 🔥. Visit the [organisation page](https://huggingface.co/dreambooth-hackathon) for instructions on how to take part!

## Description

This is a Stable Diffusion model fine-tuned on `character` images for the wildcard theme.

## Usage

```python

from diffusers import StableDiffusionPipeline

pipeline = StableDiffusionPipeline.from_pretrained('Kayvane/rick-and-morty-ramrick-character')

image = pipeline().images[0]

image

```

| e61dea39c1f159bf09600296e67b22c8 |

Helsinki-NLP/opus-mt-lus-sv | Helsinki-NLP | marian | 10 | 8 | transformers | 0 | translation | true | true | false | apache-2.0 | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['translation'] | false | true | true | 776 | false |

### opus-mt-lus-sv

* source languages: lus

* target languages: sv

* OPUS readme: [lus-sv](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/lus-sv/README.md)

* dataset: opus

* model: transformer-align

* pre-processing: normalization + SentencePiece

* download original weights: [opus-2020-01-09.zip](https://object.pouta.csc.fi/OPUS-MT-models/lus-sv/opus-2020-01-09.zip)

* test set translations: [opus-2020-01-09.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/lus-sv/opus-2020-01-09.test.txt)

* test set scores: [opus-2020-01-09.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/lus-sv/opus-2020-01-09.eval.txt)

## Benchmarks

| testset | BLEU | chr-F |

|-----------------------|-------|-------|

| JW300.lus.sv | 25.5 | 0.439 |

| af46eedd4bb7c755a0253f84757ac771 |

Xhaheen/srkay-man_6-1-2022 | Xhaheen | null | 17 | 136 | diffusers | 91 | text-to-image | true | false | false | creativeml-openrail-m | null | null | null | 1 | 1 | 0 | 0 | 0 | 0 | 0 | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'wildcard'] | false | true | true | 1,288 | false |

# DreamBooth model for the srkay concept trained by Xhaheen on the Xhaheen/dreambooth-hackathon-images-srkman-2 dataset.

This is a Stable Diffusion model fine-tuned on the sha rukh khan images with DreamBooth. It can be used by modifying the `instance_prompt`: **a photo of srkay man**

This model was created as part of the DreamBooth Hackathon 🔥. Visit the [organisation page](https://huggingface.co/dreambooth-hackathon) for instructions on how to take part!

## Dataset used

## Description

This is a Stable Diffusion model fine-tuned on `man` images for the wildcard theme.

## Usage

```python

from diffusers import StableDiffusionPipeline

pipeline = StableDiffusionPipeline.from_pretrained('Xhaheen/srkay-man_6-1-2022')

image = pipeline().images[0]

image

```

[](https://colab.research.google.com/drive/1FmTaUN38enNdCgi4HxG0LMZ4HobM0Iq3?usp=sharing)

| d222eaa26bf88b1b457e96c76e6d086c |

deepset/gbert-large | deepset | null | 8 | 102,101 | transformers | 17 | fill-mask | true | true | false | mit | ['de'] | ['wikipedia', 'OPUS', 'OpenLegalData', 'oscar'] | null | 0 | 0 | 0 | 0 | 1 | 1 | 0 | [] | false | true | true | 2,787 | false |

# German BERT large

Released, Oct 2020, this is a German BERT language model trained collaboratively by the makers of the original German BERT (aka "bert-base-german-cased") and the dbmdz BERT (aka bert-base-german-dbmdz-cased). In our [paper](https://arxiv.org/pdf/2010.10906.pdf), we outline the steps taken to train our model and show that it outperforms its predecessors.

## Overview

**Paper:** [here](https://arxiv.org/pdf/2010.10906.pdf)

**Architecture:** BERT large

**Language:** German

## Performance

```

GermEval18 Coarse: 80.08

GermEval18 Fine: 52.48

GermEval14: 88.16

```

See also:

deepset/gbert-base

deepset/gbert-large

deepset/gelectra-base

deepset/gelectra-large

deepset/gelectra-base-generator

deepset/gelectra-large-generator

## Authors

**Branden Chan:** branden.chan@deepset.ai

**Stefan Schweter:** stefan@schweter.eu

**Timo Möller:** timo.moeller@deepset.ai

## About us

<div class="grid lg:grid-cols-2 gap-x-4 gap-y-3">

<div class="w-full h-40 object-cover mb-2 rounded-lg flex items-center justify-center">

<img alt="" src="https://raw.githubusercontent.com/deepset-ai/.github/main/deepset-logo-colored.png" class="w-40"/>

</div>

<div class="w-full h-40 object-cover mb-2 rounded-lg flex items-center justify-center">

<img alt="" src="https://raw.githubusercontent.com/deepset-ai/.github/main/haystack-logo-colored.png" class="w-40"/>

</div>

</div>

[deepset](http://deepset.ai/) is the company behind the open-source NLP framework [Haystack](https://haystack.deepset.ai/) which is designed to help you build production ready NLP systems that use: Question answering, summarization, ranking etc.

Some of our other work:

- [Distilled roberta-base-squad2 (aka "tinyroberta-squad2")]([https://huggingface.co/deepset/tinyroberta-squad2)

- [German BERT (aka "bert-base-german-cased")](https://deepset.ai/german-bert)

- [GermanQuAD and GermanDPR datasets and models (aka "gelectra-base-germanquad", "gbert-base-germandpr")](https://deepset.ai/germanquad)

## Get in touch and join the Haystack community

<p>For more info on Haystack, visit our <strong><a href="https://github.com/deepset-ai/haystack">GitHub</a></strong> repo and <strong><a href="https://docs.haystack.deepset.ai">Documentation</a></strong>.

We also have a <strong><a class="h-7" href="https://haystack.deepset.ai/community">Discord community open to everyone!</a></strong></p>

[Twitter](https://twitter.com/deepset_ai) | [LinkedIn](https://www.linkedin.com/company/deepset-ai/) | [Discord](https://haystack.deepset.ai/community) | [GitHub Discussions](https://github.com/deepset-ai/haystack/discussions) | [Website](https://deepset.ai)

By the way: [we're hiring!](http://www.deepset.ai/jobs) | 5832ff0d5b3b74b9bde9efea9ad4c31b |

sd-dreambooth-library/tempa | sd-dreambooth-library | null | 22 | 2 | diffusers | 0 | null | false | false | false | mit | null | null | null | 2 | 2 | 0 | 0 | 0 | 0 | 0 | [] | false | true | true | 909 | false | ### Tempa on Stable Diffusion via Dreambooth

#### model by Giordyman

This your the Stable Diffusion model fine-tuned the Tempa concept taught to Stable Diffusion with Dreambooth.

It can be used by modifying the `instance_prompt`: **a photo of sks Tempa**

You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb).

Here are the images used for training this concept:

| c282c5f6a77ecd06c3dd6a2e4cb4851e |

Tusarkant/xlm-roberta-base-finetuned-ner | Tusarkant | xlm-roberta | 9 | 2 | transformers | 0 | token-classification | true | false | false | mit | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 904 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# xlm-roberta-base-finetuned-ner

This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on an unknown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 6

### Framework versions

- Transformers 4.26.0

- Pytorch 1.13.1+cu116

- Datasets 2.9.0

- Tokenizers 0.13.2

| 19f8bb2bd6d0b7127da00f28772a66ac |

Santiagot1105/wav2vec2-lar-xlsr-es-col | Santiagot1105 | wav2vec2 | 12 | 10 | transformers | 0 | automatic-speech-recognition | true | false | false | apache-2.0 | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 1,502 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-lar-xlsr-es-col

This model is a fine-tuned version of [jonatasgrosman/wav2vec2-large-xlsr-53-spanish](https://huggingface.co/jonatasgrosman/wav2vec2-large-xlsr-53-spanish) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0947

- Wer: 0.1884

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 30

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 4.8446 | 8.51 | 400 | 2.8174 | 0.9854 |

| 0.5146 | 17.02 | 800 | 0.1022 | 0.2020 |

| 0.0706 | 25.53 | 1200 | 0.0947 | 0.1884 |

### Framework versions

- Transformers 4.11.3

- Pytorch 1.10.1+cu102

- Datasets 1.13.3

- Tokenizers 0.10.3

| f97dc92bf8681dd12293248381e66362 |

tzvc/8f6b362c-26c3-4c26-9e7f-2b8ff6ef353e | tzvc | null | 31 | 12 | diffusers | 0 | text-to-image | false | false | false | creativeml-openrail-m | null | null | null | 1 | 1 | 0 | 0 | 0 | 0 | 0 | ['text-to-image'] | false | true | true | 1,743 | false | ### 8f6b362c-26c3-4c26-9e7f-2b8ff6ef353e Dreambooth model trained by tzvc with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v1-5 base model

You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts!

Sample pictures of:

sdcid (use that on your prompt)

| dc1050bdbb79c063e876ff0ddd9fd5c1 |

sd-concepts-library/glass-pipe | sd-concepts-library | null | 12 | 0 | null | 0 | null | false | false | false | mit | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | [] | false | true | true | 1,386 | false | ### glass pipe on Stable Diffusion

This is the `<glass-sherlock>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

| daa2edac56b70d28a91d581c0fec766c |

Bugjuhjugjyy/tails-diffusion | Bugjuhjugjyy | null | 27 | 36 | diffusers | 0 | text-to-image | false | false | false | mit | null | null | null | 2 | 1 | 0 | 1 | 0 | 0 | 0 | [] | false | true | true | 1,971 | false | ### tails diffusion on Stable Diffusion via Dreambooth trained on the [fast-DreamBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook

#### model by Bugjuhjugjyy

This your the Stable Diffusion model fine-tuned the tails diffusion concept taught to Stable Diffusion with Dreambooth.

It can be used by modifying the `instance_prompt(s)`: **images**

You can also train your own concepts and upload them to the library by using [the fast-DremaBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb).

And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts)

Here are the images used for training this concept:

images

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

| 79607e2c54f129ab5248f38703e97ca8 |

Shobhank-iiitdwd/BERT-L-QA | Shobhank-iiitdwd | bert | 10 | 1 | transformers | 0 | question-answering | true | false | true | cc-by-4.0 | ['en'] | ['squad_v2'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | [] | true | true | true | 3,425 | false |

# bert-large-uncased-whole-word-masking-squad2

This is a berta-large model, fine-tuned using the SQuAD2.0 dataset for the task of question answering.

## Overview

**Language model:** bert-large

**Language:** English

**Downstream-task:** Extractive QA

**Training data:** SQuAD 2.0

**Eval data:** SQuAD 2.0

**Code:** See [an example QA pipeline on Haystack](https://haystack.deepset.ai/tutorials/first-qa-system)

## Usage

### In Haystack

Haystack is an NLP framework by deepset. You can use this model in a Haystack pipeline to do question answering at scale (over many documents). To load the model in [Haystack](https://github.com/deepset-ai/haystack/):

```python

reader = FARMReader(model_name_or_path="deepset/bert-large-uncased-whole-word-masking-squad2")

# or

reader = TransformersReader(model_name_or_path="FILL",tokenizer="deepset/bert-large-uncased-whole-word-masking-squad2")

```

### In Transformers

```python

from transformers import AutoModelForQuestionAnswering, AutoTokenizer, pipeline

model_name = "deepset/bert-large-uncased-whole-word-masking-squad2"

# a) Get predictions

nlp = pipeline('question-answering', model=model_name, tokenizer=model_name)

QA_input = {

'question': 'Why is model conversion important?',

'context': 'The option to convert models between FARM and transformers gives freedom to the user and let people easily switch between frameworks.'

}

res = nlp(QA_input)

# b) Load model & tokenizer

model = AutoModelForQuestionAnswering.from_pretrained(model_name)

tokenizer = AutoTokenizer.from_pretrained(model_name)

```

## About us

<div class="grid lg:grid-cols-2 gap-x-4 gap-y-3">

<div class="w-full h-40 object-cover mb-2 rounded-lg flex items-center justify-center">

<img alt="" src="https://raw.githubusercontent.com/deepset-ai/.github/main/deepset-logo-colored.png" class="w-40"/>

</div>

<div class="w-full h-40 object-cover mb-2 rounded-lg flex items-center justify-center">

<img alt="" src="https://raw.githubusercontent.com/deepset-ai/.github/main/haystack-logo-colored.png" class="w-40"/>

</div>

</div>

[deepset](http://deepset.ai/) is the company behind the open-source NLP framework [Haystack](https://haystack.deepset.ai/) which is designed to help you build production ready NLP systems that use: Question answering, summarization, ranking etc.

Some of our other work:

- [Distilled roberta-base-squad2 (aka "tinyroberta-squad2")]([https://huggingface.co/deepset/tinyroberta-squad2)

- [German BERT (aka "bert-base-german-cased")](https://deepset.ai/german-bert)

- [GermanQuAD and GermanDPR datasets and models (aka "gelectra-base-germanquad", "gbert-base-germandpr")](https://deepset.ai/germanquad)

## Get in touch and join the Haystack community

<p>For more info on Haystack, visit our <strong><a href="https://github.com/deepset-ai/haystack">GitHub</a></strong> repo and <strong><a href="https://docs.haystack.deepset.ai">Documentation</a></strong>.

We also have a <strong><a class="h-7" href="https://haystack.deepset.ai/community">Discord community open to everyone!</a></strong></p>

[Twitter](https://twitter.com/deepset_ai) | [LinkedIn](https://www.linkedin.com/company/deepset-ai/) | [Discord](https://haystack.deepset.ai/community/join) | [GitHub Discussions](https://github.com/deepset-ai/haystack/discussions) | [Website](https://deepset.ai)

By the way: [we're hiring!](http://www.deepset.ai/jobs) | fa5d04dcead89e57c9db4a716764512c |

Rocketknight1/temp-colab-upload-test2 | Rocketknight1 | distilbert | 8 | 5 | transformers | 0 | text-classification | false | true | false | apache-2.0 | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_keras_callback'] | true | true | true | 1,200 | false |

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Rocketknight1/temp-colab-upload-test2

This model is a fine-tuned version of [distilbert-base-cased](https://huggingface.co/distilbert-base-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.6931

- Validation Loss: 0.6931

- Epoch: 1

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'learning_rate': 0.001, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 0.6931 | 0.6931 | 0 |

| 0.6931 | 0.6931 | 1 |

### Framework versions

- Transformers 4.17.0

- TensorFlow 2.8.0

- Datasets 2.0.0

- Tokenizers 0.11.6

| 9389effddd257620e4c7b4f224bcc541 |

osanseviero/asr-with-transformers-wav2vec2 | osanseviero | wav2vec2 | 11 | 7 | superb | 0 | automatic-speech-recognition | true | true | false | apache-2.0 | ['en'] | ['librispeech_asr'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['audio', 'automatic-speech-recognition', 'superb'] | false | true | true | 3,808 | false |

# Fork of Wav2Vec2-Base-960h

[Facebook's Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/)

The base model pretrained and fine-tuned on 960 hours of Librispeech on 16kHz sampled speech audio. When using the model

make sure that your speech input is also sampled at 16Khz.

[Paper](https://arxiv.org/abs/2006.11477)

Authors: Alexei Baevski, Henry Zhou, Abdelrahman Mohamed, Michael Auli

**Abstract**

We show for the first time that learning powerful representations from speech audio alone followed by fine-tuning on transcribed speech can outperform the best semi-supervised methods while being conceptually simpler. wav2vec 2.0 masks the speech input in the latent space and solves a contrastive task defined over a quantization of the latent representations which are jointly learned. Experiments using all labeled data of Librispeech achieve 1.8/3.3 WER on the clean/other test sets. When lowering the amount of labeled data to one hour, wav2vec 2.0 outperforms the previous state of the art on the 100 hour subset while using 100 times less labeled data. Using just ten minutes of labeled data and pre-training on 53k hours of unlabeled data still achieves 4.8/8.2 WER. This demonstrates the feasibility of speech recognition with limited amounts of labeled data.

The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec#wav2vec-20.

# Usage

To transcribe audio files the model can be used as a standalone acoustic model as follows:

```python

from transformers import Wav2Vec2Tokenizer, Wav2Vec2ForCTC

from datasets import load_dataset

import soundfile as sf

import torch

# load model and tokenizer

tokenizer = Wav2Vec2Tokenizer.from_pretrained("facebook/wav2vec2-base-960h")

model = Wav2Vec2ForCTC.from_pretrained("facebook/wav2vec2-base-960h")

# define function to read in sound file

def map_to_array(batch):

speech, _ = sf.read(batch["file"])

batch["speech"] = speech

return batch

# load dummy dataset and read soundfiles

ds = load_dataset("patrickvonplaten/librispeech_asr_dummy", "clean", split="validation")

ds = ds.map(map_to_array)

# tokenize

input_values = tokenizer(ds["speech"][:2], return_tensors="pt", padding="longest").input_values # Batch size 1

# retrieve logits

logits = model(input_values).logits

# take argmax and decode

predicted_ids = torch.argmax(logits, dim=-1)

transcription = tokenizer.batch_decode(predicted_ids)

```

## Evaluation

This code snippet shows how to evaluate **facebook/wav2vec2-base-960h** on LibriSpeech's "clean" and "other" test data.

```python

from datasets import load_dataset

from transformers import Wav2Vec2ForCTC, Wav2Vec2Tokenizer

import soundfile as sf

import torch

from jiwer import wer

librispeech_eval = load_dataset("librispeech_asr", "clean", split="test")

model = Wav2Vec2ForCTC.from_pretrained("facebook/wav2vec2-base-960h").to("cuda")

tokenizer = Wav2Vec2Tokenizer.from_pretrained("facebook/wav2vec2-base-960h")

def map_to_array(batch):

speech, _ = sf.read(batch["file"])

batch["speech"] = speech

return batch

librispeech_eval = librispeech_eval.map(map_to_array)

def map_to_pred(batch):

input_values = tokenizer(batch["speech"], return_tensors="pt", padding="longest").input_values

with torch.no_grad():

logits = model(input_values.to("cuda")).logits

predicted_ids = torch.argmax(logits, dim=-1)

transcription = tokenizer.batch_decode(predicted_ids)

batch["transcription"] = transcription

return batch

result = librispeech_eval.map(map_to_pred, batched=True, batch_size=1, remove_columns=["speech"])

print("WER:", wer(result["text"], result["transcription"]))

```

*Result (WER)*:

| "clean" | "other" |

|---|---|

| 3.4 | 8.6 | | 044b12c9bbc0f0fbbc8ba2828c549230 |

AlphaNinja27/wav2vec2-large-xls-r-300m-panjabi-colab | AlphaNinja27 | wav2vec2 | 16 | 5 | transformers | 0 | automatic-speech-recognition | true | false | false | apache-2.0 | null | ['common_voice'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 1,105 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-large-xls-r-300m-panjabi-colab

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 30

- mixed_precision_training: Native AMP

### Training results

### Framework versions

- Transformers 4.11.3

- Pytorch 1.10.0+cu113

- Datasets 1.18.3

- Tokenizers 0.10.3

| 4ce1b523c3eb5a4f5176e971e0848dd8 |

Helsinki-NLP/opus-mt-fi-hr | Helsinki-NLP | marian | 10 | 17 | transformers | 0 | translation | true | true | false | apache-2.0 | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['translation'] | false | true | true | 768 | false |

### opus-mt-fi-hr

* source languages: fi

* target languages: hr

* OPUS readme: [fi-hr](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/fi-hr/README.md)

* dataset: opus

* model: transformer-align

* pre-processing: normalization + SentencePiece

* download original weights: [opus-2020-01-08.zip](https://object.pouta.csc.fi/OPUS-MT-models/fi-hr/opus-2020-01-08.zip)

* test set translations: [opus-2020-01-08.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-hr/opus-2020-01-08.test.txt)

* test set scores: [opus-2020-01-08.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-hr/opus-2020-01-08.eval.txt)

## Benchmarks

| testset | BLEU | chr-F |

|-----------------------|-------|-------|

| JW300.fi.hr | 23.5 | 0.476 |

| 03609731a4e2819e7e7cb4fc95cebeec |

pritoms/opt-350m-finetuned-stack | pritoms | opt | 10 | 2 | transformers | 0 | text-generation | true | false | false | other | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 900 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# opt-350m-finetuned-stack

This model is a fine-tuned version of [facebook/opt-350m](https://huggingface.co/facebook/opt-350m) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Framework versions

- Transformers 4.20.1

- Pytorch 1.12.0+cu113

- Datasets 2.3.2

- Tokenizers 0.12.1

| bc014fd712437b5489a74d6037cfe85b |

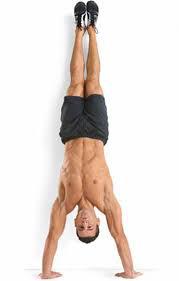

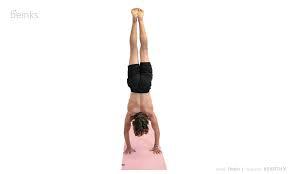

sd-concepts-library/handstand | sd-concepts-library | null | 9 | 0 | null | 1 | null | false | false | false | mit | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | [] | false | true | true | 1,020 | false | ### handstand on Stable Diffusion

This is the `<handstand>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

| 882caaf6fc3c30593a1d446872d1ed38 |

tftransformers/bert-large-cased | tftransformers | null | 6 | 1 | null | 0 | null | false | false | false | apache-2.0 | ['en'] | ['bookcorpus', 'wikipedia'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['exbert'] | false | true | true | 6,119 | false |

# BERT Large model (cased)

Pretrained model on English language using a masked language modeling (MLM) objective. It was introduced in

[this paper](https://arxiv.org/abs/1810.04805) and first released in

[this repository](https://github.com/google-research/bert). This model is case-sensitive: it makes a difference between

english and English.

Disclaimer: The team releasing BERT did not write a model card for this model so this model card has been written by

the Hugging Face team.

## Model description

BERT is a transformers model pretrained on a large corpus of English data in a self-supervised fashion. This means it

was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of

publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, it

was pretrained with two objectives:

- Masked language modeling (MLM): taking a sentence, the model randomly masks 15% of the words in the input then run

the entire masked sentence through the model and has to predict the masked words. This is different from traditional

recurrent neural networks (RNNs) that usually see the words one after the other, or from autoregressive models like

GPT which internally mask the future tokens. It allows the model to learn a bidirectional representation of the

sentence.

- Next sentence prediction (NSP): the models concatenates two masked sentences as inputs during pretraining. Sometimes

they correspond to sentences that were next to each other in the original text, sometimes not. The model then has to

predict if the two sentences were following each other or not.

This way, the model learns an inner representation of the English language that can then be used to extract features

useful for downstream tasks: if you have a dataset of labeled sentences for instance, you can train a standard

classifier using the features produced by the BERT model as inputs.

## Intended uses & limitations

You can use the raw model for either masked language modeling or next sentence prediction, but it's mostly intended to

be fine-tuned on a downstream task. See the [model hub](https://huggingface.co/models?filter=bert) to look for

fine-tuned versions on a task that interests you.

Note that this model is primarily aimed at being fine-tuned on tasks that use the whole sentence (potentially masked)

to make decisions, such as sequence classification, token classification or question answering. For tasks such as text

generation you should look at model like GPT2.

### How to use

You can use this model directly with a pipeline for masked language modeling:

In tf_transformers

```python

from tf_transformers.models import BertModel

from transformers import BertTokenizer

tokenizer = BertTokenizer.from_pretrained('bert-large-cased')

model = BertModel.from_pretrained("bert-large-cased")

text = "Replace me by any text you'd like."

inputs_tf = {}

inputs = tokenizer(text, return_tensors='tf')

inputs_tf["input_ids"] = inputs["input_ids"]

inputs_tf["input_type_ids"] = inputs["token_type_ids"]

inputs_tf["input_mask"] = inputs["attention_mask"]

outputs_tf = model(inputs_tf)

```

## Training data

The BERT model was pretrained on [BookCorpus](https://yknzhu.wixsite.com/mbweb), a dataset consisting of 11,038

unpublished books and [English Wikipedia](https://en.wikipedia.org/wiki/English_Wikipedia) (excluding lists, tables and

headers).

## Training procedure

### Preprocessing

The texts are tokenized using WordPiece and a vocabulary size of 30,000. The inputs of the model are then of the form:

```

[CLS] Sentence A [SEP] Sentence B [SEP]

```

With probability 0.5, sentence A and sentence B correspond to two consecutive sentences in the original corpus and in

the other cases, it's another random sentence in the corpus. Note that what is considered a sentence here is a

consecutive span of text usually longer than a single sentence. The only constrain is that the result with the two

"sentences" has a combined length of less than 512 tokens.

The details of the masking procedure for each sentence are the following:

- 15% of the tokens are masked.

- In 80% of the cases, the masked tokens are replaced by `[MASK]`.

- In 10% of the cases, the masked tokens are replaced by a random token (different) from the one they replace.

- In the 10% remaining cases, the masked tokens are left as is.

### Pretraining

The model was trained on 4 cloud TPUs in Pod configuration (16 TPU chips total) for one million steps with a batch size

of 256. The sequence length was limited to 128 tokens for 90% of the steps and 512 for the remaining 10%. The optimizer

used is Adam with a learning rate of 1e-4, \\(\beta_{1} = 0.9\\) and \\(\beta_{2} = 0.999\\), a weight decay of 0.01,

learning rate warmup for 10,000 steps and linear decay of the learning rate after.

## Evaluation results

When fine-tuned on downstream tasks, this model achieves the following results:

Glue test results:

| Task | MNLI-(m/mm) | QQP | QNLI | SST-2 | CoLA | STS-B | MRPC | RTE | Average |

|:----:|:-----------:|:----:|:----:|:-----:|:----:|:-----:|:----:|:----:|:-------:|

| | 84.6/83.4 | 71.2 | 90.5 | 93.5 | 52.1 | 85.8 | 88.9 | 66.4 | 79.6 |

### BibTeX entry and citation info

```bibtex

@article{DBLP:journals/corr/abs-1810-04805,

author = {Jacob Devlin and

Ming{-}Wei Chang and

Kenton Lee and

Kristina Toutanova},

title = {{BERT:} Pre-training of Deep Bidirectional Transformers for Language

Understanding},

journal = {CoRR},

volume = {abs/1810.04805},

year = {2018},

url = {http://arxiv.org/abs/1810.04805},

archivePrefix = {arXiv},

eprint = {1810.04805},

timestamp = {Tue, 30 Oct 2018 20:39:56 +0100},

biburl = {https://dblp.org/rec/journals/corr/abs-1810-04805.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

```

<a href="https://huggingface.co/exbert/?model=bert-base-cased">

<img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png">

</a> | d8025808f2173c1de54adb340ac35483 |

google/t5-efficient-small-dl4 | google | t5 | 12 | 7 | transformers | 0 | text2text-generation | true | true | true | apache-2.0 | ['en'] | ['c4'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['deep-narrow'] | false | true | true | 6,251 | false |

# T5-Efficient-SMALL-DL4 (Deep-Narrow version)

T5-Efficient-SMALL-DL4 is a variation of [Google's original T5](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) following the [T5 model architecture](https://huggingface.co/docs/transformers/model_doc/t5).

It is a *pretrained-only* checkpoint and was released with the

paper **[Scale Efficiently: Insights from Pre-training and Fine-tuning Transformers](https://arxiv.org/abs/2109.10686)**

by *Yi Tay, Mostafa Dehghani, Jinfeng Rao, William Fedus, Samira Abnar, Hyung Won Chung, Sharan Narang, Dani Yogatama, Ashish Vaswani, Donald Metzler*.

In a nutshell, the paper indicates that a **Deep-Narrow** model architecture is favorable for **downstream** performance compared to other model architectures

of similar parameter count.

To quote the paper:

> We generally recommend a DeepNarrow strategy where the model’s depth is preferentially increased

> before considering any other forms of uniform scaling across other dimensions. This is largely due to

> how much depth influences the Pareto-frontier as shown in earlier sections of the paper. Specifically, a

> tall small (deep and narrow) model is generally more efficient compared to the base model. Likewise,

> a tall base model might also generally more efficient compared to a large model. We generally find

> that, regardless of size, even if absolute performance might increase as we continue to stack layers,

> the relative gain of Pareto-efficiency diminishes as we increase the layers, converging at 32 to 36

> layers. Finally, we note that our notion of efficiency here relates to any one compute dimension, i.e.,

> params, FLOPs or throughput (speed). We report all three key efficiency metrics (number of params,

> FLOPS and speed) and leave this decision to the practitioner to decide which compute dimension to

> consider.

To be more precise, *model depth* is defined as the number of transformer blocks that are stacked sequentially.

A sequence of word embeddings is therefore processed sequentially by each transformer block.

## Details model architecture

This model checkpoint - **t5-efficient-small-dl4** - is of model type **Small** with the following variations:

- **dl** is **4**

It has **52.13** million parameters and thus requires *ca.* **208.51 MB** of memory in full precision (*fp32*)

or **104.25 MB** of memory in half precision (*fp16* or *bf16*).

A summary of the *original* T5 model architectures can be seen here:

| Model | nl (el/dl) | ff | dm | kv | nh | #Params|

| ----| ---- | ---- | ---- | ---- | ---- | ----|

| Tiny | 4/4 | 1024 | 256 | 32 | 4 | 16M|

| Mini | 4/4 | 1536 | 384 | 32 | 8 | 31M|

| Small | 6/6 | 2048 | 512 | 32 | 8 | 60M|

| Base | 12/12 | 3072 | 768 | 64 | 12 | 220M|

| Large | 24/24 | 4096 | 1024 | 64 | 16 | 738M|

| Xl | 24/24 | 16384 | 1024 | 128 | 32 | 3B|

| XXl | 24/24 | 65536 | 1024 | 128 | 128 | 11B|

whereas the following abbreviations are used:

| Abbreviation | Definition |

| ----| ---- |

| nl | Number of transformer blocks (depth) |

| dm | Dimension of embedding vector (output vector of transformers block) |

| kv | Dimension of key/value projection matrix |

| nh | Number of attention heads |

| ff | Dimension of intermediate vector within transformer block (size of feed-forward projection matrix) |

| el | Number of transformer blocks in the encoder (encoder depth) |

| dl | Number of transformer blocks in the decoder (decoder depth) |

| sh | Signifies that attention heads are shared |

| skv | Signifies that key-values projection matrices are tied |

If a model checkpoint has no specific, *el* or *dl* than both the number of encoder- and decoder layers correspond to *nl*.

## Pre-Training

The checkpoint was pretrained on the [Colossal, Cleaned version of Common Crawl (C4)](https://huggingface.co/datasets/c4) for 524288 steps using

the span-based masked language modeling (MLM) objective.

## Fine-Tuning

**Note**: This model is a **pretrained** checkpoint and has to be fine-tuned for practical usage.

The checkpoint was pretrained in English and is therefore only useful for English NLP tasks.

You can follow on of the following examples on how to fine-tune the model:

*PyTorch*:

- [Summarization](https://github.com/huggingface/transformers/tree/master/examples/pytorch/summarization)

- [Question Answering](https://github.com/huggingface/transformers/blob/master/examples/pytorch/question-answering/run_seq2seq_qa.py)

- [Text Classification](https://github.com/huggingface/transformers/tree/master/examples/pytorch/text-classification) - *Note*: You will have to slightly adapt the training example here to make it work with an encoder-decoder model.

*Tensorflow*:

- [Summarization](https://github.com/huggingface/transformers/tree/master/examples/tensorflow/summarization)

- [Text Classification](https://github.com/huggingface/transformers/tree/master/examples/tensorflow/text-classification) - *Note*: You will have to slightly adapt the training example here to make it work with an encoder-decoder model.

*JAX/Flax*:

- [Summarization](https://github.com/huggingface/transformers/tree/master/examples/flax/summarization)

- [Text Classification](https://github.com/huggingface/transformers/tree/master/examples/flax/text-classification) - *Note*: You will have to slightly adapt the training example here to make it work with an encoder-decoder model.

## Downstream Performance

TODO: Add table if available

## Computational Complexity

TODO: Add table if available

## More information

We strongly recommend the reader to go carefully through the original paper **[Scale Efficiently: Insights from Pre-training and Fine-tuning Transformers](https://arxiv.org/abs/2109.10686)** to get a more nuanced understanding of this model checkpoint.

As explained in the following [issue](https://github.com/google-research/google-research/issues/986#issuecomment-1035051145), checkpoints including the *sh* or *skv*

model architecture variations have *not* been ported to Transformers as they are probably of limited practical usage and are lacking a more detailed description. Those checkpoints are kept [here](https://huggingface.co/NewT5SharedHeadsSharedKeyValues) as they might be ported potentially in the future. | ce7024bc6f001704b16589e9e39da2d0 |

muks14/og-deberta-extra-o | muks14 | deberta | 13 | 7 | transformers | 0 | token-classification | true | false | false | mit | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 3,554 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# og-deberta-extra-o

This model is a fine-tuned version of [microsoft/deberta-base](https://huggingface.co/microsoft/deberta-base) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5184

- Precision: 0.5981

- Recall: 0.6667

- F1: 0.6305

- Accuracy: 0.9226

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 25

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| No log | 1.0 | 55 | 0.4813 | 0.2863 | 0.3467 | 0.3136 | 0.8720 |

| No log | 2.0 | 110 | 0.3469 | 0.4456 | 0.4587 | 0.4520 | 0.9010 |

| No log | 3.0 | 165 | 0.3166 | 0.5206 | 0.5387 | 0.5295 | 0.9147 |

| No log | 4.0 | 220 | 0.3338 | 0.4899 | 0.584 | 0.5328 | 0.9087 |

| No log | 5.0 | 275 | 0.3166 | 0.5625 | 0.648 | 0.6022 | 0.9198 |

| No log | 6.0 | 330 | 0.3464 | 0.5707 | 0.6027 | 0.5863 | 0.9207 |

| No log | 7.0 | 385 | 0.3548 | 0.5489 | 0.6133 | 0.5793 | 0.9207 |

| No log | 8.0 | 440 | 0.4005 | 0.6125 | 0.6027 | 0.6075 | 0.9210 |

| No log | 9.0 | 495 | 0.4185 | 0.5763 | 0.6347 | 0.6041 | 0.9171 |

| 0.2019 | 10.0 | 550 | 0.4174 | 0.5596 | 0.6507 | 0.6017 | 0.9179 |

| 0.2019 | 11.0 | 605 | 0.4558 | 0.5603 | 0.632 | 0.5940 | 0.9179 |

| 0.2019 | 12.0 | 660 | 0.4615 | 0.5632 | 0.6533 | 0.6049 | 0.9166 |

| 0.2019 | 13.0 | 715 | 0.4899 | 0.5815 | 0.6187 | 0.5995 | 0.9208 |

| 0.2019 | 14.0 | 770 | 0.4800 | 0.5581 | 0.64 | 0.5963 | 0.9186 |

| 0.2019 | 15.0 | 825 | 0.4752 | 0.5905 | 0.6613 | 0.6239 | 0.9212 |

| 0.2019 | 16.0 | 880 | 0.5014 | 0.5773 | 0.6373 | 0.6058 | 0.9174 |

| 0.2019 | 17.0 | 935 | 0.5095 | 0.5917 | 0.6453 | 0.6173 | 0.9195 |

| 0.2019 | 18.0 | 990 | 0.5249 | 0.5807 | 0.6427 | 0.6101 | 0.9203 |

| 0.0077 | 19.0 | 1045 | 0.5086 | 0.5761 | 0.656 | 0.6135 | 0.9222 |

| 0.0077 | 20.0 | 1100 | 0.5108 | 0.5962 | 0.6693 | 0.6307 | 0.9219 |

| 0.0077 | 21.0 | 1155 | 0.5144 | 0.5977 | 0.6853 | 0.6385 | 0.9231 |

| 0.0077 | 22.0 | 1210 | 0.5176 | 0.5990 | 0.6613 | 0.6286 | 0.9229 |

| 0.0077 | 23.0 | 1265 | 0.5171 | 0.6039 | 0.6667 | 0.6337 | 0.9226 |

| 0.0077 | 24.0 | 1320 | 0.5184 | 0.6043 | 0.672 | 0.6364 | 0.9226 |

| 0.0077 | 25.0 | 1375 | 0.5184 | 0.5981 | 0.6667 | 0.6305 | 0.9226 |

### Framework versions

- Transformers 4.23.1

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.1

| e79305d7136be23cb688bf9677bdf9e4 |

JovialValley/model_syllable_onSet4 | JovialValley | wav2vec2 | 15 | 0 | transformers | 0 | automatic-speech-recognition | true | false | false | apache-2.0 | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 11,452 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# model_syllable_onSet4

This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1349

- 0 Precision: 1.0

- 0 Recall: 1.0

- 0 F1-score: 1.0

- 0 Support: 26

- 1 Precision: 1.0

- 1 Recall: 0.9677

- 1 F1-score: 0.9836

- 1 Support: 31

- 2 Precision: 0.9630

- 2 Recall: 1.0

- 2 F1-score: 0.9811

- 2 Support: 26

- 3 Precision: 1.0

- 3 Recall: 1.0

- 3 F1-score: 1.0

- 3 Support: 14

- Accuracy: 0.9897

- Macro avg Precision: 0.9907

- Macro avg Recall: 0.9919

- Macro avg F1-score: 0.9912

- Macro avg Support: 97

- Weighted avg Precision: 0.9901

- Weighted avg Recall: 0.9897

- Weighted avg F1-score: 0.9897

- Weighted avg Support: 97

- Wer: 0.2258

- Mtrix: [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 30, 1, 0], [2, 0, 0, 26, 0], [3, 0, 0, 0, 14]]

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 16

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 200

- num_epochs: 70

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | 0 Precision | 0 Recall | 0 F1-score | 0 Support | 1 Precision | 1 Recall | 1 F1-score | 1 Support | 2 Precision | 2 Recall | 2 F1-score | 2 Support | 3 Precision | 3 Recall | 3 F1-score | 3 Support | Accuracy | Macro avg Precision | Macro avg Recall | Macro avg F1-score | Macro avg Support | Weighted avg Precision | Weighted avg Recall | Weighted avg F1-score | Weighted avg Support | Wer | Mtrix |

|:-------------:|:-----:|:----:|:---------------:|:-----------:|:--------:|:----------:|:---------:|:-----------:|:--------:|:----------:|:---------:|:-----------:|:--------:|:----------:|:---------:|:-----------:|:--------:|:----------:|:---------:|:--------:|:-------------------:|:----------------:|:------------------:|:-----------------:|:----------------------:|:-------------------:|:---------------------:|:--------------------:|:------:|:--------------------------------------------------------------------------------------:|

| 1.6602 | 4.16 | 100 | 1.5639 | 0.0 | 0.0 | 0.0 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.2584 | 0.8846 | 0.4 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.2371 | 0.0646 | 0.2212 | 0.1 | 97 | 0.0693 | 0.2371 | 0.1072 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 0, 0, 26, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] |

| 1.616 | 8.33 | 200 | 1.4203 | 0.0 | 0.0 | 0.0 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.2584 | 0.8846 | 0.4 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.2371 | 0.0646 | 0.2212 | 0.1 | 97 | 0.0693 | 0.2371 | 0.1072 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 0, 0, 26, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] |

| 1.2107 | 12.49 | 300 | 1.1249 | 0.0 | 0.0 | 0.0 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.2584 | 0.8846 | 0.4 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.2371 | 0.0646 | 0.2212 | 0.1 | 97 | 0.0693 | 0.2371 | 0.1072 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 0, 0, 26, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] |

| 1.1283 | 16.65 | 400 | 1.0201 | 0.0 | 0.0 | 0.0 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.2584 | 0.8846 | 0.4 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.2371 | 0.0646 | 0.2212 | 0.1 | 97 | 0.0693 | 0.2371 | 0.1072 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 0, 0, 26, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] |

| 0.8868 | 20.82 | 500 | 0.8944 | 0.0 | 0.0 | 0.0 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.2584 | 0.8846 | 0.4 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.2371 | 0.0646 | 0.2212 | 0.1 | 97 | 0.0693 | 0.2371 | 0.1072 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 0, 0, 26, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] |

| 0.8863 | 24.98 | 600 | 0.9316 | 0.0 | 0.0 | 0.0 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.2584 | 0.8846 | 0.4 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.2371 | 0.0646 | 0.2212 | 0.1 | 97 | 0.0693 | 0.2371 | 0.1072 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 0, 0, 26, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] |

| 0.9019 | 29.16 | 700 | 0.8688 | 0.7647 | 1.0 | 0.8667 | 26 | 0.0 | 0.0 | 0.0 | 31 | 0.3651 | 0.8846 | 0.5169 | 26 | 0.0 | 0.0 | 0.0 | 14 | 0.5052 | 0.2824 | 0.4712 | 0.3459 | 97 | 0.3028 | 0.5052 | 0.3708 | 97 | 0.9732 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 0, 31, 0], [2, 3, 0, 23, 0], [3, 5, 0, 9, 0]] |

| 0.7977 | 33.33 | 800 | 0.8014 | 1.0 | 1.0 | 1.0 | 26 | 0.9667 | 0.9355 | 0.9508 | 31 | 0.9259 | 0.9615 | 0.9434 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9691 | 0.9731 | 0.9743 | 0.9736 | 97 | 0.9695 | 0.9691 | 0.9691 | 97 | 1.0 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 29, 2, 0], [2, 0, 1, 25, 0], [3, 0, 0, 0, 14]] |

| 0.729 | 37.49 | 900 | 0.8163 | 1.0 | 1.0 | 1.0 | 26 | 0.9091 | 0.9677 | 0.9375 | 31 | 0.9583 | 0.8846 | 0.9200 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9588 | 0.9669 | 0.9631 | 0.9644 | 97 | 0.9598 | 0.9588 | 0.9586 | 97 | 1.0 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 30, 1, 0], [2, 0, 3, 23, 0], [3, 0, 0, 0, 14]] |

| 0.6526 | 41.65 | 1000 | 0.6691 | 1.0 | 1.0 | 1.0 | 26 | 0.9667 | 0.9355 | 0.9508 | 31 | 0.9259 | 0.9615 | 0.9434 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9691 | 0.9731 | 0.9743 | 0.9736 | 97 | 0.9695 | 0.9691 | 0.9691 | 97 | 0.7055 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 29, 2, 0], [2, 0, 1, 25, 0], [3, 0, 0, 0, 14]] |

| 0.6633 | 45.82 | 1100 | 0.3445 | 1.0 | 1.0 | 1.0 | 26 | 0.9394 | 1.0 | 0.9688 | 31 | 1.0 | 0.9231 | 0.9600 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9794 | 0.9848 | 0.9808 | 0.9822 | 97 | 0.9806 | 0.9794 | 0.9793 | 97 | 0.5017 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 31, 0, 0], [2, 0, 2, 24, 0], [3, 0, 0, 0, 14]] |

| 0.1913 | 49.98 | 1200 | 0.2455 | 1.0 | 1.0 | 1.0 | 26 | 0.9677 | 0.9677 | 0.9677 | 31 | 0.96 | 0.9231 | 0.9412 | 26 | 0.9333 | 1.0 | 0.9655 | 14 | 0.9691 | 0.9653 | 0.9727 | 0.9686 | 97 | 0.9693 | 0.9691 | 0.9689 | 97 | 0.3946 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 30, 1, 0], [2, 0, 1, 24, 1], [3, 0, 0, 0, 14]] |

| 0.2024 | 54.16 | 1300 | 0.1865 | 1.0 | 1.0 | 1.0 | 26 | 1.0 | 0.9355 | 0.9667 | 31 | 0.9286 | 1.0 | 0.9630 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9794 | 0.9821 | 0.9839 | 0.9824 | 97 | 0.9809 | 0.9794 | 0.9794 | 97 | 0.3423 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 29, 2, 0], [2, 0, 0, 26, 0], [3, 0, 0, 0, 14]] |

| 0.1212 | 58.33 | 1400 | 0.1485 | 1.0 | 1.0 | 1.0 | 26 | 1.0 | 0.9677 | 0.9836 | 31 | 0.9630 | 1.0 | 0.9811 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9897 | 0.9907 | 0.9919 | 0.9912 | 97 | 0.9901 | 0.9897 | 0.9897 | 97 | 0.2957 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 30, 1, 0], [2, 0, 0, 26, 0], [3, 0, 0, 0, 14]] |

| 0.108 | 62.49 | 1500 | 0.1348 | 1.0 | 1.0 | 1.0 | 26 | 1.0 | 0.9677 | 0.9836 | 31 | 0.9630 | 1.0 | 0.9811 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9897 | 0.9907 | 0.9919 | 0.9912 | 97 | 0.9901 | 0.9897 | 0.9897 | 97 | 0.2433 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 30, 1, 0], [2, 0, 0, 26, 0], [3, 0, 0, 0, 14]] |

| 0.1058 | 66.65 | 1600 | 0.1328 | 1.0 | 1.0 | 1.0 | 26 | 1.0 | 0.9677 | 0.9836 | 31 | 0.9630 | 1.0 | 0.9811 | 26 | 1.0 | 1.0 | 1.0 | 14 | 0.9897 | 0.9907 | 0.9919 | 0.9912 | 97 | 0.9901 | 0.9897 | 0.9897 | 97 | 0.2224 | [[0, 1, 2, 3], [0, 26, 0, 0, 0], [1, 0, 30, 1, 0], [2, 0, 0, 26, 0], [3, 0, 0, 0, 14]] |

### Framework versions

- Transformers 4.25.1

- Pytorch 1.13.0+cu116

- Datasets 2.8.0

- Tokenizers 0.13.2

| cebf784784b02faa326bb7adb2edf7a6 |

plncmm/roberta-clinical-wl-es | plncmm | roberta | 13 | 9 | transformers | 0 | fill-mask | true | false | false | apache-2.0 | ['es'] | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 1,015 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# plncmm/roberta-clinical-wl-es

This model is a fine-tuned version of [PlanTL-GOB-ES/roberta-base-biomedical-clinical-es](https://huggingface.co/PlanTL-GOB-ES/roberta-base-biomedical-clinical-es) on the Chilean waiting list dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

### Framework versions

- Transformers 4.20.0.dev0

- Pytorch 1.11.0+cu113

- Datasets 2.2.1

- Tokenizers 0.12.1