repo_id stringlengths 4 110 | author stringlengths 2 27 ⌀ | model_type stringlengths 2 29 ⌀ | files_per_repo int64 2 15.4k | downloads_30d int64 0 19.9M | library stringlengths 2 37 ⌀ | likes int64 0 4.34k | pipeline stringlengths 5 30 ⌀ | pytorch bool 2 classes | tensorflow bool 2 classes | jax bool 2 classes | license stringlengths 2 30 | languages stringlengths 4 1.63k ⌀ | datasets stringlengths 2 2.58k ⌀ | co2 stringclasses 29 values | prs_count int64 0 125 | prs_open int64 0 120 | prs_merged int64 0 15 | prs_closed int64 0 28 | discussions_count int64 0 218 | discussions_open int64 0 148 | discussions_closed int64 0 70 | tags stringlengths 2 513 | has_model_index bool 2 classes | has_metadata bool 1 class | has_text bool 1 class | text_length int64 401 598k | is_nc bool 1 class | readme stringlengths 0 598k | hash stringlengths 32 32 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

jonatasgrosman/exp_w2v2t_pl_xls-r_s235 | jonatasgrosman | wav2vec2 | 10 | 5 | transformers | 0 | automatic-speech-recognition | true | false | false | apache-2.0 | ['pl'] | ['mozilla-foundation/common_voice_7_0'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['automatic-speech-recognition', 'pl'] | false | true | true | 453 | false | # exp_w2v2t_pl_xls-r_s235

Fine-tuned [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) for speech recognition using the train split of [Common Voice 7.0 (pl)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0).

When using this model, make sure that your speech input is sampled at 16kHz.

This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool.

| a15ec24005f7a42dc87a892d1e930963 |

Palak/google_electra-small-discriminator_squad | Palak | electra | 13 | 9 | transformers | 0 | question-answering | true | false | false | apache-2.0 | null | ['squad'] | null | 1 | 1 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 1,073 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# google_electra-small-discriminator_squad

This model is a fine-tuned version of [google/electra-small-discriminator](https://huggingface.co/google/electra-small-discriminator) on the **squadV1** dataset.

- "eval_exact_match": 76.95364238410596

- "eval_f1": 84.98869246841396

- "eval_samples": 10784

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 16

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

### Framework versions

- Transformers 4.14.1

- Pytorch 1.9.0

- Datasets 1.16.1

- Tokenizers 0.10.3

| cfee47a84ddde4d8f15d6f05b48c4f0b |

Helsinki-NLP/opus-mt-kwn-en | Helsinki-NLP | marian | 10 | 10 | transformers | 0 | translation | true | true | false | apache-2.0 | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['translation'] | false | true | true | 776 | false |

### opus-mt-kwn-en

* source languages: kwn

* target languages: en

* OPUS readme: [kwn-en](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/kwn-en/README.md)

* dataset: opus

* model: transformer-align

* pre-processing: normalization + SentencePiece

* download original weights: [opus-2020-01-09.zip](https://object.pouta.csc.fi/OPUS-MT-models/kwn-en/opus-2020-01-09.zip)

* test set translations: [opus-2020-01-09.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/kwn-en/opus-2020-01-09.test.txt)

* test set scores: [opus-2020-01-09.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/kwn-en/opus-2020-01-09.eval.txt)

## Benchmarks

| testset | BLEU | chr-F |

|-----------------------|-------|-------|

| JW300.kwn.en | 27.5 | 0.434 |

| c73fa858a7869e02f2ec7538ebca0fba |

hassnain/wav2vec2-base-timit-demo-colab11 | hassnain | wav2vec2 | 12 | 6 | transformers | 0 | automatic-speech-recognition | true | false | false | apache-2.0 | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 1,462 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-base-timit-demo-colab11

This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 1.6269

- Wer: 0.7418

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 1000

- num_epochs: 30

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 5.6439 | 7.04 | 500 | 3.3083 | 1.0 |

| 2.3763 | 14.08 | 1000 | 1.5059 | 0.8146 |

| 1.0161 | 21.13 | 1500 | 1.5101 | 0.7488 |

| 0.6195 | 28.17 | 2000 | 1.6269 | 0.7418 |

### Framework versions

- Transformers 4.11.3

- Pytorch 1.11.0+cu113

- Datasets 1.18.3

- Tokenizers 0.10.3

| 82cb18adfe353fdd29ad4f95bf986d1d |

AkiKagura/mkgen-diffusion | AkiKagura | null | 25 | 18 | diffusers | 1 | text-to-image | false | false | false | creativeml-openrail-m | null | null | null | 2 | 0 | 2 | 0 | 1 | 1 | 0 | ['text-to-image'] | false | true | true | 952 | false |

A stable diffusion model used to generate Marco's pictures by the prompt **'mkmk woman'**

Based on runwayml/stable-diffusion-v1-5 trained by Dreambooth

Trained on 39 pics, 3000 steps

What is Marco like?

<img src="https://huggingface.co/AkiKagura/mkgen-diffusion/resolve/main/samples/IMG_2683.jpeg" width="512" height="512"/>

<img src="https://huggingface.co/AkiKagura/mkgen-diffusion/resolve/main/samples/IMG_0537.jpeg" width="512" height="512"/>

Some samples generated by this model:

<img src="https://huggingface.co/AkiKagura/mkgen-diffusion/resolve/main/samples/0.png" width="512" height="512"/>

<img src="https://huggingface.co/AkiKagura/mkgen-diffusion/resolve/main/samples/1.png" width="512" height="512"/>

<img src="https://huggingface.co/AkiKagura/mkgen-diffusion/resolve/main/samples/2.png" width="512" height="512"/>

<img src="https://huggingface.co/AkiKagura/mkgen-diffusion/resolve/main/samples/3.png" width="512" height="512"/>

| 4372ecd2eb9dda4a11686d100fdecaff |

sd-concepts-library/trigger-studio | sd-concepts-library | null | 22 | 0 | null | 30 | null | false | false | false | mit | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | [] | false | true | true | 2,590 | false | ### Trigger Studio on Stable Diffusion

This is the `<Trigger Studio>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

| 5fe9c5b34bbb32631a1669f0458aeaa5 |

frgfm/cspdarknet53 | frgfm | null | 4 | 4 | transformers | 0 | image-classification | true | false | false | apache-2.0 | null | ['frgfm/imagenette'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['image-classification', 'pytorch'] | false | true | true | 2,923 | false |

# CSP-Darknet-53 model

Pretrained on [ImageNette](https://github.com/fastai/imagenette). The CSP-Darknet-53 architecture was introduced in [this paper](https://arxiv.org/pdf/1911.11929.pdf).

## Model description

The core idea of the author is to change the convolutional stage by adding cross stage partial blocks in the architecture.

## Installation

### Prerequisites

Python 3.6 (or higher) and [pip](https://pip.pypa.io/en/stable/)/[conda](https://docs.conda.io/en/latest/miniconda.html) are required to install Holocron.

### Latest stable release

You can install the last stable release of the package using [pypi](https://pypi.org/project/pylocron/) as follows:

```shell

pip install pylocron

```

or using [conda](https://anaconda.org/frgfm/pylocron):

```shell

conda install -c frgfm pylocron

```

### Developer mode

Alternatively, if you wish to use the latest features of the project that haven't made their way to a release yet, you can install the package from source *(install [Git](https://git-scm.com/book/en/v2/Getting-Started-Installing-Git) first)*:

```shell

git clone https://github.com/frgfm/Holocron.git

pip install -e Holocron/.

```

## Usage instructions

```python

from PIL import Image

from torchvision.transforms import Compose, ConvertImageDtype, Normalize, PILToTensor, Resize

from torchvision.transforms.functional import InterpolationMode

from holocron.models import model_from_hf_hub

model = model_from_hf_hub("frgfm/cspdarknet53").eval()

img = Image.open(path_to_an_image).convert("RGB")

# Preprocessing

config = model.default_cfg

transform = Compose([

Resize(config['input_shape'][1:], interpolation=InterpolationMode.BILINEAR),

PILToTensor(),

ConvertImageDtype(torch.float32),

Normalize(config['mean'], config['std'])

])

input_tensor = transform(img).unsqueeze(0)

# Inference

with torch.inference_mode():

output = model(input_tensor)

probs = output.squeeze(0).softmax(dim=0)

```

## Citation

Original paper

```bibtex

@article{DBLP:journals/corr/abs-1911-11929,

author = {Chien{-}Yao Wang and

Hong{-}Yuan Mark Liao and

I{-}Hau Yeh and

Yueh{-}Hua Wu and

Ping{-}Yang Chen and

Jun{-}Wei Hsieh},

title = {CSPNet: {A} New Backbone that can Enhance Learning Capability of {CNN}},

journal = {CoRR},

volume = {abs/1911.11929},

year = {2019},

url = {http://arxiv.org/abs/1911.11929},

eprinttype = {arXiv},

eprint = {1911.11929},

timestamp = {Tue, 03 Dec 2019 20:41:07 +0100},

biburl = {https://dblp.org/rec/journals/corr/abs-1911-11929.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

```

Source of this implementation

```bibtex

@software{Fernandez_Holocron_2020,

author = {Fernandez, François-Guillaume},

month = {5},

title = {{Holocron}},

url = {https://github.com/frgfm/Holocron},

year = {2020}

}

```

| 34a70dcdf13a50fc8bdbd6434ba3d3e1 |

domenicrosati/deberta-v3-large-finetuned-DAGPap22 | domenicrosati | deberta-v2 | 21 | 1 | transformers | 0 | text-classification | true | false | false | mit | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['text-classification', 'generated_from_trainer'] | true | true | true | 995 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# deberta-v3-large-finetuned-DAGPap22

This model is a fine-tuned version of [microsoft/deberta-v3-large](https://huggingface.co/microsoft/deberta-v3-large) on an unknown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 6e-06

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 50

- num_epochs: 20

- mixed_precision_training: Native AMP

### Framework versions

- Transformers 4.18.0

- Pytorch 1.11.0

- Datasets 2.1.0

- Tokenizers 0.12.1

| 2ca45ce0c371dc1a3351e4f243404ff0 |

DOOGLAK/Tagged_One_50v6_NER_Model_3Epochs_AUGMENTED | DOOGLAK | bert | 13 | 5 | transformers | 0 | token-classification | true | false | false | apache-2.0 | null | ['tagged_one50v6_wikigold_split'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 1,563 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Tagged_One_50v6_NER_Model_3Epochs_AUGMENTED

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the tagged_one50v6_wikigold_split dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6728

- Precision: 0.0625

- Recall: 0.0005

- F1: 0.0010

- Accuracy: 0.7775

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| No log | 1.0 | 16 | 0.7728 | 0.0 | 0.0 | 0.0 | 0.7773 |

| No log | 2.0 | 32 | 0.6898 | 0.04 | 0.0002 | 0.0005 | 0.7774 |

| No log | 3.0 | 48 | 0.6728 | 0.0625 | 0.0005 | 0.0010 | 0.7775 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.11.0+cu113

- Datasets 2.4.0

- Tokenizers 0.11.6

| d1d4a14b3313f045b428e501fb373751 |

Padomin/t5-base-TEDxJP-10front-1body-10rear | Padomin | t5 | 20 | 1 | transformers | 0 | text2text-generation | true | false | false | cc-by-sa-4.0 | null | ['te_dx_jp'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 2,955 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-base-TEDxJP-10front-1body-10rear

This model is a fine-tuned version of [sonoisa/t5-base-japanese](https://huggingface.co/sonoisa/t5-base-japanese) on the te_dx_jp dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4366

- Wer: 0.1693

- Mer: 0.1636

- Wil: 0.2493

- Wip: 0.7507

- Hits: 55904

- Substitutions: 6304

- Deletions: 2379

- Insertions: 2249

- Cer: 0.1332

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 32

- eval_batch_size: 32

- seed: 40

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer | Mer | Wil | Wip | Hits | Substitutions | Deletions | Insertions | Cer |

|:-------------:|:-----:|:-----:|:---------------:|:------:|:------:|:------:|:------:|:-----:|:-------------:|:---------:|:----------:|:------:|

| 0.6166 | 1.0 | 1457 | 0.4595 | 0.2096 | 0.1979 | 0.2878 | 0.7122 | 54866 | 6757 | 2964 | 3819 | 0.1793 |

| 0.4985 | 2.0 | 2914 | 0.4190 | 0.1769 | 0.1710 | 0.2587 | 0.7413 | 55401 | 6467 | 2719 | 2241 | 0.1417 |

| 0.4787 | 3.0 | 4371 | 0.4130 | 0.1728 | 0.1670 | 0.2534 | 0.7466 | 55677 | 6357 | 2553 | 2249 | 0.1368 |

| 0.4299 | 4.0 | 5828 | 0.4085 | 0.1726 | 0.1665 | 0.2530 | 0.7470 | 55799 | 6381 | 2407 | 2357 | 0.1348 |

| 0.3855 | 5.0 | 7285 | 0.4130 | 0.1702 | 0.1644 | 0.2501 | 0.7499 | 55887 | 6309 | 2391 | 2292 | 0.1336 |

| 0.3109 | 6.0 | 8742 | 0.4182 | 0.1732 | 0.1668 | 0.2525 | 0.7475 | 55893 | 6317 | 2377 | 2494 | 0.1450 |

| 0.3027 | 7.0 | 10199 | 0.4256 | 0.1691 | 0.1633 | 0.2486 | 0.7514 | 55949 | 6273 | 2365 | 2283 | 0.1325 |

| 0.2729 | 8.0 | 11656 | 0.4252 | 0.1709 | 0.1649 | 0.2503 | 0.7497 | 55909 | 6283 | 2395 | 2362 | 0.1375 |

| 0.2531 | 9.0 | 13113 | 0.4329 | 0.1696 | 0.1639 | 0.2499 | 0.7501 | 55870 | 6322 | 2395 | 2235 | 0.1334 |

| 0.2388 | 10.0 | 14570 | 0.4366 | 0.1693 | 0.1636 | 0.2493 | 0.7507 | 55904 | 6304 | 2379 | 2249 | 0.1332 |

### Framework versions

- Transformers 4.21.2

- Pytorch 1.12.1+cu116

- Datasets 2.4.0

- Tokenizers 0.12.1

| b0f84e896c4fe2915ec98e7425227e32 |

sd-concepts-library/kairuno | sd-concepts-library | null | 18 | 0 | null | 6 | null | false | false | false | mit | null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | [] | false | true | true | 1,894 | false | ### kairuno on Stable Diffusion

This is the `kairuno` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

| 0ec5e8e2bb6e21930598be3326f617a3 |

CLAck/indo-mixed | CLAck | marian | 11 | 3 | transformers | 1 | translation | true | false | false | apache-2.0 | ['en', 'id'] | ['ALT'] | null | 1 | 0 | 0 | 1 | 0 | 0 | 0 | ['translation'] | false | true | true | 1,515 | false |

This model is pretrained on Chinese and Indonesian languages, and fine-tuned on Indonesian language.

### Example

```

%%capture

!pip install transformers transformers[sentencepiece]

from transformers import AutoModelForSeq2SeqLM, AutoTokenizer

# Download the pretrained model for English-Vietnamese available on the hub

model = AutoModelForSeq2SeqLM.from_pretrained("CLAck/indo-mixed")

tokenizer = AutoTokenizer.from_pretrained("CLAck/indo-mixed")

# Download a tokenizer that can tokenize English since the model Tokenizer doesn't know anymore how to do it

# We used the one coming from the initial model

# This tokenizer is used to tokenize the input sentence

tokenizer_en = AutoTokenizer.from_pretrained('Helsinki-NLP/opus-mt-en-zh')

# These special tokens are needed to reproduce the original tokenizer

tokenizer_en.add_tokens(["<2zh>", "<2indo>"], special_tokens=True)

sentence = "The cat is on the table"

# This token is needed to identify the target language

input_sentence = "<2indo> " + sentence

translated = model.generate(**tokenizer_en(input_sentence, return_tensors="pt", padding=True))

output_sentence = [tokenizer.decode(t, skip_special_tokens=True) for t in translated]

```

### Training results

MIXED

| Epoch | Bleu |

|:-----:|:-------:|

| 1.0 | 24.2579 |

| 2.0 | 30.6287 |

| 3.0 | 34.4417 |

| 4.0 | 36.2577 |

| 5.0 | 37.3488 |

FINETUNING

| Epoch | Bleu |

|:-----:|:-------:|

| 6.0 | 34.1676 |

| 7.0 | 35.2320 |

| 8.0 | 36.7110 |

| 9.0 | 37.3195 |

| 10.0 | 37.9461 | | b67155070646d7a8aca3aa23fdfde0a3 |

milyiyo/paraphraser-spanish-t5-small | milyiyo | t5 | 19 | 3 | transformers | 0 | text2text-generation | true | false | false | mit | ['es'] | ['paws-x', 'tapaco'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['generated_from_trainer'] | true | true | true | 1,126 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# paraphraser-spanish-t5-small

This model is a fine-tuned version of [flax-community/spanish-t5-small](https://huggingface.co/flax-community/spanish-t5-small) on the None dataset.

It achieves the following results on the evaluation set:

- eval_loss: 1.1079

- eval_runtime: 4.9573

- eval_samples_per_second: 365.924

- eval_steps_per_second: 36.713

- epoch: 0.83

- step: 43141

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 10

- eval_batch_size: 10

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 4

### Framework versions

- Transformers 4.24.0

- Pytorch 1.12.1+cu113

- Datasets 2.7.0

- Tokenizers 0.13.2 | 97b2785f5f75631dc1ac67292979b124 |

google/flan-t5-xxl | google | t5 | 32 | 231,088 | transformers | 303 | text2text-generation | true | true | true | apache-2.0 | ['en', 'sp', 'ja', 'pe', 'hi', 'fr', 'ch', 'be', 'gu', 'ge', 'te', 'it', 'ar', 'po', 'ta', 'ma', 'ma', 'or', 'pa', 'po', 'ur', 'ga', 'he', 'ko', 'ca', 'th', 'du', 'in', 'vi', 'bu', 'fi', 'ce', 'la', 'tu', 'ru', 'cr', 'sw', 'yo', 'ku', 'bu', 'ma', 'cz', 'fi', 'so', 'ta', 'sw', 'si', 'ka', 'zh', 'ig', 'xh', 'ro', 'ha', 'es', 'sl', 'li', 'gr', 'ne', 'as', False] | ['svakulenk0/qrecc', 'taskmaster2', 'djaym7/wiki_dialog', 'deepmind/code_contests', 'lambada', 'gsm8k', 'aqua_rat', 'esnli', 'quasc', 'qed'] | null | 8 | 1 | 6 | 1 | 22 | 17 | 5 | ['text2text-generation'] | false | true | true | 9,197 | false |

# Model Card for FLAN-T5 XXL

# Table of Contents

0. [TL;DR](#TL;DR)

1. [Model Details](#model-details)

2. [Usage](#usage)

3. [Uses](#uses)

4. [Bias, Risks, and Limitations](#bias-risks-and-limitations)

5. [Training Details](#training-details)

6. [Evaluation](#evaluation)

7. [Environmental Impact](#environmental-impact)

8. [Citation](#citation)

# TL;DR

If you already know T5, FLAN-T5 is just better at everything. For the same number of parameters, these models have been fine-tuned on more than 1000 additional tasks covering also more languages.

As mentioned in the first few lines of the abstract :

> Flan-PaLM 540B achieves state-of-the-art performance on several benchmarks, such as 75.2% on five-shot MMLU. We also publicly release Flan-T5 checkpoints,1 which achieve strong few-shot performance even compared to much larger models, such as PaLM 62B. Overall, instruction finetuning is a general method for improving the performance and usability of pretrained language models.

**Disclaimer**: Content from **this** model card has been written by the Hugging Face team, and parts of it were copy pasted from the [T5 model card](https://huggingface.co/t5-large).

# Model Details

## Model Description

- **Model type:** Language model

- **Language(s) (NLP):** English, Spanish, Japanese, Persian, Hindi, French, Chinese, Bengali, Gujarati, German, Telugu, Italian, Arabic, Polish, Tamil, Marathi, Malayalam, Oriya, Panjabi, Portuguese, Urdu, Galician, Hebrew, Korean, Catalan, Thai, Dutch, Indonesian, Vietnamese, Bulgarian, Filipino, Central Khmer, Lao, Turkish, Russian, Croatian, Swedish, Yoruba, Kurdish, Burmese, Malay, Czech, Finnish, Somali, Tagalog, Swahili, Sinhala, Kannada, Zhuang, Igbo, Xhosa, Romanian, Haitian, Estonian, Slovak, Lithuanian, Greek, Nepali, Assamese, Norwegian

- **License:** Apache 2.0

- **Related Models:** [All FLAN-T5 Checkpoints](https://huggingface.co/models?search=flan-t5)

- **Original Checkpoints:** [All Original FLAN-T5 Checkpoints](https://github.com/google-research/t5x/blob/main/docs/models.md#flan-t5-checkpoints)

- **Resources for more information:**

- [Research paper](https://arxiv.org/pdf/2210.11416.pdf)

- [GitHub Repo](https://github.com/google-research/t5x)

- [Hugging Face FLAN-T5 Docs (Similar to T5) ](https://huggingface.co/docs/transformers/model_doc/t5)

# Usage

Find below some example scripts on how to use the model in `transformers`:

## Using the Pytorch model

### Running the model on a CPU

<details>

<summary> Click to expand </summary>

```python

from transformers import T5Tokenizer, T5ForConditionalGeneration

tokenizer = T5Tokenizer.from_pretrained("google/flan-t5-xxl")

model = T5ForConditionalGeneration.from_pretrained("google/flan-t5-xxl")

input_text = "translate English to German: How old are you?"

input_ids = tokenizer(input_text, return_tensors="pt").input_ids

outputs = model.generate(input_ids)

print(tokenizer.decode(outputs[0]))

```

</details>

### Running the model on a GPU

<details>

<summary> Click to expand </summary>

```python

# pip install accelerate

from transformers import T5Tokenizer, T5ForConditionalGeneration

tokenizer = T5Tokenizer.from_pretrained("google/flan-t5-xxl")

model = T5ForConditionalGeneration.from_pretrained("google/flan-t5-xxl", device_map="auto")

input_text = "translate English to German: How old are you?"

input_ids = tokenizer(input_text, return_tensors="pt").input_ids.to("cuda")

outputs = model.generate(input_ids)

print(tokenizer.decode(outputs[0]))

```

</details>

### Running the model on a GPU using different precisions

#### FP16

<details>

<summary> Click to expand </summary>

```python

# pip install accelerate

import torch

from transformers import T5Tokenizer, T5ForConditionalGeneration

tokenizer = T5Tokenizer.from_pretrained("google/flan-t5-xxl")

model = T5ForConditionalGeneration.from_pretrained("google/flan-t5-xxl", device_map="auto", torch_dtype=torch.float16)

input_text = "translate English to German: How old are you?"

input_ids = tokenizer(input_text, return_tensors="pt").input_ids.to("cuda")

outputs = model.generate(input_ids)

print(tokenizer.decode(outputs[0]))

```

</details>

#### INT8

<details>

<summary> Click to expand </summary>

```python

# pip install bitsandbytes accelerate

from transformers import T5Tokenizer, T5ForConditionalGeneration

tokenizer = T5Tokenizer.from_pretrained("google/flan-t5-xxl")

model = T5ForConditionalGeneration.from_pretrained("google/flan-t5-xxl", device_map="auto", load_in_8bit=True)

input_text = "translate English to German: How old are you?"

input_ids = tokenizer(input_text, return_tensors="pt").input_ids.to("cuda")

outputs = model.generate(input_ids)

print(tokenizer.decode(outputs[0]))

```

</details>

# Uses

## Direct Use and Downstream Use

The authors write in [the original paper's model card](https://arxiv.org/pdf/2210.11416.pdf) that:

> The primary use is research on language models, including: research on zero-shot NLP tasks and in-context few-shot learning NLP tasks, such as reasoning, and question answering; advancing fairness and safety research, and understanding limitations of current large language models

See the [research paper](https://arxiv.org/pdf/2210.11416.pdf) for further details.

## Out-of-Scope Use

More information needed.

# Bias, Risks, and Limitations

The information below in this section are copied from the model's [official model card](https://arxiv.org/pdf/2210.11416.pdf):

> Language models, including Flan-T5, can potentially be used for language generation in a harmful way, according to Rae et al. (2021). Flan-T5 should not be used directly in any application, without a prior assessment of safety and fairness concerns specific to the application.

## Ethical considerations and risks

> Flan-T5 is fine-tuned on a large corpus of text data that was not filtered for explicit content or assessed for existing biases. As a result the model itself is potentially vulnerable to generating equivalently inappropriate content or replicating inherent biases in the underlying data.

## Known Limitations

> Flan-T5 has not been tested in real world applications.

## Sensitive Use:

> Flan-T5 should not be applied for any unacceptable use cases, e.g., generation of abusive speech.

# Training Details

## Training Data

The model was trained on a mixture of tasks, that includes the tasks described in the table below (from the original paper, figure 2):

## Training Procedure

According to the model card from the [original paper](https://arxiv.org/pdf/2210.11416.pdf):

> These models are based on pretrained T5 (Raffel et al., 2020) and fine-tuned with instructions for better zero-shot and few-shot performance. There is one fine-tuned Flan model per T5 model size.

The model has been trained on TPU v3 or TPU v4 pods, using [`t5x`](https://github.com/google-research/t5x) codebase together with [`jax`](https://github.com/google/jax).

# Evaluation

## Testing Data, Factors & Metrics

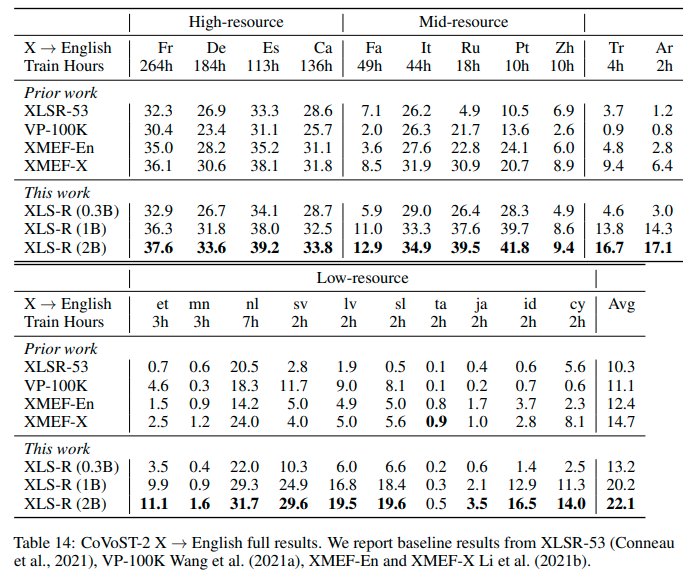

The authors evaluated the model on various tasks covering several languages (1836 in total). See the table below for some quantitative evaluation:

For full details, please check the [research paper](https://arxiv.org/pdf/2210.11416.pdf).

## Results

For full results for FLAN-T5-XXL, see the [research paper](https://arxiv.org/pdf/2210.11416.pdf), Table 3.

# Environmental Impact

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** Google Cloud TPU Pods - TPU v3 or TPU v4 | Number of chips ≥ 4.

- **Hours used:** More information needed

- **Cloud Provider:** GCP

- **Compute Region:** More information needed

- **Carbon Emitted:** More information needed

# Citation

**BibTeX:**

```bibtex

@misc{https://doi.org/10.48550/arxiv.2210.11416,

doi = {10.48550/ARXIV.2210.11416},

url = {https://arxiv.org/abs/2210.11416},

author = {Chung, Hyung Won and Hou, Le and Longpre, Shayne and Zoph, Barret and Tay, Yi and Fedus, William and Li, Eric and Wang, Xuezhi and Dehghani, Mostafa and Brahma, Siddhartha and Webson, Albert and Gu, Shixiang Shane and Dai, Zhuyun and Suzgun, Mirac and Chen, Xinyun and Chowdhery, Aakanksha and Narang, Sharan and Mishra, Gaurav and Yu, Adams and Zhao, Vincent and Huang, Yanping and Dai, Andrew and Yu, Hongkun and Petrov, Slav and Chi, Ed H. and Dean, Jeff and Devlin, Jacob and Roberts, Adam and Zhou, Denny and Le, Quoc V. and Wei, Jason},

keywords = {Machine Learning (cs.LG), Computation and Language (cs.CL), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {Scaling Instruction-Finetuned Language Models},

publisher = {arXiv},

year = {2022},

copyright = {Creative Commons Attribution 4.0 International}

}

```

| 265bda2844c1fdd4c877ffb7765a52cd |

samayl24/vit-base-beans-demo-v5 | samayl24 | vit | 7 | 6 | transformers | 0 | image-classification | true | false | false | apache-2.0 | null | ['beans'] | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['image-classification', 'generated_from_trainer'] | true | true | true | 1,333 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# vit-base-beans-demo-v5

This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the beans dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0427

- Accuracy: 0.9925

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0002

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 4

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.1378 | 1.54 | 100 | 0.1444 | 0.9549 |

| 0.0334 | 3.08 | 200 | 0.0427 | 0.9925 |

### Framework versions

- Transformers 4.20.1

- Pytorch 1.12.0+cu113

- Datasets 2.3.2

- Tokenizers 0.12.1

| f00d438f5e311a5c5016d6212dc9874d |

sachin/vit2distilgpt2 | sachin | vision-encoder-decoder | 4 | 87 | transformers | 5 | image-to-text | true | false | false | mit | ['en'] | ['coco2017'] | null | 0 | 0 | 0 | 0 | 1 | 1 | 0 | ['image-to-text'] | false | true | true | 1,700 | false |

# Vit2-DistilGPT2

This model takes in an image and outputs a caption. It was trained using the Coco dataset and the full training script can be found in [this kaggle kernel](https://www.kaggle.com/sachin/visionencoderdecoder-model-training)

## Usage

```python

import Image

from transformers import AutoModel, GPT2Tokenizer, ViTFeatureExtractor

model = AutoModel.from_pretrained("sachin/vit2distilgpt2")

vit_feature_extractor = ViTFeatureExtractor.from_pretrained("google/vit-base-patch16-224-in21k")

# make sure GPT2 appends EOS in begin and end

def build_inputs_with_special_tokens(self, token_ids_0, token_ids_1=None):

outputs = [self.bos_token_id] + token_ids_0 + [self.eos_token_id]

return outputs

GPT2Tokenizer.build_inputs_with_special_tokens = build_inputs_with_special_tokens

gpt2_tokenizer = GPT2Tokenizer.from_pretrained("distilgpt2")

# set pad_token_id to unk_token_id -> be careful here as unk_token_id == eos_token_id == bos_token_id

gpt2_tokenizer.pad_token = gpt2_tokenizer.unk_token

image = (Image.open(image_path).convert("RGB"), return_tensors="pt").pixel_values

encoder_outputs = model.generate(image.unsqueeze(0))

generated_sentences = gpt2_tokenizer.batch_decode(encoder_outputs, skip_special_tokens=True)

```

Note that the output sentence may be repeated, hence a post processing step may be required.

## Bias Warning

This model may be biased due to dataset, lack of long training and the model itself. The following gender bias is an example.

## Results

<iframe src="https://wandb.ai/sachinruk/Vit2GPT2/reports/Shared-panel-22-01-27-23-01-56--VmlldzoxNDkyMTM3?highlightShare" style="border:none;height:1024px;width:100%">

| 6bf597ae2f1e6eec01433adb091f9fcd |

Ojimi/waifumake-full | Ojimi | null | 25 | 21 | diffusers | 1 | text-to-image | false | false | false | agpl-3.0 | ['en', 'vi'] | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ['art'] | false | true | true | 4,400 | false |

# Waifumake (●'◡'●) AI Art model.

A single student training an AI model that generates art.

## **New model avalable** : [waifumake-full-v2](waifumake-full-v2.safetensors)!

## What's new in v2:

- Fix color loss.

- Increase image quality.

## Introduction:

- It's an AI art model for converting text to images, images to images, inpainting, and outpainting using Stable Diffusion.

- The AI art model is developed with a focus on the ability to draw anime characters relatively well through fine-tuning using Dreambooth.

- It can be used as a tool for upscaling or rendering anime-style images from 3D modeling software (Blender).

- Create an image from a sketch you created from a pure drawing program. (MS Paint)

- The model is aimed at everyone and has limitless usage potential.

## Used:

- For 🧨 Diffusers Library:

```python

from diffusers import DiffusionPipeline

pipe = DiffusionPipeline.from_pretrained("Ojimi/waifumake-full")

pipe = pipe.to("cuda")

prompt = "1girl, animal ears, long hair, solo, cat ears, choker, bare shoulders, red eyes, fang, looking at viewer, animal ear fluff, upper body, black hair, blush, closed mouth, off shoulder, bangs, bow, collarbone"

image = pipe(prompt, negative_prompt="lowres, bad anatomy").images[0]

```

- For Web UI by Automatic1111:

```bash

#Install Web UI.

git clone https://github.com/AUTOMATIC1111/stable-diffusion-webui.git

cd /content/stable-diffusion-webui/

pip install -qq -r requirements.txt

pip install -U xformers #Install `xformes` for better performance.

```

```bash

#Download model.

wget https://huggingface.co/Ojimi/waifumake-full/resolve/main/waifumake-full-v2.safetensors -O /content/stable-diffusion-webui/models/Stable-diffusion/waifumake-full-v2.safetensors

```

```bash

#Run and enjoy ☕.

cd /content/stable-diffusion-webui

python launch.py --xformers

```

## Tips:

- The `masterpiece` and `best quality` tags are not necessary, as it sometimes leads to contradictory results, but if it is distorted or discolored, add them now.

- The CGF scale should be 7.5 and the step count 28 for the best quality and best performance.

- Use a sample photo for your idea. `Interrogate DeepBooru` and change the prompts to suit what you want.

- You should use it as a supportive tool for creating works of art, and not rely on it completely.

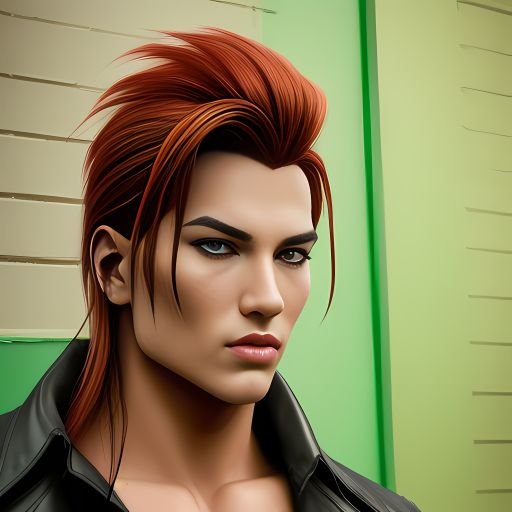

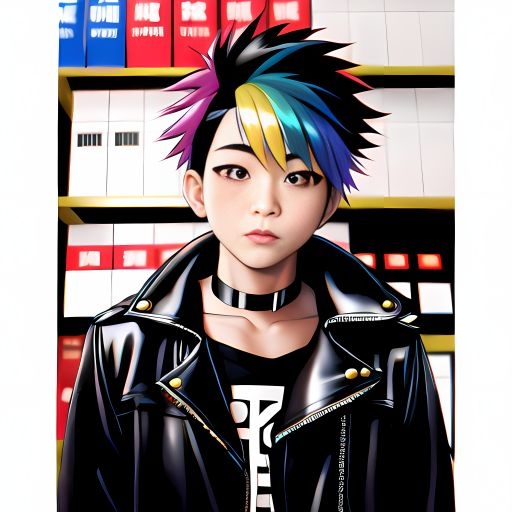

## Preview: v2 model

## Training:

- **Data**: The model is trained based on a database of various sources from the Internet provided by my friend and images created by another AI.

- **Schedule**: Euler Ancestral Discrete.

- **Optimizer**: AdamW.

- **Precision**: BF16.

- **Hardware**: Google Colaboratory Pro - NVIDIA A100 40GB VRAM.

## **Limitations:**

- Loss of detail, errors, bad human-like (six-fingered hand) details, deformation, blurring, and unclear images are inevitable.

- Complex tasks cannot be handled.

- ⚠️Content may not be appropriate for all ages: As it is trained on data that includes adult content, the generated images may contain content not suitable for children (depending on your country there will be a specific regulation about it). If you do not want to appear adult content, make sure you have additional safety measures in place, such as adding "nsfw" to the negative prompt.

- The results generated by the model are considered impressive. But unfortunately, currently, it only supports the English language, to use multilingual, consider using third-party translation programs.

- The model is trained on the `Danbooru` and `Nai` tagging system, so the long text may result in poor results.

- My amount of money: 0 USD =((.

## **Desires:**

As it is a version made only by myself and my small associates, the model will not be perfect and may differ from what people expect. Any contributions from everyone will be respected.

Want to support me? Thank you, please help me make it better. ❤️

## Special Thank:

This wouldn't have happened if they hadn't made a breakthrough.

- [Runwayml](https://huggingface.co/runwayml/): Base model.

- [d8ahazard](https://github.com/d8ahazard/.sd_dreambooth_extension) : Dreambooth.

- [Automatic1111](https://github.com/AUTOMATIC1111/) : Web UI.

- [Mikubill](https://github.com/Mikubill/): Where my ideas started.

- Chat-GPT: Help me do crazy things that I thought I would never do.

- Novel AI: Dataset images. An AI made me thousands of pictures without worrying about copyright or dispute.

- Danbooru: Help me write the correct tag.

- My friend and others.

- And You 🫵❤️

## Copyright:

This license allows anyone to copy, modify, publish, and commercialize the model, but please follow the terms of the GNU General Public License. You can learn more about the GNU General Public License at [here](LICENSE.txt).

If any part of the model does not comply with the terms of the GNU General Public License, the copyright and other rights of the model will still be valid.

All AI-generated images are yours, you can do whatever you want, but please obey the laws of your country. We will not be responsible for any problems you cause.

Don't forget me.

# Have fun with your waifu! (●'◡'●)

Like it? | 5e7e74b9bc2ce79e65f8ca4fdad80aab |

Bingsu/my-k-anything-v3-0 | Bingsu | null | 18 | 53 | diffusers | 4 | text-to-image | false | false | false | creativeml-openrail-m | ['ko'] | null | null | 3 | 0 | 3 | 0 | 0 | 0 | 0 | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | true | true | 2,127 | false |

# my-k-anything-v3-0

[Bingsu/my-korean-stable-diffusion-v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5)와 같은 방법으로 만든 k-아무거나 3.0 모델.

생각보다 잘 안되고, 특히 캐릭터에 관한 정보는 다 잊어버린 걸로 보입니다.

# Usage

```sh

pip install transformers accelerate>=0.14.0 diffusers>=0.7.2

```

```python

import torch

from diffusers import StableDiffusionPipeline

repo = "Bingsu/my-k-anything-v3-0"

pipe = StableDiffusionPipeline.from_pretrained(

repo, torch_dtype=torch.float16,

)

pipe.to("cuda")

pipe.safety_checker = None

```

```python

from typing import Optional

import torch

def gen_image(

prompt: str,

negative_prompt: Optional[str] = None,

seed: Optional[int] = None,

scale: float = 7.5,

steps: int = 30,

):

if seed is not None:

generator = torch.Generator("cuda").manual_seed(seed)

else:

generator = None

image = pipe(

prompt=prompt,

negative_prompt=negative_prompt,

generator=generator,

guidance_scale=scale,

num_inference_steps=steps,

).images[0]

return image

```

```python

prompt = "파란색 포니테일 헤어, 브로치, 정장을 입은 성인 여성, 고퀄리티, 최고품질"

negative = "저화질, 저품질, 텍스트"

seed = 42467781

scale = 12.0

gen_image(prompt, negative, seed, scale)

```

## License

This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage. The CreativeML OpenRAIL License specifies:

1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content

2. The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully) [Please read the full license here](https://huggingface.co/spaces/CompVis/stable-diffusion-license)

| c8da03e727b2b703d1262ea0d036785c |

DmitryPogrebnoy/MedRuRobertaLarge | DmitryPogrebnoy | roberta | 9 | 24 | transformers | 0 | fill-mask | true | false | false | apache-2.0 | ['ru'] | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 | [] | false | true | true | 2,142 | false |

# Model MedRuRobertaLarge

# Model Description

This model is fine-tuned version of [ruRoberta-large](https://huggingface.co/sberbank-ai/ruRoberta-large).

The code for the fine-tuned process can be found [here](https://github.com/DmitryPogrebnoy/MedSpellChecker/blob/main/spellchecker/ml_ranging/models/med_ru_roberta_large/fine_tune_ru_roberta_large.py).

The model is fine-tuned on a specially collected dataset of over 30,000 medical anamneses in Russian.

The collected dataset can be found [here](https://github.com/DmitryPogrebnoy/MedSpellChecker/blob/main/data/anamnesis/processed/all_anamnesis.csv).

This model was created as part of a master's project to develop a method for correcting typos

in medical histories using BERT models as a ranking of candidates.

The project is open source and can be found [here](https://github.com/DmitryPogrebnoy/MedSpellChecker).

# How to Get Started With the Model

You can use the model directly with a pipeline for masked language modeling:

```python

>>> from transformers import pipeline

>>> pipeline = pipeline('fill-mask', model='DmitryPogrebnoy/MedRuRobertaLarge')

>>> pipeline("У пациента <mask> боль в грудине.")

[{'score': 0.2467374950647354,

'token': 9233,

'token_str': ' сильный',

'sequence': 'У пациента сильный боль в грудине.'},

{'score': 0.16476310789585114,

'token': 27876,

'token_str': ' постоянный',

'sequence': 'У пациента постоянный боль в грудине.'},

{'score': 0.07211139053106308,

'token': 19551,

'token_str': ' острый',

'sequence': 'У пациента острый боль в грудине.'},

{'score': 0.0616639070212841,

'token': 18840,

'token_str': ' сильная',

'sequence': 'У пациента сильная боль в грудине.'},

{'score': 0.029712719842791557,

'token': 40176,

'token_str': ' острая',

'sequence': 'У пациента острая боль в грудине.'}]

```

Or you can load the model and tokenizer and do what you need to do:

```python

>>> from transformers import AutoTokenizer, AutoModelForMaskedLM

>>> tokenizer = AutoTokenizer.from_pretrained("DmitryPogrebnoy/MedRuRobertaLarge")

>>> model = AutoModelForMaskedLM.from_pretrained("DmitryPogrebnoy/MedRuRobertaLarge")

``` | 4038186c9f320e03221b3e44b72581dc |

m3hrdadfi/zabanshenas-roberta-base-mix | m3hrdadfi | roberta | 11 | 4 | transformers | 2 | text-classification | true | true | false | apache-2.0 | ['multilingual', 'ace', 'afr', 'als', 'amh', 'ang', 'ara', 'arg', 'arz', 'asm', 'ast', 'ava', 'aym', 'azb', 'aze', 'bak', 'bar', 'bcl', 'bel', 'ben', 'bho', 'bjn', 'bod', 'bos', 'bpy', 'bre', 'bul', 'bxr', 'cat', 'cbk', 'cdo', 'ceb', 'ces', 'che', 'chr', 'chv', 'ckb', 'cor', 'cos', 'crh', 'csb', 'cym', 'dan', 'deu', 'diq', 'div', 'dsb', 'dty', 'egl', 'ell', 'eng', 'epo', 'est', 'eus', 'ext', 'fao', 'fas', 'fin', 'fra', 'frp', 'fry', 'fur', 'gag', 'gla', 'gle', 'glg', 'glk', 'glv', 'grn', 'guj', 'hak', 'hat', 'hau', 'hbs', 'heb', 'hif', 'hin', 'hrv', 'hsb', 'hun', 'hye', 'ibo', 'ido', 'ile', 'ilo', 'ina', 'ind', 'isl', 'ita', 'jam', 'jav', 'jbo', 'jpn', 'kaa', 'kab', 'kan', 'kat', 'kaz', 'kbd', 'khm', 'kin', 'kir', 'koi', 'kok', 'kom', 'kor', 'krc', 'ksh', 'kur', 'lad', 'lao', 'lat', 'lav', 'lez', 'lij', 'lim', 'lin', 'lit', 'lmo', 'lrc', 'ltg', 'ltz', 'lug', 'lzh', 'mai', 'mal', 'mar', 'mdf', 'mhr', 'min', 'mkd', 'mlg', 'mlt', 'nan', 'mon', 'mri', 'mrj', 'msa', 'mwl', 'mya', 'myv', 'mzn', 'nap', 'nav', 'nci', 'nds', 'nep', 'new', 'nld', 'nno', 'nob', 'nrm', 'nso', 'oci', 'olo', 'ori', 'orm', 'oss', 'pag', 'pam', 'pan', 'pap', 'pcd', 'pdc', 'pfl', 'pnb', 'pol', 'por', 'pus', 'que', 'roh', 'ron', 'rue', 'rup', 'rus', 'sah', 'san', 'scn', 'sco', 'sgs', 'sin', 'slk', 'slv', 'sme', 'sna', 'snd', 'som', 'spa', 'sqi', 'srd', 'srn', 'srp', 'stq', 'sun', 'swa', 'swe', 'szl', 'tam', 'tat', 'tcy', 'tel', 'tet', 'tgk', 'tgl', 'tha', 'ton', 'tsn', 'tuk', 'tur', 'tyv', 'udm', 'uig', 'ukr', 'urd', 'uzb', 'vec', 'vep', 'vie', 'vls', 'vol', 'vro', 'war', 'wln', 'wol', 'wuu', 'xho', 'xmf', 'yid', 'yor', 'zea', 'zho'] | ['wili_2018'] | null | 1 | 0 | 1 | 0 | 0 | 0 | 0 | [] | false | true | true | 56,099 | false |

# Zabanshenas - Language Detector

Zabanshenas is a Transformer-based solution for identifying the most likely language of a written document/text. Zabanshenas is a Persian word that has two meanings:

- A person who studies linguistics.

- A way to identify the type of written language.

## How to use

Follow [Zabanshenas repo](https://github.com/m3hrdadfi/zabanshenas) for more information!

## Evaluation

The following tables summarize the scores obtained by model overall and per each class.

### By Paragraph

| language | precision | recall | f1-score |

|:--------------------------------------:|:---------:|:--------:|:--------:|

| Achinese (ace) | 1.000000 | 0.982143 | 0.990991 |

| Afrikaans (afr) | 1.000000 | 1.000000 | 1.000000 |

| Alemannic German (als) | 1.000000 | 0.946429 | 0.972477 |

| Amharic (amh) | 1.000000 | 0.982143 | 0.990991 |

| Old English (ang) | 0.981818 | 0.964286 | 0.972973 |

| Arabic (ara) | 0.846154 | 0.982143 | 0.909091 |

| Aragonese (arg) | 1.000000 | 1.000000 | 1.000000 |

| Egyptian Arabic (arz) | 0.979592 | 0.857143 | 0.914286 |

| Assamese (asm) | 0.981818 | 0.964286 | 0.972973 |

| Asturian (ast) | 0.964912 | 0.982143 | 0.973451 |

| Avar (ava) | 0.941176 | 0.905660 | 0.923077 |

| Aymara (aym) | 0.964912 | 0.982143 | 0.973451 |

| South Azerbaijani (azb) | 0.965517 | 1.000000 | 0.982456 |

| Azerbaijani (aze) | 1.000000 | 1.000000 | 1.000000 |

| Bashkir (bak) | 1.000000 | 0.978261 | 0.989011 |

| Bavarian (bar) | 0.843750 | 0.964286 | 0.900000 |

| Central Bikol (bcl) | 1.000000 | 0.982143 | 0.990991 |

| Belarusian (Taraschkewiza) (be-tarask) | 1.000000 | 0.875000 | 0.933333 |

| Belarusian (bel) | 0.870968 | 0.964286 | 0.915254 |

| Bengali (ben) | 0.982143 | 0.982143 | 0.982143 |

| Bhojpuri (bho) | 1.000000 | 0.928571 | 0.962963 |

| Banjar (bjn) | 0.981132 | 0.945455 | 0.962963 |

| Tibetan (bod) | 1.000000 | 0.982143 | 0.990991 |

| Bosnian (bos) | 0.552632 | 0.375000 | 0.446809 |

| Bishnupriya (bpy) | 1.000000 | 0.982143 | 0.990991 |

| Breton (bre) | 1.000000 | 0.964286 | 0.981818 |

| Bulgarian (bul) | 1.000000 | 0.964286 | 0.981818 |

| Buryat (bxr) | 0.946429 | 0.946429 | 0.946429 |

| Catalan (cat) | 0.982143 | 0.982143 | 0.982143 |

| Chavacano (cbk) | 0.914894 | 0.767857 | 0.834951 |

| Min Dong (cdo) | 1.000000 | 0.982143 | 0.990991 |

| Cebuano (ceb) | 1.000000 | 1.000000 | 1.000000 |

| Czech (ces) | 1.000000 | 1.000000 | 1.000000 |

| Chechen (che) | 1.000000 | 1.000000 | 1.000000 |

| Cherokee (chr) | 1.000000 | 0.963636 | 0.981481 |

| Chuvash (chv) | 0.938776 | 0.958333 | 0.948454 |

| Central Kurdish (ckb) | 1.000000 | 1.000000 | 1.000000 |

| Cornish (cor) | 1.000000 | 1.000000 | 1.000000 |

| Corsican (cos) | 1.000000 | 0.982143 | 0.990991 |

| Crimean Tatar (crh) | 1.000000 | 0.946429 | 0.972477 |

| Kashubian (csb) | 1.000000 | 0.963636 | 0.981481 |

| Welsh (cym) | 1.000000 | 1.000000 | 1.000000 |

| Danish (dan) | 1.000000 | 1.000000 | 1.000000 |

| German (deu) | 0.828125 | 0.946429 | 0.883333 |

| Dimli (diq) | 0.964912 | 0.982143 | 0.973451 |

| Dhivehi (div) | 1.000000 | 1.000000 | 1.000000 |

| Lower Sorbian (dsb) | 1.000000 | 0.982143 | 0.990991 |

| Doteli (dty) | 0.940000 | 0.854545 | 0.895238 |

| Emilian (egl) | 1.000000 | 0.928571 | 0.962963 |

| Modern Greek (ell) | 1.000000 | 1.000000 | 1.000000 |

| English (eng) | 0.588889 | 0.946429 | 0.726027 |

| Esperanto (epo) | 1.000000 | 0.982143 | 0.990991 |

| Estonian (est) | 0.963636 | 0.946429 | 0.954955 |

| Basque (eus) | 1.000000 | 0.982143 | 0.990991 |

| Extremaduran (ext) | 0.982143 | 0.982143 | 0.982143 |

| Faroese (fao) | 1.000000 | 1.000000 | 1.000000 |

| Persian (fas) | 0.948276 | 0.982143 | 0.964912 |

| Finnish (fin) | 1.000000 | 1.000000 | 1.000000 |

| French (fra) | 0.710145 | 0.875000 | 0.784000 |

| Arpitan (frp) | 1.000000 | 0.946429 | 0.972477 |

| Western Frisian (fry) | 0.982143 | 0.982143 | 0.982143 |

| Friulian (fur) | 1.000000 | 0.982143 | 0.990991 |

| Gagauz (gag) | 0.981132 | 0.945455 | 0.962963 |

| Scottish Gaelic (gla) | 0.982143 | 0.982143 | 0.982143 |

| Irish (gle) | 0.949153 | 1.000000 | 0.973913 |

| Galician (glg) | 1.000000 | 1.000000 | 1.000000 |

| Gilaki (glk) | 0.981132 | 0.945455 | 0.962963 |

| Manx (glv) | 1.000000 | 1.000000 | 1.000000 |

| Guarani (grn) | 1.000000 | 0.964286 | 0.981818 |

| Gujarati (guj) | 1.000000 | 0.982143 | 0.990991 |

| Hakka Chinese (hak) | 0.981818 | 0.964286 | 0.972973 |

| Haitian Creole (hat) | 1.000000 | 1.000000 | 1.000000 |

| Hausa (hau) | 1.000000 | 0.945455 | 0.971963 |

| Serbo-Croatian (hbs) | 0.448276 | 0.464286 | 0.456140 |

| Hebrew (heb) | 1.000000 | 0.982143 | 0.990991 |

| Fiji Hindi (hif) | 0.890909 | 0.890909 | 0.890909 |

| Hindi (hin) | 0.981481 | 0.946429 | 0.963636 |

| Croatian (hrv) | 0.500000 | 0.636364 | 0.560000 |

| Upper Sorbian (hsb) | 0.955556 | 1.000000 | 0.977273 |

| Hungarian (hun) | 1.000000 | 1.000000 | 1.000000 |

| Armenian (hye) | 1.000000 | 0.981818 | 0.990826 |

| Igbo (ibo) | 0.918033 | 1.000000 | 0.957265 |

| Ido (ido) | 1.000000 | 1.000000 | 1.000000 |

| Interlingue (ile) | 1.000000 | 0.962264 | 0.980769 |

| Iloko (ilo) | 0.947368 | 0.964286 | 0.955752 |

| Interlingua (ina) | 1.000000 | 1.000000 | 1.000000 |

| Indonesian (ind) | 0.761905 | 0.872727 | 0.813559 |

| Icelandic (isl) | 1.000000 | 1.000000 | 1.000000 |

| Italian (ita) | 0.861538 | 1.000000 | 0.925620 |

| Jamaican Patois (jam) | 1.000000 | 0.946429 | 0.972477 |

| Javanese (jav) | 0.964912 | 0.982143 | 0.973451 |

| Lojban (jbo) | 1.000000 | 1.000000 | 1.000000 |

| Japanese (jpn) | 1.000000 | 1.000000 | 1.000000 |

| Karakalpak (kaa) | 0.965517 | 1.000000 | 0.982456 |

| Kabyle (kab) | 1.000000 | 0.964286 | 0.981818 |

| Kannada (kan) | 0.982143 | 0.982143 | 0.982143 |

| Georgian (kat) | 1.000000 | 0.964286 | 0.981818 |

| Kazakh (kaz) | 0.980769 | 0.980769 | 0.980769 |

| Kabardian (kbd) | 1.000000 | 0.982143 | 0.990991 |

| Central Khmer (khm) | 0.960784 | 0.875000 | 0.915888 |

| Kinyarwanda (kin) | 0.981132 | 0.928571 | 0.954128 |

| Kirghiz (kir) | 1.000000 | 1.000000 | 1.000000 |

| Komi-Permyak (koi) | 0.962264 | 0.910714 | 0.935780 |

| Konkani (kok) | 0.964286 | 0.981818 | 0.972973 |

| Komi (kom) | 1.000000 | 0.962264 | 0.980769 |

| Korean (kor) | 1.000000 | 1.000000 | 1.000000 |

| Karachay-Balkar (krc) | 1.000000 | 0.982143 | 0.990991 |

| Ripuarisch (ksh) | 1.000000 | 0.964286 | 0.981818 |

| Kurdish (kur) | 1.000000 | 0.964286 | 0.981818 |

| Ladino (lad) | 1.000000 | 1.000000 | 1.000000 |

| Lao (lao) | 0.961538 | 0.909091 | 0.934579 |

| Latin (lat) | 0.877193 | 0.943396 | 0.909091 |

| Latvian (lav) | 0.963636 | 0.946429 | 0.954955 |

| Lezghian (lez) | 1.000000 | 0.964286 | 0.981818 |

| Ligurian (lij) | 1.000000 | 0.964286 | 0.981818 |

| Limburgan (lim) | 0.938776 | 1.000000 | 0.968421 |

| Lingala (lin) | 0.980769 | 0.927273 | 0.953271 |

| Lithuanian (lit) | 0.982456 | 1.000000 | 0.991150 |

| Lombard (lmo) | 1.000000 | 1.000000 | 1.000000 |

| Northern Luri (lrc) | 1.000000 | 0.928571 | 0.962963 |

| Latgalian (ltg) | 1.000000 | 0.982143 | 0.990991 |

| Luxembourgish (ltz) | 0.949153 | 1.000000 | 0.973913 |

| Luganda (lug) | 1.000000 | 1.000000 | 1.000000 |

| Literary Chinese (lzh) | 1.000000 | 1.000000 | 1.000000 |

| Maithili (mai) | 0.931034 | 0.964286 | 0.947368 |

| Malayalam (mal) | 1.000000 | 0.982143 | 0.990991 |

| Banyumasan (map-bms) | 0.977778 | 0.785714 | 0.871287 |

| Marathi (mar) | 0.949153 | 1.000000 | 0.973913 |

| Moksha (mdf) | 0.980000 | 0.890909 | 0.933333 |

| Eastern Mari (mhr) | 0.981818 | 0.964286 | 0.972973 |

| Minangkabau (min) | 1.000000 | 1.000000 | 1.000000 |

| Macedonian (mkd) | 1.000000 | 0.981818 | 0.990826 |

| Malagasy (mlg) | 0.981132 | 1.000000 | 0.990476 |

| Maltese (mlt) | 0.982456 | 1.000000 | 0.991150 |

| Min Nan Chinese (nan) | 1.000000 | 1.000000 | 1.000000 |

| Mongolian (mon) | 1.000000 | 0.981818 | 0.990826 |

| Maori (mri) | 1.000000 | 1.000000 | 1.000000 |

| Western Mari (mrj) | 0.982456 | 1.000000 | 0.991150 |

| Malay (msa) | 0.862069 | 0.892857 | 0.877193 |

| Mirandese (mwl) | 1.000000 | 0.982143 | 0.990991 |

| Burmese (mya) | 1.000000 | 1.000000 | 1.000000 |

| Erzya (myv) | 0.818182 | 0.964286 | 0.885246 |

| Mazanderani (mzn) | 0.981481 | 1.000000 | 0.990654 |

| Neapolitan (nap) | 1.000000 | 0.981818 | 0.990826 |

| Navajo (nav) | 1.000000 | 1.000000 | 1.000000 |

| Classical Nahuatl (nci) | 0.981481 | 0.946429 | 0.963636 |

| Low German (nds) | 0.982143 | 0.982143 | 0.982143 |

| West Low German (nds-nl) | 1.000000 | 1.000000 | 1.000000 |

| Nepali (macrolanguage) (nep) | 0.881356 | 0.928571 | 0.904348 |

| Newari (new) | 1.000000 | 0.909091 | 0.952381 |

| Dutch (nld) | 0.982143 | 0.982143 | 0.982143 |

| Norwegian Nynorsk (nno) | 1.000000 | 1.000000 | 1.000000 |

| Bokmål (nob) | 1.000000 | 1.000000 | 1.000000 |

| Narom (nrm) | 0.981818 | 0.964286 | 0.972973 |

| Northern Sotho (nso) | 1.000000 | 1.000000 | 1.000000 |

| Occitan (oci) | 0.903846 | 0.839286 | 0.870370 |

| Livvi-Karelian (olo) | 0.982456 | 1.000000 | 0.991150 |

| Oriya (ori) | 0.964912 | 0.982143 | 0.973451 |

| Oromo (orm) | 0.982143 | 0.982143 | 0.982143 |

| Ossetian (oss) | 0.982143 | 1.000000 | 0.990991 |

| Pangasinan (pag) | 0.980000 | 0.875000 | 0.924528 |

| Pampanga (pam) | 0.928571 | 0.896552 | 0.912281 |

| Panjabi (pan) | 1.000000 | 1.000000 | 1.000000 |

| Papiamento (pap) | 1.000000 | 0.964286 | 0.981818 |

| Picard (pcd) | 0.849057 | 0.849057 | 0.849057 |

| Pennsylvania German (pdc) | 0.854839 | 0.946429 | 0.898305 |

| Palatine German (pfl) | 0.946429 | 0.946429 | 0.946429 |

| Western Panjabi (pnb) | 0.981132 | 0.962963 | 0.971963 |

| Polish (pol) | 0.933333 | 1.000000 | 0.965517 |

| Portuguese (por) | 0.774648 | 0.982143 | 0.866142 |

| Pushto (pus) | 1.000000 | 0.910714 | 0.953271 |

| Quechua (que) | 0.962963 | 0.928571 | 0.945455 |

| Tarantino dialect (roa-tara) | 1.000000 | 0.964286 | 0.981818 |

| Romansh (roh) | 1.000000 | 0.928571 | 0.962963 |

| Romanian (ron) | 0.965517 | 1.000000 | 0.982456 |

| Rusyn (rue) | 0.946429 | 0.946429 | 0.946429 |

| Aromanian (rup) | 0.962963 | 0.928571 | 0.945455 |

| Russian (rus) | 0.859375 | 0.982143 | 0.916667 |

| Yakut (sah) | 1.000000 | 0.982143 | 0.990991 |

| Sanskrit (san) | 0.982143 | 0.982143 | 0.982143 |

| Sicilian (scn) | 1.000000 | 1.000000 | 1.000000 |

| Scots (sco) | 0.982143 | 0.982143 | 0.982143 |

| Samogitian (sgs) | 1.000000 | 0.982143 | 0.990991 |

| Sinhala (sin) | 0.964912 | 0.982143 | 0.973451 |

| Slovak (slk) | 1.000000 | 0.982143 | 0.990991 |

| Slovene (slv) | 1.000000 | 0.981818 | 0.990826 |

| Northern Sami (sme) | 0.962264 | 0.962264 | 0.962264 |

| Shona (sna) | 0.933333 | 1.000000 | 0.965517 |

| Sindhi (snd) | 1.000000 | 1.000000 | 1.000000 |

| Somali (som) | 0.948276 | 1.000000 | 0.973451 |

| Spanish (spa) | 0.739130 | 0.910714 | 0.816000 |

| Albanian (sqi) | 0.982143 | 0.982143 | 0.982143 |

| Sardinian (srd) | 1.000000 | 0.982143 | 0.990991 |

| Sranan (srn) | 1.000000 | 1.000000 | 1.000000 |

| Serbian (srp) | 1.000000 | 0.946429 | 0.972477 |

| Saterfriesisch (stq) | 1.000000 | 0.964286 | 0.981818 |

| Sundanese (sun) | 1.000000 | 0.977273 | 0.988506 |

| Swahili (macrolanguage) (swa) | 1.000000 | 1.000000 | 1.000000 |

| Swedish (swe) | 1.000000 | 1.000000 | 1.000000 |

| Silesian (szl) | 1.000000 | 0.981481 | 0.990654 |

| Tamil (tam) | 0.982143 | 1.000000 | 0.990991 |

| Tatar (tat) | 1.000000 | 1.000000 | 1.000000 |

| Tulu (tcy) | 0.982456 | 1.000000 | 0.991150 |

| Telugu (tel) | 1.000000 | 0.920000 | 0.958333 |

| Tetum (tet) | 1.000000 | 0.964286 | 0.981818 |

| Tajik (tgk) | 1.000000 | 1.000000 | 1.000000 |

| Tagalog (tgl) | 1.000000 | 1.000000 | 1.000000 |

| Thai (tha) | 0.932203 | 0.982143 | 0.956522 |

| Tongan (ton) | 1.000000 | 0.964286 | 0.981818 |

| Tswana (tsn) | 1.000000 | 1.000000 | 1.000000 |

| Turkmen (tuk) | 1.000000 | 0.982143 | 0.990991 |

| Turkish (tur) | 0.901639 | 0.982143 | 0.940171 |

| Tuvan (tyv) | 1.000000 | 0.964286 | 0.981818 |

| Udmurt (udm) | 1.000000 | 0.982143 | 0.990991 |

| Uighur (uig) | 1.000000 | 0.982143 | 0.990991 |

| Ukrainian (ukr) | 0.963636 | 0.946429 | 0.954955 |

| Urdu (urd) | 1.000000 | 0.982143 | 0.990991 |

| Uzbek (uzb) | 1.000000 | 1.000000 | 1.000000 |

| Venetian (vec) | 1.000000 | 0.982143 | 0.990991 |

| Veps (vep) | 0.982456 | 1.000000 | 0.991150 |

| Vietnamese (vie) | 0.964912 | 0.982143 | 0.973451 |

| Vlaams (vls) | 1.000000 | 0.982143 | 0.990991 |

| Volapük (vol) | 1.000000 | 1.000000 | 1.000000 |

| Võro (vro) | 0.964286 | 0.964286 | 0.964286 |

| Waray (war) | 1.000000 | 0.982143 | 0.990991 |

| Walloon (wln) | 1.000000 | 1.000000 | 1.000000 |

| Wolof (wol) | 0.981481 | 0.963636 | 0.972477 |

| Wu Chinese (wuu) | 0.981481 | 0.946429 | 0.963636 |

| Xhosa (xho) | 1.000000 | 0.964286 | 0.981818 |

| Mingrelian (xmf) | 1.000000 | 0.964286 | 0.981818 |

| Yiddish (yid) | 1.000000 | 1.000000 | 1.000000 |

| Yoruba (yor) | 0.964912 | 0.982143 | 0.973451 |

| Zeeuws (zea) | 1.000000 | 0.982143 | 0.990991 |

| Cantonese (zh-yue) | 0.981481 | 0.946429 | 0.963636 |

| Standard Chinese (zho) | 0.932203 | 0.982143 | 0.956522 |

| accuracy | 0.963055 | 0.963055 | 0.963055 |

| macro avg | 0.966424 | 0.963216 | 0.963891 |

| weighted avg | 0.966040 | 0.963055 | 0.963606 |

### By Sentence

| language | precision | recall | f1-score |

|:--------------------------------------:|:---------:|:--------:|:--------:|

| Achinese (ace) | 0.754545 | 0.873684 | 0.809756 |

| Afrikaans (afr) | 0.708955 | 0.940594 | 0.808511 |

| Alemannic German (als) | 0.870130 | 0.752809 | 0.807229 |

| Amharic (amh) | 1.000000 | 0.820000 | 0.901099 |

| Old English (ang) | 0.966667 | 0.906250 | 0.935484 |

| Arabic (ara) | 0.907692 | 0.967213 | 0.936508 |

| Aragonese (arg) | 0.921569 | 0.959184 | 0.940000 |

| Egyptian Arabic (arz) | 0.964286 | 0.843750 | 0.900000 |

| Assamese (asm) | 0.964286 | 0.870968 | 0.915254 |

| Asturian (ast) | 0.880000 | 0.795181 | 0.835443 |

| Avar (ava) | 0.864198 | 0.843373 | 0.853659 |

| Aymara (aym) | 1.000000 | 0.901961 | 0.948454 |

| South Azerbaijani (azb) | 0.979381 | 0.989583 | 0.984456 |

| Azerbaijani (aze) | 0.989899 | 0.960784 | 0.975124 |

| Bashkir (bak) | 0.837209 | 0.857143 | 0.847059 |

| Bavarian (bar) | 0.741935 | 0.766667 | 0.754098 |

| Central Bikol (bcl) | 0.962963 | 0.928571 | 0.945455 |

| Belarusian (Taraschkewiza) (be-tarask) | 0.857143 | 0.733333 | 0.790419 |

| Belarusian (bel) | 0.775510 | 0.752475 | 0.763819 |

| Bengali (ben) | 0.861111 | 0.911765 | 0.885714 |

| Bhojpuri (bho) | 0.965517 | 0.933333 | 0.949153 |

| Banjar (bjn) | 0.891566 | 0.880952 | 0.886228 |

| Tibetan (bod) | 1.000000 | 1.000000 | 1.000000 |

| Bosnian (bos) | 0.375000 | 0.323077 | 0.347107 |

| Bishnupriya (bpy) | 0.986301 | 1.000000 | 0.993103 |

| Breton (bre) | 0.951613 | 0.893939 | 0.921875 |

| Bulgarian (bul) | 0.945055 | 0.877551 | 0.910053 |

| Buryat (bxr) | 0.955556 | 0.843137 | 0.895833 |

| Catalan (cat) | 0.692308 | 0.750000 | 0.720000 |

| Chavacano (cbk) | 0.842857 | 0.641304 | 0.728395 |

| Min Dong (cdo) | 0.972973 | 1.000000 | 0.986301 |

| Cebuano (ceb) | 0.981308 | 0.954545 | 0.967742 |

| Czech (ces) | 0.944444 | 0.915385 | 0.929687 |

| Chechen (che) | 0.875000 | 0.700000 | 0.777778 |

| Cherokee (chr) | 1.000000 | 0.970588 | 0.985075 |

| Chuvash (chv) | 0.875000 | 0.836957 | 0.855556 |

| Central Kurdish (ckb) | 1.000000 | 0.983051 | 0.991453 |

| Cornish (cor) | 0.979592 | 0.969697 | 0.974619 |

| Corsican (cos) | 0.986842 | 0.925926 | 0.955414 |

| Crimean Tatar (crh) | 0.958333 | 0.907895 | 0.932432 |

| Kashubian (csb) | 0.920354 | 0.904348 | 0.912281 |

| Welsh (cym) | 0.971014 | 0.943662 | 0.957143 |

| Danish (dan) | 0.865169 | 0.777778 | 0.819149 |

| German (deu) | 0.721311 | 0.822430 | 0.768559 |

| Dimli (diq) | 0.915966 | 0.923729 | 0.919831 |

| Dhivehi (div) | 1.000000 | 0.991228 | 0.995595 |

| Lower Sorbian (dsb) | 0.898876 | 0.879121 | 0.888889 |

| Doteli (dty) | 0.821429 | 0.638889 | 0.718750 |

| Emilian (egl) | 0.988095 | 0.922222 | 0.954023 |

| Modern Greek (ell) | 0.988636 | 0.966667 | 0.977528 |

| English (eng) | 0.522727 | 0.784091 | 0.627273 |

| Esperanto (epo) | 0.963855 | 0.930233 | 0.946746 |

| Estonian (est) | 0.922222 | 0.873684 | 0.897297 |

| Basque (eus) | 1.000000 | 0.941176 | 0.969697 |

| Extremaduran (ext) | 0.925373 | 0.885714 | 0.905109 |

| Faroese (fao) | 0.855072 | 0.887218 | 0.870849 |

| Persian (fas) | 0.879630 | 0.979381 | 0.926829 |

| Finnish (fin) | 0.952830 | 0.943925 | 0.948357 |

| French (fra) | 0.676768 | 0.943662 | 0.788235 |

| Arpitan (frp) | 0.867925 | 0.807018 | 0.836364 |

| Western Frisian (fry) | 0.956989 | 0.890000 | 0.922280 |

| Friulian (fur) | 1.000000 | 0.857143 | 0.923077 |

| Gagauz (gag) | 0.939024 | 0.802083 | 0.865169 |

| Scottish Gaelic (gla) | 1.000000 | 0.879121 | 0.935673 |

| Irish (gle) | 0.989247 | 0.958333 | 0.973545 |

| Galician (glg) | 0.910256 | 0.922078 | 0.916129 |

| Gilaki (glk) | 0.964706 | 0.872340 | 0.916201 |

| Manx (glv) | 1.000000 | 0.965517 | 0.982456 |

| Guarani (grn) | 0.983333 | 1.000000 | 0.991597 |

| Gujarati (guj) | 1.000000 | 0.991525 | 0.995745 |

| Hakka Chinese (hak) | 0.955224 | 0.955224 | 0.955224 |

| Haitian Creole (hat) | 0.833333 | 0.666667 | 0.740741 |

| Hausa (hau) | 0.936709 | 0.913580 | 0.925000 |

| Serbo-Croatian (hbs) | 0.452830 | 0.410256 | 0.430493 |

| Hebrew (heb) | 0.988235 | 0.976744 | 0.982456 |

| Fiji Hindi (hif) | 0.936709 | 0.840909 | 0.886228 |

| Hindi (hin) | 0.965517 | 0.756757 | 0.848485 |

| Croatian (hrv) | 0.443820 | 0.537415 | 0.486154 |

| Upper Sorbian (hsb) | 0.951613 | 0.830986 | 0.887218 |

| Hungarian (hun) | 0.854701 | 0.909091 | 0.881057 |

| Armenian (hye) | 1.000000 | 0.816327 | 0.898876 |

| Igbo (ibo) | 0.974359 | 0.926829 | 0.950000 |

| Ido (ido) | 0.975000 | 0.987342 | 0.981132 |

| Interlingue (ile) | 0.880597 | 0.921875 | 0.900763 |

| Iloko (ilo) | 0.882353 | 0.821918 | 0.851064 |

| Interlingua (ina) | 0.952381 | 0.895522 | 0.923077 |

| Indonesian (ind) | 0.606383 | 0.695122 | 0.647727 |

| Icelandic (isl) | 0.978261 | 0.882353 | 0.927835 |

| Italian (ita) | 0.910448 | 0.910448 | 0.910448 |

| Jamaican Patois (jam) | 0.988764 | 0.967033 | 0.977778 |

| Javanese (jav) | 0.903614 | 0.862069 | 0.882353 |

| Lojban (jbo) | 0.943878 | 0.929648 | 0.936709 |

| Japanese (jpn) | 1.000000 | 0.764706 | 0.866667 |

| Karakalpak (kaa) | 0.940171 | 0.901639 | 0.920502 |

| Kabyle (kab) | 0.985294 | 0.837500 | 0.905405 |

| Kannada (kan) | 0.975806 | 0.975806 | 0.975806 |

| Georgian (kat) | 0.953704 | 0.903509 | 0.927928 |

| Kazakh (kaz) | 0.934579 | 0.877193 | 0.904977 |

| Kabardian (kbd) | 0.987952 | 0.953488 | 0.970414 |

| Central Khmer (khm) | 0.928571 | 0.829787 | 0.876404 |

| Kinyarwanda (kin) | 0.953125 | 0.938462 | 0.945736 |

| Kirghiz (kir) | 0.927632 | 0.881250 | 0.903846 |

| Komi-Permyak (koi) | 0.750000 | 0.776786 | 0.763158 |

| Konkani (kok) | 0.893491 | 0.872832 | 0.883041 |

| Komi (kom) | 0.734177 | 0.690476 | 0.711656 |

| Korean (kor) | 0.989899 | 0.989899 | 0.989899 |

| Karachay-Balkar (krc) | 0.928571 | 0.917647 | 0.923077 |

| Ripuarisch (ksh) | 0.915789 | 0.896907 | 0.906250 |

| Kurdish (kur) | 0.977528 | 0.935484 | 0.956044 |

| Ladino (lad) | 0.985075 | 0.904110 | 0.942857 |

| Lao (lao) | 0.896552 | 0.812500 | 0.852459 |

| Latin (lat) | 0.741935 | 0.831325 | 0.784091 |

| Latvian (lav) | 0.710526 | 0.878049 | 0.785455 |

| Lezghian (lez) | 0.975309 | 0.877778 | 0.923977 |

| Ligurian (lij) | 0.951807 | 0.897727 | 0.923977 |

| Limburgan (lim) | 0.909091 | 0.921053 | 0.915033 |

| Lingala (lin) | 0.942857 | 0.814815 | 0.874172 |

| Lithuanian (lit) | 0.892857 | 0.925926 | 0.909091 |

| Lombard (lmo) | 0.766234 | 0.951613 | 0.848921 |

| Northern Luri (lrc) | 0.972222 | 0.875000 | 0.921053 |

| Latgalian (ltg) | 0.895349 | 0.865169 | 0.880000 |

| Luxembourgish (ltz) | 0.882353 | 0.750000 | 0.810811 |

| Luganda (lug) | 0.946429 | 0.883333 | 0.913793 |

| Literary Chinese (lzh) | 1.000000 | 1.000000 | 1.000000 |

| Maithili (mai) | 0.893617 | 0.823529 | 0.857143 |

| Malayalam (mal) | 1.000000 | 0.975000 | 0.987342 |

| Banyumasan (map-bms) | 0.924242 | 0.772152 | 0.841379 |

| Marathi (mar) | 0.874126 | 0.919118 | 0.896057 |

| Moksha (mdf) | 0.771242 | 0.830986 | 0.800000 |

| Eastern Mari (mhr) | 0.820000 | 0.860140 | 0.839590 |

| Minangkabau (min) | 0.973684 | 0.973684 | 0.973684 |

| Macedonian (mkd) | 0.895652 | 0.953704 | 0.923767 |

| Malagasy (mlg) | 1.000000 | 0.966102 | 0.982759 |

| Maltese (mlt) | 0.987952 | 0.964706 | 0.976190 |

| Min Nan Chinese (nan) | 0.975000 | 1.000000 | 0.987342 |

| Mongolian (mon) | 0.954545 | 0.933333 | 0.943820 |

| Maori (mri) | 0.985294 | 1.000000 | 0.992593 |

| Western Mari (mrj) | 0.966292 | 0.914894 | 0.939891 |

| Malay (msa) | 0.770270 | 0.695122 | 0.730769 |

| Mirandese (mwl) | 0.970588 | 0.891892 | 0.929577 |

| Burmese (mya) | 1.000000 | 0.964286 | 0.981818 |

| Erzya (myv) | 0.535714 | 0.681818 | 0.600000 |

| Mazanderani (mzn) | 0.968750 | 0.898551 | 0.932331 |

| Neapolitan (nap) | 0.892308 | 0.865672 | 0.878788 |

| Navajo (nav) | 0.984375 | 0.984375 | 0.984375 |

| Classical Nahuatl (nci) | 0.901408 | 0.761905 | 0.825806 |

| Low German (nds) | 0.896226 | 0.913462 | 0.904762 |

| West Low German (nds-nl) | 0.873563 | 0.835165 | 0.853933 |

| Nepali (macrolanguage) (nep) | 0.704545 | 0.861111 | 0.775000 |

| Newari (new) | 0.920000 | 0.741935 | 0.821429 |

| Dutch (nld) | 0.925926 | 0.872093 | 0.898204 |

| Norwegian Nynorsk (nno) | 0.847059 | 0.808989 | 0.827586 |

| Bokmål (nob) | 0.861386 | 0.852941 | 0.857143 |

| Narom (nrm) | 0.966667 | 0.983051 | 0.974790 |

| Northern Sotho (nso) | 0.897436 | 0.921053 | 0.909091 |

| Occitan (oci) | 0.958333 | 0.696970 | 0.807018 |

| Livvi-Karelian (olo) | 0.967742 | 0.937500 | 0.952381 |

| Oriya (ori) | 0.933333 | 1.000000 | 0.965517 |

| Oromo (orm) | 0.977528 | 0.915789 | 0.945652 |

| Ossetian (oss) | 0.958333 | 0.841463 | 0.896104 |

| Pangasinan (pag) | 0.847328 | 0.909836 | 0.877470 |

| Pampanga (pam) | 0.969697 | 0.780488 | 0.864865 |

| Panjabi (pan) | 1.000000 | 1.000000 | 1.000000 |

| Papiamento (pap) | 0.876190 | 0.920000 | 0.897561 |

| Picard (pcd) | 0.707317 | 0.568627 | 0.630435 |

| Pennsylvania German (pdc) | 0.827273 | 0.827273 | 0.827273 |

| Palatine German (pfl) | 0.882353 | 0.914634 | 0.898204 |

| Western Panjabi (pnb) | 0.964286 | 0.931034 | 0.947368 |

| Polish (pol) | 0.859813 | 0.910891 | 0.884615 |

| Portuguese (por) | 0.535714 | 0.833333 | 0.652174 |

| Pushto (pus) | 0.989362 | 0.902913 | 0.944162 |

| Quechua (que) | 0.979167 | 0.903846 | 0.940000 |

| Tarantino dialect (roa-tara) | 0.964912 | 0.901639 | 0.932203 |

| Romansh (roh) | 0.914894 | 0.895833 | 0.905263 |

| Romanian (ron) | 0.880597 | 0.880597 | 0.880597 |

| Rusyn (rue) | 0.932584 | 0.805825 | 0.864583 |

| Aromanian (rup) | 0.783333 | 0.758065 | 0.770492 |

| Russian (rus) | 0.517986 | 0.765957 | 0.618026 |

| Yakut (sah) | 0.954023 | 0.922222 | 0.937853 |

| Sanskrit (san) | 0.866667 | 0.951220 | 0.906977 |

| Sicilian (scn) | 0.984375 | 0.940299 | 0.961832 |

| Scots (sco) | 0.851351 | 0.900000 | 0.875000 |

| Samogitian (sgs) | 0.977011 | 0.876289 | 0.923913 |

| Sinhala (sin) | 0.406154 | 0.985075 | 0.575163 |

| Slovak (slk) | 0.956989 | 0.872549 | 0.912821 |

| Slovene (slv) | 0.907216 | 0.854369 | 0.880000 |

| Northern Sami (sme) | 0.949367 | 0.892857 | 0.920245 |

| Shona (sna) | 0.936508 | 0.855072 | 0.893939 |

| Sindhi (snd) | 0.984962 | 0.992424 | 0.988679 |

| Somali (som) | 0.949153 | 0.848485 | 0.896000 |

| Spanish (spa) | 0.584158 | 0.746835 | 0.655556 |

| Albanian (sqi) | 0.988095 | 0.912088 | 0.948571 |

| Sardinian (srd) | 0.957746 | 0.931507 | 0.944444 |

| Sranan (srn) | 0.985714 | 0.945205 | 0.965035 |

| Serbian (srp) | 0.950980 | 0.889908 | 0.919431 |

| Saterfriesisch (stq) | 0.962500 | 0.875000 | 0.916667 |

| Sundanese (sun) | 0.778846 | 0.910112 | 0.839378 |

| Swahili (macrolanguage) (swa) | 0.915493 | 0.878378 | 0.896552 |

| Swedish (swe) | 0.989247 | 0.958333 | 0.973545 |

| Silesian (szl) | 0.944444 | 0.904255 | 0.923913 |

| Tamil (tam) | 0.990000 | 0.970588 | 0.980198 |

| Tatar (tat) | 0.942029 | 0.902778 | 0.921986 |

| Tulu (tcy) | 0.980519 | 0.967949 | 0.974194 |

| Telugu (tel) | 0.965986 | 0.965986 | 0.965986 |

| Tetum (tet) | 0.898734 | 0.855422 | 0.876543 |

| Tajik (tgk) | 0.974684 | 0.939024 | 0.956522 |

| Tagalog (tgl) | 0.965909 | 0.934066 | 0.949721 |

| Thai (tha) | 0.923077 | 0.882353 | 0.902256 |

| Tongan (ton) | 0.970149 | 0.890411 | 0.928571 |

| Tswana (tsn) | 0.888889 | 0.926316 | 0.907216 |

| Turkmen (tuk) | 0.968000 | 0.889706 | 0.927203 |

| Turkish (tur) | 0.871287 | 0.926316 | 0.897959 |

| Tuvan (tyv) | 0.948454 | 0.859813 | 0.901961 |

| Udmurt (udm) | 0.989362 | 0.894231 | 0.939394 |

| Uighur (uig) | 1.000000 | 0.953333 | 0.976109 |

| Ukrainian (ukr) | 0.893617 | 0.875000 | 0.884211 |

| Urdu (urd) | 1.000000 | 1.000000 | 1.000000 |

| Uzbek (uzb) | 0.636042 | 0.886700 | 0.740741 |

| Venetian (vec) | 1.000000 | 0.941176 | 0.969697 |

| Veps (vep) | 0.858586 | 0.965909 | 0.909091 |

| Vietnamese (vie) | 1.000000 | 0.940476 | 0.969325 |

| Vlaams (vls) | 0.885714 | 0.898551 | 0.892086 |

| Volapük (vol) | 0.975309 | 0.975309 | 0.975309 |

| Võro (vro) | 0.855670 | 0.864583 | 0.860104 |

| Waray (war) | 0.972222 | 0.909091 | 0.939597 |

| Walloon (wln) | 0.742138 | 0.893939 | 0.810997 |

| Wolof (wol) | 0.882979 | 0.954023 | 0.917127 |

| Wu Chinese (wuu) | 0.961538 | 0.833333 | 0.892857 |

| Xhosa (xho) | 0.934066 | 0.867347 | 0.899471 |

| Mingrelian (xmf) | 0.958333 | 0.929293 | 0.943590 |

| Yiddish (yid) | 0.984375 | 0.875000 | 0.926471 |

| Yoruba (yor) | 0.868421 | 0.857143 | 0.862745 |

| Zeeuws (zea) | 0.879518 | 0.793478 | 0.834286 |

| Cantonese (zh-yue) | 0.896552 | 0.812500 | 0.852459 |

| Standard Chinese (zho) | 0.906250 | 0.935484 | 0.920635 |

| accuracy | 0.881051 | 0.881051 | 0.881051 |

| macro avg | 0.903245 | 0.880618 | 0.888996 |

| weighted avg | 0.894174 | 0.881051 | 0.884520 |

### By Token (3 to 5)

| language | precision | recall | f1-score |

|:--------------------------------------:|:---------:|:--------:|:--------:|

| Achinese (ace) | 0.873846 | 0.827988 | 0.850299 |

| Afrikaans (afr) | 0.638060 | 0.732334 | 0.681954 |

| Alemannic German (als) | 0.673780 | 0.547030 | 0.603825 |

| Amharic (amh) | 0.997743 | 0.954644 | 0.975717 |

| Old English (ang) | 0.840816 | 0.693603 | 0.760148 |

| Arabic (ara) | 0.768737 | 0.840749 | 0.803132 |

| Aragonese (arg) | 0.493671 | 0.505181 | 0.499360 |

| Egyptian Arabic (arz) | 0.823529 | 0.741935 | 0.780606 |

| Assamese (asm) | 0.948454 | 0.893204 | 0.920000 |

| Asturian (ast) | 0.490000 | 0.508299 | 0.498982 |