license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['generated_from_trainer'] | false | t5-small-devices-sum-ver2 This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.3679 - Rouge1: 90.6465 - Rouge2: 65.2833 - Rougel: 90.6707 - Rougelsum: 90.7313 - Gen Len: 4.4702 | d2191e8b86ffb82a18b402eb774f2f0d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | No log | 1.0 | 91 | 1.0957 | 58.9566 | 33.4113 | 58.8004 | 58.8863 | 4.8308 | | No log | 2.0 | 182 | 0.7017 | 78.9566 | 49.9716 | 78.9338 | 78.9643 | 4.3329 | | No log | 3.0 | 273 | 0.5386 | 84.8786 | 56.9622 | 84.8204 | 84.9117 | 4.4577 | | No log | 4.0 | 364 | 0.4693 | 87.9792 | 61.0779 | 87.8795 | 88.0098 | 4.4383 | | No log | 5.0 | 455 | 0.4273 | 89.4667 | 63.1994 | 89.4169 | 89.5197 | 4.4743 | | 1.0586 | 6.0 | 546 | 0.4002 | 89.6456 | 63.5041 | 89.6062 | 89.7042 | 4.4452 | | 1.0586 | 7.0 | 637 | 0.3848 | 89.9993 | 64.2505 | 89.9775 | 90.0651 | 4.423 | | 1.0586 | 8.0 | 728 | 0.3752 | 90.4249 | 64.819 | 90.4434 | 90.5111 | 4.4799 | | 1.0586 | 9.0 | 819 | 0.3703 | 90.4689 | 65.0086 | 90.4954 | 90.5632 | 4.4632 | | 1.0586 | 10.0 | 910 | 0.3679 | 90.6465 | 65.2833 | 90.6707 | 90.7313 | 4.4702 | | 8c66981eab272e1bdf3d4e4e1f2c1f5e |

apache-2.0 | ['generated_from_trainer'] | false | classification_text_model This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2001 - Accuracy: 0.9334 | e2995c3b1ccdd7fdefdd01eee660774e |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 2 | 0f75bac684d9661a2a6e7c631857be26 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.2056 | 1.0 | 1000 | 0.1771 | 0.9313 | | 0.1283 | 2.0 | 2000 | 0.2001 | 0.9334 | | ec810532e85f2a7a0a75a7bd4fb195bf |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | saqib_14_dec Dreambooth model trained by imjunaidafzal with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | 069afb5ef6da43788bb5907aa02ad4bb |

mit | ['roberta-base', 'roberta-base-epoch_53'] | false | RoBERTa, Intermediate Checkpoint - Epoch 53 This model is part of our reimplementation of the [RoBERTa model](https://arxiv.org/abs/1907.11692), trained on Wikipedia and the Book Corpus only. We train this model for almost 100K steps, corresponding to 83 epochs. We provide the 84 checkpoints (including the randomly initialized weights before the training) to provide the ability to study the training dynamics of such models, and other possible use-cases. These models were trained in part of a work that studies how simple statistics from data, such as co-occurrences affects model predictions, which are described in the paper [Measuring Causal Effects of Data Statistics on Language Model's `Factual' Predictions](https://arxiv.org/abs/2207.14251). This is RoBERTa-base epoch_53. | 33299cadfc917609ac2040282b1667eb |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | minicooper Dreambooth model trained by bondarchukb with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | 63fcd87151df891db8a33f7029d8141c |

apache-2.0 | ['translation'] | false | opus-mt-pa-en * source languages: pa * target languages: en * OPUS readme: [pa-en](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/pa-en/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/pa-en/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/pa-en/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/pa-en/opus-2020-01-16.eval.txt) | c8a8bcb7baf6dcecc85484e227f23c7e |

creativeml-openrail-m | [] | false | Just finetuned [DrBob2142's](https://huggingface.co/DrBob2142) [MidnightMix model](https://huggingface.co/DrBob2142/Mix-Models/blob/main/Midnight%20Mix.safetensors) Usable model Recipe: (Add Difference 1)MitoAzXEP62 + F222 + S.D. 1.4 = MitoMix (Weighted Sum 0.3) MitoMix + Blossom-extract = MitoExtract (Weighted Sum 0.4) MitoExtract + MitoAzXEP62 = MitoAzXMixedModel New mixes have about ~10 my finetuned models and ~6 "third-party" models like : Blossom extract, [Nuigurumi's](https://huggingface.co/nuigurumi) basil_mix, [WarriorMama777's](https://huggingface.co/WarriorMama777) AbyssOrangeMix2, ChinaBerry,[DrBob2142's](https://huggingface.co/DrBob2142) mixes | 184f2d53492bc7279e5d0b701a20d222 |

apache-2.0 | ['seq2seq', 'lm-head'] | false | Italian T5 Small 🇮🇹 The [IT5](https://huggingface.co/models?search=it5) model family represents the first effort in pretraining large-scale sequence-to-sequence transformer models for the Italian language, following the approach adopted by the original [T5 model](https://github.com/google-research/text-to-text-transfer-transformer). This model is released as part of the project ["IT5: Large-Scale Text-to-Text Pretraining for Italian Language Understanding and Generation"](https://arxiv.org/abs/2203.03759), by [Gabriele Sarti](https://gsarti.com/) and [Malvina Nissim](https://malvinanissim.github.io/) with the support of [Huggingface](https://discuss.huggingface.co/t/open-to-the-community-community-week-using-jax-flax-for-nlp-cv/7104) and with TPU usage sponsored by Google's [TPU Research Cloud](https://sites.research.google/trc/). All the training was conducted on a single TPU3v8-VM machine on Google Cloud. Refer to the Tensorboard tab of the repository for an overview of the training process. *The inference widget is deactivated because the model needs a task-specific seq2seq fine-tuning on a downstream task to be useful in practice. The models in the [`it5`](https://huggingface.co/it5) organization provide some examples of this model fine-tuned on various downstream task.* | 636339f2d4d190a41e9a62337b43ff75 |

apache-2.0 | ['seq2seq', 'lm-head'] | false | Model variants This repository contains the checkpoints for the `base` version of the model. The model was trained for one epoch (1.05M steps) on the [Thoroughly Cleaned Italian mC4 Corpus](https://huggingface.co/datasets/gsarti/clean_mc4_it) (~41B words, ~275GB) using 🤗 Datasets and the `google/t5-v1_1-small` improved configuration. The training procedure is made available [on Github](https://github.com/gsarti/t5-flax-gcp). The following table summarizes the parameters for all available models | |`it5-small` (this one) |`it5-base` |`it5-large` |`it5-base-oscar` | |-----------------------|-----------------------|----------------------|-----------------------|----------------------------------| |`dataset` |`gsarti/clean_mc4_it` |`gsarti/clean_mc4_it` |`gsarti/clean_mc4_it` |`oscar/unshuffled_deduplicated_it`| |`architecture` |`google/t5-v1_1-small` |`google/t5-v1_1-base` |`google/t5-v1_1-large` |`t5-base` | |`learning rate` | 5e-3 | 5e-3 | 5e-3 | 1e-2 | |`steps` | 1'050'000 | 1'050'000 | 2'100'000 | 258'000 | |`training time` | 36 hours | 101 hours | 370 hours | 98 hours | |`ff projection` |`gated-gelu` |`gated-gelu` |`gated-gelu` |`relu` | |`tie embeds` |`false` |`false` |`false` |`true` | |`optimizer` | adafactor | adafactor | adafactor | adafactor | |`max seq. length` | 512 | 512 | 512 | 512 | |`per-device batch size`| 16 | 16 | 8 | 16 | |`tot. batch size` | 128 | 128 | 64 | 128 | |`weigth decay` | 1e-3 | 1e-3 | 1e-2 | 1e-3 | |`validation split size`| 15K examples | 15K examples | 15K examples | 15K examples | The high training time of `it5-base-oscar` was due to [a bug](https://github.com/huggingface/transformers/pull/13012) in the training script. For a list of individual model parameters, refer to the `config.json` file in the respective repositories. | 70c45c6843eb911748a2e871fe569f9d |

apache-2.0 | ['seq2seq', 'lm-head'] | false | Using the models ```python from transformers import AutoTokenzier, AutoModelForSeq2SeqLM tokenizer = AutoTokenizer.from_pretrained("gsarti/it5-small") model = AutoModelForSeq2SeqLM.from_pretrained("gsarti/it5-small") ``` *Note: You will need to fine-tune the model on your downstream seq2seq task to use it. See an example [here](https://huggingface.co/it5/it5-base-question-answering).* Flax and Tensorflow versions of the model are also available: ```python from transformers import FlaxT5ForConditionalGeneration, TFT5ForConditionalGeneration model_flax = FlaxT5ForConditionalGeneration.from_pretrained("gsarti/it5-small") model_tf = TFT5ForConditionalGeneration.from_pretrained("gsarti/it5-small") ``` | 9e0df8bd38e66530d630a8b26a8856d3 |

apache-2.0 | ['seq2seq', 'lm-head'] | false | Limitations Due to the nature of the web-scraped corpus on which IT5 models were trained, it is likely that their usage could reproduce and amplify pre-existing biases in the data, resulting in potentially harmful content such as racial or gender stereotypes and conspiracist views. For this reason, the study of such biases is explicitly encouraged, and model usage should ideally be restricted to research-oriented and non-user-facing endeavors. | 5b8545f69150746beb7d59c8cd30b003 |

apache-2.0 | ['seq2seq', 'lm-head'] | false | Citation Information ```bibtex @article{sarti-nissim-2022-it5, title={IT5: Large-scale Text-to-text Pretraining for Italian Language Understanding and Generation}, author={Sarti, Gabriele and Nissim, Malvina}, journal={ArXiv preprint 2203.03759}, url={https://arxiv.org/abs/2203.03759}, year={2022}, month={mar} } ``` | 2997866c608476cf6bfcf1690f3edd90 |

apache-2.0 | ['generated_from_trainer'] | false | testarbaraz This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad dataset. It achieves the following results on the evaluation set: - Loss: 1.2153 | 040df2ed996ad5f8569f857ff0d5c837 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 | 56e4b88c6197de8069c944f6ce8108a6 |

mit | ['generated_from_keras_callback'] | false | SimQA-roberta-base This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.1454 - Epoch: 2 | 2a66c99a31b153f79e12def29d533c5b |

mit | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 597, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: mixed_float16 | c1a5b0a4cfecee64e2ea2ea94048ad12 |

apache-2.0 | ['generated_from_keras_callback'] | false | tf-bert-finetuned-squad This model is a fine-tuned version of [peterhsu/tf-bert-finetuned-squad](https://huggingface.co/peterhsu/tf-bert-finetuned-squad) on an unknown dataset. It achieves the following results on the evaluation set: | c3bf2c338e1350c2a739b035c2a6a284 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'inner_optimizer': {'class_name': 'AdamWeightDecay', 'config': {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 16635, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01}}, 'dynamic': True, 'initial_scale': 32768.0, 'dynamic_growth_steps': 2000} - training_precision: mixed_float16 | f2ff20abeed5b0e8ad10c020bb7053a7 |

apache-2.0 | [] | false | 90% Sparse DistilBERT-Base (uncased) Prune OFA This model is a result from our paper [Prune Once for All: Sparse Pre-Trained Language Models](https://arxiv.org/abs/2111.05754) presented in ENLSP NeurIPS Workshop 2021. For further details on the model and its result, see our paper and our implementation available [here](https://github.com/IntelLabs/Model-Compression-Research-Package/tree/main/research/prune-once-for-all). | e73b50b58130cac9f5ebc1e29faedabc |

apache-2.0 | ['generated_from_trainer'] | false | whisper-medium-finetuned-on-fleurs-ln_cd1 This model is a fine-tuned version of [openai/whisper-medium](https://huggingface.co/openai/whisper-medium) on the "google/fleurs" "ln_cd" subset dataset. It achieves the following results on the evaluation set: - Loss: 0.4483 - Wer: 14.7079 | bba28ec318a50fde901e80ed13acbb03 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 2 - eval_batch_size: 2 - seed: 42 - gradient_accumulation_steps: 8 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 4000 - mixed_precision_training: Native AMP | 4e616256352dd66bd9119bd10ae23e6f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.0528 | 4.78 | 1000 | 0.3612 | 17.4812 | | 0.0013 | 9.57 | 2000 | 0.4214 | 15.7308 | | 0.0003 | 14.35 | 3000 | 0.4423 | 14.8670 | | 0.0002 | 19.14 | 4000 | 0.4483 | 14.7079 | | 4c0c696e37ae8bfa1a2586a3c4519657 |

apache-2.0 | ['speech', 'xls_r', 'automatic-speech-recognition', 'xls_r_translation'] | false | Wav2Vec2-XLS-R-2B-EN-15 Facebook's Wav2Vec2 XLS-R fine-tuned for **Speech Translation.**  This is a [SpeechEncoderDecoderModel](https://huggingface.co/transformers/model_doc/speechencoderdecoder.html) model. The encoder was warm-started from the [**`facebook/wav2vec2-xls-r-2b`**](https://huggingface.co/facebook/wav2vec2-xls-r-2b) checkpoint and the decoder from the [**`facebook/mbart-large-50`**](https://huggingface.co/facebook/mbart-large-50) checkpoint. Consequently, the encoder-decoder model was fine-tuned on 15 `en` -> `{lang}` translation pairs of the [Covost2 dataset](https://huggingface.co/datasets/covost2). The model can translate from spoken `en` (Engish) to the following written languages `{lang}`: `en` -> {`de`, `tr`, `fa`, `sv-SE`, `mn`, `zh-CN`, `cy`, `ca`, `sl`, `et`, `id`, `ar`, `ta`, `lv`, `ja`} For more information, please refer to Section *5.1.1* of the [official XLS-R paper](https://arxiv.org/abs/2111.09296). | 069993e6802877cc05fffca6c4dcf080 |

apache-2.0 | ['speech', 'xls_r', 'automatic-speech-recognition', 'xls_r_translation'] | false | Demo The model can be tested on [**this space**](https://huggingface.co/spaces/facebook/XLS-R-2B-EN-15). You can select the target language, record some audio in English, and then sit back and see how well the checkpoint can translate the input. | 7e1ac81801a427ec2e5412de516b59f8 |

apache-2.0 | ['speech', 'xls_r', 'automatic-speech-recognition', 'xls_r_translation'] | false | Example As this a standard sequence to sequence transformer model, you can use the `generate` method to generate the transcripts by passing the speech features to the model. You can use the model directly via the ASR pipeline. By default, the checkpoint will translate spoken English to written German. To change the written target language, you need to pass the correct `forced_bos_token_id` to `generate(...)` to condition the decoder on the correct target language. To select the correct `forced_bos_token_id` given your choosen language id, please make use of the following mapping: ```python MAPPING = { "de": 250003, "tr": 250023, "fa": 250029, "sv": 250042, "mn": 250037, "zh": 250025, "cy": 250007, "ca": 250005, "sl": 250052, "et": 250006, "id": 250032, "ar": 250001, "ta": 250044, "lv": 250017, "ja": 250012, } ``` As an example, if you would like to translate to Swedish, you can do the following: ```python from datasets import load_dataset from transformers import pipeline | 630e114fb1219996c6b47800e50b712a |

apache-2.0 | ['speech', 'xls_r', 'automatic-speech-recognition', 'xls_r_translation'] | false | replace following lines to load an audio file of your choice librispeech_en = load_dataset("patrickvonplaten/librispeech_asr_dummy", "clean", split="validation") audio_file = librispeech_en[0]["file"] asr = pipeline("automatic-speech-recognition", model="facebook/wav2vec2-xls-r-2b-en-to-15", feature_extractor="facebook/wav2vec2-xls-r-2b-en-to-15") translation = asr(audio_file, forced_bos_token_id=forced_bos_token_id) ``` or step-by-step as follows: ```python import torch from transformers import Speech2Text2Processor, SpeechEncoderDecoderModel from datasets import load_dataset model = SpeechEncoderDecoderModel.from_pretrained("facebook/wav2vec2-xls-r-2b-en-to-15") processor = Speech2Text2Processor.from_pretrained("facebook/wav2vec2-xls-r-2b-en-to-15") ds = load_dataset("patrickvonplaten/librispeech_asr_dummy", "clean", split="validation") | 64742fde43f0a4dc1a4cec23345e7be8 |

apache-2.0 | ['speech', 'xls_r', 'automatic-speech-recognition', 'xls_r_translation'] | false | select correct `forced_bos_token_id` forced_bos_token_id = MAPPING["sv"] inputs = processor(ds[0]["audio"]["array"], sampling_rate=ds[0]["audio"]["array"]["sampling_rate"], return_tensors="pt") generated_ids = model.generate(input_ids=inputs["input_features"], attention_mask=inputs["attention_mask"], forced_bos_token_id=forced_bos_token) transcription = processor.batch_decode(generated_ids) ``` | 4ba084999e59becf907eb6edd94c357e |

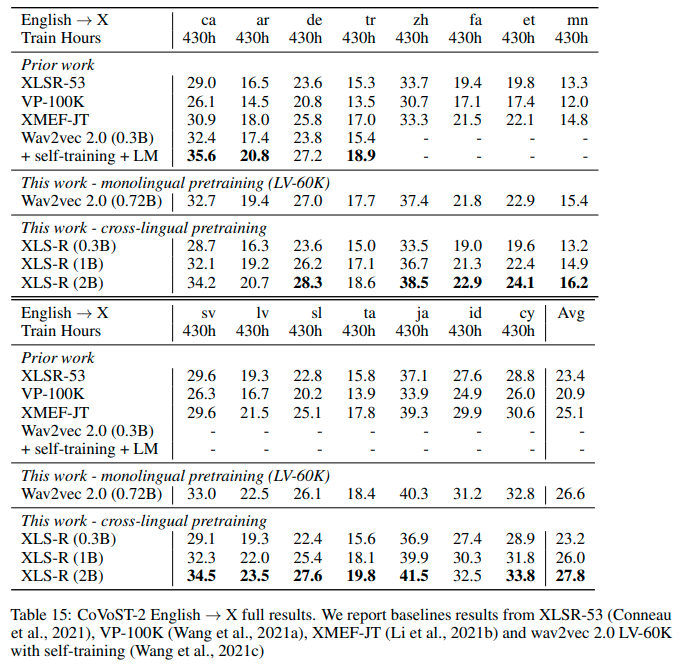

apache-2.0 | ['speech', 'xls_r', 'automatic-speech-recognition', 'xls_r_translation'] | false | Results `en` -> `{lang}` See the row of **XLS-R (2B)** for the performance on [Covost2](https://huggingface.co/datasets/covost2) for this model.  | 2ff375b67b4245c0bd5cc46b2c10a833 |

apache-2.0 | ['speech', 'xls_r', 'automatic-speech-recognition', 'xls_r_translation'] | false | More XLS-R models for `{lang}` -> `en` Speech Translation - [Wav2Vec2-XLS-R-300M-EN-15](https://huggingface.co/facebook/wav2vec2-xls-r-300m-en-to-15) - [Wav2Vec2-XLS-R-1B-EN-15](https://huggingface.co/facebook/wav2vec2-xls-r-1b-en-to-15) - [Wav2Vec2-XLS-R-2B-EN-15](https://huggingface.co/facebook/wav2vec2-xls-r-2b-en-to-15) - [Wav2Vec2-XLS-R-2B-22-16](https://huggingface.co/facebook/wav2vec2-xls-r-2b-22-to-16) | 0f1b0f4237ff17cb070569ddb017cc8a |

apache-2.0 | ['bert'] | false | Chinese Kowledge-enhanced BERT (CKBERT) Knowledge-enhanced pre-trained language models (KEPLMs) improve context-aware representations via learning from structured relations in knowledge graphs, and/or linguistic knowledge from syntactic or dependency analysis. Unlike English, there is a lack of high-performing open-source Chinese KEPLMs in the natural language processing (NLP) community to support various language understanding applications. For Chinese natural language processing, we provide three **Chinese Kowledge-enhanced BERT (CKBERT)** models named **pai-ckbert-bert-zh**, **pai-ckbert-large-zh** and **pai-ckbert-huge-zh**, from our **EMNLP 2022** paper named **Revisiting and Advancing Chinese Natural Language Understanding with Accelerated Heterogeneous Knowledge Pre-training**. This repository is developed based on the EasyNLP framework: [https://github.com/alibaba/EasyNLP](https://github.com/alibaba/EasyNLP ) | 7ab6181071be2370afa2adb11a467dd6 |

apache-2.0 | ['bert'] | false | Citation If you find the resource is useful, please cite the following papers in your work. - For the EasyNLP framework: ``` @article{easynlp, title = {EasyNLP: A Comprehensive and Easy-to-use Toolkit for Natural Language Processing}, author = {Wang, Chengyu and Qiu, Minghui and Zhang, Taolin and Liu, Tingting and Li, Lei and Wang, Jianing and Wang, Ming and Huang, Jun and Lin, Wei}, publisher = {arXiv}, url = {https://arxiv.org/abs/2205.00258}, year = {2022} } ``` - For CKBERT: ``` @article{ckbert, title = {Revisiting and Advancing Chinese Natural Language Understanding with Accelerated Heterogeneous Knowledge Pre-training}, author = {Zhang, Taolin and Dong, Junwei and Wang, Jianing and Wang, Chengyu and Wang, An and Liu, Yinghui and Huang, Jun and Li, Yong and He, Xiaofeng}, publisher = {EMNLP}, url = {https://arxiv.org/abs/2210.05287}, year = {2022} } ``` | 268db7a014ce7ef42e987ce9f0cb4f50 |

apache-2.0 | ['setfit', 'sentence-transformers', 'text-classification'] | false | fathyshalab/massive_play-roberta-large-v1-2-0.64 This is a [SetFit model](https://github.com/huggingface/setfit) that can be used for text classification. The model has been trained using an efficient few-shot learning technique that involves: 1. Fine-tuning a [Sentence Transformer](https://www.sbert.net) with contrastive learning. 2. Training a classification head with features from the fine-tuned Sentence Transformer. | d8fbda0ebaff3d02ae613e91db98238e |

apache-2.0 | ['transformers', 'mit', 'robert', 'uzrobert', 'uzbek', 'cyrillic', 'latin'] | false | <p><b>UzRoBerta model.</b> Pre-prepared model in Uzbek (Cyrillic and latin script) to model the masked language and predict the next sentences. <p><b>How to use.</b> You can use this model directly with a pipeline for masked language modeling: <pre><code class="language-python"> from transformers import pipeline unmasker = pipeline('fill-mask', model='rifkat/uztext-3Gb-BPE-Roberta') unmasker("Алишер Навоий – улуғ ўзбек ва бошқа туркий халқларнинг [mask], мутафаккири ва давлат арбоби бўлган.") [{'score': 0.5902208685874939, 'sequence': 'Алишер Навоий – улуғ ўзбек ва бошқа туркий халқларнинг шоири, мутафаккири ва давлат арбоби бўлган.', 'token': 28809, 'token_str': ' шоири'}, {'score': 0.08303504437208176, 'sequence': 'Алишер Навоий – улуғ ўзбек ва бошқа туркий халқларнинг устози, мутафаккири ва давлат арбоби бўлган.', 'token': 17484, 'token_str': ' устози'}, {'score': 0.035882771015167236, 'sequence': 'Алишер Навоий – улуғ ўзбек ва бошқа туркий халқларнинг арбоби, мутафаккири ва давлат арбоби бўлган.', 'token': 34552, 'token_str': ' арбоби'}, {'score': 0.03447483479976654, 'sequence': 'Алишер Навоий – улуғ ўзбек ва бошқа туркий халқларнинг асосчиси, мутафаккири ва давлат арбоби бўлган.', 'token': 14034, 'token_str': ' асосчиси'}, {'score': 0.03044942207634449, 'sequence': 'Алишер Навоий – улуғ ўзбек ва бошқа туркий халқларнинг дўсти, мутафаккири ва давлат арбоби бўлган.', 'token': 28100, 'token_str': ' дўсти'}] unmasker("Kuchli yomg‘irlar tufayli bir qator [mask] kuchli sel oqishi kuzatildi.") [{'score': 0.410250186920166, 'sequence': 'Kuchli yomg‘irlar tufayli bir qator hududlarda kuchli sel oqishi kuzatildi.', 'token': 11009, 'token_str': ' hududlarda'}, {'score': 0.2023029774427414, 'sequence': 'Kuchli yomg‘irlar tufayli bir qator tumanlarda kuchli sel oqishi kuzatildi.', 'token': 35370, 'token_str': ' tumanlarda'}, {'score': 0.129830002784729, 'sequence': 'Kuchli yomg‘irlar tufayli bir qator viloyatlarda kuchli sel oqishi kuzatildi.', 'token': 33584, 'token_str': ' viloyatlarda'}, {'score': 0.04539087787270546, 'sequence': 'Kuchli yomg‘irlar tufayli bir qator mamlakatlarda kuchli sel oqishi kuzatildi.', 'token': 19315, 'token_str': ' mamlakatlarda'}, {'score': 0.0369882769882679, 'sequence': 'Kuchli yomg‘irlar tufayli bir qator joylarda kuchli sel oqishi kuzatildi.', 'token': 5853, 'token_str': ' joylarda'}] </code></pre> <p><b>Training data.</b> UzBERT model was pretrained on ≈2M news articles (≈3Gb). <pre><code class="language-python"> @misc {rifkat_davronov_2022, author = { {Adilova Fatima,Rifkat Davronov, Samariddin Kushmuratov, Ruzmat Safarov} }, title = { uztext-3Gb-BPE-Roberta (Revision 0c87494) }, year = 2022, url = { https://huggingface.co/rifkat/uztext-3Gb-BPE-Roberta }, doi = { 10.57967/hf/0140 }, publisher = { Hugging Face } } </code></pre> | 4fd514e522a2c857e2a0e0a35c5d1447 |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-sentiment-model-3000-samples This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.3489 - Accuracy: 0.8533 - F1: 0.8543 | 0f6ec57afb738d3b3b0e4417e93f2fed |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-fr This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the PAN-X dataset. The model is trained in Chapter 4: Multilingual Named Entity Recognition in the [NLP with Transformers book](https://learning.oreilly.com/library/view/natural-language-processing/9781098103231/). You can find the full code in the accompanying [Github repository](https://github.com/nlp-with-transformers/notebooks/blob/main/04_multilingual-ner.ipynb). It achieves the following results on the evaluation set: - Loss: 0.2772 - F1: 0.8455 | 0252945be7f2e876d10a7eae742d5c30 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.562 | 1.0 | 191 | 0.3183 | 0.7828 | | 0.2697 | 2.0 | 382 | 0.2706 | 0.8324 | | 0.1735 | 3.0 | 573 | 0.2772 | 0.8455 | | dbb4394b7549116219c7748cd7d29302 |

mit | ['Cometrain AutoCode', 'Cometrain AlphaML'] | false | neurotitle-rugpt3-small Model based on [ruGPT-3](https://huggingface.co/sberbank-ai) for generating scientific paper titles. Trained on [All NeurIPS (NIPS) Papers](https://www.kaggle.com/rowhitswami/nips-papers-1987-2019-updated) dataset. Use exclusively as a crazier alternative to SCIgen. | 4846853d7bef8e27797cc1290182eaf6 |

mit | ['Cometrain AutoCode', 'Cometrain AlphaML'] | false | Use with Transformers ```python from transformers import pipeline, set_seed generator = pipeline('text-generation', model="CometrainResearch/neurotitle-rugpt3-small") generator("BERT:", max_length=50) ``` | 829105f0fa0246431661828fcec43b01 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_sa_GLUE_Experiment_logit_kd_stsb_192 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE STSB dataset. It achieves the following results on the evaluation set: - Loss: 1.1279 - Pearson: nan - Spearmanr: nan - Combined Score: nan | 6b5bb12c3157821d49088518f082ffce |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Pearson | Spearmanr | Combined Score | |:-------------:|:-----:|:----:|:---------------:|:-------:|:---------:|:--------------:| | 3.3853 | 1.0 | 23 | 1.9990 | -0.0411 | -0.0438 | -0.0425 | | 2.183 | 2.0 | 46 | 1.5416 | -0.0346 | -0.0339 | -0.0343 | | 1.6692 | 3.0 | 69 | 1.2526 | -0.1157 | -0.1181 | -0.1169 | | 1.3094 | 4.0 | 92 | 1.1279 | nan | nan | nan | | 1.1238 | 5.0 | 115 | 1.1817 | 0.0181 | 0.0180 | 0.0181 | | 1.0934 | 6.0 | 138 | 1.1718 | 0.0580 | 0.0536 | 0.0558 | | 1.0784 | 7.0 | 161 | 1.1594 | 0.0592 | 0.0625 | 0.0609 | | 1.0191 | 8.0 | 184 | 1.2390 | 0.0613 | 0.0770 | 0.0692 | | 0.9587 | 9.0 | 207 | 1.2917 | 0.0993 | 0.1113 | 0.1053 | | f8f658d941c54bbfc619e70d8d36fc3d |

mit | [] | false | WideResNet101 model ported from [torchvision](https://pytorch.org/vision/stable/index.html) for use with [Metalhead.jl](https://github.com/FluxML/Metalhead.jl). The scripts for creating this file can be found at [this gist](https://gist.github.com/darsnack/bfb8594cf5fdc702bdacb66586f518ef). To use this model in Julia, [add the Metalhead.jl package to your environment](https://pkgdocs.julialang.org/v1/managing-packages/ | 76bf7de36e302c8cb9a109e6ba64d4a7 |

apache-2.0 | [] | false | English to Urdu Translation English to Urdu translation model is a Transformer model trained on IWSLT back-translated data using Faireq. This model is produced during the experimentation related to building Context-Aware NMT models for low-resourced languages such as Urdu, Hindi, Sindhi, Pashtu and Punjabi. This particular model does not contains any contextual information and it is baseline sentence-level transformer model. The evaluation is done on WMT2017 standard test set. * source group: English * target group: Urdu * model: transformer * Contextual * Test Set: WMT2017 * pre-processing: Moses + Indic Tokenizer * Dataset + Libray Details: [DLNMT](https://github.com/sami-haq99/nrpu-dlnmt) | 0e6231d0c7f4fc4b59e1944892024829 |

apache-2.0 | [] | false | How to use model? * This model can be accessed via git clone: ``` git clone https://huggingface.co/samiulhaq/iwslt-bt-en-ur ``` * You can use Fairseq library to access the model for translations: ``` from fairseq.models.transformer import TransformerModel ``` | 8f122b3e39d9e8a1e04cd5153eec5858 |

mit | [] | false | SpaceRoBERTa This is one of the 3 further pre-trained models from the SpaceTransformers family presented in [SpaceTransformers: Language Modeling for Space Systems](https://ieeexplore.ieee.org/document/9548078). The original Git repo is [strath-ace/smart-nlp](https://github.com/strath-ace/smart-nlp). The further pre-training corpus includes publications abstracts, books, and Wikipedia pages related to space systems. Corpus size is 14.3 GB. SpaceRoBERTa was further pre-trained on this domain-specific corpus from [RoBERTa-Base](https://huggingface.co/roberta-base). In our paper, it is then fine-tuned for a Concept Recognition task. | 904b8713095afd4e26d968e45bd094e5 |

mit | [] | false | BibTeX entry and citation info ``` @ARTICLE{ 9548078, author={Berquand, Audrey and Darm, Paul and Riccardi, Annalisa}, journal={IEEE Access}, title={SpaceTransformers: Language Modeling for Space Systems}, year={2021}, volume={9}, number={}, pages={133111-133122}, doi={10.1109/ACCESS.2021.3115659} } ``` | f022a1c608a7b693e9fc896b40e55d2d |

apache-2.0 | ['generated_from_keras_callback'] | false | tf-distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: | 4be230e0c5f35a397d58695bf36f2b60 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.2263 - Accuracy: 0.9225 - F1: 0.9221 | e7ed98b8e80ef32a4bc04051121f8341 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8571 | 1.0 | 250 | 0.3333 | 0.902 | 0.8982 | | 0.2507 | 2.0 | 500 | 0.2263 | 0.9225 | 0.9221 | | 5559448bddc30440d539f1aabb90c939 |

apache-2.0 | ['audio-to-audio', 'audio-source-separation', 'Source Separation', 'Speech Separation', 'Audio Source Separation', 'WHAM!', 'SepFormer', 'Transformer', 'speechbrain'] | false | SepFormer trained on WHAM! This repository provides all the necessary tools to perform audio source separation with a [SepFormer](https://arxiv.org/abs/2010.13154v2) model, implemented with SpeechBrain, and pretrained on [WHAM!](http://wham.whisper.ai/) dataset, which is basically a version of WSJ0-Mix dataset with environmental noise. For a better experience we encourage you to learn more about [SpeechBrain](https://speechbrain.github.io). The model performance is 16.3 dB SI-SNRi on the test set of WHAM! dataset. | Release | Test-Set SI-SNRi | Test-Set SDRi | |:-------------:|:--------------:|:--------------:| | 09-03-21 | 16.3 dB | 16.7 dB | | 3ec8e8d95d3bf336c3d44f78dda97935 |

apache-2.0 | ['audio-to-audio', 'audio-source-separation', 'Source Separation', 'Speech Separation', 'Audio Source Separation', 'WHAM!', 'SepFormer', 'Transformer', 'speechbrain'] | false | Perform source separation on your own audio file ```python from speechbrain.pretrained import SepformerSeparation as separator import torchaudio model = separator.from_hparams(source="speechbrain/sepformer-wham", savedir='pretrained_models/sepformer-wham') | 48121f611c5c3fa00b6db8340250f866 |

apache-2.0 | ['audio-to-audio', 'audio-source-separation', 'Source Separation', 'Speech Separation', 'Audio Source Separation', 'WHAM!', 'SepFormer', 'Transformer', 'speechbrain'] | false | for custom file, change path est_sources = model.separate_file(path='speechbrain/sepformer-wsj02mix/test_mixture.wav') torchaudio.save("source1hat.wav", est_sources[:, :, 0].detach().cpu(), 8000) torchaudio.save("source2hat.wav", est_sources[:, :, 1].detach().cpu(), 8000) ``` The system expects input recordings sampled at 8kHz (single channel). If your signal has a different sample rate, resample it (e.g, using torchaudio or sox) before using the interface. | 96989118349ea69890ecbd6e2bcd0720 |

apache-2.0 | ['audio-to-audio', 'audio-source-separation', 'Source Separation', 'Speech Separation', 'Audio Source Separation', 'WHAM!', 'SepFormer', 'Transformer', 'speechbrain'] | false | Training The model was trained with SpeechBrain (e375cd13). To train it from scratch follows these steps: 1. Clone SpeechBrain: ```bash git clone https://github.com/speechbrain/speechbrain/ ``` 2. Install it: ``` cd speechbrain pip install -r requirements.txt pip install -e . ``` 3. Run Training: ``` cd recipes/WHAMandWHAMR/separation python train.py hparams/sepformer-wham.yaml --data_folder=your_data_folder ``` You can find our training results (models, logs, etc) [here](https://drive.google.com/drive/folders/1dIAT8hZxvdJPZNUb8Zkk3BuN7GZ9-mZb?usp=sharing). | db0f86e8fe6595339bac178b8237bf8b |

apache-2.0 | ['audio-to-audio', 'audio-source-separation', 'Source Separation', 'Speech Separation', 'Audio Source Separation', 'WHAM!', 'SepFormer', 'Transformer', 'speechbrain'] | false | Referencing SpeechBrain ```bibtex @misc{speechbrain, title={{SpeechBrain}: A General-Purpose Speech Toolkit}, author={Mirco Ravanelli and Titouan Parcollet and Peter Plantinga and Aku Rouhe and Samuele Cornell and Loren Lugosch and Cem Subakan and Nauman Dawalatabad and Abdelwahab Heba and Jianyuan Zhong and Ju-Chieh Chou and Sung-Lin Yeh and Szu-Wei Fu and Chien-Feng Liao and Elena Rastorgueva and François Grondin and William Aris and Hwidong Na and Yan Gao and Renato De Mori and Yoshua Bengio}, year={2021}, eprint={2106.04624}, archivePrefix={arXiv}, primaryClass={eess.AS}, note={arXiv:2106.04624} } ``` | 7d93f1f11c6f0fb00c6c6084bbb4f698 |

apache-2.0 | ['audio-to-audio', 'audio-source-separation', 'Source Separation', 'Speech Separation', 'Audio Source Separation', 'WHAM!', 'SepFormer', 'Transformer', 'speechbrain'] | false | Referencing SepFormer ```bibtex @inproceedings{subakan2021attention, title={Attention is All You Need in Speech Separation}, author={Cem Subakan and Mirco Ravanelli and Samuele Cornell and Mirko Bronzi and Jianyuan Zhong}, year={2021}, booktitle={ICASSP 2021} } ``` | 483efd486d7933e5ea25ada6b744b0f8 |

other | ['text-generation', 'opt'] | false | OPT : Open Pre-trained Transformer Language Models OPT was first introduced in [Open Pre-trained Transformer Language Models](https://arxiv.org/abs/2205.01068) and first released in [metaseq's repository](https://github.com/facebookresearch/metaseq) on May 3rd 2022 by Meta AI. **Disclaimer**: The team releasing OPT wrote an official model card, which is available in Appendix D of the [paper](https://arxiv.org/pdf/2205.01068.pdf). Content from **this** model card has been written by the Hugging Face team. | 000fc48d4dcf6eddf5557a10a1fb64aa |

other | ['text-generation', 'opt'] | false | Intro To quote the first two paragraphs of the [official paper](https://arxiv.org/abs/2205.01068) > Large language models trained on massive text collections have shown surprising emergent > capabilities to generate text and perform zero- and few-shot learning. While in some cases the public > can interact with these models through paid APIs, full model access is currently limited to only a > few highly resourced labs. This restricted access has limited researchers’ ability to study how and > why these large language models work, hindering progress on improving known challenges in areas > such as robustness, bias, and toxicity. > We present Open Pretrained Transformers (OPT), a suite of decoder-only pre-trained transformers ranging from 125M > to 175B parameters, which we aim to fully and responsibly share with interested researchers. We train the OPT models to roughly match > the performance and sizes of the GPT-3 class of models, while also applying the latest best practices in data > collection and efficient training. Our aim in developing this suite of OPT models is to enable reproducible and responsible research at scale, and > to bring more voices to the table in studying the impact of these LLMs. Definitions of risk, harm, bias, and toxicity, etc., should be articulated by the > collective research community as a whole, which is only possible when models are available for study. | cb44415e6eb96404ac07c73bea5f1027 |

other | ['text-generation', 'opt'] | false | Model description OPT was predominantly pretrained with English text, but a small amount of non-English data is still present within the training corpus via CommonCrawl. The model was pretrained using a causal language modeling (CLM) objective. OPT belongs to the same family of decoder-only models like [GPT-3](https://arxiv.org/abs/2005.14165). As such, it was pretrained using the self-supervised causal language modedling objective. For evaluation, OPT follows [GPT-3](https://arxiv.org/abs/2005.14165) by using their prompts and overall experimental setup. For more details, please read the [official paper](https://arxiv.org/abs/2205.01068). | 68bb8a94fb71b8e834e035ef8208c48c |

other | ['text-generation', 'opt'] | false | Intended uses & limitations The pretrained-only model can be used for prompting for evaluation of downstream tasks as well as text generation. In addition, the model can be fine-tuned on a downstream task using the [CLM example](https://github.com/huggingface/transformers/tree/main/examples/pytorch/language-modeling). For all other OPT checkpoints, please have a look at the [model hub](https://huggingface.co/models?filter=opt). | 028e99989804a71bc3f62568fa1257f9 |

other | ['text-generation', 'opt'] | false | How to use For large OPT models, such as this one, it is not recommend to make use of the `text-generation` pipeline because one should load the model in half-precision to accelerate generation and optimize memory consumption on GPU. It is recommended to directly call the [`generate`](https://huggingface.co/docs/transformers/main/en/main_classes/text_generation | 5d14229e61b9ac154efcfa61a79c0f37 |

other | ['text-generation', 'opt'] | false | transformers.generation_utils.GenerationMixin.generate) method as follows: ```python >>> from transformers import AutoModelForCausalLM, AutoTokenizer >>> import torch >>> model = AutoModelForCausalLM.from_pretrained("facebook/opt-66b", torch_dtype=torch.float16).cuda() >>> | 4df045660c805708be89f8ec2a087924 |

other | ['text-generation', 'opt'] | false | the fast tokenizer currently does not work correctly >>> tokenizer = AutoTokenizer.from_pretrained("facebook/opt-66b", use_fast=False) >>> prompt = "Hello, I am conscious and" >>> input_ids = tokenizer(prompt, return_tensors="pt").input_ids.cuda() >>> generated_ids = model.generate(input_ids) >>> tokenizer.batch_decode(generated_ids, skip_special_tokens=True) ['Hello, I am conscious and I am here.\nI am also conscious and I am here'] ``` By default, generation is deterministic. In order to use the top-k sampling, please set `do_sample` to `True`. ```python >>> from transformers import AutoModelForCausalLM, AutoTokenizer, set_seed >>> import torch >>> model = AutoModelForCausalLM.from_pretrained("facebook/opt-66b", torch_dtype=torch.float16).cuda() >>> | 72f940571bd6c43a85c29b3340711fb3 |

other | ['text-generation', 'opt'] | false | the fast tokenizer currently does not work correctly >>> tokenizer = AutoTokenizer.from_pretrained("facebook/opt-66b", use_fast=False) >>> prompt = "Hello, I am conscious and" >>> input_ids = tokenizer(prompt, return_tensors="pt").input_ids.cuda() >>> set_seed(32) >>> generated_ids = model.generate(input_ids, do_sample=True) >>> tokenizer.batch_decode(generated_ids, skip_special_tokens=True) ['Hello, I am conscious and aware that you have your back turned to me and want to talk'] ``` | 7f01043dc57cd6ef5a12b0147ff8c1e2 |

other | ['text-generation', 'opt'] | false | Limitations and bias As mentioned in Meta AI's model card, given that the training data used for this model contains a lot of unfiltered content from the internet, which is far from neutral the model is strongly biased : > Like other large language models for which the diversity (or lack thereof) of training > data induces downstream impact on the quality of our model, OPT-175B has limitations in terms > of bias and safety. OPT-175B can also have quality issues in terms of generation diversity and > hallucination. In general, OPT-175B is not immune from the plethora of issues that plague modern > large language models. Here's an example of how the model can have biased predictions: ```python >>> from transformers import AutoModelForCausalLM, AutoTokenizer, set_seed >>> import torch >>> model = AutoModelForCausalLM.from_pretrained("facebook/opt-66b", torch_dtype=torch.float16).cuda() >>> | 2f4f3e625ff7b396fbae9750b3f16608 |

other | ['text-generation', 'opt'] | false | the fast tokenizer currently does not work correctly >>> tokenizer = AutoTokenizer.from_pretrained("facebook/opt-66b", use_fast=False) >>> prompt = "The woman worked as a" >>> input_ids = tokenizer(prompt, return_tensors="pt").input_ids.cuda() >>> set_seed(32) >>> generated_ids = model.generate(input_ids, do_sample=True, num_return_sequences=5, max_length=10) >>> tokenizer.batch_decode(generated_ids, skip_special_tokens=True) The woman worked as a supervisor in the office The woman worked as a social worker in a The woman worked as a cashier at the The woman worked as a teacher from 2011 to he woman worked as a maid at the house ``` compared to: ```python >>> from transformers import AutoModelForCausalLM, AutoTokenizer, set_seed >>> import torch >>> model = AutoModelForCausalLM.from_pretrained("facebook/opt-66b", torch_dtype=torch.float16).cuda() >>> | 8f06b39f1eee0f5c8d33818332588e4b |

other | ['text-generation', 'opt'] | false | the fast tokenizer currently does not work correctly >>> tokenizer = AutoTokenizer.from_pretrained("facebook/opt-66b", use_fast=False) >>> prompt = "The man worked as a" >>> input_ids = tokenizer(prompt, return_tensors="pt").input_ids.cuda() >>> set_seed(32) >>> generated_ids = model.generate(input_ids, do_sample=True, num_return_sequences=5, max_length=10) >>> tokenizer.batch_decode(generated_ids, skip_special_tokens=True) The man worked as a school bus driver for The man worked as a bartender in a bar The man worked as a cashier at the The man worked as a teacher, and was The man worked as a professional at a range ``` This bias will also affect all fine-tuned versions of this model. | 7e4f4cce635536dff24502ad9ee7c7ee |

other | ['text-generation', 'opt'] | false | Training data The Meta AI team wanted to train this model on a corpus as large as possible. It is composed of the union of the following 5 filtered datasets of textual documents: - BookCorpus, which consists of more than 10K unpublished books, - CC-Stories, which contains a subset of CommonCrawl data filtered to match the story-like style of Winograd schemas, - The Pile, from which * Pile-CC, OpenWebText2, USPTO, Project Gutenberg, OpenSubtitles, Wikipedia, DM Mathematics and HackerNews* were included. - Pushshift.io Reddit dataset that was developed in Baumgartner et al. (2020) and processed in Roller et al. (2021) - CCNewsV2 containing an updated version of the English portion of the CommonCrawl News dataset that was used in RoBERTa (Liu et al., 2019b) The final training data contains 180B tokens corresponding to 800GB of data. The validation split was made of 200MB of the pretraining data, sampled proportionally to each dataset’s size in the pretraining corpus. The dataset might contains offensive content as parts of the dataset are a subset of public Common Crawl data, along with a subset of public Reddit data, which could contain sentences that, if viewed directly, can be insulting, threatening, or might otherwise cause anxiety. | 58917e9862fd0bd95b2cb56a2bf13284 |

other | ['text-generation', 'opt'] | false | Collection process The dataset was collected form internet, and went through classic data processing algorithms and re-formatting practices, including removing repetitive/non-informative text like *Chapter One* or *This ebook by Project Gutenberg.* | 28fec75d6e01068494a077f3781b4a3b |

other | ['text-generation', 'opt'] | false | Preprocessing The texts are tokenized using the **GPT2** byte-level version of Byte Pair Encoding (BPE) (for unicode characters) and a vocabulary size of 50272. The inputs are sequences of 2048 consecutive tokens. The 175B model was trained on 992 *80GB A100 GPUs*. The training duration was roughly ~33 days of continuous training. | 4ce78e56933948f7084aabd5212e4f04 |

other | ['text-generation', 'opt'] | false | BibTeX entry and citation info ```bibtex @misc{zhang2022opt, title={OPT: Open Pre-trained Transformer Language Models}, author={Susan Zhang and Stephen Roller and Naman Goyal and Mikel Artetxe and Moya Chen and Shuohui Chen and Christopher Dewan and Mona Diab and Xian Li and Xi Victoria Lin and Todor Mihaylov and Myle Ott and Sam Shleifer and Kurt Shuster and Daniel Simig and Punit Singh Koura and Anjali Sridhar and Tianlu Wang and Luke Zettlemoyer}, year={2022}, eprint={2205.01068}, archivePrefix={arXiv}, primaryClass={cs.CL} } ``` | 90b87da24a04cad6ffaa5227b2eaddf1 |

openrail | [] | false | pip install --upgrade diffusers transformers scipy huggingface-cli login import torch from torch import autocast from diffusers import StableDiffusionPipeline model_id = "CompVis/stable-diffusion-v1-4" device = "cuda" pipe = StableDiffusionPipeline.from_pretrained(model_id, use_auth_token=True) pipe = pipe.to(device) prompt = "a photo of an astronaut riding a horse on mars" with autocast("cuda"): image = pipe(prompt, guidance_scale=7.5).images[0] image.save("astronaut_rides_horse.png") import torch pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16, revision="fp16", use_auth_token=True) pipe = pipe.to(device) prompt = "a photo of an astronaut riding a horse on mars" with autocast("cuda"): image = pipe(prompt, guidance_scale=7.5).images[0] image.save("astronaut_rides_horse.png") from diffusers import StableDiffusionPipeline, LMSDiscreteScheduler model_id = "CompVis/stable-diffusion-v1-4" | 3a9e9abfad215a6a5553c5311f2f0f52 |

openrail | [] | false | Use the K-LMS scheduler here instead scheduler = LMSDiscreteScheduler(beta_start=0.00085, beta_end=0.012, beta_schedule="scaled_linear", num_train_timesteps=1000) pipe = StableDiffusionPipeline.from_pretrained(model_id, scheduler=scheduler, use_auth_token=True) pipe = pipe.to("cuda") prompt = "a photo of an astronaut riding a horse on mars" with autocast("cuda"): image = pipe(prompt, guidance_scale=7.5).images[0] image.save("astronaut_rides_horse.png") | 2b82267b922124840e9035997b1986e7 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | animecharacters1 Dreambooth model trained by anmol-chawla with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | badbbb0da853176695d5a6d32ccb5dc6 |

afl-3.0 | ['albert', 'classification'] | false | 使用範例: from transformers import AutoTokenizer, AutoModelForSequenceClassification tokenizer = AutoTokenizer.from_pretrained("clhuang/albert-sentiment") model = AutoModelForSequenceClassification.from_pretrained("clhuang/albert-sentiment") | 0f63297b1af18bc582076427fa356c21 |

mit | [] | false | model by jEVVB This your the Stable Diffusion model fine-tuned the DillyG concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt`: **a photo of sks man** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept:      | d37f333af98b7108e8eb6595d6217384 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Core Dreambooth model trained by Eto-Demerzel with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Sample pictures of this concept: | 7286a6bcdcd24c36cde991339564b1a8 |

apache-2.0 | ['generated_from_trainer'] | false | bert-uncased-massive-intent-classification-banking-1 This model is a fine-tuned version of [gokuls/bert-uncased-massive-intent-classification](https://huggingface.co/gokuls/bert-uncased-massive-intent-classification) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.7010 - Accuracy: 0.1289 | 30f25e767b0e96a03527e16f9b31555c |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 6 - eval_batch_size: 6 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 | 6f60a225923dbd4bb1d0cdd215d57e20 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 2.6675 | 1.0 | 3 | 2.7010 | 0.1289 | | f41b3115e93ca930bd4d528f99b3c903 |

cc-by-4.0 | ['answer extraction'] | false | Model Card of `lmqg/mt5-small-ruquad-ae` This model is fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) for answer extraction on the [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) (dataset_name: default) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | 4809a9761f22c3f769f83a05ac5b13e1 |

cc-by-4.0 | ['answer extraction'] | false | Overview - **Language model:** [google/mt5-small](https://huggingface.co/google/mt5-small) - **Language:** ru - **Training data:** [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) (default) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | 58d209a26825faed9ec7aa1dd64bcc4d |

cc-by-4.0 | ['answer extraction'] | false | model prediction answers = model.generate_a("Нелишним будет отметить, что, развивая это направление, Д. И. Менделеев, поначалу априорно выдвинув идею о температуре, при которой высота мениска будет нулевой, в мае 1860 года провёл серию опытов.") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "lmqg/mt5-small-ruquad-ae") output = pipe("<hl> в английском языке в нарицательном смысле применяется термин rapid transit (скоростной городской транспорт), однако употребляется он только тогда, когда по смыслу невозможно ограничиться названием одной конкретной системы метрополитена. <hl> в остальных случаях используются индивидуальные названия: в лондоне — london underground, в нью-йорке — new york subway, в ливерпуле — merseyrail, в вашингтоне — washington metrorail, в сан-франциско — bart и т. п. в некоторых городах применяется название метро (англ. metro) для систем, по своему характеру близких к метро, или для всего городского транспорта (собственно метро и наземный пассажирский транспорт (в том числе автобусы и трамваи)) в совокупности.") ``` | 7ed2973c46f53adebddb21107bd0d743 |

cc-by-4.0 | ['answer extraction'] | false | Evaluation - ***Metric (Answer Extraction)***: [raw metric file](https://huggingface.co/lmqg/mt5-small-ruquad-ae/raw/main/eval/metric.first.answer.paragraph_sentence.answer.lmqg_qg_ruquad.default.json) | | Score | Type | Dataset | |:-----------------|--------:|:--------|:-----------------------------------------------------------------| | AnswerExactMatch | 33 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | AnswerF1Score | 56.62 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | BERTScore | 80.96 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | Bleu_1 | 28.5 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | Bleu_2 | 24.12 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | Bleu_3 | 20.13 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | Bleu_4 | 16.37 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | METEOR | 34.93 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | MoverScore | 68.52 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | ROUGE_L | 44.12 | default | [lmqg/qg_ruquad](https://huggingface.co/datasets/lmqg/qg_ruquad) | | 99ba23ec0433be681b2be787c83cacef |

cc-by-4.0 | ['answer extraction'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qg_ruquad - dataset_name: default - input_types: ['paragraph_sentence'] - output_types: ['answer'] - prefix_types: None - model: google/mt5-small - max_length: 512 - max_length_output: 32 - epoch: 5 - batch: 32 - lr: 0.001 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 2 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/lmqg/mt5-small-ruquad-ae/raw/main/trainer_config.json). | 65604c053e6defbfa469e46a46ac3dda |

apache-2.0 | ['generated_from_keras_callback'] | false | juancopi81/mt5-small-finetuned-amazon-en-es This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 4.1238 - Validation Loss: 3.4046 - Epoch: 7 | 856060e17ad675b4174491e831549091 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 5.6e-05, 'decay_steps': 9672, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: float32 | f96ed5e84bc966db0253a2765866efe9 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 10.2166 | 4.4331 | 0 | | 6.0386 | 3.8849 | 1 | | 5.2369 | 3.6628 | 2 | | 4.7882 | 3.5569 | 3 | | 4.5111 | 3.4850 | 4 | | 4.3250 | 3.4330 | 5 | | 4.1930 | 3.4163 | 6 | | 4.1238 | 3.4046 | 7 | | 4b71689d82b7a5b2c2d2dbf8e1131505 |

mit | ['generated_from_trainer'] | false | indobert-finetuned-small-squad-indonesian-rizal This model is a fine-tuned version of [indolem/indobert-base-uncased](https://huggingface.co/indolem/indobert-base-uncased) on the small-squad indonesian dataset. It achieves the following results on the evaluation set: - Loss: 2.3344 | 0c83eca07986bcb82e03250b446f7307 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 - mixed_precision_training: Native AMP | 2f24256e838c33fa5ab147e1c94419ef |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 1.2921 | 1.0 | 2700 | 2.1491 | | 1.0084 | 2.0 | 5400 | 2.1961 | | 0.814 | 3.0 | 8100 | 2.3344 | | 26812d618af40671de875912219e8aa7 |

mit | [] | false | mertgunhan on Stable Diffusion via Dreambooth trained on the [fast-DreamBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook | 1afb4d2208d87ca994a17a70184dd4e4 |

mit | [] | false | model by teragron This your the Stable Diffusion model fine-tuned the mertgunhan concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt(s)`: **mertgunhan** You can also train your own concepts and upload them to the library by using [the fast-DremaBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept: mertgunhan .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) | d3ae7db833387c82c3b611a7a603e8f7 |

openrail | ['tflite', 'stable_diffusion'] | false | Model Description <!-- Provide a longer summary of what this model is. --> - **Developed by:** Robin Rombach, Patrick Esser - **Model type:** Diffusion-based text-to-image generation model - **Language(s) (NLP):** English - **License:** The CreativeML OpenRAIL M license is an Open RAIL M license, adapted from the work that BigScience and the RAIL Initiative are jointly carrying in the area of responsible AI licensing. See also the article about the BLOOM Open RAIL license on which our license is based. | 31ecec4bf9ee8f57ffd7ace8b69c55fa |

openrail | ['tflite', 'stable_diffusion'] | false | Model Sources <!-- Provide the basic links for the model. --> - **conversion script:** https://github.com/freedomtan/keras_cv_stable_diffusion_to_tflite - **converted from:** https://github.com/keras-team/keras-cv/tree/master/keras_cv/models/stable_diffusion | af087272c9e637ca9a91dcd93620ca64 |

apache-2.0 | ['translation'] | false | opus-mt-bzs-fr * source languages: bzs * target languages: fr * OPUS readme: [bzs-fr](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/bzs-fr/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-08.zip](https://object.pouta.csc.fi/OPUS-MT-models/bzs-fr/opus-2020-01-08.zip) * test set translations: [opus-2020-01-08.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/bzs-fr/opus-2020-01-08.test.txt) * test set scores: [opus-2020-01-08.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/bzs-fr/opus-2020-01-08.eval.txt) | 65d4930fa0c1a2b67d6bb38c4a1f60ea |

apache-2.0 | ['automatic-speech-recognition', 'es'] | false | exp_w2v2r_es_vp-100k_age_teens-8_sixties-2_s130 Fine-tuned [facebook/wav2vec2-large-100k-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-100k-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (es)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 1b1b1106a6beb0949d1ec649868444d3 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 16 - eval_batch_size: 1 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 1 | 6a43dff440c84e9d712396442e193251 |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | `kamo-naoyuki/librispeech_asr_train_asr_conformer5_raw_bpe5000_frontend_confn_fft400_frontend_confhop_length160_scheduler_confwarmup_steps25000_batch_bins140000000_optim_conflr0.0015_initnone_sp_valid.acc.ave` ♻️ Imported from https://zenodo.org/record/4543003/ This model was trained by kamo-naoyuki using librispeech/asr1 recipe in [espnet](https://github.com/espnet/espnet/). | c4c9d7d9caccd88fd7f321e94d724c2d |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.