license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['generated_from_trainer', 'fnet-bert-base-comparison'] | false | fnet-base-finetuned-rte This model is a fine-tuned version of [google/fnet-base](https://huggingface.co/google/fnet-base) on the GLUE RTE dataset. It achieves the following results on the evaluation set: - Loss: 0.6978 - Accuracy: 0.6282 The model was fine-tuned to compare [google/fnet-base](https://huggingface.co/google/fnet-base) as introduced in [this paper](https://arxiv.org/abs/2105.03824) against [bert-base-cased](https://huggingface.co/bert-base-cased). | 70f6b4c78070744a42858a40cde4151d |

apache-2.0 | ['generated_from_trainer', 'fnet-bert-base-comparison'] | false | !/usr/bin/bash python ../run_glue.py \\n --model_name_or_path google/fnet-base \\n --task_name rte \\n --do_train \\n --do_eval \\n --max_seq_length 512 \\n --per_device_train_batch_size 16 \\n --learning_rate 2e-5 \\n --num_train_epochs 3 \\n --output_dir fnet-base-finetuned-rte \\n --push_to_hub \\n --hub_strategy all_checkpoints \\n --logging_strategy epoch \\n --save_strategy epoch \\n --evaluation_strategy epoch \\n``` | cb1b8eddb5f3c3e5224254bb845eae50 |

apache-2.0 | ['generated_from_trainer', 'fnet-bert-base-comparison'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6829 | 1.0 | 156 | 0.6657 | 0.5704 | | 0.6174 | 2.0 | 312 | 0.6784 | 0.6101 | | 0.5141 | 3.0 | 468 | 0.6978 | 0.6282 | | 0bb84f17340acaf3bee8bffa106eacc3 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-fa-base-ner This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.2856 - Precision: 0.5353 - Recall: 0.5704 - F1: 0.5523 - Accuracy: 0.9168 | d8f196edd36c1c63675d9cdcfc6d4fe0 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.673 | 1.0 | 511 | 0.4067 | 0.4767 | 0.3981 | 0.4339 | 0.8956 | | 0.3673 | 2.0 | 1022 | 0.3279 | 0.4611 | 0.5138 | 0.4860 | 0.9031 | | 0.2998 | 3.0 | 1533 | 0.2977 | 0.5265 | 0.4976 | 0.5116 | 0.9132 | | 0.2616 | 4.0 | 2044 | 0.2860 | 0.5365 | 0.5477 | 0.5420 | 0.9151 | | 0.2394 | 5.0 | 2555 | 0.2856 | 0.5353 | 0.5704 | 0.5523 | 0.9168 | | 04e5cd06e4bf7b9ad7dc4da02d7a5cb8 |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'capitel', 'ner'] | false | Model description The **roberta-base-bne-capitel-ner-plus** is a Named Entity Recognition (NER) model for the Spanish language fine-tuned from the [roberta-base-bne](https://huggingface.co/PlanTL-GOB-ES/roberta-base-bne) model, a [RoBERTa](https://arxiv.org/abs/1907.11692) base model pre-trained using the largest Spanish corpus known to date, with a total of 570GB of clean and deduplicated text, processed for this work, compiled from the web crawlings performed by the [National Library of Spain (Biblioteca Nacional de España)](http://www.bne.es/en/Inicio/index.html) from 2009 to 2019. This model is a more robust version of the [roberta-base-bne-capitel-ner](https://huggingface.co/PlanTL-GOB-ES/roberta-base-bne-capitel-ner) model that recognizes better lowercased Named Entities (NE). | c1c98e00d5afa8dbbf50d75c8eaa7e2b |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'capitel', 'ner'] | false | Intended uses and limitations **roberta-base-bne-capitel-ner-plus** model can be used to recognize Named Entities (NE). The model is limited by its training dataset and may not generalize well for all use cases. | 1b8bd554828162e1c404987b0e8d368c |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'capitel', 'ner'] | false | How to use ```python from transformers import pipeline from pprint import pprint nlp = pipeline("ner", model="PlanTL-GOB-ES/roberta-base-bne-capitel-ner-plus") example = "Me llamo francisco javier y vivo en madrid." ner_results = nlp(example) pprint(ner_results) ``` | 9f0985013da18057215866f00d7625b4 |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'capitel', 'ner'] | false | Limitations and bias At the time of submission, no measures have been taken to estimate the bias embedded in the model. However, we are well aware that our models may be biased since the corpora have been collected using crawling techniques on multiple web sources. We intend to conduct research in these areas in the future, and if completed, this model card will be updated. | 133de85b326e7a3d9ead17d8d18baa1a |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'capitel', 'ner'] | false | Training The dataset used for training and evaluation is the one from the [CAPITEL competition at IberLEF 2020](https://sites.google.com/view/capitel2020) (sub-task 1). We lowercased and uppercased the dataset, and added the additional sentences to the training. | ee38f4e8dabd25aafde3be00124afaf6 |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'capitel', 'ner'] | false | Training procedure The model was trained with a batch size of 16 and a learning rate of 5e-5 for 5 epochs. We then selected the best checkpoint using the downstream task metric in the corresponding development set and then evaluated it on the test set. | eac2cefdf75b1ff07ced686ab2466891 |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'capitel', 'ner'] | false | Evaluation results We evaluated the **roberta-base-bne-capitel-ner-plus** on the CAPITEL-NERC test set against standard multilingual and monolingual baselines: | Model | CAPITEL-NERC (F1) | | ------------|:----| | roberta-large-bne-capitel-ner | **90.51** | | roberta-base-bne-capitel-ner | 89.60| | roberta-base-bne-capitel-ner-plus | 89.60| | BETO | 87.72 | | mBERT | 88.10 | | BERTIN | 88.56 | | ELECTRA | 80.35 | For more details, check the fine-tuning and evaluation scripts in the official [GitHub repository](https://github.com/PlanTL-GOB-ES/lm-spanish). | 9545c6e696f580fbdade2f95b386f152 |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'capitel', 'ner'] | false | Citing information If you use this model, please cite our [paper](http://journal.sepln.org/sepln/ojs/ojs/index.php/pln/article/view/6405): ``` @article{, abstract = {We want to thank the National Library of Spain for such a large effort on the data gathering and the Future of Computing Center, a Barcelona Supercomputing Center and IBM initiative (2020). This work was funded by the Spanish State Secretariat for Digitalization and Artificial Intelligence (SEDIA) within the framework of the Plan-TL.}, author = {Asier Gutiérrez Fandiño and Jordi Armengol Estapé and Marc Pàmies and Joan Llop Palao and Joaquin Silveira Ocampo and Casimiro Pio Carrino and Carme Armentano Oller and Carlos Rodriguez Penagos and Aitor Gonzalez Agirre and Marta Villegas}, doi = {10.26342/2022-68-3}, issn = {1135-5948}, journal = {Procesamiento del Lenguaje Natural}, keywords = {Artificial intelligence,Benchmarking,Data processing.,MarIA,Natural language processing,Spanish language modelling,Spanish language resources,Tractament del llenguatge natural (Informàtica),Àrees temàtiques de la UPC::Informàtica::Intel·ligència artificial::Llenguatge natural}, publisher = {Sociedad Española para el Procesamiento del Lenguaje Natural}, title = {MarIA: Spanish Language Models}, volume = {68}, url = {https://upcommons.upc.edu/handle/2117/367156 | 705150c7fc4fbaf7a990175eeff2ee22 |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'capitel', 'ner'] | false | Disclaimer The models published in this repository are intended for a generalist purpose and are available to third parties. These models may have bias and/or any other undesirable distortions. When third parties, deploy or provide systems and/or services to other parties using any of these models (or using systems based on these models) or become users of the models, they should note that it is their responsibility to mitigate the risks arising from their use and, in any event, to comply with applicable regulations, including regulations regarding the use of artificial intelligence. In no event shall the owner of the models (SEDIA – State Secretariat for digitalization and artificial intelligence) nor the creator (BSC – Barcelona Supercomputing Center) be liable for any results arising from the use made by third parties of these models. Los modelos publicados en este repositorio tienen una finalidad generalista y están a disposición de terceros. Estos modelos pueden tener sesgos y/u otro tipo de distorsiones indeseables. Cuando terceros desplieguen o proporcionen sistemas y/o servicios a otras partes usando alguno de estos modelos (o utilizando sistemas basados en estos modelos) o se conviertan en usuarios de los modelos, deben tener en cuenta que es su responsabilidad mitigar los riesgos derivados de su uso y, en todo caso, cumplir con la normativa aplicable, incluyendo la normativa en materia de uso de inteligencia artificial. En ningún caso el propietario de los modelos (SEDIA – Secretaría de Estado de Digitalización e Inteligencia Artificial) ni el creador (BSC – Barcelona Supercomputing Center) serán responsables de los resultados derivados del uso que hagan terceros de estos modelos. | c344d2caf58786e2a90be88f23329fc0 |

mit | ['generated_from_trainer'] | false | camembert-base-cae-ressentis-pensees This model is a fine-tuned version of [camembert-base](https://huggingface.co/camembert-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.6742 - Precision: 0.8398 - Recall: 0.8417 - F1: 0.8384 | 83f4ea3408765969018399d7de29f0d8 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 10.0 | 2b739ffb302c5006442ee66937a8d97c |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:| | 1.2705 | 1.0 | 60 | 1.1442 | 0.2844 | 0.5333 | 0.3710 | | 1.0289 | 2.0 | 120 | 0.8098 | 0.8284 | 0.75 | 0.7533 | | 0.6056 | 3.0 | 180 | 0.5520 | 0.8345 | 0.8167 | 0.8042 | | 0.3228 | 4.0 | 240 | 0.5299 | 0.8198 | 0.825 | 0.8181 | | 0.1346 | 5.0 | 300 | 0.7416 | 0.8067 | 0.8083 | 0.7944 | | 0.0518 | 6.0 | 360 | 0.6852 | 0.8330 | 0.8333 | 0.8226 | | 0.0356 | 7.0 | 420 | 0.6758 | 0.8416 | 0.8417 | 0.8373 | | 0.0221 | 8.0 | 480 | 0.6996 | 0.8300 | 0.8333 | 0.8299 | | 0.0161 | 9.0 | 540 | 0.6701 | 0.8398 | 0.8417 | 0.8384 | | 0.0145 | 10.0 | 600 | 0.6742 | 0.8398 | 0.8417 | 0.8384 | | 1f13d5136c7c8d7bce564608b6b23fb8 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-distilled-clinc This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the clinc_oos dataset. It achieves the following results on the evaluation set: - Loss: 0.3060 - Accuracy: 0.9487 | 6f6d57d35fe08318e1d45ccfa926bd3f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 2.643 | 1.0 | 318 | 1.9110 | 0.7452 | | 1.4751 | 2.0 | 636 | 0.9678 | 0.8606 | | 0.7736 | 3.0 | 954 | 0.5578 | 0.9168 | | 0.4652 | 4.0 | 1272 | 0.4081 | 0.9352 | | 0.3364 | 5.0 | 1590 | 0.3538 | 0.9442 | | 0.2801 | 6.0 | 1908 | 0.3294 | 0.9465 | | 0.2515 | 7.0 | 2226 | 0.3165 | 0.9471 | | 0.2366 | 8.0 | 2544 | 0.3107 | 0.9487 | | 0.2292 | 9.0 | 2862 | 0.3069 | 0.9490 | | 0.2247 | 10.0 | 3180 | 0.3060 | 0.9487 | | 1eda25b6e6983ff65dfc2b351cc1a19f |

apache-2.0 | [] | false | longformer). This is an unofficial *led-large-16384* checkpoint that is fine-tuned on the [pubmed dataset](https://huggingface.co/datasets/scientific_papers). The model was fine-tuned and evaluated as detailed in [this notebook](https://colab.research.google.com/drive/12LjJazBl7Gam0XBPy_y0CTOJZeZ34c2v?usp=sharing) | 36001d1eeb850e4b0a6fa4aa458165c8 |

apache-2.0 | [] | false | Usage The model can be used as follows. The input is taken from the test data of the [pubmed dataset](https://huggingface.co/datasets/scientific_papers). ```python LONG_ARTICLE = """"anxiety affects quality of life in those living with parkinson 's disease ( pd ) more so than overall cognitive status , motor deficits , apathy , and depression [ 13 ] . although anxiety and depression are often related and coexist in pd patients , recent research suggests that anxiety rather than depression is the most prominent and prevalent mood disorder in pd [ 5 , 6 ] . yet , our current understanding of anxiety and its impact on cognition in pd , as well as its neural basis and best treatment practices , remains meager and lags far behind that of depression . overall , neuropsychiatric symptoms in pd have been shown to be negatively associated with cognitive performance . for example , higher depression scores have been correlated with lower scores on the mini - mental state exam ( mmse ) [ 8 , 9 ] as well as tests of memory and executive functions ( e.g. , attention ) [ 1014 ] . likewise , apathy and anhedonia in pd patients have been associated with executive dysfunction [ 10 , 1523 ] . however , few studies have specifically investigated the relationship between anxiety and cognition in pd . one study showed a strong negative relationship between anxiety ( both state and trait ) and overall cognitive performance ( measured by the total of the repeatable battery for the assessment of neuropsychological status index ) within a sample of 27 pd patients . furthermore , trait anxiety was negatively associated with each of the cognitive domains assessed by the rbans ( i.e. , immediate memory , visuospatial construction , language , attention , and delayed memory ) . two further studies have examined whether anxiety differentially affects cognition in patients with left - sided dominant pd ( lpd ) versus right - sided dominant pd ( rpd ) ; however , their findings were inconsistent . the first study found that working memory performance was worse in lpd patients with anxiety compared to rpd patients with anxiety , whereas the second study reported that , in lpd , apathy but not anxiety was associated with performance on nonverbally mediated executive functions and visuospatial tasks ( e.g. , tmt - b , wms - iii spatial span ) , while in rpd , anxiety but not apathy significantly correlated with performance on verbally mediated tasks ( e.g. , clock reading test and boston naming test ) . furthermore , anxiety was significantly correlated with neuropsychological measures of attention and executive and visuospatial functions . taken together , it is evident that there are limited and inconsistent findings describing the relationship between anxiety and cognition in pd and more specifically how anxiety might influence particular domains of cognition such as attention and memory and executive functioning . it is also striking that , to date , no study has examined the influence of anxiety on cognition in pd by directly comparing groups of pd patients with and without anxiety while excluding depression . given that research on healthy young adults suggests that anxiety reduces processing capacity and impairs processing efficiency , especially in the central executive and attentional systems of working memory [ 26 , 27 ] , we hypothesized that pd patients with anxiety would show impairments in attentional set - shifting and working memory compared to pd patients without anxiety . furthermore , since previous work , albeit limited , has focused on the influence of symptom laterality on anxiety and cognition , we also explored this relationship . seventeen pd patients with anxiety and thirty - three pd patients without anxiety were included in this study ( see table 1 ) . the cross - sectional data from these participants was taken from a patient database that has been compiled over the past 8 years ( since 2008 ) at the parkinson 's disease research clinic at the brain and mind centre , university of sydney . inclusion criteria involved a diagnosis of idiopathic pd according to the united kingdom parkinson 's disease society brain bank criteria and were confirmed by a neurologist ( sjgl ) . patients also had to have an adequate proficiency in english and have completed a full neuropsychological assessment . ten patients in this study ( 5 pd with anxiety ; 5 pd without anxiety ) were taking psychotropic drugs ( i.e. , benzodiazepine or selective serotonin reuptake inhibitor ) . patients were also excluded if they had other neurological disorders , psychiatric disorders other than affective disorders ( such as anxiety ) , or if they reported a score greater than six on the depression subscale of the hospital anxiety and depression scale ( hads ) . thus , all participants who scored within a depressed ( hads - d > 6 ) range were excluded from this study , in attempt to examine a refined sample of pd patients with and without anxiety in order to determine the independent effect of anxiety on cognition . this research was approved by the human research ethics committee of the university of sydney , and written informed consent was obtained from all participants . self - reported hads was used to assess anxiety in pd and has been previously shown to be a useful measure of clinical anxiety in pd . a cut - off score of > 8 on the anxiety subscale of the hads ( hads - a ) was used to identify pd cases with anxiety ( pda+ ) , while a cut - off score of < 6 on the hads - a was used to identify pd cases without anxiety ( pda ) . this criterion was more stringent than usual ( > 7 cut - off score ) , in effort to create distinct patient groups . the neurological evaluation rated participants according to hoehn and yahr ( h&y ) stages and assessed their motor symptoms using part iii of the revised mds task force unified parkinson 's disease rating scale ( updrs ) . in a similar way this was determined by calculating a total left and right score from rigidity items 3035 , voluntary movement items 3643 , and tremor items 5057 from the mds - updrs part iii ( see table 1 ) . processing speed was assessed using the trail making test , part a ( tmt - a , z - score ) . attentional set - shifting was measured using the trail making test , part b ( tmt - b , z - score ) . working memory was assessed using the digit span forward and backward subtest of the wechsler memory scale - iii ( raw scores ) . language was assessed with semantic and phonemic verbal fluency via the controlled oral word associated test ( cowat animals and letters , z - score ) . the ability to retain learned verbal memory was assessed using the logical memory subtest from the wechsler memory scale - iii ( lm - i z - score , lm - ii z - score , % lm retention z - score ) . the mini - mental state examination ( mmse ) demographic , clinical , and neuropsychological variables were compared between the two groups with the independent t - test or mann whitney u test , depending on whether the variable met parametric assumptions . chi - square tests were used to examine gender and symptom laterality differences between groups . all analyses employed an alpha level of p < 0.05 and were two - tailed . spearman correlations were performed separately in each group to examine associations between anxiety and/or depression ratings and cognitive functions . as expected , the pda+ group reported significant greater levels of anxiety on the hads - a ( u = 0 , p < 0.001 ) and higher total score on the hads ( u = 1 , p < 0.001 ) compared to the pda group ( table 1 ) . groups were matched in age ( t(48 ) = 1.31 , p = 0.20 ) , disease duration ( u = 259 , p = 0.66 ) , updrs - iii score ( u = 250.5 , p = 0.65 ) , h&y ( u = 245 , p = 0.43 ) , ledd ( u = 159.5 , p = 0.80 ) , and depression ( hads - d ) ( u = 190.5 , p = 0.06 ) . additionally , all groups were matched in the distribution of gender ( = 0.098 , p = 0.75 ) and side - affected ( = 0.765 , p = 0.38 ) . there were no group differences for tmt - a performance ( u = 256 , p = 0.62 ) ( table 2 ) ; however , the pda+ group had worse performance on the trail making test part b ( t(46 ) = 2.03 , p = 0.048 ) compared to the pda group ( figure 1 ) . the pda+ group also demonstrated significantly worse performance on the digit span forward subtest ( t(48 ) = 2.22 , p = 0.031 ) and backward subtest ( u = 190.5 , p = 0.016 ) compared to the pda group ( figures 2(a ) and 2(b ) ) . neither semantic verbal fluency ( t(47 ) = 0.70 , p = 0.49 ) nor phonemic verbal fluency ( t(47 ) = 0.39 , p = 0.70 ) differed between groups . logical memory i immediate recall test ( u = 176 , p = 0.059 ) showed a trend that the pda+ group had worse new verbal learning and immediate recall abilities than the pda group . however , logical memory ii test performance ( u = 219 , p = 0.204 ) and logical memory % retention ( u = 242.5 , p = 0.434 ) did not differ between groups . there were also no differences between groups in global cognition ( mmse ) ( u = 222.5 , p = 0.23 ) . participants were split into lpd and rpd , and then further group differences were examined between pda+ and pda. importantly , the groups remained matched in age , disease duration , updrs - iii , dde , h&y stage , and depression but remained significantly different on self - reported anxiety . lpda+ demonstrated worse performance on the digit span forward test ( t(19 ) = 2.29 , p = 0.033 ) compared to lpda , whereas rpda+ demonstrated worse performance on the digit span backward test ( u = 36.5 , p = 0.006 ) , lm - i immediate recall ( u = 37.5 , p = 0.008 ) , and lm - ii ( u = 45.0 , p = 0.021 ) but not lm % retention ( u = 75.5 , p = 0.39 ) compared to rpda. this study is the first to directly compare cognition between pd patients with and without anxiety . the findings confirmed our hypothesis that anxiety negatively influences attentional set - shifting and working memory in pd . more specifically , we found that pd patients with anxiety were more impaired on the trail making test part b which assessed attentional set - shifting , on both digit span tests which assessed working memory and attention , and to a lesser extent on the logical memory test which assessed memory and new verbal learning compared to pd patients without anxiety . taken together , these findings suggest that anxiety in pd may reduce processing capacity and impair processing efficiency , especially in the central executive and attentional systems of working memory in a similar way as seen in young healthy adults [ 26 , 27 ] . although the neurobiology of anxiety in pd remains unknown , many researchers have postulated that anxiety disorders are related to neurochemical changes that occur during the early , premotor stages of pd - related degeneration [ 37 , 38 ] such as nigrostriatal dopamine depletion , as well as cell loss within serotonergic and noradrenergic brainstem nuclei ( i.e. , raphe nuclei and locus coeruleus , resp . , which provide massive inputs to corticolimbic regions ) . over time , chronic dysregulation of adrenocortical and catecholamine functions can lead to hippocampal damage as well as dysfunctional prefrontal neural circuitries [ 39 , 40 ] , which play a key role in memory and attention . recent functional neuroimaging work has suggested that enhanced hippocampal activation during executive functioning and working memory tasks may represent compensatory processes for impaired frontostriatal functions in pd patients compared to controls . therefore , chronic stress from anxiety , for example , may disrupt compensatory processes in pd patients and explain the cognitive impairments specifically in working memory and attention seen in pd patients with anxiety . it has also been suggested that hyperactivation within the putamen may reflect a compensatory striatal mechanism to maintain normal working memory performance in pd patients ; however , losing this compensatory activation has been shown to contribute to poor working memory performance . anxiety in mild pd has been linked to reduced putamen dopamine uptake which becomes more extensive as the disease progresses . this further supports the notion that anxiety may disrupt compensatory striatal mechanisms as well , providing another possible explanation for the cognitive impairments observed in pd patients with anxiety in this study . noradrenergic and serotonergic systems should also be considered when trying to explain the mechanisms by which anxiety may influence cognition in pd . although these neurotransmitter systems are relatively understudied in pd cognition , treating the noradrenergic and serotonergic systems has shown beneficial effects on cognition in pd . selective serotonin reuptake inhibitor , citalopram , was shown to improve response inhibition deficits in pd , while noradrenaline reuptake blocker , atomoxetine , has been recently reported to have promising effects on cognition in pd [ 45 , 46 ] . overall , very few neuroimaging studies have been conducted in pd in order to understand the neural correlates of pd anxiety and its underlying neural pathology . future research should focus on relating anatomical changes and neurochemical changes to neural activation in order to gain a clearer understanding on how these pathologies affect anxiety in pd . to further understand how anxiety and cognitive dysfunction are related , future research should focus on using advanced structural and function imaging techniques to explain both cognitive and neural breakdowns that are associated with anxiety in pd patients . research has indicated that those with amnestic mild cognitive impairment who have more neuropsychiatric symptoms have a greater risk of developing dementia compared to those with fewer neuropsychiatric symptoms . future studies should also examine whether treating neuropsychiatric symptoms might impact the progression of cognitive decline and improve cognitive impairments in pd patients . previous studies have used pd symptom laterality as a window to infer asymmetrical dysfunction of neural circuits . for example , lpd patients have greater inferred right hemisphere pathology , whereas rpd patients have greater inferred left hemisphere pathology . thus , cognitive domains predominantly subserved by the left hemisphere ( e.g. , verbally mediated tasks of executive function and verbal memory ) might be hypothesized to be more affected in rpd than lpd ; however , this remains controversial . it has also been suggested that since anxiety is a common feature of left hemisphere involvement [ 48 , 49 ] , cognitive domains subserved by the left hemisphere may also be more strongly related to anxiety . results from this study showed selective verbal memory deficits in rpd patients with anxiety compared to rpd without anxiety , whereas lpd patients with anxiety had greater attentional / working memory deficits compared to lpd without anxiety . although these results align with previous research , interpretations of these findings should be made with caution due to the small sample size in the lpd comparison specifically . recent work has suggested that the hads questionnaire may underestimate the burden of anxiety related symptomology and therefore be a less sensitive measure of anxiety in pd [ 30 , 50 ] . in addition , our small sample size also limited the statistical power for detecting significant findings . based on these limitations , our findings are likely conservative and underrepresent the true impact anxiety has on cognition in pd . additionally , the current study employed a very brief neuropsychological assessment including one or two tests for each cognitive domain . future studies are encouraged to collect a more complex and comprehensive battery from a larger sample of pd participants in order to better understand the role anxiety plays on cognition in pd . another limitation of this study was the absence of diagnostic interviews to characterize participants ' psychiatric symptoms and specify the type of anxiety disorders included in this study . future studies should perform diagnostic interviews with participants ( e.g. , using dsm - v criteria ) rather than relying on self - reported measures to group participants , in order to better understand whether the type of anxiety disorder ( e.g. , social anxiety , phobias , panic disorders , and generalized anxiety ) influences cognitive performance differently in pd . one advantage the hads questionnaire provided over other anxiety scales was that it assessed both anxiety and depression simultaneously and allowed us to control for coexisting depression . although there was a trend that the pda+ group self - reported higher levels of depression than the pda group , all participants included in the study scored < 6 on the depression subscale of the hads . controlling for depression while assessing anxiety has been identified as a key shortcoming in the majority of recent work . considering many previous studies have investigated the influence of depression on cognition in pd without accounting for the presence of anxiety and the inconsistent findings reported to date , we recommend that future research should try to disentangle the influence of anxiety versus depression on cognitive impairments in pd . considering the growing number of clinical trials for treating depression , there are few if any for the treatment of anxiety in pd . anxiety is a key contributor to decreased quality of life in pd and greatly requires better treatment options . moreover , anxiety has been suggested to play a key role in freezing of gait ( fog ) , which is also related to attentional set - shifting [ 52 , 53 ] . future research should examine the link between anxiety , set - shifting , and fog , in order to determine whether treating anxiety might be a potential therapy for improving fog .""" from transformers import LEDForConditionalGeneration, LEDTokenizer import torch tokenizer = LEDTokenizer.from_pretrained("patrickvonplaten/led-large-16384-pubmed") input_ids = tokenizer(LONG_ARTICLE, return_tensors="pt").input_ids.to("cuda") global_attention_mask = torch.zeros_like(input_ids) | d43d93116dba5d22d6b9ae3c970b45e1 |

apache-2.0 | [] | false | set global_attention_mask on first token global_attention_mask[:, 0] = 1 model = LEDForConditionalGeneration.from_pretrained("patrickvonplaten/led-large-16384-pubmed", return_dict_in_generate=True).to("cuda") sequences = model.generate(input_ids, global_attention_mask=global_attention_mask).sequences summary = tokenizer.batch_decode(sequences) ``` | c7bb602d7eb2f131089ec5f305f3fe54 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de-fr This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1629 - F1: 0.8584 | a545659cdb5f97439983b36426bd3a7f |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2904 | 1.0 | 715 | 0.1823 | 0.8286 | | 0.1446 | 2.0 | 1430 | 0.1626 | 0.8488 | | 0.0941 | 3.0 | 2145 | 0.1629 | 0.8584 | | c307d2aca1a10054dcd775246e1cd5f4 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 12 - eval_batch_size: 8 - seed: 2 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - training_steps: 200 | 6a7b35e5a0a6939e17b3be4199b35fa8 |

apache-2.0 | [] | false | Cross-Encoder for multilingual MS Marco This model was trained on the [MMARCO](https://hf.co/unicamp-dl/mmarco) dataset. It is a machine translated version of MS MARCO using Google Translate. It was translated to 14 languages. In our experiments, we observed that it performs also well for other languages. As a base model, we used the [multilingual MiniLMv2](https://huggingface.co/nreimers/mMiniLMv2-L12-H384-distilled-from-XLMR-Large) model. The model can be used for Information Retrieval: Given a query, encode the query will all possible passages (e.g. retrieved with ElasticSearch). Then sort the passages in a decreasing order. See [SBERT.net Retrieve & Re-rank](https://www.sbert.net/examples/applications/retrieve_rerank/README.html) for more details. The training code is available here: [SBERT.net Training MS Marco](https://github.com/UKPLab/sentence-transformers/tree/master/examples/training/ms_marco) | 11c3a549119ca3e615ee6b9fdd05fc96 |

apache-2.0 | [] | false | Usage with SentenceTransformers The usage becomes easy when you have [SentenceTransformers](https://www.sbert.net/) installed. Then, you can use the pre-trained models like this: ```python from sentence_transformers import CrossEncoder model = CrossEncoder('model_name') scores = model.predict([('Query', 'Paragraph1'), ('Query', 'Paragraph2') , ('Query', 'Paragraph3')]) ``` | fc4bd0870d4dad1529fedd74c4e33a98 |

apache-2.0 | [] | false | Usage with Transformers ```python from transformers import AutoTokenizer, AutoModelForSequenceClassification import torch model = AutoModelForSequenceClassification.from_pretrained('model_name') tokenizer = AutoTokenizer.from_pretrained('model_name') features = tokenizer(['How many people live in Berlin?', 'How many people live in Berlin?'], ['Berlin has a population of 3,520,031 registered inhabitants in an area of 891.82 square kilometers.', 'New York City is famous for the Metropolitan Museum of Art.'], padding=True, truncation=True, return_tensors="pt") model.eval() with torch.no_grad(): scores = model(**features).logits print(scores) ``` | 09cdb83626bc09daf30588c730282f54 |

apache-2.0 | [] | false |

This model is used in the paper **Generative Relation Linking for Question Answering over Knowledge Bases**. [ArXiv](https://arxiv.org/abs/2108.07337), [GitHub](https://github.com/IBM/kbqa-relation-linking)

| 2cd458f7301d47f8d4a009ee79c05887 |

apache-2.0 | [] | false | Citation

```bibtex

@inproceedings{rossiello-genrl-2021,

title={Generative relation linking for question answering over knowledge bases},

author={Rossiello, Gaetano and Mihindukulasooriya, Nandana and Abdelaziz, Ibrahim and Bornea, Mihaela and Gliozzo, Alfio and Naseem, Tahira and Kapanipathi, Pavan},

booktitle={International Semantic Web Conference},

pages={321--337},

year={2021},

organization={Springer},

url = "https://link.springer.com/chapter/10.1007/978-3-030-88361-4_19",

doi = "10.1007/978-3-030-88361-4_19"

}

``` | f7609cd779713c8ea282a971acf089b1 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 | 6a6bb28370a870ae49360211ee73aed2 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 125 | 1.8785 | 0.396 | | 63865783a484c12146be83090b33c294 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', False, 'nb-NO', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | XLS-R-300M-LM - Norwegian

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the Norwegian [NPSC](https://huggingface.co/datasets/NbAiLab/NPSC) dataset.

| e3ac331d153d9609c7d0ad3929c7bf7b |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', False, 'nb-NO', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | Scores without Language Model

Without using a language model, it achieves the following scores on the NPSC Eval set

It achieves the following results on the evaluation set without a language model:

- WER: 0.2110

- CER: 0.0622

| 59f1dbf5b1367d43052b5f07f87c5bcf |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', False, 'nb-NO', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | Scores with Language Model

A 5-gram KenLM was added to boost the models performance. The language model was created on a corpus mainly consisting of online newspapers, public reports and Wikipedia data. After this we are getting these values.

- WER: 0.1540

- CER: 0.0548

| e755cf47366b421dedc334ad63a1c1f5 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', False, 'nb-NO', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | Training and evaluation data

The model is trained and evaluated on [NPSC](https://huggingface.co/datasets/NbAiLab/NPSC). Unfortunately there is no Norwegian test data in Common Voice, and currently the model is only evaluated on the validation set of NPSC..

| 116efeda799f190e6b0ba4e3ff7fbd20 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', False, 'nb-NO', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 7.5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 2000

- num_epochs: 30.0 (But interrupted after 8500 steps, approx 6 epochs)

- mixed_precision_training: Native AMP

| 0aebe3dc6db8304ab868b0066f090039 |

apache-2.0 | ['translation'] | false | By the Hellenic Army Academy (SSE) and the Technical University of Crete (TUC) * source languages: el * target languages: en * licence: apache-2.0 * dataset: Opus, CCmatrix * model: transformer(fairseq) * pre-processing: tokenization + BPE segmentation * metrics: bleu, chrf | 949fcbe33c025842f2019a8493aedd87 |

apache-2.0 | ['translation'] | false | How to use ``` from transformers import FSMTTokenizer, FSMTForConditionalGeneration mname = "lighteternal/SSE-TUC-mt-el-en-cased" tokenizer = FSMTTokenizer.from_pretrained(mname) model = FSMTForConditionalGeneration.from_pretrained(mname) text = "Ο όρος τεχνητή νοημοσύνη αναφέρεται στον κλάδο της πληροφορικής ο οποίος ασχολείται με τη σχεδίαση και την υλοποίηση υπολογιστικών συστημάτων που μιμούνται στοιχεία της ανθρώπινης συμπεριφοράς ." encoded = tokenizer.encode(text, return_tensors='pt') outputs = model.generate(encoded, num_beams=5, num_return_sequences=5, early_stopping=True) for i, output in enumerate(outputs): i += 1 print(f"{i}: {output.tolist()}") decoded = tokenizer.decode(output, skip_special_tokens=True) print(f"{i}: {decoded}") ``` | af0c5041c196eb49c97ce6b32df81dd6 |

apache-2.0 | ['translation'] | false | Eval results Results on Tatoeba testset (EL-EN): | BLEU | chrF | | ------ | ------ | | 79.3 | 0.795 | Results on XNLI parallel (EL-EN): | BLEU | chrF | | ------ | ------ | | 66.2 | 0.623 | | 2b1e78704383359e38e33af21d891e73 |

apache-2.0 | ['translation'] | false | BibTeX entry and citation info Dimitris Papadopoulos, et al. "PENELOPIE: Enabling Open Information Extraction for the Greek Language through Machine Translation." (2021). Accepted at EACL 2021 SRW | 2798ace007f71d349d140da0282826f2 |

apache-2.0 | ['translation'] | false | opus-mt-es-es * source languages: es * target languages: es * OPUS readme: [es-es](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/es-es/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-20.zip](https://object.pouta.csc.fi/OPUS-MT-models/es-es/opus-2020-01-20.zip) * test set translations: [opus-2020-01-20.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/es-es/opus-2020-01-20.test.txt) * test set scores: [opus-2020-01-20.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/es-es/opus-2020-01-20.eval.txt) | 55337e79b0a73a79086aacdf6b77110a |

mit | ['question-generation', 'distilt5', 'distilt5-qg'] | false | DistilT5 for question-generation This is distilled version of [t5-small-qa-qg-hl](https://huggingface.co/valhalla/t5-small-qa-qg-hl) model trained for question answering and answer aware question generation tasks. The model is distilled using the **No Teacher Distillation** method proposed by Huggingface, [here](https://github.com/huggingface/transformers/tree/master/examples/seq2seq | 0aa1a2cf6105e6cb99d03574747d6d4c |

mit | ['question-generation', 'distilt5', 'distilt5-qg'] | false | distilbart). We just copy alternating layers from `t5-small-qa-qg-hl` and finetune more on the same data. Following table lists other distilled models and their metrics. | Name | BLEU-4 | METEOR | ROUGE-L | QA-EM | QA-F1 | |---------------------------------------------------------------------------------|---------|---------|---------|--------|--------| | [distilt5-qg-hl-6-4](https://huggingface.co/valhalla/distilt5-qg-hl-6-4) | 18.4141 | 24.8417 | 40.3435 | - | - | | [distilt5-qa-qg-hl-6-4](https://huggingface.co/valhalla/distilt5-qa-qg-hl-6-4) | 18.6493 | 24.9685 | 40.5605 | 76.13 | 84.659 | | [distilt5-qg-hl-12-6](https://huggingface.co/valhalla/distilt5-qg-hl-12-6) | 20.5275 | 26.5010 | 43.2676 | - | - | | [distilt5-qa-qg-hl-12-6](https://huggingface.co/valhalla/distilt5-qa-qg-hl-12-6)| 20.6109 | 26.4533 | 43.0895 | 81.61 | 89.831 | You can play with the model using the inference API. Here's how you can use it For QG `generate question: <hl> 42 <hl> is the answer to life, the universe and everything.` For QA `question: What is 42 context: 42 is the answer to life, the universe and everything.` For more deatils see [this](https://github.com/patil-suraj/question_generation) repo. | d1938c3b98f34c166962269f87f950a8 |

mit | ['question-generation', 'distilt5', 'distilt5-qg'] | false | Model in action 🚀 You'll need to clone the [repo](https://github.com/patil-suraj/question_generation). [](https://colab.research.google.com/github/patil-suraj/question_generation/blob/master/question_generation.ipynb) ```python3 from pipelines import pipeline nlp = pipeline("multitask-qa-qg", model="valhalla/distilt5-qa-qg-hl-6-4") | 10b409947af064feb28be6ed4186f08d |

mit | ['question-generation', 'distilt5', 'distilt5-qg'] | false | for qa pass a dict with "question" and "context" nlp({ "question": "What is 42 ?", "context": "42 is the answer to life, the universe and everything." }) => 'the answer to life, the universe and everything' ``` | fc6b3da7647c2760f9254133b54efe6c |

apache-2.0 | ['translation'] | false | opus-mt-de-eo * source languages: de * target languages: eo * OPUS readme: [de-eo](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/de-eo/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-20.zip](https://object.pouta.csc.fi/OPUS-MT-models/de-eo/opus-2020-01-20.zip) * test set translations: [opus-2020-01-20.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/de-eo/opus-2020-01-20.test.txt) * test set scores: [opus-2020-01-20.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/de-eo/opus-2020-01-20.eval.txt) | 0fdda1cc1f63edb7f991ce1d7e76077f |

apache-2.0 | ['generated_from_trainer'] | false | SEED0042 This model is a fine-tuned version of [bert-large-uncased](https://huggingface.co/bert-large-uncased) on the HATEXPLAIN dataset. It achieves the following results on the evaluation set: - Loss: 0.7731 - Accuracy: 0.4079 - Accuracy 0: 0.8027 - Accuracy 1: 0.1869 - Accuracy 2: 0.2956 | f538545b15a34a517b47a55e1f397928 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 1 - eval_batch_size: 1 - seed: 42 - distributed_type: not_parallel - gradient_accumulation_steps: 32 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 150 - num_epochs: 3 | f1ceb0b6762c97e3e8faab523e18a859 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Accuracy 0 | Accuracy 1 | Accuracy 2 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:----------:|:----------:|:----------:| | No log | 1.0 | 480 | 0.8029 | 0.4235 | 0.7589 | 0.0461 | 0.5985 | | No log | 2.0 | 960 | 0.7574 | 0.4011 | 0.7470 | 0.1831 | 0.3376 | | No log | 3.0 | 1440 | 0.7731 | 0.4079 | 0.8027 | 0.1869 | 0.2956 | | f3b632713894215560b95567dff99e41 |

apache-2.0 | ['generated_from_trainer'] | false | vit-base-beans This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the beans dataset. It achieves the following results on the evaluation set: - Accuracy: 0.9774 - Loss: 0.0876 | 694439fcc9287ece8bede757bae00972 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Accuracy | Validation Loss | |:-------------:|:-----:|:----:|:--------:|:---------------:| | 0.26 | 1.0 | 130 | 0.9549 | 0.2285 | | 0.277 | 2.0 | 260 | 0.9925 | 0.1066 | | 0.1629 | 3.0 | 390 | 0.9699 | 0.1069 | | 0.0963 | 4.0 | 520 | 0.9774 | 0.0885 | | 0.1569 | 5.0 | 650 | 0.9774 | 0.0876 | | 261be52f57dc847e6630f2d0b42eaf04 |

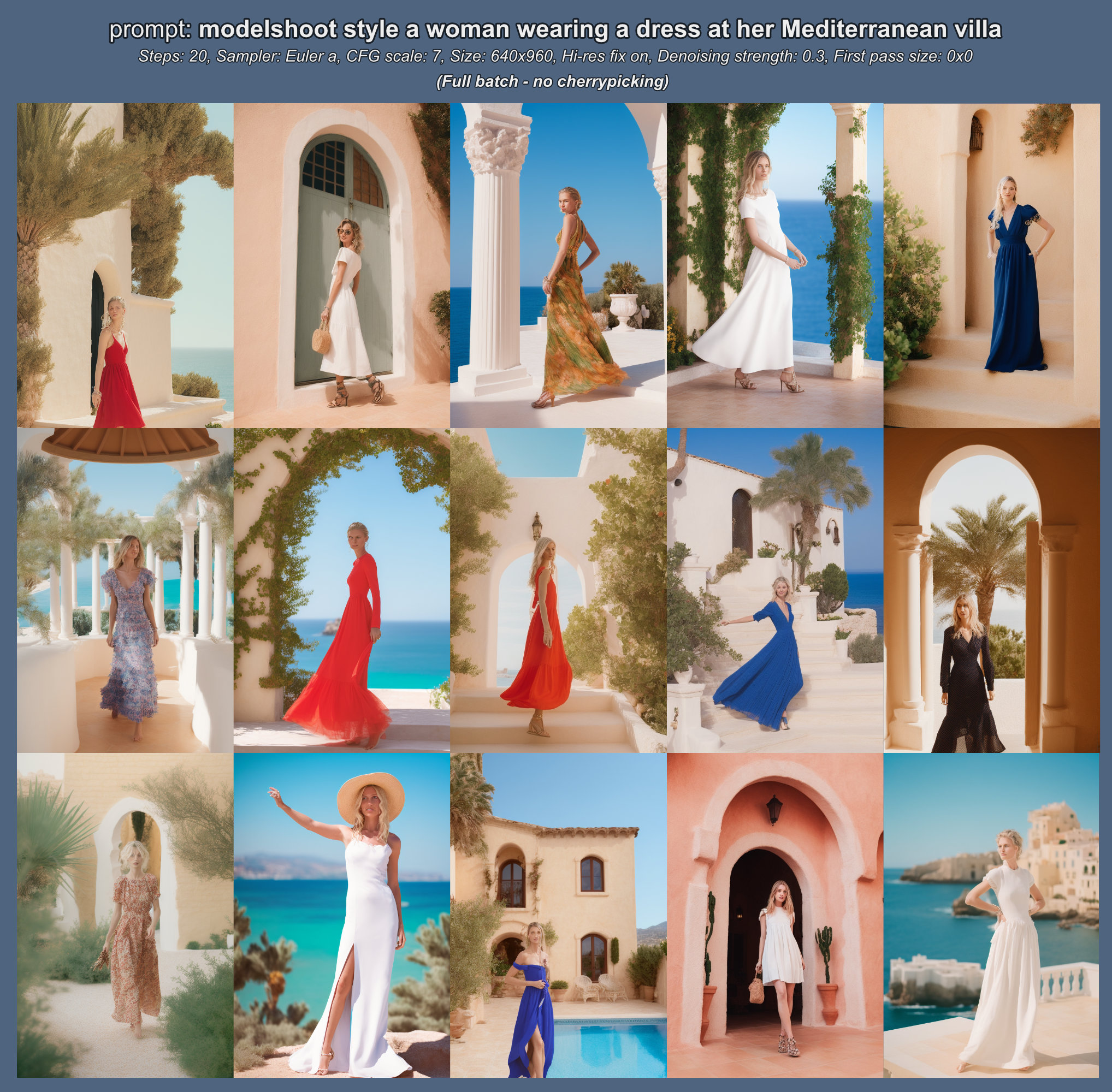

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'safetensors', 'diffusers'] | false | **Modelshoot Style**  [*CKPT DOWNLOAD LINK*](https://huggingface.co/wavymulder/modelshoot/resolve/main/modelshoot-1.0.ckpt) Use `modelshoot style` in your prompt (I recommend at the start) I also suggest your prompts include subject and location, for example "`amy adams at the construction site`" , as this helps the model to resolve backgrounds and small details. Modelshoot is a Dreambooth model trained from 1.5 with VAE on a diverse set of photographs of people. The goal was to create a model focused on full to medium body shots, with an emphasis on cool clothing and a fashion-shoot aesthetic. A result of the composition is that when your subject is further away, their face will usually look worse (and for celebrities, less like them). This limitation of training on 512x512 can be fixed with inpainting, and I plan on revisiting this model at higher resolution in the future. Modelshoot style works best when using a tall aspect ratio. This model was inspired by all the great responses to Analog Diffusion, especially ones where you all trained yourselves in and created awesome, fashionable photos! I hope that this model allows even greater images :) Please see [this document where I share the parameters (prompt, sampler, seed, etc.) used for all example images above.](https://huggingface.co/wavymulder/modelshoot/resolve/main/parameters_for_samples.txt) See below a batch example and how the model helps ensure a fashion-shoot composition without any excessive prompting. No face restoration used for any examples on this page, for demonstration purposes.  | 6ef16a51968d108a6c6acec0f9a8f833 |

mit | [] | false | ScandiNER - Named Entity Recognition model for Scandinavian Languages This model is a fine-tuned version of [NbAiLab/nb-bert-base](https://huggingface.co/NbAiLab/nb-bert-base) for Named Entity Recognition for Danish, Norwegian (both Bokmål and Nynorsk), Swedish, Icelandic and Faroese. It has been fine-tuned on the concatenation of [DaNE](https://aclanthology.org/2020.lrec-1.565/), [NorNE](https://arxiv.org/abs/1911.12146), [SUC 3.0](https://spraakbanken.gu.se/en/resources/suc3) and the Icelandic and Faroese parts of the [WikiANN](https://aclanthology.org/P17-1178/) dataset. It also works reasonably well on English sentences, given the fact that the pretrained model is also trained on English data along with Scandinavian languages. The model will predict the following four entities: | **Tag** | **Name** | **Description** | | :------ | :------- | :-------------- | | `PER` | Person | The name of a person (e.g., *Birgitte* and *Mohammed*) | | `LOC` | Location | The name of a location (e.g., *Tyskland* and *Djurgården*) | | `ORG` | Organisation | The name of an organisation (e.g., *Bunnpris* and *Landsbankinn*) | | `MISC` | Miscellaneous | A named entity of a different kind (e.g., *Ūjķnustu pund* and *Mona Lisa*) | | 85d8ef7743f76d574ac6c7e1e24b348b |

mit | [] | false | Quick start You can use this model in your scripts as follows: ```python >>> from transformers import pipeline >>> import pandas as pd >>> ner = pipeline(task='ner', ... model='saattrupdan/nbailab-base-ner-scandi', ... aggregation_strategy='first') >>> result = ner('Borghild kjøper seg inn i Bunnpris') >>> pd.DataFrame.from_records(result) entity_group score word start end 0 PER 0.981257 Borghild 0 8 1 ORG 0.974099 Bunnpris 26 34 ``` | 4a1f610dc7ea757d494d2855a475ae63 |

mit | [] | false | Performance The following is the Micro-F1 NER performance on Scandinavian NER test datasets, compared with the current state-of-the-art. The models have been evaluated on the test set along with 9 bootstrapped versions of it, with the mean and 95% confidence interval shown here: | **Model ID** | **DaNE** | **NorNE-NB** | **NorNE-NN** | **SUC 3.0** | **WikiANN-IS** | **WikiANN-FO** | **Average** | | :----------- | -------: | -----------: | -----------: | ----------: | -------------: | -------------: | ----------: | | saattrupdan/nbailab-base-ner-scandi | **87.44 ± 0.81** | **91.06 ± 0.26** | **90.42 ± 0.61** | **88.37 ± 0.17** | **88.61 ± 0.41** | **90.22 ± 0.46** | **89.08 ± 0.46** | | chcaa/da\_dacy\_large\_trf | 83.61 ± 1.18 | 78.90 ± 0.49 | 72.62 ± 0.58 | 53.35 ± 0.17 | 50.57 ± 0.46 | 51.72 ± 0.52 | 63.00 ± 0.57 | | RecordedFuture/Swedish-NER | 64.09 ± 0.97 | 61.74 ± 0.50 | 56.67 ± 0.79 | 66.60 ± 0.27 | 34.54 ± 0.73 | 42.16 ± 0.83 | 53.32 ± 0.69 | | Maltehb/danish-bert-botxo-ner-dane | 69.25 ± 1.17 | 60.57 ± 0.27 | 35.60 ± 1.19 | 38.37 ± 0.26 | 21.00 ± 0.57 | 27.88 ± 0.48 | 40.92 ± 0.64 | | Maltehb/-l-ctra-danish-electra-small-uncased-ner-dane | 70.41 ± 1.19 | 48.76 ± 0.70 | 27.58 ± 0.61 | 35.39 ± 0.38 | 26.22 ± 0.52 | 28.30 ± 0.29 | 39.70 ± 0.61 | | radbrt/nb\_nocy\_trf | 56.82 ± 1.63 | 68.20 ± 0.75 | 69.22 ± 1.04 | 31.63 ± 0.29 | 20.32 ± 0.45 | 12.91 ± 0.50 | 38.08 ± 0.75 | Aside from its high accuracy, it's also substantially **smaller** and **faster** than the previous state-of-the-art: | **Model ID** | **Samples/second** | **Model size** | | :----------- | -----------------: | -------------: | | saattrupdan/nbailab-base-ner-scandi | 4.16 ± 0.18 | 676 MB | | chcaa/da\_dacy\_large\_trf | 0.65 ± 0.01 | 2,090 MB | | 3dae44e4b77e6146bd4952c882c02a9a |

mit | [] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 90135.90000000001 - num_epochs: 1000 | a829be5b1d5d1ef89c70b04d72ca610d |

mit | [] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Micro F1 | Micro F1 No Misc | |:-------------:|:-----:|:-----:|:---------------:|:--------:|:----------------:| | 0.6682 | 1.0 | 2816 | 0.0872 | 0.6916 | 0.7306 | | 0.0684 | 2.0 | 5632 | 0.0464 | 0.8167 | 0.8538 | | 0.0444 | 3.0 | 8448 | 0.0367 | 0.8485 | 0.8783 | | 0.0349 | 4.0 | 11264 | 0.0316 | 0.8684 | 0.8920 | | 0.0282 | 5.0 | 14080 | 0.0290 | 0.8820 | 0.9033 | | 0.0231 | 6.0 | 16896 | 0.0283 | 0.8854 | 0.9060 | | 0.0189 | 7.0 | 19712 | 0.0253 | 0.8964 | 0.9156 | | 0.0155 | 8.0 | 22528 | 0.0260 | 0.9016 | 0.9201 | | 0.0123 | 9.0 | 25344 | 0.0266 | 0.9059 | 0.9233 | | 0.0098 | 10.0 | 28160 | 0.0280 | 0.9091 | 0.9279 | | 0.008 | 11.0 | 30976 | 0.0309 | 0.9093 | 0.9287 | | 0.0065 | 12.0 | 33792 | 0.0313 | 0.9103 | 0.9284 | | 0.0053 | 13.0 | 36608 | 0.0322 | 0.9078 | 0.9257 | | 0.0046 | 14.0 | 39424 | 0.0343 | 0.9075 | 0.9256 | | 696ea16ce900372afdfc9de457b7d98f |

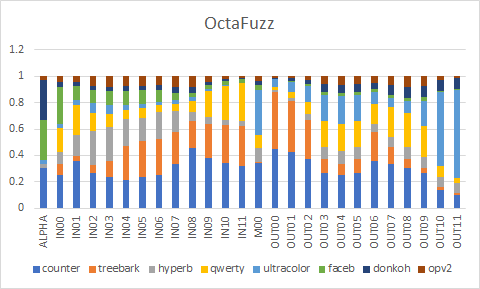

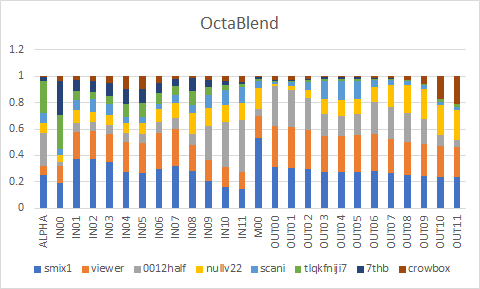

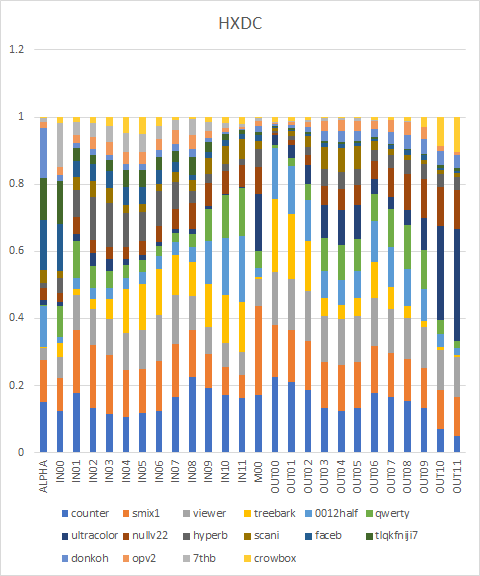

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | HXDC Counterfeit-V2.5 - <a href="https://huggingface.co/gsdf/Counterfeit-V2.5">Download</a><br/> Treebark - <a href="https://huggingface.co/HIZ/aichan_pick">Download</a><br/> HyperBomb, FaceBomb - <a href="https://huggingface.co/mocker/KaBoom">Download</a><br/> qwerty - <a href="https://huggingface.co/1q2W3e/qwerty">Download</a><br/> ultracolor.v4 - <a href="https://huggingface.co/xdive/ultracolor.v4">Download</a><br/> donko-mix-hard - <a href="https://civitai.com/models/7037/donko-mix-nsfw-hard">Download</a><br/> OrangePastelV2 - ~~Download~~ Currently not available.<br/> smix 1.12121 - <a href="https://civitai.com/models/8019/smix-1-series">Download</a><br/> viewer-mix - <a href="https://civitai.com/models/7813/viewer-mix">Download</a><br/> 0012-half - <a href="https://huggingface.co/1q2W3e/Attached-model_collection">Download</a><br/> Null v2.2 - <a href="https://civitai.com/models/8173/null-v22">Download</a><br/> school anime - <a href="https://civitai.com/models/7189/school-anime">Download</a><br/> tlqkfniji7 - <a href="https://huggingface.co/uiouiouio/The_lovely_quality_kahlua_flavour">Download</a><br/> 7th_anime_v3_B - <a href="https://huggingface.co/syaimu/7th_Layer">Download</a><br/> Crowbox-Vol.1 - <a href="https://huggingface.co/kf1022/Crowbox-Vol.1">Download</a><br/> EasyNegative and pastelmix-lora seem to work well with the models. EasyNegative - <a href="https://huggingface.co/datasets/gsdf/EasyNegative">Download</a><br/> pastelmix-lora - <a href="https://huggingface.co/andite/pastel-mix">Download</a> | 708e7546433d632a964f5a813669ea81 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | Formula ``` Counterfeit-V2.5 + Treebark = ct base_alpha = 0.009901 Weight values = 0.259221, 0.094699, 0.186355, 0.344377, 0.54691, 0.535689, 0.526122, 0.420305, 0.312004, 0.40172, 0.452608, 0.481439, 0.029126, 0.492655, 0.478894, 0.443794, 0.284518, 0.24424, 0.284451, 0.382469, 0.282082, 0.18387, 0.126064, 0.113941, 0.103878 ct + HyperBomb = cth base_alpha = 0.09009 Weight values = 0.208912, 0.290962, 0.44034, 0.426141, 0.294959, 0.258193, 0.279347, 0.219226, 0.100589, 0.076065, 0.061552, 0.053125, 0.225564, 0.013679, 0.029582, 0.067917, 0.209599, 0.238881, 0.209736, 0.097528, 0.143293, 0.18856, 0.227611, 0.336235, 0.40562 cth + qwerty = cthq base_alpha = 0.008929 Weight values = 0.298931, 0.286255, 0.185812, 0.136147, 0.100038, 0.09741, 0.069466, 0.065465, 0.099956, 0.218813, 0.27544, 0.304705, 0.184049, 0.021782, 0.051109, 0.115061, 0.291535, 0.319518, 0.291441, 0.197459, 0.295056, 0.359111, 0.375537, 0.264379, 0.170006 cthq + ultracolor.v4 = cthqu base_alpha = 0.081967 Weight values = 0.044348, 0.051224, 0.092643, 0.0896, 0.047055, 0.03864, 0.032217, 0.034381, 0.032329, 0.017, 0.009525, 0.005618, 0.380228, 0.060561, 0.083015, 0.128444, 0.233262, 0.247876, 0.234218, 0.103302, 0.082694, 0.111921, 0.235504, 0.634374, 0.746614 cthqu + FaceBomb = cthquf base_alpha = 0.45045 Weight values = 0.304652, 0.108189, 0.113682, 0.116402, 0.118828, 0.11284, 0.095841, 0.065612, 0.035945, 0.033428, 0.032195, 0.03155, 0.03663, 0.006005, 0.008193, 0.012592, 0.022593, 0.023941, 0.02257, 0.019395, 0.027618, 0.032024, 0.029911, 0.015144, 0.010908 cthquf + donko-mix-hard = cthqufd base_alpha = 0.310559 Weight values = 0.041071, 0.033818, 0.035788, 0.036933, 0.038236, 0.037834, 0.040386, 0.045727, 0.049152, 0.025509, 0.0135, 0.007091, 0.035336, 0.009262, 0.016837, 0.031714, 0.063923, 0.068124, 0.063941, 0.051919, 0.076044, 0.091518, 0.094579, 0.081523, 0.077707 cthqufd + OrangePastelV2 = OctaFuzz base_alpha = 0.03012 Weight values = 0.045454, 0.044635, 0.071192, 0.078145, 0.074833, 0.072486, 0.069609, 0.08331, 0.082494, 0.043373, 0.022197, 0.010507, 0.03413, 0.009176, 0.016555, 0.030733, 0.06007, 0.063741, 0.059989, 0.049022, 0.069114, 0.078421, 0.07162, 0.029375, 0.016293 smix 1.12121 + viewer-mix = sv base_alpha = 0.230769 Weight values = 0.395271, 0.35297, 0.359395, 0.382984, 0.448508, 0.468333, 0.478042, 0.475167, 0.419157, 0.446681, 0.469808, 0.48688, 0.230769, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5 sv + 0012-half = sv0 base_alpha = 0.434783 Weight values = 0.096641, 0.097719, 0.100011, 0.105301, 0.118931, 0.122252, 0.120899, 0.11391, 0.15397, 0.407393, 0.526559, 0.587752, 0.071429, 0.326817, 0.315594, 0.291682, 0.229445, 0.220024, 0.229364, 0.30164, 0.31157, 0.309196, 0.281226, 0.145209, 0.089865 sv0 + Null v2.2 = sv0n base_alpha = 0.115385 Weight values = 0.132862, 0.1371, 0.108727, 0.104247, 0.117468, 0.122796, 0.131157, 0.14836, 0.213205, 0.184383, 0.170088, 0.16255, 0.176471, 0.013049, 0.029363, 0.062385, 0.138653, 0.149139, 0.138776, 0.119286, 0.183455, 0.228237, 0.255516, 0.296091, 0.311362 sv0n + school anime = sv0ns base_alpha = 0.103448 Weight values = 0.087455, 0.088646, 0.114848, 0.110151, 0.070954, 0.064852, 0.054146, 0.06643, 0.083591, 0.111871, 0.125259, 0.132157, 0.055556, 0.014513, 0.032747, 0.067662, 0.139412, 0.148332, 0.139177, 0.054834, 0.040531, 0.031203, 0.02771, 0.029855, 0.03066 sv0ns + tlqkfniji7 = sv0nst base_alpha = 0.25641 Weight values = 0.366264, 0.082457, 0.061703, 0.0743, 0.128699, 0.132356, 0.090334, 0.073644, 0.120288, 0.066093, 0.038035, 0.022911, 0.016393, 0.010271, 0.010979, 0.012331, 0.015099, 0.015235, 0.014313, 0.006851, 0.005245, 0.005269, 0.008194, 0.021708, 0.026685 sv0nst + 7th_anime_v3_B = sv0nst7 base_alpha = 0.025 Weight values = 0.270768, 0.082819, 0.089464, 0.099695, 0.122101, 0.11876, 0.079592, 0.057662, 0.096981, 0.056373, 0.033881, 0.021306, 0.016129, 0.004163, 0.005616, 0.008379, 0.013987, 0.01468, 0.013977, 0.00666, 0.004674, 0.003356, 0.002823, 0.002944, 0.002989 sv0nst7 + Crowbox-Vol.1 = OctaBlend base_alpha = 0.007444 Weight values = 0.036592, 0.028764, 0.033246, 0.051828, 0.096045, 0.099435, 0.054162, 0.020355, 0.01281, 0.027376, 0.035261, 0.039613, 0.005348, 0.029654, 0.026405, 0.020164, 0.00725, 0.005724, 0.007621, 0.016328, 0.014867, 0.025298, 0.058555, 0.172774, 0.208144 OctaFuzz + OctaBlend = HXDC base_alpha = 0.5 Weight values = 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5 ``` | 5498b45dca1785798ed32aeaf0bbe070 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | Converted weights    | d429446d97f3d2dc66296c6d6050b295 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | Samples All of the images use following negatives/settings. EXIF preserved. ``` Negative prompt: (worst quality, low quality:1.4), EasyNegative, bad anatomy, bad hands, error, missing fingers, extra digit, fewer digits Steps: 28, Sampler: DPM++ 2M Karras, CFG scale: 7, Size: 768x512, Denoising strength: 0.6, Clip skip: 2, ENSD: 31337, Hires upscale: 1.5, Hires steps: 14, Hires upscaler: Latent (nearest-exact) ``` | f00ae2f050ce113a470c76b6e636e870 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | OctaFuzz         | 698183082972fed553a59a7263e1c1c8 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | OctaBlend         | df5ac38000a7ecc534a46c3748f6937d |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | HXDC         | 94889fda16cdb1e29a63c2c9254ae841 |

cc-by-sa-4.0 | ['chinese', 'token-classification', 'pos', 'dependency-parsing'] | false | Model Description This is a RoBERTa model pre-trained on Chinese Wikipedia texts (both simplified and traditional) for POS-tagging and dependency-parsing, derived from [roberta-base-chinese](https://huggingface.co/KoichiYasuoka/roberta-base-chinese). Every word is tagged by [UPOS](https://universaldependencies.org/u/pos/) (Universal Part-Of-Speech). | edf97f6008831bd33e9b40d585d9b20c |

cc-by-sa-4.0 | ['chinese', 'token-classification', 'pos', 'dependency-parsing'] | false | How to Use ```py from transformers import AutoTokenizer,AutoModelForTokenClassification tokenizer=AutoTokenizer.from_pretrained("KoichiYasuoka/roberta-base-chinese-upos") model=AutoModelForTokenClassification.from_pretrained("KoichiYasuoka/roberta-base-chinese-upos") ``` or ```py import esupar nlp=esupar.load("KoichiYasuoka/roberta-base-chinese-upos") ``` | b276b37816b601f8f1a7556b8fe34277 |

apache-2.0 | ['generated_from_trainer'] | false | my_awesome_wnut_model This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the wnut_17 dataset. It achieves the following results on the evaluation set: - Loss: 0.2808 - Precision: 0.5314 - Recall: 0.2901 - F1: 0.3753 - Accuracy: 0.9404 | b523b7e5bee091d8d5f3d796eb94fb2d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 213 | 0.2960 | 0.3920 | 0.1900 | 0.2559 | 0.9352 | | No log | 2.0 | 426 | 0.2808 | 0.5314 | 0.2901 | 0.3753 | 0.9404 | | 84a7ad11b730e6602dc42063d7a574ed |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Shortjourney is a Stable Diffusion model that lets you generate Midjourney style images with simple prompts This model was finetuned over the [22h/vintedois-diffusion](https://huggingface.co/22h/vintedois-diffusion-v0-1) (SD 1.5) model with some Midjourney style images. This allows it to create stunning images without long and tedious prompt engineering. Trigger Phrase: "**sjrny-v1 style**" e.g. "sjrny-v1 style paddington bear" **You can use this model for personal or commercial business. I am not liable for it's use/mis-use... you are!** The model does portraits extremely well. For landscapes, try using 512x832 or some other landscape aspect ratio. | ba1ee5d9c5003e51f1e77675cb401c69 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Examples * Prompt: sjrny-v1 style portrait of a woman, cosmic * CFG scale: 7 * Scheduler: Euler_a * Steps: 30 * Dimensions: 512x512 * Seed: 557913691  * Prompt: sjrny-v1 style paddington bear * CFG scale: 7 * Scheduler: Euler_a * Steps: 30 * Dimensions: 512x512  * Prompt: sjrny-v1 style livingroom, cinematic lighting, 4k, unreal engine * CFG scale: 7 * Scheduler: Euler_a * Steps: 30 * Dimensions: 512x832 * Seed: 638363858  * Prompt: sjrny-v1 style dream landscape, cosmic * CFG scale: 7 * Scheduler: Euler_a * Steps: 30 * Dimensions: 512x832  | 8c6fd1e837f50c9af44643ee516f2ab4 |

apache-2.0 | ['generated_from_trainer'] | false | satya-matury-asr-task-2-hindidata This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 3.0972 - Wer: 0.9942 | f11cc86c0a65e5702e45ecffde33f549 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 50 | 5dc165fe7a89552534fa20de0cb586b8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 6.6515 | 44.42 | 400 | 3.0972 | 0.9942 | | 819c6e8415b36635106bf7d09e8236cb |

apache-2.0 | ['generated_from_trainer'] | false | resnet-50_finetuned This model is a fine-tuned version of [microsoft/resnet-50](https://huggingface.co/microsoft/resnet-50) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.7209 - Precision: 0.3702 - Recall: 0.5 - F1: 0.4254 - Accuracy: 0.7404 | 5890aed2601d22707b411837e707cfc5 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 46 | 0.6599 | 0.3702 | 0.5 | 0.4254 | 0.7404 | | No log | 2.0 | 92 | 0.6725 | 0.3702 | 0.5 | 0.4254 | 0.7404 | | No log | 3.0 | 138 | nan | 0.8714 | 0.5062 | 0.4384 | 0.7436 | | No log | 4.0 | 184 | nan | 0.8714 | 0.5062 | 0.4384 | 0.7436 | | No log | 5.0 | 230 | nan | 0.8714 | 0.5062 | 0.4384 | 0.7436 | | No log | 6.0 | 276 | nan | 0.8714 | 0.5062 | 0.4384 | 0.7436 | | No log | 7.0 | 322 | nan | 0.8714 | 0.5062 | 0.4384 | 0.7436 | | No log | 8.0 | 368 | nan | 0.8714 | 0.5062 | 0.4384 | 0.7436 | | No log | 9.0 | 414 | nan | 0.8714 | 0.5062 | 0.4384 | 0.7436 | | No log | 10.0 | 460 | 0.7209 | 0.3702 | 0.5 | 0.4254 | 0.7404 | | e5c67f496abe3dbb599f20dbe2b3608d |

apache-2.0 | ['generated_from_trainer'] | false | t5-small-finetuned-wikisql This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the wiki_sql dataset. It achieves the following results on the evaluation set: - Loss: 0.1246 - Rouge2 Precision: 0.8187 - Rouge2 Recall: 0.7269 - Rouge2 Fmeasure: 0.7629 | 7385d63a8238f931a4dc641d21c12efa |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5 | e4aff22054dbbcae963ea8b7a4b840b5 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge2 Precision | Rouge2 Recall | Rouge2 Fmeasure | |:-------------:|:-----:|:-----:|:---------------:|:----------------:|:-------------:|:---------------:| | 0.1952 | 1.0 | 4049 | 0.1567 | 0.7948 | 0.7057 | 0.7406 | | 0.167 | 2.0 | 8098 | 0.1382 | 0.8092 | 0.7171 | 0.7534 | | 0.1517 | 3.0 | 12147 | 0.1296 | 0.8145 | 0.7228 | 0.7589 | | 0.1433 | 4.0 | 16196 | 0.1260 | 0.8175 | 0.7254 | 0.7617 | | 0.1414 | 5.0 | 20245 | 0.1246 | 0.8187 | 0.7269 | 0.7629 | | c0a0ab3fbdb5ea300501a2ab9cf66c53 |

apache-2.0 | ['generated_from_trainer'] | false | small-mlm-glue-rte-target-glue-wnli This model is a fine-tuned version of [muhtasham/small-mlm-glue-rte](https://huggingface.co/muhtasham/small-mlm-glue-rte) on the None dataset. It achieves the following results on the evaluation set: - Loss: 7.6097 - Accuracy: 0.0704 | 050a96164d05793d61f285e2b2ee33aa |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.628 | 25.0 | 500 | 2.6799 | 0.1127 | | 0.2976 | 50.0 | 1000 | 5.1702 | 0.0845 | | 0.1272 | 75.0 | 1500 | 6.3920 | 0.0845 | | 0.0709 | 100.0 | 2000 | 7.6097 | 0.0704 | | b4633372da4e2dcf9fcb1ff75c7379e6 |

mit | ['generated_from_trainer'] | false | roberta-base-finetuned-academic This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on the elsevier-oa-cc-by dataset. It achieves the following results on the evaluation set: - Loss: 2.1158 | 4d76ab690adb31c206d57b4aa608011f |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5 - mixed_precision_training: Native AMP | de3d7a6c393aaac531852d53f98d5979 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 2.1903 | 0.25 | 1025 | 2.0998 | | 2.1752 | 0.5 | 2050 | 2.1186 | | 2.1864 | 0.75 | 3075 | 2.1073 | | 2.1874 | 1.0 | 4100 | 2.1177 | | 2.1669 | 1.25 | 5125 | 2.1091 | | 2.1859 | 1.5 | 6150 | 2.1212 | | 2.1783 | 1.75 | 7175 | 2.1096 | | 2.1734 | 2.0 | 8200 | 2.0998 | | 2.1712 | 2.25 | 9225 | 2.0972 | | 2.1812 | 2.5 | 10250 | 2.1051 | | 2.1811 | 2.75 | 11275 | 2.1150 | | 2.1826 | 3.0 | 12300 | 2.1097 | | 2.172 | 3.25 | 13325 | 2.1115 | | 2.1745 | 3.5 | 14350 | 2.1098 | | 2.1758 | 3.75 | 15375 | 2.1101 | | 2.1834 | 4.0 | 16400 | 2.1232 | | 2.1836 | 4.25 | 17425 | 2.1052 | | 2.1791 | 4.5 | 18450 | 2.1186 | | 2.172 | 4.75 | 19475 | 2.1039 | | 2.1797 | 5.0 | 20500 | 2.1015 | | 2a78c72c8eabe39bb1b8491a965ab1b3 |

mit | ['text-classification', 'PyTorch', 'Transformers'] | false | fakeBert

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on a [news dataset](https://www.kaggle.com/datasets/clmentbisaillon/fake-and-real-news-dataset) from Kaggle.

| 52846917cb2a5c7c55d96af0107f8436 |

mit | ['text-classification', 'PyTorch', 'Transformers'] | false | Training and evaluation data

Training & Validation: [Fake and real news dataset](https://www.kaggle.com/datasets/clmentbisaillon/fake-and-real-news-dataset)

Testing: [Fake News Detection Challenge KDD 2020](https://www.kaggle.com/competitions/fakenewskdd2020/overview)

| 0663fdf536510daf4422010eef158965 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | dnciic Dreambooth model trained by dnciic with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Sample pictures of this concept: | c3ce63373a77b4e8971c7296a7bb7c44 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_add_GLUE_Experiment_sst2_96 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE SST2 dataset. It achieves the following results on the evaluation set: - Loss: 0.5220 - Accuracy: 0.7683 | 61fb08902bbc4dd0f3303bb86ef5b031 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6871 | 1.0 | 264 | 0.6971 | 0.5092 | | 0.6867 | 2.0 | 528 | 0.6968 | 0.5092 | | 0.6865 | 3.0 | 792 | 0.6978 | 0.5092 | | 0.6864 | 4.0 | 1056 | 0.6951 | 0.5092 | | 0.514 | 5.0 | 1320 | 0.5220 | 0.7683 | | 0.3536 | 6.0 | 1584 | 0.5425 | 0.7764 | | 0.297 | 7.0 | 1848 | 0.5412 | 0.7901 | | 0.2677 | 8.0 | 2112 | 0.5740 | 0.7729 | | 0.245 | 9.0 | 2376 | 0.5970 | 0.7741 | | 0.2313 | 10.0 | 2640 | 0.6024 | 0.7856 | | 602f32828b0b2e203d8d47429c024ca5 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | {INSTANCE_NAME} Dreambooth model trained by asp2131 with [buildspace's DreamBooth](https://colab.research.google.com/github/buildspace/diffusers/blob/main/examples/dreambooth/DreamBooth_Stable_Diffusion.ipynb) notebook Build your own using the [AI Avatar project](https://buildspace.so/builds/ai-avatar)! To get started head over to the [project dashboard](https://buildspace.so/p/build-ai-avatars). Sample pictures of this concept:  | 95d8d6f014f5bd68506f725a563460d5 |

cc-by-4.0 | ['generated_from_trainer'] | false | roberta-base-bne-finetuned-amazon_reviews_multi This model is a fine-tuned version of [BSC-TeMU/roberta-base-bne](https://huggingface.co/BSC-TeMU/roberta-base-bne) on the amazon_reviews_multi dataset. It achieves the following results on the evaluation set: - Loss: 0.2557 - Accuracy: 0.9085 | cef45d2d7455558afa9c3f1b36badc12 |

cc-by-4.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.2296 | 1.0 | 125 | 0.2557 | 0.9085 | | b2957888e2e3a6f513fc66bb1445fe0a |

cc-by-4.0 | ['text2text-generation', 'question-generation', 'answer-extraction', 'question-answering', 'text-generation'] | false | mt5-small for Turkish Question Generation Automated question generation and question answering using text-to-text transformers by OBSS AI. ```python from core.api import GenerationAPI generation_api = GenerationAPI('mt5-small-3task-both-tquad2', qg_format='both') ``` | c8e5e9085493f767439b19158e1afa67 |

cc-by-4.0 | ['text2text-generation', 'question-generation', 'answer-extraction', 'question-answering', 'text-generation'] | false | Citation 📜 ``` @article{akyon2022questgen, author = {Akyon, Fatih Cagatay and Cavusoglu, Ali Devrim Ekin and Cengiz, Cemil and Altinuc, Sinan Onur and Temizel, Alptekin}, doi = {10.3906/elk-1300-0632.3914}, journal = {Turkish Journal of Electrical Engineering and Computer Sciences}, title = {{Automated question generation and question answering from Turkish texts}}, url = {https://journals.tubitak.gov.tr/elektrik/vol30/iss5/17/}, year = {2022} } ``` | ca5c7b7c434528d61fb47a4e42571608 |

cc-by-4.0 | ['text2text-generation', 'question-generation', 'answer-extraction', 'question-answering', 'text-generation'] | false | Overview ✔️ **Language model:** mt5-small **Language:** Turkish **Downstream-task:** Extractive QA/QG, Answer Extraction **Training data:** TQuADv2-train **Code:** https://github.com/obss/turkish-question-generation **Paper:** https://journals.tubitak.gov.tr/elektrik/vol30/iss5/17/ | 90fc5acb046405910f9e7600f2ed2332 |

cc-by-4.0 | ['text2text-generation', 'question-generation', 'answer-extraction', 'question-answering', 'text-generation'] | false | Hyperparameters ``` batch_size = 256 n_epochs = 15 base_LM_model = "mt5-small" max_source_length = 512 max_target_length = 64 learning_rate = 1.0e-3 task_lisst = ["qa", "qg", "ans_ext"] qg_format = "both" ``` | 0e43bf899c11dfe7f8c832fc3d235bda |

cc-by-4.0 | ['text2text-generation', 'question-generation', 'answer-extraction', 'question-answering', 'text-generation'] | false | Usage 🔥 ```python from core.api import GenerationAPI generation_api = GenerationAPI('mt5-small-3task-both-tquad2', qg_format='both') context = """ Bu modelin eğitiminde, Türkçe soru cevap verileri kullanılmıştır. Çalışmada sunulan yöntemle, Türkçe metinlerden otomatik olarak soru ve cevap üretilebilir. Bu proje ile paylaşılan kaynak kodu ile Türkçe Soru Üretme / Soru Cevaplama konularında yeni akademik çalışmalar yapılabilir. Projenin detaylarına paylaşılan Github ve Arxiv linklerinden ulaşılabilir. """ | 82d1c61b88bc9d3afa72f76b7c27831e |

apache-2.0 | ['generated_from_trainer'] | false | Tagged_Uni_500v1_NER_Model_3Epochs_AUGMENTED This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the tagged_uni500v1_wikigold_split dataset. It achieves the following results on the evaluation set: - Loss: 0.2425 - Precision: 0.7049 - Recall: 0.7077 - F1: 0.7063 - Accuracy: 0.9309 | dbb859c95df3f4aeb1ff2a45aa829039 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 163 | 0.2804 | 0.4476 | 0.3886 | 0.4160 | 0.9019 | | No log | 2.0 | 326 | 0.2401 | 0.6803 | 0.6657 | 0.6729 | 0.9265 | | No log | 3.0 | 489 | 0.2425 | 0.7049 | 0.7077 | 0.7063 | 0.9309 | | d98825b96eaccfbe859366708a64b359 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Whisper Small PT - Ariel Azzi This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.2065 - Wer: 14.3447 | ebe1625f4f9295e9522e884706417f1b |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.