license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 64 - eval_batch_size: 256 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1.0 - mixed_precision_training: Native AMP | 599670989eac6b0911994970b4e847a5 |

mit | ['roberta'] | false | Indo-roberta-indonli Indo-roberta-indonli is natural language inference classifier based on [Indo-roberta](https://huggingface.co/flax-community/indonesian-roberta-base) model. It was trained on the trained on [IndoNLI](https://github.com/ir-nlp-csui/indonli/tree/main/data/indonli) dataset. The model used was [Indo-roberta](https://huggingface.co/flax-community/indonesian-roberta-base) and was transfer-learned to a natural inference classifier model. The model are tested using the validation, test_layer and test_expert dataset given in the github repository. The results are shown below. | 97ef7ab2d4bf7677b877cbaaf41c5fca |

mit | ['roberta'] | false | Result | Dataset | Accuracy | F1 | Precision | Recall | |-------------|----------|---------|-----------|---------| | Test Lay | 0.74329 | 0.74075 | 0.74283 | 0.74133 | | Test Expert | 0.6115 | 0.60543 | 0.63924 | 0.61742 | | e2fa1007b9cfce9a18061524da092ff7 |

mit | ['roberta'] | false | Model The model was trained on with 5 epochs, batch size 16, learning rate 2e-5 and weight decay 0.01. Achieved different metrics as shown below. | Epoch | Training Loss | Validation Loss | Accuracy | F1 | Precision | Recall | |-------|---------------|-----------------|----------|----------|-----------|----------| | 1 | 0.942500 | 0.658559 | 0.737369 | 0.735552 | 0.735488 | 0.736679 | | 2 | 0.649200 | 0.645290 | 0.761493 | 0.759593 | 0.762784 | 0.759642 | | 3 | 0.437100 | 0.667163 | 0.766045 | 0.763979 | 0.765740 | 0.763792 | | 4 | 0.282000 | 0.786683 | 0.764679 | 0.761802 | 0.762011 | 0.761684 | | 5 | 0.193500 | 0.925717 | 0.765134 | 0.763127 | 0.763560 | 0.763489 | | 37c4481cc29f2649d83f36be5935b03f |

mit | ['roberta'] | false | As NLI Classifier ```python from transformers import pipeline pretrained_name = "StevenLimcorn/indonesian-roberta-indonli" nlp = pipeline( "zero-shot-classification", model=pretrained_name, tokenizer=pretrained_name ) nlp("Amir Sjarifoeddin Harahap lahir di Kota Medan, Sumatera Utara, 27 April 1907. Ia meninggal di Surakarta, Jawa Tengah, pada 19 Desember 1948 dalam usia 41 tahun. </s></s> Amir Sjarifoeddin Harahap masih hidup.") ``` | a9ba84ce9b452f491b02d422da85612a |

mit | ['roberta'] | false | Author Indonesian RoBERTa Base IndoNLI was trained and evaluated by [Steven Limcorn](https://github.com/stevenlimcorn). All computation and development are done on Google Colaboratory using their free GPU access. | d9e30e745671c9877f30acdc26ba3ffd |

mit | ['roberta'] | false | Reference The dataset we used is by IndoNLI. ``` @inproceedings{indonli, title = "IndoNLI: A Natural Language Inference Dataset for Indonesian", author = "Mahendra, Rahmad and Aji, Alham Fikri and Louvan, Samuel and Rahman, Fahrurrozi and Vania, Clara", booktitle = "Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing", month = nov, year = "2021", publisher = "Association for Computational Linguistics", } ``` | 59c2364d4a1bfdc665d7f833779429a4 |

other | ['generated_from_trainer'] | false | FoodAds_text-generator_opt350m This model is a fine-tuned version of [facebook/opt-350m](https://huggingface.co/facebook/opt-350m) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.9823 | 26d4bc78d2e641db84ad52e76154685c |

other | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 27 | 4.5522 | | No log | 2.0 | 54 | 4.1730 | | No log | 3.0 | 81 | 4.0330 | | No log | 4.0 | 108 | 3.9801 | | No log | 5.0 | 135 | 3.9823 | | 0e1849193915be12875fafd1de506932 |

apache-2.0 | ['generated_from_trainer'] | false | Tagged_Uni_500v9_NER_Model_3Epochs_AUGMENTED This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the tagged_uni500v9_wikigold_split dataset. It achieves the following results on the evaluation set: - Loss: 0.2209 - Precision: 0.7117 - Recall: 0.7177 - F1: 0.7146 - Accuracy: 0.9351 | 1470fbafad58eee4f4a6a297bbd19eab |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 165 | 0.2693 | 0.5953 | 0.5249 | 0.5579 | 0.9126 | | No log | 2.0 | 330 | 0.2203 | 0.6916 | 0.6853 | 0.6884 | 0.9313 | | No log | 3.0 | 495 | 0.2209 | 0.7117 | 0.7177 | 0.7146 | 0.9351 | | bc9cf5e6ca730665e71a8952e012633d |

apache-2.0 | ['generated_from_trainer'] | false | recipe-lr1e05-wd0.02-bs8 This model is a fine-tuned version of [paola-md/recipe-distilroberta-Is](https://huggingface.co/paola-md/recipe-distilroberta-Is) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2781 - Rmse: 0.5273 - Mse: 0.2781 - Mae: 0.4279 | 93830dc9fe2bdb0edee969d4ff21078f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rmse | Mse | Mae | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:| | 0.2766 | 1.0 | 2490 | 0.2740 | 0.5234 | 0.2740 | 0.4172 | | 0.2738 | 2.0 | 4980 | 0.2783 | 0.5276 | 0.2783 | 0.4297 | | 0.2724 | 3.0 | 7470 | 0.2781 | 0.5273 | 0.2781 | 0.4279 | | d7924ec7ed16c534935c7b46ff71e943 |

apache-2.0 | ['generated_from_trainer'] | false | bert-finetuned-ner This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0575 - Precision: 0.9322 - Recall: 0.9505 - F1: 0.9413 - Accuracy: 0.9860 | cb1d48ba9fcebeb1184463f5949c3fb3 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.2219 | 1.0 | 878 | 0.0716 | 0.9076 | 0.9288 | 0.9181 | 0.9808 | | 0.0453 | 2.0 | 1756 | 0.0597 | 0.9297 | 0.9477 | 0.9386 | 0.9852 | | 0.0239 | 3.0 | 2634 | 0.0575 | 0.9322 | 0.9505 | 0.9413 | 0.9860 | | 9cc5ff54918ee696ec268f723da59d3f |

mit | ['spacy', 'token-classification'] | false | This is a Spacy multilingual (Catalan & Spanish) anonimization model, for use with BSC's AnonymizationPipeline at: https://github.com/TeMU-BSC/AnonymizationPipeline. pip install https://huggingface.co/PlanTL-GOB-ES/es_anonimization_core_lg/resolve/main/es_anonimization_core_lg-any-py3-none-any.whl The anonymization pipeline is a library for performing sensitive data identification and ultimately anonymization of the detected data in Spanish and Catalan user generated plain text. This is not a standalone model and is meant to work within the pipeline. The model can detect the following entities: `EMAIL`, `FINANCIAL`, `ID`, `LOC`, `MISC`, `ORG`, `PER`, `TELEPHONE`, `VEHICLE`, `ZIP` | Feature | Description | | --- | --- | | **Name** | `ca_anonimization_core_lg` | | **Version** | `1.0.0` | | **spaCy** | `>=3.2.3,<4.0.0` | | **Default Pipeline** | `tok2vec`, `morphologizer`, `parser`, `attribute_ruler`, `lemmatizer`, `ner` | | **Components** | `tok2vec`, `morphologizer`, `parser`, `attribute_ruler`, `lemmatizer`, `ner` | | **Vectors** | 500000 keys, 500000 unique vectors (300 dimensions) | | **Sources** | n/a | | **License** | `MIT` | | **Author** | [Joaquin Silveira](https://github.com/TeMU-BSC/AnonymizationPipeline) | | 847f4a7ce40e56e56a0cf77e4b0e4fed |

mit | ['spacy', 'token-classification'] | false | Label Scheme <details> <summary>View label scheme (322 labels for 3 components)</summary> | Component | Labels | | --- | --- | | **`morphologizer`** | `Definite=Def\|Gender=Masc\|Number=Sing\|POS=DET\|PronType=Art`, `POS=PROPN`, `POS=PUNCT\|PunctSide=Ini\|PunctType=Brck`, `POS=PUNCT\|PunctSide=Fin\|PunctType=Brck`, `Mood=Ind\|Number=Sing\|POS=AUX\|Person=3\|Tense=Pres\|VerbForm=Fin`, `Gender=Masc\|Number=Sing\|POS=VERB\|Tense=Past\|VerbForm=Part`, `Definite=Def\|Gender=Fem\|Number=Sing\|POS=DET\|PronType=Art`, `Gender=Fem\|Number=Sing\|POS=NOUN`, `POS=ADP`, `NumType=Card\|Number=Plur\|POS=NUM`, `Gender=Masc\|Number=Plur\|POS=NOUN`, `Number=Sing\|POS=ADJ`, `POS=CCONJ`, `Gender=Fem\|Number=Sing\|POS=DET\|PronType=Ind`, `NumForm=Digit\|NumType=Card\|POS=NUM`, `NumForm=Digit\|POS=NOUN`, `Gender=Masc\|Number=Plur\|POS=ADJ`, `POS=PUNCT\|PunctType=Comm`, `POS=AUX\|VerbForm=Inf`, `Case=Acc,Dat\|POS=PRON\|Person=3\|PrepCase=Npr\|PronType=Prs\|Reflex=Yes`, `Definite=Def\|Gender=Masc\|Number=Plur\|POS=DET\|PronType=Art`, `POS=PRON\|PronType=Rel`, `Mood=Ind\|Number=Plur\|POS=VERB\|Person=3\|Tense=Imp\|VerbForm=Fin`, `Gender=Fem\|Number=Sing\|POS=DET\|PronType=Art`, `Gender=Fem\|Number=Sing\|POS=DET\|Person=3\|Poss=Yes\|PronType=Prs`, `Definite=Def\|Gender=Fem\|Number=Plur\|POS=DET\|PronType=Art`, `Gender=Fem\|Number=Plur\|POS=NOUN`, `Gender=Fem\|Number=Plur\|POS=ADJ`, `POS=VERB\|VerbForm=Inf`, `Case=Acc,Dat\|Number=Plur\|POS=PRON\|Person=3\|PronType=Prs`, `Number=Plur\|POS=ADJ`, `POS=PUNCT\|PunctType=Peri`, `Number=Sing\|POS=PRON\|PronType=Rel`, `Gender=Masc\|Number=Sing\|POS=NOUN`, `Mood=Imp\|Number=Sing\|POS=VERB\|Person=2\|VerbForm=Fin`, `Gender=Masc\|Number=Plur\|POS=ADJ\|VerbForm=Part`, `POS=SCONJ`, `Mood=Ind\|Number=Plur\|POS=AUX\|Person=3\|Tense=Pres\|VerbForm=Fin`, `Gender=Masc\|Number=Plur\|POS=VERB\|Tense=Past\|VerbForm=Part`, `Definite=Def\|Number=Sing\|POS=DET\|PronType=Art`, `Gender=Masc\|Number=Sing\|POS=DET\|PronType=Ind`, `Gender=Fem\|Number=Plur\|POS=ADJ\|VerbForm=Part`, `Gender=Masc\|Number=Sing\|POS=DET\|PronType=Dem`, `POS=VERB\|VerbForm=Ger`, `POS=NOUN`, `Gender=Fem\|NumType=Card\|Number=Sing\|POS=NUM`, `Gender=Fem\|Number=Sing\|POS=ADJ\|VerbForm=Part`, `Gender=Fem\|NumType=Ord\|Number=Plur\|POS=ADJ`, `POS=SYM`, `Gender=Masc\|Number=Sing\|POS=ADJ`, `Gender=Masc\|Number=Sing\|POS=ADJ\|VerbForm=Part`, `Mood=Ind\|Number=Sing\|POS=VERB\|Person=3\|Tense=Pres\|VerbForm=Fin`, `Gender=Fem\|Number=Sing\|POS=DET\|PronType=Dem`, `POS=ADV\|Polarity=Neg`, `POS=ADV`, `Number=Sing\|POS=PRON\|PronType=Dem`, `Number=Sing\|POS=NOUN`, `Mood=Ind\|Number=Plur\|POS=VERB\|Person=3\|Tense=Pres\|VerbForm=Fin`, `Number=Plur\|POS=NOUN`, `Mood=Sub\|Number=Plur\|POS=VERB\|Person=3\|Tense=Imp\|VerbForm=Fin`, `Gender=Fem\|Number=Sing\|POS=ADJ`, `Mood=Sub\|Number=Sing\|POS=VERB\|Person=1\|Tense=Pres\|VerbForm=Fin`, `Gender=Masc\|Number=Sing\|POS=PRON\|PronType=Tot`, `Case=Loc\|POS=PRON\|Person=3\|PronType=Prs`, `Gender=Fem\|NumType=Ord\|Number=Sing\|POS=ADJ`, `Degree=Cmp\|POS=ADV`, `Gender=Fem\|Number=Plur\|POS=DET\|PronType=Art`, `Gender=Fem\|Number=Plur\|POS=DET\|Person=3\|Poss=Yes\|PronType=Prs`, `Mood=Ind\|Number=Sing\|POS=VERB\|Person=3\|Tense=Fut\|VerbForm=Fin`, `Gender=Masc\|NumType=Ord\|Number=Sing\|POS=ADJ`, `Mood=Ind\|Number=Sing\|POS=AUX\|Person=3\|Tense=Fut\|VerbForm=Fin`, `NumType=Card\|POS=NUM`, `Mood=Ind\|Number=Plur\|POS=VERB\|Person=3\|Tense=Fut\|VerbForm=Fin`, `Number=Sing\|POS=PRON\|PronType=Ind`, `Gender=Masc\|Number=Sing\|POS=DET\|PronType=Art`, `Number=Plur\|POS=DET\|PronType=Ind`, `Mood=Sub\|Number=Plur\|POS=VERB\|Person=3\|Tense=Pres\|VerbForm=Fin`, `Gender=Masc\|Number=Plur\|POS=DET\|PronType=Dem`, `Mood=Ind\|Number=Plur\|POS=AUX\|Person=3\|Tense=Fut\|VerbForm=Fin`, `Gender=Masc\|NumType=Card\|Number=Sing\|POS=NUM`, `Mood=Sub\|Number=Plur\|POS=AUX\|Person=3\|Tense=Pres\|VerbForm=Fin`, `Case=Acc\|Gender=Fem\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `Number=Sing\|POS=DET\|PronType=Ind`, `POS=PUNCT`, `Number=Sing\|POS=DET\|PronType=Rel`, `Case=Gen\|POS=PRON\|Person=3\|PronType=Prs`, `Gender=Fem\|NumType=Card\|Number=Plur\|POS=NUM`, `Mood=Ind\|Number=Plur\|POS=VERB\|Person=1\|Tense=Pres\|VerbForm=Fin`, `POS=DET\|PronType=Ind`, `POS=AUX`, `Case=Acc\|Gender=Neut\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `Case=Acc,Dat\|Number=Plur\|POS=PRON\|Person=1\|PronType=Prs`, `Degree=Cmp\|Number=Sing\|POS=ADJ`, `Number=Sing\|POS=VERB`, `Gender=Masc\|Number=Plur\|POS=PRON\|PronType=Ind`, `Gender=Fem\|Number=Plur\|POS=DET\|PronType=Dem`, `Gender=Masc\|Number=Plur\|POS=DET\|PronType=Art`, `Gender=Masc\|Number=Plur\|POS=DET\|Person=3\|Poss=Yes\|PronType=Prs`, `Case=Acc\|Gender=Fem,Masc\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `Gender=Fem\|Number=Sing\|POS=VERB\|Tense=Past\|VerbForm=Part`, `Gender=Masc\|Number=Sing\|POS=PRON\|PronType=Ind`, `Gender=Fem\|Number=Plur\|POS=PRON\|PronType=Ind`, `Mood=Sub\|Number=Sing\|POS=VERB\|Person=3\|Tense=Pres\|VerbForm=Fin`, `Number=Plur\|POS=PRON\|PronType=Rel`, `Gender=Masc\|Number=Plur\|POS=DET\|PronType=Int`, `Mood=Ind\|Number=Plur\|POS=AUX\|Person=3\|Tense=Imp\|VerbForm=Fin`, `AdvType=Tim\|POS=NOUN`, `Gender=Masc\|Number=Plur\|POS=DET\|PronType=Ind`, `Gender=Fem\|Number=Plur\|POS=DET\|PronType=Ind`, `Gender=Masc\|Number=Sing\|POS=DET\|PronType=Int`, `Mood=Cnd\|Number=Sing\|POS=AUX\|Person=3\|VerbForm=Fin`, `Mood=Ind\|Number=Sing\|POS=VERB\|Person=3\|Tense=Imp\|VerbForm=Fin`, `Number=Sing\|POS=DET\|PronType=Art`, `Gender=Masc\|Number=Sing\|POS=DET\|Person=3\|Poss=Yes\|PronType=Prs`, `Case=Acc\|Gender=Masc\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `Gender=Masc\|Number=Sing\|POS=PRON\|PronType=Int`, `POS=PUNCT\|PunctType=Semi`, `Mood=Cnd\|Number=Plur\|POS=AUX\|Person=3\|VerbForm=Fin`, `Case=Dat\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `Gender=Masc\|NumType=Card\|Number=Plur\|POS=NUM`, `Mood=Ind\|Number=Sing\|POS=AUX\|Person=3\|Tense=Imp\|VerbForm=Fin`, `Gender=Fem\|Number=Sing\|POS=PRON\|PronType=Ind`, `Mood=Sub\|Number=Sing\|POS=AUX\|Person=3\|Tense=Imp\|VerbForm=Fin`, `NumForm=Digit\|POS=SYM`, `Gender=Masc\|Number=Sing\|POS=AUX\|Tense=Past\|VerbForm=Part`, `Gender=Fem\|Number=Sing\|POS=PRON\|PronType=Int`, `Gender=Fem\|Number=Sing\|POS=DET\|PronType=Int`, `POS=PRON\|PronType=Int`, `Gender=Fem\|Number=Plur\|POS=DET\|PronType=Int`, `Mood=Cnd\|Number=Sing\|POS=VERB\|Person=3\|VerbForm=Fin`, `Mood=Cnd\|Number=Plur\|POS=VERB\|Person=3\|VerbForm=Fin`, `POS=PART`, `Gender=Fem\|Number=Sing\|POS=PRON\|PronType=Dem`, `Gender=Masc\|Number=Sing\|POS=DET\|PronType=Tot`, `Gender=Masc\|Number=Plur\|POS=PRON\|PronType=Dem`, `POS=ADJ`, `Gender=Masc\|Number=Plur\|POS=PRON\|Person=3\|PronType=Prs`, `Degree=Cmp\|Number=Plur\|POS=ADJ`, `POS=PUNCT\|PunctType=Dash`, `Mood=Sub\|Number=Sing\|POS=AUX\|Person=3\|Tense=Pres\|VerbForm=Fin`, `Case=Acc\|Gender=Fem\|Number=Plur\|POS=PRON\|Person=3\|PronType=Prs`, `Mood=Sub\|Number=Sing\|POS=VERB\|Person=3\|Tense=Imp\|VerbForm=Fin`, `Gender=Fem\|Number=Plur\|POS=VERB\|Tense=Past\|VerbForm=Part`, `Gender=Fem\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `Gender=Masc\|POS=NOUN`, `Mood=Ind\|Number=Sing\|POS=VERB\|Person=3\|Tense=Past\|VerbForm=Fin`, `Gender=Fem\|Number=Plur\|POS=PRON\|PronType=Int`, `Gender=Masc\|NumType=Ord\|Number=Plur\|POS=ADJ`, `Mood=Ind\|Number=Plur\|POS=AUX\|Person=1\|Tense=Fut\|VerbForm=Fin`, `POS=PUNCT\|PunctType=Colo`, `Gender=Masc\|NumType=Card\|POS=NUM`, `Gender=Masc\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `Number=Sing\|POS=PRON\|PronType=Int`, `POS=PUNCT\|PunctType=Quot`, `Mood=Imp\|Number=Sing\|POS=VERB\|Person=3\|VerbForm=Fin`, `Gender=Fem\|Number=Sing\|Number[psor]=Plur\|POS=DET\|Person=1\|Poss=Yes\|PronType=Prs`, `Gender=Masc\|Number=Sing\|Number[psor]=Plur\|POS=DET\|Person=1\|Poss=Yes\|PronType=Prs`, `Mood=Ind\|Number=Plur\|POS=VERB\|Person=1\|Tense=Fut\|VerbForm=Fin`, `POS=AUX\|VerbForm=Ger`, `Gender=Fem\|Number=Plur\|POS=PRON\|Person=3\|PronType=Prs`, `Mood=Imp\|Number=Sing\|POS=AUX\|Person=3\|VerbForm=Fin`, `Number=Plur\|POS=PRON\|PronType=Ind`, `Gender=Masc\|Number=Sing\|POS=PRON\|PronType=Dem`, `Case=Acc,Dat\|Number=Sing\|POS=PRON\|Person=2\|Polite=Infm\|PrepCase=Npr\|PronType=Prs`, `Gender=Masc\|Number=Plur\|POS=PRON\|PronType=Int`, `Mood=Ind\|Number=Plur\|POS=AUX\|Person=1\|Tense=Pres\|VerbForm=Fin`, `NumForm=Digit\|NumType=Frac\|POS=NUM`, `POS=VERB`, `Gender=Fem\|Number=Plur\|POS=PRON\|PronType=Dem`, `Gender=Fem\|POS=NOUN`, `Case=Acc,Dat\|Number=Sing\|POS=PRON\|Person=1\|PrepCase=Npr\|PronType=Prs`, `Mood=Sub\|Number=Plur\|POS=VERB\|Person=2\|Tense=Pres\|VerbForm=Fin`, `Mood=Ind\|Number=Plur\|POS=AUX\|Person=2\|Tense=Fut\|VerbForm=Fin`, `Mood=Sub\|Number=Plur\|POS=AUX\|Person=1\|Tense=Pres\|VerbForm=Fin`, `Mood=Sub\|Number=Plur\|POS=AUX\|Person=3\|Tense=Imp\|VerbForm=Fin`, `Number=Plur\|POS=PRON\|Person=1\|PronType=Prs`, `Mood=Ind\|Number=Sing\|POS=VERB\|Person=1\|Tense=Pres\|VerbForm=Fin`, `Case=Nom\|Number=Sing\|POS=PRON\|Person=2\|Polite=Infm\|PronType=Prs`, `POS=X`, `Mood=Cnd\|Number=Plur\|POS=AUX\|Person=1\|VerbForm=Fin`, `Number=Sing\|POS=DET\|PronType=Dem`, `POS=DET`, `Mood=Ind\|Number=Sing\|POS=VERB\|Person=1\|Tense=Fut\|VerbForm=Fin`, `Mood=Ind\|Number=Sing\|POS=AUX\|Person=1\|Tense=Pres\|VerbForm=Fin`, `POS=DET\|PronType=Art`, `Gender=Masc\|Number=Sing\|POS=PRON\|Person=3\|Poss=Yes\|PronType=Prs`, `NumType=Ord\|Number=Sing\|POS=ADJ`, `Gender=Fem\|Number=Sing\|POS=AUX\|Tense=Past\|VerbForm=Part`, `Number=Plur\|Number[psor]=Plur\|POS=DET\|Person=1\|Poss=Yes\|PronType=Prs`, `Gender=Fem\|Number=Plur\|POS=AUX\|Tense=Past\|VerbForm=Part`, `Gender=Masc\|Number=Plur\|POS=AUX\|Tense=Past\|VerbForm=Part`, `Number=Plur\|POS=PRON\|PronType=Dem`, `Mood=Imp\|Number=Plur\|POS=VERB\|Person=1\|VerbForm=Fin`, `POS=PRON\|PronType=Ind`, `Mood=Ind\|Number=Sing\|POS=VERB\|Person=2\|Tense=Pres\|VerbForm=Fin`, `Mood=Imp\|Number=Plur\|POS=VERB\|Person=3\|VerbForm=Fin`, `Case=Nom\|Number=Sing\|POS=PRON\|Person=1\|PronType=Prs`, `Case=Acc\|Number=Sing\|POS=PRON\|Person=1\|PrepCase=Pre\|PronType=Prs`, `Mood=Ind\|Number=Sing\|POS=AUX\|Person=2\|Tense=Pres\|VerbForm=Fin`, `Mood=Ind\|Number=Plur\|POS=VERB\|Person=1\|Tense=Imp\|VerbForm=Fin`, `POS=PUNCT\|PunctSide=Fin\|PunctType=Qest`, `NumForm=Digit\|NumType=Ord\|POS=ADJ`, `Case=Acc\|POS=PRON\|Person=3\|PrepCase=Pre\|PronType=Prs\|Reflex=Yes`, `NumForm=Digit\|NumType=Frac\|POS=SYM`, `Mood=Ind\|Number=Plur\|POS=VERB\|Person=2\|Tense=Pres\|VerbForm=Fin`, `Gender=Masc\|Number=Sing\|Number[psor]=Sing\|POS=DET\|Person=2\|Poss=Yes\|PronType=Prs`, `Gender=Masc\|Number=Plur\|POS=PRON\|Person=3\|Poss=Yes\|PronType=Prs`, `Mood=Sub\|Number=Plur\|POS=VERB\|Person=1\|Tense=Pres\|VerbForm=Fin`, `POS=PUNCT\|PunctSide=Ini\|PunctType=Qest`, `NumType=Card\|Number=Sing\|POS=NUM`, `Foreign=Yes\|POS=PRON\|PronType=Int`, `Foreign=Yes\|Mood=Ind\|POS=VERB\|VerbForm=Fin`, `Foreign=Yes\|POS=ADP`, `Gender=Masc\|Number=Sing\|POS=PROPN`, `POS=PUNCT\|PunctSide=Ini\|PunctType=Excl`, `POS=PUNCT\|PunctSide=Fin\|PunctType=Excl`, `Mood=Cnd\|Number=Sing\|POS=AUX\|Person=1\|VerbForm=Fin`, `Number=Plur\|POS=PRON\|Person=2\|Polite=Form\|PronType=Prs`, `Mood=Sub\|POS=AUX\|Person=1\|Tense=Imp\|VerbForm=Fin`, `POS=PUNCT\|PunctSide=Ini\|PunctType=Comm`, `POS=PUNCT\|PunctSide=Fin\|PunctType=Comm`, `Number=Plur\|POS=PRON\|Person=2\|PronType=Prs`, `Mood=Ind\|Number=Plur\|POS=AUX\|Person=2\|Tense=Pres\|VerbForm=Fin`, `Case=Acc,Dat\|Number=Plur\|POS=PRON\|Person=2\|PronType=Prs`, `Mood=Cnd\|Number=Sing\|POS=VERB\|Person=1\|VerbForm=Fin`, `Mood=Cnd\|Number=Plur\|POS=VERB\|Person=1\|VerbForm=Fin`, `Mood=Ind\|Number=Plur\|POS=AUX\|Person=1\|Tense=Imp\|VerbForm=Fin`, `Gender=Masc\|Number=Plur\|Number[psor]=Sing\|POS=DET\|Person=1\|Poss=Yes\|PronType=Prs`, `Definite=Ind\|Gender=Masc\|Number=Sing\|POS=DET\|PronType=Art`, `Number=Sing\|POS=PRON\|Person=2\|Polite=Form\|PronType=Prs`, `Gender=Masc\|Number=Sing\|Number[psor]=Sing\|POS=DET\|Person=1\|Poss=Yes\|PronType=Prs`, `Mood=Ind\|Number=Sing\|POS=VERB\|Person=1\|Tense=Imp\|VerbForm=Fin`, `POS=VERB\|Tense=Past\|VerbForm=Part`, `Mood=Imp\|Number=Plur\|POS=AUX\|Person=3\|VerbForm=Fin`, `Case=Nom\|POS=PRON\|Person=3\|PronType=Prs`, `Mood=Ind\|Number=Sing\|POS=AUX\|Person=3\|Tense=Past\|VerbForm=Fin`, `Gender=Fem\|Number=Sing\|POS=PRON\|Person=3\|Poss=Yes\|PronType=Prs`, `Gender=Masc\|Number=Sing\|POS=PRON\|PronType=Rel`, `Definite=Ind\|Number=Sing\|POS=DET\|PronType=Art`, `Gender=Masc\|Number=Sing\|Number[psor]=Plur\|POS=PRON\|Person=1\|Poss=Yes\|PronType=Prs`, `Number=Plur\|Number[psor]=Plur\|POS=PRON\|Person=1\|Poss=Yes\|PronType=Prs`, `POS=AUX\|Tense=Past\|VerbForm=Part`, `Gender=Fem\|NumType=Card\|POS=NUM`, `Mood=Ind\|Number=Sing\|POS=AUX\|Person=1\|Tense=Imp\|VerbForm=Fin`, `Mood=Sub\|Number=Sing\|POS=VERB\|Person=1\|Tense=Imp\|VerbForm=Fin`, `Gender=Fem\|Number=Plur\|POS=PRON\|Person=3\|Poss=Yes\|PronType=Prs`, `Mood=Ind\|Number=Sing\|POS=AUX\|Person=1\|Tense=Fut\|VerbForm=Fin`, `Mood=Ind\|Number=Plur\|POS=AUX\|Person=3\|Tense=Past\|VerbForm=Fin`, `AdvType=Tim\|Degree=Cmp\|POS=ADV`, `Case=Acc\|Number=Sing\|POS=PRON\|Person=2\|Polite=Infm\|PrepCase=Pre\|PronType=Prs`, `POS=DET\|PronType=Rel`, `Definite=Ind\|Gender=Fem\|Number=Plur\|POS=DET\|PronType=Art`, `Mood=Ind\|Number=Plur\|POS=VERB\|Person=2\|Tense=Fut\|VerbForm=Fin`, `POS=INTJ`, `Mood=Sub\|Number=Sing\|POS=AUX\|Person=1\|Tense=Pres\|VerbForm=Fin`, `POS=VERB\|VerbForm=Fin`, `Mood=Ind\|Number=Plur\|POS=VERB\|Person=3\|Tense=Past\|VerbForm=Fin`, `Definite=Ind\|Gender=Fem\|Number=Sing\|POS=DET\|PronType=Art`, `Mood=Sub\|Number=Plur\|POS=AUX\|Person=1\|Tense=Imp\|VerbForm=Fin`, `Gender=Fem\|Number=Sing\|Number[psor]=Sing\|POS=PRON\|Person=3\|Poss=Yes\|PronType=Prs`, `Mood=Sub\|Number=Sing\|POS=VERB\|Person=2\|Tense=Pres\|VerbForm=Fin`, `Case=Acc\|POS=PRON\|Person=3\|PronType=Prs\|Reflex=Yes`, `Foreign=Yes\|POS=NOUN`, `Foreign=Yes\|Mood=Ind\|Number=Sing\|POS=AUX\|Person=3\|Tense=Pres\|VerbForm=Fin`, `Foreign=Yes\|Gender=Masc\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `Foreign=Yes\|POS=SCONJ`, `Foreign=Yes\|Gender=Fem\|Number=Sing\|POS=DET\|PronType=Art`, `Gender=Masc\|POS=SYM`, `Gender=Fem\|Number=Sing\|Number[psor]=Sing\|POS=DET\|Person=2\|Poss=Yes\|PronType=Prs`, `Number=Sing\|POS=DET\|Person=3\|Poss=Yes\|PronType=Prs`, `Gender=Masc\|Number=Plur\|Number[psor]=Sing\|POS=DET\|Person=2\|Poss=Yes\|PronType=Prs`, `Gender=Fem\|Number=Sing\|POS=PROPN`, `Mood=Sub\|Number=Plur\|POS=VERB\|Person=1\|Tense=Imp\|VerbForm=Fin`, `Definite=Def\|Foreign=Yes\|Gender=Masc\|Number=Sing\|POS=DET\|PronType=Art`, `Foreign=Yes\|POS=VERB`, `Foreign=Yes\|POS=ADJ`, `Foreign=Yes\|POS=DET`, `Foreign=Yes\|POS=ADV`, `POS=PUNCT\|PunctSide=Fin\|Punta d'aignctType=Brck`, `Degree=Cmp\|POS=ADJ`, `AdvType=Tim\|POS=SYM`, `Number=Plur\|POS=DET\|PronType=Dem`, `Mood=Ind\|Number=Sing\|POS=VERB\|Person=2\|Tense=Fut\|VerbForm=Fin` | | **`parser`** | `ROOT`, `acl`, `advcl`, `advmod`, `amod`, `appos`, `aux`, `case`, `cc`, `ccomp`, `compound`, `conj`, `cop`, `csubj`, `dep`, `det`, `expl:pass`, `fixed`, `flat`, `iobj`, `mark`, `nmod`, `nsubj`, `nummod`, `obj`, `obl`, `parataxis`, `punct`, `xcomp` | | **`ner`** | `EMAIL`, `FINANCIAL`, `ID`, `LOC`, `MISC`, `ORG`, `PER`, `TELEPHONE`, `VEHICLE`, `ZIP` | </details> | b7b7e508bee065c6187b7c4730647735 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-clinc This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the clinc_oos dataset. It achieves the following results on the evaluation set: - Loss: 0.7710 - Accuracy: 0.9177 | 6cb771b18d2c958f0d2a42ae0d9e8c4f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 4.2892 | 1.0 | 318 | 3.2830 | 0.7432 | | 2.627 | 2.0 | 636 | 1.8728 | 0.8403 | | 1.5429 | 3.0 | 954 | 1.1554 | 0.8910 | | 1.0089 | 4.0 | 1272 | 0.8530 | 0.9129 | | 0.7938 | 5.0 | 1590 | 0.7710 | 0.9177 | | a0f4dc6becf4737060cf4d95ab059355 |

mit | ['generated_from_trainer'] | false | japanese-roberta-base-finetuned-wikitext2 This model is a fine-tuned version of [rinna/japanese-roberta-base](https://huggingface.co/rinna/japanese-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.2302 | 7a96652850ad486895912c6f008ba08c |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 18 | 3.4128 | | No log | 2.0 | 36 | 3.1374 | | No log | 3.0 | 54 | 3.2285 | | 184fe45b42d06fa0198a0946feb8bd82 |

mit | ['generated_from_trainer'] | false | ru_t5absum_for_legaltext This model is a fine-tuned version of [cointegrated/rut5-base-absum](https://huggingface.co/cointegrated/rut5-base-absum) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: nan - Rouge1: 1.4175 - Rouge2: 0.0 - Rougel: 1.4476 - Rougelsum: 1.4302 - Gen Len: 17.2133 | d564c7248721733796646256066fd59f |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:|:---------:|:-------:| | No log | 1.0 | 157 | nan | 1.4175 | 0.0 | 1.4476 | 1.4302 | 17.2133 | | No log | 2.0 | 314 | nan | 1.4175 | 0.0 | 1.4476 | 1.4302 | 17.2133 | | No log | 3.0 | 471 | nan | 1.4175 | 0.0 | 1.4476 | 1.4302 | 17.2133 | | 0.0 | 4.0 | 628 | nan | 1.4175 | 0.0 | 1.4476 | 1.4302 | 17.2133 | | 0.0 | 5.0 | 785 | nan | 1.4175 | 0.0 | 1.4476 | 1.4302 | 17.2133 | | 0.0 | 6.0 | 942 | nan | 1.4175 | 0.0 | 1.4476 | 1.4302 | 17.2133 | | 0.0 | 7.0 | 1099 | nan | 1.4175 | 0.0 | 1.4476 | 1.4302 | 17.2133 | | 0.0 | 8.0 | 1256 | nan | 1.4175 | 0.0 | 1.4476 | 1.4302 | 17.2133 | | 0.0 | 9.0 | 1413 | nan | 1.4175 | 0.0 | 1.4476 | 1.4302 | 17.2133 | | 0.0 | 10.0 | 1570 | nan | 1.4175 | 0.0 | 1.4476 | 1.4302 | 17.2133 | | 7938bb36d9227a392d30ee355b0a4805 |

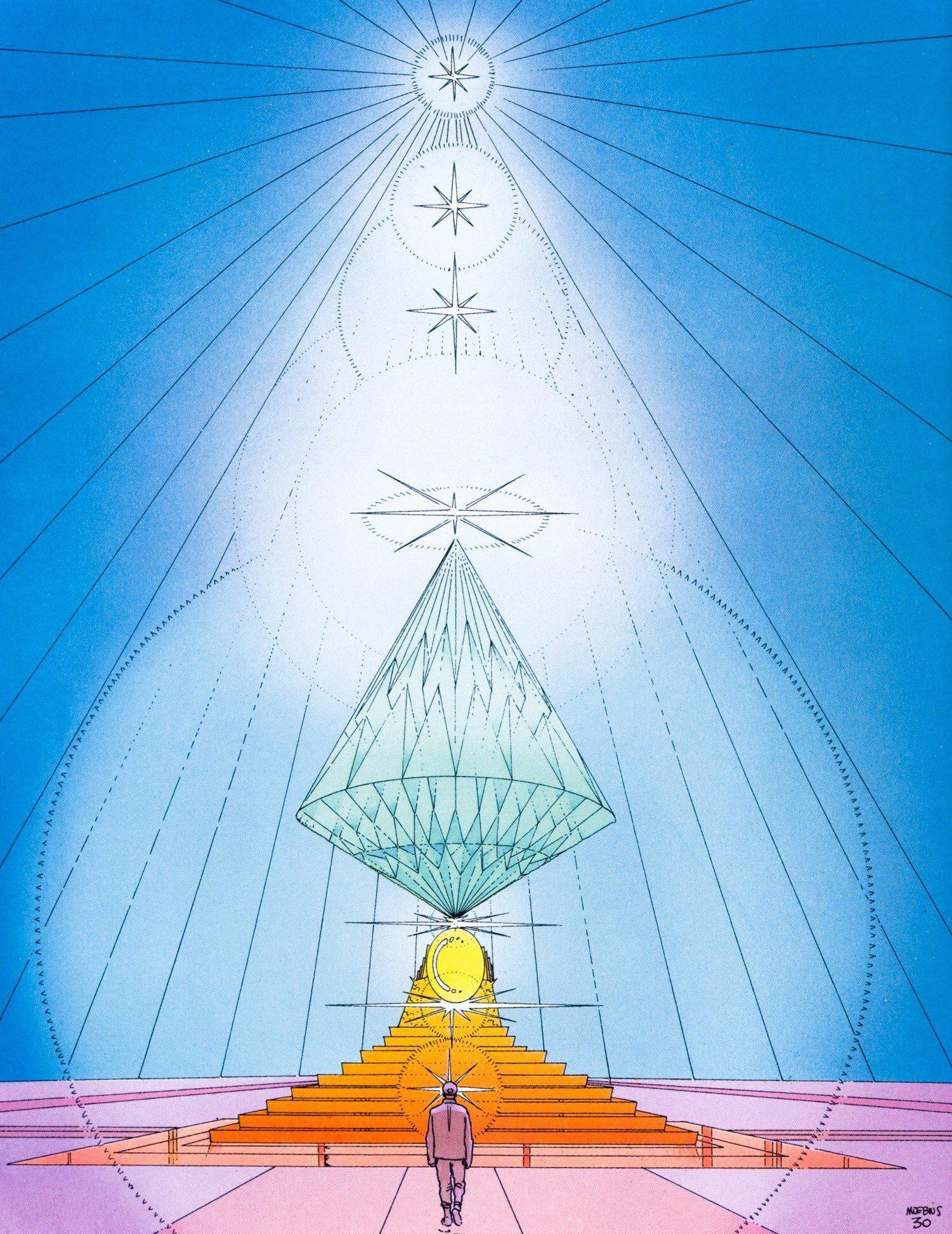

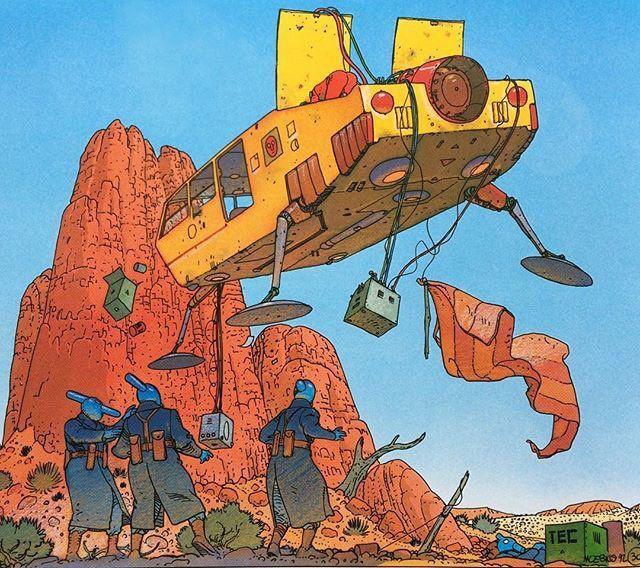

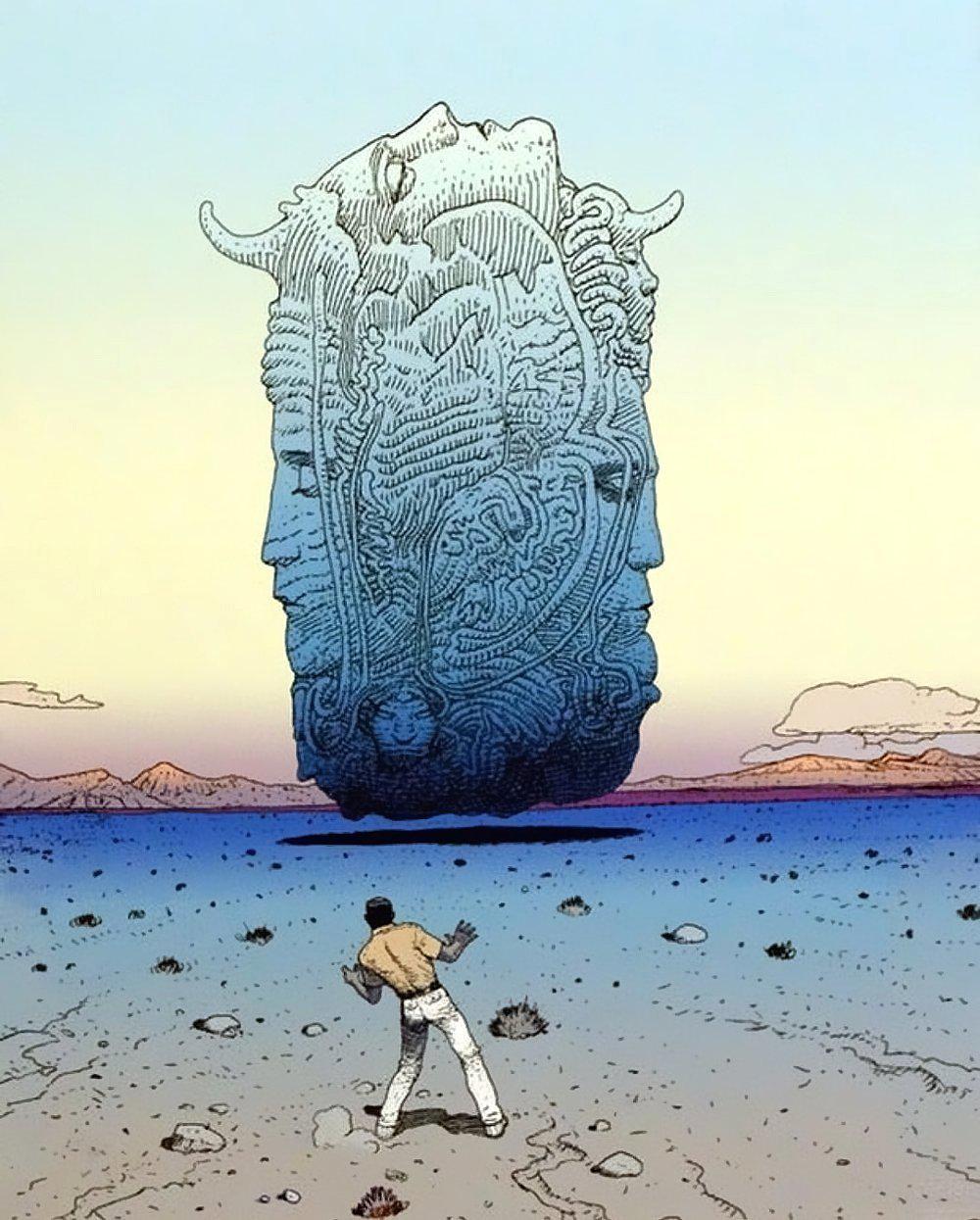

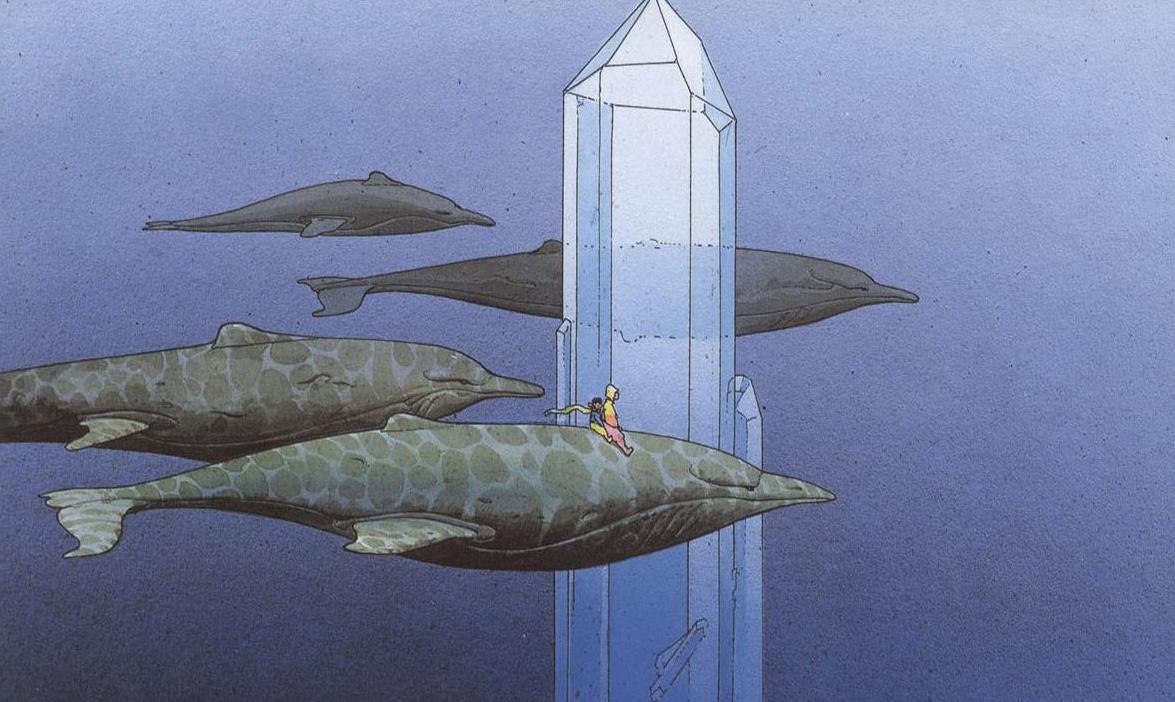

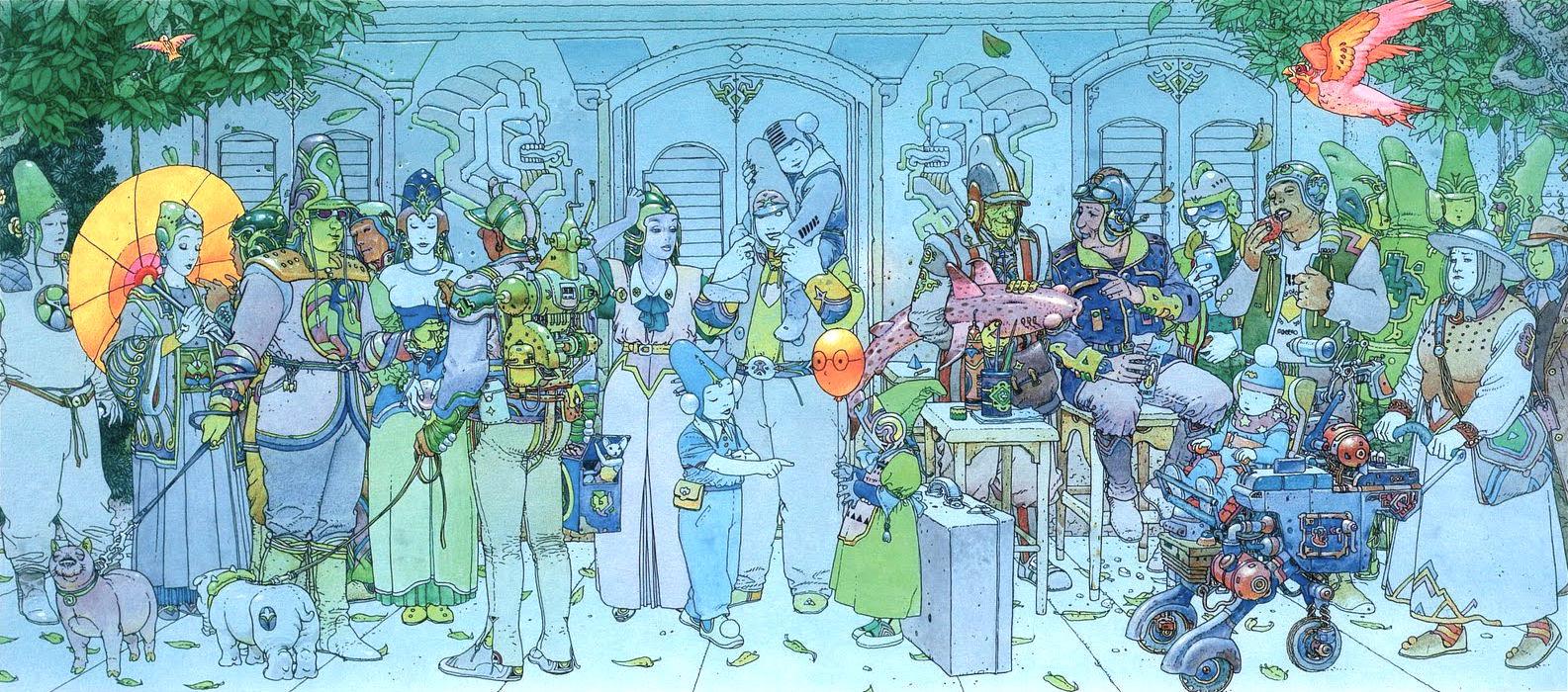

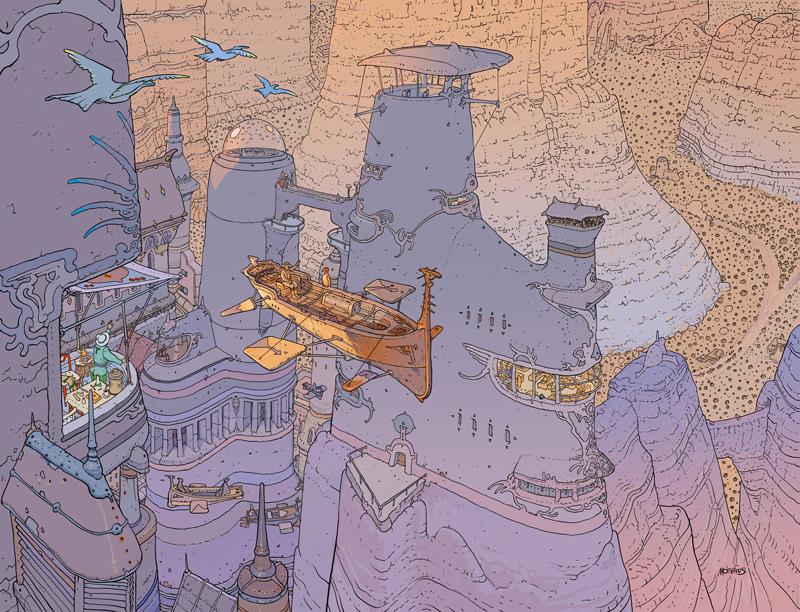

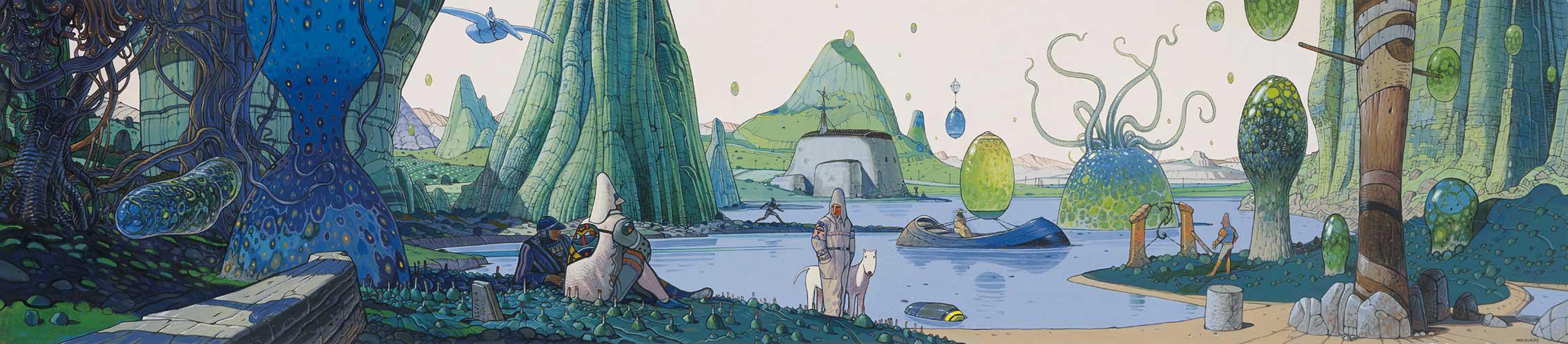

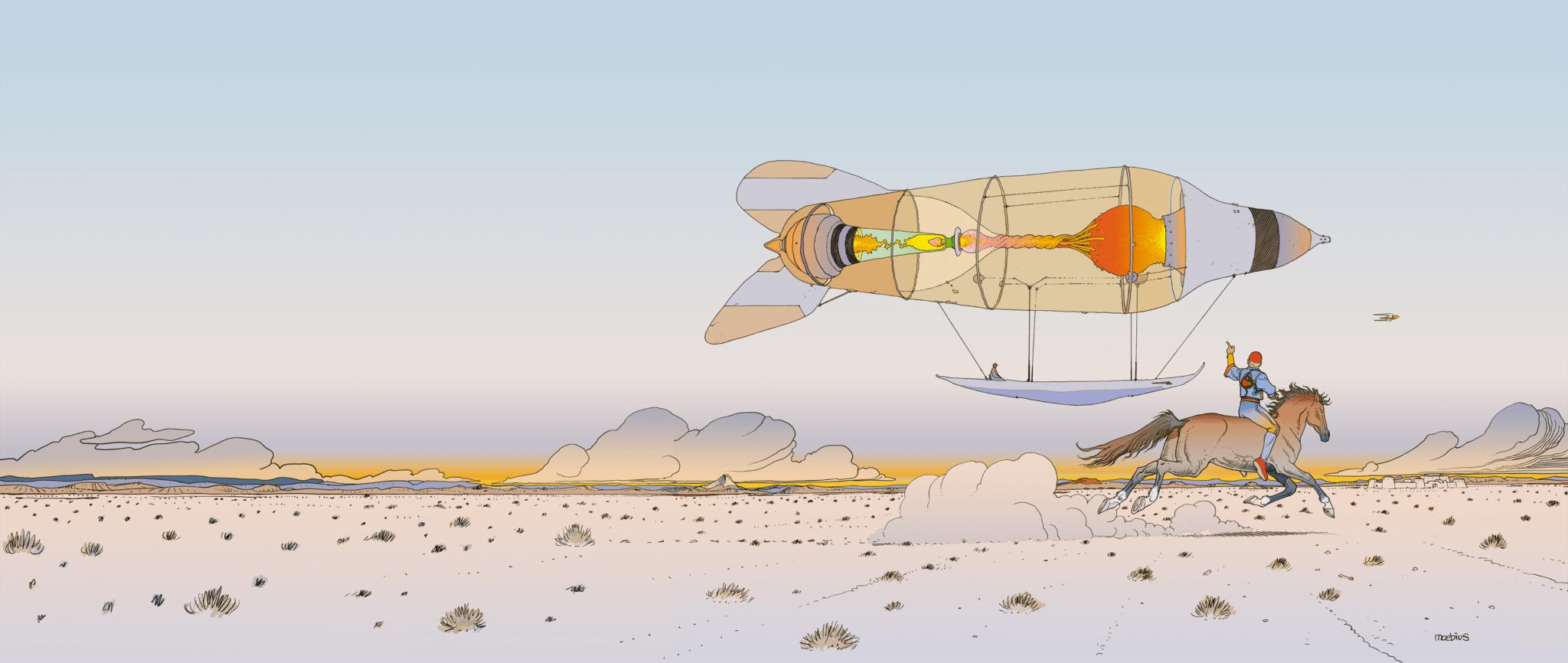

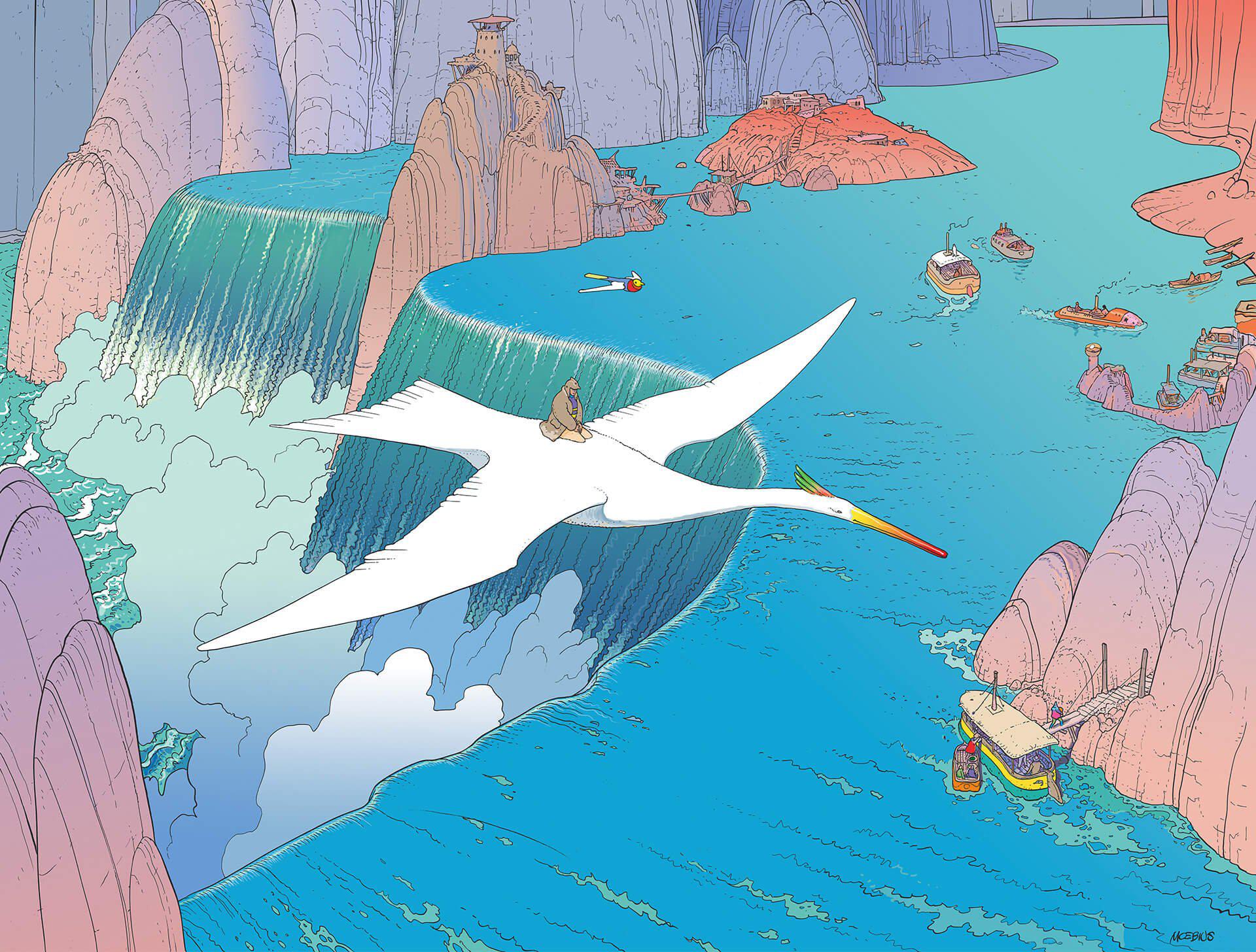

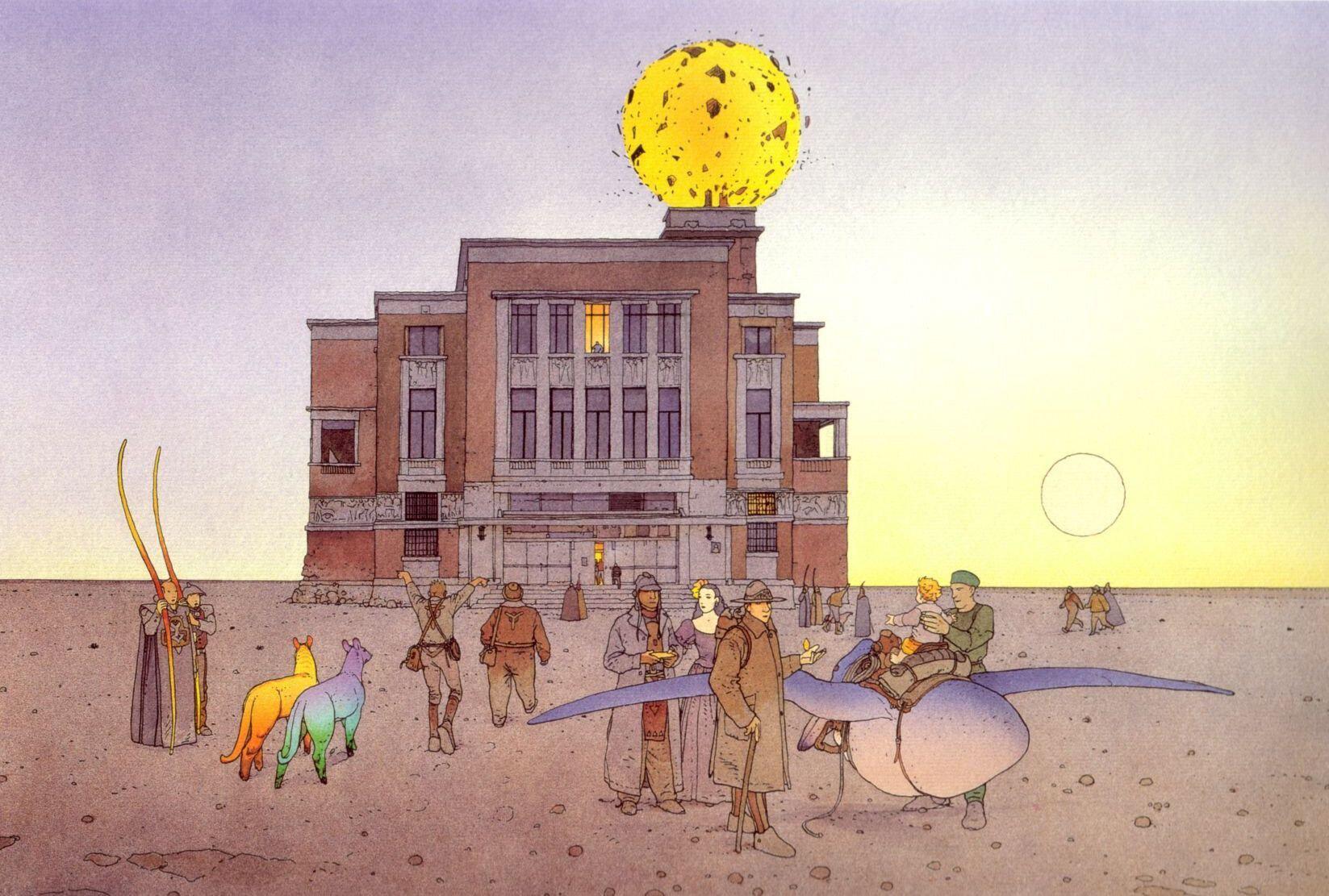

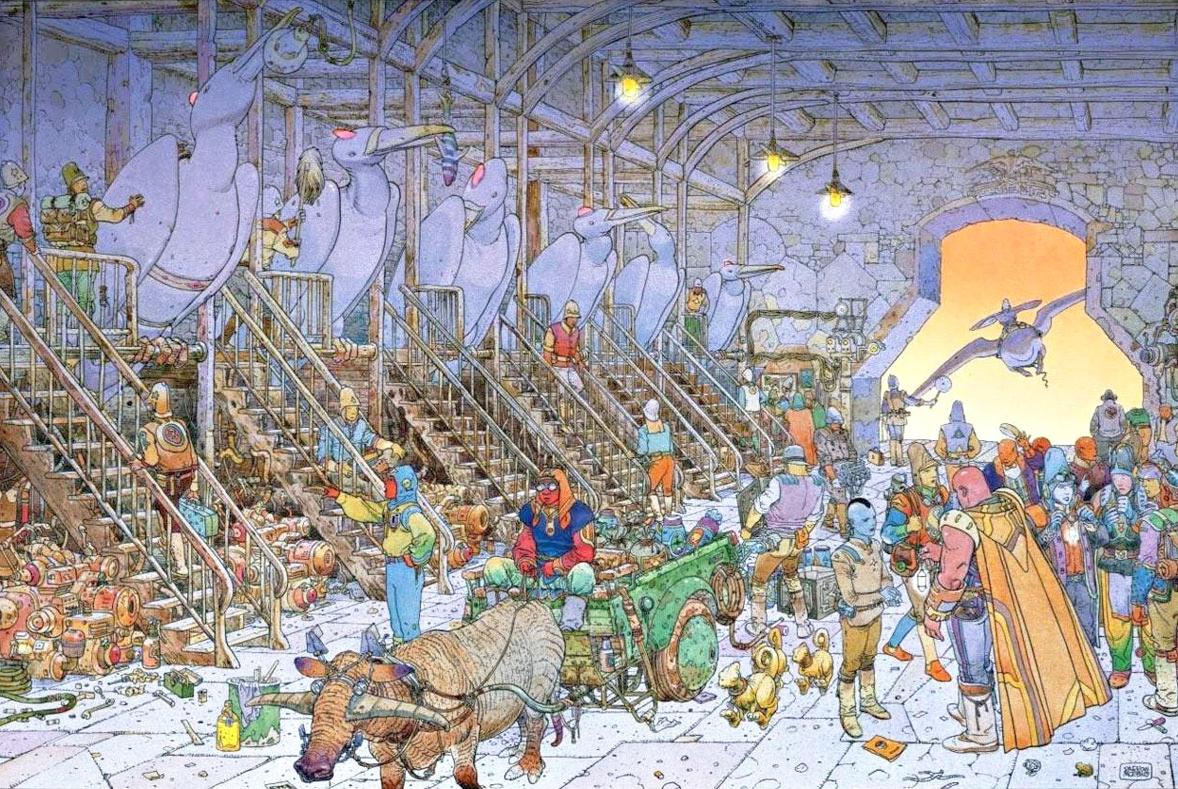

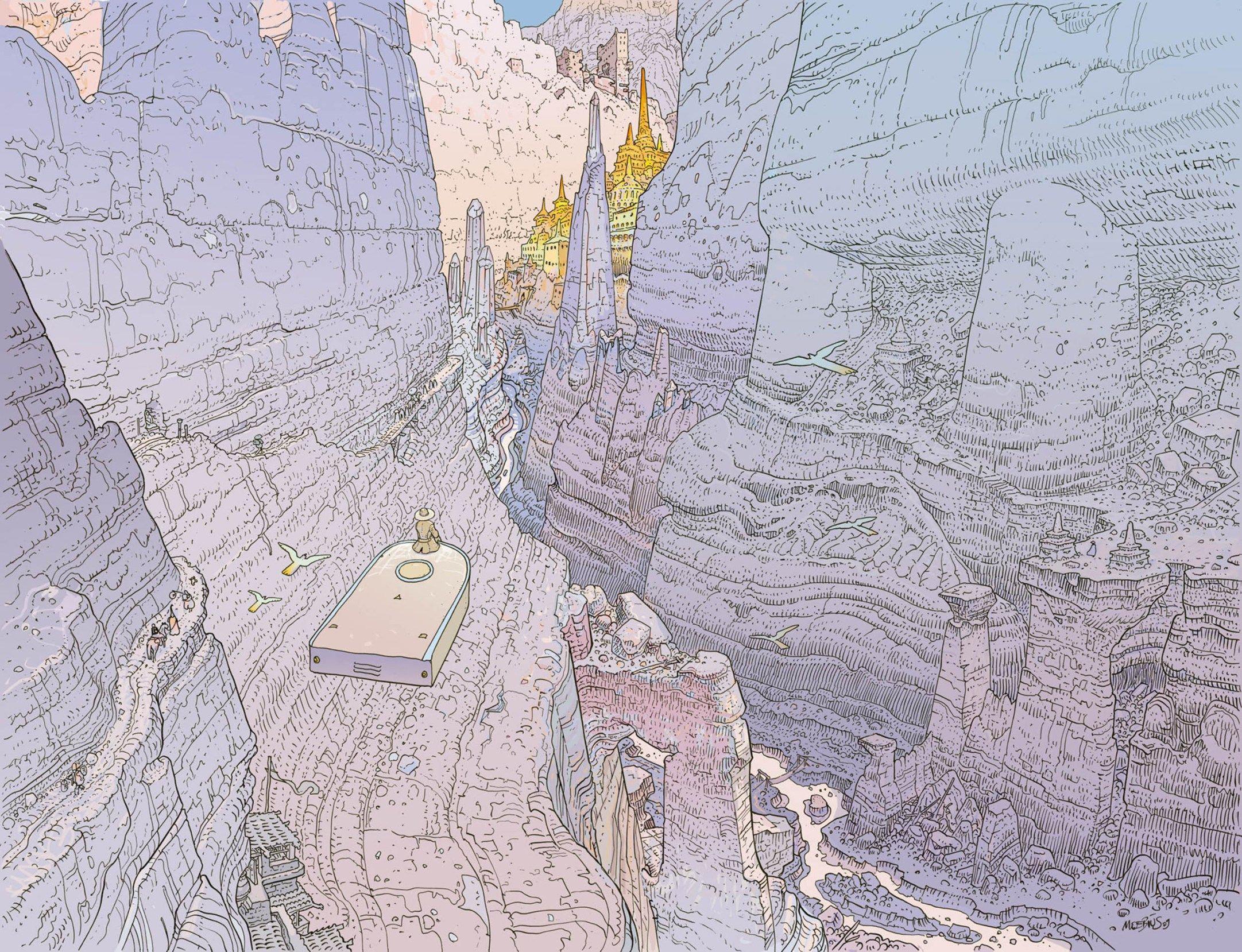

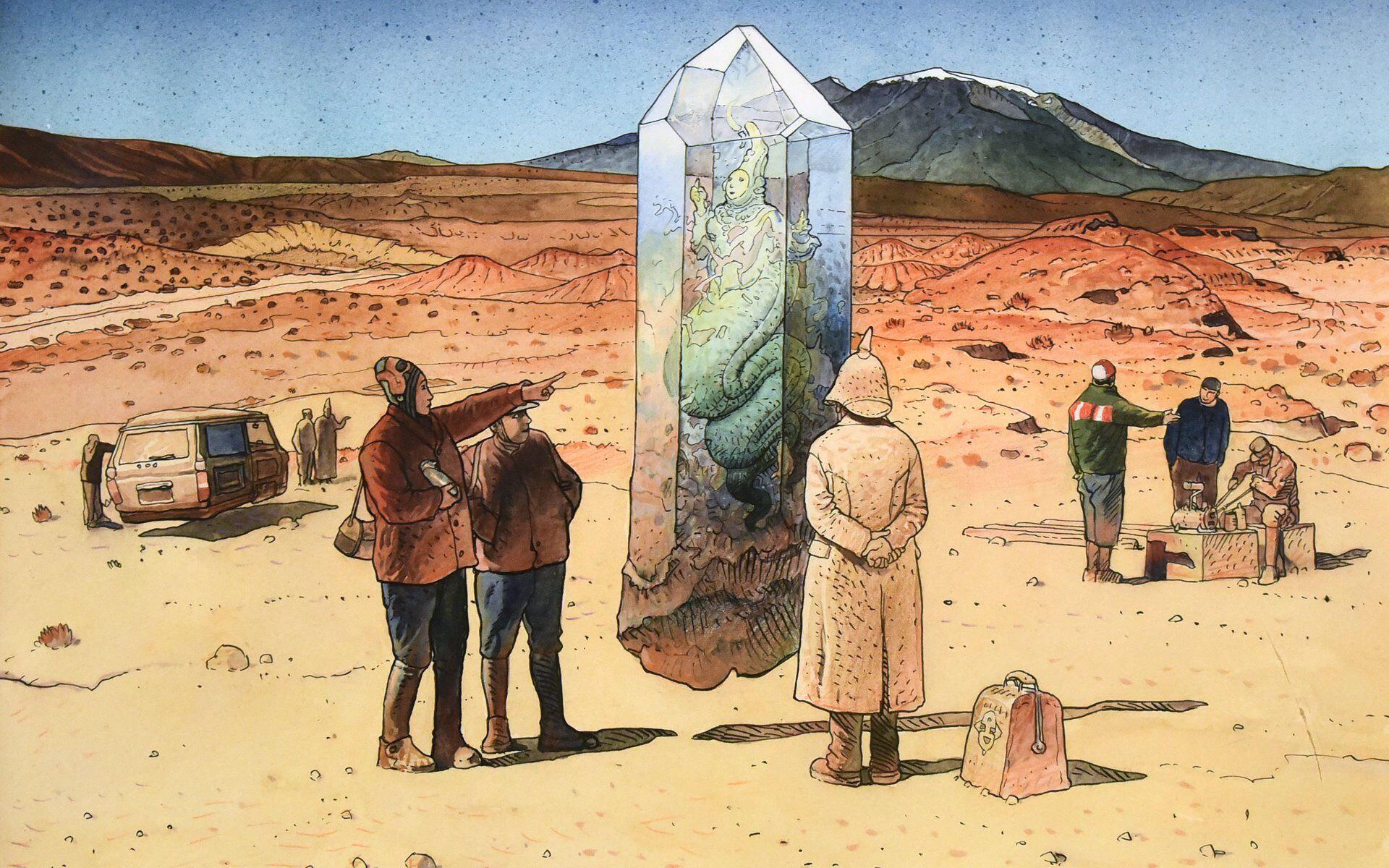

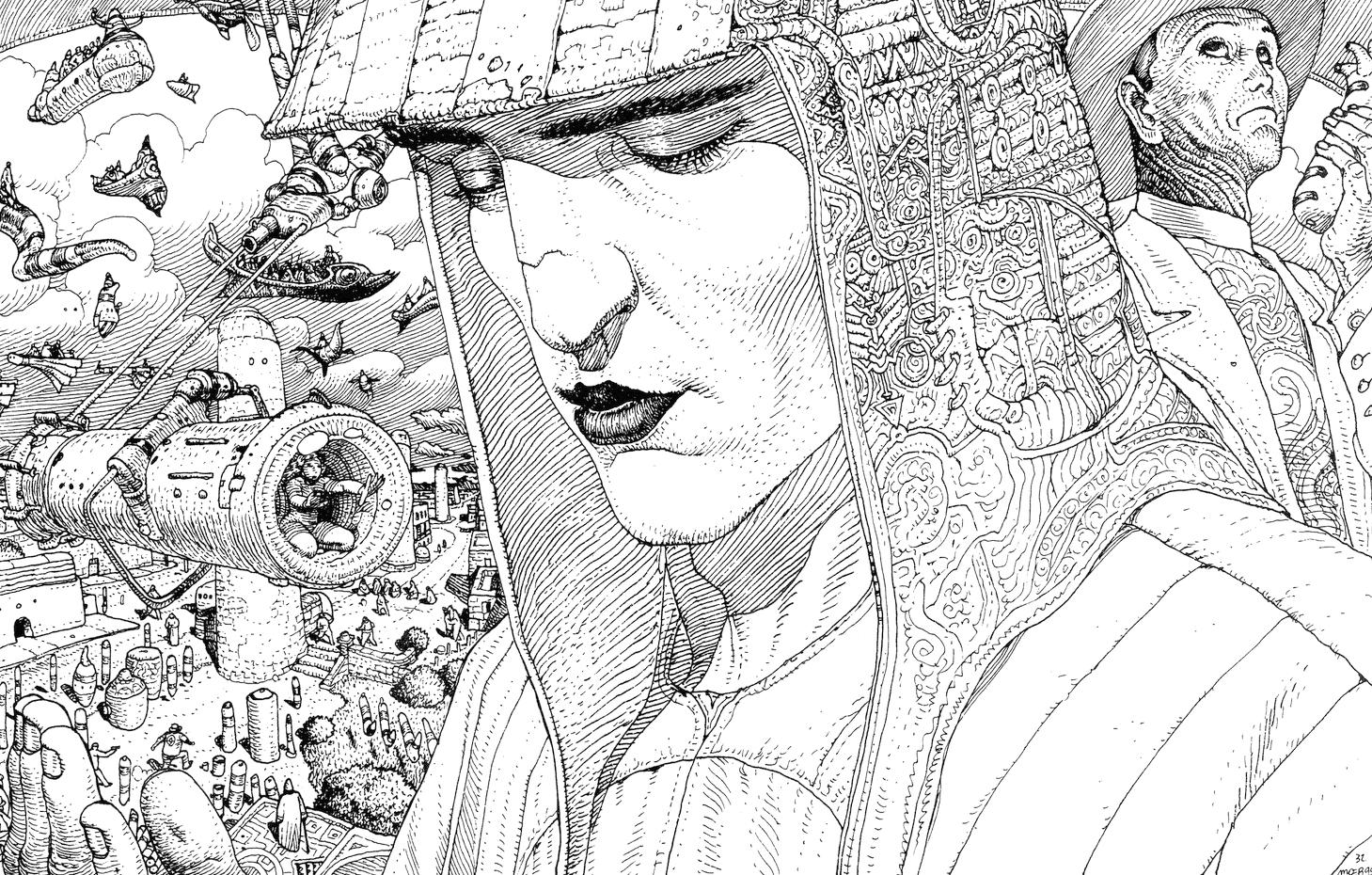

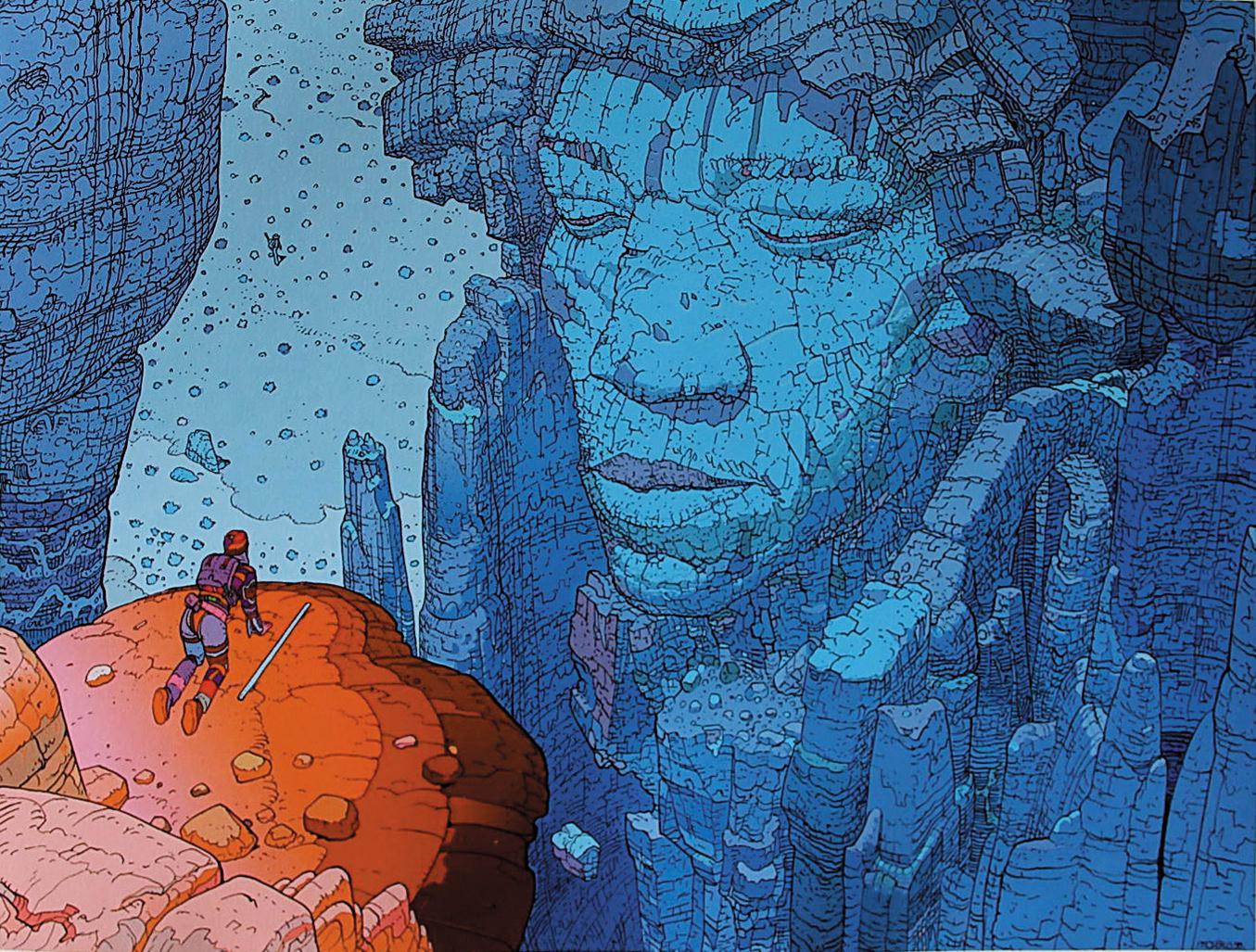

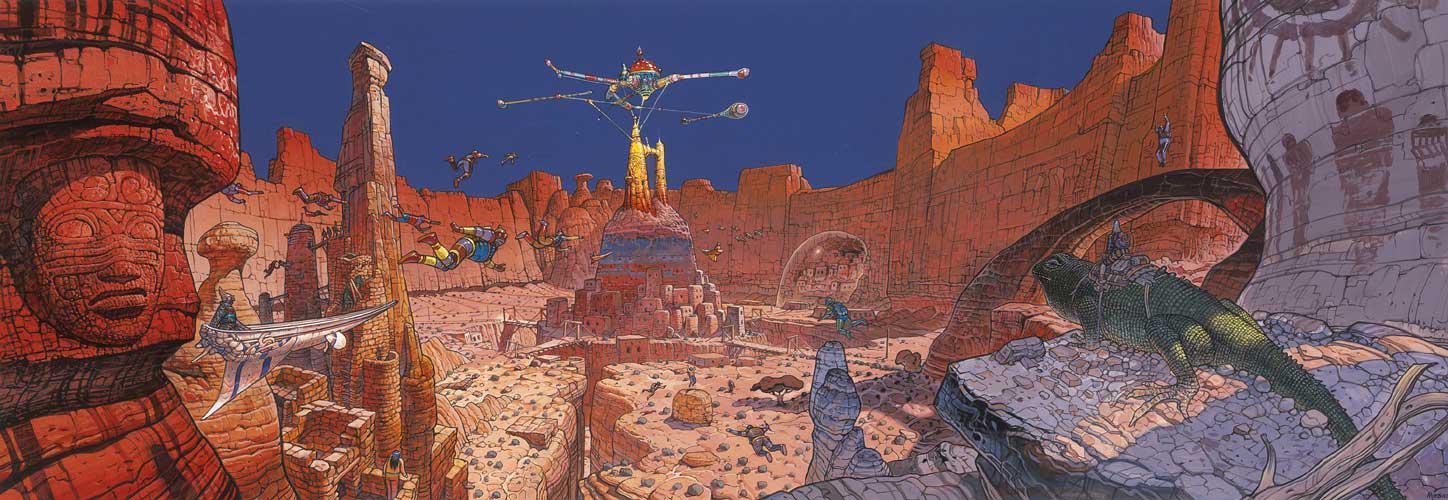

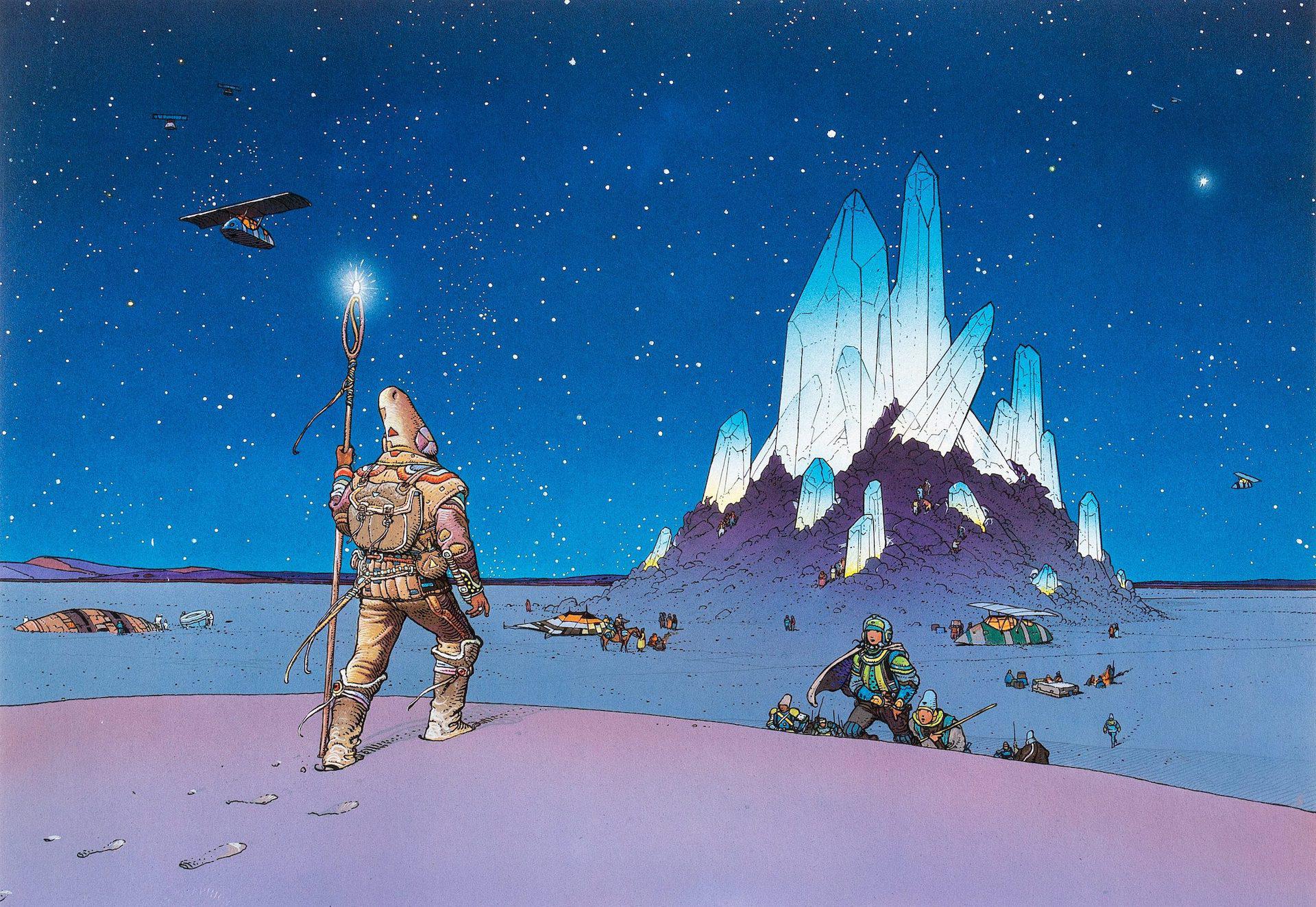

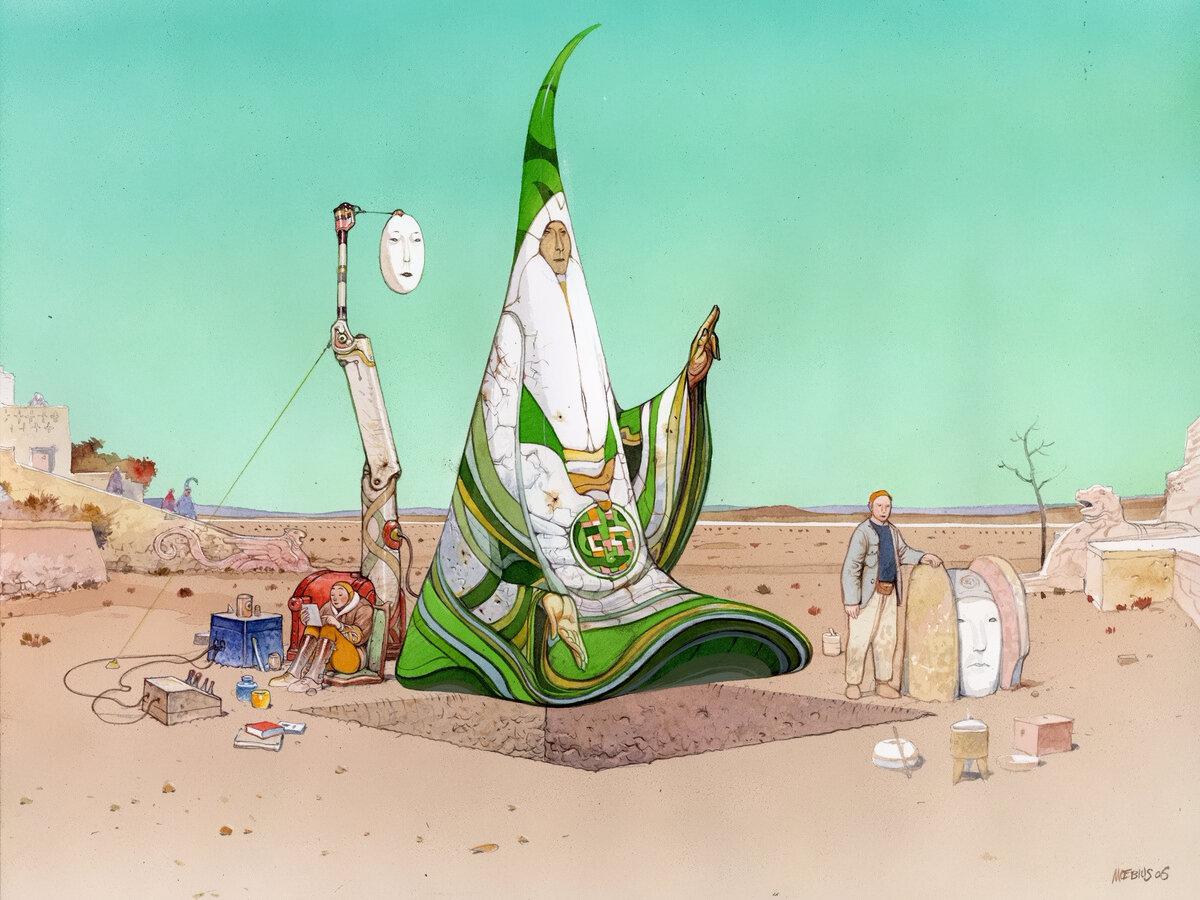

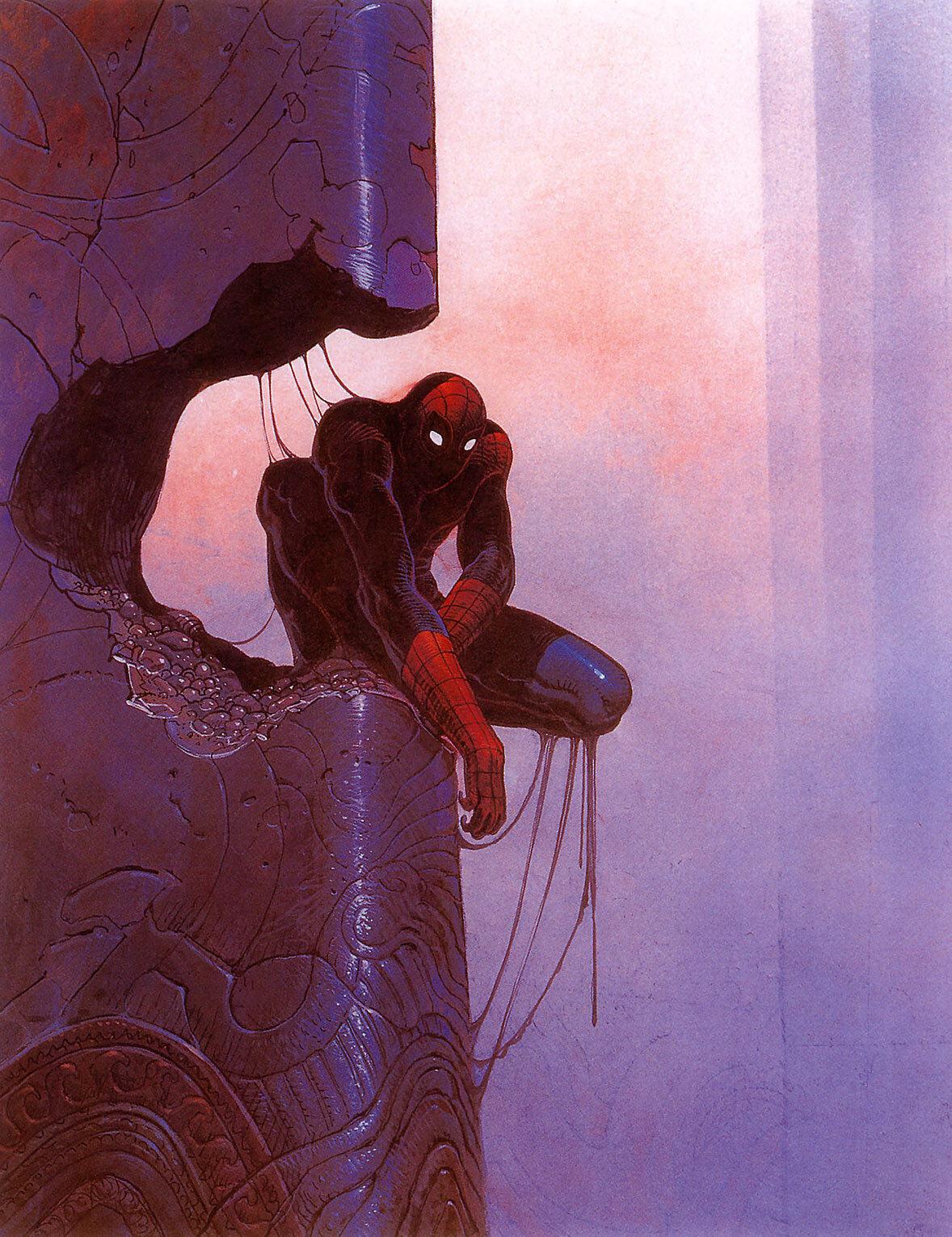

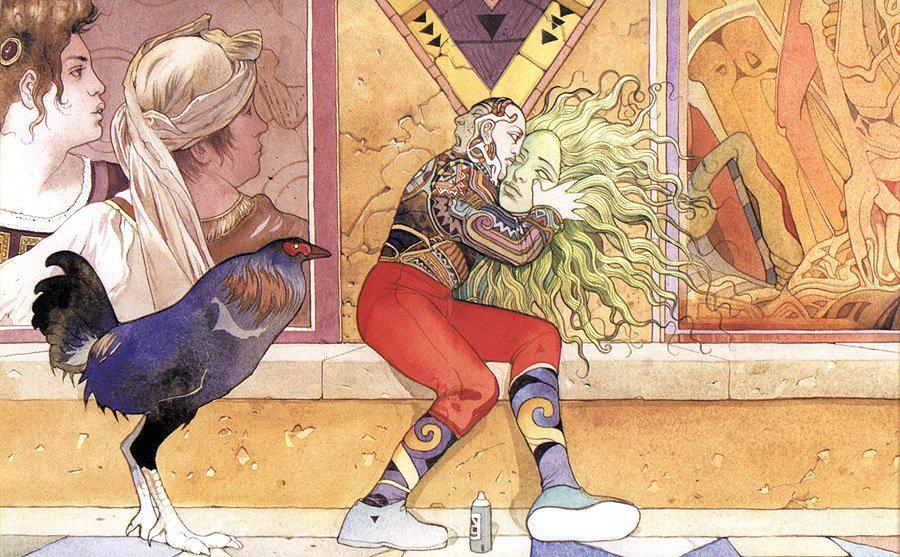

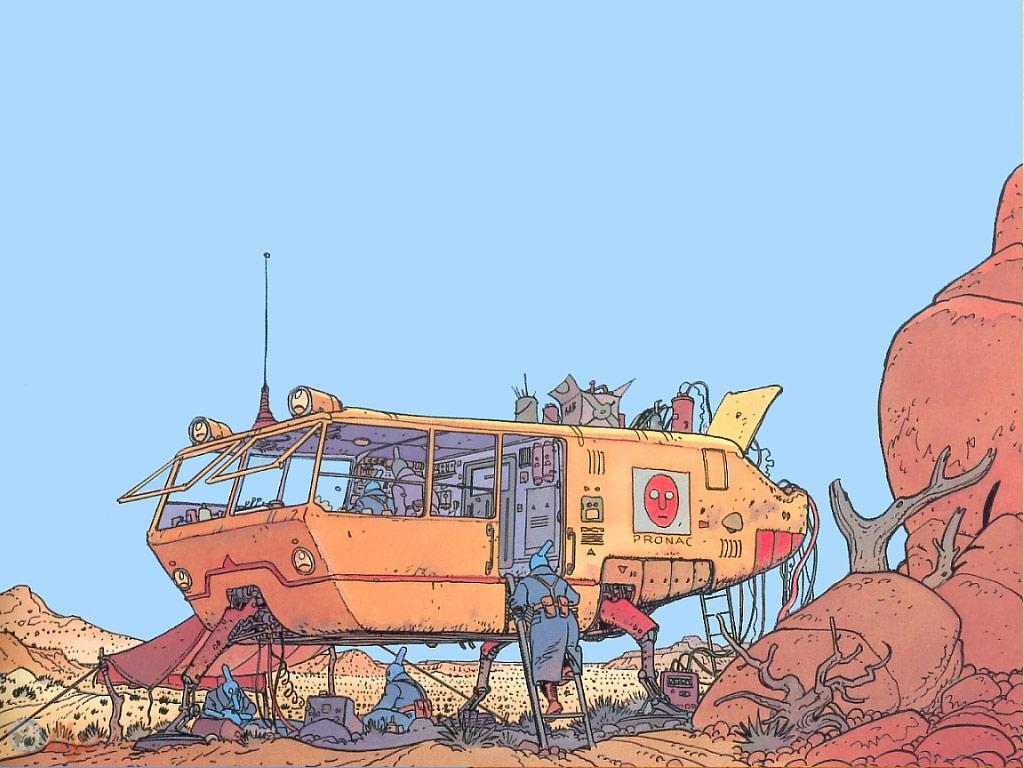

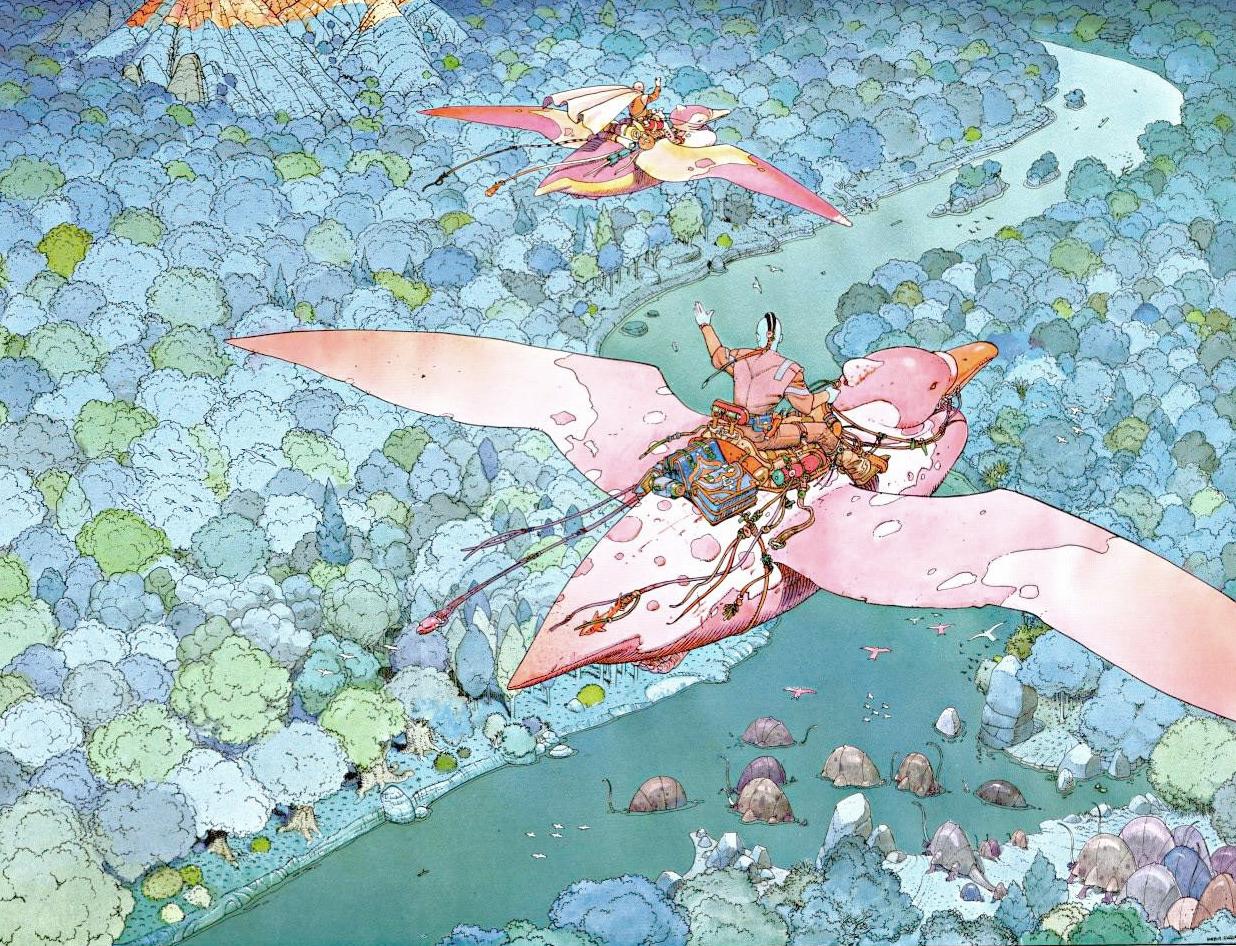

mit | [] | false | moebius on Stable Diffusion This is the `<moebius>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:                                 | 8ca4f3d654beab42fcd47c2ed8af1f09 |

apache-2.0 | ['generated_from_trainer'] | false | DistilBert-finetuned-Hackaton This model is a fine-tuned version of [distilbert-base-uncased-finetuned-sst-2-english](https://huggingface.co/distilbert-base-uncased-finetuned-sst-2-english) on the None dataset. It achieves the following results on the evaluation set: - Loss: 2.1456 - Accuracy: 0.4283 - F1: 0.4344 | a913c159f5024f45734e286ae332703b |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 25 | fa16939e85a0f6ef29d8a031fdfaa357 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 2.3155 | 1.0 | 338 | 2.6640 | 0.33 | 0.3161 | | 2.2064 | 2.0 | 676 | 2.5991 | 0.3283 | 0.3094 | | 2.0703 | 3.0 | 1014 | 2.5172 | 0.3467 | 0.3347 | | 2.0222 | 4.0 | 1352 | 2.4497 | 0.3567 | 0.3434 | | 1.9197 | 5.0 | 1690 | 2.3951 | 0.375 | 0.3639 | | 1.8334 | 6.0 | 2028 | 2.3398 | 0.375 | 0.3646 | | 1.7327 | 7.0 | 2366 | 2.3231 | 0.3833 | 0.3749 | | 1.6621 | 8.0 | 2704 | 2.3040 | 0.3867 | 0.3787 | | 1.5902 | 9.0 | 3042 | 2.2702 | 0.3883 | 0.3809 | | 1.5554 | 10.0 | 3380 | 2.2230 | 0.4167 | 0.4143 | | 1.5008 | 11.0 | 3718 | 2.2277 | 0.4067 | 0.3999 | | 1.4451 | 12.0 | 4056 | 2.2023 | 0.4033 | 0.4025 | | 1.3788 | 13.0 | 4394 | 2.1953 | 0.41 | 0.4066 | | 1.3418 | 14.0 | 4732 | 2.1774 | 0.4083 | 0.4036 | | 1.2689 | 15.0 | 5070 | 2.1798 | 0.41 | 0.4123 | | 1.2495 | 16.0 | 5408 | 2.1700 | 0.4233 | 0.4228 | | 1.1946 | 17.0 | 5746 | 2.1653 | 0.42 | 0.4241 | | 1.1652 | 18.0 | 6084 | 2.1672 | 0.4283 | 0.4279 | | 1.1428 | 19.0 | 6422 | 2.1631 | 0.4217 | 0.4259 | | 1.1027 | 20.0 | 6760 | 2.1501 | 0.4133 | 0.4189 | | 1.063 | 21.0 | 7098 | 2.1522 | 0.4183 | 0.4244 | | 1.0621 | 22.0 | 7436 | 2.1480 | 0.42 | 0.4258 | | 1.0412 | 23.0 | 7774 | 2.1491 | 0.4217 | 0.4285 | | 1.0311 | 24.0 | 8112 | 2.1493 | 0.4267 | 0.4333 | | 1.0195 | 25.0 | 8450 | 2.1456 | 0.4283 | 0.4344 | | 1d225fdef6126564462dac67c8b20fef |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-finetuned-berttokenizer-shards_ext_ This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.0079 | 6f6f464e806ab27806be038f9766c103 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:------:|:---------------:| | 2.1965 | 1.0 | 43009 | 2.0675 | | 1.9985 | 2.0 | 86018 | 2.0185 | | 1.8484 | 3.0 | 129027 | 2.0079 | | 6d0e934098f6ab287ecdf409bcb3faf4 |

apache-2.0 | ['automatic-speech-recognition', 'sv-SE'] | false | exp_w2v2t_sv-se_hubert_s930 Fine-tuned [facebook/hubert-large-ll60k](https://huggingface.co/facebook/hubert-large-ll60k) for speech recognition using the train split of [Common Voice 7.0 (sv-SE)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | de087d4e428d2ac462195811c4a0c839 |

mit | ['sklearn', 'skops', 'tabular-classification', 'visual emb-gam'] | false | Model description This is a LogisticRegressionCV model trained on averages of patch embeddings from the Imagenette dataset. This forms the GAM of an [Emb-GAM](https://arxiv.org/abs/2209.11799) extended to images. Patch embeddings are meant to be extracted with the [`facebook/dino-vitb16` DINO checkpoint](https://huggingface.co/facebook/dino-vitb16). | f72159474f0fd5fda158258332ceccee |

mit | ['sklearn', 'skops', 'tabular-classification', 'visual emb-gam'] | false | sk-58b9b78a-229c-4a6a-b67b-244945cdc29d input.sk-hidden--visually {border: 0;clip: rect(1px 1px 1px 1px);clip: rect(1px, 1px, 1px, 1px);height: 1px;margin: -1px;overflow: hidden;padding: 0;position: absolute;width: 1px;} | 5d406b3c54ef3d86e766c2dc444e1dc8 |

mit | ['sklearn', 'skops', 'tabular-classification', 'visual emb-gam'] | false | sk-58b9b78a-229c-4a6a-b67b-244945cdc29d div.sk-dashed-wrapped {border: 1px dashed gray;margin: 0 0.4em 0.5em 0.4em;box-sizing: border-box;padding-bottom: 0.4em;background-color: white;position: relative;} | e09e1afbe62ecb8634429411d12fc840 |

mit | ['sklearn', 'skops', 'tabular-classification', 'visual emb-gam'] | false | sk-58b9b78a-229c-4a6a-b67b-244945cdc29d div.sk-container {/* jupyter's `normalize.less` sets `[hidden] { display: none; }` but bootstrap.min.css set `[hidden] { display: none !important; }` so we also need the `!important` here to be able to override the default hidden behavior on the sphinx rendered scikit-learn.org. See: https://github.com/scikit-learn/scikit-learn/issues/21755 */display: inline-block !important;position: relative;} | 73f117054b151f1a2b438352e59698bd |

mit | ['sklearn', 'skops', 'tabular-classification', 'visual emb-gam'] | false | sk-58b9b78a-229c-4a6a-b67b-244945cdc29d div.sk-text-repr-fallback {display: none;}</style><div id="sk-58b9b78a-229c-4a6a-b67b-244945cdc29d" class="sk-top-container"><div class="sk-text-repr-fallback"><pre>LogisticRegressionCV(cv=StratifiedKFold(n_splits=5, random_state=1, shuffle=True),random_state=1, refit=False)</pre><b>Please rerun this cell to show the HTML repr or trust the notebook.</b></div><div class="sk-container" hidden><div class="sk-item"><div class="sk-estimator sk-toggleable"><input class="sk-toggleable__control sk-hidden--visually" id="d612eebc-39a3-42fc-99e0-37e6f258ac21" type="checkbox" checked><label for="d612eebc-39a3-42fc-99e0-37e6f258ac21" class="sk-toggleable__label sk-toggleable__label-arrow">LogisticRegressionCV</label><div class="sk-toggleable__content"><pre>LogisticRegressionCV(cv=StratifiedKFold(n_splits=5, random_state=1, shuffle=True),random_state=1, refit=False)</pre></div></div></div></div></div> | d37732d36d096ccea32a70bff3aad634 |

mit | ['sklearn', 'skops', 'tabular-classification', 'visual emb-gam'] | false | load embedding model device = torch.device('cuda' if torch.cuda.is_available() else 'cpu') feature_extractor = AutoFeatureExtractor.from_pretrained('facebook/dino-vitb16') model = AutoModel.from_pretrained('facebook/dino-vitb16').eval().to(device) | 261df387162167ce6f869a05c11b7652 |

mit | ['sklearn', 'skops', 'tabular-classification', 'visual emb-gam'] | false | load logistic regression os.mkdir('emb-gam-dino') hub_utils.download(repo_id='Ramos-Ramos/emb-gam-dino', dst='emb-gam-dino') with open('emb-gam-dino/model.pkl', 'rb') as file: logistic_regression = pickle.load(file) | 4c633721bb4ebc62b244389181d5a37e |

mit | ['sklearn', 'skops', 'tabular-classification', 'visual emb-gam'] | false | Citation **BibTeX:** ``` @article{singh2022emb, title={Emb-GAM: an Interpretable and Efficient Predictor using Pre-trained Language Models}, author={Singh, Chandan and Gao, Jianfeng}, journal={arXiv preprint arXiv:2209.11799}, year={2022} } ``` | 8cd3a91f57cb2883a9f7205ed35a5089 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers'] | false | Anything V3.1  Anything V3.1 is a third-party continuation of a latent diffusion model, Anything V3.0. This model is claimed to be a better version of Anything V3.0 with a fixed VAE model and a fixed CLIP position id key. The CLIP reference was taken from Stable Diffusion V1.5. The VAE was swapped using Kohya's merge-vae script and the CLIP was fixed using Arena's stable-diffusion-model-toolkit webui extensions. Anything V3.2 is supposed to be a resume training of Anything V3.1. The current model has been fine-tuned with a learning rate of 2.0e-6, 50 epochs, and 4 batch sizes on datasets collected from many sources, with 1/4 of them being synthetic datasets. The dataset has been preprocessed using the Aspect Ratio Bucketing Tool so that it can be converted to latents and trained at non-square resolutions. This model is supposed to be a test model to see how the clip fix affects training. Like other anime-style Stable Diffusion models, it also supports Danbooru tags to generate images. e.g. **_1girl, white hair, golden eyes, beautiful eyes, detail, flower meadow, cumulonimbus clouds, lighting, detailed sky, garden_** - Use it with the [`Automatic1111's Stable Diffusion Webui`](https://github.com/AUTOMATIC1111/stable-diffusion-webui) see: ['how-to-use']( | f199cbaa81ce2da5e91e98eff0a7cdf2 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers'] | false | Model Details - **Currently maintained by:** Cagliostro Research Lab - **Model type:** Diffusion-based text-to-image generation model - **Model Description:** This is a model that can be used to generate and modify anime-themed images based on text prompts. - **License:** [CreativeML Open RAIL++-M License](https://huggingface.co/stabilityai/stable-diffusion-2/blob/main/LICENSE-MODEL) - **Finetuned from model:** Anything V3.1 | ae36c9624fcfb14db22802786b0129db |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers'] | false | How-to-Use - Download `Anything V3.1` [here](https://huggingface.co/cag/anything-v3-1/resolve/main/anything-v3-1.safetensors), or `Anything V3.2` [here](https://huggingface.co/cag/anything-v3-1/resolve/main/anything-v3-2.safetensors), all model are in `.safetensors` format. - You need to adjust your prompt using aesthetic tags to get better result, you can use any generic negative prompt or use the following suggested negative prompt to guide the model towards high aesthetic generationse: ``` lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry ``` - And, the following should also be prepended to prompts to get high aesthetic results: ``` masterpiece, best quality, illustration, beautiful detailed, finely detailed, dramatic light, intricate details ``` | a23b205bfccd356373aa9cece4f635d2 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers'] | false | 🧨Diffusers This model can be used just like any other Stable Diffusion model. For more information, please have a look at the [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion). You can also export the model to [ONNX](https://huggingface.co/docs/diffusers/optimization/onnx), [MPS](https://huggingface.co/docs/diffusers/optimization/mps) and/or [FLAX/JAX](). Pretrained model currently based on Anything V3.1. You should install dependencies below in order to running the pipeline ```bash pip install diffusers transformers accelerate scipy safetensors ``` Running the pipeline (if you don't swap the scheduler it will run with the default DDIM, in this example we are swapping it to DPMSolverMultistepScheduler): ```python import torch from torch import autocast from diffusers import StableDiffusionPipeline, DPMSolverMultistepScheduler model_id = "cag/anything-v3-1" | 0978d2c0c20c93795720ad44d8e2e80c |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers'] | false | Use the DPMSolverMultistepScheduler (DPM-Solver++) scheduler here instead pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16) pipe.scheduler = DPMSolverMultistepScheduler.from_config(pipe.scheduler.config) pipe = pipe.to("cuda") prompt = "masterpiece, best quality, high quality, 1girl, solo, sitting, confident expression, long blonde hair, blue eyes, formal dress" negative_prompt = "lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry" with autocast("cuda"): image = pipe(prompt, negative_prompt=negative_prompt, width=512, height=728, guidance_scale=12, num_inference_steps=50).images[0] image.save("anime_girl.png") ``` | 171f0b3b6bb8a4055d7cdd468263b5f4 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers'] | false | Limitation This model is overfitted and cannot follow prompts well, even after the text encoder has been fixed. This leads to laziness in prompting, as you will only get good results by typing 1girl. Additionally, this model is anime-based and biased towards anime female characters. It is difficult to generate masculine male characters without providing specific prompts. Furthermore, not much has changed compared to the Anything V3.0 base model, as it only involved swapping the VAE and CLIP models and then fine-tuning for 50 epochs with small scale datasets. | a8ec7edfa680e3762272fabfdd81523a |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers'] | false | Example Here is some cherrypicked samples and comparison between available models    | 03ddbf28133e0d8da9261cf8beb5f220 |

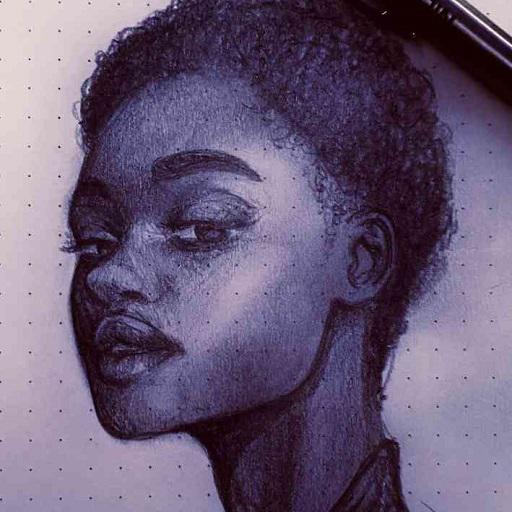

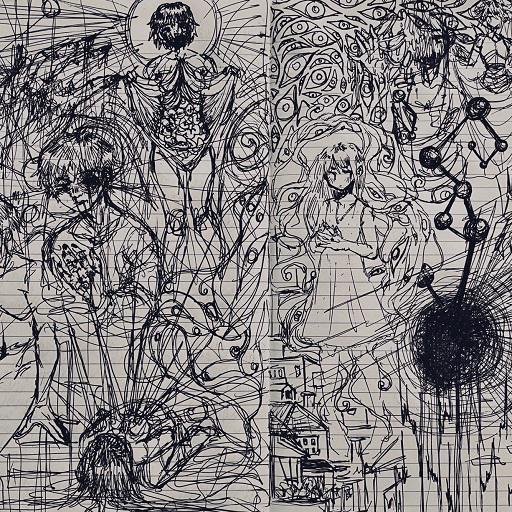

mit | [] | false | GHOST style on Stable Diffusion This is the `<ghost>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:      | 432ce8eeec0a1f662e5eeb0d62db038e |

apache-2.0 | ['multiberts', 'multiberts-seed_4'] | false | MultiBERTs - Seed 4 MultiBERTs is a collection of checkpoints and a statistical library to support robust research on BERT. We provide 25 BERT-base models trained with similar hyper-parameters as [the original BERT model](https://github.com/google-research/bert) but with different random seeds, which causes variations in the initial weights and order of training instances. The aim is to distinguish findings that apply to a specific artifact (i.e., a particular instance of the model) from those that apply to the more general procedure. We also provide 140 intermediate checkpoints captured during the course of pre-training (we saved 28 checkpoints for the first 5 runs). The models were originally released through [http://goo.gle/multiberts](http://goo.gle/multiberts). We describe them in our paper [The MultiBERTs: BERT Reproductions for Robustness Analysis](https://arxiv.org/abs/2106.16163). This is model | 60110416831a3983744f3f1ecf9b2d1e |

apache-2.0 | ['multiberts', 'multiberts-seed_4'] | false | How to use Using code from [BERT-base uncased](https://huggingface.co/bert-base-uncased), here is an example based on Tensorflow: ``` from transformers import BertTokenizer, TFBertModel tokenizer = BertTokenizer.from_pretrained('google/multiberts-seed_4') model = TFBertModel.from_pretrained("google/multiberts-seed_4") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='tf') output = model(encoded_input) ``` PyTorch version: ``` from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('google/multiberts-seed_4') model = BertModel.from_pretrained("google/multiberts-seed_4") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | 81dbf87c0483aea61dd3a1b70437fe33 |

apache-2.0 | ['generated_from_trainer', 'text-generation', 'opt', 'non-commercial'] | false | OPT-Peter-1.3B-1E > This is an initial checkpoint of the model - the latest version is [here](https://huggingface.co/pszemraj/opt-peter-1.3B) This model is a fine-tuned version of [facebook/opt-1.3b](https://huggingface.co/facebook/opt-1.3b) on text message data (mine) for 1.6 epochs. It achieves the following results on the evaluation set (at the end of epoch 1): - eval_loss: 3.3595 - eval_runtime: 988.6985 - eval_samples_per_second: 8.803 - eval_steps_per_second: 2.201 - epoch: 1.0 - step: 1235 | 460db707e6fa051cb5f9ee553654356e |

apache-2.0 | ['generated_from_trainer', 'text-generation', 'opt', 'non-commercial'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 6e-05 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - distributed_type: multi-GPU - gradient_accumulation_steps: 16 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: cosine - lr_scheduler_warmup_ratio: 0.01 - num_epochs: 1.6 | 66c67da55790e906283352a01566c50f |

mit | ['generated_from_trainer'] | false | twitter-data-xlm-roberta-base-eng-only-sentiment-finetuned-memes This model is a fine-tuned version of [jayantapaul888/twitter-data-xlm-roberta-base-sentiment-finetuned-memes](https://huggingface.co/jayantapaul888/twitter-data-xlm-roberta-base-sentiment-finetuned-memes) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.6286 - Accuracy: 0.8660 - Precision: 0.8796 - Recall: 0.8795 - F1: 0.8795 | 501dbecc43e908fc22a550110cdf3288 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:---------:|:------:|:------:| | No log | 1.0 | 378 | 0.3421 | 0.8407 | 0.8636 | 0.8543 | 0.8553 | | 0.396 | 2.0 | 756 | 0.3445 | 0.8496 | 0.8726 | 0.8634 | 0.8631 | | 0.2498 | 3.0 | 1134 | 0.3656 | 0.8585 | 0.8764 | 0.8727 | 0.8723 | | 0.1543 | 4.0 | 1512 | 0.4549 | 0.8600 | 0.8742 | 0.8740 | 0.8741 | | 0.1543 | 5.0 | 1890 | 0.5932 | 0.8645 | 0.8783 | 0.8780 | 0.8780 | | 0.0815 | 6.0 | 2268 | 0.6286 | 0.8660 | 0.8796 | 0.8795 | 0.8795 | | 4a35923237e565159af8f006a8fcb65f |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-squad_v2 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad_v2 dataset. It achieves the following results on the evaluation set: - Loss: 1.3949 | f414a276d95a4fa2e9dafd74a26b2280 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.1996 | 1.0 | 8235 | 1.2485 | | 0.9303 | 2.0 | 16470 | 1.2147 | | 0.7438 | 3.0 | 24705 | 1.3949 | | 3aea705ff1eaeb179289715621f5b2e8 |

mit | [] | false | Mona (Genshin Impact) on Stable Diffusion This is the Mona concept taught to Stable Diffusion via Textual Inversion. You can load this concept into a Stable Diffusion fork such as this [repo](https://github.com/AUTOMATIC1111/stable-diffusion-webui) (Instructions [here](https://github.com/AUTOMATIC1111/stable-diffusion-webui-feature-showcase | 24529aa690657107a208a46a1ef6cdb0 |

apache-2.0 | ['roberta', 'NLU', 'Similarity', 'Chinese'] | false | 模型分类 Model Taxonomy | 需求 Demand | 任务 Task | 系列 Series | 模型 Model | 参数 Parameter | 额外 Extra | | :----: | :----: | :----: | :----: | :----: | :----: | | 通用 General | 自然语言理解 NLU | 二郎神 Erlangshen | Roberta | 330M | 中文-相似度 Similarity | | 9a20799a51ce39e1f141442886a70f4c |

apache-2.0 | ['roberta', 'NLU', 'Similarity', 'Chinese'] | false | 模型信息 Model Information 基于[chinese-roberta-wwm-ext-large](https://huggingface.co/hfl/chinese-roberta-wwm-ext-large),我们在收集的20个中文领域的改写数据集,总计2773880个样本上微调了一个Similarity版本。 Based on [chinese-roberta-wwm-ext-large](https://huggingface.co/hfl/chinese-roberta-wwm-ext-large), we fine-tuned a similarity version on 20 Chinese paraphrase datasets, with totaling 2,773,880 samples. | 3889145c9de262bbde178d95c2984110 |

apache-2.0 | ['roberta', 'NLU', 'Similarity', 'Chinese'] | false | 下游效果 Performance | Model | BQ | BUSTM | AFQMC | | :--------: | :-----: | :----: | :-----: | | Erlangshen-Roberta-110M-Similarity | 85.41 | 95.18 | 81.72 | | Erlangshen-Roberta-330M-Similarity | 86.21 | 99.29 | 93.89 | | Erlangshen-MegatronBert-1.3B-Similarity | 86.31 | - | - | | cea8a3aecebe312a25d56346b7e6ce22 |

apache-2.0 | ['roberta', 'NLU', 'Similarity', 'Chinese'] | false | 使用 Usage ``` python from transformers import BertForSequenceClassification from transformers import BertTokenizer import torch tokenizer=BertTokenizer.from_pretrained('IDEA-CCNL/Erlangshen-Roberta-330M-Similarity') model=BertForSequenceClassification.from_pretrained('IDEA-CCNL/Erlangshen-Roberta-330M-Similarity') texta='今天的饭不好吃' textb='今天心情不好' output=model(torch.tensor([tokenizer.encode(texta,textb)])) print(torch.nn.functional.softmax(output.logits,dim=-1)) ``` | f5cea9785c263e7123ef6005323961dd |

apache-2.0 | ['generated_from_keras_callback'] | false | donyd/distilbert-finetuned-imdb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 2.8432 - Validation Loss: 2.6247 - Epoch: 0 | 327b6980c7de36c63125b00b22924b5e |

mit | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | MPNet NLI and STS This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search. It uses the [jamescalam/mpnet-snli-negatives](https://huggingface.co/jamescalam/mpnet-snli-negatives) model as a starting point, and is fine-tuned further on the **S**emantic **T**extual **S**imilarity **b**enchmark (STSb) dataset. Returning evaluation scores of ~0.9 cosine Pearson correlation using the STSb test set. Find more info from [James Briggs on YouTube](https://youtube.com/c/jamesbriggs) or in the [**free** NLP for Semantic Search ebook](https://pinecone.io/learn/nlp). <!--- Describe your model here --> | 8df321d45cd7012fd22c251ef64fbfcb |

mit | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Usage (Sentence-Transformers) Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed: ``` pip install -U sentence-transformers ``` Then you can use the model like this: ```python from sentence_transformers import SentenceTransformer sentences = ["This is an example sentence", "Each sentence is converted"] model = SentenceTransformer('jamescalam/mpnet-nli-sts') embeddings = model.encode(sentences) print(embeddings) ``` | 578759c16275f750f42f1601806e7c2d |

mit | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Training The model was trained with the parameters: **DataLoader**: `torch.utils.data.dataloader.DataLoader` of length 180 with parameters: ``` {'batch_size': 32, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'} ``` **Loss**: `sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss` Parameters of the fit()-Method: ``` { "epochs": 5, "evaluation_steps": 25, "evaluator": "sentence_transformers.evaluation.EmbeddingSimilarityEvaluator.EmbeddingSimilarityEvaluator", "max_grad_norm": 1, "optimizer_class": "<class 'torch.optim.adamw.AdamW'>", "optimizer_params": { "lr": 2e-05 }, "scheduler": "WarmupLinear", "steps_per_epoch": null, "warmup_steps": 90, "weight_decay": 0.01 } ``` | 3d79fcedea2bd325967b75446071585d |

other | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 9e-07 - train_batch_size: 1 - eval_batch_size: 8 - seed: 100 - distributed_type: multi-GPU - num_devices: 4 - total_train_batch_size: 4 - total_eval_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: constant - num_epochs: 15.0 | d8aa265900668e97d2aa66104c0f573f |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-lm-all This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.8646 | 472a6a6cb2a31bf780e2b68616ecb22e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.6625 | 1.0 | 1194 | 1.3270 | | 1.3001 | 2.0 | 2388 | 1.1745 | | 1.1694 | 3.0 | 3582 | 1.1133 | | 1.0901 | 4.0 | 4776 | 1.0547 | | 1.0309 | 5.0 | 5970 | 0.9953 | | 0.9842 | 6.0 | 7164 | 0.9997 | | 0.9396 | 7.0 | 8358 | 0.9707 | | 0.8997 | 8.0 | 9552 | 0.9324 | | 0.8633 | 9.0 | 10746 | 0.9145 | | 0.8314 | 10.0 | 11940 | 0.9047 | | 0.812 | 11.0 | 13134 | 0.8954 | | 0.7841 | 12.0 | 14328 | 0.8940 | | 0.7616 | 13.0 | 15522 | 0.8555 | | 0.7508 | 14.0 | 16716 | 0.8711 | | 0.7333 | 15.0 | 17910 | 0.8351 | | 0.7299 | 16.0 | 19104 | 0.8646 | | 4f293193a06c8f6dcabac198951d3724 |

apache-2.0 | ['automatic-speech-recognition', 'es'] | false | exp_w2v2t_es_vp-100k_s732 Fine-tuned [facebook/wav2vec2-large-100k-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-100k-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (es)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 897bf2f632e4d850c84516d08e9dcc6f |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased__hate_speech_offensive__train-32-1 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.0606 - Accuracy: 0.4745 | 31e2840f9a42c90b6fac0d4a28c7df57 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 1.0941 | 1.0 | 19 | 1.1045 | 0.2 | | 0.9967 | 2.0 | 38 | 1.1164 | 0.35 | | 0.8164 | 3.0 | 57 | 1.1570 | 0.4 | | 0.5884 | 4.0 | 76 | 1.2403 | 0.35 | | 0.3322 | 5.0 | 95 | 1.3815 | 0.35 | | 0.156 | 6.0 | 114 | 1.8102 | 0.3 | | 0.0576 | 7.0 | 133 | 2.1439 | 0.4 | | 0.0227 | 8.0 | 152 | 2.4368 | 0.3 | | 0.0133 | 9.0 | 171 | 2.5994 | 0.4 | | 0.009 | 10.0 | 190 | 2.7388 | 0.35 | | 0.0072 | 11.0 | 209 | 2.8287 | 0.35 | | 9e58c0a93e298c1d5864b0fb9c741064 |

mit | ['generated_from_trainer'] | false | SentimentBert This model is a fine-tuned version of [cahya/bert-base-indonesian-522M](https://huggingface.co/cahya/bert-base-indonesian-522M) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.2005 - Accuracy: 0.965 | 61627aa53534beae5e846f94dd3d4271 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 275 | 0.7807 | 0.715 | | 0.835 | 2.0 | 550 | 1.0588 | 0.635 | | 0.835 | 3.0 | 825 | 0.2764 | 0.94 | | 0.5263 | 4.0 | 1100 | 0.1913 | 0.97 | | 0.5263 | 5.0 | 1375 | 0.2005 | 0.965 | | 164552cb3d469091a5d7f6ce8c0009de |

apache-2.0 | ['automatic-speech-recognition', 'id'] | false | exp_w2v2t_id_vp-fr_s27 Fine-tuned [facebook/wav2vec2-large-fr-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-fr-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (id)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 8cfdea2b6c461c41ca9a422f6e5e3125 |

apache-2.0 | ['translation'] | false | opus-mt-fi-sk * source languages: fi * target languages: sk * OPUS readme: [fi-sk](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/fi-sk/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-08.zip](https://object.pouta.csc.fi/OPUS-MT-models/fi-sk/opus-2020-01-08.zip) * test set translations: [opus-2020-01-08.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-sk/opus-2020-01-08.test.txt) * test set scores: [opus-2020-01-08.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-sk/opus-2020-01-08.eval.txt) | f639a0eb3b681400806a1ab763cb5e5d |

mit | ['generated_from_trainer'] | false | roberta-large-finetuned-combined-DS This model is a fine-tuned version of [roberta-large](https://huggingface.co/roberta-large) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 3.2062 - Accuracy: 0.7001 - Precision: 0.6703 - Recall: 0.6700 - F1: 0.6701 | cce4a824e8c4678429f14ff67bc44b18 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:-----:|:---------------:|:--------:|:---------:|:------:|:------:| | 0.8804 | 1.0 | 711 | 0.8517 | 0.6573 | 0.6786 | 0.6253 | 0.6231 | | 0.6949 | 2.0 | 1422 | 0.7444 | 0.6833 | 0.6609 | 0.6647 | 0.6604 | | 0.5674 | 3.0 | 2133 | 0.8379 | 0.6798 | 0.6571 | 0.6659 | 0.6575 | | 0.433 | 3.99 | 2844 | 0.8703 | 0.7079 | 0.6947 | 0.6801 | 0.6809 | | 0.3314 | 4.99 | 3555 | 1.1792 | 0.6861 | 0.6672 | 0.6558 | 0.6569 | | 0.2519 | 5.99 | 4266 | 1.5574 | 0.6966 | 0.6761 | 0.6639 | 0.6662 | | 0.2083 | 6.99 | 4977 | 1.8781 | 0.6952 | 0.6681 | 0.6592 | 0.6619 | | 0.1773 | 7.99 | 5688 | 1.8687 | 0.6959 | 0.6677 | 0.6748 | 0.6675 | | 0.1536 | 8.99 | 6399 | 2.2483 | 0.7037 | 0.6788 | 0.6674 | 0.6694 | | 0.1305 | 9.99 | 7110 | 2.4602 | 0.6875 | 0.6597 | 0.6681 | 0.6612 | | 0.0982 | 10.98 | 7821 | 2.5573 | 0.6994 | 0.6705 | 0.6728 | 0.6709 | | 0.0858 | 11.98 | 8532 | 2.8048 | 0.6994 | 0.6765 | 0.6730 | 0.6737 | | 0.0734 | 12.98 | 9243 | 3.0408 | 0.6945 | 0.6640 | 0.6628 | 0.6626 | | 0.0625 | 13.98 | 9954 | 3.0047 | 0.7037 | 0.6784 | 0.6757 | 0.6764 | | 0.0434 | 14.98 | 10665 | 3.0789 | 0.6987 | 0.6737 | 0.6669 | 0.6691 | | 0.0432 | 15.98 | 11376 | 2.9647 | 0.6945 | 0.6649 | 0.6684 | 0.6663 | | 0.0326 | 16.98 | 12087 | 3.3076 | 0.6931 | 0.6630 | 0.6563 | 0.6583 | | 0.032 | 17.97 | 12798 | 3.1890 | 0.7022 | 0.6737 | 0.6702 | 0.6717 | | 0.0275 | 18.97 | 13509 | 3.1798 | 0.7029 | 0.6738 | 0.6750 | 0.6744 | | 0.0251 | 19.97 | 14220 | 3.2062 | 0.7001 | 0.6703 | 0.6700 | 0.6701 | | fef02d2c50781beb1155b89bc4691180 |

mit | ['generated_from_trainer'] | false | tolkien-mythopoeic-gen This model is a fine-tuned version of [gpt2](https://huggingface.co/gpt2) on Tolkien's mythopoeic works, namely The Silmarillion and Unfinished Tales of Numenor and Middle Earth. It achieves the following results on the evaluation set: - Loss: 3.5110 | 2f0599c26f9a72fb8ff25f3209f7a904 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 3.5732 | 1.0 | 145 | 3.5110 | | 3.5713 | 2.0 | 290 | 3.5110 | | 3.5718 | 3.0 | 435 | 3.5110 | | fae0a874ddf8ce4b63317a53df32500a |

apache-2.0 | ['generated_from_trainer'] | false | t5-small-finetuned-de-en-epochs5 This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the wmt14 dataset. It achieves the following results on the evaluation set: - Loss: 2.2040 - Bleu: 5.8913 - Gen Len: 17.5408 | 87fad4029eeae04c5fd917c673a97435 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:------:|:-------:| | No log | 1.0 | 188 | 2.3366 | 2.8075 | 17.8188 | | No log | 2.0 | 376 | 2.2557 | 4.8765 | 17.626 | | 2.6928 | 3.0 | 564 | 2.2246 | 5.5454 | 17.5534 | | 2.6928 | 4.0 | 752 | 2.2086 | 5.8511 | 17.5461 | | 2.6928 | 5.0 | 940 | 2.2040 | 5.8913 | 17.5408 | | 73316192fc3813a81291342edfc420c9 |

mit | ['generated_from_trainer'] | false | discourse_classification_using_robrta_base This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.0832 - Accuracy: 0.6592 - F1: 0.6592 | 3a827981c61d5d15374ee59e62d421b8 |

mit | ['summarization', 'translation', 'question-answering'] | false | How to use For more details, do check out [our Github repo](https://github.com/vietai/ViT5). [Finetunning Example can be found here](https://github.com/vietai/ViT5/tree/main/finetunning_huggingface). ```python from transformers import AutoTokenizer, AutoModelForSeq2SeqLM tokenizer = AutoTokenizer.from_pretrained("VietAI/vit5-large") model = AutoModelForSeq2SeqLM.from_pretrained("VietAI/vit5-large") model.cuda() ``` | 69b9c38332182443e4a1cc9a3fdf2861 |

apache-2.0 | ['vision', 'image-classification'] | false | LeViT LeViT-256 model pre-trained on ImageNet-1k at resolution 224x224. It was introduced in the paper [LeViT: a Vision Transformer in ConvNet's Clothing for Faster Inference ](https://arxiv.org/abs/2104.01136) by Graham et al. and first released in [this repository](https://github.com/facebookresearch/LeViT). Disclaimer: The team releasing LeViT did not write a model card for this model so this model card has been written by the Hugging Face team. | 3bc081545287318465d6198ffe65e15e |

apache-2.0 | ['vision', 'image-classification'] | false | Usage Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes: ```python from transformers import LevitFeatureExtractor, LevitForImageClassificationWithTeacher from PIL import Image import requests url = 'http://images.cocodataset.org/val2017/000000039769.jpg' image = Image.open(requests.get(url, stream=True).raw) feature_extractor = LevitFeatureExtractor.from_pretrained('facebook/levit-256') model = LevitForImageClassificationWithTeacher.from_pretrained('facebook/levit-256') inputs = feature_extractor(images=image, return_tensors="pt") outputs = model(**inputs) logits = outputs.logits | 021d071edde29443f4924d47815fa207 |

apache-2.0 | ['automatic-speech-recognition', 'ja'] | false | exp_w2v2t_ja_vp-nl_s287 Fine-tuned [facebook/wav2vec2-large-nl-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-nl-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (ja)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 2a5e8bdbb8f73e4fe1df1c670b816a92 |

mit | ['generated_from_trainer'] | false | eloquent_stallman This model was trained from scratch on the tomekkorbak/detoxify-pile-chunk3-0-50000, the tomekkorbak/detoxify-pile-chunk3-50000-100000, the tomekkorbak/detoxify-pile-chunk3-100000-150000, the tomekkorbak/detoxify-pile-chunk3-150000-200000, the tomekkorbak/detoxify-pile-chunk3-200000-250000, the tomekkorbak/detoxify-pile-chunk3-250000-300000, the tomekkorbak/detoxify-pile-chunk3-300000-350000, the tomekkorbak/detoxify-pile-chunk3-350000-400000, the tomekkorbak/detoxify-pile-chunk3-400000-450000, the tomekkorbak/detoxify-pile-chunk3-450000-500000, the tomekkorbak/detoxify-pile-chunk3-500000-550000, the tomekkorbak/detoxify-pile-chunk3-550000-600000, the tomekkorbak/detoxify-pile-chunk3-600000-650000, the tomekkorbak/detoxify-pile-chunk3-650000-700000, the tomekkorbak/detoxify-pile-chunk3-700000-750000, the tomekkorbak/detoxify-pile-chunk3-750000-800000, the tomekkorbak/detoxify-pile-chunk3-800000-850000, the tomekkorbak/detoxify-pile-chunk3-850000-900000, the tomekkorbak/detoxify-pile-chunk3-900000-950000, the tomekkorbak/detoxify-pile-chunk3-950000-1000000, the tomekkorbak/detoxify-pile-chunk3-1000000-1050000, the tomekkorbak/detoxify-pile-chunk3-1050000-1100000, the tomekkorbak/detoxify-pile-chunk3-1100000-1150000, the tomekkorbak/detoxify-pile-chunk3-1150000-1200000, the tomekkorbak/detoxify-pile-chunk3-1200000-1250000, the tomekkorbak/detoxify-pile-chunk3-1250000-1300000, the tomekkorbak/detoxify-pile-chunk3-1300000-1350000, the tomekkorbak/detoxify-pile-chunk3-1350000-1400000, the tomekkorbak/detoxify-pile-chunk3-1400000-1450000, the tomekkorbak/detoxify-pile-chunk3-1450000-1500000, the tomekkorbak/detoxify-pile-chunk3-1500000-1550000, the tomekkorbak/detoxify-pile-chunk3-1550000-1600000, the tomekkorbak/detoxify-pile-chunk3-1600000-1650000, the tomekkorbak/detoxify-pile-chunk3-1650000-1700000, the tomekkorbak/detoxify-pile-chunk3-1700000-1750000, the tomekkorbak/detoxify-pile-chunk3-1750000-1800000, the tomekkorbak/detoxify-pile-chunk3-1800000-1850000, the tomekkorbak/detoxify-pile-chunk3-1850000-1900000 and the tomekkorbak/detoxify-pile-chunk3-1900000-1950000 datasets. | c1d16fe2f4b5c80c47101d234f52aad8 |

mit | ['generated_from_trainer'] | false | Full config {'dataset': {'datasets': ['tomekkorbak/detoxify-pile-chunk3-0-50000', 'tomekkorbak/detoxify-pile-chunk3-50000-100000', 'tomekkorbak/detoxify-pile-chunk3-100000-150000', 'tomekkorbak/detoxify-pile-chunk3-150000-200000', 'tomekkorbak/detoxify-pile-chunk3-200000-250000', 'tomekkorbak/detoxify-pile-chunk3-250000-300000', 'tomekkorbak/detoxify-pile-chunk3-300000-350000', 'tomekkorbak/detoxify-pile-chunk3-350000-400000', 'tomekkorbak/detoxify-pile-chunk3-400000-450000', 'tomekkorbak/detoxify-pile-chunk3-450000-500000', 'tomekkorbak/detoxify-pile-chunk3-500000-550000', 'tomekkorbak/detoxify-pile-chunk3-550000-600000', 'tomekkorbak/detoxify-pile-chunk3-600000-650000', 'tomekkorbak/detoxify-pile-chunk3-650000-700000', 'tomekkorbak/detoxify-pile-chunk3-700000-750000', 'tomekkorbak/detoxify-pile-chunk3-750000-800000', 'tomekkorbak/detoxify-pile-chunk3-800000-850000', 'tomekkorbak/detoxify-pile-chunk3-850000-900000', 'tomekkorbak/detoxify-pile-chunk3-900000-950000', 'tomekkorbak/detoxify-pile-chunk3-950000-1000000', 'tomekkorbak/detoxify-pile-chunk3-1000000-1050000', 'tomekkorbak/detoxify-pile-chunk3-1050000-1100000', 'tomekkorbak/detoxify-pile-chunk3-1100000-1150000', 'tomekkorbak/detoxify-pile-chunk3-1150000-1200000', 'tomekkorbak/detoxify-pile-chunk3-1200000-1250000', 'tomekkorbak/detoxify-pile-chunk3-1250000-1300000', 'tomekkorbak/detoxify-pile-chunk3-1300000-1350000', 'tomekkorbak/detoxify-pile-chunk3-1350000-1400000', 'tomekkorbak/detoxify-pile-chunk3-1400000-1450000', 'tomekkorbak/detoxify-pile-chunk3-1450000-1500000', 'tomekkorbak/detoxify-pile-chunk3-1500000-1550000', 'tomekkorbak/detoxify-pile-chunk3-1550000-1600000', 'tomekkorbak/detoxify-pile-chunk3-1600000-1650000', 'tomekkorbak/detoxify-pile-chunk3-1650000-1700000', 'tomekkorbak/detoxify-pile-chunk3-1700000-1750000', 'tomekkorbak/detoxify-pile-chunk3-1750000-1800000', 'tomekkorbak/detoxify-pile-chunk3-1800000-1850000', 'tomekkorbak/detoxify-pile-chunk3-1850000-1900000', 'tomekkorbak/detoxify-pile-chunk3-1900000-1950000'], 'filter_threshold': 0.00078, 'is_split_by_sentences': True, 'skip_tokens': 1661599744}, 'generation': {'metrics_configs': [{}, {'n': 1}, {'n': 2}, {'n': 5}], 'scenario_configs': [{'generate_kwargs': {'do_sample': True, 'max_length': 128, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'unconditional', 'num_samples': 2048}, {'generate_kwargs': {'do_sample': True, 'max_length': 128, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'challenging_rtp', 'num_samples': 2048, 'prompts_path': 'resources/challenging_rtp.jsonl'}], 'scorer_config': {'device': 'cuda:0'}}, 'kl_gpt3_callback': {'max_tokens': 64, 'num_samples': 4096}, 'model': {'from_scratch': False, 'gpt2_config_kwargs': {'reorder_and_upcast_attn': True, 'scale_attn_by': True}, 'model_kwargs': {'revision': '81a1701e025d2c65ae6e8c2103df559071523ee0'}, 'path_or_name': 'tomekkorbak/goofy_pasteur'}, 'objective': {'name': 'MLE'}, 'tokenizer': {'path_or_name': 'gpt2'}, 'training': {'dataloader_num_workers': 0, 'effective_batch_size': 64, 'evaluation_strategy': 'no', 'fp16': True, 'hub_model_id': 'eloquent_stallman', 'hub_strategy': 'all_checkpoints', 'learning_rate': 0.0005, 'logging_first_step': True, 'logging_steps': 1, 'num_tokens': 3300000000, 'output_dir': 'training_output104340', 'per_device_train_batch_size': 16, 'push_to_hub': True, 'remove_unused_columns': False, 'save_steps': 25354, 'save_strategy': 'steps', 'seed': 42, 'tokens_already_seen': 1661599744, 'warmup_ratio': 0.01, 'weight_decay': 0.1}} | d35fc4a4f07459c675ec44943721b69d |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'wildcard'] | false | DreamBooth model for the abrozick concept trained by matallanas on the https://huggingface.co/datasets/matallanas/AbduRozik dataset. This is a Stable Diffusion model fine-tuned on the abrozick concept with DreamBooth. It can be used by modifying the `instance_prompt`: **a photo of abrozick** This model was created as part of the DreamBooth Hackathon 🔥. Visit the [organisation page](https://huggingface.co/dreambooth-hackathon) for instructions on how to take part! | 8ad0c4d3079a433ef0cc4610f6a2d686 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 4 - eval_batch_size: 8 - seed: 0 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: constant - training_steps: 1000 | 659758054d600795a1c30a8db07a41c0 |

mit | [] | false | Model Description <!-- Provide a longer summary of what this model is. --> ['Royal_Institute_of_British_Architects', 'Westminster_Abbey', 'England_national_football_team', 'Order_of_the_British_Empire', 'Elizabeth_II', 'Queen_Victoria', 'Buckingham_Palace', 'Royal_Dutch_Shell', 'British_Empire', 'The_Sun_(United_Kingdom)', 'London', 'Labour_Party_(UK)', 'Liberal_Party_of_Australia', 'British_Isles'] - **Developed by:** nandysoham - **Shared by [optional]:** [More Information Needed] - **Model type:** [More Information Needed] - **Language(s) (NLP):** en - **License:** mit - **Finetuned from model [optional]:** [More Information Needed] | 06668c57dff7fdce35c25cab384499ca |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Whisper Small Italian This model is a fine-tuned version of [openai/whisper-base](https://huggingface.co/openai/whisper-base) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.1185 - Wer: 17.3916 | a5557e44cb2c2266cd3a9e9c17a2c1ee |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 16 - eval_batch_size: 8 <!-- - seed: 42 --> - gradient_accumulation_steps: 1 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 954 <!-- - mixed_precision_training: Native AMP --> | a4190c555dc6359e7c516eb87fa7232b |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training results | Training Loss | Step | Validation Loss | Wer | |:-------------:|:----:|:---------------:|:-------:| | 1.4744 | 100 | 1.1852 | 117.6059 | | 0.7241 | 200 | 0.7452 | 79.7386 | | 0.3321 | 300 | 0.3215 | 21.0497 | | 0.2930 | 400 | 0.3030 | 20.2129 | | 0.2698 | 500 | 0.2982 | 19.7635 | | 0.2453 | 600 | 0.2898 | 19.0097 | | 0.2338 | 700 | 0.2768 | 18.7054 | | 0.2402 | 800 | 0.2646 | 18.2214 | | 0.2340 | 900 | 0.2581 | 17.3916 | | fb68efcd00ef3c328ebf939c721a2ed2 |

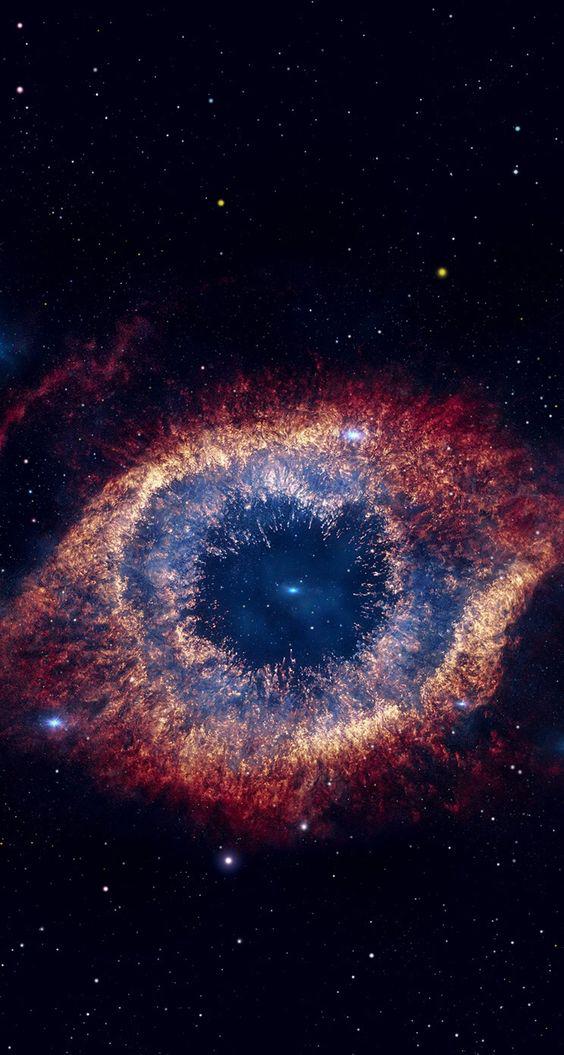

mit | [] | false | Nebula on Stable Diffusion This is the `<nebula>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:       | 1233ebb3b8513bb42d085ec9b0fb96eb |

agpl-3.0 | ['generated_from_trainer'] | false | XLMR-ENIS-finetuned-ner This model is a fine-tuned version of [vesteinn/XLMR-ENIS](https://huggingface.co/vesteinn/XLMR-ENIS) on the mim_gold_ner dataset. It achieves the following results on the evaluation set: - Loss: 0.0955 - Precision: 0.8714 - Recall: 0.8423 - F1: 0.8566 - Accuracy: 0.9827 | 6eb8aa4e7b16e13bdc0808aa7e9942ea |

agpl-3.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.0561 | 1.0 | 2904 | 0.0939 | 0.8481 | 0.8205 | 0.8341 | 0.9804 | | 0.031 | 2.0 | 5808 | 0.0917 | 0.8652 | 0.8299 | 0.8472 | 0.9819 | | 0.0186 | 3.0 | 8712 | 0.0955 | 0.8714 | 0.8423 | 0.8566 | 0.9827 | | 0e2cf01ccd42992c53c60e77fd4d7f20 |

mit | [] | false | Beetlejuice Cartoon Style on Stable Diffusion This is the `<beetlejuice-cartoon>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:           | d2fa7cfad758bfc3a1ee6d47ba55f2ef |

apache-2.0 | ['generated_from_keras_callback'] | false | Zynovia/vit-base-patch16-224-in21k-wwwwii This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.8976 - Train Accuracy: 0.8813 - Train Top-3-accuracy: 0.9721 - Validation Loss: 1.6144 - Validation Accuracy: 0.5845 - Validation Top-3-accuracy: 0.7845 - Epoch: 4 | fe704051248eb7d20703224b03db09fa |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'inner_optimizer': {'class_name': 'AdamWeightDecay', 'config': {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 6e-05, 'decay_steps': 4122, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.001}}, 'dynamic': True, 'initial_scale': 32768.0, 'dynamic_growth_steps': 2000} - training_precision: mixed_float16 | 7d6fbb6308d9a1a180656a089b51dc4b |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train Accuracy | Train Top-3-accuracy | Validation Loss | Validation Accuracy | Validation Top-3-accuracy | Epoch | |:----------:|:--------------:|:--------------------:|:---------------:|:-------------------:|:-------------------------:|:-----:| | 3.4972 | 0.1475 | 0.3067 | 3.0825 | 0.3240 | 0.5178 | 0 | | 2.7352 | 0.4129 | 0.6613 | 2.4838 | 0.4543 | 0.6930 | 1 | | 2.0429 | 0.6153 | 0.8315 | 1.9934 | 0.5690 | 0.7550 | 2 | | 1.4246 | 0.7672 | 0.9166 | 1.6714 | 0.5876 | 0.8016 | 3 | | 0.8976 | 0.8813 | 0.9721 | 1.6144 | 0.5845 | 0.7845 | 4 | | 2f9460ce2a1e2c535343fde952e81ec4 |

apache-2.0 | ['translation'] | false | opus-mt-de-guw * source languages: de * target languages: guw * OPUS readme: [de-guw](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/de-guw/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-20.zip](https://object.pouta.csc.fi/OPUS-MT-models/de-guw/opus-2020-01-20.zip) * test set translations: [opus-2020-01-20.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/de-guw/opus-2020-01-20.test.txt) * test set scores: [opus-2020-01-20.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/de-guw/opus-2020-01-20.eval.txt) | 408096ae3c9aff9f1e86eacb423ae327 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.