license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['bert', 'biomedical'] | false | CODER: Knowledge infused cross-lingual medical term embedding for term normalization. English Version. ``` @article{YUAN2022103983, title = {CODER: Knowledge-infused cross-lingual medical term embedding for term normalization}, journal = {Journal of Biomedical Informatics}, pages = {103983}, year = {2022}, issn = {1532-0464}, doi = {https://doi.org/10.1016/j.jbi.2021.103983}, url = {https://www.sciencedirect.com/science/article/pii/S1532046421003129}, author = {Zheng Yuan and Zhengyun Zhao and Haixia Sun and Jiao Li and Fei Wang and Sheng Yu}, keywords = {medical term normalization, cross-lingual, medical term representation, knowledge graph embedding, contrastive learning} } ``` | d5688d359ab202c927bd53ec7dc13946 |

mit | ['msmarco', 't5', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Introduction ptt5-base-msmarco-pt-100k-v1 is a T5-based model pretrained in the BrWac corpus, finetuned on Portuguese translated version of MS MARCO passage dataset. In the version v1, the Portuguese dataset was translated using [Helsinki](https://huggingface.co/Helsinki-NLP) NMT model. This model was finetuned for 100k steps. Further information about the dataset or the translation method can be found on our [**mMARCO: A Multilingual Version of MS MARCO Passage Ranking Dataset**](https://arxiv.org/abs/2108.13897) and [mMARCO](https://github.com/unicamp-dl/mMARCO) repository. | 36a8142c237c380eab9bcaf032f58201 |

mit | ['msmarco', 't5', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Usage ```python from transformers import T5Tokenizer, T5ForConditionalGeneration model_name = 'unicamp-dl/ptt5-base-msmarco-pt-100k-v1' tokenizer = T5Tokenizer.from_pretrained(model_name) model = T5ForConditionalGeneration.from_pretrained(model_name) ``` | 1853d4401d7d5299a3940dfff1798192 |

mit | ['msmarco', 't5', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Citation If you use ptt5-base-msmarco-pt-100k-v1, please cite: @misc{bonifacio2021mmarco, title={mMARCO: A Multilingual Version of MS MARCO Passage Ranking Dataset}, author={Luiz Henrique Bonifacio and Vitor Jeronymo and Hugo Queiroz Abonizio and Israel Campiotti and Marzieh Fadaee and and Roberto Lotufo and Rodrigo Nogueira}, year={2021}, eprint={2108.13897}, archivePrefix={arXiv}, primaryClass={cs.CL} } | f13d00f2ac46cbeb64d8d867ae45c82e |

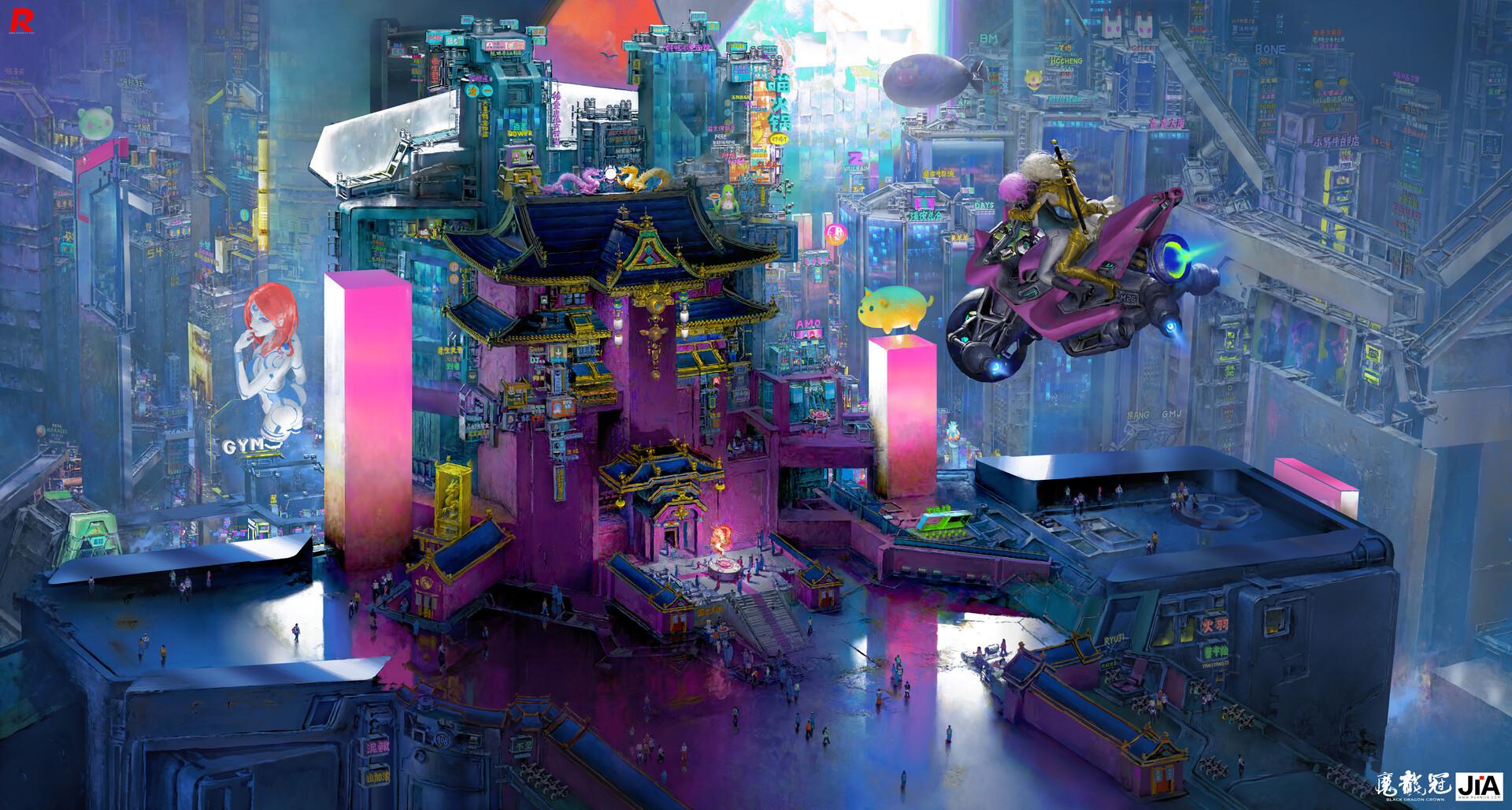

mit | [] | false | Ruan Jia on Stable Diffusion This is the `<ruan-jia>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:                | a75ad66c8f4fbd118444b0a4b522c958 |

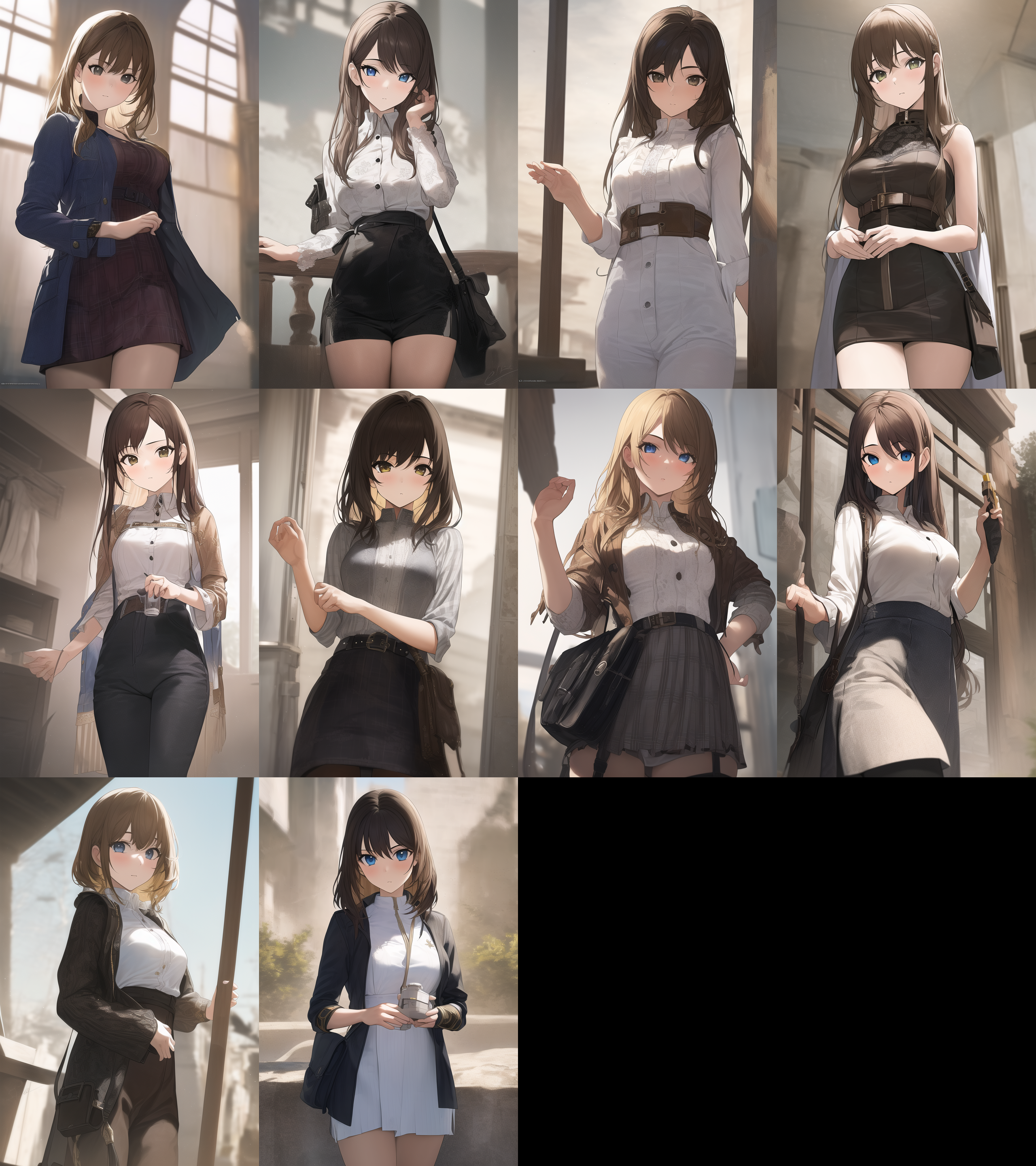

creativeml-openrail-m | ['stable-diffusion', 'anime', 'aiart'] | false | I decide to stop creating a separate repository for each model, so most of the future models will go here. I will only create repository for more important project. Despite the name YuriDiffusion, I am not sure whether I will really train such a model. Dataset collection and the hard limit of SD both make this task very challenging. | d630cb1ca1831622bfbed44e4c964d89 |

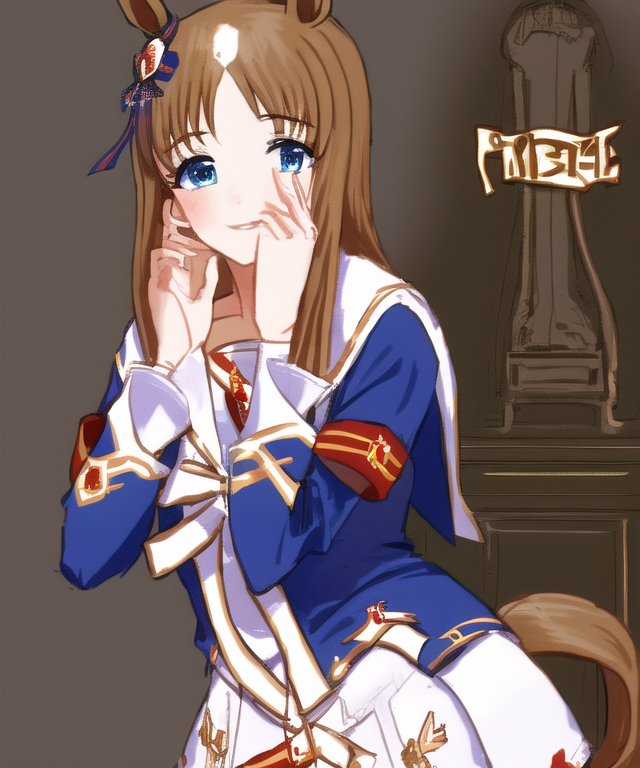

creativeml-openrail-m | ['stable-diffusion', 'anime', 'aiart'] | false | List of models -[suremio-nozomizo-eilanya-maplesally](https://huggingface.co/alea31415/YuriDiffusion/blob/main/suremio-nozomizo-eilanya-maplesally/README.md)     -[onimai mahiro and mihari](https://huggingface.co/alea31415/YuriDiffusion/blob/main/onimai/README.md)    -[grass wonder from umamusume](https://huggingface.co/alea31415/YuriDiffusion/blob/main/grasswonder-umamusume/README.md)    | b48a41be4d2e84e6623fdac85d9a4717 |

creativeml-openrail-m | ['stable-diffusion', 'anime', 'aiart'] | false | Questions that I have partial answer to **Can we make an image of multiple known characters** This is possible either through native training, lora, or merging. **Can we use embedding, lora, and native training together?** Interestingly, independent trained lora and embedding, or indpendent fine-tuning and embedding go hand in hand. On the other hand, putting lora with a find-tuned model for that character does not seem to give good result. | 20da92d51018003cd8a522f4333f90c3 |

creativeml-openrail-m | ['stable-diffusion', 'anime', 'aiart'] | false | Questions that I do not have answer to **Native training or LoRA?** I tried both for [suremio-nozomizo-eilanya-maplesally](https://huggingface.co/alea31415/YuriDiffusion/blob/main/suremio-nozomizo-eilanya-maplesally/README.md) and [grass wonder from umamusume](https://huggingface.co/alea31415/YuriDiffusion/blob/main/grasswonder-umamusume/README.md) so you can compare them. Clearly LoRA has certain advantages - Faster to train - Lower vram requirement - Smaller size Moreover, with the same number of steps LoRA seems to lead to better fidelity if trained with a large learning rate (1e-4). Nonetheless, this can also be a sort of overfitting, and it is unclear whether we are just trading fidelity for flexibility here. In fact, I observe applying lora can have a significant impact on the style of the base model. The problem is whether we can find a better trade-off with a smaller learning rate. Another advantage that is not inherent to the method is the fact that we can now use LoRA directly with any network. This should be possible for normal model as well through add difference merging, but unfortunately the current interface that does not support on-the-fly merging. **Clip skip 1 or 2?** I played with this in my [EuphiAni model](https://huggingface.co/alea31415/EuphiAni-TenseiOujo) but I cannot really judge whether training with clip skip 1 or 2 is better. Changing the prompt can always make things more favorable to one model than another. More surprisingly, I observe that even for model trained with clip skip 2, it may still be better to do inference with clip skip 1. This is not so much the case for LoRA, which can be explained by the fact that LoRA quickly bias the model towards the training distribution so it is important to match training and inference. As for native training, the model still retains its capacity to do inference at clip skip 1. **Learning rate?** - For native training something around 1e-6 is good. - For LoRA the default 1e-4 learns the concept quite fast but there may be some overfitting. On the other hand 1e-5 seems to low. Still need more experience. | 4e5433c399bc21ce18f9b7729af971d9 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-base-timit-demo-colab This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4798 - Wer: 0.3474 | 9764f2903b2afd95109c737e1ecde522 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.5229 | 4.0 | 500 | 1.6557 | 1.0422 | | 0.6618 | 8.0 | 1000 | 0.4420 | 0.4469 | | 0.2211 | 12.0 | 1500 | 0.4705 | 0.4002 | | 0.1281 | 16.0 | 2000 | 0.4347 | 0.3688 | | 0.0868 | 20.0 | 2500 | 0.4653 | 0.3590 | | 0.062 | 24.0 | 3000 | 0.4747 | 0.3519 | | 0.0472 | 28.0 | 3500 | 0.4798 | 0.3474 | | 2640468e82210826e5fc107471893aeb |

afl-3.0 | [] | false | afro-xlmr-mini AfroXLMR-mini was created by MLM adaptation of [XLM-R-miniLM](https://huggingface.co/nreimers/mMiniLMv2-L12-H384-distilled-from-XLMR-Large) model on 17 African languages (Afrikaans, Amharic, Hausa, Igbo, Malagasy, Chichewa, Oromo, Naija, Kinyarwanda, Kirundi, Shona, Somali, Sesotho, Swahili, isiXhosa, Yoruba, and isiZulu) covering the major African language families and 3 high resource languages (Arabic, French, and English). | 822db6392a1b76fdb946f3af095c74ca |

apache-2.0 | ['generated_from_trainer', 'automatic-speech-recognition', 'NbAiLab/NPSC', 'robust-speech-event', False, 'nb-NO', 'hf-asr-leaderboard'] | false | wav2vec2-xls-r-1b-npsc This model is a fine-tuned version of [facebook/wav2vec2-xls-r-1b](https://huggingface.co/facebook/wav2vec2-xls-r-1b) on the [NbAiLab/NPSC (16K_mp3_bokmaal)](https://huggingface.co/datasets/NbAiLab/NPSC/viewer/16K_mp3_bokmaal/train) dataset. It achieves the following results on the evaluation set: - Loss: 0.1598 - WER: 0.0966 | 2e80944205e0ba12aa6bac53859841a4 |

apache-2.0 | ['generated_from_trainer', 'automatic-speech-recognition', 'NbAiLab/NPSC', 'robust-speech-event', False, 'nb-NO', 'hf-asr-leaderboard'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 0.8361 | 0.32 | 500 | 0.6304 | 0.4970 | | 0.5703 | 0.64 | 1000 | 0.3195 | 0.2775 | | 0.5451 | 0.97 | 1500 | 0.2700 | 0.2246 | | 0.47 | 1.29 | 2000 | 0.2564 | 0.2329 | | 0.4063 | 1.61 | 2500 | 0.2459 | 0.2099 | | 0.374 | 1.93 | 3000 | 0.2175 | 0.1894 | | 0.3297 | 2.26 | 3500 | 0.2036 | 0.1755 | | 0.3145 | 2.58 | 4000 | 0.1957 | 0.1757 | | 0.3989 | 2.9 | 4500 | 0.1923 | 0.1723 | | 0.271 | 3.22 | 5000 | 0.1889 | 0.1649 | | 0.2758 | 3.55 | 5500 | 0.1768 | 0.1588 | | 0.2683 | 3.87 | 6000 | 0.1720 | 0.1534 | | 0.2341 | 4.19 | 6500 | 0.1689 | 0.1471 | | 0.2316 | 4.51 | 7000 | 0.1706 | 0.1405 | | 0.2383 | 4.84 | 7500 | 0.1637 | 0.1426 | | 0.2148 | 5.16 | 8000 | 0.1584 | 0.1347 | | 0.2085 | 5.48 | 8500 | 0.1601 | 0.1387 | | 0.2944 | 5.8 | 9000 | 0.1566 | 0.1294 | | 0.1944 | 6.13 | 9500 | 0.1494 | 0.1271 | | 0.1853 | 6.45 | 10000 | 0.1561 | 0.1247 | | 0.235 | 6.77 | 10500 | 0.1461 | 0.1215 | | 0.2286 | 7.09 | 11000 | 0.1447 | 0.1167 | | 0.1781 | 7.41 | 11500 | 0.1502 | 0.1199 | | 0.1714 | 7.74 | 12000 | 0.1425 | 0.1179 | | 0.1725 | 8.06 | 12500 | 0.1427 | 0.1173 | | 0.143 | 8.38 | 13000 | 0.1448 | 0.1142 | | 0.154 | 8.7 | 13500 | 0.1392 | 0.1104 | | 0.1447 | 9.03 | 14000 | 0.1404 | 0.1094 | | 0.1471 | 9.35 | 14500 | 0.1404 | 0.1088 | | 0.1479 | 9.67 | 15000 | 0.1414 | 0.1133 | | 0.1607 | 9.99 | 15500 | 0.1458 | 0.1171 | | 0.166 | 10.32 | 16000 | 0.1652 | 0.1264 | | 0.188 | 10.64 | 16500 | 0.1713 | 0.1322 | | 0.1461 | 10.96 | 17000 | 0.1423 | 0.1111 | | 0.1289 | 11.28 | 17500 | 0.1388 | 0.1097 | | 0.1273 | 11.61 | 18000 | 0.1438 | 0.1074 | | 0.1317 | 11.93 | 18500 | 0.1312 | 0.1066 | | 0.1448 | 12.25 | 19000 | 0.1446 | 0.1042 | | 0.1424 | 12.57 | 19500 | 0.1386 | 0.1015 | | 0.1392 | 12.89 | 20000 | 0.1379 | 0.1005 | | 0.1408 | 13.22 | 20500 | 0.1408 | 0.0992 | | 0.1239 | 13.54 | 21000 | 0.1338 | 0.0968 | | 0.1244 | 13.86 | 21500 | 0.1335 | 0.0957 | | 0.1254 | 14.18 | 22000 | 0.1382 | 0.0950 | | 0.1597 | 14.51 | 22500 | 0.1544 | 0.0970 | | 0.1566 | 14.83 | 23000 | 0.1589 | 0.0963 | | 5164b06d8a16d47414bb93bcc91b732d |

other | [] | false | Test Model https://huggingface.co/syaimu/7th_test <img src="https://i.imgur.com/0xKIUvL.jpg" width="1700" height=""> <img src="https://i.imgur.com/lFZAYVv.jpg" width="1700" height=""> <img src="https://i.imgur.com/4IYqlYq.jpg" width="1700" height=""> <img src="https://i.imgur.com/v2pn57R.jpg" width="1700" height=""> | 963e977b5978956c72b7967d9fdd4487 |

other | [] | false | other <img src="https://i.imgur.com/oCZyzdA.jpg" width="1700" height=""> <img src="https://i.imgur.com/sAw842D.jpg" width="1700" height=""> <img src="https://i.imgur.com/lzuYVh0.jpg" width="1700" height=""> <img src="https://i.imgur.com/dOXsoeg.jpg" width="1700" height=""> | 80b6bff0bfa83e3b4b28eba24a13a187 |

mit | [] | false | bozo 22 on Stable Diffusion This is the `<bozo-22>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:            | b7c08ab6c51d3037580abc00d21334ae |

mit | ['ja', 'japanese', 'gpt2', 'text-generation', 'lm', 'nlp'] | false | How to use First, install sentencepiece. We have confirmed behavior with the latest version August 2022. (Skip if not necessary.) ``` shell pip install sentencepiece ``` When using pipeline for text generation. ``` python from transformers import pipeline generator = pipeline("text-generation", model="abeja/gpt2-large-japanese") generated = generator( "人とAIが協調するためには、", max_length=30, do_sample=True, num_return_sequences=3, top_p=0.95, top_k=50, pad_token_id=3 ) print(*generated, sep="\n") """ [out] {'generated_text': '人とAIが協調するためには、社会的なルールをきちんと理解して、人と共存し、協働して生きていくのが重要だという。'} {'generated_text': '人とAIが協調するためには、それぞれが人間性を持ち、またその人間性から生まれるインタラクションを調整しなければならないことはいうまで'} {'generated_text': '人とAIが協調するためには、AIが判断すべきことを人間が決める必要がある。人工知能の目的は、人間の知性、記憶、理解、'} """ ``` When using PyTorch. ``` python from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("abeja/gpt2-large-japanese") model = AutoModelForCausalLM.from_pretrained("abeja/gpt2-large-japanese") input_text = "人とAIが協調するためには、" input_ids = tokenizer.encode(input_text, return_tensors="pt") gen_tokens = model.generate( input_ids, max_length=100, do_sample=True, num_return_sequences=3, top_p=0.95, top_k=50, pad_token_id=tokenizer.pad_token_id ) for gen_text in tokenizer.batch_decode(gen_tokens, skip_special_tokens=True): print(gen_text) ``` When using TensorFlow. ```python from transformers import AutoTokenizer, TFAutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("abeja/gpt2-large-japanese") model = TFAutoModelForCausalLM.from_pretrained("abeja/gpt2-large-japanese", from_pt=True) input_text = "人とAIが協調するためには、" input_ids = tokenizer.encode(input_text, return_tensors="tf") gen_tokens = model.generate( input_ids, max_length=100, do_sample=True, num_return_sequences=3, top_p=0.95, top_k=50, pad_token_id=tokenizer.pad_token_id ) for gen_text in tokenizer.batch_decode(gen_tokens, skip_special_tokens=True): print(gen_text) ``` | 9648211d7a5c406bb565f149097e6ed3 |

mit | ['ja', 'japanese', 'gpt2', 'text-generation', 'lm', 'nlp'] | false | Dataset The model was trained on [Japanese CC-100](http://data.statmt.org/cc-100/ja.txt.xz), [Japanese Wikipedia](https://dumps.wikimedia.org/other/cirrussearch), and [Japanese OSCAR](https://huggingface.co/datasets/oscar). | 47428f467c6e3ef447039ec9b509641f |

apache-2.0 | ['translation'] | false | opus-mt-he-de * source languages: he * target languages: de * OPUS readme: [he-de](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/he-de/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-26.zip](https://object.pouta.csc.fi/OPUS-MT-models/he-de/opus-2020-01-26.zip) * test set translations: [opus-2020-01-26.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/he-de/opus-2020-01-26.test.txt) * test set scores: [opus-2020-01-26.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/he-de/opus-2020-01-26.eval.txt) | 66e3993c06d11bf99382c95f9a072a81 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | saqib_v2 Dreambooth model trained by imjunaidafzal with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: 2082932.jpg  | 5c14d2f70d9a852047a6745eebec10b8 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_sa_GLUE_Experiment_qnli_192 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE QNLI dataset. It achieves the following results on the evaluation set: - Loss: 0.6554 - Accuracy: 0.6050 | e41f5756c9e8360d391c6da55ca813d4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6802 | 1.0 | 410 | 0.6614 | 0.5988 | | 0.6514 | 2.0 | 820 | 0.6554 | 0.6050 | | 0.6306 | 3.0 | 1230 | 0.6610 | 0.5938 | | 0.6105 | 4.0 | 1640 | 0.6700 | 0.5942 | | 0.5925 | 5.0 | 2050 | 0.6833 | 0.5891 | | 0.5725 | 6.0 | 2460 | 0.7225 | 0.5898 | | 0.5537 | 7.0 | 2870 | 0.7806 | 0.5810 | | 5ab4eab15b12af0811399ebe0ea0616a |

cc-by-4.0 | ['questions and answers generation'] | false | Model Card of `lmqg/t5-base-squad-qag` This model is fine-tuned version of [t5-base](https://huggingface.co/t5-base) for question & answer pair generation task on the [lmqg/qag_squad](https://huggingface.co/datasets/lmqg/qag_squad) (dataset_name: default) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | 2f23b371b09a862315747261de69b26c |

cc-by-4.0 | ['questions and answers generation'] | false | Overview - **Language model:** [t5-base](https://huggingface.co/t5-base) - **Language:** en - **Training data:** [lmqg/qag_squad](https://huggingface.co/datasets/lmqg/qag_squad) (default) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | d407f9f97fa266adb4aa2cf64e5bcf2e |

cc-by-4.0 | ['questions and answers generation'] | false | model prediction question_answer_pairs = model.generate_qa("William Turner was an English painter who specialised in watercolour landscapes") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "lmqg/t5-base-squad-qag") output = pipe("generate question and answer: Beyonce further expanded her acting career, starring as blues singer Etta James in the 2008 musical biopic, Cadillac Records.") ``` | 6fe3a2944bee008c99a3bd8aad9721fb |

cc-by-4.0 | ['questions and answers generation'] | false | Evaluation - ***Metric (Question & Answer Generation)***: [raw metric file](https://huggingface.co/lmqg/t5-base-squad-qag/raw/main/eval/metric.first.answer.paragraph.questions_answers.lmqg_qag_squad.default.json) | | Score | Type | Dataset | |:--------------------------------|--------:|:--------|:-----------------------------------------------------------------| | QAAlignedF1Score (BERTScore) | 93.34 | default | [lmqg/qag_squad](https://huggingface.co/datasets/lmqg/qag_squad) | | QAAlignedF1Score (MoverScore) | 65.78 | default | [lmqg/qag_squad](https://huggingface.co/datasets/lmqg/qag_squad) | | QAAlignedPrecision (BERTScore) | 93.18 | default | [lmqg/qag_squad](https://huggingface.co/datasets/lmqg/qag_squad) | | QAAlignedPrecision (MoverScore) | 65.96 | default | [lmqg/qag_squad](https://huggingface.co/datasets/lmqg/qag_squad) | | QAAlignedRecall (BERTScore) | 93.51 | default | [lmqg/qag_squad](https://huggingface.co/datasets/lmqg/qag_squad) | | QAAlignedRecall (MoverScore) | 65.68 | default | [lmqg/qag_squad](https://huggingface.co/datasets/lmqg/qag_squad) | | 78f74183e70f6bfb25c133760f76070f |

cc-by-4.0 | ['questions and answers generation'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qag_squad - dataset_name: default - input_types: ['paragraph'] - output_types: ['questions_answers'] - prefix_types: ['qag'] - model: t5-base - max_length: 512 - max_length_output: 256 - epoch: 17 - batch: 8 - lr: 0.0001 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 8 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/lmqg/t5-base-squad-qag/raw/main/trainer_config.json). | 73f85b765a5277bfe6b692e1d78f411a |

apache-2.0 | ['stanza', 'token-classification'] | false | Stanza model for Old_Church_Slavonic (cu) Stanza is a collection of accurate and efficient tools for the linguistic analysis of many human languages. Starting from raw text to syntactic analysis and entity recognition, Stanza brings state-of-the-art NLP models to languages of your choosing. Find more about it in [our website](https://stanfordnlp.github.io/stanza) and our [GitHub repository](https://github.com/stanfordnlp/stanza). This card and repo were automatically prepared with `hugging_stanza.py` in the `stanfordnlp/huggingface-models` repo Last updated 2022-09-25 01:05:38.870 | 07b49196267c559e6e809c2fd1794b87 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'robust-speech-event', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | wav2vec2-large-xls-r-300m-ia This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.1452 - Wer: 0.1253 | 15f015b30ec0cdd90ff638c9364db5a4 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'robust-speech-event', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | Training and evaluation data Language Model Created from texts from processed sentence in train + validation split of dataset (common voice 8.0 for Interlingua) Evaluation is conducted in Notebook, you can see within the repo "notebook_evaluation_wav2vec2_ia.ipynb" Test WER without LM wer = 20.1776 % cer = 4.7205 % Test WER using wer = 8.6074 % cer = 2.4147 % evaluation using eval.py ``` huggingface-cli login | c5839bba3829a43d50451c1701deb280 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'robust-speech-event', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 16 - eval_batch_size: 4 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 400 - num_epochs: 30 - mixed_precision_training: Native AMP | a68702b733b71433c515a2f745c1599c |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'robust-speech-event', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 7.432 | 1.87 | 400 | 2.9636 | 1.0 | | 2.6922 | 3.74 | 800 | 2.2111 | 0.9977 | | 1.2581 | 5.61 | 1200 | 0.4864 | 0.4028 | | 0.6232 | 7.48 | 1600 | 0.2807 | 0.2413 | | 0.4479 | 9.35 | 2000 | 0.2219 | 0.1885 | | 0.3654 | 11.21 | 2400 | 0.1886 | 0.1606 | | 0.323 | 13.08 | 2800 | 0.1716 | 0.1444 | | 0.2935 | 14.95 | 3200 | 0.1687 | 0.1443 | | 0.2707 | 16.82 | 3600 | 0.1632 | 0.1382 | | 0.2559 | 18.69 | 4000 | 0.1507 | 0.1337 | | 0.2433 | 20.56 | 4400 | 0.1572 | 0.1358 | | 0.2338 | 22.43 | 4800 | 0.1489 | 0.1305 | | 0.2258 | 24.3 | 5200 | 0.1485 | 0.1278 | | 0.2218 | 26.17 | 5600 | 0.1470 | 0.1272 | | 0.2169 | 28.04 | 6000 | 0.1470 | 0.1270 | | 0.2117 | 29.91 | 6400 | 0.1452 | 0.1253 | | 323960b55604271df88e95dcd52c8d30 |

apache-2.0 | ['generated_from_trainer'] | false | albert-base-v2-finetuned-code-mixed-DS This model is a fine-tuned version of [albert-base-v2](https://huggingface.co/albert-base-v2) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.0408 - Accuracy: 0.7324 - Precision: 0.6883 - Recall: 0.6822 - F1: 0.6833 | 29d7abfbd215c18b0dc7ba2f4f3c4b45 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1.2766380106570283e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 43 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5 | 9c070858f385fde237e8d2b509823220 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:---------:|:------:|:------:| | 0.9207 | 1.0 | 497 | 0.7810 | 0.6016 | 0.5878 | 0.5953 | 0.5264 | | 0.7519 | 2.0 | 994 | 0.8159 | 0.6600 | 0.6020 | 0.6194 | 0.6015 | | 0.6029 | 3.0 | 1491 | 0.8026 | 0.7163 | 0.6599 | 0.6604 | 0.6593 | | 0.4259 | 4.0 | 1988 | 0.9464 | 0.7384 | 0.7058 | 0.6808 | 0.6822 | | 0.2845 | 5.0 | 2485 | 1.0408 | 0.7324 | 0.6883 | 0.6822 | 0.6833 | | 08aa6325354a4e0138dc152db48e8902 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-en This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.3932 - F1 Score: 0.6774 | 5ba28ce87cbb4bebb81a363364bfffc5 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 Score | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 1.0236 | 1.0 | 50 | 0.5462 | 0.5109 | | 0.5047 | 2.0 | 100 | 0.4387 | 0.6370 | | 0.3716 | 3.0 | 150 | 0.3932 | 0.6774 | | cf3e76b21a095e41598f2ae1d1966271 |

apache-2.0 | [] | false | Features - Can understand and respond to user input in natural language - Can perform a variety of tasks such as answering questions, providing information and executing commands - Has been trained on data from a specific Discord server and is familiar with the language and context used on that server | a0586568847a38acf0e188dc610fd644 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-1B-common_voice7-lt-ft This model is a fine-tuned version of [facebook/wav2vec2-xls-r-1b](https://huggingface.co/facebook/wav2vec2-xls-r-1b) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 2.5101 - Wer: 1.0 | 6867db91cd85ddbdd06752df63214bfa |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 36 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 72 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 900 - num_epochs: 100 - mixed_precision_training: Native AMP | 23d7305c5e6b53971f3e7d5b81fdb3d2 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:---:| | 2.3491 | 31.24 | 500 | 3.9827 | 1.0 | | 0.0421 | 62.48 | 1000 | 2.9544 | 1.0 | | 0.0163 | 93.73 | 1500 | 2.5101 | 1.0 | | 2ac7310bf39ca2502aafaa0c56b7da9c |

apache-2.0 | ['multiberts', 'multiberts-seed_2', 'multiberts-seed_2-step_200k'] | false | MultiBERTs, Intermediate Checkpoint - Seed 2, Step 200k MultiBERTs is a collection of checkpoints and a statistical library to support robust research on BERT. We provide 25 BERT-base models trained with similar hyper-parameters as [the original BERT model](https://github.com/google-research/bert) but with different random seeds, which causes variations in the initial weights and order of training instances. The aim is to distinguish findings that apply to a specific artifact (i.e., a particular instance of the model) from those that apply to the more general procedure. We also provide 140 intermediate checkpoints captured during the course of pre-training (we saved 28 checkpoints for the first 5 runs). The models were originally released through [http://goo.gle/multiberts](http://goo.gle/multiberts). We describe them in our paper [The MultiBERTs: BERT Reproductions for Robustness Analysis](https://arxiv.org/abs/2106.16163). This is model | 67e775575b435018b3db1b04cc4dea0d |

apache-2.0 | ['multiberts', 'multiberts-seed_2', 'multiberts-seed_2-step_200k'] | false | How to use Using code from [BERT-base uncased](https://huggingface.co/bert-base-uncased), here is an example based on Tensorflow: ``` from transformers import BertTokenizer, TFBertModel tokenizer = BertTokenizer.from_pretrained('google/multiberts-seed_2-step_200k') model = TFBertModel.from_pretrained("google/multiberts-seed_2-step_200k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='tf') output = model(encoded_input) ``` PyTorch version: ``` from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('google/multiberts-seed_2-step_200k') model = BertModel.from_pretrained("google/multiberts-seed_2-step_200k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | 319b8dcc59065d047009ddc0af5e1a7a |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2208 - Accuracy: 0.9215 - F1: 0.9217 | e37be3f94aeeeec38cfa136b4378ffa8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8381 | 1.0 | 250 | 0.3167 | 0.8995 | 0.8960 | | 0.2493 | 2.0 | 500 | 0.2208 | 0.9215 | 0.9217 | | 4ca7b21c36574263b24d2eb3d2d8fa88 |

mit | ['text-classification'] | false | Multi2ConvAI-Quality: finetuned Bert for Italian

This model was developed in the [Multi2ConvAI](https://multi2conv.ai) project:

- domain: Quality (more details about our use cases: ([en](https://multi2convai/en/blog/use-cases), [de](https://multi2convai/en/blog/use-cases)))

- language: Italian (it)

- model type: finetuned Bert

| e3cc49a00e4baccdcdebf921ea4d0ada |

mit | ['text-classification'] | false | Run with Huggingface Transformers

````python

from transformers import AutoTokenizer, AutoModelForSequenceClassification

tokenizer = AutoTokenizer.from_pretrained("inovex/multi2convai-quality-it-bert")

model = AutoModelForSequenceClassification.from_pretrained("inovex/multi2convai-quality-it-bert")

````

| 9e6f7d6280ceda2fcefc2f781251340a |

apache-2.0 | ['generated_from_keras_callback'] | false | tmpny35efxx This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.1996 - Train Accuracy: 0.9348 - Validation Loss: 0.8523 - Validation Accuracy: 0.7633 - Epoch: 1 | f05535285e659f83870f71f9fbd1cd94 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch | |:----------:|:--------------:|:---------------:|:-------------------:|:-----:| | 0.5865 | 0.7626 | 0.5505 | 0.8010 | 0 | | 0.1996 | 0.9348 | 0.8523 | 0.7633 | 1 | | eb0cd96fc73891369fe33d4d036213dc |

apache-2.0 | ['dighum'] | false | Description This is a fine-tuned NER model for early-modern Dutch United East India Company (VOC) letters based on XLM-R_base [(Conneau et al., 2020)](https://aclanthology.org/2020.acl-main.747/). The model identifies *locations*, *persons*, *organisations*, but also *ships* as well as derived forms of locations and religions. | 3e6ea344887609ea3c71db58cf9640aa |

apache-2.0 | ['dighum'] | false | Intended uses and limitations This model was fine-tuned (trained, validated and tested) on a single source of data, the General Letters (Generale Missiven). These letters span a large variety of Dutch, as they cover the largest part of the 17th and 18th centuries, and have been extended with editorial notes between 1960 and 2017. As the model was only fine-tuned on this data however, it may perform less well on other texts from the same period. | fad17574e31057ea7952fddf5c4e0629 |

apache-2.0 | ['dighum'] | false | How to use The model can run on raw text through the *token-classification* pipeline: ``` from transformers import AutoTokenizer, AutoModelForTokenClassification from transformers import pipeline tokenizer = AutoTokenizer.from_pretrained("CLTL/gm-ner-xlmrbase") model = AutoModelForTokenClassification.from_pretrained("CLTL/gm-ner-xlmrbase") nlp = pipeline("ner", model=model, tokenizer=tokenizer) example = "Batavia heeft om advies gevraagd." ner_results = nlp(example) print(ner_results) ``` This outputs a list of entities with their character offsets in the input text: ``` [{'entity': 'B-LOC', 'score': 0.99739265, 'index': 1, 'word': '▁Bata', 'start': 0, 'end': 4}, {'entity': 'I-LOC', 'score': 0.5373179, 'index': 2, 'word': 'via', 'start': 4, 'end': 7}] ``` | 5889827e68a02a44b2fb4b119b7b5e9b |

apache-2.0 | ['dighum'] | false | Training data and tagset The model was fine-tuned on the General Letters [GM-NER](https://github.com/cltl/voc-missives/tree/master/data/ner/datasplit_all_standard) dataset, with the following tagset: | tag | description | notes | | --- | ----------- | ----- | | LOC | locations | | | LOCderiv | derived forms of locations | by derivation, e.g. *Bandanezen*, or composition, e.g. *Javakoffie* | | ORG | organisations | includes forms derived by composition, e.g. *Compagnieszaken* | PER | persons | | RELderiv | forms related to religion | merges religion names (*Christendom*), derived forms (*christenen*) and composed forms (*Christen-orangkay*) | | SHP | ships | The base text for this dataset is OCR text that has been partially corrected. The text is clean overall but errors remain. | e18446d009e1360fee20f98443025120 |

apache-2.0 | ['dighum'] | false | Training procedure The model was fine-tuned with [xlm-roberta-base](https://huggingface.co/xlm-roberta-base), using [this script](https://github.com/huggingface/transformers/blob/master/examples/legacy/token-classification/run_ner.py). Non-default training parameters are: * training batch size: 16 * max sequence length: 256 * number of epochs: 4 -- loading the best checkpoint model by loss at the end, with checkpoints every 200 steps * (seed: 1) | 27bbdfe79bb0fa2357f1f5ebc5c05cb4 |

apache-2.0 | ['dighum'] | false | Reference The model and fine-tuning data presented here were developed as part of: ```bibtex @inproceedings{arnoult-etal-2021-batavia, title = "Batavia asked for advice. Pretrained language models for Named Entity Recognition in historical texts.", author = "Arnoult, Sophie I. and Petram, Lodewijk and Vossen, Piek", booktitle = "Proceedings of the 5th Joint SIGHUM Workshop on Computational Linguistics for Cultural Heritage, Social Sciences, Humanities and Literature", month = nov, year = "2021", address = "Punta Cana, Dominican Republic (online)", publisher = "Association for Computational Linguistics", url = "https://aclanthology.org/2021.latechclfl-1.3", pages = "21--30" } ``` | b4972e214e5c7a726e717888a9bafd5d |

gpl | ['corenlp'] | false | Core NLP model for sp CoreNLP is your one stop shop for natural language processing in Java! CoreNLP enables users to derive linguistic annotations for text, including token and sentence boundaries, parts of speech, named entities, numeric and time values, dependency and constituency parses, coreference, sentiment, quote attributions, and relations. Find more about it in [our website](https://stanfordnlp.github.io/CoreNLP) and our [GitHub repository](https://github.com/stanfordnlp/CoreNLP). | c873d494402373d671f8fe0518873e12 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased_title_fine_tuned This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.3368 - Accuracy: {'accuracy': 0.8810840405146455} - Recall: {'recall': 0.8611674554879423} - Precision: {'precision': 0.890468422279189} - F1: {'f1': 0.8755728689275893} | 515f2cdb2c3a51ba8994ffc643fe2513 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 24 - eval_batch_size: 24 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - num_epochs: 4 | ad334e90bac026caa7debb6bcff05af1 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Recall | Precision | F1 | |:-------------:|:-----:|:-----:|:---------------:|:--------------------------------:|:------------------------------:|:---------------------------------:|:--------------------------:| | 0.3224 | 1.0 | 3045 | 0.3079 | {'accuracy': 0.8730358609362168} | {'recall': 0.8139508677034032} | {'precision': 0.915346597389431} | {'f1': 0.861676110945422} | | 0.2818 | 2.0 | 6090 | 0.3153 | {'accuracy': 0.8814672871612373} | {'recall': 0.8299526707234618} | {'precision': 0.9182146864480738} | {'f1': 0.8718555785735426} | | 0.2394 | 3.0 | 9135 | 0.3104 | {'accuracy': 0.8830002737476047} | {'recall': 0.8548568852828488} | {'precision': 0.8993479549496147} | {'f1': 0.8765382171124848} | | 0.204 | 4.0 | 12180 | 0.3368 | {'accuracy': 0.8810840405146455} | {'recall': 0.8611674554879423} | {'precision': 0.890468422279189} | {'f1': 0.8755728689275893} | | 95747b46a08910f648fb01240b4ce35b |

apache-2.0 | [] | false | Results on Natural Questions - Test Set |Id | link | Exact Match | |---|---|---| |**T5-large**|**https://huggingface.co/google/t5-large-ssm-nqo**|**29.0**| |T5-xxl|https://huggingface.co/google/t5-xxl-ssm-nqo|35.2| |T5-3b|https://huggingface.co/google/t5-3b-ssm-nqo|31.7| |T5-11b|https://huggingface.co/google/t5-11b-ssm-nqo|34.8| | f2298aba381e4c542b8e91f626f04af9 |

apache-2.0 | [] | false | Usage The model can be used as follows for **closed book question answering**: ```python from transformers import AutoModelForSeq2SeqLM, AutoTokenizer t5_qa_model = AutoModelForSeq2SeqLM.from_pretrained("google/t5-large-ssm-nqo") t5_tok = AutoTokenizer.from_pretrained("google/t5-large-ssm-nqo") input_ids = t5_tok("When was Franklin D. Roosevelt born?", return_tensors="pt").input_ids gen_output = t5_qa_model.generate(input_ids)[0] print(t5_tok.decode(gen_output, skip_special_tokens=True)) ``` | 7c1d021bb01f07e190496b6d3b152513 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'en', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_6_0', 'robust-speech-event', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-English Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on English using the [Common Voice](https://huggingface.co/datasets/common_voice). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned thanks to the GPU credits generously given by the [OVHcloud](https://www.ovhcloud.com/en/public-cloud/ai-training/) :) The script used for training can be found here: https://github.com/jonatasgrosman/wav2vec2-sprint | 430e61211327ac07e76a5878bae4e2e9 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'en', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_6_0', 'robust-speech-event', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows... Using the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) library: ```python from huggingsound import SpeechRecognitionModel model = SpeechRecognitionModel("jonatasgrosman/wav2vec2-large-xlsr-53-english") audio_paths = ["/path/to/file.mp3", "/path/to/another_file.wav"] transcriptions = model.transcribe(audio_paths) ``` Writing your own inference script: ```python import torch import librosa from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor LANG_ID = "en" MODEL_ID = "jonatasgrosman/wav2vec2-large-xlsr-53-english" SAMPLES = 10 test_dataset = load_dataset("common_voice", LANG_ID, split=f"test[:{SAMPLES}]") processor = Wav2Vec2Processor.from_pretrained(MODEL_ID) model = Wav2Vec2ForCTC.from_pretrained(MODEL_ID) | 8b470aa6cd8d5b98eb7611cc248ef8f3 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'en', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_6_0', 'robust-speech-event', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the audio files as arrays def speech_file_to_array_fn(batch): speech_array, sampling_rate = librosa.load(batch["path"], sr=16_000) batch["speech"] = speech_array batch["sentence"] = batch["sentence"].upper() return batch test_dataset = test_dataset.map(speech_file_to_array_fn) inputs = processor(test_dataset["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values, attention_mask=inputs.attention_mask).logits predicted_ids = torch.argmax(logits, dim=-1) predicted_sentences = processor.batch_decode(predicted_ids) for i, predicted_sentence in enumerate(predicted_sentences): print("-" * 100) print("Reference:", test_dataset[i]["sentence"]) print("Prediction:", predicted_sentence) ``` | Reference | Prediction | | ------------- | ------------- | | "SHE'LL BE ALL RIGHT." | SHE'LL BE ALL RIGHT | | SIX | SIX | | "ALL'S WELL THAT ENDS WELL." | ALL AS WELL THAT ENDS WELL | | DO YOU MEAN IT? | DO YOU MEAN IT | | THE NEW PATCH IS LESS INVASIVE THAN THE OLD ONE, BUT STILL CAUSES REGRESSIONS. | THE NEW PATCH IS LESS INVASIVE THAN THE OLD ONE BUT STILL CAUSES REGRESSION | | HOW IS MOZILLA GOING TO HANDLE AMBIGUITIES LIKE QUEUE AND CUE? | HOW IS MOSLILLAR GOING TO HANDLE ANDBEWOOTH HIS LIKE Q AND Q | | "I GUESS YOU MUST THINK I'M KINDA BATTY." | RUSTIAN WASTIN PAN ONTE BATTLY | | NO ONE NEAR THE REMOTE MACHINE YOU COULD RING? | NO ONE NEAR THE REMOTE MACHINE YOU COULD RING | | SAUCE FOR THE GOOSE IS SAUCE FOR THE GANDER. | SAUCE FOR THE GUICE IS SAUCE FOR THE GONDER | | GROVES STARTED WRITING SONGS WHEN SHE WAS FOUR YEARS OLD. | GRAFS STARTED WRITING SONGS WHEN SHE WAS FOUR YEARS OLD | | e04bcbd048ebc2f039812fdf7afe3ce3 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'en', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_6_0', 'robust-speech-event', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation 1. To evaluate on `mozilla-foundation/common_voice_6_0` with split `test` ```bash python eval.py --model_id jonatasgrosman/wav2vec2-large-xlsr-53-english --dataset mozilla-foundation/common_voice_6_0 --config en --split test ``` 2. To evaluate on `speech-recognition-community-v2/dev_data` ```bash python eval.py --model_id jonatasgrosman/wav2vec2-large-xlsr-53-english --dataset speech-recognition-community-v2/dev_data --config en --split validation --chunk_length_s 5.0 --stride_length_s 1.0 ``` | bba8f38b28346fd2f541e5855d407364 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'en', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_6_0', 'robust-speech-event', 'speech', 'xlsr-fine-tuning-week'] | false | Citation If you want to cite this model you can use this: ```bibtex @misc{grosman2021wav2vec2-large-xlsr-53-english, title={XLSR Wav2Vec2 English by Jonatas Grosman}, author={Grosman, Jonatas}, publisher={Hugging Face}, journal={Hugging Face Hub}, howpublished={\url{https://huggingface.co/jonatasgrosman/wav2vec2-large-xlsr-53-english}}, year={2021} } ``` | 583748c83961c5c088180f1ea8b5e79f |

cc-by-4.0 | ['answer extraction'] | false | Model Card of `lmqg/t5-large-squad-ae` This model is fine-tuned version of [t5-large](https://huggingface.co/t5-large) for answer extraction on the [lmqg/qg_squad](https://huggingface.co/datasets/lmqg/qg_squad) (dataset_name: default) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | f5a14937f88d5a04229e4d314ce6eb3b |

cc-by-4.0 | ['answer extraction'] | false | model prediction answers = model.generate_a("William Turner was an English painter who specialised in watercolour landscapes") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "lmqg/t5-large-squad-ae") output = pipe("extract answers: <hl> Beyonce further expanded her acting career, starring as blues singer Etta James in the 2008 musical biopic, Cadillac Records. <hl> Her performance in the film received praise from critics, and she garnered several nominations for her portrayal of James, including a Satellite Award nomination for Best Supporting Actress, and a NAACP Image Award nomination for Outstanding Supporting Actress.") ``` | ab127d21608194a27c29863814d9550a |

cc-by-4.0 | ['answer extraction'] | false | Evaluation - ***Metric (Answer Extraction)***: [raw metric file](https://huggingface.co/lmqg/t5-large-squad-ae/raw/main/eval/metric.first.answer.paragraph_sentence.answer.lmqg_qg_squad.default.json) | | Score | Type | Dataset | |:-----------------|--------:|:--------|:---------------------------------------------------------------| | AnswerExactMatch | 59.77 | default | [lmqg/qg_squad](https://huggingface.co/datasets/lmqg/qg_squad) | | AnswerF1Score | 70.41 | default | [lmqg/qg_squad](https://huggingface.co/datasets/lmqg/qg_squad) | | BERTScore | 91.91 | default | [lmqg/qg_squad](https://huggingface.co/datasets/lmqg/qg_squad) | | Bleu_1 | 65.48 | default | [lmqg/qg_squad](https://huggingface.co/datasets/lmqg/qg_squad) | | Bleu_2 | 62.11 | default | [lmqg/qg_squad](https://huggingface.co/datasets/lmqg/qg_squad) | | Bleu_3 | 58.71 | default | [lmqg/qg_squad](https://huggingface.co/datasets/lmqg/qg_squad) | | Bleu_4 | 55.66 | default | [lmqg/qg_squad](https://huggingface.co/datasets/lmqg/qg_squad) | | METEOR | 43.06 | default | [lmqg/qg_squad](https://huggingface.co/datasets/lmqg/qg_squad) | | MoverScore | 82.82 | default | [lmqg/qg_squad](https://huggingface.co/datasets/lmqg/qg_squad) | | ROUGE_L | 69.67 | default | [lmqg/qg_squad](https://huggingface.co/datasets/lmqg/qg_squad) | | e882c4497d53d8a2be74f8e2648577ea |

cc-by-4.0 | ['answer extraction'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qg_squad - dataset_name: default - input_types: ['paragraph_sentence'] - output_types: ['answer'] - prefix_types: ['ae'] - model: t5-large - max_length: 512 - max_length_output: 32 - epoch: 9 - batch: 4 - lr: 0.0001 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 32 - label_smoothing: 0.0 The full configuration can be found at [fine-tuning config file](https://huggingface.co/lmqg/t5-large-squad-ae/raw/main/trainer_config.json). | d82cbc5aae9b4177ea82560c67e4d26b |

apache-2.0 | ['generated_from_trainer'] | false | bertiny-finetuned-finer-full This model is a fine-tuned version of [google/bert_uncased_L-2_H-128_A-2](https://huggingface.co/google/bert_uncased_L-2_H-128_A-2) on the 10% of finer-139 dataset for 40 epochs according to paper. It achieves the following results on the evaluation set: - Loss: 0.0788 - Precision: 0.5554 - Recall: 0.5164 - F1: 0.5352 - Accuracy: 0.9887 | 391e6487938486db695ddb7fae5b81a4 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 40 | e435490b947ae319c35d7238408927a3 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:------:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.0852 | 1.0 | 11255 | 0.0929 | 1.0 | 0.0001 | 0.0002 | 0.9843 | | 0.08 | 2.0 | 22510 | 0.0840 | 0.4626 | 0.0730 | 0.1261 | 0.9851 | | 0.0759 | 3.0 | 33765 | 0.0750 | 0.5113 | 0.2035 | 0.2912 | 0.9865 | | 0.0569 | 4.0 | 45020 | 0.0673 | 0.4973 | 0.3281 | 0.3953 | 0.9872 | | 0.0488 | 5.0 | 56275 | 0.0635 | 0.5289 | 0.3749 | 0.4388 | 0.9878 | | 0.0422 | 6.0 | 67530 | 0.0606 | 0.5258 | 0.4068 | 0.4587 | 0.9880 | | 0.0364 | 7.0 | 78785 | 0.0600 | 0.5588 | 0.4186 | 0.4787 | 0.9883 | | 0.0307 | 8.0 | 90040 | 0.0589 | 0.5223 | 0.4916 | 0.5065 | 0.9883 | | 0.0284 | 9.0 | 101295 | 0.0595 | 0.5588 | 0.4813 | 0.5171 | 0.9887 | | 0.0255 | 10.0 | 112550 | 0.0597 | 0.5606 | 0.4944 | 0.5254 | 0.9888 | | 0.0223 | 11.0 | 123805 | 0.0600 | 0.5533 | 0.4998 | 0.5252 | 0.9888 | | 0.0228 | 12.0 | 135060 | 0.0608 | 0.5290 | 0.5228 | 0.5259 | 0.9885 | | 0.0225 | 13.0 | 146315 | 0.0612 | 0.5480 | 0.5111 | 0.5289 | 0.9887 | | 0.0204 | 14.0 | 157570 | 0.0634 | 0.5646 | 0.5120 | 0.5370 | 0.9890 | | 0.0176 | 15.0 | 168825 | 0.0639 | 0.5611 | 0.5135 | 0.5363 | 0.9889 | | 0.0167 | 16.0 | 180080 | 0.0647 | 0.5631 | 0.5120 | 0.5363 | 0.9888 | | 0.0161 | 17.0 | 191335 | 0.0665 | 0.5607 | 0.5081 | 0.5331 | 0.9889 | | 0.0145 | 18.0 | 202590 | 0.0673 | 0.5437 | 0.5280 | 0.5357 | 0.9887 | | 0.0166 | 19.0 | 213845 | 0.0687 | 0.5722 | 0.5008 | 0.5341 | 0.9889 | | 0.0155 | 20.0 | 225100 | 0.0685 | 0.5325 | 0.5337 | 0.5331 | 0.9885 | | 0.0142 | 21.0 | 236355 | 0.0705 | 0.5626 | 0.5166 | 0.5386 | 0.9890 | | 0.0127 | 22.0 | 247610 | 0.0694 | 0.5426 | 0.5358 | 0.5392 | 0.9887 | | 0.0112 | 23.0 | 258865 | 0.0721 | 0.5591 | 0.5129 | 0.5351 | 0.9888 | | 0.0123 | 24.0 | 270120 | 0.0733 | 0.5715 | 0.5081 | 0.5380 | 0.9889 | | 0.0116 | 25.0 | 281375 | 0.0735 | 0.5621 | 0.5123 | 0.5361 | 0.9888 | | 0.0112 | 26.0 | 292630 | 0.0739 | 0.5634 | 0.5181 | 0.5398 | 0.9889 | | 0.0108 | 27.0 | 303885 | 0.0753 | 0.5548 | 0.5155 | 0.5344 | 0.9887 | | 0.0125 | 28.0 | 315140 | 0.0746 | 0.5507 | 0.5221 | 0.5360 | 0.9886 | | 0.0093 | 29.0 | 326395 | 0.0762 | 0.5602 | 0.5156 | 0.5370 | 0.9888 | | 0.0094 | 30.0 | 337650 | 0.0762 | 0.5625 | 0.5157 | 0.5381 | 0.9889 | | 0.0117 | 31.0 | 348905 | 0.0767 | 0.5519 | 0.5195 | 0.5352 | 0.9887 | | 0.0091 | 32.0 | 360160 | 0.0772 | 0.5501 | 0.5198 | 0.5345 | 0.9887 | | 0.0109 | 33.0 | 371415 | 0.0775 | 0.5635 | 0.5097 | 0.5353 | 0.9888 | | 0.0094 | 34.0 | 382670 | 0.0776 | 0.5467 | 0.5216 | 0.5339 | 0.9887 | | 0.009 | 35.0 | 393925 | 0.0782 | 0.5601 | 0.5139 | 0.5360 | 0.9889 | | 0.0093 | 36.0 | 405180 | 0.0780 | 0.5568 | 0.5156 | 0.5354 | 0.9888 | | 0.0087 | 37.0 | 416435 | 0.0783 | 0.5588 | 0.5143 | 0.5356 | 0.9888 | | 0.009 | 38.0 | 427690 | 0.0785 | 0.5483 | 0.5178 | 0.5326 | 0.9887 | | 0.0094 | 39.0 | 438945 | 0.0787 | 0.5541 | 0.5154 | 0.5340 | 0.9887 | | 0.0088 | 40.0 | 450200 | 0.0788 | 0.5554 | 0.5164 | 0.5352 | 0.9887 | | addd563b0b70e6f643e9b012d7dcff70 |

mit | ['small100', 'translation', 'flores101', 'gsarti/flores_101', 'tico19', 'gmnlp/tico19', 'tatoeba'] | false | SMALL-100 Model SMaLL-100 is a compact and fast massively multilingual machine translation model covering more than 10K language pairs, that achieves competitive results with M2M-100 while being much smaller and faster. It is introduced in [this paper](https://arxiv.org/abs/2210.11621)(accepted to EMNLP2022), and initially released in [this repository](https://github.com/alirezamshi/small100). The model architecture and config are the same as [M2M-100](https://huggingface.co/facebook/m2m100_418M/tree/main) implementation, but the tokenizer is modified to adjust language codes. So, you should load the tokenizer locally from [tokenization_small100.py](https://huggingface.co/alirezamsh/small100/blob/main/tokenization_small100.py) file for the moment. **Demo**: https://huggingface.co/spaces/alirezamsh/small100 **Note**: SMALL100Tokenizer requires sentencepiece, so make sure to install it by: ```pip install sentencepiece``` - **Supervised Training** SMaLL-100 is a seq-to-seq model for the translation task. The input to the model is ```source:[tgt_lang_code] + src_tokens + [EOS]``` and ```target: tgt_tokens + [EOS]```. An example of supervised training is shown below: ``` from transformers import M2M100ForConditionalGeneration from tokenization_small100 import SMALL100Tokenizer model = M2M100ForConditionalGeneration.from_pretrained("alirezamsh/small100") tokenizer = M2M100Tokenizer.from_pretrained("alirezamsh/small100", tgt_lang="fr") src_text = "Life is like a box of chocolates." tgt_text = "La vie est comme une boîte de chocolat." model_inputs = tokenizer(src_text, text_target=tgt_text, return_tensors="pt") loss = model(**model_inputs).loss | cfc2d97fea17fc5e1cd9dda71338deac |

mit | ['small100', 'translation', 'flores101', 'gsarti/flores_101', 'tico19', 'gmnlp/tico19', 'tatoeba'] | false | forward pass ``` Training data can be provided upon request. - **Generation** Beam size of 5, and maximum target length of 256 is used for the generation. ``` from transformers import M2M100ForConditionalGeneration from tokenization_small100 import SMALL100Tokenizer hi_text = "जीवन एक चॉकलेट बॉक्स की तरह है।" chinese_text = "生活就像一盒巧克力。" model = M2M100ForConditionalGeneration.from_pretrained("alirezamsh/small100") tokenizer = SMALL100Tokenizer.from_pretrained("alirezamsh/small100") | 5ca4ffa2fbf96df371329d5292dc937a |

mit | ['small100', 'translation', 'flores101', 'gsarti/flores_101', 'tico19', 'gmnlp/tico19', 'tatoeba'] | false | translate Hindi to French tokenizer.tgt_lang = "fr" encoded_hi = tokenizer(hi_text, return_tensors="pt") generated_tokens = model.generate(**encoded_hi) tokenizer.batch_decode(generated_tokens, skip_special_tokens=True) | 76aba4b34ba8c6ccef9320e44e6c6648 |

mit | ['small100', 'translation', 'flores101', 'gsarti/flores_101', 'tico19', 'gmnlp/tico19', 'tatoeba'] | false | translate Chinese to English tokenizer.tgt_lang = "en" encoded_zh = tokenizer(chinese_text, return_tensors="pt") generated_tokens = model.generate(**encoded_zh) tokenizer.batch_decode(generated_tokens, skip_special_tokens=True) | ae3d32354acc34b97631f6a4854713d6 |

mit | ['small100', 'translation', 'flores101', 'gsarti/flores_101', 'tico19', 'gmnlp/tico19', 'tatoeba'] | false | => "Life is like a box of chocolate." ``` - **Evaluation** Please refer to [original repository](https://github.com/alirezamshi/small100) for spBLEU computation. - **Languages Covered** Afrikaans (af), Amharic (am), Arabic (ar), Asturian (ast), Azerbaijani (az), Bashkir (ba), Belarusian (be), Bulgarian (bg), Bengali (bn), Breton (br), Bosnian (bs), Catalan; Valencian (ca), Cebuano (ceb), Czech (cs), Welsh (cy), Danish (da), German (de), Greeek (el), English (en), Spanish (es), Estonian (et), Persian (fa), Fulah (ff), Finnish (fi), French (fr), Western Frisian (fy), Irish (ga), Gaelic; Scottish Gaelic (gd), Galician (gl), Gujarati (gu), Hausa (ha), Hebrew (he), Hindi (hi), Croatian (hr), Haitian; Haitian Creole (ht), Hungarian (hu), Armenian (hy), Indonesian (id), Igbo (ig), Iloko (ilo), Icelandic (is), Italian (it), Japanese (ja), Javanese (jv), Georgian (ka), Kazakh (kk), Central Khmer (km), Kannada (kn), Korean (ko), Luxembourgish; Letzeburgesch (lb), Ganda (lg), Lingala (ln), Lao (lo), Lithuanian (lt), Latvian (lv), Malagasy (mg), Macedonian (mk), Malayalam (ml), Mongolian (mn), Marathi (mr), Malay (ms), Burmese (my), Nepali (ne), Dutch; Flemish (nl), Norwegian (no), Northern Sotho (ns), Occitan (post 1500) (oc), Oriya (or), Panjabi; Punjabi (pa), Polish (pl), Pushto; Pashto (ps), Portuguese (pt), Romanian; Moldavian; Moldovan (ro), Russian (ru), Sindhi (sd), Sinhala; Sinhalese (si), Slovak (sk), Slovenian (sl), Somali (so), Albanian (sq), Serbian (sr), Swati (ss), Sundanese (su), Swedish (sv), Swahili (sw), Tamil (ta), Thai (th), Tagalog (tl), Tswana (tn), Turkish (tr), Ukrainian (uk), Urdu (ur), Uzbek (uz), Vietnamese (vi), Wolof (wo), Xhosa (xh), Yiddish (yi), Yoruba (yo), Chinese (zh), Zulu (zu) | e74be3ad1b0e899d6a7f1f3cede2bedd |

mit | ['small100', 'translation', 'flores101', 'gsarti/flores_101', 'tico19', 'gmnlp/tico19', 'tatoeba'] | false | Citation If you use this model for your research, please cite the following work: ``` @inproceedings{mohammadshahi-etal-2022-small, title = "{SM}a{LL}-100: Introducing Shallow Multilingual Machine Translation Model for Low-Resource Languages", author = "Mohammadshahi, Alireza and Nikoulina, Vassilina and Berard, Alexandre and Brun, Caroline and Henderson, James and Besacier, Laurent", booktitle = "Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing", month = dec, year = "2022", address = "Abu Dhabi, United Arab Emirates", publisher = "Association for Computational Linguistics", url = "https://aclanthology.org/2022.emnlp-main.571", pages = "8348--8359", abstract = "In recent years, multilingual machine translation models have achieved promising performance on low-resource language pairs by sharing information between similar languages, thus enabling zero-shot translation. To overcome the {``}curse of multilinguality{''}, these models often opt for scaling up the number of parameters, which makes their use in resource-constrained environments challenging. We introduce SMaLL-100, a distilled version of the M2M-100(12B) model, a massively multilingual machine translation model covering 100 languages. We train SMaLL-100 with uniform sampling across all language pairs and therefore focus on preserving the performance of low-resource languages. We evaluate SMaLL-100 on different low-resource benchmarks: FLORES-101, Tatoeba, and TICO-19 and demonstrate that it outperforms previous massively multilingual models of comparable sizes (200-600M) while improving inference latency and memory usage. Additionally, our model achieves comparable results to M2M-100 (1.2B), while being 3.6x smaller and 4.3x faster at inference.", } @inproceedings{mohammadshahi-etal-2022-compressed, title = "What Do Compressed Multilingual Machine Translation Models Forget?", author = "Mohammadshahi, Alireza and Nikoulina, Vassilina and Berard, Alexandre and Brun, Caroline and Henderson, James and Besacier, Laurent", booktitle = "Findings of the Association for Computational Linguistics: EMNLP 2022", month = dec, year = "2022", address = "Abu Dhabi, United Arab Emirates", publisher = "Association for Computational Linguistics", url = "https://aclanthology.org/2022.findings-emnlp.317", pages = "4308--4329", abstract = "Recently, very large pre-trained models achieve state-of-the-art results in various natural language processing (NLP) tasks, but their size makes it more challenging to apply them in resource-constrained environments. Compression techniques allow to drastically reduce the size of the models and therefore their inference time with negligible impact on top-tier metrics. However, the general performance averaged across multiple tasks and/or languages may hide a drastic performance drop on under-represented features, which could result in the amplification of biases encoded by the models. In this work, we assess the impact of compression methods on Multilingual Neural Machine Translation models (MNMT) for various language groups, gender, and semantic biases by extensive analysis of compressed models on different machine translation benchmarks, i.e. FLORES-101, MT-Gender, and DiBiMT. We show that the performance of under-represented languages drops significantly, while the average BLEU metric only slightly decreases. Interestingly, the removal of noisy memorization with compression leads to a significant improvement for some medium-resource languages. Finally, we demonstrate that compression amplifies intrinsic gender and semantic biases, even in high-resource languages.", } ``` | 77f5822ce3712488b3db17d2703822d3 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-truthful This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.4660 - Accuracy: 0.87 - F1: 0.8697 | 27d29e1c959dec6497b06106f66c72cd |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 9.910294163459086e-05 - train_batch_size: 400 - eval_batch_size: 400 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 9 | 6d48c2c71a0fe96cd4629ba8c93e396c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | No log | 1.0 | 5 | 0.6509 | 0.59 | 0.5780 | | No log | 2.0 | 10 | 0.4950 | 0.77 | 0.7701 | | No log | 3.0 | 15 | 0.4787 | 0.81 | 0.8099 | | No log | 4.0 | 20 | 0.4936 | 0.81 | 0.8096 | | No log | 5.0 | 25 | 0.4443 | 0.82 | 0.82 | | No log | 6.0 | 30 | 0.4547 | 0.85 | 0.8497 | | No log | 7.0 | 35 | 0.4268 | 0.85 | 0.8500 | | No log | 8.0 | 40 | 0.4790 | 0.87 | 0.8697 | | No log | 9.0 | 45 | 0.4660 | 0.87 | 0.8697 | | 675a17c7a1ebff69a610056111896061 |

apache-2.0 | ['text2text-generation'] | false | pip install -q transformers from transformers import AutoModelForSeq2SeqLM, AutoTokenizer checkpoint = "bigscience/mt0-xxl-mt" tokenizer = AutoTokenizer.from_pretrained(checkpoint) model = AutoModelForSeq2SeqLM.from_pretrained(checkpoint) inputs = tokenizer.encode("Translate to English: Je t’aime.", return_tensors="pt") outputs = model.generate(inputs) print(tokenizer.decode(outputs[0])) ``` </details> | 60205e7da60393bd25420cca722eb843 |

apache-2.0 | ['text2text-generation'] | false | pip install -q transformers accelerate from transformers import AutoModelForSeq2SeqLM, AutoTokenizer checkpoint = "bigscience/mt0-xxl-mt" tokenizer = AutoTokenizer.from_pretrained(checkpoint) model = AutoModelForSeq2SeqLM.from_pretrained(checkpoint, torch_dtype="auto", device_map="auto") inputs = tokenizer.encode("Translate to English: Je t’aime.", return_tensors="pt").to("cuda") outputs = model.generate(inputs) print(tokenizer.decode(outputs[0])) ``` </details> | 8109b4e773f812d182ce10fb23cb9976 |

apache-2.0 | ['text2text-generation'] | false | pip install -q transformers accelerate bitsandbytes from transformers import AutoModelForSeq2SeqLM, AutoTokenizer checkpoint = "bigscience/mt0-xxl-mt" tokenizer = AutoTokenizer.from_pretrained(checkpoint) model = AutoModelForSeq2SeqLM.from_pretrained(checkpoint, device_map="auto", load_in_8bit=True) inputs = tokenizer.encode("Translate to English: Je t’aime.", return_tensors="pt").to("cuda") outputs = model.generate(inputs) print(tokenizer.decode(outputs[0])) ``` </details> <!-- Necessary for whitespace --> | 2f7e32dc9a5dfcd3dcacd8752491f962 |

apache-2.0 | [] | false | 简介 Brief Introduction 本模型基于大规模信息抽取数据进行预训练,可支持few-shot、zero-shot场景下的实体识别、关系三元组抽取任务。 This model is pre-trained on large-scale information extraction data, to better support Named Entity Recognition (NER) and Relation Extraction (RE) tasks in few-shot/zero-shot scenarios. | 789691d4bea0c4295a8c23dc77bdafeb |

apache-2.0 | [] | false | 模型分类 Model Taxonomy | 需求 Demand | 任务 Task | 系列 Series | 模型 Model | 参数 Parameter | 额外 Extra | | ---------- | ---------- | -------------- | -------- | ------------ | -------- | | 通用 General | 信息抽取 Information Extraction | 二郎神 Erlangshen | BagualuIEModel | 120M | Chinese | | 96d6e9255e13dfc704bd481a418d8e6c |

apache-2.0 | [] | false | 下游效果 Performance Erlangshen-BERT-120M-IE-Chinese在多个信息抽取任务下进行测试。 其中,zh_weibo/MSRA/OntoNote4/Resume为NER任务,其中MSRA在原始数据下进行测试;SanWen/FinRE作为实体关系联合抽取任务进行测试,非单一关系分类任务。 部分参数设置如下: ``` batch_size=16 precision=16 max_epoch=50 lr=2e-5 weight_decay=0.1 warmup=0.06 max_length=512 ``` 我们分别在随机种子123/456/789下进行测试,并以[MacBERT-base, Chinese](https://github.com/ymcui/MacBERT)作为预训练模型保持相同参数进行训练作为对比baseline,得到效果计算平均,效果如下: | Dataset | Training epochs | Test precision | Test recall | Test f1 | Baseline f1 | | --------- | --------------- | -------------- | ----------- | ------- | ----------- | | zh_weibo | 10.3 | 0.7282 | 0.6447 | 0.6839 | 0.6778 | | MSRA | 5 | 0.9374 | 0.9299 | 0.9336 | 0.8483 | | OntoNote4 | 9 | 0.8640 | 0.8634 | 0.8636 | 0.7996 | | Resume | 15 | 0.9568 | 0.9658 | 0.9613 | 0.9479 | | SanWen | 6.7 | 0.3655 | 0.2072 | 0.2639 | 0.2655 | | FinRE | 7 | 0.5190 | 0.4274 | 0.4685 | 0.4559 | | 3a3bc17f6717f094d9af7468576d3fea |

apache-2.0 | [] | false | 使用 Usage GTS引擎(GTS-Engine)是一款开箱即用且性能强大的自然语言理解引擎,能够仅用小样本就能自动化生产NLP模型。GTS Engine包含两个训练引擎:乾坤鼎和八卦炉。 本模型为可在GTS-Engine八卦炉引擎信息抽取任务中,作为预训练模型进行finetune。 GTS-Engine文档参考:[GTS-Engine](https://gts-engine-doc.readthedocs.io/en/latest/docs/about.html) | c21c739afe74b5eb7f3d485ba0808f52 |

apache-2.0 | [] | false | 引用 如果您在您的工作中使用了我们的模型,可以引用我们的[网站](https://github.com/IDEA-CCNL/GTS-Engine): You can also cite our website: ``` @misc{GTS-Engine, title={GTS-Engine}, author={IDEA-CCNL}, year={2022}, howpublished={\url{https://github.com/IDEA-CCNL/GTS-Engine}}, } ``` | b6dc43612b1ebbfa323db001bf667243 |

apache-2.0 | [] | false | Results The following table summarizes the F1 score obtained by ParsBERT as compared to other models and architectures. | Dataset | ParsBERT v2 | ParsBERT v1 | mBERT | MorphoBERT | Beheshti-NER | LSTM-CRF | Rule-Based CRF | BiLSTM-CRF | |---------|-------------|-------------|-------|------------|--------------|----------|----------------|------------| | PEYMA | 93.40* | 93.10 | 86.64 | - | 90.59 | - | 84.00 | - | | a01ff3b6c62dfe8a79c0efa4daaf8a44 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | Counterfeit is anime style Stable Diffusion model. e.g. prompt: solo,(a girl),cowboy shot,((masterpiece)),((best quality)),((an extremely detailed)) negative: low quality, lowres, worst quality, normal quality,(bad anatomy) Steps: 28 Sampler: Euler a CFG scale: 12 Size: 640x960  prompt: solo,(a loli girl),cowboy shot,((masterpiece)),((best quality)),((an extremely detailed)) negative: low quality, lowres, worst quality, normal quality,(bad anatomy) Steps: 28 Sampler: Euler a CFG scale: 12 Size: 640x960  | 2a1e3f0d9bcfeab9fd9719629da5cf54 |

apache-2.0 | ['generated_from_trainer'] | false | my_awesome_qa_model This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 4.9699 | 2620b66e76db64bebd324fb3e9c8e57b |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 7 | 5.5290 | | No log | 2.0 | 14 | 5.1385 | | No log | 3.0 | 21 | 4.9699 | | 22253a7ecca3bb92387bde94a13827e7 |

mit | ['generated_from_trainer'] | false | deberta-v3-base-lm-all This model is a fine-tuned version of [microsoft/deberta-v3-base](https://huggingface.co/microsoft/deberta-v3-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.0354 | 5aaf096293fa200a7ce885207b955f5b |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 16 | 9f5f77f1599fca48af8bc1253b5fddea |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 3.3663 | 1.0 | 2388 | 2.2002 | | 2.1379 | 2.0 | 4776 | 1.8135 | | 1.8234 | 3.0 | 7164 | 1.6199 | | 1.623 | 4.0 | 9552 | 1.5031 | | 1.4936 | 5.0 | 11940 | 1.4027 | | 1.4034 | 6.0 | 14328 | 1.3346 | | 1.3214 | 7.0 | 16716 | 1.2927 | | 1.2618 | 8.0 | 19104 | 1.2377 | | 1.2113 | 9.0 | 21492 | 1.2043 | | 1.1595 | 10.0 | 23880 | 1.1843 | | 1.1082 | 11.0 | 26268 | 1.1174 | | 1.0712 | 12.0 | 28656 | 1.1071 | | 1.0468 | 13.0 | 31044 | 1.0723 | | 1.0209 | 14.0 | 33432 | 1.0741 | | 0.99 | 15.0 | 35820 | 1.0456 | | 0.9733 | 16.0 | 38208 | 1.0354 | | 6f310708ef7b4a087e54cbdb48e96493 |

cc | [] | false | A Textual Inversion Embedding for Stable Diffusion, trained on SD2.1 Version 2 of this embedding now allows you to achieve that loose digital paint style with much greater flexibility and fewer artifacts. I highly recommend it over V1. Simply call on the keyword laxpeintV2 (or laxpeint for the original) as in, single solo skydiver by laxpeintV2, overhead view of English country, above a skydiver, excitement and adrenaline <strong>Interested in generating your own embeddings? <a href="https://docs.google.com/document/d/1JvlM0phnok4pghVBAMsMq_-Z18_ip_GXvHYE0mITdFE/edit?usp=sharing" target="_blank">My Google doc walkthrough might help</a></strong> One odd artifact (though I think it's a funny kind of blessing from the gods of the latent space) is that skydiver prompt and multiple variations all generated a horse. No horses were included in the training data, so where it came from? Who knows! But I love it. Generally, laxpeintV2 lets you prompt directly and simply for what you want and you'll be quickly on the right track, whether that's a cute animal, anthropomorphic animal, fantasy scene, sci-fi scene, landscape, interior, or whatever you dream up. This embedding works with your vision and rarely requires you to struggle with its quirks.       | 3f33e24f6b641080be0befd7f08081e3 |

apache-2.0 | ['generated-from-trainer'] | false | model This card is a copy of [this repo](https://huggingface.co/julien-c/reactiongif-roberta/tree/main) for the purpose of testing the repo card utilities in `huggingface_hub`. It achieves the following results on the evaluation set: - Loss: 2.9150 - Accuracy: 0.2662 | 40b395aca23d7c3fa0c0982835345073 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.