license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['automatic-speech-recognition', 'ar'] | false | exp_w2v2t_ar_wav2vec2_s211 Fine-tuned [facebook/wav2vec2-large-lv60](https://huggingface.co/facebook/wav2vec2-large-lv60) for speech recognition using the train split of [Common Voice 7.0 (ar)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 55dd1c3e517604f099201101a72b199e |

mit | [] | false | sanguo-guanyu on Stable Diffusion This is the `<sanguo-guanyu>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:       | 5663b52cea9874c7e512a080bb683a7b |

apache-2.0 | ['generated_from_trainer'] | false | reviews-generator This model is a fine-tuned version of [facebook/bart-base](https://huggingface.co/facebook/bart-base) on the amazon_reviews_multi dataset. It achieves the following results on the evaluation set: - Loss: 3.4989 | 02362bdd3741046a6ca0bb25e727641d |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 32 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - training_steps: 1000 | 4a25ae4b0589765fc6ca0c4b1ee23904 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 3.7955 | 0.08 | 500 | 3.5578 | | 3.7486 | 0.16 | 1000 | 3.4989 | | 9728187c31dd6a06449053126193df5b |

apache-2.0 | ['generated_from_keras_callback'] | false | dpovedano/distilbert-base-uncased-finetuned-ner This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.0285 - Validation Loss: 0.0612 - Train Precision: 0.9222 - Train Recall: 0.9358 - Train F1: 0.9289 - Train Accuracy: 0.9834 - Epoch: 2 | 93d12da9d3a2610dcd1709a76f7ffd8e |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Train Precision | Train Recall | Train F1 | Train Accuracy | Epoch | |:----------:|:---------------:|:---------------:|:------------:|:--------:|:--------------:|:-----:| | 0.0289 | 0.0612 | 0.9222 | 0.9358 | 0.9289 | 0.9834 | 0 | | 0.0284 | 0.0612 | 0.9222 | 0.9358 | 0.9289 | 0.9834 | 1 | | 0.0285 | 0.0612 | 0.9222 | 0.9358 | 0.9289 | 0.9834 | 2 | | e5b49597ac61e16ef84a61f6c3da9451 |

apache-2.0 | ['stanza', 'token-classification'] | false | Stanza model for Marathi (mr) Stanza is a collection of accurate and efficient tools for the linguistic analysis of many human languages. Starting from raw text to syntactic analysis and entity recognition, Stanza brings state-of-the-art NLP models to languages of your choosing. Find more about it in [our website](https://stanfordnlp.github.io/stanza) and our [GitHub repository](https://github.com/stanfordnlp/stanza). This card and repo were automatically prepared with `hugging_stanza.py` in the `stanfordnlp/huggingface-models` repo Last updated 2022-10-11 01:39:15.208 | f625e922453513ac2bed7ea55562b62d |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-cased-finetuned-squad This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the squad dataset. It achieves the following results on the evaluation set: - Loss: 1.0835 | 4bfb903256cae5271dc00292d57fa7fe |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.0302 | 1.0 | 5546 | 1.0068 | | 0.7597 | 2.0 | 11092 | 0.9976 | | 0.5483 | 3.0 | 16638 | 1.0835 | | 13779834ba8569ad0fa824bd25a24692 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | tiny Turkish Whisper (tTW) This model is a fine-tuned version of [openai/whisper-tiny](https://huggingface.co/openai/whisper-tiny) on the Ermetal Meetings dataset. It achieves the following results on the evaluation set: - Loss: 6.0735 - Wer: 1.4939 - Cer: 1.0558 | 9aa10f79963b3b80090214b48daf20b7 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 1 | 9b05f6fb9b827b57c0282c9deeb19a0f |

apache-2.0 | ['automatic-speech-recognition', 'robust-speech-event', 'hf-asr-leaderboard'] | false | wav2vec2-large-xls-r-1b-Swedish This model is a fine-tuned version of [facebook/wav2vec2-xls-r-1b](https://huggingface.co/facebook/wav2vec2-xls-r-1b) on the common_voice dataset. It achieves the following results on the evaluation set: **Without LM** - Loss: 0.3370 - Wer: 18.44 - Cer: 5.75 **With LM** - Loss: 0.3370 - Wer: 14.04 - Cer: 4.86 | 46372a46a88a9b2121ecdf77a43601c8 |

apache-2.0 | ['automatic-speech-recognition', 'robust-speech-event', 'hf-asr-leaderboard'] | false | Evaluation Commands 1. To evaluate on `mozilla-foundation/common_voice_8_0` with split `test` ```bash python eval.py --model_id kingabzpro/wav2vec2-large-xls-r-1b-Swedish --dataset mozilla-foundation/common_voice_8_0 --config sv-SE --split test ``` 2. To evaluate on `speech-recognition-community-v2/dev_data` ```bash python eval.py --model_id kingabzpro/wav2vec2-large-xls-r-1b-Swedish --dataset speech-recognition-community-v2/dev_data --config sv --split validation --chunk_length_s 5.0 --stride_length_s 1.0 ``` | 1cb5c33c5baea667057ae5615823860f |

apache-2.0 | ['automatic-speech-recognition', 'robust-speech-event', 'hf-asr-leaderboard'] | false | Inference With LM ```python import torch from datasets import load_dataset from transformers import AutoModelForCTC, AutoProcessor import torchaudio.functional as F model_id = "kingabzpro/wav2vec2-large-xls-r-1b-Swedish" sample_iter = iter(load_dataset("mozilla-foundation/common_voice_8_0", "sv-SE", split="test", streaming=True, use_auth_token=True)) sample = next(sample_iter) resampled_audio = F.resample(torch.tensor(sample["audio"]["array"]), 48_000, 16_000).numpy() model = AutoModelForCTC.from_pretrained(model_id) processor = AutoProcessor.from_pretrained(model_id) input_values = processor(resampled_audio, return_tensors="pt").input_values with torch.no_grad(): logits = model(input_values).logits transcription = processor.batch_decode(logits.numpy()).text ``` | 57664a5e9f28de53365fc7d07b1a278b |

apache-2.0 | ['automatic-speech-recognition', 'robust-speech-event', 'hf-asr-leaderboard'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 7.5e-05 - train_batch_size: 64 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 256 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - num_epochs: 50 - mixed_precision_training: Native AMP | a558a4ae123387bbba0747e2dc9dc0ad |

apache-2.0 | ['automatic-speech-recognition', 'robust-speech-event', 'hf-asr-leaderboard'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | Cer | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:| | 3.1562 | 11.11 | 500 | 0.4830 | 0.3729 | 0.1169 | | 0.5655 | 22.22 | 1000 | 0.3553 | 0.2381 | 0.0743 | | 0.3376 | 33.33 | 1500 | 0.3359 | 0.2179 | 0.0696 | | 0.2419 | 44.44 | 2000 | 0.3232 | 0.1844 | 0.0575 | | 328048c7f2f60cb7797f6a7e650a5005 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'gn', 'robust-speech-event', 'hf-asr-leaderboard'] | false | wav2vec2-xls-r-300m-gn-cv8-3 This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.9517 - Wer: 0.8542 | 4b23610c804ed32aa9b5ecc4a922d9c2 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'gn', 'robust-speech-event', 'hf-asr-leaderboard'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:------:|:----:|:---------------:|:------:| | 19.9125 | 5.54 | 100 | 5.4279 | 1.0 | | 3.8031 | 11.11 | 200 | 3.3070 | 1.0 | | 3.3783 | 16.65 | 300 | 3.2450 | 1.0 | | 3.3472 | 22.22 | 400 | 3.2424 | 1.0 | | 3.2714 | 27.76 | 500 | 3.1100 | 1.0 | | 3.2367 | 33.32 | 600 | 3.1091 | 1.0 | | 3.1968 | 38.86 | 700 | 3.1013 | 1.0 | | 3.2004 | 44.43 | 800 | 3.1173 | 1.0 | | 3.1656 | 49.97 | 900 | 3.0682 | 1.0 | | 3.1563 | 55.54 | 1000 | 3.0457 | 1.0 | | 3.1356 | 61.11 | 1100 | 3.0139 | 1.0 | | 3.086 | 66.65 | 1200 | 2.8108 | 1.0 | | 2.954 | 72.22 | 1300 | 2.3238 | 1.0 | | 2.6125 | 77.76 | 1400 | 1.6461 | 1.0 | | 2.3296 | 83.32 | 1500 | 1.2834 | 0.9744 | | 2.1345 | 88.86 | 1600 | 1.1091 | 0.9693 | | 2.0346 | 94.43 | 1700 | 1.0273 | 0.9233 | | 1.9611 | 99.97 | 1800 | 0.9642 | 0.9182 | | 1.9066 | 105.54 | 1900 | 0.9590 | 0.9105 | | 1.8178 | 111.11 | 2000 | 0.9679 | 0.9028 | | 1.7799 | 116.65 | 2100 | 0.9007 | 0.8619 | | 1.7726 | 122.22 | 2200 | 0.9689 | 0.8951 | | 1.7389 | 127.76 | 2300 | 0.8876 | 0.8593 | | 1.7151 | 133.32 | 2400 | 0.8716 | 0.8542 | | 1.6842 | 138.86 | 2500 | 0.9536 | 0.8772 | | 1.6449 | 144.43 | 2600 | 0.9296 | 0.8542 | | 1.5978 | 149.97 | 2700 | 0.8895 | 0.8440 | | 1.6515 | 155.54 | 2800 | 0.9162 | 0.8568 | | 1.6586 | 161.11 | 2900 | 0.9039 | 0.8568 | | 1.5966 | 166.65 | 3000 | 0.8627 | 0.8542 | | 1.5695 | 172.22 | 3100 | 0.9549 | 0.8824 | | 1.5699 | 177.76 | 3200 | 0.9332 | 0.8517 | | 1.5297 | 183.32 | 3300 | 0.9163 | 0.8338 | | 1.5367 | 188.86 | 3400 | 0.8822 | 0.8312 | | 1.5586 | 194.43 | 3500 | 0.9217 | 0.8363 | | 1.5429 | 199.97 | 3600 | 0.9564 | 0.8568 | | 1.5273 | 205.54 | 3700 | 0.9508 | 0.8542 | | 1.5043 | 211.11 | 3800 | 0.9374 | 0.8542 | | 1.4724 | 216.65 | 3900 | 0.9622 | 0.8619 | | 1.4794 | 222.22 | 4000 | 0.9550 | 0.8363 | | 1.4843 | 227.76 | 4100 | 0.9577 | 0.8465 | | 1.4781 | 233.32 | 4200 | 0.9543 | 0.8440 | | 1.4507 | 238.86 | 4300 | 0.9553 | 0.8491 | | 1.4997 | 244.43 | 4400 | 0.9728 | 0.8491 | | 1.4371 | 249.97 | 4500 | 0.9543 | 0.8670 | | 1.4825 | 255.54 | 4600 | 0.9636 | 0.8619 | | 1.4187 | 261.11 | 4700 | 0.9609 | 0.8440 | | 1.4363 | 266.65 | 4800 | 0.9567 | 0.8593 | | 1.4463 | 272.22 | 4900 | 0.9581 | 0.8542 | | 1.4117 | 277.76 | 5000 | 0.9517 | 0.8542 | | 30629e6ea463fa8569740ab8ed85b1ea |

mit | ['generated_from_trainer'] | false | 3label_model This model is a fine-tuned version of [microsoft/deberta-v3-base](https://huggingface.co/microsoft/deberta-v3-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.3920 - Accuracy: 0.8520 | ef891da8a9b1fdaa526ab4b6d17cc40f |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6073 | 1.0 | 707 | 0.3921 | 0.8343 | | 0.3319 | 2.0 | 1414 | 0.3920 | 0.8520 | | 972ad3ab352f3e70453e697c8ac85bff |

mit | [] | false | XLNet (base-sized model) XLNet model pre-trained on English language. It was introduced in the paper [XLNet: Generalized Autoregressive Pretraining for Language Understanding](https://arxiv.org/abs/1906.08237) by Yang et al. and first released in [this repository](https://github.com/zihangdai/xlnet/). Disclaimer: The team releasing XLNet did not write a model card for this model so this model card has been written by the Hugging Face team. | 1be752f87d86d9cebba75a22c99a2aba |

mit | [] | false | Usage Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import XLNetTokenizer, XLNetModel tokenizer = XLNetTokenizer.from_pretrained('xlnet-base-cased') model = XLNetModel.from_pretrained('xlnet-base-cased') inputs = tokenizer("Hello, my dog is cute", return_tensors="pt") outputs = model(**inputs) last_hidden_states = outputs.last_hidden_state ``` | 4e08c1bf05a632c551fd630cfd8a4a34 |

apache-2.0 | ['tensorflowtts', 'audio', 'text-to-speech', 'text-to-mel'] | false | Tacotron 2 with Guided Attention trained on LJSpeech (En) This repository provides a pretrained [Tacotron2](https://arxiv.org/abs/1712.05884) trained with [Guided Attention](https://arxiv.org/abs/1710.08969) on LJSpeech dataset (Eng). For a detail of the model, we encourage you to read more about [TensorFlowTTS](https://github.com/TensorSpeech/TensorFlowTTS). | 23ef8880c62d56532fea6fa08af94274 |

apache-2.0 | ['tensorflowtts', 'audio', 'text-to-speech', 'text-to-mel'] | false | Converting your Text to Mel Spectrogram ```python import numpy as np import soundfile as sf import yaml import tensorflow as tf from tensorflow_tts.inference import AutoProcessor from tensorflow_tts.inference import TFAutoModel processor = AutoProcessor.from_pretrained("tensorspeech/tts-tacotron2-ljspeech-en") tacotron2 = TFAutoModel.from_pretrained("tensorspeech/tts-tacotron2-ljspeech-en") text = "This is a demo to show how to use our model to generate mel spectrogram from raw text." input_ids = processor.text_to_sequence(text) decoder_output, mel_outputs, stop_token_prediction, alignment_history = tacotron2.inference( input_ids=tf.expand_dims(tf.convert_to_tensor(input_ids, dtype=tf.int32), 0), input_lengths=tf.convert_to_tensor([len(input_ids)], tf.int32), speaker_ids=tf.convert_to_tensor([0], dtype=tf.int32), ) ``` | 264690a85221235a46e0c58ac2310f7a |

apache-2.0 | ['deep-narrow'] | false | T5-Efficient-TINY-NL32 (Deep-Narrow version) T5-Efficient-TINY-NL32 is a variation of [Google's original T5](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) following the [T5 model architecture](https://huggingface.co/docs/transformers/model_doc/t5). It is a *pretrained-only* checkpoint and was released with the paper **[Scale Efficiently: Insights from Pre-training and Fine-tuning Transformers](https://arxiv.org/abs/2109.10686)** by *Yi Tay, Mostafa Dehghani, Jinfeng Rao, William Fedus, Samira Abnar, Hyung Won Chung, Sharan Narang, Dani Yogatama, Ashish Vaswani, Donald Metzler*. In a nutshell, the paper indicates that a **Deep-Narrow** model architecture is favorable for **downstream** performance compared to other model architectures of similar parameter count. To quote the paper: > We generally recommend a DeepNarrow strategy where the model’s depth is preferentially increased > before considering any other forms of uniform scaling across other dimensions. This is largely due to > how much depth influences the Pareto-frontier as shown in earlier sections of the paper. Specifically, a > tall small (deep and narrow) model is generally more efficient compared to the base model. Likewise, > a tall base model might also generally more efficient compared to a large model. We generally find > that, regardless of size, even if absolute performance might increase as we continue to stack layers, > the relative gain of Pareto-efficiency diminishes as we increase the layers, converging at 32 to 36 > layers. Finally, we note that our notion of efficiency here relates to any one compute dimension, i.e., > params, FLOPs or throughput (speed). We report all three key efficiency metrics (number of params, > FLOPS and speed) and leave this decision to the practitioner to decide which compute dimension to > consider. To be more precise, *model depth* is defined as the number of transformer blocks that are stacked sequentially. A sequence of word embeddings is therefore processed sequentially by each transformer block. | 0f68bc1c6ab21df42a7197578eed5309 |

apache-2.0 | ['deep-narrow'] | false | Details model architecture This model checkpoint - **t5-efficient-tiny-nl32** - is of model type **Tiny** with the following variations: - **nl** is **32** It has **67.06** million parameters and thus requires *ca.* **268.25 MB** of memory in full precision (*fp32*) or **134.12 MB** of memory in half precision (*fp16* or *bf16*). A summary of the *original* T5 model architectures can be seen here: | Model | nl (el/dl) | ff | dm | kv | nh | | d0cce9c4c67893873f75e7aae3d8f9c9 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_sa_GLUE_Experiment_logit_kd_qnli_384 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE QNLI dataset. It achieves the following results on the evaluation set: - Loss: 0.3912 - Accuracy: 0.5881 | 122da806d9709f401497e4c13ec86684 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.4059 | 1.0 | 410 | 0.3930 | 0.5733 | | 0.3918 | 2.0 | 820 | 0.3919 | 0.5839 | | 0.3807 | 3.0 | 1230 | 0.3912 | 0.5881 | | 0.371 | 4.0 | 1640 | 0.3949 | 0.5843 | | 0.3618 | 5.0 | 2050 | 0.3985 | 0.5815 | | 0.352 | 6.0 | 2460 | 0.4136 | 0.5801 | | 0.3416 | 7.0 | 2870 | 0.4222 | 0.5773 | | 0.331 | 8.0 | 3280 | 0.4226 | 0.5742 | | e4cf2d2f26a95e91b1eab42a682dc209 |

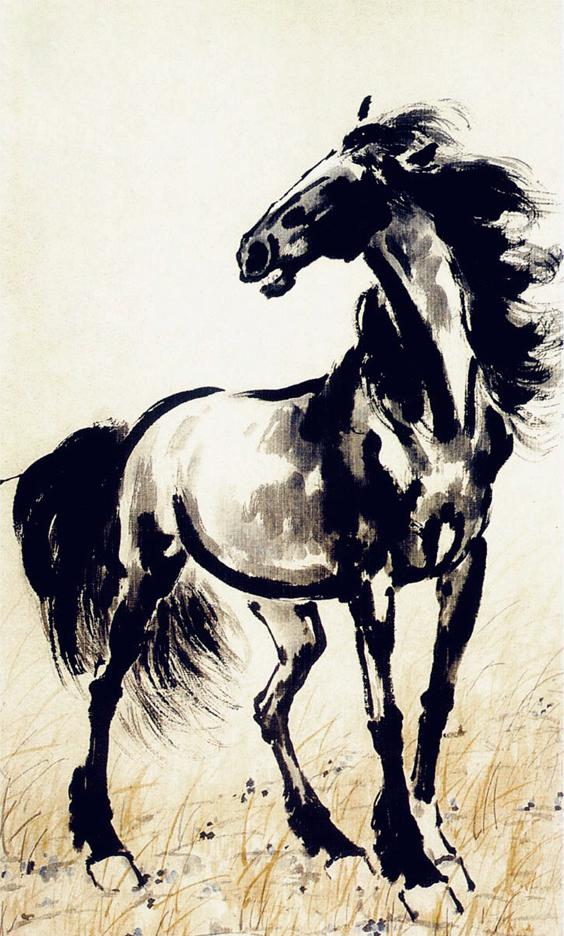

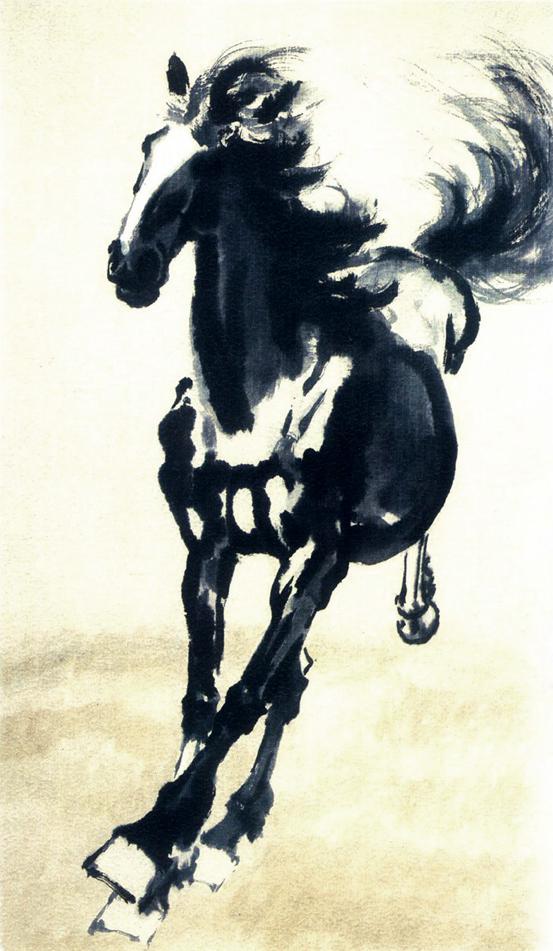

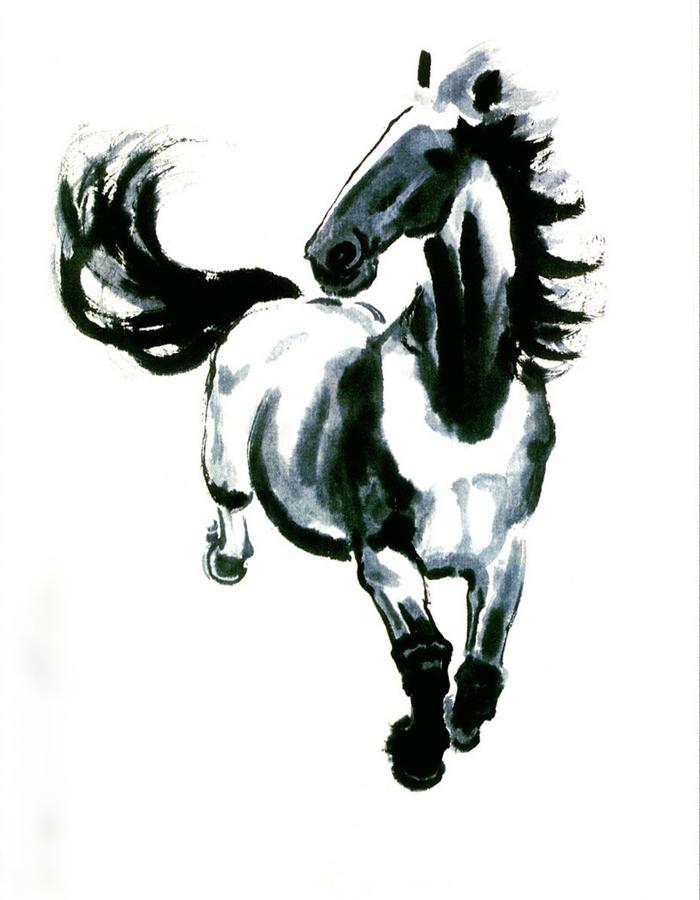

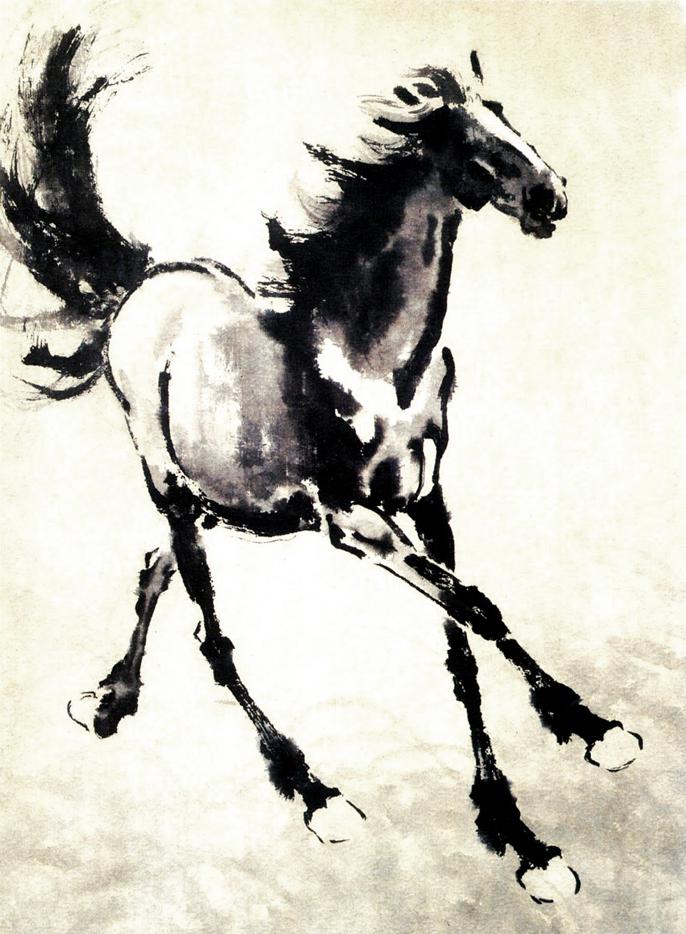

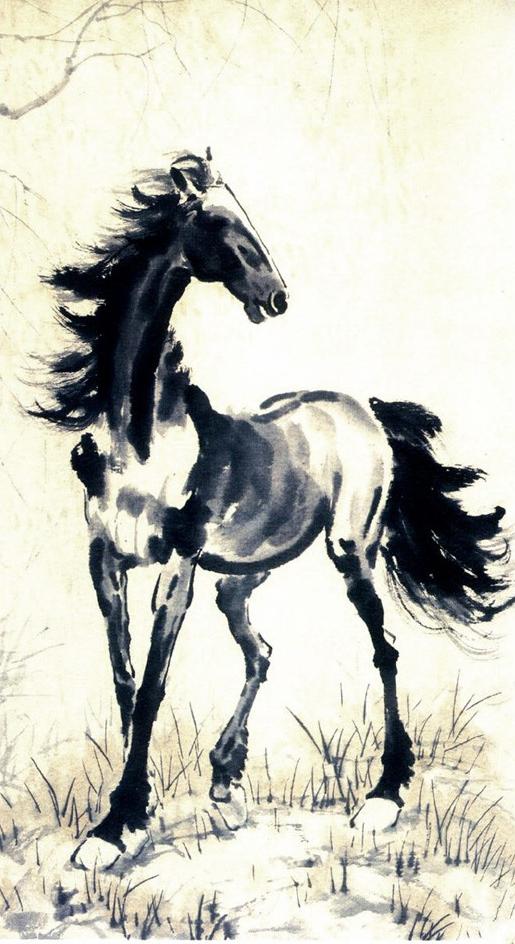

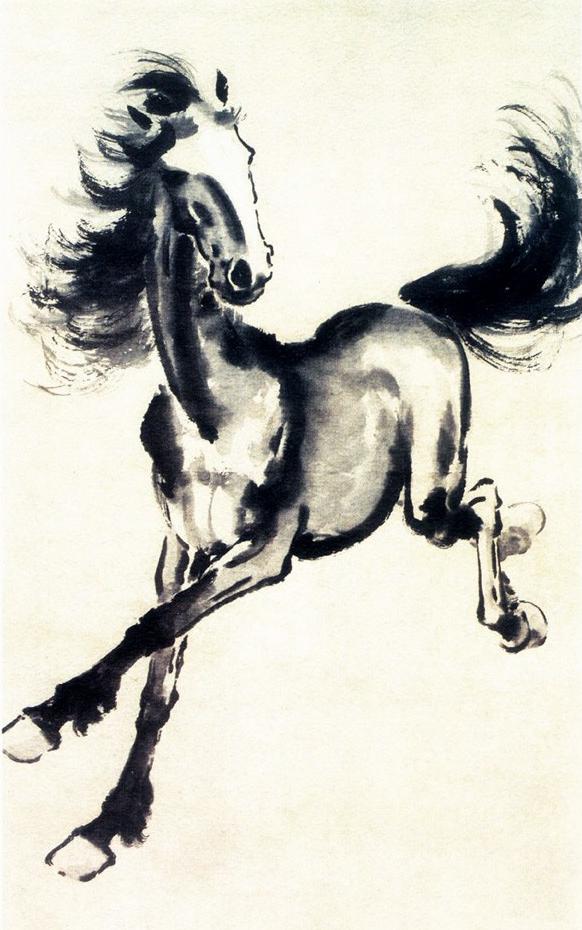

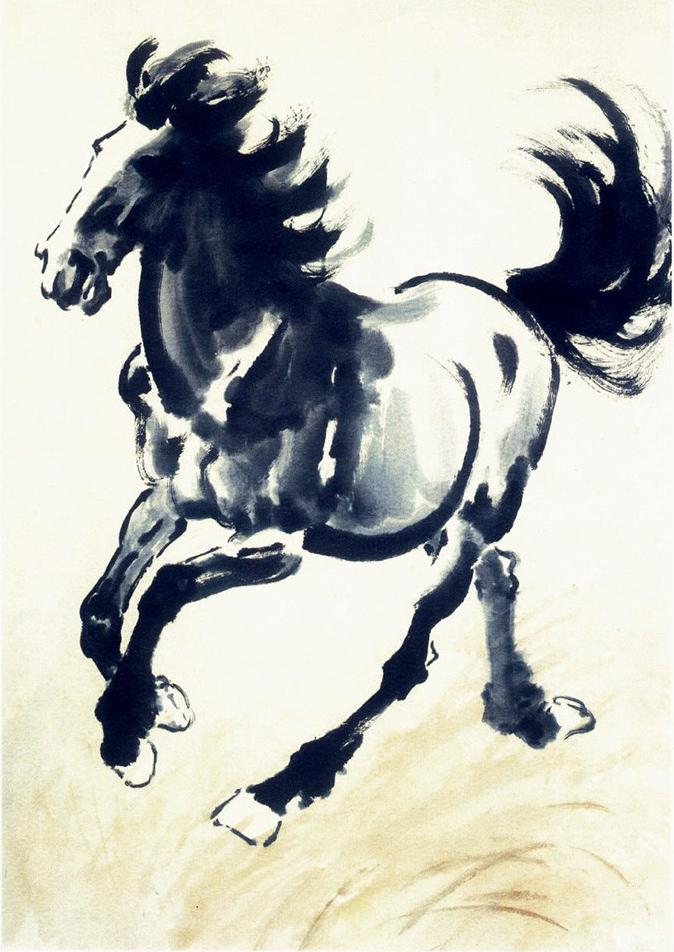

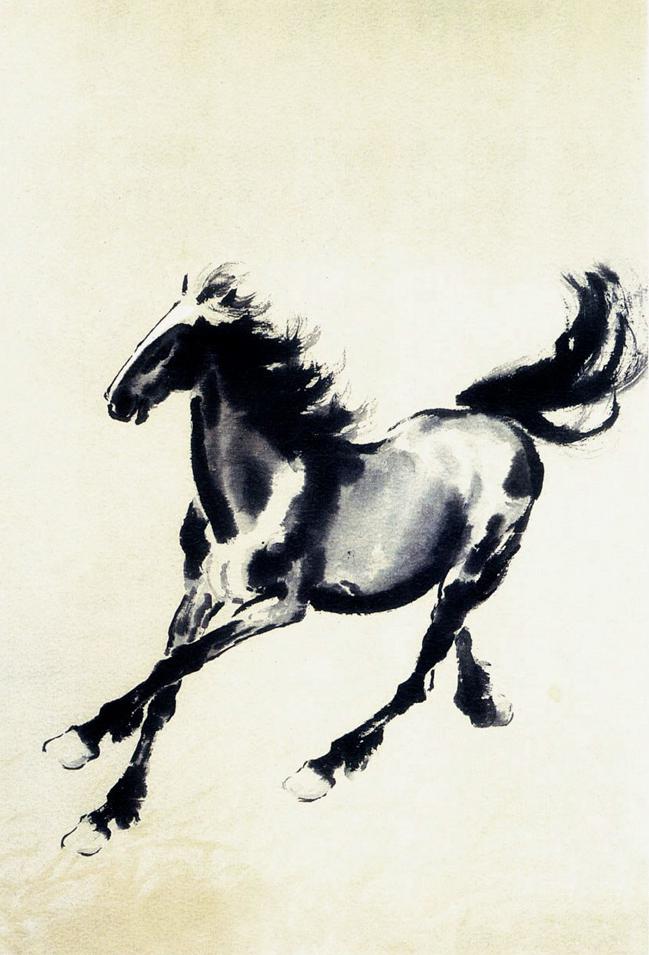

mit | [] | false | xbh on Stable Diffusion This is the `<xbh>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:          | 483623cd57327eeda524db5a67125b65 |

apache-2.0 | [] | false | RuLeanALBERT is a pretrained masked language model for the Russian language using a memory-efficient architecture. Read more about the model in [this blog post](https://habr.com/ru/company/yandex/blog/688234/) (in Russian). See its implementation, as well as the pretraining and finetuning code, at [https://github.com/yandex-research/RuLeanALBERT](https://github.com/yandex-research/RuLeanALBERT). | 6228b7a60e01f5a5af081c26c81f286f |

apache-2.0 | ['generated_from_trainer'] | false | bert-finetuned-ner This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.1193 - Precision: 0.8333 - Recall: 0.9322 - F1: 0.8800 - Accuracy: 0.9725 | bb0d38c0e36e8604bc0261e9750ed069 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 18 | 0.1216 | 0.8594 | 0.9322 | 0.8943 | 0.9740 | | No log | 2.0 | 36 | 0.1200 | 0.8615 | 0.9492 | 0.9032 | 0.9740 | | No log | 3.0 | 54 | 0.1193 | 0.8333 | 0.9322 | 0.8800 | 0.9725 | | c2d835ae8d0bde9f47df09ec8980bc3d |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Archer Diffusion This is the fine-tuned Stable Diffusion model trained on screenshots from the TV-show Archer. Use the tokens **_archer style_** in your prompts for the effect. **If you enjoy my work, please consider supporting me** [](https://patreon.com/user?u=79196446) | d72ed469995726d96e28cb57c128e695 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | !pip install diffusers transformers scipy torch from diffusers import StableDiffusionPipeline import torch model_id = "nitrosocke/archer-diffusion" pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16) pipe = pipe.to("cuda") prompt = "a magical princess with golden hair, archer style" image = pipe(prompt).images[0] image.save("./magical_princess.png") ``` **Portraits rendered with the model:**  **Celebrities rendered with the model:**  **Landscapes rendered with the model:**  **Animals rendered with the model:**  **Sample images used for training:**  | 1b43c40958798dffa1876c7f0fb8b1ee |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Prompt and settings for landscapes: **archer style suburban street night blue indoor lighting Negative prompt: grey cars** _Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 2915669764, Size: 1024x576_ This model was trained using the diffusers based dreambooth training and prior-preservation loss in 4.000 steps and using the _train-text-encoder_ feature. | 110b2324c5c3d743bf80954b921c678c |

apache-2.0 | ['biomedical', 'clinical', 'spanish'] | false | Intended uses and limitations The model is ready-to-use only for masked language modelling to perform the Fill Mask task (try the inference API or read the next section). However, it is intended to be fine-tuned on downstream tasks such as Named Entity Recognition or Text Classification. | 44226a440c5af54b8d8586967037efe8 |

apache-2.0 | ['biomedical', 'clinical', 'spanish'] | false | Tokenization and model pretraining This model is a [RoBERTa-based](https://github.com/pytorch/fairseq/tree/master/examples/roberta) model trained on a **biomedical** corpus in Spanish collected from several sources (see next section). The training corpus has been tokenized using a byte version of [Byte-Pair Encoding (BPE)](https://github.com/openai/gpt-2) used in the original [RoBERTA](https://github.com/pytorch/fairseq/tree/master/examples/roberta) model with a vocabulary size of 52,000 tokens. The pretraining consists of a masked language model training at the subword level following the approach employed for the RoBERTa base model with the same hyperparameters as in the original work. The training lasted a total of 48 hours with 16 NVIDIA V100 GPUs of 16GB DDRAM, using Adam optimizer with a peak learning rate of 0.0005 and an effective batch size of 2,048 sentences. | d7696193d2c96ae1ec140df90a953bbf |

apache-2.0 | ['biomedical', 'clinical', 'spanish'] | false | Training corpora and preprocessing The training corpus is composed of several biomedical corpora in Spanish, collected from publicly available corpora and crawlers. To obtain a high-quality training corpus, a cleaning pipeline with the following operations has been applied: - data parsing in different formats - sentence splitting - language detection - filtering of ill-formed sentences - deduplication of repetitive contents - keep the original document boundaries Finally, the corpora are concatenated and further global deduplication among the corpora has been applied. The result is a medium-size biomedical corpus for Spanish composed of about 963M tokens. The table below shows some basic statistics of the individual cleaned corpora: | Name | No. tokens | Description | |-----------------------------------------------------------------------------------------|-------------|------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------| | [Medical crawler](https://zenodo.org/record/4561970) | 903,558,136 | Crawler of more than 3,000 URLs belonging to Spanish biomedical and health domains. | | Clinical cases misc. | 102,855,267 | A miscellany of medical content, essentially clinical cases. Note that a clinical case report is a scientific publication where medical practitioners share patient cases and it is different from a clinical note or document. | | [Scielo](https://zenodo.org/record/2541681 | b72d5a603604fe8aa6d68610ff33bad1 |

apache-2.0 | ['biomedical', 'clinical', 'spanish'] | false | Evaluation The model has been fine-tuned on three Named Entity Recognition (NER) tasks using three clinical NER datasets: - [PharmaCoNER](https://zenodo.org/record/4270158): is a track on chemical and drug mention recognition from Spanish medical texts (for more info see: https://temu.bsc.es/pharmaconer/). - [CANTEMIST](https://zenodo.org/record/3978041 | 459fd08a3158415995be59c9e60e7e71 |

apache-2.0 | ['biomedical', 'clinical', 'spanish'] | false | .YTt5qH2xXbQ). - ICTUSnet: consists of 1,006 hospital discharge reports of patients admitted for stroke from 18 different Spanish hospitals. It contains more than 79,000 annotations for 51 different kinds of variables. We addressed the NER task as a token classification problem using a standard linear layer along with the BIO tagging schema. We compared our models with the general-domain Spanish [roberta-base-bne](https://huggingface.co/PlanTL-GOB-ES/roberta-base-bne), the general-domain multilingual model that supports Spanish [mBERT](https://huggingface.co/bert-base-multilingual-cased), the domain-specific English model [BioBERT](https://huggingface.co/dmis-lab/biobert-base-cased-v1.2), and three domain-specific models based on continual pre-training, [mBERT-Galén](https://ieeexplore.ieee.org/document/9430499), [XLM-R-Galén](https://ieeexplore.ieee.org/document/9430499) and [BETO-Galén](https://ieeexplore.ieee.org/document/9430499). The table below shows the F1 scores obtained: | Tasks/Models | bsc-bio-es | XLM-R-Galén | BETO-Galén | mBERT-Galén | mBERT | BioBERT | roberta-base-bne | |--------------|----------------|--------------------|--------------|--------------|--------------|--------------|------------------| | PharmaCoNER | **0.8907** | 0.8754 | 0.8537 | 0.8594 | 0.8671 | 0.8545 | 0.8474 | | CANTEMIST | **0.8220** | 0.8078 | 0.8153 | 0.8168 | 0.8116 | 0.8070 | 0.7875 | | ICTUSnet | **0.8727** | 0.8716 | 0.8498 | 0.8509 | 0.8631 | 0.8521 | 0.8677 | The fine-tuning scripts can be found in the official GitHub [repository](https://github.com/PlanTL-GOB-ES/lm-biomedical-clinical-es). | da076fca02fb477c79d388f48de18fed |

apache-2.0 | ['biomedical', 'clinical', 'spanish'] | false | Citation information If you use these models, please cite our work: ```bibtext @inproceedings{carrino-etal-2022-pretrained, title = "Pretrained Biomedical Language Models for Clinical {NLP} in {S}panish", author = "Carrino, Casimiro Pio and Llop, Joan and P{\`a}mies, Marc and Guti{\'e}rrez-Fandi{\~n}o, Asier and Armengol-Estap{\'e}, Jordi and Silveira-Ocampo, Joaqu{\'\i}n and Valencia, Alfonso and Gonzalez-Agirre, Aitor and Villegas, Marta", booktitle = "Proceedings of the 21st Workshop on Biomedical Language Processing", month = may, year = "2022", address = "Dublin, Ireland", publisher = "Association for Computational Linguistics", url = "https://aclanthology.org/2022.bionlp-1.19", doi = "10.18653/v1/2022.bionlp-1.19", pages = "193--199", abstract = "This work presents the first large-scale biomedical Spanish language models trained from scratch, using large biomedical corpora consisting of a total of 1.1B tokens and an EHR corpus of 95M tokens. We compared them against general-domain and other domain-specific models for Spanish on three clinical NER tasks. As main results, our models are superior across the NER tasks, rendering them more convenient for clinical NLP applications. Furthermore, our findings indicate that when enough data is available, pre-training from scratch is better than continual pre-training when tested on clinical tasks, raising an exciting research question about which approach is optimal. Our models and fine-tuning scripts are publicly available at HuggingFace and GitHub.", } ``` | 9cab71a7e8e7e9a78845a50f44a25c7d |

apache-2.0 | ['generated_from_trainer'] | false | bert-engonly-sentiment-test This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.4479 - Accuracy: 0.8967 | 3ff4ff63d2509ee783f696252d8b11fc |

apache-2.0 | ['automatic-speech-recognition', 'NbAiLab/NPSC', 'generated_from_trainer'] | false | xls-npsc This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the NBAILAB/NPSC - 48K_MP3 dataset. It achieves the following results on the evaluation set: - Loss: 3.5006 - Wer: 1.0 | 26ea9a37b2b253e0dd8d5ac9d5e26ff3 |

apache-2.0 | ['automatic-speech-recognition', 'NbAiLab/NPSC', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 7.5e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 10.0 - mixed_precision_training: Native AMP | cd13f4cc0d5fc5d1582d5a9076beef8d |

apache-2.0 | ['generated_from_trainer'] | false | KB13-t5-base-finetuned-en-to-regex This model is a fine-tuned version of [t5-base](https://huggingface.co/t5-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.4785 - Semantic accuracy: 0.3902 - Syntactic accuracy: 0.3171 - Gen Len: 15.2927 | 0866b361efe166fff5803947c3038fae |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.001 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - training_steps: 1000 | 0cca447df1b10b27f781c5300ace6cb3 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Semantic accuracy | Syntactic accuracy | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-----------------:|:------------------:|:-------:| | No log | 2.13 | 100 | 0.7159 | 0.122 | 0.0976 | 15.2439 | | No log | 4.26 | 200 | 0.4649 | 0.2683 | 0.2195 | 15.0488 | | No log | 6.38 | 300 | 0.3749 | 0.4146 | 0.3415 | 15.3659 | | No log | 8.51 | 400 | 0.4155 | 0.3902 | 0.2927 | 15.0976 | | 0.5191 | 10.64 | 500 | 0.4148 | 0.3902 | 0.2927 | 15.7561 | | 0.5191 | 12.77 | 600 | 0.4010 | 0.439 | 0.3415 | 15.3902 | | 0.5191 | 14.89 | 700 | 0.4429 | 0.3902 | 0.3171 | 15.3659 | | 0.5191 | 17.02 | 800 | 0.4607 | 0.3902 | 0.3415 | 15.561 | | 0.5191 | 19.15 | 900 | 0.4629 | 0.3902 | 0.3171 | 15.122 | | 0.0518 | 21.28 | 1000 | 0.4785 | 0.3902 | 0.3171 | 15.2927 | | 1deec60b091b3fbe35c32063fbcb86bc |

mit | ['generated_from_trainer'] | false | gpt2-largeforbiddentoystory This model is a fine-tuned version of [gpt2-large](https://huggingface.co/gpt2-large) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.1643 | 8eb60ba82d6c503c084258b16548d4b9 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 32 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 9 | edb6f0c81bbcc63ba883b7470b68c25b |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 110 | 2.6384 | | No log | 2.0 | 220 | 2.1784 | | No log | 3.0 | 330 | 1.8316 | | No log | 4.0 | 440 | 1.5842 | | 2.407 | 5.0 | 550 | 1.4129 | | 2.407 | 6.0 | 660 | 1.2985 | | 2.407 | 7.0 | 770 | 1.2222 | | 2.407 | 8.0 | 880 | 1.1786 | | 2.407 | 9.0 | 990 | 1.1643 | | a41634aa347f0298a8ffd991f12bc30d |

mit | ['generated_from_trainer', 'nlu', 'intent-classification'] | false | mdeberta-v3-base_amazon-massive_intent This model is a fine-tuned version of [microsoft/mdeberta-v3-base](https://huggingface.co/microsoft/mdeberta-v3-base) on the [MASSIVE1.1](https://huggingface.co/datasets/AmazonScience/massive) dataset. It achieves the following results on the evaluation set: - Loss: 1.1661 - Accuracy: 0.8136 - F1: 0.8136 | ca614fdb969affd5a2934ff33868f503 |

mit | ['generated_from_trainer', 'nlu', 'intent-classification'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:-----:|:---------------:|:--------:|:------:| | 3.6412 | 1.0 | 720 | 2.7536 | 0.3123 | 0.3123 | | 2.8575 | 2.0 | 1440 | 1.8556 | 0.5303 | 0.5303 | | 1.7284 | 3.0 | 2160 | 1.3758 | 0.6699 | 0.6699 | | 1.3794 | 4.0 | 2880 | 1.1221 | 0.7236 | 0.7236 | | 0.942 | 5.0 | 3600 | 0.9936 | 0.7609 | 0.7609 | | 0.7672 | 6.0 | 4320 | 0.9411 | 0.7727 | 0.7727 | | 0.602 | 7.0 | 5040 | 0.9196 | 0.7841 | 0.7841 | | 0.4776 | 8.0 | 5760 | 0.9328 | 0.7895 | 0.7895 | | 0.4347 | 9.0 | 6480 | 0.9602 | 0.7860 | 0.7860 | | 0.2941 | 10.0 | 7200 | 0.9543 | 0.7949 | 0.7949 | | 0.2783 | 11.0 | 7920 | 0.9979 | 0.8013 | 0.8013 | | 0.2038 | 12.0 | 8640 | 0.9702 | 0.8062 | 0.8062 | | 0.1827 | 13.0 | 9360 | 1.0121 | 0.8106 | 0.8106 | | 0.1352 | 14.0 | 10080 | 1.0339 | 0.8136 | 0.8136 | | 0.1115 | 15.0 | 10800 | 1.1091 | 0.8057 | 0.8057 | | 0.0996 | 16.0 | 11520 | 1.1134 | 0.8151 | 0.8151 | | 0.0837 | 17.0 | 12240 | 1.1288 | 0.8160 | 0.8160 | | 0.0711 | 18.0 | 12960 | 1.1499 | 0.8155 | 0.8155 | | 0.0594 | 19.0 | 13680 | 1.1622 | 0.8126 | 0.8126 | | 0.0569 | 20.0 | 14400 | 1.1661 | 0.8136 | 0.8136 | | 079f6df4fe300d3167d0b07c9c8b6429 |

mit | [] | false | model by Rodrigoajj This your the Stable Diffusion model fine-tuned the Rajj concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt`: **a photo of sks man face** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept:            | 340fd52dedbce2bf7eb7bb6a67cb2ae6 |

apache-2.0 | ['generated_from_trainer'] | false | all-roberta-large-v1-meta-4-16-5 This model is a fine-tuned version of [sentence-transformers/all-roberta-large-v1](https://huggingface.co/sentence-transformers/all-roberta-large-v1) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.4797 - Accuracy: 0.28 | db09db95cff6d6f5a44ec9059c016d7e |

apache-2.0 | ['generated_from_trainer'] | false | beit-base-patch16-224-pt22k-ft22k-rim_one-new This model is a fine-tuned version of [microsoft/beit-base-patch16-224-pt22k-ft22k](https://huggingface.co/microsoft/beit-base-patch16-224-pt22k-ft22k) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.4550 - Accuracy: 0.8767 | ff5642ceeb098e0dbd09d459d13e0b2a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 0.73 | 2 | 0.2411 | 0.9178 | | No log | 1.73 | 4 | 0.2182 | 0.8973 | | No log | 2.73 | 6 | 0.3085 | 0.8973 | | No log | 3.73 | 8 | 0.2794 | 0.8973 | | 0.1392 | 4.73 | 10 | 0.2398 | 0.9110 | | 0.1392 | 5.73 | 12 | 0.2925 | 0.8973 | | 0.1392 | 6.73 | 14 | 0.2798 | 0.9110 | | 0.1392 | 7.73 | 16 | 0.2184 | 0.9178 | | 0.1392 | 8.73 | 18 | 0.3007 | 0.9110 | | 0.0416 | 9.73 | 20 | 0.3344 | 0.9041 | | 0.0416 | 10.73 | 22 | 0.3626 | 0.9110 | | 0.0416 | 11.73 | 24 | 0.4842 | 0.8904 | | 0.0416 | 12.73 | 26 | 0.3664 | 0.8973 | | 0.0416 | 13.73 | 28 | 0.3458 | 0.9110 | | 0.0263 | 14.73 | 30 | 0.2810 | 0.9110 | | 0.0263 | 15.73 | 32 | 0.4695 | 0.8699 | | 0.0263 | 16.73 | 34 | 0.3723 | 0.9041 | | 0.0263 | 17.73 | 36 | 0.3447 | 0.9041 | | 0.0263 | 18.73 | 38 | 0.3708 | 0.8904 | | 0.0264 | 19.73 | 40 | 0.4052 | 0.9110 | | 0.0264 | 20.73 | 42 | 0.4492 | 0.9041 | | 0.0264 | 21.73 | 44 | 0.4649 | 0.8904 | | 0.0264 | 22.73 | 46 | 0.4061 | 0.9178 | | 0.0264 | 23.73 | 48 | 0.4136 | 0.9110 | | 0.0139 | 24.73 | 50 | 0.4183 | 0.8973 | | 0.0139 | 25.73 | 52 | 0.4504 | 0.8904 | | 0.0139 | 26.73 | 54 | 0.4368 | 0.8973 | | 0.0139 | 27.73 | 56 | 0.4711 | 0.9110 | | 0.0139 | 28.73 | 58 | 0.3928 | 0.9110 | | 0.005 | 29.73 | 60 | 0.4550 | 0.8767 | | 99ef889bab55f5ae0cde8711374f075d |

apache-2.0 | ['generated_from_trainer', 'summarization'] | false | summarization This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the xsum dataset. It achieves the following results on the evaluation set: - Loss: 2.6690 - Rouge1: 23.9405 - Rouge2: 5.0879 - Rougel: 18.4981 - Rougelsum: 18.5032 - Gen Len: 18.7376 | 4eedb62a8b415b080d1cd1bfbed55a50 |

apache-2.0 | ['generated_from_trainer', 'summarization'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - training_steps: 1000 - mixed_precision_training: Native AMP | 02b749dc608a57c7408029da6a0490ba |

apache-2.0 | ['generated_from_trainer', 'summarization'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:------:|:-------:|:---------:|:-------:| | 2.9249 | 0.08 | 1000 | 2.6690 | 23.9405 | 5.0879 | 18.4981 | 18.5032 | 18.7376 | | b762cd61caab803ac300c72dcb5118c1 |

apache-2.0 | ['translation'] | false | opus-mt-es-lus * source languages: es * target languages: lus * OPUS readme: [es-lus](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/es-lus/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/es-lus/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/es-lus/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/es-lus/opus-2020-01-16.eval.txt) | 2287527d9841786706bbd4a6e738c785 |

apache-2.0 | ['mt5-small', 'text2text-generation', 'natural language generation', 'conversational system', 'task-oriented dialog'] | false | mt5-small-nlg-all-crosswoz This model is a fine-tuned version of [mt5-small](https://huggingface.co/mt5-small) on [CrossWOZ](https://huggingface.co/datasets/ConvLab/crosswoz) both user and system utterances. Refer to [ConvLab-3](https://github.com/ConvLab/ConvLab-3) for model description and usage. | f53ae5467463fd22342ae711bbda1c64 |

apache-2.0 | ['mt5-small', 'text2text-generation', 'natural language generation', 'conversational system', 'task-oriented dialog'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.001 - train_batch_size: 32 - eval_batch_size: 16 - seed: 42 - gradient_accumulation_steps: 8 - total_train_batch_size: 256 - optimizer: Adafactor - lr_scheduler_type: linear - num_epochs: 10.0 | 4f0b658c221fa6c5d28f963411fb18cd |

mit | ['vision', 'video-classification'] | false | X-CLIP (base-sized model) X-CLIP model (base-sized, patch resolution of 16) trained in a few-shot fashion (K=2) on [UCF101](https://www.crcv.ucf.edu/data/UCF101.php). It was introduced in the paper [Expanding Language-Image Pretrained Models for General Video Recognition](https://arxiv.org/abs/2208.02816) by Ni et al. and first released in [this repository](https://github.com/microsoft/VideoX/tree/master/X-CLIP). This model was trained using 32 frames per video, at a resolution of 224x224. Disclaimer: The team releasing X-CLIP did not write a model card for this model so this model card has been written by the Hugging Face team. | a62881c34dc25a3507b24d6fe676749a |

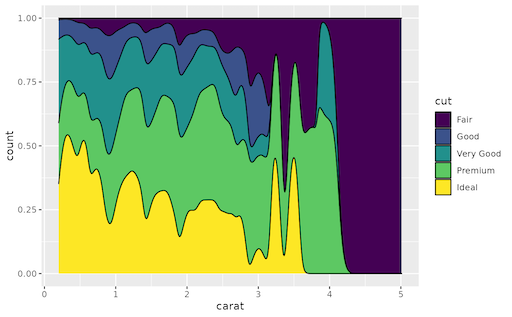

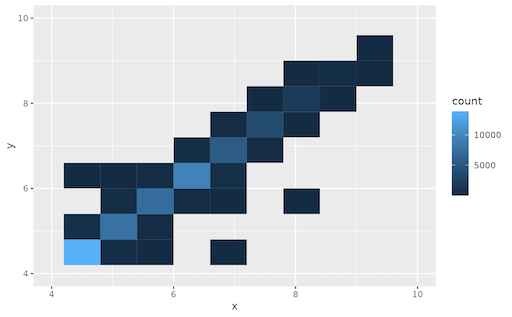

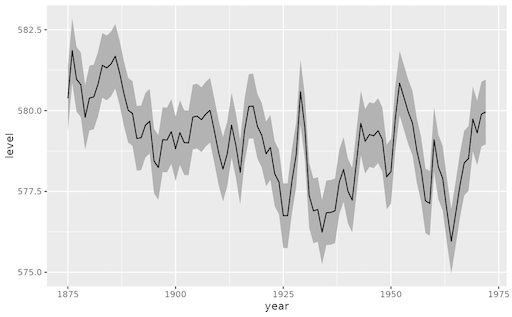

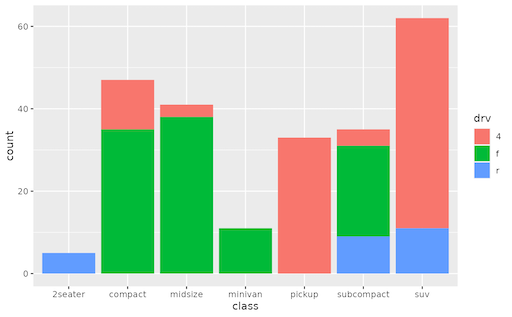

mit | [] | false | ggplot2 on Stable Diffusion This is the `<ggplot2>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:      | db5a1edba6b5a4ffceed6c63c411edd2 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-squad-ver1 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad dataset. It achieves the following results on the evaluation set: - Loss: 1.8669 | 6de9782118b46681bdddafc8cba768d2 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.6175 | 1.0 | 554 | 1.8621 | | 1.1951 | 2.0 | 1108 | 1.8669 | | 9c3414bea3928423b817204c73b57126 |

mit | ['glossbert'] | false | GlossBERT A BERT-based model fine-tuned on SemCor 3.0 to perform word-sense-disambiguation by leveraging gloss information. This model is the research output of the paper titled: '[GlossBERT: BERT for Word Sense Disambiguation with Gloss Knowledge](https://arxiv.org/pdf/1908.07245.pdf)' Disclaimer: This model was built and trained by a group of researchers different than the repository's author. The original model code can be found on github: https://github.com/HSLCY/GlossBERT | b02e215c56e6575a0221ae42f80d4da0 |

mit | ['glossbert'] | false | Usage The following code loads GlossBERT: ```py from transformers import AutoTokenizer, BertForSequenceClassification tokenizer = AutoTokenizer.from_pretrained('kanishka/GlossBERT') model = BertForSequenceClassification.from_pretrained('kanishka/GlossBERT') ``` | 0c30b6607a6f3e66c89b16cd12561c32 |

mit | ['glossbert'] | false | Citation If you use this model in any of your projects, please cite the original authors using the following bibtex: ``` @inproceedings{huang-etal-2019-glossbert, title = "{G}loss{BERT}: {BERT} for Word Sense Disambiguation with Gloss Knowledge", author = "Huang, Luyao and Sun, Chi and Qiu, Xipeng and Huang, Xuanjing", booktitle = "Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP)", month = nov, year = "2019", address = "Hong Kong, China", publisher = "Association for Computational Linguistics", url = "https://www.aclweb.org/anthology/D19-1355", doi = "10.18653/v1/D19-1355", pages = "3507--3512" } ``` | ddd3238ff4cbfaf404ba57f834e9ef68 |

mit | [] | false | gpt2-wechsel-swahili Model trained with WECHSEL: Effective initialization of subword embeddings for cross-lingual transfer of monolingual language models. See the code here: https://github.com/CPJKU/wechsel And the paper here: https://aclanthology.org/2022.naacl-main.293/ | 483faa93210b4da224d4b1bb42fcf9ce |

mit | [] | false | RoBERTa | Model | NLI Score | NER Score | Avg Score | |---|---|---|---| | `roberta-base-wechsel-french` | **82.43** | **90.88** | **86.65** | | `camembert-base` | 80.88 | 90.26 | 85.57 | | Model | NLI Score | NER Score | Avg Score | |---|---|---|---| | `roberta-base-wechsel-german` | **81.79** | **89.72** | **85.76** | | `deepset/gbert-base` | 78.64 | 89.46 | 84.05 | | Model | NLI Score | NER Score | Avg Score | |---|---|---|---| | `roberta-base-wechsel-chinese` | **78.32** | 80.55 | **79.44** | | `bert-base-chinese` | 76.55 | **82.05** | 79.30 | | Model | NLI Score | NER Score | Avg Score | |---|---|---|---| | `roberta-base-wechsel-swahili` | **75.05** | **87.39** | **81.22** | | `xlm-roberta-base` | 69.18 | 87.37 | 78.28 | | 26bedf73ed94b86e3ed96b624a0d7707 |

mit | [] | false | GPT2 | Model | PPL | |---|---| | `gpt2-wechsel-french` | **19.71** | | `gpt2` (retrained from scratch) | 20.47 | | Model | PPL | |---|---| | `gpt2-wechsel-german` | **26.8** | | `gpt2` (retrained from scratch) | 27.63 | | Model | PPL | |---|---| | `gpt2-wechsel-chinese` | **51.97** | | `gpt2` (retrained from scratch) | 52.98 | | Model | PPL | |---|---| | `gpt2-wechsel-swahili` | **10.14** | | `gpt2` (retrained from scratch) | 10.58 | See our paper for details. | bd201910f4de0b49c74b65e63d3c5ca6 |

creativeml-openrail-m | [] | false | AniPlus v1 is a Stable Diffusion model based on a mix of Stable Diffusion 1.5, Waifu Diffusion 1.3, TrinArt Characters v1, and several bespoke Dreambooth models. It has been shown to perform favorably when prompted to produce a variety of anime and semi-realistic art styles, as well as a variety of different poses. This repository also contains (or will contain) finetuned models based on AniPlus v1 that are geared toward particular artstyles. Currently there is no AniPlus-specific VAE to enhance output, but you should receive good results from the TrinArt Characters VAE. AniPlus v1, from the leading provider* of non-infringing, anime-oriented Stable Diffusion models. \* Statement not verified by anyone. | 7ebaba61980de0e2d809ab0b98561221 |

creativeml-openrail-m | [] | false | Samples *All samples were produced using the AUTOMATIC1111 Stable Diffusion Web UI @ commit ac085628540d0ec6a988fad93f5b8f2154209571.* ``` 1girl, school uniform, smiling, looking at viewer, portrait Negative prompt: nsfw, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry Steps: 15, Sampler: DPM++ 2S a, CFG scale: 11, Seed: 1036725987, Size: 512x512, Model hash: 29bc1e6e, Batch size: 2, Batch pos: 0, Eta: 0.69 ```  ``` 1girl, miko, sitting on a park bench Negative prompt: nsfw, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry Steps: 15, Sampler: DPM++ 2S a, CFG scale: 11, Seed: 3863997491, Size: 768x512, Model hash: 29bc1e6e, Eta: 0.69 ```  ``` fantasy landscape, moon, night, galaxy, mountains Negative prompt: nsfw, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry Steps: 15, Sampler: DPM++ 2S a, CFG scale: 11, Seed: 2653170208, Size: 768x512, Model hash: 29bc1e6e, Eta: 0.69 ```  ``` semirealistic, a girl giving a thumbs up Negative prompt: nsfw, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry Steps: 15, Sampler: DPM++ 2S a, CFG scale: 11, Seed: 2500414341, Size: 512x768, Model hash: 29bc1e6e, Eta: 0.69 ```  *What? Polydactyly isn't normal?* ``` [semirealistic:3d cgi cartoon:0.6], 1girl, pink hair, blue eyes, smile, portrait Negative prompt: nsfw, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry Steps: 15, Sampler: DPM++ 2S a, CFG scale: 11, Seed: 487376122, Size: 512x512, Model hash: 29bc1e6e, Batch size: 2, Batch pos: 0, Eta: 0.69 ```  ``` 1boy, suit, smirk Negative prompt: nsfw, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry Steps: 15, Sampler: DPM++ 2S a, CFG scale: 11, Seed: 4122323677, Size: 512x768, Model hash: 29bc1e6e, Batch size: 2, Batch pos: 0, Eta: 0.69 ```  ``` absurdres, semirealistic, a bunch of girls running in a marathon Negative prompt: nsfw, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry Steps: 15, Sampler: DPM++ 2S a, CFG scale: 11, Seed: 2811740076, Size: 960x640, Model hash: 29bc1e6e, Eta: 0.69 ```  *The marathon test.* | 2948b24fb16fe6a718d6240f819f036a |

mit | ['ner'] | false | Description A Named Entity Recognition model trained on a customer feedback data using DistilBert. Possible labels are in BIO-notation. Performance of the PERS tag could be better because of low data samples: - PROD: for certain products - BRND: for brands - PERS: people names The following tags are simply in place to help better categorize the previous tags - MATR: relating to materials, e.g. cloth, leather, seam, etc. - TIME: time related entities - MISC: any other entity that might skew the results | 2fbc668e7150610637ce4686de426015 |

mit | ['ner'] | false | Usage ``` from transformers import AutoTokenizer, AutoModelForTokenClassification tokenizer = AutoTokenizer.from_pretrained("CouchCat/ma_ner_v7_distil") model = AutoModelForTokenClassification.from_pretrained("CouchCat/ma_ner_v7_distil") ``` | 224fee51194baf67a9bb5dba93a5a4c9 |

afl-3.0 | [] | false | VLP Dataset Metadata This dataset was acquired during the dissertation entitled **Optical Camera Communications and Machine Learning for Indoor Visible Light Positioning**. This work was carried out in the academic year 2020/2021 at the Instituto de Telecomunicacoes in Aveiro. The images that constitute this dataset were acquired over a grid with 15 regularly spaced reference points on the floor surface. Table 2 shows the position of these points in relation to the referential defined in the room along with the position of the LED luminaires. During the dataset acquisition, the CMOS image sensor (Sony IMX219) was positioned parallel to the floor at a height of 25.6 cm facing upwards, i.e. with pitch and yaw angles equal to 0. All images were saved as TIFF (Tagged Image File Format) with a resolution of 3264 × 2464 pixels and exposure and readout times equal to 9 µs and 18 µs, respectively. | dac88f17421701038d46910f21ee7709 |

apache-2.0 | ['generated_from_trainer'] | false | small-mlm-glue-sst2-target-glue-mnli This model is a fine-tuned version of [muhtasham/small-mlm-glue-sst2](https://huggingface.co/muhtasham/small-mlm-glue-sst2) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.6528 - Accuracy: 0.7271 | d6cbcbbef71ded17e0d8cf03cff38494 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.9063 | 0.04 | 500 | 0.8249 | 0.6370 | | 0.8116 | 0.08 | 1000 | 0.7813 | 0.6619 | | 0.7724 | 0.12 | 1500 | 0.7504 | 0.6764 | | 0.7489 | 0.16 | 2000 | 0.7261 | 0.6908 | | 0.7413 | 0.2 | 2500 | 0.7141 | 0.6900 | | 0.7312 | 0.24 | 3000 | 0.7088 | 0.6972 | | 0.7146 | 0.29 | 3500 | 0.6805 | 0.7127 | | 0.7041 | 0.33 | 4000 | 0.6703 | 0.7164 | | 0.6815 | 0.37 | 4500 | 0.6674 | 0.7241 | | 0.6828 | 0.41 | 5000 | 0.6528 | 0.7271 | | d9c43026d7ffd0a568ceb195fcd44ff5 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-l-xlsr-es-col-pro-noise This model is a fine-tuned version of [jonatasgrosman/wav2vec2-large-xlsr-53-spanish](https://huggingface.co/jonatasgrosman/wav2vec2-large-xlsr-53-spanish) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.0677 - Wer: 0.0380 | 1b58de0bbc34ab89775595e6278f036d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.94 | 1.21 | 400 | 0.0800 | 0.0814 | | 0.4711 | 2.42 | 800 | 0.0730 | 0.0692 | | 0.3451 | 3.62 | 1200 | 0.0729 | 0.0669 | | 0.2958 | 4.83 | 1600 | 0.0796 | 0.0667 | | 0.2544 | 6.04 | 2000 | 0.0808 | 0.0584 | | 0.227 | 7.25 | 2400 | 0.0791 | 0.0643 | | 0.2061 | 8.46 | 2800 | 0.0718 | 0.0582 | | 0.1901 | 9.67 | 3200 | 0.0709 | 0.0587 | | 0.179 | 10.87 | 3600 | 0.0698 | 0.0558 | | 0.1693 | 12.08 | 4000 | 0.0709 | 0.0530 | | 0.1621 | 13.29 | 4400 | 0.0640 | 0.0487 | | 0.1443 | 14.5 | 4800 | 0.0793 | 0.0587 | | 0.1408 | 15.71 | 5200 | 0.0741 | 0.0528 | | 0.1377 | 16.92 | 5600 | 0.0702 | 0.0462 | | 0.1292 | 18.13 | 6000 | 0.0822 | 0.0539 | | 0.1197 | 19.33 | 6400 | 0.0625 | 0.0436 | | 0.1137 | 20.54 | 6800 | 0.0650 | 0.0419 | | 0.1017 | 21.75 | 7200 | 0.0630 | 0.0392 | | 0.0976 | 22.96 | 7600 | 0.0630 | 0.0387 | | 0.0942 | 24.17 | 8000 | 0.0631 | 0.0380 | | 0.0924 | 25.38 | 8400 | 0.0645 | 0.0374 | | 0.0862 | 26.59 | 8800 | 0.0677 | 0.0402 | | 0.0831 | 27.79 | 9200 | 0.0680 | 0.0393 | | 0.077 | 29.0 | 9600 | 0.0677 | 0.0380 | | 9cb186d134d891f7fa1bc4dbd5e124f9 |

apache-2.0 | ['generated_from_trainer'] | false | platzi-vit-model-tommasory-beans This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the beans dataset. It achieves the following results on the evaluation set: - Loss: 0.0343 - Accuracy: 0.9925 | d1d3b79548ae8d620f84e91bb6f6488c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.1441 | 3.85 | 500 | 0.0343 | 0.9925 | | 032e028d184560643890ee5b5078a589 |

apache-2.0 | ['automatic-speech-recognition', 'th'] | false | exp_w2v2t_th_wavlm_s847 Fine-tuned [microsoft/wavlm-large](https://huggingface.co/microsoft/wavlm-large) for speech recognition on Thai using the train split of [Common Voice 7.0](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | e4de3e0cbb1dc5a19200c41245f62a07 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-najianews This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.3788 - Accuracy: 0.9075 - F1: 0.9074 | ef3abeb5a7729ce2bf6a3284de043f36 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.4709 | 1.0 | 249 | 0.3247 | 0.8933 | 0.8898 | | 0.2174 | 2.0 | 498 | 0.3848 | 0.9004 | 0.8952 | | 0.1444 | 3.0 | 747 | 0.3788 | 0.9075 | 0.9074 | | 2398185048b521bed8ec024d35011fdd |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'speech-emotion-recognition'] | false | Prediction ```python import torch import torch.nn as nn import torch.nn.functional as F import torchaudio from transformers import AutoConfig, Wav2Vec2FeatureExtractor import librosa import IPython.display as ipd import numpy as np import pandas as pd ``` ```python device = torch.device("cuda" if torch.cuda.is_available() else "cpu") model_name_or_path = "m3hrdadfi/wav2vec2-xlsr-persian-speech-emotion-recognition" config = AutoConfig.from_pretrained(model_name_or_path) feature_extractor = Wav2Vec2FeatureExtractor.from_pretrained(model_name_or_path) sampling_rate = feature_extractor.sampling_rate model = Wav2Vec2ForSpeechClassification.from_pretrained(model_name_or_path).to(device) ``` ```python def speech_file_to_array_fn(path, sampling_rate): speech_array, _sampling_rate = torchaudio.load(path) resampler = torchaudio.transforms.Resample(_sampling_rate) speech = resampler(speech_array).squeeze().numpy() return speech def predict(path, sampling_rate): speech = speech_file_to_array_fn(path, sampling_rate) inputs = feature_extractor(speech, sampling_rate=sampling_rate, return_tensors="pt", padding=True) inputs = {key: inputs[key].to(device) for key in inputs} with torch.no_grad(): logits = model(**inputs).logits scores = F.softmax(logits, dim=1).detach().cpu().numpy()[0] outputs = [{"Label": config.id2label[i], "Score": f"{round(score * 100, 3):.1f}%"} for i, score in enumerate(scores)] return outputs ``` ```python path = "/path/to/sadness.wav" outputs = predict(path, sampling_rate) ``` ```bash [ {'Label': 'Anger', 'Score': '0.0%'}, {'Label': 'Fear', 'Score': '0.0%'}, {'Label': 'Happiness', 'Score': '0.0%'}, {'Label': 'Neutral', 'Score': '0.0%'}, {'Label': 'Sadness', 'Score': '99.9%'}, {'Label': 'Surprise', 'Score': '0.0%'} ] ``` | 1abbcb77fa91fed256e038b449949ab6 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'speech-emotion-recognition'] | false | Evaluation The following tables summarize the scores obtained by model overall and per each class. | Emotions | precision | recall | f1-score | accuracy | |:---------:|:---------:|:------:|:--------:|:--------:| | Anger | 0.95 | 0.95 | 0.95 | | | Fear | 0.33 | 0.17 | 0.22 | | | Happiness | 0.69 | 0.69 | 0.69 | | | Neutral | 0.91 | 0.94 | 0.93 | | | Sadness | 0.92 | 0.85 | 0.88 | | | Surprise | 0.81 | 0.88 | 0.84 | | | | | | Overal | 0.90 | | b0c0317d259339d6598feb46f4b03cd4 |

wtfpl | [] | false | Marble statues with a hint of abstract. Works well with the words 'flower petals' and other embeds like PhotoHelper and Hyperfluid. The embedding is very strongly biased towards humans; requires some tinkering to get other results. Might make a v2 at some point that's more universal. Use FloralMarble-150.pt or FloralMarble-250.pt if you want the effect to be more controllable! Trained for 500 epochs/steps. 35 images, 4 vectors. Batch size of 7, 5 grad acc steps, learning rate of 0.0025:250,0.001:500. Dataset available (35 PNG images) here: https://huggingface.co/datasets/spaablauw/FloralMarble_dataset      | f0b6e8e8633cd43be7530f98b3443ef1 |

mit | ['roberta-base', 'roberta-base-epoch_8'] | false | RoBERTa, Intermediate Checkpoint - Epoch 8 This model is part of our reimplementation of the [RoBERTa model](https://arxiv.org/abs/1907.11692), trained on Wikipedia and the Book Corpus only. We train this model for almost 100K steps, corresponding to 83 epochs. We provide the 84 checkpoints (including the randomly initialized weights before the training) to provide the ability to study the training dynamics of such models, and other possible use-cases. These models were trained in part of a work that studies how simple statistics from data, such as co-occurrences affects model predictions, which are described in the paper [Measuring Causal Effects of Data Statistics on Language Model's `Factual' Predictions](https://arxiv.org/abs/2207.14251). This is RoBERTa-base epoch_8. | f6ebceecb01bd6dc498d48cf4ea88e77 |

apache-2.0 | ['automatic-speech-recognition', 'uk'] | false | exp_w2v2t_uk_wav2vec2_s317 Fine-tuned [facebook/wav2vec2-large-lv60](https://huggingface.co/facebook/wav2vec2-large-lv60) for speech recognition using the train split of [Common Voice 7.0 (uk)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 8a004b446929581932a30bcd3d7df185 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image', 'lora'] | false | Usage To use this LoRA you have to download the file, as well as drop it into the "\stable-diffusion-webui\models\Lora" folder To use it in a prompt, please refer to the extra networks panel in your Automatic1111 webui. I highly recommend using it at around 0.4 to 0.6 strength for the best results. If you'd like to support the amazing artist on whose work this LoRA was trained, I'd highly recommend you check out [Syroh](https://www.pixiv.net/en/users/323340). Have fun :) | 389360b74d65eb60c155d1bbe414861a |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image', 'lora'] | false | Example Pictures <table> <tr> <td><img src=https://i.imgur.com/2aiatls.png width=50% height=100%/></td> </tr> <tr> <td><img src=https://i.imgur.com/HWMhTUt.png width=50% height=100%/></td> </tr> <tr> <td><img src=https://i.imgur.com/hBelYEF.png width=50% height=100%/></td> </tr> </table> | 25cb81556331334bfeaac993ef3b8296 |

cc-by-4.0 | [] | false | `cyT5-small` is a light-weight (alpha-version) Welsh T5 model extracted from the `google/mt5-small` model and fine-tuned only on the [Welsh summarization dataset](https://huggingface.co/datasets/ignatius/welsh_summarization). **Citation:** [Introducing the Welsh Text Summarisation Dataset and Baseline Systems](https://arxiv.org/abs/2205.02545). ``` @article{ezeani2022introducing, title={Introducing the Welsh text summarisation dataset and baseline systems}, author={Ezeani, Ignatius and El-Haj, Mahmoud and Morris, Jonathan and Knight, Dawn}, journal={arXiv preprint arXiv:2205.02545}, year={2022} } ``` Further developments are ongoing and will update will be shared soon. | e08f2362909252b6d0221113f6f468ed |

apache-2.0 | ['generated_from_trainer'] | false | whisper-small-zh-hk This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the mozilla-foundation/common_voice_11_0 zh-HK dataset. It achieves the following results on the evaluation set: - Loss: 0.3003 - Wer: 0.5615 | 2f04e830fc99b5aa927d1fbf068b5413 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - distributed_type: multi-GPU - num_devices: 2 - total_train_batch_size: 32 - total_eval_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 5000 - mixed_precision_training: Native AMP | 1e2acb7ca5e1d2ae7602cbc8097427f9 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.1556 | 2.28 | 1000 | 0.2708 | 0.6069 | | 0.038 | 4.57 | 2000 | 0.2674 | 0.5701 | | 0.0059 | 6.85 | 3000 | 0.2843 | 0.5635 | | 0.0017 | 9.13 | 4000 | 0.2952 | 0.5622 | | 0.0013 | 11.42 | 5000 | 0.3003 | 0.5615 | | 8de424f81315e58de9e7b63b31100a6b |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-sst2 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.7027 - Accuracy: 0.5092 | 40cf28010e85f187dff3a77ee587d53d |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.01 - train_batch_size: 64 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5 | 92473e60036f166990db1565b013c17e |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.