license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 12 | 4893826ce410f104347587726ae95269 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.4988 | 1.0 | 535 | 0.6503 | 0.4420 | | 0.3345 | 2.0 | 1070 | 0.5180 | 0.5756 | | 0.2408 | 3.0 | 1605 | 0.5348 | 0.5781 | | 0.193 | 4.0 | 2140 | 0.6002 | 0.5916 | | 0.1506 | 5.0 | 2675 | 0.9790 | 0.5803 | | 0.114 | 6.0 | 3210 | 0.9925 | 0.5907 | | 0.0882 | 7.0 | 3745 | 0.9395 | 0.6295 | | 0.0832 | 8.0 | 4280 | 1.1077 | 0.6167 | | 0.0506 | 9.0 | 4815 | 1.2207 | 0.6119 | | 0.0473 | 10.0 | 5350 | 1.3050 | 0.5931 | | 0.0378 | 11.0 | 5885 | 1.3288 | 0.6115 | | 0.0381 | 12.0 | 6420 | 1.3596 | 0.6115 | | 04c280449062f894f7523800286ceac1 |

apache-2.0 | ['generated_from_trainer'] | false | <img src="https://huggingface.co/Chemsseddine/bert2gpt2_med_ml_orange_summ-finetuned_med_sum_new-finetuned_med_sum_new/resolve/main/logobert2gpt2.png" alt="Map of positive probabilities per country." width="200"/> | 2ca9e7d8405294851b59ba116970f045 |

apache-2.0 | ['generated_from_trainer'] | false | This model is used for french summarization - Problem type: Summarization - Model ID: 980832493 - CO2 Emissions (in grams): 0.10685501288084795 This model is a fine-tuned version of [Chemsseddine/bert2gpt2SUMM](https://huggingface.co/Chemsseddine/bert2gpt2SUMM) on the None dataset. It achieves the following results on the evaluation set: - Loss: 4.03749418258667 - Rouge1: 28.8384 - Rouge2: 10.7511 - RougeL: 27.0842 - RougeLsum: 27.5118 - Gen Len: 22.0625 | b61f3b76e9af830a40924f418290aac4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | No log | 1.0 | 33199 | 4.03749 | 28.8384 | 10.7511 | 27.0842 | 27.5118 | 22.0625 | | 86f23127e39845b722d1760db109e8e7 |

mit | [] | false | Hello! This is a 14,000 step trained model based on the famous Where's Waldo / Where's Wally art style. (I'm American so I named the style Waldo, if you're familiar with Wally instead, my apologies!) The keyword to invoke the style is "Wheres Waldo style" and I've found it works best when you use it in conjunction with real world locations if you want to ground it at least a little bit in reality. If you really want the Wally/Waldo look, be sure to include "Bright primary colors" in your prompt, and add things like "pastel colors" and "washed out colors" to your negative prompts. You can also control the amount of "Waldo-ness" by de-emphasizing the style in your prompt. For example, "(Wheres Waldo Style:1.0), A busy street in New York City, (bright primary colors:1.2)" results in the following image:  While "(Wheres Waldo Style:0.6), A busy street in New York City, (bright primary colors:1.2) brings in some details about New York city like the subway entrance that you won't find at full strength style.  One thing to keep in mind, if you try to just spit out a 2048 x 2048 image, it's not going to give you waldo, it's going to give you a monstrosity like this:  I've found the sweet spot for this model to be in the 512 x 512 to about a max of 640 x 960. Much beyond that and it starts to create big blobs like the example above. It does take pretty well to inpainting though, so if you create something interesting at 640 x 960, throw it in inpaint and start drawing in fun details (you may have to reeeeally de-emphasize the style in your inpaints to get it to give you what you want, just a heads up) Finally, one thing I've found that really helps give it the "waldo look" is using Aesthetics. I like to run an Aesthetics pass at a strength of .20 for 30 steps. It helps prevent the really washed out colors and adds the stripes that are so prevalent in Wally/Waldo comics. I've included the Waldo2.pt file if you want to download it and use it, it was trained on the same high quality images I used for the checkpoint dreambooth training. Here is a screenshot of my config I use for generating these images:  I hope you have fun with this! Sadly it won't actually generate a Waldo/Wally into the image (at least not one that you can generate on demand), but if you're going to all the trouble to inpaint a proper Waldo/Wally scene, you can do some quick post work to add Waldo/Wally in there somewhere! =) Here are sample images using this model:            | 4bd484ad518fa99500b8de33311161c1 |

apache-2.0 | ['generated_from_trainer'] | false | question-paraphraser This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the adamlin/question_augmentation dataset. It achieves the following results on the evaluation set: - Loss: 3.5901 - Rouge1: 0.5385 - Rouge2: 0.0769 - Rougel: 0.5586 - Rougelsum: 0.5586 - Gen Len: 7.6712 | 57c14bb2a2a983973413eede7feab7ad |

apache-2.0 | ['translation', 'generated_from_trainer'] | false | marian-finetuned-kde4-en-to-fr This model is a fine-tuned version of [Helsinki-NLP/opus-mt-en-fr](https://huggingface.co/Helsinki-NLP/opus-mt-en-fr) on the kde4 dataset. It achieves the following results on the evaluation set: - Loss: 2.3557 - Bleu: 37.1286 | 36221b8c9699f977fa09198d0c91b1ff |

apache-2.0 | ['SEAD'] | false | Abstract With the widespread use of pre-trained language models (PLM), there has been increased research on how to make them applicable, especially in limited-resource or low latency high throughput scenarios. One of the dominant approaches is knowledge distillation (KD), where a smaller model is trained by receiving guidance from a large PLM. While there are many successful designs for learning knowledge from teachers, it remains unclear how students can learn better. Inspired by real university teaching processes, in this work we further explore knowledge distillation and propose a very simple yet effective framework, SEAD, to further improve task-specific generalization by utilizing multiple teachers. Our experiments show that SEAD leads to better performance compared to other popular KD methods [[1](https://arxiv.org/abs/1910.01108)] [[2](https://arxiv.org/abs/1909.10351)] [[3](https://arxiv.org/abs/2002.10957)] and achieves comparable or superior performance to its teacher model such as BERT [[4](https://arxiv.org/abs/1810.04805)] on total 13 tasks for the GLUE [[5](https://arxiv.org/abs/1804.07461)] and SuperGLUE [[6](https://arxiv.org/abs/1905.00537)] benchmarks. *Moyan Mei and Rohit Sroch. 2022. [SEAD: Simple ensemble and knowledge distillation framework for natural language understanding](https://www.adasci.org/journals/lattice-35309407/?volumes=true&open=621a3b18edc4364e8a96cb63). Lattice, THE MACHINE LEARNING JOURNAL by Association of Data Scientists, 3(1).* | 31d27091d787488f96d05254249a0f7b |

apache-2.0 | ['SEAD'] | false | SEAD-L-6_H-256_A-8-sst2 This is a student model distilled from [**BERT base**](https://huggingface.co/bert-base-uncased) as teacher by using SEAD framework on **sst2** task. For weights initialization, we used [microsoft/xtremedistil-l6-h256-uncased](https://huggingface.co/microsoft/xtremedistil-l6-h256-uncased) | 95efccf57af94dc074e5ec03471f1ca6 |

apache-2.0 | ['SEAD'] | false | Evaluation results | eval_accuracy | eval_runtime | eval_samples_per_second | eval_steps_per_second | eval_loss | eval_samples | |:-------------:|:------------:|:-----------------------:|:---------------------:|:---------:|:------------:| | 0.9266 | 1.3676 | 637.636 | 20.475 | 0.2503 | 872 | | fc96a18155f95fbba15163aca2e0a141 |

apache-2.0 | ['SEAD'] | false | Framework versions - Transformers >=4.8.0 - Pytorch >=1.6.0 - TensorFlow >=2.5.0 - Flax >=0.3.5 - Datasets >=1.10.2 - Tokenizers >=0.11.6 If you use these models, please cite the following paper: ``` @article{article, author={Mei, Moyan and Sroch, Rohit}, title={SEAD: Simple Ensemble and Knowledge Distillation Framework for Natural Language Understanding}, volume={3}, number={1}, journal={Lattice, The Machine Learning Journal by Association of Data Scientists}, day={26}, year={2022}, month={Feb}, url = {www.adasci.org/journals/lattice-35309407/?volumes=true&open=621a3b18edc4364e8a96cb63} } ``` | b0a6ff639ad5a76409e1d6d7d6f0fa0b |

apache-2.0 | ['generated_from_trainer'] | false | results This model is a fine-tuned version of [linydub/bart-large-samsum](https://huggingface.co/linydub/bart-large-samsum) on the samsum dataset. It achieves the following results on the evaluation set: - Loss: 1.0158 | 7d8d17b2926f35743332da3c61ffa65d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 1 | 0.9563 | | No log | 2.0 | 2 | 0.9877 | | No log | 3.0 | 3 | 1.0158 | | 45ea35d59b70eced8cef95b0804d594f |

apache-2.0 | ['generated_from_trainer'] | false | my_first_model This model is a fine-tuned version of [microsoft/resnet-50](https://huggingface.co/microsoft/resnet-50) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.6853 - Accuracy: 0.6 | f1622795e30ecc6a83f20ddce31c0ab5 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6918 | 1.0 | 23 | 0.6895 | 0.8 | | 0.7019 | 2.0 | 46 | 0.6859 | 0.6 | | 0.69 | 3.0 | 69 | 0.6853 | 0.6 | | 4a15d31c6fb355ad6204210726998cb7 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | LH_miki_v1 Dreambooth model trained by asukii with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept:      | 31490920ae23793fda991d9a6c69a65a |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-TIMIT-IPA This model is a fine-tuned version of [facebook/wav2vec2-large](https://huggingface.co/facebook/wav2vec2-large) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.3130 - Per: 0.0550 | f341633e061acf551d7c4f3d58c3de31 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Per | |:-------------:|:-----:|:----:|:---------------:|:------:| | 4.3003 | 6.85 | 500 | 3.8093 | 0.9424 | | 1.7151 | 13.7 | 1000 | 0.2929 | 0.0708 | | 0.2212 | 20.55 | 1500 | 0.2259 | 0.0575 | | 0.1241 | 27.4 | 2000 | 0.2716 | 0.0595 | | 0.0917 | 34.25 | 2500 | 0.2902 | 0.0606 | | 0.0659 | 41.1 | 3000 | 0.2982 | 0.0570 | | 0.0532 | 47.95 | 3500 | 0.2770 | 0.0595 | | 0.0438 | 54.79 | 4000 | 0.2953 | 0.0579 | | 0.0368 | 61.64 | 4500 | 0.3151 | 0.0572 | | 0.0303 | 68.49 | 5000 | 0.3425 | 0.0576 | | 0.0281 | 75.34 | 5500 | 0.3065 | 0.0558 | | 0.0215 | 82.19 | 6000 | 0.3288 | 0.0558 | | 0.0185 | 89.04 | 6500 | 0.3288 | 0.0558 | | 0.018 | 95.89 | 7000 | 0.3130 | 0.0550 | | be5adf28a2a7b390bf1d91754ba10bb5 |

apache-2.0 | ['translation'] | false | opus-mt-de-gaa * source languages: de * target languages: gaa * OPUS readme: [de-gaa](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/de-gaa/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-20.zip](https://object.pouta.csc.fi/OPUS-MT-models/de-gaa/opus-2020-01-20.zip) * test set translations: [opus-2020-01-20.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/de-gaa/opus-2020-01-20.test.txt) * test set scores: [opus-2020-01-20.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/de-gaa/opus-2020-01-20.eval.txt) | 7b566bea5ec1eb1a02ff60c0560baea5 |

mit | [] | false | Xuna on Stable Diffusion This is the `<Xuna>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:    | 7268045394db7d0d58fa85f0896cb40d |

mit | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Utilizing These weights are intended to be used with the original [CompVis Stable Diffusion codebase](https://github.com/CompVis/stable-diffusion). If you are looking for the model to use with the 🧨 diffusers library, [come here](https://huggingface.co/CompVis/stabilityai/sd-vae-ft-ema). | 211f7842ce61ca8e4464102b408202f6 |

mit | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | pretrained-autoencoding-models) on a 1:1 ratio of [LAION-Aesthetics](https://laion.ai/blog/laion-aesthetics/) and LAION-Humans, an unreleased subset containing only SFW images of humans. The intent was to fine-tune on the Stable Diffusion training set (the autoencoder was originally trained on OpenImages) but also enrich the dataset with images of humans to improve the reconstruction of faces. The first, _ft-EMA_, was resumed from the original checkpoint, trained for 313198 steps and uses EMA weights. It uses the same loss configuration as the original checkpoint (L1 + LPIPS). The second, _ft-MSE_, was resumed from _ft-EMA_ and uses EMA weights and was trained for another 280k steps using a different loss, with more emphasis on MSE reconstruction (MSE + 0.1 * LPIPS). It produces somewhat ``smoother'' outputs. The batch size for both versions was 192 (16 A100s, batch size 12 per GPU). To keep compatibility with existing models, only the decoder part was finetuned; the checkpoints can be used as a drop-in replacement for the existing autoencoder.. _Original kl-f8 VAE vs f8-ft-EMA vs f8-ft-MSE_ | 7b22a9426f2d83eaf032e1776508ca23 |

apache-2.0 | ['generated_from_trainer'] | false | distilled-mt5-small-0.4-2 This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the wmt16 ro-en dataset. It achieves the following results on the evaluation set: - Loss: 3.6725 - Bleu: 1.5399 - Gen Len: 88.995 | 22d45538b8f9f806a736e5d18e7b15d4 |

apache-2.0 | [] | false | Model Card for mT5-base-HunSum-1 The mT5-base-HunSum-1 is a Hungarian abstractive summarization model, which was trained on the [SZTAKI-HLT/HunSum-1 dataset](https://huggingface.co/datasets/SZTAKI-HLT/HunSum-1). The model is based on [google/mt5-base](https://huggingface.co/google/mt5-base). | 6d8b16af8e230785b38d00286bcd2d14 |

apache-2.0 | [] | false | Intended uses & limitations - **Model type:** Text Summarization - **Language(s) (NLP):** Hungarian - **Resource(s) for more information:** - [GitHub Repo](https://github.com/dorinapetra/summarization) | e7e3b511bb812f1bee6c070685f27bbd |

apache-2.0 | [] | false | Parameters - **Batch Size:** 12 - **Learning Rate:** 5e-5 - **Weight Decay:** 0.01 - **Warmup Steps:** 3000 - **Epochs:** 10 - **no_repeat_ngram_size:** 3 - **num_beams:** 5 - **early_stopping:** False - **encoder_no_repeat_ngram_size:** 4 | 31564c1e56864396a8d034559fff2728 |

apache-2.0 | [] | false | Results | Metric | Value | | :------------ | :------------------------------------------ | | ROUGE-1 | 37.70 | | ROUGE-2 | 11.22 | | ROUGE-L | 24.37 | | dc1e3a4dfdcce156b4bb93140169a0da |

apache-2.0 | [] | false | Citation If you use our model, please cite the following paper: ``` @inproceedings {HunSum-1, title = {{HunSum-1: an Abstractive Summarization Dataset for Hungarian}}, booktitle = {XIX. Magyar Számítógépes Nyelvészeti Konferencia (MSZNY 2023)}, year = {2023}, publisher = {Szegedi Tudományegyetem, Informatikai Intézet}, address = {Szeged, Magyarország}, author = {Barta, Botond and Lakatos, Dorina and Nagy, Attila and Nyist, Mil{\'{a}}n Konor and {\'{A}}cs, Judit}, pages = {231--243} } ``` | 05a9039f3cdda7e1b79d7ecbcb97f13a |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2186 - Accuracy: 0.924 - F1: 0.9241 | 725f01cde0a90fdd31ebc612188846c2 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8218 | 1.0 | 250 | 0.3165 | 0.9025 | 0.9001 | | 0.2494 | 2.0 | 500 | 0.2186 | 0.924 | 0.9241 | | aea5011e13b6dbf5911079688e201769 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'kmr', 'model_for_talk', 'mozilla-foundation/common_voice_7_0', 'robust-speech-event'] | false | wav2vec2-large-xls-r-300m-kurdish This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the MOZILLA-FOUNDATION/COMMON_VOICE_7_0 - KMR dataset. It achieves the following results on the evaluation set: - Loss: 0.2548 - Wer: 0.2688 | 90bf2489a87498a0a9aded4eee936f43 |

apache-2.0 | ['automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'kmr', 'model_for_talk', 'mozilla-foundation/common_voice_7_0', 'robust-speech-event'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 1.3161 | 12.27 | 2000 | 0.4199 | 0.4797 | | 1.0643 | 24.54 | 4000 | 0.2982 | 0.3721 | | 0.9718 | 36.81 | 6000 | 0.2762 | 0.3333 | | 0.8772 | 49.08 | 8000 | 0.2586 | 0.3051 | | 0.8236 | 61.35 | 10000 | 0.2575 | 0.2865 | | 0.7745 | 73.62 | 12000 | 0.2603 | 0.2816 | | 0.7297 | 85.89 | 14000 | 0.2539 | 0.2727 | | 0.7079 | 98.16 | 16000 | 0.2554 | 0.2681 | | ff5167e357d1c7238b5585297eecaf42 |

apache-2.0 | ['generated_from_trainer'] | false | test_trainer This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the yelp_review_full dataset. It achieves the following results on the evaluation set: - Loss: 1.0183 - Accuracy: 0.586 | 56579eba0bb6e0c5985941efac9ceba1 |

apache-2.0 | ['translation'] | false | opus-mt-ar-en * source languages: ar * target languages: en * OPUS readme: [ar-en](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/ar-en/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2019-12-18.zip](https://object.pouta.csc.fi/OPUS-MT-models/ar-en/opus-2019-12-18.zip) * test set translations: [opus-2019-12-18.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/ar-en/opus-2019-12-18.test.txt) * test set scores: [opus-2019-12-18.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/ar-en/opus-2019-12-18.eval.txt) | 00ca7dca2b10202c9a8701f4554c0f6a |

apache-2.0 | ['generated_from_trainer'] | false | distilgpt2-finetuned-wikitext2 This model is a fine-tuned version of [distilgpt2](https://huggingface.co/distilgpt2) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.6423 | ef3de32a47600d65ec5abdff2ec9fa87 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 3.7602 | 1.0 | 2334 | 3.6669 | | 3.633 | 2.0 | 4668 | 3.6455 | | 3.6078 | 3.0 | 7002 | 3.6423 | | 4960f6d8d8bbdc02dcac8d06d2b81021 |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'pytorch', 'speechbrain'] | false | | Release | Test WER | GPUs | |:-------------:|:--------------:| :--------:| | 22-05-11 | - | 1xK80 24GB | after 9 epochs training - valid %WER: 4.09e+02 after 12 epochs training - valid %WER: 2.07e+02, test WER: 1.78e+02 | 634182528686cba745c67e0172524f6c |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'pytorch', 'speechbrain'] | false | Pipeline description (by SpeechBrain text) This ASR system is composed with 3 different but linked blocks: - Tokenizer (unigram) that transforms words into subword units and trained with the train transcriptions of LibriSpeech. - Neural language model (RNNLM) trained on the full (380K) words dataset. - Acoustic model (CRDNN + CTC/Attention). The CRDNN architecture is made of N blocks of convolutional neural networks with normalisation and pooling on the frequency domain. Then, a bidirectional LSTM is connected to a final DNN to obtain the final acoustic representation that is given to the CTC and attention decoders. The system is trained with recordings sampled at 16kHz (single channel). The code will automatically normalize your audio (i.e., resampling + mono channel selection) when calling *transcribe_file* if needed. | de707b69d7d3e0ac71c7540a03031ab9 |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'pytorch', 'speechbrain'] | false | Install SpeechBrain First of all, please install SpeechBrain with the following command: ``` pip install speechbrain ``` Please notice that SpeechBrain encourage you to read tutorials and learn more about [SpeechBrain](https://speechbrain.github.io). | 084207ea1dc3a75ccc610292747584fa |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'pytorch', 'speechbrain'] | false | Transcribing your own audio files (in Russian) ```python from speechbrain.pretrained import EncoderDecoderASR asr_model = EncoderDecoderASR.from_hparams(source="AndyGo/speechbrain-asr-crdnn-rnnlm-buriy-audiobooks-2-val", savedir="pretrained_models/speech-brain-asr-crdnn-rnnlm-buriy-audiobooks-2-val") asr_model.transcribe_file('speechbrain-asr-crdnn-rnnlm-buriy-audiobooks-2-val/example.wav') ``` | ea14c8c64a17e7476408da9045b3e0c8 |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'pytorch', 'speechbrain'] | false | Russian Speech Datasets Russian Speech Datasets are provided by Microsoft Corporation with CC BY-NC license. Instructions by downloading - https://github.com/snakers4/open_stt The CC BY-NC license requires that the original copyright owner be listed as the author and the work be used only for non-commercial purposes We used buriy-audiobooks-2-val dataset | e0c4ea4e86252bb0d95a3c9ed1f27338 |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'pytorch', 'speechbrain'] | false | Citing SpeechBrain Please, cite SpeechBrain if you use it for your research or business. @misc{speechbrain, title={{SpeechBrain}: A General-Purpose Speech Toolkit}, author={Mirco Ravanelli and Titouan Parcollet and Peter Plantinga and Aku Rouhe and Samuele Cornell and Loren Lugosch and Cem Subakan and Nauman Dawalatabad and Abdelwahab Heba and Jianyuan Zhong and Ju-Chieh Chou and Sung-Lin Yeh and Szu-Wei Fu and Chien-Feng Liao and Elena Rastorgueva and François Grondin and William Aris and Hwidong Na and Yan Gao and Renato De Mori and Yoshua Bengio}, year={2021}, eprint={2106.04624}, archivePrefix={arXiv}, primaryClass={eess.AS}, note={arXiv:2106.04624} } | 5edfe945d7dbd49f6b3091f6d7e802f3 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | sentence-transformers/paraphrase-albert-base-v2 This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search. | c67ce925377af850fa99348ebe095066 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Usage (Sentence-Transformers) Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed: ``` pip install -U sentence-transformers ``` Then you can use the model like this: ```python from sentence_transformers import SentenceTransformer sentences = ["This is an example sentence", "Each sentence is converted"] model = SentenceTransformer('sentence-transformers/paraphrase-albert-base-v2') embeddings = model.encode(sentences) print(embeddings) ``` | e71de8606d608ba296a7d4993095244d |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Load model from HuggingFace Hub tokenizer = AutoTokenizer.from_pretrained('sentence-transformers/paraphrase-albert-base-v2') model = AutoModel.from_pretrained('sentence-transformers/paraphrase-albert-base-v2') | 8d364edc0c2ba67a515aee96ffc55c38 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Evaluation Results For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=sentence-transformers/paraphrase-albert-base-v2) | b97bfcfae9f266c4a9aa440b3b38435c |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Full Model Architecture ``` SentenceTransformer( (0): Transformer({'max_seq_length': 128, 'do_lower_case': False}) with Transformer model: AlbertModel (1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False}) ) ``` | 4dd433453715824ebe2521425758ba3e |

creativeml-openrail-m | ['cyberpunk', 'anime', 'stable-diffusion', 'aiart', 'text-to-image', 'TPU'] | false | the same as the other one execpt with built-in support for genshin impact characters <center><img src="https://huggingface.co/AdamOswald1/Cyberpunk-Anime-Diffusion_with_support_for_Gen-Imp_characters/resolve/main/img/5.jpg" width="512" height="512"/></center>  | 38c8b8fd71143beac1dd8d54e6d5ba79 |

creativeml-openrail-m | ['cyberpunk', 'anime', 'stable-diffusion', 'aiart', 'text-to-image', 'TPU'] | false | !pip install diffusers transformers scipy torch from diffusers import StableDiffusionPipeline import torch model_id = "AdamOswald1/Cyberpunk-Anime-Diffusion_with_support_for_Gen-Imp_characters" pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16) pipe = pipe.to("cuda") prompt = "a beautiful perfect face girl in dgs illustration style, Anime fine details portrait of school girl in front of modern tokyo city landscape on the background deep bokeh, anime masterpiece, 8k, sharp high quality anime" image = pipe(prompt).images[0] image.save("./cyberpunk_girl.png") ``` | ca8f32daf0ddd51be6eb0d6ea95dc57a |

creativeml-openrail-m | ['cyberpunk', 'anime', 'stable-diffusion', 'aiart', 'text-to-image', 'TPU'] | false | Online Demo You can try the Online Web UI demo build with [Gradio](https://github.com/gradio-app/gradio), or use Colab Notebook at here: *My Online Space Demo* [](https://huggingface.co/spaces/DGSpitzer/DGS-Diffusion-Space) *Finetuned Diffusion WebUI Demo by anzorq* [](https://huggingface.co/spaces/anzorq/finetuned_diffusion) *Colab Notebook* [](https://colab.research.google.com/github/HelixNGC7293/cyberpunk-anime-diffusion/blob/main/cyberpunk_anime_diffusion.ipynb)[](https://github.com/HelixNGC7293/cyberpunk-anime-diffusion) *Buy me a coffee if you like this project ;P ♥* [](https://www.buymeacoffee.com/dgspitzer) <center><img src="https://huggingface.co/AdamOswald1/Cyberpunk-Anime-Diffusion_with_support_for_Gen-Imp_characters/resolve/main/img/1.jpg" width="512" height="512"/></center> | af8d3750970a61087b35bd9ee4c6de96 |

creativeml-openrail-m | ['cyberpunk', 'anime', 'stable-diffusion', 'aiart', 'text-to-image', 'TPU'] | false | **👇Model👇** AI Model Weights available at huggingface: https://huggingface.co/AdamOswald1/Cyberpunk-Anime-Diffusion_with_support_for_Gen-Imp_characters <center><img src="https://huggingface.co/AdamOswald1/Cyberpunk-Anime-Diffusion_with_support_for_Gen-Imp_characters/resolve/main/img/2.jpg" width="512" height="512"/></center> | d747fa5422fececbf25b304e3240719c |

creativeml-openrail-m | ['cyberpunk', 'anime', 'stable-diffusion', 'aiart', 'text-to-image', 'TPU'] | false | Usage After model loaded, use keyword **dgs** in your prompt, with **illustration style** to get even better results. For sampler, use **Euler A** for the best result (**DDIM** kinda works too), CFG Scale 7, steps 20 should be fine **Example 1:** ``` portrait of a girl in dgs illustration style, Anime girl, female soldier working in a cyberpunk city, cleavage, ((perfect femine face)), intricate, 8k, highly detailed, shy, digital painting, intense, sharp focus ``` For cyber robot male character, you can add **muscular male** to improve the output. **Example 2:** ``` a photo of muscular beard soldier male in dgs illustration style, half-body, holding robot arms, strong chest ``` **Example 3 (with Stable Diffusion WebUI):** If using [AUTOMATIC1111's Stable Diffusion WebUI](https://github.com/AUTOMATIC1111/stable-diffusion-webui) You can simply use this as **prompt** with **Euler A** Sampler, CFG Scale 7, steps 20, 704 x 704px output res: ``` an anime girl in dgs illustration style ``` And set the **negative prompt** as this to get cleaner face: ``` out of focus, scary, creepy, evil, disfigured, missing limbs, ugly, gross, missing fingers ``` This will give you the exactly same style as the sample images above. <center><img src="https://huggingface.co/AdamOswald1/Cyberpunk-Anime-Diffusion_with_support_for_Gen-Imp_characters/resolve/main/img/ReadmeAddon.jpg" width="256" height="353"/></center> --- **NOTE: usage of this model implies accpetance of stable diffusion's [CreativeML Open RAIL-M license](LICENSE)** --- <center><img src="https://huggingface.co/AdamOswald1/Cyberpunk-Anime-Diffusion_with_support_for_Gen-Imp_characters/resolve/main/img/4.jpg" width="700" height="700"/></center> <center><img src="https://huggingface.co/AdamOswald1/Cyberpunk-Anime-Diffusion_with_support_for_Gen-Imp_characters/resolve/main/img/6.jpg" width="700" height="700"/></center> | c2d6ca52fac2783525c89e32ec0a9e93 |

mit | ['generated_from_keras_callback'] | false | sachinsahu/Catalan_language-clustered This model is a fine-tuned version of [nandysoham16/13-clustered_aug](https://huggingface.co/nandysoham16/13-clustered_aug) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.5775 - Train End Logits Accuracy: 0.8368 - Train Start Logits Accuracy: 0.8646 - Validation Loss: 0.5087 - Validation End Logits Accuracy: 0.9091 - Validation Start Logits Accuracy: 0.9091 - Epoch: 0 | 7847e75cc1eff3bdd6a3dbbc496c57e5 |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 0.5775 | 0.8368 | 0.8646 | 0.5087 | 0.9091 | 0.9091 | 0 | | 8de4a21eef1f11ad099866308bd65627 |

apache-2.0 | ['generated_from_trainer'] | false | fine-tuned-ai-ss-hs-01 This model is a fine-tuned version of [bert-base-multilingual-uncased](https://huggingface.co/bert-base-multilingual-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - AUC: 0.88609 - Precision: 0.8514 - Accuracy: 0.8101 - F1: 0.7875 - Recall: 0.7326 | 7046d6dcfc89ffaf34a48ca93a03a374 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1.1207606211860595e-05 - train_batch_size: 16 - eval_batch_size: 4 - seed: 2 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 4 | 7bb33ff17720375b82712e04c61bfa81 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Precision | Recall | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|:---------:|:------:| | No log | 1.0 | 357 | 1.0285 | 0.6955 | 0.5657 | 0.8987 | 0.4128 | | 0.5857 | 2.0 | 714 | 1.0350 | 0.7207 | 0.6296 | 0.8673 | 0.4942 | | 0.51 | 3.0 | 1071 | 0.7467 | 0.8156 | 0.7975 | 0.8442 | 0.7558 | | 0.51 | 4.0 | 1428 | 0.8376 | 0.8101 | 0.7875 | 0.8514 | 0.7326 | | 7e629ef8384caf60b1a1a648eb2c7d40 |

apache-2.0 | ['generated_from_trainer'] | false | pneumonia1 This model is a fine-tuned version of [microsoft/swin-tiny-patch4-window7-224](https://huggingface.co/microsoft/swin-tiny-patch4-window7-224) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.1400 - Accuracy: 0.9519 | 6e1ddf3081edd36bda2c3fabf2ac6d96 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 163 | 0.3493 | 0.8429 | | No log | 2.0 | 326 | 0.1928 | 0.9423 | | No log | 3.0 | 489 | 0.1373 | 0.9439 | | 0.1973 | 4.0 | 652 | 0.1389 | 0.9487 | | 0.1973 | 5.0 | 815 | 0.2311 | 0.9327 | | 0.1973 | 6.0 | 978 | 0.2150 | 0.9423 | | 0.1255 | 7.0 | 1141 | 0.2523 | 0.9359 | | 0.1255 | 8.0 | 1304 | 0.1220 | 0.9535 | | 0.1255 | 9.0 | 1467 | 0.1348 | 0.9519 | | 0.1099 | 10.0 | 1630 | 0.1400 | 0.9519 | | ca87ef4e20530805ce185869cd91b8f3 |

['apache-2.0', 'bsd-3-clause'] | ['summarization', 'led', 'summary', 'longformer', 'booksum', 'long-document', 'long-form'] | false | Longformer Encoder-Decoder (LED) for Narrative-Esque Long Text Summarization <a href="https://colab.research.google.com/gist/pszemraj/36950064ca76161d9d258e5cdbfa6833/led-base-demo-token-batching.ipynb"> <img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/> </a> - **What:** This is the (current) result of the quest for a summarization model that condenses technical/long information down well _in general, academic and narrative usage - **Use cases:** long narrative summarization (think stories - as the dataset intended), article/paper/textbook/other summarization, technical:simple summarization. - Models trained on this dataset tend to also _explain_ what they are summarizing, which IMO is awesome. - Works well on lots of text, and can hand 16384 tokens/batch. - See examples in Colab demo linked above, or try the [demo on Spaces](https://huggingface.co/spaces/pszemraj/summarize-long-text) - > Note: the API is set to generate a max of 64 tokens for runtime reasons, so the summaries may be truncated (depending on the length of input text). For best results use python as below. | 52bac38334dcaa190cd35b152c435118 |

['apache-2.0', 'bsd-3-clause'] | ['summarization', 'led', 'summary', 'longformer', 'booksum', 'long-document', 'long-form'] | false | About - Trained on the BookSum dataset released by SalesForce (this is what adds the `bsd-3-clause` license) - Trained for 16 epochs vs. [`pszemraj/led-base-16384-finetuned-booksum`](https://huggingface.co/pszemraj/led-base-16384-finetuned-booksum), - parameters adjusted for _very_ fine-tuning type training (super low LR, etc) - all the parameters for generation on the API are the same for easy comparison between versions. | 405fcd2eee1e7bf233658a8d045db60e |

['apache-2.0', 'bsd-3-clause'] | ['summarization', 'led', 'summary', 'longformer', 'booksum', 'long-document', 'long-form'] | false | Usage - Basics - it is recommended to use `encoder_no_repeat_ngram_size=3` when calling the pipeline object to improve summary quality. - this param forces the model to use new vocabulary and create an abstractive summary otherwise it may l compile the best _extractive_ summary from the input provided. - create the pipeline object: ```python import torch from transformers import pipeline hf_name = 'pszemraj/led-base-book-summary' summarizer = pipeline( "summarization", hf_name, device=0 if torch.cuda.is_available() else -1, ) ``` - put words into the pipeline object: ```python wall_of_text = "your words here" result = summarizer( wall_of_text, min_length=8, max_length=256, no_repeat_ngram_size=3, encoder_no_repeat_ngram_size=3, repetition_penalty=3.5, num_beams=4, do_sample=False, early_stopping=True, ) print(result[0]['generated_text']) ``` --- | 4c779b8c03bd0624572838f71babf35f |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-finetuned-vi-infovqa This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 3.5470 | e7f06f9827013297ee5b4fbe9732b079 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 0.21 | 100 | 4.2058 | | No log | 0.43 | 200 | 4.0210 | | No log | 0.64 | 300 | 4.0454 | | No log | 0.85 | 400 | 3.7557 | | 4.04 | 1.07 | 500 | 3.8257 | | 4.04 | 1.28 | 600 | 3.7713 | | 4.04 | 1.49 | 700 | 3.6075 | | 4.04 | 1.71 | 800 | 3.6155 | | 4.04 | 1.92 | 900 | 3.5470 | | 98240c309ffccccaa29b8b9a4564cda4 |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | `siddhana/slurp_entity_asr_train_asr_conformer_raw_en_word_valid.acc.ave_10best` ♻️ Imported from https://zenodo.org/record/5590204 This model was trained by siddhana using fsc/asr1 recipe in [espnet](https://github.com/espnet/espnet/). | 2e0c5ba9d5eb74966ecc501e1e62a11e |

apache-2.0 | ['generated_from_trainer'] | false | switch-base-8-finetuned-samsum This model is a fine-tuned version of [google/switch-base-8](https://huggingface.co/google/switch-base-8) on the samsum dataset. It achieves the following results on the evaluation set: - Loss: 1.4606 - Rouge1: 46.5651 - Rouge2: 23.2378 - Rougel: 39.4484 - Rougelsum: 43.1011 - Gen Len: 17.0183 | 9cd34b3eef25f6ab81504d2e7641075c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | 1.8829 | 1.0 | 3683 | 1.5154 | 46.3805 | 23.0982 | 39.0612 | 43.0142 | 17.6296 | | 1.6207 | 2.0 | 7366 | 1.4578 | 47.7434 | 24.9471 | 40.6481 | 44.351 | 17.2066 | | 1.442 | 3.0 | 11049 | 1.4360 | 47.6903 | 24.9954 | 40.713 | 44.3487 | 17.0501 | | 1.3103 | 4.0 | 14732 | 1.4396 | 48.4517 | 25.7725 | 41.5212 | 45.1211 | 16.9071 | | 1.2393 | 5.0 | 18415 | 1.4445 | 48.4002 | 25.8727 | 41.5361 | 45.0467 | 16.9804 | | cc0f7e83f78bc4e2c510cd71272d91a0 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-fr This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.2848 - F1: 0.8299 | dcd4d5ff7263784c9cb248b853938a42 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.5989 | 1.0 | 191 | 0.3383 | 0.7928 | | 0.2617 | 2.0 | 382 | 0.2966 | 0.8318 | | 0.1672 | 3.0 | 573 | 0.2848 | 0.8299 | | a75c02c06082c4804b7dd79c3d2169e8 |

apache-2.0 | ['generated_from_trainer'] | false | finetuned_sentence_itr0_2e-05_all_01_03_2022-05_32_03 This model is a fine-tuned version of [distilbert-base-uncased-finetuned-sst-2-english](https://huggingface.co/distilbert-base-uncased-finetuned-sst-2-english) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4208 - Accuracy: 0.8283 - F1: 0.8915 - Precision: 0.8487 - Recall: 0.9389 | 97fcfdbb42a6bd772775714746b58a25 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Precision | Recall | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|:---------:|:------:| | No log | 1.0 | 390 | 0.4443 | 0.7768 | 0.8589 | 0.8072 | 0.9176 | | 0.4532 | 2.0 | 780 | 0.4603 | 0.8098 | 0.8791 | 0.8302 | 0.9341 | | 0.2608 | 3.0 | 1170 | 0.5284 | 0.8061 | 0.8713 | 0.8567 | 0.8863 | | 0.1577 | 4.0 | 1560 | 0.6398 | 0.8085 | 0.8749 | 0.8472 | 0.9044 | | 0.1577 | 5.0 | 1950 | 0.7089 | 0.8085 | 0.8741 | 0.8516 | 0.8979 | | f2c48d21dab2926c13b0cdaea8643e75 |

apache-2.0 | ['generated_from_trainer'] | false | albert-large-v2_cls_CR This model is a fine-tuned version of [albert-large-v2](https://huggingface.co/albert-large-v2) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.6549 - Accuracy: 0.6383 | e519b3e8ebefb8ba6be7eda661127926 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 213 | 0.3524 | 0.8803 | | No log | 2.0 | 426 | 0.6839 | 0.6383 | | 0.5671 | 3.0 | 639 | 0.6622 | 0.6383 | | 0.5671 | 4.0 | 852 | 0.6549 | 0.6383 | | 0.6652 | 5.0 | 1065 | 0.6549 | 0.6383 | | 4a03c7830035279f3a49fc0aec154c7c |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-squad This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad dataset. It achieves the following results on the evaluation set: - Loss: 1.2108 | 600a7ca045e6def55a6458aa85d8da79 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.4952 | 1.0 | 5533 | 1.3895 | | 1.3024 | 2.0 | 11066 | 1.2490 | | 1.2087 | 3.0 | 16599 | 1.2108 | | 77422a9358a77a55c754529eac450480 |

apache-2.0 | [] | false | This repository is created with the aim to provide better models for NLI in persian, with the transparent codes for training I hope you guys find it inspiring and build better model in the future. for more details about the task and methods used for training check the [medium post](https://haddadhesam.medium.com/) and notebooks. | 90410ef075f81b25981ca911befb427d |

apache-2.0 | [] | false | Model The proposed model is published at HuggingFace Hub with the name of ``demoversion/bert-fa-base-uncased-haddad-wikinli``. You can download and use the model from [HuggingFace Website](https://huggingface.co/demoversion/bert-fa-base-uncased-haddad-wikinli) or directly in transformers library like this: from transformers import pipeline model = pipeline("zero-shot-classification", model="demoversion/bert-fa-base-uncased-haddad-wikinli") labels = ["ورزشی", "سیاسی", "علمی", "فرهنگی"] template_str = "این یک متن {} است." str_sentence = "مرحله مقدماتی جام جهانی حاشیههای زیادی داشت." model(str_sentence, labels, hypothesis_template=template_str) The result of this code snippet is: Asking to truncate to max_length but no maximum length is provided and the model has no predefined maximum length. Default to no truncation. {'labels': ['فرهنگی', 'علمی', 'سیاسی', 'ورزشی'], 'scores': [0.25921085476875305, 0.25713297724723816, 0.24884170293807983, 0.23481446504592896], 'sequence': 'مرحله مقدماتی جام جهانی حاشیه\u200cهای زیادی داشت.'} Yep, the right label (highest score) without training. | 8867947e0876917110ea6db69b4ca277 |

apache-2.0 | [] | false | Results The result comparing to the original model published for this dataset is available in the table bellow. |Model|dev_accuracy| dev_f1|test_accuracy|test_f1| |--|--|--|--|--| |[m3hrdadfi/bert-fa-base-uncased-wikinli](https://huggingface.co/m3hrdadfi/bert-fa-base-uncased-wikinli)|77.88|77.57|76.64|75.99| |[demoversion/bert-fa-base-uncased-haddad-wikinli](https://huggingface.co/demoversion/bert-fa-base-uncased-haddad-wikinli)|**78.62**|**79.74**|**77.04**|**78.56**| | 68b72c8bc1a614f42889ae9120e8b270 |

apache-2.0 | [] | false | Notebooks Notebooks used for training and evaluation are available below. [Training ](https://colab.research.google.com/github/DemoVersion/persian-nli-trainer/blob/main/notebooks/training.ipynb) [Evaluation ](https://colab.research.google.com/github/DemoVersion/persian-nli-trainer/blob/main/notebooks/evaluation.ipynb) | d2ca891e674032f8e4336baa45a86be3 |

apache-2.0 | ['generated_from_keras_callback'] | false | my_Med This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 1.5902 - Validation Loss: 1.3655 - Epoch: 9 | 93a902ef339678b1adfc8da966a930db |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 2.8689 | 2.0671 | 0 | | 2.2250 | 1.8456 | 1 | | 2.0132 | 1.7104 | 2 | | 1.9079 | 1.6828 | 3 | | 1.8237 | 1.5935 | 4 | | 1.7651 | 1.5240 | 5 | | 1.7246 | 1.4930 | 6 | | 1.6565 | 1.4191 | 7 | | 1.6166 | 1.3944 | 8 | | 1.5902 | 1.3655 | 9 | | 42d7b920ef0d09859b8e547317fadc6c |

apache-2.0 | ['bert'] | false | German Hotel Review Sentiment Classification A model trained on English Hotel Reviews from Switzerland. The base model is the [bert-base-uncased](https://huggingface.co/bert-base-uncased). The last hidden layer of the base model was extracted and a classification layer was added. The entire model was then trained for 5 epochs on our dataset. | d2f93db58ef3b52528a1945a674026aa |

apache-2.0 | ['bert'] | false | Model Performance | Classes | Precision | Recall | F1 Score | | :--- | :---: | :---: |:---: | | Room | 77.78% | 77.78% | 77.78% | | Location | 95.45% | 95.45% | 95.45% | | Staff | 75.00% | 93.75% | 83.33% | | Unknown | 71.43% | 50.00% | 58.82% | | HotelOrganisation | 27.27% | 30.00% | 28.57% | | Food | 87.50% | 87.50% | 87.50% | | ReasonForStay | 63.64% | 58.33% | 60.87%| | GeneralUtility | 66.67% | 50.00% | 66.67% | | Accuracy | | | 74.00% | | Macro Average | 70.59%| 67.85% | 68.68% | | Weighted Average | 74.17% | 74.00% | 73.66% | | 0c27ffdcb4748458849816dca6c3aed7 |

apache-2.0 | ['generated_from_keras_callback'] | false | albert-humor-classification This model is a fine-tuned version of [albert-base-v2](https://huggingface.co/albert-base-v2) on an unknown dataset. It achieves the following results on the evaluation set: | 20c18b43e199859fd12132ff14e3412e |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-emotion-model This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2238 - Accuracy: 0.9205 - F1: 0.9204 | f6d8610b95b8a15f7131a9ec1665aa25 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | No log | 1.0 | 250 | 0.3235 | 0.9035 | 0.9003 | | 0.5384 | 2.0 | 500 | 0.2238 | 0.9205 | 0.9204 | | eb25619e7e67e8b0de217cf8de583cbc |

apache-2.0 | ['vision', 'image-segmentation'] | false | CLIPSeg model CLIPSeg model with reduce dimension 16. It was introduced in the paper [Image Segmentation Using Text and Image Prompts](https://arxiv.org/abs/2112.10003) by Lüddecke et al. and first released in [this repository](https://github.com/timojl/clipseg). | 0469fb80a7866f958826253ca7c8816b |

apache-2.0 | ['generated_from_trainer'] | false | mbert-targin-final This model is a fine-tuned version of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.9847 - Accuracy: 0.7025 - Precision: 0.6490 - Recall: 0.6487 - F1: 0.6489 | c0da3687ecdc62f2b14b0c6ec0c80cd7 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:---------:|:------:|:------:| | No log | 1.0 | 296 | 0.5774 | 0.7091 | 0.6506 | 0.6378 | 0.6426 | | 0.5912 | 2.0 | 592 | 0.5316 | 0.7376 | 0.6880 | 0.6767 | 0.6814 | | 0.5912 | 3.0 | 888 | 0.5511 | 0.7253 | 0.6692 | 0.6293 | 0.6378 | | 0.4844 | 4.0 | 1184 | 0.6262 | 0.6835 | 0.6622 | 0.6884 | 0.6613 | | 0.4844 | 5.0 | 1480 | 0.6320 | 0.7006 | 0.6574 | 0.6701 | 0.6616 | | 0.3861 | 6.0 | 1776 | 0.6983 | 0.7148 | 0.6632 | 0.6620 | 0.6626 | | 0.2773 | 7.0 | 2072 | 0.8109 | 0.7110 | 0.6630 | 0.6689 | 0.6655 | | 0.2773 | 8.0 | 2368 | 0.8948 | 0.7072 | 0.6525 | 0.6487 | 0.6504 | | 0.2068 | 9.0 | 2664 | 0.9693 | 0.7072 | 0.6519 | 0.6469 | 0.6492 | | 0.2068 | 10.0 | 2960 | 0.9847 | 0.7025 | 0.6490 | 0.6487 | 0.6489 | | 326786c9c862c5aa3618a57fbff71ff8 |

apache-2.0 | ['tapas'] | false | TAPAS medium model fine-tuned on Sequential Question Answering (SQA) This model has 2 versions which can be used. The default version corresponds to the `tapas_sqa_inter_masklm_medium_reset` checkpoint of the [original Github repository](https://github.com/google-research/tapas). This model was pre-trained on MLM and an additional step which the authors call intermediate pre-training, and then fine-tuned on [SQA](https://www.microsoft.com/en-us/download/details.aspx?id=54253). It uses relative position embeddings (i.e. resetting the position index at every cell of the table). The other (non-default) version which can be used is: - `no_reset`, which corresponds to `tapas_sqa_inter_masklm_medium` (intermediate pre-training, absolute position embeddings). Disclaimer: The team releasing TAPAS did not write a model card for this model so this model card has been written by the Hugging Face team and contributors. | 0ce7cb08136edc5f99fd7d2025ebd537 |

apache-2.0 | ['tapas'] | false | Results on SQA - Dev Accuracy Size | Reset | Dev Accuracy | Link -------- | --------| -------- | ---- LARGE | noreset | 0.7223 | [tapas-large-finetuned-sqa (absolute pos embeddings)](https://huggingface.co/google/tapas-large-finetuned-sqa/tree/no_reset) LARGE | reset | 0.7289 | [tapas-large-finetuned-sqa](https://huggingface.co/google/tapas-large-finetuned-sqa/tree/main) BASE | noreset | 0.6737 | [tapas-base-finetuned-sqa (absolute pos embeddings)](https://huggingface.co/google/tapas-base-finetuned-sqa/tree/no_reset) BASE | reset | 0.6874 | [tapas-base-finetuned-sqa](https://huggingface.co/google/tapas-base-finetuned-sqa/tree/main) **MEDIUM** | **noreset** | **0.6464** | [tapas-medium-finetuned-sqa (absolute pos embeddings)](https://huggingface.co/google/tapas-medium-finetuned-sqa/tree/no_reset) **MEDIUM** | **reset** | **0.6561** | [tapas-medium-finetuned-sqa](https://huggingface.co/google/tapas-medium-finetuned-sqa/tree/main) SMALL | noreset | 0.5876 | [tapas-small-finetuned-sqa (absolute pos embeddings)](https://huggingface.co/google/tapas-small-finetuned-sqa/tree/no_reset) SMALL | reset | 0.6155 | [tapas-small-finetuned-sqa](https://huggingface.co/google/tapas-small-finetuned-sqa/tree/main) MINI | noreset | 0.4574 | [tapas-mini-finetuned-sqa (absolute pos embeddings)](https://huggingface.co/google/tapas-mini-finetuned-sqa/tree/no_reset) MINI | reset | 0.5148 | [tapas-mini-finetuned-sqa](https://huggingface.co/google/tapas-mini-finetuned-sqa/tree/main)) TINY | noreset | 0.2004 | [tapas-tiny-finetuned-sqa (absolute pos embeddings)](https://huggingface.co/google/tapas-tiny-finetuned-sqa/tree/no_reset) TINY | reset | 0.2375 | [tapas-tiny-finetuned-sqa](https://huggingface.co/google/tapas-tiny-finetuned-sqa/tree/main) | c2b72f3959a1eabb56b8c6f62e559093 |

apache-2.0 | ['tapas'] | false | BibTeX entry and citation info ```bibtex @misc{herzig2020tapas, title={TAPAS: Weakly Supervised Table Parsing via Pre-training}, author={Jonathan Herzig and Paweł Krzysztof Nowak and Thomas Müller and Francesco Piccinno and Julian Martin Eisenschlos}, year={2020}, eprint={2004.02349}, archivePrefix={arXiv}, primaryClass={cs.IR} } ``` ```bibtex @misc{eisenschlos2020understanding, title={Understanding tables with intermediate pre-training}, author={Julian Martin Eisenschlos and Syrine Krichene and Thomas Müller}, year={2020}, eprint={2010.00571}, archivePrefix={arXiv}, primaryClass={cs.CL} } ``` ```bibtex @InProceedings{iyyer2017search-based, author = {Iyyer, Mohit and Yih, Scott Wen-tau and Chang, Ming-Wei}, title = {Search-based Neural Structured Learning for Sequential Question Answering}, booktitle = {Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics}, year = {2017}, month = {July}, abstract = {Recent work in semantic parsing for question answering has focused on long and complicated questions, many of which would seem unnatural if asked in a normal conversation between two humans. In an effort to explore a conversational QA setting, we present a more realistic task: answering sequences of simple but inter-related questions. We collect a dataset of 6,066 question sequences that inquire about semi-structured tables from Wikipedia, with 17,553 question-answer pairs in total. To solve this sequential question answering task, we propose a novel dynamic neural semantic parsing framework trained using a weakly supervised reward-guided search. Our model effectively leverages the sequential context to outperform state-of-the-art QA systems that are designed to answer highly complex questions.}, publisher = {Association for Computational Linguistics}, url = {https://www.microsoft.com/en-us/research/publication/search-based-neural-structured-learning-sequential-question-answering/}, } ``` | 4fd126b1a2f22e0f8c2fc17028821cbe |

apache-2.0 | ['italian', 'sequence-to-sequence', 'newspaper', 'ilgiornale', 'repubblica', 'style-transfer'] | false | mT5 Base for News Headline Style Transfer (Il Giornale to Repubblica) 🗞️➡️🗞️ 🇮🇹 This repository contains the checkpoint for the [mT5 Base](https://huggingface.co/google/mt5-base) model fine-tuned on news headline style transfer in the Il Giornale to Repubblica direction on the Italian CHANGE-IT dataset as part of the experiments of the paper [IT5: Large-scale Text-to-text Pretraining for Italian Language Understanding and Generation](https://arxiv.org/abs/2203.03759) by [Gabriele Sarti](https://gsarti.com) and [Malvina Nissim](https://malvinanissim.github.io). A comprehensive overview of other released materials is provided in the [gsarti/it5](https://github.com/gsarti/it5) repository. Refer to the paper for additional details concerning the reported scores and the evaluation approach. | 08baddfd3ab714fd37540b5afb477f89 |

apache-2.0 | ['italian', 'sequence-to-sequence', 'newspaper', 'ilgiornale', 'repubblica', 'style-transfer'] | false | Using the model The model is trained to generate an headline in the style of Repubblica from the full body of an article written in the style of Il Giornale. Model checkpoints are available for usage in Tensorflow, Pytorch and JAX. They can be used directly with pipelines as: ```python from transformers import pipelines g2r = pipeline("text2text-generation", model='it5/mt5-base-ilgiornale-to-repubblica') g2r("Arriva dal Partito nazionalista basco (Pnv) la conferma che i cinque deputati che siedono in parlamento voteranno la sfiducia al governo guidato da Mariano Rajoy. Pochi voti, ma significativi quelli della formazione politica di Aitor Esteban, che interverrà nel pomeriggio. Pur con dimensioni molto ridotte, il partito basco si è trovato a fare da ago della bilancia in aula. E il sostegno alla mozione presentata dai Socialisti potrebbe significare per il primo ministro non trovare quei 176 voti che gli servono per continuare a governare. \" Perché dovrei dimettermi io che per il momento ho la fiducia della Camera e quella che mi è stato data alle urne \", ha detto oggi Rajoy nel suo intervento in aula, mentre procedeva la discussione sulla mozione di sfiducia. Il voto dei baschi ora cambia le carte in tavola e fa crescere ulteriormente la pressione sul premier perché rassegni le sue dimissioni. La sfiducia al premier, o un'eventuale scelta di dimettersi, porterebbe alle estreme conseguenze lo scandalo per corruzione che ha investito il Partito popolare. Ma per ora sembra pensare a tutt'altro. \"Non ha intenzione di dimettersi - ha detto il segretario generale del Partito popolare , María Dolores de Cospedal - Non gioverebbe all'interesse generale o agli interessi del Pp\".") >>> [{"generated_text": "il nazionalista rajoy: 'voteremo la sfiducia'"}] ``` or loaded using autoclasses: ```python from transformers import AutoTokenizer, AutoModelForSeq2SeqLM tokenizer = AutoTokenizer.from_pretrained("it5/mt5-base-ilgiornale-to-repubblica") model = AutoModelForSeq2SeqLM.from_pretrained("it5/mt5-base-ilgiornale-to-repubblica") ``` If you use this model in your research, please cite our work as: ```bibtex @article{sarti-nissim-2022-it5, title={{IT5}: Large-scale Text-to-text Pretraining for Italian Language Understanding and Generation}, author={Sarti, Gabriele and Nissim, Malvina}, journal={ArXiv preprint 2203.03759}, url={https://arxiv.org/abs/2203.03759}, year={2022}, month={mar} } ``` | 1121398613d88302e380f362cbf435b5 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-clinc

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the clinc_oos dataset.

It achieves the following results on the evaluation set:

- Loss: 0.7778

- Accuracy: 0.9171

| d7c44b119423bcdec35525fa5430d873 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 48

- eval_batch_size: 48

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

| eed06c4adad3c0a8a30277e85c48bd5d |

apache-2.0 | ['generated_from_trainer'] | false | Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 4.2882 | 1.0 | 318 | 3.2777 | 0.7390 |

| 2.6228 | 2.0 | 636 | 1.8739 | 0.8287 |

| 1.5439 | 3.0 | 954 | 1.1619 | 0.8894 |

| 1.0111 | 4.0 | 1272 | 0.8601 | 0.9094 |

| 0.7999 | 5.0 | 1590 | 0.7778 | 0.9171 |

| e2e59c47577682fb5ca1bf826469c153 |

mit | [] | false | char-con on Stable Diffusion This is the `<char-con>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:        | 2e229a195acd4d88535222ec5318ed8a |

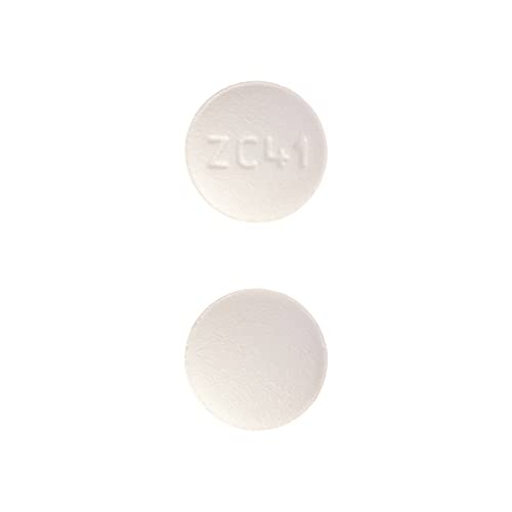

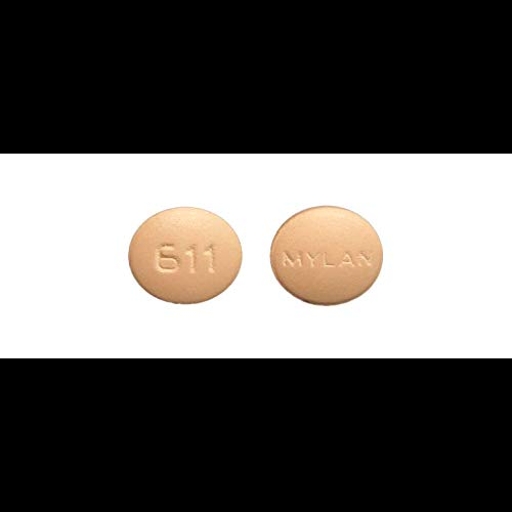

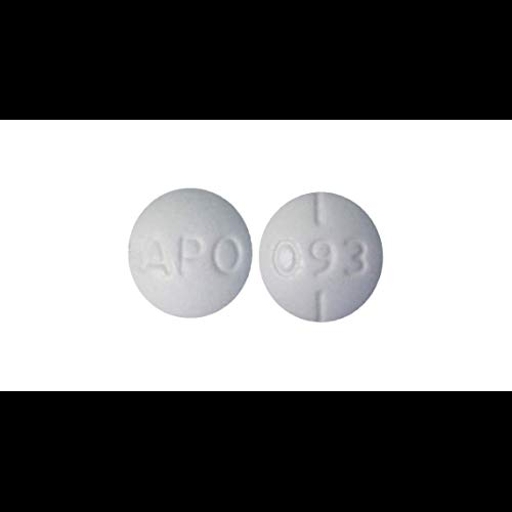

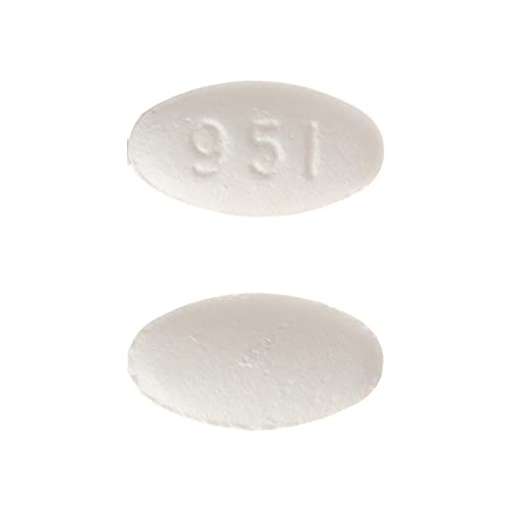

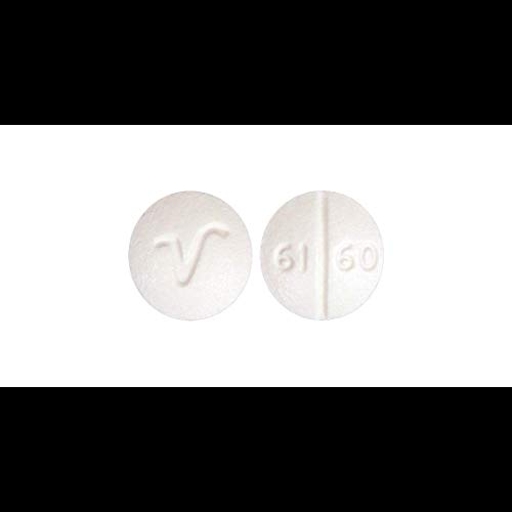

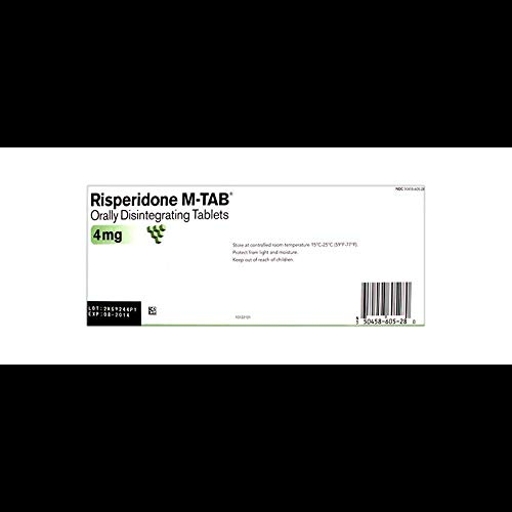

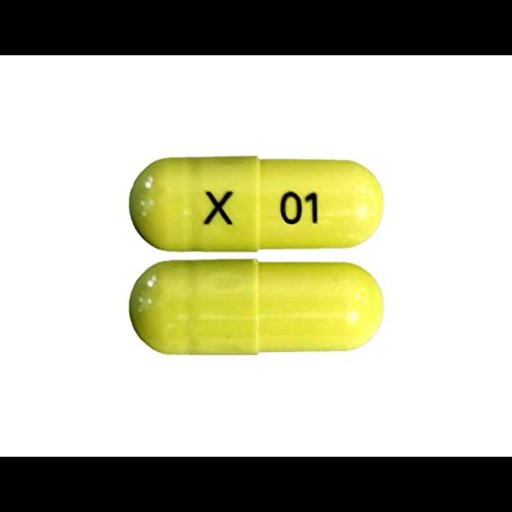

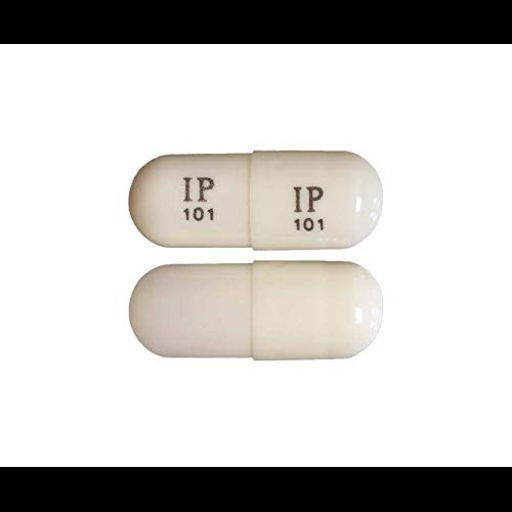

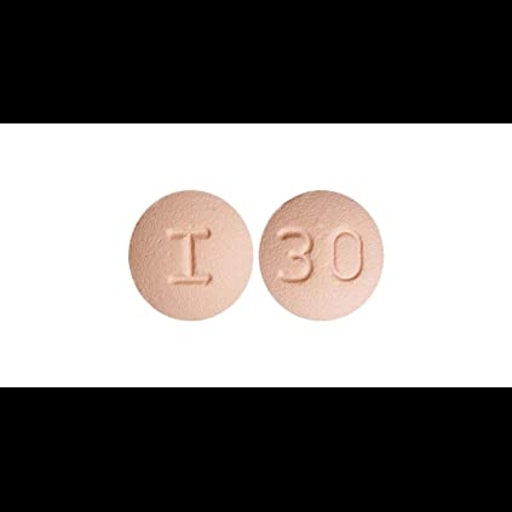

creativeml-openrail-m | ['text-to-image'] | false | pills1testmodel Dreambooth model fine-tuned v2-1-512 base model Sample pictures of: pills (use that on your prompt)  | 242c2ed6f9998edd9897239d9c9d2236 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.