license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'wildcard'] | false | DreamBooth model for the pochita concept trained by Arch4ngel on the Arch4ngel/pochita dataset. This is a Stable Diffusion model fine-tuned on the pochita concept with DreamBooth. It can be used by modifying the `instance_prompt`: **a photo of pochita plushie** This model was created as part of the DreamBooth Hackathon 🔥. Visit the [organisation page](https://huggingface.co/dreambooth-hackathon) for instructions on how to take part! | 60e9881efbae31096532841d454927f1 |

apache-2.0 | ['generated_from_trainer'] | false | train_model This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.0825 - Wer: 0.9077 | ab24baf315a4973c895a9c9517dec5e7 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 4.6984 | 11.11 | 500 | 3.1332 | 1.0 | | 2.4775 | 22.22 | 1000 | 1.0825 | 0.9077 | | 5ebcb5a86184b6f2d039cfdeebff7a50 |

apache-2.0 | ['translation'] | false | fra-bul * source group: French * target group: Bulgarian * OPUS readme: [fra-bul](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/fra-bul/README.md) * model: transformer * source language(s): fra * target language(s): bul * model: transformer * pre-processing: normalization + SentencePiece (spm32k,spm32k) * download original weights: [opus-2020-07-03.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/fra-bul/opus-2020-07-03.zip) * test set translations: [opus-2020-07-03.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/fra-bul/opus-2020-07-03.test.txt) * test set scores: [opus-2020-07-03.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/fra-bul/opus-2020-07-03.eval.txt) | 192ee7333176dd31dfdbdaa09734c8f0 |

apache-2.0 | ['translation'] | false | System Info: - hf_name: fra-bul - source_languages: fra - target_languages: bul - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/fra-bul/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['fr', 'bg'] - src_constituents: {'fra'} - tgt_constituents: {'bul', 'bul_Latn'} - src_multilingual: False - tgt_multilingual: False - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/fra-bul/opus-2020-07-03.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/fra-bul/opus-2020-07-03.test.txt - src_alpha3: fra - tgt_alpha3: bul - short_pair: fr-bg - chrF2_score: 0.657 - bleu: 46.3 - brevity_penalty: 0.953 - ref_len: 3286.0 - src_name: French - tgt_name: Bulgarian - train_date: 2020-07-03 - src_alpha2: fr - tgt_alpha2: bg - prefer_old: False - long_pair: fra-bul - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | ade034f7a22ce8c31ae922e943c7ff83 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 64 - eval_batch_size: 128 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 128 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 5.0 - mixed_precision_training: Native AMP | 17cdc0d3fd861267e2a2a58ce2b2d687 |

apache-2.0 | ['catalan', 'text classification', 'WikiCAT_ca', 'CaText', 'Catalan Textual Corpus'] | false | Model description The **roberta-base-ca-v2-cased-wikicat-ca** is a Text Classification model for the Catalan language fine-tuned from the [roberta-base-ca-v2](https://huggingface.co/projecte-aina/roberta-base-ca-v2) model, a [RoBERTa](https://arxiv.org/abs/1907.11692) base model pre-trained on a medium-size corpus collected from publicly available corpora and crawlers (check the roberta-base-ca-v2 model card for more details). Dataset used is https://huggingface.co/datasets/projecte-aina/WikiCAT_ca, automatically created from Wikipedia and Wikidata sources | 0da3ca53f3790ddba1ad069aa51c59a6 |

apache-2.0 | ['catalan', 'text classification', 'WikiCAT_ca', 'CaText', 'Catalan Textual Corpus'] | false | Intended uses and limitations **roberta-base-ca-v2-cased-wikicat-ca** model can be used to classify texts. The model is limited by its training dataset and may not generalize well for all use cases. | 5c69987e668d85f177eedb2f2dc5c275 |

apache-2.0 | ['catalan', 'text classification', 'WikiCAT_ca', 'CaText', 'Catalan Textual Corpus'] | false | How to use Here is how to use this model: ```python from transformers import pipeline from pprint import pprint nlp = pipeline("text-classification", model="roberta-base-ca-v2-cased-wikicat-ca") example = "La ressonància magnètica és una prova diagnòstica clau per a moltes malalties." tc_results = nlp(example) pprint(tc_results) ``` | de1b03ce19209afc505d09d12dd2640d |

apache-2.0 | ['catalan', 'text classification', 'WikiCAT_ca', 'CaText', 'Catalan Textual Corpus'] | false | Training procedure The model was trained with a batch size of 16 and three learning rates (1e-5, 3e-5, 5e-5) for 10 epochs. We then selected the best learning rate (3e-5) and checkpoint (epoch 3, step 1857) using the downstream task metric in the corresponding development set. | ab2ca937526f99e68e9d67e64c9a84b9 |

apache-2.0 | ['catalan', 'text classification', 'WikiCAT_ca', 'CaText', 'Catalan Textual Corpus'] | false | Evaluation results We evaluated the _roberta-base-ca-v2-cased-wikicat-ca_ on the WikiCAT_ca dev set: | Model | WikiCAT_ca (F1)| | ------------|:-------------| | roberta-base-ca-v2-cased-wikicat-ca | 77.823 | For more details, check the fine-tuning and evaluation scripts in the official [GitHub repository](https://github.com/projecte-aina/club). | 0e5966662f41fab9e6ee928836892a7c |

creativeml-openrail-m | ['text-to-image'] | false | tovebw Dreambooth model trained by duja1 with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v1-5 base model You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts! Sample pictures of: t123ovebw (use that on your prompt)  | 01259ca9221f034e790c038ffe821f01 |

apache-2.0 | ['translation'] | false | opus-mt-ro-sv * source languages: ro * target languages: sv * OPUS readme: [ro-sv](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/ro-sv/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/ro-sv/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/ro-sv/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/ro-sv/opus-2020-01-16.eval.txt) | e253fac4b268b57e72edc5ce0eeecb80 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-multilang-cv-ru This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.9734 - Wer: 0.7037 | e9f3cc88c307cb7ded9e455e057d9c69 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0005 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - num_epochs: 30 - mixed_precision_training: Native AMP | 85e4b3fc421c4baff3366188e7ffa08c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 6.0328 | 0.79 | 500 | 3.0713 | 1.0 | | 1.9426 | 1.58 | 1000 | 1.2048 | 0.9963 | | 1.1285 | 2.37 | 1500 | 0.9825 | 0.9282 | | 0.9462 | 3.15 | 2000 | 0.8836 | 0.8965 | | 0.8274 | 3.94 | 2500 | 0.8134 | 0.8661 | | 0.7106 | 4.73 | 3000 | 0.8033 | 0.8387 | | 0.6545 | 5.52 | 3500 | 0.8309 | 0.8366 | | 0.6013 | 6.31 | 4000 | 0.7667 | 0.8240 | | 0.5599 | 7.1 | 4500 | 0.7740 | 0.8160 | | 0.5027 | 7.89 | 5000 | 0.7796 | 0.8188 | | 0.4588 | 8.68 | 5500 | 0.8204 | 0.7968 | | 0.4448 | 9.46 | 6000 | 0.8277 | 0.7738 | | 0.4122 | 10.25 | 6500 | 0.8292 | 0.7776 | | 0.3816 | 11.04 | 7000 | 0.8548 | 0.7907 | | 0.3587 | 11.83 | 7500 | 0.8245 | 0.7805 | | 0.3374 | 12.62 | 8000 | 0.8371 | 0.7701 | | 0.3214 | 13.41 | 8500 | 0.8311 | 0.7822 | | 0.3072 | 14.2 | 9000 | 0.8940 | 0.7674 | | 0.2929 | 14.98 | 9500 | 0.8788 | 0.7604 | | 0.257 | 15.77 | 10000 | 0.8911 | 0.7633 | | 0.2592 | 16.56 | 10500 | 0.8673 | 0.7604 | | 0.2392 | 17.35 | 11000 | 0.9582 | 0.7810 | | 0.232 | 18.14 | 11500 | 0.9340 | 0.7423 | | 0.2252 | 18.93 | 12000 | 0.8874 | 0.7320 | | 0.2079 | 19.72 | 12500 | 0.9436 | 0.7483 | | 0.2003 | 20.5 | 13000 | 0.9573 | 0.7638 | | 0.194 | 21.29 | 13500 | 0.9361 | 0.7308 | | 0.188 | 22.08 | 14000 | 0.9704 | 0.7221 | | 0.1754 | 22.87 | 14500 | 0.9668 | 0.7265 | | 0.1688 | 23.66 | 15000 | 0.9680 | 0.7246 | | 0.162 | 24.45 | 15500 | 0.9443 | 0.7066 | | 0.1617 | 25.24 | 16000 | 0.9664 | 0.7265 | | 0.1504 | 26.03 | 16500 | 0.9505 | 0.7189 | | 0.1425 | 26.81 | 17000 | 0.9536 | 0.7112 | | 0.134 | 27.6 | 17500 | 0.9674 | 0.7047 | | 0.1301 | 28.39 | 18000 | 0.9852 | 0.7066 | | 0.1314 | 29.18 | 18500 | 0.9766 | 0.7073 | | 0.1219 | 29.97 | 19000 | 0.9734 | 0.7037 | | ad5799c8fd61eaee534bbe7dff6a4afa |

mit | ['generated_from_keras_callback'] | false | piyusharma/gpt2-medium-lex This model is a fine-tuned version of [gpt2-medium](https://huggingface.co/gpt2-medium) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 3.1565 - Epoch: 0 | abc17248fc46fcad124a063a6e3315e8 |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-sentiment-model-3000-samples This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.3107 - Accuracy: 0.8767 - F1: 0.8779 | ece38b7b978191f846275bc61095b00e |

mit | ['spacy', 'token-classification'] | false | uk_core_news_sm Ukrainian pipeline optimized for CPU. Components: tok2vec, morphologizer, parser, senter, ner, attribute_ruler, lemmatizer. | Feature | Description | | --- | --- | | **Name** | `uk_core_news_sm` | | **Version** | `3.5.0` | | **spaCy** | `>=3.5.0,<3.6.0` | | **Default Pipeline** | `tok2vec`, `morphologizer`, `parser`, `attribute_ruler`, `lemmatizer`, `ner` | | **Components** | `tok2vec`, `morphologizer`, `parser`, `senter`, `attribute_ruler`, `lemmatizer`, `ner` | | **Vectors** | 0 keys, 0 unique vectors (0 dimensions) | | **Sources** | [Ukr-Synth (e5d9eaf3)](https://huggingface.co/datasets/ukr-models/Ukr-Synth) (Volodymyr Kurnosov) | | **License** | `MIT` | | **Author** | [Explosion](https://explosion.ai) | | 6756dc83953cda643997c3ff0b50e0f9 |

mit | ['spacy', 'token-classification'] | false | Accuracy | Type | Score | | --- | --- | | `TOKEN_ACC` | 99.99 | | `TOKEN_P` | 99.99 | | `TOKEN_R` | 99.97 | | `TOKEN_F` | 99.98 | | `POS_ACC` | 98.13 | | `MORPH_ACC` | 94.65 | | `MORPH_MICRO_P` | 97.59 | | `MORPH_MICRO_R` | 96.79 | | `MORPH_MICRO_F` | 97.19 | | `SENTS_P` | 94.12 | | `SENTS_R` | 90.47 | | `SENTS_F` | 92.26 | | `DEP_UAS` | 93.44 | | `DEP_LAS` | 91.20 | | `TAG_ACC` | 98.13 | | `LEMMA_ACC` | 0.00 | | `ENTS_P` | 85.97 | | `ENTS_R` | 86.63 | | `ENTS_F` | 86.30 | | 8dbf95998a0a6d0b154189e01257e2f0 |

apache-2.0 | ['generated_from_trainer'] | false | run1 This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.6666 - Wer: 0.6375 - Cer: 0.3170 | 8f9e9bbca26d9b528f81fa1f1de432df |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 2000 - num_epochs: 50 - mixed_precision_training: Native AMP | 6a2bb4e694d01200ecdd5948c1d95513 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | Cer | |:-------------:|:-----:|:-----:|:---------------:|:------:|:------:| | 1.0564 | 2.36 | 2000 | 2.3456 | 0.9628 | 0.5549 | | 0.5071 | 4.73 | 4000 | 2.0652 | 0.9071 | 0.5115 | | 0.3952 | 7.09 | 6000 | 2.3649 | 0.9108 | 0.4628 | | 0.3367 | 9.46 | 8000 | 1.7615 | 0.8253 | 0.4348 | | 0.2765 | 11.82 | 10000 | 1.6151 | 0.7937 | 0.4087 | | 0.2493 | 14.18 | 12000 | 1.4976 | 0.7881 | 0.3905 | | 0.2318 | 16.55 | 14000 | 1.6731 | 0.8160 | 0.3925 | | 0.2074 | 18.91 | 16000 | 1.5822 | 0.7658 | 0.3913 | | 0.1825 | 21.28 | 18000 | 1.5442 | 0.7361 | 0.3704 | | 0.1824 | 23.64 | 20000 | 1.5988 | 0.7621 | 0.3711 | | 0.1699 | 26.0 | 22000 | 1.4261 | 0.7119 | 0.3490 | | 0.158 | 28.37 | 24000 | 1.7482 | 0.7658 | 0.3648 | | 0.1385 | 30.73 | 26000 | 1.4103 | 0.6784 | 0.3348 | | 0.1199 | 33.1 | 28000 | 1.5214 | 0.6636 | 0.3273 | | 0.116 | 35.46 | 30000 | 1.4288 | 0.7212 | 0.3486 | | 0.1071 | 37.83 | 32000 | 1.5344 | 0.7138 | 0.3411 | | 0.1007 | 40.19 | 34000 | 1.4501 | 0.6691 | 0.3237 | | 0.0943 | 42.55 | 36000 | 1.5367 | 0.6859 | 0.3265 | | 0.0844 | 44.92 | 38000 | 1.5321 | 0.6599 | 0.3273 | | 0.0762 | 47.28 | 40000 | 1.6721 | 0.6264 | 0.3142 | | 0.0778 | 49.65 | 42000 | 1.6666 | 0.6375 | 0.3170 | | 09fefd3fba76a67109c367adc240cede |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-all This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2508 - F1: 0.8470 | 0973c75b46b0835d4785d4ab5ea068b4 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.3712 | 1.0 | 2503 | 0.2633 | 0.8062 | | 0.2059 | 2.0 | 5006 | 0.2391 | 0.8330 | | 0.125 | 3.0 | 7509 | 0.2508 | 0.8470 | | 9c971233c5961372a37d3ac11decf7b6 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "lt", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained("seccily/wav2vec-lt-lite") model = Wav2Vec2ForCTC.from_pretrained("seccily/wav2vec-lt-lite") resampler = torchaudio.transforms.Resample(48_000, 16_000) ``` Test Result: 59.47 | b59d0d36831f031307e7eb6878584dd1 |

apache-2.0 | ['minds14', 'google/xtreme_s', 'generated_from_trainer'] | false | xtreme_s_xlsr_300m_minds14.en-US_2 This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the GOOGLE/XTREME_S - MINDS14.EN-US dataset. It achieves the following results on the evaluation set: - Loss: 0.5685 - F1: 0.8747 - Accuracy: 0.8759 | 864e2ae15ead02ba033fe1165202daa7 |

apache-2.0 | ['minds14', 'google/xtreme_s', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 2 - eval_batch_size: 8 - seed: 42 - distributed_type: multi-GPU - num_devices: 2 - gradient_accumulation_steps: 8 - total_train_batch_size: 32 - total_eval_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - num_epochs: 50.0 - mixed_precision_training: Native AMP | 3f3cc259f59417efd1f8cf84f439860d |

apache-2.0 | ['minds14', 'google/xtreme_s', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:------:|:--------:| | 2.6195 | 3.95 | 20 | 2.6348 | 0.0172 | 0.0816 | | 2.5925 | 7.95 | 40 | 2.6119 | 0.0352 | 0.0851 | | 2.1271 | 11.95 | 60 | 2.3066 | 0.1556 | 0.1986 | | 1.2618 | 15.95 | 80 | 1.3810 | 0.6877 | 0.7128 | | 0.5455 | 19.95 | 100 | 1.0403 | 0.6992 | 0.7270 | | 0.2571 | 23.95 | 120 | 0.8423 | 0.8160 | 0.8121 | | 0.3478 | 27.95 | 140 | 0.6500 | 0.8516 | 0.8440 | | 0.0732 | 31.95 | 160 | 0.7066 | 0.8123 | 0.8156 | | 0.1092 | 35.95 | 180 | 0.5878 | 0.8767 | 0.8759 | | 0.0271 | 39.95 | 200 | 0.5994 | 0.8578 | 0.8617 | | 0.4664 | 43.95 | 220 | 0.7830 | 0.8403 | 0.8440 | | 0.0192 | 47.95 | 240 | 0.5685 | 0.8747 | 0.8759 | | fa79861eaeba0efabbd99aa1f24de882 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-300m-turkish-colab This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.4409 - Wer: 0.3676 | 7197fb7e2aedc48c6197d8bfd8119c0a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.9829 | 3.67 | 400 | 0.7245 | 0.7504 | | 0.4544 | 7.34 | 800 | 0.4710 | 0.5193 | | 0.2201 | 11.01 | 1200 | 0.4801 | 0.4815 | | 0.1457 | 14.68 | 1600 | 0.4397 | 0.4324 | | 0.1079 | 18.35 | 2000 | 0.4770 | 0.4287 | | 0.0877 | 22.02 | 2400 | 0.4583 | 0.3813 | | 0.0698 | 25.69 | 2800 | 0.4421 | 0.3892 | | 0.0554 | 29.36 | 3200 | 0.4409 | 0.3676 | | 60a6c02ccea417921f6c369d5aeb446b |

mit | ['spacy', 'token-classification'] | false | en_core_web_sm English pipeline optimized for CPU. Components: tok2vec, tagger, parser, senter, ner, attribute_ruler, lemmatizer. | Feature | Description | | --- | --- | | **Name** | `en_core_web_sm` | | **Version** | `3.3.0` | | **spaCy** | `>=3.3.0.dev0,<3.4.0` | | **Default Pipeline** | `tok2vec`, `tagger`, `parser`, `attribute_ruler`, `lemmatizer`, `ner` | | **Components** | `tok2vec`, `tagger`, `parser`, `senter`, `attribute_ruler`, `lemmatizer`, `ner` | | **Vectors** | 0 keys, 0 unique vectors (0 dimensions) | | **Sources** | [OntoNotes 5](https://catalog.ldc.upenn.edu/LDC2013T19) (Ralph Weischedel, Martha Palmer, Mitchell Marcus, Eduard Hovy, Sameer Pradhan, Lance Ramshaw, Nianwen Xue, Ann Taylor, Jeff Kaufman, Michelle Franchini, Mohammed El-Bachouti, Robert Belvin, Ann Houston)<br />[ClearNLP Constituent-to-Dependency Conversion](https://github.com/clir/clearnlp-guidelines/blob/master/md/components/dependency_conversion.md) (Emory University)<br />[WordNet 3.0](https://wordnet.princeton.edu/) (Princeton University) | | **License** | `MIT` | | **Author** | [Explosion](https://explosion.ai) | | 6790d82b78b99b2fd828b107a3321e76 |

mit | ['spacy', 'token-classification'] | false | Accuracy | Type | Score | | --- | --- | | `TOKEN_ACC` | 99.93 | | `TOKEN_P` | 99.57 | | `TOKEN_R` | 99.58 | | `TOKEN_F` | 99.57 | | `TAG_ACC` | 97.27 | | `SENTS_P` | 91.89 | | `SENTS_R` | 89.35 | | `SENTS_F` | 90.60 | | `DEP_UAS` | 91.81 | | `DEP_LAS` | 89.97 | | `ENTS_P` | 85.08 | | `ENTS_R` | 83.45 | | `ENTS_F` | 84.26 | | 23c9ed80889fb493a7f49ad1d13a8a77 |

openrail++ | [] | false | Model Details - **Developed by:** Corruptlake - **License:** openrail++ - **Finetuned from model:** Stable Diffusion v1.5 - **Developed using:** Everydream 1.0 - **Version:** v1.0 **If you would like to support the development of v2.0 and other future projects, please consider supporting me here:** [](https://www.patreon.com/user?u=86594740) | 802ea7e608eb2f8b8995feb92d0b2a29 |

openrail++ | [] | false | Model Description This model has been trained on 26,949 high resolution and quality Sci-Fi themed images for 2 Epochs. Which equals to around 53K steps/iterations. The training resolution was 640, however it works well at higher resolutions. This model is still in developement Comparison between SD1.5/2.1 on the same seed, prompt and settings can be found below. <a href="https://ibb.co/NV2265y"><img src="https://i.ibb.co/vw44xpj/sdvssf.png" alt="sdvssf" border="0"></a> | 0c9b7f95e337d909d70c781d00c7c286 |

openrail++ | [] | false | Model Usage - **Recommended sampler:** Euler, Euler A Recommended words to add to prompts are as follows: - Sci-Fi - caspian Sci-Fi - Star Citizen - Star Atlas - Spaceship - Render More words that were prominent in the dataset, but effects are currently not well known: - Inktober - Star Trek - Star Wars - Sketch | 77c6d3fed2815d03dadadc441c469509 |

openrail++ | [] | false | Misuse, Malicious Use, and Out-of-Scope Use Note: This section is originally taken from the Stable-Diffusion 2.1 model card. The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. This includes generating images that people would foreseeably find disturbing, distressing, or offensive; or content that propagates historical or current stereotypes. | ed3fe1323f2cd87eec6dfdaf466fc1b1 |

openrail++ | [] | false | Out-of-Scope Use The model was not trained to be factual or true representations of people or events, and therefore using the model to generate such content is out-of-scope for the abilities of this model. Misuse and Malicious Use Using the model to generate content that is cruel to individuals is a misuse of this model. This includes, but is not limited to: - Generating demeaning, dehumanizing, or otherwise harmful representations of people or their environments, cultures, religions, etc. - Intentionally promoting or propagating discriminatory content or harmful stereotypes. - Impersonating individuals without their consent. - Sexual content without consent of the people who might see it. - Mis- and disinformation - Representations of egregious violence and gore - Sharing of copyrighted or licensed material in violation of its terms of use. - Sharing content that is an alteration of copyrighted or licensed material in violation of its terms of use. | 3e23b50dab5935f9d29d3eb77e668c77 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Small Vietnamese This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the mozilla-foundation/common_voice_11_0 vi dataset. It achieves the following results on the evaluation set: - Loss: 0.7277 - Wer: 25.9925 | c78ff1b404c39c81204caf90d2ab1f41 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 256 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - training_steps: 1000 - mixed_precision_training: Native AMP | fa92081c6b4b52520173be2f203592c8 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.0003 | 62.01 | 1000 | 0.7277 | 25.9925 | | cb344e14bf7071af49e9fd2336905350 |

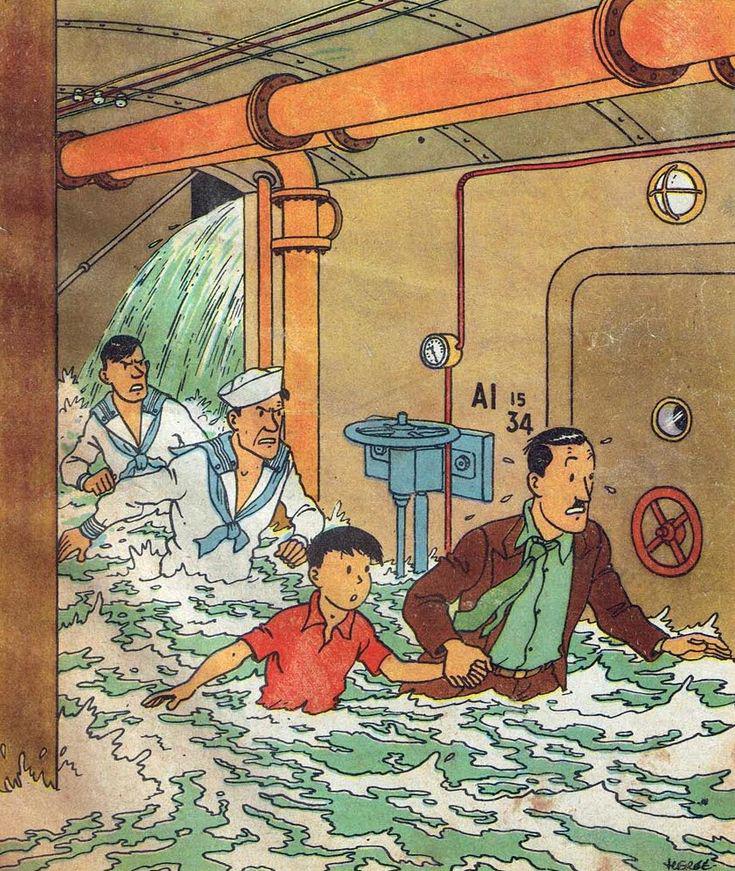

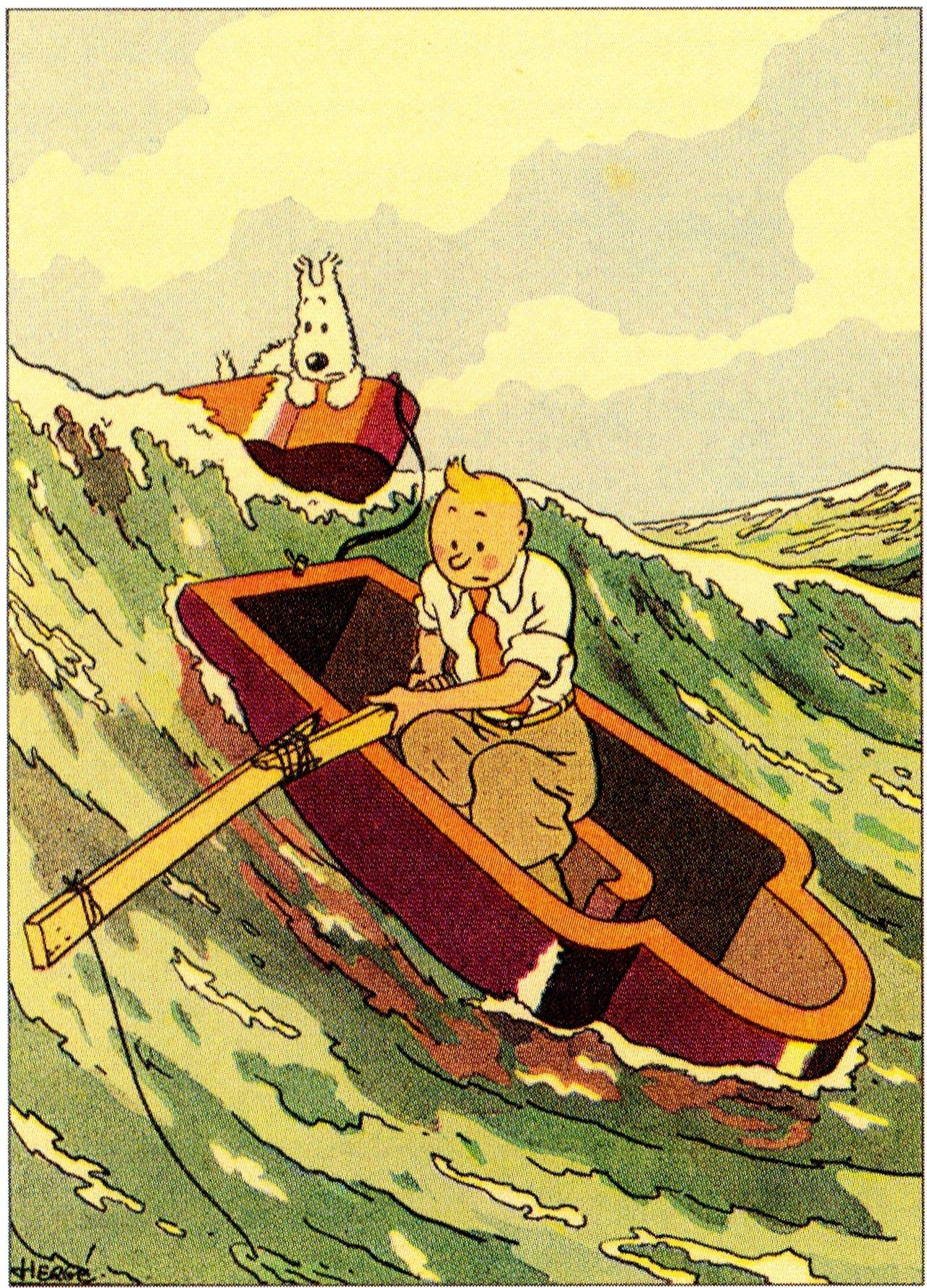

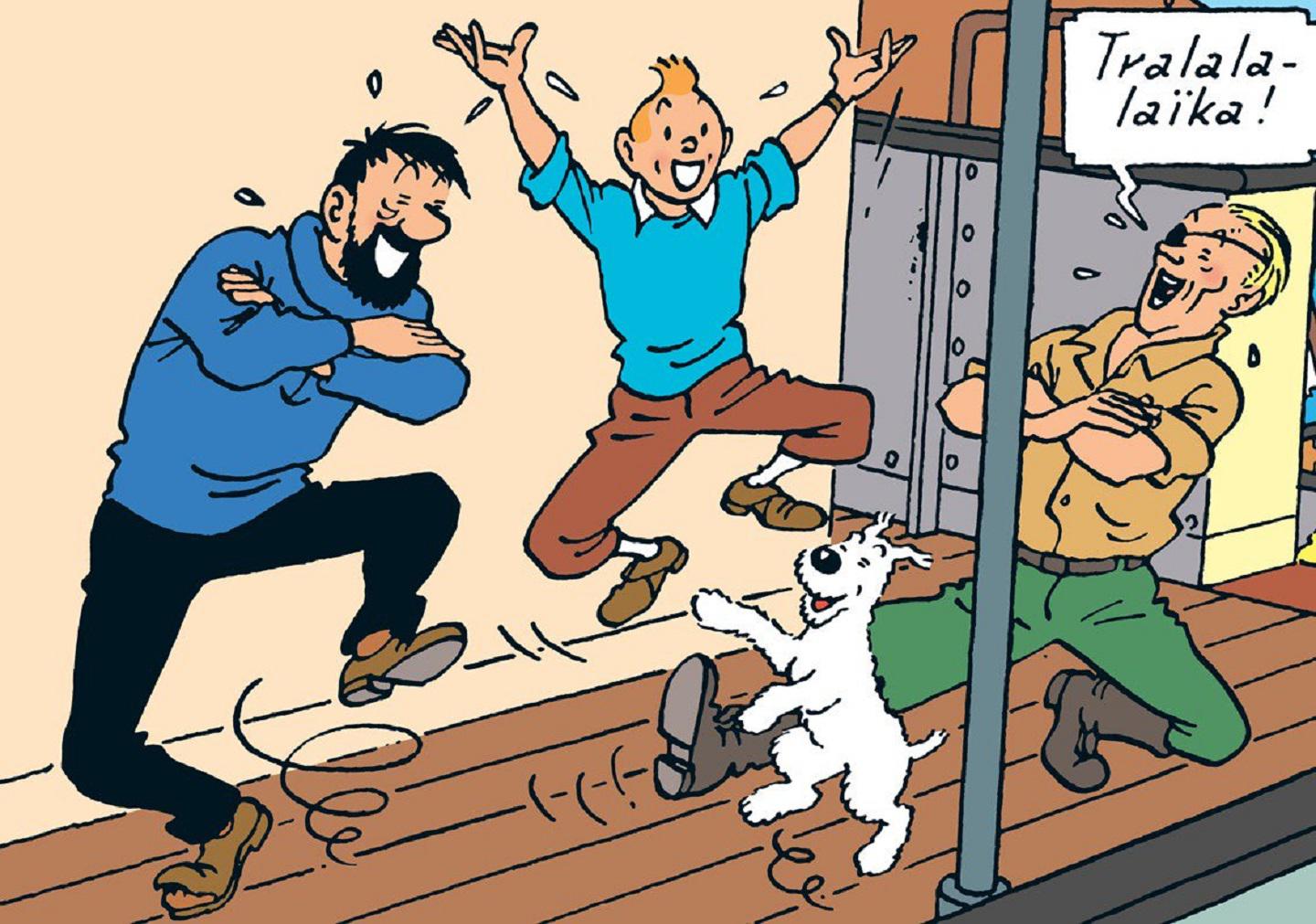

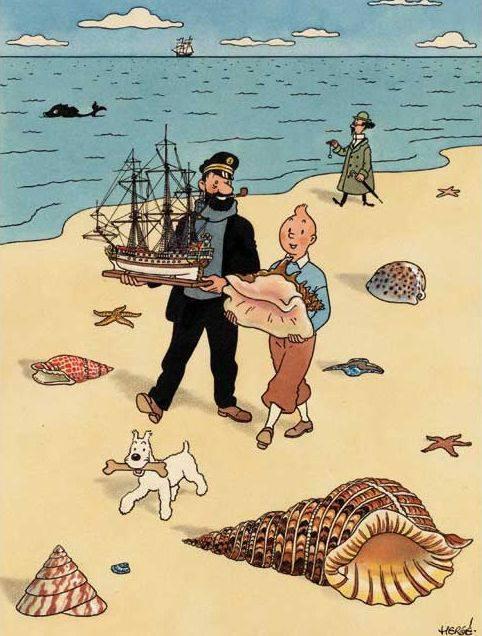

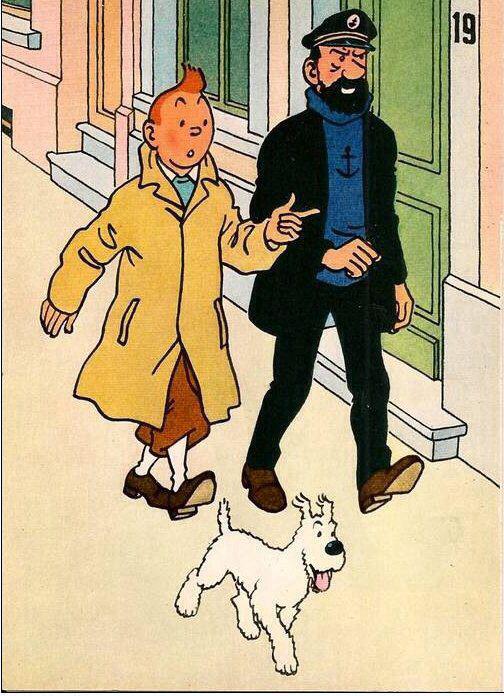

mit | ['text-to-image'] | false | model by maderix This your the Stable Diffusion model fine-tuned the herge_style concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt`: **a photo of sks herge_style** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept:           | 25209c20407ed5e1c4a1d99ac4601971 |

apache-2.0 | ['generated_from_trainer'] | false | distilbart-cnn-12-6-finetuned-1.1.1 This model is a fine-tuned version of [sshleifer/distilbart-cnn-12-6](https://huggingface.co/sshleifer/distilbart-cnn-12-6) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.1031 - Rouge1: 80.2783 - Rouge2: 76.9012 - Rougel: 79.1544 - Rougelsum: 79.3582 | 07aa2b838eca11dd4803e7d9c44eb559 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:| | 0.1389 | 1.0 | 1161 | 0.1130 | 79.3969 | 75.5992 | 78.117 | 78.3237 | | 0.0881 | 2.0 | 2322 | 0.1031 | 80.2783 | 76.9012 | 79.1544 | 79.3582 | | 6a0d573038ab5411393d94ab1a8c8b0d |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-cola This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.8815 - Matthews Correlation: 0.5173 | 4d94ca5f4f2319b5b47f02adaf55368a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.5272 | 1.0 | 535 | 0.5099 | 0.4093 | | 0.3563 | 2.0 | 1070 | 0.5114 | 0.5019 | | 0.2425 | 3.0 | 1605 | 0.6696 | 0.4898 | | 0.1726 | 4.0 | 2140 | 0.7715 | 0.5123 | | 0.132 | 5.0 | 2675 | 0.8815 | 0.5173 | | c543024407243973722d4cad22a20d22 |

apache-2.0 | ['automatic-speech-recognition', 'ar'] | false | exp_w2v2t_ar_r-wav2vec2_s527 Fine-tuned [facebook/wav2vec2-large-robust](https://huggingface.co/facebook/wav2vec2-large-robust) for speech recognition using the train split of [Common Voice 7.0 (ar)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 642b300226a5a480a02bbc78fd6e98dc |

apache-2.0 | ['RandAugment', 'Image Classification'] | false | RandAugment for Image Classification for Improved Robustness on the 🤗Hub! [Paper](https://arxiv.org/abs/1909.13719) | [Keras Tutorial](https://keras.io/examples/vision/randaugment/) Keras Tutorial Credit goes to : [Sayak Paul](https://twitter.com/RisingSayak) **Excerpt from the Tutorial:** Data augmentation is a very useful technique that can help to improve the translational invariance of convolutional neural networks (CNN). RandAugment is a stochastic vision data augmentation routine composed of strong augmentation transforms like color jitters, Gaussian blurs, saturations, etc. along with more traditional augmentation transforms such as random crops. Recently, it has been a key component of works like [Noisy Student Training](https://arxiv.org/abs/1911.04252) and [Unsupervised Data Augmentation for Consistency Training](https://arxiv.org/abs/1904.12848). It has been also central to the success of EfficientNets. | a84192aa78cbbfa60b8728f965c03eb5 |

apache-2.0 | ['RandAugment', 'Image Classification'] | false | About The dataset The model was trained on [**CIFAR-10**](https://huggingface.co/datasets/cifar10), consisting of 60000 32x32 color images in 10 classes, with 6000 images per class. There are 50000 training images and 10000 test images. | 96142b89d54bb892e1c87345ec786b73 |

apache-2.0 | ['translation'] | false | opus-mt-ssp-es * source languages: ssp * target languages: es * OPUS readme: [ssp-es](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/ssp-es/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/ssp-es/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/ssp-es/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/ssp-es/opus-2020-01-16.eval.txt) | 26224b20fa53170a4301854abb6f7618 |

apache-2.0 | ['automatic-speech-recognition', 'sv-SE'] | false | exp_w2v2t_sv-se_vp-nl_s764 Fine-tuned [facebook/wav2vec2-large-nl-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-nl-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (sv-SE)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | b4c445953edc672a15f392d454d8b561 |

apache-2.0 | ['generated_from_trainer'] | false | eval_masked_102_sst2 This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the GLUE SST2 dataset. It achieves the following results on the evaluation set: - Loss: 0.3561 - Accuracy: 0.9266 | 1169c01929187a7ca7d5762e7a09c3b8 |

apache-2.0 | ['generated_from_keras_callback'] | false | whisper_havest_0020 This model is a fine-tuned version of [openai/whisper-tiny](https://huggingface.co/openai/whisper-tiny) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 4.2860 - Train Accuracy: 0.0128 - Train Do Wer: 1.0 - Validation Loss: 4.6401 - Validation Accuracy: 0.0125 - Validation Do Wer: 1.0 - Epoch: 19 | 37fcc1463dd96531efef985722216ebd |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train Accuracy | Train Do Wer | Validation Loss | Validation Accuracy | Validation Do Wer | Epoch | |:----------:|:--------------:|:------------:|:---------------:|:-------------------:|:-----------------:|:-----:| | 9.9191 | 0.0046 | 1.0 | 8.5836 | 0.0067 | 1.0 | 0 | | 8.0709 | 0.0083 | 1.0 | 7.4667 | 0.0089 | 1.0 | 1 | | 7.1652 | 0.0100 | 1.0 | 6.8204 | 0.0112 | 1.0 | 2 | | 6.7196 | 0.0114 | 1.0 | 6.5192 | 0.0114 | 1.0 | 3 | | 6.4115 | 0.0115 | 1.0 | 6.2357 | 0.0115 | 1.0 | 4 | | 6.1085 | 0.0115 | 1.0 | 5.9657 | 0.0115 | 1.0 | 5 | | 5.8206 | 0.0115 | 1.0 | 5.7162 | 0.0115 | 1.0 | 6 | | 5.5567 | 0.0115 | 1.0 | 5.4963 | 0.0115 | 1.0 | 7 | | 5.3223 | 0.0116 | 1.0 | 5.3096 | 0.0116 | 1.0 | 8 | | 5.1222 | 0.0117 | 1.0 | 5.1600 | 0.0117 | 1.0 | 9 | | 4.9580 | 0.0117 | 1.0 | 5.0391 | 0.0118 | 1.0 | 10 | | 4.8251 | 0.0119 | 1.0 | 4.9427 | 0.0118 | 1.0 | 11 | | 4.7171 | 0.0119 | 1.0 | 4.8691 | 0.0119 | 1.0 | 12 | | 4.6284 | 0.0121 | 1.0 | 4.8123 | 0.0120 | 1.0 | 13 | | 4.5508 | 0.0121 | 1.0 | 4.7620 | 0.0121 | 1.0 | 14 | | 4.4855 | 0.0123 | 1.0 | 4.7260 | 0.0121 | 1.0 | 15 | | 4.4305 | 0.0124 | 1.0 | 4.7018 | 0.0123 | 1.0 | 16 | | 4.3788 | 0.0125 | 1.0 | 4.6738 | 0.0123 | 1.0 | 17 | | 4.3305 | 0.0127 | 1.0 | 4.6525 | 0.0124 | 1.0 | 18 | | 4.2860 | 0.0128 | 1.0 | 4.6401 | 0.0125 | 1.0 | 19 | | fef886d33d050f0aae73b8300d134dd2 |

apache-2.0 | ['generated_from_trainer'] | false | whisper_ami_finetuned This model is a fine-tuned version of [openai/whisper-medium](https://huggingface.co/openai/whisper-medium) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.0307 - Wer: 28.8275 | 5b61e5d4ec876f2cb58c41794b83f06a |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 2 - eval_batch_size: 2 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 5 - mixed_precision_training: Native AMP | d6174a61c7ed0d82a30248ddbb3d963d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 1.3847 | 1.0 | 649 | 0.7598 | 29.7442 | | 0.6419 | 2.0 | 1298 | 0.7462 | 28.5128 | | 0.4658 | 3.0 | 1947 | 0.7728 | 28.7454 | | 0.154 | 4.0 | 2596 | 0.8675 | 29.2516 | | 0.0852 | 5.0 | 3245 | 1.0307 | 28.8275 | | 657f417086ff70107f5a175dd1cf947c |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-ner This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0617 - Precision: 0.9274 - Recall: 0.9397 - F1: 0.9335 - Accuracy: 0.9838 | fede26f3e2e0657613ef737b9c0ab944 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.2403 | 1.0 | 878 | 0.0714 | 0.9171 | 0.9216 | 0.9193 | 0.9805 | | 0.0555 | 2.0 | 1756 | 0.0604 | 0.9206 | 0.9347 | 0.9276 | 0.9829 | | 0.031 | 3.0 | 2634 | 0.0617 | 0.9274 | 0.9397 | 0.9335 | 0.9838 | | 1f076e60324dc342cc7446681672071e |

apache-2.0 | ['translation'] | false | opus-mt-fr-yap * source languages: fr * target languages: yap * OPUS readme: [fr-yap](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/fr-yap/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/fr-yap/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/fr-yap/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/fr-yap/opus-2020-01-16.eval.txt) | ff6270ac3cbf299069d5e28e16ca2922 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | これらのモデルは私が2022年12月末から2023年1月上旬にかけて作成したマージモデルです。そのレシピの多くがすでに失われていますが、バックアップを兼ねて公開することにしました。 These are merge models I created between late December 2022 and early January 2023. Many of their recipes have already been lost, but I decided to publish them as a backup. sample <img src="https://i.imgur.com/alpB7IK.jpg" width="900" height=""> <img src="https://i.imgur.com/MDc9SHp.jpg" width="900" height=""> <img src="https://i.imgur.com/oSGvybv.jpg" width="900" height=""> | 9e90ec1820bb81a2f5f33cbaed452a70 |

apache-2.0 | ['generated_from_trainer'] | false | neuroscience-to-dev-bio This model is a fine-tuned version of [facebook/bart-large](https://huggingface.co/facebook/bart-large) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.0374 | 3dced8d5f37328ddc045441955a6bedf |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 19.3369 | 0.95 | 7 | 17.9500 | | 17.1316 | 1.95 | 14 | 15.1026 | | 14.0654 | 2.95 | 21 | 12.5013 | | 12.6374 | 3.95 | 28 | 11.3803 | | 11.7608 | 4.95 | 35 | 10.2692 | | 10.5271 | 5.95 | 42 | 8.7652 | | 9.0429 | 6.95 | 49 | 6.8763 | | 7.4963 | 7.95 | 56 | 5.9052 | | 6.6044 | 8.95 | 63 | 5.3443 | | 6.007 | 9.95 | 70 | 4.8687 | | 5.4706 | 10.95 | 77 | 4.3708 | | 4.8812 | 11.95 | 84 | 3.8094 | | 4.2359 | 12.95 | 91 | 3.1743 | | 3.4727 | 13.95 | 98 | 2.4480 | | 2.6582 | 14.95 | 105 | 1.6751 | | 1.8084 | 15.95 | 112 | 0.9828 | | 1.0742 | 16.95 | 119 | 0.5074 | | 0.5521 | 17.95 | 126 | 0.2471 | | 0.263 | 18.95 | 133 | 0.1276 | | 0.1281 | 19.95 | 140 | 0.0761 | | 0.0826 | 20.95 | 147 | 0.0620 | | 0.0419 | 21.95 | 154 | 0.0434 | | 0.0685 | 22.95 | 161 | 0.1522 | | 0.1332 | 23.95 | 168 | 0.0536 | | 0.0405 | 24.95 | 175 | 0.0405 | | 0.0214 | 25.95 | 182 | 0.0380 | | 0.0142 | 26.95 | 189 | 0.0370 | | 0.0202 | 27.95 | 196 | 0.0375 | | 0.0105 | 28.95 | 203 | 0.0413 | | 0.0092 | 29.95 | 210 | 0.0370 | | 0.0083 | 30.95 | 217 | 0.0384 | | 0.0079 | 31.95 | 224 | 0.0406 | | 0.0381 | 32.95 | 231 | 0.0371 | | 0.011 | 33.95 | 238 | 0.0439 | | 0.0066 | 34.95 | 245 | 0.0374 | | 02e0585734bcec4520e40de864535aca |

MIT | ['keytotext', 'k2t', 'Keywords to Sentences'] | false | <h1 align="center">keytotext</h1> [](https://pypi.org/project/keytotext/) [](https://pepy.tech/project/keytotext) [](https://colab.research.google.com/github/gagan3012/keytotext/blob/master/notebooks/K2T.ipynb) [](https://share.streamlit.io/gagan3012/keytotext/UI/app.py) [](https://github.com/gagan3012/keytotext | c8c4cc8c70abc5c825435a3947c18b1f |

apache-2.0 | ['generated_from_keras_callback'] | false | Imene/vit-base-patch16-224-in21k-wwwwwi This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 3.2187 - Train Accuracy: 0.5652 - Train Top-3-accuracy: 0.7611 - Validation Loss: 3.8221 - Validation Accuracy: 0.2540 - Validation Top-3-accuracy: 0.4409 - Epoch: 9 | 69b3dea954199f31ab0302013e6d2855 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'inner_optimizer': {'class_name': 'AdamWeightDecay', 'config': {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 3e-05, 'decay_steps': 4920, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01}}, 'dynamic': True, 'initial_scale': 32768.0, 'dynamic_growth_steps': 2000} - training_precision: mixed_float16 | 5779cc313e736d5b7cc0a53fbfad17c0 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train Accuracy | Train Top-3-accuracy | Validation Loss | Validation Accuracy | Validation Top-3-accuracy | Epoch | |:----------:|:--------------:|:--------------------:|:---------------:|:-------------------:|:-------------------------:|:-----:| | 5.3476 | 0.0283 | 0.0716 | 5.1306 | 0.0483 | 0.1240 | 0 | | 4.9357 | 0.0914 | 0.2057 | 4.7998 | 0.1158 | 0.2385 | 1 | | 4.6155 | 0.1641 | 0.3230 | 4.5616 | 0.1430 | 0.2891 | 2 | | 4.3325 | 0.2269 | 0.4188 | 4.3480 | 0.1722 | 0.3391 | 3 | | 4.0702 | 0.2915 | 0.4984 | 4.1662 | 0.2042 | 0.3886 | 4 | | 3.8262 | 0.3638 | 0.5758 | 4.0416 | 0.2296 | 0.4067 | 5 | | 3.6117 | 0.4258 | 0.6415 | 3.9451 | 0.2329 | 0.4234 | 6 | | 3.4324 | 0.4855 | 0.6956 | 3.8690 | 0.2499 | 0.4397 | 7 | | 3.2991 | 0.5320 | 0.7376 | 3.8351 | 0.2553 | 0.4359 | 8 | | 3.2187 | 0.5652 | 0.7611 | 3.8221 | 0.2540 | 0.4409 | 9 | | be0bc201f2bf04591467be7438417beb |

mit | ['generated_from_trainer'] | false | finetuned_gpt2-large_sst2_negation0.0_pretrainedFalse This model is a fine-tuned version of [gpt2-large](https://huggingface.co/gpt2-large) on the sst2 dataset. It achieves the following results on the evaluation set: - Loss: 5.2093 | ea4d2c2dca0bc22481c1285f538f724e |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 4.788 | 1.0 | 1059 | 5.4756 | | 4.4023 | 2.0 | 2118 | 5.2800 | | 4.1422 | 3.0 | 3177 | 5.2093 | | bbeb4ac2789de8a885bb5332bfaa164e |

mit | ['generated_from_keras_callback'] | false | sachinsahu/Cardinal__Catholicism_-clustered This model is a fine-tuned version of [nandysoham16/11-clustered_aug](https://huggingface.co/nandysoham16/11-clustered_aug) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.2749 - Train End Logits Accuracy: 0.9271 - Train Start Logits Accuracy: 0.9410 - Validation Loss: 0.2860 - Validation End Logits Accuracy: 1.0 - Validation Start Logits Accuracy: 0.75 - Epoch: 0 | ed8055f3ee80a78eae24cd0eee432a00 |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 0.2749 | 0.9271 | 0.9410 | 0.2860 | 1.0 | 0.75 | 0 | | d5d7642e86d02b18bd8853f5564e1c63 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_sa_GLUE_Experiment_stsb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE STSB dataset. It achieves the following results on the evaluation set: - Loss: 2.3709 - Pearson: 0.1628 - Spearmanr: 0.1610 - Combined Score: 0.1619 | 78d7fa3126ed9c6cb74bf5a70d28a1e9 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Pearson | Spearmanr | Combined Score | |:-------------:|:-----:|:----:|:---------------:|:-------:|:---------:|:--------------:| | 3.4997 | 1.0 | 23 | 2.5067 | 0.0558 | 0.0619 | 0.0588 | | 2.0151 | 2.0 | 46 | 2.4888 | 0.1092 | 0.0973 | 0.1033 | | 1.8234 | 3.0 | 69 | 2.3709 | 0.1628 | 0.1610 | 0.1619 | | 1.5482 | 4.0 | 92 | 3.0640 | 0.1571 | 0.1632 | 0.1602 | | 1.33 | 5.0 | 115 | 3.1306 | 0.1649 | 0.1896 | 0.1772 | | 1.1586 | 6.0 | 138 | 2.9752 | 0.1454 | 0.1567 | 0.1511 | | 1.0473 | 7.0 | 161 | 3.1783 | 0.1490 | 0.1670 | 0.1580 | | 0.9198 | 8.0 | 184 | 3.0440 | 0.1632 | 0.1734 | 0.1683 | | 1a3958dcd9c7d7f95441c10e8e92527f |

apache-2.0 | ['translation'] | false | opus-mt-fi-pis * source languages: fi * target languages: pis * OPUS readme: [fi-pis](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/fi-pis/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-24.zip](https://object.pouta.csc.fi/OPUS-MT-models/fi-pis/opus-2020-01-24.zip) * test set translations: [opus-2020-01-24.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-pis/opus-2020-01-24.test.txt) * test set scores: [opus-2020-01-24.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-pis/opus-2020-01-24.eval.txt) | f5ba729e52020e88b8621ee246918b85 |

cc-by-4.0 | ['generated_from_trainer'] | false | EstBERT128_Rubric This model is a fine-tuned version of [tartuNLP/EstBERT](https://huggingface.co/tartuNLP/EstBERT). It achieves the following results on the test set: - Loss: 2.0552 - Accuracy: 0.8329 | b4ca1b550a90cbc2da54bc76e8e1c488 |

cc-by-4.0 | ['generated_from_trainer'] | false | How to use? You can use this model with the Transformers pipeline for text classification. ``` from transformers import AutoTokenizer, AutoModelForSequenceClassification from transformers import pipeline tokenizer = AutoTokenizer.from_pretrained("tartuNLP/EstBERT128_Rubric") model = AutoModelForSequenceClassification.from_pretrained("tartuNLP/EstBERT128_Rubric") nlp = pipeline("text-classification", model=model, tokenizer=tokenizer) text = "Kaia Kanepi (WTA 57.) langes USA-s Charlestonis toimuval WTA 500 kategooria tenniseturniiril konkurentsist kaheksandikfinaalis, kaotades poolatarile Magda Linette'ile (WTA 64.) 3 : 6, 6 : 4, 2 : 6." result = nlp(text) print(result) ``` ``` [{'label': 'SPORT', 'score': 0.9999998807907104}] ``` | b4131aa65480fd698515853d311f000f |

cc-by-4.0 | ['generated_from_trainer'] | false | Intended uses & limitations This model is intended to be used as it is. We hope that it can prove to be useful to somebody but we do not guarantee that the model is useful for anything or that the predictions are accurate on new data. | 124166f43590f874ac2dc99583d38b83 |

cc-by-4.0 | ['generated_from_trainer'] | false | Citation information If you use this model, please cite: ``` @inproceedings{tanvir2021estbert, title={EstBERT: A Pretrained Language-Specific BERT for Estonian}, author={Tanvir, Hasan and Kittask, Claudia and Eiche, Sandra and Sirts, Kairit}, booktitle={Proceedings of the 23rd Nordic Conference on Computational Linguistics (NoDaLiDa)}, pages={11--19}, year={2021} } ``` | e262d764baf322e450ef02fec4d0e64d |

cc-by-4.0 | ['generated_from_trainer'] | false | Training and evaluation data The model was trained and evaluated on the rubric categories of the [Estonian Valence dataset](http://peeter.eki.ee:5000/valence/paragraphsquery). The data was split into train/dev/test parts with 70/10/20 proportions. The nine rubric labels in the Estonian Valence dataset are: - ARVAMUS (opinion) - EESTI (domestic) - ELU-O (life) - KOMM-O-ELU (comments) - KOMM-P-EESTI (comments) - KRIMI (crime) - KULTUUR (culture) - SPORT (sports) - VALISMAA (world) It probably makes sense to treat the two comments categories (KOMM-O-ELU and KOMM-P-EESTI) as a single category. | bc7c1ae07e0448ff02e3ec354f1d7502 |

cc-by-4.0 | ['generated_from_trainer'] | false | Training procedure The model was trained for maximu 100 epochs using early stopping procedure. After every epoch, the accuracy was calculated on the development set. If the development set accuracy did not improve for 20 epochs, the training was stopped. | 9de53a706cbc55bdc73fc16a7db88dca |

cc-by-4.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 3 - optimizer: Adam with betas=(0.9,0.98) and epsilon=1e-06 - lr_scheduler_type: polynomial - num_epochs: 100 - mixed_precision_training: Native AMP | 3082d88e374bfe54f7a768c169ae772e |

cc-by-4.0 | ['generated_from_trainer'] | false | Training results The final model was taken after 39th epoch. | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:--------:| | 1.1147 | 1.0 | 179 | 0.7421 | 0.7445 | | 0.4323 | 2.0 | 358 | 0.6863 | 0.7813 | | 0.1442 | 3.0 | 537 | 0.8545 | 0.7838 | | 0.0496 | 4.0 | 716 | 1.2872 | 0.7494 | | 0.0276 | 5.0 | 895 | 1.4702 | 0.7641 | | 0.0202 | 6.0 | 1074 | 1.3764 | 0.7838 | | 0.0144 | 7.0 | 1253 | 1.5762 | 0.7887 | | 0.0078 | 8.0 | 1432 | 1.8806 | 0.7666 | | 0.0177 | 9.0 | 1611 | 1.6159 | 0.7912 | | 0.0223 | 10.0 | 1790 | 1.5863 | 0.7936 | | 0.0108 | 11.0 | 1969 | 1.8051 | 0.7912 | | 0.0201 | 12.0 | 2148 | 1.9344 | 0.7789 | | 0.0252 | 13.0 | 2327 | 1.7978 | 0.8084 | | 0.0104 | 14.0 | 2506 | 1.8779 | 0.7887 | | 0.0138 | 15.0 | 2685 | 1.6456 | 0.8133 | | 0.0066 | 16.0 | 2864 | 1.9668 | 0.7912 | | 0.0148 | 17.0 | 3043 | 2.0068 | 0.7813 | | 0.0128 | 18.0 | 3222 | 2.1539 | 0.7617 | | 0.0115 | 19.0 | 3401 | 2.2490 | 0.7838 | | 0.0186 | 20.0 | 3580 | 2.1768 | 0.7666 | | 0.0051 | 21.0 | 3759 | 1.8859 | 0.7912 | | 0.001 | 22.0 | 3938 | 2.0132 | 0.7912 | | 0.0133 | 23.0 | 4117 | 1.8786 | 0.8084 | | 0.0149 | 24.0 | 4296 | 2.2307 | 0.7961 | | 0.014 | 25.0 | 4475 | 2.0041 | 0.8206 | | 0.0132 | 26.0 | 4654 | 1.8872 | 0.8133 | | 0.0079 | 27.0 | 4833 | 1.9357 | 0.7961 | | 0.0078 | 28.0 | 5012 | 2.1891 | 0.7936 | | 0.0126 | 29.0 | 5191 | 2.0207 | 0.8034 | | 0.0003 | 30.0 | 5370 | 2.1917 | 0.8010 | | 0.0015 | 31.0 | 5549 | 2.0417 | 0.8157 | | 0.0056 | 32.0 | 5728 | 2.1172 | 0.8084 | | 0.0058 | 33.0 | 5907 | 2.1921 | 0.8206 | | 0.0001 | 34.0 | 6086 | 2.0079 | 0.8206 | | 0.0031 | 35.0 | 6265 | 2.2447 | 0.8206 | | 0.0007 | 36.0 | 6444 | 2.1802 | 0.8084 | | 0.0061 | 37.0 | 6623 | 2.1103 | 0.8157 | | 0.0 | 38.0 | 6802 | 2.2265 | 0.8084 | | 0.0035 | 39.0 | 6981 | 2.0549 | 0.8329 | | 0.0038 | 40.0 | 7160 | 2.1352 | 0.8182 | | 0.0001 | 41.0 | 7339 | 2.0975 | 0.8108 | | 0.0 | 42.0 | 7518 | 2.0833 | 0.8256 | | 0.0 | 43.0 | 7697 | 2.1020 | 0.8280 | | 0.0 | 44.0 | 7876 | 2.0841 | 0.8305 | | 0.0 | 45.0 | 8055 | 2.2085 | 0.8182 | | 0.0 | 46.0 | 8234 | 2.0756 | 0.8329 | | 0.0 | 47.0 | 8413 | 2.1237 | 0.8305 | | 0.0 | 48.0 | 8592 | 2.1217 | 0.8280 | | 0.0052 | 49.0 | 8771 | 2.3567 | 0.8059 | | 0.0014 | 50.0 | 8950 | 2.1710 | 0.8206 | | 0.0032 | 51.0 | 9129 | 2.1452 | 0.8206 | | 0.0 | 52.0 | 9308 | 2.2820 | 0.8133 | | 0.0001 | 53.0 | 9487 | 2.2279 | 0.8157 | | 0.0 | 54.0 | 9666 | 2.1841 | 0.8182 | | 0.0 | 55.0 | 9845 | 2.1208 | 0.8231 | | 0.0 | 56.0 | 10024 | 2.0967 | 0.8256 | | 0.0002 | 57.0 | 10203 | 2.1911 | 0.8231 | | 0.0 | 58.0 | 10382 | 2.2014 | 0.8231 | | 0.0 | 59.0 | 10561 | 2.2014 | 0.8182 | | daecd8b33764bc58395611aeac752149 |

apache-2.0 | ['generated_from_trainer'] | false | Fine_Tunning_on_CV_Urdu_dataset This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the common_voice_8_0 dataset. It achieves the following results on the evaluation set: - Loss: 1.2389 - Wer: 0.7380 | d91aa3fe593c6a8c4f6a11541b9591d9 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - num_epochs: 30 - mixed_precision_training: Native AMP | 443f9848f5e017c21fb0f1dd419af763 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 15.2352 | 1.69 | 100 | 4.0555 | 1.0 | | 3.3873 | 3.39 | 200 | 3.2521 | 1.0 | | 3.2387 | 5.08 | 300 | 3.2304 | 1.0 | | 3.1983 | 6.78 | 400 | 3.1712 | 1.0 | | 3.1224 | 8.47 | 500 | 3.0883 | 1.0 | | 3.0782 | 10.17 | 600 | 3.0767 | 0.9996 | | 3.0618 | 11.86 | 700 | 3.0280 | 1.0 | | 2.9929 | 13.56 | 800 | 2.8994 | 1.0 | | 2.785 | 15.25 | 900 | 2.4330 | 1.0 | | 2.1276 | 16.95 | 1000 | 1.7795 | 0.9517 | | 1.5544 | 18.64 | 1100 | 1.5101 | 0.8266 | | 1.2651 | 20.34 | 1200 | 1.4037 | 0.7993 | | 1.0816 | 22.03 | 1300 | 1.3101 | 0.7638 | | 0.9817 | 23.73 | 1400 | 1.2855 | 0.7542 | | 0.9019 | 25.42 | 1500 | 1.2737 | 0.7421 | | 0.8688 | 27.12 | 1600 | 1.2457 | 0.7435 | | 0.8293 | 28.81 | 1700 | 1.2389 | 0.7380 | | 1455f10bd20d0022596b5cda4c62efa1 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased__subj__all-train This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.3193 - Accuracy: 0.9485 | b2ed0d7e1095ba99c0af68541c3aa64e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.1992 | 1.0 | 500 | 0.1236 | 0.963 | | 0.084 | 2.0 | 1000 | 0.1428 | 0.963 | | 0.0333 | 3.0 | 1500 | 0.1906 | 0.965 | | 0.0159 | 4.0 | 2000 | 0.3193 | 0.9485 | | a1523ea0e70b0de16b138bcdb59bdbb9 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python %%capture !pip install datasets !pip install transformers==4.4.0 !pip install torchaudio !pip install jiwer !pip install tnkeeh import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "ar", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained("mohammed/ar") model = Wav2Vec2ForCTC.from_pretrained("mohammed/ar") resampler = torchaudio.transforms.Resample(48_000, 16_000) | 29062081c508370966ef7a5f0b028bf2 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | For each match, look-up corresponding value in dictionary batch["sentence"] = regex.sub(lambda mo: dict[mo.string[mo.start():mo.end()]], batch["sentence"]) return batch test_dataset = load_dataset("common_voice", "ar", split="test") wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("mohammed/ar") model = Wav2Vec2ForCTC.from_pretrained("mohammed/ar") model.to("cuda") resampler = torchaudio.transforms.Resample(48_000, 16_000) | bcba99b546267716fe1f50b6288d014c |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the audio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**: 36.69% | e7056973fa79ced0b36ca871c290dd65 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-marc-en This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the amazon_reviews_multi dataset. It achieves the following results on the evaluation set: - Loss: 0.8850 - Mae: 0.4390 | 92d2b6c6630ec04a790997d476601c17 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Mae | |:-------------:|:-----:|:----:|:---------------:|:------:| | 1.1589 | 1.0 | 235 | 0.9769 | 0.5122 | | 0.974 | 2.0 | 470 | 0.8850 | 0.4390 | | 2d12adfb9194ce147e75f3d8e98edff3 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-nepali This model is pre-trained on [nepalitext](https://huggingface.co/datasets/Sakonii/nepalitext-language-model-dataset) dataset consisting of over 13 million Nepali text sequences using a masked language modeling (MLM) objective. Our approach trains a Sentence Piece Model (SPM) for text tokenization similar to [XLM-ROBERTa](https://arxiv.org/abs/1911.02116) and trains [distilbert model](https://arxiv.org/abs/1910.01108) for language modeling. It achieves the following results on the evaluation set: mlm probability|evaluation loss|evaluation perplexity --:|----:|-----:| 15%|2.349|10.479| 20%|2.605|13.351| | a59f0dab31faa05b9a7dfb617c84abf5 |

apache-2.0 | ['generated_from_trainer'] | false | Usage This model can be used directly with a pipeline for masked language modeling: ```python >>> from transformers import pipeline >>> unmasker = pipeline('fill-mask', model='Sakonii/distilbert-base-nepali') >>> unmasker("मानविय गतिविधिले प्रातृतिक पर्यावरन प्रनालीलाई अपरिमेय क्षति पु्र्याएको छ। परिवर्तनशिल जलवायुले खाध, सुरक्षा, <mask>, जमिन, मौसमलगायतलाई असंख्य तरिकाले प्रभावित छ।") [{'score': 0.04128897562623024, 'sequence': 'मानविय गतिविधिले प्रातृतिक पर्यावरन प्रनालीलाई अपरिमेय क्षति पु्र्याएको छ। परिवर्तनशिल जलवायुले खाध, सुरक्षा, मौसम, जमिन, मौसमलगायतलाई असंख्य तरिकाले प्रभावित छ।', 'token': 2605, 'token_str': 'मौसम'}, {'score': 0.04100276157259941, 'sequence': 'मानविय गतिविधिले प्रातृतिक पर्यावरन प्रनालीलाई अपरिमेय क्षति पु्र्याएको छ। परिवर्तनशिल जलवायुले खाध, सुरक्षा, प्रकृति, जमिन, मौसमलगायतलाई असंख्य तरिकाले प्रभावित छ।', 'token': 2792, 'token_str': 'प्रकृति'}, {'score': 0.026525357738137245, 'sequence': 'मानविय गतिविधिले प्रातृतिक पर्यावरन प्रनालीलाई अपरिमेय क्षति पु्र्याएको छ। परिवर्तनशिल जलवायुले खाध, सुरक्षा, पानी, जमिन, मौसमलगायतलाई असंख्य तरिकाले प्रभावित छ।', 'token': 387, 'token_str': 'पानी'}, {'score': 0.02340106852352619, 'sequence': 'मानविय गतिविधिले प्रातृतिक पर्यावरन प्रनालीलाई अपरिमेय क्षति पु्र्याएको छ। परिवर्तनशिल जलवायुले खाध, सुरक्षा, जल, जमिन, मौसमलगायतलाई असंख्य तरिकाले प्रभावित छ।', 'token': 1313, 'token_str': 'जल'}, {'score': 0.02055591531097889, 'sequence': 'मानविय गतिविधिले प्रातृतिक पर्यावरन प्रनालीलाई अपरिमेय क्षति पु्र्याएको छ। परिवर्तनशिल जलवायुले खाध, सुरक्षा, वातावरण, जमिन, मौसमलगायतलाई असंख्य तरिकाले प्रभावित छ।', 'token': 790, 'token_str': 'वातावरण'}] ``` Here is how we can use the model to get the features of a given text in PyTorch: ```python from transformers import AutoTokenizer, AutoModelForMaskedLM tokenizer = AutoTokenizer.from_pretrained('Sakonii/distilbert-base-nepali') model = AutoModelForMaskedLM.from_pretrained('Sakonii/distilbert-base-nepali') | 9106324239f71fc178f8886662cdc30d |

apache-2.0 | ['generated_from_trainer'] | false | Training procedure The model is trained with the same configuration as the original [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased); 512 tokens per instance, 28 instances per batch, and around 35.7K training steps. | 357b4bb81f2c9d700a8d34daa54bc67b |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used for training of the final epoch: [ Refer to the *Training results* table below for varying hyperparameters every epoch ] - learning_rate: 5e-05 - train_batch_size: 28 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 - mixed_precision_training: Native AMP | 608effb5d233353b615c676987765531 |

apache-2.0 | ['generated_from_trainer'] | false | Training results The model is trained for 4 epochs with varying hyperparameters: | Training Loss | Epoch | MLM Probability | Train Batch Size | Step | Validation Loss | Perplexity | |:-------------:|:-----:|:---------------:|:----------------:|:-----:|:---------------:|:----------:| | 3.4477 | 1.0 | 15 | 26 | 38864 | 3.3067 | 27.2949 | | 2.9451 | 2.0 | 15 | 28 | 35715 | 2.8238 | 16.8407 | | 2.866 | 3.0 | 20 | 28 | 35715 | 2.7431 | 15.5351 | | 2.7287 | 4.0 | 20 | 28 | 35715 | 2.6053 | 13.5353 | | 2.6412 | 5.0 | 20 | 28 | 35715 | 2.5161 | 12.3802 | Final model evaluated with MLM Probability of 15%: | Training Loss | Epoch | MLM Probability | Train Batch Size | Step | Validation Loss | Perplexity | |:-------------:|:-----:|:---------------:|:----------------:|:-----:|:---------------:|:----------:| | - | - | 15 | - | - | 2.3494 | 10.4791 | | 9cab0a7d3317a73587cab98bdae22feb |

apache-2.0 | ['automatic-speech-recognition', 'fr'] | false | exp_w2v2r_fr_vp-100k_gender_male-10_female-0_s714 Fine-tuned [facebook/wav2vec2-large-100k-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-100k-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (fr)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 50c4a1171e49bf9abe91237a34ac41c7 |

cc-by-4.0 | ['question generation'] | false | Model Card of `research-backup/t5-small-subjqa-vanilla-books-qg` This model is fine-tuned version of [t5-small](https://huggingface.co/t5-small) for question generation task on the [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) (dataset_name: books) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | 37a1226211e1e9a2fcf86a374804b6d1 |

cc-by-4.0 | ['question generation'] | false | Overview - **Language model:** [t5-small](https://huggingface.co/t5-small) - **Language:** en - **Training data:** [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) (books) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | 3f850f614e04a232d04e0d6b9ae7de2c |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.