license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

other | [] | false | Model description This is the second generation of the original Shinen made by Mr. Seeker. The full dataset consists of 6 different sources, all surrounding the "Adult" theme. The name "Erebus" comes from the greek mythology, also named "darkness". This is in line with Shin'en, or "deep abyss". For inquiries, please contact the KoboldAI community. **Warning: THIS model is NOT suitable for use by minors. The model will output X-rated content.** | 9aff1e1860f62819811472fd20cfae4a |

other | [] | false | Training data The data can be divided in 6 different datasets: - Literotica (everything with 4.5/5 or higher) - Sexstories (everything with 90 or higher) - Dataset-G (private dataset of X-rated stories) - Doc's Lab (all stories) - Pike Dataset (novels with "adult" rating) - SoFurry (collection of various animals) The dataset uses `[Genre: <comma-separated list of genres>]` for tagging. | d33550f07429fec8fab18400328ef90b |

other | [] | false | How to use You can use this model directly with a pipeline for text generation. This example generates a different sequence each time it's run: ```py >>> from transformers import pipeline >>> generator = pipeline('text-generation', model='KoboldAI/OPT-2.7B-Erebus') >>> generator("Welcome Captain Janeway, I apologize for the delay.", do_sample=True, min_length=50) [{'generated_text': 'Welcome Captain Janeway, I apologize for the delay."\nIt's all right," Janeway said. "I'm certain that you're doing your best to keep me informed of what\'s going on."'}] ``` | 7e4c3d8336f7e3d0fe33d91d2616b30c |

other | [] | false | Limitations and biases Based on known problems with NLP technology, potential relevant factors include bias (gender, profession, race and religion). **Warning: This model has a very strong NSFW bias!** | 57cb73c09d1d32fcb7ef1cb8499eb5d7 |

other | [] | false | BibTeX entry and citation info ``` @misc{zhang2022opt, title={OPT: Open Pre-trained Transformer Language Models}, author={Susan Zhang and Stephen Roller and Naman Goyal and Mikel Artetxe and Moya Chen and Shuohui Chen and Christopher Dewan and Mona Diab and Xian Li and Xi Victoria Lin and Todor Mihaylov and Myle Ott and Sam Shleifer and Kurt Shuster and Daniel Simig and Punit Singh Koura and Anjali Sridhar and Tianlu Wang and Luke Zettlemoyer}, year={2022}, eprint={2205.01068}, archivePrefix={arXiv}, primaryClass={cs.CL} } ``` | 47647ef2136b3047d8cdcf5aba8cd36b |

apache-2.0 | ['catalan', 'paraphrase', 'textual entailment'] | false | Model description The **roberta-large-ca-paraphrase** is a Paraphrase Detection model for the Catalan language fine-tuned from the roberta-large-ca-v2 model, a [RoBERTa](https://arxiv.org/abs/1907.11692) base model pre-trained on a medium-size corpus collected from publicly available corpora and crawlers. | 6a312f09755d8b3dad064bec1e0acc5b |

apache-2.0 | ['catalan', 'paraphrase', 'textual entailment'] | false | Intended uses and limitations **roberta-large-ca-paraphrase** model can be used to detect if two sentences are in a paraphrase relation. The model is limited by its training dataset and may not generalize well for all use cases. | e331a76db6aab5609b002e0c3bf64729 |

apache-2.0 | ['catalan', 'paraphrase', 'textual entailment'] | false | How to use Here is how to use this model: ```python from transformers import pipeline from pprint import pprint nlp = pipeline("text-classification", model="projecte-aina/roberta-large-ca-paraphrase") example = "Tinc un amic a Manresa. </s></s> A Manresa hi viu un amic meu." paraphrase = nlp(example) pprint(paraphrase) ``` | 2fd866ee4585586ccdbf2333ccd17c25 |

apache-2.0 | ['catalan', 'paraphrase', 'textual entailment'] | false | Evaluation results We evaluated the _roberta-large-ca-paraphrase_ on the Parafraseja test set against standard multilingual and monolingual baselines: | Model | Parafraseja (combined_score) | | ------------|:-------------| | roberta-large-ca-v2 |**86.42** | | roberta-base-ca-v2 |84.38 | | mBERT | 79.66 | | XLM-RoBERTa | 77.83 | | f91b0e4defb4a3d14676e1180a26e7bf |

apache-2.0 | ['catalan', 'paraphrase', 'textual entailment'] | false | Disclaimer <details> <summary>Click to expand</summary> The models published in this repository are intended for a generalist purpose and are available to third parties. These models may have bias and/or any other undesirable distortions. When third parties, deploy or provide systems and/or services to other parties using any of these models (or using systems based on these models) or become users of the models, they should note that it is their responsibility to mitigate the risks arising from their use and, in any event, to comply with applicable regulations, including regulations regarding the use of Artificial Intelligence. In no event shall the owner and creator of the models (BSC – Barcelona Supercomputing Center) be liable for any results arising from the use made by third parties of these models. | 7ff3efdba6060dbbe4586e389c876930 |

mit | ['Code', 'GPyT', 'code generator'] | false | GPyT is a GPT2 model trained from scratch (not fine tuned) on Python code from Github. Overall, it was ~80GB of pure Python code, the current GPyT model is a mere 2 epochs through this data, so it may benefit greatly from continued training and/or fine-tuning. Newlines are replaced by `<N>` Input to the model is code, up to the context length of 1024, with newlines replaced by `<N>` Here's a quick example of using this model: ```py from transformers import AutoTokenizer, AutoModelWithLMHead tokenizer = AutoTokenizer.from_pretrained("Sentdex/GPyT") model = AutoModelWithLMHead.from_pretrained("Sentdex/GPyT") | 3e82bddce554a52c92eb8cd4301a4aad |

mit | ['Code', 'GPyT', 'code generator'] | false | copy and paste some code in here inp = """import""" newlinechar = "<N>" converted = inp.replace("\n", newlinechar) tokenized = tokenizer.encode(converted, return_tensors='pt') resp = model.generate(tokenized) decoded = tokenizer.decode(resp[0]) reformatted = decoded.replace("<N>","\n") print(reformatted) ``` Should produce: ``` import numpy as np import pytest import pandas as pd<N ``` This model does a ton more than just imports, however. For a bunch of examples and a better understanding of the model's capabilities: https://pythonprogramming.net/GPT-python-code-transformer-model-GPyT/ Considerations: 1. This model is intended for educational and research use only. Do not trust model outputs. 2. Model is highly likely to regurgitate code almost exactly as it saw it. It's up to you to determine licensing if you intend to actually use the generated code. 3. All Python code was blindly pulled from github. This means included code is both Python 2 and 3, among other more subtle differences, such as tabs being 2 spaces in some cases and 4 in others...and more non-homologous things. 4. Along with the above, this means the code generated could wind up doing or suggesting just about anything. Run the generated code at own risk...it could be *anything* | 2a4b643ddcff76008c5cfdd0f4f52650 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Golden_hour_photography Dreambooth model trained by NAWNIE with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept:        | 8e53bd16f6bd50c5d40d9c3dde99a5c2 |

cc-by-sa-4.0 | ['hupd', 'roberta', 'distilroberta', 'patents'] | false | HUPD DistilRoBERTa-Base Model This HUPD DistilRoBERTa model was fine-tuned on the HUPD dataset with a masked language modeling objective. It was originally introduced in [this paper](TBD). For more information about the Harvard USPTO Patent Dataset, please feel free to visit the [project website](https://patentdataset.org/) or the [project's GitHub repository](https://github.com/suzgunmirac/hupd). | dbe88acf7a3588d7a92527a21bc074d9 |

cc-by-sa-4.0 | ['hupd', 'roberta', 'distilroberta', 'patents'] | false | How to Use You can use this model directly with a pipeline for masked language modeling: ```python from transformers import pipeline model = pipeline(task="fill-mask", model="hupd/hupd-distilroberta-base") model("Improved <mask> for playing a game of thumb wrestling.") ``` Here is the output: ```python [{'score': 0.4274042248725891, 'sequence': 'Improved method for playing a game of thumb wrestling.', 'token': 5448, 'token_str': ' method'}, {'score': 0.06967400759458542, 'sequence': 'Improved system for playing a game of thumb wrestling.', 'token': 467, 'token_str': ' system'}, {'score': 0.06849079579114914, 'sequence': 'Improved device for playing a game of thumb wrestling.', 'token': 2187, 'token_str': ' device'}, {'score': 0.04544765502214432, 'sequence': 'Improved apparatus for playing a game of thumb wrestling.', 'token': 26529, 'token_str': ' apparatus'}, {'score': 0.025765646249055862, 'sequence': 'Improved means for playing a game of thumb wrestling.', 'token': 839, 'token_str': ' means'}] ``` Alternatively, you can load the model and use it as follows: ```python import torch from transformers import AutoTokenizer, AutoModelForMaskedLM | 121aebe01d9c0153dcfc8d87999540bb |

cc-by-sa-4.0 | ['hupd', 'roberta', 'distilroberta', 'patents'] | false | cuda/cpu device = 'cuda' if torch.cuda.is_available() else 'cpu' tokenizer = AutoTokenizer.from_pretrained("hupd/hupd-distilroberta-base") model = AutoModelForMaskedLM.from_pretrained("hupd/hupd-distilroberta-base").to(device) TEXT = "Improved <mask> for playing a game of thumb wrestling." inputs = tokenizer(TEXT, return_tensors="pt").to(device) with torch.no_grad(): logits = model(**inputs).logits | 84c64bb11978bbfcb39e0b5d8307cac6 |

cc-by-sa-4.0 | ['hupd', 'roberta', 'distilroberta', 'patents'] | false | retrieve indices of <mask> mask_token_indxs = (inputs.input_ids == tokenizer.mask_token_id)[0].nonzero(as_tuple=True)[0] for mask_idx in mask_token_indxs: predicted_token_id = logits[0, mask_idx].argmax(axis=-1) output = tokenizer.decode(predicted_token_id) print(f'Prediction for the <mask> token at index {mask_idx}: "{output}"') ``` Here is the output: ```python Prediction for the <mask> token at index 2: " method" ``` | ec6cf10de393fd4768a8dc8bc59b1762 |

cc-by-sa-4.0 | ['hupd', 'roberta', 'distilroberta', 'patents'] | false | Citation For more information, please take a look at the original paper. * Paper: [The Harvard USPTO Patent Dataset: A Large-Scale, Well-Structured, and Multi-Purpose Corpus of Patent Applications](TBD) * Authors: *Mirac Suzgun, Luke Melas-Kyriazi, Suproteem K. Sarkar, Scott Duke Kominers, and Stuart M. Shieber* * BibTeX: ``` @article{suzgun2022hupd, title={The Harvard USPTO Patent Dataset: A Large-Scale, Well-Structured, and Multi-Purpose Corpus of Patent Applications}, author={Suzgun, Mirac and Melas-Kyriazi, Luke and Sarkar, Suproteem K and Kominers, Scott and Shieber, Stuart}, year={2022} } ``` | 939cc3a14533099f8a1f92eab4041470 |

apache-2.0 | ['generated_from_trainer'] | false | opus-mt-en-ro-finetuned-en-to-ro This model is a fine-tuned version of [Helsinki-NLP/opus-mt-en-ro](https://huggingface.co/Helsinki-NLP/opus-mt-en-ro) on the wmt16 dataset. It achieves the following results on the evaluation set: - Loss: 1.2886 - Bleu: 28.1505 - Gen Len: 34.1036 | 6abddd5eee6b07f44c250325053fc0da |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:| | 0.7437 | 1.0 | 38145 | 1.2886 | 28.1505 | 34.1036 | | b7e369045683fbff300c130dd926ee38 |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | `kan-bayashi/jsut_tts_train_conformer_fastspeech2_raw_phn_jaconv_pyopenjtalk_train.loss.ave` ♻️ Imported from https://zenodo.org/record/4032246/ This model was trained by kan-bayashi using jsut/tts1 recipe in [espnet](https://github.com/espnet/espnet/). | dac34731476614fbde59bec32e3c6cb1 |

mit | ['generated_from_trainer'] | false | bert_medium_pretrain_squad This model is a fine-tuned version of [anas-awadalla/bert-medium-pretrained-on-squad](https://huggingface.co/anas-awadalla/bert-medium-pretrained-on-squad) on the squad dataset. It achieves the following results on the evaluation set: - Loss: 0.0973 - "exact_match": 77.95648060548723 - "f1": 85.85300366384631 | f39bb3bf3fdaa44b3434d6dbe18da2f5 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3.0 | 0f40dc399c6e885913922d06143a35dd |

apache-2.0 | ['generated_from_trainer'] | false | t5-small-finetuned-cnndm3-wikihow2 This model is a fine-tuned version of [Chikashi/t5-small-finetuned-cnndm2-wikihow2](https://huggingface.co/Chikashi/t5-small-finetuned-cnndm2-wikihow2) on the cnn_dailymail dataset. It achieves the following results on the evaluation set: - Loss: 1.6265 - Rouge1: 24.6704 - Rouge2: 11.9038 - Rougel: 20.3622 - Rougelsum: 23.2612 - Gen Len: 18.9997 | e4bd045fb0f374ce19707b77ae81dfa1 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 - mixed_precision_training: Native AMP | 6e13747c198e147b3cc6998106cb93e9 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | 1.8071 | 1.0 | 71779 | 1.6265 | 24.6704 | 11.9038 | 20.3622 | 23.2612 | 18.9997 | | 0b5dbf3d283855d3aaebf467d148f0a6 |

apache-2.0 | ['roberta', 'classification', 'dialog state tracking', 'conversational system', 'task-oriented dialog'] | false | SetSUMBT-dst-sgd This model is a fine-tuned version [SetSUMBT](https://github.com/ConvLab/ConvLab-3/tree/master/convlab/dst/setsumbt) of [roberta-base](https://huggingface.co/roberta-base) on [Schema-Guided Dialog](https://huggingface.co/datasets/ConvLab/sgd). Refer to [ConvLab-3](https://github.com/ConvLab/ConvLab-3) for model description and usage. | 22b878e921b700cbbf08269caf394fbf |

apache-2.0 | ['roberta', 'classification', 'dialog state tracking', 'conversational system', 'task-oriented dialog'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.00001 - train_batch_size: 3 - eval_batch_size: 16 - seed: 0 - gradient_accumulation_steps: 1 - optimizer: AdamW - lr_scheduler_type: linear - num_epochs: 50.0 | 2d4d62d505a96c6b7c3495af6de62656 |

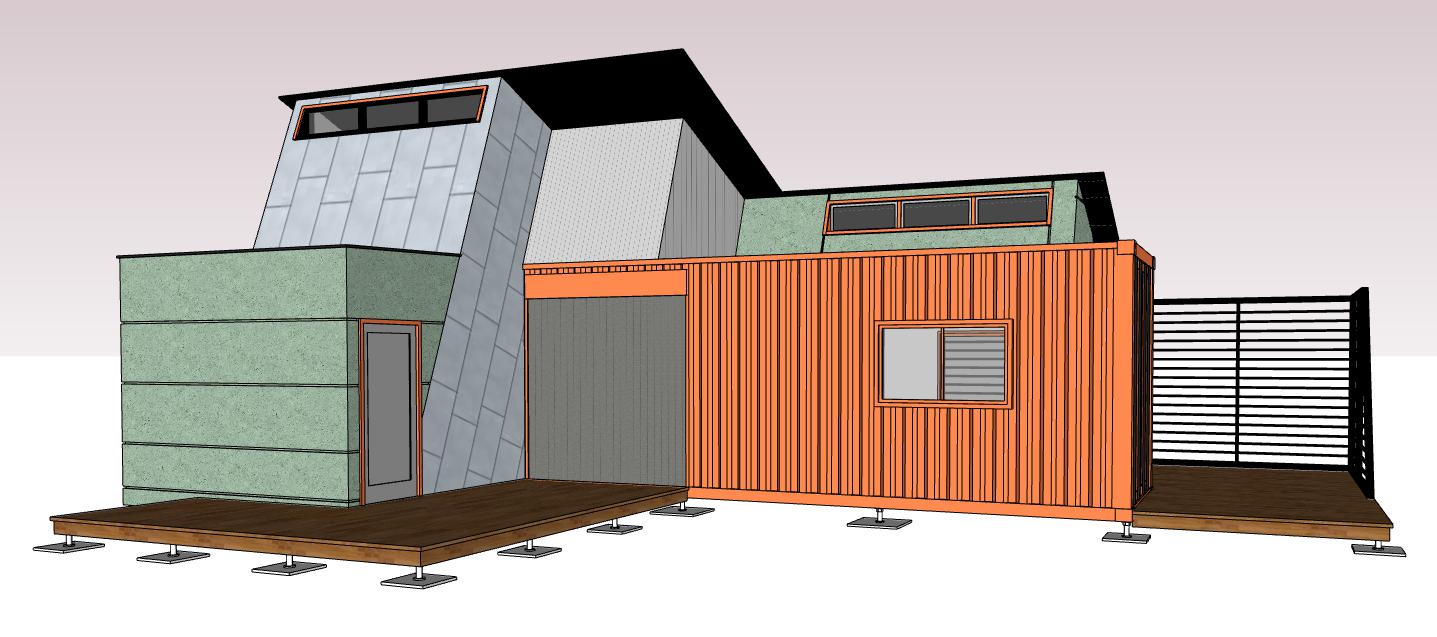

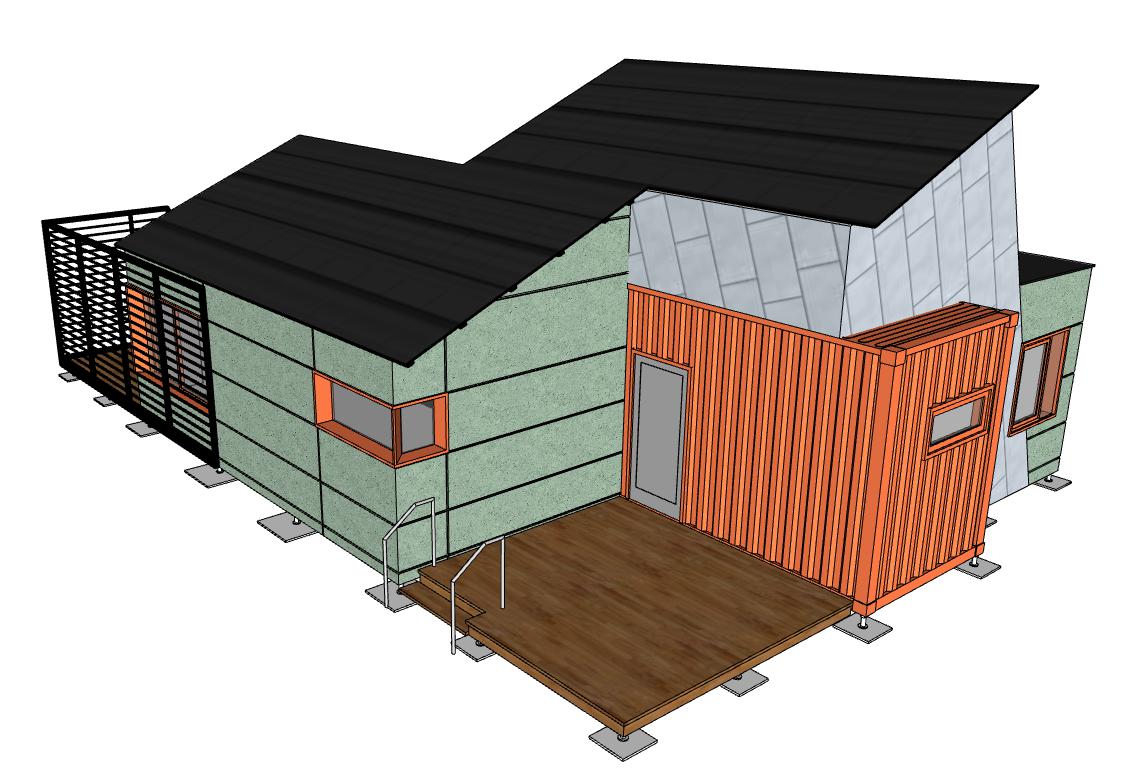

mit | [] | false | model by rrustom This your the Stable Diffusion model fine-tuned the A modern house concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt`: **a photo of sks modern home** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept:     | 24f5324f71fe8628ff75e9c35e2d5910 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_9_0', 'generated_from_trainer'] | false | This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the MOZILLA-FOUNDATION/COMMON_VOICE_9_0 - BN dataset. It achieves the following results on the evaluation set: - Loss: 0.2297 - Wer: 0.2850 - Cer: 0.0660 | df2e61fb07dc9447ca838fb48e0c7901 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_9_0', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 7.5e-05 - train_batch_size: 64 - eval_batch_size: 64 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 128 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - training_steps: 8692 - mixed_precision_training: Native AMP | 63f554fab009ac717679024df4711856 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_9_0', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | Cer | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:| | 3.675 | 2.3 | 400 | 3.5052 | 1.0 | 1.0 | | 3.0446 | 4.6 | 800 | 2.2759 | 1.0052 | 0.5215 | | 1.7276 | 6.9 | 1200 | 0.7083 | 0.6697 | 0.1969 | | 1.5171 | 9.2 | 1600 | 0.5328 | 0.5733 | 0.1568 | | 1.4176 | 11.49 | 2000 | 0.4571 | 0.5161 | 0.1381 | | 1.343 | 13.79 | 2400 | 0.3910 | 0.4522 | 0.1160 | | 1.2743 | 16.09 | 2800 | 0.3534 | 0.4137 | 0.1044 | | 1.2396 | 18.39 | 3200 | 0.3278 | 0.3877 | 0.0959 | | 1.2035 | 20.69 | 3600 | 0.3109 | 0.3741 | 0.0917 | | 1.1745 | 22.99 | 4000 | 0.2972 | 0.3618 | 0.0882 | | 1.1541 | 25.29 | 4400 | 0.2836 | 0.3427 | 0.0832 | | 1.1372 | 27.59 | 4800 | 0.2759 | 0.3357 | 0.0812 | | 1.1048 | 29.89 | 5200 | 0.2669 | 0.3284 | 0.0783 | | 1.0966 | 32.18 | 5600 | 0.2678 | 0.3249 | 0.0775 | | 1.0747 | 34.48 | 6000 | 0.2547 | 0.3134 | 0.0748 | | 1.0593 | 36.78 | 6400 | 0.2491 | 0.3077 | 0.0728 | | 1.0417 | 39.08 | 6800 | 0.2450 | 0.3012 | 0.0711 | | 1.024 | 41.38 | 7200 | 0.2402 | 0.2956 | 0.0694 | | 1.0106 | 43.68 | 7600 | 0.2351 | 0.2915 | 0.0681 | | 1.0014 | 45.98 | 8000 | 0.2328 | 0.2896 | 0.0673 | | 0.9999 | 48.28 | 8400 | 0.2318 | 0.2866 | 0.0667 | | 1fbc8f62dd83d4b6f8916ce379868e87 |

creativeml-openrail-m | ['text-to-image'] | false | 🧨 Diffusers This model can be used just like any other Stable Diffusion model. For more information, please have a look at the [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion). You can also export the model to [ONNX](https://huggingface.co/docs/diffusers/optimization/onnx), [MPS](https://huggingface.co/docs/diffusers/optimization/mps) and/or [FLAX/JAX](). ```python from diffusers import StableDiffusionPipeline import torch model_id = "Fictiverse/Stable_Diffusion_PaperCut_Model" pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16) pipe = pipe.to("cuda") prompt = "PaperCut R2-D2" image = pipe(prompt).images[0] image.save("./R2-D2.png") ``` | 2948544ed0847eff62bc1c08bd9b310f |

mit | [] | false |

This is a text generation algorithm that is fine-tuned on subtitles from Binging with Babish (https://www.youtube.com/c/bingingwithbabish)

Just type in your starting sentence, click "compute" and see what the model has to say! The first time you run the model, it may take a minute to load (after that it takes ~6 seconds to run)

This is created with the help of aitextgen (https://github.com/minimaxir/aitextgen), using a pertained 124M gpt-2 model

Disclaimer:

The use of this model is for parody only, and is not affiliated with Binging with Babish or the Babish Culinary Universe. | 48e6224aa886247e295ad95a4b0ed485 |

apache-2.0 | ['generated_from_keras_callback'] | false | adi1494/distilbert-base-uncased-finetuned-squad This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 1.5671 - Validation Loss: 1.2217 - Epoch: 0 | a8684f516dedd0480ecdb9a631ce6704 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'Adam', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 5532, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False} - training_precision: float32 | 0b705a3da98f4b28e982f61e48279554 |

apache-2.0 | ['generated_from_trainer'] | false | finetuning12 This model is a fine-tuned version of [facebook/wav2vec2-base-960h](https://huggingface.co/facebook/wav2vec2-base-960h) on the None dataset. It achieves the following results on the evaluation set: - Loss: nan - Wer: 1.0 | 39e8c0683fd264fdcfed0b04064b1ef0 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.00024 - train_batch_size: 1 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 2 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 800 - num_epochs: 2 | 98cca528789dd6ae1de43b66de28f658 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:---:| | 0.0 | 0.31 | 500 | nan | 1.0 | | 0.0 | 0.61 | 1000 | nan | 1.0 | | 0.0 | 0.92 | 1500 | nan | 1.0 | | 0.0 | 1.23 | 2000 | nan | 1.0 | | 0.0 | 1.54 | 2500 | nan | 1.0 | | 0.0 | 1.84 | 3000 | nan | 1.0 | | 30b92a5890a04889b97423e0876b8c6a |

mit | ['generated_from_trainer'] | false | finetuned_gpt2-medium_sst2_negation0.5 This model is a fine-tuned version of [gpt2-medium](https://huggingface.co/gpt2-medium) on the sst2 dataset. It achieves the following results on the evaluation set: - Loss: 3.4090 | de36c0cfb4909b664873da1d5d925a10 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.7891 | 1.0 | 1092 | 3.2810 | | 2.5081 | 2.0 | 2184 | 3.3508 | | 2.3572 | 3.0 | 3276 | 3.4090 | | a86cc63a9707ba5dc3e4e37e32b7b559 |

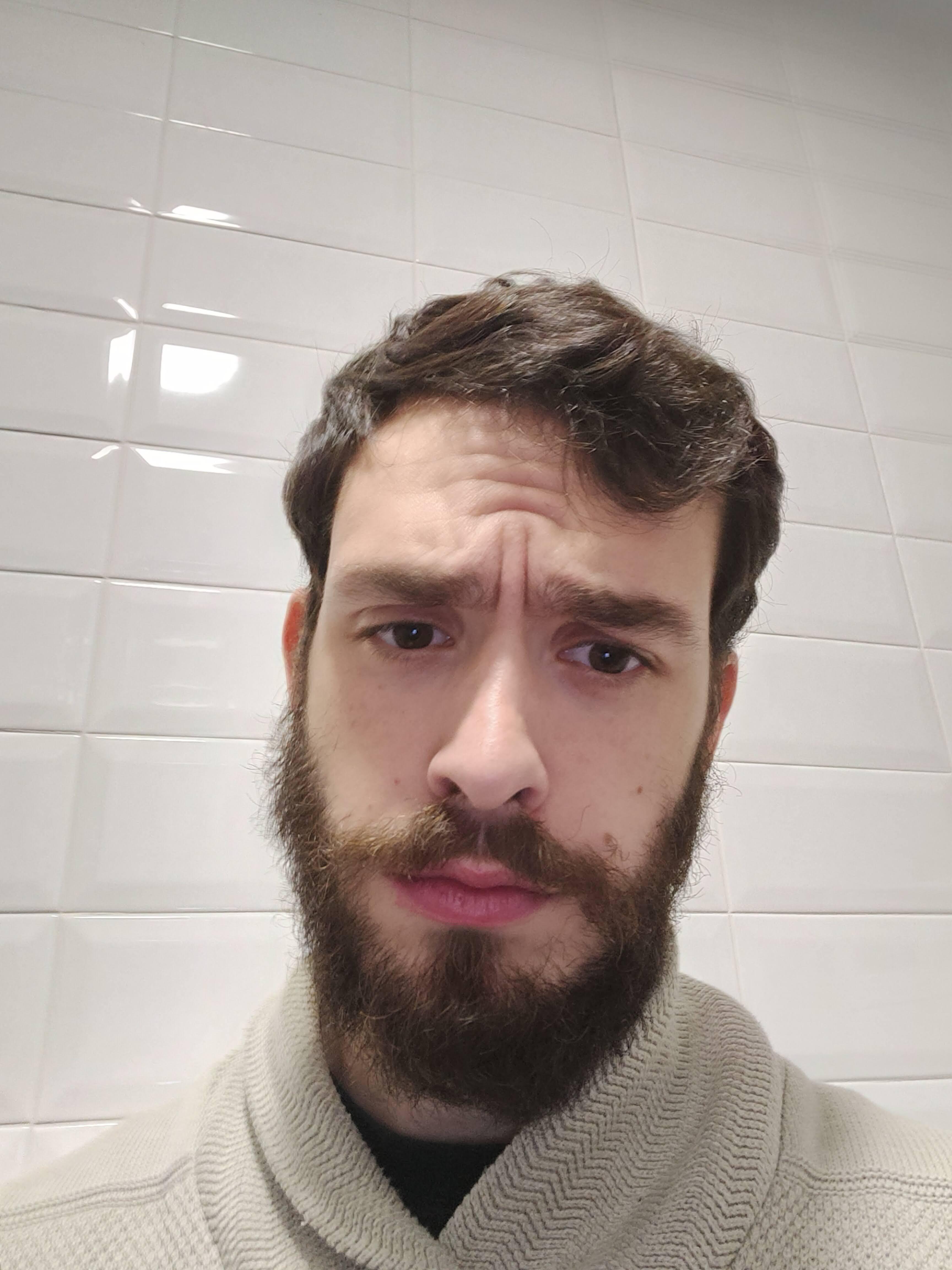

mit | [] | false | model by Ganosh This your the Stable Diffusion model fine-tuned the Alberto_Pablo concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt`: **a photo of sks Alberto** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept:                | 156249ef55ee8b9defcbb45406e51ceb |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-base-timit-demo-colab9 This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.1922 - Wer: 1.0 | 7bf2083f69f62f15e60395b0e22635a1 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:---:| | 5.0683 | 1.42 | 500 | 3.2471 | 1.0 | | 3.1349 | 2.85 | 1000 | 3.2219 | 1.0 | | 3.1317 | 4.27 | 1500 | 3.2090 | 1.0 | | 3.1262 | 5.7 | 2000 | 3.2152 | 1.0 | | 3.1307 | 7.12 | 2500 | 3.2147 | 1.0 | | 3.1264 | 8.55 | 3000 | 3.2072 | 1.0 | | 3.1279 | 9.97 | 3500 | 3.2158 | 1.0 | | 3.1287 | 11.4 | 4000 | 3.2190 | 1.0 | | 3.1256 | 12.82 | 4500 | 3.2069 | 1.0 | | 3.1254 | 14.25 | 5000 | 3.2134 | 1.0 | | 3.1259 | 15.67 | 5500 | 3.2231 | 1.0 | | 3.1269 | 17.09 | 6000 | 3.2005 | 1.0 | | 3.1279 | 18.52 | 6500 | 3.1988 | 1.0 | | 3.1246 | 19.94 | 7000 | 3.1929 | 1.0 | | 3.128 | 21.37 | 7500 | 3.1864 | 1.0 | | 3.1245 | 22.79 | 8000 | 3.1868 | 1.0 | | 3.1266 | 24.22 | 8500 | 3.1852 | 1.0 | | 3.1239 | 25.64 | 9000 | 3.1855 | 1.0 | | 3.125 | 27.07 | 9500 | 3.1917 | 1.0 | | 3.1233 | 28.49 | 10000 | 3.1929 | 1.0 | | 3.1229 | 29.91 | 10500 | 3.1922 | 1.0 | | 61d5e60637d1847a9dcd80465ce1ef68 |

apache-2.0 | ['generated_from_keras_callback'] | false | distilbertbaseuncasedz This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.5368 - Train End Logits Accuracy: 0.8401 - Train Start Logits Accuracy: 0.8078 - Validation Loss: 1.2427 - Validation End Logits Accuracy: 0.7050 - Validation Start Logits Accuracy: 0.6725 - Epoch: 3 | 1cc72ce35b5f59bddf7ec6076677e7fb |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 1.3338 | 0.6448 | 0.6045 | 1.1322 | 0.6906 | 0.6563 | 0 | | 0.9044 | 0.7466 | 0.7090 | 1.0996 | 0.7032 | 0.6720 | 1 | | 0.6756 | 0.8042 | 0.7680 | 1.1416 | 0.7047 | 0.6718 | 2 | | 0.5368 | 0.8401 | 0.8078 | 1.2427 | 0.7050 | 0.6725 | 3 | | e807ce9c99edd5ec791bbdddf389dd42 |

apache-2.0 | ['generated_from_keras_callback'] | false | Rocketknight1/mt5-small-finetuned-amazon-en-es This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 10.2613 - Validation Loss: 4.5342 - Epoch: 0 | 051fc5a0118db30ffb8557fc93f0a920 |

apache-2.0 | [] | false | bert-base-sw-cased We are sharing smaller versions of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) that handle a custom number of languages. Unlike [distilbert-base-multilingual-cased](https://huggingface.co/distilbert-base-multilingual-cased), our versions give exactly the same representations produced by the original model which preserves the original accuracy. For more information please visit our paper: [Load What You Need: Smaller Versions of Multilingual BERT](https://www.aclweb.org/anthology/2020.sustainlp-1.16.pdf). | 5f26be70187dbf1141f080ba812e2770 |

apache-2.0 | [] | false | How to use ```python from transformers import AutoTokenizer, AutoModel tokenizer = AutoTokenizer.from_pretrained("Geotrend/bert-base-sw-cased") model = AutoModel.from_pretrained("Geotrend/bert-base-sw-cased") ``` To generate other smaller versions of multilingual transformers please visit [our Github repo](https://github.com/Geotrend-research/smaller-transformers). | 8673ef0eea1e1ed43f21e2a03d792411 |

apache-2.0 | ['generated_from_trainer'] | false | Article_50v2_NER_Model_3Epochs_UNAUGMENTED This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the article50v2_wikigold_split dataset. It achieves the following results on the evaluation set: - Loss: 0.7694 - Precision: 0.0 - Recall: 0.0 - F1: 0.0 - Accuracy: 0.7776 | 02ea5e011cffd2685020ddd770a1801b |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 6 | 0.9910 | 0.1161 | 0.0044 | 0.0085 | 0.7766 | | No log | 2.0 | 12 | 0.8031 | 0.0 | 0.0 | 0.0 | 0.7776 | | No log | 3.0 | 18 | 0.7694 | 0.0 | 0.0 | 0.0 | 0.7776 | | ad9efedce58c72a4f056fed5d3b7bd60 |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'wildcard'] | false | Description <figure> <img src=https://datasets-server.huggingface.co/assets/adamwatters/roblox-guy/--/adamwatters--roblox-guy/train/7/image/image.jpg width=200px height=200px> <figcaption align = "left"><b>Screenshot from Roblox used for training</b></figcaption> </figure> This is a Stable Diffusion model fine-tuned on images of my specific customized Roblox avatar. Idea is: maybe it would be fun for Roblox players to make images of their avatars in different settings. It can be used by modifying the instance_prompt: a photo of rblx character This model was created as part of the DreamBooth Hackathon 🔥. Visit the [organisation page](https://huggingface.co/dreambooth-hackathon) for instructions on how to take part! | 6394233198b8133738c44d311eb6fc48 |

mit | [] | false | Load the model (supports full path, relative path, and remote paths) model = RWKV( "https://huggingface.co/Hazzzardous/RWKV-8Bit/resolve/main/RWKV-4-Pile-7B-Instruct.pqth" ) model.loadContext(newctx=f"Q: who is Jim Butcher?\n\nA:") output = model.forward(number=100)["output"] print(output) | cd2e72633173461721c1e516bd518f69 |

apache-2.0 | ['automatic-speech-recognition', 'fa'] | false | exp_w2v2t_fa_unispeech-ml_s408 Fine-tuned [microsoft/unispeech-large-multi-lingual-1500h-cv](https://huggingface.co/microsoft/unispeech-large-multi-lingual-1500h-cv) for speech recognition using the train split of [Common Voice 7.0 (fa)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | bdb4a110b7cd36bb4144e5107ee167c9 |

apache-2.0 | ['generated_from_trainer'] | false | bart-mlm-pubmed-medterm This model is a fine-tuned version of [facebook/bart-base](https://huggingface.co/facebook/bart-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.0000 - Rouge2 Precision: 0.985 - Rouge2 Recall: 0.7208 - Rouge2 Fmeasure: 0.8088 | 1ba9bc536593ee70463eae91f51cc17e |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 - mixed_precision_training: Native AMP | a4a105925fd05bbf027f00d8e85bb933 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge2 Precision | Rouge2 Recall | Rouge2 Fmeasure | |:-------------:|:-----:|:------:|:---------------:|:----------------:|:-------------:|:---------------:| | 0.0018 | 1.0 | 13833 | 0.0003 | 0.985 | 0.7208 | 0.8088 | | 0.0014 | 2.0 | 27666 | 0.0006 | 0.9848 | 0.7207 | 0.8086 | | 0.0009 | 3.0 | 41499 | 0.0002 | 0.9848 | 0.7207 | 0.8086 | | 0.0007 | 4.0 | 55332 | 0.0002 | 0.985 | 0.7208 | 0.8088 | | 0.0006 | 5.0 | 69165 | 0.0001 | 0.9848 | 0.7207 | 0.8087 | | 0.0001 | 6.0 | 82998 | 0.0002 | 0.9846 | 0.7206 | 0.8086 | | 0.0009 | 7.0 | 96831 | 0.0001 | 0.9848 | 0.7208 | 0.8087 | | 0.0 | 8.0 | 110664 | 0.0000 | 0.9848 | 0.7207 | 0.8087 | | 0.0001 | 9.0 | 124497 | 0.0000 | 0.985 | 0.7208 | 0.8088 | | 0.0 | 10.0 | 138330 | 0.0000 | 0.985 | 0.7208 | 0.8088 | | 2f9fa391d5f7247835c1c4dc6ebf2537 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_sa_GLUE_Experiment_qnli This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE QNLI dataset. It achieves the following results on the evaluation set: - Loss: 0.6530 - Accuracy: 0.6077 | f7d007712938e0a6188420aece167c55 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6767 | 1.0 | 410 | 0.6560 | 0.6041 | | 0.644 | 2.0 | 820 | 0.6530 | 0.6077 | | 0.6141 | 3.0 | 1230 | 0.6655 | 0.6074 | | 0.5762 | 4.0 | 1640 | 0.7018 | 0.5940 | | 0.5144 | 5.0 | 2050 | 0.7033 | 0.5934 | | 0.4324 | 6.0 | 2460 | 0.8714 | 0.5817 | | 0.3483 | 7.0 | 2870 | 1.0825 | 0.5847 | | 56c8688f8d958d25c0a817cbff031098 |

mit | ['go-emotion', 'text-classification', 'pytorch'] | false | Model Description 1. Based on the uncased BERT pretrained model with a linear output layer. 2. Added several commonly-used emoji and tokens to the special token list of the tokenizer. 3. Did label smoothing while training. 4. Used weighted loss and focal loss to help the cases which trained badly. | 6953bd9e51432c0dd20f4eabef7c0376 |

apache-2.0 | ['automatic-speech-recognition', 'openslr_SLR66', 'generated_from_trainer', 'robust-speech-event', 'hf-asr-leaderboard'] | false | This model is a fine-tuned version of [facebook/wav2vec2-xls-r-1b](https://huggingface.co/facebook/wav2vec2-xls-r-1b) on the OPENSLR_SLR66 - NA dataset. It achieves the following results on the evaluation set: - Loss: 0.3119 - Wer: 0.2613 | b24123675b94e80719874d5cdd594499 |

apache-2.0 | ['automatic-speech-recognition', 'openslr_SLR66', 'generated_from_trainer', 'robust-speech-event', 'hf-asr-leaderboard'] | false | Evaluation metrics | Metric | Split | Decode with LM | Value | |:------:|:------:|:--------------:|:---------:| | WER | Train | No | 5.36 | | CER | Train | No | 1.11 | | WER | Test | No | 26.14 | | CER | Test | No | 4.93 | | WER | Train | Yes | 5.04 | | CER | Train | Yes | 1.07 | | WER | Test | Yes | 20.69 | | CER | Test | Yes | 3.986 | | eed268284f43453cabcb293aadbe3c72 |

apache-2.0 | ['automatic-speech-recognition', 'openslr_SLR66', 'generated_from_trainer', 'robust-speech-event', 'hf-asr-leaderboard'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 16 - eval_batch_size: 4 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 2000 - num_epochs: 150.0 - mixed_precision_training: Native AMP | bed07dd7e2467d2e849b725076a07087 |

apache-2.0 | ['automatic-speech-recognition', 'openslr_SLR66', 'generated_from_trainer', 'robust-speech-event', 'hf-asr-leaderboard'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:------:|:-----:|:---------------:|:------:| | 2.9038 | 4.8 | 500 | 3.0125 | 1.0 | | 1.3777 | 9.61 | 1000 | 0.8681 | 0.8753 | | 1.1436 | 14.42 | 1500 | 0.6256 | 0.7961 | | 1.0997 | 19.23 | 2000 | 0.5244 | 0.6875 | | 1.0363 | 24.04 | 2500 | 0.4585 | 0.6276 | | 0.7996 | 28.84 | 3000 | 0.4072 | 0.5295 | | 0.825 | 33.65 | 3500 | 0.3590 | 0.5222 | | 0.8018 | 38.46 | 4000 | 0.3678 | 0.4671 | | 0.7545 | 43.27 | 4500 | 0.3474 | 0.3962 | | 0.7375 | 48.08 | 5000 | 0.3224 | 0.3869 | | 0.6198 | 52.88 | 5500 | 0.3233 | 0.3630 | | 0.6608 | 57.69 | 6000 | 0.3029 | 0.3308 | | 0.645 | 62.5 | 6500 | 0.3195 | 0.3722 | | 0.5249 | 67.31 | 7000 | 0.3004 | 0.3202 | | 0.4875 | 72.11 | 7500 | 0.2826 | 0.2992 | | 0.5171 | 76.92 | 8000 | 0.2962 | 0.2976 | | 0.4974 | 81.73 | 8500 | 0.2990 | 0.2933 | | 0.4387 | 86.54 | 9000 | 0.2834 | 0.2755 | | 0.4511 | 91.34 | 9500 | 0.2886 | 0.2787 | | 0.4112 | 96.15 | 10000 | 0.3093 | 0.2976 | | 0.4064 | 100.96 | 10500 | 0.3123 | 0.2863 | | 0.4047 | 105.77 | 11000 | 0.2968 | 0.2719 | | 0.3519 | 110.57 | 11500 | 0.3106 | 0.2832 | | 0.3719 | 115.38 | 12000 | 0.3030 | 0.2737 | | 0.3669 | 120.19 | 12500 | 0.2964 | 0.2714 | | 0.3386 | 125.0 | 13000 | 0.3101 | 0.2714 | | 0.3137 | 129.8 | 13500 | 0.3063 | 0.2710 | | 0.3008 | 134.61 | 14000 | 0.3082 | 0.2617 | | 0.301 | 139.42 | 14500 | 0.3121 | 0.2628 | | 0.3291 | 144.23 | 15000 | 0.3105 | 0.2612 | | 0.3133 | 149.04 | 15500 | 0.3114 | 0.2624 | | c56000fc7af37b2e655f5182973914d8 |

creativeml-openrail-m | [] | false | Be aware: Model is heavly overfitted, merge needed. Best use probably is to merge with something else for a style change. Will upload other version later on that should be better Sample images: <style> img { display: inline-block; } </style> <img src="https://huggingface.co/YoungMasterFromSect/Ton_Inf/resolve/main/1.png" width="300" height="200"> <img src="https://huggingface.co/YoungMasterFromSect/Ton_Inf/resolve/main/2.png" width="300" height="200"> <img src="https://huggingface.co/YoungMasterFromSect/Ton_Inf/resolve/main/3.png" width="300" height="300"> <img src="https://huggingface.co/YoungMasterFromSect/Ton_Inf/resolve/main/4.png" width="300" height="300"> <img src="https://huggingface.co/YoungMasterFromSect/Ton_Inf/resolve/main/5.png" width="500" height="500"> | dffb0b1b71655ec9a2a74b948af02ac3 |

openrail | [] | false | Conceptart embedding version 1.0 This model is made for Stable Diffusion 2.0 `checkpoint 768` <div style="display: flex; flex-direction: row; flex-wrap: wrap"> <img src="https://i.imgur.com/1l48vSo.png"> </div> | 0e4b07b8198110c1e0471b08b08882f1 |

openrail | [] | false | For whom is this model? This model is targeted toward people who would love to create a more artistic stuff in SD, to get a cool logo, or stickers concepts, or baseline for an amazing poster. For sure as well for concept artists needing inspiration or indie game dev - who might need some assets. This embedding will be useful as well for all fans of bording games/table top rpg-s. | 945c1a407dab9661c9e5d526ed80abf6 |

openrail | [] | false | How to use it? Simply drop the conceptart-x file (where `x` is a number of training steps) into the folder named `embeddings`. It should appear in your SD instance main folder. In your prompt just type in: "XYZ something in style of `conceptart-x`". This is just an example. The most important part is the `conceptart-x`. I would recommend you to first try each of them as they all might behave a bit different. | 047beb65edc6f7c71860090d37eb12f4 |

openrail | [] | false | Issues Currently, the model has some issues. It tends to have grayish/dull colors sometimes. The object's elements are not ideally coherent. The improvements will come with future versions. You might expect them in the following weeks. | 7c6b106c0e820a7cfca883834f5ecfa5 |

openrail | [] | false | The strengths One of the biggest strengths of this model is pure creativity and out of the box with proper prompting a good quality of output. The strongest part of the model is a good quality improvement with img2img. I think ofthen the usual workflow will look as following (ideas): 1. You prompt-craft and create cool designs, 2. You select ones you like (sometimes smaller objects/elements/designs from the output) 3. You go to img2img to get more variations, or you select a smaller element that you like and you generate a bigger version of it. Then you improve on the new one up until you are satisfied. 4. You use another embedding to get a surprisingly amazing output! Or you already have a design you like! 5. At The same time you might like to keep the design and upscale it to get a great resolution. | 288eaa2d80e93f19423bd68960c006fc |

openrail | [] | false | Examples ***Basketballs*** with japanese dragons on them: I have used the one of the outputs, selected the object I liked with the rectangle took in img2img authomatic1111 ui, and went throught two img 2 img iterations to get the output. Prompt: `((basketball ball covered in colourful tattoo of a dragons and underground punk stickers)), illustration in style of conceptart-200, oil painting style Negative prompt: bad anatomy, unrealistic, abstract, random, amateur photography, blurred, underwater shot, watermark, logo, demon eyes, plastic skin, ((text)) Steps: 30, Sampler: Euler a, CFG scale: 11.5, Seed: 719571754, Size: 832x832, Model hash: 2c02b20a, Denoising strength: 0.91, Mask blur: 4` <div style="display: flex; flex-direction: row; flex-wrap: wrap"> <img src="https://preview.redd.it/lxsqj6oayd3a1.png?width=1664&format=png&auto=webp&s=875129c03f166aa129f3d37b24f1b919d568d7b3"> </div> ***Anime demons*** Just one extra refinement in img2img. Prompt: `colored illustration of dark beast pokemon in style of conceptart-200, [bright colors] Negative prompt: bad anatomy, unrealistic, abstract, cartoon, random, amateur photography, blurred, underwater shot, watermark, logo, demon eyes, plastic skin, ((text)), ((multiple characters)) ((desaturated colors)) Steps: 24, Sampler: DDIM, CFG scale: 11.5, Seed: 1001839889, Size: 704x896, Model hash: 2c02b20a` <div style="display: flex; flex-direction: row; flex-wrap: wrap"> <img src="https://i.imgur.com/KBt2mWB.png"> </div> ***Cave entrance*** Straight out comparison between the different embeedings. At the end result with vanilla SD 2.0 768 Prompt: `colored illustration of dark cave entrance in style of conceptart-200, ((bright background)), ((bright colors)) Negative prompt: bad anatomy, unrealistic, abstract, cartoon, random, amateur photography, blurred, underwater shot, watermark, logo, demon eyes, plastic skin, ((text)), ((multiple characters)) ((desaturated colors)) Steps: 24, Sampler: DDIM, CFG scale: 8, Seed: 1479340448, Size: 768x768, Model hash: 2c02b20a` <div style="display: flex; flex-direction: row; flex-wrap: wrap"> <img src="https://i.imgur.com/6MtiGUs.jpg"> </div> Enjoy! Hope you will find it helpful! | d3e2cfb586e99a875d43f5b2478db880 |

apache-2.0 | ['stanza', 'token-classification'] | false | Stanza model for German (de) Stanza is a collection of accurate and efficient tools for the linguistic analysis of many human languages. Starting from raw text to syntactic analysis and entity recognition, Stanza brings state-of-the-art NLP models to languages of your choosing. Find more about it in [our website](https://stanfordnlp.github.io/stanza) and our [GitHub repository](https://github.com/stanfordnlp/stanza). This card and repo were automatically prepared with `hugging_stanza.py` in the `stanfordnlp/huggingface-models` repo Last updated 2022-10-26 21:18:21.275 | 061925e5cff7458747887e60d04466dc |

mit | ['exbert'] | false | How to use You can use this model directly with a pipeline for text generation. Since the generation relies on some randomness, we set a seed for reproducibility: ```python from tf_transformers.models import GPT2Model from transformers import GPT2Tokenizer tokenizer = GPT2Tokenizer.from_pretrained('gpt2') model = GPT2Model.from_pretrained("gpt2") text = "Replace me by any text you'd like." inputs_tf = {} inputs = tokenizer(text, return_tensors='tf') inputs_tf["input_ids"] = inputs["input_ids"] outputs_tf = model(inputs_tf) ``` | c00f011d1434cf3f9b93145c94ea3464 |

apache-2.0 | ['generated_from_trainer'] | false | flan-t5-base-finetuned-openai-summarize_from_feedback This model is a fine-tuned version of [google/flan-t5-base](https://huggingface.co/google/flan-t5-base) on the summarize_from_feedback dataset. It achieves the following results on the evaluation set: - Loss: 1.8833 - Rouge1: 29.3494 - Rouge2: 10.9406 - Rougel: 23.9907 - Rougelsum: 25.461 - Gen Len: 18.9265 | f98d1b3cf531dd7ae1be5899db259722 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 16 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 6 | b9a2851cfd106756dbca6f9d8fb8b7b4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | 1.7678 | 1.0 | 5804 | 1.8833 | 29.3494 | 10.9406 | 23.9907 | 25.461 | 18.9265 | | 1.5839 | 2.0 | 11608 | 1.8992 | 29.6239 | 11.1795 | 24.2927 | 25.7183 | 18.9358 | | 1.4812 | 3.0 | 17412 | 1.8929 | 29.8899 | 11.2855 | 24.4193 | 25.9219 | 18.9189 | | 1.4198 | 4.0 | 23216 | 1.8939 | 29.8897 | 11.2606 | 24.3262 | 25.8642 | 18.9309 | | 1.3612 | 5.0 | 29020 | 1.9105 | 29.8469 | 11.2112 | 24.2483 | 25.7884 | 18.9396 | | 1.3279 | 6.0 | 34824 | 1.9170 | 30.038 | 11.3426 | 24.4385 | 25.9675 | 18.9328 | | 5ee9f6de96cca0e0227288073c3efba4 |

gpl | ['corenlp'] | false | Core NLP model for fr CoreNLP is your one stop shop for natural language processing in Java! CoreNLP enables users to derive linguistic annotations for text, including token and sentence boundaries, parts of speech, named entities, numeric and time values, dependency and constituency parses, coreference, sentiment, quote attributions, and relations. Find more about it in [our website](https://stanfordnlp.github.io/CoreNLP) and our [GitHub repository](https://github.com/stanfordnlp/CoreNLP). | 06cdc23622018b16cf1316af0c6c3799 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-xls-r-timit-trainer This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.1064 - Wer: 1.0 | d47a52c9e9461c6e9a09c306014e5b64 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 100 - mixed_precision_training: Native AMP | 57913321f4163eb47de07017306b7b15 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 3.5537 | 4.03 | 500 | 0.6078 | 1.0 | | 0.5444 | 8.06 | 1000 | 0.4990 | 0.9994 | | 0.3744 | 12.1 | 1500 | 0.5530 | 1.0 | | 0.2863 | 16.13 | 2000 | 0.6401 | 1.0 | | 0.2357 | 20.16 | 2500 | 0.6485 | 1.0 | | 0.1933 | 24.19 | 3000 | 0.7448 | 0.9994 | | 0.162 | 28.22 | 3500 | 0.7502 | 1.0 | | 0.1325 | 32.26 | 4000 | 0.7801 | 1.0 | | 0.1169 | 36.29 | 4500 | 0.8334 | 1.0 | | 0.1031 | 40.32 | 5000 | 0.8269 | 1.0 | | 0.0913 | 44.35 | 5500 | 0.8432 | 1.0 | | 0.0793 | 48.39 | 6000 | 0.8738 | 1.0 | | 0.0694 | 52.42 | 6500 | 0.8897 | 1.0 | | 0.0613 | 56.45 | 7000 | 0.8966 | 1.0 | | 0.0548 | 60.48 | 7500 | 0.9398 | 1.0 | | 0.0444 | 64.51 | 8000 | 0.9548 | 1.0 | | 0.0386 | 68.55 | 8500 | 0.9647 | 1.0 | | 0.0359 | 72.58 | 9000 | 0.9901 | 1.0 | | 0.0299 | 76.61 | 9500 | 1.0151 | 1.0 | | 0.0259 | 80.64 | 10000 | 1.0526 | 1.0 | | 0.022 | 84.67 | 10500 | 1.0754 | 1.0 | | 0.0189 | 88.71 | 11000 | 1.0688 | 1.0 | | 0.0161 | 92.74 | 11500 | 1.0914 | 1.0 | | 0.0138 | 96.77 | 12000 | 1.1064 | 1.0 | | 32488a5f2a85f8c895baa3408ba22177 |

apache-2.0 | ['generated_from_trainer'] | false | fin_sentiment This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.5739 - Accuracy: 0.7703 | ebead02959871fea3726f1533c4ad98e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 125 | 0.5739 | 0.7703 | | d28d1838fb85b589c44409397cd3a433 |

apache-2.0 | [] | false | BERT Miniatures === This is the set of 24 BERT models referenced in [Well-Read Students Learn Better: On the Importance of Pre-training Compact Models](https://arxiv.org/abs/1908.08962) (English only, uncased, trained with WordPiece masking). We have shown that the standard BERT recipe (including model architecture and training objective) is effective on a wide range of model sizes, beyond BERT-Base and BERT-Large. The smaller BERT models are intended for environments with restricted computational resources. They can be fine-tuned in the same manner as the original BERT models. However, they are most effective in the context of knowledge distillation, where the fine-tuning labels are produced by a larger and more accurate teacher. Our goal is to enable research in institutions with fewer computational resources and encourage the community to seek directions of innovation alternative to increasing model capacity. You can download the 24 BERT miniatures either from the [official BERT Github page](https://github.com/google-research/bert/), or via HuggingFace from the links below: | |H=128|H=256|H=512|H=768| |---|:---:|:---:|:---:|:---:| | **L=2** |[**2/128 (BERT-Tiny)**][2_128]|[2/256][2_256]|[2/512][2_512]|[2/768][2_768]| | **L=4** |[4/128][4_128]|[**4/256 (BERT-Mini)**][4_256]|[**4/512 (BERT-Small)**][4_512]|[4/768][4_768]| | **L=6** |[6/128][6_128]|[6/256][6_256]|[6/512][6_512]|[6/768][6_768]| | **L=8** |[8/128][8_128]|[8/256][8_256]|[**8/512 (BERT-Medium)**][8_512]|[8/768][8_768]| | **L=10** |[10/128][10_128]|[10/256][10_256]|[10/512][10_512]|[10/768][10_768]| | **L=12** |[12/128][12_128]|[12/256][12_256]|[12/512][12_512]|[**12/768 (BERT-Base)**][12_768]| Note that the BERT-Base model in this release is included for completeness only; it was re-trained under the same regime as the original model. Here are the corresponding GLUE scores on the test set: |Model|Score|CoLA|SST-2|MRPC|STS-B|QQP|MNLI-m|MNLI-mm|QNLI(v2)|RTE|WNLI|AX| |---|:---:|:---:|:---:|:---:|:---:|:---:|:---:|:---:|:---:|:---:|:---:|:---:| |BERT-Tiny|64.2|0.0|83.2|81.1/71.1|74.3/73.6|62.2/83.4|70.2|70.3|81.5|57.2|62.3|21.0| |BERT-Mini|65.8|0.0|85.9|81.1/71.8|75.4/73.3|66.4/86.2|74.8|74.3|84.1|57.9|62.3|26.1| |BERT-Small|71.2|27.8|89.7|83.4/76.2|78.8/77.0|68.1/87.0|77.6|77.0|86.4|61.8|62.3|28.6| |BERT-Medium|73.5|38.0|89.6|86.6/81.6|80.4/78.4|69.6/87.9|80.0|79.1|87.7|62.2|62.3|30.5| For each task, we selected the best fine-tuning hyperparameters from the lists below, and trained for 4 epochs: - batch sizes: 8, 16, 32, 64, 128 - learning rates: 3e-4, 1e-4, 5e-5, 3e-5 If you use these models, please cite the following paper: ``` @article{turc2019, title={Well-Read Students Learn Better: On the Importance of Pre-training Compact Models}, author={Turc, Iulia and Chang, Ming-Wei and Lee, Kenton and Toutanova, Kristina}, journal={arXiv preprint arXiv:1908.08962v2 }, year={2019} } ``` [2_128]: https://huggingface.co/google/bert_uncased_L-2_H-128_A-2 [2_256]: https://huggingface.co/google/bert_uncased_L-2_H-256_A-4 [2_512]: https://huggingface.co/google/bert_uncased_L-2_H-512_A-8 [2_768]: https://huggingface.co/google/bert_uncased_L-2_H-768_A-12 [4_128]: https://huggingface.co/google/bert_uncased_L-4_H-128_A-2 [4_256]: https://huggingface.co/google/bert_uncased_L-4_H-256_A-4 [4_512]: https://huggingface.co/google/bert_uncased_L-4_H-512_A-8 [4_768]: https://huggingface.co/google/bert_uncased_L-4_H-768_A-12 [6_128]: https://huggingface.co/google/bert_uncased_L-6_H-128_A-2 [6_256]: https://huggingface.co/google/bert_uncased_L-6_H-256_A-4 [6_512]: https://huggingface.co/google/bert_uncased_L-6_H-512_A-8 [6_768]: https://huggingface.co/google/bert_uncased_L-6_H-768_A-12 [8_128]: https://huggingface.co/google/bert_uncased_L-8_H-128_A-2 [8_256]: https://huggingface.co/google/bert_uncased_L-8_H-256_A-4 [8_512]: https://huggingface.co/google/bert_uncased_L-8_H-512_A-8 [8_768]: https://huggingface.co/google/bert_uncased_L-8_H-768_A-12 [10_128]: https://huggingface.co/google/bert_uncased_L-10_H-128_A-2 [10_256]: https://huggingface.co/google/bert_uncased_L-10_H-256_A-4 [10_512]: https://huggingface.co/google/bert_uncased_L-10_H-512_A-8 [10_768]: https://huggingface.co/google/bert_uncased_L-10_H-768_A-12 [12_128]: https://huggingface.co/google/bert_uncased_L-12_H-128_A-2 [12_256]: https://huggingface.co/google/bert_uncased_L-12_H-256_A-4 [12_512]: https://huggingface.co/google/bert_uncased_L-12_H-512_A-8 [12_768]: https://huggingface.co/google/bert_uncased_L-12_H-768_A-12 | 0cacce68c6a00d8a21cdd0dfd91daffd |

apache-2.0 | ['summarization', 'translation'] | false | Model Card for T5 Small  | aa3cb68abffc934a83527fe43fd96085 |

apache-2.0 | ['summarization', 'translation'] | false | Model Description The developers of the Text-To-Text Transfer Transformer (T5) [write](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html): > With T5, we propose reframing all NLP tasks into a unified text-to-text-format where the input and output are always text strings, in contrast to BERT-style models that can only output either a class label or a span of the input. Our text-to-text framework allows us to use the same model, loss function, and hyperparameters on any NLP task. T5-Small is the checkpoint with 60 million parameters. - **Developed by:** Colin Raffel, Noam Shazeer, Adam Roberts, Katherine Lee, Sharan Narang, Michael Matena, Yanqi Zhou, Wei Li, Peter J. Liu. See [associated paper](https://jmlr.org/papers/volume21/20-074/20-074.pdf) and [GitHub repo](https://github.com/google-research/text-to-text-transfer-transformer | 85f58c9312394b2863f4592f114a5ce8 |

apache-2.0 | ['summarization', 'translation'] | false | released-model-checkpoints) - **Model type:** Language model - **Language(s) (NLP):** English, French, Romanian, German - **License:** Apache 2.0 - **Related Models:** [All T5 Checkpoints](https://huggingface.co/models?search=t5) - **Resources for more information:** - [Research paper](https://jmlr.org/papers/volume21/20-074/20-074.pdf) - [Google's T5 Blog Post](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) - [GitHub Repo](https://github.com/google-research/text-to-text-transfer-transformer) - [Hugging Face T5 Docs](https://huggingface.co/docs/transformers/model_doc/t5) | 692691918d54ff762c656fa92c33050c |

apache-2.0 | ['summarization', 'translation'] | false | Direct Use and Downstream Use The developers write in a [blog post](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) that the model: > Our text-to-text framework allows us to use the same model, loss function, and hyperparameters on any NLP task, including machine translation, document summarization, question answering, and classification tasks (e.g., sentiment analysis). We can even apply T5 to regression tasks by training it to predict the string representation of a number instead of the number itself. See the [blog post](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) and [research paper](https://jmlr.org/papers/volume21/20-074/20-074.pdf) for further details. | 6091f62ded919da808bb8aaffacb0220 |

apache-2.0 | ['summarization', 'translation'] | false | Training Data The model is pre-trained on the [Colossal Clean Crawled Corpus (C4)](https://www.tensorflow.org/datasets/catalog/c4), which was developed and released in the context of the same [research paper](https://jmlr.org/papers/volume21/20-074/20-074.pdf) as T5. The model was pre-trained on a on a **multi-task mixture of unsupervised (1.) and supervised tasks (2.)**. Thereby, the following datasets were being used for (1.) and (2.): 1. **Datasets used for Unsupervised denoising objective**: - [C4](https://huggingface.co/datasets/c4) - [Wiki-DPR](https://huggingface.co/datasets/wiki_dpr) 2. **Datasets used for Supervised text-to-text language modeling objective** - Sentence acceptability judgment - CoLA [Warstadt et al., 2018](https://arxiv.org/abs/1805.12471) - Sentiment analysis - SST-2 [Socher et al., 2013](https://nlp.stanford.edu/~socherr/EMNLP2013_RNTN.pdf) - Paraphrasing/sentence similarity - MRPC [Dolan and Brockett, 2005](https://aclanthology.org/I05-5002) - STS-B [Ceret al., 2017](https://arxiv.org/abs/1708.00055) - QQP [Iyer et al., 2017](https://quoradata.quora.com/First-Quora-Dataset-Release-Question-Pairs) - Natural language inference - MNLI [Williams et al., 2017](https://arxiv.org/abs/1704.05426) - QNLI [Rajpurkar et al.,2016](https://arxiv.org/abs/1606.05250) - RTE [Dagan et al., 2005](https://link.springer.com/chapter/10.1007/11736790_9) - CB [De Marneff et al., 2019](https://semanticsarchive.net/Archive/Tg3ZGI2M/Marneffe.pdf) - Sentence completion - COPA [Roemmele et al., 2011](https://www.researchgate.net/publication/221251392_Choice_of_Plausible_Alternatives_An_Evaluation_of_Commonsense_Causal_Reasoning) - Word sense disambiguation - WIC [Pilehvar and Camacho-Collados, 2018](https://arxiv.org/abs/1808.09121) - Question answering - MultiRC [Khashabi et al., 2018](https://aclanthology.org/N18-1023) - ReCoRD [Zhang et al., 2018](https://arxiv.org/abs/1810.12885) - BoolQ [Clark et al., 2019](https://arxiv.org/abs/1905.10044) | db749c01821f2f5befd477769d56d947 |

apache-2.0 | ['summarization', 'translation'] | false | Training Procedure In their [abstract](https://jmlr.org/papers/volume21/20-074/20-074.pdf), the model developers write: > In this paper, we explore the landscape of transfer learning techniques for NLP by introducing a unified framework that converts every language problem into a text-to-text format. Our systematic study compares pre-training objectives, architectures, unlabeled datasets, transfer approaches, and other factors on dozens of language understanding tasks. The framework introduced, the T5 framework, involves a training procedure that brings together the approaches studied in the paper. See the [research paper](https://jmlr.org/papers/volume21/20-074/20-074.pdf) for further details. | 8d36c80df2539cc824e7b0bc86c463fc |

apache-2.0 | ['summarization', 'translation'] | false | compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700). - **Hardware Type:** Google Cloud TPU Pods - **Hours used:** More information needed - **Cloud Provider:** GCP - **Compute Region:** More information needed - **Carbon Emitted:** More information needed | a0a888273bb969fcd003f11d1f3d932f |

apache-2.0 | ['summarization', 'translation'] | false | Citation **BibTeX:** ```bibtex @article{2020t5, author = {Colin Raffel and Noam Shazeer and Adam Roberts and Katherine Lee and Sharan Narang and Michael Matena and Yanqi Zhou and Wei Li and Peter J. Liu}, title = {Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer}, journal = {Journal of Machine Learning Research}, year = {2020}, volume = {21}, number = {140}, pages = {1-67}, url = {http://jmlr.org/papers/v21/20-074.html} } ``` **APA:** - Raffel, C., Shazeer, N., Roberts, A., Lee, K., Narang, S., Matena, M., ... & Liu, P. J. (2020). Exploring the limits of transfer learning with a unified text-to-text transformer. J. Mach. Learn. Res., 21(140), 1-67. | 446f669b8ce33b29de81fe11b3e6fb29 |

apache-2.0 | ['summarization', 'translation'] | false | How to Get Started with the Model Use the code below to get started with the model. <details> <summary> Click to expand </summary> ```python from transformers import T5Tokenizer, T5Model tokenizer = T5Tokenizer.from_pretrained("t5-small") model = T5Model.from_pretrained("t5-small") input_ids = tokenizer( "Studies have been shown that owning a dog is good for you", return_tensors="pt" ).input_ids | e41ff3e6a42243fdcda3b93ae04c1593 |

apache-2.0 | ['summarization', 'translation'] | false | forward pass outputs = model(input_ids=input_ids, decoder_input_ids=decoder_input_ids) last_hidden_states = outputs.last_hidden_state ``` See the [Hugging Face T5](https://huggingface.co/docs/transformers/model_doc/t5 | 8ab779f22064089adf9295dc2be0665c |

apache-2.0 | ['summarization', 'translation'] | false | transformers.T5Model) docs and a [Colab Notebook](https://colab.research.google.com/github/google-research/text-to-text-transfer-transformer/blob/main/notebooks/t5-trivia.ipynb) created by the model developers for more examples. </details> | 9633657d2b73a133a302e2c86c6d7126 |

apache-2.0 | ['summarization', 'translation'] | false | [Google's T5](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) Pretraining Dataset: [C4](https://huggingface.co/datasets/c4) Other Community Checkpoints: [here](https://huggingface.co/models?search=t5) Paper: [Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer](https://arxiv.org/pdf/1910.10683.pdf) Authors: *Colin Raffel, Noam Shazeer, Adam Roberts, Katherine Lee, Sharan Narang, Michael Matena, Yanqi Zhou, Wei Li, Peter J. Liu* | 83216a803b5619e63e243162e725e1d5 |

apache-2.0 | ['summarization', 'translation'] | false | Abstract Transfer learning, where a model is first pre-trained on a data-rich task before being fine-tuned on a downstream task, has emerged as a powerful technique in natural language processing (NLP). The effectiveness of transfer learning has given rise to a diversity of approaches, methodology, and practice. In this paper, we explore the landscape of transfer learning techniques for NLP by introducing a unified framework that converts every language problem into a text-to-text format. Our systematic study compares pre-training objectives, architectures, unlabeled datasets, transfer approaches, and other factors on dozens of language understanding tasks. By combining the insights from our exploration with scale and our new “Colossal Clean Crawled Corpus”, we achieve state-of-the-art results on many benchmarks covering summarization, question answering, text classification, and more. To facilitate future work on transfer learning for NLP, we release our dataset, pre-trained models, and code.  | 346b722ef17c64dfb030d07f180eec62 |

apache-2.0 | ['automatic-speech-recognition', 'pl'] | false | exp_w2v2t_pl_unispeech-ml_s463 Fine-tuned [microsoft/unispeech-large-multi-lingual-1500h-cv](https://huggingface.co/microsoft/unispeech-large-multi-lingual-1500h-cv) for speech recognition using the train split of [Common Voice 7.0 (pl)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | c2e7a68bbbb4fece35af093bd0c37513 |

apache-2.0 | ['generated_from_trainer'] | false | all-roberta-large-v1-utility-2-16-5 This model is a fine-tuned version of [sentence-transformers/all-roberta-large-v1](https://huggingface.co/sentence-transformers/all-roberta-large-v1) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.3728 - Accuracy: 0.3956 | 6c294f8eeea26601c4145fbce851425d |

apache-2.0 | ['generated_from_trainer'] | false | roberta-base-biomedical-clinical-es-finetuned-ner-BioNLP13 This model is a fine-tuned version of [PlanTL-GOB-ES/roberta-base-biomedical-clinical-es](https://huggingface.co/PlanTL-GOB-ES/roberta-base-biomedical-clinical-es) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2217 - Precision: 0.7936 - Recall: 0.8067 - F1: 0.8001 - Accuracy: 0.9451 | 7fdf4dc3dab9c204559d3a56308aaae7 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 4 | eda60dffc0c790b5cafc27570600e9a2 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.