license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 24 - eval_batch_size: 24 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 8 - mixed_precision_training: Native AMP | bd2b0e6d0af58246216951a1fc2e27cd |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:|:---------:|:-------:| | No log | 1.0 | 1 | 8.4025 | 4.8565 | 0.4435 | 3.9735 | 4.415 | 19.0 | | No log | 2.0 | 2 | 8.4025 | 4.8565 | 0.4435 | 3.9735 | 4.415 | 19.0 | | No log | 3.0 | 3 | 7.7250 | 4.8565 | 0.4435 | 3.9735 | 4.415 | 19.0 | | No log | 4.0 | 4 | 7.1617 | 4.8565 | 0.4435 | 3.9735 | 4.415 | 19.0 | | No log | 5.0 | 5 | 6.7113 | 4.8565 | 0.4435 | 3.9735 | 4.415 | 19.0 | | No log | 6.0 | 6 | 6.3646 | 4.8565 | 0.4435 | 3.9735 | 4.415 | 19.0 | | No log | 7.0 | 7 | 6.1056 | 4.8565 | 0.4435 | 3.9735 | 4.415 | 19.0 | | No log | 8.0 | 8 | 6.1056 | 4.8565 | 0.4435 | 3.9735 | 4.415 | 19.0 | | 1fc48484accf419f8d8dc484c1f19583 |

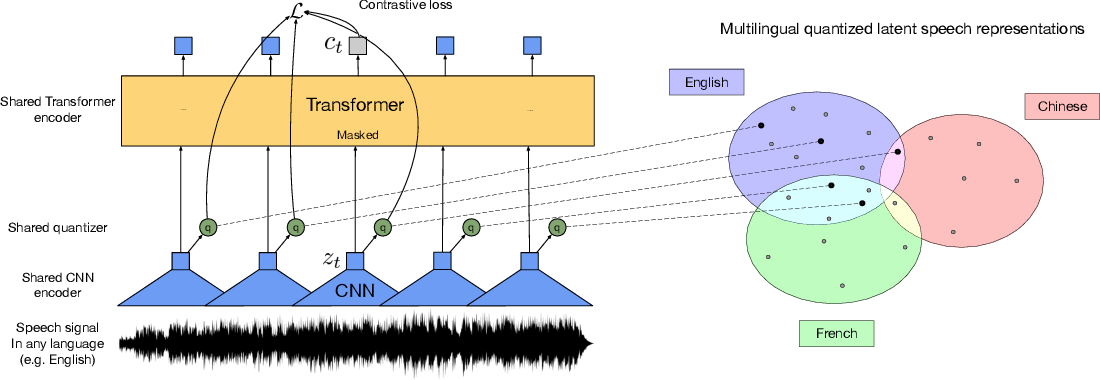

apache-2.0 | ['speech'] | false | Wav2Vec2-XLSR-53 [Facebook's XLSR-Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/) The base model pretrained on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz. Note that this model should be fine-tuned on a downstream task, like Automatic Speech Recognition. Check out [this blog](https://huggingface.co/blog/fine-tune-wav2vec2-english) for more information. [Paper](https://arxiv.org/abs/2006.13979) Authors: Alexis Conneau, Alexei Baevski, Ronan Collobert, Abdelrahman Mohamed, Michael Auli **Abstract** This paper presents XLSR which learns cross-lingual speech representations by pretraining a single model from the raw waveform of speech in multiple languages. We build on wav2vec 2.0 which is trained by solving a contrastive task over masked latent speech representations and jointly learns a quantization of the latents shared across languages. The resulting model is fine-tuned on labeled data and experiments show that cross-lingual pretraining significantly outperforms monolingual pretraining. On the CommonVoice benchmark, XLSR shows a relative phoneme error rate reduction of 72% compared to the best known results. On BABEL, our approach improves word error rate by 16% relative compared to a comparable system. Our approach enables a single multilingual speech recognition model which is competitive to strong individual models. Analysis shows that the latent discrete speech representations are shared across languages with increased sharing for related languages. We hope to catalyze research in low-resource speech understanding by releasing XLSR-53, a large model pretrained in 53 languages. The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec | f058dca54bf4db171c86507be6a5c4dc |

apache-2.0 | ['speech'] | false | Usage See [this notebook](https://colab.research.google.com/github/patrickvonplaten/notebooks/blob/master/Fine_Tune_XLSR_Wav2Vec2_on_Turkish_ASR_with_%F0%9F%A4%97_Transformers.ipynb) for more information on how to fine-tune the model.  | 131d73e33e9e022b089155e56063c48d |

apache-2.0 | [] | false | [Google's T5](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) for **Closed Book Question Answering**. The model was pre-trained using T5's denoising objective on [C4](https://huggingface.co/datasets/c4), subsequently additionally pre-trained using [REALM](https://arxiv.org/pdf/2002.08909.pdf)'s salient span masking objective on [Wikipedia](https://huggingface.co/datasets/wikipedia), and finally fine-tuned on [Web Questions (WQ)](https://huggingface.co/datasets/web_questions). **Note**: The model was fine-tuned on 100% of the train splits of [Web Questions (WQ)](https://huggingface.co/datasets/web_questions) for 10k steps. Other community Checkpoints: [here](https://huggingface.co/models?search=ssm) Paper: [How Much Knowledge Can You Pack Into the Parameters of a Language Model?](https://arxiv.org/abs/1910.10683.pdf) Authors: *Adam Roberts, Colin Raffel, Noam Shazeer* | da4da9893eadbc690c6fbb63ab4c0d14 |

apache-2.0 | [] | false | Results on Web Questions - Test Set |Id | link | Exact Match | |---|---|---| |**T5-11b**|**https://huggingface.co/google/t5-11b-ssm-wq**|**44.7**| |T5-xxl|https://huggingface.co/google/t5-xxl-ssm-wq|43.5| | 7c41b0f7808b15b25b10660a27e2f12c |

apache-2.0 | [] | false | Usage The model can be used as follows for **closed book question answering**: ```python from transformers import AutoModelForSeq2SeqLM, AutoTokenizer t5_qa_model = AutoModelForSeq2SeqLM.from_pretrained("google/t5-11b-ssm-wq") t5_tok = AutoTokenizer.from_pretrained("google/t5-11b-ssm-wq") input_ids = t5_tok("When was Franklin D. Roosevelt born?", return_tensors="pt").input_ids gen_output = t5_qa_model.generate(input_ids)[0] print(t5_tok.decode(gen_output, skip_special_tokens=True)) ``` | 85f3e22c4d34d69257d315ea4fa807b7 |

apache-2.0 | [] | false | Abstract It has recently been observed that neural language models trained on unstructured text can implicitly store and retrieve knowledge using natural language queries. In this short paper, we measure the practical utility of this approach by fine-tuning pre-trained models to answer questions without access to any external context or knowledge. We show that this approach scales with model size and performs competitively with open-domain systems that explicitly retrieve answers from an external knowledge source when answering questions. To facilitate reproducibility and future work, we release our code and trained models at https://goo.gle/t5-cbqa.  | bb6881a09c91266bd2666dd67e30ed82 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0005 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - gradient_accumulation_steps: 8 - total_train_batch_size: 256 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: cosine - lr_scheduler_warmup_steps: 1000 - num_epochs: 4000 | cac380aec17176a633fdcda7d786c63c |

apache-2.0 | ['generated_from_trainer'] | false | bert-finetuned-ner-v2.2 This model is a fine-tuned version of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) on the caner dataset. It achieves the following results on the evaluation set: - Loss: 0.3595 - Precision: 0.8823 - Recall: 0.8497 - F1: 0.8657 - Accuracy: 0.9427 | 49bea52a41d962ba6d8a5fad3ba943d7 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.2726 | 1.0 | 3228 | 0.4504 | 0.7390 | 0.7287 | 0.7338 | 0.9107 | | 0.2057 | 2.0 | 6456 | 0.3679 | 0.8633 | 0.8446 | 0.8538 | 0.9385 | | 0.1481 | 3.0 | 9684 | 0.3595 | 0.8823 | 0.8497 | 0.8657 | 0.9427 | | 823829fd7e12d60d71c502db0a71904b |

mit | ['nowcasting', 'forecasting', 'timeseries', 'remote-sensing'] | false | Model description 3d conv model, that takes in different data streams architecture is roughly 1. satellite image time series goes into many 3d convolution layers. 2. nwp time series goes into many 3d convolution layers. 3. Final convolutional layer goes to full connected layer. This is joined by other data inputs like - pv yield - time variables Then there ~4 fully connected layers which end up forecasting the pv yield / gsp into the future | 88377fd9867d8433808cf58b625b4cc2 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2270 - Accuracy: 0.924 - F1: 0.9241 | ce6e11ea56e24b2dc68015d27d946299 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8204 | 1.0 | 250 | 0.3160 | 0.9035 | 0.9008 | | 0.253 | 2.0 | 500 | 0.2270 | 0.924 | 0.9241 | | 0b5d75287a663bb0b203856a4c76087e |

mit | ['endpoints-template', 'optimum'] | false | Optimized and Quantized [deepset/roberta-base-squad2](https://huggingface.co/deepset/roberta-base-squad2) with a custom handler.py This repository implements a `custom` handler for `question-answering` for 🤗 Inference Endpoints for accelerated inference using [🤗 Optiumum](https://huggingface.co/docs/optimum/index). The code for the customized handler is in the [handler.py](https://huggingface.co/philschmid/roberta-base-squad2-optimized/blob/main/handler.py). Below is also describe how we converted & optimized the model, based on the [Accelerate Transformers with Hugging Face Optimum](https://huggingface.co/blog/optimum-inference) blog post. You can also check out the [notebook](https://huggingface.co/philschmid/roberta-base-squad2-optimized/blob/main/optimize_model.ipynb). | ecfb6f23197b097f943cd31847e7d652 |

mit | ['endpoints-template', 'optimum'] | false | expected Request payload ```json { "inputs": { "question": "As what is Philipp working?", "context": "Hello, my name is Philipp and I live in Nuremberg, Germany. Currently I am working as a Technical Lead at Hugging Face to democratize artificial intelligence through open source and open science. In the past I designed and implemented cloud-native machine learning architectures for fin-tech and insurance companies. I found my passion for cloud concepts and machine learning 5 years ago. Since then I never stopped learning. Currently, I am focusing myself in the area NLP and how to leverage models like BERT, Roberta, T5, ViT, and GPT2 to generate business value." } } ``` below is an example on how to run a request using Python and `requests`. | d920888b6e2501473b4f615a8e1df7b8 |

mit | ['endpoints-template', 'optimum'] | false | Run Request ```python import json from typing import List import requests as r import base64 ENDPOINT_URL = "" HF_TOKEN = "" def predict(question:str=None,context:str=None): payload = {"inputs": {"question": question, "context": context}} response = r.post( ENDPOINT_URL, headers={"Authorization": f"Bearer {HF_TOKEN}"}, json=payload ) return response.json() prediction = predict( question="As what is Philipp working?", context="Hello, my name is Philipp and I live in Nuremberg, Germany. Currently I am working as a Technical Lead at Hugging Face to democratize artificial intelligence through open source and open science." ) ``` expected output ```python { 'score': 0.4749588668346405, 'start': 88, 'end': 102, 'answer': 'Technical Lead' } ``` | 20e65e025b15470afb62b7be848812de |

mit | ['endpoints-template', 'optimum'] | false | 5-push-to-repository-and-create-inference-endpoint) Helpful links: * [Accelerate Transformers with Hugging Face Optimum](https://huggingface.co/blog/optimum-inference) * [Optimizing Transformers for GPUs with Optimum](https://www.philschmid.de/optimizing-transformers-with-optimum-gpu) * [Optimum Documentation](https://huggingface.co/docs/optimum/onnxruntime/modeling_ort) * [Create Custom Handler Endpoints](https://link-to-docs) | 7c6c11f24812aaa31e35a165c0d6d2ab |

mit | ['endpoints-template', 'optimum'] | false | 0. Base line Performance ```python from transformers import pipeline qa = pipeline("question-answering",model="deepset/roberta-base-squad2") ``` Okay, let's test the performance (latency) with sequence length of 128. ```python context="Hello, my name is Philipp and I live in Nuremberg, Germany. Currently I am working as a Technical Lead at Hugging Face to democratize artificial intelligence through open source and open science. In the past I designed and implemented cloud-native machine learning architectures for fin-tech and insurance companies. I found my passion for cloud concepts and machine learning 5 years ago. Since then I never stopped learning. Currently, I am focusing myself in the area NLP and how to leverage models like BERT, Roberta, T5, ViT, and GPT2 to generate business value." question="As what is Philipp working?" payload = {"inputs": {"question": question, "context": context}} ``` ```python from time import perf_counter import numpy as np def measure_latency(pipe,payload): latencies = [] | 336a00b009eca212b27b5319e9a09f13 |

mit | ['endpoints-template', 'optimum'] | false | Timed run for _ in range(50): start_time = perf_counter() _ = pipe(question=payload["inputs"]["question"], context=payload["inputs"]["context"]) latency = perf_counter() - start_time latencies.append(latency) | d52ccd8cdeaf2c156d6c59c57266bcbd |

mit | ['endpoints-template', 'optimum'] | false | Compute run statistics time_avg_ms = 1000 * np.mean(latencies) time_std_ms = 1000 * np.std(latencies) return f"Average latency (ms) - {time_avg_ms:.2f} +\- {time_std_ms:.2f}" print(f"Vanilla model {measure_latency(qa,payload)}") | aa177068d99c7a0768b407d42cf26614 |

mit | ['endpoints-template', 'optimum'] | false | 1. Convert model to ONNX ```python from optimum.onnxruntime import ORTModelForQuestionAnswering from transformers import AutoTokenizer from pathlib import Path model_id="deepset/roberta-base-squad2" onnx_path = Path(".") | 08b73e504ec48e45572b202e34bc0f0c |

mit | ['endpoints-template', 'optimum'] | false | 2. Optimize & quantize model with Optimum ```python from optimum.onnxruntime import ORTOptimizer, ORTQuantizer from optimum.onnxruntime.configuration import OptimizationConfig, AutoQuantizationConfig | ce652d6c19c7cd9272d2a04f631de130 |

mit | ['endpoints-template', 'optimum'] | false | create ORTQuantizer and define quantization configuration dynamic_quantizer = ORTQuantizer.from_pretrained(onnx_path, file_name="model_optimized.onnx") dqconfig = AutoQuantizationConfig.avx512_vnni(is_static=False, per_channel=False) | a0677301539223568e71f6408771fbe7 |

mit | ['endpoints-template', 'optimum'] | false | 3. Create Custom Handler for Inference Endpoints ```python %%writefile handler.py from typing import Dict, List, Any from optimum.onnxruntime import ORTModelForQuestionAnswering from transformers import AutoTokenizer, pipeline class EndpointHandler(): def __init__(self, path=""): | 683ed4fa325c851dc6ee321140bac6e7 |

mit | ['endpoints-template', 'optimum'] | false | load the optimized model self.model = ORTModelForQuestionAnswering.from_pretrained(path, file_name="model_optimized_quantized.onnx") self.tokenizer = AutoTokenizer.from_pretrained(path) | e9926da537ab30a75ebc4c84f866f5e4 |

mit | ['endpoints-template', 'optimum'] | false | create pipeline self.pipeline = pipeline("question-answering", model=self.model, tokenizer=self.tokenizer) def __call__(self, data: Any) -> List[List[Dict[str, float]]]: """ Args: data (:obj:): includes the input data and the parameters for the inference. Return: A :obj:`list`:. The list contains the answer and scores of the inference inputs """ inputs = data.get("inputs", data) | b379426da7b4162be3bc7310a991a129 |

mit | ['endpoints-template', 'optimum'] | false | prepare sample payload context="Hello, my name is Philipp and I live in Nuremberg, Germany. Currently I am working as a Technical Lead at Hugging Face to democratize artificial intelligence through open source and open science. In the past I designed and implemented cloud-native machine learning architectures for fin-tech and insurance companies. I found my passion for cloud concepts and machine learning 5 years ago. Since then I never stopped learning. Currently, I am focusing myself in the area NLP and how to leverage models like BERT, Roberta, T5, ViT, and GPT2 to generate business value." question="As what is Philipp working?" payload = {"inputs": {"question": question, "context": context}} | 6b640c47791b869ed09ea56bdcacdd0f |

mit | ['endpoints-template', 'optimum'] | false | Compute run statistics time_avg_ms = 1000 * np.mean(latencies) time_std_ms = 1000 * np.std(latencies) return f"Average latency (ms) - {time_avg_ms:.2f} +\- {time_std_ms:.2f}" print(f"Optimized & Quantized model {measure_latency(my_handler,payload)}") | a628253b562487780bf06d991d3fea96 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 0.01 | 623d039802f129b37d01f619f9a43b6f |

apache-2.0 | ['automatic-speech-recognition', 'pt'] | false | exp_w2v2t_pt_vp-nl_s6 Fine-tuned [facebook/wav2vec2-large-nl-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-nl-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (pt)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | e90c881eb6a0a248dd6cf65e70728d15 |

apache-2.0 | ['generated_from_keras_callback'] | false | hsohn3/cchs-bert-visit-uncased-wordlevel-block512-batch8-ep10 This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 2.9857 - Epoch: 9 | 305c523f111bf8d1f486e06ac4dc92d4 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': 2e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: float32 | 4dbca421c2e8c37d97233470d6451213 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Epoch | |:----------:|:-----:| | 4.4277 | 0 | | 3.1148 | 1 | | 3.0454 | 2 | | 3.0227 | 3 | | 3.0048 | 4 | | 3.0080 | 5 | | 2.9920 | 6 | | 2.9963 | 7 | | 2.9892 | 8 | | 2.9857 | 9 | | f3d03514781bb27cec16355bf212aade |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-base-timit-demo-colab This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.8686 - Wer: 0.6263 | a4fca7f876b09b485a42fe043a7beedd |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 5.0505 | 13.89 | 500 | 3.0760 | 1.0 | | 1.2748 | 27.78 | 1000 | 0.8686 | 0.6263 | | 5cb04eca2d05614292cf411b315ad6f2 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.1365 - F1: 0.8649 | 6864cbe2e019739b2c8be9675e1c92ca |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2553 | 1.0 | 525 | 0.1575 | 0.8279 | | 0.1284 | 2.0 | 1050 | 0.1386 | 0.8463 | | 0.0813 | 3.0 | 1575 | 0.1365 | 0.8649 | | bbe67b85d398cebcb97a751d9dffa0a9 |

mit | ['fastai'] | false | Some next steps 1. Fill out this model card with more information (see the template below and the [documentation here](https://huggingface.co/docs/hub/model-repos))! 2. Create a demo in Gradio or Streamlit using 🤗 Spaces ([documentation here](https://huggingface.co/docs/hub/spaces)). 3. Join the fastai community on the [Fastai Discord](https://discord.com/invite/YKrxeNn)! Greetings fellow fastlearner 🤝! Don't forget to delete this content from your model card. --- | 1cd004c0135d64b3e0f9f19b2f69e245 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | bart-base-finetuned-summarization-cnn-ver2 This model is a fine-tuned version of [facebook/bart-base](https://huggingface.co/facebook/bart-base) on the cnn_dailymail dataset. It achieves the following results on the evaluation set: - Loss: 2.1715 | dacd2490128f684cfb5b3df973ff7a82 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 1 - eval_batch_size: 1 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 | c022382c68f9b809d45d7d26d06ef0f2 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week', 'hf-asr-leaderboard'] | false | Wav2Vec2-Large-XLSR-53-Irish Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) in Irish using the [Common Voice](https://huggingface.co/datasets/common_voice) When using this model, make sure that your speech input is sampled at 16kHz. | fdbdf4552c537a896ae6b76949d6ec2d |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week', 'hf-asr-leaderboard'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "ga-IE", split="test[:2%]"). processor = Wav2Vec2Processor.from_pretrained("manandey/wav2vec2-large-xlsr-_irish") model = Wav2Vec2ForCTC.from_pretrained("manandey/wav2vec2-large-xlsr-_irish") resampler = torchaudio.transforms.Resample(48_000, 16_000) | 5e92bff839363c02a47c8d9fbd214ad6 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week', 'hf-asr-leaderboard'] | false | We need to read the aduio files as arrays def speech_file_to_array_fn(batch): speech_array, sampling_rate = torchaudio.load(batch["path"]) batch["speech"] = resampler(speech_array).squeeze().numpy() return batch test_dataset = test_dataset.map(speech_file_to_array_fn) inputs = processor(test_dataset["speech"][:2], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values, attention_mask=inputs.attention_mask).logits predicted_ids = torch.argmax(logits, dim=-1) print("Prediction:", processor.batch_decode(predicted_ids)) print("Reference:", test_dataset["sentence"][:2]) ``` | c8be1d6faaff673a550929e000584233 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week', 'hf-asr-leaderboard'] | false | Evaluation The model can be evaluated as follows on the {language} test data of Common Voice. ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re test_dataset = load_dataset("common_voice", "ga-IE", split="test") wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("manandey/wav2vec2-large-xlsr-_irish") model = Wav2Vec2ForCTC.from_pretrained("manandey/wav2vec2-large-xlsr-_irish") model.to("cuda") chars_to_ignore_regex = '[\,\?\.\!\-\;\:\"\“\%\‘\”\�\’\–\(\)]' resampler = torchaudio.transforms.Resample(48_000, 16_000) | a3ffefaa8ff17f9977a93c3c859a6b3a |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week', 'hf-asr-leaderboard'] | false | We need to read the aduio files as arrays def speech_file_to_array_fn(batch): batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower() speech_array, sampling_rate = torchaudio.load(batch["path"]) batch["speech"] = resampler(speech_array).squeeze().numpy() return batch test_dataset = test_dataset.map(speech_file_to_array_fn) | 506c5d255a49b14d02d2f43f83822b36 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week', 'hf-asr-leaderboard'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**: 42.34% | 40a5d782c9cdcf27876c3eedc8296363 |

apache-2.0 | ['translation'] | false | ita-vie * source group: Italian * target group: Vietnamese * OPUS readme: [ita-vie](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/ita-vie/README.md) * model: transformer-align * source language(s): ita * target language(s): vie * model: transformer-align * pre-processing: normalization + SentencePiece (spm32k,spm32k) * download original weights: [opus-2020-06-17.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/ita-vie/opus-2020-06-17.zip) * test set translations: [opus-2020-06-17.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/ita-vie/opus-2020-06-17.test.txt) * test set scores: [opus-2020-06-17.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/ita-vie/opus-2020-06-17.eval.txt) | 04146b598c51189b387865f2b2f4e20e |

apache-2.0 | ['translation'] | false | System Info: - hf_name: ita-vie - source_languages: ita - target_languages: vie - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/ita-vie/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['it', 'vi'] - src_constituents: {'ita'} - tgt_constituents: {'vie', 'vie_Hani'} - src_multilingual: False - tgt_multilingual: False - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/ita-vie/opus-2020-06-17.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/ita-vie/opus-2020-06-17.test.txt - src_alpha3: ita - tgt_alpha3: vie - short_pair: it-vi - chrF2_score: 0.535 - bleu: 36.2 - brevity_penalty: 1.0 - ref_len: 2144.0 - src_name: Italian - tgt_name: Vietnamese - train_date: 2020-06-17 - src_alpha2: it - tgt_alpha2: vi - prefer_old: False - long_pair: ita-vie - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | 981dbc6da930c685cfb7bd42a817e8bf |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | -converting-models-to-core-ml).<br> Provide the model to an app such as [Mochi Diffusion](https://github.com/godly-devotion/MochiDiffusion) to generate images.<br> `split_einsum` version is compatible with all compute unit options including Neural Engine.<br> `original` version is only compatible with CPU & GPU option. | 3544adbeb5d2ef70622fc9ac3d96ad02 |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Stable Diffusion v1-5 Model Card Stable Diffusion is a latent text-to-image diffusion model capable of generating photo-realistic images given any text input. For more information about how Stable Diffusion functions, please have a look at [🤗's Stable Diffusion blog](https://huggingface.co/blog/stable_diffusion). The **Stable-Diffusion-v1-5** checkpoint was initialized with the weights of the [Stable-Diffusion-v1-2](https:/steps/huggingface.co/CompVis/stable-diffusion-v1-2) checkpoint and subsequently fine-tuned on 595k steps at resolution 512x512 on "laion-aesthetics v2 5+" and 10% dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598). You can use this both with the [🧨Diffusers library](https://github.com/huggingface/diffusers) and the [RunwayML GitHub repository](https://github.com/runwayml/stable-diffusion). | e23d8f87ee04ccf53c7ce08a709c7cb1 |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Diffusers ```py from diffusers import StableDiffusionPipeline import torch model_id = "runwayml/stable-diffusion-v1-5" pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16) pipe = pipe.to("cuda") prompt = "a photo of an astronaut riding a horse on mars" image = pipe(prompt).images[0] image.save("astronaut_rides_horse.png") ``` For more detailed instructions, use-cases and examples in JAX follow the instructions [here](https://github.com/huggingface/diffusers | 2c183a7effd368c672fedede142de06f |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Original GitHub Repository 1. Download the weights - [v1-5-pruned-emaonly.ckpt](https://huggingface.co/runwayml/stable-diffusion-v1-5/resolve/main/v1-5-pruned-emaonly.ckpt) - 4.27GB, ema-only weight. uses less VRAM - suitable for inference - [v1-5-pruned.ckpt](https://huggingface.co/runwayml/stable-diffusion-v1-5/resolve/main/v1-5-pruned.ckpt) - 7.7GB, ema+non-ema weights. uses more VRAM - suitable for fine-tuning 2. Follow instructions [here](https://github.com/runwayml/stable-diffusion). | 009764aab044b94eb0c9d45222629d20 |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Model Details - **Developed by:** Robin Rombach, Patrick Esser - **Model type:** Diffusion-based text-to-image generation model - **Language(s):** English - **License:** [The CreativeML OpenRAIL M license](https://huggingface.co/spaces/CompVis/stable-diffusion-license) is an [Open RAIL M license](https://www.licenses.ai/blog/2022/8/18/naming-convention-of-responsible-ai-licenses), adapted from the work that [BigScience](https://bigscience.huggingface.co/) and [the RAIL Initiative](https://www.licenses.ai/) are jointly carrying in the area of responsible AI licensing. See also [the article about the BLOOM Open RAIL license](https://bigscience.huggingface.co/blog/the-bigscience-rail-license) on which our license is based. - **Model Description:** This is a model that can be used to generate and modify images based on text prompts. It is a [Latent Diffusion Model](https://arxiv.org/abs/2112.10752) that uses a fixed, pretrained text encoder ([CLIP ViT-L/14](https://arxiv.org/abs/2103.00020)) as suggested in the [Imagen paper](https://arxiv.org/abs/2205.11487). - **Resources for more information:** [GitHub Repository](https://github.com/CompVis/stable-diffusion), [Paper](https://arxiv.org/abs/2112.10752). - **Cite as:** @InProceedings{Rombach_2022_CVPR, author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn}, title = {High-Resolution Image Synthesis With Latent Diffusion Models}, booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)}, month = {June}, year = {2022}, pages = {10684-10695} } | 07f88f0afbed3a5cf3474269b5f95711 |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Misuse, Malicious Use, and Out-of-Scope Use _Note: This section is taken from the [DALLE-MINI model card](https://huggingface.co/dalle-mini/dalle-mini), but applies in the same way to Stable Diffusion v1_. The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. This includes generating images that people would foreseeably find disturbing, distressing, or offensive; or content that propagates historical or current stereotypes. | 364aca98373a927bc5e0d992e1cf0e0e |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Limitations - The model does not achieve perfect photorealism - The model cannot render legible text - The model does not perform well on more difficult tasks which involve compositionality, such as rendering an image corresponding to “A red cube on top of a blue sphere” - Faces and people in general may not be generated properly. - The model was trained mainly with English captions and will not work as well in other languages. - The autoencoding part of the model is lossy - The model was trained on a large-scale dataset [LAION-5B](https://laion.ai/blog/laion-5b/) which contains adult material and is not fit for product use without additional safety mechanisms and considerations. - No additional measures were used to deduplicate the dataset. As a result, we observe some degree of memorization for images that are duplicated in the training data. The training data can be searched at [https://rom1504.github.io/clip-retrieval/](https://rom1504.github.io/clip-retrieval/) to possibly assist in the detection of memorized images. | 9eeeca5fd58c0bc99ea85d1362dc729c |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Bias While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases. Stable Diffusion v1 was trained on subsets of [LAION-2B(en)](https://laion.ai/blog/laion-5b/), which consists of images that are primarily limited to English descriptions. Texts and images from communities and cultures that use other languages are likely to be insufficiently accounted for. This affects the overall output of the model, as white and western cultures are often set as the default. Further, the ability of the model to generate content with non-English prompts is significantly worse than with English-language prompts. | 42cdeddd211502a9c6ed83a37726f7f2 |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Safety Module The intended use of this model is with the [Safety Checker](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/safety_checker.py) in Diffusers. This checker works by checking model outputs against known hard-coded NSFW concepts. The concepts are intentionally hidden to reduce the likelihood of reverse-engineering this filter. Specifically, the checker compares the class probability of harmful concepts in the embedding space of the `CLIPTextModel` *after generation* of the images. The concepts are passed into the model with the generated image and compared to a hand-engineered weight for each NSFW concept. | 7d739b9d61e1a66ee31dfb5f5366498d |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Training **Training Data** The model developers used the following dataset for training the model: - LAION-2B (en) and subsets thereof (see next section) **Training Procedure** Stable Diffusion v1-5 is a latent diffusion model which combines an autoencoder with a diffusion model that is trained in the latent space of the autoencoder. During training, - Images are encoded through an encoder, which turns images into latent representations. The autoencoder uses a relative downsampling factor of 8 and maps images of shape H x W x 3 to latents of shape H/f x W/f x 4 - Text prompts are encoded through a ViT-L/14 text-encoder. - The non-pooled output of the text encoder is fed into the UNet backbone of the latent diffusion model via cross-attention. - The loss is a reconstruction objective between the noise that was added to the latent and the prediction made by the UNet. Currently six Stable Diffusion checkpoints are provided, which were trained as follows. - [`stable-diffusion-v1-1`](https://huggingface.co/CompVis/stable-diffusion-v1-1): 237,000 steps at resolution `256x256` on [laion2B-en](https://huggingface.co/datasets/laion/laion2B-en). 194,000 steps at resolution `512x512` on [laion-high-resolution](https://huggingface.co/datasets/laion/laion-high-resolution) (170M examples from LAION-5B with resolution `>= 1024x1024`). - [`stable-diffusion-v1-2`](https://huggingface.co/CompVis/stable-diffusion-v1-2): Resumed from `stable-diffusion-v1-1`. 515,000 steps at resolution `512x512` on "laion-improved-aesthetics" (a subset of laion2B-en, filtered to images with an original size `>= 512x512`, estimated aesthetics score `> 5.0`, and an estimated watermark probability `< 0.5`. The watermark estimate is from the LAION-5B metadata, the aesthetics score is estimated using an [improved aesthetics estimator](https://github.com/christophschuhmann/improved-aesthetic-predictor)). - [`stable-diffusion-v1-3`](https://huggingface.co/CompVis/stable-diffusion-v1-3): Resumed from `stable-diffusion-v1-2` - 195,000 steps at resolution `512x512` on "laion-improved-aesthetics" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598). - [`stable-diffusion-v1-4`](https://huggingface.co/CompVis/stable-diffusion-v1-4) Resumed from `stable-diffusion-v1-2` - 225,000 steps at resolution `512x512` on "laion-aesthetics v2 5+" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598). - [`stable-diffusion-v1-5`](https://huggingface.co/runwayml/stable-diffusion-v1-5) Resumed from `stable-diffusion-v1-2` - 595,000 steps at resolution `512x512` on "laion-aesthetics v2 5+" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598). - [`stable-diffusion-inpainting`](https://huggingface.co/runwayml/stable-diffusion-inpainting) Resumed from `stable-diffusion-v1-5` - then 440,000 steps of inpainting training at resolution 512x512 on “laion-aesthetics v2 5+” and 10% dropping of the text-conditioning. For inpainting, the UNet has 5 additional input channels (4 for the encoded masked-image and 1 for the mask itself) whose weights were zero-initialized after restoring the non-inpainting checkpoint. During training, we generate synthetic masks and in 25% mask everything. - **Hardware:** 32 x 8 x A100 GPUs - **Optimizer:** AdamW - **Gradient Accumulations**: 2 - **Batch:** 32 x 8 x 2 x 4 = 2048 - **Learning rate:** warmup to 0.0001 for 10,000 steps and then kept constant | 1243041939d536cfed0252b3c4cc8615 |

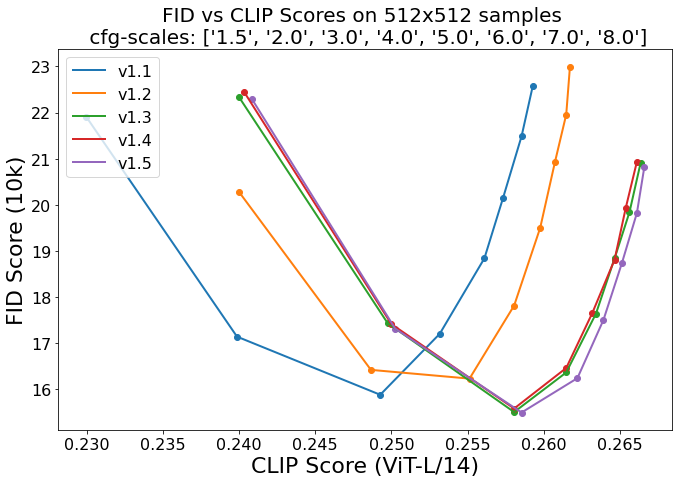

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Evaluation Results Evaluations with different classifier-free guidance scales (1.5, 2.0, 3.0, 4.0, 5.0, 6.0, 7.0, 8.0) and 50 PNDM/PLMS sampling steps show the relative improvements of the checkpoints:  Evaluated using 50 PLMS steps and 10000 random prompts from the COCO2017 validation set, evaluated at 512x512 resolution. Not optimized for FID scores. | 5eb3402e4c35c2548ac51f8cb9a146ba |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700). The hardware, runtime, cloud provider, and compute region were utilized to estimate the carbon impact. - **Hardware Type:** A100 PCIe 40GB - **Hours used:** 150000 - **Cloud Provider:** AWS - **Compute Region:** US-east - **Carbon Emitted (Power consumption x Time x Carbon produced based on location of power grid):** 11250 kg CO2 eq. | 8fe8d837e68710b3c11675192b2d3fe8 |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Citation ```bibtex @InProceedings{Rombach_2022_CVPR, author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn}, title = {High-Resolution Image Synthesis With Latent Diffusion Models}, booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)}, month = {June}, year = {2022}, pages = {10684-10695} } ``` *This model card was written by: Robin Rombach and Patrick Esser and is based on the [DALL-E Mini model card](https://huggingface.co/dalle-mini/dalle-mini).* | c5c1e99cb709368c2b7422e4d398a0e6 |

apache-2.0 | ['automatic-speech-recognition', 'pl'] | false | exp_w2v2t_pl_wavlm_s250 Fine-tuned [microsoft/wavlm-large](https://huggingface.co/microsoft/wavlm-large) for speech recognition using the train split of [Common Voice 7.0 (pl)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | c51ec97bcc8a94a652ed511a462918dc |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-movie-sentiment-model-9000-samples This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.4040 - Accuracy: 0.9178 - F1: 0.9155 | 4ff5eceffeda5faf2601cb5cba083bd0 |

apache-2.0 | ['generated_from_trainer'] | false | mobilebert_add_GLUE_Experiment_logit_kd_pretrain_rte This model is a fine-tuned version of [gokuls/mobilebert_add_pre-training-complete](https://huggingface.co/gokuls/mobilebert_add_pre-training-complete) on the GLUE RTE dataset. It achieves the following results on the evaluation set: - Loss: nan - Accuracy: 0.5271 | eb8556c77be76a56cf2fd0ecc4338b1c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.0 | 1.0 | 20 | nan | 0.5271 | | 0.0 | 2.0 | 40 | nan | 0.5271 | | 0.0 | 3.0 | 60 | nan | 0.5271 | | 0.0 | 4.0 | 80 | nan | 0.5271 | | 0.0 | 5.0 | 100 | nan | 0.5271 | | 0.0 | 6.0 | 120 | nan | 0.5271 | | efeb72c8b6bb68a150c4ed30fd090ac2 |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-sentiment-model-3000-samples This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.3322 - Accuracy: 0.8533 - F1: 0.8562 | 44acee131f215f6754b514a75100973e |

mit | ['generated_from_trainer'] | false | camembert-base-squad-fr This model is a fine-tuned version of [camembert-base](https://huggingface.co/camembert-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.5182 | 1d96f362f5623ba1f730e88e4d917195 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.2 - num_epochs: 2 | 37f175313c8e1c83469f37f564a09d77 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 1.7504 | 1.0 | 3581 | 1.6470 | | 1.4776 | 2.0 | 7162 | 1.5182 | | 275621cc6cd5b2c9f6257c28a8df54fa |

apache-2.0 | ['automatic-speech-recognition', 'fr'] | false | exp_w2v2r_fr_vp-100k_gender_male-10_female-0_s626 Fine-tuned [facebook/wav2vec2-large-100k-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-100k-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (fr)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 298dfda3f19f24454854a446d26fca67 |

mit | [] | false | Description This model is based on the pre-trained [NB-BERT-large model](https://huggingface.co/NbAiLab/nb-bert-large?text=P%C3%A5+biblioteket+kan+du+l%C3%A5ne+en+%5BMASK%5D.). It is a model for sentiment analysis. | c8cdb3fa91775823386df28662420a3b |

mit | [] | false | Data for fine-tuning This model was fine-tuned on 1000 exemples from the [NoReC train dataset](https://github.com/ltgoslo/norec) that belonged to the screen category. The training lasted 3 epochs with a learning rate of 5e-5. The code used to create this model (and some additional models) can be found on [Github](https://github.com/Karolill/NB-BERT-fine-tuned-on-english). | 3db31f584fa3bbf534b197ee425bd4c2 |

cc-by-4.0 | [] | false | Readability benchmark (ES): mbert-es-sentences-3class This project is part of a series of models from the paper "A Benchmark for Neural Readability Assessment of Texts in Spanish". You can find more details about the project in our [GitHub](https://github.com/lmvasque/readability-es-benchmark). | 50aa7e724de02ab41dda8a0a7e0827f5 |

cc-by-4.0 | [] | false | Models Our models were fine-tuned in multiple settings, including readability assessment in 2-class (simple/complex) and 3-class (basic/intermediate/advanced) for sentences and paragraph datasets. You can find more details in our [paper](https://drive.google.com/file/d/1KdwvqrjX8MWYRDGBKeHmiR1NCzDcVizo/view?usp=share_link). These are the available models you can use (current model page in bold): | Model | Granularity | | af6b9d8a063cb619e6f236e6e2ddac49 |

cc-by-4.0 | [] | false | classes | |-----------------------------------------------------------------------------------------------------------|----------------|:---------:| | [BERTIN (ES)](https://huggingface.co/lmvasque/readability-es-benchmark-bertin-es-paragraphs-2class) | paragraphs | 2 | | [BERTIN (ES)](https://huggingface.co/lmvasque/readability-es-benchmark-bertin-es-paragraphs-3class) | paragraphs | 3 | | [mBERT (ES)](https://huggingface.co/lmvasque/readability-es-benchmark-mbert-es-paragraphs-2class) | paragraphs | 2 | | [mBERT (ES)](https://huggingface.co/lmvasque/readability-es-benchmark-mbert-es-paragraphs-3class) | paragraphs | 3 | | [mBERT (EN+ES)](https://huggingface.co/lmvasque/readability-es-benchmark-mbert-en-es-paragraphs-3class) | paragraphs | 3 | | [BERTIN (ES)](https://huggingface.co/lmvasque/readability-es-benchmark-bertin-es-sentences-2class) | sentences | 2 | | **[mBERT (ES)](https://huggingface.co/lmvasque/readability-es-benchmark-mbert-es-sentences-3class)** | **sentences** | **3** | | [mBERT (ES)](https://huggingface.co/lmvasque/readability-es-benchmark-mbert-es-sentences-2class) | sentences | 2 | | [mBERT (ES)](https://huggingface.co/lmvasque/readability-es-benchmark-mbert-es-sentences-3class) | sentences | 3 | | [mBERT (EN+ES)](https://huggingface.co/lmvasque/readability-es-benchmark-mbert-en-es-sentences-3class) | sentences | 3 | For the zero-shot setting, we used the original models [BERTIN](bertin-project/bertin-roberta-base-spanish) and [mBERT](https://huggingface.co/bert-base-multilingual-uncased) with no further training. | 5f3936a350354c44580fe5b60e0b2d20 |

cc-by-4.0 | [] | false | Results These are our results for all the readability models in different settings. Please select your model based on the desired performance: | Granularity | Model | F1 Score (2-class) | Precision (2-class) | Recall (2-class) | F1 Score (3-class) | Precision (3-class) | Recall (3-class) | |-------------|---------------|:-------------------:|:---------------------:|:------------------:|:--------------------:|:---------------------:|:------------------:| | Paragraph | Baseline (TF-IDF+LR) | 0.829 | 0.832 | 0.827 | 0.556 | 0.563 | 0.550 | | Paragraph | BERTIN (Zero) | 0.308 | 0.222 | 0.500 | 0.227 | 0.284 | 0.338 | | Paragraph | BERTIN (ES) | 0.924 | 0.923 | 0.925 | 0.772 | 0.776 | 0.768 | | Paragraph | mBERT (Zero) | 0.308 | 0.222 | 0.500 | 0.253 | 0.312 | 0.368 | | Paragraph | mBERT (EN) | - | - | - | 0.505 | 0.560 | 0.552 | | Paragraph | mBERT (ES) | **0.933** | **0.932** | **0.936** | 0.776 | 0.777 | 0.778 | | Paragraph | mBERT (EN+ES) | - | - | - | **0.779** | **0.783** | **0.779** | | Sentence | Baseline (TF-IDF+LR) | 0.811 | 0.814 | 0.808 | 0.525 | 0.531 | 0.521 | | Sentence | BERTIN (Zero) | 0.367 | 0.290 | 0.500 | 0.188 | 0.232 | 0.335 | | Sentence | BERTIN (ES) | **0.900** | **0.900** | **0.900** | **0.699** | **0.701** | **0.698** | | Sentence | mBERT (Zero) | 0.367 | 0.290 | 0.500 | 0.278 | 0.329 | 0.351 | | Sentence | mBERT (EN) | - | - | - | 0.521 | 0.565 | 0.539 | | Sentence | mBERT (ES) | 0.893 | 0.891 | 0.896 | 0.688 | 0.686 | 0.691 | | Sentence | mBERT (EN+ES) | - | - | - | 0.679 | 0.676 | 0.682 | | 1fbf32abf59f2beb0a76a0231be6940a |

cc-by-4.0 | [] | false | Citation If you use our results and scripts in your research, please cite our work: "[A Benchmark for Neural Readability Assessment of Texts in Spanish](https://drive.google.com/file/d/1KdwvqrjX8MWYRDGBKeHmiR1NCzDcVizo/view?usp=share_link)" (to be published) ``` @inproceedings{vasquez-rodriguez-etal-2022-benchmarking, title = "A Benchmark for Neural Readability Assessment of Texts in Spanish", author = "V{\'a}squez-Rodr{\'\i}guez, Laura and Cuenca-Jim{\'\e}nez, Pedro-Manuel and Morales-Esquivel, Sergio Esteban and Alva-Manchego, Fernando", booktitle = "Workshop on Text Simplification, Accessibility, and Readability (TSAR-2022), EMNLP 2022", month = dec, year = "2022", } ``` | be6b14468d06ad42176eb210076809d8 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-distilled-clinc This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the clinc_oos dataset. It achieves the following results on the evaluation set: - Loss: 0.2699 - Accuracy: 0.9458 | e6ae98c1666d175becba7a1fc1f89953 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 48 - eval_batch_size: 48 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 9 | f2ede0120c1287cccdb53926cb560181 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 4.2203 | 1.0 | 318 | 3.1656 | 0.7532 | | 2.4201 | 2.0 | 636 | 1.5891 | 0.8558 | | 1.1961 | 3.0 | 954 | 0.8037 | 0.9152 | | 0.5996 | 4.0 | 1272 | 0.4888 | 0.9326 | | 0.3306 | 5.0 | 1590 | 0.3589 | 0.9439 | | 0.2079 | 6.0 | 1908 | 0.3070 | 0.9439 | | 0.1458 | 7.0 | 2226 | 0.2809 | 0.9458 | | 0.1155 | 8.0 | 2544 | 0.2740 | 0.9461 | | 0.1021 | 9.0 | 2862 | 0.2699 | 0.9458 | | 055a5277a122fbc3aca89b8102b1898e |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 4e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: cosine - lr_scheduler_warmup_ratio: 0.15 - num_epochs: 5 - mixed_precision_training: Native AMP | a6af920ca8e73431bb8bff3445e30b4d |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-base-50k This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 3.5640 - Wer: 1.0 | 555ecfff67b790adb1277225b850dd1f |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 7.5e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - num_epochs: 5 - mixed_precision_training: Native AMP | 0c60aab13e6ba30d09419749f4c887e9 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:---:| | 10.7005 | 0.48 | 300 | 5.3021 | 1.0 | | 3.9938 | 0.96 | 600 | 3.4997 | 1.0 | | 3.591 | 1.44 | 900 | 3.5641 | 1.0 | | 3.6168 | 1.92 | 1200 | 3.5641 | 1.0 | | 3.6252 | 2.4 | 1500 | 3.5641 | 1.0 | | 3.6137 | 2.88 | 1800 | 3.5641 | 1.0 | | 3.6124 | 3.36 | 2100 | 3.5641 | 1.0 | | 3.6171 | 3.84 | 2400 | 3.5641 | 1.0 | | 3.6436 | 4.32 | 2700 | 3.5641 | 1.0 | | 3.6189 | 4.8 | 3000 | 3.5640 | 1.0 | | 62b4e2b6e34b30a4e3cf253256e0c4ed |

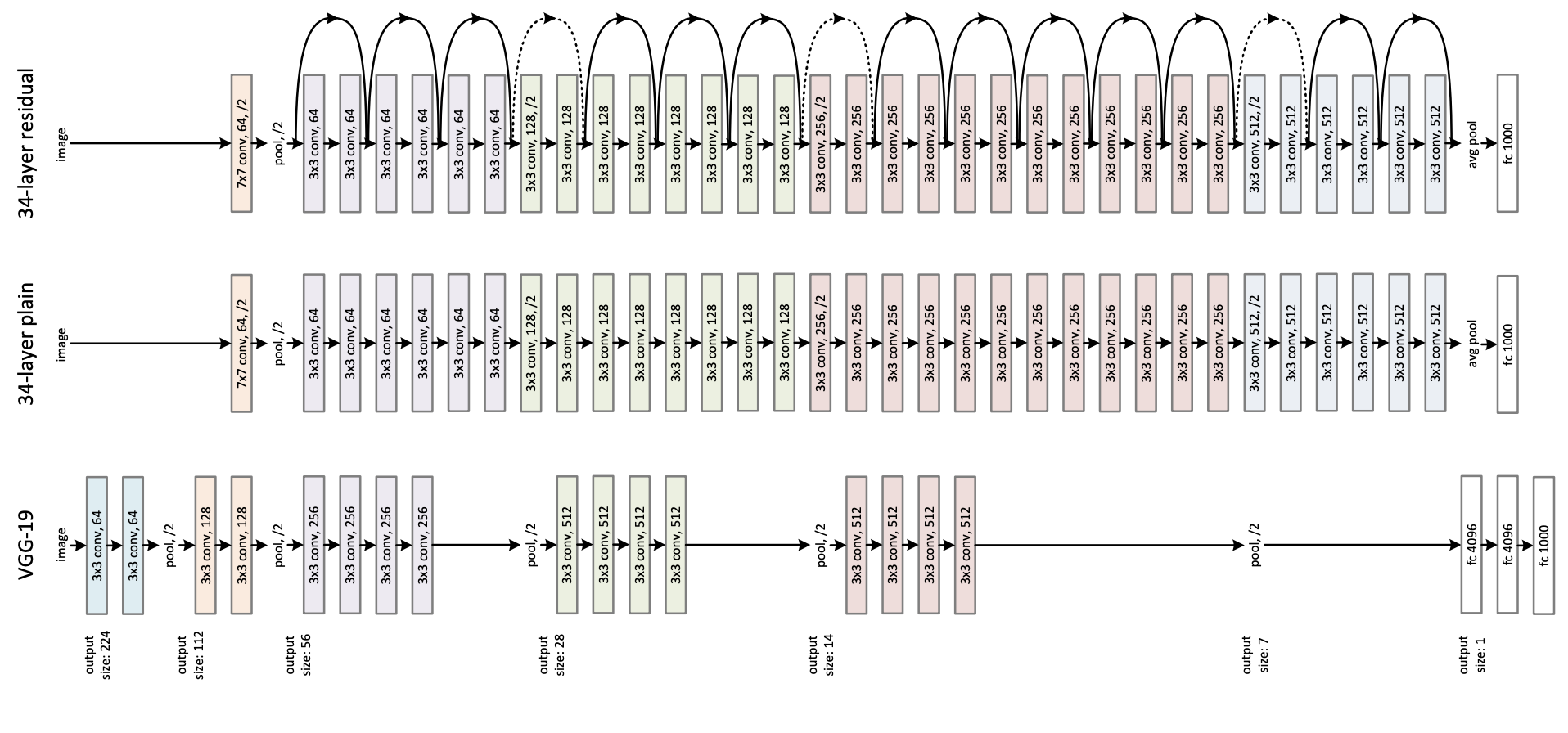

apache-2.0 | ['vision', 'image-classification'] | false | ResNet-34 v1.5 ResNet model pre-trained on ImageNet-1k at resolution 224x224. It was introduced in the paper [Deep Residual Learning for Image Recognition](https://arxiv.org/abs/1512.03385) by He et al. Disclaimer: The team releasing ResNet did not write a model card for this model so this model card has been written by the Hugging Face team. | b35a77271270f554818eb2f8b19ea273 |

apache-2.0 | ['vision', 'image-classification'] | false | Model description ResNet (Residual Network) is a convolutional neural network that democratized the concepts of residual learning and skip connections. This enables to train much deeper models. This is ResNet v1.5, which differs from the original model: in the bottleneck blocks which require downsampling, v1 has stride = 2 in the first 1x1 convolution, whereas v1.5 has stride = 2 in the 3x3 convolution. This difference makes ResNet50 v1.5 slightly more accurate (\~0.5% top1) than v1, but comes with a small performance drawback (~5% imgs/sec) according to [Nvidia](https://catalog.ngc.nvidia.com/orgs/nvidia/resources/resnet_50_v1_5_for_pytorch).  | f101f21ff4fdb43a5cc39228cc93e8e5 |

apache-2.0 | ['vision', 'image-classification'] | false | Intended uses & limitations You can use the raw model for image classification. See the [model hub](https://huggingface.co/models?search=resnet) to look for fine-tuned versions on a task that interests you. | aa4921a57a4623c018ac42f48dc4553c |

apache-2.0 | ['vision', 'image-classification'] | false | How to use Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes: ```python from transformers import AutoFeatureExtractor, ResNetForImageClassification import torch from datasets import load_dataset dataset = load_dataset("huggingface/cats-image") image = dataset["test"]["image"][0] feature_extractor = AutoFeatureExtractor.from_pretrained("microsoft/resnet-34") model = ResNetForImageClassification.from_pretrained("microsoft/resnet-34") inputs = feature_extractor(image, return_tensors="pt") with torch.no_grad(): logits = model(**inputs).logits | 10f0e79c59c8597164588290c205e050 |

apache-2.0 | ['vision', 'image-classification'] | false | model predicts one of the 1000 ImageNet classes predicted_label = logits.argmax(-1).item() print(model.config.id2label[predicted_label]) ``` For more code examples, we refer to the [documentation](https://huggingface.co/docs/transformers/main/en/model_doc/resnet). | e906935913dd9ba36de6de226009b547 |

apache-2.0 | ['vision', 'image-classification'] | false | BibTeX entry and citation info ```bibtex @inproceedings{he2016deep, title={Deep residual learning for image recognition}, author={He, Kaiming and Zhang, Xiangyu and Ren, Shaoqing and Sun, Jian}, booktitle={Proceedings of the IEEE conference on computer vision and pattern recognition}, pages={770--778}, year={2016} } ``` | 3fa644f0971db201d0a6dddb9eeb128a |

apache-2.0 | ['vision', 'image-classification'] | false | densenet121-res224-rsna A DenseNet is a type of convolutional neural network that utilises dense connections between layers, through Dense Blocks, where we connect all layers (with matching feature-map sizes) directly with each other. To preserve the feed-forward nature, each layer obtains additional inputs from all preceding layers and passes on its own feature-maps to all subsequent layers. | 500659c65c09d3e889c668a388496071 |

apache-2.0 | ['vision', 'image-classification'] | false | How to use Here is how to use this model to classify an image of xray: Note: Each pretrained model has 18 outputs. The `all` model has every output trained. However, for the other weights some targets are not trained and will predict randomly becuase they do not exist in the training dataset. The only valid outputs are listed in the field `{dataset}.pathologies` on the dataset that corresponds to the weights. Benchmarks of the modes are here: [BENCHMARKS.md](https://github.com/mlmed/torchxrayvision/blob/master/BENCHMARKS.md) ```python import urllib.request import skimage import torch import torch.nn.functional as F import torchvision import torchvision.transforms import torchxrayvision as xrv model_name = "densenet121-res224-rsna" img_url = "https://huggingface.co/spaces/torchxrayvision/torchxrayvision-classifier/resolve/main/16747_3_1.jpg" img_path = "xray.jpg" urllib.request.urlretrieve(img_url, img_path) model = xrv.models.get_model(model_name, from_hf_hub=True) img = skimage.io.imread(img_path) img = xrv.datasets.normalize(img, 255) | d80eee5f2d8ad9f7b327cd494c78731a |

apache-2.0 | ['vision', 'image-classification'] | false | Add color channel img = img[None, :, :] transform = torchvision.transforms.Compose([xrv.datasets.XRayCenterCrop()]) img = transform(img) with torch.no_grad(): img = torch.from_numpy(img).unsqueeze(0) preds = model(img).cpu() output = { k: float(v) for k, v in zip(xrv.datasets.default_pathologies, preds[0].detach().numpy()) } print(output) ``` For more code examples, we refer to the [example scripts](https://github.com/kamalkraj/torchxrayvision/blob/master/scripts). | 826e3cb03c30df212f84521d016cfbc1 |

apache-2.0 | ['vision', 'image-classification'] | false | Citation Primary TorchXRayVision paper: [https://arxiv.org/abs/2111.00595](https://arxiv.org/abs/2111.00595) ``` Joseph Paul Cohen, Joseph D. Viviano, Paul Bertin, Paul Morrison, Parsa Torabian, Matteo Guarrera, Matthew P Lungren, Akshay Chaudhari, Rupert Brooks, Mohammad Hashir, Hadrien Bertrand TorchXRayVision: A library of chest X-ray datasets and models. https://github.com/mlmed/torchxrayvision, 2020 @article{Cohen2020xrv, author = {Cohen, Joseph Paul and Viviano, Joseph D. and Bertin, Paul and Morrison, Paul and Torabian, Parsa and Guarrera, Matteo and Lungren, Matthew P and Chaudhari, Akshay and Brooks, Rupert and Hashir, Mohammad and Bertrand, Hadrien}, journal = {https://github.com/mlmed/torchxrayvision}, title = {{TorchXRayVision: A library of chest X-ray datasets and models}}, url = {https://github.com/mlmed/torchxrayvision}, year = {2020} arxivId = {2111.00595}, } ``` and this paper which initiated development of the library: [https://arxiv.org/abs/2002.02497](https://arxiv.org/abs/2002.02497) ``` Joseph Paul Cohen and Mohammad Hashir and Rupert Brooks and Hadrien Bertrand On the limits of cross-domain generalization in automated X-ray prediction. Medical Imaging with Deep Learning 2020 (Online: https://arxiv.org/abs/2002.02497) @inproceedings{cohen2020limits, title={On the limits of cross-domain generalization in automated X-ray prediction}, author={Cohen, Joseph Paul and Hashir, Mohammad and Brooks, Rupert and Bertrand, Hadrien}, booktitle={Medical Imaging with Deep Learning}, year={2020}, url={https://arxiv.org/abs/2002.02497} } ``` | d522f986021e9a07cb616d548f775e06 |

apache-2.0 | [] | false | Chinese MRC macbert-large * 使用大量中文MRC数据训练的macbert-large模型,详情可查看:https://github.com/basketballandlearn/MRC_Competition_Dureader * 此库发布的再训练模型,在 阅读理解/分类 等任务上均有大幅提高<br/> (已有多位小伙伴在Dureader-2021等多个比赛中取得**top5**的成绩😁) | 模型/数据集 | Dureader-2021 | tencentmedical | | ------------------------------------------|--------------- | --------------- | | | F1-score | Accuracy | | | dev / A榜 | test-1 | | macbert-large (哈工大预训练语言模型) | 65.49 / 64.27 | 82.5 | | roberta-wwm-ext-large (哈工大预训练语言模型) | 65.49 / 64.27 | 82.5 | | macbert-large (ours) | 70.45 / **68.13**| **83.4** | | roberta-wwm-ext-large (ours) | 68.91 / 66.91 | 83.1 | | 0caf317c5e214c8f2602408502a08a73 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2264 - Accuracy: 0.9275 - F1: 0.9275 | d5521bae133ec7d44a5f22df139f9437 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8546 | 1.0 | 250 | 0.3415 | 0.902 | 0.8975 | | 0.2647 | 2.0 | 500 | 0.2264 | 0.9275 | 0.9275 | | 949cf718aa73100cc8615b7e3271546a |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_7_0', 'generated_from_trainer', 'uk'] | false | Ukrainian STT model (with Language Model) 🇺🇦 Join Ukrainian Speech Recognition Community - https://t.me/speech_recognition_uk ⭐ See other Ukrainian models - https://github.com/egorsmkv/speech-recognition-uk - Have a look on an updated 300m model: https://huggingface.co/Yehor/wav2vec2-xls-r-300m-uk-with-small-lm - Have a look on a better model with more parameters: https://huggingface.co/Yehor/wav2vec2-xls-r-1b-uk-with-lm This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the MOZILLA-FOUNDATION/COMMON_VOICE_7_0 - UK dataset. It achieves the following results on the evaluation set: - Loss: 0.3015 - Wer: 0.3377 - Cer: 0.0708 The above results present evaluation without the language model. | f4e1a9d1dce84f85d51f1dae85be9463 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_7_0', 'generated_from_trainer', 'uk'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 20 - total_train_batch_size: 160 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 100.0 - mixed_precision_training: Native AMP | 180301b070048d8b734a224434a45b5f |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.