datasetId stringlengths 2 117 | card stringlengths 19 1.01M |

|---|---|

liuyanchen1015/MULTI_VALUE_qqp_not_preverbal_negator | ---

dataset_info:

features:

- name: question1

dtype: string

- name: question2

dtype: string

- name: label

dtype: int64

- name: idx

dtype: int64

- name: value_score

dtype: int64

splits:

- name: dev

num_bytes: 255092

num_examples: 1281

- name: test

num_bytes: 2419258

num_examples: 12249

- name: train

num_bytes: 2272011

num_examples: 11176

download_size: 3042453

dataset_size: 4946361

---

# Dataset Card for "MULTI_VALUE_qqp_not_preverbal_negator"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

open-llm-leaderboard/details_TinyLlama__TinyLlama-1.1B-Chat-v0.6 | ---

pretty_name: Evaluation run of TinyLlama/TinyLlama-1.1B-Chat-v0.6

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [TinyLlama/TinyLlama-1.1B-Chat-v0.6](https://huggingface.co/TinyLlama/TinyLlama-1.1B-Chat-v0.6)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 1 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the aggregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_TinyLlama__TinyLlama-1.1B-Chat-v0.6\"\

,\n\t\"harness_gsm8k_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\nThese\

\ are the [latest results from run 2023-12-02T13:49:51.667624](https://huggingface.co/datasets/open-llm-leaderboard/details_TinyLlama__TinyLlama-1.1B-Chat-v0.6/blob/main/results_2023-12-02T13-49-51.667624.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.02122820318423048,\n\

\ \"acc_stderr\": 0.003970449129848635\n },\n \"harness|gsm8k|5\":\

\ {\n \"acc\": 0.02122820318423048,\n \"acc_stderr\": 0.003970449129848635\n\

\ }\n}\n```"

repo_url: https://huggingface.co/TinyLlama/TinyLlama-1.1B-Chat-v0.6

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_gsm8k_5

data_files:

- split: 2023_12_02T13_49_51.667624

path:

- '**/details_harness|gsm8k|5_2023-12-02T13-49-51.667624.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2023-12-02T13-49-51.667624.parquet'

- config_name: results

data_files:

- split: 2023_12_02T13_49_51.667624

path:

- results_2023-12-02T13-49-51.667624.parquet

- split: latest

path:

- results_2023-12-02T13-49-51.667624.parquet

---

# Dataset Card for Evaluation run of TinyLlama/TinyLlama-1.1B-Chat-v0.6

## Dataset Description

- **Homepage:**

- **Repository:** https://huggingface.co/TinyLlama/TinyLlama-1.1B-Chat-v0.6

- **Paper:**

- **Leaderboard:** https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

- **Point of Contact:** clementine@hf.co

### Dataset Summary

Dataset automatically created during the evaluation run of model [TinyLlama/TinyLlama-1.1B-Chat-v0.6](https://huggingface.co/TinyLlama/TinyLlama-1.1B-Chat-v0.6) on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).

The dataset is composed of 1 configuration, each one coresponding to one of the evaluated task.

The dataset has been created from 1 run(s). Each run can be found as a specific split in each configuration, the split being named using the timestamp of the run.The "train" split is always pointing to the latest results.

An additional configuration "results" store all the aggregated results of the run (and is used to compute and display the aggregated metrics on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).

To load the details from a run, you can for instance do the following:

```python

from datasets import load_dataset

data = load_dataset("open-llm-leaderboard/details_TinyLlama__TinyLlama-1.1B-Chat-v0.6",

"harness_gsm8k_5",

split="train")

```

## Latest results

These are the [latest results from run 2023-12-02T13:49:51.667624](https://huggingface.co/datasets/open-llm-leaderboard/details_TinyLlama__TinyLlama-1.1B-Chat-v0.6/blob/main/results_2023-12-02T13-49-51.667624.json)(note that their might be results for other tasks in the repos if successive evals didn't cover the same tasks. You find each in the results and the "latest" split for each eval):

```python

{

"all": {

"acc": 0.02122820318423048,

"acc_stderr": 0.003970449129848635

},

"harness|gsm8k|5": {

"acc": 0.02122820318423048,

"acc_stderr": 0.003970449129848635

}

}

```

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

ysharma/short_jokes | ---

license: mit

---

**Context**

Generating humor is a complex task in the domain of machine learning, and it requires the models to understand the deep semantic meaning of a joke in order to generate new ones. Such problems, however, are difficult to solve due to a number of reasons, one of which is the lack of a database that gives an elaborate list of jokes. Thus, a large corpus of over 0.2 million jokes has been collected by scraping several websites containing funny and short jokes.

You can visit the [Github repository](https://github.com/amoudgl/short-jokes-dataset) from [amoudgl](https://github.com/amoudgl) for more information regarding collection of data and the scripts used.

**Content**

This dataset is in the form of a csv file containing 231,657 jokes. Length of jokes ranges from 10 to 200 characters. Each line in the file contains a unique ID and joke.

**Disclaimer**

It has been attempted to keep the jokes as clean as possible. Since the data has been collected by scraping websites, it is possible that there may be a few jokes that are inappropriate or offensive to some people.

**Note**

This dataset is taken from Kaggle dataset that can be found [here](https://www.kaggle.com/datasets/abhinavmoudgil95/short-jokes). |

bri25yu/flores200_packed2 | ---

dataset_info:

features:

- name: id

dtype: int32

- name: input_ids

sequence: int32

- name: attention_mask

sequence: int8

- name: labels

sequence: int64

splits:

- name: train

num_bytes: 15086599195.0

num_examples: 10240000

- name: val

num_bytes: 3827042

num_examples: 5000

- name: test

num_bytes: 7670994

num_examples: 10000

download_size: 6552366058

dataset_size: 15098097231.0

---

# Dataset Card for "flores200_packed2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

OpenGVLab/InternVid-10M-FLT-INFO | ---

license: cc-by-nc-sa-4.0

task_categories:

- feature-extraction

language:

- en

size_categories:

- 10M<n<100M

extra_gated_prompt: "You agree to not use the data to conduct experiments that cause harm to human subjects."

extra_gated_fields:

Name: text

Company/Organization: text

E-Mail: text

configs:

- config_name: InternVid-10M-FLT

data_files:

- split: FLT

path: InternVid-10M-FLT-INFO.jsonl

---

# InternVid

## Dataset Description

- **Homepage:** [InternVid](https://github.com/OpenGVLab/InternVideo/tree/main/Data/InternVid)

- **Repository:** [OpenGVLab](https://github.com/OpenGVLab/InternVideo/tree/main/Data/InternVid)

- **Paper:** [2307.06942](https://arxiv.org/pdf/2307.06942.pdf)

- **Point of Contact:** mailto:[InternVideo](gvx-sh@pjlab.org.cn)

## InternVid-10M-FLT

We present InternVid-10M-FLT, a subset of this dataset, consisting of 10 million video clips, with generated high-quality captions for publicly available web videos.

## Download

The 10M samples are provided in jsonlines file. Columns include the videoID, timestamps, generated caption and their UMT similarity scores.\

## How to Use

```

from datasets import load_dataset

dataset = load_dataset("OpenGVLab/InternVid")

```

## Method

## Citation

If you find this work useful for your research, please consider citing InternVid. Your acknowledgement would greatly help us in continuing to contribute resources to the research community.

```

@article{wang2023internvid,

title={InternVid: A Large-scale Video-Text Dataset for Multimodal Understanding and Generation},

author={Wang, Yi and He, Yinan and Li, Yizhuo and Li, Kunchang and Yu, Jiashuo and Ma, Xin and Chen, Xinyuan and Wang, Yaohui and Luo, Ping and Liu, Ziwei and Wang, Yali and Wang, Limin and Qiao, Yu},

journal={arXiv preprint arXiv:2307.06942},

year={2023}

}

@article{wang2022internvideo,

title={InternVideo: General Video Foundation Models via Generative and Discriminative Learning},

author={Wang, Yi and Li, Kunchang and Li, Yizhuo and He, Yinan and Huang, Bingkun and Zhao, Zhiyu and Zhang, Hongjie and Xu, Jilan and Liu, Yi and Wang, Zun and Xing, Sen and Chen, Guo and Pan, Junting and Yu, Jiashuo and Wang, Yali and Wang, Limin and Qiao, Yu},

journal={arXiv preprint arXiv:2212.03191},

year={2022}

}

```

|

open-llm-leaderboard/details_tianlinliu0121__zephyr-7b-dpo-full-beta-0.2 | ---

pretty_name: Evaluation run of tianlinliu0121/zephyr-7b-dpo-full-beta-0.2

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [tianlinliu0121/zephyr-7b-dpo-full-beta-0.2](https://huggingface.co/tianlinliu0121/zephyr-7b-dpo-full-beta-0.2)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 1 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 4 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the aggregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_tianlinliu0121__zephyr-7b-dpo-full-beta-0.2\"\

,\n\t\"harness_gsm8k_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\nThese\

\ are the [latest results from run 2023-12-03T18:01:25.798597](https://huggingface.co/datasets/open-llm-leaderboard/details_tianlinliu0121__zephyr-7b-dpo-full-beta-0.2/blob/main/results_2023-12-03T18-01-25.798597.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.30022744503411675,\n\

\ \"acc_stderr\": 0.012625423152283044\n },\n \"harness|gsm8k|5\":\

\ {\n \"acc\": 0.30022744503411675,\n \"acc_stderr\": 0.012625423152283044\n\

\ }\n}\n```"

repo_url: https://huggingface.co/tianlinliu0121/zephyr-7b-dpo-full-beta-0.2

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_gsm8k_5

data_files:

- split: 2023_12_03T17_57_40.359102

path:

- '**/details_harness|gsm8k|5_2023-12-03T17-57-40.359102.parquet'

- split: 2023_12_03T17_57_42.100432

path:

- '**/details_harness|gsm8k|5_2023-12-03T17-57-42.100432.parquet'

- split: 2023_12_03T18_01_20.411431

path:

- '**/details_harness|gsm8k|5_2023-12-03T18-01-20.411431.parquet'

- split: 2023_12_03T18_01_25.798597

path:

- '**/details_harness|gsm8k|5_2023-12-03T18-01-25.798597.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2023-12-03T18-01-25.798597.parquet'

- config_name: results

data_files:

- split: 2023_12_03T17_57_40.359102

path:

- results_2023-12-03T17-57-40.359102.parquet

- split: 2023_12_03T17_57_42.100432

path:

- results_2023-12-03T17-57-42.100432.parquet

- split: 2023_12_03T18_01_20.411431

path:

- results_2023-12-03T18-01-20.411431.parquet

- split: 2023_12_03T18_01_25.798597

path:

- results_2023-12-03T18-01-25.798597.parquet

- split: latest

path:

- results_2023-12-03T18-01-25.798597.parquet

---

# Dataset Card for Evaluation run of tianlinliu0121/zephyr-7b-dpo-full-beta-0.2

## Dataset Description

- **Homepage:**

- **Repository:** https://huggingface.co/tianlinliu0121/zephyr-7b-dpo-full-beta-0.2

- **Paper:**

- **Leaderboard:** https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

- **Point of Contact:** clementine@hf.co

### Dataset Summary

Dataset automatically created during the evaluation run of model [tianlinliu0121/zephyr-7b-dpo-full-beta-0.2](https://huggingface.co/tianlinliu0121/zephyr-7b-dpo-full-beta-0.2) on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).

The dataset is composed of 1 configuration, each one coresponding to one of the evaluated task.

The dataset has been created from 4 run(s). Each run can be found as a specific split in each configuration, the split being named using the timestamp of the run.The "train" split is always pointing to the latest results.

An additional configuration "results" store all the aggregated results of the run (and is used to compute and display the aggregated metrics on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).

To load the details from a run, you can for instance do the following:

```python

from datasets import load_dataset

data = load_dataset("open-llm-leaderboard/details_tianlinliu0121__zephyr-7b-dpo-full-beta-0.2",

"harness_gsm8k_5",

split="train")

```

## Latest results

These are the [latest results from run 2023-12-03T18:01:25.798597](https://huggingface.co/datasets/open-llm-leaderboard/details_tianlinliu0121__zephyr-7b-dpo-full-beta-0.2/blob/main/results_2023-12-03T18-01-25.798597.json)(note that their might be results for other tasks in the repos if successive evals didn't cover the same tasks. You find each in the results and the "latest" split for each eval):

```python

{

"all": {

"acc": 0.30022744503411675,

"acc_stderr": 0.012625423152283044

},

"harness|gsm8k|5": {

"acc": 0.30022744503411675,

"acc_stderr": 0.012625423152283044

}

}

```

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

automated-research-group/phi-boolq-results | ---

dataset_info:

config_name: '{''do_sample''=False, ''beams''=1}'

features:

- name: id

dtype: string

- name: prediction

dtype: string

- name: bool_accuracy

dtype: bool

splits:

- name: train

num_bytes: 475041

num_examples: 3270

download_size: 282821

dataset_size: 475041

configs:

- config_name: '{''do_sample''=False, ''beams''=1}'

data_files:

- split: train

path: '{''do_sample''=False, ''beams''=1}/train-*'

---

# Dataset Card for "phi-boolq-results"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

presencesw/wmt14_fr_en | ---

dataset_info:

features:

- name: en

dtype: string

- name: fr

dtype: string

splits:

- name: train

num_bytes: 14753166087

num_examples: 40836876

- name: validation

num_bytes: 744439

num_examples: 3000

- name: test

num_bytes: 838849

num_examples: 3003

download_size: 9661488345

dataset_size: 14754749375

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

---

|

librarian-bots/authors_merged_model_prs | Invalid username or password. |

zolak/twitter_dataset_81_1713123291 | ---

dataset_info:

features:

- name: id

dtype: string

- name: tweet_content

dtype: string

- name: user_name

dtype: string

- name: user_id

dtype: string

- name: created_at

dtype: string

- name: url

dtype: string

- name: favourite_count

dtype: int64

- name: scraped_at

dtype: string

- name: image_urls

dtype: string

splits:

- name: train

num_bytes: 272567

num_examples: 662

download_size: 149726

dataset_size: 272567

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

Francesco/solar-panels-taxvb | ---

dataset_info:

features:

- name: image_id

dtype: int64

- name: image

dtype: image

- name: width

dtype: int32

- name: height

dtype: int32

- name: objects

sequence:

- name: id

dtype: int64

- name: area

dtype: int64

- name: bbox

sequence: float32

length: 4

- name: category

dtype:

class_label:

names:

'0': solar-panels

'1': Cell

'2': Cell-Multi

'3': No-Anomaly

'4': Shadowing

'5': Unclassified

annotations_creators:

- crowdsourced

language_creators:

- found

language:

- en

license:

- cc

multilinguality:

- monolingual

size_categories:

- 1K<n<10K

source_datasets:

- original

task_categories:

- object-detection

task_ids: []

pretty_name: solar-panels-taxvb

tags:

- rf100

---

# Dataset Card for solar-panels-taxvb

** The original COCO dataset is stored at `dataset.tar.gz`**

## Dataset Description

- **Homepage:** https://universe.roboflow.com/object-detection/solar-panels-taxvb

- **Point of Contact:** francesco.zuppichini@gmail.com

### Dataset Summary

solar-panels-taxvb

### Supported Tasks and Leaderboards

- `object-detection`: The dataset can be used to train a model for Object Detection.

### Languages

English

## Dataset Structure

### Data Instances

A data point comprises an image and its object annotations.

```

{

'image_id': 15,

'image': <PIL.JpegImagePlugin.JpegImageFile image mode=RGB size=640x640 at 0x2373B065C18>,

'width': 964043,

'height': 640,

'objects': {

'id': [114, 115, 116, 117],

'area': [3796, 1596, 152768, 81002],

'bbox': [

[302.0, 109.0, 73.0, 52.0],

[810.0, 100.0, 57.0, 28.0],

[160.0, 31.0, 248.0, 616.0],

[741.0, 68.0, 202.0, 401.0]

],

'category': [4, 4, 0, 0]

}

}

```

### Data Fields

- `image`: the image id

- `image`: `PIL.Image.Image` object containing the image. Note that when accessing the image column: `dataset[0]["image"]` the image file is automatically decoded. Decoding of a large number of image files might take a significant amount of time. Thus it is important to first query the sample index before the `"image"` column, *i.e.* `dataset[0]["image"]` should **always** be preferred over `dataset["image"][0]`

- `width`: the image width

- `height`: the image height

- `objects`: a dictionary containing bounding box metadata for the objects present on the image

- `id`: the annotation id

- `area`: the area of the bounding box

- `bbox`: the object's bounding box (in the [coco](https://albumentations.ai/docs/getting_started/bounding_boxes_augmentation/#coco) format)

- `category`: the object's category.

#### Who are the annotators?

Annotators are Roboflow users

## Additional Information

### Licensing Information

See original homepage https://universe.roboflow.com/object-detection/solar-panels-taxvb

### Citation Information

```

@misc{ solar-panels-taxvb,

title = { solar panels taxvb Dataset },

type = { Open Source Dataset },

author = { Roboflow 100 },

howpublished = { \url{ https://universe.roboflow.com/object-detection/solar-panels-taxvb } },

url = { https://universe.roboflow.com/object-detection/solar-panels-taxvb },

journal = { Roboflow Universe },

publisher = { Roboflow },

year = { 2022 },

month = { nov },

note = { visited on 2023-03-29 },

}"

```

### Contributions

Thanks to [@mariosasko](https://github.com/mariosasko) for adding this dataset. |

yyy999/Unicauca-dataset-April-June-2019-Network-flows | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: ts

dtype: int64

- name: duration

dtype: int64

- name: src_ip

dtype: int64

- name: src_port

dtype: int64

- name: dst_ip

dtype: int64

- name: dst_port

dtype: int64

- name: proto

dtype: int64

- name: packets

dtype: int64

- name: bytes

dtype: int64

- name: packet_size

dtype: float64

splits:

- name: train

num_bytes: 173109680

num_examples: 2163871

- name: test

num_bytes: 43277440

num_examples: 540968

download_size: 99801648

dataset_size: 216387120

---

# Dataset Card for "Unicauca-dataset-April-June-2019-Network-flows"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

AppleHarem/tsurugi_bluearchive | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of tsurugi (Blue Archive)

This is the dataset of tsurugi (Blue Archive), containing 200 images and their tags.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).([LittleAppleWebUI](https://github.com/LittleApple-fp16/LittleAppleWebUI))

| Name | Images | Download | Description |

|:----------------|---------:|:----------------------------------------|:-----------------------------------------------------------------------------------------|

| raw | 200 | [Download](dataset-raw.zip) | Raw data with meta information. |

| raw-stage3 | 531 | [Download](dataset-raw-stage3.zip) | 3-stage cropped raw data with meta information. |

| raw-stage3-eyes | 667 | [Download](dataset-raw-stage3-eyes.zip) | 3-stage cropped (with eye-focus) raw data with meta information. |

| 384x512 | 200 | [Download](dataset-384x512.zip) | 384x512 aligned dataset. |

| 512x704 | 200 | [Download](dataset-512x704.zip) | 512x704 aligned dataset. |

| 640x880 | 200 | [Download](dataset-640x880.zip) | 640x880 aligned dataset. |

| stage3-640 | 531 | [Download](dataset-stage3-640.zip) | 3-stage cropped dataset with the shorter side not exceeding 640 pixels. |

| stage3-800 | 531 | [Download](dataset-stage3-800.zip) | 3-stage cropped dataset with the shorter side not exceeding 800 pixels. |

| stage3-p512-640 | 485 | [Download](dataset-stage3-p512-640.zip) | 3-stage cropped dataset with the area not less than 512x512 pixels. |

| stage3-eyes-640 | 667 | [Download](dataset-stage3-eyes-640.zip) | 3-stage cropped (with eye-focus) dataset with the shorter side not exceeding 640 pixels. |

| stage3-eyes-800 | 667 | [Download](dataset-stage3-eyes-800.zip) | 3-stage cropped (with eye-focus) dataset with the shorter side not exceeding 800 pixels. |

|

vincha77/review_sample_with_aspect | ---

dataset_info:

features:

- name: text

dtype: string

- name: aspect

dtype: string

- name: food

dtype: string

- name: service

dtype: string

- name: label

dtype: int64

- name: review_length

dtype: int64

- name: price

dtype: string

- name: ambience

dtype: string

splits:

- name: train

num_bytes: 114005.35714285714

num_examples: 100

- name: test

num_bytes: 13680.642857142857

num_examples: 12

download_size: 96925

dataset_size: 127686.0

---

# Dataset Card for "review_sample_with_aspect"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

CyberHarem/arkhangelsk_azurlane | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of arkhangelsk/アルハンゲリスク/阿尔汉格尔斯克 (Azur Lane)

This is the dataset of arkhangelsk/アルハンゲリスク/阿尔汉格尔斯克 (Azur Lane), containing 23 images and their tags.

The core tags of this character are `breasts, large_breasts, long_hair, yellow_eyes, blue_hair, hair_between_eyes, bangs, very_long_hair, white_headwear, hat`, which are pruned in this dataset.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).

## List of Packages

| Name | Images | Size | Download | Type | Description |

|:-----------------|---------:|:----------|:----------------------------------------------------------------------------------------------------------------------|:-----------|:---------------------------------------------------------------------|

| raw | 23 | 39.92 MiB | [Download](https://huggingface.co/datasets/CyberHarem/arkhangelsk_azurlane/resolve/main/dataset-raw.zip) | Waifuc-Raw | Raw data with meta information (min edge aligned to 1400 if larger). |

| 800 | 23 | 20.14 MiB | [Download](https://huggingface.co/datasets/CyberHarem/arkhangelsk_azurlane/resolve/main/dataset-800.zip) | IMG+TXT | dataset with the shorter side not exceeding 800 pixels. |

| stage3-p480-800 | 59 | 45.54 MiB | [Download](https://huggingface.co/datasets/CyberHarem/arkhangelsk_azurlane/resolve/main/dataset-stage3-p480-800.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

| 1200 | 23 | 33.10 MiB | [Download](https://huggingface.co/datasets/CyberHarem/arkhangelsk_azurlane/resolve/main/dataset-1200.zip) | IMG+TXT | dataset with the shorter side not exceeding 1200 pixels. |

| stage3-p480-1200 | 59 | 67.31 MiB | [Download](https://huggingface.co/datasets/CyberHarem/arkhangelsk_azurlane/resolve/main/dataset-stage3-p480-1200.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

### Load Raw Dataset with Waifuc

We provide raw dataset (including tagged images) for [waifuc](https://deepghs.github.io/waifuc/main/tutorials/installation/index.html) loading. If you need this, just run the following code

```python

import os

import zipfile

from huggingface_hub import hf_hub_download

from waifuc.source import LocalSource

# download raw archive file

zip_file = hf_hub_download(

repo_id='CyberHarem/arkhangelsk_azurlane',

repo_type='dataset',

filename='dataset-raw.zip',

)

# extract files to your directory

dataset_dir = 'dataset_dir'

os.makedirs(dataset_dir, exist_ok=True)

with zipfile.ZipFile(zip_file, 'r') as zf:

zf.extractall(dataset_dir)

# load the dataset with waifuc

source = LocalSource(dataset_dir)

for item in source:

print(item.image, item.meta['filename'], item.meta['tags'])

```

## List of Clusters

List of tag clustering result, maybe some outfits can be mined here.

### Raw Text Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | Tags |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| 0 | 13 |  |  |  |  |  | 1girl, elbow_gloves, solo, black_gloves, cleavage, looking_at_viewer, smile, white_coat, white_thighhighs, military_hat, thighs, fur_trim, thigh_boots, blush, holding_sword, open_mouth, white_dress, white_footwear |

| 1 | 8 |  |  |  |  |  | 1girl, black_bodysuit, latex_bodysuit, looking_at_viewer, blush, headset, cat_ear_headphones, cat_ears, cleavage, skin_tight, smile, solo, official_alternate_costume, arms_up, black_gloves, open_mouth, shiny_clothes |

### Table Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | 1girl | elbow_gloves | solo | black_gloves | cleavage | looking_at_viewer | smile | white_coat | white_thighhighs | military_hat | thighs | fur_trim | thigh_boots | blush | holding_sword | open_mouth | white_dress | white_footwear | black_bodysuit | latex_bodysuit | headset | cat_ear_headphones | cat_ears | skin_tight | official_alternate_costume | arms_up | shiny_clothes |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------|:---------------|:-------|:---------------|:-----------|:--------------------|:--------|:-------------|:-------------------|:---------------|:---------|:-----------|:--------------|:--------|:----------------|:-------------|:--------------|:-----------------|:-----------------|:-----------------|:----------|:---------------------|:-----------|:-------------|:-----------------------------|:----------|:----------------|

| 0 | 13 |  |  |  |  |  | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | | | | | | | | | |

| 1 | 8 |  |  |  |  |  | X | | X | X | X | X | X | | | | | | | X | | X | | | X | X | X | X | X | X | X | X | X |

|

arbml/tashkeela | ---

dataset_info:

features:

- name: diacratized

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 1419585102

num_examples: 979982

- name: test

num_bytes: 78869542

num_examples: 54444

- name: dev

num_bytes: 78863352

num_examples: 54443

download_size: 747280703

dataset_size: 1577317996

---

# Dataset Card for "tashkeela"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

andstor/methods2test_small | ---

language:

- en

license: mit

task_categories:

- text-generation

configs:

- config_name: fm

data_files:

- split: train

path: data/fm/train-*

- split: test

path: data/fm/test-*

- split: validation

path: data/fm/validation-*

- config_name: fm_indented

data_files:

- split: train

path: data/fm_indented/train-*

- split: test

path: data/fm_indented/test-*

- split: validation

path: data/fm_indented/validation-*

- config_name: fm+t

data_files:

- split: train

path: data/fm+t/train-*

- split: test

path: data/fm+t/test-*

- split: validation

path: data/fm+t/validation-*

- config_name: fm+fc

data_files:

- split: train

path: data/fm+fc/train-*

- split: test

path: data/fm+fc/test-*

- split: validation

path: data/fm+fc/validation-*

- config_name: fm+fc+t+tc

data_files:

- split: train

path: data/fm+fc+t+tc/train-*

- split: test

path: data/fm+fc+t+tc/test-*

- split: validation

path: data/fm+fc+t+tc/validation-*

- config_name: fm+fc+c

data_files:

- split: train

path: data/fm+fc+c/train-*

- split: test

path: data/fm+fc+c/test-*

- split: validation

path: data/fm+fc+c/validation-*

- config_name: fm+fc+c+t+tc

data_files:

- split: train

path: data/fm+fc+c+t+tc/train-*

- split: test

path: data/fm+fc+c+t+tc/test-*

- split: validation

path: data/fm+fc+c+t+tc/validation-*

- config_name: fm+fc+c+m

data_files:

- split: train

path: data/fm+fc+c+m/train-*

- split: test

path: data/fm+fc+c+m/test-*

- split: validation

path: data/fm+fc+c+m/validation-*

- config_name: fm+fc+c+m+t+tc

data_files:

- split: train

path: data/fm+fc+c+m+t+tc/train-*

- split: test

path: data/fm+fc+c+m+t+tc/test-*

- split: validation

path: data/fm+fc+c+m+t+tc/validation-*

- config_name: fm+fc+c+m+f

data_files:

- split: train

path: data/fm+fc+c+m+f/train-*

- split: test

path: data/fm+fc+c+m+f/test-*

- split: validation

path: data/fm+fc+c+m+f/validation-*

- config_name: fm+fc+c+m+f+t+tc

data_files:

- split: train

path: data/fm+fc+c+m+f+t+tc/train-*

- split: test

path: data/fm+fc+c+m+f+t+tc/test-*

- split: validation

path: data/fm+fc+c+m+f+t+tc/validation-*

- config_name: t

data_files:

- split: train

path: data/t/train-*

- split: test

path: data/t/test-*

- split: validation

path: data/t/validation-*

- config_name: t_indented

data_files:

- split: train

path: data/t_indented/train-*

- split: test

path: data/t_indented/test-*

- split: validation

path: data/t_indented/validation-*

- config_name: t+tc

data_files:

- split: train

path: data/t+tc/train-*

- split: test

path: data/t+tc/test-*

- split: validation

path: data/t+tc/validation-*

dataset_info:

- config_name: fm

features:

- name: id

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 4696431

num_examples: 7440

- name: test

num_bytes: 642347

num_examples: 1017

- name: validation

num_bytes: 662917

num_examples: 953

download_size: 2633268

dataset_size: 6001695

- config_name: fm+fc

features:

- name: id

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 5387123

num_examples: 7440

- name: test

num_bytes: 738049

num_examples: 1017

- name: validation

num_bytes: 757167

num_examples: 953

download_size: 2925807

dataset_size: 6882339

- config_name: fm+fc+c

features:

- name: id

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 5906873

num_examples: 7440

- name: test

num_bytes: 820149

num_examples: 1017

- name: validation

num_bytes: 824441

num_examples: 953

download_size: 3170873

dataset_size: 7551463

- config_name: fm+fc+c+m

features:

- name: id

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 11930672

num_examples: 7440

- name: test

num_bytes: 1610045

num_examples: 1017

- name: validation

num_bytes: 1553249

num_examples: 953

download_size: 5406454

dataset_size: 15093966

- config_name: fm+fc+c+m+f

features:

- name: id

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 12722890

num_examples: 7440

- name: test

num_bytes: 1713683

num_examples: 1017

- name: validation

num_bytes: 1654607

num_examples: 953

download_size: 5753116

dataset_size: 16091180

- config_name: fm+fc+c+m+f+t+tc

features:

- name: id

dtype: string

- name: source

dtype: string

- name: target

dtype: string

splits:

- name: train

num_bytes: 18332635

num_examples: 7440

- name: test

num_bytes: 2461169

num_examples: 1017

- name: validation

num_bytes: 2510969

num_examples: 953

download_size: 8280985

dataset_size: 23304773

- config_name: fm+fc+c+m+t+tc

features:

- name: id

dtype: string

- name: source

dtype: string

- name: target

dtype: string

splits:

- name: train

num_bytes: 17537661

num_examples: 7440

- name: test

num_bytes: 2357359

num_examples: 1017

- name: validation

num_bytes: 2409506

num_examples: 953

download_size: 8178222

dataset_size: 22304526

- config_name: fm+fc+c+t+tc

features:

- name: id

dtype: string

- name: source

dtype: string

- name: target

dtype: string

splits:

- name: train

num_bytes: 11445562

num_examples: 7440

- name: test

num_bytes: 1565365

num_examples: 1017

- name: validation

num_bytes: 1676986

num_examples: 953

download_size: 5944482

dataset_size: 14687913

- config_name: fm+fc+t+tc

features:

- name: id

dtype: string

- name: source

dtype: string

- name: target

dtype: string

splits:

- name: train

num_bytes: 10923038

num_examples: 7440

- name: test

num_bytes: 1483265

num_examples: 1017

- name: validation

num_bytes: 1609296

num_examples: 953

download_size: 5715335

dataset_size: 14015599

- config_name: fm+t

features:

- name: id

dtype: string

- name: source

dtype: string

- name: target

dtype: string

splits:

- name: train

num_bytes: 8889443

num_examples: 7440

- name: test

num_bytes: 1207763

num_examples: 1017

- name: validation

num_bytes: 1336798

num_examples: 953

download_size: 4898458

dataset_size: 11434004

- config_name: fm_indented

features:

- name: id

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 5054397

num_examples: 7440

- name: test

num_bytes: 692948

num_examples: 1017

- name: validation

num_bytes: 714462

num_examples: 953

download_size: 2703115

dataset_size: 6461807

- config_name: t

features:

- name: id

dtype: string

- name: source

dtype: string

- name: target

dtype: string

splits:

- name: train

num_bytes: 4316096

num_examples: 7440

- name: test

num_bytes: 582266

num_examples: 1017

- name: validation

num_bytes: 689647

num_examples: 953

download_size: 2434024

dataset_size: 5588009

- config_name: t+tc

features:

- name: id

dtype: string

- name: source

dtype: string

- name: target

dtype: string

splits:

- name: train

num_bytes: 5648321

num_examples: 7440

- name: test

num_bytes: 761386

num_examples: 1017

- name: validation

num_bytes: 867350

num_examples: 953

download_size: 3024686

dataset_size: 7277057

- config_name: t_indented

features:

- name: id

dtype: string

- name: source

dtype: string

- name: target

dtype: string

splits:

- name: train

num_bytes: 4606253

num_examples: 7440

- name: test

num_bytes: 623576

num_examples: 1017

- name: validation

num_bytes: 734221

num_examples: 953

download_size: 2496661

dataset_size: 5964050

tags:

- unit test

- java

- code

---

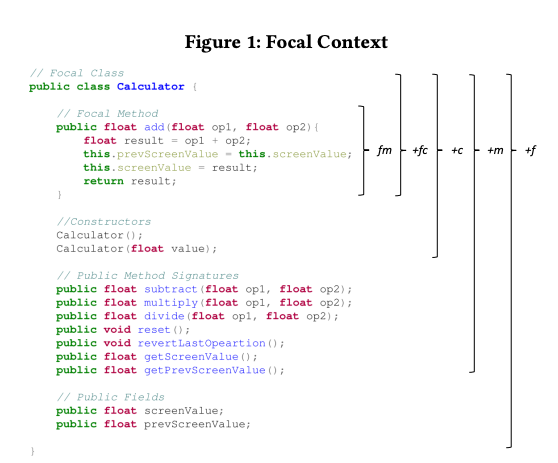

## Dataset Description

Microsoft created the methods2test dataset, consisting of Java Junit test cases with its corresponding focal methods.

It contains 780k pairs of JUnit test cases and focal methods which were extracted from a total of 91K

Java open source project hosted on GitHub.

This is smaller subset of the assembled version of the methods2test dataset.

It provides convenient access to the different context levels based on the raw source code (e.g. newlines are preserved). The test cases and associated classes are also made available.

The subset is created by randomly selecting only one sample from each of the 91k projects.

The mapping between test case and focal methods are based heuristics rules and Java developer's best practice.

More information could be found here:

- [methods2test Github repo](https://github.com/microsoft/methods2test)

- [Methods2Test: A dataset of focal methods mapped to test cases](https://arxiv.org/pdf/2203.12776.pdf)

## Dataset Schema

```

t: <TEST_CASE>

t+tc: <TEST_CASE> <TEST_CLASS_NAME>

fm: <FOCAL_METHOD>

fm+fc: <FOCAL_CLASS_NAME> <FOCAL_METHOD>

fm+fc+c: <FOCAL_CLASS_NAME> <FOCAL_METHOD> <CONTRSUCTORS>

fm+fc+c+m: <FOCAL_CLASS_NAME> <FOCAL_METHOD> <CONTRSUCTORS> <METHOD_SIGNATURES>

fm+fc+c+m+f: <FOCAL_CLASS_NAME> <FOCAL_METHOD> <CONTRSUCTORS> <METHOD_SIGNATURES> <FIELDS>

```

## Focal Context

- fm: this representation incorporates exclusively the source

code of the focal method. Intuitively, this contains the most

important information for generating accurate test cases for

the given method.

- fm+fc: this representations adds the focal class name, which

can provide meaningful semantic information to the model.

- fm+fc+c: this representation adds the signatures of the constructor methods of the focal class. The idea behind this

augmentation is that the test case may require instantiating

an object of the focal class in order to properly test the focal

method.

- fm+fc+c+m: this representation adds the signatures of the

other public methods in the focal class. The rationale which

motivated this inclusion is that the test case may need to

invoke other auxiliary methods within the class (e.g., getters,

setters) to set up or tear down the testing environment.

- fm+fc+c+m+f : this representation adds the public fields of

the focal class. The motivation is that test cases may need to

inspect the status of the public fields to properly test a focal

method.

The different levels of focal contexts are the following:

```

T: test case

t+tc: test case + test class name

FM: focal method

fm+fc: focal method + focal class name

fm+fc+c: focal method + focal class name + constructor signatures

fm+fc+c+m: focal method + focal class name + constructor signatures + public method signatures

fm+fc+c+m+f: focal method + focal class name + constructor signatures + public method signatures + public fields

```

## Limitations

The original authors validate the heuristics by inspecting a

statistically significant sample (confidence level of 95% within 10%

margin of error) of 97 samples from the training set. Two authors

independently evaluated the sample, then met to discuss the disagreements. We found that 90.72% of the samples have a correct

link between the test case and the corresponding focal method

## Contribution

All thanks to the original authors.

|

AlekseyKorshuk/instinwild-chatml-deduplicated | ---

dataset_info:

features:

- name: conversation

list:

- name: content

dtype: string

- name: do_train

dtype: bool

- name: role

dtype: string

splits:

- name: train

num_bytes: 38772282.58722768

num_examples: 50970

download_size: 20538245

dataset_size: 38772282.58722768

---

# Dataset Card for "instinwild-chatml-deduplicated"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

TIGER-Lab/MMLU-STEM | ---

license: mit

---

This is the dataset being used by MINERVA and LLEMMA in https://huggingface.co/EleutherAI/llemma_7b. This contains a subset of STEM subjects defined in MMLU by MINERVA.

The included subjects are

- 'abstract_algebra',

- 'anatomy',

- 'astronomy',

- 'college_biology',

- 'college_chemistry',

- 'college_computer_science',

- 'college_mathematics',

- 'college_physics',

- 'computer_security',

- 'conceptual_physics',

- 'electrical_engineering',

- 'elementary_mathematics',

- 'high_school_biology',

- 'high_school_chemistry',

- 'high_school_computer_science',

- 'high_school_mathematics',

- 'high_school_physics',

- 'high_school_statistics',

- 'machine_learning'

Please cite the original MMLU paper when you are using it. |

SlimX/Subset-test-FREE | ---

license: apache-2.0

---

|

CyberHarem/kaname_rana_bangdreamitsmygo | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of Kaname Rāna

This is the dataset of Kaname Rāna, containing 131 images and their tags.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).

| Name | Images | Download | Description |

|:------------|---------:|:------------------------------------|:-------------------------------------------------------------------------|

| raw | 131 | [Download](dataset-raw.zip) | Raw data with meta information. |

| raw-stage3 | 315 | [Download](dataset-raw-stage3.zip) | 3-stage cropped raw data with meta information. |

| 384x512 | 131 | [Download](dataset-384x512.zip) | 384x512 aligned dataset. |

| 512x512 | 131 | [Download](dataset-512x512.zip) | 512x512 aligned dataset. |

| 512x704 | 131 | [Download](dataset-512x704.zip) | 512x704 aligned dataset. |

| 640x640 | 131 | [Download](dataset-640x640.zip) | 640x640 aligned dataset. |

| 640x880 | 131 | [Download](dataset-640x880.zip) | 640x880 aligned dataset. |

| stage3-640 | 315 | [Download](dataset-stage3-640.zip) | 3-stage cropped dataset with the shorter side not exceeding 640 pixels. |

| stage3-800 | 315 | [Download](dataset-stage3-800.zip) | 3-stage cropped dataset with the shorter side not exceeding 800 pixels. |

| stage3-1200 | 315 | [Download](dataset-stage3-1200.zip) | 3-stage cropped dataset with the shorter side not exceeding 1200 pixels. |

|

joseluhf11/oct-fovea-detection_v2 | ---

dataset_info:

features:

- name: image

dtype: image

- name: objects

struct:

- name: bbox

sequence:

sequence: int64

- name: categories

sequence: string

splits:

- name: train

num_bytes: 438180043.0

num_examples: 539

download_size: 430057504

dataset_size: 438180043.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

pyRis/wikinewssum | ---

license: mit

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

- split: validation

path: data/validation-*

dataset_info:

features:

- name: lang_src

dtype: string

- name: text

dtype: string

- name: lang_tgt

dtype: string

- name: summary

dtype: string

splits:

- name: train

num_bytes: 286852471

num_examples: 62543

- name: test

num_bytes: 41437935

num_examples: 8977

- name: validation

num_bytes: 81600711

num_examples: 17996

download_size: 199887304

dataset_size: 409891117

---

|

one-sec-cv12/chunk_39 | ---

dataset_info:

features:

- name: audio

dtype:

audio:

sampling_rate: 16000

splits:

- name: train

num_bytes: 24516540144.375

num_examples: 255253

download_size: 21196982997

dataset_size: 24516540144.375

---

# Dataset Card for "chunk_39"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

carcanha/marilhamendonsa | ---

license: openrail

---

|

grosenthal/lat_en_loeb_morph | ---

dataset_info:

features:

- name: id

dtype: int64

- name: la

dtype: string

- name: en

dtype: string

- name: file

dtype: string

splits:

- name: train

num_bytes: 60797479

num_examples: 99343

- name: test

num_bytes: 628768

num_examples: 1014

- name: valid

num_bytes: 605889

num_examples: 1014

download_size: 31059812

dataset_size: 62032136

---

# Dataset Card for "lat_en_loeb_morph"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

stealthwriter/newAIHumanGPT3.5V2 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

dataset_info:

features:

- name: sentence

dtype: string

- name: label

dtype: int64

splits:

- name: train

num_bytes: 4751074

num_examples: 36000

- name: validation

num_bytes: 528788

num_examples: 4000

download_size: 3478514

dataset_size: 5279862

---

# Dataset Card for "newAIHumanGPT3.5V2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

m-ric/Open_Assistant_Chains_German_Translation | ---

language:

- en

- de

license: apache-2.0

size_categories:

- 10K<n<100K

task_categories:

- conversational

- text-generation

pretty_name: OpenAssistant Conversation Chains - With German Translation

tags:

- human-feedback

configs:

- config_name: default

data_files:

- split: train_english

path: data/train_english-*

- split: train_german

path: data/train_german-*

dataset_info:

features:

- name: conversation_id

dtype: string

- name: user_id

dtype: string

- name: created_date

dtype: string

- name: messages

list:

- name: content

dtype: string

- name: role

dtype: string

- name: lang_original

dtype: string

- name: could_be_code

dtype: bool

splits:

- name: train_english

num_bytes: 29675151

num_examples: 18192

- name: train_german

num_bytes: 28931906

num_examples: 18192

download_size: 21854409

dataset_size: 58607057

---

# Dataset Card for Dataset Name

## Dataset description

<!-- Provide a quick summary of the dataset. -->

This dataset is derived from [OpenAssistant Conversation Chains](https://huggingface.co/datasets/A-Roucher/Open_Assistant_Conversation_Chains), which is a reformatting of [OpenAssistant Conversations (OASST1)](https://huggingface.co/datasets/OpenAssistant/oasst1), which is itself

> a human-generated, human-annotated assistant-style conversation corpus consisting of 161,443 messages in 35 different languages, annotated with 461,292 quality ratings, resulting in over 10,000 fully annotated conversation trees. The corpus is a product of a worldwide crowd-sourcing effort involving over 13,500 volunteers.

It was modified from the original by adding a german translation of each conversation, generated with [Helsinki-NLP/opus-mt-en-de](https://huggingface.co/Helsinki-NLP/opus-mt-en-de).

#### Who are the editors?

Aymeric Roucher, Hugging Face.

### Example

````

{

'conversation_id': '6708c47f-05c9-4346-b3d2-40b2bd24fde4',

'user_id': '2c96e467-66f0-4be7-9693-bda51356a424',

'created_date': '2023-02-06T18:48:49.391686+00:00',

'messages_original': [

{'content': 'Can you write a short introduction about the relevance of the term "monopsony" in economics? Please use examples related to potential monopsonies in the labour market and cite relevant research.',

'role': 'user'},

{'content': '"Monopsony" refers to a market structure where there is only one buyer for a particular good or service. In economics, this term is particularly relevant in the labor market, where a monopsony employer has significant power over the wages and working conditions of their employees. The presence of a monopsony can result in lower wages and reduced employment opportunities for workers, as the employer has little incentive to increase wages or provide better working conditions.\n\nRecent research has identified potential monopsonies in industries such as retail and fast food, where a few large companies control a significant portion of the market (Bivens & Mishel, 2013). In these industries, workers often face low wages, limited benefits, and reduced bargaining power, leading to a situation where they are dependent on the employer for their livelihood. This dependence can result in further suppression of wages and a decline in working conditions.\n\nOverall, the concept of monopsony is essential to understanding the dynamics of labor markets and the impact of market power on workers. Further research is needed to understand the extent and impact of monopsonies on the economy and to develop policies to address this issue.\n\nReferences:\nBivens, J., & Mishel, L. (2013). The Pay of Corporate Executives and Financial Professionals as Evidence of Rents in Top 1 Percent Incomes. Journal of Economic Perspectives, 27(3), 57-78.',

'role': 'assistant'},

{'content': 'Now explain it to a dog', 'role': 'user'}

],

'lang_original': 'en'

'messages_german':

{ "content": "Können Sie eine kurze Einführung über die Relevanz des Begriffs \"Monopsonie\" in der Wirtschaft schreiben? Bitte verwenden Sie Beispiele für mögliche Monopsonien auf dem Arbeitsmarkt und zitieren Sie relevante Forschung.", "role": "user" }, { "content": "\"Monopsony\" bezieht sich auf eine Marktstruktur, in der es nur einen Käufer für ein bestimmtes Gut oder eine bestimmte Dienstleistung gibt. In der Wirtschaft ist dieser Begriff besonders relevant auf dem Arbeitsmarkt, wo ein Monopsony Arbeitgeber über die Löhne und Arbeitsbedingungen ihrer Mitarbeiter verfügt. Das Vorhandensein eines Monopsony kann zu niedrigeren Löhnen und reduzierten Beschäftigungsmöglichkeiten für Arbeitnehmer führen, da der Arbeitgeber wenig Anreiz hat, Löhne zu erhöhen oder bessere Arbeitsbedingungen zu bieten. Neuere Forschung hat mögliche Monopsonies in Branchen wie Einzelhandel und Fast Food identifiziert, wo ein paar große Unternehmen einen erheblichen Teil des Marktes kontrollieren (Bivens & Mishel, 2013). In diesen Branchen ist das Konzept der Monopsony oft mit niedrigen Löhnen, begrenzten Vorteilen und reduzierten Verhandlungsmacht, was zu einer Situation führt, in der sie vom Arbeitgeber für ihren Lebensunterhalt abhängig sind. Diese Abhängigkeit kann zu einer weiteren Unterdrückung der Löhne und einem Rückgang der Arbeitsbedingungen führen. Insgesamt ist das Konzept der Monopsony wesentlich, um die Dynamik der Arbeitsmärkte und die Auswirkungen der Marktmacht auf die Arbeitnehmer zu verstehen. Weitere Forschung ist erforderlich, um das Ausmaß und die Auswirkungen der Monopsonies auf die Wirtschaft zu verstehen und zu entwickeln.", "role": "assistant" },

{ "content": "Nun erklären Sie es einem Hund", "role": "user" }

]

}

```` |

yassineechahboun9/INSUSTRY | ---

license: llama2

---

|

semeru/code-code-CodeCompletion-TokenLevel-Java | ---

license: mit

Programminglanguage: "Java"

version: "N/A"

Date: "From paper: https://homepages.inf.ed.ac.uk/csutton/publications/msr2013.pdf (2013 - paper release date)"

Contaminated: "Very Likely"

Size: "Standard Tokenizer (TreeSitter)"

---

### Dataset is imported from CodeXGLUE and pre-processed using their script.

# Where to find in Semeru:

The dataset can be found at /nfs/semeru/semeru_datasets/code_xglue/code-to-code/CodeCompletion-token/dataset/javaCorpus in Semeru

# CodeXGLUE -- Code Completion (token level)

**Update 2021.07.30:** We update the code completion dataset with literals normalized to avoid sensitive information.

Here is the introduction and pipeline for token level code completion task.

## Task Definition

Predict next code token given context of previous tokens. Models are evaluated by token level accuracy.

Code completion is a one of the most widely used features in software development through IDEs. An effective code completion tool could improve software developers' productivity. We provide code completion evaluation tasks in two granularities -- token level and line level. Here we introduce token level code completion. Token level task is analogous to language modeling. Models should have be able to predict the next token in arbitary types.

## Dataset

The dataset is in java.

### Dependency

- javalang == 0.13.0

### Github Java Corpus

We use java corpus dataset mined by Allamanis and Sutton, in their MSR 2013 paper [Mining Source Code Repositories at Massive Scale using Language Modeling](https://homepages.inf.ed.ac.uk/csutton/publications/msr2013.pdf). We follow the same split and preprocessing in Karampatsis's ICSE 2020 paper [Big Code != Big Vocabulary: Open-Vocabulary Models for Source Code](http://homepages.inf.ed.ac.uk/s1467463/documents/icse20-main-1325.pdf).

### Data Format

Code corpus are saved in txt format files. one line is a tokenized code snippets:

```

<s> from __future__ import unicode_literals <EOL> from django . db import models , migrations <EOL> class Migration ( migrations . Migration ) : <EOL> dependencies = [ <EOL> ] <EOL> operations = [ <EOL> migrations . CreateModel ( <EOL> name = '<STR_LIT>' , <EOL> fields = [ <EOL> ( '<STR_LIT:id>' , models . AutoField ( verbose_name = '<STR_LIT>' , serialize = False , auto_created = True , primary_key = True ) ) , <EOL> ( '<STR_LIT:name>' , models . CharField ( help_text = b'<STR_LIT>' , max_length = <NUM_LIT> ) ) , <EOL> ( '<STR_LIT:image>' , models . ImageField ( help_text = b'<STR_LIT>' , null = True , upload_to = b'<STR_LIT>' , blank = True ) ) , <EOL> ] , <EOL> options = { <EOL> '<STR_LIT>' : ( '<STR_LIT:name>' , ) , <EOL> '<STR_LIT>' : '<STR_LIT>' , <EOL> } , <EOL> bases = ( models . Model , ) , <EOL> ) , <EOL> ] </s>

```

### Data Statistics

Data statistics of Github Java Corpus dataset are shown in the below table:

| Data Split | #Files | #Tokens |

| ----------- | :--------: | :---------: |

| Train | 12,934 | 15.7M |

| Dev | 7,176 | 3.8M |

| Test | 8,268 | 5.3M |

|

atmallen/qm_bob_easy_2_mixture_1.0e | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

dataset_info:

features:

- name: alice_label

dtype: bool

- name: bob_label

dtype: bool

- name: difficulty

dtype: int64

- name: statement

dtype: string

- name: choices

sequence: string

- name: character

dtype: string

- name: label

dtype:

class_label:

names:

'0': 'False'

'1': 'True'

splits:

- name: train

num_bytes: 12520368.5

num_examples: 117117

- name: validation

num_bytes: 1221097.5

num_examples: 11279

- name: test

num_bytes: 1205746.0

num_examples: 11186

download_size: 3703276

dataset_size: 14947212.0

---

# Dataset Card for "qm_bob_easy_2_mixture_1.0e"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

logikon/oasst1-delib | ---

language:

- en

license: apache-2.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

dataset_info:

features:

- name: message_id

dtype: string

- name: parent_id

dtype: string

- name: user_id

dtype: string

- name: created_date

dtype: string

- name: text

dtype: string

- name: role

dtype: string

- name: lang

dtype: string

- name: review_count

dtype: int32

- name: review_result

dtype: bool

- name: deleted

dtype: bool

- name: rank

dtype: float64

- name: synthetic

dtype: bool

- name: model_name

dtype: 'null'

- name: detoxify

struct:

- name: identity_attack

dtype: float64

- name: insult

dtype: float64

- name: obscene

dtype: float64

- name: severe_toxicity

dtype: float64

- name: sexual_explicit

dtype: float64

- name: threat

dtype: float64

- name: toxicity

dtype: float64

- name: message_tree_id

dtype: string

- name: tree_state

dtype: string

- name: emojis

struct:

- name: count

sequence: int32

- name: name

sequence: string

- name: labels

struct:

- name: count

sequence: int32

- name: name

sequence: string

- name: value

sequence: float64

- name: history

dtype: string

splits:

- name: train

num_bytes: 278875

num_examples: 90

- name: validation

num_bytes: 18290

num_examples: 6

download_size: 208227

dataset_size: 297165

---

# Dataset Card for "oasst1-delib"

Subset of `OpenAssistant/oasst1` with English chat messages that (are supposed to) contain reasoning:

* filtered by keyword "pros"

* includes chat history as extra feature

Dataset creation is documented in https://github.com/logikon-ai/deliberation-datasets/blob/main/notebooks/create_oasst1_delib.ipynb

|

heliosprime/twitter_dataset_1713101663 | ---

dataset_info:

features:

- name: id

dtype: string

- name: tweet_content

dtype: string

- name: user_name

dtype: string

- name: user_id

dtype: string

- name: created_at

dtype: string

- name: url

dtype: string

- name: favourite_count

dtype: int64

- name: scraped_at

dtype: string

- name: image_urls

dtype: string

splits:

- name: train

num_bytes: 11500

num_examples: 33

download_size: 12604

dataset_size: 11500

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "twitter_dataset_1713101663"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

MohammedNasri/wav_to_vec_common_voice_fleurs_without_diacs | ---

dataset_info:

features:

- name: input_values

sequence: float32

- name: input_length

dtype: int64

- name: labels

sequence: int64

splits:

- name: train

num_bytes: 11806675364

num_examples: 40880

- name: test

num_bytes: 2889905492

num_examples: 10440

download_size: 14014156133

dataset_size: 14696580856

---

# Dataset Card for "wav_to_vec_common_voice_fleurs_without_diacs"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

open-llm-leaderboard/details_xxyyy123__mc_data_30k_from_platpus_orca_7b_10k_v1_lora_qk_rank14_v2 | ---

pretty_name: Evaluation run of xxyyy123/mc_data_30k_from_platpus_orca_7b_10k_v1_lora_qk_rank14_v2

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [xxyyy123/mc_data_30k_from_platpus_orca_7b_10k_v1_lora_qk_rank14_v2](https://huggingface.co/xxyyy123/mc_data_30k_from_platpus_orca_7b_10k_v1_lora_qk_rank14_v2)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 61 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the agregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_xxyyy123__mc_data_30k_from_platpus_orca_7b_10k_v1_lora_qk_rank14_v2\"\

,\n\t\"harness_truthfulqa_mc_0\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\

\nThese are the [latest results from run 2023-09-03T18:41:04.280567](https://huggingface.co/datasets/open-llm-leaderboard/details_xxyyy123__mc_data_30k_from_platpus_orca_7b_10k_v1_lora_qk_rank14_v2/blob/main/results_2023-09-03T18%3A41%3A04.280567.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.5159772470651705,\n\

\ \"acc_stderr\": 0.03490050368845693,\n \"acc_norm\": 0.5196198874675843,\n\

\ \"acc_norm_stderr\": 0.03488383911166199,\n \"mc1\": 0.3574051407588739,\n\

\ \"mc1_stderr\": 0.0167765996767294,\n \"mc2\": 0.5084843623108531,\n\

\ \"mc2_stderr\": 0.015788699144390992\n },\n \"harness|arc:challenge|25\"\

: {\n \"acc\": 0.5537542662116041,\n \"acc_stderr\": 0.014526705548539982,\n\

\ \"acc_norm\": 0.5810580204778157,\n \"acc_norm_stderr\": 0.014418106953639013\n\

\ },\n \"harness|hellaswag|10\": {\n \"acc\": 0.6132244572794264,\n\

\ \"acc_stderr\": 0.004860162076330978,\n \"acc_norm\": 0.8008364867556264,\n\

\ \"acc_norm_stderr\": 0.0039855506403304606\n },\n \"harness|hendrycksTest-abstract_algebra|5\"\

: {\n \"acc\": 0.28,\n \"acc_stderr\": 0.04512608598542128,\n \

\ \"acc_norm\": 0.28,\n \"acc_norm_stderr\": 0.04512608598542128\n \

\ },\n \"harness|hendrycksTest-anatomy|5\": {\n \"acc\": 0.48148148148148145,\n\

\ \"acc_stderr\": 0.043163785995113245,\n \"acc_norm\": 0.48148148148148145,\n\

\ \"acc_norm_stderr\": 0.043163785995113245\n },\n \"harness|hendrycksTest-astronomy|5\"\

: {\n \"acc\": 0.47368421052631576,\n \"acc_stderr\": 0.04063302731486671,\n\

\ \"acc_norm\": 0.47368421052631576,\n \"acc_norm_stderr\": 0.04063302731486671\n\

\ },\n \"harness|hendrycksTest-business_ethics|5\": {\n \"acc\": 0.5,\n\

\ \"acc_stderr\": 0.050251890762960605,\n \"acc_norm\": 0.5,\n \

\ \"acc_norm_stderr\": 0.050251890762960605\n },\n \"harness|hendrycksTest-clinical_knowledge|5\"\

: {\n \"acc\": 0.6,\n \"acc_stderr\": 0.030151134457776285,\n \

\ \"acc_norm\": 0.6,\n \"acc_norm_stderr\": 0.030151134457776285\n \

\ },\n \"harness|hendrycksTest-college_biology|5\": {\n \"acc\": 0.5625,\n\

\ \"acc_stderr\": 0.04148415739394154,\n \"acc_norm\": 0.5625,\n \

\ \"acc_norm_stderr\": 0.04148415739394154\n },\n \"harness|hendrycksTest-college_chemistry|5\"\

: {\n \"acc\": 0.38,\n \"acc_stderr\": 0.04878317312145632,\n \

\ \"acc_norm\": 0.38,\n \"acc_norm_stderr\": 0.04878317312145632\n \

\ },\n \"harness|hendrycksTest-college_computer_science|5\": {\n \"acc\"\

: 0.36,\n \"acc_stderr\": 0.048241815132442176,\n \"acc_norm\": 0.36,\n\

\ \"acc_norm_stderr\": 0.048241815132442176\n },\n \"harness|hendrycksTest-college_mathematics|5\"\

: {\n \"acc\": 0.28,\n \"acc_stderr\": 0.04512608598542128,\n \

\ \"acc_norm\": 0.28,\n \"acc_norm_stderr\": 0.04512608598542128\n \

\ },\n \"harness|hendrycksTest-college_medicine|5\": {\n \"acc\": 0.4797687861271676,\n\

\ \"acc_stderr\": 0.03809342081273957,\n \"acc_norm\": 0.4797687861271676,\n\

\ \"acc_norm_stderr\": 0.03809342081273957\n },\n \"harness|hendrycksTest-college_physics|5\"\

: {\n \"acc\": 0.29411764705882354,\n \"acc_stderr\": 0.04533838195929775,\n\

\ \"acc_norm\": 0.29411764705882354,\n \"acc_norm_stderr\": 0.04533838195929775\n\

\ },\n \"harness|hendrycksTest-computer_security|5\": {\n \"acc\":\

\ 0.62,\n \"acc_stderr\": 0.048783173121456316,\n \"acc_norm\": 0.62,\n\

\ \"acc_norm_stderr\": 0.048783173121456316\n },\n \"harness|hendrycksTest-conceptual_physics|5\"\

: {\n \"acc\": 0.4851063829787234,\n \"acc_stderr\": 0.032671518489247764,\n\

\ \"acc_norm\": 0.4851063829787234,\n \"acc_norm_stderr\": 0.032671518489247764\n\

\ },\n \"harness|hendrycksTest-econometrics|5\": {\n \"acc\": 0.32456140350877194,\n\

\ \"acc_stderr\": 0.044045561573747664,\n \"acc_norm\": 0.32456140350877194,\n\

\ \"acc_norm_stderr\": 0.044045561573747664\n },\n \"harness|hendrycksTest-electrical_engineering|5\"\

: {\n \"acc\": 0.45517241379310347,\n \"acc_stderr\": 0.04149886942192117,\n\

\ \"acc_norm\": 0.45517241379310347,\n \"acc_norm_stderr\": 0.04149886942192117\n\

\ },\n \"harness|hendrycksTest-elementary_mathematics|5\": {\n \"acc\"\

: 0.30952380952380953,\n \"acc_stderr\": 0.023809523809523867,\n \"\

acc_norm\": 0.30952380952380953,\n \"acc_norm_stderr\": 0.023809523809523867\n\

\ },\n \"harness|hendrycksTest-formal_logic|5\": {\n \"acc\": 0.2777777777777778,\n\

\ \"acc_stderr\": 0.040061680838488774,\n \"acc_norm\": 0.2777777777777778,\n\

\ \"acc_norm_stderr\": 0.040061680838488774\n },\n \"harness|hendrycksTest-global_facts|5\"\

: {\n \"acc\": 0.37,\n \"acc_stderr\": 0.04852365870939099,\n \

\ \"acc_norm\": 0.37,\n \"acc_norm_stderr\": 0.04852365870939099\n \

\ },\n \"harness|hendrycksTest-high_school_biology|5\": {\n \"acc\": 0.5612903225806452,\n\

\ \"acc_stderr\": 0.028229497320317216,\n \"acc_norm\": 0.5612903225806452,\n\

\ \"acc_norm_stderr\": 0.028229497320317216\n },\n \"harness|hendrycksTest-high_school_chemistry|5\"\

: {\n \"acc\": 0.3842364532019704,\n \"acc_stderr\": 0.0342239856565755,\n\

\ \"acc_norm\": 0.3842364532019704,\n \"acc_norm_stderr\": 0.0342239856565755\n\

\ },\n \"harness|hendrycksTest-high_school_computer_science|5\": {\n \

\ \"acc\": 0.41,\n \"acc_stderr\": 0.04943110704237102,\n \"acc_norm\"\

: 0.41,\n \"acc_norm_stderr\": 0.04943110704237102\n },\n \"harness|hendrycksTest-high_school_european_history|5\"\

: {\n \"acc\": 0.7090909090909091,\n \"acc_stderr\": 0.03546563019624336,\n\

\ \"acc_norm\": 0.7090909090909091,\n \"acc_norm_stderr\": 0.03546563019624336\n\

\ },\n \"harness|hendrycksTest-high_school_geography|5\": {\n \"acc\"\

: 0.6818181818181818,\n \"acc_stderr\": 0.0331847733384533,\n \"acc_norm\"\

: 0.6818181818181818,\n \"acc_norm_stderr\": 0.0331847733384533\n },\n\

\ \"harness|hendrycksTest-high_school_government_and_politics|5\": {\n \

\ \"acc\": 0.7512953367875648,\n \"acc_stderr\": 0.031195840877700286,\n\

\ \"acc_norm\": 0.7512953367875648,\n \"acc_norm_stderr\": 0.031195840877700286\n\

\ },\n \"harness|hendrycksTest-high_school_macroeconomics|5\": {\n \

\ \"acc\": 0.48205128205128206,\n \"acc_stderr\": 0.02533466708095495,\n\

\ \"acc_norm\": 0.48205128205128206,\n \"acc_norm_stderr\": 0.02533466708095495\n\

\ },\n \"harness|hendrycksTest-high_school_mathematics|5\": {\n \"\

acc\": 0.2518518518518518,\n \"acc_stderr\": 0.02646611753895991,\n \

\ \"acc_norm\": 0.2518518518518518,\n \"acc_norm_stderr\": 0.02646611753895991\n\

\ },\n \"harness|hendrycksTest-high_school_microeconomics|5\": {\n \

\ \"acc\": 0.5126050420168067,\n \"acc_stderr\": 0.03246816765752174,\n \

\ \"acc_norm\": 0.5126050420168067,\n \"acc_norm_stderr\": 0.03246816765752174\n\

\ },\n \"harness|hendrycksTest-high_school_physics|5\": {\n \"acc\"\

: 0.3576158940397351,\n \"acc_stderr\": 0.03913453431177258,\n \"\

acc_norm\": 0.3576158940397351,\n \"acc_norm_stderr\": 0.03913453431177258\n\

\ },\n \"harness|hendrycksTest-high_school_psychology|5\": {\n \"acc\"\

: 0.7192660550458716,\n \"acc_stderr\": 0.019266055045871623,\n \"\

acc_norm\": 0.7192660550458716,\n \"acc_norm_stderr\": 0.019266055045871623\n\

\ },\n \"harness|hendrycksTest-high_school_statistics|5\": {\n \"acc\"\

: 0.375,\n \"acc_stderr\": 0.033016908987210894,\n \"acc_norm\": 0.375,\n\