datasetId stringlengths 2 117 | card stringlengths 19 1.01M |

|---|---|

Ssunbell/boostcamp-docvqa-v2 | ---

dataset_info:

features:

- name: questionId

dtype: int64

- name: question

dtype: string

- name: image

sequence:

sequence:

sequence: uint8

- name: docId

dtype: int64

- name: ucsf_document_id

dtype: string

- name: ucsf_document_page_no

dtype: string

- name: answers

sequence: string

- name: data_split

dtype: string

- name: words

sequence: string

- name: boxes

sequence:

sequence: int64

splits:

- name: train

num_bytes: 6381793673

num_examples: 39454

- name: val

num_bytes: 869361798

num_examples: 5349

download_size: 2578867675

dataset_size: 7251155471

---

# Dataset Card for "boostcamp-docvqa-v2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

alvarobartt/distilabel | ---

dataset_info:

features:

- name: instruction

dtype: string

- name: completion

dtype: string

- name: meta

struct:

- name: category

dtype: string

- name: completion

dtype: string

- name: id

dtype: int64

- name: input

dtype: string

- name: motivation_app

dtype: string

- name: prompt

dtype: string

- name: source

dtype: string

- name: subcategory

dtype: string

- name: model_names

sequence: string

- name: generations

sequence: string

- name: output

dtype: string

- name: model_name

dtype: string

splits:

- name: train

num_bytes: 245396

num_examples: 100

download_size: 171121

dataset_size: 245396

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

BramVanroy/dutch_chat_datasets | ---

language:

- nl

size_categories:

- 100K<n<1M

task_categories:

- question-answering

- text-generation

- conversational

pretty_name: Chat Datasets for Dutch

dataset_info:

features:

- name: prompt

dtype: string

- name: prompt_id

dtype: string

- name: messages

list:

- name: content

dtype: string

- name: role

dtype: string

splits:

- name: train_sft

num_bytes: 198305113

num_examples: 160248

- name: test_sft

num_bytes: 22076114

num_examples: 17806

download_size: 124497015

dataset_size: 220381227

configs:

- config_name: default

data_files:

- split: train_sft

path: data/train_sft-*

- split: test_sft

path: data/test_sft-*

---

# Dataset Card for "dutch_chat_datasets"

This dataset is a merge of the following datasets. See their pages for licensing, usage, creation, and citation information.

- https://huggingface.co/datasets/BramVanroy/dolly-15k-dutch

- https://huggingface.co/datasets/BramVanroy/alpaca-cleaned-dutch-baize

- https://huggingface.co/datasets/BramVanroy/stackoverflow-chat-dutch

- https://huggingface.co/datasets/BramVanroy/quora-chat-dutch

They are reformatted for easier, consistent processing in downstream tasks such as language modelling.

If you use this dataset or any parts of it, please use the following citation:

Vanroy, B. (2023). *Language Resources for Dutch Large Language Modelling*. [https://arxiv.org/abs/2312.12852](https://arxiv.org/abs/2312.12852)

```bibtext

@article{vanroy2023language,

title={Language Resources for {Dutch} Large Language Modelling},

author={Vanroy, Bram},

journal={arXiv preprint arXiv:2312.12852},

year={2023}

}

```

**Columns**:

- `dialog`: a list of turns, where each turn is a dictionary that contains these keys:

- `role`: `user` or `assistant`

- `content`: the given text `str`

- `source`: the source dataset that this dialog originates from

|

ostapeno/oasst1_seed10737 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: instruction

dtype: string

- name: instruction_quality

dtype: float64

- name: response

dtype: string

- name: response_quality

dtype: float64

splits:

- name: train

num_bytes: 12797624

num_examples: 10737

download_size: 7501802

dataset_size: 12797624

---

# Dataset Card for "oasst1_seed10737"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

CyberHarem/sheema_fireemblem | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of sheema (Fire Emblem)

This is the dataset of sheema (Fire Emblem), containing 16 images and their tags.

The core tags of this character are `brown_hair, long_hair, red_eyes, brown_eyes`, which are pruned in this dataset.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).

## List of Packages

| Name | Images | Size | Download | Type | Description |

|:-----------------|---------:|:----------|:-------------------------------------------------------------------------------------------------------------------|:-----------|:---------------------------------------------------------------------|

| raw | 16 | 25.33 MiB | [Download](https://huggingface.co/datasets/CyberHarem/sheema_fireemblem/resolve/main/dataset-raw.zip) | Waifuc-Raw | Raw data with meta information (min edge aligned to 1400 if larger). |

| 800 | 16 | 12.66 MiB | [Download](https://huggingface.co/datasets/CyberHarem/sheema_fireemblem/resolve/main/dataset-800.zip) | IMG+TXT | dataset with the shorter side not exceeding 800 pixels. |

| stage3-p480-800 | 33 | 23.65 MiB | [Download](https://huggingface.co/datasets/CyberHarem/sheema_fireemblem/resolve/main/dataset-stage3-p480-800.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

| 1200 | 16 | 21.57 MiB | [Download](https://huggingface.co/datasets/CyberHarem/sheema_fireemblem/resolve/main/dataset-1200.zip) | IMG+TXT | dataset with the shorter side not exceeding 1200 pixels. |

| stage3-p480-1200 | 33 | 35.01 MiB | [Download](https://huggingface.co/datasets/CyberHarem/sheema_fireemblem/resolve/main/dataset-stage3-p480-1200.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

### Load Raw Dataset with Waifuc

We provide raw dataset (including tagged images) for [waifuc](https://deepghs.github.io/waifuc/main/tutorials/installation/index.html) loading. If you need this, just run the following code

```python

import os

import zipfile

from huggingface_hub import hf_hub_download

from waifuc.source import LocalSource

# download raw archive file

zip_file = hf_hub_download(

repo_id='CyberHarem/sheema_fireemblem',

repo_type='dataset',

filename='dataset-raw.zip',

)

# extract files to your directory

dataset_dir = 'dataset_dir'

os.makedirs(dataset_dir, exist_ok=True)

with zipfile.ZipFile(zip_file, 'r') as zf:

zf.extractall(dataset_dir)

# load the dataset with waifuc

source = LocalSource(dataset_dir)

for item in source:

print(item.image, item.meta['filename'], item.meta['tags'])

```

## List of Clusters

List of tag clustering result, maybe some outfits can be mined here.

### Raw Text Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | Tags |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:-------------------------------------------------------------------------------------------------------------------------|

| 0 | 16 |  |  |  |  |  | solo, 1girl, cape, weapon, white_background, armored_boots, gloves, shield, simple_background, full_body, shoulder_armor |

### Table Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | solo | 1girl | cape | weapon | white_background | armored_boots | gloves | shield | simple_background | full_body | shoulder_armor |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:-------|:--------|:-------|:---------|:-------------------|:----------------|:---------|:---------|:--------------------|:------------|:-----------------|

| 0 | 16 |  |  |  |  |  | X | X | X | X | X | X | X | X | X | X | X |

|

Francesco/flir-camera-objects | ---

dataset_info:

features:

- name: image_id

dtype: int64

- name: image

dtype: image

- name: width

dtype: int32

- name: height

dtype: int32

- name: objects

sequence:

- name: id

dtype: int64

- name: area

dtype: int64

- name: bbox

sequence: float32

length: 4

- name: category

dtype:

class_label:

names:

'0': flir-camera-objects

'1': bicycle

'2': car

'3': dog

'4': person

annotations_creators:

- crowdsourced

language_creators:

- found

language:

- en

license:

- cc

multilinguality:

- monolingual

size_categories:

- 1K<n<10K

source_datasets:

- original

task_categories:

- object-detection

task_ids: []

pretty_name: flir-camera-objects

tags:

- rf100

---

# Dataset Card for flir-camera-objects

** The original COCO dataset is stored at `dataset.tar.gz`**

## Dataset Description

- **Homepage:** https://universe.roboflow.com/object-detection/flir-camera-objects

- **Point of Contact:** francesco.zuppichini@gmail.com

### Dataset Summary

flir-camera-objects

### Supported Tasks and Leaderboards

- `object-detection`: The dataset can be used to train a model for Object Detection.

### Languages

English

## Dataset Structure

### Data Instances

A data point comprises an image and its object annotations.

```

{

'image_id': 15,

'image': <PIL.JpegImagePlugin.JpegImageFile image mode=RGB size=640x640 at 0x2373B065C18>,

'width': 964043,

'height': 640,

'objects': {

'id': [114, 115, 116, 117],

'area': [3796, 1596, 152768, 81002],

'bbox': [

[302.0, 109.0, 73.0, 52.0],

[810.0, 100.0, 57.0, 28.0],

[160.0, 31.0, 248.0, 616.0],

[741.0, 68.0, 202.0, 401.0]

],

'category': [4, 4, 0, 0]

}

}

```

### Data Fields

- `image`: the image id

- `image`: `PIL.Image.Image` object containing the image. Note that when accessing the image column: `dataset[0]["image"]` the image file is automatically decoded. Decoding of a large number of image files might take a significant amount of time. Thus it is important to first query the sample index before the `"image"` column, *i.e.* `dataset[0]["image"]` should **always** be preferred over `dataset["image"][0]`

- `width`: the image width

- `height`: the image height

- `objects`: a dictionary containing bounding box metadata for the objects present on the image

- `id`: the annotation id

- `area`: the area of the bounding box

- `bbox`: the object's bounding box (in the [coco](https://albumentations.ai/docs/getting_started/bounding_boxes_augmentation/#coco) format)

- `category`: the object's category.

#### Who are the annotators?

Annotators are Roboflow users

## Additional Information

### Licensing Information

See original homepage https://universe.roboflow.com/object-detection/flir-camera-objects

### Citation Information

```

@misc{ flir-camera-objects,

title = { flir camera objects Dataset },

type = { Open Source Dataset },

author = { Roboflow 100 },

howpublished = { \url{ https://universe.roboflow.com/object-detection/flir-camera-objects } },

url = { https://universe.roboflow.com/object-detection/flir-camera-objects },

journal = { Roboflow Universe },

publisher = { Roboflow },

year = { 2022 },

month = { nov },

note = { visited on 2023-03-29 },

}"

```

### Contributions

Thanks to [@mariosasko](https://github.com/mariosasko) for adding this dataset. |

starfishmedical/webGPT_x_dolly | ---

license: cc-by-sa-3.0

task_categories:

- question-answering

size_categories:

- 10K<n<100K

---

This dataset contains a selection of Q&A-related tasks gathered and cleaned from the webGPT_comparisons set and the databricks-dolly-15k set.

Unicode escapes were explicitly removed, and wikipedia citations in the "output" were stripped through regex to hopefully help any

end-product model ignore these artifacts within their input context.

This data is formatted for use in the alpaca instruction format, however the instruction, input, and output columns are kept separate in

the raw data to allow for other configurations. The data has been filtered so that every entry is less than our chosen truncation length of

1024 (LLaMA-style) tokens with the format:

```

"""Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.

### Instruction:

{instruction}

### Input:

{inputt}

### Response:

{output}"""

```

<h3>webGPT</h3>

This set was filtered from the webGPT_comparisons data by taking any Q&A option that was positively or neutrally-rated by humans (e.g. "score" >= 0).

This might not provide the ideal answer, but this dataset was assembled specifically for extractive Q&A with less regard for how humans

feel about the results.

This selection comprises 23826 of the total entries in the data.

<h3>Dolly</h3>

The dolly data was selected primarily to focus on closed-qa tasks. For this purpose, only entries in the "closed-qa", "information_extraction",

"summarization", "classification", and "creative_writing" were used. While not all of these include a context, they were judged to help

flesh out the training set.

This selection comprises 5362 of the total entries in the data.

|

tyzhu/squad_qa_baseline_v5_full_recite_full_passage_first_permute_rerun | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

dataset_info:

features:

- name: id

dtype: string

- name: title

dtype: string

- name: context

dtype: string

- name: question

dtype: string

- name: answers

sequence:

- name: text

dtype: string

- name: answer_start

dtype: int32

- name: answer

dtype: string

- name: context_id

dtype: string

- name: inputs

dtype: string

- name: targets

dtype: string

splits:

- name: train

num_bytes: 4369231.0

num_examples: 2385

- name: validation

num_bytes: 573308

num_examples: 300

download_size: 1012407

dataset_size: 4942539.0

---

# Dataset Card for "squad_qa_baseline_v5_full_recite_full_passage_first_permute_rerun"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

zxx-silence/my-shiba-inu-dataset | ---

dataset_info:

features:

- name: image

dtype: image

- name: text

dtype: string

splits:

- name: train

num_bytes: 2997266.0

num_examples: 13

download_size: 2987648

dataset_size: 2997266.0

---

# Dataset Card for "my-shiba-inu-dataset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Multimodal-Fatima/FGVC_Aircraft_test_embeddings | ---

dataset_info:

features:

- name: image

dtype: image

- name: id

dtype: int64

- name: vision_embeddings

sequence: float32

splits:

- name: openai_clip_vit_large_patch14

num_bytes: 933154950.0

num_examples: 3333

download_size: 935494333

dataset_size: 933154950.0

---

# Dataset Card for "FGVC_Aircraft_test_embeddings"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

liuyanchen1015/MULTI_VALUE_sst2_me_us | ---

dataset_info:

features:

- name: sentence

dtype: string

- name: label

dtype: int64

- name: idx

dtype: int64

- name: score

dtype: int64

splits:

- name: dev

num_bytes: 1012

num_examples: 8

- name: test

num_bytes: 2232

num_examples: 16

- name: train

num_bytes: 30783

num_examples: 292

download_size: 19403

dataset_size: 34027

---

# Dataset Card for "MULTI_VALUE_sst2_me_us"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

mask-distilled-onesec-cv12-each-chunk-uniq/chunk_136 | ---

dataset_info:

features:

- name: logits

sequence: float32

- name: mfcc

sequence:

sequence: float64

splits:

- name: train

num_bytes: 1161938388.0

num_examples: 228189

download_size: 1185012083

dataset_size: 1161938388.0

---

# Dataset Card for "chunk_136"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

seansullivan/PCone-Integrations | ---

license: other

---

|

AmelieSchreiber/aging_proteins | ---

license: mit

task_categories:

- text-classification

language:

- en

tags:

- esm

- esm2

- ESM-2

- aging proteins

- protein laguage model

- biology

---

# Description of the Dataset

This is (part of) the dataset used in

[Prediction and characterization of human ageing-related proteins by using machine learning](https://www.nature.com/articles/s41598-018-22240-w).

This can be used to train a binary sequence classifier using protein language models such as [ESM-2](https://huggingface.co/facebook/esm2_t6_8M_UR50D).

Please also see [the github for the paper](https://github.com/kerepesi/aging_ml/blob/master/aging_labels.csv) for more information.

|

satyambarnwal/balcony8 | ---

dataset_info:

features:

- name: original_image

dtype: image

- name: edit_prompt

dtype: string

- name: output_image

dtype: image

splits:

- name: train

num_bytes: 56784678.0

num_examples: 63

download_size: 29126186

dataset_size: 56784678.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

NetherlandsForensicInstitute/simplewiki-translated-nl | ---

license: cc-by-sa-4.0

task_categories:

- sentence-similarity

language:

- nl

size_categories:

- 100K<n<1M

---

This is a Dutch version of the [SimpleWiki](https://cs.pomona.edu/~dkauchak/simplification/) text simplification dataset. Which we have auto-translated from English into Dutch using Meta's [No Language Left Behind](https://ai.facebook.com/research/no-language-left-behind/) model, specifically the [huggingface implementation](https://huggingface.co/facebook/nllb-200-distilled-600M). |

idrismaric/dxb_realestate | ---

license: mit

---

|

alkzar90/NIH-Chest-X-ray-dataset | ---

annotations_creators:

- machine-generated

- expert-generated

language_creators:

- machine-generated

- expert-generated

language:

- en

license:

- unknown

multilinguality:

- monolingual

pretty_name: NIH-CXR14

paperswithcode_id: chestx-ray14

size_categories:

- 100K<n<1M

task_categories:

- image-classification

task_ids:

- multi-class-image-classification

---

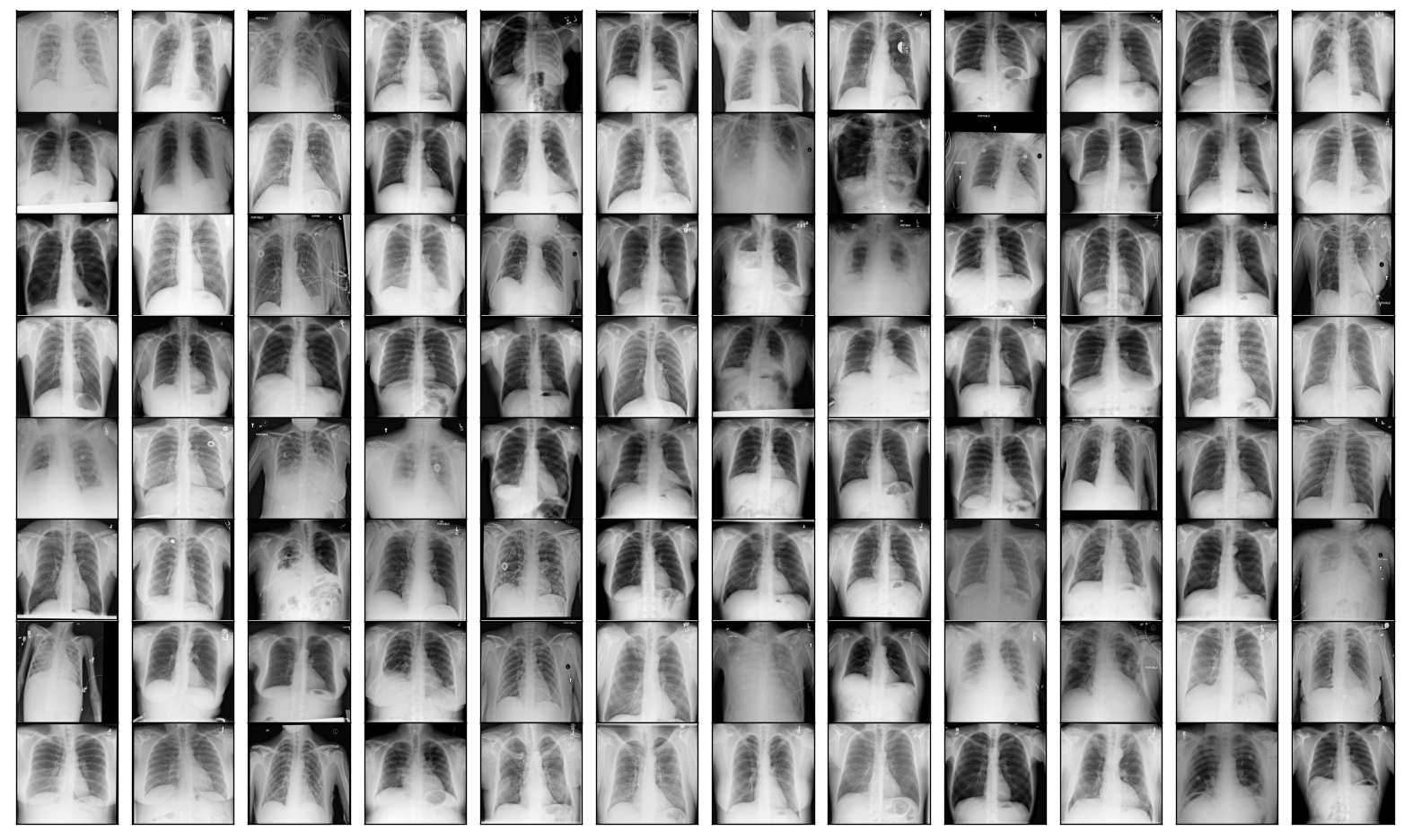

# Dataset Card for NIH Chest X-ray dataset

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [NIH Chest X-ray Dataset of 10 Common Thorax Disease Categories](https://nihcc.app.box.com/v/ChestXray-NIHCC/folder/36938765345)

- **Repository:**

- **Paper:** [ChestX-ray8: Hospital-scale Chest X-ray Database and Benchmarks on Weakly-Supervised Classification and Localization of Common Thorax Diseases](https://arxiv.org/abs/1705.02315)

- **Leaderboard:**

- **Point of Contact:** rms@nih.gov

### Dataset Summary

_ChestX-ray dataset comprises 112,120 frontal-view X-ray images of 30,805 unique patients with the text-mined fourteen disease image labels (where each image can have multi-labels), mined from the associated radiological reports using natural language processing. Fourteen common thoracic pathologies include Atelectasis, Consolidation, Infiltration, Pneumothorax, Edema, Emphysema, Fibrosis, Effusion, Pneumonia, Pleural_thickening, Cardiomegaly, Nodule, Mass and Hernia, which is an extension of the 8 common disease patterns listed in our CVPR2017 paper. Note that original radiology reports (associated with these chest x-ray studies) are not meant to be publicly shared for many reasons. The text-mined disease labels are expected to have accuracy >90%.Please find more details and benchmark performance of trained models based on 14 disease labels in our arxiv paper: [1705.02315](https://arxiv.org/abs/1705.02315)_

## Dataset Structure

### Data Instances

A sample from the training set is provided below:

```

{'image_file_path': '/root/.cache/huggingface/datasets/downloads/extracted/95db46f21d556880cf0ecb11d45d5ba0b58fcb113c9a0fff2234eba8f74fe22a/images/00000798_022.png',

'image': <PIL.PngImagePlugin.PngImageFile image mode=L size=1024x1024 at 0x7F2151B144D0>,

'labels': [9, 3]}

```

### Data Fields

The data instances have the following fields:

- `image_file_path` a `str` with the image path

- `image`: A `PIL.Image.Image` object containing the image. Note that when accessing the image column: `dataset[0]["image"]` the image file is automatically decoded. Decoding of a large number of image files might take a significant amount of time. Thus it is important to first query the sample index before the `"image"` column, *i.e.* `dataset[0]["image"]` should **always** be preferred over `dataset["image"][0]`.

- `labels`: an `int` classification label.

<details>

<summary>Class Label Mappings</summary>

```json

{

"No Finding": 0,

"Atelectasis": 1,

"Cardiomegaly": 2,

"Effusion": 3,

"Infiltration": 4,

"Mass": 5,

"Nodule": 6,

"Pneumonia": 7,

"Pneumothorax": 8,

"Consolidation": 9,

"Edema": 10,

"Emphysema": 11,

"Fibrosis": 12,

"Pleural_Thickening": 13,

"Hernia": 14

}

```

</details>

**Label distribution on the dataset:**

| labels | obs | freq |

|:-------------------|------:|-----------:|

| No Finding | 60361 | 0.426468 |

| Infiltration | 19894 | 0.140557 |

| Effusion | 13317 | 0.0940885 |

| Atelectasis | 11559 | 0.0816677 |

| Nodule | 6331 | 0.0447304 |

| Mass | 5782 | 0.0408515 |

| Pneumothorax | 5302 | 0.0374602 |

| Consolidation | 4667 | 0.0329737 |

| Pleural_Thickening | 3385 | 0.023916 |

| Cardiomegaly | 2776 | 0.0196132 |

| Emphysema | 2516 | 0.0177763 |

| Edema | 2303 | 0.0162714 |

| Fibrosis | 1686 | 0.0119121 |

| Pneumonia | 1431 | 0.0101104 |

| Hernia | 227 | 0.00160382 |

### Data Splits

| |train| test|

|-------------|----:|----:|

|# of examples|86524|25596|

**Label distribution by dataset split:**

| labels | ('Train', 'obs') | ('Train', 'freq') | ('Test', 'obs') | ('Test', 'freq') |

|:-------------------|-------------------:|--------------------:|------------------:|-------------------:|

| No Finding | 50500 | 0.483392 | 9861 | 0.266032 |

| Infiltration | 13782 | 0.131923 | 6112 | 0.164891 |

| Effusion | 8659 | 0.082885 | 4658 | 0.125664 |

| Atelectasis | 8280 | 0.0792572 | 3279 | 0.0884614 |

| Nodule | 4708 | 0.0450656 | 1623 | 0.0437856 |

| Mass | 4034 | 0.038614 | 1748 | 0.0471578 |

| Consolidation | 2852 | 0.0272997 | 1815 | 0.0489654 |

| Pneumothorax | 2637 | 0.0252417 | 2665 | 0.0718968 |

| Pleural_Thickening | 2242 | 0.0214607 | 1143 | 0.0308361 |

| Cardiomegaly | 1707 | 0.0163396 | 1069 | 0.0288397 |

| Emphysema | 1423 | 0.0136211 | 1093 | 0.0294871 |

| Edema | 1378 | 0.0131904 | 925 | 0.0249548 |

| Fibrosis | 1251 | 0.0119747 | 435 | 0.0117355 |

| Pneumonia | 876 | 0.00838518 | 555 | 0.0149729 |

| Hernia | 141 | 0.00134967 | 86 | 0.00232012 |

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### License and attribution

There are no restrictions on the use of the NIH chest x-ray images. However, the dataset has the following attribution requirements:

- Provide a link to the NIH download site: https://nihcc.app.box.com/v/ChestXray-NIHCC

- Include a citation to the CVPR 2017 paper (see Citation information section)

- Acknowledge that the NIH Clinical Center is the data provider

### Citation Information

```

@inproceedings{Wang_2017,

doi = {10.1109/cvpr.2017.369},

url = {https://doi.org/10.1109%2Fcvpr.2017.369},

year = 2017,

month = {jul},

publisher = {{IEEE}

},

author = {Xiaosong Wang and Yifan Peng and Le Lu and Zhiyong Lu and Mohammadhadi Bagheri and Ronald M. Summers},

title = {{ChestX}-Ray8: Hospital-Scale Chest X-Ray Database and Benchmarks on Weakly-Supervised Classification and Localization of Common Thorax Diseases},

booktitle = {2017 {IEEE} Conference on Computer Vision and Pattern Recognition ({CVPR})}

}

```

### Contributions

Thanks to [@alcazar90](https://github.com/alcazar90) for adding this dataset.

|

SINAI/SA-Corpus | ---

license: cc-by-nc-sa-4.0

tags:

- Sentiment Analysis

configs:

- config_name: default

data_files:

- split: 1star

path: SINAI-SA-corpus/1/*.txt

- split: 2star

path: SINAI-SA-corpus/2/*.txt

- split: 3star

path: SINAI-SA-corpus/3/*.txt

- split: 4star

path: SINAI-SA-corpus/4/*.txt

- split: 5star

path: SINAI-SA-corpus/5/*.txt

---

### Dataset Description

**Paper**: [Experiments with SVM to classify opinions in different domains](https://www.sciencedirect.com/science/article/pii/S0957417411008542/pdfft?md5=3d961785088ca5e7215fb1611cc9aeeb&pid=1-s2.0-S0957417411008542-main.pdf)

**Point of Contact**: maite@ujaen.es

This corpus has been prepared by the SINAI group in December 2008. SINAI SA (Sentiment Analysis) was created by tracking the Amazon website. Nearly 2,000 comments were extracted from different cameras.

**Structure:** The SINAI corpus contains 5 directories and each represents the number of stars for reviews. (eg directory 1 contains rated with a star). Each directory contains a file in plain text by document/comment.

The amount of comments is as follows:

- 1…star: 78 comments

- 2…stars: 67 comments

- 3…stars: 97 comments

- 4…stars: 411 comments

- 5…stars: 1,290 comments

Total: 1,943 comments

| Camera | Comments |

|----------|----------|

| CanonA590IS | 400 |

| CanonA630 | 300 |

| CanonSD1100IS | 426 |

| KodakCx7430 | 64 |

| KodakV1003 | 95 |

| KodakZ740 | 155 |

| Nikon5700 | 119 |

| Olympus1030SW | 168 |

| PentaxK10D | 126 |

| PentaxK200D | 90 |

| **Total** | **1,943** |

### Licensing Information

SINAI-SA Corpus is released under the [Apache-2.0 License](http://www.apache.org/licenses/LICENSE-2.0).

### Citation Information

```bibtex

@article{RUSHDISALEH201114799,

title = {Experiments with SVM to classify opinions in different domains},

journal = {Expert Systems with Applications},

volume = {38},

number = {12},

pages = {14799-14804},

year = {2011},

issn = {0957-4174},

doi = {https://doi.org/10.1016/j.eswa.2011.05.070},

url = {https://www.sciencedirect.com/science/article/pii/S0957417411008542},

author = {M. {Rushdi Saleh} and M.T. Martín-Valdivia and A. Montejo-Ráez and L.A. Ureña-López},

keywords = {Opinion mining, Machine learning, SVM, Corpora},

abstract = {Recently, opinion mining is receiving more attention due to the abundance of forums, blogs, e-commerce web sites, news reports and additional web sources where people tend to express their opinions. Opinion mining is the task of identifying whether the opinion expressed in a document is positive or negative about a given topic. In this paper we explore this new research area applying Support Vector Machines (SVM) for testing different domains of data sets and using several weighting schemes. We have accomplished experiments with different features on three corpora. Two of them have already been used in several works. The last one has been built from Amazon.com specifically for this paper in order to prove the feasibility of the SVM for different domains.}

}

``` |

boapps/kmdb_classification | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

dataset_info:

features:

- name: text

dtype: string

- name: title

dtype: string

- name: description

dtype: string

- name: keywords

sequence: string

- name: label

dtype: int64

- name: url

dtype: string

- name: date

dtype: string

- name: is_hand_annoted

dtype: bool

- name: score

dtype: float64

- name: title_score

dtype: float64

splits:

- name: train

num_bytes: 187493981

num_examples: 45683

- name: test

num_bytes: 13542701

num_examples: 3605

- name: validation

num_bytes: 25309037

num_examples: 6579

download_size: 139938458

dataset_size: 226345719

---

# Dataset Card for "kmdb_classification"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

MikeXydas/qr2t_benchmark | ---

license: mit

---

|

Deojoandco/reward_model_anthropic_88 | ---

dataset_info:

features:

- name: prompt

dtype: string

- name: response

dtype: string

- name: chosen

dtype: string

- name: rejected

dtype: string

- name: output

sequence: string

- name: toxicity

sequence: float64

- name: severe_toxicity

sequence: float64

- name: obscene

sequence: float64

- name: identity_attack

sequence: float64

- name: insult

sequence: float64

- name: threat

sequence: float64

- name: sexual_explicit

sequence: float64

- name: mean_toxity_value

dtype: float64

- name: max_toxity_value

dtype: float64

- name: min_toxity_value

dtype: float64

- name: sd_toxity_value

dtype: float64

- name: median_toxity_value

dtype: float64

- name: median_output

dtype: string

- name: toxic

dtype: bool

- name: regard_8

list:

list:

- name: label

dtype: string

- name: score

dtype: float64

- name: regard_8_neutral

sequence: float64

- name: regard_8_negative

sequence: float64

- name: regard_8_positive

sequence: float64

- name: regard_8_other

sequence: float64

- name: regard_8_neutral_mean

dtype: float64

- name: regard_8_neutral_sd

dtype: float64

- name: regard_8_neutral_median

dtype: float64

- name: regard_8_neutral_min

dtype: float64

- name: regard_8_neutral_max

dtype: float64

- name: regard_8_negative_mean

dtype: float64

- name: regard_8_negative_sd

dtype: float64

- name: regard_8_negative_median

dtype: float64

- name: regard_8_negative_min

dtype: float64

- name: regard_8_negative_max

dtype: float64

- name: regard_8_positive_mean

dtype: float64

- name: regard_8_positive_sd

dtype: float64

- name: regard_8_positive_median

dtype: float64

- name: regard_8_positive_min

dtype: float64

- name: regard_8_positive_max

dtype: float64

- name: regard_8_other_mean

dtype: float64

- name: regard_8_other_sd

dtype: float64

- name: regard_8_other_median

dtype: float64

- name: regard_8_other_min

dtype: float64

- name: regard_8_other_max

dtype: float64

- name: regard

list:

list:

- name: label

dtype: string

- name: score

dtype: float64

- name: regard_neutral

dtype: float64

- name: regard_positive

dtype: float64

- name: regard_negative

dtype: float64

- name: regard_other

dtype: float64

- name: bias_matches_0

dtype: string

- name: bias_matches_1

dtype: string

- name: bias_matches_2

dtype: string

- name: bias_matches_3

dtype: string

- name: bias_matches_4

dtype: string

- name: bias_matches_5

dtype: string

- name: bias_matches_6

dtype: string

- name: bias_matches_7

dtype: string

- name: bias_matches

dtype: string

splits:

- name: test

num_bytes: 38897637

num_examples: 8552

download_size: 19767367

dataset_size: 38897637

---

# Dataset Card for "reward_model_anthropic_88"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

liuyanchen1015/MULTI_VALUE_mrpc_synthetic_superlative | ---

dataset_info:

features:

- name: sentence1

dtype: string

- name: sentence2

dtype: string

- name: label

dtype: int64

- name: idx

dtype: int64

- name: value_score

dtype: int64

splits:

- name: test

num_bytes: 3769

num_examples: 13

- name: train

num_bytes: 8162

num_examples: 30

- name: validation

num_bytes: 1575

num_examples: 5

download_size: 20615

dataset_size: 13506

---

# Dataset Card for "MULTI_VALUE_mrpc_synthetic_superlative"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

imdatta0/oasst_top1_5k | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 8933937.59172009

num_examples: 5000

- name: test

num_bytes: 1215910

num_examples: 690

download_size: 5835349

dataset_size: 10149847.59172009

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

---

|

AdapterOcean/data-standardized_cluster_17 | ---

dataset_info:

features:

- name: text

dtype: string

- name: conversation_id

dtype: int64

- name: embedding

sequence: float64

- name: cluster

dtype: int64

splits:

- name: train

num_bytes: 19624365

num_examples: 1858

download_size: 5710907

dataset_size: 19624365

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "data-standardized_cluster_17"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

open-llm-leaderboard/details_vibhorag101__llama-2-13b-chat-hf-phr_mental_therapy | ---

pretty_name: Evaluation run of vibhorag101/llama-2-13b-chat-hf-phr_mental_therapy

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [vibhorag101/llama-2-13b-chat-hf-phr_mental_therapy](https://huggingface.co/vibhorag101/llama-2-13b-chat-hf-phr_mental_therapy)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 63 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the aggregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_vibhorag101__llama-2-13b-chat-hf-phr_mental_therapy\"\

,\n\t\"harness_winogrande_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\n\

These are the [latest results from run 2023-12-04T18:26:43.065214](https://huggingface.co/datasets/open-llm-leaderboard/details_vibhorag101__llama-2-13b-chat-hf-phr_mental_therapy/blob/main/results_2023-12-04T18-26-43.065214.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.2434367192235923,\n\

\ \"acc_stderr\": 0.03008501938303984,\n \"acc_norm\": 0.24224538912156782,\n\

\ \"acc_norm_stderr\": 0.030747150403453674,\n \"mc1\": 0.2778457772337821,\n\

\ \"mc1_stderr\": 0.015680929364024643,\n \"mc2\": 0.4692403294958895,\n\

\ \"mc2_stderr\": 0.015061938982346217\n },\n \"harness|arc:challenge|25\"\

: {\n \"acc\": 0.36945392491467577,\n \"acc_stderr\": 0.014104578366491894,\n\

\ \"acc_norm\": 0.38822525597269625,\n \"acc_norm_stderr\": 0.01424161420741405\n\

\ },\n \"harness|hellaswag|10\": {\n \"acc\": 0.5696076478789086,\n\

\ \"acc_stderr\": 0.004941191607317913,\n \"acc_norm\": 0.7276438956383191,\n\

\ \"acc_norm_stderr\": 0.004442623590846322\n },\n \"harness|hendrycksTest-abstract_algebra|5\"\

: {\n \"acc\": 0.22,\n \"acc_stderr\": 0.04163331998932268,\n \

\ \"acc_norm\": 0.22,\n \"acc_norm_stderr\": 0.04163331998932268\n \

\ },\n \"harness|hendrycksTest-anatomy|5\": {\n \"acc\": 0.18518518518518517,\n\

\ \"acc_stderr\": 0.03355677216313142,\n \"acc_norm\": 0.18518518518518517,\n\

\ \"acc_norm_stderr\": 0.03355677216313142\n },\n \"harness|hendrycksTest-astronomy|5\"\

: {\n \"acc\": 0.17763157894736842,\n \"acc_stderr\": 0.031103182383123398,\n\

\ \"acc_norm\": 0.17763157894736842,\n \"acc_norm_stderr\": 0.031103182383123398\n\

\ },\n \"harness|hendrycksTest-business_ethics|5\": {\n \"acc\": 0.3,\n\

\ \"acc_stderr\": 0.046056618647183814,\n \"acc_norm\": 0.3,\n \

\ \"acc_norm_stderr\": 0.046056618647183814\n },\n \"harness|hendrycksTest-clinical_knowledge|5\"\

: {\n \"acc\": 0.21509433962264152,\n \"acc_stderr\": 0.02528839450289137,\n\

\ \"acc_norm\": 0.21509433962264152,\n \"acc_norm_stderr\": 0.02528839450289137\n\

\ },\n \"harness|hendrycksTest-college_biology|5\": {\n \"acc\": 0.2569444444444444,\n\

\ \"acc_stderr\": 0.03653946969442099,\n \"acc_norm\": 0.2569444444444444,\n\

\ \"acc_norm_stderr\": 0.03653946969442099\n },\n \"harness|hendrycksTest-college_chemistry|5\"\

: {\n \"acc\": 0.2,\n \"acc_stderr\": 0.04020151261036845,\n \

\ \"acc_norm\": 0.2,\n \"acc_norm_stderr\": 0.04020151261036845\n },\n\

\ \"harness|hendrycksTest-college_computer_science|5\": {\n \"acc\": 0.26,\n\

\ \"acc_stderr\": 0.0440844002276808,\n \"acc_norm\": 0.26,\n \

\ \"acc_norm_stderr\": 0.0440844002276808\n },\n \"harness|hendrycksTest-college_mathematics|5\"\

: {\n \"acc\": 0.21,\n \"acc_stderr\": 0.040936018074033256,\n \

\ \"acc_norm\": 0.21,\n \"acc_norm_stderr\": 0.040936018074033256\n \

\ },\n \"harness|hendrycksTest-college_medicine|5\": {\n \"acc\": 0.20809248554913296,\n\

\ \"acc_stderr\": 0.030952890217749874,\n \"acc_norm\": 0.20809248554913296,\n\

\ \"acc_norm_stderr\": 0.030952890217749874\n },\n \"harness|hendrycksTest-college_physics|5\"\

: {\n \"acc\": 0.21568627450980393,\n \"acc_stderr\": 0.04092563958237654,\n\

\ \"acc_norm\": 0.21568627450980393,\n \"acc_norm_stderr\": 0.04092563958237654\n\

\ },\n \"harness|hendrycksTest-computer_security|5\": {\n \"acc\":\

\ 0.28,\n \"acc_stderr\": 0.045126085985421276,\n \"acc_norm\": 0.28,\n\

\ \"acc_norm_stderr\": 0.045126085985421276\n },\n \"harness|hendrycksTest-conceptual_physics|5\"\

: {\n \"acc\": 0.26382978723404255,\n \"acc_stderr\": 0.028809989854102973,\n\

\ \"acc_norm\": 0.26382978723404255,\n \"acc_norm_stderr\": 0.028809989854102973\n\

\ },\n \"harness|hendrycksTest-econometrics|5\": {\n \"acc\": 0.23684210526315788,\n\

\ \"acc_stderr\": 0.039994238792813365,\n \"acc_norm\": 0.23684210526315788,\n\

\ \"acc_norm_stderr\": 0.039994238792813365\n },\n \"harness|hendrycksTest-electrical_engineering|5\"\

: {\n \"acc\": 0.2413793103448276,\n \"acc_stderr\": 0.03565998174135302,\n\

\ \"acc_norm\": 0.2413793103448276,\n \"acc_norm_stderr\": 0.03565998174135302\n\

\ },\n \"harness|hendrycksTest-elementary_mathematics|5\": {\n \"acc\"\

: 0.20899470899470898,\n \"acc_stderr\": 0.02094048156533486,\n \"\

acc_norm\": 0.20899470899470898,\n \"acc_norm_stderr\": 0.02094048156533486\n\

\ },\n \"harness|hendrycksTest-formal_logic|5\": {\n \"acc\": 0.2857142857142857,\n\

\ \"acc_stderr\": 0.04040610178208841,\n \"acc_norm\": 0.2857142857142857,\n\

\ \"acc_norm_stderr\": 0.04040610178208841\n },\n \"harness|hendrycksTest-global_facts|5\"\

: {\n \"acc\": 0.18,\n \"acc_stderr\": 0.038612291966536934,\n \

\ \"acc_norm\": 0.18,\n \"acc_norm_stderr\": 0.038612291966536934\n \

\ },\n \"harness|hendrycksTest-high_school_biology|5\": {\n \"acc\"\

: 0.1774193548387097,\n \"acc_stderr\": 0.02173254068932927,\n \"\

acc_norm\": 0.1774193548387097,\n \"acc_norm_stderr\": 0.02173254068932927\n\

\ },\n \"harness|hendrycksTest-high_school_chemistry|5\": {\n \"acc\"\

: 0.15270935960591134,\n \"acc_stderr\": 0.02530890453938063,\n \"\

acc_norm\": 0.15270935960591134,\n \"acc_norm_stderr\": 0.02530890453938063\n\

\ },\n \"harness|hendrycksTest-high_school_computer_science|5\": {\n \

\ \"acc\": 0.25,\n \"acc_stderr\": 0.04351941398892446,\n \"acc_norm\"\

: 0.25,\n \"acc_norm_stderr\": 0.04351941398892446\n },\n \"harness|hendrycksTest-high_school_european_history|5\"\

: {\n \"acc\": 0.21818181818181817,\n \"acc_stderr\": 0.03225078108306289,\n\

\ \"acc_norm\": 0.21818181818181817,\n \"acc_norm_stderr\": 0.03225078108306289\n\

\ },\n \"harness|hendrycksTest-high_school_geography|5\": {\n \"acc\"\

: 0.17676767676767677,\n \"acc_stderr\": 0.027178752639044915,\n \"\

acc_norm\": 0.17676767676767677,\n \"acc_norm_stderr\": 0.027178752639044915\n\

\ },\n \"harness|hendrycksTest-high_school_government_and_politics|5\": {\n\

\ \"acc\": 0.19689119170984457,\n \"acc_stderr\": 0.028697873971860664,\n\

\ \"acc_norm\": 0.19689119170984457,\n \"acc_norm_stderr\": 0.028697873971860664\n\

\ },\n \"harness|hendrycksTest-high_school_macroeconomics|5\": {\n \

\ \"acc\": 0.20256410256410257,\n \"acc_stderr\": 0.020377660970371372,\n\

\ \"acc_norm\": 0.20256410256410257,\n \"acc_norm_stderr\": 0.020377660970371372\n\

\ },\n \"harness|hendrycksTest-high_school_mathematics|5\": {\n \"\

acc\": 0.2111111111111111,\n \"acc_stderr\": 0.024882116857655075,\n \

\ \"acc_norm\": 0.2111111111111111,\n \"acc_norm_stderr\": 0.024882116857655075\n\

\ },\n \"harness|hendrycksTest-high_school_microeconomics|5\": {\n \

\ \"acc\": 0.21008403361344538,\n \"acc_stderr\": 0.026461398717471874,\n\

\ \"acc_norm\": 0.21008403361344538,\n \"acc_norm_stderr\": 0.026461398717471874\n\

\ },\n \"harness|hendrycksTest-high_school_physics|5\": {\n \"acc\"\

: 0.1986754966887417,\n \"acc_stderr\": 0.03257847384436776,\n \"\

acc_norm\": 0.1986754966887417,\n \"acc_norm_stderr\": 0.03257847384436776\n\

\ },\n \"harness|hendrycksTest-high_school_psychology|5\": {\n \"acc\"\

: 0.1926605504587156,\n \"acc_stderr\": 0.016909276884936094,\n \"\

acc_norm\": 0.1926605504587156,\n \"acc_norm_stderr\": 0.016909276884936094\n\

\ },\n \"harness|hendrycksTest-high_school_statistics|5\": {\n \"acc\"\

: 0.1527777777777778,\n \"acc_stderr\": 0.024536326026134224,\n \"\

acc_norm\": 0.1527777777777778,\n \"acc_norm_stderr\": 0.024536326026134224\n\

\ },\n \"harness|hendrycksTest-high_school_us_history|5\": {\n \"acc\"\

: 0.25,\n \"acc_stderr\": 0.03039153369274154,\n \"acc_norm\": 0.25,\n\

\ \"acc_norm_stderr\": 0.03039153369274154\n },\n \"harness|hendrycksTest-high_school_world_history|5\"\

: {\n \"acc\": 0.270042194092827,\n \"acc_stderr\": 0.028900721906293426,\n\

\ \"acc_norm\": 0.270042194092827,\n \"acc_norm_stderr\": 0.028900721906293426\n\

\ },\n \"harness|hendrycksTest-human_aging|5\": {\n \"acc\": 0.31390134529147984,\n\

\ \"acc_stderr\": 0.031146796482972465,\n \"acc_norm\": 0.31390134529147984,\n\

\ \"acc_norm_stderr\": 0.031146796482972465\n },\n \"harness|hendrycksTest-human_sexuality|5\"\

: {\n \"acc\": 0.2595419847328244,\n \"acc_stderr\": 0.03844876139785271,\n\

\ \"acc_norm\": 0.2595419847328244,\n \"acc_norm_stderr\": 0.03844876139785271\n\

\ },\n \"harness|hendrycksTest-international_law|5\": {\n \"acc\":\

\ 0.2396694214876033,\n \"acc_stderr\": 0.03896878985070417,\n \"\

acc_norm\": 0.2396694214876033,\n \"acc_norm_stderr\": 0.03896878985070417\n\

\ },\n \"harness|hendrycksTest-jurisprudence|5\": {\n \"acc\": 0.25925925925925924,\n\

\ \"acc_stderr\": 0.042365112580946336,\n \"acc_norm\": 0.25925925925925924,\n\

\ \"acc_norm_stderr\": 0.042365112580946336\n },\n \"harness|hendrycksTest-logical_fallacies|5\"\

: {\n \"acc\": 0.22085889570552147,\n \"acc_stderr\": 0.032591773927421776,\n\

\ \"acc_norm\": 0.22085889570552147,\n \"acc_norm_stderr\": 0.032591773927421776\n\

\ },\n \"harness|hendrycksTest-machine_learning|5\": {\n \"acc\": 0.3125,\n\

\ \"acc_stderr\": 0.043994650575715215,\n \"acc_norm\": 0.3125,\n\

\ \"acc_norm_stderr\": 0.043994650575715215\n },\n \"harness|hendrycksTest-management|5\"\

: {\n \"acc\": 0.17475728155339806,\n \"acc_stderr\": 0.037601780060266224,\n\

\ \"acc_norm\": 0.17475728155339806,\n \"acc_norm_stderr\": 0.037601780060266224\n\

\ },\n \"harness|hendrycksTest-marketing|5\": {\n \"acc\": 0.2905982905982906,\n\

\ \"acc_stderr\": 0.02974504857267404,\n \"acc_norm\": 0.2905982905982906,\n\

\ \"acc_norm_stderr\": 0.02974504857267404\n },\n \"harness|hendrycksTest-medical_genetics|5\"\

: {\n \"acc\": 0.3,\n \"acc_stderr\": 0.046056618647183814,\n \

\ \"acc_norm\": 0.3,\n \"acc_norm_stderr\": 0.046056618647183814\n \

\ },\n \"harness|hendrycksTest-miscellaneous|5\": {\n \"acc\": 0.23754789272030652,\n\

\ \"acc_stderr\": 0.015218733046150193,\n \"acc_norm\": 0.23754789272030652,\n\

\ \"acc_norm_stderr\": 0.015218733046150193\n },\n \"harness|hendrycksTest-moral_disputes|5\"\

: {\n \"acc\": 0.24855491329479767,\n \"acc_stderr\": 0.023267528432100174,\n\

\ \"acc_norm\": 0.24855491329479767,\n \"acc_norm_stderr\": 0.023267528432100174\n\

\ },\n \"harness|hendrycksTest-moral_scenarios|5\": {\n \"acc\": 0.23798882681564246,\n\

\ \"acc_stderr\": 0.014242630070574915,\n \"acc_norm\": 0.23798882681564246,\n\

\ \"acc_norm_stderr\": 0.014242630070574915\n },\n \"harness|hendrycksTest-nutrition|5\"\

: {\n \"acc\": 0.22549019607843138,\n \"acc_stderr\": 0.023929155517351284,\n\

\ \"acc_norm\": 0.22549019607843138,\n \"acc_norm_stderr\": 0.023929155517351284\n\

\ },\n \"harness|hendrycksTest-philosophy|5\": {\n \"acc\": 0.1864951768488746,\n\

\ \"acc_stderr\": 0.02212243977248077,\n \"acc_norm\": 0.1864951768488746,\n\

\ \"acc_norm_stderr\": 0.02212243977248077\n },\n \"harness|hendrycksTest-prehistory|5\"\

: {\n \"acc\": 0.21604938271604937,\n \"acc_stderr\": 0.022899162918445806,\n\

\ \"acc_norm\": 0.21604938271604937,\n \"acc_norm_stderr\": 0.022899162918445806\n\

\ },\n \"harness|hendrycksTest-professional_accounting|5\": {\n \"\

acc\": 0.23404255319148937,\n \"acc_stderr\": 0.025257861359432417,\n \

\ \"acc_norm\": 0.23404255319148937,\n \"acc_norm_stderr\": 0.025257861359432417\n\

\ },\n \"harness|hendrycksTest-professional_law|5\": {\n \"acc\": 0.2457627118644068,\n\

\ \"acc_stderr\": 0.010996156635142692,\n \"acc_norm\": 0.2457627118644068,\n\

\ \"acc_norm_stderr\": 0.010996156635142692\n },\n \"harness|hendrycksTest-professional_medicine|5\"\

: {\n \"acc\": 0.18382352941176472,\n \"acc_stderr\": 0.023529242185193106,\n\

\ \"acc_norm\": 0.18382352941176472,\n \"acc_norm_stderr\": 0.023529242185193106\n\

\ },\n \"harness|hendrycksTest-professional_psychology|5\": {\n \"\

acc\": 0.25,\n \"acc_stderr\": 0.01751781884501444,\n \"acc_norm\"\

: 0.25,\n \"acc_norm_stderr\": 0.01751781884501444\n },\n \"harness|hendrycksTest-public_relations|5\"\

: {\n \"acc\": 0.21818181818181817,\n \"acc_stderr\": 0.03955932861795833,\n\

\ \"acc_norm\": 0.21818181818181817,\n \"acc_norm_stderr\": 0.03955932861795833\n\

\ },\n \"harness|hendrycksTest-security_studies|5\": {\n \"acc\": 0.18775510204081633,\n\

\ \"acc_stderr\": 0.02500025603954621,\n \"acc_norm\": 0.18775510204081633,\n\

\ \"acc_norm_stderr\": 0.02500025603954621\n },\n \"harness|hendrycksTest-sociology|5\"\

: {\n \"acc\": 0.24378109452736318,\n \"acc_stderr\": 0.03036049015401465,\n\

\ \"acc_norm\": 0.24378109452736318,\n \"acc_norm_stderr\": 0.03036049015401465\n\

\ },\n \"harness|hendrycksTest-us_foreign_policy|5\": {\n \"acc\":\

\ 0.28,\n \"acc_stderr\": 0.04512608598542128,\n \"acc_norm\": 0.28,\n\

\ \"acc_norm_stderr\": 0.04512608598542128\n },\n \"harness|hendrycksTest-virology|5\"\

: {\n \"acc\": 0.28313253012048195,\n \"acc_stderr\": 0.03507295431370518,\n\

\ \"acc_norm\": 0.28313253012048195,\n \"acc_norm_stderr\": 0.03507295431370518\n\

\ },\n \"harness|hendrycksTest-world_religions|5\": {\n \"acc\": 0.3216374269005848,\n\

\ \"acc_stderr\": 0.03582529442573122,\n \"acc_norm\": 0.3216374269005848,\n\

\ \"acc_norm_stderr\": 0.03582529442573122\n },\n \"harness|truthfulqa:mc|0\"\

: {\n \"mc1\": 0.2778457772337821,\n \"mc1_stderr\": 0.015680929364024643,\n\

\ \"mc2\": 0.4692403294958895,\n \"mc2_stderr\": 0.015061938982346217\n\

\ },\n \"harness|winogrande|5\": {\n \"acc\": 0.6558800315706393,\n\

\ \"acc_stderr\": 0.013352121905005941\n },\n \"harness|gsm8k|5\":\

\ {\n \"acc\": 0.07808946171341925,\n \"acc_stderr\": 0.007390654481108261\n\

\ }\n}\n```"

repo_url: https://huggingface.co/vibhorag101/llama-2-13b-chat-hf-phr_mental_therapy

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_arc_challenge_25

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|arc:challenge|25_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|arc:challenge|25_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_gsm8k_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|gsm8k|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hellaswag_10

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hellaswag|10_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hellaswag|10_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-12-04T18-26-43.065214.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_abstract_algebra_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_anatomy_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_astronomy_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_business_ethics_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_clinical_knowledge_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_college_biology_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_college_chemistry_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_college_computer_science_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_college_mathematics_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_college_medicine_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_college_physics_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_computer_security_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_conceptual_physics_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_econometrics_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_electrical_engineering_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_elementary_mathematics_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_formal_logic_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_global_facts_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_high_school_biology_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_high_school_chemistry_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_high_school_computer_science_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_high_school_european_history_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_high_school_geography_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_high_school_government_and_politics_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_high_school_macroeconomics_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_high_school_mathematics_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_high_school_microeconomics_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_high_school_physics_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_high_school_psychology_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_high_school_statistics_5

data_files:

- split: 2023_12_04T18_26_43.065214

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-04T18-26-43.065214.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-04T18-26-43.065214.parquet'

- config_name: harness_hendrycksTest_high_school_us_history_5

data_files:

- split: 2023_12_04T18_26_43.065214

path: