datasetId stringlengths 2 117 | card stringlengths 19 1.01M |

|---|---|

GenVRadmin/Samvaad-Mixed-Language-3 | ---

license: mit

---

|

open-llm-leaderboard/details_Weyaxi__Seraph-7B | ---

pretty_name: Evaluation run of Weyaxi/Seraph-7B

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [Weyaxi/Seraph-7B](https://huggingface.co/Weyaxi/Seraph-7B) on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 63 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the aggregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_Weyaxi__Seraph-7B\"\

,\n\t\"harness_winogrande_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\n\

These are the [latest results from run 2023-12-11T09:44:37.311244](https://huggingface.co/datasets/open-llm-leaderboard/details_Weyaxi__Seraph-7B/blob/main/results_2023-12-11T09-44-37.311244.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.6548171567091998,\n\

\ \"acc_stderr\": 0.031923676546826464,\n \"acc_norm\": 0.6547760288690921,\n\

\ \"acc_norm_stderr\": 0.03258255753948947,\n \"mc1\": 0.4283965728274174,\n\

\ \"mc1_stderr\": 0.017323088597314754,\n \"mc2\": 0.5948960816711865,\n\

\ \"mc2_stderr\": 0.015146045918500203\n },\n \"harness|arc:challenge|25\"\

: {\n \"acc\": 0.6544368600682594,\n \"acc_stderr\": 0.013896938461145683,\n\

\ \"acc_norm\": 0.6783276450511946,\n \"acc_norm_stderr\": 0.013650488084494166\n\

\ },\n \"harness|hellaswag|10\": {\n \"acc\": 0.6727743477394941,\n\

\ \"acc_stderr\": 0.004682414968323629,\n \"acc_norm\": 0.8621788488348935,\n\

\ \"acc_norm_stderr\": 0.003440076775300575\n },\n \"harness|hendrycksTest-abstract_algebra|5\"\

: {\n \"acc\": 0.34,\n \"acc_stderr\": 0.04760952285695235,\n \

\ \"acc_norm\": 0.34,\n \"acc_norm_stderr\": 0.04760952285695235\n \

\ },\n \"harness|hendrycksTest-anatomy|5\": {\n \"acc\": 0.6370370370370371,\n\

\ \"acc_stderr\": 0.04153948404742398,\n \"acc_norm\": 0.6370370370370371,\n\

\ \"acc_norm_stderr\": 0.04153948404742398\n },\n \"harness|hendrycksTest-astronomy|5\"\

: {\n \"acc\": 0.7302631578947368,\n \"acc_stderr\": 0.03611780560284898,\n\

\ \"acc_norm\": 0.7302631578947368,\n \"acc_norm_stderr\": 0.03611780560284898\n\

\ },\n \"harness|hendrycksTest-business_ethics|5\": {\n \"acc\": 0.63,\n\

\ \"acc_stderr\": 0.04852365870939099,\n \"acc_norm\": 0.63,\n \

\ \"acc_norm_stderr\": 0.04852365870939099\n },\n \"harness|hendrycksTest-clinical_knowledge|5\"\

: {\n \"acc\": 0.7094339622641509,\n \"acc_stderr\": 0.02794321998933714,\n\

\ \"acc_norm\": 0.7094339622641509,\n \"acc_norm_stderr\": 0.02794321998933714\n\

\ },\n \"harness|hendrycksTest-college_biology|5\": {\n \"acc\": 0.7847222222222222,\n\

\ \"acc_stderr\": 0.03437079344106135,\n \"acc_norm\": 0.7847222222222222,\n\

\ \"acc_norm_stderr\": 0.03437079344106135\n },\n \"harness|hendrycksTest-college_chemistry|5\"\

: {\n \"acc\": 0.49,\n \"acc_stderr\": 0.05024183937956912,\n \

\ \"acc_norm\": 0.49,\n \"acc_norm_stderr\": 0.05024183937956912\n \

\ },\n \"harness|hendrycksTest-college_computer_science|5\": {\n \"acc\"\

: 0.51,\n \"acc_stderr\": 0.05024183937956911,\n \"acc_norm\": 0.51,\n\

\ \"acc_norm_stderr\": 0.05024183937956911\n },\n \"harness|hendrycksTest-college_mathematics|5\"\

: {\n \"acc\": 0.32,\n \"acc_stderr\": 0.046882617226215034,\n \

\ \"acc_norm\": 0.32,\n \"acc_norm_stderr\": 0.046882617226215034\n \

\ },\n \"harness|hendrycksTest-college_medicine|5\": {\n \"acc\": 0.6589595375722543,\n\

\ \"acc_stderr\": 0.03614665424180826,\n \"acc_norm\": 0.6589595375722543,\n\

\ \"acc_norm_stderr\": 0.03614665424180826\n },\n \"harness|hendrycksTest-college_physics|5\"\

: {\n \"acc\": 0.4019607843137255,\n \"acc_stderr\": 0.048786087144669955,\n\

\ \"acc_norm\": 0.4019607843137255,\n \"acc_norm_stderr\": 0.048786087144669955\n\

\ },\n \"harness|hendrycksTest-computer_security|5\": {\n \"acc\":\

\ 0.79,\n \"acc_stderr\": 0.04093601807403326,\n \"acc_norm\": 0.79,\n\

\ \"acc_norm_stderr\": 0.04093601807403326\n },\n \"harness|hendrycksTest-conceptual_physics|5\"\

: {\n \"acc\": 0.5957446808510638,\n \"acc_stderr\": 0.03208115750788684,\n\

\ \"acc_norm\": 0.5957446808510638,\n \"acc_norm_stderr\": 0.03208115750788684\n\

\ },\n \"harness|hendrycksTest-econometrics|5\": {\n \"acc\": 0.49122807017543857,\n\

\ \"acc_stderr\": 0.04702880432049615,\n \"acc_norm\": 0.49122807017543857,\n\

\ \"acc_norm_stderr\": 0.04702880432049615\n },\n \"harness|hendrycksTest-electrical_engineering|5\"\

: {\n \"acc\": 0.5586206896551724,\n \"acc_stderr\": 0.04137931034482757,\n\

\ \"acc_norm\": 0.5586206896551724,\n \"acc_norm_stderr\": 0.04137931034482757\n\

\ },\n \"harness|hendrycksTest-elementary_mathematics|5\": {\n \"acc\"\

: 0.4365079365079365,\n \"acc_stderr\": 0.02554284681740051,\n \"\

acc_norm\": 0.4365079365079365,\n \"acc_norm_stderr\": 0.02554284681740051\n\

\ },\n \"harness|hendrycksTest-formal_logic|5\": {\n \"acc\": 0.4523809523809524,\n\

\ \"acc_stderr\": 0.044518079590553275,\n \"acc_norm\": 0.4523809523809524,\n\

\ \"acc_norm_stderr\": 0.044518079590553275\n },\n \"harness|hendrycksTest-global_facts|5\"\

: {\n \"acc\": 0.35,\n \"acc_stderr\": 0.047937248544110196,\n \

\ \"acc_norm\": 0.35,\n \"acc_norm_stderr\": 0.047937248544110196\n \

\ },\n \"harness|hendrycksTest-high_school_biology|5\": {\n \"acc\"\

: 0.7741935483870968,\n \"acc_stderr\": 0.023785577884181015,\n \"\

acc_norm\": 0.7741935483870968,\n \"acc_norm_stderr\": 0.023785577884181015\n\

\ },\n \"harness|hendrycksTest-high_school_chemistry|5\": {\n \"acc\"\

: 0.49261083743842365,\n \"acc_stderr\": 0.035176035403610084,\n \"\

acc_norm\": 0.49261083743842365,\n \"acc_norm_stderr\": 0.035176035403610084\n\

\ },\n \"harness|hendrycksTest-high_school_computer_science|5\": {\n \

\ \"acc\": 0.7,\n \"acc_stderr\": 0.046056618647183814,\n \"acc_norm\"\

: 0.7,\n \"acc_norm_stderr\": 0.046056618647183814\n },\n \"harness|hendrycksTest-high_school_european_history|5\"\

: {\n \"acc\": 0.7696969696969697,\n \"acc_stderr\": 0.0328766675860349,\n\

\ \"acc_norm\": 0.7696969696969697,\n \"acc_norm_stderr\": 0.0328766675860349\n\

\ },\n \"harness|hendrycksTest-high_school_geography|5\": {\n \"acc\"\

: 0.803030303030303,\n \"acc_stderr\": 0.028335609732463362,\n \"\

acc_norm\": 0.803030303030303,\n \"acc_norm_stderr\": 0.028335609732463362\n\

\ },\n \"harness|hendrycksTest-high_school_government_and_politics|5\": {\n\

\ \"acc\": 0.9015544041450777,\n \"acc_stderr\": 0.021500249576033456,\n\

\ \"acc_norm\": 0.9015544041450777,\n \"acc_norm_stderr\": 0.021500249576033456\n\

\ },\n \"harness|hendrycksTest-high_school_macroeconomics|5\": {\n \

\ \"acc\": 0.6717948717948717,\n \"acc_stderr\": 0.023807633198657266,\n\

\ \"acc_norm\": 0.6717948717948717,\n \"acc_norm_stderr\": 0.023807633198657266\n\

\ },\n \"harness|hendrycksTest-high_school_mathematics|5\": {\n \"\

acc\": 0.34444444444444444,\n \"acc_stderr\": 0.028972648884844267,\n \

\ \"acc_norm\": 0.34444444444444444,\n \"acc_norm_stderr\": 0.028972648884844267\n\

\ },\n \"harness|hendrycksTest-high_school_microeconomics|5\": {\n \

\ \"acc\": 0.6932773109243697,\n \"acc_stderr\": 0.02995382389188704,\n \

\ \"acc_norm\": 0.6932773109243697,\n \"acc_norm_stderr\": 0.02995382389188704\n\

\ },\n \"harness|hendrycksTest-high_school_physics|5\": {\n \"acc\"\

: 0.33774834437086093,\n \"acc_stderr\": 0.03861557546255169,\n \"\

acc_norm\": 0.33774834437086093,\n \"acc_norm_stderr\": 0.03861557546255169\n\

\ },\n \"harness|hendrycksTest-high_school_psychology|5\": {\n \"acc\"\

: 0.8605504587155963,\n \"acc_stderr\": 0.014852421490033053,\n \"\

acc_norm\": 0.8605504587155963,\n \"acc_norm_stderr\": 0.014852421490033053\n\

\ },\n \"harness|hendrycksTest-high_school_statistics|5\": {\n \"acc\"\

: 0.5231481481481481,\n \"acc_stderr\": 0.03406315360711507,\n \"\

acc_norm\": 0.5231481481481481,\n \"acc_norm_stderr\": 0.03406315360711507\n\

\ },\n \"harness|hendrycksTest-high_school_us_history|5\": {\n \"acc\"\

: 0.8284313725490197,\n \"acc_stderr\": 0.026460569561240634,\n \"\

acc_norm\": 0.8284313725490197,\n \"acc_norm_stderr\": 0.026460569561240634\n\

\ },\n \"harness|hendrycksTest-high_school_world_history|5\": {\n \"\

acc\": 0.810126582278481,\n \"acc_stderr\": 0.025530100460233494,\n \

\ \"acc_norm\": 0.810126582278481,\n \"acc_norm_stderr\": 0.025530100460233494\n\

\ },\n \"harness|hendrycksTest-human_aging|5\": {\n \"acc\": 0.6905829596412556,\n\

\ \"acc_stderr\": 0.03102441174057221,\n \"acc_norm\": 0.6905829596412556,\n\

\ \"acc_norm_stderr\": 0.03102441174057221\n },\n \"harness|hendrycksTest-human_sexuality|5\"\

: {\n \"acc\": 0.8015267175572519,\n \"acc_stderr\": 0.03498149385462472,\n\

\ \"acc_norm\": 0.8015267175572519,\n \"acc_norm_stderr\": 0.03498149385462472\n\

\ },\n \"harness|hendrycksTest-international_law|5\": {\n \"acc\":\

\ 0.7933884297520661,\n \"acc_stderr\": 0.03695980128098824,\n \"\

acc_norm\": 0.7933884297520661,\n \"acc_norm_stderr\": 0.03695980128098824\n\

\ },\n \"harness|hendrycksTest-jurisprudence|5\": {\n \"acc\": 0.7870370370370371,\n\

\ \"acc_stderr\": 0.039578354719809805,\n \"acc_norm\": 0.7870370370370371,\n\

\ \"acc_norm_stderr\": 0.039578354719809805\n },\n \"harness|hendrycksTest-logical_fallacies|5\"\

: {\n \"acc\": 0.7730061349693251,\n \"acc_stderr\": 0.03291099578615769,\n\

\ \"acc_norm\": 0.7730061349693251,\n \"acc_norm_stderr\": 0.03291099578615769\n\

\ },\n \"harness|hendrycksTest-machine_learning|5\": {\n \"acc\": 0.44642857142857145,\n\

\ \"acc_stderr\": 0.04718471485219588,\n \"acc_norm\": 0.44642857142857145,\n\

\ \"acc_norm_stderr\": 0.04718471485219588\n },\n \"harness|hendrycksTest-management|5\"\

: {\n \"acc\": 0.7864077669902912,\n \"acc_stderr\": 0.040580420156460344,\n\

\ \"acc_norm\": 0.7864077669902912,\n \"acc_norm_stderr\": 0.040580420156460344\n\

\ },\n \"harness|hendrycksTest-marketing|5\": {\n \"acc\": 0.8717948717948718,\n\

\ \"acc_stderr\": 0.02190190511507333,\n \"acc_norm\": 0.8717948717948718,\n\

\ \"acc_norm_stderr\": 0.02190190511507333\n },\n \"harness|hendrycksTest-medical_genetics|5\"\

: {\n \"acc\": 0.71,\n \"acc_stderr\": 0.045604802157206845,\n \

\ \"acc_norm\": 0.71,\n \"acc_norm_stderr\": 0.045604802157206845\n \

\ },\n \"harness|hendrycksTest-miscellaneous|5\": {\n \"acc\": 0.8288633461047255,\n\

\ \"acc_stderr\": 0.013468201614066302,\n \"acc_norm\": 0.8288633461047255,\n\

\ \"acc_norm_stderr\": 0.013468201614066302\n },\n \"harness|hendrycksTest-moral_disputes|5\"\

: {\n \"acc\": 0.7514450867052023,\n \"acc_stderr\": 0.023267528432100174,\n\

\ \"acc_norm\": 0.7514450867052023,\n \"acc_norm_stderr\": 0.023267528432100174\n\

\ },\n \"harness|hendrycksTest-moral_scenarios|5\": {\n \"acc\": 0.39106145251396646,\n\

\ \"acc_stderr\": 0.01632076376380838,\n \"acc_norm\": 0.39106145251396646,\n\

\ \"acc_norm_stderr\": 0.01632076376380838\n },\n \"harness|hendrycksTest-nutrition|5\"\

: {\n \"acc\": 0.738562091503268,\n \"acc_stderr\": 0.025160998214292452,\n\

\ \"acc_norm\": 0.738562091503268,\n \"acc_norm_stderr\": 0.025160998214292452\n\

\ },\n \"harness|hendrycksTest-philosophy|5\": {\n \"acc\": 0.7106109324758842,\n\

\ \"acc_stderr\": 0.025755865922632945,\n \"acc_norm\": 0.7106109324758842,\n\

\ \"acc_norm_stderr\": 0.025755865922632945\n },\n \"harness|hendrycksTest-prehistory|5\"\

: {\n \"acc\": 0.7561728395061729,\n \"acc_stderr\": 0.023891879541959607,\n\

\ \"acc_norm\": 0.7561728395061729,\n \"acc_norm_stderr\": 0.023891879541959607\n\

\ },\n \"harness|hendrycksTest-professional_accounting|5\": {\n \"\

acc\": 0.4929078014184397,\n \"acc_stderr\": 0.02982449855912901,\n \

\ \"acc_norm\": 0.4929078014184397,\n \"acc_norm_stderr\": 0.02982449855912901\n\

\ },\n \"harness|hendrycksTest-professional_law|5\": {\n \"acc\": 0.46936114732724904,\n\

\ \"acc_stderr\": 0.012746237711716634,\n \"acc_norm\": 0.46936114732724904,\n\

\ \"acc_norm_stderr\": 0.012746237711716634\n },\n \"harness|hendrycksTest-professional_medicine|5\"\

: {\n \"acc\": 0.6911764705882353,\n \"acc_stderr\": 0.02806499816704009,\n\

\ \"acc_norm\": 0.6911764705882353,\n \"acc_norm_stderr\": 0.02806499816704009\n\

\ },\n \"harness|hendrycksTest-professional_psychology|5\": {\n \"\

acc\": 0.684640522875817,\n \"acc_stderr\": 0.01879808628488689,\n \

\ \"acc_norm\": 0.684640522875817,\n \"acc_norm_stderr\": 0.01879808628488689\n\

\ },\n \"harness|hendrycksTest-public_relations|5\": {\n \"acc\": 0.6727272727272727,\n\

\ \"acc_stderr\": 0.0449429086625209,\n \"acc_norm\": 0.6727272727272727,\n\

\ \"acc_norm_stderr\": 0.0449429086625209\n },\n \"harness|hendrycksTest-security_studies|5\"\

: {\n \"acc\": 0.7306122448979592,\n \"acc_stderr\": 0.02840125202902294,\n\

\ \"acc_norm\": 0.7306122448979592,\n \"acc_norm_stderr\": 0.02840125202902294\n\

\ },\n \"harness|hendrycksTest-sociology|5\": {\n \"acc\": 0.8606965174129353,\n\

\ \"acc_stderr\": 0.024484487162913973,\n \"acc_norm\": 0.8606965174129353,\n\

\ \"acc_norm_stderr\": 0.024484487162913973\n },\n \"harness|hendrycksTest-us_foreign_policy|5\"\

: {\n \"acc\": 0.87,\n \"acc_stderr\": 0.033799766898963086,\n \

\ \"acc_norm\": 0.87,\n \"acc_norm_stderr\": 0.033799766898963086\n \

\ },\n \"harness|hendrycksTest-virology|5\": {\n \"acc\": 0.5602409638554217,\n\

\ \"acc_stderr\": 0.03864139923699122,\n \"acc_norm\": 0.5602409638554217,\n\

\ \"acc_norm_stderr\": 0.03864139923699122\n },\n \"harness|hendrycksTest-world_religions|5\"\

: {\n \"acc\": 0.8245614035087719,\n \"acc_stderr\": 0.029170885500727665,\n\

\ \"acc_norm\": 0.8245614035087719,\n \"acc_norm_stderr\": 0.029170885500727665\n\

\ },\n \"harness|truthfulqa:mc|0\": {\n \"mc1\": 0.4283965728274174,\n\

\ \"mc1_stderr\": 0.017323088597314754,\n \"mc2\": 0.5948960816711865,\n\

\ \"mc2_stderr\": 0.015146045918500203\n },\n \"harness|winogrande|5\"\

: {\n \"acc\": 0.8066298342541437,\n \"acc_stderr\": 0.011099796645920522\n\

\ },\n \"harness|gsm8k|5\": {\n \"acc\": 0.7187263078089462,\n \

\ \"acc_stderr\": 0.012384789310940244\n }\n}\n```"

repo_url: https://huggingface.co/Weyaxi/Seraph-7B

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_arc_challenge_25

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|arc:challenge|25_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|arc:challenge|25_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_gsm8k_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|gsm8k|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hellaswag_10

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hellaswag|10_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hellaswag|10_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-12-11T09-44-37.311244.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_abstract_algebra_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_anatomy_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_astronomy_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_business_ethics_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_clinical_knowledge_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_college_biology_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_college_chemistry_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_college_computer_science_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_college_mathematics_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_college_medicine_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_college_physics_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_computer_security_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_conceptual_physics_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_econometrics_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_electrical_engineering_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_elementary_mathematics_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_formal_logic_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_global_facts_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_biology_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_chemistry_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_computer_science_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_european_history_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_geography_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_government_and_politics_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_macroeconomics_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_mathematics_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_microeconomics_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_physics_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_psychology_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_statistics_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_us_history_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_high_school_world_history_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_human_aging_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_human_sexuality_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_international_law_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-international_law|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-international_law|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_jurisprudence_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_logical_fallacies_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_machine_learning_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_management_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-management|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-management|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_marketing_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-marketing|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-marketing|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_medical_genetics_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_miscellaneous_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_moral_disputes_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_moral_scenarios_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_nutrition_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_philosophy_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_prehistory_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_professional_accounting_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_professional_law_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_professional_medicine_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_professional_psychology_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_public_relations_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_security_studies_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_sociology_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-sociology|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-sociology|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_us_foreign_policy_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_virology_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-virology|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-virology|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_hendrycksTest_world_religions_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_truthfulqa_mc_0

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|truthfulqa:mc|0_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|truthfulqa:mc|0_2023-12-11T09-44-37.311244.parquet'

- config_name: harness_winogrande_5

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- '**/details_harness|winogrande|5_2023-12-11T09-44-37.311244.parquet'

- split: latest

path:

- '**/details_harness|winogrande|5_2023-12-11T09-44-37.311244.parquet'

- config_name: results

data_files:

- split: 2023_12_11T09_44_37.311244

path:

- results_2023-12-11T09-44-37.311244.parquet

- split: latest

path:

- results_2023-12-11T09-44-37.311244.parquet

---

# Dataset Card for Evaluation run of Weyaxi/Seraph-7B

## Dataset Description

- **Homepage:**

- **Repository:** https://huggingface.co/Weyaxi/Seraph-7B

- **Paper:**

- **Leaderboard:** https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

- **Point of Contact:** clementine@hf.co

### Dataset Summary

Dataset automatically created during the evaluation run of model [Weyaxi/Seraph-7B](https://huggingface.co/Weyaxi/Seraph-7B) on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).

The dataset is composed of 63 configuration, each one coresponding to one of the evaluated task.

The dataset has been created from 1 run(s). Each run can be found as a specific split in each configuration, the split being named using the timestamp of the run.The "train" split is always pointing to the latest results.

An additional configuration "results" store all the aggregated results of the run (and is used to compute and display the aggregated metrics on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).

To load the details from a run, you can for instance do the following:

```python

from datasets import load_dataset

data = load_dataset("open-llm-leaderboard/details_Weyaxi__Seraph-7B",

"harness_winogrande_5",

split="train")

```

## Latest results

These are the [latest results from run 2023-12-11T09:44:37.311244](https://huggingface.co/datasets/open-llm-leaderboard/details_Weyaxi__Seraph-7B/blob/main/results_2023-12-11T09-44-37.311244.json)(note that their might be results for other tasks in the repos if successive evals didn't cover the same tasks. You find each in the results and the "latest" split for each eval):

```python

{

"all": {

"acc": 0.6548171567091998,

"acc_stderr": 0.031923676546826464,

"acc_norm": 0.6547760288690921,

"acc_norm_stderr": 0.03258255753948947,

"mc1": 0.4283965728274174,

"mc1_stderr": 0.017323088597314754,

"mc2": 0.5948960816711865,

"mc2_stderr": 0.015146045918500203

},

"harness|arc:challenge|25": {

"acc": 0.6544368600682594,

"acc_stderr": 0.013896938461145683,

"acc_norm": 0.6783276450511946,

"acc_norm_stderr": 0.013650488084494166

},

"harness|hellaswag|10": {

"acc": 0.6727743477394941,

"acc_stderr": 0.004682414968323629,

"acc_norm": 0.8621788488348935,

"acc_norm_stderr": 0.003440076775300575

},

"harness|hendrycksTest-abstract_algebra|5": {

"acc": 0.34,

"acc_stderr": 0.04760952285695235,

"acc_norm": 0.34,

"acc_norm_stderr": 0.04760952285695235

},

"harness|hendrycksTest-anatomy|5": {

"acc": 0.6370370370370371,

"acc_stderr": 0.04153948404742398,

"acc_norm": 0.6370370370370371,

"acc_norm_stderr": 0.04153948404742398

},

"harness|hendrycksTest-astronomy|5": {

"acc": 0.7302631578947368,

"acc_stderr": 0.03611780560284898,

"acc_norm": 0.7302631578947368,

"acc_norm_stderr": 0.03611780560284898

},

"harness|hendrycksTest-business_ethics|5": {

"acc": 0.63,

"acc_stderr": 0.04852365870939099,

"acc_norm": 0.63,

"acc_norm_stderr": 0.04852365870939099

},

"harness|hendrycksTest-clinical_knowledge|5": {

"acc": 0.7094339622641509,

"acc_stderr": 0.02794321998933714,

"acc_norm": 0.7094339622641509,

"acc_norm_stderr": 0.02794321998933714

},

"harness|hendrycksTest-college_biology|5": {

"acc": 0.7847222222222222,

"acc_stderr": 0.03437079344106135,

"acc_norm": 0.7847222222222222,

"acc_norm_stderr": 0.03437079344106135

},

"harness|hendrycksTest-college_chemistry|5": {

"acc": 0.49,

"acc_stderr": 0.05024183937956912,

"acc_norm": 0.49,

"acc_norm_stderr": 0.05024183937956912

},

"harness|hendrycksTest-college_computer_science|5": {

"acc": 0.51,

"acc_stderr": 0.05024183937956911,

"acc_norm": 0.51,

"acc_norm_stderr": 0.05024183937956911

},

"harness|hendrycksTest-college_mathematics|5": {

"acc": 0.32,

"acc_stderr": 0.046882617226215034,

"acc_norm": 0.32,

"acc_norm_stderr": 0.046882617226215034

},

"harness|hendrycksTest-college_medicine|5": {

"acc": 0.6589595375722543,

"acc_stderr": 0.03614665424180826,

"acc_norm": 0.6589595375722543,

"acc_norm_stderr": 0.03614665424180826

},

"harness|hendrycksTest-college_physics|5": {

"acc": 0.4019607843137255,

"acc_stderr": 0.048786087144669955,

"acc_norm": 0.4019607843137255,

"acc_norm_stderr": 0.048786087144669955

},

"harness|hendrycksTest-computer_security|5": {

"acc": 0.79,

"acc_stderr": 0.04093601807403326,

"acc_norm": 0.79,

"acc_norm_stderr": 0.04093601807403326

},

"harness|hendrycksTest-conceptual_physics|5": {

"acc": 0.5957446808510638,

"acc_stderr": 0.03208115750788684,

"acc_norm": 0.5957446808510638,

"acc_norm_stderr": 0.03208115750788684

},

"harness|hendrycksTest-econometrics|5": {

"acc": 0.49122807017543857,

"acc_stderr": 0.04702880432049615,

"acc_norm": 0.49122807017543857,

"acc_norm_stderr": 0.04702880432049615

},

"harness|hendrycksTest-electrical_engineering|5": {

"acc": 0.5586206896551724,

"acc_stderr": 0.04137931034482757,

"acc_norm": 0.5586206896551724,

"acc_norm_stderr": 0.04137931034482757

},

"harness|hendrycksTest-elementary_mathematics|5": {

"acc": 0.4365079365079365,

"acc_stderr": 0.02554284681740051,

"acc_norm": 0.4365079365079365,

"acc_norm_stderr": 0.02554284681740051

},

"harness|hendrycksTest-formal_logic|5": {

"acc": 0.4523809523809524,

"acc_stderr": 0.044518079590553275,

"acc_norm": 0.4523809523809524,

"acc_norm_stderr": 0.044518079590553275

},

"harness|hendrycksTest-global_facts|5": {

"acc": 0.35,

"acc_stderr": 0.047937248544110196,

"acc_norm": 0.35,

"acc_norm_stderr": 0.047937248544110196

},

"harness|hendrycksTest-high_school_biology|5": {

"acc": 0.7741935483870968,

"acc_stderr": 0.023785577884181015,

"acc_norm": 0.7741935483870968,

"acc_norm_stderr": 0.023785577884181015

},

"harness|hendrycksTest-high_school_chemistry|5": {

"acc": 0.49261083743842365,

"acc_stderr": 0.035176035403610084,

"acc_norm": 0.49261083743842365,

"acc_norm_stderr": 0.035176035403610084

},

"harness|hendrycksTest-high_school_computer_science|5": {

"acc": 0.7,

"acc_stderr": 0.046056618647183814,

"acc_norm": 0.7,

"acc_norm_stderr": 0.046056618647183814

},

"harness|hendrycksTest-high_school_european_history|5": {

"acc": 0.7696969696969697,

"acc_stderr": 0.0328766675860349,

"acc_norm": 0.7696969696969697,

"acc_norm_stderr": 0.0328766675860349

},

"harness|hendrycksTest-high_school_geography|5": {

"acc": 0.803030303030303,

"acc_stderr": 0.028335609732463362,

"acc_norm": 0.803030303030303,

"acc_norm_stderr": 0.028335609732463362

},

"harness|hendrycksTest-high_school_government_and_politics|5": {

"acc": 0.9015544041450777,

"acc_stderr": 0.021500249576033456,

"acc_norm": 0.9015544041450777,

"acc_norm_stderr": 0.021500249576033456

},

"harness|hendrycksTest-high_school_macroeconomics|5": {

"acc": 0.6717948717948717,

"acc_stderr": 0.023807633198657266,

"acc_norm": 0.6717948717948717,

"acc_norm_stderr": 0.023807633198657266

},

"harness|hendrycksTest-high_school_mathematics|5": {

"acc": 0.34444444444444444,

"acc_stderr": 0.028972648884844267,

"acc_norm": 0.34444444444444444,

"acc_norm_stderr": 0.028972648884844267

},

"harness|hendrycksTest-high_school_microeconomics|5": {

"acc": 0.6932773109243697,

"acc_stderr": 0.02995382389188704,

"acc_norm": 0.6932773109243697,

"acc_norm_stderr": 0.02995382389188704

},

"harness|hendrycksTest-high_school_physics|5": {

"acc": 0.33774834437086093,

"acc_stderr": 0.03861557546255169,

"acc_norm": 0.33774834437086093,

"acc_norm_stderr": 0.03861557546255169

},

"harness|hendrycksTest-high_school_psychology|5": {

"acc": 0.8605504587155963,

"acc_stderr": 0.014852421490033053,

"acc_norm": 0.8605504587155963,

"acc_norm_stderr": 0.014852421490033053

},

"harness|hendrycksTest-high_school_statistics|5": {

"acc": 0.5231481481481481,

"acc_stderr": 0.03406315360711507,

"acc_norm": 0.5231481481481481,

"acc_norm_stderr": 0.03406315360711507

},

"harness|hendrycksTest-high_school_us_history|5": {

"acc": 0.8284313725490197,

"acc_stderr": 0.026460569561240634,

"acc_norm": 0.8284313725490197,

"acc_norm_stderr": 0.026460569561240634

},

"harness|hendrycksTest-high_school_world_history|5": {

"acc": 0.810126582278481,

"acc_stderr": 0.025530100460233494,

"acc_norm": 0.810126582278481,

"acc_norm_stderr": 0.025530100460233494

},

"harness|hendrycksTest-human_aging|5": {

"acc": 0.6905829596412556,

"acc_stderr": 0.03102441174057221,

"acc_norm": 0.6905829596412556,

"acc_norm_stderr": 0.03102441174057221

},

"harness|hendrycksTest-human_sexuality|5": {

"acc": 0.8015267175572519,

"acc_stderr": 0.03498149385462472,

"acc_norm": 0.8015267175572519,

"acc_norm_stderr": 0.03498149385462472

},

"harness|hendrycksTest-international_law|5": {

"acc": 0.7933884297520661,

"acc_stderr": 0.03695980128098824,

"acc_norm": 0.7933884297520661,

"acc_norm_stderr": 0.03695980128098824

},

"harness|hendrycksTest-jurisprudence|5": {

"acc": 0.7870370370370371,

"acc_stderr": 0.039578354719809805,

"acc_norm": 0.7870370370370371,

"acc_norm_stderr": 0.039578354719809805

},

"harness|hendrycksTest-logical_fallacies|5": {

"acc": 0.7730061349693251,

"acc_stderr": 0.03291099578615769,

"acc_norm": 0.7730061349693251,

"acc_norm_stderr": 0.03291099578615769

},

"harness|hendrycksTest-machine_learning|5": {

"acc": 0.44642857142857145,

"acc_stderr": 0.04718471485219588,

"acc_norm": 0.44642857142857145,

"acc_norm_stderr": 0.04718471485219588

},

"harness|hendrycksTest-management|5": {

"acc": 0.7864077669902912,

"acc_stderr": 0.040580420156460344,

"acc_norm": 0.7864077669902912,

"acc_norm_stderr": 0.040580420156460344

},

"harness|hendrycksTest-marketing|5": {

"acc": 0.8717948717948718,

"acc_stderr": 0.02190190511507333,

"acc_norm": 0.8717948717948718,

"acc_norm_stderr": 0.02190190511507333

},

"harness|hendrycksTest-medical_genetics|5": {

"acc": 0.71,

"acc_stderr": 0.045604802157206845,

"acc_norm": 0.71,

"acc_norm_stderr": 0.045604802157206845

},

"harness|hendrycksTest-miscellaneous|5": {

"acc": 0.8288633461047255,

"acc_stderr": 0.013468201614066302,

"acc_norm": 0.8288633461047255,

"acc_norm_stderr": 0.013468201614066302

},

"harness|hendrycksTest-moral_disputes|5": {

"acc": 0.7514450867052023,

"acc_stderr": 0.023267528432100174,

"acc_norm": 0.7514450867052023,

"acc_norm_stderr": 0.023267528432100174

},

"harness|hendrycksTest-moral_scenarios|5": {

"acc": 0.39106145251396646,

"acc_stderr": 0.01632076376380838,

"acc_norm": 0.39106145251396646,

"acc_norm_stderr": 0.01632076376380838

},

"harness|hendrycksTest-nutrition|5": {

"acc": 0.738562091503268,

"acc_stderr": 0.025160998214292452,

"acc_norm": 0.738562091503268,

"acc_norm_stderr": 0.025160998214292452

},

"harness|hendrycksTest-philosophy|5": {

"acc": 0.7106109324758842,

"acc_stderr": 0.025755865922632945,

"acc_norm": 0.7106109324758842,

"acc_norm_stderr": 0.025755865922632945

},

"harness|hendrycksTest-prehistory|5": {

"acc": 0.7561728395061729,

"acc_stderr": 0.023891879541959607,

"acc_norm": 0.7561728395061729,

"acc_norm_stderr": 0.023891879541959607

},

"harness|hendrycksTest-professional_accounting|5": {

"acc": 0.4929078014184397,

"acc_stderr": 0.02982449855912901,

"acc_norm": 0.4929078014184397,

"acc_norm_stderr": 0.02982449855912901

},

"harness|hendrycksTest-professional_law|5": {

"acc": 0.46936114732724904,

"acc_stderr": 0.012746237711716634,

"acc_norm": 0.46936114732724904,

"acc_norm_stderr": 0.012746237711716634

},

"harness|hendrycksTest-professional_medicine|5": {

"acc": 0.6911764705882353,

"acc_stderr": 0.02806499816704009,

"acc_norm": 0.6911764705882353,

"acc_norm_stderr": 0.02806499816704009

},

"harness|hendrycksTest-professional_psychology|5": {

"acc": 0.684640522875817,

"acc_stderr": 0.01879808628488689,

"acc_norm": 0.684640522875817,

"acc_norm_stderr": 0.01879808628488689

},

"harness|hendrycksTest-public_relations|5": {

"acc": 0.6727272727272727,

"acc_stderr": 0.0449429086625209,

"acc_norm": 0.6727272727272727,

"acc_norm_stderr": 0.0449429086625209

},

"harness|hendrycksTest-security_studies|5": {

"acc": 0.7306122448979592,

"acc_stderr": 0.02840125202902294,

"acc_norm": 0.7306122448979592,

"acc_norm_stderr": 0.02840125202902294

},

"harness|hendrycksTest-sociology|5": {

"acc": 0.8606965174129353,

"acc_stderr": 0.024484487162913973,

"acc_norm": 0.8606965174129353,

"acc_norm_stderr": 0.024484487162913973

},

"harness|hendrycksTest-us_foreign_policy|5": {

"acc": 0.87,

"acc_stderr": 0.033799766898963086,

"acc_norm": 0.87,

"acc_norm_stderr": 0.033799766898963086

},

"harness|hendrycksTest-virology|5": {

"acc": 0.5602409638554217,

"acc_stderr": 0.03864139923699122,

"acc_norm": 0.5602409638554217,

"acc_norm_stderr": 0.03864139923699122

},

"harness|hendrycksTest-world_religions|5": {

"acc": 0.8245614035087719,

"acc_stderr": 0.029170885500727665,

"acc_norm": 0.8245614035087719,

"acc_norm_stderr": 0.029170885500727665

},

"harness|truthfulqa:mc|0": {

"mc1": 0.4283965728274174,

"mc1_stderr": 0.017323088597314754,

"mc2": 0.5948960816711865,

"mc2_stderr": 0.015146045918500203

},

"harness|winogrande|5": {

"acc": 0.8066298342541437,

"acc_stderr": 0.011099796645920522

},

"harness|gsm8k|5": {

"acc": 0.7187263078089462,

"acc_stderr": 0.012384789310940244

}

}

```

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

blindsubmissions/M2CRB | ---

dataset_info:

features:

- name: identifier

dtype: string

- name: parameters

dtype: string

- name: return_statement

dtype: string

- name: docstring

dtype: string

- name: docstring_summary

dtype: string

- name: function

dtype: string

- name: function_tokens

sequence: string

- name: start_point

sequence: int64

- name: end_point

sequence: int64

- name: argument_list

dtype: 'null'

- name: language

dtype: string

- name: docstring_language

dtype: string

- name: docstring_language_predictions

dtype: string

- name: is_langid_reliable

dtype: string

- name: is_langid_extra_reliable

dtype: bool

- name: type

dtype: string

splits:

- name: test

num_bytes: 15742687

num_examples: 7743

download_size: 5530793

dataset_size: 15742687

license: other

task_categories:

- translation

- summarization

language:

- pt

- de

- fr

- es

tags:

- code

pretty_name: m

size_categories:

- 1K<n<10K

---

# M2CRB

## Dataset Summary

M2CRB contains pairs of text and code data with multiple natural and programming language pairs. Namely: Spanish, Portuguese, German, and French, each paired with code snippets for: Python, Java, and JavaScript. The data is curated via an automated filtering pipeline from source files within [The Stack](https://huggingface.co/datasets/bigcode/the-stack) followed by human verification to ensure accurate language classification I.e., humans were asked to filter out data for which natural language did not correspond to a target language.

## Supported Tasks

M2CRB is a multilingual evaluation dataset for code-to-text and/or text-to-code models, both on information retrieval or conditional generation evaluations.

## Currently Supported Languages

```python

NATURAL_LANGUAGE_SET = {"es", "fr", "pt", "de"}

PROGRAMMING_LANGUAGE_SET = {"python", "java", "javascript"}

```

## How to get the data with a given language combination

```python

from datasets import load_dataset

def get_dataset(prog_lang, nat_lang):

test_data = load_dataset("blindsubmissions/M2CRB")

test_data = test_data.filter(

lambda example: example["docstring_language"] == nat_lang

and example["language"] == prog_lang

)

return test_data

```

## Dataset Structure

### Data Instances

Each data instance corresponds to function/methods occurring in licensed files that compose The Stack. That is, files with permissive licences collected from GitHub.

### Relevant Data Fields

- identifier (string): Function/method name.

- parameters (string): Function parameters.

- return_statement (string): Return statement if found during parsing.

- docstring (string): Complete docstring content.

- docstring_summary (string): Summary/processed docstring dropping args and return statements.

- function (string): Actual function/method content.

- argument_list (null): List of arguments.

- language (string): Programming language of the function.

- docstring_language (string): Natural language of the docstring.

- type (string): Return type if found during parsing.

## Summary of data curation pipeline

- Filtering out repositories that appear in [CodeSearchNet](https://huggingface.co/datasets/code_search_net).

- Filtering the files that belong to the programming languages of interest.

- Pre-filtering the files that likely contain text in the natural languages of interest.

- AST parsing with [Tree-sitter](\url{https://tree-sitter.github.io/tree-sitter/).

- Perform language identification of docstrings in the resulting set of functions/methods.

- Perform human verification/validation of the underlying language of docstrings.

## Social Impact of the dataset

M2CRB is released with the aim to increase the coverage of the NLP for code research community by providing data from scarce combinations of languages. We expect this data to help enable more accurate information retrieval systems and text-to-code or code-to-text summarization on languages other than English.

As a subset of The Stack, this dataset inherits de-risking efforts carried out when that dataset was built, though we highlight risks exist and malicious use of the data could exist such as, for instance, to aid on creation of malicious code. We highlight however that this is a risk shared by any code dataset made openly available.

Moreover, we remark that while unlikely due to human filtering, the data may contain harmful or offensive language, which could be learned by the models.

## Discussion of Biases

The data is collected from GitHub and naturally occurring text on that platform. As a consequence, certain language combinations are more or less likely to contain well documented code and, as such, resulting data will not be uniformly represented in terms of their natural and programing languages.

## Known limitations

While we cover 16 scarce combinations of programming and natural languages, our evaluation dataset can be expanded to further improve its coverage.

Moreover, we use text naturally occurring as comments or docstrings as opposed to human annotators. As such, resulting data will have high variance in terms of quality and depending on practices of sub-communities of software developers. However, we remark that the task our evaluation dataset defines is reflective of what searching on a real codebase would look like.

Finally, we note that some imbalance on data is observed due to the same reason since certain language combinations are more or less likely to contain well documented code.

## Maintenance plan:

The data will be kept up to date by following The Stack releases. We should rerun our pipeline for every new release and add non-overlapping new content to both training and testing partitions of our data.

This is so that we carry over opt-out updates and include fresh repos.

## Update plan:

- Short term:

- Cover all 6 programming languages from CodeSearchNet.

- Long-term

- Add an extra test set containing human-generated text/code pairs so the gap between in-the-wild and controlled performances can be measured.

- Include extra natural languages.

## Licensing Information

M2CRB is a subset filtered and pre-processed from [The Stack](https://huggingface.co/datasets/bigcode/the-stack), a collection of source code from repositories with various licenses. Any use of all or part of the code gathered in M2CRB must abide by the terms of the original licenses. |

systemk/c4-toxic | ---

dataset_info:

features:

- name: text

dtype: string

- name: toxic

dtype: bool

- name: hate

dtype: bool

- name: sexual

dtype: bool

- name: harassment

dtype: bool

- name: violence

dtype: bool

- name: self_harm

dtype: bool

splits:

- name: train

num_bytes: 113384085

num_examples: 11983

download_size: 59413174

dataset_size: 113384085

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

distinsion/images | ---

dataset_info:

features:

- name: id

dtype: string

- name: author

dtype: string

- name: width

dtype: int64

- name: height

dtype: int64

- name: url

dtype: string

- name: download_url

dtype: string

- name: text_field_for_argilla

dtype: string

splits:

- name: train

num_bytes: 225036

num_examples: 1093

download_size: 67433

dataset_size: 225036

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

zxcej/AICE_dataset | ---

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype:

class_label:

names:

'0': SMT

'1': angiodysplasia

'2': bleeding

'3': diverticulum

'4': erosion

'5': erythema

'6': foreign body

'7': lymph follicle

'8': lymphangiectasia

'9': no_class

'10': polyp-like

'11': stenosis

splits:

- name: train

num_bytes: 993869095.1352087

num_examples: 14784

- name: test

num_bytes: 247932424.8427913

num_examples: 3697

download_size: 1242057657

dataset_size: 1241801519.978

---

# Dataset Card for "AICE_dataset"

[AICE project on Kaggle](https://www.kaggle.com/datasets/capsuleyolo/kyucapsule)

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Isora/Embeddings | ---

license: other

---

|

rokset3/slim_pajama_chunk_2 | ---

dataset_info:

features:

- name: text

dtype: string

- name: meta

dtype: string

- name: __index_level_0__

dtype: int64

splits:

- name: train

num_bytes: 258571513240

num_examples: 58982360

download_size: 150404827683

dataset_size: 258571513240

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "slim_pajama_chunk_2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

DehydratedWater42/semantic_relations_extraction | ---

language:

- en

size_categories:

- 1K<n<10K

licence:

- license:unknown

task_categories:

- summarization

- feature-extraction

- text-generation

- text2text-generation

tags:

- math

- semantic

- extraction

- graph

- relations

- science

- synthetic

pretty_name: SemanticRelationsExtraction

configs:

- config_name: core_extracted_relations

data_files:

- split: train

path:

- core_extracted_relations.csv

default: true

- config_name: extracted_relations

data_files:

- split: train

path:

- extracted_relations.csv

- config_name: llama2_prompts

data_files:

- split: train

path:

- llama2_prompts.csv

---

# Dataset Card for "Semantic Relations Extraction"

## Dataset Description

### Repository

The "Semantic Relations Extraction" dataset is hosted on the Hugging Face platform, and was created with code from this [GitHub repository](https://github.com/DehydratedWater/qlora_semantic_extraction).

### Purpose

The "Semantic Relations Extraction" dataset was created for the purpose of fine-tuning smaller LLama2 (7B) models to speed up and reduce the costs of extracting semantic relations between entities in texts. This repository is part of a larger project aimed at creating a low-cost system for preprocessing documents in order to build a knowledge graph used for question answering and automated alerting.

### Data Sources

The dataset was built using the following source:

- [`datasets/scientific_papers`](https://huggingface.co/datasets/scientific_papers)

### Files in the Dataset

The repository contains 4 files:

1. `extracted_relations.csv` -> A dataset of generated relations between entities containing columns for [`summary`, `article part`, `output json`, `database`, `abstract`, `list_of_contents`].

2. `core_extracted_relations.csv` -> The same dataset but without the original abstracts and lists_of_contents. It contains columns for [`summary`, `article part`, `output json`].

3. `llama2_prompts.csv` -> Multiple variants of the prompt with a response that can be used for fine-tuning the model. It is created by concatenating data in `core_extracted_relations.csv`.

4. `synthetic_data_12_02_24-full.dump` -> A backup of the whole PostgreSQL database used during data generation. It is the source for all the other files, exported by the `airflow` user in custom format, with compression level 6 and UTF-8 encoding.

### Database Schema

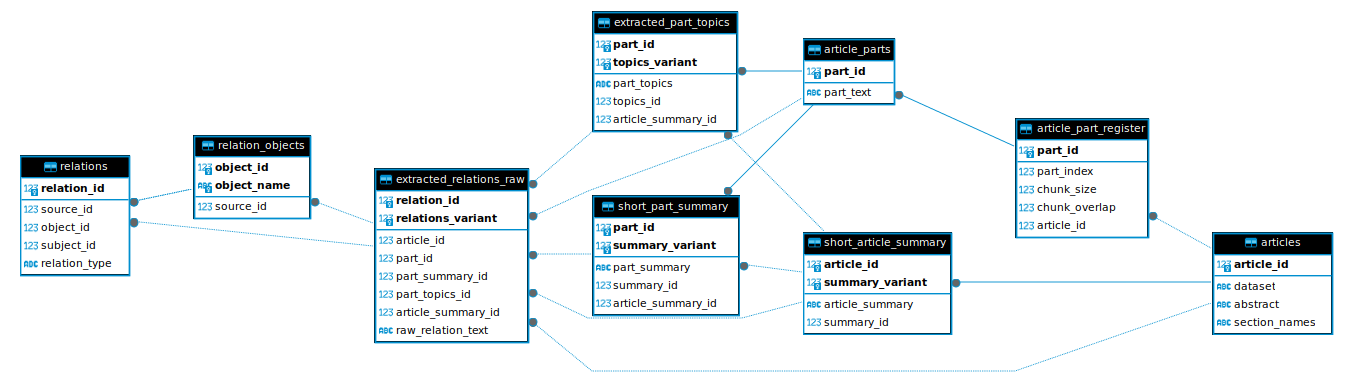

The dataset includes a database schema illustration, which provides an overview of how the data is organized within the database.

### GitHub Repository