datasetId stringlengths 2 117 | card stringlengths 19 1.01M |

|---|---|

maknee/minigpt4-13b-ggml | ---

license: apache-2.0

tags:

- minigpt4

- ggml

language:

- en

- bg

- ca

- cs

- da

- de

- es

- fr

- hr

- hu

- it

- nl

- pl

- pt

- ro

- ru

- sl

- sr

- sv

- uk

---

These are quantized ggml binary files for minigpt4 13B model.

These files can be used in conjunction with [vicuna v0 ggml models](https://huggingface.co/datasets/maknee/ggml-vicuna-v0-quantized) to get minigpt4 working.

Not all implementations were tested. If there are any issues, use f16.

|

DBQ/Net.a.Porter.Product.prices.Portugal | ---

annotations_creators:

- other

language_creators:

- other

language:

- en

license:

- unknown

multilinguality:

- monolingual

source_datasets:

- original

task_categories:

- text-classification

- image-classification

- feature-extraction

- image-segmentation

- image-to-image

- image-to-text

- object-detection

- summarization

- zero-shot-image-classification

pretty_name: Portugal - Net-a-Porter - Product-level price list

tags:

- webscraping

- ecommerce

- Net

- fashion

- fashion product

- image

- fashion image

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: Net-a-Porter

dtype: string

- name: '2023-11-08'

dtype: string

- name: PRT

dtype: string

- name: EUR

dtype: string

- name: SAINT LAURENT

dtype: string

- name: BAGS

dtype: string

- name: SHOULDER BAGS

dtype: string

- name: CROSS BODY

dtype: string

- name: '33258524072235985'

dtype: int64

- name: Loulou Toy quilted leather shoulder bag

dtype: string

- name: https://www.net-a-porter.com/pt/en/shop/product/saint-laurent/bags/cross-body/loulou-toy-quilted-leather-shoulder-bag/33258524072235985

dtype: string

- name: https://www.net-a-porter.com/variants/images/33258524072235985/ou/w1000.jpg

dtype: string

- name: '1490.00'

dtype: float64

- name: 1490.00.1

dtype: float64

- name: 1490.00.2

dtype: float64

- name: 1490.00.3

dtype: float64

- name: '0'

dtype: int64

splits:

- name: train

num_bytes: 18057389

num_examples: 44280

download_size: 5682167

dataset_size: 18057389

---

# Net-a-Porter web scraped data

## About the website

Net-a-Porter operates within the thriving **Ecommerce industry** across the EMEA region, with a strong foothold in the market of **Portugal** particularly. The brand is renowned for its luxury fashion offerings online, making it a key player in the digital retail landscape. They cater to tastes ranging from high street to haute couture, offering a wide variety of products. A data analysis has been conducted on Net-a-Porters **Ecommerce product-list page (PLP)** in Portugal. These PLP data sets offer crucial insights on product performance, customer preferences, and market trends, which are critical towards shaping the brand’s strategies in the competitive online fashion space.

## Link to **dataset**

[Portugal - Net-a-Porter - Product-level price list dataset](https://www.databoutique.com/buy-data-page/Net-a-Porter%20Product-prices%20Portugal/r/recA0xr8F85lVPMgr)

|

Norod78/hewiki-20220901-articles-dataset | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 1458031124

num_examples: 4325836

download_size: 745537027

dataset_size: 1458031124

annotations_creators:

- other

language_creators:

- other

language:

- he

multilinguality:

- monolingual

pretty_name: hewiki Corpus from hewiki-20220901-pages-articles-multistream.xml.bz2

size_categories:

- 100M<n<1B

source_datasets:

- extended|wikipedia

tags:

- he-wiki

task_categories:

- text-generation

- fill-mask

task_ids:

- language-modeling

- masked-language-modeling

---

# Dataset Card for "hewiki-20220901-articles-dataset" |

TrainingDataPro/attacks-with-2d-printed-masks-of-indian-people | ---

license: cc-by-nc-nd-4.0

task_categories:

- video-classification

language:

- en

tags:

- finance

- legal

- code

---

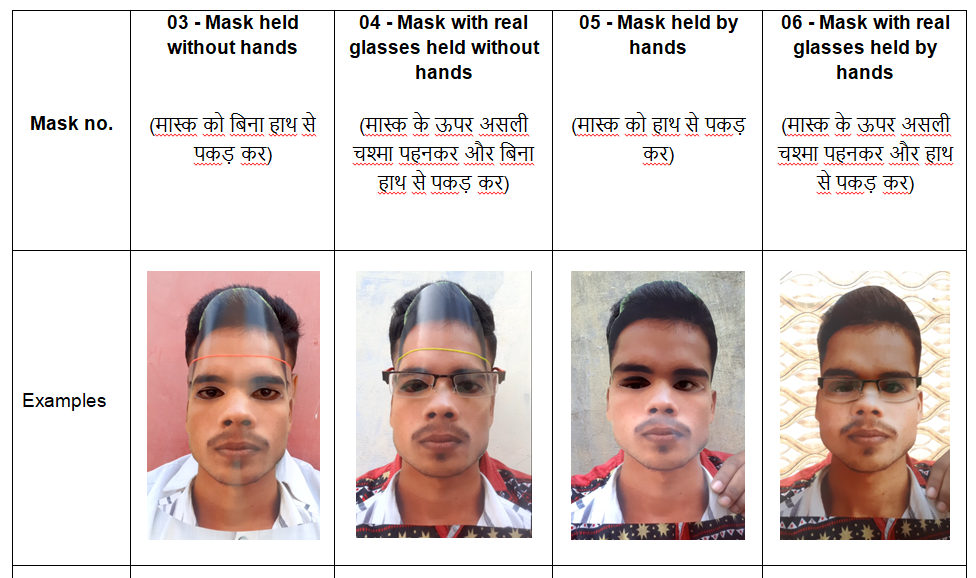

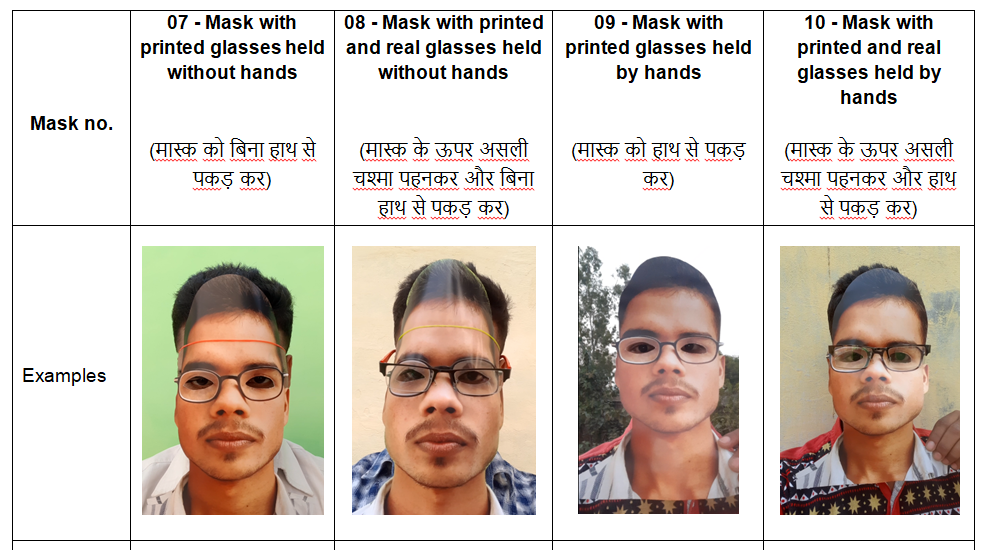

# Attacks with 2D Printed Masks of Indian People

The dataset consists of videos of individuals wearing printed 2D masks of different kinds and directly looking at the camera. Videos are filmed in different lightning conditions and in different places (*indoors, outdoors*). Each video in the dataset has an approximate duration of 3-4 seconds.

### Types of videos in the dataset:

Inside the **"attacks"** folder there are 10 sub-folders and corresponding files inside:

- **1**- Real videos without glasses

- **2** - Real videos with glasses

- **3** - Mask held without hands

- **4** - Mask with real glasses held without hands

- **5** - Mask held by hands

- **6** - Mask with real glasses held by hands

- **7** - Mask with printed glasses held without hands

- **8** - Mask with printed and real glasses held without hands

- **9** - Mask with printed glasses held by hands

- **10** - Mask with printed and real glasses held by hands

The dataset serves as a valuable resource for computer vision, anti-spoofing tasks, video analysis, and security systems. It allows for the development of algorithms and models that can effectively detect attacks perpetrated by individuals wearing printed 2D masks.

Studying the dataset may lead to the development of improved security systems, surveillance technologies, and solutions to mitigate the risks associated with masked individuals carrying out attacks.

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=attacks-with-2d-printed-masks-of-indian-people) to discuss your requirements, learn about the price and buy the dataset.

# Content

### The folder **"attacks"** includes 10 folders:

- corresponding to each type of the video in the sample

- containing of 21 videos of people

### File with the extension .csv

- **type_1**: link to the real video without glasses,

- **type_2**: link to the real video with glasses,

- **type_3,... type_10**: links to the videos with different types of attacks, identified earlier

# Attacks might be collected in accordance with your requirements.

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=attacks-with-2d-printed-masks-of-indian-people) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** |

kaitchup/opus-Danish-to-English | ---

configs:

- config_name: default

data_files:

- split: validation

path: data/validation-*

- split: train

path: data/train-*

dataset_info:

features:

- name: text

dtype: string

splits:

- name: validation

num_bytes: 302616

num_examples: 2000

- name: train

num_bytes: 95961400

num_examples: 946341

download_size: 70298567

dataset_size: 96264016

---

# Dataset Card for "opus-da-en"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

inkoziev/jokes_dialogues | ---

license: cc-by-nc-4.0

task_categories:

- conversational

language:

- ru

---

# Диалоги из анекдотов и шуток

Датасет содержит результат парсинга анекдотов, наскрапленных с разных сайтов.

## Формат

Каждый сэмпл содержит четыре поля:

"context" - контекст диалога, включая все недиалоговые вставки. Обратите внимание, что контекст содержит как предшествующие реплики, так и прочий сопутствующий текст, так

как он определяет общий сеттинг, необходимый для генерации реплики. Из реплики удалены маркеры косвенной речи.

"utterance" - диалоговая реплика.

"hash" - хэш-код исходного полного текста для связывания сэмплов.

"reply_num" - порядковый номер диалоговой реплики. Часто последняя реплика является "пайнчалайном", в ней сконцентрирована суть шутки.

Один исходный текст может дать несколько сэмплов, если в нем было много реплик. |

huangyt/FINETUNE3 | ---

license: openrail

---

# 📔 **DATASET**

| **Dataset** | Class | Number of Questions |

| ------- | ----------------------------------------------------------------- | ------------------------ |

| **FLAN_CoT(zs)** | Reasoning 、 MATH 、 ScienceQA 、 Commonsense | 8000 |

| **Prm800k** | Reasoning 、 MATH | 6713 |

| **ScienceQA** | ScienceQA | 5177 |

| **SciBench** | ScienceQA | 695 |

| **ReClor** | Reasoning | 1624 |

| **TheoremQA** | Commonsense 、 MATH 、 ScienceQA | 800 |

| **OpenBookQA** | Text_Understanding 、 Reasoning 、 Commonsense 、 ScienceQA | 5957 |

| **ARB** | Reasoning 、 MATH 、 ScienceQA 、 Commonsense 、 Text_Understanding | 605 |

| **Openassistant-guanaco** | Commonsense 、 Text_Understanding 、 Reasoning | 802 |

| **SAT** | Text_Understanding 、 Reasoning 、 MATH | 426 |

| **GRE、GMAT** | Reasoning 、 MATH | 254 |

| **AMC、AIME** | Reasoning 、 MATH | 1000 |

| **LSAT** | Reasoning 、 LAW | 1009 |

# 📌 **Methon**

## *Improving the dataset*

Based on the content of the "Textbooks are all you need" paper, We want to try fine-tuning using advanced questions.

## *Dataset Format Definition*

Use "instruction、input、output" tend to lean towards guided datasets. In this format, each sample includes an instruction, an input, and an expected output. The instruction provides guidance on how to process the input to generate the output. This format of dataset is often used to train models to perform specific tasks, as they explicitly indicate the operations the model should perform.

```

{

"input": "",

"output": "",

"instruction": ""

}

```

- ### [FLAN_V2 COT(ZS)](https://huggingface.co/datasets/conceptofmind/cot_submix_original/tree/main)

We only extract the 'zs_opt' from COT and categorize each task.

- ### SAT、GRE、GMAT、AMC、AIME、LSAT

We will configure the input for datasets such as GRE, GMAT, SAT etc. as "Please read the question and options carefully, then select the most appropriate answer and provide the corresponding explanation." Meanwhile, for the math input, it will be set as "Please provide the answer along with a corresponding explanation based on the given question." Moreover, the questions will be arranged in ascending order of difficulty levels. This is done because, according to the ORCA paper, they started training the model using GPT-3.5 and later transitioned to GPT-4. To avoid the student model from acquiring knowledge beyond its scope and thereby delivering suboptimal results, a progressive learning strategy was utilized. This approach was found to be effective, therefore, in datasets like AMC, AIME which have various levels of difficulty, I have arranged them in a way that embodies this gradual, progressive learning technique.

Furthermore, their question and options are combined to form the instruction, and the label and solution are merged to become the output.

Lastly, for the LSAT dataset, since it doesn't involve step-by-step processes, the passage is transformed into instruction, while the combination of the question and options serves as the input, and the label represents the output.

- ### [OTHER](https://github.com/arielnlee/Platypus/tree/main/data_pipeline)

Prm800k, ScienceQA, SciBench, ReClor, TheoremQA, OpenBookQA, ARB, and OpenAssistant-Guanaco datasets adopt the same format as Platypus.

## *Sampling Algorithms*

Since the flan_v2 cot dataset includes tasks like:

- cot_esnli

- cot_strategyqa

- cot_qasc

- stream_qed

- cot_gsm8k

- cot_ecqa

- cot_creak

- stream_aqua

To ensure this dataset contains diverse high-quality data, we first select zs_opt questions. Then, we filter out questions with output lengths exceeding the average length. This step aims to help the model learn richer reasoning steps. After that, we perform stratified sampling. Initially, we attempted stratified sampling before the length-based filtering, but we found that this approach resulted in varying sample sizes, making it challenging to reproduce. Thus, we decided to first filter by length and then perform stratified sampling.

```py

import json

import random

with open("cot_ORIGINAL.json", "r") as f:

abc = json.load(f)

# --- part1 ---

zsopt_data = [] # "zs_opt"

for i in abc :

if i["template_type"] == "zs_opt":

zsopt_data.append(i)

# --- part2 ---

output_lengths = [len(i["targets"]) for i in zsopt_data]

average_length = sum(output_lengths) / len(output_lengths) # average length

filtered_data = []

for a in zsopt_data:

if len(a["targets"]) >= average_length:

filtered_data.append(a) # output length need to >= average_length

class_counts = {} # Count the number of samples for each class

for a in filtered_data:

task_name = a["task_name"]

if task_name in class_counts:

class_counts[task_name] += 1

else:

class_counts[task_name] = 1

# --- part3 ---

total_samples = 8000 # we plan to select a total of 8000 samples

sample_ratios = {}

for task_name, count in class_counts.items():

sample_ratios[task_name] = count / len(filtered_data)

sample_sizes = {}

for task_name, sample_ratio in sample_ratios.items():

sample_sizes[task_name] = round(sample_ratio * total_samples)

stratified_samples = {} # Perform stratified sampling for each class

for task_name, sample_size in sample_sizes.items():

class_samples = []

for data in filtered_data:

if data["task_name"] == task_name:

class_samples.append(data)

selected_samples = random.sample(class_samples, sample_size)

stratified_samples[task_name] = selected_samples

final_samples = [] # Convert to the specified format

for task_name, samples in stratified_samples.items():

for sample in samples:

final_samples.append(

{

"input": "", # use ""

"output": sample["targets"], # output

"instruction": sample["inputs"], # question

}

)

with open("cot_change.json", "w") as f:

json.dump(final_samples, f, indent=2)

```

LSAT arranged according to LEVEL

```py

import json

with open("math-json.json", "r", encoding="utf-8") as f:

data_list = json.load(f)

sorted_data = sorted(data_list, key=lambda x: x["other"]["level"])

output_data = [

{

"input": "Please provide the answer along with a corresponding explanation based on the given question.",

"output": f"{item['answer']},solution:{item['other']['solution']}",

"instruction": item["question"],

}

for item in sorted_data

]

with open("math_convert.json", "w", encoding="utf-8") as output_file:

json.dump(output_data, output_file, ensure_ascii=False, indent=4)

``` |

EgilKarlsen/Spirit_BERT_FT | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: '0'

dtype: float32

- name: '1'

dtype: float32

- name: '2'

dtype: float32

- name: '3'

dtype: float32

- name: '4'

dtype: float32

- name: '5'

dtype: float32

- name: '6'

dtype: float32

- name: '7'

dtype: float32

- name: '8'

dtype: float32

- name: '9'

dtype: float32

- name: '10'

dtype: float32

- name: '11'

dtype: float32

- name: '12'

dtype: float32

- name: '13'

dtype: float32

- name: '14'

dtype: float32

- name: '15'

dtype: float32

- name: '16'

dtype: float32

- name: '17'

dtype: float32

- name: '18'

dtype: float32

- name: '19'

dtype: float32

- name: '20'

dtype: float32

- name: '21'

dtype: float32

- name: '22'

dtype: float32

- name: '23'

dtype: float32

- name: '24'

dtype: float32

- name: '25'

dtype: float32

- name: '26'

dtype: float32

- name: '27'

dtype: float32

- name: '28'

dtype: float32

- name: '29'

dtype: float32

- name: '30'

dtype: float32

- name: '31'

dtype: float32

- name: '32'

dtype: float32

- name: '33'

dtype: float32

- name: '34'

dtype: float32

- name: '35'

dtype: float32

- name: '36'

dtype: float32

- name: '37'

dtype: float32

- name: '38'

dtype: float32

- name: '39'

dtype: float32

- name: '40'

dtype: float32

- name: '41'

dtype: float32

- name: '42'

dtype: float32

- name: '43'

dtype: float32

- name: '44'

dtype: float32

- name: '45'

dtype: float32

- name: '46'

dtype: float32

- name: '47'

dtype: float32

- name: '48'

dtype: float32

- name: '49'

dtype: float32

- name: '50'

dtype: float32

- name: '51'

dtype: float32

- name: '52'

dtype: float32

- name: '53'

dtype: float32

- name: '54'

dtype: float32

- name: '55'

dtype: float32

- name: '56'

dtype: float32

- name: '57'

dtype: float32

- name: '58'

dtype: float32

- name: '59'

dtype: float32

- name: '60'

dtype: float32

- name: '61'

dtype: float32

- name: '62'

dtype: float32

- name: '63'

dtype: float32

- name: '64'

dtype: float32

- name: '65'

dtype: float32

- name: '66'

dtype: float32

- name: '67'

dtype: float32

- name: '68'

dtype: float32

- name: '69'

dtype: float32

- name: '70'

dtype: float32

- name: '71'

dtype: float32

- name: '72'

dtype: float32

- name: '73'

dtype: float32

- name: '74'

dtype: float32

- name: '75'

dtype: float32

- name: '76'

dtype: float32

- name: '77'

dtype: float32

- name: '78'

dtype: float32

- name: '79'

dtype: float32

- name: '80'

dtype: float32

- name: '81'

dtype: float32

- name: '82'

dtype: float32

- name: '83'

dtype: float32

- name: '84'

dtype: float32

- name: '85'

dtype: float32

- name: '86'

dtype: float32

- name: '87'

dtype: float32

- name: '88'

dtype: float32

- name: '89'

dtype: float32

- name: '90'

dtype: float32

- name: '91'

dtype: float32

- name: '92'

dtype: float32

- name: '93'

dtype: float32

- name: '94'

dtype: float32

- name: '95'

dtype: float32

- name: '96'

dtype: float32

- name: '97'

dtype: float32

- name: '98'

dtype: float32

- name: '99'

dtype: float32

- name: '100'

dtype: float32

- name: '101'

dtype: float32

- name: '102'

dtype: float32

- name: '103'

dtype: float32

- name: '104'

dtype: float32

- name: '105'

dtype: float32

- name: '106'

dtype: float32

- name: '107'

dtype: float32

- name: '108'

dtype: float32

- name: '109'

dtype: float32

- name: '110'

dtype: float32

- name: '111'

dtype: float32

- name: '112'

dtype: float32

- name: '113'

dtype: float32

- name: '114'

dtype: float32

- name: '115'

dtype: float32

- name: '116'

dtype: float32

- name: '117'

dtype: float32

- name: '118'

dtype: float32

- name: '119'

dtype: float32

- name: '120'

dtype: float32

- name: '121'

dtype: float32

- name: '122'

dtype: float32

- name: '123'

dtype: float32

- name: '124'

dtype: float32

- name: '125'

dtype: float32

- name: '126'

dtype: float32

- name: '127'

dtype: float32

- name: '128'

dtype: float32

- name: '129'

dtype: float32

- name: '130'

dtype: float32

- name: '131'

dtype: float32

- name: '132'

dtype: float32

- name: '133'

dtype: float32

- name: '134'

dtype: float32

- name: '135'

dtype: float32

- name: '136'

dtype: float32

- name: '137'

dtype: float32

- name: '138'

dtype: float32

- name: '139'

dtype: float32

- name: '140'

dtype: float32

- name: '141'

dtype: float32

- name: '142'

dtype: float32

- name: '143'

dtype: float32

- name: '144'

dtype: float32

- name: '145'

dtype: float32

- name: '146'

dtype: float32

- name: '147'

dtype: float32

- name: '148'

dtype: float32

- name: '149'

dtype: float32

- name: '150'

dtype: float32

- name: '151'

dtype: float32

- name: '152'

dtype: float32

- name: '153'

dtype: float32

- name: '154'

dtype: float32

- name: '155'

dtype: float32

- name: '156'

dtype: float32

- name: '157'

dtype: float32

- name: '158'

dtype: float32

- name: '159'

dtype: float32

- name: '160'

dtype: float32

- name: '161'

dtype: float32

- name: '162'

dtype: float32

- name: '163'

dtype: float32

- name: '164'

dtype: float32

- name: '165'

dtype: float32

- name: '166'

dtype: float32

- name: '167'

dtype: float32

- name: '168'

dtype: float32

- name: '169'

dtype: float32

- name: '170'

dtype: float32

- name: '171'

dtype: float32

- name: '172'

dtype: float32

- name: '173'

dtype: float32

- name: '174'

dtype: float32

- name: '175'

dtype: float32

- name: '176'

dtype: float32

- name: '177'

dtype: float32

- name: '178'

dtype: float32

- name: '179'

dtype: float32

- name: '180'

dtype: float32

- name: '181'

dtype: float32

- name: '182'

dtype: float32

- name: '183'

dtype: float32

- name: '184'

dtype: float32

- name: '185'

dtype: float32

- name: '186'

dtype: float32

- name: '187'

dtype: float32

- name: '188'

dtype: float32

- name: '189'

dtype: float32

- name: '190'

dtype: float32

- name: '191'

dtype: float32

- name: '192'

dtype: float32

- name: '193'

dtype: float32

- name: '194'

dtype: float32

- name: '195'

dtype: float32

- name: '196'

dtype: float32

- name: '197'

dtype: float32

- name: '198'

dtype: float32

- name: '199'

dtype: float32

- name: '200'

dtype: float32

- name: '201'

dtype: float32

- name: '202'

dtype: float32

- name: '203'

dtype: float32

- name: '204'

dtype: float32

- name: '205'

dtype: float32

- name: '206'

dtype: float32

- name: '207'

dtype: float32

- name: '208'

dtype: float32

- name: '209'

dtype: float32

- name: '210'

dtype: float32

- name: '211'

dtype: float32

- name: '212'

dtype: float32

- name: '213'

dtype: float32

- name: '214'

dtype: float32

- name: '215'

dtype: float32

- name: '216'

dtype: float32

- name: '217'

dtype: float32

- name: '218'

dtype: float32

- name: '219'

dtype: float32

- name: '220'

dtype: float32

- name: '221'

dtype: float32

- name: '222'

dtype: float32

- name: '223'

dtype: float32

- name: '224'

dtype: float32

- name: '225'

dtype: float32

- name: '226'

dtype: float32

- name: '227'

dtype: float32

- name: '228'

dtype: float32

- name: '229'

dtype: float32

- name: '230'

dtype: float32

- name: '231'

dtype: float32

- name: '232'

dtype: float32

- name: '233'

dtype: float32

- name: '234'

dtype: float32

- name: '235'

dtype: float32

- name: '236'

dtype: float32

- name: '237'

dtype: float32

- name: '238'

dtype: float32

- name: '239'

dtype: float32

- name: '240'

dtype: float32

- name: '241'

dtype: float32

- name: '242'

dtype: float32

- name: '243'

dtype: float32

- name: '244'

dtype: float32

- name: '245'

dtype: float32

- name: '246'

dtype: float32

- name: '247'

dtype: float32

- name: '248'

dtype: float32

- name: '249'

dtype: float32

- name: '250'

dtype: float32

- name: '251'

dtype: float32

- name: '252'

dtype: float32

- name: '253'

dtype: float32

- name: '254'

dtype: float32

- name: '255'

dtype: float32

- name: '256'

dtype: float32

- name: '257'

dtype: float32

- name: '258'

dtype: float32

- name: '259'

dtype: float32

- name: '260'

dtype: float32

- name: '261'

dtype: float32

- name: '262'

dtype: float32

- name: '263'

dtype: float32

- name: '264'

dtype: float32

- name: '265'

dtype: float32

- name: '266'

dtype: float32

- name: '267'

dtype: float32

- name: '268'

dtype: float32

- name: '269'

dtype: float32

- name: '270'

dtype: float32

- name: '271'

dtype: float32

- name: '272'

dtype: float32

- name: '273'

dtype: float32

- name: '274'

dtype: float32

- name: '275'

dtype: float32

- name: '276'

dtype: float32

- name: '277'

dtype: float32

- name: '278'

dtype: float32

- name: '279'

dtype: float32

- name: '280'

dtype: float32

- name: '281'

dtype: float32

- name: '282'

dtype: float32

- name: '283'

dtype: float32

- name: '284'

dtype: float32

- name: '285'

dtype: float32

- name: '286'

dtype: float32

- name: '287'

dtype: float32

- name: '288'

dtype: float32

- name: '289'

dtype: float32

- name: '290'

dtype: float32

- name: '291'

dtype: float32

- name: '292'

dtype: float32

- name: '293'

dtype: float32

- name: '294'

dtype: float32

- name: '295'

dtype: float32

- name: '296'

dtype: float32

- name: '297'

dtype: float32

- name: '298'

dtype: float32

- name: '299'

dtype: float32

- name: '300'

dtype: float32

- name: '301'

dtype: float32

- name: '302'

dtype: float32

- name: '303'

dtype: float32

- name: '304'

dtype: float32

- name: '305'

dtype: float32

- name: '306'

dtype: float32

- name: '307'

dtype: float32

- name: '308'

dtype: float32

- name: '309'

dtype: float32

- name: '310'

dtype: float32

- name: '311'

dtype: float32

- name: '312'

dtype: float32

- name: '313'

dtype: float32

- name: '314'

dtype: float32

- name: '315'

dtype: float32

- name: '316'

dtype: float32

- name: '317'

dtype: float32

- name: '318'

dtype: float32

- name: '319'

dtype: float32

- name: '320'

dtype: float32

- name: '321'

dtype: float32

- name: '322'

dtype: float32

- name: '323'

dtype: float32

- name: '324'

dtype: float32

- name: '325'

dtype: float32

- name: '326'

dtype: float32

- name: '327'

dtype: float32

- name: '328'

dtype: float32

- name: '329'

dtype: float32

- name: '330'

dtype: float32

- name: '331'

dtype: float32

- name: '332'

dtype: float32

- name: '333'

dtype: float32

- name: '334'

dtype: float32

- name: '335'

dtype: float32

- name: '336'

dtype: float32

- name: '337'

dtype: float32

- name: '338'

dtype: float32

- name: '339'

dtype: float32

- name: '340'

dtype: float32

- name: '341'

dtype: float32

- name: '342'

dtype: float32

- name: '343'

dtype: float32

- name: '344'

dtype: float32

- name: '345'

dtype: float32

- name: '346'

dtype: float32

- name: '347'

dtype: float32

- name: '348'

dtype: float32

- name: '349'

dtype: float32

- name: '350'

dtype: float32

- name: '351'

dtype: float32

- name: '352'

dtype: float32

- name: '353'

dtype: float32

- name: '354'

dtype: float32

- name: '355'

dtype: float32

- name: '356'

dtype: float32

- name: '357'

dtype: float32

- name: '358'

dtype: float32

- name: '359'

dtype: float32

- name: '360'

dtype: float32

- name: '361'

dtype: float32

- name: '362'

dtype: float32

- name: '363'

dtype: float32

- name: '364'

dtype: float32

- name: '365'

dtype: float32

- name: '366'

dtype: float32

- name: '367'

dtype: float32

- name: '368'

dtype: float32

- name: '369'

dtype: float32

- name: '370'

dtype: float32

- name: '371'

dtype: float32

- name: '372'

dtype: float32

- name: '373'

dtype: float32

- name: '374'

dtype: float32

- name: '375'

dtype: float32

- name: '376'

dtype: float32

- name: '377'

dtype: float32

- name: '378'

dtype: float32

- name: '379'

dtype: float32

- name: '380'

dtype: float32

- name: '381'

dtype: float32

- name: '382'

dtype: float32

- name: '383'

dtype: float32

- name: '384'

dtype: float32

- name: '385'

dtype: float32

- name: '386'

dtype: float32

- name: '387'

dtype: float32

- name: '388'

dtype: float32

- name: '389'

dtype: float32

- name: '390'

dtype: float32

- name: '391'

dtype: float32

- name: '392'

dtype: float32

- name: '393'

dtype: float32

- name: '394'

dtype: float32

- name: '395'

dtype: float32

- name: '396'

dtype: float32

- name: '397'

dtype: float32

- name: '398'

dtype: float32

- name: '399'

dtype: float32

- name: '400'

dtype: float32

- name: '401'

dtype: float32

- name: '402'

dtype: float32

- name: '403'

dtype: float32

- name: '404'

dtype: float32

- name: '405'

dtype: float32

- name: '406'

dtype: float32

- name: '407'

dtype: float32

- name: '408'

dtype: float32

- name: '409'

dtype: float32

- name: '410'

dtype: float32

- name: '411'

dtype: float32

- name: '412'

dtype: float32

- name: '413'

dtype: float32

- name: '414'

dtype: float32

- name: '415'

dtype: float32

- name: '416'

dtype: float32

- name: '417'

dtype: float32

- name: '418'

dtype: float32

- name: '419'

dtype: float32

- name: '420'

dtype: float32

- name: '421'

dtype: float32

- name: '422'

dtype: float32

- name: '423'

dtype: float32

- name: '424'

dtype: float32

- name: '425'

dtype: float32

- name: '426'

dtype: float32

- name: '427'

dtype: float32

- name: '428'

dtype: float32

- name: '429'

dtype: float32

- name: '430'

dtype: float32

- name: '431'

dtype: float32

- name: '432'

dtype: float32

- name: '433'

dtype: float32

- name: '434'

dtype: float32

- name: '435'

dtype: float32

- name: '436'

dtype: float32

- name: '437'

dtype: float32

- name: '438'

dtype: float32

- name: '439'

dtype: float32

- name: '440'

dtype: float32

- name: '441'

dtype: float32

- name: '442'

dtype: float32

- name: '443'

dtype: float32

- name: '444'

dtype: float32

- name: '445'

dtype: float32

- name: '446'

dtype: float32

- name: '447'

dtype: float32

- name: '448'

dtype: float32

- name: '449'

dtype: float32

- name: '450'

dtype: float32

- name: '451'

dtype: float32

- name: '452'

dtype: float32

- name: '453'

dtype: float32

- name: '454'

dtype: float32

- name: '455'

dtype: float32

- name: '456'

dtype: float32

- name: '457'

dtype: float32

- name: '458'

dtype: float32

- name: '459'

dtype: float32

- name: '460'

dtype: float32

- name: '461'

dtype: float32

- name: '462'

dtype: float32

- name: '463'

dtype: float32

- name: '464'

dtype: float32

- name: '465'

dtype: float32

- name: '466'

dtype: float32

- name: '467'

dtype: float32

- name: '468'

dtype: float32

- name: '469'

dtype: float32

- name: '470'

dtype: float32

- name: '471'

dtype: float32

- name: '472'

dtype: float32

- name: '473'

dtype: float32

- name: '474'

dtype: float32

- name: '475'

dtype: float32

- name: '476'

dtype: float32

- name: '477'

dtype: float32

- name: '478'

dtype: float32

- name: '479'

dtype: float32

- name: '480'

dtype: float32

- name: '481'

dtype: float32

- name: '482'

dtype: float32

- name: '483'

dtype: float32

- name: '484'

dtype: float32

- name: '485'

dtype: float32

- name: '486'

dtype: float32

- name: '487'

dtype: float32

- name: '488'

dtype: float32

- name: '489'

dtype: float32

- name: '490'

dtype: float32

- name: '491'

dtype: float32

- name: '492'

dtype: float32

- name: '493'

dtype: float32

- name: '494'

dtype: float32

- name: '495'

dtype: float32

- name: '496'

dtype: float32

- name: '497'

dtype: float32

- name: '498'

dtype: float32

- name: '499'

dtype: float32

- name: '500'

dtype: float32

- name: '501'

dtype: float32

- name: '502'

dtype: float32

- name: '503'

dtype: float32

- name: '504'

dtype: float32

- name: '505'

dtype: float32

- name: '506'

dtype: float32

- name: '507'

dtype: float32

- name: '508'

dtype: float32

- name: '509'

dtype: float32

- name: '510'

dtype: float32

- name: '511'

dtype: float32

- name: '512'

dtype: float32

- name: '513'

dtype: float32

- name: '514'

dtype: float32

- name: '515'

dtype: float32

- name: '516'

dtype: float32

- name: '517'

dtype: float32

- name: '518'

dtype: float32

- name: '519'

dtype: float32

- name: '520'

dtype: float32

- name: '521'

dtype: float32

- name: '522'

dtype: float32

- name: '523'

dtype: float32

- name: '524'

dtype: float32

- name: '525'

dtype: float32

- name: '526'

dtype: float32

- name: '527'

dtype: float32

- name: '528'

dtype: float32

- name: '529'

dtype: float32

- name: '530'

dtype: float32

- name: '531'

dtype: float32

- name: '532'

dtype: float32

- name: '533'

dtype: float32

- name: '534'

dtype: float32

- name: '535'

dtype: float32

- name: '536'

dtype: float32

- name: '537'

dtype: float32

- name: '538'

dtype: float32

- name: '539'

dtype: float32

- name: '540'

dtype: float32

- name: '541'

dtype: float32

- name: '542'

dtype: float32

- name: '543'

dtype: float32

- name: '544'

dtype: float32

- name: '545'

dtype: float32

- name: '546'

dtype: float32

- name: '547'

dtype: float32

- name: '548'

dtype: float32

- name: '549'

dtype: float32

- name: '550'

dtype: float32

- name: '551'

dtype: float32

- name: '552'

dtype: float32

- name: '553'

dtype: float32

- name: '554'

dtype: float32

- name: '555'

dtype: float32

- name: '556'

dtype: float32

- name: '557'

dtype: float32

- name: '558'

dtype: float32

- name: '559'

dtype: float32

- name: '560'

dtype: float32

- name: '561'

dtype: float32

- name: '562'

dtype: float32

- name: '563'

dtype: float32

- name: '564'

dtype: float32

- name: '565'

dtype: float32

- name: '566'

dtype: float32

- name: '567'

dtype: float32

- name: '568'

dtype: float32

- name: '569'

dtype: float32

- name: '570'

dtype: float32

- name: '571'

dtype: float32

- name: '572'

dtype: float32

- name: '573'

dtype: float32

- name: '574'

dtype: float32

- name: '575'

dtype: float32

- name: '576'

dtype: float32

- name: '577'

dtype: float32

- name: '578'

dtype: float32

- name: '579'

dtype: float32

- name: '580'

dtype: float32

- name: '581'

dtype: float32

- name: '582'

dtype: float32

- name: '583'

dtype: float32

- name: '584'

dtype: float32

- name: '585'

dtype: float32

- name: '586'

dtype: float32

- name: '587'

dtype: float32

- name: '588'

dtype: float32

- name: '589'

dtype: float32

- name: '590'

dtype: float32

- name: '591'

dtype: float32

- name: '592'

dtype: float32

- name: '593'

dtype: float32

- name: '594'

dtype: float32

- name: '595'

dtype: float32

- name: '596'

dtype: float32

- name: '597'

dtype: float32

- name: '598'

dtype: float32

- name: '599'

dtype: float32

- name: '600'

dtype: float32

- name: '601'

dtype: float32

- name: '602'

dtype: float32

- name: '603'

dtype: float32

- name: '604'

dtype: float32

- name: '605'

dtype: float32

- name: '606'

dtype: float32

- name: '607'

dtype: float32

- name: '608'

dtype: float32

- name: '609'

dtype: float32

- name: '610'

dtype: float32

- name: '611'

dtype: float32

- name: '612'

dtype: float32

- name: '613'

dtype: float32

- name: '614'

dtype: float32

- name: '615'

dtype: float32

- name: '616'

dtype: float32

- name: '617'

dtype: float32

- name: '618'

dtype: float32

- name: '619'

dtype: float32

- name: '620'

dtype: float32

- name: '621'

dtype: float32

- name: '622'

dtype: float32

- name: '623'

dtype: float32

- name: '624'

dtype: float32

- name: '625'

dtype: float32

- name: '626'

dtype: float32

- name: '627'

dtype: float32

- name: '628'

dtype: float32

- name: '629'

dtype: float32

- name: '630'

dtype: float32

- name: '631'

dtype: float32

- name: '632'

dtype: float32

- name: '633'

dtype: float32

- name: '634'

dtype: float32

- name: '635'

dtype: float32

- name: '636'

dtype: float32

- name: '637'

dtype: float32

- name: '638'

dtype: float32

- name: '639'

dtype: float32

- name: '640'

dtype: float32

- name: '641'

dtype: float32

- name: '642'

dtype: float32

- name: '643'

dtype: float32

- name: '644'

dtype: float32

- name: '645'

dtype: float32

- name: '646'

dtype: float32

- name: '647'

dtype: float32

- name: '648'

dtype: float32

- name: '649'

dtype: float32

- name: '650'

dtype: float32

- name: '651'

dtype: float32

- name: '652'

dtype: float32

- name: '653'

dtype: float32

- name: '654'

dtype: float32

- name: '655'

dtype: float32

- name: '656'

dtype: float32

- name: '657'

dtype: float32

- name: '658'

dtype: float32

- name: '659'

dtype: float32

- name: '660'

dtype: float32

- name: '661'

dtype: float32

- name: '662'

dtype: float32

- name: '663'

dtype: float32

- name: '664'

dtype: float32

- name: '665'

dtype: float32

- name: '666'

dtype: float32

- name: '667'

dtype: float32

- name: '668'

dtype: float32

- name: '669'

dtype: float32

- name: '670'

dtype: float32

- name: '671'

dtype: float32

- name: '672'

dtype: float32

- name: '673'

dtype: float32

- name: '674'

dtype: float32

- name: '675'

dtype: float32

- name: '676'

dtype: float32

- name: '677'

dtype: float32

- name: '678'

dtype: float32

- name: '679'

dtype: float32

- name: '680'

dtype: float32

- name: '681'

dtype: float32

- name: '682'

dtype: float32

- name: '683'

dtype: float32

- name: '684'

dtype: float32

- name: '685'

dtype: float32

- name: '686'

dtype: float32

- name: '687'

dtype: float32

- name: '688'

dtype: float32

- name: '689'

dtype: float32

- name: '690'

dtype: float32

- name: '691'

dtype: float32

- name: '692'

dtype: float32

- name: '693'

dtype: float32

- name: '694'

dtype: float32

- name: '695'

dtype: float32

- name: '696'

dtype: float32

- name: '697'

dtype: float32

- name: '698'

dtype: float32

- name: '699'

dtype: float32

- name: '700'

dtype: float32

- name: '701'

dtype: float32

- name: '702'

dtype: float32

- name: '703'

dtype: float32

- name: '704'

dtype: float32

- name: '705'

dtype: float32

- name: '706'

dtype: float32

- name: '707'

dtype: float32

- name: '708'

dtype: float32

- name: '709'

dtype: float32

- name: '710'

dtype: float32

- name: '711'

dtype: float32

- name: '712'

dtype: float32

- name: '713'

dtype: float32

- name: '714'

dtype: float32

- name: '715'

dtype: float32

- name: '716'

dtype: float32

- name: '717'

dtype: float32

- name: '718'

dtype: float32

- name: '719'

dtype: float32

- name: '720'

dtype: float32

- name: '721'

dtype: float32

- name: '722'

dtype: float32

- name: '723'

dtype: float32

- name: '724'

dtype: float32

- name: '725'

dtype: float32

- name: '726'

dtype: float32

- name: '727'

dtype: float32

- name: '728'

dtype: float32

- name: '729'

dtype: float32

- name: '730'

dtype: float32

- name: '731'

dtype: float32

- name: '732'

dtype: float32

- name: '733'

dtype: float32

- name: '734'

dtype: float32

- name: '735'

dtype: float32

- name: '736'

dtype: float32

- name: '737'

dtype: float32

- name: '738'

dtype: float32

- name: '739'

dtype: float32

- name: '740'

dtype: float32

- name: '741'

dtype: float32

- name: '742'

dtype: float32

- name: '743'

dtype: float32

- name: '744'

dtype: float32

- name: '745'

dtype: float32

- name: '746'

dtype: float32

- name: '747'

dtype: float32

- name: '748'

dtype: float32

- name: '749'

dtype: float32

- name: '750'

dtype: float32

- name: '751'

dtype: float32

- name: '752'

dtype: float32

- name: '753'

dtype: float32

- name: '754'

dtype: float32

- name: '755'

dtype: float32

- name: '756'

dtype: float32

- name: '757'

dtype: float32

- name: '758'

dtype: float32

- name: '759'

dtype: float32

- name: '760'

dtype: float32

- name: '761'

dtype: float32

- name: '762'

dtype: float32

- name: '763'

dtype: float32

- name: '764'

dtype: float32

- name: '765'

dtype: float32

- name: '766'

dtype: float32

- name: '767'

dtype: float32

- name: label

dtype: string

splits:

- name: train

num_bytes: 115650093

num_examples: 37500

- name: test

num_bytes: 38549993

num_examples: 12500

download_size: 211768316

dataset_size: 154200086

---

# Dataset Card for "Spirit_BERT_FT"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

open-llm-leaderboard/details_quantumaikr__open_llama_7b_hf | ---

pretty_name: Evaluation run of quantumaikr/open_llama_7b_hf

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [quantumaikr/open_llama_7b_hf](https://huggingface.co/quantumaikr/open_llama_7b_hf)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 61 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the agregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_quantumaikr__open_llama_7b_hf\"\

,\n\t\"harness_truthfulqa_mc_0\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\

\nThese are the [latest results from run 2023-07-19T17:01:48.631436](https://huggingface.co/datasets/open-llm-leaderboard/details_quantumaikr__open_llama_7b_hf/blob/main/results_2023-07-19T17%3A01%3A48.631436.json)\

\ (note that their might be results for other tasks in the repos if successive evals\

\ didn't cover the same tasks. You find each in the results and the \"latest\" split\

\ for each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.2648279332452004,\n\

\ \"acc_stderr\": 0.03195749858994142,\n \"acc_norm\": 0.26548960439125074,\n\

\ \"acc_norm_stderr\": 0.03196726632461042,\n \"mc1\": 0.23133414932680538,\n\

\ \"mc1_stderr\": 0.014761945174862661,\n \"mc2\": 0.4954484536663258,\n\

\ \"mc2_stderr\": 0.016312743256662564\n },\n \"harness|arc:challenge|25\"\

: {\n \"acc\": 0.23293515358361774,\n \"acc_stderr\": 0.012352507042617391,\n\

\ \"acc_norm\": 0.2645051194539249,\n \"acc_norm_stderr\": 0.012889272949313366\n\

\ },\n \"harness|hellaswag|10\": {\n \"acc\": 0.26199960167297354,\n\

\ \"acc_stderr\": 0.004388237557526716,\n \"acc_norm\": 0.26946823341963755,\n\

\ \"acc_norm_stderr\": 0.004427767996301633\n },\n \"harness|hendrycksTest-abstract_algebra|5\"\

: {\n \"acc\": 0.3,\n \"acc_stderr\": 0.046056618647183814,\n \

\ \"acc_norm\": 0.3,\n \"acc_norm_stderr\": 0.046056618647183814\n \

\ },\n \"harness|hendrycksTest-anatomy|5\": {\n \"acc\": 0.31851851851851853,\n\

\ \"acc_stderr\": 0.040247784019771096,\n \"acc_norm\": 0.31851851851851853,\n\

\ \"acc_norm_stderr\": 0.040247784019771096\n },\n \"harness|hendrycksTest-astronomy|5\"\

: {\n \"acc\": 0.2894736842105263,\n \"acc_stderr\": 0.036906779861372814,\n\

\ \"acc_norm\": 0.2894736842105263,\n \"acc_norm_stderr\": 0.036906779861372814\n\

\ },\n \"harness|hendrycksTest-business_ethics|5\": {\n \"acc\": 0.21,\n\

\ \"acc_stderr\": 0.040936018074033256,\n \"acc_norm\": 0.21,\n \

\ \"acc_norm_stderr\": 0.040936018074033256\n },\n \"harness|hendrycksTest-clinical_knowledge|5\"\

: {\n \"acc\": 0.2490566037735849,\n \"acc_stderr\": 0.026616482980501704,\n\

\ \"acc_norm\": 0.2490566037735849,\n \"acc_norm_stderr\": 0.026616482980501704\n\

\ },\n \"harness|hendrycksTest-college_biology|5\": {\n \"acc\": 0.2708333333333333,\n\

\ \"acc_stderr\": 0.03716177437566016,\n \"acc_norm\": 0.2708333333333333,\n\

\ \"acc_norm_stderr\": 0.03716177437566016\n },\n \"harness|hendrycksTest-college_chemistry|5\"\

: {\n \"acc\": 0.34,\n \"acc_stderr\": 0.04760952285695236,\n \

\ \"acc_norm\": 0.34,\n \"acc_norm_stderr\": 0.04760952285695236\n \

\ },\n \"harness|hendrycksTest-college_computer_science|5\": {\n \"acc\"\

: 0.27,\n \"acc_stderr\": 0.0446196043338474,\n \"acc_norm\": 0.27,\n\

\ \"acc_norm_stderr\": 0.0446196043338474\n },\n \"harness|hendrycksTest-college_mathematics|5\"\

: {\n \"acc\": 0.26,\n \"acc_stderr\": 0.04408440022768079,\n \

\ \"acc_norm\": 0.26,\n \"acc_norm_stderr\": 0.04408440022768079\n \

\ },\n \"harness|hendrycksTest-college_medicine|5\": {\n \"acc\": 0.23699421965317918,\n\

\ \"acc_stderr\": 0.03242414757483098,\n \"acc_norm\": 0.23699421965317918,\n\

\ \"acc_norm_stderr\": 0.03242414757483098\n },\n \"harness|hendrycksTest-college_physics|5\"\

: {\n \"acc\": 0.4019607843137255,\n \"acc_stderr\": 0.04878608714466996,\n\

\ \"acc_norm\": 0.4019607843137255,\n \"acc_norm_stderr\": 0.04878608714466996\n\

\ },\n \"harness|hendrycksTest-computer_security|5\": {\n \"acc\":\

\ 0.16,\n \"acc_stderr\": 0.03684529491774708,\n \"acc_norm\": 0.16,\n\

\ \"acc_norm_stderr\": 0.03684529491774708\n },\n \"harness|hendrycksTest-conceptual_physics|5\"\

: {\n \"acc\": 0.2425531914893617,\n \"acc_stderr\": 0.028020226271200214,\n\

\ \"acc_norm\": 0.2425531914893617,\n \"acc_norm_stderr\": 0.028020226271200214\n\

\ },\n \"harness|hendrycksTest-econometrics|5\": {\n \"acc\": 0.2807017543859649,\n\

\ \"acc_stderr\": 0.042270544512322,\n \"acc_norm\": 0.2807017543859649,\n\

\ \"acc_norm_stderr\": 0.042270544512322\n },\n \"harness|hendrycksTest-electrical_engineering|5\"\

: {\n \"acc\": 0.23448275862068965,\n \"acc_stderr\": 0.035306258743465914,\n\

\ \"acc_norm\": 0.23448275862068965,\n \"acc_norm_stderr\": 0.035306258743465914\n\

\ },\n \"harness|hendrycksTest-elementary_mathematics|5\": {\n \"acc\"\

: 0.25132275132275134,\n \"acc_stderr\": 0.022340482339643898,\n \"\

acc_norm\": 0.25132275132275134,\n \"acc_norm_stderr\": 0.022340482339643898\n\

\ },\n \"harness|hendrycksTest-formal_logic|5\": {\n \"acc\": 0.2222222222222222,\n\

\ \"acc_stderr\": 0.037184890068181146,\n \"acc_norm\": 0.2222222222222222,\n\

\ \"acc_norm_stderr\": 0.037184890068181146\n },\n \"harness|hendrycksTest-global_facts|5\"\

: {\n \"acc\": 0.32,\n \"acc_stderr\": 0.046882617226215034,\n \

\ \"acc_norm\": 0.32,\n \"acc_norm_stderr\": 0.046882617226215034\n \

\ },\n \"harness|hendrycksTest-high_school_biology|5\": {\n \"acc\"\

: 0.3161290322580645,\n \"acc_stderr\": 0.02645087448904277,\n \"\

acc_norm\": 0.3161290322580645,\n \"acc_norm_stderr\": 0.02645087448904277\n\

\ },\n \"harness|hendrycksTest-high_school_chemistry|5\": {\n \"acc\"\

: 0.31527093596059114,\n \"acc_stderr\": 0.03269080871970186,\n \"\

acc_norm\": 0.31527093596059114,\n \"acc_norm_stderr\": 0.03269080871970186\n\

\ },\n \"harness|hendrycksTest-high_school_computer_science|5\": {\n \

\ \"acc\": 0.23,\n \"acc_stderr\": 0.04229525846816506,\n \"acc_norm\"\

: 0.23,\n \"acc_norm_stderr\": 0.04229525846816506\n },\n \"harness|hendrycksTest-high_school_european_history|5\"\

: {\n \"acc\": 0.24848484848484848,\n \"acc_stderr\": 0.03374402644139405,\n\

\ \"acc_norm\": 0.24848484848484848,\n \"acc_norm_stderr\": 0.03374402644139405\n\

\ },\n \"harness|hendrycksTest-high_school_geography|5\": {\n \"acc\"\

: 0.31313131313131315,\n \"acc_stderr\": 0.033042050878136525,\n \"\

acc_norm\": 0.31313131313131315,\n \"acc_norm_stderr\": 0.033042050878136525\n\

\ },\n \"harness|hendrycksTest-high_school_government_and_politics|5\": {\n\

\ \"acc\": 0.3005181347150259,\n \"acc_stderr\": 0.0330881859441575,\n\

\ \"acc_norm\": 0.3005181347150259,\n \"acc_norm_stderr\": 0.0330881859441575\n\

\ },\n \"harness|hendrycksTest-high_school_macroeconomics|5\": {\n \

\ \"acc\": 0.2128205128205128,\n \"acc_stderr\": 0.020752423722128013,\n\

\ \"acc_norm\": 0.2128205128205128,\n \"acc_norm_stderr\": 0.020752423722128013\n\

\ },\n \"harness|hendrycksTest-high_school_mathematics|5\": {\n \"\

acc\": 0.25555555555555554,\n \"acc_stderr\": 0.02659393910184408,\n \

\ \"acc_norm\": 0.25555555555555554,\n \"acc_norm_stderr\": 0.02659393910184408\n\

\ },\n \"harness|hendrycksTest-high_school_microeconomics|5\": {\n \

\ \"acc\": 0.3277310924369748,\n \"acc_stderr\": 0.030489911417673227,\n\

\ \"acc_norm\": 0.3277310924369748,\n \"acc_norm_stderr\": 0.030489911417673227\n\

\ },\n \"harness|hendrycksTest-high_school_physics|5\": {\n \"acc\"\

: 0.31788079470198677,\n \"acc_stderr\": 0.038020397601079024,\n \"\

acc_norm\": 0.31788079470198677,\n \"acc_norm_stderr\": 0.038020397601079024\n\

\ },\n \"harness|hendrycksTest-high_school_psychology|5\": {\n \"acc\"\

: 0.28440366972477066,\n \"acc_stderr\": 0.019342036587702588,\n \"\

acc_norm\": 0.28440366972477066,\n \"acc_norm_stderr\": 0.019342036587702588\n\

\ },\n \"harness|hendrycksTest-high_school_statistics|5\": {\n \"acc\"\

: 0.4074074074074074,\n \"acc_stderr\": 0.03350991604696042,\n \"\

acc_norm\": 0.4074074074074074,\n \"acc_norm_stderr\": 0.03350991604696042\n\

\ },\n \"harness|hendrycksTest-high_school_us_history|5\": {\n \"acc\"\

: 0.25980392156862747,\n \"acc_stderr\": 0.030778554678693254,\n \"\

acc_norm\": 0.25980392156862747,\n \"acc_norm_stderr\": 0.030778554678693254\n\

\ },\n \"harness|hendrycksTest-high_school_world_history|5\": {\n \"\

acc\": 0.189873417721519,\n \"acc_stderr\": 0.025530100460233497,\n \

\ \"acc_norm\": 0.189873417721519,\n \"acc_norm_stderr\": 0.025530100460233497\n\

\ },\n \"harness|hendrycksTest-human_aging|5\": {\n \"acc\": 0.18834080717488788,\n\

\ \"acc_stderr\": 0.026241132996407273,\n \"acc_norm\": 0.18834080717488788,\n\

\ \"acc_norm_stderr\": 0.026241132996407273\n },\n \"harness|hendrycksTest-human_sexuality|5\"\

: {\n \"acc\": 0.22900763358778625,\n \"acc_stderr\": 0.036853466317118506,\n\

\ \"acc_norm\": 0.22900763358778625,\n \"acc_norm_stderr\": 0.036853466317118506\n\

\ },\n \"harness|hendrycksTest-international_law|5\": {\n \"acc\":\

\ 0.19008264462809918,\n \"acc_stderr\": 0.03581796951709282,\n \"\

acc_norm\": 0.19008264462809918,\n \"acc_norm_stderr\": 0.03581796951709282\n\

\ },\n \"harness|hendrycksTest-jurisprudence|5\": {\n \"acc\": 0.21296296296296297,\n\

\ \"acc_stderr\": 0.0395783547198098,\n \"acc_norm\": 0.21296296296296297,\n\

\ \"acc_norm_stderr\": 0.0395783547198098\n },\n \"harness|hendrycksTest-logical_fallacies|5\"\

: {\n \"acc\": 0.22699386503067484,\n \"acc_stderr\": 0.032910995786157686,\n\

\ \"acc_norm\": 0.22699386503067484,\n \"acc_norm_stderr\": 0.032910995786157686\n\

\ },\n \"harness|hendrycksTest-machine_learning|5\": {\n \"acc\": 0.23214285714285715,\n\

\ \"acc_stderr\": 0.04007341809755806,\n \"acc_norm\": 0.23214285714285715,\n\

\ \"acc_norm_stderr\": 0.04007341809755806\n },\n \"harness|hendrycksTest-management|5\"\

: {\n \"acc\": 0.3592233009708738,\n \"acc_stderr\": 0.04750458399041692,\n\

\ \"acc_norm\": 0.3592233009708738,\n \"acc_norm_stderr\": 0.04750458399041692\n\

\ },\n \"harness|hendrycksTest-marketing|5\": {\n \"acc\": 0.20085470085470086,\n\

\ \"acc_stderr\": 0.026246772946890477,\n \"acc_norm\": 0.20085470085470086,\n\

\ \"acc_norm_stderr\": 0.026246772946890477\n },\n \"harness|hendrycksTest-medical_genetics|5\"\

: {\n \"acc\": 0.26,\n \"acc_stderr\": 0.04408440022768078,\n \

\ \"acc_norm\": 0.26,\n \"acc_norm_stderr\": 0.04408440022768078\n \

\ },\n \"harness|hendrycksTest-miscellaneous|5\": {\n \"acc\": 0.2656449553001277,\n\

\ \"acc_stderr\": 0.01579430248788873,\n \"acc_norm\": 0.2656449553001277,\n\

\ \"acc_norm_stderr\": 0.01579430248788873\n },\n \"harness|hendrycksTest-moral_disputes|5\"\

: {\n \"acc\": 0.2138728323699422,\n \"acc_stderr\": 0.022075709251757183,\n\

\ \"acc_norm\": 0.2138728323699422,\n \"acc_norm_stderr\": 0.022075709251757183\n\

\ },\n \"harness|hendrycksTest-moral_scenarios|5\": {\n \"acc\": 0.24916201117318434,\n\

\ \"acc_stderr\": 0.01446589382985993,\n \"acc_norm\": 0.24916201117318434,\n\

\ \"acc_norm_stderr\": 0.01446589382985993\n },\n \"harness|hendrycksTest-nutrition|5\"\

: {\n \"acc\": 0.27450980392156865,\n \"acc_stderr\": 0.025553169991826514,\n\

\ \"acc_norm\": 0.27450980392156865,\n \"acc_norm_stderr\": 0.025553169991826514\n\

\ },\n \"harness|hendrycksTest-philosophy|5\": {\n \"acc\": 0.2861736334405145,\n\

\ \"acc_stderr\": 0.025670259242188943,\n \"acc_norm\": 0.2861736334405145,\n\

\ \"acc_norm_stderr\": 0.025670259242188943\n },\n \"harness|hendrycksTest-prehistory|5\"\

: {\n \"acc\": 0.2623456790123457,\n \"acc_stderr\": 0.024477222856135104,\n\

\ \"acc_norm\": 0.2623456790123457,\n \"acc_norm_stderr\": 0.024477222856135104\n\

\ },\n \"harness|hendrycksTest-professional_accounting|5\": {\n \"\

acc\": 0.25886524822695034,\n \"acc_stderr\": 0.026129572527180844,\n \

\ \"acc_norm\": 0.25886524822695034,\n \"acc_norm_stderr\": 0.026129572527180844\n\

\ },\n \"harness|hendrycksTest-professional_law|5\": {\n \"acc\": 0.2438070404172099,\n\

\ \"acc_stderr\": 0.010966507972178479,\n \"acc_norm\": 0.2438070404172099,\n\

\ \"acc_norm_stderr\": 0.010966507972178479\n },\n \"harness|hendrycksTest-professional_medicine|5\"\

: {\n \"acc\": 0.4485294117647059,\n \"acc_stderr\": 0.030211479609121593,\n\

\ \"acc_norm\": 0.4485294117647059,\n \"acc_norm_stderr\": 0.030211479609121593\n\

\ },\n \"harness|hendrycksTest-professional_psychology|5\": {\n \"\

acc\": 0.24673202614379086,\n \"acc_stderr\": 0.0174408203674025,\n \

\ \"acc_norm\": 0.24673202614379086,\n \"acc_norm_stderr\": 0.0174408203674025\n\

\ },\n \"harness|hendrycksTest-public_relations|5\": {\n \"acc\": 0.24545454545454545,\n\

\ \"acc_stderr\": 0.041220665028782834,\n \"acc_norm\": 0.24545454545454545,\n\

\ \"acc_norm_stderr\": 0.041220665028782834\n },\n \"harness|hendrycksTest-security_studies|5\"\

: {\n \"acc\": 0.2938775510204082,\n \"acc_stderr\": 0.029162738410249762,\n\

\ \"acc_norm\": 0.2938775510204082,\n \"acc_norm_stderr\": 0.029162738410249762\n\

\ },\n \"harness|hendrycksTest-sociology|5\": {\n \"acc\": 0.23383084577114427,\n\

\ \"acc_stderr\": 0.029929415408348405,\n \"acc_norm\": 0.23383084577114427,\n\

\ \"acc_norm_stderr\": 0.029929415408348405\n },\n \"harness|hendrycksTest-us_foreign_policy|5\"\

: {\n \"acc\": 0.28,\n \"acc_stderr\": 0.04512608598542127,\n \

\ \"acc_norm\": 0.28,\n \"acc_norm_stderr\": 0.04512608598542127\n \

\ },\n \"harness|hendrycksTest-virology|5\": {\n \"acc\": 0.15060240963855423,\n\

\ \"acc_stderr\": 0.02784386378726433,\n \"acc_norm\": 0.15060240963855423,\n\

\ \"acc_norm_stderr\": 0.02784386378726433\n },\n \"harness|hendrycksTest-world_religions|5\"\

: {\n \"acc\": 0.23976608187134502,\n \"acc_stderr\": 0.03274485211946956,\n\

\ \"acc_norm\": 0.23976608187134502,\n \"acc_norm_stderr\": 0.03274485211946956\n\

\ },\n \"harness|truthfulqa:mc|0\": {\n \"mc1\": 0.23133414932680538,\n\

\ \"mc1_stderr\": 0.014761945174862661,\n \"mc2\": 0.4954484536663258,\n\

\ \"mc2_stderr\": 0.016312743256662564\n }\n}\n```"

repo_url: https://huggingface.co/quantumaikr/open_llama_7b_hf

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_arc_challenge_25

data_files:

- split: 2023_07_19T17_01_48.631436

path:

- '**/details_harness|arc:challenge|25_2023-07-19T17:01:48.631436.parquet'

- split: latest

path:

- '**/details_harness|arc:challenge|25_2023-07-19T17:01:48.631436.parquet'

- config_name: harness_hellaswag_10

data_files:

- split: 2023_07_19T17_01_48.631436

path:

- '**/details_harness|hellaswag|10_2023-07-19T17:01:48.631436.parquet'

- split: latest

path:

- '**/details_harness|hellaswag|10_2023-07-19T17:01:48.631436.parquet'

- config_name: harness_hendrycksTest_5

data_files:

- split: 2023_07_19T17_01_48.631436

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-07-19T17:01:48.631436.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-07-19T17:01:48.631436.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-07-19T17:01:48.631436.parquet'

- config_name: harness_hendrycksTest_abstract_algebra_5

data_files:

- split: 2023_07_19T17_01_48.631436

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-07-19T17:01:48.631436.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-07-19T17:01:48.631436.parquet'

- config_name: harness_hendrycksTest_anatomy_5

data_files:

- split: 2023_07_19T17_01_48.631436

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-07-19T17:01:48.631436.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-07-19T17:01:48.631436.parquet'

- config_name: harness_hendrycksTest_astronomy_5

data_files:

- split: 2023_07_19T17_01_48.631436

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-07-19T17:01:48.631436.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-07-19T17:01:48.631436.parquet'

- config_name: harness_hendrycksTest_business_ethics_5

data_files:

- split: 2023_07_19T17_01_48.631436

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-07-19T17:01:48.631436.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-07-19T17:01:48.631436.parquet'

- config_name: harness_hendrycksTest_clinical_knowledge_5

data_files:

- split: 2023_07_19T17_01_48.631436

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-07-19T17:01:48.631436.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-07-19T17:01:48.631436.parquet'

- config_name: harness_hendrycksTest_college_biology_5

data_files:

- split: 2023_07_19T17_01_48.631436

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-07-19T17:01:48.631436.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-07-19T17:01:48.631436.parquet'

- config_name: harness_hendrycksTest_college_chemistry_5

data_files:

- split: 2023_07_19T17_01_48.631436

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-07-19T17:01:48.631436.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-07-19T17:01:48.631436.parquet'

- config_name: harness_hendrycksTest_college_computer_science_5

data_files:

- split: 2023_07_19T17_01_48.631436

path: