datasetId stringlengths 2 117 | card stringlengths 19 1.01M |

|---|---|

Binho7/rodrigodelara | ---

license: openrail

---

|

CyberHarem/odamaki_sapphire_pokemon | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of odamaki_sapphire (Pokémon)

This is the dataset of odamaki_sapphire (Pokémon), containing 19 images and their tags.

The core tags of this character are `brown_hair, blue_eyes, breasts, large_breasts, fang`, which are pruned in this dataset.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).

## List of Packages

| Name | Images | Size | Download | Type | Description |

|:-----------------|---------:|:----------|:--------------------------------------------------------------------------------------------------------------------------|:-----------|:---------------------------------------------------------------------|

| raw | 19 | 18.21 MiB | [Download](https://huggingface.co/datasets/CyberHarem/odamaki_sapphire_pokemon/resolve/main/dataset-raw.zip) | Waifuc-Raw | Raw data with meta information (min edge aligned to 1400 if larger). |

| 800 | 19 | 10.99 MiB | [Download](https://huggingface.co/datasets/CyberHarem/odamaki_sapphire_pokemon/resolve/main/dataset-800.zip) | IMG+TXT | dataset with the shorter side not exceeding 800 pixels. |

| stage3-p480-800 | 42 | 22.29 MiB | [Download](https://huggingface.co/datasets/CyberHarem/odamaki_sapphire_pokemon/resolve/main/dataset-stage3-p480-800.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

| 1200 | 19 | 16.73 MiB | [Download](https://huggingface.co/datasets/CyberHarem/odamaki_sapphire_pokemon/resolve/main/dataset-1200.zip) | IMG+TXT | dataset with the shorter side not exceeding 1200 pixels. |

| stage3-p480-1200 | 42 | 29.57 MiB | [Download](https://huggingface.co/datasets/CyberHarem/odamaki_sapphire_pokemon/resolve/main/dataset-stage3-p480-1200.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

### Load Raw Dataset with Waifuc

We provide raw dataset (including tagged images) for [waifuc](https://deepghs.github.io/waifuc/main/tutorials/installation/index.html) loading. If you need this, just run the following code

```python

import os

import zipfile

from huggingface_hub import hf_hub_download

from waifuc.source import LocalSource

# download raw archive file

zip_file = hf_hub_download(

repo_id='CyberHarem/odamaki_sapphire_pokemon',

repo_type='dataset',

filename='dataset-raw.zip',

)

# extract files to your directory

dataset_dir = 'dataset_dir'

os.makedirs(dataset_dir, exist_ok=True)

with zipfile.ZipFile(zip_file, 'r') as zf:

zf.extractall(dataset_dir)

# load the dataset with waifuc

source = LocalSource(dataset_dir)

for item in source:

print(item.image, item.meta['filename'], item.meta['tags'])

```

## List of Clusters

List of tag clustering result, maybe some outfits can be mined here.

### Raw Text Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | Tags |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:-----------------------------------------------|

| 0 | 19 |  |  |  |  |  | 1girl, open_mouth, solo, navel, scar, swimsuit |

### Table Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | 1girl | open_mouth | solo | navel | scar | swimsuit |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------|:-------------|:-------|:--------|:-------|:-----------|

| 0 | 19 |  |  |  |  |  | X | X | X | X | X | X |

|

dokiubr/lucas | ---

license: openrail

---

|

ThanhJamieAI/test | ---

license: apache-2.0

---

|

omarelsayeed/zsum2 | ---

dataset_info:

features:

- name: text

dtype: string

- name: summary

dtype: string

splits:

- name: train

num_bytes: 1641887

num_examples: 426

download_size: 823395

dataset_size: 1641887

---

# Dataset Card for "zsum2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

mask-distilled-one-sec-cv12/chunk_235 | ---

dataset_info:

features:

- name: logits

sequence: float32

- name: mfcc

sequence:

sequence: float64

splits:

- name: train

num_bytes: 1166918364

num_examples: 229167

download_size: 1191475987

dataset_size: 1166918364

---

# Dataset Card for "chunk_235"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

ParisNeo/Word_in_Sentence_Database | ---

license: apache-2.0

task_categories:

- table-question-answering

language:

- en

pretty_name: Word in Sentence database

---

# WIS database

This database contains a question answer list about text

This database was built using my this workflow:

1- load a raw text file

2- split into paragraphs

3- split paragraphs into sentences

4- for each word, ask question about its position and answer with the position, then ask about the word length and answer with the actual length of the word

5- ask a question about the number of words in the sentence and answer it

6- build a json database using this.

To do this, I kindly got the concent of Keith Curtis to use his website content as fuel to this algorithm.

The website can be found here:

https://keithcu.com/wordpress/?page_id=599

Best regards. |

results-sd-v1-5-sd-v2-1-if-v1-0-karlo/0ca7bb7b | ---

dataset_info:

features:

- name: result

dtype: string

- name: id

dtype: int64

splits:

- name: train

num_bytes: 182

num_examples: 10

download_size: 1337

dataset_size: 182

---

# Dataset Card for "0ca7bb7b"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

VENF/me | ---

license: openrail

---

|

multimodalart/genai-book-images | ---

license: mit

---

|

dustinwloring1988/big_patent_100k_characters | ---

dataset_info:

features:

- name: description

dtype: string

- name: abstract

dtype: string

- name: description_length

dtype: int64

splits:

- name: train

num_bytes: 234534932

num_examples: 4819

- name: test

num_bytes: 28347714

num_examples: 574

download_size: 102178356

dataset_size: 262882646

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

---

|

xbgoose/dusha | ---

dataset_info:

features:

- name: audio

dtype: audio

- name: emotion

dtype: string

splits:

- name: train

num_bytes: 23511966173.784

num_examples: 150352

- name: test

num_bytes: 2212754711.79

num_examples: 14035

download_size: 21507131221

dataset_size: 25724720885.574

---

# Dataset Card for "dusha"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

DGurgurov/sinhala_sa | ---

license: mit

---

## Sentiment Analysis Data for the Sinhala Language

**Dataset Description:**

This dataset contains a sentiment analysis data from Ranathunga et al (2021).

**Data Structure:**

The data was used for the project on [injecting external commonsense knowledge into multilingual Large Language Models](https://github.com/d-gurgurov/Injecting-Commonsense-Knowledge-into-LLMs).

**Citation:**

```bibtex

@article{ranathunga2021sentiment,

title={Sentiment analysis of sinhala news comments},

author={Ranathunga, Surangika and Liyanage, Isuru Udara},

journal={Transactions on Asian and Low-Resource Language Information Processing},

volume={20},

number={4},

pages={1--23},

year={2021},

publisher={ACM New York, NY}

}

``` |

Tytfghugn/test | ---

license: cc-by-2.5

---

|

miehin/16thaug | ---

license: openrail

---

|

indiejoseph/cc100-yue | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 32135136

num_examples: 176047

download_size: 23579906

dataset_size: 32135136

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "cc100-yue"

The Filtered Cantonese Dataset is a subset of the larger CC100 corpus that has been filtered to include only Cantonese language content. It is designed to facilitate various NLP tasks, such as text classification, sentiment analysis, named entity recognition, and machine translation, among others.

## Filtering Process

The filtering process is according to article [Building a Hong Kongese Language Identifier](https://medium.com/@kyubi_fox/building-a-hong-kongese-language-identifier-5e20fd221323) by ToastyNews

|

adamjweintraut/bart-finetuned-context_comparison-256_2023-12-10_run | ---

dataset_info:

features:

- name: index

dtype: int64

- name: q_id

dtype: string

- name: question

dtype: string

- name: og_context

dtype: string

- name: og_predicted

dtype: string

- name: og_label

dtype: string

- name: og_nli-roberta_label

dtype: string

- name: og_nli-roberta_plot_vals

dtype: int64

- name: og_nli-roberta-max-score

dtype: float64

- name: new_context

dtype: string

- name: new_predicted

dtype: string

- name: new_label

dtype: string

- name: new_nli-roberta_label

dtype: string

- name: new_nli-roberta_plot_vals

dtype: int64

- name: new_nli-roberta-max-score

dtype: float64

- name: context_sim

dtype: float32

splits:

- name: train

num_bytes: 146486

num_examples: 9

download_size: 134647

dataset_size: 146486

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

open-llm-leaderboard/details_daxiongshu__Pluto_24B_DPO_63 | ---

pretty_name: Evaluation run of daxiongshu/Pluto_24B_DPO_63

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [daxiongshu/Pluto_24B_DPO_63](https://huggingface.co/daxiongshu/Pluto_24B_DPO_63)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 63 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the aggregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_daxiongshu__Pluto_24B_DPO_63\"\

,\n\t\"harness_winogrande_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\n\

These are the [latest results from run 2024-01-25T14:41:24.929250](https://huggingface.co/datasets/open-llm-leaderboard/details_daxiongshu__Pluto_24B_DPO_63/blob/main/results_2024-01-25T14-41-24.929250.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.6498497884015774,\n\

\ \"acc_stderr\": 0.03220698512335376,\n \"acc_norm\": 0.6505024665977697,\n\

\ \"acc_norm_stderr\": 0.03286228741096377,\n \"mc1\": 0.6682986536107711,\n\

\ \"mc1_stderr\": 0.01648214881024148,\n \"mc2\": 0.7936098015130929,\n\

\ \"mc2_stderr\": 0.013242598947000108\n },\n \"harness|arc:challenge|25\"\

: {\n \"acc\": 0.7064846416382252,\n \"acc_stderr\": 0.013307250444941122,\n\

\ \"acc_norm\": 0.7397610921501706,\n \"acc_norm_stderr\": 0.012821930225112563\n\

\ },\n \"harness|hellaswag|10\": {\n \"acc\": 0.6981676956781517,\n\

\ \"acc_stderr\": 0.004581147247963204,\n \"acc_norm\": 0.8816968731328421,\n\

\ \"acc_norm_stderr\": 0.0032230665918060006\n },\n \"harness|hendrycksTest-abstract_algebra|5\"\

: {\n \"acc\": 0.35,\n \"acc_stderr\": 0.0479372485441102,\n \

\ \"acc_norm\": 0.35,\n \"acc_norm_stderr\": 0.0479372485441102\n },\n\

\ \"harness|hendrycksTest-anatomy|5\": {\n \"acc\": 0.6222222222222222,\n\

\ \"acc_stderr\": 0.04188307537595853,\n \"acc_norm\": 0.6222222222222222,\n\

\ \"acc_norm_stderr\": 0.04188307537595853\n },\n \"harness|hendrycksTest-astronomy|5\"\

: {\n \"acc\": 0.7368421052631579,\n \"acc_stderr\": 0.03583496176361074,\n\

\ \"acc_norm\": 0.7368421052631579,\n \"acc_norm_stderr\": 0.03583496176361074\n\

\ },\n \"harness|hendrycksTest-business_ethics|5\": {\n \"acc\": 0.64,\n\

\ \"acc_stderr\": 0.04824181513244218,\n \"acc_norm\": 0.64,\n \

\ \"acc_norm_stderr\": 0.04824181513244218\n },\n \"harness|hendrycksTest-clinical_knowledge|5\"\

: {\n \"acc\": 0.6867924528301886,\n \"acc_stderr\": 0.028544793319055326,\n\

\ \"acc_norm\": 0.6867924528301886,\n \"acc_norm_stderr\": 0.028544793319055326\n\

\ },\n \"harness|hendrycksTest-college_biology|5\": {\n \"acc\": 0.7708333333333334,\n\

\ \"acc_stderr\": 0.03514697467862388,\n \"acc_norm\": 0.7708333333333334,\n\

\ \"acc_norm_stderr\": 0.03514697467862388\n },\n \"harness|hendrycksTest-college_chemistry|5\"\

: {\n \"acc\": 0.47,\n \"acc_stderr\": 0.050161355804659205,\n \

\ \"acc_norm\": 0.47,\n \"acc_norm_stderr\": 0.050161355804659205\n \

\ },\n \"harness|hendrycksTest-college_computer_science|5\": {\n \"\

acc\": 0.53,\n \"acc_stderr\": 0.050161355804659205,\n \"acc_norm\"\

: 0.53,\n \"acc_norm_stderr\": 0.050161355804659205\n },\n \"harness|hendrycksTest-college_mathematics|5\"\

: {\n \"acc\": 0.32,\n \"acc_stderr\": 0.04688261722621504,\n \

\ \"acc_norm\": 0.32,\n \"acc_norm_stderr\": 0.04688261722621504\n \

\ },\n \"harness|hendrycksTest-college_medicine|5\": {\n \"acc\": 0.6589595375722543,\n\

\ \"acc_stderr\": 0.036146654241808254,\n \"acc_norm\": 0.6589595375722543,\n\

\ \"acc_norm_stderr\": 0.036146654241808254\n },\n \"harness|hendrycksTest-college_physics|5\"\

: {\n \"acc\": 0.37254901960784315,\n \"acc_stderr\": 0.04810840148082636,\n\

\ \"acc_norm\": 0.37254901960784315,\n \"acc_norm_stderr\": 0.04810840148082636\n\

\ },\n \"harness|hendrycksTest-computer_security|5\": {\n \"acc\":\

\ 0.75,\n \"acc_stderr\": 0.04351941398892446,\n \"acc_norm\": 0.75,\n\

\ \"acc_norm_stderr\": 0.04351941398892446\n },\n \"harness|hendrycksTest-conceptual_physics|5\"\

: {\n \"acc\": 0.5531914893617021,\n \"acc_stderr\": 0.032500536843658404,\n\

\ \"acc_norm\": 0.5531914893617021,\n \"acc_norm_stderr\": 0.032500536843658404\n\

\ },\n \"harness|hendrycksTest-econometrics|5\": {\n \"acc\": 0.5263157894736842,\n\

\ \"acc_stderr\": 0.046970851366478626,\n \"acc_norm\": 0.5263157894736842,\n\

\ \"acc_norm_stderr\": 0.046970851366478626\n },\n \"harness|hendrycksTest-electrical_engineering|5\"\

: {\n \"acc\": 0.5517241379310345,\n \"acc_stderr\": 0.04144311810878152,\n\

\ \"acc_norm\": 0.5517241379310345,\n \"acc_norm_stderr\": 0.04144311810878152\n\

\ },\n \"harness|hendrycksTest-elementary_mathematics|5\": {\n \"acc\"\

: 0.41005291005291006,\n \"acc_stderr\": 0.025331202438944427,\n \"\

acc_norm\": 0.41005291005291006,\n \"acc_norm_stderr\": 0.025331202438944427\n\

\ },\n \"harness|hendrycksTest-formal_logic|5\": {\n \"acc\": 0.5079365079365079,\n\

\ \"acc_stderr\": 0.044715725362943486,\n \"acc_norm\": 0.5079365079365079,\n\

\ \"acc_norm_stderr\": 0.044715725362943486\n },\n \"harness|hendrycksTest-global_facts|5\"\

: {\n \"acc\": 0.35,\n \"acc_stderr\": 0.04793724854411019,\n \

\ \"acc_norm\": 0.35,\n \"acc_norm_stderr\": 0.04793724854411019\n \

\ },\n \"harness|hendrycksTest-high_school_biology|5\": {\n \"acc\": 0.7645161290322581,\n\

\ \"acc_stderr\": 0.02413763242933771,\n \"acc_norm\": 0.7645161290322581,\n\

\ \"acc_norm_stderr\": 0.02413763242933771\n },\n \"harness|hendrycksTest-high_school_chemistry|5\"\

: {\n \"acc\": 0.49261083743842365,\n \"acc_stderr\": 0.03517603540361008,\n\

\ \"acc_norm\": 0.49261083743842365,\n \"acc_norm_stderr\": 0.03517603540361008\n\

\ },\n \"harness|hendrycksTest-high_school_computer_science|5\": {\n \

\ \"acc\": 0.7,\n \"acc_stderr\": 0.046056618647183814,\n \"acc_norm\"\

: 0.7,\n \"acc_norm_stderr\": 0.046056618647183814\n },\n \"harness|hendrycksTest-high_school_european_history|5\"\

: {\n \"acc\": 0.7636363636363637,\n \"acc_stderr\": 0.03317505930009182,\n\

\ \"acc_norm\": 0.7636363636363637,\n \"acc_norm_stderr\": 0.03317505930009182\n\

\ },\n \"harness|hendrycksTest-high_school_geography|5\": {\n \"acc\"\

: 0.797979797979798,\n \"acc_stderr\": 0.028606204289229872,\n \"\

acc_norm\": 0.797979797979798,\n \"acc_norm_stderr\": 0.028606204289229872\n\

\ },\n \"harness|hendrycksTest-high_school_government_and_politics|5\": {\n\

\ \"acc\": 0.8860103626943006,\n \"acc_stderr\": 0.022935144053919432,\n\

\ \"acc_norm\": 0.8860103626943006,\n \"acc_norm_stderr\": 0.022935144053919432\n\

\ },\n \"harness|hendrycksTest-high_school_macroeconomics|5\": {\n \

\ \"acc\": 0.6666666666666666,\n \"acc_stderr\": 0.023901157979402534,\n\

\ \"acc_norm\": 0.6666666666666666,\n \"acc_norm_stderr\": 0.023901157979402534\n\

\ },\n \"harness|hendrycksTest-high_school_mathematics|5\": {\n \"\

acc\": 0.2962962962962963,\n \"acc_stderr\": 0.02784081149587193,\n \

\ \"acc_norm\": 0.2962962962962963,\n \"acc_norm_stderr\": 0.02784081149587193\n\

\ },\n \"harness|hendrycksTest-high_school_microeconomics|5\": {\n \

\ \"acc\": 0.6764705882352942,\n \"acc_stderr\": 0.03038835355188679,\n \

\ \"acc_norm\": 0.6764705882352942,\n \"acc_norm_stderr\": 0.03038835355188679\n\

\ },\n \"harness|hendrycksTest-high_school_physics|5\": {\n \"acc\"\

: 0.36423841059602646,\n \"acc_stderr\": 0.03929111781242742,\n \"\

acc_norm\": 0.36423841059602646,\n \"acc_norm_stderr\": 0.03929111781242742\n\

\ },\n \"harness|hendrycksTest-high_school_psychology|5\": {\n \"acc\"\

: 0.8385321100917431,\n \"acc_stderr\": 0.015776239256163248,\n \"\

acc_norm\": 0.8385321100917431,\n \"acc_norm_stderr\": 0.015776239256163248\n\

\ },\n \"harness|hendrycksTest-high_school_statistics|5\": {\n \"acc\"\

: 0.5555555555555556,\n \"acc_stderr\": 0.03388857118502325,\n \"\

acc_norm\": 0.5555555555555556,\n \"acc_norm_stderr\": 0.03388857118502325\n\

\ },\n \"harness|hendrycksTest-high_school_us_history|5\": {\n \"acc\"\

: 0.8333333333333334,\n \"acc_stderr\": 0.026156867523931045,\n \"\

acc_norm\": 0.8333333333333334,\n \"acc_norm_stderr\": 0.026156867523931045\n\

\ },\n \"harness|hendrycksTest-high_school_world_history|5\": {\n \"\

acc\": 0.7974683544303798,\n \"acc_stderr\": 0.026160568246601446,\n \

\ \"acc_norm\": 0.7974683544303798,\n \"acc_norm_stderr\": 0.026160568246601446\n\

\ },\n \"harness|hendrycksTest-human_aging|5\": {\n \"acc\": 0.6771300448430493,\n\

\ \"acc_stderr\": 0.031381476375754995,\n \"acc_norm\": 0.6771300448430493,\n\

\ \"acc_norm_stderr\": 0.031381476375754995\n },\n \"harness|hendrycksTest-human_sexuality|5\"\

: {\n \"acc\": 0.8015267175572519,\n \"acc_stderr\": 0.03498149385462471,\n\

\ \"acc_norm\": 0.8015267175572519,\n \"acc_norm_stderr\": 0.03498149385462471\n\

\ },\n \"harness|hendrycksTest-international_law|5\": {\n \"acc\":\

\ 0.768595041322314,\n \"acc_stderr\": 0.03849856098794088,\n \"acc_norm\"\

: 0.768595041322314,\n \"acc_norm_stderr\": 0.03849856098794088\n },\n\

\ \"harness|hendrycksTest-jurisprudence|5\": {\n \"acc\": 0.7592592592592593,\n\

\ \"acc_stderr\": 0.04133119440243838,\n \"acc_norm\": 0.7592592592592593,\n\

\ \"acc_norm_stderr\": 0.04133119440243838\n },\n \"harness|hendrycksTest-logical_fallacies|5\"\

: {\n \"acc\": 0.7668711656441718,\n \"acc_stderr\": 0.0332201579577674,\n\

\ \"acc_norm\": 0.7668711656441718,\n \"acc_norm_stderr\": 0.0332201579577674\n\

\ },\n \"harness|hendrycksTest-machine_learning|5\": {\n \"acc\": 0.44642857142857145,\n\

\ \"acc_stderr\": 0.04718471485219588,\n \"acc_norm\": 0.44642857142857145,\n\

\ \"acc_norm_stderr\": 0.04718471485219588\n },\n \"harness|hendrycksTest-management|5\"\

: {\n \"acc\": 0.7669902912621359,\n \"acc_stderr\": 0.04185832598928315,\n\

\ \"acc_norm\": 0.7669902912621359,\n \"acc_norm_stderr\": 0.04185832598928315\n\

\ },\n \"harness|hendrycksTest-marketing|5\": {\n \"acc\": 0.8717948717948718,\n\

\ \"acc_stderr\": 0.021901905115073325,\n \"acc_norm\": 0.8717948717948718,\n\

\ \"acc_norm_stderr\": 0.021901905115073325\n },\n \"harness|hendrycksTest-medical_genetics|5\"\

: {\n \"acc\": 0.71,\n \"acc_stderr\": 0.045604802157206845,\n \

\ \"acc_norm\": 0.71,\n \"acc_norm_stderr\": 0.045604802157206845\n \

\ },\n \"harness|hendrycksTest-miscellaneous|5\": {\n \"acc\": 0.8339719029374202,\n\

\ \"acc_stderr\": 0.013306478243066302,\n \"acc_norm\": 0.8339719029374202,\n\

\ \"acc_norm_stderr\": 0.013306478243066302\n },\n \"harness|hendrycksTest-moral_disputes|5\"\

: {\n \"acc\": 0.6936416184971098,\n \"acc_stderr\": 0.024818350129436596,\n\

\ \"acc_norm\": 0.6936416184971098,\n \"acc_norm_stderr\": 0.024818350129436596\n\

\ },\n \"harness|hendrycksTest-moral_scenarios|5\": {\n \"acc\": 0.4569832402234637,\n\

\ \"acc_stderr\": 0.01666049858050917,\n \"acc_norm\": 0.4569832402234637,\n\

\ \"acc_norm_stderr\": 0.01666049858050917\n },\n \"harness|hendrycksTest-nutrition|5\"\

: {\n \"acc\": 0.7058823529411765,\n \"acc_stderr\": 0.02609016250427905,\n\

\ \"acc_norm\": 0.7058823529411765,\n \"acc_norm_stderr\": 0.02609016250427905\n\

\ },\n \"harness|hendrycksTest-philosophy|5\": {\n \"acc\": 0.7202572347266881,\n\

\ \"acc_stderr\": 0.02549425935069491,\n \"acc_norm\": 0.7202572347266881,\n\

\ \"acc_norm_stderr\": 0.02549425935069491\n },\n \"harness|hendrycksTest-prehistory|5\"\

: {\n \"acc\": 0.75,\n \"acc_stderr\": 0.02409347123262133,\n \

\ \"acc_norm\": 0.75,\n \"acc_norm_stderr\": 0.02409347123262133\n \

\ },\n \"harness|hendrycksTest-professional_accounting|5\": {\n \"acc\"\

: 0.4716312056737589,\n \"acc_stderr\": 0.029779450957303062,\n \"\

acc_norm\": 0.4716312056737589,\n \"acc_norm_stderr\": 0.029779450957303062\n\

\ },\n \"harness|hendrycksTest-professional_law|5\": {\n \"acc\": 0.47327249022164275,\n\

\ \"acc_stderr\": 0.012751977967676012,\n \"acc_norm\": 0.47327249022164275,\n\

\ \"acc_norm_stderr\": 0.012751977967676012\n },\n \"harness|hendrycksTest-professional_medicine|5\"\

: {\n \"acc\": 0.6838235294117647,\n \"acc_stderr\": 0.028245687391462927,\n\

\ \"acc_norm\": 0.6838235294117647,\n \"acc_norm_stderr\": 0.028245687391462927\n\

\ },\n \"harness|hendrycksTest-professional_psychology|5\": {\n \"\

acc\": 0.6797385620915033,\n \"acc_stderr\": 0.018875682938069443,\n \

\ \"acc_norm\": 0.6797385620915033,\n \"acc_norm_stderr\": 0.018875682938069443\n\

\ },\n \"harness|hendrycksTest-public_relations|5\": {\n \"acc\": 0.6727272727272727,\n\

\ \"acc_stderr\": 0.0449429086625209,\n \"acc_norm\": 0.6727272727272727,\n\

\ \"acc_norm_stderr\": 0.0449429086625209\n },\n \"harness|hendrycksTest-security_studies|5\"\

: {\n \"acc\": 0.7428571428571429,\n \"acc_stderr\": 0.02797982353874455,\n\

\ \"acc_norm\": 0.7428571428571429,\n \"acc_norm_stderr\": 0.02797982353874455\n\

\ },\n \"harness|hendrycksTest-sociology|5\": {\n \"acc\": 0.835820895522388,\n\

\ \"acc_stderr\": 0.026193923544454115,\n \"acc_norm\": 0.835820895522388,\n\

\ \"acc_norm_stderr\": 0.026193923544454115\n },\n \"harness|hendrycksTest-us_foreign_policy|5\"\

: {\n \"acc\": 0.85,\n \"acc_stderr\": 0.0358870281282637,\n \

\ \"acc_norm\": 0.85,\n \"acc_norm_stderr\": 0.0358870281282637\n },\n\

\ \"harness|hendrycksTest-virology|5\": {\n \"acc\": 0.536144578313253,\n\

\ \"acc_stderr\": 0.038823108508905954,\n \"acc_norm\": 0.536144578313253,\n\

\ \"acc_norm_stderr\": 0.038823108508905954\n },\n \"harness|hendrycksTest-world_religions|5\"\

: {\n \"acc\": 0.8421052631578947,\n \"acc_stderr\": 0.02796678585916089,\n\

\ \"acc_norm\": 0.8421052631578947,\n \"acc_norm_stderr\": 0.02796678585916089\n\

\ },\n \"harness|truthfulqa:mc|0\": {\n \"mc1\": 0.6682986536107711,\n\

\ \"mc1_stderr\": 0.01648214881024148,\n \"mc2\": 0.7936098015130929,\n\

\ \"mc2_stderr\": 0.013242598947000108\n },\n \"harness|winogrande|5\"\

: {\n \"acc\": 0.8168902920284136,\n \"acc_stderr\": 0.010869778633168367\n\

\ },\n \"harness|gsm8k|5\": {\n \"acc\": 0.6611068991660348,\n \

\ \"acc_stderr\": 0.013037955768562514\n }\n}\n```"

repo_url: https://huggingface.co/daxiongshu/Pluto_24B_DPO_63

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_arc_challenge_25

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|arc:challenge|25_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|arc:challenge|25_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_gsm8k_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|gsm8k|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hellaswag_10

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hellaswag|10_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hellaswag|10_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-management|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-virology|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-management|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-virology|5_2024-01-25T14-41-24.929250.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_abstract_algebra_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_anatomy_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-anatomy|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-anatomy|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_astronomy_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-astronomy|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-astronomy|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_business_ethics_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_clinical_knowledge_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_college_biology_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-college_biology|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_biology|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_college_chemistry_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_college_computer_science_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_college_mathematics_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_college_medicine_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_college_physics_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-college_physics|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_physics|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_computer_security_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-computer_security|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-computer_security|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_conceptual_physics_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_econometrics_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-econometrics|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-econometrics|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_electrical_engineering_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_elementary_mathematics_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_formal_logic_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_global_facts_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-global_facts|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-global_facts|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_biology_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_chemistry_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_computer_science_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_european_history_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_geography_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_government_and_politics_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_macroeconomics_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_mathematics_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_microeconomics_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_physics_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_psychology_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_statistics_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_us_history_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_high_school_world_history_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_human_aging_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-human_aging|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_aging|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_human_sexuality_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_international_law_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-international_law|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-international_law|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_jurisprudence_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_logical_fallacies_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_machine_learning_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_management_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-management|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-management|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_marketing_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-marketing|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-marketing|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_medical_genetics_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_miscellaneous_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_moral_disputes_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_moral_scenarios_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_nutrition_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-nutrition|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-nutrition|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_philosophy_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-philosophy|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-philosophy|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_prehistory_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-prehistory|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-prehistory|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_professional_accounting_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_professional_law_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-professional_law|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_law|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_professional_medicine_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_professional_psychology_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_public_relations_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-public_relations|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-public_relations|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_security_studies_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-security_studies|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-security_studies|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_sociology_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-sociology|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-sociology|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_us_foreign_policy_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_virology_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-virology|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-virology|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_hendrycksTest_world_religions_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|hendrycksTest-world_religions|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-world_religions|5_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_truthfulqa_mc_0

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|truthfulqa:mc|0_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|truthfulqa:mc|0_2024-01-25T14-41-24.929250.parquet'

- config_name: harness_winogrande_5

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- '**/details_harness|winogrande|5_2024-01-25T14-41-24.929250.parquet'

- split: latest

path:

- '**/details_harness|winogrande|5_2024-01-25T14-41-24.929250.parquet'

- config_name: results

data_files:

- split: 2024_01_25T14_41_24.929250

path:

- results_2024-01-25T14-41-24.929250.parquet

- split: latest

path:

- results_2024-01-25T14-41-24.929250.parquet

---

# Dataset Card for Evaluation run of daxiongshu/Pluto_24B_DPO_63

<!-- Provide a quick summary of the dataset. -->

Dataset automatically created during the evaluation run of model [daxiongshu/Pluto_24B_DPO_63](https://huggingface.co/daxiongshu/Pluto_24B_DPO_63) on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).

The dataset is composed of 63 configuration, each one coresponding to one of the evaluated task.

The dataset has been created from 1 run(s). Each run can be found as a specific split in each configuration, the split being named using the timestamp of the run.The "train" split is always pointing to the latest results.

An additional configuration "results" store all the aggregated results of the run (and is used to compute and display the aggregated metrics on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).

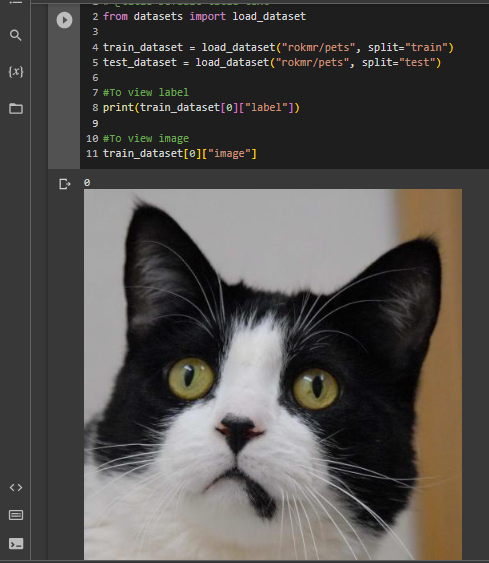

To load the details from a run, you can for instance do the following:

```python

from datasets import load_dataset

data = load_dataset("open-llm-leaderboard/details_daxiongshu__Pluto_24B_DPO_63",

"harness_winogrande_5",

split="train")

```

## Latest results

These are the [latest results from run 2024-01-25T14:41:24.929250](https://huggingface.co/datasets/open-llm-leaderboard/details_daxiongshu__Pluto_24B_DPO_63/blob/main/results_2024-01-25T14-41-24.929250.json)(note that their might be results for other tasks in the repos if successive evals didn't cover the same tasks. You find each in the results and the "latest" split for each eval):

```python

{

"all": {

"acc": 0.6498497884015774,

"acc_stderr": 0.03220698512335376,

"acc_norm": 0.6505024665977697,

"acc_norm_stderr": 0.03286228741096377,

"mc1": 0.6682986536107711,

"mc1_stderr": 0.01648214881024148,

"mc2": 0.7936098015130929,

"mc2_stderr": 0.013242598947000108

},

"harness|arc:challenge|25": {

"acc": 0.7064846416382252,

"acc_stderr": 0.013307250444941122,

"acc_norm": 0.7397610921501706,

"acc_norm_stderr": 0.012821930225112563

},

"harness|hellaswag|10": {

"acc": 0.6981676956781517,

"acc_stderr": 0.004581147247963204,

"acc_norm": 0.8816968731328421,

"acc_norm_stderr": 0.0032230665918060006

},

"harness|hendrycksTest-abstract_algebra|5": {

"acc": 0.35,

"acc_stderr": 0.0479372485441102,

"acc_norm": 0.35,

"acc_norm_stderr": 0.0479372485441102

},

"harness|hendrycksTest-anatomy|5": {

"acc": 0.6222222222222222,

"acc_stderr": 0.04188307537595853,

"acc_norm": 0.6222222222222222,

"acc_norm_stderr": 0.04188307537595853

},

"harness|hendrycksTest-astronomy|5": {

"acc": 0.7368421052631579,

"acc_stderr": 0.03583496176361074,

"acc_norm": 0.7368421052631579,

"acc_norm_stderr": 0.03583496176361074

},

"harness|hendrycksTest-business_ethics|5": {

"acc": 0.64,

"acc_stderr": 0.04824181513244218,

"acc_norm": 0.64,

"acc_norm_stderr": 0.04824181513244218

},

"harness|hendrycksTest-clinical_knowledge|5": {

"acc": 0.6867924528301886,

"acc_stderr": 0.028544793319055326,

"acc_norm": 0.6867924528301886,

"acc_norm_stderr": 0.028544793319055326

},

"harness|hendrycksTest-college_biology|5": {

"acc": 0.7708333333333334,

"acc_stderr": 0.03514697467862388,

"acc_norm": 0.7708333333333334,

"acc_norm_stderr": 0.03514697467862388

},

"harness|hendrycksTest-college_chemistry|5": {

"acc": 0.47,

"acc_stderr": 0.050161355804659205,

"acc_norm": 0.47,

"acc_norm_stderr": 0.050161355804659205

},

"harness|hendrycksTest-college_computer_science|5": {

"acc": 0.53,

"acc_stderr": 0.050161355804659205,

"acc_norm": 0.53,

"acc_norm_stderr": 0.050161355804659205

},

"harness|hendrycksTest-college_mathematics|5": {

"acc": 0.32,

"acc_stderr": 0.04688261722621504,

"acc_norm": 0.32,

"acc_norm_stderr": 0.04688261722621504

},

"harness|hendrycksTest-college_medicine|5": {

"acc": 0.6589595375722543,

"acc_stderr": 0.036146654241808254,

"acc_norm": 0.6589595375722543,

"acc_norm_stderr": 0.036146654241808254

},

"harness|hendrycksTest-college_physics|5": {

"acc": 0.37254901960784315,

"acc_stderr": 0.04810840148082636,

"acc_norm": 0.37254901960784315,

"acc_norm_stderr": 0.04810840148082636

},

"harness|hendrycksTest-computer_security|5": {

"acc": 0.75,

"acc_stderr": 0.04351941398892446,

"acc_norm": 0.75,

"acc_norm_stderr": 0.04351941398892446

},

"harness|hendrycksTest-conceptual_physics|5": {

"acc": 0.5531914893617021,

"acc_stderr": 0.032500536843658404,

"acc_norm": 0.5531914893617021,

"acc_norm_stderr": 0.032500536843658404

},

"harness|hendrycksTest-econometrics|5": {

"acc": 0.5263157894736842,

"acc_stderr": 0.046970851366478626,

"acc_norm": 0.5263157894736842,

"acc_norm_stderr": 0.046970851366478626

},

"harness|hendrycksTest-electrical_engineering|5": {

"acc": 0.5517241379310345,

"acc_stderr": 0.04144311810878152,

"acc_norm": 0.5517241379310345,

"acc_norm_stderr": 0.04144311810878152

},

"harness|hendrycksTest-elementary_mathematics|5": {

"acc": 0.41005291005291006,

"acc_stderr": 0.025331202438944427,

"acc_norm": 0.41005291005291006,

"acc_norm_stderr": 0.025331202438944427

},

"harness|hendrycksTest-formal_logic|5": {

"acc": 0.5079365079365079,

"acc_stderr": 0.044715725362943486,

"acc_norm": 0.5079365079365079,

"acc_norm_stderr": 0.044715725362943486

},

"harness|hendrycksTest-global_facts|5": {

"acc": 0.35,

"acc_stderr": 0.04793724854411019,

"acc_norm": 0.35,

"acc_norm_stderr": 0.04793724854411019

},

"harness|hendrycksTest-high_school_biology|5": {

"acc": 0.7645161290322581,

"acc_stderr": 0.02413763242933771,

"acc_norm": 0.7645161290322581,

"acc_norm_stderr": 0.02413763242933771

},

"harness|hendrycksTest-high_school_chemistry|5": {

"acc": 0.49261083743842365,

"acc_stderr": 0.03517603540361008,

"acc_norm": 0.49261083743842365,

"acc_norm_stderr": 0.03517603540361008

},

"harness|hendrycksTest-high_school_computer_science|5": {

"acc": 0.7,

"acc_stderr": 0.046056618647183814,

"acc_norm": 0.7,

"acc_norm_stderr": 0.046056618647183814

},

"harness|hendrycksTest-high_school_european_history|5": {

"acc": 0.7636363636363637,

"acc_stderr": 0.03317505930009182,

"acc_norm": 0.7636363636363637,

"acc_norm_stderr": 0.03317505930009182

},

"harness|hendrycksTest-high_school_geography|5": {

"acc": 0.797979797979798,

"acc_stderr": 0.028606204289229872,

"acc_norm": 0.797979797979798,

"acc_norm_stderr": 0.028606204289229872

},

"harness|hendrycksTest-high_school_government_and_politics|5": {

"acc": 0.8860103626943006,

"acc_stderr": 0.022935144053919432,

"acc_norm": 0.8860103626943006,

"acc_norm_stderr": 0.022935144053919432

},

"harness|hendrycksTest-high_school_macroeconomics|5": {

"acc": 0.6666666666666666,

"acc_stderr": 0.023901157979402534,

"acc_norm": 0.6666666666666666,

"acc_norm_stderr": 0.023901157979402534

},

"harness|hendrycksTest-high_school_mathematics|5": {

"acc": 0.2962962962962963,

"acc_stderr": 0.02784081149587193,

"acc_norm": 0.2962962962962963,

"acc_norm_stderr": 0.02784081149587193

},

"harness|hendrycksTest-high_school_microeconomics|5": {

"acc": 0.6764705882352942,

"acc_stderr": 0.03038835355188679,

"acc_norm": 0.6764705882352942,

"acc_norm_stderr": 0.03038835355188679

},

"harness|hendrycksTest-high_school_physics|5": {

"acc": 0.36423841059602646,

"acc_stderr": 0.03929111781242742,

"acc_norm": 0.36423841059602646,

"acc_norm_stderr": 0.03929111781242742

},

"harness|hendrycksTest-high_school_psychology|5": {

"acc": 0.8385321100917431,

"acc_stderr": 0.015776239256163248,

"acc_norm": 0.8385321100917431,

"acc_norm_stderr": 0.015776239256163248

},

"harness|hendrycksTest-high_school_statistics|5": {

"acc": 0.5555555555555556,

"acc_stderr": 0.03388857118502325,

"acc_norm": 0.5555555555555556,

"acc_norm_stderr": 0.03388857118502325

},

"harness|hendrycksTest-high_school_us_history|5": {

"acc": 0.8333333333333334,

"acc_stderr": 0.026156867523931045,

"acc_norm": 0.8333333333333334,

"acc_norm_stderr": 0.026156867523931045

},

"harness|hendrycksTest-high_school_world_history|5": {

"acc": 0.7974683544303798,

"acc_stderr": 0.026160568246601446,

"acc_norm": 0.7974683544303798,

"acc_norm_stderr": 0.026160568246601446

},

"harness|hendrycksTest-human_aging|5": {

"acc": 0.6771300448430493,

"acc_stderr": 0.031381476375754995,

"acc_norm": 0.6771300448430493,

"acc_norm_stderr": 0.031381476375754995

},

"harness|hendrycksTest-human_sexuality|5": {

"acc": 0.8015267175572519,

"acc_stderr": 0.03498149385462471,

"acc_norm": 0.8015267175572519,

"acc_norm_stderr": 0.03498149385462471

},

"harness|hendrycksTest-international_law|5": {

"acc": 0.768595041322314,

"acc_stderr": 0.03849856098794088,

"acc_norm": 0.768595041322314,

"acc_norm_stderr": 0.03849856098794088

},

"harness|hendrycksTest-jurisprudence|5": {

"acc": 0.7592592592592593,

"acc_stderr": 0.04133119440243838,

"acc_norm": 0.7592592592592593,

"acc_norm_stderr": 0.04133119440243838

},

"harness|hendrycksTest-logical_fallacies|5": {

"acc": 0.7668711656441718,

"acc_stderr": 0.0332201579577674,

"acc_norm": 0.7668711656441718,

"acc_norm_stderr": 0.0332201579577674

},

"harness|hendrycksTest-machine_learning|5": {

"acc": 0.44642857142857145,

"acc_stderr": 0.04718471485219588,

"acc_norm": 0.44642857142857145,

"acc_norm_stderr": 0.04718471485219588

},

"harness|hendrycksTest-management|5": {

"acc": 0.7669902912621359,

"acc_stderr": 0.04185832598928315,

"acc_norm": 0.7669902912621359,

"acc_norm_stderr": 0.04185832598928315

},

"harness|hendrycksTest-marketing|5": {

"acc": 0.8717948717948718,

"acc_stderr": 0.021901905115073325,

"acc_norm": 0.8717948717948718,

"acc_norm_stderr": 0.021901905115073325

},

"harness|hendrycksTest-medical_genetics|5": {

"acc": 0.71,

"acc_stderr": 0.045604802157206845,

"acc_norm": 0.71,

"acc_norm_stderr": 0.045604802157206845

},

"harness|hendrycksTest-miscellaneous|5": {

"acc": 0.8339719029374202,

"acc_stderr": 0.013306478243066302,

"acc_norm": 0.8339719029374202,

"acc_norm_stderr": 0.013306478243066302

},

"harness|hendrycksTest-moral_disputes|5": {

"acc": 0.6936416184971098,

"acc_stderr": 0.024818350129436596,

"acc_norm": 0.6936416184971098,

"acc_norm_stderr": 0.024818350129436596

},

"harness|hendrycksTest-moral_scenarios|5": {

"acc": 0.4569832402234637,

"acc_stderr": 0.01666049858050917,

"acc_norm": 0.4569832402234637,

"acc_norm_stderr": 0.01666049858050917

},

"harness|hendrycksTest-nutrition|5": {

"acc": 0.7058823529411765,

"acc_stderr": 0.02609016250427905,

"acc_norm": 0.7058823529411765,

"acc_norm_stderr": 0.02609016250427905

},

"harness|hendrycksTest-philosophy|5": {

"acc": 0.7202572347266881,

"acc_stderr": 0.02549425935069491,

"acc_norm": 0.7202572347266881,

"acc_norm_stderr": 0.02549425935069491

},

"harness|hendrycksTest-prehistory|5": {

"acc": 0.75,

"acc_stderr": 0.02409347123262133,

"acc_norm": 0.75,

"acc_norm_stderr": 0.02409347123262133

},

"harness|hendrycksTest-professional_accounting|5": {

"acc": 0.4716312056737589,

"acc_stderr": 0.029779450957303062,

"acc_norm": 0.4716312056737589,

"acc_norm_stderr": 0.029779450957303062

},

"harness|hendrycksTest-professional_law|5": {

"acc": 0.47327249022164275,

"acc_stderr": 0.012751977967676012,

"acc_norm": 0.47327249022164275,

"acc_norm_stderr": 0.012751977967676012

},

"harness|hendrycksTest-professional_medicine|5": {

"acc": 0.6838235294117647,

"acc_stderr": 0.028245687391462927,

"acc_norm": 0.6838235294117647,

"acc_norm_stderr": 0.028245687391462927

},

"harness|hendrycksTest-professional_psychology|5": {

"acc": 0.6797385620915033,

"acc_stderr": 0.018875682938069443,

"acc_norm": 0.6797385620915033,

"acc_norm_stderr": 0.018875682938069443

},

"harness|hendrycksTest-public_relations|5": {

"acc": 0.6727272727272727,

"acc_stderr": 0.0449429086625209,

"acc_norm": 0.6727272727272727,

"acc_norm_stderr": 0.0449429086625209

},

"harness|hendrycksTest-security_studies|5": {

"acc": 0.7428571428571429,

"acc_stderr": 0.02797982353874455,

"acc_norm": 0.7428571428571429,

"acc_norm_stderr": 0.02797982353874455

},

"harness|hendrycksTest-sociology|5": {

"acc": 0.835820895522388,

"acc_stderr": 0.026193923544454115,

"acc_norm": 0.835820895522388,

"acc_norm_stderr": 0.026193923544454115

},

"harness|hendrycksTest-us_foreign_policy|5": {

"acc": 0.85,

"acc_stderr": 0.0358870281282637,

"acc_norm": 0.85,

"acc_norm_stderr": 0.0358870281282637

},

"harness|hendrycksTest-virology|5": {

"acc": 0.536144578313253,

"acc_stderr": 0.038823108508905954,

"acc_norm": 0.536144578313253,

"acc_norm_stderr": 0.038823108508905954

},

"harness|hendrycksTest-world_religions|5": {

"acc": 0.8421052631578947,

"acc_stderr": 0.02796678585916089,

"acc_norm": 0.8421052631578947,

"acc_norm_stderr": 0.02796678585916089

},

"harness|truthfulqa:mc|0": {

"mc1": 0.6682986536107711,

"mc1_stderr": 0.01648214881024148,

"mc2": 0.7936098015130929,

"mc2_stderr": 0.013242598947000108

},

"harness|winogrande|5": {

"acc": 0.8168902920284136,

"acc_stderr": 0.010869778633168367

},

"harness|gsm8k|5": {

"acc": 0.6611068991660348,

"acc_stderr": 0.013037955768562514

}

}

```

## Dataset Details

### Dataset Description

<!-- Provide a longer summary of what this dataset is. -->

- **Curated by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

### Dataset Sources [optional]

<!-- Provide the basic links for the dataset. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the dataset is intended to be used. -->

### Direct Use

<!-- This section describes suitable use cases for the dataset. -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the dataset will not work well for. -->

[More Information Needed]

## Dataset Structure

<!-- This section provides a description of the dataset fields, and additional information about the dataset structure such as criteria used to create the splits, relationships between data points, etc. -->

[More Information Needed]

## Dataset Creation

### Curation Rationale

<!-- Motivation for the creation of this dataset. -->

[More Information Needed]

### Source Data

<!-- This section describes the source data (e.g. news text and headlines, social media posts, translated sentences, ...). -->

#### Data Collection and Processing

<!-- This section describes the data collection and processing process such as data selection criteria, filtering and normalization methods, tools and libraries used, etc. -->

[More Information Needed]

#### Who are the source data producers?

<!-- This section describes the people or systems who originally created the data. It should also include self-reported demographic or identity information for the source data creators if this information is available. -->

[More Information Needed]

### Annotations [optional]

<!-- If the dataset contains annotations which are not part of the initial data collection, use this section to describe them. -->

#### Annotation process

<!-- This section describes the annotation process such as annotation tools used in the process, the amount of data annotated, annotation guidelines provided to the annotators, interannotator statistics, annotation validation, etc. -->

[More Information Needed]

#### Who are the annotators?

<!-- This section describes the people or systems who created the annotations. -->

[More Information Needed]

#### Personal and Sensitive Information