datasetId stringlengths 2 117 | card stringlengths 19 1.01M |

|---|---|

NikkoIGuess/NikkoDoesRandom_Ai | ---

license: apache-2.0

task_categories:

- text-classification

language:

- en

tags:

- chemistry

pretty_name: 'NikkoDoesRandom '

size_categories:

- n>1T

---

# Dataset Card for Dataset Name

<!-- Provide a quick summary of the dataset. -->

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

## Dataset Details

### Dataset Description

<!-- Provide a longer summary of what this dataset is. -->

- **Curated by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

### Dataset Sources [optional]

<!-- Provide the basic links for the dataset. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the dataset is intended to be used. -->

### Direct Use

<!-- This section describes suitable use cases for the dataset. -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the dataset will not work well for. -->

[More Information Needed]

## Dataset Structure

<!-- This section provides a description of the dataset fields, and additional information about the dataset structure such as criteria used to create the splits, relationships between data points, etc. -->

[More Information Needed]

## Dataset Creation

### Curation Rationale

<!-- Motivation for the creation of this dataset. -->

[More Information Needed]

### Source Data

<!-- This section describes the source data (e.g. news text and headlines, social media posts, translated sentences, ...). -->

#### Data Collection and Processing

<!-- This section describes the data collection and processing process such as data selection criteria, filtering and normalization methods, tools and libraries used, etc. -->

[More Information Needed]

#### Who are the source data producers?

<!-- This section describes the people or systems who originally created the data. It should also include self-reported demographic or identity information for the source data creators if this information is available. -->

[More Information Needed]

### Annotations [optional]

<!-- If the dataset contains annotations which are not part of the initial data collection, use this section to describe them. -->

#### Annotation process

<!-- This section describes the annotation process such as annotation tools used in the process, the amount of data annotated, annotation guidelines provided to the annotators, interannotator statistics, annotation validation, etc. -->

[More Information Needed]

#### Who are the annotators?

<!-- This section describes the people or systems who created the annotations. -->

[More Information Needed]

#### Personal and Sensitive Information

<!-- State whether the dataset contains data that might be considered personal, sensitive, or private (e.g., data that reveals addresses, uniquely identifiable names or aliases, racial or ethnic origins, sexual orientations, religious beliefs, political opinions, financial or health data, etc.). If efforts were made to anonymize the data, describe the anonymization process. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users should be made aware of the risks, biases and limitations of the dataset. More information needed for further recommendations.

## Citation [optional]

<!-- If there is a paper or blog post introducing the dataset, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the dataset or dataset card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Dataset Card Authors [optional]

[More Information Needed]

## Dataset Card Contact

[More Information Needed] |

leslyarun/c4_200m_gec_train100k_test25k | ---

language:

- en

source_datasets:

- allenai/c4

task_categories:

- text-generation

pretty_name: C4 200M Grammatical Error Correction Dataset

tags:

- grammatical-error-correction

---

# C4 200M

# Dataset Summary

C4 200M Sample Dataset adopted from https://huggingface.co/datasets/liweili/c4_200m

C4_200m is a collection of 185 million sentence pairs generated from the cleaned English dataset from C4. This dataset can be used in grammatical error correction (GEC) tasks.

The corruption edits and scripts used to synthesize this dataset is referenced from: [C4_200M Synthetic Dataset](https://github.com/google-research-datasets/C4_200M-synthetic-dataset-for-grammatical-error-correction)

# Description

As discussed before, this dataset contains 185 million sentence pairs. Each article has these two attributes: `input` and `output`. Here is a sample of dataset:

```

{

"input": "Bitcoin is for $7,094 this morning, which CoinDesk says."

"output": "Bitcoin goes for $7,094 this morning, according to CoinDesk."

}

``` |

shireesh-uop/nhs_classification | ---

dataset_info:

features:

- name: label

dtype: string

- name: data

dtype: string

- name: idx

dtype: int64

splits:

- name: train

num_bytes: 3574265

num_examples: 27124

download_size: 1511963

dataset_size: 3574265

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

TrainingDataPro/facial-emotion-recognition-dataset | ---

language:

- en

license: cc-by-nc-nd-4.0

task_categories:

- image-classification

- image-to-image

tags:

- code

dataset_info:

features:

- name: set_id

dtype: int32

- name: neutral

dtype: image

- name: anger

dtype: image

- name: contempt

dtype: image

- name: disgust

dtype: image

- name: fear

dtype: image

- name: happy

dtype: image

- name: sad

dtype: image

- name: surprised

dtype: image

- name: age

dtype: int8

- name: gender

dtype: string

- name: country

dtype: string

splits:

- name: train

num_bytes: 22981

num_examples: 19

download_size: 453786356

dataset_size: 22981

---

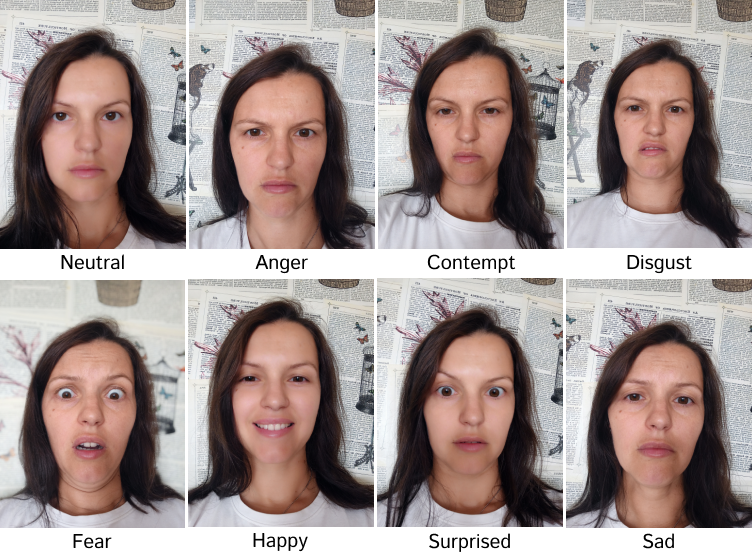

# Facial Emotion Recognition Dataset

The dataset consists of images capturing people displaying **7 distinct emotions** (*anger, contempt, disgust, fear, happiness, sadness and surprise*). Each image in the dataset represents one of these specific emotions, enabling researchers and machine learning practitioners to study and develop models for emotion recognition and analysis.

The images encompass a diverse range of individuals, including different *genders, ethnicities, and age groups*. The dataset aims to provide a comprehensive representation of human emotions, allowing for a wide range of use cases.

### The dataset's possible applications:

- automatic emotion detection

- mental health analysis

- artificial intelligence (AI) and computer vision

- entertainment industries

- advertising and market research

- security and surveillance

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=facial-emotion-recognition-dataset) to discuss your requirements, learn about the price and buy the dataset.

# Content

- **images**: includes folders corresponding to people and containing images with 8 different impersonated emotions, each file is named according to the expressed emotion

- **.csv** file: contains information about people in the dataset

### Emotions in the dataset:

- anger

- contempt

- disgust

- fear

- happy

- sad

- surprised

### File with the extension .csv

includes the following information for each set of media files:

- **set_id**: id of the set of images,

- **gender**: gender of the person,

- **age**: age of the person,

- **country**: country of the person

# Images for facial emotion recognition might be collected in accordance with your requirements.

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=facial-emotion-recognition-dataset) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** |

CLEAR-Global/Gamayun-kits | ---

task_categories:

- translation

language:

- ha

- kr

- en

- fr

- sw

- swc

- ln

- nnd

- rhg

- ti

size_categories:

- 10K<n<100K

pretty_name: Gamayun kits

---

# Gamayun Language Data Kits

There are more than 7,000 languages in the world, yet only a small proportion of them have language data presence in public. CLEAR Global's Gamayun kits are a starting point for developing audio and text corpora for languages without pre-existing data resources. We create parallel data for a language by translating a pre-compiled set of general-domain sentences in English. If audio data is needed, these translated sentences are recorded by native speakers.

To scale corpus production, we offer four dataset versions:

- Mini-kit of 5,000 sentences (`kit5k`)

- Small-kit of 10,000 sentences (`kit10k`)

- Medium-kit of 15,000 sentences (`kit15k`)

- Large-kit of 30,000 sentences (`kit30k`)

For audio corpora developed using these kits refer to the official initiative website [Gamayun portal](https://gamayun.translatorswb.org/data/).

## Source sentences (`core`)

Sentences in `core` directory are in English, French and Spanish and are sourced from the [Tatoeba repository](https://tatoeba.org). Sentence selection algorithm ensures representation of most frequently used words in the language. For more information, please refer to [corepus-gen repository](https://github.com/translatorswb/corepus-gen). `etc` directories contain sentence id's as used in the Tatoeba corpus.

## Parallel corpora (`parallel`)

Translations of the kits are performed by professionals and volunteers of TWB's translator community. A complete list of translated sentences are:

| Language | Pair | # Segments | Source |

|------|--------|--------|--------|

| Hausa | English | 15,000 | Tatoeba |

| Kanuri | English | 5,000 | Tatoeba |

| Nande | French | 15,000 | Tatoeba |

| Rohingya | English | 5,000 | Tatoeba |

| Swahili (Coastal) | English | 5,000 | Tatoeba |

| Swahili (Congolese) | French | 25,302 | Tatoeba |

## Reference

More on [Gamayun, language equity initiative](https://translatorswithoutborders.org/gamayun/)

Gamayun kits are officially published in the [Gamayun portal](https://gamayun.translatorswb.org/data/). Conditions for use are described in `LICENSE.txt`.

If you need to cite Gamayun kits:

```

Alp Öktem, Muhannad Albayk Jaam, Eric DeLuca, Grace Tang

Gamayun – Language Technology for Humanitarian Response

In: 2020 IEEE Global Humanitarian Technology Conference (GHTC)

2020 October 29 - November 1; Virtual.

Link: https://ieeexplore.ieee.org/document/9342939

``` |

Mitsuki-Sakamoto/alpaca_farm-deberta-re-preference-64-nsample-16_filter_gold_thr_0.0_self_70m | ---

dataset_info:

config_name: alpaca_instructions-pythia_70m_alpaca_farm_instructions_sft_constant_pa_seed_1

features:

- name: instruction

dtype: string

- name: input

dtype: string

- name: output

dtype: string

- name: preference

dtype: int64

- name: output_1

dtype: string

- name: output_2

dtype: string

- name: reward_model_prompt_format

dtype: string

- name: gen_prompt_format

dtype: string

- name: gen_kwargs

struct:

- name: do_sample

dtype: bool

- name: max_new_tokens

dtype: int64

- name: pad_token_id

dtype: int64

- name: top_k

dtype: int64

- name: top_p

dtype: float64

- name: reward_1

dtype: float64

- name: reward_2

dtype: float64

- name: n_samples

dtype: int64

- name: reject_select

dtype: string

- name: index

dtype: int64

- name: prompt

dtype: string

- name: chosen

dtype: string

- name: rejected

dtype: string

- name: filtered_epoch

dtype: int64

- name: gen_reward

dtype: float64

- name: gen_response

dtype: string

splits:

- name: epoch_0

num_bytes: 43497434

num_examples: 18928

- name: epoch_1

num_bytes: 44355307

num_examples: 18928

- name: epoch_2

num_bytes: 44429044

num_examples: 18928

- name: epoch_3

num_bytes: 44454073

num_examples: 18928

- name: epoch_4

num_bytes: 44459094

num_examples: 18928

- name: epoch_5

num_bytes: 44477699

num_examples: 18928

- name: epoch_6

num_bytes: 44479423

num_examples: 18928

- name: epoch_7

num_bytes: 44487040

num_examples: 18928

- name: epoch_8

num_bytes: 44493050

num_examples: 18928

- name: epoch_9

num_bytes: 44497058

num_examples: 18928

download_size: 683566317

dataset_size: 443629222

configs:

- config_name: alpaca_instructions-pythia_70m_alpaca_farm_instructions_sft_constant_pa_seed_1

data_files:

- split: epoch_0

path: alpaca_instructions-pythia_70m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_0-*

- split: epoch_1

path: alpaca_instructions-pythia_70m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_1-*

- split: epoch_2

path: alpaca_instructions-pythia_70m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_2-*

- split: epoch_3

path: alpaca_instructions-pythia_70m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_3-*

- split: epoch_4

path: alpaca_instructions-pythia_70m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_4-*

- split: epoch_5

path: alpaca_instructions-pythia_70m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_5-*

- split: epoch_6

path: alpaca_instructions-pythia_70m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_6-*

- split: epoch_7

path: alpaca_instructions-pythia_70m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_7-*

- split: epoch_8

path: alpaca_instructions-pythia_70m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_8-*

- split: epoch_9

path: alpaca_instructions-pythia_70m_alpaca_farm_instructions_sft_constant_pa_seed_1/epoch_9-*

---

|

open-llm-leaderboard/details_bhenrym14__airoboros-33b-gpt4-1.4.1-PI-8192-fp16 | ---

pretty_name: Evaluation run of bhenrym14/airoboros-33b-gpt4-1.4.1-PI-8192-fp16

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [bhenrym14/airoboros-33b-gpt4-1.4.1-PI-8192-fp16](https://huggingface.co/bhenrym14/airoboros-33b-gpt4-1.4.1-PI-8192-fp16)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 3 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the agregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_bhenrym14__airoboros-33b-gpt4-1.4.1-PI-8192-fp16\"\

,\n\t\"harness_winogrande_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\n\

These are the [latest results from run 2023-10-15T19:12:34.050776](https://huggingface.co/datasets/open-llm-leaderboard/details_bhenrym14__airoboros-33b-gpt4-1.4.1-PI-8192-fp16/blob/main/results_2023-10-15T19-12-34.050776.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"em\": 0.03544463087248322,\n\

\ \"em_stderr\": 0.0018935573437954016,\n \"f1\": 0.08440436241610706,\n\

\ \"f1_stderr\": 0.002470333585036359,\n \"acc\": 0.2841357537490134,\n\

\ \"acc_stderr\": 0.0069604360550053574\n },\n \"harness|drop|3\":\

\ {\n \"em\": 0.03544463087248322,\n \"em_stderr\": 0.0018935573437954016,\n\

\ \"f1\": 0.08440436241610706,\n \"f1_stderr\": 0.002470333585036359\n\

\ },\n \"harness|gsm8k|5\": {\n \"acc\": 0.0,\n \"acc_stderr\"\

: 0.0\n },\n \"harness|winogrande|5\": {\n \"acc\": 0.5682715074980268,\n\

\ \"acc_stderr\": 0.013920872110010715\n }\n}\n```"

repo_url: https://huggingface.co/bhenrym14/airoboros-33b-gpt4-1.4.1-PI-8192-fp16

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_drop_3

data_files:

- split: 2023_10_15T19_12_34.050776

path:

- '**/details_harness|drop|3_2023-10-15T19-12-34.050776.parquet'

- split: latest

path:

- '**/details_harness|drop|3_2023-10-15T19-12-34.050776.parquet'

- config_name: harness_gsm8k_5

data_files:

- split: 2023_10_15T19_12_34.050776

path:

- '**/details_harness|gsm8k|5_2023-10-15T19-12-34.050776.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2023-10-15T19-12-34.050776.parquet'

- config_name: harness_winogrande_5

data_files:

- split: 2023_10_15T19_12_34.050776

path:

- '**/details_harness|winogrande|5_2023-10-15T19-12-34.050776.parquet'

- split: latest

path:

- '**/details_harness|winogrande|5_2023-10-15T19-12-34.050776.parquet'

- config_name: results

data_files:

- split: 2023_10_15T19_12_34.050776

path:

- results_2023-10-15T19-12-34.050776.parquet

- split: latest

path:

- results_2023-10-15T19-12-34.050776.parquet

---

# Dataset Card for Evaluation run of bhenrym14/airoboros-33b-gpt4-1.4.1-PI-8192-fp16

## Dataset Description

- **Homepage:**

- **Repository:** https://huggingface.co/bhenrym14/airoboros-33b-gpt4-1.4.1-PI-8192-fp16

- **Paper:**

- **Leaderboard:** https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

- **Point of Contact:** clementine@hf.co

### Dataset Summary

Dataset automatically created during the evaluation run of model [bhenrym14/airoboros-33b-gpt4-1.4.1-PI-8192-fp16](https://huggingface.co/bhenrym14/airoboros-33b-gpt4-1.4.1-PI-8192-fp16) on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).

The dataset is composed of 3 configuration, each one coresponding to one of the evaluated task.

The dataset has been created from 1 run(s). Each run can be found as a specific split in each configuration, the split being named using the timestamp of the run.The "train" split is always pointing to the latest results.

An additional configuration "results" store all the aggregated results of the run (and is used to compute and display the agregated metrics on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).

To load the details from a run, you can for instance do the following:

```python

from datasets import load_dataset

data = load_dataset("open-llm-leaderboard/details_bhenrym14__airoboros-33b-gpt4-1.4.1-PI-8192-fp16",

"harness_winogrande_5",

split="train")

```

## Latest results

These are the [latest results from run 2023-10-15T19:12:34.050776](https://huggingface.co/datasets/open-llm-leaderboard/details_bhenrym14__airoboros-33b-gpt4-1.4.1-PI-8192-fp16/blob/main/results_2023-10-15T19-12-34.050776.json)(note that their might be results for other tasks in the repos if successive evals didn't cover the same tasks. You find each in the results and the "latest" split for each eval):

```python

{

"all": {

"em": 0.03544463087248322,

"em_stderr": 0.0018935573437954016,

"f1": 0.08440436241610706,

"f1_stderr": 0.002470333585036359,

"acc": 0.2841357537490134,

"acc_stderr": 0.0069604360550053574

},

"harness|drop|3": {

"em": 0.03544463087248322,

"em_stderr": 0.0018935573437954016,

"f1": 0.08440436241610706,

"f1_stderr": 0.002470333585036359

},

"harness|gsm8k|5": {

"acc": 0.0,

"acc_stderr": 0.0

},

"harness|winogrande|5": {

"acc": 0.5682715074980268,

"acc_stderr": 0.013920872110010715

}

}

```

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

hafsteinn/ice_and_fire | ---

license: cc-by-4.0

task_categories:

- text-classification

language:

- is

---

# Ice and Fire Comment Dataset

## Description

The Ice and Fire Dataset is a collection of comments from the Icelandic blog platform, blog.is, that have been annotated in several tasks.

## Dataset Structure

### Data Fields

- `annotator_id`: An integer identifier for the annotator who labeled the comment.

- `label`: The label assigned to the comment.

- `task_type`: The type of task the comment was annotated for (see paper).

- `show_blog_post`: A boolean indicating whether the annotator viewed the blog post in the annotation process.

- `show_preceding_comments`: A boolean indicating whether the annotator viewed preceding comments in the annotation process.

- `blog_title`: The title of the blog post associated with the comment.

- `blog_text`: The text of the blog post associated with the comment.

- `comment_body`: The body of the comment.

- `previous_comments`: A string containing all previous comments concatenated together, separated by " || ".

### Data Splits

This dataset is provided as a single CSV file, `ice_and_fire_huggingface_dataset.csv`, without predefined training, validation, or test splits due to the size and label distribution. Users are encouraged to create their own splits as needed for their specific tasks or to use cross-validation for benchmarking.

### Citation Information

If you use the Ice and Fire Dataset in your research, please cite it as follows:

TODO |

autoevaluate/autoeval-eval-cnn_dailymail-3.0.0-c51db7-51930145327 | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- cnn_dailymail

eval_info:

task: summarization

model: Alred/t5-small-finetuned-summarization-cnn

metrics: []

dataset_name: cnn_dailymail

dataset_config: 3.0.0

dataset_split: test

col_mapping:

text: article

target: highlights

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Summarization

* Model: Alred/t5-small-finetuned-summarization-cnn

* Dataset: cnn_dailymail

* Config: 3.0.0

* Split: test

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@MaryYarova](https://huggingface.co/MaryYarova) for evaluating this model. |

ostapeno/tulu_v2_cot_subset | ---

dataset_info:

features:

- name: dataset

dtype: string

- name: id

dtype: string

- name: messages

list:

- name: role

dtype: string

- name: content

dtype: string

splits:

- name: train

num_bytes: 57705790

num_examples: 50000

download_size: 25971959

dataset_size: 57705790

---

# Dataset Card for "tulu_v2_cot_subset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

ctang/formatted_util_deontology_for_llama2_v2 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 26907365

num_examples: 30471

download_size: 3740261

dataset_size: 26907365

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

sbunlp/hmblogs-v3 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 45957987986

num_examples: 16896817

download_size: 21312867175

dataset_size: 45957987986

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

task_categories:

- text-generation

language:

- fa

pretty_name: 'HmBlogs: A big general Persian corpus'

size_categories:

- 10M<n<100M

---

# HmBlogs: A big general Persian corpus

HmBlogs is a general Persian corpus collected from nearly 20 million blog posts over a period of 15 years containig 6.8 billion tokens.

This version is the **preprocessed version** of the dataset prepared by the original authors and converted to proper format to integrate with 🤗Datasets.

In order to access the raw versions visit the official link at http://nlplab.sbu.ac.ir/hmBlogs-v3 .

**Paper:** https://arxiv.org/abs/2111.02362 <br>

**Authors:** Hamzeh Motahari Khansari, Mehrnoush Shamsfard <br>

**Original Link:** http://nlplab.sbu.ac.ir/hmBlogs-v3/<br>

## Usage

This dataset can be used for masked/causal language modeling. You can easily load this dataset like below:

```python

from datasets import load_dataset

# Load the whole dataset

dataset = load_dataset("sbunlp/hmblogs-v3", split="train")

# Load a portion by %

dataset = load_dataset("sbunlp/hmblogs-v3", split="train[:50%]")

# Load a custom shard

dataset = load_dataset("sbunlp/hmblogs-v3", data_files=["data/train-00000-of-00046.parquet", "data/train-00001-of-00046.parquet"])

```

# Citation

```cite

@article{DBLP:journals/corr/abs-2111-02362,

author = {Hamzeh Motahari Khansari and

Mehrnoush Shamsfard},

title = {HmBlogs: {A} big general Persian corpus},

journal = {CoRR},

volume = {abs/2111.02362},

year = {2021},

url = {https://arxiv.org/abs/2111.02362},

eprinttype = {arXiv},

eprint = {2111.02362},

timestamp = {Fri, 05 Nov 2021 15:25:54 +0100},

biburl = {https://dblp.org/rec/journals/corr/abs-2111-02362.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

```

|

CyberHarem/hougetsu_shimamura_adachitoshimamura | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of Hougetsu Shimamura

This is the dataset of Hougetsu Shimamura, containing 550 images and their tags.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).

| Name | Images | Download | Description |

|:----------------|---------:|:----------------------------------------|:-----------------------------------------------------------------------------------------|

| raw | 550 | [Download](dataset-raw.zip) | Raw data with meta information. |

| raw-stage3 | 1263 | [Download](dataset-raw-stage3.zip) | 3-stage cropped raw data with meta information. |

| raw-stage3-eyes | 1370 | [Download](dataset-raw-stage3-eyes.zip) | 3-stage cropped (with eye-focus) raw data with meta information. |

| 384x512 | 550 | [Download](dataset-384x512.zip) | 384x512 aligned dataset. |

| 512x704 | 550 | [Download](dataset-512x704.zip) | 512x704 aligned dataset. |

| 640x880 | 550 | [Download](dataset-640x880.zip) | 640x880 aligned dataset. |

| stage3-640 | 1263 | [Download](dataset-stage3-640.zip) | 3-stage cropped dataset with the shorter side not exceeding 640 pixels. |

| stage3-800 | 1263 | [Download](dataset-stage3-800.zip) | 3-stage cropped dataset with the shorter side not exceeding 800 pixels. |

| stage3-p512-640 | 1087 | [Download](dataset-stage3-p512-640.zip) | 3-stage cropped dataset with the area not less than 512x512 pixels. |

| stage3-eyes-640 | 1370 | [Download](dataset-stage3-eyes-640.zip) | 3-stage cropped (with eye-focus) dataset with the shorter side not exceeding 640 pixels. |

| stage3-eyes-800 | 1370 | [Download](dataset-stage3-eyes-800.zip) | 3-stage cropped (with eye-focus) dataset with the shorter side not exceeding 800 pixels. |

|

steven1116/ninespecies_exclude_honeybee | ---

license: apache-2.0

---

|

tasksource/med | ---

dataset_info:

features:

- name: sentence1

dtype: string

- name: sentence2

dtype: string

- name: gold_label

dtype: string

- name: genre

dtype: string

splits:

- name: train

num_bytes: 532705

num_examples: 4068

download_size: 146614

dataset_size: 532705

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

license: cc-by-sa-4.0

task_categories:

- text-classification

language:

- en

---

# Dataset Card for "med"

Crowsourced (=original part) of the MED dataset for Monotonicity Entailment

https://github.com/verypluming/MED

```

@inproceedings{yanaka-etal-2019-neural,

title = "Can Neural Networks Understand Monotonicity Reasoning?",

author = "Yanaka, Hitomi and

Mineshima, Koji and

Bekki, Daisuke and

Inui, Kentaro and

Sekine, Satoshi and

Abzianidze, Lasha and

Bos, Johan",

booktitle = "Proceedings of the 2019 ACL Workshop BlackboxNLP: Analyzing and Interpreting Neural Networks for NLP",

year = "2019",

pages = "31--40",

}

``` |

qbaro/speech2text | ---

dataset_info:

features:

- name: sentence

dtype: string

- name: audio

struct:

- name: array

sequence: float32

- name: path

dtype: string

- name: sampling_rate

dtype: int64

splits:

- name: train

num_bytes: 1357744185

num_examples: 1057

- name: test

num_bytes: 589556544

num_examples: 464

download_size: 1949997840

dataset_size: 1947300729

---

# Dataset Card for "speech2text"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

sfsdfsafsddsfsdafsa/MovieLLM-raw-data | ---

license: mit

---

|

arthurmluz/cstnews_data-xlsum_gptextsum2_results | ---

dataset_info:

features:

- name: id

dtype: string

- name: text

dtype: string

- name: summary

dtype: string

- name: gen_summary

dtype: string

- name: rouge

struct:

- name: rouge1

dtype: float64

- name: rouge2

dtype: float64

- name: rougeL

dtype: float64

- name: rougeLsum

dtype: float64

- name: bert

struct:

- name: f1

sequence: float64

- name: hashcode

dtype: string

- name: precision

sequence: float64

- name: recall

sequence: float64

- name: moverScore

dtype: float64

splits:

- name: validation

num_bytes: 59919

num_examples: 16

download_size: 59830

dataset_size: 59919

configs:

- config_name: default

data_files:

- split: validation

path: data/validation-*

---

# Dataset Card for "cstnews_data-xlsum_gptextsum2_results"

rouge= {'rouge1': 0.5251493615673016, 'rouge2': 0.2936121215948489, 'rougeL': 0.35087788149320814, 'rougeLsum': 0.35087788149320814}

bert= {'precision': 0.7674689218401909, 'recall': 0.8024204447865486, 'f1': 0.7838323190808296}

mover = 0.6346333578747139 |

lazybear17/ShapeColor_33_500 | ---

size_categories:

- 1K<n<10K

--- |

liuyanchen1015/MULTI_VALUE_rte_say_complementizer | ---

dataset_info:

features:

- name: sentence1

dtype: string

- name: sentence2

dtype: string

- name: label

dtype: int64

- name: idx

dtype: int64

- name: value_score

dtype: int64

splits:

- name: test

num_bytes: 298321

num_examples: 627

- name: train

num_bytes: 286475

num_examples: 601

download_size: 381820

dataset_size: 584796

---

# Dataset Card for "MULTI_VALUE_rte_say_complementizer"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Seenka/direvtv-test | ---

dataset_info:

features:

- name: image

dtype: image

- name: timestamp

dtype: int64

- name: video_storage_path

dtype: string

splits:

- name: train

num_bytes: 14771526.0

num_examples: 50

download_size: 9696484

dataset_size: 14771526.0

---

# Dataset Card for "direvtv-test"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

CyberHarem/cirno_touhou | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of cirno/ちるの/치르노 (Touhou)

This is the dataset of cirno/ちるの/치르노 (Touhou), containing 500 images and their tags.

The core tags of this character are `blue_hair, short_hair, bow, hair_bow, wings, blue_eyes, ice_wings, blue_bow, ribbon, bangs, hair_between_eyes, red_ribbon, neck_ribbon`, which are pruned in this dataset.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).

## List of Packages

| Name | Images | Size | Download | Type | Description |

|:-----------------|---------:|:-----------|:--------------------------------------------------------------------------------------------------------------|:-----------|:---------------------------------------------------------------------|

| raw | 500 | 740.99 MiB | [Download](https://huggingface.co/datasets/CyberHarem/cirno_touhou/resolve/main/dataset-raw.zip) | Waifuc-Raw | Raw data with meta information (min edge aligned to 1400 if larger). |

| 800 | 500 | 397.23 MiB | [Download](https://huggingface.co/datasets/CyberHarem/cirno_touhou/resolve/main/dataset-800.zip) | IMG+TXT | dataset with the shorter side not exceeding 800 pixels. |

| stage3-p480-800 | 1223 | 859.15 MiB | [Download](https://huggingface.co/datasets/CyberHarem/cirno_touhou/resolve/main/dataset-stage3-p480-800.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

| 1200 | 500 | 642.16 MiB | [Download](https://huggingface.co/datasets/CyberHarem/cirno_touhou/resolve/main/dataset-1200.zip) | IMG+TXT | dataset with the shorter side not exceeding 1200 pixels. |

| stage3-p480-1200 | 1223 | 1.22 GiB | [Download](https://huggingface.co/datasets/CyberHarem/cirno_touhou/resolve/main/dataset-stage3-p480-1200.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

### Load Raw Dataset with Waifuc

We provide raw dataset (including tagged images) for [waifuc](https://deepghs.github.io/waifuc/main/tutorials/installation/index.html) loading. If you need this, just run the following code

```python

import os

import zipfile

from huggingface_hub import hf_hub_download

from waifuc.source import LocalSource

# download raw archive file

zip_file = hf_hub_download(

repo_id='CyberHarem/cirno_touhou',

repo_type='dataset',

filename='dataset-raw.zip',

)

# extract files to your directory

dataset_dir = 'dataset_dir'

os.makedirs(dataset_dir, exist_ok=True)

with zipfile.ZipFile(zip_file, 'r') as zf:

zf.extractall(dataset_dir)

# load the dataset with waifuc

source = LocalSource(dataset_dir)

for item in source:

print(item.image, item.meta['filename'], item.meta['tags'])

```

## List of Clusters

List of tag clustering result, maybe some outfits can be mined here.

### Raw Text Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | Tags |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| 0 | 5 |  |  |  |  |  | 1girl, :d, blue_dress, blush, cowboy_shot, ice, looking_at_viewer, open_mouth, puffy_short_sleeves, simple_background, solo, white_background, white_shirt, breasts, collared_shirt, standing |

| 1 | 6 |  |  |  |  |  | 1girl, blue_dress, closed_mouth, collared_shirt, ice, looking_at_viewer, puffy_short_sleeves, simple_background, solo, white_background, white_shirt, blush, pinafore_dress, cowboy_shot, smile |

| 2 | 8 |  |  |  |  |  | 1girl, blue_dress, ice, looking_at_viewer, puffy_short_sleeves, solo, white_background, simple_background, shirt, upper_body, smile |

| 3 | 7 |  |  |  |  |  | 1girl, blue_dress, full_body, ice, looking_at_viewer, open_mouth, solo, white_socks, puffy_short_sleeves, white_shirt, :d, blush, black_footwear, mary_janes, pinafore_dress |

### Table Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | 1girl | :d | blue_dress | blush | cowboy_shot | ice | looking_at_viewer | open_mouth | puffy_short_sleeves | simple_background | solo | white_background | white_shirt | breasts | collared_shirt | standing | closed_mouth | pinafore_dress | smile | shirt | upper_body | full_body | white_socks | black_footwear | mary_janes |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------|:-----|:-------------|:--------|:--------------|:------|:--------------------|:-------------|:----------------------|:--------------------|:-------|:-------------------|:--------------|:----------|:-----------------|:-----------|:---------------|:-----------------|:--------|:--------|:-------------|:------------|:--------------|:-----------------|:-------------|

| 0 | 5 |  |  |  |  |  | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | | | | | | | | | |

| 1 | 6 |  |  |  |  |  | X | | X | X | X | X | X | | X | X | X | X | X | | X | | X | X | X | | | | | | |

| 2 | 8 |  |  |  |  |  | X | | X | | | X | X | | X | X | X | X | | | | | | | X | X | X | | | | |

| 3 | 7 |  |  |  |  |  | X | X | X | X | | X | X | X | X | | X | | X | | | | | X | | | | X | X | X | X |

|

HustonMatthew/LenghtPrediction | ---

license: cc

---

|

Hemanth-thunder/ocr-data-tnpsc | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 12574068

num_examples: 9217

download_size: 4400902

dataset_size: 12574068

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

pretty_name: Public Tamil Nadu old School Books and Tnpsc Content (English)

license: apache-2.0

task_categories:

- text-generation

- text2text-generation

language:

- ta

tags:

- ocr

- tnpsc

- tamil

- chemistry

- biology

- finance

- medical

---

# Tamil Public Domain Books (Tamil)

The dataset comprises over 30 school textbooks and certain TNPSC (Tamil Nadu Public Service Commission) materials in Tamil medium, presumed to be in the public domain. |

ekolasky/RelevantTextForCustomLEDForQA650 | ---

dataset_info:

features:

- name: input_ids

sequence: int32

- name: start_positions

sequence: int64

- name: end_positions

sequence: int64

- name: global_attention_mask

sequence: int64

- name: attention_mask

sequence: int8

splits:

- name: train

num_bytes: 36495790

num_examples: 586

- name: validation

num_bytes: 4341131

num_examples: 65

download_size: 4313316

dataset_size: 40836921

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

---

|

mohammedriza-rahman/conll2003 | ---

annotations_creators:

- crowdsourced

language_creators:

- found

language:

- en

license:

- other

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- extended|other-reuters-corpus

task_categories:

- token-classification

task_ids:

- named-entity-recognition

- part-of-speech

paperswithcode_id: conll-2003

pretty_name: CoNLL-2003

dataset_info:

features:

- name: id

dtype: string

- name: tokens

sequence: string

- name: pos_tags

sequence:

class_label:

names:

'0': '"'

'1': ''''''

'2': '#'

'3': $

'4': (

'5': )

'6': ','

'7': .

'8': ':'

'9': '``'

'10': CC

'11': CD

'12': DT

'13': EX

'14': FW

'15': IN

'16': JJ

'17': JJR

'18': JJS

'19': LS

'20': MD

'21': NN

'22': NNP

'23': NNPS

'24': NNS

'25': NN|SYM

'26': PDT

'27': POS

'28': PRP

'29': PRP$

'30': RB

'31': RBR

'32': RBS

'33': RP

'34': SYM

'35': TO

'36': UH

'37': VB

'38': VBD

'39': VBG

'40': VBN

'41': VBP

'42': VBZ

'43': WDT

'44': WP

'45': WP$

'46': WRB

- name: chunk_tags

sequence:

class_label:

names:

'0': O

'1': B-ADJP

'2': I-ADJP

'3': B-ADVP

'4': I-ADVP

'5': B-CONJP

'6': I-CONJP

'7': B-INTJ

'8': I-INTJ

'9': B-LST

'10': I-LST

'11': B-NP

'12': I-NP

'13': B-PP

'14': I-PP

'15': B-PRT

'16': I-PRT

'17': B-SBAR

'18': I-SBAR

'19': B-UCP

'20': I-UCP

'21': B-VP

'22': I-VP

- name: ner_tags

sequence:

class_label:

names:

'0': O

'1': B-PER

'2': I-PER

'3': B-ORG

'4': I-ORG

'5': B-LOC

'6': I-LOC

'7': B-MISC

'8': I-MISC

config_name: conll2003

splits:

- name: train

num_bytes: 6931345

num_examples: 14041

- name: validation

num_bytes: 1739223

num_examples: 3250

- name: test

num_bytes: 1582054

num_examples: 3453

download_size: 982975

dataset_size: 10252622

train-eval-index:

- config: conll2003

task: token-classification

task_id: entity_extraction

splits:

train_split: train

eval_split: test

col_mapping:

tokens: tokens

ner_tags: tags

metrics:

- type: seqeval

name: seqeval

---

# Dataset Card for "conll2003"

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [https://www.aclweb.org/anthology/W03-0419/](https://www.aclweb.org/anthology/W03-0419/)

- **Repository:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Paper:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Point of Contact:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Size of downloaded dataset files:** 4.85 MB

- **Size of the generated dataset:** 10.26 MB

- **Total amount of disk used:** 15.11 MB

### Dataset Summary

The shared task of CoNLL-2003 concerns language-independent named entity recognition. We will concentrate on

four types of named entities: persons, locations, organizations and names of miscellaneous entities that do

not belong to the previous three groups.

The CoNLL-2003 shared task data files contain four columns separated by a single space. Each word has been put on

a separate line and there is an empty line after each sentence. The first item on each line is a word, the second

a part-of-speech (POS) tag, the third a syntactic chunk tag and the fourth the named entity tag. The chunk tags

and the named entity tags have the format I-TYPE which means that the word is inside a phrase of type TYPE. Only

if two phrases of the same type immediately follow each other, the first word of the second phrase will have tag

B-TYPE to show that it starts a new phrase. A word with tag O is not part of a phrase. Note the dataset uses IOB2

tagging scheme, whereas the original dataset uses IOB1.

For more details see https://www.clips.uantwerpen.be/conll2003/ner/ and https://www.aclweb.org/anthology/W03-0419

### Supported Tasks and Leaderboards

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Languages

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Dataset Structure

### Data Instances

#### conll2003

- **Size of downloaded dataset files:** 4.85 MB

- **Size of the generated dataset:** 10.26 MB

- **Total amount of disk used:** 15.11 MB

An example of 'train' looks as follows.

```

{

"chunk_tags": [11, 12, 12, 21, 13, 11, 11, 21, 13, 11, 12, 13, 11, 21, 22, 11, 12, 17, 11, 21, 17, 11, 12, 12, 21, 22, 22, 13, 11, 0],

"id": "0",

"ner_tags": [0, 3, 4, 0, 0, 0, 0, 0, 0, 7, 0, 0, 0, 0, 0, 7, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0],

"pos_tags": [12, 22, 22, 38, 15, 22, 28, 38, 15, 16, 21, 35, 24, 35, 37, 16, 21, 15, 24, 41, 15, 16, 21, 21, 20, 37, 40, 35, 21, 7],

"tokens": ["The", "European", "Commission", "said", "on", "Thursday", "it", "disagreed", "with", "German", "advice", "to", "consumers", "to", "shun", "British", "lamb", "until", "scientists", "determine", "whether", "mad", "cow", "disease", "can", "be", "transmitted", "to", "sheep", "."]

}

```

The original data files have `-DOCSTART-` lines used to separate documents, but these lines are removed here.

Indeed `-DOCSTART-` is a special line that acts as a boundary between two different documents, and it is filtered out in this implementation.

### Data Fields

The data fields are the same among all splits.

#### conll2003

- `id`: a `string` feature.

- `tokens`: a `list` of `string` features.

- `pos_tags`: a `list` of classification labels (`int`). Full tagset with indices:

```python

{'"': 0, "''": 1, '#': 2, '$': 3, '(': 4, ')': 5, ',': 6, '.': 7, ':': 8, '``': 9, 'CC': 10, 'CD': 11, 'DT': 12,

'EX': 13, 'FW': 14, 'IN': 15, 'JJ': 16, 'JJR': 17, 'JJS': 18, 'LS': 19, 'MD': 20, 'NN': 21, 'NNP': 22, 'NNPS': 23,

'NNS': 24, 'NN|SYM': 25, 'PDT': 26, 'POS': 27, 'PRP': 28, 'PRP$': 29, 'RB': 30, 'RBR': 31, 'RBS': 32, 'RP': 33,

'SYM': 34, 'TO': 35, 'UH': 36, 'VB': 37, 'VBD': 38, 'VBG': 39, 'VBN': 40, 'VBP': 41, 'VBZ': 42, 'WDT': 43,

'WP': 44, 'WP$': 45, 'WRB': 46}

```

- `chunk_tags`: a `list` of classification labels (`int`). Full tagset with indices:

```python

{'O': 0, 'B-ADJP': 1, 'I-ADJP': 2, 'B-ADVP': 3, 'I-ADVP': 4, 'B-CONJP': 5, 'I-CONJP': 6, 'B-INTJ': 7, 'I-INTJ': 8,

'B-LST': 9, 'I-LST': 10, 'B-NP': 11, 'I-NP': 12, 'B-PP': 13, 'I-PP': 14, 'B-PRT': 15, 'I-PRT': 16, 'B-SBAR': 17,

'I-SBAR': 18, 'B-UCP': 19, 'I-UCP': 20, 'B-VP': 21, 'I-VP': 22}

```

- `ner_tags`: a `list` of classification labels (`int`). Full tagset with indices:

```python

{'O': 0, 'B-PER': 1, 'I-PER': 2, 'B-ORG': 3, 'I-ORG': 4, 'B-LOC': 5, 'I-LOC': 6, 'B-MISC': 7, 'I-MISC': 8}

```

### Data Splits

| name |train|validation|test|

|---------|----:|---------:|---:|

|conll2003|14041| 3250|3453|

## Dataset Creation

### Curation Rationale

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### Who are the source language producers?

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Annotations

#### Annotation process

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### Who are the annotators?

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Personal and Sensitive Information

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Discussion of Biases

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Other Known Limitations

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Additional Information

### Dataset Curators

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Licensing Information

From the [CoNLL2003 shared task](https://www.clips.uantwerpen.be/conll2003/ner/) page:

> The English data is a collection of news wire articles from the Reuters Corpus. The annotation has been done by people of the University of Antwerp. Because of copyright reasons we only make available the annotations. In order to build the complete data sets you will need access to the Reuters Corpus. It can be obtained for research purposes without any charge from NIST.

The copyrights are defined below, from the [Reuters Corpus page](https://trec.nist.gov/data/reuters/reuters.html):

> The stories in the Reuters Corpus are under the copyright of Reuters Ltd and/or Thomson Reuters, and their use is governed by the following agreements:

>

> [Organizational agreement](https://trec.nist.gov/data/reuters/org_appl_reuters_v4.html)

>

> This agreement must be signed by the person responsible for the data at your organization, and sent to NIST.

>

> [Individual agreement](https://trec.nist.gov/data/reuters/ind_appl_reuters_v4.html)

>

> This agreement must be signed by all researchers using the Reuters Corpus at your organization, and kept on file at your organization.

### Citation Information

```

@inproceedings{tjong-kim-sang-de-meulder-2003-introduction,

title = "Introduction to the {C}o{NLL}-2003 Shared Task: Language-Independent Named Entity Recognition",

author = "Tjong Kim Sang, Erik F. and

De Meulder, Fien",

booktitle = "Proceedings of the Seventh Conference on Natural Language Learning at {HLT}-{NAACL} 2003",

year = "2003",

url = "https://www.aclweb.org/anthology/W03-0419",

pages = "142--147",

}

```

### Contributions

Thanks to [@jplu](https://github.com/jplu), [@vblagoje](https://github.com/vblagoje), [@lhoestq](https://github.com/lhoestq) for adding this dataset. |

infgrad/retrieval_data_llm | ---

license: mit

language:

- zh

size_categories:

- 100K<n<1M

---

带有难负例的检索训练数据。约20万。

文件格式:jsonl。单行示例:

```

{"Query": "大熊猫的饮食习性", "Positive Document": "大熊猫主要以竹子为食,但也会吃水果和小型动物。它们拥有强壮的颌部和牙齿,能够咬碎竹子坚硬的外壳。", "Hard Negative Document": "老虎是肉食性动物,主要捕食鹿、野猪等大型动物。它们的牙齿和爪子非常锋利,是捕猎的利器。"}

``` |

gguichard/wsd_fr_wngt_semcor_translated_aligned | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: tokens

sequence: string

- name: wn_sens

sequence: int64

- name: input_ids

sequence: int32

- name: attention_mask

sequence: int8

- name: labels

sequence: int64

splits:

- name: train

num_bytes: 120127351.96891159

num_examples: 167549

- name: test

num_bytes: 6322945.031088406

num_examples: 8819

download_size: 35442307

dataset_size: 126450297.0

---

# Dataset Card for "wsd_fr_wngt_semcor_translated_aligned"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Venki-ds/test-my-alpaca-llama2-1k | ---

dataset_info:

features:

- name: instruction

dtype: string

- name: input

dtype: string

- name: output

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 668749

num_examples: 1000

download_size: 412751

dataset_size: 668749

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

unknown12367556/43590439 | ---

license: afl-3.0

---

|

autoevaluate/autoeval-staging-eval-project-xsum-6cd6bf3a-11245505 | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- xsum

eval_info:

task: summarization

model: ARTeLab/it5-summarization-ilpost

metrics: []

dataset_name: xsum

dataset_config: default

dataset_split: test

col_mapping:

text: document

target: summary

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Summarization

* Model: ARTeLab/it5-summarization-ilpost

* Dataset: xsum

* Config: default

* Split: test

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@dishant16](https://huggingface.co/dishant16) for evaluating this model. |

ImperialIndians23/nlp_cw_data_unprocessed | ---

dataset_info:

features:

- name: par_id

dtype: string

- name: community

dtype: string

- name: text

dtype: string

- name: label

dtype: int64

splits:

- name: train

num_bytes: 2520387

num_examples: 8375

- name: valid

num_bytes: 616626

num_examples: 2094

download_size: 1979627

dataset_size: 3137013

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: valid

path: data/valid-*

---

|

heliosprime/twitter_dataset_1713164754 | ---

dataset_info:

features:

- name: id

dtype: string

- name: tweet_content

dtype: string

- name: user_name

dtype: string

- name: user_id

dtype: string

- name: created_at

dtype: string

- name: url

dtype: string

- name: favourite_count

dtype: int64

- name: scraped_at

dtype: string

- name: image_urls

dtype: string

splits:

- name: train

num_bytes: 8822

num_examples: 21

download_size: 12027

dataset_size: 8822

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "twitter_dataset_1713164754"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

davanstrien/haiku-kto-raw-argilla | ---

size_categories: 1K<n<10K

tags:

- rlfh

- argilla

- human-feedback

---

# Dataset Card for haiku-kto-raw-argilla

This dataset has been created with [Argilla](https://docs.argilla.io).

As shown in the sections below, this dataset can be loaded into Argilla as explained in [Load with Argilla](#load-with-argilla), or used directly with the `datasets` library in [Load with `datasets`](#load-with-datasets).

## Dataset Description

- **Homepage:** https://argilla.io

- **Repository:** https://github.com/argilla-io/argilla

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset contains:

* A dataset configuration file conforming to the Argilla dataset format named `argilla.yaml`. This configuration file will be used to configure the dataset when using the `FeedbackDataset.from_huggingface` method in Argilla.

* Dataset records in a format compatible with HuggingFace `datasets`. These records will be loaded automatically when using `FeedbackDataset.from_huggingface` and can be loaded independently using the `datasets` library via `load_dataset`.

* The [annotation guidelines](#annotation-guidelines) that have been used for building and curating the dataset, if they've been defined in Argilla.

### Load with Argilla

To load with Argilla, you'll just need to install Argilla as `pip install argilla --upgrade` and then use the following code:

```python

import argilla as rg

ds = rg.FeedbackDataset.from_huggingface("davanstrien/haiku-kto-raw-argilla")

```

### Load with `datasets`

To load this dataset with `datasets`, you'll just need to install `datasets` as `pip install datasets --upgrade` and then use the following code:

```python

from datasets import load_dataset

ds = load_dataset("davanstrien/haiku-kto-raw-argilla")

```

### Supported Tasks and Leaderboards

This dataset can contain [multiple fields, questions and responses](https://docs.argilla.io/en/latest/conceptual_guides/data_model.html#feedback-dataset) so it can be used for different NLP tasks, depending on the configuration. The dataset structure is described in the [Dataset Structure section](#dataset-structure).

There are no leaderboards associated with this dataset.

### Languages

[More Information Needed]

## Dataset Structure

### Data in Argilla

The dataset is created in Argilla with: **fields**, **questions**, **suggestions**, **metadata**, **vectors**, and **guidelines**.

The **fields** are the dataset records themselves, for the moment just text fields are supported. These are the ones that will be used to provide responses to the questions.

| Field Name | Title | Type | Required | Markdown |

| ---------- | ----- | ---- | -------- | -------- |

| prompt | Haiku prompt | text | True | True |

| completion | Haiku | text | True | True |

The **questions** are the questions that will be asked to the annotators. They can be of different types, such as rating, text, label_selection, multi_label_selection, or ranking.

| Question Name | Title | Type | Required | Description | Values/Labels |

| ------------- | ----- | ---- | -------- | ----------- | ------------- |

| label | Do you like this haiku? | label_selection | True | Classify the text by selecting the correct label from the given list of labels. | ['Yes', 'No'] |

The **suggestions** are human or machine generated recommendations for each question to assist the annotator during the annotation process, so those are always linked to the existing questions, and named appending "-suggestion" and "-suggestion-metadata" to those, containing the value/s of the suggestion and its metadata, respectively. So on, the possible values are the same as in the table above, but the column name is appended with "-suggestion" and the metadata is appended with "-suggestion-metadata".

The **metadata** is a dictionary that can be used to provide additional information about the dataset record. This can be useful to provide additional context to the annotators, or to provide additional information about the dataset record itself. For example, you can use this to provide a link to the original source of the dataset record, or to provide additional information about the dataset record itself, such as the author, the date, or the source. The metadata is always optional, and can be potentially linked to the `metadata_properties` defined in the dataset configuration file in `argilla.yaml`.

| Metadata Name | Title | Type | Values | Visible for Annotators |

| ------------- | ----- | ---- | ------ | ---------------------- |

The **guidelines**, are optional as well, and are just a plain string that can be used to provide instructions to the annotators. Find those in the [annotation guidelines](#annotation-guidelines) section.

### Data Instances

An example of a dataset instance in Argilla looks as follows:

```json

{

"external_id": null,

"fields": {

"completion": "Iceberg, silent threat\nDeceptive beauty, hidden\nSinking ships, cold death",

"prompt": "Can you write a haiku that describes the danger of an iceberg?"

},

"metadata": {

"generation_model": "NousResearch/Nous-Hermes-2-Yi-34B",

"prompt": "Can you write a haiku that describes the danger of an iceberg?"

},

"responses": [],

"suggestions": [],

"vectors": {}

}

```

While the same record in HuggingFace `datasets` looks as follows:

```json

{

"completion": "Iceberg, silent threat\nDeceptive beauty, hidden\nSinking ships, cold death",

"external_id": null,

"label": [],

"label-suggestion": null,

"label-suggestion-metadata": {

"agent": null,

"score": null,

"type": null

},

"metadata": "{\"prompt\": \"Can you write a haiku that describes the danger of an iceberg?\", \"generation_model\": \"NousResearch/Nous-Hermes-2-Yi-34B\"}",

"prompt": "Can you write a haiku that describes the danger of an iceberg?"

}

```

### Data Fields

Among the dataset fields, we differentiate between the following:

* **Fields:** These are the dataset records themselves, for the moment just text fields are supported. These are the ones that will be used to provide responses to the questions.

* **prompt** is of type `text`.

* **completion** is of type `text`.

* **Questions:** These are the questions that will be asked to the annotators. They can be of different types, such as `RatingQuestion`, `TextQuestion`, `LabelQuestion`, `MultiLabelQuestion`, and `RankingQuestion`.

* **label** is of type `label_selection` with the following allowed values ['Yes', 'No'], and description "Classify the text by selecting the correct label from the given list of labels.".

* **Suggestions:** As of Argilla 1.13.0, the suggestions have been included to provide the annotators with suggestions to ease or assist during the annotation process. Suggestions are linked to the existing questions, are always optional, and contain not just the suggestion itself, but also the metadata linked to it, if applicable.

* (optional) **label-suggestion** is of type `label_selection` with the following allowed values ['Yes', 'No'].

Additionally, we also have two more fields that are optional and are the following:

* **metadata:** This is an optional field that can be used to provide additional information about the dataset record. This can be useful to provide additional context to the annotators, or to provide additional information about the dataset record itself. For example, you can use this to provide a link to the original source of the dataset record, or to provide additional information about the dataset record itself, such as the author, the date, or the source. The metadata is always optional, and can be potentially linked to the `metadata_properties` defined in the dataset configuration file in `argilla.yaml`.

* **external_id:** This is an optional field that can be used to provide an external ID for the dataset record. This can be useful if you want to link the dataset record to an external resource, such as a database or a file.

### Data Splits

The dataset contains a single split, which is `train`.

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation guidelines

Do you like this haiku?

Yes or no?

A vibes only assessment is fine!

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

GEM-submissions/lewtun__hugging-face-test-t5-base.outputs.json-36bf2a59__1645800191 | ---

benchmark: gem

type: prediction

submission_name: Hugging Face test T5-base.outputs.json 36bf2a59

---

|

crumb/Clean-Instruct-440k | ---

dataset_info:

features:

- name: instruction

dtype: string

- name: output

dtype: string

- name: input

dtype: string

splits:

- name: train

num_bytes: 650842125.0

num_examples: 443612

download_size: 357775511

dataset_size: 650842125.0

license: mit

task_categories:

- conversational

language:

- en

---

# Dataset Card for "Clean-Instruct"

[yahma/alpaca-cleaned](https://hf.co/datasets/yahma/alpaca-cleaned) + [crumb/gpt4all-clean](https://hf.co/datasets/crumb/gpt4all-clean) + GPTeacher-Instruct-Dedup

It isn't perfect, but it's 443k high quality semi-cleaned instructions without "As an Ai language model".

```python

from datasets import load_dataset

dataset = load_dataset("crumb/clean-instruct", split="train")

def promptify(example):

if example['input']!='':

return {"text": f"<instruction> {example['instruction']} <input> {example['input']} <output> {example['output']}"}

return {"text": f"<instruction> {example['instruction']} <output> {example['output']}"}

dataset = dataset.map(promptify, batched=False)

dataset = dataset.remove_columns(["instruction", "input", "output"])

``` |

open-llm-leaderboard/details_PY007__TinyLlama-1.1B-intermediate-step-480k-1T | ---

pretty_name: Evaluation run of PY007/TinyLlama-1.1B-intermediate-step-480k-1T

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [PY007/TinyLlama-1.1B-intermediate-step-480k-1T](https://huggingface.co/PY007/TinyLlama-1.1B-intermediate-step-480k-1T)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 64 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 2 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the agregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_PY007__TinyLlama-1.1B-intermediate-step-480k-1T\"\

,\n\t\"harness_winogrande_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\n\

These are the [latest results from run 2023-10-24T09:15:17.830156](https://huggingface.co/datasets/open-llm-leaderboard/details_PY007__TinyLlama-1.1B-intermediate-step-480k-1T/blob/main/results_2023-10-24T09-15-17.830156.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"em\": 0.0012583892617449664,\n\

\ \"em_stderr\": 0.0003630560893119088,\n \"f1\": 0.0418026426174498,\n\

\ \"f1_stderr\": 0.0011748218433740387,\n \"acc\": 0.2891570770949507,\n\

\ \"acc_stderr\": 0.007951591896761558\n },\n \"harness|drop|3\": {\n\

\ \"em\": 0.0012583892617449664,\n \"em_stderr\": 0.0003630560893119088,\n\

\ \"f1\": 0.0418026426174498,\n \"f1_stderr\": 0.0011748218433740387\n\

\ },\n \"harness|gsm8k|5\": {\n \"acc\": 0.00530705079605762,\n \

\ \"acc_stderr\": 0.0020013057209480613\n },\n \"harness|winogrande|5\"\

: {\n \"acc\": 0.5730071033938438,\n \"acc_stderr\": 0.013901878072575057\n\

\ }\n}\n```"

repo_url: https://huggingface.co/PY007/TinyLlama-1.1B-intermediate-step-480k-1T

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_arc_challenge_25

data_files:

- split: 2023_10_04T06_32_33.540256

path:

- '**/details_harness|arc:challenge|25_2023-10-04T06-32-33.540256.parquet'

- split: latest

path:

- '**/details_harness|arc:challenge|25_2023-10-04T06-32-33.540256.parquet'

- config_name: harness_drop_3

data_files:

- split: 2023_10_24T09_15_17.830156

path:

- '**/details_harness|drop|3_2023-10-24T09-15-17.830156.parquet'

- split: latest

path:

- '**/details_harness|drop|3_2023-10-24T09-15-17.830156.parquet'

- config_name: harness_gsm8k_5

data_files:

- split: 2023_10_24T09_15_17.830156

path:

- '**/details_harness|gsm8k|5_2023-10-24T09-15-17.830156.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2023-10-24T09-15-17.830156.parquet'

- config_name: harness_hellaswag_10

data_files:

- split: 2023_10_04T06_32_33.540256

path:

- '**/details_harness|hellaswag|10_2023-10-04T06-32-33.540256.parquet'

- split: latest

path:

- '**/details_harness|hellaswag|10_2023-10-04T06-32-33.540256.parquet'

- config_name: harness_hendrycksTest_5

data_files:

- split: 2023_10_04T06_32_33.540256

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-10-04T06-32-33.540256.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-10-04T06-32-33.540256.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-10-04T06-32-33.540256.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-10-04T06-32-33.540256.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-10-04T06-32-33.540256.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-10-04T06-32-33.540256.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-10-04T06-32-33.540256.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-10-04T06-32-33.540256.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-10-04T06-32-33.540256.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-10-04T06-32-33.540256.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-10-04T06-32-33.540256.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-10-04T06-32-33.540256.parquet'