datasetId stringlengths 2 117 | card stringlengths 19 1.01M |

|---|---|

Edopangui/promo3 | ---

license: apache-2.0

---

|

Uchenna/Testdataset | ---

license: mit

language:

- en

dataset_info:

features:

- name: product

dtype: string

- name: description

dtype: string

- name: advert

dtype: string

- name: ad

dtype: string

splits:

- name: train

num_bytes: 5766

num_examples: 10

download_size: 9580

dataset_size: 5766

---

|

AlekseyKorshuk/code-alpaca-eval-debug-completions | ---

dataset_info:

features:

- name: model_input

list:

- name: content

dtype: string

- name: role

dtype: string

- name: baseline_response

dtype: string

- name: completion

dtype: string

splits:

- name: train

num_bytes: 1548

num_examples: 2

download_size: 7523

dataset_size: 1548

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

open-llm-leaderboard/details_KevinNi__mistral-class-bio-tutor | ---

pretty_name: Evaluation run of KevinNi/mistral-class-bio-tutor

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [KevinNi/mistral-class-bio-tutor](https://huggingface.co/KevinNi/mistral-class-bio-tutor)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 1 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the aggregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_KevinNi__mistral-class-bio-tutor\"\

,\n\t\"harness_gsm8k_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\nThese\

\ are the [latest results from run 2023-12-02T15:48:30.567817](https://huggingface.co/datasets/open-llm-leaderboard/details_KevinNi__mistral-class-bio-tutor/blob/main/results_2023-12-02T15-48-30.567817.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.0,\n \"\

acc_stderr\": 0.0\n },\n \"harness|gsm8k|5\": {\n \"acc\": 0.0,\n \

\ \"acc_stderr\": 0.0\n }\n}\n```"

repo_url: https://huggingface.co/KevinNi/mistral-class-bio-tutor

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_gsm8k_5

data_files:

- split: 2023_12_02T15_48_30.567817

path:

- '**/details_harness|gsm8k|5_2023-12-02T15-48-30.567817.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2023-12-02T15-48-30.567817.parquet'

- config_name: results

data_files:

- split: 2023_12_02T15_48_30.567817

path:

- results_2023-12-02T15-48-30.567817.parquet

- split: latest

path:

- results_2023-12-02T15-48-30.567817.parquet

---

# Dataset Card for Evaluation run of KevinNi/mistral-class-bio-tutor

## Dataset Description

- **Homepage:**

- **Repository:** https://huggingface.co/KevinNi/mistral-class-bio-tutor

- **Paper:**

- **Leaderboard:** https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

- **Point of Contact:** clementine@hf.co

### Dataset Summary

Dataset automatically created during the evaluation run of model [KevinNi/mistral-class-bio-tutor](https://huggingface.co/KevinNi/mistral-class-bio-tutor) on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).

The dataset is composed of 1 configuration, each one coresponding to one of the evaluated task.

The dataset has been created from 1 run(s). Each run can be found as a specific split in each configuration, the split being named using the timestamp of the run.The "train" split is always pointing to the latest results.

An additional configuration "results" store all the aggregated results of the run (and is used to compute and display the aggregated metrics on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).

To load the details from a run, you can for instance do the following:

```python

from datasets import load_dataset

data = load_dataset("open-llm-leaderboard/details_KevinNi__mistral-class-bio-tutor",

"harness_gsm8k_5",

split="train")

```

## Latest results

These are the [latest results from run 2023-12-02T15:48:30.567817](https://huggingface.co/datasets/open-llm-leaderboard/details_KevinNi__mistral-class-bio-tutor/blob/main/results_2023-12-02T15-48-30.567817.json)(note that their might be results for other tasks in the repos if successive evals didn't cover the same tasks. You find each in the results and the "latest" split for each eval):

```python

{

"all": {

"acc": 0.0,

"acc_stderr": 0.0

},

"harness|gsm8k|5": {

"acc": 0.0,

"acc_stderr": 0.0

}

}

```

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

AdapterOcean/med_alpaca_standardized_cluster_38_alpaca | ---

dataset_info:

features:

- name: input

dtype: string

- name: output

dtype: string

splits:

- name: train

num_bytes: 26390473

num_examples: 13489

download_size: 13464677

dataset_size: 26390473

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "med_alpaca_standardized_cluster_38_alpaca"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

CesarChaMal/my-personal-model | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 41999

num_examples: 426

- name: test

num_bytes: 12984

num_examples: 175

download_size: 29597

dataset_size: 54983

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

---

|

UtkuC/utku_test_dog | ---

license: mit

dataset_info:

features:

- name: image

dtype: string

- name: label

dtype: string

splits:

- name: train

num_bytes: 406

num_examples: 7

download_size: 1482

dataset_size: 406

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

TrainingDataPro/crowd-counting-dataset | ---

license: cc-by-nc-nd-4.0

task_categories:

- image-classification

- image-to-image

language:

- en

tags:

- legal

- code

---

# Crowd Counting Dataset

The dataset includes images featuring crowds of people ranging from **0 to 5000 individuals**. The dataset includes a diverse range of scenes and scenarios, capturing crowds in various settings. Each image in the dataset is accompanied by a corresponding **JSON file** containing detailed labeling information for each person in the crowd for crowd count and classification.

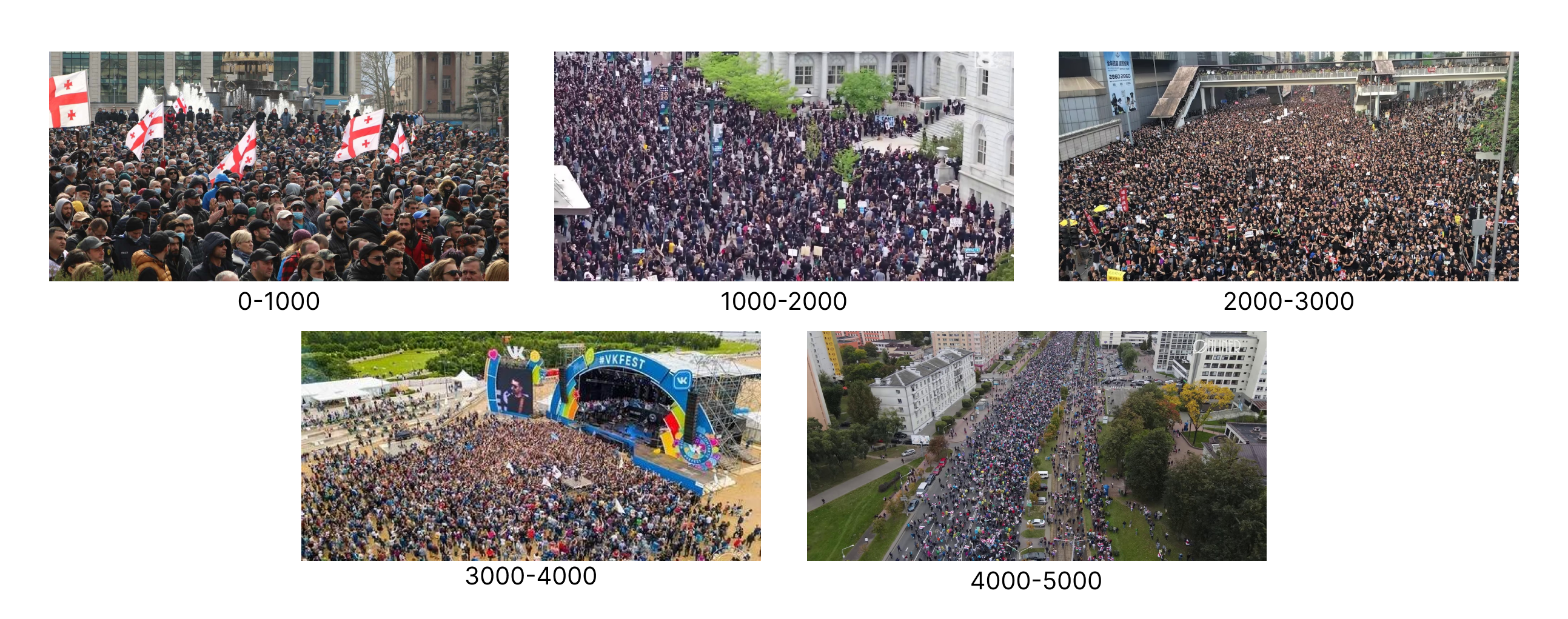

**Types of crowds** in the dataset: *0-1000, 1000-2000, 2000-3000, 3000-4000 and 4000-5000*

This dataset provides a valuable resource for researchers and developers working on crowd counting technology, enabling them to train and evaluate their algorithms with a wide range of crowd sizes and scenarios. It can also be used for benchmarking and comparison of different crowd counting algorithms, as well as for real-world applications such as *public safety and security, urban planning, and retail analytics*.

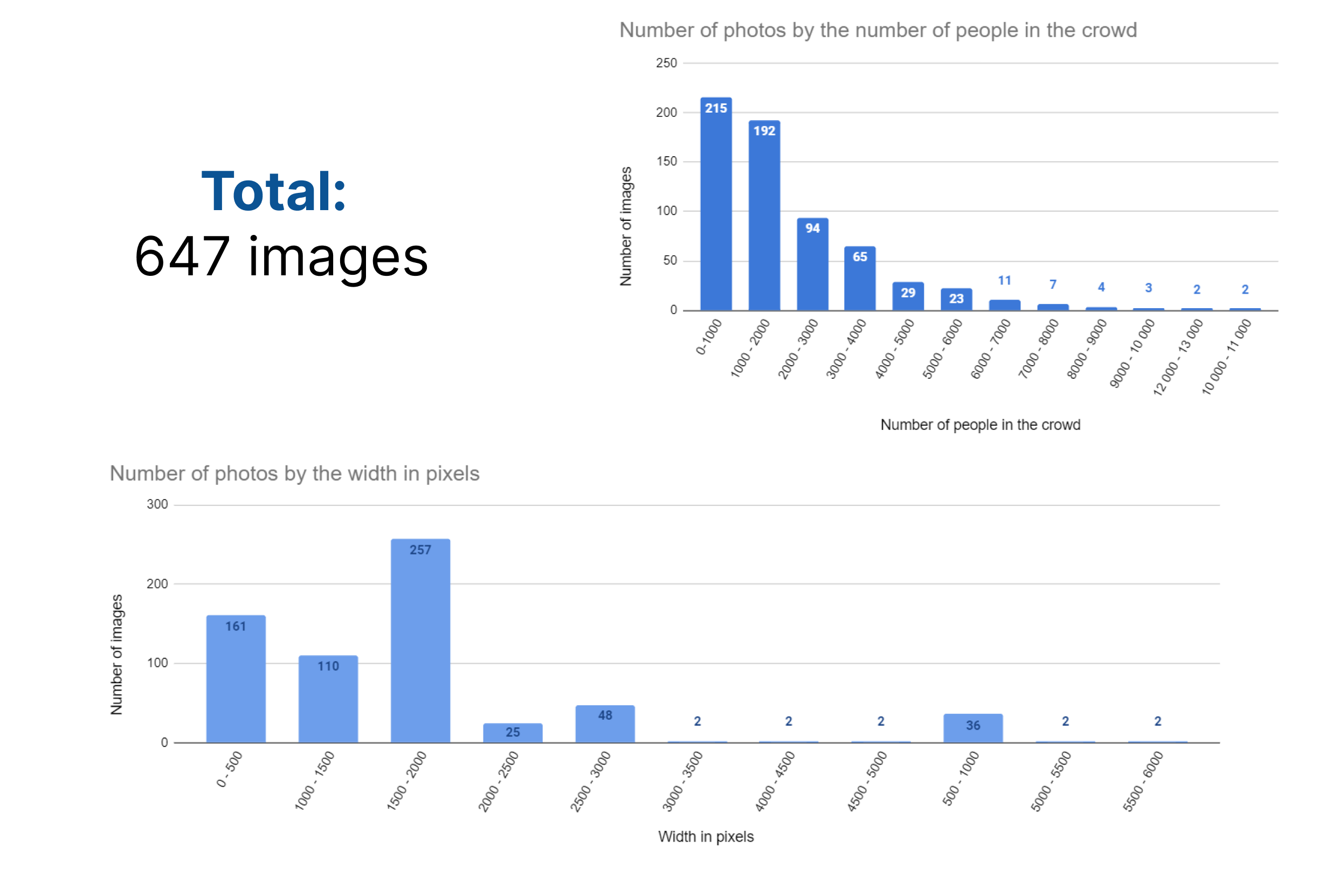

## Full version of the dataset includes 647 labeled images of crowds, leave a request on **[TrainingData](https://trainingdata.pro/data-market/crowd-counting?utm_source=huggingface&utm_medium=cpc&utm_campaign=crowd-counting-dataset)** to buy the dataset

### Statistics for the dataset (number of images by the crowd's size and image width):

# Get the Dataset

## This is just an example of the data

Leave a request on **[https://trainingdata.pro/data-market](https://trainingdata.pro/data-market/crowd-counting?utm_source=huggingface&utm_medium=cpc&utm_campaign=crowd-counting-dataset) to learn about the price and buy the dataset**

# Content

- **images** - includes original images of crowds placed in subfolders according to its size,

- **labels** - includes json-files with labeling and visualised labeling for the images in the previous folder,

- **csv file** - includes information for each image in the dataset

### File with the extension .csv

- **id**: id of the image,

- **image**: link to access the original image,

- **label**: link to access the json-file with labeling,

- **type**: type of the crowd on the photo

## **[TrainingData](https://trainingdata.pro/data-market/crowd-counting?utm_source=huggingface&utm_medium=cpc&utm_campaign=crowd-counting-dataset)** provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **<https://www.kaggle.com/trainingdatapro/datasets>**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets**

*keywords: crowd counting, crowd density estimation, people counting, crowd analysis, image annotation, computer vision, deep learning, object detection, object counting, image classification, dense regression, crowd behavior analysis, crowd tracking, head detection, crowd segmentation, crowd motion analysis, image processing, machine learning, artificial intelligence, ai, human detection, crowd sensing, image dataset, public safety, crowd management, urban planning, event planning, traffic management* |

nastyboget/stackmix_cyrillic | ---

license: mit

task_categories:

- image-to-text

language:

- ru

size_categories:

- 100K<n<1M

---

Dataset generated from cyrillic train set using Stackmix

========================================================

Number of images: 300000

Sources:

* [Cyrillic dataset](https://www.kaggle.com/datasets/constantinwerner/cyrillic-handwriting-dataset)

* [Stackmix code](https://github.com/ai-forever/StackMix-OCR)

|

yaygomii/Dialect_Speech_Corpus_Tamil_with_info | ---

dataset_info:

features:

- name: label

dtype: string

- name: audio

dtype:

audio:

sampling_rate: 16000

- name: sentence

dtype: string

- name: gender

dtype: string

- name: dialect

dtype: string

splits:

- name: train

num_bytes: 3019481844.752

num_examples: 10812

download_size: 2873255375

dataset_size: 3019481844.752

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

heliosprime/twitter_dataset_1713007117 | ---

dataset_info:

features:

- name: id

dtype: string

- name: tweet_content

dtype: string

- name: user_name

dtype: string

- name: user_id

dtype: string

- name: created_at

dtype: string

- name: url

dtype: string

- name: favourite_count

dtype: int64

- name: scraped_at

dtype: string

- name: image_urls

dtype: string

splits:

- name: train

num_bytes: 7492

num_examples: 16

download_size: 8456

dataset_size: 7492

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "twitter_dataset_1713007117"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

kristinashemet/Knowledge_Based_Questions-Anwers_with_text_from_doc-Part1-24.03 | ---

dataset_info:

features:

- name: formatted_data

dtype: string

splits:

- name: train

num_bytes: 247196

num_examples: 532

download_size: 65541

dataset_size: 247196

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

open-llm-leaderboard/details_chargoddard__piano-medley-7b | ---

pretty_name: Evaluation run of chargoddard/piano-medley-7b

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [chargoddard/piano-medley-7b](https://huggingface.co/chargoddard/piano-medley-7b)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 63 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the aggregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_chargoddard__piano-medley-7b\"\

,\n\t\"harness_winogrande_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\n\

These are the [latest results from run 2023-12-10T03:24:54.482171](https://huggingface.co/datasets/open-llm-leaderboard/details_chargoddard__piano-medley-7b/blob/main/results_2023-12-10T03-24-54.482171.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.6462767300930756,\n\

\ \"acc_stderr\": 0.032134853847514466,\n \"acc_norm\": 0.6489933678568897,\n\

\ \"acc_norm_stderr\": 0.03277330582106223,\n \"mc1\": 0.44063647490820074,\n\

\ \"mc1_stderr\": 0.017379697555437446,\n \"mc2\": 0.6142054505900651,\n\

\ \"mc2_stderr\": 0.015456544162012987\n },\n \"harness|arc:challenge|25\"\

: {\n \"acc\": 0.6399317406143344,\n \"acc_stderr\": 0.014027516814585186,\n\

\ \"acc_norm\": 0.6757679180887372,\n \"acc_norm_stderr\": 0.013678810399518826\n\

\ },\n \"harness|hellaswag|10\": {\n \"acc\": 0.6645090619398526,\n\

\ \"acc_stderr\": 0.004711968379069029,\n \"acc_norm\": 0.8536148177653854,\n\

\ \"acc_norm_stderr\": 0.0035276951498235004\n },\n \"harness|hendrycksTest-abstract_algebra|5\"\

: {\n \"acc\": 0.35,\n \"acc_stderr\": 0.0479372485441102,\n \

\ \"acc_norm\": 0.35,\n \"acc_norm_stderr\": 0.0479372485441102\n },\n\

\ \"harness|hendrycksTest-anatomy|5\": {\n \"acc\": 0.6518518518518519,\n\

\ \"acc_stderr\": 0.04115324610336953,\n \"acc_norm\": 0.6518518518518519,\n\

\ \"acc_norm_stderr\": 0.04115324610336953\n },\n \"harness|hendrycksTest-astronomy|5\"\

: {\n \"acc\": 0.6842105263157895,\n \"acc_stderr\": 0.0378272898086547,\n\

\ \"acc_norm\": 0.6842105263157895,\n \"acc_norm_stderr\": 0.0378272898086547\n\

\ },\n \"harness|hendrycksTest-business_ethics|5\": {\n \"acc\": 0.57,\n\

\ \"acc_stderr\": 0.049756985195624284,\n \"acc_norm\": 0.57,\n \

\ \"acc_norm_stderr\": 0.049756985195624284\n },\n \"harness|hendrycksTest-clinical_knowledge|5\"\

: {\n \"acc\": 0.6830188679245283,\n \"acc_stderr\": 0.02863723563980089,\n\

\ \"acc_norm\": 0.6830188679245283,\n \"acc_norm_stderr\": 0.02863723563980089\n\

\ },\n \"harness|hendrycksTest-college_biology|5\": {\n \"acc\": 0.7569444444444444,\n\

\ \"acc_stderr\": 0.0358687928008034,\n \"acc_norm\": 0.7569444444444444,\n\

\ \"acc_norm_stderr\": 0.0358687928008034\n },\n \"harness|hendrycksTest-college_chemistry|5\"\

: {\n \"acc\": 0.46,\n \"acc_stderr\": 0.05009082659620332,\n \

\ \"acc_norm\": 0.46,\n \"acc_norm_stderr\": 0.05009082659620332\n \

\ },\n \"harness|hendrycksTest-college_computer_science|5\": {\n \"acc\"\

: 0.47,\n \"acc_stderr\": 0.050161355804659205,\n \"acc_norm\": 0.47,\n\

\ \"acc_norm_stderr\": 0.050161355804659205\n },\n \"harness|hendrycksTest-college_mathematics|5\"\

: {\n \"acc\": 0.31,\n \"acc_stderr\": 0.04648231987117316,\n \

\ \"acc_norm\": 0.31,\n \"acc_norm_stderr\": 0.04648231987117316\n \

\ },\n \"harness|hendrycksTest-college_medicine|5\": {\n \"acc\": 0.653179190751445,\n\

\ \"acc_stderr\": 0.036291466701596636,\n \"acc_norm\": 0.653179190751445,\n\

\ \"acc_norm_stderr\": 0.036291466701596636\n },\n \"harness|hendrycksTest-college_physics|5\"\

: {\n \"acc\": 0.4019607843137255,\n \"acc_stderr\": 0.048786087144669955,\n\

\ \"acc_norm\": 0.4019607843137255,\n \"acc_norm_stderr\": 0.048786087144669955\n\

\ },\n \"harness|hendrycksTest-computer_security|5\": {\n \"acc\":\

\ 0.76,\n \"acc_stderr\": 0.042923469599092816,\n \"acc_norm\": 0.76,\n\

\ \"acc_norm_stderr\": 0.042923469599092816\n },\n \"harness|hendrycksTest-conceptual_physics|5\"\

: {\n \"acc\": 0.5617021276595745,\n \"acc_stderr\": 0.03243618636108101,\n\

\ \"acc_norm\": 0.5617021276595745,\n \"acc_norm_stderr\": 0.03243618636108101\n\

\ },\n \"harness|hendrycksTest-econometrics|5\": {\n \"acc\": 0.5263157894736842,\n\

\ \"acc_stderr\": 0.046970851366478626,\n \"acc_norm\": 0.5263157894736842,\n\

\ \"acc_norm_stderr\": 0.046970851366478626\n },\n \"harness|hendrycksTest-electrical_engineering|5\"\

: {\n \"acc\": 0.593103448275862,\n \"acc_stderr\": 0.04093793981266236,\n\

\ \"acc_norm\": 0.593103448275862,\n \"acc_norm_stderr\": 0.04093793981266236\n\

\ },\n \"harness|hendrycksTest-elementary_mathematics|5\": {\n \"acc\"\

: 0.3941798941798942,\n \"acc_stderr\": 0.02516798233389414,\n \"\

acc_norm\": 0.3941798941798942,\n \"acc_norm_stderr\": 0.02516798233389414\n\

\ },\n \"harness|hendrycksTest-formal_logic|5\": {\n \"acc\": 0.4444444444444444,\n\

\ \"acc_stderr\": 0.04444444444444449,\n \"acc_norm\": 0.4444444444444444,\n\

\ \"acc_norm_stderr\": 0.04444444444444449\n },\n \"harness|hendrycksTest-global_facts|5\"\

: {\n \"acc\": 0.38,\n \"acc_stderr\": 0.04878317312145633,\n \

\ \"acc_norm\": 0.38,\n \"acc_norm_stderr\": 0.04878317312145633\n \

\ },\n \"harness|hendrycksTest-high_school_biology|5\": {\n \"acc\": 0.8,\n\

\ \"acc_stderr\": 0.02275520495954294,\n \"acc_norm\": 0.8,\n \

\ \"acc_norm_stderr\": 0.02275520495954294\n },\n \"harness|hendrycksTest-high_school_chemistry|5\"\

: {\n \"acc\": 0.5073891625615764,\n \"acc_stderr\": 0.035176035403610105,\n\

\ \"acc_norm\": 0.5073891625615764,\n \"acc_norm_stderr\": 0.035176035403610105\n\

\ },\n \"harness|hendrycksTest-high_school_computer_science|5\": {\n \

\ \"acc\": 0.7,\n \"acc_stderr\": 0.046056618647183814,\n \"acc_norm\"\

: 0.7,\n \"acc_norm_stderr\": 0.046056618647183814\n },\n \"harness|hendrycksTest-high_school_european_history|5\"\

: {\n \"acc\": 0.7636363636363637,\n \"acc_stderr\": 0.03317505930009182,\n\

\ \"acc_norm\": 0.7636363636363637,\n \"acc_norm_stderr\": 0.03317505930009182\n\

\ },\n \"harness|hendrycksTest-high_school_geography|5\": {\n \"acc\"\

: 0.803030303030303,\n \"acc_stderr\": 0.02833560973246336,\n \"acc_norm\"\

: 0.803030303030303,\n \"acc_norm_stderr\": 0.02833560973246336\n },\n\

\ \"harness|hendrycksTest-high_school_government_and_politics|5\": {\n \

\ \"acc\": 0.9067357512953368,\n \"acc_stderr\": 0.02098685459328973,\n\

\ \"acc_norm\": 0.9067357512953368,\n \"acc_norm_stderr\": 0.02098685459328973\n\

\ },\n \"harness|hendrycksTest-high_school_macroeconomics|5\": {\n \

\ \"acc\": 0.6794871794871795,\n \"acc_stderr\": 0.023661296393964273,\n\

\ \"acc_norm\": 0.6794871794871795,\n \"acc_norm_stderr\": 0.023661296393964273\n\

\ },\n \"harness|hendrycksTest-high_school_mathematics|5\": {\n \"\

acc\": 0.3333333333333333,\n \"acc_stderr\": 0.028742040903948482,\n \

\ \"acc_norm\": 0.3333333333333333,\n \"acc_norm_stderr\": 0.028742040903948482\n\

\ },\n \"harness|hendrycksTest-high_school_microeconomics|5\": {\n \

\ \"acc\": 0.6974789915966386,\n \"acc_stderr\": 0.029837962388291932,\n\

\ \"acc_norm\": 0.6974789915966386,\n \"acc_norm_stderr\": 0.029837962388291932\n\

\ },\n \"harness|hendrycksTest-high_school_physics|5\": {\n \"acc\"\

: 0.33774834437086093,\n \"acc_stderr\": 0.03861557546255169,\n \"\

acc_norm\": 0.33774834437086093,\n \"acc_norm_stderr\": 0.03861557546255169\n\

\ },\n \"harness|hendrycksTest-high_school_psychology|5\": {\n \"acc\"\

: 0.8532110091743119,\n \"acc_stderr\": 0.015173141845126253,\n \"\

acc_norm\": 0.8532110091743119,\n \"acc_norm_stderr\": 0.015173141845126253\n\

\ },\n \"harness|hendrycksTest-high_school_statistics|5\": {\n \"acc\"\

: 0.5046296296296297,\n \"acc_stderr\": 0.03409825519163572,\n \"\

acc_norm\": 0.5046296296296297,\n \"acc_norm_stderr\": 0.03409825519163572\n\

\ },\n \"harness|hendrycksTest-high_school_us_history|5\": {\n \"acc\"\

: 0.8333333333333334,\n \"acc_stderr\": 0.026156867523931045,\n \"\

acc_norm\": 0.8333333333333334,\n \"acc_norm_stderr\": 0.026156867523931045\n\

\ },\n \"harness|hendrycksTest-high_school_world_history|5\": {\n \"\

acc\": 0.7932489451476793,\n \"acc_stderr\": 0.026361651668389094,\n \

\ \"acc_norm\": 0.7932489451476793,\n \"acc_norm_stderr\": 0.026361651668389094\n\

\ },\n \"harness|hendrycksTest-human_aging|5\": {\n \"acc\": 0.7130044843049327,\n\

\ \"acc_stderr\": 0.03036037971029195,\n \"acc_norm\": 0.7130044843049327,\n\

\ \"acc_norm_stderr\": 0.03036037971029195\n },\n \"harness|hendrycksTest-human_sexuality|5\"\

: {\n \"acc\": 0.8015267175572519,\n \"acc_stderr\": 0.034981493854624714,\n\

\ \"acc_norm\": 0.8015267175572519,\n \"acc_norm_stderr\": 0.034981493854624714\n\

\ },\n \"harness|hendrycksTest-international_law|5\": {\n \"acc\":\

\ 0.768595041322314,\n \"acc_stderr\": 0.03849856098794088,\n \"acc_norm\"\

: 0.768595041322314,\n \"acc_norm_stderr\": 0.03849856098794088\n },\n\

\ \"harness|hendrycksTest-jurisprudence|5\": {\n \"acc\": 0.8148148148148148,\n\

\ \"acc_stderr\": 0.03755265865037181,\n \"acc_norm\": 0.8148148148148148,\n\

\ \"acc_norm_stderr\": 0.03755265865037181\n },\n \"harness|hendrycksTest-logical_fallacies|5\"\

: {\n \"acc\": 0.754601226993865,\n \"acc_stderr\": 0.03380939813943354,\n\

\ \"acc_norm\": 0.754601226993865,\n \"acc_norm_stderr\": 0.03380939813943354\n\

\ },\n \"harness|hendrycksTest-machine_learning|5\": {\n \"acc\": 0.48214285714285715,\n\

\ \"acc_stderr\": 0.047427623612430116,\n \"acc_norm\": 0.48214285714285715,\n\

\ \"acc_norm_stderr\": 0.047427623612430116\n },\n \"harness|hendrycksTest-management|5\"\

: {\n \"acc\": 0.7961165048543689,\n \"acc_stderr\": 0.039891398595317706,\n\

\ \"acc_norm\": 0.7961165048543689,\n \"acc_norm_stderr\": 0.039891398595317706\n\

\ },\n \"harness|hendrycksTest-marketing|5\": {\n \"acc\": 0.8717948717948718,\n\

\ \"acc_stderr\": 0.02190190511507332,\n \"acc_norm\": 0.8717948717948718,\n\

\ \"acc_norm_stderr\": 0.02190190511507332\n },\n \"harness|hendrycksTest-medical_genetics|5\"\

: {\n \"acc\": 0.7,\n \"acc_stderr\": 0.046056618647183814,\n \

\ \"acc_norm\": 0.7,\n \"acc_norm_stderr\": 0.046056618647183814\n \

\ },\n \"harness|hendrycksTest-miscellaneous|5\": {\n \"acc\": 0.8263090676883781,\n\

\ \"acc_stderr\": 0.013547415658662253,\n \"acc_norm\": 0.8263090676883781,\n\

\ \"acc_norm_stderr\": 0.013547415658662253\n },\n \"harness|hendrycksTest-moral_disputes|5\"\

: {\n \"acc\": 0.7196531791907514,\n \"acc_stderr\": 0.024182427496577612,\n\

\ \"acc_norm\": 0.7196531791907514,\n \"acc_norm_stderr\": 0.024182427496577612\n\

\ },\n \"harness|hendrycksTest-moral_scenarios|5\": {\n \"acc\": 0.41787709497206704,\n\

\ \"acc_stderr\": 0.01649540063582008,\n \"acc_norm\": 0.41787709497206704,\n\

\ \"acc_norm_stderr\": 0.01649540063582008\n },\n \"harness|hendrycksTest-nutrition|5\"\

: {\n \"acc\": 0.7287581699346405,\n \"acc_stderr\": 0.02545775669666788,\n\

\ \"acc_norm\": 0.7287581699346405,\n \"acc_norm_stderr\": 0.02545775669666788\n\

\ },\n \"harness|hendrycksTest-philosophy|5\": {\n \"acc\": 0.7202572347266881,\n\

\ \"acc_stderr\": 0.02549425935069491,\n \"acc_norm\": 0.7202572347266881,\n\

\ \"acc_norm_stderr\": 0.02549425935069491\n },\n \"harness|hendrycksTest-prehistory|5\"\

: {\n \"acc\": 0.7314814814814815,\n \"acc_stderr\": 0.02465968518596728,\n\

\ \"acc_norm\": 0.7314814814814815,\n \"acc_norm_stderr\": 0.02465968518596728\n\

\ },\n \"harness|hendrycksTest-professional_accounting|5\": {\n \"\

acc\": 0.48226950354609927,\n \"acc_stderr\": 0.02980873964223777,\n \

\ \"acc_norm\": 0.48226950354609927,\n \"acc_norm_stderr\": 0.02980873964223777\n\

\ },\n \"harness|hendrycksTest-professional_law|5\": {\n \"acc\": 0.45371577574967403,\n\

\ \"acc_stderr\": 0.01271540484127774,\n \"acc_norm\": 0.45371577574967403,\n\

\ \"acc_norm_stderr\": 0.01271540484127774\n },\n \"harness|hendrycksTest-professional_medicine|5\"\

: {\n \"acc\": 0.6911764705882353,\n \"acc_stderr\": 0.028064998167040094,\n\

\ \"acc_norm\": 0.6911764705882353,\n \"acc_norm_stderr\": 0.028064998167040094\n\

\ },\n \"harness|hendrycksTest-professional_psychology|5\": {\n \"\

acc\": 0.6519607843137255,\n \"acc_stderr\": 0.019270998708223977,\n \

\ \"acc_norm\": 0.6519607843137255,\n \"acc_norm_stderr\": 0.019270998708223977\n\

\ },\n \"harness|hendrycksTest-public_relations|5\": {\n \"acc\": 0.6818181818181818,\n\

\ \"acc_stderr\": 0.044612721759105085,\n \"acc_norm\": 0.6818181818181818,\n\

\ \"acc_norm_stderr\": 0.044612721759105085\n },\n \"harness|hendrycksTest-security_studies|5\"\

: {\n \"acc\": 0.7387755102040816,\n \"acc_stderr\": 0.028123429335142783,\n\

\ \"acc_norm\": 0.7387755102040816,\n \"acc_norm_stderr\": 0.028123429335142783\n\

\ },\n \"harness|hendrycksTest-sociology|5\": {\n \"acc\": 0.8557213930348259,\n\

\ \"acc_stderr\": 0.024845753212306053,\n \"acc_norm\": 0.8557213930348259,\n\

\ \"acc_norm_stderr\": 0.024845753212306053\n },\n \"harness|hendrycksTest-us_foreign_policy|5\"\

: {\n \"acc\": 0.84,\n \"acc_stderr\": 0.03684529491774709,\n \

\ \"acc_norm\": 0.84,\n \"acc_norm_stderr\": 0.03684529491774709\n \

\ },\n \"harness|hendrycksTest-virology|5\": {\n \"acc\": 0.5301204819277109,\n\

\ \"acc_stderr\": 0.03885425420866767,\n \"acc_norm\": 0.5301204819277109,\n\

\ \"acc_norm_stderr\": 0.03885425420866767\n },\n \"harness|hendrycksTest-world_religions|5\"\

: {\n \"acc\": 0.8245614035087719,\n \"acc_stderr\": 0.02917088550072767,\n\

\ \"acc_norm\": 0.8245614035087719,\n \"acc_norm_stderr\": 0.02917088550072767\n\

\ },\n \"harness|truthfulqa:mc|0\": {\n \"mc1\": 0.44063647490820074,\n\

\ \"mc1_stderr\": 0.017379697555437446,\n \"mc2\": 0.6142054505900651,\n\

\ \"mc2_stderr\": 0.015456544162012987\n },\n \"harness|winogrande|5\"\

: {\n \"acc\": 0.7916337805840569,\n \"acc_stderr\": 0.011414554399987729\n\

\ },\n \"harness|gsm8k|5\": {\n \"acc\": 0.5655799848369977,\n \

\ \"acc_stderr\": 0.013653507211411417\n }\n}\n```"

repo_url: https://huggingface.co/chargoddard/piano-medley-7b

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_arc_challenge_25

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|arc:challenge|25_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|arc:challenge|25_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_gsm8k_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|gsm8k|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hellaswag_10

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hellaswag|10_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hellaswag|10_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-12-10T03-24-54.482171.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_abstract_algebra_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_anatomy_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_astronomy_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_business_ethics_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_clinical_knowledge_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_college_biology_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_college_chemistry_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_college_computer_science_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_college_mathematics_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_college_medicine_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_college_physics_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_computer_security_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_conceptual_physics_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_econometrics_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_electrical_engineering_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_elementary_mathematics_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_formal_logic_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_global_facts_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_biology_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_chemistry_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_computer_science_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_european_history_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_geography_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_government_and_politics_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_macroeconomics_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_mathematics_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_microeconomics_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_physics_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_psychology_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_statistics_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_us_history_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_high_school_world_history_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_human_aging_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_human_sexuality_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_international_law_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-international_law|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-international_law|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_jurisprudence_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_logical_fallacies_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_machine_learning_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_management_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-management|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-management|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_marketing_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-marketing|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-marketing|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_medical_genetics_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_miscellaneous_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_moral_disputes_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_moral_scenarios_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_nutrition_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_philosophy_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_prehistory_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_professional_accounting_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_professional_law_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_professional_medicine_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_professional_psychology_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_public_relations_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_security_studies_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_sociology_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-sociology|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-sociology|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_us_foreign_policy_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_virology_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-virology|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-virology|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_hendrycksTest_world_religions_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_truthfulqa_mc_0

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|truthfulqa:mc|0_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|truthfulqa:mc|0_2023-12-10T03-24-54.482171.parquet'

- config_name: harness_winogrande_5

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- '**/details_harness|winogrande|5_2023-12-10T03-24-54.482171.parquet'

- split: latest

path:

- '**/details_harness|winogrande|5_2023-12-10T03-24-54.482171.parquet'

- config_name: results

data_files:

- split: 2023_12_10T03_24_54.482171

path:

- results_2023-12-10T03-24-54.482171.parquet

- split: latest

path:

- results_2023-12-10T03-24-54.482171.parquet

---

# Dataset Card for Evaluation run of chargoddard/piano-medley-7b

## Dataset Description

- **Homepage:**

- **Repository:** https://huggingface.co/chargoddard/piano-medley-7b

- **Paper:**

- **Leaderboard:** https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

- **Point of Contact:** clementine@hf.co

### Dataset Summary

Dataset automatically created during the evaluation run of model [chargoddard/piano-medley-7b](https://huggingface.co/chargoddard/piano-medley-7b) on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).

The dataset is composed of 63 configuration, each one coresponding to one of the evaluated task.

The dataset has been created from 1 run(s). Each run can be found as a specific split in each configuration, the split being named using the timestamp of the run.The "train" split is always pointing to the latest results.

An additional configuration "results" store all the aggregated results of the run (and is used to compute and display the aggregated metrics on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).

To load the details from a run, you can for instance do the following:

```python

from datasets import load_dataset

data = load_dataset("open-llm-leaderboard/details_chargoddard__piano-medley-7b",

"harness_winogrande_5",

split="train")

```

## Latest results

These are the [latest results from run 2023-12-10T03:24:54.482171](https://huggingface.co/datasets/open-llm-leaderboard/details_chargoddard__piano-medley-7b/blob/main/results_2023-12-10T03-24-54.482171.json)(note that their might be results for other tasks in the repos if successive evals didn't cover the same tasks. You find each in the results and the "latest" split for each eval):

```python

{

"all": {

"acc": 0.6462767300930756,

"acc_stderr": 0.032134853847514466,

"acc_norm": 0.6489933678568897,

"acc_norm_stderr": 0.03277330582106223,

"mc1": 0.44063647490820074,

"mc1_stderr": 0.017379697555437446,

"mc2": 0.6142054505900651,

"mc2_stderr": 0.015456544162012987

},

"harness|arc:challenge|25": {

"acc": 0.6399317406143344,

"acc_stderr": 0.014027516814585186,

"acc_norm": 0.6757679180887372,

"acc_norm_stderr": 0.013678810399518826

},

"harness|hellaswag|10": {

"acc": 0.6645090619398526,

"acc_stderr": 0.004711968379069029,

"acc_norm": 0.8536148177653854,

"acc_norm_stderr": 0.0035276951498235004

},

"harness|hendrycksTest-abstract_algebra|5": {

"acc": 0.35,

"acc_stderr": 0.0479372485441102,

"acc_norm": 0.35,

"acc_norm_stderr": 0.0479372485441102

},

"harness|hendrycksTest-anatomy|5": {

"acc": 0.6518518518518519,

"acc_stderr": 0.04115324610336953,

"acc_norm": 0.6518518518518519,

"acc_norm_stderr": 0.04115324610336953

},

"harness|hendrycksTest-astronomy|5": {

"acc": 0.6842105263157895,

"acc_stderr": 0.0378272898086547,

"acc_norm": 0.6842105263157895,

"acc_norm_stderr": 0.0378272898086547

},

"harness|hendrycksTest-business_ethics|5": {

"acc": 0.57,

"acc_stderr": 0.049756985195624284,

"acc_norm": 0.57,

"acc_norm_stderr": 0.049756985195624284

},

"harness|hendrycksTest-clinical_knowledge|5": {

"acc": 0.6830188679245283,

"acc_stderr": 0.02863723563980089,

"acc_norm": 0.6830188679245283,

"acc_norm_stderr": 0.02863723563980089

},

"harness|hendrycksTest-college_biology|5": {

"acc": 0.7569444444444444,

"acc_stderr": 0.0358687928008034,

"acc_norm": 0.7569444444444444,

"acc_norm_stderr": 0.0358687928008034

},

"harness|hendrycksTest-college_chemistry|5": {

"acc": 0.46,

"acc_stderr": 0.05009082659620332,

"acc_norm": 0.46,

"acc_norm_stderr": 0.05009082659620332

},

"harness|hendrycksTest-college_computer_science|5": {

"acc": 0.47,

"acc_stderr": 0.050161355804659205,

"acc_norm": 0.47,

"acc_norm_stderr": 0.050161355804659205

},

"harness|hendrycksTest-college_mathematics|5": {

"acc": 0.31,

"acc_stderr": 0.04648231987117316,

"acc_norm": 0.31,

"acc_norm_stderr": 0.04648231987117316

},

"harness|hendrycksTest-college_medicine|5": {

"acc": 0.653179190751445,

"acc_stderr": 0.036291466701596636,

"acc_norm": 0.653179190751445,

"acc_norm_stderr": 0.036291466701596636

},

"harness|hendrycksTest-college_physics|5": {

"acc": 0.4019607843137255,

"acc_stderr": 0.048786087144669955,

"acc_norm": 0.4019607843137255,

"acc_norm_stderr": 0.048786087144669955

},

"harness|hendrycksTest-computer_security|5": {

"acc": 0.76,

"acc_stderr": 0.042923469599092816,

"acc_norm": 0.76,

"acc_norm_stderr": 0.042923469599092816

},

"harness|hendrycksTest-conceptual_physics|5": {

"acc": 0.5617021276595745,

"acc_stderr": 0.03243618636108101,

"acc_norm": 0.5617021276595745,

"acc_norm_stderr": 0.03243618636108101

},

"harness|hendrycksTest-econometrics|5": {

"acc": 0.5263157894736842,

"acc_stderr": 0.046970851366478626,

"acc_norm": 0.5263157894736842,

"acc_norm_stderr": 0.046970851366478626

},

"harness|hendrycksTest-electrical_engineering|5": {

"acc": 0.593103448275862,

"acc_stderr": 0.04093793981266236,

"acc_norm": 0.593103448275862,

"acc_norm_stderr": 0.04093793981266236

},

"harness|hendrycksTest-elementary_mathematics|5": {

"acc": 0.3941798941798942,

"acc_stderr": 0.02516798233389414,

"acc_norm": 0.3941798941798942,

"acc_norm_stderr": 0.02516798233389414

},

"harness|hendrycksTest-formal_logic|5": {

"acc": 0.4444444444444444,

"acc_stderr": 0.04444444444444449,

"acc_norm": 0.4444444444444444,

"acc_norm_stderr": 0.04444444444444449

},

"harness|hendrycksTest-global_facts|5": {

"acc": 0.38,

"acc_stderr": 0.04878317312145633,

"acc_norm": 0.38,

"acc_norm_stderr": 0.04878317312145633

},

"harness|hendrycksTest-high_school_biology|5": {

"acc": 0.8,

"acc_stderr": 0.02275520495954294,

"acc_norm": 0.8,

"acc_norm_stderr": 0.02275520495954294

},

"harness|hendrycksTest-high_school_chemistry|5": {

"acc": 0.5073891625615764,

"acc_stderr": 0.035176035403610105,

"acc_norm": 0.5073891625615764,

"acc_norm_stderr": 0.035176035403610105

},

"harness|hendrycksTest-high_school_computer_science|5": {

"acc": 0.7,

"acc_stderr": 0.046056618647183814,

"acc_norm": 0.7,

"acc_norm_stderr": 0.046056618647183814

},

"harness|hendrycksTest-high_school_european_history|5": {

"acc": 0.7636363636363637,

"acc_stderr": 0.03317505930009182,

"acc_norm": 0.7636363636363637,

"acc_norm_stderr": 0.03317505930009182

},

"harness|hendrycksTest-high_school_geography|5": {

"acc": 0.803030303030303,

"acc_stderr": 0.02833560973246336,

"acc_norm": 0.803030303030303,

"acc_norm_stderr": 0.02833560973246336

},

"harness|hendrycksTest-high_school_government_and_politics|5": {

"acc": 0.9067357512953368,

"acc_stderr": 0.02098685459328973,

"acc_norm": 0.9067357512953368,

"acc_norm_stderr": 0.02098685459328973

},

"harness|hendrycksTest-high_school_macroeconomics|5": {

"acc": 0.6794871794871795,

"acc_stderr": 0.023661296393964273,

"acc_norm": 0.6794871794871795,

"acc_norm_stderr": 0.023661296393964273

},

"harness|hendrycksTest-high_school_mathematics|5": {

"acc": 0.3333333333333333,

"acc_stderr": 0.028742040903948482,

"acc_norm": 0.3333333333333333,

"acc_norm_stderr": 0.028742040903948482

},

"harness|hendrycksTest-high_school_microeconomics|5": {

"acc": 0.6974789915966386,

"acc_stderr": 0.029837962388291932,

"acc_norm": 0.6974789915966386,

"acc_norm_stderr": 0.029837962388291932

},

"harness|hendrycksTest-high_school_physics|5": {

"acc": 0.33774834437086093,

"acc_stderr": 0.03861557546255169,

"acc_norm": 0.33774834437086093,

"acc_norm_stderr": 0.03861557546255169

},

"harness|hendrycksTest-high_school_psychology|5": {

"acc": 0.8532110091743119,

"acc_stderr": 0.015173141845126253,

"acc_norm": 0.8532110091743119,

"acc_norm_stderr": 0.015173141845126253

},

"harness|hendrycksTest-high_school_statistics|5": {

"acc": 0.5046296296296297,

"acc_stderr": 0.03409825519163572,

"acc_norm": 0.5046296296296297,

"acc_norm_stderr": 0.03409825519163572

},

"harness|hendrycksTest-high_school_us_history|5": {

"acc": 0.8333333333333334,

"acc_stderr": 0.026156867523931045,

"acc_norm": 0.8333333333333334,

"acc_norm_stderr": 0.026156867523931045

},

"harness|hendrycksTest-high_school_world_history|5": {

"acc": 0.7932489451476793,

"acc_stderr": 0.026361651668389094,

"acc_norm": 0.7932489451476793,

"acc_norm_stderr": 0.026361651668389094

},

"harness|hendrycksTest-human_aging|5": {

"acc": 0.7130044843049327,

"acc_stderr": 0.03036037971029195,

"acc_norm": 0.7130044843049327,

"acc_norm_stderr": 0.03036037971029195

},

"harness|hendrycksTest-human_sexuality|5": {

"acc": 0.8015267175572519,

"acc_stderr": 0.034981493854624714,

"acc_norm": 0.8015267175572519,

"acc_norm_stderr": 0.034981493854624714

},

"harness|hendrycksTest-international_law|5": {

"acc": 0.768595041322314,

"acc_stderr": 0.03849856098794088,

"acc_norm": 0.768595041322314,

"acc_norm_stderr": 0.03849856098794088

},

"harness|hendrycksTest-jurisprudence|5": {

"acc": 0.8148148148148148,

"acc_stderr": 0.03755265865037181,

"acc_norm": 0.8148148148148148,

"acc_norm_stderr": 0.03755265865037181

},

"harness|hendrycksTest-logical_fallacies|5": {

"acc": 0.754601226993865,

"acc_stderr": 0.03380939813943354,

"acc_norm": 0.754601226993865,

"acc_norm_stderr": 0.03380939813943354

},

"harness|hendrycksTest-machine_learning|5": {

"acc": 0.48214285714285715,

"acc_stderr": 0.047427623612430116,

"acc_norm": 0.48214285714285715,

"acc_norm_stderr": 0.047427623612430116

},

"harness|hendrycksTest-management|5": {

"acc": 0.7961165048543689,

"acc_stderr": 0.039891398595317706,

"acc_norm": 0.7961165048543689,

"acc_norm_stderr": 0.039891398595317706

},

"harness|hendrycksTest-marketing|5": {

"acc": 0.8717948717948718,

"acc_stderr": 0.02190190511507332,

"acc_norm": 0.8717948717948718,

"acc_norm_stderr": 0.02190190511507332

},

"harness|hendrycksTest-medical_genetics|5": {

"acc": 0.7,

"acc_stderr": 0.046056618647183814,

"acc_norm": 0.7,

"acc_norm_stderr": 0.046056618647183814

},

"harness|hendrycksTest-miscellaneous|5": {

"acc": 0.8263090676883781,

"acc_stderr": 0.013547415658662253,

"acc_norm": 0.8263090676883781,

"acc_norm_stderr": 0.013547415658662253

},

"harness|hendrycksTest-moral_disputes|5": {

"acc": 0.7196531791907514,

"acc_stderr": 0.024182427496577612,

"acc_norm": 0.7196531791907514,

"acc_norm_stderr": 0.024182427496577612

},

"harness|hendrycksTest-moral_scenarios|5": {

"acc": 0.41787709497206704,

"acc_stderr": 0.01649540063582008,

"acc_norm": 0.41787709497206704,

"acc_norm_stderr": 0.01649540063582008

},

"harness|hendrycksTest-nutrition|5": {

"acc": 0.7287581699346405,

"acc_stderr": 0.02545775669666788,

"acc_norm": 0.7287581699346405,

"acc_norm_stderr": 0.02545775669666788

},

"harness|hendrycksTest-philosophy|5": {

"acc": 0.7202572347266881,

"acc_stderr": 0.02549425935069491,

"acc_norm": 0.7202572347266881,

"acc_norm_stderr": 0.02549425935069491

},

"harness|hendrycksTest-prehistory|5": {

"acc": 0.7314814814814815,

"acc_stderr": 0.02465968518596728,

"acc_norm": 0.7314814814814815,

"acc_norm_stderr": 0.02465968518596728

},

"harness|hendrycksTest-professional_accounting|5": {

"acc": 0.48226950354609927,

"acc_stderr": 0.02980873964223777,

"acc_norm": 0.48226950354609927,

"acc_norm_stderr": 0.02980873964223777

},

"harness|hendrycksTest-professional_law|5": {

"acc": 0.45371577574967403,

"acc_stderr": 0.01271540484127774,

"acc_norm": 0.45371577574967403,

"acc_norm_stderr": 0.01271540484127774

},

"harness|hendrycksTest-professional_medicine|5": {

"acc": 0.6911764705882353,

"acc_stderr": 0.028064998167040094,

"acc_norm": 0.6911764705882353,

"acc_norm_stderr": 0.028064998167040094

},

"harness|hendrycksTest-professional_psychology|5": {

"acc": 0.6519607843137255,

"acc_stderr": 0.019270998708223977,

"acc_norm": 0.6519607843137255,

"acc_norm_stderr": 0.019270998708223977

},

"harness|hendrycksTest-public_relations|5": {

"acc": 0.6818181818181818,

"acc_stderr": 0.044612721759105085,

"acc_norm": 0.6818181818181818,

"acc_norm_stderr": 0.044612721759105085

},

"harness|hendrycksTest-security_studies|5": {

"acc": 0.7387755102040816,

"acc_stderr": 0.028123429335142783,

"acc_norm": 0.7387755102040816,

"acc_norm_stderr": 0.028123429335142783

},

"harness|hendrycksTest-sociology|5": {

"acc": 0.8557213930348259,

"acc_stderr": 0.024845753212306053,

"acc_norm": 0.8557213930348259,

"acc_norm_stderr": 0.024845753212306053

},

"harness|hendrycksTest-us_foreign_policy|5": {

"acc": 0.84,

"acc_stderr": 0.03684529491774709,

"acc_norm": 0.84,

"acc_norm_stderr": 0.03684529491774709

},

"harness|hendrycksTest-virology|5": {

"acc": 0.5301204819277109,

"acc_stderr": 0.03885425420866767,

"acc_norm": 0.5301204819277109,

"acc_norm_stderr": 0.03885425420866767

},

"harness|hendrycksTest-world_religions|5": {

"acc": 0.8245614035087719,

"acc_stderr": 0.02917088550072767,

"acc_norm": 0.8245614035087719,

"acc_norm_stderr": 0.02917088550072767

},