datasetId stringlengths 2 117 | card stringlengths 19 1.01M |

|---|---|

ML-Projects-Kiel/tweetyface | ---

annotations_creators:

- machine-generated

language:

- en

- de

language_creators:

- crowdsourced

license:

- apache-2.0

multilinguality:

- multilingual

pretty_name: tweetyface_en

size_categories:

- 10K<n<100K

source_datasets: []

tags: []

task_categories:

- text-generation

task_ids: []

---

# Dataset Card for "tweetyface"

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:**

- **Repository:** [GitHub](https://github.com/ml-projects-kiel/OpenCampus-ApplicationofTransformers)

### Dataset Summary

Dataset containing Tweets from prominent Twitter Users.

The dataset has been created utilizing a crawler for the Twitter API.

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

English, German

## Dataset Structure

### Data Instances

#### english

- **Size of downloaded dataset files:** 4.77 MB

- **Size of the generated dataset:** 5.92 MB

- **Total amount of disk used:** 4.77 MB

#### german

- **Size of downloaded dataset files:** 2.58 MB

- **Size of the generated dataset:** 3.10 MB

- **Total amount of disk used:** 2.59 MB

An example of 'validation' looks as follows.

```

{

"text": "@SpaceX @Space_Station About twice as much useful mass to orbit as rest of Earth combined",

"label": elonmusk,

"idx": 1001283

}

```

### Data Fields

The data fields are the same among all splits and languages.

- `text`: a `string` feature.

- `label`: a classification label

- `idx`: an `int64` feature.

### Data Splits

| name | train | validation |

| ------- | ----: | ---------: |

| english | 27857 | 6965 |

| german | 10254 | 2564 |

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

|

CyberMaike/kendl | ---

license: openrail

---

|

Norod78/microsoft-fluentui-emoji-768 | ---

language: en

license: mit

size_categories:

- n<10K

task_categories:

- text-to-image

pretty_name: Microsoft FluentUI Emoji 768x768

dataset_info:

features:

- name: text

dtype: string

- name: image

dtype: image

splits:

- name: train

num_bytes: 679617796.94

num_examples: 7564

download_size: 704564297

dataset_size: 679617796.94

tags:

- emoji

- fluentui

---

# Dataset Card for "microsoft-fluentui-emoji-768"

[svg and their file names were converted to images and text from Microsoft's fluentui-emoji repo](https://github.com/microsoft/fluentui-emoji) |

chuquan282/CBD_ERROR_LOGS | ---

dataset_info:

features:

- name: instruction

dtype: string

- name: input

dtype: float64

- name: output

dtype: string

splits:

- name: train

num_bytes: 767437.1685606061

num_examples: 1900

- name: test

num_bytes: 85629.83143939394

num_examples: 212

download_size: 270309

dataset_size: 853067.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

---

|

CyberHarem/hoshii_miki_theidolmster | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of hoshii_miki/星井美希/호시이미키 (THE iDOLM@STER)

This is the dataset of hoshii_miki/星井美希/호시이미키 (THE iDOLM@STER), containing 500 images and their tags.

The core tags of this character are `blonde_hair, long_hair, green_eyes, ahoge, breasts`, which are pruned in this dataset.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).

## List of Packages

| Name | Images | Size | Download | Type | Description |

|:-----------------|---------:|:-----------|:--------------------------------------------------------------------------------------------------------------------------|:-----------|:---------------------------------------------------------------------|

| raw | 500 | 523.12 MiB | [Download](https://huggingface.co/datasets/CyberHarem/hoshii_miki_theidolmster/resolve/main/dataset-raw.zip) | Waifuc-Raw | Raw data with meta information (min edge aligned to 1400 if larger). |

| 800 | 500 | 352.37 MiB | [Download](https://huggingface.co/datasets/CyberHarem/hoshii_miki_theidolmster/resolve/main/dataset-800.zip) | IMG+TXT | dataset with the shorter side not exceeding 800 pixels. |

| stage3-p480-800 | 1116 | 702.99 MiB | [Download](https://huggingface.co/datasets/CyberHarem/hoshii_miki_theidolmster/resolve/main/dataset-stage3-p480-800.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

| 1200 | 500 | 484.66 MiB | [Download](https://huggingface.co/datasets/CyberHarem/hoshii_miki_theidolmster/resolve/main/dataset-1200.zip) | IMG+TXT | dataset with the shorter side not exceeding 1200 pixels. |

| stage3-p480-1200 | 1116 | 912.39 MiB | [Download](https://huggingface.co/datasets/CyberHarem/hoshii_miki_theidolmster/resolve/main/dataset-stage3-p480-1200.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

### Load Raw Dataset with Waifuc

We provide raw dataset (including tagged images) for [waifuc](https://deepghs.github.io/waifuc/main/tutorials/installation/index.html) loading. If you need this, just run the following code

```python

import os

import zipfile

from huggingface_hub import hf_hub_download

from waifuc.source import LocalSource

# download raw archive file

zip_file = hf_hub_download(

repo_id='CyberHarem/hoshii_miki_theidolmster',

repo_type='dataset',

filename='dataset-raw.zip',

)

# extract files to your directory

dataset_dir = 'dataset_dir'

os.makedirs(dataset_dir, exist_ok=True)

with zipfile.ZipFile(zip_file, 'r') as zf:

zf.extractall(dataset_dir)

# load the dataset with waifuc

source = LocalSource(dataset_dir)

for item in source:

print(item.image, item.meta['filename'], item.meta['tags'])

```

## List of Clusters

List of tag clustering result, maybe some outfits can be mined here.

### Raw Text Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | Tags |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| 0 | 6 |  |  |  |  |  | 1girl, solo, midriff, navel, skirt, smile, open_mouth, thighhighs |

| 1 | 8 |  |  |  |  |  | 1girl, cleavage, medium_breasts, navel, single_leg_pantyhose, smile, solo, fishnet_pantyhose, midriff, belly_chain, jacket, open_mouth, pink_shorts, necklace, one_eye_closed, star_(symbol), yellow_bra |

| 2 | 13 |  |  |  |  |  | 1girl, open_mouth, smile, solo, blush, ;d, one_eye_closed, star_(symbol) |

| 3 | 10 |  |  |  |  |  | 1girl, smile, solo, blush, looking_at_viewer, simple_background, necklace, open_mouth, white_background |

| 4 | 5 |  |  |  |  |  | 1girl, flower, solo, elbow_gloves, smile, wedding_dress, boots, bridal_veil, hair_ornament, open_mouth, white_dress |

| 5 | 6 |  |  |  |  |  | 1girl, plaid_skirt, school_uniform, solo, smile, open_mouth, star_(symbol), blush, necktie |

| 6 | 7 |  |  |  |  |  | 1girl, cleavage, smile, solo, medium_breasts, open_mouth, day, navel, blush, looking_at_viewer, side-tie_bikini_bottom, sky, beach, cloud, green_bikini, outdoors, wet |

### Table Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | 1girl | solo | midriff | navel | skirt | smile | open_mouth | thighhighs | cleavage | medium_breasts | single_leg_pantyhose | fishnet_pantyhose | belly_chain | jacket | pink_shorts | necklace | one_eye_closed | star_(symbol) | yellow_bra | blush | ;d | looking_at_viewer | simple_background | white_background | flower | elbow_gloves | wedding_dress | boots | bridal_veil | hair_ornament | white_dress | plaid_skirt | school_uniform | necktie | day | side-tie_bikini_bottom | sky | beach | cloud | green_bikini | outdoors | wet |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------|:-------|:----------|:--------|:--------|:--------|:-------------|:-------------|:-----------|:-----------------|:-----------------------|:--------------------|:--------------|:---------|:--------------|:-----------|:-----------------|:----------------|:-------------|:--------|:-----|:--------------------|:--------------------|:-------------------|:---------|:---------------|:----------------|:--------|:--------------|:----------------|:--------------|:--------------|:-----------------|:----------|:------|:-------------------------|:------|:--------|:--------|:---------------|:-----------|:------|

| 0 | 6 |  |  |  |  |  | X | X | X | X | X | X | X | X | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | |

| 1 | 8 |  |  |  |  |  | X | X | X | X | | X | X | | X | X | X | X | X | X | X | X | X | X | X | | | | | | | | | | | | | | | | | | | | | | | |

| 2 | 13 |  |  |  |  |  | X | X | | | | X | X | | | | | | | | | | X | X | | X | X | | | | | | | | | | | | | | | | | | | | | |

| 3 | 10 |  |  |  |  |  | X | X | | | | X | X | | | | | | | | | X | | | | X | | X | X | X | | | | | | | | | | | | | | | | | | |

| 4 | 5 |  |  |  |  |  | X | X | | | | X | X | | | | | | | | | | | | | | | | | | X | X | X | X | X | X | X | | | | | | | | | | | |

| 5 | 6 |  |  |  |  |  | X | X | | | | X | X | | | | | | | | | | | X | | X | | | | | | | | | | | | X | X | X | | | | | | | | |

| 6 | 7 |  |  |  |  |  | X | X | | X | | X | X | | X | X | | | | | | | | | | X | | X | | | | | | | | | | | | | X | X | X | X | X | X | X | X |

|

fiveflow/koquad_v2_polyglot_tkd_20th | ---

dataset_info:

features:

- name: context

dtype: string

- name: input_ids

sequence: int32

- name: attention_mask

sequence: int8

- name: labels

sequence: int64

splits:

- name: train

num_bytes: 1766922390

num_examples: 20000

download_size: 592965039

dataset_size: 1766922390

---

# Dataset Card for "koquad_v2_polyglot_tkd_20th"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Zenodia/dreambooth-bee-images | ---

dataset_info:

features:

- name: image

dtype: image

splits:

- name: train

num_bytes: 1640816.0

num_examples: 6

download_size: 1626376

dataset_size: 1640816.0

---

# Dataset Card for "dreambooth-bee-images"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

one-sec-cv12/chunk_36 | ---

dataset_info:

features:

- name: audio

dtype:

audio:

sampling_rate: 16000

splits:

- name: train

num_bytes: 9147419424.25

num_examples: 95238

download_size: 8330550680

dataset_size: 9147419424.25

---

# Dataset Card for "chunk_36"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Litalp/audio-class | ---

dataset_info:

features:

- name: audio

dtype: audio

- name: label

dtype: string

splits:

- name: train

num_bytes: 249884107.148

num_examples: 1628

download_size: 249501839

dataset_size: 249884107.148

---

# Dataset Card for "audio-class"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

mask-distilled-onesec-cv12-each-chunk-uniq/chunk_232 | ---

dataset_info:

features:

- name: logits

sequence: float32

- name: mfcc

sequence:

sequence: float64

splits:

- name: train

num_bytes: 1198651708.0

num_examples: 235399

download_size: 1225301497

dataset_size: 1198651708.0

---

# Dataset Card for "chunk_232"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

ProfessorBob/text-embedding-dataset | ---

dataset_info:

- config_name: Documents

features:

- name: doc_id

dtype: string

- name: doc

dtype: string

splits:

- name: history

num_bytes: 508218

num_examples: 224

- name: religion

num_bytes: 302837

num_examples: 126

- name: recherche

num_bytes: 235256

num_examples: 69

- name: python

num_bytes: 660763

num_examples: 194

download_size: 952235

dataset_size: 1707074

- config_name: MOOC_MCQ_Queries

features:

- name: query_id

dtype: string

- name: query

dtype: string

- name: answers

sequence: string

- name: distractions

sequence: string

- name: relevant_docs

sequence: string

splits:

- name: history

num_bytes: 13156

num_examples: 58

- name: religion

num_bytes: 52563

num_examples: 125

- name: recherche

num_bytes: 18791

num_examples: 52

- name: python

num_bytes: 29759

num_examples: 85

download_size: 80494

dataset_size: 114269

configs:

- config_name: Documents

data_files:

- split: history

path: Documents/history-*

- split: religion

path: Documents/religion-*

- split: recherche

path: Documents/recherche-*

- split: python

path: Documents/python-*

- config_name: MOOC_MCQ_Queries

data_files:

- split: history

path: MOOC_MCQ_Queries/history-*

- split: religion

path: MOOC_MCQ_Queries/religion-*

- split: recherche

path: MOOC_MCQ_Queries/recherche-*

- split: python

path: MOOC_MCQ_Queries/python-*

---

# Text embedding Datasets

The text embedding datasets consist of several (query, passage) paired datasets aiming for text-embedding model finetuning. These datasets are ideal for developing and testing algorithms in the fields of natural language processing, information retrieval, and similar applications.

## Dataset Details

Each dataset in this collection is structured to facilitate the training and evaluation of text-embedding models. The datasets are diverse, covering multiple domains and formats. They are particularly useful for tasks like semantic search, question-answering systems, and document retrieval.

### [MOOC MCQ Queries]

The "MOOC MCQ Queries" dataset is derived from [FUN MOOC](https://www.fun-mooc.fr/fr/), an online platform offering a wide range of French courses across various domains. This dataset is uniquely valuable for its high-quality content, manually curated to assist students in understanding course materials better.

#### Content Overview:

- **Language**: French

- **Domains**:

- History: 57 examples

- Religion: 125 examples

- [Other domains to be added]

- **Dataset Description**:

Each record in the dataset includes the following fields:

```json

{

"query_id": "Unique identifier for each query",

"query": "Text of the multiple-choice question (MCQ)",

"answers": ["List of correct answer choices"],

"distractions": ["List of incorrect choices"],

"relevant_docs": ["List of relevant document IDs aiding the answer"]

}

```

- **statistics**:

| Category | Num. of Queries | Query Avg. Words | Number of Docs | Short Docs (<375 words) | Long Docs (≥375 words) | Doc Avg. Words |

|----------------|-----------------|------------------|----------------|-------------------------|------------------------|----------------|

| history | 57 | 11.31 | 224 | 147 | 77 | 351.79 |

| religion | 125 | 15.08 | 126 | 78 | 48 | 375.63 |

| recherche | 52 | 12.71 | 69 | 20 | 49 | 535.00 |

| python | 85 | 21.24 | 194 | 27 | 167 | 552.60 |

### [Wikitext generated Queries]

To complete

### [Documents]

This dataset is an extensive collection of document chunkings or entire document for short texts, designed to complement the MOOC MCQ Queries and other datasets in the collection.

- **chunking strategies**:

- MOOC MCQ Queries: documents are chunked according to their natural divisions, like sections or subsections, ensuring that each chunk maintains contextual integrity.

- **content format**:

```json

{

"doc_id": "Unique identifier for each document",

"doc": "Text content of the document"

}

``` |

open-llm-leaderboard/details_pankajmathur__orca_mini_v3_7b | ---

pretty_name: Evaluation run of pankajmathur/orca_mini_v3_7b

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [pankajmathur/orca_mini_v3_7b](https://huggingface.co/pankajmathur/orca_mini_v3_7b)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 64 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 2 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the agregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_pankajmathur__orca_mini_v3_7b\"\

,\n\t\"harness_winogrande_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\n\

These are the [latest results from run 2023-10-24T09:53:37.786344](https://huggingface.co/datasets/open-llm-leaderboard/details_pankajmathur__orca_mini_v3_7b/blob/main/results_2023-10-24T09-53-37.786344.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"em\": 0.08043204697986577,\n\

\ \"em_stderr\": 0.0027851341980506704,\n \"f1\": 0.15059563758389252,\n\

\ \"f1_stderr\": 0.0030534563383277672,\n \"acc\": 0.4069827001752661,\n\

\ \"acc_stderr\": 0.009686225873410097\n },\n \"harness|drop|3\": {\n\

\ \"em\": 0.08043204697986577,\n \"em_stderr\": 0.0027851341980506704,\n\

\ \"f1\": 0.15059563758389252,\n \"f1_stderr\": 0.0030534563383277672\n\

\ },\n \"harness|gsm8k|5\": {\n \"acc\": 0.0712661106899166,\n \

\ \"acc_stderr\": 0.007086462127954491\n },\n \"harness|winogrande|5\"\

: {\n \"acc\": 0.7426992896606156,\n \"acc_stderr\": 0.012285989618865706\n\

\ }\n}\n```"

repo_url: https://huggingface.co/pankajmathur/orca_mini_v3_7b

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_arc_challenge_25

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|arc:challenge|25_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|arc:challenge|25_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_drop_3

data_files:

- split: 2023_10_24T09_53_37.786344

path:

- '**/details_harness|drop|3_2023-10-24T09-53-37.786344.parquet'

- split: latest

path:

- '**/details_harness|drop|3_2023-10-24T09-53-37.786344.parquet'

- config_name: harness_gsm8k_5

data_files:

- split: 2023_10_24T09_53_37.786344

path:

- '**/details_harness|gsm8k|5_2023-10-24T09-53-37.786344.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2023-10-24T09-53-37.786344.parquet'

- config_name: harness_hellaswag_10

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hellaswag|10_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hellaswag|10_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-09-13T09-56-47.532864.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_abstract_algebra_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_anatomy_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_astronomy_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_business_ethics_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_clinical_knowledge_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_college_biology_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_college_chemistry_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_college_computer_science_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_college_mathematics_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_college_medicine_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_college_physics_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_computer_security_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_conceptual_physics_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_econometrics_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_electrical_engineering_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_elementary_mathematics_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_formal_logic_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_global_facts_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_biology_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_chemistry_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_computer_science_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_european_history_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_geography_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_government_and_politics_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_macroeconomics_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_mathematics_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_microeconomics_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_physics_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_psychology_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_statistics_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_us_history_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_high_school_world_history_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_human_aging_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-human_aging|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_aging|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_human_sexuality_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_international_law_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-international_law|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-international_law|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_jurisprudence_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_logical_fallacies_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_machine_learning_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_management_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-management|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-management|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_marketing_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-marketing|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-marketing|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_medical_genetics_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_miscellaneous_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_moral_disputes_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_moral_scenarios_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_nutrition_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-nutrition|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-nutrition|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_philosophy_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-philosophy|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-philosophy|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_prehistory_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-prehistory|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-prehistory|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_professional_accounting_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_professional_law_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-professional_law|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_law|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_professional_medicine_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_professional_psychology_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_public_relations_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-public_relations|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-public_relations|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_security_studies_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-security_studies|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-security_studies|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_sociology_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-sociology|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-sociology|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_us_foreign_policy_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_virology_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-virology|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-virology|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_hendrycksTest_world_religions_5

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|hendrycksTest-world_religions|5_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-world_religions|5_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_truthfulqa_mc_0

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- '**/details_harness|truthfulqa:mc|0_2023-09-13T09-56-47.532864.parquet'

- split: latest

path:

- '**/details_harness|truthfulqa:mc|0_2023-09-13T09-56-47.532864.parquet'

- config_name: harness_winogrande_5

data_files:

- split: 2023_10_24T09_53_37.786344

path:

- '**/details_harness|winogrande|5_2023-10-24T09-53-37.786344.parquet'

- split: latest

path:

- '**/details_harness|winogrande|5_2023-10-24T09-53-37.786344.parquet'

- config_name: results

data_files:

- split: 2023_09_13T09_56_47.532864

path:

- results_2023-09-13T09-56-47.532864.parquet

- split: 2023_10_24T09_53_37.786344

path:

- results_2023-10-24T09-53-37.786344.parquet

- split: latest

path:

- results_2023-10-24T09-53-37.786344.parquet

---

# Dataset Card for Evaluation run of pankajmathur/orca_mini_v3_7b

## Dataset Description

- **Homepage:**

- **Repository:** https://huggingface.co/pankajmathur/orca_mini_v3_7b

- **Paper:**

- **Leaderboard:** https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

- **Point of Contact:** clementine@hf.co

### Dataset Summary

Dataset automatically created during the evaluation run of model [pankajmathur/orca_mini_v3_7b](https://huggingface.co/pankajmathur/orca_mini_v3_7b) on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).

The dataset is composed of 64 configuration, each one coresponding to one of the evaluated task.

The dataset has been created from 2 run(s). Each run can be found as a specific split in each configuration, the split being named using the timestamp of the run.The "train" split is always pointing to the latest results.

An additional configuration "results" store all the aggregated results of the run (and is used to compute and display the agregated metrics on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).

To load the details from a run, you can for instance do the following:

```python

from datasets import load_dataset

data = load_dataset("open-llm-leaderboard/details_pankajmathur__orca_mini_v3_7b",

"harness_winogrande_5",

split="train")

```

## Latest results

These are the [latest results from run 2023-10-24T09:53:37.786344](https://huggingface.co/datasets/open-llm-leaderboard/details_pankajmathur__orca_mini_v3_7b/blob/main/results_2023-10-24T09-53-37.786344.json)(note that their might be results for other tasks in the repos if successive evals didn't cover the same tasks. You find each in the results and the "latest" split for each eval):

```python

{

"all": {

"em": 0.08043204697986577,

"em_stderr": 0.0027851341980506704,

"f1": 0.15059563758389252,

"f1_stderr": 0.0030534563383277672,

"acc": 0.4069827001752661,

"acc_stderr": 0.009686225873410097

},

"harness|drop|3": {

"em": 0.08043204697986577,

"em_stderr": 0.0027851341980506704,

"f1": 0.15059563758389252,

"f1_stderr": 0.0030534563383277672

},

"harness|gsm8k|5": {

"acc": 0.0712661106899166,

"acc_stderr": 0.007086462127954491

},

"harness|winogrande|5": {

"acc": 0.7426992896606156,

"acc_stderr": 0.012285989618865706

}

}

```

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

jan-hq/spider_sql_binarized | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: messages

list:

- name: content

dtype: string

- name: role

dtype: string

splits:

- name: train

num_bytes: 1494601

num_examples: 7000

- name: test

num_bytes: 214813

num_examples: 1034

download_size: 405782

dataset_size: 1709414

---

# Dataset Card for "spider_sql_binarized"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

yuan-sf63/word_mask_Nf_32 | ---

dataset_info:

features:

- name: feature

dtype: string

- name: target

dtype: string

splits:

- name: train

num_bytes: 7934257.279690487

num_examples: 80487

- name: validation

num_bytes: 881682.7203095123

num_examples: 8944

download_size: 6602823

dataset_size: 8815940.0

---

# Dataset Card for "word_mask_Nf_32"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

ancerlop/MistralAI | ---

configs:

- config_name: default

data_files:

- split: train

path: data.csv

---

# Dataset Card for Dataset Name

<!-- Provide a quick summary of the dataset. -->

## Dataset Details

### Dataset Description

<!-- Provide a longer summary of what this dataset is. -->

- **Curated by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

### Dataset Sources [optional]

<!-- Provide the basic links for the dataset. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the dataset is intended to be used. -->

### Direct Use

<!-- This section describes suitable use cases for the dataset. -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the dataset will not work well for. -->

[More Information Needed]

## Dataset Structure

<!-- This section provides a description of the dataset fields, and additional information about the dataset structure such as criteria used to create the splits, relationships between data points, etc. -->

[More Information Needed]

## Dataset Creation

### Curation Rationale

<!-- Motivation for the creation of this dataset. -->

[More Information Needed]

### Source Data

<!-- This section describes the source data (e.g. news text and headlines, social media posts, translated sentences, ...). -->

#### Data Collection and Processing

<!-- This section describes the data collection and processing process such as data selection criteria, filtering and normalization methods, tools and libraries used, etc. -->

[More Information Needed]

#### Who are the source data producers?

<!-- This section describes the people or systems who originally created the data. It should also include self-reported demographic or identity information for the source data creators if this information is available. -->

[More Information Needed]

### Annotations [optional]

<!-- If the dataset contains annotations which are not part of the initial data collection, use this section to describe them. -->

#### Annotation process

<!-- This section describes the annotation process such as annotation tools used in the process, the amount of data annotated, annotation guidelines provided to the annotators, interannotator statistics, annotation validation, etc. -->

[More Information Needed]

#### Who are the annotators?

<!-- This section describes the people or systems who created the annotations. -->

[More Information Needed]

#### Personal and Sensitive Information

<!-- State whether the dataset contains data that might be considered personal, sensitive, or private (e.g., data that reveals addresses, uniquely identifiable names or aliases, racial or ethnic origins, sexual orientations, religious beliefs, political opinions, financial or health data, etc.). If efforts were made to anonymize the data, describe the anonymization process. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users should be made aware of the risks, biases and limitations of the dataset. More information needed for further recommendations.

## Citation [optional]

<!-- If there is a paper or blog post introducing the dataset, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the dataset or dataset card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Dataset Card Authors [optional]

[More Information Needed]

## Dataset Card Contact

[More Information Needed] |

kgr123/quality_counter_1000 | ---

dataset_info:

features:

- name: context

dtype: string

- name: word

dtype: string

- name: claim

dtype: string

- name: label

dtype: int64

splits:

- name: test

num_bytes: 5847385

num_examples: 1929

- name: train

num_bytes: 5806234

num_examples: 1935

- name: validation

num_bytes: 5882182

num_examples: 1941

download_size: 4214869

dataset_size: 17535801

configs:

- config_name: default

data_files:

- split: test

path: data/test-*

- split: train

path: data/train-*

- split: validation

path: data/validation-*

---

|

tr416/dataset_20231007_034029 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: input_ids

sequence: int32

- name: attention_mask

sequence: int8

splits:

- name: train

num_bytes: 762696.0

num_examples: 297

- name: test

num_bytes: 7704.0

num_examples: 3

download_size: 73744

dataset_size: 770400.0

---

# Dataset Card for "dataset_20231007_034029"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

swikrit/embedding | ---

license: mit

---

|

TrainingDataPro/spine-magnetic-resonance-imaging-dataset | ---

license: cc-by-nc-nd-4.0

task_categories:

- image-classification

- image-segmentation

- image-to-image

- object-detection

language:

- en

tags:

- medical

- biology

- code

---

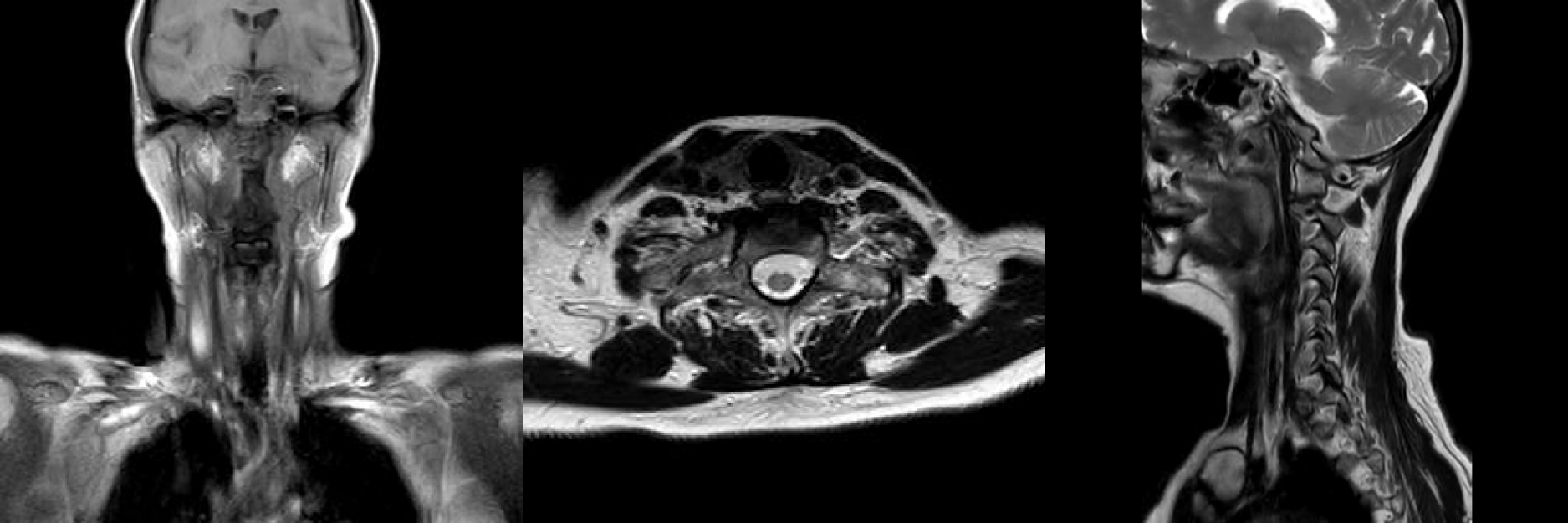

# Spine MRI Dataset, Anomaly Detection & Segmentation

The dataset consists of .dcm files containing **MRI scans of the spine** of the person with several dystrophic changes, such as changes in the shape of the spine, osteophytes, disc protrusions, intracerebral lesions, hydromyelia, spondyloarthrosis and spondylosis, anatomical narrowness of the spinal canal and asymmetry of the vertebral arteries. The images are **labeled** by the doctors and accompanied by **report** in PDF-format.

The dataset includes 5 studies, made from the different angles which provide a comprehensive understanding of a several dystrophic changes and useful in training spine anomaly classification algorithms. Each scan includes detailed imaging of the spine, including the *vertebrae, discs, nerves, and surrounding tissues*.

### MRI study angles in the dataset

# 💴 For Commercial Usage: Full version of the dataset includes 20,000 spine studies of people with different conditions, leave a request on **[TrainingData](https://trainingdata.pro/data-market/spine-mri?utm_source=huggingface&utm_medium=cpc&utm_campaign=spine-magnetic-resonance-imaging-dataset)** to buy the dataset

### Types of diseases and conditions in the full dataset:

- Degeneration of discs

- Osteophytes

- Osteochondrosis

- Hemangioma

- Disk extrusion

- Spondylitis

- **AND MANY OTHER CONDITIONS**

Researchers and healthcare professionals can use this dataset to study spinal conditions and disorders, such as herniated discs, spinal stenosis, scoliosis, and fractures. The dataset can also be used to develop and evaluate new imaging techniques, computer algorithms for image analysis, and artificial intelligence models for automated diagnosis.

# 💴 Buy the Dataset: This is just an example of the data. Leave a request on [https://trainingdata.pro/data-market](https://trainingdata.pro/data-market/spine-mri?utm_source=huggingface&utm_medium=cpc&utm_campaign=spine-magnetic-resonance-imaging-dataset) to discuss your requirements, learn about the price and buy the dataset

# Content

### The dataset includes:

- **ST000001**: includes subfolders with 5 studies. Each study includes MRI-scans in **.dcm and .jpg formats**,

- **DICOMDIR**: includes information about the patient's condition and links to access files,

- **Spine_MRI_5.pdf**: includes medical report, provided by the radiologist,

- **.csv file**: includes id of the studies and the number of files

### Medical reports include the following data:

- Patient's **demographic information**,

- **Description** of the case,

- Preliminary **diagnosis**,

- **Recommendations** on the further actions

*All patients consented to the publication of data*

# Medical data might be collected in accordance with your requirements.

## [TrainingData](https://trainingdata.pro/data-market/spine-mri?utm_source=huggingface&utm_medium=cpc&utm_campaign=spine-magnetic-resonance-imaging-dataset) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets**

*keywords: mri spine scans, spinal imaging, radiology dataset, neuroimaging, medical imaging data, image segmentation, lumbar spine mri, thoracic spine mri, cervical spine mri, spine anatomy, spinal cord mri, orthopedic imaging, radiologist dataset, mri scan analysis, spine mri dataset, machine learning medical imaging, spinal abnormalities, image classification, neural network spine scans, mri data analysis, deep learning medical imaging, mri image processing, spine tumor detection, spine injury diagnosis, mri image segmentation, spine mri classification, artificial intelligence in radiology, spine abnormalities detection, spine pathology analysis, mri feature extraction.* |

Aman6917/autotrain-data-fine_tune_table_tm2 | ---

task_categories:

- summarization

---

# AutoTrain Dataset for project: fine_tune_table_tm2

## Dataset Description

This dataset has been automatically processed by AutoTrain for project fine_tune_table_tm2.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"text": "List all PO headers with a valid vendor record in database",

"target": "select * from RETAILBUYER_POHEADER P inner join RETAILBUYER_VENDOR V\non P.VENDOR_ID = V.VENDOR_ID"

},

{

"text": "List all details of PO headers which have a vendor in vendor table",

"target": "select * from RETAILBUYER_POHEADER P inner join RETAILBUYER_VENDOR V\non P.VENDOR_ID = V.VENDOR_ID"

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"text": "Value(dtype='string', id=None)",

"target": "Value(dtype='string', id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 32 |

| valid | 17 |

|

iamnguyen/fqa_v1 | ---

dataset_info:

features:

- name: question

dtype: string

- name: answer

dtype: string

- name: vector

sequence: float64

- name: tokenized_question

dtype: string

- name: content

dtype: string

- name: school_id

dtype: string

splits:

- name: train

num_bytes: 2559239

num_examples: 178

download_size: 2035990

dataset_size: 2559239

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

ziozzang/multi-lang-translation-set | ---

license: mit

language:

- ar

- ko

- en

- ja

- id

- de

- pt

- es

- ru

- fr

- it

---

This is test datasets for multiple language translation.

- Generated by Machine translation.

License

- MIT. |

jlbaker361/korra-lite_captioned-augmented | ---

dataset_info:

features:

- name: image

dtype: image

- name: src

dtype: string

- name: split

dtype: string

- name: id

dtype: int64

- name: caption

dtype: string

splits:

- name: train

num_bytes: 254281033.375

num_examples: 1173

download_size: 254182437

dataset_size: 254281033.375

---

# Dataset Card for "korra-lite_captioned-augmented"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

liuyanchen1015/MULTI_VALUE_mrpc_what_comparative | ---

dataset_info:

features:

- name: sentence1

dtype: string

- name: sentence2

dtype: string

- name: label

dtype: int64

- name: idx

dtype: int64

- name: value_score

dtype: int64

splits:

- name: test

num_bytes: 989

num_examples: 3

- name: train

num_bytes: 1398

num_examples: 4

download_size: 9495

dataset_size: 2387

---

# Dataset Card for "MULTI_VALUE_mrpc_what_comparative"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

ArteChile/footos | ---

license: artistic-2.0

---

|

sreejith8100/death_marriage_data | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype:

class_label:

names:

'0': death

'1': marriage

splits:

- name: train

num_bytes: 579589900.0

num_examples: 448

- name: test

num_bytes: 13589304.0

num_examples: 20

download_size: 593212683

dataset_size: 593179204.0

---

# Dataset Card for "death_marriage_data"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

fathyshalab/germanquad_qg_dataset | ---

license: cc-by-4.0

task_categories:

- text2text-generation

language:

- de

size_categories:

- 1K<n<10K

--- |

kpriyanshu256/MultiTabQA-multitable_pretraining-Salesforce-codet5-base_train-latex-80000 | ---

dataset_info:

features:

- name: input_ids

sequence:

sequence: int32

- name: attention_mask

sequence:

sequence: int8

- name: labels

sequence:

sequence: int64

splits:

- name: train

num_bytes: 13336000

num_examples: 1000

download_size: 970107

dataset_size: 13336000

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

TheAIchemist13/malyalam_asr_dataset | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: audio

dtype: audio

- name: ' transcriptions'

dtype: string

splits:

- name: train

num_bytes: 1437332887.196

num_examples: 3023

- name: test

num_bytes: 576755142.814

num_examples: 1103

download_size: 1668143452

dataset_size: 2014088030.0100002

---

# Dataset Card for "malyalam_asr_dataset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Tanvir1337/Allopathic_Drug_Manufacturers-BD | ---

license: odc-by

pretty_name: Allopathic Drug Manufacturers Bangladesh

tags:

- Allopathic

- Drugs

- Manufacturer

language:

- en

size_categories:

- n<1K

---

# Allopathic_Drug_Manufacturers-BD [JSON dataset]