datasetId stringlengths 2 117 | card stringlengths 19 1.01M |

|---|---|

diwank/scenario_instructor | ---

dataset_info:

features:

- name: query

sequence: string

- name: pos

sequence: string

- name: neg

sequence: string

splits:

- name: train

num_bytes: 14083675

num_examples: 14732

- name: test

num_bytes: 788355

num_examples: 819

- name: validation

num_bytes: 769580

num_examples: 818

download_size: 7159274

dataset_size: 15641610

---

# Dataset Card for "scenario_instructor"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

kiringodhwani/msp8 | ---

dataset_info:

features:

- name: From

sequence: string

- name: Sent

sequence: string

- name: To

sequence: string

- name: Cc

sequence: string

- name: Subject

sequence: string

- name: Attachment

sequence: string

- name: body

dtype: string

splits:

- name: train

num_bytes: 5451396

num_examples: 5348

download_size: 2135865

dataset_size: 5451396

---

# Dataset Card for "msp8"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Mitsuki-Sakamoto/reward-model-deberta-v3-large-v2-alpaca_farm-alpaca_gpt4_preference-preference_test | ---

dataset_info:

features:

- name: instruction

dtype: string

- name: input

dtype: string

- name: output_1

dtype: string

- name: output_2

dtype: string

- name: preference

dtype: int64

- name: old_preference

dtype: int64

splits:

- name: preference

num_bytes: 113541

num_examples: 194

download_size: 76166

dataset_size: 113541

configs:

- config_name: default

data_files:

- split: preference

path: data/preference-*

---

|

CVasNLPExperiments/Sample_test_google_flan_t5_xxl_mode_T_SPECIFIC_A_ns_10 | ---

dataset_info:

features:

- name: id

dtype: int64

- name: prompt

dtype: string

- name: true_label

dtype: string

- name: prediction

dtype: string

splits:

- name: fewshot_0__Attributes_LAION_ViT_H_14_2B_descriptors_text_davinci_003_full_clip_tags_LAION_ViT_H_14_2B_with_openai_rices

num_bytes: 4266

num_examples: 10

download_size: 5331

dataset_size: 4266

---

# Dataset Card for "Sample_test_google_flan_t5_xxl_mode_T_SPECIFIC_A_ns_10"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

arcee-ai/nuclear_patents | ---

dataset_info:

features:

- name: patent_number

dtype: string

- name: section

dtype: string

- name: raw_text

dtype: string

splits:

- name: train

num_bytes: 350035355.37046283

num_examples: 33523

- name: test

num_bytes: 38895137.62953716

num_examples: 3725

download_size: 151011439

dataset_size: 388930493.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

---

# Dataset Card for "nuclear_patents"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

JetQin/seven-wonders | ---

language:

- en

tags:

- seven-wonders

size_categories:

- 100K<n<1M

--- |

katielink/GuacaMol | ---

license: mit

tags:

- chemistry

- molecular design

---

# GuacaMol: Benchmarks for Molecular Design

For an in-depth explanation of the types of benchmarks and baseline scores,

please consult the paper

[Benchmarking Models for De Novo Molecular Design](https://arxiv.org/abs/1811.09621)

## Leaderboard

See [https://www.benevolent.com/guacamol](https://www.benevolent.com/guacamol).

|

ryan2009/ph | ---

license: openrail

---

|

mesolitica/pseudostreaming-malaysian-youtube-whisper-large-v3 | ---

license: mit

task_categories:

- automatic-speech-recognition

language:

- ms

---

# Pseudostreaming Malaysian Youtube videos using Whisper Large V3

Original dataset at https://huggingface.co/datasets/mesolitica/pseudolabel-malaysian-youtube-whisper-large-v3

We use https://huggingface.co/mesolitica/conformer-medium-mixed to generate pseudostreaming dataset, source code at https://github.com/mesolitica/malaysian-dataset/tree/master/speech-to-text-semisupervised/pseudostreaming-whisper

Total 40486.589364839296 hours.

data format from [processed.jsonl](processed.jsonl),

```json

[

{

"text": "dalam sukan olimpik dan paralimpik tokyo dua ribu dua puluh",

"start": 3.52,

"end": 6.46,

"audio_filename": "processed-audio/1-225586-0.mp3",

"original_audio_filename": "output-audio/3-1084-10.mp3"

},

{

"text": "to azizul has",

"start": 7.12,

"end": 8.179999999999998,

"audio_filename": "processed-audio/1-225586-1.mp3",

"original_audio_filename": "output-audio/3-1084-10.mp3"

},

{

"text": "awang meraih kilauan perak untuk malaysia dalam sukan olimpik tokyo dua ribu dua puluh tampil sebagai satu satunya wakil asia bagaimanapun beliau terpaksa akur di tangan pelumba great britain jason",

"start": 8.4,

"end": 22.98,

"audio_filename": "processed-audio/1-225586-2.mp3",

"original_audio_filename": "output-audio/3-1084-10.mp3"

},

{

"text": "y yang meraih pingat emas",

"start": 23.28,

"end": 25.060000000000002,

"audio_filename": "processed-audio/1-225586-3.mp3",

"original_audio_filename": "output-audio/3-1084-10.mp3"

}

]

```

## how-to

```bash

git clone https://huggingface.co/datasets/mesolitica/pseudostreaming-malaya-speech-stt

cd pseudostreaming-malaya-speech-stt

wget https://www.7-zip.org/a/7z2301-linux-x64.tar.xz

tar -xf 7z2301-linux-x64.tar.xz

./7zz x processed-audio.7z.001 -y -mmt40

``` |

autoevaluate/autoeval-eval-inverse-scaling__NeQA-inverse-scaling__NeQA-1e740e-1694759585 | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- inverse-scaling/NeQA

eval_info:

task: text_zero_shot_classification

model: inverse-scaling/opt-2.7b_eval

metrics: []

dataset_name: inverse-scaling/NeQA

dataset_config: inverse-scaling--NeQA

dataset_split: train

col_mapping:

text: prompt

classes: classes

target: answer_index

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Zero-Shot Text Classification

* Model: inverse-scaling/opt-2.7b_eval

* Dataset: inverse-scaling/NeQA

* Config: inverse-scaling--NeQA

* Split: train

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@MicPie](https://huggingface.co/MicPie) for evaluating this model. |

jjpetrisko/authentiface_v2.0 | ---

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype:

class_label:

names:

'0': fake

'1': real

splits:

- name: train

num_bytes: 1157058798.187

num_examples: 133567

- name: validation

num_bytes: 12237890754.551

num_examples: 19117

- name: test

num_bytes: 31235137663.783

num_examples: 38167

download_size: 8659498485

dataset_size: 44630087216.521

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

---

|

HausaNLP/Naija-Lex | ---

license: cc-by-nc-sa-4.0

tags:

- sentiment analysis, Twitter, tweets

- stopwords

multilinguality:

- monolingual

- multilingual

language:

- hau

- ibo

- yor

pretty_name: NaijaStopwords

---

# Naija-Lexicons

Naija-Lexicons is a part of the [Naija-Senti](https://huggingface.co/datasets/HausaNLP/NaijaSenti-Twitter) project. It is a list of collected stopwords from the four most widely spoken languages in Nigeria — Hausa, Igbo, Nigerian-Pidgin, and Yorùbá.

--------------------------------------------------------------------------------

## Dataset Description

- **Homepage:** https://github.com/hausanlp/NaijaSenti/tree/main/data/stopwords

- **Repository:** [GitHub](https://github.com/hausanlp/NaijaSenti/tree/main/data/stopwords)

- **Paper:** [NaijaSenti: A Nigerian Twitter Sentiment Corpus for Multilingual Sentiment Analysis](https://aclanthology.org/2022.lrec-1.63/)

- **Leaderboard:** N/A

- **Point of Contact:** [Shamsuddeen Hassan Muhammad](shamsuddeen2004@gmail.com)

### Languages

3 most indigenous Nigerian languages

* Hausa (hau)

* Igbo (ibo)

* Yoruba (yor)

## Dataset Structure

### Data Instances

List of lexicons instances in each of the 3 languages with their sentiment labels.

```

{

"word": "string",

"label": "string"

}

```

### How to use it

```python

from datasets import load_dataset

# you can load specific languages (e.g., Hausa). This download manually created and translated lexicons.

ds = load_dataset("HausaNLP/Naija-Lexicons", "hau")

# you can load specific languages (e.g., Hausa). You may also specify the split you want to downloaf

ds = load_dataset("HausaNLP/Naija-Lexicons", "hau", split = "manual")

```

## Additional Information

### Dataset Curators

* Shamsuddeen Hassan Muhammad

* Idris Abdulmumin

* Ibrahim Said Ahmad

* Bello Shehu Bello

### Licensing Information

This Naija-Lexicons dataset is licensed under a Creative Commons Attribution BY-NC-SA 4.0 International License

### Citation Information

```

@inproceedings{muhammad-etal-2022-naijasenti,

title = "{N}aija{S}enti: A {N}igerian {T}witter Sentiment Corpus for Multilingual Sentiment Analysis",

author = "Muhammad, Shamsuddeen Hassan and

Adelani, David Ifeoluwa and

Ruder, Sebastian and

Ahmad, Ibrahim Sa{'}id and

Abdulmumin, Idris and

Bello, Bello Shehu and

Choudhury, Monojit and

Emezue, Chris Chinenye and

Abdullahi, Saheed Salahudeen and

Aremu, Anuoluwapo and

Jorge, Al{\'\i}pio and

Brazdil, Pavel",

booktitle = "Proceedings of the Thirteenth Language Resources and Evaluation Conference",

month = jun,

year = "2022",

address = "Marseille, France",

publisher = "European Language Resources Association",

url = "https://aclanthology.org/2022.lrec-1.63",

pages = "590--602",

}

```

### Contributions

> This work was carried out with support from Lacuna Fund, an initiative co-founded by The Rockefeller Foundation, Google.org, and Canada’s International Development Research Centre. The views expressed herein do not necessarily represent those of Lacuna Fund, its Steering Committee, its funders, or Meridian Institute. |

gengyuanmax/WikiTiLo | ---

license: mit

---

|

mhhmm/typescript-instruct-20k-v2c | ---

license: cc

task_categories:

- text-generation

language:

- en

tags:

- typescript

- code-generation

- instruct-tuning

---

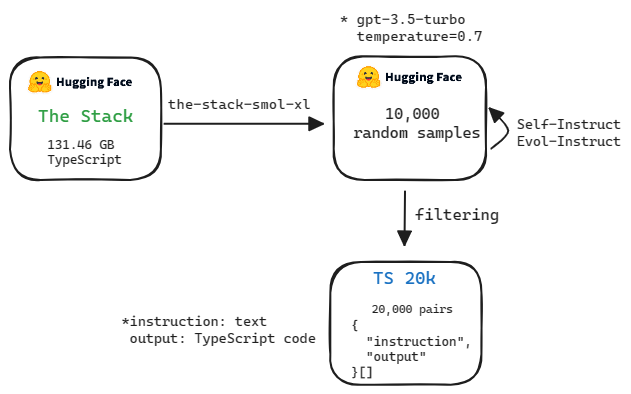

Why always Python?

I get 20,000 TypeScript code from [The Stack](https://huggingface.co/datasets/bigcode/the-stack-smol-xl) and generate {"instruction", "output"} pairs (based on gpt-3.5-turbo)

Using this dataset for finetune code generation model just for TypeScript

Make web developers great again ! |

Cesar7980/fingpt_chatglm2_sentiment_instruction_lora_ft_dataset | ---

dataset_info:

features:

- name: input

dtype: string

- name: output

dtype: string

- name: instruction

dtype: string

splits:

- name: train

num_bytes: 18540941.869938433

num_examples: 76772

download_size: 6417302

dataset_size: 18540941.869938433

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "fingpt_chatglm2_sentiment_instruction_lora_ft_dataset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

CyberHarem/clara_pokemon | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of clara (Pokémon)

This is the dataset of clara (Pokémon), containing 500 images and their tags.

The core tags of this character are `pink_hair, mole, mole_under_mouth, bangs, breasts, pink_lips, bow, purple_eyes, eyeshadow, hairband, eyelashes, pink_eyeshadow, large_breasts`, which are pruned in this dataset.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).

## List of Packages

| Name | Images | Size | Download | Type | Description |

|:-----------------|---------:|:-----------|:---------------------------------------------------------------------------------------------------------------|:-----------|:---------------------------------------------------------------------|

| raw | 500 | 659.01 MiB | [Download](https://huggingface.co/datasets/CyberHarem/clara_pokemon/resolve/main/dataset-raw.zip) | Waifuc-Raw | Raw data with meta information (min edge aligned to 1400 if larger). |

| 800 | 500 | 365.76 MiB | [Download](https://huggingface.co/datasets/CyberHarem/clara_pokemon/resolve/main/dataset-800.zip) | IMG+TXT | dataset with the shorter side not exceeding 800 pixels. |

| stage3-p480-800 | 1216 | 783.82 MiB | [Download](https://huggingface.co/datasets/CyberHarem/clara_pokemon/resolve/main/dataset-stage3-p480-800.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

| 1200 | 500 | 578.47 MiB | [Download](https://huggingface.co/datasets/CyberHarem/clara_pokemon/resolve/main/dataset-1200.zip) | IMG+TXT | dataset with the shorter side not exceeding 1200 pixels. |

| stage3-p480-1200 | 1216 | 1.10 GiB | [Download](https://huggingface.co/datasets/CyberHarem/clara_pokemon/resolve/main/dataset-stage3-p480-1200.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

### Load Raw Dataset with Waifuc

We provide raw dataset (including tagged images) for [waifuc](https://deepghs.github.io/waifuc/main/tutorials/installation/index.html) loading. If you need this, just run the following code

```python

import os

import zipfile

from huggingface_hub import hf_hub_download

from waifuc.source import LocalSource

# download raw archive file

zip_file = hf_hub_download(

repo_id='CyberHarem/clara_pokemon',

repo_type='dataset',

filename='dataset-raw.zip',

)

# extract files to your directory

dataset_dir = 'dataset_dir'

os.makedirs(dataset_dir, exist_ok=True)

with zipfile.ZipFile(zip_file, 'r') as zf:

zf.extractall(dataset_dir)

# load the dataset with waifuc

source = LocalSource(dataset_dir)

for item in source:

print(item.image, item.meta['filename'], item.meta['tags'])

```

## List of Clusters

List of tag clustering result, maybe some outfits can be mined here.

### Raw Text Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | Tags |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| 0 | 8 |  |  |  |  |  | 1girl, bracelet, collared_shirt, dynamax_band, makeup, mismatched_legwear, shorts, single_glove, smile, thighhighs, white_jacket, solo, one_eye_closed, partially_fingerless_gloves, hands_up, ring, fur_coat, looking_at_viewer, open_mouth |

| 1 | 10 |  |  |  |  |  | 1girl, bracelet, collared_shirt, holding_poke_ball, looking_at_viewer, shorts, single_glove, smile, thighhighs, dynamax_band, makeup, poke_ball_(basic), solo, white_jacket, mismatched_legwear, hands_up |

| 2 | 5 |  |  |  |  |  | 1girl, bracelet, collared_shirt, dynamax_band, full_body, hand_up, mismatched_legwear, shoes, shorts, single_glove, solo, standing, thighhighs, white_jacket, makeup, shaded_face, smile, white_background, blue_eyes, index_finger_raised, looking_at_viewer, ring, simple_background |

| 3 | 5 |  |  |  |  |  | 1girl, dynamax_band, looking_at_viewer, makeup, navel, nipples, single_glove, smile, solo, blue_eyes, mismatched_legwear, pussy, thighhighs, fur_coat, open_clothes, partially_fingerless_gloves, shiny_skin, anus, blush, collarbone, hair_bow, jacket, nude, open_mouth, spread_legs |

| 4 | 19 |  |  |  |  |  | 1girl, hetero, 1boy, blush, nipples, penis, open_mouth, sex, looking_at_viewer, solo_focus, sweat, vaginal, thighhighs, navel, smile, cum_in_pussy, pov, heart, mosaic_censoring, pubic_hair, spread_legs, makeup, shirt_lift, straddling, uncensored |

| 5 | 5 |  |  |  |  |  | 1boy, 1girl, hetero, ahegao, looking_back, open_mouth, patreon_username, penis, rolling_eyes, sex_from_behind, solo_focus, thighhighs, tongue_out, uncensored, blush, smile, testicles, web_address, anal, anus, asymmetrical_legwear, blue_eyes, cum, fucked_silly, hair_bow, huge_ass, nipples, nude, overflow, pussy, shiny, teeth, vaginal, watermark |

### Table Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | 1girl | bracelet | collared_shirt | dynamax_band | makeup | mismatched_legwear | shorts | single_glove | smile | thighhighs | white_jacket | solo | one_eye_closed | partially_fingerless_gloves | hands_up | ring | fur_coat | looking_at_viewer | open_mouth | holding_poke_ball | poke_ball_(basic) | full_body | hand_up | shoes | standing | shaded_face | white_background | blue_eyes | index_finger_raised | simple_background | navel | nipples | pussy | open_clothes | shiny_skin | anus | blush | collarbone | hair_bow | jacket | nude | spread_legs | hetero | 1boy | penis | sex | solo_focus | sweat | vaginal | cum_in_pussy | pov | heart | mosaic_censoring | pubic_hair | shirt_lift | straddling | uncensored | ahegao | looking_back | patreon_username | rolling_eyes | sex_from_behind | tongue_out | testicles | web_address | anal | asymmetrical_legwear | cum | fucked_silly | huge_ass | overflow | shiny | teeth | watermark |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------|:-----------|:-----------------|:---------------|:---------|:---------------------|:---------|:---------------|:--------|:-------------|:---------------|:-------|:-----------------|:------------------------------|:-----------|:-------|:-----------|:--------------------|:-------------|:--------------------|:--------------------|:------------|:----------|:--------|:-----------|:--------------|:-------------------|:------------|:----------------------|:--------------------|:--------|:----------|:--------|:---------------|:-------------|:-------|:--------|:-------------|:-----------|:---------|:-------|:--------------|:---------|:-------|:--------|:------|:-------------|:--------|:----------|:---------------|:------|:--------|:-------------------|:-------------|:-------------|:-------------|:-------------|:---------|:---------------|:-------------------|:---------------|:------------------|:-------------|:------------|:--------------|:-------|:-----------------------|:------|:---------------|:-----------|:-----------|:--------|:--------|:------------|

| 0 | 8 |  |  |  |  |  | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | |

| 1 | 10 |  |  |  |  |  | X | X | X | X | X | X | X | X | X | X | X | X | | | X | | | X | | X | X | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | |

| 2 | 5 |  |  |  |  |  | X | X | X | X | X | X | X | X | X | X | X | X | | | | X | | X | | | | X | X | X | X | X | X | X | X | X | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | |

| 3 | 5 |  |  |  |  |  | X | | | X | X | X | | X | X | X | | X | | X | | | X | X | X | | | | | | | | | X | | | X | X | X | X | X | X | X | X | X | X | X | X | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | |

| 4 | 19 |  |  |  |  |  | X | | | | X | | | | X | X | | | | | | | | X | X | | | | | | | | | | | | X | X | | | | | X | | | | | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | | | | | | | | | | | | | | | | | |

| 5 | 5 |  |  |  |  |  | X | | | | | | | | X | X | | | | | | | | | X | | | | | | | | | X | | | | X | X | | | X | X | | X | | X | | X | X | X | | X | | X | | | | | | | | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X |

|

deeptigp/car_generation_diffusion_mini | ---

license: unknown

---

|

jamestalentium/xsum_1000_rm | ---

dataset_info:

features:

- name: input_text

dtype: string

- name: output_text

dtype: string

- name: id

dtype: string

splits:

- name: train

num_bytes: 2348532.740326889

num_examples: 1000

download_size: 830060

dataset_size: 2348532.740326889

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "xsum_1000_rm"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

open-llm-leaderboard/details_janhq__Mistral-7B-Instruct-v0.2-DARE | ---

pretty_name: Evaluation run of janhq/Mistral-7B-Instruct-v0.2-DARE

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [janhq/Mistral-7B-Instruct-v0.2-DARE](https://huggingface.co/janhq/Mistral-7B-Instruct-v0.2-DARE)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 63 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the aggregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_janhq__Mistral-7B-Instruct-v0.2-DARE\"\

,\n\t\"harness_winogrande_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\n\

These are the [latest results from run 2023-12-12T11:22:55.278603](https://huggingface.co/datasets/open-llm-leaderboard/details_janhq__Mistral-7B-Instruct-v0.2-DARE/blob/main/results_2023-12-12T11-22-55.278603.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.5002671303432286,\n\

\ \"acc_stderr\": 0.03440023987934237,\n \"acc_norm\": 0.506255828811682,\n\

\ \"acc_norm_stderr\": 0.035162947112250174,\n \"mc1\": 0.3953488372093023,\n\

\ \"mc1_stderr\": 0.017115815632418194,\n \"mc2\": 0.5435910325313378,\n\

\ \"mc2_stderr\": 0.015385871725485683\n },\n \"harness|arc:challenge|25\"\

: {\n \"acc\": 0.5580204778156996,\n \"acc_stderr\": 0.014512682523128342,\n\

\ \"acc_norm\": 0.6194539249146758,\n \"acc_norm_stderr\": 0.014188277712349814\n\

\ },\n \"harness|hellaswag|10\": {\n \"acc\": 0.5338577972515435,\n\

\ \"acc_stderr\": 0.0049783281907755245,\n \"acc_norm\": 0.7562238597888866,\n\

\ \"acc_norm_stderr\": 0.004284817238406704\n },\n \"harness|hendrycksTest-abstract_algebra|5\"\

: {\n \"acc\": 0.29,\n \"acc_stderr\": 0.04560480215720684,\n \

\ \"acc_norm\": 0.29,\n \"acc_norm_stderr\": 0.04560480215720684\n \

\ },\n \"harness|hendrycksTest-anatomy|5\": {\n \"acc\": 0.4962962962962963,\n\

\ \"acc_stderr\": 0.04319223625811331,\n \"acc_norm\": 0.4962962962962963,\n\

\ \"acc_norm_stderr\": 0.04319223625811331\n },\n \"harness|hendrycksTest-astronomy|5\"\

: {\n \"acc\": 0.5986842105263158,\n \"acc_stderr\": 0.03988903703336285,\n\

\ \"acc_norm\": 0.5986842105263158,\n \"acc_norm_stderr\": 0.03988903703336285\n\

\ },\n \"harness|hendrycksTest-business_ethics|5\": {\n \"acc\": 0.51,\n\

\ \"acc_stderr\": 0.05024183937956912,\n \"acc_norm\": 0.51,\n \

\ \"acc_norm_stderr\": 0.05024183937956912\n },\n \"harness|hendrycksTest-clinical_knowledge|5\"\

: {\n \"acc\": 0.569811320754717,\n \"acc_stderr\": 0.030471445867183235,\n\

\ \"acc_norm\": 0.569811320754717,\n \"acc_norm_stderr\": 0.030471445867183235\n\

\ },\n \"harness|hendrycksTest-college_biology|5\": {\n \"acc\": 0.5763888888888888,\n\

\ \"acc_stderr\": 0.041321250197233685,\n \"acc_norm\": 0.5763888888888888,\n\

\ \"acc_norm_stderr\": 0.041321250197233685\n },\n \"harness|hendrycksTest-college_chemistry|5\"\

: {\n \"acc\": 0.4,\n \"acc_stderr\": 0.049236596391733084,\n \

\ \"acc_norm\": 0.4,\n \"acc_norm_stderr\": 0.049236596391733084\n \

\ },\n \"harness|hendrycksTest-college_computer_science|5\": {\n \"acc\"\

: 0.42,\n \"acc_stderr\": 0.049604496374885836,\n \"acc_norm\": 0.42,\n\

\ \"acc_norm_stderr\": 0.049604496374885836\n },\n \"harness|hendrycksTest-college_mathematics|5\"\

: {\n \"acc\": 0.31,\n \"acc_stderr\": 0.04648231987117316,\n \

\ \"acc_norm\": 0.31,\n \"acc_norm_stderr\": 0.04648231987117316\n \

\ },\n \"harness|hendrycksTest-college_medicine|5\": {\n \"acc\": 0.5433526011560693,\n\

\ \"acc_stderr\": 0.03798106566014498,\n \"acc_norm\": 0.5433526011560693,\n\

\ \"acc_norm_stderr\": 0.03798106566014498\n },\n \"harness|hendrycksTest-college_physics|5\"\

: {\n \"acc\": 0.37254901960784315,\n \"acc_stderr\": 0.04810840148082635,\n\

\ \"acc_norm\": 0.37254901960784315,\n \"acc_norm_stderr\": 0.04810840148082635\n\

\ },\n \"harness|hendrycksTest-computer_security|5\": {\n \"acc\":\

\ 0.62,\n \"acc_stderr\": 0.04878317312145633,\n \"acc_norm\": 0.62,\n\

\ \"acc_norm_stderr\": 0.04878317312145633\n },\n \"harness|hendrycksTest-conceptual_physics|5\"\

: {\n \"acc\": 0.48936170212765956,\n \"acc_stderr\": 0.03267862331014063,\n\

\ \"acc_norm\": 0.48936170212765956,\n \"acc_norm_stderr\": 0.03267862331014063\n\

\ },\n \"harness|hendrycksTest-econometrics|5\": {\n \"acc\": 0.38596491228070173,\n\

\ \"acc_stderr\": 0.04579639422070434,\n \"acc_norm\": 0.38596491228070173,\n\

\ \"acc_norm_stderr\": 0.04579639422070434\n },\n \"harness|hendrycksTest-electrical_engineering|5\"\

: {\n \"acc\": 0.5310344827586206,\n \"acc_stderr\": 0.04158632762097828,\n\

\ \"acc_norm\": 0.5310344827586206,\n \"acc_norm_stderr\": 0.04158632762097828\n\

\ },\n \"harness|hendrycksTest-elementary_mathematics|5\": {\n \"acc\"\

: 0.3783068783068783,\n \"acc_stderr\": 0.02497695405315526,\n \"\

acc_norm\": 0.3783068783068783,\n \"acc_norm_stderr\": 0.02497695405315526\n\

\ },\n \"harness|hendrycksTest-formal_logic|5\": {\n \"acc\": 0.2619047619047619,\n\

\ \"acc_stderr\": 0.03932537680392871,\n \"acc_norm\": 0.2619047619047619,\n\

\ \"acc_norm_stderr\": 0.03932537680392871\n },\n \"harness|hendrycksTest-global_facts|5\"\

: {\n \"acc\": 0.35,\n \"acc_stderr\": 0.047937248544110196,\n \

\ \"acc_norm\": 0.35,\n \"acc_norm_stderr\": 0.047937248544110196\n \

\ },\n \"harness|hendrycksTest-high_school_biology|5\": {\n \"acc\"\

: 0.47419354838709676,\n \"acc_stderr\": 0.02840609505765332,\n \"\

acc_norm\": 0.47419354838709676,\n \"acc_norm_stderr\": 0.02840609505765332\n\

\ },\n \"harness|hendrycksTest-high_school_chemistry|5\": {\n \"acc\"\

: 0.35960591133004927,\n \"acc_stderr\": 0.03376458246509567,\n \"\

acc_norm\": 0.35960591133004927,\n \"acc_norm_stderr\": 0.03376458246509567\n\

\ },\n \"harness|hendrycksTest-high_school_computer_science|5\": {\n \

\ \"acc\": 0.53,\n \"acc_stderr\": 0.050161355804659205,\n \"acc_norm\"\

: 0.53,\n \"acc_norm_stderr\": 0.050161355804659205\n },\n \"harness|hendrycksTest-high_school_european_history|5\"\

: {\n \"acc\": 0.28484848484848485,\n \"acc_stderr\": 0.03524390844511784,\n\

\ \"acc_norm\": 0.28484848484848485,\n \"acc_norm_stderr\": 0.03524390844511784\n\

\ },\n \"harness|hendrycksTest-high_school_geography|5\": {\n \"acc\"\

: 0.6666666666666666,\n \"acc_stderr\": 0.033586181457325226,\n \"\

acc_norm\": 0.6666666666666666,\n \"acc_norm_stderr\": 0.033586181457325226\n\

\ },\n \"harness|hendrycksTest-high_school_government_and_politics|5\": {\n\

\ \"acc\": 0.7564766839378239,\n \"acc_stderr\": 0.030975436386845436,\n\

\ \"acc_norm\": 0.7564766839378239,\n \"acc_norm_stderr\": 0.030975436386845436\n\

\ },\n \"harness|hendrycksTest-high_school_macroeconomics|5\": {\n \

\ \"acc\": 0.5153846153846153,\n \"acc_stderr\": 0.02533900301010651,\n \

\ \"acc_norm\": 0.5153846153846153,\n \"acc_norm_stderr\": 0.02533900301010651\n\

\ },\n \"harness|hendrycksTest-high_school_mathematics|5\": {\n \"\

acc\": 0.2814814814814815,\n \"acc_stderr\": 0.027420019350945277,\n \

\ \"acc_norm\": 0.2814814814814815,\n \"acc_norm_stderr\": 0.027420019350945277\n\

\ },\n \"harness|hendrycksTest-high_school_microeconomics|5\": {\n \

\ \"acc\": 0.5294117647058824,\n \"acc_stderr\": 0.03242225027115006,\n \

\ \"acc_norm\": 0.5294117647058824,\n \"acc_norm_stderr\": 0.03242225027115006\n\

\ },\n \"harness|hendrycksTest-high_school_physics|5\": {\n \"acc\"\

: 0.304635761589404,\n \"acc_stderr\": 0.03757949922943343,\n \"acc_norm\"\

: 0.304635761589404,\n \"acc_norm_stderr\": 0.03757949922943343\n },\n\

\ \"harness|hendrycksTest-high_school_psychology|5\": {\n \"acc\": 0.6935779816513762,\n\

\ \"acc_stderr\": 0.01976551722045852,\n \"acc_norm\": 0.6935779816513762,\n\

\ \"acc_norm_stderr\": 0.01976551722045852\n },\n \"harness|hendrycksTest-high_school_statistics|5\"\

: {\n \"acc\": 0.35648148148148145,\n \"acc_stderr\": 0.03266478331527272,\n\

\ \"acc_norm\": 0.35648148148148145,\n \"acc_norm_stderr\": 0.03266478331527272\n\

\ },\n \"harness|hendrycksTest-high_school_us_history|5\": {\n \"acc\"\

: 0.39215686274509803,\n \"acc_stderr\": 0.03426712349247271,\n \"\

acc_norm\": 0.39215686274509803,\n \"acc_norm_stderr\": 0.03426712349247271\n\

\ },\n \"harness|hendrycksTest-high_school_world_history|5\": {\n \"\

acc\": 0.48945147679324896,\n \"acc_stderr\": 0.032539983791662855,\n \

\ \"acc_norm\": 0.48945147679324896,\n \"acc_norm_stderr\": 0.032539983791662855\n\

\ },\n \"harness|hendrycksTest-human_aging|5\": {\n \"acc\": 0.5739910313901345,\n\

\ \"acc_stderr\": 0.03318833286217281,\n \"acc_norm\": 0.5739910313901345,\n\

\ \"acc_norm_stderr\": 0.03318833286217281\n },\n \"harness|hendrycksTest-human_sexuality|5\"\

: {\n \"acc\": 0.6106870229007634,\n \"acc_stderr\": 0.04276486542814591,\n\

\ \"acc_norm\": 0.6106870229007634,\n \"acc_norm_stderr\": 0.04276486542814591\n\

\ },\n \"harness|hendrycksTest-international_law|5\": {\n \"acc\":\

\ 0.6694214876033058,\n \"acc_stderr\": 0.04294340845212094,\n \"\

acc_norm\": 0.6694214876033058,\n \"acc_norm_stderr\": 0.04294340845212094\n\

\ },\n \"harness|hendrycksTest-jurisprudence|5\": {\n \"acc\": 0.6203703703703703,\n\

\ \"acc_stderr\": 0.04691521224077742,\n \"acc_norm\": 0.6203703703703703,\n\

\ \"acc_norm_stderr\": 0.04691521224077742\n },\n \"harness|hendrycksTest-logical_fallacies|5\"\

: {\n \"acc\": 0.5337423312883436,\n \"acc_stderr\": 0.03919415545048409,\n\

\ \"acc_norm\": 0.5337423312883436,\n \"acc_norm_stderr\": 0.03919415545048409\n\

\ },\n \"harness|hendrycksTest-machine_learning|5\": {\n \"acc\": 0.42857142857142855,\n\

\ \"acc_stderr\": 0.04697113923010212,\n \"acc_norm\": 0.42857142857142855,\n\

\ \"acc_norm_stderr\": 0.04697113923010212\n },\n \"harness|hendrycksTest-management|5\"\

: {\n \"acc\": 0.6893203883495146,\n \"acc_stderr\": 0.04582124160161551,\n\

\ \"acc_norm\": 0.6893203883495146,\n \"acc_norm_stderr\": 0.04582124160161551\n\

\ },\n \"harness|hendrycksTest-marketing|5\": {\n \"acc\": 0.7777777777777778,\n\

\ \"acc_stderr\": 0.027236013946196687,\n \"acc_norm\": 0.7777777777777778,\n\

\ \"acc_norm_stderr\": 0.027236013946196687\n },\n \"harness|hendrycksTest-medical_genetics|5\"\

: {\n \"acc\": 0.52,\n \"acc_stderr\": 0.05021167315686779,\n \

\ \"acc_norm\": 0.52,\n \"acc_norm_stderr\": 0.05021167315686779\n \

\ },\n \"harness|hendrycksTest-miscellaneous|5\": {\n \"acc\": 0.6934865900383141,\n\

\ \"acc_stderr\": 0.016486952893041508,\n \"acc_norm\": 0.6934865900383141,\n\

\ \"acc_norm_stderr\": 0.016486952893041508\n },\n \"harness|hendrycksTest-moral_disputes|5\"\

: {\n \"acc\": 0.5202312138728323,\n \"acc_stderr\": 0.026897049996382875,\n\

\ \"acc_norm\": 0.5202312138728323,\n \"acc_norm_stderr\": 0.026897049996382875\n\

\ },\n \"harness|hendrycksTest-moral_scenarios|5\": {\n \"acc\": 0.33743016759776534,\n\

\ \"acc_stderr\": 0.015813901283913048,\n \"acc_norm\": 0.33743016759776534,\n\

\ \"acc_norm_stderr\": 0.015813901283913048\n },\n \"harness|hendrycksTest-nutrition|5\"\

: {\n \"acc\": 0.5196078431372549,\n \"acc_stderr\": 0.028607893699576063,\n\

\ \"acc_norm\": 0.5196078431372549,\n \"acc_norm_stderr\": 0.028607893699576063\n\

\ },\n \"harness|hendrycksTest-philosophy|5\": {\n \"acc\": 0.5434083601286174,\n\

\ \"acc_stderr\": 0.028290869054197604,\n \"acc_norm\": 0.5434083601286174,\n\

\ \"acc_norm_stderr\": 0.028290869054197604\n },\n \"harness|hendrycksTest-prehistory|5\"\

: {\n \"acc\": 0.5462962962962963,\n \"acc_stderr\": 0.027701228468542595,\n\

\ \"acc_norm\": 0.5462962962962963,\n \"acc_norm_stderr\": 0.027701228468542595\n\

\ },\n \"harness|hendrycksTest-professional_accounting|5\": {\n \"\

acc\": 0.35815602836879434,\n \"acc_stderr\": 0.02860208586275942,\n \

\ \"acc_norm\": 0.35815602836879434,\n \"acc_norm_stderr\": 0.02860208586275942\n\

\ },\n \"harness|hendrycksTest-professional_law|5\": {\n \"acc\": 0.31747066492829207,\n\

\ \"acc_stderr\": 0.01188889206880931,\n \"acc_norm\": 0.31747066492829207,\n\

\ \"acc_norm_stderr\": 0.01188889206880931\n },\n \"harness|hendrycksTest-professional_medicine|5\"\

: {\n \"acc\": 0.41911764705882354,\n \"acc_stderr\": 0.029972807170464626,\n\

\ \"acc_norm\": 0.41911764705882354,\n \"acc_norm_stderr\": 0.029972807170464626\n\

\ },\n \"harness|hendrycksTest-professional_psychology|5\": {\n \"\

acc\": 0.49019607843137253,\n \"acc_stderr\": 0.020223946005074312,\n \

\ \"acc_norm\": 0.49019607843137253,\n \"acc_norm_stderr\": 0.020223946005074312\n\

\ },\n \"harness|hendrycksTest-public_relations|5\": {\n \"acc\": 0.6909090909090909,\n\

\ \"acc_stderr\": 0.044262946482000985,\n \"acc_norm\": 0.6909090909090909,\n\

\ \"acc_norm_stderr\": 0.044262946482000985\n },\n \"harness|hendrycksTest-security_studies|5\"\

: {\n \"acc\": 0.49387755102040815,\n \"acc_stderr\": 0.032006820201639086,\n\

\ \"acc_norm\": 0.49387755102040815,\n \"acc_norm_stderr\": 0.032006820201639086\n\

\ },\n \"harness|hendrycksTest-sociology|5\": {\n \"acc\": 0.5124378109452736,\n\

\ \"acc_stderr\": 0.03534439848539579,\n \"acc_norm\": 0.5124378109452736,\n\

\ \"acc_norm_stderr\": 0.03534439848539579\n },\n \"harness|hendrycksTest-us_foreign_policy|5\"\

: {\n \"acc\": 0.73,\n \"acc_stderr\": 0.044619604333847394,\n \

\ \"acc_norm\": 0.73,\n \"acc_norm_stderr\": 0.044619604333847394\n \

\ },\n \"harness|hendrycksTest-virology|5\": {\n \"acc\": 0.43373493975903615,\n\

\ \"acc_stderr\": 0.03858158940685517,\n \"acc_norm\": 0.43373493975903615,\n\

\ \"acc_norm_stderr\": 0.03858158940685517\n },\n \"harness|hendrycksTest-world_religions|5\"\

: {\n \"acc\": 0.7251461988304093,\n \"acc_stderr\": 0.03424042924691584,\n\

\ \"acc_norm\": 0.7251461988304093,\n \"acc_norm_stderr\": 0.03424042924691584\n\

\ },\n \"harness|truthfulqa:mc|0\": {\n \"mc1\": 0.3953488372093023,\n\

\ \"mc1_stderr\": 0.017115815632418194,\n \"mc2\": 0.5435910325313378,\n\

\ \"mc2_stderr\": 0.015385871725485683\n },\n \"harness|winogrande|5\"\

: {\n \"acc\": 0.749802683504341,\n \"acc_stderr\": 0.01217300964244914\n\

\ },\n \"harness|gsm8k|5\": {\n \"acc\": 0.18119787717968158,\n \

\ \"acc_stderr\": 0.010609827611527357\n }\n}\n```"

repo_url: https://huggingface.co/janhq/Mistral-7B-Instruct-v0.2-DARE

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_arc_challenge_25

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|arc:challenge|25_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|arc:challenge|25_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_gsm8k_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|gsm8k|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hellaswag_10

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hellaswag|10_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hellaswag|10_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-12-12T11-22-55.278603.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_abstract_algebra_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_anatomy_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_astronomy_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_business_ethics_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_clinical_knowledge_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_college_biology_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_college_chemistry_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_college_computer_science_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_college_mathematics_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_college_medicine_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_college_physics_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_computer_security_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_conceptual_physics_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_econometrics_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_electrical_engineering_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_elementary_mathematics_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_formal_logic_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_global_facts_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_biology_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_chemistry_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_computer_science_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_european_history_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_geography_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_government_and_politics_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_macroeconomics_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_mathematics_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_microeconomics_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_physics_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_psychology_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_statistics_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_us_history_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_high_school_world_history_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_human_aging_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_aging|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_human_sexuality_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_international_law_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-international_law|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-international_law|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_jurisprudence_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_logical_fallacies_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_machine_learning_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_management_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-management|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-management|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_marketing_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-marketing|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-marketing|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_medical_genetics_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_miscellaneous_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_moral_disputes_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_moral_scenarios_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_nutrition_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-nutrition|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_philosophy_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-philosophy|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_prehistory_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-prehistory|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_professional_accounting_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_professional_law_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_law|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_professional_medicine_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_professional_psychology_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_public_relations_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-public_relations|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_security_studies_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-security_studies|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_sociology_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-sociology|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-sociology|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_us_foreign_policy_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_virology_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-virology|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-virology|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_hendrycksTest_world_religions_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-world_religions|5_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_truthfulqa_mc_0

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|truthfulqa:mc|0_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|truthfulqa:mc|0_2023-12-12T11-22-55.278603.parquet'

- config_name: harness_winogrande_5

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- '**/details_harness|winogrande|5_2023-12-12T11-22-55.278603.parquet'

- split: latest

path:

- '**/details_harness|winogrande|5_2023-12-12T11-22-55.278603.parquet'

- config_name: results

data_files:

- split: 2023_12_12T11_22_55.278603

path:

- results_2023-12-12T11-22-55.278603.parquet

- split: latest

path:

- results_2023-12-12T11-22-55.278603.parquet

---

# Dataset Card for Evaluation run of janhq/Mistral-7B-Instruct-v0.2-DARE

<!-- Provide a quick summary of the dataset. -->

Dataset automatically created during the evaluation run of model [janhq/Mistral-7B-Instruct-v0.2-DARE](https://huggingface.co/janhq/Mistral-7B-Instruct-v0.2-DARE) on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).

The dataset is composed of 63 configuration, each one coresponding to one of the evaluated task.

The dataset has been created from 1 run(s). Each run can be found as a specific split in each configuration, the split being named using the timestamp of the run.The "train" split is always pointing to the latest results.

An additional configuration "results" store all the aggregated results of the run (and is used to compute and display the aggregated metrics on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).

To load the details from a run, you can for instance do the following:

```python

from datasets import load_dataset

data = load_dataset("open-llm-leaderboard/details_janhq__Mistral-7B-Instruct-v0.2-DARE",

"harness_winogrande_5",

split="train")

```

## Latest results

These are the [latest results from run 2023-12-12T11:22:55.278603](https://huggingface.co/datasets/open-llm-leaderboard/details_janhq__Mistral-7B-Instruct-v0.2-DARE/blob/main/results_2023-12-12T11-22-55.278603.json)(note that their might be results for other tasks in the repos if successive evals didn't cover the same tasks. You find each in the results and the "latest" split for each eval):

```python

{

"all": {

"acc": 0.5002671303432286,

"acc_stderr": 0.03440023987934237,

"acc_norm": 0.506255828811682,

"acc_norm_stderr": 0.035162947112250174,

"mc1": 0.3953488372093023,

"mc1_stderr": 0.017115815632418194,

"mc2": 0.5435910325313378,

"mc2_stderr": 0.015385871725485683

},

"harness|arc:challenge|25": {

"acc": 0.5580204778156996,

"acc_stderr": 0.014512682523128342,

"acc_norm": 0.6194539249146758,

"acc_norm_stderr": 0.014188277712349814

},

"harness|hellaswag|10": {

"acc": 0.5338577972515435,

"acc_stderr": 0.0049783281907755245,

"acc_norm": 0.7562238597888866,

"acc_norm_stderr": 0.004284817238406704

},

"harness|hendrycksTest-abstract_algebra|5": {

"acc": 0.29,

"acc_stderr": 0.04560480215720684,

"acc_norm": 0.29,

"acc_norm_stderr": 0.04560480215720684

},

"harness|hendrycksTest-anatomy|5": {

"acc": 0.4962962962962963,

"acc_stderr": 0.04319223625811331,

"acc_norm": 0.4962962962962963,

"acc_norm_stderr": 0.04319223625811331

},

"harness|hendrycksTest-astronomy|5": {

"acc": 0.5986842105263158,

"acc_stderr": 0.03988903703336285,

"acc_norm": 0.5986842105263158,

"acc_norm_stderr": 0.03988903703336285

},

"harness|hendrycksTest-business_ethics|5": {

"acc": 0.51,

"acc_stderr": 0.05024183937956912,

"acc_norm": 0.51,

"acc_norm_stderr": 0.05024183937956912

},

"harness|hendrycksTest-clinical_knowledge|5": {

"acc": 0.569811320754717,

"acc_stderr": 0.030471445867183235,

"acc_norm": 0.569811320754717,

"acc_norm_stderr": 0.030471445867183235

},

"harness|hendrycksTest-college_biology|5": {

"acc": 0.5763888888888888,

"acc_stderr": 0.041321250197233685,

"acc_norm": 0.5763888888888888,

"acc_norm_stderr": 0.041321250197233685

},

"harness|hendrycksTest-college_chemistry|5": {

"acc": 0.4,

"acc_stderr": 0.049236596391733084,

"acc_norm": 0.4,

"acc_norm_stderr": 0.049236596391733084

},

"harness|hendrycksTest-college_computer_science|5": {

"acc": 0.42,

"acc_stderr": 0.049604496374885836,

"acc_norm": 0.42,

"acc_norm_stderr": 0.049604496374885836

},

"harness|hendrycksTest-college_mathematics|5": {

"acc": 0.31,

"acc_stderr": 0.04648231987117316,

"acc_norm": 0.31,

"acc_norm_stderr": 0.04648231987117316

},

"harness|hendrycksTest-college_medicine|5": {

"acc": 0.5433526011560693,

"acc_stderr": 0.03798106566014498,

"acc_norm": 0.5433526011560693,

"acc_norm_stderr": 0.03798106566014498

},

"harness|hendrycksTest-college_physics|5": {

"acc": 0.37254901960784315,

"acc_stderr": 0.04810840148082635,

"acc_norm": 0.37254901960784315,

"acc_norm_stderr": 0.04810840148082635

},

"harness|hendrycksTest-computer_security|5": {

"acc": 0.62,

"acc_stderr": 0.04878317312145633,

"acc_norm": 0.62,

"acc_norm_stderr": 0.04878317312145633

},

"harness|hendrycksTest-conceptual_physics|5": {

"acc": 0.48936170212765956,

"acc_stderr": 0.03267862331014063,

"acc_norm": 0.48936170212765956,

"acc_norm_stderr": 0.03267862331014063

},

"harness|hendrycksTest-econometrics|5": {

"acc": 0.38596491228070173,

"acc_stderr": 0.04579639422070434,

"acc_norm": 0.38596491228070173,

"acc_norm_stderr": 0.04579639422070434

},

"harness|hendrycksTest-electrical_engineering|5": {

"acc": 0.5310344827586206,

"acc_stderr": 0.04158632762097828,

"acc_norm": 0.5310344827586206,

"acc_norm_stderr": 0.04158632762097828

},

"harness|hendrycksTest-elementary_mathematics|5": {

"acc": 0.3783068783068783,

"acc_stderr": 0.02497695405315526,

"acc_norm": 0.3783068783068783,

"acc_norm_stderr": 0.02497695405315526

},

"harness|hendrycksTest-formal_logic|5": {

"acc": 0.2619047619047619,

"acc_stderr": 0.03932537680392871,

"acc_norm": 0.2619047619047619,

"acc_norm_stderr": 0.03932537680392871

},

"harness|hendrycksTest-global_facts|5": {

"acc": 0.35,

"acc_stderr": 0.047937248544110196,

"acc_norm": 0.35,