datasetId stringlengths 2 117 | card stringlengths 19 1.01M |

|---|---|

coref-data/conll2012_raw | ---

license: other

configs:

- config_name: english_v4

data_files:

- split: train

path: "english_v4/train-*.parquet"

- split: validation

path: "english_v4/validation-*.parquet"

- split: test

path: "english_v4/test-*.parquet"

- config_name: chinese_v4

data_files:

- split: train

path: "chinese_v4/train-*.parquet"

- split: validation

path: "chinese_v4/validation-*.parquet"

- split: test

path: "chinese_v4/test-*.parquet"

- config_name: arabic_v4

data_files:

- split: train

path: "arabic_v4/train-*.parquet"

- split: validation

path: "arabic_v4/validation-*.parquet"

- split: test

path: "arabic_v4/test-*.parquet"

- config_name: english_v12

data_files:

- split: train

path: "english_v12/train-*.parquet"

- split: validation

path: "english_v12/validation-*.parquet"

- split: test

path: "english_v12/test-*.parquet"

---

# CoNLL-2012 Shared Task

## Dataset Description

- **Homepage:** [CoNLL-2012 Shared Task](https://conll.cemantix.org/2012/data.html), [Author's page](https://cemantix.org/data/ontonotes.html)

- **Repository:** [Mendeley](https://data.mendeley.com/datasets/zmycy7t9h9)

- **Paper:** [Towards Robust Linguistic Analysis using OntoNotes](https://aclanthology.org/W13-3516/)

### Dataset Summary

OntoNotes v5.0 is the final version of OntoNotes corpus, and is a large-scale, multi-genre,

multilingual corpus manually annotated with syntactic, semantic and discourse information.

This dataset is the version of OntoNotes v5.0 extended and is used in the CoNLL-2012 shared task.

It includes v4 train/dev and v9 test data for English/Chinese/Arabic and corrected version v12 train/dev/test data (English only).

The source of data is the Mendeley Data repo [ontonotes-conll2012](https://data.mendeley.com/datasets/zmycy7t9h9), which seems to be as the same as the official data, but users should use this dataset on their own responsibility.

See also summaries from paperwithcode, [OntoNotes 5.0](https://paperswithcode.com/dataset/ontonotes-5-0) and [CoNLL-2012](https://paperswithcode.com/dataset/conll-2012-1)

For more detailed info of the dataset like annotation, tag set, etc., you can refer to the documents in the Mendeley repo mentioned above.

### Languages

V4 data for Arabic, Chinese, English, and V12 data for English

Arabic has certain typos noted at https://github.com/juntaoy/aracoref/blob/main/preprocess_arabic.py

## Dataset Structure

### Data Instances

```

{

{'document_id': 'nw/wsj/23/wsj_2311',

'sentences': [{'part_id': 0,

'words': ['CONCORDE', 'trans-Atlantic', 'flights', 'are', '$', '2, 'to', 'Paris', 'and', '$', '3, 'to', 'London', '.']},

'pos_tags': [25, 18, 27, 43, 2, 12, 17, 25, 11, 2, 12, 17, 25, 7],

'parse_tree': '(TOP(S(NP (NNP CONCORDE) (JJ trans-Atlantic) (NNS flights) )(VP (VBP are) (NP(NP(NP ($ $) (CD 2,400) )(PP (IN to) (NP (NNP Paris) ))) (CC and) (NP(NP ($ $) (CD 3,200) )(PP (IN to) (NP (NNP London) ))))) (. .) ))',

'predicate_lemmas': [None, None, None, 'be', None, None, None, None, None, None, None, None, None, None],

'predicate_framenet_ids': [None, None, None, '01', None, None, None, None, None, None, None, None, None, None],

'word_senses': [None, None, None, None, None, None, None, None, None, None, None, None, None, None],

'speaker': None,

'named_entities': [7, 6, 0, 0, 0, 15, 0, 5, 0, 0, 15, 0, 5, 0],

'srl_frames': [{'frames': ['B-ARG1', 'I-ARG1', 'I-ARG1', 'B-V', 'B-ARG2', 'I-ARG2', 'I-ARG2', 'I-ARG2', 'I-ARG2', 'I-ARG2', 'I-ARG2', 'I-ARG2', 'I-ARG2', 'O'],

'verb': 'are'}],

'coref_spans': [],

{'part_id': 0,

'words': ['In', 'a', 'Centennial', 'Journal', 'article', 'Oct.', '5', ',', 'the', 'fares', 'were', 'reversed', '.']}]}

'pos_tags': [17, 13, 25, 25, 24, 25, 12, 4, 13, 27, 40, 42, 7],

'parse_tree': '(TOP(S(PP (IN In) (NP (DT a) (NML (NNP Centennial) (NNP Journal) ) (NN article) ))(NP (NNP Oct.) (CD 5) ) (, ,) (NP (DT the) (NNS fares) )(VP (VBD were) (VP (VBN reversed) )) (. .) ))',

'predicate_lemmas': [None, None, None, None, None, None, None, None, None, None, None, 'reverse', None],

'predicate_framenet_ids': [None, None, None, None, None, None, None, None, None, None, None, '01', None],

'word_senses': [None, None, None, None, None, None, None, None, None, None, None, None, None],

'speaker': None,

'named_entities': [0, 0, 4, 22, 0, 12, 30, 0, 0, 0, 0, 0, 0],

'srl_frames': [{'frames': ['B-ARGM-LOC', 'I-ARGM-LOC', 'I-ARGM-LOC', 'I-ARGM-LOC', 'I-ARGM-LOC', 'B-ARGM-TMP', 'I-ARGM-TMP', 'O', 'B-ARG1', 'I-ARG1', 'O', 'B-V', 'O'],

'verb': 'reversed'}],

'coref_spans': [],

}

```

### Data Fields

- **`document_id`** (*`str`*): This is a variation on the document filename

- **`sentences`** (*`List[Dict]`*): All sentences of the same document are in a single example for the convenience of concatenating sentences.

Every element in `sentences` is a *`Dict`* composed of the following data fields:

- **`part_id`** (*`int`*) : Some files are divided into multiple parts numbered as 000, 001, 002, ... etc.

- **`words`** (*`List[str]`*) :

- **`pos_tags`** (*`List[ClassLabel]` or `List[str]`*) : This is the Penn-Treebank-style part of speech. When parse information is missing, all parts of speech except the one for which there is some sense or proposition annotation are marked with a XX tag. The verb is marked with just a VERB tag.

- tag set : Note tag sets below are founded by scanning all the data, and I found it seems to be a little bit different from officially stated tag sets. See official documents in the [Mendeley repo](https://data.mendeley.com/datasets/zmycy7t9h9)

- arabic : str. Because pos tag in Arabic is compounded and complex, hard to represent it by `ClassLabel`

- chinese v4 : `datasets.ClassLabel(num_classes=36, names=["X", "AD", "AS", "BA", "CC", "CD", "CS", "DEC", "DEG", "DER", "DEV", "DT", "ETC", "FW", "IJ", "INF", "JJ", "LB", "LC", "M", "MSP", "NN", "NR", "NT", "OD", "ON", "P", "PN", "PU", "SB", "SP", "URL", "VA", "VC", "VE", "VV",])`, where `X` is for pos tag missing

- english v4 : `datasets.ClassLabel(num_classes=49, names=["XX", "``", "$", "''", ",", "-LRB-", "-RRB-", ".", ":", "ADD", "AFX", "CC", "CD", "DT", "EX", "FW", "HYPH", "IN", "JJ", "JJR", "JJS", "LS", "MD", "NFP", "NN", "NNP", "NNPS", "NNS", "PDT", "POS", "PRP", "PRP$", "RB", "RBR", "RBS", "RP", "SYM", "TO", "UH", "VB", "VBD", "VBG", "VBN", "VBP", "VBZ", "WDT", "WP", "WP$", "WRB",])`, where `XX` is for pos tag missing, and `-LRB-`/`-RRB-` is "`(`" / "`)`".

- english v12 : `datasets.ClassLabel(num_classes=51, names="english_v12": ["XX", "``", "$", "''", "*", ",", "-LRB-", "-RRB-", ".", ":", "ADD", "AFX", "CC", "CD", "DT", "EX", "FW", "HYPH", "IN", "JJ", "JJR", "JJS", "LS", "MD", "NFP", "NN", "NNP", "NNPS", "NNS", "PDT", "POS", "PRP", "PRP$", "RB", "RBR", "RBS", "RP", "SYM", "TO", "UH", "VB", "VBD", "VBG", "VBN", "VBP", "VBZ", "VERB", "WDT", "WP", "WP$", "WRB",])`, where `XX` is for pos tag missing, and `-LRB-`/`-RRB-` is "`(`" / "`)`".

- **`parse_tree`** (*`Optional[str]`*) : An serialized NLTK Tree representing the parse. It includes POS tags as pre-terminal nodes. When the parse information is missing, the parse will be `None`.

- **`predicate_lemmas`** (*`List[Optional[str]]`*) : The predicate lemma of the words for which we have semantic role information or word sense information. All other indices are `None`.

- **`predicate_framenet_ids`** (*`List[Optional[int]]`*) : The PropBank frameset ID of the lemmas in predicate_lemmas, or `None`.

- **`word_senses`** (*`List[Optional[float]]`*) : The word senses for the words in the sentence, or None. These are floats because the word sense can have values after the decimal, like 1.1.

- **`speaker`** (*`Optional[str]`*) : This is the speaker or author name where available. Mostly in Broadcast Conversation and Web Log data. When it is not available, it will be `None`.

- **`named_entities`** (*`List[ClassLabel]`*) : The BIO tags for named entities in the sentence.

- tag set : `datasets.ClassLabel(num_classes=37, names=["O", "B-PERSON", "I-PERSON", "B-NORP", "I-NORP", "B-FAC", "I-FAC", "B-ORG", "I-ORG", "B-GPE", "I-GPE", "B-LOC", "I-LOC", "B-PRODUCT", "I-PRODUCT", "B-DATE", "I-DATE", "B-TIME", "I-TIME", "B-PERCENT", "I-PERCENT", "B-MONEY", "I-MONEY", "B-QUANTITY", "I-QUANTITY", "B-ORDINAL", "I-ORDINAL", "B-CARDINAL", "I-CARDINAL", "B-EVENT", "I-EVENT", "B-WORK_OF_ART", "I-WORK_OF_ART", "B-LAW", "I-LAW", "B-LANGUAGE", "I-LANGUAGE",])`

- **`srl_frames`** (*`List[{"word":str, "frames":List[str]}]`*) : A dictionary keyed by the verb in the sentence for the given Propbank frame labels, in a BIO format.

- **`coref spans`** (*`List[List[int]]`*) : The spans for entity mentions involved in coreference resolution within the sentence. Each element is a tuple composed of (cluster_id, start_index, end_index). Indices are inclusive.

### Data Splits

Each dataset (arabic_v4, chinese_v4, english_v4, english_v12) has 3 splits: _train_, _validation_, and _test_

### Citation Information

```

@inproceedings{pradhan-etal-2013-towards,

title = "Towards Robust Linguistic Analysis using {O}nto{N}otes",

author = {Pradhan, Sameer and

Moschitti, Alessandro and

Xue, Nianwen and

Ng, Hwee Tou and

Bj{\"o}rkelund, Anders and

Uryupina, Olga and

Zhang, Yuchen and

Zhong, Zhi},

booktitle = "Proceedings of the Seventeenth Conference on Computational Natural Language Learning",

month = aug,

year = "2013",

address = "Sofia, Bulgaria",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/W13-3516",

pages = "143--152",

}

```

### Contributions

Based on dataset script by [@richarddwang](https://github.com/richarddwang) |

rabib-jahin/Concept-Art | ---

dataset_info:

features:

- name: image

dtype: image

- name: conditioning_image

dtype: image

- name: text

dtype: string

- name: params

struct:

- name: downsample

dtype: int64

- name: grid_size

dtype: int64

- name: high_threshold

dtype: int64

- name: low_threshold

dtype: int64

- name: sigma

dtype: float64

splits:

- name: train

num_bytes: 1972101386.0

num_examples: 5264

download_size: 1971873386

dataset_size: 1972101386.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

zjko/sample-dataset | ---

license: apache-2.0

---

|

bsankar/github-issues | ---

dataset_info:

features:

- name: url

dtype: string

- name: repository_url

dtype: string

- name: labels_url

dtype: string

- name: comments_url

dtype: string

- name: events_url

dtype: string

- name: html_url

dtype: string

- name: id

dtype: int64

- name: node_id

dtype: string

- name: number

dtype: int64

- name: title

dtype: string

- name: user

struct:

- name: login

dtype: string

- name: id

dtype: int64

- name: node_id

dtype: string

- name: avatar_url

dtype: string

- name: gravatar_id

dtype: string

- name: url

dtype: string

- name: html_url

dtype: string

- name: followers_url

dtype: string

- name: following_url

dtype: string

- name: gists_url

dtype: string

- name: starred_url

dtype: string

- name: subscriptions_url

dtype: string

- name: organizations_url

dtype: string

- name: repos_url

dtype: string

- name: events_url

dtype: string

- name: received_events_url

dtype: string

- name: type

dtype: string

- name: site_admin

dtype: bool

- name: labels

list:

- name: id

dtype: int64

- name: node_id

dtype: string

- name: url

dtype: string

- name: name

dtype: string

- name: color

dtype: string

- name: default

dtype: bool

- name: description

dtype: string

- name: state

dtype: string

- name: locked

dtype: bool

- name: assignee

struct:

- name: login

dtype: string

- name: id

dtype: int64

- name: node_id

dtype: string

- name: avatar_url

dtype: string

- name: gravatar_id

dtype: string

- name: url

dtype: string

- name: html_url

dtype: string

- name: followers_url

dtype: string

- name: following_url

dtype: string

- name: gists_url

dtype: string

- name: starred_url

dtype: string

- name: subscriptions_url

dtype: string

- name: organizations_url

dtype: string

- name: repos_url

dtype: string

- name: events_url

dtype: string

- name: received_events_url

dtype: string

- name: type

dtype: string

- name: site_admin

dtype: bool

- name: assignees

list:

- name: login

dtype: string

- name: id

dtype: int64

- name: node_id

dtype: string

- name: avatar_url

dtype: string

- name: gravatar_id

dtype: string

- name: url

dtype: string

- name: html_url

dtype: string

- name: followers_url

dtype: string

- name: following_url

dtype: string

- name: gists_url

dtype: string

- name: starred_url

dtype: string

- name: subscriptions_url

dtype: string

- name: organizations_url

dtype: string

- name: repos_url

dtype: string

- name: events_url

dtype: string

- name: received_events_url

dtype: string

- name: type

dtype: string

- name: site_admin

dtype: bool

- name: milestone

struct:

- name: url

dtype: string

- name: html_url

dtype: string

- name: labels_url

dtype: string

- name: id

dtype: int64

- name: node_id

dtype: string

- name: number

dtype: int64

- name: title

dtype: string

- name: description

dtype: string

- name: creator

struct:

- name: login

dtype: string

- name: id

dtype: int64

- name: node_id

dtype: string

- name: avatar_url

dtype: string

- name: gravatar_id

dtype: string

- name: url

dtype: string

- name: html_url

dtype: string

- name: followers_url

dtype: string

- name: following_url

dtype: string

- name: gists_url

dtype: string

- name: starred_url

dtype: string

- name: subscriptions_url

dtype: string

- name: organizations_url

dtype: string

- name: repos_url

dtype: string

- name: events_url

dtype: string

- name: received_events_url

dtype: string

- name: type

dtype: string

- name: site_admin

dtype: bool

- name: open_issues

dtype: int64

- name: closed_issues

dtype: int64

- name: state

dtype: string

- name: created_at

dtype: timestamp[s]

- name: updated_at

dtype: timestamp[s]

- name: due_on

dtype: 'null'

- name: closed_at

dtype: 'null'

- name: comments

sequence: string

- name: created_at

dtype: timestamp[s]

- name: updated_at

dtype: timestamp[s]

- name: closed_at

dtype: timestamp[s]

- name: author_association

dtype: string

- name: active_lock_reason

dtype: 'null'

- name: draft

dtype: bool

- name: pull_request

struct:

- name: url

dtype: string

- name: html_url

dtype: string

- name: diff_url

dtype: string

- name: patch_url

dtype: string

- name: merged_at

dtype: timestamp[s]

- name: body

dtype: string

- name: reactions

struct:

- name: url

dtype: string

- name: total_count

dtype: int64

- name: '+1'

dtype: int64

- name: '-1'

dtype: int64

- name: laugh

dtype: int64

- name: hooray

dtype: int64

- name: confused

dtype: int64

- name: heart

dtype: int64

- name: rocket

dtype: int64

- name: eyes

dtype: int64

- name: timeline_url

dtype: string

- name: performed_via_github_app

dtype: 'null'

- name: state_reason

dtype: string

- name: is_pull_request

dtype: bool

- name: is_closed

dtype: bool

- name: close_time

dtype: duration[us]

splits:

- name: train

num_bytes: 12125043

num_examples: 1000

download_size: 3282501

dataset_size: 12125043

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "github-issues"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

ostapeno/self_instruct | ---

dataset_info:

features:

- name: dataset

dtype: string

- name: id

dtype: string

- name: messages

list:

- name: role

dtype: string

- name: content

dtype: string

splits:

- name: train

num_bytes: 27516583

num_examples: 82439

download_size: 11204230

dataset_size: 27516583

---

# Dataset Card for "self_instruct"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

CyberHarem/oumae_kumiko_soundeuphonium | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of Oumae Kumiko/黄前久美子 (Sound! Euphonium)

This is the dataset of Oumae Kumiko/黄前久美子 (Sound! Euphonium), containing 441 images and their tags.

The core tags of this character are `brown_hair, short_hair, brown_eyes`, which are pruned in this dataset.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).

## List of Packages

| Name | Images | Size | Download | Type | Description |

|:-----------------|---------:|:-----------|:-----------------------------------------------------------------------------------------------------------------------------|:-----------|:---------------------------------------------------------------------|

| raw | 441 | 321.73 MiB | [Download](https://huggingface.co/datasets/CyberHarem/oumae_kumiko_soundeuphonium/resolve/main/dataset-raw.zip) | Waifuc-Raw | Raw data with meta information (min edge aligned to 1400 if larger). |

| 1200 | 441 | 321.57 MiB | [Download](https://huggingface.co/datasets/CyberHarem/oumae_kumiko_soundeuphonium/resolve/main/dataset-1200.zip) | IMG+TXT | dataset with the shorter side not exceeding 1200 pixels. |

| stage3-p480-1200 | 882 | 590.63 MiB | [Download](https://huggingface.co/datasets/CyberHarem/oumae_kumiko_soundeuphonium/resolve/main/dataset-stage3-p480-1200.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

### Load Raw Dataset with Waifuc

We provide raw dataset (including tagged images) for [waifuc](https://deepghs.github.io/waifuc/main/tutorials/installation/index.html) loading. If you need this, just run the following code

```python

import os

import zipfile

from huggingface_hub import hf_hub_download

from waifuc.source import LocalSource

# download raw archive file

zip_file = hf_hub_download(

repo_id='CyberHarem/oumae_kumiko_soundeuphonium',

repo_type='dataset',

filename='dataset-raw.zip',

)

# extract files to your directory

dataset_dir = 'dataset_dir'

os.makedirs(dataset_dir, exist_ok=True)

with zipfile.ZipFile(zip_file, 'r') as zf:

zf.extractall(dataset_dir)

# load the dataset with waifuc

source = LocalSource(dataset_dir)

for item in source:

print(item.image, item.meta['filename'], item.meta['tags'])

```

## List of Clusters

List of tag clustering result, maybe some outfits can be mined here.

### Raw Text Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | Tags |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| 0 | 5 |  |  |  |  |  | 1girl, blue_sailor_collar, blurry_background, blush, kitauji_high_school_uniform, pink_neckerchief, serafuku, solo, white_shirt, open_mouth, outdoors, short_sleeves, looking_at_viewer |

| 1 | 23 |  |  |  |  |  | 1girl, blue_sailor_collar, kitauji_high_school_uniform, pink_neckerchief, serafuku, white_shirt, short_sleeves, solo, blush, indoors, blue_skirt, pleated_skirt, standing, open_mouth, closed_mouth, looking_at_viewer |

| 2 | 10 |  |  |  |  |  | 1girl, kitauji_high_school_uniform, looking_at_viewer, portrait, serafuku, solo, closed_mouth, blurry_background, blush, indoors, blue_sailor_collar, brown_shirt, white_sailor_collar |

| 3 | 6 |  |  |  |  |  | 1girl, brown_shirt, kitauji_high_school_uniform, red_neckerchief, serafuku, solo, white_sailor_collar, blush, closed_mouth, looking_at_viewer, upper_body, ponytail |

| 4 | 5 |  |  |  |  |  | 1girl, blush, brown_shirt, kitauji_high_school_uniform, red_neckerchief, serafuku, solo, white_sailor_collar, open_mouth, parted_lips, indoors, long_sleeves, looking_to_the_side, window |

| 5 | 5 |  |  |  |  |  | 1girl, blush, brown_shirt, from_side, kitauji_high_school_uniform, profile, red_neckerchief, serafuku, solo, upper_body, white_sailor_collar, blurry_background, closed_eyes, closed_mouth, open_mouth, outdoors, tree |

| 6 | 13 |  |  |  |  |  | 1girl, brown_shirt, brown_skirt, kitauji_high_school_uniform, pleated_skirt, red_neckerchief, solo, white_sailor_collar, long_sleeves, looking_at_viewer, blush, smile, brown_serafuku, closed_mouth |

| 7 | 6 |  |  |  |  |  | 1girl, kitauji_high_school_uniform, playing_instrument, serafuku, solo, blush, blurry, ponytail, sailor_collar |

| 8 | 7 |  |  |  |  |  | 1girl, portrait, solo, blush, looking_at_viewer, blurry, close-up, closed_mouth, anime_coloring |

| 9 | 6 |  |  |  |  |  | 1girl, open_mouth, short_sleeves, solo, t-shirt, white_shirt, blush, collarbone, guitar_case, night, outdoors, backpack, looking_at_viewer |

### Table Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | 1girl | blue_sailor_collar | blurry_background | blush | kitauji_high_school_uniform | pink_neckerchief | serafuku | solo | white_shirt | open_mouth | outdoors | short_sleeves | looking_at_viewer | indoors | blue_skirt | pleated_skirt | standing | closed_mouth | portrait | brown_shirt | white_sailor_collar | red_neckerchief | upper_body | ponytail | parted_lips | long_sleeves | looking_to_the_side | window | from_side | profile | closed_eyes | tree | brown_skirt | smile | brown_serafuku | playing_instrument | blurry | sailor_collar | close-up | anime_coloring | t-shirt | collarbone | guitar_case | night | backpack |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------|:---------------------|:--------------------|:--------|:------------------------------|:-------------------|:-----------|:-------|:--------------|:-------------|:-----------|:----------------|:--------------------|:----------|:-------------|:----------------|:-----------|:---------------|:-----------|:--------------|:----------------------|:------------------|:-------------|:-----------|:--------------|:---------------|:----------------------|:---------|:------------|:----------|:--------------|:-------|:--------------|:--------|:-----------------|:---------------------|:---------|:----------------|:-----------|:-----------------|:----------|:-------------|:--------------|:--------|:-----------|

| 0 | 5 |  |  |  |  |  | X | X | X | X | X | X | X | X | X | X | X | X | X | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | |

| 1 | 23 |  |  |  |  |  | X | X | | X | X | X | X | X | X | X | | X | X | X | X | X | X | X | | | | | | | | | | | | | | | | | | | | | | | | | | | |

| 2 | 10 |  |  |  |  |  | X | X | X | X | X | | X | X | | | | | X | X | | | | X | X | X | X | | | | | | | | | | | | | | | | | | | | | | | | |

| 3 | 6 |  |  |  |  |  | X | | | X | X | | X | X | | | | | X | | | | | X | | X | X | X | X | X | | | | | | | | | | | | | | | | | | | | | |

| 4 | 5 |  |  |  |  |  | X | | | X | X | | X | X | | X | | | | X | | | | | | X | X | X | | | X | X | X | X | | | | | | | | | | | | | | | | | |

| 5 | 5 |  |  |  |  |  | X | | X | X | X | | X | X | | X | X | | | | | | | X | | X | X | X | X | | | | | | X | X | X | X | | | | | | | | | | | | | |

| 6 | 13 |  |  |  |  |  | X | | | X | X | | | X | | | | | X | | | X | | X | | X | X | X | | | | X | | | | | | | X | X | X | | | | | | | | | | |

| 7 | 6 |  |  |  |  |  | X | | | X | X | | X | X | | | | | | | | | | | | | | | | X | | | | | | | | | | | | X | X | X | | | | | | | |

| 8 | 7 |  |  |  |  |  | X | | | X | | | | X | | | | | X | | | | | X | X | | | | | | | | | | | | | | | | | | X | | X | X | | | | | |

| 9 | 6 |  |  |  |  |  | X | | | X | | | | X | X | X | X | X | X | | | | | | | | | | | | | | | | | | | | | | | | | | | | X | X | X | X | X |

|

projecte-aina/casum | ---

annotations_creators:

- machine-generated

language_creators:

- expert-generated

language:

- ca

license:

- cc-by-nc-4.0

multilinguality:

- monolingual

size_categories:

- unknown

source_datasets: []

task_categories:

- summarization

task_ids: []

pretty_name: casum

---

# Dataset Card for CaSum

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Paper:** [Sequence to Sequence Resources for Catalan](https://arxiv.org/pdf/2202.06871.pdf)

- **Point of Contact:** langtech@bsc.es

### Dataset Summary

CaSum is a summarization dataset. It is extracted from a newswire corpus crawled from the Catalan News Agency ([Agència Catalana de Notícies; ACN](https://www.acn.cat/)). The corpus consists of 217,735 instances that are composed by the headline and the body.

### Supported Tasks and Leaderboards

The dataset can be used to train a model for abstractive summarization. Success on this task is typically measured by achieving a high Rouge score. The [mbart-base-ca-casum](https://huggingface.co/projecte-aina/bart-base-ca-casum) model currently achieves a 41.39.

### Languages

The dataset is in Catalan (`ca-ES`).

## Dataset Structure

### Data Instances

```

{

'summary': 'Mapfre preveu ingressar 31.000 milions d’euros al tancament de 2018',

'text': 'L’asseguradora llançarà la seva filial Verti al mercat dels EUA a partir de 2017 ACN Madrid.-Mapfre preveu assolir uns ingressos de 31.000 milions d'euros al tancament de 2018 i destinarà a retribuir els seus accionistes com a mínim el 50% dels beneficis del grup durant el període 2016-2018, amb una rendibilitat mitjana a l’entorn del 5%, segons ha anunciat la companyia asseguradora durant la celebració aquest divendres de la seva junta general d’accionistes. La firma asseguradora també ha avançat que llançarà la seva filial d’automoció i llar al mercat dels EUA a partir de 2017. Mapfre ha recordat durant la junta que va pagar més de 540 milions d'euros en impostos el 2015, amb una taxa impositiva efectiva del 30,4 per cent. La companyia també ha posat en marxa el Pla de Sostenibilitat 2016-2018 i el Pla de Transparència Activa, “que han de contribuir a afermar la visió de Mapfre com a asseguradora global de confiança”, segons ha informat en un comunicat.'

}

```

### Data Fields

- `summary` (str): Summary of the piece of news

- `text` (str): The text of the piece of news

### Data Splits

We split our dataset into train, dev and test splits

- train: 197,735 examples

- validation: 10,000 examples

- test: 10,000 examples

## Dataset Creation

### Curation Rationale

We created this corpus to contribute to the development of language models in Catalan, a low-resource language. There exist few resources for summarization in Catalan.

### Source Data

#### Initial Data Collection and Normalization

We obtained each headline and its corresponding body of each news piece on the Catalan News Agency ([Agència Catalana de Notícies; ACN](https://www.acn.cat/)) website and applied the following cleaning pipeline: deduplicating the documents, removing the documents with empty attributes, and deleting some boilerplate sentences.

#### Who are the source language producers?

The news portal Catalan News Agency ([Agència Catalana de Notícies; ACN](https://www.acn.cat/)).

### Annotations

The dataset is unannotated.

#### Annotation process

[N/A]

#### Who are the annotators?

[N/A]

### Personal and Sensitive Information

Since all data comes from public websites, no anonymization process was performed.

## Considerations for Using the Data

### Social Impact of Dataset

We hope this corpus contributes to the development of summarization models in Catalan, a low-resource language.

### Discussion of Biases

We are aware that since the data comes from unreliable web pages, some biases may be present in the dataset. Nonetheless, we have not applied any steps to reduce their impact.

### Other Known Limitations

[N/A]

## Additional Information

### Dataset Curators

Text Mining Unit (TeMU) at the Barcelona Supercomputing Center (bsc-temu@bsc.es)

This work was funded by MT4All CEF project and [Departament de la Vicepresidència i de Polítiques Digitals i Territori de la Generalitat de Catalunya](https://politiquesdigitals.gencat.cat/ca/inici/index.html#googtrans(ca|en) within the framework of [Projecte AINA](https://politiquesdigitals.gencat.cat/ca/economia/catalonia-ai/aina).

### Licensing information

[Creative Commons Attribution 4.0 International](https://creativecommons.org/licenses/by/4.0/).

### BibTeX citation

If you use any of these resources (datasets or models) in your work, please cite our latest preprint:

```bibtex

@misc{degibert2022sequencetosequence,

title={Sequence-to-Sequence Resources for Catalan},

author={Ona de Gibert and Ksenia Kharitonova and Blanca Calvo Figueras and Jordi Armengol-Estapé and Maite Melero},

year={2022},

eprint={2202.06871},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

### Contributions

[N/A] |

cnmoro/WizardVicuna-PTBR-Instruct-Clean | ---

license: apache-2.0

---

|

hugfaceguy0001/FamousNovels | ---

dataset_info:

- config_name: V2

features:

- name: title

dtype: string

- name: author

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 729958627

num_examples: 1589

download_size: 487948266

dataset_size: 729958627

- config_name: default

features:

- name: title

dtype: string

- name: author

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 159881188

num_examples: 334

download_size: 107391809

dataset_size: 159881188

configs:

- config_name: V2

data_files:

- split: train

path: V2/train-*

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

manishiitg/jondurbin-truthy-dpo-v0.1 | ---

dataset_info:

features:

- name: id

dtype: string

- name: source

dtype: string

- name: system

dtype: string

- name: prompt

dtype: string

- name: chosen

dtype: string

- name: rejected

dtype: string

splits:

- name: train

num_bytes: 4768473

num_examples: 2032

download_size: 1984224

dataset_size: 4768473

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

EJinHF/SQuALITY_retrieve | ---

task_categories:

- summarization

language:

- en

--- |

adnankarim/urdu_asr_data | ---

dataset_info:

features:

- name: audio

dtype: audio

- name: text

dtype: string

splits:

- name: train

num_bytes: 22930938703.12

num_examples: 98189

download_size: 22145178407

dataset_size: 22930938703.12

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

ovior/twitter_dataset_1713054391 | ---

dataset_info:

features:

- name: id

dtype: string

- name: tweet_content

dtype: string

- name: user_name

dtype: string

- name: user_id

dtype: string

- name: created_at

dtype: string

- name: url

dtype: string

- name: favourite_count

dtype: int64

- name: scraped_at

dtype: string

- name: image_urls

dtype: string

splits:

- name: train

num_bytes: 2315231

num_examples: 7193

download_size: 1301228

dataset_size: 2315231

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

quocanh34/soict_test_dataset | ---

dataset_info:

features:

- name: audio

dtype:

audio:

sampling_rate: 16000

- name: id

dtype: string

splits:

- name: train

num_bytes: 174203109.625

num_examples: 1299

download_size: 164141076

dataset_size: 174203109.625

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "soict_test_dataset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Slichi/Orslok | ---

license: openrail

---

|

hippocrates/emrqaQA_risk_train | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: valid

path: data/valid-*

dataset_info:

features:

- name: id

dtype: int64

- name: conversations

list:

- name: from

dtype: string

- name: value

dtype: string

- name: text

dtype: string

- name: label

dtype: string

splits:

- name: train

num_bytes: 32922109

num_examples: 52467

- name: valid

num_bytes: 5505022

num_examples: 8359

download_size: 3101626

dataset_size: 38427131

---

# Dataset Card for "emrqaQA_risk_train"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

wiki_summary | ---

annotations_creators:

- no-annotation

language_creators:

- crowdsourced

language:

- fa

license:

- apache-2.0

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- original

task_categories:

- text2text-generation

- translation

- question-answering

- summarization

task_ids:

- abstractive-qa

- explanation-generation

- extractive-qa

- open-domain-qa

- open-domain-abstractive-qa

- text-simplification

pretty_name: WikiSummary

dataset_info:

features:

- name: id

dtype: string

- name: link

dtype: string

- name: title

dtype: string

- name: article

dtype: string

- name: highlights

dtype: string

splits:

- name: train

num_bytes: 207186608

num_examples: 45654

- name: test

num_bytes: 25693509

num_examples: 5638

- name: validation

num_bytes: 23130954

num_examples: 5074

download_size: 255168504

dataset_size: 256011071

---

# Dataset Card for [Needs More Information]

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** https://github.com/m3hrdadfi/wiki-summary

- **Repository:** https://github.com/m3hrdadfi/wiki-summary

- **Paper:** [More Information Needed]

- **Leaderboard:** [More Information Needed]

- **Point of Contact:** [Mehrdad Farahani](mailto:m3hrdadphi@gmail.com)

### Dataset Summary

The dataset extracted from Persian Wikipedia into the form of articles and highlights and cleaned the dataset into pairs of articles and highlights and reduced the articles' length (only version 1.0.0) and highlights' length to a maximum of 512 and 128, respectively, suitable for parsBERT. This dataset is created to achieve state-of-the-art results on some interesting NLP tasks like Text Summarization.

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

The text in the dataset is in Percy.

## Dataset Structure

### Data Instances

```

{

'id' :'0598cfd2ac491a928615945054ab7602034a8f4f',

'link': 'https://fa.wikipedia.org/wiki/انقلاب_1917_روسیه',

'title': 'انقلاب 1917 روسیه',

'article': 'نخست انقلاب فوریه ۱۹۱۷ رخ داد . در این انقلاب پس از یکسری اعتصابات ، تظاهرات و درگیریها ، نیکولای دوم ، آخرین تزار روسیه از سلطنت خلع شد و یک دولت موقت به قدرت رسید . دولت موقت زیر نظر گئورگی لووف و الکساندر کرنسکی تشکیل شد . اکثر اعضای دولت موقت ، از شاخه منشویک حزب سوسیال دموکرات کارگری روسیه بودند . دومین مرحله ، انقلاب اکتبر ۱۹۱۷ بود . انقلاب اکتبر ، تحت نظارت حزب بلشویک (شاخه رادیکال از حزب سوسیال دموکرات کارگری روسیه) و به رهبری ولادیمیر لنین به پیش رفت و طی یک یورش نظامی همهجانبه به کاخ زمستانی سن پترزبورگ و سایر اماکن مهم ، قدرت را از دولت موقت گرفت . در این انقلاب افراد بسیار کمی کشته شدند . از زمان شکست روسیه در جنگ ۱۹۰۵ با ژاپن ، اوضاع بد اقتصادی ، گرسنگی ، عقبماندگی و سرمایهداری و نارضایتیهای گوناگون در بین مردم ، سربازان ، کارگران ، کشاورزان و نخبگان روسیه بهوجود آمدهبود . سرکوبهای تزار و ایجاد مجلس دوما نظام مشروطه حاصل آن دوران است . حزب سوسیال دموکرات ، اصلیترین معترض به سیاستهای نیکلای دوم بود که بهطور گسترده بین دهقانان کشاورزان و کارگران کارخانجات صنعتی علیه سیاستهای سیستم تزار فعالیت داشت . در اوت ۱۹۱۴ میلادی ، امپراتوری روسیه به دستور تزار وقت و به منظور حمایت از اسلاوهای صربستان وارد جنگ جهانی اول در برابر امپراتوری آلمان و امپراتوری اتریش-مجارستان شد . نخست فقط بلشویکها ، مخالف ورود روسیه به این جنگ بودند و میگفتند که این جنگ ، سبب بدتر شدن اوضاع نابسامان اقتصادی و اجتماعی روسیه خواهد شد . در سال ۱۹۱۴ میلادی ، یعنی در آغاز جنگ جهانی اول ، روسیه بزرگترین ارتش جهان را داشت ، حدود ۱۲ میلیون سرباز و ۶ میلیون سرباز ذخیره ؛ ولی در پایان سال ۱۹۱۶ میلادی ، پنج میلیون نفر از سربازان روسیه کشته ، زخمی یا اسیر شده بودند . حدود دو میلیون سرباز نیز محل خدمت خود را ترک کرده و غالبا با اسلحه به شهر و دیار خود بازگشته بودند . در میان ۱۰ یا ۱۱ میلیون سرباز باقیمانده نیز ، اعتبار تزار و سلسله مراتب ارتش و اتوریته افسران بالا دست از بین رفته بود . عوامل نابسامان داخلی اعم از اجتماعی کشاورزی و فرماندهی نظامی در شکستهای روسیه بسیار مؤثر بود . شکستهای روسیه در جنگ جهانی اول ، حامیان نیکلای دوم در روسیه را به حداقل خود رساند . در اوایل فوریه ۱۹۱۷ میلادی اکثر کارگران صنعتی در پتروگراد و مسکو دست به اعتصاب زدند . سپس شورش به پادگانها و سربازان رسید . اعتراضات دهقانان نیز گسترش یافت . سوسیال دموکراتها هدایت اعتراضات را در دست گرفتند . در ۱۱ مارس ۱۹۱۷ میلادی ، تزار وقت روسیه ، نیکلای دوم ، فرمان انحلال مجلس روسیه را صادر کرد ، اما اکثر نمایندگان مجلس متفرق نشدند و با تصمیمات نیکلای دوم مخالفت کردند . سرانجام در پی تظاهرات گسترده کارگران و سپس نافرمانی سربازان در سرکوب تظاهرکنندگان در پتروگراد ، نیکلای دوم از مقام خود استعفا داد . بدین ترتیب حکمرانی دودمان رومانوفها بر روسیه پس از حدود سیصد سال پایان یافت .',

'highlights': 'انقلاب ۱۹۱۷ روسیه ، جنبشی اعتراضی ، ضد امپراتوری روسیه بود که در سال ۱۹۱۷ رخ داد و به سرنگونی حکومت تزارها و برپایی اتحاد جماهیر شوروی انجامید . مبانی انقلاب بر پایه صلح-نان-زمین استوار بود . این انقلاب در دو مرحله صورت گرفت : در طول این انقلاب در شهرهای اصلی روسیه همانند مسکو و سن پترزبورگ رویدادهای تاریخی برجستهای رخ داد . انقلاب در مناطق روستایی و رعیتی نیز پا به پای مناطق شهری در حال پیشروی بود و دهقانان زمینها را تصرف کرده و در حال بازتوزیع آن در میان خود بودند .'

}

```

### Data Fields

- `id`: Article id

- `link`: Article link

- `title`: Title of the article

- `article`: Full text content in the article

- `highlights`: Summary of the article

### Data Splits

| Train | Test | Validation |

|-------------|-------------|-------------|

| 45,654 | 5,638 | 5,074 |

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

No annotations.

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

The dataset was created by Mehrdad Farahani.

### Licensing Information

[Apache License 2.0](https://github.com/m3hrdadfi/wiki-summary/blob/master/LICENSE)

### Citation Information

```

@misc{Bert2BertWikiSummaryPersian,

author = {Mehrdad Farahani},

title = {Summarization using Bert2Bert model on WikiSummary dataset},

year = {2020},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {https://github.com/m3hrdadfi/wiki-summary},

}

```

### Contributions

Thanks to [@tanmoyio](https://github.com/tanmoyio) for adding this dataset. |

autoevaluate/autoeval-eval-banking77-default-880a34-2252471790 | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- banking77

eval_info:

task: multi_class_classification

model: philschmid/BERT-Banking77

metrics: ['bleu', 'exact_match']

dataset_name: banking77

dataset_config: default

dataset_split: test

col_mapping:

text: text

target: label

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Multi-class Text Classification

* Model: philschmid/BERT-Banking77

* Dataset: banking77

* Config: default

* Split: test

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@HEIT](https://huggingface.co/HEIT) for evaluating this model. |

jclian91/people_relation_classification | ---

license: mit

---

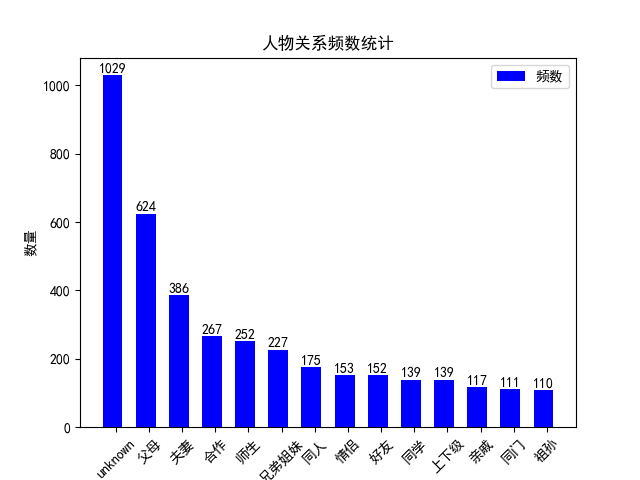

本数据集用于人物关系分类,一共14种关系类型:不确定, 夫妻, 父母, 兄弟姐妹, 上下级, 师生, 好友, 同学, 合作, 同一个人, 情侣, 祖孙, 同门, 亲戚。

本数据集共3881条,其中训练集3105条,测试集776条,参看train.csv和test.csv。

数据集的人物关系分布如下:

关于使用R-BERT模型训练该数据集,可参考文章:[NLP(四十二)人物关系分类的再次尝试](https://percent4.github.io/2023/07/10/NLP%EF%BC%88%E5%9B%9B%E5%8D%81%E4%BA%8C%EF%BC%89%E4%BA%BA%E7%89%A9%E5%85%B3%E7%B3%BB%E5%88%86%E7%B1%BB%E7%9A%84%E5%86%8D%E6%AC%A1%E5%B0%9D%E8%AF%95/). |

wikipunk/d3fend | ---

language:

- en

license: mit

tags:

- knowledge-graph

- rdf

- owl

- ontology

- cybersecurity

annotations_creators:

- expert-generated

pretty_name: D3FEND

size_categories:

- 100K<n<1M

task_categories:

- graph-ml

dataset_info:

features:

- name: subject

dtype: string

- name: predicate

dtype: string

- name: object

dtype: string

config_name: default

splits:

- name: train

num_bytes: 46899451

num_examples: 231842

dataset_size: 46899451

viewer: false

---

# D3FEND: A knowledge graph of cybersecurity countermeasures

### Overview

D3FEND encodes a countermeasure knowledge base in the form of a

knowledge graph. It meticulously organizes key concepts and relations

in the cybersecurity countermeasure domain, linking each to pertinent

references in the cybersecurity literature.

### Use-cases

Researchers and cybersecurity enthusiasts can leverage D3FEND to:

- Develop sophisticated graph-based models.

- Fine-tune large language models, focusing on cybersecurity knowledge

graph completion.

- Explore the complexities and nuances of defensive techniques,

mappings to MITRE ATT&CK, weaknesses (CWEs), and cybersecurity

taxonomies.

- Gain insight into ontology development and modeling in the

cybersecurity domain.

### Dataset construction and pre-processing

### Source:

- [Dataset Repository - 0.13.0-BETA-1](https://github.com/d3fend/d3fend-ontology/tree/release/0.13.0-BETA-1)

- [Commit Details](https://github.com/d3fend/d3fend-ontology/commit/3dcc495879bb62cee5c4109e9b784dd4a2de3c9d)

- [CWE Extension](https://github.com/d3fend/d3fend-ontology/tree/release/0.13.0-BETA-1/extensions/cwe)

#### Building and Verification:

1. **Construction**: The ontology, denoted as `d3fend-full.owl`, was

built from the beta version of the D3FEND ontology referenced

above using documented README in d3fend-ontology. This includes the

CWE extensions.

2. **Import and Reasoning**: Imported into Protege version 5.6.1,

utilizing the Pellet reasoner plugin for logical reasoning and

verification.

3. **Coherence Check**: Utilized the Debug Ontology plugin in Protege

to ensure the ontology's coherence and consistency.

#### Exporting, Transformation, and Compression:

Note: The following steps were performed using Apache Jena's command

line tools. (https://jena.apache.org/documentation/tools/)

1. **Exporting Inferred Axioms**: Post-verification, I exported

inferred axioms along with asserted axioms and

annotations. [Detailed

Process](https://www.michaeldebellis.com/post/export-inferred-axioms)

2. **Filtering**: The materialized ontology was filtered using

`d3fend.rq` to retain relevant triples.

3. **Format Transformation**: Subsequently transformed to Turtle and

N-Triples formats for diverse usability. Note: I export in Turtle

first because it is easier to read and verify. Then I convert to

N-Triples.

```shell

arq --query=d3fend.rq --data=d3fend.owl --results=turtle > d3fend.ttl

riot --output=nt d3fend.ttl > d3fend.nt

```

4. **Compression**: Compressed the resulting ontology files using

gzip.

## Features

The D3FEND dataset is composed of triples representing the

relationships between different cybersecurity countermeasures. Each

triple is a representation of a statement about a cybersecurity

concept or a relationship between concepts. The dataset includes the

following features:

### 1. **Subject** (`string`)

The subject of a triple is the entity that the statement is about. In

this dataset, the subject represents a cybersecurity concept or

entity, such as a specific countermeasure or ATT&CK technique.

### 2. **Predicate** (`string`)

The predicate of a triple represents the property or characteristic of

the subject, or the nature of the relationship between the subject and

the object. For instance, it might represent a specific type of

relationship like "may-be-associated-with" or "has a reference."

### 3. **Object** (`string`)

The object of a triple is the entity that is related to the subject by

the predicate. It can be another cybersecurity concept, such as an

ATT&CK technique, or a literal value representing a property of the

subject, such as a name or a description.

### Usage

First make sure you have the requirements installed:

```python

pip install datasets

pip install rdflib

```

You can load the dataset using the Hugging Face Datasets library with

the following Python code:

```python

from datasets import load_dataset

dataset = load_dataset('wikipunk/d3fend', split='train')

```

#### Note on Format:

The subject, predicate, and object are stored in N3 notation, a

verbose serialization for RDF. This allows users to unambiguously

parse each component using `rdflib.util.from_n3` from the RDFLib

Python library. For example:

```python

from rdflib.util import from_n3

subject_node = from_n3(dataset[0]['subject'])

predicate_node = from_n3(dataset[0]['predicate'])

object_node = from_n3(dataset[0]['object'])

```

Once loaded, each example in the dataset will be a dictionary with

`subject`, `predicate`, and `object` keys corresponding to the

features described above.

### Example

Here is an example of a triple in the dataset:

- Subject: `"<http://d3fend.mitre.org/ontologies/d3fend.owl#T1550.002>"`

- Predicate: `"<http://d3fend.mitre.org/ontologies/d3fend.owl#may-be-associated-with>"`

- Object: `"<http://d3fend.mitre.org/ontologies/d3fend.owl#T1218.014>"`

This triple represents the statement that the ATT&CK technique

identified by `T1550.002` may be associated with the ATT&CK technique

identified by `T1218.014`.

### Acknowledgements

This ontology is developed by MITRE Corporation and is licensed under

the MIT license. I would like to thank the authors for their work

which has opened my eyes to a new world of cybersecurity modeling.

If you are a cybersecurity expert please consider [contributing to

D3FEND](https://d3fend.mitre.org/contribute/).

[D3FEND Resources](https://d3fend.mitre.org/resources/)

### Citation

```bibtex

@techreport{kaloroumakis2021d3fend,

title={Toward a Knowledge Graph of Cybersecurity Countermeasures},

author={Kaloroumakis, Peter E. and Smith, Michael J.},

institution={The MITRE Corporation},

year={2021},

url={https://d3fend.mitre.org/resources/D3FEND.pdf}

}

```

|

joey234/medmcqa-original-neg | ---

dataset_info:

features:

- name: id

dtype: string

- name: question

dtype: string

- name: opa

dtype: string

- name: opb

dtype: string

- name: opc

dtype: string

- name: opd

dtype: string

- name: cop

dtype:

class_label:

names:

'0': a

'1': b

'2': c

'3': d

- name: choice_type

dtype: string

- name: exp

dtype: string

- name: subject_name

dtype: string

- name: topic_name

dtype: string

splits:

- name: validation

num_bytes: 366432.0631125986

num_examples: 690

download_size: 292370

dataset_size: 366432.0631125986

---

# Dataset Card for "medmcqa-original-neg"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Aisha/BAAD6 | ---

annotations_creators:

- found

- crowdsourced

- expert-generated

language_creators:

- found

- crowdsourced

language:

- bn

license:

- cc-by-4.0

multilinguality:

- monolingual

pretty_name: 'BAAD6: Bangla Authorship Attribution Dataset (6 Authors)'

size_categories:

- unknown

source_datasets:

- original

task_categories:

- text-classification

task_ids:

- multi-class-classification

---

## Description

**BAAD6** is an **Authorship Attribution dataset for Bengali Literature**. It was collected and analyzed by Hemayet et al [[1]](https://ieeexplore.ieee.org/document/8631977). The data was obtained from different online posts and blogs. This dataset is balanced among the 6 Authors with 350 sample texts per author. This is a relatively small dataset but is noisy given the sources it was collected from and its cleaning procedure. Nonetheless, it may help evaluate authorship attribution systems as it resembles texts often available on the Internet. Details about the dataset are given in the table below.

| Author | Samples | Word count | Unique word |

| ------ | ------ | ------ | ------ |

|fe|350|357k|53k|

| ij | 350 | 391k | 72k

| mk | 350 | 377k | 47k

| rn | 350 | 231k | 50k

| hm | 350 | 555k | 72k

| rg | 350 | 391k | 58k

**Total** | 2,100 | 2,304,338 | 230,075

**Average** | 350 | 384,056.33 | 59,006.67

## Citation

If you use this dataset, please cite the paper [A Comparative Analysis of Word Embedding Representations in Authorship Attribution of Bengali Literature](https://ieeexplore.ieee.org/document/8631977).

```

@INPROCEEDINGS{BAAD6Dataset,

author={Ahmed Chowdhury, Hemayet and Haque Imon, Md. Azizul and Islam, Md. Saiful},

booktitle={2018 21st International Conference of Computer and Information Technology (ICCIT)},

title={A Comparative Analysis of Word Embedding Representations in Authorship Attribution of Bengali Literature},

year={2018},

volume={},

number={},

pages={1-6},

doi={10.1109/ICCITECHN.2018.8631977}

}

```

This dataset is also available in Mendeley: [BAAD6 dataset](https://data.mendeley.com/datasets/w9wkd7g43f/5). Always make sure to use the latest version of the dataset. Cite the dataset directly by:

```

@misc{BAAD6Dataset,

author = {Ahmed Chowdhury, Hemayet and Haque Imon, Md. Azizul and Khatun, Aisha and Islam, Md. Saiful},

title = {BAAD6: Bangla Authorship Attribution Dataset},

year={2018},

doi = {10.17632/w9wkd7g43f.5},

howpublished= {\url{https://data.mendeley.com/datasets/w9wkd7g43f/5}}

}

``` |

manishiitg/llm_judge | ---

dataset_info:

features:

- name: prompt

dtype: string

- name: response

dtype: string

- name: type

dtype: string

- name: lang

dtype: string

- name: model_name

dtype: string

- name: simple_prompt

dtype: string

- name: judgement_pending

dtype: bool

- name: judgement

dtype: string

- name: rating

dtype: float64

splits:

- name: train

num_bytes: 132281216

num_examples: 30492

download_size: 42012690

dataset_size: 132281216

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

#### LLM Judge Language: hi

| Model | Language | Score | No# Questions |

| --- | --- | --- | --- |

| Qwen/Qwen1.5-72B-Chat-AWQ | hi | 8.3722 | 562 |

| Qwen/Qwen1.5-14B-Chat | hi | 8.2561 | 561 |

| google/gemma-7b-it | hi | 7.8930 | 561 |

| Qwen/Qwen1.5-7B-Chat | hi | 7.8518 | 562 |

| manishiitg/open-aditi-hi-v3 | hi | 7.7464 | 562 |

| manishiitg/open-aditi-hi-v4 | hi | 7.5537 | 562 |

| manishiitg/open-aditi-hi-v2 | hi | 7.2536 | 562 |

| teknium/OpenHermes-2.5-Mistral-7B | hi | 7.2240 | 562 |

| ai4bharat/Airavata | hi | 6.9355 | 550 |

| 01-ai/Yi-34B-Chat | hi | 6.5692 | 562 |

| manishiitg/open-aditi-hi-v1 | hi | 4.6521 | 562 |

| sarvamai/OpenHathi-7B-Hi-v0.1-Base | hi | 4.2417 | 606 |

| Qwen/Qwen1.5-4B-Chat | hi | 4.0970 | 562 |

#### LLM Judge Language: en

| Model | Language | Score | No# Questions |

| --- | --- | --- | --- |

| Qwen/Qwen1.5-14B-Chat | en | 9.1956 | 362 |

| Qwen/Qwen1.5-72B-Chat-AWQ | en | 9.1577 | 362 |

| Qwen/Qwen1.5-7B-Chat | en | 9.1503 | 362 |

| 01-ai/Yi-34B-Chat | en | 9.1373 | 362 |

| mistralai/Mixtral-8x7B-Instruct-v0.1 | en | 9.1340 | 362 |

| teknium/OpenHermes-2.5-Mistral-7B | en | 9.0006 | 362 |

| manishiitg/open-aditi-hi-v3 | en | 8.9069 | 362 |

| manishiitg/open-aditi-hi-v4 | en | 8.9064 | 362 |

| google/gemma-7b-it | en | 8.7945 | 362 |

| Qwen/Qwen1.5-4B-Chat | en | 8.7224 | 362 |

| manishiitg/open-aditi-hi-v2 | en | 8.4343 | 362 |

| ai4bharat/Airavata | en | 7.3923 | 362 |

| manishiitg/open-aditi-hi-v1 | en | 6.6413 | 361 |

| sarvamai/OpenHathi-7B-Hi-v0.1-Base | en | 5.9009 | 318 |

Using QWen-72B-AWQ as LLM Judge

Evaluation on hindi and english prompts borrowed from teknimum, airoboros, https://huggingface.co/datasets/HuggingFaceH4/mt_bench_prompts, https://huggingface.co/datasets/ai4bharat/human-eval

and other sources

Mainly used to evalaution on written tasks through LLM JUDGE

https://github.com/lm-sys/FastChat/blob/main/fastchat/llm_judge/README.md

|

boapps/alpaca-hu | ---

license: cc-by-sa-4.0

task_categories:

- text-generation

language:

- hu

size_categories:

- 10K<n<100K

pretty_name: Alpaca HU

---

# Alpaca HU

Magyar nyelvű utánzása a stanford alpaca adathalmazának.

Nem fordítással készült, hanem az OpenAI API segítségével lett generálva. A ~15 000 feladat 9.17$-ért.

Az eredeti [stanford_alpaca](https://github.com/tatsu-lab/stanford_alpaca) kód módosításával és a seed-taskok fordításával/átírásával készült. [repo itt](https://github.com/boapps/stanford_alpaca_hu)

Annak ellenére, hogy nem fordítás, nem tökéletes és vannak benne magyartalan kifejezések, további tisztítás fontos lenne. Viszont így is számos magyarul releváns feladat került az adathalmazba, ami egy egyszerű fordításból hiányzana.

A generálás közben módosítottam a kódon, ahogy észleltem benne hibákat, emiatt az adathalmaz elejét nem a repo-ban található kód alkotta.

Például az adathalmaz 1/3-a körül megtudtam, hogy a `GPT-3.5-turbo` az valójában a régebbi, rosszabb és drágább `GPT-3.5-turbo-0613`-at használja, ezért ott módosítottam a modellt `GPT-3.5-turbo-0125`-re.

Ez az adathalmaz az eredeti stanford_alpaca-hoz hasonlóan kutatási célra van ajánlva és **üzleti célú használata tilos**.

Ennek az oka, hogy az OpenAI ToS nem engedi az OpenAI-al versengő modellek fejlesztését.

Ezen kívül az adathalmaz nem esett át megfelelő szűrésen, tartalmazhat káros utasításokat.

|

v2ray/jannie-log | ---

license: mit

task_categories:

- conversational

language:

- en

tags:

- not-for-all-audiences

size_categories:

- 100K<n<1M

---

# Jannie Log

From moxxie proxy.

Not formatted version: [Click Me](https://drive.google.com/drive/folders/1HZtPe0j7PmNnaFcJtFfXxqFDUK_URqkf?usp=sharing) |

EJaalborg2022/mt5-small-finetuned-beer-ctg-en | ---

dataset_info:

features:

- name: prediction_ts

dtype: float32

- name: beer_ABV

dtype: float32

- name: beer_name

dtype: string

- name: beer_style

dtype: string

- name: review_appearance

dtype: float32

- name: review_palette

dtype: float32

- name: review_taste

dtype: float32

- name: review_aroma

dtype: float32

- name: text

dtype: string

- name: label

dtype:

class_label:

names:

'0': negative

'1': neutral

'2': positive

splits:

- name: training

num_bytes: 6908323

num_examples: 9000

- name: validation

num_bytes: 970104

num_examples: 1260

- name: production

num_bytes: 21305419

num_examples: 27742

download_size: 16954616

dataset_size: 29183846

---

# Dataset Card for "mt5-small-finetuned-beer-ctg-en"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

mask-distilled-onesec-cv12-each-chunk-uniq/chunk_150 | ---

dataset_info:

features:

- name: logits

sequence: float32

- name: mfcc

sequence:

sequence: float64

splits:

- name: train

num_bytes: 846397332.0

num_examples: 166221

download_size: 862299585

dataset_size: 846397332.0

---

# Dataset Card for "chunk_150"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

csaybar/CloudSEN12-scribble | ---

license: cc-by-nc-4.0

---

# **CloudSEN12 SCRIBBLE**

## **A Benchmark Dataset for Cloud Semantic Understanding**

CloudSEN12 is a LARGE dataset (~1 TB) for cloud semantic understanding that consists of 49,400 image patches (IP) that are

evenly spread throughout all continents except Antarctica. Each IP covers 5090 x 5090 meters and contains data from Sentinel-2

levels 1C and 2A, hand-crafted annotations of thick and thin clouds and cloud shadows, Sentinel-1 Synthetic Aperture Radar (SAR),

digital elevation model, surface water occurrence, land cover classes, and cloud mask results from six cutting-edge

cloud detection algorithms.

CloudSEN12 is designed to support both weakly and self-/semi-supervised learning strategies by including three distinct forms of

hand-crafted labeling data: high-quality, scribble and no-annotation. For more details on how we created the dataset see our

paper.

Ready to start using **[CloudSEN12](https://cloudsen12.github.io/)**?

**[Download Dataset](https://cloudsen12.github.io/download.html)**

**[Paper - Scientific Data](https://www.nature.com/articles/s41597-022-01878-2)**

**[Inference on a new S2 image](https://colab.research.google.com/github/cloudsen12/examples/blob/master/example02.ipynb)**

**[Enter to cloudApp](https://github.com/cloudsen12/CloudApp)**

**[CloudSEN12 in Google Earth Engine](https://gee-community-catalog.org/projects/cloudsen12/)**

<br>

### **Description**

<br>

| File | Name | Scale | Wavelength | Description | Datatype |

|---------------|-----------------|--------|------------------------------|------------------------------------------------------------------------------------------------------|----------|

| L1C_ & L2A_ | B1 | 0.0001 | 443.9nm (S2A) / 442.3nm (S2B)| Aerosols. | np.int16 |

| | B2 | 0.0001 | 496.6nm (S2A) / 492.1nm (S2B)| Blue. | np.int16 |

| | B3 | 0.0001 | 560nm (S2A) / 559nm (S2B) | Green. | np.int16 |

| | B4 | 0.0001 | 664.5nm (S2A) / 665nm (S2B) | Red. | np.int16 |

| | B5 | 0.0001 | 703.9nm (S2A) / 703.8nm (S2B)| Red Edge 1. | np.int16 |

| | B6 | 0.0001 | 740.2nm (S2A) / 739.1nm (S2B)| Red Edge 2. | np.int16 |

| | B7 | 0.0001 | 782.5nm (S2A) / 779.7nm (S2B)| Red Edge 3. | np.int16 |

| | B8 | 0.0001 | 835.1nm (S2A) / 833nm (S2B) | NIR. | np.int16 |

| | B8A | 0.0001 | 864.8nm (S2A) / 864nm (S2B) | Red Edge 4. | np.int16 |

| | B9 | 0.0001 | 945nm (S2A) / 943.2nm (S2B) | Water vapor. | np.int16 |

| | B11 | 0.0001 | 1613.7nm (S2A) / 1610.4nm (S2B)| SWIR 1. | np.int16 |

| | B12 | 0.0001 | 2202.4nm (S2A) / 2185.7nm (S2B)| SWIR 2. | np.int16 |

| L1C_ | B10 | 0.0001 | 1373.5nm (S2A) / 1376.9nm (S2B)| Cirrus. | np.int16 |

| L2A_ | AOT | 0.001 | - | Aerosol Optical Thickness. | np.int16 |

| | WVP | 0.001 | - | Water Vapor Pressure. | np.int16 |

| | TCI_R | 1 | - | True Color Image, Red. | np.int16 |

| | TCI_G | 1 | - | True Color Image, Green. | np.int16 |

| | TCI_B | 1 | - | True Color Image, Blue. | np.int16 |

| S1_ | VV | 1 | 5.405GHz | Dual-band cross-polarization, vertical transmit/horizontal receive. |np.float32|

| | VH | 1 | 5.405GHz | Single co-polarization, vertical transmit/vertical receive. |np.float32|

| | angle | 1 | - | Incidence angle generated by interpolating the ‘incidenceAngle’ property. |np.float32|

| EXTRA_ | CDI | 0.0001 | - | Cloud Displacement Index. | np.int16 |

| | Shwdirection | 0.01 | - | Azimuth. Values range from 0°- 360°. | np.int16 |

| | elevation | 1 | - | Elevation in meters. Obtained from MERIT Hydro datasets. | np.int16 |

| | ocurrence | 1 | - | JRC Global Surface Water. The frequency with which water was present. | np.int16 |

| | LC100 | 1 | - | Copernicus land cover product. CGLS-LC100 Collection 3. | np.int16 |

| | LC10 | 1 | - | ESA WorldCover 10m v100 product. | np.int16 |

| LABEL_ | fmask | 1 | - | Fmask4.0 cloud masking. | np.int16 |

| | QA60 | 1 | - | SEN2 Level-1C cloud mask. | np.int8 |

| | s2cloudless | 1 | - | sen2cloudless results. | np.int8 |

| | sen2cor | 1 | - | Scene Classification band. Obtained from SEN2 level 2A. | np.int8 |

| | cd_fcnn_rgbi | 1 | - | López-Puigdollers et al. results based on RGBI bands. | np.int8 |

| |cd_fcnn_rgbi_swir| 1 | - | López-Puigdollers et al. results based on RGBISWIR bands. | np.int8 |

| | kappamask_L1C | 1 | - | KappaMask results using SEN2 level L1C as input. | np.int8 |

| | kappamask_L2A | 1 | - | KappaMask results using SEN2 level L2A as input. | np.int8 |

| | manual_hq | 1 | | High-quality pixel-wise manual annotation. | np.int8 |

| | manual_sc | 1 | | Scribble manual annotation. | np.int8 |

<br>

### **Label Description**

| **CloudSEN12** | **KappaMask** | **Sen2Cor** | **Fmask** | **s2cloudless** | **CD-FCNN** | **QA60** |

|------------------|------------------|-------------------------|-----------------|-----------------------|---------------------|--------------------|

| 0 Clear | 1 Clear | 4 Vegetation | 0 Clear land | 0 Clear | 0 Clear | 0 Clear |

| | | 2 Dark area pixels | 1 Clear water | | | |

| | | 5 Bare Soils | 3 Snow | | | |

| | | 6 Water | | | | |

| | | 11 Snow | | | | |

| 1 Thick cloud | 4 Cloud | 8 Cloud medium probability | 4 Cloud | 1 Cloud | 1 Cloud | 1024 Opaque cloud |

| | | 9 Cloud high probability | | | | |

| 2 Thin cloud | 3 Semi-transparent cloud | 10 Thin cirrus | | | | 2048 Cirrus cloud |

| 3 Cloud shadow | 2 Cloud shadow | 3 Cloud shadows | 2 Cloud shadow | | | |

<br>

### **np.memmap shape information**

<br>

**train shape: (8785, 512, 512)**

<br>

**val shape: (560, 512, 512)**

<br>

**test shape: (655, 512, 512)**

<br>

### **Example**

<br>

```py

import numpy as np

# Read high-quality train

train_shape = (8785, 512, 512)

B4X = np.memmap('train/L1C_B04.dat', dtype='int16', mode='r', shape=train_shape)

y = np.memmap('train/manual_hq.dat', dtype='int8', mode='r', shape=train_shape)

# Read high-quality val

val_shape = (560, 512, 512)

B4X = np.memmap('val/L1C_B04.dat', dtype='int16', mode='r', shape=val_shape)

y = np.memmap('val/manual_hq.dat', dtype='int8', mode='r', shape=val_shape)

# Read high-quality test

test_shape = (655, 512, 512)

B4X = np.memmap('test/L1C_B04.dat', dtype='int16', mode='r', shape=test_shape)

y = np.memmap('test/manual_hq.dat', dtype='int8', mode='r', shape=test_shape)

```

<br>

This work has been partially supported by the Spanish Ministry of Science and Innovation project

PID2019-109026RB-I00 (MINECO-ERDF) and the Austrian Space Applications Programme within the

**[SemantiX project](https://austria-in-space.at/en/projects/2019/semantix.php)**.

|

Nerfgun3/winter_style | ---

language:

- en

tags:

- stable-diffusion

- text-to-image

license: creativeml-openrail-m

inference: false

---

# Winter Style Embedding / Textual Inversion

## Usage

To use this embedding you have to download the file aswell as drop it into the "\stable-diffusion-webui\embeddings" folder

To use it in a prompt: ```"art by winter_style"```

If it is to strong just add [] around it.

Trained until 10000 steps

I added a 7.5k steps trained ver in the files aswell. If you want to use that version, remove the ```"-7500"``` from the file name and replace the 10k steps ver in your folder

Have fun :)

## Example Pictures

<table>

<tr>

<td><img src=https://i.imgur.com/oVqfSZ2.png width=100% height=100%/></td>

<td><img src=https://i.imgur.com/p0cslGJ.png width=100% height=100%/></td>

<td><img src=https://i.imgur.com/LJmGvsc.png width=100% height=100%/></td>

<td><img src=https://i.imgur.com/T4I0gFQ.png width=100% height=100%/></td>

<td><img src=https://i.imgur.com/hzfmsA8.png width=100% height=100%/></td>

</tr>

</table>

## License

This embedding is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage.

The CreativeML OpenRAIL License specifies:

1. You can't use the embedding to deliberately produce nor share illegal or harmful outputs or content

2. The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

3. You may re-distribute the weights and use the embedding commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully)

[Please read the full license here](https://huggingface.co/spaces/CompVis/stable-diffusion-license) |

nlhappy/CLUE-NER | ---

license: mit

---

|

paperplaneflyr/recepies_reduced_3.0_1K | ---

license: mit

---

|

Junetheriver/OpsEval | ---

language:

- en

- zh

pretty_name: OpsEval

tags:

- AIOps

- LLM

- Operations

- Benchmark

- Dataset

license: apache-2.0

task_categories:

- question-answering

size_categories:

- 1K<n<10K

---

# OpsEval Dataset

[Website](https://opseval.cstcloud.cn/content/home) | [Reporting Issues](https://github.com/NetManAIOps/OpsEval-Datasets/issues/new)

## Introduction

The OpsEval dataset represents a pioneering effort in the evaluation of Artificial Intelligence for IT Operations (AIOps), focusing on the application of Large Language Models (LLMs) within this domain. In an era where IT operations are increasingly reliant on AI technologies for automation and efficiency, understanding the performance of LLMs in operational tasks becomes crucial. OpsEval offers a comprehensive task-oriented benchmark specifically designed for assessing LLMs in various crucial IT Ops scenarios.

This dataset is motivated by the emerging trend of utilizing AI in automated IT operations, as predicted by Gartner, and the remarkable capabilities exhibited by LLMs in NLP-related tasks. OpsEval aims to bridge the gap in evaluating these models' performance in AIOps tasks, including root cause analysis of failures, generation of operations and maintenance scripts, and summarizing alert information.

## Highlights

- **Comprehensive Evaluation**: OpsEval includes 7184 multi-choice questions and 1736 question-answering (QA) formats, available in both English and Chinese, making it one of the most extensive benchmarks in the AIOps domain.

- **Task-Oriented Design**: The benchmark is tailored to assess LLMs' proficiency across different crucial scenarios and ability levels, offering a nuanced view of model performance in operational contexts.

- **Expert-Reviewed**: To ensure the reliability of our evaluation, dozens of domain experts have manually reviewed our questions, providing a solid foundation for the benchmark's credibility.

- **Open-Sourced and Dynamic Leaderboard**: We have open-sourced 20% of the test QA to facilitate preliminary evaluations by researchers. An online leaderboard, updated in real-time, captures the performance of emerging LLMs, ensuring the benchmark remains current and relevant.

## Dataset Structure

Here is a brief overview of the dataset structure:

- `/dev/` - Examples for few-shot in-context learning.

- `/test/` - Test sets of OpsEval.

<!-- - `/metadata/` - Contains metadata related to the dataset. -->

## Dataset Informations

| Dataset Name | Open-Sourced Size |

| ------------- | ------------- |

| Wired Network | 1563 |

| Oracle Database | 395 |

| 5G Communication | 349 |

| Log Analysis | 310 |

<!-- ## Usage

To use the OpsEval dataset in your research or project, please follow these steps:

1. Clone this repository to your local machine or server.

2. [Insert specific steps if needed, like environment setup, dependencies installation].

3. Explore the dataset directories and refer to the `metadata` directory for understanding the dataset schema and organization.

4. [Optional: include example code or scripts for common operations on the dataset]. -->

<!-- ## License

[Specify the license under which the OpsEval dataset is distributed, e.g., MIT, GPL, Apache 2.0]

## Acknowledgments

We would like to thank [Acknowledgments to contributors, institutions, funding bodies, etc.]

For any questions or further information, please contact [Insert contact information]. -->

## Website

For evaluation results on the full OpsEval dataset, please checkout our official website [OpsEval Leaderboard](https://opseval.cstcloud.cn/content/home).

## Paper

For a detailed description of the dataset, its structure, and its applications, please refer to our paper available at: [OpsEval: A Comprehensive IT Operations Benchmark Suite for Large Language Models](https://arxiv.org/abs/2310.07637)

### Citation

Please use the following citation when referencing the OpsEval dataset in your research:

```

@misc{liu2024opseval,

title={OpsEval: A Comprehensive IT Operations Benchmark Suite for Large Language Models},