datasetId stringlengths 2 117 | card stringlengths 19 1.01M |

|---|---|

jijivski/metaculus_binary | ---

license: apache-2.0

---

|

dnagpt/human_genome_GCF_009914755.1 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 1032653672

num_examples: 989132

- name: test

num_bytes: 10431648

num_examples: 9992

download_size: 472762984

dataset_size: 1043085320

license: apache-2.0

---

# Dataset Card for "human_genome_GCF_009914755.1"

how to build this data:

human full genome data from:

https://www.ncbi.nlm.nih.gov/datasets/genome/GCF_009914755.1/

Preprocess:

1 download data use ncbi data set tools:

curl -o datasets 'https://ftp.ncbi.nlm.nih.gov/pub/datasets/command-line/LATEST/linux-amd64/datasets'

chmod +x datasets

./datasets download genome accession GCF_000001405.40 --filename genomes/human_genome_dataset.zip

then move the gene data to human2.fra

2 write the origin data into pure dna data, one line 1000 bp/letters:

```

filename = "human2.fna"

data_file = open(filename, 'r')

out_filename = "human2.fna.line"

out_file = open(out_filename, 'w')

max_line_len = 1000 #1000个字母一行数据

text = ""

for line in data_file:

line = line.strip()

if line.find(">") != 0: #去掉标题行

line = line.upper()

line = line.replace(" ","").replace("N","") #去掉N和空格

text = text + line

if len(text) > max_line_len:

text = text.strip()

out_file.write(text+"\n")

text = "" #clear text

#last line

if len(text) <= max_line_len:

pass #不要了

3 split data into train and valid dataset:

```

filename = "human2.fna.line"

data_file = open(filename, 'r')

out_train_filename = "human2.fna.line.train"

out_train_file = open(out_train_filename, 'w')

out_valid_filename = "human2.fna.line.valid"

out_valid_file = open(out_valid_filename, 'w')

line_num = 0

select_line_num = 0

for line in data_file:

if 0==line_num%3: #取1/3数据

if select_line_num%100: #取1%做校验

out_train_file.write(line)

else:

out_valid_file.write(line)

select_line_num = select_line_num + 1

line_num = line_num + 1

4 the we could use it in local, or push it to hub:

```

from datasets import load_dataset

data_files = {"train": "human2.fna.line.train", "test": "human2.fna.line.valid"}

train_dataset = load_dataset("text", data_files=data_files)

train_dataset.push_to_hub("dnagpt/human_genome_GCF_009914755.1",token="hf_*******")

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

HuggingFaceH4/testing_codealpaca_small | ---

dataset_info:

features:

- name: prompt

dtype: string

- name: completion

dtype: string

splits:

- name: train

num_bytes: 31503

num_examples: 100

- name: test

num_bytes: 29802

num_examples: 100

download_size: 44006

dataset_size: 61305

---

# Dataset Card for "testing_codealpaca_small"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

TrainingDataPro/ocr-generated-machine-readable-zone-mrz-text-detection | ---

license: cc-by-nc-nd-4.0

task_categories:

- image-to-text

- object-detection

language:

- en

tags:

- code

- legal

---

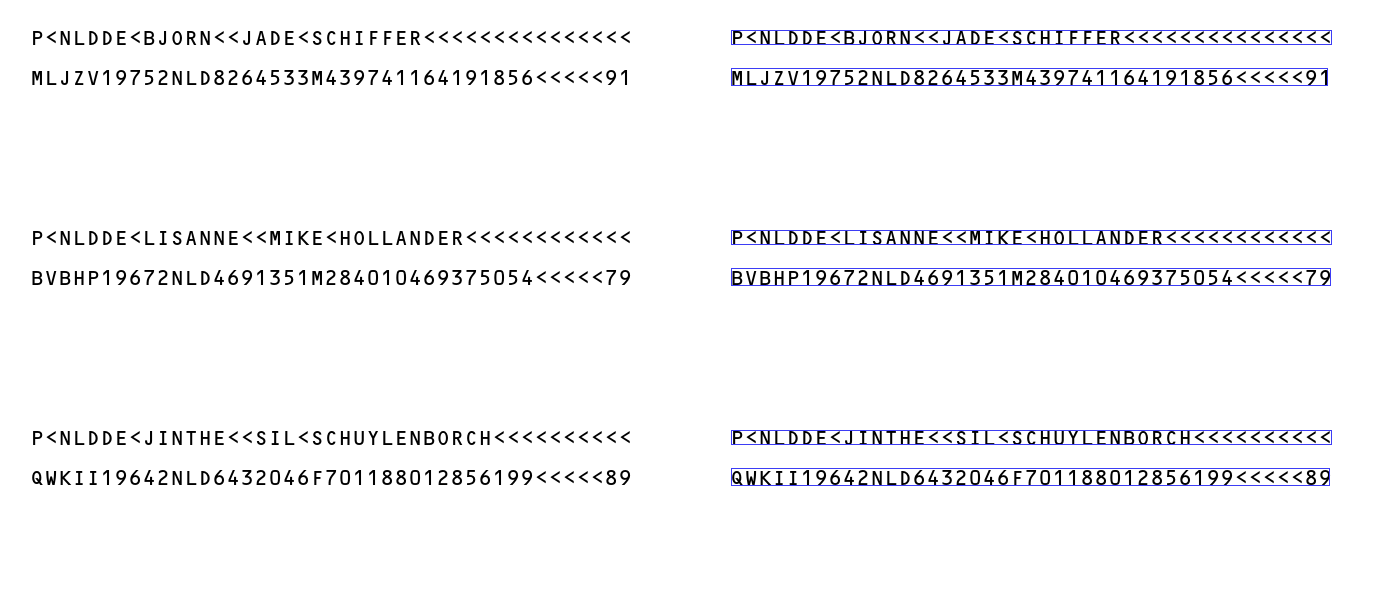

# OCR GENERATED Machine-Readable Zone (MRZ) Text Detection

The dataset includes a collection of **GENERATED** photos containing Machine Readable Zones (MRZ) commonly found on identification documents such as passports, visas, and ID cards. Each photo in the dataset is accompanied by **text detection** and **Optical Character Recognition (OCR)** results.

This dataset is useful for developing applications related to *document verification, identity authentication, or automated data extraction from identification documents*.

### The dataset is solely for informational or educational purposes and should not be used for any fraudulent or deceptive activities.

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market/ocr-machine-readable-zone-mrz?utm_source=huggingface&utm_medium=cpc&utm_campaign=ocr-generated-machine-readable-zone-mrz-text-detection) to discuss your requirements, learn about the price and buy the dataset.

# Dataset structure

- **images** - contains of original images of documents

- **boxes** - includes bounding box labeling for the original images

- **annotations.xml** - contains coordinates of the bounding boxes and detected text, created for the original photo

# Data Format

Each image from `images` folder is accompanied by an XML-annotation in the `annotations.xml` file indicating the coordinates of the bounding boxes and detected text . For each point, the x and y coordinates are provided.

# Example of XML file structure

.png?generation=1694514503035476&alt=media)

# Text Detection in the Documents might be made in accordance with your requirements.

## [**TrainingData**](https://trainingdata.pro/data-market/ocr-machine-readable-zone-mrz?utm_source=huggingface&utm_medium=cpc&utm_campaign=ocr-generated-machine-readable-zone-mrz-text-detection) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** |

tinkerface/Minerb | ---

license: apache-2.0

---

|

lowem1/ocr-bert_cms-vocab | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 10038136

num_examples: 925113

download_size: 1633020

dataset_size: 10038136

---

# Dataset Card for "ocr-bert_cms-vocab"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

thmk/java_10 | ---

dataset_info:

features:

- name: code

dtype: string

- name: repo_name

dtype: string

- name: path

dtype: string

- name: language

dtype: string

- name: license

dtype: string

- name: size

dtype: int64

splits:

- name: train

num_bytes: 605825109

num_examples: 100000

download_size: 195428485

dataset_size: 605825109

---

# Dataset Card for "java_10"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

harpomaxx/jurisgpt | ---

license: openrail

configs:

- config_name: default

data_files:

- split: train

path: "train_set.json"

- split: test

path: "test_set.json"

---

### Dataset Description

#### Title

**Legal Texts and Summaries Dataset**

#### Description

This dataset is a collection of legal documents and their associated summaries, subjects (materia), and keywords (voces). It is primarily focused on the field of labor law, with particular emphasis on legal proceedings, labor rights, and workers' compensation laws in Argentina.

#### Structure

Each entry in the dataset contains the following fields:

- `sumario`: A unique identifier for the legal document.

- `materia`: The subject of the legal document, in this case, "DERECHO DEL TRABAJO" (Labor Law).

- `voces`: Keywords or phrases summarizing the main topics of the document, such as "FALLO PLENARIO", "DERECHO LABORAL", "LEY SOBRE RIESGOS DEL TRABAJO", etc.

- `sentencia`: The text of the legal document, which includes references to laws, legal precedents, and detailed analysis. The text was summarized using Claude v2 LLM.

- 'texto': A legal summary.

#### Applications

This dataset is valuable for legal research, especially in the domain of labor law. It can be used for training models in legal text summarization, keyword extraction, and legal document classification. Additionally, it's useful for academic research in legal studies, especially regarding labor law and workers' compensation in Argentina.

#### Format

The dataset is provided in JSON format, ensuring easy integration with most data processing and machine learning tools.

#### Language

The content is predominantly in Spanish, reflecting its focus on Argentine law.

#### Source and Authenticity

The data is compiled from official legal documents and summaries from Argentina. It's important for users to verify the authenticity and current relevance of the legal texts as they might have undergone revisions or may not reflect the latest legal standings.

|

irds/lotte_pooled_dev_search | ---

pretty_name: '`lotte/pooled/dev/search`'

viewer: false

source_datasets: ['irds/lotte_pooled_dev']

task_categories:

- text-retrieval

---

# Dataset Card for `lotte/pooled/dev/search`

The `lotte/pooled/dev/search` dataset, provided by the [ir-datasets](https://ir-datasets.com/) package.

For more information about the dataset, see the [documentation](https://ir-datasets.com/lotte#lotte/pooled/dev/search).

# Data

This dataset provides:

- `queries` (i.e., topics); count=2,931

- `qrels`: (relevance assessments); count=8,573

- For `docs`, use [`irds/lotte_pooled_dev`](https://huggingface.co/datasets/irds/lotte_pooled_dev)

## Usage

```python

from datasets import load_dataset

queries = load_dataset('irds/lotte_pooled_dev_search', 'queries')

for record in queries:

record # {'query_id': ..., 'text': ...}

qrels = load_dataset('irds/lotte_pooled_dev_search', 'qrels')

for record in qrels:

record # {'query_id': ..., 'doc_id': ..., 'relevance': ..., 'iteration': ...}

```

Note that calling `load_dataset` will download the dataset (or provide access instructions when it's not public) and make a copy of the

data in 🤗 Dataset format.

## Citation Information

```

@article{Santhanam2021ColBERTv2,

title = "ColBERTv2: Effective and Efficient Retrieval via Lightweight Late Interaction",

author = "Keshav Santhanam and Omar Khattab and Jon Saad-Falcon and Christopher Potts and Matei Zaharia",

journal= "arXiv preprint arXiv:2112.01488",

year = "2021",

url = "https://arxiv.org/abs/2112.01488"

}

```

|

jmc255/aphantasia_drawing_dataset | ---

language:

- en

tags:

- medical

- psychology

pretty_name: Aphantasic Drawing Dataset

---

# Aphantasic Drawing Dataset

<!-- Provide a quick summary of the dataset. -->

This dataset contains data from an online memory drawing experiment conducted with individuals with aphantasia and normal imagery.

### Dataset Description

<!-- Provide a longer summary of what this dataset is. -->

This dataset comes from the Brain Bridge Lab from the University of Chicago.

It is from an online memory drawing experiment with 61 individuals with aphantasia

and 52 individuals with normal imagery. In the experiment participants 1) studied 3

separate scene photographs presented one after the other 2) drew them from memory, 3)

completed a recognition task 4) copied the images while viewing them 5) filled out a VVIQ

and OSIQ questionnaire and also demographics questions. The scenes the participants were asked

to draw were of a kitchen, bedroom, and living room. The control (normal imagery) and

treatment group (aphantasia) were determined by VVIQ scores. Those with a score >=40 were control

and those with scores <=25 were in the aphantasia group. For more info on the experiment and design

follow the paper linked below.

The original repository for the data from the experiment

was made available on the OSF website linked below.

It was created July 31, 2020 and last updated September 27, 2023.

- **Curated by:** Wilma Bainbridge, Zoe Pounder, Alison Eardley, Chris Baker

### Dataset Sources

<!-- Provide the basic links for the dataset. -->

- **Original Repository:** https://osf.io/cahyd/

- **Paper:** https://doi.org/10.1016/j.cortex.2020.11.014

## Dataset Structure

<!-- This section provides a description of the dataset fields, and additional information about the dataset structure such as criteria used to create the splits, relationships between data points, etc. -->

Example of the structure of the data:

```

{

"subject_id": 111,

"treatment": "aphantasia",

"demographics": {

"country": "United States",

"age": 56,

"gender": "male",

"occupation": "sales/music",

"art_ability": 3,

"art_experience": "None",

"device": "desktop",

"input": "mouse",

"difficult": "memorydrawing",

"diff_explanation": "drawing i have little patience for i can do it but i will spend hours editing (never got the hang of digital drawing on screens",

"vviq_score": 16,

"osiq_score": 61

},

"drawings": {

"kitchen": {

"perception": <PIL.PngImagePlugin.PngImageFile image mode=RGB size=500x500>,

"memory": <PIL.PngImagePlugin.PngImageFile image mode=RGB size=500x500>

},

"livingroom": {

"perception": <PIL.PngImagePlugin.PngImageFile image mode=RGB size=500x500>,

"memory": <PIL.PngImagePlugin.PngImageFile image mode=RGB size=500x500>

},

"bedroom": {

"perception": <PIL.PngImagePlugin.PngImageFile image mode=RGB size=500x500>,

"memory": <PIL.PngImagePlugin.PngImageFile image mode=RGB size=500x500>

}

},

"image": {

"kitchen": <PIL.PngImagePlugin.PngImageFile image mode=RGB size=500x500>,

"livingroom": <PIL.PngImagePlugin.PngImageFile image mode=RGB size=500x500>,

"bedroom": <PIL.PngImagePlugin.PngImageFile image mode=RGB size=500x500>

}

}

```

## Data Fields

- subject_id: Subject ID

- treatment: group for the experiment based on VVIQ score; "aphantasia"(VVIQ<=25) or "control"(VVIQ>=40)

- country: Participants Country

- age: Age

- gender: Gender

- occupation: Occupation

- art_ability: Self-Report rating of art ability from 1-5

- art_experience: Description of participant's art experience

- device: Device used for the experiment (desktop or laptop)

- input: What participant used to draw (mouse, trackpad, etc.)

- difficult: Part of the experiment participant though was most challenging

- diff_explanation: Explanation of why they thought the part specified in "difficult" was hard

- vviq_score: VVIQ test total points (16 questions from 0-5)

- osiq_score: OSIQ test total points (30 questions from 0-5)

- perception drawings: Participant drawings of kitchen, living room, and bedroom from perception part of experiment

- memory drawings: Participant drawings of kitchen, living room, and bedroom from memory part of experiment

- image: Actual images (stimuli) participants had to draw (kitchen, living room, bedroom)

## Dataset Creation

### Source Data

<!-- This section describes the source data (e.g. news text and headlines, social media posts, translated sentences, ...). -->

The orignal repository for the data included a folder for the participant's drawings (1 for aphantasia and 1 for control),

a folder of the scene images they had to draw (stimuli), and a excel file with the demographic survey and test

score data.

The excel file had 117 rows and the 2 folders for aphantasia and control had 115 subject folders total.

Subject 168 did not fill out the demographic information or take the VVIQ and OSIQ test and

subjects 160, 161, and 162 did not have any drawings. These 4 were removed during the data

cleaning process and the final JSON has 114 participants.

#### Data Collection and Processing

<!-- This section describes the data collection and processing process such as data selection criteria, filtering and normalization methods, tools and libraries used, etc. -->

The original excel file did not have total score for the VVIQ and OSIQ tests, but instead

individual points for each questions. The total score was calculated from these numbers.

The orignal drawing folders for each participant had typically 18 files. There were 6 files

of interest: The 3 drawings from the memory part of the experiment, and the 3 drawings from

the perception part of the experiment. They had file names that were easy to distinguish:

For memory: sub{subid}-mem{1,2,or 3}-{room}.png where the room was either livingroom, bedroom, or kitchen

and 1,2, or 3 depending on the order in which the participant did the drawings.

For perception: sub{subid}-pic{1,2,or 3}-{room}.png

These files were matched with the excel file rows by subject ID,

so each participant typically had 6 drawings total (some participants

did not label what they were drawing and the file name was not in the normal

format and therefore did not have all their drawings).

The actual image folder had 3 images (kitchen, living room, bedroom) that

were replicated to go with each of the 114 participants.

The final format of the data is linked above.

#### Example Analysis

https://colab.research.google.com/drive/1FFnVkaw-jr3ygEGDK41YyAyKlqvTDvkg?usp=sharing

#### Data Producers

<!-- This section describes the people or systems who originally created the data. It should also include self-reported demographic or identity information for the source data creators if this information is available. -->

The contributors of the original dataset and authors of the paper are:

- Wilma Bainbridge (University of Chicago Department of Psychology & Laboratory of Brain and Cognition, National Institute of Mental Health, Bethesda, MD, USA),

- Zoë Pounder (Department of Psychology, University of Westminster, London, UK)

- Alison Eardley (Department of Psychology, University of Westminster, London, UK)

- Chris Baker (Laboratory of Brain and Cognition, National Institute of Mental Health, Bethesda, MD, USA)

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users should be made aware of the risks, biases and limitations of the dataset. More information needed for further recommendations.

## Citation

<!-- If there is a paper or blog post introducing the dataset, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

@misc{Bainbridge_Pounder_Eardley_Baker_2023,

title={Quantifying Aphantasia through drawing: Those without visual imagery show deficits in object but not spatial memory},

url={osf.io/cahyd},

publisher={OSF},

author={Bainbridge, Wilma A and Pounder, Zoë and Eardley, Alison and Baker, Chris I},

year={2023},

month={Sep}

}

**APA:**

Bainbridge, W. A., Pounder, Z., Eardley, A., & Baker, C. I. (2023, September 27). Quantifying Aphantasia through drawing: Those without visual imagery show deficits in object but not spatial memory. Retrieved from osf.io/cahyd

## Glossary

<!-- If relevant, include terms and calculations in this section that can help readers understand the dataset or dataset card. -->

Aphantasia: The inability to create visual imagery, keeping you from visualizing things in your mind.

|

sabuhi1997/fine-tune-hebrew-dataset | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

dataset_info:

features:

- name: audio

dtype: audio

- name: label

dtype:

class_label:

names:

'0': test

'1': train

'2': validation

splits:

- name: train

num_bytes: 5714802.0

num_examples: 8

- name: validation

num_bytes: 1759819.0

num_examples: 3

- name: test

num_bytes: 1625529.0

num_examples: 4

download_size: 7719156

dataset_size: 9100150.0

---

# Dataset Card for "fine-tune-hebrew-dataset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

CyberHarem/comet_azurlane | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of comet/コメット/彗星 (Azur Lane)

This is the dataset of comet/コメット/彗星 (Azur Lane), containing 34 images and their tags.

The core tags of this character are `green_hair, long_hair, red_eyes, twintails, ahoge, hat, bangs, beret, breasts, hair_between_eyes, hair_ornament, white_headwear, ribbon, very_long_hair`, which are pruned in this dataset.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).

## List of Packages

| Name | Images | Size | Download | Type | Description |

|:-----------------|---------:|:----------|:----------------------------------------------------------------------------------------------------------------|:-----------|:---------------------------------------------------------------------|

| raw | 34 | 41.48 MiB | [Download](https://huggingface.co/datasets/CyberHarem/comet_azurlane/resolve/main/dataset-raw.zip) | Waifuc-Raw | Raw data with meta information (min edge aligned to 1400 if larger). |

| 800 | 34 | 25.11 MiB | [Download](https://huggingface.co/datasets/CyberHarem/comet_azurlane/resolve/main/dataset-800.zip) | IMG+TXT | dataset with the shorter side not exceeding 800 pixels. |

| stage3-p480-800 | 77 | 50.55 MiB | [Download](https://huggingface.co/datasets/CyberHarem/comet_azurlane/resolve/main/dataset-stage3-p480-800.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

| 1200 | 34 | 36.64 MiB | [Download](https://huggingface.co/datasets/CyberHarem/comet_azurlane/resolve/main/dataset-1200.zip) | IMG+TXT | dataset with the shorter side not exceeding 1200 pixels. |

| stage3-p480-1200 | 77 | 67.95 MiB | [Download](https://huggingface.co/datasets/CyberHarem/comet_azurlane/resolve/main/dataset-stage3-p480-1200.zip) | IMG+TXT | 3-stage cropped dataset with the area not less than 480x480 pixels. |

### Load Raw Dataset with Waifuc

We provide raw dataset (including tagged images) for [waifuc](https://deepghs.github.io/waifuc/main/tutorials/installation/index.html) loading. If you need this, just run the following code

```python

import os

import zipfile

from huggingface_hub import hf_hub_download

from waifuc.source import LocalSource

# download raw archive file

zip_file = hf_hub_download(

repo_id='CyberHarem/comet_azurlane',

repo_type='dataset',

filename='dataset-raw.zip',

)

# extract files to your directory

dataset_dir = 'dataset_dir'

os.makedirs(dataset_dir, exist_ok=True)

with zipfile.ZipFile(zip_file, 'r') as zf:

zf.extractall(dataset_dir)

# load the dataset with waifuc

source = LocalSource(dataset_dir)

for item in source:

print(item.image, item.meta['filename'], item.meta['tags'])

```

## List of Clusters

List of tag clustering result, maybe some outfits can be mined here.

### Raw Text Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | Tags |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| 0 | 17 |  |  |  |  |  | smile, 1girl, solo, open_mouth, star_(symbol), blush, choker, looking_at_viewer, puffy_sleeves, white_thighhighs, blue_skirt, plaid_skirt, white_shirt, long_sleeves, collared_shirt, one_eye_closed, ;d, hair_ribbon, pleated_skirt, retrofit_(azur_lane), white_background |

| 1 | 5 |  |  |  |  |  | 2girls, smile, 1girl, blush, looking_at_viewer, open_mouth, solo_focus, blonde_hair, thighhighs, collarbone, one_eye_closed, skirt, standing |

### Table Version

| # | Samples | Img-1 | Img-2 | Img-3 | Img-4 | Img-5 | smile | 1girl | solo | open_mouth | star_(symbol) | blush | choker | looking_at_viewer | puffy_sleeves | white_thighhighs | blue_skirt | plaid_skirt | white_shirt | long_sleeves | collared_shirt | one_eye_closed | ;d | hair_ribbon | pleated_skirt | retrofit_(azur_lane) | white_background | 2girls | solo_focus | blonde_hair | thighhighs | collarbone | skirt | standing |

|----:|----------:|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------------------------------|:--------|:--------|:-------|:-------------|:----------------|:--------|:---------|:--------------------|:----------------|:-------------------|:-------------|:--------------|:--------------|:---------------|:-----------------|:-----------------|:-----|:--------------|:----------------|:-----------------------|:-------------------|:---------|:-------------|:--------------|:-------------|:-------------|:--------|:-----------|

| 0 | 17 |  |  |  |  |  | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | X | | | | | | | |

| 1 | 5 |  |  |  |  |  | X | X | | X | | X | | X | | | | | | | | X | | | | | | X | X | X | X | X | X | X |

|

tessiw/german_OpenOrca2 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: id

dtype: string

- name: system_prompt

dtype: string

- name: question

dtype: string

- name: response

dtype: string

splits:

- name: train

num_bytes: 453043119

num_examples: 250000

download_size: 257694182

dataset_size: 453043119

---

# Dataset Card for "german_OpenOrca2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

zaydzuhri/the_pile_tokenized_5percent | ---

dataset_info:

features:

- name: input_ids

sequence: int32

- name: attention_mask

sequence: int8

splits:

- name: train

num_bytes: 46366029035

num_examples: 6000000

download_size: 16007372812

dataset_size: 46366029035

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

Eathan/labeled-ortho-training | ---

license: unknown

---

|

junyinc/NINJAL-Ainu-Folklore | ---

license: cc-by-sa-4.0

---

# Dataset Card for NINJAL Ainu Folklore

## Dataset Description

- **Original source** [A Glossed Audio Corpus of Ainu folklore](https://ainu.ninjal.ac.jp/folklore/en/)

### Dataset Summary

Ainu is an endangered (nearly extinct) language spoken in Hokkaido, Japan. This dataset contains recordings of 38 traditional Ainu folktales by two Ainu speakers (Mrs. Kimi Kimura and Mrs. Ito Oda), along with their transcriptions (in Latin script), English translations, and underlying and surface gloss forms in English. (For transcriptions in Katakana and translation/gloss in Japanese, please see the original corpus webpage.) In total, there are over 8 hours (~7.7k sentences) of transcribed and glossed speech.

### Annotations

The glosses in this dataset are the original glosses from the Glossed Audio Corpus, with minor changes to fit the Generalized Glossing Format (e.g. multi-word translations of individual morphemes are now separated by underscores instead of periods). Uncertainty in interpretation by the original annotators is indicated with a question mark (?). Additional notes on the Latin transcriptions in the corpus can be found on the original corpus webpage (under the "Structure, Transcriptions, and Glosses" tab).

## Additional Information

### Limitations

This dataset has a small number of speakers and a limited domain, and models trained on this dataset might not be suitable for general purpose applications. The audio data contain varying degrees of noise which makes this dataset a poor fit for training TTS models.

### Acknowledgement

We would like to thank the original authors of the Glossed Audio Corpus of Ainu Folklore for their dedication and care in compiling these resources, and kindly ask anyone who uses this dataset to cite them in their work.

### License

Attribution-ShareAlike 4.0 International ([cc-by-sa-4.0](https://creativecommons.org/licenses/by-sa/4.0/))

### Original Source

```

@misc{ninjal-ainu-folklore,

title={A Glossed Audio Corpus of Ainu Folklore},

url={https://ainu.ninjal.ac.jp/folklore/},

author={Nakagawa, Hiroshi and Bugaeva, Anna and Kobayashi, Miki and Yoshikawa, Yoshimi},

publisher={The National Institute for Japanese Language and Linguistics ({NINJAL})},

date={2016--2021}

}

``` |

liuyanchen1015/MULTI_VALUE_mnli_shadow_pronouns | ---

dataset_info:

features:

- name: premise

dtype: string

- name: hypothesis

dtype: string

- name: label

dtype: int64

- name: idx

dtype: int64

- name: score

dtype: int64

splits:

- name: dev_matched

num_bytes: 200969

num_examples: 714

- name: dev_mismatched

num_bytes: 242835

num_examples: 909

- name: test_matched

num_bytes: 235033

num_examples: 840

- name: test_mismatched

num_bytes: 221353

num_examples: 866

- name: train

num_bytes: 9073404

num_examples: 33730

download_size: 6082221

dataset_size: 9973594

---

# Dataset Card for "MULTI_VALUE_mnli_shadow_pronouns"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

HarryAJMK418/Joyner | ---

license: openrail

---

|

pradeep239/plain_philp_250PDFs | ---

license: mit

dataset_info:

features:

- name: image

dtype: image

- name: ground_truth

dtype: string

splits:

- name: train

num_bytes: 620972376.064

num_examples: 1272

- name: validation

num_bytes: 75497524.0

num_examples: 150

- name: test

num_bytes: 37954461.0

num_examples: 75

download_size: 542872519

dataset_size: 734424361.064

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

---

|

one-sec-cv12/chunk_26 | ---

dataset_info:

features:

- name: audio

dtype:

audio:

sampling_rate: 16000

splits:

- name: train

num_bytes: 16222987440.875

num_examples: 168905

download_size: 14476054976

dataset_size: 16222987440.875

---

# Dataset Card for "chunk_26"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

sunjoong/adit-testdata-01 | ---

license: unknown

---

|

deepapaikar/Katzbot_sentences_pairs | ---

license: apache-2.0

---

|

open-llm-leaderboard/details_jondurbin__airoboros-l2-13b-2.2.1 | ---

pretty_name: Evaluation run of jondurbin/airoboros-l2-13b-2.2.1

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [jondurbin/airoboros-l2-13b-2.2.1](https://huggingface.co/jondurbin/airoboros-l2-13b-2.2.1)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 64 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 2 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the agregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_jondurbin__airoboros-l2-13b-2.2.1\"\

,\n\t\"harness_winogrande_5\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\n\

These are the [latest results from run 2023-10-28T06:36:10.303707](https://huggingface.co/datasets/open-llm-leaderboard/details_jondurbin__airoboros-l2-13b-2.2.1/blob/main/results_2023-10-28T06-36-10.303707.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"em\": 0.04467281879194631,\n\

\ \"em_stderr\": 0.0021156186992613577,\n \"f1\": 0.10597210570469756,\n\

\ \"f1_stderr\": 0.0024082864478827287,\n \"acc\": 0.438030054339078,\n\

\ \"acc_stderr\": 0.010411282060464109\n },\n \"harness|drop|3\": {\n\

\ \"em\": 0.04467281879194631,\n \"em_stderr\": 0.0021156186992613577,\n\

\ \"f1\": 0.10597210570469756,\n \"f1_stderr\": 0.0024082864478827287\n\

\ },\n \"harness|gsm8k|5\": {\n \"acc\": 0.11599696739954511,\n \

\ \"acc_stderr\": 0.008820485491442476\n },\n \"harness|winogrande|5\"\

: {\n \"acc\": 0.7600631412786109,\n \"acc_stderr\": 0.01200207862948574\n\

\ }\n}\n```"

repo_url: https://huggingface.co/jondurbin/airoboros-l2-13b-2.2.1

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_arc_challenge_25

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|arc:challenge|25_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|arc:challenge|25_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_drop_3

data_files:

- split: 2023_10_28T06_36_10.303707

path:

- '**/details_harness|drop|3_2023-10-28T06-36-10.303707.parquet'

- split: latest

path:

- '**/details_harness|drop|3_2023-10-28T06-36-10.303707.parquet'

- config_name: harness_gsm8k_5

data_files:

- split: 2023_10_28T06_36_10.303707

path:

- '**/details_harness|gsm8k|5_2023-10-28T06-36-10.303707.parquet'

- split: latest

path:

- '**/details_harness|gsm8k|5_2023-10-28T06-36-10.303707.parquet'

- config_name: harness_hellaswag_10

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hellaswag|10_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hellaswag|10_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-anatomy|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-astronomy|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-business_ethics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-college_biology|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-college_medicine|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-college_physics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-computer_security|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-econometrics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-formal_logic|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-global_facts|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-human_aging|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-international_law|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-machine_learning|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-management|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-marketing|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-nutrition|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-philosophy|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-prehistory|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-professional_law|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-public_relations|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-security_studies|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-sociology|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-virology|5_2023-10-01T13-47-59.401032.parquet'

- '**/details_harness|hendrycksTest-world_religions|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_abstract_algebra_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-abstract_algebra|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_anatomy_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-anatomy|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_astronomy_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-astronomy|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_business_ethics_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-business_ethics|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_clinical_knowledge_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-clinical_knowledge|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_college_biology_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_biology|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_college_chemistry_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_chemistry|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_college_computer_science_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_computer_science|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_college_mathematics_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_mathematics|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_college_medicine_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_medicine|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_college_physics_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-college_physics|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_computer_security_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-computer_security|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_conceptual_physics_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-conceptual_physics|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_econometrics_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-econometrics|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_electrical_engineering_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-electrical_engineering|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_elementary_mathematics_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-elementary_mathematics|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_formal_logic_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-formal_logic|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_global_facts_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-global_facts|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_biology_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_biology|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_chemistry_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_chemistry|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_computer_science_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_computer_science|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_european_history_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_european_history|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_geography_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_geography|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_government_and_politics_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_government_and_politics|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_macroeconomics_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_macroeconomics|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_mathematics_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_mathematics|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_microeconomics_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_microeconomics|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_physics_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_physics|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_psychology_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_psychology|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_statistics_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_statistics|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_us_history_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_us_history|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_high_school_world_history_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-high_school_world_history|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_human_aging_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-human_aging|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_aging|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_human_sexuality_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-human_sexuality|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_international_law_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-international_law|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-international_law|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_jurisprudence_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-jurisprudence|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_logical_fallacies_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-logical_fallacies|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_machine_learning_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-machine_learning|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_management_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-management|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-management|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_marketing_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-marketing|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-marketing|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_medical_genetics_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-medical_genetics|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_miscellaneous_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-miscellaneous|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_moral_disputes_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_disputes|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_moral_scenarios_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-moral_scenarios|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_nutrition_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-nutrition|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-nutrition|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_philosophy_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-philosophy|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-philosophy|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_prehistory_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-prehistory|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-prehistory|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_professional_accounting_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_accounting|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_professional_law_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-professional_law|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_law|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_professional_medicine_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_medicine|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_professional_psychology_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-professional_psychology|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_public_relations_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-public_relations|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-public_relations|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_security_studies_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-security_studies|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-security_studies|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_sociology_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-sociology|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-sociology|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_us_foreign_policy_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-us_foreign_policy|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_virology_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-virology|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-virology|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_hendrycksTest_world_religions_5

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|hendrycksTest-world_religions|5_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|hendrycksTest-world_religions|5_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_truthfulqa_mc_0

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- '**/details_harness|truthfulqa:mc|0_2023-10-01T13-47-59.401032.parquet'

- split: latest

path:

- '**/details_harness|truthfulqa:mc|0_2023-10-01T13-47-59.401032.parquet'

- config_name: harness_winogrande_5

data_files:

- split: 2023_10_28T06_36_10.303707

path:

- '**/details_harness|winogrande|5_2023-10-28T06-36-10.303707.parquet'

- split: latest

path:

- '**/details_harness|winogrande|5_2023-10-28T06-36-10.303707.parquet'

- config_name: results

data_files:

- split: 2023_10_01T13_47_59.401032

path:

- results_2023-10-01T13-47-59.401032.parquet

- split: 2023_10_28T06_36_10.303707

path:

- results_2023-10-28T06-36-10.303707.parquet

- split: latest

path:

- results_2023-10-28T06-36-10.303707.parquet

---

# Dataset Card for Evaluation run of jondurbin/airoboros-l2-13b-2.2.1

## Dataset Description

- **Homepage:**

- **Repository:** https://huggingface.co/jondurbin/airoboros-l2-13b-2.2.1

- **Paper:**

- **Leaderboard:** https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

- **Point of Contact:** clementine@hf.co

### Dataset Summary

Dataset automatically created during the evaluation run of model [jondurbin/airoboros-l2-13b-2.2.1](https://huggingface.co/jondurbin/airoboros-l2-13b-2.2.1) on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).

The dataset is composed of 64 configuration, each one coresponding to one of the evaluated task.

The dataset has been created from 2 run(s). Each run can be found as a specific split in each configuration, the split being named using the timestamp of the run.The "train" split is always pointing to the latest results.

An additional configuration "results" store all the aggregated results of the run (and is used to compute and display the agregated metrics on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).

To load the details from a run, you can for instance do the following:

```python

from datasets import load_dataset

data = load_dataset("open-llm-leaderboard/details_jondurbin__airoboros-l2-13b-2.2.1",

"harness_winogrande_5",

split="train")

```

## Latest results

These are the [latest results from run 2023-10-28T06:36:10.303707](https://huggingface.co/datasets/open-llm-leaderboard/details_jondurbin__airoboros-l2-13b-2.2.1/blob/main/results_2023-10-28T06-36-10.303707.json)(note that their might be results for other tasks in the repos if successive evals didn't cover the same tasks. You find each in the results and the "latest" split for each eval):

```python

{

"all": {

"em": 0.04467281879194631,

"em_stderr": 0.0021156186992613577,

"f1": 0.10597210570469756,

"f1_stderr": 0.0024082864478827287,

"acc": 0.438030054339078,

"acc_stderr": 0.010411282060464109

},

"harness|drop|3": {

"em": 0.04467281879194631,

"em_stderr": 0.0021156186992613577,

"f1": 0.10597210570469756,

"f1_stderr": 0.0024082864478827287

},

"harness|gsm8k|5": {

"acc": 0.11599696739954511,

"acc_stderr": 0.008820485491442476

},

"harness|winogrande|5": {

"acc": 0.7600631412786109,

"acc_stderr": 0.01200207862948574

}

}

```

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

yalkgam/talmudbavli | ---

license: mit

---

|

mespinosami/map2sat-edi5k20-samples | ---

dataset_info:

features:

- name: input_image

dtype: image

- name: edit_prompt

dtype: string

- name: edited_image

dtype: image

splits:

- name: train

num_bytes: 453584.8

num_examples: 16

- name: test

num_bytes: 125145.2

num_examples: 4

download_size: 596502

dataset_size: 578730.0

---

# Dataset Card for "map2sat-edi5k20-samples"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Sowmya15/English_Profanity_Full | ---

license: apache-2.0

---

|

benayas/massive_llm_v4 | ---

dataset_info:

features:

- name: id

dtype: string

- name: locale

dtype: string

- name: partition

dtype: string

- name: scenario

dtype:

class_label:

names:

'0': social

'1': transport

'2': calendar

'3': play

'4': news

'5': datetime

'6': recommendation

'7': email

'8': iot

'9': general

'10': audio

'11': lists

'12': qa

'13': cooking

'14': takeaway

'15': music

'16': alarm

'17': weather

- name: intent

dtype:

class_label:

names:

'0': datetime_query

'1': iot_hue_lightchange

'2': transport_ticket

'3': takeaway_query

'4': qa_stock

'5': general_greet

'6': recommendation_events

'7': music_dislikeness

'8': iot_wemo_off

'9': cooking_recipe

'10': qa_currency

'11': transport_traffic

'12': general_quirky

'13': weather_query

'14': audio_volume_up

'15': email_addcontact

'16': takeaway_order

'17': email_querycontact

'18': iot_hue_lightup

'19': recommendation_locations

'20': play_audiobook

'21': lists_createoradd

'22': news_query

'23': alarm_query

'24': iot_wemo_on

'25': general_joke

'26': qa_definition

'27': social_query

'28': music_settings

'29': audio_volume_other

'30': calendar_remove

'31': iot_hue_lightdim

'32': calendar_query

'33': email_sendemail

'34': iot_cleaning

'35': audio_volume_down

'36': play_radio

'37': cooking_query

'38': datetime_convert

'39': qa_maths

'40': iot_hue_lightoff

'41': iot_hue_lighton

'42': transport_query

'43': music_likeness

'44': email_query

'45': play_music

'46': audio_volume_mute

'47': social_post

'48': alarm_set

'49': qa_factoid

'50': calendar_set

'51': play_game

'52': alarm_remove

'53': lists_remove

'54': transport_taxi

'55': recommendation_movies

'56': iot_coffee

'57': music_query

'58': play_podcasts

'59': lists_query

- name: utt

dtype: string

- name: annot_utt

dtype: string

- name: worker_id

dtype: string

- name: slot_method

sequence:

- name: slot

dtype: string

- name: method

dtype: string

- name: judgments

sequence:

- name: worker_id

dtype: string

- name: intent_score

dtype: int8

- name: slots_score

dtype: int8

- name: grammar_score

dtype: int8

- name: spelling_score

dtype: int8

- name: language_identification

dtype: string

- name: category

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 17839343

num_examples: 11514

- name: validation

num_bytes: 3144099

num_examples: 2033

- name: test

num_bytes: 4598528

num_examples: 2974

download_size: 2975271

dataset_size: 25581970

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

---

|

pavanBuduguppa/asr_inverse_text_normalization | ---

license: gpl-3.0

---

|

result-kand2-sdxl-wuerst-karlo/e8491cc1 | ---

dataset_info:

features:

- name: result

dtype: string

- name: id

dtype: int64

splits:

- name: train

num_bytes: 168

num_examples: 10

download_size: 1314

dataset_size: 168

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "e8491cc1"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

weijiawu/DSText | ---

license: cc-by-4.0

---

|

fiatrete/dan-used-apps | ---

license: mit

---

|

JelleWo/common_voice_13_0_en_VALTEST_pseudo_labelled | ---

dataset_info:

config_name: en

features:

- name: client_id

dtype: string